A Comparative Analysis of Model Quality Assessment Tools for Drug Development in 2025

This article provides a comprehensive comparative analysis of model quality assessment tools tailored for researchers, scientists, and drug development professionals.

A Comparative Analysis of Model Quality Assessment Tools for Drug Development in 2025

Abstract

This article provides a comprehensive comparative analysis of model quality assessment tools tailored for researchers, scientists, and drug development professionals. It explores the foundational principles of Model-Informed Drug Development (MIDD) and the 'fit-for-purpose' paradigm [citation:3]. The analysis covers a wide spectrum of methodologies, from quantitative systems pharmacology (QSP) and physiologically based pharmacokinetic (PBPK) modeling [citation:3] to emerging AI evaluation platforms [citation:7] and expert-in-the-loop services [citation:2]. A practical framework is presented for troubleshooting common model failures, optimizing workflows, and validating tools through comparative analysis of leading platforms, empowering teams to select the right tools to enhance model reliability, accelerate development timelines, and support regulatory decision-making.

Foundations of Model Quality: Understanding MIDD and the Fit-for-Purpose Paradigm

Defining Model Quality Assessment in Drug Development

In modern drug development, the reliance on quantitative models for critical decision-making has made rigorous model quality assessment (MQA) indispensable. Model-informed Drug Development (MIDD) represents a foundational framework that integrates quantitative models to optimize drug development and support regulatory decisions across all stages—from early discovery to post-market surveillance [1]. The core principle of "fit-for-purpose" (FFP) underscores that model evaluation must be closely aligned with the specific Question of Interest (QOI) and Context of Use (COU) [1]. Essentially, a model's quality is not an abstract property but its fitness to reliably address a specific development need, such as first-in-human dose prediction or clinical trial optimization.

The need for standardized MQA is particularly acute for complex mechanistic models like Quantitative Systems Pharmacology (QSP), where establishing confidence among stakeholders remains a significant challenge [2]. Without consistent evaluation standards, model predictions may be met with skepticism, limiting their adoption and impact. This guide provides a comparative analysis of MQA methodologies across different model types used in drug development, offering researchers a structured approach to evaluating model credibility and performance.

Comparative Analysis of Model Assessment Across Paradigms

Core Evaluation Metrics by Model Type

Different modeling paradigms require specialized evaluation metrics tailored to their structure, purpose, and application context. The table below summarizes key metrics across major model categories used in pharmaceutical development.

Table 1: Model Evaluation Metrics by Modeling Paradigm

| Model Type | Primary Application | Key Quantitative Metrics | Diagnostic Graphics | Domain-Specific Considerations |

|---|---|---|---|---|

| PopPK/PD Models [3] | Precision dosing, Exposure-response | MAE, RMSE, MPE, GMFE, Forecast accuracy | Observed vs. Predicted plots, Visual Predictive Checks | Bayesian forecasting performance, Covariate selection validity |

| QSP/PBPK Models [2] [4] | Mechanistic prediction, DDI risk | Sensitivity indices, Uncertainty quantification, GMFE | Parameter identifiability plots, Sobol analysis | Model credibility assessment, Risk-informed evaluation |

| Machine Learning (Biopharma) [5] | Compound screening, Toxicity prediction | Precision-at-K, Rare event sensitivity, Pathway impact metrics | ROC curves, Enrichment plots | Class imbalance handling, Biological relevance validation |

| Clinical Trial Simulation [1] [6] | Trial optimization, Probability of success | Hazard ratios, Predictive accuracy, Type I/II error rates | Kaplan-Meier plots, Funnel plots | Historical benchmarking adequacy, Development path aggregation |

Assessment Methodologies for Different Prediction Contexts

For models used in clinical decision support, especially for Model-Informed Precision Dosing (MIPD), the evaluation approach must match the intended clinical application. The table below compares three fundamental approaches for evaluating PopPK models, each with distinct strengths and limitations.

Table 2: Performance Assessment Approaches for PopPK Models in Precision Dosing

| Assessment Approach | Prediction Type | TDM Data Usage | Key Interpretation | Clinical Relevance |

|---|---|---|---|---|

| Population Predictions [3] | Forward-looking forecast | No TDM used | Tests model without therapeutic drug monitoring | Represents baseline performance without patient feedback |

| Individual Fitted Predictions [3] | Backward-looking fit | All available TDM | Measures model fit to historical data | Overestimates clinical performance due to data overfitting |

| Individual Forecasted Predictions [3] | Forward-looking forecast | Iterative TDM incorporation | Gold standard for real-world forecasting | Best mimics clinical practice; most relevant for MIPD |

Experimental Protocols for Model Evaluation

Protocol 1: Forecasted Performance Evaluation for PopPK Models

Purpose: To assess the real-world predictive performance of a PopPK model for clinical dosing applications by simulating how the model would perform in actual clinical practice with sequential TDM data [3].

Materials:

- Population PK Model: Structural model with parameter estimates and variance components

- Validation Dataset: Rich TDM data with multiple samples per patient across different dosing intervals

- Software Capability: Bayesian estimation algorithms (e.g., NONMEM, Monolix, InsightRX Nova)

- Computational Scripts: Custom scripts for iterative forecasting analysis

Procedure:

- Data Sequencing: For each patient, sort TDM samples chronologically and label them sequentially (TDM1, TDM2, ..., TDMn)

- Initial Bayesian Estimation: Using only TDM1, compute individual PK parameter estimates via Bayesian feedback

- First Forecast: Using the estimated parameters from Step 2, predict concentrations at TDM2 timepoints

- Iterative Updating: Incorporate TDM2 into Bayesian estimation, update parameters, and forecast TDM3

- Performance Calculation: Repeat until all samples are forecasted, then compute bias (MPE) and accuracy (RMSE) between forecasted and observed values

- Comparative Analysis: Compare forecasting performance across candidate models to select the optimal one for clinical implementation

Interpretation: Models with lower forecast RMSE and MPE closer to zero are preferred for clinical decision support. Accuracy >80% within ±20% of observed values is often considered acceptable, though clinical context may modify this threshold [3].

Protocol 2: Credibility Assessment for QSP/PBPK Models

Purpose: To establish confidence in QSP or PBPK model predictions through a risk-informed credibility assessment framework that aligns with the model's context of use [2] [4].

Materials:

- Documented QSP/PBPK Model: Complete mathematical specification with all equations and parameters

- Parameter Pedigree Table: Documentation of parameter sources and reliability assessments

- Validation Datasets: External clinical or preclinical data not used in model development

- Sensitivity Analysis Tools: Software for local (e.g., OAT) and global (e.g., Sobol, Morris) sensitivity analysis

Procedure:

- Context of Use Definition: Explicitly document the specific questions the model will address and the risks associated with incorrect predictions

- Verification Activities:

- Implement mass-balance checks for PBPK models

- Conduct peer code reviews

- Run unit tests for model components

- Validation Activities:

- Compare model predictions to available clinical data

- Calculate GMFE for exposure metrics (AUC, Cmax)

- Generate observed vs. predicted plots for key biomarkers

- Uncertainty Quantification:

- Perform local sensitivity analysis for key parameters

- Conduct global sensitivity analysis using Sobol or Morris methods

- Assess parameter identifiability using profile likelihood or Markov chain Monte Carlo methods

- Documentation: Compile evidence from steps 1-4 into a credibility assessment report

Interpretation: Model credibility is established when validation demonstrates GMFE <2-fold error for exposure metrics and sensitivity analysis confirms that predictions are robust to parameter uncertainty, with higher standards for higher-risk applications [4].

Model Credibility Assessment Workflow

Protocol 3: Domain-Specific Evaluation for ML Models in Drug Discovery

Purpose: To evaluate machine learning models for drug discovery applications using metrics that address the domain-specific challenges of imbalanced datasets and rare event prediction [5].

Materials:

- Curated Compound Dataset: Annotated with activity labels and relevant molecular descriptors

- Benchmark Datasets: Publicly available datasets (e.g., ChEMBL, PubChem) for comparative assessment

- ML Framework: TensorFlow, PyTorch, or scikit-learn with appropriate model architectures

- Evaluation Scripts: Custom implementations for domain-specific metrics

Procedure:

- Data Preparation:

- Split data into training, validation, and test sets (typically 70/20/10)

- Preserve imbalance characteristics across splits to maintain real-world distribution

- Baseline Establishment: Train and evaluate baseline models using traditional metrics (accuracy, F1-score, ROC-AUC)

- Domain-Specific Evaluation:

- Calculate Precision-at-K (K=1%, 5%, 10%) to assess ranking capability for virtual screening

- Compute Rare Event Sensitivity by focusing on recall for minority classes (active compounds)

- Apply Pathway Impact Metrics to evaluate biological relevance of predictions using enrichment analysis

- Comparative Analysis: Compare domain-specific metrics across different algorithms (e.g., Random Forest vs. Neural Networks)

- Ablation Studies: Systematically remove input features to assess model robustness and feature importance

Interpretation: ML models with high Precision-at-K (>0.8 for K=1%) and improved rare event sensitivity compared to baselines are preferred. Pathway impact should show statistically significant enrichment in relevant biological pathways [5].

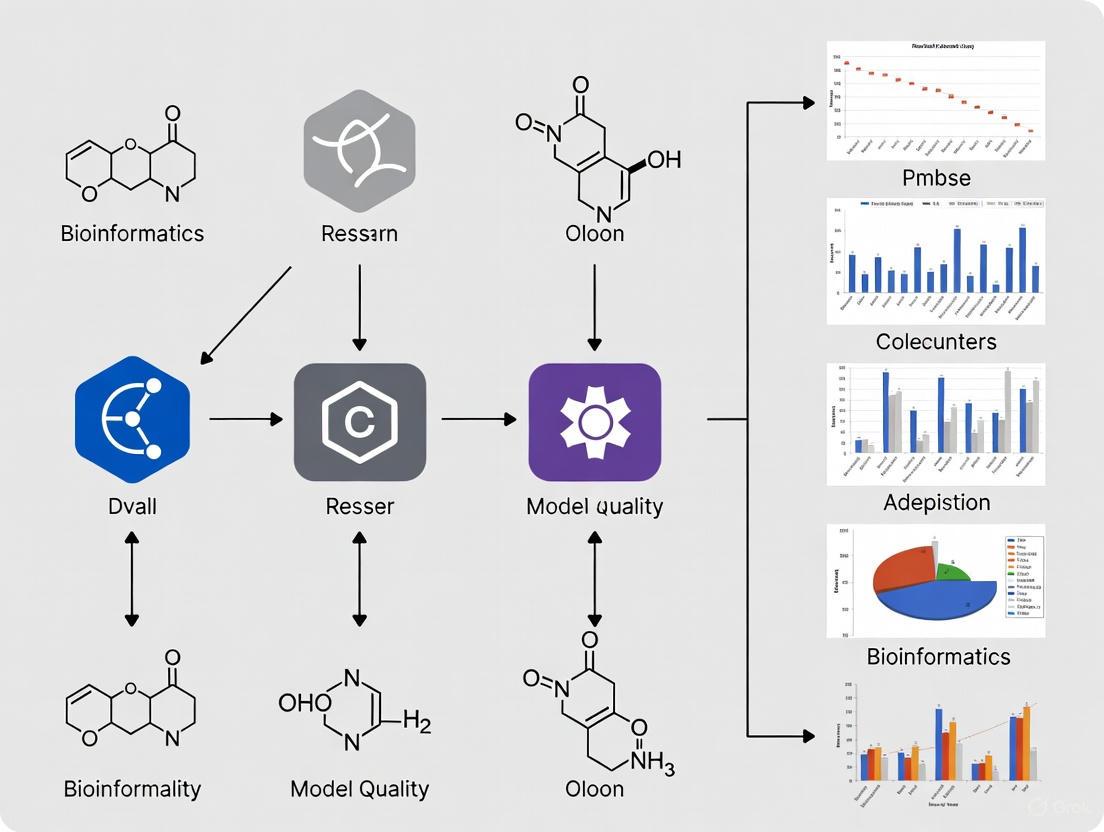

Visualization of Model Evaluation Frameworks

The Right Question, Right Model, Right Analysis Framework

Right Question, Model, Analysis Framework

Essential Research Reagent Solutions

Table 3: Key Research Reagents and Tools for Model Quality Assessment

| Reagent/Tool Category | Specific Examples | Primary Function in MQA |

|---|---|---|

| Modeling & Simulation Platforms [1] [4] | NONMEM, Monolix, MATLAB, R/Python with mrgsolve, Open Systems Pharmacology Suite | Core infrastructure for model development, parameter estimation, and simulation capabilities |

| Sensitivity Analysis Tools [2] [4] | Sobol analysis implementations, Morris method scripts, Parameter identifiability algorithms | Quantification of parameter influence on model outputs and identification of non-identifiable parameters |

| Performance Metrics Calculators [3] [4] | Custom scripts for MAE, RMSE, GMFE, Forecast accuracy, Precision-at-K | Standardized computation of quantitative performance metrics across different model types |

| Credibility Assessment Frameworks [2] [4] | ASME V&V 40 risk-informed framework, Pedigree tables for parameter sourcing | Structured approach for assessing model credibility based on context of use and risk assessment |

| Specialized ML Evaluation Packages [5] | Custom implementations for rare event sensitivity, Pathway enrichment analysis, Precision-at-K | Domain-specific evaluation of ML models addressing biopharma challenges like class imbalance |

Model quality assessment in drug development requires a multifaceted approach tailored to specific model types and their contexts of use. The comparative analysis presented in this guide demonstrates that while fundamental principles of verification and validation apply universally, the specific metrics and methodologies vary significantly across PopPK, QSP/PBPK, and ML modeling paradigms. The "fit-for-purpose" principle remains paramount—evaluation strategies must align with the specific questions a model intends to address and the consequences of potential prediction errors. As modeling approaches continue to evolve and integrate artificial intelligence, standardized assessment frameworks will become increasingly crucial for building stakeholder confidence and ensuring reliable model-informed decisions throughout the drug development lifecycle.

The Critical Role of Model-Informed Drug Development (MIDD)

Model-Informed Drug Development (MIDD) is a quantitative framework that uses exposure-based, biological, and statistical models derived from preclinical and clinical data to improve the quality, efficiency, and cost-effectiveness of drug development decision-making [7] [8]. MIDD approaches maximize information extracted from collected data to enhance confidence in drug targets, endpoints, and regulatory decisions while allowing extrapolation to unstudied situations and populations [9]. Within the broader context of model quality assessment tools research, evaluating the predictive performance, robustness, and context of use for various MIDD approaches becomes paramount for establishing their credibility in regulatory and development decision-making [10].

The fundamental principle of MIDD involves creating a knowledge base from integrated models of compound, mechanism, and disease-level data, which enables greater efficiency in drug development programs [11] [8]. This approach stands in contrast to traditional development methods that often rely on sequential trial-and-error experimentation. By viewing individual trials as building blocks of a cumulative knowledge base, MIDD enables the design of programs optimized for information maximization and uncertainty minimization [11].

Comparative Analysis of Major MIDD Approaches

Taxonomy of MIDD Methodologies

MIDD encompasses a diverse set of quantitative approaches that can be broadly categorized into top-down and bottom-up methodologies [9]. Top-down approaches typically include population pharmacokinetic/pharmacodynamic (PopPK/PD) modeling and simulation, model-based meta-analysis (MBMA), and exposure-response modeling. These methods often work backward from observed clinical data to identify patterns and relationships. In contrast, bottom-up or mechanistic approaches include physiologically-based pharmacokinetic (PBPK) modeling, quantitative systems pharmacology (QSP), and semi-mechanistic PK/PD modeling, which build predictions from first principles of biology and physiology [9].

The choice between these approaches depends on the specific question of interest, available data, and decision context. Top-down methods are particularly valuable when substantial clinical data exists and researchers need to understand relationships between variables or optimize dosing regimens. Bottom-up approaches prove most beneficial in early development when clinical data is limited, or when researchers need to understand complex biological systems and their interactions with therapeutic interventions [9].

Quantitative Comparison of MIDD Approaches

Table 1: Comparison of Primary MIDD Approaches and Their Applications

| MIDD Approach | Primary Applications | Key Inputs Required | Typical Outputs | Regulatory Acceptance |

|---|---|---|---|---|

| Population PK/PD [9] | Dose-response relationships, Subject variability, Dose regimen optimization | Sparse PK samples, PD measurements, Patient covariates | Parameter estimates of variability, Model-based dosing recommendations | Well-established, Expected in late-stage programs |

| PBPK Modeling [9] | Drug-drug interactions, Special populations, Formulation development, First-in-human dosing | Physicochemical properties, In vitro metabolism data, Physiological parameters | PK predictions in unstudied populations, DDI risk assessment | Standard for DDI and specific populations |

| QSP [9] | New modalities, Combination therapy, Target selection, Safety risk qualification | Pathway information, Biomarker data, Drug mechanism data | Systems-level drug effects, Biomarker strategies, Combination rationale | Emerging, Case-by-case basis |

| MBMA [9] | Comparator analysis, Trial design optimization, Go/no-go decisions | Curated clinical trial databases, Literature data | Indirect treatment comparisons, Competitive positioning | Support for trial design and positioning |

Table 2: Performance Metrics of MIDD Impact on Drug Development

| Development Aspect | Traditional Approach | MIDD-Enhanced Approach | Impact Evidence |

|---|---|---|---|

| Dose Selection Strategy [11] | Parallel Phase III trials with limited dose information | Dose-finding trial followed by confirmatory trials | Higher probability of appropriate dose selection (KMco vs DinosaurRX) |

| Development Timeline [9] | Direct to late-stage development | Iterative learning-confirming cycles | 10 months average savings per program (Pfizer data) |

| Proof of Mechanism Success [9] | Standard development pathway | Mechanism-based biosimulation | 2.5x increased chance of positive proof (AstraZeneca data) |

| Phase III Success Rate [11] | Assumed a priori treatment effect | Evidence synthesis and risk mitigation | 55% failure rate due to inadequate efficacy addressed |

Experimental Protocols and Methodologies

Model Development and Validation Workflow

The development and application of MIDD approaches follow systematic protocols to ensure robustness and regulatory acceptance. The FDA's Model-Informed Drug Development Paired Meeting Program outlines a structured approach that begins with defining the question of interest and context of use [7]. This includes a detailed assessment of model risk, considering both the weight of model predictions in the totality of data and the potential risk of making an incorrect decision [7].

A critical component is model evaluation, which the ICH M15 draft guidance emphasizes through a harmonized framework for assessing evidence derived from MIDD [10]. This includes verification (ensuring the model is implemented correctly), validation (ensuring the model accurately represents the real-world system), and qualification (establishing the model's suitability for a specific context of use) [10]. The guidance recommends that model development should follow general recommendations in conjunction with current accepted standards and scientific practices for specific modeling and simulation methods [10].

MIDD Workflow and Regulatory Integration

The workflow diagram above illustrates the iterative learning process fundamental to MIDD, emphasizing how models inform decisions throughout development. This process aligns with regulatory expectations outlined in the FDA's MIDD Paired Meeting Program, which encourages early discussion of MIDD approaches to inform specific drug development programs [7].

Research Reagent Solutions for MIDD Implementation

Table 3: Essential Research Tools and Resources for MIDD Applications

| Tool Category | Specific Solutions | Function in MIDD | Implementation Considerations |

|---|---|---|---|

| Data Curation Platforms [9] | Clinical trial databases (e.g., Codex), Literature curation tools | Supports MBMA by providing highly curated clinical trial data for indirect comparisons | Requires standardized data structure and quality control processes |

| Modeling Software [9] [8] | PBPK platforms, PopPK/PD tools, QSP frameworks | Enables mechanism-based biosimulation and pharmacokinetic/pharmacodynamic modeling | Selection depends on specific question of interest and development stage |

| Simulation Environments [11] [8] | Clinical trial simulators, Statistical computing environments | Facilitates assessment of trial operating characteristics and probabilistic determinations | Must balance computational efficiency with model complexity |

| Regulatory Submission Templates [7] [10] | ICH M15-aligned documentation frameworks | Standardizes evidence presentation for regulatory assessment | Early alignment with regulatory expectations through FDA MIDD Program |

Regulatory Landscape and Quality Assessment

Regulatory Framework and Standards

The regulatory landscape for MIDD has evolved significantly, with major regulatory bodies establishing formal pathways for MIDD integration into drug development. The FDA's MIDD Paired Meeting Program, conducted under PDUFA VII, provides sponsors the opportunity to meet with Agency staff to discuss MIDD approaches in medical product development [7]. This program focuses particularly on dose selection, clinical trial simulation, and predictive safety evaluation [7].

Internationally, the ICH M15 draft guidance on general principles for Model-Informed Drug Development represents a significant step toward harmonized approaches to MIDD assessment [10]. This guidance discusses multidisciplinary principles of MIDD and provides recommendations on MIDD planning, model evaluation, and evidence documentation, promoting consistent and transparent evaluation of MIDD evidence to inform regulatory decision-making [10].

Model Quality Assessment Framework

The assessment of model quality in MIDD follows a risk-based framework that considers both the model influence (weight of model predictions in the totality of data) and the decision consequence (potential risk of making an incorrect decision) [7]. This framework acknowledges that not all models require the same level of validation, with the necessary evaluation rigor proportional to the model's potential impact on development and regulatory decisions.

The ICH M15 guidance provides a harmonized framework for assessing evidence derived from MIDD, focusing on model credibility through evaluation of its scientific basis, technical performance, and relevance to the specific context of use [10]. This represents a crucial advancement in model quality assessment tools research, establishing standardized criteria for evaluating MIDD approaches across regulatory jurisdictions.

The continued evolution of MIDD approaches points toward increased integration of artificial intelligence and machine learning methods, expanded use of quantitative systems pharmacology for complex biological systems, and greater emphasis on model-based extrapolation to special populations [12] [9]. As recognized in regulatory guidance, the appropriate use of MIDD enables greater efficiency in drug development while harmonized approaches to assessment promote consistent and transparent evaluation of MIDD evidence [10].

The critical role of MIDD in modern drug development is now firmly established, with the framework moving from a "nice-to-have" capability to a "regulatory essential" component of comprehensive drug development programs [9]. Through continued refinement of model quality assessment tools and methodologies, MIDD approaches will play an increasingly vital role in bridging knowledge gaps, optimizing development efficiency, and ultimately delivering better medicines to patients through more informed decision-making.

Understanding the 'Fit-for-Purpose' Framework for Tool Selection

In the rapidly evolving field of drug discovery, selecting the right software tool is a critical determinant of research success. With the integration of advanced artificial intelligence and machine learning technologies, modern platforms have dramatically accelerated development cycles and improved prediction accuracy [13]. Against this backdrop, the 'Fit-for-Purpose' Framework emerges as a systematic methodology for evaluating and selecting tools based on how well they address specific research needs rather than abstract feature comparisons [14]. This framework provides researchers with a structured approach to identify solutions that deliver optimal performance for their specific use cases, technical environment, and organizational constraints.

This guide implements this framework through a comparative analysis of leading AI-driven drug discovery platforms, providing experimental data and methodological details to facilitate informed decision-making for research professionals engaged in model quality assessment.

Core Principles of the Fit-for-Purpose Framework

The Fit-for-Purpose Framework shifts tool selection from feature-centric checklists to a holistic assessment of how well a tool's capabilities align with research objectives. The framework emphasizes two fundamental characteristics for data and tools: reliability (trustworthiness and credibility) and relevancy (appropriateness for the specific research question) [14].

This methodology involves:

- Articulating Specific Research Questions: Clearly defining the scientific problems and objectives.

- Identifying Minimum Criteria: Determining the essential capabilities needed to validly address the research questions.

- Systematic Assessment: Evaluating candidate tools against these criteria with a focus on practical implementation.

- Operational Considerations: Accounting for logistical factors including data access, timelines, and integration requirements [14].

The framework further classifies evaluation metrics into four distinct categories to clarify their role in assessment:

- Fitness Criteria: The thresholds customers use for selection, with minimum acceptable and exceptional performance levels.

- Health Indicators: Important vital signs that should be monitored within a healthy range but aren't primary selection factors.

- Improvement Drivers: Temporary metrics with specific targets used to motivate and measure enhancements.

- Vanity Metrics: Measurements that may serve emotional needs but don't correlate with improved business performance or customer satisfaction [15].

Comparative Analysis of AI Drug Discovery Platforms

Applying the Fit-for-Purpose Framework reveals significant differentiation among leading platforms. The table below summarizes key platforms and their specialized capabilities:

Table 1: AI Drug Discovery Platform Specializations

| Platform | Primary Specialization | Best For | Standout Feature |

|---|---|---|---|

| Atomwise | Hit Identification | Fast, accurate small molecule screening | AtomNet deep learning for virtual screening [13] |

| Insilico Medicine | End-to-End Discovery | Full drug discovery lifecycle | Generative chemistry models for molecule design [13] |

| Schrödinger | Structure-Based Design | Enterprise-level research | Physics-based + ML simulations for accuracy [13] |

| DeepMind AlphaFold | Target Discovery | Protein structure prediction | Near-exact protein folding predictions [13] |

| Exscientia | Automated Optimization | AI-driven molecular design | Automated design-make-test-analyze cycles [13] |

| DeepMirror | Hit-to-Lead Optimization | Accelerating lead optimization phases | Generative AI engine for molecular property prediction [16] |

| Cresset | Protein-Ligand Modeling | Understanding molecular interactions | Free Energy Perturbation (FEP) enhancements [16] |

Quantitative Performance Comparison

Platform performance varies significantly across different computational tasks. The following table summarizes experimental results from benchmark studies and vendor demonstrations:

Table 2: Experimental Performance Metrics Across Platforms

| Platform/Technology | Experimental Task | Reported Result | Experimental Context |

|---|---|---|---|

| Generative AI (DeepMirror) | Hit-to-Lead Optimization | 6x acceleration | Real-world scenario reduction from traditional timelines [16] |

| AI-Enabled Workflows | Molecular Design | 142x parameter reduction | Microsoft's Phi-3-mini (3.8B params) achieving same threshold as 540B parameter model [17] |

| Deep Graph Networks | Analog Generation & Potency | 4,500x potency improvement; 26,000+ virtual analogs | Sub-nanomolar MAGL inhibitors from initial hits [18] |

| AI-Enhanced Screening | Hit Enrichment | 50x boost vs. traditional methods | Integration of pharmacophoric features with protein-ligand data [18] |

| OpenAI o1 Model | Complex Reasoning | 74.4% vs. 9.3% (GPT-4o) | International Mathematical Olympiad qualifying exam [17] |

Experimental Protocols for Platform Evaluation

Benchmarking Methodology for Generative AI Platforms

Standardized experimental protocols are essential for meaningful cross-platform comparisons. The following workflow outlines a comprehensive evaluation methodology for generative AI in drug discovery:

Diagram 1: Generative AI platform evaluation workflow.

Key Performance Metrics and Measurement Approaches

- Molecular Generation Diversity: Measured using Tanimoto similarity coefficients and scaffold analysis to evaluate structural novelty of generated compounds versus training data.

- Property Prediction Accuracy: Quantified through root mean square error (RMSE) and correlation coefficients for ADMET (Absorption, Distribution, Metabolism, Excretion, Toxicity) endpoints compared to experimental values.

- Binding Affinity Validation: Assessed using computational methods (Free Energy Perturbation, molecular docking) and experimental techniques (CETSA for cellular target engagement) [18].

- Synthetic Accessibility: Evaluated using synthetic complexity scores and manual assessment by medicinal chemists.

The Scientist's Toolkit: Essential Research Reagents & Materials

Successful implementation of AI drug discovery platforms requires integration with specialized experimental reagents and materials. The following table details key components of the modern drug discovery toolkit:

Table 3: Essential Research Reagents and Materials for Experimental Validation

| Reagent/Material | Function/Purpose | Application Context |

|---|---|---|

| CETSA (Cellular Thermal Shift Assay) | Validates direct drug-target engagement in intact cells and tissues [18] | Confirmation of binding in physiologically relevant environments |

| Target Protein Libraries | Provides structures for virtual screening and docking studies | Structure-based drug design and target identification |

| Compound Libraries | Sources for hit identification and lead optimization | High-throughput screening and virtual screening |

| ADMET Prediction Tools | Estimates pharmacokinetic and toxicity properties early in discovery | Prioritization of compounds for synthesis and testing |

| Molecular Probes | Investigates target function and binding mechanisms | Biochemical and cellular assay development |

| Analytical Standards | Ensures quality control and data reliability | Chromatography and mass spectrometry applications |

Platform-Specific Experimental Data and Workflows

Schrödinger's Physics-Based Modeling Platform

Schrödinger integrates advanced computational methods with specialized experimental workflows:

Diagram 2: Schrödinger physics-based modeling workflow.

Experimental Outcome: Schrödinger's FEP+ implementation achieves high accuracy in binding affinity predictions, with benchmark studies demonstrating correlation coefficients (R²) exceeding 0.8 between computed and experimental binding free energies across diverse target classes [16].

DeepMirror's Generative AI Approach

DeepMirror employs foundational models that automatically adapt to user data for molecular generation and optimization:

Methodology: The platform utilizes deep generative models trained on chemical and biological data to propose novel molecular structures with optimized properties. The system incorporates:

- Transfer Learning: Models pre-trained on large public databases and fine-tuned with project-specific data.

- Multi-Objective Optimization: Simultaneous optimization of potency, selectivity, and ADMET properties.

- Reinforcement Learning: Reward functions shaped by predictive models and medicinal chemistry rules.

Experimental Results: In an antimalarial drug program, DeepMirror's platform demonstrated significant reduction in ADMET liabilities while maintaining target potency [16].

The Fit-for-Purpose Framework provides a structured methodology for matching platform capabilities to specific research requirements. When applied to AI drug discovery tools, the framework yields the following selection guidelines:

- Choose Atomwise for rapid, deep learning-based hit identification through virtual screening of billions of compounds [13].

- Select Insilico Medicine for end-to-end discovery pipelines with strong generative chemistry capabilities [13].

- Utilize Schrödinger for high-accuracy structure-based design requiring physics-based simulations and molecular docking [16] [13].

- Implement DeepMind AlphaFold for accurate protein structure prediction to enable target identification and characterization [13].

- Apply Exscientia for automated molecular design with integrated design-make-test-analyze cycles [13].

- Employ DeepMirror for AI-accelerated hit-to-lead optimization with generative molecular design [16].

The most effective tool selection strategy involves mapping specific research requirements against platform specializations, then validating choices through controlled pilot studies that measure relevant fitness criteria. This approach ensures selected tools deliver optimal performance for the intended research context while providing measurable experimental evidence to support implementation decisions.

Aligning Tools with Questions of Interest (QOI) and Context of Use (COU)

In the rigorous field of clinical and qualitative research, the strategic selection of assessment tools is paramount. This process is anchored by two foundational concepts: the Concept of Interest (COI) and the Context of Use (COU). The COI is formally defined as "the aspect of an individual’s clinical, biological, physical, or functional state, or experience that the assessment is intended to capture" [19]. In practice, this is the specific "thing" researchers aim to measure, which should be directly informed by patient input on what is important to them, such as fatigue or pain levels [19]. The COU, conversely, is "a statement that fully and clearly describes the way the medical product development tool is to be used and the medical product development-related purpose of the use" [19]. It provides a detailed specification of the situation in which the assessment instrument will be deployed, including the target patient population and the specific setting [19]. The alignment of quality assessment tools with the specific COI and COU is a critical first step in the iterative development of any Clinical Outcome Assessment (COA), ensuring that the tools selected are fit-for-purpose and that the resulting data are credible, dependable, and transferable [19] [20].

Comparative Analysis of Quality Assessment Tools

A diverse ecosystem of quality assessment tools exists to serve different research questions and study designs. The choice of tool must be matched to the specific domain (e.g., diagnosis, prognosis, intervention) and the type of evidence being assessed (e.g., a prediction model versus a single test) [21].

Tool Selection Guidance Framework

To navigate the complex landscape of quality assessment, researchers can use a structured set of questions to identify the most appropriate tool [21]:

Catalog of Quality Assessment Tools

The table below provides a categorized overview of prominent quality assessment tools available to researchers [22]:

Table 1: Quality Assessment Tools by Study Design

| Study Design | Assessment Tools |

|---|---|

| Randomized Controlled Trials (RCTs) | Cochrane Risk of Bias (ROB) 2.0, CASP RCT Checklist, Jadad Scale, CEBM RCT Tool, JBI RCT Checklist [22] |

| Observational Studies | Newcastle-Ottawa Scale (NOS), CASP Cohort/Case-Control Checklists, JBI Cohort/Case-Control Checklists, STROBE Checklist [22] |

| Diagnostic Studies | QUADAS-2, CASP Diagnostic Checklist, JBI Diagnostic Test Accuracy Checklist [21] [22] |

| Systematic Reviews | AMSTAR, CASP Systematic Review Checklist, ROBIS, JBI Critical Appraisal Checklist for Systematic Reviews [22] |

| Qualitative Research | CASP Qualitative Checklist, JBI Qualitative Checklist, Evaluative Tools for Qualitative Studies (ETQS) [20] [22] |

| Economic Evaluations | CASP Economic Evaluation Checklist, Consensus Health Economic Criteria (CHEC) List [22] |

| Mixed Methods / Other | McGill Mixed Methods Appraisal Tool (MMAT), LEGEND Evidence Evaluation Tools [22] |

Experimental Performance Data in Qualitative Research Appraisal

The emergence of Artificial Intelligence (AI) as a potential tool for augmenting research processes presents a new dimension for comparative analysis. A recent study evaluated the performance of five AI models in assessing the quality of qualitative research using three standardized tools [20].

Experimental Protocol for AI Model Evaluation

Objective: To evaluate and compare the performance of five AI models (GPT-3.5, Claude 3.5, Sonar Huge, GPT-4, and Claude 3 Opus) in assessing the quality of qualitative health research using the Critical Appraisal Skills Programme (CASP), Joanna Briggs Institute (JBI) checklist, and Evaluative Tools for Qualitative Studies (ETQS) [20].

Methodology:

- Model Selection: Five AI models with diverse architectures and capabilities were selected (see Table 2 for details) [20].

- Input Material: Each model was tasked with appraising three peer-reviewed qualitative papers in health and physical activity research [20].

- Assessment Framework: Models generated assessments based on the three standardized tools (CASP, JBI, ETQS) [20].

- Data Analysis: The study analyzed systematic affirmation bias (the tendency to answer "Yes" to checklist questions), interrater reliability among models, and tool-dependent disagreements. A sensitivity analysis evaluated the impact of excluding specific models on agreement levels [20].

The Scientist's Toolkit: Key Research Reagents

- AI Large Language Models (GPT-3.5, GPT-4, Claude 3.5, etc.): Function as automated qualitative appraisers; process textual data from research papers to generate quality assessments based on predefined criteria [20].

- Standardized Quality Checklists (CASP, JBI, ETQS): Act as structured evaluation frameworks; provide a consistent set of methodological criteria against which AI models or human raters assess research quality [20].

- Peer-Reviewed Qualitative Research Papers: Serve as the test substrate; provide standardized, real-world input material for benchmarking the performance of different assessment methods (AI or human) [20].

- Interrater Reliability Metrics (Krippendorff’s α): Function as a statistical consistency gauge; measure the degree of agreement between multiple raters (e.g., AI models) beyond what is expected by chance [20].

Quantitative Performance Results

The experimental data reveals significant variations in AI model performance and consensus.

Table 2: AI Model Affirmation Bias and Characteristics [20]

| AI Model | Developer | "Yes" Response Rate | Key Characteristics |

|---|---|---|---|

| Claude 3.5 | Anthropic | 85.4% (164/192) | Exhibited the highest rate of affirmation bias |

| GPT-3.5 | OpenAI | 81.3% (156/192) | Showed near-perfect alignment with Claude 3.5 |

| Sonar Huge | Perplexity AI | 79.2% (76/96 for 1 paper) | Open-source model with greater variability |

| Claude 3 Opus | Anthropic | 75.9% (145/191) | Lower affirmation rate than its counterpart |

| GPT-4 | OpenAI | 59.9% (115/192) | Significant divergence, with high uncertainty ("Cannot tell": 35.9%) |

Table 3: Interrater Reliability by Assessment Tool [20]

| Assessment Tool | Baseline Agreement (Krippendorff’s α) | Maximum Agreement After Model Exclusion | Model Whose Exclusion Increased Agreement Most |

|---|---|---|---|

| CASP | 0.653 | 0.784 (+20%) | GPT-4 |

| JBI | 0.477 | 0.561 (+18%) | Sonar Huge |

| ETQS | 0.376 | 0.409 (+9%) | GPT-4 or Claude 3 Opus |

The workflow and results of this comparative experiment are summarized below:

Discussion and Synthesis

Key Performance Differentiators

The comparative data indicates that the choice of assessment tool significantly influences the consistency of appraisal outcomes. The CASP tool demonstrated the highest baseline consensus among AI models (α=0.653), suggesting its structure may be more readily interpreted with consistency compared to the JBI and ETQS tools [20]. Furthermore, proprietary models like GPT-3.5 and Claude 3.5 showed remarkably high alignment (Cramer V=0.891), whereas open-source models and GPT-4 exhibited greater variability [20]. This highlights that both the tool and the appraiser introduce variability.

A central finding across studies is the critical importance of aligning the tool with the COI and COU. Research indicates that an overly rigid application of quality criteria may fail to capture the diversity of qualitative research approaches [20]. The AI study concluded that while AI models enhance efficiency, they struggle with nuanced, context-dependent interpretation, particularly for specific ETQS criteria [20]. This underscores the necessity of a hybrid framework that leverages AI's scalability while retaining human expertise for final interpretive judgment.

Implications for Research Practice

For researchers, scientists, and drug development professionals, this analysis underscores several critical practices. First, the tool selection framework (Section 2.1) provides a logical starting point to ensure the QOI and COU drive the selection process. Second, when designing studies or systematic reviews, consider the inherent variability of different appraisal tools and raters (human or AI), as this can impact the synthesis of evidence. Finally, the emerging potential of AI in qualitative research appraisal is promising for efficiency, but it is not a substitute for human contextual judgment. The future of rigorous quality assessment lies in collaborative human-AI workflows that leverage the strengths of both.

In the rapidly evolving field of artificial intelligence, particularly within high-stakes domains like drug development, rigorous model evaluation is paramount. The performance of AI and large language models (LLMs) is no longer measured by single-dimension metrics but through a multifaceted lens focusing on four critical dimensions: accuracy, factuality, robustness, and safety [23]. These dimensions form the cornerstone of reliable AI systems, ensuring they perform as intended in controlled environments and maintain reliability when deployed in real-world scenarios characterized by unpredictable inputs, adversarial conditions, and evolving data distributions [24] [25].

For researchers, scientists, and drug development professionals, understanding these quality dimensions transcends academic interest—it represents a fundamental requirement for regulatory compliance, patient safety, and successful clinical application. As AI becomes increasingly integrated into drug discovery pipelines, diagnostic tools, and treatment optimization systems, the comparative analysis of assessment methodologies enables professionals to select appropriate tools, implement effective validation protocols, and ultimately build trustworthy AI solutions that accelerate therapeutic advancements while mitigating potential risks [26] [1].

Comparative Analysis of Evaluation Tools

The market offers diverse tools specializing in different aspects of model quality assessment. The following comparison summarizes the capabilities of leading platforms across our key quality dimensions, providing researchers with a practical reference for tool selection.

Table 1: Comprehensive Comparison of AI Model Evaluation Tools

| Tool Name | Primary Focus | Accuracy & Factuality Metrics | Robustness Testing Capabilities | Safety & Alignment Features | Drug Development Applications |

|---|---|---|---|---|---|

| Confident AI (DeepEval) | LLM Evaluation | Answer relevancy, factual consistency, G-Eval framework [27] | - | Bias detection, toxicity assessment [27] | - |

| Galileo | GenAI Evaluation | ChainPoll methodology, hallucination detection, factuality without ground truth [28] | - | Contextual appropriateness, real-time guardrails [28] | - |

| MLflow | Lifecycle Management | Traditional ML metrics, LLM-as-judge evaluators (v3.0+) [28] | - | - | Experiment tracking for research pipelines [29] |

| iMerit | Human-in-the-Loop | Expert-guided factual consistency, reasoning evaluation [30] | Red-teaming, edge case testing, multimodal evaluation [30] | Bias testing, toxicity detection, safety alignment [30] | Medical AI validation, clinical data assessment [30] |

| Arize AI/Phoenix | Monitoring & Observability | QA correctness, hallucination tracking [29] | Data drift monitoring, performance segmentation [29] | Toxicity assessment [29] | - |

| RAGAS | Retrieval-Augmented Generation | Accuracy, answer correctness, faithfulness [29] | Context precision/recall, context relevance [29] | - | - |

| Humanloop | LLM Development | Accuracy, tone, coherence scoring [30] | - | Cultural safety, toxicity assessments [30] | - |

| Encord Active | Computer Vision | Automated quality scoring [30] | Performance heatmaps, error discovery [30] | - | Medical imaging validation [30] |

Experimental Protocols for Quality Assessment

Robustness Evaluation Methodology

Robustness testing evaluates model performance under suboptimal or adversarial conditions that mimic real-world challenges [24]. Standardized protocols ensure consistent, reproducible assessments across different models and applications.

Table 2: Standardized Robustness Testing Protocol

| Test Category | Methodology | Measurement Metrics | Domain Applications |

|---|---|---|---|

| Out-of-Distribution (OOD) | Evaluate on data statistically different from training distribution [24] | Performance degradation (accuracy drop), confidence calibration [24] | Generalizing to new patient populations, novel molecular structures [1] |

| Input Corruption & Noise | Introduce synthetic noise, typos, or perturbations to inputs [24] [23] | Accuracy retention rate, success under perturbation [24] | Handling clinical note variations, sensor noise in medical devices [24] |

| Adversarial Examples | Apply gradient-based or heuristic attacks (e.g., via IBM Adversarial Robustness Toolbox) [25] | Attack success rate, robustness accuracy [25] | Security-sensitive applications (e.g., patient data access systems) [25] |

| Stress Testing | Systematically vary input complexity/size under constrained resources [29] | Latency, throughput, failure rate under load [29] | Clinical trial simulation at scale, molecular screening pipelines [26] |

The following diagram illustrates the sequential workflow for implementing a comprehensive robustness evaluation protocol:

Factuality and Hallucination Detection

For LLMs used in drug discovery documentation or clinical guideline synthesis, factuality assessment is critical. The following protocol details methodology for quantifying factual accuracy:

Confident AI/DeepEval Implementation:

- Dataset Curation: Compile ~10-100 input/expected output pairs with domain expert validation [27]

- Metric Selection: Apply answer relevancy, factual consistency, and contextual recall metrics

- Evaluation Execution: Automated testing via Python SDK with continuous integration

- Benchmarking: Compare model versions across consistent dataset to track improvements

MLflow 3.0 with LLM-as-Judge:

- Judge Model Configuration: Establish baseline evaluator (e.g., GPT-4, Claude 3) with predefined criteria [28]

- Multi-Dimensional Scoring: Evaluate factuality, groundedness, and retrieval relevance simultaneously

- Threshold Establishment: Define minimum acceptable scores for production deployment

- Trace Analysis: Correlate factuality failures with specific reasoning steps in model outputs

Safety and Alignment Assessment

Safety evaluation extends beyond technical metrics to encompass ethical considerations, particularly crucial in healthcare applications:

Red Teaming Protocol [30] [25]:

- Threat Modeling: Identify potential misuse scenarios and vulnerability areas

- Automated Testing: Deploy toolkits (e.g., Microsoft PyRIT, IBM Adversarial Robustness Toolbox) for systematic vulnerability scanning [25]

- Manual Expert Testing: Domain specialists craft creative prompts to uncover novel failure modes

- Bias and Toxicity Assessment: Evaluate outputs across demographic segments and measure toxicity scores [30] [27]

- Privacy Auditing: Conduct membership inference and model inversion attacks to detect data leakage [25]

The Scientist's Toolkit: Research Reagent Solutions

Implementing comprehensive quality assessments requires specialized tools and frameworks. The following table catalogs essential "research reagents" for model evaluation laboratories.

Table 3: Essential Research Reagents for Model Quality Assessment

| Reagent Category | Specific Tools/Frameworks | Primary Function | Application Context |

|---|---|---|---|

| Evaluation Frameworks | DeepEval, RAGAS, HELM [27] [29] [23] | Provide standardized metrics and testing pipelines | Benchmarking model capabilities across defined dimensions |

| Monitoring Platforms | Arize AI, Galileo, LangSmith [29] [28] | Track model performance in production environments | Detecting performance degradation, data drift in deployed systems |

| Adversarial Testing Tools | IBM Adversarial Robustness Toolbox, Microsoft PyRIT [25] | Generate adversarial examples and conduct security testing | Assessing model robustness against malicious inputs |

| Human-in-the-Loop Systems | iMerit, Scale AI, Surge AI [30] | Incorporate expert human judgment into evaluation workflows | Complex subjective tasks, domain-specific validation |

| Bias/Fairness Toolkits | AI Fairness 360, Fairlearn | Detect and mitigate algorithmic bias | Ensuring equitable performance across patient demographics |

| Uncertainty Quantification | Bayesian frameworks, temperature scaling [24] [23] | Measure model calibration and confidence reliability | Safety-critical applications requiring reliable confidence scores |

| Data Quality Assurance | Encord Active, Scale Nucleus [30] | Validate training and evaluation dataset quality | Maintaining data integrity throughout model lifecycle |

Interdimensional Relationships and Tradeoffs

The four quality dimensions interact in complex ways, requiring careful balancing during model development. The following diagram visualizes these relationships and the strategies needed to optimize across multiple dimensions.

Strategic Optimization Approaches

Accuracy-Robustness Tradeoff Management: The perennial tension between accuracy and robustness necessitates strategic approaches. Ensemble methods like bagging (e.g., Random Forests) demonstrate how combining multiple models can reduce variance and improve stability without sacrificing accuracy [24]. In deep learning contexts, techniques such as adversarial training explicitly trade minor reductions in clean accuracy for substantial gains in robustness against manipulated inputs [24] [25].

Factuality-Safety Synergy: Models with strong factuality foundations typically demonstrate better safety characteristics, as harmful behaviors often correlate with factual errors [23]. Implementation of retrieval-augmented generation (RAG) architectures creates a virtuous cycle where grounded factual responses naturally reduce hallucination-induced safety incidents [30] [29].

Calibration as a Bridge: Well-calibrated confidence scores enable more effective human-AI collaboration, particularly in high-stakes drug development decisions [23]. When models accurately convey uncertainty through appropriate confidence levels, human experts can focus attention on potentially erroneous outputs, creating a hybrid system that leverages both AI efficiency and human judgment [23].

The comparative analysis of model quality assessment tools reveals a maturing ecosystem with increasing specialization across the four key dimensions. No single tool dominates all categories; rather, researchers must assemble complementary toolkits that address their specific application requirements, particularly in specialized domains like drug development where regulatory compliance and patient safety impose additional constraints [31] [1].

The most effective quality assurance strategies combine automated evaluation frameworks with human expert oversight, continuous monitoring in production environments, and rigorous adversarial testing [30] [25]. As AI systems grow more sophisticated and deeply integrated into healthcare and scientific discovery, the frameworks for assessing accuracy, factuality, robustness, and safety must similarly evolve—maintaining rigorous standards while adapting to new challenges posed by generative AI, agentic systems, and multimodal models. For researchers and drug development professionals, this comprehensive approach to quality assessment isn't merely a technical consideration but an ethical imperative that ensures AI technologies deliver on their promise to advance human health safely and reliably.

The Impact of Poor Model Quality on Development Timelines and Decision-Making

In the high-stakes field of drug development, the quality of quantitative models is not an academic concern—it is a critical factor that directly impacts the efficiency of bringing new therapies to patients and the quality of the decisions made along the way. Model-Informed Drug Development (MIDD) employs a range of quantitative techniques to guide objective decision-making, from discovery through post-market surveillance [1]. The strategic application of these models has been demonstrated to yield significant time and cost savings; one portfolio-wide analysis reported annualized average savings of approximately 10 months of cycle time and $5 million per program [32]. Conversely, poor model quality can erode these benefits, leading to delayed timelines, misallocated resources, and flawed decision-making.

Quantitative Evidence: The Cost of Poor Quality

The link between model quality and development efficiency is quantifiable. The following data, derived from industry analysis, illustrates the tangible savings achieved through rigorous model quality, which conversely highlights the losses incurred when quality is compromised.

Table 1: Documented Impact of High-Quality MIDD on Development Efficiency [32]

| MIDD Activity | Impact on Development | Estimated Time Savings | Estimated Cost Savings |

|---|---|---|---|

| Trial Waivers | Waiver of dedicated clinical studies (e.g., organ impairment, drug-drug interaction) | 9-18 months per study waived | $0.4M - $2M per study waived |

| Sample Size Reduction | Optimization of patient numbers in clinical trials | Varies by trial phase and size | Direct correlation with reduced patient count and trial costs |

| Informed "No-Go" Decisions | Early termination of non-viable programs | Avoids years of futile development | Avoids millions in downstream costs |

| Portfolio-Wide Application | Aggregate savings across all development programs | ~10 months per program annually | ~$5 million per program annually |

Table 2: Consequences of Poor Model Quality on Development Outcomes

| Aspect of Poor Quality | Impact on Development Timeline | Impact on Decision-Making |

|---|---|---|

| Incomplete or Non-Granular Data [33] | Delays for additional data collection; need for new studies to resolve ambiguities | Inability to compare programs accurately; flawed assessment of Probability of Technical and Regulatory Success (PTRS) |

| Inconsistent or Non-Interoperable Data [33] | Time lost reconciling data sources and terminology | Misguided investment and portfolio prioritization decisions |

| Flawed Model Assumptions or Structure | Regulatory agency questions requiring model re-development and re-submission | Incorrect dose selection or patient stratification, leading to failed clinical trials |

| Lack of Contextual Richness [33] | Inability to extrapolate findings to new indications or populations, requiring new models | Failure to understand the root cause of past failures, leading to repetition of mistakes |

Experimental Protocols for Model Quality Assessment

To ensure model quality, researchers employ structured assessment protocols. The methodology below details two critical approaches: one for assessing controlled intervention studies that provide input data for models, and another for establishing a "fit-for-purpose" framework for the models themselves.

Protocol 1: Quality Assessment of Controlled Intervention Studies

This protocol is based on the NHLBI's Quality Assessment of Controlled Intervention Studies tool, which is used to evaluate the internal validity of clinical trials—a primary data source for many drug development models [34].

- Objective: To assess the methodological rigor and risk of bias in controlled intervention studies, ensuring that data used for model-building is reliable.

- Key Criteria for Assessment:

- Randomization Adequacy: Was the allocation sequence randomly generated? [34]

- Allocation Concealment: Could trial staff predict treatment assignments? [34]

- Blinding: Were participants, care providers, and outcome assessors blinded to group assignment? [34]

- Group Similarity at Baseline: Were the groups similar on key characteristics that could affect outcomes? [34]

- Drop-out Rates: Was the overall drop-out rate ≤20% and the differential drop-out rate ≤15 percentage points? [34]

- Adherence: Did participants adhere to the intervention protocols? [34]

- Outcome Assessment: Were outcomes assessed using valid, reliable measures applied consistently? [34]

- Intent-to-Treat Analysis: Were all randomized participants analyzed in their original assigned groups? [34]

- Quality Rating: Studies are rated as Good, Fair, or Poor based on the number of criteria met and the presence of critical flaws (e.g., high differential dropout). A "Poor" rating indicates high risk of bias [34].

Protocol 2: A "Fit-for-Purpose" Model Quality Framework

This protocol outlines a strategic approach to ensure models are developed to a standard appropriate for their specific role in decision-making [1].

- Objective: To guide the development and evaluation of MIDD tools to ensure they are "fit-for-purpose" for their defined Context of Use (COU).

- Methodology Workflow:

- Key Workflow Steps:

- Define the Question of Interest (QOI): Precisely articulate the scientific or clinical question the model must answer (e.g., "What is the recommended Phase 2 dose?") [1].

- Establish the Context of Use (COU): Define the specific application of the model's results for informed decision-making, including all intended audiences (e.g., internal project teams, regulators) [1].

- Select MIDD Tool: Choose the quantitative methodology (e.g., PBPK, QSP, Exposure-Response) that is best suited to address the QOI within the COU [1].

- Develop and Evaluate Model: Build the model, ensuring it undergoes rigorous verification (does the model code work as intended?) and validation (does the model accurately represent the real-world system?) [1].

- Assess Model Influence and Risk: Evaluate the model's impact on the decision and the potential consequences of the model being wrong. This determines the level of evidence needed for model acceptance [1].

The Scientist's Toolkit: Key Reagents for Model-Informed Drug Development

The effective application of MIDD relies on a suite of sophisticated quantitative tools. The following table details key "reagents" in the modeler's toolkit, explaining their primary function in the development process.

Table 3: Essential Tools for Model-Informed Drug Development [32] [1]

| Tool/Analysis | Primary Function in Drug Development |

|---|---|

| Physiologically Based Pharmacokinetic (PBPK) Modeling | Simulates drug absorption, distribution, metabolism, and excretion based on physiology; often used to support waivers for clinical drug-drug interaction or organ impairment studies [32] [1]. |

| Population PK (PPK) Analysis | Describes the sources and correlates of variability in drug exposure among individuals from a target patient population [1]. |

| Exposure-Response (ER) Modeling | Characterizes the relationship between drug exposure (e.g., concentration) and both efficacy and safety outcomes to inform dose selection and optimization [1]. |

| Quantitative Systems Pharmacology (QSP) | Integrates disease biology and drug mechanisms to predict drug behavior and treatment effects in virtual patient populations; useful for target identification and trial design [1]. |

| Model-Based Meta-Analysis (MBMA) | Quantifies the drug's expected efficacy and safety by integrating and comparing data from multiple compounds and clinical trials within a therapeutic area [1]. |

The quality of models in drug development is inextricably linked to program success. High-quality, "fit-for-purpose" models, built on complete, consistent, and context-rich data, demonstrably compress development timelines and sharpen decision-making. They enable smarter trial designs, inform go/no-go decisions, and can even replace certain clinical studies. Conversely, poor model quality introduces risk and uncertainty, leading to delays, increased costs, and flawed decisions that can ultimately deprive patients of new therapies. A disciplined approach to model quality assessment is not a bureaucratic hurdle but a fundamental prerequisite for efficient and effective drug development.

Methodologies in Practice: A Landscape of Assessment Tools and Platforms

Taxonomy of Model Quality Assessment Tools

This comparative analysis systematically classifies and evaluates model quality assessment tools across biomedical and computational domains. By developing a comprehensive taxonomy grounded in established frameworks and current tool capabilities, this guide provides researchers, scientists, and drug development professionals with structured methodologies for selecting appropriate assessment strategies. The taxonomy distinguishes tools by application domain, methodological approach, and quality dimensions addressed, supported by experimental data and protocol details to facilitate informed tool selection for specific research contexts.

Model quality assessment encompasses the methodologies and tools used to evaluate the reliability, validity, and usefulness of computational models across scientific domains. In drug development and biomedical research, these assessments are critical for establishing trust in models that inform diagnostic, prognostic, and therapeutic decisions. The fundamental challenge in this domain stems from the conceptual distinction between traditional software verification and model evaluation: whereas software is verified against precise specifications, models are evaluated based on their fit for purpose and predictive utility rather than binary correctness [35]. This paradigm, encapsulated by statistician George Box's aphorism that "all models are wrong, but some are useful," necessitates sophisticated assessment frameworks that can quantify a model's practical value amid inevitable approximation [35].

The taxonomy presented herein addresses the pressing need for structured guidance in navigating the diverse ecosystem of quality assessment tools. With the proliferation of computational models in biomedical research, researchers face significant challenges in selecting appropriate evaluation methodologies that align with their specific model types and research objectives. This guide systematically classifies assessment approaches, provides comparative analysis of tool capabilities, and details experimental protocols to establish a rigorous foundation for model evaluation in scientific and pharmaceutical contexts.

Taxonomy Framework and Classification

Our taxonomy classifies model quality assessment tools along three primary dimensions: application domain, methodological approach, and quality attributes addressed. This framework adapts the CREATE (Classification Rubric for Evidence Based Practice Assessment Tools in Education) framework for broader model assessment contexts, enabling consistent characterization of tools across diverse domains [36].

The foundational taxonomy structure organizes assessment approaches according to their primary application domains, which include evidence-based medicine, data quality management, computational model evaluation, and study methodological quality. Each domain addresses distinct quality dimensions through specialized methodologies. For instance, evidence-based medicine assessment tools typically evaluate competence across the five 'A's': asking, acquiring, appraising, applying, and assessing impact, with current tools predominantly focusing on the appraising step [36]. Similarly, tools for data quality assessment monitor dimensions such as completeness, accuracy, consistency, timeliness, validity, and uniqueness through automated validation checks and anomaly detection [37] [38].

Table 1: Taxonomy of Model Quality Assessment Tools by Domain and Primary Function

| Domain | Tool Examples | Primary Function | Quality Dimensions Addressed |

|---|---|---|---|

| Evidence-Based Medicine | Fresno Test, Berlin Tool | Evaluate EBM competence and teaching effectiveness | Knowledge gain, Skills application, Critical appraisal [36] |

| Data Quality | Great Expectations, Deequ, Monte Carlo | Automated data validation and monitoring | Completeness, Accuracy, Consistency, Timeliness, Validity, Uniqueness [37] [38] |

| Computational Models | CASP Assessment, I-TASSER | Protein structure prediction accuracy | Template-based modeling accuracy, Free modeling reliability, Alignment precision [39] |

| Study Methodology | NHLBI Quality Assessment Tool | Appraise study design and risk of bias | Randomization adequacy, Blinding, Drop-out rates, Adherence, Outcome measurement validity [34] |

| AI/ML Models | iMerit, Scale AI, Braintrust | Human-in-the-loop model evaluation | Factual consistency, Bias, Toxicity, Hallucinations, Edge case performance [30] [40] |

Methodological approaches are further categorized as checklist-based assessments (e.g., NHLBI's Quality Assessment of Controlled Intervention Studies), metric-based evaluations (e.g., prediction accuracy measures), human-in-the-loop validation (e.g., iMerit's expert-guided workflows), and automated monitoring systems (e.g., Monte Carlo's machine learning-powered anomaly detection) [34] [30] [37]. Each approach offers distinct advantages for specific assessment contexts, with checklist-based methods providing standardized critical appraisal frameworks, metric-based approaches enabling quantitative comparisons, human-in-the-loop validation capturing nuanced quality aspects, and automated systems offering continuous monitoring capabilities.

Diagram 1: Taxonomy of model quality assessment tools showing primary classification dimensions.

Comparative Analysis of Assessment Tools

Experimental Data and Performance Metrics

Rigorous evaluation of assessment tools requires standardized metrics across domains. In evidence-based medicine (EBM) assessment, a systematic review identified 12 validated tools, with only 6 classified as high quality according to criteria including interrater reliability, objective outcome measures, and multiple types of established validity evidence (≥3 types) [36]. These high-quality tools predominantly assessed the "appraise" step of EBM practice (100% of tools), with limited coverage of "assess" steps (0%), revealing significant gaps in comprehensive EBM evaluation [36].

In computational structure prediction, the Critical Assessment of Protein Structure Prediction (CASP) experiment provides robust comparative data. The assessment employs global distance test scores and model quality assessment programs to evaluate prediction accuracy. Analysis of CASP7 results demonstrated that top-performing template-based modeling methods (I-TASSER and Robetta) improved upon the best available templates for most targets, with automated servers achieving performance comparable to human-expert groups for over 90% of easy template-based modeling targets [39]. Alignment accuracy remains a critical challenge, with sequence identity below 20% potentially resulting in approximately 50% of residues being misaligned [39].

Table 2: Performance Metrics Across Assessment Tool Categories

| Tool Category | Primary Metrics | Experimental Results | Limitations |

|---|---|---|---|

| EBM Assessment Tools | Validity evidence types, Reliability coefficients, Educational domains covered | 6 of 12 tools met high-quality threshold; All assessed 'appraise' step; None assessed 'assess' step [36] | Limited coverage of all EBM steps; Few address attitudes, behaviors, or patient benefits [36] |

| Data Quality Tools | Data-error ratio, Number of empty values, Time-to-value, Rule effectiveness | Great Expectations: 300+ pre-built checks; Soda: 25+ built-in metrics; Tools reduce issue investigation time by 40-60% [37] [38] | Open-source tools require engineering resources; Commercial solutions involve licensing costs [37] [38] |

| Protein Structure Prediction | GDT_TS, RMSD, Alignment accuracy | Zhang-server outperformed Robetta in CASP7; >90% of easy targets had server models among top 6; <20% sequence identity yields ~50% misalignment [39] | Accuracy decreases significantly with lower sequence similarity; Alignment errors propagate to model quality [39] |

| LLM Observability | Latency, Token usage, Cost, Factual consistency, Hallucination rate | Braintrust handles 80x faster queries vs. traditional databases; Tracks 13+ AI frameworks; Automatic cost tracking across models [40] | Implementation overhead; Requires integration with production systems; Specialized expertise needed [40] |

Tool-Specific Capabilities and Applications

Evidence-Based Medicine Assessment Tools: High-quality tools like the Fresno Test and Berlin Tool focus on evaluating EBM knowledge and skills through scenario-based testing and multiple-choice questions. These tools demonstrate robust psychometric properties but remain limited in assessing the complete EBM process cycle [36].

Data Quality Assessment Platforms: Modern tools employ increasingly sophisticated approaches. Monte Carlo utilizes machine learning-powered anomaly detection to establish baseline data patterns and automatically flag deviations, providing data lineage tracing for root cause analysis [37]. Great Expectations offers an open-source alternative with 300+ pre-built expectations for data validation, while Soda Core uses a YAML-based interface accessible to non-technical users [38]. These tools typically reduce time spent fixing data issues by approximately 40%, addressing a major productivity bottleneck in data teams [37].

Computational Model Assessment: The CASP experiment employs blind prediction challenges to objectively evaluate protein structure modeling techniques. Assessment focuses on both template-based modeling (comparative modeling and fold recognition) and free modeling (de novo and ab initio approaches) [39]. Quality assessment programs within this domain evaluate model quality without reference to native structures, enabling practical utility estimation for biological applications.

AI/ML Model Evaluation Services: Specialized providers like iMerit offer human-in-the-loop evaluation for complex AI systems, assessing factors including factual consistency, reasoning validity, bias, toxicity, and multimodal alignment [30]. These services employ domain experts and structured evaluation workflows through platforms like Ango Hub, enabling quality assessment for models in specialized domains including healthcare and drug development.

Experimental Protocols and Methodologies

Protocol for EBM Assessment Tool Validation

The validation of evidence-based medicine assessment tools follows a rigorous methodological protocol derived from systematic review criteria [36]:

Participant Recruitment: Include medical professionals and students across training levels (undergraduate, postgraduate, practicing clinicians) with varying EBM expertise.

Tool Administration: Implement tools in controlled settings with standardized instructions and time limits where appropriate.

Validity Evidence Collection: Establish multiple forms of validity evidence including:

- Content validity through expert review of item relevance

- Response process validity through cognitive interviews

- Internal structure through factor analysis

- Relations to other variables through correlation with experience levels

- Consequences through sensitivity to educational interventions

Reliability Assessment: Calculate internal consistency (Cronbach's alpha) and interrater reliability (intraclass correlation coefficients) for applicable tools.

Educational Impact Evaluation: Assess reaction to educational experience, attitudes, self-efficacy, knowledge, skills, behaviors, and patient benefits across the seven EBM learning domains.

This protocol ensures comprehensive psychometric evaluation, with tools requiring demonstration of at least three types of validity evidence, established reliability, and objective outcome measures to achieve high-quality classification [36].

CASP Protein Structure Prediction Assessment

The Critical Assessment of Protein Structure Prediction employs a blind evaluation protocol conducted biennially [39]:

Target Selection: Organizers select protein sequences with soon-to-be-solved or recently solved but unpublished structures.

Prediction Phase: Participating groups worldwide submit structure predictions for approximately three months.

Evaluation Methodology: The Prediction Center performs automated comparisons using:

- Global Distance Test (GDT) to measure structural similarity

- Root-mean-square deviation (RMSD) calculations

- Model Quality Assessment Programs (MQAPs) for estimation without native structure reference

Assessment Categorization: Predictions are classified by difficulty:

- Template-Based Modeling (comparative modeling and fold recognition)

- Free Modeling (knowledge-based de novo and ab initio approaches)

Independent Analysis: Assessors analyze anonymized predictions with results presented at community workshops and published in special journal issues.

This protocol provides objective, community-wide evaluation standards that have driven significant methodological advances in protein structure prediction over two decades [39].

Diagram 2: CASP protein structure prediction assessment protocol workflow.

Data Quality Tool Implementation Protocol

Implementing data quality assessment tools follows a standardized protocol for validation rule development and monitoring:

Data Profiling: Analyze dataset structure, value distributions, and patterns to understand normal data characteristics.

Expectation Definition: Create validation rules using:

- Declarative checks in frameworks like Great Expectations' 300+ pre-built expectations

- Custom rules for domain-specific requirements using SQL or Python

- YAML-based configurations in tools like Soda Core for non-technical users

Monitoring Implementation:

- Integrate checks into data pipelines via orchestration tools (Airflow, dbt)

- Configure automated anomaly detection using machine learning (Monte Carlo, Bigeye)

- Establish data lineage mapping for root cause analysis

Alerting Configuration: Set up notifications through Slack, email, or PagerDuty with appropriate threshold tuning to balance sensitivity and alert fatigue.

Remediation Workflows: Create standardized processes for investigating and resolving data quality issues, including prioritization based on business impact.

This protocol enables systematic data quality management, with implementations typically reducing time spent on data issue investigation by 40% according to industry reports [37].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Reagent Solutions for Model Quality Assessment

| Reagent/Tool | Function | Application Context |

|---|---|---|

| NHLBI Quality Assessment Tool | Systematic appraisal of controlled intervention studies | Critical evaluation of clinical trial methodology and risk of bias [34] |

| CREATE Framework | Taxonomy classification rubric for evidence-based practice | Standardized characterization of EBP assessment tools by domain and outcome [36] |

| Great Expectations Library | 300+ pre-built data validation checks | Automated testing of data quality across completeness, accuracy, and consistency dimensions [38] |

| CASP Evaluation Suite | Protein structure prediction accuracy metrics | Objective comparison of modeling approaches through blind challenges [39] |

| Ango Hub Platform | Custom model evaluation workflows with expert-in-the-loop | Structured human evaluation for complex AI models in specialized domains [30] |

| Braintrust Observability | LLM monitoring with cost tracking and quality assessment | Production monitoring of AI system behavior, performance, and output quality [40] |