A Complete Step-by-Step Guide to PCA for RNA-seq Data Analysis: From Basics to Advanced Applications

This comprehensive guide provides researchers, scientists, and drug development professionals with a complete protocol for performing Principal Component Analysis (PCA) on RNA-seq data.

A Complete Step-by-Step Guide to PCA for RNA-seq Data Analysis: From Basics to Advanced Applications

Abstract

This comprehensive guide provides researchers, scientists, and drug development professionals with a complete protocol for performing Principal Component Analysis (PCA) on RNA-seq data. Covering foundational concepts through advanced applications, we detail the entire workflow from data preprocessing and normalization to visualization and interpretation using established tools like DESeq2. The article addresses critical quality control measures, troubleshooting common pitfalls, and validation techniques to ensure robust analysis. With practical examples and optimization strategies, this resource enables reliable exploratory analysis and meaningful biological insights from high-dimensional transcriptomic data, supporting applications in biomarker discovery, experimental quality assessment, and clinical research.

Understanding PCA Fundamentals and RNA-seq Data Preparation

What is PCA? Core Concepts and Mathematical Principles

Core Concepts of PCA

Principal Component Analysis (PCA) is a dimensionality reduction technique that simplifies complex datasets by transforming a large set of variables into a smaller one that still contains most of the information from the original set [1]. This method is foundational in data analysis and machine learning, particularly valuable for exploring and visualizing high-dimensional data [2] [3].

The Purpose of Dimensionality Reduction

Large datasets with many variables present significant challenges: they are difficult to visualize, computationally intensive to process, and often contain correlated variables or noise that can obscure meaningful patterns [2]. PCA addresses these issues by:

- Reducing computational requirements and improving efficiency for subsequent analysis [2] [4].

- Minimizing noise by filtering out less significant variations in the data [2] [3].

- Enabling visualization of high-dimensional data in 2D or 3D spaces [3].

- Mitigating multicollinearity by creating new, uncorrelated variables for downstream statistical analysis [3] [4].

Understanding Principal Components

Principal Components (PCs) are new variables constructed as linear combinations of the original variables [1]. They are designed to be uncorrelated (orthogonal), and are ordered such that the first component captures the maximum possible variance in the data, the second component captures the next highest variance while being uncorrelated to the first, and so on [1] [5].

Geometrically, principal components represent the directions of maximum variance in the data. Think of them as new axes that provide the optimal angle to view and evaluate data, making differences between observations more apparent [1]. The first principal component (PC1) is the axis along which data points show the greatest spread, the second component (PC2) is perpendicular to PC1 and captures the next best direction of spread, and this continues for all subsequent components [3].

Table: Key Characteristics of Principal Components

| Characteristic | Description |

|---|---|

| Orthogonality | All principal components are perpendicular (uncorrelated) to each other [1]. |

| Variance Ranking | Components are ordered by the amount of variance they explain, from highest to lowest [1]. |

| Interpretation | PCs are less interpretable than original variables as they lack direct real-world meaning [1]. |

| Completeness | The total number of PCs equals the number of original variables, but only the first few are typically used [1]. |

Mathematical Principles of PCA

The mathematical foundation of PCA lies in linear algebra and statistical concepts including standardization, covariance, and eigenvectors/eigenvalues [2].

The PCA Algorithm: A Step-by-Step Mathematical Workflow

The computation of principal components follows a systematic, five-step process.

Step 1: Standardization and Centering The goal is to standardize the range of continuous initial variables so that each contributes equally to the analysis [1]. Without this step, variables with larger ranges would dominate those with smaller ranges, leading to biased results [1]. Standardization is performed by subtracting the mean and dividing by the standard deviation for each value of each variable [1] [4]:

[ Z = \frac{X - \mu}{\sigma} ]

where ( \mu ) is the mean of the feature and ( \sigma ) is its standard deviation [4]. This transforms all variables to the same scale with a mean of 0 and standard deviation of 1 [3].

Step 2: Covariance Matrix Computation The covariance matrix reveals how variables in the dataset vary from the mean relative to each other [1]. It identifies correlated variables that may contain redundant information [1]. For a dataset with ( p ) variables, the covariance matrix is a ( p \times p ) symmetric matrix where the diagonal elements represent the variances of each variable, and the off-diagonal elements represent the covariances between variable pairs [1]. The covariance between two features ( x1 ) and ( x2 ) is calculated as [4]:

[ \text{cov}(x1,x2) = \frac{\sum{i=1}^{n}(x{1i} - \bar{x1})(x{2i} - \bar{x_2})}{n-1} ]

The sign of the covariance indicates the nature of the relationship: positive (variables increase together), negative (one increases when the other decreases), or zero (no linear relationship) [1] [3].

Step 3: Eigen Decomposition Eigenvectors and eigenvalues of the covariance matrix are calculated to determine the principal components [1]. The eigenvectors represent the directions of the axes with the most variance (the principal components themselves), while the eigenvalues indicate the magnitude or amount of variance carried by each principal component [1] [2]. For a square matrix ( A ), an eigenvector ( X ) (a non-zero vector) and its corresponding eigenvalue ( \lambda ) satisfy [4]:

[ A\mathbf{X} = \lambda\mathbf{X} ]

Step 4: Feature Selection In this step, we decide which principal components to keep. The eigenvectors are ranked by their eigenvalues in descending order, and only the most significant components (those with the highest eigenvalues) are retained [1]. This forms a feature vector - a matrix containing the selected eigenvectors [1]. The choice of how many components to retain involves a trade-off between dimensionality reduction and information preservation, often determined using a scree plot which shows the proportion of total variance explained by each component [3].

Step 5: Data Projection (Recast the Data) The final step transforms the dataset onto the new principal component axes. This is done by multiplying the original standardized data by the feature vector (the matrix of retained eigenvectors) [1]. The result is a new dataset with reduced dimensions that captures the most significant patterns in the original data [1].

Key Mathematical Outputs and Their Interpretation

Eigenvalues and Variance Explained The proportion of total variance explained by each principal component is calculated by dividing its eigenvalue by the sum of all eigenvalues [5]. For example, if the eigenvalues for PC1 and PC2 are ( \lambda1 ) and ( \lambda2 ), then:

- Variance explained by PC1 = ( \frac{\lambda1}{\lambda1 + \lambda_2} )

- Variance explained by PC2 = ( \frac{\lambda2}{\lambda1 + \lambda_2} ) [1]

In practical applications, the first few components often capture the majority of the variance in the dataset [1].

Principal Components as Linear Combinations Each principal component is expressed as a linear combination of the original variables. For example: [ PC1 = w{11}X1 + w{12}X2 + \cdots + w{1p}Xp ] [ PC2 = w{21}X1 + w{22}X2 + \cdots + w{2p}Xp ] where the weights ( w{ij} ) are derived from the eigenvectors, and ( Xj ) are the standardized original variables [5].

Table: Mathematical Elements of PCA

| Element | Mathematical Role | Interpretation in PCA |

|---|---|---|

| Covariance Matrix | ( \mathbf{\Sigma} = \frac{1}{n-1} \mathbf{X}^T \mathbf{X} ) [2] | Captures variable relationships and variances [1]. |

| Eigenvectors | ( \mathbf{\Sigma v} = \lambda \mathbf{v} ) [2] | Directions of maximum variance (principal components) [1] [3]. |

| Eigenvalues | ( \lambda ) in the equation above [2] | Amount of variance carried by each component [1] [3]. |

| Data Projection | ( \mathbf{T} = \mathbf{XW} ) [5] | Transformed data in the new coordinate system. |

Application Note: PCA for RNA-Seq Data Analysis

In RNA sequencing (RNA-seq) experiments, PCA is an essential tool for quality control, exploratory analysis, and visualizing sample relationships based on gene expression patterns [6] [7].

Workflow for RNA-Seq PCA

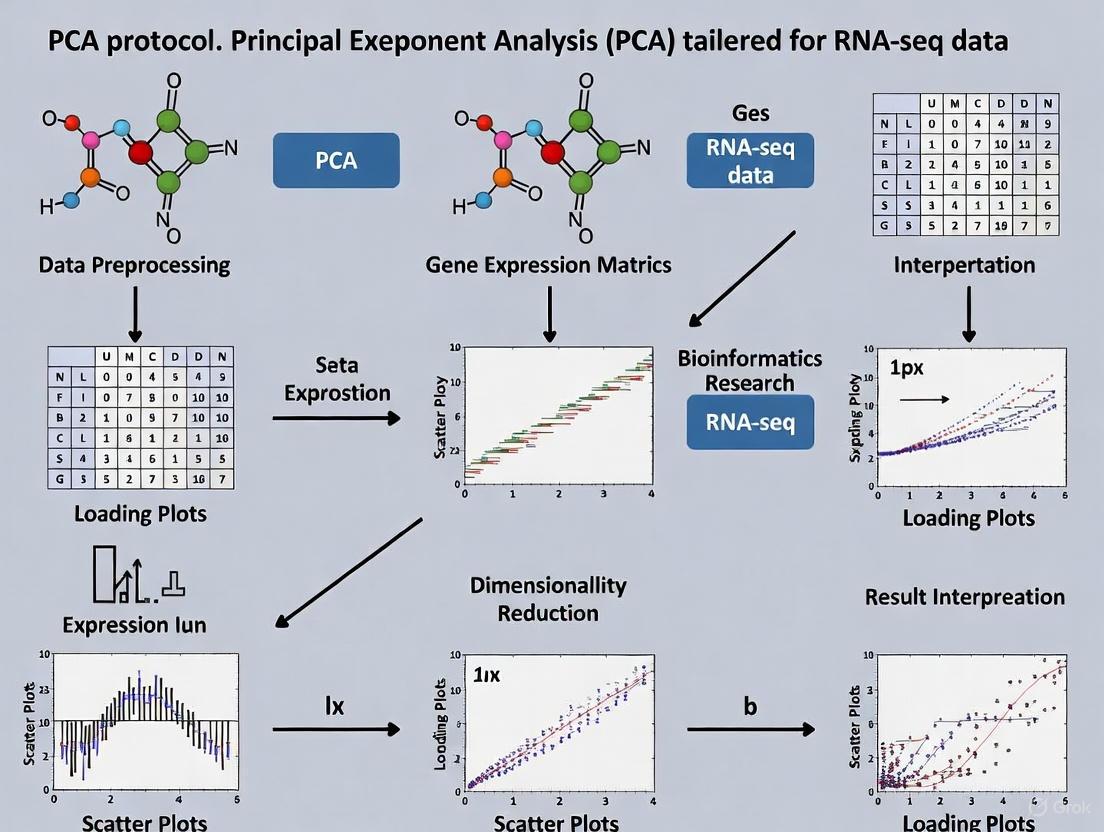

The following diagram illustrates the complete protocol for applying PCA to RNA-seq data, from raw counts to visualization and interpretation.

Experimental Protocol: PCA Implementation for RNA-Seq Data

Purpose: To reduce the dimensionality of gene expression data and visualize global expression patterns, sample relationships, and potential batch effects or outliers [6] [7].

Sample Preparation and Data Generation

- RNA Extraction: Isolate high-quality RNA from samples (e.g., cells, tissues). For the example murine alveolar macrophage dataset, RNA integrity number (RIN) should be >7.0 [6].

- Library Preparation and Sequencing: Convert RNA to cDNA, prepare libraries using appropriate kits (e.g., NEBNext Ultra DNA Library Prep Kit for Illumina), and sequence on a platform such as Illumina NextSeq 500 [6].

- Read Processing: Demultiplex raw sequencing data (bcl2fastq), align reads to a reference genome (e.g., mm10 for mouse) using aligners like TopHat2, and generate raw counts per gene using tools such as HTSeq [6].

Computational Methods

- Data Preprocessing:

- Low-count filtering: Remove genes with minimal expression that add mostly noise. A common threshold is keeping only genes with 10 or more reads total across all samples [7].

- Normalization: Account for differences in library size and other technical variations. For RNA-seq data, methods in packages like DESeq2 are commonly used [7].

- PCA Implementation in R:

Interpretation of Results

- Sample Grouping: Samples that cluster together in PCA space have similar gene expression profiles [6].

- Variance Explained: The percentage values on axes indicate how much of the total gene expression variability is captured by each PC [6] [7].

- Outlier Detection: Samples far from main clusters may indicate quality issues or biologically distinct states [6].

- Batch Effects: Strong separation along any PC correlated with technical factors (e.g., processing date) rather than biological variables indicates potential batch effects [6].

The Scientist's Toolkit: Essential Reagents and Computational Tools

Table: Key Research Reagent Solutions for RNA-seq PCA Analysis

| Item | Function/Application | Example Products/Tools |

|---|---|---|

| RNA Isolation Kit | Extract high-quality RNA from biological samples | PicoPure RNA Isolation Kit [6] |

| Library Prep Kit | Prepare sequencing libraries from RNA | NEBNext Poly(A) mRNA Magnetic Isolation Kit, NEBNext Ultra DNA Library Prep Kit [6] |

| Sequencing Platform | Generate raw sequencing reads | Illumina NextSeq 500 [6] |

| Alignment Software | Map sequences to reference genome | TopHat2 [6] |

| Gene Counting Tool | Generate count data per gene | HTSeq [6] |

| Statistical Environment | Data analysis and PCA implementation | R programming language [7] |

| Analysis Packages | Specialized methods for RNA-seq data | DESeq2, ggplot2 [7] |

Advanced Applications in Drug Discovery and Development

PCA has proven particularly valuable in drug discovery, where it helps researchers identify patterns in complex chemical and biological data [8] [9].

Molecular Descriptor Analysis for Compound Optimization

In a study investigating quercetin analogues for improved blood-brain barrier (BBB) permeability, PCA was applied to identify which molecular descriptors contribute most significantly to BBB penetration [9]. Researchers calculated numerous molecular descriptors related to membrane permeability for 34 quercetin analogues and used PCA to transform these descriptors into a reduced set of principal components [9].

The analysis revealed that descriptors related to intrinsic solubility and lipophilicity (logP) were primarily responsible for clustering four quercetin analogues (trihydroxyflavones) with the highest predicted BBB permeability [9]. This application demonstrates how PCA can guide lead optimization in neuroprotective drug development by highlighting structural characteristics most relevant to desired pharmacokinetic properties.

Protein Dynamics and Ligand Binding Analysis

In drug discovery projects, PCA is frequently used to analyze Molecular Dynamics (MD) simulations of protein-ligand complexes [8]. By applying PCA to the 3D coordinates of protein trajectories, researchers can:

- Identify the dominant collective motions of proteins and how they are affected by ligand binding [8].

- Compare conformational spaces explored by proteins under different conditions (e.g., with different ligands bound) [8].

- Detect structural convergence and identify distinct conformational states that might be missed by conventional analyses like RMSD [8].

For example, in analyzing a dimeric protein, PCA revealed an allosteric effect between subunits - when one binding site was unoccupied, the protein explored a significantly different conformational space compared to when both sites were occupied [8]. Such insights are valuable for understanding mechanisms of drug action and designing more effective therapeutics.

Technical Considerations and Limitations

While PCA is a powerful technique, researchers should be aware of its limitations and appropriate use cases:

- Linearity Assumption: PCA is a linear technique and may not capture complex nonlinear relationships in data [4]. For nonlinear data, alternatives like Kernel PCA may be more appropriate [2].

- Interpretation Challenges: Principal components are mathematical constructs that may not have straightforward biological interpretations, as they are linear combinations of all original variables [1] [4].

- Sensitivity to Scaling: PCA results are heavily influenced by data scaling, making proper standardization crucial [1] [4].

- Information Loss: While dimensionality reduction is beneficial, discarding components with low eigenvalues inevitably sacrifices some information [1] [4].

- Variance vs. Relevance: PCA focuses on directions of maximum variance, but these may not always be the most biologically relevant patterns [6].

For RNA-seq data specifically, proper experimental design is essential to minimize batch effects that can dominate the true biological signal in PCA results [6]. Strategies include processing control and experimental samples together, harvesting samples at the same time of day, and minimizing variations in RNA isolation procedures [6].

RNA sequencing (RNA-seq) has become the primary method for transcriptome analysis since its introduction in 2008, generating unprecedented detail about the RNA landscape and enabling comprehensive understanding of gene expression, regulatory networks, and biological processes [6] [10]. The technique involves converting RNA into cDNA, followed by fragmentation, adapter ligation, and high-throughput sequencing to produce "reads" that must be computationally processed and interpreted [6]. The enormous datasets generated by RNA-seq present significant bioinformatics challenges, requiring researchers to understand the principles underlying each processing step to avoid misinterpretation and draw meaningful biological conclusions [6].

The transformation of raw sequencing data into an analysis-ready format represents a critical phase in RNA-seq experiments. This process involves multiple computational steps that progressively refine the data quality and structure until it becomes suitable for sophisticated analyses such as differential expression and dimensionality reduction techniques like Principal Component Analysis (PCA) [6]. Proper processing ensures that biological signals are preserved and enhanced while technical artifacts and noise are minimized. This protocol outlines the complete workflow from raw sequencing outputs to structured datasets ready for exploratory analysis, with particular emphasis on preparation for PCA, which serves as a powerful quality control and hypothesis-generation tool in transcriptomic studies.

RNA-seq Experimental Design and Data Generation

Experimental Considerations

Robust RNA-seq analysis begins with proper experimental design. Key considerations include minimizing batch effects, which can introduce technical variation that confounds biological signals. Batch effects can originate from multiple sources including different researchers, temporal factors, environmental conditions, RNA isolation procedures, library preparation techniques, and sequencing runs [6]. To mitigate these effects, researchers should harvest controls and experimental conditions simultaneously, process samples in randomized orders when possible, use intra-animal and littermate controls, perform RNA isolation and library preparation for all samples on the same day, and sequence all samples in a single run when feasible [6].

Sample quality is paramount throughout this process. RNA integrity should be verified using metrics such as RNA Integrity Number (RIN), with values above 7.0 indicating high-quality RNA suitable for library preparation [6]. For library construction, researchers must select appropriate kits based on their specific needs—standard mRNA kits for abundant RNA, specialized low-input kits for limited starting material, and rRNA depletion methods when seeking to retain non-coding RNA species [11].

Sequencing Output and Raw Data Structure

The initial output from RNA-seq experiments consists of raw sequencing reads stored in FASTQ format. These files contain both sequence information and quality scores for each base call (Phred scores) [12]. A typical FASTQ file contains four lines per read: a sequence identifier, the nucleotide sequence, a separator line, and quality scores encoded in ASCII format [12].

The data structure at this stage includes:

- Read identifiers: Unique identifiers for each sequence read

- Nucleotide sequences: The actual DNA sequences derived from RNA transcripts

- Quality scores: Probability-based scores indicating confidence in each base call

- Adapter sequences: Artificial sequences added during library preparation that may need removal

Primary Data Processing and Quality Control

Quality Assessment and Read Trimming

The initial quality assessment of raw FASTQ files uses tools like FastQC to evaluate key metrics including Phred quality scores, adapter contamination, GC content, duplication rates, and length distribution [11] [12]. This assessment identifies potential issues that might compromise downstream analyses.

Following quality assessment, reads typically undergo trimming and filtering to remove low-quality bases, adapter sequences, and contaminants. This step is crucial as inaccuracies and artifacts at this stage can propagate through the entire analysis pipeline. Commonly used tools include fastp and Trimmomatic, which offer flexible parameters for adapter removal and quality filtering [11] [10].

Table 1: Quality Control Tools and Their Applications

| Tool | Purpose | Key Features | Best For |

|---|---|---|---|

| FastQC | Quality control | Generates visual quality reports | Initial assessment of raw reads |

| fastp | Trimming and filtering | Fast processing, integrated QC | Comprehensive preprocessing |

| Trimmomatic | Trimming | Flexible parameters, thorough trimming | Customized adapter removal |

| MultiQC | Aggregate reporting | Combines multiple QC reports | Projects with many samples |

A typical trimming command using fastp for paired-end data includes:

This command trims both read pairs, automatically detects adapters, sets a minimum read length of 25 bases, and generates both HTML and JSON reports for quality assessment [12].

Read Alignment and Quantification

Alignment-Based Approaches

Trimmed reads are aligned to a reference genome or transcriptome using splice-aware aligners that can handle the gaps created by intron splicing in eukaryotic transcripts [13]. The alignment process produces Sequence Alignment Map (SAM) or Binary Alignment Map (BAM) files that contain mapping locations and CIGAR strings describing alignment characteristics [13].

Table 2: Comparison of RNA-seq Alignment Tools

| Aligner | Strengths | Limitations | Best Application |

|---|---|---|---|

| STAR | Accurate spliced alignment, fast | High memory requirements | Complex transcriptomes, novel junction detection |

| HISAT2 | Fast, memory-efficient | Less accurate for complex splicing | Large datasets, standard splicing |

| TopHat2 | Established method | Slower than newer alternatives | Legacy compatibility |

For alignment with STAR, a typical command includes:

This command executes alignment using multiple threads, handles compressed input files, outputs unsorted BAM files, and generates both genome and transcriptome alignments [12].

Pseudoalignment and Quantification

As an alternative to traditional alignment, pseudoaligners like Salmon and Kallisto use k-mer matching to assign reads to transcripts without generating full alignments, offering dramatic improvements in speed [11] [13]. These tools decompose reference transcripts into k-mers (short subsequences), construct a network where these k-mers serve as nodes, and then determine the most likely transcript of origin for each read by finding the best path through this network [13].

The quantification step generates count data representing the abundance of each transcript or gene in the sample. These counts are typically stored in matrix format with genes as rows and samples as columns. For alignment-based approaches, tools like HTSeq count the number of reads overlapping each genomic feature [6], while pseudoaligners use expectation-maximization algorithms to estimate transcript abundances [13].

Data Transformation and Normalization

Count Normalization Methods

Raw count data requires normalization to account for technical variations such as sequencing depth and gene length, enabling meaningful comparisons between samples. Different normalization methods address specific technical artifacts:

- TPM (Transcripts Per Million): Normalizes for both sequencing depth and gene length, allowing comparison within and between samples [11]

- FPKM/RPKM (Fragments Per Kilobase Million): Similar to TPM but with different mathematical properties that make between-sample comparisons less reliable [11]

- DESeq2's Median of Ratios: Assumes most genes are not differentially expressed and uses the geometric mean to calculate size factors for each sample [7]

- edgeR's Trimmed Mean of M-values (TMM): Trims extreme values before calculating normalization factors, robust against highly differentially expressed genes [6]

The choice of normalization method significantly impacts downstream analysis, particularly for differential expression and dimensionality reduction. For PCA applications, variance-stabilizing transformations such as the regularized logarithm (rlog) in DESeq2 or the variance stabilizing transformation (VST) are particularly valuable as they reduce the mean-variance relationship in count data [7].

Low-Count Filtering and Data Cleaning

Prior to analysis, genes with low counts are typically filtered out as they contribute mostly noise rather than biological signal. The filterByExpr function in edgeR provides a robust method for this filtering by keeping only genes with a minimum number of counts in a sufficient number of samples [11]. In a typical analysis, this filtering might retain approximately 58% of genes while removing those with consistently low expression [11].

Additional data cleaning may involve checking for outliers using PCA or hierarchical clustering, examining the percentage of reads mapping to ribosomal RNA genes (indicating potential contamination), and verifying that sample relationships reflect expected biological groupings rather than technical batches [11].

Analysis-Ready Data Structure for PCA

Data Formatting for PCA

The final analysis-ready dataset for PCA typically takes the form of a normalized, transformed expression matrix with genes as rows and samples as columns. This matrix should have appropriate dimensions—after quality filtering, a typical dataset might contain 10,000-20,000 genes across multiple samples [7]. The data should be free of technical artifacts, with normalization applied to address sequencing depth and composition biases.

In preparation for PCA, the expression matrix is often transformed using variance-stabilizing techniques that make the data more amenable to linear dimensionality reduction methods. The DESeq2 package provides built-in functions for both normalization and transformation specifically designed for RNA-seq count data [7].

Workflow Integration

The complete workflow from raw data to PCA-ready format can be visualized as a sequential process with multiple checkpoints:

Diagram 1: RNA-seq Data Processing Workflow for PCA. This diagram illustrates the sequential steps required to transform raw sequencing data into an analysis-ready format suitable for Principal Component Analysis.

The Scientist's Toolkit: Essential Research Reagents and Computational Tools

Table 3: Essential Research Reagents and Computational Tools for RNA-seq Analysis

| Category | Tool/Reagent | Function | Application Notes |

|---|---|---|---|

| Quality Control | FastQC | Quality assessment of raw reads | Provides visual reports for key metrics including Phred scores and adapter contamination [11] [12] |

| Trimming | fastp | Read trimming and filtering | Fast processing with integrated quality control, suitable for most applications [10] [12] |

| Alignment | STAR | Spliced alignment of reads | Accurate for complex transcriptomes, generates both genome and transcriptome alignments [11] [12] |

| Quantification | Salmon | Alignment-free quantification | Extremely fast, useful for large datasets and isoform-level analysis [11] [13] |

| Normalization | DESeq2 | Statistical normalization | Uses median of ratios method, ideal for differential expression analysis [7] |

| Visualization | ggplot2 | Data visualization | Flexible plotting system for creating publication-quality figures [7] |

| Reference Genome | GRCh38 (human) | Alignment reference | Use most recent version with appropriate annotations [11] [12] |

| Gene Annotation | GENCODE | Gene model annotations | Ensure compatibility with reference genome version [12] |

Quality Assessment and Validation

Post-Processing Quality Metrics

After processing, the quality of the analysis-ready data should be verified using multiple approaches. Qualimap provides comprehensive quality metrics for aligned reads, including the distribution of reads across genomic features (exonic, intronic, intergenic), which should show strong enrichment for exonic regions in standard mRNA-seq experiments [12]. Additional metrics include the alignment rate (typically >80%), the uniformity of coverage along transcripts, and the complexity of the library [11].

PCA itself serves as a powerful quality assessment tool. When applied to the processed data, it should reveal whether biological replicates cluster together and whether the major sources of variation correspond to expected biological conditions rather than technical batches [6] [7]. Samples that appear as outliers in PCA may indicate potential quality issues that require further investigation.

Batch Effect Detection and Correction

PCA is particularly effective for visualizing batch effects, which occur when technical variations dominate biological signals [6] [14]. In a PCA plot, batch effects typically manifest as clear separation of samples processed in different batches, often along the first principal component. When batch effects are detected, correction methods such as ComBat or removeBatchEffect can be applied before proceeding with downstream analysis [14].

The effectiveness of batch correction can be validated by examining PCA plots before and after correction. Successful correction should reduce the separation between batches while maintaining biological differences of interest. However, it is always preferable to minimize batch effects through proper experimental design rather than relying solely on computational correction [6].

The transformation of raw RNA-seq data into an analysis-ready format represents a critical multi-step process that progressively enhances data quality and biological interpretability. Each stage—from quality control and trimming through alignment, quantification, and normalization—prepares the data for sophisticated analytical techniques like Principal Component Analysis. Proper execution of these steps ensures that the resulting expression matrix accurately reflects biological reality rather than technical artifacts, enabling meaningful insights into transcriptomic regulation.

The structured approach outlined in this protocol emphasizes quality assessment at multiple checkpoints, appropriate tool selection based on experimental needs, and thorough validation of the final dataset. By following this comprehensive workflow, researchers can confidently proceed to dimensionality reduction, differential expression analysis, and other advanced computational methods, secure in the knowledge that their data foundation is robust and analytically sound.

In the context of transcriptomics research, particularly when preparing data for Principal Component Analysis (PCA), the preprocessing steps of quality control (QC) and normalization form the critical foundation for all subsequent biological interpretations. RNA sequencing (RNA-Seq) has revolutionized the study of transcriptomes by enabling comprehensive, genome-wide quantification of RNA abundance with lower background noise and finer resolution than previous methods like microarrays [15]. However, the reliability of conclusions drawn from RNA-Seq data, including PCA visualizations that reveal sample clustering and patterns, is directly dependent on the quality of preprocessing [16]. Without rigorous QC and appropriate normalization, technical variations can obscure true biological signals, leading to misinterpretations that compromise research validity, especially in critical applications like drug development and biomarker discovery [15] [16].

This protocol outlines a systematic approach to RNA-seq data preprocessing, emphasizing how each step influences the integrity of downstream PCA. We provide detailed methodologies for quality assessment, data cleaning, and normalization strategies, enabling researchers to transform raw sequencing data into robust datasets capable of revealing meaningful biological insights through dimension reduction techniques.

RNA-seq Workflow: From Raw Data to Analysis-Ready Information

The journey from raw sequencing outputs to analysis-ready data involves multiple critical steps that progressively enhance data quality and comparability. The following diagram illustrates the complete workflow, highlighting how each preprocessing stage contributes to preparing data for downstream applications like PCA:

Comprehensive Quality Control Protocol

Three-Stage Quality Control Implementation

Quality control in RNA-seq analysis is not a single step but a continuous process applied at multiple stages to ensure data integrity. Systematic QC practices help detect technical artifacts early, preventing misleading biological conclusions [16].

Stage 1: Raw Data Quality Control

The initial QC assessment evaluates FASTQ files obtained directly from the sequencer, providing the first indication of data quality and potential technical issues [15] [16].

Protocol Steps:

- Run FastQC on all FASTQ files to generate quality metrics

- Use MultiQC to aggregate and compare results across all samples

- Critical metrics to assess:

- Per-base sequence quality (Phred scores)

- Adapter contamination levels

- GC content distribution

- Sequence duplication levels

- Overrepresented sequences

Quality Thresholds:

- Base quality: Phred score ≥ Q30 (indicating 0.1% error rate) [16]

- Adapter contamination: Minimal presence (<5% of reads)

- GC content: Should match organism expectations without unusual bimodal distributions

Table 1: Key QC Metrics and Interpretation Guidelines

| QC Metric | Optimal Range | Potential Issue | Corrective Action |

|---|---|---|---|

| Per-base Sequence Quality | Q30 or higher across all bases | Quality drops at read ends | Increase trimming at low-quality regions |

| Adapter Contamination | <5% of reads | High adapter content (>10%) | More aggressive adapter trimming |

| GC Content | Organism-specific normal distribution | Unusual bimodal distribution | Potential contamination; investigate sources |

| Sequence Duplication | <20-50% depending on organism | Very high duplication (>70%) | PCR over-amplification; consider duplicate removal |

| rRNA Content | <10% in mRNA-seq | High rRNA percentage (>30%) | Inadequate rRNA depletion during library prep |

Stage 2: Preprocessing and Data Cleaning

After initial assessment, raw reads undergo cleaning to remove technical artifacts while preserving biological signal [15].

Tools and Parameters:

- Trimmomatic: For adapter removal and quality trimming

- Cutadapt: Specifically designed for adapter sequence removal

- fastp: All-in-one FASTQ preprocessor with comprehensive reporting

Implementation Protocol:

Critical Consideration: Balance between removing technical artifacts and preserving biological data. Over-trimming reduces sequencing depth and statistical power, while under-trimming leaves artifacts that distort downstream analysis [15].

Stage 3: Post-Alignment Quality Control

After reads are aligned to a reference genome or transcriptome, additional QC metrics become available to assess alignment quality and potential biases [15] [17].

Assessment Protocol:

- Mapping Rate Evaluation:

- Minimum acceptable: >70% uniquely mapped reads

- Optimal: >80% uniquely mapped reads

- Investigate samples with mapping rates <70% for potential contamination or reference mismatches [16]

Alignment Distribution Analysis:

- Evaluate gene body coverage uniformity using RSeQC

- Check for 5' or 3' bias that might indicate library preparation issues

- Assess splice junction detection rates

Duplicate Read Analysis:

- Use Picard Tools to mark and assess PCR duplicates

- High duplication rates may indicate low input material or over-amplification

Batch Effect Detection and Mitigation

Batch effects arising from experimental conditions (different library preparation dates, sequencing runs, or technicians) can introduce systematic variations that obscure biological signals [6] [16].

Detection Methods:

- Principal Component Analysis (PCA) coloring samples by potential batch variables

- Hierarchical clustering to identify sample groupings by technical factors

- Correlation analysis between technical covariates and principal components

Mitigation Strategies:

- Include biological replicates to distinguish technical from biological variation

- Randomize sample processing across experimental batches

- Utilize statistical batch correction methods (ComBat, Limma) when necessary, applied after normalization but before downstream analysis [18]

RNA-seq Normalization Strategies

Understanding Normalization Requirements

Normalization adjusts raw count data to account for technical variations, enabling meaningful biological comparisons. The diagram below illustrates the three primary normalization contexts in RNA-seq analysis:

Normalization Methods: Protocol and Applications

Different normalization methods address specific technical biases and are suited for particular analytical contexts. The choice of method significantly impacts downstream analysis, including PCA outcomes and differential expression results [19] [18].

Within-Sample Normalization Methods

These methods enable comparison of expression levels between different genes within the same sample by accounting for gene length and sequencing depth variations [18].

FPKM/RPKM Protocol:

- Formula: FPKM = [Fragment Count / (Gene Length in kb × Total Fragments in Millions)]

- Application: Gene expression comparisons within a single sample

- Limitation: Not suitable for between-sample comparisons due to composition effects [20]

TPM (Transcripts Per Million) Protocol:

- Calculation Steps:

- Divide read counts by transcript length in kilobases (yielding reads per kilobase)

- Sum all per-kilobase values for the sample

- Divide each per-kilobase value by the sample sum and multiply by 10⁶

- Advantage: Sum of all TPMs is identical across samples, enabling more accurate cross-sample comparisons than FPKM [18] [20]

- Application: Both within-sample and between-sample comparisons when used with additional between-sample normalization

Between-Sample Normalization Methods

These methods enable robust comparisons of gene expression across different samples by accounting for library size differences and composition effects [18].

TMM (Trimmed Mean of M-values) Protocol:

- Assumption: Most genes are not differentially expressed [19]

- Implementation:

- Select a reference sample (often with median library size)

- Calculate fold changes (M-values) and absolute expression levels (A-values) for all genes relative to reference

- Trim extreme M and A values (typically 5% from each end and 30% of M-values)

- Calculate scaling factors from weighted mean of remaining log-fold-changes

- Tools: Available in edgeR package [19]

RLE (Relative Log Expression) Protocol:

- Assumption: Most genes are not differentially expressed [19]

- Implementation:

- Calculate geometric mean for each gene across all samples

- Compute ratio of each sample's counts to these geometric means

- Calculate median of these ratios for each sample (size factor)

- Divide raw counts by sample-specific size factors

- Tools: Default method in DESeq2 package [19]

Table 2: Comparative Analysis of RNA-seq Normalization Methods

| Method | Type | Corrects For | Best Applications | Limitations |

|---|---|---|---|---|

| TPM | Within-sample | Sequencing depth, Gene length | Within-sample comparisons, RNA-seq with varying transcript lengths | Less accurate for between-sample DE analysis |

| FPKM/RPKM | Within-sample | Sequencing depth, Gene length | Single-sample gene expression comparisons | Problematic for between-sample comparisons [20] |

| TMM | Between-sample | Library size, RNA composition | Differential expression, PCA visualization | Assumes most genes not DE; sensitive to extreme outliers |

| RLE (DESeq2) | Between-sample | Library size, RNA composition | Differential expression, Small sample sizes | Similar assumptions to TMM; performance affected by many DE genes |

| Quantile | Between-sample | Distribution differences | Making expression distributions similar across samples | Assumes technical variation causes distribution differences |

Advanced Normalization: Batch Effect Correction

When integrating multiple datasets or dealing with batch effects, additional normalization is required to remove unwanted technical variation while preserving biological signals [18].

ComBat Protocol:

- Principle: Empirical Bayes method that adjusts for known batch effects

- Implementation:

- Apply within-dataset normalization first (e.g., TMM or RLE)

- Identify batch variables (sequencing date, facility, etc.)

- Use ComBat to estimate and remove batch-specific effects

- Verify effectiveness using PCA visualization colored by batch

Surrogate Variable Analysis (SVA):

- Application: Detect and adjust for unknown sources of technical variation

- Implementation: Identify hidden factors that correlate with expression but not primary variables of interest

Table 3: Essential Computational Tools for RNA-seq Quality Control and Normalization

| Tool | Primary Function | Key Features | Application Context |

|---|---|---|---|

| FastQC | Raw data quality assessment | Comprehensive quality metrics, HTML reports | Initial QC of FASTQ files [15] [16] |

| MultiQC | Aggregate QC reports | Summarizes multiple tools and samples, Comparative visualization | Study-level quality assessment [15] |

| Trimmomatic | Read trimming | Adapter removal, Quality-based trimming, Leading/trailing base cutting | Pre-alignment data cleaning [15] |

| STAR | Read alignment | Spliced alignment, Fast processing, High accuracy | Mapping reads to reference genome [15] [17] |

| featureCounts | Read quantification | Fast processing, Multiple annotation formats, Strand-specific counting | Generating count matrices from aligned reads [15] [17] |

| DESeq2 | Normalization & DE analysis | RLE normalization, Negative binomial model, Multiple testing correction | Between-sample normalization, Differential expression [19] |

| edgeR | Normalization & DE analysis | TMM normalization, Robust statistical framework | Between-sample normalization, Differential expression [19] |

| Qualimap | Post-alignment QC | Mapping quality analysis, Coverage biases, Junction detection | Comprehensive alignment assessment [15] |

Integration with PCA: From Preprocessing to Visualization

The ultimate goal of rigorous preprocessing is to enable biologically meaningful PCA visualizations that accurately reflect sample relationships rather than technical artifacts.

Preprocessing Impact on PCA Outcomes

Effective normalization is crucial for PCA because:

- Technical variations can dominate principal components, obscuring biological patterns

- Inadequate normalization may cause samples to cluster by technical factors (batch, sequencing depth) rather than biological conditions

- Properly normalized data should show biological replicates clustering together in PCA space

Protocol: PCA Implementation After Preprocessing

Step 1: Data Preparation

- Start with normalized count matrix (e.g., TMM- or RLE-normalized counts)

- Filter lowly expressed genes (e.g., keep genes with ≥10 counts in minimum sample size)

- Apply variance-stabilizing transformation (e.g., DESeq2's vst or rlog) if using count-based methods

Step 2: PCA Computation

- Use standard statistical packages (prcomp in R, scikit-learn in Python)

- Center data to mean zero (essential)

- Scale to unit variance (recommended when genes have different expression ranges)

Step 3: Result Interpretation

- Examine variance explained by each principal component

- Color samples by biological conditions and technical factors to identify potential confounders

- Assess whether biological replicates cluster more tightly than samples from different conditions

Quality control and normalization are not mere technical formalities but fundamental processes that determine the validity of all subsequent RNA-seq analyses, including PCA. By implementing the systematic protocols outlined in this document—incorporating multi-stage quality assessment, appropriate normalization strategies, and batch effect management—researchers can transform raw sequencing data into reliable datasets capable of revealing meaningful biological insights. The stringent application of these preprocessing steps ensures that PCA visualizations and other downstream analyses reflect true biological signals rather than technical artifacts, ultimately supporting robust scientific conclusions in transcriptomics research and drug development.

RNA sequencing (RNA-Seq) has revolutionized transcriptomic research by enabling genome-wide quantification of RNA abundance, but the resulting count data presents significant statistical challenges for analysis [21] [15]. The raw count matrix generated from RNA-Seq experiments exhibits characteristic properties that complicate direct application of statistical methods: counts are restricted to non-negative integers, the variance depends on the mean (heteroskedasticity), and technical variations in sampling efficiency between samples create systematic biases [22] [23]. Data transformation methods address these challenges by converting raw counts into continuous, normalized values with stable variance, making the data amenable to downstream statistical analyses and visualization techniques such as Principal Component Analysis (PCA) [24] [25].

The fundamental need for transformation arises from the statistical properties of RNA-Seq data. Without transformation, a few highly expressed genes can dominate the variance structure, potentially obscuring biologically relevant patterns [25]. As demonstrated in single-cell RNA-Seq studies, when data is not properly transformed, "PC1 will basically coincide with a single gene" that has extreme expression differences between samples [25]. Effective transformation methods stabilize variance across the dynamic range of expression and remove technical artifacts, enabling more biologically meaningful interpretation of data structure through dimensionality reduction techniques like PCA [7].

Theoretical Foundations of RNA-Seq Data Transformation

Characteristics of Raw Count Data

RNA-Seq data originates from counting sequencing reads mapped to genomic features, resulting in a genes × samples matrix of non-negative integers [22] [23]. These raw counts possess several challenging statistical properties. First, they exhibit mean-variance dependence, where highly expressed genes show greater absolute variability than lowly expressed genes [24]. Second, counts are subject to compositional biases, where a few highly expressed genes can consume a large fraction of the total sequencing depth, creating spurious differences between samples [21]. Third, the data displays heteroskedasticity, with variance increasing with mean expression level, violating assumptions of many standard statistical methods [22] [25].

The mathematical relationship between mean and variance in RNA-Seq data is typically modeled using a negative binomial distribution [24], which accounts for overdispersion (extra-Poisson variation) through the mean-variance relationship: Var[Y] = μ + αμ², where Y represents the count, μ is the mean expression, and α is the dispersion parameter [22] [23]. This quadratic mean-variance relationship has important implications for data transformation and downstream analysis.

The Necessity of Transformation for PCA

Principal Component Analysis (PCA) is a dimensionality reduction technique that identifies axes of maximum variance in high-dimensional data [26]. When applied to untransformed RNA-Seq counts, PCA results become dominated by technical artifacts rather than biological signals [25]. The inherent mean-variance relationship means that highly expressed genes contribute disproportionately to principal components, regardless of their biological relevance.

As demonstrated in empirical studies, log-transformation of RNA-Seq data redistributes the variance structure, requiring more principal components to achieve the same explained variance but providing a more biologically meaningful representation [25]. Without transformation, "there is one gene that alone explains above 40% of the variance" in some datasets, whereas proper transformation allows multiple biological factors to contribute to the variance structure [25].

Transformation Methods: Principles and Applications

The Shifted Logarithm (logCPM)

The shifted logarithm transformation, commonly implemented as log-counts-per-million (logCPM), applies a logarithmic transformation to counts after scaling by library size and adding a pseudo-count [22] [27]. The transformation follows the formula:

log(y/s + y₀)

where y represents the raw counts, s is a size factor (typically accounting for library size differences), and y₀ is a pseudo-count added to avoid undefined values when y = 0 [22] [23]. The choice of pseudo-count significantly impacts the transformation: conventional CPM with L = 10⁶ implies y₀ = 0.005, while Seurat's approach with L = 10,000 implies y₀ = 0.5 [22]. For optimal performance with typical RNA-Seq data, researchers recommend parameterizing the shifted logarithm in terms of overdispersion using y₀ = 1/(4α), where α represents the typical overdispersion [22].

Table 1: Comparison of logCPM Implementations

| Implementation | Library Size Factor (L) | Implied Pseudo-count | Typical Use Cases |

|---|---|---|---|

| Conventional CPM | 1,000,000 | 0.005 | Bulk RNA-Seq visualization |

| Seurat | 10,000 | 0.5 | Single-cell RNA-Seq |

| Parameterized by overdispersion | Variable | 1/(4α) | Differential expression analysis |

Variance Stabilizing Transformation (VST)

Variance Stabilizing Transformation (VST) employs a more sophisticated approach based on the delta method to explicitly address heteroskedasticity [22] [23]. For the negative binomial distribution with mean μ and overdispersion α, the theoretically optimal VST is given by:

g(y) = 1/√α · acosh(2αy + 1)

This transformation stabilizes the variance across the entire dynamic range of expression values, making it particularly effective for lowly expressed genes [22]. In practice, VST is implemented in packages like DESeq2, which estimate gene-specific dispersion parameters and apply an empirical Bayes approach to shrink these estimates toward a trended mean [24]. The resulting transformed data has approximately homoskedastic variance, satisfying the assumptions of many statistical tests and linear modeling approaches.

Pearson Residuals

An alternative approach based on generalized linear models uses Pearson residuals for variance stabilization [22] [23]. The formula for Pearson residuals is:

r_gc = (y_gc - μ̂_gc) / √(μ̂_gc + α̂_g · μ̂_gc²)

where ygc represents the count for gene g in cell c, μ̂gc is the predicted mean from a gamma-Poisson GLM, and α̂_g is the estimated dispersion parameter [22]. This approach simultaneously accounts for library size differences and mean-variance relationships while providing normalized residuals that can be used for downstream analysis. Hafemeister and Satija [22] developed this method specifically to address the limitation of delta-method transformations for lowly expressed genes.

Comparative Analysis of Transformation Methods

Table 2: Properties of RNA-Seq Data Transformation Methods

| Method | Variance Stabilization | Handling of Zeros | Computational Complexity | Optimal Use Cases |

|---|---|---|---|---|

| logCPM | Moderate | Pseudo-count avoids undefined values | Low | Exploratory analysis, visualization |

| VST | Strong | Handles zeros naturally | Medium | Differential expression, PCA |

| Pearson Residuals | Strong for moderately-high expression | Limited by clipping | High | Single-cell RNA-Seq, clustering |

| acosh Transformation | Strong across all expression levels | Handles zeros naturally | Medium | Bulk and single-cell RNA-Seq |

Empirical comparisons of these transformation methods reveal that despite the theoretical advantages of more sophisticated approaches, the simple shifted logarithm with appropriate parameterization often performs comparably or better in benchmarks [22]. However, each method has distinct strengths: VST and Pearson residuals better stabilize variance for lowly expressed genes, while logCPM remains computationally efficient and interpretable [22] [23].

Practical Implementation for PCA Applications

Integrated Workflow for RNA-Seq Data Transformation and PCA

Figure 1: RNA-Seq Data Transformation and PCA Workflow

Step-by-Step Protocol for DESeq2-Based Transformation and PCA

Preprocessing and Transformation

- Filter lowly expressed genes: Remove genes with minimal expression across samples. A common threshold is keeping genes with at least 10 counts total or in a minimum number of samples [7].

- Create DESeqDataSet object: Incorporate count data, sample information, and design formula.

- Apply transformation: Use either the

vst()orrlog()functions in DESeq2 for variance stabilization. - Extract transformed values: Use

assay()to obtain the transformed matrix for downstream analysis.

PCA Implementation

- Compute PCA: Apply the

prcomp()function to the transformed data matrix. - Visualize sample relationships: Create a scatter plot of the first two principal components.

- Color by experimental conditions: Use metadata variables (e.g., treatment, cell type) to annotate points.

- Interpret variance explained: Check the proportion of variance explained by each principal component.

Quality Control Considerations

- Examine the PCA plot for batch effects and outliers.

- Ensure sufficient separation between biological conditions of interest.

- Verify that technical replicates cluster together.

Research Reagent Solutions

Table 3: Essential Tools for RNA-Seq Data Transformation and PCA

| Tool/Category | Specific Software/Package | Function |

|---|---|---|

| Quality Control | FastQC, MultiQC | Assess read quality, adapter contamination, GC bias |

| Alignment | HISAT2, STAR | Map reads to reference genome |

| Quantification | featureCounts, HTSeq-count | Generate count matrices from aligned reads |

| Transformation | DESeq2, edgeR, limma | Implement VST, logCPM, and related methods |

| Visualization | ggplot2, pheatmap | Create PCA plots, heatmaps, and other visualizations |

| Differential Expression | DESeq2, edgeR, limma-voom | Identify statistically significant expression changes |

Applications in Drug Development and Biomedical Research

Proper data transformation enables more accurate biological interpretation in pharmaceutical and clinical research settings. In drug development, transformed RNA-Seq data facilitates identification of gene expression signatures associated with drug response, resistance mechanisms, and patient stratification [15]. For example, PCA applied to properly transformed data can reveal distinct molecular subtypes of tumors that may respond differently to targeted therapies [7].

In a prostate cancer study analyzing pre- and post-androgen deprivation therapy (ADT) samples, PCA applied to transformed data successfully separated samples based on treatment status, revealing key transcriptional changes induced by therapy [7]. Such analyses provide insights into drug mechanisms of action and potential resistance pathways, guiding combination therapy strategies and biomarker development.

The choice of transformation method impacts sensitivity to detect biologically relevant signals. While logCPM offers computational efficiency for large-scale drug screening applications, VST may provide enhanced power to detect subtle expression changes in preclinical models, potentially identifying more candidate biomarkers or drug targets.

Data transformation methods including VST, logCPM, and Pearson residuals serve as critical preprocessing steps that enable biologically meaningful application of PCA to RNA-Seq data. By addressing the statistical challenges inherent to count-based sequencing data, these methods stabilize variance, reduce the influence of technical artifacts, and enhance detection of biologically relevant patterns. The optimal choice of transformation depends on specific research goals, dataset characteristics, and analytical priorities, with each method offering distinct advantages for different applications in basic research and drug development.

Principal Component Analysis (PCA) is a fundamental statistical technique used to simplify the complexity of high-dimensional data by transforming it into a lower-dimensional space while preserving the key patterns of variation [26]. In the context of RNA sequencing (RNA-seq), where each sample contains expression values for tens of thousands of genes, PCA serves as an indispensable tool for quality control, outlier detection, and understanding the major sources of variation in the dataset [28] [15].

RNA-seq has revolutionized transcriptomic research by enabling genome-wide quantification of mRNA levels in cells and tissues [29] [15]. However, the data generated from these experiments presents significant analytical challenges due to its high-dimensional nature, where the number of genes (features) far exceeds the number of samples (observations). PCA addresses this challenge by identifying the principal components (PCs)—new orthogonal variables that capture the directions of maximum variance in the data [26] [30]. The first principal component (PC1) accounts for the largest possible variance, followed by PC2, which captures the next highest variance under the constraint of being orthogonal to PC1, and so on [26].

This application note explores the critical dual role of PCA in RNA-seq analysis: assessing sample similarity based on global gene expression patterns and detecting technical artifacts known as batch effects. We provide a comprehensive, step-by-step protocol framed within a broader thesis on PCA applications in genomic research, specifically tailored for researchers, scientists, and drug development professionals working with transcriptomic data.

Theoretical Foundations of PCA in RNA-seq

Mathematical Principles

The mathematical foundation of PCA relies on eigen decomposition of the covariance matrix or singular value decomposition (SVD) of the data matrix [30]. For an RNA-seq dataset with samples as columns and genes as rows, PCA identifies linear combinations of the original genes that define new axes of variation. The explained variance ratio indicates how much of the total variability in the original data each principal component captures, while the cumulative explained variance ratio represents the total variance explained by the first m components [26].

When RNA-seq data is represented as a matrix where rows correspond to genes and columns to samples, PCA transforms this high-dimensional data into a new coordinate system that highlights the dominant patterns of expression variation across samples [26]. The principal components are calculated such that PC1 represents the direction of maximum variance, PC2 captures the next highest variance while being orthogonal to PC1, and subsequent components continue this pattern while maintaining orthogonality [30].

Preprocessing Requirements for RNA-seq Data

Proper normalization is essential before applying PCA to RNA-seq data. Raw read counts must be normalized to account for differences in sequencing depth and library composition between samples [15]. The most common approach involves:

- Counts Per Million (CPM) calculation: Uses the effective library sizes as calculated by the TMM (Trimmed Mean of M-values) normalization [28].

- Log transformation: Applying log2 transformation to the CPM values to reduce the influence of extreme values [28].

- Z-score normalization: Mean centering and scaling to unit variance across samples for each gene [28].

Additionally, filtering out lowly expressed genes is crucial as they contribute mostly noise rather than biological signal to the analysis [7]. A common approach is to "filter out the genes that have not been expressed or that have low expression counts since these genes are likely to add noise rather than useful signal to our analysis" [7]. For instance, keeping only genes with 10 or more reads total across all samples effectively removes uninformative genes [7].

PCA for Sample Similarity Assessment

Visualizing Global Expression Patterns

PCA enables researchers to visualize the overall similarity between samples based on their global gene expression profiles. When samples cluster closely together in PCA space, it indicates they have similar expression patterns across thousands of genes, while distant points represent samples with divergent expression profiles [26] [28]. This visualization provides immediate insights into data quality, reproducibility of biological replicates, and potential outliers that may require further investigation.

In practice, after performing PCA on RNA-seq data, researchers typically create a two-dimensional scatter plot using the first two principal components (PC1 vs. PC2), which together capture the largest proportion of variability in the dataset [26]. The explained variance ratios for each component are indicated in parentheses on the axis labels, allowing assessment of how well the 2D representation reflects the complete expression landscape [26]. For example, if PC1 explains 38.57% of variance and PC2 explains 19.55%, the cumulative explained variance would be 58.12%, meaning the plot captures over half of the total variability in the original high-dimensional data [26].

Interpreting Sample Clusters

The grouping of samples in PCA plots provides valuable biological insights. Biological replicates (samples from the same experimental condition) should cluster together, indicating technical reproducibility and biological consistency [15]. Conversely, samples from different conditions (e.g., treated vs. control, different tissue types, or different disease states) may form distinct clusters, revealing systematic differences in gene expression patterns driven by the experimental conditions [26] [28].

Unexpected clustering patterns can reveal previously unappreciated relationships between samples or potential issues in sample labeling or processing. For instance, if samples from the same biological group do not cluster together, it may indicate problems with sample processing, hidden covariates, or excessive technical variation that needs to be addressed before proceeding with differential expression analysis [15].

PCA for Batch Effect Detection

Understanding Batch Effects

Batch effects represent systematic technical variations introduced during different stages of the RNA-seq workflow, including sample collection, library preparation, sequencing runs, or different personnel handling the samples [31] [32]. These non-biological variations can significantly compromise data quality and lead to false conclusions if not properly identified and addressed [33] [32].

Common sources of batch effects in transcriptomics include [32]:

- Sample Preparation Variability: Different protocols, technicians, or enzyme efficiency

- Sequencing Platform Differences: Machine type, calibration, or flow cell variation

- Library Prep Artifacts: Reverse transcription efficiency, amplification cycles

- Reagent Batch Effects: Different lot numbers, chemical purity variations

- Environmental Conditions: Temperature, humidity, handling time

Identifying Batch Effects through PCA

PCA serves as a powerful diagnostic tool for detecting batch effects by revealing whether samples cluster primarily by technical factors rather than biological conditions [31]. When batch effects are present, the PCA plot typically shows clear separation of samples processed in different batches, often along the first or second principal component, which would otherwise be expected to separate samples by biological group [31] [32].

This visualization approach allows researchers to identify the presence and magnitude of batch effects before proceeding with differential expression analysis. The impact of batch effects can be substantial, potentially causing "clustering algorithms might group samples by batch rather than by true biological similarity" and leading to false discoveries in downstream analyses [31].

Table: Common Batch Effect Signatures in PCA Results

| PCA Pattern | Interpretation | Recommended Action |

|---|---|---|

| Clear separation by known batch variable | Significant batch effect present | Apply batch correction methods |

| Mixing of samples across batches | Minimal batch effect | Proceed with analysis |

| Separation by unknown factor | Potential hidden batch effect | Investigate experimental metadata |

| Biological groups separate within batches | Both biological signal and batch effect present | Include batch in statistical models |

Computational Protocols

Research Reagent Solutions

Table: Essential Computational Tools for PCA in RNA-seq Analysis

| Tool/Package | Application | Key Function |

|---|---|---|

| FastQC [29] [15] | Quality Control | Assesses raw sequence quality before alignment |

| Trimmomatic [29] [15] | Read Preprocessing | Removes adapter sequences and low-quality bases |

| STAR [34] [15] | Read Alignment | Splice-aware alignment to reference genome |

| HISAT2 [29] [15] | Read Alignment | Alternative splice-aware aligner |

| Salmon [34] | Quantification | Alignment-free transcript quantification |

| featureCounts [29] | Quantification | Assigns reads to genomic features |

| DESeq2 [7] | Normalization/PCA | Performs variance-stabilizing transformation and PCA |

| limma [31] | Batch Correction | Removes batch effects using linear models |

| ComBat-seq [33] [31] | Batch Correction | Adjusts for batch effects in count data |

| ggplot2 [29] [7] | Visualization | Creates publication-quality PCA plots |

Step-by-Step PCA Protocol for RNA-seq Data

Step 1: Data Preparation and Quality Control

Begin with raw FASTQ files from sequencing and perform quality assessment using FastQC to identify potential issues with adapter contamination, unusual base composition, or duplicated reads [29] [15]. Clean the reads by trimming adapter sequences and low-quality bases using Trimmomatic [29] [15].

Step 2: Read Alignment and Quantification

Align the cleaned reads to a reference genome using a splice-aware aligner such as STAR or HISAT2 [29] [34]. Alternatively, use pseudoalignment tools like Salmon for faster quantification [34]. Generate a count matrix where rows represent genes and columns represent samples, containing the number of reads mapped to each gene [29] [15].

Step 3: Data Normalization and Filtering

Normalize the raw counts to account for differences in sequencing depth between samples using methods such as DESeq2's median of ratios or edgeR's TMM (Trimmed Mean of M-values) [15] [7]. Filter out lowly expressed genes that may contribute noise rather than biological signal—a common approach is to "keep only genes with 10 or more reads total" across all samples [7].

Step 4: PCA Computation

Perform the principal component analysis on the normalized and filtered expression data. In R, this can be accomplished using the prcomp() function or through the DESeq2 package, which internally applies a variance-stabilizing transformation before computing principal components [7].

Step 5: Visualization and Interpretation

Create a PCA plot showing samples projected onto the first two principal components. Color points by biological conditions and/or batch variables to assess data structure and identify potential batch effects [7]. Examine the percentage of variance explained by each component to understand how well the low-dimensional projection represents the original data [26].

RNA-seq PCA Analysis Workflow

Batch Effect Correction Methods

When PCA reveals significant batch effects, several computational approaches can be employed to mitigate their impact:

ComBat-seq: Specifically designed for RNA-seq count data, ComBat-seq uses an empirical Bayes framework to adjust for batch effects while preserving biological signals [33] [31]. It employs a negative binomial model for count data adjustment and can select a reference batch with the smallest dispersion, preserving count data for the reference batch while adjusting other batches toward the reference [33].

limma removeBatchEffect: This function applies linear modeling-based correction and works on normalized expression data rather than raw counts [31]. It is particularly well-integrated with the limma-voom workflow for differential expression analysis [31].

Mixed Linear Models (MLM): For complex experimental designs, MLM can handle both fixed and random effects, making them suitable for scenarios with nested or hierarchical batch effects [31].

Table: Comparison of Batch Effect Correction Methods

| Method | Data Type | Mechanism | Strengths | Limitations |

|---|---|---|---|---|

| ComBat-seq [33] [31] | Count data | Negative binomial model, empirical Bayes | Preserves biological signals, reference batch approach | Requires known batch information |

| limma removeBatchEffect [31] [32] | Normalized data | Linear modeling | Efficient, integrates with DE analysis workflows | Assumes known, additive batch effects |

| SVA [32] | Various | Surrogate variable analysis | Captures hidden batch effects | Risk of removing biological signal |

| Mixed Linear Models [31] | Normalized data | Random effects for batch | Handles complex designs | Computationally intensive |

After applying batch effect correction, it is essential to repeat the PCA to verify that batch effects have been successfully mitigated without removing biological variation of interest [31]. The corrected PCA plot should show mixing of samples across batches while maintaining separation by biological groups [31] [32].

Advanced Applications and Considerations

Quantitative Assessment of Batch Effects

Beyond visual inspection of PCA plots, several quantitative metrics can be used to assess batch effect correction quality [32]:

- Average Silhouette Width (ASW): Measures how similar samples are to their own cluster compared to other clusters

- Adjusted Rand Index (ARI): Assesses the similarity between two clusterings

- Local Inverse Simpson's Index (LISI): Quantifies batch mixing while preserving biological separation

- k-nearest neighbor Batch Effect Test (kBET): Tests whether batches are well-mixed in the local neighborhood of each sample

These metrics provide objective criteria for evaluating the success of batch effect correction methods and complement visual assessment of PCA plots [32].

Experimental Design to Minimize Batch Effects

The most effective approach to managing batch effects is through proper experimental design that minimizes their impact from the outset [32]. Key strategies include:

- Randomization: Distributing samples from different biological groups across processing batches

- Balancing: Ensuring each condition is represented within each processing batch

- Replication: Including at least two replicates per group per batch for robust statistical modeling

- Consistency: Using consistent reagents and protocols throughout the study

Proactive experimental design significantly reduces reliance on post-hoc computational correction and produces more reliable results [32].

PCA Visualization Process

PCA serves as an indispensable tool in RNA-seq data analysis, providing critical insights into sample similarity and technical artifacts such as batch effects. By projecting high-dimensional gene expression data into a lower-dimensional space, PCA enables researchers to visualize global patterns, assess data quality, identify outliers, and detect unwanted technical variation that could compromise downstream analyses.

The integration of PCA throughout the RNA-seq analytical workflow—from initial quality assessment to post-correction validation—ensures that biological interpretations are based on true biological signals rather than technical artifacts. When combined with appropriate experimental design and batch correction methods, PCA significantly enhances the reliability, reproducibility, and biological relevance of RNA-seq studies.

As transcriptomic technologies continue to evolve and study designs grow in complexity, the role of PCA in quality assessment and batch effect detection remains fundamental. Researchers should incorporate PCA as a standard component of their RNA-seq analytical pipeline to maximize the value of their transcriptomic data and draw robust biological conclusions.

Hands-On PCA Protocol: From Raw Data to Visualization

The initial step in any RNA-seq analysis, including Principal Component Analysis (PCA), requires two fundamental components: the count matrix and the sample metadata. The count matrix is a numerical table where rows represent genomic features (genes or transcripts) and columns represent individual samples. Each entry contains the raw expression count for a specific feature in a specific sample [15] [35]. The sample metadata is a data frame where rows correspond to samples and columns describe the experimental conditions (e.g., treatment, time point, cell type) and other technical factors (e.g., batch, sequencing lane) [35]. Proper preparation of these components is critical, as the accuracy of all downstream analyses, including PCA, depends on the integrity of this input data.

The Count Matrix: Structure and Properties

The gene-level count matrix summarizes how many sequencing reads were mapped to each gene in each sample, where a larger number of reads indicates higher gene expression [15]. It is generated after a multi-step computational preprocessing pipeline that converts raw sequencing files (FASTQ) into a table of summarized expression values [34].

Table: Characteristics of an RNA-seq Count Matrix

| Aspect | Description |

|---|---|

| Data Structure | Rows = Genes/Transcripts, Columns = Individual Samples [35] |

| Matrix Entries | Raw read counts (integer values) for a specific gene in a specific sample [35] |

| Data Distribution | Typically modeled with a Negative Binomial distribution, as the mean is less than the variance [36] |

| Key Feature | Raw counts cannot be directly compared between samples without normalization due to differences in sequencing depth [15] |

RNA-seq count data can be modeled using a Poisson distribution. However, a unique property of this distribution is that the mean equals the variance. Realistically, with RNA-Seq data, there is always biological variation across replicates, and genes with larger average expression levels tend to have larger observed variances. The model that best fits this type of variability (mean < variance) is the Negative Binomial model [36].

The Sample Metadata: Structuring Experimental Design

The metadata file links the biological and technical reality of the experiment to the abstract count matrix. It is essential for both specifying statistical models in differential expression testing and for interpreting the results of unsupervised analyses like PCA.

A correctly formatted metadata data frame might look like this:

Table: Example Structure of a Sample Metadata File

| Sample | Condition | TimePoint | Batch |

|---|---|---|---|

| Sample01 | Treated | Day1 | A |

| Sample02 | Treated | Day1 | B |

| Sample03 | Treated | Day5 | A |

| Sample04 | Treated | Day5 | B |

| Sample05 | Untreated | Day1 | A |

| Sample06 | Untreated | Day1 | B |

| Sample07 | Untreated | Day5 | A |

| Sample08 | Untreated | Day5 | B |

For a situation where all samples were sequenced in the same run and only a single characteristic is being compared, the metadata would be simpler, containing just one column, for example, "Condition" with values like "treated" and "untreated" [35]. The rownames of the metadata data frame must exactly match the column names of the count matrix [35].

Generating Count Matrices: Experimental Protocols

The journey from raw sequencing data to a count matrix involves several key steps, each with specific tools and quality control checkpoints. The general workflow is as follows [15] [29]:

Quality Control (QC): The first step identifies potential technical errors, such as leftover adapter sequences, unusual base composition, or duplicated reads using tools like FastQC or multiQC. It is critical to review QC reports to ensure errors are removed without over-trimming good reads [15] [29].

Read Trimming: This step cleans the data by removing low-quality parts of the reads and leftover adapter sequences that can interfere with accurate mapping. Tools like Trimmomatic, Cutadapt, or fastp are commonly used [15] [29].

Alignment (Mapping): Cleaned reads are aligned (mapped) to a reference genome or transcriptome using software such as STAR, HISAT2, or TopHat2. This step identifies which genes or transcripts are being expressed in the samples [15] [29]. An alternative is pseudo-alignment with Kallisto or Salmon, which estimate transcript abundances without full base-by-base alignment. These methods are faster and use less memory, making them well-suited for large datasets [15] [34].

Post-Alignment QC and Quantification: Post-alignment QC is performed by removing reads that are poorly aligned or mapped to multiple locations, using tools like SAMtools, Qualimap, or Picard. This step is essential because incorrectly mapped reads can artificially inflate read counts and distort expression comparisons. The final step is read quantification, where the number of reads mapped to each gene is counted by tools like featureCounts or HTSeq-count, producing a raw count matrix [15] [29].

Practical Protocol: Using Automated Pipelines