A Comprehensive Guide to Histone ChIP-seq Data Processing: From Raw Reads to Biological Insights

This article provides a complete framework for processing and analyzing histone ChIP-seq data, tailored for researchers and drug development professionals.

A Comprehensive Guide to Histone ChIP-seq Data Processing: From Raw Reads to Biological Insights

Abstract

This article provides a complete framework for processing and analyzing histone ChIP-seq data, tailored for researchers and drug development professionals. It covers foundational concepts of histone modifications and the specific challenges of broad peak calling, followed by a step-by-step methodological pipeline from quality control to peak annotation. The guide also addresses critical troubleshooting for data quality issues and explores validation techniques and comparisons with emerging methods like CUT&Tag. By synthesizing current standards from consortia like ENCODE with practical optimization tips, this resource enables robust epigenetic analysis for biomedical research.

Understanding Histone Biology and ChIP-seq Fundamentals

Histone post-translational modifications (PTMs) are dynamic chemical alterations to the histone proteins that form the nucleosome, the fundamental repeating unit of chromatin. These modifications—including acetylation, methylation, phosphorylation, and ubiquitination—play a pivotal role in the epigenetic regulation of genome activity by altering chromatin structure and creating docking sites for specific effector proteins [1] [2]. The precise combination and genomic distribution of these marks help define the functional state of chromatin, influencing critical processes such as gene transcription, DNA repair, and replication [3]. From a technical perspective, chromatin immunoprecipitation coupled with high-throughput sequencing (ChIP-seq) has become the predominant method for mapping the genomic locations of these modifications on a genome-wide scale, providing snapshots of the epigenomic landscape across different cell types, developmental stages, and disease states [3] [4].

The Encyclopedia of DNA Elements (ENCODE) Consortium has established a foundational framework for categorizing histone modifications based on their characteristic enrichment patterns observed in ChIP-seq data [1] [5]. This framework classifies marks into two primary categories: broad domains and narrow peaks. This distinction is not merely morphological; it reflects fundamental differences in biological function, regulatory mechanisms, and, crucially, the analytical strategies required for accurate detection and interpretation [5] [6]. Understanding this classification is a prerequisite for designing robust ChIP-seq experiments and implementing appropriate bioinformatic processing pipelines for histone research.

Classification and Characteristics of Broad and Narrow Marks

The distinction between broad and narrow histone marks is based on the spatial scale of their enrichment across the genome, which correlates strongly with their functional roles.

Narrow marks are characterized by highly localized, punctate enrichment patterns, typically spanning a few nucleosomes. These marks are often associated with specific regulatory elements. For example, H3K4me3 is a classic narrow mark found at active promoters, while H3K9ac and H3K27ac are hallmarks of active enhancers [5] [3]. Their sharp, defined signals make them amenable to detection with standard peak-calling algorithms originally developed for transcription factor binding sites [6].

In contrast, broad domains cover extensive genomic regions, potentially encompassing entire gene bodies or large chromatin segments. These marks are typically linked to repressive chromatin states or transcriptional elongation. H3K27me3, a mark of facultative heterochromatin deposited by the Polycomb Repressive Complex 2, and H3K36me3, associated with the gene bodies of actively transcribed genes, are canonical examples of broad marks [1] [3] [7]. Others include H3K9me3 (constitutive heterochromatin) and H3K79me2/3 [5]. Their widespread and often low-level enrichment poses significant challenges for analysis, as they can evade detection by peak callers tuned for sharp, focal signals [8] [7].

Table 1: Characteristics of Common Histone Modifications

| Histone Mark | Type | Primary Genomic Location | Associated Biological Function |

|---|---|---|---|

| H3K4me3 | Narrow | Promoters | Transcriptional activation |

| H3K27ac | Narrow | Enhancers, Promoters | Transcriptional activation |

| H3K9ac | Narrow | Enhancers, Promoters | Transcriptional activation |

| H3K27me3 | Broad | Gene bodies | Polycomb-mediated repression |

| H3K9me3 | Broad | Gene bodies, repetitive regions | Constitutive heterochromatin |

| H3K36me3 | Broad | Gene bodies | Transcriptional elongation |

| H3K4me1 | Narrow/Intermediate | Enhancers | Enhancer identification |

Experimental Workflow for Histone ChIP-seq

Generating high-quality maps of histone modifications requires a meticulously executed ChIP-seq protocol. The following detailed methodology is adapted from established standards [3].

Key Reagents and Materials

Table 2: Essential Research Reagents for Histone ChIP-seq

| Reagent / Material | Function / Description | Example |

|---|---|---|

| Crosslinking Reagent | Stabilizes protein-DNA interactions in living cells. | Formaldehyde (37%) |

| Cell Lysis Buffer | Lyses the cell membrane while leaving nuclei intact. | PIPES, KCl, Igepal |

| Nuclei Lysis Buffer | Disrupts nuclei and releases chromatin. | Tris-HCl, EDTA, SDS |

| Sonication Device | Shears chromatin into fragments of 200–700 bp. | Bioruptor (Diagenode) |

| ChIP-grade Antibodies | Immunoprecipitate the histone modification of interest. | Anti-H3K27me3 (CST #9733S) |

| Protein A/G Beads | Capture the antibody-chromatin complex. | Magnetic or sepharose beads |

| IP Dilution Buffer | Dilutes chromatin to reduce SDS concentration before IP. | Tris-HCl, NaCl, Igepal, deoxycholate |

| Elution Buffer | Releases immunoprecipitated DNA from beads. | NaHCO₃, SDS |

| DNase-free RNase A | Degrades RNA in the sample. | 10 mg/ml |

| DNA Purification Kit | Purifies the final ChIP DNA for sequencing. | QIAquick PCR Purification Kit |

Detailed Step-by-Step Protocol

- Crosslinking: Treat cells with 1% formaldehyde for 8–10 minutes at room temperature to crosslink histones to DNA. Quench the reaction with 125 mM glycine.

- Chromatin Preparation: Harvest cells and wash with PBS. Resuspend the cell pellet in Cell Lysis Buffer supplemented with protease inhibitors (e.g., PMSF, aprotinin, leupeptin) to isolate nuclei. Pellet nuclei and resuspend in Nuclei Lysis Buffer.

- Chromatin Shearing: Sonicate the chromatin to fragment DNA to an average size of 200–500 bp. This is critical and must be optimized for each cell type and sonicator. Use a Bioruptor or equivalent sonicator with multiple cycles (e.g., 30 seconds ON, 30 seconds OFF for 15–20 cycles).

- Chromatin Quality Control: Reverse crosslinks for a small aliquot of sheared chromatin and purify the DNA. Analyze the fragment size distribution using a Bioanalyzer; a successful shearing should yield a smear centered around 300 bp.

- Immunoprecipitation (IP): Dilute the sheared chromatin 10-fold in IP Dilution Buffer. Add 1–5 µg of a validated, ChIP-grade antibody specific to your histone mark of interest (see Table 2 for examples). Incubate overnight at 4°C with rotation.

- Capture and Washes: Add Protein A/G beads to capture the antibody-chromatin complexes. Wash the beads sequentially with low-salt, high-salt, and LiCl wash buffers, followed by a final TE buffer wash to remove non-specifically bound material.

- Elution and Decrosslinking: Elute the immunoprecipitated complexes from the beads using Elution Buffer. Add 5 M NaCl and incubate at 65°C overnight to reverse the crosslinks.

- DNA Purification: Treat the sample with DNase-free RNase A and Proteinase K. Purify the DNA using a silica membrane-based kit (e.g., QIAquick). The purified DNA is now ready for library preparation.

Library Preparation and Sequencing

Following the ChIP assay, sequencing libraries are constructed from the purified IP DNA and the input control DNA. This process involves end-repair, dA-tailing, adapter ligation, and PCR amplification to create molecules compatible with the sequencing platform [3]. The ENCODE Consortium provides specific sequencing depth standards to ensure sufficient data quality: 20 million usable fragments per replicate for narrow marks and 45 million usable fragments per replicate for broad marks, with H3K9me3 being a notable exception also requiring 45 million reads due to its enrichment in repetitive regions [5].

Computational Analysis and Data Processing

The raw sequencing data (FASTQ files) must be processed through a bioinformatic pipeline to identify regions significantly enriched for the histone mark.

Primary Data Processing

High-quality reads are first mapped to a reference genome (e.g., hg19 or GRCh38) using aligners like Bowtie [1]. It is critical to remove reads that align to "blacklist" regions, which are genomic areas associated with repetitive sequences and artifactual signals [1]. Quality control metrics, such as the Fraction of Reads in Peaks (FRiP) score, library complexity (NRF, PBC1/2), and strand cross-correlation, should be assessed to ensure experimental validity [5].

Peak and Domain Calling Strategies

The choice of algorithm for identifying enriched regions depends heavily on whether the target is a narrow or broad mark.

- Analysis of Narrow Marks: For marks like H3K4me3 and H3K27ac, general-purpose peak callers such as MACS2 are widely used and effective. These tools are designed to detect sharp, focal enrichments against a local background [1] [6].

- Analysis of Broad Marks: The diffuse nature of marks like H3K27me3 and H3K36me3 requires specialized tools. Algorithms like SICER and Rseg aggregate reads across larger genomic windows to improve sensitivity for broad, low-signal domains [6] [7]. More recently, methods like hiddenDomains and PBS (Probability of Being Signal) have been developed that can simultaneously handle both narrow peaks and broad domains, simplifying the analysis of datasets containing mixed signal types [6] [8].

Table 3: Comparison of Peak Calling Algorithms for Histone Modifications

| Algorithm | Primary Strength | Ideal for Mark Type | Key Reference |

|---|---|---|---|

| MACS2 | Sensitive detection of narrow peaks | Narrow (H3K4me3, H3K27ac) | [1] [6] |

| SICER | Identifies broad domains by spatial clustering | Broad (H3K27me3, H3K36me3) | [6] |

| Rseg | Segmentation-based approach for broad marks | Broad (H3K27me3, H3K9me3) | [6] [7] |

| hiddenDomains | Simultaneously calls both peaks and domains | Mixed / Broad | [6] |

| PBS (Probability of Being Signal) | Bin-based method; compares signals across datasets | Both (Especially Broad) | [8] |

Advanced Analysis: Differential Enrichment

Comparing histone modification landscapes between conditions (e.g., disease vs. healthy) requires differential analysis tools. For broad marks, methods like histoneHMM use a bivariate Hidden Markov Model to classify genomic regions as modified in both samples, unmodified in both, or differentially modified, outperforming general-purpose tools in this specific context [7].

Visualization and Data Interpretation

Effective visualization is key to interpreting ChIP-seq data and generating biological insights. Tools like the SeqCode toolkit facilitate the creation of standardized, publication-quality graphics [9].

- Genome Browser Tracks: Visualizing signal (e.g., as BigWig files) in a genome browser allows for the inspection of enrichment patterns at specific genomic loci, confirming the broad or narrow nature of the mark and its relationship to genes and other regulatory elements [5] [9].

- Aggregate Plots (Meta-plots): These plots show the average signal of a histone mark across a defined set of genomic features, such as transcription start sites (TSS) or gene bodies. They are invaluable for confirming expected behaviors—for instance, H3K4me3 sharply peaks at the TSS, while H3K36me3 is enriched across gene bodies [9].

- Heatmaps: Heatmaps display the signal intensity across a set of regions (e.g., all promoters), clustered by similarity. They provide a powerful way to visualize the heterogeneity of histone modification patterns across different genomic elements and to correlate these patterns with gene expression data [9].

The fundamental dichotomy between broad domains and narrow peaks provides an essential framework for the experimental and computational analysis of histone modifications. This classification directly informs critical decisions throughout the ChIP-seq pipeline, from the required sequencing depth and antibody validation to the choice of peak-calling algorithms and visualization strategies. A thorough understanding of these distinct categories, their biological correlates, and their specific technical requirements is indispensable for any researcher aiming to generate and interpret high-quality epigenomic maps. As the field progresses, the development of more sophisticated analytical methods that seamlessly handle both signal types, along with standardized visualization and reporting standards, will further empower scientists to decipher the complex language of histone modifications in health and disease.

Chromatin Immunoprecipitation followed by sequencing (ChIP-seq) is a powerful technique that allows researchers to analyze DNA-protein interactions on a genome-wide scale. In the context of histone research, this method is indispensable for capturing a snapshot of the epigenetic landscape, revealing how post-translational modifications to histones—such as methylation, acetylation, phosphorylation, and ubiquitination—influence gene expression, cell identity, and disease states [10]. The core principle of ChIP-seq involves the cross-linking and immunoprecipitation of chromatin complexes, enabling the selective isolation of DNA regions bound by histones bearing specific modifications. Subsequent high-throughput sequencing of this purified DNA provides a comprehensive map of histone-mark enrichment across the genome [11] [12].

This technical guide details the core ChIP-seq workflow, framed within a broader thesis on basic data processing pipelines for histone research. It is structured to provide researchers, scientists, and drug development professionals with a thorough understanding of both the wet-lab experimental procedures and the foundational bioinformatic principles required to generate and interpret high-quality histone ChIP-seq data.

Core Principles and Experimental Design

A successful ChIP-seq experiment hinges on careful planning and the inclusion of appropriate controls. The primary consideration is the choice of a high-specificity antibody that recognizes the histone modification of interest. Antibodies for ChIP must not only bind their target effectively but also demonstrate minimal cross-reactivity with similar epitopes to avoid misleading results [10]. For example, an antibody intended to pull down H3K9me2 should not significantly recognize H3K9me1 or H3K9me3, as these marks can have opposing effects on gene expression [10].

The inclusion of robust experimental controls is non-negotiable for accurate data interpretation. Essential controls include:

- Input DNA: Chromatin sample taken before immunoprecipitation. This controls for biases in chromatin accessibility and sequencing efficiency [10] [12].

- Mock IP (No-Antibody Control): An immunoprecipitation reaction performed without an antibody. This identifies DNA fragments that bind non-specifically to the beads or other components of the IP system [10].

- Positive and Negative Loci: Known genomic regions that are expected to be enriched or not enriched for the histone mark, respectively. These are used to validate the success and specificity of the ChIP via qPCR [10].

Finally, the experimental design must account for biological replication (isogenic or anisogenic) to ensure the reproducibility of findings and provide an estimate of technical and biological variability. The ENCODE consortium, a leader in setting ChIP-seq standards, recommends a minimum of two biological replicates for reliable results [5].

Step-by-Step Technical Protocol

Stage 1: Cross-Linking and Cell Harvesting

The workflow begins with the stabilization of protein-DNA interactions in live cells using formaldehyde. Formaldehyde is a reversible, zero-length crosslinker that penetrates cells and creates covalent bonds between histones and DNA, as well as between proteins in close complex, thereby preserving in vivo interactions [10] [13].

Detailed Protocol:

- Cross-linking: For cells in culture, add formaldehyde directly to the growth medium to a final concentration of 1%. Incubate for 10 minutes at room temperature or 37°C with gentle agitation [11] [14]. Critical Note: Cross-linking time must be optimized. Under-crosslinking fails to preserve interactions, while over-crosslinking (e.g., 60 minutes) can dramatically increase non-specific background by trapping soluble proteins near open chromatin, leading to false positives [13].

- Quenching: Stop the cross-linking reaction by adding glycine to a final concentration of 125 mM and incubating for 5 minutes at room temperature. Glycine neutralizes the formaldehyde [11].

- Cell Harvesting: Wash the cells twice with ice-cold PBS to remove residual cross-linker. For adherent cells, use a cell scraper to detach them from the flask. Pellet cells by centrifugation (e.g., 1,500 x g for 5 mins at 4°C) [11]. The cell pellet can be frozen at -80°C for storage at this stage [10].

Safety Note: All steps involving formaldehyde should be performed in a fume hood, and waste should be disposed of according to local regulations [11].

Stage 2: Chromatin Isolation and Fragmentation

After cross-linking, the next critical step is to isolate the chromatin and shear the DNA into manageable fragments. This process reduces cytoplasmic background and generates DNA fragments of a size suitable for immunoprecipitation and sequencing.

Detailed Protocol:

- Nuclear Isolation: Resuspend the cell pellet in a nuclear extraction buffer (e.g., 50 mM HEPES-NaOH pH=7.5, 140 mM NaCl, 1 mM EDTA, 10% Glycerol, 0.5% NP-40, 0.25% Triton X-100) supplemented with protease inhibitors. Incubate on ice for 15 minutes with rocking to lyse the cells and isolate nuclei. A second incubation in a different buffer (e.g., 10 mM Tris-HCl pH=8.0, 200 mM NaCl, 1 mM EDTA, 0.5 mM EGTA) helps to remove residual detergents [11].

- Chromatin Fragmentation (Sonication): Pellet the nuclei and resuspend them in an appropriate sonication buffer. The buffer composition may differ for histone versus non-histone targets [11]. Shear the DNA using a sonicator. The goal is to achieve an average fragment size of 150–300 bp for histone targets [11]. Critical Note: Sonication conditions (duration, power, pulse number) are highly dependent on the cell type, sonicator model, and sample volume and must be empirically optimized. Keep samples on ice at all times to prevent heat denaturation, and avoid foaming [10] [14]. After sonication, pellet the cell debris by high-speed centrifugation (e.g., 17,000 x g for 15 mins at 4°C) and retain the supernatant, which contains the sheared chromatin [11].

Alternative Method: Chromatin can also be fragmented using enzymatic digestion with Micrococcal Nuclease (MNase), which is highly reproducible and more amenable to processing multiple samples. However, MNase has a sequence bias and preferentially cleaves internucleosomal regions, which may not provide truly randomized fragments [10].

Stage 3: Immunoprecipitation

This stage involves the specific pulldown of the cross-linked histone-DNA complexes using an antibody against the target histone modification.

Detailed Protocol:

- Bead Preparation: Magnetic beads (Protein A, Protein G, or a 50:50 mix) are washed and blocked with a blocking buffer (e.g., 0.5% BSA in RIPA-150) to prevent non-specific binding. The primary antibody is then bound to the beads by incubating for approximately 6 hours or overnight at 4°C with gentle rotation. The amount of antibody required can vary; a typical starting point is 4 µg for histone targets [11].

- Immunoprecipitation: The prepared sheared chromatin is added to the antibody-bound beads and incubated overnight at 4°C with rotation. This allows the antibody-bead complex to capture the target histone-DNA complexes from the solution [11].

- Washing: The bead-antibody-chromatin complex is subjected to a series of washes with cold buffers of increasing stringency (e.g., RIPA buffer, RIPA with high salt, LiCl buffer, and TE buffer) to remove non-specifically bound chromatin [11] [13].

Stage 4: DNA Purification and Library Preparation

The final wet-lab stages involve the recovery of the purified DNA and preparation of a sequencing library.

Detailed Protocol:

- Cross-link Reversal and DNA Elution: The immunoprecipitated complexes are resuspended in a buffer (e.g., TE + 0.25% SDS) and incubated with Proteinase K overnight at 65°C. This step reverses the formaldehyde cross-links and digests the proteins [13].

- DNA Purification: The DNA is purified from the eluate using a commercial PCR purification kit or phenol-chloroform extraction. The final DNA is eluted in a small volume of water or TE buffer [14].

- Library Preparation and Sequencing: The purified DNA is used to construct a sequencing library, which involves end-repair, adapter ligation, and PCR amplification. The library is then sequenced on an appropriate high-throughput platform. The ENCODE consortium recommends a minimum of 20 million usable fragments per replicate for broad histone marks like H3K27me3 and 45 million for narrow marks like H3K4me3, with read lengths of at least 50 base pairs [5].

Essential Materials and Reagents

The following table summarizes the key reagents and materials required for a successful ChIP-seq experiment.

Table 1: Research Reagent Solutions for ChIP-seq

| Reagent/Material | Function/Description | Key Considerations |

|---|---|---|

| Formaldehyde | Cross-linking agent to covalently stabilize protein-DNA interactions. | Concentration (typically 1%) and incubation time (typically 10 min) are critical and must be optimized. Handle in a fume hood [11] [13]. |

| ChIP-grade Antibody | Binds specifically to the histone modification of interest for immunoprecipitation. | Specificity is paramount. Prefer antibodies validated for ChIP. Polyclonal or oligoclonal antibodies may recognize multiple epitopes [10]. |

| Protein A/G Magnetic Beads | Solid-phase matrix for binding antibody-target complexes. | Magnetic beads facilitate easy washing and buffer changes. A 50:50 mix of Protein A and G ensures broad antibody species coverage [11]. |

| Sonication Equipment | Instrument to shear chromatin into fragments of desired size (150-300 bp for histones). | Requires extensive optimization for each cell type and model. Alternative: Micrococcal Nuclease (MNase) for enzymatic digestion [11] [10]. |

| Protease Inhibitors | Added to all buffers to prevent proteolytic degradation of target proteins and complexes. | Essential for maintaining complex integrity during cell lysis and chromatin preparation [11] [10]. |

| Lysis & Wash Buffers | Series of buffers for cell lysis, nuclear isolation, and stringent washing of immunoprecipitates. | Typically contain detergents (SDS, Triton X-100), salts, and buffering agents. Recipes are target-specific [11] [14]. |

ChIP-seq Data Analysis Workflow

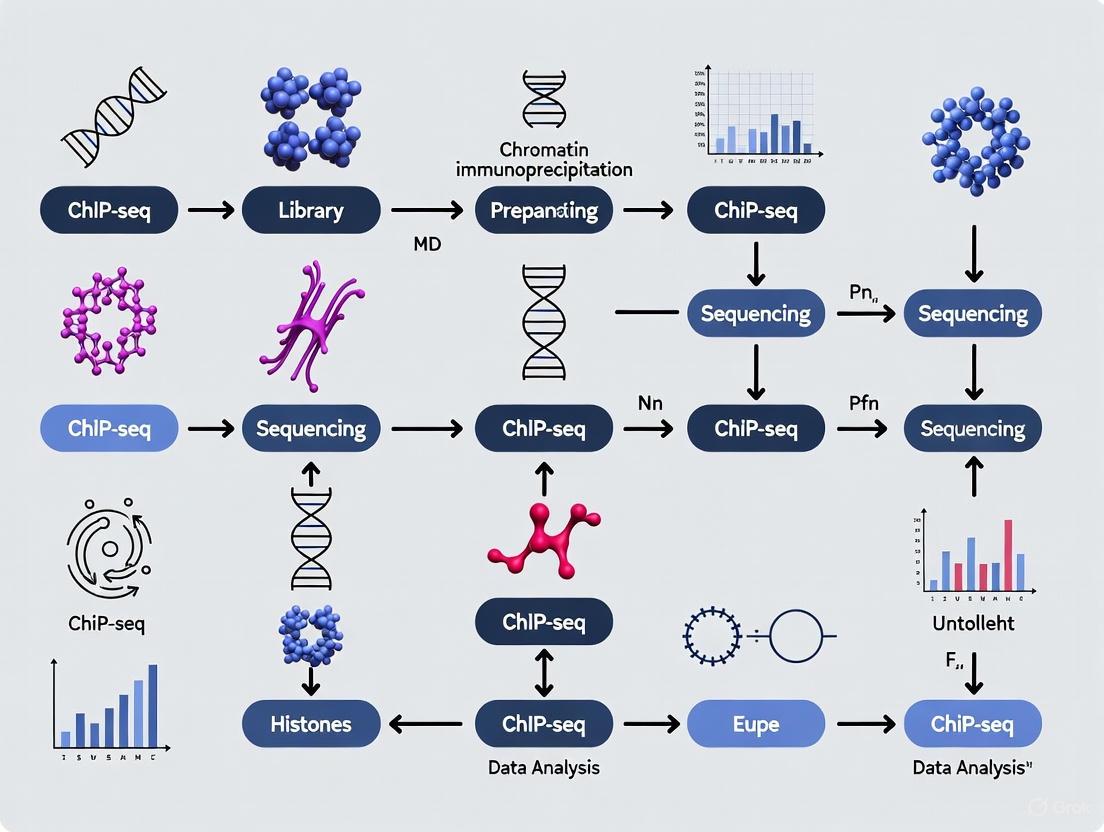

The computational analysis of ChIP-seq data transforms raw sequencing reads into interpretable maps of histone enrichment. The ENCODE consortium and other groups have developed standardized pipelines for this purpose [5] [12]. The following diagram illustrates the key steps in the ChIP-seq data analysis workflow for histone marks.

Diagram 1: ChIP-seq Data Analysis Workflow

From Raw Data to Alignment

- Quality Assessment and Read Trimming: Raw sequencing data in FASTQ format is first assessed for quality using tools like FastQC. Adapter sequences and low-quality bases are trimmed to ensure clean data for alignment [15] [12].

- Alignment to Reference Genome: The trimmed reads are mapped to a reference genome (e.g., GRCh38, mm10) using aligners such as Bowtie2, BWA, or STAR. The output is a BAM file containing the genomic coordinates of each read [12]. A key quality control metric is the proportion of uniquely mapped reads, with a ratio over 50% generally indicating good library quality [12].

- Post-Alignment Processing: This includes filtering to retain only uniquely mapped reads, removing PCR duplicates to mitigate amplification bias, and calculating quality metrics like the Non-Redundant Fraction (NRF) and PCR Bottlenecking Coefficients (PBC1 & PBC2). Preferred values are NRF > 0.9 and PBC1 > 0.9 [5].

Peak Calling and Advanced Analysis

- Peak Calling: This critical step identifies genomic regions with significant enrichment of ChIP-seq signals compared to a background model (input DNA control) [12]. For histone marks, which can exhibit either narrow (punctate) or broad (diffuse) enrichment patterns, specialized peak callers are used. The ENCODE histone pipeline generates signal tracks (e.g., bigWig files showing fold-change over control) and calls peaks (BED files) [5]. For broad marks like H3K27me3 that are challenging for standard peak callers, alternative methods like bin-based approaches (e.g., Probability of Being Signal - PBS) can be more effective [16].

- Downstream Analysis and Integration: The final stage involves biological interpretation. This can include:

- Annotation: Associating peaks with genomic features like promoters, enhancers, or gene bodies.

- Motif Analysis: Discovering enriched DNA sequences within peaks.

- Integration: Correlating histone modification patterns with other data types, such as gene expression (RNA-seq) to link marks to transcriptional output, or with genetic variants from GWAS to provide functional context for disease-associated SNPs [4] [12] [16].

Table 2: Common Tools for ChIP-seq Data Analysis

| Analysis Step | Tool Examples | Primary Function |

|---|---|---|

| Read Mapping | Bowtie2, BWA, STAR | Aligns sequencing reads to a reference genome. |

| Peak Calling | MACS2, SICER, Homer | Identifies statistically significant regions of enrichment. |

| Quality Control | FastQC, ChIPQC, PICARD | Assesses read quality, mapping efficiency, and library complexity. |

| Signal Visualization | IGV, UCSC Genome Browser | Visualizes alignment and enrichment tracks across the genome. |

| Advanced Analysis | Cistrome, ChIPseeker | Integrative platforms for peak annotation, comparison, and enrichment analysis. |

The ChIP-seq workflow, from cross-linking to sequencing and data analysis, is a complex but robust methodology that provides unparalleled insight into the epigenomic landscape. A successful experiment depends on the meticulous execution of each wet-lab step—particularly cross-linking, sonication, and immunoprecipitation—coupled with rigorous bioinformatic analysis that accounts for the unique characteristics of histone modifications. By adhering to established standards and controls, and by thoughtfully integrating ChIP-seq data with other genomic datasets, researchers can leverage this powerful technique to uncover the fundamental mechanisms of gene regulation in development, health, and disease.

Chromatin Immunoprecipitation followed by sequencing (ChIP-seq) has become the cornerstone method for genome-wide profiling of protein-DNA interactions and epigenetic marks. While the initial wet-lab procedures for histone modifications and transcription factor (TF) binding studies share fundamental similarities, their analytical pathways diverge significantly to address their distinct biological characteristics. Histone modifications often manifest as broad domains of enrichment across the genome, while transcription factor binding sites typically present as punctate, localized peaks. This fundamental difference necessitates specialized bioinformatic approaches for accurate signal detection and interpretation. The ENCODE Consortium has formally acknowledged this distinction by developing and maintaining two separate processing pipelines for these data types [5] [17]. This guide provides an in-depth technical comparison of these analytical methodologies, framed within the context of a standard ChIP-seq data processing pipeline for histone research, to equip researchers with the knowledge to select and implement the appropriate analysis strategy for their experimental goals.

Core Computational Pipelines and Peak Calling

Mapping and Initial Signal Processing

Both histone and transcription factor ChIP-seq pipelines commence with a shared initial workflow for processing raw sequencing data into aligned genomic signals. This process begins with FASTQ files containing the raw sequence reads. These reads are quality-checked and aligned to a reference genome (e.g., GRCh38 or mm10) to produce a BAM file containing the mapped reads [5] [17]. A critical step in both pipelines is the generation of signal tracks, which provide a nucleotide-resolution visualization of enrichment. These are typically stored as bigWig files and represent two key statistical transformations: the fold-change over control (often an input DNA sample) and the signal p-value, which tests the null hypothesis that the observed signal could originate from the control sample [5] [17]. Despite this shared starting point, the subsequent analytical steps diverge dramatically to accommodate the different spatial distributions of the biological signals.

Peak Calling for Transcription Factors

The analysis of transcription factor ChIP-seq data focuses on identifying precise, punctate binding sites. The ENCODE pipeline for TFs utilizes the Irreproducible Discovery Rate (IDR) framework to assess reproducibility between biological replicates, which is a cornerstone of a robust TF analysis [17]. This method ranks binding events from replicates and identifies those that are consistent across replicates, effectively filtering out irreproducible peaks. The pipeline outputs three sets of peaks to cater to different analytical needs:

- Conservative IDR Peaks: A high-confidence set of peaks derived from IDR analysis.

- Optimal IDR Peaks: The largest set of peaks from IDR analysis that maintains reproducibility.

- Relaxed Peaks: Broader peak calls from individual replicates or pooled reads, which contain more false positives and are intended for input into the IDR statistical comparison rather than direct biological interpretation [17].

Peak Calling for Histone Modifications

In contrast, the histone ChIP-seq pipeline is engineered to capture both punctate and broad chromatin domains. It employs a different strategy for handling replicates, relying on a "naive overlap" method [5]. The pipeline generates:

- Relaxed Peak Calls: Initial, permissive peak calls from individual replicates and pooled reads.

- Replicated Peaks: The final set of peaks, which are those from the pooled set that are either observed in both true biological replicates or in two pseudoreplicates (random partitions of the pooled data) [5].

This approach is more suitable for the extended regions of enrichment typical of many histone marks. Furthermore, specialized tools or analytical strategies are often required for challenging broad marks like H3K27me3, which can evade detection by standard peak callers. One such method is the Probability of Being Signal (PBS), a bin-based approach that divides the genome into non-overlapping 5 kB bins, fits a gamma distribution to the background, and calculates a probability (0 to 1) for each bin containing true signal. This method is particularly effective for identifying broad, low-enrichment regions and facilitates comparison across multiple datasets [16].

Table 1: Comparison of Peak Calling and Replicate Analysis

| Feature | Transcription Factor ChIP-seq | Histone Modification ChIP-seq |

|---|---|---|

| Primary Peak Type | Punctate (narrow) [5] | Broad or mixed (broad & narrow) [5] |

| Replicate Analysis Method | Irreproducible Discovery Rate (IDR) [17] | Naive overlap and pseudoreplicates [5] |

| Key Outputs | Conservative & Optimal IDR peaks [17] | Replicated peaks from pooled reads [5] |

| Handling of Broad Domains | Not optimal; pipeline is designed for punctate binding | Specialized for long chromatin domains [5] |

| Alternative Methods | - | Bin-based methods (e.g., PBS) for challenging broad marks [16] |

Experimental Design and Quality Control

Sequencing Depth and Library Complexity

A critical factor in experimental design is determining the required sequencing depth, which varies significantly between the two ChIP-seq types due to the different genomic coverage of their targets.

- Transcription Factors: The ENCODE standard recommends 20 million usable fragments per biological replicate to adequately cover punctate binding sites [17].

- Histone Modifications: The required depth depends on whether the mark is narrow or broad.

- Narrow marks (e.g., H3K4me3, H3K27ac): Require 20 million usable fragments per replicate [5].

- Broad marks (e.g., H3K27me3, H3K36me3): Require 45 million usable fragments per replicate to sufficiently cover the extended domains [5]. An exception is H3K9me3, which is enriched in repetitive regions; tissues and primary cells profiling this mark should target 45 million total mapped reads per replicate [5].

Both pipeline types rigorously assess library complexity using the same set of metrics to ensure the library is not overly dominated by PCR duplicates. The preferred values are a Non-Redundant Fraction (NRF) > 0.9, PBC1 > 0.9, and PBC2 > 10 [5] [17]. Another key quality metric is the Fraction of Reads in Peaks (FRiP), which should generally be greater than 1% for a successful experiment [18].

Control Samples and Antibody Validation

The use of appropriate control samples is paramount for accurate background correction and peak calling. The most common control is a Whole Cell Extract (WCE) or "input" DNA, which is sheared chromatin taken prior to immunoprecipitation [19]. A mock immunoprecipitation with a non-specific antibody like IgG is also used. For histone modification studies specifically, an alternative control is a Histone H3 (H3) pull-down, which maps the underlying distribution of nucleosomes. Research has shown that while an H3 control is generally more similar to the histone mark ChIP-seq signal, the differences between H3 and WCE controls have a negligible impact on the quality of a standard analysis [19].

Antibody specificity is a cornerstone of any ChIP-seq experiment. The ENCODE consortium mandates rigorous antibody characterization. For transcription factors, this includes primary characterization via immunoblot or immunofluorescence, followed by secondary validation through methods like factor knockdown, independent ChIP experiments, or binding site motif analyses. For histone modifications, validation includes peptide binding tests or immunoreactivity analysis in cell lines with relevant enzyme knockdowns [18].

Table 2: Experimental Standards and QC Metrics

| Parameter | Transcription Factor ChIP-seq | Histone Modification ChIP-seq |

|---|---|---|

| Recommended Sequencing Depth | 20 million usable fragments/replicate [17] | Narrow marks: 20M, Broad marks: 45M fragments/replicate [5] |

| Replicate Concordance Metric | Irreproducible Discovery Rate (IDR) [17] | Overlap of peaks from replicates or pseudoreplicates [5] |

| Key QC Metrics | NRF > 0.9, PBC1 > 0.9, PBC2 > 10, FRiP > 1% [17] [18] | NRF > 0.9, PBC1 > 0.9, PBC2 > 10, FRiP > 1% [5] [18] |

| Common Control Samples | Input DNA (WCE) or IgG [19] [17] | Input DNA (WCE), IgG, or Histone H3 pull-down [19] |

| Antibody Validation | Immunoblot, knockdown, motif analysis [18] | Peptide binding, immunoblot, analysis in mutant lines [18] |

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful execution and interpretation of a ChIP-seq experiment rely on a suite of critical reagents and materials.

- Specific Antibodies: The core of the assay. Must be rigorously validated for specificity for the target transcription factor or histone modification [18].

- Control Samples:

- Whole Cell Extract (WCE/Input): Sheared chromatin prior to IP; controls for technical biases [19] [17].

- IgG Control: A mock IP with non-specific immunoglobulin; controls for non-specific antibody binding [19].

- Histone H3 Control: Specific to histone mark studies; controls for underlying nucleosome occupancy [19].

- Chromatin Shearing Reagents & Equipment: For standard ChIP-seq, this involves formaldehyde for cross-linking and sonication devices (e.g., Covaris sonicator) for DNA fragmentation [19] [20]. Native protocols use micrococcal nuclease (MNase) for digestion [20].

- Magnetic Beads (Protein A/G): Used to immunoprecipitate the antibody-target protein-DNA complex [19] [20].

- Library Preparation Kits: Kits tailored to the specific method (ChIP-seq, CUT&RUN, CUT&Tag) and sequencing platform (e.g., Illumina) [19] [21].

- Spike-in Controls: Synthetic DNA or chromatin added to the sample before immunoprecipitation; used for normalization between samples, especially when comparing different conditions or cell types [18].

Advanced and Emerging Methodologies

The field of chromatin profiling continues to evolve, with new methods addressing limitations of traditional ChIP-seq.

CUT&RUN (Cleavage Under Targets and Release Using Nuclease) and CUT&Tag (Cleavage Under Targets and Tagmentation) are two prominent techniques. These are performed in situ under native chromatin conditions, eliminating the need for cross-linking and extensive fragmentation. They use a target-specific antibody to recruit pA-MNase (CUT&RUN) or pA-Tn5 transposase (CUT&Tag) to the target site, where the enzyme then cleaves or tagments the DNA, respectively [21]. The key advantages of these methods are a dramatic reduction in required cell number (as low as 10³ for CUT&RUN and 10⁴ for CUT&Tag), a significantly streamlined workflow (1-2 days), and an extremely high signal-to-noise ratio with low background [21]. CUT&Tag is particularly well-suited for profiling histone modifications, while CUT&RUN may offer more stable performance for certain transcription factors [21].

Other advanced ChIP-seq variants include:

- ChIP-exo: Uses an exonuclease to trim DNA bound to the protein of interest, yielding single-base-pair precision in mapping binding sites and a vastly improved signal-to-noise ratio [18].

- Indexing-first ChIP (iChIP): Uses a barcoding strategy to index chromatin fragments before immunoprecipitation, enabling multiplexing of samples and reducing variability, which is valuable for studying rare cell populations [20].

The choice between a histone-focused and a transcription factor-focused ChIP-seq analysis pipeline is dictated by the fundamental nature of the protein-DNA interaction under investigation. Transcription factors, with their punctate binding, demand a rigorous statistical framework like IDR to identify discrete, reproducible binding events. In contrast, histone modifications, which can form broad, diffuse domains across the chromatin, require an analytical strategy capable of capturing these extended regions, such as overlap-based replication checks or bin-based probabilistic methods. These analytical paths, supported by distinct experimental standards for sequencing depth and replication, ensure the accurate interpretation of the complex language of chromatin regulation. As the field advances, methodologies like CUT&RUN and CUT&Tag offer powerful alternatives, particularly for limited samples, but the core analytical principles distinguishing the analysis of punctate binding from broad domains remain foundational.

Chromatin Immunoprecipitation followed by sequencing (ChIP-seq) has become a cornerstone technique for mapping the genomic locations of histone modifications, transcription factors, and other DNA-associated proteins. A well-designed ChIP-seq experiment is the critical foundation upon which all subsequent data analysis rests. Within the context of a basic ChIP-seq data processing pipeline for histone research, flaws in experimental design can introduce biases and artifacts that are impossible to fully correct computationally. This guide details the three essential pillars of experimental design—sequencing depth, replicates, and controls—to ensure the generation of biologically meaningful and statistically robust data.

Determining Sequencing Depth for Histone Marks

A key consideration in experimental design is the minimum number of sequenced reads required to obtain statistically significant results. Insufficient depth leads to failure in detecting genuine enrichment regions, while excessive sequencing is cost-ineffective. The required depth is not a fixed number but depends heavily on the nature of the histone mark and the genome size.

The Impact of Depth on Saturation

In a ChIP-seq experiment, the number of detected enriched regions increases with sequencing depth but eventually plateaus. The point of sufficient sequencing depth is defined as the number of reads at which detected enrichment regions increase by less than 1% for an additional million reads [22]. Research on deep-sequenced datasets in human and fly has shown that:

- For the fly genome, sufficient depth is often reached at under 20 million reads for many marks [22].

- For the more complex human genome, analysis suggests 40–50 million reads as a practical minimum for most broad marks, with some datasets showing no clear saturation point even at high depths [22].

Depth Recommendations by Mark Type

The ENCODE consortium, a leading authority in the field, provides specific guidelines for sequencing depth, differentiating between mark types and accounting for special cases [5]. The following table summarizes these key recommendations:

Table 1: Recommended Sequencing Depth for Histone ChIP-seq Experiments

| Histone Mark Type | Examples | Recommended Depth (per replicate) | Notes |

|---|---|---|---|

| Broad Marks | H3K27me3, H3K36me3, H3K4me1, H3K9me2 | 45 million usable fragments | Usable fragments are uniquely mapped, non-duplicate reads [5]. |

| Narrow Marks | H3K4me3, H3K27ac, H3K9ac | 20 million usable fragments | Point-source factors like some transcription factors also fall in this category [5]. |

| Exception (H3K9me3) | H3K9me3 | 45 million total mapped reads | Enriched in repetitive regions; standard "usable fragments" metric is relaxed to "total mapped reads" to account for multi-mapping reads [5] [23]. |

Additional Design Factors

- Genome Size and Complexity: The required depth scales with genome size, but the relationship is not linear; it depends on the total genomic coverage of the specific mark [22].

- Peak-Calling Algorithm: The five algorithms tested do not agree well for broad enrichment profiles, especially at lower depths. Ensuring sufficient depth and selecting an appropriate algorithm are both essential for robust conclusions [22].

- Paired-End vs. Single-End Sequencing: While single-end sequencing may be sufficient for point-source factors, investigating broader occupancy patterns benefits from paired-end data, as it provides a direct measure of fragment size and improves mapping confidence [24].

The Imperative of Replicates and Controls

Biological Replication

Biological replicates are independent biological samples (e.g., different cell cultures) that capture random biological variation. The ENCODE consortium mandates two or more biological replicates for all ChIP-seq experiments to ensure findings are reproducible and not attributable to random chance or unique conditions in a single sample [5] [25]. Some experts suggest that three is a minimum for rigorous statistical analysis of occupancy patterns between different conditions, and if small differences in occupancy are expected, increasing the number of replicates provides more statistical power than simply sequencing deeper [24].

Input Controls

Control experiments are crucial for distinguishing true enrichment from experimental artifacts and background noise. The most common and recommended control is the input chromatin, which consists of sonicated, non-immunoprecipitated DNA sequenced to characterize the background signal from the native chromatin [25] [24].

- Purpose: Input DNA controls for variations in chromatin accessibility, sequencing bias, and genome-wide DNA accessibility and fragmentation [25].

- Experimental Design: The input control must be derived from the same cell type or tissue as the ChIP experiment. Furthermore, each biological replicate of a ChIP experiment should have its own matching input control that is processed and sequenced separately; pooling of inputs is not recommended [24].

- Sequencing Depth: The input control should be sequenced to at least the same depth as the ChIP samples to ensure the background signal is characterized with sufficient precision to model local fluctuations [24].

Detailed Methodologies and Protocols

Antibody Validation and Immunoprecipitation

The quality of a ChIP experiment is governed by the specificity of the antibody. The ENCODE consortium employs a rigorous, two-test system for antibody characterization [25].

- Primary Characterization (for transcription factors): This is typically an immunoblot analysis on protein lysates. The guideline states that the primary reactive band should contain at least 50% of the signal observed on the blot and ideally correspond to the expected size of the protein. If immunoblot fails, immunofluorescence demonstrating the expected nuclear staining pattern can serve as an alternative primary test [25].

- Secondary Characterization: A successful ChIP experiment itself, confirmed by an independent method such as comparison to published data or qPCR on known target sites, serves as the secondary validation [25].

- Reporting: All antibody characterization data, including the source, catalog number, and lot number, must be thoroughly reported to allow users of the data to judge its quality [25].

Library Preparation and Quality Control

After immunoprecipitation, the enriched DNA is prepared into a sequencing library. Key considerations and quality checks include:

- Library Complexity: This measures the uniqueness of the sequenced DNA fragments and is critical for determining if sufficient starting material was used. The ENCODE consortium uses the Non-Redundant Fraction (NRF) and PCR Bottlenecking Coefficients (PBC1 and PBC2). Preferred values are NRF > 0.9, PBC1 > 0.9, and PBC2 > 10 [5]. Low complexity indicates over-amplification or insufficient starting material, which can severely limit peak detection.

- Cross-Correlation Analysis: This quality metric, developed by the ENCODE consortium, helps assess the quality of a ChIP-seq experiment by calculating the correlation between reads on the forward and reverse strands. A strong signal with a clear peak at the fragment length is indicative of a high-quality experiment [25].

- FRiP Score: The Fraction of Reads in Peaks (FRiP) is the proportion of all mapped reads that fall into identified peak regions. It is a straightforward measure of enrichment and signal-to-noise ratio. While there is no universal threshold, a higher FRiP score (e.g., >1%) generally indicates a more successful IP [5].

Table 2: The Scientist's Toolkit - Essential Research Reagents and Materials

| Item | Function / Explanation |

|---|---|

| Specific Antibody | Binds to the target protein or histone modification for immunoprecipitation; requires rigorous validation for specificity [25]. |

| Input Chromatin DNA | Sonicated, non-immunoprecipitated DNA used as a control to account for background noise and technical biases [24]. |

| Formaldehyde | A cross-linking agent that covalently binds proteins to DNA in living cells, preserving in vivo interactions [25]. |

| Protein A/G Beads | Used to bind the antibody and facilitate the pulldown of the antibody-target complex. |

| Unique Molecular Identifiers (UMIs) | Short nucleotide barcodes ligated to chromatin fragments before IP; enable accurate deduplication and quantification in multiplexed protocols [26]. |

| Spike-in Chromatin | A foreign chromatin (e.g., from D. melanogaster) added in known quantities to the sample; allows for normalization and quantitative comparisons between samples [26]. |

Workflow Visualization

The following diagram illustrates the key decision points and components in a ChIP-seq experimental design, integrating the concepts of replicates, controls, and depth.

A meticulously planned ChIP-seq experiment is a non-negotiable prerequisite for generating high-quality data that can yield biologically valid insights, especially within a pipeline designed for histone research. Adherence to the core principles outlined in this guide—employing an adequate number of biological replicates, using properly sequenced input controls, and selecting a sequencing depth appropriate for the specific histone mark—will significantly enhance the robustness, reproducibility, and interpretability of your research outcomes. As sequencing technologies and analytical methods continue to evolve, these foundational elements of experimental design will remain paramount.

The Encyclopedia of DNA Elements (ENCODE) and Cistrome represent two pivotal resources in the field of functional genomics, providing comprehensive reference maps of functional elements in animal and human genomes. These consortium-driven projects have dramatically accelerated research in gene regulation, epigenetics, and disease mechanisms by providing standardized, high-quality data and analysis tools to the scientific community.

ENCODE is a landmark international research project that aims to comprehensively identify functional elements in the human and mouse genomes. These elements include genes, transcriptional regulatory regions, and chromatin structural elements. A core strength of ENCODE lies in its rigorous data standards and uniform processing pipelines, which ensure consistency and reproducibility across thousands of datasets [17] [5] [27]. The project provides extensive ChIP-seq data for transcription factors, histone modifications, and chromatin-associated proteins across diverse cell types and biological conditions.

Cistrome is an integrated platform that collects, processes, and analyzes publicly available ChIP-seq, DNase-seq, and ATAC-seq data from multiple sources, including GEO, ENCODE, and the Roadmap Epigenomics Project [28]. The Cistrome Data Browser currently hosts approximately 47,000 human and mouse samples, nearly double its previous release, making it one of the most comprehensive resources for cis-regulatory information [28]. Unlike ENCODE, which generates primary data, Cistrome focuses on reprocessing public data with uniform analytical pipelines and providing user-friendly tools for data exploration and interpretation.

Table 1: Core Features of ENCODE and Cistrome Resources

| Feature | ENCODE | Cistrome |

|---|---|---|

| Primary Focus | Generate high-quality reference data | Reprocess and integrate public data |

| Data Types | ChIP-seq, RNA-seq, ATAC-seq, DNase-seq | ChIP-seq, DNase-seq, ATAC-seq |

| Species Covered | Human, Mouse | Human, Mouse |

| Sample Count | ~31 TB total data volume [27] | ~47,000 samples [28] |

| Key Innovations | Uniform processing pipelines, rigorous standards | Quality control metrics, toolkit functions |

| Data Access | UCSC genome browser, dedicated portal [27] | Cistrome DB, toolkit interfaces [28] |

Integration with Histone ChIP-seq Research

For researchers investigating histone modifications, both ENCODE and Cistrome provide essential resources for experimental design, data analysis, and interpretation. Histone ChIP-seq represents a central method in epigenomic research, enabling genome-wide analysis of histone modifications that determine chromatin state and function [4]. These modifications serve as critical regulators of gene expression patterns during development, differentiation, and disease progression.

The analytical challenges specific to histone ChIP-seq differ significantly from transcription factor ChIP-seq. Histone marks often exhibit broad domains of enrichment (e.g., H3K27me3) that evade detection by peak callers optimized for punctate transcription factor binding sites [16] [5]. Furthermore, comparing signal across multiple histone modification profiles is complicated by shifting nucleosome positions and normalization artifacts resulting from differing read depths, ChIP efficiencies, and target sizes [16]. ENCODE and Cistrome address these challenges through specialized processing pipelines and analytical approaches.

ENCODE's histone pipeline is specifically optimized for proteins that associate with DNA over longer regions or domains, employing different statistical treatments and peak-calling approaches compared to their transcription factor pipeline [5]. The consortium has established distinct sequencing depth requirements for different histone mark categories: narrow marks (e.g., H3K4me3, H3K27ac) require 20 million usable fragments per replicate, while broad marks (e.g., H3K27me3, H3K36me3) require 45 million usable fragments [5].

Cistrome's reprocessing approach ensures consistent analysis of histone modification data across diverse sources using the ChiLin pipeline, which maps reads to reference genomes and identifies statistically significant peaks [28]. The platform provides quality control metrics specifically relevant to histone marks, including genomic distribution characteristics that help researchers identify potentially problematic datasets.

Data Processing Pipelines and Standards

ENCODE Processing Pipelines

ENCODE has established distinct uniform processing pipelines for transcription factor and histone ChIP-seq data, sharing mapping steps but differing in peak calling and statistical treatment of replicates [17] [5].

The histone ChIP-seq pipeline generates two primary types of output: signal tracks and peak calls. Signal tracks are provided in bigWig format, representing fold change over control and signal p-value at nucleotide resolution [5]. Peak calls are provided in BED format (broadPeak for histone marks), which includes genomic coordinates and statistical measures of enrichment [5]. For replicated experiments, the pipeline generates a conservative set of peaks observed in both replicates or in pseudoreplicates derived from pooled reads [5].

ENCODE's quality control framework includes multiple metrics to assess data quality. Library complexity is measured using the Non-Redundant Fraction (NRF > 0.9) and PCR Bottlenecking Coefficients (PBC1 > 0.9, PBC2 > 10) [5]. The Fraction of Reads in Peaks (FRiP) provides a measure of enrichment efficiency, though specific thresholds vary by target type [5]. Experimental guidelines mandate at least two biological replicates, antibody validation, and matched input controls [5].

Cistrome Processing and Quality Control

Cistrome employs a uniform reprocessing strategy using the ChiLin pipeline, which uses BWA for read alignment to hg38 or mm10 genomes and MACS2 for peak calling [28]. This approach mitigates the inconsistencies that arise when combining data analyzed with different algorithms and parameters.

The platform provides six quality control metrics that address different aspects of data quality [28]:

- Read quality: Based on median FASTQ read quality

- Mapping quality: Percentage of reads mapping to unique genomic loci

- PCR bottleneck coefficient (PBC): Estimates read duplication through PCR amplification

- FRiP score: Fraction of non-mitochondrial reads in peak regions

- Peaks with 10-fold enrichment: Number of peaks with significant enrichment

- Union DHS overlap: Percentage of peaks overlapping DNase hypersensitive sites

These metrics are visualized with intuitive color coding (green for high quality, red for lower quality), enabling researchers to quickly assess dataset suitability for their specific applications [28].

Diagram 1: Histone ChIP-seq workflow showing integration points with ENCODE and Cistrome resources

Analytical Tools and Toolkit Functions

Cistrome Toolkit Capabilities

The Cistrome DB Toolkit provides three powerful functions that enable researchers to extract biological insights from integrated ChIP-seq data [28]:

Gene-centric queries ("What factors regulate your gene of interest?"): This function identifies transcription factors likely to regulate a specific gene based on regulatory potential scores that weigh the influence of binding sites by their distance to the transcription start site. The tool calculates short (1 kb), mid-range (10 kb), and long-range (100 kb) influence scores, enabling researchers to identify potential regulators based on high-confidence peaks with 5-fold enrichment over background [28].

Interval-based queries ("What factors bind in your interval?"): This functionality allows researchers to identify transcription factors, histone modifications, or chromatin accessibility features present in any genomic interval up to 2 Mb. This is particularly valuable for interpreting non-coding regions identified through GWAS or other genomic approaches.

Cistrome similarity searches ("What factors have significant binding overlap with your peak set?"): Using the GIGGLE algorithm, this tool identifies ChIP-seq samples with significant overlap with user-provided peak sets, enabling comparison with existing data and hypothesis generation about co-regulatory factors [28].

CistromeGO for Functional Enrichment Analysis

CistromeGO is a specialized webserver that performs functional enrichment analysis of transcription factor ChIP-seq peaks [29]. It employs two working modes:

- Solo mode: Uses only ChIP-seq peak data to calculate regulatory potential (RP) scores for genes, weighting peaks by their distance from transcription start sites.

- Ensemble mode: Integrates ChIP-seq peaks with differential expression data from TF perturbation experiments to distinguish direct targets from secondary effects [29].

A key innovation in CistromeGO is its automatic classification of transcription factors as promoter-dominant (e.g., MYC) or enhancer-dominant (e.g., AR, ESR1) based on the distribution of their binding sites relative to promoters [29]. This classification determines the distance parameters used in regulatory potential calculations, with promoter-dominant TFs using a 1 kb half-decay distance and enhancer-dominant TFs using a 10 kb half-decay distance by default [29].

Advanced Analytical Approaches

For histone modification data, advanced analytical methods have been developed to address specific challenges. The Probability of Being Signal (PBS) method uses a bin-based approach to identify enriched regions in ChIP-seq data by dividing the genome into non-overlapping 5 kB bins and estimating a global background distribution [16]. This approach is particularly effective for broad histone marks like H3K27me3 that often evade detection by conventional peak callers [16].

The PBS method transforms data into universally normalized values between 0 and 1, representing the probability that a bin contains true signal [16]. This facilitates comparison across datasets and integration with other data types, such as GWAS SNPs, providing biological context for interpretation of histone modification patterns.

Table 2: Analytical Tools for Histone ChIP-seq Data Interpretation

| Tool/Platform | Primary Function | Key Features | Use Cases |

|---|---|---|---|

| Cistrome DB Toolkit | Query and compare regulatory data | GIGGLE search algorithm, regulatory potential scores | Identify regulators of genes, find factors in genomic intervals |

| CistromeGO | Functional enrichment analysis | TF type classification, ensemble mode with expression data | Identify biological processes from ChIP-seq peaks |

| PBS Method | Signal detection for broad marks | Bin-based approach, global background estimation | Analyze H3K27me3 and other broad histone marks |

| H3NGST | Automated pipeline | End-to-end analysis, mobile accessibility | Rapid analysis without bioinformatics expertise [30] |

Practical Applications and Research Protocols

Accessing and Utilizing ENCODE Data

Researchers can access ENCODE data through multiple pathways. The UCSC Genome Browser provides visualization tracks for most ENCODE data, which can be located using the Track Search tool with GEO sample accession numbers (GSM) [27]. For analytical use, files can be downloaded in formats such as BED (peak calls) and bigWig (signal tracks) through the ENCODE portal or directly via rsync protocols [27].

When interpreting ENCODE histone data, it is important to understand that ChIP-seq files are typically stored in ENCODE narrowPeak or broadPeak formats, which extend BED6 to include fields for signalValue, pValue, qValue, and point source information [27]. The "score" field in ENCODE tables (0-1000) determines display intensity in browsers and is proportional to maximum signal strength across cell lines [27].

ENCODE and Cistrome provide valuable guidance for designing histone ChIP-seq experiments. Based on consortium recommendations:

- Biological replicates: At least two biological replicates are required for robust results [5]

- Antibody validation: Antibodies must be characterized according to ENCODE standards [5]

- Sequencing depth: 20 million usable fragments per replicate for narrow histone marks, 45 million for broad marks [5]

- Controls: Input DNA controls with matching characteristics are essential [5]

For researchers analyzing their own data, H3NGST provides a fully automated, web-based platform that performs end-to-end ChIP-seq analysis without requiring bioinformatics expertise [30]. Users need only provide a BioProject ID, and the system automatically retrieves data, performs quality control, alignment, peak calling, and annotation using established tools like BWA-MEM and HOMER [30].

Integration with Genomic Annotations

A critical application of ENCODE and Cistrome data is the functional annotation of genomic regions identified through other approaches. For example, bQTL mapping (binding quantitative trait loci) integrates chromatin footprinting data with genetic variation to identify variants that affect transcription factor binding [31]. In maize, this approach demonstrated that genetic variation at transcription factor binding sites captures the majority of heritable trait variation across 72% of 143 phenotypes [31], highlighting the power of integrating functional genomic data with genetic studies.

Similarly, histone modification data from these resources can help prioritize non-coding variants identified through GWAS by determining whether they fall within regulatory regions marked by specific histone modifications in relevant cell types.

Table 3: Key Research Reagent Solutions for Histone ChIP-seq Studies

| Resource Type | Specific Examples | Function & Application |

|---|---|---|

| Reference Datasets | ENCODE histone modification tracks, Cistrome processed samples | Experimental design, data validation, comparative analysis |

| Quality Control Tools | ChiLin pipeline metrics, ENCODE standards | Assessing data quality, identifying potential issues |

| Analysis Pipelines | ENCODE uniform processing pipelines, H3NGST | Standardized data processing, reproducible analysis |

| Antibody Validation | ENCODE antibody characterization standards | Ensuring specificity in histone modification detection |

| Genome Browsers | UCSC Genome Browser with ENCODE tracks, WashU Epigenome Browser | Data visualization, integration with annotations |

| Functional Annotation | CistromeGO, Regulatory Potential scores | Connecting binding sites to gene regulation and function |

| Motif Analysis | HOMER motif enrichment, Cistrome motif scanning | Identifying enriched sequence patterns in binding sites |

| Data Retrieval Tools | SRA prefetch, fasterq-dump, rsync with UDR protocol | Efficient access to public sequencing data |

ENCODE and Cistrome have transformed the landscape of epigenomic research by providing comprehensive, standardized resources for interpreting histone modifications and regulatory elements. Their rigorous data standards, uniform processing pipelines, and intuitive toolkits enable researchers to contextualize their findings within a broader biological framework. As these resources continue to expand—with Cistrome now containing approximately 47,000 human and mouse samples [28]—they offer increasingly powerful platforms for generating hypotheses, validating experimental results, and translating genomic observations into biological insights. The integration of these public data resources with experimental histone ChIP-seq research creates a synergistic relationship that accelerates discovery in gene regulation, disease mechanisms, and therapeutic development.

Step-by-Step Histone ChIP-seq Processing Pipeline

Raw Data Quality Control with FastQC and Adapter Trimming

In the context of a basic ChIP-seq data processing pipeline for histone research, the initial steps of raw data quality control and adapter trimming are critical for ensuring the validity of all subsequent analyses. High-throughput sequencing data, by its nature, contains biases and artifacts that can confound the identification of broad histone marks such as H3K27me3 or H3K36me3. For epigenetic studies aimed at drug development, rigorous initial QC is a non-negotiable standard to ensure that the resulting chromatin state annotations are biologically accurate and reproducible. This guide provides an in-depth technical protocol for assessing raw sequence data quality using FastQC and for performing necessary read cleaning, forming the foundational steps upon which reliable histone ChIP-seq analysis is built.

FastQC: A Practical Guide to Quality Assessment

FastQC provides a simple way to do quality control checks on raw sequence data coming from high throughput sequencing pipelines. It offers a modular set of analyses to give a quick impression of whether your data has any problems before further analysis [32].

FastQC is a Java-based application that requires a Java Runtime Environment (JRE). The tool is considered stable and mature, and it is freely available under the GPL v3 or later license. It can import data directly from BAM, SAM, or FastQ files (any variant) and operates offline, which allows for automated generation of reports without running an interactive application [32].

Key Functions of FastQC:

- Provides a quick overview of potential problem areas in sequencing data

- Generates summary graphs and tables for rapid data assessment

- Exports results to HTML-based permanent reports

- Supports offline operation for automated pipeline integration

Interpreting Key FastQC Modules

Understanding the output of FastQC's various analysis modules is crucial for diagnosing data quality issues. The "per base sequence quality" graph is particularly important, as it shows the distribution of quality scores at each position across all reads. Quality scores (Q scores) are calculated as Q = -10 log₁₀ P, where P is the probability that an incorrect base was called. A Q score of above 30 is generally considered good quality for most sequencing experiments, indicating a 1 in 1000 chance of an incorrect base call [33].

The following table summarizes the core FastQC modules and their interpretation:

Table 1: Key FastQC Modules and Their Interpretation in a ChIP-seq Context

| Module Name | What It Measures | Ideal Outcome for Histone ChIP-seq |

|---|---|---|

| Per Base Sequence Quality | Mean quality scores (Q) at each read position | All positions have median Q > 28, no degradation at ends. |

| Per Sequence Quality Scores | Average quality per read (not per base) | A single, sharp peak at high quality (Q > 30). |

| Per Base Sequence Content | Percentage of A/T/G/C bases at each position | Flat lines, indicating random base composition. Deviations at starts may indicate contaminants. |

| Adapter Content | Proportion of sequences containing adapter oligonucleotides | Little to no adapter sequence present. If high, trimming is required. |

| Overrepresented Sequences | Sequences that appear more frequently than expected | No single sequence makes up a significant portion of the library. |

For histone ChIP-seq data, special attention should be paid to the "per base sequence content" module. Abnormalities here can indicate PCR bias or the presence of contaminants that may interfere with the accurate detection of broad chromatin domains. Similarly, high levels of duplication ("Sequence Duplication Levels" module) are common in histone ChIP-seq due to genuine enrichment, but extreme values can indicate technical issues with library complexity [32] [33].

Adapter Trimming and Data Cleaning

The Necessity of Trimming

Adapter trimming is an essential preprocessing step to remove low-quality data. Base quality typically decreases towards the 3' end of reads due to the sequencing process. If these poor-quality reads are included, they can cause accuracy problems in downstream mapping algorithms. Furthermore, adapter sequences can be incorporated into the read data when the DNA fragment being sequenced is shorter than the read length. Removing these artifacts is crucial to maximize the number of reads that can be successfully aligned to the reference genome [33].

Trimming Tools and Workflow

Several tools are available for trimming and filtering low-quality reads. Popular packages include CutAdapt and Trimmomatic, which can be run from the command line or through web-based platforms like Galaxy. These tools typically require the user to specify a quality threshold (commonly set to 20), which removes any bases with a quality score below this value. Reads can then be filtered to remove those that fall below a certain length (e.g., <20 bases) after trimming [33].

The general workflow for read cleaning is as follows:

- Quality-based Trimming: Trim bases from the 3' end (and sometimes 5' end) that fall below a specified quality threshold.

- Adapter Removal: Remove any adapter sequences present in the reads. For Illumina sequencing, common adapter sequences are well-documented and provided with the tools.

- Length Filtering: Discard reads that become too short after trimming to be uniquely mapped.

After trimming, it is considered best practice to rerun FastQC on the cleaned read files to verify that the data quality has been improved, with particular attention to the confirmation that adapter dimers have been successfully removed [33].

Table 2: Essential Tools for ChIP-seq Raw Data Processing

| Tool Name | Primary Function | Key Parameters / Inputs | Application in Histone ChIP-seq |

|---|---|---|---|

| FastQC [32] | Quality Control Visualization | BAM, SAM, or FASTQ file | Initial and post-trimming assessment of data quality. |

| CutAdapt [33] | Adapter/Quality Trimming | Quality threshold (e.g., 20), adapter sequences, min length. | Removes adapter contamination and low-quality ends. |

| Trimmomatic [33] | Quality Trimming & Adapter Removal | Quality threshold, sliding window, adapter file. | Flexible read trimming to improve mappability. |

| Bowtie2/BWA [34] | Read Alignment | Reference genome (e.g., GRCh38), FASTQ files. | Maps cleaned reads to a reference genome for peak calling. |

Integrated Workflow and Visualization

The process of quality control and preprocessing is a linear, logical sequence where the output of each step informs the next. The following diagram illustrates this integrated workflow, from raw data to analysis-ready aligned reads, highlighting the critical feedback loop provided by FastQC.

ChIP-seq QC and Trimming Workflow

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful execution of a histone ChIP-seq experiment, from bench to data analysis, relies on a suite of critical reagents and tools. The following table details these essential components, spanning both wet-lab and computational domains.

Table 3: Essential Research Reagents and Tools for Histone ChIP-seq

| Category | Item | Function / Purpose | Example / Note |

|---|---|---|---|

| Experimental | Validated Antibody [25] | Immunoprecipitation of target histone mark. | Must be characterized for ChIP; check ENCODE guidelines. |

| Input Control DNA [5] | Control for background signal & open chromatin. | Sonicated, non-immunoprecipitated genomic DNA. | |

| Cross-linking Agent | Fixes protein-DNA interactions in place. | Typically formaldehyde. | |

| Library Prep Kit | Prepares immunoprecipitated DNA for sequencing. | Must be compatible with sequencing platform. | |

| Sequencing | NGS Platform | Generates raw sequence reads. | Illumina, Oxford Nanopore, etc. |

| Adapter Oligos | Ligated to DNA fragments for sequencing. | Platform-specific (e.g., Illumina TruSeq). | |

| Computational | FastQC [32] [33] | Quality control of raw sequence data. | First step after receiving FASTQ files. |

| Trimming Tool (e.g., CutAdapt) [33] | Removes adapters and low-quality bases. | Critical for data cleanliness. | |

| Aligner (e.g., Bowtie2, BWA) [34] | Maps reads to a reference genome. | Requires a reference genome (e.g., GRCh38). | |

| Peak Caller (e.g., MACS2) [34] | Identifies enriched genomic regions. | MACS2 is commonly used for broad histone marks. |

Within the basic ChIP-seq processing pipeline for histone research, rigorous raw data quality control using FastQC and subsequent adapter trimming are not optional steps but fundamental prerequisites. They ensure that the data entering the alignment and peak-calling stages is of sufficient integrity to produce biologically meaningful results. For researchers and drug development professionals, adhering to this detailed protocol establishes a foundation of data quality, ultimately supporting robust discoveries in chromatin biology and epigenetics.

Read Alignment to Reference Genomes using Bowtie2

In chromatin immunoprecipitation followed by sequencing (ChIP-seq) for histone research, the accurate alignment of sequencing reads to a reference genome is a critical first step in data analysis. This process enables researchers to identify genomic regions enriched for specific histone modifications, thereby illuminating the epigenetic landscape of cells. Bowtie2 has emerged as a preferred alignment tool in major consortium pipelines, including ENCODE, for its efficiency in handling the unique characteristics of histone ChIP-seq data, which often exhibits broader enrichment patterns compared to transcription factor studies. This technical guide provides comprehensive methodologies for implementing Bowtie2 within a standardized ChIP-seq processing workflow, detailing best practices for genome indexing, read alignment, output processing, and quality assessment specifically tailored to histone research applications.

ChIP-seq technology combines chromatin immunoprecipitation with massively parallel DNA sequencing to identify genome-wide binding sites of DNA-associated proteins, including histone modifications [5] [34]. In histone ChIP-seq, proteins that associate with DNA over extended genomic regions or domains are investigated, resulting in characteristic broad enrichment patterns [5]. The alignment of sequenced reads to a reference genome represents the foundational computational step in this process, transforming raw sequence data into mappable genomic coordinates that reveal protein-DNA interaction sites.

Bowtie2, an ultrafast and memory-efficient alignment tool, employs an FM Index based on the Burrows-Wheeler Transform method to efficiently map sequencing reads to reference genomes [35]. This method enables rapid alignment while maintaining low memory requirements, making it particularly suitable for processing large ChIP-seq datasets. Unlike its predecessor, Bowtie2 supports gapped, local, and paired-end alignment modes, accommodating the diverse sequencing strategies employed in modern epigenomics research [35]. The tool performs optimally with reads of at least 50 base pairs, though it can process read lengths as low as 25 base pairs according to ENCODE pipeline specifications [5].

Within the context of histone research, accurate read alignment enables the identification of broad chromatin domains marked by specific histone modifications, such as H3K27me3 (associated with facultative heterochromatin) or H3K4me3 (associated with active promoters) [5]. The output of this alignment process serves as critical input for subsequent analytical steps, including peak calling, signal visualization, and chromatin state segmentation models that classify functional genomic regions [5].

Bowtie2 Implementation in ChIP-seq Pipelines

Integration with Standardized Processing Workflows

The ENCODE Consortium, which has established comprehensive standards for ChIP-seq data processing, specifies Bowtie2 as a core component in both transcription factor and histone analysis pipelines [5] [17]. These standardized workflows ensure consistency and reproducibility across experiments, particularly important in histone research where broad enrichment patterns require specialized analytical approaches. The histone ChIP-seq pipeline specifically resolves both punctate binding and extended chromatin domains bound by multiple instances of the target protein or modification [5].