A Comprehensive Guide to Mitigating Batch Effects in Transcriptomics: From Foundational Concepts to Advanced Correction Strategies

This article provides a systematic framework for researchers, scientists, and drug development professionals to understand, address, and validate batch effect correction in transcriptomics studies.

A Comprehensive Guide to Mitigating Batch Effects in Transcriptomics: From Foundational Concepts to Advanced Correction Strategies

Abstract

This article provides a systematic framework for researchers, scientists, and drug development professionals to understand, address, and validate batch effect correction in transcriptomics studies. Covering both bulk and single-cell RNA-seq data, it explores the profound negative impacts of technical variations on data interpretation and reproducibility. The content details established and emerging computational methods like ComBat, Harmony, and STACAS, while offering practical guidance for troubleshooting common pitfalls such as overcorrection and confounded designs. A strong emphasis is placed on rigorous validation using both visual and quantitative metrics to ensure biological signals are preserved, ultimately empowering researchers to produce reliable and reproducible transcriptomic data for biomedical discovery.

Understanding Batch Effects: The Hidden Threat to Transcriptomic Data Integrity

What is a Batch Effect?

In transcriptomics, a batch effect refers to systematic, non-biological variations introduced into gene expression data due to technical inconsistencies during the experimental process [1]. These are technical biases that can confound data analysis and are unrelated to the biological questions being studied [2]. Even biologically identical samples may show significant differences in gene expression due to these technical influences, which can impact both bulk and single-cell RNA-seq data [1].

What Causes Batch Effects?

Batch effects can originate from multiple sources throughout the experimental workflow [1] [3]:

- Sample Preparation Variability: Differences in protocols, technicians, or enzyme efficiency.

- Sequencing Platform Differences: Machine type, calibration, or flow cell variation.

- Library Prep Artifacts: Variations in reverse transcription or amplification cycles.

- Reagent Batch Effects: Different lot numbers or chemical purity variations.

- Environmental Conditions: Temperature, humidity, or handling time.

- Temporal Factors: Experiments conducted on different days or across months.

- Personnel Differences: Different individuals handling samples.

Table 1: Common Sources of Batch Effects in Transcriptomics

| Category | Examples | Applies To |

|---|---|---|

| Sample Preparation | Different protocols, technicians, enzyme efficiency | Bulk & single-cell RNA-seq |

| Sequencing Platform | Machine type, calibration, flow cell variation | Bulk & single-cell RNA-seq |

| Library Prep | Reverse transcription, amplification cycles | Mostly bulk RNA-seq |

| Reagent Batch | Different lot numbers, chemical purity variations | All types |

| Environmental | Temperature, humidity, handling time | All types |

| Single-cell/Spatial Specific | Slide prep, tissue slicing, barcoding methods | scRNA-seq & spatial transcriptomics |

How to Detect Batch Effects in Your Data

Visual Detection Methods

Principal Component Analysis (PCA) Performing PCA on raw data aids in identifying batch effects through analysis of the top principal components. The scatter plot of these top PCs reveals variations induced by the batch effect, showcasing sample separation attributed to distinct batches rather than biological sources [4].

t-SNE/UMAP Plot Examination Visualize cell groups on a t-SNE or UMAP plot, labeling cells based on their sample group and batch number before and after batch correction. In the presence of uncorrected batch effects, cells from different batches tend to cluster together instead of grouping based on biological similarities [1] [4].

Quantitative Metrics

Several quantitative metrics can assess batch effect presence and correction quality [1]:

- Average Silhouette Width (ASW): Measures clustering tightness and separation.

- Adjusted Rand Index (ARI): Assesses similarity between two clusterings.

- Local Inverse Simpson's Index (LISI): Evaluates batch mixing while preserving biological identity.

- k-nearest neighbor Batch Effect Test (kBET): Tests whether batches are well-mixed in the local neighborhood.

Batch Effect Correction Methods

Statistical Correction Approaches

Various statistical techniques have been developed to correct for batch effects in transcriptomic datasets [1] [3]:

Table 2: Common Batch Effect Correction Methods

| Method | Strengths | Limitations | Best For |

|---|---|---|---|

| Combat/ComBat-seq | Simple, widely used; adjusts known batch effects using empirical Bayes; ComBat-seq preserves count data [1] [5] | Requires known batch info; may not handle nonlinear effects [1] | Bulk RNA-seq with known batches [1] |

| SVA | Captures hidden batch effects; suitable when batch labels are unknown [1] | Risk of removing biological signal; requires careful modeling [1] | Complex designs with unknown batches [1] |

| limma removeBatchEffect | Efficient linear modeling; integrates with DE analysis workflows [1] | Assumes known, additive batch effect; less flexible [1] | Bulk RNA-seq with linear models [1] |

| Harmony | Iteratively clusters cells across batches; works well with Seurat workflows [1] [4] | May oversimplify complex biological variation [1] | Single-cell RNA-seq [1] [4] |

| fastMNN | Identifies mutual nearest neighbors across batches [1] [3] | Computationally intensive for large datasets [1] | Complex single-cell structures [1] |

| Scanorama | Performs nonlinear manifold alignment across batches [1] [4] | Python-based (may require workflow adjustment for R users) [1] | Data from different platforms [1] |

Experimental Design Strategies

The best way to manage batch effects is to minimize them during experimental design [1]:

- Randomization: Randomize samples across batches so each condition is represented within each processing batch.

- Balancing: Balance biological groups across time, operators, and sequencing runs.

- Consistency: Use consistent reagents and protocols throughout the study.

- Control Samples: Include pooled quality control samples and technical replicates across batches.

- Avoid Group Processing: Never process all samples of one condition together.

Troubleshooting Guide: Common Batch Effect Issues

FAQ: Frequently Asked Questions

Q1: What's the difference between normalization and batch effect correction? A: Normalization operates on the raw count matrix and mitigates sequencing depth, library size, and amplification bias. Batch effect correction addresses different sequencing platforms, timing, reagents, or different conditions/laboratories [4].

Q2: Can batch correction remove true biological signal? A: Yes. Overcorrection may remove real biological variation if batch effects are correlated with the experimental condition. Always validate correction results using both visual and quantitative methods [1] [6].

Q3: Do I always need batch correction? A: If samples cluster by batch in PCA/UMAP plots or show known batch-driven trends, correction is highly recommended. For single-batch studies with consistent processing, correction may not be necessary [1].

Q4: How many batches or replicates are needed? A: At least two replicates per group per batch is ideal. More batches allow more robust statistical modeling [1].

Q5: What metrics indicate successful correction? A: Visual clustering by biological condition rather than batch, replicate consistency, and quantitative scores like kBET, ARI, or silhouette width approaching ideal values [1].

Signs of Overcorrection

One common issue in batch correction is overcorrection, which can be identified by these signs [4]:

- A significant portion of cluster-specific markers comprising genes with widespread high expression across various cell types.

- Substantial overlap among markers specific to clusters.

- Notable absence of expected cluster-specific markers.

- Scarcity or absence of differential expression hits associated with pathways expected based on sample composition.

Batch Effect Correction Workflow

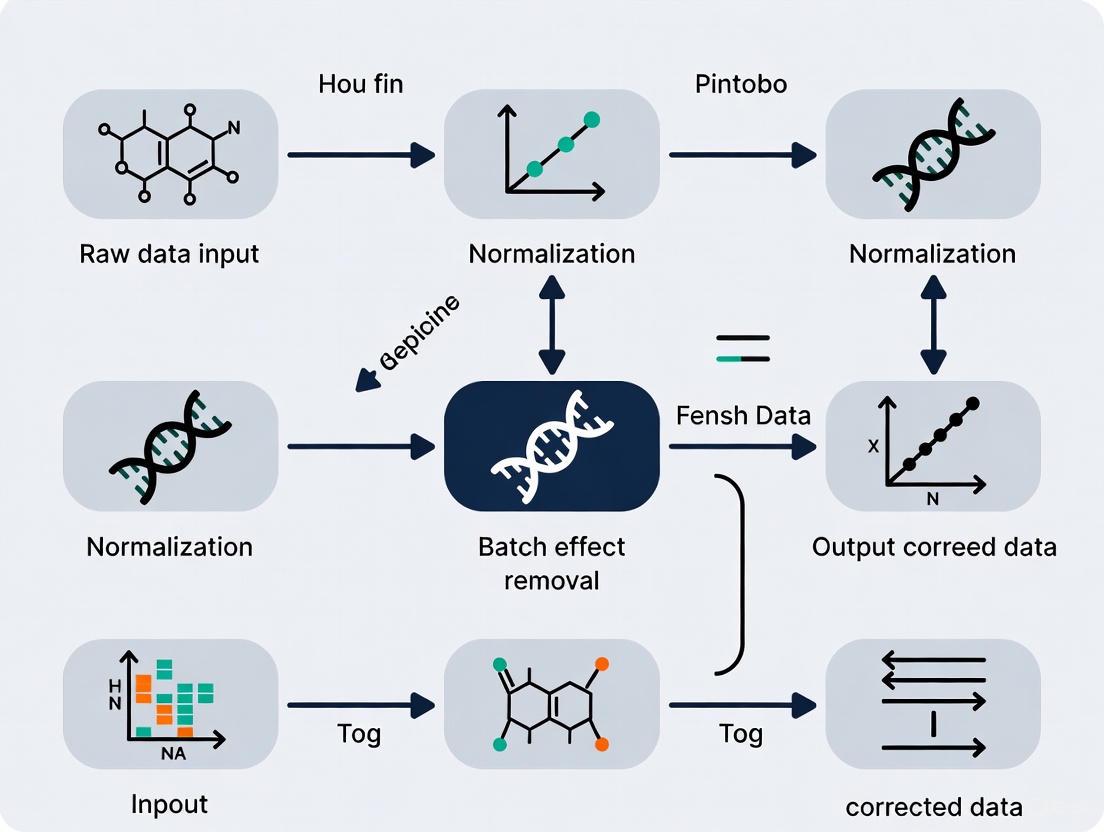

The following diagram illustrates a standard workflow for detecting and correcting batch effects in transcriptomics data:

Research Reagent Solutions

Table 3: Key Materials and Tools for Batch Effect Management

| Item | Function | Application Notes |

|---|---|---|

| Pooled QC Samples | Monitor technical variation across batches [1] | Include in every batch for consistency tracking |

| Technical Replicates | Assess reproducibility [1] | Process identical samples across different batches |

| Standardized Reagent Lots | Minimize lot-to-lot variability [1] [3] | Use same lot for entire study when possible |

| Automated Sample Processing | Reduce personnel-induced variation [3] | Minimize manual handling steps |

| RNA Integrity Tools | Assess sample quality pre-sequencing [7] | Use metrics like TIN score [7] |

| Batch Correction Software | Computational removal of technical variation [1] | Choose method based on data type and design |

Advanced Topics in Batch Effects

Single-cell vs. Bulk RNA-seq Considerations

Batch effects are more complex in single-cell RNA-seq data due to [8] [9]:

- Lower RNA input and higher dropout rates

- Higher proportion of zero counts and low-abundance transcripts

- Increased cell-to-cell variations

- More severe technical variations than bulk RNA-seq

Machine Learning Approaches

Recent advances include machine learning and deep learning methods for batch effect correction [6] [2]:

- Autoencoders: Learn complex nonlinear projections of high-dimensional data

- Quality-aware correction: Uses machine-predicted sample quality for batch detection

- Deep transfer learning: Discovers hidden high-resolution cellular subtypes

Multi-omics Integration Challenges

Batch effects become increasingly complex in multi-omics studies because [8] [9]:

- Different omics types have different distributions and scales

- Data are measured on different platforms

- Integration requires careful handling of technical variations across modalities

Batch effects remain a persistent challenge in transcriptomic research, with potential to lead to incorrect conclusions and irreproducible results if not properly addressed [8] [9]. Through proper experimental design, rigorous detection methods, appropriate correction strategies, and thorough validation, researchers can effectively manage these technical variations. By minimizing technical noise, scientists can ensure the biological accuracy, reproducibility, and impact of their transcriptomic analyses.

FAQs on Batch Effects in Transcriptomics

What are batch effects, and why are they a critical concern in transcriptomics? Batch effects are technical variations introduced during experimental procedures that are unrelated to the biological questions being studied. In transcriptomics, they can dilute true biological signals, reduce the statistical power of an analysis, and, in the worst cases, lead to incorrect scientific conclusions and irreproducible research [8]. Tackling them is essential for ensuring data reliability.

What are the most common stages where batch effects originate in an RNA-seq workflow? Batch effects can arise at virtually every stage of a transcriptomics study. Key sources include the initial study design, sample collection and preservation, RNA extraction, library preparation, and the sequencing run itself [8] [10]. A flaw in the study design, such as processing all control samples in one batch and all treatment samples in another, is a particularly critical source of confounding [8].

Can batch effects be completely avoided through experimental design? While a well-designed experiment is the most effective defense, it is often impossible to eliminate batch effects entirely, especially in large, multi-center, or longitudinal studies [11]. Therefore, a combination of careful experimental planning and subsequent computational correction is typically required to mitigate their impact.

Troubleshooting Guides

Guide 1: Addressing Batch Effects from Sample Preservation and RNA Extraction

Problem: RNA degradation or modification during sample preservation leads to poor data quality and introduces significant technical variation between batches.

Investigation & Solution:

- Check RNA Integrity Number (RIN): Always assess RNA quality using a method like Bioanalyzer. Low RIN values or high degradation are red flags.

- Identify Source: Determine if degradation occurred due to delayed fixation, improper storage, or repeated freeze-thaw cycles.

- Apply Corrective Measures:

- For FFPE samples, which are prone to nucleic acid cross-linking and fragmentation, use optimized RNA extraction protocols and consider higher RNA input for library preparation [10].

- For frozen samples, minimize freeze-thaw cycles and ensure consistent storage conditions across all samples. Use high-quality, consistent reagent lots for RNA extraction, such as the mirVana miRNA isolation kit, which has been reported to produce high-yield, high-quality RNA [10].

Guide 2: Mitigating Library Preparation Biases

Problem: Biases introduced during library construction, such as those from mRNA enrichment, fragmentation, and PCR amplification, create non-biological differences between samples processed in different batches.

Investigation & Solution:

- Identify Bias Type: Common issues include 3'-end capture bias from poly(A) enrichment, non-random RNA fragmentation, and preferential amplification of certain transcripts during PCR.

- Apply Corrective Measures:

- For mRNA enrichment: Consider using rRNA depletion instead of poly(A) selection, especially for non-polyadenylated transcripts or degraded samples [10].

- For fragmentation: Use chemical treatment (e.g., zinc) rather than enzymatic methods (RNase III) for more random fragmentation [10].

- For PCR amplification: Reduce the number of amplification cycles where possible. Use high-fidelity polymerases like Kapa HiFi and consider PCR additives like TMAC or betaine for AT/GC-rich genomes [10]. For ultra-low input samples, evaluate multiple displacement amplification (MDA) as an alternative [10].

Guide 3: Correcting for Sequencing Platform Variations

Problem: Technical variations between different sequencing runs, flow cells, or platforms can manifest as batch effects.

Investigation & Solution:

- Monitor Sequencing Metrics: Check for batch-specific differences in metrics like total reads, mapping rates, GC content, and insert sizes.

- Apply Corrective Measures:

- Wet-lab strategy: Whenever possible, multiplex libraries from different experimental groups and sequence them across the same flow cells to spread out technical variation [11].

- Computational strategy: Apply batch effect correction algorithms (BECAs) such as Harmony, Mutual Nearest Neighbors (MNN), or Seurat's integration method to the final count matrix [11]. These tools aim to align the data from different batches in a shared space, removing technical variation while preserving biology.

Data Presentation

| Stage | Source of Bias | Description of Issue | Suggested Improvement |

|---|---|---|---|

| Sample Preservation | Formalin-fixed, paraffin-embedded (FFPE) tissue | Causes nucleic acid cross-linking, fragmentation, and chemical modifications [10]. | Use non-cross-linking organic fixatives; for FFPE, use high RNA input and random priming in RT [10]. |

| RNA Extraction | TRIzol (phenol-chloroform) method | Can lead to loss of small RNAs, especially at low concentrations [10]. | Use high RNA concentrations or avoid TRIzol; use silica-column-based kits (e.g., mirVana kit) [10]. |

| Library Preparation | mRNA Enrichment (3'-end bias) | Poly(A) selection can introduce 3'-end capture bias [10]. | Use ribosomal RNA (rRNA) depletion kits instead [10]. |

| RNA Fragmentation | Enzymatic fragmentation (e.g., RNase III) is not completely random, reducing library complexity [10]. | Use chemical treatment (e.g., zinc) or fragment cDNA post-reverse transcription [10]. | |

| PCR Amplification | Preferential amplification of cDNA with neutral GC%; biases propagate through cycles [10]. | Use high-fidelity polymerases (e.g., Kapa HiFi); reduce cycle number; use additives (TMAC/betaine) for extreme GC% [10]. | |

| Low Input RNA | Low quantity/quality starting material has strong, harmful effects on downstream analysis [10]. | Use specialized low-input protocols; increase input material if possible. |

Table 2: Key Research Reagent Solutions for Mitigating Batch Effects

| Reagent / Kit | Primary Function | Role in Batch Effect Mitigation |

|---|---|---|

| mirVana miRNA Isolation Kit | RNA extraction and purification | Provides high-yield, high-quality RNA from various sample types, reducing sample-specific variation [10]. |

| NEBNext UltraExpress Library Prep Kits | DNA/RNA library preparation | Streamlines workflow, reduces hands-on time and consumables (fewer tips/tubes), enhancing reproducibility [12]. |

| Sera-Mag SpeedBead Magnetic Beads | Sample clean-up and size selection | Engineered with a core-shell design for high yields and tight size distributions, improving NGS consistency [12]. |

| CRISPR-based Depletion Solutions | Removal of non-informative RNA (e.g., rRNA) | Increases library complexity and informative read depth by highly specific removal of unwanted transcripts [12]. |

| Kapa HiFi Polymerase | PCR amplification during library prep | Reduces PCR bias through high-fidelity amplification, leading to more uniform coverage [10]. |

Experimental Protocols

Protocol 1: Best Practices for a Batch-Effect-Aware RNA-seq Study Design

Objective: To design a transcriptomics experiment that minimizes the introduction of batch effects from the outset.

Methodology:

- Randomization: Randomly assign samples from different biological groups (e.g., control vs. treatment) across all processing batches. Do not process all samples from one group in a single batch.

- Replication: Include technical replicates (the same sample processed multiple times) to help estimate the level of technical noise.

- Balancing: Ensure that potential confounding factors (e.g., age, sex, sample source) are balanced across batches. If a known batch variable exists (e.g., different reagent lots), ensure it is not perfectly correlated with a biological group of interest.

- Blocking: If the number of samples is too large for a single batch, process the samples in smaller, balanced blocks (batches) and record all batch variables meticulously.

Protocol 2: Computational Batch Effect Correction Using Seurat Integration

Objective: To merge multiple single-cell or bulk RNA-seq datasets and remove technical batch effects.

Methodology (as cited in the community resources):

- Data Preprocessing: Independently preprocess each dataset (batch) by normalizing and identifying highly variable features.

- Anchor Identification: Identify "anchors" between pairs of datasets. These are mutual nearest neighbors—cells or samples that are most similar across batches, presumed to represent the same biological state [11].

- Data Integration: Use these anchors to harmonize the datasets, effectively removing the technical batch effects. This results in a corrected gene expression matrix where cells/samples cluster by biological type rather than by batch [11].

- Validation: Visually inspect integrated data using dimensionality reduction plots (e.g., UMAP, t-SNE) to confirm that batches are mixed and biological signals are preserved.

Workflow Visualizations

Batch Effect Sources in Transcriptomics Workflow

Batch Effect Mitigation Strategies

Batch effects are systematic technical variations introduced during the processing of samples in separate groups or at different times. These non-biological variations are notoriously common in transcriptomics and other omics studies and represent a significant threat to the reliability and reproducibility of your research. When uncorrected, they can obscure true biological signals, lead to false discoveries, and render findings irreproducible across laboratories. This technical support guide provides clear methodologies to identify, troubleshoot, and correct for batch effects, ensuring the integrity of your transcriptomics data and conclusions.

FAQ: Understanding the Impact of Batch Effects

Q1: What exactly are batch effects in transcriptomics? Batch effects are systematic, non-biological variations in gene expression data introduced by technical inconsistencies. These can occur at virtually any stage of an experiment, including during sample collection, library preparation, sequencing runs, or data analysis. Common causes include processing samples on different days, using different reagent lots, different sequencing machines, or different personnel [1] [8]. Even biologically identical samples processed in different batches can show significant differences in their expression profiles due to these technical influences.

Q2: What makes batch effects such a high-stakes problem? The stakes are high because batch effects can directly lead to incorrect conclusions and irreproducible research, which can waste resources, invalidate findings, and even impact clinical decisions.

- Misleading Outcomes: Batch effects can cause statistical models to falsely identify genes as differentially expressed (false positives) or mask true biological signals (false negatives) [1] [8]. In one documented clinical trial, a change in RNA-extraction solution caused a shift in gene-based risk calculations, leading to incorrect treatment classifications for 162 patients [8] [13].

- The Reproducibility Crisis: A survey in Nature found that 90% of researchers believe there is a reproducibility crisis. Batch effects from reagent variability and experimental bias are paramount factors contributing to this problem, resulting in retracted papers and discredited research [8]. For example, a high-profile study on a serotonin biosensor had to be retracted when its key results could not be reproduced with a new batch of a common reagent (fetal bovine serum) [8].

Q3: How can I tell if my data has batch effects? Batch effects can be detected through both visual and quantitative means:

- Visual Methods: Use dimensionality reduction plots like PCA, t-SNE, or UMAP. If your samples cluster primarily by processing date, sequencing lane, or other technical factors—rather than by the biological conditions you are comparing—this is a strong indicator of batch effects [1] [14].

- Quantitative Metrics: Several metrics provide a less biased assessment:

- kBET (k-nearest neighbor Batch Effect Test): Measures how well cells from different batches mix among their nearest neighbors.

- ASW (Average Silhouette Width): Evaluates the compactness of batch clusters.

- LISI (Local Inverse Simpson's Index): Assesses the diversity of batches in local neighborhoods [1] [14].

It is recommended to use a combination of visual and quantitative methods for robust validation [1].

Q4: Can correcting for batch effects accidentally remove real biological signals? Yes, this phenomenon, known as over-correction, is a significant risk. It occurs when the correction method is too aggressive or when batch effects are completely confounded with the biological groups of interest (e.g., all control samples were processed in one batch and all treatment samples in another) [14] [13]. Signs of over-correction include:

- Distinct cell types clustering together on a UMAP plot.

- A complete overlap of samples from very different biological conditions.

- Cluster-specific markers being dominated by commonly expressed genes, like ribosomal genes [14].

Q5: What is the single most important step to minimize batch effects? The best strategy is prevention through rigorous experimental design. It is far more effective to minimize batch effects at the source than to rely solely on computational correction later. Key practices include:

- Randomization: Randomly assign samples from all biological groups to each processing batch.

- Balancing: Ensure each batch contains a balanced representation of all biological conditions.

- Consistency: Use the same protocols, reagents, and equipment throughout the study.

- Replication: Include at least two biological replicates per group per batch to allow for robust statistical modeling [1] [8] [15].

Troubleshooting Guide: Detecting and Correcting Batch Effects

How to Detect Batch Effects in Your Dataset

Follow this workflow to systematically assess the presence and severity of batch effects.

Protocol: Visual Detection with Dimensionality Reduction

- Data Input: Start with your raw, uncorrected gene expression count matrix.

- Dimensionality Reduction: Perform Principal Component Analysis (PCA) on the data.

- Visualization: Create a scatter plot of the first two principal components (PC1 vs. PC2).

- Coloration: Color the data points by the known technical batch (e.g., processing date).

- Interpretation: Observe the clustering pattern. If data points group strongly by their batch color, rather than by biological condition, batch effects are present [1] [14].

How to Correct for Batch Effects

A variety of computational methods exist. The choice depends on your data type (bulk vs. single-cell) and the structure of your batch information. The table below summarizes popular tools.

Table 1: Comparison of Common Batch Effect Correction Methods

| Method | Data Type | Strengths | Limitations | Key Reference |

|---|---|---|---|---|

| ComBat | Bulk RNA-seq | Uses empirical Bayes framework; adjusts for known batch variables; simple and widely used. | Requires known batch info; may not handle nonlinear effects well. | [1] |

| SVA | Bulk RNA-seq | Captures hidden batch effects (when batch labels are unknown). | Risk of removing biological signal; requires careful modeling. | [1] |

| limma removeBatchEffect | Bulk RNA-seq | Efficient linear modeling; integrates well with differential expression workflows. | Assumes known, additive batch effects; less flexible. | [1] |

| Harmony | Single-cell RNA-seq | Fast runtime; integrates cells in a shared embedding; good for complex datasets. | Performance may vary with sample imbalance. | [1] [11] [14] |

| Seurat Integration | Single-cell RNA-seq | Popular and well-supported within the Seurat ecosystem; good performance. | Can have low scalability with very large datasets. | [11] [14] |

| Ratio-Based Scaling | Multi-omics | Highly effective when batch and biology are confounded; uses a reference material for scaling. | Requires profiling a common reference material in every batch. | [13] |

Protocol: Executing Batch Correction with Harmony on Single-Cell Data

This protocol outlines the steps for using Harmony, a widely used and effective integration tool.

- Preprocessing: Generate a PCA embedding of your single-cell RNA-seq data using your standard workflow (e.g., in Seurat or Scanpy).

- Input: Provide the PCA matrix and a metadata vector specifying the batch for each cell to the Harmony

RunHarmony()function. - Integration: Harmony will iteratively correct the PCA coordinates to maximize the diversity of batches within local cell neighborhoods.

- Output: The function returns a new, integrated embedding (e.g., "harmony" dimensions).

- Downstream Analysis: Use this corrected Harmony embedding for all downstream analyses, such as UMAP visualization and clustering [11].

Table 2: Key Research Reagent Solutions for Batch Effect Mitigation

| Item | Function | Best Practice Guidance |

|---|---|---|

| Reference Materials | A commercially available or internally standardized sample (e.g., from a cell line) processed in every batch. | Enables ratio-based correction methods, which are powerful in confounded scenarios [13]. |

| Validated Reagent Lots | Consumables like enzymes, kits, and buffers used for RNA extraction and library prep. | Purchase in large, single lots for the entire study to minimize variability [1] [15]. |

| RNA Integrity Number (RIN) Standard | A measure of RNA quality (e.g., via Bioanalyzer). | Only proceed with samples having a RIN > 7 to ensure high-quality input and reduce technical noise [15]. |

| Sample Multiplexing Kits | Kits that allow barcoding and pooling of samples from different biological groups into a single sequencing library. | Dramatically reduces batch effects by ensuring pooled samples are processed together through library prep and sequencing [14]. |

| Internal Spike-In Controls | Exogenous RNAs added to each sample in known quantities. | Helps control for technical variation in RNA capture efficiency and sequencing depth [8]. |

Batch effects are systematic technical variations introduced during different stages of high-throughput experiments, unrelated to the biological questions being studied. In transcriptomics, these effects arise from inconsistencies in sample processing, sequencing platforms, reagent lots, personnel, or environmental conditions [1]. When unaddressed, batch effects can distort gene expression data, leading to incorrect conclusions, irreproducible findings, and misguided clinical decisions [8].

This technical support guide presents real-world case studies demonstrating the profound consequences of batch effects in both clinical and cross-species research. By examining these instances and providing actionable troubleshooting guidance, we aim to equip researchers and drug development professionals with strategies to safeguard their analyses against technical artifacts, thereby enhancing data reliability and translational impact.

Case Study 1: Batch Effects in a Clinical Trial

The Problem: Incorrect Patient Stratification

In a clinical trial for a cancer therapy, researchers used gene expression profiles to calculate a risk score for patients, which was intended to guide chemotherapy decisions. During the trial, a change was made to the RNA-extraction solution used in processing patient samples [8].

- The Batch Effect: The change in reagent introduced a significant technical shift in the resulting gene expression data.

- The Consequence: This batch effect directly altered the gene-based risk calculation. Consequently, 162 patients were misclassified, leading to 28 patients receiving incorrect or unnecessary chemotherapy regimens [8]. This case starkly illustrates how a technical artifact can directly impact patient care and clinical outcomes.

Troubleshooting Guide & FAQ: Clinical Genomics

Q: How can a simple reagent change cause such a major problem? A: Gene expression measurements are highly sensitive. Different reagent lots can have subtle variations in efficiency or purity, which systematically alter the measured intensity of thousands of genes. If this technical variation is confounded with a biological group (e.g., all post-change samples are from a specific patient group), the analysis model cannot distinguish technical from biological effects [1] [8].

Q: What are the best practices to prevent this in our clinical study design? A: Proactive experimental design is the most effective strategy [1] [8].

- Randomization: Process samples from all patient groups in every batch.

- Balancing: Ensure that biological conditions of interest (e.g., treatment vs. control) are equally represented across processing batches, sequencing runs, and personnel.

- Reagent Consistency: Use the same lot of critical reagents (e.g., extraction kits, enzymes) for the entire study whenever possible.

- Quality Control (QC) Samples: Include pooled control samples or technical replicates across all batches to monitor technical variation.

Q: Our clinical trial is already completed, and we suspect a batch effect. How can we diagnose it? A: The following diagnostic workflow can help identify the presence of batch effects:

Experimental Protocol: Diagnosing Batch Effects with PCA

Objective: To visually assess whether technical batches are a major source of variation in your gene expression dataset.

Materials Needed:

- Normalized gene expression matrix (e.g., counts from RNA-seq)

- Metadata file listing the technical batch and biological group for each sample.

- Statistical software (R/Python) with PCA capabilities.

Methodology:

- Data Input: Load your normalized expression matrix and metadata.

- Dimensionality Reduction: Perform Principal Component Analysis (PCA) on the expression matrix. This reduces the high-dimensional gene data into a few key components that explain the most variance.

- Visualization:

- Generate a PCA plot where points are colored by their technical batch (e.g., sequencing run, processing date). If samples from the same batch cluster tightly together and separate from other batches, a batch effect is likely [1].

- Generate a second PCA plot where points are colored by the biological condition (e.g., disease state, treatment). In an ideal, batch-free world, samples should cluster primarily by biology.

- Interpretation: Compare the two plots. If the first plot (colored by batch) shows clearer clustering than the second (colored by biology), you have strong evidence of a batch effect that requires correction before any downstream analysis [1].

Case Study 2: Batch Effects in Cross-Species Analysis

The Problem: Mistaking Technical Bias for Biological Difference

A prominent study initially reported that gene expression differences between humans and mice were greater than the differences between tissues within the same species [8]. This finding suggested profound evolutionary divergence.

- The Batch Effect: A more rigorous re-analysis revealed a critical confounder: the human and mouse data were generated in separate studies, three years apart, using different experimental designs [8].

- The Consequence: The so-called "species-specific" signal was largely driven by technical batch effects related to the time of data generation. After applying appropriate batch-effect correction, the data showed a more biologically plausible pattern: samples clustered by tissue type rather than by species [8]. This case highlights how batch effects can lead to fundamentally incorrect biological interpretations.

Troubleshooting Guide & FAQ: Cross-Species & Multi-Center Studies

Q: How can we differentiate true biological signals from batch effects in integrative studies? A: This is a central challenge. The key is to use both negative and positive controls [8].

- Negative Controls: Use housekeeping genes or other features expected to be stable across your batches. If these show systematic variation by batch, it indicates a technical effect.

- Positive Controls: Ensure known biological differences (e.g., clear cell-type markers) are preserved after any correction.

- Validation: Orthogonal validation of key findings using a different, independent method or dataset is crucial.

Q: What batch correction methods are suitable for complex integrations, like cross-species data? A: Methods that allow for the use of prior biological knowledge can be particularly effective. Semi-supervised methods like STACAS leverage initial cell-type annotations to guide integration, helping to preserve biological variance while removing technical batch effects [16]. Other advanced tools like Harmony and Scanorama are also widely used for integrating diverse datasets [1] [11].

Q: How can we design a multi-center study to minimize batch effects from the start? A: Consortium-level standardization is essential [8].

- Standardized Protocols: All participating centers should use the same, meticulously detailed protocols for sample collection, processing, and sequencing.

- Reference Materials: Circulate a common reference sample to all centers to quantify and later correct for inter-center technical variation.

- Balanced Design: If possible, ensure that each center processes samples from all biological groups being compared.

Quantitative Impact of Batch Effect Correction

The table below summarizes the consequences and corrective outcomes from the featured case studies.

Table 1: Summary of Real-World Batch Effect Case Studies

| Case Study | Source of Batch Effect | Impact of Uncorrected Effect | Outcome After Correction |

|---|---|---|---|

| Clinical Trial [8] | Change in RNA-extraction reagent | 28 patients received incorrect chemotherapy; misclassification of 162 patients | (Case highlighted the problem; correction would prevent misclassification) |

| Cross-Species Study [8] | Data generated 3 years apart in separate studies | False conclusion: species differences > tissue differences | Correct conclusion: clustering by tissue type over species |

The following table lists essential methodological solutions and their specific functions for addressing batch effects.

Table 2: Research Reagent Solutions & Computational Tools

| Tool / Solution Name | Function / Purpose | Applicable Context |

|---|---|---|

| Pooled QC Samples [8] | A control sample included in every batch to monitor and model technical variation across runs. | All omics studies (Transcriptomics, Proteomics) |

| ComBat & ComBat-seq [1] [5] | Empirical Bayes frameworks to adjust for known batch effects in both normalized (ComBat) and raw count (ComBat-seq) data. | Bulk RNA-seq data |

| Harmony [1] [11] | Integrates cells across batches by iteratively correcting a low-dimensional embedding, suitable for complex single-cell data. | Single-cell RNA-seq, scATAC-seq |

| STACAS [16] | A semi-supervised integration method that uses prior cell type knowledge to guide batch correction, preserving biological variance. | Single-cell RNA-seq (especially with imbalanced cell types) |

| RECODE/iRECODE [17] | Reduces technical noise and batch effects in high-dimensional single-cell data while preserving the full dimensionality of the data. | scRNA-seq, scHi-C, Spatial Transcriptomics |

Integrated Workflow: From Experiment to Validated Analysis

A robust transcriptomics study requires vigilance against batch effects at every stage. The following workflow synthesizes the key steps covered in this guide.

Distinguishing Batch Effects in Bulk versus Single-Cell RNA-Seq

Batch effects are systematic technical variations introduced during the processing of samples in separate groups, and they represent a significant challenge in transcriptomics studies [4] [8]. These non-biological variations can arise from differences in sequencing platforms, reagents, personnel, laboratory conditions, or processing times, potentially confounding downstream analyses and leading to irreproducible findings [8]. While batch effects impact both bulk and single-cell RNA sequencing (scRNA-seq) technologies, their characteristics, implications, and correction strategies differ substantially between these approaches. Understanding these distinctions is crucial for researchers, scientists, and drug development professionals aiming to generate reliable and interpretable transcriptomic data. This guide provides a technical framework for distinguishing and addressing batch effects across these two sequencing modalities within the broader context of mitigating technical artifacts in transcriptomics research.

FAQ: Understanding Batch Effects in Transcriptomics

What fundamentally causes batch effects in RNA-seq experiments? Batch effects stem from the inherent inconsistency in the relationship between the true analyte concentration in a sample and the final instrument readout across different experimental conditions [8]. This technical variation can be introduced at virtually every stage of a high-throughput study, from sample collection and preparation to sequencing and data processing [8].

How do the challenges of batch effects differ between bulk and single-cell RNA-seq? The challenges are more pronounced in scRNA-seq due to its higher technical sensitivity. scRNA-seq methods have lower RNA input, higher dropout rates (where nearly 80% of gene expression values can be zero), and greater cell-to-cell variation compared to bulk RNA-seq [4] [8]. These factors make single-cell data more susceptible to technical variations, and the data's sparsity complicates correction efforts [4].

Can I use the same method to correct batch effects in both bulk and single-cell data? While the purpose of batch correction is the same—to mitigate technical variations—the algorithms are often not directly interchangeable [4]. Techniques developed for bulk RNA-seq may be insufficient for single-cell data due to the latter's large size (thousands of cells versus a dozen samples) and significant sparsity [4]. Conversely, single-cell specific methods might be excessive for the simpler structure of bulk RNA-seq experiments [4].

What are the signs of overcorrection in batch effect removal? Overcorrection occurs when batch effect removal also strips away genuine biological signal. Key signs include [4]:

- Cluster-specific markers comprising genes with widespread high expression (e.g., ribosomal genes).

- Substantial overlap among markers for different clusters.

- Absence of expected canonical cell-type markers.

- Scarcity of differential expression hits in pathways expected based on the sample composition.

How can I assess the effectiveness of a batch correction method? Effectiveness can be evaluated visually and quantitatively. Visual assessment involves examining PCA, t-SNE, or UMAP plots before and after correction to see if cells group by biological condition rather than batch [4]. Quantitative metrics include [4]:

- k-nearest neighbor batch effect test (kBET)

- Graph-based integrated local similarity inference (Graph_iLSI)

- Adjusted Rand Index (ARI)

- Normalized Mutual Information (NMI)

Comparative Analysis: Bulk vs. Single-Cell RNA-Seq Batch Effects

Table 1: Key Differences in Batch Effects Between Bulk and Single-Cell RNA-Seq

| Characteristic | Bulk RNA-Seq | Single-Cell RNA-Seq |

|---|---|---|

| Primary Data Structure | Gene expression matrix (samples × genes) | Gene expression matrix (cells × genes), with extreme sparsity |

| Technical Variation Scale | Moderate, affects entire sample profiles | High, with increased sensitivity to technical noise [8] |

| Data Sparsity | Low | High (approximately 80% zero values) [4] |

| Typical Correction Unit | Entire samples | Individual cells |

| Key Correction Challenge | Preserving inter-sample biological variance while removing technical variation | Distinguishing technical effects from true cellular heterogeneity in sparse data |

Table 2: Commonly Used Batch Effect Correction Methods and Their Applications

| Method Name | Primary Application | Key Algorithmic Approach | Input Data Type |

|---|---|---|---|

| ComBat-seq/ComBat-ref [5] [18] | Bulk RNA-Seq | Empirical Bayes framework with negative binomial model | Raw count matrix |

| Harmony [4] [19] | Single-Cell RNA-Seq | Iterative clustering with soft k-means and linear correction | Normalized count matrix |

| Seurat [4] | Single-Cell RNA-Seq | Canonical Correlation Analysis (CCA) and Mutual Nearest Neighbors (MNN) as anchors | Normalized count matrix |

| MNN Correct [4] [20] | Single-Cell RNA-Seq | Mutual Nearest Neighbors detection and linear correction | Normalized count matrix |

| LIGER [4] | Single-Cell RNA-Seq | Integrative non-negative matrix factorization (NMF) | Normalized count matrix |

| sysVI (cVAE-based) [21] | Single-Cell RNA-Seq (substantial effects) | Conditional Variational Autoencoder with VampPrior and cycle-consistency | Raw count matrix |

Experimental Protocols for Batch Effect Assessment and Correction

Protocol 1: Detecting Batch Effects in Single-Cell RNA-Seq Data

Principle: Visually identify whether systematic technical variations are causing cells to cluster by batch rather than biological origin [4].

Procedure:

- Data Preparation: Begin with a raw count matrix (cells × genes) and corresponding metadata specifying batch labels and biological conditions.

- Quality Control: Filter out low-quality cells and genes. Normalize the data for sequencing depth.

- Dimensionality Reduction: Perform Principal Component Analysis (PCA) on the normalized data.

- Visualization:

- Create a scatter plot of the top two principal components.

- Color cells by their batch of origin.

- Shape or facet cells by their biological condition.

- Interpretation: If cells from the same biological condition but different batches form separate clusters in the PCA plot, a batch effect is likely present.

Protocol 2: Correcting Batch Effects in Bulk RNA-Seq Using ComBat-ref

Principle: Employ a reference batch with the smallest dispersion to guide the adjustment of other batches, preserving statistical power for differential expression analysis [5] [18].

Procedure:

- Input Data Preparation: Compile a raw count matrix (samples × genes) and a design matrix specifying batch and biological conditions.

- Dispersion Estimation: Use the

edgeRpackage in R to estimate a pooled (shrunk) dispersion parameter for each batch. - Reference Batch Selection: Identify and select the batch with the smallest dispersion as the reference.

- Model Fitting: Apply the ComBat-ref algorithm, which uses a negative binomial generalized linear model:

log(μ_ijg) = α_g + γ_ig + β_cjg + log(N_j)- Where

μ_ijgis the expected expression of genegin samplejfrom batchi,α_gis the global expression background,γ_igis the batch effect,β_cjgis the biological condition effect, andN_jis the library size.

- Data Adjustment: Adjust count data from non-reference batches toward the reference batch using cumulative distribution function matching.

- Output: A corrected integer count matrix suitable for downstream differential expression analysis with tools like edgeR or DESeq2.

Workflow Visualization

Diagram 1: Generalized batch effect correction workflow for RNA-seq data.

Diagram 2: Methodological differences in batch correction for bulk versus single-cell RNA-seq.

The Scientist's Toolkit: Essential Research Reagents and Computational Tools

Table 3: Key Research Reagent Solutions for Batch Effect Mitigation

| Reagent/Tool | Function | Considerations for Batch Effect Mitigation |

|---|---|---|

| Sequencing Kits & Reagents | Library preparation and sequencing | Use the same lot numbers across all samples in a study; document all kit versions and lot numbers [11] |

| RNA Extraction Kits | Isolation of high-quality RNA | Consistency in RNA extraction methods and solutions is critical; changes can introduce significant batch effects [8] |

| Enzymes (Reverse Transcriptase) | cDNA synthesis | Enzyme efficiency variations can introduce technical bias; use consistent sources and lots [11] |

| Cell Culture Reagents (e.g., FBS) | Cell growth and maintenance | Reagent batch variability can affect gene expression; document all reagent lots and sources [8] |

| Single-Cell Partitioning Reagents | Cell isolation and barcoding | Critical for scRNA-seq; consistency in partitioning technology and chemistry reduces technical variation [11] |

| Computational Tools (R/Python) | Data analysis and correction | Document software versions; use established batch correction packages like Harmony, ComBat-seq [4] [19] |

Successfully distinguishing and addressing batch effects in bulk versus single-cell RNA-seq requires both rigorous experimental design and appropriate computational correction strategies. For bulk RNA-seq, methods like ComBat-ref that operate directly on count data and preserve statistical power for differential expression are often optimal [5]. For single-cell RNA-seq, integration methods like Harmony that correct embeddings while preserving biological heterogeneity have demonstrated superior performance with minimal introduction of artifacts [19]. When confronting substantial batch effects across systems—such as in cross-species or protocol integration—emerging approaches like sysVI that combine VampPrior with cycle-consistency constraints show promise for maintaining biological fidelity while removing technical variation [21] [22]. By applying these specialized approaches within a framework of careful experimental planning and post-correction validation, researchers can reliably mitigate the confounding influence of batch effects and draw robust biological conclusions from their transcriptomics studies.

A Practical Toolkit: Batch Effect Correction Algorithms and Their Applications

What are the main categories of batch effect correction algorithms (BECAs)?

Batch effect correction methods can be broadly classified into several categories, each with distinct underlying principles and use cases. The table below summarizes the main approaches.

| Method Category | Key Principle | Representative Algorithms | Typical Use Case Scenarios |

|---|---|---|---|

| Model-Based | Uses statistical models to estimate and adjust for batch-specific biases. | ComBat [23] [8] [24], limma [24] | Balanced study designs; when batch and biological factors are not confounded [23]. |

| Ratio-Based | Scales feature values relative to those from a common reference material processed in the same batch. | Ratio-G [23] | Confounded scenarios; multiomics studies; when reference materials are available [23]. |

| Integration (Dimensionality Reduction) | Embeds cells or samples into a common low-dimensional space where batch effects are minimized. | Harmony [23] [25] [4], MNN Correct [4] [11], LIGER [4] [11], Seurat [4] [11] | Single-cell RNA-seq data integration; large-scale atlas projects [4] [21]. |

| Deep Learning | Uses neural networks to learn a batch-invariant representation of the data. | scGen [4], sysVI (cVAE-based) [21] | Complex, non-linear batch effects; integrating datasets with substantial technical or biological differences (e.g., across species) [21]. |

The following diagram illustrates the logical workflow for selecting and applying these major correction approaches.

How do I choose the right method for my experiment?

The choice of batch effect correction method depends heavily on your experimental design, the type of omics data, and the severity of the batch effects.

Key Considerations for Method Selection

- Study Design (Balanced vs. Confounded): This is a critical factor.

- Balanced Design: Samples from different biological groups are evenly distributed across batches. Many BECAs, including ComBat and Harmony, can be effective in this scenario [23].

- Confounded Design: Biological groups are completely processed in separate batches (e.g., all controls in one batch and all treatments in another). In this challenging case, ratio-based methods are often the most effective and sometimes the only viable option, as they can leverage reference materials to disentangle technical noise from biological signal [23].

- Data Type (Bulk vs. Single-Cell):

- Bulk Omics: Model-based (ComBat, limma) and ratio-based methods are commonly used [23] [8].

- Single-Cell RNA-seq: Methods designed for high-dimensional, sparse data are preferred. A 2025 benchmark study found that Harmony was the only method that consistently performed well without introducing detectable artifacts, while other popular methods like MNN, SCVI, and LIGER often altered the data considerably [25]. Seurat is also widely used for scRNA-seq integration [4] [11].

- Data Completeness: For large-scale studies with many missing values (incomplete omic profiles), a 2025 study introduced Batch-Effect Reduction Trees (BERT), which outperforms other imputation-free methods like HarmonizR in both data retention and computational speed [24].

How can I detect if my data has batch effects?

Batch effects can be identified through visualization and quantitative metrics.

| Method | Description | How to Interpret |

|---|---|---|

| Principal Component Analysis (PCA) | A dimensionality reduction technique that projects data onto the directions of maximum variance [4]. | If samples cluster strongly by batch, rather than by biological group, in the first few principal components, a batch effect is likely present [4]. |

| t-SNE/UMAP Plot Examination | Non-linear dimensionality reduction techniques used to visualize high-dimensional data in 2D or 3D [23] [4]. | Before correction, cells from the same batch may cluster together unnaturally. After successful correction, cells should cluster by biological cell type or group, with batches mixed within clusters [4]. |

| Quantitative Metrics | Numerical scores that measure the degree of batch mixing and biological preservation. | Metrics include the k-nearest neighbor batch effect test (kBET), adjusted rand index (ARI), and graph-based integrated local similarity inference (Graph_iLISI) [4]. Values closer to 1 typically indicate better integration. |

What are the signs of overcorrection, and how can I avoid it?

Overcorrection occurs when a batch effect correction algorithm removes not only technical variation but also genuine biological signal. This can lead to misleading conclusions.

- Loss of Biological Markers: Canonical, well-established cell-type-specific markers (e.g., a specific T-cell marker) are absent from the differential expression analysis after correction.

- Non-Specific Markers: The list of identified cluster-specific markers becomes dominated by genes that are universally highly expressed (e.g., ribosomal genes) rather than specific to a cell type.

- Blurred Clusters: There is a substantial overlap in the marker genes between clusters that are known to be biologically distinct.

- Missing Pathways: Differential expression analysis fails to identify pathways that are expected to be active given the known biological conditions and cell types in the experiment.

How to Avoid Overcorrection

- Use Reference Materials: When available, using ratio-based methods with well-characterized reference materials can help preserve biological truth [23].

- Benchmark Methods: Test multiple correction algorithms and compare the results. A method that retains known biological signals while effectively mixing batches is ideal.

- Incremental Correction: Avoid using the strongest possible correction strength. Some methods, like those based on conditional Variational Autoencoders (cVAE), can lose biological information if the regularization forcing integration is too strong [21].

What experimental protocols and reagents are key for mitigating batch effects?

Proactive experimental design is the most effective way to minimize batch effects. The following table lists essential reagents and materials used in the Quartet Project, which provides a framework for quality control in multiomics studies [23].

| Research Reagent / Material | Function in Mitigating Batch Effects |

|---|---|

| Multiomics Reference Materials (RMs) | Commercially available or in-house standardized materials derived from well-characterized cell lines (e.g., B-lymphoblastoid cells). They are processed alongside study samples in every batch to serve as a technical baseline for ratio-based correction [23]. |

| Standardized Nucleic Acid Extraction Kits | Using the same lot of RNA/DNA extraction kits across all batches minimizes variability introduced during sample preparation [26]. |

| RNA Stabilization Reagents | Reagents like DNA/RNA Shield preserve sample integrity at the point of collection, preventing degradation-driven batch effects, especially in multi-center studies [27]. |

| Standardized Library Prep Kits | Using consistent lots of library preparation kits (e.g., NEBNext RNA Library Prep Kits) ensures uniform adapter ligation, fragmentation, and amplification, reducing technical noise between batches [28]. |

Detailed Protocol: Implementing Ratio-Based Correction with Reference Materials

This protocol is adapted from large-scale multiomics studies and is highly effective for confounded batch-group scenarios [23].

Experimental Design:

- Select one or more well-characterized multiomics reference materials (RMs). In the Quartet Project, four reference materials are used [23].

- Design your experiment so that every batch includes multiple replicates (e.g., 3) of the chosen RM(s) alongside your study samples.

Sample Processing:

- Process all samples (study samples and RMs) in the same batch identically, using the same reagents, equipment, and protocols to the greatest extent possible [11].

Data Generation:

- Generate your omics data (e.g., transcriptomics, proteomics) for all samples in the batch.

Data Transformation (Ratio Calculation):

- For each feature (e.g., a gene's expression value) in each study sample, transform the absolute value into a ratio relative to the average value of that feature in the RM replicates from the same batch.

- Formula:

Ratio(Sample) = Absolute_Value(Sample) / Mean(Absolute_Value(RM_Replicates))

Data Integration:

- The resulting ratio-based values for all study samples across all batches can now be integrated for downstream analysis, as the technical variation has been scaled out relative to the common reference.

How do I handle massive datasets or those with many missing values?

For large-scale data integration tasks involving thousands of datasets or data with substantial missing values, a 2025 study introduced Batch-Effect Reduction Trees (BERT) [24].

- Principle: BERT decomposes a large data integration task into a binary tree of smaller, pairwise batch-effect correction steps using established methods like ComBat or limma. This allows for efficient parallel processing [24].

- Advantages over Previous Methods:

- Retains More Data: BERT was shown to retain up to five orders of magnitude more numeric values compared to the previous state-of-the-art method, HarmonizR [24].

- Computational Efficiency: It leverages multi-core systems for up to an 11x runtime improvement [24].

- Handles Covariates: It can account for experimental conditions (covariates) and use reference samples to correct datasets with severely imbalanced designs [24].

ComBat and Empirical Bayes Frameworks for Known Batch Variables

Frequently Asked Questions (FAQs)

Fundamental Concepts

Q1: What is the core principle behind ComBat's Empirical Bayes approach? ComBat uses an Empirical Bayes framework to adjust for batch effects by pooling information across all genes to estimate batch-specific parameters (mean and variance). This approach is particularly powerful for small sample sizes, as it "shrinks" the batch effect estimates towards a common value, making the corrections more stable and reliable [1] [29].

Q2: How does ComBat differ from simply including batch as a covariate in a linear model? While including batch as a covariate in a one-step linear model is a valid approach, ComBat's two-step method offers a richer adjustment. ComBat models and corrects for both additive (location) and multiplicative (scale) batch effects across batches, not just the mean. Furthermore, its Empirical Bayes shrinkage provides more robust performance, especially with many batches or small batch sizes [30] [31].

Q3: When should I use ComBat versus ComBat-seq? The choice depends on your data type:

- ComBat: Use on already normalized and continuous data, such as log-transformed microarray data or normalized RNA-seq data (e.g., log-CPM, TPM) [32] [33].

- ComBat-seq: Use on raw RNA-seq count data. It employs a negative binomial model, which is more appropriate for counts, and outputs a corrected count matrix suitable for downstream differential expression tools like DESeq2 and edgeR [5] [33].

Practical Implementation & Troubleshooting

Q4: I am getting errors regarding my data matrix. What are the input requirements? ComBat expects your data to be a cleaned and normalized genomic measure matrix (e.g., gene expression) with specific dimensions:

- The matrix should be in a "probe x sample" or "gene x sample" format [32].

- Ensure all values are numerical. Check for and remove any non-numeric characters,

NAvalues, or genes with zero variance across all samples. - For standard ComBat, the data should be pre-normalized using appropriate methods (e.g., RMA for microarrays, voom for RNA-seq) to ensure genes have similar overall means and variances [34] [29].

Q5: How do I specify a reference batch, and why would I want to?

You can specify a reference batch using the ref.batch parameter [32]. This is useful when you have a batch you consider a "gold standard" (e.g., a control batch, the largest batch, or a batch from a primary study). All other batches are then adjusted towards this reference, preserving the biological signal in the reference batch. This can be particularly helpful in meta-analyses [5].

Q6: What is the difference between parametric and non-parametric priors in ComBat?

- Parametric (

par.prior=TRUE): Assumes the batch effects follow a specific distribution (a normal distribution). It is faster and is the default, recommended for most use cases [32] [29]. - Non-parametric (

par.prior=FALSE): Does not assume a specific distribution for the batch effects. It is more flexible but computationally slower. Use this if a prior plot (generated withprior.plots=TRUE) shows that the empirical distribution of batch effects does not fit the parametric model well [29].

Q7: After using ComBat, my downstream differential expression analysis shows exaggerated significance or reduced power. Why? This is a known pitfall of two-step batch correction methods like ComBat. The adjustment process introduces a correlation structure between samples within the same batch. If this induced correlation is ignored in the downstream linear model, it can lead to inflated false positive rates (exaggerated significance) or, in some cases, loss of power. The solution is to use a downstream method that accounts for this correlation, such as Generalized Least Squares (GLS) with the estimated correlation matrix [30].

Troubleshooting Guide

| Problem | Potential Cause | Solution |

|---|---|---|

| Convergence errors or model fitting failures. | Highly unbalanced design or very small batch sizes (e.g., n<2). | Check group distribution across batches. If a group is absent from a batch, correction may be impossible. Consider combining small batches if justified. |

| "Missing value" or "NA" errors. | The input data matrix contains NA, NaN, or non-numeric values. |

Perform thorough data cleaning and imputation or removal of genes/samples with excessive missing values before correction [29]. |

| Persistent batch clustering in PCA after correction. | Overly strong batch effects confounded with biological conditions. | Verify that your experimental design is not fully confounded. Validate correction using quantitative metrics (kBET, LISI). Try non-parametric priors [1] [35]. |

| Loss of biological signal after correction (overcorrection). | Batch variable is highly correlated with the biological variable of interest. | Re-specify the mod argument to include a known biological covariate to protect it during correction. Always validate results visually and quantitatively [1] [32]. |

| Corrected data shows different results in R vs. Python. | Slight differences in optimization routines and random number generation between R's sva and Python's pyComBat. |

Differences are typically negligible for downstream analysis. For RNA-seq, ComBat-seq and pyComBat produce identical integer outputs [33]. |

Experimental Protocols & Workflows

Standard Workflow for Bulk RNA-seq Data Using ComBat-seq

This protocol is designed to correct for known batch effects in raw RNA-seq count data.

1. Prerequisite Software and Data Preparation

2. Data Preprocessing and Filtering Filter out lowly expressed genes to reduce noise.

3. Applying ComBat-seq Correction

Apply the batch effect correction directly to the raw counts. The group parameter is used to protect the biological signal of interest.

4. Post-Correction Validation Use Principal Component Analysis (PCA) to visually assess the success of the correction.

- Successful Correction: Samples should no longer cluster primarily by batch and should instead group by biological condition (e.g., treatment vs. control) [36].

Advanced Workflow: Accounting for Induced Correlation in Downstream Analysis

As highlighted in the FAQs, a naive two-step approach can bias inference. This advanced workflow mitigates that risk.

1. Perform ComBat Adjustment Generate the batch-corrected data matrix as usual.

2. Estimate the Induced Sample Correlation Matrix

The ComBat adjustment process introduces a known correlation structure, defined by the formula: Correlation = I - X(X^T X)^{-1} X^T, where X is the batch design matrix [30].

3. Conduct Downstream Analysis with Correlation Adjustment Incorporate the correlation matrix into your differential expression analysis using Generalized Least Squares (GLS).

Method Selection and Comparison Tables

Comparison of ComBat Variants and Related Methods

| Method | Input Data Type | Key Features | Strengths | Limitations |

|---|---|---|---|---|

| ComBat [1] [32] | Normalized, continuous data (Microarray, log-CPM) | Empirical Bayes, adjusts mean and variance. | Powerful for small batches, widely used and validated. | Not designed for raw counts; can introduce non-integer values. |

| ComBat-seq [5] [33] | Raw count data (RNA-seq) | Negative binomial model, outputs integers. | Preserves count structure, ideal for DESeq2/edgeR. | May have lower power than ComBat-ref in some scenarios. |

| ComBat-ref [5] | Raw count data (RNA-seq) | Selects a low-dispersion batch as reference. | High statistical power, controls FDR effectively. | Newer method, requires evaluation in diverse datasets. |

| limma removeBatchEffect [1] [34] | Normalized, continuous data | Linear model-based adjustment. | Fast, integrated into limma workflow. | Only adjusts for additive mean effects. |

| SVA [1] [30] | Normalized or count data | Estimates hidden batch effects (surrogate variables). | Does not require known batch labels. | Risk of overcorrection if surrogate variables capture biology. |

Key Parameters for the ComBat Function

| Parameter | Description | Recommendation |

|---|---|---|

dat |

Input genomic data matrix (genes x samples). | Must be cleaned and normalized for standard ComBat. |

batch |

Factor or vector indicating batch membership. | Required. Ensure at least 2 samples per batch. |

mod |

Model matrix for biological covariates to preserve. | Highly recommended to include to prevent overcorrection. |

par.prior |

Whether to use parametric priors. | TRUE (faster) unless prior plots show poor fit. |

prior.plots |

Whether to produce plots to check prior fit. | Use TRUE to diagnose if parametric prior is suitable. |

ref.batch |

Specifies a batch to which others are adjusted. | Use if a specific batch should serve as a benchmark. |

mean.only |

If TRUE, only corrects mean batch effects. | Set to FALSE to correct for mean and variance. |

Visual Workflows and Diagrams

ComBat Empirical Bayes Workflow

Batch Correction Decision Guide

| Item | Function in Context of Batch Correction |

|---|---|

| Technical Replicates | Samples from the same biological source processed across different batches. Essential for quantifying the magnitude of batch effects and validating correction methods [31]. |

| Pooled Quality Control (QC) Samples | A standardized sample (e.g., a reference RNA) run in every batch. Allows for direct modeling of technical variation and instrument drift across batches [1]. |

| Negative Control Genes | A set of genes assumed not to be influenced by the biological conditions of interest (e.g., housekeeping genes). Used by some methods (e.g., RUV) to estimate the factor of unwanted variation [31]. |

| Reference Batch | A specific batch selected as a benchmark (e.g., the largest batch, or one from the primary study). In ComBat-ref, the batch with the smallest dispersion is chosen to enhance statistical power in downstream analysis [5]. |

| Balanced Experimental Design | The practice of distributing all biological conditions of interest evenly across all batches. This is the single most important preventative measure to minimize confounding and make batch effects correctable [1] [35]. |

Batch effects are systematic, non-biological variations introduced into transcriptomics data due to technical inconsistencies, such as differences in reagent lots, sequencing platforms, operators, or sample processing days. These effects can mask true biological signals and lead to false conclusions in differential expression analysis [1]. The Ratio-based scaling method, also known as Ratio-G, is a powerful batch-effect correction algorithm (BECA) that mitigates these technical variations by scaling the absolute feature values of study samples relative to those of concurrently profiled reference materials [23] [13].

This method is particularly effective in confounded scenarios where biological groups of interest are completely grouped by batch, making it nearly impossible for many other BECAs to distinguish technical variation from true biological difference. By transforming data relative to a stable benchmark, Ratio-G provides a robust mechanism for data integration and cross-batch comparability [23] [13].

Experimental Protocol and Workflow

Prerequisites and Experimental Design

Before implementing Ratio-G, ensure proper experimental design:

- Reference Material Selection: Establish stable, well-characterized reference materials. In the Quartet Project, researchers used immortalized B-lymphoblastoid cell lines (LCLs) from a family quartet as comprehensive reference materials [23] [13].

- Batch Planning: Include the same reference material in every batch of your experiment.

- Replication: Process multiple technical replicates of reference materials within each batch (typically 3 replicates) [13].

- Randomization: Balance biological groups across batches when possible, though Ratio-G remains effective even in completely confounded designs.

Step-by-Step Methodology

Table: Detailed Ratio-G Implementation Steps

| Step | Procedure | Technical Specifications | Quality Control Checkpoints |

|---|---|---|---|

| 1. Reference Material Processing | Process reference samples alongside study samples in each batch | Use identical library preparation protocols; maintain consistent RNA input amounts | Confirm RNA integrity numbers (RIN > 8.0 for reference materials) |

| 2. Data Generation | Generate transcriptomics data using your standard platform | Follow consistent sequencing depth across batches; minimum 30 million reads per sample | Check sequencing quality metrics (Q-score > 30, GC content consistency) |

| 3. Expression Quantification | Generate expression values (FPKM, TPM, or count data) | Use standardized quantification pipelines (e.g., STAR, Kallisto) | Confirm correlation between technical replicates (R² > 0.95) |

| 4. Ratio Transformation | For each feature (gene) in each sample: Calculate ratio = Study sample value / Reference material value | Use median of reference material replicates as denominator; apply log2 transformation post-ratio | Check for division by zero; apply pseudocount if necessary |

| 5. Data Integration | Combine ratio-scaled values from multiple batches | Create unified expression matrix of ratio values | Perform PCA to confirm batch mixing |

Workflow Visualization

Performance Evaluation and Comparison

Quantitative Performance Metrics

Table: Ratio-G Performance Comparison with Other BECAs

| Performance Metric | Ratio-G Method | ComBat | limma removeBatchEffect | SVA |

|---|---|---|---|---|

| Signal-to-Noise Ratio | Superior improvement in confounded scenarios [23] | Moderate | Moderate | Variable |

| False Discovery Rate | Effectively controls FDR in DE analysis [13] | May introduce false positives | May introduce false positives | Risk of overcorrection |

| Data Retention | Retains all numeric values after transformation [13] | May lose features with missing values | May lose features with missing values | Depends on missing data pattern |

| Confounded Scenario Performance | Maintains effectiveness [23] [13] | Limited effectiveness | Limited effectiveness | Limited effectiveness |

| Computational Efficiency | High (simple calculation) [13] | Moderate (empirical Bayes) | High (linear models) | High (surrogate variable estimation) |

| Biological Signal Preservation | High when reference is appropriate [13] | Risk of removing biological signal | Risk of removing biological signal | High risk of removing biological signal |

Validation Methods for Ratio-G Implementation

After applying Ratio-G, confirm successful batch effect correction using:

- Principal Component Analysis (PCA): Visualize pre- and post-correction plots to confirm samples no longer cluster by batch [1].

- Average Silhouette Width (ASW): Quantify batch mixing with target ASWbatch < 0.25 and biological separation with ASWlabel > 0.5 [24].

- Differential Expression Consistency: Confirm consistent DE results across batches for known markers.

- Positive Control Genes: Verify that expression patterns of housekeeping genes align with expectations.

Troubleshooting Guide: Common Issues and Solutions

Reference Material Issues

Problem: High variability in reference material measurements across replicates.

- Potential Cause: Degradation of reference material or inconsistent processing.

- Solution:

- Check RNA quality of reference material (RIN > 8.0)

- Increase number of technical replicates (minimum 3)

- Use median value instead of mean for ratio calculation

- Establish new reference material batch if variability persists

Problem: Reference material shows extreme values for specific genes.

- Potential Cause: Biological differences between reference and study samples.

- Solution:

- Filter out genes with extreme values in reference material

- Use multiple reference materials to identify consistent patterns

- Apply modified ratio using percentile normalization instead of raw values

Data Quality Issues

Problem: Poor batch effect correction after Ratio-G application.

- Potential Cause: Batch effects overwhelming biological signal or inappropriate reference.

- Solution:

- Confirm reference material is processed identically to test samples

- Check for confounding between biological groups and batches

- Combine Ratio-G with other methods (e.g., pre-filtering with Harmony)

- Validate with positive control genes with known expression patterns

Problem: Introduction of missing values after ratio transformation.

- Potential Cause: Low-expression genes in reference material creating division artifacts.

- Solution:

- Apply minimum expression threshold to both numerator and denominator

- Use pseudocount addition before ratio calculation

- Filter genes with consistently low expression across batches

Integration Challenges

Problem: Inconsistent results when integrating more than 5 batches.

- Potential Cause: Drift in reference material performance or experimental conditions.

- Solution:

- Implement quality control charts for reference material performance

- Use cross-batch normalization with subset of samples

- Consider hierarchical Ratio-G application for large-scale studies

Comparison with Other Batch Effect Correction Methods

While Ratio-G demonstrates particular strength in confounded scenarios, understanding its position in the BECA landscape helps researchers select appropriate methods:

Table: Method Selection Guide for Different Experimental Scenarios

| Experimental Scenario | Recommended Method | Rationale | Implementation Considerations |

|---|---|---|---|

| Completely Confounded Design | Ratio-G | Only method that effectively handles batch-group confounding [23] [13] | Requires reference materials; simple implementation |

| Balanced Batch Design | ComBat, limma, or Ratio-G | All methods perform well with balanced designs [13] | Ratio-G provides most conservative correction |

| Single-Cell RNA-seq | Harmony or fastMNN with Ratio-G adaptation | Handles sparsity and cellular heterogeneity [1] [8] | Modified ratio approach needed for sparse data |

| Longitudinal Studies | Ratio-G with time-matched references | Preserves temporal biological signals [8] | Requires reference at each time point |

| Multi-Omics Integration | Ratio-G across platforms | Consistent approach across data types [23] [13] | Platform-specific reference materials ideal |

The Scientist's Toolkit: Essential Research Reagents

Table: Key Research Reagents for Ratio-G Implementation

| Reagent/Material | Function in Ratio-G Protocol | Specifications & Quality Controls |

|---|---|---|

| Reference Material | Serves as denominator in ratio calculation; normalizes technical variations | Stable, well-characterized (e.g., Quartet LCLs [13]); high RNA quality (RIN > 8.0); multiple aliquots |