A Comprehensive Guide to Principal Component Analysis (PCA) for Transcriptomics Data Exploration

This guide provides a comprehensive framework for applying Principal Component Analysis (PCA) to transcriptomics data, from foundational concepts to advanced applications.

A Comprehensive Guide to Principal Component Analysis (PCA) for Transcriptomics Data Exploration

Abstract

This guide provides a comprehensive framework for applying Principal Component Analysis (PCA) to transcriptomics data, from foundational concepts to advanced applications. It covers the essential role of PCA in overcoming the curse of dimensionality inherent in gene expression studies, where thousands of genes (variables) are measured across far fewer samples. The article details methodological best practices for data preprocessing, normalization, and component selection, while addressing common troubleshooting scenarios like batch effects and overfitting. By integrating validation strategies and comparing PCA with alternative dimensionality reduction techniques, this resource empowers researchers and drug development professionals to extract robust biological insights, improve data visualization, and enhance downstream analytical outcomes in their transcriptomics workflows.

Understanding PCA and Its Critical Role in Transcriptomics

Principal Component Analysis (PCA) is a fundamental dimension reduction technique that transforms high-dimensional data into a lower-dimensional form while preserving essential patterns and structures. For biologists working with transcriptomics data, PCA serves as a powerful exploratory tool that simplifies complex datasets containing thousands of gene expressions into manageable visualizations. This technique identifies principal components—new, uncorrelated variables that capture the maximum variance in the data. By projecting samples into a reduced space defined by these components, PCA enables researchers to identify sample relationships, detect outliers, assess batch effects, and uncover underlying biological structures without prior hypotheses. This overview provides both conceptual and practical guidance for applying PCA in biological research, with particular emphasis on transcriptomics applications.

The Core Concept of PCA

What is Dimension Reduction?

High-throughput biological technologies, such as RNA sequencing and microarray platforms, routinely generate datasets where the number of measured variables (genes, transcripts) far exceeds the number of biological samples. This "large d, small n" characteristic presents significant challenges for analysis and visualization [1]. PCA addresses this problem through linear transformation, converting potentially correlated variables into a smaller set of uncorrelated variables called principal components that successively maximize variance [2]. The first principal component (PC1) captures the direction of maximum variance in the data, with each subsequent component accounting for the next highest variance while being uncorrelated with previous components [3].

Geometric Interpretation

Geometrically, PCA represents a rotation of the original coordinate system to create new axes aligned with the directions of maximum variance [4]. Imagine a cloud of data points in multidimensional space; PCA identifies the primary axes of this cloud, with the first axis (PC1) oriented along the longest extent of the cloud, the second axis (PC2) along the next longest perpendicular extent, and so on. This rotation provides the "best angle" to view and evaluate data, making differences between observations more visible [5]. The technique is particularly valuable for visualizing relationships between samples in transcriptomics studies, where each sample represents a point in a space with thousands of dimensions (genes).

Key Properties of Principal Components

Principal components possess several mathematically important properties: (1) They are linear combinations of the original variables weighted by loadings [2]; (2) Different PCs are orthogonal (uncorrelated) to each other [1]; (3) The variance explained decreases with each subsequent component, with the first few components typically capturing most information [3]; and (4) The total variance of all components equals the total variance in the original dataset [2].

Mathematical Foundation

The PCA Algorithm: A Step-by-Step Process

The computation of principal components follows a standardized mathematical procedure:

Standardization and Centering Data: The aim is to standardize the range of continuous initial variables so each contributes equally to analysis. This is done by subtracting the mean and dividing by the standard deviation for each value of each variable, transforming all variables to comparable scales [5]. For RNA-seq data, this typically involves calculating log counts per million (CPM) values followed by Z-score normalization across samples for each gene [6].

Covariance Matrix Computation: The standardized data is used to compute a covariance matrix that identifies correlations between all possible pairs of variables. This symmetric matrix has variances along the diagonal and covariances in off-diagonal elements, providing a comprehensive view of variable relationships [5].

Eigen Decomposition: Eigenvectors and eigenvalues of the covariance matrix are calculated. The eigenvectors (principal components) indicate the directions of maximum variance, while eigenvalues represent the magnitude of variance along each component [5]. This can be achieved through eigendecomposition of the covariance matrix or singular value decomposition (SVD) of the centered data matrix [2].

Component Selection: Eigenvectors are ranked by their corresponding eigenvalues in descending order. Researchers select a subset of components that capture sufficient variance, often using scree plots or cumulative variance thresholds to determine the optimal number [3].

Data Projection: The original data is projected onto the selected principal components to create new coordinates (scores) in the reduced-dimensional space [5].

Covariance vs. Correlation Matrix

PCA can be performed using either the covariance matrix or correlation matrix. The covariance matrix preserves the original scale and units of measurement, making it sensitive to variables with large variances. In contrast, the correlation matrix standardizes all variables to unit variance, giving equal weight to all variables regardless of their original scales [4]. The choice depends on research objectives and data characteristics; correlation-based PCA is preferred when variables are on different scales or when researchers wish to avoid dominance by high-variance variables.

Table 1: Comparison of PCA Approaches Based on Data Preprocessing

| Processing Method | Data Transformation | When to Use | Advantages | Limitations |

|---|---|---|---|---|

| Covariance Matrix | Center only | Variables have similar scales and variances; preserving original data structure is important | Maintains original data relationships; simpler interpretation | Can be dominated by high-variance variables |

| Correlation Matrix | Center and scale | Variables have different units or scales; avoiding dominance by high-variance variables | Equal contribution from all variables; better for heterogeneous data | Removes natural variance differences that may be biologically meaningful |

Key Outputs of PCA

Three primary outputs result from PCA:

Eigenvalues: Represent the amount of variance explained by each principal component. Larger eigenvalues indicate components that capture more information from the original dataset [7].

Loadings: Also called eigenvectors, these indicate the weight of each original variable on each principal component. Higher absolute loadings signify greater contribution of a variable to that component [7].

Scores: The coordinates of each sample in the new principal component space, obtained by projecting original data onto the principal components [2].

Practical Application in Transcriptomics

Standard Workflow for RNA-Seq Data

Applying PCA to transcriptomics data requires specific preprocessing steps to handle count-based measurements:

Normalization: Calculate counts per million (CPM) or similar normalized values to account for differing library sizes [6].

Transformation: Apply log₂ transformation to stabilize variance across the dynamic range of expression values.

Filtering: Remove genes with zero expression across all samples or invalid values [6].

Standardization: Perform Z-score normalization (mean-centering and scaling to unit variance) across samples for each gene [6].

PCA Implementation: Apply PCA using statistical software, typically via the

prcomp()function in R or similar tools in other programming environments [7].

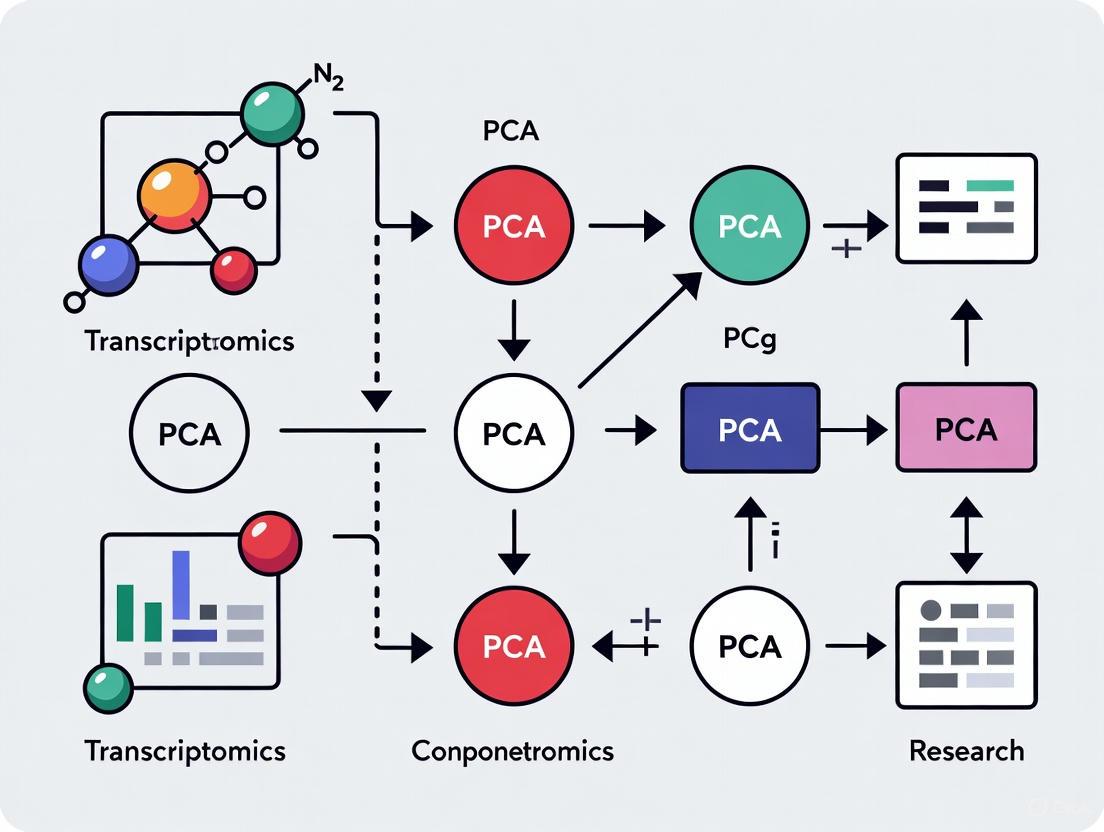

The following diagram illustrates the complete PCA workflow for RNA-seq data analysis:

Interpreting PCA Results

PCA results are typically visualized through:

Scree Plots: Display the proportion of total variance explained by each principal component, helping determine how many components to retain. The "elbow" point often indicates the optimal number [3].

PCA Plots: Scatterplots of samples using the first two or three principal components as axes. Similar samples cluster together, while dissimilar samples separate [7].

Loading Plots: Visualize the contribution of original variables to the principal components, highlighting genes with strongest influence on sample separation.

Table 2: Critical Steps in PCA Interpretation for Transcriptomics

| Interpretation Step | Purpose | Key Questions | Common Patterns |

|---|---|---|---|

| Variance Assessment | Determine information content | How much variance do the first few components capture? Is the reduced representation adequate? | First 2-3 components typically explain 30-50% of total variance in heterogeneous datasets [8] |

| Sample Clustering | Identify sample relationships | Do biological replicates cluster together? Are there unexpected outliers or batch effects? | Tight clusters of replicates indicate technical reliability; separation may reflect biological conditions |

| Component Annotation | Relate PCs to biology | What biological factors (cell type, treatment, batch) correlate with component separation? | PC1 often separates major cell types, PC2 may reflect treatment effects or other biological variables |

| Gene Influence | Identify driving genes | Which genes contribute most to each component? Are they biologically relevant? | High-loading genes often belong to coordinated pathways or biological processes |

Advanced Considerations & Limitations

When PCA Performs Poorly

Despite its utility, PCA has limitations that researchers must recognize:

- Linear Assumptions: PCA is a linear technique that may fail to capture complex nonlinear relationships in data [3].

- Variance Maximization: By focusing on maximum variance, PCA may emphasize technical artifacts or batch effects rather than biologically relevant signals [9].

- Distributional Assumptions: PCA assumes variables follow approximately normal distributions, an assumption frequently violated in genomic data [9].

- Sample Composition Sensitivity: PCA results depend heavily on sample composition. The inclusion or exclusion of specific sample types can dramatically alter component directions [8].

Beyond Standard PCA: Advanced Variants

Several PCA extensions address specific analytical challenges:

Sparse PCA: Incorporates variable selection to identify a subset of informative genes, improving interpretability by focusing on biologically relevant features [1].

Supervised PCA: Integrates outcome variables to guide dimension reduction, potentially increasing sensitivity for detecting biologically meaningful patterns [1].

Independent Principal Component Analysis (IPCA): Combines PCA with Independent Component Analysis (ICA) to better separate biological signals from noise, particularly for data following super-Gaussian distributions [9].

Functional PCA: Adapted for time-course gene expression data, capturing dynamic patterns across multiple time points [1].

Comparison to Alternative Methods

PCA is one of several dimension reduction techniques available to biologists:

- PCA vs. t-SNE/UMAP: While PCA is linear and preserves global structure, t-SNE and UMAP are nonlinear methods that excel at preserving local neighborhoods but may distort global relationships.

- PCA vs. Factor Analysis: Both methods perform dimension reduction, but factor analysis focuses on latent variables representing underlying constructs, while PCA creates components that maximize variance explanation [3].

- PCA vs. LDA: Linear Discriminant Analysis (LDA) is supervised and requires class labels, while PCA is unsupervised and ignores class information [3].

The Scientist's Toolkit

Table 3: Essential Computational Tools for PCA in Biological Research

| Tool/Resource | Function | Application Context | Implementation |

|---|---|---|---|

| R prcomp() | PCA computation | General purpose PCA analysis | Standard R function, uses SVD |

| Python sklearn | Machine learning library | Integration with ML pipelines | PCA class in sklearn.decomposition |

| Z-score normalization | Data standardization | Preparing data for correlation-based PCA | Scale genes to mean=0, variance=1 |

| CPM/TMM normalization | RNA-seq specific processing | Accounting for library size differences | EdgeR, DESeq2, or custom scripts |

| Scree plot | Variance visualization | Determining component retention | Plot eigenvalues in descending order |

| Cumulative variance plot | Information assessment | Evaluating variance capture | Plot running total of variance explained |

Principal Component Analysis remains a cornerstone technique for exploratory analysis of high-dimensional biological data. Its power to reduce thousands of gene expressions to manageable visualizations makes it indispensable for quality assessment, pattern recognition, and hypothesis generation in transcriptomics research. While practitioners must remain aware of its limitations—particularly its sensitivity to data composition and variance structure—appropriate application provides invaluable insights into the underlying structure of complex biological systems. As genomic technologies continue to evolve, PCA and its advanced variants will maintain their relevance as essential tools in the biologist's computational arsenal.

Transcriptomics technologies, such as RNA sequencing (RNA-seq) and microarrays, enable the comprehensive measurement of gene expression levels across thousands of genes simultaneously [10]. While this provides a powerful window into biological systems, it creates a significant computational challenge known as the curse of dimensionality [11] [12]. This phenomenon describes the problems that arise when analyzing data in high-dimensional spaces, where the vast number of features (genes) far exceeds the number of observations (samples) [11]. In practice, transcriptomic datasets commonly analyze more than 20,000 genes across fewer than 100 samples, creating a scenario where P (variables) ≫ N (observations) [11]. This high-dimensionality leads to data sparsity, increased noise, computational inefficiency, and heightened risk of overfitting in downstream analyses [12] [13] [14].

Principal Component Analysis (PCA) serves as a fundamental computational technique to mitigate these challenges by reducing the dimensionality of transcriptomic data while preserving its essential biological information [13]. This guide explores the theoretical foundation of the curse of dimensionality in transcriptomics, details how PCA provides a solution, and presents practical protocols and evidence from recent research demonstrating its critical role in deriving meaningful biological insights.

Understanding the Curse of Dimensionality in Transcriptomic Data

Fundamental Concepts and Mathematical Challenges

In transcriptomics, each measured gene represents a dimension in the data space [11]. The curse of dimensionality manifests through several interconnected problems:

- Data Sparsity: As dimensionality increases, data points become increasingly scattered through the high-dimensional space. The volume of space grows exponentially with dimensions, making the available data insufficient to densely populate the space [14]. This sparsity makes it difficult to detect meaningful patterns or clusters.

- Distance Concentration: In high-dimensional spaces, the distances between points become more similar, compromising the effectiveness of distance-based algorithms commonly used in clustering and classification [13].

- Increased Computational Complexity: Analyzing high-dimensional data requires significant computational resources and time. For example, spatial transcriptomics methods analyzing 100,000+ locations pose substantial computational burdens that necessitate efficient algorithms [15].

- Overfitting and Spurious Correlations: With thousands of genes and limited samples, machine learning models may memorize noise rather than learn true biological signals, leading to poor generalization on new data [14].

Consequences for Transcriptomics Analysis

The curse of dimensionality particularly impacts key transcriptomics applications:

- Cell Type Identification: High dimensionality and sparsity obscure the true biological variation between cell types or states [12].

- Differential Expression Analysis: Standard statistical tests lose power when correcting for thousands of simultaneous hypothesis tests [16].

- Drug Response Prediction: High-dimensional pharmacotranscriptomic data complicates the identification of robust drug response signatures [17] [18].

- Spatial Transcriptomics: Emerging technologies generating data at subcellular resolution for hundreds of thousands of locations create unprecedented computational demands [15] [19].

Table 1: Impact of Dimensionality on Transcriptomic Data Analysis

| Aspect | Low-Dimensional Scenario | High-Dimensional Challenge |

|---|---|---|

| Data Distribution | Dense, meaningful distances | Sparse, concentrated distances |

| Computational Load | Manageable | High memory/time requirements |

| Statistical Power | Sufficient for sample size | Diminished by multiple testing |

| Pattern Discovery | Clear clustering | Obscured patterns, noise dominance |

| Risk of Overfitting | Low | High without regularization |

Figure 1: The cascade of analytical challenges resulting from high-dimensional transcriptomic data.

PCA as a Computational Solution: Theory and Workflow

Algorithmic Foundations of Principal Component Analysis

PCA addresses the curse of dimensionality by transforming the original high-dimensional gene expression data into a lower-dimensional space of uncorrelated variables called principal components (PCs) [11] [13]. These PCs are linear combinations of the original genes, ordered such that the first component (PC1) captures the maximum possible variance in the data, PC2 captures the next highest variance while being orthogonal to PC1, and so on [12]. Mathematically, PCA works by:

- Standardizing the data to have zero mean and unit variance for each gene [13].

- Computing the covariance matrix to understand how genes vary together.

- Performing eigendecomposition of the covariance matrix to obtain eigenvectors (principal components) and eigenvalues (amount of variance explained by each PC) [13].

- Selecting the top K eigenvectors based on their corresponding eigenvalues to create a lower-dimensional projection of the original data.

Practical Implementation Workflow

For transcriptomic data, PCA implementation typically follows this standardized workflow:

Table 2: Standardized PCA Workflow for Transcriptomic Data

| Step | Procedure | Considerations for Transcriptomics |

|---|---|---|

| 1. Preprocessing | Quality control, normalization, and filtering of raw count data. | Apply RNA Integrity Number (RIN) cutoff >6 [10]. For FFPE tissues, use DV200 >70 [10]. |

| 2. Feature Selection | Identify highly variable genes for PCA input. | Use statistical measures (e.g., highly deviant genes) to focus on biologically relevant genes [12]. |

| 3. Data Scaling | Standardize data to have zero mean and unit variance. | Prevents highly expressed genes from dominating the PCA [13]. |

| 4. PCA Computation | Perform eigendecomposition using optimized algorithms. | Use singular value decomposition (SVD) for computational efficiency with large matrices [12]. |

| 5. Component Selection | Choose the number of PCs for downstream analysis. | Consider the "elbow" in scree plot or cumulative variance >90% [13]. |

| 6. Data Projection | Project original data onto selected PCs. | Resulting coordinates in PC space serve as input for clustering, visualization, etc. |

Figure 2: Standardized PCA workflow for transcriptomic data analysis, highlighting critical steps for addressing high-dimensionality.

Advanced PCA Applications in Modern Transcriptomics

Spatial Transcriptomics and Enhanced PCA Variants

Standard PCA has been extended with spatial awareness to address the unique challenges of spatial transcriptomics (ST) data. These specialized methods integrate spatial coordinates with gene expression patterns:

- RASP (Randomized Spatial PCA): Employs randomized linear algebra for computational efficiency, achieving orders-of-magnitude faster processing while maintaining performance comparable to slower methods like BASS, GraphST, and STAGATE [15]. RASP incorporates spatial smoothing using k-nearest neighbors thresholds and supports integration of non-transcriptomic covariates.

- GraphPCA: Utilizes graph-constrained PCA with a closed-form solution that preserves spatial neighborhood structures through penalty terms, demonstrating superior performance in spatial domain detection and robustness to noise [19].

- PCAUFE (PCA-based Unsupervised Feature Extraction): Effectively identifies disease-related genes from datasets with small sample sizes but many variables, successfully applied to COVID-19 patient blood data to identify 123 critical genes including immune-related pathways [16].

Benchmarking Studies and Performance Evidence

Recent comprehensive benchmarking studies demonstrate PCA's utility across diverse transcriptomics applications:

Table 3: Performance Comparison of Dimensionality Reduction Methods in Transcriptomics

| Application Context | Top-Performing Methods | PCA's Relative Performance | Key Findings |

|---|---|---|---|

| Drug-Induced Transcriptomics (CMap dataset) [18] | t-SNE, UMAP, PaCMAP, TRIMAP | Lower clustering performance | PCA performed relatively poorly in preserving biological similarity compared to nonlinear methods. |

| Spatial Domain Detection (10x Visium DLPFC) [19] | GraphPCA, STAGATE, SpatialPCA | Foundation for specialized methods | GraphPCA, based on constrained PCA, outperformed deep learning methods in accuracy and interpretability. |

| Cell Type Identification (Mouse Ovary MERFISH) [15] | RASP, PCA, SEDR | Highly competitive (ARI: 0.58) | Standard PCA outperformed several spatially-aware methods, surpassed only by RASP (ARI: 0.69). |

| COVID-19 Feature Selection (Blood transcriptomics) [16] | PCAUFE | Superior to SAM and LIMMA | PCA-based selection identified minimal gene sets (123 genes) with high classification accuracy (AUC >0.9). |

In spatial transcriptomics analyses, RASP has demonstrated particular effectiveness, achieving the highest Adjusted Rand Index (ARI: 0.69) for cell type identification in complex mouse ovary MERFISH data while being 1-3 orders of magnitude faster than competing methods [15]. The method's performance is influenced by parameter selection, with optimal kNN thresholds and inverse distance power values (β=2) being crucial for maintaining spatial fidelity while enabling accurate domain detection [15].

Experimental Protocols and Research Toolkit

Implementation Protocol for Transcriptomic PCA

For researchers implementing PCA in transcriptomic studies, the following detailed protocol ensures robust results:

Software Environment Setup

- Programming Language: Python (scanpy, scikit-learn) or R (Seurat)

- Quality Control Metrics: RNA Integrity Number (RIN >6), mitochondrial gene percentage, detected genes per cell [10]

- Normalization Methods: Shifted logarithm, fragments per kilobase million (FPKM), or counts per million (CPM)

Step-by-Step PCA Procedure

- Input Data Preparation: Begin with normalized count matrix (cells × genes) following quality control filtering

- Highly Variable Gene Selection: Identify 2,000-5,000 most variable genes using Seurat's

FindVariableFeaturesor Scanpy'spp.highly_variable_genes[12] - Data Scaling: Center to zero mean and scale to unit variance using

StandardScalerorsc.pp.scale[13] - PCA Computation: Execute PCA with ARPACK solver for efficiency:

sc.pp.pca(adata, svd_solver="arpack", use_highly_variable=True, n_comps=50)[12] - Component Selection: Plot scree plot of explained variance and select components where cumulative variance >90% or identify the "elbow" point [13]

- Downstream Application: Use selected PCs for clustering, visualization (UMAP/t-SNE), or differential expression testing

The Researcher's Toolkit for PCA in Transcriptomics

Table 4: Essential Computational Tools for PCA in Transcriptomic Research

| Tool/Resource | Function | Application Context |

|---|---|---|

| Scanpy [12] | Python-based single-cell analysis toolkit | Standard PCA implementation and integration with other preprocessing steps |

| Seurat [19] | R package for single-cell genomics | PCA with automated variable feature selection and dimension selection |

| GraphPCA [19] | Interpretable dimension reduction for ST | Spatial transcriptomics with graph-constrained PCA |

| RASP [15] | Randomized Spatial PCA | Large-scale ST datasets with >100,000 locations |

| PCAUFE [16] | Unsupervised feature extraction | Identifying critical genes from small sample sizes with many variables |

The curse of dimensionality presents a fundamental challenge in transcriptomic data analysis, where the abundance of gene expression measurements threatens to obscure meaningful biological signals. PCA remains an essential computational strategy to overcome this challenge through its mathematically principled approach to dimensionality reduction. While standard PCA provides a robust foundation, specialized variants like RASP, GraphPCA, and PCAUFE extend its utility to emerging applications in spatial transcriptomics, drug development, and precision medicine. As transcriptomics technologies continue to evolve toward higher resolutions and larger sample sizes, PCA-based methods will remain indispensable tools for extracting biological insights from high-dimensional gene expression data.

How PCA Captures Biological and Technical Variance in Gene Expression Data

In the analysis of gene expression data, researchers are often confronted with the "curse of dimensionality", where the number of measured genes (variables) vastly exceeds the number of biological samples (observations) [11]. Principal Component Analysis (PCA) serves as a fundamental dimensionality reduction technique that transforms high-dimensional transcriptomic data into a lower-dimensional space while preserving the most relevant patterns of variance [3]. This linear transformation identifies orthogonal principal components (PCs) that are ordered by the amount of variance they explain from the original dataset, with the first component (PC1) capturing the largest source of variance, the second (PC2) the next largest, and so on [7] [3]. In transcriptomics, these sources of variance can represent both biologically meaningful signals (e.g., differences between cell types, disease states, or developmental stages) and technical artifacts (e.g., batch effects, platform differences, or sample processing variations) [20] [21]. The ability to distinguish between these sources makes PCA an indispensable tool for quality assessment, exploratory data analysis, and understanding the underlying structure of gene expression data.

Table 1: Key Characteristics of PCA in Transcriptomic Studies

| Aspect | Description | Importance in Transcriptomics |

|---|---|---|

| Dimensionality Reduction | Transforms thousands of gene measurements into fewer composite variables | Enables visualization and analysis of high-dimensional data [11] |

| Variance Maximization | Each principal component captures the maximum possible variance in the data | Prioritizes the most influential sources of variation [3] |

| Orthogonality | Components are perpendicular (uncorrelated) in the transformed space | Ensures independent axes of variation for interpretation [22] |

| Unsupervised Nature | Does not require pre-specified sample groups | Reveals inherent data structure without prior biological assumptions [3] |

Fundamental Concepts and Mathematical Foundation

The Mathematics of PCA

PCA operates through a systematic mathematical procedure that begins with the standardization of expression data, where each gene's expression values are centered to a mean of zero and scaled to unit variance to ensure equal contribution from all genes regardless of their original measurement scales [3]. The core of PCA involves eigen decomposition of the covariance matrix, which identifies the principal axes (eigenvectors) that capture the directions of maximum variance in the data, with corresponding eigenvalues representing the amount of variance explained by each component [22] [3]. Algebraically, for a data matrix X with n samples and p genes, PCA computes a new set of variables (principal components) that are linear combinations of the original genes: PCi = ei1X1 + ei2X2 + ... + einXn, where ei represents the eigenvector corresponding to the i-th largest eigenvalue [22].

Biological vs. Technical Variance in Gene Expression

In single-cell and bulk transcriptomic studies, technical variability arises from multiple sources that can be categorized as inter-cell variability (e.g., differences in cell cycle stage, cell size) and within-cell variability (e.g., amplification biases, low RNA capture efficiency, stochastic sampling) [20]. These technical artifacts manifest systematically in PCA visualizations, often clustering samples by processing batch, sequencing date, or other technical parameters rather than biological conditions [21]. Conversely, biological variance represents true differences in gene expression patterns driven by underlying biological processes such as cell type identity, developmental trajectory, disease state, or response to experimental perturbations [20]. The challenge in interpreting PCA results lies in distinguishing these sources, particularly since biological and technical factors can be confounded in experimental designs.

Figure 1: Standard PCA Workflow for Gene Expression Analysis

PCA Applications for Biological Discovery in Transcriptomics

Characterizing Cellular Heterogeneity and Development

PCA has proven particularly valuable for interrogating cellular heterogeneity in seemingly homogeneous cell populations, revealing subpopulations that may represent distinct functional states or lineage commitments [20]. In developmental biology, PCA enables the reconstruction of pseudotemporal ordering of cells along differentiation trajectories by capturing the dominant axes of transcriptional change in snapshot data from unsynchronized cell populations [20]. This approach has been successfully applied to map developmental hierarchies in various systems, including embryonic stem cell differentiation, myeloid progenitor commitment, and T helper cell development, where it has helped identify transient intermediate states and branching decision points [20]. The technology has been especially transformative for identifying rare cell types that would be obscured in bulk analyses, such as dormant neural cells activated upon brain injury or novel progenitor populations during vertebrate development [20].

Analyzing Complex Tissues and Disease States

In complex tissues and disease contexts, PCA provides a powerful approach for unraveling tissue-specific expression patterns and disease-associated heterogeneity. Large-scale studies applying PCA to heterogeneous gene expression datasets have consistently identified dominant components corresponding to major tissue types, with the first three PCs often separating hematopoietic cells, neural tissues, and proliferative/cancer phenotypes [8]. However, contrary to earlier assumptions that higher components primarily represent noise, recent evidence demonstrates that biologically relevant information extends beyond the first few PCs [8]. For instance, the fourth PC in a comprehensive analysis of 7,100 samples specifically separated liver and hepatocellular carcinoma samples from other tissues, revealing that tissue-specific information often resides in these higher components [8]. This has important implications for study design, as the detection of specific biological signals in PCA depends strongly on the representation of relevant sample types in the dataset.

Table 2: Biological Insights Revealed Through PCA in Transcriptomic Studies

| Biological Application | PCA Utility | Key Findings |

|---|---|---|

| Tumor Heterogeneity | Distinguishes malignant and non-malignant cells; reveals intra-tumoral diversity | Identification of distinct cancer subclones with differential treatment responses [20] |

| Developmental Biology | Reconstructs differentiation trajectories from snapshot data | Mapping of branching decisions in embryonic development [20] |

| Rare Cell Identification | Detects low-abundance cell populations in complex tissues | Discovery of dormant neural stem cells activated after injury [20] |

| Cross-Tissue Analysis | Identifies tissue-specific expression patterns in large datasets | Separation of hematopoietic, neural, and liver tissues in distinct components [8] |

Technical Variance and Batch Effects in PCA

In single-cell RNA-Seq data, technical variability introduces substantial challenges for interpretation, primarily stemming from the minimal starting material (RNA from individual cells) that requires significant amplification [20]. This amplification process introduces multiple biases, including 3' end enrichment where sequences from the 3' end of transcripts are overrepresented, and preferential amplification of certain transcripts or mRNA fragments based on sequence composition [20]. Additional sources of technical variance include capture efficiency differences between cells or samples, library preparation artifacts, and sequencing depth variations, all of which can manifest as prominent patterns in PCA that may obscure biological signals [20] [21]. The problem is particularly pronounced in single-cell transcriptomics, where the necessary amplification of starting material introduces additional biases that result in many missing values (either technical zeros or true biological absence of expression), with currently limited ability to discriminate between these possibilities [20].

Batch Effects and Experimental Artifacts

Batch effects represent systematic technical variations introduced during sample collection, processing, or measurement that can substantially confound biological interpretation [22] [21]. In PCA visualizations, batch effects are typically identified when samples cluster according to technical parameters (processing date, sequencing lane, laboratory personnel) rather than biological variables of interest [21]. The presence of strong batch effects can completely mask true biological outcomes, making it difficult to detect meaningful associations even when biological conditions are evenly distributed across batches [21]. The impact of batch effects on PCA results is not merely theoretical; studies have demonstrated that the fourth principal component in large gene expression datasets sometimes correlates with array quality metrics rather than biological annotations, clearly representing measurement noise rather than biological variation [8].

Figure 2: Sources of Biological and Technical Variance in Gene Expression Data

Experimental Design and Methodological Protocols

Standard PCA Implementation for Transcriptomics

The implementation of PCA for gene expression analysis follows a systematic protocol to ensure robust and interpretable results. For RNA-seq data analysis using R, the standard approach begins with data preprocessing to normalize read counts and transform the data (typically using variance-stabilizing or log2 transformations) to satisfy the assumptions of PCA [7]. The core computation employs the prcomp() function on a transposed expression matrix where rows represent samples and columns represent genes:

The center = TRUE parameter ensures data is mean-centered, while scale = TRUE applies unit variance scaling to give equal weight to all genes regardless of their original expression ranges [7]. Following PCA computation, key results are extracted including: (1) PC scores (sample_pca$x) representing sample coordinates in the new PC space; (2) Eigenvalues (sample_pca$sdev^2) indicating variance explained by each component; and (3) Loadings (sample_pca$rotation) reflecting gene contributions to each PC [7].

Advanced PCA Variations and Extensions

Several specialized PCA variations have been developed to address specific challenges in transcriptomic data analysis. Independent Principal Component Analysis (IPCA) combines the advantages of both PCA and Independent Component Analysis (ICA), using ICA as a denoising process of the loading vectors produced by PCA to better highlight important biological entities [23]. This approach makes the assumption that biologically meaningful components can be obtained after removing noise from the associated loading vectors, resulting in improved sample clustering and enhanced biological interpretability [23]. The sparse IPCA (sIPCA) extension incorporates a built-in variable selection procedure through soft-thresholding on the independent loading vectors, enabling automatic identification of biologically relevant genes [23]. Another advanced approach, Principal Variance Component Analysis (PVCA), hybridizes PCA with variance components analysis to estimate and partition total variability into biological, technical, and other sources, providing quantitative assessment of batch effects and other confounding factors [22].

Table 3: Experimental Considerations for PCA in Transcriptomic Studies

| Experimental Factor | Impact on PCA Results | Recommendations |

|---|---|---|

| Sample Representation | PCA components reflect dominant sample types in dataset | Balance sample types to avoid overrepresentation biases [8] |

| Data Scaling | Without proper scaling, highly expressed genes dominate components | Always scale genes to unit variance when expression ranges vary [7] |

| Batch Effects | Technical batches can become dominant components in PCA | Include batch balance in design; use PVCA to quantify batch effects [22] |

| Sample Size | Small sample sizes reduce stability of component estimation | Include sufficient biological replicates for robust PCA [8] |

Table 4: Essential Research Reagents and Computational Tools for PCA in Transcriptomics

| Resource Category | Specific Tools/Reagents | Function/Purpose |

|---|---|---|

| Computational Packages | R prcomp(), limma, scatterplot3d |

Core PCA computation and visualization [24] [7] |

| Batch Effect Assessment | PVCA R Script, SAS Macro | Quantifies and partitions sources of variability [22] |

| Advanced PCA Methods | mixOmics (IPCA, sIPCA) | Implements independent and sparse PCA variants [23] |

| Visualization Tools | ggplot2, scatterplot3d, plotly | Creates publication-quality PCA visualizations [7] |

| Data Preprocessing | DESeq2, edgeR, Seurat | Normalizes raw count data before PCA application [7] |

Interpretation Guidelines and Limitations

Best Practices for Interpreting PCA Results

Effective interpretation of PCA in transcriptomic studies requires both computational rigor and biological insight. The initial step involves variance assessment through scree plots, which display the proportion of total variance explained by each successive component, allowing researchers to identify an appropriate cutoff for biologically relevant components [7] [3]. The visualization of sample clusters in PC space (typically PC1 vs. PC2, PC2 vs. PC3, etc.) should be systematically compared with experimental metadata to identify both expected biological groupings and potential technical confounders [21] [7]. For biological interpretation of components, researchers should examine the gene loadings (weights) that contribute most strongly to each PC, as these high-loading genes often reveal the biological processes driving sample separation along that component [7] [8]. This gene-level analysis can be complemented with gene set enrichment analysis to identify functional themes associated with each component.

Limitations and Complementary Approaches

While PCA provides powerful exploratory capabilities, it has important limitations that researchers must acknowledge. As a linear technique, PCA may fail to capture complex nonlinear relationships in gene expression data, potentially missing biologically important patterns that would be detected by nonlinear methods like t-SNE or UMAP [21] [3]. PCA also prioritizes high-variance signals, meaning that biologically important but low-variance phenomena (e.g., subtle transcriptional changes or rare cell types) may be overlooked in favor of more dominant variation sources [8]. Additionally, the interpretation of components can be challenging when biological and technical factors are confounded, requiring careful experimental design and potentially the application of specialized methods like PVCA to disentangle these sources [22]. For these reasons, PCA should be viewed as one component in a comprehensive transcriptomic analysis workflow rather than a complete solution, ideally complemented by clustering methods, differential expression analysis, and other specialized bioinformatic approaches.

Principal Component Analysis (PCA) is a foundational dimensionality reduction method in statistics and machine learning, strategically designed to transform a large set of correlated variables into a smaller, more manageable set of uncorrelated variables called principal components. This transformation preserves the most significant patterns and structures within the original data [25]. The core objective of PCA is rank reduction, which allows researchers to project high-dimensional data into a lower-dimensional space, facilitating visualization, analysis, and interpretation without a critical loss of information [4]. In fields like transcriptomics, where datasets often encompass thousands of genes (variables) across multiple samples, PCA is an indispensable tool for initial data exploration, quality control, and identifying overarching patterns such as batch effects or the dominant sources of biological variation.

The mathematical foundation of PCA can be viewed through three complementary lenses: a rotation procedure, an eigenvalue decomposition method, and a technique for finding linear combinations [4]. Conceptually, PCA works by identifying the directions of maximum variance in the original data space. The first principal component (PC1) is the axis along which the data shows the greatest variability. The second principal component (PC2) is then defined as the direction with the next highest variance, subject to the constraint of being orthogonal (uncorrelated) to the first. This process continues for all subsequent components [26]. The resulting principal components are, therefore, a new set of orthogonal axes, sorted in descending order of the variance they capture from the original dataset [27].

Key Outputs of PCA and Their Interpretation

Principal Components and Loadings

The principal components themselves are linear combinations of the original variables. The weights used in these combinations are known as loadings (or eigenvectors), which indicate the contribution and direction of each original variable to a particular principal component [25] [4].

- Components Matrix: The matrix of loadings, often called the

components_attribute in libraries like scikit-learn, contains the principal axes in feature space. These vectors are sorted in decreasing order of their correspondingexplained_variance_[28]. - Interpreting Loadings: For a given principal component, original variables with high absolute loading values have a strong influence on that component. The sign of the loading (positive or negative) indicates the direction of the correlation. For instance, in a transcriptomics study, genes with high positive loadings on PC1 are co-expressed and vary similarly across samples, while genes with high negative loadings show an inverse relationship.

Explained Variance and Variance Ratio

A critical part of interpreting PCA is understanding how much information each principal component retains from the original dataset.

- Explained Variance: The

explained_variance_attribute in scikit-learn provides the amount of variance explained by each of the selected components. This value is equivalent to the eigenvalues of the covariance matrix [28]. It represents the absolute amount of variability captured by each component. - Explained Variance Ratio: The

explained_variance_ratio_is often more intuitive, as it represents the proportion of the total variance in the original dataset that is explained by each principal component [29]. The total variance is the sum of the variances of all original variables (or the sum of all eigenvalues) [30].

Table 1: Key Metrics for Determining the Number of Components to Retain

| Metric | Definition | Interpretation | How to Access in scikit-learn |

|---|---|---|---|

| Explained Variance | Absolute amount of variance captured by each component (Eigenvalues). | Useful for knowing the absolute contribution of each component. | pca.explained_variance_ |

| Explained Variance Ratio | Fraction of total variance explained by each component. | Helps assess the relative importance of each component. | pca.explained_variance_ratio_ |

| Cumulative Variance Ratio | Running total of explained variance ratio as components are added. | Determines the total information retained with k components. |

pca.explained_variance_ratio_.cumsum() |

The relationship between these metrics is straightforward. The explained variance ratio of a component is its eigenvalue divided by the sum of all eigenvalues of the covariance matrix [29]. This means that if you have a dataset with a total variance of 10, and the first principal component has an explained variance of 6, then its explained variance ratio is 0.6 (or 60%).

Determining the Number of Components

Choosing the right number of principal components (k) to retain is a balance between dimensionality reduction and information preservation. Two common strategies are:

- The Kaiser Criterion: Retain all components with an eigenvalue (explained variance) greater than 1 [25]. This means the component captures more variance than a single original variable (assuming the data was standardized).

- Percentage of Variance Explained: Retain the smallest number of components such that their cumulative explained variance ratio meets a predefined threshold, such as 95% or 99% of the total variance [31]. This is a more flexible and commonly used approach. In scikit-learn, this can be automated by setting

PCA(n_components=0.95, svd_solver='full')[31].

A Scree Plot, which plots the explained variance or variance ratio against the component number, is a valuable visual aid for this decision. The "elbow" of the plot—where the explained variance drops sharply—often indicates a suitable cutoff point [26].

The Biplot: An Integrated Visualization Tool

A biplot is a powerful, consolidated visualization that simultaneously displays both the scores (the coordinates of the samples in the principal component space) and the loadings (the contributions of the original variables) on the same plot [25] [27]. This allows for a unified interpretation of the relationships between samples and variables.

Interpreting a Biplot

Interpreting a biplot involves analyzing the positions and directions of both data points and variable arrows:

- Data Points (Scores): The position of each sample (e.g., a single cell or tissue sample in transcriptomics data) is determined by its scores on the principal components. Points that are close to each other represent observations with similar values across the original variables [25]. In a transcriptomics context, this can reveal clusters of samples with similar gene expression profiles.

- Variable Vectors (Loadings/Arrows): The arrows represent the original variables (e.g., genes). The direction of the vector indicates the axis that the variable is most aligned with. The length of the vector is proportional to the amount of variance the variable contributes to the displayed principal components—a longer arrow means the variable is better represented in the two-dimensional subspace [25] [26].

- Angles Between Vectors: The cosine of the angle between two variable vectors approximates their correlation [25] [27].

- Small Acute Angle: The two variables are highly positively correlated.

- 90-Degree Angle: The two variables are uncorrelated.

- Obtuse Angle (close to 180°): The two variables are highly negatively correlated.

Table 2: A Guide to Interpreting a Biplot

| Element to Observe | What it Signifies | Example Interpretation |

|---|---|---|

| Clustering of Data Points | Samples with similar profiles. | Different cell types or experimental conditions forming distinct clusters. |

| Length of a Variable Arrow | How well the variable is represented by the current PCs. | A gene with a long arrow is a major driver of the variance shown in the plot. |

| Angle between two Variables | Correlation between variables. | Two genes pointing in the same direction are positively correlated in their expression. |

| Position of a Point relative to an Arrow | The value of that sample for the variable. | A sample located in the direction a variable arrow points will have a high value for that variable. |

The following diagram summarizes the workflow for generating and interpreting a standard PCA, culminating in the creation of a biplot.

Application in Transcriptomics Data Exploration

PCA is extensively used in transcriptomics to tackle the "curse of dimensionality," where the number of genes (features) vastly exceeds the number of samples. It helps in visualizing global gene expression patterns, identifying outliers, and understanding the primary factors driving variation in the data.

Experimental Protocol: PCA on a Transcriptomics Dataset

The following is a generalized protocol for applying PCA to a transcriptomics data matrix, where rows represent samples (e.g., cells, tissues) and columns represent genes.

Data Preprocessing:

- Input: A count matrix (e.g., from RNA-seq or spatial transcriptomics platforms).

- Normalization: Normalize raw counts to account for sequencing depth (e.g., using TPM, FPKM, or library size normalization methods like DESeq2's median of ratios).

- Filtering: Filter out genes with very low expression across all samples to reduce noise.

- Transformation: Apply a variance-stabilizing transformation (e.g., log2(1+x)) to make the data more homoscedastic.

- Standardization: Center the data by subtracting the mean of each gene, and scale it by dividing by its standard deviation. This creates a z-score for each gene per sample and is crucial when using a correlation matrix for PCA, as it prevents highly expressed genes from dominating the analysis purely due to their scale [25] [26]. In scikit-learn, the

PCAclass centers the data by default but does not scale it, so preprocessing withStandardScaleris often required.

Model Fitting and Component Extraction:

Visualization and Analysis:

- Scree Plot: Plot the

explained_variance_ratio_to decide on the number of components (k) to retain. - 2D/3D Score Plots: Plot the sample scores (the transformed data) on the first 2 or 3 principal components. Color the points by experimental conditions (e.g., disease state, treatment, cell type) to visually identify clusters or outliers.

- Biplot: Create a biplot to overlay the gene loadings (as arrows) onto the score plot. This reveals which genes are the primary drivers of the separation between sample clusters observed in the score plot.

- Scree Plot: Plot the

Advanced PCA Applications in Transcriptomics

Recent methodological advances have extended PCA's utility in transcriptomics:

- Spatial Transcriptomics Alignment and Integration: A significant challenge in spatial transcriptomics is aligning and integrating multiple tissue slices (2D) to reconstruct a holistic 3D view of the tissue architecture. A 2025 review categorized 24 computational tools that address this, some of which leverage statistical mapping methods, including PCA-based approaches, to align slices across datasets, individuals, and experiments [32]. This integration is vital for capturing spatial relationships and gradients of gene expression that are lost in isolated 2D analysis.

- Kernel PCA for Non-linear Integration: Standard PCA is a linear technique. For more complex, non-linear relationships, Kernel PCA has been developed. For instance, the KSRV framework uses Kernel PCA with a radial basis function (RBF) kernel to integrate single-cell RNA-seq data with spatial transcriptomics data. This non-linear alignment in a shared latent space allows for the accurate prediction of spliced and unspliced transcripts at each spatial location, enabling the inference of spatial RNA velocity and the reconstruction of differentiation trajectories directly within the tissue context [33].

The diagram below illustrates this advanced application of Kernel PCA for spatial transcriptomics.

The Scientist's Toolkit: Essential Reagents and Computational Solutions

Table 3: Key Computational Tools for PCA in Transcriptomics Research

| Tool / Solution | Function / Purpose | Application Context |

|---|---|---|

| scikit-learn (Python) | A comprehensive machine learning library with a robust PCA class for dimensionality reduction. |

General-purpose PCA on any numerical matrix, including gene expression data. [29] [28] |

| PRECISE | A domain adaptation framework used to align distributions of different datasets before dimensionality reduction. | Integrating single-cell and spatial transcriptomics data by mitigating batch effects. [33] |

| KSRV Framework | A Kernel PCA-based framework for inferring spatial RNA velocity. | Predicting unmeasured spliced/unspliced counts in spatial data to study differentiation dynamics. [33] |

| factoextra (R) | An R package dedicated to elegant and easy visualization of multivariate analyses. | Generating scree plots, biplots, and other PCA-related visualizations. [26] |

| StandardScaler | A preprocessing module to standardize features by removing the mean and scaling to unit variance. | Essential preparation step for PCA on a correlation matrix. [26] |

| Cumulative Variance Ratio | The sum of explained_variance_ratio_ for the first k components, calculated via .cumsum(). |

Objectively determining the number of components to retain for a given variance threshold. [31] |

Interpreting the outputs of PCA—the components, explained variance, and biplots—is a critical skill for extracting meaningful biological insights from high-dimensional transcriptomics data. A firm grasp of what loadings, variance metrics, and biplot elements represent allows researchers to move beyond a "black-box" application of the algorithm. By following standardized protocols for preprocessing and analysis, and by leveraging advanced extensions like Kernel PCA, scientists can effectively use PCA to reveal the underlying structure of their data, identify key driver genes, generate hypotheses, and build a solid foundation for more complex, targeted analyses. In the context of modern spatial transcriptomics, these techniques are evolving to handle non-linear integrations, opening new avenues for understanding cellular dynamics in a native tissue context.

A Step-by-Step PCA Workflow for Transcriptomics Data

Principal Component Analysis (PCA) is a fundamental dimensionality reduction technique that transforms a collection of correlated variables into a smaller set of uncorrelated variables called principal components, which are ranked by the variance they explain [34] [35]. In transcriptomics data exploration research, where datasets commonly contain measurements for over 20,000 genes across far fewer samples, PCA enables researchers to visualize and identify the strongest patterns and relationships between variables [11] [35]. However, the reliability of PCA is profoundly dependent on proper data preprocessing and normalization. Without these critical preliminary steps, technical artifacts can dominate biological signals, leading to misleading interpretations and flawed scientific conclusions [36] [37] [38].

The core mathematical foundation of PCA rests on the Singular Value Decomposition (SVD) of the data matrix, which identifies linear subspaces that best represent the data in the squared sense [34] [39]. This computational approach makes PCA particularly sensitive to measurement scales and data distribution. Variables on larger scales can disproportionately influence the principal components, while skewed distributions can distort the variance structure that PCA seeks to capture [34] [40]. In the context of transcriptomics, where raw gene expression counts exhibit substantial technical variability due to factors like sequencing depth and library preparation protocols, normalization becomes not merely beneficial but essential for meaningful analysis [37] [38].

Theoretical Foundation: Why Preprocessing Matters for PCA

Mathematical Sensitivity of PCA

PCA operates by identifying directions of maximum variance in the data through eigen decomposition of the covariance matrix or SVD of the data matrix [34] [39]. This mathematical foundation makes the technique particularly sensitive to several data characteristics:

- Scale Dependency: Variables with larger scales and variances dominate the principal components regardless of their biological significance [40]. In transcriptomics, highly expressed genes can overshadow subtle but biologically important expression changes unless proper scaling is applied.

- Coordinate System Orientation: Linear subspaces must pass through the origin [40]. Data not centered around zero may be poorly approximated, making centering essential.

- Variance Structure: PCA captures variability in the sum of squares sense [40]. Skewed distributions or heteroscedasticity (non-constant variance) can distort the true biological variance structure [34] [41].

Challenges Specific to Transcriptomics Data

Transcriptomics data presents unique challenges that necessitate careful preprocessing:

- Sequencing Depth Variation: Cells or samples have differing total numbers of sequencing reads, making direct comparison of raw counts invalid [37]. Without normalization, a cell with twice the sequencing depth would appear to have all genes doubled in expression.

- Abundance of Zeros: Single-cell RNA-sequencing datasets contain an unusually high abundance of zeros due to both biological and technical factors [38].

- Overdispersion: Gene expression data exhibits high cell-to-cell variability derived from both biological factors and measurement inefficiencies [38].

- Compositional Nature: Gene expression data is compositional; an increase in one gene's count necessarily decreases the relative proportion of all other genes [38].

The diagram below illustrates the critical decision points in preprocessing transcriptomics data for PCA:

Normalization Methods for Transcriptomics Data

Within-Sample Normalization

Within-sample normalization addresses differences in sequencing depth between cells or samples, making counts comparable. The table below summarizes the primary approaches:

Table 1: Within-Sample Normalization Methods for Transcriptomics Data

| Method | Mathematical Formula | Use Case | Advantages | Limitations |

|---|---|---|---|---|

| Library Size Normalization | Bulk RNA-seq; basic comparison | Simple calculation; intuitive interpretation | Does not address compositionality; sensitive to highly expressed genes | |

| DESeq2's Median of Ratios | Bulk and single-cell RNA-seq | Robust to outliers; handles compositionality | Assumes most genes are not differentially expressed | |

| TPM (Transcripts Per Million) | Full-length protocols; isoform analysis | Accounts for both depth and gene length | Complex calculation; requires length information | |

| SCTransform | Single-cell RNA-seq with UMI | Simultaneously normalizes and selects features; handles technical noise | Computationally intensive; complex implementation |

Variance-Stabilizing Transformations

Variance-stabilizing transformations address heteroscedasticity, where the variance depends on the mean, which is characteristic of count data:

Table 2: Variance-Stabilizing Transformation Methods

| Transformation | Formula | Variance Assumption | Primary Applications |

|---|---|---|---|

| Log Transformation | Variance ∝ Mean² | Right-skewed distributions; RNA-seq data [41] | |

| Square Root Transformation | Variance ∝ Mean (Poisson) | Count data; ecology studies [41] | |

| Box-Cox Transformation | Flexible power transformation | Regression analysis; automated parameter selection [34] [41] | |

| Power Transformation | Adjustable skewness reduction | Preprocessing pipelines; predictive modeling [41] |

The selection of an appropriate variance-stabilizing transformation depends on the relationship between mean and variance in the data, as illustrated below:

Experimental Protocols for Preprocessing Evaluation

Standardized Preprocessing Workflow

A robust preprocessing protocol for transcriptomics data prior to PCA should include these critical steps:

Quality Control and Filtering

- Remove genes expressed in fewer than a minimum number of cells (e.g., < 10 cells)

- Exclude cells with unusually high or low numbers of detected genes

- Filter cells with excessive mitochondrial read percentage (indicates poor cell quality)

Normalization for Sequencing Depth

- Calculate size factors for each cell using the median of ratios method [38] or library size normalization

- Apply normalization by dividing counts by cell-specific size factors

- Log-transform normalized counts using y = log2(normalized count + 1)

Feature Selection

- Identify highly variable genes using mean-variance relationship

- Select 2,000-5,000 most variable genes for downstream PCA

- This focuses the analysis on biologically informative genes and reduces technical noise

Scaling and Centering

- Center each gene to have mean zero across cells

- Scale each gene to have unit variance (Z-score standardization)

- This ensures equal weight for all genes in PCA regardless of expression level

Performance Evaluation Metrics

After applying PCA to normalized data, researchers should assess preprocessing effectiveness using these quantitative metrics:

Table 3: Metrics for Evaluating Normalization Performance

| Metric | Calculation Method | Interpretation | Optimal Value |

|---|---|---|---|

| Silhouette Width | Measures clustering cohesion and separation based on Euclidean distance in PCA space | Higher values indicate better-defined clusters | Values > 0.5 indicate strong cluster structure |

| PCA Signal-to-Noise | Ratio of biological to technical variance captured in principal components | Higher ratios indicate successful removal of technical noise | Study-dependent; should be maximized |

| k-Nearest Neighbor Batch-Effect Test | Quantifies batch integration by measuring mixing of cells from different batches | Lower values indicate successful batch effect removal | P-value > 0.05 suggests no significant batch effect |

| Highly Variable Genes | Number of genes identified as highly variable after normalization | Appropriate number suggests preservation of biological variance | Typically 2,000-5,000 for scRNA-seq |

The Scientist's Toolkit: Essential Research Reagents and Computational Tools

Table 4: Essential Tools for Transcriptomics Preprocessing and PCA

| Tool Name | Category | Primary Function | Application in Workflow |

|---|---|---|---|

| UMI (Unique Molecular Identifier) | Molecular Barcode | Corrects PCR amplification biases by uniquely tagging mRNA molecules | Experimental; during library preparation [38] |

| Spike-in RNA (ERCC) | External RNA Controls | Creates standard baseline for counting and normalization | Added during cell lysis; enables technical noise estimation [38] |

| SCTransform | Computational Method | Normalizes using regularized negative binomial regression | Replaces log-normalization; selects features and removes technical effects [38] |

| Scanpy | Python Toolkit | Comprehensive single-cell analysis including PCA | End-to-end analysis from preprocessing to visualization [38] |

| Seurat | R Toolkit | Integrative single-cell analysis platform | Normalization, scaling, PCA, and downstream clustering [38] |

| Scikit-learn Pipeline | Python Framework | Concatenates preprocessing and PCA steps | Ensures consistent transformation of training and test data [42] |

Proper data preprocessing and normalization form the indispensable foundation for reliable PCA in transcriptomics research. Without these critical steps, technical artifacts can dominate biological signals, leading to flawed interpretations and irreproducible findings. The choice of normalization strategy must be guided by the specific experimental design, sequencing technology, and biological question. By implementing rigorous preprocessing protocols and evaluating their effectiveness using appropriate metrics, researchers can ensure that their PCA visualizations and downstream analyses capture meaningful biological variation rather than technical confounding. As transcriptomics technologies continue to evolve, with increasingly complex experimental designs and larger datasets, the principles outlined in this guide will remain essential for extracting biologically meaningful insights from high-dimensional gene expression data.

Best Practices for Data Standardization and Scaling

In transcriptomics research, data standardization and scaling are not merely optional preprocessing steps but foundational operations that determine the success of all subsequent analyses. High-throughput transcriptomic technologies, whether RNA-seq or microarrays, generate datasets where the number of variables (genes) dramatically exceeds the number of observations (samples)—a classic challenge known as the "curse of dimensionality" [11]. Principal Component Analysis (PCA) serves as an essential dimensionality reduction technique in this context, transforming correlated gene expression variables into a smaller set of uncorrelated principal components that capture the essential biological variation in the data [43]. However, the effectiveness of PCA is profoundly dependent on appropriate data standardization, as technical variances from library size, sequencing depth, and other experimental factors can easily obscure biological signals if not properly addressed [44] [45].

This technical guide provides a comprehensive framework for data standardization and scaling practices optimized for PCA in transcriptomics research. We integrate established methodologies with emerging approaches to empower researchers in making informed decisions about preprocessing their gene expression data, with particular emphasis on applications in drug discovery and biomedical research where accurate biological interpretation is paramount [43].

Core Concepts: Standardization vs. Scaling

In the context of transcriptomics data analysis, standardization and scaling represent distinct but complementary mathematical operations applied to gene expression measurements prior to dimensionality reduction:

Scaling typically refers to adjustments that normalize for technical variations in sampling depth or library size across different samples, ensuring that expression levels are comparable between experimental runs [44] [46]. These methods operate primarily on the columns (samples) of the expression matrix.

Standardization transforms the distribution of expression values for each gene (rows) to have consistent statistical properties, most commonly by centering (subtracting the mean) and scaling (dividing by standard deviation) to create z-scores. This prevents highly expressed genes from disproportionately influencing the analysis simply due to their magnitude [43].

The fundamental goal of both operations is to create a transformed dataset where technical artifacts are minimized, biological signals are enhanced, and the underlying assumptions of multivariate techniques like PCA are satisfied. Proper application of these techniques enables PCA to fulfill its role as what Karl Pearson described as the "best fitting" summary of multidimensional data [43].

Methodologies for Transcriptomics Data

Between-Sample Normalization (Scaling) Methods

Between-sample normalization methods primarily address differences in sequencing depth and library size across samples. These techniques apply global scaling factors to make expression measurements comparable:

Table 1: Between-Sample Normalization Methods for Transcriptomics Data

| Method | Description | Mathematical Formula | Use Case | Advantages/Limitations |

|---|---|---|---|---|

| TPM (Transcripts Per Million) | Within-sample normalization that accounts for gene length and sequencing depth | ( TPMg = \frac{Rg \times Lg \times 10^6}{\sum(Rg \times Lg)} ) where (Rg): read count, (L_g): gene length | Gene expression quantification | Standardized for comparisons; but shows high variability in metabolic models [44] |

| FPKM (Fragments Per Kilobase Million) | Similar to TPM but different operation order | ( FPKMg = \frac{Rg \times 10^9}{L_g \times T} ) where (T): total mapped fragments | Single-end RNA-seq | Length-normalized; high variability in metabolic models [44] |

| TMM (Trimmed Mean of M-values) | Between-sample normalization using a reference sample | ( TMM = \frac{\sum{g\in G} wg Mg}{\sum{g\in G} wg} ) where (Mg): log expression ratio, (w_g): weight | Differential expression | Low variability in metabolic models; good for capturing disease genes [44] |

| RLE (Relative Log Expression) | Median-based normalization assuming most genes not DE | ( SFk = median{g} \frac{Y{gk}}{(\prod{j=1}^S Y{gj})^{1/S}} ) where (SFk): size factor for sample k | Differential expression | Low variability; high accuracy for disease-associated genes [44] |

| GeTMM (Gene Length Corrected TMM) | Combines TMM with gene length correction | ( GeTMMg = TMMg \times L_g ) | Cross-sample comparison | Combines length correction with between-sample normalization [44] |

Within-Gene Standardization Methods

Within-gene standardization addresses the substantial differences in expression levels across different genes, ensuring that each gene contributes appropriately to the analysis regardless of its absolute expression level:

Z-score Standardization: This method transforms each gene's expression values to have a mean of zero and standard deviation of one across samples: ( Zg = \frac{Xg - \mug}{\sigmag} ) where (Xg) is the expression value for gene g, (\mug) is the mean expression of gene g across all samples, and (\sigma_g) is the standard deviation. This approach is particularly valuable when genes have different measurement units or scales.

Robust Z-score: For datasets with potential outliers, a modified approach using median and median absolute deviation (MAD) provides more robust standardization: ( Z{robust,g} = \frac{Xg - median(Xg)}{MAD(Xg)} ).

Log Transformation: Frequently applied before standardization to handle the severe skewness in count-based transcriptomics data: ( X{log} = \log2(X + 1) ) where the pseudocount of 1 handles zero values. This transformation helps stabilize variance across the dynamic range of expression values.

Advanced and Emerging Approaches

Recent methodological advances have introduced more sophisticated normalization frameworks that address specific challenges in transcriptomics data:

Biwhitened PCA (BiPCA): A theoretically grounded framework that adaptively rescales rows and columns of count data to standardize noise variances across both dimensions [45]. This approach overcomes fundamental difficulties with handling count noise in omics data by first transforming the data to satisfy homoscedastic spectral properties before applying PCA, effectively separating biological signal from technical noise.

SpaNorm: A spatially-aware normalization method specifically designed for spatial transcriptomics data that concurrently models library size effects and underlying biology [46]. Unlike global scaling methods, SpaNorm computes spatially smooth location- and gene-specific scaling factors, addressing the region-specific library size biases that commonly confound spatial analyses.

Non-Differentially Expressed Gene (NDEG) Normalization: An approach that uses stably expressed genes as reference features for normalization, potentially improving cross-platform integration and machine learning performance [47]. By selecting genes with low F-values from ANOVA (p > 0.85) as normalization factors, this method aims to remove technical variance while preserving biological signals.

Experimental Protocols and Workflows

Comprehensive Preprocessing Pipeline for RNA-seq Data

A robust standardization workflow for transcriptomics data analysis should systematically address both technical artifacts and biological variability:

RNA-seq Preprocessing Workflow

Step 1: Quality Control and Filtering

- Remove genes with zero counts across all samples

- Filter genes with low expression (e.g., <10 counts in at least 10% of samples)

- Identify and address outliers through sample-level clustering

- Assess potential batch effects using PCA visualization

Step 2: Normalization Method Selection

- For differential expression analysis: Select between-sample methods (TMM, RLE)

- For cross-sample comparison: Consider TPM or FPKM for length normalization

- For metabolic modeling: Prefer RLE, TMM, or GeTMM which show lower variability and better accuracy for disease-associated genes [44]

- For spatial transcriptomics: Implement spatially-aware methods like SpaNorm [46]

Step 3: Covariate Adjustment

- Identify technical covariates (batch, processing date) and biological covariates (age, sex)

- For Alzheimer's disease data: Adjust for age, gender, and post-mortem interval [44]

- For cancer datasets: Adjust for age, gender, and tumor purity

- Use linear models to regress out covariate effects before downstream analysis

Step 4: Transformation and Standardization

- Apply log2 transformation to count data: ( X{log} = \log2(X + 1) )

- Standardize each gene to z-scores across samples

- Validate distribution properties after transformation

Step 5: PCA and Interpretation

- Perform PCA on the standardized data matrix

- Select optimal number of components using cumulative variance explanation (target: 70-80%) [48]

- Interpret components through loading analysis of influential genes

- Validate biological coherence of component patterns

Normalization Selection Framework for Specific Applications

The optimal choice of normalization strategy depends on the specific research question and data characteristics. The following decision framework guides method selection:

Normalization Strategy Selection

Covariate Adjustment Protocol

Systematic covariate adjustment significantly improves normalization performance, particularly in clinical transcriptomics studies:

Protocol:

- Identify Potential Covariates

- Technical factors: batch, processing date, RNA quality metrics

- Biological factors: age, sex, ethnicity, body mass index

- Disease-specific: post-mortem interval (neurodegenerative studies), tumor stage (cancer studies)

Assess Covariate Impact

- Perform PCA on unadjusted data

- Correlate principal components with potential covariates

- Identify significant associations (p < 0.05)

Implement Adjustment

- Use linear regression: ( Y{adjusted} = Y - \sum(\betai \times X_i) )

- Where (Y) is expression values, (Xi) are covariates, and (\betai) are coefficients

- Apply to normalized expression data before downstream analysis

Validate Adjustment

- Confirm reduced correlation between PCs and technical covariates

- Verify preservation of biological signal through known biomarkers

Studies demonstrate that covariate adjustment increases accuracy in capturing disease-associated genes across normalization methods [44].

Quantitative Comparison of Normalization Methods

Performance Benchmarking in Metabolic Modeling

Recent benchmarking studies provide quantitative comparisons of normalization method performance in specific biological applications:

Table 2: Normalization Method Performance in Genome-Scale Metabolic Modeling