A Comprehensive Guide to qPCR Biomarker Validation: From Foundational Principles to Clinical Application

This article provides researchers, scientists, and drug development professionals with a comprehensive framework for the rigorous validation of biomarker genes using qPCR.

A Comprehensive Guide to qPCR Biomarker Validation: From Foundational Principles to Clinical Application

Abstract

This article provides researchers, scientists, and drug development professionals with a comprehensive framework for the rigorous validation of biomarker genes using qPCR. Covering the entire workflow from foundational concepts and assay design to troubleshooting, optimization, and final validation, it addresses the critical lack of standardization that often hinders the translation of research findings into clinical practice. By integrating current consensus guidelines, practical troubleshooting strategies, and comparative analyses with emerging technologies like dPCR, this guide aims to bridge the gap between Research Use Only (RUO) assays and certified In Vitro Diagnostics (IVD), ultimately supporting the development of robust, clinically relevant biomarker tests.

The Pillars of qPCR Biomarker Validation: Laying the Groundwork for Success

In the era of personalized oncology and precision medicine, biomarkers have become indispensable tools that enable a more individualized approach to patient care. According to the FDA-NIH Biomarker Working Group, a biomarker is defined as "a characteristic that is objectively measured and evaluated as an indicator of normal biological processes, pathogenic processes, or pharmacological response to a therapeutic agent" [1]. These measurable indicators are categorized based on their specific clinical applications, with prognostic and predictive biomarkers representing two fundamentally distinct categories that serve different purposes in clinical decision-making [2] [3].

The critical distinction between prognostic and predictive biomarkers lies in their relationship to treatment effects. Prognostic biomarkers provide information about the natural history of the disease regardless of therapy, while predictive biomarkers identify patients who are more likely to experience a favorable or unfavorable effect from a specific medical product or environmental agent [3]. This differentiation is not merely academic; it has direct implications for therapeutic decision-making, clinical trial design, and drug development strategies. Understanding this distinction is particularly crucial in oncology, where biomarkers guide increasingly targeted therapies [2].

The validation of biomarker genes by quantitative PCR (qPCR) research represents a fundamental methodological framework in biomarker development. The accuracy and reliability of biomarker measurements depend heavily on rigorous technical validation, which is especially important for molecular biomarkers detected using qPCR-based methods [4]. This article will compare prognostic and predictive biomarkers, provide experimental data supporting their applications, and detail the qPCR validation protocols essential for their implementation in clinical research and practice.

Theoretical Foundations: Distinguishing Prognostic and Predictive Biomarkers

Prognostic Biomarkers: Defining Disease Trajectory

Prognostic biomarkers provide information about the overall cancer outcome in patients, including the likelihood of disease recurrence, progression, or death, independent of specific therapeutic interventions [2] [3]. These biomarkers offer insights into the intrinsic aggressiveness of a disease and help clinicians identify patients with different outcome risks. Essentially, prognostic biomarkers answer the question: "What is the likely course of my disease regardless of treatment?"

The National Institutes of Health BEST Resource clarifies that "prognostic biomarkers are used to identify the likelihood of a clinical event, disease recurrence, or progression in patients who have the disease or medical condition of interest" [3]. These biomarkers are often identified from observational data and are regularly used to identify patients more likely to have a particular outcome. For example, in multiple myeloma, the Revised International Staging System utilizes β2-microglobulin, lactate dehydrogenase, and high-risk cytogenetics to stratify patients into prognostic groups [1].

Predictive Biomarkers: Informing Treatment Response

Predictive biomarkers help optimize therapy decisions by providing information on the likelihood of response to a given chemotherapeutic agent [2]. These biomarkers identify individuals who are more likely than similar individuals without the biomarker to experience a favorable or unfavorable effect from exposure to a medical product [3]. Predictive biomarkers essentially answer the question: "Will this specific treatment work for me?"

According to the FDA-NIH Biomarker Working Group, to identify a predictive biomarker, there generally should be a comparison of a treatment to a control in patients with and without the biomarker [3]. The ideal predictive biomarker demonstrates what statisticians call a qualitative treatment-by-biomarker interaction, where there is a clear benefit of the experimental treatment in one biomarker subgroup but a clear lack of benefit, or potential harm, in the other biomarker subgroup [3]. A prominent example is the BRAF V600E mutation, which predicts response to BRAF inhibitors like vemurafenib in late-stage melanoma [3].

Key Conceptual Differences

The fundamental distinction between these biomarker types lies in their relationship to treatment. A biomarker that appears to predict response to a particular therapy in a single-arm study might actually be prognostic if the same survival differences according to biomarker status exist with standard therapy [3]. This underscores the necessity of controlled studies for properly identifying predictive biomarkers.

Some biomarkers can serve both prognostic and predictive functions, which complicates their classification and application [2]. For instance, MGMT promoter methylation in glioblastoma and circulating tumor cells in various cancers have demonstrated both prognostic and predictive potential, making their clinical interpretation more complex [2].

Table 1: Fundamental Differences Between Prognostic and Predictive Biomarkers

| Characteristic | Prognostic Biomarkers | Predictive Biomarkers |

|---|---|---|

| Primary Question | What is the likely disease course? | Will this specific treatment work? |

| Relationship to Treatment | Independent of specific therapies | Dependent on specific therapies |

| Study Design for Identification | Observational studies | Randomized controlled trials |

| Clinical Utility | Patient stratification by risk level | Treatment selection |

| Example Biomarkers | BRCA mutations in breast cancer, CTCs | BRAF V600E, HER2 amplification |

| Impact on Clinical Decision | Determines intensity of monitoring/treatment | Determines choice of specific therapeutic agent |

Clinical Applications and Comparison Data

Prognostic Biomarker Applications

Prognostic biomarkers enable the monitoring of advances in anticancer therapy, assessment of tumor stage and potential malignancy, and prognosis of disease remission in individual cases [2]. These biomarkers can be classified into several molecular categories based on their biological characteristics:

DNA Mutations and Polymorphisms: Mutations in genes involved in DNA repair, such as BRCA1, BRCA2, ATM, and P53, predispose patients to an increased risk of developing breast cancer and provide prognostic information [2]. These germline mutations may be inherited and contribute to the inactivation of DNA repair proteins, thereby influencing disease course. Similarly, constitutive mutations in the APC gene predispose patients to familial adenomatous polyposis, characterized by an increased probability of developing gut polyps and tumors [2].

Gene Expression Signatures: Multi-gene expression assays have been developed to provide prognostic information across various cancer types. In breast cancer, the MammaPrint test utilizes a 70-gene panel to assess tumor dynamics and stratify patients into groups with high and low risk of relapse [2]. Similarly, the Oncotype DX test consists of a 21-gene panel that assesses the probability of breast cancer recurrence within 10 years, evaluating genes related to proliferation, invasiveness, and hormonal response [2].

MicroRNA Profiles: miRNAs are small, noncoding RNA molecules that regulate gene expression and can serve as prognostic indicators. For example, in hepatocellular carcinoma, overexpression of miRNA-255 increases the activity of the Wnt signaling pathway, while the presence of miRNA-155 suggests a high level of malignancy, potential for metastasis, and poor prognosis [2]. In colorectal cancer, miRNA-362-3p overexpression leads to cell cycle arrest and inhibits tumor cell growth and migration, with high expression levels correlating with better patient prognosis [2].

DNA Methylation Patterns: Changes in DNA methylation represent another category of prognostic biomarkers. Hypermethylation of tumor suppressor gene promoters can lead to loss of gene expression and is associated with tumor progression [2]. For example, methylation patterns of the RASSF1A gene have been used to determine the time of tumor relapse and survival [2].

Predictive Biomarker Applications

Predictive biomarkers are primarily used to optimize therapy decisions by identifying patients who are likely to respond to specific treatments. These biomarkers have become particularly important in oncology with the development of targeted therapies:

Targeted Therapy Selection: Predictive biomarkers enable the selection of patients for specific targeted therapies. The development of BRAF inhibitors for melanoma treatment in patients with BRAF V600E mutation-positive tumors represents a classic example of a predictive biomarker application [3]. Similarly, HER2 amplification in breast cancer predicts response to HER2-targeted therapies like trastuzumab.

Therapy Intensification or Reduction: In multiple myeloma, the Spanish myeloma group demonstrated that high-risk smoldering multiple myeloma patients identified using specific biomarkers had delayed progression and improved overall survival when treated with lenalidomide and dexamethasone compared with observation alone, showing the predictive utility of their biomarker model [1].

Treatment Resistance Prediction: Some predictive biomarkers can identify patients who are unlikely to respond to certain therapies, thereby avoiding unnecessary toxicity and costs. For example, KRAS mutations in colorectal cancer predict resistance to EGFR-targeted therapies.

Table 2: Comparative Clinical Applications of Prognostic and Predictive Biomarkers

| Application Domain | Prognostic Biomarkers | Predictive Biomarkers |

|---|---|---|

| Diagnosis | Facilitate cancer diagnosis, usually with non-invasive methods | Not typically used for diagnosis |

| Risk Stratification | Identify patients with different outcome risks (e.g., recurrence) | Identify patients likely to respond to specific treatments |

| Treatment Planning | Inform intensity of treatment (e.g., adjuvant therapy decisions) | Inform selection of specific therapeutic agents |

| Clinical Trial Design | Enrich trial populations with high-risk patients | Enrich trial populations with likely responders |

| Disease Monitoring | Assess therapy advances, tumor stage, and malignancy | Monitor response to targeted therapies |

| Clinical Decision Impact | "How aggressively should we treat?" | "Which treatment should we use?" |

Biomarker Performance Comparison Framework

A standardized statistical framework for comparing biomarker performance has been developed to evaluate biomarkers based on predefined criteria, including precision in capturing change over time and clinical validity [5]. This framework operationalizes performance criteria through specific measures and uses statistical techniques for inference-based comparisons of biomarker performance.

In this comparative framework, precision refers to the ability of a biomarker to capture change over time with small variance relative to the estimated change, while clinical validity refers to the association with cognitive change and clinical progression [5]. Such standardized comparisons are essential for identifying the most promising biomarkers for specific clinical contexts.

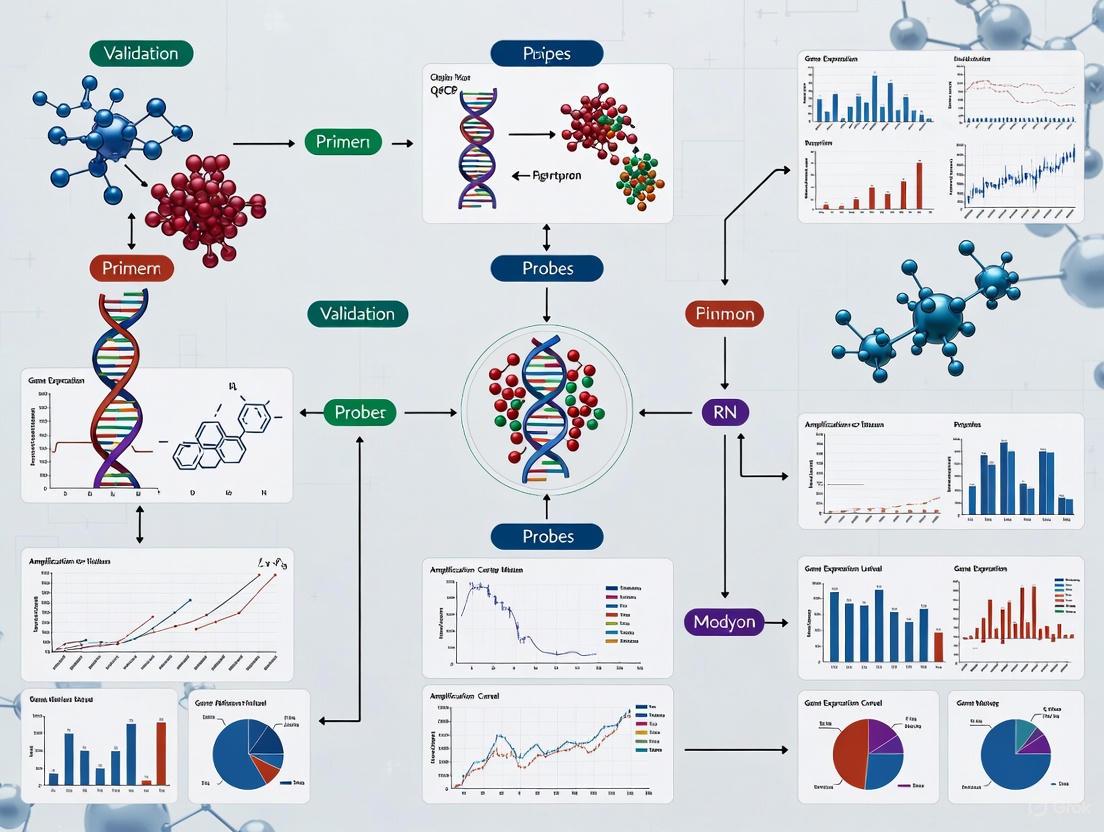

Diagram 1: Biomarker Performance Evaluation Framework. This diagram illustrates the key components of the standardized framework for comparing biomarker performance, focusing on precision in capturing change and clinical validity [5].

qPCR Experimental Protocols for Biomarker Validation

Sample Acquisition and Preanalytical Processing

The validation of biomarker genes by qPCR requires rigorous attention to preanalytical conditions, as these factors significantly impact assay reproducibility and reliability. According to consensus guidelines for the validation of qRT-PCR assays, sample acquisition, processing, and storage represent critical initial steps in the validation workflow [4].

Sample Collection and Stabilization: Biological samples for DNA biomarker analysis are mainly collected from tissue obtained after biopsy or from blood cells containing nuclei [2]. For RNA-based biomarkers, proper stabilization is essential immediately after collection to prevent degradation. The choice of anticoagulant in blood collection tubes (e.g., EDTA, heparin) can affect downstream qPCR analysis, and consistency in collection methodology is paramount across study populations.

RNA Extraction and Quality Control: RNA purification represents a crucial step in qRT-PCR assays. The consensus guidelines emphasize the importance of standardized RNA extraction protocols and rigorous quality control measures [4]. RNA integrity should be assessed using appropriate methods such as capillary electrophoresis, with minimum RNA integrity number thresholds established for inclusion in analysis. The use of reference genes for normalization requires careful selection based on stability across sample types.

Sample Storage Conditions: Standardized storage conditions including temperature, duration, and freeze-thaw cycles must be defined and consistently implemented. The EU-CardioRNA COST Action consortium guidelines recommend documenting all storage parameters and establishing stability profiles for biomarkers under various storage conditions [4].

qPCR Assay Design and Optimization

Target Selection and Assay Design: Appropriate target selection is fundamental to successful qPCR biomarker validation. Assays should be designed to avoid known polymorphisms, secondary structures, and genomic repeats. The consensus guidelines recommend designing amplicons between 70-150 base pairs, with primer melting temperatures typically between 58-62°C and GC content of 40-60% [4]. Specificity should be verified through sequence alignment tools and confirmed experimentally using melt curve analysis or sequencing.

Experimental Design and Validation: The validation of qPCR assays for clinical research requires a fit-for-purpose approach that determines the appropriate level of validation based on the context of use [4]. Key analytical validation parameters include:

- Analytical specificity: The ability of the test to distinguish target from nontarget analytes

- Analytical sensitivity: The minimum detectable concentration or limit of detection

- Analytical precision: Closeness of repeated measurements to each other

- Analytical trueness: Closeness of measured values to true values

Controls and Reference Genes: Appropriate controls are essential for reliable qPCR results. These include positive controls, negative controls, no-template controls, and internal controls. Reference genes for normalization must be carefully selected based on stability across experimental conditions and sample types [4]. The consensus guidelines recommend using multiple reference genes and employing algorithms such as geNorm or NormFinder to determine the most stable references.

qPCR Validation Parameters and Acceptance Criteria

The validation of qPCR assays for biomarker application requires establishing and meeting predefined performance criteria for key analytical parameters:

Efficiency and Dynamic Range: Amplification efficiency should be between 90-110%, with a correlation coefficient (R²) of >0.980 for standard curves. The dynamic range should cover at least 3-5 orders of magnitude, encompassing the expected biological range of the biomarker [4].

Limit of Detection and Quantification: The limit of detection should be established as the lowest concentration at which the target can be reliably detected, while the limit of quantification represents the lowest concentration that can be precisely measured [4]. These parameters are typically determined using serial dilutions of standards with known concentrations.

Precision and Reproducibility: Repeatability (intra-assay precision) and reproducibility (inter-assay precision) should be evaluated using multiple replicates across different runs, operators, and days [4]. The coefficient of variation for replicate measurements should generally not exceed 15-25%, depending on the biomarker context of use.

Robustness: Assay performance should be evaluated under varying conditions, including different reagent lots, instrument platforms, and operator expertise to establish robustness [4].

Table 3: Essential qPCR Validation Parameters for Biomarker Assays

| Validation Parameter | Description | Recommended Acceptance Criteria |

|---|---|---|

| Amplification Efficiency | How efficiently the target is amplified | 90-110% |

| Dynamic Range | Range of concentrations that can be accurately quantified | 3-5 orders of magnitude |

| Limit of Detection | Lowest concentration reliably detected | Dependent on biomarker and context |

| Limit of Quantification | Lowest concentration precisely quantified | CV < 25% at LOQ |

| Precision (Repeatability) | Intra-assay variability | CV < 15% |

| Precision (Reproducibility) | Inter-assay variability | CV < 20% |

| Specificity | Ability to distinguish target from nonspecific sequences | Single peak in melt curve analysis |

| Robustness | Performance under varying conditions | Consistent results across variables |

Diagram 2: qPCR Biomarker Validation Workflow. This diagram outlines the key steps in the qPCR validation process for biomarker applications, from sample collection through analytical validation [4].

Research Reagent Solutions for Biomarker Validation

The successful validation of biomarker genes by qPCR requires specific research reagents and materials designed to ensure reproducibility, accuracy, and precision. The following table details essential solutions for biomarker validation studies:

Table 4: Essential Research Reagent Solutions for qPCR Biomarker Validation

| Reagent/Material | Function | Application Notes |

|---|---|---|

| Stabilized Blood Collection Tubes | Preserve RNA/DNA integrity during sample transport | PAXgene, Tempus, or similar systems; critical for transcriptomic biomarkers |

| Quality-Controled Nucleic Acid Extraction Kits | Isolve high-quality RNA/DNA from various sample types | Select based on sample matrix; include DNase treatment for RNA workflows |

| qPCR Master Mixes | Provide optimized buffer conditions, enzymes, dNTPs for amplification | Select based on detection chemistry; SYBR Green or probe-based |

| Validated Primer/Probe Sets | Specifically amplify target biomarker sequences | Designed to avoid polymorphisms; optimized for efficiency and specificity |

| Reference Gene Panels | Normalize for variation in input material and efficiency | Multiple stable genes; tissue-specific validation required |

| RNA/DNA Quality Assessment Kits | Evaluate nucleic acid integrity and quantity | Fluorometric quantification; capillary electrophoresis for RIN |

| Positive Control Templates | Verify assay performance and sensitivity | Synthetic genes or confirmed positive samples |

| Standard Curve Materials | Establish quantification range and efficiency | Serial dilutions of synthetic templates or characterized reference samples |

Comparative Analysis of Biomarker Performance in Clinical Studies

Case Study: Multiple Myeloma Biomarkers

Multiple myeloma provides an instructive case study for comparing prognostic and predictive biomarker applications. The International Staging System utilizes the prognostic biomarkers albumin and β2-microglobulin to stratify patients into three stages with significantly different overall survival [1]. The Revised International Staging System incorporates high-risk cytogenetics in addition to β2-microglobulin and lactate dehydrogenase, demonstrating improved prognostic capability [1].

While these staging systems provide valuable prognostic information, they currently lack predictive utility for treatment selection. As noted in recent reviews, "despite new and improved biomarkers for determining the overall prognosis of MM patients, there is currently insufficient information to routinely utilise predictive biomarkers to select initial treatment for MM, intensify treatment for high-risk MM, reduce treatment for low-risk MM or for changing to an alternative treatment strategy altogether" [1].

Case Study: Breast Cancer Biomarkers

In breast cancer, several biomarker tests illustrate the distinction between prognostic and predictive applications. The MammaPrint 70-gene signature provides primarily prognostic information, stratifying patients into high and low risk of recurrence categories regardless of estrogen receptor, progesterone receptor, and HER2 status [2]. In contrast, the Oncotype DX 21-gene recurrence score provides both prognostic information and predictive insights regarding potential benefit from chemotherapy [2].

The TargetPrint test, which analyzes the expression of 80 transcripts, provides molecular distinction of breast cancers into basal, luminal, and ERBB2 types, while the TheraPrint test offers predictive information on the expression of 56-125 genes identified as predictive biomarkers [2]. Significant differences in the expression of BCL2, CDH3, GRB7, KRT6B, and KRT17 genes have been observed between patients responding and not responding to treatment, highlighting their potential predictive value [2].

Emerging Biomarker Technologies and Applications

Circulating Tumor Cells and Liquid Biopsies: The detection of circulating tumor cells in peripheral blood represents an emerging approach with both prognostic and potential predictive applications [2]. CTCs generally lead to a poor prognosis for patients but may also provide information about treatment response and resistance mechanisms.

Wastewater-Based Epidemiology: An emerging application of biomarker analysis involves wastewater surveillance for population health monitoring. Recent research has demonstrated the feasibility of using machine learning models for classifying C-reactive protein concentrations in wastewater samples, with the Cubic Support Vector Machine achieving classification accuracies of 64.88% to 65.48% for distinguishing five concentration classes [6]. This approach represents a novel application of biomarker analysis at the population rather than individual level.

Imaging Biomarkers: In multiple myeloma, imaging modalities including whole-body low-dose CT, MRI, and FDG PET/CT have been incorporated into diagnostic and response assessment criteria [1]. The metabolic response and number of focal lesions on PET/CT in newly diagnosed patients after treatment have demonstrated independent prognostic value [1].

The distinction between prognostic and predictive biomarkers represents a fundamental concept in precision medicine, with significant implications for clinical decision-making and therapeutic development. Prognostic biomarkers provide information about disease natural history and outcome probabilities, while predictive biomarkers inform the likelihood of response to specific treatments. This comparative analysis demonstrates that while some biomarkers serve dual purposes, their optimal application requires understanding their distinct clinical utilities.

The validation of biomarker genes by qPCR research provides a critical methodological foundation for biomarker implementation. As outlined in this review, rigorous technical validation following established guidelines is essential for generating reliable, reproducible biomarker data. The standardized framework for comparing biomarker performance offers a systematic approach for evaluating biomarkers based on precision in capturing change and clinical validity.

Future directions in biomarker development will likely focus on integrating multiple biomarker types, developing standardized validation protocols across platforms, and establishing robust bioinformatic pipelines for complex data interpretation. As biomarker science continues to evolve, the distinction between prognostic and predictive applications will remain essential for translating biomarker research into improved patient outcomes across diverse disease contexts, particularly in oncology where biomarker-guided therapy has become standard practice.

In the field of qPCR-based biomarker research, the distinction between Research Use Only (RUO) and In Vitro Diagnostic (IVD) products represents more than just a regulatory classification—it defines a critical validation gap that researchers must navigate to ensure reliable, reproducible results. RUO products are designed solely for scientific research with no intended medical purpose and are exempt from most regulatory controls [7] [8]. In contrast, IVD products are medical devices used for clinical diagnosis, patient monitoring, or determining predisposition to disease, and must undergo extensive validation and regulatory approval by authorities such as the FDA and European Commission under the In Vitro Diagnostic Regulation (IVDR) [9] [8].

This distinction creates a significant validation chasm. While RUO products offer flexibility for exploratory research, IVD products provide the rigorous standardization necessary for clinical decision-making. For researchers validating biomarker genes by qPCR, understanding this divide is essential for selecting appropriate reagents and assays throughout the research continuum—from initial discovery to clinical application [4].

Regulatory and Intended Use Frameworks

Regulatory Landscapes and Requirements

The regulatory requirements for RUO and IVD products differ substantially, directly impacting their development, validation, and permissible applications.

Table 1: Regulatory Requirements Comparison

| Regulatory Aspect | RUO Products | IVD Products |

|---|---|---|

| Intended Use | Research purposes only; no medical purpose [7] | Diagnosis, monitoring, or prediction of disease [9] |

| Regulatory Body Oversight | Exempt from most regulatory controls [8] | FDA (U.S.), EMA/EU IVDR (Europe), and other national bodies [9] |

| Quality System Regulations | Not subject to 21 CFR 820 [8] | Subject to Quality System Regulations (21 CFR 820) [8] |

| Premarket Requirements | None [8] | Premarket notification or approval required [8] |

| Registration and Listing | Not required [8] | Required [8] |

| Post-Market Surveillance | Not required [9] | Required [9] [8] |

| Adverse Event Reporting | Not required [8] | Required [8] |

Intended Use and Legal Definitions

The fundamental distinction between RUO and IVD lies in their intended purpose, a concept with significant legal and practical implications. According to the IVDR, IVD products must have a medical purpose, providing information about physiological or pathological processes, congenital impairments, predisposition to medical conditions, safety and compatibility with potential recipients, prediction of treatment response, or monitoring therapeutic measures [7]. RUO products explicitly lack any medical purpose, restricting their use to basic research, pharmaceutical discovery, and identification of chemical substances or ligands in biological specimens [7].

This intended use distinction carries through to labeling requirements. RUO products must bear the disclaimer: "For Research Use Only. Not for use in diagnostic procedures" [10] [8]. IVD products must include specific instructions for use, limitations, and appropriate regulatory markings such as the CE mark in Europe [9]. Using RUO products for clinical diagnostics constitutes misuse, potentially exposing manufacturers and laboratories to liability while jeopardizing patient safety [7] [8].

Performance Characteristics and Validation Data

Analytical Performance Comparison

The performance differential between RUO and IVD products can be quantified through key analytical metrics. A comparative study of nine commercial RT-qPCR kits for SARS-CoV-2 detection demonstrated significant variation in analytical sensitivity between different kits, with limits of detection at 95% probability (LOD95%) ranging from approximately 3.5 to 6.4 copies per reaction for the most sensitive kits [11]. While this study compared IVD and RUO kits, it highlights the critical importance of independent validation regardless of regulatory status.

Table 2: Performance Characteristics of SARS-CoV-2 RT-qPCR Kits

| Kit Identifier | Manufacturer | Regulatory Status | LOD95% (ORF 1ab) | LOD95% (N Gene) | Cross-reactivity with other coronaviruses |

|---|---|---|---|---|---|

| Kit-1 (DAAN) | DAAN | IVD (CE-IVD, NMPA EUA) | 3.5 copies/reaction | 5.6 copies/reaction | None detected [11] |

| Kit-2 (Huirui) | Huirui | RUO | 4.6 copies/reaction | 6.4 copies/reaction | None detected [11] |

| Kit-7 (Geneodx) | Geneodx | IVD (CE-IVD, NMPA EUA) | ~3-4 fold higher than Kit-1 & 2 | ~3-4 fold higher than Kit-1 & 2 | None detected [11] |

This data reveals that regulatory status alone does not predict analytical performance, as some RUO kits demonstrated sensitivity comparable to IVD products. However, IVD products undergo standardized validation against certified reference materials, providing more reliable performance characteristics [11].

Variability and Reproducibility

A critical differentiator between RUO and IVD products lies in their variability and lot-to-lot consistency. IVD assays, particularly those approved through processes like the FDA's 510k pathway, typically demonstrate low inter-lot variability measuring below 5%, as they are manufactured in high volumes with tightly controlled reagent quality [12]. Conversely, RUO kits typically show higher variability ranging from 10% to 25% or more due to smaller batch production volumes and more manual processes in 96-well plate formats [12].

This variability has direct implications for biomarker validation studies. The EU-CardioRNA COST Action consortium highlights that lack of technical standardization remains a huge obstacle in translating qPCR-based tests to clinical applications, citing contradictory results between studies investigating the same miRNAs as biomarkers [4]. For example, in coronary artery disease biomarker research, miR-21 was reported as upregulated in two studies but downregulated in another, demonstrating how variable assay performance contributes to irreproducible findings [4].

Diagram 1: Manufacturing scale impact on RUO and IVD product variability

Experimental Validation Protocols

Validation Methodologies for qPCR Assays

Proper validation of qPCR assays for biomarker research requires rigorous experimental protocols. The EU-CardioRNA COST Action consortium recommends a comprehensive approach addressing multiple performance parameters [4]:

Analytical Sensitivity and Limit of Detection (LOD)

- Conduct serial dilutions of certified reference material (CRM) to determine the minimum detectable concentration

- For SARS-CoV-2 detection, studies used CRM derived from genomic RNA with specified copy numbers for ORF1ab, N, and E genes [11]

- Perform 20-30 replicates at each dilution near the expected LOD to establish LOD95% (limit of detection at 95% probability) [11]

- Use digital droplet PCR (ddPCR) to independently verify template concentration and quality [11]

Analytical Specificity

- Test cross-reactivity with related genetic targets; for SARS-CoV-2, this included testing against six other human coronaviruses and respiratory viruses [11]

- Evaluate potential interference substances that may be present in clinical samples

Amplification Efficiency and Linear Dynamic Range

- Generate standard curves using at least 5 serial dilutions spanning the expected working range

- Acceptable amplification efficiency typically falls between 90-110% [4]

- Calculate correlation coefficients (R²) to demonstrate linearity, with values >0.98 generally acceptable

Precision and Reproducibility

- Assess repeatability through multiple replicates within the same run

- Evaluate intermediate precision across different days, operators, and equipment

- For IVD applications, conduct reproducibility studies across multiple sites [4]

Certified Reference Materials and Standardization

The use of certified reference materials (CRMs) is essential for proper validation of qPCR assays. In SARS-CoV-2 assay validation, the use of CRM (GBW(E)091099) containing SARS-CoV-2 genomic RNA with specified copy number concentrations for target genes enabled direct comparison of nine different RT-qPCR kits [11]. This approach revealed approximately 3 to 4-fold differences in LOD95% between kits, demonstrating that commercial kits can vary significantly in sensitivity despite targeting the same viral genes [11].

For biomarker gene validation, researchers should:

- Use CRMs when available for absolute quantification

- Establish laboratory-specific reference materials for long-term study consistency

- Implement standardized nucleic acid quantification methods across all experiments

- Document all normalization procedures, including reference gene selection and validation

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for qPCR Biomarker Validation

| Reagent/Material | Function | RUO vs. IVD Considerations |

|---|---|---|

| Certified Reference Materials (CRMs) | Provide standardized template for absolute quantification and assay comparison | Essential for both RUO and IVD; enables cross-platform comparisons [11] |

| Primer/Probe Sets | Target-specific amplification and detection | RUO: Flexible for optimization; IVD: Predetermined, validated sequences [4] |

| Reverse Transcriptase | cDNA synthesis from RNA templates | RUO: Multiple options available; IVD: Often part of integrated system [4] |

| qPCR Master Mix | Provides enzymes, buffers, dNTPs for amplification | RUO: Can optimize components; IVD: Standardized formulation [12] |

| RNA Storage Solution | Preserves RNA integrity during storage | Critical pre-analytical factor affecting both RUO and IVD results [4] |

| Positive/Negative Controls | Monitor assay performance and contamination | Both require; IVD includes standardized controls with defined values [8] |

| Internal Control Genes | Normalization of sample-to-sample variation | Must be validated for specific sample type and experimental conditions [4] |

Decision Framework and Research Applications

Selecting the Appropriate Product Category

Choosing between RUO and IVD products requires careful consideration of the research context and objectives. The following decision framework can guide researchers:

Diagram 2: Decision framework for RUO, Clinical Research, and IVD assays

RUO Applications are appropriate for:

- Basic research to identify potential biomarker candidates

- Assay development and optimization

- Exploratory studies with constantly changing parameters

- Target discovery and preliminary validation

Clinical Research (CR) Assays represent an intermediate category between RUO and IVD, undergoing more thorough validation than typical RUO products but not reaching the full certification of IVD assays [4]. These are suitable for:

- Biomarker validation studies in clinical trials

- Studies where standardized protocols are needed across multiple sites

- Research intended to support regulatory submissions

IVD Applications are required for:

- Clinical diagnosis, patient management, or treatment decisions

- Tests used for patient stratification in interventional trials

- Applications where results directly impact patient care

The Concept of "Fit-for-Purpose" Validation

The level of validation required for qPCR biomarker assays should follow a "fit-for-purpose" (FFP) approach, defined as "a conclusion that the level of validation associated with a medical product development tool is sufficient to support its context of use" [4]. This concept recognizes that the stringency of validation should match the intended application of the biomarker, from early discovery through clinical qualification.

For qPCR-based biomarker validation, the FFP approach includes establishing:

- Analytical precision (closeness of measurements to each other)

- Analytical sensitivity (minimum detectable concentration)

- Analytical specificity (ability to distinguish target from nontarget sequences)

- Analytical trueness (closeness to true value) [4]

The context of use (COU) determines which performance characteristics require the most rigorous evaluation. For example, a biomarker intended for patient stratification in a clinical trial demands more extensive validation than one used for exploratory research [4].

The critical validation gap between RUO and IVD products presents both challenges and opportunities for researchers validating biomarker genes by qPCR. RUO products offer the flexibility needed for discovery-phase research, while IVD products provide the standardization essential for clinical application. The emerging category of Clinical Research assays helps bridge this gap, offering an intermediate level of validation appropriate for biomarker qualification studies [4].

Successful navigation of this landscape requires researchers to clearly define their context of use, implement appropriate validation protocols, and select reagents matched to their research phase. By understanding the distinctions, limitations, and appropriate applications of RUO and IVD products, researchers can generate more reliable, reproducible data that effectively advances biomarker discovery along the translational pathway from bench to bedside.

In the field of molecular diagnostics, the transition of biomarker genes from research discoveries to clinically applicable tools necessitates rigorous analytical validation. For quantitative polymerase chain reaction (qPCR) assays, this process ensures that measurements of gene expression are reliable, reproducible, and accurate. The core parameters of analytical trueness, precision, sensitivity, and specificity form the foundation of this validation framework, providing researchers and drug development professionals with the confidence to make critical decisions based on experimental data. These parameters are not merely academic exercises but essential components for meeting regulatory standards and ensuring that biomarker data can reliably inform diagnostic and therapeutic development.

The CardioRNA consortium guidelines emphasize that the lack of technical standardization remains a significant obstacle in translating qPCR-based tests to clinical applications. Establishing clear validation protocols bridges the gap between research use only (RUO) and in vitro diagnostics (IVD), impacting clinical management including diagnosis, prognosis, prediction of therapeutic response, and toxicity evaluation [13]. In biotech and pharmaceutical development, the balance between assay precision and sensitivity often tilts toward precision because it directly impacts data turnaround times, cost-efficiency, and experimental repeats. Highly precise assays minimize inter-assay variability, ensuring results obtained at different times or by different operators are comparable—a critical factor for generating reliable data [14].

Defining the Core Validation Parameters

Analytical Trueness

Analytical trueness refers to the closeness of agreement between the average value obtained from a large series of test results and an accepted reference value. It indicates how accurately a qPCR assay measures the true concentration or expression level of a target biomarker gene. Trueness is typically assessed through correlation studies with established methods or reference materials. In practical terms, for biomarker validation, trueness ensures that the expression levels measured by a qPCR assay genuinely reflect the biological reality, preventing misinterpretation of upregulation or downregulation patterns.

A study on ddPCR quantification of miR-192-5p in hepatocellular carcinoma demonstrated excellent trueness, showing a strong correlation (R=0.92) with reverse transcription qPCR (RT-qPCR) measurements. This level of agreement with an established method provides confidence in the assay's ability to deliver accurate absolute quantification of the microRNA biomarker in liquid biopsies [15]. Such correlation with orthogonal methods is a standard approach for establishing trueness in molecular assays.

Precision

Precision describes the closeness of agreement between independent test results obtained under stipulated conditions. Unlike trueness, precision does not reflect accuracy to a true value but rather the reproducibility of measurements. Precision is typically evaluated at three levels: repeatability (intra-assay precision), intermediate precision (inter-assay precision), and reproducibility (between laboratories). For qPCR biomarker assays, precision ensures that results remain consistent across multiple runs, different operators, various instruments, and over time.

The same ddPCR assay for miR-192-5p exhibited exceptional precision with an intra-batch coefficient of variation (CV) ranging from 2.31% to 21.63% and an inter-batch CV of 17.54% [15]. In biomarker validation, CV values below 20-25% are generally considered acceptable, with lower values obviously preferred. The precision of an assay directly impacts its reliability in longitudinal studies where biomarker levels are monitored over time to assess disease progression or treatment response.

Sensitivity

Sensitivity in analytical validation encompasses two related concepts: the ability of an assay to detect low quantities of the target (detection limit) and its ability to distinguish between different concentrations (quantification limit). The limit of blank (LoB), limit of detection (LoD), and limit of quantification (LoQ) are key parameters defining analytical sensitivity. LoB represents the highest apparent analyte concentration expected to be found in replicates of a blank sample, LoD is the lowest analyte concentration that can be reliably detected but not necessarily quantified, and LoQ is the lowest concentration that can be reliably quantified with acceptable precision and trueness.

For low-abundance biomarkers, such as circulating microRNAs in liquid biopsies, sensitivity becomes particularly critical. The ddPCR miR-192-5p assay demonstrated a LoB of 1.75 copies/μL, LoD of 3.33 copies/μL, and LoQ of 13.45 copies/μL, with a linear range extending from 13.45 to 129,693 copies/μL (R²=0.9965) [15]. This exceptional sensitivity enables reliable detection and quantification of the biomarker even at very low concentrations typically encountered in liquid biopsy samples.

Specificity

Specificity refers to the ability of an assay to measure solely the analyte of interest without interference from other components in the sample. In qPCR biomarker assays, specificity is primarily determined by the design of primers and probes to target unique sequences of the biomarker gene, avoiding cross-reactivity with similar sequences, pseudogenes, or unrelated transcripts. Specificity ensures that the measured signal genuinely originates from the target biomarker rather than background noise or similar sequences.

Methodologies to enhance specificity include the use of locked nucleic acid (LNA)-modified probes, as demonstrated in the ddPCR miR-192-5p assay, where the LNA modification improved positive droplet counts by 32% [15]. Specificity can be compromised by substances that inhibit polymerase activity or by similar genetic sequences; thus, validation must include testing for potential interferents. The miR-192-5p assay, for instance, was shown to tolerate low hemoglobin and triglycerides but was affected by bilirubin, highlighting the importance of understanding matrix effects [15].

Comparative Performance Data Across Platforms and Applications

Table 1: Comparative Analytical Performance of qPCR and dPCR Platforms in Biomarker Validation

| Platform | Trueness (Correlation with Reference) | Precision (CV %) | Sensitivity (LoD/LoQ) | Specificity Enhancements | Applications in Literature |

|---|---|---|---|---|---|

| qPCR | Not explicitly quantified | Varies with optimization | Varies with target | Standard TaqMan probes | Multiplex biomarker panels [16] |

| ddPCR with LNA | R=0.92 vs RT-qPCR [15] | Intra-batch: 2.31-21.63% Inter-batch: 17.54% [15] | LoD: 3.33 copies/μL LoQ: 13.45 copies/μL [15] | LNA probes increase specificity & signal by 32% [15] | Liquid biopsy miRNA quantification [15] |

| RT-qPCR | Used as reference method | Dependent on optimization | Varies with extraction method & target | Specific primer design | Validation of MDD biomarkers MRPS11, SHMT2 [17] |

Table 2: Sensitivity Comparison Across Molecular Detection Methods

| Method | Analytical Sensitivity | Factors Influencing Sensitivity | Impact on Biomarker Validation |

|---|---|---|---|

| qrtPCR | Variable; not inherently superior to conventional PCR [18] | Primer design, target reiteration, reaction optimization [18] | Claims of superior sensitivity must be assay-specific, not technology-general [18] |

| cnPCR | Can exceed qrtPCR for some targets [18] | Higher reaction volumes may dilute inhibitors [18] | More sensitive for some pathogens (e.g., Toxoplasma: 0.5 genome equivalent) [18] |

| ddPCR | Absolute quantification without standard curves | Digital counting of individual molecules | Superior for low-abundance targets and liquid biopsies [15] |

Experimental Protocols for Parameter Validation

Protocol for Establishing Sensitivity: LoB, LoD, and LoQ

The determination of sensitivity parameters follows a standardized experimental approach that can be applied to qPCR biomarker assays:

Limit of Blank (LoB) Determination: Measure multiple replicates (n≥20) of a blank sample (without template or with non-target template). Calculate the mean and standard deviation (SD). LoB is defined as the mean blank value + 1.645 * SD of the blank.

Limit of Detection (LoD) Determination: Prepare samples with low concentrations of the target analyte (approximately 2-5 times the LoB). Test multiple replicates (n≥20) of these low-concentration samples. LoD is the lowest concentration where ≥95% of samples test positive. The miR-192-5p study established an LoD of 3.33 copies/μL using this approach [15].

Limit of Quantification (LoQ) Determination: Prepare a dilution series of the target analyte and test multiple replicates at each concentration. LoQ is the lowest concentration that can be measured with an acceptable imprecision (typically CV <20-25%) and trueness (deviation from reference value <20-25%). The ddPCR assay achieved an LoQ of 13.45 copies/μL with excellent linearity (R²=0.9965) across a wide dynamic range [15].

Protocol for Establishing Precision

Precision validation follows a nested experimental design to evaluate different levels of variability:

Repeatability (Intra-assay Precision): Within a single run, analyze multiple replicates (n≥3-5) of at least two samples (low and high concentrations). Calculate the mean, SD, and CV for each sample. The ddPCR miR-192-5p assay demonstrated intra-batch CVs ranging from 2.31% to 21.63% [15].

Intermediate Precision (Inter-assay Precision): Across different runs (different days, different operators, possibly different instruments), analyze multiple replicates of at least two samples (low and high concentrations). The previously mentioned study showed an inter-batch CV of 17.54% [15].

Reproducibility: Conducted between different laboratories using the same protocol and sample types. While not always feasible in early validation, it's essential for multicenter studies and regulatory submissions.

Protocol for Evaluating Interference and Specificity

Specificity and interference testing ensures the assay accurately measures the target despite potential confounding factors:

Specificity Testing: Test the assay against samples containing possible cross-reactive analytes (similar gene sequences, pseudogenes, or related isoforms). For the miR-192-5p assay, this included testing against similar microRNA sequences to ensure no cross-reactivity [15].

Interference Testing: Spike samples with potential interferents at clinically relevant concentrations. Common interferents include hemoglobin (hemolysis), lipids (lipemia), bilirubin (icterus), and substances common to the sample matrix. The miR-192-5p assay demonstrated tolerance to low hemoglobin and triglycerides but sensitivity to bilirubin [15].

Sample Integrity Studies: Evaluate how sample quality affects assay performance. A multiplex qPCR-array for bladder cancer biomarkers demonstrated robust performance across different RNA quality thresholds (DV200 >15%), input levels (5-100 ng), and despite necrosis in samples [16].

Visualizing Validation Workflows and Relationships

Figure 1: Comprehensive qPCR Assay Validation Workflow. This diagram illustrates the iterative process of analytical validation, highlighting the interconnected nature of the four core parameters and the necessity of meeting acceptance criteria for each before an assay can be considered validated.

Figure 2: Factors Influencing Core Validation Parameters. This diagram demonstrates how pre-analytical, assay design, and technical factors collectively impact the four core validation parameters, highlighting the multidimensional nature of assay validation.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Essential Research Reagents and Materials for qPCR Biomarker Validation

| Reagent/Material | Function in Validation | Considerations for Core Parameters |

|---|---|---|

| LNA-modified Probes | Enhance hybridization specificity and stability | Improve sensitivity (lower LoD) and specificity; demonstrated 32% increase in positive droplet counts in ddPCR [15] |

| High-Quality Polymerase | Catalyzes DNA amplification | Impacts precision (CV%) and sensitivity; choice affects resistance to inhibitors in sample matrix |

| Standardized Master Mix | Provides optimized reaction environment | Improves precision by reducing inter-assay variability; enables automation [14] |

| Reference Materials | Establish trueness through correlation | Certified reference materials essential for establishing analytical trueness |

| Quality Control Panels | Monitor assay performance over time | Include samples at multiple concentrations for precision monitoring |

| Inhibition Resistance Additives | Counteract PCR inhibitors in samples | Improve robustness and precision in complex matrices [18] |

| Automated Nucleic Acid Extraction Systems | Standardize sample preparation | Improve precision by reducing pre-analytical variability; essential for clinical translation [14] |

The validation of qPCR assays for biomarker genes requires a comprehensive, integrated approach addressing all four core parameters—trueness, precision, sensitivity, and specificity. As demonstrated across multiple studies, successful validation involves not only optimizing each parameter individually but understanding their interrelationships and collective impact on assay performance. The ddPCR assay for miR-192-5p exemplifies how attention to all these parameters creates a clinically relevant test, with its combination of excellent trueness (R=0.92), precision (CV 2.31-21.63%), sensitivity (LoD 3.33 copies/μL), and enhanced specificity through LNA probes [15].

The multiplex qPCR array for bladder cancer biomarkers further demonstrates how robust validation across these parameters enables assays that perform reliably across different sample types (FFPE and fresh-frozen), RNA qualities, and operators [16]. Such robustness is essential for clinical implementation where standardized protocols must deliver consistent results despite variations in sample collection and processing.

Ultimately, thorough analytical validation of these core parameters forms the foundation for any subsequent clinical validation of biomarker genes. It ensures that observed differences in gene expression reflect biology rather than technical variability, enabling researchers and drug development professionals to make confident decisions in both basic research and clinical translation. As regulatory guidance evolves, with the FDA pointing sponsors to apply specific criteria for biomarker data associated with regulatory approvals [14], comprehensive analytical validation becomes increasingly critical for successful translation of qPCR biomarker assays from research tools to clinical diagnostics.

The Importance of Context of Use (COU) and the Fit-for-Purpose (FFP) Validation Concept

In the development of biomarker genes for qPCR research, the Context of Use (COU) and Fit-for-Purpose (FFP) validation concepts are foundational pillars that ensure scientific rigor and regulatory relevance. The COU is defined as a concise description of the biomarker's specified application in drug development, detailing the biomarker category and its intended purpose [19]. This clarity of purpose is critical, as "no context [means] no validated assay" [20]. The FFP approach, accepted by regulatory agencies, posits that the level of validation must be sufficient to support the assay's COU [4] [21]. For qPCR-based biomarker development, this means tailoring validation stringency to whether the data will support internal decision-making, regulatory submissions, or clinical patient management.

The relationship between COU and FFP is synergistic. The COU defines the "what" and "why" of the biomarker application, while FFP determines the "how" of the validation process [20]. This framework is particularly crucial for qPCR biomarker assays, where technical standardization remains a significant obstacle to clinical translation [4]. Without clearly defined COU and appropriate FFP validation, qPCR-based biomarkers risk generating irreproducible data that fails to advance either scientific understanding or therapeutic development.

Defining Context of Use for Biomarker Genes

Biomarker Categories and Their Contexts of Use

The FDA-NIH BEST Resource defines several biomarker categories, each with distinct COUs that dictate different validation requirements [19]. Understanding these categories is the first step in establishing an appropriate COU for qPCR-based biomarker genes.

Table: Biomarker Categories and Contexts of Use in Drug Development

| Biomarker Category | Definition | Example COU in qPCR Research | Regulatory Impact |

|---|---|---|---|

| Diagnostic | Identifies presence or absence of a disease [19] | Using mRNA expression patterns to diagnose specific cancer subtypes | High - may support product labeling |

| Prognostic | Defines likelihood of clinical event, disease recurrence or progression [19] | qPCR signature predicting disease aggressiveness in neuroblastoma [22] | Moderate to High - trial enrichment |

| Predictive | Identifies individuals more likely to respond to a specific treatment [19] | EGFR mutation status predicting response to targeted therapies in NSCLC | High - patient selection |

| Pharmacodynamic/Response | Shows biological response to therapeutic intervention [19] | mRNA level changes following drug treatment indicating target engagement | Variable - proof of mechanism |

| Safety | Indicates potential for toxicity or adverse effects [19] | Detecting gene expression changes associated with organ toxicity | Moderate to High - risk monitoring |

| Monitoring | Assesses status of disease or medical condition [19] | Serial measurement of viral load genes during antiviral therapy | Moderate - treatment adjustment |

Elements of a Well-Defined COU

A comprehensively defined COU includes three key elements: (1) what specific aspect of the biomarker is measured and in what form, (2) the clinical purpose of the measurements, and (3) the interpretation and decision/action based on the measurements [4]. For qPCR biomarker genes, this translates to specifying the exact RNA targets (e.g., specific mRNA or noncoding RNA), the biological matrix (e.g., FFPE tissue, plasma), the clinical decision the biomarker will inform (e.g., patient stratification), and the actionable outcomes (e.g., treatment selection) [4].

The COU directly influences every aspect of qPCR assay development. As illustrated in the workflow below, the COU dictates the necessary technical performance characteristics, which in turn determine the validation experiments required.

Implementing Fit-for-Purpose Validation

The Fit-for-Purpose Validation Framework

Fit-for-purpose validation recognizes that biomarker assays require different levels of validation stringency based on their COU [23]. This approach is "scientifically-driven" and aims to "produce robust and reproducible data" appropriate for the intended application [24]. For qPCR-based biomarker genes, the FFP approach manifests throughout the method validation process, with increasing rigor as the biomarker progresses toward regulatory submission.

The FFP continuum ranges from exploratory assays used for internal decision-making to fully validated assays supporting regulatory claims [25]. This continuum aligns with the drug development pipeline, where early discovery may utilize qualified assays, while later-phase trials require fully validated methods [21]. The transition point between qualification and validation depends on factors including whether the data will support regulatory submission and what type of conclusions will be drawn from the results [21].

Validation Parameters for qPCR Biomarker Assays

The technical validation of qPCR biomarker methods progresses through discrete stages: definition of purpose and assay selection, reagent assembly and validation planning, experimental performance verification, in-study validation, and finally routine use with quality monitoring [23]. At each stage, different parameters are evaluated with stringency dictated by the COU.

Table: Fit-for-Purpose Validation Parameters for qPCR Biomarker Assays

| Validation Parameter | Exploratory COU | Advanced COU | Regulatory COU |

|---|---|---|---|

| Accuracy/Trueness | Limited assessment | Assessment with surrogate matrices | Full demonstration of closeness to true value [4] |

| Precision | Intra-assay CV <25-30% [23] | Intra- & inter-assay CV <20-25% | Intra- & inter-assay CV <15-20% with total error assessment [23] |

| Analytical Sensitivity (LOD) | Estimated from dilution series | Statistically determined with confidence intervals | Full determination with biological matrix [4] |

| Analytical Specificity | Basic evaluation | Testing of related transcripts | Comprehensive assessment including isoforms [4] |

| Assay Range | 2-3 log range | 3-4 log range with LLOQ/ULOQ | Full dynamic range with accuracy profiles [23] |

| Parallelism | May be omitted | Recommended with surrogate matrices | Required with clinical samples [20] |

| Sample Stability | Limited conditions | Multiple conditions & timepoints | Comprehensive under all handling conditions |

For qPCR assays specifically, additional technical parameters require validation based on the COU. These include amplification efficiency (ideally 90-110%), linear dynamic range, impact of different amplification curve analysis methods [22], and the selection of appropriate reference genes for normalization [4]. The mathematical approach used for Cq determination and efficiency correction can significantly impact results and must be consistent throughout a study [22].

Practical Application in qPCR Research

Experimental Protocols for Key Validation Experiments

Accuracy and Precision Assessment for qPCR Biomarker Assays

For qPCR biomarker assays, accuracy and precision are typically evaluated using quality control samples at low, medium, and high concentrations across multiple runs [23]. The experimental protocol involves:

- Prepare quality control samples in appropriate matrix at three concentrations spanning the assay range

- Analyze replicates (n≥5) of each QC concentration within a single run for intra-assay precision

- Repeat analysis across multiple runs (≥3) on different days by different analysts for inter-assay precision

- Calculate %CV for precision assessment: %CV = (Standard Deviation/Mean) × 100

- For accuracy, compare measured concentrations to known values: %Bias = [(Measured - Expected)/Expected] × 100 For exploratory COUs, 25-30% CV may be acceptable, while advanced COUs require 20-25%, and regulatory COUs need <15-20% CV except at LLOQ where 20% is acceptable [23].

Establishing Analytical Sensitivity and Specificity

The limit of detection (LOD) for qPCR assays is determined through serial dilution experiments:

- Prepare serial dilutions of the target template in appropriate matrix

- Analyze multiple replicates (n≥10) at each dilution level

- Determine the lowest concentration where ≥95% of replicates are detected

- For limit of quantification (LLOQ), establish the lowest concentration where precision (%CV) ≤20-25% and accuracy (%Bias) ±20-25% depending on COU Analytical specificity for qPCR assays involves testing against related genetic targets and potentially testing the impact of homologous sequences on amplification efficiency [4]. For miRNA biomarkers, this is particularly important due to sequence similarities within families.

Research Reagent Solutions for qPCR Biomarker Validation

Successful validation of qPCR biomarker assays requires careful selection and quality control of research reagents. The following table outlines essential materials and their functions in the validation process.

Table: Key Research Reagent Solutions for qPCR Biomarker Validation

| Reagent Category | Specific Examples | Function in Validation | Critical Quality Parameters |

|---|---|---|---|

| Nucleic Acid Extraction Kits | Column-based, magnetic bead, organic extraction | Isolate target RNA with consistent yield and purity; significantly impacts results [4] | Yield, purity (A260/280), integrity (RIN), absence of inhibitors |

| Reverse Transcriptase Enzymes | Moloney murine leukemia virus (M-MLV), engineered variants | Convert RNA to cDNA with consistent efficiency; major source of variability [4] | Processivity, fidelity, efficiency with difficult templates, inhibitor tolerance |

| qPCR Master Mixes | SYBR Green, TaqMan, digital PCR mixes | Provide optimal environment for amplification with minimal variability [22] | Amplification efficiency, specificity, inhibitor tolerance, uniform Cq values |

| Reference Gene Panels | Housekeeping genes (GAPDH, ACTB), stable non-coding RNAs | Normalize technical and biological variation; critical for accurate quantification [4] | Stable expression across sample types, minimal variability under experimental conditions |

| Quality Control Materials | Synthetic RNA standards, pooled patient samples, reference materials | Monitor assay performance across validation and study samples [20] | Commutability with native analyte, stability, well-characterized concentration |

Workflow for qPCR Biomarker Validation

Implementing a comprehensive validation strategy for qPCR biomarker assays requires coordination across multiple experimental phases. The following workflow illustrates the progression from pre-analytical considerations through analytical validation, emphasizing the critical role of COU in decision-making at each stage.

Regulatory Considerations and Industry Perspectives

Pathways to Regulatory Acceptance

Engaging with regulatory agencies early in the biomarker development process is critical for successful qualification. The FDA provides several pathways for regulatory acceptance, including Early Engagement (Critical Path Innovation Meetings), the IND application process, and the Biomarker Qualification Program (BQP) [19]. The BQP offers a structured framework for development and regulatory acceptance of biomarkers for a specific COU, involving three stages: Letter of Intent, Qualification Plan, and Full Qualification Package [19].

For qPCR-based biomarkers, understanding the distinction between Research Use Only, Clinical Research Assays, and In Vitro Diagnostic assays is essential [4]. Clinical Research Assays fill the gap between RUO and IVD, undergoing more thorough validation without reaching certified IVD status. These are similar to Laboratory-Developed Tests but are specifically tailored for clinical research applications [4].

Current Challenges and Consensus Gaps

Despite general acceptance of FFP principles, the pharmaceutical industry lacks consensus on minimum validation standards for biomarker assays [20]. A pre-workshop survey revealed that 75% of respondents agreed there should be minimum standards, yet workshop discussions demonstrated less agreement on how to implement such standards [20]. This highlights the ongoing evolution of biomarker validation practices.

For qPCR-based biomarkers specifically, major challenges include the lack of harmonized reference values, poor standardization of pre-analytical variables, and underpowered studies leading to irreproducible results [4]. The CardioRNA consortium notes that for circulating miRNA biomarkers, contradictory findings between studies are common, with technical analytical aspects contributing significantly to variability [4].

Addressing Reproducibility Challenges in Biomarker Research

Reproducibility remains a fundamental challenge in biomarker research, impacting the translation of promising molecular signatures into clinically viable tools. Inconsistent results from quantitative PCR (qPCR) validation studies often stem from methodological variability, including differences in data analysis techniques, assay sensitivity, and platform performance [26]. The widespread reliance on the 2−ΔΔCT method, for instance, frequently overlooks critical factors such as amplification efficiency variability and reference gene stability, potentially compromising findings before they enter clinical validation [26]. This guide objectively compares experimental approaches and technologies central to robust biomarker validation, providing researchers with performance data and standardized protocols to enhance the reliability of qPCR-based biomarker research.

Comparative Analysis of qPCR Data Analysis Methods

The choice of statistical methodology for analyzing qPCR data significantly influences the robustness and reproducibility of biomarker validation studies. The following comparison outlines the performance of traditional versus advanced analytical approaches.

Table 1: Comparison of qPCR Data Analysis Methods

| Method | Key Principle | Impact on Reproducibility | Statistical Considerations |

|---|---|---|---|

| 2−ΔΔCT | Relative quantification based on threshold cycle (CT) differences and assumed amplification efficiency [26] | Lowers reproducibility due to variability in amplification efficiency and reference gene stability [26] | Limited statistical power; susceptible to efficiency variability |

| ANCOVA (Analysis of Covariance) | Flexible multivariable linear modeling that accounts for covariates like efficiency [26] | Enhances rigor and reproducibility; P-values not affected by variability in amplification efficiency [26] | Greater statistical power and robustness across diverse experimental conditions [26] |

Evidence from large-scale analyses indicates that ANCOVA generally offers greater statistical power and robustness compared to 2−ΔΔCT, with simulations supporting its applicability across diverse experimental conditions [26]. The adoption of ANCOVA is part of a broader movement toward improved practices that facilitate rigor and reproducibility in qPCR research.

Performance Comparison of PCR Platforms for Biomarker Research

Selecting appropriate instrumentation is crucial for generating reliable, reproducible data in biomarker validation workflows. The market offers various PCR systems with distinct capabilities suited to different research needs and throughput requirements.

Table 2: Performance Comparison of Selected PCR Platforms (2025)

| Platform | Technology | Key Applications in Biomarker Research | Sensitivity & Precision | Throughput |

|---|---|---|---|---|

| Bio-Rad QX200 AutoDG Droplet Digital PCR | Droplet Digital PCR (ddPCR) [27] | Absolute quantification, rare mutation detection, copy number variation (CNV) analysis, minimal residual disease (MRD) monitoring [27] | Unmatched sensitivity for low-abundance targets; absolute quantification without standard curves [27] | Automated droplet generation reduces hands-on time and variability [27] |

| Applied Biosystems QuantStudio 3 | Real-Time PCR (qPCR) [27] | Gene expression, pathogen detection, genotyping [27] | Reliable for routine quantification; supports multiplexing up to 4 dyes [27] | 96-well and 384-well formats; suitable for moderate throughput [27] |

| Bio-Rad CFX Opus96 | Real-Time PCR (qPCR) [27] | Gene expression, genotyping, pathogen detection [27] | High-performance thermal cycling and optical detection; factory-calibrated optics [27] | 96-well format; enhanced connectivity for modern lab environments [27] |

Recent comparative studies highlight the importance of platform selection for specific applications. A 2025 study comparing digital PCR platforms found that the QX200 ddPCR system demonstrated a limit of detection (LOD) of approximately 0.17 copies/μL input, while the QIAcuity One nanoplate dPCR system showed an LOD of 0.39 copies/μL input [28]. Both platforms showed high precision, with coefficients of variation (CV) ranging between 6-13% for ddPCR and 7-11% for ndPCR across dilution series above the limit of quantification [28].

Experimental Protocols for Enhanced Reproducibility

ANCOVA Implementation for qPCR Data Analysis

The transition from 2−ΔΔCT to ANCOVA represents a significant advancement in addressing reproducibility challenges in qPCR-based biomarker research [26].

Protocol: ANCOVA Implementation for qPCR Data Analysis

- Data Preparation: Start with raw fluorescence data rather than pre-processed CT values. Share raw data and analysis scripts to enhance reproducibility [26].

- Efficiency Calculation: Calculate amplification efficiency for each assay using standard curves or linear regression of raw fluorescence data.

- Model Specification: Implement a multivariable linear model with expression value as the dependent variable. Include factors such as treatment group, efficiency, and sample covariates as independent variables.

- Model Validation: Check model assumptions including homogeneity of variances, normality of residuals, and linearity relationships.

- Result Interpretation: Obtain P-values and confidence intervals from the ANCOVA model that account for efficiency variability, unlike traditional 2−ΔΔCT methods.

This approach enhances statistical power and generates more reliable results for biomarker validation studies [26].

Validation Protocol for Biomarker Assay Sensitivity

Protocol: Determination of Assay Limit of Detection (ALOD) and Process Limit of Detection (PLOD)

- Sample Preparation: Prepare serial dilutions of the target analyte in the appropriate biological matrix (e.g., gamma-irradiated JEV in piggery wastewater for environmental biomarkers) [29].

- Extraction and Amplification: Perform nucleic acid extraction followed by RT-qPCR analysis using the candidate biomarker assays.

- Data Collection: Run sufficient replicates at each dilution level to establish statistical confidence (typically n ≥ 5).

- ALOD Calculation: Determine the lowest concentration where the target is detected in ≥95% of replicates. For the ACDP JEV G4 assay, this was 2.20–5.70 copies/reaction [29].

- PLOD Calculation: Establish the lowest concentration detectable in the entire process, accounting for extraction efficiency. For the ACDP JEV G4 assay, this was 72–282 copies/10 mL of piggery wastewater [29].

- Recovery Efficiency: Calculate percentage recovery across concentrations; the ACDP JEV G4 assay showed 14.9–26.6% recovery efficiency [29].

Workflow for Machine Learning-Enhanced Biomarker Discovery

The integration of traditional machine learning with experimental validation represents a powerful approach for identifying robust biomarker signatures.

Diagram 1: Biomarker discovery workflow combining machine learning and qPCR validation. This workflow, applied to pancreatic cancer research, identified a five-gene signature validated across 14 datasets [30].

Protocol: Machine Learning-Guided Biomarker Validation

- Data Collection and Normalization: Systematically gather transcriptomic data from public repositories (e.g., GEO database). Normalize using GC-Robust Multi-array Average (gcRMA) algorithm followed by log2 transformation [30].

- Meta-Analysis: Apply random-effects meta-analysis (DerSimonian-Laird method) to combine gene expression effect sizes across multiple datasets using Hedges' g effect size calculations [30].

- Signature Identification: Identify significant genes based on effect size (ES > 2) and false discovery rate (FDR < 0.01) [30].

- Experimental Validation: Recruit patient cohorts (e.g., 30 patients with confirmed disease and 25 healthy controls) [30]. Collect peripheral blood samples under standardized conditions (early morning after overnight fast) [30].

- RNA Extraction and qPCR: Isolate total RNA (RIN > 7) and perform qPCR analysis with appropriate internal controls (e.g., GAPDH) [30].

- Performance Assessment: Evaluate diagnostic performance using AUC statistics. The five-gene pancreatic cancer signature achieved an AUC of 0.83 in peripheral blood validation [30].

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Research Reagent Solutions for Biomarker Validation

| Reagent/Category | Specific Examples | Function in Biomarker Research |

|---|---|---|

| Nucleic Acid Isolation Kits | AllPrep DNA/RNA Mini Kit (Qiagen), AllPrep DNA/RNA FFPE Kit (Qiagen) [31] | Simultaneous DNA/RNA extraction from limited samples; maintains nucleic acid integrity for paired analysis |

| Library Preparation Kits | TruSeq stranded mRNA kit (Illumina), SureSelect XTHS2 DNA/RNA kits (Agilent) [31] | Preparation of sequencing-ready libraries from RNA and DNA; enables multi-omics biomarker discovery |

| Reverse Transcription Systems | SuperScript III First-Strand Synthesis System (Invitrogen) [30] | High-efficiency cDNA synthesis from RNA templates; critical for gene expression biomarker studies |

| qPCR Master Mixes | SYBR Green Master Mix (Applied Biosystems) [30] | Sensitive detection of amplified products; enables quantitative assessment of biomarker expression |

| Restriction Enzymes | HaeIII, EcoRI [28] | Enhance accessibility of target genes; improve precision of copy number quantification in dPCR |

| Reference Materials | Synthetic oligonucleotides, cell line DNA at varying purities [31] [28] | Analytical validation controls; enable standardization across platforms and laboratories |

Emerging Trends and Future Directions

The field of biomarker research continues to evolve with several emerging trends poised to address ongoing reproducibility challenges:

Artificial Intelligence Integration: By 2025, AI and machine learning are expected to play an expanded role in biomarker analysis through predictive analytics, automated data interpretation, and personalized treatment planning [32]. These technologies will enhance the identification of robust biomarker signatures from complex datasets.

Multi-Omics Approaches: The integration of genomics, proteomics, metabolomics, and transcriptomics data provides comprehensive biomarker profiles that better reflect disease complexity [32]. Combined RNA and DNA sequencing assays have demonstrated enhanced detection of clinically actionable alterations in 98% of cases [31].

Advanced Validation Frameworks: The 2025 FDA Biomarker Guidance emphasizes that biomarker assays require tailored validation approaches despite similar parameters to drug assays [33]. There is growing recognition that biomarker assays must demonstrate suitability for measuring endogenous analytes rather than relying solely on spike-recovery approaches used in drug concentration analysis [33].

Liquid Biopsy Advancements: By 2025, liquid biopsies are expected to become standard tools with enhanced sensitivity and specificity [32]. These non-invasive approaches facilitate real-time monitoring of disease progression and treatment response, particularly valuable for cancers where tissue acquisition is challenging [30].