A Comprehensive Guide to the GATK Somatic Variant Calling Workflow: From Best Practices to Clinical Application

This article provides a complete overview of the GATK somatic variant calling workflow for researchers, scientists, and drug development professionals.

A Comprehensive Guide to the GATK Somatic Variant Calling Workflow: From Best Practices to Clinical Application

Abstract

This article provides a complete overview of the GATK somatic variant calling workflow for researchers, scientists, and drug development professionals. It covers the foundational principles of the Genome Analysis Toolkit (GATK) and its Mutect2 caller, detailing the step-by-step methodology for accurate SNV and indel discovery in tumor samples. The content explores advanced troubleshooting for common issues like low allele fraction variants and offers optimization strategies for challenging data types, including FFPE samples. Furthermore, it presents a comparative analysis of Mutect2's performance against other callers, guidelines for validation, and the integration of regulatory and quality assurance frameworks essential for clinical translation and compliant biomarker development.

Understanding Somatic Variants and the GATK Ecosystem

The Biological and Clinical Significance of Somatic Mutations in Cancer

Somatic mutations represent the cornerstone of cancer evolution, serving as the fundamental genetic alterations that drive the transformation of normal cells into malignant tumors. These acquired changes, distinct from inherited germline variants, accumulate throughout an individual's lifetime due to interactions with environmental mutagens, endogenous processes, and random replication errors. Within the context of cancer genomics research, the accurate identification and interpretation of somatic mutations through robust bioinformatic workflows like the GATK (Genome Analysis Toolkit) somatic variant calling pipeline is paramount for understanding oncogenesis, tumor heterogeneity, and therapeutic vulnerabilities. This in-depth technical review examines the biological mechanisms through which somatic mutations influence cancer development, explores cutting-edge detection methodologies, and discusses the growing clinical implications for precision oncology, with particular emphasis on the critical role of accurate variant calling in advancing cancer research and drug development.

The transformation of a normal cell into a cancer cell occurs through a sequence of discrete genetic events, establishing cancer properly as a genetic disease of somatic cells [1]. The analogy between organismal evolution and cancer evolution is compelling: in both scenarios, mutation drives change, but Darwinian selection enables clones with a growth advantage to expand, providing a larger target for subsequent mutations [1]. Somatic mutations can be triggered by diverse factors including environmental carcinogens, endogenous metabolic processes, or spontaneous stochastic events during DNA replication [1].

The search for molecular lesions in tumors has been revolutionized by powerful technologies such as microarrays for global gene expression analysis and deep sequencing for comprehensive genome analysis [1]. While next-generation sequencing provides unprecedented insights, it also presents the challenge of distinguishing meaningful driver mutations from biologically inert passenger mutations—a computational challenge that somatic variant calling workflows must overcome through sophisticated filtering and annotation approaches [2] [3].

Biological Significance of Somatic Mutations

Driver versus Passenger Mutations

In the genomic landscape of any cancer, somatic mutations can be categorized into two fundamental classes:

- Driver mutations: These genetic alterations confer selective growth advantage and are directly implicated in cancer development. They occur in cancer genes and have been positively selected during tumor evolution [2]. The ratio of non-synonymous to synonymous substitutions in protein-coding sequences can help estimate the extent of selection pressure on these mutations [2].

- Passenger mutations: These do not contribute to cancer development but represent the imprints of mutational mechanisms that have generated them, providing insights into cancer etiology and pathogenesis without being subject to selection pressures [2].

Mutation Order and Cancer Evolution

Emerging evidence demonstrates that the temporal sequence in which mutations are acquired significantly impacts cancer biology, clinical presentation, and therapeutic response [4]. This represents a paradigm shift from viewing cancer as merely the sum of its mutational parts to understanding it as a dynamically evolving system where historical contingency shapes future trajectories.

In chronic myeloproliferative neoplasms (MPNs), the order of acquisition of JAK2 V617F and TET2 mutations dramatically influences stem and progenitor cell behavior, clonal evolution, and clinical phenotypes [4]. Similar order effects have been observed in mouse models of adrenocortical tumors, where expression of oncogenic Ras before loss of p53 produced highly malignant, metastatic tumors, while the reverse order resulted only in benign tumors [4]. This temporal dependency introduces additional complexity into variant interpretation and underscores the limitations of static mutational catalogs.

Clonal Evolution and Intratumor Heterogeneity

Cancers evolve through a process of sequential subclonal evolution, with competition between subclones and branching evolutionary patterns [4]. Instead of representing a homogeneous mass of genetically identical cells, tumors comprise families of clonally diverse cells with common ancestral origins [4]. This genetic diversity has profound clinical implications, as the pool of genetically diverse clones frequently serves as a source of therapy-resistant populations [4].

Table 1: Key Classes of Somatic Mutations in Cancer

| Mutation Type | Functional Impact | Example Genes | Detection Challenge |

|---|---|---|---|

| Oncogenic activation | Gain-of-function promoting proliferation | PDGFRA, KIT, MET, RET | Distinguishing from benign hypermutation |

| Tumor suppressor loss | Loss-of-function removing growth constraints | p53, APC, VHL, CDKN2A | Identifying haploinsufficient effects |

| Genome stability defects | Compromised DNA repair accelerating mutation rate | ATM, BLM, BRCA2, MSH2 | Interpreting in context of mutational signatures |

| Epigenetic regulator alteration | Changed expression landscape without DNA sequence change | EZH2, DNMT3A, TET2 | Functional interpretation without coding change |

Methodological Approaches: From Detection to Interpretation

Evolution of Somatic Mutation Detection Technologies

The sensitivity and accuracy of somatic mutation detection have progressed dramatically, from early methods with limited throughput to contemporary approaches capable of identifying mutations present in microscopic clones:

- Traditional sequencing: Initially limited to PCR-based resequencing of coding exons to identify base substitutions and small indels [2].

- Whole-genome/exome sequencing: Enabled comprehensive cataloguing of somatic mutations across cancer genomes, revealing remarkable insights into mutational processes [2].

- Error-corrected sequencing: Newer methods like NanoSeq achieve error rates below 5 errors per billion base pairs, enabling detection of mutations in single DNA molecules and revealing the extensive clonal landscapes of normal tissues [5].

The application of targeted NanoSeq to 1,042 non-invasive oral epithelium samples and 371 blood samples demonstrated an extremely rich selection landscape, with 46 genes under positive selection in oral epithelium and evidence of negative selection in essential genes [5]. This approach allows multivariate regression models to study how exposures and cancer risk factors alter the acquisition or selection of somatic mutations [5].

GATK Somatic Variant Calling Workflow

The GATK somatic variant calling pipeline represents a standardized approach for identifying somatic mutations when contrasting tumor and normal samples from the same individual [3]. The workflow encompasses several critical stages:

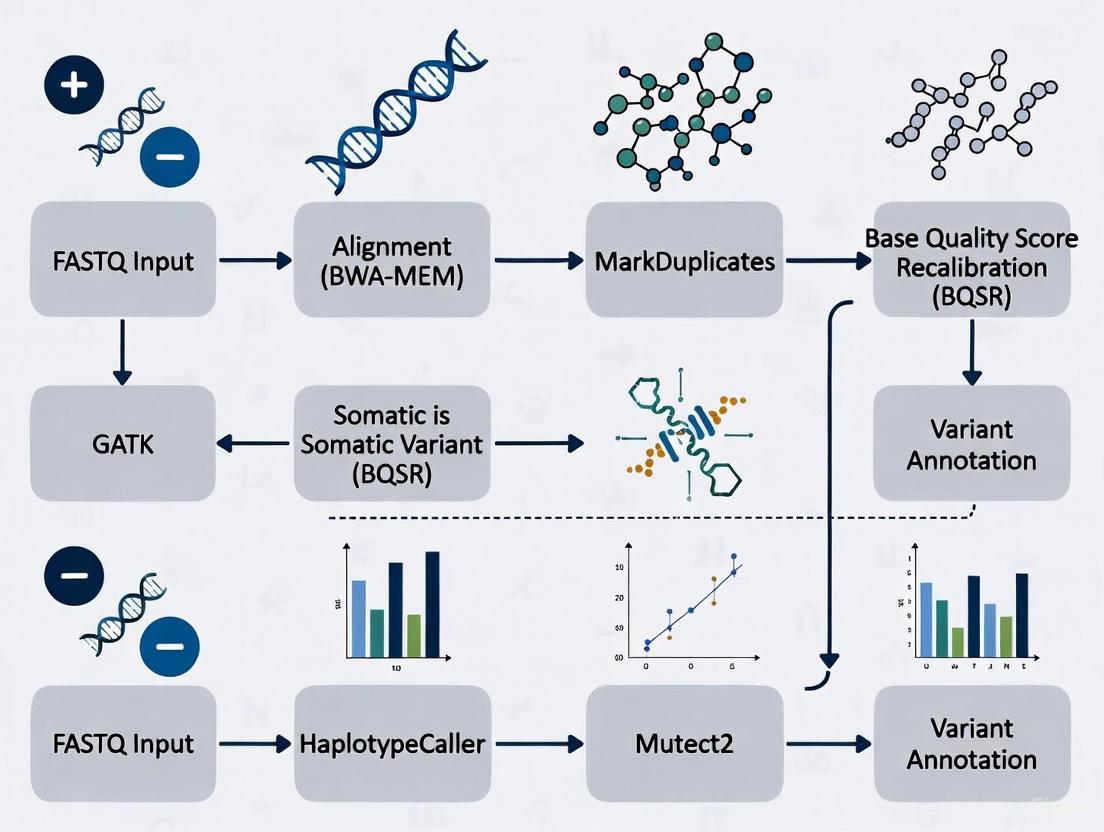

Diagram 1: GATK somatic variant calling workflow. The pipeline processes tumor and normal samples through alignment, quality control, and specialized somatic calling with Mutect2, incorporating additional resources like Panels of Normals (PoN) and population allele frequencies to improve accuracy.

Key Workflow Components

- Tumor-Normal Analysis: The core approach compares a tumor sample against a matched normal sample from the same individual to identify tumor-specific variants [3].

- Tumor-Only Mode: When matched normal samples are unavailable, the pipeline can operate in tumor-only mode, though this requires additional filtering strategies to address increased false positives [3].

- Panel of Normals (PoN): A critical resource especially important for tumor-only analysis, the PoN contains artifacts and common germline variants identified across multiple normal samples, allowing their systematic removal from tumor results [3].

- External Resources: Integration of population allele frequency data (e.g., gnomAD) and contamination information helps distinguish true somatic variants from germline polymorphisms or technical artifacts [3].

Comparative Analysis of Variant Calling Tools

Table 2: Performance Comparison of Somatic Variant Calling Tools

| Tool | Primary Algorithm | Optimal Use Case | Strengths | Limitations |

|---|---|---|---|---|

| GATK Mutect2 | Bayesian modeling with local assembly | Tumor-normal pairs with matched normal | High accuracy for SNVs/indels, comprehensive filtering | Computational intensity, parameter complexity |

| Strelka2 | Bayesian statistics with local realignment | Sensitive low-frequency somatic detection | Optimized for low VAF, fast operation | Less comprehensive than GATK ecosystem |

| Illumina DRAGEN | Hardware-accelerated graph alignment | Clinical applications requiring speed | Extreme speed, high accuracy | Proprietary hardware dependency |

| VarScan2 | Heuristic/statistical hybrid | Resource-constrained environments | Flexible threshold setting | Higher false positive rate without tuning |

Multiple benchmarking studies have demonstrated that GATK and DRAGEN typically perform best in SNV and indel detection, achieving high F-scores, while Strelka2 shows particular advantage in detecting low allele frequency somatic mutations [6]. The choice of tool depends on specific research requirements, with GATK offering comprehensive functionality and an active community, Strelka2 providing sensitivity for low-frequency variants, and DRAGEN delivering unprecedented speed through hardware acceleration [6].

Research Reagents and Experimental Solutions

Table 3: Essential Research Reagents for Somatic Mutation Analysis

| Reagent/Resource | Function | Application Notes |

|---|---|---|

| Panel of Normals (PoN) | Filters common artifacts and germline variants | Critical for tumor-only mode; should be cohort-specific |

| Population AF databases (gnomAD) | Identifies common polymorphisms | Reduces false positives from population variants |

| BWA-MEM aligner | Maps sequencing reads to reference genome | Optimal for short-read Illumina data |

| Control DNA samples | Quality monitoring and cross-experiment standardization | Enables batch effect correction |

| Targeted capture panels | Enrichment for cancer-related genes | Enables deeper sequencing at lower cost |

| Reference genomes (GRCh38) | Standardized genomic coordinate system | Important to use same version across samples |

Clinical Implications and Therapeutic Applications

Mutational Signatures as Exposure Fingerprints

The patterns of somatic mutations in cancer genomes can serve as fingerprints of past mutagen exposures, providing powerful insights into cancer etiology [2]. For example, the comprehensive catalogue of somatic mutations from a malignant melanoma revealed a dominant mutational signature reflective of DNA damage due to ultraviolet light exposure, a known risk factor for this cancer type [2]. Specifically, the majority of somatic base substitutions were C>T/G>A transitions, with a high frequency of CC>TT/GG>AA dinucleotide changes—a pattern characteristic of ultraviolet light exposure [2].

Similar mutational signatures have been identified for tobacco smoke, aristolochic acid, and other environmental carcinogens, creating opportunities for connecting specific exposures to individual cancers [7]. This information is becoming increasingly valuable for understanding lifestyle factors that influence cancer risk, ultimately impacting clinical decisions and public health strategies [7].

Clonal Hematopoiesis and Cancer Predisposition

Recent sensitive sequencing approaches have revealed that as we age, many tissues become colonized by microscopic clones carrying somatic driver mutations [5]. Some of these clones represent a first step toward cancer, while others may contribute to ageing and other diseases [5]. In blood, targeted NanoSeq has identified 14 genes under positive selection, all known clonal hematopoiesis drivers, with approximately 11.9 non-synonymous mutations in these driver genes per donor [5]. This clonal architecture represents a premalignant state that significantly influences cancer risk and may contribute to non-malignant age-associated diseases.

Therapeutic Implications and Resistance Mechanisms

The identification of specific somatic mutations has direct implications for targeted therapy approaches:

- Oncogene-directed therapies: Drugs targeting specific driver mutations in genes like BRAF (V600E) in melanoma or EGFR mutations in lung cancer represent paradigm shifts in cancer treatment [2].

- Mutation order effects: The sequence in which mutations are acquired can influence response to targeted therapies, suggesting that therapeutic strategies may need to account for evolutionary history [4].

- Resistance mechanisms: The pool of genetically diverse subclones within tumors frequently serves as a source of therapy-resistant populations, necessitating combination approaches or evolutionary therapies [4].

Emerging Technologies and Approaches

The field of somatic mutation analysis continues to evolve rapidly, with several promising technological developments:

- Single-molecule sequencing: Methods like NanoSeq with error rates below 5×10^-9 errors per base pair enable accurate mutation detection from single DNA molecules, permitting quantification of mutation rates and signatures in any tissue [5].

- Multivariate regression models: These statistical approaches enable mutational epidemiology studies examining how exposures and cancer risk factors alter the acquisition or selection of somatic mutations [5].

- Integration of epigenetic analyses: Combining mutational data with information on epigenetic changes provides a more comprehensive view of the molecular alterations driving cancer [1].

Somatic mutations represent the fundamental drivers of cancer development, with their identification and interpretation being essential for both biological understanding and clinical application. The GATK somatic variant calling workflow and related bioinformatic pipelines provide robust frameworks for distinguishing true somatic variants from technical artifacts and germline polymorphisms. As detection technologies continue to improve, particularly with the advent of single-molecule sequencing methods, our appreciation of the complex clonal architecture of both tumors and normal tissues has deepened.

The biological and clinical significance of somatic mutations extends from their roles in oncogenesis and tumor evolution to their utility as biomarkers for targeted therapies and indicators of past mutagen exposures. For researchers and drug development professionals, accurate somatic variant calling remains an essential capability, enabling the discovery of new cancer genes, the identification of therapeutic targets, and the development of personalized treatment approaches based on the unique mutational profile of each patient's cancer. As we continue to refine our analytical methods and expand our understanding of mutation order effects, clonal evolution, and mutational signatures, somatic mutation analysis will undoubtedly remain central to cancer research and clinical oncology.

The Genome Analysis Toolkit (GATK) is a structured programming framework developed by the Broad Institute's Data Sciences Platform to enable efficient analysis of high-throughput sequencing data. Established as the industry standard for variant discovery, GATK provides a comprehensive software package with a primary focus on identifying SNPs, indels, and other genetic variants with exceptional accuracy and reliability. Its powerful processing engine and robust architecture make it capable of scaling from small-scale research projects to massive genomic initiatives like the 1000 Genomes Project and The Cancer Genome Atlas. GATK's status as the benchmark in genomic analysis is further solidified by its best practices workflows, which provide battle-tested protocols optimized for computational efficiency and reproducible results across diverse sequencing platforms and organisms.

Technical Architecture and Core Framework

GATK is built on a sophisticated technical foundation that separates data management from analytical algorithms, allowing researchers to focus on biological interpretation rather than computational challenges.

MapReduce Programming Paradigm

GATK implements a functional programming philosophy based on the MapReduce model, which divides computations into discrete, independent pieces that can be efficiently parallelized [8]. This architecture encompasses:

- Traversals: Data access patterns that prepare and present genomic data to analysis modules

- Walkers: Analysis modules that contain the map and reduce methods for processing data

- Data Bundles: Associated genomic information packaged by traversals for consumption by walkers

This separation of data access patterns from analysis algorithms enables the GATK core team to optimize the framework continuously for correctness, stability, and CPU/memory efficiency while providing automatic parallelization capabilities [8].

Supported Platforms and Requirements

GATK is designed to run on Linux and POSIX-compatible platforms, including MacOS X, with no native support for Windows systems [9]. The major system requirement is Java 1.8, with some tools having additional R or Python dependencies. For ease of deployment, the Broad Institute recommends using Docker containers, with an official image available on Dockerhub [9].

The framework has been engineered to support both traditional compute environments and modern cloud infrastructures, leveraging Apache Spark for parallelization and optimized cloud usage [9]. This flexibility allows researchers to scale analyses efficiently across local clusters or cloud platforms like AWS, Google Cloud, and Microsoft Azure [10].

Data Format Support and Compatibility

GATK utilizes the SAM/BAM format as the standard for representing sequencing reads, employing a production-quality SAM library for parsing and querying capabilities [8]. The toolkit has been extensively tested with data from multiple sequencing platforms, including:

- Illumina sequencing technology

- Applied Biosystems SOLiD System

- 454 Life Sciences (Roche)

- Complete Genomics

The framework supports BAM files with alignments from most next-generation sequence aligners and can accommodate various genotyping and variation formats, including VCF, GLF, and the GELI text format [8].

GATK as the Industry Standard

Historical Development and Adoption

GATK emerged to address the computational challenges posed by massive NGS datasets, with the original 2010 publication highlighting its capability to process the "nearly five terabases" of data from the 1000 Genomes Project pilot [8]. The toolkit's structured approach to genomic data analysis filled a critical development gap between sequencing output and biologically interpretable results.

The framework has evolved through multiple generations, with GATK4 representing a significant architectural redesign featuring:

- Apache Spark integration for distributed computing

- Cloud-native execution capabilities

- Expanded variant calling scope beyond germline variants

- Bundled Picard toolkit utilities with harmonized syntax [9]

Industry Partnerships and Ecosystem

GATK's position as the industry standard is reinforced through strategic collaborations with technology leaders, including:

- Intel: Co-development of Cromwell (workflow execution engine) and GenomicsDB (variant storage database) [10]

- Cloudera: Implementation of GATK4 on Apache Spark framework for accelerated genomic research [10]

- Cloud Providers: Partnerships with AWS, Google, IBM, and Microsoft to enable cloud-based GATK access via software-as-a-service models [10]

These collaborations have resulted in performance improvements of two orders of magnitude over previous GATK versions, enabling faster iterative analysis and propelling genomic innovation [10].

Licensing and Accessibility

GATK4 is distributed as open-source software under a BSD 3-clause "New" or "Revised" license [9], which permits:

- Commercial use

- Modification

- Distribution

- Private use

This permissive licensing model has facilitated widespread adoption across academic, clinical, and commercial environments, with over 31,000 registered users benefiting from the toolkit [10].

Somatic Variant Calling Workflow: Methods and Protocols

The GATK Best Practices workflow for somatic short variant discovery (SNVs + Indels) provides a comprehensive, battle-tested protocol for identifying somatic mutations in cancer genomics.

Input Requirements and Data Preparation

The somatic variant discovery workflow requires BAM files for each input tumor and normal sample, which must be pre-processed according to GATK Best Practices for data pre-processing [11]. Key input specifications include:

- Coordinate-sorted BAM files with proper indexing

- Reference genome in FASTA format with sequence dictionary and index

- Optional but recommended: Germline resource (e.g., gnomAD) and panel of normals (PoN)

Step-by-Step Experimental Protocol

Candidate Variant Calling with Mutect2

Purpose: Identify potential somatic SNVs and indels via local de novo assembly of haplotypes.

Methodology:

Technical Details: Mutect2 uses a Bayesian somatic genotyping model that differs from the original MuTect algorithm [12]. The tool performs:

- Local assembly of haplotypes in active regions showing evidence of variation

- Pair-HMM alignment of reads to candidate haplotypes to generate likelihood matrices

- Log odds calculation for alleles being somatic variants versus sequencing errors

Mutect2 can operate in three primary modes:

- Tumor-normal mode: For matched tumor-normal pairs (highest accuracy)

- Tumor-only mode: When only tumor sample is available

- Mitochondrial mode: Optimized for high-depth mitochondrial DNA analysis [12]

Contamination Estimation

Purpose: Estimate cross-sample contamination fraction for each tumor sample.

Tools: GetPileupSummaries, CalculateContamination

Methodology:

Technical Details: CalculateContamination is specifically designed to work without a matched normal, even in samples with significant copy number variation, and makes no assumptions about the number of contaminating samples [11].

Orientation Bias Artifact Modeling

Purpose: Model sequencing artifacts related to read orientation, particularly critical for FFPE tumor samples.

Tool: LearnReadOrientationModel

Methodology:

Technical Details: This step uses the F1R2 counts output from Mutect2 to learn prior probabilities of single-stranded substitution errors for each trinucleotide context prior to sequencing [11].

Variant Filtering

Purpose: Remove false positive calls resulting from correlated errors, alignment artifacts, and other technical artifacts.

Tool: FilterMutectCalls

Methodology:

Technical Details: FilterMutectCalls implements several filtering approaches:

- Hard filters for alignment artifacts

- Probabilistic models for strand and orientation bias artifacts

- Polymerase slippage artifact detection

- Germline variant and contamination filtering

- Bayesian model for overall SNV and indel mutation rate and allele fraction spectrum [11]

The tool automatically sets filtering thresholds to optimize the F score (harmonic mean of sensitivity and precision) [11].

Variant Annotation

Purpose: Add biological and clinical context to filtered somatic variants.

Tool: Funcotator

Methodology:

Technical Details: Funcotator adds comprehensive annotations including:

- Variant classifications (23 distinct types)

- Gene information (affected gene, protein change prediction)

- Database annotations from GENCODE, dbSNP, gnomAD, COSMIC, and other sources [11]

The tool supports both VCF and MAF output formats and features extensible, user-configurable data sources, including cloud-based resources via Google Cloud Storage [11].

Key Research Reagent Solutions

Table: Essential Computational Tools and Resources for GATK Somatic Variant Calling

| Resource Category | Specific Tool/Resource | Function and Purpose |

|---|---|---|

| Variant Caller | Mutect2 [11] [12] | Core somatic variant caller using Bayesian model and local assembly |

| Workflow Management | Cromwell [10] | Portable workflow execution engine for genomic pipelines |

| Variant Storage | GenomicsDB [10] | Optimized database for joint analysis of large variant datasets |

| Data Processing | Picard Toolkit [9] | Data manipulation and QC utilities bundled with GATK4 |

| Germline Resource | gnomAD [11] [12] | Population allele frequencies for germline variant filtering |

| Panel of Normals | CreateSomaticPanelOfNormals [11] | Aggregate normal samples to identify technical artifacts |

| Functional Annotation | Funcotator [11] | Comprehensive variant annotation with clinical and biological context |

| Quality Control | GetPileupSummaries/CalculateContamination [11] | Cross-sample contamination estimation and quality metrics |

Technical Specifications and Performance

GATK Architecture Components

Computational Performance and Scaling

GATK4 represents a significant advancement in computational efficiency through:

- Apache Spark Integration: Enables distributed memory parallelization across compute clusters [10]

- Cloud Optimization: Native support for cloud storage and computing environments [9]

- GenomicsDB Innovation: Provides unprecedented scalability for variant data storage and processing [10]

Benchmarking demonstrates that GATK4 running on Spark with Cloudera Enterprise can perform genomic analysis two orders of magnitude faster than previous GATK versions [10], dramatically reducing iteration time for research and development.

The Genome Analysis Toolkit maintains its position as the industry standard for genomic variant discovery through continuous innovation, comprehensive documentation, and robust community support. Its somatic variant calling workflow, centered on Mutect2 and complementary tools, provides researchers with a rigorously validated methodology for detecting cancer-associated mutations with high confidence. As genomic data continues to grow in scale and complexity, GATK's evolving architecture—with cloud-native execution, Spark-based parallelization, and optimized storage solutions—ensures it remains capable of addressing the computational challenges of modern biomedical research. The toolkit's permissive open-source license, extensive documentation, and integration with commercial and cloud platforms make advanced genomic analysis accessible to researchers across academia, clinical diagnostics, and pharmaceutical development.

The Genome Analysis Toolkit (GATK) Mutect2 is a sophisticated computational tool designed to identify somatic short variants—single nucleotide variants (SNVs) and insertions/deletions (indels)—in cancer sequencing data. Developed by the Broad Institute, Mutect2 represents a significant advancement over its predecessor by incorporating local assembly of haplotypes to detect somatic mutations in one or more tumor samples, with or without a matched normal sample [11] [13]. This capability is crucial for cancer research and drug development, as identifying tumor-specific mutations enables researchers to understand tumor biology, identify therapeutic targets, and guide personalized treatment strategies [14] [15].

In cancer genomics, somatic variant discovery presents unique challenges distinct from germline variant calling. Tumor samples often contain a mixture of cancerous and normal cells, leading to variable mutation allele frequencies. Additionally, artifacts from formalin-fixed, paraffin-embedded (FFPE) samples, tumor heterogeneity, and cross-sample contamination further complicate accurate variant detection [14] [16]. Mutect2 addresses these challenges through a comprehensive probabilistic framework that combines local assembly, powerful filtering, and artifact identification to distinguish true somatic variants from sequencing errors and technical artifacts.

Core Algorithm and Technical Architecture

Local Assembly and Variant Calling

Mutect2 employs an sophisticated approach to variant calling based on local de novo assembly of haplotypes, similar to the GATK HaplotypeCaller but optimized for somatic variant discovery. When Mutect2 encounters a genomic region showing evidence of variation, it performs the following key operations [11]:

- Local Assembly: Discards existing read alignment information and completely reassembles reads in the active region to generate candidate haplotypes. This assembly-based approach is particularly effective for detecting complex variants and indels that may be missed by alignment-based methods.

- Pair-HMM Alignment: Aligns each read to each candidate haplotype using the Pair-Hidden Markov Model (Pair-HMM) algorithm to generate a matrix of alignment likelihoods.

- Bayesian Somatic Likelihoods Model: Applies a specialized Bayesian model to calculate the log odds of alleles being true somatic variants versus sequencing errors. This model forms the statistical foundation for initial variant identification.

Advanced Filtering Framework

Following initial variant calling, Mutect2 employs a multi-tiered filtering approach to remove false positives through the FilterMutectCalls tool. The filtering framework has evolved significantly in recent versions, replacing multiple individual thresholds with a unified approach that filters based on a single quantity: the probability that a variant is a true somatic mutation [17]. Key aspects include:

- Automatic Threshold Optimization: FilterMutectCalls automatically determines the optimal filtering threshold to maximize the F-score (the harmonic mean of sensitivity and precision), eliminating the need for manual threshold tuning [17].

- Somatic Clustering Model: Implements a Dirichlet process binomial mixture model to account for subclonal allele fractions in the tumor. This model clusters variants by their allele fractions, helping to distinguish true somatic variants from artifacts based on their expected frequency distribution [17].

- Artifact-Specific Detection: Incorporates specialized probabilistic models to identify and filter strand bias artifacts, polymerase slippage artifacts, germline variants, and contamination.

Table 1: Key Technical Components of the Mutect2 Algorithm

| Component | Function | Technical Approach |

|---|---|---|

| Variant Calling | Identify candidate somatic variants | Local de novo assembly followed by Pair-HMM alignment and Bayesian likelihood calculation |

| Contamination Estimation | Estimate cross-sample contamination | GetPileupSummaries and CalculateContamination tools using common germline variants |

| Orientation Bias Correction | Correct for strand-specific artifacts | LearnReadOrientationModel using F1R2 counts from Mutect2 |

| Variant Filtering | Remove false positive calls | Unified probabilistic framework optimizing F-score with specialized artifact models |

Complete Mutect2 Workflow

The standard Mutect2 workflow consists of several interconnected steps, each addressing specific challenges in somatic variant discovery.

Figure 1: Complete GATK Mutect2 workflow for somatic variant discovery

Call Candidate Variants

The initial variant calling step uses Mutect2 with appropriate resources to generate raw candidate somatic variants:

Essential resources for this step include [11] [17] [18]:

- Reference genome: The standard reference sequence for alignment (e.g., GRCh38)

- Panel of Normals (PoN): A VCF file containing artifacts identified in normal samples, crucial for filtering systematic technical artifacts

- Germline resource: A population database (e.g., gnomAD) of common germline variants to help distinguish true somatic variants from rare germline polymorphisms

- Intervals: Genomic regions to target for variant calling (essential for exome and targeted sequencing)

Calculate Contamination

Cross-sample contamination can significantly impact variant calling accuracy, particularly for low-frequency variants. Mutect2 uses a two-step process to estimate and account for contamination [11] [18]:

Unlike other contamination tools, CalculateContamination is designed to work effectively even in samples with significant copy number variation and without matched normal samples [11].

Learn Orientation Bias Artifacts

Sequencing artifacts that occur on a single DNA strand before sequencing are common in FFPE samples and certain sequencing platforms. Mutect2 includes a specialized workflow to identify and correct these artifacts [17]:

This step uses the F1R2 counts output from Mutect2 to learn prior probabilities of single-stranded substitution errors for each trinucleotide context, which is particularly important for FFPE tumor samples and Illumina NovaSeq data [17].

Filter Variants

The FilterMutectCalls step integrates all available information to filter false positive variants:

This step accounts for correlated errors, alignment artifacts, strand and orientation bias, polymerase slippage, germline variants, and contamination. It also incorporates the learned somatic clustering model to refine variant probabilities based on the observed allele fraction spectrum in the tumor [11] [17].

Annotate Variants

Functional annotation is the final step in the workflow, adding biological context to the identified variants:

Funcotator adds gene-level information, variant classifications, protein change predictions, and annotations from various datasources including GENCODE, dbSNP, gnomAD, and COSMIC [11]. The output can be generated in either VCF or Mutation Annotation Format (MAF), facilitating integration with downstream analysis tools.

Performance Characteristics and Benchmarking

Understanding the performance characteristics of Mutect2 is essential for proper experimental design and data interpretation. Independent benchmarking studies have evaluated Mutect2 against other somatic variant callers across various sequencing depths and variant allele frequencies.

Table 2: Performance Comparison of Somatic Variant Callers at Different Mutation Frequencies

| Mutation Frequency | Sequencing Depth | Mutect2 F-Score | Strelka2 F-Score | Recommended Use Cases |

|---|---|---|---|---|

| ≥20% (High) | 200X | 0.94-0.95 | 0.95-0.96 | Standard tumor samples with high purity |

| 10-20% (Medium) | 300X | 0.90-0.94 | 0.89-0.93 | Mixed samples with stromal contamination |

| 5-10% (Low) | 500X | 0.65-0.95 | 0.64-0.94 | Low-purity tumors, subclonal mutations |

| 1% (Very Low) | 500-800X | 0.32-0.50 | 0.27-0.37 | Early-stage lesions, liquid biopsies |

Impact of Sequencing Depth and Mutation Frequency

Systematic evaluations reveal that sequencing depth and mutation frequency significantly impact variant calling performance [15]:

- For mutations with ≥20% allele frequency, sequencing depths of 200X are sufficient to detect >95% of variants with high precision (>95%)

- For lower frequency mutations (5-10%), increasing sequencing depth to 500X improves recall, but precision may decrease slightly

- At very low mutation frequencies (1%), even high sequencing depths (800X) yield limited recall (<50%), suggesting that improving experimental methods may be more effective than simply increasing sequencing depth

Comparison with Other Somatic Callers

When compared with other widely used somatic variant callers, Mutect2 demonstrates specific strengths and limitations [14] [15]:

- Mutect2 generally shows higher sensitivity for low-frequency variants compared to VarScan2 and Torrent Variant Caller, particularly in Ion Torrent sequencing data

- Compared to Strelka2, Mutect2 performs slightly better at lower mutation frequencies (<10%), while Strelka2 has a small advantage at higher frequencies (≥20%)

- Strelka2 typically executes 17-22 times faster than Mutect2, making it more suitable for time-sensitive clinical applications

- Mutect2 shows particularly strong performance in challenging samples such as FFPE specimens and those with significant contamination

Implementation Considerations

Computational Requirements and Scaling

The computational intensity of Mutect2 varies significantly based on sample type, sequencing depth, and genome size. For whole genome sequencing data, the workflow can be distributed across multiple computational nodes using scatter-gather approaches [17] [19]:

This parallelization strategy significantly reduces execution time for large datasets, though it requires careful management of intermediate files.

Panel of Normals Creation

A critical component for optimal Mutect2 performance is creating a panel of normals (PoN) specific to your sequencing platform and processing pipeline [17] [18]:

The PoN should ideally include 20-40 normal samples processed using the same experimental and computational methods as your tumor samples. Even an imperfect PoN is significantly better than no PoN, as it effectively captures systematic artifacts specific to your sequencing pipeline [18].

Tumor-Only Analysis

For samples without matched normal tissue, Mutect2 can operate in tumor-only mode with appropriate adjustments to filtering parameters. In this scenario, the germline resource and panel of normals become even more critical for distinguishing somatic variants from rare germline polymorphisms [14]. Additional caution is warranted for variants with allele frequencies around 50%, which likely represent heterozygous germline variants rather than somatic mutations.

Research Reagent Solutions

Table 3: Essential Research Reagents and Resources for Mutect2 Analysis

| Resource Type | Specific Examples | Function in Workflow | Availability |

|---|---|---|---|

| Reference Genome | GRCh38, b37 | Baseline for read alignment and variant calling | GATK Resource Bundle |

| Germline Resource | af-only-gnomad.vcf | Population allele frequencies to filter common germline variants | GATK Resource Bundle |

| Panel of Normals | Laboratory-specific PoN | Identifies systematic technical artifacts | Created in-house from normal samples |

| Common Variants Set | chr17smallexaccommon3_grch38.vcf | Known common variants for contamination estimation | GATK Resource Bundle |

| Functional Annotation Data Sources | GENCODE, dbSNP, COSMIC, gnomAD | Provides biological context for identified variants | Funcotator Data Sources |

GATK Mutect2 provides a comprehensive, robust solution for somatic SNV and indel discovery in cancer genomics research. Its assembly-based approach, combined with sophisticated filtering for various artifact types, enables sensitive and specific detection of somatic variants across a wide range of allele frequencies and sample types. The complete workflow—from raw variant calling through contamination estimation, orientation bias correction, and functional annotation—provides researchers with a standardized method for generating high-quality somatic variant calls.

For optimal performance, researchers should ensure adequate sequencing depth based on expected mutation frequencies, create sequencing platform-specific panels of normals, and carefully interpret variants in the context of sample purity and potential technical artifacts. As cancer research increasingly focuses on subclonal mutations and low-frequency variants, proper implementation of the Mutect2 workflow becomes ever more critical for generating biologically meaningful results that can inform drug development and clinical decision-making.

The Importance of the GATK Best Practices Workflows for Reproducibility

In the field of genomics, reproducibility stands as a fundamental requirement for scientific validity, clinical application, and drug development. The Genome Analysis Toolkit (GATK) Best Practices provide the genomic community with standardized, rigorously validated workflows for variant discovery in high-throughput sequencing data. Developed and maintained by the Broad Institute, these workflows represent evolving methodologies that address the substantial technical challenges inherent in sequencing data analysis. For researchers, scientists, and drug development professionals, adherence to these practices is not merely a convenience but a critical factor in ensuring that results are reliable, comparable, and interpretable across different studies and institutions. This technical guide examines the structural components, performance benchmarks, and methodological frameworks that establish GATK Best Practices as a cornerstone for reproducible genomic research, with particular focus on somatic variant calling.

Understanding the GATK Best Practices Framework

Definition and Scope

The GATK Best Practices are defined as the reads-to-variants workflows used at the Broad Institute, providing step-by-step recommendations for variant discovery analysis [20]. These workflows are specifically tailored to particular applications and experimental designs, primarily focusing on:

- Whole genomes and exomes, with adaptability for gene panels and RNAseq [20]

- Different types of variation, including single nucleotide variants (SNVs), insertions/deletions (indels), and copy number variants (CNVs) [21] [22]

- Various experimental designs, from single samples to large cohorts [23]

A critical aspect of the framework is its acknowledgment of limitations. The Broad Institute explicitly notes that these workflows are developed and validated primarily for human data sequenced with Illumina technology, and adaptation may be required for other organisms or technologies [20].

The Three-Phase Analysis Structure

All GATK Best Practices workflows follow a consistent pattern comprising two or three main analysis phases, which creates a standardized approach that enhances reproducibility [20]:

Data Pre-processing: This initial phase converts raw sequence data (FASTQ or uBAM files) into analysis-ready BAM files through alignment to a reference genome and data cleanup operations to correct technical biases.

Variant Discovery: This core phase identifies genomic variation in one or more individuals and applies appropriate filtering methods. The output is typically in VCF format, though some variant classes like CNVs may use other structured formats [21] [20].

Additional Processing: Depending on the application, this may include filtering and annotation using resources of known variation, truth sets, and other metadata to assess and improve accuracy [20].

Quantitative Benchmarks: Establishing Performance Standards

Reproducibility requires not only standardized methods but also predictable performance. Independent benchmarking studies provide crucial quantitative data on the expected accuracy and efficiency of GATK workflows.

Performance of GATK-gCNV for Rare Variants

The GATK-gCNV algorithm enables sensitive discovery of rare copy number variants from sequencing read-depth information. Benchmarking against orthogonal technologies demonstrates its robust performance in exome sequencing data [21].

Table 1: Performance metrics for GATK-gCNV based on validation with 7,962 exomes from autism quartet families

| Metric | Performance | Validation Method |

|---|---|---|

| Recall of rare coding CNVs | Up to 95% | Matched WGS and microarray data |

| Resolution | >2 exons | 7,962 samples with matched WGS |

| Precision Validation Rate | 97% of de novo CNVs | Five orthogonal technologies |

| Application Scale | 197,306 individuals | UK Biobank exome dataset |

Comparative Benchmarking of Variant Callers

A comprehensive 2022 benchmark of state-of-the-art variant calling pipelines evaluated GATK alongside other tools across 14 "gold standard" genome datasets [24]. The study found that while actively developed tools like DeepVariant, Clair3, and Strelka2 also performed well, GATK maintained competitive performance, with accuracy depending more on the variant caller than the read aligner when using established aligners like BWA-MEM [24].

Computational Performance and Optimization

For reproducible workflows, consistent computational performance is essential for practical implementation. Research into GATK optimization reveals significant improvements across versions [25]:

Table 2: Computational performance comparisons between GATK versions

| Version | Speed Improvement | Recommended Use Case | Cost per Sample (Cloud) |

|---|---|---|---|

| GATK3.8 | 29.3% reduction in execution time | Time-critical situations; parallel processing on multiple nodes | $41.60 (4 c5.18xlarge instances) |

| GATK4 | 16.9% reduction in execution time | Cost-effective routine analyses; large population studies | $2.60 (single c5.18xlarge instance) |

GATK4's architecture makes it particularly suitable for large-scale studies, with the ability to process approximately 1.18 samples per hour on a single cloud instance, enabling population-scale analyses while maintaining reproducibility [25].

Experimental Protocols: Implementing Reproducible Workflows

The Somatic Variant Calling Workflow with Mutect2

For somatic mutation detection in cancer research, GATK's Mutect2 represents the industry standard for identifying tumor-specific variants [22]. The detailed protocol ensures reproducible detection of low-frequency variants present in as few as 5% of reads.

Diagram 1: Somatic variant calling workflow with Mutect2

The Mutect2 workflow employs several critical reference resources to ensure accurate variant detection:

- Panel of Normals (PON): Contains common technical artifacts observed across many normal samples, enabling their subtraction from tumor samples [22]

- Germline Resource: Population allele frequencies from gnomAD help distinguish somatic from germline variants [22]

- Common Variants: Used for contamination estimation between tumor-normal pairs [22]

The matched tumor-normal approach provides maximum specificity by eliminating patient-specific germline variants that would otherwise appear as false positive somatic mutations [22].

Germline Short Variant Calling Workflow

The germline variant calling workflow follows a structured three-stage process that emphasizes data quality and joint calling across samples [23]:

Diagram 2: Germline short variant discovery workflow

The germline workflow incorporates several sophisticated statistical models that enhance reproducibility:

- Base Quality Score Recalibration (BQSR): Corrects systematic errors in base quality scores assigned by sequencers, using covariates encoded in read groups [23]

- Joint Calling: Leverages data from multiple samples to improve genotype inference sensitivity, boost statistical power, and reduce technical artifacts [23]

- Variant Quality Score Recalibration (VQSR): Employs Gaussian mixture models to classify variants based on annotation values clustering using training sets of high-confidence variants [23]

Reproducible implementation of GATK workflows requires specific reference files and computational resources. The following table details these essential components:

Table 3: Essential research reagents and resources for GATK workflows

| Resource Type | Specific Examples | Function in Workflow |

|---|---|---|

| Reference Genome | GRCh37, GRCh38 (hg38) | Standardized coordinate system for read alignment and variant calling [22] [24] |

| Known Sites Resources | dbSNP, gnomAD, 1000 Genomes | Population allele frequencies for distinguishing somatic from germline variants [26] [22] |

| Panel of Normals (PON) | 1000g_pon.hg38.vcf.gz | Database of technical artifacts observed across normal samples [22] |

| Contamination Resources | smallexaccommon_3.hg38.vcf.gz | Common variants for estimating cross-sample contamination [22] |

| High-Confidence Callsets | Genome in a Bottle (GIAB) | Gold-standard truth sets for validation and benchmarking [24] |

Implementation and Validation Strategies

Computational Infrastructure Recommendations

Deploying GATK workflows with reproducible performance requires appropriate computational infrastructure. Based on systematic testing, the following configurations are recommended [27]:

- CPU Architecture: x86_64 with minimum AVX instruction set for PairHMM library acceleration, with AVX2 recommended for Smith-Waterman acceleration [27]

- Memory Allocation: Sensible heap sizes (4-8GB) to balance Java memory management with native library requirements [27]

- Storage Solutions: Fast storage systems to mitigate I/O bottlenecks, with CRAM format recommended for efficient data compression [27]

Validation and Concordance Assessment

The GATK Concordance tool provides quantitative measures of reproducibility by comparing variant calls against truth sets [28]. Key metrics include:

- True Positives (TP): Variants correctly identified in both test and truth datasets

- False Positives (FP): Variants identified in test data but absent in truth set

- False Negatives (FN): Variants present in truth set but missed in test data

- Recall and Precision: Calculated as TP/(TP+FN) and TP/(TP+FP) respectively [28]

Proper interpretation of these metrics requires ensuring that compared variant sets are on the same genome build, as build discrepancies can artificially inflate false positive and false negative counts [28].

The GATK Best Practices workflows represent an essential framework for achieving reproducibility in genomic variant discovery. Through standardized methodologies, comprehensive benchmarking, detailed experimental protocols, and validated computational infrastructure, these workflows provide the scientific community with a reliable foundation for both research and clinical applications. The continued evolution of these practices—including the recent development of tools like GATK-gCNV for copy number variant detection and Mutect2 for somatic analysis—ensures that the framework adapts to new challenges while maintaining the rigorous standards required for reproducible science. For drug development professionals and researchers, adherence to these practices provides the consistency and reliability necessary to translate genomic discoveries into meaningful clinical applications.

The identification of somatic variants using the GATK framework requires a carefully curated set of input files and resources. Unlike germline variant discovery, somatic calling presents unique challenges, including the need to distinguish true somatic events from technical artifacts and germline variants present at low allele fractions. The GATK somatic short variant discovery workflow is specifically designed to identify somatic short variants (SNVs and Indels) in one or more tumor samples from a single individual, with or without a matched normal sample [11]. Success in this endeavor hinges on the quality and appropriateness of core inputs—primarily pre-processed BAM files, germline reference resources, and a Panel of Normals (PoN). These elements form the foundational layer upon which the variant calling process is built, directly impacting the sensitivity and specificity of the final results. Proper understanding and preparation of these prerequisites are essential for researchers, scientists, and drug development professionals aiming to generate reliable data for downstream analysis in cancer genomics.

Core Input Requirements: The Essential Components

Primary Input: Analysis-Ready BAM Files

The primary input for the GATK somatic variant discovery workflow is sequence data in BAM file format. The workflow requires BAM files for each input tumor and, if available, matched normal sample [11]. It is critical that these input BAMs have undergone comprehensive pre-processing as described in the GATK Best Practices for data pre-processing. This pre-processing typically includes steps such as alignment to a reference genome, duplicate marking, base quality score recalibration (BQSR), and indel realignment, which collectively ensure that the data is in an optimal state for variant calling. The matched normal sample, typically derived from healthy tissue (often blood), serves as a crucial control to identify germline variants present in the patient and filter them from the final somatic callset.

Beyond the primary sequence data, the somatic workflow depends on several key resources to function accurately and effectively. The requirements for these resources vary based on the specific use case and availability of a matched normal sample.

Table: Core Resource Requirements for Somatic Variant Calling

| Resource Type | Mandatory/Optional | Description & Purpose | Use Case |

|---|---|---|---|

| Reference Genome | Mandatory | A FASTA file of the reference sequence (e.g., GRCh38, b37/hg19) and its associated index (fai) and dictionary (dict) files. |

Universal; required for all analysis steps. |

| Germline Resource | Highly Recommended | A sites-only VCF containing population allele frequencies (e.g., gnomAD). Used to classify variants as potential germline events. | Essential for tumor-only mode; highly beneficial for tumor-normal pairs. |

| Panel of Normals (PoN) | Highly Recommended | A sites-only VCF of artifacts common across a set of normal samples. Filters recurrent technical artifacts. | Universal; significantly reduces false positives. |

| Matched Normal BAM | Conditional | A BAM file from a normal tissue of the same individual. The most effective resource for removing patient-specific germline variants. | Tumor-Normal Pair mode; not used in Tumor-Only mode. |

The Panel of Normals (PoN): A Cornerstone for Artifact Filtering

Definition and Purpose of a PoN

A Panel of Normals (PoN) is a critical resource in somatic variant analysis whose primary purpose is to capture recurrent technical artifacts present in sequencing data [29]. It is created from a collection of normal samples—derived from healthy tissue believed to be free of somatic alterations—that have been processed using the same experimental and analytical protocols intended for the tumor samples. The PoN works by identifying sites where variants consistently appear across multiple normal samples. Since these normals are from healthy tissue, true somatic variants should not be present. Therefore, variants appearing in the PoN are classified as systematic errors—arising from factors like sequencing chemistry, mapping ambiguities, or other platform-specific biases—and are filtered out from the tumor sample results. This process dramatically increases the specificity of the final somatic callset by removing false positives that would otherwise appear as recurrent artifacts across multiple samples in a cohort.

Construction and Best Practices for PoNs

Creating an effective Panel of Normals is a multi-step process that requires careful sample selection and processing. The established method for short variants involves running Mutect2 on each normal sample individually and then combining the results [29] [17].

Table: Methodology for Creating a Short Variant Panel of Normals

| Step | Tool | Action | Key Parameters |

|---|---|---|---|

| 1. Call Variants on Normals | Mutect2 |

Run in tumor-only mode on each normal BAM. | --max-mnp-distance 0 |

| 2. Consolidate Calls | GenomicsDBImport |

Import the per-normal VCFs into a GenomicsDB datastore. | Interval list is required. |

| 3. Create PoN | CreateSomaticPanelOfNormals |

Generate the final sites-only VCF from the datastore. | Optional: --germline-resource to exclude germline sites. |

Several best practices govern the creation and use of a PoN. The most important selection criterion for normals is technical similarity to the tumor samples; they should come from the same exome or genome preparation methods, sequencing technology, and analysis software versions [29]. Biologically, samples should ideally come from young, healthy subjects to minimize the chance of including an undiagnosed tumor. While there is no absolute minimum, a PoN with at least 40 samples is recommended in practice, with larger panels (hundreds of samples) providing greater power to identify rare artifacts [29]. For researchers without the resources to build a custom PoN, the GATK team provides public panels of normals for common reference genomes (e.g., hg38 and b37) as part of the GATK resource bundle, which can be directly used or serve as a starting point [29].

In tumor-only analyses, where a matched normal sample is unavailable, a germline resource becomes essential. This resource is a sites-only VCF containing population allele frequencies, such as a subset of gnomAD [17] [30]. Mutect2 uses the allele frequencies from this resource to calculate the prior probability that a variant is germline, allowing it to filter out common germline polymorphisms that would otherwise appear as false positive somatic calls [31]. When a matched normal is available, the germline resource still provides valuable power to distinguish somatic from germline variants, especially in regions with low coverage in the normal sample.

The reference genome is a mandatory input for all steps in the workflow, from variant calling with Mutect2 to filtering and annotation [17] [30]. The GATK resource bundle provides reference sequences and a wide array of supporting files for common human genome builds like GRCh38/hg38 and b37/hg19 [30]. These bundles include reference FASTA files, index files, known variant sites from projects like dbSNP and the 1000 Genomes Project, which are used for base quality recalibration in pre-processing. While the bundle is hosted on Google Cloud storage, its files can be accessed via a web browser with a Google account or downloaded for local analysis.

Diagram 1: A simplified workflow of the GATK4 Mutect2 somatic variant calling pipeline, showing the integration points for its key inputs, including BAM files, reference genome, germline resource, and Panel of Normals.

Table: Essential Materials and Resources for GATK Somatic Variant Calling

| Item | Category | Critical Function | Example/Format |

|---|---|---|---|

| Pre-processed BAM Files | Primary Data | Analysis-ready aligned sequences for tumor and (optionally) matched normal samples. | Coordinate-sorted, duplicate-marked, BQSR-applied BAM. |

| Reference Genome | Core Reference | Standard sequence for read alignment and variant comparison. | GRCh38 or b37 FASTA file with .fai and .dict indices. |

| Germline Resource VCF | Filtering Resource | Provides population allele frequencies to flag common germline variants. | gnomAD subset (sites-only VCF). |

| Panel of Normals (PoN) | Filtering Resource | Captures recurrent technical artifacts to remove systematic false positives. | Sites-only VCF generated from normal samples. |

| Known Sites Resources | Pre-processing | Used for Base Quality Score Recalibration (BQSR) during data pre-processing. | dbSNP, Mills indels, 1000G indels (VCF format) [30]. |

The reliability of a somatic variant analysis is fundamentally determined by the quality and appropriateness of its inputs. The journey from raw BAM files to a confident somatic callset is underpinned by several non-negotiable prerequisites: meticulously pre-processed sequence data, a high-quality reference genome, and robust filtering resources like a Panel of Normals and a germline resource. These components work in concert to empower the GATK Mutect2 workflow to distinguish true somatic mutations from the vast background of technical artifacts and inherited germline variation. For researchers in drug development and clinical science, a rigorous approach to gathering these inputs is not merely a technical formality but a critical step in ensuring that subsequent biological insights and therapeutic decisions are based on a foundation of accurate genomic data.

A Step-by-Step Guide to the Mutect2 Somatic Calling Pipeline

The Genome Analysis Toolkit (GATK) somatic variant calling workflow represents the industry standard for identifying somatic single nucleotide variants (SNVs) and insertions/deletions (indels) in cancer genomics research [9]. This end-to-end pipeline transforms raw sequencing data from tumor and normal samples into a high-confidence set of annotated somatic variants suitable for downstream biological interpretation. The GATK Best Practices for somatic short variant discovery provide a battle-tested framework that optimizes the balance between sensitivity (finding true variants) and specificity (excluding false positives) [32] [20]. This technical guide details the core components, experimental protocols, and analytical methodologies comprising a production-grade somatic variant calling workflow, providing researchers and drug development professionals with the foundational knowledge required to implement robust variant detection in cancer studies.

Core Workflow Components and Process

The GATK somatic variant discovery workflow follows a structured, multi-phase approach that systematically processes sequencing data through quality control, alignment, preprocessing, variant calling, and filtration stages [20]. Each phase employs specialized tools and methodologies designed to address specific technical challenges in somatic variant detection.

Table 1: Core Workflow Phases in Somatic Variant Discovery

| Phase | Primary Input | Primary Output | Key Tools | Main Purpose |

|---|---|---|---|---|

| Data Pre-processing | Raw FASTQ files | Analysis-ready BAM files | BWA, Picard, GATK | Convert raw reads into aligned, quality-processed data [33] [34] |

| Variant Discovery | Analysis-ready BAMs | Raw variant calls (VCF) | Mutect2 | Identify potential somatic variants [12] |

| Filtering & Annotation | Raw variant calls | Filtered, annotated VCF | FilterMutectCalls, Funcotator | Remove false positives and add biological context [12] [17] |

The computational foundation of this workflow leverages an industrial-strength infrastructure and engine that handles data access, conversion, and traversal, with high-performance computing features including parallelization using Apache Spark and optimized usage of cloud infrastructure [9]. This engineering enables the processing of projects of any scale, from targeted gene panels to whole genomes.

Figure 1: Comprehensive Somatic Variant Calling Workflow. The end-to-end process transforms raw sequencing data into filtered, annotated variants using a structured, multi-phase approach with integrated quality control and external resources.

Successful implementation of the somatic variant calling workflow requires both computational tools and carefully curated biological resources. These components work in concert to distinguish true somatic mutations from technical artifacts and germline variation.

Table 2: Essential Research Reagents and Computational Resources

| Resource Category | Specific Examples | Purpose & Function | Implementation Notes |

|---|---|---|---|

| Reference Genome | GRCh37, GRCh38 | Standardized coordinate system for read alignment and variant reporting | Must include sequence, dictionary, and index files [34] |

| Germline Resource | gnomAD, 1000 Genomes | Provides population allele frequencies to distinguish somatic from germline variants | VCF format with population AF annotations; critical for tumor-only mode [12] [34] |

| Panel of Normals (PON) | Broad Institute PON, custom-built PON | Identifies systematic technical artifacts present in normal samples | Created from Mutect2 calls on multiple normal samples; sites present in ≥2 samples included [17] [34] |

| Known Variants | dbSNP, COSMIC | Context for variant interpretation and filtering | Helps distinguish common polymorphisms from potential somatic events [34] |

| Intervals/Targets | WGS, WES, panel intervals | Defines genomic regions for analysis in targeted sequencing | BED or interval list format; essential for exome/panel sequencing [34] |

The germline resource and Panel of Normals serve distinct but complementary functions. The germline resource contains population frequency data for common polymorphisms, enabling Mutect2 to distinguish potential somatic mutations from inherited variants [12]. In contrast, the Panel of Normals specifically captures systematic technical artifacts that arise from sequencing methodologies, mapping biases, or sample preparation protocols [34]. When constructing a custom Panel of Normals, the GATK Best Practices workflow recommends running Mutect2 in tumor-only mode on each normal sample, then combining the results using GenomicsDBImport and CreateSomaticPanelOfNormals to generate a sites-only VCF suitable for reuse [17].

Detailed Experimental Protocols and Methodologies

Data Pre-processing and Quality Control

The initial phase transforms raw sequencing data into analysis-ready BAM files through a series of computational steps that improve data quality and mitigate technical artifacts:

- Read Alignment: Process raw FASTQ files using BWA-MEM to align sequencing reads to the reference genome [33] [34]. This tool efficiently handles the large volumes of sequencing data generated by modern instruments while accounting for genetic variation.

- Duplicate Marking: Identify and flag PCR duplicates using Picard MarkDuplicates to prevent artificial inflation of variant supporting reads [33]. PCR duplicates typically represent 5-15% of sequencing reads in a standard exome capture [35].

- Base Quality Score Recalibration (BQSR): Apply GATK's BQSR to empirically adjust base quality scores using an established error model [33]. This step corrects for systematic technical errors in base quality scores, though evaluations suggest improvements may be marginal for modern sequencing technologies [35] [34].

- Quality Control Metrics: Generate comprehensive QC reports using tools such as Picard and VerifyBAMID to confirm sample identity, check for contamination, and verify that sufficient sequencing coverage was achieved [35]. For paired tumor-normal samples, confirm expected genetic relationships using the KING algorithm [35].

Somatic Variant Calling with Mutect2

The Mutect2 algorithm represents the core somatic variant discovery engine in the GATK workflow. Unlike germline callers that assume fixed ploidy, Mutect2 employs a Bayesian somatic genotyping model that accommodates the varying allele fractions characteristic of tumor samples due to factors such as tumor purity, subclonality, and copy number alterations [12] [31].

Fundamental Mutect2 Execution Modes:

Tumor with Matched Normal (Recommended):

This mode provides the highest specificity by leveraging the matched normal to directly identify and filter germline variants [12].

Tumor-Only Mode:

Tumor-only analysis requires both a germline resource and Panel of Normals to compensate for the absence of a matched normal, though it produces higher false positive rates [12].

Mitochondrial Mode:

The

--mitochondriaflag automatically optimizes parameters for calling variants in mitochondrial DNA, including setting appropriate initial LOD thresholds and allele frequency priors [12].

Advanced Filtering Strategies

Post-calling filtration represents a critical step in the somatic workflow, leveraging multiple orthogonal approaches to remove false positives while retaining true biological variants:

Read Orientation Artifact Filtering: For samples susceptible to strand-specific artifacts (e.g., FFPE tumors or NovaSeq data), implement a three-step orientation bias filter [17]:

- Step 1: Generate F1R2 counts during Mutect2 execution using the

--f1r2-tar-gzargument - Step 2: Learn the orientation model using

LearnReadOrientationModel - Step 3: Apply the learned model during filtering with

FilterMutectCalls --ob-priors

- Step 1: Generate F1R2 counts during Mutect2 execution using the

Contamination Estimation: Calculate cross-sample contamination using GetPileupSummaries and CalculateContamination [17]. This is particularly important for large sequencing batches where sample mixing may occur.

Probabilistic Filtering: FilterMutectCalls employs a unified probabilistic framework that calculates the probability each variant is a true somatic mutation [17]. The tool automatically determines the optimal threshold that maximizes the F-score (harmonic mean of sensitivity and precision), though users can adjust the sensitivity-precision balance using the

-f-score-betaparameter.

Technical Considerations and Benchmarking

Computational Requirements and Optimization

Somatic variant calling represents a computationally intensive process, with a typical whole-genome sequencing sample requiring approximately 84.5 hours on a 36-core machine using standard implementation [33]. Several strategies can significantly improve computational efficiency:

- Parallelization Frameworks: Leverage Spark-based implementations such as Halvade Somatic to distribute workload across multiple cores and nodes, reducing runtime by 4.3× on a single node and up to 62.4× across multiple nodes [33].

- Scattering by Genomic Region: Execute Mutect2 concurrently across multiple genomic intervals, then merge results using MergeMutectStats [17].

- Cloud-Native Implementation: Utilize WDL workflows with Cromwell execution engine for portable, scalable analysis across cloud and local compute environments [20].

Performance Validation and Benchmarking

Rigorous validation using established benchmarking resources represents a critical component of clinical-grade variant calling [35]. Key benchmarking resources include:

- Genome in a Bottle (GIAB): Provides consensus "ground truth" variant calls for reference samples, though primarily focused on germline variation [35].

- Synthetic Diploid (Syndip): Offers less biased benchmarking across challenging genomic regions through synthetic datasets with known variant positions [35].

- Tumor-Normal Benchmark Sets: Utilize well-characterized cancer cell lines with orthogonal validation to establish sensitivity and specificity metrics.

When evaluating pipeline performance, employ sophisticated comparison tools that account for subtle differences in variant representation and focus analysis on high-confidence genomic regions where benchmark truth sets are most reliable [35].

The GATK somatic variant calling workflow provides a comprehensive, production-grade framework for detecting cancer-associated mutations from high-throughput sequencing data. Through its structured approach encompassing data pre-processing, variant discovery, and sophisticated filtering, the workflow achieves an optimal balance between sensitivity for true somatic variants and specificity against technical artifacts and germline polymorphisms. The integration of external resources such as germline databases and panels of normals further enhances the biological accuracy of the resulting variant calls. As sequencing technologies continue to evolve and new capabilities such as long-read single-molecule sequencing emerge, the fundamental principles underlying this workflow—rigorous quality control, appropriate use of reference resources, and comprehensive validation—will remain essential components of reliable somatic variant discovery in both research and clinical settings.

In the context of cancer genomics, identifying somatic mutations—acquired variants present in tumor cells but absent from the germline—is fundamental for understanding tumorigenesis, progression, and therapeutic targeting. The Genome Analysis Toolkit's (GATK) Mutect2 is a specialized tool designed to call somatic short mutations (single nucleotide variants and indels) via local assembly of haplotypes [12]. Its operation differs fundamentally from germline variant callers, as it employs a Bayesian somatic genotyping model tailored to the unique characteristics of cancer genomes, such as variable purity, heterogeneous subclones, and aneuploidy [31]. This technical guide details the core algorithmic step of calling candidate variants, which forms the foundation of the GATK somatic short variant discovery workflow [11].

The Core Algorithm: Local Re-assembly of Haplotypes

Mutect2, like the HaplotypeCaller, operates on the principle that accurate variant discovery, especially for indels in repetitive regions, requires moving beyond the initial read alignments. It does this by performing local de-novo assembly of haplotypes within genomic regions that show evidence of variation, known as ActiveRegions [36] [11]. This process allows Mutect2 to reconstruct the true biological sequences present in the sample, leading to superior accuracy compared to methods that rely solely on pre-aligned reads.

The following diagram illustrates the multi-stage workflow of the local assembly and variant evaluation process.

Reference Graph Assembly

The process begins by constructing a reference assembly graph, which is a directed DeBruijn graph [36]. The reference sequence for the ActiveRegion is decomposed into a succession of kmers (small sequence subunits 'k' bases long), where each kmer overlaps the previous one by k-1 bases. This creates a graph where nodes represent unique kmers and edges represent the sequential relationship between them. By default, Mutect2 builds two separate graphs using kmer sizes of 10 and 25, then selects the most effective one [36]. A hash table of unique kmers is also built to track node positions.

Threading Reads and Graph Refinement

Once the initial graph is built, each read is "threaded" through it [36]. The algorithm compares each read's kmers against the hash table. Matching kmers are mapped to their existing nodes, while new, unique kmers (indicating potential variation) are added as new nodes. Edges are created or reinforced based on read support; the weight of an edge is increased by one for every read that supports that connection. After all reads are processed, the graph undergoes pruning to remove sections supported by very few reads (default: fewer than 2 reads), which are likely stochastic sequencing errors [36]. This step is crucial for reducing noise and maintaining computational efficiency.

Haplotype Determination and Site Identification

After pruning, the program identifies the most plausible haplotypes by traversing all possible paths in the cleaned graph [36]. Each path's likelihood is scored based on the transition probabilities of its edges, which are derived from the supporting read counts. To limit computational load, Mutect2 selects a maximum number of the best-scoring haplotypes (default: 128 per kmer size) [36]. Finally, each candidate haplotype is aligned to the original reference sequence using the Smith-Waterman algorithm to reconstruct a CIGAR string and identify the precise locations of potential variants (SNVs and indels) relative to the reference [36]. This yields a super-set of candidate variant sites for the final step.

Evaluating Evidence for Candidate Variants

The list of candidate haplotypes and sites must now be rigorously evaluated against the read data. This is done using the PairHMM (Pair Hidden Markov Model) algorithm [37].

Mutect2 aligns each individual read to every candidate haplotype (including the reference) using the PairHMM, which produces a likelihood score expressing the probability of observing the read given a specific haplotype, while accounting for base quality scores [37]. This generates a matrix of per-read likelihoods for each haplotype. These haplotype-level likelihoods are then marginalized over individual alleles to produce per-read likelihoods for each candidate allele at a given site [37]. For each read and each candidate allele, the algorithm selects the highest likelihood among all haplotypes supporting that allele. The total evidence for an allele is the product of all per-read likelihoods supporting it. Sites with sufficient evidence for at least one non-reference allele are passed on as candidate somatic variants [37].

Key Parameters and Research Reagents

The following table summarizes the function of key resources and parameters used in a standard Mutect2 experiment, which are essential for achieving high-specificity somatic calls [12] [17].

Table 1: Essential research reagents and parameters for Mutect2 analysis

| Item | Type | Function / Purpose |

|---|---|---|

| Reference Genome | Reagent | A reference sequence in FASTA format and its index, used for read alignment and variant calling [12]. |