A Modern RNA-seq Data Analysis Workflow: From Raw Reads to Biological Insights

This article provides a comprehensive, step-by-step guide to RNA-seq data analysis, tailored for researchers, scientists, and drug development professionals.

A Modern RNA-seq Data Analysis Workflow: From Raw Reads to Biological Insights

Abstract

This article provides a comprehensive, step-by-step guide to RNA-seq data analysis, tailored for researchers, scientists, and drug development professionals. It covers the foundational principles of the workflow, from experimental design and quality control to read alignment and quantification. The guide then delves into methodological applications for differential expression analysis, offers best practices for troubleshooting and optimizing pipelines, and concludes with strategies for validating results and comparing computational tools. By integrating current best practices and tool comparisons, this resource empowers users to perform robust, reproducible transcriptomic analyses that yield reliable biological insights.

Laying the Groundwork: Core Principles and Experimental Design for RNA-seq

The primary goal of the initial RNA-seq data processing stage is to transform raw sequencing data into a gene count matrix, a table where rows represent genes, columns represent samples, and integer values indicate the number of sequencing reads assigned to each gene in each sample [1] [2]. This count matrix is the fundamental input for downstream statistical analyses, such as the identification of differentially expressed genes [3].

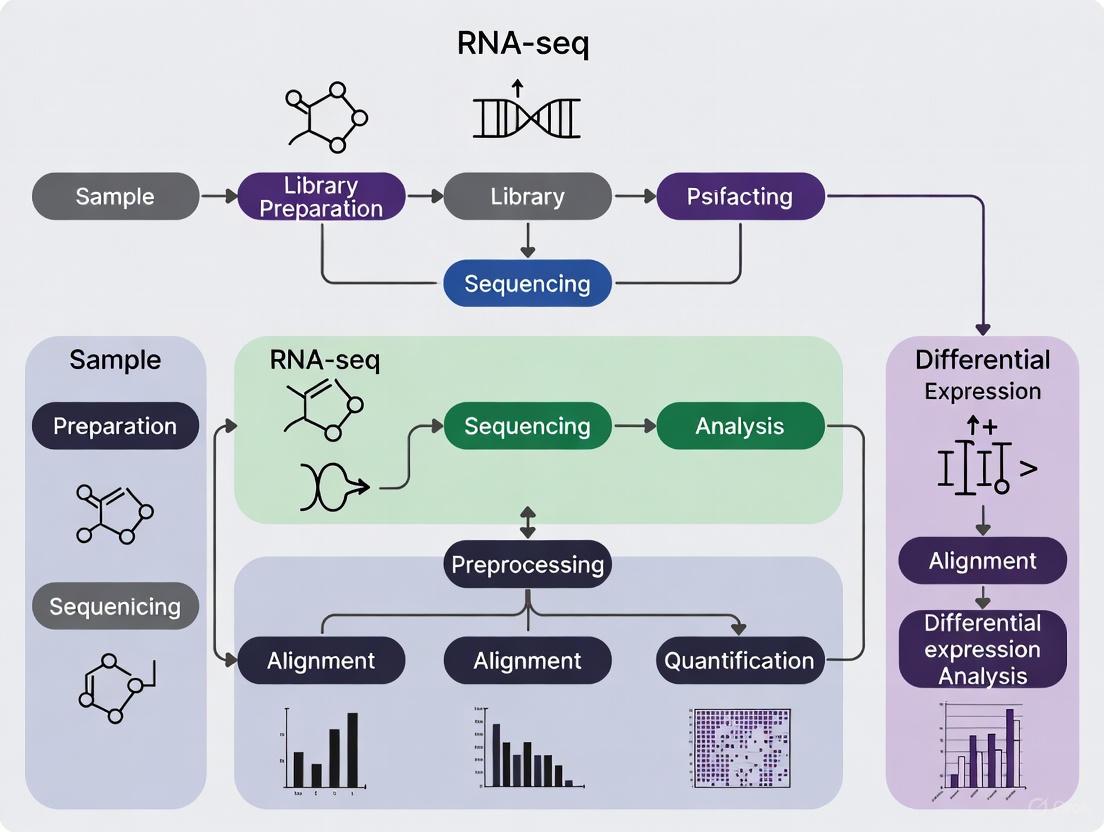

The journey begins after RNA has been extracted from biological samples, reverse-transcribed into complementary DNA (cDNA), and sequenced on a high-throughput platform [1]. The following diagram illustrates the core steps in this transformation process.

Detailed Methodologies for Core Workflow Steps

Primary Analysis: From BCL to FASTQ

The first computational step involves converting raw data from the sequencer into sequence reads.

- Base Calling and Demultiplexing: Sequencing instruments initially generate data in proprietary binary base call (BCL) formats. Using tools like

bcl2fastq, these files are converted into standard FASTQ format files. During this process, demultiplexing is performed, where sequenced reads are sorted into individual sample-specific FASTQ files based on their unique index (barcode) sequences [4]. - FASTQ Format: The FASTQ file is the standard output, storing each read across four lines: a sequence identifier, the nucleotide sequence, a separator line, and a string of quality scores encoding the confidence of each base call [5] [2]. For paired-end sequencing, two FASTQ files (R1 and R2) are generated per sample.

Quality Control and Read Trimming

Assessing and cleaning the raw reads is crucial to ensure the reliability of subsequent analysis.

- Quality Control with FastQC: Tools like FastQC provide an initial overview of data quality, highlighting potential issues such as leftover adapter sequences, unusual base composition, or regions of low quality scores [1] [5]. This generates an HTML report for visual inspection.

- Read Trimming with Trimmomatic or Cutadapt: Based on the QC report, reads are cleaned using tools like Trimmomatic or Cutadapt. This step removes adapter sequences, trims low-quality bases from the ends of reads, and filters out very short reads [1] [5] [4]. This process improves the accuracy of read alignment in the next step.

Alignment and Quantification

This is the core step where reads are mapped to a reference to determine their genomic origin.

- Alignment-Based Workflow: In this traditional approach, cleaned FASTQ reads are aligned to a reference genome using "splice-aware" aligners such as STAR or HISAT2 [1] [5] [3]. These tools can handle reads that span intron-exon junctions. The output is typically in SAM/BAM format. The aligned reads are then counted per gene using tools like featureCounts, which produces the raw counts for the matrix [1] [5].

- Lightweight/Pseudoalignment-Based Workflow: As a faster and often more accurate alternative, tools like Salmon and Kallisto perform quantification directly from the FASTQ files without generating a full base-by-base alignment [1] [6] [2]. They use statistical models to rapidly estimate transcript abundances, incorporating uncertainty in read assignment. The output is a file of "pseudocounts" that can be aggregated into a gene-level count matrix.

Post-Alignment QC and Aggregated Reporting

A final quality check is performed before analysis.

- Post-Alignment QC: After alignment, tools like Qualimap analyze the BAM files to check for biases such as DNA or rRNA contamination, 5'-3' bias, and coverage uniformity across genes [2].

- Aggregated Reporting with MultiQC: When dealing with multiple samples, MultiQC is invaluable. It aggregates results from FastQC, Trimmomatic, Salmon, STAR, Qualimap, and others into a single HTML report, allowing for easy comparison of QC metrics across all samples [1] [2].

Key Data Formats and Normalization Techniques

Essential File Formats in RNA-seq

The workflow involves several standard file formats, each serving a distinct purpose.

Table 1: Key File Formats in the RNA-seq Processing Workflow

| Format | Description | Primary Use Case |

|---|---|---|

| FASTQ [5] [2] | Stores nucleotide sequences and their corresponding per-base quality scores. | Output from demultiplexing; input for alignment/quantification tools. |

| SAM/BAM [1] [3] | SAM (Sequence Alignment Map) is a text-based format for aligned reads; BAM is its binary, compressed version. | Output from genome aligners like STAR and HISAT2. |

| Gene Count Matrix [1] [3] | A table where rows are genes, columns are samples, and values are raw integer counts of aligned reads. | The final output of the preprocessing workflow and primary input for differential expression analysis. |

Common Normalization Methods

Raw count data must be normalized to remove technical biases before comparative analysis. Different methods correct for different factors.

Table 2: Common Normalization Methods for RNA-seq Count Data

| Method | Sequencing Depth Correction | Gene Length Correction | Library Composition Correction | Suitable for DE Analysis |

|---|---|---|---|---|

| CPM [1] | Yes | No | No | No |

| RPKM/FPKM [1] | Yes | Yes | No | No |

| TPM [1] | Yes | Yes | Partial | No |

| Median-of-Ratios (DESeq2) [1] | Yes | No | Yes | Yes |

| TMM (edgeR) [1] | Yes | No | Yes | Yes |

The Scientist's Toolkit: Essential Research Reagents and Software

A successful RNA-seq experiment relies on a combination of laboratory reagents and bioinformatics software.

Table 3: Essential Reagents and Software for RNA-seq Analysis

| Category | Tool/Reagent | Function |

|---|---|---|

| Wet-Lab Reagents | Poly(dT) Oligos / Ribo-Zero | Enriches for mRNA by selecting polyadenylated transcripts or depleting abundant ribosomal RNA [7] [2]. |

| Fragmentation Enzymes/Reagents | Shears RNA or cDNA into uniform fragments of optimal size for sequencing [2]. | |

| Reverse Transcriptase | Synthesizes first- and second-strand cDNA from the RNA template [2]. | |

| Core Bioinformatics Software | FastQC [5] [2] | Performs initial quality control checks on raw FASTQ files. |

| Trimmomatic/Cutadapt [1] [5] | Trims adapter sequences and low-quality bases from reads. | |

| STAR/HISAT2 [1] [5] [3] | Aligns reads to a reference genome (splice-aware). | |

| Salmon/Kallisto [1] [6] [2] | Performs fast, alignment-free quantification of transcript abundances. | |

| featureCounts [1] [5] | Counts the number of reads mapping to each genomic feature (e.g., gene). | |

| Quality Control & Visualization | Qualimap [1] [2] | Provides quality control metrics for aligned data in BAM files. |

| MultiQC [1] [2] | Aggregates results from multiple tools and samples into a single report. | |

| Downstream Analysis | DESeq2 / edgeR / limma [1] [3] [6] | Performs statistical testing for differentially expressed genes using the count matrix. |

In the structured workflow of RNA-seq data analysis, the steps taken before sequencing—the experimental design—are arguably the most critical. They form the foundation upon which all subsequent computational analyses and biological conclusions are built. A poorly designed experiment can introduce biases and technical artifacts that even the most advanced statistical methods cannot later remedy [8]. As one perspective notes, consulting a statistician after an experiment is complete is often merely asking them "to conduct a post mortem examination... [to] say what the experiment died of" [8]. This guide details the core principles of experimental design—appropriate replication, strategic controls, and proactive management of batch effects—within the context of a complete RNA-seq research workflow. Adhering to these principles ensures that resources are used efficiently and that the resulting data provides a valid and reliable basis for scientific discovery, particularly in critical applications like drug development [9].

The Pillars of Sound Experimental Design

The reliability of any RNA-seq experiment rests on three key pillars: adequate replication to measure biological variability, appropriate controls to calibrate measurements, and careful design to minimize confounding technical noise.

Replication: Biological vs. Technical and Sample Size

Replication is fundamental for capturing the natural variation in biological systems and for assessing the reliability of observed effects. It is crucial to distinguish between the two primary types of replicates:

- Biological Replicates are independent biological samples (e.g., different individuals, animals, or separately cultured cell lines) representing the same experimental condition. They are essential for capturing biological variability and ensuring that findings are generalizable beyond the specific samples used [9].

- Technical Replicates involve multiple measurements of the same biological sample (e.g., splitting one RNA sample across multiple library preps or sequencing runs). They help assess technical variation introduced by the laboratory workflow but do not provide information about biological variability [9].

For most experiments aiming to make generalizable biological inferences, biological replication is the priority. While technical replicates can be useful for optimizing protocols, they cannot substitute for biological replicates [9].

Determining an adequate sample size is a critical step. An underpowered study, with too few biological replicates, is unlikely to detect genuine differential expression. Power analysis is a statistical method used to optimize sample size, balancing resource constraints with the need for reliable results [8]. While a common recommendation is a minimum of three biological replicates per condition, more may be needed for studies expecting subtle expression changes or working with highly variable samples [9]. Pilot studies are an excellent way to gather preliminary data on variability to inform sample size decisions for the main experiment [9].

Table 1: Types of Replicates in RNA-seq Experiments

| Replicate Type | Definition | Purpose | Example |

|---|---|---|---|

| Biological Replicate | Independent biological samples or entities [9] | To assess biological variability and ensure findings are reliable and generalizable [9] | 3 different animals or cell samples in each experimental group (treatment vs. control) [9] |

| Technical Replicate | The same biological sample, measured multiple times [9] | To assess and minimize technical variation (e.g., from sequencing runs or lab workflows) [9] | 3 separate RNA sequencing experiments for the same RNA sample [9] |

Controls: Positive, Negative, and Spike-Ins

Controls are reference points that allow researchers to validate their experimental protocols and interpret results correctly.

- Positive and Negative Controls: These are fundamental for verifying that an experiment worked as expected.

- Positive controls are samples where a known effect is expected. For a drug treatment experiment, this could be a well-characterized compound known to induce a specific transcriptional response.

- Negative controls are samples where no effect is expected. A common example is an "untreated" or "vehicle control" group, which is exposed to the solvent used to deliver a drug but not the active compound itself. Comparing treated samples to the appropriate negative control is essential for identifying drug-specific effects [9].

- Spike-in Controls: These are synthetic RNA sequences (e.g., SIRVs) added in known quantities to each sample at the start of the workflow. They serve as an internal standard to monitor the performance of the entire assay, including dynamic range, sensitivity, and quantification accuracy across samples and batches [9] [10]. They are particularly valuable for large-scale experiments to ensure data consistency and for normalizing samples where global transcript levels are expected to change [9].

Batch Effects: The Hidden Confounder

A batch effect is a systematic, non-biological variation introduced into data due to technical differences in sample processing [10]. These effects can arise from numerous sources, including different library preparation dates, reagent lots, personnel, or sequencing machines [10]. Even biologically identical samples processed in different batches can show significant differences in gene expression profiles, which can obscure true biological signals or create false positives [10] [11].

Table 2: Common Causes of Batch Effects in Transcriptomics

| Category | Examples | Applies To |

|---|---|---|

| Sample Preparation | Different protocols, technicians, enzyme efficiency | Bulk & single-cell RNA-seq [10] |

| Sequencing Platform | Machine type, calibration, flow cell variation | Bulk & single-cell RNA-seq [10] |

| Library Prep Artifacts | Reverse transcription efficiency, amplification cycles | Mostly bulk RNA-seq [10] |

| Reagent Batches | Different lot numbers, chemical purity variations | All types [10] |

| Environmental Conditions | Temperature, humidity, handling time | All types [10] |

The most reliable strategy for managing batch effects is to prevent them during experimental design. This involves:

- Randomization: Avoid processing all samples from one condition together. Instead, randomize the processing order of samples from all conditions across batches [8] [10].

- Balancing: Ensure that each biological group is represented within each processing batch. This prevents "confounding," where a technical batch is perfectly correlated with a biological condition, making it impossible to separate the two [8] [11].

- Metadata Tracking: Meticulously record all technical and processing information (dates, reagent lots, personnel, etc.) to use as covariates during statistical analysis [12].

When batch effects cannot be avoided, computational correction methods are required. Several tools are available, each with strengths and limitations.

Table 3: Common Batch Effect Correction Methods for Transcriptomics Data

| Method | Strengths | Limitations |

|---|---|---|

| ComBat | Simple, widely used; adjusts known batch effects using an empirical Bayes framework [10] [13] | Requires known batch info; may not handle nonlinear effects well [10] |

limma removeBatchEffect |

Efficient linear modeling; integrates well with differential expression analysis workflows [10] [6] | Assumes known, additive batch effects; less flexible [10] |

| SVA (Surrogate Variable Analysis) | Captures hidden batch effects; suitable when batch labels are unknown [10] | Risk of removing biological signal; requires careful modeling [10] |

| Harmony | Effective for single-cell data; iteratively aligns cells in a shared embedding space [10] [11] | Designed for cell embeddings, not raw count matrices [10] |

Integrating Design into the RNA-seq Workflow

A robust RNA-seq project is a multi-stage process, from hypothesis formulation to data interpretation. The following workflow diagrams illustrate how experimental design principles are integrated into the key wet-lab and computational phases.

Experimental Design and Wet-Lab Workflow

This diagram outlines the critical pre-sequencing steps where careful planning prevents future problems.

Computational Analysis with Batch Effect Management

This diagram maps the core bioinformatics steps, highlighting where batch effects are detected and corrected.

The Scientist's Toolkit: Essential Reagents and Materials

Successful execution of a well-designed RNA-seq experiment relies on key reagents and materials. The following table details critical components for generating high-quality libraries and data.

Table 4: Key Research Reagent Solutions for RNA-seq

| Item | Function | Application Notes |

|---|---|---|

| Spike-in Controls (e.g., SIRVs) | Synthetic RNA sequences added to samples to monitor technical performance, normalization, and quantification accuracy [9] [10]. | Essential for large-scale studies and experiments where global changes in transcript levels are expected [9]. |

| RNA Extraction Kit | Isolates total RNA from biological material (cells, tissues, FFPE blocks) [9]. | Must be selected based on sample type and the RNA species of interest (e.g., small RNAs) [9]. |

| rRNA Depletion / mRNA Enrichment Kits | Enriches for coding RNA by removing abundant ribosomal RNA (rRNA) or selecting for polyadenylated mRNA [9]. | Choice depends on the organism and study goals. rRNA depletion is often used for degraded samples (e.g., FFPE) [9]. |

| Library Preparation Kit | Converts RNA into a sequence-ready DNA library. Includes reverse transcription, adapter ligation, and PCR amplification steps [9]. | 3'-end focused kits (e.g., QuantSeq) are cost-effective for large-scale gene expression studies, while whole transcriptome kits are needed for isoform analysis [9]. |

| Strandedness Control Reagents | Reagents within the library prep kit that preserve the information about which DNA strand was the original RNA template [6]. | Critical for accurately assigning reads to genes, especially in regions where genes overlap on opposite strands. |

The path to robust and interpretable RNA-seq data begins long before the sequencing run. A rigorous experimental design, characterized by sufficient biological replication, thoughtfully selected controls, and proactive strategies to mitigate batch effects, is not an optional preliminary step but the very foundation of scientific validity. By integrating these principles into every stage of the research workflow—from the initial hypothesis to the final computational analysis—researchers can ensure their experiments yield meaningful biological insights, accelerate discovery, and make reliable contributions to the scientific record, particularly in high-stakes fields like drug development.

Quality control (QC) of raw sequencing reads represents the foundational first step in any RNA sequencing (RNA-seq) data analysis workflow. This initial assessment is crucial for identifying potential problems in sequencing data that could compromise downstream analysis results and biological interpretations. High-throughput sequencing data inevitably contains various artifacts, including adapter contamination, low-quality bases, and overrepresented sequences, which must be detected and addressed before proceeding with alignment and differential expression analysis. Within the broader context of RNA-seq methodology, rigorous QC serves as a essential checkpoint that ensures the reliability and reproducibility of subsequent findings, particularly in pharmaceutical development and clinical research applications where conclusions may inform drug discovery decisions.

The establishment of robust QC procedures has become increasingly important as RNA-seq technologies evolve and sample sizes expand. Batch effects and subtle technical biases can easily confound biological results if not detected early in the analysis process [14]. This technical guide provides a comprehensive framework for implementing quality assessment of raw sequencing reads using two complementary tools: FastQC for individual sample evaluation and MultiQC for aggregated project-level visualization. When properly integrated into RNA-seq workflows, these tools enable researchers to quickly identify problematic samples, make informed decisions about read processing parameters, and document quality metrics for regulatory compliance or publication purposes.

Theoretical Foundation: Sequencing Quality Metrics and Their Interpretation

Essential Quality Control Metrics

Quality assessment of high-throughput sequencing data relies on multiple complementary metrics that collectively provide a comprehensive picture of data quality. The most fundamental metrics include per-base sequence quality, which assesses Phred quality scores across all sequencing cycles; per-base sequence content, which examines nucleotide representation at each position; GC content distribution, which compares the observed GC distribution to a theoretical model; sequence duplication levels, which identify overrepresented sequences; and adapter contamination, which quantifies the presence of adapter sequences in read data [15] [16]. Each metric provides unique insights into different potential problems that could affect downstream analysis.

The interpretation of these metrics requires understanding both the sequencing technology employed and the biological system under investigation. For instance, certain sequence content biases are expected in RNA-seq data due to the non-random nature of transcript sequences, while other patterns may indicate technical artifacts requiring remediation. Similarly, the expected duplication level varies depending on sequencing depth and transcriptome complexity, with highly expressed genes naturally exhibiting higher duplication rates. Proper interpretation therefore necessitates considering both the technical context of library preparation and sequencing methodology alongside the biological context of the sample source [17].

Implications for Downstream Analysis

Quality issues undetected at the raw read stage can propagate through the entire analysis pipeline, potentially leading to erroneous biological conclusions. Adapter contamination can cause misalignment of reads to incorrect genomic locations, while systematic quality drops at read ends may reduce mapping rates and coverage uniformity. GC bias can skew expression estimates, particularly for genes with extreme GC content, and overrepresented sequences may indicate contamination that could compromise sample integrity interpretations [15]. These issues are particularly critical in drug development contexts, where inaccurate expression measurements could mislead target identification or biomarker discovery efforts.

The consequences of poor quality data extend beyond immediate analytical concerns to encompass resource allocation and experimental planning. Datasets with fundamental quality issues may be unsuitable for their intended purposes, necessitating costly resequencing and potentially delaying research timelines. Comprehensive initial QC provides the evidence base for these decisions, enabling researchers to distinguish between minor issues that can be addressed computationally and major problems requiring wet-lab intervention. This proactive approach to quality management ultimately enhances research efficiency and reliability across the RNA-seq workflow [17].

Experimental Protocols: Implementing FastQC and MultiQC

FastQC Analysis of Individual Samples

FastQC provides a comprehensive quality assessment of raw sequencing data through a series of modular analyses. The tool accepts input in various formats, including FASTQ, BAM, and SAM files, and generates both graphical summaries and text-based reports for each input file [15]. The implementation protocol begins with quality assessment of individual sequencing files before proceeding to multi-sample aggregation.

Step-by-Step Protocol:

- Tool Installation: FastQC requires Java Runtime Environment (JRE). Download the software from the Babraham Bioinformatics website and install according to platform-specific instructions [15].

- Basic Execution: For a single FASTQ file, use the command:

fastqc sample.fastq.gz. This generates an HTML report and a ZIP file containing detailed data. - Batch Processing: To process multiple files simultaneously:

fastqc sample1.fastq.gz sample2.fastq.gz sample3.fastq.gz. - Output Interpretation: Open the generated HTML file in a web browser to visualize quality metrics. Examine each module for warnings or failures that indicate potential quality issues.

FastQC provides multiple configuration options to customize analysis based on specific needs. The --nogroup option disables base grouping for long reads, providing higher resolution quality assessment. The --extract option automatically unzips output files, while --format allows explicit specification of file format when auto-detection fails [15]. For large-scale analyses, FastQC can be integrated into automated pipelines with results parsed from the text output files rather than manual inspection of HTML reports.

MultiQC for Aggregated Quality Reporting

MultiQC addresses the significant challenge of consolidating and comparing QC results across multiple samples by automatically scanning directories for analysis logs and generating unified reports [18] [14]. The tool recognizes output from numerous bioinformatics tools, with FastQC being one of its most prominently supported formats.

Step-by-Step Protocol:

- Tool Installation: Install MultiQC via Python Package Index using:

pip install multiqc[14]. - Basic Execution: Navigate to the directory containing FastQC reports and execute:

multiqc .(the dot indicates current directory). MultiQC will recursively search for recognized output files [19] [20]. - Output Customization: Use the

--filenameparameter to assign a custom name to the output report:multiqc --filename project_qc_report .[20]. - Report Interpretation: Open the generated HTML file to explore interactive plots and summary tables. Use the navigation menu to jump to specific sections and the toolbox to highlight samples of interest.

MultiQC provides numerous advanced options for handling complex project structures. The --dirs flag prefixes sample names with directory paths to distinguish between samples with identical names processed in different contexts. The --ignore option excludes specific files or patterns from analysis, while --file-list processes only explicitly listed files [20]. These features make MultiQC adaptable to diverse project organizations and naming conventions commonly encountered in large-scale RNA-seq studies.

Integrated Workflow for Comprehensive Quality Assessment

A robust quality assessment protocol combines both tools in a sequential workflow that provides both granular and holistic views of data quality. The integrated approach begins with individual file assessment using FastQC, proceeds to aggregated reporting with MultiQC, and culminates in informed decision-making about necessary quality remediation steps.

Table 1: FastQC Analysis Modules and Interpretation Guidelines

| Module Name | Function | Potential Issues Detected | Interpretation Guidelines |

|---|---|---|---|

| Per-base sequence quality | Assesses Phred quality scores across all bases | Quality drops at read ends, consistently low quality | Scores below 20 indicate potential problems for variant calling |

| Per-base sequence content | Examines nucleotide proportion per cycle | Library-specific biases, random hexamer priming issues | First 10-15 bases often show bias in RNA-seq data |

| Per-sequence GC content | Compares observed vs. theoretical GC distribution | Contamination, PCR biases | Sharp peaks may indicate contamination; shifts suggest biases |

| Sequence duplication levels | Identifies overrepresented sequences | PCR over-amplification, low complexity libraries | High duplication in RNA-seq expected for highly expressed genes |

| Adapter content | Quantifies adapter sequence presence | Adapter contamination requiring trimming | Any significant adapter content (>1%) warrants trimming |

| Overrepresented sequences | Flags sequences with unexpected high frequency | Contaminants, PCR artifacts | BLAST significant hits to identify source |

The output from this integrated workflow provides both detailed individual metrics and project-wide trends that inform subsequent processing steps. The General Statistics table in MultiQC reports provides a concise overview of key metrics across all samples, enabling rapid identification of outliers [18]. Interactive plots facilitate exploration of specific quality aspects, while the toolbox enables sample highlighting, renaming, and filtering to focus on specific sample subsets [18]. This comprehensive approach ensures that quality assessment captures both individual sample issues and systematic patterns affecting multiple samples.

Table 2: Essential Research Reagents and Computational Tools for Quality Assessment

| Tool/Resource | Function | Application Context | Key Considerations |

|---|---|---|---|

| FastQC | Quality control analysis of raw sequencing data | Initial assessment of individual sequencing files | Java-dependent; multiple input format support |

| MultiQC | Aggregate results from multiple tools and samples | Project-level quality overview and comparison | Supports >50 bioinformatics tools; customizable output |

| Trim Galore | Adapter trimming and quality filtering | Read preprocessing based on QC results | Wrapper around Cutadapt and FastQC |

| Cutadapt | Adapter removal from sequencing reads | Specific adapter contamination issues | Precise adapter sequence specification required |

| fastp | Integrated QC and preprocessing | Streamlined workflow for large datasets | Performs filtering, trimming, and QC simultaneously |

| Reference genomes | Species-specific sequence databases | Context for contamination detection | Must match studied organism and assembly version |

Successful quality assessment requires not only the primary analysis tools but also appropriate computational infrastructure. FastQC benefits from sufficient memory allocation, particularly for large datasets, with a --memory option available to control usage [15]. MultiQC efficiently handles projects with hundreds of samples, automatically switching to static plots for large sample numbers to maintain browser responsiveness [14]. For institutional sequencing facilities or large-scale drug development projects, MultiQC plugins enable integration with Laboratory Information Management Systems (LIMS) for automated metadata incorporation and result tracking [14].

Alternative tools offer complementary capabilities for specific use cases. Falco provides a high-speed FastQC emulation that generates equivalent results with improved performance, particularly valuable for high-throughput environments [21]. FastQ Screen extends quality assessment beyond technical metrics to sequence origin, detecting contamination from other species by mapping reads against multiple reference genomes [22]. These specialized tools can be incorporated into enhanced QC workflows when project requirements warrant additional checks.

Workflow Visualization and Implementation

The following diagram illustrates the integrated quality assessment workflow incorporating both FastQC and MultiQC:

Quality Assessment Workflow: This diagram illustrates the sequential process of raw read quality evaluation, highlighting the iterative nature of quality remediation when issues are detected.

The MultiQC reporting system generates interactive visualizations that enable researchers to explore quality metrics across multiple dimensions:

MultiQC Reporting Structure: This diagram outlines the internal processing steps within MultiQC, from log file parsing to the generation of interactive output reports with multiple visualization components.

Interpretation Guidelines and Decision Framework

Critical Metrics and Thresholds

Effective quality assessment requires not just metric calculation but also evidence-based interpretation against established thresholds. Based on empirical analyses of RNA-seq data, certain thresholds provide practical guidance for quality determinations. Per-base sequence quality should generally maintain Phred scores above 20 (99% base call accuracy) throughout most of the read, with minor degradation at the very ends often being tolerable for alignment [16]. Adapter content exceeding 1% typically warrants trimming intervention, while GC content deviations from the expected distribution may indicate contamination or other technical artifacts [15].

The interpretation must be contextualized to the specific experiment and sequencing technology. For instance, RNA-seq data naturally exhibits sequence content bias at the beginning of reads due to random hexamer priming artifacts, which would be flagged as problematic in genomic DNA but represents expected technical bias in transcriptomic data [16]. Similarly, duplication levels must be interpreted in light of sequencing depth and transcriptome diversity, with highly expressed genes expected to produce duplicate reads at appropriate levels. This nuanced interpretation prevents both undetected quality issues and unnecessary rejection of biologically valid data patterns.

Performance Considerations and Alternative Approaches

As sequencing projects grow in scale, computational efficiency of quality assessment becomes increasingly important. Performance comparisons indicate that Falco, a FastQC alternative implemented in C++, provides equivalent results with significantly faster execution times—approximately three times faster than FastQC while using less memory [21]. This performance advantage becomes substantial when processing large datasets or in high-throughput sequencing facilities where computational resource optimization is essential.

Alternative quality assessment tools offer different functionality trade-offs. Fastp provides an integrated solution that performs filtering, trimming, and quality control in a single step, with speed advantages particularly valuable for large-scale analyses [17]. However, FastQC remains the most widely adopted tool with the most comprehensive module coverage, making it particularly suitable for initial quality assessment in regulated environments where standardized methodologies are preferred. For most research applications, the combination of FastQC and MultiQC provides the optimal balance between comprehensive assessment and practical implementation.

Quality assessment of raw reads with FastQC and MultiQC represents the essential foundation upon which reliable RNA-seq analysis is built. This initial phase enables researchers to verify data quality, identify potential technical artifacts, and make informed decisions about necessary preprocessing steps before proceeding to alignment and expression quantification. The comprehensive visualization and aggregation capabilities of these tools facilitate both technical validation and documentation of quality metrics for publication or regulatory compliance.

When properly implemented within the broader RNA-seq workflow, this quality assessment phase serves as a critical checkpoint that safeguards downstream analyses from technical artifacts that could compromise biological interpretations. This is particularly crucial in drug development contexts, where decisions about target engagement or biomarker selection may hinge on accurate differential expression results. By establishing robust, reproducible quality assessment procedures using the methodologies outlined in this guide, researchers can ensure the reliability and interpretability of their RNA-seq analyses throughout subsequent computational and biological validation stages.

In RNA sequencing (RNA-seq) analysis, data cleaning through adapter trimming and quality filtering forms the essential foundation for all downstream biological interpretations. This process directly addresses the fundamental truth that raw sequencing data invariably contains artifacts that can compromise analytical integrity if left unaddressed. Without proper cleaning, researchers risk misalignment of reads, inaccurate quantification of gene expression, and ultimately, biologically misleading conclusions [23] [1].

Adapter contamination occurs when segments of the artificial sequences used during library preparation remain attached to the biological sequences of interest. This typically happens when DNA fragments are shorter than the read length, causing the sequencer to continue reading into the adapter sequence [24]. Simultaneously, sequencing quality naturally degrades along the length of reads, particularly at the 3' end, introducing base-calling errors [25]. The cleaning process systematically removes these technical artifacts while preserving biological signal, ensuring that the data passed to alignment and quantification tools represents actual transcript sequences rather than technical noise [26].

Within the broader RNA-seq workflow, data cleaning occupies a critical position immediately following initial quality assessment and preceding alignment [1]. This strategic placement ensures that aligners and quantifiers receive optimized input, leading to more accurate mapping, reduced computational resources, and ultimately, more reliable detection of differentially expressed genes [25] [26].

Key Concepts: Adapters, Quality Scores, and Filtering Criteria

Understanding Adapter Contamination

Adapter sequences are artificial oligonucleotides integrated during library preparation that enable the sequencing process. However, they become contaminants when they appear within the sequenced reads themselves. The most common scenario occurs with short RNA fragments where the read length exceeds the fragment length, resulting in the sequencer "reading through" the biological fragment and into the adapter sequence [24]. The resulting adapter contamination can manifest in several ways: as full adapter sequences within reads, partial adapter sequences at read ends, or in paired-end sequencing, as short adapter remnants forming "palindrome" sequences between forward and reverse reads [24].

Quality Metrics and Filtering Parameters

Sequencing quality is quantified using Phred quality scores (Q-score), which represent the probability that a base was called incorrectly [25]. A Q-score of 30 indicates a 1 in 1000 error probability (99.9% accuracy), which is typically considered the minimum threshold for high-quality data [25]. Effective filtering employs multiple criteria to eliminate problematic reads while preserving biological content:

- Minimum Length Threshold: Discards reads that become too short after trimming to contain meaningful mapping information (e.g., < 25-35 nucleotides) [27] [23].

- Quality Thresholds: Removes reads with overall poor quality or trims low-quality regions using sliding window approaches [24].

- Ambiguous Base Limits: Filters reads containing excessive 'N' characters representing uncertain base calls [23].

- Complexity Filtering: Eliminates reads with low sequence complexity (e.g., homopolymers) that may align unreliably [23].

Trimmomatic: A Versatile Processing Workhorse

Trimmomatic operates as a comprehensive trimming tool specifically designed for Illumina data, employing a multi-step processing pipeline to address various quality issues systematically [27] [24]. Its modular architecture allows researchers to combine different processing steps tailored to their specific data characteristics.

The tool's key advantage lies in its flexible handling of adapter contamination through the ILLUMINACLIP parameter, which simultaneously manages standard adapter removal and specialized palindrome clipping for paired-end data [27] [24]. This parameter requires multiple sub-parameters including the adapter file path, seed mismatches, palindrome clip threshold, and simple clip threshold, providing fine-grained control over the adapter detection process [27].

Cutadapt: Precision Adapter Trimming

Cutadapt specializes in finding and removing adapter sequences through sophisticated alignment algorithms that allow for error-tolerant matching [28] [29]. Its core functionality revolves around searching for adapter sequences with a user-defined error rate (default: 0.1 or 10%), enabling detection of adapters even when they contain sequencing errors or minor degradation [29].

A particular strength of Cutadapt is its comprehensive adapter typology, which recognizes that adapters can appear in different orientations and locations relative to the biological sequence [28]. The tool supports regular 3' adapters (-a), 5' adapters (-g), anchored adapters that must appear at read ends (^ for 5', $ for 3'), and non-internal adapters that allow partial matches only at sequence termini [28]. This specificity prevents over-trimming while ensuring complete adapter removal.

Comparative Analysis: Tool Selection Guide

Feature Comparison

Table 1: Comparative analysis of Trimmomatic and Cutadapt features and performance.

| Feature | Trimmomatic | Cutadapt |

|---|---|---|

| Primary Strength | Comprehensive quality processing | Precise adapter removal |

| Adapter Detection | Sequence-based + palindrome clipping | Alignment with error tolerance |

| Quality Trimming | Sliding window, leading, trailing | Quality cutoff, NextSeq-specific |

| Paired-end Handling | Dedicated modes with separate outputs | Coordinated trimming with pair awareness |

| Performance | Moderate speed, good efficiency | Fast with multicore support (-j option) |

| Best Applications | RNA-seq, WGS, datasets needing broad quality control | Small RNA-seq, amplicon sequencing, targeted adapter removal |

| Key Parameters | ILLUMINACLIP, SLIDINGWINDOW, MINLEN | -a/-g adapters, -e error rate, -m minimum length |

| Limitations | Limited non-Illumina adapter support | Less comprehensive quality processing |

Selection Guidelines

The choice between Trimmomatic and Cutadapt depends on experimental context and data characteristics. Trimmomatic excels when dealing with standard RNA-seq data requiring comprehensive quality control beyond just adapter removal [23]. Its integrated approach to quality trimming, adapter clipping, and length filtering makes it ideal for transcriptomic studies where overall read quality may be variable.

Cutadapt proves superior when working with specialized library types such as small RNA sequencing, where precise removal of specific adapters is paramount [23]. Its flexible adapter specification and support for multiple adapter types make it invaluable for protocols with complex adapter structures or when targeting only specific contaminants without altering other read regions.

For datasets suffering from severe adapter contamination, some practitioners employ both tools sequentially—first using Cutadapt for precise adapter removal followed by Trimmomatic for general quality control—though this approach requires careful parameterization to avoid over-trimming [30].

Experimental Protocols and Methodologies

Trimmomatic Protocol for RNA-Seq Data

A standard Trimmomatic workflow for paired-end RNA-seq data incorporates multiple processing steps designed to address the most common data quality issues:

- ILLUMINACLIP: Removes adapter sequences using a reference adapter file with optimized parameters for sensitivity and specificity

ILLUMINACLIP:TruSeq3-PE.fa:2:30:10[24]. - SLIDINGWINDOW: Performs quality-based trimming using a sliding window approach

SLIDINGWINDOW:5:10(window size: 5 bases, required quality: 10) [24]. - LEADING/TRAILING: Removes low-quality bases from read starts and ends

LEADING:5 TRAILING:5[24]. - MINLEN: Filters reads that become too short after processing

MINLEN:50[24].

The complete command implementation appears as:

This protocol typically processes reads in a paired-end mode that maintains read synchronization, outputting both paired reads (where both mates survived filtering) and unpaired reads (where one mate was discarded) [24]. The ILLUMINACLIP step is particularly crucial for RNA-seq data, as it eliminates adapter sequences that could otherwise prevent accurate alignment to splice junctions.

Cutadapt Protocol for Precision Trimming

For targeted adapter removal in applications like small RNA sequencing, Cutadapt implements a focused approach:

- Adapter Specification: Define the exact adapter sequence and type based on library preparation method

-a ADAPTERfor 3' adapters [28]. - Error Tolerance: Set appropriate error rates balancing sensitivity and specificity

-e 0.1allows 10% errors in adapter matching [29]. - Quality Filtering: Apply quality thresholds and length filters

-q 20 -m 15[30]. - Parallel Processing: Utilize multicore support for efficiency

-j 0auto-detects available cores [29].

A typical Cutadapt command for removing Illumina universal adapters demonstrates this approach:

For paired-end data, the command expands to coordinate trimming between mates:

A critical consideration when using Cutadapt is adapter sequence specification. Research indicates that using the exact adapter sequence referenced by quality control tools like FastQC (sometimes shorter than manufacturer-provided sequences) significantly improves removal efficiency [30].

Integration within the RNA-Seq Workflow

Position in Analytical Pipeline

Data cleaning occupies a defined position in the comprehensive RNA-seq analytical workflow, situated between initial quality assessment and alignment/quantification steps. The complete pathway encompasses:

Diagram 1: RNA-seq workflow with trimming step highlighted.

This sequencing demonstrates how data cleaning bridges the gap between raw data generation and biological interpretation. The process typically begins with FastQC analysis of raw reads to identify specific quality issues, informs parameter selection for trimming tools, and concludes with post-cleaning verification to ensure successful artifact removal before proceeding to computationally intensive alignment steps [25] [1].

Quality Control Verification

Effective data cleaning requires quality verification at multiple stages. Initial FastQC reports on raw data identify adapter contamination levels, sequence quality degradation patterns, and other artifacts that inform trimming parameter selection [27]. Following trimming, a second FastQC analysis confirms the successful removal of adapters and quality improvement [27].

Key metrics to evaluate include:

- Adapter Content: Should approach 0% after successful trimming [27]

- Per Base Sequence Quality: Should show improved scores, particularly at 3' ends

- Sequence Length Distribution: Should reflect the minimum length threshold applied

For large-scale studies with multiple samples, MultiQC aggregates these metrics across all samples, enabling researchers to identify outliers and verify consistency throughout the dataset [25].

Table 2: Key reagents, tools, and their functions in RNA-seq data cleaning.

| Resource | Function | Application Context |

|---|---|---|

| Trimmomatic | Comprehensive read trimming | General RNA-seq quality control |

| Cutadapt | Precision adapter removal | Small RNA-seq, targeted trimming |

| FastQC | Quality control assessment | Pre- and post-trimming validation |

| TruSeq3 Adapters | Standard Illumina adapter sequences | Reference for adapter contamination |

| MultiQC | Aggregate QC reporting | Multi-sample experiment validation |

| High-Quality RNA Samples | Starting material for sequencing | Foundation for all downstream steps |

| RIN > 7 | RNA Integrity Number threshold | Sample quality verification pre-seq |

| Qubit/Bioanalyzer | Nucleic acid quantification and QC | Library preparation quality assurance |

Impact on Downstream Analysis

Proper data cleaning exerts a profound influence on all subsequent analytical stages in the RNA-seq workflow. Alignment rates typically improve significantly after trimming, as reads devoid of adapter sequences and low-quality bases map more reliably to reference transcriptomes [23]. This enhanced mapping efficiency directly translates to more accurate gene-level quantification, as reads are assigned to their correct transcriptional origins rather than misaligning due to adapter contamination or quality issues.

The ultimate impact emerges in differential expression analysis, where cleaned data demonstrates improved detection power for genes with low to moderate expression levels [26]. Research has shown that appropriate cleaning methods can significantly increase the number of detected differentially expressed genes while providing more significant p-values, without introducing bias toward low-count genes [26]. This enhancement proves particularly valuable for identifying biologically relevant but weakly expressed transcripts that might otherwise be lost in technical noise.

Furthermore, effective cleaning reduces false positive variant calls in genomic studies, improves transcript assembly completeness, and increases the reproducibility of results across technical replicates [23]. By investing computational resources in thorough data cleaning, researchers establish a foundation of analytical integrity that supports all subsequent biological interpretations and conclusions derived from their RNA-seq experiments.

Within the RNA-seq data analysis workflow, the steps of selecting, annotating, and indexing a reference genome are foundational. The quality and appropriateness of these references directly determine the accuracy of all subsequent analyses, from read mapping and quantification to the final identification of differentially expressed genes. This guide provides researchers and drug development professionals with a technical roadmap for these critical initial steps, framed within the context of a complete RNA-seq research thesis.

Reference Genome Selection

Selecting an appropriate reference genome is the first and most critical choice in an RNA-seq study. Using an unsuitable reference can introduce mapping errors and systematic biases, compromising the entire project.

Official Selection Criteria

For species with established genomic resources, reference genomes are often formally designated by authoritative databases. The National Center for Biotechnology Information (NCBI) RefSeq database, for instance, selects a single "reference" genome for each defined species to provide a normalized, taxonomically diverse view of its collection [31].

The criteria for selection differ between prokaryotes and eukaryotes, with a summary of key metrics provided in Table 1.

Table 1: Key Criteria for Reference Genome Selection

| Organism Type | Primary Criteria | Additional Considerations |

|---|---|---|

| Prokaryotes [31] | - Assembly contiguity (scaffold count)- CheckM completeness score- Low count of pseudo CDSs- Deviation from mean species assembly length | - Manual selection based on community input- Presence of plasmid sequences- Type strain status |

| Eukaryotes [31] | - Contig N50 value- Assembly completeness (gapless chromosomes, full chromosome set) | - Manual curation for high-profile species (e.g., human)- Preference for existing RefSeq genomes |

For prokaryotes, the process is highly automated, weighing factors like assembly contiguity, gene completeness, and annotation quality [31]. For eukaryotes, the decision is often more nuanced, considering the contiguity of the assembly (e.g., contig N50) and its completeness, such as whether it possesses a full set of gapless chromosomes [31]. In some cases, manual selection overrides computational rankings, particularly for well-studied model organisms where a specific genome build has become the community standard [31].

Practical Selection for RNA-seq

When initiating an RNA-seq study, researchers should first seek out these officially designated reference genomes from databases like NCBI RefSeq or GENCODE [32]. If multiple options exist, the selection hierarchy for eukaryotes should be followed, prioritizing more contiguous and complete assemblies [31].

In the absence of a designated reference, such as for a non-model organism, the guiding principle is to select the assembly with the highest quality and most comprehensive gene annotation. It is also crucial to ensure that the genome sequence file (FASTA) and the annotation file (GTF/GFF) are from the same source and version to avoid coordinate mismatches [33].

Genome Annotation

Genome annotation is the process of inferring the structure and function of genomic sequences, identifying the locations of genes, exons, splice sites, and other functional elements. Accurate annotation is essential for correctly assigning RNA-seq reads to the genes from which they originated.

Annotation Methodologies

Annotation strategies have evolved from relying on single sources of evidence to integrated, evidence-driven pipelines.

- Ab Initio Prediction: Tools like AUGUSTUS use computational models to predict gene structures based on statistical signatures in the DNA sequence, such as codon usage and splice site patterns [34]. These are useful when experimental data is scarce but can have high false positive rates [35].

- Evidence-Based Annotation: This more accurate method leverages experimental data. Transcriptome data (e.g., from RNA-seq) is mapped to the genome to directly define exon-intron structures using assemblers like StringTie [35]. Protein sequences from related species can also be aligned to the genome to identify conserved coding regions [35].

- Integrated Annotation Pipelines: Modern pipelines like MAKER and BRAKER combine multiple lines of evidence—including ab initio predictions, transcriptomic alignments, and protein homology—to generate a consolidated and more accurate annotation [35] [34]. They use algorithms like EvidenceModeler to weigh and combine evidence into a final gene set [35].

Emerging Technologies: DNA Foundation Models

A transformative new approach frames genome annotation as a multilabel semantic segmentation task. Models like SegmentNT are built by fine-tuning pre-trained DNA foundation models, such as the Nucleotide Transformer, on known genomic elements [34].

These models can process long DNA sequences (up to 50 kb) and simultaneously predict the location of diverse genic and regulatory elements—including exons, introns, promoters, and enhancers—at single-nucleotide resolution [34]. This end-to-end methodology shows state-of-the-art performance and strong generalization across species, representing a significant advance over traditional pipeline-based methods [34].

The following diagram illustrates the conceptual workflow of this approach.

Diagram 1: Foundation model-based annotation workflow.

Genome Indexing for RNA-seq

Before RNA-seq reads can be mapped, the reference genome and its annotation must be indexed. This process creates auxiliary files that allow the aligner to quickly and efficiently find potential mapping locations for each read, drastically reducing computation time.

Purpose of Indexing

Indexing creates a searchable catalog of all subsequences (e.g., k-mers) in the genome. For splicing-aware aligners like STAR, the process also incorporates the genome annotation to create a database of known splice junctions. This allows the aligner to recognize reads that span an intron, a critical feature for accurate transcriptome mapping [33].

Indexing with STAR

STAR (Spliced Transcripts Alignment to a Reference) is a widely used aligner for RNA-seq data. The following protocol details the steps for building a genome index.

Experimental Protocol: Building a Genome Index with STAR

Objective: To generate a genome index file for use with the STAR RNA-seq aligner. Inputs:

- Genome sequence in FASTA format (e.g.,

GRCh38.primary_assembly.genome.fa). - Genome annotation in GTF format (e.g.,

gencode.v29.annotation.gtf).

Method:

- Data Preparation: Download the genome FASTA and GTF files from a trusted source like GENCODE, Ensembl, or UCSC, ensuring the versions match [33]. Unzip the files if necessary.

- Load Software Module: On a high-performance computing (HPC) cluster, load the STAR module (e.g.,

module load star). - Run GenomeGenerate: Execute the STAR command in

genomeGeneratemode. A typical command is shown below [33]:

Key Parameters:

--genomeDir: Path to the directory where the index will be saved.--genomeFastaFiles: Path to the input genome FASTA file.--sjdbGTFfile: Path to the annotation GTF file for splice junction information.--sjdbOverhang: A critical parameter specifying the length of the genomic sequence around annotated junctions. This should be set toReadLength - 1. For 100bp paired-end reads, use99[33].--runThreadN: Number of CPU threads to use for faster indexing.

Output: A directory containing the genome index files (e.g., chrLength.txt, Genome, SAindex).

The Scientist's Toolkit

Successful execution of a genome-based RNA-seq study requires a suite of key reagents and software tools. Table 2 details these essential components.

Table 2: Research Reagent and Tool Solutions for Reference-Based RNA-seq

| Item | Function | Example Tools/Databases |

|---|---|---|

| Reference Genome (FASTA) | The DNA sequence of the organism used as a map for read alignment. | NCBI RefSeq [31], GENCODE [32], Ensembl |

| Genome Annotation (GTF/GFF) | File containing the coordinates and metadata for genes, transcripts, and other features. | GENCODE [32], Ensembl, NCBI |

| Splicing-aware Aligner | Software that aligns RNA-seq reads to a genome, accounting for introns. | STAR [33], HISAT2, minimap2 (long reads) [33] |

| Alignment-based Quantifier | Tool that estimates gene/transcript abundance from aligned reads. | Salmon (alignment mode) [6], featureCounts |

| Pseudo-aligner | Tool that rapidly estimates abundance by matching reads to a transcriptome without full alignment. | Kallisto [36], Salmon (standard mode) [6] |

| Integrated Workflow | Frameworks that automate multi-step RNA-seq analysis from raw data to counts. | nf-core/rnaseq [6] |

The overall workflow for an RNA-seq experiment, from raw data to differential expression, is summarized in the diagram below.

Diagram 2: RNA-seq workflow with reference preparation highlighted.

The processes of genome selection, annotation, and indexing form the critical foundation of a robust RNA-seq analysis. By understanding the official criteria for genome selection, leveraging integrated and modern deep learning-based annotation methods, and correctly implementing indexing protocols, researchers can ensure that their downstream differential expression and functional analysis results are built upon a reliable and accurate base. Mastery of these initial steps is indispensable for generating biologically meaningful and reproducible insights in genomics and drug development research.

From Alignment to Discovery: A Step-by-Step Analytical Pipeline

In RNA sequencing (RNA-Seq) analysis, the step of determining where sequencing reads originate from in the genome is fundamental to connecting genomic information with phenotypic and physiological data [37]. The choice of alignment strategy directly impacts the accuracy, efficiency, and biological validity of downstream results, including differential gene expression analysis. Currently, two predominant computational approaches exist: traditional spliced aligners (e.g., STAR, HISAT2) that perform base-by-base alignment to a reference genome, and modern pseudo-aligners (e.g., Salmon, Kallisto) that use fast k-mer matching to a reference transcriptome for quantification without exact positional alignment [38]. This technical guide provides an in-depth comparison of these strategies, supported by quantitative benchmarking data and detailed protocols, to inform researchers and drug development professionals in selecting optimal workflows for their specific research contexts.

Core Concepts: Fundamental Differences Between Alignment Strategies

Spliced Aligners: Genome-Based Mapping

Spliced aligners are designed to address the unique challenges of RNA-seq data mapping, particularly the presence of introns that cause reads to span splice junctions.

STAR (Spliced Transcripts Alignment to a Reference) utilizes a novel strategy based on sequential maximum mappable seed search followed by clustering, stitching, and scoring stages [39]. For every read, STAR searches for the longest sequence that exactly matches one or more locations on the reference genome (Maximal Mappable Prefixes). These "seeds" are then stitched together based on proximity and alignment scoring to generate complete read alignments, including those spanning splice junctions [39].

HISAT2 (Hierarchical Indexing for Spliced Alignment of Transcripts 2) employs a different approach using a graph-based alignment with a Ferragina-Manzini index (also used by bowtie2) that can align reads to a genome while being aware of splice sites [37]. This hierarchical indexing strategy allows efficient mapping against both small genomic regions and the entire genome.

Pseudo-aligners: Transcriptome-Based Quantification

Pseudo-aligners represent a paradigm shift in RNA-seq analysis by focusing directly on quantification while bypassing the computationally expensive step of base-by-base alignment.

Kallisto introduces the concept of pseudo-alignments based on k-mer matching in a transcript De Bruijn graph. Rather than determining exact genomic positions, it establishes a relationship between a read and a set of compatible transcripts, then uses equivalence classes for rapid isoform quantification [37].

Salmon utilizes a similar approach called quasi-mapping, which employs an indexed suffix array and Burrows-Wheeler Transform to discover shared substrings of any length between a read and transcript sequences [37]. It incorporates additional statistical modeling to correct for GC and sequence biases during quantification.

Table 1: Fundamental Operational Differences Between Alignment Tools

| Feature | STAR | HISAT2 | Salmon | Kallisto |

|---|---|---|---|---|

| Reference Type | Genome | Genome | Transcriptome | Transcriptome |

| Alignment Strategy | Seed extension & stitching [39] | Hierarchical FM-index [37] | Quasi-mapping [37] | Pseudo-alignment [37] |

| Splice Junction Handling | Direct detection via segmented alignment [39] | Graph-based alignment [37] | Not applicable | Not applicable |

| Primary Output | Genomic coordinates (BAM) [38] | Genomic coordinates (BAM) [38] | Transcript abundance [38] | Transcript abundance [38] |

| Key Innovation | Speed through uncompressed suffix arrays [39] | Memory efficiency through hierarchical indexing [40] | Bias correction & quasi-mapping [37] | k-mer matching in De Bruijn graph [37] |

Quantitative Performance Comparison: Benchmarking Studies and Results

Mapping Efficiency and Concordance

Multiple studies have systematically compared the performance of different RNA-seq alignment tools. A 2020 evaluation of seven alignment tools on Arabidopsis thaliana data found that between 92.4% and 99.5% of reads were successfully mapped across different tools, with STAR achieving the highest mapping rates (99.5% for Col-0 and 98.1% for N14 accessions) [37]. The raw count distributions generated by different mappers showed high correlation, with coefficients ranging from 0.977 to 0.997 for Col-0 samples [37]. Notably, the highest similarity was observed between the pseudo-aligners kallisto and salmon (Rv = 0.9999) [37].

Differential Gene Expression Concordance

When assessing the impact on downstream differential expression analysis, studies reveal substantial but incomplete overlap between tools. Research on checkpoint blockade-treated CT26 experimental data showed that while all methods identified a core set of differentially expressed genes, HISAT2 detected additional unique genes not found by the pseudo-aligners [41]. The overlap of differentially expressed genes between kallisto and salmon was notably high (98%), while the lowest overlaps were detected between bwa and STAR (93.4%) [37].

Table 2: Performance Benchmarking Across Alignment Tools (Based on Multiple Studies)

| Performance Metric | STAR | HISAT2 | Salmon | Kallisto |

|---|---|---|---|---|

| Mapping Rate (%) | 99.5 [37] | 95-98 [37] | 95-98 [37] | 95-98 [37] |

| DGE Overlap with Salmon (%) | 92-94 [37] | 92-94 [37] | - | 97-98 [37] |

| Small RNA Quantification | Accurate [42] | Accurate [42] | Reduced accuracy [42] | Reduced accuracy [42] |

| Resource Requirements | High memory [39] | Moderate [40] | Low [38] | Low [38] |

| Speed | Fast but memory-intensive [39] | Moderate [40] | Very fast [38] | Very fast [38] |

Performance with Specialized RNA Types

Important performance differences emerge when quantifying specific RNA classes. A comprehensive 2018 study revealed that alignment-based pipelines significantly outperformed alignment-free tools for quantifying small structured non-coding RNAs (e.g., tRNAs, snoRNAs) and lowly-expressed genes [42]. While all pipelines showed high accuracy for quantifying protein-coding genes and synthetic spike-ins, alignment-free tools showed systematically poorer performance for small RNAs, particularly those containing biological variations [42].

Practical Implementation: Protocols and Workflow Integration

STAR Alignment Protocol

For researchers implementing STAR alignment, the following protocol provides a robust starting point:

Genome Index Generation:

Read Alignment:

This two-step process first creates a genome index optimized for the specific read length (--sjdbOverhang should be set to read length -1), then performs the actual read alignment, producing sorted BAM files ready for downstream quantification [39].

Pseudo-alignment Protocol with Salmon

For pseudo-alignment-based quantification, the workflow with Salmon typically involves:

Transcriptome Indexing:

Quantification:

This approach directly generates transcript abundance estimates in a fraction of the time required by traditional alignment [38].

Integrated workflows

Comprehensive RNA-seq pipelines like nf-core/RNA-seq support multiple alignment strategies, allowing researchers to choose between STAR, HISAT2, or pseudo-aligners based on their specific needs [40]. The pipeline also includes automated strandedness detection using Salmon quantification when set to 'auto', demonstrating how these tools can be complementary rather than mutually exclusive [40].

Table 3: Essential Computational Reagents for RNA-seq Alignment

| Resource Type | Specific Examples | Function & Importance |

|---|---|---|

| Reference Genome | GRCh38 (Ensembl), HG38 (UCSC), GRCm39 (Mouse) | Baseline genomic sequence for alignment; must match annotation source [43] |

| Gene Annotation | GTF/GFF files from Ensembl, GENCODE, RefSeq | Provides transcript models for read assignment and quantification [43] |

| Alignment Indices | STAR genome index, HISAT2 index, Salmon transcriptome index | Pre-built structures enabling rapid read mapping [39] |

| Quality Control Tools | FastQC, MultiQC, RSeQC | Assess read quality, library complexity, and strandedness [40] [17] |

| Quantification Tools | featureCounts, HTSeq-count, tximport | Convert alignments to count data for differential expression [5] |

| Differential Expression | DESeq2, edgeR, limma-voom | Statistical analysis of expression changes between conditions [37] |

Strategic Recommendations: Selecting the Right Tool for Your Research Context

Decision Framework for Alignment Strategy Selection

Based on comprehensive benchmarking studies and practical considerations, the following strategic recommendations emerge:

For comprehensive transcriptome characterization including novel transcript discovery, alternative splicing analysis, or identification of genetic variants, traditional spliced aligners like STAR or HISAT2 remain essential [38]. These tools provide the genomic context necessary for such analyses, which pseudo-aligners cannot deliver.

For standardized differential expression analyses focused on well-annotated protein-coding genes, pseudo-aligners offer an excellent combination of speed and accuracy [37] [38]. Their quantification performance for common gene targets is highly concordant with traditional aligners while offering substantial computational efficiency gains.

For specialized applications involving small RNAs or other structured non-coding RNAs, alignment-based approaches currently demonstrate superior performance [42]. The k-mer-based approaches used by pseudo-aligners struggle with the unique characteristics of these RNA classes.

In resource-constrained environments or for rapid iterative analysis, pseudo-aligners provide significant advantages, with kallisto demonstrating 2.6 times faster processing and 15x lower memory usage compared to STAR in single-cell RNA-seq benchmarks [38].

Emerging Trends and Future Directions

The distinction between alignment strategies is becoming increasingly blurred with tool development. Modern versions of Salmon incorporate "selective alignment," which represents a hybrid approach between traditional alignment and quasi-mapping [38]. Integrated workflows like nf-core/RNA-seq now support multiple alignment strategies within a single framework, allowing researchers to select the optimal approach based on their specific biological questions and computational resources [40].

Furthermore, recent research emphasizes that optimal tool selection should consider species-specific differences, with different analytical tools demonstrating performance variations when applied to data from humans, animals, plants, and fungi [17]. This highlights the importance of context-aware pipeline design rather than one-size-fits-all solutions in RNA-seq analysis.

Within the comprehensive workflow of RNA-seq data analysis, the steps following read alignment are critical for transforming raw sequencing data into biologically interpretable information. Post-alignment processing, encompassing file sorting, indexing, and rigorous quality control (QC), serves as the foundation for all subsequent analyses, including differential expression and pathway analysis [44]. Neglecting these steps can lead to incorrect biological interpretations, low reproducibility, and ultimately, wasted resources [45]. This guide details the essential procedures for processing alignment files using SAMtools and performing advanced quality assessment with Qualimap, providing a robust framework for researchers and drug development professionals to validate their data before proceeding to higher-order analyses.

Understanding the Alignment File Format: SAM/BAM

The Sequence Alignment/Map (SAM) format is a standardized, tab-delimited text file containing all alignment information for each read, while its binary equivalent (BAM) provides a compressed, computationally efficient representation of the same data [46] [47]. The BAM format is significantly smaller in size and is typically the required input for downstream tools [47].

A SAM/BAM file consists of two primary sections:

- Header Section: Optional, begins with the '@' character, and describes the source of data, reference sequence, and alignment method [46].

- Alignment Section: Each line corresponds to a single read and contains 11 mandatory fields that detail the essential mapping information [46].

Table 1: Mandatory Fields in a SAM/BAM File Alignment Record

| Field Position | Field Name | Description |

|---|---|---|

| 1 | QNAME | Query template name (read identifier) |

| 2 | FLAG | Bitwise flag encoding various mapping properties |

| 3 | RNAME | Reference sequence name (chromosome) |

| 4 | POS | 1-based leftmost mapping position |

| 5 | MAPQ | Mapping quality (phred-scaled probability of incorrect alignment) |

| 6 | CIGAR | String encoding alignment matches, mismatches, indels, and splices |

| 7 | MRNM | Mate reference sequence name (for paired-end reads) |

| 8 | MPOS | 1-based leftmost mate position |

| 9 | ISIZE | Inferred insert size |

| 10 | SEQ | Read sequence |

| 11 | QUAL | Base quality scores (ASCII-encoded Phred values) |

The FLAG field is of particular importance as it provides crucial information about the alignment in a bitwise format. Common flag values include 1 (read is paired), 2 (read is mapped in a proper pair), 4 (read is unmapped), and 16 (read aligns to the reverse strand) [46]. The CIGAR (Compact Idiosyncratic Gapped Alignment Report) string details the alignment by specifying the number and type of operations (e.g., M for match, I for insertion, D for deletion, N for skipped region) required to match the read to the reference [46]. This is especially critical for RNA-seq data as the 'N' operation can indicate the presence of a splice junction.

Essential SAM/BAM Processing with SAMtools

SAMtools is a versatile suite of utilities that enables efficient manipulation and analysis of SAM/BAM files [48]. Its functions include format conversion, sorting, indexing, filtering, and statistical summary generation.

Basic SAMtools Operations

The first steps typically involve converting between SAM and BAM formats, and sorting the alignments by genomic coordinate, which is a prerequisite for many downstream analyses.

Converting SAM to BAM and Sorting:

Indexing the Sorted BAM File: Indexing creates a separate index file (.bai) that allows for rapid retrieval of alignments from specific genomic regions without processing the entire file [48]. This is essential for visualization in genome browsers like IGV and for many quantification tools.

Filtering and Assessing Alignments

SAMtools provides powerful options for filtering alignments based on specific criteria and for generating basic mapping statistics.

Filtering by Mapping Quality: Removing poorly mapped or non-uniquely mapped reads can improve the reliability of downstream analysis.

It is crucial to understand that Mapping Quality (MAPQ) scores are calculated differently by various aligners. For example, STAR may output MAPQ values of 0, 1, 3, or 50, where 50 represents a unique match, while other aligners like Bowtie2 have a different scoring range [48].

Filtering with SAM Flags: SAM flags can be used to include (-f) or exclude (-F) reads based on specific alignment characteristics.

Generating Mapping Statistics:

The flagstat command provides a quick overview of the mapping performance.

Table 2: Key SAMtools Commands for Post-Alignment Processing

| Command | Function | Common Usage |

|---|---|---|

samtools view |

Format conversion, read filtering, and region extraction | samtools view -b -h input.sam > output.bam |

samtools sort |

Sorts alignments by genomic coordinate | samtools sort input.bam -o output_sorted.bam |

samtools index |

Creates an index for a sorted BAM file | samtools index sorted_input.bam |

samtools flagstat |

Generates simple statistics from the BAM file | samtools flagstat input.bam |

samtools idxstats |

Reports alignment statistics per reference sequence | samtools idxstats input.bam |

The following workflow diagram outlines the logical sequence of these core SAMtools processing steps:

Figure 1: Core SAMtools Data Processing Workflow

Comprehensive Quality Control with Qualimap

After processing BAM files with SAMtools, the next critical step is an in-depth quality assessment. Qualimap is a Java application that generates comprehensive QC reports for NGS data, including specific modules for RNA-seq [47] [49].

Running Qualimap RNA-seq QC

Qualimap requires a sorted and indexed BAM file, along with an annotation file in GTF format.

Basic Qualimap Command:

Key Input Parameters:

-bam: Path to the input sorted BAM file.-gtf: Annotation file in GTF format.-outdir: Directory to store the HTML report and output files.--java-mem-size: Memory allocated to the Java engine (important for large files).-pe: Option for paired-end data.-s: Strand-specific library protocol (e.g.,-s strand-specific-forward).

Interpreting Key Qualimap Output and Metrics

The Qualimap HTML report provides multiple sections with diagnostic plots and metrics. For RNA-seq data, several aspects are particularly important for assessing technical quality [47] [49].

Reads Genomic Origin: This analysis determines the proportion of reads mapping to exonic, intronic, and intergenic regions. A high-quality RNA-seq sample typically shows a majority of reads (e.g., >55%) mapping to exonic regions. An unusually high number of reads in intronic regions (>30%) may indicate significant genomic DNA contamination or a high level of pre-mRNA [46].

Junction Analysis: Qualimap analyzes splice junctions, categorizing them as known, novel, or partly known. This provides insight into the transcriptomic complexity of the sample and the performance of the splice-aware aligner.

Transcript Coverage and 5'-3' Bias: Ideal transcript coverage should be relatively uniform from the 5' to 3' end. A significant coverage drop-off at either end indicates bias, potentially introduced during library preparation (e.g., RNA degradation or fragmentation issues). Qualimap calculates a 5'-3' bias value, where a value near 1 indicates minimal bias.