A Practical Guide to Filtering Low-Quality Cells in scRNA-seq Data: From Foundational QC to Advanced Optimization

This article provides a comprehensive guide for researchers and drug development professionals on filtering low-quality cells in single-cell RNA sequencing (scRNA-seq) data.

A Practical Guide to Filtering Low-Quality Cells in scRNA-seq Data: From Foundational QC to Advanced Optimization

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on filtering low-quality cells in single-cell RNA sequencing (scRNA-seq) data. It covers the foundational principles of quality control, including the sources of technical noise and the biological meaning behind key QC metrics. The guide details methodological best practices for applying filters related to library size, mitochondrial content, and doublet detection, while also addressing critical troubleshooting scenarios like sample-specific thresholds and the unique challenges of cancer data. Finally, it explores validation strategies and comparative analyses of computational tools, offering a holistic framework to ensure data integrity and robust biological discovery in scRNA-seq studies.

Understanding the Why: Foundational Principles of scRNA-seq Quality Control

Core Concepts and Impact on Data Quality

What are the key technical artifacts that affect scRNA-seq data quality? The three primary technical artifacts in scRNA-seq data are ambient RNA, doublets, and cell stress signatures. Ambient RNA consists of cell-free mRNAs released from lysed cells that contaminate droplet contents, distorting transcriptome profiles by adding background expression noise [1] [2]. Doublets occur when two or more cells are captured within a single droplet, creating artificial hybrid expression profiles that can be misinterpreted as novel cell states [3] [4]. Cell stress signatures represent transcriptional changes induced by sample processing, which can obscure genuine biological signals and be mistaken for biological stress responses [4].

How do these artifacts impact downstream biological interpretations? These artifacts significantly compromise data integrity and can lead to incorrect biological conclusions. Ambient RNA contamination causes misidentification of cell types and false detection of differentially expressed genes, particularly impacting rare cell populations [1] [2]. Doublets create artificial cell types that don't exist biologically and obscure true cellular heterogeneity by blending expression profiles [3] [4]. Cell stress signatures mask true biological variation and can be misinterpreted as disease-related pathways, potentially leading to incorrect mechanistic insights [4].

Table 1: Characteristic Features of Major Technical Artifacts in scRNA-seq

| Artifact Type | Primary Causes | Key Indicators | Impact on Downstream Analysis |

|---|---|---|---|

| Ambient RNA | Cell lysis during tissue dissociation, extracellular RNA, RNA degradation [1] | Expression of cell-type-specific markers in inappropriate cell types, particularly markers from abundant cell populations [4] [5] | Misclassification of cell types, false positive DEGs, obscured cellular heterogeneity [1] [2] |

| Doublets | Overloading cells during library preparation, incomplete tissue dissociation [4] [6] | Co-expression of marker genes from distinct cell types, unusually high UMI counts/number of genes [4] [6] | Artificial hybrid cell types, obscured true heterogeneity, incorrect trajectory inference [3] [4] |

| Cell Stress | Sample processing delays, enzymatic digestion, mechanical stress [1] [4] | Elevated mitochondrial gene percentage (>5-15%), expression of dissociation-induced stress genes [4] [6] | Masked biological variation, misinterpretation of stress pathways, incorrect cell state identification [4] |

Detection and Troubleshooting Methodologies

How can I detect and quantify ambient RNA contamination in my dataset? Effective detection of ambient RNA utilizes both computational tools and biological indicators. SoupX provides a profile of the ambient RNA content by analyzing empty droplets and estimates contamination levels using known marker genes that shouldn't be expressed in certain cell types [1] [5]. CellBender employs deep learning to distinguish true cell expression from background noise, offering an end-to-end solution for large datasets [1] [2]. Biologically, a key indicator is detecting hemoglobin genes in non-erythroid cells or other cell-type-specific markers appearing in inappropriate contexts, suggesting contamination from the ambient pool [5].

What methods reliably identify doublets in scRNA-seq data? Doublet detection combines computational scoring and expression-based filtering. Scrublet creates artificial doublets and compares them to real cells to predict doublet scores, demonstrating good scalability for large datasets [1] [7]. DoubletFinder employs a neighborhood-based approach that has shown superior accuracy in benchmarking studies and effectively preserves downstream analyses [1] [4]. Additionally, cells exhibiting simultaneous expression of established marker genes for distinct cell types (e.g., immune and epithelial markers) should be carefully scrutinized as potential doublets [4].

Which experimental and computational strategies effectively mitigate cell stress effects? Addressing cell stress requires both protocol optimization and computational correction. Experimentally, reducing tissue processing time, optimizing dissociation protocols, and implementing rapid sample fixation can minimize stress induction [4]. Computationally, regressing out mitochondrial percentage and stress gene signatures during data scaling helps remove these confounding technical effects [4]. Filtering cells with mitochondrial percentages exceeding 5-15% (tissue-dependent) and removing cells with very low gene counts further cleanses the data of stress-affected cells [4] [6].

Table 2: Computational Tools for Artifact Identification and Removal

| Tool Name | Primary Application | Methodology | Key Strengths |

|---|---|---|---|

| SoupX | Ambient RNA removal | Estimates contamination from empty droplets; uses marker genes for decomposition [1] [5] | Does not require precise pre-annotation; effective with single-nucleus data [4] |

| CellBender | Ambient RNA and background noise removal | Deep learning model to distinguish biological signal from technical noise [1] [2] | End-to-end strategy; accurate background estimation; handles large datasets well [1] [4] |

| DecontX | Ambient RNA decontamination | Bayesian method to estimate and remove contamination [1] | Integrated with Celda pipeline; effective for diverse sample types [1] |

| Scrublet | Doublet detection | Creates synthetic doublets for comparison to real cells [1] [7] | Scalable for large datasets; widely adopted in community [7] [4] |

| DoubletFinder | Doublet detection | Neighborhood-based classification; uses artificial nearest neighbors [1] [4] | High accuracy in benchmarking; minimal impact on downstream analyses [4] |

Advanced Experimental Design Considerations

Can doublets ever provide biologically meaningful information? In specific contexts, doublets can indeed offer valuable biological insights. The CIcADA pipeline identifies biologically meaningful doublets representing cells engaged in juxtacrine interactions that maintained physical contact through processing [3]. These preserved doublets consistently upregulated immune response genes in tumor microenvironments, providing direct evidence of cell-cell communication events that would be invisible in singlet-based analyses [3]. To distinguish biological doublets from artifacts, CIcADA compares potential doublets against synthetic doublets created from high-confidence singlets, with differential expression analysis revealing interaction-specific signatures [3].

How should quality control thresholds be adapted for different biological systems? Quality control thresholds must be tailored to specific biological contexts as rigid universal standards can eliminate valid cell populations. Mitochondrial percentage thresholds should account for species and tissue differences, with human tissues typically exhibiting higher baseline mitochondrial gene expression than murine tissues [4]. Highly metabolically active tissues like kidney and heart naturally exhibit elevated mitochondrial content, necessitating adjusted thresholds to avoid filtering biologically relevant cells [4]. For heterogeneous samples, consider implementing cluster-specific QC metrics rather than global thresholds, as different cell types may have distinct technical characteristics [6].

Table 3: Essential Computational Tools for scRNA-seq Artifact Management

| Resource Category | Specific Tools | Primary Function | Implementation Platform |

|---|---|---|---|

| Ambient RNA Correction | SoupX, CellBender, DecontX [1] [4] [2] | Estimate and remove background RNA contamination | R (SoupX), Python (CellBender) |

| Doublet Detection | Scrublet, DoubletFinder, Solo [1] [4] [6] | Identify and filter multiplets from single-cell data | Python (Scrublet), R (DoubletFinder) |

| Quality Control & Filtering | Seurat, Scanpy, Scarf [7] [4] | Comprehensive QC metrics calculation and data filtering | R (Seurat), Python (Scanpy) |

| Data Integration & Batch Correction | Harmony, BBKNN, scVI [7] [4] | Remove technical batch effects while preserving biology | R/Python (Harmony), Python (BBKNN, scVI) |

Frequently Asked Questions (FAQs)

What percentage of mitochondrial genes should trigger cell filtering? While commonly used thresholds range from 5-15%, this must be adapted to your specific biological system [4]. Human samples typically exhibit higher mitochondrial percentages than mouse tissues, and highly metabolic tissues like kidney and heart naturally have elevated mitochondrial content [4] [6]. Cardiomyocytes, for instance, normally show high mitochondrial gene expression, and applying standard thresholds would inappropriately remove these biologically valid cells [6].

How does the multiplet rate change with the number of loaded cells? Multiplet rates increase substantially with higher cell loading concentrations. 10x Genomics reports that loading 7,000 target cells yields 378 multiplets (5.4%), while increasing to 10,000 cells raises the multiplet rate to 7.6% [4]. This non-linear relationship necessitates careful experimental planning to balance cell recovery against data quality, with consideration of downstream doublet detection tools to manage the resulting artifacts [4] [6].

Should I always remove cells co-expressing markers of different lineages? Not necessarily - while such co-expression often indicates doublets, it may also represent legitimate transitional states or hybrid cell identities [4]. Carefully examine these cells using tools like CIcADA that distinguish biological interactions from technical artifacts [3]. The experimental context is crucial; partially dissociated tissues may preserve biologically meaningful cell pairs that provide valuable interaction information [3].

Frequently Asked Questions (FAQs)

1. What are the key QC metrics for filtering low-quality cells in scRNA-seq data? The three fundamental QC metrics are UMI counts, genes detected, and mitochondrial read percentage. These metrics help distinguish high-quality cells from those compromised by technical issues like failed reverse transcription, cell damage, or apoptosis. Proper filtering is crucial as low-quality libraries can form misleading clusters, interfere with population heterogeneity characterization, and create false "upregulation" of genes [8] [9].

2. Why is the number of genes detected per cell an important metric? The number of genes detected (also called nFeature) indicates the complexity of a cell's transcriptome. Cells with an unusually low number of genes often represent empty droplets or severely damaged cells, while those with an extremely high number may be multiplets (droplets containing more than one cell) [10] [6]. This metric is closely related to the total UMI count.

3. How do I interpret and set a threshold for mitochondrial percentage? A high percentage of reads mapping to mitochondrial genes is a strong indicator of poor cell quality, often resulting from broken cells where cytoplasmic RNA has leaked out, leaving behind mitochondrial RNA [9] [6]. While a fixed threshold of 10% is sometimes used, the appropriate cutoff can vary by organism, cell type, and protocol. Some cell types, like cardiomyocytes, naturally have high mitochondrial activity, so applying a universal threshold may introduce bias [6]. It is often better to identify outliers statistically [9].

4. My dataset has cells with very low UMI counts. Should I filter them? Yes, barcodes with very low UMI counts (e.g., below 500) often do not represent true cells but instead contain only ambient RNA [6]. The lower limit can be data-dependent. For example, in the Seurat guided clustering tutorial, cells with fewer than 200 genes detected are filtered out. The distribution of UMI counts should be visualized to set an appropriate, dataset-specific threshold [8] [6].

5. Are fixed thresholds for these QC metrics applicable to all experiments? No, fixed thresholds are not universally applicable. The expected values for QC metrics can vary substantially based on the experimental protocol, sample type, and biological system [11] [6]. Using data-driven, adaptive thresholds—such as identifying outliers based on the median absolute deviation (MAD)—is a more robust approach, especially for heterogeneous samples [9] [6].

Troubleshooting Common QC Metric Issues

Problem 1: Unexpectedly Low UMI Counts or Genes Detected Across Most Cells

- Potential Cause: Inefficient cDNA capture or amplification during library preparation, or low sequencing depth.

- Solution:

- Bioinformatic: Be cautious when setting a lower threshold to avoid losing genuine small cell types (e.g., neutrophils). Use data-driven thresholds (e.g., 3 MADs below the median) instead of arbitrary ones [6].

- Experimental: Optimize cell viability and ensure accurate cell concentration quantification using a hemocytometer or automated cell counter—not a FACS machine or Bioanalyzer [8].

Problem 2: High Mitochondrial Percent in a Subset of Cells

- Potential Cause: This is typically a sign of apoptotic or physically damaged cells, which can occur during tissue dissociation [9] [6].

- Solution:

- Bioinformatic: Filter out cells that are outliers for mitochondrial percentage. Visually inspect the distribution per sample and apply a filter (e.g., 10% for PBMCs) [10]. For heterogeneous samples, consider cluster-specific QC [6].

- Experimental: Improve tissue dissociation techniques to reduce cell stress. Use a dead cell removal kit during sample preparation to enrich for live cells [12].

Problem 3: A Shoulder or Bimodal Distribution in Genes Detected per Cell

- Potential Cause: The presence of a distinct group of low-quality cells, or biologically distinct cell populations with inherently lower RNA content [8].

- Solution:

- Bioinformatic: Calculate and visualize the distribution of genes detected per cell. Correlate this metric with the mitochondrial percentage. Cells that are outliers for both are likely low-quality and should be removed [8] [11].

- Biological: Prior knowledge of the biological system is crucial. If your sample is expected to contain less complex cell types (e.g., quiescent cells), avoid filtering them out based on this metric alone [8].

Problem 4: Persistent Technical Noise After Standard Filtering

- Potential Cause: Ambient RNA contamination from lysed cells in the solution, which can be captured in droplets and distort gene expression counts [6].

- Solution:

Table 1: Common Thresholds and Considerations for Key QC Metrics

| QC Metric | Common Thresholds (Starting Points) | Biological/Technical Meaning | Caveats and Considerations |

|---|---|---|---|

| UMI Counts | • Lower limit: 500-1000 [8] [6]• Seurat example: > 500 [8] | • Low: Empty droplets, ambient RNA.• High: Multiplets. | Highly heterogeneous samples may contain real cells with naturally low (e.g., neutrophils) or high RNA content. Use data-driven thresholds [6]. |

| Genes Detected | • Lower limit: 200-500 genes [6]• Seurat example: 200-2500 [6] | Correlates with library complexity. Low values indicate poor-quality cells or empty droplets. | Often correlates strongly with UMI counts. Can be cell-type specific. |

| Mitochondrial Percent | • Upper limit: ~5-10% [10] [6]• Can use 3-5 MADs from median [9] | High values indicate cellular stress, apoptosis, or physical damage. | Varies by organism and cell type. Cardiomyocytes naturally have high mtRNA; applying a standard threshold can be misleading [6]. |

Table 2: Key Bioinformatics Tools for QC and Filtering

| Tool Name | Primary Function | Application Context |

|---|---|---|

| Seurat [8] [13] | Comprehensive analysis toolkit (R) | Calculates QC metrics, visualization, and filtering. |

| scater [9] [11] | Single-cell QC and visualization (R/Bioconductor) | Computes per-cell QC statistics and diagnostic plots. |

| Cell Ranger [10] [13] | Raw data processing (10x Genomics) | Initial processing, alignment, and cell calling from FASTQ files. |

| DoubletFinder / Scrublet [6] | Doublet detection | Identifies potential multiplets post-cell-calling. |

| SoupX / CellBender [6] [13] | Ambient RNA removal | Corrects for background noise in droplet-based data. |

Experimental Protocol: Calculating QC Metrics with Seurat

This protocol details the steps for calculating key QC metrics from a merged Seurat object, as outlined in the HCBR training materials [8].

1. Explore Initial Metadata:

- After creating or merging a Seurat object, begin by examining the automatically generated metadata with

View(merged_seurat@meta.data). This includesnCount_RNA(number of UMIs per cell) andnFeature_RNA(number of genes detected per cell) [8].

2. Calculate Genes per UMI:

- Compute the number of genes detected per UMI for each cell, which reflects the complexity of the RNA species in the cell. Log-transform the result for better comparison across samples.

- Code:

merged_seurat$log10GenesPerUMI <- log10(merged_seurat$nFeature_RNA) / log10(merged_seurat$nCount_RNA)[8].

3. Compute Mitochondrial Ratio:

- Use the

PercentageFeatureSet()function to calculate the percentage of transcripts mapping to mitochondrial genes. The pattern"^MT-"is used for human gene names. Adjust this pattern for your organism of interest (e.g.,"^mt-"for mouse). - Code:

r merged_seurat$mitoRatio <- PercentageFeatureSet(object = merged_seurat, pattern = "^MT-") merged_seurat$mitoRatio <- merged_seurat@meta.data$mitoRatio / 100[8].

4. Create and Augment Metadata Dataframe:

- Extract the metadata into a separate dataframe for safer manipulation.

- Code:

r metadata <- merged_seurat@meta.data metadata$cells <- rownames(metadata) # Add cell IDs metadata <- metadata %>% dplyr::rename(seq_folder = orig.ident, nUMI = nCount_RNA, nGene = nFeature_RNA) # Create a sample column based on cell IDs metadata$sample <- NA metadata$sample[which(str_detect(metadata$cells, "^ctrl_"))] <- "ctrl" metadata$sample[which(str_detect(metadata$cells, "^stim_"))] <- "stim"[8].

5. Integrate Metadata Back to Seurat Object:

- Save the updated metadata back into the Seurat object to complete the process.

- Code:

merged_seurat@meta.data <- metadata[8].

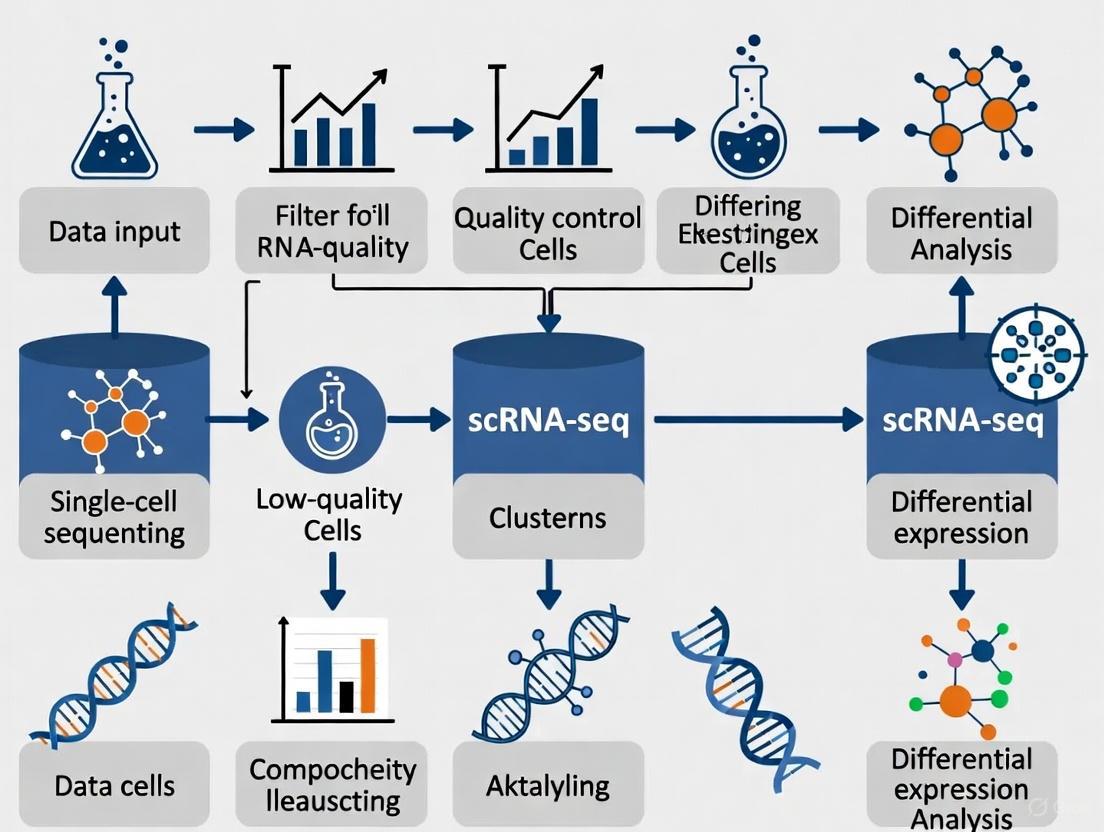

scRNA-seq QC Workflow

The following diagram illustrates the logical workflow for quality control in single-cell RNA sequencing analysis, from raw data to a filtered cell matrix.

A Researcher's Toolkit for scRNA-seq QC

Table 3: Essential Research Reagent Solutions for scRNA-seq QC

| Item | Function in QC Context |

|---|---|

| Dead Cell Removal Kit [12] | Improves initial sample quality by enriching for live cells, which reduces background signal from lysed cells and leads to a lower mitochondrial percentage in the final data. |

| Cell Strainer | Removes cell aggregates and large debris to prevent clogs in microfluidic chips and reduce multiplet rates, leading to more accurate UMI and gene counts per cell. |

| Hemocytometer / Automated Cell Counter [8] [12] | Provides an accurate count of cell concentration and viability. Inaccurate counting is a common source of poor cell recovery and can affect the interpretation of UMI counts per cell. |

| Trypan Blue or Fluorescent Viability Dyes [12] | Allows discrimination between live and dead cells during counting. Fluorescent dyes are more accurate for complex samples like nuclei suspensions or those with debris. |

| Cryopreservation Media (with DMSO) [12] | Enables freezing of high-quality cell suspensions for later processing, preserving cell viability and RNA integrity to prevent degradation that inflates mitochondrial metrics. |

| Nuclei Isolation Kit [12] | Provides a standardized method for nuclei extraction from difficult tissues, ensuring nuclear integrity and reducing contamination from cytoplasmic RNA, which affects gene and UMI counts. |

Within the framework of a broader thesis on filtering low-quality cells in single-cell RNA sequencing (scRNA-seq) research, this guide addresses a fundamental challenge: reliably distinguishing intact, high-quality cells from empty droplets and other artifacts. scRNA-seq data is inherently sparse and dropout-prone, making initial quality assessment critical for all downstream analyses [14] [15]. Proper identification of real cells ensures that subsequent discoveries in cellular heterogeneity, disease mechanisms, and drug development are built upon a solid biological foundation.

Frequently Asked Questions

1. What are the primary quantitative metrics used to distinguish real cells from empty droplets, and what are their typical thresholds?

The initial quality control (QC) step typically relies on three core metrics calculated for each cellular barcode. The following table summarizes these key indicators and their generally accepted thresholds for identifying high-quality cells [15] [8] [4].

Table 1: Key Quality Control Metrics for scRNA-seq Data

| Metric | Biological/Technical Meaning | Typical Threshold (for high-quality cells) | Rationale |

|---|---|---|---|

| Number of Counts per Cell (nUMI) | Total number of transcripts (UMIs) detected. Represents the "library size." | > 500 - 1000 [8] | Values that are too low suggest an empty droplet or a cell with little RNA content (e.g., a dead cell). |

| Number of Genes per Cell (nGene) | The diversity of expressed genes. | > 250 - 300 [8] | Low numbers indicate a poor-quality cell or empty droplet. Excessively high numbers may indicate a doublet. |

| Mitochondrial Count Fraction | Percentage of transcripts originating from mitochondrial genes. | Varies by species and sample; often 5% - 15% [4] | A high percentage suggests cell stress, apoptosis, or broken cytoplasm where mitochondrial RNA has leaked out. |

2. Beyond the standard metrics, what additional quality indicators can reveal low-quality cells?

Recent research advocates for incorporating the nuclear fraction, based on intronic read content, as a crucial quality metric [16]. In droplet-based scRNA-seq, all nucleated cells should have a significant fraction of reads mapped to introns. Cells with a very low intronic fraction likely represent empty droplets, cytoplasmic debris, or nuclei-free cytoplasmic remnants. Conversely, cells with an extremely high intronic fraction may represent lysed cells that have lost their cytosol [16]. The expression of the long non-coding RNA MALAT1 can also serve as a nuclear marker; its absence can flag cells lacking a nucleus [16].

3. What are doublets/multiplets, and why are they problematic?

A doublet or multiplet is a droplet that contains more than one cell. This occurs at a non-ignorable rate during library preparation, especially with higher cell loading concentrations [4] [17]. Multiplets are problematic because they create hybrid expression profiles, which can be misinterpreted as novel or transitional cell types, leading to spurious biological conclusions [17]. The multiplet rate for a platform like 10x Genomics can be around 5.4% when loading 7,000 target cells [4].

4. How can I differentiate a true rare cell population from a technical artifact like a doublet?

This is a significant challenge. True rare cell types will have coherent gene expression programs, including the expression of established marker genes for a known lineage. In contrast, heterotypic doublets (formed from two different cell types) may co-express marker genes from two distinct lineages, which is biologically implausible for a single cell [4]. Computational tools like DoubletFinder and Scrublet are designed to detect these anomalous cells by comparing observed expression profiles to simulated doublets [4]. However, caution is needed, as some true cells in transitional states might also co-express markers; therefore, a combination of automated tools and manual inspection is recommended [4].

5. My dataset has a high level of ambient RNA. How does this affect cell calling, and how can I correct for it?

Ambient RNA consists of transcripts from lysed cells that exist in the solution and are subsequently encapsulated into droplets along with intact cells [4]. This contamination can lead to the misidentification of empty droplets as cells and can blur the distinct expression profiles of real cell types, complicating annotation. Tools like SoupX and CellBender have been developed to estimate and subtract this background contamination [4]. SoupX is noted for its performance with single-nucleus data, while CellBender provides accurate estimation of background noise in diverse datasets [4].

Experimental Protocols for Robust Quality Control

The following workflow provides a detailed methodology for a comprehensive QC analysis of scRNA-seq data, integrating both standard and advanced metrics.

Protocol: A Comprehensive Workflow for scRNA-seq Quality Control

Step 1: Environment Setup and Data Input

Begin by loading your count matrix (e.g., from Cell Ranger) into a standard analysis environment like Scanpy (Python) or Seurat (R). For example, in Scanpy, you would use sc.read_10x_h5() to import the data [15].

Step 2: Calculation of QC Metrics Compute the standard QC metrics for each barcode:

- Total counts (nUMI) and number of genes detected (nGene).

- Mitochondrial fraction: Identify mitochondrial genes (e.g., those starting with "MT-" in humans, "mt-" in mice) and calculate their percentage of total counts [15] [8].

- Additional gene sets: Calculate the proportion of ribosomal protein genes (RPS, RPL) and hemoglobin genes (HB) as they can also indicate specific biological or technical states [15].

- Nuclear fraction: Use specialized packages (e.g., the

DropletQCR package) to calculate the proportion of intronic reads for each barcode, a key metric for identifying nucleus-free debris [16].

Step 3: Automated and Manual Thresholding for Filtering

- Strategy: It is advised to be as permissive as possible initially to avoid filtering out viable cell populations, especially rare subtypes [15].

- Manual Inspection: Visualize the distribution of QC metrics (nUMI, nGene, mitochondrial fraction) using histograms, violin plots, and scatter plots to identify outliers [8].

- Automated Thresholding: For larger datasets, apply an automatic outlier detection method. A robust method is to use the Median Absolute Deviation (MAD). Cells that deviate by more than 5 MADs from the median for a given metric can be flagged as potential low-quality cells [15].

Step 4: Doublet Detection

- Apply a computational doublet detection tool such as DoubletFinder, Scrublet, or COMPOSITE (the latter is designed for multi-omics data) [4] [17].

- These tools work by creating artificial doublets and then comparing all cells to these simulated profiles to assign a doublet score. Remove cells classified as doublets with high probability.

Step 5: Ambient RNA Correction

- Apply an ambient RNA removal tool like SoupX or CellBender to clean the count matrix. This step helps to correct the expression values of the remaining cells, improving downstream analysis [4].

Step 6: Iterative Re-assessment

- QC is not always a linear process. Re-assess your filtering strategy after initial clustering and annotation, as some biological populations may naturally have higher mitochondrial or lower gene content [15].

The following diagram illustrates the logical workflow and decision points in this protocol:

The following table details key computational tools and resources essential for implementing the quality control procedures described above.

Table 2: Research Reagent Solutions: Key Computational Tools for scRNA-seq QC

| Tool Name | Function | Brief Description of Role |

|---|---|---|

| Scanpy [15] | Data Analysis & QC | A comprehensive Python-based toolkit for analyzing single-cell gene expression data. Used for calculating QC metrics, filtering, and visualization. |

| Seurat [8] | Data Analysis & QC | A widely-used R toolkit for single-cell genomics. Provides functions for QC, normalization, and clustering. |

| DoubletFinder [4] | Doublet Detection | A tool that uses artificial nearest-neighbor networks to classify doublets in scRNA-seq data. |

| Scrublet [4] | Doublet Detection | A scalable tool for predicting doublets in scRNA-seq data by simulating doublets and identifying neighbors. |

| COMPOSITE [17] | Multiplet Detection | A statistical model-based framework for detecting multiplets, particularly effective in single-cell multi-omics data. |

| SoupX [4] | Ambient RNA Removal | A tool for estimating and removing the ambient RNA contamination profile from droplet-based scRNA-seq data. |

| CellBender [4] | Ambient RNA Removal | A tool that uses a deep generative model to remove technical artifacts, including ambient RNA, from count data. |

| DropletQC [16] | Nuclear Fraction | An R package for identifying empty droplets and low-quality cells based on nuclear fraction (intronic content). |

Frequently Asked Questions (FAQs)

Q1: I am working with fragile cells, like those from epithelial tissues. Which platform is gentler and might prevent cell stress? A1: For fragile cells, such as gastrointestinal tract epithelial cells, picowell-based (well-based) platforms are often the gentler option. They utilize processes that are less mechanically and enzymatically stressful compared to droplet-based methods, which helps preserve more natural gene expression profiles and reduces sample degradation [18].

Q2: My project requires profiling thousands of cells. Which platform is better for high-throughput studies? A2: Droplet-based technologies, like the 10x Genomics Chromium system, are generally superior for high-throughput applications. They are designed to process thousands to millions of cells in a single experiment, offering a lower cost per cell when working at a large scale [19] [14].

Q3: I am concerned about technical artifacts like doublets, where two cells are mistakenly sequenced as one. How do these platforms compare? A3: Both platforms generate doublets, but the causes and rates differ.

- Droplet-based: Doublets occur primarily from co-encapsulation of multiple cells in a single droplet. The rate follows a Poisson distribution and is typically kept below 5% with optimal cell loading concentrations [19] [20].

- Well-based: Doublets can occur but some systems, like Well-TEMP-seq, achieve a high single cell-barcoded bead pairing rate of ~80%, which is significantly higher than the Poisson-dependent pairing in some droplet methods [21].

Q4: What about background noise from ambient RNA? Is one platform less prone to this? A4: Picowell-based platforms can have an advantage in reducing ambient RNA contamination. The workflow for some well-based systems allows for the removal of cell-free RNAs by washing the wells before the barcoding step, which is not possible in standard droplet workflows [21]. In droplet-based systems, ambient RNA is a known challenge that often requires computational tools for correction [19] [22].

Technical Comparison Tables

Table 1: Key Performance Metrics for Droplet vs. Well-Based Platforms

| Performance Metric | Droplet-Based (e.g., 10x Genomics, inDrop) | Well-Based (e.g., Picowell platforms) |

|---|---|---|

| Typical Cell Throughput | Very High (Thousands to millions of cells) [19] | Variable, but generally lower than droplet-based systems [18] |

| Cell Capture Efficiency | 30% - 75% [19] | Information not specified in search results |

| mRNA Capture Efficiency | 10% - 50% of cellular transcripts [19] | Information not specified in search results |

| Typical Multiplet Rate | < 5% (with optimal loading) [19] | Information not specified in search results |

| Single Cell-Bead Pairing Efficiency | Low in some systems (e.g., <1% for Drop-seq based methods) due to Poisson distribution [21] | Can be very high (e.g., ~80% for Well-TEMP-seq) [21] |

| Gentleness on Fragile Cells | Lower; enzymatic/mechanical processes can stress delicate cells [18] | Higher; gentler capture process better preserves cell integrity [18] |

| Ambient RNA Control | Challenging; requires computational cleanup [19] [22] | Better; allows physical washing to remove cell-free RNA [21] |

| Cost per Cell | Lower at very high throughput [19] [14] | Can be a cost-effective alternative [18] |

Table 2: Essential Experimental Protocols for Platform Validation

| Experiment Name | Purpose | Key Steps | Interpretation & Role in Filtering Low-Quality Cells |

|---|---|---|---|

| Species-Mixing Experiment [20] | To quantify the cell doublet rate. | 1. Mix cells from different species (e.g., human & mouse).2. Process the mixed sample through the scRNA-seq platform.3. Sequence and analyze the data. | Identifies doublets: Cells expressing genes from both species are technical doublets. The measured heterotypic doublet rate is used to estimate the overall (including homotypic) doublet rate, allowing for the computational removal of these artifacts. |

| Cell Hashing / Multiplexing (e.g., MULTI-seq) [20] | To label cells from different samples with unique barcodes before pooling, enabling sample multiplexing and doublet detection. | 1. Label individual cell samples with unique lipid-conjugated or antibody-conjugated oligonucleotide barcodes.2. Pool the labeled samples.3. Process the pooled sample through the scRNA-seq platform. | Identifies sample multiplets: After sequencing, cells with more than one hashtag barcode are identified as doublets or multiplets and filtered out. This allows for intentional overloading of cells to increase throughput while controlling the final doublet rate. |

Workflow Diagrams

Diagram 1: Key Steps for scRNA-seq Quality Control

Diagram 2: Droplet vs. Well-Based Cell Capture

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Reagents for scRNA-seq Quality Control

| Reagent / Material | Function | Considerations for Platform Choice |

|---|---|---|

| Viability Stains (e.g., Calcein-AM) [22] | Fluorescent dye used to identify live cells based on esterase activity. | Can be used with advanced droplet systems (e.g., spinDrop) for fluorescence-activated droplet sorting (FADS) to enrich for live cells before lysis and barcoding. |

| Cell Hashing Oligonucleotides (e.g., MULTI-seq) [20] | Sample-specific barcodes (antibody- or lipid-conjugated) that label cells prior to pooling. | Enables sample multiplexing and doublet detection on both droplet and well-based platforms. Crucial for increasing throughput while maintaining data quality. |

| Barcoded Beads (Gel Beads) [19] [23] | Microbeads containing millions of oligonucleotides with cell barcodes and UMIs for capturing mRNA. | A core component for both platforms. Hydrogel beads (used in 10x, inDrop) allow for sub-Poisson loading, while hard resin beads are used in Drop-seq. |

| Unique Molecular Identifiers (UMIs) [24] [25] | Short random nucleotide sequences added to each transcript during reverse transcription. | Essential for both platforms to digitally count individual mRNA molecules and correct for PCR amplification bias, ensuring quantitative data. |

| Surfactant/Oil Emulsion [23] | Creates stable, nanoliter-scale water-in-oil droplets that act as isolated reaction chambers. | Critical for the stability of droplet-based systems. The quality and formulation directly impact droplet integrity and prevent cross-contamination. |

The How-To Guide: Methodologies and Tools for Effective Cell Filtering

This guide details the critical process of filtering low-quality cells in single-cell RNA sequencing (scRNA-seq) data analysis. The quality of your initial cell matrix profoundly impacts all downstream biological interpretations, from identifying cell types to understanding cellular communication. This workflow provides researchers with a structured, troubleshooting-oriented approach to ensure that the cellular data underlying their research is robust and reliable.

Why is Filtering Low-Quality Cells So Critical?

In scRNA-seq data, low-quality cells can arise from several sources, including damaged cells during dissociation, empty droplets, or droplets containing cell doublets/multiplets. If not removed, these cells introduce significant technical noise that can obscure true biological variation. For instance, transcripts from ruptured cells can become "ambient RNA," contaminating nearby cells and leading to misclassification. Furthermore, dying cells often exhibit aberrantly high mitochondrial gene expression, which can be mistaken for a genuine biological state. Effective filtering is the first and most crucial defense against these artifacts, ensuring that subsequent clustering and differential expression analysis reflect biology, not technical artifacts [26] [14] [10].

Frequently Asked Questions (FAQs)

1. What are the key metrics used to identify a low-quality cell? Three primary metrics are commonly used:

- Unique Gene Count (

nFeature_RNA): The number of genes detected in a cell. Low counts may indicate empty droplets or broken cells, while very high counts can suggest doublets (multiple cells captured together) [27] [10]. - UMI Count (

nCount_RNA): The total number of transcripts detected. This often correlates strongly with the gene count and is used similarly to identify outliers [27]. - Mitochondrial Gene Percentage (

percent.mt): The proportion of transcripts derived from mitochondrial genes. A high percentage is a hallmark of stressed, apoptotic, or low-quality cells due to compromised cell membranes [27] [28].

2. How do I set specific filtering thresholds for my dataset? Thresholds are not universal and must be determined empirically from the data distribution of your own experiment. The following steps are recommended:

- Visualize Distributions: Use violin plots or scatter plots to inspect the distributions of the three key metrics across all cells [27] [29].

- Identify Outliers: Manually set thresholds to exclude clear outliers. A common strategy is to filter cells with a mitochondrial percentage significantly above the majority of the population (e.g., >5-10% for PBMCs) and remove cells at the extreme low ends of the gene and UMI count distributions [27] [10].

- Iterate and Re-cluster: Filtering is an iterative process. After applying initial thresholds, re-visualize the data to ensure that low-quality clusters are removed while biologically relevant populations are retained [28].

3. My dataset has a high overall mitochondrial percentage. What should I do? A uniformly high mitochondrial percentage can indicate a problem with the sample viability itself. Before filtering, consider:

- Biology vs. Artifact: Certain cell types, like cardiomyocytes, naturally have high respiratory activity and thus high mitochondrial RNA content. Filtering these could introduce bias [26] [10].

- Experimental Review: If the biology does not explain the high percentage, it may point to an issue with the sample preparation protocol, such as excessive cell stress during dissociation. If possible, troubleshoot the wet-lab process.

4. What tools can I use to perform this filtering?

- Seurat (R): A widely used toolkit that provides functions for calculating QC metrics (

PercentageFeatureSet), visualization (VlnPlot,FeatureScatter), and filtering (subset) [27] [29]. - Loupe Browser (10x Genomics): A graphical interface that allows for interactive visualization and filtering of cells based on QC metrics, providing real-time feedback on how filtering affects cell clusters [10] [28].

- Command-Line Tools: Packages like

Cell Ranger(10x Genomics) andSTARsoloperform initial quantification and can generate summary reports used for QC [26] [10].

Troubleshooting Common Issues

Problem: Loss of a rare cell population after filtering.

- Potential Cause: Overly stringent thresholds on gene or UMI counts may have removed small cells with naturally low RNA content.

- Solution: Relax the lower bounds on UMI and gene counts. Visually inspect the pre-filtering plots to see if the potential rare population forms a distinct cluster at the lower end of the counts and adjust thresholds to preserve it [26] [28].

Problem: A distinct cluster of cells has a high mitochondrial percentage.

- Potential Cause: This could be a genuine population of stressed or dying cells, or a technical artifact.

- Solution: Do not automatically filter this entire cluster. First, check for the expression of marker genes for known cell types. If the cluster expresses markers for a real cell type but also has high stress signatures, you may choose to retain it and regress out the mitochondrial signal as a source of unwanted variation in downstream steps, rather than removing the cells entirely [27] [28].

Problem: Persistent technical batch effects after filtering.

- Potential Cause: Filtering alone cannot always correct for strong technical differences between samples processed in different batches.

- Solution: After performing quality control on each sample individually, use data integration tools like those provided in Seurat (

FindIntegrationAnchors,IntegrateData) to harmonize the datasets before proceeding to clustering [27] [30].

Standard Operating Procedure: Cell Quality Control and Filtering

Methodology Using Seurat in R

This protocol outlines the standard pre-processing workflow for scRNA-seq data in Seurat, focusing on quality control and filtering of cells.

1. Load Data and Calculate QC Metrics

2. Visualize QC Metrics to Inform Thresholds

3. Filter Cells Based on Visualized Distributions

Workflow Visualization

Reference Table: Common QC Threshold Guidelines

Table 1: Example quality control thresholds for different sample types. These are starting points and must be validated for each dataset.

| Sample Type | nFeature_RNA (Low) | nFeature_RNA (High) | percent.mt | Notes |

|---|---|---|---|---|

| PBMCs (10x) [27] | > 200 | < 2500 | < 5% | Standard immune cells; adjust high threshold for activated cells. |

| Complex Tissue [26] | > 500-1000 | < 5000-10000 | < 10-20% | More diverse cell sizes and types; thresholds are wider. |

| Cardiomyocytes [26] [10] | Cell-specific | Cell-specific | Use with caution | Naturally high mitochondrial content; do not filter based on this metric alone. |

| Nuclei (snRNA-seq) [26] | > 200 | < 5000 | < 5% | Generally lower gene counts per nucleus. |

The Scientist's Toolkit: Essential Research Reagents & Software

Table 2: Key tools and resources for scRNA-seq data quality control and analysis.

| Tool / Resource | Category | Primary Function | Reference |

|---|---|---|---|

| Cell Ranger | Processing Pipeline | Processes 10x Genomics FASTQ files into a count matrix; provides initial QC web summary. | [10] |

| Seurat | R Analysis Toolkit | Comprehensive toolkit for scRNA-seq analysis, including QC, filtering, normalization, and clustering. | [27] [29] |

| Loupe Browser | Visualization Software | Interactive visualization of 10x Genomics data; allows manual filtering with real-time cluster feedback. | [10] [28] |

| FastQC | Read QC Tool | Assesses the quality of raw sequencing reads from FASTQ files. | [26] [31] |

| DoubletFinder | R Package | Computational prediction of doublets in the data based on artificial nearest neighbors. | [26] |

| SoupX | R Package | Corrects for ambient RNA contamination in droplet-based data. | [10] |

## Frequently Asked Questions (FAQs)

Q1: Why should I use a data-driven method instead of fixed thresholds for filtering cells by UMI and gene counts? Fixed thresholds (e.g., keeping cells with gene counts between 200 and 2,500) are often borrowed from tutorials but are not suitable for all datasets. Using arbitrary cutoffs can inadvertently eliminate valid biological cells, especially in highly heterogeneous samples where some cell types naturally have very high or low RNA content. A data-driven approach identifies outliers specific to your dataset, which helps preserve biological heterogeneity and leads to more reliable downstream results [6] [9].

Q2: What are the common data-driven methods for identifying outlier cells? A common and robust method is to use the Median Absolute Deviation (MAD). This method calculates a threshold based on the median value of a metric (like UMI counts) across all cells and how much each cell deviates from that median. Cells that fall beyond a certain number of MADs from the median are considered outliers. This approach is more resistant to the influence of extreme values than methods based on the mean and standard deviation [6] [9].

Q3: How do I handle samples with different cell types that have naturally different UMI counts? When your sample contains cell types with vastly different RNA contents (e.g., neutrophils versus lymphocytes), applying one global threshold to the entire dataset can be harmful. In such cases, a more advanced strategy is cluster-specific QC. This involves performing an initial, permissive clustering of the cells and then applying data-driven QC thresholds within each cluster separately. This protects rare or biologically distinct cell populations from being filtered out [6].

Q4: My data is very large. Are there automated tools for this process?

Yes, many widely used single-cell analysis toolkits have built-in functions for data-driven QC. For example, the perCellQCFilters() function in the scater R package (part of the Bioconductor ecosystem) can automatically identify outliers for multiple QC metrics using the MAD method [9]. The Seurat and Scanpy packages also provide extensive functionality for calculating and visualizing these metrics to guide threshold setting.

Q5: What other metrics should I consider alongside UMI and gene counts? A comprehensive QC workflow always includes assessing the percentage of reads mapping to the mitochondrial genome. A high percentage often indicates broken or dying cells, as cytoplasmic RNA leaks out while mitochondrial RNAs are retained. However, this threshold is also biology-dependent; some active cell types, like cardiomyocytes, naturally have high mitochondrial activity [6] [10].

Q6: Is quality control a one-time step? No, quality control is often an iterative process. It is good practice to start with permissive filtering parameters. After performing initial clustering and cell type annotation, you should re-inspect the QC metrics across the clusters. You may discover that some low-quality cells were missed or that some valid cells were incorrectly filtered, requiring you to revisit your thresholds [6].

## Troubleshooting Guide

### Problem: Loss of a known cell population after filtering

Potential Cause: The filtering thresholds for UMI counts, gene counts, or mitochondrial percentage were too stringent and did not account for the natural biological variation of that specific cell type.

Solution:

- Re-visit your distributions: Before filtering, plot the distribution of your QC metrics (library size, number of genes, mitochondrial percentage) colored by the cluster or cell type identity from an initial, lightly filtered analysis.

- Implement cluster-specific filtering: If you observe that one cluster has systematically lower UMI counts or higher mitochondrial content, apply your data-driven thresholding method (e.g., MAD-based filtering) separately to each cluster. This ensures that thresholds are tailored to the biological properties of each cell group [6].

- Use diagnostic plots: The following diagnostic plot helps visualize the relationship between key QC metrics and can reveal if certain cell populations are driving specific metric distributions.

### Problem: Ambiguous boundary between cells and empty droplets in UMI/gene count distribution

Potential Cause: The data does not have a clear inflection point ("knee") in the barcode rank plot, making it difficult to distinguish true cells from background noise using simple thresholds.

Solution:

- Use advanced cell-calling algorithms: Move beyond a simple UMI cutoff. Tools like emptyDrops use a statistical framework to test whether each barcode's expression profile is significantly different from the ambient RNA profile. Barcodes that are significantly different are classified as cells [6] [10].

- Leverage ambient RNA removal: Consider using tools like SoupX, CellBender, or DecontX to estimate and subtract the background ambient RNA signal from your count matrix. This can "clean" the data and make the separation between cells and empty droplets more distinct, simplifying subsequent filtering [6] [10].

## Experimental Protocols & Data Presentation

### Protocol: A Data-Driven Workflow for Setting UMI and Gene Count Thresholds

This protocol outlines the steps for using the Median Absolute Deviation (MAD) method to set robust, dataset-specific filtering thresholds.

Step 1: Calculate QC Metrics

Using your analysis toolkit (e.g., Seurat's PercentageFeatureSet and CreateSeuratObject or Scanpy's pp.calculate_qc_metrics), compute for every cell barcode:

nCount_RNA: Total number of UMIs (library size).nFeature_RNA: Total number of unique genes detected.percent.mt: Percentage of UMIs mapping to mitochondrial genes.

Step 2: Visualize Metric Distributions Plot the distributions of these three metrics using violin plots or histograms. This provides an initial overview of data quality and helps identify obvious issues.

Step 3: Calculate MAD-Based Thresholds For each metric, calculate the lower and upper bounds. The following logic is typically applied:

- For UMI Counts and Gene Counts: Filter out outliers on both the lower end (likely empty droplets) and the upper end (likely multiplets).

- For Mitochondrial Percentage: Filter out outliers only on the upper end (likely dead/dying cells).

The standard formula for a MAD-based threshold for a metric ( x ) is: [ \text{Lower Bound} = \text{Median}(x) - 3 \times \text{MAD} ] [ \text{Upper Bound} = \text{Median}(x) + 3 \times \text{MAD} ] Where ( \text{MAD} = \text{median}( | x_i - \text{median}(x) | ) ).

Note: For library size and gene counts, calculations are often performed on log-transformed values to mitigate the influence of extreme high outliers on the MAD [9].

Step 4: Apply Filters and Document Remove all cell barcodes that fall outside the calculated bounds for any of the key metrics. It is critical to record the final thresholds and the number of cells filtered for each metric to ensure reproducibility.

The table below summarizes the key QC metrics, their biological interpretations, and the recommended data-driven approach for setting thresholds.

Table 1: Key Quality Control Metrics for scRNA-seq Data Filtering

| QC Metric | Description | What Low Values May Indicate | What High Values May Indicate | Data-Driven Thresholding Method |

|---|---|---|---|---|

| UMI Counts (Library Size) | Total number of mRNA molecules detected per cell. | Empty droplet, ambient RNA, or very small cell (e.g., platelet). | Multiplet (multiple cells in one droplet) or a large, transcriptionally active cell. | Median Absolute Deviation (MAD), typically applied to log-transformed values. Common cutoff: 3 MADs from the median [6] [9]. |

| Gene Counts (Number of Features) | Number of unique genes detected per cell. | Empty droplet, poor-quality cell, or a cell type with low transcriptional complexity. | Multiplet or a cell with very broad transcriptional activity. | Median Absolute Deviation (MAD), typically applied to log-transformed values. Common cutoff: 3 MADs from the median [6] [9]. |

| Mitochondrial Percent | Percentage of a cell's UMIs that map to mitochondrial genes. | Not typically used as a lower filter. | Cell stress, apoptosis, or broken cell where cytoplasmic RNA has been lost. | Median Absolute Deviation (MAD). Common cutoff: 3 MADs above the median [6] [9]. |

### The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions for scRNA-seq QC

| Item | Function in scRNA-seq QC |

|---|---|

| Cell Ranger | A set of official pipelines from 10x Genomics that processes raw sequencing data (FASTQ) into aligned reads, generates the feature-barcode count matrix, and performs initial cell calling. It is the foundational step before applying further QC filters [6] [10]. |

| Unique Molecular Identifiers (UMIs) | Short nucleotide barcodes added to each mRNA molecule during library preparation. UMIs allow for the accurate counting of original transcript molecules and correction for amplification bias, making UMI count a core QC metric [14]. |

| ERCC Spike-in RNAs | A set of synthetic, external RNA controls added to the cell lysate in known concentrations. They can be used to monitor technical variability and assess the sensitivity of the assay, providing an alternative metric for identifying low-quality cells [9]. |

| Mitochondrial Read Proportion | Not a reagent, but a critical computational metric. The proportion of reads from mitochondrial genes serves as a natural internal control for cell health, as it increases in compromised cells [6] [32]. |

## Workflow Visualization

The following diagram illustrates the complete, iterative workflow for quality control and filtering of single-cell RNA-seq data, integrating both data-driven thresholding and biological inspection.

Addressing Ambient RNA Contamination with Tools like SoupX and CellBender

Ambient RNA contamination is a pervasive technical challenge in droplet-based single-cell RNA sequencing (scRNA-seq). It occurs when freely floating mRNAs from the input solution are captured along with cell-specific mRNAs, leading to a contaminated gene expression profile that can confound downstream biological interpretation [33] [34]. This background contamination, often originating from lysed or dead cells, varies significantly between experiments (typically 2-50%), with around 10% being common [33]. The consequences are particularly severe for rare cell type identification and can lead to biological misinterpretation, such as misannotation of glial cell types in brain samples due to neuronal ambient RNA [35]. This guide provides comprehensive troubleshooting and FAQs for addressing ambient RNA contamination within the broader context of filtering low-quality cells in scRNA-seq research.

FAQs and Troubleshooting for Ambient RNA Correction Tools

SoupX

Q: The autoEstCont function fails with an "Extremely high contamination estimated" error. What should I do?

A: This error occurs when SoupX estimates an unrealistically high contamination fraction (e.g., >0.5), often in problematic samples. You have several options:

- Manual Estimation: Use

setContaminationFractionto manually set a reasonable value based on the distribution from the failedautoEstContcall. For example, if the distribution shows a peak around 0.1, setcontFrac = 0.1[36]. - Range Limitation: Re-run

autoEstContwith thecontaminationRangeparameter to restrict the estimation range (e.g.,c(0.01, 0.5)) [36]. - Sample Evaluation: Consider if the sample quality is too poor. Extremely high contamination may indicate fundamental issues with sample preparation that cannot be fully resolved computationally [36].

Q: My data still appears contaminated after running SoupX. Why didn't it work?

A: Several factors could cause this:

- Missing Cluster Information: SoupX requires clustering information to identify contamination accurately. Ensure you have provided clustering information either via

setClustersor by loading 10X data withload10X, which automatically imports cellranger clusters [33]. - Insufficient Marker Diversity: The automatic estimation relies on diverse marker genes. For homogeneous data (e.g., cell lines) or very few cells (< few hundred), manual estimation may be necessary [33].

- Conservative Defaults: SoupX is designed to err on the side of not removing true counts. For situations where aggressive removal is preferred, try manually increasing the contamination fraction with

setContaminationFraction[33].

Q: I cannot find appropriate genes to estimate the contamination fraction. What should I try?

A: Ideal genes are highly specific to certain cell types and show bimodal expression patterns:

- Common Markers: First try commonly successful gene sets like hemoglobin (HB) genes for erythrocytes, immunoglobulin genes for B-cells, or TPSB2/TPSAB1 for mast cells [33].

- Visual Inspection: Use

plotMarkerDistributionto identify genes with appropriate expression patterns [33]. - Alternative Approach: If no clear markers emerge, test a range of contamination fractions (e.g., 2-10%) to evaluate their impact on your downstream analysis [33].

CellBender

Q: How do I know if CellBender worked correctly after it completes?

A: Several diagnostic approaches can verify success:

- Learning Curve Inspection: Check the ELBO (Evidence Lower Bound) versus epoch plot in the output

_report.html. A good run shows the ELBO increasing and plateauing, not spiking or decreasing [37]. - HTML Report: Review the automatic diagnostics in the output report, which provides warnings and recommendations [37].

- Downstream Analysis: Load the output with

load_anndata_from_input_and_outputand compare raw vs. corrected data in downstream analyses like clustering and marker gene expression [37].

Q: The learning curve (ELBO vs. epoch) looks strange with spikes or dips. What does this indicate?

A: Spikes or downward trends in the learning curve typically indicate training instability:

- Solution: Reduce the

--learning-rateby a factor of two and rerun CellBender. Training should proceed for at least 150 epochs until the ELBO plateaus [37]. - Expected Pattern: Ideally, the ELBO should increase monotonically and stabilize. Examples of good and bad learning curves are provided in the CellBender documentation [37].

Q: CellBender seems to have called too many or too few cells. How can I adjust this?

A: Cell calling depends on several parameters:

- Too Many Cells: Increase

--total-droplets-includedor decrease--expected-cells. Remember that CellBender identifies "non-empty" droplets, which may include low-quality cells requiring downstream filtering [37]. - Too Few Cells: Increase

--expected-cellsand ensure--total-droplets-includedis large enough to include all potentially non-empty droplets [37]. - Downstream Filtering: Apply additional filters post-CellBender based on mitochondrial read percentage and genes expressed per cell [37].

Q: Do I need a GPU to run CellBender, and how can I work around resource limitations?

A: While not absolutely necessary, GPU usage significantly speeds up processing:

- CPU Alternative: For CPU-only runs, use fewer

--total-droplets-included, increase--projected-ambient-count-thresholdto analyze fewer features, and decrease--empty-drop-training-fraction[37]. - Cloud Solutions: Consider Terra or Google Colab for free GPU access. CellBender produces checkpoint files, allowing you to resume interrupted runs [37].

Quantitative Data Comparison

Table 1: Key Characteristics of Ambient RNA Removal Tools

| Feature | SoupX | CellBender |

|---|---|---|

| Primary Approach | Estimates contamination from empty droplets; subtracts counts using cluster information [33] [34] | Deep generative model that distinguishes cell-containing from cell-free droplets; learns background profile [37] [34] |

| Typical Contamination Reduction | 2-50% (depending on initial contamination) [33] | Varies by dataset; metrics provided in output [37] |

| Computational Demand | Moderate | High (GPU recommended) [37] [34] |

| Key Parameters | Contamination fraction (rho), cluster labels [33] [38] | Expected cells, FPR, total droplets included [37] |

| Integration with scRNA-seq Pipelines | Compatible with Seurat; outputs corrected count matrix [33] [38] | Outputs Anndata object compatible with Scanpy and Seurat [37] |

| Best Suited For | Standard 10X data; cases where cluster information is available [33] | Complex datasets requiring joint cell calling and background removal [37] [34] |

Table 2: Typical Parameter Settings for Common Scenarios

| Scenario | SoupX Parameters | CellBender Parameters |

|---|---|---|

| Standard 10X Data | autoEstCont with default parameters [33] |

--expected-cells 10000 --fpr 0.01 [37] |

| High Contamination Samples | Manual setContaminationFraction at 0.1-0.2 or contaminationRange = c(0.01, 0.5) [36] |

--fpr 0.05 --total-droplets-included 20000 [37] |

| Low Cell Number (<1000) | Manual contamination fraction setting; cluster information critical [33] | Reduce --total-droplets-included; consider CPU parameters [37] |

| Complex Tissues (e.g., Brain) | Ensure clustering resolution sufficient to distinguish cell types [33] | Standard parameters typically adequate; check for neuronal contamination in glia [35] |

Workflow Integration Diagrams

Diagram 1: Ambient RNA Correction Workflow Integration. This diagram outlines the decision process for incorporating ambient RNA correction into scRNA-seq quality control, showing tool selection criteria and iterative evaluation.

Table 3: Key Resources for Ambient RNA Correction Experiments

| Resource | Function/Purpose | Implementation Notes |

|---|---|---|

| 10X Genomics Cell Ranger | Initial processing of 10X data; provides filtered and raw matrices for SoupX input [34] | Use raw matrix for SoupX; filtered matrix for CellBender (depending on workflow) |

| Clustering Information | Essential for SoupX to distinguish true expression from contamination [33] | Can be from Cell Ranger or custom clustering (e.g., Seurat, Scanpy) |

| Marker Gene Sets | Genes with cell-type specific expression used to estimate contamination [33] [35] | Hemoglobin genes, immunoglobulin genes, cell-type specific markers |

| Seurat/Scanpy | Downstream analysis platforms for evaluating correction effectiveness [33] [37] | Compare clustering and marker expression before/after correction |

| Mitochondrial Gene List | Quality control metric to identify compromised cells [39] | High expression may indicate cell stress/death contributing to ambient RNA |

| Reference Datasets | Positive controls for expected cell-type specific expression patterns [39] [35] | e.g., Allen Brain Atlas for neuronal markers; PanglaoDB for general cell markers |

Effective management of ambient RNA contamination is an essential component of comprehensive scRNA-seq quality control. Both SoupX and CellBender offer powerful solutions, with complementary strengths: SoupX provides a cluster-aware approach that integrates well with standard workflows, while CellBender offers a more comprehensive joint modeling of cells and background. Success requires careful parameter optimization, thorough diagnostic checks, and integration with other quality control measures. By addressing ambient RNA contamination appropriately, researchers can significantly improve cell type identification accuracy, enhance differential expression detection, and draw more reliable biological conclusions from their single-cell transcriptomic studies.

Identifying and Removing Doublets with Scrublet and DoubletFinder

Within the broader context of filtering low-quality cells in single-cell RNA sequencing (scRNA-seq) research, the identification and removal of doublets—artifacts formed when two or more cells are sequenced as a single entity—is a critical preprocessing step. Doublets can lead to spurious cell type identification, obscure genuine biological signals, and compromise the integrity of downstream analyses [40]. This guide focuses on two widely used computational tools for doublet detection, Scrublet and DoubletFinder, providing a technical support center to address common implementation challenges and frequently asked questions.

Scrublet: Core Principle and Workflow

Scrublet is a Python-based framework designed to predict the impact of multiplets and identify problematic doublets in scRNA-seq data. Its method operates on two key assumptions: multiplets are relatively rare events, and all cell states contributing to doublets are also present as single cells elsewhere in the data [40].

The algorithm works by:

- Simulating Doublets: Generating artificial doublets from the observed data by combining random pairs of observed transcriptomes.

- Building a Classifier: Constructing a k-nearest neighbor (KNN) classifier to calculate a continuous

doublet_scorefor each observed transcriptome based on the relative densities of simulated doublets and observed transcriptomes in its vicinity [40].

DoubletFinder: Core Principle and Workflow

DoubletFinder is an R package that interfaces with Seurat objects to predict doublets. Its performance is largely invariant to the proportion of artificial doublets generated (pN) but is sensitive to the neighborhood size (pK), which must be optimized for each dataset [41].

The process involves four main steps:

- Generate Artificial Doublets: Create artificial doublets from existing scRNA-seq data.

- Pre-process Merged Data: Merge real and artificial data and pre-process.

- Compute pANN: Perform PCA and use the PC distance matrix to find each cell's proportion of artificial k nearest neighbors (pANN).

- Threshold pANN Values: Rank order and threshold pANN values according to the expected number of doublets [41].

Classifying Doublet-Associated Errors

Understanding the types of errors doublets introduce helps in appreciating the tools' utility:

- Neotypic Errors: Generate new features in the data, such as spurious cell clusters, "branches" from an existing cluster, or "bridges" between clusters. These can lead to qualitatively incorrect biological inferences and are typically caused by heterotypic doublets (formed from transcriptionally distinct cell types) [40].

- Embedded Errors: Cause quantitative changes in gene expression when a doublet is grouped with a large population of similar singlets. Their impact is generally smaller if doublets are rare and are often caused by homotypic doublets (formed from transcriptionally similar cells) [40].

The following diagram illustrates the logical workflow and key decision points for both tools:

Troubleshooting Guides

Scrublet Troubleshooting

| Problem | Possible Cause | Solution |

|---|---|---|

| Poorly defined bimodal histogram [42] | Suboptimal choice of min_gene_variability_pctl, which controls the set of highly variable genes used for classification. |

Re-run Scrublet trying multiple percentile values (e.g., 80, 85, 90, 95). Choose the value that produces the best bimodal distribution in the doublet_score_histogram.png. |

| Predicted doublets do not co-localize in UMAP [43] | The doublet score threshold was set incorrectly, or pre-processing parameters do not adequately resolve the underlying cell states. | Manually adjust the scrublet_doublet_threshold parameter and/or re-process the data to better resolve cell states before running Scrublet. |

| Low doublet detection rate on merged data | Running Scrublet on an aggregated dataset from multiple samples (e.g., different 10X lanes) where artificial cell states are created. | Run Scrublet on each sample separately. The tool is designed to detect technical doublets within a single sample [43]. |

DoubletFinder Troubleshooting

| Problem | Possible Cause | Solution |

|---|---|---|

| Multiple potential pK values when visualizing BCmvn [41] | The mean-variance normalized bimodality coefficient (BCmvn) plot shows several local maxima, making pK selection ambiguous. | Spot-check the results in gene expression (GEX) space for the top candidate pK values. Select the pK that makes the most sense given your biological understanding of the data [41]. |

| Inaccurate doublet number estimation | Using the Poisson statistic alone, which overestimates detectable doublets by ignoring homotypic doublets (transcriptionally similar cells). | Use literature-supported cell type annotations to model the proportion of homotypic doublets. The Poisson estimate (without homotypic adjustment) and the adjusted estimate can 'bookend' the real detectable doublet rate [41]. |

| Poor performance on aggregated data | Running DoubletFinder on data merged from multiple distinct samples (e.g., WT and mutant cell lines). Artificial doublets generated from biologically distinct samples cannot exist in the real data and skew results. | Only run DoubletFinder on data from a single sample or from splitting a single sample across multiple lanes. Do not run on integrated Seurat objects representing biologically distinct conditions [41]. |

Frequently Asked Questions (FAQs)

Q1: What anticipated doublet rate should I use for my experiment?

The expected doublet rate is dependent on your platform (10x, Parse, etc.) and the number of cells loaded. It is not a fixed value. You should consult the user guide for your specific technology to determine the expected rate based on your cell loading density. For example, one common calculation for 10x data is to use (number of recovered cells / 1000) * 0.008 [42] [41].

Q2: Can Scrublet and DoubletFinder detect homotypic doublets? Both tools are primarily sensitive to heterotypic doublets (formed from different cell types). They are largely insensitive to homotypic doublets (formed from the same or very similar cell types) because the resulting transcriptome closely resembles a singlet [41] [40]. This is a fundamental limitation of most computational doublet detection methods.

Q3: Should I run doublet detection before or after data integration and normalization? You should run doublet detection on individual samples prior to data integration. Running these tools on aggregated or integrated data can create artificial cell states that do not biologically exist, leading to inaccurate doublet predictions [41] [43]. The analysis should be performed on normalized data, typically after initial quality control to remove low-quality cells but before batch correction or integration of multiple samples.

Q4: How do I determine the optimal pK parameter for DoubletFinder?

DoubletFinder does not set a default pK. The optimal pK must be determined for each dataset using the parameter sweep function (paramSweep_v3) and the mean-variance normalized bimodality coefficient (BCmvn) metric. The pK value corresponding to the maximum BCmvn is typically selected for downstream analysis [41].

Q5: My data is very homogeneous. Will these tools work? Performance of both tools suffers when applied to transcriptionally homogeneous data because the simulated doublets will be embedded within the main cell population, making them difficult to distinguish from singlets [41] [40]. In such cases, it is even more critical to use an accurate prior expectation for the doublet rate and to be aware that many true doublets may go undetected.

The Scientist's Toolkit: Essential Materials and Reagents

The following table details key reagents and computational tools essential for preparing samples for scRNA-seq and subsequent doublet detection analysis.

| Item | Function/Description | Relevance to Doublet Detection |

|---|---|---|

| 10x Genomics Chromium Platform | A droplet-based system for high-throughput single-cell partitioning and barcoding. | The platform's user guide provides expected doublet rates based on cell loading density, which is a critical input parameter for both Scrublet and DoubletFinder [10]. |

| Parse Biosciences Evercode Combinatorial Barcoding | A wafer-based technology that uses combinatorial barcoding for single-cell analysis. | Known for lower doublet rates, which can be used to inform the expecteddoubletrate parameter in Scrublet [44]. |

| Cell Hashing Antibodies [17] | Sample-specific antibody tags that allow experimental multiplexing. | Provides a ground-truth method for identifying multiplets formed from cells of different samples, enabling validation of computational predictions. |

| Cell Ranger Software | 10x Genomics' pipeline for processing raw sequencing data into a count matrix. | Generates the filtered_feature_bc_matrix.h5 file that serves as the primary input for Scrublet and is used to create the Seurat object for DoubletFinder [10]. |

| Seurat R Toolkit | A comprehensive R package for single-cell genomics. | DoubletFinder is implemented to interface directly with processed Seurat objects, making it a dependency for using this tool [41]. |

Beyond the Basics: Troubleshooting Common Pitfalls and Optimizing Filters

Core Concepts: Why Mitochondrial Thresholds Are Not One-Size-Fits-All

What is the biochemical threshold effect in mitochondrial biology?

The biochemical threshold effect refers to the minimum percentage of mutant mitochondrial DNA (mtDNA) copies, known as the Variant Allele Frequency (VAF), required before a measurable defect in oxidative phosphorylation (OXPHOS) complex activity occurs [45]. It is widely accepted that the mere presence of a pathogenic mtDNA variant is not sufficient to alter mitochondrial function and result in disease; the proportion of mutant mtDNAs must reach a critical level to cause a biochemical defect [45].

Why can't I use a single mitochondrial read percentage cutoff for all my scRNA-seq experiments?

Using a single cutoff is not recommended because the expression level of mitochondrial genes and the sensitivity to mitochondrial dysfunction vary significantly among different cell types [6]. For example:

- High-Energy Tissues: Cells from tissues with high energy demands, such as cardiomyocytes, neurons, and skeletal muscle, naturally have higher levels of mitochondrial gene expression. Applying a standard, stringent cutoff might incorrectly filter out these viable, biologically distinct cells [46] [6].

- Disease States: In the context of certain diseases, such as spinocerebellar ataxia type 1 (SCA1), mitochondrial processes are significantly affected in specific cell types like Purkinje cells. A generic filter could remove the very cells central to the study [47].

- Cell Death Indicator: An increased percentage of mitochondrial reads is often associated with broken or dying cells, as cytoplasmic mRNA leaks out while mitochondrial RNAs remain trapped and are captured in the assay [15] [6]. However, this is not the only biological interpretation.

The following table summarizes key factors that necessitate tailored thresholds:

Table 1: Factors Influencing Mitochondrial Thresholds in scRNA-seq

| Factor | Impact on Mitochondrial Read Percentage | Example Cell Types or Conditions |

|---|---|---|

| Inherent Cell Metabolism | Cells with high metabolic rates naturally have higher mitochondrial content. | Cardiomyocytes, neurons, skeletal muscle cells [46] [6] |

| Pathological Cell Stress | Loss of cytoplasmic RNA due to cell rupture artificially inflates the mitochondrial fraction. | Apoptotic or necrotic cells in any sample [15] [6] |

| Specific Disease Pathways | Disease mechanisms may directly alter mitochondrial gene expression or mass. | Neurodegenerative diseases, metabolic syndromes [47] [48] |

| Species and Gene Annotation | Mitochondrial gene prefixes differ by species (e.g., MT- human vs. mt- mouse) [15] [49]. |

Human (MT-ND1, MT-CO1), Mouse (mt-Nd1, mt-Co1) |

Experimental Protocols & Methodologies

How do I implement a data-driven threshold for mitochondrial read filtering?

A robust method for setting a flexible threshold is using the Median Absolute Deviation (MAD), a robust statistic of variability. This is preferable to arbitrary cutoffs, especially for heterogeneous samples [15] [6].

Protocol: Data-Driven Thresholding with Scanpy

This protocol assumes you have an AnnData object named adata containing your raw count matrix.

What is the recommended workflow for mitochondrial QC in scRNA-seq analysis?

A rigorous QC workflow involves multiple steps and iterative assessment. The diagram below outlines the logical sequence for making filtering decisions, emphasizing the importance of context.

Diagram Title: Iterative scRNA-seq Mitochondrial QC Workflow

Troubleshooting FAQs

I am studying a disease with known mitochondrial involvement. Should I relax my mitochondrial filters?

Not necessarily. Instead, adopt a more nuanced strategy. Filtering should be performed with the specific biological context in mind [47] [48].

- Recommended Action: Perform an initial, permissive round of filtering and clustering to annotate your cell types. Then, examine the distribution of