A Researcher's Guide to Batch Effects in Gene Expression PCA: From Detection to Correction and Validation

This article provides a comprehensive guide for researchers and drug development professionals on addressing batch effects in Principal Component Analysis (PCA) of gene expression data.

A Researcher's Guide to Batch Effects in Gene Expression PCA: From Detection to Correction and Validation

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on addressing batch effects in Principal Component Analysis (PCA) of gene expression data. It covers the foundational knowledge of identifying technical variations through visualization tools like PCA and UMAP, explores current methodological solutions including established algorithms like ComBat and Harmony, and delves into troubleshooting common pitfalls like over-correction. The guide also outlines rigorous validation frameworks using both quantitative metrics and downstream sensitivity analysis to ensure biological signals are preserved. By synthesizing the latest research and best practices, this resource aims to empower scientists to improve the reliability, reproducibility, and biological accuracy of their transcriptomic analyses.

Understanding and Detecting Batch Effects: Why Your PCA Plots Can Be Misleading

What are batch effects and why are they a critical problem in gene expression research?

Answer: Batch effects are systematic technical variations introduced into high-throughput omics data during the experimental process that are unrelated to the biological factors of interest [1] [2] [3]. These non-biological fluctuations occur when samples are processed and measured under different conditions, creating artifacts that can confound biological interpretation [4] [2].

The profound impact of batch effects makes them a critical concern:

- Misleading Conclusions: Batch effects can lead to false discoveries in differential expression analysis and prediction, especially when batch is correlated with biological outcomes [1] [3]. In one clinical trial example, a change in RNA-extraction solution caused incorrect classification outcomes for 162 patients, with 28 receiving incorrect or unnecessary chemotherapy regimens [1] [3].

- Irreproducibility Crisis: Batch effects from reagent variability and experimental bias are paramount factors contributing to the reproducibility crisis in science [1] [3]. A Nature survey found 90% of researchers believe there is a reproducibility crisis, with batch effects identified as a major contributor [1] [3].

- Economic and Scientific Loss: Irreproducibility caused by batch effects has resulted in retracted articles, discredited research findings, and significant financial losses [1] [3].

Table 1: Common Sources of Batch Effects in Omics Studies

| Source Category | Specific Examples | Affected Omics Types |

|---|---|---|

| Study Design | Flawed/confounded design, sample size, number of batches | All omics types [1] [3] |

| Sample Preparation | Different centrifugal forces, storage temperature, freeze-thaw cycles | Transcriptomics, Proteomics, Metabolomics [1] [3] |

| Reagents & Personnel | Reagent lot variations, different personnel skill sets | All omics types [4] [2] |

| Sequencing & Instrumentation | Different sequencing platforms, instruments, runs | Genomics, Transcriptomics [5] [1] |

| Temporal Factors | Processing at different days, time of day, atmospheric conditions | All omics types [1] [2] |

How can I detect batch effects in my PCA of gene expression data?

Answer: Principal Component Analysis (PCA) is one of the most effective methods for visualizing and detecting batch effects in gene expression data [5] [6]. When examining your PCA results, look for these telltale signs of batch effects:

Visual Detection Methods:

- PCA Cluster Separation: Create a PCA plot from your raw data and color the samples by batch. If samples cluster primarily by their batch rather than by biological condition, this indicates strong batch effects [5] [6]. The scatter plot of top principal components should be analyzed for variations induced by batch effects rather than biological sources [5].

- t-SNE/UMAP Examination: Visualize cell groups on a t-SNE or UMAP plot, labeling cells based on their batch number. In the presence of uncorrected batch effects, cells from different batches tend to cluster together instead of grouping based on biological similarities [5] [6].

Quantitative Assessment Metrics: For more objective assessment, several quantitative metrics can complement visual inspection:

Table 2: Quantitative Metrics for Batch Effect Detection

| Metric Name | Purpose | Interpretation |

|---|---|---|

| k-Nearest Neighbor Batch Effect Test (kBET) | Tests if batches are well-mixed in local neighborhoods | Lower values indicate better mixing [5] |

| Local Inverse Simpson's Index (LISI) | Measures diversity of batches in local neighborhoods | Higher values indicate better integration [7] |

| Principal Component Analysis (PCA) | Identifies batch effect through analysis of top principal components | Sample separation by batch indicates batch effect [5] [6] |

| Clustering Examination | Checks if data clusters by batches instead of treatments | Clustering by batch signals batch effects [6] |

Experimental Protocol: PCA-Based Batch Effect Detection

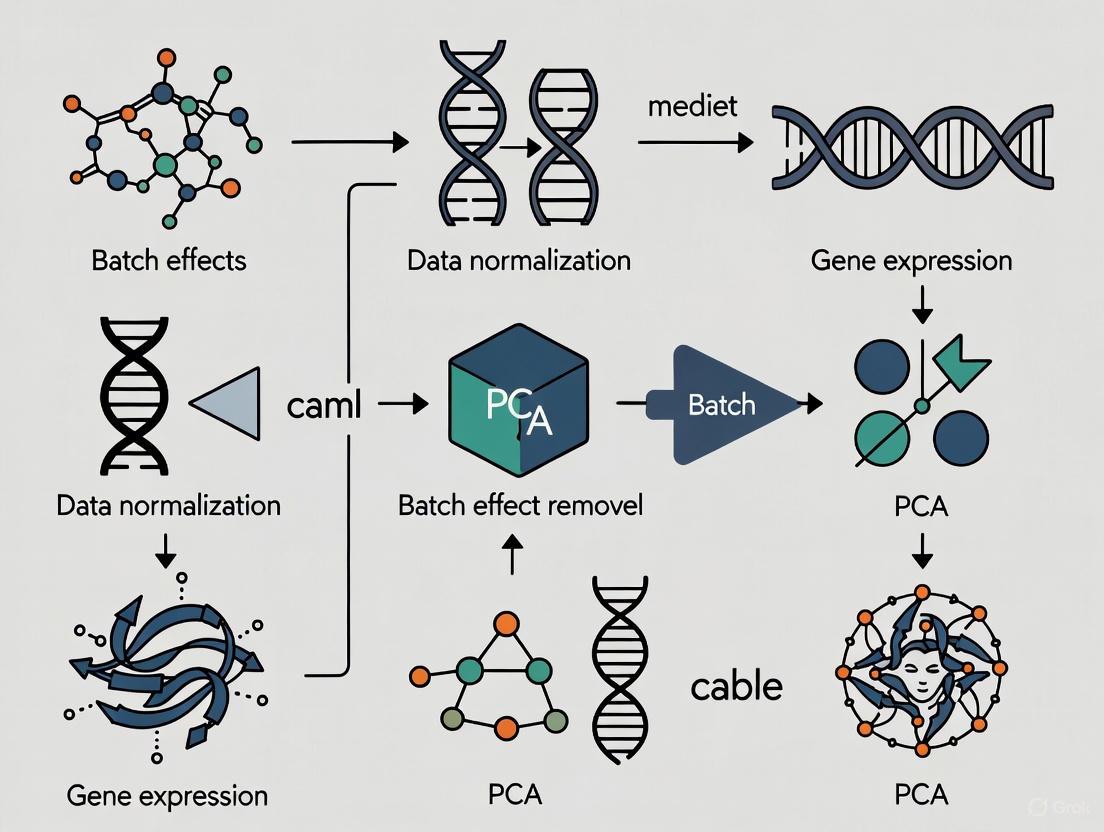

Diagram 1: Batch Effect Assessment Workflow

What are the most effective methods for correcting batch effects in PCA of gene expression data?

Answer: Multiple computational approaches have been developed for batch effect correction, each with different strengths and appropriate use cases. The choice of method depends on your experimental design, data type, and the severity of batch effects.

Batch Effect Correction Methods:

Table 3: Comparison of Major Batch Effect Correction Methods

| Method | Algorithm Type | Best For | Key Features | Performance Notes |

|---|---|---|---|---|

| ComBat/ComBat-seq | Empirical Bayes | Bulk RNA-seq, small sample sizes | Adjusts for batch effects using empirical Bayes framework [4] [8] | Particularly useful for small sample sizes as it borrows information across genes [8] |

| Harmony | PCA-based iterative clustering | Single-cell RNA-seq, large datasets | Uses PCA + iterative clustering to maximize diversity within clusters [5] [6] | Recommended for faster runtime; good performance in benchmarks [5] [6] |

| Limma removeBatchEffect | Linear model adjustment | Bulk RNA-seq, microarray | Removes estimated batch effects using linear regression techniques [4] [8] | Well-integrated with limma-voom workflow; works on normalized data [8] |

| Seurat CCA | Canonical Correlation Analysis | Single-cell RNA-seq | Uses CCA to project data into subspace, finds mutual nearest neighbors [5] [6] | Good performance but has lower scalability [6] |

| MNN Correct | Mutual Nearest Neighbors | Single-cell RNA-seq | Detects mutual nearest neighbors between datasets to quantify batch effects [5] [7] | Can be computationally intensive due to high-dimensional neighbor computations [5] |

| SVA (Surrogate Variable Analysis) | Surrogate variable estimation | Studies with unknown batch factors | Identifies and adjusts for unknown sources of variation [1] [8] [9] | Particularly useful when batch information is incomplete [8] |

Experimental Protocol: GTExPro Batch Correction Pipeline The GTExPro pipeline provides a robust framework for batch correction in large-scale transcriptomic data, integrating multiple correction strategies [9]:

Diagram 2: GTEx Pro Batch Correction Pipeline

This pipeline has demonstrated:

- Enhanced Tissue-Specific Clustering: 3D PCA showed pronounced enhancement in tissue-specific clustering after processing [9]

- Improved Euclidean Distances: Average Euclidean distance between tissue clusters increased after SVA batch correction [9]

- Better Clustering Quality: Davies-Bouldin index (DBI) scores decreased, indicating better clustering following batch correction [9]

How can I avoid overcorrection and ensure I'm preserving biological signals?

Answer: Overcorrection occurs when batch effect removal methods inadvertently remove biological variation, potentially causing more harm than the original batch effects. Watch for these key signs of overcorrection:

Signs of Overcorrection:

- Distinct Cell Types Cluster Together: On dimensionality reduction plots (PCA, t-SNE, UMAP), biologically distinct cell types that should form separate clusters appear merged together [5] [6]

- Complete Overlap of Samples: When samples from very different biological conditions show complete overlap in visualizations, suggesting loss of meaningful biological variation [6]

- Loss of Expected Markers: Canonical cell-type-specific markers that are known to be present in the dataset fail to appear in differential expression analysis [5]

- Ribosomal Gene Dominance: A significant portion of cluster-specific markers comprises genes with widespread high expression (e.g., ribosomal genes) rather than true biological markers [5]

- Absence of Differential Expression: Scarcity or absence of differential expression hits associated with pathways expected based on the experimental conditions [5]

Strategies to Prevent Overcorrection:

- Start with Assessment: Always assess whether batch effects actually exist before applying correction methods [6]

- Compare Multiple Methods: Test different batch correction algorithms as performance can vary across datasets [6]

- Use Positive Controls: Include known biological signals in your experiment to verify they persist after correction

- Validate with External Data: Compare your corrected data with independent datasets or published results

- Examine Negative Controls: Ensure that biologically unrelated samples don't artificially cluster together after correction

How does sample imbalance affect batch effect correction and how can I address it?

Answer: Sample imbalance occurs when there are differences in the number of cell types present, cells per cell type, and cell type proportions across samples. This is particularly common in cancer biology with significant intra-tumoral and intra-patient discrepancies [6].

Impact of Sample Imbalance: Recent benchmarking across 2,600 integration experiments has demonstrated that "sample imbalance has substantial impacts on downstream analyses and the biological interpretation of integration results" [6]. When sample imbalance occurs with batch effects, it can:

- Skew correction toward over-represented cell types

- Cause under-represented cell types to be improperly corrected or lost

- Lead to inaccurate biological interpretations

- Reduce the effectiveness of integration techniques

Guidelines for Imbalanced Sample Integration: Based on recent benchmarking studies [6], follow these refined guidelines:

- Assess Imbalance First: Quantify the degree of sample imbalance before selecting a correction method

- Method Selection: Choose batch correction methods that have demonstrated robustness to sample imbalance

- Stratified Sampling: Consider using stratified approaches when possible to balance cell type representation

- Validation: Pay special attention to rare cell populations in your validation to ensure they haven't been adversely affected

- Multiple Method Testing: Test how different correction methods handle your specific imbalance pattern

The Researcher's Toolkit: Essential Resources for Batch Effect Management

Table 4: Key Research Reagent Solutions for Batch Effect Mitigation

| Tool/Resource | Function | Application Context |

|---|---|---|

| Omics Playground | Automated batch effect correction platform with multiple methods | Accessible bioinformatics for users without programming skills [4] |

| Polly Processed Data | Batch-corrected single-cell data with quantitative validation | Ensuring "Polly Verified" absence of batch effects in delivered datasets [5] |

| CDIAM Multi-Omics Studio | Interactive platform with preset workflows for batch correction | Convenient exploration of various omics data with interactive UI [6] |

| RECODE/iRECODE | Simultaneous technical and batch noise reduction | Single-cell RNA-seq, epigenomics, and spatial transcriptomics [7] |

| GTEx_Pro Pipeline | TMM + CPM + SVA integrated normalization and correction | Large-scale transcriptomic datasets like GTEx [9] |

| HarmonizR | Data harmonization across independent proteomic datasets | Appropriate handling of missing values in proteomics [2] |

Are batch effect correction methods different for single-cell RNA-seq versus bulk RNA-seq?

Answer: Yes, significant algorithmic differences exist between batch effect correction methods for single-cell versus bulk RNA-seq data, primarily due to fundamental data structure differences [5] [1].

Key Differences:

- Data Sparsity: Single-cell RNA-seq data exhibits high dropout rates (almost 80% of gene expression values are zero), requiring methods specifically designed to handle this sparsity [5] [1]

- Data Scale: Single-cell experiments typically involve thousands of cells versus tens of samples in bulk RNA-seq, necessitating different computational approaches [5]

- Technical Variation: Single-cell technologies suffer from higher technical variations including lower RNA input, higher dropout rates, and greater cell-to-cell variation [1] [3]

Method Compatibility:

- Bulk Methods on Single-cell Data: Techniques used in bulk RNA-seq are often insufficient for single-cell data due to data size and sparsity challenges [5]

- Single-cell Methods on Bulk Data: Single-cell RNA-seq techniques may be excessive for the smaller experimental design of bulk RNA-seq [5]

- Cross-omics Applications: Some batch effect correction algorithms originally developed for one omics type have shown applicability to other types, while others remain platform-specific [1] [3]

The selection of appropriate batch effect correction methods should therefore be guided by your specific data type and experimental design, with particular attention to the fundamental differences between bulk and single-cell approaches.

Batch effects are systematic technical variations in data that are not related to the biological variables of interest. These non-biological variations arise from differences in experimental conditions, such as processing samples on different days, using different reagent lots, different sequencing instruments, or different personnel [8] [5] [10]. In transcriptomics studies, these effects represent one of the most challenging technical hurdles researchers face, as they can create significant artifacts in your data that may be mistakenly interpreted as biological signals if not properly addressed [8].

The impact of batch effects extends to virtually all aspects of RNA-seq data analysis. They can cause differential expression analysis to identify genes that differ between batches rather than between biological conditions, lead clustering algorithms to group samples by batch rather than by true biological similarity, and cause pathway enrichment analysis to highlight technical artifacts instead of meaningful biological processes [8]. The stakes are particularly high in large-scale studies where samples are processed in multiple batches over time, and in meta-analyses that combine data from multiple sources [8].

The Serious Consequences of Uncorrected Batch Effects

Batch effects have profound negative impacts on research outcomes. In the most benign cases, they increase variability and decrease statistical power to detect real biological signals. However, in worse scenarios, they can actively mislead researchers and contribute to the reproducibility crisis in scientific research [3].

Documented Cases of Severe Consequences:

- Clinical Misclassification: In a clinical trial, a change in RNA-extraction solution introduced batch effects that resulted in incorrect gene-based risk calculations for 162 patients, 28 of whom subsequently received incorrect or unnecessary chemotherapy regimens [3].

- Species vs. Tissue Clustering: One study initially reported that cross-species differences between human and mouse were greater than cross-tissue differences within the same species. However, reanalysis revealed this was an artifact of data generated 3 years apart. After proper batch correction, the data clustered by tissue type rather than by species [3].

- Retracted Research: High-profile articles have been retracted due to batch-effect-driven irreproducibility. In one case published in Nature Methods, authors identified a fluorescent serotonin biosensor, but later discovered its sensitivity was highly dependent on the reagent batch, particularly the batch of fetal bovine serum. When the FBS batch changed, the key results could not be reproduced, leading to article retraction [3].

A survey conducted by Nature found that 90% of respondents believed there is a reproducibility crisis in science, with over half considering it a significant crisis. Among the many factors contributing to irreproducibility, batch effects from reagent variability and experimental bias are paramount factors [3].

Impact on Differential Expression Analysis

One of the most critical consequences of batch effects in transcriptomic data is their impact on differential expression analysis. When samples cluster by technical variables rather than biological conditions, statistical models may falsely identify genes as differentially expressed [10]. This introduces a high false-positive rate, misleading researchers and wasting downstream validation efforts. Conversely, true biological signals may be masked, resulting in missed discoveries [10].

Table 1: How Batch Effects Skew Research Outcomes

| Scenario | Impact on Data | Downstream Consequences |

|---|---|---|

| Benign Case | Increased technical variability | Reduced statistical power to detect real effects |

| Moderate Case | Batch-correlated features identified as significant | False positives in differential expression analysis |

| Severe Case | Batch effects correlated with outcomes of interest | Incorrect conclusions, irreproducible findings |

Detecting Batch Effects in Your Data

Before attempting correction, it's crucial to detect and visualize batch effects to understand their magnitude and pattern. Several approaches are available for this purpose, ranging from simple visualizations to quantitative metrics [5] [6].

Visualization Methods

Principal Component Analysis (PCA) is one of the most common techniques for batch effect detection. By performing PCA on raw data and coloring samples by batch in the scatter plot of top principal components, you can identify whether samples cluster by batch rather than biological sources [8] [5]. When examining the resulting PCA plot, look for clustering by batch rather than by biological condition. If samples cluster primarily by batch, this confirms the presence of significant batch effects that require correction [8].

t-SNE/UMAP Plot Examination provides another effective approach. By visualizing cell groups on a t-SNE or UMAP plot and labeling cells based on their batch number, you can identify whether cells from different batches cluster separately. In the presence of uncorrected batch effects, cells from different batches tend to cluster together based on technical factors instead of biological similarities [5].

The diagram below illustrates the workflow for detecting batch effects:

Quantitative Assessment

Beyond visual inspection, several quantitative metrics can objectively assess batch effect severity and correction quality [5] [10]:

Table 2: Quantitative Metrics for Batch Effect Assessment

| Metric | What It Measures | Interpretation |

|---|---|---|

| Average Silhouette Width (ASW) | Cluster compactness and separation | Higher values indicate better-defined clusters |

| Adjusted Rand Index (ARI) | Clustering accuracy compared to known cell types | Values closer to 1 indicate better cell type purity |

| Local Inverse Simpson's Index (LISI) | Neighborhood diversity in batch mixing | Higher values indicate better mixing of batches |

| k-nearest neighbor Batch Effect Test (kBET) | Proportion of cells with well-mixed neighbors | Higher acceptance rates indicate successful correction |

These metrics evaluate different aspects of correction—such as clustering tightness, batch mixing, and preservation of cell identity. To ensure robust results, it is recommended to combine both visualizations and quantitative metrics when validating batch effects and their correction [10].

Batch Effect Correction Methods

Multiple computational methods have been developed to address batch effects in transcriptomic data. These can be broadly categorized into one-step and two-step methods, each with distinct advantages and limitations [11].

One-step methods perform batch correction and data analysis simultaneously by integrating batch correction directly in the statistical model. For example, including a batch indicator covariate in a linear model during differential expression analysis represents a one-step approach. These methods have the advantage of removing batch effects directly in the modeling step but may be limited in their ability to capture complex batch effects [11].

Two-step methods perform batch correction as a separate data preprocessing step before downstream analysis. Methods like ComBat and SVA fall into this category. These approaches allow for richer modeling of batch effects (mean, variance, or other moments) but can introduce correlation structures in the corrected data that must be accounted for in downstream analyses [11].

Table 3: Comparison of Popular Batch Correction Methods

| Method | Type | Strengths | Limitations |

|---|---|---|---|

| ComBat | Two-step | Simple, widely used; adjusts known batch effects using empirical Bayes | Requires known batch info; may not handle nonlinear effects well [10] |

| SVA | Two-step | Captures hidden batch effects; suitable when batch labels are unknown | Risk of removing biological signal; requires careful modeling [10] |

| limma removeBatchEffect | Two-step | Efficient linear modeling; integrates with DE analysis workflows | Assumes known, additive batch effect; less flexible [10] |

| Harmony | One-step | Fast runtime; good performance in benchmarks | Output is embedding space rather than corrected counts [5] [6] |

| Seurat CCA | One-step | Well-integrated in Seurat workflow; good for complex data | Lower scalability for very large datasets [6] |

Practical Implementation

For RNA-seq count data, ComBat-seq and its refined version ComBat-ref use a negative binomial model specifically designed for count data adjustment [8] [12]. ComBat-ref innovates by selecting a reference batch with the smallest dispersion, preserving count data for the reference batch, and adjusting other batches toward the reference batch, demonstrating superior performance in both simulated environments and real-world datasets [12].

For single-cell RNA-seq data, Harmony and Seurat are among the most recommended methods. A comprehensive benchmark study recommended Harmony and Seurat CCA, with preference given to Harmony due to its faster runtime [6].

The following workflow diagram illustrates the batch effect correction process:

Troubleshooting Guide: FAQs on Batch Effect Correction

Q1: How can I tell if I'm overcorrecting my data?

Overcorrection occurs when batch effect removal also removes genuine biological variation. Signs of overcorrection include [5] [6]:

- Distinct cell types clustering together on dimensionality reduction plots (PCA, UMAP)

- A complete overlap of samples from very different biological conditions

- Cluster-specific markers comprising genes with widespread high expression across various cell types (e.g., ribosomal genes)

- Significant overlap among markers specific to different clusters

- Absence of expected cluster-specific markers

- Scarcity of differential expression hits in pathways expected based on sample composition

Q2: Should I always correct for batch effects?

Not necessarily. First assess whether your data actually has batch effects using the detection methods described in Section 3. If samples don't cluster by batch in PCA/UMAP plots and no batch-driven trends are apparent, correction might not be needed [10] [6]. Additionally, if you're working with cell hashing or sample multiplexed data (where multiple samples are processed in a single run), batch effects may be minimal [6].

Q3: What's the difference between normalization and batch effect correction?

These are distinct processes addressing different technical variations [5]:

- Normalization operates on the raw count matrix and mitigates sequencing depth across cells, library size, and amplification bias caused by gene length.

- Batch effect correction mitigates differences from different sequencing platforms, timing, reagents, or different conditions/laboratories.

Normalization typically precedes batch effect correction in analysis workflows.

Q4: How does sample imbalance affect batch correction?

Sample imbalance—where there are differences in cell type numbers, cells per cell type, and cell type proportions across samples—substantially impacts integration results and biological interpretation [6]. In fully confounded studies where biological groups completely separate by batches, it may be impossible to distinguish whether differences are due to biological signals or technical effects [4]. In such cases, specific guidelines for imbalanced settings should be followed [6].

Q5: What are the best practices for experimental design to minimize batch effects?

The best approach is to minimize batch effects during experimental design through [10] [13]:

- Randomizing samples across batches so each condition is represented within each processing batch

- Balancing biological groups across time, operators, and sequencing runs

- Using consistent reagents and protocols throughout the study

- Avoiding processing all samples of one condition together

- Including pooled quality control samples and technical replicates across batches

- For single-cell studies, multiplexing libraries across flow cells to spread out flow cell-specific variation

Table 4: Key Research Reagent Solutions for Batch Effect Management

| Resource Category | Specific Tools/Methods | Function/Purpose |

|---|---|---|

| Detection & Visualization | PCA, UMAP, t-SNE | Identify and visualize batch effects in datasets |

| Quantitative Metrics | ASW, ARI, LISI, kBET | Objectively measure batch effect severity and correction quality |

| Bulk RNA-seq Correction | ComBat, limma removeBatchEffect, SVA | Correct batch effects in bulk transcriptomic data |

| Single-cell RNA-seq Correction | Harmony, Seurat, scANVI, MNN Correct | Correct batch effects in single-cell data |

| Experimental Quality Control | Pooled QC samples, technical replicates | Monitor and account for technical variation across batches |

| Workflow Platforms | Omics Playground, CDIAM Multi-Omics Studio | Integrated platforms with preset workflows for batch correction |

Batch effects represent a significant challenge in transcriptomics research with potentially serious consequences for data interpretation and research reproducibility. Through proper detection using visualization and quantitative metrics, appropriate application of correction methods, and vigilant experimental design, researchers can effectively mitigate these technical variations. By implementing the troubleshooting guidelines and best practices outlined in this technical support document, researchers can ensure their findings reflect true biological signals rather than technical artifacts, ultimately advancing reliable and reproducible science.

Troubleshooting Guides

FAQ 1: Why do my samples cluster by batch instead of biological condition in a PCA plot, and how can I confirm this is a batch effect?

Issue: A PCA plot shows clear separation of sample groups based on processing batch (e.g., different sequencing runs, days, or technicians) rather than the expected biological conditions (e.g., treatment vs. control, different tissue types).

Diagnosis: This indicates strong batch effects—systematic technical variations introduced during experimental procedures that can obscure true biological signals [10]. Batch effects are a common challenge in transcriptomics and can originate from various sources throughout the experimental workflow [10] [8].

Confirmation Steps:

- Visual Inspection: Generate a PCA plot colored by the known batch variable (e.g., sequencing run, processing date) and a second plot colored by the biological condition. If samples group primarily by batch in the first plot, a batch effect is likely present [10] [8].

- Quantitative Validation: Use statistical metrics to objectively assess the effect:

- Principal Variance Component Analysis (PVCA): Quantifies the proportion of variance in the data explained by the batch variable compared to the biological variable [14].

- Batch Effect Score (BES): A metric from the BEEx tool that evaluates whether image features can distinguish datasets from different batches in an unsupervised manner [14].

- kBET (k-nearest neighbor Batch Effect test): Measures the extent to which the local neighborhood of a sample reflects the overall batch distribution [10].

Solution: Proceed with statistical batch effect correction methods after confirming its presence. The following troubleshooting questions detail specific correction strategies.

FAQ 2: What are the main computational methods to correct for batch effects in RNA-seq data before PCA?

Issue: After identifying a batch effect, you need to choose an appropriate correction method for your RNA-seq count data.

Diagnosis: Multiple statistical methods exist, each with strengths and limitations. The choice depends on your data structure, whether batch labels are known, and the level of correction needed [10] [8].

Resolution Methods: The table below summarizes standard batch effect correction methods applicable to RNA-seq data.

Table: Common Batch Effect Correction Methods for RNA-seq Data

| Method | Underlying Principle | Strengths | Limitations |

|---|---|---|---|

| ComBat/ComBat-seq [12] [10] [8] | Empirical Bayes framework with a negative binomial model for count data. | Highly effective; adjusts for known batch effects; good for structured bulk RNA-seq data. | Requires known batch information. |

limma removeBatchEffect [10] [8] |

Linear modeling to remove batch effects as an additive component. | Efficient; integrates well with differential expression workflows in R. | Assumes known, additive batch effects; less flexible for non-linear effects. |

| SVA (Surrogate Variable Analysis) [10] [9] | Estimates and adjusts for hidden sources of variation (surrogate variables). | Does not require known batch labels; captures unobserved technical factors. | Risk of overcorrection and removing biological signal if not carefully modeled. |

| Harmony [10] [15] | Iterative clustering and mixture-based correction to integrate datasets. | Effective for complex datasets (e.g., single-cell); preserves biological variation. | Originally designed for single-cell data; may require recomputation for new data. |

Solution: For bulk RNA-seq with known batches, ComBat-seq is a robust choice as it works directly on count data. If batches are unknown, SVA is a practical option, but results require careful validation.

FAQ 3: How do I validate that my batch correction worked without removing the biological signal?

Issue: After applying a correction algorithm, you need to verify that technical variation has been reduced while biologically relevant signals are preserved.

Diagnosis: Over-correction is a risk where true biological differences are mistakenly removed along with technical noise [10]. Validation requires both visual and quantitative assessments.

Validation Protocol:

- Visual Assessment:

- Quantitative Metrics:

- Calculate metrics before and after correction to measure improvement. The table below lists key metrics and their interpretation.

Table: Key Metrics for Validating Batch Effect Correction

| Metric | What It Measures | Interpretation of Success |

|---|---|---|

| Average Silhouette Width (ASW) [10] | How similar a sample is to its own cluster (biology) compared to other clusters. | Higher values indicate better, tighter biological clustering. |

| Adjusted Rand Index (ARI) [10] | Agreement between two clusterings (e.g., before/after correction). | Increased ARI for biological labels indicates improved alignment with the true condition. |

| kBET Acceptance Rate [10] | The local mixing of batches in the data. | A higher acceptance rate indicates better batch mixing. |

| Davies-Bouldin Index (DBI) [9] | The average similarity between each cluster and its most similar one. | A lower DBI indicates better, more distinct separation between biological clusters. |

Solution: A combination of visual inspection (intermixed batches in PCA) and improved quantitative scores confirms successful correction that preserves biology. For example, the GTEx_Pro pipeline used DBI to show improved tissue clustering after SVA correction [9].

Experimental Protocols

Detailed Methodology: A Standard Workflow for Batch Effect Diagnosis and Correction in RNA-seq Data

This protocol outlines the steps from data preprocessing to batch effect correction and validation, commonly used in transcriptomic analysis [8] [9].

I. Preprocessing and Normalization

- Data Input: Load the raw count matrix and sample metadata (including batch and biological group labels).

- Filter Low-Expressed Genes: Remove genes with negligible counts across most samples to reduce noise. A common threshold is to keep genes with counts > 0 in at least 80% of samples [8].

- Normalization: Account for differences in library size and RNA composition. A standard method is TMM (Trimmed Mean of M-values) normalization, often implemented with the

edgeRpackage in R [8] [9].

II. Diagnostic Visualization via PCA

- Transform Data: Convert normalized counts to log2-CPM (Counts Per Million) to stabilize variance for PCA.

- Perform PCA: Run Principal Component Analysis on the transformed data.

- Visualize: Plot the first two principal components, coloring points by the batch variable and, separately, by the biological condition variable.

III. Batch Effect Correction

Apply a chosen correction method. Below is an example using the ComBat_seq function from the sva package, which is designed for count data [12] [8].

IV. Post-Correction Validation

- Repeat PCA: Perform PCA on the batch-corrected data (e.g., the

corrected_countsmatrix). - Generate Validation Plots: Create new PCA plots, again colored by batch and biology.

- Calculate Quantitative Metrics: Compute metrics like ASW or ARI on the corrected data to quantitatively confirm the improvement in data structure.

Workflow Diagram

The following diagram illustrates the logical workflow for diagnosing and correcting batch effects, from raw data to validated results.

Title: Batch Effect Diagnosis and Correction Workflow

The Scientist's Toolkit: Research Reagent Solutions

This table details essential computational tools and resources used for effective batch effect management in gene expression studies.

Table: Essential Tools and Resources for Batch Effect Analysis

| Item / Tool Name | Function / Application | Brief Explanation |

|---|---|---|

| BEEx (Batch Effect Explorer) [14] | Open-source platform for batch effect identification in medical images. | Provides qualitative and quantitative metrics (like BES) to determine if batch effects exist across multi-site imaging datasets. |

| ComBat-seq [12] | Batch effect correction algorithm for RNA-seq count data. | Employs a negative binomial model to adjust data, preserving the count nature of the data. An improved version, ComBat-ref, uses a low-dispersion reference batch for adjustment. |

| SVA (Surrogate Variable Analysis) [10] [9] | Statistical method for identifying and adjusting for unknown batch effects. | Estimates "surrogate variables" that represent unmodeled technical variation, which can then be included in downstream models to improve specificity. |

| Harmony [10] [15] | Batch integration algorithm for single-cell or complex data. | Iteratively clusters cells and computes correction factors to align datasets in a shared embedding, effectively removing batch-driven clustering. |

| GTEx_Pro Pipeline [9] | A specialized preprocessing pipeline for GTEx transcriptomic data. | Integrates TMM normalization, CPM scaling, and SVA correction into a robust, scalable workflow to enhance multi-tissue comparability in large-scale studies. |

| Reference Materials (e.g., Quartet) [16] | Physically defined standards used across batches and labs. | In proteomics and other fields, these materials are profiled concurrently with study samples to enable ratio-based batch correction, providing a technical baseline. |

Frequently Asked Questions

- What are the primary visualization tools for assessing batch effects? Principal Component Analysis (PCA), t-Distributed Stochastic Neighbor Embedding (t-SNE), and Uniform Manifold Approximation and Projection (UMAP) are standard techniques. PCA is a linear method, while t-SNE and UMAP are non-linear and often used for their powerful clustering visualizations [6] [8].

- How can I tell if my data has a batch effect by looking at a UMAP/t-SNE plot? In the presence of batch effects, cells or samples from different batches cluster separately, rather than grouping based on biological similarities (like cell type or disease condition). A clear separation of batches on the UMAP or t-SNE plot signals a batch effect [6].

- What is the main practical difference between t-SNE and UMAP for this task? t-SNE excels at preserving local structure, creating tight, well-separated clusters ideal for identifying cell types. UMAP better preserves global structure, providing a more holistic view of how clusters relate to each other, which can be crucial for understanding overarching data trends [17] [18].

- My batches are mixed after correction, but distinct cell types are now overlapping. What happened? This is a classic sign of over-correction. The correction algorithm has been too aggressive and has removed biological variation along with the technical batch effect. You should try a less aggressive correction method or adjust its parameters [6].

- Are there quantitative ways to measure batch effects beyond visualization? Yes. Metrics such as the k-nearest neighbor batch-effect test (kBET) and the local inverse Simpson's index (LISI) provide quantitative scores for batch mixing and cell type purity, reducing human bias in assessment [6] [19].

Experimental Protocols for Batch Effect Assessment

This section provides a step-by-step guide for visually diagnosing batch effects in your data.

Protocol 1: Basic Workflow for Batch Effect Assessment

The following diagram outlines the core process for using visualization to detect and confirm batch effects.

Step-by-Step Instructions:

- Data Preprocessing: Begin with your raw gene expression count matrix. Perform standard normalization (e.g., Total-count normalization, log-transformation, or Z-scoring) to account for technical variation. The choice of transformation can significantly impact downstream results [20].

- Dimensionality Reduction: Perform PCA on the preprocessed data. This linear reduction technique helps capture the major sources of variation and is often used as input for non-linear methods [6] [19].

- Generate UMAP/t-SNE Plots: Using the top principal components from PCA (or the highly variable genes), create UMAP and t-SNE plots. Color the data points by their batch identifier (e.g., processing date, sequencing run) [6] [8].

- Visual Assessment for Batch Effects: Examine the plot. If you see clear separation or strong clustering of points based on their batch color, this indicates a batch effect [6].

- Control for Biological Variation: To confirm that the separation is technical and not biological, re-plot the same UMAP/t-SNE coordinates but color the points by a biological label (e.g., cell type, treatment condition). If the biological groups are fragmented across the plot while batches are distinct, you have confirmed that a batch effect is obscuring your biological signal [6].

Protocol 2: Choosing Between UMAP and t-SNE

The decision to use UMAP or t-SNE depends on your dataset size and analytical goals. The following flowchart guides this choice.

Guidance for Use:

- Choose UMAP for:

- Choose t-SNE for:

Comparative Data and Technical Specifications

Table 1: Technical Comparison of Visualization Techniques for Batch Effect Assessment

| Feature | PCA | t-SNE | UMAP |

|---|---|---|---|

| Primary Strength | Fast, linear, preserves global variance | Excellent for local structure and tight clustering | Balances local and global structure; faster |

| Structure Preservation | Global (linear relationships) | Primarily Local | Both Local and Global |

| Computational Speed | Fast | Slow, especially on large datasets | Faster, scalable to large datasets |

| Key Parameter(s) | Number of components | Perplexity | nneighbors, mindist |

| Deterministic Output | Yes | No (results vary between runs) | No (results vary between runs) |

| Interpretability of Distances | Yes, distances are meaningful | No, inter-cluster distances are not meaningful | Yes, more meaningful than t-SNE |

Table 2: Troubleshooting Common Visualization Artifacts

| Symptom | Potential Cause | Next Steps |

|---|---|---|

| Distinct clusters based solely on batch | Strong batch effect present. | Proceed with batch effect correction methods (e.g., Harmony, Seurat) [6] [19]. |

| All batches are completely overlapped after correction | Over-correction; biological signal has been removed. | Try a less aggressive correction method or adjust parameters [6]. |

| Different cell types are mixed together after correction | Over-correction or poor choice of correction method. | Verify with a different method and check if biological markers are retained. |

| Plots look drastically different between t-SNE and UMAP | Normal, as they emphasize different structures. | Use both for complementary insights. Trust cell type labels and marker genes. |

| A single biological group splits into sub-clusters | Could be a batch effect or a novel biological subtype. | Investigate marker genes for the sub-clusters to determine if the separation is technical or biological. |

Table 3: Key Computational Tools for Batch Effect Analysis

| Item | Function | Relevance to Batch Effect Assessment |

|---|---|---|

| Seurat [19] | A comprehensive R toolkit for single-cell genomics. | Provides integrated workflows for PCA, t-SNE, UMAP, and batch correction (e.g., CCA integration). |

| Harmony [6] [19] | Batch effect correction algorithm. | Effectively integrates datasets; is fast and often a top-performing method in benchmarks. |

| Scanpy | A Python-based toolkit for single-cell analysis. | Offers scalable and flexible functions for normalization, dimensionality reduction (PCA, UMAP), and batch integration. |

| scANVI [6] | A deep learning-based method for data integration. | Performs well in complex integration tasks, as noted in benchmark studies. |

| ComBat/reComBat [21] | Empirical Bayes method for batch correction. | Adjusts for batch effects in gene expression data; reComBat is designed for large-scale data. |

| kBET & LISI Metrics [6] [19] | Quantitative batch effect evaluation metrics. | Provide objective, numerical scores for batch mixing (kBET) and cell type purity (LISI) post-correction. |

In the analysis of high-dimensional genomic data, particularly Principal Component Analysis (PCA) of gene expression data, batch effects represent a critical challenge. These technical artifacts arise from variations in sample processing, sequencing platforms, or laboratory conditions and can obscure genuine biological signals. To objectively evaluate the success of batch effect correction methods, researchers rely on quantitative metrics that assess how well batches are mixed while preserving biological variation. Three widely adopted metrics—Silhouette Width, Local Inverse Simpson's Index (LISI), and k-Nearest Neighbour Batch Effect Test (kBET)—form the cornerstone of this evaluation process in single-cell RNA sequencing (scRNA-seq) and other genomic studies. [22] [23] [19]

The following diagram illustrates the conceptual relationship between these metrics and their role in assessing data integration quality:

Metric Comparison Table

The table below provides a comprehensive comparison of the three key quantitative metrics used for assessing batch effect correction:

| Metric | Calculation Basis | Score Range | Optimal Value | Primary Application Context | Key Advantages | Main Limitations |

|---|---|---|---|---|---|---|

| Silhouette Width (ASW) | Distance-based cohesion vs separation [24] | -1 to +1 | → +1 (Strong clustering) [24] | Cluster validation [24] | Intuitive interpretation; No reference needed [24] | Poor performance on non-convex clusters [24] |

| LISI | Inverse Simpson's index in local neighborhoods [22] [23] | 1 to B (number of batches) | → B (Perfect mixing) [22] | Batch mixing assessment [22] | Cell-specific scores; Handles multiple batches [22] | Requires pre-defined cell neighborhoods [22] |

| kBET | Chi-square test of batch proportions in neighborhoods [23] [19] | 0 to 1 (rejection rate) | → 0 (Well-mixed) [19] | Local batch effect test [19] | Statistical testing framework; Local assessment [19] | Sensitive to parameter k [19] |

Frequently Asked Questions

What are the most critical limitations of Silhouette Width when evaluating batch-corrected gene expression data?

The Silhouette Width has several important limitations in the context of batch effect evaluation. It assumes clusters are convex-shaped and may perform poorly when data clusters have irregular shapes or are of varying sizes, which is common in real-world biological data. [24] The metric also becomes less reliable with increasing dimensionality due to the curse of dimensionality, as distances become more similar in high-dimensional spaces. [24] Additionally, when applied with external labels (e.g., batch effects or cell types), it can yield misleadingly high scores if clusters overlap with only one other group, failing to detect residual separations in partially integrated data. [25]

How do I interpret conflicting results between LISI and kBET metrics after applying batch correction methods?

Conflicting results between LISI and kBET typically indicate different aspects of batch mixing. LISI measures the effective number of batches in local neighborhoods, with higher values indicating better mixing. [22] [23] kBET uses a statistical test to check if local batch proportions match the global distribution, with lower rejection rates indicating successful integration. [23] [19] When conflicts occur:

- High LISI but poor kBET: Suggest generally good mixing but specific regions with batch imbalances

- Good kBET but low LISI: May indicate overall balanced proportions but insufficient fine-grained mixing

Consider visualizing the specific regions where each metric performs poorly using UMAP or t-SNE plots to identify problematic cell populations. [6] Also, ensure you're using appropriate parameters (neighborhood size for kBET, perplexity for LISI) as these significantly impact results. [22] [19]

My batch correction appears successful by visual inspection (UMAP), but quantitative metrics show poor performance. Which should I trust?

This common discrepancy typically arises because visualization techniques like UMAP prioritize preserving global structure and may obscure local mixing issues. [6] Quantitative metrics like kBET and LISI provide objective, localized assessment that often reveals problems not visible in 2D projections. [22] [23] When this occurs:

- Verify metric parameters align with your biological question

- Examine metric scores at the cellular level to identify specific poorly-mixed populations

- Check for over-correction where biological signal has been removed along with batch effects

- Compare multiple metrics to identify consistent patterns across different assessment methods

Quantitative metrics should generally take precedence over visual interpretation alone, as they provide statistical rigor and are less susceptible to perceptual biases. [23] [19]

Which metric is most suitable for evaluating integration of datasets with highly unbalanced batch compositions?

For highly unbalanced datasets where cell types or sample proportions vary significantly between batches, LISI generally performs more reliably than kBET or Silhouette Width. [22] LISI's use of the Inverse Simpson's Index makes it less sensitive to population imbalances compared to kBET, which relies on expected proportions. [22] The cell-specific mixing score (cms) from the CellMixS package was specifically designed to handle unbalanced batches and can differentiate between true batch effects and natural population imbalances. [22] When working with unbalanced data, avoid relying solely on Silhouette Width, as it may give misleading results when cluster sizes vary substantially. [24]

What are the recommended threshold values for determining successful batch integration using these metrics?

While optimal thresholds can vary by dataset and biological context, these general guidelines provide a starting point:

- Silhouette Width: Values >0.7 indicate "strong" clustering, >0.5 "reasonable," and >0.25 "weak" structure—but note these were established for cluster validation rather than batch mixing assessment. [24]

- LISI: Target values approaching the number of batches (B) in your dataset, with scores >B/2 generally indicating acceptable mixing. [22] [23]

- kBET: Rejection rates <0.1-0.2 typically indicate well-mixed data, though some studies use more stringent thresholds (<0.05). [19]

Always compare post-integration metrics to pre-correction values to assess improvement magnitude, and consider your specific research context when setting thresholds. [23] [6]

Experimental Protocols

Standardized Workflow for Batch Effect Metric Calculation

Step-by-Step Protocol for Comprehensive Metric Assessment

Data Preparation

- Begin with batch-corrected gene expression matrices or embeddings

- Ensure batch labels and optional cell type annotations are prepared

- For large datasets, consider subsampling to 10,000-50,000 cells for computational efficiency [23]

Parameter Optimization

Metric Computation

- Calculate global scores for overall assessment

- Generate cell-specific scores to identify problematic subpopulations

- Compute pre-correction and post-correction values for comparison

Visual Validation

- Create UMAP/t-SNE plots colored by metric scores to spatialize results

- Generate violin plots of metric distributions across cell types

- Visualize batch mixing before and after correction [6]

The Scientist's Toolkit

Essential Software Packages for Metric Implementation

| Tool/Package | Primary Function | Implementation | Key Features |

|---|---|---|---|

| scIB [23] | Comprehensive integration benchmarking | Python | Unified implementation of multiple metrics including ASW, LISI, kBET |

| CellMixS [22] | Batch effect evaluation | R/Bioconductor | Cell-specific mixing score (cms) for detecting local batch bias |

| scater [26] | Single-cell analysis toolkit | R | Quality control and basic metric calculation |

| Seurat [19] | Single-cell analysis | R | Integration methods with built-in assessment visualizations |

| scikit-learn [25] | Machine learning library | Python | Silhouette score implementation for general clustering validation |

Critical Computational Considerations

When implementing these metrics in practice:

- Computational Complexity: kBET and LISI scale with O(N²) for N cells without optimizations [24]

- Memory Requirements: Large datasets (>100,000 cells) may require subsampling or batch processing [23]

- Parallelization: Many implementations support multi-core processing for faster computation [22]

- Dimensionality Reduction: Most metrics perform better on PCA-reduced data (20-50 components) than raw expression matrices [23] [19]

Troubleshooting Guide

Common Issues and Solutions

| Problem | Potential Causes | Solutions |

|---|---|---|

| Poor metric scores despite good visualization | Overfitting to visualization; Inappropriate metric parameters | Adjust neighborhood sizes; Try multiple metrics; Check cell-specific scores |

| High variance in metric values across cell types | Cell type-specific batch effects; Population imbalances | Apply cell type-specific analysis; Use metrics robust to imbalances (LISI) |

| Extremely long computation times | Large dataset size; Inefficient implementation | Subsample data; Use approximated algorithms; Increase computational resources |

| Conflicting results between metrics | Different aspects of mixing being measured | Create consensus scoring; Focus on metrics most relevant to biological question |

| Worsening scores after correction | Over-correction removing biological signal; Incorrect method application | Verify correction method suitability; Check for technical artifacts in data |

Optimization Strategies for Reliable Assessment

- Always benchmark multiple metrics rather than relying on a single measure of success [23]

- Compare to pre-correction baselines to quantify improvement magnitude [6]

- Validate with biological knowledge to ensure preservation of meaningful signal [23]

- Use dataset-specific positive controls when available to establish expected performance [19]

- Consider the final analytical goal when weighting the importance of different metrics [23]

What are batch effects and why do they matter in my research?

Batch effects are systematic non-biological variations that are introduced when samples are processed in different groups or "batches" [27]. These technical artifacts are not related to your scientific question but can drastically alter your data, leading to misleading analysis results and false conclusions [28] [29].

In gene expression studies, batch effects can cause you to identify genes that differ between batches rather than between your biological conditions of interest [8]. They can cause clustering algorithms to group samples by processing date instead of by cell type or disease state, and they are a significant challenge for meta-analyses that combine data from different sources [8] [27]. Effectively managing batch effects is therefore not just a technical detail—it is essential for ensuring the reliability and reproducibility of your research findings [8].

How can I detect batch effects in my gene expression data?

The first step is visualization, often using Principal Component Analysis (PCA). When you run PCA on your data, look for clustering or separation of data points colored by their batch (e.g., processing date, sequencing run). If samples from the same batch cluster together distinctly from other batches, this is a clear indicator of a batch effect [27] [30].

For a more quantitative approach, you can use statistical tests and metrics designed to quantify batch effects:

| Metric/Test | Description | Interpretation |

|---|---|---|

| Dispersion Separability Criterion (DSC) [27] | Quantifies the ratio of dispersion between batches vs. within batches. A higher DSC indicates a greater batch effect. | DSC < 0.5: Batch effects likely minor. DSC > 0.5: Batch effects may exist. DSC > 1: Strong batch effects likely present. |

| Guided PCA (gPCA) [28] | A statistical test that calculates the proportion of variance due to batch. | A significant p-value (< 0.05) indicates a statistically significant batch effect. |

| Local Inverse Simpson's Index (LISI) [31] | Measures how well batches are mixed within local neighborhoods. A higher Batch LISI score indicates better integration. | Scores closer to the total number of batches indicate good mixing. |

Batch effects can arise at virtually every stage of your experimental workflow, from sample collection to data generation. Being aware of these common sources can help you plan and mitigate them proactively.

| Experimental Stage | Specific Examples of Batch Effect Sources |

|---|---|

| Sample Preparation | Different personnel handling samples [8] [29], variations in protocols (e.g., incubation times, number of washes) [29], different reagent lots or manufacturing batches [8], use of different anticoagulants in blood collection [29]. |

| Sequencing Runs | Different sequencing runs, instruments, or platforms (e.g., Illumina vs. Ion Torrent) [8] [28], changes in laboratory environmental conditions (temperature, humidity) [8], replacement of a laser or detector module during the study [29]. |

| Time & Organization | Samples processed over multiple weeks or months (time-related factors) [8], acquiring all samples from one experimental group on a single day instead of randomizing across runs [29]. |

What can I do to prevent batch effects?

The best strategy is a combination of good experimental design and practical laboratory practices.

- Plan Your Experiment Carefully: Whenever possible, randomize your samples across processing batches. Do not run all your control samples on one day and all your treatment samples on another [29]. If you are banking samples, randomize which samples are included in each acquisition session.

- Standardize Protocols: Ensure all technicians follow the same detailed, written protocols to minimize unwritten variations [29].

- Use Bridge or Anchor Samples: A highly effective method is to include a consistent control sample (a "bridge" sample) in every batch. This sample, such as an aliquot from a large leukopak for PBMC studies, serves as a reference point to quantify and correct for technical variation between batches [29].

- Titrate Reagents and Control Instrument Variation: Titrate your antibodies correctly for the expected cell number and type to avoid under- or over-staining [29]. Use the instrument's QC programs to ensure a consistent detection level before each run [29].

How can I correct for batch effects in my data?

If batch effects are detected, several computational tools can be used to correct them. The choice of tool often depends on your data type and analysis goals.

| Tool/Method | Description | Best For |

|---|---|---|

| ComBat-seq [8] | An empirical Bayes method that works directly on raw count data. | RNA-seq count data; when you need to correct data before differential expression analysis. |

removeBatchEffect (limma) [8] |

A linear model-based adjustment that works on normalized, log-transformed expression data. | Microarray data or RNA-seq data normalized with the limma-voom workflow. Note: Not recommended for direct use before differential expression; include batch in your model instead. |

| Harmony [31] | Integrates datasets by iteratively clustering and correcting in a low-dimensional space (e.g., PCA). | Large, complex datasets (scales to millions of cells); preserving biological variation while removing batch effects. |

| Seurat Integration [31] | Uses canonical correlation analysis (CCA) and mutual nearest neighbors (MNN) to align datasets. | Single-cell RNA-seq data; when high biological fidelity is required for distinguishing cell types. |

| Mixed Linear Models (MLM) [8] | Incorporates batch as a random effect into a statistical model, offering a sophisticated approach for complex designs. | Complex experimental designs with nested or hierarchical batch effects. |

The Scientist's Toolkit: Key Reagents & Materials for Batch Control

| Item | Function in Mitigating Batch Effects |

|---|---|

| Bridge/Anchor Sample | A consistent control sample included in every batch to monitor and correct for technical variation [29]. |

| Single Reagent Lot | Using the same manufacturing lot for all critical reagents (e.g., antibodies, enzymes) throughout a study to minimize variability [29]. |

| Fluorescent Cell Barcoding Kits | Allows unique labeling and pooling of multiple samples for simultaneous staining and acquisition, eliminating variability from these steps [29]. |

| Reference Control Beads/Cells | Stable particles with fixed fluorescence, used for daily instrument quality control to ensure consistent detection across batches [29]. |

Batch Correction Methodologies: A Practical Toolkit for Gene Expression Data

Batch effects are unwanted technical variations in data resulting from differences in labs, experimental protocols, handling personnel, reagent lots, sequencing platforms, or processing times [13] [32]. In gene expression studies, these systematic non-biological variations can confound true biological signals, compromising data reliability and potentially leading to false biological discoveries [32] [33]. The challenge is particularly pronounced in single-cell RNA sequencing (scRNA-seq) and mass spectrometry-based proteomics, where the integration of multiple datasets is essential for comprehensive biological insights [32] [34] [19].

The principal challenge addressed by Batch Effect Correction Algorithms (BECAs) is removing these technical variations while preserving biologically relevant information [32] [33]. Over-correction, where true biological variation is erroneously removed, is a significant risk that can lead to inaccurate downstream analyses and conclusions [33].

Numerous computational methods have been developed to address batch effects across different omics data types. The table below summarizes key algorithms, their primary methodologies, and common applications.

Table 1: Common Batch Effect Correction Algorithms (BECAs)

| Algorithm | Primary Methodology | Typical Application | Key Reference |

|---|---|---|---|

| Harmony | Iterative clustering in PCA space with linear correction | scRNA-seq, Multi-omics | [Korsunsky et al., 2019] |

| Seurat | Canonical Correlation Analysis (CCA) and Mutual Nearest Neighbors (MNN) | scRNA-seq | [Stuart et al., 2019] |

| ComBat/ComBat-seq | Empirical Bayes - linear correction (ComBat); Negative binomial regression (ComBat-seq) | Bulk RNA-seq, scRNA-seq | [Johnson et al., 2007; Zhang et al., 2020] |

| MNN Correct | Mutual Nearest Neighbors in high-dimensional or PCA space | scRNA-seq | [Haghverdi et al., 2018] |

| LIGER | Integrative Non-negative Matrix Factorization (NMF) and quantile alignment | scRNA-seq | [Welch et al., 2019] |

| Scanorama | Mutual Nearest Neighbors in a panoramic, stitching-like approach | scRNA-seq | [Hie et al., 2019] |

| BBKNN | Graph-based correction of the k-Nearest Neighbor graph | scRNA-seq | [Polański et al., 2020] |

| SCVI | Variational Autoencoder (VAE) in a deep learning framework | scRNA-seq | [Lopez et al., 2018] |

| RUV-III-C | Linear regression model to estimate and remove unwanted variation | Proteomics data | [32] |

| WaveICA2.0 | Multi-scale decomposition with injection order time trend | Metabolomics, Proteomics | [32] |

| NormAE | Deep learning-based correction via neural networks | Proteomics | [32] |

| scGen | Variational Autoencoder (VAE) model trained on a reference dataset | scRNA-seq | [19] |

Benchmarking and Performance Evaluation

Selecting an appropriate BECA requires careful consideration of performance. Benchmarking studies evaluate methods based on their ability to remove technical variation while preserving biological truth.

Table 2: BECA Performance Evaluation Metrics

| Metric | What it Measures | Interpretation |

|---|---|---|

| kBET | Local batch mixing using nearest neighbors | Lower rejection rate indicates better mixing [19] [33]. |

| LISI | Batch and cell type diversity within neighborhoods | Higher score indicates better mixing or diversity [19] [33]. |

| ASW (Average Silhouette Width) | Clustering compactness and separation | Values closer to 1 indicate well-separated, compact clusters [19] [33]. |

| ARI (Adjusted Rand Index) | Similarity between two clusterings | Higher value (max 1) indicates better agreement with known labels [19]. |

| RBET | Batch effect on reference genes (RGs) | Lower value indicates better performance; sensitive to overcorrection [33]. |

Key Benchmarking Findings

- Harmony, LIGER, and Seurat 3 are frequently recommended as top performers for scRNA-seq data integration. Due to its significantly shorter runtime, Harmony is often recommended as the first method to try [19].

- A 2025 evaluation notes that methods like MNN, SCVI, and LIGER can alter data considerably during correction, while Harmony was the only method consistently performing well across all tests [34].

- For MS-based proteomics data, protein-level batch correction is often more robust than correction at the precursor or peptide level [32].

- The Ratio method (intensities of study samples divided by concurrently profiled reference materials) has been shown to be a universally effective BECA, particularly when batch effects are confounded with biological groups [32].

BECA Selection Workflow

The following diagram illustrates a logical workflow for selecting and evaluating an appropriate batch effect correction method, based on common data characteristics and benchmarking recommendations.

Troubleshooting Guides and FAQs

FAQ 1: My PCA results show poor separation of biological groups after batch correction. What might be happening?

This could indicate overcorrection, where the batch effect correction algorithm has erroneously removed true biological variation along with the technical batch effects [33].

- Solution:

- Re-evaluate parameter settings: For methods like Seurat, increasing the number of anchors (

k) beyond an optimal point can lead to overcorrection. Try a lowerkvalue [33]. - Use a different algorithm: If using a method known for aggressive correction (e.g., some implementations of MNN or LIGER [34]), try a method like Harmony, which has demonstrated better calibration in preserving biological structure [34] [19].

- Employ RBET for evaluation: Use the Reference-informed Batch Effect Testing (RBET) metric, which is sensitive to overcorrection, to guide your method selection and parameter tuning [33].

- Re-evaluate parameter settings: For methods like Seurat, increasing the number of anchors (

FAQ 2: How can I objectively determine if my batch correction was successful?

Successful correction effectively removes technical variation without removing biological signal. Use a combination of quantitative metrics and visual inspection.

- Actionable Checklist:

- Quantitative Metrics:

- Calculate kBET and LISI scores to quantify batch mixing. Successful correction should yield a low kBET rejection rate and a higher LISI score for batch [19] [33].

- Use RBET to check for overcorrection by testing on stable reference genes. A low RBET value indicates good performance [33].

- Compute the Silhouette Coefficient (SC) for cell type clusters. Well-defined biological clusters should persist or improve after correction [33].

- Visual Inspection:

- Downstream Validation:

- Quantitative Metrics:

FAQ 3: I have missing values in my data matrix. Can I still perform PCA and batch correction?

Standard PCA requires a complete data matrix. Common solutions include data imputation (which can be arbitrary) or deleting parts of the data (which loses information) [35].

- Solution:

- Consider using InDaPCA (PCA of Incomplete Data), a modified eigenanalysis-based PCA that calculates correlations using different numbers of observations for each variable pair, avoiding artificial imputation [35].

- The success of this method is less dependent on the total percentage of missing entries and more on the minimum number of observations available for comparing any given pair of variables [35].

FAQ 4: When using PCA for dimensionality reduction before classification, why does my classifier performance sometimes worsen?

PCA is an unsupervised method that maximizes variance, not class separation. The principal components that explain the most variance may not be the most discriminatory features for your classification task [36].

- Explanation & Solution:

- Cause: The direction of maximal variance captured by PCA might be orthogonal or even contradictory to the features that best separate your classes [36].

- Illustration: If class separation is determined by the difference

x1 - x2, but the first PC isx1 + x2(which has higher variance), then using the first PC for classification will discard the most informative feature [36]. - Alternative: For supervised analyses, consider using methods like PLS (Partial Least Squares), which finds components that simultaneously explain variance and are correlated with the outcome variable [36].

The Scientist's Toolkit: Essential Reagents and Materials

Table 3: Key Research Reagent Solutions for Batch Effect Management

| Reagent/Material | Function in Mitigating Batch Effects |

|---|---|

| Universal Reference Materials (e.g., Quartet) | Provides a standardized benchmark across batches and labs to quantify and correct for technical variation [32]. |

| Validated Housekeeping Genes | Serve as stable, non-varying reference genes (RGs) for evaluation of overcorrection in frameworks like RBET [33]. |

| Standardized Reagent Lots | Using the same reagent lots across an experiment minimizes a major source of technical variation [13]. |

| Multiplexing Libraries | Pooling libraries and spreading them across sequencing flow cells helps to distribute technical variation evenly across samples [13]. |

RNA sequencing (RNA-seq) has become a cornerstone technology in transcriptomics, providing detailed insights into gene expression profiles across various biological conditions. However, the reliability of RNA-seq data is often compromised by batch effects—systematic non-biological variations introduced when samples are processed in different batches, by different personnel, using different reagents, or at different times [37] [8]. These technical artifacts can be substantial enough to obscure true biological signals, leading to false discoveries and reduced statistical power in differential expression analysis [37].

The Empirical Bayes framework has emerged as a powerful statistical approach for addressing these challenges. This methodology borrows information across genes to stabilize parameter estimates, making it particularly effective for studies with limited sample sizes. Two prominent implementations of this framework for RNA-seq count data are ComBat-seq and its recent refinement ComBat-ref, which specifically address the unique characteristics of count-based sequencing data through negative binomial regression models [37] [38].

Understanding ComBat-seq: Core Algorithm and Methodology

Theoretical Foundation

ComBat-seq builds upon the established ComBat algorithm but replaces the normal distribution assumption used for microarray data with a negative binomial distribution, which better captures the characteristics of RNA-seq count data [37] [38]. This approach models each count value ( n_{ijg} ) for gene ( g ) in sample ( j ) from batch ( i ) as:

[ n{ijg} \sim \text{NB}(\mu{ijg}, \lambda_{ig}) ]

where ( \mu{ijg} ) represents the expected expression level and ( \lambda{ig} ) is the dispersion parameter for batch ( i ) [37].

The expected expression is modeled using a generalized linear model (GLM) with a logarithmic link function:

[ \log(\mu{ijg}) = \alphag + \gamma{ig} + \beta{cj g} + \log(Nj) ]

where:

- ( \alpha_g ) = global background expression of gene ( g )

- ( \gamma_{ig} ) = effect of batch ( i ) on gene ( g )

- ( \beta{cj g} ) = effect of biological condition ( c_j ) on gene ( g )

- ( N_j ) = library size for sample ( j ) [37]

Parameter Estimation and Adjustment

ComBat-seq employs a two-stage estimation process:

- Dispersion Estimation: Gene-wise dispersions are estimated within each batch using methods adapted from edgeR [38]

- Model Fitting: Parameters are estimated via GLM fitting, followed by empirical Bayes shrinkage to improve stability [38]

The adjustment procedure uses the estimated parameters to remove batch effects while preserving biological signals. The algorithm maintains the integer nature of count data, making the adjusted values compatible with downstream differential expression tools like edgeR and DESeq2 [37].

Table 1: Key Parameters in ComBat-seq Implementation

| Parameter | Description | Default Value | Recommendation |

|---|---|---|---|

batch |

Batch indices for samples | Required | Ensure adequate samples per batch |

group |

Biological conditions | NULL | Specify to preserve biological variation |

covar_mod |

Additional covariates | NULL | Include known confounding factors |

shrink |

Apply parameter shrinkage | FALSE | Set to TRUE for small sample sizes |

shrink.disp |

Apply dispersion shrinkage | FALSE | Enable for improved precision |

full_mod |

Include group in model | TRUE | Set FALSE if group-batch confounded |

ComBat-ref: Advanced Refinement with Reference Batch Selection

Theoretical Advancements

ComBat-ref represents a significant refinement of ComBat-seq that introduces a reference batch selection strategy to enhance performance. The key innovation lies in identifying the batch with the smallest dispersion and using it as a reference for adjusting all other batches [37].

The mathematical adjustment in ComBat-ref modifies the expected expression values as:

[ \log(\tilde{\mu}{ijg}) = \log(\mu{ijg}) + \gamma{1g} - \gamma{ig} ]

where batch 1 is the reference batch with the smallest dispersion ( \lambda1 ), and the adjusted dispersion for all batches is set to ( \tilde{\lambda}i = \lambda_1 ) [37]. This approach minimizes the propagation of technical variance while maximizing the preservation of biological signals.

Performance Advantages

Simulation studies demonstrate that ComBat-ref maintains exceptionally high statistical power comparable to data without batch effects, even when significant variance exists between batch dispersions [37]. The method particularly excels in scenarios with large dispersion factors (disp_FC > 2), where traditional methods including ComBat-seq show reduced sensitivity in differential expression detection [37].

Diagram 1: ComBat-ref Batch Correction Workflow

Frequently Asked Questions (FAQs)

Q1: What are the key differences between ComBat-seq and ComBat-ref?

Table 2: Comparison Between ComBat-seq and ComBat-ref

| Feature | ComBat-seq | ComBat-ref |

|---|---|---|

| Dispersion Handling | Averages dispersions across batches | Selects reference batch with minimum dispersion |

| Reference Strategy | No specific reference batch | Uses lowest-dispersion batch as reference |

| Statistical Power | Good, but reduced with high dispersion variance | Excellent, maintained even with dispersion differences |

| Implementation | Available in sva R package | Newer method, check original publication |

| Data Adjustment | Adjusts all batches collectively | Preserves reference batch, adjusts others toward it |

Q2: When should I choose ComBat-ref over ComBat-seq? ComBat-ref is particularly beneficial when dealing with batches that exhibit substantially different levels of technical variation. If preliminary analysis shows significant differences in dispersion parameters between batches, ComBat-ref will likely provide superior results by using the least variable batch as a reference [37].

Q3: Can these methods handle studies with only one sample per batch? No, neither ComBat-seq nor ComBat-ref currently support single-sample batches. The algorithms require multiple samples per batch to estimate batch-specific parameters reliably. The software will return an error if any batch contains only one sample [38].

Q4: How do I determine whether batch correction has been effective? Principal Component Analysis (PCA) visualization before and after correction is the most common diagnostic approach. Effective correction should reduce clustering by batch while maintaining or enhancing separation by biological conditions [39] [8]. Additionally, you can evaluate the reduction in batch-associated variance through metrics like Percent Variance Explained.

Q5: What precautions should I take when including biological covariates? Ensure that your biological conditions of interest are not completely confounded with batch. If all samples from one condition come from a single batch, the methods cannot distinguish biological effects from batch effects. The design matrix must be full rank for parameter estimation [38].

Troubleshooting Guides

Batch Correction Not Working Effectively

Symptoms: PCA plots show similar batch clustering before and after correction.

Potential Causes and Solutions:

Insufficient Model Specification

- Problem: Not accounting for all relevant batch factors or covariates