A Researcher's Guide to PCA for RNA-seq Data: From Quality Control to Biological Insight

This article provides a comprehensive guide to performing and interpreting Principal Component Analysis (PCA) on bulk RNA-sequencing data.

A Researcher's Guide to PCA for RNA-seq Data: From Quality Control to Biological Insight

Abstract

This article provides a comprehensive guide to performing and interpreting Principal Component Analysis (PCA) on bulk RNA-sequencing data. Tailored for researchers and drug development professionals, it covers the foundational principles of PCA as a dimensionality reduction technique, a step-by-step methodological workflow for implementation using common tools like DESeq2, and crucial troubleshooting advice for optimizing results and avoiding common pitfalls. Furthermore, it contextualizes the role of PCA within the broader RNA-seq analysis pipeline, comparing it with other methods and explaining how it validates experimental design. By transforming complex gene expression data into actionable visual insights, PCA serves as an indispensable tool for quality control, exploratory data analysis, and uncovering the biological drivers of variation.

What is PCA and Why is it Essential for RNA-seq Exploration?

Principal Component Analysis (PCA) stands as a foundational technique in the analysis of high-dimensional genomic data, particularly for RNA-sequencing (RNA-seq) and single-cell RNA-sequencing (scRNA-seq) studies. This dimensionality reduction method transforms complex gene expression datasets with thousands of variables into a simplified, manageable form while preserving essential biological information. By identifying the principal axes of variation within high-dimensional data, PCA enables researchers to visualize sample relationships, identify outliers, detect batch effects, and uncover hidden patterns that might represent distinct biological states or responses. This technical guide provides a comprehensive examination of PCA's mathematical foundations, practical implementation for gene expression data, and interpretation within the context of exploratory data analysis, offering researchers and drug development professionals an essential toolkit for navigating the complexities of modern transcriptomics.

High-throughput sequencing technologies have revolutionized biological research by enabling comprehensive profiling of gene expression across entire transcriptomes. However, this power comes with a significant challenge: the curse of dimensionality. A typical RNA-seq dataset may contain expression measurements for 20,000-50,000 genes across multiple samples, creating a high-dimensional space where each gene represents a separate dimension [1] [2]. In such spaces, distances between samples become less meaningful, computational demands skyrocket, and visualization becomes virtually impossible.

Dimensionality reduction techniques address these challenges by transforming data into a lower-dimensional representation while preserving essential structures and patterns. PCA accomplishes this through an orthogonal transformation that converts correlated variables (gene expression values) into a set of linearly uncorrelated variables called principal components (PCs) [3]. These components are ordered such that the first few retain most of the variation present in the original dataset, providing a powerful framework for data compaction and noise reduction.

In the specific context of RNA-seq analysis, PCA serves multiple critical functions: it enables quality control by identifying outliers and technical artifacts, reveals biological heterogeneity and sample groupings, informs hypothesis generation, and provides a preprocessing step for downstream analyses like clustering and differential expression [4]. For drug discovery professionals, PCA offers a systemic approach to understanding complex pharmacological responses, moving beyond reductionist approaches to capture network-level effects of therapeutic interventions [5] [6].

Mathematical Foundations of PCA

Core Linear Algebra Concepts

PCA operates on fundamental principles of linear algebra to redefine the coordinate system of a dataset. The transformation occurs through the following mathematical operations:

Covariance Matrix Computation: PCA begins by calculating the covariance matrix, which captures how pairs of variables (genes) vary together from their means. For a dataset with p variables, this produces a p×p symmetric matrix where diagonal elements represent variances of individual variables, and off-diagonal elements represent covariances between variable pairs [3].

Eigen Decomposition: The covariance matrix is decomposed into its eigenvectors and eigenvalues. Eigenvectors represent the directions of maximum variance in the data (the principal components), while eigenvalues quantify the amount of variance carried by each corresponding eigenvector [3].

Orthogonal Transformation: The principal components are constrained to be orthogonal (perpendicular) to each other, ensuring they capture uncorrelated directions of variance in the data [3].

The first principal component (PC1) corresponds to the eigenvector with the largest eigenvalue, representing the direction of maximum variance. The second component (PC2) captures the next greatest variance direction orthogonal to the first, and so on. Mathematically, each principal component constitutes a linear combination of the original variables:

PC = a₁x₁ + a₂x₂ + ... + aₚxₚ

Where x₁...xₚ represent the original variables (gene expression values) and a₁...aₚ are the coefficients (loadings) indicating each variable's contribution to that component [5].

Geometric Interpretation

Geometrically, PCA can be visualized as fitting an n-dimensional ellipsoid to the data, where each principal component represents an axis of the ellipsoid. The orientation of these axes follows the directions of maximal variance, with the longest axis (major axis) corresponding to PC1 [3]. This process effectively identifies a new coordinate system where the origin centers at the data mean, axes align with directions of maximal variance, and dimensions are ordered by their importance in describing data spread.

Table: Key Mathematical Concepts in PCA

| Concept | Mathematical Definition | Interpretation in PCA Context |

|---|---|---|

| Eigenvector | Vector satisfying Av = λv for matrix A | Direction of a principal component |

| Eigenvalue | Scalar λ in the equation Av = λv | Amount of variance explained by the component |

| Covariance | Measure of how two variables change together | Indicates redundant information between genes |

| Orthogonality | Vectors with zero dot product | Principal components are uncorrelated |

PCA Workflow for RNA-seq Data

Preprocessing and Data Standardization

Proper preprocessing is critical for successful PCA of RNA-seq data due to technical variations that can dominate biological signals:

Data Normalization: RNA-seq data requires normalization to account for differences in sequencing depth between samples. Common approaches include the shifted logarithm transformation (log1p_norm) for single-cell data [1] or variance-stabilizing transformations for bulk RNA-seq.

Feature Selection: Including all genes in PCA introduces noise and computational burden. Selecting the most variable genes (e.g., the top 2000 genes with the largest biological components) focuses the analysis on biologically informative features and reduces high-dimensional random noise [2].

Standardization: Scaling variables to have comparable ranges ensures genes with naturally higher expression levels don't dominate the PCA results. This typically involves centering (subtracting the mean) and scaling (dividing by standard deviation) each variable [3].

Computational Implementation

The PCA workflow can be implemented using various bioinformatics tools and programming environments:

Using Scanpy for Single-Cell Data:

Using scran for R/Bioconductor:

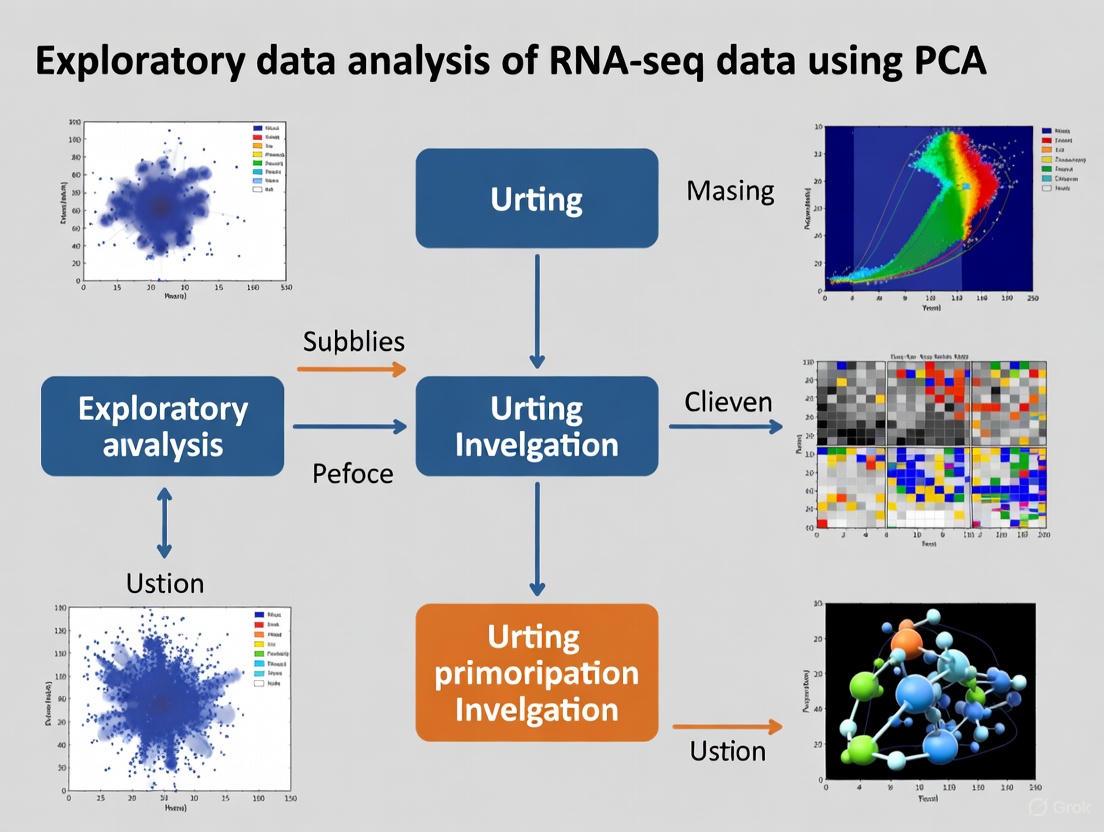

The following diagram illustrates the complete PCA workflow for RNA-seq data analysis:

Visualizing and Interpreting PCA Results

Explained Variance and Scree Plots

A critical first step in interpreting PCA is determining how many components to retain for downstream analysis. The explained variance ratio indicates the proportion of total dataset variance captured by each component [7]. A scree plot visualizes the percentage of variance explained by each principal component in descending order, typically showing a sharp drop followed by a gradual decline (the "elbow"), helping researchers identify how many components meaningfully contribute to data structure.

Table: Example of Cumulative Variance Explained by Principal Components

| Number of Components | Cumulative Variance Explained |

|---|---|

| 1 | 36.2% |

| 2 | 55.4% |

| 3 | 66.5% |

| 4 | 73.6% |

| 5 | 80.2% |

| 6 | 85.1% |

| 7 | 89.3% |

| 8 | 92.0% |

| 9 | 94.2% |

| 10 | 96.2% |

In practice, retaining enough components to capture 70-95% of total variance is common, though this threshold depends on specific research goals and data characteristics [7] [2]. For many RNA-seq applications, 10-50 principal components provide a reasonable balance between information retention and dimensionality reduction [2].

PCA Plots and Biological Interpretation

The most common visualization approach projects samples onto the first two principal components, creating a 2D scatter plot where each point represents a sample and spatial relationships approximate expression profile similarities:

Cluster Identification: Samples with similar expression profiles group together in PCA space, potentially representing distinct biological states, cell types, or experimental conditions [4].

Outlier Detection: Samples far from main clusters may represent technical artifacts, contamination, or unique biological states worthy of further investigation [4].

Batch Effect Assessment: Systematic separations along principal components may reveal technical batch effects rather than biological signals [4].

Treatment Effects: In drug discovery contexts, separation of treated vs. control samples along specific components can reveal compound effects on transcriptional programs [5].

The diagram below illustrates the relationship between high-dimensional gene expression data and its 2D PCA projection:

While the first two components provide the "best" 2D view of the data, they may not capture all biologically relevant variance. Examining additional component pairs (e.g., PC1 vs. PC3, PC2 vs. PC3) through pairwise scatterplots can reveal patterns obscured in the initial projection [2].

Advanced Applications in Drug Discovery and Biomedical Research

Network Pharmacology and Systems Biology

PCA facilitates a shift from reductionist drug discovery approaches toward network pharmacology by capturing system-level responses to therapeutic interventions. Rather than examining individual gene targets, PCA enables researchers to identify coordinated transcriptional programs that reflect:

Mode of Action: Similar compounds often induce similar transcriptional changes, creating distinct clusters in PCA space that reflect shared mechanisms of action [5].

Polypharmacology: Drugs with multiple targets produce unique expression signatures that may be distinguishable through PCA, even when traditional metrics show similarity [5].

Toxicological Profiles: Adverse effects may manifest as distinct transcriptional programs detectable as separations in principal component space [5].

Biomarker Identification and Patient Stratification

In translational research, PCA of transcriptomic data enables:

Patient Stratification: Identifying molecular subtypes within seemingly homogeneous patient populations that may respond differently to therapies [5].

Biomarker Discovery: Genes with high loadings on components that separate clinical groups represent potential biomarkers for diagnosis, prognosis, or treatment selection.

Clinical Trial Optimization: Ensuring balanced allocation of molecular subtypes across treatment arms in clinical trials [5].

Practical Considerations and Methodological Limitations

Critical Parameter Choices

Several analytical decisions significantly impact PCA results and interpretation:

Number of Components: While automated methods like elbow detection in scree plots exist, the optimal number of components ultimately depends on research goals. For visualization, 2-3 components suffice; for downstream analyses, enough components to capture biological signal while excluding noise [2].

Gene Selection: Restricting PCA to highly variable genes improves signal-to-noise ratio but may exclude biologically relevant features with lower variability [2].

Normalization Method: The choice of normalization approach significantly affects PCA results, particularly for comparisons across experiments or platforms [1] [8].

Limitations and Complementary Approaches

While powerful, PCA has specific limitations that researchers should acknowledge:

Linearity Assumption: PCA captures linear relationships but may miss important nonlinear structures in biological data [9].

Variance vs. Biology: Components capture directions of maximum variance, which may not always align with biologically meaningful axes [2].

Global Structure: PCA prioritizes preservation of large-scale distances, potentially at the expense of local neighborhood structures [1].

For these reasons, PCA is often complemented by nonlinear dimensionality reduction techniques like t-SNE and UMAP, which excel at preserving local structures and revealing fine-grained cluster relationships [1] [2]. Recent benchmarking studies have also explored alternatives like Random Projection methods, which offer computational advantages for extremely large datasets [9].

Table: Key Software Tools for PCA Analysis of RNA-seq Data

| Tool/Package | Platform | Primary Function | Application Context |

|---|---|---|---|

| Scanpy [1] | Python | Single-cell analysis | End-to-end scRNA-seq analysis including PCA |

| scran [2] | R/Bioconductor | Single-cell analysis | PCA with automated biological component detection |

| scikit-learn [7] | Python | General machine learning | Flexible PCA implementation for various data types |

| MDAnalysis [10] | Python | Molecular dynamics | PCA for protein trajectories and structural biology |

| edgeR/DESeq2 [8] | R/Bioconductor | Differential expression | RNA-seq analysis with PCA visualization capabilities |

Table: Critical Experimental Considerations for RNA-seq PCA

| Consideration | Potential Impact | Mitigation Strategy |

|---|---|---|

| Batch Effects | Spurious separation along components | Balance experimental conditions across batches |

| RNA Quality | Increased technical variance | Use samples with high RNA integrity numbers (RIN >7) [4] |

| Sequencing Depth | Dominant effect on early components | Apply appropriate normalization methods [8] |

| Cell Type Heterogeneity | Confounded signals in bulk RNA-seq | Use cell sorting or single-cell approaches [4] |

Principal Component Analysis remains an indispensable tool in the analysis of high-dimensional gene expression data, serving as a bridge between raw sequencing data and biological insight. By transforming thousands of correlated gene measurements into a compact set of uncorrelated components, PCA enables visualization of complex relationships, quality assessment, and hypothesis generation essential for modern genomics research. When properly applied and interpreted within the context of study design and biological knowledge, PCA provides a powerful framework for exploratory analysis of RNA-seq data, from basic research to drug discovery applications. As sequencing technologies continue to evolve, producing increasingly complex and large-scale datasets, the principles of dimensionality reduction embodied by PCA will remain fundamental to extracting meaningful biological understanding from transcriptional complexity.

The Critical Role of PCA in RNA-seq Quality Control and Batch Effect Detection

Principal Component Analysis (PCA) is a foundational statistical technique for dimensionality reduction, serving as an essential first step in the exploratory data analysis of RNA-sequencing (RNA-seq) studies. In the context of high-dimensional genomic data, where the number of measured genes (features) far exceeds the number of samples (observations), PCA provides a powerful framework to simplify complex datasets by transforming the original variables into a smaller set of uncorrelated variables called principal components (PCs) [11] [5]. These components are linear combinations of the original gene expressions, orthogonal to each other, and ordered such that the first component captures the largest possible variance in the data, with each succeeding component capturing the next highest variance under the constraint of orthogonality [12]. This characteristic makes PCA an indispensable tool for visualizing underlying data structure, identifying patterns, and detecting technical artifacts such as batch effects, which are systematic technical variations introduced during different experimental processing rounds that can confound biological interpretation if left unaddressed [13] [14].

The application of PCA to RNA-seq data analysis is particularly crucial because it allows researchers to project the high-dimensional gene expression data into a two- or three-dimensional space that can be easily visualized and interpreted [11]. This projection often reveals the dominant sources of variation in the dataset, enabling the rapid assessment of data quality and the detection of sample outliers, batch effects, and other unwanted variations [15] [14]. By examining which samples cluster together in the reduced PCA space, researchers can determine whether the observed separation is driven by biological factors of interest or by technical artifacts, thus guiding subsequent analytical decisions in the RNA-seq workflow [13].

Theoretical Foundations of PCA for High-Dimensional Biological Data

Mathematical Framework and Computational Implementation

The mathematical foundation of PCA rests on eigen decomposition of the covariance matrix or singular value decomposition (SVD) of the data matrix itself [11]. For a gene expression data matrix X with dimensions m×n (where m is the number of samples and n is the number of genes), PCA identifies a set of new variables (PCs) that are linear combinations of the original genes. The first PC is defined as the linear combination PC₁ = a₁₁x₁ + a₁₂x₂ + ... + a₁ₙxₙ that captures the maximum variance in the data, subject to the constraint that the sum of squares of the coefficients a₁ᵢ equals 1 [5]. Subsequent components capture the remaining variance in decreasing order, each orthogonal to all previous components.

The computational implementation of PCA typically follows a standardized workflow [16]:

- Data Preprocessing: Standardize or normalize the data to ensure all variables have the same scale, as PCA is sensitive to variable variances.

- Covariance Matrix Computation: Calculate the covariance matrix representing relationships between all pairs of variables.

- Eigenvalue and Eigenvector Calculation: Compute eigenvalues and corresponding eigenvectors of the covariance matrix.

- Component Selection: Sort eigenvalues in descending order and select top k eigenvectors corresponding to the largest eigenvalues.

- Data Transformation: Project original data onto the selected principal components to obtain a lower-dimensional representation.

For large-scale RNA-seq datasets, computational efficiency becomes paramount. Benchmarking studies have identified that PCA algorithms based on Krylov subspace and randomized singular value decomposition offer optimal balance of speed, memory efficiency, and accuracy for single-cell RNA-seq datasets with hundreds of thousands of cells [15].

Component Selection Strategies in Biomedical Research

A critical step in PCA is determining the optimal number of principal components to retain for downstream analysis. Several methods exist, each with distinct advantages and limitations, particularly in high-dimensional biological data where the number of variables (genes) far exceeds the number of observations (samples) [17].

Table 1: Methods for Selecting Principal Components in PCA

| Method | Approach | Advantages | Limitations |

|---|---|---|---|

| Kaiser-Guttman Criterion | Retains components with eigenvalues >1 | Simple, objective criterion | Tends to select too many components when variables are numerous [17] |

| Cattell's Scree Test | Identifies "elbow" where eigenvalues level off | Visual, intuitive approach | Subjective interpretation; no clear cutoff definition [17] |

| Percent Cumulative Variance | Retains components explaining specific variance percentage (typically 70-80%) | Directly controls information retention | Arbitrary threshold selection; may retain too many/few components [17] |

In healthcare and biomedical research, the percent cumulative variance approach often provides the most stable and reliable component selection, particularly in high-dimensional settings where n (samples) is much smaller than p (variables) [17]. This method ensures sufficient biological signal is preserved while reducing dimensionality for downstream analyses.

PCA as a Tool for Quality Control in RNA-seq Data

Visualizing Data Structure and Detecting Outliers

In RNA-seq quality control, PCA serves as a primary tool for visualizing global gene expression patterns and identifying potential outliers that may represent poor-quality samples or experimental artifacts. By projecting high-dimensional expression data onto the first two or three principal components, researchers can quickly assess the overall structure of their dataset and identify samples that deviate substantially from the majority [15]. These outliers may result from various technical issues, including RNA degradation, library preparation failures, or sequencing errors, and their early detection prevents them from confounding subsequent differential expression or clustering analyses.

The application of PCA to a dataset of 33,148 human peripheral blood mononuclear cells (PBMCs) exemplifies this approach, where the first two principal components clearly separated major immune cell populations while simultaneously identifying potentially problematic samples that clustered separately from the main cell groups [18]. Such visual inspection enables researchers to make informed decisions about sample inclusion or exclusion before proceeding with more sophisticated analyses. Furthermore, the projection of samples in PCA space can reveal whether biological replicates cluster together as expected, providing an initial assessment of experimental reproducibility and technical variance within the dataset [13].

Workflow for RNA-seq Quality Control Using PCA

The following diagram illustrates a standardized workflow for implementing PCA in RNA-seq quality control, from raw data processing to outlier detection:

The effectiveness of PCA for quality control depends heavily on proper data preprocessing. Normalization is an essential prerequisite to address differences in sequencing depth across samples, which if uncorrected, would dominate the variation captured by the first principal component [19] [18]. Common normalization approaches include regularized negative binomial regression for UMI-based data [18], size factor estimation similar to bulk RNA-seq [18], or more sophisticated approaches that model technical noise while preserving biological heterogeneity [18]. Following normalization, standardization (scaling) of expression values ensures that each gene contributes equally to the PCA, preventing highly expressed genes from dominating the component structure simply due to their larger numerical range [16] [19].

Detecting and Correcting Batch Effects with PCA

Identifying Batch Effects Through PCA Visualization

Batch effects represent one of the most significant technical challenges in RNA-seq studies, particularly when samples are processed across different sequencing runs, laboratories, or experimental conditions [13] [14]. These technical artifacts can introduce systematic variations that obscure biological signals and lead to false conclusions if not properly addressed. PCA serves as a powerful diagnostic tool for detecting batch effects by visualizing whether samples cluster primarily by batch rather than by biological group in the reduced-dimensional space [14].

In a comprehensive evaluation of 12 publicly available RNA-seq datasets, researchers utilized PCA to identify pronounced batch effects in multiple datasets, observing that samples from different experimental batches formed distinct clusters along the first two principal components, separate from the expected biological groupings [13]. This visual separation indicates that technical variance exceeds biological variance in these components, signaling the need for batch effect correction before proceeding with downstream analyses. The same study demonstrated that quality scores derived from machine learning classifiers could successfully predict batch membership based on sample quality differences, reinforcing the connection between technical quality and batch effects in RNA-seq data [13].

Quantitative Metrics for Batch Effect Assessment

While visual inspection of PCA plots provides an intuitive assessment of batch effects, quantitative metrics offer objective measures of batch effect severity and correction efficacy. Several metrics have been developed specifically for this purpose:

Table 2: Quantitative Metrics for Assessing Batch Effects in RNA-seq Data

| Metric | Description | Interpretation |

|---|---|---|

| Normalized Mutual Information (NMI) | Measures similarity between batch labels and clustering assignments | Values closer to 0 indicate better mixing of batches [14] |

| Adjusted Rand Index (ARI) | Measures agreement between batch labels and clustering | Values closer to 0 indicate successful batch integration [14] |

| kBET | k-nearest neighbor batch effect test | Quantifies local batch mixing; lower values indicate better correction [14] |

| Design Bias | Correlation between quality scores and sample groups | Higher values indicate potential confounding [13] |

These metrics complement visual PCA inspection by providing objective criteria for evaluating batch effect correction methods. For instance, in the analysis of dataset GSE163214, a strong batch effect was quantitatively confirmed by poor clustering evaluation scores (Gamma = 0.09, Dunn1 = 0.01) in the uncorrected PCA, which improved significantly after appropriate correction (Gamma = 0.32, Dunn1 = 0.17) [13].

Batch Effect Correction Strategies and Their Evaluation

Once detected, batch effects can be addressed using various computational approaches that leverage the PCA framework or alternative dimensionality reduction techniques:

The effectiveness of these correction methods varies across datasets and experimental designs. A comparative study of preprocessing pipelines for RNA-seq data found that batch effect correction improved classification performance when trained on TCGA data and tested against GTEx datasets, but sometimes worsened performance when tested against independent ICGC and GEO datasets, highlighting the context-dependent nature of batch correction [19]. This underscores the importance of carefully evaluating correction efficacy using both visual (PCA plots) and quantitative metrics rather than applying these methods indiscriminately.

Experimental Protocols and Case Studies

Standardized Protocol for PCA-Based Batch Effect Detection

Implementing a robust PCA analysis for batch effect detection requires careful attention to experimental design and computational parameters. The following protocol outlines a standardized approach suitable for most RNA-seq studies:

Sample Preparation and Sequencing

- Process samples in randomized order across sequencing batches to avoid confounding biological conditions with technical batches

- Include control samples or reference materials across batches when possible

- Record detailed metadata including sequencing date, lane, library preparation batch, and operator

Data Preprocessing

- Perform standard RNA-seq quality control using FastQC or similar tools

- Align reads to reference genome using STAR or HISAT2 [19]

- Generate gene count matrices using featureCounts or HTSeq

- Apply normalization method appropriate for data type (e.g., regularized negative binomial regression for UMI data [18])

- Filter lowly expressed genes (e.g., requiring at least 10 counts in a minimum of samples)

- Apply variance-stabilizing transformation or logCPM transformation

PCA Implementation

- Standardize expression matrix to mean-centered and unit variance

- Perform PCA using computationally efficient implementation (e.g., randomized SVD for large datasets [15])

- Retain principal components explaining cumulative variance of 70-80% [17]

Batch Effect Assessment

- Visualize first 2-3 PCs colored by batch and biological condition

- Calculate quantitative batch effect metrics (kBET, ARI, NMI) [14]

- Perform statistical tests (e.g., Kruskal-Wallis) to assess association between quality metrics and batches [13]

Interpretation and Decision

- If batches separate clearly in PCA space, apply appropriate correction method

- If biological groups remain separated after accounting for batch effects, proceed to downstream analysis

- Document all findings and correction steps for reproducibility

Case Study: Integrated Analysis of Multiple RNA-seq Datasets

A comprehensive study evaluating batch effect correction methods across 12 public RNA-seq datasets provides compelling evidence for the utility of PCA in quality control [13]. In dataset GSE163214, researchers observed a strong batch effect in the uncorrected PCA, where samples clustered primarily by experimental batch rather than biological group. This visual assessment was supported by a significant Kruskal-Wallis test (p-value = 1.03e−2) and high correlation between quality scores and batch groups (designBias = 0.44) [13].

After applying batch correction using the ComBat method, the PCA showed improved clustering by biological group rather than technical batch, with enhanced clustering metrics (Gamma improved from 0.09 to 0.32) and increased number of differentially expressed genes (from 4 to 12) [13]. Notably, when the researchers incorporated quality-aware correction using machine learning-predicted quality scores, they achieved comparable or better correction than the reference method that used a priori batch knowledge in 10 of 12 datasets (92% total) [13]. This case study demonstrates how PCA serves as both a diagnostic tool for identifying batch effects and an evaluation framework for assessing correction efficacy.

Research Reagent Solutions for RNA-seq Quality Control

Table 3: Essential Research Reagents and Computational Tools for PCA-Based RNA-seq QC

| Resource | Type | Function in PCA-based QC |

|---|---|---|

| STAR/HISAT2 | Alignment Tool | Aligns RNA-seq reads to reference genome; impacts gene count matrix quality [19] |

| sctransform | Normalization Method | Regularized negative binomial regression for UMI data; improves PCA input quality [18] |

| Harmony | Batch Correction | Integrates PCA with iterative clustering to remove batch effects [14] |

| ComBat | Batch Correction | Empirical Bayes framework for batch effect removal on PCA or full data [19] [14] |

| Seurat | Single-cell Toolkit | Implements PCA visualization and batch correction using CCA and MNN [14] |

| Scanpy | Single-cell Toolkit | Provides PCA implementations and downstream analysis for large-scale data [15] |

Advanced Applications and Future Directions

The application of PCA to RNA-seq data continues to evolve with methodological advancements and emerging computational challenges. Recent developments include supervised PCA approaches that incorporate outcome variables to guide component identification, sparse PCA techniques that enhance interpretability by producing components with fewer non-zero loadings, and functional PCA methods designed to analyze time-course gene expression data [11]. These specialized approaches address specific limitations of standard PCA while maintaining its core strength as a dimensionality reduction technique.

For large-scale single-cell RNA-seq datasets, computational efficiency has become a critical consideration. Benchmarking studies have demonstrated that PCA algorithms based on Krylov subspace and randomized singular value decomposition provide optimal balance of speed, memory efficiency, and accuracy when analyzing datasets comprising hundreds of thousands to millions of cells [15]. These implementations enable researchers to apply PCA to the increasingly massive datasets generated by modern sequencing technologies without sacrificing analytical precision.

Future directions in PCA development for RNA-seq analysis include improved methods for component interpretation that better bridge statistical patterns with biological mechanisms, enhanced integration with downstream machine learning applications for disease classification and biomarker discovery, and more sophisticated approaches for handling zero-inflated single-cell data distributions [11] [15]. As RNA-seq technologies continue to evolve and dataset scales expand, PCA will undoubtedly remain a cornerstone technique for quality assessment, batch effect detection, and exploratory analysis in transcriptional genomics.

Principal Component Analysis serves as an indispensable tool in the RNA-seq analytical workflow, providing critical insights into data quality, batch effects, and underlying biological structure. Through appropriate implementation and interpretation, PCA enables researchers to identify technical artifacts, assess normalization strategies, and guide subsequent analytical decisions. The visual and quantitative framework offered by PCA makes it particularly valuable for detecting unwanted technical variance that could otherwise confound biological interpretation. When integrated with modern batch correction methods and quality-aware approaches, PCA significantly enhances the reliability and reproducibility of RNA-seq studies, ultimately strengthening the biological conclusions drawn from high-throughput transcriptional profiling.

Principal Component Analysis (PCA) serves as a cornerstone of exploratory data analysis in RNA-seq studies, enabling researchers to visualize high-dimensional transcriptomic data in a simplified low-dimensional space. This technical guide elucidates the core principles of PCA interpretation within the context of RNA-seq research, focusing on three critical analytical aspects: assessing sample similarity, detecting outliers, and identifying cluster patterns. We provide a comprehensive framework for extracting biological insights from PCA visualizations, supplemented by robust statistical methodologies for outlier detection, detailed experimental protocols, and practical implementation guidelines. By establishing a standardized approach to PCA plot interpretation, this whitepaper empowers researchers and drug development professionals to enhance the reliability of their transcriptomic analyses and make informed decisions in subsequent investigative pathways.

RNA-sequencing generates complex high-dimensional datasets where each sample contains expression values for tens of thousands of genes, creating visualization and interpretation challenges [20]. Principal Component Analysis (PCA) addresses this complexity through dimensionality reduction, transforming the original variables into a new set of uncorrelated variables called principal components (PCs) that capture maximum variance in the data [21]. The first principal component (PC1) represents the axis of maximum variance in the dataset, followed by PC2 capturing the next greatest variance orthogonal to PC1, and so forth for subsequent components [20]. This transformation allows researchers to project samples into a two- or three-dimensional space defined by the top principal components, facilitating visual assessment of global expression patterns.

The explained variance ratio quantifies the proportion of total dataset variance captured by each principal component, while the cumulative explained variance ratio indicates the total variance explained by the first m components combined [20]. For example, if PC1 explains 50% of variance and PC2 explains 30%, their cumulative explained variance equals 80%, indicating that the two-dimensional visualization represents most original data structure [20]. In practice, RNA-seq PCA plots with cumulative variance of 70-90% for the first two components provide reasonably accurate representations of sample relationships with minimal information loss.

PCA's utility in RNA-seq quality assessment stems from its ability to reveal underlying data structure through sample positioning and clustering patterns. When samples cluster tightly in PCA space, they share similar gene expression profiles, suggesting technical reproducibility or biological similarity [20]. Conversely, samples distant from main clusters may represent outliers requiring further investigation [22]. The subsequent sections detail systematic approaches for interpreting these patterns to derive meaningful biological and technical insights.

Theoretical Framework of PCA

Mathematical Foundations

Principal Component Analysis operates on the fundamental principle of identifying orthogonal directions of maximum variance in high-dimensional data through eigen decomposition of the covariance matrix [23]. Formally, given a mean-centered data matrix X with dimensions n × p (where n represents samples and p represents genes), PCA computes the covariance matrix C = (XᵀX)/(n-1). The eigenvectors of C correspond to the principal components (directions of maximum variance), while the eigenvalues represent the magnitude of variance along each component direction [24]. The descending order of eigenvalues ensures that each subsequent component captures the maximum possible remaining variance, providing an optimal linear transformation for dimensionality reduction.

The intrinsic dimensionality of a dataset reflects the minimal number of dimensions needed to approximately represent the data, equivalent to the matrix rank in linear algebra terms [23]. In RNA-seq applications, biological systems typically exhibit lower intrinsic dimensionality than the measured feature space (thousands of genes), as genes operate in coordinated pathways and networks rather than independently. This biological principle enables effective dimensionality reduction without substantial information loss, making PCA particularly valuable for transcriptomic data exploration.

Computational Implementation for RNA-seq Data

RNA-seq data requires specific preprocessing before PCA application to ensure meaningful results. The standard workflow involves: (1) raw count normalization to account for library size differences; (2) transformation to stabilize variance across the expression range; and (3) filtering to remove uninformative genes. Common normalization approaches include counts per million (CPM) with TMM normalization for effective library size correction [25], or geometric methods as implemented in DESeq2 [24]. Variance-stabilizing transformations such as log2(CPM + k) or regularized log transformation (rlog) address the mean-variance relationship in count data, preventing highly expressed genes from disproportionately influencing component calculations [24].

Table 1: Standard Preprocessing Steps for RNA-seq PCA

| Step | Purpose | Common Methods |

|---|---|---|

| Normalization | Correct for library size differences | TMM (edgeR), geometric (DESeq2), CPM [25] [24] |

| Transformation | Stabilize variance across expression range | log2(CPM+1), rlog, vst [24] |

| Filtering | Remove uninformative genes | Minimum count threshold (e.g., ≥10 reads total) [26] |

| Scaling | Standardize gene variances | Z-score normalization (optional) [25] |

Following preprocessing, PCA computation typically employs singular value decomposition (SVD) or eigen decomposition algorithms [23]. The prcomp() function in R or specialized RNA-seq tools implement these algorithms, generating principal components scores for samples and loadings for genes. For effective visualization, researchers typically select the first 2-3 components that capture the greatest variance, though examining additional components may reveal subtler data patterns.

Interpreting PCA Plots: Key Analytical Aspects

Assessing Sample Similarity and Clustering Patterns

In PCA plots, proximity between sample points indicates similarity in their global gene expression profiles [20]. Samples clustering tightly together demonstrate high reproducibility and shared biological characteristics, while greater distances reflect increasing transcriptional differences. Interpretation requires correlation with experimental metadata – when samples from the same experimental group (e.g., treatment condition, tissue type) cluster together, this validates expected biological patterns and suggests a strong treatment effect relative to other sources of variation [27].

The explained variance for each component, typically displayed in axis labels, indicates each dimension's relative importance [20]. For example, a PCA plot where PC1 explains 60% of variance and PC2 explains 20% suggests that the primary separation axis (PC1) captures the most substantial source of transcriptional variation. Researchers should examine whether this dominant separation corresponds to biological factors of interest (e.g., disease status) or technical artifacts (e.g., batch effects), guiding subsequent analytical decisions.

Table 2: Common Cluster Patterns and Their Interpretations in RNA-seq PCA

| Cluster Pattern | Potential Interpretation | Recommended Actions |

|---|---|---|

| Tight within-group clustering | High reproducibility; strong biological signal | Proceed with confidence in group differences |

| Overlapping group clusters | Weak treatment effect; high biological variability | Consider increased replication; validate findings |

| Separation along PC1 | Strongest source of variation drives separation | Identify biological or technical factors associated with PC1 |

| Subclusters within groups | Potential hidden covariates or biological subtypes | Investigate additional metadata; consider stratification |

Biological interpretation extends beyond simple cluster identification to examining the specific genes driving component separations. Genes with extreme loadings (weights) on a particular principal component disproportionately influence sample positioning along that axis [24]. Functional enrichment analysis of high-loading genes can reveal biological processes, pathways, or cell type signatures underlying observed clustering patterns, transforming descriptive visualizations into mechanistic hypotheses.

Detecting and Validating Outliers

Outlier samples in PCA plots appear as isolated points distant from the main sample cloud, potentially indicating technical artifacts, sample mislabeling, or genuine biological extremes [22]. Visual inspection remains the most common initial detection approach, where samples "a magnitude of ~200 to 1000 from the main group along PC1" warrant further investigation [28]. However, subjective visual assessment introduces bias, particularly for subtle outliers or datasets with small sample sizes where biological and technical variation may be conflated.

Robust statistical methods provide objective outlier detection by quantifying a sample's deviation from the multivariate distribution. The Z-score method calculates standardized distances along principal components, flagging samples with absolute Z-scores exceeding 3 on major components as potential outliers [28]. More advanced approaches include Robust PCA (rPCA) methods like PcaGrid and PcaHubert, which iteratively fit the majority of data distribution before identifying deviant observations [22]. These methods demonstrate particular utility in RNA-seq studies with limited replicates, where classical PCA may fail to detect outliers that significantly impact downstream analyses [22].

Table 3: Outlier Detection Methods for RNA-seq PCA

| Method | Principle | Advantages | Limitations |

|---|---|---|---|

| Visual Inspection | Subjective assessment of sample positioning | Quick; intuitive; requires no statistical expertise | Prone to bias; inconsistent between analysts |

| Z-score (PC1 > |3|) | Standard deviation-based threshold | Simple implementation; objective criterion | Assumes normal distribution; may miss multivariate outliers |

| Robust PCA (PcaGrid) | Statistical fitting resistant to outlier influence | High sensitivity/specificity; automated detection [22] | Computational intensity; requires specialized implementation |

When outliers are detected, researchers must carefully determine their nature before exclusion. Technical outliers resulting from RNA degradation, library preparation failures, or sequencing artifacts should be removed, as they introduce non-biological variance that reduces statistical power [22]. In contrast, genuine biological outliers may represent important biological phenomena or patient subgroups and require preservation despite increasing apparent variability. Integrating RNA quality metrics (e.g., transcript integrity numbers) with expression-based PCA can distinguish these scenarios [27].

Advanced Interpretation Considerations

Batch Effects and Confounding Factors

Batch effects represent systematic technical variations introduced by processing date, reagent lots, sequencing lanes, or personnel, potentially confounding biological interpretation when correlated with experimental conditions [22]. In PCA plots, batch effects manifest as sample clustering by processing batch rather than biological group, often visible along secondary principal components. For example, separation of samples along PC2 correlated with sequencing date suggests batch effects requiring statistical correction.

Diagnosing batch effects necessitates incorporating technical metadata into PCA visualization through color, shape, or size encoding [26]. The pcaExplorer package facilitates this exploratory process through interactive visualization, enabling researchers to rapidly assess potential confounders [29]. When batch effects are detected, several mitigation strategies exist, including experimental design (randomization, blocking), statistical adjustment (ComBat, removeBatchEffect), or incorporating batch as a covariate in differential expression models [22].

Functional Interpretation of Components

Moving beyond sample relationships, PCA enables investigation of biological processes driving observed patterns through component loadings analysis. Genes with extreme positive or negative loadings on a principal component disproportionately influence sample positioning along that component's axis [24]. For example, a principal component separating immune from epithelial samples would show high positive loadings for immune cell markers and negative loadings for epithelial genes.

Functional enrichment analysis of high-loading genes transforms abstract components into biological narratives. Specialized tools like pcaExplorer automatically perform Gene Ontology (GO) enrichment for genes with extreme loadings in each component, identifying biological processes, molecular functions, and cellular compartments associated with major variance sources [29]. This approach can reveal unexpected biological themes or quality concerns, such as components dominated by stress response genes potentially indicating sample processing issues.

Experimental Protocols and Workflows

Standardized PCA Workflow for RNA-seq Data

Implementing a robust PCA analysis requires systematic execution of sequential steps from data preprocessing through interpretation. The following workflow represents best practices derived from multiple RNA-seq analysis frameworks:

Step 1: Data Normalization – Apply library size normalization to remove technical variability. The TMM method in edgeR or median-of-ratios in DESeq2 represent robust approaches that assume most genes are not differentially expressed [25] [29].

Step 2: Data Transformation – Convert normalized counts to log2-scale to stabilize variance across the expression range. Regularized log transformation (rlog) in DESeq2 or voom transformation in limma specifically address mean-variance relationships in count data [24].

Step 3: Gene Filtering – Remove uninformative genes with low expression across samples. A common threshold retains genes with ≥10 counts in at least X samples, where X depends on study design [26].

Step 4: PCA Computation – Perform principal component analysis using SVD algorithm implementation. The prcomp() function in R with scale=FALSE for already transformed data is recommended [24].

Step 5: Visualization – Generate 2D/3D scatter plots of sample scores with biological/technical metadata overlay. Interactive tools like pcaExplorer enhance exploration capabilities [29].

Step 6: Interpretation – Systematically evaluate clustering, outliers, and variance explanations to inform downstream analyses.

Robust Outlier Detection Protocol

For studies requiring rigorous outlier assessment, particularly with limited replicates, the following protocol implements robust statistical detection:

Method 1: Statistical Thresholding – Compute principal components using prcomp(), extract PC1 values, convert to Z-scores, and flag samples with |Z-score| > 3 as potential outliers [28].

Method 2: Robust PCA Implementation – Apply PcaGrid algorithm from rrcov R package, demonstrated to achieve 100% sensitivity and specificity in controlled tests [22]. Execute with PcaGrid(t(expression_matrix)) and identify outliers through which(pca@flag=='FALSE').

Validation – Correlate statistical outliers with RNA quality metrics (e.g., TIN scores) [27], sequencing statistics, and sample metadata to distinguish technical failures from biological extremes before deciding on exclusion.

Research Reagent Solutions

Table 4: Essential Tools for RNA-seq PCA Analysis

| Tool/Category | Specific Implementation | Function in PCA Workflow |

|---|---|---|

| Statistical Environment | R/Bioconductor [29] | Primary computational platform for analysis |

| Normalization Methods | TMM (edgeR) [25], geometric (DESeq2) [24] | Correct library size differences before PCA |

| PCA Computation | prcomp() [24], PcaGrid() [22] |

Perform principal component analysis |

| Visualization Packages | ggplot2 [26], pcaExplorer [29] | Generate publication-quality PCA plots |

| Quality Metrics | TIN scores [27], FastQC | Assess RNA integrity and sequencing quality |

| Interactive Analysis | pcaExplorer Shiny app [29] | Explore PCA results dynamically |

| Functional Interpretation | GO enrichment [29], Metascape [27] | Biological meaning of components |

Effective interpretation of PCA plots represents a fundamental competency in RNA-seq exploratory analysis, enabling researchers to assess data quality, identify patterns, and detect anomalies before committing to specific analytical pathways. This whitepaper has established a comprehensive framework for extracting maximum insight from PCA visualizations through systematic assessment of sample similarity, statistical outlier detection, and biological interpretation of components. The standardized protocols and methodologies presented here provide researchers and drug development professionals with practical tools to enhance analytical rigor in transcriptomic studies.

As RNA-seq technologies evolve toward single-cell applications and larger sample sizes, PCA remains an indispensable tool for initial data exploration. Future developments will likely enhance interactive visualization capabilities and integrate PCA more seamlessly with downstream analysis workflows. By adopting the systematic interpretation framework outlined in this guide, researchers can ensure they extract meaningful biological insights from complex transcriptomic datasets while maintaining analytical transparency and reproducibility.

Principal Component Analysis (PCA) serves as a fundamental dimensionality reduction technique in the exploratory analysis of high-dimensional biological data, particularly RNA-seq datasets. This technical guide provides a comprehensive examination of PCA's core concepts—principal components, explained variance, and scree plots—framed within the context of RNA-seq data analysis. We detail the mathematical foundations, interpretation methodologies, and practical applications for researchers, scientists, and drug development professionals working with transcriptomic data. The whitepaper synthesizes current methodologies and provides structured frameworks for implementing PCA within RNA-seq workflows to extract meaningful biological insights from complex gene expression matrices.

RNA-seq datasets present significant analytical challenges due to their high-dimensional nature, where the number of genes (variables) far exceeds the number of samples (observations). This curse of dimensionality creates computational, analytical, and visualization difficulties that PCA helps mitigate [30]. In a typical RNA-seq experiment, researchers measure expression levels of >20,000 genes across <100 samples, creating a scenario where P ≫ N (variables greatly outnumber observations) [30]. PCA addresses this by transforming correlated variables into a smaller set of uncorrelated principal components that capture the essential patterns of variation in the data [31].

The application of PCA to RNA-seq data requires special considerations. As noted in research by Tang [32], the default approach in tools like DESeq2 uses the top 500 most variable genes to compute PCA rather than the full gene matrix. This preprocessing step reduces noise and emphasizes key drivers of sample differences, demonstrating how domain-specific implementations enhance PCA's utility in transcriptomic analysis.

Principal Components: The Foundation of PCA

Mathematical Definition

Principal Components (PCs) are linear combinations of the original variables (genes) that redefine the coordinate system of the data [31]. Mathematically, the first principal component score for observation i is defined as:

[ t{1(i)} = x{(i)} · w_{(1)} \quad \text{for} \quad i = 1,\dots,n ]

where (x{(i)}) represents the original variables and (w{(1)}) represents the weight vector [31]. The first weight vector w(1) is defined such that:

[ \mathbf{w}{(1)} = \arg\max{\|\mathbf{w}\|=1} \left{ \sumi \left( \mathbf{x}{(i)} \cdot \mathbf{w} \right)^2 \right} ]

This maximizes the variance of the projected data [31]. Subsequent components are derived similarly, with each successive component capturing the next highest variance direction while being constrained to be orthogonal to previous components.

Geometric Interpretation

Geometrically, PCA can be visualized as fitting a p-dimensional ellipsoid to the data, where each axis represents a principal component [31]. The axes of the ellipsoid are determined by the eigenvectors of the covariance matrix, with their lengths proportional to the corresponding eigenvalues. The direction of the first principal component aligns with the longest axis of the ellipsoid, representing the direction of maximum variance in the data [31].

Practical Implementation in RNA-seq

In RNA-seq analysis, principal components transform gene expression measurements into new variables that represent patterns of co-expression across samples. The resulting PC scores can reveal sample clusters, outliers, and batch effects that might not be apparent in the original high-dimensional space [33]. When samples cluster closely in PCA space, they share similar expression profiles, potentially indicating similar biological states or experimental conditions [32].

Explained Variance: Quantifying Information Retention

Mathematical Foundation

Explained variance represents the amount of variability in the original data captured by each principal component [34]. Mathematically, the explained variance for a component equals its corresponding eigenvalue from the covariance matrix's eigen decomposition [35]. For a dataset with total variance equal to the sum of all eigenvalues ((\sum{j=1}^p \lambdaj)), the proportion of variance explained by the (k^{th}) principal component is:

[ \text{Proportion}k = \frac{\lambdak}{\sum{j=1}^p \lambdaj} ]

This proportion represents the fraction of total variance attributed to the (k^{th}) component [34] [35].

Explained Variance vs. Explained Variance Ratio

In practice, two related metrics are used to assess variance capture:

- Explained Variance: The absolute amount of variance captured by each component (eigenvalues) [34]

- Explained Variance Ratio: The proportion of total variance explained by each component (eigenvalues divided by total variance) [34]

The following table summarizes the key differences:

Table 1: Explained Variance versus Explained Variance Ratio

| Aspect | Explained Variance | Explained Variance Ratio |

|---|---|---|

| Definition | Absolute amount of variance explained by each PC | Proportion of total variance explained by each PC |

| Calculation | Equal to the eigenvalue for each component | Eigenvalue divided by sum of all eigenvalues |

| Interpretation | Useful for determining how many PCs to retain | Useful for understanding relative importance of each PC |

| Range | 0 to total variance | 0 to 1 (or 0% to 100%) |

| Cumulative Sum | Cumulative explained variance | Cumulative proportion of variance explained |

Example from RNA-seq Analysis

Consider a PCA on an RNA-seq dataset with 8 variables (genes). The eigenanalysis might yield the following results:

Table 2: Example Variance Explanation in PCA

| Principal Component | Eigenvalue | Proportion | Cumulative Proportion |

|---|---|---|---|

| PC1 | 3.5476 | 0.443 | 0.443 |

| PC2 | 2.1320 | 0.266 | 0.710 |

| PC3 | 1.0447 | 0.131 | 0.841 |

| PC4 | 0.5315 | 0.066 | 0.907 |

| PC5 | 0.4112 | 0.051 | 0.958 |

| PC6 | 0.1665 | 0.021 | 0.979 |

| PC7 | 0.1254 | 0.016 | 0.995 |

| PC8 | 0.0411 | 0.005 | 1.000 |

In this example, the first three principal components explain 84.1% of the total variance in the data, suggesting they capture most of the meaningful patterns [36]. This cumulative proportion is crucial for determining how many components to retain for further analysis.

Scree Plots: Determining Component Retention

Definition and Purpose

A scree plot is a line graph displaying the eigenvalues of principal components in descending order of magnitude [37]. The term "scree" refers to the accumulation of rock fragments at the base of a cliff, analogous to the point where eigenvalues level off and form a straight line [37]. The primary function of a scree plot is to serve as a diagnostic tool to determine the optimal number of principal components to retain in an analysis [33].

Interpretation Guidelines

The scree plot provides visual criteria for component retention:

- Elbow Method: Identify the "elbow" point where the curve bends and begins to flatten [33] [37]. Components to the left of this point (with higher eigenvalues) are considered meaningful and retained for analysis.

- Kaiser Criterion: Retain components with eigenvalues greater than 1 [36]. This rule is based on the rationale that a component should explain at least as much variance as a single standardized variable.

- Proportion of Variance: Select enough components to explain a predetermined percentage of total variance (typically 70-90% for descriptive purposes) [36].

Practical Application in RNA-seq

In RNA-seq analysis, scree plots help balance dimensionality reduction with information retention. An ideal scree plot shows a steep curve that quickly bends at an "elbow" before flattening out [33]. If the first 2-3 components capture most variation, PCA effectively reduces dimensionality while preserving biological signal. If too many components are needed (e.g., >5 for 80% variance explained), alternative dimension reduction techniques like t-SNE may be more appropriate [33].

Integrated Workflow for RNA-seq Data

Preprocessing Considerations

Proper data preprocessing is critical for meaningful PCA results with RNA-seq data:

- Data Transformation: RNA-seq count data typically requires log transformation to stabilize variance [38].

- Feature Selection: Using the top 500 most variable genes, as implemented in DESeq2, often produces clearer patterns than using all genes [32].

- Scaling Decision: Variables with substantially different variances may require standardization to prevent highly expressed genes from dominating the analysis [39].

Complete Analytical Pipeline

The integrated workflow for PCA in RNA-seq analysis involves:

- Data Preparation: Raw count matrix with samples as columns and genes as rows

- Preprocessing: Filtering, transformation, and optionally scaling

- PCA Implementation: Eigen decomposition of covariance/correlation matrix

- Result Interpretation: Evaluating scree plots, calculating explained variance

- Visualization: Creating PCA plots colored by experimental conditions

Table 3: Researcher's Toolkit for PCA in RNA-seq Analysis

| Tool/Resource | Function | Application Context |

|---|---|---|

| DESeq2 | Differential expression analysis with built-in PCA | RNA-seq count data normalization and visualization |

| Top 500 most variable genes | Feature selection for PCA | Noise reduction and emphasis on key differential signals |

| Scree Plot | Determining number of components to retain | Assessing dimensionality and information compression |

| Cumulative Variance Plot | Visualizing explained variance progression | Decision making for component retention threshold |

| Biplot | Simultaneous visualization of samples and variable influences | Interpreting biological meaning of components |

Case Study: Airway Smooth Muscle Cells

A representative example comes from the airway dataset, which examines the transcriptomic response of human airway smooth muscle cells to dexamethasone treatment [38]. In this experiment:

- Four primary cell lines were treated with dexamethasone or control

- PCA would typically be applied to the transformed count matrix

- The first two principal components would likely separate samples by treatment status

- A scree plot would determine if additional components capture biological variation related to cell line differences

Principal Component Analysis provides an essential foundation for exploratory analysis of RNA-seq data through its core components: principal components that redefine the data space, explained variance that quantifies information retention, and scree plots that guide dimensional reduction. When properly implemented within the RNA-seq workflow, PCA enables researchers to identify strong patterns, detect outliers, visualize sample relationships, and compress high-dimensional transcriptomic data into interpretable forms. The integration of these concepts forms a critical toolkit for extracting biological insights from complex gene expression datasets, supporting hypothesis generation and guiding subsequent analytical steps in the drug development pipeline.

A Step-by-Step Protocol: Running PCA on Your RNA-seq Dataset

In the realm of transcriptomics, RNA sequencing (RNA-seq) has revolutionized our ability to measure gene expression at a genome-wide scale. This high-throughput technology enables researchers to address diverse biological questions, from disease biomarker discovery to understanding developmental biology and environmental responses [40]. The initial output of an RNA-seq experiment consists of millions of short DNA sequences (reads) that represent fragments of the RNA molecules present in the sample at the time of sequencing [40]. Before these raw data can yield biological insights, they must undergo a rigorous transformation process to become a normalized gene expression matrix suitable for downstream analyses, including Principal Component Analysis (PCA).

PCA serves as a fundamental exploratory technique in the analysis of RNA-seq data, providing a valuable tool for visualizing high-dimensional datasets in a reduced space [41]. This dimensionality reduction method helps researchers identify patterns, relationships, and potential outliers within their data by projecting samples onto principal components (PCs) that capture the greatest variance [41] [42]. However, the reliability and interpretability of PCA results are profoundly influenced by the preceding data preparation steps, particularly normalization. Recent research demonstrates that normalization methods significantly impact both the PCA model and its biological interpretation [43]. Within the context of a broader thesis on exploratory data analysis of RNA-seq data using PCA, this technical guide provides comprehensive methodologies for transforming raw count data into a normalized matrix optimized for PCA.

RNA-Seq Data Structure and the Need for Normalization

The Raw Count Matrix

RNA-seq data is typically stored in specialized formats throughout the processing pipeline. The analysis begins with raw reads in FASTQ format, which contain both sequence information and quality scores [40]. After alignment to a reference genome (resulting in SAM/BAM files) and quantification, the data is summarized as a raw count matrix [40] [44]. This matrix represents the fundamental starting point for normalization, with rows corresponding to genes or transcripts and columns corresponding to individual samples. Each value in the matrix indicates the number of sequencing reads that have been uniquely mapped to a particular gene in a specific sample [44].

A critical characteristic of this raw count data is its compositionality. RNA-seq naturally generates relative abundance information because sequencers can only process a fixed number of nucleotide fragments, creating a competitive situation where an increase in one transcript's count may effectively decrease the observed counts of others [45]. This compositional nature means the data carries relative rather than absolute information, which has important implications for normalization strategy selection.

Challenges Necessitating Normalization

Raw RNA-seq counts cannot be directly compared between samples or used for PCA without normalization due to several technical artifacts:

Sequencing Depth Variation: Samples with more total reads will naturally have higher counts, even for genes expressed at identical biological levels [40]. This variation in library size must be corrected to enable valid comparisons.

Gene Length Bias: Longer transcripts have a higher probability of being sequenced, resulting in more reads independent of actual expression level [46].

Library Composition Effects: When a few genes are extremely highly expressed in one sample, they consume a large fraction of the sequencing budget, distorting the representation of other genes [40].

Data Sparsity: Especially in single-cell RNA-seq applications, a large proportion of genes may show zero counts due to technical artifacts called "dropouts," which can lead to false discoveries or ambiguous conclusions if not properly handled [45].

Without appropriate normalization, these technical artifacts can dominate the variance structure of the data, potentially leading to misleading PCA results and incorrect biological interpretations [43].

The Normalization Workflow: From Raw Data to PCA-Ready Matrix

The transformation of raw RNA-seq data into a normalized matrix suitable for PCA follows a structured workflow encompassing multiple quality control and processing stages. The following diagram visualizes this complete pathway:

Figure 1: Complete RNA-seq Data Normalization Workflow for PCA

Preprocessing Steps Preceding Normalization

Before normalization can begin, several critical preprocessing steps must be performed to ensure data quality:

Quality Control (QC): The initial QC step identifies potential technical errors, including leftover adapter sequences, unusual base composition, or duplicated reads [40]. Tools like FastQC or MultiQC generate quality reports that should be carefully reviewed to identify any issues requiring remediation [40].

Read Trimming: This process cleans the data by removing low-quality segments of reads and residual adapter sequences that could interfere with accurate mapping [40]. Common tools include Trimmomatic, Cutadapt, or fastp [40].

Alignment/Mapping: Cleaned reads are aligned to a reference genome or transcriptome using software such as STAR, HISAT2, or TopHat2 [40]. This step identifies which genes or transcripts are expressed in the samples. Alternatively, pseudo-alignment tools like Kallisto or Salmon estimate transcript abundances without full base-by-base alignment, offering faster processing with less memory requirements [40].

Post-Alignment QC: After alignment, additional QC is performed to remove poorly aligned reads or those mapped to multiple locations, using tools like SAMtools, Qualimap, or Picard [40]. This step is crucial because incorrectly mapped reads can artificially inflate read counts, distorting true expression levels.

Read Quantification: The final preprocessing step counts the number of reads mapped to each gene, producing a raw count matrix [40]. Tools like featureCounts or HTSeq-count perform this counting, generating a matrix where higher read counts indicate higher gene expression [40].

Experimental Design Considerations for Effective PCA

The reliability of downstream PCA and other analyses depends heavily on thoughtful experimental design implemented prior to sequencing:

Biological Replicates: With only two replicates, differential expression analysis is technically possible but greatly reduces the ability to estimate variability and control false discovery rates [40]. While three replicates per condition is often considered the minimum standard, this may not be universally sufficient, especially when biological variability within groups is high [40]. Increasing replicate numbers improves power to detect true expression differences.

Sequencing Depth: For standard differential gene expression analysis, approximately 20–30 million reads per sample is often sufficient [40]. Deeper sequencing captures more reads per gene, increasing sensitivity to detect lowly expressed transcripts.

Batch Effects: Every effort should be made to minimize batch effects, which can result in identification of differentially expressed genes unrelated to the experimental design [4]. Potential sources include different users, temporal variation (time of day), environmental factors, and technical variations in RNA isolation, library preparation, or sequencing runs [4]. Strategies to mitigate batch effects include processing controls and experimental conditions simultaneously, using intra-animal controls, and sequencing all samples in the same run [4].

Normalization Methods: Theory and Application

Common Normalization Techniques

Multiple normalization approaches have been developed to address different technical artifacts in RNA-seq data. The table below summarizes the key characteristics of popular methods:

| Method | Sequencing Depth Correction | Gene Length Correction | Library Composition Correction | Suitable for DE Analysis | Key Assumptions & Limitations |

|---|---|---|---|---|---|

| CPM (Counts per Million) | Yes | No | No | No | Simple scaling by total reads; heavily affected by highly expressed genes [40] |

| RPKM/FPKM (Reads/Fragments per Kilobase of Transcript, per Million Mapped Reads) | Yes | Yes | No | No | Adjusts for gene length; still affected by library composition; not recommended for cross-sample comparison [40] [46] |

| TPM (Transcripts per Million) | Yes | Yes | Partial | No | Scales sample to constant total (1M), reducing composition bias; good for visualization and cross-sample comparison [40] |

| Median-of-Ratios (DESeq2) | Yes | No | Yes | Yes | Implemented in DESeq2; affected by expression shifts; assumes most genes are not differentially expressed [40] [42] |

| TMM (Trimmed Mean of M-values) | Yes | No | Yes | Yes | Implemented in edgeR; affected by over-trimming genes; assumes most genes are not differentially expressed [40] |

| VST (Variance Stabilizing Transformation) | Yes | Varies | Yes | Yes (primarily) | Implemented in DESeq2; transforms data to minimize mean-variance dependence; useful for visualization [44] [42] |

Table 1: Comparison of RNA-Seq Normalization Methods

Specialized Normalization Approaches

DESeq2's Median-of-Ratios Method: This approach, implemented in the DESeq2 package, calculates a scaling factor for each sample by comparing it to a reference sample [40]. The method assumes that most genes are not differentially expressed, using the median of ratios of observed counts to estimate size factors that correct for sequencing depth and library composition [42]. DESeq2 operates on the principle that the count matrix follows a negative binomial distribution, computing means proportionally to the concentration of cDNA fragments from genes in each sample, then scaling by a normalization factor [42].

Variance Stabilizing Transformation (VST): DESeq2 also offers VST, which transforms the count data to minimize the relationship between variance and mean expression [44] [42]. This transformation is particularly useful for techniques like PCA that assume homoscedasticity (constant variance) across the range of expression values.

Compositional Data Analysis (CoDA): An emerging approach treats RNA-seq data explicitly as compositional data, applying log-ratio transformations to address its relative nature [45]. CoDA methods offer scale invariance, sub-compositional coherence, and permutation invariance, potentially providing more robust normalization for challenging datasets [45]. These approaches are particularly relevant for single-cell RNA-seq data with high sparsity but can also be applied to bulk RNA-seq [45].

Practical Implementation for PCA

Method Selection Guidelines

Choosing the appropriate normalization method depends on the research question, data characteristics, and planned analyses:

For PCA Preceding Differential Expression Analysis: When PCA serves as an exploratory step before formal differential expression testing, using the same normalization method planned for differential expression is recommended. DESeq2's median-of-ratios or VST approaches work well in this context [42].

For Visualization-Focused PCA: When the primary goal is sample clustering and outlier detection, TPM can effectively facilitate cross-sample comparison [40]. VST is also excellent for visualization purposes as it stabilizes variance across the expression range [42].

For Data with Suspected Compositional Artifacts: When dealing with datasets where highly variable genes dominate the signal, or when concerned about the relative nature of RNA-seq data, CoDA-based approaches like centered log-ratio (CLR) transformation may provide more robust results [45].

For Single-Cell RNA-seq Data: Specialized methods like SCTransform or CoDA approaches designed for high-dimensional sparse data may be preferable due to the excessive zero counts (dropouts) characteristic of single-cell datasets [45].

Implementation Protocols

DESeq2 Variance Stabilizing Transformation Protocol:

- Begin with a raw count matrix where rows represent genes and columns represent samples

- Pre-filter low-count genes (though DESeq2 performs internal filtering)

- Create a DESeqDataSet object containing the count matrix and sample metadata

- Apply the varianceStabilizingTransformation function to the DESeqDataSet

- Extract the transformed matrix for downstream PCA

- The transformed data approximates variance stability across the mean expression range, making it suitable for PCA and other distance-based analyses [42]

TPM Calculation Protocol:

- Start with raw read counts for each gene

- Divide each gene count by the length of the gene in kilobases, producing reads per kilobase (RPK)

- Sum all RPK values in a sample and divide by 1,000,000 to determine per-million scaling factor

- Divide each RPK value by the per-million scaling factor to obtain TPM [40] [46]

- TPM values sum to 1,000,000 for each sample, facilitating comparison across samples

Compositional Data Analysis Protocol:

- Begin with raw count matrix with zeros handled appropriately (e.g., using count addition schemes)

- Calculate centered log-ratio (CLR) transformation by taking the logarithm of each value divided by the geometric mean of all values for that sample

- The transformed data represents log-ratios rather than absolute values, accounting for compositional nature [45]

- Use CLR-transformed data for PCA and other multivariate analyses

Evaluation of Normalization Effectiveness

After normalization, assessing the success of the procedure is crucial before proceeding to PCA:

Distribution Inspection: Boxplots or density plots of normalized values should show similar distributions across samples, indicating successful correction for technical variations [46].

Sample Correlation: Scatter plots or correlation matrices of normalized expression between samples should show high correlation among replicates and lower correlation between different conditions [46].