Accurate Gene Prediction in Long-Read Microbial Genomes: Methods, Tools, and Clinical Applications

This article provides a comprehensive guide for researchers and drug development professionals on leveraging long-read sequencing for microbial gene prediction.

Accurate Gene Prediction in Long-Read Microbial Genomes: Methods, Tools, and Clinical Applications

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on leveraging long-read sequencing for microbial gene prediction. It covers foundational principles of long-read assembly, explores integrated bioinformatics platforms and lineage-specific methodologies, addresses common troubleshooting and optimization challenges, and establishes validation frameworks for ensuring prediction accuracy. The content synthesizes current best practices to enable reliable genome annotation, supporting applications in microbial ecology, antibiotic resistance tracking, and therapeutic discovery.

The Rise of Long-Read Sequencing in Microbial Genomics: Foundations and New Frontiers

Why Long-Read Sequencing is a Game-Changer for Microbial Genome Assembly

For years, short-read sequencing (SRS) platforms have been the workhorse of microbial genomics. However, a significant limitation has hindered progress: their inability to accurately resolve repetitive genomic regions and complex structural variants [1] [2]. Assembling a genome from these short snippets, typically a few hundred base pairs long, is like reconstructing a book from countless sentence fragments without any paragraph breaks. This often results in fragmented, incomplete genome assemblies that misrepresent the true biology of the microbe [1] [3].

These gaps are particularly problematic for key genomic features, such as:

- Repetitive elements (e.g., ribosomal RNA operons, transposons).

- Mobile genetic elements (e.g., plasmids, integrons) that facilitate the spread of antimicrobial resistance genes (ARGs) [4].

- Large biosynthetic gene clusters (BGCs) encoding pathways for secondary metabolites, which are crucial for drug discovery [3].

Long-read sequencing (LRS) technologies, primarily from Pacific Biosciences (PacBio) and Oxford Nanopore Technologies (ONT), have emerged as a transformative solution. By generating sequence reads that are thousands to tens of thousands of bases long, LRS can span these repetitive and complex regions, enabling the routine production of complete, gapless microbial genomes [1] [2]. For researchers focused on gene prediction, this completeness is foundational, as an uninterrupted genomic sequence is essential for the accurate identification and annotation of gene models.

Technical Advantages and Quantitative Comparisons

The core advantage of long-read sequencing lies in its ability to produce reads that are long enough to span repetitive regions, thereby simplifying the assembly process into a much more accurate and complete genomic picture [1]. This capability directly translates into superior outcomes for microbial genomics.

Table 1: A Comparative Overview of Sequencing Technologies for Microbial Genomics

| Feature | Short-Read Sequencing (e.g., Illumina) | Long-Read Sequencing (PacBio HiFi) | Long-Read Sequencing (ONT) |

|---|---|---|---|

| Typical Read Length | 150-300 bp [5] | 15-20 kb [6] | 5-30+ kb (can exceed 1 Mb) [5] [1] |

| Typical Raw Read Accuracy | >99.9% (Q30) [5] | >99.9% (Q30) [6] | ~97-99% (Q12-Q20), improving with new chemistries [5] [1] |

| Primary Assembly Challenge | Highly fragmented assemblies due to repeats [1] | Highly contiguous, often complete assemblies [7] | Highly contiguous, often complete assemblies [8] |

| Ability to Resolve Repetitive Regions | Poor [2] | Excellent [6] | Excellent [4] |

| Plasmid & Mobile Element Reconstruction | Difficult, often misassembled [4] | Accurate, complete reconstruction [4] | Accurate, complete reconstruction [4] |

| Epigenetic Modification Detection | Requires special treatment, degrades DNA [2] | Built-in, native detection [2] | Built-in, native detection [2] |

| Portability / Throughput | Benchtop to high-throughput | Moderate to high-throughput (e.g., Revio system) [6] | Portable (MinION) to ultra-high-throughput (PromethION) [4] [1] |

Table 2: Impact of Long-Read Sequencing on Genome Assembly Quality in Recent Studies

| Study Context | Sequencing Technology | Key Genomic Outcome |

|---|---|---|

| Antimicrobial Resistance (AMR) Research [4] | Nanopore Long-Read Sequencing | Precise identification of the genetic context of ARGs and their location on mobile elements like plasmids. |

| Terrestrial Microbial Diversity [8] | Nanopore Long-Read Sequencing | Recovery of 15,314 previously undescribed microbial species from complex soil samples. |

| Phytopathogen Epidemiology [7] | Nanopore vs. Illumina | Long-read assemblies were more complete than short-read assemblies and contained few sequence errors. |

| Genome Mining for Drug Discovery [3] | PacBio & ONT Long-Reads | Essential for obtaining finished-quality genomes to correctly assemble large NRPS and PKS-I biosynthetic gene clusters. |

Applications in Microbial Research

The shift to long-read sequencing is unlocking new possibilities across multiple areas of microbiology.

Unveiling the Spread of Antimicrobial Resistance (AMR)

LRS uniquely enables researchers to track the horizontal transfer of antimicrobial resistance genes (ARGs). Because long reads can encompass an entire ARG and its surrounding genetic context, they can precisely determine whether the gene is located on a chromosome, plasmid, or other mobile genetic element [4]. This is critical for understanding and containing the spread of multidrug-resistant pathogens in both clinical and environmental settings [4].

Accelerating Natural Product Discovery

Microbial genome mining is a powerful approach for discovering new drugs. Many valuable compounds are synthesized by large, repetitive biosynthetic gene clusters (BGCs), such as those for nonribosomal peptide synthetases (NRPS) and polyketide synthases (PKS). Short-read sequencing routinely fragments and misassembles these BGCs, leading to failed discovery efforts [3]. Finished-quality genomes from LRS are now considered critical for the robust assembly of these clusters, opening up a vast untapped resource for novel antibiotics and therapeutics [3].

Expanding the Microbial Tree of Life

Metagenomic studies of complex environments like soil have been historically challenging due to their immense microbial diversity. Deep long-read sequencing, as demonstrated by the Microflora Danica project, allows for the recovery of high-quality metagenome-assembled genomes (MAGs) directly from environmental samples [8]. This approach has dramatically expanded the known microbial diversity, leading to the discovery of thousands of novel species and genera that had previously eluded detection using short-read methods [8].

Enhancing Pathogen Surveillance and Outbreak Investigation

In microbial epidemiology, long-read sequencing facilitates both accurate genotyping and high-quality genome assembly from a single assay. A 2025 study on phytopathogenic bacteria confirmed that variant calls and genotypes inferred from Nanopore long reads are as accurate as those from short reads when using optimized bioinformatic pipelines [7]. This enables researchers to track transmission chains with high resolution while also obtaining complete genomes to understand virulence and resistance mechanisms.

Experimental Protocols

Below is a generalized workflow for generating a complete microbial genome assembly using long-read sequencing, from DNA extraction to functional annotation.

Sample Preparation and Sequencing

Goal: Obtain high-molecular-weight (HMW), ultra-pure genomic DNA for sequencing.

- Step 1: Cell Lysis. Use gentle, enzyme-based lysis methods (e.g., lysozyme treatment for Gram-positive bacteria) to avoid shearing DNA.

- Step 2: DNA Extraction. Employ HMW DNA extraction kits designed for long-read sequencing. Critical: Assess DNA quality and quantity using a fluorometer (e.g., Qubit) and fragment size using pulsed-field gel electrophoresis (PFGE) or a Femto Pulse system. An ideal input is >150 ng of DNA with fragments >50 kb [1] [3].

- Step 3: Library Preparation. Follow the manufacturer's protocol for your chosen platform. For PacBio, this involves converting DNA into SMRTbell libraries for HiFi sequencing. For ONT, this entails ligating motor protein adapters to the DNA ends. New automated and high-throughput kits have significantly reduced preparation time and cost [6].

Genome Assembly and Quality Control

Goal: Convert raw sequencing reads into a complete, accurate genome sequence.

- Step 1: Base Calling and Read QC. Convert raw signal data (ONT) or movie files (PacBio) into nucleotide sequences. Filter reads by length and quality.

- Step 2: De Novo Assembly. Use long-read-specific assemblers. Common tools include:

- Flye [9]: A fast and efficient assembler that is widely used.

- Canu [9]: Excellent for correcting errors in noisy long reads.

- hifiasm (for PacBio HiFi data): Specialized for highly accurate reads.

- Note: Some advanced pipelines, like the one from MIRRI ERIC, run multiple assemblers and combine the results for the best possible output [9].

- Step 3: Polishing (if required). While HiFi reads typically do not require polishing, traditional ONT or PacBio CLR reads may benefit from a polishing step using the same long reads or with high-accuracy short reads to correct small indels.

- Step 4: Quality Assessment. Evaluate the assembly using:

- Completeness and Contamination: Check with tools like CheckM (for prokaryotes) or BUSCO (for eukaryotes).

- Contiguity: Metrics like N50/L50 (the contig length such that longer contigs cover 50% of the genome; higher is better).

- Circularization: For prokaryotes, check if the chromosome and plasmids have been assembled into single, circular contigs.

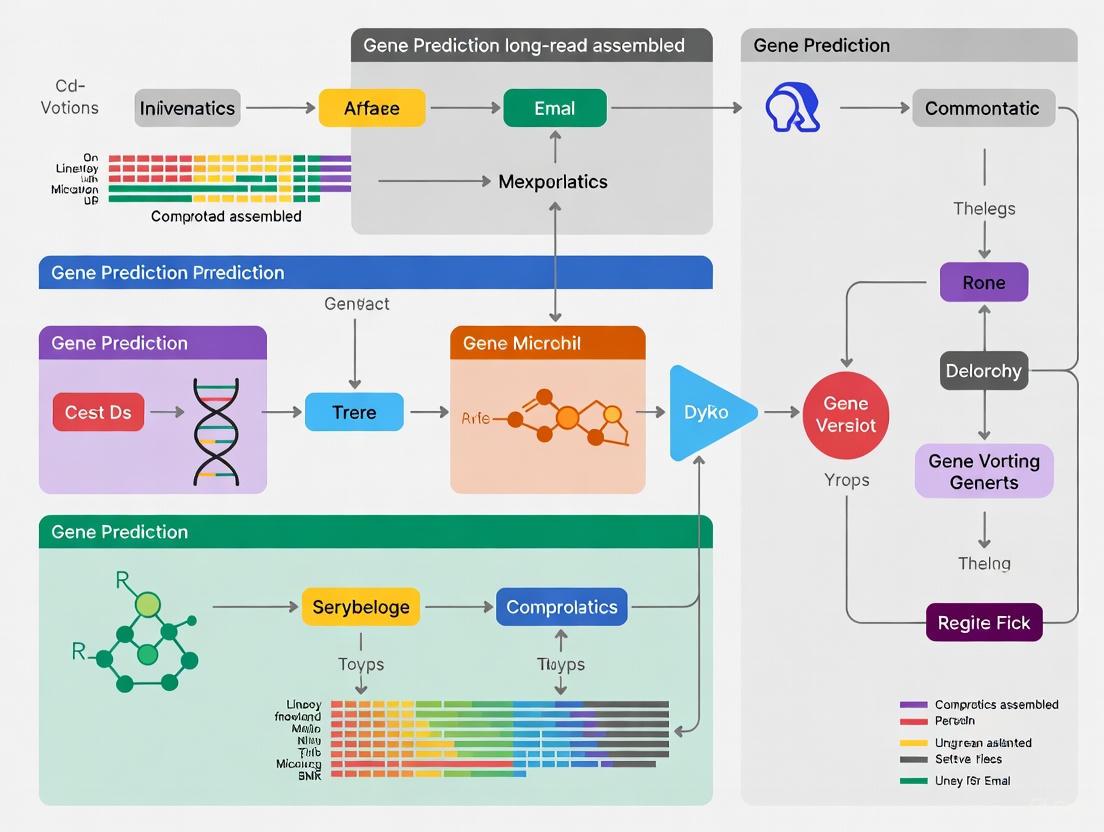

The following diagram illustrates the core bioinformatics workflow from raw data to an annotated genome.

Diagram 1: Core bioinformatics workflow for long-read genome assembly, from raw data to an annotated genome.

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Long-Read Microbial Genome Sequencing

| Item | Function / Application | Examples / Notes |

|---|---|---|

| HMW DNA Extraction Kits | Gentle isolation of long, intact DNA fragments. | Kits from Qiagen, MagAttract, or similar, optimized for microbes. |

| PacBio SMRTbell Prep Kits | Library preparation for PacBio HiFi sequencing. | SMRTbell prep kit 3.0; enables multiplexing of microbial genomes [6]. |

| ONT Ligation Sequencing Kits | Library preparation for Nanopore sequencing. | Ligation Sequencing Kit (e.g., SQK-LSK114); compatible with various flow cells. |

| Long-Range PCR Kits | Target enrichment for specific genes or regions. | Not always required but useful for amplifying low-abundance BGCs or resistance genes. |

| Flow Cells | The consumable where sequencing occurs. | PacBio SMRT Cells (8M, 25M); ONT Flongle, MinION (R10.4.1), PromethION (R10.4.1). |

| Bioinformatics Platforms | For assembly, gene prediction, and annotation. | MIRRI ERIC platform [9], Galaxy, CLAWS, or custom Snakemake/CWL workflows. |

Long-read sequencing has unequivocally transformed microbial genome assembly from a challenging puzzle into a streamlined process for generating complete, reference-quality genomes. Its ability to resolve complex genomic landscapes provides an accurate foundation for all downstream analyses, most critically for precise gene prediction and functional annotation. As the technology continues to evolve, with costs decreasing and accuracy and throughput increasing [6], long-read sequencing is poised to become the new gold standard in microbial genomics. For researchers and drug development professionals, adopting this technology is no longer a niche choice but a strategic imperative to drive discovery in antimicrobial resistance, natural products, and our fundamental understanding of the microbial world.

The advent of long-read sequencing technologies has revolutionized microbial genomics, enabling researchers to generate unprecedented amounts of raw genomic data. However, transforming this data into meaningful biological insights presents significant computational and analytical challenges [9] [10]. This application note examines the key bioinformatics hurdles in gene prediction from long-read assembled microbial genomes and presents integrated solutions currently bridging the gap between raw data and biological understanding, with particular relevance for drug development targeting microbial pathogens.

Key Challenges in Microbial Genome Analysis

The journey from raw sequencing data to assembled, annotated genomes involves multiple critical steps where challenges can compromise final results. The table below summarizes these primary challenges and their implications for downstream analysis.

Table 1: Key Bioinformatics Challenges in Microbial Genome Analysis

| Challenge Category | Specific Challenges | Impact on Biological Insight |

|---|---|---|

| Computational Demands | Genome reconstruction and annotation remain computationally demanding and technically complex [9] [10]. | Limits accessibility for researchers without advanced computational skills or HPC access. |

| Data Integration | Few platforms integrate assembly, gene prediction, and annotation for both prokaryotes and eukaryotes [9]. | Hinders comprehensive analysis of host-pathogen systems relevant to drug development. |

| Workflow Reproducibility | Combining multiple tools into reproducible, scalable workflows requires significant bioinformatics expertise [9]. | Reduces reliability and transparency of results for critical applications like therapeutic target identification. |

| Genome Complexity | Repetitive elements, heterozygosity, and extreme GC-content complicate assembly and annotation [11]. | Can obscure important genomic features such as virulence factors or drug resistance genes. |

Integrated Platforms Addressing Analytical Challenges

The MIRRI-ERIC Bioinformatics Platform

The Italian node of the Microbial Resource Research Infrastructure (MIRRI ERIC) has developed a specialized bioinformatics platform to address these challenges through a unified workflow [9] [10]. This service provides a comprehensive solution for analyzing both prokaryotic and eukaryotic genomes, from assembly to functional protein annotation, specifically designed for long-read sequencing data.

The platform's architecture employs a hybrid computational infrastructure, integrating cloud computing for user interaction and High-Performance Computing (HPC) for accelerated analysis execution [9]. This design effectively addresses the computational demands highlighted in Table 1 while maintaining accessibility for non-bioinformatics specialists.

Comparative Analysis of Genomic Workflows

Table 2: Comparison of Genomic Analysis Workflows and Platforms

| Platform/Workflow | Supported Assemblers | Gene Prediction Tools | Functional Annotation | Key Limitations |

|---|---|---|---|---|

| MIRRI-ERIC Platform [9] [10] | Canu, Flye, wtdbg2 | BRAKER3 (eukaryotes), Prokka (prokaryotes) | InterProScan | Newer platform with growing adoption |

| Galaxy Europe [9] | CANU, Flye | Prokka, BRAKER3 | Various tools | Lacks integrated workflow for both genomic domains |

| CLAWS [9] | Flye, NextDenovo | None | None | No gene prediction or functional annotation |

| MGnify [9] | Multiple | Multiple | Multiple | Focused on metagenomics rather than isolated microbes |

Experimental Protocol: From Sequencing to Annotation

Complete Microbial Genome Analysis Workflow

The following protocol outlines the comprehensive workflow for microbial genome analysis using the MIRRI-ERIC platform, which integrates state-of-the-art tools within a reproducible, scalable framework built on Common Workflow Language (CWL) and containerized with Docker [9] [10].

Phase 1: Genome Assembly

Input Requirements: Pacific Biosciences (PacBio) HiFi or Oxford Nanopore Technologies (ONT) long-read sequencing data. DNA quality is crucial for successful long-read sequencing, requiring High Molecular Weight (HMW) DNA with both chemical purity and structural integrity [11].

Assembly Tools and Parameters:

- Canu: Specialized for noisy long reads, performs correction, trimming, and assembly [9]

- Flye: Designed for de novo assembly using repetitive graphs [9]

- wtdbg2: Efficient assembly without error correction [9]

Protocol Notes: The platform executes all three assemblers in parallel, then integrates their outputs to enhance performance, completeness, and accuracy [9]. Users specify their sequencing technology (Nanopore, PacBio, or PacBio HiFi) through the graphical interface.

Phase 2: Assembly Evaluation

Quality Metrics:

- Standard metrics: N50 and L50 statistics [9]

- Evolutionarily informed metrics: BUSCO analysis to assess gene content completeness using near-universal single-copy orthologs [9]

Validation: This phase ensures the assembly quality before proceeding to computationally intensive annotation steps, crucial for reliable downstream analysis.

Phase 3: Gene Prediction

Organism-Specific Tools:

- Prokaryotic genomes: Prokka for rapid annotation [9] [10]

- Eukaryotic genomes: BRAKER3 for automated eukaryotic genome annotation [9] [10]

Implementation: The workflow automatically routes data through the appropriate prediction tool based on the organism type specified by the user.

Phase 4: Functional Annotation

Primary Tool: InterProScan for comprehensive protein classification, identifying domains, families, and functional sites [9] [10].

Output: Annotated genomic features with functional predictions, enabling biological interpretation and insight generation for downstream applications such as drug target identification.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Reagents and Computational Tools for Long-Read Microbial Genomics

| Category | Specific Tool/Reagent | Function/Purpose | Application Context |

|---|---|---|---|

| Sequencing Technologies | PacBio HiFi | Generates highly accurate long reads (>99% accuracy) [12]. | Ideal for variant calling and assembly where accuracy is prioritized. |

| Oxford Nanopore (ONT) | Produces long reads with additional functionality for methylation analysis [12]. | Suitable when detecting epigenetic modifications or adaptive sampling is needed. | |

| Assembly Tools | Canu | Performs assembly optimized for noisy long reads [9]. | Microbial genome assembly from noisy long-read data. |

| Flye | Enables de novo assembly using repetitive graphs [9]. | Assembling genomes with significant repetitive content. | |

| Gene Prediction | BRAKER3 | Automated eukaryotic genome annotation [9] [10]. | Gene prediction in fungal pathogens such as Candida auris. |

| Prokka | Rapid prokaryotic genome annotation [9] [10]. | Annotation of bacterial genomes like Klebsiella pneumoniae. | |

| Functional Annotation | InterProScan | Classifies proteins into families, predicts domains and functional sites [9] [10]. | Functional characterization of predicted genes. |

| Quality Assessment | BUSCO | Assesses genome completeness using evolutionary-informed expectations [9]. | Evaluation of assembly and annotation completeness. |

Implementation Considerations for Research and Drug Development

Technical Infrastructure Requirements

Successful implementation of microbial genome analysis workflows requires appropriate computational resources. The MIRRI-ERIC platform utilizes a hybrid infrastructure with both cloud computing and High-Performance Computing (HPC) components [9]:

- Cloud subsystem: Built on OpenStack, hosting the web-based component for data upload, parameter configuration, and result visualization

- HPC subsystem: Orchestrated by BookedSlurm, comprising multiple nodes with varied architectures (Intel and ARM) for parallel processing of computationally intensive tasks

- Storage systems: Utilizing BeeGFS and LUSTRE configured in all-flash setups for rapid data access

Data Management and FAIR Principles

Effective data management is crucial in genomic research. Researchers should:

- Submit assembled genomes and annotations to public repositories such as INSDC (GenBank, ENA, or DDBJ) [11]

- Apply FAIR (Findable, Accessible, Interoperable, and Reusable) principles to ensure data sustainability and reuse [11]

- Document workflow parameters and software versions to ensure reproducibility [9] [10]

This application note has detailed the key bioinformatics challenges in deriving biological insights from long-read sequencing data of microbial genomes and presented integrated solutions through platforms like MIRRI-ERIC. The comprehensive workflow from assembly to functional annotation, when properly implemented with appropriate computational resources and quality controls, enables researchers and drug development professionals to reliably characterize microbial genomes. This capability is particularly valuable for identifying potential therapeutic targets in pathogenic species, advancing both basic research and applied drug discovery efforts. The continuous evolution of long-read technologies and analytical methods promises to further enhance our ability to extract meaningful biological insights from microbial genomic data.

The vast majority of microorganisms on Earth have never been cultivated in a laboratory, representing a vast reservoir of unexplored biological diversity known as "microbial dark matter" (MDM) [13]. Traditional metagenomic studies, relying on short-read sequencing technologies, have struggled to assemble complete genomes from complex environmental samples due to challenges in resolving repetitive regions and distinguishing between closely related strains [1]. The emergence of high-throughput, long-read DNA sequencing has fundamentally transformed this landscape, enabling researchers to recover microbial genomes from environmental samples at an unprecedented scale and quality [8] [14]. This application note details how long-read sequencing technologies and associated bioinformatic workflows are expanding the known microbial diversity, providing researchers with powerful tools to access this untapped source of biodiversity for drug discovery and basic research.

Quantitative Advances in Microbial Diversity Discovery

Recent large-scale studies demonstrate the profound impact of long-read sequencing on cataloging microbial diversity. The Microflora Danica project, which performed deep long-read Nanopore sequencing of 154 complex terrestrial samples, exemplifies this progress [8] [14].

Table 1: Genome Recovery from the Microflora Danica Project

| Metric | Value | Significance |

|---|---|---|

| Total high-quality MAGs | 6,076 | Meet MIMAG high-quality standards |

| Total medium-quality MAGs | 17,767 | Useful for diversity assessments |

| Previously undescribed species | 15,314 | Substantial expansion of known diversity |

| Previously uncharacterized genera | 1,086 | 8% expansion of prokaryotic tree of life |

| Total sequencing data generated | 14.4 Tbp | Deep coverage enables high MAG recovery |

| Median MAGs per sample | 154 | Effective for complex terrestrial habitats |

The taxonomic novelty of these discoveries is striking, with 97.9% of the recovered metagenome-assembled genomes (MAGs) representing previously undescribed microbial genera or species [8]. This expansion is not merely quantitative but also functional, as the long-read assemblies enabled the recovery of thousands of complete ribosomal RNA operons, biosynthetic gene clusters, and CRISPR-Cas systems, providing valuable insights into the functional capabilities of these novel microorganisms [8] [14].

Table 2: Impact on Taxonomic Classification

| Database Enhancement | Improvement | Application |

|---|---|---|

| Incorporation into public databases | Substantially improved species-level classification | Better interpretation of soil and sediment metagenomes |

| Recovery of complete rRNA operons | Improved phylogenetic resolution | More accurate taxonomic placement |

| Biosynthetic gene cluster discovery | Identification of novel natural product pathways | Drug discovery and biotechnology |

Experimental Protocols for Genome-Resolved Metagenomics

Sample Collection and DNA Extraction

The success of long-read metagenomics begins with appropriate sample handling. The Microflora Danica project analyzed 125 soil, 28 sediment, and 1 water sample, selected from over 10,000 samples to represent 15 distinct habitat types [8]. For optimal results:

- Sample Preservation: Immediately freeze samples at -80°C or use specialized preservation buffers to prevent nucleic acid degradation.

- DNA Extraction: Use amplification-free, high-quality DNA extraction protocols. Long-read sequencing typically requires 150 ng to 1 μg of high-molecular-weight DNA, with minimal fragmentation [1].

- Quality Assessment: Verify DNA integrity using pulsed-field gel electrophoresis or similar methods to ensure sufficient fragment length.

Sequencing Platform Considerations

The two dominant long-read sequencing platforms offer complementary advantages for metagenomic studies:

Table 3: Sequencing Platform Comparison for Metagenomics

| Parameter | PacBio HiFi | Oxford Nanopore Technologies (ONT) |

|---|---|---|

| Read accuracy | >99.5% [15] | 97-99% [1] |

| Average read length | 15-18 kb [1] | 13-20 kb (up to 4 Mb) [1] |

| DNA input requirement | 150 ng-1 μg [1] | 150 ng-1 μg [1] |

| Throughput | 90 Gb per SMRT Cell (Revio) [1] | Up to 120 Gb (PromethION) [1] |

| Methylation detection | Native detection without bisulfite conversion [15] | Direct detection of modifications [12] |

| Cost considerations | Higher perGb cost [1] | Lower initial investment for portable units |

For the Microflora Danica project, Nanopore sequencing was selected, generating a median of 94.9 Gbp per sample with a read N50 of 6.1 kbp [8]. The total output of 14.4 Tbp demonstrates the scalability of this approach for large biodiversity surveys.

The mmlong2 Bioinformatics Workflow

The computational recovery of genomes from complex metagenomes requires specialized bioinformatic workflows. The custom mmlong2 workflow developed for the Microflora Danica project incorporates multiple optimizations for recovering prokaryotic MAGs from extremely complex datasets [8]:

Figure 1: The mmlong2 bioinformatic workflow for recovering MAGs from complex metagenomes. The workflow integrates multiple assembly and binning strategies to maximize genome recovery from long-read data.

Key computational steps include:

- Metagenome Assembly: Use long-read assemblers such as Canu or Flye to generate contigs from raw reads.

- Polishing and Eukaryotic Contig Removal: Refine assemblies and filter eukaryotic sequences based on taxonomic classification.

- Circular MAG Extraction: Identify circular contigs representing potential complete genomes or plasmids.

- Differential Coverage Binning: Incorporate read mapping information from multi-sample datasets to distinguish populations.

- Ensemble Binning: Apply multiple binning algorithms (e.g., MetaBAT2, MaxBin2) to the same metagenome and consolidate results.

- Iterative Binning: Perform multiple rounds of binning, progressively refining the metagenome.

This workflow recovered 3,349 (14.0%) additional MAGs through iterative binning that would have been missed with standard approaches [8].

Advantages of Long-Read Over Short-Read Metagenomics

Long-read technologies provide distinct advantages for exploring microbial dark matter:

Figure 2: Comparative advantages of long-read versus short-read sequencing for metagenomic studies. Long reads enable more complete genome reconstruction and additional layers of analysis.

- Resolution of Repetitive Regions: Long reads can span repetitive elements and highly homologous regions that confound short-read assembly, enabling complete genome reconstruction [12] [1].

- Strain-Level Differentiation: Read lengths covering multiple variants enable precise discrimination of microbial strains, which is crucial as strain-level differences often determine functional capabilities and host interactions [16].

- Complete Gene and Operon Recovery: Long reads facilitate the assembly of complete biosynthetic gene clusters, ribosomal RNA operons, and other multi-gene elements, providing better insights into functional potential [8].

- Epigenetic Profiling: Both PacBio and ONT technologies can natively detect DNA methylation patterns during sequencing, providing additional layers of regulatory information without requiring specialized library preparation [12] [15].

Table 4: Key Research Reagents and Computational Tools for Long-Read Metagenomics

| Category | Tool/Resource | Function | Application Notes |

|---|---|---|---|

| Wet Lab | AMPure XP beads | DNA size selection and cleanup | Critical for obtaining high-molecular-weight DNA |

| Nanopore Ligation Sequencing Kit | Library preparation for ONT | Rapid protocol (1-2 hours) [1] | |

| PacBio SMRTbell Prep Kit | Library preparation for HiFi | Longer protocol (minimum 7 hours) [1] | |

| Sequencing Platforms | PacBio Revio | High-throughput HiFi sequencing | 360 Gb in 24 hours [1] |

| ONT PromethION | High-throughput nanopore sequencing | ~120 Gb per flow cell [1] | |

| ONT MinION | Portable sequencing | Enables field sequencing [1] | |

| Bioinformatics | mmlong2 [8] | MAG recovery from complex samples | Custom workflow for terrestrial metagenomes |

| hifiasm [15] | De novo assembly | Optimized for PacBio HiFi reads | |

| minimap2/pbmm2 [12] [15] | Read alignment | Foundation for variant detection | |

| Dorado [12] | Basecalling for ONT | Converts raw signal to nucleotide sequences | |

| Databases | GTDB (Genome Taxonomy Database) [17] | Taxonomic classification | Standardized microbial taxonomy |

| IMG/M [18] | Genome data management and analysis | Includes statistical analysis tools |

Implications for Gene Prediction and Functional Annotation

The application of long-read sequencing to microbial dark matter has profound implications for gene prediction in assembled genomes:

- Improved Gene Model Accuracy: Continuous sequence information across full coding sequences enables more accurate prediction of gene start and stop sites, particularly for genes with repetitive domains or complex structures.

- Discovery of Novel Gene Families: The expanded genomic diversity revealed through long-read metagenomics has led to the identification of previously unknown protein families and functional domains [8].

- Metabolic Pathway Reconstruction: Complete genome assemblies enable more reliable reconstruction of metabolic pathways, revealing the functional potential of uncultivated microorganisms [17].

- Connection to Expression Data: The generation of complete gene models facilitates integration with metatranscriptomic data, enabling researchers to distinguish which genes are actively expressed in different environments [16].

Long-read sequencing technologies have fundamentally transformed our ability to explore microbial dark matter, moving from fragmented genomic glimpses to complete genome reconstruction for thousands of previously unknown microorganisms. The combination of advanced sequencing platforms with specialized bioinformatic workflows like mmlong2 enables researchers to efficiently recover high-quality microbial genomes from even the most complex terrestrial environments. These advances are rapidly expanding the known microbial tree of life and providing unprecedented access to the genomic potential of uncultivated microorganisms, opening new frontiers for drug discovery, biotechnology, and fundamental understanding of microbial evolution and ecology.

Within gene prediction research for long-read assembled microbial genomes, the initial quality of the genome assembly is paramount. A fragmented or incomplete assembly will inevitably lead to fragmented and incomplete gene models, compromising all downstream biological interpretation [9]. For drug development professionals investigating microbial secondary metabolites or virulence factors, accurate identification of these often repetitive genomic features is entirely dependent on a high-quality, contiguous assembly [19]. This guide details the essential metrics and standardized protocols for evaluating the completeness and accuracy of microbial genome assemblies, providing a critical foundation for reliable gene prediction and annotation.

Core Concepts and Metric Definitions

The quality of a de novo genome assembly is evaluated through multiple lenses, primarily focusing on contiguity, completeness, and accuracy [20]. Contiguity measures how much of the assembly is reconstructed into large, uninterrupted sequences. Completeness assesses whether the entire expected genomic content, particularly genes, is present. Accuracy evaluates the correctness of the assembled nucleotide sequence.

Key Metric Definitions

- N50/L50: The N50 is the length of the shortest contig or scaffold at which 50% of the entire assembly is contained [20]. The L50 is the minimal number of contigs or scaffolds whose length sum makes up 50% of the genome size [20]. A higher N50 and a lower L50 indicate a more contiguous assembly.

- BUSCO (Benchmarking Universal Single-Copy Orthologs): This metric assesses genome completeness by searching for a set of evolutionarily conserved, single-copy orthologs that are expected to be present in a single copy in a given lineage [9] [20]. The result is presented as the percentage of these orthologs found as complete (and whether single or duplicated), fragmented, or missing.

- QV (Quality Value): A logarithmic measure of assembly accuracy, calculated as QV = -10 × log₁₀(error rate). For example, a QV of 30 corresponds to an error rate of 1 in 1,000 bases [20]. This can be calculated using k-mer based tools like Merqury.

- k-mer Completeness: This metric, derived from tools like Merqury, evaluates what percentage of the k-mers from the original sequencing reads are present in the final assembly, indicating how well the assembly represents the raw data [20].

Table 1: A summary of key assembly quality metrics, their descriptions, and ideal targets for microbial genomes.

| Metric Category | Specific Metric | Description | Ideal Target (Microbial Genomes) |

|---|---|---|---|

| Contiguity | Number of Contigs | Total number of contigs in the assembly. | As low as possible; approaching the number of chromosomes/plasmids. |

| N50 / L50 | N50: Length of the shortest contig at 50% of total genome length. L50: The number of contigs at the N50 size [20]. | Higher N50, Lower L50. | |

| Total Assembly Length | Total number of base pairs in the assembly, including 'N's. | Should match the expected genome size for the organism. | |

| Completeness | BUSCO Score | Percentage of conserved, single-copy orthologs found complete in the assembly [9] [20]. | >95% for a high-quality draft. |

| k-mer Completeness | Percentage of unique k-mers from sequencing reads found in the assembly [20]. | >99%. | |

| Accuracy | QV (Quality Value) | Logarithmic measure of base-level accuracy [20]. | QV > 40 (error rate < 1/10,000) is excellent. |

| GC Content | Percentage of Guanine and Cytosine bases. | Should be consistent with known biology of closely related species. |

Experimental Protocols for Quality Assessment

Implementing a standardized workflow is crucial for consistent and comprehensive assembly evaluation. The following protocols are widely adopted in the field.

A Standardized Workflow for Assembly QC

The diagram below outlines a generalized workflow for genome assessment, integrating the key tools and metrics described in this guide.

Protocol 1: Contiguity and Basic Statistics with QUAST

Principle: This protocol uses QUAST (Quality Assessment Tool for Genome Assemblies) to generate a comprehensive report on assembly contiguity and basic statistics, which are fundamental for initial quality screening [20].

Materials:

- Genome assembly file in FASTA format.

- A reference genome (optional, for comparative analysis).

- QUAST software (v5.0.2 or higher).

Method:

- Execute QUAST: Run QUAST from the command line. If you have a reference genome, include it for a more detailed analysis.

- Interpret Results: Open the generated

report.htmlfile. Key metrics to examine include:- Total number of contigs: Fewer is better.

- Largest contig: Larger is better.

- N50 & L50: Primary indicators of contiguity.

- Total length: Check against expected genome size.

- GC (%): Verify it is consistent with the organism.

Protocol 2: Gene-Completeness Assessment with BUSCO

Principle: BUSCO assesses the completeness of a genome assembly based on expected gene content. It works by searching for universal single-copy orthologs that should be present in a specific evolutionary lineage [9] [20].

Materials:

- Genome assembly file in FASTA format.

- BUSCO software (v5.4.6 or higher).

- Appropriate BUSCO lineage dataset (e.g.,

bacteria_odb10for prokaryotes).

Method:

- Run BUSCO: Execute BUSCO with the appropriate lineage.

- Analyze Output: The key results are in

short_summary.json:- Complete Single-Copy: The percentage of BUSCO genes found exactly once. This is the primary measure of completeness.

- Complete Duplicated: A high percentage here may indicate haplotypic duplication or collapse of repeats.

- Fragmented: BUSCOs found only partially.

- Missing: BUSCOs entirely absent from the assembly.

Protocol 3: k-mer Based Accuracy and Completeness with Merqury

Principle: Merqury evaluates assembly quality by comparing the k-mers present in the assembly to those in the original high-quality sequencing reads (e.g., PacBio HiFi or Illumina). This provides independent measures of accuracy (QV) and completeness without a reference genome [20].

Materials:

- Genome assembly file in FASTA format.

- The raw sequencing reads used for assembly (in

fastqsanger.gzformat). - Merqury software (v1.3 or higher).

- Meryl for k-mer counting.

Method:

- Build k-mer Database: First, count k-mers from the raw reads using Meryl.

- Run Merqury: Use the k-mer database to evaluate the assembly.

- Review Key Metrics:

- QV: The overall base-level quality value.

- k-mer Completeness: The proportion of read k-mers found in the assembly.

- Spectra CN Plot: Visualizes k-mer multiplicity to help identify assembly artifacts like false duplications.

The Scientist's Toolkit

Table 2: Essential software and databases for evaluating genome assembly quality.

| Tool / Resource | Function | Application in Quality Control |

|---|---|---|

| QUAST | Genome assembly quality assessment [20]. | Calculates contiguity metrics (N50, L50) and compares assembly versions. |

| BUSCO | Assessment of genome completeness [9] [20]. | Quantifies the presence of universal single-copy orthologs to benchmark completeness. |

| Merqury | k-mer based evaluation of accuracy and completeness [20]. | Provides QV and k-mer completeness scores without a reference genome. |

| BUSCO Lineage Datasets | Curated sets of orthologs for specific taxonomic groups. | Serves as the reference for BUSCO analysis; critical to select the correct lineage (e.g., bacteria, fungi). |

| Meryl | Efficient k-mer counting and database management [20]. | Creates the k-mer databases required for Merqury analysis. |

| LongReadSum | Quality control for long-read sequencing data [12] [21]. | Assesses raw read quality prior to assembly, which impacts final assembly quality. |

Rigorous evaluation of assembly completeness and accuracy is a non-negotiable step in any research pipeline involving long-read microbial genomes. For gene prediction in particular, a high BUSCO score ensures that the full gene repertoire is present for annotation, while a high QV and contiguity metrics ensure that the gene models themselves are accurately reconstructed and not fragmented. By adhering to the standardized metrics and protocols outlined in this guide, researchers and drug development scientists can establish a robust foundation for their genomic studies, ensuring that subsequent discoveries in gene function, virulence, and drug discovery are built upon reliable data.

Integrated Workflows and Advanced Tools for Precision Gene Prediction

The advent of long-read sequencing technologies has significantly enhanced our ability to generate high-quality microbial genome assemblies, providing more complete and contiguous genomic sequences. However, transforming these raw sequencing data into biologically meaningful insights remains a formidable challenge for many researchers. The process requires the integration of multiple sophisticated tools for genome assembly, gene prediction, and functional annotation, demanding advanced computational skills and access to powerful computing infrastructure that may not be readily available to all research groups [9] [10].

To address these challenges, the Italian node of the Microbial Resource Research Infrastructure (MIRRI ERIC) has developed a comprehensive bioinformatics platform specifically designed for long-read microbial sequencing data. This service provides an end-to-end solution for analyzing both prokaryotic and eukaryotic genomes, making advanced genomic analysis accessible to researchers without extensive computational expertise while maintaining the reproducibility and rigor required for scientific research [9]. Developed as part of the SUS-MIRRI.IT project, this platform represents a significant advancement toward user-centered scalable bioinformatics services for microbial research [9] [10].

The MIRRI platform employs a modular architecture that operates on a hybrid computational infrastructure, seamlessly integrating both cloud computing and High-Performance Computing (HPC) resources. This design ensures both accessibility and computational power for demanding bioinformatics workflows [9] [10].

The system is structured around two primary components. The web-based component operates on virtual machines within a cloud infrastructure and is responsible for user interactions, including data upload, configuration of analysis parameters, and visualization of results. It features an intuitive user interface designed to ensure seamless interaction for users with varying levels of computational expertise. The computing component manages the execution of data analysis workflows on HPC infrastructure, processing user data in parallel across multiple HPC nodes and returning results to the web interface [9].

This service is integrated into the broader Italian Collaborative Working Environment (ItCWE), serving as the primary access point for SUS-MIRRI.IT services. The platform stands out for its three key innovative aspects: (1) ease of use through an intuitive web application, (2) transparent leveraging of HPC infrastructure to accelerate analysis, and (3) ensuring reproducibility through Common Workflow Language (CWL) and Docker containers [9] [10].

Table 1: Computational Infrastructure Supporting the MIRRI Platform

| Infrastructure Component | Specifications | Role in Platform |

|---|---|---|

| Cloud System (OpenStack) | 2,400+ physical cores, 60 TB RAM, 120 GPUs, 25 Gb/s networking | Hosts web-based component for user interaction |

| HPC Subsystem (BookedSlurm) | 68 Intel nodes (36 cores, 128 GB RAM each), 4 ARM nodes (80 cores, 512 GB RAM each) | Executes computationally intensive analysis workflows |

| Storage Systems | BeeGFS and LUSTRE, configured in all-flash setup | Manages large genomic datasets and interim results |

Application Notes: Workflow and Implementation

End-to-End Analysis Workflow

The platform implements a comprehensive workflow for microbial genome analysis that encompasses four main phases: assembly, evaluation, gene prediction, and functional annotation. This workflow is designed to be flexible, supporting data from various long-read sequencing technologies including Nanopore, PacBio, and PacBio HiFi [10].

The following diagram illustrates the complete analysis workflow implemented by the platform:

Diagram 1: The four-phase workflow for microbial genome analysis, showing the pathway from raw sequencing data to functional annotation. The process begins with multiple assemblers operating in parallel to enhance assembly quality, followed by comprehensive evaluation, domain-specific gene prediction, and concluding with functional characterization.

Phase 1: Assembly Phase Protocol

The assembly phase employs multiple state-of-the-art assemblers to enhance the completeness and accuracy of genome reconstructions. The protocol begins with quality assessment of raw long-read sequencing data, followed by simultaneous processing through three assemblers [9] [10].

Materials and Reagents:

- Input: Long-read sequencing data (Nanopore, PacBio, or PacBio HiFi)

- Canu assembler (v2.0 or higher)

- Flye assembler (v2.9 or higher)

- wtdbg2 assembler (v2.5 or higher)

- Computational resources: Minimum 128 GB RAM, 36 cores per assembler instance

Step-by-Step Procedure:

- Data Preprocessing: Upload raw FASTQ files through the web interface. The platform accepts compressed (.gz) or uncompressed formats.

- Parameter Configuration: Select sequencing technology used (Nanopore, PacBio, or PacBio HiFi) via the graphical interface. Default parameters are optimized for each technology but can be customized.

- Parallel Assembly Execution: Launch simultaneous assembly jobs using Canu, Flye, and wtdbg2. The platform automatically distributes these across available HPC nodes.

- Output Generation: Each assembler produces a draft genome assembly in FASTA format. The platform retains all outputs for evaluation in the next phase.

Technical Notes: Using multiple assemblers improves the probability of obtaining a high-quality assembly, as different algorithms may perform better depending on the specific characteristics of the dataset and organism [9].

Phase 2: Assembly Evaluation Protocol

The evaluation phase assesses the quality of the generated assemblies using both standard metrics and evolutionarily informed assessments of gene content.

Materials and Reagents:

- Input: Draft genome assemblies from Phase 1

- BUSCO software (v5 or higher) with appropriate lineage datasets

- QUAST or similar assembly metrics tool

- Reference genome (optional, for comparative analysis)

Step-by-Step Procedure:

- Contiguity Metrics Calculation: Compute standard assembly statistics including N50, L50, total assembly size, and number of contigs.

- BUSCO Analysis: Run BUSCO analysis using the appropriate lineage dataset (bacteria, fungi, etc.) to assess completeness based on evolutionarily informed expectations of gene content.

- Comparative Assessment: Compare results across assemblies from different tools to select the highest quality assembly for downstream analysis.

- Report Generation: Compile a comprehensive quality assessment report highlighting strengths and weaknesses of each assembly.

Technical Notes: The platform automatically selects the best assembly based on a weighted score incorporating both contiguity metrics and BUSCO completeness scores. However, users can manually override this selection based on their specific research needs [9] [10].

Phase 3: Gene Prediction Protocol

This phase employs specialized tools for gene prediction based on the genomic domain of the organism—prokaryotic or eukaryotic.

Materials and Reagents:

- Input: Selected genome assembly from Phase 2

- Prokka (v1.14 or higher) for prokaryotic genomes

- BRAKER3 (v3.0 or higher) for eukaryotic genomes

- Reference protein sequences (optional, for homology-based prediction)

Step-by-Step Procedure:

- Domain Specification: Indicate whether the organism is prokaryotic or eukaryotic through the web interface.

- Tool Execution:

- For prokaryotes: Run Prokka with default parameters or customized settings

- For eukaryotes: Execute BRAKER3 pipeline incorporating both gene model prediction and evidence-based annotation

- Output Processing: Extract predicted gene models, protein sequences, and functional assignments

- Format Standardization: Generate standard GFF3 and GBK files containing annotation information

Technical Notes: BRAKER3 incorporates GeneMark-EP+ and AUGUSTUS supported by protein database information, significantly improving prediction accuracy for eukaryotic genomes [9].

Phase 4: Functional Annotation Protocol

The final phase focuses on characterizing the functional elements of predicted genes through protein domain analysis and functional classification.

Materials and Reagents:

- Input: Predicted protein sequences from Phase 3

- InterProScan (v5.0 or higher)

- Functional databases: Pfam, PROSITE, PRINTS, PANTHER, Gene3D, SUPERFAMILY

Step-by-Step Procedure:

- Protein Sequence Submission: Submit predicted protein sequences in FASTA format to InterProScan

- Domain Analysis: Execute simultaneous searches against multiple protein signature databases

- Integration: Combine results from all databases to generate comprehensive functional annotations

- Enrichment: Map functional annotations to Gene Ontology (GO) terms, metabolic pathways, and other functional classifications

Technical Notes: The platform provides enriched annotations by querying multiple external repositories, facilitating biological interpretation and extraction of meaningful insights from analysis outcomes [9].

Table 2: Bioinformatics Tools Integrated in the MIRRI Platform

| Tool | Version | Function | Organism Type |

|---|---|---|---|

| Canu | 2.0+ | Long-read assembly | Prokaryotes & Eukaryotes |

| Flye | 2.9+ | Long-read assembly | Prokaryotes & Eukaryotes |

| wtdbg2 | 2.5+ | Long-read assembly | Prokaryotes & Eukaryotes |

| BUSCO | 5.0+ | Assembly evaluation | Prokaryotes & Eukaryotes |

| Prokka | 1.14+ | Gene prediction | Prokaryotes |

| BRAKER3 | 3.0+ | Gene prediction | Eukaryotes |

| InterProScan | 5.0+ | Functional annotation | Prokaryotes & Eukaryotes |

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of genomic analyses requires both computational tools and biological materials. The following table details essential research reagent solutions for researchers working with the MIRRI platform or similar bioinformatics workflows.

Table 3: Essential Research Reagents and Materials for Microbial Genome Analysis

| Reagent/Material | Function/Application | Specifications |

|---|---|---|

| Microbial Culturing Media | Isolation and propagation of pure microbial cultures | Composition varies by microbial type (e.g., LB for bacteria, PDA for fungi) |

| DNA Extraction Kits | High-molecular-weight DNA isolation suitable for long-read sequencing | Must yield DNA >20 kb fragment size (e.g., Qiagen Genomic-tip, Nanobind CBB) |

| Long-read Sequencing Kits | Library preparation for sequencing platforms | Oxford Nanopore Ligation Sequencing Kit or PacBio SMRTbell Prep Kit 3.0 |

| Quality Control Reagents | Assessment of DNA quality and quantity prior to sequencing | Fluorometric assays (Qubit dsDNA HS), fragment analyzers (Femto Pulse) |

| Reference Genomes | Comparative analysis and validation | Species-specific complete genomes from NCBI RefSeq |

| BUSCO Lineage Datasets | Assessment of genome completeness | Specific to taxonomic group (e.g., bacteriaodb10, fungiodb10) |

| Functional Annotation Databases | Protein domain identification and functional classification | InterPro-integrated databases (Pfam, PROSITE, Gene3D, etc.) |

Validation and Case Studies

The utility of the MIRRI platform has been demonstrated through case studies involving three microorganisms of clinical and environmental significance from the TUCC culture collections: Scedosporium dehoogii MUT6599, Klebsiella pneumoniae TUCC281, and Candida auris TUCC287 [9] [10].

For each organism, the platform successfully generated high-quality genome assemblies, accurate gene predictions, and biologically meaningful functional annotations. The integration of multiple assemblers proved particularly valuable, as different tools performed variably across organisms, allowing selection of the optimal assembly for each species. The platform's ability to handle both prokaryotic (K. pneumoniae) and eukaryotic (S. dehoogii and C. auris) genomes demonstrated its versatility across microbial domains [9].

The case studies validated the platform's performance in producing reliable, biologically meaningful insights, positioning it as a valuable tool for both routine genome analysis and advanced microbial research. The automated evaluation metrics provided objective assessment of assembly and annotation quality, while the user-friendly interface made these advanced analyses accessible to researchers without specialized bioinformatics training [9] [10].

The MIRRI ERIC Italian node's bioinformatics platform represents a significant advancement in microbial genome analysis, providing an end-to-end solution that bridges the gap between raw long-read sequencing data and biologically meaningful insights. By integrating state-of-the-art tools within a reproducible, scalable workflow and providing access through an intuitive web interface, the platform addresses critical challenges in computational microbiology.

The platform's modular architecture, leveraging both cloud computing for accessibility and HPC for computational intensity, makes advanced genomic analyses accessible to a broader research community. Its support for both prokaryotic and eukaryotic organisms, combined with rigorous quality assessment at each analysis phase, ensures reliable results suitable for diverse research applications from basic microbiology to drug development.

As long-read sequencing technologies continue to evolve and become more widely adopted, comprehensive platforms like this will play an increasingly important role in accelerating microbial genomics research and translating genomic information into biological understanding with potential applications in health, biotechnology, agriculture, and environmental science.

In the field of microbial genomics, accurate gene prediction is a cornerstone for understanding gene function, evolutionary dynamics, and biotechnological potential. Traditional gene prediction tools often assume a standard genetic code and uniform gene structure, an approach that fails to account for the remarkable diversity of genetic codes used by different microbial lineages. This limitation is particularly acute when analyzing complex metagenomic samples or long-read assembled genomes encompassing organisms from multiple domains of life (Bacteria, Archaea, Eukarya, and Viruses), each with their own distinct genetic architectures. Lineage-specific prediction has emerged as a critical solution, leveraging the taxonomic assignment of genetic sequences to apply optimized, lineage-appropriate gene-finding tools and parameters. This paradigm shift enables a more accurate and comprehensive exploration of the functional potential encoded within microbial genomes [22].

The advent of long-read sequencing technologies has significantly improved the quality of microbial genome assemblies by producing longer contiguous sequences (contigs). However, transforming these high-quality assemblies into biologically meaningful annotations requires sophisticated computational workflows that respect biological diversity. Lineage-specific prediction addresses this by ensuring that the correct genetic code is used for different taxa, that incomplete protein predictions are filtered out, and that the prediction of small proteins is optimized. This is especially vital for the growing field of protein ecology, which studies the distribution and association of proteins, rather than just taxonomic markers, to understand their ecological importance and relationship with host health [22].

Comparative Analysis of Gene Prediction Approaches

A comparative analysis reveals the significant quantitative and qualitative advantages of a lineage-specific workflow over a one-size-fits-all approach. The core improvement lies in using taxonomic classification to select the most appropriate gene prediction tools for each contig, rather than applying a single tool to all data.

Quantitative Workflow Output Comparison

The table below summarizes a performance comparison between a standard single-tool approach and an integrated lineage-specific workflow, applied to a large-scale human gut microbiome dataset comprising 9,677 metagenomes [22].

Table 1: Performance comparison of gene prediction approaches on human gut microbiome data

| Performance Metric | Standard Approach (Pyrodigal only) | Lineage-Specific Workflow | Change |

|---|---|---|---|

| Total Genes Predicted | 737,874,876 | 846,619,045 | +14.7% |

| Protein Clusters (90% similarity) | Not Available | 29,232,514 | +210.2% vs. UHGP* |

| Singleton Protein Clusters | Not Available | 14,043,436 | - |

| Expressed Singletons (via metatranscriptomics) | Not Available | 39.1% | - |

| Bacterial Contig Proteins | Dominant | 58.4% ± 18.9% | - |

| Eukaryotic Contig Proteins | Underrepresented | 0.03% ± 1.31% | - |

*UHGP: Unified Human Gastrointestinal Protein catalogue, used as a reference benchmark [22].

The lineage-specific workflow identified over 108 million additional genes, substantially expanding the known protein landscape of the human gut. Crucially, metatranscriptomic validation confirmed that a significant proportion of the rare "singleton" proteins are expressed, confirming they are not computational artifacts but real, functionally relevant components of the microbiome [22].

Tool Selection by Taxonomic Domain

The effectiveness of the lineage-specific approach depends on using the optimal combination of gene prediction tools for each taxonomic group. The following table details the tool selection based on systematic benchmarking.

Table 2: Lineage-specific tool selection and key parameters for gene prediction

| Taxonomic Group | Recommended Tool Combination | Key Rationale and Parameters |

|---|---|---|

| Bacteria | Pyrodigal, MetaGeneMark, FragGeneScan+ | Optimized for prokaryotic gene structure; uses translation table 11 [22]. |

| Archaea | Pyrodigal, MetaGeneMark, FragGeneScan+ | Adapted for archaeal genetic codes and gene structures [22]. |

| Eukaryotes | AUGUSTUS, SNAP, GeneMark-ES | Critical for predicting multi-exon genes with introns; Pyrodigal is not suitable [22]. |

| Viruses | Pyrodigal, MetaGeneMark, PHANOTATE | Tailored for compact viral genomes and alternative genetic codes [22]. |

| Unknown/Unassigned | Pyrodigal, MetaGeneMark, FragGeneScan+ | Applies a conservative prokaryotic-leaning model for contigs without taxonomic assignment [22]. |

Benchmarking showed that while using multiple tools per domain slightly increases spurious predictions, the benefit of capturing a much larger set of real proteins outweighs this cost. For eukaryotic genes, the combination of tools is particularly important due to the inability of prokaryotic-centric tools like Pyrodigal to handle introns [22].

Integrated Protocol for Lineage-Specific Gene Prediction

This section provides a detailed, executable protocol for implementing lineage-specific gene prediction, from genome assembly to functional annotation.

The diagram below illustrates the complete integrated workflow for long-read microbial genome assembly and lineage-specific gene prediction.

Step-by-Step Experimental Protocol

Phase 1: Genome Assembly and Evaluation

Step 1.1: Multi-Tool Assembly

- Objective: Generate a high-quality, contiguous genome assembly from long-read data.

- Procedure: Execute at least two of the following assemblers in parallel on your long-read data (e.g., Oxford Nanopore or PacBio):

- Canu:

canu -p [output_prefix] -d [output_dir] genomeSize=[size] useGrid=false -nanopore [input.fastq] - Flye:

flye --nano-raw [input.fastq] --out-dir [out_dir] --threads [threads] - wtdbg2:

wtdbg2 -x ont -g [size] -i [input.fastq] -t [threads] -o [output_prefix]

- Canu:

- Rationale: Using multiple assemblers and generating a consensus improves assembly completeness and accuracy [9] [10].

Step 1.2: Assembly Evaluation

- Objective: Assess the quality and completeness of the assembled genome.

- Procedure:

- Run BUSCO to assess gene content completeness against a near-universal single-copy ortholog dataset:

busco -i [assembly.fasta] -l [lineage_dataset] -o [busco_output] -m genome - Calculate standard assembly metrics (N50, L50) using tools like Quast.

- Run BUSCO to assess gene content completeness against a near-universal single-copy ortholog dataset:

- Note: A high-quality microbial assembly should typically have a BUSCO completeness score >90% and a high N50 value, indicating contiguity [9] [10].

Phase 2: Taxonomic Classification and Gene Prediction

Step 2.1: Taxonomic Classification of Contigs

- Objective: Determine the taxonomic origin of each contig in the assembly to inform tool selection.

- Procedure: Use Kraken 2 with a standard database (e.g., RefSeq) to classify contigs:

kraken2 --db [kraken_db] --threads [threads] --report [report.txt] --output [output.txt] [assembly.fasta][22].

Step 2.2: Lineage-Specific Gene Calling

- Objective: Accurately predict protein-coding genes on each contig using domain-appropriate tools.

- Procedure: Parse the Kraken 2 output and process contigs based on their taxonomic assignment using the tool combinations specified in Table 2.

- For Bacterial/Archaeal/Viral/Unknown contigs: Execute the recommended three-tool combination (e.g., Pyrodigal, MetaGeneMark, FragGeneScan+). Merge the results, prioritizing overlapping predictions.

- For Eukaryotic contigs: Execute AUGUSTUS, SNAP, and GeneMark-ES. Use evidence-based approaches where possible to reconcile multi-exon gene predictions.

- Critical Parameter: Ensure the correct genetic code (e.g., translation table) is specified for each tool based on the lineage [22].

Phase 3: Functional Annotation and Downstream Analysis

Step 3.1: Functional Protein Annotation

- Objective: Assign biological functions to the predicted protein sequences.

- Procedure: Run InterProScan on the final set of predicted protein sequences:

interproscan.sh -i [proteins.faa] -f tsv -o [output.tsv] --goterms --pathways[9] [10]. For prokaryotic-focused analyses, Prokka can provide a rapid, integrated annotation [9].

Step 3.2: Protein Ecology Analysis

- Objective: Study the ecological distribution of proteins and their association with host parameters.

- Procedure: For large-scale metagenomic studies, dereplicate proteins into clusters (e.g., at 90% identity using CD-HIT). Use tools like InvestiGUT to correlate protein cluster prevalence and abundance with host metadata (e.g., disease state, diet) [22].

This section catalogs the key software tools and computational resources essential for implementing the lineage-specific prediction protocol.

Table 3: Essential resources for lineage-specific gene prediction workflows

| Resource Name | Type | Primary Function | Key Application Note |

|---|---|---|---|

| Flye / Canu | Software Tool | De novo genome assembly from long reads. | Used in the initial assembly phase to generate contigs from raw sequencing data [9] [10]. |

| Kraken 2 | Software Tool | Taxonomic classification of sequence contigs. | Determines the lineage of each contig, directing it to the appropriate gene prediction tools [22]. |

| Pyrodigal | Software Tool | Gene prediction in prokaryotic and viral sequences. | Fast and accurate; a core tool for bacterial, archaeal, and viral contigs [22]. |

| AUGUSTUS | Software Tool | Gene prediction in eukaryotic sequences. | Critical for predicting complex, multi-exon genes in fungal and other microbial eukaryotic contigs [22]. |

| BRAKER3 | Software Tool | Eukaryotic gene prediction with RNA-seq data integration. | An alternative for eukaryotic gene prediction that can leverage transcriptomic evidence [9]. |

| InterProScan | Software Tool | Functional annotation of protein sequences. | Scans against multiple databases to assign protein families, domains, and functional sites [9] [10]. |

| Prokka | Software Tool | Rapid prokaryotic genome annotation. | Provides a streamlined pipeline for functional annotation of bacterial and archaeal genomes [9]. |

| MIRRI-IT Platform | Web Service | Integrated online platform for microbial analysis. | Provides a user-friendly, HPC-powered implementation of a similar long-read assembly-to-annotation workflow [9]. |

| InvestiGUT | Software Tool | Protein ecology analysis. | Enables association studies between protein prevalence from metagenomes and host parameters [22]. |

Workflow Logic of Lineage-Specific Gene Prediction

The core logical process of the lineage-specific prediction step (Phase 2) is detailed in the following diagram.

Within the framework of gene prediction research on long-read assembled microbial genomes, the selection of an appropriate annotation tool is a critical determinant of success. High-quality genome assemblies from technologies like Oxford Nanopore or PacBio provide the foundation, but accurate gene structural annotation transforms this sequence into biologically meaningful information [9] [10]. For microbial genomics, which encompasses both prokaryotic and eukaryotic organisms, this process is not one-size-fits-all. The choice of tool must be guided by the fundamental biological distinctions between these cellular life forms, primarily the presence of a nucleus and complex gene architecture in eukaryotes.

This Application Note provides a structured comparison between two established annotation tools: Prokka, optimized for prokaryotic genomes, and BRAKER3, designed for eukaryotic genomes. We detail their operational principles, provide validated protocols for their use with long-read data, and contextualize their application within a broader microbial genomics research pipeline. The platform developed by the Italian MIRRI ERIC node demonstrates the integration of both tools (Prokka for prokaryotes and BRAKER3 for eukaryotes) into a unified, reproducible workflow for long-read microbial data, highlighting their complementary roles in comprehensive microbial research [9] [10].

Tool Comparison and Selection Criteria

The table below summarizes the core characteristics of Prokka and BRAKER3 to guide tool selection.

Table 1: Key Comparison between Prokka and BRAKER3

| Feature | Prokka | BRAKER3 |

|---|---|---|

| Primary Domain | Bacteria, Archaea, Viruses [23] | Eukaryotes [24] [25] |

| Core Prediction Method | Integration of evidence-based tools (e.g., Prodigal) | Combination of GeneMark-ETP and AUGUSTUS [24] |

| Evidence Integration | Protein homology (e.g., UniProt) [23] | RNA-Seq alignments and/or protein homology [24] [25] |

| Typical Inputs | Assembled genome (FASTA) [23] | Assembled genome (FASTA), plus RNA-Seq (BAM) and/or protein sequences (FASTA) [24] [25] |

| Key Strength | Speed, standardization of output for prokaryotes [23] | High accuracy by leveraging extrinsic evidence and combining multiple gene finders [24] |

| Considerations | Less suitable for genomes with atypical features without manual curation [26] | Computationally intensive; requires evidence data for optimal performance [24] |

Rationale for Domain-Specific Tool Selection

The divergence in tool design is driven by fundamental biological differences. Prokaryotic genes are relatively simple, lacking introns and being densely packed on the genome. Prokka leverages this by using fast, ab initio predictors like Prodigal and aligning sequences to protein databases for functional annotation [23]. In contrast, eukaryotic genes contain introns, making their prediction more complex. BRAKER3 addresses this by employing a sophisticated pipeline that first trains GeneMark-ETP and AUGUSTUS using extrinsic evidence from RNA-Seq or protein homologs. This evidence is crucial for accurately identifying exon-intron boundaries [24] [25]. Using Prokka on a eukaryotic genome would fail to predict spliced genes, while using BRAKER3 on a prokaryote would be unnecessarily complex and resource-intensive.

Experimental Protocols for Long-Read Assembled Genomes

The following protocols assume you have a high-quality, long-read genome assembly. Using a repeat-masked genome is highly recommended, especially for eukaryotes, as it prevents the prediction of false positive gene structures in repetitive regions [24].

Protocol 1: Rapid Prokaryotic Genome Annotation with Prokka

This protocol is designed for annotating a bacterial or archaeal genome assembly using Prokka.

Materials and Reagents

- Input Data: A high-quality prokaryotic genome assembly in FASTA format.

- Computational Environment: A Unix-based system (Linux/macOS) with Prokka installed. Installation can be performed via Conda:

conda install -c conda-forge -c bioconda -c defaults prokka[23]. - Optional: A custom protein database (in FASTA format) for improved homology-based annotation, if annotating a non-model organism.

Step-by-Step Procedure

- Database Setup (First-time use): Run

prokka --setupdbto index the default databases [23]. - Basic Annotation Command: Execute Prokka with a minimal command.

This will create a directory

my_genome_annotationcontaining all output files with the prefixmy_bacterium[23]. - Advanced Annotation (Recommended): For more accurate and compliant annotation, specify the genus and enable the

--addgenesflag. For public submission, use--compliant. - Output Analysis: Key output files include:

.gff: The master annotation file in GFF3 format..gbk: A standard GenBank format file..faa: Protein FASTA file of the translated CDS sequences..txt: Summary statistics of the annotated features [23].

Protocol 2: Evidence-Driven Eukaryotic Genome Annotation with BRAKER3

This protocol describes annotating a eukaryotic genome using BRAKER3 with RNA-Seq and protein evidence.

Materials and Reagents

- Input Data:

genome.fasta: The repeat-masked eukaryotic genome assembly.rnaseq.bam: RNA-Seq reads aligned to the genome using a splice-aware aligner (e.g., HISAT2, STAR with--outSAMstrandField intronMotifoption) [25].protein_db.fasta: A database of protein sequences from a related species (e.g., a subset of UniProt/SwissProt) [24] [25].

- Computational Environment: BRAKER3 is available as a command-line tool and on public platforms like Galaxy Europe (

usegalaxy.eu) [24] [25].

Step-by-Step Procedure

- Data Preparation: Ensure the BAM file is sorted and indexed. The genome file should have simple scaffold names (e.g.,

>scaffold_1) [24]. - Run BRAKER3 with Combined Evidence: Execute the pipeline using both RNA-Seq and protein evidence. This is the most robust mode for BRAKER3.

--species: A unique name for the training species.--prg=exonerate: Specifies the tool for protein-to-genome alignment.--gff3: Produces output in GFF3 format [24].

- Run BRAKER3 with Proteins Only: If no RNA-Seq data is available, run using protein evidence alone. This requires a database of protein families for optimal performance (e.g., OrthoDB) [24].

- Output Analysis: The primary output is

braker/annotations.gff3. This file contains the final gene predictions combining results from both GeneMark-ETP and AUGUSTUS, filtered for high support from extrinsic evidence [24] [25]. Visual inspection of the results in a genome browser like UCSC or JBrowse is highly recommended [24].

The logical relationship and data flow for the BRAKER3 protocol with combined evidence is illustrated below.

BRAKER3 Evidence Integration Flow

The Scientist's Toolkit: Research Reagents and Materials

The table below lists essential materials and their functions for the gene prediction workflows described.

Table 2: Essential Research Reagents and Computational Tools

| Item Name | Function / Application Notes |

|---|---|

| High-Quality Genomic DNA | Source material for long-read sequencing. For challenging samples like plants, a sorbitol wash may be required to remove polysaccharides before extraction [27]. |

| Long-read Sequencer (Nanopore/PacBio) | Generates long sequencing reads, enabling high-contiguity genome assemblies that are crucial for accurate annotation [9] [8]. |

| Prokka Software Suite | Integrated annotation tool for prokaryotes. Rapidly produces standard-compliant GFF and GenBank files [23]. |

| BRAKER3 Pipeline | Eukaryotic gene prediction pipeline. Uses RNA-Seq and/or protein evidence to train and run GeneMark-ETP and AUGUSTUS [24]. |

| RNA-Seq Data (Paired-end) | Provides direct evidence of transcribed regions and splice sites for training and guiding eukaryotic gene prediction in BRAKER3 [24] [25]. |

| Curated Protein Database (e.g., UniProt/SwissProt) | Provides protein homology evidence for both Prokka and BRAKER3. Using high-quality, curated sequences is critical for accuracy [24] [25]. |

| High-Performance Computing (HPC) Infrastructure | Essential for managing computationally demanding tasks, especially BRAKER3 and long-read genome assembly [9] [10]. |

The accurate annotation of microbial genomes is a critical step in translating sequence data into biological discovery. The choice between Prokka for prokaryotes and BRAKER3 for eukaryotes is dictated by the fundamental biology of the organism under study. Prokka offers a fast, efficient, and standardized solution for bacterial and archaeal genomes. In contrast, BRAKER3 provides a powerful, evidence-driven approach capable of handling the complexity of eukaryotic gene structures. By following the detailed protocols and leveraging the integrated toolkit outlined in this guide, researchers can confidently apply these tools to their long-read assembled genomes, ensuring high-quality annotations that serve as a reliable foundation for downstream functional and comparative genomic studies within a thesis or broader research project.

Functional annotation is a critical step following gene prediction in microbial genomics, transforming raw nucleotide sequences into biologically meaningful information. For research on long-read assembled microbial genomes, this process reveals the putative roles of predicted genes within metabolic pathways, cellular components, and biological processes, thereby enabling hypothesis generation about the organism's ecological role or biotechnological potential [9]. InterProScan stands as a cornerstone tool in this domain, providing a unified interface to multiple protein signature databases through a single analysis [28].

This protocol details the application of InterProScan for the comprehensive functional annotation of protein sequences derived from microbial genomes. By integrating results from databases such as Pfam, PANTHER, and Gene Ontology (GO), InterProScan facilitates the transfer of functional knowledge from well-characterized proteins to novel sequences identified in genomic studies [29] [28]. The following sections provide a structured workflow, from data preparation to advanced analysis, tailored for researchers annotating microbial genomes.

Experimental Protocols

Installation and Setup

Local Installation on a Computing Cluster For large-scale projects, such as annotating an entire microbial genome, a local installation of InterProScan is recommended for performance and flexibility [29].

- Download and Install: Follow the official installation instructions from the EMBL-EBI website. Ensure your system meets the requirements, notably an updated version of Java (above 1.7) and GCC libraries [29].

- Add Proprietary Algorithms: InterProScan does not include all algorithms by default. To add Phobius, SignalP, and TMHMM, request the compiled binaries and place them in the respective subfolders within the

bindirectory of your InterProScan installation [29]. - Configure Environment Variables: For tools like SignalP, you must set environment variables to point to the installation directory. Add a line similar to the following to your configuration to ensure proper library loading:

- Verify Installation: Run

./interproscan.shwithout arguments. The tool will list all successfully loaded analysis algorithms. Check this list to ensure no critical algorithms failed to load [29].

Using the Web-Based REST Service For smaller datasets or users without access to a high-performance computing cluster, the InterProScan REST service provides a accessible alternative, though it is limited to 30 sequences per job [29] [30].

- Access Method: The REST service can be accessed programmatically using provided client libraries for Python 2 or 3. The service is also integrated into user-friendly platforms like the Geneious Prime bioinformatics software via a dedicated plugin [31].

Input Data Preparation