Assessing Enzyme Active Site Models: From AI-Driven Prediction to Experimental Validation

Accurately assessing the quality of enzyme active site models is critical for advancing drug discovery, enzyme engineering, and synthetic biology.

Assessing Enzyme Active Site Models: From AI-Driven Prediction to Experimental Validation

Abstract

Accurately assessing the quality of enzyme active site models is critical for advancing drug discovery, enzyme engineering, and synthetic biology. This article provides a comprehensive framework for researchers and drug development professionals, covering foundational concepts, cutting-edge multi-modal deep learning methods like EasIFA, common troubleshooting pitfalls, and rigorous experimental validation strategies. By synthesizing the latest advancements in computational scoring and experimental benchmarking, this guide aims to bridge the gap between in silico predictions and real-world functionality, enabling more reliable and efficient model selection for biomedical applications.

The Critical Role of Active Site Annotation in Modern Biology

The enzyme active site is a specific region on an enzyme where substrate molecules bind and undergo a chemical reaction [1]. This region, often described as a ‘binding pocket,’ may partially or completely envelop the substrate to facilitate catalysis [1]. While the active site itself is typically small—often comprising only about a dozen amino acid residues—it represents the functional core of enzymatic activity, with as few as three residues directly involved in substrate binding and catalysis [1]. Understanding the structural and functional characteristics of active sites is crucial for applications ranging from fundamental biochemistry to drug discovery and the development of industrial biocatalysts.

In neuroscience, for example, understanding enzyme active sites is essential because many neural enzymes regulate neurotransmitter metabolism and signal transduction [1]. The precise architecture of these active sites determines substrate specificity, catalytic efficiency, and regulatory mechanisms that maintain neurological function. This protocol outlines comprehensive approaches for defining enzyme active site characteristics, with a particular emphasis on assessing model quality in structural and computational research.

Key Structural Features of Enzyme Active Sites

Architectural Motifs and Chemical Microenvironments

Active sites possess distinct structural features that enable their catalytic functions. They often exist as internal cavities or clefts within the enzyme structure, providing a specialized chemical environment that differs from the surrounding aqueous solution. The amino acid residues that constitute the active site, while potentially distant in the primary sequence, are brought into close proximity through protein folding to form a three-dimensional catalytic unit.

Catalytic triads represent a classic architectural motif found in many enzyme families. In acetylcholinesterase (AChE), for instance, the catalytic triad consists of serine 200, histidine 440, and glutamate 327, which differs from the serine-histidine-aspartate triad commonly found in serine proteases [1]. This triad exhibits opposite handedness compared to serine proteases, highlighting how similar functional modules can evolve distinct structural variations [1]. The active site of AChE is located at the bottom of a narrow gorge extending approximately 20 Å into the protein, with aromatic residues such as tryptophan and phenylalanine contributing to substrate binding and transition state stabilization within this deep cavity [1].

Metal ion coordination is another critical structural component for many enzymes. Catechol O-methyltransferase (COMT) requires a magnesium ion (Mg²⁺) for catalysis, where the binding of the two catechol hydroxyl groups to Mg²⁺ facilitates the direct transfer of a methyl group from S-adenosylmethionine (AdoMet) to the catechol substrate in an SN2 reaction [1]. The binding pocket for AdoMet is deep within the protein, behind the Mg²⁺-binding site, with limited solvent accessibility—less than 1% of the AdoMet surface is exposed [1].

Structural Conservation and Variation Across Enzyme Families

Table 1: Comparative Structural Features of Neural Enzyme Active Sites

| Enzyme | Key Active Site Components | Structural Features | Catalytic Efficiency |

|---|---|---|---|

| Acetylcholinesterase (AChE) | Ser200, His440, Glu327 (catalytic triad); choline-binding pocket (Trp84, Phe330, Glu199) | Located at bottom of 20Å deep gorge; opposite handedness vs. serine proteases | kcat/Km of 10⁸ M⁻¹s⁻¹ (approaches diffusion-controlled limit) |

| Aromatic Amino Acid Hydroxylases (AAAHs) | 2-histidine-1-carboxylate facial triad coordinating iron | Structurally conserved iron-binding motif | Diverse catalytic reactions essential for neurotransmitter biosynthesis |

| Monoamine Oxidase B (MAO-B) | Flavin cofactor (binds at N-5 position) | Bipartite structure: outer entry chamber and inner combining cavity | Degrades monoamines (dopamine, serotonin) via unstable adduct formation |

| Catechol O-Methyltransferase (COMT) | Mg²⁺ ion; S-adenosylmethionine (AdoMet) binding pocket | Deeply embedded AdoMet binding site (<1% solvent exposure) | Methyl group transfer to catecholamines (meta-hydroxyl preferred) |

| Glycogen Synthase Kinase-3 (GSK-3) | Bilobar structure with ATP binding site; positively charged catalytic site | Activation loop major role in kinase activation | Phosphorylates diverse protein substrates in signal transduction |

The active sites of related enzymes often show remarkable structural conservation despite sequence variations. For example, the glutamate decarboxylase (GAD) isoforms GAD65 and GAD67 possess a catalytic domain that is highly conserved, containing six motifs and several residues that interact directly with the cofactor pyridoxal phosphate (PLP) [1]. Meanwhile, the N-terminal domains of these isoforms are involved in targeting and membrane association, demonstrating how conserved active sites can be coupled with variable regulatory regions [1].

Functional Characteristics and Mechanistic Insights

Substrate Recognition and Binding

Substrate specificity originates from the three-dimensional structure of the enzyme active site and the complicated transition state of the reaction [2]. The binding affinity between enzyme and substrate is a primary determinant of substrate specificity, though many enzymes exhibit promiscuity—the ability to catalyze reactions or act on substrates beyond those for which they were originally evolved [2].

In AChE, substrate specificity and catalytic efficiency are enhanced by the choline-binding pocket formed by tryptophan 84, phenylalanine 330, and glutamate 199, and the acyl-binding pocket formed by phenylalanine 288 and phenylalanine 290, which stabilize and orient the acetyl portion of acetylcholine [1]. The tetrahedral transition state is stabilized via interaction with alanine 201, demonstrating how multiple residues coordinate to achieve efficient catalysis [1].

Catalytic Efficiency and Kinetic Parameters

Enzymes are characterized by their remarkable catalytic efficiency, often accelerating reactions by many orders of magnitude compared to uncatalyzed reactions. The catalytic efficiency (kcat/Km) of AChE reaches 10⁸ M⁻¹s⁻¹, a value approaching the diffusion-controlled limit for substrate entry into the active site [1]. This exceptional efficiency results from the precise arrangement of catalytic residues and the optimization of the active site environment for transition state stabilization.

Kinetic parameters such as the Michaelis constant (Km) and turnover number (kcat) provide crucial insights into enzyme function. Km reflects the substrate concentration at which the reaction rate is half of Vmax and indicates the binding affinity between enzyme and substrate. The turnover number k_cat quantifies the maximum number of catalytic cycles per active site per second [3]. These parameters are essential for understanding enzymatic behavior under physiological conditions and for designing enzyme applications in biotechnology and medicine.

Computational Protocols for Active Site Analysis

Sequence-Based Annotation with CASA Workflow

The Computer-Assisted Sequence Annotation (CASA) workflow is a freely available Python-based tool designed to automate portions of novel protein characterization while producing human-interpretable output [4]. This approach is particularly valuable for enzyme discovery, where determining which sequences are suitable for further study requires annotation that goes beyond basic sequence similarity.

Protocol: Active Site Analysis Using CASA

- Input Preparation: Compile FASTA-formatted protein sequences of interest

- BLAST Search: Run

search_proteins.pyagainst the manually curated Swiss-Prot database - Annotation Retrieval: Execute

retrieve_annotations.pyto obtain feature annotations for valid UniProt entries - Multiple Sequence Alignment: Generate alignment using

alignment.pywith Clustal Omega - Visualization: Create publication-quality scalable vector graphics (SVG) files using

clustal_to_svg.py

The resulting alignments provide comparisons to known reference sequences, displaying user-specified features such as active site residues, disulfide bonds, and substrate-binding residues [4]. This facilitates the integration of biological knowledge into sequence interpretation and supports targeted selection of enzymes for experimental characterization.

Structure-Based Evaluation of Enzyme Active Sites

Protocol: Molecular Docking for Active Site Characterization

- Receptor Preparation:

- Obtain protein structure from PDB or generate via AlphaFold

- Prepare receptor file using appropriate scripts (e.g.,

prepare_receptor.pyfor pdbqt format) - Define binding site coordinates based on known active site residues

Ligand Preparation:

- Obtain small molecule structures in appropriate formats (e.g., SMILES, InChI)

- Convert to docking-compatible formats (pdbqt, mol2) using tools like Open Babel

- Ensure proper protonation states and tautomers

Docking Execution:

- Select docking program based on target (GNINA recommended for CNN scoring)

- Set appropriate search parameters and grid size

- Run docking simulations with multiple poses

Result Evaluation:

- Assess pose quality using multiple metrics (affinity scores, RMSD, CNN score)

- Apply CNN score cutoff of 0.9 (GNINA) to improve specificity [5]

- Validate against known crystal structures when available

Molecular docking performance should be evaluated using Receiver Operating Characteristic (ROC) analysis, which characterizes the ability of docking methods to distinguish between true and false binders [5]. The area under the curve (AUC) identifies good classifiers (AUC ≥0.70) versus those closer to random guess (AUC ≤0.5). For structure-based evaluation of novel enzymes, tools like DeepMolecules provide predictions of enzyme-substrate interactions and kinetic parameters (Km and kcat) using deep learning-generated numerical representations of proteins and small molecules [3].

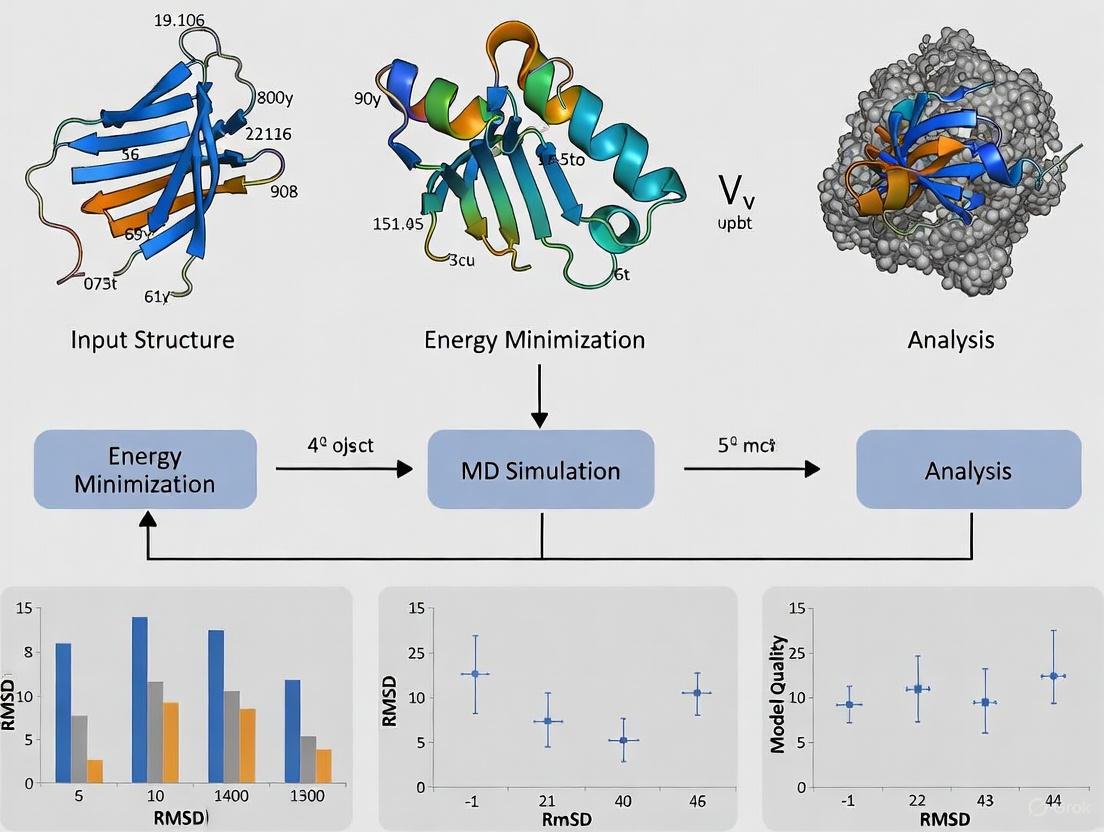

Figure 1: Computational workflow for defining enzyme active site characteristics, integrating both sequence-based and structure-based approaches with quality assessment metrics.

Advanced AI-Driven Approaches for Active Site Analysis

Substrate Specificity Prediction with EZSpecificity

EZSpecificity is a cross-attention-empowered SE(3)-equivariant graph neural network architecture for predicting enzyme substrate specificity, trained on a comprehensive database of enzyme-substrate interactions at sequence and structural levels [2]. This model outperforms existing machine learning approaches for enzyme substrate specificity prediction, achieving 91.7% accuracy in identifying single potential reactive substrates compared to 58.3% for state-of-the-art models in experimental validation with halogenases [2].

Protocol: Substrate Scope Prediction

- Input Preparation: Provide enzyme structure and potential substrates

- Feature Extraction: EZSpecificity generates numerical representations of enzyme active sites and substrates

- Interaction Modeling: The cross-attention mechanism identifies complementary features between enzymes and substrates

- Specificity Scoring: Outputs likelihood scores for enzyme-substrate pairs

- Experimental Validation: Prioritize predicted substrates for testing

AI-Enabled Enzyme Design

Recent breakthroughs in AI-driven protein design now enable the generation of efficient protein catalysts with complex active sites tailored for specific chemical reactions [6]. These approaches integrate deep learning-based protein design with novel assessment tools to evaluate catalytic preorganization across multiple reaction states.

Protocol: De Novo Enzyme Design

- Reaction Definition: Specify target chemical transformation and mechanism

- Active Site Scaffolding: Generate protein folds that position catalytic residues optimally

- Sequence Optimization: Design sequences that stabilize the intended fold and active site geometry

- In Silico Screening: Evaluate designs for structural integrity and catalytic preorganization

- Experimental Characterization: Test designed enzymes for activity and specificity

In one demonstration of this approach, over 300 computer-generated proteins were tested in the lab, with a subset showing reactivity with chemical probes, indicating successful installation of an activated catalytic serine [6]. Structural analysis revealed that the designed enzymes closely matched their intended architectures, with crystal structures deviating by less than 1 Å from computational models [6].

Table 2: Key Research Reagent Solutions for Enzyme Active Site Studies

| Tool/Resource | Type | Primary Function | Application Context |

|---|---|---|---|

| CASA Workflow | Software Package | Automated sequence annotation with custom feature mapping | Identifying conserved active site residues in novel enzymes [4] |

| DeepMolecules | Web Server | Predicting enzyme-substrate pairs and kinetic parameters | Virtual screening of potential substrates; metabolic engineering [3] |

| EZSpecificity | Deep Learning Model | Substrate specificity prediction from enzyme structure | Determining enzyme function and promiscuity [2] |

| GNINA | Docking Software | Molecular docking with CNN-based scoring | Structure-based analysis of ligand binding in active sites [5] |

| EnzyMS | Python Pipeline | LC-MS data analysis for biocatalysis experiments | Detecting enzymatic reaction products and unexpected outcomes [7] |

| RoseTTAFold | AI Protein Design | De novo enzyme design with complex active sites | Creating custom enzymes for specific chemical transformations [6] |

| ZRANK2 | Scoring Function | Ranking protein-protein complex conformations | Assessing macromolecular interactions involving enzymes [8] |

| PyDock | Scoring Function | Electrostatic and desolvation energy-based scoring | Evaluating protein-protein docking in enzyme complexes [8] |

Defining enzyme active sites requires a multi-faceted approach that integrates sequence analysis, structural characterization, and computational modeling. The key structural features—including catalytic triads, metal coordination sites, and specific binding pockets—create unique microenvironments optimized for substrate recognition and transition state stabilization. Functional characterization through kinetic parameters and substrate specificity profiling provides insights into catalytic mechanisms and efficiency.

The protocols outlined here, from sequence annotation with CASA to structure-based analysis with molecular docking and AI-driven design, provide researchers with comprehensive methodologies for active site investigation. Critical to these approaches is the rigorous assessment of model quality through ROC analysis, CNN scoring, and experimental validation. As AI methods continue to advance, the precision with which we can define, predict, and even design enzyme active sites will further accelerate applications in drug discovery, metabolic engineering, and sustainable biotechnology.

Accurate data annotation is a foundational step in developing reliable computational models for biomedical research. It involves the precise labeling of key biological elements—such as enzyme active sites or disease subtypes—to create high-quality datasets that train machine learning (ML) and artificial intelligence (AI) algorithms. The performance of these models is critically dependent on the quality of their underlying annotations; even advanced algorithms can fail or produce misleading results if trained on inconsistent or error-filled data [9] [10]. This application note explores the critical importance of accurate annotation, focusing on its applications in two key areas: the identification of enzyme active sites for drug discovery and the classification of disease subtypes for personalized medicine. We provide detailed protocols and data summaries to guide researchers in implementing these annotation strategies effectively.

The Critical Role of Annotation in Model Performance

In supervised machine learning, models learn patterns from the data they are provided. When this data contains annotation inaccuracies, the model's ability to learn the true underlying patterns is compromised. The principle of "garbage in, garbage out" is particularly relevant here.

Impact on Clinical Decision-Making: A 2023 study highlighted the profound implications of annotation inconsistencies in healthcare. When 11 intensive care unit (ICU) consultants independently annotated the same patient dataset, the resulting inter-annotator agreement was only fair (Fleiss’ κ = 0.383). Classifiers built from these individually annotated datasets subsequently showed low pairwise agreement (average Cohen’s κ = 0.255) when applied to an external validation dataset. This demonstrates that annotation inconsistencies directly propagate into inconsistent model predictions, which in critical care settings can impact patient discharge and mortality decisions [10].

Sources of Annotation Error: Major sources of inconsistency include human subjectivity, data complexity, insufficient domain expertise, and ambiguity in the data itself. For instance, annotating complex medical images or genomic sequences requires specialized knowledge, and a lack of clear guidelines can lead to significant inter-annotator variability [9] [10].

Table 1: Common Challenges in Biological Data Annotation and Their Impacts

| Challenge | Description | Potential Impact on Model |

|---|---|---|

| Human Subjectivity | Variation in interpretation among annotators, especially with nuanced data. | Introduces inconsistent labels, reducing model reliability and accuracy [9]. |

| Data Complexity | Requires specialized expertise (e.g., for medical images or enzyme structures). | Errors from lack of expertise compromise the model's ability to learn true patterns [9]. |

| Ambiguity & Context | Data that can be interpreted in multiple ways depending on context. | Leads to mislabeled data, causing the model to learn incorrect associations [9]. |

| Insufficient Information | Poor quality data or unclear annotation guidelines. | Prevents reliable labeling, resulting in a "shifting ground truth" and unstable models [10]. |

Application Note 1: Accurate Annotation of Enzyme Active Sites in Drug Discovery

Background and Rationale

Enzymes are fundamental catalysts in biochemical processes, and their active sites are primary targets for drug design. Accurately annotating these active sites is crucial for understanding disease mechanisms and developing therapeutic inhibitors. However, high-quality annotation is challenging; of over forty million enzyme sequences identified in the UniProt database, less than 0.7% have high-quality annotations of their active sites [11]. This scarcity of reliable data has historically hindered computational approaches.

Recent advances in AI are overcoming these limitations. The EasIFA (Enzyme active site Identification by Feature Alignment) algorithm exemplifies how multi-modal deep learning can leverage accurate annotations to achieve breakthroughs in speed and precision [11].

Quantitative Performance of Advanced Annotation Tools

The integration of protein language models and 3D structural encoders has led to significant performance improvements.

Table 2: Performance Comparison of Enzyme Active Site Annotation Tools

| Annotation Method | Key Principle | Recall Improvement | Speed Increase | Key Advantage |

|---|---|---|---|---|

| EasIFA (Proposed) | Multi-modal deep learning fusing sequence, structure, and reaction data [11]. | +7.57% vs. BLASTp [11] | 10x faster than BLASTp; 650-1400x faster than PSSM-based DL [11] | High accuracy and speed, suitable for large-scale annotation. |

| BLASTp | Homology-based sequence alignment [11]. | Baseline | Baseline | Well-established, but performance drops if similar sequences are absent from the database. |

| AEGAN | Structure-based graph network using PSSM features [11]. | High accuracy | Baseline (Slow) | Good performance but computationally expensive, limiting large-scale use. |

| 3D Catalytic Modules | Identifies recurring 3D structural motifs in active sites [12]. | Functional insights | Not Specified | Provides mechanistic understanding, useful for enzyme design. |

Experimental Protocol: Annotation of Enzyme Active Sites Using EasIFA

Objective: To accurately annotate catalytic residues in an enzyme's amino acid sequence using its 3D structure and reaction information.

Materials and Reagents:

- Input Data: Enzyme structure file (PDB format) and the chemical reaction it catalyzes (Reaction SMILES format) [11].

- Software: EasIFA algorithm (Freely accessible via web server at http://easifa.iddd.group) [11].

- Computing Environment: Standard computer with internet access for the web server; for local installation, a Python environment with necessary deep learning libraries (e.g., PyTorch) is required.

Procedure:

- Data Preparation:

- Obtain the 3D atomic coordinates of the target enzyme in PDB format. This can be from an experimental source (e.g., RCSB PDB) or a computational prediction (e.g., AlphaFold2).

- Define the enzymatic reaction catalyzed by the target enzyme in SMILES (Simplified Molecular Input Line Entry System) format [11].

Feature Extraction:

- The enzyme's amino acid sequence is processed by a protein language model (e.g., ESM) to extract evolutionary and contextual features.

- The 3D enzyme structure is encoded using a structural encoder to capture spatial relationships and physicochemical properties of residues [11].

- The reaction SMILES is processed by a lightweight graph neural network, pre-trained on a broad dataset of organic reactions, to generate a representation of the chemical transformation [11].

Multi-Modal Information Fusion:

- The latent representations from the protein language model and the 3D structural encoder are fused.

- A multi-modal cross-attention framework aligns the combined enzyme representation with the reaction representation. This step allows the model to focus on enzyme residues that are relevant to the specific chemical reaction being catalyzed [11].

Active Site Prediction:

- The fused and aligned features are fed into a classifier (e.g., a multi-layer perceptron) to generate per-residue predictions.

- The output is a probability score for each amino acid residue in the sequence, indicating its likelihood of being part of the active site. Residues with scores above a defined threshold (e.g., 0.5) are annotated as active site residues [11].

Validation:

- Where possible, compare the predictions with experimentally validated catalytic residues from databases such as the Mechanism and Catalytic Site Atlas (M-CSA) [12].

The following workflow diagram illustrates the streamlined EasIFA annotation process:

The Scientist's Toolkit: Research Reagent Solutions for Enzyme Annotation

Table 3: Essential Resources for Enzyme Active Site Research

| Item/Tool Name | Function/Application | Specifications/Notes |

|---|---|---|

| EasIFA Web Server | Automated annotation of enzyme active sites from structure and reaction data. | Freely available at http://easifa.iddd.group; no local installation required [11]. |

| Mechanism and Catalytic Site Atlas (M-CSA) | Database of enzyme catalytic mechanisms and annotated active sites. | Used for model training and validation; provides expert-curated gold-standard data [12]. |

| 3D Catalytic Template Library | A compiled library of recurring 3D modules in enzyme active sites. | Useful for understanding catalytic function and for enzyme design [12]. |

| AlphaFold2 | Protein structure prediction tool. | Generates reliable 3D structural models when experimental structures are unavailable [11]. |

Application Note 2: Annotation of Disease Subtypes for Personalized Medicine

Background and Rationale

Distinguishing diseases into distinct subtypes is crucial for developing effective, personalized treatment strategies. The Open Targets Platform integrates vast biomedical datasets to support disease classification, yet many disease annotations remain incomplete, requiring laborious expert input [13]. This is especially problematic for rare diseases. Machine learning models trained on accurately annotated datasets can predict disease subtypes from genomic, phenotypic, and clinical data, enabling a more robust and scalable approach to ontology completion [13].

Quantitative Performance in Disease Subtype Identification

A machine learning model designed to identify diseases with potential subtypes achieved a high ROC AUC (Area Under the Receiver Operating Characteristic Curve) of 89.4% [13]. This performance demonstrates the model's strong capability to distinguish between diseases with and without known subtypes. Furthermore, the model identified 515 disease candidates predicted to possess previously unannotated subtypes, offering novel targets for personalized medicine and drug repurposing [13].

Experimental Protocol: Predicting Novel Disease Subtypes

Objective: To build a machine learning model that identifies diseases likely to have unannotated subtypes using features from integrated biomedical datasets.

Materials and Reagents:

- Data Source: The Open Targets Platform (OT), which integrates approximately 23,000 diseases with genetic, genomic, and biochemical data [13].

- Features: Novel features derived from direct evidence, such as genetic associations (from GWAS), phenotypic data (from HPO), and pathway information [13].

- Software: Machine learning libraries (e.g., Scikit-learn for Random Forest/LR) and deep learning frameworks (for integrating pre-trained language models) [13].

Procedure:

- Dataset Curation:

- Extract known disease-subtype relationships from established ontologies like the Experimental Factor Ontology (EFO) and Orphanet to create a labeled training set [13].

- For each disease, compute feature vectors representing genetic, phenotypic, and environmental associations.

Feature Engineering and Model Training:

- Split the data into training and validation sets using cross-validation (CV) to ensure robust performance estimation [13].

- Train multiple classifier models, such as Logistic Regression (LR) and Random Forest (RF). Integrate embeddings from pre-trained deep-learning language models to capture semantic information from biomedical literature [13].

- Use feature importance analysis (e.g., SHAP values) to interpret which data types (genetic, phenotypic, etc.) most strongly contribute to predictions [13].

Prediction and Candidate Prioritization:

- Apply the trained model to all diseases in the Open Targets Platform.

- Diseases with high prediction scores but no current subtype annotations in the database are considered high-confidence candidates for novel subtypes.

- Generate a ranked list of candidate diseases (e.g., the 515 candidates identified) for further experimental validation [13].

The following workflow summarizes the disease subtype prediction process:

Best Practices for High-Quality Data Annotation

To ensure the development of robust AI models, adhering to established annotation best practices is essential.

- Develop Detailed Annotation Guidelines: Create comprehensive documentation with clear label definitions, practical examples of common and edge cases, and instructions for handling ambiguities. This minimizes subjectivity and aligns all annotators [14] [9].

- Implement Rigorous Quality Control: Employ a multi-tiered validation system. This includes peer review, redundant annotations (multiple annotators per data point), and the use of agreement metrics like the Kappa coefficient to measure consistency [9] [10].

- Leverage AI-Assisted Annotation and Active Learning: Use pre-trained models to suggest initial annotations, which human experts can then validate or correct. An active learning approach, where the model prioritizes the most uncertain data for annotation, maximizes efficiency and impact [9].

- Involve Domain Experts: For complex fields like enzymology and medicine, the involvement of biochemists, pathologists, and clinicians is non-negotiable. Their expertise ensures contextual accuracy and handles nuances that non-specialists might miss [9] [10].

Accurate data annotation is not a mere preliminary step but a critical determinant of success in computational biology and drug discovery. As demonstrated, advanced annotation tools like EasIFA for enzyme active sites and ML models for disease subtypes are achieving high performance, enabling large-scale, reliable applications that were previously infeasible. By adhering to the detailed protocols and best practices outlined in this document—such as using multi-modal data, implementing robust quality control, and leveraging domain expertise—researchers can build more accurate and trustworthy models. Ultimately, precise annotation directly accelerates the pace of scientific discovery, from identifying novel drug targets to enabling personalized medicine through refined disease classification.

A profound data challenge lies at the heart of modern enzymology: the critical gap between the linear protein sequences being generated at an unprecedented rate and their detailed functional annotation. While advances in sequencing technology have made enzyme sequences readily available, the experimental characterization of their active sites—the specific regions responsible for catalytic activity—has failed to keep pace. The UniProt database reveals that despite the identification of over forty million enzyme sequences, less than 0.7% have high-quality annotations of their active sites [11]. This massive annotation deficit impedes progress across multiple fields, including drug discovery, disease research, and enzyme engineering, where understanding catalytic mechanisms is paramount.

This application note addresses this challenge by presenting structured protocols and computational solutions for accurate enzyme active site annotation. We frame these methodologies within the broader context of assessing model quality for enzyme active site research, providing researchers with practical tools to bridge the sequence-function divide through integrated computational approaches that leverage both evolutionary and structural information.

Quantitative Landscape of the Annotation Gap

Table 1: Scale of the Enzyme Sequence-Function Annotation Gap

| Metric | Value | Source | Implication |

|---|---|---|---|

| Annotated enzyme sequences in UniProtKB/Swiss-Prot | 216,785 records (38.6% of total) | [15] | Vast majority of sequences lack expert curation |

| Rhea reactions mapped in UniProtKB/Swiss-Prot | 6,654 unique reactions | [15] | Coverage of biochemical transformations remains incomplete |

| Rhea reactions linked to EC numbers | ~75% (4,938 reactions) | [15] | Significant portion of reactions lack standard classification |

| Sequences with high-quality active site annotations | <0.7% | [11] | Critical catalytic information is missing for most enzymes |

Integrated Tools for Active Site Annotation

Table 2: Computational Tools for Enzyme Active Site Annotation

| Tool | Methodology | Input | Output | Strengths |

|---|---|---|---|---|

| EasIFA [11] | Multi-modal deep learning (PLM + 3D structure) | Protein structure, reaction SMILES | Active site residues with types | 10x faster than BLASTp, high accuracy |

| CAPIM [16] | Integrates P2Rank, GASS, and AutoDock Vina | Protein structure | Binding pockets, catalytic residues, EC numbers, docking validation | Residue-level function annotation; multimer support |

| GASS [16] | Genetic algorithm with structural templates | Protein structure | Catalytic residues, EC numbers | Identifies residues across different protein chains |

| P2Rank [16] | Machine learning (Random Forest) | Protein structure | Ligand-binding pockets | Template-free approach; suitable for automation |

Protocol 1: Multi-Modal Active Site Annotation with EasIFA

Application Note: EasIFA (Enzyme active site annotation) represents a significant advancement in annotation technology by fusing protein language models with 3D structural encoders, enabling both rapid and accurate identification of catalytic residues.

Experimental Protocol:

Input Preparation:

- Obtain the protein structure file in PDB format for the enzyme of interest.

- Prepare the corresponding enzymatic reaction information in Reaction SMILES format.

Feature Extraction:

- Process the amino acid sequence through a protein language model (ESM) to generate evolutionary context embeddings.

- Encode the 3D structural information using a graph neural network to capture spatial relationships.

- Utilize a pre-trained molecular transformer to encode the reaction SMILES into a latent representation.

Multi-Modal Fusion:

- Employ a cross-attention mechanism to align the protein-level representations with the reaction knowledge.

- This fusion enables the model to understand the relationship between the enzyme's structure and the specific chemistry it catalyzes.

Active Site Prediction:

- The fused representations are processed through a classification layer to predict whether each amino acid residue belongs to an active site.

- The model further classifies the type of active site (e.g., catalytic vs. binding).

Validation:

- Compare predictions against known annotated structures in databases such as the Catalytic Site Atlas (CSA).

- Performance metrics including recall, precision, F1 score, and Matthews correlation coefficient (MCC) should be calculated to assess model quality.

Figure 1: EasIFA Multi-Modal Annotation Workflow

Protocol 2: Residue-Level Functional Annotation with CAPIM

Application Note: CAPIM addresses the critical gap between catalytic site identification and functional characterization by integrating pocket detection, EC number assignment, and docking validation in a unified pipeline, with particular utility for multimeric enzymes.

Experimental Protocol:

Binding Pocket Prediction:

- Input the protein structure (in PDB format) into P2Rank.

- P2Rank generates solvent-accessible points and uses a Random Forest classifier to evaluate ligandability based on physicochemical and geometric features.

- Output is a ranked list of predicted binding pockets with their spatial coordinates.

Catalytic Residue Identification and EC Number Assignment:

- Process the same protein structure using GASS (Genetic Active Site Search).

- GASS employs genetic algorithms to compare the query structure against a database of active site templates.

- The output includes identification of catalytically active residues and assignment of potential Enzyme Commission (EC) numbers based on template matches.

Data Integration and Analysis:

- Merge P2Rank and GASS outputs to generate residue-level activity profiles within predicted pockets.

- Correlate spatial pocket information with functional EC number annotations.

Functional Validation via Docking:

- Prepare ligand structures of known substrates for the predicted EC classes.

- Use AutoDock Vina to perform docking simulations into the predicted binding pockets.

- Analyze binding poses and affinities to validate the functional predictions.

Quality Assessment:

- For homomeric enzymes, special attention must be paid to symmetric interface accuracy, as inaccurate interface modeling can prevent atomic-level accuracy in active site prediction [17].

- Cross-validate predictions against experimental data where available.

Figure 2: CAPIM Integrated Annotation Pipeline

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Databases and Resources for Enzyme Annotation

| Resource | Type | Function | Application in Annotation |

|---|---|---|---|

| RCSB PDB Annotations [18] | Database | Aggregates structural domain and gene ontology information | Provides evolutionary context (CATH, SCOP) and functional clues (GO terms) |

| Rhea [15] | Biochemical reaction knowledgebase | Expert-curated biochemical reactions with ChEBI ontology | Standardized enzyme annotation in UniProtKB; connects sequences to chemistry |

| Catalytic Site Atlas (CSA) | Database | Manually curated catalytic residues in enzyme structures | Gold-standard validation set for predictive tools |

| UniProtKB [15] | Protein sequence database | Central repository of protein sequence and functional information | Primary source of sequence data with Rhea-integrated enzyme annotation |

| EC Number Classification | Nomenclature system | Hierarchical classification of enzymes by catalyzed reaction | Standardized functional classification across tools and databases |

The integration of multi-modal computational approaches represents a paradigm shift in addressing the enzyme annotation challenge. By simultaneously leveraging sequence embeddings, structural information, and chemical reaction data, tools like EasIFA and CAPIM demonstrate that it is possible to achieve both high accuracy and efficiency in active site prediction. The protocols outlined in this application note provide researchers with standardized methodologies for implementing these advanced annotation strategies, creating a foundation for more reliable assessment of model quality in enzyme active site research. As these computational methods continue to evolve, they will dramatically accelerate our ability to translate the vast landscape of enzyme sequences into functional understanding, ultimately driving innovation across biotechnology, drug discovery, and fundamental biochemical research.

The accurate prediction of protein structure, particularly for enzyme active sites, is a cornerstone of modern drug discovery and biotechnology. The evolution of computational methods from traditional homology modeling to contemporary artificial intelligence (AI) has fundamentally transformed this field, enabling unprecedented accuracy in modeling functional sites. This progression represents a paradigm shift from reliance on evolutionary templates to the de novo generation of structures through deep learning. Within enzyme research, where function is dictated by the precise three-dimensional geometry of active sites, this evolution is critically important for assessing the quality and reliability of structural models. The journey began with homology modeling, which depended on structural similarities with known proteins, and has now reached a new era with AI systems like AlphaFold achieving atomic accuracy, thereby offering profound implications for understanding enzyme mechanism and function [19] [20].

The Foundation: Homology Modeling

Homology modeling, also known as comparative modeling, established the foundational framework for computational protein structure prediction. This methodology is predicated on two core principles: that a protein's amino acid sequence determines its three-dimensional structure, and that this structure is more conserved than its sequence over evolutionary time. When a protein of unknown structure (the target) shares a detectable level of sequence similarity with one or more proteins of known structure (the templates), the structural coordinates of the templates can be used to model the target [20].

The classical homology modeling process is a sequential pipeline involving multiple, refined steps:

- Template Identification and Selection: The target sequence is searched against protein structure databases like the Protein Data Bank (PDB) using tools such as BLASTp, HHblits, or JackHMMER to identify suitable templates.

- Alignment Correction and Optimization: The sequence alignment between the target and template(s) is carefully optimized to minimize errors from gaps and mutations, often using multiple sequence alignments from tools like CLUSTALW or MUSCLE.

- Backbone Model Building: The backbone of the target protein is constructed based on the coordinates of the aligned regions in the template. This can be achieved through rigid-body assembly, segment matching, or spatial restraint methods.

- Loop Modeling and Side-Chain Addition: Regions not aligned with any template, typically loops, are modeled separately. Side-chain conformations (rotamers) are then built onto the backbone.

- Model Optimization and Validation: The initial model is subjected to energy minimization and molecular dynamics to relieve steric clashes and improve stereochemistry. The final model is validated using geometric checks and statistical potentials [20].

A significant challenge for homology modeling, particularly in the context of enzyme active sites, has been the accurate prediction of binding site residues from sequence alone. To address this, evolutionary approaches were developed that leverage the principle that spatial patterns of functional residues are conserved. One such method constructed a database of pocket-containing segments and used a residue-matching profiling technique to predict binding site residues with a reported precision of 70% at 60% sensitivity, even when sequence identity with the template was below 30% [21].

Table 1: Key Steps in the Homology Modeling Pipeline

| Step | Key Methods/Tools | Primary Objective | Challenge for Active Sites |

|---|---|---|---|

| 1. Template Identification | BLASTp, psi-BLAST, HHblits, JackHMMER | Find structurally characterized homologs | Low sequence identity can lead to misalignment of functional residues. |

| 2. Alignment Optimization | CLUSTALW, MUSCLE, profile-profile alignment | Maximize alignment accuracy for core regions | Gaps and shifts can distort the geometry of the binding pocket. |

| 3. Backbone Construction | MODELLER, SWISS-MODEL | Build a initial 3D coordinate set | Conserved backbone geometry may not reflect catalytic state. |

| 4. Loop & Side-Chain Modeling | Ab initio loop modeling, rotamer libraries | Model variable regions and atomic details | High flexibility of catalytic loops is difficult to sample accurately. |

| 5. Validation | MolProbity, PROCHECK, Verify3D | Assess structural reasonableness | Physics-based force fields may not correctly rank models for function. |

For enzyme active site research, the primary limitation of homology modeling is its inherent dependence on the existence and quality of available templates. If the template's active site is not representative of the target's true catalytic state, or if the target possesses a novel fold, the model will be unreliable. Furthermore, the method often fails to capture the dynamic reality of proteins in their native biological environments, a critical aspect for understanding enzyme mechanism [22].

The AI Revolution in Structure Prediction

The advent of artificial intelligence has catalyzed a revolutionary shift in protein structure prediction, moving beyond the template constraints of homology modeling to achieve unprecedented atomic-level accuracy. This revolution is exemplified by DeepMind's AlphaFold2, which demonstrated in the 14th Critical Assessment of protein Structure Prediction (CASP14) that computational methods could regularly predict protein structures to near-experimental accuracy [19]. The core innovation of modern AI systems lies in their ability to learn the complex relationships between protein sequence, evolutionary history, and 3D structure directly from vast datasets of known sequences and structures.

AlphaFold2 introduced several groundbreaking architectural components. Its neural network employs an Evoformer block, a novel architecture that processes input data as a graph inference problem. The Evoformer jointly embeds and refines two key representations: a multiple sequence alignment (MSA) representation and a pair representation that encapsulates relationships between residues. This is followed by a structure module that introduces an explicit 3D structure, iteratively refining it using a novel equivariant transformer to produce accurate coordinates for all heavy atoms [19]. The system incorporates physical and biological knowledge about protein structure and leverages intermediate losses and iterative refinement (recycling) to progressively enhance the predicted model.

The quantitative leap in accuracy has been profound. In CASP14, AlphaFold2 achieved a median backbone accuracy (Cα root-mean-square deviation) of 0.96 Å, a value comparable to the width of a carbon atom and significantly superior to the next best method at 2.8 Å [19]. This level of precision extends to side-chain placement and the packing of domains in large, multi-domain proteins, making these predictions highly useful for inferring enzyme function.

However, AI-based structure prediction faces its own set of challenges. A fundamental epistemological challenge is that the machine learning methods are trained on experimentally determined structures from databases, which may not fully represent the thermodynamic environment controlling protein conformation at functional sites [22]. Furthermore, these methods typically produce single, static models, which are inadequate for representing the millions of possible conformations that proteins—especially those with flexible regions or intrinsic disorders—can adopt in solution. For enzyme active sites, which often rely on precise dynamics for catalysis, this static representation is a significant limitation [22].

Table 2: Evolution of Key Prediction Methods and Their Performance

| Method Era | Representative Tool | Core Methodology | Reported Accuracy (Backbone) | Key Limitation for Active Sites |

|---|---|---|---|---|

| Homology Modeling | MODELLER, SWISS-MODEL | Template-based coordinate assembly | Highly variable; degrades sharply with <30% sequence identity to template. | Template dependence; poor performance on novel folds or binding sites. |

| Deep Learning (c. 2020) | AlphaFold2 [19] | Evoformer & SE(3)-equivariant transformer | 0.96 Å median RMSD (CASP14) | Static structure output; limited representation of dynamics and flexibility. |

| Specificity Prediction | EZSpecificity [2] | SE(3)-equivariant graph neural network | 91.7% accuracy in identifying reactive substrate (vs. 58.3% for previous model) | Focused on substrate specificity, not full atomic structure. |

Application Notes for Enzyme Active Site Research

The transition to AI-driven models has necessitated new protocols for assessing the quality of predicted enzyme active sites. The following application notes provide a structured framework for researchers to validate and utilize these models effectively.

Protocol for Benchmarking Compound Activity Predictions

For drug discovery applications, benchmarking the predictive power of models against real-world experimental data is crucial. The CARA (Compound Activity benchmark for Real-world Applications) benchmark provides a robust framework for this task, distinguishing between two primary application scenarios: Virtual Screening (VS) and Lead Optimization (LO) [23].

Procedure:

- Data Curation and Assay Classification: Collect compound activity data from public resources like ChEMBL, grouped by Assay ID. Classify each assay as either VS-type (characterized by a diverse set of compound scaffolds) or LO-type (characterized by series of congeneric compounds with high similarity).

- Data Splitting: Implement different data splitting schemes tailored to the task.

- For VS tasks, apply a protein-level split, where all data for a specific target protein is held out in the test set. This evaluates the model's ability to generalize to novel targets.

- For LO tasks, apply a scaffold-level split, where compounds sharing a core molecular scaffold are held out. This tests the model's ability to predict activity for novel chemotypes within the same target project.

- Model Training and Evaluation:

- Train selected machine learning or deep learning models (e.g., graph neural networks, random forests) on the training split.

- Evaluate model performance on the test set using metrics appropriate for each task. For VS, prioritize metrics like AUC-ROC and enrichment factors. For LO, use metrics sensitive to ranking quality, such as Spearman's correlation or Kendall's Tau, to assess structure-activity relationships.

Interpretation and Analysis: This protocol helps identify whether a model is fit for a specific purpose in the drug discovery pipeline. It has been observed that popular training strategies like meta-learning can improve performance for VS tasks, while training separate QSAR models per assay can be effective for LO tasks due to their distinct data distributions [23]. This benchmark is essential for avoiding over-optimism and ensuring model utility in practical enzyme inhibitor discovery.

Protocol for Predicting Enzyme Substrate Specificity

Accurately defining an enzyme's function requires predicting its substrate specificity, which originates from the 3D structure of its active site and the complicated reaction transition state. AI models like EZSpecificity have been developed specifically for this task [2].

Procedure:

- Input Data Preparation:

- Sequence & Structure: Obtain the enzyme's amino acid sequence and its 3D structure (either experimentally determined or computationally predicted, e.g., by AlphaFold2).

- Substrate Library: Prepare a library of candidate substrate molecules in a standardized molecular format (e.g., SMILES strings).

- Model Application:

- Input the enzyme and substrate data into the EZSpecificity model. This model uses a cross-attention-empowered SE(3)-equivariant graph neural network architecture, which is inherently aware of 3D rotational and translational symmetries, making it ideal for structural data.

- The model will output a score or probability representing the likelihood of a catalytic reaction between the enzyme and each substrate.

- Validation:

- For high-priority predictions, experimental validation is essential. For example, in the case of halogenases, the top predicted reactive substrate can be tested in vitro, where EZSpecificity achieved an accuracy of 91.7% in identifying the single potential reactive substrate [2].

Key Considerations: This protocol moves beyond static structure prediction to infer dynamic function. The high accuracy of specialized models like EZSpecificity demonstrates how AI can leverage structural information to provide deep functional insights, bridging a critical gap in enzyme characterization.

Protocol for Assessing Model Quality and Dynamics

Given the limitations of static AI models, a critical protocol involves assessing the quality of a predicted active site and inferring its dynamic properties.

Procedure:

- Confidence Metric Analysis: Always examine the per-residue confidence score provided with the prediction (e.g., AlphaFold's pLDDT). Low confidence (typically pLDDT < 70) in active site regions is a major red flag and suggests the model may be unreliable for that locale. The pLDDT score has been shown to reliably predict the local accuracy of the model [19].

- Ensemble Generation: To probe flexibility, use the predicted aligned error (PAE) matrix from models like AlphaFold. A high PAE between the active site and other structural elements suggests potential flexibility or domain movements. Alternatively, use molecular dynamics simulations starting from the AI-predicted structure to sample conformational space.

- Functional Consistency Check: Map known catalytic residues from sequence annotations onto the predicted structure. Verify that the geometric arrangement (distances, angles) between these residues is chemically plausible for the proposed enzymatic mechanism. For proteins with no annotation, tools for functional site prediction can be used.

Interpretation and Analysis: This quality assessment is vital for determining whether a model is sufficient for downstream tasks like drug docking or rational design. A high-confidence, geometrically plausible active site model can be used with high confidence. A low-confidence model necessitates experimental structure determination or the use of more advanced sampling methods to explore conformational ensembles, as single static models cannot represent the dynamic reality of functional proteins [22].

Table 3: Key Software and Database Resources for Enzyme Structure Prediction

| Resource Name | Type | Primary Function in Enzyme Research | Access |

|---|---|---|---|

| AlphaFold [19] | AI Structure Prediction | Predicts 3D protein structure from sequence with high accuracy; provides confidence metrics (pLDDT/PAE). | AlphaFold Protein Structure Database (pre-computed); source code (local deployment). |

| Rosetta [24] | Software Suite | Enables de novo protein design, enzyme engineering, ligand docking, and loop modeling using physics-based and knowledge-based methods. | Rosetta Commons (academic license). |

| EZSpecificity [2] | Specificity Prediction | Predicts enzyme substrate specificity using 3D structural information via a graph neural network. | Code available on Zenodo. |

| ChEMBL [23] | Database | A manually curated database of bioactive molecules with drug-like properties. Used for benchmarking compound activity. | Publicly available online. |

| SplitPocket/PSD [21] | Database (Template Library) | Database of functional pockets and pocket-containing sequence segments; used for template-based binding site prediction. | Publicly available. |

| CARA Benchmark [23] | Benchmarking Dataset | A curated benchmark for evaluating compound activity prediction methods in real-world virtual screening and lead optimization tasks. | Derived from public data. |

Workflow and Relationship Visualizations

Homology to AI Modeling Evolution

Modeling Evolution Workflow

Active Site Quality Assessment

Active Site Quality Check

Cutting-Edge Computational Methods for Active Site Prediction

Application Notes

The integration of protein language models (PLMs) with 3D structural data is revolutionizing computational biology, particularly in the high-precision task of enzyme active site research. This multi-modal approach overcomes the inherent limitations of sequence-only or structure-only models by creating a unified representation that captures evolutionary, structural, and functional constraints. The assessment of model quality in this domain hinges on the ability to accurately annotate catalytic residues and predict functional dynamics, which are critical for drug discovery and enzyme engineering.

Advanced Multi-Modal Architectures for Active Site Analysis

1.1.1. EasIFA: Fusing Sequence, Structure, and Reaction Information The EasIFA (Enzyme active site annotation) framework demonstrates the power of integrating latent enzyme representations from a PLM with 3D structural encoders. Its core innovation lies in a multi-modal cross-attention framework that aligns protein-level information with knowledge of enzymatic reactions. This architecture allows the model to precisely understand the relationship between an enzyme and its specific substrates and reaction types. Evaluated against standard tools, EasIFA outperforms BLASTp with a 10-fold speed increase and improvements in recall (7.57%), precision (13.08%), F1 score (9.68%), and Matthews Correlation Coefficient (0.1012). It also surpasses other deep learning methods based on Position-Specific Scoring Matrices (PSSM), achieving a 650 to 1400-fold speed increase while enhancing annotation quality. This makes it suitable for large-scale industrial and academic applications [11].

1.1.2. OneProt: A Multi-Modal Foundation Model OneProt represents a significant step towards general-purpose multi-modal protein foundation models. It integrates five distinct modalities: protein sequence, 3D structure (in two representations), text annotations, and crucially, binding site data. Using the ImageBind framework, OneProt aligns the latent spaces of these modalities through lightweight fine-tuning that focuses on pairwise alignment with sequence data. This approach enables emergent alignment, where modalities not directly paired during training (e.g., text and binding sites) become aligned through their common connection to the sequence anchor. The model employs a mix of Graph Neural Networks and transformer architectures, with exhaustive ablation studies highlighting the critical contribution of the binding site encoder to its performance on enzyme function prediction and binding site analysis tasks [25].

1.1.3. MICA: Combining Experimental and Predicted Structures For protein structure determination, MICA (Multimodal Integration of Cryo-EM and AlphaFold) exemplifies deep learning integration at both input and output levels. It combines experimental cryo-electron microscopy (cryo-EM) density maps with AlphaFold3-predicted structures using an encoder-decoder architecture with a Feature Pyramid Network (FPN). This allows the model to compensate for limitations in either modality—such as low-resolution regions in cryo-EM maps or incorrectly predicted regions in AF3 structures. Tested on density maps with resolutions between 1.5 Å and 4 Å, MICA significantly outperformed state-of-the-art methods, constructing high-accuracy structural models with an average TM-score of 0.93. This demonstrates the robustness of multi-modal integration for real-world, automated protein structure determination [26].

Quantitative Performance of Multi-Modal Models

Table 1: Performance Metrics of Multi-Modal Models in Key Tasks

| Model Name | Primary Task | Key Metric | Performance | Baseline Comparison |

|---|---|---|---|---|

| EasIFA [11] | Enzyme active site annotation | F1 Score / MCC | Improved by 9.68% / 0.1012 | Outperforms BLASTp & PSSM-based DL |

| EasIFA [11] | Inference Speed | Speed Increase | 10x faster than BLASTp; 650-1400x faster than PSSM-DL | Enables large-scale application |

| MICA [26] | Protein structure modeling | TM-score (Avg.) | 0.93 on high-res cryo-EM maps | Outperforms ModelAngelo & EModelX(+AF) |

| OneProt [25] | Multi-modal retrieval | Downstream Task Accuracy | Enhanced by binding site modality | Ablation shows pocket encoder is crucial |

Table 2: Key Research Reagents and Computational Tools for Multi-Modal Protein Research

| Item Name | Type | Function in Research | Relevant Model/Study |

|---|---|---|---|

| ESM Protein Language Model [11] | Software / Encoder | Provides evolutionary and semantic information from protein sequences. | EasIFA, OneProt |

| AlphaFold3 (AF3) [26] | Software / Encoder | Generates highly accurate 3D structural predictions from amino acid sequences. | MICA |

| Cryo-EM Density Maps [26] | Experimental Data | Provides experimental 3D structural information from cryo-electron microscopy. | MICA |

| Mechanism and Catalytic Site Atlas (M-CSA) [12] | Database | A curated repository of enzyme catalytic mechanisms and active site annotations for training and validation. | 3D Module Library Study |

| Catalytic Site Atlas (CSA) [27] | Database | The largest catalogue of catalytic residues, used for compiling datasets of active site groups. | Active Site Flexibility Study |

| CLoSA Algorithm [27] | Software | A Constraint-based Local Structure Alignment tool for comparing active site geometries and measuring flexibility. | Active Site Flexibility Study |

| Graph Neural Network (GNN) [25] [28] | Software / Architecture | Models protein structures as graphs to capture spatial relationships and residue interactions. | OneProt, STAG-LLM |

| Reaction SMILES [11] | Data Format | Represents the chemical reaction an enzyme catalyzes as a string, providing critical functional context. | EasIFA |

Experimental Protocols

Protocol 1: Multi-Modal Training for Enzyme Active Site Annotation with EasIFA

This protocol outlines the procedure for training a model to annotate enzyme active sites by integrating protein sequence, structure, and reaction information, as exemplified by EasIFA [11].

2.1.1. Input Data Preparation

- Protein Structural Information: Obtain or predict the 3D structure of the target enzyme. If an experimental structure is unavailable from the PDB, use a predictive tool like AlphaFold2. Structure files should be in PDB format.

- Chemical Reaction Information: For the enzyme of interest, retrieve the Reaction SMILES string representing the specific biochemical transformation it catalyzes from a database like Rhea or BRENDA.

- Active Site Annotations: Source high-quality, curated catalytic residue labels from databases like the Mechanism and Catalytic Site Atlas (M-CSA) or Catalytic Site Atlas (CSA) for supervised training.

2.1.2. Feature Extraction and Fusion

- Sequence-Structure Representation:

- Process the amino acid sequence using a pre-trained Protein Language Model (e.g., ESM) to generate a latent representation that captures evolutionary constraints.

- Simultaneously, process the 3D atomic coordinates of the enzyme structure using a 3D structural encoder (e.g., a Graph Neural Network that represents residues as nodes and spatial relationships as edges).

- Fuse the latent representations from the PLM and the structural encoder into a unified enzyme representation.

- Reaction Representation:

- Process the Reaction SMILES string using a lightweight, self-supervised graph neural network pre-trained on a broad dataset of organic chemical reactions. This network should employ an atom-wise, distance-aware attention mechanism.

- Multi-Modal Cross-Attention:

- Design an interpretable, attention-based information interaction network. This network uses cross-attention layers to allow the unified enzyme representation and the reaction representation to interact, enabling the model to focus on protein regions most relevant to the catalytic chemistry.

2.1.3. Model Training and Output

- Task Formulation: Frame the problem as a token classification task, where the model predicts whether each amino acid residue in the sequence is part of the active site.

- Loss Function: Use a standard cross-entropy loss for the classification task between the predicted and true catalytic residue labels.

- Output: The model produces a probability for each residue, indicating its likelihood of being a catalytic residue. A threshold can then be applied to generate the final binary annotation.

The following workflow diagram illustrates the complete EasIFA process:

Protocol 2: Multi-Modal Protein Representation Alignment with OneProt

This protocol describes the method for aligning multiple protein modalities into a shared latent space using a framework like OneProt, which is foundational for many downstream tasks such as retrieval and function prediction [25].

2.2.1. Data Curation and Pairing

- Modalities: Collect data for a set of proteins across the following modalities: Sequence (

ℱ), Structure (𝒮), Text (𝒯), and Binding Site/Pocket (𝒫). - Pairing: Form paired samples

(ai, bi)whereaiis always a protein sequence, andbiis data from one of the other modalities for the same protein. For example:(Sequence_A, Structure_A),(Sequence_A, Text_A),(Sequence_A, Pocket_A). - Pre-processing: To reduce redundancy, cluster the protein sequences at a threshold (e.g., ≤50% identity).

2.2.2. Encoder and Projection Setup

- Modality-Specific Encoders: Utilize pre-trained or train-from-scratch encoders for each modality:

ϕ_ℱ: A transformer-based encoder for protein sequences.ϕ_𝒮: A Graph Neural Network for 3D structures.ϕ_𝒯: A text encoder (e.g., based on BERT) for textual descriptions.ϕ_𝒫: A specialized encoder for binding site pockets.

- Projection Heads: For each encoder, attach a lightweight Multi-Layer Perceptron (MLP) projection head (

proj_ℱ,proj_𝒮, etc.). The purpose of these heads is to map the encoder-specific output vectors into a shared latent space of the same dimensionl.

2.2.3. Contrastive Alignment Training

- Batch Construction: For a batch of size

n, construct batches of paired modalities, always using the sequence as the anchor. For instance, a batch could consist ofn(Sequence, Structure)pairs. - Embedding Calculation:

- For a batch of pairs

{(a1, b1), ..., (an, bn)}, passaiandbithrough their respective encoders and projection heads to get normalized unit embeddings𝐚_iand𝐛_i.

- For a batch of pairs

- Loss Calculation:

- Compute the symmetric InfoNCE (NT-Xent) loss. The loss for a single direction is:

L_ℱ,ℰ = -1/n ∑_i log( exp(𝐚_i^⊤ 𝐛_i / τ) / [ exp(𝐚_i^⊤ 𝐛_i / τ) + ∑_{j≠i} exp(𝐚_i^⊤ 𝐛_j / τ) ] )whereτis a temperature parameter. - The total loss for the batch is the sum of the loss in both directions:

L_total = L_ℱ,ℰ + L_ℰ,ℱ.

- Compute the symmetric InfoNCE (NT-Xent) loss. The loss for a single direction is:

- Sequential Update: Perform a gradient update for the encoders and projection heads of the two modalities in the current pair before moving to the next modality pair in the training step. This sequential optimization aligns all modalities to the sequence anchor.

The following diagram visualizes the representation alignment process:

Protocol 3: Integrating Cryo-EM and AlphaFold3 with MICA for Structure Modeling

This protocol details the multi-modal integration of experimental density maps and AI-predicted structures for building high-accuracy atomic models, as implemented in MICA [26].

2.3.1. Input Data Preprocessing

- Cryo-EM Density Maps: Obtain the cryo-EM density map file for the target protein complex. Preprocess the map to normalize voxel intensities and adjust the grid orientation as needed.

- AlphaFold3 Prediction: Run AlphaFold3 using the amino acid sequences of all protein chains in the complex. This will generate predicted structures and confidence metrics (pLDDT) for each residue.

2.3.2. Multi-Task Deep Learning with Feature Pyramid Network (FPN)

- 3D Grid Feature Extraction: Convert both the cryo-EM density map and the AlphaFold3-predicted structure into 3D voxel grids. Extract feature representations from both grids.

- Encoder-Stack and FPN:

- Pass the fused feature input through a progressive encoder stack comprising three encoder blocks with increasing feature depth to generate hierarchical feature representations.

- Process the encoder output with a Feature Pyramid Network (FPN) to generate multi-scale feature maps. These maps capture hierarchical structural information at different resolutions, from local atomic details to global protein fold patterns.

- Task-Specific Decoding: The FPN feature maps are fed into three separate, hierarchically organized decoder blocks:

- Backbone Atom Decoder: Uses the FPN features to predict the positions of backbone atoms (N, Cα, C, O).

- Cα Atom Decoder: Uses both the FPN features and the predictions from the backbone atom decoder to specifically refine Cα atom positions.

- Amino Acid Decoder: Uses the FPN features and the predictions from both the backbone and Cα decoders to predict the amino acid type at each Cα position.

2.3.3. Backbone Tracing and Refinement

- Initial Backbone Tracing: Use the predicted Cα atoms and their assigned amino acid types to build an initial backbone model. This involves linking Cα positions into chains and registering the sequence.

- Gap Filling and Hybrid Refinement:

- Identify any unmodeled gaps in the initial backbone model.

- Fill these gaps using a sequence-guided Cα extension procedure that leverages the structural information from the corresponding regions of the AlphaFold3-predicted structure.

- Full-Atom Model Building and Refinement:

- Convert the Cα backbone model into a full-atom model using a tool like PULCHRA.

- Finally, refine the full model against the experimental cryo-EM density map using a tool like

phenix.real_space_refineto improve the fit and stereochemical quality.

The workflow for this multi-modal structure determination is as follows:

The accurate annotation of enzyme active sites is a cornerstone for advancing drug discovery, disease research, and enzyme engineering. However, a significant trade-off between speed and accuracy has long hindered the large-scale application of automated annotation tools. The EasIFA (enzyme active site annotation algorithm) framework addresses this challenge by introducing a multi-modal deep learning approach that fuses latent enzyme representations from a Protein Language Model (PLM) and a 3D structural encoder. A key innovation is its use of a multi-modal cross-attention framework to align protein-level information with the knowledge of enzymatic reactions. This architecture enables EasIFA to outperform traditional homology-based methods like BLASTp by a substantial margin, achieving not only superior accuracy but also a 10-fold increase in inference speed. Furthermore, it surpasses other state-of-the-art deep learning methods, providing a speed increase of 650 to 1400 times while simultaneously enhancing annotation quality, making it a suitable replacement for conventional tools in both industrial and academic settings [11] [29].

Performance Benchmarking and Quantitative Assessment

To assess the quality of a model for enzyme active site research, it is crucial to evaluate its performance against established benchmarks. The following tables summarize the key quantitative metrics demonstrating EasIFA's capabilities compared to other methods.

Table 1: Performance Comparison of EasIFA Against BLASTp and AEGAN on Key Metrics [11]

| Model | Recall (%) | Precision (%) | F1 Score | MCC | Relative Speed |

|---|---|---|---|---|---|

| EasIFA | +7.57 | +13.08 | +9.68 | +0.1012 | 10x faster |

| BLASTp (Baseline) | Baseline | Baseline | Baseline | Baseline | 1x |

| EasIFA | - | - | - | - | ~1400x faster |

| AEGAN (PSSM-based) | - | - | - | - | 1x |

MCC: Matthews Correlation Coefficient

Table 2: Overview of Modern Enzyme Active Site Prediction Tools

| Tool Name | Modality | Key Innovation | Primary Application |

|---|---|---|---|

| EasIFA [11] | Sequence, Structure, Reaction | Multi-modal cross-attention between enzyme and reaction data | High-speed, accurate active site annotation |

| Squidly [30] | Sequence-only | Biology-informed contrastive learning on PLM embeddings | Fast, large-scale screening from sequence alone |

| OmniESI [31] | Enzyme-Substrate Interaction | Two-stage progressive conditional deep learning | Multi-task prediction (kinetics, pairing, mutation, annotation) |

| EZSpecificity [2] | Structure, Sequence | Cross-attention SE(3)-equivariant GNN | Predicting enzyme substrate specificity |

Experimental Protocols for Model Evaluation

For researchers to independently verify the performance claims of EasIFA and similar models, the following detailed methodologies are provided for key benchmarking experiments.

Protocol for Benchmarking Against BLASTp and Structure-Based Tools

Objective: To compare the annotation accuracy and inference speed of EasIFA against BLASTp and empirical-rule-based algorithms (e.g., SiteMap) [11].

Materials:

- Test Dataset: A standardized benchmark dataset such as CataloDB [30] or others derived from UniProt and M-CSA (Mechanism and Catalytic Site Atlas) with experimentally validated active sites.

- Hardware: A computing node with a modern GPU (e.g., NVIDIA V100 or A100) for deep learning models and standard CPU resources for BLASTp.

- Software: EasIFA (available via its web server http://easifa.iddd.group), BLASTp suite, and Schrödinger's SiteMap.

Procedure:

- Data Preparation: Curate a test set of enzyme sequences and their corresponding 3D structures (from PDB or predicted via AlphaFold2). Ensure the dataset includes the associated reaction SMILES strings for EasIFA.

- Run BLASTp:

- Use the query enzyme sequence as input against a comprehensive protein database (e.g., Swiss-Prot).

- Execute BLASTp with standard parameters, recording the runtime.

- Extract active site annotations from the top homologous hits.

- Run Empirical-Rule-Based Tool (e.g., SiteMap):

- Input the 3D structure of the enzyme.

- Execute the software's binding site detection function.

- Record the predicted catalytic pockets and the computation time.

- Run EasIFA:

- Input the enzyme's 3D structure (PDB format) and its chemical reaction sequence (SMILES format).

- Execute the EasIFA model.

- Record the predicted active site residues and the inference time.

- Evaluation:

- Calculate recall, precision, F1 score, and MCC using the experimentally validated active sites as the ground truth.

- Compare the computational time of each method, normalizing to the slowest method for relative speed calculation.

Protocol for Low-Sequence-Identity Generalization Test

Objective: To evaluate the model's performance on enzymes with low homology to those in the training set, assessing its generalizability [30].

Materials:

- Test Dataset: A specialized benchmark like CataloDB, which is explicitly designed to contain test sequences with less than 30% sequence and structural identity to the training set [30].

- Software: EasIFA and other models for comparison (e.g., Squidly, AEGAN).

Procedure:

- Dataset Splitting: Use the predefined training and test splits of the benchmark dataset to ensure no data leakage.

- Model Inference: Run the trained models on the low-identity test set.

- Performance Analysis: Calculate the F1 score and other metrics specifically on this challenging subset. A model that maintains a high F1 score (e.g., Squidly reports 0.64 on <30% identity sequences [30]) demonstrates robust generalization.

Workflow and Architecture Visualization

Diagram 1: EasIFA Multi-modal Annotation Workflow. The framework integrates representations from three distinct modalities (sequence, structure, and reaction) via a cross-attention mechanism to produce final active site annotations [11].

Successful application and development of enzyme annotation models require a suite of computational tools and data resources.

Table 3: Key Research Reagent Solutions for Enzyme Active Site Research

| Resource | Type | Function & Application | Access |

|---|---|---|---|

| ESM-2 [11] [30] | Protein Language Model | Generates high-quality, biologically meaningful representations from amino acid sequences alone. | Publicly Available |

| AlphaFold2 [11] [32] | Structure Prediction | Provides reliable 3D structural models for enzymes where experimental structures are unavailable. | Publicly Available |

| UniProt [11] [30] | Protein Database | Source of enzyme sequences and high-quality, manually curated active site annotations for training and testing. | Publicly Available |

| M-CSA [30] | Mechanism Database | A manually curated database of enzyme catalytic mechanisms and active sites; essential for creating high-quality benchmark sets. | Publicly Available |

| CataloDB [30] | Benchmark Dataset | A modern benchmark designed to evaluate model performance on low-sequence-identity enzymes, reducing evaluation bias. | Research Paper |

| EasIFA Web Server [11] | Annotation Tool | A user-friendly web interface for running the EasIFA algorithm without local installation. | http://easifa.iddd.group |

Homology modeling, a cornerstone of structural bioinformatics, predicts a protein's three-dimensional structure based on its sequence similarity to templates with experimentally determined structures. While traditional methods have relied heavily on sequence alignment accuracy, the integration of geometric constraints has emerged as a transformative approach for enhancing atomic-level accuracy, particularly in functionally critical regions like enzyme active sites. These constraints, derived from physical laws, evolutionary conservation, and machine learning predictions, restrict the conformational search space to biologically plausible configurations, leading to more reliable models. This application note details protocols for incorporating geometric constraints into homology modeling workflows, with a specific focus on improving the model quality for enzyme active site research, which is essential for accurate function annotation and drug discovery.

The Performance of Geometric Constraint-Based Refinement

Integrating geometric constraints into protein structure prediction and refinement significantly improves model accuracy across diverse protein classes. The following table summarizes the performance gains reported by various constraint-based methodologies.

Table 1: Performance Metrics of Geometric Constraint-Based Modeling Approaches