Batch Effect Correction for PCA in Genomics: A Comprehensive Guide for Researchers and Clinicians

This article provides a comprehensive guide for researchers and drug development professionals on identifying, correcting, and validating batch effects in genomic studies using Principal Component Analysis (PCA) and advanced methods.

Batch Effect Correction for PCA in Genomics: A Comprehensive Guide for Researchers and Clinicians

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on identifying, correcting, and validating batch effects in genomic studies using Principal Component Analysis (PCA) and advanced methods. It covers foundational concepts of non-biological technical variation, explores specialized methodologies like guided PCA (gPCA) and ratio-based correction, and offers practical troubleshooting for common challenges like over-correction and sample imbalance. Building on the latest benchmarking studies, the guide delivers evidence-based recommendations for method selection and robust validation strategies to ensure data integrity in downstream analyses, including differential expression and predictive modeling.

Understanding and Diagnosing Batch Effects in Genomic Data

What Are Batch Effects? Defining Non-Biological Technical Variation

In molecular biology, batch effects are systematic technical variations introduced into experimental data by non-biological factors. These unwanted variations occur when samples are processed and measured in different batches, creating differences that are unrelated to any genuine biological variation. Batch effects are notoriously common across various high-throughput technologies, including microarrays, mass spectrometry, and single-cell RNA-sequencing, and can lead to inaccurate conclusions when their causes correlate with experimental outcomes of interest [1].

The fundamental challenge with batch effects stems from their ability to confound analysis. When technical variations—arising from factors like different reagent lots, personnel, or instrument calibrations—become systematically linked to biological groups, they can create the illusion of biological signals where none exist or mask true biological signals. This is particularly problematic in large-scale genomics research where samples often must be processed across multiple batches due to practical limitations [2].

Batch effects originate from numerous technical sources throughout the experimental workflow. Understanding these sources is crucial for both prevention and effective correction.

- Laboratory conditions: Fluctuations in temperature, humidity, or atmospheric ozone levels can introduce systematic variations [1] [3]

- Reagent variability: Different lots or batches of reagents, enzymes, or kits may have varying efficiencies [1]

- Personnel differences: Variations in technique between different technicians handling samples [1]

- Instrumentation factors: Changes in machine calibration, performance drift over time, or using different instruments [1] [3]

- Temporal factors: Experiments conducted at different times of day, different days, or across longer periods [1] [4]

- Sample preparation inconsistencies: Variations in extraction protocols, incubation times, or solvent batches [3]

Experimental Scenarios Prone to Batch Effects

Batch effects are particularly problematic in specific experimental scenarios:

- Longitudinal studies where samples are collected and processed over extended periods

- Multi-center studies involving different laboratories or facilities

- Large-scale genomics studies requiring processing in multiple batches due to technical constraints

- Meta-analyses combining existing datasets from different sources [4] [5]

Impact of Batch Effects on Genomic Research

The consequences of uncorrected batch effects can severely impact research validity and reproducibility.

Analytical Consequences

- False discoveries in differential expression analysis: Batch-confounded features may be erroneously identified as significant [4] [5]

- Misleading clustering patterns: Samples may cluster by batch rather than biological similarity [6]

- Reduced statistical power: Technical variation dilutes true biological signals [5]

- Compromised prediction models: Batch effects can lead to overfitted models that fail to generalize [5]

Reproducibility Implications

Batch effects represent a paramount factor contributing to the reproducibility crisis in scientific research. A Nature survey found that 90% of researchers believe there is a reproducibility crisis, with batch effects from reagent variability and experimental bias identified as key contributing factors [5].

In one notable example, batch effects introduced by a change in RNA-extraction solution resulted in incorrect classification outcomes for 162 patients in a clinical trial, 28 of whom subsequently received incorrect or unnecessary chemotherapy regimens [5].

Table 1: Documented Impacts of Batch Effects in Biomedical Research

| Impact Category | Specific Consequences | Field |

|---|---|---|

| Clinical Implications | Incorrect patient classifications; Inappropriate treatment decisions | Clinical trials [5] |

| Scientific Integrity | Retracted publications; Irreproducible findings | Multiple fields [5] |

| Data Integration | Inability to combine datasets; Misleading cross-study comparisons | Multi-omics [5] |

| Biological Interpretation | False pathway identification; Incorrect biological conclusions | Genomics, transcriptomics [4] |

Detecting Batch Effects Using Principal Component Analysis

Principal Component Analysis (PCA) serves as a powerful unsupervised method for detecting batch effects by exploring the variance structure of high-dimensional data and reducing it to a few principal components (PCs) that explain the greatest variation [7].

PCA Workflow for Batch Effect Detection

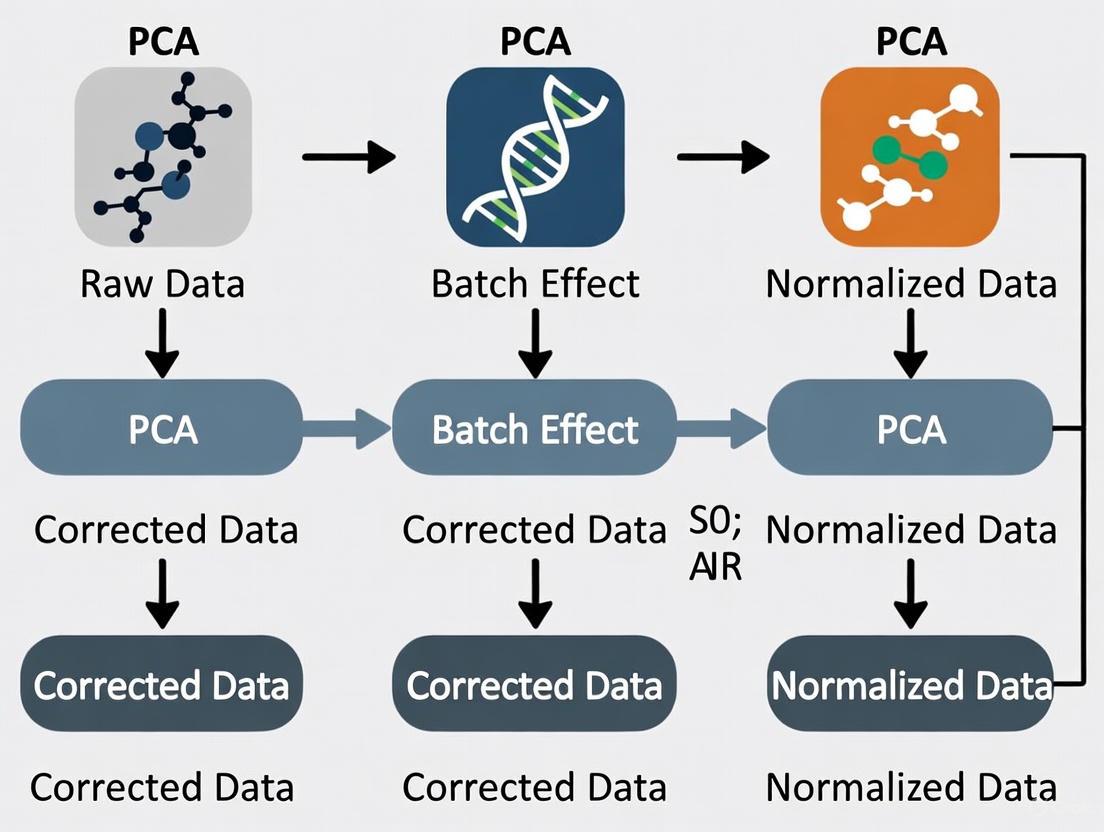

The following diagram illustrates the standard workflow for PCA-based batch effect detection:

Interpretation of PCA Results

In PCA, the first few principal components capture the largest sources of variation in the data. When batch effects represent a major source of variation:

- PC scatter plots show clear separation of samples by batch rather than biological condition [7] [6]

- Density plots for each principal component reveal different distributions across batches [7]

- Variance explanation shows batch-related components accounting for substantial proportion of total variance [7]

Practical Example from Sponge Dataset

Analysis of the sponge dataset demonstrates how PCA reveals batch effects:

In this example, PC1 captured biological variation between different tissues (the effect of interest), while PC2 displayed sample differences due to different gel batches. This clear separation in PCA space confirms the presence of batch effects that require correction [7].

Quantitative Assessment Methods

Beyond visual inspection, quantitative metrics can strengthen batch effect detection:

- Linear models testing batch coefficient significance for individual features [7]

- ANOVA assessing batch contribution to overall variance [7]

- kBET (k-nearest neighbor batch effect test) measuring local batch mixing [6] [8]

- Silhouette width quantifying separation strength between batches [8]

Table 2: PCA Interpretation Guide for Batch Effect Detection

| PCA Pattern | Interpretation | Recommended Action |

|---|---|---|

| Clear batch separation in top PCs | Strong batch effects present; may confound biological analysis | Batch effect correction required before downstream analysis |

| Biological grouping in PC1, batch effects in later PCs | Batch effects present but smaller than biological effects | Evaluate whether correction is needed based on effect size |

| Mixed patterns without clear batch separation | Minimal batch effects or complex interactions | Proceed with caution; consider covariate adjustment in models |

| Batch effects stronger than biological signals | Severe batch confounding | Major correction needed; may require re-analysis with different approach |

Experimental Design Strategies for Batch Effect Prevention

Proper experimental design represents the most effective approach to managing batch effects, as prevention is superior to correction.

Strategic Sample Allocation

The Optimal Sample Assignment Tool (OSAT) was specifically developed to facilitate proper allocation of collected samples to different batches in genomics studies. OSAT optimizes the even distribution of biological groups and confounding factors across batches, reducing the correlation between batches and biological variables of interest [2].

Key principles for effective sample allocation include:

- Balance biological groups across batches to avoid confounding

- Distribute confounding factors (e.g., age, sex, sample source) homogeneously

- Include replicate samples across batches to enable correction validation

- Randomize processing order where possible to avoid systematic biases [2]

Quality Control Measures

Incorporating appropriate quality control (QC) samples is essential for both detecting and correcting batch effects:

- Pooled QC samples: Inserted at regular intervals to monitor and correct instrumental drift [3]

- Technical replicates: Processed across different batches to assess cross-batch reproducibility [3]

- Reference materials: Commercial or internally standardized materials for normalization [9]

Batch Effect Correction Methods

When batch effects cannot be prevented through experimental design, numerous computational approaches exist for batch effect correction.

Classification of Correction Approaches

Genomics-Focused Correction Tools

Table 3: Batch Effect Correction Methods for Genomics Research

| Method | Underlying Approach | Best For | Considerations |

|---|---|---|---|

| ComBat-seq [4] | Empirical Bayes framework | RNA-seq count data | Preserves biological signals; handles small batch sizes |

| removeBatchEffect (limma) [4] | Linear model adjustment | Normalized expression data | Integrated with limma-voom workflow |

| Harmony [10] [6] | Iterative clustering with PCA | Single-cell and bulk data | Fast runtime; good scalability |

| Mutual Nearest Neighbors (MNN) [1] [6] | Matching mutual nearest neighbors | Single-cell RNA-seq data | Identifies shared cell populations across batches |

| Surrogate Variable Analysis (sva) [1] [4] | Estimation of unmodeled variation | Studies with unknown covariates | Handles incomplete batch information |

| Mixed Linear Models [4] | Random effects for batch | Complex experimental designs | Handles nested and hierarchical structures |

Correction Strategy Selection Guidelines

Choosing an appropriate correction method depends on multiple factors:

- Data type: Count-based (ComBat-seq) vs. continuous (ComBat) data

- Batch structure: Balanced vs. confounded designs [9]

- Sample size: Empirical Bayes methods advantageous for small batches [1]

- Biological complexity: Methods preserving subtle biological signals [6]

Recent benchmarking studies recommend:

- Harmony and Seurat CCA for single-cell data with preference for Harmony due to faster runtime [6]

- Protein-level correction for MS-based proteomics data [9]

- Ratio-based methods when batch effects are confounded with biological groups [9]

Research Reagent Solutions for Batch Effect Management

Table 4: Essential Research Reagents and Resources for Batch Effect Control

| Reagent/Resource | Function in Batch Effect Management | Application Context |

|---|---|---|

| Reference Materials (e.g., Quartet protein reference materials) [9] | Inter-batch calibration standards | Large-scale proteomics and multi-omics studies |

| Pooled QC Samples [3] | Monitoring technical variation across batches | Metabolomics, proteomics, and transcriptomics |

| Internal Standards (isotopically labeled) [3] | Normalization within batches | Mass spectrometry-based proteomics and metabolomics |

| Universal Reference Samples [9] | Cross-batch normalization | Multi-center studies and dataset integration |

| Standardized Reagent Lots [1] | Minimizing batch-to-batch reagent variation | All high-throughput genomics applications |

Batch effects represent a fundamental challenge in genomics research, introducing non-biological technical variation that can compromise data interpretation and research reproducibility. Through careful experimental design, vigilant detection using methods like PCA, and appropriate application of correction algorithms, researchers can effectively manage batch effects to ensure the reliability of their genomic findings. As genomic technologies continue to evolve and datasets grow in complexity, sophisticated batch effect management will remain essential for generating biologically meaningful and reproducible results.

In genomics research, Principal Component Analysis (PCA) is a cornerstone tool for the exploratory analysis of high-dimensional data. Its standard application involves projecting samples into a reduced-dimensional space defined by principal components (PCs) that sequentially capture the greatest variance in the dataset. A fundamental assumption in this process is that the largest sources of variation represent the most biologically significant signals. However, this assumption fails dramatically when technically introduced batch effects—systematic technical variations arising from different processing times, laboratories, protocols, or operators—constitute an intermediate source of variation, neither the largest nor the smallest in the dataset [11] [5].

This technical limitation of standard PCA has profound implications for genomic studies. When batch effects are not the primary drivers of variance, they often remain hidden within lower-order principal components, evading visual detection while still significantly confounding biological interpretation [11] [12]. Consequently, researchers may draw incorrect biological conclusions from data where technical artifacts masquerade as biological signals. This paper examines why standard PCA fails under these conditions, introduces enhanced methodologies for detecting and correcting hidden batch effects, and provides practical protocols for genomics researchers working toward robust batch effect correction.

The Limitation of Standard PCA in Batch Effect Detection

How Standard PCA Obscures Intermediate Batch Effects

Standard PCA operates on a straightforward variance-maximization principle: the first PC captures the direction of maximum variance in the data, with subsequent PCs capturing remaining orthogonal variance in descending order. This approach succeeds when batch effects either dominate the variance structure (appearing in early PCs) or represent minor noise (appearing in late PCs). However, when batch effects constitute an intermediate source of variation, they become embedded within middle-order PCs where they are rarely visualized and often overlooked [11].

The consequence is that biologically distinct sample types may cluster by batch rather than by biological condition in the latent space defined by these intermediate components. As noted in assessments of genomic consortia data, "batch effects are a considerable issue, but it is non-trivial to determine if batch adjustment leads to an improvement in data quality" [11]. Visual inspection of only the first two or three PCs—a common practice—provides a false sense of security when batch effects reside in higher-order components.

Specific Failure Scenarios in Genomic Research

- Heterogeneous Samples with Strong Biological Signals: In datasets with substantial legitimate biological variation (e.g., different tissue types, cancer subtypes), biological differences may dominate the first several PCs, pushing batch effects to intermediate components [11].

- Confounded Designs: When batch effects are correlated with biological groups of interest—a common occurrence in multi-center studies—standard PCA cannot distinguish technical artifacts from biological signals [13].

- Low Replicate Numbers: With few biological replicates per batch, the statistical power to detect batch effects through standard PCA diminishes significantly [11].

Table 1: Scenarios Where Standard PCA Fails to Detect Batch Effects

| Scenario | Impact on PCA | Potential Consequences |

|---|---|---|

| High sample heterogeneity | Biological variation dominates early PCs, pushing batch effects to middle PCs | False biological interpretations; batch-confounded results |

| Confounded batch and biological groups | Inability to distinguish technical from biological variance | Incorrect assignment of batch effects as biological signals |

| Longitudinal studies | Time effects entangled with batch effects | Misattribution of temporal changes to batch effects or vice versa |

| Multi-platform data integration | Platform-specific technical variations appear across multiple PCs | Failure to properly integrate datasets from different technologies |

Enhanced Methods for Detecting Hidden Batch Effects

PCA-Plus: Extensions to Standard PCA

To address the limitations of standard PCA, enhanced methods like PCA-Plus introduce algorithmic extensions that improve batch effect detection [12]. PCA-Plus incorporates several key enhancements:

- Group Centroids: Computes and visualizes the central point for each pre-defined batch or biological group

- Dispersion Rays: Shows the variation and distribution of samples within each group

- Trend Trajectories: Identifies and visualizes temporal or sequential patterns across batches

- Dispersion Separability Criterion (DSC): A novel metric that quantifies the separation between groups while accounting for within-group variation

The DSC metric is particularly valuable as it provides a quantitative measure of batch effect severity. It is defined as DSC = D~b~/D~w~, where D~b~ represents the trace of the between-group scatter matrix and D~w~ represents the trace of the within-group scatter matrix [12]. Higher DSC values indicate greater separation between groups relative to within-group variation, suggesting more pronounced batch effects.

Alternative Visualization and Quantification Approaches

Beyond PCA-Plus, several other methods have proven effective for detecting batch effects that evade standard PCA:

- t-Distributed Stochastic Neighbor Embedding (t-SNE): This nonlinear dimensionality reduction technique can reveal batch-associated patterns that remain hidden in PCA [14].

- k-Nearest Neighbor Batch Effect Test (kBET): Quantifies batch effects by measuring how well batches mix at the local level of each sample's nearest neighbors [15].

- Principal Variance Component Analysis (PVCA): Partitions total variation in the dataset into components attributable to batch, biological, and other known factors [9].

Table 2: Methods for Detecting Hidden Batch Effects

| Method | Underlying Principle | Advantages | Limitations |

|---|---|---|---|

| PCA-Plus | Enhanced PCA with group centroids and DSC metric | Quantifiable separation index; maintains PCA interpretability | Requires pre-defined group labels |

| t-SNE | Nonlinear dimensionality reduction | Can reveal complex batch patterns invisible to linear methods | Computational intensive; harder to interpret |

| kBET | Local neighborhood batch mixing | Quantifies batch effect at local scale | Requires batch labels; sensitive to parameters |

| PVCA | Variance partitioning | Quantifies contribution of known factors | Requires complete metadata |

Advanced Batch Effect Correction Strategies

Reference-Based Correction Methods

For scenarios where batch effects are confounded with biological groups, reference-based methods have demonstrated particular effectiveness:

Ratio-Based Scaling: This method transforms absolute feature values into ratios relative to concurrently profiled reference materials. The approach has shown superior performance in multi-omics studies, especially when batch effects are completely confounded with biological factors [13] [9].

Reference Material Design: The Quartet Project employs multi-omics reference materials from four related cell lines, enabling robust batch effect correction across diverse genomic platforms [13]. When implementing ratio-based correction, expression values of study samples are scaled relative to the reference material processed in the same batch:

Corrected Value = Original Value / Reference Value.

Algorithmic Correction Methods

Multiple batch effect correction algorithms (BECAs) have been developed with varying strengths for different scenarios:

Harmony: This method iteratively clusters cells by similarity and calculates cluster-specific correction factors, demonstrating strong performance across both single-cell and bulk genomic data [13] [16].

ComBat: Utilizing empirical Bayes frameworks, ComBat adjusts for batch effects by modeling them as additive and multiplicative noise. Its performance improves significantly when biological covariates are included in the model [17] [16].

Mutual Nearest Neighbors (MNN): This approach identifies pairs of cells across batches that are mutual nearest neighbors in expression space, using these "anchors" to correct batch effects while preserving biological variation [14].

Batch Effect Correction Workflow

Experimental Protocols for Robust Batch Effect Management

Protocol 1: Comprehensive Batch Effect Assessment

Purpose: Systematically evaluate batch effects in genomic data when standard PCA suggests minimal technical artifacts.

Materials:

- Normalized genomic data matrix (e.g., gene expression, methylation)

- Complete metadata including batch identifiers and biological covariates

- R or Python statistical environment

Procedure:

- Standard PCA Visualization

- Perform conventional PCA and plot PC1 vs. PC2, PC2 vs. PC3, and PC1 vs. PC3

- Color points by known batch variables and biological conditions

- Document apparent clustering patterns

Enhanced PCA Analysis

- Implement PCA-Plus with DSC calculation [12]

- Compute group centroids for each batch

- Calculate DSC metric with permutation testing for significance

- Retain components explaining >80% cumulative variance for full assessment

Alternative Visualization

- Apply t-SNE with multiple perplexity values

- Color resulting embeddings by batch and biological variables

- Compare patterns across visualizations

Quantitative Assessment

- Perform kBET analysis to quantify local batch mixing

- Conduct PVCA to partition variance components

- Calculate correlation between replicates within and across batches

Interpretation: Significant batch effects are indicated by DSC p-value <0.05, kBET rejection rate >0.2, or batch accounting for >15% variance in PVCA.

Protocol 2: Reference Material-Based Batch Correction

Purpose: Implement ratio-based batch effect correction using reference materials.

Materials:

- Study samples with reference materials processed in parallel batches

- Quantified feature-level data (e.g., gene expression values)

- Computing environment with statistical software

Procedure:

- Data Preparation

- Organize data by processing batch

- Verify reference material presence in each batch

- Log-transform expression data if necessary

Ratio Calculation

- For each feature in every study sample:

Ratio = Study Sample Value / Reference Material Value - Use median reference value if multiple reference replicates available

- Handle zero values with appropriate pseudo-counts

- For each feature in every study sample:

Batch Effect Assessment

- Apply PCA to ratio-transformed data

- Compare with pre-correction visualization

- Quantify improvement using DSC or similar metrics

Validation

- Assess biological signal preservation through known biological groups

- Evaluate technical artifact reduction through replicate correlation

Notes: This method is particularly effective for multi-omics studies and confounded batch-group scenarios [13].

Protocol 3: Algorithmic Batch Effect Correction for Complex Datasets

Purpose: Apply and compare computational batch correction methods.

Materials:

- Normalized genomic data with batch labels

- Biological covariates of interest

- High-performance computing resources for resource-intensive methods

Procedure:

- Data Preprocessing

- Select highly variable genes (top 5000 by default) [14]

- Scale data using multiBatchNorm or equivalent approach

- Split into discovery and validation sets if possible

Method Application

- Apply multiple correction methods (Harmony, ComBat, MNN, etc.)

- For ComBat, run with and without biological covariates

- For deep learning methods (e.g., scVI), ensure adequate computational resources

Performance Evaluation

- Visualize corrected data using PCA and t-SNE

- Quantify batch mixing using kBET or similar metrics

- Assess biological preservation through clustering of known cell types

- Evaluate method robustness via replicate correlation

Method Selection

- Choose method that optimally balances batch removal and biological signal preservation

- Consider computational efficiency for large datasets

Troubleshooting: If over-correction is suspected (loss of biological signal), prioritize methods that incorporate biological covariates or use more conservative parameters.

Table 3: Research Reagent Solutions for Batch Effect Management

| Reagent/Resource | Function | Application Notes |

|---|---|---|

| Quartet Reference Materials | Multi-omics quality control and ratio-based correction | Enables ratio-based scaling across DNA, RNA, protein, and metabolite profiling [13] |

| Cell Line Controls | Batch effect monitoring through consistent biological material | Include in every processing batch to track technical variation |

| Universal RNA References | Standardization of transcriptomic measurements | Particularly valuable for cross-laboratory studies |

| Synthetic Spike-in Controls | Technical variation assessment | Add known quantities of synthetic sequences to distinguish technical from biological variation |

Standard PCA represents a necessary but insufficient tool for comprehensive batch effect detection in genomics research. Its fundamental limitation lies in the variance-maximization principle that inevitably misses batch effects when they constitute intermediate rather than dominant sources of variation. This oversight can lead to biologically misleading conclusions and compromised analytical outcomes.

The enhanced methodologies presented here—including PCA-Plus with its DSC metric, reference material-based ratio correction, and sophisticated algorithms like Harmony and ComBat—provide researchers with a robust toolkit for identifying and correcting these hidden technical artifacts. The experimental protocols offer practical guidance for implementation across diverse genomic research scenarios.

As genomic studies grow in scale and complexity, with increasing integration of multi-omics data from multiple centers, rigorous approaches to batch effect management become increasingly critical. By moving beyond standard PCA and adopting the comprehensive framework outlined here, researchers can significantly enhance the reliability and reproducibility of their genomic findings, ensuring that biological signals remain distinct from technical artifacts in even the most challenging research contexts.

In genomics research, batch effects are technical variations introduced during the experimental process that are unrelated to the biological signals of interest. These non-biological variations arise from differences in reagent lots, processing times, equipment calibration, laboratory personnel, or sequencing platforms [18]. In large-scale omics studies, such as those using single-cell RNA sequencing (scRNA-seq), batch effects can confound biological variation, reduce statistical power, and potentially lead to misleading conclusions if not properly addressed [18] [19]. The detection and correction of these effects are therefore crucial steps in ensuring data reliability and reproducibility.

Visual diagnostic tools play a fundamental role in the initial detection and assessment of batch effects. Dimensionality reduction techniques – including Principal Component Analysis (PCA), t-distributed Stochastic Neighbor Embedding (t-SNE), and Uniform Manifold Approximation and Projection (UMAP) – transform high-dimensional genomic data into two or three-dimensional spaces that can be visually inspected [20] [6] [21]. These methods allow researchers to observe systematic patterns in their data that may indicate the presence of batch effects before applying quantitative metrics or correction algorithms. When batches cluster separately rather than mixing according to biological conditions, it provides strong visual evidence of batch effects that require remediation [6].

Theoretical Foundations of Visualization Methods

Principal Component Analysis (PCA)

PCA is a linear dimensionality reduction technique that projects data onto the directions of maximum variance, called principal components [20] [19]. It operates by computing the eigenvectors of the covariance matrix of the data, with the first component capturing the greatest variance, the second component the second greatest, and so on. For batch effect detection, PCA is computationally efficient and effective when batch effects exhibit linear patterns [19]. However, its linear nature makes it less capable of capturing complex nonlinear batch effects that are common in genomic data [19].

t-Distributed Stochastic Neighbor Embedding (t-SNE)

t-SNE is a nonlinear probabilistic method that minimizes the Kullback-Leibler divergence between probability distributions in high and low dimensions [20]. It emphasizes the preservation of local data structures, making it particularly effective for visualizing distinct cell types or sample groups. However, t-SNE may not preserve global structures well, and its interpretation can be complicated by parameters such as perplexity that significantly affect the resulting visualization [20].

Uniform Manifold Approximation and Projection (UMAP)

UMAP is based on Riemannian geometry and fuzzy simplicial set theory [20]. It constructs a graphical representation of the data manifold and optimizes a low-dimensional layout that preserves both local and some global structures [20]. UMAP generally offers faster runtime than t-SNE and often provides better preservation of global data structure, making it increasingly popular for single-cell genomics visualization [20] [21].

Table 1: Comparative Characteristics of Dimensionality Reduction Methods

| Feature | PCA | t-SNE | UMAP |

|---|---|---|---|

| Type | Linear | Nonlinear | Nonlinear |

| Preservation | Global variance | Local structure | Local & global structure |

| Speed | Fast | Slow | Moderate to Fast |

| Deterministic | Yes | No | Yes |

| Parameters | Few | Perplexity, iterations | Neighbors, min distance |

| Batch Effect Detection | Effective for linear patterns | Effective for local patterns | Effective for complex patterns |

Experimental Protocols for Batch Effect Detection

Data Preprocessing Requirements

Prior to applying visualization techniques, proper data preprocessing is essential. For scRNA-seq data, this typically includes quality control filtering to remove low-quality cells, normalization to account for sequencing depth variations, logarithmic transformation to stabilize variance, and selection of highly variable genes that drive biological variation [20] [21]. These steps help ensure that technical artifacts do not dominate the visualization and that the resulting plots reflect true biological signals and batch effects rather than preprocessing artifacts.

Protocol for PCA-Based Batch Effect Detection

- Input Preparation: Begin with a normalized gene expression matrix (cells × genes) and associated batch metadata.

- Feature Selection: Use highly variable genes (typically 2,000-5,000) as input features to focus on biologically relevant signals [21].

- PCA Computation: Perform PCA on the standardized expression matrix using singular value decomposition.

- Variance Examination: Check the proportion of variance explained by each principal component, noting components that correlate with batch metadata.

- Visualization: Create scatter plots of the first few principal components (e.g., PC1 vs. PC2, PC2 vs. PC3) colored by batch labels.

- Interpretation: Look for clear separation of samples by batch rather than biological condition in the PCA plot, which indicates batch effects [6].

Protocol for t-SNE and UMAP-Based Batch Effect Detection

- Input Preparation: Use the same normalized expression matrix and batch metadata as for PCA.

- Initial Dimensionality Reduction: First reduce dimensions with PCA (e.g., 50 principal components) to denoise data and reduce computational burden [21].

- Parameter Optimization:

- Embedding Generation: Run t-SNE or UMAP on the PCA-reduced data to generate 2D coordinates for each cell.

- Visualization: Create scatter plots of the t-SNE or UMAP embeddings, coloring points by batch labels and optionally by cell type labels.

- Interpretation: Assess whether cells cluster primarily by batch rather than biological cell type, which indicates batch effects [6].

The following workflow diagram illustrates the complete batch effect detection process:

Quantitative Validation of Visual Findings

While visual inspection provides initial evidence of batch effects, quantitative metrics offer objective validation. The most commonly used metrics include:

- k-nearest neighbor Batch Effect Test (kBET): Measures batch mixing by comparing local versus global batch label distributions using a chi-squared test [19] [21]. Lower rejection rates indicate better batch mixing.

- Local Inverse Simpson's Index (LISI): Quantifies the diversity of batches in local neighborhoods, with higher scores indicating better integration [19] [21]. Integration LISI (iLISI) specifically measures batch mixing.

- Average Silhouette Width (ASW): Evaluates cluster compactness and separation, with separate calculations for batch and biological labels [20] [21]. Batch ASW should be low while cell type ASW should be high after successful correction.

- Adjusted Rand Index (ARI): Measures similarity between clustering results and known cell type annotations, with higher values indicating better preservation of biological signals [22] [21].

Table 2: Quantitative Metrics for Batch Effect Assessment

| Metric | Measurement Target | Ideal Value | Interpretation |

|---|---|---|---|

| kBET Rejection Rate | Batch mixing in local neighborhoods | < 0.2 | Lower = better mixing |

| iLISI Score | Diversity of batches in local neighborhoods | > 1.5 | Higher = better integration |

| Batch ASW | Separation by batch | Close to 0 | Lower = less batch effect |

| Cell Type ASW | Separation by cell type | > 0.5 | Higher = biological preservation |

| ARI | Agreement with cell type labels | > 0.7 | Higher = biological preservation |

Advanced Detection Methods and Limitations

Addressing Nonlinear Batch Effects with BEENE

Traditional PCA has limitations in detecting nonlinear batch effects, which are common in complex genomic datasets [19]. To address this challenge, Batch Effect Estimation using Nonlinear Embedding (BEENE) employs a deep autoencoder network that learns both batch and biological variables simultaneously [19]. BEENE generates embeddings that are more sensitive to both linear and nonlinear batch effects compared to PCA, providing enhanced detection capability for complex batch effects that might be missed by linear methods [19].

Limitations and Considerations

Each visualization method has limitations that researchers must consider. PCA may miss complex nonlinear batch effects [19]. t-SNE results can vary between runs due to stochasticity and are sensitive to parameter choices [20]. UMAP may create artificial connections between distinct clusters, potentially obscuring true biological separation [20]. Additionally, over-reliance on visual inspection without quantitative validation can lead to subjective interpretations [19]. Therefore, a combination of multiple visualization methods and quantitative metrics is recommended for comprehensive batch effect assessment [6] [21].

Research Reagent Solutions

Table 3: Essential Tools for Batch Effect Analysis in Genomics Research

| Tool/Resource | Function | Application Context |

|---|---|---|

| BEEx [23] | Open-source platform for qualitative and quantitative batch effect assessment in medical images | Digital pathology, radiology |

| BEENE [19] | Deep autoencoder for detecting nonlinear batch effects | scRNA-seq data with complex batch effects |

| Harmony [16] [21] | Batch effect correction using iterative clustering | scRNA-seq, image-based profiling |

| Seurat [16] [21] | Integration method using CCA or RPCA and mutual nearest neighbors | scRNA-seq, multi-modal genomics |

| Scanpy [20] | Python-based toolkit for single-cell data analysis | scRNA-seq preprocessing and visualization |

| TCGA Batch Effects Viewer [24] | Web-based platform for assessing batch effects in TCGA data | Cancer genomics, multi-institutional studies |

Effective batch effect detection using PCA, t-SNE, and UMAP visualization is a critical first step in ensuring the reliability of genomic analyses. Each method offers complementary strengths: PCA provides linear efficiency, t-SNE reveals local structure, and UMAP balances local and global patterns. When combined with quantitative metrics like kBET and LISI, these visual tools form an essential component of rigorous genomic data quality assessment. As batch effects grow more complex in large-scale multi-omics studies, advanced methods like BEENE that address nonlinear patterns will become increasingly important for maintaining data quality and biological validity in genomics research.

In genomics research, batch effects are a pervasive challenge, defined as systematic non-biological variations between groups of samples processed under different conditions, such as different times, laboratories, or technicians [25]. These technical artifacts can confound biological signals, leading to misleading conclusions in downstream analyses. Principal Component Analysis (PCA) is a common visual tool for initial batch effect detection; however, its utility is limited because it identifies directions of maximum variance, which may not always correspond to batch effects if they are not the largest source of variation [25] [26]. This limitation within a broader thesis on batch effect correction underscores the necessity for robust, quantitative statistical metrics to reliably identify and measure batch effects prior to applying correction methods such as ComBat or Harmony [27] [21] [28]. This document provides detailed application notes and protocols for three key metrics—Dispersion Separability Criterion (DSC), guided PCA (gPCA), and findBATCH—enabling researchers to make informed decisions about the presence and severity of batch effects in their genomic data.

The following table summarizes the core characteristics of the three quantitative batch effect assessment metrics discussed in this protocol.

Table 1: Overview of Quantitative Batch Effect Assessment Metrics

| Metric | Full Name | Underlying Principle | Primary Output | Key Reference |

|---|---|---|---|---|

| DSC | Dispersion Separability Criterion | Ratio of between-batch to within-batch dispersion | A continuous positive value (DSC) and an empirical p-value | [24] |

| gPCA | guided Principal Component Analysis | Modifies PCA to be guided by a batch indicator matrix, comparing variance to unguided PCA | Test statistic (δ) and a p-value from a permutation test | [25] [26] |

| findBATCH | finding Batch Effects | Evaluates batch effects based on Probabilistic Principal Component and Covariates Analysis (PPCCA) | A statistical measure for diagnosing and quantifying batch effects | [29] |

The Scientist's Toolkit: Essential Research Reagents and Software

Successful implementation of the assessment protocols requires specific computational tools and resources.

Table 2: Key Research Reagent Solutions for Batch Effect Assessment

| Item Name | Function/Application | Implementation |

|---|---|---|

| gPCA R Package | Provides functions to perform the gPCA method and compute the δ statistic. | Available via CRAN [25] |

| MBatch R Package | Contains algorithms (e.g., ANOVA, Empirical Bayes, Median Polish) for assessing and correcting batch effects, and is associated with the TCGA Batch Effects Viewer. | R package [24] [28] |

| TCGA Batch Effects Viewer | A web-based platform to quantitatively and visually assess batch effects in TCGA data, including DSC metric calculation. | Online tool [24] |

| Harman R Package | An alternative batch effect correction and diagnosis tool that maximizes batch noise removal while constraining the risk of signal loss. | Available on Bioconductor [30] |

| findBATCH Algorithm | A method to evaluate batch effect based on probabilistic principal component and covariates analysis (PPCCA). | Methodology described in literature [29] |

Detailed Methodologies and Experimental Protocols

Dispersion Separability Criterion (DSC)

The DSC metric quantifies batch effect by measuring the ratio of dispersion between batches to the dispersion within batches [24].

Mathematical Foundation

The DSC is calculated using the following formulas:

- Between-batch dispersion ((Db)): ( Db = \sqrt{trace(S_b)} )

- Within-batch dispersion ((Dw)): ( Dw = \sqrt{trace(S_w)} )

- DSC: ( DSC = Db / Dw )

Here, (Sb) is the between-batch scatter matrix and (Sw) is the within-batch scatter matrix, as defined in Dy et al., 2004 [24]. (Dw) represents the average distance between samples within a batch and the batch's centroid, while (Db) represents the average distance between batch centroids and the global mean.

Interpretation and Decision Guidelines

Table 3: Interpreting DSC Values and Associated Actions

| DSC Value | p-value | Interpretation | Recommended Action |

|---|---|---|---|

| < 0.5 | Any | Batch effects are not strong. | Proceed with analysis; correction may be unnecessary. |

| > 0.5 | < 0.05 | Significant batch effects are likely present. | Consider batch effect correction before analysis. |

| > 1 | < 0.05 | Strong batch effects are present. | Batch effect correction is strongly recommended. |

Note: The p-value is derived empirically via permutation tests (e.g., 1000 permutations). Both the DSC value and its p-value should be considered for a robust assessment [24].

Experimental Protocol

Procedure:

- Input Data Preparation: Start with a normalized data matrix (e.g., gene expression counts) and a metadata file specifying the batch identifier for each sample.

- DSC Calculation: a. Compute the global mean feature vector across all samples. b. For each batch, calculate the batch centroid (mean feature vector of samples within the batch). c. Compute (Sb), the between-batch scatter matrix. d. For each sample, calculate its deviation from its respective batch centroid. Compute (Sw), the within-batch scatter matrix. e. Calculate (Db) and (Dw) as the square root of the trace of (Sb) and (Sw), respectively. f. Compute the DSC statistic.

- Significance Testing via Permutation: a. Randomly permute the batch labels across all samples. b. Recalculate the DSC statistic with the permuted labels. c. Repeat this process a large number of times (e.g., M=1000) to build a null distribution of DSC under the hypothesis of no batch effects. d. The empirical p-value is the proportion of permuted DSC values that are greater than or equal to the observed DSC value.

- Decision: Use Table 3 to interpret the results and decide whether batch effect correction is needed.

guided Principal Component Analysis (gPCA)

gPCA is an extension of traditional PCA that incorporates a batch indicator matrix to directly guide the decomposition towards variance associated with batch [25].

Mathematical Foundation and Test Statistic

The core of gPCA involves performing singular value decomposition (SVD) not on the data matrix (X) itself, but on (Y'X), where (Y) is the batch indicator matrix. This guides the analysis to find components that separate batches [25].

The primary test statistic, δ, quantifies the proportion of variance attributable to batch effects: [ \delta = \frac{\text{Variance of 1st PC from gPCA}}{\text{Variance of 1st PC from unguided PCA}} = \frac{\lambdag}{\lambdau} ] where (\lambdag) and (\lambdau) are the first eigenvalues from the gPCA and unguided PCA, respectively [25]. A δ value near 1 implies a large batch effect.

The percentage of total variation explained by batch can be estimated as: [ \% \text{ Var} = \frac{\sum \lambda{g, i}}{\sum \lambda{u, i}} \times 100\% ] where the summation is over all principal components [25].

Experimental Protocol

Procedure:

- Data Preprocessing: Center the data matrix (X). Filter non-informative features (e.g., retain the 1000 most variable probes) to reduce noise [25].

- Unguided PCA: Perform standard SVD on the centered matrix (X) to obtain the eigenvalues ((\lambda_u)) for the unguided principal components.

- Guided PCA (gPCA): a. Construct the batch indicator matrix (Y). b. Perform SVD on the matrix (Y'X) to obtain the guided eigenvalues ((\lambda_g)).

- Calculate the δ Statistic: Compute δ using the first eigenvalues from steps 2 and 3.

- Significance Testing via Permutation: a. Permute the batch assignment vector. b. Recompute the δ statistic with the permuted batch labels. c. Repeat this process M times (e.g., M=1000) to create a permutation distribution for δ under the null hypothesis. d. Calculate a one-sided p-value as the proportion of permuted δ values that are greater than or equal to the observed δ.

- Interpretation: A significant p-value (e.g., < 0.05) indicates the presence of a statistically significant batch effect.

findBATCH

The findBATCH algorithm offers a novel approach to diagnosing and quantifying batch effects using a probabilistic framework [29].

findBATCH is based on Probabilistic Principal Component and Covariates Analysis (PPCCA). This method integrates the assessment of batch effects directly into a probabilistic model for dimensionality reduction, allowing for a more formal statistical assessment of the influence of batch covariates on the high-dimensional data structure.

The following diagram illustrates the logical workflow for applying and interpreting these three batch effect assessment metrics.

Diagram 1: Batch effect assessment workflow.

Experimental Protocol

Procedure:

- Input: A normalized genomic data matrix (e.g., from microarray or RNA-seq) and a covariate matrix that includes batch identifiers and potentially other biological factors.

- Model Fitting: Apply the PPCCA model to the data. This model jointly estimates the principal components and the effects of the provided covariates (like batch) on the data.

- Batch Effect Diagnosis: The model output provides a statistical measure quantifying the extent to which batch covariates explain the variance in the data. A stronger association indicates a more substantial batch effect.

- Correction (Optional): The same PPCCA framework underlying findBATCH can be extended to provide a correction method,

CorrectBATCH, which aims to remove the identified batch effects [29].

Integrated Application Workflow

For a comprehensive assessment, it is advisable to use these metrics in concert, as they probe different aspects of batch effects.

- Exploratory Analysis: Begin with a standard PCA plot colored by batch to visually inspect for obvious clustering by batch.

- Quantitative Assessment: a. Run the gPCA test to obtain a p-value for the presence of any batch effect. b. Calculate the DSC metric and its p-value to quantify the strength and significance of batch separation relative to within-batch variation. c. Apply findBATCH to leverage a probabilistic model for a complementary assessment.

- Holistic Decision Making: Synthesize results from all metrics. If multiple metrics indicate a significant batch effect (e.g., gPCA p-value < 0.05 and DSC > 0.5), proceed with batch effect correction using a method such as ComBat, Harmony, or a ratio-based method before any downstream biological analysis [31] [27] [21].

Within the broader objective of developing robust batch effect correction pipelines for genomics, reliable detection is the critical first step. The DSC, gPCA, and findBATCH metrics provide a powerful, statistically grounded toolkit that moves beyond visual PCA inspection. By implementing the detailed application notes and protocols outlined herein, researchers and drug development professionals can systematically diagnose batch effects, thereby ensuring the integrity and reproducibility of their genomic findings.

In the realm of genomics research, batch effects represent a formidable challenge, introducing non-biological technical variations that can compromise data integrity and lead to irreproducible findings. These effects are notoriously common in omics data and, if left uncorrected, can result in misleading outcomes and biased biological interpretation [18]. This application note presents a concrete case study demonstrating how uncorrected batch effects skewed analysis in a real genomic dataset and details the experimental protocols used to diagnose and correct these effects, framed within a broader thesis on batch effect correction for principal component analysis (PCA).

Case Study: Batch Effects in Breast Cancer Gene Expression Profiling

Background and Experimental Design

This case study examines the integration of gene expression data from three independent breast cancer studies profiled using the Affymetrix GeneChip Human Genome U133 Plus 2.0 Array [32]. The pooled dataset comprised 70 samples (30, 22, and 18 from studies GSE12763, GSE13787, and GSE23593, respectively) after standard microarray quality control procedures. The research aimed to identify conserved gene expression signatures across different breast cancer cohorts.

Table 1: Dataset Composition for Breast Cancer Case Study

| Dataset Identifier | Sample Size | Platform | Primary Tissue Source |

|---|---|---|---|

| GSE12763 | 30 | Affymetrix U133 Plus 2.0 | Primary human breast tumors |

| GSE13787 | 22 | Affymetrix U133 Plus 2.0 | Primary human breast tumors |

| GSE23593 | 18 | Affymetrix U133 Plus 2.0 | Primary human breast tumors |

Manifestation of Batch Effects

Initial PCA of the pooled dataset revealed a critical problem: sample clustering in the principal subspace was exclusively driven by batch effect rather than biological characteristics. As shown in Figure 1, samples clustered strictly by their study of origin (batch) in the principal component space, with the first two principal components capturing technical variations rather than biological signals [32].

Figure 1: Workflow demonstrating how batch effects manifested in the breast cancer gene expression case study. PCA visualization revealed clustering by study origin rather than biological characteristics.

Formal statistical testing using the findBATCH method (part of the exploBATCH framework based on Probabilistic Principal Component and Covariates Analysis - PPCCA) confirmed significant batch effects on three of the first five probabilistic principal components (pPCs) [32]. The 95% confidence intervals for the estimated batch effects on pPC1, pPC2, and pPC4 did not include zero, indicating statistically significant technical variation across the batches.

Consequences of Uncorrected Batch Effects

The profound impact of these uncorrected batch effects included:

Masked Biological Signals: True biological differences between breast cancer subtypes were obscured by stronger technical variations [32].

Risk of False Associations: Differential expression analysis conducted on uncorrected data risked identifying falsely significant genes correlated with batch rather than biology [18].

Irreproducible Findings: Any conclusions drawn from the uncorrected data would be specific to the individual studies rather than generalizable across breast cancer populations [18].

Quantitative Impact Assessment

The consequences of batch effects extend beyond this single case study. In a clinical trial context, batch effects introduced by a change in RNA-extraction solution resulted in incorrect classification outcomes for 162 patients, 28 of whom received incorrect or unnecessary chemotherapy regimens [18]. The table below summarizes documented impacts of uncorrected batch effects across various genomic studies.

Table 2: Documented Impacts of Uncorrected Batch Effects in Genomic Studies

| Research Context | Impact of Uncorrected Batch Effects | Consequence |

|---|---|---|

| Breast Cancer Gene Expression [32] | Samples clustered by study origin rather than biology | Masked true biological signals; risk of false conclusions |

| Clinical Trial Molecular Profiling [18] | Shift in gene-based risk calculation | 162 patients misclassified, 28 received incorrect chemotherapy |

| Cross-Species Comparison [18] | Apparent species differences greater than tissue differences | Misleading evolutionary conclusions; corrected to show tissue similarities |

| Ovarian Cancer Study [33] | False gene expression signatures identified | Retracted study and misdirected research directions |

Experimental Protocols for Batch Effect Diagnosis and Correction

Protocol 1: Statistical Diagnosis of Batch Effects

Principle: Implement formal statistical testing to diagnose batch effects before correction [32].

Reagents and Materials:

- R statistical environment (v4.0 or higher)

- exploBATCH R package (https://github.com/syspremed/exploBATCH)

- Normalized gene expression matrix (samples × features)

- Batch metadata (study/site/platform of origin for each sample)

Procedure:

- Data Preprocessing: Normalize each dataset separately according to platform-specific protocols, then pool based on common gene identifiers.

- PPCCA Modeling: Apply findBATCH function to select optimal number of probabilistic principal components using Bayesian Information Criterion.

- Batch Effect Quantification: Calculate 95% confidence intervals for estimated batch effects on each probabilistic principal component.

- Significance Determination: Identify components with 95% CIs not including zero as significantly affected by batch effects.

- Visualization: Generate forest plots to visualize effect sizes and confidence intervals across components.

Expected Results: Formal statistical testing will identify which principal components are significantly affected by batch effects, providing guidance for targeted correction approaches.

Protocol 2: Batch Effect Correction Using exploBATCH

Principle: Implement PPCCA-based correction to remove batch effects while preserving biological variation [32].

Reagents and Materials:

- R statistical environment

- exploBATCH R package

- Expression matrix with confirmed batch effects

- Biological covariates of interest (e.g., disease status)

Procedure:

- Model Fitting: Apply correctBATCH function to estimate batch effects on significant components identified in Protocol 1.

- Effect Subtraction: Subtract the estimated batch effect from each affected probabilistic principal component.

- Data Reconstruction: Reconstruct batch-corrected expression data using the adjusted components.

- Validation: Confirm removal of batch effects using PCA visualization and statistical testing.

- Biological Signal Verification: Verify preservation of biological effects using known biological covariates.

Expected Results: Batch-corrected data where samples cluster by biological characteristics rather than technical artifacts, enabling valid cross-study comparisons.

Protocol 3: ComBat-based Correction for RNA-seq Data

Principle: Implement reference-based batch correction using negative binomial models for count-based RNA-seq data [31].

Reagents and Materials:

- R statistical environment

- ComBat-ref implementation

- RNA-seq count matrix

- Batch metadata

- Reference batch identification

Procedure:

- Reference Batch Selection: Identify the batch with smallest dispersion as reference batch.

- Model Parameter Estimation: Fit negative binomial models to estimate batch-specific parameters.

- Data Adjustment: Adjust non-reference batches toward the reference batch while preserving count data nature.

- Quality Assessment: Evaluate correction using silhouette scores and PCA visualization.

- Downstream Analysis: Proceed with differential expression analysis on corrected data.

Expected Results: Effective removal of batch effects while maintaining the statistical properties of count data and improving sensitivity and specificity of differential expression analysis.

The Scientist's Toolkit: Essential Research Reagents and Computational Tools

Table 3: Essential Research Reagents and Computational Tools for Batch Effect Management

| Tool/Reagent | Function | Application Context |

|---|---|---|

| exploBATCH R Package [32] | Statistical diagnosis and correction of batch effects using PPCCA | General genomic studies (microarray, RNA-seq) |

| ComBat/ComBat-seq [31] [34] | Empirical Bayes framework for batch correction | Microarray (ComBat) and RNA-seq count data (ComBat-seq) |

| ComBat-met [34] | Beta regression framework for DNA methylation data | Methylation array or bisulfite sequencing data |

| findBATCH Function [32] | Formal statistical testing for batch effects | Pre-correction diagnosis in any high-throughput data |

| Reference Materials [9] | Quality control samples for batch effect monitoring | Large-scale multi-batch proteomics and genomics studies |

| Harmony Algorithm [10] | Iterative clustering-based batch correction | Single-cell RNA sequencing and spatial transcriptomics |

Batch Effect Correction Workflow

A robust batch effect management strategy requires a systematic approach from experimental design through data analysis, as illustrated below.

Figure 2: Comprehensive batch effect management workflow spanning experimental design through validation phases. A systematic approach is essential for generating reliable, reproducible genomic data.

This case study demonstrates that uncorrected batch effects can severely compromise genomic analyses, leading to misleading biological interpretations and potentially costly clinical misapplications. The breast cancer gene expression example illustrates how technical variations can dominate the principal components that should ideally capture biological signals. Through implementation of rigorous statistical diagnosis and appropriate correction methods such as those provided by the exploBATCH framework, researchers can effectively mitigate these technical artifacts while preserving biological signals of interest. As genomic technologies continue to evolve and multi-study integrations become increasingly common, robust batch effect management will remain essential for generating reliable, reproducible research findings.

A Practical Guide to Batch Correction Methods Integrating PCA

In genomic studies, batch effects are technical, non-biological variations introduced when samples are processed in different groups or "batches" due to factors like different time points, personnel, reagents, or sequencing platforms [10] [18]. These effects can confound biological signals, reduce statistical power, and if left uncorrected, lead to misleading scientific conclusions and irreproducible results [18] [5]. A survey in Nature found that 90% of researchers believe there is a reproducibility crisis, with batch effects being a paramount contributing factor [5]. Traditional diagnostic methods like Principal Component Analysis (PCA) rely on visual inspection to detect batch clustering, but this approach is subjective and can fail when batch effect is not the greatest source of variability [32].

Guided PCA (gPCA) is a statistical methodology designed specifically to address the limitations of conventional PCA in batch effect diagnosis. Unlike standard PCA, which identifies directions of maximal variance without considering their source, gPCA provides a formal, statistical framework to determine whether the observed patterns in high-dimensional genomic data are significantly associated with batch [32]. This targeted approach offers researchers an objective measure to diagnose batch effects before proceeding with correction, thereby reducing the risk of unnecessary data manipulation or failure to detect confounding technical variation.

gPCA in Context: Comparison with Other Batch Effect Evaluation Methods

Several methods exist for diagnosing and evaluating batch effects in genomic data, each with different strengths and limitations. The table below summarizes the key characteristics of gPCA against other common evaluation approaches.

Table 1: Comparison of Batch Effect Evaluation Methods

| Method | Underlying Principle | Key Output | Primary Use | Key Advantage | Key Limitation |

|---|---|---|---|---|---|

| Guided PCA (gPCA) | Extension of traditional PCA that incorporates batch labels to test for significant batch-associated variation [32]. | A formal statistical test p-value for the global presence of batch effect across all principal components [32]. | Formal statistical diagnosis of batch effect. | Provides an objective, global test for batch effect significance, reducing subjectivity [32]. | Does not assess the effect of batch on individual principal components [32]. |

| Principal Component Analysis (PCA) | Identifies directions of maximal variance in the data without using batch information. | Visualization (e.g., scatter plot) of samples in the space of the first few principal components. | Exploratory visual inspection for batch clustering. | Intuitive and widely used for an initial, quick check of data structure. | Subjective; relies on visual inspection. Can fail if batch effect is not the largest source of variance [32]. |

| findBATCH (via PPCCA) | Uses Probabilistic PCA and Covariates Analysis to model batch as a covariate [32]. | Forest plots with 95% confidence intervals for the estimated batch effect on each probabilistic PC [32]. | Formal statistical diagnosis and quantification of batch effect on individual components. | Identifies which specific principal components are significantly affected by batch [32]. | More complex multi-step procedure; requires selection of the optimal number of probabilistic PCs [32]. |

| Principal Variation Component Analysis (PVCA) | Combines PCA and a linear mixed model to quantify variance contributions from batch and other factors [32]. | Proportion of total variability in the data attributed to batch effect. | Quantifying the magnitude of batch effect relative to other sources of variation. | Provides an estimate of how much of the total data variance is due to batch. | Involves multiple steps, reducing statistical power; no formal statistical test for the presence of batch effect [32]. |

gPCA: Theoretical Foundation and Statistical Protocol

Core Principles of gPCA

Guided PCA is built upon an extension of the traditional PCA framework. While standard PCA identifies a set of orthogonal axes (principal components) that capture the greatest variance in the data matrix X, gPCA specifically tests whether the structure of this variance is significantly associated with a known batch variable b. The method determines if the data distribution in the principal subspace is statistically dependent on the batch labels, providing a formal test for the null hypothesis that no batch effect exists [32]. This makes it a more powerful and objective diagnostic tool than visual inspection of PCA plots, particularly in cases where batch effects are subtle or confounded with biological signals.

Detailed Statistical Protocol

The following workflow outlines the key steps for implementing a gPCA analysis to diagnose batch effects.

Diagram 1: gPCA analysis workflow for batch effect diagnosis.

Step 1: Data Preparation. Begin with a normalized genomic data matrix (e.g., gene expression counts) where rows represent features (genes) and columns represent samples. Ensure the data is properly normalized and filtered according to the standards for the specific omics technology (e.g., RNA-seq, microarrays) [32]. Simultaneously, define a batch covariate vector that assigns each sample to a specific batch (e.g., study, processing date, lab).

Step 2: Algorithm Execution. Run the gPCA algorithm, which compares the variance explained by the principal components in the context of the predefined batch labels. The core of gPCA involves a supervised decomposition of the data matrix that is "guided" by the batch covariate [32].

Step 3: Test Statistic Calculation. The gPCA algorithm computes a test statistic, often denoted as δ, which quantifies the degree to which the principal components are associated with the batch variable. A larger δ value suggests a stronger batch effect.

Step 4: Significance Assessment. To determine the statistical significance of the δ statistic, gPCA employs a permutation test [32]. This involves:

- a) Randomly shuffling the batch labels across the samples a large number of times (e.g., 1000 permutations).

- b) Recalculating the δ statistic for each permuted dataset.

- c) Generating a null distribution of δ values under the assumption of no true batch effect.

- d) Comparing the observed δ from the original data to this null distribution to calculate a p-value.

Step 5: Interpretation. A statistically significant p-value (e.g., p < 0.05) leads to the rejection of the null hypothesis and provides evidence of a significant batch effect in the dataset. This objective result should then inform the decision to apply a batch correction method before proceeding with further biological analysis.

A Practical Toolkit for gPCA and Batch Analysis

Implementing gPCA and related analyses requires specific computational tools and reagents. The following table lists essential components for a research pipeline focused on batch effect diagnosis and correction.

Table 2: Research Reagent Solutions for Batch Effect Analysis

| Item Name / Resource | Type / Category | Function in Analysis | Relevant Method(s) |

|---|---|---|---|

| R Statistical Software | Software Environment | Primary platform for statistical computing, implementing most batch effect diagnosis and correction algorithms. | gPCA [32], findBATCH [32], ComBat [32] [35] |

| exploBATCH R Package | Software Tool / R Package | Provides a framework for formal statistical testing of batch effects using findBATCH (PPCCA) and includes correctBATCH for correction [32]. | findBATCH, correctBATCH [32] |

| Normalized Count Matrix | Data Object | A pre-processed genomic data matrix (e.g., gene expression), normalized for sequencing depth and other technical factors, serving as the input for analysis. | gPCA [32], PCA, all correction methods |

| Batch Covariate File | Data Object | A text file or vector defining the batch membership (e.g., lab, date) for each sample in the study. | gPCA [32], all batch-aware methods |

| ComBat / ComBat-seq | Algorithm / R Function | Empirical Bayes methods for batch correction, often used as a standard against which new methods are compared [32] [35]. | ComBat (microarray), ComBat-seq (RNA-seq) [35] |

| Harmony | Algorithm / R/Python Function | Batch correction method that operates on a principal component embedding, often recommended for single-cell RNA-seq data [36]. | Harmony |

Comparative Performance and Application Protocols

Performance Evaluation: gPCA vs. findBATCH

In a study integrating three breast cancer gene expression datasets (GSE12763, GSE13787, GSE23593), both gPCA and findBATCH were applied to diagnose batch effects. Visual inspection via traditional PCA showed clear clustering by batch, suggesting a strong effect [32]. The findBATCH method, part of the exploBATCH package, identified significant batch effects on three out of the first five probabilistic principal components (pPCs) by using 95% confidence intervals that did not include zero [32]. In the same analysis, gPCA provided a global p-value of less than 0.001, confirming the presence of a significant batch effect across all components, a finding consistent with findBATCH but without identifying which specific PCs were affected [32].

Integrated Experimental Protocol for Batch Management

This protocol describes a complete workflow from batch effect diagnosis to correction and validation, positioning gPCA as a critical first diagnostic step.

Diagram 2: Integrated workflow for batch effect management.

Step 1: Cohort and Study Design. During the initial experimental design, implement strategies to minimize batch effects. This includes randomizing biological samples across processing batches, balancing biological groups of interest within batches, and using the same reagents and equipment where possible [10] [18].

Step 2: Data Generation and Collection. Generate the omics data (e.g., RNA-seq), meticulously recording all technical metadata that could define a batch, such as sequencing date, flow cell, library preparation kit lot, and personnel [18].

Step 3: Data Pre-processing. Normalize the raw data using standard methods for the specific technology (e.g., TPM for RNA-seq, RMA for microarrays). Perform quality control and filtering to remove low-quality features and samples.

Step 4: Batch Effect Diagnosis. This is the critical stage where gPCA is applied.

- 4a. Apply gPCA: Execute the gPCA protocol as described in Section 3.2, using the prepared batch covariate file.

- 4b. Visualize with PCA: Generate a standard PCA plot colored by batch for visual corroboration of the gPCA result.

Step 5: Decision Point. Interpret the gPCA result. A significant p-value (e.g., p < 0.05) indicates a statistically significant batch effect that requires correction. A non-significant result suggests that batch effects are minimal, and you may proceed to biological analysis, though visual inspection of the PCA plot should also be considered.

Step 6: Batch Effect Correction. If a significant batch effect is diagnosed, select and apply an appropriate correction algorithm. For single-cell RNA-seq data, Harmony has been shown to perform well without introducing significant artifacts [36]. For bulk RNA-seq count data, ComBat-seq or the newer ComBat-ref are suitable choices that preserve the integer nature of the data [35].

Step 7: Post-Correction Validation. Re-run gPCA and PCA visualization on the corrected data to confirm the removal of the batch effect. The gPCA p-value should now be non-significant, and samples should no longer cluster by batch in the PCA plot.

Step 8: Biological Analysis. Only after confirming the successful mitigation of batch effects should you proceed with downstream analyses such as differential expression, clustering, or biomarker discovery.

Guided PCA provides a crucial, statistically rigorous tool for the initial diagnosis of batch effects, addressing a key challenge in modern genomics: distinguishing technical artifacts from true biological signals. Its primary strength lies in its objectivity, replacing the subjective visual inspection of PCA plots with a formal hypothesis test [32]. This is particularly valuable in large-scale or multi-center studies where batch effects are almost inevitable and can have profound negative impacts, including false conclusions and irreproducible research [18] [5].

However, the utility of gPCA must be understood in the context of its limitations. As a global test, it indicates the presence of a batch effect but does not specify which principal components are affected, a detail offered by alternative methods like findBATCH [32]. Therefore, gPCA is best deployed as part of an integrated workflow, such as the one detailed in this protocol, where it serves as a gatekeeper to determine the necessity of batch correction.

The ultimate goal of any batch effect management strategy is to preserve biological truth while removing technical noise. Over-correction poses a real risk of distorting or removing meaningful biological variation [18]. By providing a statistically sound basis for the decision to correct, gPCA helps ensure that subsequent analytical steps—whether using established methods like ComBat-seq and Harmony or newer algorithms like ComBat-ref—are applied judiciously. This promotes the generation of reliable, reproducible genomic findings that can robustly inform drug development and scientific discovery.

Batch effects are notorious technical variations in genomic and multi-omics studies that are irrelevant to biological factors of interest but can profoundly skew analytical outcomes and lead to misleading conclusions [13]. These effects arise from differences in experimental conditions, reagent lots, operators, and other non-biological factors across batches [25]. When biological factors and batch factors are completely confounded—where distinct biological groups are processed in entirely separate batches—most conventional batch-effect correction algorithms (BECAs) struggle to distinguish true biological signals from technical artifacts [13].

Ratio-based correction methods provide a powerful alternative by scaling the absolute feature values of study samples relative to those of concurrently profiled reference materials [37] [38]. This approach fundamentally addresses the limitation of absolute feature quantification, which has been identified as a root cause of irreproducibility in multi-omics measurement and data integration [38]. By transforming data to a ratio scale, this method enhances comparability across batches, laboratories, and analytical platforms.

The Quartet Project has pioneered the development and characterization of multi-omics reference materials specifically designed to enable ratio-based correction approaches [38]. These publicly available reference materials, derived from immortalized cell lines from a family quartet, provide built-in biological truth defined by pedigree relationships and central dogma information flow, offering an objective foundation for assessing batch effect correction performance [38].

Core Principles and Advantages of Ratio-Based Scaling

Theoretical Foundation

Ratio-based batch effect correction operates on the principle of scaling absolute feature measurements from study samples relative to corresponding measurements from common reference materials analyzed within the same batch [37] [13]. This approach effectively converts absolute measurements to relative values, thereby canceling out batch-specific technical variations that affect both study samples and reference materials similarly.

The mathematical transformation can be represented as:

[ R{ij} = \frac{A{ij}}{R_{j}} ]

Where:

- (R_{ij}) = Ratio-scaled value for feature (i) in study sample (j)

- (A_{ij}) = Absolute value for feature (i) in study sample (j)

- (R_{j}) = Absolute value for feature (i) in the reference material analyzed in the same batch as sample (j)

This simple yet powerful transformation effectively mitigates batch effects when the technical variations systematically influence both study samples and reference materials within a batch [13].

Comparative Advantages

Table 1: Performance Comparison of Batch Effect Correction Methods in Confounded Scenarios

| Method | DEF Identification Accuracy | Predictive Model Robustness | Sample Classification Accuracy | Applicability in Confounded Designs |

|---|---|---|---|---|

| Ratio-Based Scaling | High | High | High | Excellent |

| ComBat | Moderate | Moderate | Moderate | Limited |

| Harmony | Moderate | Moderate | Moderate | Limited |

| BMC (Per Batch Mean-Centering) | Low | Low | Low | Poor |

| SVA | Variable | Variable | Variable | Limited |

| RUVseq | Moderate | Moderate | Moderate | Limited |

Ratio-based methods demonstrate particular superiority in confounded experimental scenarios where biological groups are completely aligned with batch groups, making biological signals technically inseparable through conventional methods [13]. In such challenging cases, ratio-based scaling maintains the ability to distinguish true biological differences while effectively removing technical artifacts.

Additionally, ratio-based approaches show broad applicability across diverse omics types, including transcriptomics, proteomics, and metabolomics data, making them particularly valuable for integrated multi-omics studies [37] [13].

Experimental Protocols for Ratio-Based Implementation

Reference Material Selection and Design

The foundation of effective ratio-based correction lies in appropriate reference material selection. The Quartet Project reference materials provide an exemplary model with the following characteristics: