Benchmarking Differential Expression Tools: A Researcher's Guide to Accuracy, Power, and Workflow Optimization

Differential expression (DE) analysis is a cornerstone of transcriptomics, crucial for biomarker discovery and understanding disease mechanisms.

Benchmarking Differential Expression Tools: A Researcher's Guide to Accuracy, Power, and Workflow Optimization

Abstract

Differential expression (DE) analysis is a cornerstone of transcriptomics, crucial for biomarker discovery and understanding disease mechanisms. This article provides a comprehensive evaluation of DE tool performance across bulk, single-cell, and proteomics data. We explore foundational statistical models, address methodological challenges like multi-layer variability and data sparsity, and present optimization strategies for robust, reproducible analysis. Drawing on extensive benchmark studies, we compare the sensitivity, false discovery rate control, and computational efficiency of leading methods. This guide is tailored for researchers and drug development professionals seeking to navigate the complex landscape of DE analysis and implement high-performing, reliable workflows.

The Statistical Foundations of Differential Expression Analysis: From Bulk RNA-seq to Single-Cell and Proteomics

Differential expression (DE) analysis is a cornerstone of genomic research, enabling the identification of genes regulated across different biological conditions. However, the reliability of its results is continually challenged by inherent technical and biological complexities. This guide objectively compares the performance of modern DE tools in addressing three core challenges: overdispersion in count data, prevalent drop-out events in single-cell RNA sequencing (scRNA-seq), and multi-layer biological variability. Supporting experimental data and detailed methodologies provide a framework for selecting robust analytical tools.

Core Challenges in Differential Expression Analysis

The transition from bulk to single-cell transcriptomics has exacerbated specific statistical challenges that can confound traditional DE analysis methods.

- Overdispersion: In bulk RNA-seq data, the variance in gene expression counts between biological replicates often exceeds the mean, a phenomenon known as overdispersion. This violates the assumption of a Poisson distribution. Methods like DESeq2 and edgeR address this by employing negative binomial models that incorporate a dispersion parameter, which shrinks gene-wise dispersion estimates towards a trended mean for stable inference [1].

- Drop-out Events: A defining characteristic of scRNA-seq data is the "drop-out" problem, where a gene is observed at a low or moderate expression level in one cell but is undetected in another cell of the same type. These zero counts are a mixture of technical artifacts (failure to detect a truly expressed gene) and biological absence. Traditional bulk methods often misinterpret these zeros, leading to a loss of power. While some scRNA-seq-specific DE methods have been developed, a recent benchmark suggests they do not consistently outperform bulk-oriented methods, which treat this variability as noise [2].

- Multi-layer Variability: Single-cell technologies reveal that cell-to-cell heterogeneity is not just noise but a critical dimension of biology. The "variation-is-function" hypothesis posits that this variability is central to cellular identity and function [2]. Mean-centric DE analysis, which focuses on differences in average expression between conditions, overlooks the rich information encoded in how expression variability changes. A shift towards differential variability (DV) analysis is emerging, with frameworks like spline-DV designed to identify genes with significant changes in expression variance between conditions, independent of the mean [2].

Performance Comparison of DE Analysis Methods

The table below synthesizes findings from recent benchmarks to compare how different methodologies handle core analytical challenges.

Table 1: Benchmarking Differential Expression and Variability Analysis Methods

| Method Name | Core Methodology | Handling of Overdispersion | Handling of Drop-out Events | Analysis of Multi-layer Variability | Key Findings from Benchmarks |

|---|---|---|---|---|---|

| DESeq2 [1] | Negative binomial model | Shrinks gene-wise dispersion estimates towards a trended mean. | Treats as part of technical noise; not specifically designed for scRNA-seq drop-outs. | Mean-centric; focuses on differences in average expression. | A robust, widely adopted method for bulk RNA-seq; performance can be impacted by high drop-out rates in scRNA-seq data [1] [2]. |

| edgeR [1] | Negative binomial model | Uses a weighted conditional likelihood to estimate dispersion. | Similar to DESeq2; treats zeros as technical noise in bulk analysis. | Mean-centric; focuses on differences in average expression. | Consistently performs well in bulk RNA-seq benchmarks; often used in scRNA-seq via pseudobulk approaches [1] [2]. |

| voom-limma [1] | Linear modeling of log-CPM | Models the mean-variance relationship to assign observation-level weights. | Preprocessing and weighting can mitigate some effects, but not specifically for drop-outs. | Mean-centric; focuses on differences in average expression. | Effective for bulk RNA-seq, particularly with complex experimental designs [1]. |

| dearseq [1] | Robust statistical framework | Handles complex designs and heteroscedasticity (unequal variances). | Designed to be robust against various data anomalies. | Mean-centric; focuses on differences in average expression. | Performs well, especially with small sample sizes; identified 191 DEGs in a real vaccine dataset benchmark [1]. |

| Pseudobulk [2] | Aggregating single-cell counts | Uses bulk methods (e.g., DESeq2, edgeR) on aggregated data. | Aggregation reduces the impact of individual drop-out events. | Obscures cellular variability; ignores cell-to-cell heterogeneity by design. | May recover bulk-like DE signals but misses the primary advantage of single-cell resolution, potentially obscuring key biology [2]. |

| spline-DV [2] | Non-parametric differential variability | Models variability directly using coefficient of variation (CV) and dropout rate. | Explicitly incorporates dropout rate as a key metric in its 3D model. | Variability-centric; specifically designed to identify genes with significant changes in expression variance. | Successfully identified ground-truth DV genes in simulations; case studies revealed functionally relevant genes in obesity and cancer [2]. |

Experimental Protocols for Benchmarking

To ensure fair and reproducible comparisons, benchmarks must employ rigorous, standardized pipelines. The following protocols are commonly used in method evaluation studies.

A Standard RNA-seq Preprocessing Pipeline

A robust preprocessing phase is critical for reliable downstream DE analysis. A typical pipeline involves the following steps and tools [1]:

- Quality Control: Raw sequencing reads are assessed using FastQC to identify potential sequencing artifacts and biases.

- Trimming and Cleaning: Trimmomatic is employed to trim low-quality bases and adapter sequences, producing high-quality reads.

- Read Quantification: Transcript abundance is estimated using highly efficient tools like Salmon, which utilizes quasi-alignment-based methods to estimate gene-level expression.

- Normalization: To account for sequencing depth and compositional biases, the Trimmed Mean of M-values (TMM) normalization method, implemented in edgeR, is widely used.

- Batch Effect Correction: Proper identification and correction of batch effects, a common source of technical variation, is essential. This is typically handled using specialized statistical approaches before DEA.

Benchmarking with Real and Synthetic Data

Performance evaluations often leverage a combination of real and synthetic datasets to assess different aspects of a method's capabilities [1] [2].

- Real Datasets: Benchmarks use real data (e.g., from a Yellow Fever vaccine study) to evaluate performance under realistic biological conditions and complex experimental designs [1].

- Synthetic Datasets: Data is simulated with controlled modifications (e.g., known differentially expressed or differentially variable genes) to create a "ground truth." This allows for rigorous testing of a method's accuracy, true positive rate, and robustness to challenges like sparse expression patterns and varying dropout rates [2].

The SG-NEx Resource for Long-Read Benchmarking

The Singapore Nanopore Expression (SG-NEx) project provides a comprehensive benchmark dataset for evaluating transcript-level analysis. This resource includes [3]:

- Multiple Protocols: Seven human cell lines sequenced with multiple replicates using short-read RNA-seq, Nanopore long-read direct RNA, direct cDNA, PCR-amplified cDNA sequencing, and PacBio IsoSeq.

- Spike-In Controls: Inclusion of six different spike-in RNA variants with known concentrations (Sequin, ERCC, SIRVs) to enable precise accuracy measurements.

- Application: This dataset is invaluable for benchmarking computational methods for differential expression, transcript discovery, and quantification, particularly for long-read RNA-seq data.

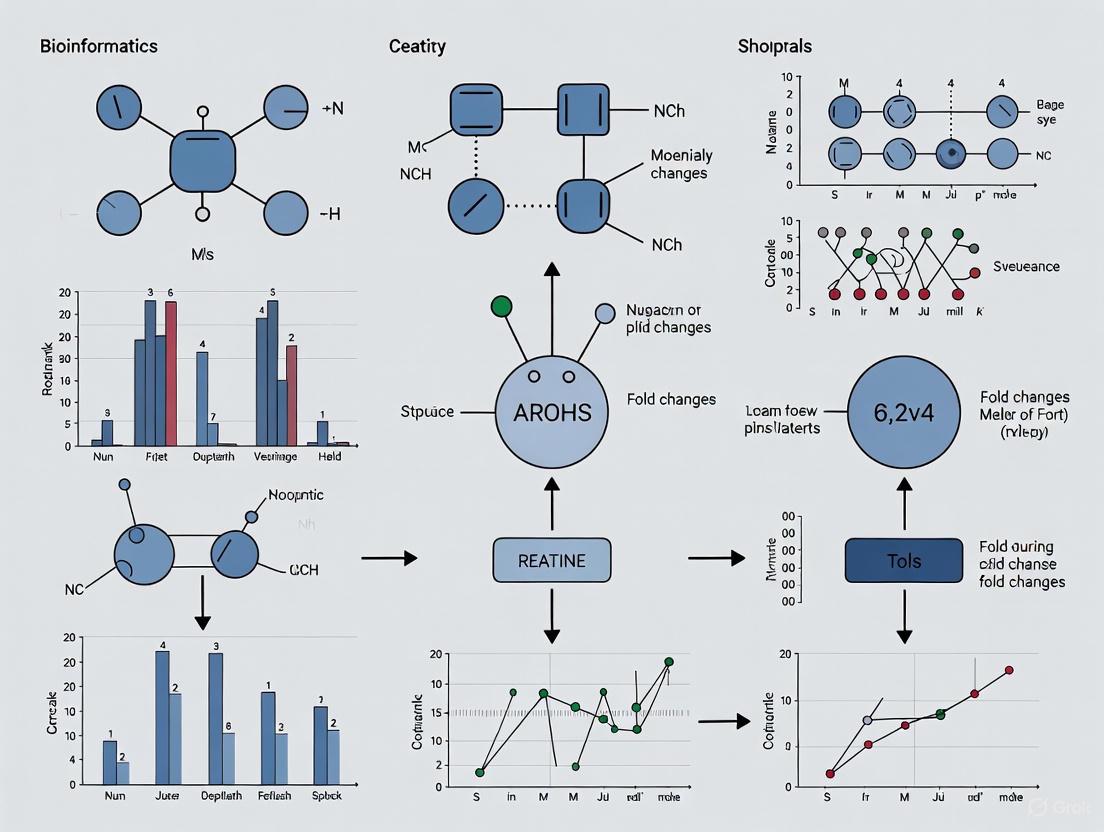

Visualizing Analytical Workflows and Concepts

The following diagrams illustrate the logical workflow of a standard DE analysis and the conceptual foundation of differential variability analysis.

DE Analysis Workflow

The Variation-is-Function Concept

The Scientist's Toolkit: Essential Research Reagents & Materials

The table below details key reagents and computational tools essential for conducting and benchmarking differential expression analyses.

Table 2: Key Research Reagent Solutions for DE Analysis

| Item / Reagent | Function in DE Analysis |

|---|---|

| Spike-in RNA Controls (ERCC, Sequin, SIRVs) [3] | Synthetic RNA sequences spiked into samples at known concentrations. They serve as an external standard for evaluating the accuracy, sensitivity, and technical bias of RNA-seq protocols and DE tools. |

| Trimmomatic [1] | A software tool used to remove adapter sequences and trim low-quality bases from raw sequencing reads, ensuring clean input data for alignment and quantification. |

| Salmon [1] | A computationally efficient tool for quantifying transcript abundance from RNA-seq data. It uses a quasi-mapping approach, enabling fast and accurate gene-level expression estimates. |

| FastQC [1] | A quality control tool that provides an overview of sequencing data quality, highlighting potential problems like sequence adapter contamination or low-quality bases. |

| SG-NEx Data Resource [3] | A comprehensive community resource of long-read RNA-seq data from multiple platforms and cell lines. It is invaluable for benchmarking DE and isoform analysis methods. |

| spline-DV Algorithm [2] | A statistical framework for identifying differentially variable (DV) genes from scRNA-seq data, moving beyond mean expression to capture changes in cell-to-cell heterogeneity. |

A Guide to Differential Expression Analysis

In the field of transcriptomics, differential expression (DE) analysis is a cornerstone for identifying genes that change across different biological conditions. The choice of statistical model is critical, as it directly impacts the reliability and replicability of results. This guide objectively compares the performance of three dominant approaches: the Negative Binomial model, the Poisson model, and Non-Parametric methods, framing the evaluation within broader research on the performance of differential expression tools.

Model Comparison at a Glance

The table below summarizes the core characteristics, strengths, and weaknesses of the three main statistical models used in differential expression analysis.

| Model | Core Principle & Assumptions | Typical Use Case & Performance | Key Strengths | Major Limitations |

|---|---|---|---|---|

| Negative Binomial | Generalized Linear Model (GLM); assumes variance > mean (overdispersion) [4] [5]. | Default for many modern tools (e.g., DESeq2, edgeR); powerful for RNA-seq data where overdispersion is common [1]. | Robustly handles overdispersion; generally higher power for eQTL mapping [6]; provides a more appropriate fit than Poisson when overdispersion is present [7] [5]. | Parameter estimation (dispersion) can be challenging and requires specialized methods for unbiased results [6]. |

| Poisson | GLM; assumes variance = mean (equidispersion) [4] [5]. | Less common for bulk RNA-seq; can be a principled choice for data that follows its strict assumptions, such as certain PET AIF data [4]. | Principled for count data with no overdispersion; simpler model [4]. | Assumption of equidispersion is often violated in RNA-seq data, leading to unreliable results and poor replicability [8] [9]. |

| Non-Parametric | Data-driven; no assumed distribution for read counts [8]. | Useful when distributional assumptions are violated or for robust control of False Discovery Rate (FDR) [8]. | Robust to violations of distributional assumptions; effective FDR control [8]. | May have less power to detect true differentially expressed genes compared to well-specified parametric models [8]. |

Experimental Protocols & Performance

Benchmarking Differential Expression Tools

Independent research has evaluated the performance of various DE tools, which are often built upon the models discussed.

- Protocol: A robust pipeline was used, starting with quality control of raw sequencing reads (FastQC), trimming of low-quality bases and adapters (Trimmomatic), and quantification of transcript abundance (Salmon). Normalization was performed using the Trimmed Mean of M-values (TMM) method. Differential analysis was then conducted using several popular methods, including voom-limma, edgeR (Negative Binomial), and DESeq2 (Negative Binomial) [1].

- Findings: This benchmark highlights that the choice of differential analysis method, including the underlying statistical model, has a direct impact on the list of identified Differentially Expressed Genes (DEGs). The performance and conclusions can vary depending on the specific dataset and experimental design [1].

The Critical Issue of Replicability

A major concern in high-throughput biology is whether results from one study can be replicated in another. For RNA-seq studies, replicability is highly dependent on statistical power, which is influenced by cohort size and the choice of model.

- Protocol: A 2025 study investigated replicability by performing 18,000 subsampled RNA-seq experiments. From 18 large real datasets, small cohorts of size N were randomly sampled, and differential expression and enrichment analyses were performed for each subsample. The overlap of significant genes and gene sets between these subsampled experiments was then measured [9].

- Findings: Experiments with small cohort sizes (e.g., fewer than five replicates) produce results that are unlikely to replicate well [9]. This low replicability is a problem of both sensitivity (recall) and precision. While underpowered experiments inevitably find only a small fraction of the true DEGs (low recall), they can still be precise (low false positive rate) depending on the dataset characteristics [9]. This underscores that no model can compensate for an fundamentally underpowered study design.

Comparing Poisson and Negative Binomial Models

Since the Poisson model is nested within the Negative Binomial model, a likelihood ratio test can formally determine which provides a better fit for a given dataset.

- Protocol: The test compares two models fitted to the same data. The null hypothesis is that the Poisson model is sufficient (i.e., the dispersion parameter in the Negative Binomial model is zero). The test statistic is twice the difference in the log-likelihoods of the two models:

2 * (logLik(NB_model) - logLik(Poisson_model)). This statistic follows a chi-squared distribution with degrees of freedom (df) equal to the difference in the number of parameters (df=1, for the extra dispersion parameter) [10]. - Interpretation: A significant p-value (e.g., < 0.05) provides statistical evidence that the Negative Binomial model, which accounts for overdispersion, is a significantly better fit for the data than the Poisson model [7] [10].

The Scientist's Toolkit

This table details key reagents, software, and methodological solutions essential for conducting differential expression analysis.

| Tool / Reagent | Function / Purpose | Relevant Model(s) |

|---|---|---|

| DESeq2 / edgeR | Software packages implementing the Negative Binomial model for DE analysis. | Negative Binomial |

| LFCseq / NOISeq | Software packages implementing non-parametric methods for DE analysis. | Non-Parametric |

| Trimmed Mean of M-values (TMM) | A normalization method to account for compositional differences and sequencing depth across samples. | All Models [1] |

| Salmon | A tool for fast and accurate quantification of transcript abundances from raw RNA-seq reads. | All Models [1] |

| Likelihood Ratio Test (LRT) | A statistical test to formally compare the goodness-of-fit between Poisson and Negative Binomial models. | Poisson, Negative Binomial [10] |

| Cox-Reid Adjusted Profile Likelihood | A method implemented in tools like quasar for unbiased estimation of the Negative Binomial dispersion parameter, improving Type 1 error control. |

Negative Binomial [6] |

- Negative Binomial is the Dominant Model: For most bulk RNA-seq datasets, which exhibit overdispersion, the Negative Binomial model is the most appropriate and powerful choice [5] [6].

- Validate Poisson Assumptions with Care: The standard Poisson model is rarely suitable for RNA-seq data due to its strict equidispersion assumption. Its use can lead to inflated false positives and poor replicability [8] [9].

- Non-Parametric Methods Offer Robustness: When distributional assumptions are severely violated or when robust FDR control is the priority, non-parametric methods provide a valuable alternative, though sometimes at the cost of some statistical power [8].

- Power and Replicability Depend on Design: Regardless of the model, underpowered studies with few biological replicates will struggle to produce replicable results. Adequate sample size is a foundational requirement [9].

Single-cell RNA sequencing (scRNA-seq) has fundamentally transformed biological research by enabling the characterization of complex cellular populations at unprecedented resolution. Unlike bulk RNA-seq, which measures average gene expression across thousands of cells, scRNA-seq reveals the transcriptional landscape of individual cells, providing crucial insights into cellular heterogeneity, rare cell populations, and developmental trajectories [11]. However, this revolutionary technology presents two fundamental analytical challenges that complicate interpretation: extreme data sparsity and complex cellular heterogeneity.

The high-dimensional nature of scRNA-seq data is characterized by substantial technical noise and frequent "dropout events" (where genes are undetected in some cells despite being expressed), leading to expression matrices with typically 80-95% zero values [12] [13]. Simultaneously, the biological complexity researchers seek to understand—the very cellular heterogeneity that makes single-cell approaches valuable—creates analytical obstacles through continuous cellular states and subtle differences between cell types [14]. This comparison guide evaluates computational strategies designed to overcome these challenges, providing objective performance assessments based on recent large-scale benchmarking studies to inform tool selection in research and drug development.

Benchmarking Methodologies: How Tools Are Evaluated

Robust evaluation of scRNA-seq analytical methods requires carefully designed benchmarking frameworks that simulate realistic biological scenarios while maintaining known ground truth. The most comprehensive benchmarks employ multiple complementary approaches:

Simulation Frameworks

Model-based simulations generate scRNA-seq count data using statistical models like the negative binomial (NB) or zero-inflated negative binomial (ZINB) distributions to replicate the over-dispersion and dropout characteristics of real data [15] [13]. The Splatter framework implements a gamma-Poisson hierarchical model to simulate mean expression, biological noise, and differential expression between pre-defined cell groups [13]. Benchmarking studies systematically vary parameters including sequencing depth (ranging from depth-4 to depth-77 average nonzero counts), batch effect strength, proportion of differentially expressed genes (typically 10-20%), and effect sizes to assess method performance across diverse experimental conditions [15] [16].

Model-Free Validation

Model-free approaches utilize real scRNA-seq datasets with established cellular identities, employing subsampling strategies or using well-annotated datasets from resources like scUnified—a standardized collection of 13 datasets across human and mouse tissues with uniform quality control and preprocessing [12]. These validation frameworks measure a method's ability to recover known biological truths, such as established cell type markers or experimentally validated cellular hierarchies.

Performance Metrics

Evaluation encompasses multiple performance dimensions:

- Accuracy metrics: F-scores (particularly F₀.₅ prioritizing precision), area under precision-recall curve (AUPR), and partial AUPR for recall rates <0.5

- False discovery control: Actual versus nominal false discovery rates (FDR)

- Replicability: Consistency of results across subsampled cohorts

- Scalability: Computational efficiency with increasing cell numbers

- Biological relevance: Prioritization of known disease genes and prognostic signatures [15] [9]

The following diagram illustrates the comprehensive benchmarking workflow that integrates these evaluation strategies:

Comparative Performance Analysis of Computational Methods

Differential Expression Analysis Under Sparsity

Large-scale benchmarking of 46 differential expression (DE) workflows reveals how method performance varies with data characteristics, particularly sparsity levels and batch effects [15]. The optimal choice of DE method depends strongly on sequencing depth and the magnitude of technical artifacts:

Table 1: Performance of Differential Expression Methods Across Data Conditions

| Method Category | Representative Methods | High Sequencing Depth | Low Sequencing Depth | Large Batch Effects | Small Batch Effects |

|---|---|---|---|---|---|

| Covariate Models | MASTCov, ZWedgeR_Cov | Excellent | Good | Best Performance | Good |

| Bulk RNA-seq Adapted | limmatrend, DESeq2 | Excellent | Best Performance | Good | Good |

| Single-cell Specific | MAST, scREHurdle | Good | Good | Good | Good |

| Non-parametric | Wilcoxon test | Moderate | Best Performance | Poor | Moderate |

| Meta-analysis | FEM, REM | Moderate | Good | Moderate | Moderate |

For datasets with substantial batch effects, covariate modeling approaches (which include batch as a covariate rather than pre-correcting data) consistently outperform methods that use batch-corrected data [15]. At low sequencing depths (average nonzero counts of 4-10), methods relying on zero-inflation models often deteriorate in performance, whereas limmatrend, Wilcoxon test, and fixed effects models maintain robust performance [15].

Clustering Methods for Resolving Cellular Heterogeneity

Clustering analysis forms the foundation for identifying cell types and states within heterogeneous tissues. Recent benchmarking studies evaluating 13 state-of-the-art clustering methods reveal several critical patterns:

Table 2: Performance of Clustering Approaches for Cellular Heterogeneity

| Method Category | Representative Methods | Handling Sparsity | Rare Cell Type Detection | Scalability | Stability |

|---|---|---|---|---|---|

| Graph-based Traditional | Seurat, Phenograph | Moderate | Moderate | Good | Good |

| Deep Learning Autoencoders | scDeepCluster, DCA | Good | Moderate | Moderate | Good |

| Graph Neural Networks | scGraphformer, scSGC | Excellent | Excellent | Good | Excellent |

| Multi-kernel Spectral | SIMLR, MPSSC | Good | Good | Poor | Moderate |

Graph neural network (GNN) approaches represent the most promising direction for addressing both sparsity and heterogeneity. The recently developed scGraphformer leverages transformer-based GNNs to dynamically learn cell-cell relational networks directly from scRNA-seq data without relying on predefined graphs, outperforming seven state-of-the-art methods in cell type identification across 20 diverse datasets [14]. Similarly, scSGC (Soft Graph Clustering) introduces non-binary edge weights to capture continuous similarities between cells, overcoming limitations of traditional hard graph constructions and demonstrating superior performance across ten benchmarking datasets compared to 11 existing methods [17].

Advanced Computational Frameworks

Integration of Gene Functional Information

The scMUG pipeline represents a novel approach that integrates gene functional module information to enhance scRNA-seq clustering analysis [11]. By incorporating biological prior knowledge about gene interactions and functional associations, scMUG moves beyond purely statistical approaches to clustering. The pipeline introduces a similarity measure that combines local density and global distribution in the latent cell representation space, presenting comparable or better clustering performance than other state-of-the-art methods while providing deeper insights into functional relationships between gene expression patterns and cellular heterogeneity [11].

Addressing Replicability Challenges in scRNA-seq Studies

Replicability remains a significant concern in single-cell research, particularly given the financial and practical constraints that often limit cohort sizes. A recent analysis of 18,000 subsampled RNA-seq experiments found that results from underpowered studies (fewer than five replicates per condition) show poor replicability, though this doesn't necessarily imply low precision [9]. The study provides a bootstrapping procedure to estimate expected replicability and recommends at least six biological replicates per condition for robust DEG detection, increasing to ten or more replicates when seeking to identify the majority of differentially expressed genes [9].

Table 3: Key Research Reagent Solutions for Single-Cell Analysis

| Resource Name | Type | Primary Function | Application Context |

|---|---|---|---|

| scUnified | Standardized Data Resource | Provides 13 uniformly processed scRNA-seq datasets | Method benchmarking, cross-dataset validation [12] |

| SimBench | Evaluation Framework | Comprehensive benchmarking of 12 simulation methods | Simulation method selection, method development [13] |

| ZINB-WaVE | Statistical Method | Observation weights for zero-inflated data | Enabling bulk tools for single-cell data [15] |

| scGraphformer | Analysis Tool | Transformer-based cell type identification | Handling complex heterogeneity, large-scale data [14] |

| scSGC | Analysis Tool | Soft graph clustering with non-binary edges | Overcoming hard graph limitations in GNNs [17] |

| Splatter | Simulation Tool | Model-based scRNA-seq data simulation | Method validation, power analysis [13] [16] |

Based on comprehensive benchmarking evidence, we recommend:

For differential expression analysis: Employ covariate models (MAST_Cov) when substantial batch effects are present and limmatrend or fixed effects models for very low-depth data. Avoid using batch-corrected data for DE analysis when data is sparse [15].

For clustering and cell type identification: Implement graph neural network approaches (scGraphformer, scSGC) for large, complex datasets with subtle cellular heterogeneity, as these methods consistently outperform traditional approaches while better handling data sparsity [14] [17].

For experimental design: Include sufficient biological replicates (at least 6, preferably 10+ per condition) to ensure replicable findings, and utilize bootstrapping procedures to estimate expected performance given cohort size constraints [9].

For method validation: Leverage standardized resources like scUnified and multiple simulation approaches from SimBench to ensure comprehensive evaluation across diverse data characteristics [12] [13].

The single-cell revolution continues to advance through sophisticated computational methods that directly address the fundamental challenges of data sparsity and cellular heterogeneity. By selecting approaches validated through rigorous benchmarking and appropriate for specific data characteristics, researchers can maximize biological insights while maintaining statistical rigor in their single-cell studies.

Mass spectrometry-based proteomics has evolved from a qualitative, descriptive discipline into a high-throughput quantitative field, playing an increasingly crucial role in biological interpretation of protein functions and biomarker discovery [18]. This transition has been accelerated by significant advances in instrumentation capable of generating enormous volumes of data, which has in turn emphasized that computational data analysis now represents the primary bottleneck for proteomics development [18]. The complexity of proteomics data analysis stems from multiple challenges, including measurement noise, missing values, and the hierarchical structure of bottom-up MS data where protein abundances must be inferred from peptide-level information [19]. This article examines the workflow parallels and unique computational challenges in mass spectrometry-based proteomics, with particular focus on evaluating the performance of differential expression analysis tools essential for drug development and clinical research.

Core Computational Workflows in Mass Spectrometry Proteomics

Foundational Principles of Quantitative Proteomics

Mass spectrometry-based protein quantitation relies on the fundamental linearity of MS ion signal versus molecular concentration, initially confirmed for protein abundances by Chelius and Bondarenko in 2002 [18]. Proteomics quantification strategies fall into two primary categories: stable isotope labeling methods (e.g., SILAC, TMT, iTRAQ) that introduce mass tags into proteins or peptides, and label-free approaches that correlate ion current signals or spectral counts directly with protein quantity [18]. The effectiveness of these approaches depends heavily on appropriate software tools for data processing, whose development has lagged behind instrumental advances [18].

A typical LC-MS quantitative proteomics workflow involves multiple coordinated steps: proteins are first digested to peptides by a site-specific enzymatic protease (typically trypsin), followed by liquid chromatography separation, conversion to gas phase ions, and analysis by MS. The mass spectrometer produces high-resolution MS spectra and automatically selects peptides for fragmentation and further analysis by tandem mass spectrometry (MS/MS). The resulting MS/MS spectra are compared to theoretical fragmentation spectra generated from protein sequence databases or spectral libraries to retrieve corresponding peptide sequences [18].

Generalized Proteomics Data Analysis Workflow

The following diagram illustrates the core computational workflow for differential expression analysis in proteomics, highlighting the multiple decision points where tool selection significantly impacts results:

Figure 1: Generalized computational workflow for differential expression analysis in proteomics, highlighting the five critical steps where tool selection significantly impacts results.

As illustrated, differential expression analysis workflows encompass five critical steps: (1) raw data quantification, (2) expression matrix construction, (3) matrix normalization, (4) missing value imputation (MVI), and (5) differential expression analysis using statistical methods. At each step, researchers must select from multiple available tools and methods, creating a combinatorial challenge for workflow optimization [20].

Comparative Analysis of Proteomics Data Analysis Tools

The proteomics field has developed numerous specialized software tools for data analysis, each with distinct strengths, limitations, and optimal use cases. The table below summarizes key characteristics of major proteomics data analysis platforms:

Table 1: Comprehensive comparison of primary proteomics data analysis software tools

| Software Tool | License Model | Primary Strengths | Optimal Use Cases | Quantification Methods Supported | Usability Considerations |

|---|---|---|---|---|---|

| MaxQuant [21] | Free, open-source | Comprehensive pipeline; integrated Perseus for statistics; Match-between-runs | Discovery proteomics; Academic labs | LFQ, SILAC, dimethyl, TMT/iTRAQ, DIA (via MaxDIA) | Windows/Linux; GUI and command-line |

| FragPipe/MSFragger [21] | Free, open-source | Ultra-fast search engine; Open modification searches; High-throughput data | Large datasets; Custom modifications; High-throughput workflows | TMT/iTRAQ, LFQ via IonQuant, DIA via MSFragger-DIA | Windows/Linux; Functional GUI; Less polished interface |

| DIA-NN [22] [21] | Free, open-source | High-performance DIA; Neural networks; Library-free analysis; Scalability | DIA proteomics; Large cohort studies; Limited library availability | DIA (specialized) | CLI with basic GUI; Minimal visualization |

| Spectronaut [22] [21] | Commercial | Gold standard DIA; Machine learning; Extensive visualization; Vendor-agnostic | Precision DIA projects; Industrial labs; Regulatory environments | DIA (specialized) | Professional GUI; Steep licensing cost |

| Proteome Discoverer [21] | Commercial | Thermo instrument integration; Multiple search engines; User-friendly workflow | Core facilities; Thermo Orbitrap users; Targeted applications | LFQ, SILAC, TMT/iTRAQ, DIA (v3.0+) | Windows GUI; Node-based workflow |

| Skyline [21] | Free, open-source | Excellent visualization; Targeted analysis; Method development | Targeted proteomics; MRM/PRM; DIA quantification; Assay development | SRM, MRM, PRM, DIA, DDA | Clean GUI; Extensive documentation |

Performance Benchmarking of Differential Expression Analysis Tools

Recent large-scale benchmarking studies have systematically evaluated the performance of various proteomics workflows. One comprehensive analysis tested 34,576 combinatoric workflow variations on 24 gold-standard spike-in datasets to identify optimal strategies for differential expression analysis [20]. The performance evaluation revealed that optimal workflows are highly dependent on the specific quantification setting (e.g., label-free DDA, DIA, or TMT-labeled data).

The following table summarizes key performance characteristics identified through systematic benchmarking:

Table 2: Performance characteristics of proteomics data analysis tools based on benchmark studies

| Software Tool | Identification Performance | Quantification Accuracy | Missing Value Handling | DIA Specialization | Recommended Normalization & Imputation |

|---|---|---|---|---|---|

| DIA-NN [22] [20] | High peptide-level detection | High precision (median CV: 16.5-18.4%) | Higher missing values (48% shared proteins) | Excellent; Library-based and library-free | DirectLFQ intensity; No normalization; SeqKNN/Impseq/MinProb imputation |

| Spectronaut [22] [20] | Highest protein detection (3066±68 proteins) | Good precision (median CV: 22.2-24.0%) | Better data completeness (57% shared proteins) | Excellent; directDIA workflow | Library-based preferred for detection; directDIA for accuracy |

| PEAKS Studio [22] | Good proteome coverage (2753±47 proteins) | Moderate precision (median CV: 27.5-30.0%) | Moderate data completeness | Growing capability | Sample-specific spectral libraries optimal |

| FragPipe/MaxQuant [20] | Varies by dataset and workflow | Better with specific normalization | Missing values challenge all workflows | Compatible with specialized workflows | Normalization and DEA methods most influential for label-free DDA |

The benchmarking research demonstrated that normalization and differential expression analysis statistical methods exert greater influence on outcomes than other steps for label-free DDA and TMT data, while for label-free DIA data, the matrix type is also critically important [20]. High-performing workflows for label-free data are enriched for directLFQ intensity, no normalization (referring to distribution correction methods not embedded with specific settings), and specific imputation methods (SeqKNN, Impseq, or MinProb), while eschewing simple statistical tools like ANOVA, SAM, and t-test, which are enriched in low-performing workflows [20].

Specialized Computational Challenges in Emerging Areas

Single-Cell Proteomics Data Analysis

Single-cell proteomics presents unique computational challenges due to low signal-to-noise ratio, high missing value rates, and limited sample size compared to bulk proteomics [19]. The extremely low abundance of proteins in single cells means measurements often occur near detection limits, resulting in sparse data matrices that complicate statistical analysis. Traditional bulk proteomics analysis methods, which often remove proteins with only one reliable peptide to avoid false identification, are unsuitable for single-cell data as they would severely compromise already limited proteome coverage [19].

Specialized tools like pepDESC (peptide-level Differential Expression analysis for Single-Cell proteomics) have been developed to address these challenges by leveraging peptide-level quantification signals to balance proteome coverage and quantification accuracy [19]. This approach recognizes the hierarchical structure of bottom-up MS-based proteomics data, where protein abundances depend on aggregating peptide-level information.

Recent benchmarking of data-independent acquisition (DIA) workflows for single-cell proteomics revealed that software tools perform differently at the single-cell level compared to bulk analyses [22]. In systematic evaluations using simulated single-cell samples, Spectronaut's directDIA workflow quantified the highest number of proteins (3066 ± 68), while DIA-NN demonstrated superior quantitative precision (median CV values of 16.5-18.4% versus 22.2-24.0% for Spectronaut) [22].

Data-Independent Acquisition (DIA) Computational Strategies

DIA mass spectrometry generates highly convoluted data that requires sophisticated computational solutions for interpretation [22]. Typical DIA analysis methods rely on spectral libraries that define the space of detectable peptides and their characteristics (retention time, ion mobility, fragment patterns). These libraries can be generated from data-dependent acquisition (DDA) runs, from the DIA data itself through deconvolution, or through in-silico prediction [22].

The performance of DIA data analysis solutions depends heavily on the computational approach and instrument platform. Benchmarking studies comparing OpenSWATH, EncyclopeDIA, Skyline, DIA-NN, and Spectronaut across TripleTOF, Orbitrap, and TimsTOF Pro instruments have demonstrated that library-free approaches can outperform library-based methods when spectral libraries have limited comprehensiveness, though constructing comprehensive libraries still generally benefits most DIA analyses [23].

Experimental Protocols for Tool Benchmarking

Large-Scale Workflow Performance Assessment

The comprehensive benchmarking study that evaluated 34,576 workflow combinations implemented a rigorous experimental protocol [20]. Researchers assembled 24 gold-standard spike-in datasets including 12 label-free DDA datasets, 5 TMT datasets, and 7 label-free DIA datasets from various proteomics projects. This collection represents the most extensive benchmark data assembly for cross-platform workflow optimization to date.

Performance was evaluated using five metrics: partial area under receiver operator characteristic curves (pAUC) at false-positive rate thresholds of 0.01, 0.05, and 0.1; normalized Matthew's correlation coefficient (nMCC); and geometric mean of specificity and recall (G-mean). For each workflow, performance metrics were calculated and converted to ranks relative to other workflows, with final ranks determined by averaging across all five metrics [20].

DIA Method Benchmarking Protocol

The benchmarking of DIA analysis tools for single-cell proteomics followed a standardized protocol using simulated single-cell samples [22]. Researchers constructed samples consisting of tryptic digests of human HeLa cells, yeast, and Escherichia coli proteins with defined composition ratios. A reference sample contained 50% human, 25% yeast, and 25% E. coli proteins, while other samples varied the yeast and E. coli proportions from 0.4 to 1.6× reference levels.

The total protein abundance injected into LC-MS/MS systems was 200 pg to mimic single-cell level input. Each sample was analyzed by diaPASEF using a timsTOF Pro 2 mass spectrometer with six technical replicates. These samples with known relative quantities enabled precise evaluation of quantification accuracy at the single-cell level [22].

Essential Research Reagent Solutions

The following table catalogues key reagents and materials essential for implementing robust proteomics workflows, particularly in method benchmarking and validation studies:

Table 3: Essential research reagents and materials for proteomics workflow implementation

| Reagent/Material | Function/Purpose | Application Examples | Quality Considerations |

|---|---|---|---|

| Universal Proteomics Standard (UPS1/2) [20] | Spike-in standards with known concentrations; Enable accuracy assessment | Benchmarking workflow performance; Quantification calibration | Defined protein composition; Certificated concentrations |

| Stable Isotope-Labeled Amino Acids [18] | Metabolic labeling for quantitative comparisons (SILAC) | Relative quantification between cell states; Internal standardization | High isotopic purity; Metabolic incorporation efficiency |

| Isobaric Mass Tags (TMT, iTRAQ) [18] [21] | Chemical labeling for multiplexed quantification | Multiplexed experimental designs; Reduced technical variability | Labeling efficiency; Reporter ion purity |

| Trypsin (Protease) [18] [21] | Site-specific protein digestion to peptides | Sample preparation for bottom-up proteomics | Sequencing grade; High purity; Modified to prevent autolysis |

| Spectral Libraries [22] [23] | Reference data for peptide identification | DIA data analysis; Targeted method development | Comprehensiveness; Platform-specific optimization |

| Internal Standard Peptides [19] | Retention time calibration; Quantification standards | Targeted proteomics; Absolute quantification | Stable isotope-labeled; High purity |

Ensemble Approaches and Workflow Integration

Recent evidence suggests that integrating results from multiple top-performing workflows through ensemble inference can expand differential proteome coverage beyond individual workflows [20]. This approach leverages complementary strengths of different analysis strategies, potentially increasing true positive detection. Research demonstrates that ensemble methods can improve mean partial AUC(0.01) by 1.17-4.61% and enhance G-mean scores by up to 11.14% across different quantification settings [20].

Specifically, integrating top-performing workflows using top0 intensities (incorporating all precursors) with intensities extracted using directLFQ and MaxLFQ can improve differential expression analysis performance more than any single workflow, achieving pAUC(0.01) gains of 4.61% in MaxQuant-processed DDA data [20]. This suggests that while individual intensity extraction methods may have limitations, their strategic combination provides complementary information that enhances overall outcomes.

However, workflow integration presents a double-edged sword: while increasing true positive detection, it also carries the risk of elevated false positives. This highlights the need for further development of robust frameworks for conducting ensemble inference across multiple proteomics workflows [20].

The expanding toolbox of proteomics data analysis software offers researchers multiple pathways for extracting biological insights from mass spectrometry data. The evidence from systematic benchmarking indicates that optimal workflow selection depends significantly on the specific experimental context—including the mass spectrometry platform, quantification strategy, and biological question. Rather than seeking a universal solution, researchers should strategically select and potentially integrate multiple complementary workflows to maximize proteome coverage and quantification accuracy.

For drug development professionals and clinical researchers, these findings emphasize that computational workflow design deserves the same rigorous optimization as experimental protocols. The emergence of ensemble methods points toward a future where strategic integration of multiple computational approaches may become standard practice for maximizing biological insights from precious proteomics samples. As the field continues to evolve, ongoing benchmarking and validation of new computational tools will remain essential for advancing proteomics from a descriptive to a truly quantitative discipline.

A Practical Toolkit: Selecting and Applying Differential Expression Methods for Your Data Type

Differential expression (DE) analysis is a fundamental step in transcriptomics, enabling researchers to identify genes whose expression changes significantly between different biological conditions, such as disease states or treatment groups [24]. Among the numerous methods developed for this task, three have established themselves as cornerstone tools in the field: edgeR, DESeq2, and limma-voom [25]. These tools are frequently applied to bulk RNA-seq data to uncover molecular mechanisms underlying biological processes.

The ongoing evaluation of these tools' performance remains a critical research area within bioinformatics. Understanding their statistical foundations, relative strengths, and limitations is essential for selecting the most appropriate method for a given experimental context and ensuring biologically valid conclusions [24] [26]. This guide provides a comprehensive, objective comparison of these three widely-used workflows, synthesizing information on their methodologies, performance characteristics, and optimal use cases, supported by experimental data and benchmark studies.

Statistical Foundations and Core Methodologies

The three methods employ distinct statistical approaches to model RNA-seq count data and test for differential expression.

Core Statistical Models

- DESeq2: Utilizes a negative binomial modeling framework with empirical Bayes shrinkage for both dispersion estimates and fold changes. This approach stabilizes estimates, particularly for genes with low counts or few replicates [24] [27].

- edgeR: Also employs a negative binomial model but offers more flexible dispersion estimation options, including common, trended, or tagwise dispersion. It provides both likelihood ratio and quasi-likelihood F-testing frameworks [24] [28].

- limma-voom: Applies linear modeling with empirical Bayes moderation to log-transformed counts. The "voom" transformation converts counts to log-CPM values and generates precision weights that account for the mean-variance relationship in the data [24].

Normalization Approaches

Normalization addresses differences in sequencing depth and RNA composition across samples, a critical step in RNA-seq analysis [1].

- DESeq2: Uses a median-of-ratios method based on the geometric mean across all samples, assuming most genes are not differentially expressed [28].

- edgeR: Typically applies the Trimmed Mean of M-values (TMM) method, which also assumes most genes are non-DE and calculates a weighted mean of log ratios between test and reference samples [28].

- limma-voom: Can utilize various normalization methods, including TMM normalization, though historically it has been associated with quantile normalization [28].

Table 1: Core Statistical Foundations of the Three Methods

| Aspect | limma-voom | DESeq2 | edgeR |

|---|---|---|---|

| Core Statistical Approach | Linear modeling with empirical Bayes moderation | Negative binomial modeling with empirical Bayes shrinkage | Negative binomial modeling with flexible dispersion estimation |

| Data Transformation | voom transformation converts counts to log-CPM values | Internal normalization based on geometric mean | TMM normalization by default |

| Variance Handling | Empirical Bayes moderation improves variance estimates for small sample sizes | Adaptive shrinkage for dispersion estimates and fold changes | Flexible options for common, trended, or tagged dispersion |

| Key Components | voom transformation, linear modeling, empirical Bayes moderation, precision weights | Normalization, dispersion estimation, GLM fitting, hypothesis testing | Normalization, dispersion modeling, GLM/QLF testing, exact testing option |

Workflow Diagrams

The following diagram illustrates the general analytical workflow shared by these methods, as well as their key differences:

Diagram 1: Comparative workflow of the three differential expression analysis methods. All methods begin with the same preprocessing steps but diverge in their statistical modeling approaches.

Performance Comparison and Benchmark Data

Extensive evaluations have been conducted to assess the performance of these tools under various experimental conditions.

Sample Size Considerations

Sample size significantly influences method performance, with each tool having different optimal ranges:

- limma-voom: Requires at least 3 replicates per condition for reliable variance estimation. Demonstrates remarkable versatility and computational efficiency, especially with large sample sizes [24].

- DESeq2: Performs well with moderate to large sample sizes (≥3 replicates) and can handle high biological variability effectively [24].

- edgeR: Efficient with very small sample sizes (≥2 replicates) and maintains good performance with large datasets [24] [29].

A critical consideration emerges in population-level studies with large sample sizes (dozens to thousands of samples). Recent research has revealed that both DESeq2 and edgeR can exhibit exaggerated false positive rates in such scenarios, with actual FDRs sometimes exceeding 20% when the target is 5% [30]. In these specific large-sample contexts, non-parametric methods like the Wilcoxon rank-sum test have demonstrated superior FDR control [30].

Agreement and Discrepancies in Results

Multiple studies have investigated the concordance between results from these three methods:

- In standard experiments, there is typically a remarkable level of agreement in the differentially expressed genes identified by all three tools, despite their distinct statistical approaches [24].

- However, significant discrepancies can emerge in specific contexts. One analysis of an immunotherapy dataset (51 vs. 58 samples) found that DESeq2 and edgeR had only an 8% overlap in identified DEGs, raising concerns about reliability in certain scenarios [30].

- When methods disagree, it often manifests as one method identifying a subset of the genes detected by another, rather than completely discordant results [27].

Table 2: Performance Characteristics Under Different Experimental Conditions

| Aspect | limma-voom | DESeq2 | edgeR |

|---|---|---|---|

| Ideal Sample Size | ≥3 replicates per condition | ≥3 replicates, performs well with more | ≥2 replicates, efficient with small samples |

| Best Use Cases | Small sample sizes, multi-factor experiments, time-series data, integration with other omics | Moderate to large sample sizes, high biological variability, subtle expression changes, strong FDR control | Very small sample sizes, large datasets, technical replicates, flexible modeling needs |

| Computational Efficiency | Very efficient, scales well | Can be computationally intensive | Highly efficient, fast processing |

| Large Sample Performance | Excellent speed and performance | Potential FDR inflation in population studies | Potential FDR inflation in population studies |

| Low Count Performance | May not handle extreme overdispersion well | Automatic outlier detection, independent filtering | Particularly shines with low expression counts |

Performance in Species-Specific Contexts

Recent comprehensive evaluations have revealed that the performance of these tools can vary when applied to data from different species. One study analyzing RNA-seq data from plants, animals, and fungi observed some variations in performance when the same analytical tools were applied to different species [26]. This highlights the importance of considering species-specific factors when selecting analysis tools, rather than using identical parameters across all organisms.

Practical Implementation Protocols

This section provides detailed methodologies for implementing these tools based on established protocols.

Data Preparation and Quality Control

Proper data preparation is essential for all three methods:

Initial Setup in R:

Data Filtering: Filter low-expressed genes to improve power and reduce multiple testing burden. A common approach is to keep genes expressed in at least 80% of samples [24]:

DESeq2 Analysis Pipeline

Protocol Source: Adapted from established DESeq2 workflows [24] [31]

Step-by-Step Implementation:

- Create DESeq2 Dataset:

Set Reference Level:

Perform DE Analysis:

Extract Results:

edgeR Analysis Pipeline

Protocol Source: Adapted from established edgeR workflows [24]

Step-by-Step Implementation:

- Create DGEList Object:

Normalize Library Sizes:

Estimate Dispersion:

Perform Quasi-likelihood F-tests:

limma-voom Analysis Pipeline

Protocol Source: Adapted from established limma-voom workflows [24]

Step-by-Step Implementation:

- Create DGEList and Normalize:

Apply voom Transformation:

Fit Linear Model:

Extract Results:

The following diagram illustrates the method-specific statistical approaches and their key steps:

Diagram 2: Detailed statistical workflows for each method showing key analytical steps and their sequence.

Essential Research Reagent Solutions

A robust RNA-seq analysis pipeline requires both computational tools and appropriate methodological components. The following table outlines key elements used in a comprehensive RNA-seq workflow:

Table 3: Essential Components of an RNA-seq Analysis Pipeline

| Component | Function | Example Tools |

|---|---|---|

| Quality Control | Assess read quality and identify sequencing artifacts | FastQC, Trimmomatic, fastp, Trim_Galore [26] |

| Read Trimming | Remove adapter sequences and low-quality bases | Trimmomatic, Cutadapt, fastp [26] |

| Alignment | Map sequencing reads to reference genome | HISAT2, STAR [32] |

| Quantification | Generate count data for genes/transcripts | Salmon, HTSeq, featureCounts [31] [1] |

| Differential Expression | Identify statistically significant expression changes | DESeq2, edgeR, limma-voom [24] [25] |

| Functional Analysis | Interpret biological meaning of results | GO enrichment, pathway analysis |

| Visualization | Create informative data representations | ggplot2, pheatmap, VennDiagram [24] |

DESeq2, edgeR, and limma-voom represent mature, highly-optimized solutions for differential expression analysis from bulk RNA-seq data. While they share the common goal of identifying statistically significant expression changes, their differing statistical approaches lead to distinct performance characteristics and optimal application domains.

The choice between methods should be guided by specific experimental parameters. For well-replicated experiments with complex designs, limma-voom often provides excellent performance and computational efficiency. When working with low-abundance transcripts or very small sample sizes, edgeR may be preferable. DESeq2 offers robust performance across many scenarios, with particularly strong FDR control in standard experimental setups [24].

Recent research has highlighted that no single method dominates all experimental scenarios. Particularly concerning are findings of FDR control issues with DESeq2 and edgeR in large population-level studies [30], suggesting that alternative approaches like non-parametric tests may be preferable in these specific contexts. Future methodological developments will likely focus on improving reliability across diverse sample sizes and experimental designs, as well as enhancing integration with other data types in multi-omics frameworks.

Differential expression (DE) analysis represents a fundamental methodology in single-cell RNA sequencing (scRNA-seq) investigations, enabling researchers to identify genes with expression patterns that vary significantly across different biological conditions, cell types, or experimental treatments. Unlike bulk RNA-seq, which measures average gene expression across cell populations, scRNA-seq provides unprecedented resolution at the individual cell level, revealing cellular heterogeneity and enabling the discovery of novel cell states and subtypes [33]. However, this technological advancement introduces substantial analytical challenges, including high levels of technical noise, dropout events (where genes that are expressed fail to be detected), and complex multimodal expression distributions that violate assumptions of traditional statistical methods [34].

The development of specialized computational tools capable of addressing these scRNA-seq-specific challenges has become an active area of bioinformatics research. Among these, MAST (Model-based Analysis of Single-cell Transcriptomics), scDD (single-cell Differential Distributions), and DiSC (Differential State Correlation) represent three distinct methodological approaches to single-cell DE analysis. MAST employs a two-part generalized linear hurdle model to separately model expression rates and levels, scDD detects diverse distributional changes beyond mean shifts, while DiSC incorporates correlation structures across cell types to improve detection power [35] [34]. Understanding the relative strengths, limitations, and appropriate application contexts for these methods is essential for researchers seeking to extract biologically meaningful insights from their single-cell data.

This review presents a comprehensive comparative analysis of MAST, scDD, and DiSC, focusing on their methodological foundations, performance characteristics, and suitability for different experimental contexts. Through examination of benchmark studies and experimental validations, we provide evidence-based guidance for researchers navigating the complex landscape of single-cell differential expression tools.

MAST (Model-based Analysis of Single-cell Transcriptomics)

MAST employs a sophisticated two-part generalized linear hurdle model that explicitly addresses the bimodal nature of single-cell expression data resulting from dropout events. The method separately models the discrete (presence/absence of expression) and continuous (expression level when present) components of gene expression [34] [36]. For each gene, MAST fits a logistic regression component to model the probability of expression (detection rate) and a Gaussian linear model component to model the conditional expression level given that the gene is detected. These two components are assumed to be independent conditional on the cellular detection rate, which is included as a covariate to account for technical variation. Statistical significance is assessed through likelihood ratio tests comparing models with and without the condition of interest [36].

The key advantage of MAST's approach lies in its explicit handling of dropout events, which typically account for a substantial proportion of zeros in scRNA-seq data. By separately modeling the detection rate and expression level, MAST can distinguish between biological absence of expression and technical dropouts, potentially increasing power to detect true differential expression. This methodology has demonstrated particularly strong performance in benchmark evaluations, with one comprehensive study identifying it as the only scRNA-seq-specific method that outperformed established bulk RNA-seq DE methods [37].

scDD (single-cell Differential Distributions)

scDD adopts a fundamentally different approach by focusing on detecting diverse distributional changes between conditions rather than simply testing for differences in mean expression. The method employs a Bayesian modeling framework to categorize genes into several distinct patterns of differential expression: differential mean (DM), differential proportion (DP), differential modality (DM), and both (DB) [34]. This comprehensive classification enables scDD to identify more subtle and complex patterns of regulation that might be missed by methods focusing solely on mean differences.

The scDD framework utilizes a semiparametric approach that combines the flexibility of Dirichlet process mixture models with the efficiency of empirical Bayes methods. Genes are modeled using a mixture of normal distributions to capture potential multimodality in expression patterns, and Bayes factors are computed to compare the evidence for differential distributions versus equivalent distributions across conditions [34]. This approach is particularly powerful for detecting genes with multimodal expression distributions, which may indicate the presence of novel cell subtypes or distinct regulatory states within apparently homogeneous cell populations.

DiSC (Differential State Correlation)

DiSC introduces a novel perspective on single-cell DE analysis by incorporating correlation structures across cell types through a Bayesian hierarchical model. Unlike traditional methods that analyze each cell type independently, DiSC leverages the biological insight that cell types with common developmental lineages or functional roles often exhibit correlated differential expression patterns [35]. The method implements a sophisticated framework where DE states across related cell types are modeled as dependent random variables, with correlations estimated from the data or derived from a cell type hierarchy.

The DiSC methodology involves creating a "pseudo-bulk" expression matrix for each cell type by aggregating counts from individual cells, followed by normalization. A cell type hierarchical tree is constructed based on correlation of expression changes across cell types, which informs the prior probabilities in the Bayesian model. Posterior probabilities for DE are then computed, enabling information sharing across correlated cell types and potentially improving power, particularly for rare cell populations with limited numbers of cells [35]. This approach represents a significant departure from conventional single-cell DE methods and addresses the important biological reality of coordinated regulation across cell types.

Table 1: Key Methodological Characteristics of MAST, scDD, and DiSC

| Feature | MAST | scDD | DiSC |

|---|---|---|---|

| Statistical Model | Two-part generalized linear hurdle model | Bayesian semiparametric mixture model | Bayesian hierarchical model |

| Handling of Zeros | Explicit via hurdle component | Through mixture components | Via pseudo-bulk aggregation |

| Distributional Focus | Mean and prevalence | Entire distribution | Mean with correlation |

| Cell Type Correlation | Not incorporated | Not incorporated | Explicitly modeled |

| Key Strength | Handling dropout events | Detecting multimodal differences | Leveraging cross-cell-type information |

| Computational Demand | Moderate | High | High |

Experimental Design and Benchmarking Approaches

Benchmarking Frameworks and Performance Metrics

Rigorous evaluation of differential expression methods requires carefully designed benchmarking studies that assess performance across multiple dimensions. The most informative benchmarks employ a combination of simulated datasets, where the ground truth is known, and real datasets with validated differential expression patterns. For scRNA-seq methods, key performance metrics include:

- Sensitivity (True Positive Rate): The proportion of truly differentially expressed genes that are correctly identified by the method.

- False Discovery Rate (FDR): The proportion of falsely identified DE genes among all genes called as differentially expressed.

- Area Under the ROC Curve (AUROC): A comprehensive measure of classification performance across all possible significance thresholds.

- Precision and Recall: Complementary metrics that balance the trade-off between identifying true positives and minimizing false positives.

- Reproducibility: Consistency of results across technical replicates or subsampled datasets [34] [36].

Comprehensive benchmarking studies have revealed that the performance of DE methods can vary substantially depending on experimental factors such as sample size, the proportion of truly DE genes, the magnitude of expression differences, and the underlying distribution of the data [36]. These findings highlight the importance of context-specific method selection rather than seeking a universally superior approach.

Reference Datasets and Validation Strategies

Well-characterized datasets play a crucial role in the objective evaluation of DE method performance. The Kang dataset, comprising scRNA-seq data from peripheral blood mononuclear cells (PBMCs) of Lupus patients before and after interferon-beta treatment, has emerged as a valuable benchmark due to its well-defined experimental conditions and the availability of multiple biological replicates [38]. This dataset enables researchers to evaluate how methods perform in detecting expression changes in response to a specific stimulus across diverse immune cell types.

Other validation approaches include using bulk RNA-seq data from the same samples as a reference standard, cross-validation with RT-qPCR results, and sophisticated simulation frameworks that generate scRNA-seq data with known DE patterns while preserving key characteristics of real data such as dropout rates and expression distributions [37]. These complementary approaches provide a comprehensive assessment of method performance under different scenarios and with varying degrees of prior knowledge about true differential expression.

Diagram 1: Experimental Benchmarking Workflow for Evaluating Differential Expression Methods. This workflow illustrates the comprehensive approach used to compare the performance of MAST, scDD, and DiSC against established methods and benchmarks.

Performance Comparison and Results

Statistical Power and Accuracy

Multiple benchmarking studies have evaluated the performance of MAST, scDD, and related methods under various experimental conditions. In a comprehensive assessment comparing eleven differential expression tools, MAST demonstrated particularly strong performance with the largest AUROC values across different sample sizes and proportions of truly differentially expressed genes when data followed negative binomial distributions [36]. When sample size increased to 100 cells per group, MAST maintained superior performance with the highest AUROC regardless of data distributions.

scDD has shown exceptional capability in detecting complex distributional differences beyond simple mean shifts. While its performance on traditional DE detection metrics may be comparable to other methods, its unique strength lies in identifying genes with differential modality or proportion of expressing cells, patterns that are often biologically significant but missed by conventional approaches [34]. This makes scDD particularly valuable for discovering novel cell subtypes or identifying genes with heterogeneous responses within seemingly uniform cell populations.

Although direct benchmarking results for DiSC are more limited in the available literature, its innovative approach of incorporating cell type correlations shows promise for improving detection power, especially for rare cell types with limited numbers of cells. By leveraging information across correlated cell types, DiSC can potentially identify consistent expression changes that might be statistically undetectable when analyzing each cell type in isolation [35].

Table 2: Performance Comparison Across Experimental Conditions

| Experimental Condition | MAST | scDD | DiSC | Best Performing Method |

|---|---|---|---|---|

| Small Sample Size (< 50 cells/group) | AUROC: 0.75-0.82 | AUROC: 0.72-0.79 | Limited data | MAST |

| Large Sample Size (> 100 cells/group) | AUROC: 0.84-0.89 | AUROC: 0.81-0.86 | Limited data | MAST |

| High Dropout Rate (> 60% zeros) | Maintains performance | Moderate performance decrease | Limited data | MAST |

| Multimodal Distributions | Limited detection | Excellent detection | Unknown | scDD |

| Rare Cell Types | Standard performance | Standard performance | Potentially improved | DiSC (theoretical) |

| Mean-Only Differences | Excellent detection | Good detection | Good detection | MAST |

False Discovery Control and Reproducibility

Proper control of false discoveries is crucial for any statistical method in genomics applications. Evaluation studies have revealed important differences in how MAST, scDD, and DiSC handle Type I error rates. MAST has demonstrated generally good FDR control across various simulation scenarios, particularly when the hurdle model assumptions align with the data characteristics [36]. However, like most methods designed for scRNA-seq data, it may become conservative or anti-conservative under certain conditions, such as when the relationship between detection rate and expression level differs from the modeled assumptions.

scDD's Bayesian framework provides natural uncertainty quantification through posterior probabilities, which can be calibrated to control false discoveries. However, the method's flexibility in detecting diverse distributional changes may come at the cost of increased false positives when applied to datasets with limited sample sizes or high technical noise [34]. Proper prior specification and careful threshold selection are particularly important for scDD to maintain appropriate error control.

The reproducibility of results across technical replicates or slightly different analytical choices represents another important dimension of method performance. Studies comparing the reproducibility of single-cell DE methods have found that MAST shows generally good concordance across replicates, while methods like scDD may show more variability due to their sensitivity to complex distributional patterns that might not be consistently captured across subsamples [36]. The reproducibility characteristics of DiSC have not been extensively evaluated in the available literature.

Computational Efficiency and Scalability

As scRNA-seq datasets continue to grow in size, computational efficiency becomes an increasingly important practical consideration. Among the three methods, MAST offers relatively moderate computational demands, making it feasible for datasets containing tens of thousands of cells. The method's implementation efficiently handles the two-component model through parallelization and optimized linear algebra operations.

scDD's Bayesian framework and semiparametric approach are computationally more intensive, particularly for large datasets with many cells and genes. The method employs various approximation techniques to improve scalability, but may still require substantial computational resources for comprehensive analyses of complex datasets [34].

DiSC's hierarchical Bayesian model and the need to estimate correlation structures across cell types introduce significant computational complexity. The method requires careful tuning of Markov Chain Monte Carlo (MCMC) sampling procedures and may benefit from variational approximation approaches for application to very large datasets [35]. Users working with massive single-cell atlas projects should consider these computational requirements when selecting an appropriate DE method.

Diagram 2: Method Strengths and Limitations Comparison. This diagram summarizes the key advantages and challenges associated with MAST, scDD, and DiSC, highlighting their complementary capabilities.

Table 3: Key Experimental Resources for Single-Cell Differential Expression Studies

| Resource Type | Specific Examples | Function in DE Analysis | Considerations |

|---|---|---|---|

| Reference Datasets | Kang PBMC dataset [38], Blischak cell line dataset [37] | Method validation and benchmarking | Ensure appropriate experimental design and replication |

| Benchmarking Frameworks | Soneson & Robinson pipeline [37], scRNA-seq best practices [38] | Standardized performance evaluation | Include multiple performance metrics and experimental conditions |

| Normalization Methods | Library size normalization, DESeq2-style normalization, TMM, UQ | Remove technical biases prior to DE analysis | Choice affects downstream results; test sensitivity |

| Quality Control Metrics | Detection rate, mitochondrial percentage, doublet scores | Filter low-quality cells and genes | Stringency affects power and false discovery rates |

| Validation Platforms | Bulk RNA-seq, RT-qPCR, spike-in controls [37] | Verify biological findings | Orthogonal confirmation strengthens conclusions |

| Computational Infrastructure | High-performance computing clusters, optimized software containers | Handle computational demands of large datasets | Scalability essential for large-scale studies |

Discussion and Practical Recommendations

The comparative analysis of MAST, scDD, and DiSC reveals a complex landscape where each method excels in specific scenarios, reflecting their different methodological foundations and design priorities. MAST consistently demonstrates strong overall performance in traditional differential expression detection, particularly when dealing with typical scRNA-seq data characteristics such as high dropout rates and moderate sample sizes. Its rigorous statistical framework and good false discovery control make it a reliable choice for most standard applications where the goal is identifying genes with mean expression differences between conditions.

scDD offers unique capabilities for detecting more complex patterns of differential expression that may reflect biologically important but subtle regulatory mechanisms. Its ability to identify genes with multimodal distributions or differences in expression proportion makes it particularly valuable for exploratory analyses aimed at discovering novel cell states or heterogeneous responses within cell populations. However, this increased sensitivity to complex distributional patterns comes with higher computational demands and requires careful interpretation of results.

DiSC represents an innovative approach that addresses the important biological reality of correlated expression changes across related cell types. While comprehensive benchmarking data is more limited, its theoretical foundation suggests particular utility for studies involving multiple cell types with known developmental or functional relationships, especially when analyzing rare cell populations with limited numbers of cells. The method's ability to leverage information across cell types could significantly improve power in such scenarios.

For researchers selecting an appropriate method for their specific application, we recommend the following evidence-based guidelines:

For standard differential expression analysis focusing on mean expression differences, particularly with moderate sample sizes and typical scRNA-seq data characteristics, MAST provides an excellent balance of statistical power, false discovery control, and computational efficiency.

For exploratory analyses aimed at detecting complex distributional changes, heterogeneous responses, or potential novel cell states, scDD offers unique capabilities not available in other methods, despite its higher computational requirements.

For studies involving multiple correlated cell types, especially when including rare cell populations, DiSC presents a promising approach for improving detection power by leveraging cross-cell-type information, though users should be prepared for its computational complexity and implementation challenges.

For critical applications where method choice could significantly impact biological conclusions, we recommend a consensus approach combining multiple complementary methods, with careful validation of results using orthogonal experimental techniques.

As the single-cell field continues to evolve with increasingly complex experimental designs and larger datasets, further method development and benchmarking will be essential. Promising directions include methods that combine the strengths of these approaches, such as modeling cross-cell-type correlations while accommodating complex distributional changes, and improved computational strategies for scaling these methods to massive single-cell atlas projects. Through continued methodological innovation and rigorous evaluation, the research community will be better equipped to extract the full biological potential from single-cell transcriptomic studies.