Benchmarking Gene Finders: A Framework for Rigorous Evaluation Using Experimentally Validated Transcription Start Sites

Accurately identifying gene start sites is a fundamental challenge in genomics, with direct implications for understanding gene regulation, variant interpretation, and drug discovery.

Benchmarking Gene Finders: A Framework for Rigorous Evaluation Using Experimentally Validated Transcription Start Sites

Abstract

Accurately identifying gene start sites is a fundamental challenge in genomics, with direct implications for understanding gene regulation, variant interpretation, and drug discovery. This article provides a comprehensive framework for evaluating the performance of gene prediction tools against experimentally validated transcription start sites. We explore the biological and computational foundations of gene finding, detail current methodologies and benchmark suites like DNALONGBENCH and PhEval, address common troubleshooting and optimization strategies, and present rigorous validation and comparative analysis techniques. Aimed at researchers and bioinformaticians, this review synthesizes best practices to enable standardized, reproducible, and biologically meaningful assessment of gene finders, ultimately enhancing their reliability in research and clinical applications.

The Biology and Benchmarking Challenge: Why Experimentally Validated Start Sites Are Crucial

Transcription Start Sites (TSSs) represent the definitive genomic locations where RNA synthesis initiates, serving as fundamental landmarks for understanding gene regulation, expression patterns, and transcript diversity. The precise mapping of TSSs provides the "ground truth" necessary for evaluating computational gene finders and interpreting regulatory mechanisms. This guide compares experimental methodologies for TSS verification and computational tools for TSS prediction, providing researchers with a framework for assessing the accuracy and limitations of current technologies in characterizing the transcriptional landscape.

Experimental Methods for TSS Verification

Experimental determination of TSSs provides the foundational data against which computational predictions are validated. Several high-throughput methodologies have been developed to precisely map TSSs at base-resolution across the genome.

Key Experimental Techniques

Table 1: Comparison of Major TSS Mapping Technologies

| Method | Approach | Key Features | Reported Input Requirements | Advantages | Limitations |

|---|---|---|---|---|---|

| CAGE-seq [1] | Cap-trapping / Illumina sequencing | Identifies capped 5' ends of transcripts | 5 μg total RNA or 500 ng poly(A)+ RNA | High spatial resolution and sensitivity | High RNA input, 5' G artifact, complex protocols |

| nAnT-iCAGE [1] | Cap-trapping / Illumina sequencing | Improved cap-trapping methodology | 5 mg total RNA | High spatial resolution and sensitivity | High RNA input, 5' G artifact |

| SLIC-CAGE [1] | Cap-trapping / Illumina sequencing | Lower input requirement variant | 1-100 ng total RNA (brought to 5 mg with carrier) | High spatial resolution with reduced input | 5' G artifact remains an issue |

| Cappable-Seq [2] [1] | Direct modification / Illumina or long-read sequencing | Enriches for 5' complete transcripts | 1-5 μg total RNA | Single-base resolution, compatible with multiple sequencing platforms | High RNA input, complex protocols |

| Deep-RACE [3] | Rapid amplification of cDNA ends with deep sequencing | Targeted verification of specific genes | Small batches (as few as 17 genes) | Cost-effective for specific gene sets, avoids cloning steps | Lower throughput than genome-wide methods |

| TSS-seq [1] | Oligo-capping / Illumina sequencing | Enzymatic conversion of 5' PPP to 5' P ends | 200 mg total RNA or 500 ng poly(A)+ RNA | High specificity and sensitivity | High RNA input, complex protocols |

| dRNA-seq [2] | Differential RNA-seq | Compares treated and untreated RNA populations | Varies by implementation | Specifically identifies primary transcripts | Primarily for prokaryotic systems |

Detailed Protocol: TSS Mapping with TAP Treatment

The TSS mapping protocol using Tobacco Acid Pyrophosphatase (TAP) exemplifies the experimental rigor required for accurate TSS identification [4]. This method employs a comparative approach:

- RNA Preparation: Extract high-quality RNA from target cells or tissues

- TAP Treatment: Divide RNA into two aliquots - one treated with TAP enzyme and one untreated control

- Enzymatic Conversion: TAP converts the 5' triphosphate (PPP) ends of native RNAs to 5' monophosphate (P) ends, making them compatible with adapter ligation

- Library Preparation: Construct sequencing libraries from both treated and untreated samples

- Sequencing and Analysis: Perform high-throughput sequencing and compare results between conditions

Without TAP treatment, sequencing captures all RNA species except those with native 5' ends. After TAP treatment, the same sequences are obtained with the additional inclusion of native RNA transcripts, enabling specific identification of genuine transcription start sites [4].

Advanced Integrative Methods: Hi-Coatis

Hi-Coatis (high-throughput capture of actively transcribed region-interacting sequences) represents a recent advancement that integrates TSS mapping with three-dimensional chromatin interaction studies [5]. This method:

- Captures 3D genome interactions at actively transcribed regions without antibodies or probes

- Enables low-input cell experiments with high resolution and robustness

- Identifies over 60,000 regulatory loci in human cells, capturing more than 93% of expressed genes

- Reveals regulatory potential of repetitive/copy number variation regions

- Demonstrates how silent genes transition to transcriptionally active states through transcription factor cooperation

Computational Tools for TSS Prediction

Computational methods for TSS prediction provide scalable alternatives to experimental verification, with varying degrees of accuracy and biological insight.

Comparative Performance of Prediction Algorithms

Table 2: Evaluation of TSS Prediction Tools on Human Chromosomes

| Prediction System | Sensitivity | Positive Predictive Value (PPV) | Key Methodology | Genomic Features Utilized |

|---|---|---|---|---|

| Dragon GSF [6] | 65.1% | 77.8% | Combines CpG islands, TSS predictions, and downstream signals | CpG islands, sequence composition, downstream features |

| FirstEF (CpG+) [6] | 71.4% | 66.4% | Ab initio prediction focusing on first exons | CpG islands, sequence motifs, splice sites |

| Eponine [6] | 39.5% | 76.9% | Scanning window with position-specific scoring | Sequence motifs, nucleotide composition |

| TSS-Captur [2] | N/A | N/A | Pipeline for characterizing unclassified TSSs | Genomic context, coding potential, termination signals |

Next-Generation Prediction: Enformer Architecture

The Enformer deep learning model represents a significant advancement in gene expression prediction from sequence by integrating long-range interactions [7]. Key innovations include:

- Expanded Receptive Field: Attends to sequence elements up to 100 kb away from TSS, compared to 20 kb in previous models

- Transformer Architecture: Uses self-attention layers to weigh relevant regulatory elements regardless of distance

- Multitask Training: Predicts thousands of epigenetic and transcriptional datasets simultaneously

- Performance Gains: Increased mean correlation for CAGE signal prediction at human protein-coding gene TSS from 0.81 (Basenji2) to 0.85

Enformer's attention mechanisms enable it to identify functional enhancer-promoter interactions directly from sequence, performing competitively with methods that require experimental interaction data as input [7].

Specialized Tools for Prokaryotic Systems

Bacterial TSS prediction requires distinct approaches due to fundamental differences in transcriptional machinery:

- GS-Finder [8]: Employs a self-training method using six recognition variables including Shine-Dalgarno sequences, coding potential, and start codon context

- TSS-Captur [2]: Specifically designed for prokaryotes, characterizing orphan and antisense TSSs, and predicting transcription termination sites

- Performance: GS-Finder achieves 92% accuracy on experimentally confirmed E. coli CDS and improves Glimmer 2.02 start site prediction from 63% to 91%

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Reagent Solutions for TSS Research

| Reagent/Resource | Function in TSS Research | Example Applications |

|---|---|---|

| Tobacco Acid Pyrophosphatase (TAP) [4] | Converts 5' PPP ends to 5' P ends for adapter ligation | Experimental TSS mapping protocols |

| Cap-Trapping Reagents [1] | Selectively capture 5'-capped RNAs | CAGE-seq, nAnT-iCAGE, SLIC-CAGE |

| Oligo-Capping Enzymes [1] | Replace 5' cap with synthetic oligonucleotides | TSS-seq, PEAT, CapSeq |

| Crosslinking Reagents [5] | Preserve protein-DNA interactions for chromatin studies | Hi-Coatis, ChIP-seq experiments |

| CAGE-Compatible Sequencing Kits [1] [7] | Library preparation for cap-analysis | Genome-wide TSS identification |

| Rho-Termination Prediction Tools [2] | Computational identification of Rho-dependent termination sites | Bacterial transcript boundary mapping |

| Intrinsic Terminator Prediction Algorithms [2] | Identify hairpin-based termination signals | Prokaryotic transcriptome annotation |

Biological Significance and Research Applications

Regulatory Implications of TSS Positioning

The precise location of TSSs has profound biological consequences:

- Promoter Architecture: TSS positioning determines core promoter element arrangement and transcription factor binding accessibility [1]

- Transcript Diversity: Alternative TSS usage generates transcript isoforms with different 5' UTRs and coding potential, contributing to cellular heterogeneity [1]

- Dynamic Regulation: TSS positions can shift in response to cellular stimuli, developmental signals, or environmental influences [1]

- Mutational Hotspots: Recent research identifies TSSs as sites of concentrated heritable variation, with implications for evolution and disease [9]

Clinical and Diagnostic Relevance

TSS misregulation has significant clinical implications:

- Disease Associations: Aberrant TSS usage is documented in cancer, neurological disorders, and developmental diseases [1]

- Biomarker Potential: TSS utilization patterns show promise for disease subtyping and treatment response monitoring [1]

- Therapeutic Targeting: Understanding TSS regulation may enable new intervention strategies through promoter manipulation

The rigorous evaluation of computational gene finders requires comparison against experimentally validated TSS datasets. Our analysis reveals:

Performance Gaps: Even advanced systems like Dragon GSF achieve approximately 65-78% accuracy on human genomic sequences, leaving substantial room for improvement [6]

Architectural Advancements: Deep learning approaches like Enformer that incorporate long-range genomic context show promising gains in prediction accuracy [7]

Biological Validation: The most reliable TSS predictions integrate multiple genomic features including CpG islands, sequence composition, and evolutionary conservation [6]

Experimental Imperative: High-throughput verification methods like Deep-RACE and Cappable-Seq remain essential for establishing the ground truth required for computational method development [3] [1]

As TSS mapping technologies continue to evolve, with methods like Hi-Coatis providing integrated views of transcription and chromatin architecture [5], the benchmark for evaluating computational predictions will increasingly require multidimensional validation against both sequence-based and structural genomic features.

In the field of genomics, accurately identifying genes and their start sites is fundamental. While in silico gene prediction tools offer a powerful, high-throughput approach, their utility is ultimately constrained by a critical dependency on experimental validation. Without rigorous benchmarking against experimentally confirmed data, the performance claims of these tools remain theoretical, potentially leading to misinterpretations in downstream research and drug development. This guide objectively compares the performance of leading gene finders, underscoring the indispensable role of experimental validation.

Comparative Performance of Gene Prediction Tools

Independent benchmarking studies reveal significant performance variations among gene prediction tools, especially when challenged with metagenomic data of different complexities. The following table summarizes the quantitative performance of several tools as reported in a benchmark study.

Table 1: Performance comparison of gene prediction tools on a benchmark dataset of 12 public genomes (3 archaea, 9 bacteria), totaling 54,980 sequences [10].

| Tool Name | Underlying Methodology | Reported Specificity | Comparative Note |

|---|---|---|---|

| geneRFinder | Random Forest (Machine Learning) | 79% higher than FragGeneScan [10] | Outperformed state-of-the-art tools across the benchmark; used only one pre-trained model [10]. |

| Prodigal | Ab initio (Traditional Algorithm) | 66% lower than geneRFinder [10] | A well-used and typically well-performing tool, though challenges exist with high-complexity metagenomes [10]. |

| FragGeneScan | Ab initio (Traditional Algorithm) | 79% lower than geneRFinder [10] | Another common tool that faces difficulties with complex environmental metagenomic samples [10]. |

| MetaGene | Ab initio (Traditional Algorithm) | Compared in the study [10] | Performance was evaluated alongside other state-of-the-art tools in the benchmark [10]. |

| Orphelia | Machine Learning | Compared in the study [10] | Performance was evaluated alongside other state-of-the-art tools in the benchmark [10]. |

The data demonstrates that machine learning-based tools like geneRFinder can achieve superior specificity. However, the study's authors explicitly noted a major challenge in the field: the lack of a standard metagenomic benchmark for gene prediction, which can allow tools to "inflate their results by obfuscating low false discovery rates" [10]. This highlights the necessity of independent, experimentally-grounded benchmarks for a true performance assessment.

Experimental Protocols for Validation

To address this validation gap, researchers employ several rigorous methodological frameworks. The protocols below are critical for moving beyond pure computation and establishing biological truth.

Benchmarking Against Manually Curated Data

- Objective: To evaluate the precision and recall of a gene finder by comparing its predictions against a "ground truth" derived from professionally curated databases and manual annotations [10] [11].

- Protocol:

- Source Ground Truth: Obtain complete genomes and their annotated Coding Sequences (CDS) from professionally curated repositories like the NCBI genome database [10].

- Extract Sequences: Systematically extract all possible Open Reading Frames (ORFs) from the genomic sequences.

- Label ORFs: Label each extracted ORF as a positive instance ("gene") if it matches a known CDS in the annotation, or as a negative instance ("intergenic") if it falls between known CDS [10].

- Run Predictions: Process the same genomes with the gene prediction tool(s) under evaluation.

- Statistical Analysis: Compare the tool's predictions against the ground truth labels using metrics like specificity, sensitivity, and false discovery rate. The statistical significance of performance differences can be assessed with tests like McNemar's test [10].

Functional Enrichment and Pathway Analysis

- Objective: To assess the biological relevance of predicted genes by determining if they are enriched in known, experimentally validated biological pathways.

- Protocol:

- Pathway Database Curation: Collect ground-truth pathway data from manually validated bioinformatics databases such as Reactome [11].

- Gene Set Compilation: For a given pathway, compile the list of genes known to be involved from the database.

- Prediction Mapping: Map the genes predicted by the in silico tool to the same pathway.

- Enrichment Testing: Use statistical methods (e.g., hypergeometric tests) to determine if the number of predicted genes mapping to the pathway is significantly higher than what would be expected by chance, indicating the tool is capturing biologically meaningful signals [11].

Experimental Validation via CRISPR-based Assays

- Objective: To directly test the functional impact of a predicted regulatory element (like an enhancer) on gene expression.

- Protocol:

- In Silico Prediction: Use a sequence-based model to identify and prioritize candidate enhancers for a specific gene, generating a contribution score for each [7].

- CRISPR Interference (CRISPRi): Experimentally suppress the activity of thousands of candidate enhancers in a relevant cell line (e.g., K562) [7].

- Measure Effect: Quantify the change in expression of the putative target gene following enhancer suppression.

- Validate Prediction: Compare the model's contribution scores against the experimentally measured effects. A high-performing model will successfully prioritize enhancers that, when suppressed, lead to a significant change in gene expression [7].

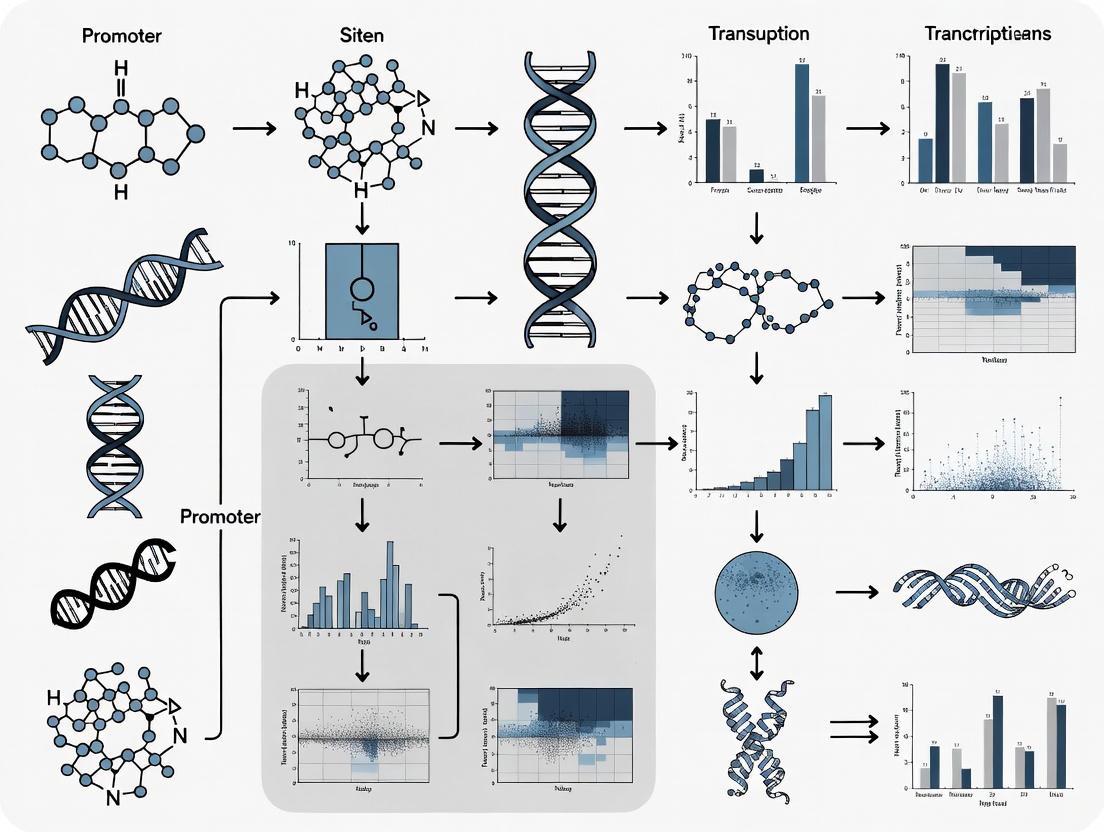

Workflow for Benchmarking Gene Finders

The following diagram illustrates the logical flow and critical steps involved in the experimental benchmarking of in silico gene prediction tools.

The Scientist's Toolkit: Essential Research Reagents & Materials

The following table details key reagents and materials essential for conducting the experimental validation of gene predictions.

Table 2: Key research reagents and materials for experimental validation of gene predictions [10] [7] [11].

| Reagent / Material | Function in Validation |

|---|---|

| Curated Genomes & Annotations (e.g., from NCBI) | Serves as the experimentally derived "ground truth" or reference standard against which computational predictions are benchmarked for accuracy [10]. |

| CRISPRi System Components | Enables direct functional testing of predicted regulatory elements (like enhancers) by knocking down their activity and measuring the impact on target gene expression [7]. |

| Pathway Databases (e.g., Reactome) | Provides a collection of manually curated and validated biological pathways used to assess the functional relevance and enrichment of genes predicted in silico [11]. |

| Protein Signature Databases (e.g., via InterproScan) | Used to functionally annotate predicted gene products by identifying known protein domains and features, helping to distinguish true coding sequences from non-coding ones [10]. |

| Clustering Tools (e.g., CD-HIT) | Reduces redundancy in large sequence datasets generated from metagenomic assemblies, making subsequent functional annotation steps computationally feasible [10]. |

The integration of in silico prediction with experimental validation is not merely a best practice but a necessity for rigorous genomic research. While tools like geneRFinder demonstrate the advancing power of machine learning, their performance must be quantified against experimentally validated benchmarks. The protocols and reagents detailed here provide a framework for researchers to critically assess these tools, ensuring that predictions used in drug discovery and functional genomics are grounded in biological reality.

In the field of computational genomics, the development of accurate and reliable models depends critically on robust evaluation frameworks. Benchmarking suites provide standardized resources for comparing the performance of different algorithms and approaches, enabling researchers to identify strengths, weaknesses, and areas for improvement. Without such standardized evaluation, claims about model performance remain difficult to verify or compare across studies. This article explores two significant benchmarking suites—DNALONGBENCH and PhEval—that address distinct but equally important challenges in genomic analysis. DNALONGBENCH focuses on the challenge of modeling long-range DNA dependencies, which are crucial for understanding genome structure and function across diverse biological contexts [12] [13]. Meanwhile, PhEval addresses the need for standardized evaluation of phenotype-driven variant and gene prioritization algorithms, which are essential tools in rare disease diagnosis [14]. Both suites represent important contributions to the field by providing standardized datasets, evaluation metrics, and frameworks that facilitate transparent and reproducible benchmarking of computational methods.

DNALONGBENCH: A Comprehensive Suite for Long-Range DNA Prediction

DNALONGBENCH represents the most comprehensive benchmark specifically designed for evaluating long-range DNA prediction tasks. It addresses a significant gap in genomics research, as previous benchmarks primarily focused on short-range tasks spanning thousands of base pairs, while long-range dependencies can span millions of base pairs in tasks such as three-dimensional chromatin folding prediction [12]. The suite was designed with four key criteria in mind: biological significance, requiring tasks to address important genomics problems; long-range dependencies, spanning hundreds of kilobase pairs or more; task difficulty, presenting significant challenges for current models; and task diversity, spanning various length scales and including different task types such as classification and regression [12] [13].

Tasks and Specifications

DNALONGBENCH comprises five distinct long-range DNA prediction tasks, each covering different aspects of important regulatory elements and biological processes within a cell. The table below summarizes the key specifications for each task:

Table 1: DNALONGBENCH Task Specifications

| Task | Task Type | Input Length (bp) | Output Shape | Evaluation Metric |

|---|---|---|---|---|

| Enhancer-target Gene Interaction | Binary Classification | 450,000 | 1 | AUROC |

| Expression Quantitative Trait Loci (eQTL) | Binary Classification | 450,000 | 1 | AUROC |

| 3D Genome Organization (Contact Map) | Binned 2D Regression | 1,048,576 | 99,681 | SCC & PCC |

| Regulatory Sequence Activity | Binned 1D Regression | 196,608 | Human: (896, 5313)Mouse: (896, 1643) | PCC |

| Transcription Initiation Signal | Nucleotide-wise 1D Regression | 100,000 | (100,000, 10) | PCC |

As shown in the table, DNALONGBENCH supports sequences up to 1 million base pairs, significantly longer than previous benchmarks such as BEND (100k bp) and LRB (192k bp) [12]. This extensive range enables evaluation of models on truly long-range dependencies that are biologically relevant but computationally challenging to capture.

Experimental Protocol and Evaluation Methodology

The evaluation protocol for DNALONGBENCH involves assessing model performance across all five tasks using three types of models: a lightweight convolutional neural network (CNN), task-specific expert models that represent the state-of-the-art for each specific task, and fine-tuned DNA foundation models including HyenaDNA and Caduceus [12] [13]. For each task, the respective expert model serves as a strong baseline: the Activity-by-Contact model for enhancer-target gene prediction, Enformer for eQTL and regulatory sequence activity prediction, Akita for contact map prediction, and Puffin for transcription initiation signal prediction [13].

The benchmarking process involves training or fine-tuning each model on the specified input sequences for each task and evaluating predictions using the appropriate metrics—AUROC for classification tasks and Pearson correlation coefficient (PCC) or stratum-adjusted correlation coefficient (SCC) for regression tasks [12]. This comprehensive approach allows for direct comparison of different modeling approaches across diverse task types and difficulty levels.

Performance Comparison and Key Findings

Experimental results from DNALONGBENCH reveal important patterns in model performance across different task types. The table below summarizes performance comparisons across the five tasks:

Table 2: Performance Comparison of Model Types on DNALONGBENCH Tasks

| Task | CNN | DNA Foundation Models | Expert Models |

|---|---|---|---|

| Enhancer-target Gene | Moderate performance | Reasonable performance | Highest performance |

| eQTL | Moderate performance | Reasonable performance | Highest performance |

| Contact Map | Lower performance | Challenging | Substantially higher |

| Regulatory Sequence Activity | Moderate performance | Reasonable performance | Highest performance |

| Transcription Initiation Signal | 0.042 PCC | 0.109-0.132 PCC | 0.733 PCC |

A key finding from DNALONGBENCH evaluations is that expert models consistently outperform DNA foundation models across all tasks [12] [13]. However, the performance gap varies substantially across tasks. For example, in the transcription initiation signal prediction task, the expert model Puffin achieves an average Pearson correlation coefficient of 0.733, significantly surpassing CNN (0.042), HyenaDNA (0.132), Caduceus-Ph (0.109), and Caduceus-PS (0.108) [13]. The contact map prediction task proves particularly challenging for all models, highlighting the difficulty of capturing complex three-dimensional genome organization from sequence data alone [13].

PhEval: Standardized Framework for Phenotype-Driven Variant Prioritization

PhEval addresses a critical challenge in rare disease diagnosis: the standardized evaluation of variant and gene prioritization algorithms (VGPAs). These computational tools are essential for identifying pathogenic variants from among the millions of variations in an individual's genome, but their performance has been difficult to measure and compare due to lack of standardization [14]. PhEval provides an empirical framework that solves issues of patient data availability and experimental tooling configuration when benchmarking rare disease VGPAs. By providing standardized data on patient cohorts from real-world case reports and controlling the configuration of evaluated VGPAs, PhEval enables transparent, portable, comparable, and reproducible benchmarking [14].

A key innovation of PhEval is its built on the Phenopacket-schema, a GA4GH and ISO standard for sharing detailed phenotypic descriptions with disease, patient, and genetic information [14]. This standardized format ensures consistency in how phenotypic data is represented and processed across different tools and evaluations, addressing a significant challenge in the field where patient phenotypic profiles may be represented differently across tools.

Core Functionality and Implementation

PhEval operates through a modular architecture that automates the evaluation pipeline while maintaining flexibility for different algorithm types. The framework includes three main components: the prepare stage, which sets up the necessary data and environment; the run stage, which executes the prioritization algorithms; and the post-process stage, which harmonizes outputs into a standardized format for comparison [15]. This structured approach ensures that despite the diversity of data formats expected by different VGPAs, all tools can be evaluated consistently using the same metrics and datasets.

The implementation supports various types of prioritization analyses, including variant prioritization, gene prioritization, and disease prioritization [15]. For each analysis type, PhEval generates standardized output directories and results files, enabling straightforward comparison across multiple tools. The framework also includes comprehensive metadata tracking, recording tool versions, configuration details, and run timestamps to ensure full reproducibility [15].

Experimental Protocol and Evaluation Methodology

The benchmarking process in PhEval begins with standardized test corpora derived from real-world patient data. The framework includes tools for generating these test corpora, ensuring that evaluations are based on clinically relevant scenarios [14]. When benchmarking a tool, researchers must implement a custom runner that extends the PhEvalRunner base class, defining the specific prepare, run, and post-process methods required for their tool [15].

PhEval employs traditional machine learning metrics for evaluation, including receiver operating characteristic (ROC) curves and precision-recall (PR) curves [14]. The area under the ROC curve (AUROC) provides a comprehensive measure of accuracy across all possible classification thresholds. The benchmarking process evaluates how effectively VGPAs can prioritize known causative variants or genes associated with a patient's phenotypes, with successful prioritization measured by the rank of the true causative entity in the results list [14].

Performance Enhancements and Recent Developments

Recent versions of PhEval have significantly improved performance and functionality. Version 0.5.1 introduced a major refactoring to use Polars instead of Pandas for data processing, resulting in dramatic performance improvements—benchmarking 111 phenopackets on Exomiser and GADO now takes approximately 2.09 seconds compared to 41.83 seconds with the previous implementation [16]. This represents a 20x speed improvement while also reducing memory usage.

Other notable enhancements include improved MONDO disease ID mapping for more consistent disease benchmarking, better handling of duplicate results, and more informative logging throughout the execution pipeline [16]. The framework continues to evolve with regular releases that address usability issues and extend functionality, making it an increasingly robust solution for VGPA evaluation.

Comparative Analysis: DNALONGBENCH vs. PhEval

Problem Domain and Application Scope

While both DNALONGBENCH and PhEval serve as benchmarking suites for genomic tools, they address fundamentally different problems in computational biology. DNALONGBENCH focuses on the challenge of predicting functional elements and interactions from DNA sequence data, particularly emphasizing long-range dependencies that span hundreds of thousands to millions of base pairs [12] [13]. In contrast, PhEval addresses the problem of prioritizing genetic variants and genes based on their association with patient phenotypes, a critical step in rare disease diagnosis [14].

This difference in scope is reflected in their respective target applications. DNALONGBENCH is designed for evaluating deep learning models that predict various aspects of genome function and structure from sequence data, with applications in basic research on gene regulation and genome organization [12]. PhEval, meanwhile, targets the evaluation of clinical decision support tools that integrate genomic and phenotypic information to facilitate diagnosis of rare genetic diseases [14].

Technical Implementation and Evaluation Metrics

The technical approaches of these benchmarking suites differ significantly, reflecting their distinct domains. DNALONGBENCH employs primarily sequence-based inputs and evaluates models on their ability to predict specific functional elements or interactions, using metrics such as AUROC for classification tasks and Pearson correlation for regression tasks [12]. PhEval, on the other hand, utilizes phenotypic profiles encoded using the Human Phenotype Ontology (HPO) and evaluates tools based on their ability to prioritize known causative variants or genes, using ranking-based metrics and AUROC [14].

Table 3: Comparison of DNALONGBENCH and PhEval Benchmarking Suites

| Feature | DNALONGBENCH | PhEval |

|---|---|---|

| Primary Focus | Long-range DNA dependency modeling | Variant and gene prioritization for rare diseases |

| Input Data | DNA sequences up to 1M bp | Phenopackets with HPO terms, genomic data |

| Evaluation Metrics | AUROC, PCC, SCC | AUROC, precision-recall, ranking accuracy |

| Model Types | Deep learning models (CNNs, transformers, expert models) | Variant and gene prioritization algorithms |

| Key Innovation | Comprehensive long-range tasks up to 1M bp | Standardized test corpora and automated evaluation |

| Primary Application | Basic research on gene regulation | Clinical diagnostics for rare diseases |

| Recent Versions | Initial release (2025) | Ongoing development (v0.6.5 as of 2025) |

Complementary Strengths and Shared Challenges

Despite their differences, both suites address the critical need for standardized evaluation in genomics and face similar challenges regarding ground truth completeness. DNALONGBENCH tackles the problem of evaluating models on tasks where the complete set of functional elements is not fully known, while PhEval addresses the challenge of benchmarking prioritization algorithms when the true causative variants may not be identified in all cases [14] [17].

Both frameworks also emphasize reproducibility and transparency in benchmarking. DNALONGBENCH provides standardized datasets and evaluation protocols to enable fair comparison of different modeling approaches [12], while PhEval automates the evaluation pipeline and ensures consistent configuration across tools [14]. This shared commitment to reproducible research represents an important advancement in computational genomics.

Essential Research Reagents and Computational Tools

Implementing and utilizing benchmarking suites like DNALONGBENCH and PhEval requires specific computational resources and tools. The table below outlines key research reagent solutions essential for working with these frameworks:

Table 4: Essential Research Reagents and Computational Tools

| Resource Type | Specific Examples | Function in Benchmarking |

|---|---|---|

| Deep Learning Models | HyenaDNA, Caduceus, CNN baselines | Provide baseline implementations for comparing model architectures on DNALONGBENCH tasks |

| Expert Models | ABC model, Enformer, Akita, Puffin | Serve as state-of-the-art references for specific tasks in DNALONGBENCH |

| Variant Prioritization Tools | Exomiser, LIRICAL, Phen2Gene | Target algorithms for evaluation using PhEval framework |

| Data Standards | Phenopacket-schema, HPO, BED format | Enable standardized data representation and exchange between tools |

| Implementation Frameworks | PhEval custom runners, Cookie cutter templates | Provide extensible infrastructure for adding new tools to benchmarks |

| Evaluation Metrics | AUROC, PCC, SCC, precision-recall | Quantify performance consistently across different tools and tasks |

These resources collectively enable researchers to implement, evaluate, and compare genomic analysis tools using standardized benchmarks. The deep learning models and expert models provide reference points for DNALONGBENCH evaluations, while the variant prioritization tools represent the target applications for PhEval. Data standards ensure consistency across evaluations, and implementation frameworks support extensibility as new tools and methods are developed.

DNALONGBENCH and PhEval represent significant advancements in standardized evaluation for computational genomics, though they address distinct challenges. DNALONGBENCH fills a critical gap in evaluating long-range DNA dependency modeling, providing the most comprehensive benchmark to date for tasks involving sequences up to 1 million base pairs. Its evaluations demonstrate that while DNA foundation models show promise, expert models specifically designed for each task still achieve superior performance, particularly for complex regression tasks like contact map prediction [12] [13].

PhEval addresses the equally important challenge of standardizing evaluation for variant and gene prioritization algorithms, which are essential tools in rare disease diagnosis. By providing automated, reproducible benchmarking pipelines and standardized test corpora, PhEval enables transparent comparison of VGPAs and facilitates improvements in diagnostic yield [14]. Recent enhancements have dramatically improved performance, with version 0.5.1 achieving 20x faster benchmarking through implementation with Polars [16].

Together, these benchmarking suites provide critical infrastructure for advancing computational genomics. DNALONGBENCH drives progress in deep learning applications for genomics by enabling rigorous evaluation of model capabilities for capturing long-range dependencies. PhEval supports improvement of clinical decision support tools by providing standardized evaluation frameworks for variant prioritization. As both suites continue to evolve, they will play increasingly important roles in ensuring that claims about model and algorithm performance are based on transparent, reproducible, and standardized evaluations.

The accurate identification of gene coding regions represents a fundamental challenge in computational genomics, with the performance of gene-finding tools having profound implications for downstream biological research and therapeutic development. Evaluating these tools requires a nuanced understanding of specific performance metrics—accuracy, specificity, and recall—each of which illuminates a different aspect of predictive behavior. These metrics are derived from a classification model's ability to correctly identify true positives (TP), true negatives (TN), false positives (FP), and false negatives (FN), which together form the confusion matrix, a foundational concept for classification evaluation [18] [19].

The choice of evaluation metric is not merely a technical decision but a strategic one that reflects the biological and practical context of the gene-finding task. In scenarios such as the identification of experimentally validated transcription start sites, different metrics answer different questions: accuracy provides an overall measure of correctness, specificity quantifies the tool's ability to avoid false alarms in non-coding regions, and recall (sensitivity) measures its capability to locate all genuine coding elements [20] [18]. This article provides a comprehensive comparison of these key performance metrics within the context of evaluating gene finders, supported by experimental data and methodological insights from recent benchmarking studies.

Metric Definitions and Mathematical Foundations

Core Performance Metrics

The evaluation of binary classification models, including gene-finding algorithms, relies on several interconnected metrics derived from the confusion matrix [18] [19]:

Accuracy: Measures the overall correctness of the model by calculating the proportion of true results among the total number of cases examined [20] [21]. Mathematically, accuracy is defined as:

[ \text{Accuracy} = \frac{TP + TN}{TP + TN + FP + FN} ]

Accuracy answers the question: "How often is the gene finder correct overall?" [21]

Recall (Sensitivity or True Positive Rate): Measures the model's ability to correctly identify all relevant instances of a class [20] [19]. For gene finders, this metric quantifies the proportion of actual genes that are correctly identified:

[ \text{Recall} = \frac{TP}{TP + FN} ]

Recall answers the question: "What fraction of all genuine genes does the finder detect?" [20]

Specificity: Measures the model's ability to correctly exclude negative instances [18]. This metric assesses how well a gene finder avoids misclassifying non-coding regions as genes:

[ \text{Specificity} = \frac{TN}{TN + FP} ]

Specificity answers the question: "What fraction of non-coding regions are correctly identified as such?" [18]

Table 1: Performance Metrics for Binary Classification

| Metric | Formula | What It Measures | Primary Concern |

|---|---|---|---|

| Accuracy | (TP + TN)/(TP + TN + FP + FN) | Overall correctness | Both false positives and false negatives |

| Recall | TP/(TP + FN) | Ability to find all positive instances | False negatives (missed genes) |

| Specificity | TN/(TN + FP) | Ability to exclude negative instances | False positives (false genes) |

| Precision | TP/(TP + FP) | Accuracy when predicting positive class | False positives (false genes) |

The Relationship Between Metrics

These metrics exist in a dynamic tension, particularly in genomic applications where researchers must often make trade-offs based on their specific priorities [20] [22]. There is typically an inverse relationship between precision and recall, where increasing one often decreases the other [20]. Similarly, tension exists between recall and specificity, as aggressively minimizing false negatives (increasing recall) may increase false positives (reducing specificity) [19].

This relationship can be visualized through a precision-recall curve or by evaluating metrics at different classification thresholds [20] [18]. The optimal balance depends fundamentally on the research context and the relative costs of different error types [20].

Figure 1: Logical relationships between confusion matrix components and key performance metrics. Metrics are derived from different combinations of true positives (TP), true negatives (TN), false positives (FP), and false negatives (FN).

Contextualizing Metric Selection for Gene-Finder Evaluation

When to Prioritize Specific Metrics

The appropriate emphasis on accuracy, specificity, or recall depends heavily on the research objectives and the biological context [20] [18]:

Prioritize Recall when false negatives (missing actual genes) are more costly than false positives. This is particularly important in exploratory research where comprehensive gene identification is crucial, or when studying genes with high biological significance but subtle signatures [20]. As exemplified in medical diagnostics, "a false negative typically has more serious consequences than a false positive" [20].

Prioritize Specificity and Precision when false positives (incorrectly labeling non-genes as genes) would lead to wasted experimental resources or erroneous conclusions. This approach is valuable in clinical applications or when prioritizing candidates for expensive validation studies [20] [21].

Rely on Accuracy mainly for balanced datasets where both classes (gene and non-gene) are approximately equally represented and both types of errors have similar costs [20] [21]. In imbalanced datasets—common in genomics where coding regions represent a small fraction of the genome—accuracy becomes misleading [18] [21].

The Challenge of Imbalanced Datasets in Genomics

In gene finding, the region of interest (genes) typically represents a small fraction of the total genomic sequence, creating a naturally imbalanced classification problem [21]. In such cases, a naive model that always predicts "non-gene" could achieve high accuracy while being biologically useless [20] [21].

For example, if genes constitute only 5% of the genomic regions being analyzed, a model that always predicts "non-gene" would achieve 95% accuracy while having 0% recall for actual genes [21]. This "accuracy paradox" necessitates the use of more informative metrics like recall, specificity, and precision [21].

Table 2: Metric Selection Guide for Different Gene-Finder Applications

| Research Context | Priority Metrics | Rationale | Exemplar Applications |

|---|---|---|---|

| Exploratory gene discovery | Recall, F1 score | Minimizing missed genes is paramount; false positives can be filtered later | Identifying novel genes in poorly annotated genomes |

| Clinical variant interpretation | Precision, Specificity | False positives could lead to incorrect diagnoses; prediction confidence is critical | Pathogenicity prediction of rare BRCA1/2 variants [23] |

| Comparative genomics | Balanced accuracy, MCC | Balanced view of performance across classes is needed | Benchmarking gene finders across multiple species |

| Resource-intensive validation | Precision, Specificity | Avoiding wasted resources on false positives is economically important | Selecting candidates for experimental validation |

Experimental Benchmarking: Insights from Genomic Evaluation Studies

Benchmarking Frameworks for Genomic Tools

Recent efforts to standardize the evaluation of genomic prediction tools have yielded sophisticated benchmarking suites that illustrate the practical application of performance metrics. The DNALONGBENCH benchmark, for example, evaluates long-range DNA prediction tasks across five biologically meaningful categories: enhancer-target gene interaction, expression quantitative trait loci, 3D genome organization, regulatory sequence activity, and transcription initiation signals [13]. This comprehensive framework assesses models on sequences up to 1 million base pairs, using multiple performance metrics to capture different aspects of predictive performance [13].

Similarly, CausalBench provides a benchmark suite for evaluating network inference methods using real-world large-scale single-cell perturbation data [24]. This platform employs both biology-driven approximations of ground truth and quantitative statistical evaluations, including precision-recall tradeoffs specifically adapted for biological networks [24].

Performance Tradeoffs in Practice

Experimental evaluations consistently reveal inherent tradeoffs between performance metrics. In the assessment of network inference methods using CausalBench, researchers observed the classic tension between precision and recall across multiple algorithms [24]. Some methods achieved high recall on biological evaluation but with correspondingly low precision, while others demonstrated the opposite pattern [24].

These tradeoffs manifest differently across biological tasks. In the DNALONGBENCH evaluation, expert models consistently outperformed DNA foundation models across all five tasks, but the performance advantage was more pronounced in regression tasks (like contact map prediction and transcription initiation signal prediction) than in classification tasks [13]. This suggests that task characteristics significantly influence the relative importance of different metrics.

Figure 2: Generalized experimental workflow for benchmarking gene-finder performance against experimentally validated datasets.

Experimental Data and Comparative Performance

Quantitative Comparisons from Genomic Studies

Recent benchmarking studies provide concrete quantitative data on the performance of various genomic prediction tools. In the evaluation of gene-specific versus disease-specific machine learning for pathogenicity prediction of rare BRCA1 and BRCA2 missense variants, researchers found that gene-specific training variants could produce optimal predictors despite smaller training datasets [23]. This study employed multiple machine learning classifiers (regularized logistic regression, XGBoost, random forests, SVMs, and deep neural networks) and evaluated performance using the area under the precision-recall curve (AUPRC), a metric particularly informative for imbalanced classification problems [23].

In the network inference domain, CausalBench evaluations revealed substantial performance variations across methods [24]. The best-performing methods achieved F1 scores (the harmonic mean of precision and recall) of approximately 0.25-0.35 on biological evaluation tasks, while others traded higher recall for lower precision or vice versa [24]. These results underscore the importance of selecting evaluation metrics that align with biological priorities.

Table 3: Experimental Performance of Genomic Tools Across Benchmarking Studies

| Benchmark Study | Task Type | Best-Performing Model | Key Performance Results | Evaluation Metrics Emphasized |

|---|---|---|---|---|

| DNALONGBENCH [13] | Long-range DNA prediction | Expert models (e.g., Enformer, Akita) | Consistently outperformed DNA foundation models across all tasks | AUROC, AUPR, stratum-adjusted correlation |

| CausalBench [24] | Network inference from single-cell data | Mean Difference, Guanlab | Superior trade-off between precision and recall | Precision, Recall, F1 Score, Mean Wasserstein distance |

| Gene-specific ML [23] | Pathogenicity prediction | Gene-specific classifiers | Optimal performance despite smaller training data | AUPRC, Precision-Recall tradeoffs |

Table 4: Key Research Reagents and Computational Tools for Gene-Finder Evaluation

| Resource Category | Specific Examples | Function in Evaluation | Application Context |

|---|---|---|---|

| Benchmark Datasets | DNALONGBENCH [13], CausalBench [24] | Provide standardized tasks and datasets for comparative evaluation | Long-range DNA prediction, Network inference |

| Experimentally Validated Gene Sets | ClinVar variants [23], ENCODE annotations | Serve as gold standards for method validation | Pathogenicity prediction, Functional element identification |

| Performance Evaluation Libraries | scikit-learn metrics [18], specialized bioinformatics packages | Calculate performance metrics and generate visualizations | General model evaluation, Precision-recall analysis |

| Visualization Tools | Graphviz, precision-recall curve plotters | Illustrate performance relationships and workflows | Metric tradeoff analysis, Method communication |

The evaluation of gene-finding tools requires careful consideration of multiple performance metrics, each providing distinct insights into different aspects of algorithmic performance. Accuracy offers a general overview of correctness but becomes misleading with imbalanced datasets common in genomics. Recall ensures comprehensive identification of genuine coding elements, while specificity guards against false positives that could misdirect valuable research resources.

Experimental benchmarks demonstrate that performance tradeoffs are inherent in genomic tool design, with different algorithms excelling at different aspects of prediction tasks [13] [24]. The optimal metric emphasis depends fundamentally on the research context: exploratory discovery prioritizes recall, clinical applications demand high specificity and precision, and balanced benchmarking requires comprehensive metric evaluation.

Future directions in gene-finder evaluation will likely involve more sophisticated biologically-grounded benchmarks and metric frameworks that better capture functional relevance beyond mere sequence prediction. As genomic tools continue to evolve, so too must our approaches to evaluating their performance, ensuring they deliver biologically meaningful insights rather than merely optimizing abstract statistical measures.

Tools of the Trade: From Deep Learning Architectures to Standardized Benchmarking Pipelines

Gene prediction, the computational task of identifying the precise locations and structures of genes within a raw DNA sequence, represents a foundational step in genomics. Accurate gene models are crucial for downstream analyses in fields ranging from basic biology to drug development, enabling researchers to interpret genetic variants, understand disease mechanisms, and identify potential therapeutic targets. The sophistication of gene prediction methodologies has evolved significantly, from early algorithms based on statistical signals to modern approaches leveraging artificial intelligence. This guide provides a comprehensive comparison of the three dominant methodological paradigms: traditional ab initio techniques, classical machine learning approaches, and cutting-edge deep learning models. The evaluation is framed within the critical context of benchmarking against experimentally validated gene start sites, a gold standard for assessing prediction accuracy in real-world research scenarios. As genomic data continues to grow exponentially in both volume and complexity, understanding the strengths, limitations, and appropriate applications of each methodology becomes increasingly vital for researchers and drug development professionals aiming to extract meaningful biological insights from sequence data.

Methodology Categories

Ab Initio Methods

Ab Initio (Latin for "from the beginning") methods predict genes solely based on genomic DNA sequence, without relying on external evidence like transcripts or homologous proteins. These approaches utilize intrinsic sequence features and statistical models to distinguish protein-coding regions from non-coding DNA.

- Core Principles: Ab initio predictors identify signals such as start and stop codons, splice sites (donor and acceptor sites), branch points, and promoter motifs. They also employ content sensors that exploit statistical biases in coding sequences, including codon usage patterns, nucleotide composition, and exon/intron length distributions [25].

- Underlying Technologies: Most established ab initio tools are based on Hidden Markov Models (HMMs) or Generalized HMMs (GHMMs). These probabilistic models are trained to recognize the grammar of gene structures, transitioning between states representing different genomic features (e.g., exon, intron, intergenic region) [26]. Notable examples include GeneMark-ES, AUGUSTUS, and FGENESH [25] [26].

- Typical Workflow: The process involves scanning the input DNA sequence for the presence of signal and content features, scoring potential gene structures based on the trained model, and outputting the most probable gene models that conform to biological rules (e.g., starting with a start codon and ending with a stop codon) [25].

Machine Learning Approaches

Machine Learning (ML) approaches for gene prediction expand upon traditional ab initio concepts by incorporating a wider array of features and utilizing more complex, data-driven classification algorithms.

- Core Principles: While still using fundamental sequence signals, ML methods can integrate additional evolutionary conservation data, sequence homology, and functional features (e.g., Gene Ontology terms) to improve prediction accuracy. They learn the complex relationships between these features and gene structures from training data [27].

- Underlying Technologies: Classical ML algorithms used in this domain include Support Vector Machines (SVMs), Random Forests, and earlier neural network architectures. These models often require careful feature engineering, where domain experts manually select and construct relevant input features from the sequence and auxiliary data [25] [27]. Tools like GeneID and SNAP exemplify this approach [25].

- Typical Workflow: After extensive feature extraction and selection from the genomic sequence and potentially aligned related genomes, the ML model is trained on a curated set of known genes. The trained classifier then evaluates genomic regions to predict their functional status (e.g., coding vs. non-coding) and assembles complete gene models [27].

Deep Learning Approaches

Deep Learning (DL) represents the most recent paradigm shift in gene prediction, using neural networks with multiple layers to automatically learn relevant features directly from raw or minimally processed sequence data.

- Core Principles: DL models minimize the need for manual feature engineering by learning hierarchical representations of genomic sequences end-to-end. They can capture complex, long-range dependencies in DNA that are difficult to model with traditional methods [26].

- Underlying Technologies: Modern gene prediction tools employ sophisticated architectures such as Convolutional Neural Networks (CNNs) for detecting local motifs and patterns, Recurrent Neural Networks (RNNs) and Long Short-Term Memory (LSTM) networks for handling sequential dependencies, and attention mechanisms for focusing on the most informative parts of a sequence [26] [27]. Helixer and Tiberius are prominent examples of deep learning-based gene finders [26].

- Typical Workflow: Nucleotide sequences are typically converted into numerical representations (e.g., one-hot encoding). These sequences are fed into a deep neural network that outputs base-wise predictions of functional categories (e.g., intergenic, intron, exon). Post-processing steps then assemble these predictions into coherent gene models, often using a dedicated HMM or rule-based system [26].

Performance Comparison

Rigorous benchmarking against experimentally validated gene structures provides the most meaningful comparison of prediction methodologies. The following tables summarize key performance metrics across different methodological categories and tools, with emphasis on accuracy relative to confirmed start sites and other structural features.

Table 1: Overall Performance Comparison of Methodology Categories

| Methodology Category | Typical Gene F1 Score | Start/Stop Codon Accuracy | Splice Site Accuracy | Data Requirements | Cross-Species Generalization |

|---|---|---|---|---|---|

| Ab Initio (HMM) | ~0.70-0.85 (varies by species) | Moderate | Moderate | Genome sequence only | Requires species-specific training |

| Machine Learning | ~0.75-0.88 | Moderate to High | Moderate to High | Sequence + multiple data sources | Limited without retraining |

| Deep Learning | ~0.85-0.95 | High | High | Genome sequence only | Strong with pretrained models |

Table 2: Tool-Specific Performance Metrics on Benchmark Datasets

| Tool | Methodology | Gene F1 Score | Exon F1 Score | Nucleotide F1 Score | BUSCO Completeness |

|---|---|---|---|---|---|

| Helixer | Deep Learning (CNN+RNN) | 0.892 | 0.921 | 0.945 | ~95% |

| AUGUSTUS | Ab Initio (HMM) | 0.831 | 0.865 | 0.891 | ~90% |

| GeneMark-ES | Ab Initio (HMM) | 0.819 | 0.854 | 0.883 | ~88% |

| Tiberius | Deep Learning (Mammals) | 0.912 | 0.934 | 0.951 | ~96% |

| EGP Hybrid-ML | Machine Learning (Ensemble) | 0.861 | - | - | - |

Recent large-scale benchmarks reveal important trends in method performance. The GGRN/PEREGGRN benchmarking platform, which evaluates expression forecasting based on gene regulatory networks, highlights that it remains challenging for computational methods to consistently outperform simple baselines when predicting outcomes of unseen genetic perturbations [28]. However, for structural gene prediction, deep learning methods like Helixer show notable advantages, achieving state-of-the-art performance across diverse eukaryotic genomes without requiring species-specific retraining or extrinsic evidence [26].

In direct comparisons, Helixer demonstrated higher phase F1 scores than both GeneMark-ES and AUGUSTUS across plant and vertebrate species, with particularly strong performance in nucleotide-level and exon-level prediction [26]. Meanwhile, specialized deep learning models like Tiberius show exceptional performance within specific clades, outperforming Helixer in mammalian genomes by approximately 20% in gene recall and precision [26].

For essential gene prediction, hybrid machine learning models like EGP Hybrid-ML (combining graph convolutional networks with Bi-LSTM and attention mechanisms) achieve sensitivity up to 0.9122, demonstrating robust cross-species generalization capabilities [27].

Experimental Protocols for Benchmarking

To ensure fair and biologically meaningful comparisons between gene prediction methods, standardized experimental protocols and benchmarking frameworks are essential. The following sections detail key methodologies for evaluating prediction accuracy against experimentally validated gene structures.

Benchmark Dataset Construction

High-quality benchmark datasets form the foundation of reliable method evaluation. The G3PO (benchmark for Gene and Protein Prediction Programs) framework exemplifies best practices in this area [25]:

- Curation of Reference Genes: The benchmark incorporates 1,793 reference genes from 147 phylogenetically diverse eukaryotic organisms, ensuring broad taxonomic representation from humans to protists.

- Validation of Gene Structures: Each gene undergoes careful validation through multiple sequence alignments to identify and flag potential annotation errors. Proteins with inconsistent sequence segments are labeled 'Unconfirmed', while those without errors are labeled 'Confirmed'.

- Complexity Representation: The benchmark includes genes with varying structural complexity, from single-exon genes to those with over 20 exons, representing challenges typical of real annotation projects.

- Genomic Context Provision: Genomic sequences are extracted with additional flanking regions (150 to 10,000 nucleotides upstream and downstream) to provide necessary context for evaluating signal sensor performance.

Evaluation Metrics and Validation Procedures

Comprehensive assessment requires multiple complementary metrics that capture different aspects of prediction quality:

- Base-Wise Metrics: Calculate precision, recall, and F1-score at the nucleotide level, classifying each base as coding, intronic, or intergenic. This provides the finest-grained assessment of prediction accuracy [26].

- Feature-Level Metrics: Evaluate accuracy in predicting discrete gene features:

- Start/Stop Codon Accuracy: Precisely measuring correct identification of translation initiation and termination sites.

- Splice Site Accuracy: Assessing correct prediction of donor and acceptor sites.

- Exon-Intron Structure: Computing exon-level and intron-level F1 scores [26].

- Gene-Level Metrics: Assess performance at the complete gene level, including:

- Gene F1 Score: Harmonic mean of gene prediction precision and recall.

- BUSCO (Benchmarking Universal Single-Copy Orthologs): Measures completeness by searching for evolutionarily conserved single-copy orthologs [26].

- Cross-Validation Strategies: Implement cross-species validation to evaluate generalization capability, where models trained on one set of species are tested on evolutionarily distant species [27].

Research Reagents and Tools

Table 3: Essential Research Reagents and Computational Tools for Gene Prediction Research

| Category | Item/Resource | Function in Gene Prediction Research |

|---|---|---|

| Benchmarking Platforms | GGRN/PEREGGRN [28] | Framework for evaluating expression forecasting methods against perturbation data |

| G3PO [25] | Benchmark for gene and protein prediction programs with diverse eukaryotic genes | |

| Database Resources | DEG (Database of Essential Genes) [27] | Repository of essential gene information for training and validation |

| UniProt [25] | Source of validated protein sequences for benchmark construction | |

| Ensembl [25] | Genomic infrastructure for accessing reference gene annotations | |

| Computational Tools | Helixer [26] | Deep learning-based ab initio gene prediction across diverse eukaryotes |

| AUGUSTUS [25] [26] | HMM-based ab initio gene predictor | |

| GeneMark-ES [25] [26] | Self-training HMM for gene prediction | |

| EGP Hybrid-ML [27] | Hybrid machine learning model for essential gene prediction | |

| DeepCNNvalid [29] | Deep convolutional network for validating NGS variants | |

| Sequencing Technologies | Oxford Nanopore [30] [31] | Long-read sequencing for structural variant detection |

| Illumina NGS [31] [29] | High-accuracy short-read sequencing for validation | |

| Experimental Validation | CRISPR Screens [31] | Functional validation of predicted essential genes |

| Single-cell RNA-seq [28] [31] | Transcriptomic validation of predicted gene models |

Technical Workflows

Implementing gene prediction methodologies requires understanding their distinct computational workflows. The following diagrams illustrate the standard processes for both ab initio/deep learning approaches and experimental validation protocols.

Ab Initio and Deep Learning Gene Prediction Workflow

Experimental Validation Workflow for Prediction Accuracy

The evolution of gene prediction methodologies from traditional ab initio approaches to modern deep learning systems represents a paradigm shift in computational genomics. Each methodological category offers distinct advantages: ab initio methods provide interpretable models requiring minimal external data; machine learning approaches leverage diverse feature sets for improved accuracy; and deep learning systems automatically learn complex sequence determinants while demonstrating remarkable generalization across diverse species. Performance benchmarks consistently show that deep learning tools like Helixer and Tiberius achieve state-of-the-art results in nucleotide-level, exon-level, and gene-level prediction metrics, particularly for well-studied clades like plants, vertebrates, and mammals.

For researchers focused on experimentally validated start sites, the critical considerations include not only raw prediction accuracy but also computational efficiency, ease of implementation, and interpretability of results. While deep learning methods generally provide the highest accuracy, traditional HMM-based tools may still offer advantages for certain applications, particularly in resource-constrained environments or for highly divergent species where training data is limited. The emergence of comprehensive benchmarking platforms like GGRN/PEREGGRN and G3PO provides researchers with standardized frameworks for objective method evaluation, enabling informed selection of appropriate tools for specific genomic contexts and research objectives. As the field continues to evolve, integration of multi-omics data and development of more sophisticated neural architectures promise to further bridge the gap between computational prediction and biological reality, ultimately accelerating discovery in basic research and drug development.

The accurate identification of genes and their regulatory elements is a fundamental challenge in genomics. Traditional computational methods often struggle with a key biological reality: critical regulatory interactions can span vast genomic distances. Enhancers, for instance, can influence gene expression from positions hundreds of thousands to millions of base pairs away [13] [7]. This challenge of long-range genomic context has necessitated the development of sophisticated deep-learning architectures capable of capturing these dependencies.

This guide provides an objective comparison of three leading deep-learning architectures—Enformer, HyenaDNA, and Caduceus—designed to model long-range DNA interactions. We focus on their performance in tasks relevant to gene finding and functional genomics, framing the evaluation within the critical context of experimentally validated genomic elements. The analysis is based on recent benchmark studies and original research to offer a current and data-driven perspective for researchers, scientists, and drug development professionals.

The three models represent distinct evolutionary paths in overcoming the computational limitations of earlier approaches, such as Convolutional Neural Networks (CNNs), which were constrained by their local receptive fields.

Table 1: Core Architectural Features of Enformer, HyenaDNA, and Caduceus

| Feature | Enformer | HyenaDNA | Caduceus |

|---|---|---|---|

| Core Innovation | Transformer with self-attention | Hyena operator (long convolutions) | Bi-directional Mamba with RC equivariance |

| Primary Mechanism | Global attention weighted by sequence content | Fast convolution via implicit kernels | Selective State Space Models (SSMs) |

| Maximum Context Length | ~100,000 bp [7] | 1,000,000 bp [32] | Hundreds of thousands of bp [33] |

| Handling of Bi-directionality | Implicit in attention | Implicit in convolution | Explicit via two Mamba passes [34] |

| Reverse Complement (RC) Symmetry | Not inherent | Not inherent | Explicitly enforced (Caduceus-PS) or used via augmentation (Caduceus-Ph) [33] |

| Computational Complexity | Quadratic in sequence length (O(N²)) | Sub-quadratic (O(N log N)) [32] | Linear (O(N)) [34] |

Model-Specific Strengths and Limitations

- Enformer: Introduced the use of transformer-based self-attention to genomics, allowing any position in a ~100 kb input sequence to directly interact with any other. This enabled it to integrate information from distal enhancers effectively. However, its quadratic computational complexity limits further context length scaling [7].

- HyenaDNA: Leverages long convolutional operators (Hyena) to achieve a global receptive field at every layer. Its sub-quadratic scaling enables it to process sequences up to 1 million nucleotides at single-character resolution, a significant leap in context length. It uses a simple single-nucleotide tokenizer (vocabulary of 4) [32].

- Caduceus: Builds on the Mamba SSM architecture, which is selective and data-dependent. Its key innovations are bi-directionality, crucial for genomic context, and reverse complement (RC) equivariance, which respects the biological reality of double-stranded DNA. This makes it the first RC-equivariant DNA language model [33] [34] [35].

Performance Benchmarking on Genomic Tasks

The DNALONGBENCH suite provides a standardized framework for evaluating long-range DNA prediction models across five biologically distinct tasks, encompassing dependencies up to 1 million base pairs [13] [12]. The suite was designed to ensure biological significance, task difficulty, and diversity in task types (classification, regression) and dimensionality (1D, 2D) [13].

Comprehensive Benchmark Results

Table 2: Model Performance on DNALONGBENCH Tasks (Summarized from [13])

| Task | Task Type | Input Length (bp) | Expert Model (Performance) | HyenaDNA | Caduceus-PS/Ph | CNN Baseline |

|---|---|---|---|---|---|---|

| Enhancer-Target Gene | Binary Classification | 450,000 | ABC Model | Moderate | Moderate | Lower |

| eQTL Prediction | Binary Classification | 450,000 | Enformer | Moderate | Moderate | Lower |

| Contact Map Prediction | 2D Binned Regression | 1,048,576 | Akita | Substantially Lower | Substantially Lower | Lower |

| Regulatory Sequence Activity | 1D Binned Regression | 196,608 | Enformer | Lower | Lower | Lower |

| Transcription Initiation Signal | Nucleotide-wise Regression | 100,000 | Puffin-D | 0.132 (PCC) | ~0.109 (PCC) | 0.042 (PCC) |

Key Findings from Benchmarking

- Expert Models Lead: A consistent finding across DNALONGBENCH is that task-specific expert models (e.g., Enformer, Akita, Puffin) achieve the highest performance on their respective tasks. Their highly specialized architectures and greater number of parameters (in some cases) set a potential upper bound for performance [13].

- Foundation Models Show Promise: DNA foundation models (HyenaDNA, Caduceus) demonstrate a reasonable ability to capture long-range dependencies, generally outperforming lightweight CNN baselines but still lagging behind expert models. This suggests that their pre-training provides a strong foundation that can be fine-tuned for specific tasks [13].

- The Contact Map Challenge: Predicting 3D genome organization (contact maps) proved to be particularly difficult for all foundation models, highlighting this as a key area for future architectural improvement [13].

- Variant Effect Prediction: On the critical task of predicting the effect of genetic variants (SNPs) on gene expression, Caduceus has been shown to outperform HyenaDNA and even the much larger Nucleotide Transformer model (500M parameters), especially when the variant is far (>100k bp) from the transcription start site [33].

Experimental Protocols for Model Evaluation

To ensure reproducible and rigorous comparisons, benchmark studies follow structured experimental protocols. Below is a detailed workflow for a typical model evaluation on a task like variant effect prediction or regulatory element annotation.

Key Methodological Details

- Data Sourcing and Curation: Benchmarks use experimentally validated data from projects like ENCODE [13], CRISPRi screens [7], and eQTL studies [13]. For gene finder evaluation, this involves using curated transcription start sites [17] [36] to avoid the pitfalls of incomplete or noisy annotations.

- Model Inputs and Targets:

- Fine-tuning Protocol: Foundation models are typically fine-tuned on benchmark tasks by adding a task-specific prediction head and using an appropriate loss function (e.g., cross-entropy for classification, MSE for regression) [13].

- Performance Metrics: Metrics are chosen to fit the task. Common ones include Area Under the Receiver Operating Characteristic Curve (AUROC), Area Under the Precision-Recall Curve (AUPR), Pearson Correlation Coefficient (PCC), and Stratum-Adjusted Correlation Coefficient (SCC) for contact maps [13].

The Scientist's Toolkit: Essential Research Reagents

The following table details key computational and data resources essential for conducting research in this field.

Table 3: Key Research Reagents and Resources

| Resource Name | Type | Function/Purpose | Relevance to Model Evaluation |

|---|---|---|---|

| DNALONGBENCH [13] | Benchmark Dataset | Standardized suite of 5 long-range genomics tasks. | Provides a comprehensive and rigorous testbed for comparing model performance on biologically meaningful problems. |

| Enformer Model [7] | Pre-trained Model | Predicts chromatin profiles and gene expression from sequence. | Serves as a strong expert model baseline for expression and variant effect prediction tasks. |

| Caduceus Checkpoints [33] | Pre-trained Model | RC-equivariant foundation model for DNA. | Enables fine-tuning on custom tasks and exploration of bi-directional, equivariant modeling. |

| HyenaDNA Checkpoints [32] | Pre-trained Model | Long-context foundation model (up to 1M bp). | Allows researchers to investigate the impact of extreme context lengths on genomic task performance. |

| Activity-by-Contact (ABC) Model [13] | Algorithm & Score | Links enhancers to target genes using experimental data. | Expert model baseline for enhancer-target gene prediction tasks. |

| Akita Model [13] | Pre-trained Model | Predicts 3D genome architecture from sequence. | Expert model baseline for the challenging contact map prediction task. |

| BED Format Files | Data Format | Stores genomic coordinates and sequences. | The standard input format for DNALONGBENCH, allowing flexible adjustment of flanking sequence context [13]. |

| Experimentally Validated Gold Standards [17] | Curated Dataset | High-confidence sets of true positive/negative examples. | Critical for reliable evaluation, especially for gene finders, to avoid benchmarking with incomplete annotations. |

The landscape of deep learning for genomics is rapidly evolving, with Enformer, HyenaDNA, and Caduceus representing significant milestones in modeling long-range context. Current benchmarks indicate that while specialized expert models still hold a performance edge on their specific tasks, the scalability and generalizability of foundation models like HyenaDNA and Caduceus present a powerful alternative.

For the critical task of evaluating gene finders, several key takeaways emerge:

- Context is King: Models with a longer effective receptive field (HyenaDNA, Caduceus) are better equipped to capture the distal regulatory signals that govern transcription initiation.

- Architecture Matters: Bi-directionality and reverse complement symmetry (as in Caduceus) are more than theoretical improvements; they translate to measurable gains on biologically relevant tasks like variant effect prediction.

- Validation is Paramount: Reliable evaluation requires experimentally validated benchmarks [17] to prevent the propagation of errors and ensure models learn true biological signals rather than annotation artifacts.

The future of this field lies in developing architectures that can further extend context windows while efficiently leveraging the fundamental symmetries and constraints of molecular biology, ultimately leading to more accurate and interpretable models for genomics and drug discovery.

The accurate identification of genes and functional elements within genomic sequences represents a foundational challenge in computational biology. Advances in sequencing technologies have produced an abundance of genomic data, creating an urgent need for robust and standardized methods to evaluate the computational tools that interpret this information. Within the specific context of research focused on evaluating gene finders on experimentally validated start sites, benchmark suites provide the essential standardized framework required for objective performance comparison, method refinement, and ultimately, scientific progress. Without such standards, assertions of tool capability remain difficult to verify and reproduce.

This guide focuses on two contemporary benchmark suites—DNALONGBENCH and PhEval—that address distinct but critical aspects of genomic annotation. DNALONGBENCH is designed to assess the capability of models, including modern DNA foundation models, to capture long-range genomic dependencies that are crucial for understanding gene regulation [12] [37]. In contrast, PhEval provides a standardized framework for evaluating phenotype-driven variant and gene prioritisation algorithms (VGPAs), which is essential for rare disease diagnosis [14]. The following sections provide a detailed objective comparison of these suites, their associated performance data, and practical protocols for their implementation in a research setting.

DNALONGBENCH and PhEval were developed to address different gaps in the genomics benchmarking landscape. The table below summarizes their core characteristics and applications.

Table 1: Core Characteristics of DNALONGBENCH and PhEval

| Feature | DNALONGBENCH | PhEval |

|---|---|---|

| Primary Purpose | Evaluate long-range DNA dependency modeling in deep learning models [12] | Standardize the evaluation of phenotype-driven variant and gene prioritization algorithms (VGPAs) [14] |