Benchmarking Gene Prediction Algorithms: A Framework for Prokaryotic Genomics in Biomedical Research

Accurate gene prediction is a cornerstone of prokaryotic genomics, with direct implications for microbial ecology, infectious disease research, and drug discovery.

Benchmarking Gene Prediction Algorithms: A Framework for Prokaryotic Genomics in Biomedical Research

Abstract

Accurate gene prediction is a cornerstone of prokaryotic genomics, with direct implications for microbial ecology, infectious disease research, and drug discovery. However, the performance of prediction algorithms can vary significantly across diverse bacterial and archaeal taxa, posing a challenge for reliable genome annotation. This article provides a comprehensive guide to benchmarking gene prediction tools across diverse prokaryotic taxa. We explore the foundational principles of algorithm evaluation, detail methodological approaches for constructing robust benchmark datasets, and present strategies for troubleshooting and optimizing pipelines. Furthermore, we synthesize validation frameworks and comparative performance analyses to guide tool selection. Designed for researchers, scientists, and drug development professionals, this resource aims to enhance the accuracy and reproducibility of genomic analyses, ultimately supporting advancements in biomedical and clinical research.

The Critical Need for Benchmarking in Prokaryotic Gene Prediction

The 'Garbage In, Garbage Out' Principle in Genomic Data Analysis

In genomic data analysis, the "Garbage In, Garbage Out" (GIGO) principle dictates that the quality of analytical outputs is fundamentally constrained by the quality of input data [1]. This concept has become increasingly critical as datasets grow larger and analytical methods more complex. A 2016 review found that quality control problems are pervasive in publicly available RNA-seq datasets, stemming from issues in sample handling, batch effects, and data preprocessing [1]. Without careful quality control at every stage, key outcomes like transcript quantification and differential expression analyses can be severely compromised [1].

The stakes of data quality in genomics extend beyond academic research. In clinical settings, errors in genomic data can affect patient diagnoses, while in drug discovery, they can waste millions of research dollars [1]. Studies indicate that up to 30% of published research contains errors traceable to data quality issues at the collection or processing stage [1]. The invisibility of bad data makes this problem particularly dangerous—compromised data doesn't announce itself but quietly corrupts results while appearing valid [1].

Quantifying the GIGO Impact: Experimental Evidence

The Data Quality Benchmarking Framework

To objectively assess how data quality issues impact genomic analyses, researchers have developed sophisticated benchmarking approaches. These methodologies typically involve comparing algorithm performance across standardized datasets with known quality parameters. The core components of this framework include:

- Reference Dataset Selection: Curating datasets representing diverse biological contexts (normal physiology, developmental stages, disease states) and varying quality metrics [2]

- Quality Metric Establishment: Defining standardized quality thresholds for parameters including base call quality scores (Phred scores), read length distributions, GC content analysis, and alignment rates [1]

- Multi-Algorithm Validation: Testing multiple analytical tools against standardized benchmarks to identify performance variations [2]

- Cross-Validation Strategies: Employing alternative methods like targeted PCR to confirm genetic variants identified through sequencing, or qPCR to validate RNA-seq findings [1]

Performance Comparison of Annotation Tools

Recent research has quantified how data quality and methodological approaches affect annotation reliability in single-cell RNA sequencing. The development of LICT (Large Language Model-based Identifier for Cell Types) demonstrates the performance advantages of innovative approaches to combat GIGO problems in cell type annotation [2].

Table 1: Performance Comparison of Annotation Methods Across Heterogeneity Conditions

| Method | PBMC Dataset Match Rate | Gastric Cancer Match Rate | Embryo Dataset Match Rate | Stromal Cells Match Rate |

|---|---|---|---|---|

| GPT-4 Only | ~78.5% | ~88.9% | ~39.4% | ~33.3% |

| LICT (Multi-Model) | ~90.3% | ~91.7% | ~48.5% | ~43.8% |

| LICT (Talk-to-Machine) | ~92.5% | ~97.2% | ~48.5% | ~43.8% |

The data reveals significant performance disparities across different heterogeneity conditions. All methods excelled with highly heterogeneous cell populations but showed substantially diminished performance with low-heterogeneity datasets [2]. LICT's multi-model integration strategy reduced mismatch rates in highly heterogeneous datasets from 21.5% to 9.7% for PBMCs and from 11.1% to 8.3% for gastric cancer data compared to GPTCelltype [2].

Table 2: Credibility Assessment of Annotation Methods

| Method | PBMC Credible Annotations | Gastric Cancer Credible Annotations | Embryo Dataset Credible Annotations | Stromal Cells Credible Annotations |

|---|---|---|---|---|

| Manual Annotation | Baseline | Comparable to LICT | 21.3% | 0% |

| LICT Annotation | Superior to manual | Comparable to manual | 50.0% | 29.6% |

The credibility assessment demonstrated that in low-heterogeneity datasets, LICT-generated annotations significantly outperformed manual annotations. In the embryo dataset, 50% of mismatched LICT-generated annotations were deemed credible compared to only 21.3% for expert annotations [2].

Methodologies for Quality Assurance in Genomic Analysis

Standardized Quality Control Workflow

Implementing robust quality control measures throughout the genomic analysis pipeline is essential for preventing GIGO scenarios. The following workflow visualization outlines key checkpoints in a comprehensive quality assurance process:

Diagram Title: Genomic Data Quality Control Workflow

This workflow emphasizes quality checkpoints at critical stages where errors commonly propagate. Sample mislabeling represents one of the most persistent and problematic errors in bioinformatics, with a 2022 survey of clinical sequencing labs finding that up to 5% of samples had some form of labeling or tracking error before corrective measures were implemented [1].

Advanced Computational Strategies

To address specific GIGO challenges in genomic analysis, researchers have developed sophisticated computational approaches:

- Multi-Model Integration: Leveraging complementary strengths of multiple large language models to reduce uncertainty and increase annotation reliability, particularly for low-heterogeneity datasets [2]

- Iterative "Talk-to-Machine" Strategy: Implementing human-computer interaction processes where initial annotations are validated against marker gene expression patterns, with iterative feedback loops refining predictions [2]

- Objective Credibility Evaluation: Establishing reference-free validation frameworks that assess annotation reliability based on marker gene expression within input datasets, reducing dependency on potentially biased reference data [2]

- Batch Effect Correction: Employing statistical methods specifically designed to detect and correct for non-biological variations introduced when samples are processed at different times or using different methods [1]

Essential Research Reagents and Tools

Table 3: Key Research Reagent Solutions for Genomic Quality Control

| Tool/Reagent | Function | Application Context |

|---|---|---|

| FastQC | Quality control metric generation | Pre-alignment sequence data assessment [1] |

| Phred Scores | Base call quality quantification | Sequencing error probability estimation [1] |

| LICT (LLM-based Identifier) | Cell type annotation with reliability assessment | Single-cell RNA sequencing data analysis [2] |

| Picard Tools | Sequencing artifact identification and removal | PCR duplicate marking, adapter contamination detection [1] |

| GToTree | Phylogenomic tree construction with completion estimates | Evolutionary analysis, genome comparison [3] |

| Trimmomatic | Read trimming and quality control | Adapter removal, quality-based filtering [1] |

| SAMtools | Alignment processing and metrics | Alignment rate analysis, file format conversion [1] |

| Global Alliance for Genomics and Health (GA4GH) Standards | Data handling standardization | Cross-laboratory reproducibility enhancement [1] |

These tools and reagents form the foundation of robust genomic analysis workflows that mitigate GIGO risks. Implementation of standardized protocols across all stages of data handling—from tissue sampling to DNA extraction to sequencing—reduces variability between labs and improves reproducibility of results [1].

Addressing the Garbage In, Garbage Out challenge in genomic data analysis requires integrated quality control strategies spanning technical, computational, and human dimensions. The experimental data presented demonstrates that while methodological advances like LICT significantly improve annotation reliability, particularly for challenging datasets, vigilance at every processing stage remains essential [2]. Standardized protocols, automated validation pipelines, and objective credibility assessments collectively provide a robust defense against the propagation of errors in genomic research [1].

Future directions in combating GIGO problems will likely involve increasingly sophisticated AI-driven approaches that can adapt to complex biological contexts while maintaining transparency in reliability assessment. As genomic technologies continue to evolve and find broader applications in clinical and industrial settings, the implementation of comprehensive quality frameworks will be essential for ensuring that conclusions drawn from genomic data analysis reflect biological reality rather than technical artifacts.

Prokaryotic genome annotation is a fundamental process in genomics, enabling researchers to decipher the genetic blueprint of bacteria and archaea. Despite advancements in sequencing technologies, the path from raw assembly to a fully annotated genome remains fraught with challenges. These include inconsistencies caused by varying assembly qualities, the limitations of traditional algorithms in identifying novel genes, and the critical difficulty in accurately pinpointing translation initiation sites (TIS). Within the broader context of benchmarking gene prediction algorithms across diverse prokaryotic taxa, this guide objectively compares the performance of current annotation tools. Ranging from established homology-based methods to innovative deep learning approaches, these tools are evaluated on their ability to deliver precise and reliable annotations, which are crucial for downstream research in microbial ecology, pathogenesis, and drug development.

Comparative Performance of Annotation Tools

The performance of annotation tools varies significantly based on the specific task, the underlying algorithm, and the genomic context. The following sections and tables summarize experimental data from recent benchmarking studies.

Traditional Tools vs. Deep Learning for Gene Prediction

Traditional gene finders like Prodigal, Glimmer, and GeneMark rely on statistical models and heuristic rules to identify coding sequences (CDSs). In contrast, newer genomic language models (gLMs), such as GeneLM (a fine-tuned DNABERT model), treat DNA sequences as linguistic data, using transformers to capture contextual dependencies [4].

A comparative evaluation of these tools on bacterial gene prediction revealed distinct performance differences [4]:

Table 1: Performance Comparison of Gene Prediction Tools on CDS Identification

| Tool | Type | Precision | Recall | Key Strengths |

|---|---|---|---|---|

| GeneLM (gLM) | Deep Learning (Transformer) | Highest | Highest | Reduces missed CDS predictions; excels at capturing complex patterns. |

| Prodigal | Traditional (Heuristic) | High | High | Fast, widely used; reliable for standard genomes. |

| GeneMark-HMM | Traditional (HMM) | High | High | Robust for well-studied taxa. |

| Glimmer | Traditional (Interpolated Markov Models) | Moderate | Moderate | Can overpredict short ORFs. |

A more critical challenge than identifying the general CDS region is the accurate prediction of the translation initiation site (TIS). Here, deep learning models show a particularly notable advantage [4].

Table 2: Performance Comparison on Translation Initiation Site (TIS) Prediction

| Tool | Type | Accuracy on Experimentally Verified TIS |

|---|---|---|

| GeneLM (gLM) | Deep Learning (Transformer) | Surpasses traditional methods |

| TiCO | Traditional | Misses several TIS predictions |

| TriTISA | Traditional | Misses several TIS predictions |

Functional Annotation: The Case of Antimicrobial Resistance (AMR) Genes

The choice of annotation tool and database directly impacts the ability to predict phenotypes like antimicrobial resistance (AMR). A study on Klebsiella pneumoniae genomes compared eight annotation tools to build "minimal models" of resistance, which use only known AMR markers to predict resistance phenotypes [5].

The performance of these minimal models, assessed using machine learning classifiers (Elastic Net and XGBoost), highlighted that the completeness of the underlying database is a major factor in the tool's effectiveness [5].

Table 3: Comparison of AMR Annotation Tools and Minimal Model Performance

| Annotation Tool | Database(s) Used | Key Characteristics | Performance in Minimal Models |

|---|---|---|---|

| AMRFinderPlus | Custom, includes point mutations | Comprehensive, includes species-specific mutations | High performance; captures broadest range of known markers. |

| Kleborate | Species-specific (K. pneumoniae) | Concise, less spurious gene matching for target species | High performance for K. pneumoniae. |

| RGI (CARD) | CARD | Stringent validation of ARGs | Varies; depends on antibiotic. |

| Abricate | NCBI (default) or others | Does not detect point mutations; covers a subset of AMRFinderPlus | Lower performance due to incomplete gene coverage. |

| DeepARG | DeepARG | Includes variants predicted with high confidence | Good performance. |

This study demonstrated that for some antibiotics, even the best minimal models using known markers significantly underperform, clearly indicating where novel AMR variant discovery is most necessary [5].

Integrated Pipelines for Comprehensive Annotation

For researchers seeking an all-in-one solution, several integrated pipelines combine multiple tools for structural and functional annotation.

Table 4: Comparison of Integrated Prokaryotic Annotation Pipelines

| Pipeline | Scope | Key Features | Use Case |

|---|---|---|---|

| NCBI PGAP | Structural & Functional | Standardized, automated; uses GeneMarkS2, tRNAscan-SE, HMMer [6]. | Gold standard for submissions to public databases. |

| CompareM2 | Comparative Genomics | Bakta/Prokka annotation, QC, phylogeny, pangenome, AMR, virulence [7]. | Easy-to-use, genomes-to-report pipeline for multi-genome studies. |

| SynGAP | Structural Polishing | Uses gene synteny with related species to correct and add gene models [8]. | Improving GSA quality for closely related species. |

Experimental Protocols for Benchmarking

To ensure fair and reproducible comparisons, benchmarking studies follow rigorous experimental protocols. Below is a detailed methodology adapted from recent publications.

Protocol for Benchmarking Gene Prediction Tools

Objective: To evaluate the accuracy of gene finders in identifying coding sequences (CDS) and translation initiation sites (TIS) in prokaryotic genomes [4].

1. Data Curation:

- Obtain complete, high-quality bacterial genomes from NCBI GenBank.

- Apply stringent filtering: include only genomes with "complete" status and classified as "reference."

- For each genome, retrieve the genome sequence (.fna) and its corresponding annotation file (.gff).

2. Data Processing for CDS and TIS Classification:

- ORF Extraction: Use a tool like ORFipy to scan all six frames of the genome sequence, identifying all open reading frames that begin with a start codon (ATG, TTG, GTG, CTG) and end with a stop codon.

- Labeling:

- For the CDS dataset, label an ORF as positive (1) if its start or end coordinates match an annotated CDS in the reference GFF file.

- For the TIS dataset, extract a 60-nucleotide sequence (30 upstream and 30 downstream of the potential start codon) from ORFs that match a CDS. Label the sequence as positive (1) if the start codon is the true TIS according to the reference.

- Class Balancing: Apply downsampling to negative classes to ensure balanced datasets and prevent model bias.

3. Model Training and Evaluation:

- For gLMs (e.g., GeneLM): Tokenize DNA sequences using a k-mer approach (e.g., k=6). Fine-tune a pre-trained transformer model (e.g., DNABERT) using the curated datasets.

- For Traditional Tools: Run tools like Prodigal and GeneMark with default parameters on the test genomes.

- Performance Metrics: Calculate precision, recall, and F1-score for CDS prediction. For TIS, report accuracy against a set of experimentally verified sites.

Protocol for Benchmarking AMR Annotation Tools

Objective: To assess the ability of different annotation tools to identify known AMR markers and accurately predict antimicrobial resistance phenotypes [5].

1. Data Collection and Pre-processing:

- Acquire a large dataset of assembled genomes (e.g., Klebsiella pneumoniae from BV-BRC) with paired, high-quality clinical phenotyping data (binary resistant/susceptible calls).

- Filter genomes for quality (e.g., exclude outliers based on contig count and genome size) and remove potential contaminants.

2. Sample Annotation and Feature Matrix Construction:

- Annotate all genomes using a suite of target tools (e.g., AMRFinderPlus, Kleborate, RGI, Abricate).

- For each tool, process the output to generate a presence/absence matrix (X_p×n) where

X_ij = 1if the AMR featurejis present in samplei, and0otherwise.

3. Building and Evaluating Minimal Models:

- For a specific antibiotic, use the list of known associated resistance genes from a curated database like CARD to define a minimal feature subset.

- Use the presence/absence matrix of these minimal features to train machine learning models (e.g., Logistic Regression with Elastic Net regularization, XGBoost) to predict the binary resistance phenotype.

- Evaluate model performance using metrics like Area Under the Receiver Operating Characteristic Curve (AUROC) through cross-validation.

- Compare the performance of models built from annotations provided by different tools. Lower performance indicates a knowledge gap in known AMR mechanisms for that antibiotic.

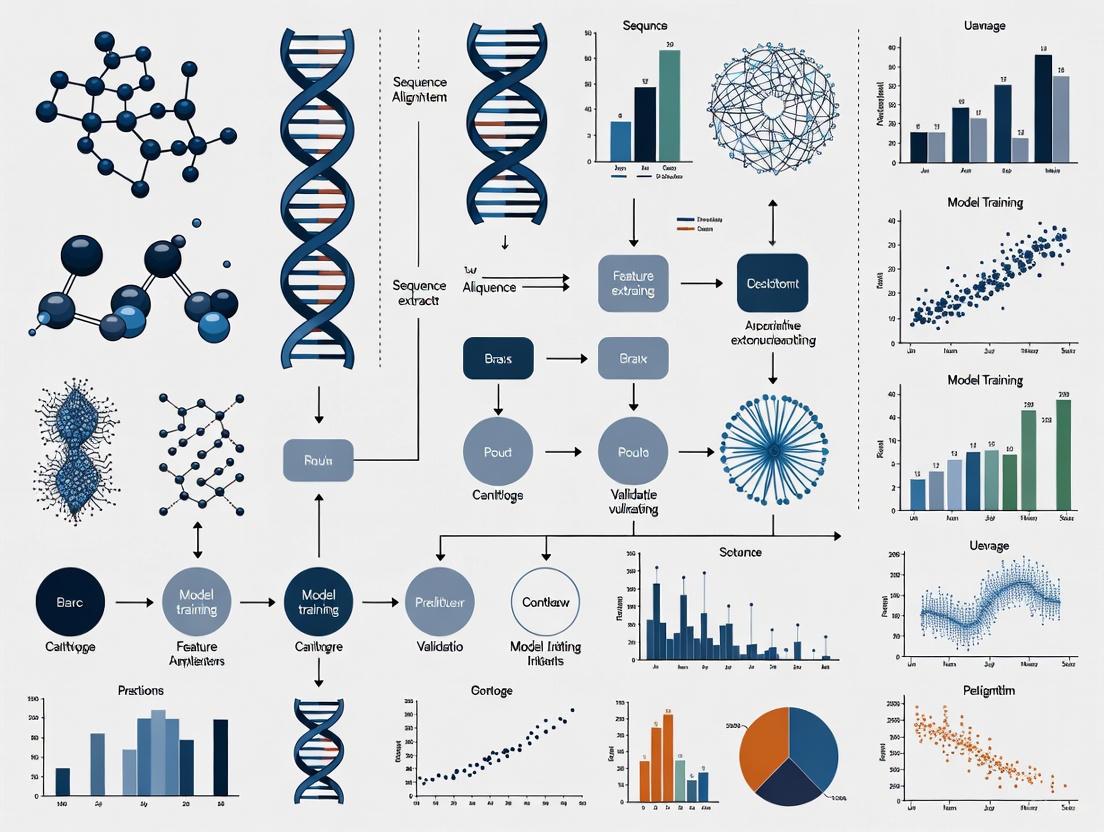

Visualization of Workflows and Relationships

The following diagrams illustrate the logical relationships and experimental workflows described in this guide.

Prokaryotic Genome Annotation and Benchmarking Workflow

Gene Prediction Model Decision Process

This table details key software, databases, and resources essential for prokaryotic genome annotation and benchmarking research.

Table 5: Key Research Reagent Solutions for Prokaryotic Genome Annotation

| Category | Item | Function | Example Sources / IDs |

|---|---|---|---|

| Software & Algorithms | GeneLM / DNABERT | gLM for precise CDS and TIS prediction. | [4] |

| Prodigal, GeneMark-HMM | Traditional, reliable gene finders for baseline comparison. | [4] | |

| AMRFinderPlus | Comprehensive annotation of AMR genes and mutations. | [5] [7] | |

| NCBI PGAP | Integrated pipeline for standardized structural/functional annotation. | [6] | |

| CompareM2 | All-in-one pipeline for comparative genomic analysis and reporting. | [7] | |

| Databases | CARD (Comprehensive Antibiotic Resistance Database) | Curated repository of AMR genes, proteins, and mutations. | [5] |

| UniProtKB (Swiss-Prot) | Database of reviewed protein sequences for functional annotation. | [9] | |

| OrthoDB | Catalog of orthologous genes for benchmarking universal single-copy orthologs. | [9] | |

| Data Resources | NCBI GenBank/RefSeq | Primary sources for genomic sequences and annotations. | [4] [6] |

| BV-BRC (Bacterial & Viral Bioinformatics Resource Center) | Integrated data and analysis platform for bacterial genomes. | [5] | |

| Validation Tools | BUSCO | Assesses completeness and quality of genome annotations using universal orthologs. | [9] [8] |

| CheckM2 | Estimates genome completeness and contamination for quality control. | [7] |

In the field of genomics, particularly for benchmarking gene prediction algorithms and genome assemblers across diverse prokaryotic taxa, a standardized framework has emerged for evaluating performance based on three fundamental metrics: contiguity, completeness, and correctness—collectively known as the "3 Cs" [10]. This framework provides researchers with a systematic approach to assess the quality of genomic assemblies, enabling meaningful comparisons between different algorithms, sequencing technologies, and bioinformatic pipelines. For prokaryotic research, where the accurate reconstruction of microbial genomes is crucial for understanding pathogenesis, metabolism, and evolutionary relationships, rigorous benchmarking using the 3 Cs is indispensable.

The contiguity metric evaluates how seamlessly a genome has been reconstructed, while completeness assesses whether all expected genetic material is present. Correctness, often the most challenging dimension to measure, evaluates the accuracy of each base pair in the assembly [10]. Together, these metrics provide a comprehensive picture of assembly quality that far surpasses what any single measurement can reveal. As the field moves toward reference-grade assemblies for both model and non-model prokaryotes, the 3 Cs framework ensures that assemblies meet the quality standards required for downstream biological interpretation and application in drug development [10] [11].

The Three Pillars of Genome Assembly Quality

Contiguity: Measuring Structural Integrity

Contiguity assesses the fragmentation level of an assembled genome, reflecting how well the assembly process has reconstructed continuous DNA sequences from shorter sequencing reads. The most commonly used metric for contiguity is the contig N50 value, which represents the length cutoff for the longest contigs that collectively contain 50% of the total genome length [10]. In practical terms, a higher N50 value indicates a less fragmented, more complete assembly. In the current era of long-read sequencing, a contig N50 over 1 Mb is generally considered good for many applications, though this threshold varies depending on the organism complexity and research goals [10].

Recent benchmarking studies on bacterial models including Escherichia coli, Pseudomonas aeruginosa, and Xylella fastidiosa have demonstrated that assembly strategy significantly impacts contiguity. Long-read-based strategies consistently show higher contiguity compared to short-read approaches, which typically produce more fragmented assemblies despite higher base-level accuracy [11]. Hybrid assembly strategies, which leverage both long and short reads, successfully balance contiguity with other quality metrics, often making them the preferred approach for high-quality prokaryotic genome assemblies [11] [12].

Completeness: Assessing Genetic Content

Completeness evaluates whether an assembled genome contains all the genetic elements expected for that organism. The standard tool for assessing completeness is BUSCO (Benchmarking Universal Single-Copy Orthologs), which searches for a set of evolutionarily conserved, single-copy genes that should be present in complete assemblies [10] [11]. These gene sets are specific to taxonomic groups, making BUSCO particularly valuable for prokaryotic taxa research where gene content conservation varies across lineages.

A BUSCO complete score above 95% is generally considered indicative of a high-quality assembly [10]. Benchmarking studies have revealed that while long-read sequencing strategies excel at contiguity, they sometimes exhibit lower completeness compared to short-read approaches, highlighting the trade-offs between different assembly strategies [11]. This underscores the importance of using multiple metrics when evaluating assembly quality, as excellence in one dimension does not guarantee performance across all criteria.

Correctness: Evaluating Sequence Accuracy

Correctness represents the accuracy of each base pair in the assembly and is often the most challenging dimension to quantify [10]. Unlike contiguity and completeness, correctness lacks a single standardized metric and instead relies on multiple approaches tailored to available resources and research contexts. For prokaryotic taxa with existing high-quality reference genomes, correctness can be measured through concordance analysis, where the assembly is aligned to the reference to identify discrepancies [10].

When reference genomes are unavailable, alternative approaches include k-mer comparison tools like Merqury, which compare k-mers between the assembly and original sequencing reads to identify errors [10]. Another method involves identifying frameshifting indels in coding genes, as these typically represent assembly errors rather than biological variation [10]. Each approach has advantages and limitations, with k-mer analysis providing comprehensive genome-wide assessment while frameshift analysis focuses on the most functionally constrained regions.

Table 1: Metrics for Assessing Genome Assembly Quality

| Quality Dimension | Primary Metric | Tool Examples | Interpretation Guidelines |

|---|---|---|---|

| Contiguity | Contig N50 | QUAST | Higher values indicate less fragmentation; >1 Mb considered good |

| Completeness | BUSCO score | BUSCO | >95% considered complete; taxon-specific gene sets |

| Correctness | Base concordance | Merqury, Yak | Higher concordance and lower error rates indicate better accuracy |

| Frameshift analysis | Gene annotation pipelines | Fewer frameshifts in coding regions indicate higher quality | |

| K-mer agreement | Merqury | QV scores >40 indicate high quality |

Benchmarking Gene Prediction Algorithms

DNA Foundation Models for Genomic Tasks

The emergence of DNA foundation models through self-supervised pre-training represents a paradigm shift in genomic sequence analysis, mirroring the revolution in natural language processing [13]. These models, including DNABERT-2, Nucleotide Transformer, HyenaDNA, Caduceus, and GROVER, are pre-trained on large genomic datasets and can be adapted for various downstream tasks including gene prediction [13]. Benchmarking these models requires specialized approaches, particularly through zero-shot embeddings where model weights remain frozen to prevent fine-tuning biases [13].

Recent comprehensive evaluations have revealed that mean token embedding consistently and significantly improves sequence classification performance compared to other pooling strategies like summary tokens or maximum pooling [13]. This embedding approach provides a more comprehensive representation of entire DNA sequences, which is particularly valuable for gene prediction tasks where discriminative features may be distributed throughout the sequence rather than concentrated in specific regions.

Performance Across Genomic Tasks

DNA foundation models have demonstrated competitive performance across diverse genomic tasks, though their effectiveness varies substantially depending on the specific application. For foundational tasks like promoter identification, splice site prediction, and transcription factor binding site prediction, these models consistently achieve AUC scores above 0.8, indicating strong predictive capability [13]. However, performance degrades for more complex tasks such as gene expression prediction and identifying putative causal quantitative trait loci (QTLs), where specialized models still maintain an advantage [13].

The architecture and pre-training data of foundation models significantly influence their performance on gene prediction tasks. For instance, DNABERT-2 shows particular strength in splice site prediction, while Caduceus exhibits superior performance in transcription factor binding site prediction [13]. These specialized capabilities highlight the importance of model selection based on the specific gene prediction task and target prokaryotic taxa.

Table 2: Performance of DNA Foundation Models on Genomic Tasks

| Model | Promoter Identification (AUC) | Splice Site Prediction (AUC) | TFBS Prediction (AUC) | Variant Effect Quantification |

|---|---|---|---|---|

| DNABERT-2 | 0.964–0.986 | 0.906 (donor), 0.897 (acceptor) | Competitive | Pathogenic variant identification |

| Nucleotide Transformer | High | Moderate | Moderate | Moderate |

| HyenaDNA | 0.689–0.864 | Moderate | Moderate | Less effective for QTLs |

| Caduceus | High | Moderate | Superior | Moderate |

| GROVER | High | Moderate | Moderate | Moderate |

Experimental Protocols for Benchmarking

Standardized Workflow for Assembly Evaluation

To ensure reproducible and comparable results when benchmarking genome assemblers and gene prediction algorithms, standardized experimental protocols must be implemented. The following workflow represents a consensus approach derived from multiple recent benchmarking studies [11] [12] [14]:

Data Acquisition and Preparation: Begin with standardized sequencing data from well-characterized reference strains. For prokaryotic benchmarking, include organisms with varying GC content and genomic features [12].

Quality Control: Perform rigorous quality assessment using tools such as FastQC to evaluate read quality, followed by adapter trimming and quality filtering [12].

Assembly and Gene Prediction: Execute multiple assembly algorithms and gene prediction tools using standardized computational resources and parameter settings to ensure fair comparison [11] [14].

Quality Assessment: Evaluate resulting assemblies using the 3 Cs framework with tools such as QUAST for contiguity, BUSCO for completeness, and Merqury for correctness [11] [12].

Comparative Analysis: Perform statistical comparisons between approaches, identifying significant differences in performance metrics across different prokaryotic taxa.

The following diagram illustrates the standardized benchmarking workflow:

Benchmarking Long-Range Dependencies with DNALONGBENCH

For advanced genomic analyses that require understanding long-range regulatory interactions, specialized benchmarking suites have been developed. DNALONGBENCH represents the most comprehensive benchmark specifically designed for long-range DNA prediction tasks, spanning up to 1 million base pairs across five distinct tasks: enhancer-target gene interaction, expression quantitative trait loci, 3D genome organization, regulatory sequence activity, and transcription initiation signals [15] [16].

When applying DNALONGBENCH to evaluate gene prediction algorithms, researchers should:

Task Selection: Choose biologically meaningful tasks relevant to the research question, considering that model performance varies substantially across different task types [15].

Model Comparison: Include three model types in evaluations: task-specific expert models, convolutional neural networks, and DNA foundation models to provide comprehensive performance baselines [15].

Performance Metrics: Utilize appropriate metrics for each task type, including AUROC for classification tasks and Pearson correlation coefficient for regression tasks [16].

Evaluation results consistently show that while DNA foundation models capture long-range dependencies to some extent, expert models specifically designed for each task consistently outperform them across all benchmarks [15]. This performance gap is particularly pronounced for complex tasks like contact map prediction, which presents greater challenges for current algorithms [15].

Essential Research Reagents and Tools

Successful benchmarking of gene prediction algorithms requires not only computational tools but also well-characterized biological materials and reference datasets. The following table summarizes key resources essential for rigorous genomic benchmarking studies:

Table 3: Research Reagent Solutions for Genomic Benchmarking

| Resource Category | Specific Examples | Function in Benchmarking | Key Characteristics |

|---|---|---|---|

| Reference Materials | HG002 (Human); ZymoBIOMICS Microbial Community Standards; ATCC strains | Provide ground truth for method validation | Well-characterized, publicly available, standardized |

| Sequencing Technologies | Illumina short-reads; Oxford Nanopore long-reads; PacBio HiFi | Generate input data for assemblies | Different error profiles, read lengths, and costs |

| Assembly Algorithms | Canu, Flye, Unicycler, NECAT, NextDenovo | Reconstruct genomes from sequencing reads | Varying strengths in 3 Cs metrics |

| Quality Assessment Tools | QUAST, BUSCO, Merqury, CheckM | Evaluate assembly quality against 3 Cs | Provide standardized, interpretable metrics |

| Taxonomic Classification | Kraken2, KMA, MetaPhlAn3, mOTUs2 | Assign taxonomic labels to sequences | DNA-to-DNA, DNA-to-protein, and DNA-to-marker approaches |

| Reference Databases | SILVA, GTDB, NCBI, GreenGenes2 | Provide reference sequences for classification | Varying coverage, quality, and taxonomic breadth |

Benchmarking gene prediction algorithms across diverse prokaryotic taxa requires a multifaceted approach centered on the 3 Cs framework: contiguity, completeness, and correctness. Through systematic evaluation using standardized metrics and experimental protocols, researchers can identify the most appropriate tools and methods for their specific research goals. Current evidence indicates that while emerging technologies like long-read sequencing and DNA foundation models offer substantial improvements for certain tasks, traditional approaches and specialized expert models still maintain advantages for specific applications.

The field continues to evolve rapidly, with ongoing developments in sequencing technologies, algorithmic approaches, and benchmarking methodologies. Future directions include more comprehensive integration of hybrid assembly strategies, enhanced evaluation of long-range dependency capture, and continued development of standardized reference materials spanning diverse prokaryotic taxa. By adhering to rigorous benchmarking principles and the 3 Cs framework, researchers and drug development professionals can ensure that their genomic analyses provide reliable, reproducible insights into prokaryotic biology.

The Impact of Taxonomic Diversity on Algorithm Performance

Taxonomic diversity presents a significant challenge in genomic research, particularly for the benchmarking and application of bioinformatics algorithms. The performance of tools for tasks such as gene prediction, genome assembly, and taxonomic classification can vary substantially when applied to organisms across different phylogenetic lineages. This variation stems from fundamental biological differences including genomic architecture, guanine-cytosine (GC) content, gene family expansions, and horizontal gene transfer events. Understanding these performance disparities is crucial for researchers, especially in drug development, where accurate genomic data from diverse prokaryotic taxa can inform target identification and resistance mechanism studies. This guide objectively compares the performance of various algorithms when confronted with taxonomic diversity, providing supporting experimental data and detailed methodologies to aid selection of appropriate tools for specific research contexts.

Algorithm Performance Across Diverse Taxa: Quantitative Comparisons

Performance of Pan-genome Analysis Tools

Pan-genome analysis, which aims to characterize the full complement of genes in a bacterial species or clade, is particularly sensitive to taxonomic diversity. Different algorithms employ distinct approaches (reference-based, phylogeny-based, or graph-based) with varying success across taxa.

Table 1: Performance of Pan-genome Analysis Tools on Simulated Datasets with Varying Taxonomic Diversity

| Tool | Approach | Ortholog Threshold Range | Reported Advantage | Limitations with Diverse Taxa |

|---|---|---|---|---|

| PGAP2 | Graph-based with fine-grained feature networks | 0.91-0.99 | More precise, robust, and scalable; superior accuracy on simulated datasets [17] | Not specified in evaluated studies |

| Roary | Graph-based (pan-genome pipeline) | Not specified | Rapid, standard for large-scale pan-genomes | Struggles with paralogous genes and mobile elements [17] |

| Panaroo | Graph-based (improved pan-genome) | Not specified | Better handles errors in assembly/gen annotation | Performance varies with genomic diversity [17] |

| PPanGGOLiN | Graph-based (partitioned pan-genome) | Not specified | Efficient for large datasets; partitions genome | Accuracy challenges with high genomic variability [17] |

| PEPPAN | Phylogeny-based | Not specified | Leverages phylogenetic relationships | Computationally intensive for thousands of genomes [17] |

The PGAP2 toolkit introduces a dual-level regional restriction strategy that confines homology searches to predefined identity and synteny ranges, significantly improving ortholog identification in diverse prokaryotic datasets [17]. In systematic evaluations, PGAP2 demonstrated superior precision and robustness compared to other state-of-the-art tools, particularly when analyzing the pan-genome of 2,794 zoonotic Streptococcus suis strains, revealing new insights into the genetic diversity of this pathogen [17].

Performance of Taxonomic Classification Tools

Taxonomic assignment represents another domain where algorithm performance is highly dependent on the diversity of the target dataset. Methods range from similarity-based approaches to modern machine learning models.

Table 2: Performance Metrics for Taxonomic Classification Algorithms

| Tool | Method | Target Gene/Region | Reported Accuracy (Species Level) | Computational Efficiency |

|---|---|---|---|---|

| DeepCOI | LLM (BERT-based) | COI (animals) | AU-ROC: 0.913, AU-PR: 0.817 [18] | ~4x faster than RDP, ~73x faster than BLAST [18] |

| RDP Classifier | Naïve Bayesian | 16S rRNA | AU-ROC: 0.828, AU-PR: 0.793 [18] | Slower than DeepCOI; speed decreases with DB size [18] |

| BLASTn | Local alignment | COI/16S rRNA | AU-ROC: 0.872, AU-PR: 0.740 [18] | Slowest method; speed decreases linearly with DB size [18] |

| Skmer | Alignment-free k-mer | Genome skimming | Varies by dataset and phylogenetic depth [19] | Not explicitly quantified |

| varKoder | Image representation | Genome skimming | Effective across phylogenetic depths [19] | Not explicitly quantified |

DeepCOI represents a significant advancement by employing a large language model pre-trained on seven million cytochrome c oxidase I (COI) gene sequences. This model achieves an AU-ROC of 0.958 and AU-PR of 0.897 across eight major animal phyla, substantially outperforming existing methods while dramatically reducing computation time [18]. The model's architecture enables it to identify taxonomically informative sequence positions, providing both accurate classification and interpretable results.

Experimental Protocols for Benchmarking Algorithm Performance

Benchmarking Dataset Curation

To ensure fair comparisons, benchmarking datasets must encompass appropriate taxonomic diversity. The curated benchmark dataset for molecular identification based on genome skimming provides a framework for standardizing evaluations [19]. This includes:

Multi-level taxonomic sampling: Datasets should include closely related populations or subspecies, congeneric species, and higher taxonomic ranks to test classification resolution at different evolutionary depths [19]. For example, the Malpighiales plant dataset contains 287 accessions representing 195 species, including comprehensive sampling of the genus Stigmaphyllon (10 species with 10 accessions each) to enable validation at shallow phylogenetic depths [19].

Taxonomically verified samples: Novel sequences from expert-curated samples (e.g., the Malpighiales dataset) ensure reliable ground truth for method validation [19].

Publicly available data compilation: Incorporating existing public data (e.g., Mycobacterium tuberculosis lineages, Corallorhiza orchids, Bembidion beetles) enables testing across diverse biological contexts and phylogenetic scales [19].

Inclusion of multiple kingdoms: Bacteria, plants, animals, and fungi exhibit different genomic architectures that can differentially impact algorithm performance [19].

Diagram 1: Benchmarking dataset curation workflow. A robust strategy incorporates multiple taxonomic levels and validation approaches to comprehensively evaluate algorithm performance.

Performance Evaluation Metrics

Standardized metrics are essential for objective algorithm comparison. The following metrics should be reported in benchmarking studies:

Accuracy metrics: Area Under Receiver Operating Characteristic Curve (AU-ROC) and Area Under Precision-Recall Curve (AU-PR) provide comprehensive classification performance assessment [18]. For instance, DeepCOI achieved AU-ROC of 0.991 (class), 0.984 (order), 0.97 (family), 0.948 (genus), and 0.913 (species) across eight animal phyla [18].

Computational efficiency: Execution time and memory usage should be measured across dataset sizes, as algorithms may scale differently with increasing taxonomic diversity [18].

Completeness metrics: For assembly and gene prediction tools, metrics such as BUSCO scores assess the completeness of genomic reconstructions based on evolutionarily informed expectations of universal single-copy orthologs [20].

Diversity representation: The ability to recover genomes or identify taxa across the phylogenetic breadth of a sample, particularly from underrepresented lineages [21].

The Impact of Sequencing Technology on Taxonomic Classification

The choice of sequencing technology interacts significantly with taxonomic diversity in affecting algorithm performance. Different platforms generate data with distinct characteristics that can advantage or disadvantage certain analytical approaches.

Long-read technologies (Oxford Nanopore, PacBio): Enable recovery of more complete genomes from diverse, previously uncharacterized microbial species. The mmlong2 workflow applied to 154 complex environmental samples yielded 15,314 previously undescribed microbial species genomes, expanding phylogenetic diversity of the prokaryotic tree of life by 8% [21]. Long reads facilitate assembly of complete ribosomal RNA operons and better resolution of repetitive regions.

Short-read technologies (Illumina): Provide lower error rates per base but limited taxonomic resolution due to shorter read lengths. One study found that only 50.2% of Illumina-derived 16S rRNA gene sequences could be classified at the genus level using the SILVA database [22].

Full-length marker gene sequencing: Nanopore sequencing of near-full-length 16S rRNA genes provides superior genus-level identification compared to Illumina sequencing of V3-V4 regions (50.2% vs 15.6% unclassified rate) [22].

Genome skimming: Low-coverage whole genome sequencing provides an efficient method for expanding reference databases, with k-mer-based approaches enabling classification even at 1× coverage [23].

Essential Research Reagent Solutions

Table 3: Key Research Reagents and Computational Tools for Taxonomic Diversity Studies

| Category | Specific Tool/Resource | Function in Taxonomic Diversity Research |

|---|---|---|

| Bioinformatics Platforms | MIRRI ERIC Italian Node Platform [20] | Integrated workflow for long-read microbial genome assembly, gene prediction, and annotation |

| Galaxy Europe [20] | Web-based platform with tool library for genomic analysis (e.g., CANU, Flye, Prokka) | |

| CLAWS Workflow [20] | Snakemake-based long-read assembly workflow with polishing and evaluation steps | |

| Reference Databases | BOLD Database [18] | 7.9+ million COI gene sequences for animal taxonomic identification |

| SILVA [22] | Curated database of 16S/18S rRNA sequences for prokaryotic and eukaryotic classification | |

| GTDB [22] | Genome Taxonomy Database providing phylogenetically consistent taxonomy | |

| Specialized Algorithms | PGAP2 [17] | Prokaryotic pan-genome analysis based on fine-grained feature networks |

| DeepCOI [18] | Large language model for taxonomic assignment of animal COI sequences | |

| mmlong2 [21] | Metagenomic workflow for MAG recovery from complex environmental samples |

Diagram 2: Algorithm selection framework for diverse taxa. Choosing the right tool requires considering input data characteristics against specific selection criteria to match algorithms to research contexts.

Taxonomic diversity significantly impacts the performance of bioinformatics algorithms, with substantial variation observed across different tools and approaches. Pan-genome analysis tools like PGAP2 demonstrate superior performance for diverse prokaryotic datasets through innovative graph-based approaches with fine-grained feature analysis. For taxonomic classification, large language models such as DeepCOI represent a breakthrough in both accuracy and efficiency, particularly for animal COI sequences. The choice of sequencing technology further modulates these performance differences, with long-read technologies enabling better characterization of diverse taxonomic groups. Successful navigation of these complexities requires careful selection of algorithms based on specific research questions, target taxa, and available data types. As reference databases continue to expand and methods evolve, the development of more taxonomically-aware algorithms promises to further improve our ability to extract meaningful biological insights from genomically diverse samples.

In the rapidly advancing field of genomics, the establishment of curated datasets and reference genomes serves as the fundamental bedrock for validating and benchmarking bioinformatic tools and algorithms. The proliferation of high-throughput sequencing technologies has generated an unprecedented volume of genomic data, creating an urgent need for standardized resources that enable fair comparison of computational methods across diverse prokaryotic taxa. Without such gold standards, researchers face significant challenges in objectively evaluating tool performance, leading to inconsistent results and hindered reproducibility. The critical importance of these resources is exemplified by successes in related biological fields; for instance, the carefully curated Critical Assessment of protein Structure Prediction (CASP) benchmark was instrumental in catalyzing developments that ultimately led to AlphaFold's solution to the protein folding problem [24].

Gold standard datasets provide the essential foundation for rigorous benchmarking studies, allowing researchers to assess the accuracy, efficiency, and robustness of gene prediction algorithms under controlled conditions. For prokaryotic genomics, where genetic diversity and horizontal gene transfer complicate analysis, well-characterized reference datasets enable meaningful comparisons across different computational approaches. These resources are particularly valuable for evaluating tools designed for specific applications such as antimicrobial resistance (AMR) gene identification, pan-genome analysis, and variant effect prediction [25]. By offering a common framework for assessment, curated benchmarks help identify methodological strengths and weaknesses, guide tool selection for specific research needs, and drive innovation through healthy competition within the scientific community.

Curated Genomic Datasets for Benchmarking

The genomic research community has developed several curated datasets specifically designed for benchmarking bioinformatics tools. These resources vary in scope, biological focus, and application, but share the common goal of providing reliable ground truth data for method evaluation.

Table 1: Curated Genomic Benchmarking Datasets

| Dataset Name | Biological Focus | Scale | Primary Application | Key Features |

|---|---|---|---|---|

| AMR Gold Standard Dataset [25] | Antimicrobial Resistance Genes | 174 bacterial genomes across 22 species | AMR gene detection tool benchmarking | Includes ESKAPE pathogens; paired raw reads and assemblies; simulated metagenomic data |

| Genomic Benchmarks Collection [24] | Regulatory elements (promoters, enhancers) | 9 datasets across human, mouse, roundworm | Genomic sequence classification | Standardized format for machine learning; training/test splits; Python package availability |

| NABench [26] | Nucleotide fitness prediction | 2.6 million mutated sequences from 162 assays | DNA/RNA fitness prediction | Covers diverse DNA/RNA families; multiple evaluation settings; standardized data splits |

| Expert Panel Dataset [27] | Missense variants in clinically relevant genes | 404 missense variants across 21 genes | Variant pathogenicity prediction | Expert-curated pathogenic/benign variants; independent benchmarking datasets |

These datasets address different aspects of the benchmarking challenge. The AMR Gold Standard Dataset, for instance, was specifically developed to compare methods for identifying antimicrobial resistance genes in bacterial isolates [25]. This resource includes 174 complete genomes from clinically relevant pathogens, with particular emphasis on ESKAPE pathogens (Enterococcus faecium, Staphylococcus aureus, Klebsiella pneumoniae, Acinetobacter baumannii, Pseudomonas aeruginosa, and Enterobacter species) plus Salmonella species. The dataset provides both raw sequencing reads and assembled genomes, enabling benchmarking of tools that operate on either data type. Additionally, it includes simulated metagenomic data, allowing researchers to evaluate performance on more complex microbial community samples.

Similarly, the Genomic Benchmarks Collection addresses the need for standardized evaluation in genomic sequence classification [24]. This resource aggregates datasets focused on regulatory elements such as promoters, enhancers, and open chromatin regions across multiple model organisms. By providing consistently formatted training and testing splits with associated documentation, this collection reduces technical variability in evaluations and enables more direct comparison of different machine learning approaches for functional genomic element prediction.

Quality Control in Benchmark Curation

The development of high-quality benchmarking datasets requires rigorous quality control procedures to ensure reliability and representativeness. For the AMR Gold Standard Dataset, researchers implemented a multi-step filtering process [25]. Initial candidate genomes were selected based on completeness and sequencing depth (>40X coverage, >100 bp read length). Subsequent quality assessment included assembly evaluation (requiring N50 >50Kb and <100 contigs), verification of read coverage against reference genomes (excluding samples with >200Kb of zero coverage), and validation of sequence variants (excluding samples with >10 SNPs between Illumina reads and their assembly). This comprehensive approach ensures that only high-quality, consistent data is included in the benchmark.

Similar rigorous approaches are implemented in other benchmarking resources. For example, the PEREGGRN expression forecasting platform incorporates extensive quality control measures, including verification that targeted genes in perturbation experiments show expected expression changes (e.g., 73-92% of overexpressed transcripts increasing as expected across different datasets) and assessment of replicate consistency [28]. These quality control steps are essential for creating benchmarks that accurately reflect biological reality and provide meaningful evaluation metrics.

Benchmarking Methodologies and Experimental Design

Standardized Evaluation Frameworks

Effective benchmarking requires not only curated datasets but also standardized experimental protocols and evaluation metrics. The PGAP2 (Pan-Genome Analysis Pipeline 2) toolkit exemplifies a comprehensive approach to prokaryotic pan-genome analysis, employing a structured workflow that includes data quality control, ortholog identification, and result visualization [17]. The methodology can be summarized in the following workflow:

Diagram 1: PGAP2 pan-genome analysis workflow featuring quality control and ortholog inference.

Another sophisticated benchmarking framework is found in the PEREGGRN platform for evaluating gene expression forecasting methods [28]. This system employs a specialized data splitting strategy where no perturbation condition appears in both training and test sets, ensuring that evaluations measure performance on truly novel interventions rather than memorization of training examples. The platform also implements careful handling of directly targeted genes to prevent inflated performance metrics, recognizing that predicting decreased expression for knocked-down genes does not represent meaningful biological insight.

Performance Metrics for Algorithm Evaluation

The selection of appropriate performance metrics is critical for meaningful benchmarking. Different types of genomic prediction problems require specialized evaluation approaches:

Table 2: Performance Metrics for Genomic Tool Evaluation

| Task Category | Key Metrics | Considerations | Example Applications |

|---|---|---|---|

| Classification | Sensitivity, Specificity, AUROC, Precision-Recall | Handles imbalanced datasets; depends on decision thresholds | Variant pathogenicity prediction [27], genomic element classification [24] |

| Regression | Mean Absolute Error (MAE), Mean Squared Error (MSE), Spearman correlation | Sensitive to outliers; different metrics capture different aspects of performance | Expression forecasting [28], fitness prediction [26] |

| Clustering | Adjusted Rand Index (ARI), Adjusted Mutual Information (AMI), Silhouette index | Extrinsic vs. intrinsic measures; ground truth dependency | Pan-genome analysis [17], cell type identification |

For classification tasks such as variant pathogenicity prediction, metrics like sensitivity and specificity provide insight into different aspects of performance. However, these single-threshold measures can be misleading, making area under the receiver operating characteristic curve (AUROC) a more robust alternative as it summarizes performance across all possible thresholds [27] [29]. For clustering applications like pan-genome analysis, the Adjusted Rand Index (ARI) measures similarity between computational results and ground truth clusters while accounting for chance agreements, with values ranging from -1 (complete disagreement) to 1 (perfect agreement) [29].

The interpretation of these metrics requires careful consideration of biological context. For example, in a benchmark of variant pathogenicity prediction tools, performance varied substantially across different datasets, with Matthews Correlation Coefficient (MCC) and AUROC providing more reliable assessment than sensitivity or specificity alone [27]. Similarly, in expression forecasting, different metrics (MAE, MSE, Spearman correlation) can lead to substantially different conclusions about method performance, highlighting the importance of metric selection aligned with biological goals [28].

Case Study: Benchmarking Antimicrobial Resistance Detection

Experimental Protocol for AMR Tool Evaluation

The AMR Gold Standard Dataset provides a comprehensive framework for benchmarking antimicrobial resistance gene detection tools [25]. The experimental workflow begins with data selection prioritizing ESKAPE pathogens and other clinically relevant species, with genomes filtered based on completeness, sequencing depth (>40X coverage), and read length (>100 bp). Quality control includes assembly using multiple tools (Shovill with both SPAdes and Skesa), assessment of assembly metrics (N50 >50Kb, <100 contigs), verification of read coverage against reference genomes, and validation of variant calls.

For tool evaluation, the benchmark incorporates multiple analysis approaches. For tools that operate on assembled genomes, the provided assemblies serve as input, while read-based tools can utilize the raw sequencing data. The benchmark also includes simulated metagenomic data created by amplifying the gold-standard assemblies following a log-normal distribution to represent natural species distributions, with additional AMR reference genes randomly inserted to ensure comprehensive coverage. Performance is assessed by comparing tool predictions against the annotated AMR genes in the benchmark, with the Resistance Gene Identifier (RGI) from the Comprehensive Antibiotic Resistance Database (CARD) serving as a reference point based on its comparable performance with other AMR detection tools.

Results and Comparative Performance

Implementation of this benchmarking approach has revealed important differences in tool performance. In comparative analyses using the hAMRonization workflow, which standardizes outputs from multiple AMR detection tools, RGI demonstrated similar performance to other established tools including Abricate, CSSTAR, ResFinder, and Srax when evaluated on a subset of 94 genomes from the benchmark [25]. This validation approach, depicted as a radar plot comparing multiple performance dimensions, provides a comprehensive assessment of tool capabilities and limitations.

The availability of this curated benchmark has enabled more systematic comparisons of AMR detection methods, helping researchers select appropriate tools for specific applications and identify areas for methodological improvement. The inclusion of both genomic and simulated metagenomic data facilitates evaluation across different use cases, from analysis of individual bacterial isolates to complex microbial communities.

Advanced Benchmarking Frameworks

The PGAP2 Pan-Genome Analysis Benchmarking Approach

The PGAP2 toolkit exemplifies advanced benchmarking methodologies for prokaryotic pan-genome analysis [17]. This approach employs a sophisticated ortholog identification method that combines gene identity networks with synteny information. The process begins with data abstraction that organizes input into gene identity networks (where edges represent similarity between genes) and gene synteny networks (where edges represent adjacent genes). The system then applies a dual-level regional restriction strategy that evaluates gene clusters within predefined identity and synteny ranges, significantly reducing computational complexity while maintaining accuracy.

The performance of PGAP2 was rigorously evaluated against five state-of-the-art tools (Roary, Panaroo, PanTa, PPanGGOLiN, and PEPPAN) using simulated datasets with varying thresholds for orthologs and paralogs [17]. This systematic assessment demonstrated PGAP2's advantages in precision, robustness, and scalability, particularly when analyzing diverse prokaryotic populations. The tool was further validated through application to 2,794 zoonotic Streptococcus suis strains, providing new insights into the genetic diversity of this pathogen and showcasing the utility of advanced pan-genome analysis for understanding genomic structure and adaptation.

Specialized Benchmarks for Emerging Applications

As genomic research advances, specialized benchmarks have emerged to address new computational challenges. The NABench resource focuses on nucleotide fitness prediction, aggregating 2.6 million mutated sequences from 162 high-throughput assays [26]. This benchmark supports multiple evaluation settings including zero-shot prediction (assessing pre-trained models without additional training), few-shot learning (limited training examples), supervised learning, and transfer learning. The inclusion of diverse DNA and RNA families (mRNA, tRNA, ribozymes, enhancers, promoters) enables comprehensive assessment of model generalization across different biological contexts.

Similarly, the PEREGGRN platform addresses the growing field of expression forecasting, providing a standardized framework for evaluating methods that predict gene expression changes in response to genetic perturbations [28]. This benchmark incorporates 11 large-scale perturbation datasets and employs specialized evaluation protocols that test model performance on unseen perturbations, a critical requirement for real-world applications where researchers need to predict outcomes of novel interventions.

Essential Research Reagents and Computational Tools

The implementation of rigorous benchmarking studies requires access to both biological datasets and computational tools. The following resources represent essential components of the genomic researcher's toolkit for developing and evaluating gene prediction algorithms.

Table 3: Essential Research Reagents and Computational Tools

| Resource Name | Type | Function | Application Context |

|---|---|---|---|

| CARD RGI [25] | Database & Tool | Antimicrobial resistance gene identification | AMR gene detection in bacterial genomes |

| NCBI Genome Access [25] | Data Repository | Source of complete bacterial genomes | Genome selection for benchmark development |

| Shovill [25] | Computational Tool | Genome assembly from Illumina reads | Data processing in benchmark creation |

| SPAdes/Skesa [25] | Computational Tool | Genome assembly algorithms | Alternative assemblers for method validation |

| QUAST [25] | Quality Assessment | Evaluation of assembly metrics | Quality control in benchmark curation |

| SNIPPY [25] | Computational Tool | Mapping reads to reference genomes | Read coverage analysis and variant calling |

| bedtools [25] | Computational Utility | Genome arithmetic operations | Data processing and manipulation |

| ART [25] | Simulation Tool | Sequencing read simulation | Metagenomic benchmark data generation |

| PGAP2 [17] | Pan-genome Analysis | Ortholog identification and visualization | Prokaryotic pan-genome benchmarking |

| Genomic Benchmarks [24] | Data Package | Curated genomic sequences for classification | Machine learning method evaluation |

These resources collectively enable the end-to-end process of benchmark development, from data acquisition and quality control to tool evaluation and comparison. The integration of multiple tools in standardized workflows, such as the hAMRonization pipeline for AMR gene detection comparison [25], facilitates comprehensive benchmarking across different methodologies and approaches.

The establishment of curated datasets and reference genomes has transformed the landscape of genomic tool development and evaluation. By providing standardized resources for benchmarking, these initiatives enable objective comparison of computational methods, identification of performance limitations, and targeted improvement of algorithms. The case studies in antimicrobial resistance detection, pan-genome analysis, and variant effect prediction demonstrate how well-designed benchmarks drive methodological advances and enhance scientific reproducibility.

As genomic research continues to evolve, future benchmarking efforts will need to address emerging challenges including the integration of diverse data types (e.g., long-read sequencing, chromatin conformation, single-cell data), standardization of evaluation metrics across different biological domains, and development of more sophisticated validation approaches that better capture real-world performance requirements. The continued collaboration between biological domain experts and computational researchers will be essential for creating next-generation benchmarks that keep pace with technological advances and enable new discoveries across diverse prokaryotic taxa.

Building Your Benchmarking Pipeline: Tools, Datasets, and Execution

The accuracy and robustness of computational methods in genomics and microbial ecology are contingent upon the quality of the benchmark datasets used for their evaluation. For gene prediction algorithms targeting diverse prokaryotic taxa, a benchmark that thoughtfully incorporates phylogenetic diversity (PD) and functional diversity (FD) is not merely beneficial—it is essential for producing biologically meaningful and generalizable results. Such datasets ensure that algorithms are tested against the vast array of genomic architectures and evolutionary histories present in nature, moving beyond a narrow focus on a few model organisms. This guide objectively compares prevailing strategies and products for curating these critical benchmarks, providing a structured framework for researchers to evaluate and implement best practices in their own work.

The rationale for this integrated approach is underscored by empirical research. A large-scale study analyzing over 15,000 vertebrate species found that while maximizing phylogenetic diversity results in an average gain of 18% in functional diversity compared to random selection, this strategy is not perfectly reliable. In over one-third of comparisons, maximum PD sets contained less FD than randomly chosen sets [30]. This highlights the inherent risk in relying solely on phylogeny and underscores the necessity of directly measuring functional traits in benchmark curation where possible.

Core Principles for Integrated Benchmark Datasets

Effective benchmark datasets for gene prediction must be constructed to address specific, recurring challenges in computational biology. The following principles outline the key considerations.

Principle 1: Hierarchical Taxonomic Sampling A robust benchmark should include taxa spanning multiple phylogenetic depths, from closely related populations or species to distantly related families. This allows researchers to test whether a gene prediction tool performs consistently across different levels of evolutionary divergence. The Malpighiales plant dataset exemplifies this principle by including comprehensively sampled genera (e.g., Stigmaphyllon with 10 species) alongside broader sampling across multiple families, enabling validation from species to family level [19].

Principle 2: Contrasting Evolutionary History with Function While phylogenetic diversity is often used as a proxy for functional diversity, the correlation is imperfect [30]. Benchmarks should therefore intentionally sample lineages that are phylogenetically closely related but ecologically or functionally divergent, as well as distantly related lineages that have converged on similar functions. This design directly tests an algorithm's ability to handle complex genotype-phenotype relationships.

Principle 3: Accounting for Data Quality Gradients In practice, researchers often work with draft genomes of varying quality. Benchmarks that incorporate real-world challenges—such as incomplete genome assemblies, low coverage, and varying sequence quality—provide a more realistic assessment of a tool's practical utility. The G3PO benchmark for gene prediction was specifically designed to include these real-world data quality issues [31].

Different benchmarking initiatives are designed to address distinct challenges. The table below provides a comparative overview of several key resources, their primary applications, and their handling of phylogenetic and functional diversity.

Table 1: Comparison of Benchmark Dataset Resources and Their Characteristics

| Resource Name | Primary Application | Handling of Phylogenetic Diversity | Handling of Functional Diversity | Key Strengths |

|---|---|---|---|---|

| Genome Skimming Benchmark [19] | Molecular identification & DNA barcoding | Curated datasets from closely-related species to all taxa in NCBI SRA. Includes a novel plant (Malpighiales) dataset. | Implicit through phylogenetic diversity; not explicitly measured. | Includes raw reads and 2D genomic representations; spans vast taxonomic breadth. |

| G3PO Benchmark [31] | Gene prediction accuracy | Based on 1793 genes from 147 phylogenetically diverse eukaryotes, from humans to protists. | Focus on gene structure complexity (e.g., exon number, protein length) as a functional proxy. | Designed for challenging, real-world annotation tasks; includes data quality gradients. |

| PhyloNext Pipeline [32] | Phylogenetic diversity analysis | Integrates GBIF occurrence data with OpenTree phylogenies to calculate phylogenetic diversity indices. | Does not directly calculate functional diversity metrics. | Automated, reproducible workflow from data download to analysis; uses open data. |

| OrthoBench [19] | Orthogroup inference | Provides standard datasets for testing algorithms on evolutionary relationships. | Not explicitly measured. | Long-standing standard for over a decade; enables unbiased method comparison. |

| EukRef Initiative [33] | Phylogenetic curation of rRNA | Community-driven curation of ribosomal RNA databases to improve taxonomic accuracy. | Not explicitly measured, but improves ecological inference. | Enhances reliability of environmental sequence annotation; community standards. |

Experimental Protocols for Benchmark Curation and Validation

The process of creating a benchmark is as critical as its final composition. The following protocols, drawn from established methods, provide a roadmap for developing robust datasets.

Protocol for Constructing a Phylogenetically Broad Benchmark

This protocol is adapted from methods used in creating genome-skimming and gene prediction benchmarks [19] [31].

- Define Taxonomic Scope: Determine the phylogenetic breadth of the benchmark (e.g., within a specific phylum, across all prokaryotes). Use authoritative taxonomic sources such as the GTDB (Genome Taxonomy Database) for consistency.

- Stratified Taxon Sampling: Select taxa in a stratified manner to ensure representation of major lineages and different divergence times. This avoids over-representation of well-studied groups.

- Sequence Acquisition and Curation: Obtain genomic data from public repositories (e.g., NCBI SRA, GenBank). Rigorously curate metadata and exclude datasets with poor quality or incomplete annotations. The EukRef initiative provides a robust model for this phylogenetic curation process [33].

- Generate Image Representations (Optional): For methods like varKoder, generate two-dimensional graphical representations of genomic data (e.g., chaos game representations) from raw reads to enable a variety of testing modalities [19].

- Validation Set Creation: Partition the data into training and validation sets. The validation set should include samples from lineages not present in the training set to test generalizability.

Protocol for Evaluating Gene Prediction Tools on G3PO

The G3PO benchmark provides a framework for a rigorous evaluation of gene prediction tools [31].

- Tool Selection and Setup: Select the ab initio gene prediction programs to evaluate (e.g., Augustus, GlimmerHMM, GeneMark-ES). Install and configure each tool according to its documentation, using recommended parameters.

- Data Preparation: Download the G3PO benchmark sequences. The benchmark includes genomic sequences with varying amounts of flanking regions (150 to 10,000 nucleotides) to simulate different annotation scenarios.

- Execution and Output Generation: Run each gene prediction tool on all benchmark sequences. Record the predicted gene models, including exon-intron structures and protein sequences.

- Accuracy Assessment: Compare the tool's predictions against the benchmark's curated reference genes. Standard metrics include:

- Sensitivity (Recall): Proportion of true exons/genes that were correctly predicted.

- Specificity (Precision): Proportion of predicted exons/genes that are correct.

- Exact Gene Match Rate: The percentage of genes for which the entire exon-intron structure was predicted perfectly.

- Analysis of Failure Modes: Analyze cases where predictions were inaccurate to identify patterns. Common issues include missing exons, retaining non-coding sequence in exons, fragmenting genes, or merging neighboring genes.

The workflow for this integrated benchmarking process, from dataset creation to tool evaluation, is visualized below.

Successful benchmark curation and analysis relies on a suite of computational tools and data resources. The following table details key solutions for building and evaluating phylogenetically and functionally diverse benchmarks.

Table 2: Key Research Reagent Solutions for Benchmarking

| Tool/Resource Name | Type | Primary Function in Benchmarking | Relevance to PD/FD |

|---|---|---|---|

| NCBI SRA & GenBank [19] | Data Repository | Source of public raw sequence data and annotated genomes for building benchmarks. | Provides taxonomic (PD) and sometimes functional (FD) metadata for vast organism diversity. |

| GTDB (Genome Taxonomy Database) | Taxonomic Database | Provides a standardized bacterial and archaeal taxonomy based on phylogenomics. | Essential for consistent and accurate phylogenetic diversity assessment in prokaryotes. |

| OpenTree of Life [32] | Phylogenetic Resource | Provides a synthetic, downloadable tree of life integrating published phylogenetic trees. | Used by pipelines like PhyloNext to calculate phylogenetic diversity metrics for a given taxon set. |

| PhyloNext [32] | Computational Pipeline | Automated workflow for phylogenetic diversity analysis using GBIF data and OpenTree phylogenies. | Streamlines the calculation of PD indices; improves reproducibility of phylogenetic analyses. |

| Biodiverse Software [32] | Analysis Tool | Calculates a range of phylogenetic diversity and endemicity indices from spatial and phylogenetic data. | Core analytical engine for quantifying phylogenetic diversity in benchmark datasets. |

| SILVA / PR2 [33] | rRNA Database | Curated databases for ribosomal RNA sequences, providing high-quality taxonomic references. | Enables accurate phylogenetic placement of sequences, especially for microbial eukaryotes. |

| OrthoBench [19] | Benchmark Dataset | Standardized dataset for evaluating orthogroup inference algorithms. | Provides a reliable benchmark for testing methods that infer evolutionary relationships. |

| EukRef [33] | Curation Framework | Community-driven protocol for phylogenetically curating ribosomal RNA reference databases. | Improves the foundational data quality for any benchmark involving microbial eukaryotes. |

Curating benchmark datasets that authentically represent phylogenetic and functional diversity is a complex but non-negotiable standard for advancing the field of computational genomics, particularly for gene prediction in diverse prokaryotic taxa. As the comparative data demonstrates, no single resource serves all purposes; rather, researchers must strategically combine datasets like the G3PO benchmark for gene-specific challenges with broader phylogenetic frameworks like those generated by PhyloNext.

The experimental evidence clearly shows that while phylogenetic diversity is a powerful guiding principle, it is an imperfect surrogate for functional diversity [30]. Therefore, the most robust future benchmarks will be those that directly integrate functional trait data—such as protein domain architectures, metabolic pathway annotations, and ecological niche characteristics—alongside comprehensive phylogenetic sampling. By adhering to the structured protocols and utilizing the toolkit outlined in this guide, researchers and drug development professionals can develop more rigorous benchmarks, leading to more accurate, reliable, and biologically insightful gene prediction algorithms.

Selecting Ab Initio and Evidence-Based Prediction Algorithms for Evaluation

Accurate gene prediction is a foundational step in genomic research, enabling downstream analyses in functional genomics, comparative genomics, and drug target identification. For prokaryotic taxa, this process is particularly critical as precise gene models define protein-coding sequences and the regulatory elements that control their expression. Gene prediction algorithms are broadly categorized into ab initio methods, which rely on statistical models of coding potential and signal sequences within the genomic DNA, and evidence-based methods, which incorporate extrinsic data such as homologous sequences or transcriptomic evidence [34] [31].

Selecting the appropriate tools for a benchmarking study requires a clear understanding of their underlying methodologies, performance characteristics, and the specific challenges presented by diverse prokaryotic genomes, such as variable GC content, the presence of leaderless genes, and non-canonical ribosome binding sites (RBS) [34] [35]. This guide provides an objective comparison of current algorithms, supported by experimental data and detailed protocols, to inform their evaluation across diverse prokaryotic taxa.

Performance Comparison of Major Algorithms

Extensive benchmarking studies reveal that the performance of gene prediction tools can vary significantly based on genomic characteristics and the specific metric being evaluated. The following tables summarize key quantitative findings from recent evaluations.

Table 1: Summary of Algorithm Performance on Prokaryotic Gene Prediction

| Algorithm | Prediction Type | Reported Accuracy on Verified Starts | Key Strengths | Noted Limitations |

|---|---|---|---|---|