Benchmarking Gene Start Prediction: A Framework for Accuracy on Verified Genomic Datasets

Accurate gene start prediction is fundamental for genome annotation and understanding regulatory mechanisms, yet it remains a challenge due to weak sequence patterns and a historical lack of standardized benchmarks.

Benchmarking Gene Start Prediction: A Framework for Accuracy on Verified Genomic Datasets

Abstract

Accurate gene start prediction is fundamental for genome annotation and understanding regulatory mechanisms, yet it remains a challenge due to weak sequence patterns and a historical lack of standardized benchmarks. This article provides a comprehensive framework for researchers and bioinformatics professionals to rigorously evaluate gene start prediction tools. We explore the foundational need for verified datasets and standardized benchmarks in genomics, detail the current landscape of methodologies from traditional algorithms to modern deep learning models, address common troubleshooting and optimization strategies to close performance gaps, and finally, present a validation and comparative analysis of leading tools using consistent metrics. By synthesizing insights from recent community challenges and benchmark suites, this resource aims to establish best practices for model selection, evaluation, and the development of more accurate predictive tools, ultimately enhancing the reliability of genomic annotations for biomedical and clinical research.

The Critical Need for Standardized Benchmarks in Genomic Prediction

Accurately identifying the translation start site of a gene is a foundational step in genome annotation. An error in pinpointing this single nucleotide can lead to an incorrect definition of the entire protein product, with cascading effects on downstream functional analysis and experimental design. In prokaryotes, the difficulty is particularly acute due to the absence of strong sequence patterns that definitively identify true translation initiation sites [1]. For decades, the "longest open reading frame" rule was frequently applied as a default strategy, assigning the start codon to the 5′-most ATG, GTG, or TTG in an operon. However, simple probability estimates suggest this rule achieves only about 75% accuracy, a level insufficient for precise genomic analysis [1]. This review objectively compares the performance of established and emerging gene prediction methods, framing the discussion within the broader context of benchmarking on verified datasets to guide researchers in selecting optimal tools for their annotation projects.

Experimental Benchmarks for Gene Prediction Accuracy

Defining Benchmark Standards: From G3PO to DNALONGBENCH

Robust benchmarking is essential for evaluating the real-world performance of gene prediction methods. The G3PO benchmark (benchmark for Gene and Protein Prediction PrOgrams) was specifically designed to represent challenges faced by modern genome annotation projects [2]. It comprises 1,793 carefully validated and curated reference genes from 147 phylogenetically diverse eukaryotic organisms, spanning a wide spectrum of gene structure complexities from single-exon genes to those with over 20 exons [2]. This diversity is crucial, as prediction accuracy varies significantly across phylogenetic groups, with Chordata genes generally being more accurately predicted than those from other eukaryotic clades.

More recently, DNALONGBENCH has emerged as a comprehensive benchmark suite specifically designed for long-range DNA prediction tasks [3]. While its scope extends beyond start prediction to include enhancer-target interactions and 3D genome organization, it establishes important standardized frameworks for evaluating how well models capture dependencies that may influence gene annotation accuracy. This benchmark assesses performance across five distinct genomics tasks with dependencies spanning up to 1 million base pairs, providing a more holistic view of model capabilities [3].

Historical Performance and the Rise of Self-Training Methods

The development of GeneMarkS represented a significant advance in non-supervised gene start prediction for prokaryotes. By combining models of protein-coding and non-coding regions with models of regulatory sites near gene starts within an iterative Hidden Markov Model framework, it achieved 83.2% accuracy on validated Bacillus subtilis genes and 94.4% accuracy on experimentally validated Escherichia coli genes [1]. This demonstrated that self-training methods could substantially outperform the simple "longest ORF" rule, while having the advantage of requiring no prior knowledge of protein or rRNA genes for a newly sequenced genome.

Table 1: Historical Accuracy of Gene Start Prediction Methods

| Method | Approach | Test Genome | Start Prediction Accuracy | Key Innovation |

|---|---|---|---|---|

| Longest ORF Rule | Heuristic | Various | ~75% (theoretical) | Simple implementation |

| GeneMarkS | Self-training HMM | Bacillus subtilis | 83.2% | Non-supervised training |

| GeneMarkS | Self-training HMM | Escherichia coli | 94.4% | Regulatory site integration |

Comparative Performance of Modern Prediction Methods

The Ab Initio Prediction Landscape

A comprehensive benchmark study of ab initio gene prediction methods across diverse eukaryotic organisms evaluated five widely used programs: Genscan, GlimmerHMM, GeneID, Snap, and Augustus [2]. The study revealed the intrinsically challenging nature of gene prediction, with 68% of exons and 69% of confirmed protein sequences not predicted with 100% accuracy by all five programs. The performance varied substantially based on gene structure complexity, with multi-exon genes presenting significantly greater challenges than single-exon genes.

The G3PO benchmark tests highlighted that several factors significantly influence prediction accuracy, including genome sequence quality, GC content, gene length, and number of exons. Augustus consistently demonstrated competitive performance across multiple test sets, particularly for complex gene structures. The benchmark also revealed that prediction programs trained on evolutionary distant species suffered significant performance drops, emphasizing the importance of species-specific training or model adaptation [2].

The Emergence of Deep Learning Approaches

Recent years have witnessed the emergence of deep learning architectures that substantially improve gene expression prediction from DNA sequences. Enformer, a neural network architecture based on self-attention, represents a significant advance by integrating information from long-range interactions (up to 100 kb away) in the genome [4]. This contrasts with previous convolutional neural network approaches like Basenji2, which could only consider sequence elements up to 20 kb from the transcription start site.

Enformer outperformed previous state-of-the-art models for predicting RNA expression measured by CAGE at transcription start sites of human protein-coding genes, increasing mean correlation from 0.81 to 0.85 [4]. This improvement is particularly relevant for start site annotation because the model's attention mechanisms allow it to identify distal regulatory elements that influence promoter activity and transcription initiation. The model also learned to predict enhancer-promoter interactions directly from DNA sequence competitively with methods that take experimental data as input [4].

Table 2: Performance Comparison of Modern Gene Prediction Architectures

| Model | Architecture | Receptive Field | Key Advantage | Reported Accuracy/Performance |

|---|---|---|---|---|

| Basenji2 | Dilated CNN | ~20 kb | Established baseline | Correlation: 0.81 (CAGE at TSS) |

| Enformer | Transformer + CNN | ~100 kb | Long-range context | Correlation: 0.85 (CAGE at TSS) |

| HyenaDNA | Foundation Model | Up to 450 kb | Long-range dependencies | Variable across tasks [3] |

| Caduceus | Foundation Model | Up to 1M bp | Reverse complement support | Variable across tasks [3] |

Experimental Protocols for Benchmarking

Standardized Evaluation Frameworks

Comprehensive benchmarking requires standardized protocols to ensure fair comparisons across methods. The G3PO benchmark established rigorous evaluation criteria including:

- Sequence Quality Tiers: Testing performance across different levels of genome completeness and contamination

- Gene Complexity Categories: Separating evaluations by number of exons, protein length, and functional domains

- Phylogenetic Groups: Assessing performance across diverse evolutionary clades

- Validation Status: Distinguishing between "Confirmed" and "Unconfirmed" protein sequences based on multiple sequence alignment consistency checks [2]

For start codon prediction specifically, benchmarks should include:

- Experimentally validated translation starts (as used in GeneMarkS validation)

- Stratification by gene function and codon usage

- Assessment of flanking sequence features including ribosomal binding sites

The DNALONGBENCH Assessment Approach

The DNALONGBENCH suite employs a structured evaluation protocol comparing three model classes:

- Task-specific expert models (e.g., ABC model, Enformer, Akita, Puffin)

- Convolutional neural network (CNN) baselines

- Fine-tuned DNA foundation models (HyenaDNA, Caduceus) [3]

The benchmarking results demonstrated that highly parameterized and specialized expert models consistently outperform DNA foundation models across most tasks, with the performance advantage being more pronounced in regression tasks like contact map prediction and transcription initiation signal prediction than in classification tasks [3].

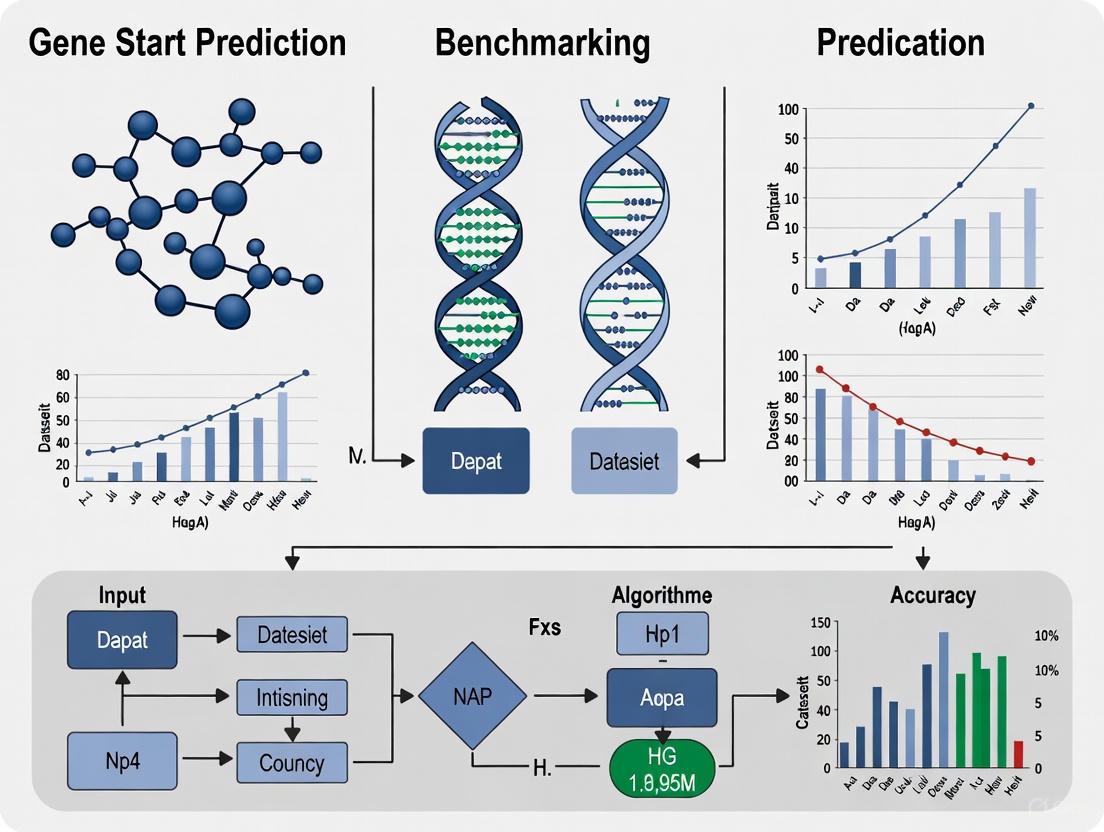

Visualization of Gene Prediction Workflows

Gene Start Prediction Logic

Benchmarking Methodology

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Reagents and Computational Tools for Gene Prediction Research

| Tool/Resource | Type | Function | Application Context |

|---|---|---|---|

| G3PO Benchmark | Dataset | Curated reference genes | Method evaluation & validation |

| DNALONGBENCH | Dataset | Long-range dependency tasks | Benchmarking long-context models |

| Enformer | Model | Gene expression prediction | Sequence-to-function modeling |

| GeneMarkS | Software | Self-training gene prediction | Prokaryotic genome annotation |

| Augustus | Software | Ab initio gene prediction | Eukaryotic genome annotation |

| ROSMAP Dataset | Data | Paired WGS & expression | Personal genome interpretation |

| UK Biobank | Data | Population-scale genomics | Training large predictive models |

Accurate gene start prediction remains challenging but essential for biological discovery. Benchmark studies consistently show that while modern methods have substantially improved beyond simple heuristic rules, significant accuracy gaps remain—particularly for complex gene structures and evolutionarily distant species. The emergence of deep learning approaches that capture long-range genomic dependencies offers promising directions, though current DNA foundation models still lag behind specialized expert models for most tasks [3].

Future progress will likely come from several directions: improved integration of multi-omics data, better modeling of phylogenetic constraints, and more comprehensive benchmarking on diverse biological sequences. As noted in assessments of personal genome interpretation, even state-of-the-art models like Enformer still struggle with correctly attributing the direction of variant effects on gene expression [5]. This highlights the need for continued refinement of our computational models and benchmarking frameworks to achieve the accuracy required for precision medicine and functional genomics applications.

For researchers engaged in genome annotation, selection of prediction tools should be guided by benchmarking results specific to their organism of interest and gene types of primary concern. Combining multiple complementary approaches and maintaining rigorous validation standards remains essential for producing high-quality gene annotations that support downstream biological insights.

In the pursuit of genomic precision, benchmark datasets serve as the foundational yardstick for evaluating sequencing technologies and bioinformatics methods. The adage, "if you cannot measure it, you cannot improve it," is particularly pertinent in this field, where accurate variant identification paves the way for advancements in clinical diagnostics and systematic research [6]. However, a significant benchmarking gap persists, especially for challenging genomic regions and for specific tasks like gene start prediction. The absence of comprehensive standards for these areas directly hinders the development and validation of more accurate genomic tools. This guide objectively compares the performance of various benchmarking resources, highlighting their coverages, limitations, and applications, to illuminate the current state and the path forward in genomic research.

Comparative Analysis of Genomic Benchmarks

Benchmark datasets vary widely in their genomic coverage, the types of variants they catalog, and their applicability to different prediction tasks. The table below summarizes key characteristics of several publicly available benchmarks.

| Benchmark Name | Primary Application | Genomic Region Coverage | Variant Types | Key Features & Limitations |

|---|---|---|---|---|

| GIAB v.4.2.1 [7] [6] | Small variant (SNV, Indel) calling | 92.2% of GRCh38 autosomes [7] | >300,000 SNVs; >50,000 Indels [7] | Includes challenging medically relevant genes and segmental duplications; excludes some complex structural variants [7]. |

| GIAB CMRG [6] | Medically relevant genes | Focused on 386 genes [6] | ~17,000 SNVs; ~3,600 Indels; ~200 SVs [6] | Targets challenging, clinically important genes in repetitive/complex regions [6]. |

| G3PO [2] | Ab initio gene prediction | 1,793 genes from 147 eukaryotes [2] | N/A (Assesses exon-intron structures) | Tests complex gene structures; used to evaluate prediction programs like Augustus and GlimmerHMM [2]. |

| DNALONGBENCH [8] | Long-range DNA dependencies | Tasks span up to 1 million base pairs [8] | N/A (Assesses interactions and signals) | Evaluates five tasks like enhancer-gene interaction and 3D genome organization; shows foundation models lag behind expert models [8]. |

Experimental Protocols for Benchmarking

To ensure reliable and reproducible results, benchmarking studies follow rigorous experimental and computational protocols. The workflow below illustrates the general process for creating and using a variant benchmark, synthesized from established methodologies [7] [6].

Detailed Methodologies

The creation and application of benchmarks involve several critical stages:

Sample and Sequencing: The process begins with stable, well-characterized reference cell lines, such as the GIAB's HG002 sample [6]. To mitigate technological biases, these samples are sequenced using a diverse array of platforms. This typically includes:

- Short-read sequencing (e.g., Illumina) for high base-level accuracy in calling small variants [6].

- Long-read sequencing (e.g., PacBio, Oxford Nanopore) and linked-read technologies to resolve repetitive regions and complex structural variants that are challenging for short reads [7] [6].

- High coverage is generated across multiple technologies to ensure statistical confidence.

Variant Calling and Integration: The sequenced data is processed through multiple bioinformatics pipelines, which involve read alignment to a reference genome and variant calling using a variety of tools [6]. An integration approach then combines these results, using expert-driven rules to determine genomic positions where each method is trusted. Regions where all methods show systematic errors or disagree without clear evidence of bias are typically excluded from the final benchmark [7].

Manual Curation and Validation: This is a crucial step for verifying potential errors in the computational benchmark. For example, in the GIAB v.4.2.1 benchmark, variants in Long Interspersed Nuclear Elements (LINEs) that were identified as potential errors in a previous benchmark version were validated using long-range PCR followed by Sanger sequencing across multiple samples [7]. This wet-lab confirmation ensures the highest possible accuracy for the benchmark set.

The Gene Prediction Benchmarking Gap

While benchmarks for variant calling have advanced, ab initio gene prediction—particularly the accurate identification of transcription start sites—remains a formidable challenge. The G3PO benchmark, designed to evaluate this task, reveals the limitations of current prediction programs.

Performance Evaluation of Gene Prediction Tools

The G3PO benchmark was used to assess the accuracy of five widely used ab initio gene prediction programs. The results, summarized in the table below, highlight a significant performance gap on complex gene structures [2].

| Prediction Program | Overall Accuracy on Complex Genes | Key Strengths | Key Weaknesses |

|---|---|---|---|

| Augustus | Variable; highly dependent on training data [2] | Generally robust across diverse eukaryotes [2] | Performance drops with increasing gene complexity and number of exons [2] |

| SNAP | Sensitive to genomic GC content [2] | Effective in specific genomic environments [2] | Accuracy decreases in genes with atypical GC content [2] |

| GlimmerHMM | Lower accuracy on genes with many exons [2] | — | Struggles with predicting long genes and complex exon-intron structures [2] |

| GeneID | Lower accuracy on genes with many exons [2] | — | Struggles with predicting long genes and complex exon-intron structures [2] |

| Genscan | Lower accuracy on genes with many exons [2] | — | Struggles with predicting long genes and complex exon-intron structures [2] |

A critical finding from the G3PO evaluation was that none of the five tested programs could predict 69% of the confirmed benchmark protein sequences with 100% accuracy [2]. This starkly illustrates the inadequacy of existing tools and the benchmarks used to train them for handling biologically complex but common gene structures.

The Scientist's Toolkit: Essential Research Reagents

Leveraging genomic benchmarks requires a suite of well-characterized reagents and computational resources. The following table details key materials essential for work in this field.

| Reagent / Resource | Function in Benchmarking | Example Sources |

|---|---|---|

| Reference DNA Sample | Provides a ground truth source for sequencing and method validation; available as immortalized cell lines or purified DNA. | GIAB Consortium (e.g., HG002), Coriell Institute [6] |

| Benchmark Variant Call Set (VCF) | The core set of curated variants (SNVs, Indels, SVs) used as the "truth set" for evaluating a new method's calls. | GIAB FTP Repository [7] [6] |

| Benchmark Regions (BED Files) | Defines the genomic coordinates where the benchmark is considered reliable; essential for calculating accurate performance metrics. | GIAB Stratification Files [7] [6] |

| Benchmarking Tools | Software that compares a new set of variant calls against the benchmark, generating standardized precision and recall metrics. | GA4GH Benchmarking Tool [7] |

The journey toward comprehensive genomic benchmarks has made remarkable progress, with resources like GIAB v.4.2.1 and CMRG now enabling the validation of variant calls in previously inaccessible but clinically vital regions [7] [6]. However, a pronounced benchmarking gap remains. Evaluations on suites like G3PO for gene prediction and DNALONGBENCH for long-range interactions demonstrate that current bioinformatics methods are still not fully equipped to handle the complexity of eukaryotic genomes [2] [8]. Closing this gap requires a continued community effort, integrating more diverse sequencing technologies, advanced assembly methods, and rigorous manual curation. Only by refining these essential yardsticks can we drive the development of next-generation tools capable of unlocking the complete functional landscape of the human genome for research and medicine.

In computational biology, the accurate prediction of gene starts remains a significant challenge, with the performance of prediction tools varying substantially across different genomic contexts [2] [1]. The establishment of robust, standardized benchmarks is crucial for driving progress in this and other complex computational fields. This guide explores two highly successful benchmarking initiatives from adjacent domains—the Critical Assessment of protein Structure Prediction (CASP) in structural biology and the ImageNet Large Scale Visual Recognition Challenge (ILSVRC) in computer vision. By examining their methodologies, quantitative outcomes, and organizational principles, we aim to extract transferable strategies for advancing the benchmarking of gene start prediction accuracy on verified datasets.

The CASP Benchmarking Model in Structural Biology

Experimental Design and Protocol

The Critical Assessment of protein Structure Prediction (CASP) is a community-wide, blind experiment established to objectively assess the state of the art in protein structure prediction [9]. Its rigorous protocol is built on several key components:

- Blind Prediction Experiment: CASP organizers release amino acid sequences of recently solved but unpublished protein structures. Prediction teams worldwide submit their models within a specified deadline, without access to the experimental structures [9].

- Independent Assessment: A separate team of assessors evaluates the submitted predictions against the experimental structures using objective metrics, ensuring impartiality [9].

- Standardized Evaluation Metrics: Multiple quantitative metrics are employed to evaluate different aspects of prediction accuracy, including:

- GDT_TS (Global Distance Test Total Score): Measures the overall fold similarity, with scores ranging from 0-100 where higher values indicate better accuracy [9].

- Interface Contact Score (ICS/F1): Specifically assesses the accuracy of multimeric complex interfaces [9].

- LDDT (Local Distance Difference Test): Evaluases local structure quality [9].

Quantifiable Impact and Progress

The table below summarizes key performance breakthroughs documented through the CASP experiment:

Table 1: Key Performance Milestones in CASP History

| CASP Edition | Key Methodological Advance | Quantitative Improvement | Biological Impact |

|---|---|---|---|

| CASP14 (2020) | Emergence of AlphaFold2 deep learning method [9] | ~2/3 of targets reached GDT_TS >90 (competitive with experimental accuracy) [9] | Four experimental structures solved with AlphaFold2 model assistance [9] |

| CASP15 (2022) | Extension of deep learning to multimeric modeling [9] | Accuracy of multimeric models doubled (ICS metric) compared to CASP14 [9] | Enabled accurate reproduction of oligomeric complex structures [9] |

| CASP13 (2018) | Use of advanced deep learning with residue-residue distance prediction [9] | 20%+ increase in backbone accuracy for template-free models (GDT_TS from 52.9 to 65.7) [9] | Significant advance in the most challenging prediction category |

The trajectory of progress in CASP demonstrates how standardized benchmarking accelerates methodological innovation. From 2014 to 2016, the backbone accuracy of submitted models improved more than in the preceding 10 years, with the next CASP continuing this trend [9].

The ImageNet Benchmark in Computer Vision

Challenge Design and Evaluation Framework

The ImageNet Large Scale Visual Recognition Challenge (ILSVRC) was designed to evaluate algorithms for object detection and image classification at scale [10]. Its core components include:

- Curated Dataset: The challenge provided a dataset of over 1 million images, each labeled with objects from 1000 categories, ensuring a diverse and comprehensive testbed [10].

- Annual Competition Cycle: A yearly cycle of challenges, workshops, and publications created a rhythm of innovation, assessment, and knowledge sharing [10].

- Standardized Tasks and Metrics: The challenge focused on two main tasks:

Catalyzing Architectural Innovation

ILSVRC served as a catalyst for groundbreaking architectural advances in deep learning. The competition track record reveals a direct correlation between benchmark participation and model evolution:

Table 2: Model Evolution and Performance on ImageNet

| Model Era | Exemplary Architecture | Key Innovation | Reported Top-5 Error |

|---|---|---|---|

| Pre-ILSVRC | Traditional computer vision | Hand-engineered features | High error rates (>25%) |

| Early Deep Learning | AlexNet (2012) | Successful application of deep convolutional networks [11] | 16.4% [10] |

| Architecture Evolution | ResNet, ViT, ConvNeXt | Residual connections, attention mechanisms, modernized ConvNets [11] | ~3% (surpassing human-level performance) |

The benchmark's impact extended beyond raw accuracy, spurring investigation into model properties like robustness, calibration, and transferability [11]. This encouraged the development of models that were not only accurate on the benchmark but also effective in real-world applications.

Comparative Analysis: Core Success Principles

The sustained success of both CASP and ImageNet stems from shared foundational principles, visualized in the workflow below:

Core Benchmarking Workflow

Standardized Evaluation Metrics

Both initiatives established quantitative, reproducible metrics that enabled direct comparison between methods and tracking of progress over time:

- CASP: Employed a suite of metrics including GDT_TS, LDDT, and ICS, each targeting different aspects of structural accuracy [9].

- ImageNet: Used top-1 and top-5 error rates for classification, and mean average precision for detection tasks [10].

The evolution of these metrics is noteworthy. As initial metrics became saturated (e.g., ImageNet classification accuracy), both communities developed more nuanced evaluations—CASP introduced new categories like multimeric modeling [9], while computer vision researchers investigated model robustness, calibration, and error types [11].

Blind Assessment and Independent Verification

The blind evaluation paradigm is central to both frameworks:

- In CASP, predictors submit models for protein sequences whose structures are known but unpublished, preventing targeted tuning to specific targets [9].

- In ImageNet, evaluation is performed on a sequestered test set with labels inaccessible to participants, ensuring honest assessment [10].

This approach eliminates conscious or unconscious overfitting and provides a genuine measure of methodological generalization.

Application to Gene Start Prediction Benchmarking

Current State and Challenges in Gene Prediction

Existing benchmarks like G3PO have revealed significant challenges in gene prediction. Recent evaluations show that ab initio gene structure prediction remains difficult, with 68% of exons and 69% of confirmed protein sequences not predicted with 100% accuracy by all five major prediction programs tested [2]. The problem is particularly acute for complex gene structures, with performance varying substantially across different phylogenetic groups [2].

The historical approach of using the "longest ORF" rule for gene start annotation has demonstrated limited accuracy, with theoretical estimates suggesting approximately 75% accuracy under equal nucleotide frequency assumptions [1]. Empirical data from prokaryotic genomes shows that the percentage of genes whose annotated starts are not at the 5' end of the longest ORF ranges from 0% to 25% across different species [1].

Proposed Benchmarking Framework

Building on the success of CASP and ImageNet, we propose a framework for benchmarking gene start prediction:

Table 3: Transferable Principles for Gene Start Prediction Benchmarking

| Principle | CASP Example | ImageNet Example | Application to Gene Start Prediction |

|---|---|---|---|

| Blind Assessment | Prediction on unpublished structures [9] | Evaluation on sequestered test set [10] | Reserve experimentally verified gene starts from diverse organisms for testing |

| Standardized Metrics | GDT_TS, LDDT, ICS [9] | Top-1/Top-5 error rates [10] | Develop metrics for start codon, RBS, and full 5' UTR accuracy |

| Community Engagement | Regular experiments with workshops [9] | Annual challenges with workshops [10] | Establish regular assessment cycles with results dissemination |

| Task Diversity | Categories for different prediction types [9] | Classification, detection, localization tasks [10] | Include prokaryotic/eukaryotic, typical/atypical start codons |

The following diagram illustrates how these principles can be integrated into a coherent benchmarking workflow for gene start prediction:

Gene Start Prediction Benchmarking Workflow

The Scientist's Toolkit: Essential Research Reagents

The table below outlines key computational resources and datasets essential for implementing rigorous benchmarking of gene start prediction:

Table 4: Essential Research Reagents for Gene Prediction Benchmarking

| Resource Type | Specific Examples | Function in Benchmarking | Key Characteristics |

|---|---|---|---|

| Verified Datasets | G3PO benchmark [2] | Provides curated set of real eukaryotic genes from diverse organisms for method evaluation | Contains 1793 reference genes from 147 species with varying complexity [2] |

| Ab Initio Prediction Tools | GeneMarkS, GlimmerHMM, Augustus [2] [1] | Baseline methods for comparative performance assessment | GeneMarkS uses self-training HMM for start prediction; achieved 83.2-94.4% accuracy in validation [1] |

| Evaluation Metrics | Exon-level accuracy, Protein-level accuracy [2] | Quantifies prediction performance at different biological scales | Measures sensitivity/specificity for start sites, contextual accuracy in genomic environment |

| Genomic Context Data | Upstream/downstream sequences [2] | Enables evaluation of regulatory region prediction | Provides 150-10,000 nt flanking regions for realistic assessment |

The remarkable success of CASP in driving the protein structure prediction revolution and ImageNet in accelerating computer vision progress provides a powerful blueprint for advancing the field of gene start prediction. Their shared principles—blind assessment, standardized metrics, regular community-wide evaluation, and public dissemination of results—offer a proven pathway for establishing authoritative benchmarks that not only measure but actively accelerate scientific progress. By adapting these principles to the specific challenges of gene start annotation, the research community can establish benchmarks that catalyze similar breakthroughs, ultimately enhancing the accuracy of genome annotation and expanding our understanding of genomic regulation.

For decades, the "longest open reading frame (ORF)" rule has served as a fundamental heuristic for initial gene prediction in computational genomics. This method identifies the longest contiguous sequence between a start and stop codon as the most likely protein-coding region. While computationally straightforward and useful for preliminary annotations, this approach suffers from significant limitations in accuracy, particularly for alternative splicing variants, non-canonical start sites, and genes with complex structures. As genomics advances into an era of precision medicine and therapeutic development, researchers require more sophisticated tools capable of accurately identifying true coding sequences amid complex transcriptional landscapes.

The establishment of verified experimental data as a gold standard represents a paradigm shift in how we benchmark gene prediction tools. This approach moves beyond computational convenience to biological accuracy, enabling the development of models that can discern genuine coding potential with remarkable precision. This comparison guide examines how modern computational methods, particularly ORFhunteR, are leveraging verified datasets to surpass the limitations of traditional rules-based approaches, providing researchers and drug development professionals with more reliable tools for genomic annotation.

Performance Comparison: Quantitative Benchmarking Against Traditional Methods

Modern ORF prediction tools have demonstrated substantial improvements over the longest ORF rule through rigorous validation on experimentally verified datasets. The table below summarizes key performance metrics for ORFhunteR compared to traditional approaches:

Table 1: Performance Comparison of ORF Prediction Methods

| Method | Accuracy | Approach | Key Features | Validation Dataset |

|---|---|---|---|---|

| Longest ORF Rule | Not quantified | Heuristic-based | Identifies longest ATG-to-stop sequence | Limited systematic validation |

| ORFhunteR | 94.9% (RefSeq), 94.6% (Ensembl) | Machine learning | Vectorization of nucleotide sequences followed by random forest classification | Human mRNA molecules from NCBI RefSeq and Ensembl [12] |

The performance advantage of ORFhunteR stems from its multi-faceted approach to sequence analysis. Unlike the longest ORF method, which relies on a single structural feature, ORFhunteR employs a comprehensive feature extraction process that evaluates multiple sequence characteristics simultaneously [12]. This enables the model to discern subtle patterns indicative of genuine coding potential that would be overlooked by rules-based methods.

Experimental Protocols and Methodologies

ORFhunteR's Machine Learning Framework

ORFhunteR employs a sophisticated computational workflow that transforms raw nucleotide sequences into accurately annotated ORFs through multiple processing stages:

Figure 1: ORFhunteR's machine learning workflow for ORF prediction.

The methodology employs several key technical innovations:

Sequence Vectorization: The approach transforms nucleotide sequences into numerical features that capture essential characteristics of genuine coding regions [12]. This process converts biological sequences into a format amenable to machine learning algorithms while preserving critical discriminatory information.

Random Forest Classification: The core of ORFhunteR utilizes an ensemble learning method that constructs multiple decision trees during training and outputs the mode of their classes for classification tasks [12]. This approach reduces overfitting and enhances generalization compared to single-model classifiers.

Experimental Validation Framework: The model was rigorously validated on human mRNA molecules from the NCBI RefSeq and Ensembl databases, establishing verified ORF annotations as the gold standard for benchmarking prediction accuracy [12].

Benchmarking Experimental Design

Robust benchmarking in genomics requires standardized evaluation frameworks. While ORFhunteR established its accuracy against verified datasets, broader benchmarking initiatives in genomics provide models for comprehensive tool assessment:

Table 2: Key Methodological Considerations for ORF Prediction Benchmarking

| Aspect | Traditional Approach | Verified Data Approach |

|---|---|---|

| Reference Data | Computational predictions | Experimentally verified ORFs |

| Evaluation Metrics | Length-based heuristics | Accuracy, precision, recall against gold standard |

| Feature Space | Single feature (length) | Multi-dimensional feature vectors |

| Validation Framework | Limited cross-validation | k-fold cross-validation on verified datasets |

The DNALONGBENCH suite exemplifies this rigorous approach to genomic benchmark development, emphasizing biological significance, long-range dependency modeling, task difficulty, and task diversity as essential criteria for meaningful evaluation [3]. Similar principles apply to ORF prediction, where verified datasets enable comprehensive assessment of model performance across diverse genomic contexts.

Implementing and validating advanced ORF prediction methods requires specific computational resources and biological data. The following table details key components of the research toolkit for gene prediction studies:

Table 3: Essential Research Toolkit for ORF Prediction and Validation

| Resource Category | Specific Examples | Function in Research |

|---|---|---|

| Genomic Databases | NCBI RefSeq, Ensembl | Provide verified mRNA sequences and annotations for model training and validation [12] |

| Software Tools | ORFhunteR (R/Bioconductor package) | Implements machine learning approach for ORF identification [12] |

| Programming Environments | R/Bioconductor | Provides computational environment for genomic analysis [12] |

| Validation Frameworks | k-fold cross-validation | Assesses model performance and generalizability [12] |

| Benchmarking Suites | DNALONGBENCH | Standardized resources for evaluating genomic prediction tasks [3] |

The integration of these resources enables a comprehensive workflow from initial sequence analysis to final model validation. The availability of ORFhunteR as both an R/Bioconductor package and online tool increases accessibility for researchers with varying computational backgrounds [12].

Implications for Research and Therapeutic Development

Accurate gene prediction has far-reaching implications across biological research and pharmaceutical development. The transition from heuristic methods to verified data-driven approaches represents a critical advancement with several important applications:

Enhanced Genome Annotation: Improved ORF prediction directly contributes to more comprehensive and accurate genome annotations, facilitating the discovery of novel protein-coding genes and alternative splicing variants that may have been overlooked by traditional methods.

Drug Target Identification: In pharmaceutical research, accurately identifying coding regions is essential for target validation. Machine learning approaches like ORFhunteR reduce false positives in coding sequence identification, providing greater confidence in potential therapeutic targets [12].

Functional Genomics Studies: High-quality ORF predictions enable more reliable functional characterization of genes, particularly for poorly annotated genomes or newly sequenced organisms where experimental data is limited.

The broader context of benchmarking in genomics, as exemplified by initiatives like DNALONGBENCH, highlights the importance of standardized evaluation across multiple biological tasks including enhancer-target gene interaction, expression quantitative trait loci, 3D genome organization, regulatory sequence activity, and transcription initiation signals [3]. This comprehensive approach to model assessment ensures that computational tools meet the rigorous demands of modern genomic research and therapeutic development.

The movement toward verified data as a gold standard represents a fundamental shift in genomic computational methods, replacing convenient heuristics with biologically validated benchmarks. ORFhunteR's machine learning framework, achieving approximately 95% accuracy through rigorous validation on reference datasets, demonstrates the significant advantages of this approach over traditional methods like the longest ORF rule [12].

As the field advances, the integration of diverse biological features—including sequence composition, evolutionary conservation, and functional genomic signals—will further enhance prediction accuracy. The establishment of comprehensive benchmarking suites across multiple genomic tasks provides a robust foundation for evaluating these emerging tools [3]. For researchers and drug development professionals, these advancements translate to more reliable genomic annotations, accelerating the discovery of novel genes and potential therapeutic targets with greater confidence in computational predictions.

The accurate interpretation of genomic information represents one of the most significant challenges in modern biology. As high-throughput technologies generate increasingly vast amounts of biological data, researchers face the critical task of distinguishing true biological signals from computational artifacts. This challenge is particularly acute in genomics, where the complex nature of gene regulation and the multifactorial influences on phenotypic outcomes complicate the establishment of reliable benchmarks. Community-driven benchmarking efforts have emerged as essential mechanisms for addressing these challenges, providing standardized frameworks that enable rigorous comparison of computational methods and help establish consensus around foundational biological truths. These collaborative initiatives harness the collective expertise of the scientific community to create evaluation resources that no single research group could develop independently, thereby accelerating methodological progress and enhancing the reproducibility of genomic findings.

The development of robust benchmarks has become increasingly important with the proliferation of artificial intelligence and deep learning models in genomics. DNA foundation models—sophisticated neural networks pre-trained on large-scale genomic datasets—have demonstrated remarkable potential for predicting various biological functions directly from sequence data. However, as these models grow in complexity and capability, the scientific community requires standardized methods to evaluate their performance objectively, particularly for tasks involving long-range genomic interactions that span hundreds of thousands to millions of base pairs. This article explores how community-driven benchmarks are addressing these needs and establishing ground truth in genomic research through carefully designed challenges and evaluation frameworks.

The Benchmarking Imperative in Genomics

The establishment of reliable benchmarks in genomics faces unique challenges distinct from those in other domains of computational biology. Genomic elements exhibit tremendous variability across biological contexts, cell types, and species, making it difficult to define universal ground truths. Furthermore, the functional characterization of genomic elements often depends on indirect measurements rather than direct observational data, introducing additional layers of complexity to validation approaches. Prior to the emergence of structured community benchmarks, the field suffered from fragmented evaluation practices where researchers typically assessed new methods using different datasets, metrics, and validation protocols, making meaningful comparisons across studies nearly impossible.

Community-driven benchmarks address these challenges by providing standardized datasets, uniform evaluation metrics, and systematic comparison frameworks that enable direct assessment of methodological performance. These resources allow researchers to identify the strengths and limitations of various approaches, guide methodological improvements, and establish consensus around the state of the art in specific genomic prediction tasks. The development of these benchmarks often involves substantial effort in curating high-quality experimental data, defining appropriate negative controls, and implementing rigorous validation strategies that ensure the resulting resources accurately reflect biological reality.

Table 1: Foundational Genomic Benchmarking Initiatives

| Benchmark Name | Primary Focus | Input Sequence Length | Key Tasks | Notable Features |

|---|---|---|---|---|

| DNALONGBENCH | Long-range DNA dependencies | Up to 1 million base pairs | Enhancer-target gene interaction, 3D genome organization, eQTL, regulatory activity, transcription initiation | Includes base-pair-resolution regression and 2D tasks [8] [3] |

| G3PO | Gene and protein prediction | Varies with gene length | Ab initio gene structure prediction | Covers 1793 genes from 147 phylogenetically diverse organisms [13] |

| BENGI | Enhancer-gene interactions | Dependent on enhancer-promoter distance | Linking enhancers to target genes | Integrates cCREs with experimental interactions from multiple technologies [14] |

| DNA Foundation Model Benchmark | DNA foundation model evaluation | Model-dependent | Sequence classification, gene expression prediction, variant effect quantification | Compares five foundation models across 57 datasets [15] |

Major Community Benchmarking Initiatives

DNALONGBENCH: Establishing Standards for Long-Range Genomic Dependencies

DNALONGBENCH represents a significant advancement in the benchmarking of long-range genomic interactions, addressing a critical gap in existing evaluation resources. This comprehensive benchmark suite encompasses five distinct biological tasks that require modeling dependencies across genomic distances up to one million base pairs: (1) enhancer-target gene interaction prediction, (2) expression quantitative trait loci (eQTL) classification, (3) 3D genome organization through contact map prediction, (4) regulatory sequence activity quantification, and (5) transcription initiation signal identification [8] [3].

The development of DNALONGBENCH followed rigorous design principles to ensure biological relevance and methodological challenge. Each task was selected based on biological significance, demonstrated long-range dependencies, appropriate task difficulty, and diversity in task types, including both classification and regression problems with varying dimensionalities (1D and 2D) and resolution levels (binned, nucleotide-wide, and sequence-wide) [8]. This careful design ensures that the benchmark comprehensively evaluates model capabilities across multiple aspects of long-range genomic function.

In benchmark evaluations, specialized expert models consistently outperformed both convolutional neural networks and fine-tuned DNA foundation models across all five tasks. For example, in contact map prediction—a particularly challenging task that requires modeling the three-dimensional architecture of chromatin—highly parameterized expert models demonstrated substantially better performance than general-purpose approaches [3]. This performance gap highlights both the current limitations of generalizable models and the value of benchmarks in identifying areas for future methodological development.

G3PO: Benchmarking Gene Prediction Accuracy

The G3PO (benchmark for Gene and Protein Prediction PrOgrams) initiative addresses the critical challenge of accurately predicting gene structures in eukaryotic genomes, particularly as new sequencing technologies produce increasingly complex draft assemblies. This benchmark was constructed from a carefully validated set of 1,793 real eukaryotic genes from 147 phylogenetically diverse organisms, representing a wide spectrum of gene structures from single-exon genes to those with over 20 exons [13].

A key innovation in G3PO is its classification of genes into 'Confirmed' and 'Unconfirmed' categories based on rigorous multiple sequence alignment analysis that identifies potentially problematic sequence segments indicative of annotation errors. This quality control process ensures that the benchmark reflects the challenges of real-world gene prediction while maintaining high standards for reliability. The benchmark also incorporates genomic sequences with varying flanking regions (150 to 10,000 nucleotides upstream and downstream) to simulate realistic genome annotation scenarios where gene boundaries are not precisely known [13].

Evaluation using G3PO revealed the substantial challenges facing ab initio gene prediction methods, with even state-of-the-art tools failing to achieve perfect accuracy on a significant majority of test cases. Notably, approximately 68% of exons and 69% of confirmed protein sequences were not predicted with 100% accuracy by all five evaluated gene prediction programs [13]. These findings underscore the difficulty of gene prediction and the value of comprehensive benchmarks in driving methodological improvements.

BENGI: Ground Truth for Enhancer-Gene Interactions

The BENGI (Benchmark of candidate Enhancer-Gene Interactions) platform addresses the critical need to connect candidate cis-regulatory elements with their target genes, a fundamental challenge in functional genomics. BENGI integrates the Registry of candidate cis-Regulatory Elements (cCREs) with experimentally derived genomic interactions from multiple technologies, including ChIA-PET, Hi-C, CHi-C, eQTL, and CRISPR/dCas9 perturbations [14].

This integration creates a comprehensive benchmark comprising over 162,000 unique cCRE-gene pairs across 13 biosamples. A particularly important aspect of BENGI's design is its handling of ambiguous assignments in 3D chromatin interaction data, where interaction anchors may overlap with multiple gene promoters. The benchmark provides both inclusive datasets that retain all cCRE-gene links and refined datasets that remove these ambiguous pairs, allowing researchers to assess the impact of assignment certainty on method performance [14].

Statistical analyses of BENGI datasets revealed that different experimental techniques capture distinct aspects of enhancer-gene interactions. For example, eQTL datasets showed higher overlap coefficients with RNAPII ChIA-PET and CHi-C datasets (0.20-0.36) than with Hi-C and CTCF ChIA-PET datasets (0.01-0.05), reflecting the promoter-focused nature of the former techniques compared to the more comprehensive chromatin interaction mapping of the latter [14]. This understanding helps researchers select appropriate benchmarks based on the specific biological questions they are addressing.

Experimental Protocols and Evaluation Methodologies

Standardized Assessment Frameworks

Community benchmarks employ rigorous experimental protocols to ensure fair and informative comparisons of computational methods. The DNA foundation model benchmark, for example, utilizes a standardized evaluation pipeline that generates zero-shot embeddings from pre-trained models, splits samples into training and testing sets, trains classifiers on these embeddings, and reports performance on the test set [15]. This approach minimizes biases introduced by different fine-tuning strategies and enables direct comparison of the intrinsic capabilities of various models.

A critical finding from this benchmarking effort was the substantial impact of embedding strategies on model performance. The evaluation revealed that mean token embedding consistently outperformed other pooling methods, such as sentence-level summary tokens or maximum pooling, across most models and datasets [15]. For instance, mean token embedding improved AUC scores by an average of 4.0% for DNABERT-2, 6.8% for Nucleotide Transformer, and 8.7% for HyenaDNA across binary classification tasks. This discovery has important implications for both benchmark design and practical applications, as it demonstrates how technical implementation choices can significantly influence perceived model performance.

Performance Metrics and Evaluation Criteria

Genomic benchmarks employ diverse evaluation metrics tailored to specific biological tasks and data types. These include:

- Area Under the Receiver Operating Characteristic Curve (AUROC): Used for binary classification tasks such as enhancer-target gene interaction prediction and eQTL classification [8]

- Pearson Correlation Coefficient (PCC): Applied to regression tasks including regulatory sequence activity and transcription initiation signal prediction [8]

- Stratum-Adjusted Correlation Coefficient (SCC): Utilized for evaluating contact map predictions where data exhibits strong spatial autocorrelation [8]

- Semantic Similarity Measures: Employed in benchmarks like GeneAgent to evaluate the functional relevance of generated biological process names [16]

The selection of appropriate metrics is crucial for meaningful benchmarking, as different metrics capture distinct aspects of model performance. For example, while AUROC provides a comprehensive view of classification performance across all threshold values, precision-recall curves may be more informative for imbalanced datasets where positive cases are rare.

Table 2: Performance Comparison of Model Types on DNALONGBENCH Tasks

| Task | Expert Models | DNA Foundation Models | CNN Models | Performance Gap |

|---|---|---|---|---|

| Enhancer-target gene prediction | 0.803 (ABC Model) | 0.602-0.681 | 0.612 | 17.9-33.4% |

| Contact map prediction | 0.841 (Akita) | 0.108-0.132 | 0.042 | 84.3-86.1% |

| eQTL prediction | 0.702 (Enformer) | 0.569-0.587 | 0.551 | 16.4-23.4% |

| Regulatory sequence activity | 0.782 (Enformer) | 0.381-0.435 | 0.432 | 44.4-51.3% |

| Transcription initiation signal | 0.733 (Puffin-D) | 0.108-0.132 | 0.042 | 81.9-84.3% |

Visualization of Benchmarking Workflows

Benchmark Development and Evaluation Process

The following diagram illustrates the standardized workflow for developing and evaluating genomic benchmarks, from data collection to method comparison:

Community Benchmark Development Workflow: This diagram illustrates the standardized process for creating and utilizing genomic benchmarks, from initial data collection to final performance analysis and methodological insights.

Essential Research Reagents and Computational Tools

The development and application of genomic benchmarks rely on a diverse set of computational tools and data resources that enable rigorous evaluation of methodological performance. The following table details key components of the benchmarking toolkit:

Table 3: Essential Research Reagents and Computational Tools for Genomic Benchmarking

| Resource Type | Examples | Primary Function | Relevance to Benchmarking |

|---|---|---|---|

| Experimental Data Sources | ENCODE cCREs, GTEx eQTLs, Hi-C data | Provide ground truth data for benchmark construction | Supply validated biological interactions for positive examples [14] |

| DNA Foundation Models | DNABERT-2, Nucleotide Transformer, HyenaDNA, Caduceus | Generate sequence embeddings and predictions | Serve as benchmark targets for evaluating pre-trained models [15] [17] |

| Specialized Expert Models | Enformer, Akita, ABC Model, Puffin-D | Provide state-of-the-art performance baselines | Establish upper bounds of performance for specific tasks [3] |

| Evaluation Metrics | AUROC, PCC, SCC, Semantic Similarity | Quantify model performance consistently | Enable standardized comparison across different methods [8] [16] |

| Benchmark Platforms | DNALONGBENCH, G3PO, BENGI | Host standardized tasks and datasets | Provide centralized resources for method evaluation [8] [13] [14] |

Impact and Future Directions

Community-driven benchmarking efforts have profoundly influenced genomic research by establishing standardized evaluation practices and facilitating direct comparison of computational methods. These initiatives have revealed critical insights about the current state of computational genomics, including the superior performance of specialized expert models on specific tasks compared to general-purpose foundation models, the significant challenges in predicting certain genomic features such as 3D chromatin contacts, and the importance of technical implementation choices like embedding strategies on overall performance [8] [15] [3].

The consistent finding that expert models outperform DNA foundation models across diverse tasks suggests that while foundation models capture general sequence patterns effectively, task-specific architectural innovations and training strategies remain essential for achieving state-of-the-art performance on many genomic prediction problems. This observation highlights the continued importance of domain knowledge in computational method development, even as general-purpose models become more sophisticated.

Looking forward, several emerging trends are likely to shape the next generation of genomic benchmarks. These include the development of multi-modal benchmarks that integrate sequence data with epigenetic, structural, and functional information; the creation of more comprehensive negative examples that better reflect biological reality; and the establishment of benchmarks specifically designed to evaluate model generalization across species, cell types, and experimental conditions. As the field continues to evolve, community-driven benchmarking will remain essential for grounding computational advances in biological reality and ensuring that methodological progress translates to genuine biological insights.

Community-driven benchmarking efforts represent a cornerstone of modern genomic research, providing the standardized frameworks necessary to establish ground truth and objectively evaluate computational methods. Initiatives such as DNALONGBENCH, G3PO, and BENGI have created essential resources that enable rigorous comparison of diverse approaches, reveal fundamental insights about methodological strengths and limitations, and guide future development toward the most pressing challenges. As genomic data grows in volume and complexity, and as computational models become increasingly sophisticated, these collaborative benchmarking efforts will play an ever more critical role in ensuring that scientific progress rests on a foundation of biological truth rather than computational artifact. By fostering transparency, reproducibility, and rigorous evaluation, community benchmarks accelerate the translation of algorithmic innovations into genuine biological understanding.

From Algorithms to Action: A Toolkit for Gene Start Prediction

Metagenomics enables the direct study of genetic material from environmental samples, bypassing the need for laboratory cultivation. A central challenge in analyzing these datasets is the accurate identification of protein-coding genes within short, anonymous DNA sequences, a task for which traditional gene-finding tools are poorly suited. Ab initio methods that rely on statistical models, rather than homology alone, are essential for discovering novel genes. GeneMark and Orphelia are two prominent programs developed to address the specific demands of metagenomic gene prediction. This guide provides an objective comparison of their performance, principles, and optimal use cases, with a focus on their accuracy in predicting gene starts within the rigorous context of benchmark studies.

Core Principles and Methodologies

GeneMark: Heuristic Model Construction

The GeneMark family of tools employs a sophisticated approach based on inhomogeneous Markov models. For metagenomic applications, its hallmark is a heuristic methodology that constructs a model of protein-coding sequence even from a minimal amount of anonymous DNA [18] [19]. This is critical for metagenomic reads where the phylogenetic origin is unknown, and standard training procedures are not feasible.

- Model Building Workflow: The heuristic procedure involves several steps. First, it establishes relationships between positional nucleotide frequencies and global nucleotide frequencies, as well as between amino acid frequencies and the global GC content of the input sequences [18]. These relationships are approximated using linear regression. An initial codon usage table is derived from the products of positional nucleotide frequencies, which is then modified by the GC content-determined amino acid frequencies. Finally, a 3-periodic zero-order Markov model for the protein-coding region is constructed from this refined codon usage table [18].

- Training and Application: GeneMark for metagenomics utilizes pre-trained heuristic models built from a large and diverse collection of hundreds of Bacterial and Archaeal genomes [18]. This allows it to make predictions on metagenomic fragments without requiring a prior training step on the sample itself, offering a significant practical advantage.

Orphelia: A Machine Learning Framework

Orphelia adopts a distinct, two-stage machine learning approach for gene prediction, specifically engineered for short DNA fragments [18] [20].

- Feature Extraction: In its first stage, Orphelia extracts multiple sequence features from every potential Open Reading Frame (ORF). These features include monocodon usage, dicodon usage, and the probability of a translation initiation site (TIS), which are calculated using pre-trained linear discriminants [18] [20]. A notable feature is the "TIS coverage," which accounts for incomplete TIS regions at fragment edges, a common occurrence in short reads [20].

- Classification: In the second stage, an artificial neural network integrates these sequence features with contextual information such as the ORF length and the overall GC-content of the DNA fragment. The neural network then computes a posterior probability for the ORF to be protein-coding [18] [20]. A final greedy selection algorithm chooses a non-overlapping set of high-probability genes per fragment.

Table 1: Core Methodological Differences Between GeneMark and Orphelia

| Feature | GeneMark | Orphelia |

|---|---|---|

| Core Approach | Heuristic inhomogeneous Markov models | Machine learning with linear discriminants & neural network |

| Key Features | Codon usage, GC content, positional nucleotide frequencies | Monocodon & dicodon usage, TIS probability, ORF length, fragment GC content |

| Training Data | Large set of prokaryotic genomes (e.g., 357 species) [18] | 131 annotated prokaryotic genomes [20] |

| Handling Short Reads | Single model for various lengths | Multiple, fragment length-specific models (e.g., Net300, Net700) [20] |

Figure 1: Computational workflows of GeneMark and Orphelia, highlighting GeneMark's model application versus Orphelia's feature-based classification.

Benchmarking Performance and Accuracy

Rigorous benchmarking on simulated and validated datasets is crucial for evaluating gene prediction tools. Key performance metrics include sensitivity (the proportion of real genes found) and specificity (the proportion of predicted genes that are real).

Performance on Metagenomic Fragments

Benchmarking studies that simulate metagenomic fragments from diverse lineages provide direct performance comparisons. One comprehensive study evaluated programs on fragments from 100 species, analyzing both fully coding regions and tricky "gene edge" regions [21] [18].

- Sensitivity vs. Specificity Trade-off: A consistent finding is that MetaGeneAnnotator (MGA, an algorithm related to the MetaGene family) often achieves the highest sensitivity, but at the cost of lower specificity. Conversely, GeneMark typically demonstrates high specificity, while Orphelia strikes a balance, maintaining high specificity with robust sensitivity [18]. For instance, on 700 bp fragments, Orphelia's Net700 model achieved a sensitivity of 88.4% and a specificity of 92.9% [20].

- Impact of Read Length: Performance for all tools improves with longer fragment lengths. Orphelia's use of length-specific models (Net300 for short pyrosequencing reads, Net700 for Sanger reads) optimizes its performance across different sequencing technologies [20]. On 300 bp fragments, its Net300 model maintained a specificity of 91.7%, outperforming the Net700 model on the same short fragments (specificity of 88.1%) [20].

- Gene Start Prediction: Accurately identifying the translation initiation site is a major challenge. The GeneMarkS algorithm (a self-training method for complete genomes) demonstrated the ability to predict precisely 83.2% of annotated starts in Bacillus subtilis and 94.4% in a validated set of Escherichia coli genes [1]. This high accuracy is achieved by integrating models of coding regions with models of regulatory sites like the ribosome binding site (RBS).

Table 2: Benchmarking Performance on Simulated Metagenomic Reads

| Tool | Read Length | Sensitivity (%) | Specificity (%) | Harmonic Mean (%) | Source |

|---|---|---|---|---|---|

| Orphelia (Net300) | 300 bp | 82.1 ± 3.6 | 91.7 ± 3.8 | 86.6 ± 2.7 | [20] |

| Orphelia (Net700) | 700 bp | 88.4 ± 3.1 | 92.9 ± 3.2 | 90.6 ± 2.9 | [20] |

| MetaGene | 700 bp | 92.6 ± 3.1 | 88.6 ± 5.9 | 90.4 ± 4.0 | [20] |

| GeneMark | 700 bp | 90.9 ± 2.7 | 92.2 ± 5.0 | 91.5 ± 3.3 | [20] |

The Power of Method Combination

Benchmarking reveals that different tools have complementary strengths and weaknesses. Consequently, combining their predictions can yield superior results. One study found that by taking a consensus of multiple methods, it was possible to significantly improve specificity with a minimal cost to sensitivity, boosting overall annotation accuracy by 1-8% depending on read length [18]. For shorter reads (≤400 bp), a majority vote of all predictors was optimal, whereas for longer reads (≥500 bp), the intersection of just GeneMark and Orphelia predictions performed best [18]. This establishes an upper-bound performance for metagenomic gene prediction when methods are used in concert [21].

Experimental Protocols for Benchmarking

To ensure the reproducibility and validity of the performance data cited in this guide, the following outlines the standard experimental protocols used in the referenced benchmarking studies.

Dataset Curation and Simulation

- Source Genomes: Benchmarks use a curated set of completely sequenced prokaryotic genomes whose phylogenetic lineages are not represented in the training sets of the evaluated tools. This prevents over-optimistic performance and tests generalization. One major study used 100 species of diverse lineages [21], while others used 12 [20] or a selected set of prokaryotes [19].

- Fragment Simulation: DNA fragments of fixed lengths (e.g., 100 bp, 300 bp, 500 bp, 700 bp) are randomly excised from the source genomes to a specified coverage (e.g., 10x) [20]. This creates a realistic mix of fully coding, fully non-coding, and gene-edge fragments.

- Sequencing Error Introduction: To assess robustness, reads are often simulated with characteristic error profiles (substitutions, insertions, deletions) of sequencing technologies like Sanger and pyrosequencing, with error rates varying from 0% to over 2% [19].

Accuracy Measurement Protocol

- Ground Truth: The annotated genes of the source genomes serve as the reference for validating predictions.

- Defining a True Positive: A common and rigorous method uses sequence alignment to define a true positive. A predicted gene is considered a true positive if it aligns with an annotated gene over at least 20 amino acids with at least 80% sequence identity, and crucially, is in the same reading frame [19]. For start codon accuracy, exact nucleotide matches to the annotated start are required [1].

- Metric Calculation:

- Sensitivity: ( \text{Sn} = \frac{TP}{TP + FN} ), where TP=True Positives, FN=False Negatives (annotated genes not predicted).

- Specificity: ( \text{Sp} = \frac{TP}{TP + FP} ), where FP=False Positives (predicted genes not in the annotation).

The Scientist's Toolkit: Research Reagent Solutions

The following table details key computational "reagents" and resources essential for conducting research in metagenomic gene prediction and benchmarking.

Table 3: Essential Research Reagents and Resources

| Item / Resource | Function / Description | Relevance to Benchmarking |

|---|---|---|

| Annotated Prokaryotic Genomes (e.g., from GenBank) | Serves as the source of ground truth data for simulating test fragments and validating predictions. | Provides the verified dataset against which prediction accuracy is measured [19] [1]. |

| Sequence Simulation Software (e.g., MetaSim) | Generates realistic synthetic metagenomic reads with controllable parameters like length, coverage, and error profiles. | Creates standardized, reproducible datasets for controlled benchmarking experiments [19]. |

| Pre-trained Model Files (for GeneMark, Orphelia) | The statistical parameters and models required by the gene prediction programs to analyze DNA sequences. | Essential for ensuring the tool functions as intended and for reproducing published results [18] [20]. |

| Benchmark Datasets (e.g., from PubMed PMC) | Curated collections of sequences and annotations specifically designed for testing bioinformatics tools. | Allows for direct comparison of new tools against established benchmarks like GeneMark and Orphelia [18] [22]. |

| Multiple Sequence Alignment Tool (e.g., BLAT) | Aligns predicted protein sequences to annotated reference sequences. | Used in the validation phase to define true positives based on sequence and reading frame overlap [19]. |

Figure 2: A standard experimental workflow for benchmarking gene prediction tools, from dataset creation to performance evaluation.

In the field of genomics, accurately predicting functional elements like gene starts from DNA sequence is a fundamental challenge with profound implications for biological discovery and therapeutic development. The evolution of deep learning has introduced three dominant architectural paradigms—Convolutional Neural Networks (CNNs), Transformers, and Hybrid CNN-Transformer models—each offering distinct capabilities for interpreting the regulatory grammar of the genome. This guide provides an objective performance comparison of these architectures on gene start prediction and related functional genomics tasks, benchmarking them against verified datasets to inform method selection within the research community. Performance is primarily evaluated through accuracy, capacity to model long-range dependencies, and computational efficiency, providing a framework for selecting optimal architectures for specific genomic prediction tasks.

Performance Comparison of Deep Learning Architectures

Table 1: Quantitative Performance Comparison Across Genomic Tasks

| Architecture | Representative Model | Primary Genomic Task | Reported Accuracy/Performance | Key Strengths | Key Limitations |

|---|---|---|---|---|---|

| CNN | CNN-MGP [23] | Metagenomics Gene Prediction | 91% Accuracy | Efficient local feature extraction; Computationally lightweight | Limited receptive field for long-range dependencies |

| CNN | Basset [23] | DNA Sequence Functional Activity | Not Specified | Automated feature learning from raw sequences | Limited to local regulatory context |

| Transformer | Nucleotide Transformer [24] | Multiple Genomics Tasks | Matched/Surpassed Baseline in 12/18 Tasks | Context-specific representations; Effective in low-data settings | High computational requirements |

| Transformer | DNABERT [25] | Promoter/Splice Site Prediction | Not Specified | K-mer tokenization effective for sequence patterns | Primarily evaluated on short-range tasks |

| Hybrid CNN-Transformer | Hybrid CNN-Transformer [26] | EEG-based Emotion Recognition | 87% Accuracy on DEAP Dataset | Combines local pattern detection with global dependencies | Increased model complexity |

| Hybrid CNN-Transformer | Enformer [27] | Gene Expression Prediction | Spearman R = 0.85 (CAGE at TSS) | Integrates information up to 100 kb from TSS | Requires substantial computational resources |

| Hybrid CNN-Transformer | SVEN [28] | Tissue-Specific Gene Expression | Spearman R = 0.892 | Multi-modality architecture; Accurate for structural variants | Complex training pipeline |

Table 2: Performance on Specific Benchmark Tasks

| Architecture | Gene Expression Prediction (Spearman R) | Regulatory Element Classification | Variant Effect Prediction | Long-Range Interaction Modeling |

|---|---|---|---|---|

| CNN | 0.812 (ExPecto) [27] | Effective for local motifs | Limited to local context | Limited (typically <20 kb) |

| Transformer | Competitive on 18-task benchmark [24] | High accuracy with pre-training | Improved through attention maps | Moderate with long-sequence variants |

| Hybrid CNN-Transformer | 0.892 (SVEN) [28] | Not Specified | Accurate for both small and large variants | Excellent (up to 100 kb with Enformer) [27] |

Experimental Protocols and Methodologies

CNN-Based Gene Prediction (CNN-MGP)

The CNN-MGP framework demonstrates a specialized approach for metagenomics gene prediction [23]. The methodology employs:

Data Pre-processing: ORFs are extracted from DNA fragments and encoded using one-hot encoding (A=[1,0,0,0], T=[0,0,0,1], C=[0,1,0,0], G=[0,0,1,0]). Each ORF is represented as an L×4 matrix, where L is the sequence length (maximum 705 bp in their implementation).

GC-Content Specific Modeling: Ten separate CNN models are trained on mutually exclusive datasets binned by GC-content ranges, acknowledging that fragments with similar GC content share closer features like codon usage.

Architecture Configuration: The network comprises convolutional layers for pattern detection, non-linear activation, pooling layers for dimensionality reduction, and fully connected layers for classification. The final output is the probability that an ORF encodes a gene, with a greedy algorithm selecting the final gene list.

Validation: rigorous testing on 700 bp fragments from 11 prokaryotic genomes (3 archaeal, 8 bacterial) with 5-fold coverage for each testing genome demonstrated 91% accuracy, outperforming or matching state-of-the-art gene prediction programs that use manually engineered features [23].

Transformer-Based Genomic Modeling (Nucleotide Transformer)

The Nucleotide Transformer represents a foundation model approach for genomics [24]:

Pre-training Strategy: Models ranging from 50 million to 2.5 billion parameters are pre-trained on unlabeled genomic sequences from 3,202 human genomes and 850 diverse species using masked language modeling, where the model predicts missing nucleotides in sequences.

Task Adaptation: Two primary evaluation strategies are employed:

- Probing: Frozen model embeddings from various layers are used as features for simple classifiers (logistic regression or small MLPs) to predict genomic labels.

- Fine-tuning: The entire model or subsets (using parameter-efficient methods) are adapted to specific tasks with minimal additional parameters (as low as 0.1% of total parameters).

Benchmarking: Evaluation across 18 curated genomic tasks including splice site prediction (GENCODE), promoter identification (Eukaryotic Promoter Database), and histone modification prediction (ENCODE) using rigorous 10-fold cross-validation.

Performance: Fine-tuned models matched baseline CNN models in 6 tasks and surpassed them in 12 out of 18 tasks, with larger and more diverse training datasets consistently yielding better performance [24].

Hybrid Architecture (Enformer)

The Enformer architecture exemplifies the hybrid approach for gene expression prediction [27]:

Architecture Design: Combines convolutional layers for initial feature extraction from raw sequence with transformer layers that apply self-attention mechanisms to capture long-range dependencies.

Input Processing: Takes 100 kb sequences as input and predicts epigenetic and transcriptional outputs across multiple cell types.

Attention Mechanism: Uses custom relative positional encoding in transformer layers to distinguish between proximal and distal regulatory elements, enabling the model to integrate information from enhancers up to 100 kb away from transcription start sites.

Validation: Outperforms previous state-of-the-art models (Basenji2) for predicting RNA expression measured by CAGE at human protein-coding genes, increasing mean correlation from 0.81 to 0.85. Notably, the model accurately prioritizes validated enhancer-gene pairs from CRISPRi screens competitively with methods that use experimental data as input [27].

Architecture Workflows and Signaling Pathways

Diagram 1: Hybrid CNN-Transformer Genomic Analysis Workflow

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Resources for Genomic Deep Learning Research

| Resource Category | Specific Tool/Dataset | Function in Research | Application Context |

|---|---|---|---|

| Benchmark Datasets | DEAP Dataset [26] | Evaluation of model performance on physiological data | 40 EEG sessions from 32 subjects for emotion recognition |

| Benchmark Datasets | DNALONGBENCH [3] | Comprehensive benchmarking suite for long-range DNA prediction | Five tasks with dependencies up to 1 million base pairs |

| Genomic Datasets | ENCODE [24] [28] | Repository of functional genomics data | TF binding, histone modifications, chromatin accessibility across cell types |

| Genomic Datasets | 1000 Genomes Project [24] | Catalog of human genetic variation | Diverse human genomes for training and testing |

| Model Architectures | Nucleotide Transformer [24] | Pre-trained foundation model for genomics | Transfer learning for various genomic prediction tasks |

| Model Architectures | Enformer [27] | Hybrid CNN-Transformer specialized for gene expression | Predicting expression from sequence with long-range context |

| Model Architectures | SVEN [28] | Hybrid architecture for variant effect prediction | Quantifying tissue-specific transcriptomic impacts of variants |

| Evaluation Suites | GenBench [25] | Standardized benchmarking platform | Evaluating model performance across diverse genomic tasks |