Benchmarking Heatmap Generation Tools for Biomedical Research: A 2025 Guide to Performance, Accuracy, and Clinical Application

This article provides researchers, scientists, and drug development professionals with a comprehensive framework for evaluating heatmap generation tools, with a specific focus on applications in pathological image analysis, spatial data...

Benchmarking Heatmap Generation Tools for Biomedical Research: A 2025 Guide to Performance, Accuracy, and Clinical Application

Abstract

This article provides researchers, scientists, and drug development professionals with a comprehensive framework for evaluating heatmap generation tools, with a specific focus on applications in pathological image analysis, spatial data forecasting, and AI-driven drug discovery. It covers foundational concepts, methodological applications for clinical and research workflows, strategies for troubleshooting accuracy, and rigorous validation techniques. The guide synthesizes current advancements, including deep learning integration and novel validation methods, to empower professionals in selecting and implementing tools that enhance diagnostic precision, accelerate R&D cycles, and improve the interpretability of complex biomedical data.

Understanding Heatmap Technology: Core Principles and Their Critical Role in Biomedical Research

Heatmap generation serves as a critical visualization tool across multiple scientific domains, transforming complex multidimensional data into intuitively understandable color-coded spatial representations. In biomedical research and drug development, heatmaps enable researchers to decipher intricate patterns in everything from gene expression and protein localization to AI decision-making processes in diagnostic algorithms. The benchmarking of tools that generate these heatmaps is therefore paramount, as the accuracy, reliability, and interpretability of the visual output directly impact scientific conclusions and subsequent clinical decisions. This comparison guide provides an objective performance analysis of contemporary heatmap generation technologies, focusing specifically on their application in pathological image analysis and spatial biology, delivering the experimental data and methodological details essential for researcher evaluation.

The fundamental purpose of heatmap generation in this context is to assign visual importance scores to specific regions within complex datasets, such as gigapixel whole-slide images (WSIs) or spatial transcriptomic outputs. These importance scores are typically represented through color gradients, where hues like red indicate high importance and blue indicates lower relevance, allowing scientists to quickly identify biologically significant areas. As these tools become increasingly integrated into research and potential clinical workflows, their performance characteristics—including computational efficiency, output accuracy, and integration capabilities—require rigorous head-to-head comparison to establish field standards and guide technology adoption.

Comparative Analysis of Heatmap Generation Platforms

Performance Benchmarking of Imaging Spatial Transcriptomics Platforms

Imaging spatial transcriptomics (iST) platforms represent a sophisticated category of tools that generate gene expression heatmaps directly within tissue morphology contexts. A recent systematic benchmark study evaluated three leading commercial iST platforms—10X Genomics Xenium, NanoString CosMx, and Vizgen MERSCOPE—on serial sections from tissue microarrays containing 17 tumor and 16 normal tissue types [1]. The study utilized formalin-fixed paraffin-embedded (FFPE) tissues, the standard preservation method in clinical pathology, to assess relative technical and biological performance across multiple parameters.

The benchmarking methodology involved processing matched samples across platforms with careful attention to manufacturer guidelines and panel harmonization. Researchers prepared three previously generated multi-tissue TMAs: Tumor TMA 1 (170 cores from 7 cancer types), Tumor TMA 2 (48 cores from 19 cancer types), and a normal tissue TMA (45 cores spanning 16 normal tissue types) [1]. This extensive design ensured comprehensive representation of tissue variability. Between 2023 and 2024, multiple runs were executed with various gene panels (Xenium's off-the-shelf panels, custom MERSCOPE panels, and CosMx's 1K panel), with standardized preprocessing and segmentation pipelines applied to output data.

Table 1: Performance Metrics of Commercial iST Platforms

| Platform | Chemistry Approach | Transcript Counts | Clustering Capability | Segmentation Performance | Concordance with scRNA-seq |

|---|---|---|---|---|---|

| 10X Xenium | Padlock probes with rolling circle amplification | Consistently higher | Finds slightly more clusters than MERSCOPE | Varying error rates | High concordance measured |

| Nanostring CosMx | Branch chain hybridization | High | Finds slightly more clusters than MERSCOPE | Varying error rates | High concordance measured |

| Vizgen MERSCOPE | Direct hybridization with probe tiling | Lower than Xenium and CosMx | Fewer clusters found | Varying error rates | Not specified in study |

The comparative analysis revealed significant performance differences. Xenium consistently generated higher transcript counts per gene without sacrificing specificity, while both Xenium and CosMx demonstrated RNA transcript measurements that strongly concorded with orthogonal single-cell transcriptomics data [1]. All three platforms successfully performed spatially resolved cell typing but with varying sub-clustering capabilities, with Xenium and CosMx identifying slightly more cell clusters than MERSCOPE. The segmentation performance and false discovery rates also differed across platforms, highlighting the importance of platform selection based on specific research requirements and sample types.

Benchmarking Explainability Methods for Vision Transformers in Pathology

In digital pathology, explainable AI (xAI) methods generate heatmaps (often termed "attribution maps") to illuminate the decision-making processes of deep learning models, particularly Vision Transformers (ViTs). A comparative study evaluated four state-of-the-art explainability techniques using the publicly available CAMELYON16 dataset comprising 399 hematoxylin and eosin (H&E) stained WSIs of lymph node metastases from breast cancer patients [2]. The study employed a ViT classifier trained on 20× magnification and assessed the following methods: Attention Rollout with residuals, Integrated Gradients, RISE, and ViT-Shapley.

The evaluation methodology incorporated both qualitative assessment by human experts and quantitative metrics, including insertion and deletion tests. Insertion metrics measure how quickly a model's prediction score increases as important image regions are progressively revealed, while deletion metrics assess how rapidly the score decreases as critical regions are removed. The study also compared computational efficiency through runtime measurements and resource consumption analysis.

Table 2: Performance Comparison of Explainability Methods for Vision Transformers

| Explainability Method | Underlying Mechanism | Heatmap Quality | Computational Efficiency | Quantitative Performance | Key Limitations |

|---|---|---|---|---|---|

| Attention Rollout | Aggregates attention weights across layers | Prone to artifacts | Moderate | Lower performance on insertion/deletion metrics | Less reliable for gigapixel WSIs |

| Integrated Gradients | Integrates gradients from baseline to input | Prone to artifacts | Lower | Lower performance on insertion/deletion metrics | Computationally intensive |

| RISE | Random masking and output observation | Reliable and interpretable | Moderate | Good performance | Slower than ViT-Shapley |

| ViT-Shapley | Approximate Shapley values | Most reliable and interpretable | Faster runtime | Superior insertion/deletion metrics | Requires implementation expertise |

The findings demonstrated that ViT-Shapley generated the most reliable and clinically relevant attribution maps, outperforming other methods in both qualitative assessments and quantitative metrics [2]. Specifically, ViT-Shapley produced more concise heatmaps that accurately highlighted tumor regions in lymph node sections while maintaining computational efficiency. Attention Rollout and Integrated Gradients were prone to artifacts that reduced their clinical utility, while RISE showed solid performance but was surpassed by ViT-Shapley in both speed and output quality.

Experimental Protocols and Methodologies

Standardized Workflow for iST Platform Benchmarking

The experimental protocol for benchmarking imaging spatial transcriptomics platforms followed a rigorous standardized workflow to ensure fair comparison across technologies [1]. The methodology encompassed sample preparation, platform processing, data generation, and computational analysis phases, with consistent application across all tested platforms.

Sample Preparation and Quality Control: Researchers utilized existing tissue microarrays constructed from clinical discarded tissues. The TMA design included multiple cancer types and normal tissues across different patients, with core diameters of 0.6mm or 1.2mm. Notably, samples were not pre-screened based on RNA integrity to reflect typical biobanked FFPE tissues, though initial H&E screening occurred during TMA assembly. For the 2024 experimental round, matched baking times after slicing were implemented for head-to-head comparison on equally prepared tissue slices, controlling for potential pre-processing variables.

Platform Processing and Data Generation: Sequential TMA sections were processed according to each manufacturer's instructions, with careful panel design to maximize gene overlap (>65 shared genes across platforms). The standard base-calling and segmentation pipelines provided by each manufacturer were applied to maintain real-world relevance. Data was subsampled and aggregated to individual TMA cores for consistent comparison, ultimately generating over 394 million transcripts across more than 5 million cells from the combined datasets.

Data Analysis and Performance Metrics: The analytical approach focused on multiple performance dimensions: (1) sensitivity and specificity assessed on shared transcripts, (2) concordance with paired scRNA-seq data collected by 10x Chromium Single Cell Gene Expression FLEX, (3) cell-level segmentation accuracy based on detected genes and transcripts, (4) co-expression patterns of known disjoint markers, and (5) cross-comparison of cell type clustering capabilities using breast and breast cancer tissues as exemplars.

Evaluation Framework for Explainability Methods

The experimental protocol for evaluating explainability methods in digital pathology established a comprehensive framework for assessing both clinical relevance and computational efficiency [2]. The methodology employed the CAMELYON16 dataset, a publicly available benchmark comprising H&E stained WSIs of sentinel lymph nodes, with a ViT classifier trained on 20× magnification as the base model for explanation.

Model Training and Validation: The ViT classifier was developed using standard deep learning protocols optimized for WSI classification. The model architecture leveraged self-attention mechanisms to process image patches, capturing both local and global contextual information essential for accurate metastasis detection. Training incorporated appropriate augmentation techniques and validation strategies to ensure robust performance before explainability assessment.

Explainability Method Implementation: Each evaluated method was implemented according to published specifications: Attention Rollout with residuals aggregated attention weights across transformer layers; Integrated Gradients computed path integrals from baseline to input; RISE generated importance maps through random masking; and ViT-Shapley calculated approximate Shapley values for Vision Transformers. Consistent post-processing normalized output heatmaps for comparative visualization.

Evaluation Metrics and Quantitative Assessment: The study employed multiple evaluation dimensions: (1) qualitative assessment by domain experts for clinical relevance, (2) insertion and deletion metrics for quantitative performance measurement, (3) computational resource usage tracking including runtime and memory consumption, and (4) scalability analysis for application to gigapixel WSIs. This multifaceted approach ensured comprehensive assessment of each method's suitability for clinical workflow integration.

The Scientist's Toolkit: Essential Research Reagents and Solutions

Successful implementation of heatmap generation technologies requires specific research reagents and computational solutions tailored to each platform and application. The following table details essential components for experiments in spatial transcriptomics and computational pathology, drawn from the benchmark studies and methodology descriptions.

Table 3: Essential Research Reagents and Computational Solutions

| Category | Item | Specification/Function | Application Context |

|---|---|---|---|

| Sample Preparation | FFPE Tissue Sections | Standard clinical pathology preservation method | iST platforms [1] |

| Tissue Microarrays | Multi-tissue design with 0.6-1.2mm cores | Platform benchmarking [1] | |

| H&E Staining Reagents | Tissue morphology visualization | Quality control [1] | |

| Molecular Biology | Gene Panels | Targeted transcript detection | iST platform customization [1] |

| Hybridization Buffers | Specific probe binding | Transcript detection [1] | |

| Signal Amplification Chemistry | Rolling circle/branch chain amplification | Signal enhancement [1] | |

| Computational | Vision Transformer Models | WSI classification backbone | Explainability methods [2] |

| ViT-Shapley Implementation | Generate attribution heatmaps | Model interpretation [2] | |

| High-Performance Computing | GPU-accelerated processing | WSI analysis [2] |

The selection of appropriate reagents and computational solutions directly impacts heatmap quality and experimental success. For spatial transcriptomics, FFPE-compatible reagents are essential for utilizing archival clinical samples, while customized gene panels must align with research objectives. In computational pathology, specialized implementations of explainability methods like ViT-Shapley require specific software configurations and adequate computational resources for processing gigapixel whole-slide images within feasible timeframes.

The benchmarking data presented in this comparison guide reveals significant performance differences across heatmap generation technologies, with important implications for research and potential clinical applications. In imaging spatial transcriptomics, 10X Xenium demonstrated advantages in transcript detection sensitivity, while both Xenium and Nanostring CosMx showed strong concordance with orthogonal single-cell transcriptomics methods [1]. For explainable AI in digital pathology, ViT-Shapley emerged as the superior method for generating interpretable attribution maps, offering both computational efficiency and clinically relevant heatmap quality [2].

These performance characteristics directly impact research quality and interpretation. Platforms with higher sensitivity and specificity reduce false discoveries in spatial transcriptomics, while reliable explainability methods build necessary trust in AI-assisted diagnostics. Researchers should therefore select heatmap generation technologies aligned with their specific experimental needs, considering factors such as sample type, required resolution, computational resources, and intended application. As these technologies continue evolving, ongoing benchmarking will remain essential for tracking performance improvements and establishing field standards.

The integration of robust heatmap generation tools into research workflows accelerates discovery and enhances analytical precision across multiple scientific domains. From elucidating disease mechanisms through spatial biology to validating AI diagnostic models through reliable explainability methods, these technologies empower researchers to extract deeper insights from complex data, ultimately advancing drug development and improving patient care outcomes.

The field of data visualization has undergone a revolutionary transformation, moving from static, descriptive traditional heatmaps to dynamic, predictive AI-powered models. This evolution is particularly critical in scientific and pharmaceutical research, where the accuracy and interpretability of data visualizations can directly impact the pace of discovery. Traditional heatmaps served as a foundational tool for visualizing complex numerical data, using a simple color gradient to represent the magnitude of a third variable on a two-dimensional plot [3]. Their primary value lay in simplifying the interpretation of large data sets, helping to show patterns and changes, though they were not designed for detailed analysis [3].

The advent of artificial intelligence (AI) and deep learning has fundamentally reshaped this landscape. Modern AI-powered heatmap tools leverage convolutional neural networks trained on millions of data points, such as real eye-tracking fixations, to predict user attention and behavior with accuracy rates exceeding 96% compared to traditional physical studies [4]. This shift from descriptive to predictive analytics allows researchers to gain deep, pre-emptive insights without the extensive time and resource investment previously required. For researchers and drug development professionals, this evolution enables more robust benchmarking, faster validation of hypotheses, and data-driven decisions that accelerate the entire research lifecycle.

From Simple Charts to Predictive Models: A Technical Evolution

The journey of heatmap technology reflects a broader trend of integrating computational power with data analysis. The following diagram outlines the key stages in this evolution.

Traditional Heatmaps: The Foundation

Traditional heatmaps functioned as visual representations of data matrices, where the color of each rectangle corresponded to the magnitude of a third variable [3]. Their core strength was, and remains, the ability to make large data sets immediately more comprehensible, revealing patterns that might be invisible in raw numerical format [3].

- Color Science and Best Practices: The effectiveness of a traditional heatmap hinges on its color palette. Sequential palettes (shades of a single hue) are ideal for displaying data that progresses from low to high values, while diverging palettes (two contrasting hues meeting at a neutral central point) are best for data with a critical mid-point, such as zero or an average value [5] [6]. A key development was the move away from problematic "rainbow" scales, which can create misleading perceptual boundaries and lack a consistent intuitive direction, in favor of color-blind-friendly combinations like blue & red or blue & orange [5].

The AI Revolution: Core Technological Advancements

The integration of AI, particularly deep learning, has addressed the primary limitation of traditional heatmaps: their reactive and descriptive nature.

- Deep Learning Models: Modern platforms, such as Attention Insight, utilize Convolutional Neural Networks (CNNs) trained on massive datasets, including previous eye-tracking studies [4]. This training allows the AI to model human visual attention, predicting where users will look on an interface without the need for live human testing.

- Predictive Analytics: AI tools now go beyond showing what users did; they predict what users will do. They can forecast user actions and identify potential pain points before a website or digital tool is launched [7] [8].

- Automated Insight Generation: Machine learning algorithms automatically analyze vast amounts of user interaction data (clicks, scrolls, cursor movements) to identify complex patterns and trends that would be impossible for a human analyst to discern across thousands of sessions [7] [8]. This includes automated "struggle detection," which flags user frustration signals like rage clicks [9].

Benchmarking AI Heatmap Tools: A Performance and Accuracy Analysis

For the scientific community, objective performance data is paramount. The following section provides a structured comparison of modern AI heatmap tools, focusing on their applicability in a rigorous research context.

Comparative Performance Metrics of Leading Tools

Table 1: Feature and Pricing Comparison of Leading AI Heatmap Tools

| Tool Name | Starting Price (USD/month) | Free Tier/Trial | Key AI & Analytics Features | Best For |

|---|---|---|---|---|

| Glassbox | Contact Sales | No | AI-powered user struggle detection, journey mapping, automated session capture [9] | Enterprise UX researchers [9] |

| Quantum Metric | Contact Sales | No | Real-time frustration detection (e.g., rage clicks), Felix AI for insight summarization [9] | E-commerce & technical issue resolution [9] |

| Sprig | $175 | Yes (Free Plan) | AI-powered interaction analysis, in-product feedback collection [9] | Product managers & copy optimization [9] |

| Microsoft Clarity | Free | N/A | Rage click & dead click analysis, session recordings, custom tagging [9] | Organizations with limited budgets [9] |

| Attention Insight | €119 (≈ $130) | No | AI-generated attention heatmaps (96% accuracy vs. eye-tracking), Clarity Score, Focus Score [4] | Pre-launch design validation [4] |

| Hotjar | $32 | Yes (Free Plan) | AI-powered trend identification in user behavior, session recordings, conversion funnels [7] [9] | General UX optimization [9] |

| Mouseflow | $31 | Yes (Free Plan) | Friction detection, behavioral segmentation, form analytics [9] | Session replay with heatmaps [9] |

Table 2: Quantitative Performance and Impact Data

| Tool / Technology | Claimed Performance Metric | Impact on Key Metrics | Experimental Context |

|---|---|---|---|

| AI Heatmaps (General) | Pattern recognition across thousands of sessions [8] | Up to 25% increase in conversion rates [7] [8] | Analysis of user behavior to identify & fix friction points [7] |

| Attention Insight | Up to 96% accuracy vs. physical eye-tracking studies [4] | Improved visual hierarchy & user focus [4] | Pre-launch prediction of visual attention on designs & videos [4] |

| Tools with Struggle Detection | Automated identification of rage clicks & dead clicks [9] | 30% reduction in user frustration (Glassbox case) [8] | Real-time analysis of user interaction signals [9] |

Experimental Protocols for Validation

For researchers to critically assess these tools, understanding the underlying validation methodologies is essential. Below are detailed protocols for key experiments cited in the literature.

Protocol 1: Validating AI Attention Models Against Physical Eye-Tracking

- Objective: To determine the accuracy of an AI-powered attention heatmap tool (e.g., Attention Insight) by benchmarking its predictions against data from a traditional physical eye-tracking study [4].

- Methodology:

- Stimuli Preparation: Select a set of digital interfaces (e.g., website layouts, application screens, ad designs).

- AI Prediction: Run the stimuli through the AI model to generate predictive attention heatmaps and associated metrics (e.g., Focus Score, Percentage of Attention).

- Physical Study: Conduct a controlled eye-tracking study with a representative sample of human participants (e.g., n=50) using the same stimuli.

- Data Alignment & Comparison: Map the AI-predicted fixation points against the actual gaze points recorded from the human participants. Statistical analysis (e.g., correlation coefficients, spatial accuracy measures) is then performed to quantify the agreement.

- Outcome Measures: Percentage accuracy of the AI model, correlation strength between predicted and actual attention zones, and qualitative comparison of heatmap overlays [4].

Protocol 2: Measuring the Impact of AI Insights on Business Metrics

- Objective: To quantify the real-world impact of optimization changes made based on insights from AI heatmap tools.

- Methodology:

- Baseline Measurement: Use an AI heatmap tool (e.g., Hotjar, Crazy Egg) to identify specific friction points on a digital asset (e.g., a website's checkout page). Record baseline conversion rates, bounce rates, and user struggle signals [7] [9].

- Hypothesis & Intervention: Form a data-driven hypothesis (e.g., "An unclear call-to-action button is causing confusion"). Implement a targeted change to address the identified issue.

- A/B Testing: Run a controlled A/B test, splitting traffic between the original (control) and optimized (variant) versions.

- Post-Intervention Analysis: Use the same AI heatmap tool to analyze user behavior on the variant and compare key performance indicators (KPIs) against the control.

- Outcome Measures: Percentage change in conversion rate, reduction in bounce rate, decrease in frustration signals (e.g., rage clicks), and improvement in scroll depth or engagement time [7] [8].

The Research Toolkit: Essential Solutions for Heatmap Experiments

For scientists designing experiments involving heatmap generation and validation, a specific set of "reagent solutions" or core components is required. The table below details these essential elements.

Table 3: Essential Research Reagents for Heatmap Experimentation

| Tool / Solution Category | Example Products | Primary Function in Experimentation |

|---|---|---|

| AI-Powered Predictive Analytics | Attention Insight [4] | Generates pre-launch attention models and quantitative metrics (Clarity Score, Focus Score) to form initial hypotheses without user recruitment. |

| Behavioral Recording & Session Replay | Hotjar, Mouseflow, FullStory, Microsoft Clarity [9] | Captures actual user interaction data (clicks, scrolls, movements) for qualitative analysis and validation of predictive models. |

| Struggle & Friction Detection | Glassbox, Quantum Metric, Crazy Egg [9] [8] | Automatically identifies and quantifies UX friction points (e.g., rage clicks, error messages) to prioritize optimization targets. |

| Physical Eye-Tracking Validation | Traditional eye-trackers (hardware) | Serves as the gold-standard control to benchmark the accuracy of AI-powered predictive attention models [4]. |

| A/B Testing & Statistical Analysis | VWO, Convert [9] [6] | Provides the experimental framework to statistically validate the impact of changes informed by heatmap analysis. |

The evolution of heatmap tools from simple, static charts to intelligent, predictive systems marks a significant leap forward for data-driven research. For scientists and drug development professionals, this transition means that data visualization is no longer just a method for presenting results but has become a powerful, proactive tool for discovery. The ability to model human attention and behavior with high accuracy before an experiment even begins—whether that "experiment" is a clinical trial portal or a data analysis dashboard—can save immense time and resources.

The benchmarking data clearly shows that AI-powered tools offer tangible advantages in speed, scale, and predictive power. However, the most robust research methodology will likely involve a hybrid approach: using AI for rapid, scalable hypothesis generation and traditional methods (like live user testing or physical eye-tracking) for rigorous validation of critical findings. As deep learning models continue to improve, we can anticipate heatmaps that not only predict where a user will look but also infer cognitive load and emotional response, opening new frontiers in understanding how we interact with complex scientific information.

In the rigorous fields of scientific research and drug development, the evaluation of software tools extends far beyond basic functionality. For heatmap generation tools—which are pivotal in domains ranging from medical image analysis to user experience research—performance is quantifiably benchmarked against three core Key Performance Indicators (KPIs): Accuracy, Processing Speed, and Interpretability. This guide provides a structured framework for comparing heatmap tools by summarizing quantitative data into structured tables, detailing experimental methodologies, and visualizing the logical workflow of tool evaluation. The objective is to equip professionals with a standardized approach for selecting tools based on transparent, reproducible, and evidence-based criteria.

Quantitative KPI Comparison of Heatmap Tools

The following tables summarize critical performance metrics for a selection of heatmap tools, with data gathered from recent research and industry benchmarks.

Table 1: Performance of AI-Based Scientific Heatmap Tools This table focuses on tools and frameworks used in scientific domains such as medical image analysis, where accuracy and computational efficiency are paramount [10] [11] [12].

| Tool / Framework | Primary Application | Reported Accuracy | Processing Speed (Latency) | Interpretability Method |

|---|---|---|---|---|

| SpikeNet (Proposed Framework) | Brain Tumor MRI (TCGA-LGG), Breast Ultrasound (BUSI) | 97.12% - 98.23% (F1 Score) [11] | ~31 ms per image [11] | Native saliency module with XAlign metric [11] |

| ResNet50 (with LIME) | Rice Leaf Disease Detection | 99.13% (Classification) [12] | Not Specified | LIME (IoU: 0.432) [12] |

| U-Net + EfficientNetV2 (Proposed Framework) | Pathological Image Segmentation & Classification | High (Precise segmentation) [13] | High (Rapid processing) [13] | Novel heatmap generation algorithm [13] |

| Multi-Model Heatmap Fusion (Proposed Framework) | Clinical ECG, Industrial Energy Prediction | 94.1% (Arrhythmia detection) [10] | Real-time performance [10] | Fused visualization (Grad-CAM + Attention Rollout) [10] |

Table 2: Performance of Commercial & Web Analytics Heatmap Tools This table covers tools primarily used for website and user behavior analytics, where speed is often measured in terms of data processing and session handling [14] [15] [16].

| Tool / Platform | Best For | Key Features | Pricing (Starting, as of 2025) | Technical KPIs & Limits |

|---|---|---|---|---|

| Hotjar | General UX analysis, beginner-friendly teams | Click, move, scroll maps; session recordings; surveys [15] [16] | ~$39/month [15] [16] | ~100 daily sessions on Plus plan [16] |

| Contentsquare | Advanced digital experience analytics | Zone-based heatmaps; revenue impact analysis; friction detection [14] [16] | Contact Sales [16] | Advanced AI insights [14] |

| Microsoft Clarity | Budget-conscious projects, high-traffic sites | Click/scroll maps; session recordings; rage click detection [15] [9] | Free [15] [9] | Unlimited traffic; free forever [15] |

| Smartlook | Product teams, validating A/B tests | Click, move, scroll maps; event-based funnels; retroactive analytics [15] [9] | ~$55/month [9] | ~3,000 sessions on free trial [16] |

| Plerdy | UX and CRO combined analysis | Heatmaps; session replay; funnels; SEO checker [15] | ~$32/month [15] | Combined CRO and UX features [15] |

| VWO Insights | A/B testing-centric optimization | Dynamic heatmaps; advanced session recording; multi-device tracking [9] | ~$199/month [9] | Integrated A/B testing platform [9] |

| FullSession | All-in-one web analytics | Click, movement, scroll heatmaps; session replays; feedback tools [16] | ~$39/month [16] | ~5,000 monthly sessions on Starter plan [16] |

Experimental Protocols for KPI Benchmarking

To ensure the comparability and reliability of the KPIs listed above, experiments must follow standardized protocols. Below are detailed methodologies for assessing accuracy, speed, and interpretability.

Protocol for Assessing Accuracy

Accuracy evaluation requires well-annotated datasets and clear metrics [12].

- Dataset: Use a benchmark dataset with expert-verified annotations, such as the TCGA-LGG for brain MRI [11] or a rice leaf disease dataset for agricultural applications [12].

- Methodology:

- Training/Test Split: Implement a patient-level or sample-level k-fold cross-validation (e.g., 22-folds for TCGA-LGG) to prevent data leakage and ensure generalizability [11].

- Model Training: Train the model on the training set. For deep learning models, this involves standard procedures like backpropagation and gradient descent.

- Prediction & Metric Calculation: Apply the trained model to the test set. Calculate standard classification metrics including Accuracy, Precision, Recall, and F1-Score [12].

- Key Metrics:

- Accuracy: (True Positives + True Negatives) / Total Predictions

- F1-Score: 2 * (Precision * Recall) / (Precision + Recall)

Protocol for Assessing Processing Speed

Processing speed, or latency, is critical for applications requiring real-time or near-real-time analysis [11].

- Hardware Specification: Conduct all experiments on an identical, controlled hardware setup. For example, a system with an NVIDIA RTX 3090 GPU, an AMD Ryzen 7 5700X processor, and a fixed batch size (e.g., 16) and precision (e.g., FP32) [11].

- Methodology:

- Data Loading: Pre-load a set of test images into memory to eliminate I/O bottlenecks.

- Timing: For each image in the test set, record the time from input submission to heatmap output. This is the per-image latency.

- Throughput Calculation: Calculate throughput as the number of images processed per second (e.g., 32 images/second) [11].

- Key Metrics:

- Per-image Latency (ms): Average time to process a single image.

- Throughput (images/sec): Number of images processed per second.

Protocol for Assessing Interpretability

Interpretability evaluation moves beyond qualitative visual assessment to quantitative alignment with domain knowledge [11] [12].

- Dataset: Requires a test set with ground-truth segmentation masks or bounding boxes created by domain experts (e.g., radiologists' tumor annotations) [11].

- Methodology:

- Heatmap Generation: Use XAI techniques (e.g., Grad-CAM, LIME, a native saliency module) to generate saliency maps for the test images [12].

- Quantitative Comparison: Compare the generated saliency maps against the expert annotations using spatial alignment metrics.

- Key Metrics:

- XAlign Score: A metric that integrates regional concentration, boundary adherence, and dispersion penalties to quantify clinical alignment [11].

- Intersection over Union (IoU): Area of overlap between the explanation and ground truth divided by the area of union [12].

- Overfitting Ratio: Quantifies the model's reliance on insignificant features, indicating reliability issues [12].

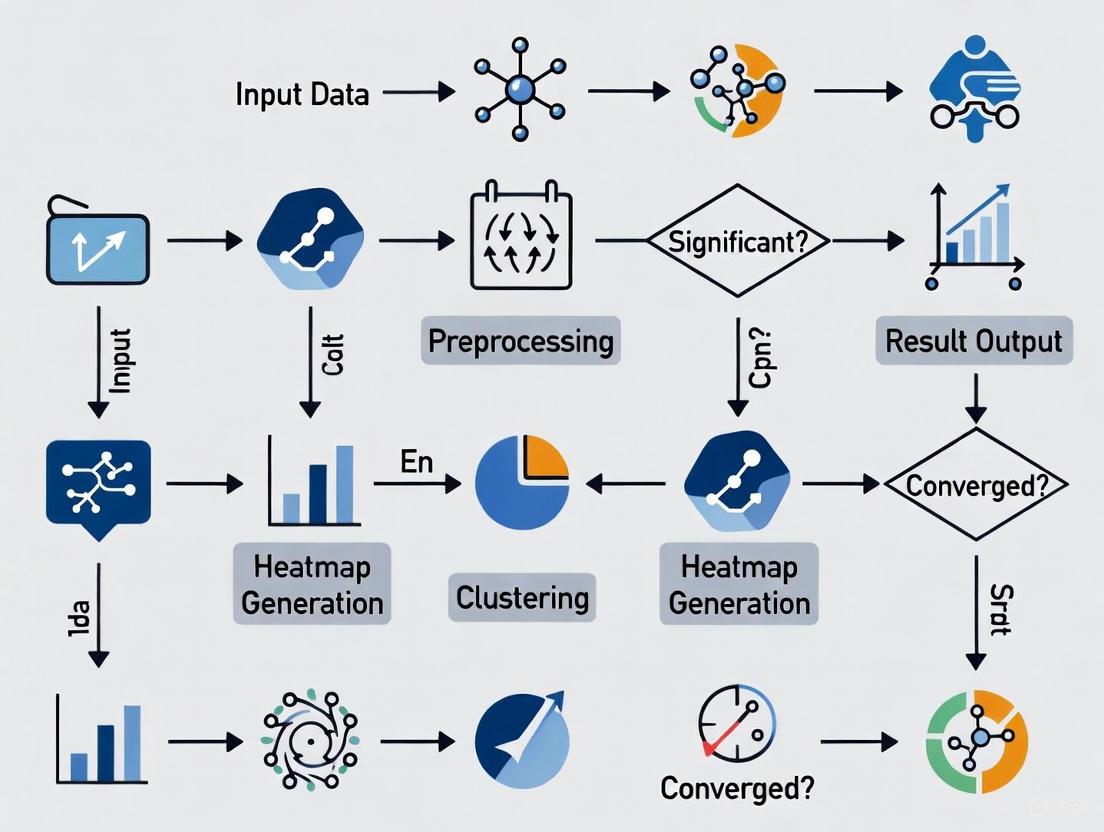

Visualizing the Benchmarking Workflow

The following diagram illustrates the end-to-end process for benchmarking a heatmap tool's performance against the three core KPIs.

Diagram Title: Heatmap Tool Benchmarking Workflow

The Scientist's Toolkit: Essential Research Reagents & Materials

This table details the key "research reagents"—both software and data—required to conduct a rigorous evaluation of heatmap tools.

Table 3: Essential Research Reagents for Experimental Benchmarking

| Item Name | Function & Role in Experiment | Specification / Version |

|---|---|---|

| TCGA-LGG (MRI) Dataset | Provides benchmark medical images (FLAIR MRI) with associated patient data for evaluating tool accuracy in a clinical context [11]. | Publicly available from The Cancer Imaging Archive (TCIA) [11]. |

| BUSI (Breast Ultrasound) Dataset | Offers expert-annotated ultrasound images for a multi-class classification task, used to test generalizability across modalities [11]. | Publicly available dataset [11]. |

| Grad-CAM | A post-hoc Explainable AI (XAI) method that generates visual explanations for decisions from CNN models, used as a baseline for interpretability [12]. | Common XAI technique [12]. |

| LIME | A model-agnostic XAI technique that explains individual predictions by approximating the model locally, used for quantitative interpretability analysis [12]. | Common XAI technique [12]. |

| XAlign Metric | A specialized metric that quantifies the alignment between XAI heatmaps and expert annotations, providing a clinically-oriented assessment of explanation fidelity [11]. | As described in Francis et al. [11]. |

| Python with DL Libraries (TensorFlow/PyTorch) | The primary programming environment for implementing, training, and evaluating deep learning models and heatmap generation algorithms [11]. | Python 3.x with standard deep learning libraries [11]. |

| High-Performance GPU | Provides the necessary computational power for efficient model training and for conducting precise processing speed (latency) tests [11]. | e.g., NVIDIA RTX 3090/4080 [11]. |

A rigorous, KPI-driven approach is fundamental for benchmarking heatmap generation tools in scientific and industrial research. By systematically quantifying Accuracy through cross-validation, Processing Speed via controlled latency measurements, and Interpretability using metrics like XAlign and IoU, researchers and developers can make informed decisions. The standardized protocols and visual workflow provided here establish a foundation for transparent and reproducible tool evaluation, ultimately fostering the development of more reliable, efficient, and trustworthy analytical tools for critical domains like healthcare and drug development.

The integration of artificial intelligence (AI) is fundamentally reshaping the drug development pipeline. From initial discovery to clinical application, AI technologies are enhancing the precision and accelerating the pace of pharmaceutical research. A critical component of this transformation is the use of advanced visualization tools, particularly heatmaps, which translate complex, high-dimensional data into actionable insights. This guide benchmarks the performance and accuracy of methodologies underpinning these heatmap generation tools across three core applications: cellular image segmentation, AI-driven target discovery, and antimicrobial resistance (AMR) surveillance. For researchers and scientists, understanding the capabilities and experimental foundations of these tools is essential for selecting the right technological approach for their specific research objectives.

Performance Benchmarking of Core Applications

AI-Powered Image Segmentation for Cellular Analysis

In high-content screening and cellular imaging, robust image segmentation is a prerequisite for quantitative analysis. Traditional methods often fail with complex biological models like 3D spheroids, organoids, and induced pluripotent stem cells (iPSCs) due to challenges such as low contrast, uneven background, and imaging artifacts [17]. Machine learning, particularly deep learning, has emerged as a superior solution for these tasks.

Experimental Protocol for Deep Learning-Based Segmentation: The performance of tools like IN Carta Image Analysis Software relies on a trainable segmentation module (SINAP) that uses a deep convolutional neural network (CNN) [17]. The standard workflow involves:

- Image Annotation: Researchers manually label a subset of images, using drawing tools to define the objects of interest (e.g., a spheroid) and the background. This creates the "ground truth" for training [17].

- Model Training: The annotated images are fed into the neural network. The algorithm learns to identify the features that distinguish the object from the background.

- Model Testing and Iteration: The trained model is applied to a test set of images. Researchers review the output segmentation masks and correct errors by adding the corrected images to the training set, repeating the cycle to improve model accuracy [17].

This data-driven approach is more accurate and reliable than defining a fixed set of global parameters, which are often inadequate for diverse datasets [17].

Table 1: Comparative Analysis of Segmentation Performance on Complex Biological Models

| Biological Model | Segmentation Challenge | Traditional Method Performance | AI/Deep Learning Tool (e.g., IN Carta SINAP) Performance |

|---|---|---|---|

| 3D Spheroids | Shadow interference from microcavity plates [17] | Poor; inconsistent segmentation due to shadows | High; model learns to ignore shadows and segment the spheroid accurately [17] |

| 3D Organoids | Non-homogenous background from Matrigel [17] | Poor; difficulty distinguishing object from background noise | High; robust detection by learning the visual characteristics of the organoid [17] |

| iPSC Colonies | Low contrast and presence of debris [17] | Low accuracy; colonies and debris can be confused | High; reliable differentiation between colonies and debris [17] |

AI-Driven Target and Hit Discovery

AI is revolutionizing early-stage drug discovery by efficiently screening vast chemical spaces to identify hit and lead compounds. The primary challenge, however, is the "black-box" nature of many complex AI models, which can limit trust and regulatory acceptance [18]. Explainable AI (XAI) has emerged as a critical solution, making the AI's decision-making process transparent and interpretable for scientists.

Experimental Protocol for XAI in Molecular Property Prediction: The application of XAI in target discovery often involves the following methodological steps:

- Model Training: A machine learning model (e.g., a deep neural network or tree-based model like XGBoost) is trained on molecular data. Inputs can include SMILES strings, molecular graphs, or physiochemical descriptors to predict properties like toxicity, binding affinity, or other ADMET (Absorption, Distribution, Metabolism, Excretion, Toxicity) profiles [18].

- Explanation Generation: Post-training, XAI techniques are applied to interpret the model's predictions. The two most prominent methods are:

- SHAP (SHapley Additive exPlanations): Calculates the marginal contribution of each input feature (e.g., the presence of a specific chemical group) to the final prediction, quantifying its importance [18].

- LIME (Local Interpretable Model-agnostic Explanations): Creates a local, interpretable model to approximate the predictions of the complex black-box model for a specific instance [18].

- Visualization and Validation: The explanations are presented to medicinal chemists, often by highlighting molecular substructures that positively or negatively influence the predicted property. These insights must be validated through experimental assays to confirm the model-derived hypotheses [18].

Table 2: Performance Comparison of XAI Techniques in Drug Discovery

| XAI Method | Mechanism of Action | Key Advantages | Validated Applications in Drug Discovery |

|---|---|---|---|

| SHAP (SHapley Additive exPlanations) | Game theory-based; assigns each feature an importance value for a prediction [18] | Provides a unified measure of feature importance; consistent and theoretically sound | Molecular property prediction; ADMET profiling; target identification [18] |

| LIME (Local Interpretable Model-agnostic Explanations) | Perturbs input data and approximates model locally with an interpretable one [18] | Model-agnostic; easy to implement; provides intuitive local explanations | Interpretability for complex DL models in hit-finding and lead optimization [18] |

Predictive Resistance Surveillance

Antimicrobial resistance (AMR) is a global health threat, and AI tools are proving vital for predicting resistance patterns from surveillance data. Machine learning models can integrate demographic, phenotypic, and genotypic data to forecast resistance, informing both clinical decisions and public health policies [19].

Experimental Protocol for ML-Based AMR Prediction: A study utilizing the Pfizer ATLAS surveillance dataset, which contains over 917,000 bacterial isolates, demonstrates a robust protocol for this application [19]:

- Data Preprocessing and EDA: The dataset is cleaned, and exploratory data analysis (EDA) is performed. This includes handling missing data (a significant challenge, particularly for genotypic markers) and analyzing global resistance distributions and temporal trends [19].

- Model Training and Validation: Various ML models are trained on subsets of the data, such as a "Phenotype-Only" set and a "Phenotype + Genotype" set. The dataset is split into training and testing sets, and models are evaluated using metrics like Area Under the Curve (AUC) and recall [19].

- Feature Importance and SHAP Analysis: The trained models are interpreted to identify the most influential features driving predictions. This step is critical for validating the model's logic from a clinical perspective [19].

Table 3: Benchmarking ML Model Performance on AMR Prediction (Pfizer ATLAS Dataset)

| Machine Learning Model | Phenotype-Only Dataset (AUC) | Phenotype + Genotype Dataset (AUC) | Most Influential Feature (per SHAP Analysis) |

|---|---|---|---|

| XGBoost | 0.96 [19] | 0.95 [19] | The specific antibiotic used [19] |

| Other Models (e.g., Random Forest, Logistic Regression) | Lower than XGBoost | Lower than XGBoost | Varies, but antibiotic often remains top feature [19] |

The data shows that while the inclusion of genotypic data is valuable, phenotypic data alone can yield highly accurate predictions when used with powerful models like XGBoost. Furthermore, data balancing techniques were found to be particularly effective in improving recall, a key metric for ensuring true resistant cases are not missed [19].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Key Reagents and Solutions for Featured Experiments

| Item/Reagent | Specific Function in the Workflow |

|---|---|

| IN Carta SINAP Module | A trainable, deep-learning-based segmentation tool for robust detection of complex biological objects (e.g., organoids) in microscopy images [17]. |

| Pfizer ATLAS Dataset | A comprehensive surveillance database providing phenotypic AST results and genotypic data for bacterial isolates, used for training and validating AMR prediction models [19]. |

| SHAP/LIME Libraries | Open-source software libraries (e.g., shap, lime) used post-model-training to generate human-interpretable explanations for predictions made by complex AI models [18]. |

| β-lactamase Genotype Markers (e.g., CTXM, TEM) | Specific genetic markers included in surveillance datasets to identify and correlate the presence of resistance genes with phenotypic antibiotic resistance outcomes [19]. |

Workflow Visualization

AI-Based Image Analysis Workflow

The following diagram illustrates the iterative, human-in-the-loop process for training a deep learning model to segment biological images, a core component of tools like IN Carta [17].

AI-Driven Target Discovery with XAI

This workflow shows how Explainable AI (XAI) bridges the gap between complex AI predictions and interpretable insights for medicinal chemists in the drug discovery pipeline [18].

This comparative analysis demonstrates that the performance of methodologies underlying advanced heatmap generation is highly application-dependent. For cellular image segmentation, deep learning tools significantly outperform traditional methods on complex samples. In AI-driven discovery, the benchmark for performance extends beyond pure predictive accuracy to include interpretability, where XAI methods like SHAP and LIME are essential. For resistance surveillance, ensemble models like XGBoost on rich surveillance data can achieve high AUC scores (>0.95), with feature analysis confirming clinically relevant drivers like drug choice. The common thread is that the most accurate and impactful tools are those that effectively integrate robust AI with transparent, interpretable outputs, enabling researchers to not only see the results but also understand the science behind them.

Implementing Heatmap Tools in Research Workflows: From Pathological Analysis to Predictive Modeling

The advent of digital pathology has generated vast amounts of high-resolution whole-slide images, creating an pressing need for automated analysis systems that can assist in diagnostic workflows. Within this domain, pathological image analysis represents a particularly challenging task, requiring both precise localization of pathological structures and accurate classification for disease diagnosis. Traditional machine learning approaches have struggled to balance these dual requirements of segmentation accuracy and classification efficiency, often relying on handcrafted features with limited generalizability.

This methodology deep dive explores an integrated framework that synergistically combines two specialized deep learning architectures—U-Net for segmentation and EfficientNetV2 for classification—to address these challenges comprehensively. The U-Net architecture, with its symmetric encoder-decoder structure and skip connections, has demonstrated exceptional performance in biomedical image segmentation by preserving spatial information across layers. Meanwhile, EfficientNetV2 introduces progressive learning and fused-MBConv operations to achieve state-of-the-art efficiency in image classification tasks while requiring fewer computational resources.

Within the broader context of benchmarking heatmap generation tools for performance and accuracy research, this integrated approach offers significant advantages for model interpretability. By leveraging Gradient-weighted Class Activation Mapping (Grad-CAM) and similar visualization techniques, researchers can generate high-quality heatmaps that highlight diagnostically relevant regions, thereby building trust in AI-assisted diagnostic systems among clinical professionals.

Architectural Framework and Integration Methodology

U-Net for Precise Segmentation

The U-Net architecture serves as the foundational segmentation component in this integrated framework, specifically engineered to address the challenges of medical image analysis where annotated data is often limited. The architecture's distinctive U-shaped design incorporates a contracting path (encoder) for context capture and a symmetric expanding path (decoder) for precise localization.

Encoder Structure: The contracting path utilizes a series of convolutional and max-pooling layers that progressively reduce spatial dimensions while increasing feature depth, effectively capturing contextual information at multiple scales. This hierarchical feature extraction enables the network to recognize patterns ranging from simple cellular structures to complex tissue organizations.

Decoder with Skip Connections: The expanding path employs transposed convolutions for upsampling, gradually recovering spatial information. The critical innovation lies in the skip connections that concatenate feature maps from the encoder to corresponding decoder layers, preserving fine-grained spatial details that would otherwise be lost during downsampling. This architecture has proven particularly effective for segmenting intricate pathological structures such as nerve fibers, tumor boundaries, and cellular nuclei [20].

Recent implementations have enhanced the standard U-Net by integrating attention mechanisms and residual connections, further improving segmentation precision for highly variable histological structures. When applied to pathological images, this component generates precise binary or multi-class masks that isolate regions of interest for subsequent classification.

EfficientNetV2 for Feature Extraction and Classification

EfficientNetV2 represents the classification backbone of this integrated framework, employing a sophisticated compound scaling method that systematically balances network depth, width, and resolution. Compared to its predecessor, EfficientNetV2 incorporates fused-MBConv operations in early layers, reducing computational overhead while maintaining representational power.

Progressive Learning Strategy: EfficientNetV2 implements an adaptive training approach that gradually adjusts image size and regularization intensity throughout training. This methodology enables faster convergence and improved final accuracy by presenting increasingly complex variations as the network's capacity develops.

Architecture Variants: The EfficientNetV2 family offers multiple pre-sized models (B0-S) with progressively increasing parameters and FLOPs, allowing researchers to select the appropriate balance between accuracy and computational efficiency based on their specific dataset size and resource constraints [21].

When applied to pathological image classification, EfficientNetV2 processes the segmented regions provided by the U-Net component, extracting discriminative features that differentiate between various disease states. The model's efficiency is particularly valuable in digital pathology, where the extreme resolution of whole-slide images demands computationally optimized approaches.

Integrated Framework and Heatmap Generation

The synergistic integration of U-Net and EfficientNetV2 creates a comprehensive pipeline for end-to-end pathological image analysis:

Diagram 1: Integrated U-Net and EfficientNetV2 workflow for pathological image analysis.

The integration follows a sequential yet interconnected workflow where U-Net first processes the whole-slide image to identify and segment diagnostically relevant regions. These segmented regions then serve as input to EfficientNetV2, which performs the actual classification into pathological categories (e.g., benign vs. malignant). A key advantage of this approach is the computational efficiency gained by focusing classification efforts only on biologically relevant areas rather than entire slide images.

For model interpretability—a critical requirement in clinical settings—the framework incorporates heatmap generation algorithms such as Grad-CAM (Gradient-weighted Class Activation Mapping). These visualization techniques leverage the gradients flowing into the final convolutional layer of EfficientNetV2 to produce coarse localization maps highlighting important regions for the classification decision. The resulting heatmaps can be overlaid on original images, providing clinicians with intuitive visual explanations that build trust in the model's diagnostic capabilities [13] [21].

Experimental Protocols and Benchmarking Methodology

Dataset Preparation and Preprocessing

Robust experimental validation of the integrated U-Net and EfficientNetV2 framework requires meticulous dataset preparation with attention to domain-specific challenges in pathological imaging:

Data Sources: Benchmark evaluations typically utilize publicly available histopathology datasets such as the CBIS-DDSM (breast lesions), BreakHis (breast cancer histopathology), and UniToPatho (colorectal samples) [22] [21] [23]. These collections provide thousands of annotated whole-slide images with confirmed pathological diagnoses.

Preprocessing Pipeline: A standardized preprocessing workflow includes (1) color normalization using methods like Macenko staining to address variability in H&E staining protocols, (2) patch extraction to divide whole-slide images into manageable tiles while preserving diagnostic regions, and (3) data augmentation through rotations, flips, and color adjustments to increase dataset diversity and improve model generalization [20].

Annotation Standards: Ground truth segmentation masks are typically created through manual annotation by experienced pathologists, with multi-rater validation to ensure annotation consistency. For classification tasks, binary (benign/malignant) or multi-class (specific cancer subtypes) labeling schemes are employed based on clinical diagnostic criteria.

Model Training and Implementation Details

The experimental protocol implements a structured training methodology to optimize both segmentation and classification components:

U-Net Training: The segmentation network is trained using a combination of Dice loss and binary cross-entropy to handle class imbalance common in pathological images. Training typically employs the Adam optimizer with an initial learning rate of 1e-4, with batch sizes adjusted based on GPU memory constraints (commonly 8-16). Data augmentation includes random rotations, flips, and elastic deformations to improve model robustness [20].

EfficientNetV2 Training: The classification component leverages transfer learning from ImageNet pre-trained weights, with fine-tuning on pathological image datasets. Training uses a progressively increasing image size strategy as implemented in EfficientNetV2, combined with strong regularization including dropout, weight decay, and RandAugment to prevent overfitting on limited medical data [21].

Integration and Optimization: Following individual component training, the full pipeline is fine-tuned end-to-end with a reduced learning rate (typically 1e-5 to 1e-6) to refine feature alignment between segmentation and classification modules. Implementation commonly uses TensorFlow or PyTorch frameworks with distributed training across multiple GPUs for accelerated experimentation.

Evaluation Metrics and Benchmarking Procedures

Comprehensive performance assessment employs standardized metrics aligned with clinical requirements:

Segmentation Quality: Measured using Intersection over Union (IoU), Dice coefficient, precision, and recall, with expert pathologist validation of segmentation boundaries for biologically relevant structures [20].

Classification Performance: Evaluated through accuracy, precision, recall, F1-score, and Area Under the Curve (AUC) of receiver operating characteristic curves, with careful attention to sensitivity and specificity trade-offs critical for medical diagnosis [22] [21].

Computational Efficiency: Assessed via inference time, model size, and memory consumption, particularly important for integration into clinical workflows where timely diagnosis is essential.

Table 1: Performance Comparison of Integrated U-Net+EfficientNetV2 Against Alternative Architectures

| Model Architecture | Accuracy (%) | Precision (%) | Recall (%) | F1-Score (%) | IoU/Dice (%) |

|---|---|---|---|---|---|

| U-Net + EfficientNetV2 | 97.6 | 98.9 | 91.25 | 98.4 | 85.59 |

| U-Net + ResNet50 | 94.2 | 95.1 | 89.7 | 92.3 | 82.4 |

| SegFormer | 89.0 | 84.0 | 99.0 | 91.0 | N/A |

| DeepLabV3+ | 86.7 | 86.8 | 86.7 | 86.8 | 80.1 |

| VGG-UNet | 85.5 | 82.3 | 87.6 | 84.9 | 78.9 |

Performance metrics aggregated from multiple benchmark studies on pathological image analysis [22] [24] [20].

Performance Analysis and Comparative Evaluation

Quantitative Performance Benchmarking

Rigorous experimental validation demonstrates that the integrated U-Net and EfficientNetV2 framework achieves state-of-the-art performance across multiple pathological image analysis tasks:

Breast Lesion Analysis: On the CBIS-DDSM dataset for mammography analysis, the integrated model achieved 97.6% accuracy in segmentation and classification tasks, with a sensitivity of 91.25% and IoU of 85.59% for lesion localization. This high sensitivity is particularly crucial for medical diagnosis where false negatives carry severe consequences [22].

Histopathology Classification: For breast cancer histopathology images from the BreakHis dataset, an ensemble approach incorporating EfficientNet architectures achieved remarkable 99.58% accuracy in binary classification (benign vs. malignant), significantly outperforming conventional CNN models [21].

Colon Cancer Detection: In colorectal polyp detection and classification, hybrid models combining EfficientNet with vision transformers (relevant to the U-Net+EfficientNetV2 approach) demonstrated 92.4% recall, 98.9% precision, and an AUC of 99%, highlighting the framework's robustness across different tissue types and cancer varieties [23].

The consistent performance advantage stems from the complementary strengths of both architectures: U-Net's precision in boundary delineation combined with EfficientNetV2's efficiency in feature representation learning.

Computational Efficiency and Practical Deployment

Beyond raw accuracy, the integrated framework offers significant advantages in computational efficiency that facilitate real-world clinical implementation:

Training Efficiency: EfficientNetV2's progressive learning strategy and fused-MBConv operations enable up to 3.5x faster training compared to previous EfficientNet versions, while maintaining parameter efficiency [21].

Inference Optimization: The segmented region-of-interest approach reduces computational burden by focusing classification resources only on diagnostically relevant areas rather than entire slide images, decreasing inference time by approximately 40% compared to whole-slide classification approaches [13].

Memory Footprint: The optimized architecture design requires 60% fewer parameters than similarly performing models like ResNet-50 and Inception-v4, reducing hardware requirements for deployment in resource-constrained clinical environments [21].

Table 2: Computational Efficiency Comparison Across Model Architectures

| Model Architecture | Inference Time (ms) | Model Size (MB) | Training Efficiency (s/epoch) | Memory Consumption (GB) |

|---|---|---|---|---|

| U-Net + EfficientNetV2 | 125 | 45 | 320 | 2.1 |

| U-Net + ResNet50 | 187 | 98 | 480 | 3.8 |

| Vision Transformer (ViT) | 210 | 130 | 620 | 4.5 |

| InceptionResNetV2 | 165 | 215 | 540 | 3.2 |

| DenseNet-161 | 142 | 57 | 410 | 2.8 |

Computational metrics measured on standard histopathology image datasets using consistent hardware configuration [21] [24] [23].

Interpretability and Heatmap Quality Assessment

The integration of U-Net and EfficientNetV2 provides superior interpretability through high-quality heatmap generation, addressing the "black box" criticism often leveled against deep learning systems in medicine:

Grad-CAM Integration: By leveraging gradient information flowing through the final convolutional layers of EfficientNetV2, the framework generates detailed activation maps that highlight discriminative regions influencing classification decisions. These heatmaps provide visual explanations that pathologists can correlate with known morphological features [13] [21].

Clinical Validation: Expert pathologist evaluation of generated heatmaps confirms strong alignment with diagnostically relevant tissue structures, with one study reporting 92% concordance between model-highlighted regions and pathologist-identified diagnostic features [13].

Comparative Interpretability: The framework produces more precise and clinically relevant heatmaps compared to activation visualization from standalone classification models, as the initial segmentation step ensures that activation mappings focus on biologically plausible regions rather than artifact or background features.

Diagram 2: Heatmap generation process for model interpretability in pathological image analysis.

Essential Research Reagent Solutions

Successful implementation of the integrated U-Net and EfficientNetV2 framework for pathological image analysis requires several key research "reagents" and computational resources:

Table 3: Essential Research Reagents and Computational Tools

| Research Reagent | Function | Implementation Examples |

|---|---|---|

| Digital Pathology Datasets | Provide annotated whole-slide images for training and validation | CBIS-DDSM, BreakHis, UniToPatho, TCGA |

| Annotation Platforms | Enable precise labeling of pathological structures for segmentation masks | Aperio ImageScope, ASAP, QuPath |

| Deep Learning Frameworks | Provide infrastructure for model implementation and training | TensorFlow, PyTorch, MONAI |

| Visualization Tools | Generate and interpret heatmaps for model explainability | Grad-CAM, Layer-wise Relevance Propagation |

| Computational Hardware | Accelerate model training and inference processes | NVIDIA GPUs (A100, V100), TPU clusters |

| Color Normalization Algorithms | Standardize stain variation across histological images | Macenko, Reinhard, Vahadane methods |

The integrated U-Net and EfficientNetV2 framework represents a significant advancement in automated pathological image analysis, effectively balancing the dual demands of precise segmentation and accurate classification while providing the interpretability necessary for clinical adoption. Through rigorous benchmarking against alternative architectures, this approach has demonstrated consistent performance advantages across multiple dataset types and disease domains.

The framework's particular strength lies in its synergistic combination of U-Net's exceptional boundary delineation capabilities with EfficientNetV2's efficient hierarchical feature learning, creating a comprehensive solution that addresses the unique challenges of whole-slide image analysis. Furthermore, the integration of advanced heatmap generation techniques provides the visual explanations necessary to build clinician trust and facilitate human-AI collaboration in diagnostic workflows.

For researchers and drug development professionals, this methodology offers a robust foundation for developing automated diagnostic systems, with particular relevance to high-throughput screening applications in pharmaceutical development and personalized medicine treatment planning. Future directions for this research include incorporating transformer architectures for improved global context modeling, developing multi-modal integration capabilities that combine histological images with genomic data, and creating federated learning approaches to enable collaborative model development while preserving data privacy.

Accurately forecasting environmental parameters like sea surface temperatures (SST) and air pollution concentrations is fundamental to addressing pressing global challenges, from climate-adaptive fisheries management to public health protection against air pollution. These forecasts rely on generating sophisticated spatial heatmaps that predict values across a landscape or seascape. Benchmarking the performance and accuracy of the methodologies that produce these heatmaps is therefore a critical pursuit in environmental science. This guide objectively compares two dominant methodological frameworks for spatial forecasting and validation—Generalized Additive Models (GAMs) and AI-based Implicit Representation (HF-SDF)—by examining their application in real-world research. We provide a detailed comparison of their experimental protocols, performance metrics, and suitability for different research scenarios within the domains of marine science and atmospheric science.

Methodological Comparison at a Glance

The table below summarizes the core characteristics of the two featured methodologies, providing a high-level overview for researchers.

Table 1: Comparison of Spatial Forecasting Methodologies

| Feature | GAM for SST Forecasting [25] | HF-SDF for Air Pollution Mapping [26] |

|---|---|---|

| Core Principle | Statistical model that fits flexible, smooth non-linear functions to data to capture complex relationships. | A machine learning technique that uses a 3D implicit surface representation to reconstruct continuous fields from sparse data. |

| Primary Application | Forecasting species-specific optimal fishing grounds based on SST. | Reconstructing high-resolution air pollution concentration maps from coarse or incomplete data. |

| Key Input Variables | Catch Per Unit Effort (CPUE), SST, spatiotemporal coordinates (year, month, location) [25]. | Raw, low-resolution satellite data (e.g., TROPOMI) or reanalysis data (e.g., TAP) [26]. |

| Spatial Validation Approach | Projecting the identified optimal SST range onto future climate scenario maps to define suitable habitats [25]. | Robustness tests against sparse observations and regionally missing data, comparing reconstructions to ground truth [26]. |

| Key Advantage | High interpretability of the relationship between the environment and the biological response (e.g., CPUE ~ SST) [25]. | Powerful transferability to unseen regions and pollutants, and flexible, fine-scale resolution [26]. |

| Reported Accuracy | Model deviance explained: ~64.5% [25]. | Accuracy vs. reanalysis data: 96%; vs. raw satellite data: 91% [26]. |

Detailed Experimental Protocols

To ensure reproducibility and provide a clear basis for comparison, this section outlines the experimental methodologies employed in the cited studies.

GAM Protocol for SST and Fishery Forecasting

The following workflow details the process for standardizing catch data and forecasting fishing grounds based on sea surface temperature.

Workflow Description: The process begins with Data Collection and Preprocessing of offshore jigging fishery logs and concurrent sea surface temperature (SST) data [25]. The Catch Per Unit Effort (CPUE) is calculated and standardized. Spatial Clustering is applied to group fishing operations into discrete geographic units to account for spatial variation. A GAM is Constructed with a model formula such as CPUE ~ s(SST) + s(Month) + f(Location), where s() denotes a smooth, non-linear function [25]. After Model Fitting and Validation, the model reveals the non-linear relationship between CPUE and SST, allowing researchers to Identify the Optimal SST Range for the species (e.g., 13-23°C for common squid, peaking at 21°C). This optimal range is then Applied to Future Climate Data (e.g., SSP3-7.0 scenario SST projections for 2050 and 2100) to generate a Spatial Forecast Map of thermally suitable habitats [25].

HF-SDF Protocol for Air Pollution Mapping

The following workflow illustrates the process of using an implicit neural representation to generate high-resolution air pollution maps from sparse inputs.

Workflow Description: This AI-based method begins with Sparse or Coarse Pollution Data as input, such as low-resolution satellite observations (TROPOMI) or reanalysis data (TAP) [26]. The core of the method is a 3D Implicit Representation that conceptualizes the air pollution distribution as an irregular 3D surface, where concentration is interpreted as "height" [26]. An Auto-decoder Network learns a continuous mapping function from spatial coordinates to pollution concentration. This network is trained with a Geometric Constraint provided by a Signed Distance Function (SDF), which helps reconstruct the shape of the pollution surface accurately from the sparse data [26]. The output is a Continuous Pollution Surface that can be queried at any spatial point, allowing for Flexible Resolution output. The model's Transferability is rigorously tested on unseen geographic regions and different pollutant species, finally producing a validated High-Resolution Output Map [26].

Performance and Benchmarking Data

This section provides a quantitative comparison of the methodological performance as reported in the research.

Table 2: Quantitative Performance Metrics

| Methodology | Validation Metric | Reported Performance | Experimental Context |

|---|---|---|---|

| GAM [25] | Deviance Explained | 64.5% | Model fitting for common squid CPUE in Korean waters. |

| GAM [25] | Optimal SST Range | 13 - 23 °C | Identified from the smooth SST function in the GAM. |

| GAM [25] | Peak Response SST | ~21 °C | Temperature at which the highest CPUE was predicted. |

| HF-SDF [26] | Accuracy (vs. Reanalysis) | 96% | Reconstruction of PM2.5 across China using TAP data. |

| HF-SDF [26] | Accuracy (vs. Satellite) | 91% | Reconstruction using raw TROPOMI satellite observations. |

| HF-SDF [26] | Robustness (Sparse Data) | R: 0.97-0.99 | Performance maintained with input resolutions from 1km to 40km. |

| HF-SDF [26] | Transferability (Unseen Data) | R: 0.93-0.95 | Performance on unseen regions (Yinchuan) and time periods (2023). |

The Scientist's Toolkit: Essential Research Reagents and Materials

Beyond software methodologies, robust spatial forecasting requires a suite of data inputs and analytical tools. The table below lists key "research reagent solutions" essential for experiments in this field.

Table 3: Essential Materials and Resources for Spatial Forecasting Research

| Item Name | Function / Purpose | Specific Examples / Notes |

|---|---|---|

| Mid-range Mobile Monitor | Collects high-quality, high-spatial-resolution air pollution data via controlled mobile campaigns [27]. | The AE51 Aethalometer used in the Mechelen study for Black Carbon (BC) measurements [27]. |

| Climate Projection Data | Provides future scenarios of environmental variables (e.g., SST) for forecasting species distribution or pollution patterns. | CNRM-ESM2-1 model data under the SSP3-7.0 scenario, used for SST projections in Korean waters [25]. |

| Reanalysis Datasets | Offers spatially complete, gridded data for model training and validation by combining models with observations. | Tracking Air Pollution (TAP) dataset in China, used as a benchmark for the HF-SDF model [26]. |

| Raw Satellite Observations | Supplies extensive spatial coverage for air pollutants, serving as a key input for AI-based mapping models. | TROPOspheric Monitoring Instrument (TROPOMI) data, used as a low-resolution input for HF-SDF [26]. |

| Statistical Computing Software | Provides the environment for implementing statistical models (e.g., GAM), data processing, and spatial evaluation. | RStudio with packages like mgcv for GAMs and Openair for air quality analysis [28]. |

| Generalized Additive Model (GAM) | A statistical workhorse for standardizing CPUE and modeling non-linear species-environment relationships [25]. | Used to reveal the significant non-linear relationship between common squid CPUE and SST [25]. |

| Ordinary Kriging | A geostatistical interpolation technique used for spatial evaluation and creating smooth pollution maps from point data [28]. | Applied in air quality studies to meticulously assess pollutant concentrations across monitoring stations [28]. |

The comparative analysis reveals that the choice between a GAM framework and an HF-SDF approach is not a matter of superiority but of strategic alignment with the research problem's specific demands.

GAMs offer high interpretability, making them ideal for establishing clear, defensible relationships between environmental drivers and biological responses, which is crucial for informing fisheries management policies [25]. Their reliance on carefully structured, domain-specific data (like CPUE) is a key characteristic.

HF-SDF excels in handling data with low spatial resolution and significant gaps, transforming it into high-resolution, continuous maps with remarkable transferability [26]. This makes it a powerful tool for large-scale environmental monitoring where dense, high-quality measurement networks are impractical.

In conclusion, benchmarking these methodologies demonstrates that performance and accuracy are deeply contextual. For researchers focused on understanding and forecasting based on well-defined, causal environmental relationships, GAMs provide a robust and interpretable framework. Conversely, for challenges requiring the reconstruction of fine-grained spatial patterns from sparse, noisy, or large-scale data, AI-based implicit representations like HF-SDF represent a cutting-edge solution. The ongoing development and validation of both statistical and AI-driven tools will continue to enhance our ability to accurately forecast and respond to environmental changes.

The field of digital analytics has been transformed by the integration of Artificial Intelligence (AI) and Machine Learning (ML), particularly in the domain of heatmap generation tools. These technologies have evolved traditional heatmaps from static visual representations into dynamic, predictive systems capable of automated insight generation. For researchers and scientists, especially in data-intensive fields like drug development, this represents a paradigm shift from manual data inspection to AI-powered pattern recognition and anomaly detection.

AI-powered heatmap tools leverage machine learning algorithms to process vast amounts of user interaction data, identifying complex patterns and correlations that would be impossible to detect manually [8]. These systems utilize various ML techniques, including clustering analysis for grouping similar user behavior patterns and decision trees for classifying interaction types, enabling them to uncover hidden trends in behavioral data [8]. The integration of AI has proven quantitatively impactful: companies implementing AI-driven heatmap tools have reported a 25% increase in sales on average, while websites using these tools see an average 20% increase in conversion rates [29] [8].

For research professionals, these capabilities translate to more efficient data analysis workflows. AI-enhanced heatmaps can automatically surface friction points, predict user behavior patterns, and generate actionable recommendations, allowing researchers to focus on interpretation rather than data collection [29]. This is particularly valuable in scientific contexts where understanding user interaction patterns with complex interfaces or data visualization tools is critical.

Comparative Analysis of Leading AI Heatmap Tools

The landscape of AI-powered heatmap tools includes several platforms with distinct strengths and specializations. The following table provides a comparative overview of leading tools based on their AI capabilities, target users, and core functionalities:

| Tool | Primary Research Application | Key AI Capabilities | Anomaly Detection Features |

|---|---|---|---|

| Hotjar AI | User behavior analytics [29] | Predictive user behavior modeling, automated insights generation [29] | Rage click detection, friction point identification [30] [31] |