Benchmarking scRNA-seq Pipelines: A Comprehensive Guide for Robust and Reproducible Single-Cell Analysis

The rapid proliferation of single-cell RNA sequencing technologies and analytical methods presents a major challenge for researchers and drug development professionals: how to select the optimal pipeline for accurate biological...

Benchmarking scRNA-seq Pipelines: A Comprehensive Guide for Robust and Reproducible Single-Cell Analysis

Abstract

The rapid proliferation of single-cell RNA sequencing technologies and analytical methods presents a major challenge for researchers and drug development professionals: how to select the optimal pipeline for accurate biological interpretation. This article synthesizes findings from major benchmarking studies to provide a structured guide for navigating scRNA-seq analysis. We explore the critical impact of platform selection, data preprocessing, and normalization methods on downstream results. The content systematically addresses foundational concepts, methodological comparisons, troubleshooting of common pitfalls, and validation frameworks. By highlighting how dataset characteristics dictate optimal bioinformatic choices, this guide empowers scientists to design robust studies, improve reproducibility, and generate reliable insights into cellular heterogeneity for biomedical and clinical applications.

Laying the Groundwork: Understanding scRNA-seq Technologies and Core Analysis Challenges

Single-cell RNA sequencing (scRNA-seq) has revolutionized biological research by allowing scientists to investigate gene expression at the resolution of individual cells. Since its emergence in 2009, this technology has progressed from a novel method to a standard approach across diverse fields including cancer research, immunology, neuroscience, and developmental biology [1]. As the field has matured, numerous high-throughput commercial platforms have become available, each with distinct technical approaches, performance characteristics, and applications. This review provides a comprehensive comparison of major scRNA-seq platforms and protocols, framing the discussion within the broader context of benchmarking scRNA-seq analysis pipelines to guide researchers in selecting the most appropriate technology for their specific experimental needs.

Section 1: Comparison of Major scRNA-seq Platforms

The selection of an appropriate scRNA-seq platform is critical for experimental success, as each technology offers different advantages in throughput, sensitivity, sample compatibility, and cost. The table below summarizes the key specifications of four mainstream platforms frequently used in research settings.

Table 1: Technical Specifications of Major scRNA-seq Platforms

| Platform | Technology | Throughput (cells/run) | Capture Efficiency | Key Strengths | Sample Compatibility | Viability Requirement |

|---|---|---|---|---|---|---|

| 10x Genomics Chromium [1] | Droplet Microfluidics | ~80,000 (8 channels) | Up to ~65% | High throughput, strong reproducibility, broad species compatibility | Fresh, frozen, gradient-frozen, FFPE tissue | Standard viability requirements |

| 10x Genomics FLEX [1] | Droplet Microfluidics | Up to 1 million (with multiplexing) | Similar to Chromium | FFPE compatibility, sample multiplexing (up to 128 samples), fixation stability | FFPE, 4% PFA fixed samples | Suitable for fixed samples |

| BD Rhapsody [1] | Microwell with Magnetic Beads | Variable | Up to ~70% | Combined RNA & protein profiling, tolerance for lower viability samples | Fresh, frozen; lower viability samples | ~65% viability |

| MobiDrop [1] | Droplet-based | Adjustable | Not specified | Cost-effectiveness, automated workflow, scalable for large projects | Fresh, frozen, and FFPE samples | Standard viability requirements |

Platform Strengths and Optimal Applications

Each platform's unique design lends itself to particular research scenarios:

- The 10x Genomics Chromium system remains the most widely adopted platform globally, often chosen by more than 80% of researchers for its robust performance and reproducibility [1]. Its droplet-based approach provides consistent results for standard fresh or frozen samples across a broad range of eukaryotic species.

- The 10x Genomics FLEX chemistry addresses specific challenges related to sample preservation and complex study designs. Its ability to handle formalin-fixed, paraffin-embedded (FFPE) tissue unlocks vast archives of clinical specimens for single-cell analysis [1]. The platform's powerful multiplexing capability (up to 16 samples per channel) enables million-cell scale experiments, making it suitable for multi-center and multi-timepoint projects.

- The BD Rhapsody platform employs a microwell-based capture system with 200,000 wells (50µm diameter) paired with 35µm magnetic barcoded beads [1]. This technology provides the highest capture efficiency among the platforms compared and offers unique advantages for immunology studies through its compatibility with CITE-seq, Cell Hashing, and AbSeq kits for simultaneous transcriptome and surface protein profiling.

- The MobiDrop system emphasizes flexibility and cost control, featuring lower per-cell reagent costs compared to most droplet-based systems and a streamlined workflow that integrates capture, library preparation, and nucleic acid extraction in a single step [1].

Section 2: Experimental Design and Methodologies

Key Elements of scRNA-seq Experimental Workflows

A typical scRNA-seq experiment involves multiple critical steps from sample preparation to sequencing. Understanding these steps is essential for proper experimental design and interpretation of results.

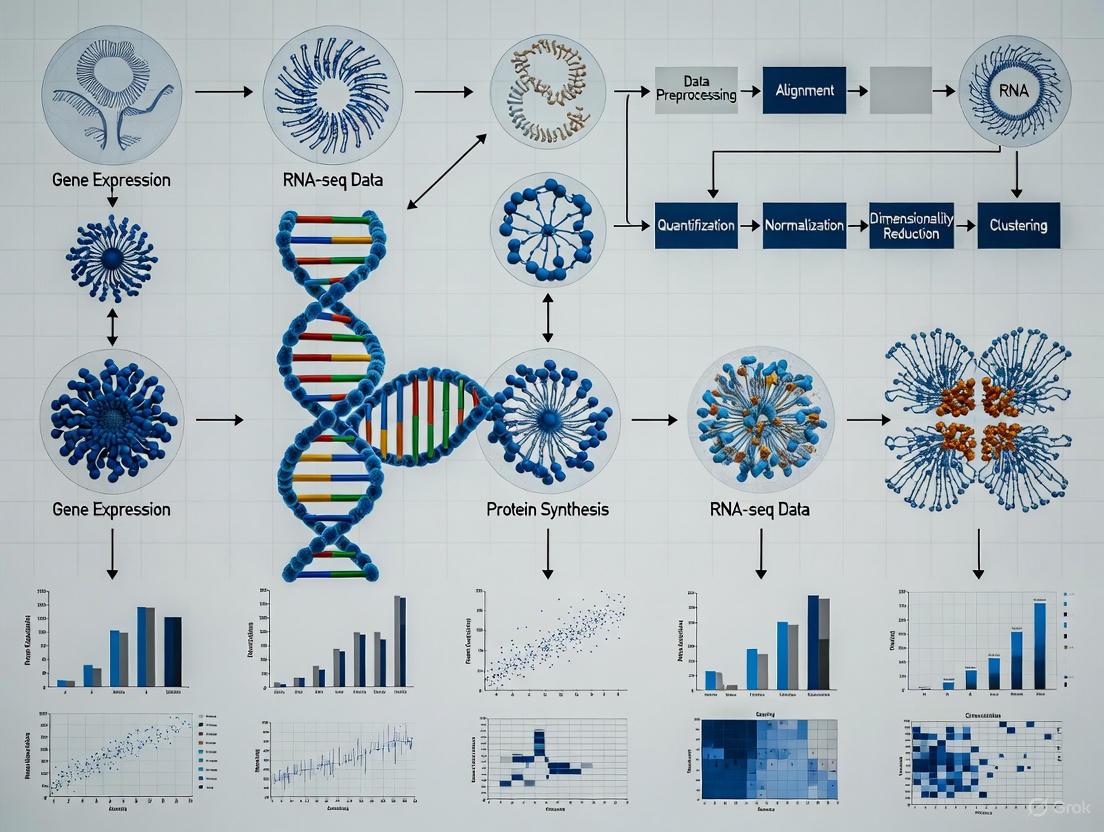

Diagram: scRNA-seq Experimental Workflow

The process begins with single-cell dissociation, where tissue samples are digested to create a single-cell suspension [2]. The method of single-cell isolation varies by platform, with plate-based techniques isolating cells into individual wells and droplet-based methods capturing cells in microfluidic droplets [2]. During library construction, intracellular mRNA is captured, reverse-transcribed to cDNA, and amplified. Critical to this process is the labeling of mRNA from each cell with a cellular barcode and, in many protocols, unique molecular identifiers (UMIs) that distinguish between amplified copies of the same mRNA molecule and reads from separate mRNA molecules [2]. Protocol choices significantly impact experimental outcomes, with full-length protocols like Smart-seq2 providing coverage across the entire transcript, while 3' or 5' tag-based methods like those used by 10x Genomics focus on either end of the transcripts [3].

Minimum Information Standards and Experimental Design

To ensure reproducibility and robust interpretation of scRNA-seq data, the minSCe (Minimum Information about a Single-Cell Experiment) guidelines provide a framework for reporting critical metadata [3]. Key experimental design considerations include:

- Species specification: Determines appropriate reference genomes and data resources for analysis [4].

- Sample origin: Affects analysis strategies, with common sources including tumor biopsies, peripheral blood mononuclear cells (PBMCs), and patient-derived organoids [4].

- Experimental design: Case-control studies, cohort designs with nested case-control approaches, and sample multiplexing each require tailored analysis strategies [4].

Section 3: Data Processing and Quality Control Framework

Raw Data Processing and Quality Control

The initial processing of scRNA-seq data transforms raw sequencing reads into gene expression matrices. Standardized pipelines are typically provided by platform vendors, such as Cell Ranger for 10x Genomics Chromium and CeleScope for Singleron's systems [4]. Alternative tools include UMI-tools, scPipe, zUMIs, and kallisto bustools [4]. The outputs of these pipelines are count matrices that represent molecular counts per gene per cell, forming the foundation for all downstream analyses.

Quality control (QC) is a critical step to ensure that only high-quality cells are included in subsequent analyses. The table below outlines the key QC metrics and their interpretation.

Table 2: Essential Quality Control Metrics and Interpretation

| QC Metric | Description | Indication of Low Quality | Indication of Doublets |

|---|---|---|---|

| Count Depth [4] [2] | Total UMI counts per barcode | Low count depth | Unexpectedly high counts |

| Genes Detected [4] [2] | Number of genes detected per barcode | Few detected genes | Large number of detected genes |

| Mitochondrial Fraction [4] [2] | Fraction of counts from mitochondrial genes | High fraction (>10-20%) | Not typically indicative |

| Hemoglobin Genes [4] | Expression of hemoglobin genes (e.g., HBB) | High in RBC contamination | Not applicable |

Quality control involves examining the distributions of these QC metrics and applying appropriate thresholds to filter out low-quality cells [2]. Cells with low numbers of detected genes and low count depth typically indicate damaged cells, while a high proportion of mitochondrial counts (often >10-20%) suggests dying cells [4] [2]. Conversely, barcodes with unexpectedly high counts and large numbers of detected genes may represent doublets (multiple cells captured together) [2]. These QC covariates should be considered jointly rather than in isolation to avoid inadvertently filtering out biologically distinct cell populations [2].

Benchmarking Computational Frameworks for scRNA-seq Analysis

The computational analysis of scRNA-seq data presents significant challenges, particularly with increasingly large datasets. A recent benchmarking study compared five widely used analysis frameworks—Seurat, OSCA, scrapper, Scanpy, and rapids-singlecell—focusing on their scalability, efficiency, and accuracy [5]. Key findings include:

- GPU acceleration: The rapids-singlecell pipeline, which utilizes GPU-based computation, provided a 15× speed-up over the best CPU methods with moderate memory usage [5].

- Clustering accuracy: OSCA and scrapper achieved the highest clustering accuracy (Adjusted Rand Index up to 0.97) in datasets with known cell identities [5].

- PCA performance: All principal component analysis (PCA) methods showed high concordance, with truncated approaches (ARPACK and IRLBA for sparse matrices; randomized SVD for HDF5-backed data) providing optimal efficiency without significant accuracy loss [5].

- Performance determinants: Differences in overall pipeline performance were largely driven by the choice of highly variable genes (HVGs) and PCA implementation rather than other analysis steps [5].

Section 4: The Scientist's Toolkit

Essential Research Reagent Solutions

Table 3: Key Reagents and Their Functions in scRNA-seq Workflows

| Reagent/Kit | Function | Application Context |

|---|---|---|

| Cellular Barcodes [2] | Labels mRNA from individual cells during library construction | All scRNA-seq protocols; enables multiplexing |

| Unique Molecular Identifiers (UMIs) [2] | Distinguishes between amplified mRNA molecules | UMI-based protocols; enables accurate transcript counting |

| CITE-seq Kits [1] | Enables simultaneous measurement of surface proteins and transcriptome | BD Rhapsody platform; immunology applications |

| Cell Hashing [1] | Allows sample multiplexing by labeling cells from different samples | 10x Genomics FLEX and BD Rhapsody; large cohort studies |

| AbSeq Kits [1] | Combines antibody-based protein detection with transcriptome profiling | BD Rhapsody platform; multi-omics studies |

| Spike-in RNAs [3] | Added to samples for quality control and normalization | Experimental quality assessment |

Platform Selection Framework

Selecting the appropriate scRNA-seq platform requires careful consideration of multiple experimental factors. The decision framework below illustrates key considerations in this process.

Diagram: Platform Selection Decision Framework

Section 5: Advanced Analytical Applications

Beyond basic cell type identification, scRNA-seq data enables several advanced analytical applications that provide deeper biological insights:

- Trajectory Inference: Reconstructs cellular differentiation pathways and developmental trajectories by ordering cells along pseudotemporal axes [4].

- Cell-Cell Communication (CCC) Analysis: Infers potential interactions between different cell types by analyzing ligand-receptor expression patterns [4].

- Transcription Factor Activity Prediction: Uses tools like regulon inference to predict transcription factor activity from gene expression data [4].

- Metabolic Analysis: Estimates metabolic flux and pathway activity at single-cell resolution [4].

Each of these advanced applications requires specialized computational tools and careful interpretation within the context of specific biological questions and experimental designs.

The scRNA-seq landscape offers multiple mature platform options, each with distinct strengths that make them suitable for different research scenarios. The 10x Genomics Chromium platform provides robust, high-throughput analysis for standard sample types, while the FLEX system enables unique applications with archived specimens. The BD Rhapsody platform offers advantages for integrated RNA-protein profiling and challenging clinical samples, and MobiDrop provides a cost-effective solution for large-scale studies. As dataset sizes continue to grow, computational considerations including GPU acceleration and efficient algorithm implementation become increasingly important. By matching platform capabilities to experimental requirements and implementing rigorous quality control and analysis frameworks, researchers can maximize the biological insights gained from single-cell RNA sequencing studies.

Single-cell RNA sequencing (scRNA-seq) has revolutionized biological research by enabling the profiling of gene expression at the individual cell level, uncovering cellular heterogeneity, identifying novel cell types, and illuminating developmental trajectories. However, the analytical pathway from raw data to biological insight is fraught with technical challenges that can compromise interpretation if not properly addressed. Two predominant technical issues—dropout events and batch effects—represent significant hurdles in scRNA-seq data analysis. Dropouts, where genes expressed in a cell are incorrectly measured as zero due to technical limitations, create sparse data matrices that obscure true biological signals [6]. Batch effects, systematic technical variations introduced when datasets are generated under different conditions, can confound biological variation and lead to spurious results [7] [8]. This guide provides a comprehensive benchmarking framework for computational strategies addressing these challenges, offering researchers evidence-based recommendations for optimizing their scRNA-seq analysis pipelines.

Understanding and Addressing Dropout Events

The Nature and Impact of Dropouts

Dropout events constitute a fundamental characteristic of scRNA-seq data, arising from the stochastic nature of gene expression combined with technical limitations in mRNA capture and amplification efficiency. These events result in excessive zero values in the gene expression matrix, where a gene actively expressed in a cell may fail to be detected. The implications for downstream analysis are profound: dropouts can break the assumption that similar cells remain proximate in high-dimensional space, thereby compromising clustering stability and making subpopulation identification increasingly difficult [9]. As datasets grow in size and complexity, the reliable detection of local cell neighborhoods becomes challenging under increasing dropout rates, potentially leading to inconsistent biological conclusions.

Benchmarking Dropout Correction Strategies

Table 1: Comparative Analysis of scRNA-seq Dropout Handling Methods

| Method | Underlying Approach | Key Advantages | Documented Limitations |

|---|---|---|---|

| scDoc | Cell-to-cell similarity-based imputation | Directly incorporates dropout information in similarity estimation; Superior performance in visualization and cell identification [10] | Requires definition of similar cells; Performance dependent on accurate similarity estimation |

| Co-occurrence Clustering | Binary dropout pattern analysis | Utilizes dropout patterns as biological signals; Identifies cell types without highly variable genes [6] | Discards quantitative expression information; Limited for detecting subtle expression differences |

| DCA | Denoising autoencoder with ZINB loss | Global model-based approach; Avoids parametric assumptions [11] | May oversmooth rare cell populations; Computationally intensive |

| M3Drop | Statistical modeling of dropout rates | Identifies genes with higher-than-expected dropouts; Useful for cross-experiment mapping [6] | Limited to specific patterns of differential expression |

| DropDAE | Contrastive learning-enhanced denoising autoencoder | Improves cluster separation while imputing; Balances reconstruction and cluster differentiation [11] | Requires careful hyperparameter tuning; Complex training process |

Experimental Protocols for Dropout Method Evaluation

Benchmarking Framework for Dropout Correction Performance

To objectively evaluate dropout correction methods, researchers should implement the following experimental protocol:

Data Preparation and Simulation:

- Utilize both synthetic datasets with known ground truth (simulated using tools like Splatter) and real-world datasets with orthogonal validation [11] [12].

- Systematically introduce additional dropout events using controlled parameters (e.g.,

dropout.midin Splatter) to test method robustness under varying noise levels [11].

Performance Metrics Calculation:

- Cluster Quality: Assess using Adjusted Rand Index (ARI), Normalized Mutual Information (NMI), and Silhouette Coefficient (SC) against known cell labels [13].

- Biological Signal Preservation: Evaluate through differential expression analysis precision/recall, measuring false positive and false negative rates against established marker genes [12].

- Computational Efficiency: Measure runtime and memory usage across increasing dataset sizes.

Visualization Assessment:

- Employ t-SNE and UMAP projections to qualitatively examine cluster separation and cell type mixing after correction [12].

Figure 1: Computational Workflow for Addressing Dropout Events in scRNA-seq Data

Tackling Batch Effects in scRNA-seq Integration

The Batch Effect Challenge

Batch effects represent systematic technical variations introduced when datasets are generated across different experiments, sequencing platforms, or processing conditions. These non-biological variations can obscure true biological signals and lead to incorrect inferences in downstream analyses [7]. In scRNA-seq data, batch effects arise from multiple sources including differences in sample preparation, reagent batches, sequencing platforms, and laboratory personnel. The amplification process essential for scRNA-seq particularly amplifies these technical variations alongside biological signals, making batch effect correction especially crucial in single-cell studies [12].

Benchmarking Batch Effect Correction Methods

Table 2: Performance Comparison of scRNA-seq Batch Effect Correction Methods

| Method | Algorithmic Approach | Batch Mixing Performance | Biological Preservation | Computational Scaling |

|---|---|---|---|---|

| Harmony | Iterative clustering in PCA space | High (LISI: 0.78) [8] | High (cLISI: 0.82) [8] | Fast, suitable for large datasets [7] |

| Seurat Integration | CCA + MNN anchoring | High (LISI: 0.75) [8] | High (cLISI: 0.85) [8] | Memory-intensive for large datasets [7] |

| BBKNN | Batch-balanced k-nearest neighbors | Moderate (LISI: 0.71) [7] | Moderate (cLISI: 0.76) [7] | Fast, lightweight [7] |

| LIGER | Integrative non-negative matrix factorization | Moderate (LISI: 0.72) [8] | High (cLISI: 0.83) [8] | Moderate, suitable for large datasets [8] |

| scANVI | Deep generative modeling | High (LISI: 0.79) [7] | High (cLISI: 0.84) [7] | Requires GPU acceleration [7] |

| ComBat | Empirical Bayes framework | Moderate (LISI: 0.69) [8] | Low (cLISI: 0.65) [14] | Fast, but limited by model assumptions [14] |

Experimental Framework for Batch Correction Evaluation

Comprehensive Benchmarking Protocol for Integration Methods

Dataset Selection and Preprocessing:

- Curate datasets with known batch structure and established cell type annotations, ensuring representation of various scenarios (identical cell types across technologies, partially overlapping cell types, multiple batches) [8].

- Apply consistent preprocessing including normalization, highly variable gene selection, and scaling according to method-specific recommendations.

Integration Execution:

- Apply batch correction methods using default parameters as specified in original publications.

- For methods requiring reference-based approaches (e.g., Seurat), designate the largest batch as reference.

Multi-metric Assessment:

- Batch Mixing Metrics: Calculate Local Inverse Simpson's Index (LISI) [8], kBET rejection rate [8], and batch ASW (Average Silhouette Width) [8] to quantify technical effect removal.

- Biological Preservation Metrics: Evaluate using cell-type LISI (cLISI) [8], ARI (Adjusted Rand Index) [8], and label ASW to assess biological structure retention.

- Overcorrection Detection: Implement Reference-informed Batch Effect Testing (RBET) which utilizes reference gene expression patterns to detect overcorrection sensitivity [13].

Downstream Analysis Validation:

- Assess performance in real analytical tasks including differential expression analysis, trajectory inference, and cell-cell communication prediction [13].

- Compare results with biological ground truth where available.

Figure 2: Comprehensive Evaluation Workflow for scRNA-seq Batch Effect Correction Methods

Table 3: Essential Computational Tools for scRNA-seq Analysis

| Tool Category | Specific Tools | Primary Function | Key Applications |

|---|---|---|---|

| Comprehensive Analysis Suites | Seurat, Scanpy | End-to-end scRNA-seq analysis | Data preprocessing, normalization, clustering, visualization, differential expression |

| Batch Correction Methods | Harmony, BBKNN, Seurat Integration, LIGER | Multi-dataset integration | Atlas building, cross-study comparisons, meta-analyses |

| Dropout Imputation | scDoc, DCA, DropDAE | Handling zero-inflated data | Data denoising, improving cluster separation, enhancing downstream analysis |

| Normalization Algorithms | SCTransform, Scran, LogNormalize | Technical bias removal | Correcting sequencing depth differences, RNA content variability |

| Evaluation Metrics | LISI, kBET, RBET, ARI | Method performance assessment | Benchmarking tool efficacy, pipeline optimization, quality control |

| Visualization Packages | ggplot2, plotly, UMAP, t-SNE | Data exploration and presentation | Cluster visualization, batch effect diagnosis, result communication |

Integrated Analysis: Navigating Trade-offs in Pipeline Design

Method Selection Guidelines

Building robust scRNA-seq analysis pipelines requires careful consideration of the trade-offs between different computational approaches. For dropout correction, researchers must choose between imputation methods that borrow information from similar cells (e.g., scDoc) and global denoising approaches (e.g., DCA, DropDAE). The former excels at recovering subtle biological signals but depends on accurate cell similarity estimation, while the latter provides more stable performance across diverse cell types but may oversmooth rare populations [10] [11]. For batch effect correction, the choice often involves balancing computational efficiency against biological preservation. Methods like Harmony offer fast processing suitable for large-scale atlas projects, while Seurat provides superior biological fidelity at the cost of greater computational resources [7] [8].

The interdependence between preprocessing steps necessitates integrated benchmarking rather than isolated method evaluation. Feature selection strategies significantly impact downstream integration success, with highly variable genes generally outperforming random gene sets [15]. Similarly, normalization choices affect both dropout imputation and batch correction efficacy, with SCTransform generally providing superior variance stabilization compared to standard log normalization [7].

Emerging Approaches and Future Directions

Recent methodological advances highlight promising new directions for addressing scRNA-seq technical challenges. The concept of leveraging dropout patterns as biological signals rather than noise represents a paradigm shift in the field [6]. Similarly, order-preserving batch correction methods that maintain gene expression rankings while removing technical artifacts offer improved preservation of biological relationships [14]. Deep learning approaches continue to evolve, with architectures like DropDAE integrating contrastive learning to simultaneously address dropouts and enhance cluster separation [11].

Evaluation frameworks are also advancing, with reference-informed metrics like RBET addressing the critical challenge of overcorrection detection that was largely overlooked in earlier benchmarking studies [13]. As single-cell technologies continue to scale, developing computationally efficient yet biologically sensitive evaluation metrics will remain essential for method development and pipeline optimization.

This benchmarking guide demonstrates that addressing technical artifacts in scRNA-seq data requires method selection tailored to specific biological questions and experimental designs. For dropout correction, methods like scDoc and DropDAE show particular promise in balancing imputation accuracy with biological structure preservation. For batch effect correction, Harmony and Seurat emerge as consistently strong performers, though optimal choice depends on dataset size and complexity. Critically, researchers should implement comprehensive evaluation frameworks that assess both technical artifact removal and biological signal preservation, with particular attention to overcorrection risks. By applying these evidence-based recommendations and maintaining awareness of methodological trade-offs, researchers can significantly enhance the reliability and biological relevance of their single-cell transcriptomic studies.

Single-cell RNA sequencing (scRNA-seq) has revolutionized biological research by enabling the characterization of gene expression at unprecedented resolution. However, the rapid development of scRNA-seq technologies and analysis methods has created a pressing challenge: the current lack of gold-standard benchmark datasets makes it difficult for researchers to systematically compare the performance of the many methods available [16]. Establishing robust benchmarks through reference samples and controlled experiments has therefore become a critical foundation for ensuring the reliability and reproducibility of single-cell genomics research.

This guide examines the experimental designs and computational frameworks that enable objective performance assessment of scRNA-seq analysis pipelines. By providing structured comparisons of benchmarking methodologies and their associated outcomes, we aim to equip researchers with the knowledge needed to select appropriate benchmarking strategies for their specific research contexts and to critically evaluate the growing array of computational tools in the field.

Experimental Designs for scRNA-seq Benchmarking

Mixture Control Experiments

A powerful approach for creating ground truth in scRNA-seq benchmarking involves the use of mixture control experiments. Researchers from The Walter and Eliza Hall Institute of Medical Research generated a realistic benchmark experiment that included single cells and admixtures of cells or RNA to create 'pseudo cells' from up to five distinct cancer cell lines [16] [17].

Table 1: Key Characteristics of Mixture Control Experiments

| Experimental Feature | Description | Utility in Benchmarking |

|---|---|---|

| Sample Types | Single cells and admixtures of cells/RNA | Creates known cellular composition for validation |

| Cell Lines | Up to five distinct cancer cell lines | Provides biological diversity while maintaining control |

| Protocols | 14 datasets using droplet and plate-based scRNA-seq | Tests protocol-specific performance |

| Analysis Combinations | 3,913 method combinations evaluated | Comprehensive pipeline assessment |

The experimental design involves creating controlled mixtures where the proportions of different cell types are known in advance, enabling researchers to determine how accurately computational pipelines can recover these known biological truths [17]. This approach has been instrumental in benchmarking tasks ranging from normalization and imputation to clustering, trajectory analysis, and data integration.

Figure 1: Workflow of mixture control experiments for scRNA-seq benchmarking. Known cell lines are used to create defined mixtures, which are processed through multiple scRNA-seq protocols to generate datasets with known ground truth for objective pipeline evaluation.

In Silico Simulation Methods

When experimental controls are difficult or impossible to generate, in silico simulation methods provide a valuable alternative for benchmarking. Simulation methods employ statistical models to estimate characteristics of real experimental single-cell data and use this information as a template to generate synthetic datasets with known ground truth [18].

Table 2: Categories of scRNA-seq Simulation Methods

| Simulation Approach | Underlying Framework | Representative Methods | Key Characteristics |

|---|---|---|---|

| Parametric Models | Negative Binomial / ZINB | Splat, powsimR, zingeR | Strong distributional assumptions |

| Semi-parametric Models | Density Estimation | SPsimSeq | Fewer distributional assumptions |

| Kinetic Models | Markov Chain Monte Carlo | SymSim | Models transcriptional kinetics |

| Deep Learning | Generative Adversarial Networks | cscGAN | Learns data distribution without explicit assumptions |

A comprehensive evaluation of 12 simulation methods through the SimBench framework revealed significant performance differences in their ability to capture properties of experimental data [18]. The benchmark assessed methods on data property estimation, biological signal retention, computational scalability, and general applicability across 35 diverse experimental datasets.

Performance Comparison Across Analysis Pipelines

Impact of Library Preparation and Normalization

Systematic evaluations of scRNA-seq analysis pipelines have revealed that choices of normalisation and library preparation protocols have the biggest impact on analytical outcomes [19] [20]. These factors substantially influence the ability to detect differential expression, particularly in challenging scenarios with asymmetric expression changes between cell types.

Library preparation protocol determines the ability to detect symmetric expression differences, while normalisation dominates pipeline performance in asymmetric differential expression setups [20]. In extreme scenarios with 60% differentially expressed genes and complete asymmetry, most normalization methods lose false discovery rate control, with only SCnorm and scran maintaining performance when cells are grouped or clustered prior to normalisation [20].

Figure 2: Impact of different analysis steps on scRNA-seq pipeline performance. Library preparation protocols most strongly affect symmetric differential expression (DE) detection, while normalization methods dominate performance in asymmetric DE setups.

Platform-Specific Performance Characteristics

Benchmarking studies have also revealed platform-specific performance characteristics in complex tissues. A systematic comparison of 10× Chromium and BD Rhapsody platforms using tumors with high cellular diversity showed distinct performance metrics including gene sensitivity, mitochondrial content, reproducibility, clustering capabilities, cell type representation, and ambient RNA contamination [21].

Table 3: Platform Performance Comparison in Complex Tissues

| Performance Metric | 10× Chromium | BD Rhapsody | Biological Implications |

|---|---|---|---|

| Gene Sensitivity | Similar between platforms | Similar between platforms | Comparable transcript detection |

| Mitochondrial Content | Lower | Higher | Affects quality control metrics |

| Cell Type Detection | Lower sensitivity for granulocytes | Lower proportion of endothelial/myofibroblast cells | Platform-specific cell type biases |

| Ambient RNA Source | Droplet-specific background | Plate-based specific background | Different decontamination strategies needed |

| Data Reproducibility | High | High | Both platforms produce robust data |

These platform-specific differential performances should be carefully considered during experimental design, as they can significantly impact cell type detection and downstream biological interpretations [21].

Benchmarking Frameworks and Computational Tools

The CellBench Framework

The CellBench R package was developed specifically for benchmarking single-cell analysis methods and provides a comprehensive framework for evaluating most common scRNA-seq analysis steps [16] [17]. This package enables systematic performance assessment of various computational methods using controlled experimental data, allowing researchers to identify optimal pipelines for their specific data types and analytical tasks.

CellBench facilitates the comparison of multiple analysis methods across different data modalities and provides standardized evaluation metrics to ensure fair comparisons. The availability of this framework in Bioconductor ensures accessibility to the broader research community and promotes reproducible benchmarking practices.

The scCompare Pipeline

For comparing biological similarities and differences between scRNA-seq samples, the scCompare computational pipeline provides a specialized approach. This method transfers phenotypic identities from a known dataset to another dataset using correlation-based mapping to average transcriptomic signatures from each cluster of cells' annotated phenotype [22].

A key feature of scCompare is its use of statistically derived lower cutoffs for phenotype inclusivity, allowing cells to be unmapped if they are distinct from known phenotypes, thereby facilitating novel cell type detection [22]. In comparisons using scRNA-seq datasets from human peripheral blood mononuclear cells (PBMCs), scCompare outperformed single-cell variational inference (scVI) in higher precision and sensitivity for most cell types [22].

Essential Research Reagents and Tools

Table 4: Key Research Reagent Solutions for scRNA-seq Benchmarking

| Reagent/Tool | Function | Utility in Benchmarking |

|---|---|---|

| CellBench R Package | Computational framework for method comparison | Standardized evaluation of analysis pipelines |

| Cell Line Mixtures | Reference samples with known composition | Ground truth for validation studies |

| scCompare Pipeline | Computational tool for dataset comparison | Objective assessment of biological reproducibility |

| Spike-in RNA Standards | External RNA controls | Normalization quality assessment |

| Human Protein Atlas Data | Reference transcriptome data | Annotation quality benchmarking |

| Tabula Sapiens Dataset | Multicellular reference atlas | Cross-study reproducibility assessment |

Benchmarking using reference samples and controlled experiments provides an essential foundation for advancing single-cell genomics. The development of mixture control experiments, sophisticated simulation frameworks, and standardized computational benchmarking tools has created a robust ecosystem for objective performance evaluation of scRNA-seq analysis methods.

As the field continues to evolve, these benchmarking approaches will play an increasingly critical role in ensuring analytical validity and reproducibility. Researchers should select benchmarking strategies that align with their specific experimental designs and analytical questions, leveraging the growing collection of reference datasets and computational frameworks now available to the community.

The systematic evaluation of analysis pipelines has demonstrated that informed choices can have the same impact on detecting biological signals as quadrupling the sample size [20], highlighting the tremendous value of rigorous benchmarking in maximizing the scientific return from scRNA-seq studies.

Impact of Pre-processing Pipelines on Cell Identification and Gene Detection

The analysis of single-cell RNA-sequencing (scRNA-seq) data is a multi-step process, with the initial pre-processing stage forming the critical foundation for all downstream biological interpretations. This stage encompasses tasks such as quality control (QC), empty droplet detection, normalization, and feature selection. The choice of methods at this juncture significantly influences the ability to accurately identify cell populations and detect genes that define cellular identity and state [23] [24] [25]. While numerous benchmarking studies have evaluated downstream tasks like clustering and differential expression, the impact of the initial pre-processing pipeline has received less systematic attention. This guide synthesizes current benchmarking research to objectively compare pre-processing methodologies, providing experimental data and protocols to inform pipeline selection for researchers and drug development professionals.

Critical Pre-processing Steps and Their Impact on Downstream Analysis

The scRNA-seq pre-processing workflow involves several interdependent steps, each addressing specific technical artifacts. The following diagram illustrates the logical sequence of these steps and their potential impacts on the final analysis.

The choices made at each step of the pre-processing pipeline can introduce distinct analytical artifacts. For instance, inappropriate normalization can fail to stabilize variance across the gene expression dynamic range, while overzealous quality control can remove rare but biologically critical cell populations [23] [26] [27]. The following sections provide a detailed, evidence-based comparison of methods for each step.

Benchmarking Quality Control and Empty Droplet Detection

Experimental Protocols for QC Assessment

Benchmarking QC pipelines requires datasets with known cell population labels or spike-in controls to establish ground truth. A standard protocol involves:

- Data Acquisition: Utilize datasets with validated cell type labels, such as the human pancreas dataset (CEL-Seq2, SMART-seq2) or the scmixology cell line mixture [13] [25].

- Pipeline Application: Apply different QC workflows (e.g., SCTK-QC, Scanpy, Seurat) to the same raw data. The SCTK-QC pipeline, for example, integrates multiple algorithms:

barcodeRanksandEmptyDropsfrom theDropletUtilspackage for empty droplet detection;scdsandScrubletfor doublet detection; anddecontXfor ambient RNA estimation [23]. - Metric Calculation: Calculate clustering accuracy metrics after downstream analysis (e.g., Adjusted Rand Index (ARI), Normalized Mutual Information (NMI), and cell type annotation accuracy) to compare how well each QC pipeline preserves biological signal while removing technical noise [13] [28].

- Overcorrection Evaluation: Use negative controls where batches are randomly assigned to measure the pipeline's tendency to introduce artifacts in the absence of true batch effects [29].

Performance Comparison of QC Metrics

Systematic benchmarking reveals that the choice of QC metrics significantly impacts the fairness of method evaluation. The following table summarizes key metrics and their properties, as identified in recent benchmarking studies.

Table 1: Performance Metrics for Evaluating Quality Control and Batch Correction

| Metric Category | Metric Name | What It Measures | Performance Insights |

|---|---|---|---|

| Batch Correction | RBET (Reference-informed Batch Effect Testing) | Batch effect removal using stable reference genes | More sensitive to overcorrection and robust to large batch effect sizes compared to other metrics [13]. |

| LISI (Local Inverse Simpson's Index) | Local batch mixing and cell type separation | Can lose discrimination power with large batch effects; may not detect overcorrection [13]. | |

| kBET (k-Nearest Neighbour Batch Effect Test) | Overall batch mixing in k-nearest neighbour graph | Prone to loss of type I error control; variation collapses with large batch effects [13] [15]. | |

| Biological Conservation | ARI (Adjusted Rand Index) / NMI (Normalized Mutual Information) | Similarity between clustering results and known cell type labels | Highly correlated metrics; selecting a subset is sufficient for benchmarking [15]. |

| cLISI (Cell-type LISI) | Separation of known cell types | A value of 1 indicates perfect separation of cell types [15]. | |

| Graph Connectivity | Whether cells of the same type form a connected graph | Measures preservation of continuous biological trajectories [15]. | |

| Cluster Purity | Silhouette Coefficient (SIL) | How similar a cell is to its own cluster vs. other clusters | Requires correction for dependency on the number of clusters [28]. |

| Calinski-Harabasz Index (CH) | Ratio of between-cluster to within-cluster dispersion | Also requires correction for number of clusters [28]. |

Benchmarking Normalization and Transformation Methods

Experimental Protocols for Normalization Benchmarking

To evaluate normalization methods, benchmarking studies typically employ a standardized workflow:

- Dataset Curation: Select datasets with strong ground truth (e.g., cell lines or FACS-sorted populations) and include a dilution series or datasets with varying library sizes to test robustness [26] [25].

- Method Application: Apply a range of normalization methods, from simple global scaling (e.g., log(CPM)) to more sophisticated approaches like Pearson residuals (

sctransform) or latent expression inference (Sanity, Dino) [26]. - Downstream Analysis: Perform standard dimensionality reduction (PCA, UMAP) and clustering on the normalized data.

- Performance Quantification: Assess performance using metrics that evaluate the removal of technical artifacts (e.g., correlation between principal components and batch) and the preservation of biological variation (e.g., cluster purity metrics from Table 1) [26] [28].

Performance Comparison of Normalization Methods

A comprehensive comparison of transformation methods for scRNA-seq data revealed that their performance is context-dependent, with simple methods often rivaling more complex ones.

Table 2: Comparison of scRNA-seq Normalization and Transformation Methods

| Method Class | Example Methods | Key Principle | Impact on Cell ID & Gene Detection |

|---|---|---|---|

| Delta Method | Log(CPM), acosh |

Applies a non-linear function to stabilize variance. | Simple and effective, but performance depends heavily on the chosen pseudo-count. Log(CPM) can fail to mix cells with different size factors [26]. |

| Residuals-based | Pearson Residuals (sctransform) |

Fits a GLM and uses Pearson residuals for normalization. | Better handles the mean-variance relationship and can more effectively mix cells with different size factors compared to delta methods [26]. |

| Latent Expression | Sanity, Dino, Normalisr | Infers a latent "true" expression state from the observed counts. | Has appealing theoretical properties, but in benchmarks, does not consistently outperform simpler approaches [26]. |

| Factor Analysis | GLM-PCA, NewWave | Directly models counts using a factor analysis framework. | A powerful alternative to transformations, directly producing a low-dimensional representation for downstream analysis [26]. |

The Scientist's Toolkit: Essential Research Reagents and Software

This section details key computational tools and resources used in benchmarking scRNA-seq pre-processing pipelines, which are essential for reproducing and extending the findings discussed in this guide.

Table 3: Key Research Reagents and Computational Tools for scRNA-seq Pre-processing

| Tool or Resource Name | Type | Primary Function in Pre-processing |

|---|---|---|

| SCTK-QC Pipeline | R/Python Software | An integrated pipeline for comprehensive QC, including empty droplet detection, doublet prediction, and ambient RNA estimation [23]. |

| Scanpy | Python Toolkit | A widely used Python-based toolkit for single-cell analysis that provides standard QC, normalization, and clustering workflows [27]. |

| Seurat | R Toolkit | A comprehensive R package for single-cell genomics, offering a full suite of pre-processing and analysis functions [30]. |

| scRNA-seq Benchmarking Datasets | Data Resource | Publicly available datasets with known ground truth (e.g., cell line mixtures, annotated pancreas data) essential for validating pipelines [13] [25]. |

| Harmony | R/Python Software | A high-performing batch integration tool that corrects for batch effects without severely altering the underlying data structure, as recommended in benchmarks [29]. |

| scIB | R/Python Software | A curated set of benchmarking metrics and tools for evaluating data integration, including LISI and other metrics [15]. |

End-to-End Pre-processing Workflow

The following diagram synthesizes the key steps, common tool choices, and critical decision points in a standard scRNA-seq pre-processing workflow, based on the aggregated benchmarking evidence.

The collective evidence from benchmarking studies indicates that the pre-processing pipeline has a non-negligible impact on cell identification and gene detection, though this impact can be context-dependent.

- Pre-processing vs. Downstream Analysis: One major benchmarking study of 10 pre-processing workflows found that while quantification properties varied, the choice of pre-processing method was generally less influential on final clustering results than the choice of downstream normalization and clustering methods [25]. This suggests that a well-validated downstream analysis pipeline can be robust to moderate variations in pre-processing.

- Interaction Between Steps: The effect of a pre-processing step can be modulated by other steps in the pipeline. For example, feature selection has been shown to significantly affect the performance of data integration and query mapping, with Highly Variable Gene (HVG) selection generally producing high-quality integrations [15].

- Risk of Overcorrection: A critical finding across multiple studies is the risk of overcorrection, where batch effect correction or normalization methods erase genuine biological variation. Metrics like RBET have been developed specifically to detect this issue, which can lead to false biological discoveries [13] [29].

- No One-Size-Fits-All Solution: No single pipeline performs best across all datasets and biological questions. The optimal choice depends on dataset-specific characteristics, such as the number of cells, batch effect size, and the complexity of the biological system [28]. Consequently, a rigorous, metric-driven evaluation of the pre-processing pipeline is recommended for every new study.

In single-cell RNA sequencing (scRNA-seq), the strategic allocation of a finite sequencing budget presents a fundamental experimental design challenge: should one sequence fewer cells more deeply or more cells at a shallower depth? [31] The resolution of this trade-off directly influences the accuracy of gene expression estimation, the ability to resolve rare cell populations, and the power to detect subtle transcriptional variations. The concepts of sequencing depth (number of reads per cell) and library complexity (the diversity of represented transcripts) are intrinsically linked to the phenomenon of saturation—the point at which additional sequencing yields diminishing returns in transcript detection [32]. Within the broader context of benchmarking scRNA-seq analysis pipelines [17], understanding how these parameters interact across different technological platforms is essential for designing biologically informative experiments, optimizing costs, and ensuring that downstream analytical pipelines operate on high-quality data. This guide objectively compares performance across major scRNA-seq platforms, providing the experimental data and frameworks needed to make evidence-based decisions.

Key Concepts and Experimental Designs for Saturation Analysis

Foundational Concepts and the Sequencing Budget

- Sequencing Depth: Defined as the number of reads allocated per cell. Deeper sequencing reduces technical noise for more accurate estimation of a cell's true transcriptional state [31].

- Library Complexity: Refers to the number of unique transcripts detected in a sample. Higher complexity libraries provide a more complete picture of the transcriptome [33].

- Saturation: In scRNA-seq, this describes the point at which additional sequencing reads fail to detect a significant number of new genes or unique transcripts. It is a key metric for determining the optimal stopping point for sequencing [32].

- The Sequencing Budget Constraint: The core trade-off is framed by the fixed total sequencing budget, expressed as ( B = n{\text{cells}} \times n{\text{reads}} ), where ( B ) is the total number of reads, ( n{\text{cells}} ) is the number of cells, and ( n{\text{reads}} ) is the mean read depth per cell [31]. Allocating this budget effectively is the central problem of experimental design.

Mathematical Frameworks for Determining Optimal Depth

A mathematical framework for scRNA-seq posits that for estimating fundamental gene properties, the optimal allocation is to sequence at a depth of around one read per cell per gene [31]. This framework uses a hierarchical model:

- The true gene expression of a cell, ( \mathbf{X}c ), is a sample from a biological distribution ( P{\mathbf{X}} ).

- The observed read counts, ( \mathbf{Y}c ), are generated through Poisson sampling from ( \mathbf{X}c ), given a sequencing depth ( n_{\text{reads}} ).

The optimal estimator derived from this model is not the standard plug-in estimator but one developed via empirical Bayes, suggesting that significantly shallower sequencing than traditionally practiced may be sufficient for many tasks [31]. For instance, an analysis of a 10x Genomics pbmc_4k dataset suggested that the optimal trade-off would have been achieved by sequencing 10 times shallower with 10 times more cells, potentially reducing the estimation error by half [31].

Table 1: Key Metrics for Saturation Analysis

| Metric | Description | Application in Saturation Analysis |

|---|---|---|

| Sequencing Saturation | The fraction of reads that originate from an already-observed unique molecular identifier (UMI). | A high saturation value (>80-90%) often indicates that additional sequencing will yield few new transcripts. |

| Gene Detection Saturation Curve | A plot of the number of genes detected per cell as a function of sequencing depth. | Used to identify the point where the curve plateaus, indicating optimal depth for gene discovery. |

| Cells vs. Reads Trade-off | The analytical framework for balancing ( n{\text{cells}} ) and ( n{\text{reads}} ) under a fixed budget ( B ) [31]. | Determines the allocation that minimizes the estimation error for a target gene property. |

| Jaccard Index (JI) | A statistic measuring the similarity between enhancer calls or cell type identifications from different datasets [34]. | Assesses the consistency of biological discovery as a function of sequencing depth and platform. |

Experimental Designs for Benchmarking

Benchmarking studies rely on controlled experimental designs to disentangle technical effects from biological signals.

- Mixture Control Experiments: These involve creating 'pseudo cells' from admixtures of cells or RNA from distinct cancer cell lines. The known composition provides a ground truth for evaluating how well analysis pipelines recover expected proportions and expression profiles [17]. For example, one study generated 14 datasets using both droplet and plate-based protocols from up to five cell lines to compare 3,913 method combinations [17] [35].

- Physical Resampling for Validation: Techniques like transcriptome resampling can physically recover targeted cDNA subsets from scRNA-seq libraries for deeper re-sequencing [33]. This allows direct validation of whether low-depth missing transcripts are detectable with higher depth, as demonstrated by increasing the median genes detected per megakaryocyte from 1,313 to 2,002 [33].

- Cross-Platform Consistency Checks: Systematic evaluation of different platforms (e.g., droplet-based, plate-based, combinatorial barcoding) on the same cell line reveals substantial inconsistencies in outputs like enhancer calls, which can be mitigated through uniform data processing pipelines [34].

Comparative Performance Across Platforms and Protocols

The optimal sequencing depth and the resulting library complexity are highly dependent on the scRNA-seq platform and library preparation method.

Table 2: Platform Comparison and Recommended Sequencing Depth

| Platform / Technology | Typical Recommended Reads/Cell | Key Strengths | Saturation Characteristics & Evidence |

|---|---|---|---|

| Droplet-Based (e.g., 10x Genomics) | 20,000 - 50,000 reads [32] | High cell throughput, commercial standardization. | A mathematical analysis suggests that for specific gene estimation, optimal depth may be much shallower (~1 UMI/cell/gene), favoring more cells over deeper sequencing [31]. |

| Combinatorial Barcoding (e.g., Parse Biosciences) | Flexible; determined via sub-sampling [32] | Low multiplet rate, flexible scaling, no specialized equipment. | A key advantage is the ability to use one sublibrary to empirically determine the saturation point, then apply this optimal depth to all other sublibraries, ensuring cost-effectiveness [32]. |

| Plate-Based (Smart-seq2) | >1,000,000 reads | High sensitivity for gene detection, full-length transcript coverage. | Designed for deep sequencing to maximize library complexity from individual cells. Saturation of isoform detection may require extreme depths, as seen in ultra-deep RNA-seq studies [36]. |

| Ultra-High-Depth RNA-seq (Bulk) | Up to 1 billion reads [36] | Detection of very low-abundance transcripts and rare splicing events. | In Mendelian disease diagnostics, gene detection nears saturation at ~1 billion reads, but isoform detection continues to improve with further depth, revealing pathologies invisible at 50 million reads [36]. |

The relationship between experimental goals, platform choice, and data quality is structured as follows:

Figure 1: Logical workflow for designing a sequencing experiment. The experimental goal drives the choice of platform, which in turn influences key sequencing design parameters like depth. The resulting data quality and saturation metrics should inform future experimental designs.

Detailed Experimental Protocols for Saturation Analysis

Protocol for Saturation Analysis Using Combinatorial Barcoding

Purpose: To empirically determine the optimal sequencing depth for a given sample using combinatorial barcoding technology. Steps:

- Library Preparation: Prepare the scRNA-seq library according to the combinatorial barcoding protocol (e.g., fixed and permeabilized cells undergo multiple rounds of barcoding in 96-well plates) [32].

- Sub-sampling: Take one or more completed sublibraries for a sequencing depth test [32].

- Sequencing: Sequence the test sublibrary(s) to a high depth.

- Bioinformatic Analysis: Use the provider's software or custom pipelines (e.g.,

CellBench[17]) to generate saturation curves.- Downsample the sequencing data to various fractions (e.g., 10%, 25%, 50% of reads).

- For each downsampled dataset, count the number of genes detected per cell and the total transcripts (UMIs) detected.

- Determine Optimal Depth: Identify the point on the saturation curve where the rate of new gene discovery sharply declines. This is the cost-effective optimal depth [32].

- Sequence Remaining Libraries: Apply the determined optimal depth to sequence all remaining sublibraries.

Protocol for Benchmarking Pipeline Performance with Mixture Controls

Purpose: To evaluate how different analysis pipelines (normalization, clustering, etc.) perform under varying sequencing depths using a known ground truth. Steps:

- Generate Control Data: Create a benchmark dataset, such as a mixture of single cells and 'pseudo cells' from distinct cell lines. Generate data using multiple scRNA-seq protocols (e.g., droplet and plate-based) [17].

- Variable Depth Simulation: Use the raw data (available under GEO SuperSeries GSE118767 [17]) and computationally subsample it to simulate different sequencing depths (e.g., from 10,000 to 100,000 reads per cell).

- Run Multiple Pipelines: Analyze each depth-simulated dataset with a wide array of analysis pipelines. The landmark study compared 3,913 method combinations for tasks like normalization, imputation, clustering, and trajectory analysis [17].

- Evaluate Performance: Compare the pipeline outputs against the known ground truth. Metrics include:

- Clustering Accuracy: Using Adjusted Rand Index (ARI) to measure concordance with known cell line identities [17].

- Differential Expression Power: The ability to recover known differentially expressed genes between cell lines.

- Trajectory Inference Accuracy: How well the inferred trajectory matches the known lineage relationships in the mixture.

The Scientist's Toolkit: Essential Reagents and Computational Tools

Table 3: Key Research Reagent Solutions and Computational Tools

| Item Name | Type | Function in Saturation Analysis | |

|---|---|---|---|

| CellBench [17] | R/Bioconductor Package | Provides data and framework for benchmarking scRNA-seq analysis methods, enabling direct comparison of how pipelines perform at different depths. | |

| CellRanger (10x Genomics) | Commercial Pipeline | Processes FASTQ files from droplet-based platforms into count matrices, and includes metrics like "Sequencing Saturation" in its summary. | |

| Split-pipe [37] | Computational Pipeline | An example of a commercial provider's pipeline (Parse Biosciences) for processing FASTQ files from combinatorial barcoding data, generating initial count matrices for QC. | |

| STAR [37] | Open-Source Aligner | A widely used spliced transcript aligner for reference genomes, a critical step in generating count data from FASTQ files. | |

| Kallisto | Bustools [37] | Open-Source Pseudoaligner | A fast, lightweight alternative for transcriptome alignment and count matrix generation, useful for large-scale studies. |

| FastQC [32] | Quality Control Tool | Assesses raw sequencing data quality from FASTQ files, a prerequisite for any meaningful saturation analysis. | |

| SC3 [17] | Clustering Tool | A consensus clustering method for single-cell data; its performance can be benchmarked at different depths using mixture controls. | |

| Slingshot [17] | Trajectory Analysis Tool | Infers cell lineages and pseudotime; its accuracy can be evaluated on benchmark datasets with known trajectories at varying depths. | |

| Unique Molecular Identifiers (UMIs) [38] | Molecular Barcode | Short random sequences added to each mRNA molecule during library prep to correct for PCR amplification bias and allow accurate transcript counting. | |

| Phi-X Control [32] | Sequencing Control | Spiked into Illumina sequencing runs to increase base diversity, which is crucial for maintaining sequencing quality on low-diversity libraries. |

The pursuit of optimal sequencing depth is not a quest for a single universal number, but rather a strategic balance dictated by the biological question, the chosen technology, and the constraints of the sequencing budget. Evidence from rigorous benchmarking studies demonstrates that shallow sequencing around one read per cell per gene can be optimal for estimating many gene properties, favoring the sequencing of more cells [31]. However, for applications requiring the detection of low-abundance transcripts or rare splicing events, significantly deeper sequencing remains necessary [36].

Platform choice directly influences this calculus. While droplet-based systems offer standardized workflows, combinatorial barcoding technologies provide a unique empirical method to determine sample-specific saturation, potentially leading to significant cost savings [32]. Ultimately, effective scRNA-seq experimental design requires researchers to clearly define their biological objectives, understand the performance characteristics of their chosen platform, and leverage the growing body of benchmarking data and mathematical frameworks to allocate their sequencing budget in a way that maximizes the discovery potential of their research.

Method Selection in Practice: Normalization, Batch Correction, and Differential Expression

In the analysis of high-throughput sequencing data, such as single-cell RNA-sequencing (scRNA-seq) and Chromatin Immunoprecipitation sequencing (ChIP-seq), normalization is a critical preprocessing step that accounts for technical variability to enable accurate biological comparisons. The performance of normalization methods is highly dependent on whether key technical assumptions about the data are met. Recent research has highlighted that the symmetry of differentially expressed (DE) features—whether the number of up-regulated and down-regulated features is approximately balanced—is a crucial factor influencing normalization efficacy [39] [40]. This comparative guide examines the performance of various normalization methods under symmetric versus asymmetric differential expression (DE) setups, providing researchers with evidence-based recommendations for selecting appropriate methods based on their experimental conditions.

The fundamental challenge in normalization stems from the compositional nature of sequencing data, where an increase in one transcript's abundance can technically lead to decreases in others due to library size constraints [41]. This property means that normalization methods relying on different statistical assumptions will perform variably when the underlying data characteristics match or violate these assumptions. Understanding these relationships is essential for accurate differential expression analysis, clustering, and trajectory inference in scRNA-seq studies [24] [41].

Conceptual Framework: Technical Conditions and Assumptions

Normalization methods operate based on specific technical assumptions about the data structure. When these assumptions are violated, normalization performance deteriorates, leading to increased false discovery rates or reduced power to detect true biological signals [39] [40]. Three key technical conditions have been identified as particularly important for normalization methods:

- Balanced Differential Signal: The number of genomic features with increased expression (or binding) between conditions is approximately equal to the number with decreased expression [39] [40]. This is also referred to as "symmetric differential DNA occupancy" in ChIP-seq contexts [40].

- Equal Total Signal: The total amount of signal (e.g., total RNA expression or DNA binding) remains constant across experimental states [39].

- Equal Background Signal: The level of non-specific background signal is consistent across samples and experimental conditions [40].

The balanced differential signal condition is particularly crucial for many widely-used normalization methods. Methods such as Library Size normalization, Trimmed Mean of M-values (TMM), and Relative Log Expression (RLE) assume that most features are not differentially expressed and that up- and down-regulation are approximately balanced [39] [40]. When this symmetry assumption is violated—such as in experiments with strong transcriptional activation or repression—these methods may produce biased results.

Table 1: Technical Conditions Underlying Major Normalization Methods

| Normalization Method | Balanced DE Assumption | Equal Total Signal Assumption | Primary Application |

|---|---|---|---|

| Library Size/TC | Required | Required | scRNA-seq, ChIP-seq |

| TMM | Required | Not Required | scRNA-seq, ChIP-seq |

| RLE | Required | Not Required | scRNA-seq, ChIP-seq |

| Med-pgQ2/UQ-pgQ2 | Less Stringent | Not Required | RNA-seq (low expression) |

| SCTransform | Less Stringent | Not Required | scRNA-seq |

| CoDA-CLR | Not Required | Required (compositional) | scRNA-seq |

Normalization Methods: Categories and Mechanisms

Global Scaling Methods

Global scaling methods apply a single scaling factor to all features in a sample to adjust for technical variations in sequencing depth or library size. These include:

- Total Count (TC): Normalizes by the total number of reads or counts per sample [42] [43].

- Reads Per Million (RPM): Similar to TC but scales to a fixed number of reads [42].

- Trimmed Mean of M-values (TMM): Removes extreme log fold-changes and library sizes before calculating scaling factors, assuming most genes are not differentially expressed [42] [43].

- Relative Log Expression (RLE): Uses a pseudo-reference sample based on geometric means of gene counts across samples [40].

These methods are computationally efficient but sensitive to violations of the balanced DE assumption, particularly when large-scale differential expression exists between conditions [39] [40].

Per-Gene Normalization Methods

Per-gene normalization approaches apply different normalization factors to individual genes based on their expression characteristics:

- Med-pgQ2 and UQ-pgQ2: These methods perform per-gene normalization after per-sample median or upper-quartile global scaling, making them more robust for data skewed towards lowly expressed genes with high variation [43].

- SCTransform: Applies regularized negative binomial regression to normalize data and is particularly effective for handling technical noise in scRNA-seq data [41].

These methods demonstrate advantages when analyzing datasets with asymmetric differential expression or low-expression skewness, maintaining better specificity while controlling false discovery rates [43].

Compositional Data Analysis (CoDA) Methods

Compositional data analysis methods explicitly treat sequencing data as relative abundances rather than absolute counts:

Centered Log-Ratio (CLR) Transformation: Transforms data using the logarithm of the ratio between each component and the geometric mean of all components [41]. The CLR transformation for a gene i in cell j is calculated as:

(CLR(x{ij}) = \log \left( \frac{x{ij}}{g(\mathbf{x}_j)} \right))

where (g(\mathbf{x}_j)) represents the geometric mean of all gene counts in cell j.

CoDA methods inherently address the compositional nature of sequencing data and can be more robust to asymmetric differential expression patterns, though they require careful handling of zeros in the data [41].

Figure 1: A decision framework for selecting normalization methods based on differential expression symmetry. Methods should be chosen based on whether the data exhibits symmetric or asymmetric differential expression patterns.

Performance Benchmarking in Symmetric vs. Asymmetric Setups

Simulation Studies

Simulation studies where ground truth is known provide the most reliable assessment of normalization method performance. In systematically designed simulations, researchers can control the symmetry of differential expression and directly measure false discovery rates (FDR) and statistical power.

In ChIP-seq simulations where technical conditions were violated, normalization methods showed markedly different performances [39] [40]. When the symmetric differential DNA occupancy assumption was violated, methods like Library Size normalization, TMM, and RLE demonstrated elevated false discovery rates in downstream differential binding analysis. Under these asymmetric conditions, the high-confidence peakset approach—taking the intersection of peaks identified by multiple normalization methods—proved more robust than relying on any single method [39].

For scRNA-seq data, the scone framework provides a comprehensive evaluation approach using multiple data-driven metrics to assess normalization performance [42]. This framework evaluates normalization methods based on their ability to remove unwanted technical variation while preserving biological signal, with performance assessment including:

- Clustering accuracy using silhouette widths

- Batch effect removal using K-nearest neighbor batch-effect test

- Preservation of biological variation using highly variable genes detection [24]

Table 2: Performance Comparison of Normalization Methods Under Different DE Setups

| Normalization Method | Symmetric DE Setup | Asymmetric DE Setup | Key Strengths | Notable Limitations |

|---|---|---|---|---|

| TMM | High accuracy, Controlled FDR | Elevated FDR, Bias | Computational efficiency, Established use | Sensitive to DE symmetry |

| RLE | High accuracy, Controlled FDR | Elevated FDR, Bias | Robust for small sample sizes | Sensitive to DE symmetry |

| Med-pgQ2/UQ-pgQ2 | Moderate accuracy | Maintained specificity, Controlled FDR | Handles low-expression skewness | Less established in scRNA-seq |

| SCTransform | High accuracy | Maintained accuracy, Controlled FDR | Handles technical noise, Zero inflation | Computational intensity |

| CoDA-CLR | Moderate accuracy | High accuracy, Robust performance | Compositional nature, Cluster separation | Zero-handling challenges |

Experimental Validations

Experimental benchmarks using mixture control experiments—where cells or RNA from different cell lines are mixed in known proportions—provide validation for simulation findings. In one comprehensive benchmark involving 14 datasets and 3,913 analysis pipelines, normalization methods performed variably depending on the data structure and technology platform [16].

For symmetric differential expression setups, global scaling methods like TMM and RLE performed well when the balanced DE assumption held. However, under asymmetric conditions—such as when one condition had widespread transcriptional activation—these methods introduced systematic biases, while per-gene methods and CoDA approaches maintained better performance [43] [41].

In the scRNA-seq context, the OSCA and scrapper pipelines achieved the highest clustering accuracy (ARI up to 0.97) in datasets with known cell identities when appropriate normalization was applied [5]. Performance differences were largely driven by the choice of highly variable genes and PCA implementation, both of which are influenced by prior normalization steps [5].

Experimental Protocols for Performance Assessment

Benchmarking Framework Implementation

Comprehensive benchmarking of normalization methods should follow established protocols to ensure reproducible and biologically meaningful assessments:

Data Preprocessing and Quality Control

- Perform initial QC assessment including alignment rates, count distributions, and detection of technical artifacts [42]

- Filter cells and genes based on quality metrics (mitochondrial content, library size, gene detection) [42] [24]

- The scone framework provides standardized approaches for these initial steps [42]

Normalization Implementation

- Apply multiple normalization methods including global scaling (TMM, RLE), per-gene approaches (Med-pgQ2, SCTransform), and compositional methods (CoDA-CLR)

- For each method, use recommended parameter settings and address method-specific requirements (e.g., zero handling for CoDA) [41]

Performance Metric Calculation

- Evaluate clustering accuracy using adjusted Rand index (ARI) when ground truth cell labels are available [5] [16]

- Assess batch effect correction using the K-nearest neighbor batch-effect test [24]

- Measure detection of highly variable genes using established benchmarks [24]

- For differential expression analysis, calculate precision-recall curves and false discovery rates when spike-ins or synthetic datasets are available [43] [16]

Figure 2: Workflow for systematic benchmarking of normalization methods. The process begins with quality control, applies multiple normalization approaches, performs downstream analyses, and assesses performance using multiple metrics before making method recommendations.

High-Confidence Peakset Strategy

For analyses where the appropriate normalization method is uncertain, a high-confidence peakset (or gene set) strategy can be employed:

- Conduct parallel analyses using multiple normalization methods with different technical assumptions [39] [40]

- Identify differentially expressed genes or bound peaks for each normalization method

- Take the intersection of these gene/peak sets as a high-confidence set for biological interpretation [39]

- In experimental analyses, roughly half of called peaks were identified as differentially bound across all normalization methods, providing a robust set for downstream investigation [39]

This approach reduces sensitivity to the choice of a specific normalization method and provides more robust biological conclusions when there is uncertainty about which technical conditions are satisfied.

Table 3: Key Computational Tools for Normalization Benchmarking

| Tool/Resource | Primary Function | Application Context | Key Features |

|---|---|---|---|

| SCONE [42] | Normalization implementation and evaluation | scRNA-seq | Comprehensive metric panel, Ranking methods, Modular framework |

| CellBench [16] | Pipeline benchmarking | scRNA-seq | Mixture control data, Multi-method comparison, Reproducible workflows |

| CoDAhd [41] | Compositional data normalization | scRNA-seq | CLR transformation, Zero-handling, High-dimensional data |

| SCTransform [41] | Regularized negative binomial regression | scRNA-seq | Handles technical noise, Addresses zero inflation |

| Seurat [41] | Integrated scRNA-seq analysis | scRNA-seq | Log-normalization, SCTransform implementation, Clustering |

| OSCA [44] [5] | Single-cell analysis workflow | scRNA-seq | Quality control, Normalization, Clustering, Bioconductor-based |

Based on comprehensive benchmarking studies, we provide the following evidence-based recommendations for selecting normalization methods:

For symmetric DE setups where most genes are not differentially expressed and up-/down-regulation is balanced, global scaling methods (TMM, RLE) provide excellent performance with computational efficiency [39] [43].

For asymmetric DE setups with widespread transcriptional changes, per-gene normalization methods (Med-pgQ2, UQ-pgQ2) and model-based approaches (SCTransform) demonstrate superior performance by maintaining specificity while controlling false discovery rates [43] [41].

For exploratory analyses or when the DE structure is unknown, compositional data analysis methods (CoDA-CLR) offer robustness to various data structures and should be included in method comparisons [41].

When uncertainty exists about which technical conditions are satisfied, employing a high-confidence set approach that intersects results from multiple normalization methods provides the most robust biological conclusions [39].

For large-scale datasets, consider computational efficiency and scalability, with GPU-accelerated implementations providing up to 15× speed-ups over CPU-based methods [5].

Normalization method selection should be guided by both the technical conditions of the experiment and the biological context. Researchers should assess the likely symmetry of differential expression based on their experimental system and employ benchmarking frameworks like scone to evaluate multiple normalization approaches when analyzing novel datasets [42]. As scRNA-seq technologies continue to evolve and dataset sizes increase, ongoing methodology development and benchmarking will remain essential for ensuring accurate biological interpretation of sequencing data.

Batch effects, defined as unwanted technical variations introduced by differences in laboratories, experimental protocols, sequencing platforms, or reagent batches, present a significant challenge in the analysis of single-cell RNA sequencing (scRNA-seq) data [45]. These non-biological variations can obscure genuine biological signals, reduce statistical power, and potentially lead to misleading scientific conclusions if not properly addressed [45]. The proliferation of large-scale scRNA-seq studies, often combining datasets from multiple sources, has intensified the need for effective batch-effect correction algorithms (BECAs) to ensure data integration reliability and analytical reproducibility.