Best Clustering Methods for RNA-seq Data Visualization: A 2025 Benchmarking Guide for Biomedical Researchers

This article provides a comprehensive guide for researchers and drug development professionals on selecting and applying clustering methods for RNA-seq data visualization.

Best Clustering Methods for RNA-seq Data Visualization: A 2025 Benchmarking Guide for Biomedical Researchers

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on selecting and applying clustering methods for RNA-seq data visualization. It covers foundational principles of single-cell and bulk RNA-seq clustering, explores top-performing algorithms like scDCC, scAIDE, and FlowSOM based on recent 2025 benchmarking studies, and offers practical workflows for implementation. The content includes troubleshooting common issues such as high dimensionality and data sparsity, and delivers validated performance comparisons across multiple metrics including accuracy, speed, and memory usage. By synthesizing the latest evaluation criteria and methodological advances, this guide empowers scientists to make informed choices that enhance cell type discovery and biological interpretation in their transcriptomic studies.

Understanding RNA-seq Clustering: Core Concepts and Challenges in Transcriptomic Data Analysis

The Critical Role of Clustering in Single-Cell and Bulk RNA-seq Analysis

Frequently Asked Questions

What are the first steps after receiving my single-cell RNA-seq data?

Your first step should be quality control (QC) and filtering of low-quality cells. Use the web_summary.html file generated by the Cell Ranger pipeline for an initial assessment. Key metrics to check include the number of cells recovered, the percentage of confidently mapped reads in cells, and median genes per cell. Following this, you should filter cell barcodes based on UMI counts, number of features, and the percentage of mitochondrial reads to remove potential multiplets, ambient RNA, and dying cells [1].

My bulk RNA-seq PCA shows poor clustering and high variation between samples. What could be wrong?

Poor clustering in PCA can often indicate a batch effect. Even if samples were sequenced in the same flow cell, batch effects can be introduced during library preparation. It is recommended to check if the separation along principal components (e.g., PC1) correlates with processing batches. You can account for this in your differential expression analysis by including a batch factor in your design formula (e.g., ~ batch + condition in DESeq2). If the treatments themselves do not cause strong transcriptional changes, a lack of clustering might be a true biological result [2].

How do I choose the right number of clusters (k) for my data? Determining the correct number of clusters is critical. You can use several visual methods:

- Elbow Plot: Use the

yellowbrickpackage to create a plot of within-cluster sum of squares against the number of clusters (k). The "elbow" point, where the rate of decrease sharply shifts, provides a recommendation for k [3]. - Silhouette Analysis: This method measures how similar a cell is to its own cluster compared to other clusters. You can plot the silhouette score for different values of k; a higher average silhouette width indicates better-defined clusters [3].

Which clustering algorithm should I use for single-cell data? The choice of algorithm depends on your data and priorities. A recent large-scale benchmark study evaluated 28 methods [4]. For top all-around performance on both transcriptomic and proteomic data, the study recommends:

- scAIDE

- scDCC

- FlowSOM (which also offers excellent robustness) If you prioritize memory efficiency, consider scDCC and scDeepCluster. For time efficiency, TSCAN, SHARP, and MarkovHC are recommended [4].

Troubleshooting Guides

Issue 1: Poor Clustering Results in Single-Cell RNA-seq Analysis

Problem: Your t-SNE or UMAP plot shows messy, unconvincing clusters, or too many/few clusters.

Investigation and Solutions:

- Review QC Metrics: Re-examine your quality control. High levels of ambient RNA or mitochondrial reads can obscure biological signal. Tools like SoupX or CellBender can be applied to estimate and remove ambient RNA background [1].

- Check Feature Selection: The selection of Highly Variable Genes (HVGs) significantly impacts clustering. Ensure you are using an appropriate number of HVGs to capture relevant biological variation without introducing excessive noise [4].

- Re-assess Cluster Number: Use the elbow method and silhouette analysis, as described in the FAQs, to verify you are not overfitting or underfitting your data [3].

- Try a Different Algorithm: If performance is poor, consider switching to a top-performing algorithm like scDCC, scAIDE, or FlowSOM [4].

- Explore Advanced Methods: For complex data, consider newer methods like scGGC, which uses a graph autoencoder and generative adversarial network (GAN) to model cell-gene interactions and improve clustering accuracy [5].

Issue 2: Handling High Variation and Batch Effects in Bulk RNA-seq

Problem: PCA of your bulk RNA-seq data shows large variation between samples, with poor separation by experimental condition but potential grouping by batch.

Investigation and Solutions:

- Confirm the Batch Effect: Check if samples separate based on processing date, sequencing lane, or other technical factors. Plot PCA with color-coding by potential batch variables [2].

- Incorporate Batch in Model: Use statistical models that can account for batch effects. In DESeq2, include the batch as a factor in the design formula (e.g.,

design = ~ batch + condition) before attempting to identify differentially expressed genes [2]. - Analyze Driving Genes: Investigate the genes that contribute most to the largest principal component (e.g., PC1). This can reveal if the variation is technical or has an unexpected biological cause [2].

Experimental Protocols & Best Practices

Standard Workflow for Single-Cell RNA-seq Clustering

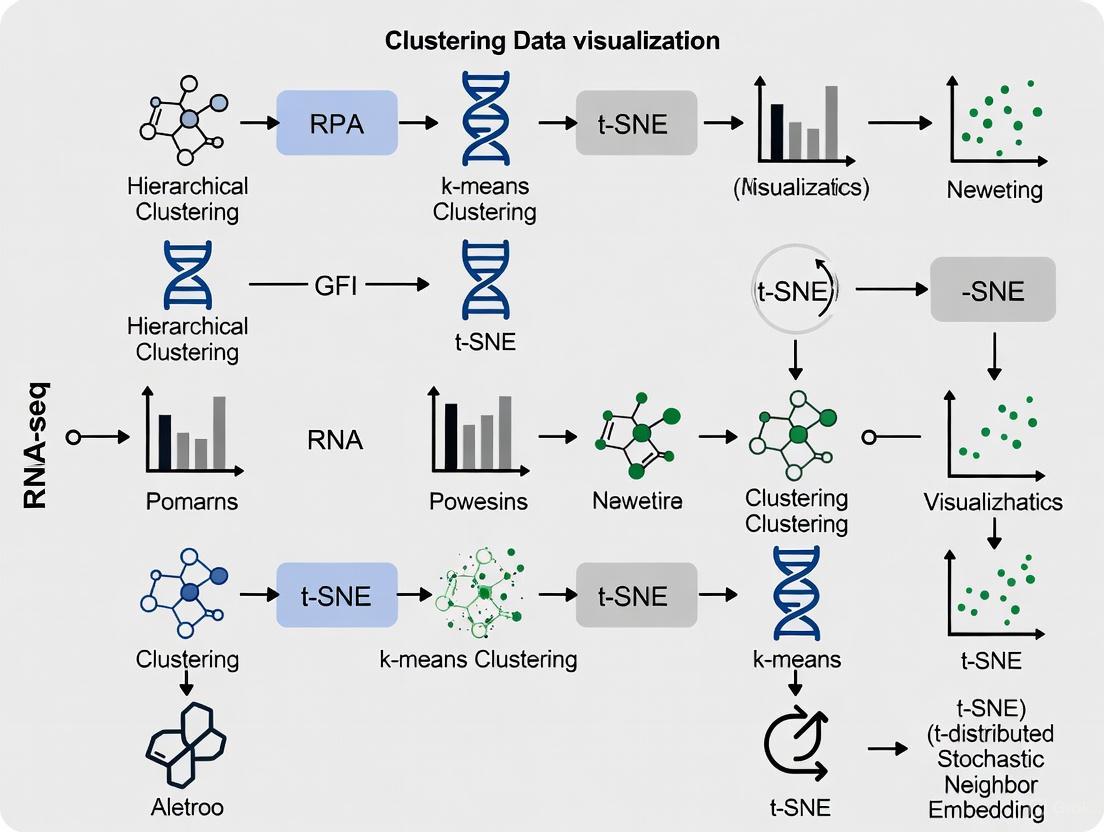

The following diagram outlines a standard bioinformatics workflow for clustering single-cell RNA-seq data, from raw data to biological interpretation.

Detailed Methodology:

- Raw Data Processing: Process raw FASTQ files using the

cellranger multipipeline from 10x Genomics for read alignment, UMI counting, and cell calling. This generates a feature-barcode matrix and aweb_summary.htmlfile for initial QC [1]. - Quality Control & Filtering:

- Use the

web_summary.htmlto check for critical issues. Expect a high percentage of confidently mapped reads in cells (e.g., >90%) [1]. - Filter the cell barcode matrix to remove low-quality cells using Loupe Browser or other tools. Typical filters include:

- Use the

- Normalization and Feature Selection: Normalize the data to account for sequencing depth and select ~2000-3000 Highly Variable Genes (HVGs) for downstream analysis [4] [5].

- Dimensionality Reduction and Clustering:

- Perform linear dimensionality reduction using Principal Component Analysis (PCA).

- Apply a graph-based clustering algorithm (e.g., Louvain, Leiden) or a top-performing deep learning method (e.g., scDCC) on the top principal components to assign cells to clusters [4].

- Visualization and Annotation:

- Visualize the clusters in 2D using non-linear methods like UMAP or t-SNE.

- Identify marker genes for each cluster and annotate clusters with known cell types using biological knowledge and reference databases.

Comparative Benchmarking of Clustering Algorithms

A 2025 benchmark study evaluated 28 clustering algorithms across 10 paired transcriptomic and proteomic datasets. Performance was ranked based on Adjusted Rand Index (ARI) and Normalized Mutual Information (NMI) [4].

Table 1: Top-Performing Single-Cell Clustering Algorithms (2025 Benchmark)

| Algorithm | Overall Rank (Transcriptomics) | Overall Rank (Proteomics) | Key Strengths | Algorithm Category |

|---|---|---|---|---|

| scDCC | 1 | 2 | Top performance, Memory efficient | Deep Learning |

| scAIDE | 2 | 1 | Top performance across omics | Deep Learning |

| FlowSOM | 3 | 3 | Excellent robustness, Fast | Classical Machine Learning |

| CarDEC | 4 | >15 | Good for transcriptomics | Deep Learning |

| PARC | 5 | >15 | Good for transcriptomics | Community Detection |

Table 2: Algorithm Recommendations Based on User Priority

| Priority | Recommended Algorithms | Notes |

|---|---|---|

| Overall Performance | scAIDE, scDCC, FlowSOM | Best ARI/NMI scores across datasets [4]. |

| Memory Efficiency | scDCC, scDeepCluster | Lower peak memory usage [4]. |

| Time Efficiency | TSCAN, SHARP, MarkovHC | Faster running times [4]. |

| Robustness | FlowSOM | Consistent performance under noise [4]. |

Protocol for a Novel Clustering Method: scGGC

The scGGC model is a two-stage, semi-supervised method that integrates graph autoencoders and generative adversarial networks (GANs) to improve clustering accuracy [5].

Methodology:

- Data Preprocessing: Remove genes with nonzero expression in fewer than 1% of cells. Select the top 2000 highly variable genes. Standardize and normalize the resulting expression matrix [5].

- Cell-Gene Pathway Construction:

- Graph Autoencoder Training:

- Use the adjacency matrix

Aas the graph structure input for a graph autoencoder. - The encoder uses graph convolutional layers to map data to a low-dimensional embedding

Z. The decoder reconstructs the adjacency matrix. The model is trained by minimizing the reconstruction loss [5].

- Use the adjacency matrix

- Initial Clustering and High-Confidence Sample Selection:

- Apply K-means on the graph embedding

Zto get initial clusters. - For each cluster, calculate the distance of each cell to the cluster centroid. The cell closest to the centroid is selected as a high-confidence sample [5].

- Apply K-means on the graph embedding

- Adversarial Training for Refinement:

- Train a Generative Adversarial Network (GAN) using the high-confidence samples.

- This step optimizes the clustering results again, improving the model's generalization and final accuracy [5].

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Single-Cell RNA-seq

| Item | Function / Application |

|---|---|

| Chromium Single Cell 3' Reagent Kits (10x Genomics) | Generate barcoded single-cell RNA-seq libraries from thousands of cells simultaneously [1]. |

| Cell Ranger Software Suite | Primary analysis pipeline for processing 10x Genomics data; performs alignment, filtering, and initial counting [1]. |

| Loupe Browser | Interactive desktop software for visual exploration and preliminary analysis of 10x Genomics single-cell data [1]. |

| SoupX / CellBender | Computational tools for estimating and removing ambient RNA contamination, a common issue in droplet-based protocols [1]. |

| scDCC / scAIDE / FlowSOM Software | Top-performing clustering algorithms identified in independent benchmarks for achieving high accuracy [4]. |

Frequently Asked Questions (FAQs)

FAQ 1: What are the most effective clustering methods for handling high-dimensional scRNA-seq data? High-dimensionality is a hallmark of scRNA-seq data, where the number of genes (features) far exceeds the number of cells (observations). Methods that incorporate dimensionality reduction or deep learning are particularly effective [6] [7].

- Graph-based clustering methods, such as Seurat, construct a k-nearest neighbor (KNN) graph from a reduced dimension space (e.g., PCA) and then use community detection algorithms like Louvain or Leiden to identify cell clusters [6].

- Deep learning-based methods, such as scSMD and scMSCF, use autoencoders or other neural network architectures to learn a non-linear, low-dimensional representation of the data that captures essential features for clustering, effectively mitigating the "curse of dimensionality" [7] [8].

- Spectral clustering methods, like MPSSC, use the Laplacian matrix of a similarity graph to perform clustering, which can reveal complex structures in high-dimensional space [6].

FAQ 2: How can I minimize the impact of technical noise on my clustering results? Technical noise, including sparsity (many zero counts) and batch effects, can obscure biological signals. Addressing this requires careful preprocessing and specialized algorithms [9] [1].

- Preprocessing and Quality Control (QC): Rigorous QC is essential. Filter out low-quality cells expressing fewer than 200 genes or more than 5000 genes, and remove genes detected in only a few cells. Calculate the percentage of mitochondrial reads per cell and filter out cells with unusually high percentages, as this can indicate broken cells [8] [1].

- Normalization: Use methods like SCTransform (in Seurat) that employ regularized negative binomial regression to normalize count data while controlling for technical covariates like sequencing depth [7].

- Specialized Models: Employ clustering models designed to handle noise. The scSMD model, for instance, is built on a denoising convolutional autoencoder informed by the negative binomial distribution, which explicitly models the noise characteristics of scRNA-seq data [8].

FAQ 3: What strategies can help distinguish subtle biological variation, such as closely related cell subtypes? Biological variability, especially between rare or highly similar cell types, requires sensitive methods that can capture fine-grained patterns [6] [8].

- Biclustering Methods: Algorithms like QUBIC2 and runibic can identify local patterns by simultaneously clustering genes and cells. This is effective for finding gene modules that are co-expressed in only a subset of cells, which can help define rare subtypes [6].

- Multi-Scale and Ensemble Approaches: Frameworks like scMSCF use a multi-dimensional PCA strategy combined with a weighted meta-clustering approach. This integrates multiple clustering results to form a more robust and stable consensus, enhancing the ability to capture subtle cell groups [7].

- Attention Mechanisms: Advanced deep learning models like scSMD incorporate a Multi-Dilated Attention Gate, allowing the model to adaptively focus on key genes and capture expression patterns at different scales, improving the resolution of closely situated cell populations [8].

Troubleshooting Guides

Problem: Clustering results are inconsistent or poorly separated.

| Potential Cause | Solution |

|---|---|

| High technical noise or batch effects | Apply batch effect correction tools (e.g., in SCTransform) and consider using deep learning models like DESC, which iteratively removes batch effects during clustering [7]. |

| Inappropriate number of clusters | Use internal validation metrics (e.g., silhouette width) to evaluate clustering quality across different resolution parameters. Some methods, like scSSA, use the BIC index to automatically determine the number of clusters [7]. |

| High data sparsity | Utilize models designed for sparse data, such as those using a negative binomial loss (e.g., scSMD, scTPC) or ZINB-based denoising autoencoders (e.g., scSemiAAE), which better model the distribution of scRNA-seq counts [7] [8]. |

Problem: Clustering algorithm fails to identify a known rare cell type.

| Potential Cause | Solution |

|---|---|

| Rare cell signals are overwhelmed by larger populations | Employ methods specifically designed for rare cell identification. GiniClust3 uses the Gini index to detect genes with highly specific expression patterns, which can be markers for rare cell types [6]. |

| Standard dimensionality reduction loses rare cell information | Use supervised or semi-supervised methods (e.g., scTPC, scSemiAAE) if some label information is available. These methods can leverage prior knowledge to guide the clustering of rare populations [7]. |

Problem: The clustering method is computationally slow and does not scale to large datasets.

| Potential Cause | Solution |

|---|---|

| Inefficient handling of high dimensionality | Switch to alignment-free quantification tools like Kallisto or Salmon for fast gene expression estimation [9]. For clustering, use scalable graph-based methods (Seurat, SCANPY) or deep learning frameworks (scMSCF) optimized for large-scale data [7] [1]. |

| Complex algorithm with high runtime | For very large datasets, consider ensemble methods like SHARP that use efficient random projections, or leverage the computational optimizations in tools like Cell Ranger from 10x Genomics for initial processing [7] [1]. |

Table 1: Performance Comparison of Selected scRNA-seq Clustering Methods on Benchmark Datasets [7]

| Method | Type | Average ARI | Average NMI | Key Strengths |

|---|---|---|---|---|

| scMSCF | Ensemble / Deep Learning | 0.86 (on PBMC5k) | ~15% higher than benchmarks | Robust to noise, integrates multi-scale clustering |

| Seurat | Graph-based | 0.72 (on PBMC5k) | Baseline | Widely adopted, good all-rounder |

| scSMD | Deep Learning (Autoencoder) | High (outperforms 6 other models) | High | Handles high sparsity, uses multi-dilated attention |

| Biclustering (e.g., QUBIC2) | Biclustering | N/A | N/A | Identifies local gene-cell patterns, good for rare cells |

Table 2: Key Computational Tools for scRNA-seq Data Preprocessing and Clustering [9] [1]

| Tool | Purpose | Key Function |

|---|---|---|

| Cell Ranger | Primary Analysis | Alignment, filtering, UMI counting, and initial clustering from FASTQ files. |

| Seurat / SCANPY | Comprehensive Analysis | R/Python suites for QC, normalization, dimensionality reduction, and graph-based clustering. |

| Kallisto / Salmon | Quantification | Ultra-fast alignment-free transcript/gene quantification. |

| FastQC | Quality Control | Quality check of raw sequencing reads. |

| SoupX / CellBender | Ambient RNA Removal | Computational removal of background noise from lysed cells. |

Experimental Protocols

Protocol 1: Standard Workflow for Clustering scRNA-seq Data using a Graph-Based Approach [1] This protocol outlines the steps for clustering scRNA-seq data using a standard graph-based pipeline, as implemented in tools like Seurat or SCANPY.

Data Preprocessing

- Quality Control: Load the count matrix and filter out cells with low unique gene counts (e.g., <200) and high mitochondrial gene percentage (threshold varies by cell type; >10% is common for PBMCs). Remove genes not expressed in a sufficient number of cells [1].

- Normalization: Normalize the data to account for varying sequencing depth per cell. A common method is log-normalization (counts per million). For advanced analysis, use SCTransform which also corrects for technical sources of variation [7].

- Feature Selection: Identify the top ~2000 highly variable genes (HVGs) that drive biological heterogeneity for downstream analysis [7].

Dimensionality Reduction and Clustering

- Linear Reduction: Perform Principal Component Analysis (PCA) on the scaled data of the HVGs. Select a sufficient number of principal components (PCs) based on an elbow plot of standard deviations.

- Graph Construction: Construct a K-Nearest Neighbor (KNN) graph in PCA space, typically based on Euclidean distance.

- Community Detection: Apply a community detection algorithm such as Louvain or Leiden to the graph to partition cells into clusters. The resolution parameter can be adjusted to control the granularity of the clusters [6] [1].

Protocol 2: Clustering with a Deep Learning Autoencoder Framework (e.g., scSMD) [8] This protocol describes the core methodology for using a deep learning model like scSMD for clustering.

Model Architecture Setup

- Encoder: The encoder consists of convolutional layers and a fully connected layer that non-linearly transform the high-dimensional input gene expression matrix into a low-dimensional latent space representation.

- Multi-Dilated Attention Gate: This component, integrated into the encoder, uses dilated convolutional layers with different dilation rates. This allows the model to capture gene-gene interaction patterns at multiple scales, enhancing feature learning [8].

- Decoder: The decoder, often using deconvolutional layers, attempts to reconstruct the input data from the latent representation. The model is trained by minimizing the reconstruction error, often with a loss function like negative binomial divergence suited for count data.

Training and Clustering

- The model is trained to learn a latent representation that captures the essential features of the data while filtering out noise.

- Clustering is performed directly in the latent space, often using a loss function that simultaneously optimizes for accurate reconstruction and cluster compactness (e.g., centroid loss). The output is a set of cluster labels for each cell [8].

Method Selection and Workflow Diagram

Method Selection Workflow

The Scientist's Toolkit: Essential Research Reagents and Computational Tools

Table 3: Research Reagent Solutions for scRNA-seq Experiments [9] [1]

| Item | Function | Example Product / Tool |

|---|---|---|

| RNA Stabilization Reagent | Prevents RNA degradation immediately after cell collection. | RNAlater, liquid nitrogen [9]. |

| Low-Input RNA Library Prep Kit | Enables library construction from very small amounts of input RNA, crucial for single-cell workflows. | SMART-Seq v4 Ultra Low Input RNA Kit; QIAseq UPXome RNA Library Kit [9]. |

| rRNA Depletion Kit | Removes abundant ribosomal RNA (rRNA) to increase reads from mRNA. | QIAseq FastSelect [9]. |

| Single Cell 3' Reagent Kit | Comprehensive solution for generating barcoded cDNA libraries from single-cell suspensions. | 10x Genomics Chromium GEM-X Single Cell 3' Reagent Kits [1]. |

| Alignment & Quantification Software | Processes raw sequencing data (FASTQ) into gene expression counts. | Cell Ranger, STAR, HISAT2, Kallisto, Salmon [9] [1]. |

The standard RNA-seq analysis pipeline transforms raw sequencing data into meaningful biological insights, such as identified gene clusters and differentially expressed genes. This process involves several critical stages, from initial quality control to final interpretation [10] [11]. The following diagram provides a high-level overview of this workflow, illustrating the key steps and their relationships.

Frequently Asked Questions (FAQs) and Troubleshooting

Common Issues and Solutions

Table: Common RNA-seq Pipeline Issues and Recommended Solutions

| Problem Category | Specific Issue | Possible Causes | Solutions & Troubleshooting Steps |

|---|---|---|---|

| Data Quality | Hidden quality imbalances between sample groups [12] | Systematic technical variations | Use tools like seqQscorer for machine learning-based quality assessment; Check for correlations between quality metrics and experimental groups [12] |

| Low overall read quality | Sequencing chemistry issues, degraded RNA | Use FastQC for quality assessment; Trim low-quality bases with Trimmomatic or fastp [11] [13] | |

| Alignment | STAR alignment errors with trimmed files [14] | Incorrect file formatting or path specifications | Verify FASTQ file integrity after trimming; Ensure correct specification of paired-end files in STAR command; Check genome index path [14] |

| Low alignment rates | Poor RNA quality, incorrect reference genome | Check RNA integrity number (RIN > 7.0); Ensure reference genome and annotation versions match [10] [11] | |

| Clustering | Poor separation in PCA plots | High batch effects, insufficient normalization | Minimize batch effects through experimental design; Use combat, Harmony, or Scanorama for batch correction [10] [15] |

| Failure to identify known cell types | High data sparsity and noise | Apply appropriate clustering methods (e.g., Seurat, SC3, scMSCF) that handle high-dimensional, sparse data [6] [7] | |

| Single-Cell Specific | High dropout events (false zeros) | Low RNA input, inefficient capture | Use computational imputation methods; Apply unique molecular identifiers (UMIs) [15] |

| Cell doublets | Multiple cells in single droplet | Implement cell hashing; Use computational detection based on gene expression profiles [15] |

Detailed Troubleshooting Guides

Q: My PCA plots show poor separation between experimental groups. What could be wrong?

Poor separation in PCA plots can result from several technical issues rather than true biological similarity. First, assess whether batch effects are confounding your analysis. Technical variation from different library preparation dates, sequencing runs, or personnel can introduce systematic differences that overshadow biological signals [10] [16]. To mitigate this:

- Experimental Design: Process controls and experimental samples simultaneously whenever possible [10]

- Batch Correction: Use computational methods like Combat, Harmony, or Scanorama to remove technical variability [15]

- Quality Imbalances: Check for systematic quality differences between groups using tools like

seqQscorer, as hidden quality imbalances can significantly impact clustering results and lead to false positives [12]

Additionally, ensure you have sufficient sequencing depth and biological replicates. For RNA-seq, a minimum of three replicates per condition is recommended, though more replicates provide greater power to detect subtle expression differences [16] [11].

Q: I'm getting unexpected results in differential expression analysis. How can I validate my findings?

Unexpected differential expression results can stem from both technical and analytical issues. First, verify that the strandedness of your library is correctly specified, as this dramatically affects read quantification [17]. Most modern pipelines can auto-detect strandedness using tools like Salmon [17].

Second, examine whether quality imbalances between sample groups might be driving apparent differences rather than true biological signals. Studies have found that 35% of clinically relevant RNA-seq datasets exhibit significant quality imbalances that can inflate false positive rates [12].

Third, ensure your normalization method is appropriate for your data characteristics. The TMM (Trimmed Mean of M-values) method implemented in edgeR is widely used for bulk RNA-seq, while single-cell data may require specialized approaches to handle its unique characteristics [11] [13].

Q: What clustering methods work best for single-cell RNA-seq data with high sparsity?

Single-cell RNA-seq data presents unique challenges due to its high dimensionality, sparsity, and noise [6] [7]. No single clustering method performs optimally across all datasets, but some have demonstrated superior performance:

- Graph-based clustering (e.g., Seurat, Phenograph): Constructs cell similarity graphs using k-nearest neighbors and applies community detection algorithms [6] [7]

- Ensemble methods (e.g., SC3, SHARP): Combine multiple clustering results to improve stability and accuracy [7]

- Deep learning approaches (e.g., scDSC, scMSCF): Use neural networks to capture complex patterns in high-dimensional data [7]

The recently developed scMSCF framework combines multi-dimensional PCA with a Transformer model and has shown 10-15% improvements in clustering metrics (ARI, NMI, ACC) compared to existing methods [7].

For optimal results, consider your specific data characteristics. Biclustering methods can be particularly effective for identifying local consistency in partially annotated datasets, while standard clustering methods generally perform better on completely unknown datasets [6].

Clustering Methods for RNA-seq Data Visualization

Comparison of Clustering Approaches

Table: RNA-seq Clustering Methods and Their Applications

| Method Type | Specific Tools | Key Features | Best For | Limitations |

|---|---|---|---|---|

| Biclustering [6] | QUBIC2, runibic, GiniClust3 | Simultaneously clusters genes and cells; Identifies local patterns | Finding functional gene modules; Partially annotated datasets | Computationally intensive; Complex implementation |

| Graph-Based Clustering [6] [7] | Seurat, Phenograph, ScGSLC | Models cell-cell relationships; Handles nonlinear structures | Large datasets; Identifying subtle cell subtypes | Sensitive to similarity matrix quality |

| Deep Learning [7] | scMSCF, scDSC, CellVGAE | Captures complex patterns; Handles high dimensionality | Noisy data; Complex biological relationships | Requires substantial computational resources |

| Spectral Clustering [7] | MPSSC | Uses graph Laplacian properties; Combines multiple similarity matrices | High-noise data; Missing data | Less efficient for very large datasets |

| Ensemble Methods [7] | SC3, SHARP | Improves stability; Reduces method-specific bias | General-purpose clustering; No prior knowledge | Higher computational costs |

Advanced Clustering Framework: scMSCF

For researchers working with complex single-cell RNA-seq data, the single-cell Multi-Scale Clustering Framework (scMSCF) represents a significant advancement. This method integrates three powerful approaches [7]:

- Multi-dimensional PCA reduction with K-means clustering across dimensions

- Weighted ensemble meta-clustering to integrate results

- Transformer model with self-attention mechanism to capture gene dependencies

This framework has demonstrated substantial improvements over existing methods, achieving on average 10-15% higher ARI, NMI, and ACC scores across diverse single-cell datasets [7]. For example, on the PBMC5k dataset, scMSCF improved the Adjusted Rand Index (ARI) from 0.72 to 0.86, indicating much more accurate identification of cell populations [7].

The following diagram illustrates the scMSCF workflow, showing how it integrates multiple clustering approaches with deep learning to achieve superior performance.

Key Research Reagent Solutions

Table: Essential Materials and Tools for RNA-seq Analysis

| Item | Function/Purpose | Examples/Alternatives |

|---|---|---|

| Splice-aware Aligner [11] [13] | Aligns RNA-seq reads across splice junctions | STAR, HISAT2, GSNAP |

| Quality Control Tools [11] [12] | Assess sequence quality and technical artifacts | FastQC, MultiQC, seqQscorer |

| Trimming Tools [11] [13] | Remove adapter sequences and low-quality bases | Trimmomatic, fastp, Trim Galore! |

| Clustering Algorithms [6] [7] | Identify cell types or co-expressed genes | Seurat, scMSCF, SC3, Phenograph |

| Normalization Methods [11] | Account for technical variability in sequencing depth | TMM, TPM, FPKM, CPM |

| Batch Effect Correction [10] [15] | Remove technical variation from non-biological factors | Combat, Harmony, Scanorama |

| Unique Molecular Identifiers (UMIs) [15] | Correct for amplification bias in single-cell data | Included in many scRNA-seq protocols |

| Reference Annotations [11] | Genome annotation for read assignment | Gencode, ENSEMBL, UCSC gene annotations |

This technical support center provides troubleshooting guides and frequently asked questions (FAQs) for researchers evaluating clustering results, specifically within the context of RNA-seq data visualization research. Clustering is a fundamental unsupervised learning technique for grouping similar data points together, such as identifying cell types from single-cell RNA sequencing (scRNA-seq) data. However, assessing the performance and quality of clustering algorithms can be challenging. This guide focuses on three critical concepts for this assessment: the Adjusted Rand Index (ARI), Normalized Mutual Information (NMI), and Cluster Stability. The following sections provide clear definitions, methodologies, and practical solutions to common problems encountered during experimental analysis.

FAQ: Understanding the Core Metrics

1. What are ARI and NMI, and when should I use them?

ARI and NMI are external validation metrics used to measure the similarity between a clustering result and a ground truth (reference) labeling, such as known cell types or experimental conditions [18] [19] [20].

- Adjusted Rand Index (ARI): Measures the similarity between two clusterings by counting pairs of samples that are assigned to the same or different clusters in both the predicted and true clusterings, while adjusting for chance agreement [19] [20]. Its values range from -1 to 1:

- Normalized Mutual Information (NMI): Measures the amount of statistical information shared between the clustering result and the ground truth. It quantifies how much knowing the cluster labels reduces uncertainty about the true class labels [18] [21]. It is normalized to a range of 0 to 1, where 1 indicates perfect correlation.

You should use these metrics when you have a reliable ground truth and want to quantitatively benchmark your clustering algorithm's accuracy against it [22].

2. What is cluster stability, and how is it measured?

Cluster stability is an internal validation concept that assesses how consistent a clustering result is when the algorithm is applied to different subsets of the data or when parameters are slightly perturbed [21]. A stable clustering method produces robust and reliable partitions that are not highly sensitive to minor changes in the input.

It is typically measured by:

- Sub-sampling or Bootstrapping: Repeatedly clustering random subsamples of the dataset.

- Comparing Results: Using metrics like ARI or NMI to compare the cluster labels across these multiple runs. High average similarity between runs indicates high stability [21].

This is particularly important in RNA-seq analysis where the absence of a definitive ground truth is common, and researchers need confidence in the identified cellular subgroups.

3. I have no ground truth labels. Which metrics can I use?

In the absence of ground truth, you must rely on internal validation metrics. These evaluate the clustering structure based on the intrinsic properties of the data itself [22]. Common choices include:

- Silhouette Coefficient: Measures how similar a sample is to its own cluster compared to other clusters. It ranges from -1 to 1, with higher values indicating better-defined clusters [18] [22].

- Davies-Bouldin Index (DBI): Evaluates the average similarity between each cluster and its most similar one. Lower values indicate better, more distinct clustering [18] [21].

- Dunn Index: Assesses the ratio of the smallest inter-cluster distance to the largest intra-cluster distance. Higher values indicate compact and well-separated clusters [18].

4. Why does my ARI value sometimes disagree with other metrics like F-score?

ARI and metrics derived from Hungarian matching (like Precision, Recall, F1-score) measure similarity differently [23].

- ARI is a symmetric measure that considers all pairs of samples (both same-cluster and different-cluster pairs) and is adjusted for chance.

- Hungarian matching first forces a one-to-one mapping between predicted and true clusters and then computes classification-like metrics. This assumes the number of clusters equals the number of classes.

It is well-documented that ARI can provide a higher score (e.g., 0.96) while F1-score may be low (e.g., 0.50), especially when the cluster-to-class mapping is not one-to-one or when there are imbalances [23]. ARI is generally considered more robust for comparing overall partition similarity.

Troubleshooting Guide: Common Experimental Issues

Problem: Low ARI/NMI scores when comparing to known cell type annotations.

- Potential Cause 1: Poor data preprocessing or normalization. RNA-seq count data requires proper normalization (e.g., Variance Stabilizing Transformation - VST) before clustering to avoid technical artifacts dominating the signal [24].

- Solution: Re-check your preprocessing pipeline. Ensure you have performed quality control, normalized for sequencing depth, and stabilized variance.

- Potential Cause 2: The chosen clustering algorithm or its parameters (e.g.,

kin k-means,resolutionin graph-based clustering) are unsuitable for your data's structure. - Solution: Perform sensitivity analysis. Systematically vary key parameters and evaluate the resulting ARI/NMI to find the optimal setting. Consider trying algorithms known to work well with transcriptomic data, such as graph-based methods (e.g., Leiden, Louvain) [1] [25].

Problem: Unstable clustering results across different algorithm runs or subsamples.

- Potential Cause: The algorithm is sensitive to initialization or the data has weak, ambiguous cluster boundaries, which is common in scRNA-seq data due to continuous biological processes like differentiation [25].

- Solution:

- Increase the number of algorithm initializations and select the most consistent result.

- Use ensemble methods: Combine results from multiple clustering runs or algorithms to achieve a consensus, more stable partition.

- Check for over-clustering: Reduce the number of clusters or the resolution parameter. Stability often decreases when forcing too many clusters from data that lacks clear separation.

Problem: Negative ARI values.

- Potential Cause: The agreement between your clustering and the ground truth is worse than what would be expected by chance [19] [20]. This is a strong indicator that the clustering algorithm is finding a structure that is fundamentally different from your reference labels.

- Solution: Investigate the biological or technical reason for the discrepancy. It may indicate that the ground truth labels are not the primary driver of the variation captured by the clustering, or that a key batch effect has not been corrected.

Problem: Choosing the optimal number of clusters without ground truth.

- Potential Cause: Reliance on a single, potentially misleading internal metric.

- Solution: Use a combination of methods and visual inspection:

- Elbow Method: Plot the within-cluster sum of squares (Inertia) against the number of clusters and look for the "elbow" point [26].

- Silhouette Analysis: Plot the average silhouette score for a range of cluster numbers. The number with the highest average score is a good candidate [26].

- Heuristic from Stability: The number of clusters that leads to the most stable results across subsampling is often a reliable choice.

The table below summarizes the primary metrics used for clustering evaluation.

Table 1: Key Clustering Evaluation Metrics

| Metric | Type | Range | Interpretation | Key Advantage |

|---|---|---|---|---|

| Adjusted Rand Index (ARI) | External | -1 to 1 | 1=Perfect, 0=Random, <0=Worse than chance | Robust adjustment for chance agreement [19] [20] |

| Normalized Mutual Info (NMI) | External | 0 to 1 | 1=Perfect, 0=No shared information | Normalized and symmetric; good for comparing different results [18] [21] |

| Silhouette Coefficient | Internal | -1 to 1 | Higher values=Better, denser clusters | Intuitive; relates to cluster cohesion and separation [18] [22] |

| Davies-Bouldin Index (DBI) | Internal | 0 to ∞ | Lower values=Better, more distinct clusters | Considers both intra-cluster and inter-cluster distances [18] [21] |

| Cluster Stability | Internal | Varies | Higher similarity across runs=More stable | Assesses robustness without ground truth [21] |

Experimental Protocol: A Standard Workflow for RNA-seq Clustering Evaluation

Here is a detailed methodology for a typical clustering evaluation experiment on RNA-seq data.

Table 2: Essential Research Reagent Solutions for scRNA-seq Clustering

| Item | Function / Description | Example Tool / Package |

|---|---|---|

| Count Matrix | The primary input data; rows are genes/transcripts, columns are cells/samples. | Output from Cell Ranger [1] |

| Quality Control Metrics | Used to filter out low-quality cells that could distort clustering. | % Mitochondrial reads, UMI counts, Genes detected per cell [1] |

| Normalization Algorithm | Corrects for technical variation like sequencing depth. | SCTransform, DESeq2's VST [24] |

| Dimensionality Reduction Tool | Reduces high-dimensional gene expression space for clustering. | PCA, UMAP, t-SNE |

| Clustering Algorithm | The core method for grouping cells. | K-means, Leiden, Louvain, DBSCAN [1] [26] [25] |

| Validation Metric | Quantifies the success of the clustering. | ARI, NMI, Silhouette Score (as detailed above) |

Workflow Overview: The following diagram visualizes the standard workflow for clustering and evaluation.

Step-by-Step Protocol:

Data Preprocessing & Quality Control (QC):

- Input: Raw gene expression count matrix (e.g., from Cell Ranger [1]).

- Action: Calculate QC metrics per cell: total UMI counts, number of genes detected, and percentage of mitochondrial reads. Filter out cells that are outliers (e.g., very high mitochondrial percentage suggests dead/dying cells; very low UMI/gen suggests empty droplets) [1].

- Documentation: Record all filtering thresholds for reproducibility.

Normalization & Feature Selection:

- Action: Apply a normalization method like the Variance Stabilizing Transformation (VST) from the DESeq2 package to correct for library size and variance trends [24].

- Action: Select highly variable genes (HVGs) that are likely to be informative for distinguishing cell types.

Dimensionality Reduction:

- Action: Perform Principal Component Analysis (PCA) on the normalized and scaled HVG matrix to reduce noise and computational complexity.

Clustering:

- Action: Apply your chosen clustering algorithm (e.g., graph-based Leiden clustering) on the top principal components.

- Action: Perform sensitivity analysis by running the algorithm with different key parameters (e.g., the

resolutionparameter). Repeat this process multiple times to assess stability.

Evaluation:

- If ground truth is available (e.g., known cell types from the literature): Calculate ARI and NMI between the cluster labels and the ground truth.

- If no ground truth is available: Calculate internal metrics like the average silhouette width. Perform a stability analysis by sub-sampling the data or by running the algorithm multiple times with different random seeds, then compute the mean ARI between the labels from all pairs of runs. A high mean ARI indicates stability.

Metric Relationships and Selection Guide

Understanding how different metrics relate helps in forming a comprehensive evaluation. The following diagram illustrates the relationship between the main types of metrics and the conditions for their use.

Top-Performing Clustering Algorithms: Implementation and Workflow Integration

This technical support center is designed to assist researchers in implementing and troubleshooting the top-performing single-cell clustering methods as identified by a recent 2025 benchmarking study. The comprehensive evaluation, published in Genome Biology, systematically compared 28 computational algorithms on 10 paired transcriptomic and proteomic datasets [4]. The study revealed that scDCC, scAIDE, and FlowSOM demonstrated superior performance across multiple metrics and data modalities [4]. This guide provides detailed methodologies, troubleshooting advice, and technical FAQs to help you successfully apply these methods in your single-cell RNA-seq data visualization research.

The 2025 benchmarking study evaluated methods across multiple dimensions, including clustering accuracy, computational efficiency, and robustness [4]. The table below summarizes the key quantitative findings for the top-performing methods.

| Method | Overall Ranking (Transcriptomics) | Overall Ranking (Proteomics) | Key Strength | Computational Efficiency | Robustness |

|---|---|---|---|---|---|

| scDCC | 2nd | 2nd | High clustering accuracy, Memory efficiency | High memory efficiency | Good |

| scAIDE | 3rd | 1st | High clustering accuracy | Moderate | Good |

| FlowSOM | 1st | 3rd | Top robustness, Excellent performance across omics | Fast execution | Excellent |

| scDeepCluster | Not in top 3 | Not in top 3 | Memory efficiency | High memory efficiency | Not specified |

| TSCAN, SHARP, MarkovHC | Not in top 3 | Not in top 3 | Time efficiency | Fast execution | Not specified |

Experimental Protocols for Top Methods

General Single-Cell Clustering Workflow

The following diagram outlines the standard experimental workflow for single-cell clustering, which forms the basis for applying scDCC, scAIDE, and FlowSOM.

Protocol for scDCC Implementation

Principle: scDCC is a deep learning-based method that uses a deep clustering network to learn feature representations and cluster assignments simultaneously [4].

Step-by-Step Procedure:

- Input Data Preparation: Begin with a preprocessed and normalized count matrix. Ensure data is properly scaled and that highly variable genes (HVGs) have been selected.

- Parameter Configuration:

- Set the dimensions of the latent representation (typically 32-128 units).

- Define the number of clusters (can be set to an over-estimation if unknown).

- Configure optimizer settings (learning rate, batch size).

- Model Training:

- The model is trained in a joint framework, optimizing both cluster assignment and data reconstruction.

- Training includes a self-training mechanism with a target distribution to improve cluster purity.

- Output Generation:

- The model outputs cluster labels for each cell.

- It also generates a low-dimensional embedding for visualization.

Protocol for scAIDE Implementation

Principle: scAIDE is another advanced deep learning approach designed for accurate cell type identification, ranking first for proteomic data and third for transcriptomic data [4].

Step-by-Step Procedure:

- Input Data Preparation: Similar to scDCC, start with a high-quality, normalized count matrix.

- Architecture Setup:

- scAIDE typically employs a more complex neural network architecture to model the complex distributions of single-cell data.

- It may incorporate attention mechanisms or other advanced structures to weight important features.

- Training Process:

- The training involves minimizing a combined loss function that includes clustering loss and reconstruction loss.

- Data augmentation might be used to improve model robustness.

- Result Extraction:

- Extract final cluster assignments from the output layer of the trained model.

- Use the model's latent space for generating visualizations like UMAP plots.

Protocol for FlowSOM Implementation

Principle: FlowSOM is a classical machine learning method that uses a self-organizing map (SOM) followed by hierarchical consensus metaclustering, noted for its excellent robustness [4].

Step-by-Step Procedure:

- Input Data Preparation: Use a normalized and scaled expression matrix. FlowSOM is particularly effective on proteomic data but performs well on transcriptomic data too.

- SOM Training:

- Define the grid size for the SOM (e.g., 10x10).

- The algorithm assigns cells to nodes on the grid based on expression similarity.

- Consensus Metaclustering:

- Apply hierarchical clustering on the SOM nodes to generate final clusters.

- The number of final clusters can be specified or determined automatically.

- Visualization and Interpretation:

- Visualize the results using a minimum spanning tree (MST) built on the SOM codes.

- This provides an intuitive graph-based representation of cell populations and their relationships.

Troubleshooting Guides & FAQs

Data Preprocessing Issues

Q1: My clustering results show strong batch effects instead of biological variation. How can I correct for this?

A: Batch effects are a common challenge. The benchmarking study highlights that data integration methods can be applied before clustering [4].

- Solution: Apply batch correction tools like Harmony or use integration methods (e.g., Seurat's CCA) before running the clustering algorithm. For deep learning methods like scDCC, you can also add a batch covariate to the model if the architecture permits.

- Verification: After correction, visualize the data using UMAP or t-SNE. Cells from different batches but the same cell type should mix well.

Q2: How does the selection of Highly Variable Genes (HVGs) impact the performance of scDCC, scAIDE, and FlowSOM?

A: The benchmarking study specifically investigated the impact of HVGs and found that clustering performance is indeed sensitive to this preprocessing step [4]. The optimal number can vary by dataset and method.

- Solution: Do not rely on a default number of HVGs. Perform a sensitivity analysis by testing different numbers of HVGs (e.g., 1,000, 2,000, 3,000) and evaluate the clustering stability and biological coherence of the results. Using too few genes can miss important signals, while too many can introduce noise.

Algorithm-Specific Problems

Q3: When running scDCC or scAIDE, the training process is unstable and produces different results each time. What should I do?

A: This is often due to the random initialization of neural network weights.

- Solution:

- Set a random seed at the beginning of your script to ensure reproducibility.

- Increase the number of training epochs to ensure the model converges fully.

- Tune the learning rate; a rate that is too high can cause instability.

- For scDCC, the authors of the benchmark recommend it for its good performance, so consult the original method's documentation for specific best practices [4].

Q4: FlowSOM is running quickly but seems to be over-clustering my data (splitting one cell type into multiple clusters). How can I fix this?

A: This is a known behavior of FlowSOM, which can be sensitive to the number of clusters (the xdim/ydim and maxMeta parameters).

- Solution:

- Reduce the number of metaclusters (

maxMetaparameter). - Manually merge clusters post-analysis based on marker gene expression.

- Use the Clustering Accuracy (CA) metric, as used in the benchmark, to quantitatively compare the results of different parameters against a known ground truth or biological expectations [4].

- Reduce the number of metaclusters (

Performance and Interpretation

Q5: The benchmarking study ranks these methods highly, but on my specific dataset, the performance is poor. What factors could explain this discrepancy?

A: The "no free lunch" theorem applies to clustering; no single method is best for all datasets. The 2025 benchmark notes that performance can be influenced by cell type granularity and data quality [4].

- Diagnosis Checklist:

- Data Quality: Check for high levels of technical noise or low number of cells per population.

- Granularity: Are you trying to identify very fine subpopulations? Some methods are better at coarse-grained clustering.

- Modality: Remember that scAIDE ranked #1 for proteomic data, while FlowSOM was top for transcriptomic data. Consider if your data's characteristics align more with one modality.

- Solution: Always try a consensus approach. Run 2-3 top-performing methods (e.g., scDCC, FlowSOM, and a community detection method like Leiden). Results that are consistent across methods are more reliable.

Q6: For a large dataset (>100k cells), which of the top methods is most suitable?

A: Computational efficiency is key for large datasets. The benchmarking study provides clear guidance here [4]:

- FlowSOM is highly recommended due to its excellent speed and top-tier robustness.

- scDCC is also a good choice as it is specifically recommended for its memory efficiency.

- Avoid methods that are computationally intensive unless necessary. The study recommends TSCAN, SHARP, and MarkovHC for users who prioritize time efficiency.

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 2: Key Computational Tools for Single-Cell Clustering

| Tool / Resource | Category | Primary Function | Relevance to Top Performers |

|---|---|---|---|

| Scanpy / Seurat | General Analysis Frameworks | Data preprocessing, normalization, visualization, and downstream analysis. | Standard environment for preparing data for scDCC, scAIDE, and FlowSOM. |

| HVGs (Highly Variable Genes) | Preprocessing | Identifies genes with high cell-to-cell variation for feature selection. | Critical preprocessing step that directly impacts all clustering performance [4]. |

| UMAP/t-SNE | Dimensionality Reduction | Non-linear dimensionality reduction for 2D/3D visualization of clusters. | Used to visualize and validate the results from all clustering methods. |

| ARI/NMI/CA Metrics | Validation | Adjusted Rand Index, Normalized Mutual Info, Clustering Accuracy. | Standard metrics from the benchmark to evaluate your results against a ground truth [4]. |

| CITE-seq Data | Multimodal Data | Provides paired transcriptomic and proteomic data from the same cell. | Ideal for training and validating models, as used in the benchmark to assess cross-modal performance [4]. |

Workflow Integration Diagram

The following diagram illustrates how the troubleshooting and best practice concepts integrate into a robust single-cell analysis workflow, from raw data to biological insights.

This technical support center serves researchers, scientists, and drug development professionals utilizing advanced deep learning clustering methods for single-cell RNA sequencing (scRNA-seq) data. scRNA-seq data presents significant challenges including high dimensionality, sparsity, pervasive dropout events (false zero counts), and technical noise, which complicate the identification of cell types and states [27] [28] [29]. This guide focuses on three powerful deep learning-based clustering tools—scDCC, scDeepCluster, and scGNN—framed within a broader thesis on best practices for clustering in RNA-seq data visualization research. Below you will find troubleshooting guides, FAQs, and detailed methodologies to address specific issues encountered during experimental implementation.

Method Comparison and Selection Guide

The table below summarizes the core characteristics, strengths, and weaknesses of scDCC, scDeepCluster, and scGNN to help you select the most appropriate method for your data and research goals.

Table 1: Comparative Overview of Deep Learning Clustering Methods for scRNA-seq Data

| Method | Core Architecture | Key Innovation | Primary Strengths | Common Challenges |

|---|---|---|---|---|

| scDCC [27] | Model-based Deep Embedded Clustering | Integrates domain knowledge via soft pairwise constraints (Must-Link/Cannot-Link). | Significantly improves clustering interpretability; Handles partial prior knowledge; Superior performance in benchmarks [4]. | Requires construction of constraint pairs; Performance depends on constraint quality. |

| scDeepCluster [30] | Autoencoder (ZINB model) + Deep Embedding Clustering | Jointly optimizes feature learning and clustering loss using a ZINB model. | Effective for discrete, over-dispersed, zero-inflated data [27]; Memory efficient [4]. | May struggle with highly sparse data without leveraging relational information [29]. |

| scGNN [31] | Graph Neural Network (GNN) + Multi-modal Autoencoders | Formulates and aggregates cell-cell relationships using a graph structure. | Captures complex cell-cell relationships; Powerful for gene imputation and clustering; Robust on complex datasets [31]. | Computationally intensive; Complex architecture requires more tuning [31]. |

Troubleshooting Guides and FAQs

FAQ 1: How do I choose between a constrained method (like scDCC) and a fully unsupervised method?

Answer: Your choice depends on the availability and quality of prior biological knowledge for your dataset.

- Use scDCC when: You have reliable prior information, such as known marker genes, flow cytometry data, or pilot experiments. scDCC converts this knowledge into soft pairwise constraints (Must-Link and Cannot-Link), guiding the clustering towards biologically interpretable results and preventing exotic, meaningless clusters [27] [32]. This is ideal when the goal is to validate or refine existing biological understanding.

- Use scDeepCluster or scGNN when: You are in an exploratory discovery phase with no or limited prior knowledge. These fully unsupervised methods are designed to de novo discover cell types and states from the data structure itself [27] [31].

FAQ 2: My clustering results are biologically uninterpretable. What could be wrong?

Answer: This is a common challenge. Below is a troubleshooting workflow to diagnose and address the issue.

Actions:

- Revisit Preprocessing: Ensure proper normalization and selection of Highly Variable Genes (HVGs). Inadequate preprocessing amplifies noise [32].

- Leverage Prior Knowledge: If available, use scDCC. It integrates domain knowledge to steer clusters toward biologically plausible structures, directly addressing the problem of uninterpretable results [27].

- Handle Complex Relationships: If cell-type relationships are highly complex and non-linear, switch to scGNN. Its graph-based model can capture global topological structures that linear methods might miss [31] [33].

FAQ 3: How can I improve clustering performance on a highly sparse dataset with many dropout events?

Answer: Dropout events are a major source of sparsity. The following table outlines method-specific strategies.

Table 2: Troubleshooting High Data Sparsity and Dropouts

| Method | Underlying Solution | Recommended Actions |

|---|---|---|

| scDeepCluster | Uses a Zero-Inflated Negative Binomial (ZINB) model in its autoencoder loss, which explicitly models the dropout events and over-dispersion of scRNA-seq data [27]. | Ensure the ZINB loss function is correctly implemented. This model is statistically tailored to handle false zeros. |

| scGNN | Employs an iterative imputation-autoencoder. It uses the learned cell-graph to recover gene expression values, effectively imputing dropouts as part of its clustering pipeline [31]. | Use the imputation output from scGNN for downstream analysis. The method is designed to denoise data during clustering. |

| scDCC | Its deep embedding network is robust to noise. The integration of constraints helps guide the learning of a latent space that is meaningful despite sparsity [27]. | Verify that your constraints are based on robust markers. The constraints help the model learn correctly even with missing data. |

Experimental Protocols and Workflows

Detailed Workflow for scDCC with Pairwise Constraints

This protocol is crucial for successfully applying scDCC to achieve superior, biologically interpretable clustering [27].

Step 1: Data Preprocessing

- Input: Raw UMI count matrix.

- Normalization: Normalize the counts per cell using a scale factor (e.g., 10,000), followed by log-transformation [32].

- Feature Selection: Select the top 2,000-3,000 Highly Variable Genes (HVGs) to reduce dimensionality and noise.

Step 2: Generation of Pairwise Constraints

- Select a small subset of cells (e.g., 10%) with high-confidence labels derived from known marker genes or other assays.

- Must-Link (ML): Create constraints between pairs of cells that are known to belong to the same cell type.

- Cannot-Link (CL): Create constraints between pairs of cells that are known to belong to different cell types.

- The number of constraints can vary, but studies show performance improves consistently with several thousand constraints, representing a small fraction of all possible pairs [27].

Step 3: Model Training and Clustering

- Train the scDCC model, which uses a deep autoencoder, by jointly optimizing:

- The standard reconstruction loss.

- A clustering loss (e.g., KL divergence).

- A constraint loss term that penalizes violations of the provided ML and CL constraints.

- The model output is the cluster assignment for all cells.

General Single-Cell Clustering Evaluation Protocol

To fairly compare methods, use the following standardized evaluation procedure [4] [6].

Step 1: Metric Selection Use a combination of external validation metrics that compare clustering results to ground truth labels:

- Adjusted Rand Index (ARI): Measures the similarity between two data clusterings. Values near 1 indicate excellent agreement [27] [32].

- Normalized Mutual Information (NMI): Measures the mutual dependence between the clusterings. Values range from 0 (no mutual information) to 1 (perfect correlation) [27] [32].

- Clustering Accuracy (CA): Measures the accuracy of the cluster assignments by finding the best match between clusters and true labels [27].

Step 2: Robustness Analysis

- Perform multiple runs with different random seeds to assess the stability of the clustering results.

- Use simulated datasets with known noise levels to evaluate method robustness, as performed in large-scale benchmarks [4].

The Scientist's Toolkit: Essential Research Reagents

This table lists key computational "reagents" and their functions in the analysis of scRNA-seq data using deep learning methods.

Table 3: Key Research Reagent Solutions for scRNA-seq Deep Clustering

| Tool / Resource | Function | Relevance to scDCC/scDeepCluster/scGNN |

|---|---|---|

| Scanpy [29] | A Python-based toolkit for single-cell data analysis. | Used for standard preprocessing: filtering low-quality cells/genes, normalization, HVG selection, and PCA. |

| SCANPY / Seurat [32] | Comprehensive R/Python toolkit for single-cell genomics. | Provides robust pipelines for data normalization, scaling, and initial exploratory analysis. |

| Pairwise Constraints | Domain knowledge encoded as Must-Link/Cannot-Link pairs. | The essential "reagent" for scDCC, guiding the clustering towards biological accuracy [27]. |

| ZINB Model | A statistical distribution modeling over-dispersed and zero-inflated count data. | The core of scDeepCluster's loss function, allowing it to handle scRNA-seq noise effectively [27]. |

| Cell-Graph / KNN Graph | A graph structure where nodes are cells and edges represent similarity. | The fundamental data structure for scGNN, enabling it to propagate information and learn complex relationships [31] [29]. |

| Gold-Standard Benchmarks | Public scRNA-seq datasets with well-annotated cell labels. | Critical for validating and benchmarking the performance of any new method or protocol [31] [4]. |

Single-cell RNA sequencing (scRNA-seq) has revolutionized biological research by enabling the transcriptomic profiling of individual cells, revealing cellular heterogeneity and identifying novel cell types [34]. Unsupervised clustering stands as a critical first step in scRNA-seq data analysis, allowing researchers to group cells with similar expression patterns and infer cell types and states [34] [35]. Among the plethora of computational methods developed, SC3, Seurat, and CIDR have emerged as prominent classical machine learning approaches that balance computational efficiency with biological relevance. These methods operate under different algorithmic assumptions and are sensitive to specific data characteristics, making the understanding of their strengths, limitations, and optimal application parameters crucial for robust analysis [36]. Within the broader thesis on best clustering methods for RNA-seq data visualization research, this technical support center provides targeted troubleshooting guidance and experimental protocols to ensure researchers can effectively implement these tools and accurately interpret their results.

Key Characteristics of SC3, Seurat, and CIDR

| Method | Core Algorithm | Key Features | Cell Number Estimation | Primary Output |

|---|---|---|---|---|

| SC3 | Consensus Clustering (Spectral + k-means) | Combines multiple distance matrices & clustering solutions; user-friendly | Tracy-Widom test on eigenvalues [36] | Consensus clusters & cell labels |

| Seurat | Graph-based (Louvain/Leiden) | PCA + shared nearest neighbor (SNN) graph + community detection [34] | Modularity optimization [36] | Cell clusters & 2D visualizations |

| CIDR | Hierarchical Clustering with Imputation | Implicit imputation for dropout reduction; Principal Coordinate Analysis (PCoA) | Calinski-Harabasz (CH) index [36] | Hierarchical clusters & cell labels |

Data Preprocessing Requirements

| Method | Normalization Approach | Dimension Reduction | Feature Selection | Input Data Format |

|---|---|---|---|---|

| SC3 | Log-transformation after adding pseudocount [35] | PCA on multiple distance matrices [34] | Optional gene filtering; can be disabled [35] | Read counts or UMI counts |

| Seurat | Log-normalization; SCTransform (recommended) [7] | Linear (PCA) followed by non-linear (t-SNE, UMAP) [34] | Top highly variable genes (default: 2000) [7] | UMI counts recommended |

| CIDR | Log-transformation with implicit imputation [35] | Principal Coordinate Analysis (PCoA) [35] | Intrinsic dropout-based weighting | Read counts or UMI counts |

Troubleshooting Guide: Common Issues and Solutions

Data Quality and Preprocessing Issues

Q1: My clustering results show poor separation between expected cell types. What preprocessing steps should I verify?

A: Poor cluster separation often originates from inadequate preprocessing. First, perform rigorous quality control to remove low-quality cells. Filter cells with gene counts outside the typical range of 200-2500 genes and exclude cells with >5% mitochondrial counts, as these often represent damaged or dying cells [34]. For Seurat specifically, ensure you're using SCTransform normalization rather than standard log-normalization, as this method better addresses technical variance while preserving biological heterogeneity [7]. When using CIDR, verify that the implicit imputation is effectively addressing dropout events by examining the PCoA plot for clear separation trends [35].

Q2: How should I handle excessive zeros in my UMI count data for these methods?

A: The approach depends on your chosen method. For UMI-based protocols, evidence suggests that UMI counts generally follow negative binomial distributions without excess zero inflation [37]. CIDR is specifically designed to handle dropout events through implicit imputation during its dimensionality reduction process [35]. For SC3 and Seurat, ensure you're using UMI counts rather than read counts, as PCR amplification artifacts in read counts can create problematic zero inflation [37]. Avoid applying additional zero-inflation models to UMI data unless your specific protocol is known to produce excessive zeros.

Parameter Tuning and Method Selection

Q3: How do I determine the optimal number of clusters (k) for each method?

A: Each method employs different internal indices for estimating k:

- SC3 uses the Tracy-Widom test on eigenvalues to estimate cluster number [36]

- Seurat relies on modularity optimization within graph-based clustering, which intrinsically determines cluster number [36]

- CIDR applies the Calinski-Harabasz (CH) index to hierarchical clustering results [36]

For robust estimation, consider using the Clustering Deviation Index (CDI), which measures distributional deviation of clustering labels from observed data and works well across methods [37]. Alternatively, ensemble approaches like SAFE-clustering can integrate results from multiple methods and provide more stable cluster number estimates [35].

Q4: Which method performs best for identifying rare cell types in heterogeneous populations?

A: Methods vary in their sensitivity to rare cell types. SC3 has demonstrated good performance in identifying rare cell types through its consensus approach [34]. RaceID (not covered here) was specifically designed for rare cell identification [36]. For Seurat, increasing the resolution parameter can help identify finer subpopulations, though this may also increase sensitivity to noise. When rare cell types are suspected, consider using multiple methods and examining consensus, as ensemble approaches like SAFE-clustering have shown improved performance over individual methods [35].

Performance and Scalability

Q5: My dataset contains over 50,000 cells. Which method is most scalable?

A: For large-scale datasets, Seurat generally offers the best scalability due to its efficient graph-based implementation [36]. SC3 can handle datasets with tens of thousands of cells but may require enabling the support vector machine (SVM) option for datasets exceeding 5,000 cells to speed up computation [35]. CIDR may face computational constraints with extremely large datasets (>100,000 cells). Recent benchmarking indicates that Seurat maintains reasonable accuracy even with large cell numbers, though it may tend to overestimate cluster numbers in some cases [36].

Q6: Why do I get different clustering results when using the same dataset with different methods?

A: Discrepancies arise because each method utilizes different characteristics of the data. SC3 employs consensus across multiple transformations and distance metrics [35]. Seurat relies on graph-based community detection which is sensitive to the construction of nearest neighbor graphs [34]. CIDR uses dimensionality reduction with implicit imputation that weights genes based on dropout rates [35]. These methodological differences lead to varying sensitivities to data characteristics. Benchmarking studies show that no single method consistently outperforms others across all datasets [36]. For critical analyses, consider using ensemble methods like SAFE-clustering or scEFSC that combine multiple individual methods to produce more robust consensus clusters [35] [38].

Experimental Protocols: Standardized Workflows

Comprehensive scRNA-seq Clustering Workflow

Diagram Title: Comprehensive scRNA-seq Clustering Workflow

Quality Control Protocol

Step 1: Cell-level Filtering

- Filter cells with gene counts <200 or >2500 [34]

- Exclude cells with >5% mitochondrial counts [34]

- Remove cells with aberrantly high gene counts (potential doublets) [34]

Step 2: Gene-level Filtering

- Remove genes expressed in fewer than 2% of cells [38]

- Retain highly variable genes for downstream analysis (2000 genes recommended for Seurat) [7]

Step 3: Normalization

- For SC3 and CIDR: Apply log-transformation after adding a pseudocount of 1 [35]

- For Seurat: Use SCTransform normalization for optimal variance stabilization [7]

Method-Specific Implementation Protocols

SC3 Protocol:

- Input data as count matrix with genes as rows and cells as columns

- Disable gene filtering if too many genes would be removed (as noted in some implementations) [35]

- Estimate cluster number using Tracy-Widom method

- Compute multiple distance matrices (Euclidean, Pearson, Spearman)

- Perform k-means clustering on each transformation

- Build consensus matrix using Cluster-based Similarity Partitioning Algorithm (CSPA)

- Apply hierarchical clustering to consensus matrix for final clusters [35]

Seurat Protocol:

- Create Seurat object with raw counts

- Perform SCTransform normalization

- Select top 2000 highly variable genes

- Run principal component analysis (PCA)

- Construct shared nearest neighbor (SNN) graph using top principal components

- Apply Louvain or Leiden algorithm for community detection

- Project results in 2D using UMAP or t-SNE for visualization [7]

CIDR Protocol:

- Input log-transformed count matrix

- Perform implicit imputation for dropout reduction

- Calculate dissimilarity matrix using imputed values

- Perform Principal Coordinate Analysis (PCoA)

- Determine optimal number of principal coordinates using internal nPC function or visual elbow detection

- Apply hierarchical clustering to PCoA results [35]

- Determine optimal cluster number using Calinski-Harabasz index [36]

Performance Benchmarking: Quantitative Comparisons

Method Performance Across Datasets

| Evaluation Metric | SC3 | Seurat | CIDR | Ensemble (SAFE) |

|---|---|---|---|---|

| Adjusted Rand Index (ARI) | Variable across datasets | Generally high | Moderate | 36% improvement over best single method [35] |

| Cluster Number Accuracy | Tendency to overestimate [36] | Tendency to overestimate [36] | More accurate estimation using CH index [36] | 18.2-58.1% reduction in absolute deviation [35] |

| Rare Cell Type Detection | Good sensitivity [34] | Moderate (depends on parameters) | Moderate | Improved through consensus |

| Computational Speed | Moderate (improves with SVM) [35] | Fast | Moderate | Slower (runs multiple methods) |

| Scalability | Good with SVM for >5,000 cells [35] | Excellent for large datasets | Limited for very large datasets | Depends on constituent methods |

Dimension Reduction Impact on Clustering Performance

| Dimension Reduction Method | Neighborhood Preservation | Recommended Usage | Compatibility |

|---|---|---|---|

| PCA | Moderate (linear assumptions) | Initial linear reduction for all methods | SC3, Seurat, CIDR |

| t-SNE | High (non-linear preservation) | Final visualization (2D/3D) | Seurat, optional for others |

| UMAP | High, faster than t-SNE [34] | Visualization and clustering | Seurat, increasing adoption |

| PCoA | Moderate | CIDR's primary approach | CIDR-specific |

| Diffusion Map | High for trajectory inference | Lineage analysis | Not primary for these methods |

The Scientist's Toolkit: Essential Research Reagents

Computational Tools and Software Packages

| Tool/Package | Function | Implementation | Availability |

|---|---|---|---|

| SC3 | Consensus clustering | R package | Bioconductor |

| Seurat | Comprehensive scRNA-seq analysis | R package | CRAN, Satija Lab |

| CIDR | Dimensionality reduction & clustering | R package | CRAN |

| SAFE-clustering | Ensemble method | R package | GitHub [35] |

| scEFSC | Ensemble feature selection clustering | R package | GitHub [38] |

| CDI | Clustering evaluation | R package | Bioconductor [37] |

Validation and Biological Interpretation Tools

| Tool/Approach | Application | Key Output |

|---|---|---|

| Differential Expression | Marker gene identification | Cell type-specific genes |

| Gene Ontology (GO) Enrichment | Functional annotation | Biological processes |

| KEGG Pathway Analysis | Pathway activation | Signaling pathways |

| Visualization (t-SNE/UMAP) | Result interpretation | 2D cluster maps |

| Clustering Deviation Index (CDI) | Objective quality assessment | Optimal parameter selection [37] |

Advanced Integration: Ensemble Approaches

Ensemble Clustering Strategies

For critical applications where clustering accuracy is paramount, ensemble methods that combine multiple algorithms typically outperform individual methods. SAFE-clustering exemplifies this approach by integrating four state-of-the-art methods (SC3, CIDR, Seurat, and t-SNE + k-means) using hypergraph partitioning algorithms [35]. The implementation involves:

- Running each clustering method independently with their default parameters

- Combining solutions using one of three hypergraph algorithms:

- Hypergraph Partitioning Algorithm (HGPA)

- Meta-Clustering Algorithm (MCLA)

- Cluster-based Similarity Partitioning Algorithm (CSPA)

- Selecting the consensus solution that demonstrates the highest stability

Benchmarking across 12 datasets demonstrated that SAFE-clustering provides an average of 36.0% improvement in Adjusted Rand Index compared to the best individual method, with up to 18.5% improvement in specific cases [35].

Ensemble Feature Selection Clustering (scEFSC)

The scEFSC approach addresses feature selection variability by combining multiple unsupervised feature selection methods before clustering:

- Phase A: Employ multiple feature selection methods (Low Variance, Laplacian Score, SPEC, MCFS) to remove non-informative genes

- Phase B: Apply diverse clustering algorithms to each feature subset

- Phase C: Integrate results using weighted-ensemble meta-clustering [38]

This approach has demonstrated superior performance across 14 real scRNA-seq datasets, highlighting the importance of addressing feature selection as part of a robust clustering workflow.

Within the broader thesis on RNA-seq data visualization research, SC3, Seurat, and CIDR represent complementary approaches with distinct strengths. SC3 provides robust consensus clustering suitable for standard analyses. Seurat offers scalability and integration with visualization. CIDR effectively handles dropout events through implicit imputation. For maximum reliability, ensemble approaches like SAFE-clustering or scEFSC that leverage multiple methods consistently outperform individual algorithms. Furthermore, the Clustering Deviation Index (CDI) provides an objective metric for parameter selection and result validation [37]. By implementing the standardized protocols and troubleshooting guides provided, researchers can navigate the complexities of single-cell clustering with greater confidence and biological relevance.

Troubleshooting Guides

FAQ 1: How do I choose between Louvain and Leiden for clustering my scRNA-seq data?