Best Practices for Benchmarking Gene Finding Tools: A Comprehensive Guide for Genomics Researchers

This article provides a comprehensive framework for benchmarking gene prediction tools, addressing critical needs in genomic research and drug development.

Best Practices for Benchmarking Gene Finding Tools: A Comprehensive Guide for Genomics Researchers

Abstract

This article provides a comprehensive framework for benchmarking gene prediction tools, addressing critical needs in genomic research and drug development. It explores the foundational principles of establishing reliable benchmarks, methodological approaches for tool application, strategies for troubleshooting and optimization, and rigorous validation techniques. By synthesizing current best practices from recent large-scale studies, this guide empowers researchers to conduct more accurate, reproducible, and biologically meaningful evaluations of computational methods, ultimately enhancing the reliability of genomic annotations for downstream biomedical applications.

Laying the Groundwork: Core Principles and Benchmark Design for Gene Prediction

In computational biology, benchmarking serves as the cornerstone for rigorous method evaluation and scientific advancement. As the number of computational methods for genomic analysis grows exponentially—exemplified by nearly 400 methods available for analyzing single-cell RNA-sequencing data—the design and implementation of benchmarking studies becomes increasingly critical for guiding research decisions [1]. Effective benchmarking bridges the gap between methodological development and biological discovery by providing objective performance assessments under controlled conditions. This protocol examines the evolution of benchmarking objectives from simple binary classification tasks to complex biological questions that require modeling long-range genomic dependencies and spatial relationships. We establish a comprehensive framework for designing benchmarking studies that meet the rigorous demands of contemporary genomics research, ensuring that evaluations yield biologically meaningful and statistically robust conclusions.

Foundational Principles of Benchmarking Design

Core Benchmarking Objectives and Typology

Benchmarking studies in computational biology generally serve one of three primary purposes, each with distinct design implications. Method development benchmarks aim to demonstrate the merits of a new approach compared to existing state-of-the-art and baseline methods [1]. These typically focus on a representative subset of methods and specific performance advantages. Neutral comparative benchmarks seek to systematically evaluate all available methods for a particular analysis task without perceived bias [1]. These studies function as comprehensive methodological reviews and should include all available methods meeting predefined inclusion criteria. Community challenges represent large-scale collaborative evaluations organized by consortia such as DREAM, CAMI, or GA4GH, where method authors collectively establish performance standards [1].

Regardless of type, successful benchmarking studies share common design principles: they define clear scope and objectives prior to implementation, select methods and datasets through predetermined criteria that avoid bias, employ multiple performance metrics that reflect diverse aspects of utility, and contextualize results according to the original benchmarking purpose [1]. For method development benchmarks, results should highlight what new capabilities the method enables; for neutral benchmarks, findings should provide clear guidance for method users and identify weaknesses for developers to address.

Defining Appropriate Evaluation Metrics

The selection of evaluation metrics must align with benchmarking objectives and the nature of the prediction task. For classification problems, the area under the receiver operating characteristic curve (AUROC) and area under the precision-recall curve (AUPR) provide comprehensive performance summaries across all classification thresholds [2]. For regression tasks, correlation coefficients (Pearson, stratum-adjusted) measure the strength of association between predictions and experimental measurements [3] [4]. Contemporary benchmarks increasingly combine multiple metric types to capture different performance dimensions, as demonstrated by recent studies that evaluate statistical calibration, computational scalability, and impact on downstream analyses in addition to prediction accuracy [5].

Table 1: Common Evaluation Metrics for Genomic Benchmarking

| Metric Category | Specific Metrics | Primary Use Cases | Interpretation Guidelines |

|---|---|---|---|

| Classification Performance | AUROC, AUPR | Binary classification (e.g., coding potential, enhancer-target interactions) | AUROC > 0.9: excellent; 0.8-0.9: good; 0.7-0.8: fair; <0.7: poor |

| Regression Performance | Pearson Correlation, Stratum-Adjusted Correlation | Quantitative prediction (e.g., gene expression, contact maps) | Closer to 1 indicates stronger predictive relationship |

| Statistical Calibration | P-value distribution, False discovery rate | Method reliability assessment | Uniform p-value distribution under null indicates proper calibration |

| Computational Performance | Runtime, Memory usage | Scalability assessment | Context-dependent based on available resources |

Evolution of Benchmarking Paradigms in Genomics

From Simple Classification to Complex Biological Questions

Early genomic benchmarking studies primarily addressed binary classification problems, such as distinguishing coding from non-coding RNAs. These initial efforts focused on sequence-based features and relatively simple model architectures. For example, benchmarks of RNA classification tools assessed 24 methods producing >55 models on datasets covering a wide range of species [6]. These studies revealed that even "simple" classification tasks present substantial challenges, with performance hampered by lack of standardized training sets, reliance on homogeneous training data, gradual changes in annotated data, and presence of false positives and negatives in datasets [6].

Contemporary benchmarking has evolved to address increasingly complex biological questions that require modeling intricate genomic relationships. The DNALONGBENCH suite exemplifies this evolution, focusing on five tasks with long-range dependencies spanning up to 1 million base pairs: enhancer-target gene interaction, expression quantitative trait loci, 3D genome organization, regulatory sequence activity, and transcription initiation signals [3] [4]. This progression from simple classification to modeling spatial and long-range dependencies reflects the growing sophistication of genomic research and computational methods.

Addressing Domain-Specific Challenges

Specialized domains within genomics present unique benchmarking challenges that require tailored approaches. Spatial transcriptomics benchmarking must account for diverse technologies (sequencing-based vs. imaging-based), varying spatial resolutions, and distinct analytical tasks [5]. Gene regulatory network inference benchmarks must address the difficulty of obtaining experimental ground truth and the challenge of directionality prediction [2]. Long-range dependency modeling requires specialized benchmarks that assess performance on interactions spanning hundreds of kilobases to megabases, presenting significant computational and methodological challenges [3].

Table 2: Domain-Specific Benchmarking Considerations

| Genomic Domain | Specialized Challenges | Adapted Benchmarking Strategies |

|---|---|---|

| Spatial Transcriptomics | Technology-specific resolution differences, lack of experimental ground truth | Realistic simulation frameworks (e.g., scDesign3), multiple pattern types, downstream application assessment |

| Gene Regulatory Networks | Directionality determination, lack of comprehensive validation | Strict scoring requiring correct edge direction, simulation studies to establish best practices |

| Long-Range Interactions | Computational scalability, capturing dependencies across large genomic distances | Tasks spanning up to 1M bp, specialized metrics for 2D predictions, comparison of expert vs. foundation models |

| RNA Classification | Overlapping training-test sets, dataset imbalance, evolutionary conservation | Cross-species validation, balanced dataset design, homology search integration |

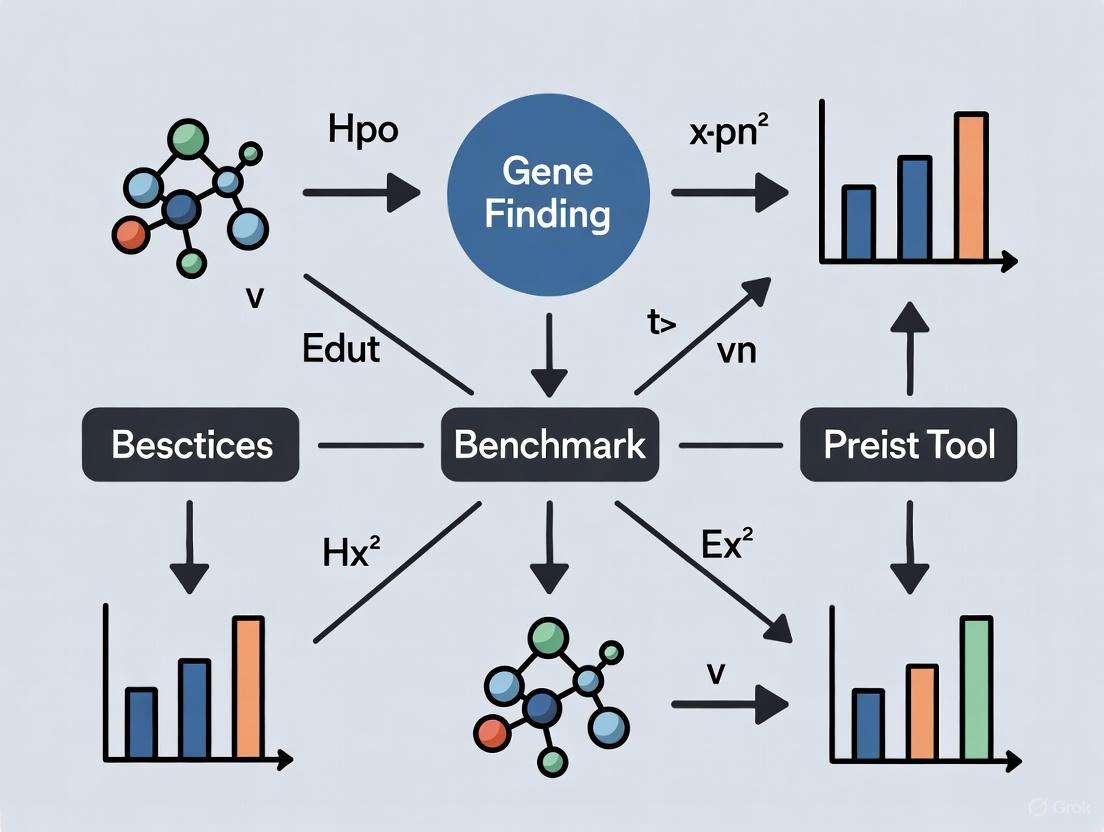

Figure 1: Evolution of Genomic Benchmarking Objectives

Experimental Protocols for Comprehensive Benchmarking

Protocol 1: Benchmarking RNA Classification Tools

Objective: To rigorously evaluate computational methods for distinguishing coding and non-coding RNAs across diverse species and transcript types.

Materials and Reagents:

- Reference Datasets: 135 transcriptomic datasets from existing studies covering 49 species [6]

- Evaluation Framework: RNAChallenge dataset derived from consistently misclassified instances [6]

- Computational Methods: 24 classification tools (e.g., CPAT, PLEK, CPC2, LncADeep) producing >55 models [6]

- Pre-processing Tools: Utilities for removing non-ACGT characters and standardizing sequence formats [6]

Methodology:

- Dataset Preparation and Curation

- Acquire datasets from existing studies to maintain quality standards

- Perform quality control by removing characters other than ACGT

- Balance dataset representation across kingdoms (animal, plant, fungi)

- Address class imbalance between mRNAs and ncRNAs through stratified sampling

Method Implementation and Configuration

- Install all tools following developers' instructions

- Execute each method with default parameters unless otherwise specified

- For methods with multiple models, select appropriate models based on species compatibility

- Standardize output formats for consistent performance evaluation

Performance Assessment and Analysis

- Calculate AUROC and AUPR values for each method-dataset combination

- Identify hard cases (consistently misclassified instances) for further analysis

- Perform cross-species validation to assess generalization capability

- Analyze performance variation across different transcript length distributions

Expected Outcomes: This protocol will identify best-performing methods for specific application contexts, reveal systematic weaknesses in current approaches, and generate a challenging validation set (RNAChallenge) for method improvement [6].

Protocol 2: Benchmarking Long-Range Dependency Modeling

Objective: To assess the capability of computational methods to capture genomic dependencies spanning up to 1 million base pairs across five biologically meaningful tasks.

Materials and Reagents:

- Benchmark Suite: DNALONGBENCH with five tasks spanning 450,000 to 1,048,576 bp inputs [3] [4]

- Model Types: Lightweight CNN, task-specific expert models, DNA foundation models (HyenaDNA, Caduceus variants) [4]

- Evaluation Metrics: Task-specific metrics including AUROC, Pearson correlation, stratum-adjusted correlation [4]

- Data Formats: BED files containing genome coordinates for flexible flanking context adjustment [3]

Methodology:

- Task-Specific Experimental Setup

- For enhancer-target gene prediction: Use Activity-by-Contact (ABC) model as expert baseline

- For contact map prediction: Implement Akita model with combined 1D-2D convolutional architecture

- For eQTL prediction: Apply Enformer model with cross-species validation

- For regulatory sequence activity: Utilize Enformer with Poisson loss training

- For transcription initiation: Implement Puffin-D model for nucleotide-wise regression

Model Training and Fine-tuning

- For foundation models: Extract last-layer hidden representations for reference and allele sequences

- For classification tasks: Train three-layer CNN with cross-entropy loss

- For contact map prediction: Design CNN with 1D and 2D convolutional layers trained with MSE loss

- Implement appropriate loss functions for each task type (cross-entropy, MSE, Poisson)

Comprehensive Evaluation

- Compare expert models, DNA foundation models, and lightweight CNNs across all tasks

- Assess performance variation across different sequence lengths and task difficulties

- Evaluate computational efficiency metrics (runtime, memory usage)

- Analyze failure cases to identify systematic limitations

Expected Outcomes: This protocol will establish performance baselines for long-range dependency modeling, reveal relative strengths of different model architectures, and identify particularly challenging tasks such as contact map prediction [3] [4].

Protocol 3: Benchmarking Spatially Variable Gene Detection

Objective: To evaluate computational methods for identifying genes with non-random spatial expression patterns in spatially resolved transcriptomics data.

Materials and Reagents:

- Spatial Transcriptomics Datasets: 96 datasets generated using scDesign3 simulation framework [5]

- Computational Methods: 14 SVG detection methods (SPARK-X, Moran's I, SpatialDE, etc.) [5]

- Evaluation Metrics: 6 metrics covering gene ranking, classification, statistical calibration, and scalability [5]

- Reference Standards: Realistic spatial patterns derived from biological data [5]

Methodology:

- Realistic Data Simulation

- Employ scDesign3 framework to generate biologically realistic spatial patterns

- Model expression of each gene as a function of spatial locations with Gaussian Process models

- Incorporate diverse spatial patterns observed in real biological systems

- Validate simulated data against empirical summaries of real datasets

Comprehensive Method Evaluation

- Assess gene ranking capability using precision-recall metrics

- Evaluate classification performance based on real spatial variation

- Analyze statistical calibration through p-value distribution examination

- Measure computational scalability via runtime and memory usage tracking

- Assess impact on downstream applications (e.g., spatial domain detection)

Cross-Technology Validation

- Apply methods to both sequencing-based and imaging-based spatial technologies

- Evaluate performance across different spatial resolutions

- Test applicability to spatial ATAC-seq data for identifying spatially variable peaks

Expected Outcomes: This protocol will identify best-performing methods for different spatial transcriptomics technologies, reveal statistical calibration issues in current approaches, and establish performance baselines for emerging methodologies [5].

Table 3: Essential Research Reagents and Computational Resources

| Resource Category | Specific Tools/Datasets | Function/Purpose | Key Characteristics |

|---|---|---|---|

| Benchmarking Suites | DNALONGBENCH, RNAChallenge, BEND, LRB | Standardized evaluation across multiple tasks | Pre-processed datasets, defined evaluation metrics, baseline implementations |

| Simulation Frameworks | scDesign3, Gaussian Process models | Generation of realistic training and test data | Incorporation of biological patterns, ground truth availability, parameter control |

| Expert Models | ABC model, Enformer, Akita, Puffin-D | Task-specific state-of-the-art performance | Specialized architectures, proven effectiveness on specific problems |

| Foundation Models | HyenaDNA, Caduceus variants | General-purpose genomic sequence modeling | Pre-training on large unlabeled datasets, transfer learning capability |

| Evaluation Metrics | AUROC, AUPR, Pearson/Spearman correlation | Quantitative performance assessment | Comprehensive threshold evaluation, statistical robustness, biological interpretability |

Analysis and Interpretation of Benchmarking Results

Effective interpretation of benchmarking results requires considering multiple performance dimensions and contextual factors. Performance should be evaluated across diverse datasets rather than single benchmarks to assess robustness and generalization [1]. Method rankings often vary substantially across different evaluation metrics, suggesting that composite assessments provide more reliable guidance than single-metric comparisons [5]. For example, in spatial transcriptomics benchmarking, SPARK-X demonstrated superior overall performance while Moran's I represented a strong baseline, but different methods excelled in specific metrics such as computational efficiency (SOMDE) or statistical calibration (SPARK) [5].

Statistical calibration represents a frequently overlooked but critical aspect of method evaluation. Most spatially variable gene detection methods except SPARK and SPARK-X produce inflated p-values, indicating poor calibration that can mislead biological interpretations [5]. Similarly, in RNA classification, the best and least well performing models under- and overfit benchmark datasets, respectively, highlighting the importance of assessing generalization rather than just optimization performance [6].

Computational efficiency must be balanced against predictive performance based on specific research contexts. Methods with modest performance advantages but substantial computational requirements may be impractical for large-scale applications. Recent benchmarks systematically report runtime and memory usage alongside accuracy metrics to facilitate these trade-off decisions [5].

Well-designed benchmarking studies serve as critical infrastructure for the genomics community, guiding method selection, stimulating methodological improvements, and establishing performance standards. As genomic assays increase in complexity—capturing spatial organization, long-range interactions, and multi-omic measurements—benchmarking practices must evolve accordingly. Future benchmarking efforts should prioritize biological realism through sophisticated simulation frameworks, comprehensive evaluation across diverse biological contexts, and assessment of downstream scientific utility rather than purely computational metrics. By adopting the rigorous frameworks and protocols outlined in this document, researchers can ensure their benchmarking studies provide accurate, unbiased, and biologically meaningful guidance for the scientific community.

The dramatic reduction in DNA sequencing costs has made de novo genome sequencing widely accessible, creating an urgent need for high-throughput analysis methods. The first and most essential step in this process is the accurate identification of protein-coding genes. However, gene prediction in eukaryotic organisms presents substantial challenges due to complex exon-intron structures, incomplete genome assemblies, and varying sequence quality. Ab initio gene prediction methods that identify protein-coding potential based on statistical models of the target genome alone are particularly vulnerable to these challenges, often producing substantial errors that can jeopardize subsequent analyses including functional annotations and evolutionary studies [7].

High-quality benchmarking datasets are critically needed to evaluate and compare the accuracy of computational methods in bioinformatics. The design of such benchmarks represents a fundamental meta-research challenge, requiring careful attention to dataset composition, performance metrics, and stratification strategies. Well-constructed benchmarks enable rigorous comparison of different computational methods, provide recommendations for method selection, and highlight areas needing improvement in current tools. For gene prediction tools, a benchmark must represent the typical challenges faced by genome annotation projects while providing reliable ground truth for evaluation [1].

The G3PO Benchmark Framework: Design and Construction

Core Design Principles

The G3PO (Gene and Protein Prediction PrOgrams) benchmark was specifically designed to address the critical challenges in evaluating ab initio gene prediction methods. Its construction followed several essential principles for rigorous benchmarking: comprehensive representation of diverse biological scenarios, careful validation and curation of reference data, and systematic definition of test sets to evaluate specific factors affecting prediction accuracy [7] [8].

A crucial innovation in G3PO's design was its focus on real eukaryotic genes from phylogenetically diverse organisms rather than simulated data. This approach ensures that the benchmark reflects the complexity of real-world prediction tasks while maintaining biological relevance. The benchmark construction involved extracting protein sequences from the UniProt database and their corresponding genomic sequences and exon maps from Ensembl, creating a foundation of biologically validated data [7].

Dataset Composition and Curation

The G3PO benchmark comprises 1,793 carefully validated proteins from 147 phylogenetically diverse eukaryotic organisms, providing exceptional taxonomic coverage. The dataset spans a wide biological range from humans to protists, with the majority (72%) of proteins from the Opisthokonta clade, including 1,236 Metazoa, 25 Fungi, and 22 Choanoflagellida sequences. Significant representation from Stramenopila (172 sequences), Euglenozoa (149), and Alveolata (99) ensures broad evolutionary diversity [7].

To ensure data quality, the developers constructed high-quality multiple sequence alignments and identified proteins with inconsistent sequence segments that might indicate annotation errors. This rigorous validation process led to the classification of sequences into two categories: 'Confirmed' (error-free) and 'Unconfirmed' (containing potential errors). This classification enables benchmarks to assess both ideal scenarios and realistic challenges where some annotation errors may be present [7].

Table 1: G3PO Benchmark Dataset Composition

| Category | Specification | Count/Description |

|---|---|---|

| Total Proteins | From UniProt database | 1,793 proteins |

| Organism Diversity | Phylogenetically diverse eukaryotes | 147 species |

| Taxonomic Distribution | Opisthokonta clade | 1,283 sequences (72%) |

| Stramenopila | 172 sequences | |

| Euglenozoa | 149 sequences | |

| Alveolata | 99 sequences | |

| Sequence Validation | Confirmed (error-free) | 1,361 sequences |

| Unconfirmed (potential errors) | 1,380 sequences | |

| Gene Structure Complexity | Single exon to complex genes | Up to 40 exons |

Structural and Functional Diversity

The G3PO benchmark was specifically designed to cover the full spectrum of gene structure complexity encountered in real genome annotation projects. The test cases range from simple single-exon genes to highly complex genes with up to 40 exons, systematically representing challenges such as varying exon lengths, intron sizes, and alternative splicing patterns. This diversity enables evaluation of how prediction tools perform across different structural architectures [7].

The proteins in G3PO were extracted from 20 orthologous families representing complex proteins with multiple functional domains, repeats, and low-complexity regions. This functional diversity ensures that the benchmark tests the ability of prediction algorithms to handle not just structural variation but also diverse sequence features that affect protein coding potential. Additionally, for each gene, genomic sequences were extracted with additional flanking regions ranging from 150 to 10,000 nucleotides, simulating the challenge of identifying gene boundaries in complete genomic sequences [7].

Experimental Protocols for Benchmark Construction

Data Collection and Curation Workflow

The construction of the G3PO benchmark follows a meticulous multi-stage protocol designed to ensure data quality and biological relevance. The workflow begins with data extraction from authoritative biological databases, proceeds through rigorous validation, and culminates in the creation of stratified test sets suitable for comprehensive method evaluation [7].

G3PO Benchmark Construction Workflow

Step 1: Data Extraction and Selection

- Source 1,793 protein sequences from the UniProt database, focusing on 20 orthologous families (BBS1-21, excluding BBS14) known to represent complex proteins with multiple functional domains

- Retrieve corresponding genomic sequences and exon maps from the Ensembl database

- Extract genomic sequences with additional flanking regions (150-10,000 nucleotides upstream and downstream) to simulate realistic genome annotation contexts

Step 2: Sequence Validation and Curation

- Construct high-quality multiple sequence alignments for all protein families

- Identify inconsistent sequence segments that may indicate annotation errors through manual inspection and computational analysis

- Classify sequences as 'Confirmed' (no errors detected) or 'Unconfirmed' (potential errors present) based on validation results

- Document all curation decisions to ensure transparency and reproducibility

Step 3: Test Set Stratification

- Define multiple test sets based on specific biological and technical factors:

- Genome sequence quality (completeness, coverage, error profiles)

- Gene structure complexity (number of exons, intron length, alternative splicing)

- Protein length and functional domain architecture

- Phylogenetic origin and GC content variation

- Ensure each test set contains sufficient examples to support statistically robust evaluation

Implementation of Evaluation Metrics

The G3PO benchmark employs standardized performance metrics adapted from best practices in computational method benchmarking. These metrics enable direct comparison across different prediction tools and provide insights into specific strengths and weaknesses [1] [9].

Core Performance Metrics:

- Exon-level accuracy: Measures the ability to correctly identify exon boundaries, calculated as the proportion of exactly predicted exons

- Gene-level accuracy: Assesses complete gene structure prediction, requiring exact match of all exon-intron boundaries

- Nucleotide-level accuracy: Evaluates coding potential prediction at the base-pair level

- Sensitivity (Recall): Measures the ability to detect true coding elements, calculated as TP/(TP+FN)

- Precision: Measures the accuracy of positive predictions, calculated as TP/(TP+FP)

Stratified Performance Analysis: The benchmark enables performance evaluation across different biological contexts through systematic stratification:

- By taxonomic group (Chordata, other Opisthokonta, other Eukaryota)

- By gene structure complexity (single-exon, few-exon, multi-exon genes)

- By sequence quality (high-quality versus draft-quality genomic sequences)

- By protein functional class (based on domain architecture and functional categories)

Table 2: G3PO Evaluation Metrics and Stratification

| Evaluation Dimension | Specific Metrics | Stratification Criteria |

|---|---|---|

| Exon-Level Accuracy | Exact exon match, Partial exon overlap | Exon length, Flanking intron size |

| Gene-Level Accuracy | Complete gene structure match | Number of exons, Gene length |

| Nucleotide-Level Accuracy | Coding nucleotide identification | GC content, Regional complexity |

| Sensitivity & Precision | TP, FP, FN rates | Organism group, Sequence quality |

| Boundary Detection | Splice site accuracy | Canonical vs. non-canonical sites |

Application to Gene Prediction Tool Evaluation

Experimental Protocol for Method Assessment

The G3PO benchmark enables systematic evaluation of ab initio gene prediction programs through a standardized experimental protocol. This protocol was used to assess five widely used prediction tools: Genscan, GlimmerHMM, GeneID, Snap, and Augustus [7].

Gene Prediction Tool Evaluation Protocol

Experimental Setup:

- Select representative ab initio prediction tools covering different algorithmic approaches (HMM, SVM, etc.)

- Install each tool following developer recommendations, ensuring consistent execution environment

- Configure tools using default parameters unless specific tuning is being evaluated

- Execute all tools on the complete G3PO benchmark dataset using high-performance computing resources

Execution and Analysis Protocol:

- Input Preparation: Format all G3PO benchmark sequences according to each tool's requirements

- Parallel Execution: Run prediction tools on all benchmark sequences, recording computational resources

- Output Processing: Parse prediction outputs into standardized format for comparison

- Truth Comparison: Compare predictions against G3PO reference annotations using standardized metrics

- Statistical Analysis: Calculate performance metrics with confidence intervals to account for variability

Key Findings from G3PO Benchmarking Studies

Application of the G3PO benchmark to evaluate ab initio gene prediction tools revealed several critical insights. The overall results demonstrated that gene structure prediction remains exceptionally challenging, with 68% of exons and 69% of confirmed protein sequences not predicted with 100% accuracy by all five evaluated programs [7].

Performance varied substantially across different biological contexts. Prediction accuracy was generally higher for organisms closely related to well-studied model species and for genes with simpler architectures. Conversely, performance declined for evolutionarily distant organisms and genes with complex exon-intron patterns. These findings highlight the importance of phylogenetic diversity in benchmark design and the need for continued method development [7].

The benchmark also enabled identification of specific error patterns common across prediction tools, including missing exons, retention of non-coding sequence in exons, gene fragmentation, and erroneous merging of neighboring genes. This granular analysis provides concrete targets for method improvement and underscores the value of comprehensive benchmarking beyond aggregate performance metrics [7].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Reagents for Benchmark Construction and Validation

| Reagent/Resource | Function in Benchmarking | Source/Specification |

|---|---|---|

| UniProt Database | Source of validated protein sequences | https://www.uniprot.org/ |

| Ensembl Genome Browser | Genomic sequences and exon maps | https://www.ensembl.org |

| Confirmed Gene Sequences | High-quality reference set | 1,361 error-free sequences from G3PO |

| Multiple Sequence Alignment Tools | Identify inconsistent sequence segments | MUSCLE, MAFFT, Clustal Omega |

| Phylogenetic Diversity Set | Test performance across evolutionary distance | 147 species across eukaryotes |

| Stratified Test Sets | Evaluate specific methodological challenges | By complexity, length, quality |

Implementation Guidelines and Best Practices

Applying the G3PO Framework to New Tool Development

For researchers developing new gene prediction methods, the G3PO benchmark provides a robust framework for validation. Implementation should follow established best practices for computational benchmarking, including proper experimental design, comprehensive metric selection, and unbiased interpretation of results [1].

Implementation Protocol:

- Benchmark Acquisition: Download the G3PO benchmark dataset from public repositories

- Tool Configuration: Implement new prediction method with appropriate parameter optimization

- Comparative Evaluation: Execute both new method and established baseline tools on the benchmark

- Stratified Analysis: Evaluate performance across different test sets to identify specific strengths and weaknesses

- Statistical Validation: Apply appropriate statistical tests to confirm significance of performance differences

When using G3PO for method development, it is crucial to avoid overfitting to the benchmark characteristics. This can be achieved by holding out portions of the benchmark during development or using complementary validation datasets. Additionally, performance should be interpreted in the context of specific application requirements, as optimal method choice may vary depending on target organisms and data quality [7] [1].

Adaptation to Emerging Challenges

The G3PO framework can be adapted to address emerging challenges in genome annotation, including prediction of atypical genomic features. Recent research has highlighted the need for improved detection of small proteins coded by short open reading frames (sORFs) and identification of events such as stop codon recoding, which are often overlooked by standard prediction pipelines [7].

The modular design of the G3PO benchmark enables expansion to include additional biological scenarios and sequence types. Future developments could incorporate:

- Long non-coding RNAs and other non-coding functional elements

- Epigenetic markers affecting gene expression and structure

- Population variation and personal genome annotation

- Metagenomic sequences from complex environmental samples

Such adaptations would maintain the benchmark's relevance as sequencing technologies and biological applications continue to evolve. The core principles of data quality, phylogenetic diversity, and stratified evaluation ensure that the G3PO approach remains applicable to these new challenges [7] [10].

Application Notes

The accuracy of computational gene prediction is fundamentally challenged by the natural complexity of eukaryotic gene structures. This complexity is characterized by features such as varying exon numbers, diverse protein lengths, and the broad phylogenetic diversity of the target organisms. The G3PO benchmark, a carefully curated set of 1,793 real eukaryotic genes from 147 phylogenetically diverse organisms, has been instrumental in quantifying how these factors impact the performance of modern gene prediction tools [7]. The findings are critical for researchers, especially in drug development, where inaccurate gene models can jeopardize downstream analyses, including the identification of drug targets [7].

Table 1: Impact of Gene Structure Features on Ab Initio Prediction Accuracy (G3PO Benchmark Data) [7]

| Gene Structure Feature | Impact on Prediction Accuracy | Representative Benchmark Statistics |

|---|---|---|

| Exon Number (Complexity) | Accuracy decreases as the number of exons increases. Genes with over 20 exons present a significant challenge. | Test cases range from single-exon genes to genes with up to 40 exons [7]. |

| Protein Length | Longer proteins are often associated with more complex gene structures, leading to lower prediction accuracy. | Benchmark covers a wide range of protein lengths to evaluate this effect [7]. |

| Phylogenetic Distance | Predictors trained on model organisms (e.g., human) show decreased accuracy when applied to distantly related species. | 72% of benchmark proteins are from Opisthokonta;其余来自Stramenopila, Euglenozoa, and Alveolata [7]. |

| Overall Performance | A majority of complex gene structures are not perfectly predicted. | 68% of exons and 69% of confirmed protein sequences were not predicted with 100% accuracy by all five leading programs [7]. |

Integrating extrinsic evidence, such as RNA-seq data and homologous protein sequences, is a powerful strategy to overcome these challenges. For instance, the GeneMark-ETP pipeline demonstrates how combining transcriptomic and protein-derived evidence significantly improves gene prediction accuracy, particularly in large and complex plant and animal genomes [11]. Its workflow involves generating high-confidence gene models from transcribed evidence, which are then used to iteratively train a statistical model for genome-wide prediction. This approach has been shown to outperform methods that rely on a single type of extrinsic evidence [11].

Table 2: Key Performance Metrics for Gene Prediction tools [11]

| Metric | Definition | Interpretation in Benchmarking |

|---|---|---|

| Sensitivity (Sn) | Sn = TP / (TP + FN)Measures the proportion of true genes/exons that are correctly predicted. | High sensitivity indicates the tool is effective at finding true genes, with few false negatives. |

| Precision (Pr) | Pr = TP / (TP + FP)Measures the proportion of predicted genes/exons that are correct. | High precision indicates the tool's predictions are reliable, with few false positives. |

| F1 Score | F1 = 2 × (Sn × Pr) / (Sn + Pr)The harmonic mean of Sensitivity and Precision. | A single metric to balance both sensitivity and precision; higher is better (often reported as F1 × 100) [11]. |

Furthermore, evolutionary history plays a crucial role. Large-scale studies of 590 eukaryotic species confirm that gene architecture—including intron number and length—differs markedly between major taxonomic groups [12]. These differences are deeply conserved, meaning a gene finder optimized for the intron-rich genes of vertebrates will likely struggle with the more compact gene structures of fungi or protists. This underscores the necessity of selecting appropriate benchmarks and training data that reflect the phylogenetic context of the organism under study [7] [12].

Protocols

Protocol 1: Constructing a Benchmark Set for Evaluating Gene Prediction Tools

This protocol outlines the methodology for creating a benchmark akin to G3PO, designed to evaluate gene prediction programs against complex gene structures [7].

1. Resource Curation and Selection

- Objective: Assemble a diverse set of genes with validated structures.

- Procedure: a. Source Data: Extract protein sequences and their corresponding genomic loci from a trusted, manually curated database such as UniProt. b. Phylogenetic Diversity: Deliberately select genes from a wide range of eukaryotic organisms. The G3PO benchmark includes species from Opisthokonta (e.g., metazoa, fungi), Stramenopila, Alveolata, and Euglenozoa [7]. c. Structural Diversity: Ensure the set includes genes with a broad distribution of exon numbers (e.g., from single-exon to over 20 exons) and protein lengths. d. Validation: Construct multiple sequence alignments (MSAs) to identify and label proteins with potential annotation errors as 'Unconfirmed,' using the consistent ones as a 'Confirmed' high-quality set [7].

2. Test Set Definition and Preparation

- Objective: Create specific test sets to isolate the impact of different factors.

- Procedure: a. Genomic Context: For each gene locus, extract the genomic sequence with additional flanking regions (e.g., 150 to 10,000 nucleotides upstream and downstream) to simulate the challenge of identifying genes within a larger, non-coding background [7]. b. Define Subsets: Create focused test sets from the main benchmark to evaluate the effect of specific variables, such as low genome quality, high gene structure complexity, or short protein length.

3. Tool Execution and Evaluation

- Objective: Run gene prediction tools and measure their performance objectively.

- Procedure: a. Tool Selection: Run multiple widely used ab initio predictors (e.g., Augustus, GeneMark-ES, SNAP) on the benchmark sequences. b. Accuracy Assessment: Compare the tool predictions against the confirmed reference gene models. Calculate standard metrics at both the exon and gene level, including: * Sensitivity: The ability to find true exons/genes. * Precision: The ability to avoid false positives. * F1 Score: The overall balanced accuracy [11]. c. Analysis: Analyze the results to determine each tool's strengths and weaknesses relative to the features recorded in Step 1 (e.g., "Tool A's precision drops by 20% on genes with more than 10 exons").

Diagram: G3PO Benchmark Construction. This workflow outlines the key steps in building a comprehensive benchmark for gene prediction tools, from data curation to final analysis.

Protocol 2: Gene Prediction Using the GeneMark-ETP Pipeline with Integrated Evidence

This protocol details the use of the GeneMark-ETP pipeline, which effectively combines intrinsic genomic signals with extrinsic transcriptomic and protein evidence for accurate gene prediction in complex genomes [11].

1. Evidence Integration and High-Confidence Model Generation

- Objective: Generate a set of high-confidence gene models to guide genome-wide training.

- Procedure: a. Transcriptome Assembly: Assemble RNA-seq reads into transcripts using a tool like StringTie2 [11]. b. Initial CDS Prediction: Run GeneMarkS-T on the assembled transcripts to perform ab initio prediction of Coding Sequences (CDSs) within the transcripts. c. Evidence-Based Refinement: Use the GeneMarkS-TP module to refine the initial CDS predictions. This is done by: i. Translating predicted CDSs into amino acid sequences. ii. Searching against a database of cross-species proteins. iii. Using the protein alignments to correct the initial CDS predictions, significantly boosting Precision. The output are High-Confidence (HC) CDSs [11].

2. Iterative Model Training and Genome-Wide Prediction

- Objective: Use the HC genes to train a model and predict genes across the entire genome.

- Procedure: a. Initial Training: Map the HC CDSs to the genome. Use this set as the initial training set for the genomic Generalized Hidden Markov Model (GHMM) in GeneMark-ETP. b. Iterative Prediction and Retraining: i. The trained model is used to predict genes in the genomic regions between the HC genes. ii. Extrinsic evidence (hints from mapped RNA-seq reads and aligned proteins) is integrated into the Viterbi algorithm during prediction. iii. The model parameters are re-estimated based on the new predictions. iv. Steps i-iii are repeated until the model converges [11]. c. Final Prediction: The pipeline produces a final, comprehensive set of gene models for the genome.

Diagram: GeneMark-ETP Workflow. The pipeline uses high-confidence genes derived from transcripts and protein homology to iteratively train a model for genome-wide prediction.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Gene Prediction Benchmarking and Analysis

| Research Reagent / Resource | Function and Application |

|---|---|

| G3PO Benchmark [7] | A curated benchmark set of 1,793 genes from 147 eukaryotes. Used for realistic evaluation of gene prediction tools on challenging, phylogenetically diverse data. |

| GeneMark-ETP [11] | An automatic gene finder that integrates genomic, transcriptomic, and protein evidence. Ideal for achieving high accuracy in large, complex plant and animal genomes. |

| Augustus [7] | A widely used ab initio gene prediction program that can also incorporate hints from extrinsic evidence. Often used as a benchmark in comparative studies. |

| StringTie2 [11] | A tool for assembling RNA-seq reads into transcripts. Used to generate transcriptome-based evidence for gene models. |

| UniProt Knowledgebase [7] | A comprehensive resource of protein sequences and functional information. Serves as a key source for curating high-quality protein sequences for benchmark construction and homology searches. |

| CATH Database [13] | A hierarchical classification of protein domain structures. Useful for selecting structurally diverse protein families for testing structure-based phylogenetics and deep homology. |

| Foldseek / FoldTree [13] | Software for rapid protein structure comparison and structure-informed phylogenetic tree building. Useful for resolving evolutionary relationships when sequence similarity is low. |

The accuracy of gene finding and genomic annotation is fundamentally constrained by the quality of the underlying genome assemblies. Incomplete assemblies and low-coverage genomes represent pervasive challenges in genomic research, particularly in non-model organisms, complex metagenomic samples, and clinical settings with limited starting material. These data quality issues can lead to fragmented gene models, missed exons, and incomplete pathway reconstructions, ultimately compromising biological interpretations. This application note outlines standardized protocols and benchmarking strategies to evaluate gene finding tool performance under these real-world constraints, providing a critical framework for researchers developing and selecting tools for robust genomic analysis.

Quantitative Landscape of Assembly Completeness

Current genomic datasets exhibit substantial variation in assembly quality and completeness. The tables below summarize key metrics and their implications for gene finding.

Table 1: Assembly Completeness Metrics and Benchmarks

| Metric | Ideal Value | Typical Range | Impact on Gene Finding |

|---|---|---|---|

| BUSCO Completeness [14] | >95% | 60% - 99% | Lower scores indicate missing conserved genes or fragments. |

| Contig N50 [14] | >1 Mb | 134.34 kb - 11.81 Mb | Lower N50 increases gene fragmentation risk. |

| T2T Gapless Assemblies [14] | Full chromosome | 11/431 medicinal plants | Ensures complete gene models and regulatory regions. |

| Sequencing Coverage | >50x | Highly variable (e.g., <10x in metagenomes [15]) | Low coverage causes misassemblies and missed variants. |

Table 2: Prevalence of Assembly Issues Across Domains (as of February 2025) [14]

| Domain | Species with Sequenced Genomes | Genomes at Draft Stage | Chromosome-Level Assemblies | Telomere-to-Telomere (T2T) |

|---|---|---|---|---|

| Medicinal Plants | 431 species | 27 assemblies | 267 (of 304 TGS genomes) | 11 assemblies |

| Microbial Metagenomes | N/A | Common in soil [15] | Rare | Extremely Rare |

Experimental Protocols for Benchmarking

Protocol 1: Evaluating Tool Performance on Low-Coverage Metagenomic Data

Purpose: To quantitatively assess how gene finding and genome binning tools perform when sequencing coverage is suboptimal.

Background: In complex environments like soil, low coverage and high sequence diversity are primary drivers of misassemblies in short-read data, particularly in variable genome regions like integrated viruses or defense systems [15].

Materials:

- Paired long-read (PacBio HiFi) and short-read (Illumina) metagenomic assemblies from the same sample [15].

- Binning tool (e.g., SemiBin2 [15]).

- Gene annotation pipeline (e.g., IMG annotation system [15]).

Method Steps:

- Generate Reference Sequences: Split long-read assembled contigs into 1 kb subsequences using a tool like

seqkit(e.g., with a 500-bp sliding window) [15]. - Filter for Coverage: Map raw short reads to these subsequences using

bowtie2. Retain only subsequences with ≥1× coverage over at least 80% of their length to ensure the region could be assembled [15]. - Assemble with SR Data: Process the same short-read data with multiple assemblers (e.g., MEGAHIT and metaSPAdes) using default settings [15].

- Calculate Recovery Rate: Compare SR-assembled contigs to the LR reference subsequences using BLASTn (>99% identity). For each reference subsequence, calculate "percent recovery" as: (Length of the best BLAST hit / 1000 bp) * 100 [15].

- Categorize and Analyze: Categorize each 1 kb subsequence based on its recovery (0-49%, 50-99%, 100%) and its average short-read coverage (e.g., <10x vs ≥10x). Analyze the percentage of sequences in each bin that fall into these recovery groups [15].

- Annotate Genes: Perform gene annotation on both fully assembled and poorly assembled regions. Use Fisher's exact test to identify COG categories of genes that are significantly enriched in poorly assembled regions [15].

Protocol 2: Benchmarking with Real-World, Imperfect Genomes

Purpose: To test gene finding tools using authentic, flawed genomes from real-world scientific discussions, capturing nuanced biological reasoning.

Background: The Genome-Bench benchmark comprises 3,332 multiple-choice questions derived from over a decade of expert discussions on a CRISPR forum. It reflects realistic scenarios involving ambiguous data, incomplete information, and methodological troubleshooting [16].

Materials:

- Genome-Bench dataset (available on Hugging Face) [16].

- Large Language Models (LLMs) or other gene finding/algorithms for testing.

- Standard computing resources for model fine-tuning and inference.

Method Steps:

- Data Partitioning: Divide the benchmark into training (2,671 questions) and test (661 questions) sets [16].

- Task Categorization: Annotate test questions by category (e.g., Validation, Troubleshooting & Optimization, GuideRNA Design) and difficulty level (Easy, Medium, Hard) based on linguistic structure and conceptual complexity [16].

- Model Evaluation: Fine-tune or prompt your model on the training set. Evaluate its performance on the test set, analyzing accuracy across the different categories and difficulty levels [16].

- Gap Analysis: Identify specific biological contexts or types of reasoning (e.g., experimental troubleshooting, protocol optimization) where model performance drops significantly, indicating weaknesses in handling real-world complexity [16].

Visualizing Experimental Workflows

The following diagrams illustrate the core benchmarking methodologies.

Diagram 1: Benchmarking workflow for low-coverage and complex regions, based on the methodology from [15].

Diagram 2: Pipeline for creating a realistic benchmark from real-world scientific data, adapted from the Genome-Bench construction process [16].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools and Databases for Real-World Benchmarking

| Tool/Resource | Function | Relevance to Incomplete/Low-Cov Genomes |

|---|---|---|

| BUSCO [14] | Assesses genome completeness based on universal single-copy orthologs. | Core metric for quantifying assembly completeness; low scores flag problematic genomes. |

| PPR-Meta [17] | Virus identification tool using convolutional neural networks. | Top performer in distinguishing viral from microbial contigs in complex metagenomes. |

| Open Problems [18] | Community platform for benchmarking single-cell genomics methods. | Provides standardized tasks and metrics for evaluating tools on noisy, real-world single-cell data. |

| CZI Benchmarking Suite [19] | Standardized toolkit for evaluating virtual cell models. | Offers reproducible pipelines for assessing model performance on biological tasks beyond technical metrics. |

| Genome-Bench [16] | Benchmark for scientific reasoning derived from expert CRISPR discussions. | Tests algorithmic understanding of biological concepts using real-world, imperfect information scenarios. |

| Long-Read Sequencers (PacBio, ONT) [14] [15] | Generate sequencing reads thousands of base pairs long. | Critical for resolving repetitive regions and complex genomic loci that fragment short-read assemblies. |

| OGM (Optical Genome Mapping) [20] | Technique for detecting large-scale structural variants. | Identifies clinically relevant SVs and CNAs with superior resolution, overcoming limitations of short-read sequencing. |

Discussion and Concluding Remarks

Integrating real-world data challenges into the benchmarking of gene finding tools is no longer optional but essential for driving biological discovery. As the data shows, even with advancing technologies, a significant proportion of genomes—from medicinal plants to clinical samples—remain incomplete or are sequenced at low coverage [14] [20]. Benchmarking protocols must therefore move beyond clean, model organism data to include structured tests on fragmented assemblies, low-coverage sequences, and biologically complex regions.

The experimental workflows and community resources outlined here provide a pathway for this transition. By adopting these protocols, tool developers can identify and address specific failure modes, such as the underperformance on low-coverage metagenomic regions [15] or the inability to reason with incomplete evidence as presented in expert forums [16]. Ultimately, the goal is to foster the development of more robust, accurate, and biologically aware gene finding tools that are reliable not just in theory, but in the messy reality of genomic science.

In the field of computational genomics, the accuracy and reliability of gene-finding and protein prediction tools are fundamentally dependent on the quality of the benchmark datasets used for their evaluation. A benchmark dataset serves as the ground truth, providing a standardized reference against which computational predictions are validated. The construction of such datasets requires meticulous attention to biological validation and curation processes. The critical distinction between "Confirmed" and "Unconfirmed" sequences within a benchmark lies in the level of empirical validation supporting their annotation. Confirmed sequences have undergone rigorous checks to minimize potential errors, whereas Unconfirmed sequences may originate from automated annotations that are prone to propagation of inaccuracies [21]. The selection between these classes of data directly impacts the perceived performance of a tool and the biological validity of the conclusions drawn. This application note, framed within a broader thesis on best practices for benchmarking, provides detailed protocols for the construction and application of rigorously validated genomic benchmarks, with a specific focus on protein-coding sequences.

Defining Confirmed and Unconfirmed Data

Theoretical Basis for Data Classification

In the context of benchmark construction, "Confirmed" and "Unconfirmed" labels indicate the degree of confidence in the accuracy of a sequence's annotation.

- Confirmed Sequences: This category comprises protein or gene sequences that have undergone a stringent, multi-step validation process. The validation often involves constructing high-quality multiple sequence alignments (MSA) to identify and remove sequences with inconsistent segments that might indicate potential annotation errors [21]. The objective is to create a high-fidelity subset of data where the functional and structural annotation is strongly supported by empirical evidence, making it suitable for testing the true positive performance of prediction tools.

- Unconfirmed Sequences: This category includes sequences, often extracted from major public databases like UniProt, that lack the same level of manual or structural validation [21]. While they represent valuable biological data, they may contain errors such as incorrect exon boundaries, missing exons, or the retention of non-coding sequence within exons. Including these sequences in a benchmark provides a realistic test scenario that reflects the typical challenges faced when annotating new genomes, including the propagation of pre-existing errors [21].

The following table summarizes the core characteristics and implications of using each data class in benchmarking experiments.

Table 1: Characteristics of Confirmed vs. Unconfirmed Protein Sequences in Benchmarking

| Feature | Confirmed Sequences | Unconfirmed Sequences |

|---|---|---|

| Definition | Sequences with annotation validated through rigorous, often structure- or alignment-based methods. | Sequences from public databases that lack extensive secondary validation. |

| Primary Use | Assessing true positive performance and intrinsic accuracy of prediction tools. | Evaluating performance on realistic, complex, and potentially noisy data. |

| Typical Content | Manually curated sequences; sequences with consistent segments in multiple sequence alignments. | Automatically annotated sequences; sequences with inconsistent segments in MSAs. |

| Impact on Benchmarking | Provides a high-confidence standard; helps identify a tool's upper performance limits. | Tests robustness to real-world data quality issues; reveals susceptibility to error propagation. |

| Example from Literature | G3PO benchmark's "Confirmed" set, based on consistent MSAs [21]. | G3PO benchmark's "Unconfirmed" set, containing sequences with potential errors [21]. |

Impact on Benchmarking Outcomes

The composition of a benchmark dataset significantly influences the evaluation of gene prediction tools. Benchmarks that rely solely on Unconfirmed sequences risk rewarding tools that replicate systemic errors present in existing databases, rather than those that discover biologically accurate gene models. A study on the G3PO benchmark highlighted this challenge, noting that a substantial proportion (69%) of Confirmed protein sequences were not predicted with 100% accuracy by a panel of five ab initio gene prediction programs [21]. This finding underscores the difficulty of the prediction task even for validated sequences and demonstrates that benchmarks incorporating Confirmed data provide a more challenging and meaningful assessment of a tool's capabilities.

Established Benchmarking Frameworks and Protocols

The G3PO Benchmark: A Case Study in Data Curation

The G3PO (benchmark for Gene and Protein Prediction PrOgrams) framework provides a detailed protocol for constructing a benchmark with a confirmed dataset.

- Objective: To create a benchmark representative of the challenges in current genome annotation projects, enabling a realistic evaluation of gene prediction tools on complex genes from diverse eukaryotes [21].

- Data Acquisition: The protocol begins with the extraction of 1,793 reference proteins and their corresponding genomic sequences from 147 phylogenetically diverse eukaryotic organisms using UniProt and Ensembl databases [21].

- Validation and Curation (The Confirmation Step): This is the critical phase for creating the Confirmed dataset.

- Multiple Sequence Alignment (MSA): Construct high-quality MSAs for the protein families.

- Identification of Inconsistencies: Analyze the MSAs to identify proteins with inconsistent sequence segments that suggest potential annotation errors.

- Classification: Label proteins with no identified errors as 'Confirmed'. Label sequences with at least one error as 'Unconfirmed' [21].

- Test Set Definition: The final benchmark includes multiple test sets designed to evaluate the effects of various features, such as genome sequence quality, gene structure complexity, and protein length.

Table 2: Overview of the G3PO Benchmark Construction Protocol

| Protocol Step | Description | Key Technical Details |

|---|---|---|

| 1. Data Sourcing | Extract protein and genomic DNA sequences. | Sources: UniProt for proteins, Ensembl for genomic coordinates and exon maps. |

| 2. Sequence Validation | Classify sequences into Confirmed and Unconfirmed sets. | Method: Construction and analysis of high-quality Multiple Sequence Alignments (MSA). |

| 3. Test Set Design | Define specific benchmark tests. | Variables: Gene length, GC content, exon number/length, protein length, phylogenetic origin. |

| 4. Tool Evaluation | Run gene prediction programs on the benchmark. | Metrics: Exon-level and protein-level accuracy. |

The following diagram illustrates the G3PO benchmark construction workflow.

DNALONGBENCH: A Framework for Long-Range Dependency Evaluation

While G3PO focuses on gene and protein prediction, the DNALONGBENCH framework addresses the challenge of benchmarking models on tasks involving long-range genomic interactions. Its data selection criteria provide a complementary protocol for defining high-quality benchmarks.

- Biological Significance: Tasks must address realistic and biologically important genomics problems [3].

- Long-Range Dependencies: Tasks are required to model input contexts spanning hundreds of kilobase pairs or more, up to 1 million base pairs [3].

- Task Difficulty: Selected tasks must pose significant challenges for current state-of-the-art models [3].

- Task Diversity: The benchmark should span various length scales and include different task types (classification, regression) and dimensionalities (1D, 2D) [3].

This structured approach to task selection ensures that the resulting benchmark is comprehensive, rigorous, and capable of revealing the true strengths and weaknesses of the models being evaluated.

Practical Application and Experimental Protocols

Protocol: Implementing a Benchmarking Study Using Confirmed Data

This protocol describes how to utilize an existing benchmark, like G3PO, to evaluate a gene-finding tool, with an emphasis on the differential analysis of Confirmed and Unconfirmed data.

Step 1: Benchmark and Tool Selection

Step 2: Experimental Execution

- Run each gene prediction tool on the entire benchmark dataset, ensuring that the genomic sequences are provided with sufficient flanking context (e.g., 150 to 10,000 nucleotides upstream and downstream) to mimic a realistic annotation task [21].

Step 3: Result Analysis and Comparison

- Primary Metric Calculation: Calculate standard accuracy metrics (e.g., sensitivity, specificity, F1-score) at the exon and whole-gene level. It is critical to perform these calculations separately for the Confirmed and Unconfirmed subsets.

- Comparative Analysis: Compare the performance metrics between the Confirmed and Unconfirmed subsets. Tools that perform well on the Confirmed set but poorly on the Unconfirmed set may be more accurate but sensitive to noisy data. Tools with similar performance on both may be more robust but could be replicating existing errors.

- Error Profiling: Analyze the types of errors made by the tools (e.g., missing exons, incorrect splice site prediction) within the Confirmed set to identify specific weaknesses.

The workflow for this experimental protocol is summarized below.

The Scientist's Toolkit: Research Reagent Solutions

The following table details key resources and tools essential for conducting rigorous benchmarking studies in genomics.

Table 3: Essential Research Reagents and Tools for Genomic Benchmarking

| Resource Name | Type | Function in Benchmarking |

|---|---|---|

| G3PO Benchmark [21] | Benchmark Dataset | Provides a curated set of Confirmed and Unconfirmed eukaryotic genes for evaluating prediction accuracy on complex gene structures. |

| DNALONGBENCH [3] | Benchmark Suite | Evaluates the ability of models to capture long-range genomic dependencies across diverse tasks (e.g., enhancer-promoter interaction, 3D genome organization). |

| BAliBASE [23] | Reference Alignment | Serves as a gold-standard set of manually curated multiple sequence alignments used for validating alignment methods, which can inform sequence confirmation. |

| Pfam Database [24] | Protein Family Database | A large collection of protein families and domains; commonly used as a source of unlabeled protein sequences for pre-training foundation models. |

| Augustus [21] [22] | Gene Prediction Software | A widely used ab initio gene prediction program often employed as a baseline in benchmarking studies. |

| HMMER [25] | Bioinformatics Tool | Performs sequence homology searches using profile hidden Markov models; a conventional method for functional annotation against which new methods (e.g., deep learning) are compared. |

| MSA (Multiple Sequence Alignment) | Analytical Technique | The core method for validating sequence consistency and classifying sequences as Confirmed or Unconfirmed during benchmark curation [21]. |

The disciplined selection of ground truth data is a cornerstone of rigorous bioinformatics tool development. By strategically incorporating Confirmed protein sequences into benchmarks, researchers can accurately assess the intrinsic predictive power of their tools and avoid the pitfall of perpetuating historical annotation errors. The protocols and frameworks outlined here, including the explicit classification of data confidence levels as demonstrated by G3PO and the principled task selection of DNALONGBENCH, provide a clear roadmap for constructing and applying benchmarks that drive meaningful progress in the field. Adopting these best practices ensures that evaluations reflect true biological accuracy, ultimately leading to more reliable gene finding and protein annotation tools for the scientific community.

Implementation Strategies: Data Processing, Tool Selection, and Performance Metrics

Robust benchmarking of computational models designed to predict cellular responses to perturbations is a cornerstone of modern computational biology. The ability to accurately forecast transcriptomic profiles following genetic or chemical interventions accelerates therapeutic discovery by enabling in-silico screens across a vast space of unobserved perturbations [26]. The core challenge lies in a model's capacity to generalize effectively—to make accurate predictions on data not encountered during training. The strategy employed to split a dataset into training, validation, and test subsets is not a mere preliminary step but a critical determinant of whether a model's reported performance reflects its true utility in a real-world research or clinical setting [27] [28]. This document outlines rigorous data splitting methodologies tailored for the evaluation of perturbation prediction models, framed within the broader context of establishing best practices for benchmarking gene finding tools.

The Critical Role of Data Splitting in Model Evaluation

Data splitting is a fundamental process that separates a dataset into distinct subsets for model construction (training/validation) and final assessment (test). Its primary purpose is to estimate how well a model will perform on new, unseen data, thereby evaluating its generalizability [27]. Inadequate data splitting can lead to overly optimistic performance estimates and models that fail in practical applications.

For perturbation prediction, the stakes are particularly high. These models are tasked with predicting out-of-sample effects, such as in covariate transfer (predicting effects in unseen cell types or lines) or combo prediction (predicting the effects of novel combinatorial perturbations) [26]. The data splitting strategy must therefore meticulously simulate these real-world challenges during evaluation. Recent comprehensive benchmarks have revealed that sophisticated foundation models can be outperformed by simpler baseline models, a finding that underscores the profound impact of evaluation protocols, including data splitting, on the perceived success of a model [29].

Foundational Concepts and Splitting Scenarios

Core Data Splitting Terminology

- Training Set: Used to fit the model's parameters.

- Validation Set: Used for hyperparameter tuning and model selection, preventing overfitting to the training set.

- Test Set: A held-out set used only once for the final evaluation of the model's generalizability. It must remain completely blind during all training and tuning phases.

Key Splitting Scenarios for Perturbation Prediction

To ensure rigorous evaluation, the test set should be constructed to reflect specific, challenging prediction tasks [26]:

- Perturbation-Exclusive (PEX): All cells subjected to a specific set of perturbations are held out from training. The model is evaluated on these unseen perturbations, testing its ability to generalize beyond the perturbations it was trained on [29].

- Covariate Transfer: The model is trained on perturbation effects measured in one set of covariates (e.g., specific cell lines) and tested on different, unseen covariates. This assesses the model's ability to transfer knowledge across biological contexts [26].

- Combinatorial Perturbation Prediction: For datasets involving combinatorial perturbations (e.g., dual-gene knockouts), the test set contains novel combinations of perturbations, some or all of which may have been seen individually during training. This evaluates the model's ability to reason about synergistic or additive effects [29] [26].

Quantitative Comparison of Data Splitting Algorithms

The algorithm used to assign samples to training and test sets can significantly impact benchmarking outcomes. The table below summarizes the characteristics of common splitting algorithms.

Table 1: Comparison of Data Splitting Algorithms for Biospectroscopic and Perturbation Data

| Algorithm | Core Principle | Advantages | Limitations | Suitability for Perturbation Data |

|---|---|---|---|---|

| Random Selection (RS) | Purely random assignment of samples to sets. | Simple to implement; no bias. | Can lead to data leakage if structure (e.g., donor, batch) is ignored; may create easy test sets. | Low. Fails to create challenging, biologically relevant test scenarios [27]. |

| Kennard-Stone (KS) | Selects samples to cover the feature space uniformly, maximizing the Euclidean distance between training samples. | Ensures training set is representative of entire data variance. | Can select outliers for training; may create artificially difficult test sets; performance can be unbalanced for classes [27]. | Moderate. Useful for ensuring feature space coverage but does not directly address biological splitting scenarios. |

| Morais-Lima-Martin (MLM) | A modification of KS that introduces a random-mutation factor. | Combines representativeness of KS with randomness to improve class balance in predictions. | Less common; may require custom implementation. | High. Shown to generate better and more balanced predictive performance in biospectroscopic classification compared to RS and KS [27]. |

Experimental Protocol for Rigorous Benchmarking

This protocol provides a step-by-step guide for implementing rigorous data splitting in a benchmark study of perturbation prediction models, using the PEX scenario as a primary example.

Pre-processing and Dataset Preparation

- Data Collection: Gather a Perturb-seq dataset (e.g., Adamson, Norman, or Replogle datasets) containing single-cell gene expression profiles from both unperturbed control cells and cells subjected to genetic perturbations [29].

- Quality Control: Perform standard single-cell RNA-seq QC. Filter cells based on metrics like mitochondrial gene percentage, number of unique genes detected, and total counts. Filter genes based on minimum expression thresholds [30].

- Normalization and Log-Transformation: Normalize counts (e.g., by library size) and apply a log-transform (e.g., log1p) to stabilize variance.

- Pseudo-bulk Aggregation (Optional but recommended for certain benchmarks): To reduce noise and computational cost, aggregate single-cell profiles by perturbation condition to create pseudo-bulk expression profiles. This is the approach used in several key benchmarks [29].

Implementing Perturbation-Exclusive (PEX) Data Splitting

- Identify Unique Perturbations: List all unique perturbation targets (e.g., knocked-down genes) in the dataset.

- Hold-Out Perturbations: Randomly select a predefined percentage (e.g., 20%) of these perturbations. All cells—both control and perturbed—associated with these held-out perturbations are assigned to the test set.

- Construct Training Set: The remaining cells, associated with the other 80% of perturbations, form the training and validation sets.

- Validation Set Creation: Within the training perturbations, further split the cells (e.g., 80/20) to create a training and validation set. This validation set is used for hyperparameter tuning and early stopping.

- Verify Separation: Ensure there is zero overlap in the perturbation identities between the training/validation and test sets.

Table 2: Essential Research Reagent Solutions for Perturbation Prediction Benchmarking

| Category | Reagent / Resource | Description and Function in Benchmarking |

|---|---|---|

| Reference Datasets | Norman et al. (2019) [29] [26] | Dataset with 155 single and 131 dual genetic perturbations in a single cell line. Essential for testing combo prediction. |

| Adamson et al. (2016) [29] | CRISPRi Perturb-seq dataset with single perturbations. A standard for benchmarking PEX performance. | |

| Replogle et al. (2022) [29] | Large-scale CRISPRi screen data in K562 and RPE1 cell lines. Useful for cross-cell-line evaluation. | |

| OP3 / NeurIPS 2023 Challenge [26] | Chemical perturbation dataset in PBMCs. Critical for benchmarking generalizability to chemical modalities. | |

| Software & Algorithms | scGPT [29] [26] | A foundation transformer model for single-cell biology; serves as a benchmark model and a source of gene embeddings. |

| GEARS [29] [26] | A model for combinatorial perturbation prediction; a standard baseline for combo prediction tasks. | |

| PerturBench [26] | A comprehensive benchmarking framework and codebase that provides standardized data loading, splitting, and evaluation metrics. | |

| Bioinformatics Tools | MAFFT [31] | Multiple sequence alignment tool, used here as an analogy for ensuring proper alignment of data splits. |

| NCBI Gene & Gene Ontology [32] | Databases for retrieving approved gene symbols and functional annotations, crucial for incorporating biological prior knowledge. |

Model Training and Evaluation

- Model Training: Train the model(s) using only the training set. Use the validation set to monitor for overfitting and to select the best model checkpoint.

- Final Evaluation: Execute a single evaluation run on the held-out test set to report final performance metrics.

- Key Performance Metrics:

- Prediction Accuracy: Use metrics like Root Mean Squared Error (RMSE) or Mean Absolute Error (MAE) on normalized expression values.

- Correlation: Calculate Pearson correlation between predicted and ground-truth pseudo-bulk profiles. Crucially, also calculate this in the differential expression space (Pearson Delta), which measures the accuracy of predicting the change in expression relative to control [29].

- Rank-based Metrics: Employ metrics like Spearman correlation to assess the model's ability to correctly order perturbations by the effect size of key genes, which is critical for in-silico screens [26].

The methodology used to split data is not a minor technical detail but a foundational aspect of benchmarking that directly shapes the validity and real-world relevance of the results. By moving beyond simple random splitting and adopting structured strategies like Perturbation-Exclusive splitting and Covariate Transfer, the research community can ensure that models are evaluated on their ability to generalize to biologically meaningful, unseen scenarios. The consistent application of these rigorous methodologies, supported by the protocols and resources outlined herein, will lead to more robust, reliable, and ultimately more useful predictive models in computational biology and therapeutic discovery.

Benchmarking gene-finding tools and other genomic deep learning models requires a rigorous and nuanced approach to model evaluation. The selection of appropriate metrics is not merely a procedural step but a critical decision that directly influences the interpretation of a model's capabilities and limitations. Within the context of genomics, where data is often high-dimensional, complex, and biologically nuanced, a comprehensive metric selection strategy is indispensable for deriving meaningful conclusions. This protocol outlines best practices for selecting and applying key metrics—including the Area Under the Receiver Operating Characteristic Curve (AUROC), Pearson Correlation Coefficient (PCC), Spearman Correlation Coefficient (SCC), and task-specific indicators—to ensure robust and biologically relevant benchmarking of genomic tools. The DNALONGBENCH suite, a benchmark for long-range DNA prediction tasks, exemplifies this approach by employing a multi-metric evaluation across diverse biological tasks to provide a holistic view of model performance [4].

Core Metric Definitions and Biological Interpretations

A foundational understanding of core metrics is essential for their correct application in genomic studies. The table below summarizes the primary metrics and their roles in evaluating models.

Table 1: Core Evaluation Metrics for Genomic Model Assessment

| Metric | Full Name | Measurement Focus | Value Range | Interpretation in Genomics |

|---|---|---|---|---|

| AUROC | Area Under the Receiver Operating Characteristic Curve | Overall discriminative ability in binary classification [33] | 0.5 to 1.0 | 0.5 = No better than chance; 0.7-0.8 = Fair; 0.8-0.9 = Considerable; ≥0.9 = Excellent [34] |

| PCC | Pearson Correlation Coefficient | Strength and direction of a linear relationship between two continuous variables [35] | -1 to 1 | -1 = Perfect negative correlation; 0 = No linear correlation; +1 = Perfect positive correlation [36] |

| SCC | Spearman's Rank Correlation Coefficient | Strength and direction of a monotonic relationship (whether linear or not) [37] | -1 to 1 | -1 = Perfect negative monotonic rank; 0 = No monotonic rank correlation; +1 = Perfect positive monotonic rank |

Detailed Metric Characteristics

AUROC (Area Under the Receiver Operating Characteristic Curve): This metric is particularly valuable for binary classification tasks in genomics, such as distinguishing between functional and non-functional genetic elements or identifying enhancer-target gene interactions [4]. A key advantage is its invariance to class distribution, making it suitable for imbalanced datasets, like those common in genomics where positive cases (e.g., specific gene variants) are often rare [33]. It evaluates the model's ability to rank positive instances higher than negative ones across all possible classification thresholds.

PCC (Pearson Correlation Coefficient): PCC assesses the linear relationship between the predicted and actual values of a continuous variable. It is ideal for regression tasks, such as predicting gene expression levels or regulatory sequence activity scores [4]. Its formula is:

( r = \frac{\sum (xi - \bar{x})(yi - \bar{y})}{\sqrt{\sum (xi - \bar{x})^2 \sum (yi - \bar{y})^2}} )