Beyond Animal Models: Revolutionizing Predictive Performance in Preclinical Drug Development

This article examines the critical challenge of translating preclinical findings across species to successful human outcomes in drug development.

Beyond Animal Models: Revolutionizing Predictive Performance in Preclinical Drug Development

Abstract

This article examines the critical challenge of translating preclinical findings across species to successful human outcomes in drug development. We explore the scientific and regulatory evolution driving the adoption of New Approach Methodologies (NAMs), including advanced in vitro systems like organoids and organs-on-chip, alongside sophisticated in silico tools such as AI and digital twins. For researchers and drug development professionals, this piece provides a comprehensive framework covering the foundational limitations of traditional models, methodological applications of innovative tools, strategies for troubleshooting and optimization, and the crucial process of validation and comparative analysis. The synthesis of these elements highlights a paradigm shift towards a more human-relevant, efficient, and predictive preclinical research ecosystem.

The Translational Gap: Why Traditional Animal Models Fail in Drug Development

The pharmaceutical industry operates at the nexus of profound scientific innovation and immense financial risk, characterized by a development process that is both lengthy and prone to failure. Developing a new drug typically takes 10–15 years and costs on the order of $1–2 billion or more per successful drug, with the average capitalized cost reaching $2.6 billion when accounting for failures [1] [2]. This high-stakes environment is governed by a rigorous, multi-stage process designed to ensure safety and efficacy but which also establishes a complex path to market where attrition rates are staggering. Industry analyses consistently show that only about 7.9% of drug candidates entering Phase I clinical trials will ultimately receive marketing approval [2]. This translates to a situation where over 90% of clinical drug development efforts fail [3] [4], creating a significant "high cost of attrition" that impacts therapeutic advancement, resource allocation, and ultimately, patient care.

Understanding these success rates and the precise points where failures occur is crucial for researchers, scientists, and drug development professionals seeking to optimize this pipeline. This analysis examines drug development through the analytical lens of validation—similar to the Species Threat Abatement and Restoration (STAR) metric used in conservation biology to quantify conservation contributions and validate global metrics at national scales [5]. Just as STAR requires validation against local data to ensure accurate threat assessment and resource prioritization, drug development strategies must be validated through robust, data-driven approaches at each development phase to mitigate attrition risks and improve the probability of success.

Quantitative Analysis of Drug Development Success Rates

Phase-Transition Success Probabilities

The drug development pipeline functions as a sequential filtering mechanism, with the highest attrition occurring during clinical trials. Table 1 summarizes the likelihood of a drug successfully transitioning from one phase to the next, based on aggregated industry data:

Table 1: Drug Development Phase Transition Success Rates and Characteristics

| Development Phase | Average Duration | Primary Purpose | Probability of Transition to Next Phase | Primary Reasons for Failure |

|---|---|---|---|---|

| Discovery & Preclinical | 2-4 years | Target identification, lead optimization, safety/toxicology testing | ~0.01% (to approval) | Toxicity, lack of effectiveness in models [2] [4] |

| Phase I | 2.3 years | Safety, tolerability, and dosage in small groups (20-100) | 52% - 70% | Unmanageable toxicity/adverse effects [2] [4] |

| Phase II | 3.6 years | Efficacy and further safety in patients (several hundred) | 29% - 40% | Lack of clinical efficacy (~40-50% of failures) [2] [4] |

| Phase III | 3.3 years | Confirm efficacy, monitor long-term safety in large populations (300-3,000) | 58% - 65% | Insufficient efficacy, safety concerns in larger cohorts [2] [4] |

| Regulatory Review | 1.3 years | Agency review of all data for benefit-risk assessment | ~91% | Safety/efficacy concerns, inadequate evidence [2] |

The data reveals that Phase II represents the most significant attrition point in clinical development, with success rates of only 29-40% [2] [4]. This phase serves as the critical efficacy testing ground, where approximately 40-50% of failures are attributed to a lack of clinical efficacy [2] [4]. This suggests that preclinical models often fail to reliably predict human therapeutic responses, highlighting a crucial validation gap between animal models and human biology.

Therapeutic Area Variability

Success rates vary substantially across therapeutic areas, reflecting differing disease complexities, validation of therapeutic targets, and regulatory environments. Table 2 compares Likelihood of Approval (LOA) from Phase I across selected therapeutic areas:

Table 2: Success Rate Variation by Therapeutic Area (Likelihood of Approval from Phase I)

| Therapeutic Area | Likelihood of Approval (LOA) from Phase I | Notable Challenges |

|---|---|---|

| Hematology | 23.9% | Often better understanding of disease mechanisms [2] |

| Oncology | <10% (average) | Tumor heterogeneity, complex microenvironment [4] |

| Neurology | <10% (average) | Blood-brain barrier delivery, disease complexity [4] |

| Cardiovascular | <10% (average) | Need for large, long-term outcome studies [4] |

| Urology | 3.6% | Specific challenges not detailed in search results [2] |

Hematology drugs demonstrate the highest LOA at 23.9%, while urology drugs have the lowest at just 3.6% [2]. Drugs targeting neurology, oncology, cardiovascular disease, and urology consistently show some of the lowest likelihoods of approval [4]. These variations underscore the importance of disease-specific validation strategies and the limitations of one-size-fits-all development approaches.

Methodologies for Analyzing and Reducing Attrition

Experimental Protocols for Efficacy and Safety Validation

Predictive Data Integration for Preclinical Validation

Advanced data integration methodologies are increasingly critical for bridging the translational gap between preclinical models and human outcomes:

- Protocol Objective: To create contextualized data infrastructures that enhance the predictive value of preclinical and early clinical data [3].

- Methodology: Implement unified data platforms that aggregate and contextualize information from numerous systems, equipment, and processes. Sanofi exemplified this approach by building a data infrastructure integrating process data with physical sensors (vibration, ultrasonic monitors) and applying anomaly-detection models to predict equipment issues and optimize maintenance [3].

- Validation Technique: Develop predictive models using real-time operations data to assess product consistency directly within the manufacturing process, as demonstrated by Biogen, which embedded consistency testing into production to dramatically reduce quality control time [3].

- Data Analysis: Deploy AI-driven process simulation in a "lab in a loop" approach, where researchers use data from experiments to create AI models that predict molecular design and interactions, then test these predictions and feed results back into model refinement [3].

Real-World Evidence (RWE) Integration for Clinical Validation

The incorporation of Real-World Evidence (RWE) represents a paradigm shift in clinical validation strategies:

- Protocol Objective: To generate clinical evidence from Real-World Data (RWD) collected outside traditional clinical trials, capturing broader patient experiences and outcomes [6].

- Data Sources: Electronic health records (EHRs), insurance claims data, patient registries, and digital health technologies (wearable devices, mobile health apps, electronic patient diaries) [6].

- Methodology: Implement robust data governance frameworks and standardization protocols (HL7, FHIR) to harmonize diverse data sources. Conduct rigorous validation processes to identify errors, missing values, and inconsistencies [6].

- Application: Utilize RWE to enhance preclinical assessment by supplementing animal models with historical clinical data, identifying potential safety signals and efficacy trends early in development. RWE also enables research on more diverse and high-risk patient groups often excluded from traditional RCTs [6].

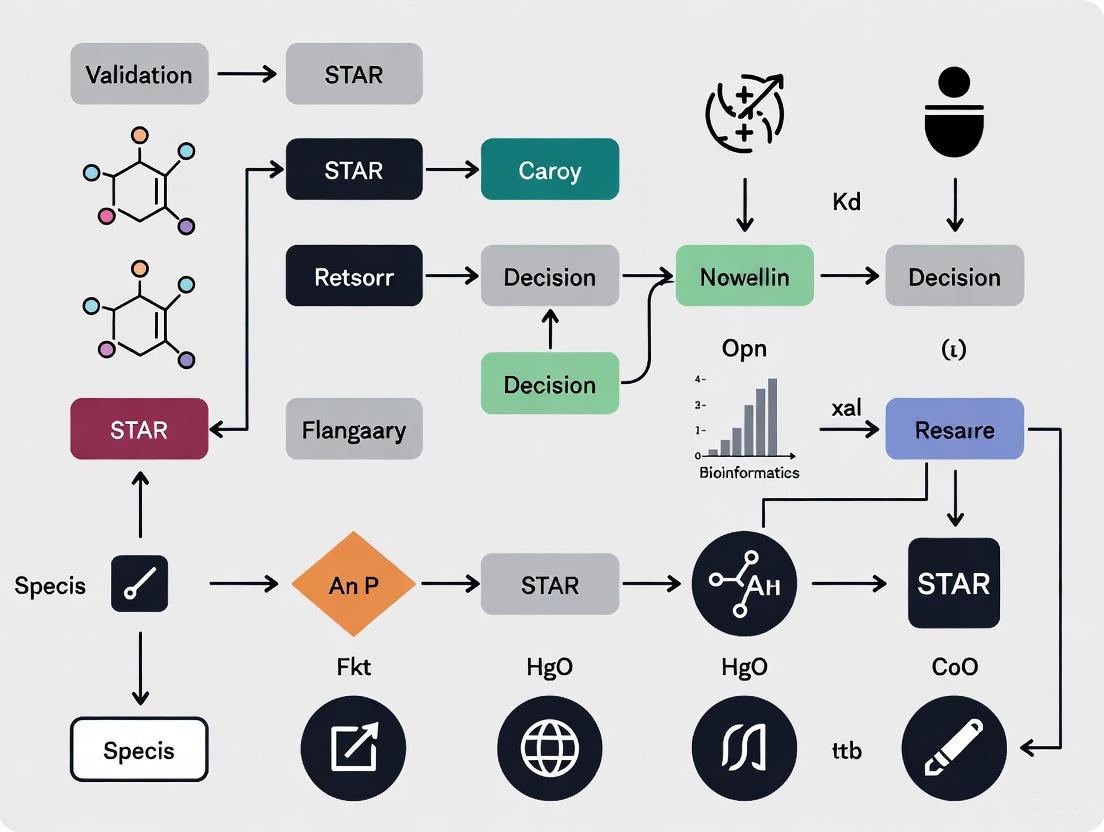

The following diagram illustrates this integrated validation methodology for bridging preclinical and clinical development:

Statistical Analysis Frameworks for Comparative Effectiveness

In the absence of head-to-head clinical trials, validated statistical methods enable indirect treatment comparisons:

- Adjusted Indirect Comparisons: This accepted method compares the magnitude of treatment effect between two treatments relative to a common comparator, preserving the randomization of originally assigned patient groups [7]. For example, if Drug A and Drug B have both been compared to Drug C in separate trials, their indirect comparison is calculated as: (A vs. C) - (B vs. C) [7].

- Multiple Adjusted Indirect Comparisons: When no common comparator exists, a series can be constructed linking two drugs indirectly via multiple comparators (e.g., A vs. C, B vs. D, and C vs. D) [7].

- Mixed Treatment Comparisons (MTC): These Bayesian statistical models incorporate all available data for a drug, including data not relevant to the comparator drug, reducing uncertainty through network integration [7].

All indirect analyses rely on the fundamental assumption that study populations in the trials being compared are sufficiently similar—a validation requirement analogous to ensuring STAR metric applicability across different geographical contexts [7] [5].

The Scientist's Toolkit: Essential Research Reagent Solutions

The following reagents, technologies, and platforms represent essential components for modern drug development workflows focused on validating targets and reducing attrition:

Table 3: Key Research Reagent Solutions for Drug Development Validation

| Tool/Category | Specific Examples | Function in Validation |

|---|---|---|

| Data Integration Platforms | AVEVA PI System, Cloud-based Data Architecture | Aggregates and contextualizes data from disparate systems, equipment, and processes for predictive modeling [3] |

| AI/Modeling Platforms | NVIDIA Earth-2, "Lab in a Loop" AI Models | Applies deep learning to explore chemical databases, streamline testing, and create digital twins for virtual patient testing [3] [8] |

| Real-World Data Sources | Electronic Health Records, Wearable Sensors, Patient Registries | Provides real-world treatment response data and enables remote patient monitoring in clinical trials [3] [6] |

| Statistical Analysis Software | R, Python, CADTH Indirect Comparison Software | Enables complex statistical analyses including adjusted indirect comparisons and mixed treatment comparisons [7] [9] |

| Screening Technologies | High-Throughput Screening, Patient-Derived Organoids | Identifies promising lead compounds and provides more physiologically relevant disease models for efficacy testing [1] |

| Biomarker Assays | Genomic Profiling, Molecular Diagnostics | Enables patient stratification, target engagement assessment, and pharmacodynamic response measurement [1] |

These tools collectively enable a more validated, data-driven approach to drug development, helping to address the high attrition rates by providing better predictive capabilities throughout the pipeline.

The analysis of drug development success rates reveals a process characterized by substantial attrition, particularly at the Phase II efficacy testing stage where approximately 60-70% of candidates fail, primarily due to insufficient clinical efficacy [2] [4]. This high failure rate, combined with lengthy development timelines of 10-15 years and costs exceeding $2 billion per approved drug, creates a challenging environment for therapeutic innovation [1] [2].

The path forward requires a fundamental shift toward rigorously validated approaches at each development stage, mirroring validation principles exemplified by the STAR metric in conservation science [5]. This includes implementing robust data integration platforms that contextualize information from multiple sources [3], incorporating real-world evidence to enhance understanding of treatment effects in diverse populations [6], and applying appropriate statistical methods for comparative effectiveness research when direct trial evidence is unavailable [7].

As the industry increasingly adopts these validated approaches—leveraging AI-driven models, real-world data, and advanced analytics—there is potential to fundamentally reshape the attrition curve, creating a more efficient and predictive drug development pipeline that ultimately delivers better therapies to patients in need.

A fundamental challenge in modern biomedical research lies in the significant biological differences between preclinical animal models and humans. These species-specific disconnects profoundly impact the development of effective therapies and accurate disease models. Chemical individuality, a concept articulated by Garrod, underscores how genetic variation creates substantial diversity in human metabolic processes and disease susceptibility [10]. Despite this understanding, traditional animal models, particularly rodents, remain the cornerstone of preclinical research, creating a translational gap where promising laboratory findings frequently fail to predict human clinical outcomes. This gap contributes significantly to drug development attrition rates, which approach 95% in fields like oncology [11].

The core issue stems from multifaceted differences across species in drug metabolism pathways, disease pathophysiology mechanisms, and population-level genetic diversity. These disconnects manifest at molecular, cellular, and systemic levels, compromising the predictive value of even sophisticated animal models. For instance, fundamental differences in cytochrome P450 enzyme systems between mice and humans lead to dramatically different drug metabolism profiles, potentially altering both efficacy and toxicity [12] [13]. Simultaneously, differences in immune system regulation, target protein expression, and genetic heterogeneity further complicate extrapolation from model organisms to human populations [14].

This guide systematically compares key species differences across these domains, providing researchers with a framework for critically evaluating preclinical data. By understanding these disconnects, scientists can make more informed decisions in model selection, experimental design, and clinical translation, ultimately improving the efficiency and success rate of therapeutic development.

Comparative Drug Metabolism: Cytochrome P450 System

The cytochrome P450 (CYP) enzyme system represents perhaps the most clinically significant site of species-specific disconnects in pharmacology. These enzymes mediate phase I metabolism for approximately 75-80% of clinically used drugs, creating profound implications for drug development and safety assessment.

Quantitative Differences in CYP Gene Families

Table 1: Cytochrome P450 Composition in Mice Versus Humans

| Species | Total CYP Genes in Major Drug-Metabolizing Families | Key Enzymes for Drug Metabolism | Regulatory Nuclear Receptors |

|---|---|---|---|

| Mouse | 34 genes across Cyp1a, Cyp2c, Cyp2d, and Cyp3a subfamilies [12] [13] | Multiple enzymes with overlapping functions | Mouse-specific Car and Pxr with different activation profiles [13] |

| Human | 8 key genes: CYP1A1, CYP1A2, CYP2C9, CYP2D6, CYP3A4, CYP3A7 [12] | CYP3A4 alone metabolizes ~50% of clinical drugs | Human CAR and PXR with distinct ligand binding [13] |

The quantitative disparity in CYP genes leads to functional differences with direct translational consequences. Mice generally metabolize drugs more rapidly than humans due to enzyme redundancy and different expression patterns, potentially leading to underestimation of human drug exposure and half-life [12]. Furthermore, the substrate specificity differs between orthologous enzymes, meaning a compound metabolized by one pathway in mice may follow a completely different metabolic route in humans, producing distinct metabolite profiles with potentially unique pharmacological or toxicological activities [13].

Experimental Models and Solutions

Experimental Protocol 1: Assessing Species-Specific Drug Metabolism Using Humanized Mouse Models

- Objective: To evaluate human-relevant metabolism and pharmacokinetics of investigational compounds while circumventing inherent mouse-specific metabolism.

- Model System: The 8HUM mouse model, in which 33 murine CYP genes together with Car and Pxr are replaced with human CYP1A1, CYP1A2, CYP2C9, CYP2D6, CYP3A4, CYP3A7, PXR, and CAR [12] [13] [15].

- Methodology:

- Administer the test compound to both 8HUM and wild-type mice via appropriate route (e.g., oral gavage, intravenous injection).

- Collect serial blood samples at predetermined time points (e.g., 0.25, 0.5, 1, 2, 4, 8, 12, 24 hours post-dose).

- Process plasma samples and quantify parent drug and major metabolites using LC-MS/MS.

- Determine key pharmacokinetic parameters: AUC (area under the curve), C~max~ (maximum concentration), T~max~ (time to C~max~), and t~1/2~ (elimination half-life).

- Compare metabolite profiles between 8HUM and wild-type mice to identify human-specific metabolites.

- Validation: This approach has been validated in studies demonstrating that 8HUM mice accurately replicate clinically observed drug-drug interactions that were not predicted by conventional mouse models or in vitro systems [15].

Figure 1: Experimental workflow for comparing drug metabolism pathways in wild-type versus humanized mouse models. The 8HUM model replaces 33 murine CYP genes with 8 human orthologs to better recapitulate human metabolic profiles.

Disease Pathophysiology and Modeling Limitations

Beyond metabolism, significant differences in disease pathophysiology between species complicate modeling of human disorders. These disconnects appear particularly pronounced in cancer, immunology, and neurology, where complex cellular interactions and tissue-specific microenvironments play critical roles.

Tumor Heterogeneity and Microenvironment

Traditional preclinical cancer models often fail to replicate the complexity of human tumors. Two-dimensional cell cultures lack crucial three-dimensional architecture, cell-matrix interactions, and diverse cellular composition characteristic of human tumors [11]. Even patient-derived xenografts implanted into immunocompromised mice suffer from replacement of human stromal components with murine counterparts, distorting the tumor microenvironment and potentially altering drug response [11].

Table 2: Limitations of Preclinical Cancer Models

| Model System | Key Advantages | Species-Specific Limitations | Impact on Translational Predictive Value |

|---|---|---|---|

| 2D Cell Culture | Simple, cost-effective, high-throughput | Lacks 3D architecture, cell-matrix interactions, tumor microenvironment | Poor prediction of clinical efficacy; high false positive rate |

| Murine Xenografts | Uses human cancer cells | Lacks functional human immune system; murine stromal replacement | Fails to predict immunotherapy responses; altered metastasis patterns |

| Patient-Derived Xenografts (PDXs) | Maintains original tumor histology/genomics | Lacks intact human tumor microenvironment; expensive; low throughput | Limited for large-scale drug screens; stromal replacement alters drug response |

| Genetically Engineered Mouse Models (GEMMs) | Studies cancer development in situ | Species differences in pharmacology and safety; time-consuming | May not accurately predict human drug responses due to pharmacological differences |

Immune System and Target Biology

Perhaps the most dramatic examples of species disconnects come from immunology. The TGN1412 catastrophe demonstrated how target expression differences can lead to tragic clinical outcomes. This CD28 superagonist antibody showed excellent tolerance in non-human primates at high doses but triggered life-threatening cytokine storms in human volunteers [14]. The critical difference was that human CD4+ effector memory T cells express CD28 and could be activated by TGN1412, while non-human primate counterparts lacked CD28 expression on this cell subset [14].

Similar target differences affect cancer therapeutics. The checkpoint inhibitor pembrolizumab (anti-PD-1) shows high affinity for human PD-1 but negligible binding to mouse PD-1 due to a single amino acid difference (aspartate in humans versus glycine in mice at position 85) [14]. Such disparities necessitate the development of humanized target models even for basic efficacy testing.

Genetic Diversity and Metabolic Individuality

Beyond species-level differences, genetic diversity within human populations creates additional complexity that animal models cannot fully capture. This chemical individuality significantly influences drug response and disease susceptibility.

Population-Level Genetic Variation

Large-scale metabolomic studies reveal how genetic variation shapes human metabolic profiles. Research analyzing 913 metabolites in 19,994 individuals identified 2,599 variant-metabolite associations, with rare variants (minor allele frequency ≤1%) explaining 9.4% of associations [10]. These genetic influences create genetically influenced metabotypes—clusters of co-regulated metabolites that reflect individual biochemical signatures [10].

Table 3: Examples of Clinically Significant Genetic Polymorphisms with Racial Disparities

| Gene/Protein | Functional Role | Polymorphism Example | Allele Frequency Disparity | Clinical Impact |

|---|---|---|---|---|

| ABCB1/P-gp | Drug efflux transporter | C3435T | Higher in Asian populations [16] | Altered drug absorption and bioavailability |

| CYP3A5 | Drug metabolism | CYP3A5*3 (non-functional) | Lower in African-Americans (~25% expressors) vs. Caucasians (~90% non-expressors) [16] | Higher tacrolimus dose requirements in African-Americans |

| DPYD | Fluoropyrimidine metabolism | DPYD variants | Varies across populations | Severe toxicity from 5-FU/capecitabine in variant carriers [10] |

| SRD5A2 | Androgen metabolism | SRD5A2 variants | Population-specific variants | Altered steroid metabolism; potential adverse effects from SRD5A2 inhibitors [10] |

Experimental Approaches for Studying Metabolic Individuality

Experimental Protocol 2: Identifying Genetically Influenced Metabotypes (GIMs)

- Objective: To identify clusters of co-regulated metabolites influenced by shared genetic variants and assign causal genes.

- Study Population: Large human cohorts (e.g., >15,000 participants) with paired genomic data and untargeted metabolomic profiling [10].

- Metabolomic Profiling: Use high-throughput untargeted mass spectrometry platforms (e.g., Metabolon HD4) quantifying 900+ metabolites across amino acids, lipids, xenobiotics, carbohydrates, nucleotides, cofactors, vitamins, and energy pathways [10].

- Genome-Wide Association Analysis:

- Perform metabolite quantitative trait locus (mQTL) mapping for each metabolite.

- Apply strict significance threshold (P < 1.25 × 10^-11) to identify variant-metabolite associations.

- Conduct conditional analyses to identify conditionally independent variant associations.

- GIM Definition:

- Within associated genomic regions, group metabolites influenced by shared genetic signals.

- Identify minimal set of variants explaining all regional metabolite associations.

- Assign causal genes through manual literature curation and functional annotation.

- Clinical Correlation: Systematically examine clinical relevance of GIMs across electronic health record data for 1,400+ phenotypes [10].

Figure 2: Pathway from genetic variation to clinical phenotype through genetically influenced metabotypes. Genetic variants alter enzyme or transporter function, which regulates metabolite clusters that ultimately influence clinical outcomes like drug response.

The Scientist's Toolkit: Essential Research Reagents and Models

Table 4: Key Research Reagent Solutions for Studying Species Disconnects

| Tool/Reagent | Specific Function | Application in Species Comparison Studies |

|---|---|---|

| 8HUM Mouse Model | Replaces 33 murine CYP genes + Car/Pxr with human orthologs [13] [15] | Predicting human-specific drug metabolism, drug-drug interactions, and metabolite profiles |

| PXB Mouse Model | Humanized liver model via hepatocyte engraftment [17] | Studying human hepatotropic diseases, liver-specific metabolism, and drug-induced liver injury |

| Untargeted Metabolomics Platforms | Simultaneous quantification of 900+ metabolites [10] | Mapping genetically influenced metabotypes and chemical individuality across populations |

| Humanized Target Models | Replacement of murine immune targets with human versions [14] | Testing therapeutics against human-specific epitopes (e.g., PD-1, CD28) |

| Conditional Knockout Systems | Tissue-specific or inducible gene deletion | Studying essential genes with species-specific functions and validating targets |

Species-specific disconnects in drug metabolism, disease pathophysiology, and genetic diversity represent fundamental challenges in translational research. The cytochrome P450 system demonstrates dramatic quantitative and qualitative differences between species, directly impacting drug metabolism rates and pathways. Disease modeling, particularly in oncology and immunology, suffers from inadequate replication of human tumor microenvironments and immune interactions. Furthermore, human genetic diversity creates metabolic individuality that influences drug response and disease susceptibility in ways difficult to capture in inbred animal models.

Advanced model systems, particularly extensively humanized mice like the 8HUM model, provide valuable tools for bridging these translational gaps. Similarly, large-scale human metabolomic studies enable direct examination of genetic influences on biochemical pathways. By acknowledging these species disconnects and employing appropriate models and methodologies, researchers can improve the predictive value of preclinical research and enhance the success rate of therapeutic development.

The 3Rs framework—Replacement, Reduction, and Refinement—represents a fundamental paradigm in humane scientific research, guiding ethical and scientific practices in drug development and toxicity testing. First proposed in 1959 by William Russell and Rex Burch, these principles have evolved from philosophical concepts to actionable guidelines that stimulate policy reform and foster innovative safety assessment approaches in drug development [18] [19]. In modern regulatory practice, the 3Rs principle has revolutionized traditional approaches, shifting focus from mandatory animal toxicity testing toward more human-relevant New Approach Methodologies (NAMs) that minimize animal use while improving predictive accuracy for human safety [18]. This transformation is particularly relevant within the context of species validation research, where the need for translatable results demands models with high biological relevance.

The 3Rs framework operates within a dynamic regulatory landscape that has recently undergone significant changes. In 2023, the United States Food and Drug Administration passed landmark legislation through the FDA Modernization Act 2.0, eliminating the long-standing requirement that all new human drugs must be tested on animals [18]. This regulatory shift, coupled with similar movements globally, has accelerated the adoption of alternative methods and positioned the 3Rs not merely as ethical guidelines but as essential components of sophisticated, predictive toxicology science. The European Medicines Agency has similarly published guidelines on the regulatory acceptance of 3Rs testing approaches, creating a global momentum toward more responsible and human-relevant research practices [18].

Core Principles of the 3Rs Framework

Reduction: Minimizing Animal Numbers Without Compromising Scientific Quality

Reduction refers to the use of methods that minimize the number of animals needed to obtain information of a given amount and precision, consistent with sound scientific statistical standards [20] [19]. In practical application, Reduction strategies enable researchers to extract maximum knowledge from minimal animal use, thereby respecting ethical considerations while maintaining scientific rigor. Modern Reduction goes beyond simply using fewer animals and encompasses sophisticated experimental designs that enhance the quality and translatability of the data obtained.

Longitudinal Experimental Designs: Scientists implement innovative approaches such as longitudinal experiments where the same animals are imaged repeatedly, effectively eliminating the need for separate control groups and reducing total animal numbers [20]. This approach not only reduces overall animal use but also generates richer datasets by tracking individual animal responses over time.

Microsampling Techniques: In experiments requiring biochemical monitoring, researchers can employ blood microsampling where small blood volumes are collected from the same animal repeatedly instead of requiring multiple animals for terminal blood collection [20]. This technique significantly reduces animal numbers while maintaining data quality.

Data and Resource Sharing: Reduction is further achieved through systematic sharing of data, animals, tissues, and equipment between research groups and organizations, ensuring that similar animal studies are not repeated unnecessarily [20]. This collaborative approach maximizes knowledge gained from each animal used in research.

Advanced Statistical Methods: Going beyond traditional Reduction, modern research employs appropriate statistical analyses and principles of human clinical experimental design—including randomization, heterogenization, and blinding—to reduce the number of animals needed to find meaningful results while accounting for natural variation within populations [19].

Refinement: Enhancing Animal Welfare and Data Quality

Refinement encompasses any decrease in the incidence or severity of inhumane procedures applied to those animals which still have to be used, with the goal of minimizing pain, suffering, distress, or lasting harm [19]. Modern Refinement strategies recognize that animal welfare is intrinsically linked to research quality, as stress can significantly alter an animal's behavior and physiology, potentially affecting experimental outcomes [20]. Contemporary Refinement extends beyond pain management to encompass the animal's entire life experience in the research environment.

Environmental Enrichment and Housing: Refinement includes providing comfortable, species-appropriate housing that allows animals to behave as they would in natural settings, implementing up-to-date animal husbandry practices, and offering environmental enrichment that meets an animal's needs while providing opportunities for choice and positive experiences [20] [19].

Procedural Refinements: During experimental procedures, Refinement involves using appropriate anesthesia and analgesia, performing minimally invasive surgery, and training animals to cooperate during procedures rather than using restraint [20]. These approaches reduce distress while often improving data quality.

Evidence-Based Welfare Assessment: Going beyond traditional Refinement involves devoting dedicated resources to implementation of Refinement strategies, including staff specialized in animal welfare and behavior who stay informed on current research, and performing ongoing assessments of animal care programs to continuously improve practices [19].

Replacement: Advancing Beyond Animal Models

Replacement refers to the substitution for conscious living higher animals of insentient material, avoiding the use of animals in experiments where possible through non-animal methods [19]. Modern conceptualizations view Replacement as a spectrum ranging from "soft" replacement (using animals considered incapable of experiencing suffering, such as fruit flies or worms) to "hard" replacement (absolute avoidance of animal use through human-relevant models) [20] [19]. This nuanced perspective acknowledges that any movement toward absolute Replacement is beneficial, even when complete Replacement is not yet feasible.

Full Replacement Methods: These approaches completely avoid animal use and include technologies such as human volunteers, human tissues and cells, established cell lines, computer models, and artificial intelligence simulations [20] [18]. Full Replacement represents the ideal scenario where scientific objectives can be met without any animal use.

Partial Replacement Methods: When full Replacement is not yet possible, researchers may use animals considered incapable of experiencing suffering, such as fruit flies, worms, or other invertebrates, or employ technologies that reduce but do not eliminate animal use [20]. Partial Replacement represents important progress along the Replacement spectrum.

Advanced Non-Animal Technologies: Modern Replacement strategies include sophisticated approaches such as organ-on-a-chip devices that use 3D printing to create compartments replicating human organs, in silico modeling and simulations, and advanced in vitro systems that provide more human-relevant data than traditional animal models [18].

Table 1: Comparison of Core 3Rs Principles and Implementation Strategies

| Principle | Core Definition | Traditional Approaches | Modern Advancements |

|---|---|---|---|

| Reduction | Using the least amount of animals needed for robust, reproducible experiments [19] | Basic statistical power analysis | Longitudinal designs with repeated imaging, blood microsampling, data sharing platforms [20] |

| Refinement | Minimizing pain, suffering, and distress for research animals [19] | Basic pain management during procedures | Species-appropriate environmental enrichment, cooperative training, evidence-based welfare assessment [20] [19] |

| Replacement | Avoiding animal use through non-animal methods [19] | Simple cell cultures, chemical tests | Human organ-on-a-chip models, AI and in silico simulations, human tissue biorepositories [20] [18] |

The 3Rs in Practice: Methodologies and Experimental Approaches

New Approach Methodologies (NAMs) as 3Rs Solutions

New Approach Methodologies (NAMs) represent a broad category of innovative scientific methods aimed at replacing, reducing, or refining animal use in toxicity testing and biomedical research while providing more accurate and relevant human safety data [18]. These methodologies encompass diverse technological platforms that offer superior human predictivity compared to traditional animal models, addressing the critical limitation of species translatability that has long plagued pharmaceutical development. The emergence of sophisticated NAMs has been catalyzed by advancements in biotechnology, computational power, and growing recognition of the scientific and ethical limitations of animal models.

A key framework supporting NAMs implementation is the Integrated Approaches to Testing and Assessment (IATA), developed by the Organisation for Economic Co-operation and Development (OECD). IATA provides a structured methodology that integrates multiple information sources—including in vitro assays, in silico models, omics technologies, and existing in vivo data—to comprehensively assess pharmaceutical safety without relying exclusively on animal testing [18]. This integrated approach allows researchers to build a weight-of-evidence understanding of compound safety using human-relevant systems, strategically employing animal testing only when essential information gaps exist. The OECD has further supported 3Rs implementation through developing guidance documents and tools such as Quantitative Structure-Activity Relationship (QSAR) models and Adverse Outcome Pathways (AOPs) that facilitate non-animal safety assessment [18].

Experimental Protocols for Key Alternative Methods

Organ-on-a-Chip Protocol for Toxicity Screening

Organ-on-a-chip technology represents a cutting-edge Replacement approach that mimics human organ-level physiology more accurately than traditional two-dimensional cell cultures. These microfluidic devices contain hollow channels lined with living human cells arranged to recapitulate organ-specific tissue structures and functions, creating more physiologically relevant models for drug toxicity assessment.

Detailed Experimental Protocol:

- Chip Fabrication: Manufacture microfluidic devices using 3D printing techniques with biocompatible polymers such as polydimethylsiloxane (PDMS), creating compartments that replicate human organs including heart, lungs, kidneys, liver, intestine, and brain [18].

- Cell Sourcing and Seeding: Isolate primary human cells or use differentiated stem cells from relevant tissues. Seed cells at appropriate densities into the respective organ compartments under sterile conditions.

- Tissue Maturation: Perfuse culture medium through the vascular channels and apply appropriate mechanical stimuli (e.g., cyclic stretching for lung chips, fluid flow shear stress for liver chips) to promote tissue maturation and functionality over 7-28 days.

- Compound Exposure: Introduce test compounds at clinically relevant concentrations through the vascular channels, mimicking systemic administration. Collect effluent medium at timed intervals for biomarker analysis.

- Endpoint Assessment: Measure functional parameters (e.g., beat frequency for heart chips, albumin production for liver chips), structural integrity (through transepithelial electrical resistance), cytotoxicity (via lactate dehydrogenase release), and specific biomarker expression (using immunofluorescence and PCR).

- Data Integration: Analyze multiple endpoint data using computational models to predict human physiological responses, comparing results to historical animal and human data for validation.

This protocol enables researchers to study drug metabolism and organ-specific toxicities in a human-relevant system that captures some aspects of organ-organ interactions, potentially replacing certain animal toxicity studies [18].

In Silico Toxicology Protocol Using QSAR Modeling

Quantitative Structure-Activity Relationship (QSAR) modeling represents a powerful Replacement and Reduction approach that predicts compound toxicity based on chemical structure similarity to compounds with known toxicological profiles.

Detailed Experimental Protocol:

- Dataset Curation: Compile high-quality experimental data from reliable sources (e.g., EPA's ToxCast, PubChem) for a well-defined toxicity endpoint. Ensure chemical structures are standardized and duplicates removed.

- Descriptor Calculation: Compute molecular descriptors capturing chemical properties (e.g., logP, molecular weight, polar surface area) and structural features using software such as PaDEL or Dragon.

- Dataset Splitting: Divide the dataset into training (70-80%), validation (10-15%), and test sets (10-15%) using rational methods such as Kennard-Stone or sphere exclusion to ensure representative chemical space coverage.

- Model Development: Apply machine learning algorithms (e.g., random forest, support vector machines, neural networks) to training data to build predictive models linking chemical descriptors to toxicity outcomes.

- Model Validation: Assess model performance using validation and test sets, calculating metrics including accuracy, sensitivity, specificity, and concordance. Apply additional validation through external datasets and prospective prediction challenges.

- Application for Safety Assessment: Use validated QSAR models to screen new chemical entities, prioritizing compounds with predicted low toxicity for further development and identifying structural alerts associated with toxicity.

This computational approach allows rapid, cost-effective toxicity screening of large compound libraries while completely replacing animal use for initial safety assessment [18].

Visualizing the 3Rs Implementation Workflow

The following diagram illustrates the integrated decision-making process for implementing the 3Rs framework in research design:

Diagram 1: 3Rs Implementation Workflow

Comparative Performance Data: Animal Models vs. Alternative Methods

Quantitative Assessment of Alternative Method Performance

The validation and adoption of 3Rs methodologies require rigorous comparison against traditional animal models across multiple performance metrics, including predictive accuracy for human responses, cost efficiency, throughput capacity, and reproducibility. The following table summarizes comprehensive comparative data between established animal models and emerging alternative approaches across key validation parameters.

Table 2: Comprehensive Performance Comparison: Animal Models vs. 3Rs Alternative Methods

| Method Category | Predictive Accuracy for Human Toxicity | Throughput (Compounds/Year) | Cost per Compound | Species Translatability Concerns | Regulatory Acceptance Status |

|---|---|---|---|---|---|

| Traditional Animal Models | Moderate (40-60%) [18] | Low (10-50) | High ($0.5-2M) | Significant species differences in metabolism, distribution | Fully established for most applications |

| In Vitro 2D Cell Cultures | Low-Moderate (30-50%) | High (1,000-5,000) | Low ($5-50K) | Limited physiological complexity, no organ interactions | Accepted for early screening, not for standalone safety |

| Organ-on-a-Chip Systems | Moderate-High (60-80%) [18] | Medium (100-500) | Medium ($100-500K) | Human cell-based but simplified physiology, limited longevity | Emerging acceptance with case-by-case justification |

| In Silico/QSAR Models | Varies by endpoint (50-90%) | Very High (10,000+) | Very Low ($1-10K) | Structure-based prediction, no species translatability issues | Accepted for prioritization and screening |

| Human Primary Tissue Models | High (70-85%) | Low-Medium (50-200) | High ($200-800K) | Maintains human-specific metabolism but donor variability | Limited acceptance, requires complementary data |

The data reveal that while traditional animal models benefit from established regulatory acceptance pathways, they demonstrate significant limitations in predictive accuracy for human outcomes, with estimates suggesting only 40-60% concordance with human toxicity profiles [18]. This translatability gap represents a fundamental scientific limitation that alternative methods specifically aim to address through human biology-based approaches. Organ-on-a-chip systems and human primary tissue models show particularly promising predictive accuracy (60-85%) while maintaining sufficient throughput for meaningful application in drug discovery pipelines [18].

Regulatory Adoption and Validation Metrics

The transition of 3Rs methodologies from research tools to regulatory-accepted approaches requires systematic validation and demonstration of reliability. Recent regulatory changes have significantly accelerated this process, with the FDA Modernization Act 2.0 removing the mandatory animal testing requirement for new drugs and explicitly opening pathways for alternative methods [18]. This legislative shift has catalyzed investment and innovation in NAMs development, with regulatory agencies developing specific guidance documents for evaluating and implementing these approaches.

Critical metrics for regulatory acceptance include:

- Technical Validation: Demonstration of reproducibility within and between laboratories, with clear standard operating procedures and quality control measures.

- Biological Relevance: Evidence that the method measures biologically meaningful endpoints relevant to human physiology and disease.

- Predictive Capacity: Statistical demonstration that the method accurately predicts human responses, typically through comparison with clinical data or established animal models.

- Reliability Assessment: Formal interlaboratory validation studies following established principles such as those developed by OECD, ensuring consistent performance across different laboratory settings.

The European Medicines Agency has published specific guidelines on the principles of regulatory acceptance of 3Rs testing approaches, creating a structured framework for evaluating alternative methods [18]. Similarly, the International Council for Harmonisation (ICH) has played an indispensable role in enhancing 3Rs principles through global harmonization of regulatory requirements, reducing redundant animal testing across different jurisdictions [18].

The Scientist's Toolkit: Essential Research Reagents and Solutions

Implementing the 3Rs framework requires specialized reagents, tools, and platforms that enable sophisticated non-animal research approaches. The following table details essential research solutions supporting modern Reduction, Refinement, and Replacement strategies.

Table 3: Essential Research Reagents and Solutions for Implementing 3Rs Principles

| Tool/Reagent Category | Specific Examples | Primary Function in 3Rs Research | Key Applications |

|---|---|---|---|

| Human Cell Sources | Primary hepatocytes, iPSCs, organ-specific primary cells | Provides human-relevant biological systems for Replacement | In vitro toxicity screening, disease modeling, metabolic studies |

| Advanced Scaffold Materials | Decellularized ECM, synthetic hydrogels, 3D printing polymers | Supports complex 3D tissue models for Replacement | Organoid development, tissue engineering, organ-on-a-chip systems |

| Microphysiological Systems | Organ-on-a-chip platforms, microfluidic bioreactors | Recreates human organ-level physiology for Replacement | ADME toxicity assessment, disease modeling, drug screening |

| Biosensing Platforms | TEER electrodes, multiparametric sensor arrays, metabolic trackers | Enables longitudinal monitoring for Reduction | Real-time barrier function assessment, metabolic monitoring |

| Computational Tools | QSAR software, PBPK modeling platforms, AI/ML algorithms | Predicts toxicity and efficacy for Replacement/Reduction | Compound prioritization, toxicity prediction, clinical trial design |

| Analytical Technologies | High-content imaging, LC-MS/MS, RNA-seq platforms | Maximizes data generation per sample for Reduction | Mechanistic toxicology, biomarker identification, pathway analysis |

| Environmental Enrichment | Species-specific housing, cognitive challenges, social structures | Improves animal welfare for Refinement | Behavioral studies, neuroscience research, welfare science |

These research tools collectively enable the implementation of all three Rs by providing human-relevant test systems (Replacement), enhancing data quality and quantity from fewer animals (Reduction), and improving animal welfare through better housing and monitoring (Refinement). The continuous development and commercialization of these tools represent a growing market responding to scientific and ethical imperatives in biomedical research.

The 3Rs framework has evolved from an ethical concept to a sophisticated scientific paradigm that simultaneously advances animal welfare and research quality. The ongoing transition from traditional animal models to human-relevant alternative methods represents both an ethical imperative and a scientific opportunity to improve the predictive accuracy of safety assessment. As regulatory agencies worldwide adapt to accept these new approaches—exemplified by the landmark FDA Modernization Act 2.0—the research community is positioned to accelerate the development and implementation of advanced methodologies that better predict human responses [18].

The future of 3Rs implementation will likely focus on further developing integrated testing strategies that combine multiple alternative approaches—computational predictions, in vitro systems, and limited targeted in vivo studies—to build comprehensive safety profiles without relying exclusively on animal models. This evolution will require continued collaboration between researchers, regulatory agencies, and tool developers to establish validated, standardized approaches that meet rigorous scientific standards while adhering to ethical principles. As the scientific community moves "Beyond 3Rs" to expand these concepts, the framework will continue to serve as both a foundation and catalyst for innovation in humane, human-relevant research [19].

The FDA Modernization Act 2.0, signed into law in December 2022, marks a transformative pivot in U.S. drug development policy by eliminating the long-standing federal mandate for animal testing in preclinical trials [21] [22] [23]. This legislative change was driven by the high failure rates of drugs in clinical trials, where an estimated 90% of drugs that pass animal studies fail in humans due to unexpected toxicity or lack of efficacy, costing the industry approximately $28 billion annually [21] [24] [25]. The Act explicitly encourages the use of New Approach Methodologies (NAMs), including cell-based assays, organ-on-a-chip systems, and sophisticated computer models, to establish drug safety and effectiveness [24] [23] [25]. This article explores the impact of this regulatory shift on preclinical validation, framing the discussion within a broader thesis on scientific and translational research (STAR) performance across different species validation research.

The Scientific and Regulatory Catalyst for Change

The impetus for the FDA Modernization Act 2.0 stems from growing recognition of the fundamental pharmacogenomic differences between animal models and humans [21]. These differences lead to substantial variations in how drugs are absorbed, distributed, metabolized, and excreted (ADME) [21].

- The Problem of Genetic Diversity: Highly inbred animal models, such as rodent strains, are genetically homogeneous, effectively acting as technical replicates. This contrasts sharply with the vast genetic diversity of human populations, where genetic variation leads to significant differences in individual responses to drugs [21] [25]. It is estimated that as few as 1 in 25 people are optimal responders to common medications, highlighting the limitations of animal-based predictions [21].

- High-Profile Failures: Instances like the phase I trial failure of theralizumab, an anti-CD28 monoclonal antibody, underscore the dangers of poor translatability. Preclinical tests in mice showed great efficacy, but in humans, a dose 500 times lower than the safe mouse dose induced a massive cytokine storm, resulting in organ failure and hospitalization [21].

- The Legislative Response: The Act amended the Federal Food, Drug, and Cosmetic Act of 1938 by replacing the term "preclinical tests (including tests on animals)" with "nonclinical tests," and broadly defining these to include cell-based assays, microphysiological systems (MPS), and computer models [26] [23].

Comparative Performance: Animal Models vs. New Approach Methodologies (NAMs)

The core premise of the regulatory shift is that human biology-based NAMs can provide more predictive data for clinical outcomes than traditional animal models. The tables below summarize key quantitative comparisons.

Table 1: Overall Performance and Translational Value of Preclinical Models

| Model Characteristic | In vivo Animal Models | In vitro 2D Cell Culture | Organ-on-a-Chip (OOC) |

|---|---|---|---|

| Human Relevance | Low (Significant species differences) [21] | Medium | High (Uses primary human cells) [23] |

| Complex 3D Tissues | Yes | No | Yes [23] |

| Blood/Fluid Perfusion | Yes | No | Yes [23] |

| Longevity for Chronic Dosing | > 4 weeks | < 7 days | ~ 4 weeks [23] |

| Predictive Accuracy for Human Toxicity | ~50% agreement with human studies [27] | Low | 87% sensitivity, 100% specificity (Demonstrated in a Liver-Chip DILI study) [26] |

| Time to Result | Slow | Fast | Fast [23] |

Table 2: Analysis of Clinical Trial Failures and NAMs' Potential Impact

| Metric | Animal Model Data | Potential Impact of NAMs |

|---|---|---|

| Phase I Trial Approval Rate (2011-2017) | 6% - 7% [21] | Not yet fully quantified, but expected to significantly improve |

| Common Cause of Clinical Trial Termination | Lack of efficacy (60%), Toxicity (30%) [21] | Improved efficacy & safety prediction via human-relevant models [21] [27] |

| Ability to Assess New Modalities (e.g., mAbs) | Low [23] | Medium-High [23] |

| Genetic Diversity of Test System | Low (Effectively clones) [21] | High (Can leverage diverse iPSC biobanks) [21] |

Detailed Experimental Protocols for Key NAMs

The adoption of NAMs requires robust and standardized experimental protocols. Below are detailed methodologies for three cornerstone technologies.

Protocol for Induced Pluripotent Stem Cell (iPSC)-Based Screening

iPSCs are created from somatic cells (e.g., skin fibroblasts, leukocytes) reprogrammed using the Yamanaka factors (OCT4, SOX2, KLF4, and cMYC) [21]. They enable the creation of patient-specific disease models.

- Step 1: Cell Line Sourcing and Barcoding: Source iPSCs from diverse, ethically-screened donors to create a biobank. For large-scale "cell village" experiments, barcode individual cell lines using whole-genome sequencing variations. This allows multiple lines to be cultured together in a single dish and their data deconvoluted later via single-cell RNA or ATAC sequencing [21].

- Step 2: Directed Differentiation: Differentiate iPSCs into the desired cell type (e.g., cardiomyocytes, neurons) using specific cytokine and small-molecule protocols. For example, to generate cardiomyocytes, activate the Wnt pathway followed by its inhibition [27].

- Step 3: Drug Exposure and Functional Assays: Expose the differentiated cells to the drug candidate. Assay for cell viability, functional output (e.g., contractility for cardiomyocytes), and transcriptomic changes. High-content imaging and multi-electrode arrays are often used [27].

- Step 4: Data Analysis and Stratification: Use single-cell sequencing to assign results back to each barcoded donor. Analyze for patterns of efficacy and toxicity across a genetically diverse cohort, identifying sub-populations of responders and non-responders [21].

Protocol for Organ-on-a-Chip (OOC) Toxicological Assessment

Organ-Chips are microfluidic devices containing living human cells that simulate organ-level physiology and organ crosstalk [26] [23].

- Step 1: Device Fabrication and Cell Seeding: Fabricate the chip, typically from polydimethylsiloxane (PDMS), using photolithography. The device contains microchannels and porous membranes. Seed relevant human cell types (e.g., hepatocytes for a Liver-Chip) into the tissue chamber and endothelial cells into the adjacent vascular channel to recreate a blood vessel interface [21] [26].

- Step 2: System Maturation and Perfusion: Connect the chip to a perfusion system to circulate cell culture medium, providing nutrients and applying mechanical cues (e.g., fluid shear stress). Allow the tissue to mature and form functional structures for 1-2 weeks [23].

- Step 3: Drug Dosing and Metabolite Tracking: Introduce the drug candidate into the perfusion system. For a multi-organ system, the drug may be introduced into a "gut" chip, with its metabolites transported to a "liver" chip for further analysis. Apply both acute and chronic dosing regimens [23].

- Step 4: Endpoint Analysis: Analyze the effluent for biomarkers of injury (e.g., albumin for liver, troponin for heart). Fix and stain the tissues for immunohistochemistry to assess structural damage. Compare the results to known human toxicants to validate the system's predictive value [26].

Protocol for In Silico Prediction of Drug-Induced Liver Injury (DILI)

Computational models use AI and machine learning to predict toxicity from a drug's structural and physicochemical properties.

- Step 1: Data Curation and Model Training: Curate a large dataset of chemical structures with known human DILI outcomes. Use this data to train a machine learning model, such as a random forest or deep neural network, to recognize structural features associated with hepatotoxicity [25].

- Step 2: Feature Extraction and Integration: For a new drug candidate, extract key molecular descriptors (e.g., molecular weight, lipophilicity, presence of specific functional groups). Some advanced models may integrate data from lower-level in vitro assays [24] [25].

- Step 3: Prediction and Confidence Scoring: The AI model outputs a prediction of the compound's DILI risk (e.g., high, medium, low). It also provides a confidence score based on the compound's similarity to those in its training set [25].

- Step 4: Experimental Correlation and Validation: Correlate the in silico predictions with experimental data from other NAMs, such as the OOC and iPSC-based assays described above, to build a comprehensive weight-of-evidence safety profile [26].

The following workflow diagram illustrates the integrated application of these key NAM protocols within a preclinical validation strategy.

The Scientist's Toolkit: Essential Research Reagent Solutions

Transitioning to a NAM-centric workflow requires a suite of specialized tools and reagents. The following table details essential components for establishing these human-relevant testing platforms.

Table 3: Key Research Reagent Solutions for NAMs-Based Preclinical Validation

| Tool/Reagent | Function | Application in Preclinical Validation |

|---|---|---|

| Induced Pluripotent Stem Cells (iPSCs) | Patient-derived cells that can be differentiated into any cell type. Provide a genetically diverse, human-specific platform for testing [21] [27]. | Disease modeling, target validation, high-throughput safety and efficacy screening, identification of sub-population responses [21]. |

| Microphysiological Systems (MPS) / Organ-Chips | Microfluidic devices containing 3D, perfused human cell cultures that emulate organ-level physiology and organ crosstalk [21] [23]. | Predictive toxicology (e.g., DILI), ADME studies, modeling multi-organ interactions, chronic dosing [26] [23]. |

| Single-Cell Sequencing Assays | Technologies like scRNA-seq and scATAC-seq that measure gene expression and chromatin accessibility at a single-cell resolution [21]. | Deconvoluting responses in pooled "cell village" experiments, uncovering mechanistic insights into drug action and toxicity, identifying novel biomarkers [21]. |

| AI/ML Software Platforms | Computational models trained on chemical and biological data to predict drug properties, toxicity, and efficacy in silico [21] [25]. | Early prioritization of lead candidates, prediction of ADME properties and off-target effects, de-risking molecules before wet-lab experiments [24] [25]. |

| Differentiation Kits & Media | Defined cytokine and small-molecule cocktails for directing iPSC differentiation into specific lineages (e.g., cardiomyocytes, hepatocytes) [27]. | Ensuring consistent, high-quality production of target cells for reproducible screening assays and organ-chip tissue seeding [27]. |

The FDA Modernization Act 2.0 has fundamentally redefined the preclinical validation landscape, moving the industry from a rigid, animal-dependent framework to a flexible, evidence-based paradigm centered on human biology. Technologies such as iPSCs, Organ-Chips, and AI-driven in silico models are demonstrating superior performance in predicting human safety and efficacy outcomes, directly addressing the high failure rates that have long plagued drug development. For researchers and drug development professionals, mastering these NAMs is no longer optional but essential. Success in this new era will depend on the strategic integration of these tools into a cohesive preclinical workflow, leveraging their respective strengths to build a more predictive, efficient, and ultimately more successful path for bringing new therapeutics to patients.

Next-Generation Predictive Tools: From Organ-on-a-Chip to AI Digital Twins

The pharmaceutical industry faces a critical challenge in improving the translational relevance of preclinical models used in drug discovery and development. Traditional systems, particularly two-dimensional (2D) cell cultures and animal models, have long served as essential tools for evaluating drug efficacy and safety. However, these models often fail to faithfully recapitulate human-specific responses, leading to poor predictive value and high attrition rates in clinical trials [28]. Conventional 2D cell cultures, propagated on plastic as flat monolayers, cannot replicate the complex spatial interactions that occur in living tissues, while animal models raise ethical concerns and demonstrate limited predictive value for human disease due to interspecies differences [29] [28].

In recent years, advanced in vitro systems have emerged as promising alternatives that bridge the gap between traditional cell culture and in vivo experimentation. Among these, Patient-Derived Tumor Organoids (PDTOs) and Microphysiological Systems (MPS) represent a paradigm shift in preclinical modeling. These technologies offer more physiologically relevant platforms that preserve patient-specific genetic and phenotypic features, enabling more accurate prediction of drug responses and supporting the advancement of precision medicine [28] [30]. By more closely mimicking human physiology and disease states, PDTOs and MPS are transforming biomedical research, drug screening, and personalized therapeutic strategies.

Patient-Derived Tumor Organoids (PDTOs)

Patient-Derived Tumor Organoids (PDTOs) are three-dimensional (3D) miniaturized structures that self-organize and mimic the architecture and functionality of native tumors. These in vitro models are cultured directly from patient tumor samples collected from biopsies, surgical specimens, or biological fluids such as ascites and blood [30]. PDTOs can be grown from a wide variety of human cancers, including colorectal, pancreatic, lung, breast, ovarian, and prostate cancers [30]. The key advantage of PDTOs lies in their ability to faithfully recapitulate the histological and molecular characteristics of the original parental tumor, maintaining intratumoral heterogeneity and drug resistance patterns observed in patients [28] [30].

PDTOs represent a significant advancement over previous 3D culture approaches such as spheroids, which are highly compact spherical structures primarily obtained from immortalized cell lines. Unlike spheroids, PDTOs are derived directly from patient tissue and maintain genomic stability over multiple passages without acquiring the irrelevant mutations that often accumulate in conventional cell lines [30]. The self-organizing capacity of PDTOs results from their origin in stem cells within the tumor tissue, which allows them to develop multicellular structures exhibiting remarkable similarities to in vivo tumor architecture [31].

Microphysiological Systems (MPS)

Microphysiological Systems (MPS), often referred to as "organ-on-chip" technologies, are advanced in vitro platforms that combine the structural complexity of 3D organoids with precise microenvironmental control through microfluidic devices [28]. These systems enable more accurate modeling of human pharmacokinetics and pharmacodynamics by incorporating dynamic flow conditions that better reflect in vivo physiology [28]. MPS can simulate the mechanical and biochemical microenvironments of human tissues, including fluid shear stress, mechanical stretching, and nutrient gradients that influence cellular behavior and drug responses.

The integration of biosensors and real-time readouts within MPS platforms allows for continuous monitoring of drug responses, improving throughput and data quality in pharmaceutical development [28]. Particularly for modeling complex biological barriers and multi-tissue interactions, MPS offer significant advantages over static culture systems. For instance, specialized MPS have been developed to study the interplay between glioblastoma and the blood-brain barrier, addressing a critical challenge in neuro-oncology where over 98% of potential therapeutic candidates fail to penetrate the brain [32].

Comparative Analysis of Preclinical Models

To objectively evaluate the performance of PDTOs against other established preclinical models, we must consider multiple parameters, including physiological relevance, scalability, cost-effectiveness, and predictive value. The following comparative analysis highlights the distinctive advantages and limitations of each model system.

Table 1: Comprehensive Comparison of Preclinical Model Systems

| Model Characteristics | 2D Cell Cultures | Animal Models | Conditionally Reprogrammed (CR) Cells | Patient-Derived Tumor Organoids (PDTOs) |

|---|---|---|---|---|

| Physiological Relevance | Low; lacks 3D architecture and tissue-specific microenvironment [33] | Medium; species-specific differences limit human relevance [29] [28] | Medium; maintains some tissue specificity but lacks 3D organization [33] | High; recapitulates histology and heterogeneity of original tumor [28] [30] |

| Success Rate & Establishment Time | High; 1-3 days [33] | Variable; months for PDX models [29] | High; 1-10 days [33] | Medium; 2-8 weeks depending on cancer type [30] [34] |

| Cost Effectiveness | High; low cost and easy to maintain [29] [33] | Low; expensive and time-consuming [29] | Medium; requires specialized culture conditions [33] | Medium; requires extracellular matrix and growth factors [30] [35] |

| Scalability & Throughput | High; suitable for high-throughput screening [33] | Low; low throughput and resource-intensive [29] | High; suitable for high-throughput drug screening [33] | Medium; adaptable to medium-throughput screening with optimization [28] [34] |

| Genetic Stability | Low; accumulate mutations over passages [33] | High; maintains human tumor genetics in PDX models [30] | High; maintains genomic composition without genetic manipulation [33] | High; maintains original tumor genomic profile over passages [30] [31] |

| Predictive Value for Clinical Response | Poor; limited correlation with patient outcomes [29] [28] | Variable; species-specific differences limit predictability [28] | Emerging evidence; shows promise for personalized medicine [33] | High; multiple studies demonstrate correlation with patient responses [28] [30] [34] |

| Tumor Microenvironment | Absent; homogenous cell population [33] | Present; but includes murine stromal components [30] | Limited; stromal cells inhibited in co-culture [33] | Can be incorporated through co-culture systems [30] [35] |

| Personalization Potential | Low; limited patient-specific models | Medium; through PDX models but time-consuming | High; can be established from individual patients [33] | High; directly derived from patient tumors [28] [30] |

Table 2: Quantitative Performance Metrics of PDTOs in Predictive Drug Testing

| Cancer Type | Study Type | Number of Patients/PDTOs | Accuracy in Predicting Clinical Response | Key Findings | Reference |

|---|---|---|---|---|---|

| Metastatic Colorectal Cancer | Community cohort | 56 treatment-naive patients | 83% accuracy for forecasting patient responses | "Resistant" predictions associated with inferior progression-free survival | [34] |

| Metastatic Colorectal Cancer | Prospective study (AGITG FORECAST-1) | 30 patients | Similar accuracy achieved for third-line or later treatment | Misclassification rates similar across different treatment regimens | [34] |

| Liver Cancer | Preclinical drug screening | 18 of 28 patient-derived clusters successfully cultured as PDTOs | Individual differences in drug sensitivity observed | Validation of oxaliplatin sensitivity via MRI and biochemical tests after patient treatment | [35] |

| Various Cancers | Review of multiple studies | Multiple cancer types | High correlation with patient response | Retains original tumor morphology, genetics, and drug resistance patterns | [30] |

The comparative data clearly demonstrate the superior performance of PDTOs in replicating human tumor biology and predicting clinical drug responses compared to traditional models. Specifically, PDTOs achieve approximately 83-85% accuracy in forecasting patient responses to standard-of-care therapies in metastatic colorectal cancer, with "resistant" predictions significantly associated with inferior progression-free survival [34]. This predictive capacity represents a substantial improvement over 2D models, which often show poor correlation with clinical outcomes, and animal models, which are compromised by species-specific differences in drug metabolism and target engagement [29] [28].

Experimental Protocols and Methodologies

Establishment of PDTO Cultures

The successful generation of PDTOs requires careful attention to sample processing, extracellular matrix selection, and culture medium composition. The following protocol outlines the standard methodology for establishing PDTOs from patient tumor tissue:

Sample Collection and Processing: Tumor tissues are obtained through surgical resection, core biopsies, or from malignant effusions. The sample should be processed within 1-2 hours of collection to maintain viability. Tissues are washed in cold phosphate-buffered saline (PBS) containing antibiotics (e.g., penicillin-streptomycin) to minimize contamination [30].

Tissue Dissociation: The tumor tissue is subjected to mechanical and/or enzymatic dissociation. Mechanical dissociation involves mincing the tissue into small fragments (approximately 1-2 mm³) using surgical scalpels. Enzymatic dissociation typically uses collagenase, dispase, or other tissue-specific enzymes at 37°C for 30 minutes to 2 hours, depending on tissue consistency [30] [35].

Extracellular Matrix Embedding: The dissociated cell suspension or small tissue fragments are mixed with an extracellular matrix (ECM) hydrogel. The most commonly used ECM is Matrigel, a basement membrane extract from Engelbreth-Holm-Swarm murine sarcoma, which provides a 3D microenvironment conducive to organoid growth. The cell-ECM mixture is plated as domes in culture plates and allowed to solidify at 37°C for 20-30 minutes [30].

Culture Medium and Conditions: Specific culture medium is added over the solidified ECM domes. The composition of the medium varies depending on the cancer type but typically includes:

- Basal medium (e.g., Advanced DMEM/F12)

- Growth factors (e.g., EGF, Noggin, R-spondin)

- Wnt pathway agonists (e.g., Wnt3a) for certain cancer types

- B27 and N2 supplements

- Antibiotics (e.g., penicillin-streptomycin)

- Other tissue-specific additives [30]

Culture Maintenance: Cultures are maintained at 37°C in a humidified incubator with 5% CO₂. The medium is refreshed every 2-3 days, and organoids are typically passaged every 1-2 weeks using mechanical disruption or enzymatic digestion with trypsin-EDTA or accutase [30].

Drug Sensitivity Assays in PDTOs

Evaluating drug responses in PDTOs follows standardized protocols that enable quantitative assessment of treatment efficacy:

PDTO Preparation for Drug Testing: Organoids are collected and dissociated into single cells or small clusters. The cell number is quantified, and a predetermined number of cells (typically 1,000-10,000 cells per well) are embedded in ECM in 96-well plates suitable for high-throughput screening [30] [34].

Drug Treatment: Once organoids are established (usually after 5-7 days), drugs are applied at various concentrations. Testing typically includes a range of 5-8 concentrations with appropriate controls (vehicle-only treated). Each condition should be tested in technical replicates (at least 3-6 replicates) to ensure statistical robustness [34].

Incubation and Response Assessment: Following drug exposure (usually 5-7 days), viability is assessed using cell viability assays such as:

Data Analysis: Dose-response curves are generated, and IC₅₀ values (half-maximal inhibitory concentration) are calculated using nonlinear regression models. Responses are typically categorized as "sensitive" or "resistant" based on predetermined thresholds specific to each drug and cancer type [34].

Advanced MPS Integration

For more complex microenvironmental studies, PDTOs can be integrated into microphysiological systems:

Microfluidic Device Preparation: Polydimethylsiloxane (PDMS)-based microfluidic devices are fabricated using soft lithography techniques and sterilized before use [32].

PDTO Loading in MPS: Dissociated PDTO cells or small organoid fragments are loaded into the designated tissue chamber of the microfluidic device, typically in an ECM hydrogel [32].

Perfusion Establishment: Culture medium is perfused through the system using syringe pumps or gravity-driven flow at physiologically relevant flow rates (typically 0.1-10 µL/min, depending on the organ system being modeled) [32].

Endpoint Analysis: After drug treatment under flow conditions, various endpoints can be assessed, including:

- Transepithelial/transendothelial electrical resistance (TEER) for barrier integrity

- Immunofluorescence staining for specific markers

- Collection of effluents for cytokine/metabolite analysis

- Real-time imaging of cellular responses [32]

Key Signaling Pathways in PDTO Development and Maintenance

The successful establishment and long-term maintenance of PDTOs depend on the precise regulation of several critical signaling pathways. Understanding these pathways is essential for optimizing culture conditions and interpreting experimental results.

The Wnt/β-catenin pathway plays a fundamental role in maintaining cancer stem cells, which are crucial for PDTO self-renewal and long-term expansion. Many colorectal cancer PDTOs harbor mutations in the Wnt pathway (e.g., APC mutations), making them independent of exogenous Wnt ligands for growth [30]. The EGFR signaling pathway promotes cancer cell proliferation and survival, with many culture media requiring supplementation with EGF. However, tumors with constitutive activation of EGFR signaling pathways (e.g., EGFR mutations) may grow independently of EGF supplementation [30]. The TGF-β/BMP pathway typically inhibits epithelial cell growth and promotes differentiation. In PDTO culture, this pathway is often suppressed using specific inhibitors (e.g., A83-01 or Noggin) to create a favorable environment for stem cell expansion [30]. Rho-associated kinase (ROCK) inhibition is utilized in some culture systems, including conditional reprogramming, to prevent anoikis (cell death due to detachment) and promote cell survival and proliferation through cytoskeleton remodeling [33].

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful establishment and experimentation with PDTOs and MPS require specific reagents and materials optimized for 3D culture systems. The following table details essential components and their functions in advanced in vitro model development.

Table 3: Essential Research Reagent Solutions for PDTO and MPS Workflows

| Reagent Category | Specific Examples | Function and Application | Considerations and Alternatives |

|---|---|---|---|

| Extracellular Matrices | Matrigel, BME (Basement Membrane Extract) | Provides 3D scaffolding for organoid growth; contains essential basement membrane proteins (laminin, collagen IV, entactin) | Significant batch-to-batch variability; animal origin limits clinical translation [30] |

| Synthetic Hydrogels | Polyethylene glycol (PEG), Alginate-gelatin blends | Defined composition with tunable mechanical properties; better reproducibility than natural matrices | May lack natural bioactive motifs unless functionalized [30] [35] |

| Growth Factors and Cytokines | EGF, FGF, R-spondin, Noggin, Wnt3a | Activate specific signaling pathways essential for stem cell maintenance and organoid growth | Requirements vary by cancer type; optimized in specific commercial media [30] |

| ROCK Inhibitors | Y-27632 | Prevents anoikis in dissociated cells; enhances cell survival during passage and cryopreservation | Can interfere with cell morphology and motility studies [33] |

| Dissociation Enzymes | Collagenase, Dispase, Trypsin-EDTA, Accutase | Breakdown extracellular matrix and cell-cell junctions for organoid passaging and single-cell culture | Enzyme concentration and incubation time must be optimized for each organoid type [30] |

| Viability Assays | CellTiter-Glo 3D, Calcein AM/EthD-1, CCK-8 | Quantify cell viability and proliferation in 3D cultures; adapted for high-throughput screening | Standard ATP-based assays may underestimate viability in quiescent cells [30] [34] |

| Culture Media Supplements | B-27, N-2, N-Acetylcysteine, Gastrin | Provide essential nutrients, antioxidants, and specific factors for optimal organoid growth | Serum-free formulations help maintain lineage commitment and differentiation capacity [30] |