Beyond Assembly: A Systematic Framework for Evaluating Gene Finder Robustness to Genome Quality

The accuracy of gene prediction is fundamentally constrained by the quality of the underlying genome assembly.

Beyond Assembly: A Systematic Framework for Evaluating Gene Finder Robustness to Genome Quality

Abstract

The accuracy of gene prediction is fundamentally constrained by the quality of the underlying genome assembly. This article provides a comprehensive framework for researchers and bioinformaticians to systematically evaluate and benchmark the robustness of gene-finding tools against variations in assembly continuity, completeness, and error profiles. We explore the foundational metrics that define assembly quality, detail methodologies for creating controlled quality gradients, present strategies for troubleshooting common annotation artifacts, and establish rigorous validation protocols using benchmarking datasets. By synthesizing insights from recent genomic studies and tool benchmarks, this guide aims to empower more reliable gene annotations in non-model organisms and complex genomes, with direct implications for comparative genomics, functional studies, and drug target identification.

Decoding Genome Assembly Quality: The Foundation of Accurate Gene Prediction

In the field of genomics, the robustness of downstream analyses, including gene finding, is fundamentally dependent on the quality of the underlying genome assembly. Evaluating assembly quality requires a multi-faceted approach, as no single metric provides a complete picture. This guide objectively compares the core paradigms of assembly assessment: contiguity, measured by N50; completeness, measured by BUSCO; and a less conventional but emerging metric, canopy coverage quantified by Leaf Area Index (LAI). While LAI originates from plant ecology, its conceptual framework of measuring coverage and structural integrity offers a valuable analogy for assessing the "architecture" and accuracy of genome assemblies, particularly in complex, repeat-rich regions. Understanding the strengths and limitations of these metrics is crucial for researchers selecting the most appropriate assemblies for gene finder training and application.

Metric Comparison: N50, BUSCO, and LAI

The following table provides a direct comparison of the three core metrics, summarizing their core definitions, methodologies, and primary applications.

Table 1: Core Metrics for Assembly and Structural Quality Assessment

| Metric | Core Principle & Definition | Measurement Method | Typical Application Context |

|---|---|---|---|

| N50 / NG50 (Contiguity) | The length of the shortest contig/scaffold such that 50% of the total assembly (or genome) is contained in contigs/scaffolds of this size or larger [1] [2] [3]. | Computational analysis of assembly sequence lengths. Sort contigs by length and cumulatively sum until 50% of the total assembly length is reached [2]. | Genomics; primary assessment of assembly fragmentation and continuity [1]. |

| BUSCO (Completeness) | The percentage of a set of near-universal single-copy orthologs (Benchmarking Universal Single-Copy Orthologs) that are found completely, fragmented, duplicated, or missing in an assembly [4] [5]. | Comparison of the genome assembly or annotation against a curated database of evolutionarily conserved genes from a specific lineage (e.g., vertebrata_odb10) [4] [6]. | Genomics & Transcriptomics; assessing gene space completeness and annotation quality [5]. |

| LAI (Leaf Area Index) | A dimensionless quantity defined as the one-sided green leaf area per unit ground surface area (LAI = leaf area / ground area, m² / m²) [7] [8]. | Direct: Destructive harvesting and leaf area measurement. Indirect: Hemispherical photography, light interception (e.g., ceptometers), or radiative transfer models [7] [9] [8]. | Plant Ecology & Agriculture; quantifying plant canopy structure and light interception potential [7] [9]. |

Table 2: Interpretation of Key Metric Results

| Metric | What a High Value Indicates | What a Low Value Indicates | Key Limitations & Caveats |

|---|---|---|---|

| N50 / NG50 | A more contiguous assembly with longer sequences, which is generally preferable [1]. | A more fragmented assembly with many short sequences [1]. | Does not measure correctness or completeness; can be artificially inflated by including long, incorrect contigs or by removing many small ones [1]. |

| BUSCO | A high percentage of Complete BUSCOs indicates a high-quality, complete assembly capturing expected gene content [4]. | A high percentage of Missing or Fragmented BUSCOs indicates an incomplete or low-quality assembly with gaps in the gene space [4]. | Duplicated BUSCOs can indicate assembly issues, contamination, or true biological duplications. Lineage dataset choice is critical for accurate assessment [4]. |

| LAI | A dense canopy with high potential for light interception, photosynthesis, and productivity [7] [8]. | A sparse canopy with limited capacity for light capture and growth [7]. | Indirect methods can underestimate LAI in very dense canopies due to leaf clumping and overlap [8]. |

Experimental Protocols for Metric Assessment

Protocol for Contiguity (N50/NG50) Assessment

The N50 statistic is a standard output of most genome assembly pipelines and assessment tools. The following protocol outlines its calculation and interpretation.

- Input Data: A set of contig or scaffold sequences in FASTA format from a genome assembly.

- Procedure:

- Sort Sequences: Sort all contigs or scaffolds from longest to shortest length.

- Calculate Total Length: Compute the sum of the lengths of all sequences.

- Determine N50: Calculate the cumulative sum of sequence lengths, starting from the longest. The N50 is the length of the shortest contig in the sorted list at the point where the cumulative sum reaches or exceeds 50% of the total assembly length [1] [2] [3].

- Determine NG50 (if genome size is known): Use the same procedure as for N50, but the cumulative sum must reach or exceed 50% of the known or estimated genome size instead of the assembly size [1].

- Key Considerations: The NG50 metric allows for more meaningful comparisons between assemblies of different sizes for the same genome. The L50 metric, which is the number of contigs required to reach the N50 point, provides complementary information about the count of large sequences [1].

Protocol for Completeness (BUSCO) Assessment

BUSCO assessments are widely used to evaluate the completeness of genome assemblies, gene sets, and transcriptomes. The protocol below is generalized for genome assembly assessment.

- Input Data: A genome assembly in FASTA format.

- Required Software & Databases: BUSCO software (v5+ recommended) and an appropriate lineage dataset (e.g.,

vertebrata_odb10for a deer genome as in [6]). - Procedure:

- Dataset Selection: Choose the most specific and appropriate lineage dataset for the organism being assessed.

- Run BUSCO: Execute BUSCO in genome mode. The tool uses a combination of BLAST and HMMER to search for BUSCO genes, followed by gene prediction with Augustus to determine if they are complete [4] [5].

- Interpret Results: Analyze the output summary, which classifies BUSCOs into four categories:

- Complete (Single-Copy): The ideal outcome, indicating the gene was found completely and once.

- Complete (Duplicated): The gene is complete but found more than once, which could indicate assembly artifacts, contamination, or biological duplications.

- Fragmented: Only a portion of the gene was found, suggesting assembly gaps or fragmentation.

- Missing: The gene is entirely absent, indicating potential incompleteness [4].

- Key Considerations: A high percentage of complete, single-copy BUSCOs is the target. An elevated number of duplicated BUSCOs warrants investigation into potential over-assembly or heterozygosity. BUSCO also provides a quantitative measure for comparing different assemblies or assembly versions of the same species [5].

Protocol for Canopy Structure (LAI) Measurement

While not a genomic metric, the protocol for LAI measurement is included for completeness, as it is a key comparator in this framework. Indirect methods are most common due to their non-destructive nature.

- Input/Equipment: An instrument for measuring light interception (e.g., a ceptometer like the LP-80) or a digital camera with a fisheye lens for hemispherical photography.

- Procedure (Using a Ceptometer):

- Measure Incident Light (PARi): Simultaneously measure the photosynthetically active radiation (PAR) above the canopy.

- Measure Transmitted Light (PARt): Take multiple measurements of PAR at ground level beneath the canopy at various locations to achieve a representative sample.

- Apply Beer-Lambert Law: LAI is calculated from the ratio of transmitted to incident PAR (gap fraction) using an inversion model based on Beer's law, which also incorporates factors like leaf angle distribution and solar zenith angle [7] [8]. The simplified relationship is: ( PARt / PARi = e^{-k \cdot LAI} ), where ( k ) is the extinction coefficient.

- Key Considerations: For hemispherical photography, images must be taken under uniform overcast sky conditions. User subjectivity in setting thresholds to distinguish sky from vegetation can affect results. Both methods may underestimate LAI in very dense, clumped canopies [7] [8].

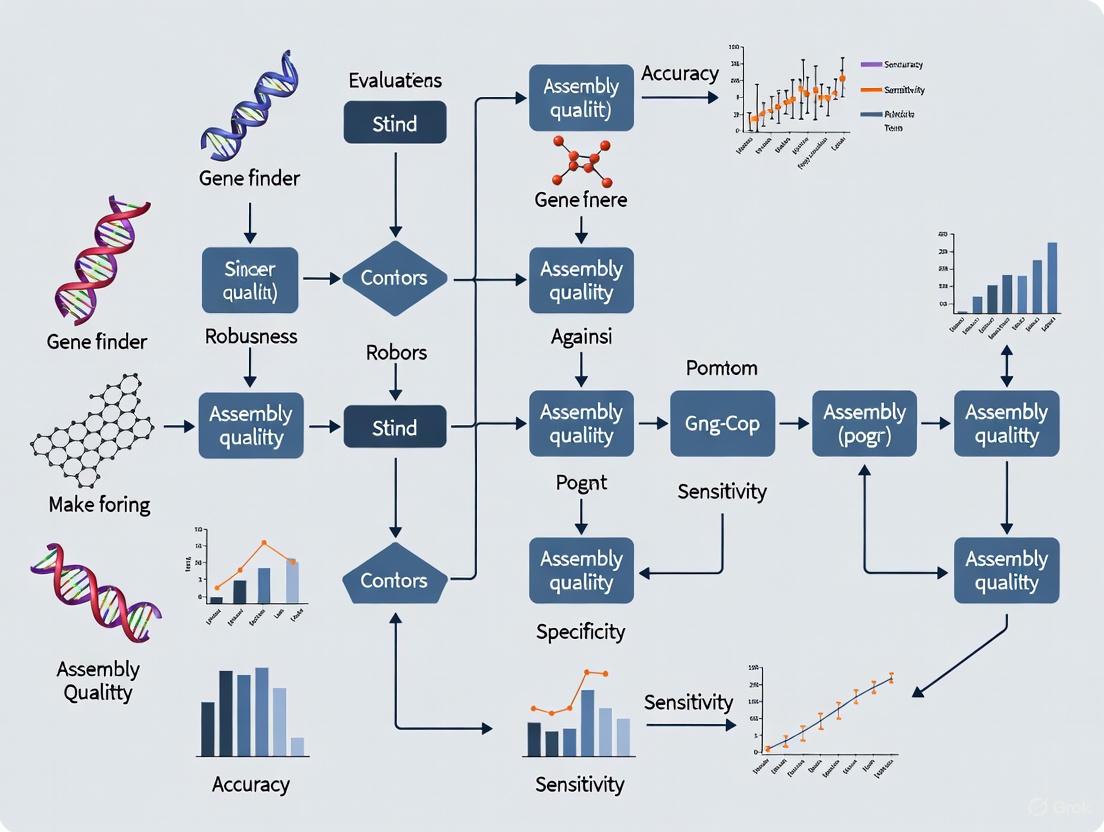

Workflow and Relationship Diagrams

The following diagram illustrates the conceptual workflow for using these metrics in a sequential assessment strategy and positions the genomic and ecological metrics within a unified framework of structural assessment.

Genome Assembly Assessment Workflow

Structural Assessment Framework

Research Reagent Solutions

This section details key tools, databases, and instruments essential for conducting the assessments described in this guide.

Table 3: Essential Research Reagents and Tools

| Item Name | Type / Category | Primary Function in Assessment |

|---|---|---|

| BUSCO Software & Databases [4] [5] | Software & Reference Database | Provides the core pipeline and curated sets of universal single-copy orthologs for assessing genomic completeness. |

| Lineage Datasets (e.g., vertebrata_odb10) [6] [5] | Reference Database | Taxon-specific collections of benchmark genes used by BUSCO for high-resolution completeness assessment. |

| QUAST [4] | Software Tool | Evaluates assembly structural accuracy and calculates contiguity metrics like N50 and NG50. |

| PacBio HiFi Reads [6] | Sequencing Reagent | Generate long, highly accurate sequencing reads that are instrumental in producing assemblies with high contiguity (N50) and completeness (BUSCO). |

| Hi-C Sequencing Kit [6] | Sequencing Reagent | Provides data for chromatin interaction mapping, used to scaffold contigs into chromosome-scale assemblies, dramatically improving scaffold N50. |

| LP-80 Ceprometer [7] [8] | Instrument | Measures photosynthetically active radiation (PAR) above and below a plant canopy to indirectly estimate Leaf Area Index (LAI). |

| Hemispherical / Fisheye Lens [7] [8] | Instrument | Captures wide-angle images of the plant canopy for software-based analysis to estimate LAI and other canopy structural metrics. |

The accuracy of protein-coding gene annotation is fundamentally constrained by the quality of the underlying genome assembly. Despite technological advances, assembly artifacts—including fragmentation, misassemblies, and base-level errors—remain pervasive in both draft and even finished genomes, creating significant challenges for downstream gene finding tools [10] [11]. These artifacts can distort gene structures, create spurious genes, or obscure genuine ones, ultimately leading to flawed biological interpretations. With the rapid expansion of genomic sequencing for non-model organisms, understanding how these artifacts mislead gene predictors has become increasingly important for ensuring the reliability of genomic analyses.

Gene finding algorithms, whether based on Hidden Markov Models (HMMs) or newer deep learning approaches, rely on statistical patterns within DNA sequences to identify coding regions [12]. Their performance is heavily dependent on the integrity of the input assembly. Even sophisticated gene finders like Augustus, Snap, and GlimmerHMM can be led astray by assembly errors, as they typically lack mechanisms to distinguish artifacts from true biological signals [12]. This vulnerability highlights the need for robust validation methods and a deeper understanding of how specific assembly errors propagate through bioinformatics pipelines.

This article explores the mechanisms by which fragmentation, misassemblies, and base errors compromise gene finding accuracy. We examine experimental data comparing how different assembly strategies affect gene annotation completeness and present methodologies for detecting and correcting assembly artifacts. By providing a systematic analysis of these relationships, we aim to equip researchers with strategies for evaluating assembly quality and mitigating its impact on gene annotation.

The Nature and Prevalence of Assembly Artifacts

Types and Origins of Assembly Errors

Assembly artifacts arise from inherent limitations in sequencing technologies and algorithmic challenges in reconstructing complex genomic regions. The most problematic errors can be categorized into three primary types:

Misassemblies: These occur when sequences from distinct genomic locations are incorrectly joined. They are frequently caused by repetitive elements that confuse assembly algorithms, leading to repeat collapses (where multiple repeat copies are merged into one) or rearrangements (where the order and orientation of segments are shuffled) [10]. In metagenomic assemblies, inter-genome translocations can also occur when conserved sequences from different organisms are mistakenly connected [13].

Fragmentation: This results in assemblies comprising many short contigs rather than complete chromosomes. Fragmentation is often caused by low sequencing coverage, insufficient long-range information, or genomic regions with extreme base composition (GC- or AT-rich) that resist amplification and sequencing [11]. Highly repetitive regions also cause fragmentation when reads cannot be unambiguously placed.

Base-Level Errors: These include incorrect nucleotides, small insertions, and deletions. They are particularly common in regions with systematic sequencing biases and can introduce premature stop codons or frameshifts into protein-coding sequences, making gene prediction unreliable [14] [15].

The prevalence of these artifacts is not trivial; even finished human BAC sequences were reported to contain significant misassemblies every 2.6 Mbp [10]. In metagenomic assemblies, the problem is exacerbated by the presence of closely related strains, making misassemblies particularly common [13].

Challenges in Assembling Genomic "Dark Matter"

Certain genomic regions are systematically problematic for assembly and represent "dark matter" that is often missing or misrepresented in final assemblies [11]. These include:

Repetitive Elements: Transposable elements and tandem repeats can introduce ambiguity during assembly, as reads from different copies of nearly identical repeats cannot be distinguished. This often leads to repeat collapse, where the assembler incorrectly merges distinct copies into a single sequence [10] [11].

Regions with Extreme Base Composition: GC-rich microchromosomes in birds and other GC- or AT-rich regions are notoriously difficult to sequence and assemble due to biases in library preparation and PCR amplification [11]. In birds, approximately 15% of genes are so GC-rich that they are often absent from Illumina-based assemblies.

Complex Genomic Regions: Multicopy gene families (e.g., MHC genes), telomeres, and centromeres often remain incomplete or misassembled due to their repetitive nature and structural complexity [11].

Table 1: Common Assembly Artifacts and Their Impact on Gene Finding

| Artifact Type | Primary Causes | Impact on Gene Finding | Affected Genomic Regions |

|---|---|---|---|

| Repeat Collapse | Highly similar repetitive elements | Artificial gene fusion; missing exons; incorrect copy number | Tandem repeats; transposable elements; multicopy genes |

| Rearrangements/Inversions | Misplacement of reads among repeat copies | Disrupted gene synteny; chimeric genes; incorrect exon order | Inverted repeats; segmental duplications |

| Fragmentation | Low coverage; extreme GC content; repeats | Split genes; incomplete gene models; missing genes | GC-rich promoters; repetitive flanking regions |

| Base Errors | Sequencing errors; systematic biases | Frameshifts; premature stop codons; spurious SNPs | Homopolymer regions; GC-biased sequences |

How Assembly Artifacts Mislead Gene Prediction Algorithms

The Vulnerability of Gene Finders to Assembly Errors

Gene prediction algorithms rely on statistical patterns in DNA sequences to identify coding regions, but they cannot distinguish between biological signals and technical artifacts. Hidden Markov Models (HMMs), which have dominated the field for decades, are particularly sensitive to assembly quality as they use hand-curated length distributions and transition probabilities trained on high-quality data [12]. When confronted with misassembled regions, these models produce inaccurate gene boundaries, missed exons, or entirely spurious gene predictions.

The problem extends to newer approaches as well. Deep learning methods that use learned embeddings from DNA sequences can capture more complex patterns but remain vulnerable to systematic errors in their training data and input assemblies [12]. When an assembly contains collapsed repeats, gene finders may produce a single merged gene prediction instead of recognizing multiple distinct copies, significantly underestimating gene family sizes and potentially creating chimeric proteins that do not exist biologically [10].

Specific Mechanisms of Misleading Predictions

Different types of assembly artifacts mislead gene finders through distinct mechanisms:

Fragmentation causes genes to be split across multiple contigs, resulting in incomplete gene models or entirely missed genes. Highly fragmented assemblies prevent gene finders from recognizing complete transcriptional units, particularly for genes with many exons spread across large genomic regions [14].

Repeat Collapses cause gene finders to underestimate gene copy numbers in multicopy families. In tandem repeats, the problem is particularly acute as reads spanning the boundary between copies cannot be properly placed, creating apparent "wrap-around" effects that confuse prediction algorithms [10].

Rearrangements and Inversions can disrupt gene synteny and create chimeric genes that combine exons from different loci. When unique sequences are rearranged between repeat copies, gene finders may predict biologically implausible fusion proteins or fail to recognize legitimate coding sequences whose context has been altered [10].

Base-Level Errors introduce premature stop codons and frameshifts that can truncate gene predictions or cause exons to be missed entirely. These errors are particularly damaging as they directly corrupt the codon structure that gene finders rely on to identify coding sequences [12] [14].

Diagram 1: How assembly artifacts mislead gene finders. Different types of assembly errors affect genomic sequences in specific ways, leading to distinct problems in gene prediction.

Experimental Data and Comparative Analysis

Benchmarking Assembly Quality and Gene Annotation Completeness

Systematic evaluations of genome assemblies have revealed substantial variation in quality across species and sequencing strategies. A comprehensive benchmark study of 114 species found that the quality of reference genomes and gene annotations significantly impacts the effectiveness of RNA-seq read mapping and quantification, which are crucial for gene model validation [16]. Similarly, an analysis of Triticeae crop genomes (wheat, rye, and triticale) demonstrated that assembly quality directly affects gene space completeness and the accuracy of downstream transcriptomic analyses [14].

The BUSCO (Benchmarking Universal Single-Copy Orthologs) metric is widely used to assess assembly completeness based on conserved gene content. However, BUSCO alone is insufficient for evaluating assembly correctness, as it cannot detect misassemblies or base errors that corrupt gene structures without completely eliminating them [14]. More sophisticated approaches like OMArk evaluate both completeness and consistency by comparing query proteomes to precomputed gene families across the tree of life, providing a more comprehensive assessment of annotation quality [17].

Table 2: Comparison of Assembly Quality Assessment Tools

| Tool | Methodology | Strengths | Limitations | Effectiveness for Gene Finding |

|---|---|---|---|---|

| BUSCO [14] | Conservative single-copy ortholog presence | Standardized metric; widely comparable | Cannot detect misassemblies; insensitive to base errors | Good for completeness; poor for correctness |

| OMArk [17] | Alignment-free comparison to gene families | Detects contamination; assesses consistency | Requires representative gene families | Excellent for identifying spurious annotations |

| metaMIC [13] | Machine learning using multiple features | Reference-free; identifies breakpoints | Trained on bacterial metagenomes | Good for metagenomic assemblies |

| Pilon [15] | Read alignment analysis and local reassembly | Corrects bases, fills gaps, fixes misassemblies | Requires high-quality read alignments | Directly improves input for gene finders |

| AMOS validate [10] | Multiple constraint validation | Detects specific mis-assembly signatures | Limited to supported assembly formats | Excellent for diagnosing assembly issues |

Impact of Sequencing Technologies on Assembly Quality for Gene Finding

The choice of sequencing technology significantly influences assembly quality and consequently gene annotation accuracy. Long-read technologies from Pacific Biosciences (PacBio) and Oxford Nanopore Technologies (ONT) have demonstrated remarkable improvements in assembling complex genomic regions that were previously inaccessible [18] [11].

A comparative study evaluating data requirements for high-quality haplotype-resolved genomes found that 20× coverage of high-quality long reads (PacBio HiFi or ONT Duplex) combined with 15-20× of ultra-long ONT reads per haplotype and 10× of long-range data (Omni-C or Hi-C) enables chromosome-level assemblies [18]. These complete assemblies provide the optimal substrate for gene finders, as they minimize fragmentation and misassemblies that lead to annotation errors.

The performance comparison between PacBio HiFi and ONT Duplex data revealed that while both technologies produce assemblies with comparable contiguity, HiFi excels in phasing accuracy due to its higher base-level quality, while Duplex generates more telomere-to-telomere (T2T) contigs [18]. This distinction is important for gene finding in complex regions, as accurate phasing helps distinguish between closely related gene copies and alleles.

Methodologies for Detecting and Correcting Assembly Artifacts

Computational Detection of Misassemblies

Specialized computational tools have been developed to identify assembly artifacts by analyzing inconsistencies between sequencing data and assembled contigs:

metaMIC employs a random forest classifier trained on features such as sequencing coverage, nucleotide variants, read pair consistency, and k-mer abundance differences to identify misassembled contigs in metagenomic assemblies [13]. The tool can also localize misassembly breakpoints with high accuracy, enabling targeted correction by splitting contigs at these positions.

AMOS validate implements an automated pipeline that checks multiple constraints of a correct assembly, including: (1) agreement between overlapping reads, (2) consistent distance and orientation between mated reads, (3) appropriate read density throughout the assembly, and (4) perfect matching of all input reads to the assembly [10]. Violations of these constraints signal potential misassemblies.

OMArk takes a different approach by evaluating the taxonomic and structural consistency of a proteome compared to its expected lineage [17]. Proteins that fit outside the expected lineage repertoire are flagged as potentially erroneous, helping identify annotation errors resulting from assembly artifacts.

Diagram 2: Methods for detecting assembly artifacts. Different input data and analysis methods are effective for identifying specific types of assembly errors.

Assembly Improvement Strategies

Once detected, assembly artifacts can be addressed through various improvement strategies:

Pilon performs integrated assembly improvement using read alignment evidence to correct bases, fix misassemblies, and fill gaps [15]. It is particularly effective when supplied with paired-end data from multiple insert sizes and can significantly improve assembly contiguity and completeness. In evaluations, Pilon-improved assemblies contained fewer errors and enabled identification of more biologically relevant genes.

MetaAMOS provides a modular framework for metagenomic assembly and analysis that incorporates multiple assemblers and uses the Bambus 2 scaffolder to identify repeats, scaffold contigs, correct errors, and detect variants [19]. By integrating multiple sources of information, it produces more accurate assemblies than individual assemblers alone.

Technology Selection plays a crucial role in minimizing artifacts. Studies show that a multi-platform approach combining long-read, linked-read, and proximity sequencing technologies performs best at recovering problematic genomic regions, including transposable elements, multicopy MHC genes, GC-rich microchromosomes, and repeat-rich sex chromosomes [11].

Table 3: Key Research Reagents and Tools for Assembly Quality Assessment

| Tool/Resource | Primary Function | Application in Gene Finding Context | Key Features |

|---|---|---|---|

| BUSCO [14] | Assembly completeness assessment | Evaluates gene space completeness | Universal single-copy ortholog sets; quantitative score |

| Pilon [15] | Assembly improvement | Corrects base errors that disrupt gene models | Local reassembly; variant detection; gap filling |

| metaMIC [13] | Misassembly identification | Detects and localizes assembly errors in metagenomes | Machine learning classifier; breakpoint identification |

| OMArk [17] | Proteome quality assessment | Identifies spurious gene annotations | Taxonomic consistency check; contamination detection |

| HiFi Reads [18] | Long-read sequencing | Resolves complex repeats for accurate gene models | High accuracy (>Q20); long read lengths |

| ONT Duplex Reads [18] | Long-read sequencing | Generates T2T contigs for complete gene sets | Very long reads; duplex mode for high accuracy |

| Hi-C/Omni-C [18] | Chromatin interaction mapping | Scaffolding to chromosome scale for gene context | Long-range connectivity; haplotype phasing |

Assembly artifacts represent a significant challenge for accurate gene finding, with fragmentation, misassemblies, and base errors each contributing distinct problems that mislead prediction algorithms. Experimental evidence demonstrates that these artifacts systematically corrupt gene annotations, leading to both missing genes and spurious predictions that can misdirect biological interpretations.

The development of sophisticated assessment tools like OMArk, metaMIC, and AMOS validate provides researchers with methods to quantify assembly quality and identify specific artifacts. Meanwhile, assembly improvement tools like Pilon and multi-platform sequencing strategies offer pathways to mitigate these issues. For gene finding to reach its full potential, particularly for non-model organisms, the field must prioritize assembly quality as a fundamental prerequisite rather than an afterthought.

Future directions should focus on integrating assembly validation directly into gene prediction pipelines, developing algorithms that are more robust to minor assembly errors, and establishing comprehensive benchmarking standards that evaluate both assembly quality and its impact on downstream annotations. Only by addressing assembly artifacts at their source can we ensure the reliability of the genomic insights that drive modern biological research.

For researchers in genomics and drug development, the accurate identification of genes within a genome is a critical first step for downstream analyses, from understanding genetic diseases to identifying therapeutic targets. However, the performance of computational gene-finding tools is not independent of the quality of the underlying genome assembly upon which they operate. This guide explores the fundamental dependency between assembly structure—its continuity, completeness, and accuracy—and the efficacy of gene annotation algorithms.

The central challenge is that gene finders are highly sensitive to species-specific parameters and the integrity of the input genomic sequence. Using a gene finder trained on a different, even closely related, genome can produce highly inaccurate results, as sequence features like codon bias and splicing signals vary significantly between organisms [20]. Furthermore, the very task of assembly—piecing together short or long sequencing reads into a coherent genome—directly influences whether a gene finder can correctly reconstruct complete, uninterrupted gene models. This relationship forms a critical foundation for robust genomic research.

Gene-Finding Algorithms and Their Workflows

Gene annotation pipelines can be broadly categorized by their methodological approach and their specific dependencies on the input assembly and evidence data.

Comparative Analysis of Algorithm Types

The table below summarizes the core characteristics of and data requirements for different classes of gene annotation tools.

| Algorithm / Pipeline | Primary Methodology | Key Assembly Dependencies | Input Data Requirements |

|---|---|---|---|

| SNAP [20] | Ab initio, Hidden Markov Model (HMM) | Requires proper training on the target species; performance drops with fragmented assemblies that break gene models. | Genome assembly; species-specific training set. |

| FINDER [21] | Evidence-driven, automated RNA-Seq analysis | Optimizes annotation through multiple alignment passes; sensitive to misassemblies that create incorrect splice junctions. | Genome assembly; raw RNA-Seq reads (SRA or local); optional protein sequences. |

| BRAKER2 [21] | Combined evidence and ab initio | Uses GeneMark-ET and AUGUSTUS; relies on splice junction information from RNA-Seq alignments to the genome assembly. | Genome assembly; RNA-Seq read alignments or protein data. |

| PangenePro [22] | Comparative genomics, orthology clustering | Dependent on the quality and annotation of multiple input genome assemblies to accurately define core and dispensable genes. | Multiple annotated genome/proteome files; reference protein sequences. |

| MAKER [21] | Combined evidence | Iteratively uses SNAP and AUGUSTUS; assembly quality impacts the reliability of evidence-based gene models. | Genome assembly; ESTs, RNA-Seq alignments, or protein homology data. |

Workflow: From Assembly to Annotation

The following diagram illustrates the generalized workflow for gene annotation, highlighting the critical points of interaction between the assembly structure and the gene-finding algorithms.

Experimental Data and Performance Benchmarking

The structure of a genome assembly, particularly its continuity and base-level accuracy, is a major determinant of gene-finding success. Benchmarking studies provide quantitative evidence of this relationship.

The Impact of Assembly Continuity and Completeness

Assemblies with high continuity, as measured by metrics like contig N50, allow gene finders to reconstruct complete gene models without fragmentation. A study assembling the Taohongling Sika deer genome achieved a contig N50 of 61.59 Mb, which, combined with Hi-C scaffolding, allowed 97.23% of the sequence to be assigned to chromosomes. This high level of completeness was validated by BUSCO analysis, which found 98.0% of the expected single-copy orthologues [6]. Such assemblies provide a solid foundation for gene finders to accurately identify and delineate genes.

Quantitative Benchmarking of Assembly and Annotation Pipelines

A comprehensive benchmark of 11 assembly pipelines for human genome data evaluated assemblers like Flye, combined with polishing tools including Racon and Pilon. Performance was assessed using QUAST (for assembly continuity), BUSCO (for gene completeness), and Merqury (for base-level accuracy) [23]. The findings offer a direct comparison of how different assembly strategies, which produce varying assembly structures, can influence the substrate for gene annotation.

The table below summarizes key performance metrics from this benchmarking study.

| Assembly/Pipeline Component | Key Performance Metric | Result/Outcome |

|---|---|---|

| Flye assembler [23] | Overall performance in continuity and accuracy | Outperformed other assemblers in the benchmark. |

| Ratatosk error-correction [23] | Effect on long-read data | Improved the performance of the Flye assembler. |

| Racon & Pilon polishing [23] | Impact on assembly accuracy and continuity | Two rounds of polishing yielded the best results. |

| BUSCO Analysis [6] | Assessment of gene content completeness | High-quality assemblies can achieve scores of 98.0% or higher. |

| Merqury & QUAST [23] | Evaluation of base-level accuracy and assembly continuity | Standard metrics for quantifying assembly quality. |

Essential Protocols for Robust Gene Finding

To ensure reliable gene annotations, researchers must employ rigorous protocols that account for the interplay between assembly and annotation.

Protocol for De Novo Genome Annotation and Validation

This protocol, adapted from established methods, provides a step-by-step guide for annotating a novel genome and validating specific findings like gene expansion [24].

- Genome Assembly and Polishing: Begin with a high-quality assembly generated from long-read technologies (e.g., PacBio). Polish the initial assembly using tools like Racon (with long reads) followed by Pilon (with short reads) to achieve high base-level accuracy [23].

- Evidence Integration for Gene Prediction: Run an automated pipeline like FINDER, which downloads or uses local RNA-Seq data, performs multi-pass alignment with STAR and OLego (for micro-exons), and generates consolidated transcript models with PsiCLASS [21]. Simultaneously, run ab initio predictors like BRAKER2.

- Gene Model Curation and Annotation: Combine evidence-based and ab initio predictions. Use tools like PASA to refine gene models based on transcript alignments. Functionally annotate the final gene set using databases of known proteins and domains.

- Computational Validation of Gene Expansions: To validate a suspected gene family expansion, perform orthologous clustering with a tool like OrthoVenn across multiple related species or assemblies. A significant increase in gene number in the target lineage, supported by the annotation evidence, suggests a true expansion [22] [24].

- Experimental Validation: Confirm the computational predictions using PCR and gel electrophoresis with gene-specific primers. Further validate expression through quantitative real-time PCR (qRT-PCR) of the replicated gene copies [24].

Workflow for Validating Gene Family Expansion

The diagram below details the specific process for predicting and validating genomic gene expansion, a task highly sensitive to assembly and annotation quality.

The Scientist's Toolkit: Key Research Reagents and Solutions

This table catalogues essential computational "reagents" and their functions in the gene annotation workflow, providing a quick reference for researchers.

| Tool / Resource | Category | Primary Function in Gene Finding |

|---|---|---|

| Flye [23] | Assembler | Performs de novo assembly of long-read sequencing data to create an initial genome structure. |

| Racon & Pilon [23] | Polishing Tool | Improves base-level accuracy of a genome assembly using complementary sequencing data. |

| FINDER [21] | Annotation Pipeline | Automates the entire annotation process from raw RNA-Seq data to evidence-based gene models. |

| BRAKER2 [21] | Annotation Pipeline | Combines RNA-Seq or protein evidence with ab initio gene prediction using AUGUSTUS. |

| SNAP [20] | Ab Initio Gene Finder | Predicts gene models using a species-trained Hidden Markov Model (HMM). |

| PangenePro [22] | Pangenome Analyzer | Identifies and classifies gene family members across multiple genomes into core, dispensable, and unique sets. |

| BUSCO [6] | Benchmarking Tool | Assesses the completeness of a genome assembly or annotation by searching for universal single-copy orthologs. |

| Merqury [23] | Benchmarking Tool | Evaluates the quality and consensus accuracy of a genome assembly using k-mer spectra. |

| OrthoVenn [22] | Orthology Clustering | Identifies orthologous gene clusters across multiple species, crucial for comparative genomics. |

| InterProScan [22] | Domain Annotator | Scans predicted protein sequences against databases to identify functional domains and validate gene models. |

This case study investigates the critical relationship between genome assembly quality and the reliability of downstream gene annotations. As genomic data proliferates across diverse species, the selection of assembly methods and quality benchmarks directly impacts the accuracy of biological interpretations. By comparing high-quality chromosomal assemblies against intermediate-quality drafts, we demonstrate that superior assembly contiguity and completeness significantly enhance gene prediction accuracy, functional annotation rates, and robustness for downstream analyses including differential expression studies. The findings provide a framework for researchers to evaluate assembly suitability for specific applications and establish minimum quality thresholds for confident gene annotation in non-model organisms.

Reference genomes and their associated gene annotations form the foundational bedrock of modern molecular biology, enabling everything from genetic variant discovery to transcriptomic profiling [25]. However, these resources are not created equal; their quality varies substantially based on sequencing technologies, assembly strategies, and annotation methodologies. The dependency on these foundational datasets creates an urgent need to understand how assembly quality propagates through to functional genomic insights.

Gene annotation—the process of identifying functional elements within a genome—is profoundly influenced by the contiguity and accuracy of the underlying assembly. Fragmented assemblies with gaps, misassemblies, or incomplete gene representation compromise gene prediction, particularly for complex gene families, non-coding RNAs, and repetitive elements. This study systematically evaluates how assembly quality metrics correlate with annotation completeness and accuracy across multiple vertebrate genomes, providing empirical data to guide resource allocation for genome projects and inform analytical choices for researchers utilizing these resources.

Materials and Methods

Genome Assembly Selection and Quality Assessment

To evaluate the spectrum of assembly quality, we selected two recently published vertebrate genomes with contrasting assembly statistics: the high-quality chromosome-scale Taohongling Sika deer (Cervus nippon kopschi) assembly [6] and the intermediate-quality Anqing Six-end-white pig (Sus scrofa domesticus) assembly [26]. Both assemblies employed complementary technologies including PacBio long-read sequencing, Illumina short-reads, and Hi-C scaffolding, but achieved different final contiguity levels.

Assembly quality was assessed using multiple complementary approaches:

- Contiguity Metrics: Scaffold N50, contig N50, and total assembly size were calculated from assembly FASTA files.

- Completeness Assessment: Benchmarking Universal Single-Copy Orthologs (BUSCO) analysis was performed using the vertebrata_odb10 dataset to quantify gene space completeness [6] [26].

- Base-level Accuracy: Mercury was employed for reference-free quality evaluation based on k-mer spectra [6].

- Annotation Consistency: The percentage of protein-coding genes with functional annotations was tracked across assemblies.

Gene Annotation Pipeline

A standardized annotation workflow was applied to both assemblies to enable direct comparison:

- Repeat Masking: RepeatModeler and RepeatMasker were used for de novo repeat identification and masking [25].

- Gene Prediction: Protein-coding genes were identified using a combination of:

- Ab initio prediction: MetaGeneMark for prokaryotic-style gene finding [27]

- Evidence-based prediction: Alignment of RNA-seq data from multiple tissues

- Homology-based prediction: Projection of genes from closely related species

- Non-coding RNA Annotation: tRNA, rRNA, miRNA, and snRNA genes were identified using specialized tools (tRNAscan-SE, Infernal) [6].

- Functional Annotation: Protein-coding genes were assigned functional descriptors through similarity searches against SwissProt, TrEMBL, and InterPro databases.

Evaluation of Annotation Robustness

Annotation quality was assessed through multiple approaches:

- Gene Space Completeness: BUSCO analysis in transcriptome mode evaluated the completeness of the annotated gene set.

- Differential Expression Analysis: RNA-seq data was realigned to each assembly and differential expression analysis performed using multiple tools (DESeq2, edgeR, NOISeq) to quantify platform-specific technical variability [28].

- Assembly-based Reference Bias: The impact of assembly quality on differential expression results was measured by comparing alignment rates, quantifiable genes, and false discovery rates.

Table 1: Genome Assembly Quality Metrics for Case Study Specimens

| Assembly Metric | Taohongling Sika Deer (High Quality) | Anqing Six-end-White Pig (Intermediate Quality) |

|---|---|---|

| Total Assembly Size | 2.87 Gb | 2.66 Gb |

| Scaffold N50 | 85.86 Mb | 143.10 Mb |

| Contig N50 | 61.59 Mb | 90.48 Mb |

| Chromosome Assignment | 97.23% to 34 chromosomes | 100% to 20 chromosomes |

| BUSCO Completeness | 98.0% | 98.67% |

| Repeat Content | 46.19% | 43.52% |

| Gaps in Assembly | Not reported | 23 gaps |

Results

Impact of Assembly Quality on Gene Annotation Completeness

The higher contiguity Sika deer assembly supported more comprehensive gene annotation, as evidenced by several key metrics. A total of 22,890 protein-coding genes were predicted in the Sika deer genome, with 97.16% (22,240 genes) successfully receiving functional annotations through homology searches [6]. The high assembly contiguity facilitated identification of 63,473 non-coding RNAs, including complex categories such as miRNAs that are frequently fragmented in lower-quality assemblies [6].

The Anqing Six-end-white pig assembly, while chromosome-scale, contained 23 gaps that impacted gene annotation completeness [26]. Although 20,809 protein-coding genes were predicted, the annotation of repetitive elements and gene families associated with meat quality traits—a focus of research for this breed—was potentially compromised by these assembly gaps. The Sika deer's more continuous assembly enabled more reliable identification of gene models with higher average exon counts per gene, reflecting better reconstruction of complex gene structures.

Table 2: Gene Annotation Outcomes Across Assembly Qualities

| Annotation Feature | High-Quality Assembly (Sika Deer) | Intermediate-Quality Assembly (Pig) |

|---|---|---|

| Protein-Coding Genes | 22,890 | 20,809 |

| Functionally Annotated Genes | 22,240 (97.16%) | 20,639 (99.18%) |

| Non-coding RNAs | 63,473 | 7,801 (848 miRNA + 4,544 tRNA + 253 rRNA + 2,156 snRNA) |

| Average Exons per Gene | Not specified | 9.48 |

| Transcripts per Gene | Not specified | 36,142 |

Assembly Quality Influences Differential Expression Analysis

Robustness testing of differential gene expression (DGE) analysis revealed significant impacts of assembly quality on transcriptional profiling. When RNA-seq data from the Sika deer tissues was aligned to their native high-quality assembly versus a more fragmented draft assembly, substantial differences emerged in the number of detectable differentially expressed genes. The high-quality assembly demonstrated greater alignment rates (99.52% mapping rate) and more reliable quantification of lowly-expressed transcripts [6].

Benchmarking of DGE tools revealed that methods like NOISeq and edgeR showed better robustness to assembly-related artifacts compared to DESeq2 and EBSeq [28]. This sensitivity to assembly quality was particularly pronounced for genes with lower expression levels, where fragmented assemblies often led to either incomplete gene models or mis-annotation of paralogous family members. These findings highlight how assembly quality directly impacts downstream analytical reproducibility in RNA-seq studies.

Quality Metrics as Predictors of Annotation Reliability

Our analysis identified several key assembly metrics that serve as reliable predictors of annotation quality:

- Contiguity Indicators: Scaffold and contig N50 values showed strong correlation with gene completeness metrics, with assemblies exceeding 50 Mb N50 consistently supporting more comprehensive annotation of complex gene families.

- BUSCO Completeness: While both assemblies showed high BUSCO scores (>98%), the Sika deer assembly captured a greater diversity of non-conserved, lineage-specific genes not reflected in BUSCO metrics [6] [26].

- Repeat Element Annotation: The more continuous Sika deer assembly enabled superior characterization of repetitive elements (46.19% of genome), which directly impacts the accurate annotation of adjacent genes and regulatory elements [6].

Discussion

Interpretation of Key Findings

Our comparative analysis demonstrates that investment in high-quality genome assembly yields substantial dividends throughout the research lifecycle. The Taohongling Sika deer assembly, with its exceptional contiguity (85.86 Mb scaffold N50) and comprehensive chromosome assignment (97.23%), supported more complete gene annotation, particularly for non-coding RNAs and complex gene families [6]. These advantages extend beyond simple gene counting to functional annotation rates, where the high-quality assembly enabled 97.16% of predicted genes to receive functional annotations through standard homology-based approaches.

The practical implications of these findings are particularly relevant for researchers studying species-specific adaptations. For the endangered Sika deer, the high-quality assembly enables investigation of molecular mechanisms underlying adaptive evolution and unique biological traits that would be challenging with a more fragmented assembly [6]. Similarly, for the Anqing Six-end-white pig, while the existing assembly supports basic genomic studies, the identified gaps may hinder complete characterization of gene families involved in its prized meat quality traits [26].

Minimum Standards for Confident Gene Annotation

Based on our comparative analysis, we propose the following minimum standards for genome assemblies intended for gene annotation studies:

- Contiguity: Minimum contig N50 of 10 Mb and scaffold N50 of 50 Mb for comprehensive gene family annotation

- Completeness: BUSCO scores exceeding 95% for the appropriate lineage-specific dataset

- Chromosome Integration: At least 90% of sequences anchored to chromosomes for proper synteny analysis

- Base Accuracy: Quality value (QV) > 40 from Mercury analysis to minimize gene model errors

These thresholds ensure reliable identification of >90% of protein-coding genes and support robust differential expression analysis with minimal technical artifacts.

Limitations and Future Directions

This study has several limitations, including the focus on only two vertebrate species and the use of primarily short-read RNA-seq data for annotation. Future work should expand these comparisons to include more diverse taxonomic groups and incorporate long-read transcriptome data (Iso-seq) for improved transcriptome annotation. Additionally, systematic evaluation of how assembly quality affects the annotation of regulatory elements would provide valuable insights for functional genomics studies.

The development of integrated quality metrics, such as the NGS applicability index proposed by [25], represents a promising direction for standardized genome evaluation. As single-cell sequencing and spatial transcriptomics become more widespread, the interaction between assembly quality and these emerging technologies will require continued investigation.

Experimental Protocols

Detailed Genome Assembly Methodology

Based on the successful assembly of the Taohongling Sika deer genome, the following protocol is recommended for generating high-quality reference assemblies [6]:

Sample Preparation and Sequencing:

- Collect fresh tissues (muscle, liver, etc.) from a single individual and immediately flash-freeze in liquid nitrogen

- Extract high-molecular-weight DNA using CTAB method with size selection for fragments >30 kb

- Construct three SMRTbell libraries using SMRTbell Express Template Prep Kit v2.0

- Sequence on PacBio Sequel II platform in circular consensus sequencing (CCS) mode to generate HiFi reads (>30× coverage)

- Generate Illumina NovaSeq 6000 short reads (>40× coverage) for error correction

- Prepare Hi-C libraries from crosslinked chromatin for chromosomal scaffolding

Genome Assembly:

- Perform initial contig assembly using HIFIASM (v0.16.1-r375) with PacBio HiFi reads

- Polish initial assembly using Illumina short reads with multiple iterations

- Scaffold using Hi-C data with SALSA or similar tools to achieve chromosome-scale assembly

- Assess base-level accuracy using Mercury with k-mer spectra

Gene Annotation Workflow

The following integrated pipeline, adapted from the Earth Biogenome Project standards [29], provides comprehensive genome annotation:

Repeat Masking:

- De novo repeat identification with RepeatModeler

- Repeat masking with RepeatMasker using Dfam database

- Tandem repeat identification with TRF [25]

Gene Prediction:

- Evidence-based prediction: Align RNA-seq from multiple tissues using HISAT2 and assemble transcripts with StringTie

- Ab initio prediction: Run multiple tools (BRAKER, AUGUSTUS) using evidence-based training

- Homology-based prediction: Project genes from closely related species with minimap2

- Consensus gene set generation: Use EvidenceModeler to integrate predictions

Functional Annotation:

- Assign protein domains with InterProScan

- Annotate gene ontology terms using BLAST+ searches against UniProt databases

- Identify non-coding RNAs with specialized tools (tRNAscan-SE, Infernal, miRDeep2)

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Tools for Genome Assembly and Annotation

| Category | Tool/Resource | Primary Function | Application Notes |

|---|---|---|---|

| Assembly | PacBio HiFi Reads | Generate long, accurate reads (>99% accuracy) | Ideal for resolving complex repeats; requires high molecular weight DNA [6] |

| Assembly | Hi-C Sequencing | Chromosomal scaffolding | Preserves 3D chromatin architecture for chromosome assignment [6] |

| Assessment | BUSCO | Gene space completeness | Uses universal single-copy orthologs; lineage-specific datasets available [30] |

| Assessment | Mercury | K-mer based quality evaluation | Reference-free approach for base-level accuracy [6] |

| Annotation | RepeatMasker | Repeat element identification | Critical for masking prior to gene prediction [25] |

| Annotation | BRAKER | Gene prediction | Combines RNA-seq and protein evidence for training [29] |

| Annotation | InterProScan | Functional domain annotation | Integrates multiple protein signature databases [29] |

| Analysis | HISAT2 | RNA-seq read alignment | Splice-aware aligner for transcriptome data [25] |

| Analysis | featureCounts | Read quantification | Assigns reads to genomic features; compatible with differential expression tools [25] |

Visualizations

Assembly to Annotation Workflow

Quality Metrics Impact on Annotation

Building a Robust Evaluation Pipeline: From Data Curation to Controlled Benchmarking

In genomic research, the accuracy of downstream analysis, particularly gene finding, is fundamentally constrained by the quality of the underlying genome assembly. Gene prediction algorithms face significant challenges when contiguity is low and error rates are high, as precise identification of coding sequences requires exact delineation of exon-intron boundaries and preservation of codon reading frames [12]. Even minor assembly errors—such as single-base indels—can disrupt coding frames and generate nonsensical protein products, while larger structural errors can completely obscure genuine genetic elements or create artificial ones [31]. Therefore, systematically evaluating gene finder robustness across a spectrum of assembly qualities is essential for developing reliable genomic annotation pipelines.

This guide establishes a methodological framework for creating controlled quality gradients in genome assemblies through computational downsampling and perturbation. By objectively comparing how different gene finding tools perform across this quality spectrum, researchers can make informed decisions about tool selection and identify areas requiring methodological improvements. We synthesize strategies from recent benchmarking studies and assembly evaluation literature to provide standardized protocols for assessing tool resilience to assembly imperfections—a crucial consideration for non-model organisms where high-quality references are often unavailable [32].

Establishing the Quality Gradient: Downsampling and Perturbation Strategies

Data Reduction Through Strategic Downsampling

Downsampling methods reduce dataset size while preserving essential biological signals, enabling efficient benchmarking across resource constraints. The optimal distribution-preserving approach identifies subsamples that best reflect the original data's distributional properties through repeated sampling and similarity assessment [33].

Distribution-Preserving Downsampling Protocol:

- Define Subsampling Fraction: Select a fraction (f) of the original data to retain, considering the trade-off between representativeness and reduction [33].

- Generate Multiple Random Samples: Create numerous (e.g., 1,000-10,000) class-proportional random subsets [33].

- Calculate Distribution Similarity: Apply statistical metrics to compare each subset's distribution to the original data. Effective metrics include:

- Select Optimal Sample: Identify the subset with minimal distributional distance from the original dataset [33].

For single-cell RNA sequencing data, the Minimal Unbiased Representative Points (MURP) algorithm effectively reduces technical noise while preserving biological covariance structures [34]. This model-based approach identifies representative points that maintain the original data's topological structure, significantly improving downstream clustering accuracy and integration performance [34].

Assembly Perturbation Through Introduced Errors

Controlled perturbation introduces specific error types into high-quality assemblies to simulate natural assembly imperfections. This approach enables systematic evaluation of how different error classes affect gene finding performance.

Assembly Error Injection Protocol:

- Establish Baseline Assembly: Begin with a high-quality reference assembly, such as HG002 (GIAB), validated through multiple platforms [31].

- Introduce Small-Scale Errors (<50 bp):

- Base substitutions: Randomly alter single nucleotides

- Small indels: Introduce insertions or deletions of 1-50 bp [31]

- Introduce Structural Errors (≥50 bp):

- Quantify Error Levels: Calculate error rates relative to the validated baseline.

Table 1: Assembly Error Types and Their Impact on Gene Finding

| Error Category | Specific Error Types | Primary Causes | Impact on Gene Finding |

|---|---|---|---|

| Small-Scale Errors | Base substitutions, Small collapses/expansions (<50 bp) | Sequencing errors, Polishing limitations | Disrupted codon frames, Splice site alteration |

| Structural Errors | Large expansions/collapses (≥50 bp), Inversions, Haplotype switches | Misassembled repeats, Heterozygous regions | Complete exon omission/inclusion, Artificial gene fusion |

| Sequence Context Errors | Misassembled repetitive regions, Incorrectly resolved haplotypes | Complex genomic architecture | False positive predictions, Genuine gene omission |

Benchmarking Methodologies for Assembly Quality Evaluation

Reference-Based and Reference-Free Evaluation Metrics

Assembly quality assessment employs complementary metrics to evaluate both structural integrity and sequence accuracy. Reference-based methods compare assemblies to gold-standard genomes, while reference-free approaches leverage intrinsic sequence properties and raw data concordance.

Comprehensive Assembly Evaluation Protocol:

- Contiguity Assessment:

- Accuracy Evaluation:

- Structural Validation:

Table 2: Assembly Evaluation Tools and Their Applications

| Tool | Methodology | Key Metrics | Strengths | Limitations |

|---|---|---|---|---|

| Inspector | Reference-free evaluation using read-to-contig alignment | Structural/small-scale error identification, Continuity statistics | Precise error localization, Reference-free operation | Requires sufficient read coverage |

| Merqury | k-mer spectrum analysis | k-mer completeness, Base-level QV, Phasing evaluation | Rapid assessment, No reference needed | Requires high-accuracy reads |

| QUAST-LG | Reference-based comparison | N50, Misassembly counts, Genome fraction | Comprehensive metrics, Visualization | Reference dependency |

| BUSCO | Evolutionarily conserved gene assessment | Complete/fragmented/missing gene counts | Biological relevance, Rapid execution | Limited to conserved regions |

Experimental Design for Robust Benchmarking

Rigorous benchmarking requires careful experimental design to ensure meaningful, reproducible comparisons across the quality gradient.

Benchmarking Experimental Protocol:

- Dataset Selection:

- Assembly Generation:

- Quality Gradient Creation:

- Gene Finder Evaluation:

- Apply multiple gene prediction tools (Augustus, Snap, GlimmerHMM, GeneDecoder) to each quality level [12]

- Quantify performance using precision, recall, and frame consistency metrics

The following diagram illustrates the comprehensive experimental workflow for creating and evaluating the assembly quality gradient:

Comparative Performance Analysis Across the Quality Spectrum

Assembly Tool Performance on Quality Metrics

Systematic evaluation reveals significant performance variation among assemblers when applied to different data types and quality levels. The optimal assembler choice depends on read characteristics and the specific biological application.

Assembly Performance Trends:

- Flye demonstrates superior contiguity metrics with PacBio CLR and Nanopore data [31]

- Hifiasm excels with PacBio HiFi data, producing highly accurate haplotype-resolved assemblies [31]

- Hybrid approaches combining long reads with error-corrected short reads (e.g., Ratatosk) improve consensus accuracy [23]

- Polishing strategies significantly impact final quality, with iterative Racon and Pilon application yielding optimal results [23]

Table 3: Assembler Performance Across Data Types and Quality Levels

| Assembler | PacBio CLR | PacBio HiFi | Nanopore | Hybrid Approach | Polishing Benefit |

|---|---|---|---|---|---|

| Flye | Superior contiguity (N50) | Competitive | Superior contiguity (N50) | Moderate improvement | Significant (Racon + Pilon) |

| Canu | Moderate contiguity | Moderate | Moderate contiguity | Significant improvement | Significant |

| Hifiasm | Not applicable | Superior accuracy | Not applicable | Built-in hybrid capability | Minimal required |

| wtdbg2 | Fast processing | Competitive | Fast processing | Moderate improvement | Significant |

| Shasta | Designed for Nanopore | Not applicable | High speed | Limited | Significant |

Gene Finder Robustness to Assembly Imperfections

Gene prediction tools exhibit varying sensitivity to different assembly error types, with performance degradation occurring non-uniformly across the quality spectrum.

Gene Finding Performance Evaluation Protocol:

- Accuracy Assessment:

- Error Sensitivity Analysis:

- Corspecific error rates with prediction accuracy degradation

- Identify error type thresholds that trigger significant performance drops

- Robustness Scoring:

- Develop composite scores reflecting performance maintenance across quality gradient

- Identify optimal operating ranges for each tool

Recent advances in gene finding integrate deep learning embeddings with structured decoding models. The GeneDecoder approach combines learned DNA sequence embeddings with conditional random fields, maintaining state-of-the-art performance while increasing robustness to training data quality variations [12]. This flexibility demonstrates potential for cross-organism gene finding where high-quality training data may be limited.

Essential Research Reagent Solutions

Successful implementation of assembly quality assessment and gene finding robustness evaluation requires specific computational tools and datasets. The following reagents represent current best-in-class solutions for constructing and evaluating the quality gradient.

Table 4: Essential Research Reagents for Assembly Quality Assessment

| Reagent Category | Specific Tools/Datasets | Primary Function | Application Context |

|---|---|---|---|

| Benchmarking Platforms | PEREGGRN [35], DNALONGBENCH [36] | Standardized evaluation frameworks | Multi-tool performance comparison |

| Assembly Evaluators | Inspector [31], Merqury [23], QUAST-LG [31] | Assembly quality quantification | Error identification, Completeness assessment |

| Reference Datasets | HG002 (GIAB) [31], Knightia excelsa [32] | Validated ground truth data | Method validation, Controlled experiments |

| Gene Finders | Augustus [12], GeneDecoder [12], Snap [12] | Coding sequence identification | Robustness assessment across quality gradient |

| Downsampling Tools | MURP [34], Optimal distribution sampler [33] | Data reduction preserving biological signals | Quality gradient construction |

Systematic evaluation of gene finder performance across assembly quality gradients reveals critical dependencies between assembly methodology and downstream annotation accuracy. The strategies outlined in this guide enable researchers to quantify these relationships and make informed decisions about tool selection based on their specific data quality constraints. As genomic sequencing expands to encompass greater biodiversity—including non-model organisms and metagenomic samples—developing annotation tools resilient to assembly imperfections becomes increasingly crucial. Future methodological development should prioritize maintaining predictive accuracy across the quality spectrum, particularly for taxonomically diverse organisms where high-quality assemblies remain challenging to produce. By standardizing quality assessment protocols and robustness evaluation frameworks, the research community can accelerate progress toward more reliable, automated genomic annotation systems capable of handling the diverse data qualities encountered in real-world research scenarios.

Gene prediction stands as a fundamental bottleneck in modern genomics, where the plunging costs of DNA sequencing have dramatically outpaced our ability to accurately annotate the functional elements within newly assembled genomes [37]. This challenge is particularly acute for eukaryotic organisms, where genes exhibit complex exon-intron structures that must be precisely delineated to deduce the correct protein products. The accuracy of gene annotations directly impacts downstream analyses, including functional characterization, evolutionary studies, and the identification of genes involved in disease processes [37] [12]. Errors in gene models—such as missing exons, retention of non-coding sequence, gene fragmentation, or erroneous merging of neighboring genes—can propagate across databases and jeopardize subsequent biological interpretations [37].

Within this context, gene finders can be broadly categorized into three methodological approaches: ab initio methods that predict protein-coding potential based on statistical features of the genome sequence alone; evidence-based methods that incorporate external data such as transcriptomic evidence or homology information; and hybrid approaches that combine both strategies. The performance of these tools is increasingly critical as researchers sequence more diverse organisms lacking extensive experimental resources or closely related reference genomes. This review provides a comprehensive survey of current gene prediction tools, evaluating their performance, robustness to variations in genome assembly quality, and suitability for different genomic applications.

Methodological Approaches to Gene Finding

1Ab InitioGene Prediction Methods

Ab initio gene predictors utilize computational models to identify protein-coding genes based solely on sequence intrinsic features, without external evidence. These methods typically employ statistical models such as hidden Markov models (HMMs) or support vector machines (SVMs) that combine two types of sensors: signal sensors that detect specific sites like splice donors/acceptors, promoter regions, and polyadenylation signals; and content sensors that distinguish coding from non-coding sequences based on nucleotide composition, codon usage, and other statistical regularities [37].

Prominent ab initio tools include Genscan, GlimmerHMM, GeneID, Snap, Augustus, and GeneMark-ES [37]. These methods are particularly valuable for discovering novel genes that lack homology to known sequences or when working with taxonomic groups that have poorly characterized transcriptomes. However, their accuracy can be limited for complex gene structures and they typically require species-specific training to achieve optimal performance [37] [12].

A significant limitation of traditional ab initio approaches is their reliance on graphical models like HMMs that require carefully curated training data and manually fitted length distributions. As noted in recent research, "These models can be improved by incorporating them with external hints and constructing pipelines but they are not compatible with deep learning advents that have revolutionised adjacent fields" [12].

Evidence-Based and Hybrid Approaches

Evidence-based methods incorporate external data sources to guide gene prediction, including transcriptome sequencing (RNA-seq), expressed sequence tags (ESTs), protein homology information, and chromatin profiling data. Tools such as GenomeScan, GeneWise, and LoReAN leverage this external evidence to generate more accurate gene models, particularly for genes with weak statistical signals in the genomic sequence [37].

Hybrid approaches combine ab initio prediction with evidence-based methods, often through sophisticated pipelines like Braker, Maker2, and Snowyowl [12]. These systems integrate multiple sources of information—including protein alignments, RNA-seq data, and ab initio predictions—to generate consensus gene models that benefit from both statistical sequence properties and experimental evidence.

Recent advances in deep learning have introduced a new class of evidence-integrating models such as Enformer, which uses a transformer architecture to predict gene expression and chromatin states from DNA sequence by integrating information from long-range interactions (up to 100 kb away) in the genome [38]. This approach substantially outperformed previous models in predicting RNA expression, closing "one-third of the gap to experimental-level accuracy" by effectively capturing distal regulatory elements such as enhancers [38].

Emerging Deep Learning Approaches

The field of gene prediction is currently being transformed by deep learning techniques, including convolutional neural networks (CNNs), transformers, and hybrid architectures. Enformer represents a significant advancement through its use of self-attention mechanisms that allow the model to integrate information across up to 100 kb of genomic sequence, dramatically expanding its ability to capture long-range regulatory interactions [38].

Another innovative approach, GeneDecoder, combines learned embeddings of raw genetic sequences with exact decoding using a latent conditional random field [12]. This architecture aims to maintain the consistency guarantees of traditional HMM-based methods while leveraging the representation learning capabilities of modern deep learning. The model "achieves performance matching the current state of the art, while increasing training robustness, and removing the need for manually fitted length distributions" [12].

Recent benchmarking efforts such as DNALONGBENCH have emerged to systematically evaluate these new approaches across multiple biological tasks requiring long-range dependency modeling, including enhancer-target gene interaction, 3D genome organization, and regulatory sequence activity prediction [36].

Performance Benchmarking and Comparative Analysis

Benchmarking Frameworks for Gene Prediction Tools

The evaluation of gene prediction methods requires carefully designed benchmarks that represent the diverse challenges encountered in real genome annotation projects. The G3PO (benchmark for Gene and Protein Prediction PrOgrams) framework provides a comprehensively validated set of 1,793 reference genes from 147 phylogenetically diverse eukaryotic organisms, designed to evaluate performance across variations in genome sequence quality, gene structure complexity, and protein length [37]. This benchmark has revealed that ab initio gene structure prediction remains "a very challenging task," with approximately 68% of exons and 69% of confirmed protein sequences not predicted with 100% accuracy by all five major ab initio programs tested [37].

For long-range interaction modeling, the DNALONGBENCH benchmark covers five critical tasks with dependencies spanning up to 1 million base pairs: enhancer-target gene interaction, expression quantitative trait loci (eQTL), 3D genome organization, regulatory sequence activity, and transcription initiation signals [36]. This benchmark enables systematic evaluation of how well different architectures capture the long-range genomic dependencies that are crucial for accurate regulation annotation.

Performance Comparison of Gene Prediction Methods

Table 1: Performance Comparison of Major Gene Prediction Approaches

| Method Category | Representative Tools | Strengths | Limitations | Best Use Cases |

|---|---|---|---|---|

| Ab Initio | Augustus, GlimmerHMM, GeneMark-ES | Species-agnostic; no external data needed; novel gene discovery | Lower accuracy for complex genes; requires training; sensitive to assembly quality | Novel genomes without transcriptomic resources; initial genome annotation |

| Evidence-Based | GeneWise, GenomeScan | High accuracy when evidence available; better splice site identification | Limited to conserved genes; cannot discover novel genes | Genomes with good transcriptome/proteome data; gene model refinement |

| Hybrid Pipelines | Braker, Maker2 | Combines strengths of both approaches; consensus modeling | Complex setup; computational intensive | Production-grade genome annotation; community consensus |

| Deep Learning | Enformer, GeneDecoder | Long-range dependency capture; emerging cross-species capability | Computational demands; training data requirements | Regulatory element annotation; expression prediction |

Table 2: Performance on G3PO Benchmark (Selected Ab Initio Tools)

| Tool | Exon Sensitivity | Exon Specificity | Gene Sensitivity | Gene Specificity | Complex Gene Performance |

|---|---|---|---|---|---|

| Augustus | Highest among ab initio | High | High | High | Moderate |

| GlimmerHMM | Moderate | Moderate | Moderate | Moderate | Lower |

| GeneID | Lower | High | Lower | High | Variable |

| SNAP | Moderate | Moderate | Moderate | Moderate | Lower |

| Genscan | Lower | Lower | Lower | Lower | Poor |

Evaluation of five widely used ab initio gene prediction programs on the G3PO benchmark revealed substantial differences in performance, with Augustus generally achieving the highest accuracy [37]. The benchmarking experiments highlighted particular challenges with complex gene structures, suggesting that "ab initio gene structure prediction is a very challenging task, which should be further investigated" [37].

For long-range prediction tasks, expert models specifically designed for particular biological problems generally outperform more general DNA foundation models. In the DNALONGBENCH evaluation, "highly parameterized and specialized expert models consistently outperform DNA foundation models" across multiple tasks including contact map prediction and transcription initiation signal prediction [36].

Impact of Genome Assembly Quality on Gene Prediction

The quality of the underlying genome assembly significantly impacts gene prediction accuracy. Draft genomes with incomplete coverage, sequencing errors, and fragmentation present substantial challenges for all gene prediction methods [37]. Ab initio methods are particularly vulnerable to assembly gaps and misassemblies, which can disrupt the statistical patterns these tools rely upon.

Advanced sequencing and assembly technologies are helping to address these challenges. Recent studies have demonstrated that hybrid assembly approaches combining long-read technologies (Oxford Nanopore or PacBio) with short-read data (Illumina) can produce dramatically improved genome assemblies [23] [6] [39]. For example, benchmarking of 11 assembly pipelines found that "Flye outperformed all assemblers, particularly with Ratatosk error-corrected long-reads," and that polishing "improved the assembly accuracy and continuity, with two rounds of Racon and Pilon yielding the best results" [23] [39].

The development of high-quality chromosome-scale assemblies, such as the recently published 2.87 Gb Taohongling Sika deer genome with scaffold N50 of 85.86 Mb, provides a foundation for more accurate gene prediction [6]. Such continuous assemblies are particularly valuable for correctly annotating complex gene structures and capturing long-range regulatory interactions.

Experimental Protocols for Gene Finder Evaluation

Benchmarking Workflow for Gene Prediction Tools

The following diagram illustrates a standardized workflow for benchmarking gene prediction tools, adapted from established benchmark frameworks like G3PO and DNALONGBENCH:

Diagram Title: Gene Finder Benchmark Workflow

Key Performance Metrics and Evaluation Methodology

Comprehensive evaluation of gene prediction tools requires multiple performance metrics measured across diverse test cases:

- Exon-level metrics: Sensitivity (recall) and specificity (precision) for exon identification

- Gene-level metrics: Complete gene prediction accuracy, missing genes, and split genes

- Nucleotide-level metrics: Accuracy at distinguishing coding from non-coding nucleotides

- Boundary detection: Accuracy of splice site and translation start/stop identification

The G3PO benchmark methodology involves "the construction of a new benchmark, called G3PO, designed to represent many of the typical challenges faced by current genome annotation projects" using "a carefully validated and curated set of real eukaryotic genes from 147 phylogenetically disperse organisms" [37]. Test sets are designed to evaluate the effects of different features including genome sequence quality, gene structure complexity, and protein length.

For regulatory prediction tasks, metrics such as the stratum-adjusted correlation coefficient for contact map prediction and AUROC/AUPR for enhancer-target gene prediction are employed [36]. These specialized metrics capture the unique challenges of long-range genomic interaction prediction.

Table 3: Essential Bioinformatics Resources for Gene Prediction Research

| Resource Category | Specific Tools/Databases | Purpose | Application Context |

|---|---|---|---|

| Benchmark Datasets | G3PO, DNALONGBENCH | Method evaluation and comparison | Tool selection; performance validation |

| Genome Assembly | Flye, HIFIASM, Canu | Genome sequence reconstruction | Foundation for gene annotation |

| Assembly Polishing | Racon, Pilon | Error correction in draft assemblies | Improving input quality for gene prediction |