Beyond the pLDDT Score: Advanced Strategies to Improve Low-Confidence AlphaFold Predictions

AlphaFold has revolutionized structural biology, yet its self-reported confidence scores (pLDDT) are not infallible, and low-confidence regions pose significant challenges for downstream applications in drug discovery and functional analysis.

Beyond the pLDDT Score: Advanced Strategies to Improve Low-Confidence AlphaFold Predictions

Abstract

AlphaFold has revolutionized structural biology, yet its self-reported confidence scores (pLDDT) are not infallible, and low-confidence regions pose significant challenges for downstream applications in drug discovery and functional analysis. This article provides a comprehensive guide for researchers aiming to understand, troubleshoot, and improve these unreliable regions. Drawing on the latest research, we explore the root causes of low confidence, detail advanced methodological fixes like EQAFold and enhanced sampling, offer targeted troubleshooting for specific scenarios like antibody-antigen complexes and disordered regions, and establish a rigorous framework for validating the improved models against experimental data and specialized benchmarks.

Decoding Low Confidence: Why AlphaFold Struggles with Certain Regions

Frequently Asked Questions (FAQs)

What is the pLDDT score?

The pLDDT (predicted Local Distance Difference Test) is a per-residue measure of local confidence in AlphaFold's predicted protein structures. It is scaled from 0 to 100, with higher scores indicating higher confidence and usually more accurate prediction. This metric estimates how well the prediction would agree with an experimental structure by assessing the correctness of local distances without relying on structural superposition [1].

How should I interpret different pLDDT value ranges?

pLDDT scores are categorized into confidence bands that correspond to expected prediction accuracy, as shown in the table below [1].

| pLDDT Score Range | Confidence Level | Typical Structural Accuracy |

|---|---|---|

| > 90 | Very High | High accuracy for both backbone and side chains. |

| 70 - 90 | Confident | Correct backbone, but potential side chain placement errors. |

| 50 - 70 | Low | Low confidence in the backbone geometry. |

| < 50 | Very Low | Unreliable prediction; likely intrinsically disordered region. |

Why do some protein regions have low pLDDT scores?

Low pLDDT scores (<50) generally indicate one of two scenarios [1]:

- Natural Flexibility: The region is highly flexible or intrinsically disordered and does not adopt a single, well-defined structure.

- Insufficient Information: The region has a predictable structure, but AlphaFold lacks sufficient evolutionary or sequence information to model it confidently.

This often occurs in flexible linkers between well-defined globular domains [1].

Does a high pLDDT score guarantee the prediction is correct?

No. While a high pLDDT score generally indicates high local reliability, it is not an absolute guarantee of accuracy. Global distortions and errors in domain orientation can occur even in high-confidence models [2]. Furthermore, AlphaFold does not account for environmental factors like ligands, covalent modifications, or specific protein-protein interactions, which can alter a protein's structure [2]. High-confidence predictions should be considered as exceptionally useful hypotheses, with experimental validation remaining crucial for verifying structural details, especially for interaction sites [2].

How can I improve the reliability of low pLDDT regions in my predictions?

The EQAFold framework offers an enhanced approach. It replaces AlphaFold's standard pLDDT prediction head with an Equivariant Graph Neural Network (EGNN) that incorporates additional features for a more reliable confidence assessment [3].

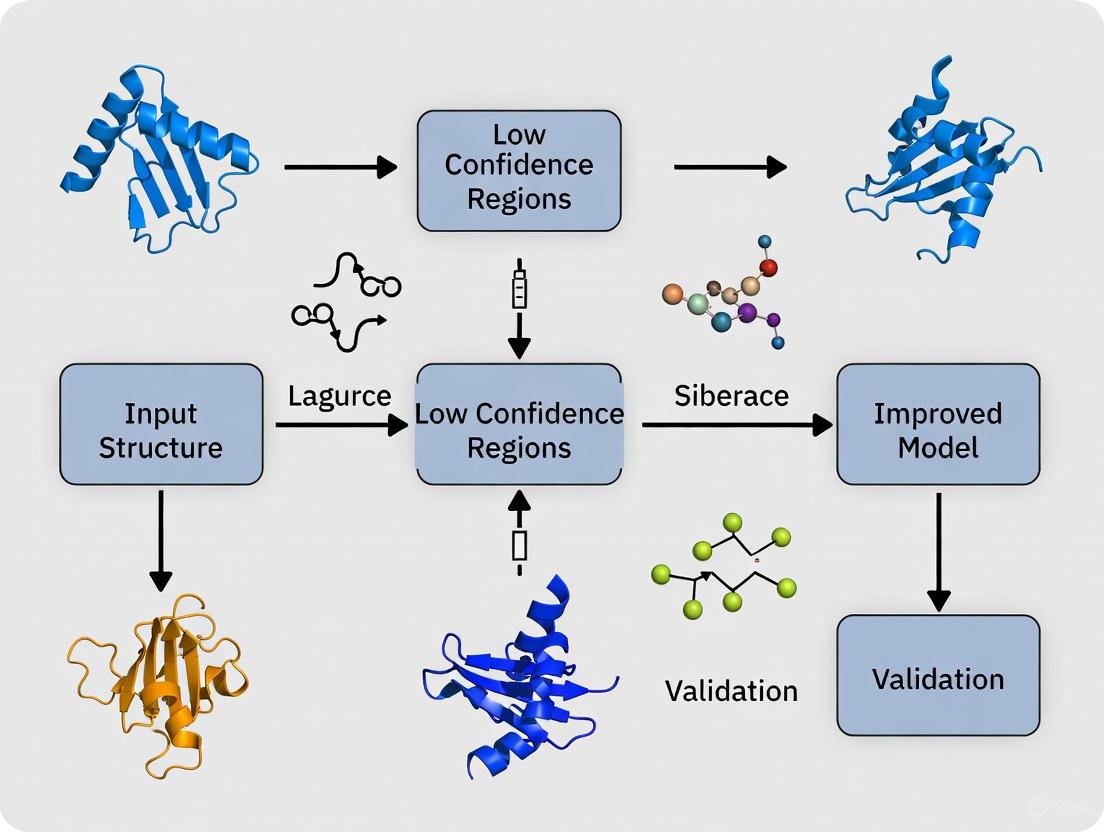

Diagram: Workflow comparing standard AlphaFold2 and enhanced EQAFold pLDDT scoring.

Troubleshooting Low Confidence Predictions

Problem: My model has a region with very low pLDDT. What does this mean?

Interpretation & Solution:

- Likely Intrinsic Disorder: A continuous region with pLDDT < 50 is likely an Intrinsically Disordered Region (IDR). This is a biological reality, not a failure of the model [1]. You should interpret this region as flexible and not over-interpret its specific coordinates.

- Verify with Experimental Data: If the region is suspected to be structured, its low confidence may stem from a lack of evolutionary information. Consider if experimental data (e.g., from crystallography or cryo-EM) is available or could be generated to validate or guide modeling [2].

- Use Enhanced Assessment Tools: For a more reliable confidence assessment, tools like EQAFold can be used. EQAFold has been shown to provide more accurate confidence metrics, particularly in regions where the standard AlphaFold pLDDT is erroneous [3].

Problem: The high-pLDDT domains in my model appear correct, but their relative orientation looks wrong.

Interpretation & Solution:

- pLDDT Measures Local Accuracy: pLDDT does not measure confidence in the relative positions or orientations of domains or large-scale assembly [1].

- Consult the PAE Plot: You must examine the Predicted Aligned Error (PAE) plot. The PAE plot estimates the positional error between residues and is the correct metric for assessing inter-domain confidence and overall fold quality. Low PAE between domains indicates high confidence in their relative placement.

Diagram: Troubleshooting logic for low pLDDT regions.

Problem: My high pLDDT prediction disagrees with my experimental data.

Interpretation & Solution:

- Trust the Experiment: Global distortion and domain movements can occur in AlphaFold predictions, even for high-confidence residues [2]. A systematic analysis found that the median Cα root-mean-square deviation (RMSD) between high-confidence predictions and experimental structures was 1.0 Å, which is considerably larger than the median RMSD (0.6 Å) between experimental structures of the same protein determined under different conditions [2].

- Use the Prediction as a Hypothesis: The prediction is a strong starting model. Use it for molecular replacement in crystallography or as an initial model for fitting into cryo-EM maps [4].

- Iterative Rebuilding: Implement iterative refinement workflows where the initial AlphaFold model is fitted into the experimental density and then used as a template for a new prediction. This can progressively improve the model's agreement with the data [4].

Experimental Protocol: Improving Self-Assessment with EQAFold

The following protocol summarizes the EQAFold methodology for generating more accurate self-confidence scores, as detailed in the research [3].

Objective: To generate a protein structure prediction with a refined and more reliable pLDDT confidence score using the EQAFold framework.

Key Research Reagent Solutions:

| Reagent / Software | Function in Protocol |

|---|---|

| Pre-trained AlphaFold2 Model | Provides the foundational structure prediction and initial representations. |

| ESM2 Protein Language Model | Supplies evolutionary embeddings used as node features in the graph network. |

| Equivariant Graph Neural Network (EGNN) | Core architecture that refines pLDDT prediction using spatial and relational data. |

| RMSF from Dropout Replicates | Quantifies structural fluctuations; used as a feature to indicate local uncertainty. |

Methodology:

- Generate Base Structure Prediction: Run the target protein sequence through a standard AlphaFold2 pipeline (including MSA generation, Evoformer, and Structure Module) to obtain the initial 3D coordinates and the single and pair representations [3].

- Create Multiple Dropout Models: Run the AlphaFold2 structure module five additional times with 50% dropout enabled. Calculate the Root Mean Square Fluctuation (RMSF) of the Cα atoms across these five models. The RMSF provides a measure of positional variance that correlates with local confidence [3].

- Construct Graph Representation:

- Nodes: Represent each amino acid residue.

- Node Features: Concatenate the final single representation from AlphaFold's Evoformer (L × 384), averaged embeddings from the ESM2 language model (L × 33), and the calculated RMSF values (L × 1). Process this through a linear layer to create final node features of dimension L × 384 [3].

- Edges: Connect residues whose Cα atoms are within 16 Å of each other.

- Edge Features: Comprise the pair embeddings from AlphaFold (L × L × 128) and the averaged attention layers from ESM2 for the corresponding residue pairs [3].

- Process with EGNN: Pass the constructed graph through an EGNN network consisting of four equivariant graph convolutional layers. The network reduces the 384 input node features to 128 hidden features and finally to 50 output features [3].

- Output Refined pLDDT: The final layer produces the refined per-residue pLDDT score, which has been demonstrated to be more accurate than the standard AlphaFold output, especially in regions with substantial initial errors [3].

Frequently Asked Questions (FAQs)

Q1: What does a low pLDDT score in an AlphaFold prediction mean? A low pLDDT (predicted Local Distance Difference Test) score is AlphaFold's per-residue estimate of its own confidence. Scores below 50 are typically associated with intrinsically disordered regions (IDRs) that lack a fixed 3D structure. However, low confidence can also indicate a "hidden order"—a region capable of folding but for which AlphaFold lacks sufficient evolutionary information to make a confident prediction [5] [6].

Q2: Why would a seemingly foldable protein segment receive a low-confidence prediction? A segment may be foldable but receive low pLDDT scores due to a shallow Multiple Sequence Alignment (MSA). AlphaFold relies heavily on co-evolutionary signals from homologous sequences to infer structural contacts. If few related sequences exist in databases, these evolutionary constraints are missing, and the model has limited information to build a confident prediction, even for structured domains [5].

Q3: How can I distinguish between true intrinsic disorder and a false negative due to a lack of data? Combining AlphaFold's output with other bioinformatics tools is key. A region with low pLDDT that is also predicted to be disordered by tools like IUPred2 is likely genuinely disordered. Conversely, a region with low pLDDT that is flagged as foldable by a tool like pyHCA (which analyzes hydrophobic cluster density) may be a structured domain suffering from a lack of evolutionary data [5].

Q4: My protein-protein complex has a poor ipTM score. What does this indicate? The ipTM (interface predicted Template Modeling) score estimates the quality of a predicted protein-protein interaction. The score can be artificially lowered if your input sequences contain large disordered regions or accessory domains that do not participate in the core interaction. Trimming the sequences to the specific interacting domains often results in a higher and more reliable ipTM score for the interaction interface itself [7].

Troubleshooting Guides

Issue: Low Confidence in Structured Regions

Problem: Your protein has segments that are suspected to be structured, but AlphaFold assigns them low pLDDT scores (e.g., < 50).

Investigation and Solution Protocol:

Confirm Foldability:

- Action: Run your protein sequence through the pyHCA tool.

- Interpretation: This tool delineates "foldable segments" (FS) based on hydrophobic cluster density. Note segments with an HCA score typical of folded domains (e.g., between -1 and 3.5) that simultaneously have low pLDDT [5].

- Rationale: A high HCA score with low pLDDT suggests the region is likely structured but AlphaFold cannot confidently model it, often due to a lack of evolutionary information.

Check Evolutionary Coverage:

- Action: Examine the depth of the Multiple Sequence Alignment (MSA) generated during the AlphaFold run. Most AlphaFold implementations provide this data.

- Interpretation: A shallow MSA, with very few homologous sequences, strongly points to a lack of co-evolutionary signals as the root cause of low confidence [5].

Experimental Validation Pathway:

- If a region is confirmed as foldable but has low confidence, it becomes a high-priority candidate for experimental structure determination (e.g., via X-ray crystallography or cryo-EM) to confirm the "hidden" structure [5].

Issue: Poor Protein-Protein Interaction (PPI) Scores

Problem: The ipTM score for a predicted protein complex is low, making the model's reliability uncertain.

Investigation and Solution Protocol:

Analyze the Interface:

- Action: Inspect the predicted complex model. Look at the interface itself and check the pLDDT of the interfacial residues and the Predicted Aligned Error (PAE) between chains.

- Interpretation: A localized region of high inter-chain PAE and low pLDDT at the interface suggests a low-quality interaction prediction. High PAE across the entire chain, however, indicates global uncertainty [7].

Refine Input Constructs:

- Action: If the interface looks plausible but the global ipTM is low, truncate your input FASTA sequences to include only the known or suspected interacting domains, removing long disordered tails or non-participating domains.

- Rationale: The standard ipTM calculation is normalized over the entire length of the input sequences. Removing non-interacting regions prevents these segments from artificially depressing the score, providing a clearer signal of the interface quality [7].

Use Alternative Metrics:

- Action: For a more focused assessment, calculate scores like pDockQ or the newly proposed ipSAE, which are designed to be less sensitive to disordered regions and provide a better estimate of interface accuracy [7].

Data Presentation

Table: Interpreting pLDDT Confidence Scores

| pLDDT Range | Confidence Level | Typical Interpretation | Recommended Action |

|---|---|---|---|

| > 90 | Very high | High-confidence model; reliable for most analyses. | Can be used for detailed mechanistic studies. |

| 70 - 90 | Confident | Good backbone prediction; side-chain rotamers may vary. | Suitable for most applications like homology modeling. |

| 50 - 70 | Low | Caution advised; may be disordered or poorly aligned. | Use with caution; combine with disorder predictors. |

| < 50 | Very low | Likely intrinsically disordered region (IDR). | Treat as flexible; consider experimental validation for function. |

Source: Adapted from AlphaFold benchmarks and literature analysis [5] [6] [8].

Experimental Protocols

Protocol 1: Identifying "Hidden Order" with pyHCA and MSA Analysis

Objective: To systematically identify protein segments that are likely folded but are assigned low confidence by AlphaFold due to insufficient evolutionary information.

Methodology:

- Input: AlphaFold2 prediction for your protein of interest, including the pLDDT scores and the amino acid sequence.

- Foldable Segment Delineation:

- Use the

segmentfunction of the pyHCA tool (available on GitHub) to automatically delineate foldable segments (FS) from your protein sequence [5]. - The tool calculates an HCA score for each segment, which estimates its order/disorder ratio. Segments with an HCA score between -1 and 3.5 are considered "soluble-like" and foldable [5].

- Use the

- Cross-Reference with pLDDT:

- Map the pyHCA-derived segments onto the AlphaFold model and calculate the mean pLDDT for each segment.

- Identify candidates: Flag long segments (>30 amino acids) that have an HCA score typical of a folded domain but a very low mean pLDDT (<50). These are your "full-VL" (Very Low) segments of interest [5].

- MSA Depth Check:

- For the candidate segments, check the depth of the Multiple Sequence Alignment (MSA) generated by AlphaFold. This can be quantified by the average number of homologs per residue found in databases like BFD or UniRef [5].

- A shallow MSA confirms that lack of evolutionary data is the likely cause of low confidence.

Protocol 2: Improving ipTM Scores for Complex Prediction

Objective: To enhance the reliability of protein-protein interaction scoring by optimizing input sequence constructs.

Methodology:

- Initial Full-Length Prediction:

- Run AlphaFold-Multimer using the full-length sequences of the interacting proteins from UniProt.

- Record the ipTM score and analyze the PAE plot. Note the regions that appear to form the core interaction interface.

- Construct Truncation:

- Based on the initial model and domain knowledge, create new truncated FASTA files. Include only the structured domains involved in the interaction and short linkers, removing long N/C-terminal tails and known disordered regions [7].

- Second Prediction with Truncated Constructs:

- Run AlphaFold-Multimer again using the new, shorter sequence constructs.

- Compare the new ipTM score to the original. A significant increase suggests the original full-length score was artificially lowered by non-interacting regions.

- Validation with ipSAE:

- As an independent check, run the

ipSAEprogram (available on GitHub) on the original full-length prediction's PAE output. - The

ipSAEmetric is designed to be less sensitive to disordered regions and may give a higher, more accurate score for the interface even with the full-length input [7].

- As an independent check, run the

Visualizations

Diagram: Diagnosing Low Confidence in AlphaFold

Title: Diagnostic workflow for low-confidence AlphaFold predictions.

Diagram: Experimental Workflow for Characterization

Title: Workflow for characterizing low-confidence regions.

The Scientist's Toolkit: Research Reagent Solutions

| Tool / Resource | Function | Application in Troubleshooting |

|---|---|---|

| pyHCA | Automatically delineates foldable segments in a protein sequence based on hydrophobic cluster density (HCA). | Identifies regions that are likely structured ("hidden order") despite having low AlphaFold pLDDT scores [5]. |

| IUPred2 | Predicts intrinsic disorder from amino acid sequence. | Helps confirm whether a low-pLDDT region is likely a genuine IDR [5]. |

| BFD / UniRef Databases | Large-scale collections of protein sequences used to build Multiple Sequence Alignments (MSAs). | Provides the evolutionary data. Checking MSA depth from these databases confirms if low confidence is due to a lack of homologous sequences [5]. |

| ipSAE Software | Calculates an alternative protein-protein interaction score from AlphaFold's PAE output. | Provides a more reliable interaction score for complexes where the standard ipTM is depressed by disordered regions [7]. |

| AlphaFold-Multimer | Specialized version of AlphaFold for predicting protein complexes. | Used in the protocol for predicting and scoring protein-protein interactions [7]. |

Frequently Asked Questions

Q1: If AlphaFold gives a region a high pLDDT score, can I fully trust that part of the model? A: No. While a high pLDDT score (e.g., >90) generally indicates high model confidence, it does not guarantee the prediction is a perfect match for the experimental, biological structure. Global distortions and incorrect local side-chain conformations can occur even in high-confidence regions [2]. Always treat high-confidence predictions as exceptionally useful hypotheses, not final truths.

Q2: What are the main structural limitations in high-confidence AlphaFold predictions? A: The primary limitations, even at high confidence, are:

- Global Distortion: The entire predicted structure can be slightly distorted compared to the real structure.

- Domain Orientation: The relative placement of protein domains can be incorrect.

- Local Conformation: The precise shaping of the protein backbone and the positioning of amino acid side chains can be inaccurate [2].

Q3: How much can a high-confidence AlphaFold model differ from an experimental structure? A: The difference is measurable and often significant. When compared to experimental crystallographic data, the atomic coordinates in high-confidence AlphaFold predictions can have a median Cα root-mean-square deviation (RMSD) of around 1.0 Å from deposited models in the Protein Data Bank (PDB). This is considerably more than the median difference of 0.6 Å observed between two high-resolution experimental structures of the same protein crystallized under different conditions [2].

Q4: Why might a high-confidence prediction be inaccurate? A: AlphaFold's training does not fully account for the cellular environment. Predictions may be inaccurate because they do not incorporate:

- Ligands (e.g., drugs, cofactors)

- Covalent modifications (e.g., phosphorylation)

- Interactions with other biomolecules (e.g., DNA, other proteins)

- Effects of specific solution conditions [2] [9].

Q5: What is the definitive method to verify a structural detail from an AlphaFold prediction? A: Experimental structure determination is the only way to verify structural details, particularly those involving interactions not included in the prediction [2]. Techniques like X-ray crystallography or cryo-electron microscopy are required for confirmation.

Troubleshooting Guide: Diagnosing Mismatches Between High Confidence and Experimental Data

This guide helps you systematically identify and address discrepancies between your high-confidence AlphaFold model and experimental results.

Table: Summary of Quantitative Data on AlphaFold Prediction Accuracy

| Metric | AlphaFold Prediction (vs. Experimental) | Experimental Structures (vs. Each Other) | Source |

|---|---|---|---|

| Median Cα RMSD | ~1.0 Å | ~0.6 Å | [2] |

| Mean Map-Model Correlation | 0.56 | 0.86 (for deposited models) | [2] |

| Inter-atomic Distance Deviation (for atoms 48-52 Å apart) | ~0.7 Å | ~0.4 Å | [2] |

| Key Limitation | Does not model ligands, modifications, or environmental factors | Represents a single experimental condition | [2] [9] |

Issue: Suspected Global Distortion or Incorrect Domain Placement

Symptoms:

- Your entire AlphaFold model has high pLDDT but does not fit well into a cryo-EM map or X-ray crystallography density.

- Specific domains of your protein appear rotated or shifted relative to their expected position in a complex.

Diagnosis & Resolution Protocol:

- Quantify the Mismatch: Calculate the global RMSD between your AlphaFold prediction and any available experimental structure. Use the morphing analysis described in the Nature Methods study to determine if the discrepancy is due to a smooth distortion or a rigid domain movement [2].

- Check for "Conditional Order": The region might be foldable but requires a binding partner or specific cellular condition to adopt its true structure. This "conditional order" can lead to low-confidence or inaccurate predictions in the unbound state. Use tools like pyHCA to identify such foldable segments that AlphaFold may struggle with [5].

- Investigate Co-evolutionary Signal: The accuracy of AlphaFold is tied to the depth of the multiple sequence alignment (MSA). A shallow MSA, indicating a lack of evolutionary information, can be a source of error, even if the final pLDDT is high. Analyze the MSA depth for the problematic region [5].

- Consider Environmental Factors: If your experiment involves a specific ligand, ion, or post-translational modification, remember that standard AlphaFold predictions do not include these. The inaccuracy may arise because the protein's true structure in your experiment is dependent on these factors [2].

Diagnostic Workflow for Inaccurate High-Confidence Models

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Resources for AlphaFold Research and Validation

| Research Reagent / Tool | Function & Purpose | Key Details |

|---|---|---|

| AlphaFold Server | Provides free, non-commercial access to AlphaFold 3 for predicting complexes of proteins, nucleic acids, ligands, and modified residues [10]. | Predicts joint structures; uses AlphaFold 3's updated diffusion-based architecture [9]. Output is in mmCIF format. |

| ColabFold | A user-friendly, web-based platform for predicting protein structures and complexes using AlphaFold 2 and RoseTTAFold [10]. | Useful for multimers; accessible via Google Colab. Allows control over parameters like max_recycles (recommended: 12-48) [10]. |

| AlphaFill | An algorithm that "transplants" missing ligands, cofactors, and ions into pre-existing AlphaFold models [10]. | Provides approximate ligand positioning. Caution: Not suitable for quantifying precise atomic interactions [10]. |

| pyHCA | A computational tool that identifies foldable segments and estimates order/disorder ratio from a single protein sequence using Hydrophobic Cluster Analysis (HCA) [5]. | Helps identify "conditional order" and segments that may be well-folded but are missed by AlphaFold due to a shallow MSA [5]. |

| FirstGlance in Jmol | A molecular visualization tool that automatically colors uploaded AlphaFold models by their pLDDT confidence score [10]. | Simplifies initial assessment of model reliability. Displays average pLDDT for selected residue ranges. |

| Experimental Structure Determination (X-ray, Cryo-EM) | The definitive method for verifying structural details and hypotheses generated by AlphaFold predictions [2]. | Essential for confirming structures, especially for regions involved in interactions or with bound ligands not modeled by AlphaFold. |

Research Workflow Integrating Key Tools

Troubleshooting Guide & FAQs

This guide addresses common challenges researchers face when using AlphaFold to model protein conformational diversity and ligand interactions, providing targeted solutions based on current research.

FAQ 1: Why does AlphaFold only predict one structure for my protein, which is known to have multiple functional states?

AlphaFold was primarily trained to predict a single, thermodynamically stable conformation and often converges on the most common state found in structural databases [11]. To sample alternative conformations, you can manipulate the input multiple sequence alignment (MSA). Reducing the depth and information content of the MSA encourages the model to explore a broader conformational landscape [12].

- Recommended Protocol: MSA Subsampling

- Generate a deep MSA using standard AlphaFold2 (AF2) procedures.

- Stochastically subsample the MSA to a reduced depth (e.g., between 16 and 512 sequences) before structure inference.

- Generate a large number of models (e.g., 50-100) for each subsampled MSA.

- Cluster the resulting models and analyze them using metrics like TM-score to identify distinct, high-confidence conformational states [12].

FAQ 2: How can I generate a structural ensemble for an intrinsically disordered protein (IDP) or a protein with disordered regions?

Standard AlphaFold2 predictions for disordered regions often appear as unrealistic, low-confidence coils [11]. The AlphaFold-Metainference method addresses this by using AlphaFold-predicted distances as restraints in molecular dynamics (MD) simulations to generate a physically plausible ensemble [13].

- Recommended Protocol: AlphaFold-Metainference

- Run AlphaFold to obtain a distogram (predicted distance map).

- Use these predicted distances as structural restraints within an MD simulation framework.

- Run the simulation to generate a conformational ensemble that satisfies the AlphaFold-derived distances.

- Validate the ensemble against experimental data, such as Small-Angle X-Ray Scattering (SAXS) profiles or NMR chemical shifts [13].

FAQ 3: Why does AlphaFold3 (AF3) sometimes produce physically unrealistic protein-ligand complexes?

While AF3 demonstrates high accuracy in benchmarks, its predictions can fail to adhere to fundamental physical principles. It may rely on pattern recognition from its training data rather than an underlying understanding of physics, leading to issues like steric clashes and incorrect ligand placement when the binding site is subtly altered [14].

- Troubleshooting Steps:

- Always inspect predictions for severe steric clashes and impossible bond geometries.

- Perform a "sanity check" by mutating key binding residues in silico (e.g., to glycine or phenylalanine) and re-running the prediction. If the ligand pose remains unchanged, this indicates a potential over-reliance on memorization [14].

- Do not rely solely on AF3. Use its predictions as initial hypotheses and refine them with physics-based methods like molecular dynamics (MD) simulations and free energy calculations [15].

FAQ 4: How reliable are the confidence scores (pLDDT, pTM) for assessing predictions of alternative conformations or ligand binding?

Confidence scores are a useful but imperfect guide. While a low pLDDT often indicates disorder or flexibility, a high score does not guarantee a unique or functionally relevant state. When generating diverse conformations via MSA subsampling, the overall confidence may decrease, but this does not necessarily correlate with lower quality for the alternative state [16]. For ligands, confidence metrics may not reliably drop even in physically implausible binding scenarios [14].

- Recommendation: Use confidence scores as one piece of evidence. Prioritize the experimental validation of predicted conformational states and ligand-binding poses, especially for novel targets [2].

Experimental Methodologies & Data

The following table summarizes key methods for capturing conformational diversity.

| Method | Core Principle | Key Applications | Key Metric(s) |

|---|---|---|---|

| MSA Subsampling [12] | Reduces evolutionary information to bias, enabling sampling of alternative states. | Transporters, GPCRs, proteins with known open/closed states. | TM-score (≥0.9 considered high accuracy). |

| AFsample2 [16] | Randomly masks columns in the MSA with "X" to break co-evolutionary constraints. | Predicting alternative end states and intermediate conformations. | TM-score improvement (ΔTM); Model diversity. |

| AlphaFold-Metainference [13] | Uses AF-predicted distances as restraints in MD simulations to generate ensembles. | Intrinsically disordered proteins, partially disordered proteins. | Kullback-Leibler divergence to SAXS data; Rg. |

Workflow Visualization

AlphaFold-Metainference Workflow

MSA Manipulation for Conformational Sampling

The Scientist's Toolkit: Research Reagent Solutions

| Reagent / Resource | Function in Experiment |

|---|---|

| AlphaFold-Metainference Server/Code [13] | Generates structural ensembles for disordered and ordered proteins by integrating AF predictions with MD simulations. |

| AFsample2 Software [16] | An AlphaFold2-based method that uses random MSA column masking to predict multiple conformations and ensembles. |

| Molecular Dynamics (MD) Software (e.g., GROMACS, AMBER) | Used to refine AF models, sample dynamics, and validate predictions using physics-based force fields [13] [15]. |

| Serratus Platform [17] | A bioinformatics platform for identifying RNA-dependent RNA polymerase (RdRp) sequences and their palmprint motifs. |

| PoseBusterV2 Dataset [14] | A benchmark dataset for evaluating protein-ligand docking accuracy, used to test AF3's co-folding performance. |

Methodological Breakthroughs: From Built-in Fixes to External Tools

Technical Support Center

Frequently Asked Questions (FAQs)

Q1: What does a low pLDDT score mean in my AlphaFold prediction, and how should I interpret it? A low pLDDT score (typically below 70) indicates low local confidence in the predicted structure. This can mean one of two things: (1) the region is genuinely flexible or intrinsically disordered and does not adopt a single, stable structure, or (2) AlphaFold lacks sufficient information to predict the region with confidence, even if it might be structured. The EQAFold framework is designed to help distinguish between these scenarios and provide more accurate confidence estimates for these challenging regions [1] [18].

Q2: My prediction contains regions of "barbed wire" or "pseudostructure." What are these? These are specific behavioral modes identified within low-pLDDT regions:

- Barbed Wire: Characterized by wide, looping coils, an absence of packing contacts, and numerous validation outliers. It is extremely un-protein-like, and its conformation is considered to have no predictive value [19].

- Pseudostructure: Presents an intermediate behavior with a misleading appearance of isolated, badly formed secondary-structure-like elements [19].

Tools like

phenix.barbed_wire_analysiscan automatically identify and help you manage these non-predictive regions in your models [19].

Q3: Can a region with low pLDDT ever be structurally accurate? Yes. Some low-pLDDT regions can exhibit a "near-predictive" mode. These regions resemble folded protein structure, can be nearly accurate, and are often associated with conditional folding, where a region folds upon binding to a partner or due to post-translational modifications [19]. Identifying these regions is a key focus of improved quality assessment methods.

Q4: How does EQAFold improve upon standard AlphaFold's self-assessment? While standard AlphaFold provides a pLDDT score, it can struggle to consistently select the best models for difficult targets and to accurately assess flexibility in the presence of interacting partners [18] [20]. EQAFold overhauls the confidence prediction head using deep graph learning, leading to more accurate self-confidence scores that better correlate with the true quality of the structural model.

Q5: What is the best way to generate an accurate structural ensemble for a disordered protein? Standard AlphaFold output (a single structure) is often inconsistent with experimental data for disordered proteins [13]. Advanced methods like AlphaFold-Metainference use AlphaFold-predicted distances as restraints in molecular dynamics simulations to generate structural ensembles that are more accurate and better agree with techniques like small-angle X-ray scattering (SAXS) [13].

Troubleshooting Guides

Issue 1: Handling Low-Confidence Regions in Predictions

Problem: Your AlphaFold model contains extensive regions with low pLDDT scores, and you are unsure how to proceed with your analysis.

Solution:

- Categorize the Low-Confidence Region: Use analysis tools to determine the behavioral mode of the low-pLDDT region. The following table summarizes the modes and recommended actions [19].

| Behavioral Mode | Key Characteristics | Recommended Action |

|---|---|---|

| Barbed Wire | Wide loops, no packing, many validation outliers. | Remove for most tasks (e.g., molecular replacement). The coordinates are non-predictive [19]. |

| Pseudostructure | Poorly formed, isolated secondary structures. | Treat with caution. Use annotations (e.g., signal peptides) for context; generally not reliable [19]. |

| Near-Predictive | Protein-like, reasonable packing, few outliers. | Retain for analysis; can be useful for molecular replacement and studying conditionally folded regions [19]. |

Run a Barbed Wire Analysis:

- Tool:

phenix.barbed_wire_analysis(included in the Phenix software package) [19]. - Input: Your predicted structure in PDB or mmCIF format, with pLDDT in the B-factor field.

- Output: The tool will categorize residues into behavioral modes based on pLDDT, packing scores, and MolProbity validation metrics. It can output annotated files or a new structure file pruned of non-predictive regions.

- Tool:

Consult External Annotations: Cross-reference the low-pLDDT regions with databases like MobiDB to see if they are annotated as intrinsically disordered. An association between "barbed wire" and disorder annotations supports the interpretation of genuine disorder [19].

Issue 2: Generating Accurate Models for Difficult Targets

Problem: For a target with shallow multiple sequence alignments (MSAs) or complicated architecture, the standard AlphaFold pipeline produces poor-quality models, and the pLDDT score is not reliable for selecting the best one.

Solution: Adopt an integrative prediction strategy, as demonstrated by high-performing systems in CASP16 [20].

- MSA Engineering: Generate diverse MSAs using different sequence databases and alignment tools. For large proteins, consider a divide-and-conquer approach using domain-based alignments [20].

- Extensive Model Sampling: Run multiple predictions with different MSAs and model seeds to explore a larger conformational space [20].

- Advanced Model Ranking: Do not rely solely on pLDDT. Use a combination of complementary model quality assessment (QA) methods and model clustering to rank and select your final predicted structures [20].

Table: Key Research Reagent Solutions for Integrative Structure Prediction

| Research Reagent / Tool | Function in Experiment |

|---|---|

| Multiple Sequence Alignments (MSAs) | Provides evolutionary constraints from diverse homologs; primary input for deep learning-based prediction [20]. |

| AlphaFold-Metainference | Uses AF-predicted distances as MD restraints to generate accurate structural ensembles of disordered/ordered proteins [13]. |

| Molecular Dynamics (MD) Simulations | Used in AlphaFold-Metainference and for independent validation; provides flexibility metrics and conformational sampling [13] [18]. |

| Model Quality Assessment (QA) Methods | Estimates the accuracy of predicted models; crucial for ranking models from extensive sampling [20] [21]. |

phenix.barbed_wire_analysis Tool |

Automates identification of predictive vs. non-predictive residues in low-pLDDT regions of AlphaFold2 models [19]. |

Experimental Protocols

Protocol 1: Using AlphaFold-Metainference to Generate Structural Ensembles

Purpose: To construct a structural ensemble of a protein (including disordered regions) that is consistent with both AlphaFold-predicted distances and experimental data [13].

Methodology:

- Input Generation: Obtain the distogram (distance map) for your protein sequence from AlphaFold.

- Restraint Setup: Use the predicted inter-residue distances as structural restraints within a molecular dynamics (MD) simulation framework, implemented according to the maximum entropy principle via the metainference approach.

- Simulation: Run the MD simulation with the applied distance restraints to generate an ensemble of conformers.

- Validation: Compare the back-calculated properties (e.g., radius of gyration, pairwise distance distributions) of your ensemble against experimental data, such as SAXS profiles, for validation.

The workflow for this protocol is illustrated below.

Protocol 2: Benchmarking EQAFold Performance on Low-pLDDT Regions

Purpose: To quantitatively evaluate whether the EQAFold framework provides more accurate confidence estimates for low-pLDDT regions compared to standard AlphaFold.

Methodology:

- Dataset Curation: Select a set of protein targets with known experimental structures that contain regions of conditional folding or intrinsic disorder, evidenced by low pLDDT scores in standard AlphaFold predictions.

- Model Generation: Process the targets using both the standard AlphaFold and the EQAFold frameworks.

- Quality Assessment:

- Calculate the per-residue accuracy (e.g., using lDDT-Cα) of the predicted models against the experimental reference.

- Compare the correlation between the predicted confidence scores (pLDDT from AlphaFold vs. the improved score from EQAFold) and the calculated per-residue accuracy, focusing on regions originally with low pLDDT.

- Analysis: A successful benchmark will show that EQAFold's confidence scores have a stronger correlation with the true structural accuracy in low-confidence regions, correctly identifying "near-predictive" segments.

The logical flow of this benchmark is shown in the following diagram.

Leveraging Massive Sampling and Dropout for Conformational Diversity

Frequently Asked Questions (FAQs) and Troubleshooting Guides

FAQ 1: How does reducing information in the Multiple Sequence Alignment (MSA) help sample alternative conformations?

Answer: Standard AlphaFold2 (AF2) uses a deep, information-rich MSA, which constrains it to predict a single, high-confidence ground state. Reducing information in the MSA by randomly masking columns or subsampling sequences disrupts the co-evolutionary signals that bias the model toward one conformation. This increased uncertainty allows the network to explore alternative structural solutions, effectively revealing different conformational states of the same protein [22] [23] [24].

FAQ 2: What is the difference between MSA column masking and MSA subsampling?

Answer: Both methods aim to reduce evolutionary constraints, but they operate differently:

- MSA Column Masking (as in AFsample2): This method randomly replaces entire columns in the MSA with a placeholder (e.g., "X"), directly removing covariance information for specific residue positions across the alignment. This is integrated directly into the inference process, with a unique masking pattern applied for each predicted model [22].

- MSA Subsampling: This method reduces the depth of the MSA by randomly selecting a smaller subset of homologous sequences (

max_seq) and clusters (extra_seq) for each prediction. A shallower MSA provides a noisier evolutionary signal, encouraging conformational diversity [24].

FAQ 3: My models for alternative states have low pLDDT scores. Should I disregard them?

Answer: Not necessarily. A decrease in mean pLDDT is an expected consequence of introducing noise via MSA masking or subsampling and does not automatically indicate a lower-quality model. Research shows that models generated with these methods can have high accuracy (high TM-score to experimental structures) even with a moderately reduced pLDDT. You should validate the alternative state models by comparing them to known experimental structures of alternate conformations if available [22] [23].

FAQ 4: How much sampling (number of models) is sufficient to capture conformational diversity?

Answer: There is no one-size-fits-all number, but increased sampling consistently improves the probability of discovering high-quality alternative conformations. One study found that generating 160 models per run was effective for capturing the conformational ensemble of Abl1 kinase. As a general guideline, you should generate hundreds of models. The quality of the best-predicted state typically improves as the number of samples increases [22] [24].

FAQ 5: What are the key parameters I need to adjust in my AlphaFold2 implementation to enable this kind of sampling?

Answer: The key parameters to modify are:

- MSA Depth Parameters:

max_seqandextra_seq. Reducing these from their default values is a primary method for MSA subsampling [24]. - Dropout: Enable dropout during inference at rates of approximately 10% for the Evoformer module and 25% for the structural module to sample from the model's uncertainty [24].

- MSA Masking Probability: If using an AFsample2-like approach, this probability (e.g., 15%) controls how many columns are randomized [22].

- Number of Recycles: Limiting the number of recycles (e.g., to 3) can prevent over-optimization toward a single state [23].

Troubleshooting Common Experimental Issues

Issue 1: The generated ensemble lacks diversity and shows only one conformational state.

Possible Causes and Solutions:

- Cause 1: The MSA masking level or subsampling depth is too low. The network is still too constrained.

- Solution: Systematically increase the MSA column masking probability (e.g., from 5% to 15% to 25%) or further reduce the

max_seq/extra_seqparameters. Note that excessive masking (>30-35%) can lead to a rapid drop in confidence and model quality [22].

- Solution: Systematically increase the MSA column masking probability (e.g., from 5% to 15% to 25%) or further reduce the

- Cause 2: Insufficient sampling.

- Solution: Drastically increase the number of models generated. Run hundreds of predictions with different random seeds to adequately explore the conformational landscape [22].

- Cause 3: Dropout is not enabled.

- Solution: Ensure that dropout is activated for the Evoformer and structural modules during inference [24].

Issue 2: All generated models have very low pLDDT confidence scores and appear unstructured.

Possible Causes and Solutions:

- Cause 1: Excessively aggressive MSA manipulation.

- Solution: Reduce the MSA masking probability or increase the MSA subsampling depth. There is a trade-off between diversity and confidence; find the optimal balance for your target protein [22].

- Cause 2: The protein may be intrinsically disordered.

- Solution: Check the pLDDT profile from a standard AF2 prediction. Regions with low pLDDT (<50) are likely disordered, and their structural heterogeneity may not reflect a functional conformational change [23].

Issue 3: I cannot reproduce a known alternative conformation from a reference paper.

Possible Causes and Solutions:

- Cause: The optimal parameters (masking level, subsampling depth) can be target-dependent.

- Solution: Do not rely on a single parameter set. Perform a grid search over key parameters like masking probability (e.g., 5%, 10%, 15%, 20%) to find what works best for your specific protein [22].

Experimental Protocols & Data

Protocol 1: Implementing an AFsample2-like Workflow with MSA Column Masking

This protocol is based on the AFsample2 method for predicting multiple conformations [22].

- Input Preparation: Generate your master MSA using standard tools (e.g., JackHMMR against UniRef90, BFD, and MGnify databases).

- MSA Masking: For each model you generate, create a uniquely masked version of the master MSA. Randomly select a predefined percentage (e.g., 15%) of columns and replace all amino acids in those columns with "X" (unknown residue). The first row (target sequence) should not be masked.

- Run Inference: Execute the AlphaFold2 neural network with the following modifications:

- Input a differently masked MSA for each model.

- Activate dropout during inference (e.g., 10% for Evoformer, 25% for structure module).

- Use a limited number of recycles (e.g., 3).

- Post-processing: Cluster the resulting models (e.g., by TM-score). Analyze clusters to identify representative structures for different conformational states. Use the pLDDT and Predicted Align Error (PAE) for validation.

Protocol 2: Exploring Conformational Landscapes via MSA Subsampling

This protocol is derived from high-throughput methods used to predict conformational populations [24].

- Build a Deep Master MSA: Compile an extensive MSA for your target sequence.

- Configure AF2 Parameters: Set the key MSA depth parameters to reduced values. A combination of

max_seq:256andextra_seq:512has been shown effective for kinases, but this should be optimized. - Enable Stochastic Inference: Run multiple independent predictions (e.g., 32 runs with 5 models each, totaling 160 models) with different random seeds.

- Activate Dropout: Use dropout with a 10% rate for the Evoformer and 25% for the structure module during predictions.

- Analyze the Ensemble: The resulting ensemble of structures can be analyzed to identify major alternative conformations. For some systems, the population of different clusters can be correlated with relative state populations in experiments like NMR [24].

The following tables summarize key quantitative findings from recent studies to guide your experimental design and expectations.

Table 1: Performance Improvement of AFsample2 over Standard AF2 (AFvanilla)

| Protein Dataset | Targets with Improved Alternate State (ΔTM>0.05) | Notable Example Improvement |

|---|---|---|

| OC23 (Open-Closed Proteins) | 9 out of 23 cases | TM-score improved from 0.58 to 0.98 [22] |

| Membrane Protein Transporters | 11 out of 16 targets | Significant improvements in alternate state modeling [22] |

Table 2: Effect of MSA Column Masking Level on Model Quality and Confidence (AFsample2)

| Masking Level | Best TM-score (Alternate State) | Impact on Mean pLDDT | Recommendation |

|---|---|---|---|

| 0% (No Masking) | 0.80 | Baseline (Highest) | Avoid for diverse sampling [22] |

| 15% | 0.88 | Linear decrease (~2% drop per 5% masking) | Optimal starting point [22] |

| >30-35% | Deteriorates | Rapid drop in confidence | Use with caution [22] |

Table 3: Key Parameters for MSA Subsampling Protocol

| Parameter | Standard AF2 Setting | Subsampling Setting | Function |

|---|---|---|---|

max_seq |

512 | 256 | Number of sequences randomly selected from master MSA [24] |

extra_seq |

1024 | 512 | Number of sequences sampled from each cluster [24] |

| Dropout (Inference) | Off | On (Evoformer: 10%, Structure: 25%) | Introduces stochasticity during model generation [24] |

| Number of Models | 5 | 160+ | Increased sampling to explore conformational space [22] [24] |

Workflow and Signaling Pathways

Diagram 1: Workflow for Enhanced Conformational Sampling

Diagram 2: Logic of MSA-Based Sampling Strategies

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Computational Tools and Resources

| Item | Function / Description | Example / Source |

|---|---|---|

| AlphaFold2 Codebase | Open-source code for running structure predictions. Can be modified for methods like MSA masking. | GitHub: https://github.com/google-deepmind/alphafold/ [25] |

| ColabFold | Accelerated and user-friendly version of AF2, useful for rapid prototyping. | https://colabfold.mmseqs.com [23] |

| AFsample2 | A specific method that integrates MSA column masking into the AF2 inference process. | Described in Nature Communications Biology [22] |

| UniProt | Standard repository of protein sequences; used for finding homologs for MSA construction. | https://www.uniprot.org/ [26] |

| AlphaFold Protein Structure Database | Repository of pre-computed AF2 predictions; useful for obtaining a ground state model for comparison. | https://alphafold.ebi.ac.uk [26] |

| JackHMMER/MMseqs2 | Software tools for building deep Multiple Sequence Alignments (MSAs) from sequence databases. | Standard tools for MSA generation [24] |

| PDB (Protein Data Bank) | Repository of experimental protein structures; essential for validating predicted alternative conformations. | https://www.rcsb.org/ |

Frequently Asked Questions (FAQs) & Troubleshooting Guides

FAQ 1: Why are the self-confidence scores (pLDDT) from AlphaFold2 not always reliable, especially in certain regions?

AlphaFold2's predicted Local Distance Difference Test (pLDDT) scores serve as a key confidence metric for its structural predictions. However, a significant limitation is that poorly modeled regions of a protein may sometimes be assigned high confidence, which can be misleading for downstream applications [3].

The reliability of pLDDT scores is notably lower in intrinsically disordered regions (IDRs). These regions lack a well-defined 3D structure and often exhibit lower sequence conservation. Since AlphaFold2's architecture and training are optimized for structured domains, its pLDDT scores are less accurate for IDRs. Consequently, tools like AlphaMissense that rely on AlphaFold2 models also show reduced sensitivity in predicting pathogenic mutations within disordered regions [27].

Table: Challenges with AlphaFold2 Self-Confidence Scores in Different Protein Regions

| Protein Region Type | Key Characteristics | pLDDT Reliability | Impact on Downstream Tools |

|---|---|---|---|

| Structured / Ordered Regions | Well-defined 3D structure; higher sequence conservation. | High | High sensitivity for variant effect prediction (e.g., AlphaMissense). |

| Intrinsically Disordered Regions (IDRs) | Lack fixed 3D structure; dynamic and flexible; lower conservation. | Low | Reduced sensitivity; pathogenic mutations are harder to identify accurately. |

Troubleshooting Guide: Interpreting Low pLDDT Scores

- Symptom: Your AlphaFold2 model has contiguous regions with pLDDT scores below 70.

- Diagnosis: These regions are likely intrinsically disordered or have no evolutionary homologs, making them difficult for AlphaFold2 to model based on co-evolutionary signals [27].

- Action:

- Cross-verify with disorder predictors: Use specialized tools like AIUPred or metapredict to confirm if the low-pLDDT region is predicted to be disordered [27].

- Use with caution: Avoid using the atomic coordinates of these low-confidence regions for functional analysis or docking studies.

- Seek experimental validation: Consider using spectroscopic techniques or nuclear magnetic resonance (NMR) to probe the actual structural properties of these regions.

FAQ 2: How can I improve the accuracy of self-confidence scores for my AlphaFold2 models?

A proposed solution is to replace AlphaFold2's standard pLDDT prediction module with an enhanced framework that integrates more sophisticated data analysis. The Equivariant Quality Assessment Folding (EQAFold) method addresses this by using an Equivariant Graph Neural Network (EGNN) as a new prediction head [3].

EQAFold generates more reliable confidence scores by leveraging a broader set of input features than standard AlphaFold2, including:

- Root Mean Square Fluctuation (RMSF): Calculated from multiple AlphaFold2 models generated with 50% dropout in the structure module. RMSF provides a measure of positional variance, which negatively correlates with LDDT [3].

- Protein Language Model Embeddings: Layer-wise embeddings from models like ESM2 are incorporated, capturing deep evolutionary and semantic information from protein sequences [3].

- Graph Representation of Structure: The protein structure is converted into a graph, allowing the EGNN to leverage both node (residue-level) and edge (pairwise) information, which the original AlphaFold2 pLDDT head does not fully utilize [3].

Table: Key Research Reagent Solutions for Enhanced Confidence Scoring

| Reagent / Resource | Type | Function in the Protocol | Source/Availability |

|---|---|---|---|

| EQAFold | Software Framework | Replaces AlphaFold2's LDDT head with an EGNN to provide more accurate self-confidence scores. | GitHub: kiharalab/EQAFold_public [3] |

| ESM-2 Protein Language Model | Pre-trained Model | Provides contextual sequence embeddings that capture evolutionary and structural constraints. | Hugging Face / Meta GitHub [28] |

| Equivariant Graph Neural Network (EGNN) | Algorithm/Architecture | Parses protein structure graphs while respecting rotational and translational symmetries. | [3] |

| CABS-flex | Simulation Software | A tool for protein flexibility simulations that can be enhanced by integrating AlphaFold's pLDDT scores to refine its restraint schemes. | GitHub: kwroblewski7/cabsflex_restraints [29] |

Experimental Protocol: Benchmarking Improved Confidence Scores

- Dataset Curation: Assemble a test set of monomeric protein structures with high-resolution experimental determinations (e.g., ≤ 2.5 Å). Ensure no more than 40% sequence similarity between training and test sets to prevent redundancy [3].

- Model Generation:

- Generate predicted structures and pLDDT scores using the standard AlphaFold2 pipeline.

- Generate five additional structure models using AlphaFold2 with 50% dropout in the structure module. Calculate the RMSF from the Cα atoms of these replicate models [3].

- Feature Extraction:

- Extract the final single and pair representations from AlphaFold2's Evoformer and structure modules.

- Compute embeddings for the target sequence using a protein language model like ESM2 [3].

- Graph Construction and Training:

- Represent the protein as a graph where nodes are amino acids and edges connect residues within a 16 Å Cα distance.

- Construct node features by concatenating single representations, ESM2 embeddings, and RMSF values. Use pair embeddings as edge features [3].

- Train the EGNN-based LDDT prediction head on your curated dataset to minimize the error between predicted and true LDDT.

- Validation: Evaluate the model-level and residue-level accuracy of the new confidence scores against the true LDDT calculated from the experimental structure. Compare the performance against the standard AlphaFold2 pLDDT [3].

The workflow for integrating these diverse data sources into an improved confidence score can be visualized as follows:

FAQ 3: What approaches exist for proteins with no homologs (orphan proteins) where MSA-based methods fail?

For orphan proteins that lack evolutionary homologs, generating a deep Multiple Sequence Alignment (MSA) is impossible. This severely limits the performance of MSA-dependent tools like AlphaFold2 [30].

Alternative strategies that do not rely on MSAs include:

- Single-Sequence Protein Language Models (PLMs): Models like RGN2, which uses AminoBERT, learn latent structural information from millions of unaligned protein sequences. They can predict structure directly from a single sequence, achieving performance comparable to or better than AlphaFold2 on orphan proteins while being significantly faster (up to 10^6-fold reduction in compute time) [30].

- Leveraging Implicit Evolutionary Information: PLMs like ESM-2 are trained on massive datasets of diverse sequences, allowing them to learn evolutionary patterns implicitly without needing explicit MSA generation [31]. This captured information can be used for structure prediction or directly as feature inputs for other tasks.

Troubleshooting Guide: Handling Orphan Protein Prediction

- Symptom: AlphaFold2 produces a model with very low overall confidence, or the MSA generation stage returns few or no hits.

- Diagnosis: The target protein is likely an orphan or has rapidly evolving sequences.

- Action:

- Use a single-sequence method: Run the target sequence through a single-sequence predictor like RGN2 or ESMFold [30].

- Compare results: If both AlphaFold2 and the single-sequence method yield a high-confidence model with similar folds, confidence in the prediction increases.

- Focus on PLM embeddings: Even if the 3D structure is uncertain, use the embeddings from models like ESM-2 as features for predicting other properties, such as function or interaction sites [28].

Table: Comparison of MSA-dependent vs. Single-Sequence Prediction Approaches

| Feature | MSA-Dependent (e.g., AlphaFold2) | Single-Sequence PLM (e.g., RGN2, ESMFold) |

|---|---|---|

| Core Requirement | Deep Multiple Sequence Alignment (MSA) | Single amino acid sequence |

| Performance on Orphan Proteins | Low (fails without homologs) | High (designed for this scenario) |

| Computational Speed | Slower (due to MSA generation) | Significantly faster (up to millions of times) |

| Theoretical Basis | Leverages co-evolutionary signals from related sequences | Learns biophysical rules from the statistical patterns in the sequence universe |

Frequently Asked Questions

Q1: What does a low pLDDT score (e.g., below 50) mean in my AlphaFold prediction, and how should I handle it?

A low pLDDT score indicates very low local confidence. This typically means one of two things: the region is naturally unstructured (intrinsically disordered) or AlphaFold lacks sufficient information to make a confident prediction [1]. To handle this:

- For structural analysis: Consider removing residues with pLDDT < 70 to obtain a more reliable core structure. Residues with pLDDT above 70 usually have a correctly predicted backbone, while scores above 90 indicate high accuracy for both backbone and side chains [1] [32].

- For functional analysis: Be aware that some intrinsically disordered regions (IDRs) with low pLDDT may undergo binding-induced folding. Conversely, AlphaFold may sometimes predict conditionally folded states for IDRs with high confidence, which may not reflect the physiological unbound state [1].

Q2: DeepSCFold constructs "paired MSAs." Why is this critical for predicting protein complexes, and what can I do if my paired MSA is too shallow?

Traditional monomeric MSAs lack information about co-evolution between potential interaction partners. Paired MSAs are critical because they explicitly encode residue-residue correlations across protein chains, which provide evolutionary constraints to guide the accurate modeling of the interaction interface [33] [34]. If your paired MSA is shallow (contains too few sequences), DeepSCFold leverages several solutions:

- It uses deep learning models (pSS-score and pIA-score) to predict structural similarity and interaction probability between monomeric sequences to construct more accurate paired MSAs [34].

- It integrates information from multiple sources, including species annotations, UniProt accession numbers, and known complexes from the PDB [34].

Q3: My protein complex prediction has high confidence (pLDDT) for individual domains but the overall orientation seems wrong. What metric should I check?

The pLDDT score is a per-residue local confidence metric and does not reliably assess the relative positions or orientations of domains [1]. You must examine the Predicted Aligned Error (PAE) plot. The PAE plot indicates AlphaFold's confidence in the relative positioning of any two residues in the structure. For domain orientation, check for large predicted errors between residues in different domains. Tools like the phenix-process_predicted_model protocol can use PAE information to help identify compact domains [32].

Q4: Are there methods newer than standard AlphaFold that provide more reliable self-confidence scores like pLDDT?

Yes, research is actively addressing cases where AlphaFold's self-confidence scores are unreliable. EQAFold is an enhanced framework that replaces AlphaFold's standard pLDDT prediction head with an Equivariant Graph Neural Network (EGNN). This architecture better leverages spatial and pairwise information, leading to a more accurate alignment between the predicted confidence and the actual quality of the structural model [3].

Troubleshooting Guides

Problem: Poor Quality Paired Multiple Sequence Alignment (MSA) Symptoms: Low overall pLDDT in the complex interface, inconsistent models across multiple runs, and high PAE between chains. Solutions:

- Utilize Integrated Predictors: Ensure you are using DeepSCFold's built-in pSS-score and pIA-score models, which are designed to identify potential interaction partners from monomeric MSAs even with sparse direct evolutionary data [34].

- Broaden Sequence Databases: Confirm your search includes diverse databases such as UniRef30, UniRef90, BFD, and MGnify to maximize the number of homologous sequences found [34].

- Leverage External Information: Use the protocol's option to incorporate metadata like species annotation and UniProt IDs, which can help in generating more biologically plausible paired sequences [34].

Problem: Handling Low-Confidence Regions and Domain Splitting Symptoms: Long, flexible loops or linkers with pLDDT < 50 are obscuring the analysis of well-folded domains. Solutions:

- Identify Low-Confidence Residues: In tools like PyMOL, pLDDT scores are stored in the B-factor column. You can select low-confidence residues by filtering on this field (e.g.,

b < 50) [35]. - Automated Processing: Use the phenix-processpredictedmodel protocol. Feed it your predicted structure (with pLDDT as B-factor) and the corresponding PAE file. The protocol can automatically remove low-confidence residues (e.g., pLDDT < 70) and split the remaining structure into compact, well-defined domains [32].

- Manual Inspection: Always visually inspect the processed model to confirm that the biological units of interest have been preserved.

Problem: Inaccurate Self-Assessment of Model Quality by AlphaFold Symptoms: Regions of the model that appear poorly folded are assigned high pLDDT scores, or well-folded regions are assigned low confidence. Solutions:

- Run EQAFold: If available, use EQAFold to generate the structure and its confidence scores. Its EGNN-based quality assessment is specifically designed to provide a more reliable confidence metric than standard AlphaFold's pLDDT, particularly for regions with substantial errors [3].

- Consensus-Based Assessment: Generate multiple models (e.g., using AlphaFold with dropout enabled) and calculate the Root Mean Square Fluctuation (RMSF) between them. Regions with high fluctuation (high RMSF) across different runs typically have lower reliability, a feature that EQAFold integrates directly into its confidence prediction [3].

Research Reagent Solutions

Table 1: Essential software and databases for advanced complex prediction pipelines.

| Item Name | Type | Function in the Pipeline |

|---|---|---|

| DeepSCFold | Software Suite | An integrated system for high-accuracy protein complex modeling. Its key function is constructing complex paired MSAs using predicted interaction probabilities and structural similarity [34]. |

| AlphaFold-Multimer | Software / Algorithm | The core structure prediction engine within DeepSCFold that takes paired MSAs and folds the protein complex structure [34]. |

| UniRef90/UniRef30 | Sequence Database | Curated clusters of protein sequences used to build deep multiple sequence alignments (MSAs), providing evolutionary information for accurate structure prediction [34]. |

| ESM2 Protein Language Model | Algorithm / Embedding | A protein language model that provides evolutionary-scale sequence embeddings. These embeddings are used as input features in methods like EQAFold to improve the accuracy of quality assessment [3]. |

| PAE File | Data / Metric | The Predicted Aligned Error file output by AlphaFold. It is essential for evaluating inter-domain and inter-chain confidence and is used by downstream processing tools [32]. |

| phenix.processpredictedmodel | Software Tool | A protocol for post-processing AlphaFold outputs. It automatically removes very low-confidence residues and splits the cleaned structure into compact structural domains [32]. |

Experimental Data & Workflows

Table 2: Key quantitative performance metrics from relevant tools and studies.

| Method / Database | Key Performance Metric | Context / Explanation |

|---|---|---|

| DeepSCFold (GuijunLab-Complex) | Ranked 11th out of 111 groups | Based on models with the best scores for protein domains in CASP [34]. |

| DeepSCFold (GuijunLab-Assembly) | Ranked 14th out of 86 groups (2nd for easy/medium targets) | Based on models with the best scores for protein multimers in CASP [34]. |

| EQAFold | Average pLDDT error: 4.74 | Benchmark on 726 monomeric proteins. Lower error indicates more reliable self-confidence scores compared to standard AlphaFold (error of 5.16) [3]. |

| Standard AlphaFold (AFDB) | Average pLDDT error: 5.16 | Baseline for comparison on the same test set of 726 proteins [3]. |

| pLDDT Score Ranges | >90: Very High70-90: Confident50-70: Low<50: Very Low | Standard interpretation scale for per-residue confidence. A score above 70 usually indicates a correct backbone [1]. |

Workflow: DeepSCFold for Protein Complex Prediction

DeepSCFold Complex Prediction Workflow

Workflow: Improving and Processing Low-Confidence Predictions

Confidence Improvement and Processing Pipeline

Troubleshooting Common Scenarios: A Practical Guide for Specific Challenges

Addressing Hallucinations in Intrinsically Disordered Proteins and Regions

Frequently Asked Questions (FAQs)

Q1: What is a "hallucination" in the context of AlphaFold and intrinsically disordered proteins?

A hallucination occurs when AlphaFold3 incorrectly predicts the structural state of a protein region. For Intrinsically Disordered Proteins (IDPs) and Intrinsically Disordered Regions (IDRs), this primarily manifests in two ways:

- False Order: AlphaFold3 predicts a region with a high-confidence, stable 3D structure (high pLDDT score) when experimental data confirms it is genuinely disordered.

- False Disorder: AlphaFold3 predicts a region with low confidence and no defined structure (low pLDDT score) when experimental data shows it has a stable, ordered structure. A recent study found that 32% of residues in a curated set of IDPs were misaligned with experimental databases, with 22% of all residues classified as hallucinations [36].

Q2: Why is accurately predicting IDP structure so important for drug discovery?

IDPs are crucial functional components in human biology, making them attractive therapeutic targets. They comprise 30-40% of the human proteome and are heavily implicated in critical biological processes like transcription, signaling, and disease [37] [36]. For example, approximately 80% of human cancer-associated proteins contain long disordered regions [36]. Hallucinations, particularly false order in biologically active regions, can misdirect drug design efforts by suggesting stable binding pockets or interfaces that do not exist in reality, leading to costly dead-ends in research [37] [38].

Q3: How can I identify a potential hallucination in my AlphaFold3 prediction?

The primary indicator is a discrepancy between the predicted local distance difference test (pLDDT) confidence score and experimental or bioinformatic evidence.

- pLDDT Score: This per-residue confidence score is scaled from 0 to 100 [1]. While a low pLDDT (below 50) can indicate a naturally disordered region, it can also mean AlphaFold lacks enough information to make a confident prediction [1].

- Key Sign: A high pLDDT score (e.g., >70) in a region that is annotated as disordered in databases like DisProt, or that is predicted to be disordered by other algorithms, is a strong signal of a potential "false order" hallucination [37] [36].

Q4: My protein is known to be disordered, but AlphaFold3 predicts a high-confidence structure. Is this always wrong?

Not necessarily. This may represent a context-driven misalignment rather than a pure hallucination. Some IDRs are conditionally folded—they remain disordered alone but adopt a stable structure upon binding to a partner biomolecule (like another protein, nucleic acid, or ion) or after a post-translational modification [1]. AlphaFold3 has a tendency to predict these conditionally folded, high-affinity states because they are often well-represented in its training data from the Protein Data Bank (PDB) [1]. This behavior highlights that a single AlphaFold3 prediction may not capture the full spectrum of a protein's conformational dynamics.

Q5: What experimental techniques are best for validating the structure of disordered regions?

Nuclear Magnetic Resonance (NMR) spectroscopy is arguably the most powerful technique for studying IDPs. Unlike X-ray crystallography, which requires a stable structure, NMR can characterize disordered states and report on residual structure, dynamics, ligand binding, and structural changes on a per-residue basis [39]. Other key biophysical techniques include:

- Small-Angle X-Ray Scattering (SAXS): Provides information about the overall dimensions and shape of disordered ensembles in solution [13].

- Circular Dichroism (CD) Spectroscopy: Can indicate the presence of transient secondary structure. These techniques are essential for grounding computational predictions in experimental reality.

Troubleshooting Guides

Guide 1: Diagnosing and Validating AlphaFold3 Hallucinations in IDRs

This guide provides a step-by-step protocol to assess the reliability of AlphaFold3 predictions for potentially disordered proteins.

- Objective: To systematically identify regions within a protein sequence where AlphaFold3 predictions may be hallucinating and to validate findings with experimental data.

- Background: The diffusion-based architecture of AlphaFold3, while powerful, can invent plausible-looking structures in regions that are inherently unstructured. This protocol uses a multi-source verification approach [37] [36].

Experimental Protocol

| Step | Action | Description | Key Output |

|---|---|---|---|

| 1 | Generate AF3 Predictions | Run the target protein sequence through AlphaFold3. Use multiple random seeds (e.g., no seed, '5', '1234567890') to assess reproducibility. | Multiple predicted structures (CIF format). |

| 2 | Extract Confidence Metrics | Programmatically parse the pLDDT scores for each residue from the B-factor column of the output CIF files. | Residue-level pLDDT data. |

| 3 | Gather Reference Data | Annotate the sequence using the manually curated DisProt database, which contains experimental evidence for disorder [36]. Run a disorder predictor like IUPred2 for additional computational evidence [5]. | Experimental and computational disorder annotations. |

| 4 | Classify Residues | Compare pLDDT scores to reference data. A common threshold is pLDDT ≥70 for "ordered" and <70 for "disordered" [36]. Classify discrepancies. | Table of aligned, hallucinated, and context-driven residues. |

| 5 | Contextual Modeling | For residues flagged as "context-driven," use AlphaFold3 to model the protein in complex with its known binding partners (if available) to see if the ordered prediction is justified [36]. | Complex structure predictions. |

| 6 | Experimental Validation | For critical regions, validate predictions using experimental techniques such as NMR or SAXS [39] [13]. | Experimental structural data. |

Guide 2: Generating Structural Ensembles for Disordered Proteins

This guide outlines an advanced method, AlphaFold-Metainference, to move beyond single structures and model the dynamic ensembles of IDPs.

- Objective: To construct a structural ensemble of a disordered protein that is consistent with both AlphaFold-predicted distances and experimental data.

- Background: A single static structure is inadequate to represent the heterogeneous nature of IDPs. This protocol integrates deep learning predictions with molecular dynamics to generate a more accurate ensemble of conformations [13].

Experimental Protocol

| Step | Action | Description | Key Output |

|---|---|---|---|

| 1 | Obtain AlphaFold Distogram | Generate the raw distance distribution map (distogram) for the protein using AlphaFold. Note: AlphaFold predicts distances up to ~22 Å [13]. | Predicted distogram. |

| 2 | Filter Distance Restraints | Apply a filtering criterion to select the most informative predicted distances for use as restraints, focusing on shorter-range contacts [13]. | Filtered distance restraints. |

| 3 | Set Up Metainference Simulation | Use the AlphaFold-Metainference method, which implements these distance restraints within a molecular dynamics framework according to the maximum entropy principle [13]. | Simulation input files. |

| 4 | Run Ensemble Simulation | Perform the molecular dynamics simulation. The restraints guide the simulation to generate an ensemble of structures that collectively satisfy the predicted distances. | Structural ensemble (trajectory). |

| 5 | Validate with SAXS | Compare the computed SAXS profile from the generated ensemble with experimental SAXS data to validate accuracy [13]. | Kullback-Leibler distance to experiment. |

Table 1: Quantitative Analysis of AlphaFold3 Hallucinations in IDPs

This table summarizes key findings from a study that analyzed AlphaFold3 predictions on 72 IDPs from the DisProt database [36].

| Metric | Value | Interpretation |

|---|---|---|

| Residues Misaligned with DisProt | 32% | Nearly one-third of all residue predictions did not match experimental annotations. |

| Hallucinated Residues | 22% | Represents clear errors (false order or false disorder). |

| Context-Driven Misalignment | 10% | Suggests AF3 predicts conditionally folded states. |

| Hallucinations in Biological Process Residues | 18% | Highlights a significant risk for misinterpretation in functionally critical areas. |

| Proteins with <70% DisProt Alignment | >50% | Over half of the tested proteins showed poor overall agreement. |

Table 2: Interpreting pLDDT Confidence Scores

This table provides a standard guide for interpreting per-residue pLDDT scores, based on AlphaFold documentation and research [1].

| pLDDT Score Range | Confidence Level | Structural Interpretation |

|---|---|---|

| 90 - 100 | Very High | High backbone and side chain accuracy. |

| 70 - 90 | Confident | Generally correct backbone, some side chain placement errors. |

| 50 - 70 | Low | Caution advised; may be flexible or poorly predicted. |

| < 50 | Very Low | Likely to be an intrinsically disordered region (IDR) or unstructured linker [1]. |

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function / Explanation | Relevance to IDP Hallucination Research |

|---|---|---|

| DisProt Database | A manually curated database of experimental annotations for IDPs and IDRs. | Serves as the ground-truth benchmark for identifying hallucinations by providing experimental disorder annotations [36]. |

| NARDINI+ Algorithm | An unsupervised learning algorithm that identifies molecular "grammars" in IDR sequences. | Helps classify IDR functions and understand sequence-determinants of structure, providing a basis for why some regions might be mispredicted [40]. |