Beyond the Scatterplot: A Practical Framework for Biologically Validating PCA Results in Biomedical Research

Principal Component Analysis (PCA) is a cornerstone of exploratory data analysis in biology and drug development, but its results can be misleading without rigorous biological validation.

Beyond the Scatterplot: A Practical Framework for Biologically Validating PCA Results in Biomedical Research

Abstract

Principal Component Analysis (PCA) is a cornerstone of exploratory data analysis in biology and drug development, but its results can be misleading without rigorous biological validation. This article provides a comprehensive framework for researchers and scientists to move beyond simple dimensionality reduction to ensure their PCA findings are biologically meaningful, robust, and clinically actionable. We cover foundational principles, methodological best practices, common troubleshooting strategies, and a structured validation approach based on coherence, uniqueness, robustness, and transferability. By integrating biological annotations and pathway analysis, this guide empowers professionals to build confidence in their PCA-driven discoveries and avoid the pitfalls of technically sound but biologically irrelevant results.

Demystifying PCA: From Mathematical Abstraction to Biological Insight

Modern biological datasets, such as those from genomics, transcriptomics, and proteomics, often comprise hundreds or thousands of features—for instance, expressions of thousands of genes or levels of numerous proteins—creating a high-dimensional space [1] [2]. This phenomenon introduces the "curse of dimensionality," where data becomes sparse, distances between points become less meaningful, and machine learning models face increased computational costs and a higher risk of overfitting [3] [4] [2]. Dimensionality reduction (DR) techniques are essential to mitigate these issues by transforming complex data into a lower-dimensional space while preserving its essential structure [5] [6].

This guide objectively compares Principal Component Analysis (PCA) against other DR methods, framing the evaluation within crucial research on validating PCA results with biological annotations.

Understanding PCA: The Biological Data Workhorse

Principal Component Analysis (PCA) is a foundational linear DR technique. It works by identifying the orthogonal directions, called principal components, that capture the maximum variance in the data [5] [3]. The process involves standardizing the data, computing the covariance matrix to understand feature relationships, and performing eigen-decomposition to derive the new components [5] [4].

Key Strengths and Limitations in Biological Contexts

- Strengths: PCA is computationally efficient, preserves the global data structure, and provides an interpretable transformation [5]. Its speed makes it suitable for initial exploratory analysis of large biological datasets [6].

- Limitations: As a linear method, PCA assumes linear relationships between variables and can struggle to capture the complex, non-linear structures often present in biological systems [5] [3]. It is also sensitive to outliers and requires careful data normalization [5].

Comparative Analysis: PCA Versus Alternative Methods

The choice of DR method depends on the data's nature and the analysis goal. The table below summarizes key techniques and their suitability for biological data.

Table 1: Comparison of Dimensionality Reduction Techniques for Biological Data

| Method | Type | Key Principle | Strengths | Weaknesses | Typical Biological Use Case |

|---|---|---|---|---|---|

| PCA [5] [4] | Linear | Finds orthogonal directions of maximum variance. | Fast; preserves global structure; interpretable. | Fails on nonlinear manifolds; sensitive to outliers. | Exploratory data analysis; compression of gene expression data [6]. |

| Kernel PCA (KPCA) [5] | Non-linear | Uses kernel trick to perform PCA in a high-dimensional feature space. | Captures complex nonlinear relationships. | High computational cost ((O(n^3))); no explicit inverse mapping; kernel choice is critical [5]. | Pattern recognition and feature extraction where PCA falls short [5]. |

| t-SNE [5] [6] | Non-linear (Manifold) | Preserves local similarities by converting distances to probabilities. | Excellent for visualizing cluster patterns in high-dimensional data. | Computationally intensive; does not preserve global structure well [6]. | Visualization of single-cell RNA-seq data to identify cell clusters [6]. |

| UMAP [5] [6] | Non-linear (Manifold) | Balances preservation of local and global data structures. | Better at preserving global structure than t-SNE; computationally efficient. | Performance depends on hyperparameter tuning [6]. | Handling large datasets and complex topologies, like in large-scale single-cell studies [4] [6]. |

| Linear Discriminant Analysis (LDA) [6] | Linear (Supervised) | Maximizes separation between predefined classes. | Ideal for supervised tasks with labeled data. | Assumes equal class covariances; requires class labels [6]. | Biomarker discovery and classification tasks, such as cancer subtype identification [6]. |

Table 2: Quantitative Performance Comparison Across Methodologies

| Method | Computational Complexity | Scalability to Large Datasets | Preservation of Global Structure | Preservation of Local Structure | Ease of Interpretation |

|---|---|---|---|---|---|

| PCA | (O(nd^2)) [6] | Excellent [5] | Excellent [5] | Poor [6] | High [5] |

| Kernel PCA | (O(n^3)) [5] [6] | Poor [5] | Good [5] | Fair | Low [5] |

| t-SNE | (O(n^2)) [6] | Moderate | Poor [6] | Excellent [5] [6] | Low |

| UMAP | (O(n^{1.14})) (approx.) [6] | Good [6] | Good [6] | Excellent [6] | Low |

Experimental Protocols for PCA Validation in Biological Studies

Validating the results of PCA with independent biological annotations is a critical step to ensure that the derived principal components capture biologically meaningful variation and not just technical noise or artifact.

Protocol 1: Integrating Machine Learning for Diagnostic Biomarker Discovery

A 2025 study on prostate cancer (PCa) diagnosis established a robust protocol for building and validating a diagnostic signature, where PCA often serves as a foundational DR step [1].

- 1. Data Collection & Processing: Transcriptomic data from 1,096 patients across five cohorts (TCGA-PRAD and four GEO datasets) were collected. The TCGA cohort (502 patients) was the training set, and the GEO cohorts (594 patients) were the validation set [1].

- 2. Differential Expression Analysis: Differential analysis using R packages "DESeq2," "edgeR," and "limma" identified 1,071 candidate mRNAs ((|\text{logFC}| > 1.5), p-value < 0.01) [1].

- 3. Dimensionality Reduction & Model Building: The high-dimensional candidate genes were input into an integrated machine learning framework. Researchers built 113 combinatorial models using 12 algorithms, including Lasso, Elastic Net, SVM, and XGBoost [1].

- 4. Validation with Biological & Clinical Annotations:

- In-vitro Validation: The top genes from the model (e.g., AOX1 and B3GNT8) were validated for their expression in one prostate epithelial cell line and five PCa cell lines.

- Clinical Liquid Biopsy Validation: Plasma samples from PCa and benign prostatic hyperplasia (BPH) patients were collected. The expression of AOX1 and B3GNT8 was confirmed to be consistent with the model's predictions, achieving a high diagnostic accuracy (AUC=0.91) that outperformed PSA [1].

This protocol demonstrates a闭环 (closed-loop) validation, where PCA-assisted feature reduction feeds into a model whose outputs are directly tested against wet-lab and clinical biological annotations.

Protocol 2: PCA-Based Denoising for Ecological Bioacoustics

A 2025 study in marine ecology provided a methodology for using PCA to denoise data, with validation against ecological ground truth [7].

- 1. Data Acquisition: 700 minutes of field recordings were collected from coral reef ecosystems [7].

- 2. PCA-Driven Noise Reduction: A PCA algorithm was applied to the soundscapes to selectively suppress anthropogenic noise (e.g., vessel sounds) overlapping with biological frequency bands [7].

- 3. Biological Index Calculation & Validation: An automatic Bio-voice Count Index (BCI) was developed to quantify target biological sounds. The method was validated by:

- Synthetic Soundscapes: Using data with known composition.

- Correlation with Biological Metrics: The BCI demonstrated strong correlations with direct biological metrics, such as fish abundance estimates. When combined with another index (Acoustic Complexity Index), it improved estimation accuracy [7].

This protocol showcases the use of PCA not just for visualization, but for active denoising, with results validated against synthetic and field-based biological annotations.

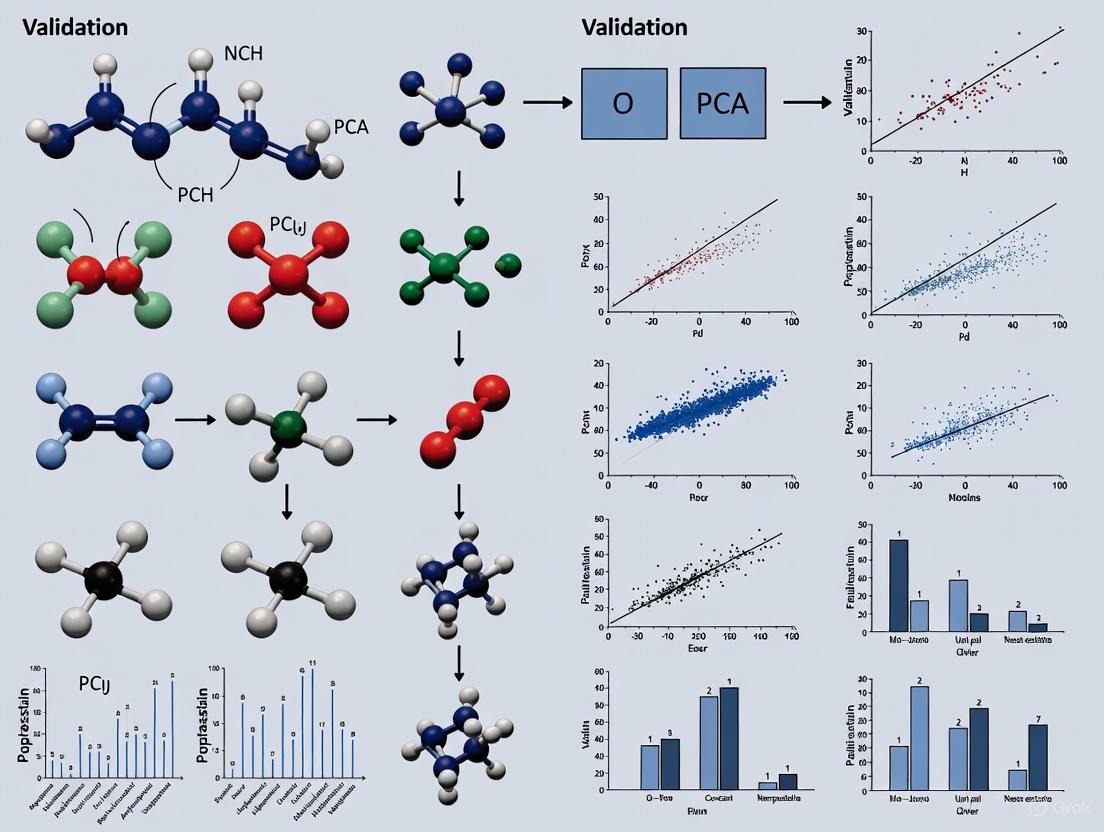

PCA Validation Workflow

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key resources and computational tools essential for conducting PCA and validation experiments in biological research.

Table 3: Key Research Reagent Solutions for PCA-Driven Biological Research

| Item / Resource | Function / Purpose | Example from Literature / Use Case | ||

|---|---|---|---|---|

| Cell Lines | In-vitro models for validating biomarker expression. | One prostate epithelial (RWPE-1) and five PCa cell lines (22RV1, C4-2, etc.) used to validate RNA biomarkers AOX1 and B3GNT8 [1]. | ||

| RNA Extraction Kit | Isolate high-quality RNA from cells or tissue for transcriptomic analysis. | RNAsimple Total RNA Kit (Tiangen Biotech) used in PCa diagnostic study [1]. | ||

| Public Databases (TCGA, GEO) | Sources of large-scale, annotated biological data for model training and validation. | TCGA-PRAD and four GEO datasets used to build and validate a 9-gene PCa diagnostic panel [1]. | ||

| R Packages (DESeq2, edgeR, limma) | Perform differential expression analysis to identify candidate features for DR. | Used with thresholds (( | \text{logFC} | > 1.5), p-value < 0.01) to find 1,071 candidate mRNAs [1]. |

| Scikit-learn (Python Library) | Provides implementations of PCA, Kernel PCA, and other machine learning algorithms. | Standard tool for applying PCA and other DR methods in a Python environment [3]. |

PCA remains an indispensable tool in the biologist's computational arsenal, offering an unparalleled combination of speed, interpretability, and effectiveness for initial data exploration and linear dimensionality reduction. While non-linear methods like UMAP and t-SNE are powerful for visualizing complex structures, PCA's role in mitigating the curse of dimensionality is firmly established.

The future of PCA in biological research lies not in being superseded, but in being integrated. As demonstrated by the cited experimental protocols, its true power is unlocked when its results are rigorously validated through a framework of biological annotations—from cell line experiments and clinical samples to ecological ground truth. This synergy between computational projection and biological validation is foundational to advancing precision medicine and scientific discovery.

Principal Component Analysis (PCA) is a foundational dimensionality reduction technique that transforms complex, high-dimensional datasets into a simpler set of uncorrelated variables called principal components [8] [9]. In biological and healthcare research, where datasets often encompass thousands of variables—from gene expression profiles to clinical measurements—PCA provides an essential tool for extracting meaningful patterns, identifying key variables, and facilitating visualization [10] [11]. By distilling essential information from overwhelming data dimensions, PCA enables researchers to uncover hidden structures that inform hypothesis generation and experimental validation.

The core value of PCA lies in its ability to reorient data along axes of maximum variance, creating a new coordinate system where the first axis (principal component) captures the greatest data spread, the second captures the next greatest while remaining uncorrelated to the first, and so on [12]. This process preserves the most critical information in fewer dimensions, allowing researchers to focus on the most relevant biological signals amid complex multivariate data. For drug development professionals and scientists, understanding how to interpret PCA results—particularly loadings, scores, and variance explained—is crucial for validating findings against biological annotations and ensuring research conclusions rest on statistically sound foundations.

Core Concepts of PCA: Loadings, Scores, and Variance Explained

What Are Principal Components?

Principal components are new variables constructed as linear combinations of the original variables [8]. They are designed to be uncorrelated with each other (orthogonal) and ordered such that the first component captures the maximum possible variance in the data, the second captures the maximum remaining variance while being uncorrelated with the first, and subsequent components continue this pattern [9] [12]. Geometrically, principal components represent the directions of maximum variance in the data, functioning as a new set of axes that provide the optimal perspective for evaluating differences between observations [8].

If you have a dataset with 10 variables, PCA will generate 10 principal components. However, the key insight is that the first few components typically contain most of the information, allowing researchers to discard the later components with minimal information loss [8]. This property makes PCA particularly valuable for biological research, where the "curse of dimensionality" often complicates analysis of high-throughput experimental data [11].

Loadings: The Blueprint of Principal Components

Loadings (sometimes referred to as the matrix P in mathematical formulations) represent the weights or coefficients assigned to each original variable when calculating the principal components [13] [14]. These coefficients indicate how much each original variable contributes to the construction of each principal component. Mathematically, the loadings are the eigenvectors of the covariance matrix of the original data [8] [9].

In practical terms, loadings answer the question: "How does each original variable influence the new principal components?" A loading value close to +1 or -1 indicates strong influence, while values near zero suggest minimal contribution [14]. The sign of the loading indicates the direction of the relationship—positive loadings suggest variables that increase together, while negative loadings indicate an inverse relationship [14].

For biological researchers, interpreting loadings is crucial for understanding what each principal component represents. For example, in a gene expression study, high loadings for specific genes on the first principal component would indicate that those genes contribute significantly to the major pattern of variation in the dataset, potentially pointing to biologically relevant pathways or processes.

Scores: The Transformed Data in the New Coordinate System

Scores (represented as matrix T) are the actual values of the observations in the new coordinate system defined by the principal components [13] [14]. Each score value represents the position of an observation along a principal component direction. If you have N observations in your original dataset, you will have N score values for the first principal component, another N for the second, and so on [14].

Mathematically, scores are calculated by projecting the original data onto the directions defined by the loadings: T = XP (where X is the original data matrix and P is the loadings matrix) [13] [14]. The score for observation i on component a is computed as:

[t{i,a} = x{i,1} \cdot p{1,a} + x{i,2} \cdot p{2,a} + \ldots + x{i,K} \cdot p_{K,a}]

Where (x{i,k}) is the standardized value of variable *k* for observation *i*, and (p{k,a}) is the loading of variable k on component a [14].

Scores enable researchers to visualize and analyze patterns in high-dimensional data by plotting just the first two or three components [12]. Observations with similar characteristics will cluster together in the score plot, while outliers will appear separated from the main clusters [14]. This makes score plots invaluable for identifying natural groupings in biological data, detecting anomalies, and observing temporal patterns [14].

Variance Explained: Measuring Information Capture

The variance explained by each principal component indicates how much of the total variability in the original data that component captures [8] [12]. The total variance in a standardized dataset equals the number of variables, and each principal component accounts for a portion of this total [9].

Eigenvalues (λ) associated with each principal component quantify the amount of variance captured by that component [8] [9]. The proportion of total variance explained by a component is calculated as its eigenvalue divided by the sum of all eigenvalues [8]. Researchers often examine the cumulative explained variance to determine how many components to retain—typically enough to capture 70-95% of the total variance [11].

In biological research, the variance explained helps assess whether principal components capture sufficient information to be biologically meaningful. A first component that explains most of the variance might represent a dominant biological factor (e.g., a major environmental influence or treatment effect), while later components with small variance might represent noise or minor modulating factors.

Comparative Analysis: PCA Component Selection Methods in Biological Research

Selecting the optimal number of principal components to retain is critical in PCA applications. Retaining too few components may discard biologically relevant information, while retaining too many introduces noise and reduces analytical efficiency [11]. Different selection methods often yield contradictory results, creating challenges for consistent interpretation across biological studies [11].

Table 1: Comparison of PCA Component Selection Methods

| Method | Approach | Advantages | Limitations | Typical Use in Biological Research |

|---|---|---|---|---|

| Kaiser-Guttman Criterion | Retains components with eigenvalues > 1 [11] | Simple, objective rule | Tends to select too many components when variables are numerous, too few when variables are limited [11] | Less reliable for high-dimensional biological data (e.g., genomics) |

| Cattell's Scree Test | Visual identification of the "elbow" where eigenvalues level off [11] | Intuitive, graphical approach | Subjective, lacks clear cutoff definition, challenging with no obvious breaks [11] | Useful for initial exploration of biological datasets with clear factor separation |

| Percent of Cumulative Variance | Retains components needed to explain specified variance (typically 70-95%) [11] | Straightforward, allows control over information retention | Arbitrary threshold selection, may retain too many/few components [11] | Most reliable for health research; balances information preservation with dimensionality reduction [11] |

Recent research evaluating these methods in simulated high-dimensional biological data found that the Percent of Cumulative Variance approach (typically using 80% threshold) offers the greatest stability and reliability for health research applications [11]. The Kaiser-Guttman criterion often retained fewer components, causing overdispersion, while Cattell's scree test retained more components, compromising reliability in biological interpretations [11].

Experimental Protocols and Validation with Biological Annotations

Standard PCA Workflow in Biological Research

The following diagram illustrates the standard workflow for applying PCA in biological research, from data preparation to validation with biological annotations:

Case Study: Predicting Developmental Delay in Preterm Infants

A 2025 study demonstrated PCA's utility in healthcare research by developing a predictive model for developmental delay in preterm infants [10]. Researchers applied PCA to integrate multiple standardized indicators—including length, weight, head circumference, and five neurodevelopmental dimensions from the Gesell Developmental Schedules—at 3, 6, 9, and 12 months corrected age [10].

The experimental protocol followed these key steps:

- Data Collection: Physical growth measurements and neurodevelopmental assessments were collected from 507 preterm infants at four time points [10]

- Standardization: All indicators were standardized as Z-scores to ensure equal contribution to the analysis [10]

- PCA Application: PCA was applied to the multidimensional dataset, with the Kaiser-Meyer-Olkin (KMO) measure used to assess sampling adequacy [10]

- Component Interpretation: The resulting principal components were used to create a comprehensive developmental quality index, with positive values classified as "normal development" and negative values as "developmental delay" [10]

- Model Validation: The PCA-based classifications were used to construct and validate a predictive nomogram using logistic regression, with performance assessed through AUROC, calibration curves, and decision curve analysis [10]

This approach overcame the limitation of using single indicators to assess preterm infant development, demonstrating how PCA can integrate multidimensional factors to create more comprehensive biomarkers for clinical prediction [10].

Case Study: Microbiome Age Prediction Using Transformer-Based PCA

A groundbreaking 2025 study published in Communications Biology introduced a Transformer-based Robust Principal Component Analysis (TRPCA) for chronological age estimation from human microbiomes [15]. This approach leveraged the strengths of transformer architectures with PCA's interpretability to analyze microbiome data from skin, oral, and gut sites using both 16S rRNA gene amplicon and whole-genome sequencing data [15].

The experimental methodology included:

- Data Processing: Microbial abundance profiles were processed to account for compositionality and sparsity

- TRPCA Implementation: Transformer architecture was integrated with robust PCA to handle microbiome-specific data characteristics

- Multi-task Learning: Combined classification (birth country prediction) and regression (age prediction) tasks

- Residual Analysis: Examined links between subjects and prediction errors across sequencing methods and body sites [15]

TRPCA demonstrated significant improvements in age prediction accuracy, achieving the largest reduction in Mean Absolute Error for WGS skin samples (MAE: 8.03, 28% reduction) and 16S skin samples (MAE: 5.09, 14% reduction) compared to conventional approaches [15]. Additionally, TRPCA achieved 89% accuracy for birth country prediction across five countries while improving age prediction from WGS stool samples [15].

This case study highlights how enhancing PCA with modern deep learning architectures can boost predictive performance while maintaining the interpretability essential for biological research and clinical applications.

Table 2: Essential Research Reagents and Computational Tools for PCA in Biological Research

| Tool/Resource | Function | Application Example | Considerations for Biological Research |

|---|---|---|---|

| StandardScaler (Python) | Standardizes features by removing mean and scaling to unit variance [12] | Preprocessing genomic expression data before PCA | Critical for ensuring equal feature contribution; prevents dominance of highly abundant molecules |

| Covariance Matrix Algorithms | Computes relationships between all variable pairs [8] [9] | Identifying co-regulated genes or proteins in omics studies | Alternative estimators (Ledoit-Wolf) may improve stability with small biological sample sizes [11] |

| Eigendecomposition Libraries | Calculates eigenvectors and eigenvalues [8] [12] | Extracting principal components from biological datasets | Numerical stability crucial for high-dimensional biological data (n<

|

| Scree Plot Visualization | Graphical tool for component selection [11] | Determining optimal number of components to retain in gene expression analysis | Subjective interpretation; should be combined with variance-based criteria in biological applications |

| BioAnnotation Databases | External biological knowledge bases (e.g., GO, KEGG) | Validating whether high-loading variables share biological functions | Essential for confirming biological relevance of statistical patterns |

PCA remains an indispensable tool for biological researchers facing high-dimensional data, but its true value emerges only when statistical patterns are validated against biological knowledge. The core concepts of loadings, scores, and variance explained form the foundation for biologically meaningful interpretation of PCA results. Loadings identify which variables drive patterns, scores reveal sample relationships and outliers, and variance explained quantifies information capture.

The case studies examined demonstrate that PCA's greatest strength in biological research lies in its ability to integrate multidimensional data into composite biomarkers and patterns that align with biological mechanisms [10] [15]. However, successful application requires appropriate component selection—with the percent cumulative variance method generally providing the most reliable approach for biological data [11]—and rigorous validation against experimental annotations and external biological knowledge bases.

For drug development professionals and researchers, PCA offers a powerful approach to distill complex biological data into interpretable patterns, but these patterns must ultimately make sense in the context of underlying biology. By following structured workflows, utilizing appropriate computational tools, and prioritizing biological validation, scientists can leverage PCA to uncover meaningful insights from increasingly complex biological datasets.

In the field of biological research, Principal Component Analysis (PCA) has become a cornerstone technique for exploring high-throughput data, from transcriptomics and metabolomics to population genetics. This multivariate statistical procedure simplifies complex datasets by generating new, uncorrelated variables—principal components (PCs)—as weighted combinations of the original biological variables [16]. These components are ordered such that the first explains the largest source of variance in the data, the second the next largest, and so on [16]. A critical challenge researchers face is determining how many of these components are biologically meaningful rather than merely representing statistical noise. The scree plot stands as a widely used graphical tool for addressing this fundamental question, yet its interpretation requires careful consideration within biological contexts where the goal is to identify patterns reflecting genuine biological mechanisms rather than mere data variance.

The Scree Plot: A Researcher's Visual Guide

A scree plot is a simple yet powerful graphical representation that displays the eigenvalues of the principal components in descending order of magnitude [17]. The name "scree" derives from geology, referring to the accumulation of loose stones or rocky debris at the base of a cliff [17]. In PCA terms, the ideal scree plot shows a sharp reduction in eigenvalue size (the cliff) followed by a gradual trailing off of the remaining eigenvalues (the rubble) [17].

The eigenvalues themselves represent the amount of variance accounted for by each principal component [18]. When you examine a scree plot, you're looking for the point at which the graph shows a distinct change in slope—the "elbow" where the steep decline transitions to a more gradual slope [19] [17]. The components before this elbow are typically considered meaningful, while those after are often dismissed as noise.

The Mathematics Behind the Plot

Mathematically, eigenvalues (λ) are derived from the covariance matrix of the original data. For a component to be considered potentially significant under the Kaiser criterion, its eigenvalue should exceed 1 [18]. The proportion of variance explained by each component is calculated as the eigenvalue for that component divided by the sum of all eigenvalues [18]. The cumulative proportion reveals the total variance explained by consecutive components, helping researchers determine if they've retained enough components to capture sufficient data variability for their biological question [18].

Quantitative Criteria for Component Selection

While the scree plot offers a visual heuristic, several quantitative approaches complement its interpretation:

Table 1: Quantitative Criteria for Component Selection

| Criterion | Threshold/Approach | Interpretation |

|---|---|---|

| Kaiser-Guttman | Eigenvalue > 1 [18] | Based on the idea that components explaining less variance than a single standardized variable may be unimportant |

| Proportion of Variance | Typically 70-90% cumulative variance [18] | Retain enough components to explain an "adequate" percentage of total variance |

| Scree Test | Visual identification of "elbow" [17] | Subjective but practical approach looking for break point between steep and shallow slopes |

| Parallel Analysis | Eigenvalues exceeding those from random data [17] | More robust method comparing actual eigenvalues to those from uncorrelated variables |

Research suggests that a combination of these approaches yields the most reliable results. As demonstrated in simulation studies, relying on a single criterion can be misleading, particularly with biological data that often contains complex correlation structures [20].

Biological Validation: Beyond Statistical Criteria

Statistical significance does not necessarily equate to biological relevance. While a scree plot might suggest retaining three components based on the elbow criterion, biological validation is essential to confirm their meaningfulness. Several approaches facilitate this validation:

Pathway and Functional Enrichment Analysis

Biologically meaningful components should enrich for coherent biological pathways. After identifying putative meaningful components based on scree plot interpretation, researchers can:

- Examine the loadings (coefficients) of original variables (e.g., genes, metabolites) on each component [18]

- Select variables with the highest absolute loadings (e.g., |loading| > 0.3-0.4) for each component

- Perform enrichment analysis using databases like Gene Ontology, KEGG, or Reactome

- Determine if the component represents a coherent biological process, pathway, or function

Reproducibility and Stability Assessment

Component stability across datasets and methodological variations provides evidence of biological relevance. The syndRomics R package offers specialized functions for assessing component stability through resampling strategies [16]. Biologically meaningful components should demonstrate:

- Robustness to missing data: Consistent patterns despite different imputation approaches

- Cross-dataset reproducibility: Similar components emerge in independent datasets

- Technical reproducibility: Stable across analytical batches or technical replicates

Integration with External Biological Knowledge

Meaningful components should align with established biological knowledge or generate testable hypotheses. Researchers should ask:

- Do the component loadings align with known co-regulated genes or metabolic pathways?

- Do sample scores along components separate known biological groups (e.g., disease vs. control)?

- Can components be interpreted in the context of the biological system under study?

Case Study: Spinal Cord Injury Data Analysis

To illustrate the process, consider a case study from spinal cord injury research [16]. Researchers analyzed 18 motor function outcome variables measured at 6 weeks post-injury in 159 subjects. The scree plot revealed a distinct elbow after the third component, suggesting three meaningful dimensions of motor recovery. Biological validation confirmed these components represented: (1) coordinated limb movements, (2) trunk stability and weight support, and (3) fine motor control—each aligning with known spinal cord functional pathways.

Experimental Protocols for Validation

Protocol 1: Component Significance Testing

Purpose: To statistically evaluate whether components explain more variance than expected by chance [16].

Procedure:

- Perform PCA on the original dataset

- Generate permuted datasets by randomly shuffling values within each variable

- Perform PCA on each permuted dataset

- Compare eigenvalues from original data to the distribution of eigenvalues from permuted data

- Components with eigenvalues exceeding the 95th percentile of the null distribution are considered significant

Protocol 2: Biological Coherence Assessment

Purpose: To evaluate whether components reflect biologically coherent patterns.

Procedure:

- Extract variables with significant contributions to each component (e.g., top 5% of loadings)

- Perform functional enrichment analysis using appropriate databases

- Calculate enrichment p-values and false discovery rates

- Components with significant enrichment (FDR < 0.05) for biologically relevant pathways are considered meaningful

Advanced Considerations in Biological Contexts

High-Dimensional Biological Data

Biological data often exhibits the "curse of dimensionality," where the number of variables (e.g., genes) far exceeds the number of observations (e.g., samples) [21]. In such cases, standard scree plot interpretation may need adjustment. The syndRomics package implements modified approaches specifically designed for high-dimensional biological data [16].

Non-Gaussian Distributions

Biological data frequently follows non-Gaussian distributions [20]. While traditional PCA assumes multivariate normality, biological variables (e.g., gene expression counts) often follow super-Gaussian distributions. In such cases, Independent Component Analysis (ICA) or Independent Principal Component Analysis (IPCA) may complement standard PCA [20]. These approaches optimize different criteria (statistical independence rather than mere variance explanation) and may yield more biologically interpretable components.

Mixed Data Types

Biological experiments often yield mixed data types (continuous, categorical, ordinal). Standard PCA requires modification to handle such data, typically through optimal scaling transformations or alternative algorithms [16].

Visualization Framework

Visual Workflow for Determining Biologically Meaningful Components

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions for PCA in Biological Studies

| Tool/Resource | Function | Application Context |

|---|---|---|

| R Statistical Environment | Open-source platform for statistical computing | Primary analysis implementation |

| syndRomics R Package | Specialized functions for syndromic analysis | Component visualization, interpretation, and stability assessment [16] |

| factoextra R Package | Enhanced visualization capabilities for multivariate analysis | Scree plot generation and PCA result visualization [22] |

| vegan R Package | Multivariate statistical methods | Community ecology and gradient analysis [22] |

| Gene Ontology Database | Functional annotation resource | Biological interpretation of component loadings |

| KEGG Pathway Database | Pathway information resource | Pathway enrichment analysis for component validation |

| EIGENSOFT Package | Population genetics-specific PCA implementation | Genetic data analysis [23] |

| Mixomics R Package | Multivariate data analysis | IPCA and sIPCA implementation [20] |

Comparative Performance of PCA Variants in Biological Studies

Different PCA approaches offer varying advantages for biological data:

Table 3: Comparison of PCA-Related Methods for Biological Data

| Method | Advantages | Limitations | Ideal Biological Application |

|---|---|---|---|

| Standard PCA | Simple, interpretable, widely implemented | Sensitive to outliers, assumes linear relationships | Initial data exploration, quality control |

| Independent PCA (IPCA) | Combines PCA and ICA advantages, better for super-Gaussian data | More complex implementation, less familiar to biologists | Microarray, metabolomics data with non-normal distributions [20] |

| Sparse IPCA (sIPCA) | Built-in variable selection, highlights biologically relevant features | Additional parameter tuning required | High-dimensional data with many irrelevant variables [20] |

| Nonlinear PCA | Handles mixed data types, captures nonlinear relationships | Computational intensity, interpretation challenges | Integration of clinical, molecular, and demographic data [16] |

Interpreting scree plots to determine biologically meaningful components requires both statistical rigor and biological reasoning. While the scree plot provides a valuable visual heuristic, biological validation remains essential. By integrating quantitative criteria with pathway analysis, stability assessment, and alignment with existing biological knowledge, researchers can move beyond merely describing variance to uncovering genuine biological insights. As PCA applications continue to evolve in biological research, approaches that combine statistical evidence with biological plausibility will yield the most meaningful and reproducible results.

Principal Component Analysis (PCA) is a foundational unsupervised method for reducing the dimensionality of high-throughput biological data, revealing dominant directions of highest variability and sample clustering patterns [24] [25]. However, a significant challenge persists in distinguishing biologically meaningful variation from technical artifacts or noise. While PCA efficiently captures variance, this variance may not always reflect biologically relevant signals [26]. The conventional approach of focusing only on the first few principal components (PCs) risks overlooking crucial biological information embedded in higher components, particularly for specific tissue types or subtle biological phenomena [24]. This guide examines rigorous methodologies for validating PCA results through biological annotations and pathway analysis, providing researchers with frameworks to ensure their dimensional reduction yields biologically interpretable and meaningful insights.

The PCA Validation Framework: Connecting Components to Biology

Validating that principal components represent genuine biological phenomena rather than technical artifacts requires a systematic approach. The following workflow outlines key validation steps, from initial dimensionality reduction to final biological interpretation.

Figure 1. A systematic workflow for validating the biological meaning of Principal Components (PCs). This process connects statistical outputs with biological annotations through multiple evidence layers.

Sample Annotation Correlation Analysis

The initial validation step involves correlating principal components with known sample annotations. This helps determine whether the major variance components separate samples based on biologically meaningful categories like tissue type, disease state, or experimental treatment. In large-scale gene expression studies, the first three PCs often separate hematopoietic cells, malignancy-related processes (particularly proliferation), and neural tissues [24]. However, sample composition strongly influences which biological signals emerge in these components. Studies demonstrate that overrepresentation of specific tissue types (e.g., liver samples) can create dedicated principal components that capture tissue-specific biology [24]. This highlights the importance of considering sample composition when interpreting PCA results.

Pathway-Level Aggregation Methods

Transforming analysis from gene-level to pathway-level represents a powerful strategy for biological validation. This approach aggregates gene expression data into predefined biological pathways, creating a more robust representation that reduces technical variability while enhancing biological interpretability [27]. Multiple methodologies exist for this pathway-level aggregation:

Table 1: Comparison of Pathway-Level Aggregation Methods

| Method | Mechanism | Best Use Cases | Performance Notes |

|---|---|---|---|

| Mean of All Genes | Averages z-scaled expression of all pathway genes | Baseline approach; large pathways | Shows lowest classification accuracy in benchmarks [27] |

| Mean CORGs | Averages only condition-responsive genes within pathway | When key pathway drivers are known | Can yield discordant pathway signatures between datasets [27] |

| ASSESS | Sample-level extension of GSEA using random walk computations | Complex phenotypes; sample-specific activity | Among best accuracy and correlation in evaluations [27] |

| PCA-Based | Applies PCA to genes within each pathway | Capturing co-regulated gene groups | Good performance but dependent on component selection [27] |

| Mean Top 50% | Averages top half of most responsive genes | Balanced approach | Among best accuracy and correlation in evaluations [27] |

Advanced Methodologies for Enhanced Validation

Independent Principal Component Analysis (IPCA)

IPCA combines the advantages of both PCA and Independent Component Analysis (ICA) by using ICA as a denoising process for PCA loading vectors. This approach better highlights important biological entities and reveals insightful patterns in the data, leading to improved sample clustering on graphical representations [26]. A sparse variant (sIPCA) incorporates internal variable selection to identify biologically relevant features, further enhancing biological interpretability.

Single-Cell Pathway Scoring (scPS)

For single-cell RNA sequencing data, the single-cell Pathway Score (scPS) method uses principal component scores weighted by their explained variance, combined with average gene set expression. This approach addresses the high noise and dropout rates characteristic of single-cell data while prioritizing biologically relevant genes within pathways [28].

Residual Space Analysis

Conventional PCA often focuses exclusively on the first few components, but significant biological information may reside in higher components. The information ratio (IR) criterion provides a quantitative method to measure phenotype-specific information distribution between projected space (first k PCs) and residual space (remaining variance) [24]. Studies demonstrate that for comparisons within large-scale groups (e.g., between two brain regions or two hematopoietic cell types), most information resides in the residual space beyond the first three PCs [24].

Experimental Comparison of PCA Validation Methods

Benchmarking Study Design

To objectively compare PCA validation methodologies, we designed a comprehensive benchmarking study based on established evaluation frameworks [27] [28]. The experimental protocol assessed method performance across multiple dimensions using both simulated and real biological datasets.

Table 2: Experimental Design for Method Comparison

| Aspect | Evaluation Method | Datasets | Performance Metrics |

|---|---|---|---|

| Classification Accuracy | Internal and external validation on independent test sets | 7 pairs of two-class gene expression datasets [27] | Accuracy, generalizability |

| Pathway Signature Correlation | Correlative extent of pathway signatures between related datasets | Microarray and single-cell RNA-seq data | Correlation coefficients, consistency |

| Biological Relevance | Expert curation and known biological truth | Liver toxicity study [26], PBMC datasets [29] | Known pathway associations, cell type markers |

| Technical Robustness | Varying gene set sizes, noise levels, cell counts | Simulated data with known ground truth [28] | Sensitivity, specificity, false positive rates |

Comparative Performance Results

The experimental comparison revealed significant differences in method performance across various evaluation criteria:

Table 3: Performance Comparison of PCA Validation Methods

| Method | Classification Accuracy | Pathway Signature Consistency | Biological Interpretability | Technical Robustness |

|---|---|---|---|---|

| ASSESS | High (internal & external) | High correlation between datasets | Excellent with sample-level scores | Good with various gene set sizes |

| Mean Top 50% | High (internal & external) | High correlation between datasets | Good for clearly defined pathways | Moderate with noisy data |

| PCA-Based | Moderate | Moderate correlation | Good with component inspection | Good with linear relationships |

| Mean CORGs | Variable | Large discordance in signatures | Good when CORGs are stable | Poor with small sample sizes |

| PLS-Based | Variable | Large discordance in signatures | Moderate with complex interpretation | Sensitive to data distribution |

| IPCA | High for sample clustering | Good with denoised components | Excellent with sparse biology | Good in super-Gaussian cases [26] |

| scPS | High for single-cell data | Good for cell type identification | Excellent for rare cell types | Robust to zero inflation [28] |

Detailed Experimental Protocols

ASSESS Methodology Protocol

The ASSESS (Analysis of Sample Set Enrichment Scores) method employs a two-step random walk approach [27]:

Gene-Level Log Likelihood Calculation: For each gene in a sample, compute the log likelihood ratio of the sample belonging to one class versus another using random walk probability calculations.

Pathway-Level Enrichment Scoring: Apply a second random walk at the pathway level using the log likelihood ratio values of member genes to compute enrichment scores for each pathway in each sample.

Implementation: ASSESS is available in R implementations and can process standard pathway formats, including KEGG and WikiPathways.

IPCA Implementation Protocol

Independent Principal Component Analysis implementation follows these steps [26]:

Standard PCA Pre-processing: Perform conventional PCA to reduce dimensionality and generate initial loading vectors.

FastICA Application: Apply the FastICA algorithm to the PCA loading vectors to generate Independent Principal Components (IPCs).

Component Ordering: Order IPCs using kurtosis measures of loading vectors, where higher kurtosis indicates stronger non-Gaussianity and potentially more biologically meaningful components.

Sparse Variant (sIPCA): Apply soft-thresholding to independent loading vectors for built-in variable selection.

scPS Calculation Protocol

For single-cell Pathway Score calculation [28]:

PCA on Gene Set: Apply PCA to the gene expression matrix of the pathway/gene set.

Score Calculation: Compute scPS using the formula:

scPS = (1/m) × Σ(sᵢ - sₘᵢₙ) × vᵢ + μ

Where:

- μ = mean gene expression of the gene set

- sᵢ = unweighted principal component score

- sₘᵢₙ = minimum sᵢ among all cells

- vᵢ = percentage of variance explained by PC i

- m = number of PCs at which 50% cumulative variance is explained

Differential Analysis: Apply statistical tests (e.g., Wilcoxon test) to scPS scores to identify differentially active pathways.

The choice of pathway database significantly impacts biological validation. Different databases offer varying coverage of biological processes and diseases:

Table 4: Pathway Database Comparison for Biological Annotation

| Database | Number of Pathways | Gene Coverage | Disease Coverage | Unique Features |

|---|---|---|---|---|

| PFOCR | ~1000 new pathways monthly | 77% of human genes (18,383 unique) [30] | 791/876 (90%) diseases covered [30] | Automated extraction from published figures; high throughput |

| WikiPathways | ~90 new pathways yearly | Up to 44% of human genes [30] | 127/876 (14%) diseases covered [30] | Community-curated; rapidly updated for emerging topics |

| Reactome | Manually curated | Up to 44% of human genes [30] | 153/876 (17%) diseases covered [30] | Detailed mechanistic pathways; high-quality curation |

| KEGG | Fixed collection | Up to 44% of human genes [30] | 94/876 (11%) diseases covered [30] | Classic pathways; widely recognized |

Visualization and Interpretation Guidelines

Effective visualization is crucial for interpreting and communicating PCA validation results. The following diagram illustrates the logical relationships between PCA results and biological interpretation pathways.

Figure 2. Decision framework for interpreting PCA components through biological validation. Components are evaluated through multiple channels to distinguish technical artifacts from biologically meaningful signals.

Visualization Best Practices

Effective color usage in data visualization enhances interpretation and accessibility:

- Color Palette Selection: Use perceptually uniform color spaces (CIE Luv, CIE Lab) for scientific visualization [31]. For categorical data, employ qualitative palettes with easily distinguishable colors.

- Accessibility Considerations: Avoid red/green combinations that challenge color-blind readers (affecting 8% of males, 0.5% of females) [32]. Use alternative color combinations like green/magenta or yellow/blue.

- Continuous Data Representation: For expression values or component scores, use sequential palettes with a single color in varying saturations or diverging palettes for data with natural midpoints [33].

Table 5: Essential Research Reagent Solutions for PCA Biological Validation

| Resource Type | Specific Tools | Function | Implementation Notes |

|---|---|---|---|

| Pathway Databases | PFOCR, WikiPathways, Reactome, KEGG | Provide biological context for gene sets | PFOCR offers greatest breadth; Reactome offers curation depth [30] |

| Analysis Packages | fgsea, clusterProfiler, GSVA, Enrichr | Perform enrichment analysis and pathway scoring | clusterProfiler supports multiple database formats [30] |

| Visualization Tools | Loupe Browser, Cytoscape, Color Oracle | Explore results and ensure accessibility | Color Oracle simulates color blindness for accessibility checking [29] [32] |

| Specialized Methods | ASSESS, IPCA, scPS, AUCell | Advanced pathway activity scoring | ASSESS and Mean Top 50% show best performance in benchmarks [27] |

Based on comprehensive experimental comparisons, the following recommendations emerge for validating the biological relevance of PCA results:

Employ Multiple Validation Methods: No single method captures all biological signals. Combine sample annotation correlation with pathway-level analysis and residual space examination.

Contextualize with Sample Composition: Interpret components in light of sample distribution, as overrepresented tissues can dominate variance structure [24].

Look Beyond the First Few Components: Biologically relevant information, particularly for specific tissue types, often resides beyond the first three principal components [24].

Leverage Complementary Pathway Methods: ASSESS and Mean Top 50% generally provide the most robust performance, but method choice should align with specific research questions and data characteristics [27].

Utilize Modern Pathway Resources: PFOCR provides exceptional coverage of biological processes and diseases, making it particularly valuable for detecting novel associations [30].

The critical link between variance and biology requires rigorous validation through multiple complementary approaches. By implementing these evidence-based practices, researchers can confidently interpret PCA results with biological meaningfulness, transforming statistical patterns into actionable biological insights.

Executing Biologically-Grounded PCA: From Data Prep to Annotation

In the analysis of high-throughput biological data, Principal Component Analysis (PCA) is an indispensable tool for dimensionality reduction and noise filtering. However, the suitability of PCA is contingent on appropriate normalization and transformation of count data, as improper choices can result in the loss of biological information or signal corruption due to excessive noise [34]. The discrete nature of biomolecules has driven the widespread use of count data in modern biology, with various experimental methods counting unique entities like RNA transcripts, open chromatin regions, or proteins to characterize biological phenomena [34]. Yet, analysis of these datasets is often complicated by technical biases, noise, and inherent measurement variability associated with discrete counts. This comparison guide objectively evaluates the performance of various PCA-based preprocessing methodologies, focusing on their ability to enhance biological interpretability while effectively handling noise in high-dimensional biological data.

Comparative Analysis of PCA Methodologies for Biological Data

Table 1: Performance Comparison of PCA Variants for Biological Data Analysis

| Method | Core Innovation | Noise Handling | Data Type Suitability | Biological Interpretability | Key Limitations |

|---|---|---|---|---|---|

| Standard PCA [35] | Orthogonal transformation maximizing variance | Homoscedastic noise only | Continuous, normally distributed data | Limited; components are linear combinations of all variables | Assumes linear relationships; sensitive to scaling; poor with count data |

| Biwhitened PCA (BiPCA) [34] | Adaptive row/column rescaling (biwhitening) | Heteroscedastic noise in count data | Omics count data (scRNA-seq, scATAC-seq, etc.) | High; enhances marker gene expression, preserves cell neighborhoods | Recently introduced (2025); requires further community validation |

| Independent PCA (IPCA) [20] [26] | ICA denoising of PCA loading vectors | Separates non-Gaussian signals from Gaussian noise | High-throughput data with super-Gaussian distributions | Better clustering of biological samples than PCA or ICA alone | Performs poorly when loading vectors follow Gaussian distribution |

| Structured Sparse PCA [36] | Incorporates biological network information | Through variable selection | Genomic data with prior pathway information | High; identifies biologically relevant pathways and gene sets | Requires pre-specified biological network information |

Table 2: Experimental Performance Metrics Across Biological Modalities

| Method | Rank Recovery Accuracy | Signal-to-Noise Improvement | Computation Time | Batch Effect Mitigation | Validation Across Modalities |

|---|---|---|---|---|---|

| Standard PCA | Variable (requires heuristics) | Limited for count data | Fast | Limited | Extensive historical use |

| Biwhitened PCA | Reliable across 100+ datasets [34] | 5.3 dB improvement in marine bioacoustics [7] | Efficient for high-dimensional data | Effective demonstrated | 7 omics modalities validated |

| Independent PCA | Better than PCA/ICA alone [26] | Enhanced through denoising | Moderate (requires multiple runs) | Not specifically reported | Microarray and metabolomics data |

| Structured Sparse PCA | Improved feature selection [36] | Through structured sparsity | Varies with network size | Not specifically reported | Glioblastoma gene expression |

Experimental Protocols and Methodologies

Biwhitened PCA for Omics Count Data

Protocol Objective: To recover the true dimensionality and denoise high-throughput biological count data while preserving biological signals.

Theoretical Foundation: BiPCA models the observed data matrix Y (m×n) as the sum of a low-rank mean matrix X (rank r≪m) and a centered noise matrix ℰ: Y = X + ℰ. This formulation covers count distributions where Yᵢⱼ ~ Poisson(Xᵢⱼ) [34].

Step-by-Step Methodology:

Biwhitening Normalization: The algorithm finds optimal rescaling factors û and v̂ to transform the data: Ỹ = D(û) Y D(v̂) = D(û) (X + ℰ) D(v̂) = X̃ + ℇ̃, where D(û) and D(v̂) are diagonal matrices. This ensures the average noise variance is 1 in each row and column [34].

Rank Estimation: The spectrum of the biwhitened noise matrix ℇ̃ converges to the Marchenko-Pastur distribution, allowing identification of signal components exceeding this noise distribution [34].

Singular Value Shrinkage: The biwhitened signal matrix X̃ is estimated using optimal singular value shrinkage: X̂ = Ũ D(g(s̃)) Ṽᵀ, where g is an optimal shrinker (e.g., Frobenius shrinker g_F) that removes noise singular values while attenuating signal singular values based on noise contamination [34].

Independent PCA for Biological Dimension Reduction

Protocol Objective: To generate denoised loading vectors that better highlight important biological entities and reveal insightful patterns.

Theoretical Foundation: IPCA combines PCA as a preprocessing step with Independent Component Analysis (ICA) applied to the loading vectors. ICA identifies statistically independent components using higher-order statistics, unlike PCA which uses second-order statistics [20] [26].

Step-by-Step Methodology:

PCA Preprocessing: Perform standard PCA on the high-dimensional data to generate loading vectors and reduce dimensionality.

ICA Denoising: Apply the FastICA algorithm to the PCA loading vectors to separate mixed signals (noise vs. biological signal).

Component Ordering: Order the Independent Principal Components (IPCs) using kurtosis values of their associated loading vectors as a measure of non-Gaussianity.

Sparse Variant (sIPCA): Apply soft-thresholding to the independent loading vectors to perform internal variable selection and identify biologically relevant features [26].

Experimental Validation: In simulation studies with super-Gaussian distributed loading vectors, IPCA achieved a median angle of 12.46° versus 20.47° for standard PCA when recovering known eigenvectors, demonstrating superior performance in recovering true biological signals [26].

Structured Sparse PCA with Biological Information

Protocol Objective: To obtain interpretable principal components that utilize biological network information while performing variable selection.

Theoretical Foundation: The method incorporates prior biological knowledge through two novel approaches: Fused sparse PCA (encourages selection of connected variables in a network) and Grouped sparse PCA (utilizes group information of variables) [36].

Step-by-Step Methodology:

Network Representation: Represent biological knowledge as a weighted undirected graph 𝒢 = (C, E, W), where C represents nodes (biological features), E represents edges (associations between features), and W represents edge weights.

Structured Optimization: Solve the constrained optimization problem that minimizes a structured-sparsity inducing penalty of principal component loadings subject to an l∞ norm constraint on the eigenvalue difference.

Pathway Identification: Utilize the structured sparsity to identify biologically relevant pathways and gene sets that explain variation in the data while respecting known biological relationships.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for PCA in Biological Research

| Tool/Package | Primary Function | Compatibility | Key Features | Application Context |

|---|---|---|---|---|

| BiPCA Python Package [34] | Biwhitening and denoising | Python | Hyperparameter-free, verifiable with goodness-of-fit metrics | Omics count data (scRNA-seq, scATAC-seq, spatial transcriptomics) |

| mixomics R Package [20] [26] | IPCA and sIPCA implementation | R | Combines PCA and ICA; includes sparse variant with variable selection | Microarray, metabolomics, general high-throughput data |

| FactoMineR, psych, ggfortify [37] | Standard PCA and visualization | R | User-friendly interfaces, biplots, scree plots, correlation circles | General exploratory data analysis and visualization |

| Structured Sparse PCA Code [36] | Fused and Grouped sparse PCA | R (implied) | Incorporates biological network information | Genomic data with known pathway information |

| PCA Denoising for Bioacoustics [7] | Marine bioacoustics denoising | MATLAB/Python (GitHub) | Selective suppression of anthropogenic noise | Ecological monitoring, bioacoustic recordings |

The validation of PCA results with biological annotations requires careful consideration of data preprocessing strategies, particularly for scaling, centering, and noise handling in high-dimensional biological data. Biwhitened PCA demonstrates robust performance for omics count data by addressing fundamental challenges with heteroscedastic noise through mathematically principled biwhitening [34]. Independent PCA offers advantages for data with super-Gaussian distributions by effectively denoising loading vectors [26], while structured sparse PCA incorporates valuable biological network information to enhance interpretability [36]. Standard PCA remains valuable for continuous, normally distributed data but shows limitations with count data common in biological applications. The choice of methodology should be guided by data characteristics, noise structure, and availability of biological prior knowledge, with validation through biological annotations essential for confirming methodological efficacy.

A Step-by-Step Workflow for PCA in Gene Expression and Clinical Datasets

Principal Component Analysis (PCA) is a fundamental dimensionality reduction technique widely used to analyze high-dimensional biological data, such as gene expression profiles and clinical datasets. By transforming complex datasets into a reduced set of principal components, PCA helps researchers identify key patterns, trends, and sources of variation while minimizing information loss [9]. In genomics and clinical research, where datasets often contain thousands of variables measured across relatively few samples, PCA provides an essential tool for exploratory data analysis, noise reduction, and data visualization [11] [38].

The application of PCA extends across multiple domains within biological research. In gene expression analysis, PCA helps summarize the biological state of profiled tumors through gene signature scores [38]. For microbiome studies, PCA-based approaches enable researchers to connect microbial community patterns to host phenotypes such as age [15]. The technique also serves crucial functions in data preprocessing before applying machine learning algorithms, where it reduces multicollinearity and minimizes overfitting by projecting high-dimensional data into smaller feature spaces [9]. This article presents a comprehensive workflow for implementing PCA specifically designed for gene expression and clinical datasets, with emphasis on validation through biological annotations.

Theoretical Foundations of PCA

Mathematical Principles

PCA operates by performing an eigendecomposition of the covariance matrix of the original data, resulting in eigenvectors (principal components) and eigenvalues (variances) [9]. The first principal component (PC1) represents the direction of maximum variance in the data, with each subsequent component capturing the next highest variance while remaining orthogonal to previous components [9]. This transformation creates a new coordinate system where the axes are structured by the principal components, allowing the original data to be represented in a lower-dimensional space while retaining the most significant patterns and relationships.

The mathematical process begins with data standardization, ensuring each variable contributes equally to the analysis by transforming features to have a mean of zero and standard deviation of one [9]. Next, the algorithm computes the covariance matrix to identify correlations between variables, followed by extraction of eigenvectors and eigenvalues from this matrix [9]. The eigenvectors represent the principal components, while the eigenvalues indicate the amount of variance captured by each component. Researchers then select a subset of components based on their eigenvalues, typically retaining those that collectively explain most of the dataset variance [11].

Comparison to Alternative Dimensionality Reduction Methods

PCA belongs to a family of dimensionality reduction techniques, each with distinct characteristics and applications. The table below compares PCA to other commonly used methods:

Table 1: Comparison of Dimensionality Reduction Techniques

| Method | Type | Key Characteristics | Best Suited For |

|---|---|---|---|

| PCA | Linear, Unsupervised | Preserves global structure, orthogonal components | Linearly separable data, noise reduction |

| LDA | Linear, Supervised | Maximizes class separability, requires class labels | Classification tasks with labeled data |

| t-SNE | Non-linear, Unsupervised | Preserves local neighborhoods, captures complex manifolds | Visualization of high-dimensional data |

| UMAP | Non-linear, Unsupervised | Preserves both local and global structure | Visualization, pre-processing for clustering |

| Factor Analysis | Linear, Unsupervised | Models latent variables, focuses on covariance structure | Identifying underlying data structures |

Unlike Linear Discriminant Analysis (LDA), PCA is not limited to supervised learning tasks and can reduce dimensions without considering class labels or categories [9]. Compared to non-linear techniques like t-SNE and UMAP, PCA performs linear transformations, making it more suitable for datasets where linear relationships dominate the variance structure [9]. Factor analysis, while similar in reducing dimensions, focuses more on identifying latent variables rather than creating components that maximize explained variance [9].

A Step-by-Step PCA Workflow for Biological Data

Experimental Design and Data Preparation

The initial phase of PCA implementation requires careful experimental design and data preprocessing to ensure meaningful results. For gene expression studies, this involves planning sample collection, determining appropriate sample sizes, and establishing normalization procedures. Sample size considerations are particularly critical in high-dimensional biological data where the number of variables (p) often exceeds the number of samples (n) [11]. In such "n < p" scenarios, specialized statistical approaches may be necessary to ensure reliable covariance estimation [11].

Data normalization represents a crucial preprocessing step before PCA application. For microarray gene expression data, tools like Genealyzer provide comprehensive preprocessing capabilities, including background correction and normalization algorithms for both Affymetrix and Agilent platforms [39]. RNA sequencing data requires appropriate normalization methods such as Counts Per Million (CPM) or others that account for library size differences [40]. Proper normalization ensures that technical variations do not dominate the biological signals captured by principal components.

Table 2: Essential Research Reagent Solutions for PCA Workflows

| Research Reagent | Function in PCA Workflow | Example Tools/Implementations |

|---|---|---|

| Normalization Algorithms | Standardize data for comparative analysis | RMA for Affymetrix, CPM for RNA-seq [39] [40] |

| Quality Control Packages | Assess data quality and identify outliers | Genealyzer, ArrayTrack [39] |

| Covariance Estimators | Handle high-dimensional data (n

| Ledoit-Wolf Estimator [11] |

| Component Selection Tools | Determine optimal number of principal components | Scree plots, Pareto charts [11] |

| Biological Annotation Databases | Validate components with known biological functions | Gene Ontology, KEGG pathways [38] |

Component Selection and Validation Framework

Determining the optimal number of principal components to retain represents one of the most critical decisions in PCA implementation. Three common approaches include the Kaiser-Guttman criterion (retaining components with eigenvalues >1), Cattell's Scree test (identifying the "elbow" where eigenvalues level off), and the percent cumulative variance approach (retaining components that explain a specific percentage of total variance, typically 70-80%) [11]. Research indicates that the percent cumulative variance method offers greater stability compared to other techniques, with the Pareto chart (which displays both cumulative percentage and cut-off points) providing the most reliable component selection method for health-related research applications [11].

Validation of PCA results requires a multifaceted approach focusing on four key properties: coherence (elements of a gene signature should be correlated beyond chance), uniqueness (the signature should capture specific biological effects rather than general data trends), robustness (biological signals should be strong and distinct compared to other signals), and transferability (PCA gene signature scores should describe the same biology in target datasets as in training datasets) [38]. This validation framework ensures that PCA-based gene signatures perform as expected when applied to datasets beyond those used for training.

Figure 1: PCA Workflow for Biological Data - This diagram illustrates the key steps in implementing PCA for gene expression and clinical datasets, from initial data preparation through biological validation.

Implementation and Computational Considerations

Practical implementation of PCA requires appropriate computational tools and software environments. The R programming language provides extensive capabilities for PCA analysis through packages available in the Bioconductor project [39] [40]. For web-based applications, tools like Genealyzer offer user-friendly interfaces that abstract away mathematical and programming details, enabling researchers without advanced computational backgrounds to perform sophisticated analyses [39]. Python implementations through scikit-learn provide additional alternatives, particularly for integration into larger machine learning pipelines.

Computational efficiency becomes particularly important when analyzing large-scale genomic datasets. For exceptionally large datasets, alternative covariance estimation techniques such as the Ledoit-Wolf Estimator or Pairwise Differences Covariance Estimation can improve stability in high-dimensional settings where n < p [11]. Additionally, specialized implementations like the ICARus package employ PCA as a foundational step for determining parameters in more complex analyses like Independent Component Analysis, demonstrating how PCA integrates into broader analytical workflows [40].

Validation of PCA Results with Biological Annotations

Establishing Biological Relevance

A critical challenge in applying PCA to biological data involves ensuring that the identified principal components correspond to meaningful biological phenomena rather than technical artifacts or random noise. Validation with biological annotations provides a framework for addressing this challenge. This process involves connecting statistical patterns revealed by PCA to established biological knowledge through gene set enrichment analysis, pathway mapping, and comparison with known biological signatures [38].

One effective validation approach involves comparing PCA results against randomized gene signatures. By generating thousands of random gene sets and comparing their PCA results to those obtained from biologically-defined signatures, researchers can quantify how much "better" the true gene signature performs compared to random expectations [38]. This method helps control for dataset-specific biases, such as the proliferation-signature bias common in tumor datasets that can cause random gene sets to appear significantly associated with clinical outcomes [38].

Addressing Common Pitfalls in PCA Interpretation

Several common pitfalls can compromise the interpretation of PCA results in biological contexts. Sign-flipping represents a technical issue where the sign of score values for samples may change depending on the software used or small data variations [38]. While this doesn't change the fundamental interpretation of PCA models, it can cause confusion when comparing different studies. This issue can be resolved by multiplying both scores and loadings by -1 to achieve consistent orientation [38].

Another significant challenge involves biological complexity within gene signatures. When a signature describes multiple biological processes, PCA may only capture one of these events in the first principal component [38]. Mixed signatures, such as those combining gender-specific genes with proliferation-related genes, exemplify this challenge, as the resulting PCA model may emphasize one biological aspect while obscuring others [38]. Addressing this limitation requires careful signature design and additional validation to ensure all relevant biological processes are adequately represented.

Figure 2: PCA Validation Framework - This validation workflow ensures PCA results capture biologically meaningful signals rather than technical artifacts or random noise.

Advanced PCA Applications in Biological Research

Specialized PCA Variations for Biological Data

Standard PCA implementations can be enhanced through specialized variations designed to address specific challenges in biological data analysis. Robust PCA approaches incorporate additional constraints to improve performance with noisy datasets or outliers. For example, Transformer-based Robust PCA (TRPCA) combines transformer architectures with robust PCA to improve prediction accuracy while maintaining interpretability [15]. In microbiome studies, TRPCA has demonstrated significant improvements in age prediction accuracy from human microbiome samples, achieving a 28% reduction in Mean Absolute Error for whole-genome sequencing skin samples compared to conventional approaches [15].

Independent Component Analysis (ICA) represents another extension that builds upon PCA foundations. While PCA identifies components that maximize variance and are orthogonal, ICA seeks statistically independent components that may better capture biologically independent processes [40]. Packages like ICARus leverage PCA to determine parameter ranges before applying ICA, using the proportion of variance explained by principal components to identify near-optimal parameters for the ICA algorithm [40]. This integrated approach demonstrates how PCA serves as a foundational element in more complex analytical workflows.

PCA in Multi-Omics Data Integration

The growing availability of multi-omics datasets presents both opportunities and challenges for dimensional reduction techniques. PCA facilitates data integration across different molecular profiling technologies, such as microarray and RNA sequencing platforms [39]. Tools like Genealyzer enable comparative analysis of gene expression data from different technologies and organisms, addressing the challenge of platform-specific technical variations that can complicate integrated analysis [39].

When applying PCA to multi-omics data, careful attention to data scaling and normalization becomes increasingly important. Different omics platforms produce measurements on different scales with distinct noise characteristics, requiring platform-specific preprocessing before integrated analysis [39]. Successful implementation also requires validation approaches that account for the unique properties of each data type while identifying biologically consistent patterns across molecular layers.

Comparative Performance Analysis

Benchmarking PCA Against Alternative Methods

Rigorous benchmarking provides essential insights for selecting appropriate analytical methods for specific research contexts. Studies comparing differential gene expression analysis tools have revealed that agreement among different methods in calling differentially expressed genes is generally not high, with a clear trade-off between true-positive rates and precision [41]. Methods with higher true positive rates tend to show low precision due to false positives, while methods with high precision typically identify fewer differentially expressed genes [41].

In the context of single-cell RNA sequencing data, conventional PCA approaches face specific challenges due to data characteristics such as multimodality and an abundance of zero counts [41]. These characteristics play important roles in the performance of differential expression analysis methods and must be considered when applying PCA to such data. specialized methods designed specifically for single-cell data, such as SCDE and MAST, employ two-part joint models to address zero counts separately from normally observed genes [41].

Table 3: Performance Comparison of PCA Component Selection Methods

| Selection Method | Key Principle | Advantages | Limitations | Recommended Context |

|---|---|---|---|---|

| Kaiser-Guttman Criterion | Retain components with eigenvalues >1 | Simple, objective rule | Tends to select too many components when many variables [11] | Initial screening, large datasets |

| Cattell's Scree Test | Identify "elbow" where eigenvalues level off | Visual, intuitive interpretation | Subjective, lacks clear cutoff definition [11] | Exploratory analysis, clear elbows |

| Percent Cumulative Variance | Retain components explaining set variance (e.g., 70-80%) | Stable, consistent results [11] | Arbitrary threshold selection | Most applications, particularly healthcare [11] |

| Pareto Chart | Display cumulative percentage and cut-off points | Comprehensive visualization, reliable [11] | More complex implementation | Critical healthcare applications [11] |

Practical Recommendations for Researchers