Beyond the Standard Code: Strategies for Predicting Genes with Non-Canonical RBS Patterns

Accurate gene prediction is fundamental to genomic annotation but remains challenged by non-canonical Ribosome Binding Site (RBS) patterns that evade detection by traditional methods.

Beyond the Standard Code: Strategies for Predicting Genes with Non-Canonical RBS Patterns

Abstract

Accurate gene prediction is fundamental to genomic annotation but remains challenged by non-canonical Ribosome Binding Site (RBS) patterns that evade detection by traditional methods. This article provides a comprehensive resource for researchers and bioinformaticians, detailing the biological basis of non-canonical translation initiation and its significance in revealing novel proteins, especially in the 'dark genome'. We explore cutting-edge computational methodologies, from ribosome profiling and proteogenomics to advanced deep learning models, for identifying these elusive genetic elements. The content further offers practical troubleshooting strategies to overcome common pitfalls and outlines rigorous validation frameworks to benchmark predictive performance. By synthesizing foundational knowledge with applied methodologies, this guide aims to equip scientists with the tools needed to expand the annotated proteome, with profound implications for discovering novel biomarkers and therapeutic targets.

Unveiling the Hidden Genome: The Biology and Significance of Non-Canonical RBS

In bacterial genetics, the Shine-Dalgarno (SD) mechanism has long been considered the canonical model for translation initiation. This process involves base pairing between an upstream SD sequence on the mRNA and the complementary anti-SD sequence at the 3' end of the 16S rRNA within the 30S ribosomal subunit [1]. This interaction positions the ribosome correctly at the start codon and has been considered the dominant initiation pathway.

However, systematic genomic analyses have revealed a surprising fact: approximately half of all bacterial genes lack a recognizable SD sequence [1]. This finding has driven the discovery and characterization of multiple non-canonical translation initiation mechanisms that deviate from this conventional route. These alternative pathways include standby binding, leaderless initiation, and protein-mediated initiation, which enable bacteria to regulate gene expression through sophisticated mechanisms beyond the traditional SD model.

Frequently Asked Questions (FAQs)

Q1: What defines a non-canonical translation initiation mechanism? A1: Non-canonical translation initiation encompasses any mechanism that does not primarily rely on the standard base-pairing interaction between the Shine-Dalgarno sequence and the anti-SD sequence of the 16S rRNA. These mechanisms include translation of leaderless mRNAs (which lack a 5' untranslated region), standby binding where ribosomes initially bind to single-stranded regions outside the structured RBS, initiation mediated by ribosomal protein S1, and recognition of non-canonical start codons such as GTG and TTG [1] [2].

Q2: My gene prediction algorithm fails to identify potential coding sequences in certain genomic regions. Could non-canonical translation be the reason? A2: Yes, conventional gene prediction tools often rely on canonical SD sequences and AUG start codons to identify coding regions. Non-canonical mechanisms utilizing non-AUG start codons (GTG, TTG) or SD-independent initiation can lead to these coding sequences being overlooked [2]. Additionally, short open reading frames (sORFs) and those located in untranslated regions (UTRs) or non-coding RNAs often escape detection by standard algorithms [3]. Incorporating ribosome profiling data and expanding search parameters to include alternative start codons can improve identification of these non-canonical coding sequences.

Q3: How does ribosomal protein S1 facilitate non-canonical initiation, and how can I experimentally verify its involvement? A3: Ribosomal protein S1 provides an alternative, SD-independent route to initiation on leadered mRNAs. This large protein has RNA binding and unwinding activities and exhibits affinity for A/U-rich leader regions [1] [4]. To experimentally verify S1's role in translating a specific mRNA:

- Conduct in vitro translation assays using S1-depleted extracts complemented with purified S1

- Perform RNA-protein binding studies (e.g., electrophoretic mobility shift assays) to test direct interaction between S1 and the 5' UTR of your target mRNA

- Use site-directed mutagenesis of potential S1 binding sites and measure effects on translation efficiency

- Employ toeprinting assays to monitor 30S ribosomal subunit formation on the mRNA with and without S1 [4]

Q4: What are the functional consequences of non-canonical start codons in bacterial systems? A4: Non-canonical start codons (GTG, TTG) typically lead to reduced translation initiation efficiency—approximately 8- to 12-fold lower than AUG [2]. However, this apparent disadvantage can provide regulatory benefits. For example, in the E. coli lactose operon, translation of the lacI repressor from a GTG start codon increases basal expression of the lactose utilization cluster, enhancing adaptation to lactose consumption and providing a competitive advantage in the gut environment [2]. This demonstrates how non-canonical start codons can serve as important regulatory elements in metabolic genes.

Q5: How prevalent are non-canonical translation mechanisms, and should I routinely check for them? A5: Non-canonical translation mechanisms are not rare exceptions but rather commonplace. Approximately 50% of bacterial genes lack a standard SD sequence [1]. Analysis of E. coli genomes revealed that more than 99% utilize a GTG start codon for the lacI gene [2], and similar preferences for non-canonical start codons exist in other metabolic regulator genes. Given this prevalence, researchers should incorporate checks for non-canonical features, especially when studying metabolic regulation, bacterial adaptation, or when canonical initiation elements are absent.

Key Mechanisms of Non-Canonical Translation

Standby Binding Mechanism

The standby binding model explains how mRNAs with ribosome binding sites sequestered in stable structures can still be translated efficiently. In this mechanism, 30S subunits initially bind to single-stranded RNA flanking the structured RBS-containing element. The bound standby 30S subunit can then compete effectively for RBS capture upon transient opening of the adjacent RNA structure [1].

Table 1: Comparison of Canonical and Major Non-Canonical Translation Initiation Mechanisms

| Mechanism | Key Features | Representative Examples | Functional Consequences |

|---|---|---|---|

| Canonical SD-dependent | Relies on base-pairing between SD and anti-SD sequences | Average E. coli SD length: 6.3 nt [1] | High translation efficiency; susceptible to mRNA structure |

| Standby Binding | 30S subunits bind upstream single-stranded regions before engaging structured RBS | Widespread in bacteria [1] | Enables translation of structured mRNAs; kinetic advantage |

| Leaderless Initiation | mRNAs lack 5' UTR; initiation with 70S ribosomes | ~30% of genes in Actinobacteria [1] | Abundant in archaea and certain bacterial groups |

| S1-Dependent | Initiation mediated by ribosomal protein S1 binding to A/U-rich leaders | rpsA mRNA [4] | Specific for proteobacteria; subject to autogenous control |

| 5'-uAUG Recognition | 5'-terminal AUG attracts 70S ribosomes to mRNA | ptrB mRNA regulation [1] | Compensates for poor SD sequences; increases ribosomal recruitment |

Protein S1-Mediated Initiation

Ribosomal protein S1 provides a distinct non-canonical initiation pathway, particularly important in Gram-negative bacteria. S1 binds to A/U-rich leader regions and facilitates translation without strong SD sequences. The rpsA mRNA, encoding protein S1 itself, represents a key example where high translational efficiency is achieved despite a vestigial SD element (GAAG, forming only 3 bp) [4]. This mechanism is subject to sophisticated autogenous regulation where excess S1 protein represses its own translation by binding to and altering the structure of its mRNA leader [4].

Non-Canonical Start Codons

While ATG is the universal initiation codon, GTG and TTG serve as start codons for approximately 20% of bacterial genes [2]. These non-canonical start codons recruit the same N-formyl methionyl-tRNA but result in significantly reduced translation efficiency (8-12 fold lower than ATG) [2]. This suboptimal efficiency can be advantageous in regulatory contexts, as demonstrated in the E. coli lacI gene, where GTG initiation fine-tunes repressor levels and enhances metabolic adaptation [2].

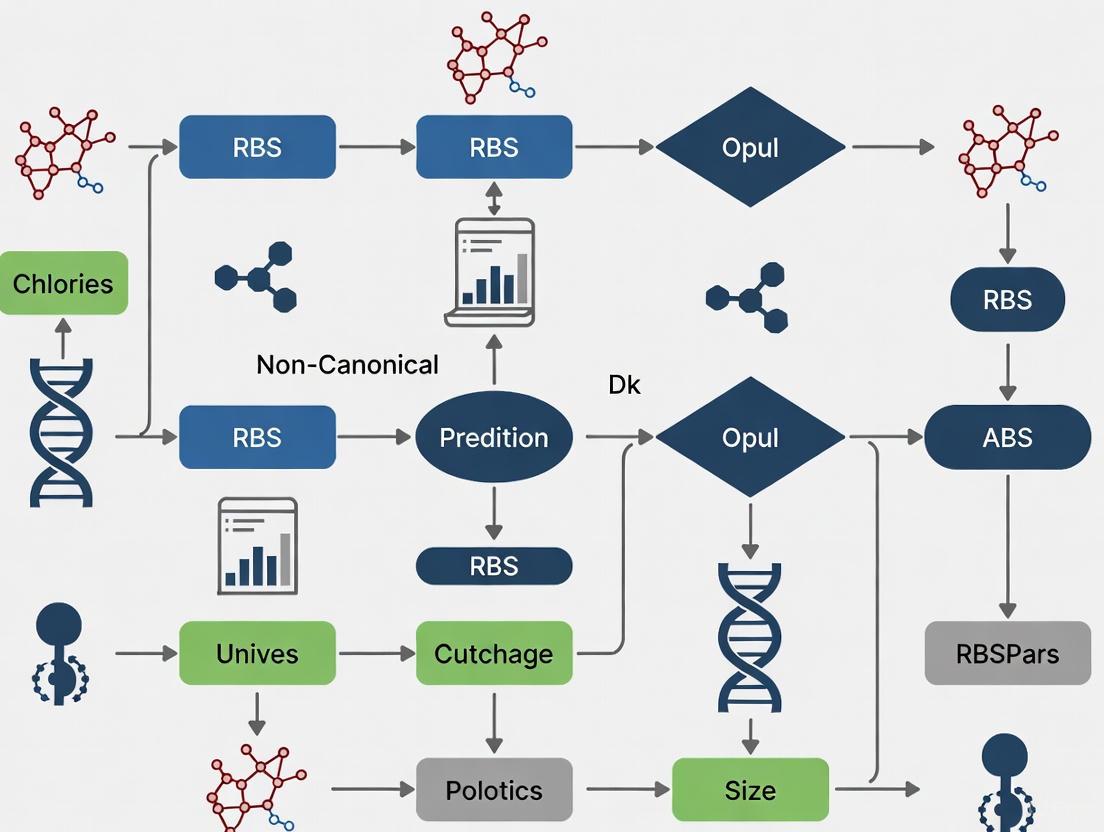

Diagram 1: Relationship between canonical and major non-canonical translation initiation pathways.

Experimental Approaches and Troubleshooting

Identifying Non-Canonical Translation Events

When canonical translation initiation elements are absent, researchers should employ multiple complementary approaches to detect and validate non-canonical translation:

Ribosome Profiling (Ribo-seq): This genome-wide technique provides precise mapping of ribosome positions on mRNAs, revealing translation initiation events regardless of mechanism [3] [5]. Ribo-seq has been instrumental in identifying thousands of non-canonical open reading frames (nORFs) in bacterial and eukaryotic systems [3].

Proteogenomics: Integration of mass spectrometry data with genomic and transcriptomic information enables direct detection of proteins encoded by non-canonical ORFs [3] [5]. This approach has revealed that cryptic proteins often exhibit distinct properties, including higher disorder and lower stability compared to canonical proteins [5].

LacZ Reporter Fusions: Systematic mutagenesis of putative regulatory regions coupled with β-galactosidase assays provides quantitative measurement of translation efficiency [4]. This approach was crucial for defining the structure-function relationship in the rpsA leader and identifying elements necessary for S1-mediated autogenous control.

Table 2: Experimental Approaches for Studying Non-Canonical Translation

| Method | Application | Key Insights Provided | Technical Considerations |

|---|---|---|---|

| Ribosome Profiling | Genome-wide mapping of translating ribosomes | Identifies nORFs; reveals initiation sites independent of sequence features | Requires optimized harvesting and nuclease treatment protocols |

| Proteogenomics | Direct detection of novel protein products | Validates translation of predicted nORFs; characterizes protein properties | Challenging for low-abundance proteins; requires specialized databases |

| Reporter Gene Fusions | Functional assessment of specific regulatory elements | Quantifies translation efficiency; tests structural requirements | May lack genomic context; requires careful design of fusion junctions |

| Site-Directed Mutagenesis | Testing necessity of specific sequence elements | Establishes causal relationships between sequences and function | Comprehensive scanning mutagenesis can be labor-intensive |

| RNA-Protein Binding Assays | Characterizing protein-mRNA interactions | Identifies direct recognition elements (e.g., S1 binding sites) | In vitro conditions may not fully recapitulate cellular environment |

Troubleshooting Common Experimental Challenges

Problem: Inconsistent translation efficiency measurements in reporter assays. Solution: Ensure consistent mRNA levels by incorporating transcriptional controls and measuring transcript abundance. For non-canonical mechanisms particularly sensitive to mRNA structure, conduct experiments at multiple temperatures to assess structure-dependence [4].

Problem: Failure to detect predicted non-canonical proteins via mass spectrometry. Solution: Consider that cryptic non-canonical proteins often have unique properties—they are frequently less structured, more unstable, and may be rapidly degraded [5]. Incorporate proteasome inhibitors during extraction, use specialized fractionation techniques for small proteins, and search MS data against customized databases that include predicted nORFs.

Problem: Ambiguous start codon assignment in genomic analyses. Solution: For genes with multiple potential start codons in close proximity (as seen in some lacI alleles [2]), use comparative genomics to assess conservation patterns, and experimentally validate through N-terminal protein tagging or mutagenesis of candidate codons.

Research Reagent Solutions

Table 3: Essential Research Reagents for Studying Non-Canonical Translation

| Reagent/Tool | Specific Application | Key Function | Implementation Example |

|---|---|---|---|

| S1-Depleted Translation Extracts | Studying S1-mediated initiation | Tests S1-dependence without genetic manipulation | Complementation with wild-type/mutant S1 [4] |

| Ribo-seq Library Prep Kits | Genome-wide translation mapping | Identifies actively translated nORFs | Detection of non-canonical translation events [3] [5] |

| Dual-Luciferase Reporter Systems | Quantifying translation efficiency | Normalizes for transcriptional effects | Testing UTR regulatory elements in bicistronic design |

| Site-Directed Mutagenesis Kits | Functional analysis of sequence elements | Tests necessity of specific nucleotides | Scanning mutagenesis of leader regions [4] |

| Custom nORF Databases | Proteogenomic analyses | Enables identification of non-canonical proteins | Integrated with mass spectrometry data [3] [5] |

| Antibodies Against Non-Canonical Proteins | Validation of protein expression | Detects specific non-canonical proteins | Custom antibodies against unique nORF-encoded epitopes |

Diagram 2: Systematic workflow for investigating non-canonical translation mechanisms.

The expanding repertoire of characterized non-canonical translation mechanisms demonstrates that bacterial gene expression is far more diverse than the canonical SD-centric model suggests. These alternative initiation pathways are not mere curiosities but represent important regulatory strategies that influence bacterial metabolism, adaptation, and competition.

Future research in this field will likely focus on elucidating the full spectrum of non-canonical mechanisms, understanding their integration in global gene regulatory networks, and exploiting this knowledge for biomedical applications. Notably, non-canonical proteins have been shown to generate MHC-I peptides 5-fold more efficiently per translation event than canonical proteins [5], highlighting their potential relevance in immunology and vaccine development.

For researchers working in gene prediction and bacterial genetics, incorporating awareness of these non-canonical mechanisms is essential for comprehensive genomic annotation and understanding of bacterial physiology. The experimental approaches outlined here provide a roadmap for investigating these fascinating deviations from the canonical translation paradigm.

The canonical model of eukaryotic translation initiation, which involves ribosome scanning from the 5' cap to the first AUG codon, no longer fully represents the complexity of gene expression regulation. It is now evident that non-canonical translation initiation mechanisms contribute significantly to the proteomic diversity of cells, particularly under stress conditions and in diseases such as cancer. These mechanisms—including leaky scanning, internal ribosome entry sites (IRES), and initiation from non-AUG start codons—allow for the production of multiple protein isoforms from a single mRNA transcript and facilitate continued protein synthesis when canonical initiation is compromised. For researchers investigating gene function and regulation, recognizing and experimentally verifying these non-canonical events is crucial, as they are often overlooked by standard gene prediction algorithms that primarily focus on canonical AUG-initiated open reading frames (ORFs). This guide addresses the core mechanisms, experimental challenges, and troubleshooting approaches for studying non-canonical translation initiation.

FAQ: Mechanisms and Biological Significance

What are the primary mechanisms of non-canonical translation initiation?

Non-canonical translation initiation bypasses one or more components of the standard cap-dependent scanning mechanism. The three primary mechanisms are:

- Leaky Scanning: The 43S pre-initiation complex (PIC) bypasses a suboptimal start codon—often a near-cognate non-AUG codon (e.g., CUG, GUG) or an AUG in a weak nucleotide context—and initiates at a downstream start site. This allows for the production of multiple protein isoforms from the same mRNA [6] [7].

- Internal Ribosome Entry Sites (IRES): These are structured RNA elements, often in the 5' untranslated region (5' UTR), that directly recruit the ribosome to an internal site near the start codon, independent of the 5' cap. This mechanism is vital for viral replication and cellular stress response [6] [8].

- Non-AUG Start Codons: Translation can initiate from near-cognate codons that differ from AUG by a single nucleotide (e.g., CUG, GUG, UUG, ACG). Although these events are generally less efficient than AUG initiation, they are abundant and generate functionally distinct proteins [9] [7].

Why is non-canonical initiation particularly relevant in cancer and viral infection?

- Cancer: Non-canonical initiation enhances the translation of oncogenes, growth factors, and anti-apoptotic proteins, contributing to cancer development and progression. For instance, non-AUG initiation can produce N-terminally extended proteoforms of oncogenes like

MYCandFGF2with distinct functional properties, influencing processes like proliferation and tumorigenicity [10] [7]. Furthermore, peptides encoded by non-canonical ORFs in non-coding RNAs have been shown to be essential for cancer cell proliferation [11]. - Viral Infection: Viruses exploit non-canonical mechanisms to hijack the host's translational machinery. Using IRES and other cap-independent strategies allows viral mRNAs to efficiently compete with cellular mRNAs for ribosomes, enabling robust viral protein synthesis even when the host cell shuts down cap-dependent translation as a defense mechanism [6] [8].

What are the major experimental challenges in confirming non-canonical translation?

Researchers often encounter several key challenges:

- Distinguishing Protein from RNA Effects: A biological phenotype resulting from a non-canonical ORF could be mediated by the transcribed RNA itself rather than the translated peptide.

- Identifying the True Start Codon: Standard gene prediction tools are often biased toward AUG and may miss non-canonical initiation sites [12].

- Detecting Low-Abundance Products: Proteins and micropeptides translated from non-canonical ORFs are often transient, low in abundance, and difficult to detect with standard mass spectrometry protocols [11].

Table 1: Quantitative Efficiencies of Near-Cognate Start Codons

| Start Codon | Relative Efficiency (Approximate) | Notes |

|---|---|---|

| AUG | 100% | The canonical start codon; highest efficiency. |

| CUG | ~3-50% | Typically the most efficient near-cognate codon [9] [7]. |

| GUG | ~3-25% | Less efficient than CUG [9]. |

| UUG | ~3-10% | Less efficient than CUG [9]. |

| ACG | ~3-10% | Less efficient than CUG [9]. |

Troubleshooting Guide: Validating Non-Canonical Initiation Events

Problem 1: How to determine if a biological effect is mediated by a translated peptide and not the RNA.

Solution: Use start codon mutagenesis and CRISPR tiling to demonstrate translation-dependent activity.

Experimental Protocol: Start Codon Mutagenesis [13]

- Clone the Locus: Clone the genomic locus containing the candidate non-canonical ORF into an expression vector.

- Introduce Mutations: Using site-directed mutagenesis, mutate the putative start codon (e.g., CUG to CUC). This disrupts translation initiation but leaves the RNA sequence largely unchanged.

- Assay Phenotype: Express both the wild-type and mutant constructs in an appropriate cell line and assay the resulting phenotype (e.g., gene expression changes via L1000 assay, cell viability, or protein interactions).

- Interpretation: A loss of phenotype in the mutant confirms that the effect is dependent on translation from that specific codon.

Experimental Protocol: CRISPR-based Functional Mapping [13]

- Design a Tiling Library: Design a library of sgRNAs that tile densely across the entire genomic region of interest, covering both the putative coding sequence and adjacent non-coding regions.

- Perform Screen: Conduct a CRISPR/Cas9 viability screen in a relevant cell model using this tiling library.

- Analyze Essential Regions: Map the sgRNAs that cause a viability defect. If the essential region maps exclusively to the predicted coding exon, it strongly supports the existence of a translated, functional protein.

- Interpretation: This method helps exclude the possibility that the phenotype is due to disrupting a regulatory non-coding RNA element or a DNA regulatory region.

Problem 2: How to definitively identify the start codon of a non-canonical ORF.

Solution: Employ ribosome profiling (Ribo-seq) with initiation inhibitors and validate with mass spectrometry.

- Experimental Protocol: Ribo-seq with Harringtonine or Lactimidomycin [9] [7]

- Treat Cells: Treat cells with an early elongation inhibitor like harringtonine or lactimidomycin. These drugs arrest ribosomes at the start codon, enriching for initiating ribosomes.

- Perform Ribo-seq: Harvest cells and perform standard ribosome profiling to sequence the mRNA fragments protected by the arrested ribosomes.

- Map Initiation Sites: The 5' ends of the ribosome-protected fragments will reveal the precise positions of translation initiation sites across the transcriptome, including those at non-AUG codons.

- Validation: Confirm the Ribo-seq predictions by inserting a small epitope tag (e.g., V5, FLAG) in-frame at the endogenous locus immediately downstream of the predicted start codon. Detection of the tagged protein by immunoblotting or immunofluorescence provides orthogonal validation of translation [13] [11].

Problem 3: How to detect proteins and micropeptides that are low in abundance or poorly immunogenic.

Solution: Optimize mass spectrometry (MS) approaches and use in vitro translation.

- Experimental Protocol: Optimized Peptidomics with Ultrafiltration [11]

- Database Construction: Build a custom reference database of all potential ORFs, including those with non-AUG start codons and from non-coding RNAs. This is critical for peptide identification.

- Sample Preparation: Use an ultrafiltration tandem MS assay to enrich for small peptides and separate them from larger, more abundant cellular proteins.

- Liquid Chromatography-Tandem MS (LC-MS/MS): Analyze the enriched peptide fraction with LC-MS/MS, searching the data against your custom database.

- In Vitro Transcription/Translation: As a complementary approach, express the candidate ORF in a cell-free in vitro translation system. This can provide direct evidence that the RNA can be translated into a protein of the expected size, bypassing complexities of the cellular environment [13].

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Reagents for Studying Non-Canonical Translation

| Reagent / Tool | Primary Function | Key Considerations |

|---|---|---|

| Ribosome Profiling (Ribo-seq) | Genome-wide mapping of translating ribosomes and initiation sites. | Use of initiation inhibitors (harringtonine) is crucial for pinpointing start codons. Requires specialized bioinformatics analysis [9] [7]. |

| CRISPR/Cas9 Tiling Libraries | Functional mapping of coding regions essential for cell fitness. | Helps distinguish protein-encoding ORFs from functional RNA elements. Design sgRNAs to cover the entire candidate locus [13] [11]. |

| Epitope Tagging (V5, FLAG) | Detecting protein expression from non-canonical ORFs. | Tags must be inserted at the endogenous genomic locus (via knock-in) to avoid overexpression artifacts and confirm natural regulation [13] [11]. |

| Custom Peptide Reference Databases | Identifying novel peptides via mass spectrometry. | Must include non-AUG initiated ORFs and ORFs in non-coding RNAs to avoid false negatives. Database size and quality are paramount [11]. |

| In Vitro Translation Systems | Confirming the translatability of an ORF independent of cellular context. | Provides clean evidence of protein synthesis from a specific RNA template without confounding cellular factors [13]. |

| Start Codon Mutagenesis | Establishing the functional requirement of a specific codon for translation. | The gold standard for proving that a phenotype is translation-dependent and not mediated by the RNA molecule itself [13]. |

Visualizing the Core Mechanisms

The following diagrams illustrate the key non-canonical initiation pathways and a recommended experimental workflow.

Non-Canonical Initiation Pathways

Experimental Validation Workflow

FAQ: Key Concepts and Experimental Challenges

What is the "dark proteome"? The dark proteome refers to the vast and largely unexplored collection of non-canonical proteins that do not follow traditional gene annotation rules. These proteins are derived from genomic regions previously not thought to be protein-coding and include miniproteins (50-100 amino acids) and microproteins (under 50 amino acids) [14].

What are the main sources of non-canonical proteins? Non-canonical proteins originate from several types of non-canonical open reading frames (ncORFs), including:

- Upstream ORFs (uORFs): Located in the 5' untranslated region (UTR) of mRNA transcripts [15].

- Downstream ORFs (dORFs): Located in the 3' UTR [15].

- ORFs within long non-coding RNAs (lncRNAs): Transcripts previously annotated as non-coding [16].

- Overlapping ORFs (ouORFs/odORFs): That overlap with canonical coding sequences [15].

Why have these proteins been overlooked until recently? Historical gene annotation relied on parameters that excluded ncORFs, such as a requirement for a protein size over 100 amino acids, an AUG start codon, and the "one-gene one-polypeptide" hypothesis. Additionally, reliance on biased detection methods like antibodies designed for known proteins and curated reference databases that filtered out non-canonical sequences created a blind spot [14] [17].

What is the functional significance of the dark proteome? Mounting evidence shows dark proteome proteins play critical roles in fundamental biological processes and diseases. Examples include:

- Immunity: A microprotein encoded by the lncRNA Aw112010 is essential for orchestrating mucosal immunity during bacterial infection [16].

- Cancer: Non-canonical proteins can support cancer cell survival, suggesting they may act as oncogenic drivers or therapeutic vulnerabilities [14] [18].

- Cellular Regulation: Microproteins regulate key processes, such as the microprotein MP31, which disrupts mitochondrial homeostasis in glioblastoma cells [14].

Troubleshooting Guide: Illuminating the Dark Proteome

Issue 1: Failure to Detect Non-Canonical Peptides in Mass Spectrometry

Problem: Expected non-canonical peptides are not identified in your mass spectrometry (MS) data.

Solution:

- Check Your Reference Database: Standard protein databases are built from canonical gene annotations and will filter out non-canonical hits. For your analysis, use a custom database that includes predicted ncORFs from ribosome profiling or other genomic searches [14].

- Improve Enrichment for Low-Abundance Proteins: Non-canonical peptides are often low in abundance. Use nanoparticle enrichment protocols, where engineered particles bind and concentrate specific or low-abundance proteins, to increase sensitivity prior to MS analysis [14].

- Verify Sample Preparation: Ensure sample preparation protocols are optimized for shorter, potentially more labile, microproteins to prevent their loss or degradation.

Issue 2: Distinguishing Functional Peptides from Translational Noise

Problem: You have identified a translated ncORF, but need to determine if it produces a stable, functional protein or is a quickly degraded byproduct.

Solution:

- Apply Computational Stability Prediction: Use machine learning models like PepScore to calculate the probability that an ORF-encoded peptide is stable. PepScore is based on ORF features such as expected length, encoded domains, and conservation [15].

- Assess Protein Stability Experimentally: Transfert cells to express your ncORF of interest and treat with inhibitors of proteasomal (e.g., MG132) and lysosomal (e.g., chloroquine) degradation pathways. If the peptide's abundance increases, it is likely actively degraded, providing clues about its regulation and half-life [15].

- Test for Cellular Localization: Use epitope tagging (e.g., HA, FLAG) to determine the subcellular localization of the microprotein. Stable, functional microproteins often show distinct subcellular localization, such as vesicular or cytoplasmic patterns, which can hint at function [16].

Issue 3: Validating the Protein-Coding Potential of a Putative lncRNA

Problem: A transcript is annotated as a non-coding RNA (lncRNA), but you suspect it may encode a microprotein.

Solution:

- Perform Ribosome Profiling (Ribo-seq): This is the gold-standard technique. Actively translated ORFs show a characteristic 3-nucleotide periodicity in ribosome-protected fragments, which can be detected by tools like RibORF or RiboCode [16] [15].

- Conduct Epitope Tagging at the Endogenous Locus: Use CRISPR/Cas9 to introduce an epitope tag (e.g., HA) directly into the genomic locus of the candidate ncORF. This allows for the detection of the endogenously expressed protein under native regulation, avoiding artifacts from overexpression systems [16].

- Validate with Immunoprecipitation and Mass Spectrometry (IP-MS): After epitope tagging, perform anti-HA immunoprecipitation on cell lysates and analyze the purified fractions by mass spectrometry. Identification of peptides that map uniquely to the predicted ncORF sequence provides high-confidence validation [16].

Experimental Protocols for Dark Proteome Analysis

Protocol 1: Identifying Translated ncORFs with Ribosome Profiling

Objective: To genome-widely identify open reading frames that are actively being translated, including non-canonical ones [16] [15].

Workflow:

Methodology:

- Cell Treatment and Lysis: Treat cells under the desired condition (e.g., LPS stimulation for immune activation). Harvest and lyse cells with a detergent-based buffer, followed by nuclease digestion to degrade RNA not protected by ribosomes [16].

- Ribosome-Bound RNA Isolation: Purify the ribosome-protected mRNA fragments using sucrose density gradient centrifugation or immunopurification [16].

- Library Preparation and Sequencing: Convert the purified RNA fragments into a sequencing library. Key steps include size-selection for ~30 nt fragments, reverse transcription, and circularization before sequencing on platforms like Illumina [15].

- Bioinformatic Analysis:

- Quality Control: Assess the sequencing data for strong 3-nucleotide periodicity, a hallmark of active translation.

- ORF Identification: Use specialized software (e.g., RibORF 2.0, RiboCode) to identify translated ORFs. These tools use logistic regression models based on features like the fraction of reads assigned to the first nucleotide of codons and the uniformity of reads across codons [15].

- Annotation: Cross-reference the identified ORFs with genomic annotations to classify them as upstream (uORF), downstream (dORF), or lncRNA-encoded.

Protocol 2: Validating a Novel Microprotein via Endogenous Tagging

Objective: To confirm the existence and subcellular localization of a microprotein encoded by a ncORF in its native context [16].

Workflow:

Methodology:

- CRISPR/Cas9 Design: Design a guide RNA (gRNA) to target the genomic locus just before the stop codon of the ncORF. Design a single-stranded DNA (ssDNA) donor template containing a C-terminal epitope tag (e.g., HA) flanked by homologous arms [16].

- Cell Transfection and Clonal Selection: Co-transfect cells (e.g., murine embryonic stem cells or a relevant cell line) with the gRNA, Cas9 protein, and the donor template. Allow cells to grow and then select for successfully edited clones via antibiotic selection or fluorescence-activated cell sorting (FACS) [16].

- Genomic Validation: Expand clonal cell lines and perform genomic PCR and sequencing across the targeted locus to confirm the precise integration of the epitope tag.

- Protein Detection and Localization:

- Generate primary cells (e.g., bone marrow-derived macrophages) from the genetically modified mice.

- Stimulate the cells (e.g., with LPS for 6-24 hours) to induce expression.

- Detect the tagged microprotein via western blotting of cell lysates using an anti-HA antibody.

- For localization, perform immunofluorescence microscopy on fixed cells using the anti-HA antibody [16].

Research Reagent Solutions

Table 1: Essential reagents and tools for dark proteome research.

| Reagent/Tool | Function | Example Use Case |

|---|---|---|

| Ribosome Profiling (Ribo-seq) | Captures genome-wide snapshot of actively translating ribosomes to identify ncORFs [16] [15]. | Discovering translated uORFs in response to cellular stress. |

| Mass Spectrometry | Unbiased detection and sequencing of peptides; cornerstone for validating novel proteins [14]. | Identifying non-canonical peptides in immunoprecipitated samples. |

| CRISPR/Cas9 Gene Editing | Enables precise insertion of epitope tags into endogenous genomic loci [16]. | Creating endogenously HA-tagged microprotein for native expression studies. |

| PepScore Computational Model | Machine learning model that calculates the probability an ncORF encodes a stable peptide based on genomic features [15]. | Prioritizing high-confidence candidate ncORFs from Ribo-seq data for functional studies. |

| Unique Molecular Identifiers (UMIs) | Short random nucleotide sequences used to tag molecules before PCR to accurately quantify abundance and remove PCR duplicates [19]. | Precisely quantifying the abundance of specific vector-genome junctions in integration site analysis. |

| Nanoparticle Enrichment | Engineered particles that bind and concentrate low-abundance proteins from complex mixtures [14]. | Enhancing detection of low-abundance microproteins in mass spectrometry. |

Troubleshooting Guide: Non-canonical RBS Patterns in Gene Prediction

Frequently Asked Questions

Q1: My gene prediction tool is missing genuine coding sequences in microbial genomes. What could be wrong? This is a common issue when tools are calibrated only for canonical Shine-Dalgarno (SD) motifs. Non-canonical translation initiation mechanisms are widespread; approximately half of bacterial genes lack an SD sequence entirely [1]. MetaGeneAnnotator (MGA) addresses this by using a self-training model from input sequences and adaptable RBS models that detect species-specific patterns without relying solely on SD sequences [20].

Q2: How can I improve translation start site prediction accuracy for short sequence fragments, such as those from metagenomic studies? For short sequences, conventional gene-finding tools that require long sequences for statistical training often fail [20]. MGA improves prediction accuracies for short sequences (96% sensitivity and 93% specificity for 700 bp fragments) by using adapted RBS models and di-codon frequencies correlated with GC content [20].

Q3: What are the major types of non-canonical translation initiation I should account for in my analysis? Several non-canonical mechanisms exist, as summarized in the table below [1].

| Mechanism | Description | Key Features |

|---|---|---|

| SD-Independent (Leadered) | Initiation on mRNAs with untranslated regions but no SD sequence. | Often relies on ribosomal protein bS1 binding to A/U-rich leaders [1]. |

| Leaderless | Initiation on mRNAs that completely lack a 5' untranslated region (5'-UTR). | Begins with the binding of a 70S ribosome directly to the 5' end; common in archaea and some bacterial groups [1]. |

| 5'-uAUG Mediated | A 5'-terminal AUG acts as a ribosome recognition signal, compensating for a poor downstream SD sequence. | Attracts 70S ribosomes to the transcript, increasing local ribosome concentration [1]. |

Q4: How does the presence of prophage or horizontally transferred genes affect prediction accuracy? Atypical genes, such as those from prophages or horizontally transferred elements, often have codon usages that differ significantly from the host's typical genes [20]. MGA integrates statistical models for prophage genes in addition to bacterial and archaeal models. It uses an ORF-by-ORF scoring procedure for sequences longer than 5000 bp to sensitively detect these atypical genes [20].

Experimental Protocols for Validating Non-canonical RBS Patterns

Protocol 1: In Silico Identification and Characterization of Non-canonical Initiation Sites

Application: This methodology is used for the genome-wide identification and analysis of non-canonical translation initiation sites, particularly useful for annotating new genomes or metagenomic assemblies.

Steps:

- Sequence Input: Provide the DNA sequence(s) for analysis. MGA can process a single sequence or a set of sequences of varying lengths [20].

- ORF Prediction: The tool identifies all potential open-reading frames (ORFs) within the input sequence[sentence]

- Model Application & Scoring:

- Four sets of di-codon frequency models (self-training, bacterial, archaeal, prophage) are applied to score each candidate gene [20].

- For each ORF, the tool calculates scores based on its own GC content [20].

- The RBS model searches upstream regions of start codons for potential RBS motifs based on complementarity to the 3' tail of 16S rRNA and calculates a score using position weight matrices [20].

- Result Integration: A maximal scoring combination of genes is calculated as the definitive prediction, allowing typical genes to score highest with the self-training model and atypical genes to score highest with one of the other models [20].

Protocol 2: Functional Validation of Predicted Non-canonical RBS Sites

Application: This experimental protocol validates the functionality of a predicted non-canonical RBS and its associated start codon, confirming translational activity.

Steps:

- Cloning: Clone the predicted gene with its native upstream region (putative RBS) into a reporter vector (e.g., expressing GFP or LacZ).

- Mutagenesis: Create mutant constructs:

- Introduce point mutations to disrupt the predicted start codon (e.g., ATG to GTG).

- If a potential structure is predicted, create mutants that alter the RNA stability.

- Expression Analysis: Introduce the wild-type and mutant constructs into a suitable host cell line.

- Measurement:

- Quantify reporter gene expression (e.g., fluorescence, enzyme activity).

- Use western blotting with an antibody against the native protein (or a tagged version) to confirm the protein product's size and expression level.

- Validation: A significant reduction in expression or absence of the protein in the start-codon mutant, compared to the wild-type construct, confirms the functional translation initiation site.

The Scientist's Toolkit: Research Reagent Solutions

The following table details key resources for investigating non-canonical gene regulation.

| Item | Function/Application |

|---|---|

| MetaGeneAnnotator (MGA) | A comprehensive gene prediction tool for prokaryotic sequences that detects typical and atypical genes using self-training and adaptable RBS models [20]. |

| AUGUSTUS | An open-source program for ab initio eukaryotic gene prediction, which can incorporate hints from RNA-Seq, EST alignments, and protein similarities [21]. |

| Eugene | An integrative gene finder for eukaryotic and prokaryotic genomes that can combine various sources of information like RNA-Seq and protein homologies [21]. |

| Reporter Vectors (e.g., GFP, LacZ) | Used in functional validation experiments (Protocol 2) to measure the activity of a predicted promoter and RBS when fused to a gene of interest. |

| NCBI RefSeq Database | A curated collection of genomic sequences and annotations used for constructing training models and validating predictions [20]. |

Workflow Visualization

Diagram 1: Gene Prediction with Non-canonical RBS Handling

Diagram 2: Non-canonical Translation Initiation Pathways

Frequently Asked Questions (FAQs)

Q1: What are non-canonical RBS and non-canonical start codons, and how do they differ from canonical ones? A1: In canonical translation, the process begins with a defined ribosome binding site (RBS) and an AUG start codon, which is recognized by the initiator tRNA. Non-canonical mechanisms bypass these strict rules. This includes using non-canonical start codons (e.g., GTG, TTG, CTG) instead of AUG, and employing alternative RBS-independent ribosome recruitment methods like Internal Ribosome Entry Sites (IRESes) [22] [2]. These alternative start codons typically lead to a lower translation initiation efficiency, resulting in reduced protein expression levels compared to AUG [2].

Q2: What is the primary evolutionary pressure driving the use of non-canonical translation mechanisms? A2: The strongest evolutionary driver is the need to maximize coding capacity and optimize genetic information within compact genomes. RNA viruses, for instance, have very limited genome sizes (often under 30kb) and are under intense selective pressure to express multiple proteins from a single mRNA transcript. Non-canonical mechanisms enable access to overlapping open reading frames (ORFs) and allow for sophisticated gene regulation without increasing genome length [22].

Q3: During my experiment, I suspect a non-canonical ORF is functional. How can I confirm its translation is required for the observed phenotype and not just an RNA-level effect? A3: This is a critical validation step. The gold-standard experiment is start codon mutagenesis combined with a functional assay. As demonstrated in multiple studies, you should mutate the putative non-canonical start codon (e.g., from GTG to GCG) and test if the biological effect is abolished. In one case, mutating the start site in 51 ORFs resulted in a loss of the perturbational response in 48 of them (94%), confirming that the effect was mediated by translation of the ORF and not the RNA itself [13]. This should be complemented by CRISPR tiling across the locus to ensure the phenotype maps to the coding region [13] [23].

Q4: We've identified a novel microprotein from a non-canonical ORF. How can we assess its stability and potential function in cells? A4: You can employ a machine learning framework like PepScore, which was developed to calculate the probability that a non-canonical ORF-encoded peptide is stable. This model is based on ORF features such as length, presence of structured domains, and conservation [15]. For functional clues, perform ectopic expression with an epitope tag (e.g., V5) to determine the protein's subcellular localization, which can indicate potential function (e.g., nuclear, cytoplasmic, secreted) [13] [16]. Furthermore, inhibiting proteasomal and lysosomal degradation pathways can test the protein's inherent stability [15].

Q5: Are non-canonical start codons and ORFs found in prokaryotes, and do they confer an advantage? A5: Yes, they are widespread and functionally important. For example, genomic analysis of E. coli revealed that over 99% of strains use a non-canonical GTG start codon for the lacI repressor gene. This suboptimal start codon fine-tunes the basal expression level of the lactose utilization operon, providing a competitive advantage in the gut by enabling faster adaptation to available lactose [2]. This demonstrates that non-canonical start codons can serve as sophisticated metabolic tuning mechanisms.

Troubleshooting Common Experimental Challenges

| Problem | Possible Cause | Solution |

|---|---|---|

| Cannot detect microprotein via Western blot | Protein is short-lived/degraded; V5 tag may disrupt structure/function of very small proteins. | Treat cells with proteasome (e.g., MG132) and/or lysosome (e.g., chloroquine) inhibitors prior to lysis [15]. For ORFs <50 aa, consider a smaller tag or endogenous epitope tagging via CRISPR [13] [16]. |

| CRISPR knock-out shows effect, but it's unclear if it's due to the ORF or RNA | sgRNA may be disrupting a regulatory genomic element or non-coding function of the transcript. | Perform CRISPR tiling with dense sgRNAs across the entire genomic locus. A phenotype that maps exclusively to the predicted coding exon strongly suggests a protein-mediated effect [13] [23]. |

| Ribosome profiling data is noisy, confounding ORF prediction | Variable RNase digestion or lineage-specific regulation can lead to poor 3-nt periodicity. | Use computational tools like RibORF 2.0 that automate quality control, select reads with strong 3-nt periodicity, and use ribosomal A-site corrected reads for prediction. Require ORFs to be identified in multiple, independent replicates [15]. |

| Uncertain biological relevance of a predicted non-canonical ORF | The ORF may be a translational "byproduct" without function. | Conduct a focused CRISPR/Cas9 viability screen. If knock-out of the ORF impairs cell growth/survival, it indicates biological essentiality. Compare the hit rate to that of canonical genes for context (~10% for non-canonical vs. ~17% for canonical in one study) [13]. |

Key Data and Experimental Evidence

Table 1: Prevalence and Validation of Non-Canonical ORFs

The following table summarizes key quantitative findings from large-scale studies investigating non-canonical ORFs.

| Metric | Finding | Experimental Context | Source |

|---|---|---|---|

| Translated ncORFs in Human Genes | 73.5% of coding genes showed translation outside the canonical ORF (58,383 ncORFs total). | Ribosome profiling analysis of 669 human samples. | [15] |

| Functional ORFs (Viability Effect) | 57 of 553 (10%) tested non-canonical ORFs induced a viability defect upon CRISPR knock-out. | CRISPR/Cas9 loss-of-function screens in 8 cancer cell lines. | [13] |

| Protein Validation Rate | 257 of 553 (46%) produced a detectable V5-tagged protein upon ectopic expression. | Epitope-tagging and detection in human cell lines. | [13] |

| Protein vs. RNA Effect | 48 of 51 (94%) ORFs lost biological activity upon start codon mutation. | Start codon mutagenesis coupled with L1000 gene expression profiling. | [13] |

| Non-AUG Start Codon Usage | ~56.7% of human ncORFs use AUG; remainder use near-cognate codons (CTG, TTG, GTG). | Ribosome profiling and computational analysis in human, mouse, zebrafish, worm, and yeast. | [15] |

| E. coli lacI Start Codon | >99% of E. coli strains use a GTG start codon for the lacI repressor. | Genomic analysis of 10,643 E. coli genomes. | [2] |

Table 2: Essential Research Reagent Solutions

This table lists key reagents and their applications for studying non-canonical translation.

| Reagent / Tool | Function / Application | Key Consideration |

|---|---|---|

| Ribosome Profiling (Ribo-seq) | Genome-wide identification of actively translated ORFs by sequencing ribosome-protected mRNA fragments. | Data quality is paramount; ensure strong 3-nt periodicity for accurate ORF calling [15]. |

| CRISPR/Cas9 Tiling | Dense tiling of sgRNAs across a genomic locus to map the specific region essential for function. | Distinguishes between protein-coding function and regulatory DNA or functional RNA elements [13] [23]. |

| Start Codon Mutagenesis | Definitive test to confirm a phenotype is mediated by translation of an ORF and not the RNA. | Mutate the start codon to a non-functional sequence (e.g., GTG to GCG) and re-test function [13]. |

| PepScore | A logistic regression model that predicts the stability of a non-canonical ORF-encoded peptide. | Uses ORF features like expected length, encoded domain, and conservation to calculate a stability probability [15]. |

| Endogenous Epitope Tagging | Using CRISPR/Cas9 to tag an endogenous non-canonical ORF (e.g., with HA) for detection under native regulation. | Crucial for detecting low-abundance or condition-specific microproteins without overexpression artifacts [16]. |

| RibORF / RiboCode | Computational algorithms to identify translated ORFs from ribosome profiling data. | Use multiple algorithms and require consensus predictions to reduce false positives [16] [15]. |

Core Experimental Protocols

Protocol 1: Validating a Non-Canonical ORF Phenotype with Start Codon Mutagenesis

This protocol is essential for distinguishing protein-mediated effects from RNA-based mechanisms.

- Clone the Locus: Clone the genomic DNA fragment containing the putative non-canonical ORF and its endogenous regulatory context (e.g., promoter, 5' UTR) into an expression vector.

- Introduce Mutation: Using site-directed mutagenesis, mutate the predicted non-canonical start codon (e.g., GTG, TTG) to a non-functional codon (e.g., GCG, GGG). Preserve the overall RNA sequence as much as possible.

- Functional Assay: Transfect the wild-type and mutant constructs into your relevant cell line and perform the functional assay used to identify the ORF (e.g., cell viability assay, reporter gene assay, L1000 transcriptomic profiling).

- Interpretation: A significant loss of the biological activity in the start codon mutant, while the wild-type construct retains activity, strongly indicates that translation of the ORF is required for the function [13].

Protocol 2: Endogenous Tagging of a Non-Canonical ORF using CRISPR/Cas9

This protocol allows for the study of the native protein without overexpression.

- Design gRNA and Donor Template: Design a gRNA to cut near the STOP codon of the non-canonical ORF. Design a single-stranded donor DNA (ssODN) template containing the epitope tag (e.g., 3xHA) flanked by homologous arms (≥ 50 nt) matching the genomic sequence.

- Transfect and Transfert: Co-transfect cells (or generate a stable cell line) with plasmids expressing Cas9, the gRNA, and the ssODN donor template.

- Screen and Validate: Single-cell clone isolation is recommended. Screen clones by genomic PCR and sequencing to confirm precise, in-frame integration of the tag.

- Detection: Use the tagged cell line for downstream applications like Western blotting to confirm protein expression under endogenous regulation or immunoprecipitation for mass spectrometry analysis to identify interacting partners [16].

Visualization of Key Concepts

Diagram: Mechanisms of Non-Canonical Translation Initiation

From Sequence to Function: Computational and Experimental Tools for Detection

Leveraging Ribosome Profiling (Ribo-seq) to Map Actively Translated ORFs

Troubleshooting Guides: Addressing Common Ribo-seq Experimental Challenges

This section provides solutions to frequent issues encountered during Ribosome Profiling experiments, framed within the context of investigating non-canonical translation events.

Problem: Inadequate Ribosome-Protected Fragment (RPF) Yield

- Symptoms: Low library concentration, insufficient sequencing reads after rRNA depletion.

- Primary Causes & Solutions:

- Cause 1: Over-digestion by RNase. Excessive nuclease activity can degrade ribosome-protected fragments.

- Solution: Titrate RNase concentration (e.g., RNase I) and optimize digestion time and buffer conditions (e.g., 150-200 mM sodium, 5-10 mM magnesium) to achieve uniform digestion without destroying RPFs [24].

- Cause 2: Sample Loss During Library Preparation. Traditional gel-based size selection and multiple purification steps lead to significant material loss, especially with low-input samples.

- Solution: Adopt ligation-free, one-pot library preparation methods like Ribo-lite or OTTR (Ordered Two-Template Relay) that skip gel purification and reduce handling steps. These methods enable successful profiling from as few as 1,000 cells or even a single oocyte [25].

- Cause 1: Over-digestion by RNase. Excessive nuclease activity can degrade ribosome-protected fragments.

Problem: Poor Quality Data with Weak Tri-Nucleotide Periodicity

- Symptoms: Metagene analysis shows no strong 3-nucleotide (codon) periodicity at the start and stop codons; inability to confidently assign the ribosome's A and P sites.

- Primary Causes & Solutions:

- Cause 1: Ineffective Translation Arrest. Ribosome run-off during cell harvesting leads to a loss of genuine translational snapshots.

- Solution: Flash-freeze cells cryogenically or use immediate lysis with a detergent-based buffer. If using elongation inhibitors like cycloheximide, be aware of potential artifacts in ribosome distribution, particularly at the 5' end of transcripts, and validate findings with inhibitor-free protocols where possible [24].

- Cause 2: Contamination from Non-Translating RNAs. Ribosomal RNA (rRNA) can constitute over 80% of sequenced reads if not effectively removed.

- Solution: Use rigorous rRNA depletion kits (e.g., Ribo-zero Plus). Alternatively, employ computational subtraction of rRNA-derived reads post-sequencing. Newer protocols like Ribo-ITP use microfluidic isotachophoresis to physically enrich for ribosome footprints, reducing rRNA background [25].

- Cause 1: Ineffective Translation Arrest. Ribosome run-off during cell harvesting leads to a loss of genuine translational snapshots.

Problem: Data Interpretation Challenges for Non-Canonical ORFs

- Symptoms: Detecting ribosome occupancy in non-annotated regions (lncRNAs, UTRs) but lacking evidence for stable protein production.

- Primary Causes & Solutions:

- Cause 1: Distinguishing Translation from Mere Ribosome Binding. Ribosome occupancy does not always equate to productive, full-length protein synthesis [26].

- Solution: Perform CRISPR-based tiling or start codon mutagenesis. If mutating the start codon abolishes the observed phenotypic effect, it strongly indicates the effect is mediated by translation of the ORF and not the RNA itself [13].

- Cause 2: Detecting the Protein Product. Small proteins from non-canonical ORFs are often missed by standard tryptic mass spectrometry [13] [26].

- Solution: Utilize complementary methods:

- Immunopeptidomics: Identifies HLA-I presented peptides, which has proven effective for detecting peptides from non-canonical ORFs without tryptic digestion [26].

- V5/Epitope Tagging: Express the ORF with a C-terminal tag (e.g., V5) to enable detection via immunoassays. Note that tags can be disruptive for very small proteins (<50 amino acids) [13].

- Targeted Mass Spectrometry: Use methods like Selective Reaction Monitoring (SRM) to hunt for specific peptides predicted from the ORF [26].

- Solution: Utilize complementary methods:

- Cause 1: Distinguishing Translation from Mere Ribosome Binding. Ribosome occupancy does not always equate to productive, full-length protein synthesis [26].

Table 1: Critical Steps for Validating Non-Canonical ORFs

| Validation Method | Key Function | Considerations for Non-Canonical ORFs |

|---|---|---|

| CRISPR Start Codon Mutagenesis [13] | Confirms translation dependence by disrupting initiation. | Essential for distinguishing protein effects from RNA-mediated effects. |

| Ribo-seq with Periodicity [27] | Provides evidence of active, in-frame translation. | Strong 3-nt periodicity is a hallmark of productive elongation. |

| Epitope Tagging & Western Blot [13] | Confirms stable protein expression. | Tag size may interfere with function or stability of very small proteins. |

| Immunopeptidomics [26] | Detects endogenously processed and presented peptides. | Excellent for detecting stable, HLA-binding peptides; cell-type dependent. |

| Mass Spectrometry (Whole Proteome) [13] [26] | Direct detection of tryptic peptides from cellular lysates. | Low detection rate for non-canonical ORFs due to small size and low abundance. |

Problem: Difficulty in Low-Input and Single-Cell Applications

- Symptoms: Failure to generate libraries from rare cell populations or small tissue samples.

- Primary Causes & Solutions:

- Cause: Massive Sample Loss. Standard protocols require millions of cells, making small samples intractable.

- Solution: Implement specialized low-input protocols.

- Ribo-lite: A ligation-free method that skips rRNA depletion and gel purification, enabling translatome analysis from 50-1,000 cells [25].

- LiRibo-seq: Combines biotinylated-puromycin-based ribosome capture (RiboLace) with a one-pot ligation-free library prep, suitable for ~5,000 cells [25] [28].

- scRibo-seq & Ribo-ITP: True single-cell Ribo-seq methods that use either well-plate-based or microfluidic (ITP) processing to profile translation in individual cells [25].

- Solution: Implement specialized low-input protocols.

- Cause: Massive Sample Loss. Standard protocols require millions of cells, making small samples intractable.

Frequently Asked Questions (FAQs)

Q1: How does Ribo-seq fundamentally differ from RNA-seq, and why is it crucial for studying non-canonical ORFs?

RNA-seq measures the abundance and sequence of all cellular RNAs, providing a view of the transcriptome. In contrast, Ribo-seq identifies which mRNAs are actively being translated by ribosomes, providing a snapshot of the translatome. This is critical for non-canonical ORFs because many are located on transcripts annotated as non-coding (lncRNAs) or within untranslated regions (UTRs) of mRNA. RNA-seq alone would not distinguish a translated from a non-translated lncRNA, whereas Ribo-seq can reveal the active translation of a small ORF within it [24] [28].

Q2: My RNA-seq and proteomics data show poor correlation. Can Ribo-seq help explain the discrepancy?

Yes, this is a key application of Ribo-seq. The discrepancy often arises from post-transcriptional regulation, where mRNA levels do not directly predict translation rates. Ribo-seq measures the immediate step before protein synthesis (translation), effectively bridging the gap between transcript abundance and protein yield. It can reveal instances where an mRNA is abundant but poorly translated, or vice versa, providing a more direct correlate of proteome dynamics than RNA-seq [24] [28].

Q3: What is the gold-standard evidence for confirming a non-canonical ORF encodes a functional microprotein?

A multi-faceted approach is recommended:

- Translation Evidence: High-quality Ribo-seq data showing strong triplet periodicity across the putative ORF [27].

- Protein Evidence: Detection of the protein product, ideally through epitope tagging, immunopeptidomics, or targeted mass spectrometry [13] [26].

- Functional Evidence: A phenotype (e.g., viability defect, gene expression change) upon ORF knockout by CRISPR, which is abolished by start codon mutation, proving the phenotype is translation-dependent [13].

Q4: What are the best practices for visualizing Ribo-seq data to identify novel ORFs?

Use visualization tools that display reads color-coded by their reading frame, which makes the 3-nucleotide periodicity of translating ribosomes visually apparent. Tools like ggRibo (an R package) are specifically designed for this, allowing researchers to see periodicity in the context of full gene structure, including UTRs and introns, which is essential for spotting uORFs, dORFs, and ORFs in lncRNAs [27].

Q5: How can I accurately quantify global translational changes from Ribo-seq data, such as during cellular stress?

Relative analysis can be misleading during global translation shutdown. To enable absolute quantification, use spike-in controls. These can be:

- External Spike-ins: Defined synthetic RNA oligonucleotides added after RNase digestion [25].

- Orthogonal Lysates: Lysates from a different species (e.g., yeast) mixed with your sample before digestion, providing a constant reference [25].

- Internal References: Under certain conditions, mitochondrial ribosome footprints can serve as an internal control, assuming their translation is unaffected by the treatment [25].

Experimental Workflows and Visualization

The following diagrams outline the core workflows for Ribo-seq experimentation and data analysis, highlighting steps critical for investigating non-canonical ORFs.

Core Ribo-seq Wet-Lab Workflow

Computational Analysis & ORF Discovery Pipeline

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 2: Key Research Reagents and Kits for Ribo-seq Studies

| Reagent / Kit | Primary Function | Role in Studying Non-Canonical ORFs |

|---|---|---|

| Translation Inhibitors (e.g., Cycloheximide, Harringtonine) [24] | Arrests ribosomes at specific stages (elongation vs. initiation). | Harringtonine enriches for initiating ribosomes, crucial for pinpointing start codons of novel ORFs. |

| RNase I [24] | Digests unprotected mRNA, generating Ribosome-Protected Fragments (RPFs). | Produces clean RPFs for accurate mapping of translated regions, including non-canonical ones. |

| Ribo-zero Plus Kit [24] | Depletes ribosomal RNA (rRNA) from the RPF sample. | Increases the fraction of informative mRNA reads in the library, boosting signal for rare ORFs. |

| RiboLace / ALL-IN-ONE Gel Free Kit [25] [28] | Affinity-based capture of elongating ribosomes using a puromycin analog. | Gel-free workflow reduces sample loss, improves reproducibility, and is adaptable to low-input samples. |

| miRNeasy Kit [24] | Purifies small RNA fragments after nuclease digestion. | Efficiently recovers RPFs (~28-30 nt) while removing contaminants. |

Frequently Asked Questions (FAQs)

General Principles

Q: What is proteogenomics and why is it important for gene prediction research? A: Proteogenomics is the use of genomic or transcriptomic nucleotide sequencing data to create customized protein databases for mass spectrometry (MS) database searching [29]. It is crucial for gene prediction because it provides empirical evidence at the protein level to validate predicted genes, discover novel genes, and correct annotation errors, especially for non-canonical open reading frames (nORFs) that are often missed by conventional algorithms [30] [3].

Q: How does proteogenomics help in studying non-canonical RBS patterns and leaderless genes? A: Proteogenomics can experimentally validate gene expression that occurs through non-canonical patterns. Research on Deinococcus radiodurans, for example, has confirmed a −10 region-like motif (5′-TANNNT-3′) functioning as a promoter immediately upstream of ORFs, producing leaderless mRNA without a Shine–Dalgarno (SD) sequence [31]. This allows proteogenomics to detect and provide evidence for proteins expressed from such non-canonical genes.

Technical Challenges

Q: Why are noncanonical proteins often missed in standard proteomic analyses? A: Noncanonical proteins, often encoded by small open reading frames (sORFs), present unique challenges: their often small size, low abundance, non-AUG initiation, and rapid turnover make them difficult to detect using conventional proteomic approaches [3]. Standard gene prediction algorithms also typically prioritize ORFs exceeding 100 codons, systematically overlooking sORFs with validated coding potential [30].

Q: What are common reasons for the failure to detect peptides in a proteogenomic experiment? A: Failure can occur due to sample loss during processing, protein degradation, or unsuitable peptide sizes post-digestion [32]. Low-abundant proteins can be lost during preparation or be undetectable next to high-abundance proteins. Peptides may also "escape detection" if they are too long or too short due to suboptimal digestion [32].

Q: How can I improve the detection of low-abundance or non-canonical proteins? A: Scale up the experiment, increase relative protein concentration using cell fractionation, or enrich low-abundance proteins by Immunoprecipitation (IP) [32]. Using a combination of two different proteases (double digestion) can also help generate a more suitable range of peptide sizes for detection [32].

Troubleshooting Guides

Problem: High False Discovery Rate or Poor Spectral Matching

| Potential Cause | Diagnostic Check | Solution |

|---|---|---|

| Incomplete or generic protein database | Check if your custom database includes sample-specific variants and novel ORFs. | Create a comprehensive custom database using RNA-Seq data from the same sample to include novel splice junctions, SAVs, and indels [33] [29]. |

| Suboptimal mass spectrometer calibration | Check instrument performance with a standard HeLa protein digest [34]. | Clean and recalibrate the mass spectrometer using a commercial calibration solution [34]. |

| Incorrect search parameters | Verify search settings in your software (e.g., Mascot Score, P-value). | Ensure parameters like species, enzyme, fragment ions, and mass tolerance are correctly set. Use a P-value/Q-value of < 0.05 or a significant Mascot Score for validation [32]. |

Problem: Low Protein Coverage or Peptide Count

| Potential Cause | Diagnostic Check | Solution |

|---|---|---|

| Protein degradation during processing | Monitor each preparation step by Western Blot or Coomassie staining [32]. | Add broad-spectrum, EDTA-free protease inhibitor cocktails to all buffers during sample prep [32]. |

| Inefficient enzymatic digestion | Evaluate peptide size range and coverage. | Optimize digestion time or change the protease type (e.g., trypsin vs. Lys-C). Consider double digestion with two different proteases [32]. |

| High sample complexity masking low-abundance targets | Assess the number of proteins and dynamic range in your sample. | Fractionate complex samples using a high-pH reversed-phase peptide fractionation kit to reduce complexity [34]. |

Problem: Inability to Validate Predicted Novel Genes

| Potential Cause | Diagnostic Check | Solution |

|---|---|---|

| Limitations of gene prediction algorithms | Check if initial gene models were based only on ab initio prediction. | Perform a multi-stage proteogenomic analysis across different biological conditions (e.g., life cycle states) to capture condition-specific expression [30]. |

| Low expression of non-canonical ORFs | Check RNA-Seq data (e.g., FPKM) for the locus of interest. | Use ribosome profiling (Ribo-seq) to provide evidence of translation, then target the specific peptide with PRM or MRM MS assays [3] [35]. |

Key Experimental Protocols

Protocol 1: Creating a Custom Protein Database from RNA-Seq Data

This protocol is essential for detecting sample-specific variations and non-canonical proteins [33] [29].

- Data Upload and Preparation: Import the reference genome (FASTA), RNA-seq reads (FASTQ), and gene annotation file (GTF). Ensure chromosome naming conventions are consistent (e.g., change "1" to "chr1") using a text replacement tool [33].

- Read Alignment: Use a splice-aware aligner like HISAT2 to map the RNA-Seq reads (FASTQ) to the reference genome. This generates a BAM file containing the alignment information [33].

- Variant Calling: Use a variant caller like FreeBayes on the BAM file to identify single-nucleotide polymorphisms (SNPs) and insertions/deletions (indels). This outputs a VCF file [33].

- Database Generation: Translate the identified variants and de novo assembled transcripts into amino acid sequences. Merge these with the reference protein database (e.g., from UniProt) to create a comprehensive, sample-specific FASTA database [33] [36].

Protocol 2: Multi-State Proteogenomic Analysis for Gene Discovery

This protocol, adapted from a Tetrahymena thermophila study, is designed to comprehensively reassess gene discovery across different biological conditions [30].

- Experimental Design:

- Select multiple distinct biological or environmental states relevant to your organism (e.g., growth, starvation, conjugation).

- Harvest cells from each state independently.

- Sample Preparation and Fractionation:

- Prepare total protein extracts from each state.

- To increase depth, process aliquots of the lysate using both in-gel and in-solution tryptic digestion.

- Pre-fractionate the in-solution digested peptides using reversed-phase HPLC (e.g., on a TechMate C18 column) to reduce complexity.

- Mass Spectrometry Data Acquisition:

- Analyze all peptide fractions on a high-resolution mass spectrometer (e.g., Q Exactive HFX).

- Consolidate proteomic data for each state by integrating results from both digestion protocols.

- Database Search and Novel Event Identification:

- Search the consolidated MS data against your custom protein database using search engine software (e.g., pFind in open search mode).

- Use integrated software (e.g., GAPE) to identify peptides, novel genes, and post-translational modifications.

- Bioinformatic Validation:

- Functionally annotate identified proteins using KOG and GO terms.

- Determine the correlation between transcriptomic (FPKM) and proteomic (NSAF) expression levels.

- Perform cluster analysis to identify proteins and PTMs associated with specific states.

Experimental Workflow and Data Integration

The following diagram illustrates the core proteogenomic workflow for validating gene models, integrating genomics, transcriptomics, and proteomics data.

Research Reagent Solutions

The following table details key reagents and kits used in proteogenomic workflows for reliable results.

| Item | Function | Example Use Case |

|---|---|---|

| Pierce HeLa Protein Digest Standard [34] | Verifies overall MS instrument performance and sample preparation protocol. | Run as a control to determine if poor results are from sample prep or the LC-MS system. |

| Pierce Peptide Retention Time Calibration Mixture [34] | Diagnoses and troubleshoots the Liquid Chromatography (LC) system and gradient. | Ensures consistent peptide elution times, critical for reproducible LC-MS/MS runs. |

| Pierce Calibration Solutions [34] | Calibrates the mass spectrometer for accurate mass measurement. | Regular instrument calibration to maintain mass accuracy, essential for peptide identification. |

| Protease Inhibitor Cocktails (EDTA-free) [32] | Prevents protein degradation during sample preparation. | Added to lysis and extraction buffers to preserve protein integrity, especially for low-abundance targets. |

| High pH Reversed-Phase Peptide Fractionation Kit [34] | Reduces sample complexity by fractionating peptides before MS analysis. | Used with complex samples (e.g., TMT-labeled) to improve depth of coverage and detect low-abundance proteins. |

| Trypsin (or alternative proteases) [32] | Digests proteins into peptides suitable for MS analysis. | Standard enzyme for proteolysis. Changing protease type or using double digestion can improve coverage of problematic proteins. |

Frequently Asked Questions (FAQs)

Q1: Why do standard gene prediction tools often fail to correctly identify short ORFs? Standard gene prediction tools frequently miss or mis-annotate short open reading frames (sORFs, <100 codons) for several key reasons. Firstly, many gene annotation pipelines deliberately ignore sORFs, often setting an arbitrary cutoff (e.g., 100 codons) and dismissing anything shorter as non-functional [37] [38]. Secondly, the statistical models (e.g., codon usage indices) and homology search methods (like BLAST) used by conventional tools are optimized for longer, typical genes and perform poorly on sORFs due to their limited sequence length [37]. This is compounded by the fact that databases contain very few experimentally verified sORFs for training and comparison, making homology-based detection unreliable [37]. Consequently, a significant number of genuine sORFs are incorrectly annotated as 'hypothetical' or 'putative' proteins [38].

Q2: What are non-canonical RBS patterns, and why are they problematic for prediction? Non-canonical Ribosome Binding Site (RBS) patterns are translation initiation signals that deviate from the standard Shine-Dalgarno (SD) sequence (5'-AGGAGG-3').

- Leaderless mRNAs: These transcripts completely lack a 5' untranslated region (5'-UTR), meaning translation starts directly at the 5' end. This mode is abundant in archaea and certain bacterial groups [12] [1].

- Non-SD RBSs: Many mRNAs possess alternative, often weaker, sequence patterns in their 5' UTR that are not complementary to the anti-Shine-Dalgarno sequence [39] [1]. It is estimated that approximately half of bacterial genes may lack a canonical SD sequence [1].

- Other Initiation Mechanisms: Recent research has identified additional non-canonical mechanisms, such as initiation mediated by a 5'-terminal AUG codon (5'-uAUG) that attracts 70S ribosomes, compensating for a poor downstream SD sequence [1].

These patterns are problematic because most ab initio gene finders are primarily trained to recognize canonical SD sequences, leading to inaccurate prediction of translation start sites for genes with atypical RBSs [12].

Q3: How do advanced tools like StartLink+ and MetaGeneAnnotator improve gene start prediction? Next-generation predictors use innovative strategies to overcome the limitations of standard tools.

- StartLink+ combines ab initio prediction with a homology-based approach. It uses multiple sequence alignments of homologous nucleotide sequences to identify conserved start codon patterns. When its prediction matches that of an ab initio algorithm (GeneMarkS-2), the accuracy is exceptionally high (98-99%) [12].

- MetaGeneAnnotator (MGA) integrates specialized statistical models for prophage genes alongside bacterial and archaeal models. A key feature is its self-training model that adapts to the di-codon usage of the input sequence itself, making it sensitive to atypical genes. It also employs a sophisticated, species-specific RBS model that can detect non-canonical patterns, leading to more precise translation start site identification [20].

Q4: What experimental validation methods are available for predicted short ORFs and gene starts? Computational predictions should be confirmed experimentally. The primary methods for validating gene starts include:

- N-terminal protein sequencing: This is a classical method for directly determining the start of a protein. Studies in E. coli and Mycobacterium tuberculosis have used this to create benchmark datasets [12].

- Mass spectrometry: This technique is powerful for identifying proteins and, with specific protocols, can be used to characterize their N-termini [12].

- Ribosome profiling (Ribo-Seq): This method maps the positions of all ribosomes on an mRNA, providing direct evidence of translation initiation events, including those from non-canonical sites [22].

- Mutagenesis: Introducing frameshift mutations or mutating the start codon can be used to test if a predicted ORF is functional [12].

Comparative Accuracy of Gene Start Prediction Tools

Table 1: Performance of different gene prediction methodologies on prokaryotic genomes.

| Tool / Method | Primary Approach | Key Strength | Reported Accuracy on Experimentally Verified Starts |

|---|---|---|---|

| StartLink+ | Hybrid (Alignment + Ab initio) | Resolves ambiguous starts by requiring consensus. | 98 - 99% [12] |

| StartLink | Alignment-based | Infers starts from conserved homologs; works on short contigs. | High, but limited to genes with sufficient homologs [12] |

| GeneMarkS-2 | Ab initio | Uses multiple models for SD, non-SD, and leaderless initiation. | High, but starts often disagree with other tools [12] |

| MetaGeneAnnotator | Ab initio | Self-training model and species-specific RBS detection. | Precisely predicts translation starts on anonymous sequences [20] |

| Prodigal | Ab initio | Optimized for canonical Shine-Dalgarno (SD) RBSs. | Performance drops with non-canonical RBS patterns [12] |

Performance of sORF Prediction Methods inS. cerevisiae

Table 2: A comparison of computational methods for predicting small Open Reading Frames (sORFs) based on a study in Saccharomyces cerevisiae [37].

| Method | Type | True Positive Rate at 5% False Positive Rate (1-99 codons) | Overall Assessment |

|---|---|---|---|

| BLAST (Homology Search) | Similarity-based | Low performance due to limited verified sORFs in databases. | Poor for novel sORFs, depends on existing knowledge [37] |

| sORF finder | Ab initio | Similar accuracy to homology search for sORFs. | Designed specifically for sORFs; uses hexamer composition bias [37] |

| CodonW (Codon Usage) | Ab initio | Performs poorly for small genes. | Effective for standard genes but unreliable for sORFs [37] |

Troubleshooting Guides

Problem: Inconsistent Gene Start Predictions Between Tools

Potential Cause: The gene in question likely uses a non-canonical translation initiation mechanism (e.g., leaderless transcription, a non-SD RBS, or an unknown mechanism) that is modeled differently by each tool [12] [1].

Solution:

- Run a specialist tool: Use a predictor designed for non-canonical patterns, such as GeneMarkS-2 or MetaGeneAnnotator, which model multiple types of RBSs and leaderless initiation [20] [12].

- Employ a hybrid approach: Use StartLink+ if possible. Its consensus-based approach is highly reliable where it produces a result [12].

- Conduct a manual check: