Building a Robust Functional Annotation Pipeline: From Gene Calls to Actionable Biological Insights

This article provides a comprehensive guide for researchers and drug development professionals on constructing and executing a high-quality functional annotation pipeline following gene calling.

Building a Robust Functional Annotation Pipeline: From Gene Calls to Actionable Biological Insights

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on constructing and executing a high-quality functional annotation pipeline following gene calling. It covers foundational principles, diverse methodological approaches including evidence-based and ab initio strategies, and best practices for troubleshooting and optimization. A strong emphasis is placed on validation techniques and comparative genomics to ensure annotation accuracy, completeness, and reproducibility, which are critical for downstream analyses in biomedical and clinical research.

Laying the Groundwork: Core Principles of Functional Genome Annotation

Following the structural annotation of a genome, where gene locations and coding sequences are identified, the crucial next step is functional annotation. This process assigns biologically relevant meaning to predicted genes, transforming a list of nucleotide coordinates and protein sequences into a functional catalog of the organism's genomic capabilities [1]. Functional annotation adds critical information such as putative protein function, presence of specific domains, cellular localization, assigned metabolic pathways, Gene Ontology (GO) terms, and comprehensive gene descriptions [1]. For predicted protein-coding genes, function is automatically assigned based on sequence and/or structural similarity to proteins in available databases, providing the essential link between genetic information and biological activity that drives hypothesis-driven research and drug discovery.

Core Components of Functional Annotation

The typical functional annotation workflow integrates multiple methods that analyze protein sequences from complementary angles [1]. The primary approaches include:

- Homology-Based Annotation: This method relies on performing similarity searches (e.g., using BLAST+ or DIAMOND) against representative sequence databases like UniProt or NCBI NR (non-redundant) [1] [2]. It assigns function based on evolutionary relationships and sequence conservation.

- Orthology-Based Annotation: Tools like EggNOG-mapper assign a predicted protein to a known orthologous group, inferring functional annotation from these evolutionarily related genes [1].

- Signature-Based Annotation: This approach identifies known protein signatures and domains using diagnostic models, such as hidden Markov models (HMM), against specialized databases including InterPro, which integrates PANTHER, Pfam, and SUPERFAMILY, among others [1] [2].

Quantitative Comparison of Functional Annotation Pipelines

Selecting an appropriate annotation pipeline requires careful consideration of their methodologies, dependencies, and outputs. The table below summarizes key features of several available tools.

Table 1: Comparative Analysis of Functional Annotation Pipelines

| Program/Pipeline | Installation | Core Software Used | Primary Databases | Key Characteristics |

|---|---|---|---|---|

| FA-nf [1] | Local Installation | Nextflow, BLAST+, DIAMOND, InterProScan | Custom (typically NCBI BLAST DBs, InterPro, UniProt-GOA) | Based on Nextflow for workflow management; uses software containers for reproducibility and ease of deployment. |

| MicrobeAnnotator [2] | Local Installation | DIAMOND, KOFAM | KEGG Orthology (KO), SwissProt/TrEMBL, RefSeq | Focused on microbial genomes; provides metabolic summary heatmaps; standard and light running modes. |

| Blast2GO [1] | Local & Web/Cloud | BLAST+, InterProScan | NCBI BLAST DBs, InterPro, GO | Subscription-based tool; includes a visualization dashboard and gene structural annotation options. |

| EggNOG Mapper [1] | Web Service & Local | DIAMOND, HMMER | EggNOGdb (consolidated from several sources), GO, PFAM, SMART, COG | Available as a command-line tool and via REST API; performs orthology assignments. |

| PANNZER2 [1] | Web Service | SANSparallel | UniProt, UniProt-GOA, GO, KEGG | Web-service based; also offers a command-line tool for querying the service. |

| DAVID [3] | Web Service | Not Specified | DAVID Knowledgebase (integrates multiple sources) | Specialized in functional enrichment analysis of gene lists; identifies enriched GO terms and pathways. |

Experimental Protocols for Functional Annotation

Protocol A: Comprehensive Annotation Using the FA-nf Pipeline

The FA-nf pipeline exemplifies a robust, containerized approach for comprehensive functional annotation [1].

I. Input Requirements

- Primary Input: A FASTA file containing predicted protein sequences.

- Optional Input: A structural annotation file in GFF3 format, where protein IDs must match those in the FASTA file [1].

II. Computational Configuration

- Software Deployment: The pipeline is implemented in Nextflow. All software dependencies are pre-packaged in Docker or Singularity containers, ensuring full reproducibility and eliminating manual installation steps [1].

- Resource Tuning: In the

nextflow.configfile, adjust CPU cores, memory, and maximum run time for each process based on protein sequence number and length, and available HPC infrastructure [1]. - Database Setup: Configure the pipeline to use relational database management systems (SQLite or MySQL/MariaDB) for storing intermediate results and speeding up report generation [1].

III. Execution Steps

- Preprocessing: If a GFF file is provided, it is automatically verified and cleaned using the AGAT Toolkit to ensure compatibility with downstream processes [1].

- Parallelized Analysis: The workflow executes multiple annotation processes in parallel, typically including:

- Homology searches using BLAST+ and DIAMOND against databases like UniRef90 and NCBI NR.

- Domain and family analysis using InterProScan.

- Motif identification via databases like CDD.

- Prediction of subcellular localization using SignalP and TargetP [1].

- Data Consolidation: Results from all processes are stored in the relational database. A consensus annotation is generated by gathering and merging the best-matching predictions for each protein entry [1].

- Output Generation: The pipeline produces several outputs, including GO assignments, summary files from the various programs, a final annotation report in GFF3 format, and a comprehensive HTML report [1].

Protocol B: Specialized Microbial Annotation Using MicrobeAnnotator

MicrobeAnnotator provides a user-friendly, comprehensive pipeline optimized for microbial genomes [2].

I. Input Requirements

- Input: One or multiple FASTA files containing predicted protein sequences from microbial genomes [2].

II. Pre-Run Setup

- Database Building: Execute the

microbeannotator_db_builderscript to download and format the required databases (KOfam, SwissProt, trEMBL, and RefSeq). The user selects the search tool (BLAST, DIAMOND, or Sword) and the number of threads to use [2].

III. Execution Steps

- Iterative Database Search:

- Step 1: All proteins are searched against the curated KEGG Ortholog (KO) database using KOfamscan; best matches are selected based on adaptive score thresholds [2].

- Step 2: Proteins without a KO match are searched against SwissProt (default: ≥40% amino acid identity, bitscore ≥80, alignment length ≥70%) [2].

- Step 3: Proteins still without annotation are searched against RefSeq [2].

- Step 4: Remaining proteins are searched against trEMBL [2].

- Annotation Compilation: All annotations are compiled into a single table per genome, including associated metadata and cross-database identifiers (KO, E.C., GO, Pfam, InterPro) [2].

- Metabolic Reconstruction: KO identifiers are used to calculate the completeness of KEGG modules, which are functional units linked to metabolic pathways. The results are summarized in a graphical heatmap to quickly compare metabolic capabilities across multiple genomes [2].

Successful functional annotation relies on a suite of computational "reagents" – databases and software tools.

Table 2: Key Research Reagent Solutions for Functional Annotation

| Resource Name | Type | Primary Function in Annotation |

|---|---|---|

| UniProt (SwissProt/TrEMBL) [2] | Protein Sequence Database | Provides high-quality, manually annotated (SwissProt) and automatically annotated (trEMBL) sequences for homology searches. |

| RefSeq [2] | Protein Sequence Database | A comprehensive, non-redundant database used as a reference for sequence similarity searches. |

| KEGG Orthology (KO) [2] | Orthology Database | A database of orthologous groups used to assign functional identifiers and reconstruct metabolic pathways. |

| InterPro [1] | Protein Signature Database | Integrates multiple protein signature databases (Pfam, PANTHER, etc.) to identify domains, families, and functional sites. |

| Gene Ontology (GO) [1] | Ontology Database | Provides a standardized set of terms (Molecular Function, Biological Process, Cellular Component) for functional characterization. |

| DIAMOND [1] [2] | Search Software | A high-throughput sequence aligner for protein searches, much faster than BLAST, used for large datasets. |

| InterProScan [1] | Search Software | Scans protein sequences against the InterPro database to classify them into families and predict domains. |

Workflow Visualization and Data Interpretation

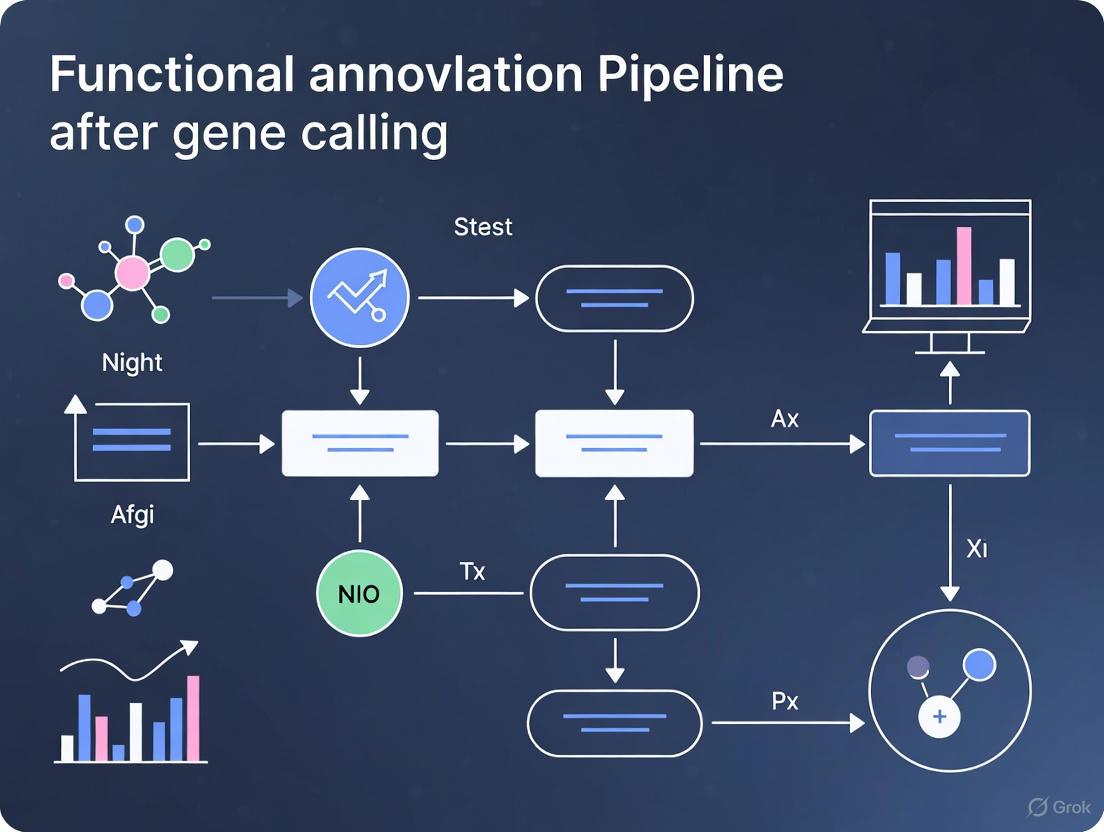

The following diagram illustrates the generalized logical workflow of an integrated functional annotation pipeline, synthesizing the steps from the described protocols.

Functional Annotation Workflow Logic

Visualizing the MicrobeAnnotator Iterative Process

MicrobeAnnotator employs a specific sequential strategy to maximize annotation yield efficiently. The DOT script below models this iterative process.

Iterative Annotation Strategy

The Critical Role of Annotation in Translational Research and Drug Discovery

In the landscape of modern translational research, the path from genomic sequencing to actionable biological insights is paved by functional annotation. Following gene calling, which identifies the structural elements within a genome, functional annotation adds biologically relevant meaning to these predicted features, such as putative function, presence of specific domains, cellular localization, and involvement in metabolic pathways [1]. This process is indispensable for drug discovery, enabling researchers to prioritize targets, understand disease mechanisms, and predict potential side effects. The integration of diverse annotation approaches—from homology searches to orthology assignment and protein signature identification—provides a consensus view that is critical for validating findings and building reliable models for therapeutic development [1]. As stem cell research and single-cell genomics advance, sophisticated annotation methods further allow scientists to evaluate the congruence of stem cell-derived models with in vivo biology, ensuring these powerful tools accurately recapitulate human disease for therapy development [4].

Functional Annotation Pipeline: Components and Workflow

A typical functional annotation workflow integrates multiple methods to analyze a protein sequence from complementary angles. The FA-nf pipeline, implemented via the Nextflow workflow management engine, exemplifies a scalable framework that combines various tools into a cohesive, reproducible process [1]. This pipeline begins with a protein sequence FASTA file and an optional structural annotation file in GFF3 format, proceeding through several critical stages.

Key integrated approaches include:

- Homology Search: Using tools like BLAST+ or DIAMOND to search against reference databases such as UniProt or NCBI NR, identifying sequences with significant similarity [1].

- Orthology Assignment: Leveraging resources like eggNOG to assign proteins to known orthologous groups, inferring functional annotation from these evolutionarily related proteins [1].

- Protein Signature Identification: Utilizing InterProScan to identify known protein domains and motifs by searching against diagnostic models, including hidden Markov models (HMM) from databases such as PANTHER, Pfam, and SUPERFAMILY [1].

The following workflow diagram illustrates the integrated process of a functional annotation pipeline, from raw sequence data to biologically relevant insights:

Comparative Analysis of Annotation Pipelines and Tools

The bioinformatics community has developed numerous frameworks for functional annotation, each with distinct strengths, installation requirements, and specialized applications. These tools can be broadly categorized into web-based services with user-friendly interfaces and locally installed pipelines that offer greater control and scalability for large datasets [1].

Table 1: Comparative Analysis of Functional Annotation Pipelines

| Program/Pipeline | Installation | Key Software Components | Primary Databases | Specialized Applications |

|---|---|---|---|---|

| FA-nf | Local Installation | BLAST+, DIAMOND, InterProScan, KOFAM, SignalP, TargetP | Custom (typically NCBI BLAST DBs, InterPro, UniProt-GOA) | General-purpose annotation with containerization for reproducibility [1] |

| Blast2GO | Local installation & Web/Cloud services | BLAST+, InterProScan, Blast2GO specific software | NCBI BLAST DBs, InterPro, GO | Subscription tool with visualization dashboard and gene structural annotation options [1] |

| eggNOG mapper | Web service & Local installation | DIAMOND, HMMER | eggNOGdb (multiple sources), GO, PFAM, SMART, COG | Command-line tool and REST API; suitable for orthology-based annotation [1] |

| MicrobeAnnotator | Local Installation | BLAST+, DIAMOND, KOFAM | SwissProt/TrEMBL, RefSeq, KEGG | Specialized for microbiome datasets [1] |

| PANNZER2 | Web Service | SANSparallel | UniProt, UniProt-GOA, GO, KEGG | Command-line tool available for querying service [1] |

| GenSAS | Web Service | BLAST+, DIAMOND, InterProScan, SignalP, TargetP | SwissProt/TrEMBL, RefSeq, RepBase | Online platform with structural annotation and visualization capabilities [1] |

Web-accessible tools like GenSAS and PANNZER2 offer convenience but may limit the number of sequences that can be processed, while locally installed pipelines such as FA-nf and MicrobeAnnotator provide scalability for high-throughput analysis at the cost of requiring more computational expertise [1]. The FA-nf pipeline distinguishes itself through its use of software containerization, which mitigates installation challenges and ensures reproducibility across different computing environments [1].

Advanced Annotation Applications in Translational Research

LA3: Language-Based Automatic Annotation Augmentation

Recent advancements in artificial intelligence are addressing the critical bottleneck of annotation scarcity in biological research. The LA3 (Language-based Automatic Annotation Augmentation) framework leverages large language models to systematically rewrite and augment molecular annotations, creating more varied sentence structures and vocabulary while preserving essential information [5]. This approach has demonstrated remarkable efficacy in training AI models for tasks such as text-based de novo molecule generation and molecule captioning. Experimental results show that models trained on LA3-augmented datasets can outperform state-of-the-art architectures by up to 301%, highlighting the transformative potential of enriched annotations for AI-driven drug discovery [5].

Single-Cell Annotation in Stem Cell Research

Stem cell-derived models represent a powerful tool for studying biology and developing cell-based therapies. However, determining precisely which cell types are present and how closely they recapitulate in vivo cells remains challenging. Single-cell genomics coupled with advanced annotation methods provides a framework for evaluating this congruence [4]. These annotation approaches face particular challenges in stem cell-derived models, including heterogeneity, incomplete differentiation, and the dynamic nature of cellular identities. Emerging recommendations emphasize the application of cell manifolds and integrated annotation strategies to better capture the complexity of stem cell systems for therapeutic development [4].

The following diagram illustrates the experimental validation workflow for confirming annotated gene functions:

Research Reagent Solutions for Annotation Experiments

Table 2: Essential Research Reagents and Resources for Functional Annotation

| Reagent/Resource | Function/Application | Key Features |

|---|---|---|

| UniProt Knowledgebase | Protein sequence and functional information repository | Manually annotated records with experimental evidence; computational annotation with taxonomy-specific rules [1] |

| InterPro | Protein signature database integrating multiple resources | Combines protein domain, family, and functional site predictions from PANTHER, Pfam, SUPERFAMILY, and other databases [1] |

| eggNOG | Orthology database and functional annotation resource | Evolutionary genealogy of genes; broad taxonomic coverage with functional inference from orthologs [1] |

| KEGG (Kyoto Encyclopedia of Genes and Genomes) | Pathway mapping and functional assignment | Maps annotated genes to metabolic pathways; useful for understanding systemic biological functions [1] |

| NCBI NR (Non-Redundant) Database | Comprehensive protein sequence database | Large-scale reference for homology searches; contains sequences from multiple sources [1] |

| SignalP | Subcellular localization prediction | Predicts presence and location of signal peptide cleavage sites [1] |

| TargetP | Subcellular localization prediction | Predicts subcellular localization of eukaryotic proteins [1] |

Implementation Protocols for Functional Annotation

Protocol 1: Basic Functional Annotation Using FA-nf Pipeline

Objective: To perform comprehensive functional annotation of protein sequences from a de novo genome assembly.

Materials and Input Requirements:

- Protein sequences in FASTA format (required)

- Structural annotation file in GFF3 format (optional but recommended)

- Linux computational environment with Docker/Singularity support

- Access to reference databases (UniProt, InterPro, KEGG, etc.)

Methodology:

- Pipeline Setup: Configure the Nextflow workflow management engine and associated container images. Software containers are provided for each process to ensure reproducibility and avoid dependency conflicts [1].

- Input Validation and Preprocessing: Verify and clean input GFF files using the AGAT Toolkit to ensure compatibility with downstream processes, as GFF3 formats vary significantly between sources like ENSEMBL and NCBI [1].

- Parallelized Execution: Launch the FA-nf pipeline with appropriate computational resources. Adjust chunk size parameters based on sequence number and length to optimize performance on available HPC infrastructure [1].

- Multi-Method Annotation: The pipeline automatically executes in parallel:

- Homology searches using BLAST+ and DIAMOND against reference databases

- Domain and motif identification using InterProScan

- Orthology assignment using eggNOG mapper

- Pathway mapping using KEGG

- Subcellular localization prediction using SignalP and TargetP [1]

- Data Integration and Output Generation: Results from all processes are stored in a relational database (SQLite or MySQL/MariaDB) with final annotation reports generated in GFF3, plain text, and HTML formats [1].

Troubleshooting Tips:

- For shared file systems in HPC environments, use MySQL/MariaDB instead of SQLite for better performance [1].

- Adjust CPU cores, memory allocation, and maximum runtime in the nextflow.config file based on sequence characteristics [1].

- Test parameters with debug mode (debugSize parameter) before full execution to optimize resource allocation [1].

Protocol 2: Annotation Augmentation Using LA3 Framework

Objective: To enhance existing molecular annotations using large language models for improved AI training in drug discovery applications.

Materials:

- Established dataset with molecular annotations (e.g., ChEBI)

- Computational environment with access to large language models

- Benchmark architecture for model training (e.g., MolT5-based models)

Methodology:

- Dataset Preparation: Extract existing molecular annotations from established datasets, preserving essential molecular information while identifying areas for linguistic diversification [5].

- Systematic Rewriting: Apply the LA3 framework to rewrite annotations while maintaining scientific accuracy. This process creates more varied sentence structures and vocabulary, enhancing the linguistic diversity of the training data [5].

- Dataset Validation: Ensure augmented annotations preserve critical molecular information while improving linguistic patterns suitable for AI model training.

- Model Training: Train molecular translation models (e.g., LaMolT5) on the enhanced dataset (e.g., LaChEBI-20) to learn mappings between molecular representations and augmented annotations [5].

- Performance Evaluation: Assess model performance on text-based de novo molecule generation and molecule captioning tasks, comparing results against state-of-the-art benchmarks [5].

Functional annotation represents the critical bridge between genomic sequences and biologically meaningful insights that drive translational research and drug discovery. As the field advances, integrated pipelines that combine multiple annotation approaches, augmented datasets enhanced by natural language processing, and specialized methods for emerging technologies like single-cell genomics are collectively expanding the boundaries of what can be achieved. The implementation of robust, reproducible annotation workflows, coupled with appropriate experimental validation, provides the foundation for identifying novel therapeutic targets, understanding disease mechanisms, and ultimately accelerating the development of new treatments. As these methodologies continue to evolve, they will undoubtedly play an increasingly vital role in personalized medicine and the next generation of biomedical breakthroughs.

Following gene calling in genomic sequencing pipelines, functional annotation serves as the critical next step, translating raw variant calls into meaningful biological insights. This process involves predicting the potential impact of genetic variants on protein structure, gene expression, cellular functions, and biological processes [6]. The landscape of genetic variation encompasses both protein-coding regions and vast non-coding territories, each requiring specialized annotation strategies to elucidate their roles in health and disease [6].

As sequencing technologies advance, the scope of functional annotation has expanded dramatically beyond protein-coding genes to include diverse regulatory elements that orchestrate gene expression. The integration of multi-omics approaches—combining genomic, epigenomic, transcriptomic, and proteomic data—now provides a more comprehensive view of biological systems than genomic analysis alone [7]. This evolution is particularly crucial given that the majority of human genetic variation resides in non-protein coding regions of the genome, presenting both a challenge and opportunity for discovering novel therapeutic targets [6].

Key Genomic Feature Classes

Protein-Coding Genes

Protein-coding genes represent the best-understood class of genomic features, with functional annotation primarily focusing on variants that alter amino acid sequences and affect protein function or structure [6]. Traditional approaches for automatic functional annotation in non-model species or newly sequenced genomes rely on homology transfer based on sequence similarity using tools like Blast2GO, identification of conserved domains, and InterPro2GO [8].

Accurately determining orthologous relationships between genes allows for more robust functional assignment of predicted genes, often described as the "orthology-function conjecture" [8]. Guided by collinear genomic segments, researchers can infer orthologous protein-coding gene pairs (OPPs) between species, which serve as proxies for transferring functional terms from well-annotated genomes to newly sequenced ones [8].

Non-Coding Regulatory Elements

Beyond protein-coding genes, the genome contains numerous regulatory elements that control gene expression, including:

- Promoter and enhancer sequences that initiate and amplify transcription [6]

- Non-coding RNAs with diverse regulatory functions [6]

- Transcription factor binding sites (TFBS) that mediate protein-DNA interactions [6]

- DNA methylation sites that influence epigenetic regulation [6]

- Transposable elements that can reshape genomic architecture [6]

The functional annotation of variants lying outside protein-coding genomic regions (intronic or intergenic) and their potential co-localization with these regulatory elements is essential for understanding their impact on phenotype [6]. Advanced sequencing techniques such as Hi-C provide insights into the three-dimensional organization of the genome, mapping global physical interactions between different genomic regions and revealing long-range interactions between regulatory elements and gene promoters [6].

Table 1: Key Genomic Feature Classes and Their Functional Characteristics

| Feature Class | Genomic Location | Primary Function | Annotation Challenges |

|---|---|---|---|

| Protein-Coding Genes | Exonic regions | Encode protein sequences | Missense variant interpretation, splice site determination |

| Promoters | 5' upstream of genes | Transcription initiation | Identifying core vs. regulatory regions |

| Enhancers | Intergenic or intronic | Enhance transcription levels | Tissue-specific activity, target gene assignment |

| Non-coding RNAs | Various genomic locations | Gene regulation | Functional classification, target identification |

| TFBS | Throughout genome | Transcription factor binding | Cell-type specificity, binding affinity prediction |

| DNA Methylation Sites | CpG islands, gene bodies | Epigenetic regulation | Tissue-specific patterns, functional consequences |

The field of genomic annotation employs diverse computational tools and databases, each with specific strengths for different annotation tasks. Ensembl Variant Effect Predictor (VEP) and ANNOVAR represent widely-used tools that can directly handle raw VCF files and are well-suited for large-scale annotation tasks, such as whole-genome and whole-exome sequencing projects [6].

The landscape of variant annotation tools is complex, with different tools targeting different genomic regions and performing different types of analyses. Some tools specialize in annotating exonic regions, while others concentrate on non-exonic intragenic regions or comprehensive tools that annotate variants across all genomic regions [6]. A less represented category includes tools designed to assess the cumulative impact of multiple variants, analyzing their collective effect on genes, pathways, or biological processes, which is particularly important for understanding complex traits and polygenic diseases [6].

Table 2: Quantitative Comparison of Functional Annotation Approaches

| Annotation Method | Primary Application | Data Input Requirements | Output Metrics | Limitations |

|---|---|---|---|---|

| Sequence Homology (BLAST) | Gene function prediction | Protein or nucleotide sequences | E-value, percent identity | May miss distant homologs |

| Orthology Inference (OrthoMCL) | Cross-species function transfer | Multiple genome sequences | Orthologous groups | Sensitive to parameter settings |

| Collinearity Analysis (i-ADHoRe) | Positional orthology detection | Genome assemblies and annotations | Collinear segments | Requires multiple related genomes |

| Machine Learning (DeepVariant) | Variant calling | Sequencing read alignments | Variant quality scores | Computational intensity |

| Multi-omics Integration | Systems biology modeling | Diverse molecular datasets | Pathway enrichment scores | Data normalization challenges |

Experimental Protocols for Comprehensive Functional Annotation

Protocol 1: Genome-Wide Collinearity Detection for Orthology Inference

Objective: Identify positional orthologous regions across multiple genomes to facilitate reliable functional annotation transfer.

Materials and Reagents:

- Genome assemblies for target species and related organisms

- High-performance computing infrastructure

- Multiple genome alignment software (LASTZ/MULTIZ)

- Collinearity detection tools (i-ADHoRe v3.0)

Methodology:

Data Preparation: Obtain genome sequences for multiple related species meeting quality thresholds (preferably chromosome-level assemblies or scaffold N50 >260 kbp) [8].

Multiple Whole-Genome Alignment:

- Perform pairwise whole-genome alignments against the reference genome using LASTZ, optimized for whole-genome alignments [8].

- Process pairwise alignments through alignment "chaining" and "netting" pipelines to ensure each reference base aligns with at most one base in other genomes [8].

- Join resulting pairwise alignments using MULTIZ, guided by phylogenetic relationships [8].

Collinearity Detection:

- Use genomic markers (multiple alignment anchors or protein-coding gene families) with i-ADHoRe to identify homologous regions [8].

- Set parameters including alignmentmethod = gg4, anchorpoints = 3, gapsize = 30 for MAA-based or 10 for protein-based, probcutoff = 0.01 [8].

- Identify n-way collinear segments (n∈{3,4,…,15}) representing multiple species level of collinear segments [8].

Orthology Inference:

- Designate protein-coding genes as orthologous pairs if they share sequence similarity (BLASTP E-value = 1E-05) and at least 50% of their sequences locate in the same collinear segment [8].

- Apply additional optional conditions including bidirectional best hits and confirmation using different marker types [8].

Protocol 2: Integrated Coding and Non-Coding Variant Annotation

Objective: Perform comprehensive functional annotation of both coding and non-coding genetic variants from whole-genome sequencing data.

Materials and Reagents:

- Variant Calling Format (VCF) files from WGS/WES

- Functional annotation tools (Ensembl VEP, ANNOVAR)

- Regulatory element databases (ENCODE, Roadmap Epigenomics)

- High-performance computing resources

Methodology:

Variant Effect Prediction:

Coding Variant Analysis:

- Focus on variants that alter amino acid sequences (missense, nonsense).

- Predict potential impact on protein function using pathogenicity prediction scores.

- Assess effect on protein structure and functional domains.

Non-Coding Variant Analysis:

- Annotate variants overlapping known regulatory elements from specialized databases [6].

- Identify variants in promoter regions, enhancers, non-coding RNAs, DNA methylation sites, TFBS, and transposable elements [6].

- Prioritize variants based on evolutionary conservation and functional genomic evidence.

Regulatory Impact Assessment:

- Integrate chromatin interaction data (Hi-C) to connect non-coding variants with potential target genes [6].

- Correlate with transcriptomic data to assess effect on gene expression.

- Utilize machine learning approaches to predict variant disruptiveness.

Visualization of Functional Annotation Workflows

Comprehensive Functional Annotation Pipeline

Functional Annotation Pipeline: This workflow illustrates the comprehensive process from raw sequencing data to biological insights, integrating both coding and non-coding variant analysis with multi-omics data.

Orthology-Based Function Transfer Protocol

Orthology-Based Function Transfer: This diagram shows the protocol for transferring functional annotations from well-characterized genomes to newly sequenced ones using collinearity and orthology inference.

Research Reagent Solutions

Table 3: Essential Research Reagents and Computational Tools for Functional Annotation

| Reagent/Tool | Category | Primary Function | Application Context |

|---|---|---|---|

| Ensembl VEP | Bioinformatics Tool | Variant effect prediction | Annotates variants with functional consequences |

| ANNOVAR | Bioinformatics Tool | Variant annotation | Handles VCF files from WGS/WES projects |

| LASTZ/MULTIZ | Alignment Software | Multiple genome alignment | Identifies conserved genomic segments |

| i-ADHoRe | Computational Algorithm | Collinearity detection | Finds homologous regions across genomes |

| OrthoMCL | Orthology Tool | Gene family clustering | Identifies orthologous groups across species |

| Hi-C Data | Genomic Resource | 3D genome organization | Maps physical interactions between genomic regions |

| ENCODE Data | Regulatory Annotation | Regulatory element mapping | Provides reference regulatory element annotations |

The Impact of Assembly Quality and Evidence on Final Annotation Outcomes

The functional annotation of predicted genes represents a critical phase in genomic studies, bridging the gap between raw sequence data and biological insight. The quality and completeness of this annotation are not predetermined but are profoundly influenced by two interdependent factors: the quality of the genome assembly itself and the nature and breadth of the experimental evidence integrated into the annotation pipeline. High-quality assembly provides a solid architectural foundation, enabling the accurate placement and structural determination of gene models. Concurrently, multifaceted evidence, including transcriptomic and protein data, is essential for corroborating these models and elucidating functional elements. This application note details protocols and analytical measures for evaluating and optimizing these factors within eukaryotic genome annotation projects, providing a framework for generating biologically meaningful and reliable annotations to support downstream research and drug development.

Quantitative Measures for Annotation Assessment and Management

Effective annotation management requires moving beyond simple gene counts to quantitative measures that track changes and quality over time. The table below summarizes key metrics for intra- and inter-genome annotation analysis.

Table 1: Quantitative Measures for Genome Annotation Management

| Measure Name | Application Scope | Description | Interpretation |

|---|---|---|---|

| Annotation Edit Distance (AED) | Intra-genome | Quantifies the structural change to a specific gene annotation between two pipeline runs or releases [9]. | Ranges from 0 (identical) to 1 (completely different). A low average AED indicates stable annotations [9]. |

| Annotation Turnover | Intra-genome | Tracks the addition and deletion of gene annotations from one release to the next [9]. | Supplements gene counts; can detect "resurrection events" where annotations are deleted and later re-created [9]. |

| Splice Complexity | Inter-genome | Quantifies the transcriptional complexity of a gene based on its alternative splicing patterns, independent of sequence homology [9]. | Allows for novel, global comparisons of alternative splicing across different genomes [9]. |

| Assembly-Induced Changes | Intra-genome | Identifies annotations altered solely due to changes in the underlying genomic assembly [9]. | Helps separate artifacts of assembly improvement from genuine annotation refinements. |

These measures reveal significant variation in annotation stability across organisms. For instance, the D. melanogaster genome is highly stable, with 94% of genes in one release remaining unaltered since 2004, and only 0.3% altered more than once. In contrast, 58% of C. elegans annotations were modified over a similar period, with 32% altered more than once, despite gene counts changing by less than 3% [9]. Furthermore, the magnitude of changes can differ; while fewer D. melanogaster annotations were revised, the changes tended to be larger (average AED 0.092) compared to C. elegans (average AED 0.058) [9].

The NCBI Eukaryotic Genome Annotation Pipeline: A Reference Protocol

The NCBI Eukaryotic Genome Annotation Pipeline provides a robust, publicly available framework that exemplifies the integration of assembly and evidence. The following protocol describes its key steps, which can be adapted for custom annotation projects [10].

Inputs: Genome Assembly and Evidence Data

- Source of Genome Assemblies: The pipeline annotates RefSeq assemblies, which are copies of public genome assemblies from INSDC (DDBJ, ENA, and GenBank). Note that unplaced scaffolds shorter than 1000 bp may be excluded from the RefSeq copy under specific conditions [10].

- Evidence Data Curation:

- Transcripts: The evidence set includes RefSeq transcripts (NM, NR), GenBank transcripts from taxonomically relevant divisions, and ESTs from dbEST. Sequences with potential contamination or low quality are screened out [10].

- Proteins: The set includes known RefSeq proteins and GenBank proteins from cDNA. Highly repetitive sequences are removed [10].

- RNA-Seq Data: When available, RNA-Seq reads from SRA are aligned. Projects spanning wide ranges of tissues and developmental stages are preferred, with a focus on untreated/non-diseased samples [10].

Core Computational Workflow

- Masking: The genomic sequence is masked to identify and handle repetitive elements. Human and mouse genomes are masked with RepeatMasker using Dfam libraries, while other species are masked with WindowMasker [10].

- Sequence Alignment:

- Transcripts: Cleaned transcripts are aligned to the genome using BLAST to find locations, followed by global re-alignment with Splign to refine splice sites. Only the best-placed (rank 1) alignment is selected [10].

- Proteins: Proteins are aligned locally with BLAST and then re-aligned globally using ProSplign [10].

- RNA-Seq Reads: RNA-Seq reads are aligned with STAR. Alignments with identical splice structures are collapsed, and rare introns likely to be noise are filtered out [10].

- Long Reads: Transcriptomics long reads (PacBio, Oxford Nanopore) are aligned with Minimap2, and the best-placed alignment above 85% identity is selected [10].

- Gene Model Prediction: The alignment evidence is passed to the Gnomon gene prediction software. Gnomon first chains non-conflicting alignments into putative models. It then performs HMM-based ab initio prediction to extend predictions or create models in regions with no supporting evidence but with long open reading frames. This initial set is refined by alignment against a non-redundant protein database, and the prediction steps are repeated [10].

- Model Selection and Integration: The final annotation is built by combining pre-existing RefSeq sequences and well-supported Gnomon predictions.

- Priority is given to known and curated RefSeq models.

- Gnomon predictions are included if they meet quality thresholds, such as being fully or partially supported by evidence, or being pure ab initio predictions with high coverage hits to Swiss-Prot proteins.

- Conflicting or poorly supported models are excluded [10].

Outputs and Functional Annotation

- Annotation Products: The pipeline produces gene, RNA, and CDS features on the genome. Models are assigned accessions: known RefSeq transcripts (NM, NR), model RefSeq transcripts (XM, XR), and predicted proteins (XP_) [10].

- Functional Naming: Genes from known RefSeq inherit their existing names and locus types. Genes represented by predicted models are named based on homology to Swiss-Prot proteins. Models with indels or frameshifts may be labeled as pseudogenes or, if they have strong homology, as "PREDICTED: LOW QUALITY PROTEIN" [10].

A Case Study in Assembly and Annotation: The Taohongling Sika Deer

A recent high-quality chromosome-scale genome assembly of the Taohongling Sika deer (Cervus nippon kopschi) demonstrates the impact of modern sequencing and assembly methods on annotation outcomes [11].

Experimental Protocol for Genome Assembly

- Sequencing Technology: The assembly utilized a combination of PacBio Sequel II (HiFi long reads, 36.22x coverage), Illumina NovaSeq 6000 (short reads, 41.82x coverage), and Hi-C sequencing (82.66x coverage) from muscle tissue [11].

- Assembly Process: Contig-level assembly was performed with HIFIASM (v0.16.1) using PacBio HiFi reads, resulting in a primary contig assembly with an N50 of 61.59 Mb. Hi-C data was then used to scaffold and assign 97.23% of the assembled sequence onto 34 chromosomes, achieving a scaffold N50 of 85.86 Mb [11].

- Assembly Quality Assessment:

Annotation Results and Analysis

The high-quality assembly directly enabled a comprehensive annotation [11]:

- Repeat Content: 46.19% of the genome was identified as repetitive sequences.

- Protein-Coding Genes: 22,890 genes were predicted, with 97.16% (22,240 genes) receiving functional annotations.

- Non-Coding RNAs: 63,473 noncoding RNAs were identified.

Table 2: Impact of Assembly Quality on Annotation Metrics in Sika Deer

| Assembly Metric | Value | Impact on Annotation |

|---|---|---|

| Sequencing Coverage | 36.22x PacBio HiFi, 41.82x Illumina | Provides high accuracy for base calling and structural variant resolution. |

| Contig N50 | 61.59 Mb | Greatly reduces gene fragmentation, allowing complete genes to be assembled on single contigs. |

| Scaffold N50 / Chromosome Assignment | 85.86 Mb / 97.23% anchored | Enables accurate analysis of gene order, synteny, and regulatory contexts. |

| BUSCO Completeness | 98.0% | Indicates near-complete representation of universal single-copy orthologs, minimizing gene loss. |

| Mapping Rate of Short Reads | 99.52% | Suggests high base-level accuracy, reducing frameshifts and erroneous stop codons in gene models. |

Critical Considerations for Comparative Genomics

The choice of annotation method is a critical, often overlooked variable that directly impacts downstream comparative analyses. A key study demonstrated that using genomes annotated with different methods (i.e., "annotation heterogeneity") within a comparative analysis can dramatically inflate the apparent number of lineage-specific genes—by up to 15-fold in some case studies [12]. These genes, which appear unique to one species or clade, are often interpreted as sources of genetic novelty and unique adaptations. However, annotation heterogeneity can create artifacts where orthologous DNA sequences are annotated as a gene in one species but not in another, falsely appearing to be lineage-specific [12]. Therefore, for meaningful comparative genomics, it is crucial to either use annotations generated by a uniform pipeline or to account for methodological differences when interpreting results.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools and Reagents for Modern Genome Assembly and Annotation

| Tool / Reagent | Function in Workflow | Example Use Case |

|---|---|---|

| PacBio HiFi Reads | Long-read sequencing providing high accuracy; ideal for resolving complex repeats and assembling complete contigs. | Generating the primary contig assembly for the Taohongling Sika deer [11]. |

| Hi-C Sequencing | Captures chromatin conformation data; used to scaffold contigs into chromosome-scale assemblies. | Anchoring 97.23% of the Sika deer assembly onto 34 chromosomes [11]. |

| Illumina Short Reads | High-accuracy short reads; used for error correction of long reads and base-level quality assessment. | Polishing the Sika deer assembly and calculating mapping rates/coverage [11]. |

| Iso-Seq (PacBio) | Full-length transcriptome sequencing without assembly; provides direct evidence for splice variants and UTRs. | Informing gene model prediction; part of the NCBI pipeline when data is available [10]. |

| RNA-Seq from SRA | Provides evidence for transcribed regions and splice junctions; crucial for supporting and refining gene models. | Aligned with STAR in the NCBI pipeline to support Gnomon predictions [10]. |

| BUSCO | Software to assess genome completeness based on evolutionarily informed expectations of gene content. | Benchmarking the completeness of the Sika deer assembly (98.0%) [11]. |

| Gnomon | NCBI's HMM-based gene prediction program that integrates evidence and performs ab initio prediction. | Core of the NCBI annotation pipeline for predicting gene models [10]. |

| Splign / ProSplign | Algorithms for computationally mapping transcripts and proteins to a genome to determine precise exon-intron structures. | Used in the NCBI pipeline for global alignment of transcripts and proteins [10]. |

In the final stages of a functional annotation pipeline, following the initial gene calling phase, researchers must systematically interpret and catalog predicted genomic elements. This process relies on standardized file formats to represent features such as genes, transcripts, exons, and regulatory elements in a computationally accessible manner. The General Feature Format (GFF) and Gene Transfer Format (GTF) are two cornerstone file specifications that enable this critical step, serving as the lingua franca for exchanging genomic annotations between visualization tools, databases, and analysis software [13] [14]. While they appear structurally similar at a glance, understanding their nuanced differences—rooted in historical development and specific use cases—is essential for selecting the appropriate format to ensure analytical accuracy and pipeline interoperability. Misapplication of these formats can lead to erroneous data interpretation, processing failures in downstream tools, and ultimately, compromised research conclusions [15] [16].

The evolution of these formats mirrors the increasing complexity of genomic science. The original GFF specification emerged from a 1997 meeting at the Isaac Newton Institute, designed as a common format for sharing information between gene-finding sensors and predictors [14]. This format has since undergone significant revisions, culminating in GFF3, while GTF developed as a more gene-centric derivative, often termed "GFF2.5," to meet the specific needs of collaborative genome annotation projects around the year 2000 [13] [17]. Today, these files are not merely passive containers of data but active components in sophisticated annotation pipelines, interfacing with tools for transcriptome assembly, functional prediction, and comparative genomics [18] [19].

Historical Development and Format Specification

The Evolution of GFF and GTF

The phylogenetic development of GFF and GTF formats reveals a trajectory of increasing specificity and structural complexity. The inaugural GFF version 0, used before November 1997, established the basic paradigm of a tab-delimited, nine-field format for describing genomic features, though it lacked the modern source field [14]. The subsequent GFF1 specification, formalized in 1998, introduced the source field and the foundational nine-column structure that persists in contemporary versions, albeit with an optional group attribute for feature grouping [14]. A significant evolutionary leap occurred with GFF2 in 2000, which standardized the attribute column formatting and enhanced the specification's robustness for increasingly complex genomic annotations [14].

The GTF format (GFF2.5) emerged around 2000 as a specialized branch from the GFF2 lineage, developed specifically to address the requirements of genome annotation projects like Ensembl [13] [17]. This format imposed stricter conventions on the attribute column, mandating specific tags such as gene_id and transcript_id to unambiguously define gene model hierarchies. The most recent major revision, GFF3, was developed to overcome GFF2's limitations in representing complex biological relationships, most notably through the introduction of explicit parent-child feature relationships using ID and Parent attributes, enabling true multi-level hierarchies of genomic features [13] [14]. This progression from a flat feature representation to a structured hierarchy mirrors the growing understanding of genomic complexity, from simple gene models to intricate networks of alternative transcripts, regulatory elements, and non-coding RNAs.

Structural Anatomy of GFF and GTF Files

Despite their historical divergence, GFF and GTF files share a common structural framework consisting of nine mandatory tab-delimited columns that provide a complete descriptor for each genomic feature [13] [20]. The table below delineates the function and content of each column:

Table: Column-by-Column Specification of GFF/GTF Format Structure

| Column Number | Column Name | Description | Format Notes |

|---|---|---|---|

| 1 | SeqID (seqname) | Reference sequence identifier (chromosome/scaffold) | Must match corresponding FASTA file [16] |

| 2 | Source | Algorithm/database that generated the feature | e.g., "AUGUSTUS," "Ensembl"; "." for unknown [20] |

| 3 | Feature (type) | Biological feature type | e.g., "gene," "exon," "CDS"; should use Sequence Ontology terms [16] |

| 4 | Start | Start coordinate of the feature | 1-based, inclusive [13] [20] |

| 5 | End | End coordinate of the feature | 1-based, inclusive [13] [20] |

| 6 | Score | Confidence measure or statistical score | Floating-point or "." if null/not applicable [13] |

| 7 | Strand | Genomic strand orientation | "+", "-", or "." for non-stranded features [13] |

| 8 | Phase (Frame) | Reading frame offset for CDS features | "0", "1", "2", or "." for non-CDS features [13] [20] |

| 9 | Attributes | Semicolon-separated key-value pairs | Meta-information (IDs, parents, names) [13] |

A critical distinction between the formats lies in their coordinate system. Both GFF and GTF use a 1-based, inclusive coordinate system, where the first nucleotide of a sequence is position 1, and an interval includes both the start and end positions [20]. This contrasts with other bioinformatics formats like BED, which use a 0-based, half-open coordinate system (where the start coordinate is zero-indexed and the end coordinate is exclusive) [13]. This fundamental difference necessitates careful handling during format conversion to prevent off-by-one errors that could misalign genomic features.

The attribute column (column 9) constitutes the most complex and format-divergent field, housing the semantic relationships between features. In GFF3, attributes follow a strict key=value syntax with multiple values comma-separated (e.g., ID=exon00001;Parent=mRNA00001;Name=EDEN) [20] [16]. In GTF, the attribute syntax is less standardized, often mixing key "value"; (with quotes and trailing semicolon) and key value; formats, though it mandates specific tags like gene_id and transcript_id for gene model representation [20] [21].

Key Differences Between GFF and GTF

Structural and Philosophical Divergences

While GFF and GTF share a common ancestry and structural framework, they diverge significantly in their philosophical approach to genomic annotation. GTF adopts a gene-centric perspective, with its specification deliberately optimized for representing eukaryotic gene models with precise exon-intron structures [13] [15]. This focus is evident in its requirement for specific attribute tags—gene_id, transcript_id—that explicitly define the relationships between transcripts and their parent genes, creating a rigid but unambiguous hierarchy necessary for transcriptome analysis and gene expression quantification [13] [17]. Consequently, GTF files are particularly valuable in RNA-seq workflows where tools like Cufflinks, StringTie, and TopHat rely on these consistent identifiers to assemble transcripts and quantify expression levels [13] [18].

In contrast, GFF3 embraces a genome-centric paradigm designed to annotate the full complexity of genomic features beyond protein-coding genes alone [13] [15]. Its flexibility allows for the representation of diverse elements including regulatory regions (promoters, enhancers), repetitive elements, non-coding RNAs, and epigenetic marks within a unified framework [22]. This comprehensive scope is facilitated by GFF3's implementation of explicit parent-child relationships through ID and Parent attributes, which can represent complex multi-level hierarchies such as gene → transcript → exon → CDS, or even more intricate relationships in the case of alternatively spliced transcripts or polycistronic genes [16] [14]. This makes GFF3 the preferred format for genome browsers, comparative genomics, and comprehensive genome annotation projects that require representing biological reality in its full complexity.

Practical Implications for Genomic Analyses

The philosophical differences between GFF and GTF translate directly to practical consequences in bioinformatics workflows. The stricter, more standardized nature of GTF ensures greater consistency and reliability when processed by different gene-centric tools, reducing the likelihood of parsing errors in pipelines focused on transcriptomics [15] [17]. However, this specificity comes at the cost of flexibility—GTF is less adept at representing the broad universe of genomic elements beyond genes and their subfeatures.

The richer hierarchical model of GFF3 comes with a computational cost; parsing and interpreting these relationships requires more sophisticated software capable of reconstructing the feature graph from the parent-child relationships [22] [14]. While this offers superior representational power, it can lead to compatibility issues with tools expecting the simpler, flatter structure of GTF files. Furthermore, the flexibility of GFF3's attribute column can result in "format flavors," where different annotation tools or communities implement semi-compatible variations, potentially causing interoperability challenges without careful validation [14] [21].

Table: Comparative Analysis of GFF3 and GTF Format Characteristics

| Characteristic | GFF3 | GTF |

|---|---|---|

| Primary Focus | Whole-genome annotation [13] [15] | Gene structure annotation [13] [15] |

| Attribute Syntax | key=value pairs [20] [16] |

Mixed: key "value"; and key value; [20] [21] |

| Hierarchy Representation | Explicit ID/Parent relationships [16] [14] |

Implicit via gene_id, transcript_id [13] [17] |

| Coordinate System | 1-based, inclusive [13] [20] | 1-based, inclusive [13] [20] |

| Flexibility | High (diverse feature types) [13] [15] | Low (gene-centric) [13] [15] |

| Ideal Use Cases | Genome browsing, comparative genomics, regulatory annotation [13] [22] | Transcriptome assembly, RNA-seq, expression quantification [13] [18] |

Application in Functional Annotation Pipelines

Integration with Annotation Tools and Workflows

In a complete functional annotation pipeline, GFF and GTF files serve as the critical junction between structural gene prediction and functional characterization. Modern annotation tools produce outputs in these formats, which then feed into downstream processes including functional term assignment, motif identification, and comparative genomic analyses [18] [19]. The decision between GFF3 and GTF often depends on the annotation methodology employed. Evidence-based approaches utilizing RNA-seq data, such as StringTie and Scallop, typically output GTF files that describe transcript structures assembled from RNA-seq read alignments [18]. Similarly, annotation transfer tools like Liftoff and TOGA, which map annotations from a reference genome to a target assembly, often use GFF3 as both input and output to preserve complex feature relationships during the transfer process [18].

Ab initio prediction tools demonstrate varied format preferences reflecting their methodological foundations. BRAKER3, which combines protein homology and RNA-seq evidence with GeneMark-ETP and AUGUSTUS, generates GFF3 output containing comprehensive gene models [19]. In contrast, newer approaches like Helixer, which employs deep learning models for cross-species gene prediction, also produces GFF3 format, underscoring its status as the current community standard for comprehensive genome annotation [19]. This convergence toward GFF3 reflects the growing recognition that high-quality genome annotations must capture biological complexity beyond simple coding sequences, including untranslated regions (UTRs), non-coding RNAs, and regulatory elements.

Protocol: Processing and Validating Annotation Files

A critical step in any annotation pipeline involves the quality control and processing of GFF/GTF files to ensure downstream compatibility. The following protocol outlines a standardized workflow for handling these files:

Step 1: File Validation and Syntax Checking

- For GFF3 files, use standalone validators such as

gff3-validatororAGATto confirm syntactic correctness and adherence to specification [16]. - For GTF files, leverage format-specific validators like

gtf2validatoror the parsing functions in Bioconductor/BioPython libraries to identify common formatting issues. - Check for critical attributes: GFF3 files require proper

IDandParentrelationships, while GTF files must containgene_idandtranscript_idfor all features [16] [17].

Step 2: Format Conversion (When Necessary)

- Utilize robust conversion tools like

AGATorGenomeToolsfor interconversion between GFF and GTF formats [13]. - Exercise caution during conversion, as the transformation is lossy—GTF to GFF3 conversion may infer hierarchical relationships, while GFF3 to GTF requires flattening of complex hierarchies [13] [14].

- Validate converted files to ensure critical information (gene boundaries, transcript structures) is preserved accurately.

Step 3: Feature Indexing for Rapid Access

- For large annotation files, implement indexing strategies to enable efficient querying. The Rust-based

GFFxtoolkit demonstrates performance benchmarks of 10-80× faster feature extraction compared to traditional tools [22]. - Alternative indexing approaches include loading annotations into SQLite databases (gffutils) or creating interval trees for rapid region-based queries [22].

- For whole-genome alignment workflows, ensure coordinate consistency between annotation files and the reference genome assembly [16].

Step 4: Quality Assessment Metrics

- Compute completeness metrics using tools like BUSCO to evaluate the annotation's coverage of evolutionarily conserved genes [18] [19].

- Perform internal consistency checks: validate coding sequence lengths (should be multiples of 3), check for premature stop codons within CDS features, and verify splice site consensus sequences [16].

- For comparative assessments, employ annotation editing tools like

Mikadoto integrate predictions from multiple methods and select highest-quality models [18].

Table: Essential Bioinformatics Tools for GFF/GTF File Processing

| Tool Name | Primary Function | Use Case | Citation |

|---|---|---|---|

| AGAT | Comprehensive annotation manipulation | Format conversion, statistics, filtering | [13] [14] |

| GFFx | High-performance indexing/querying | Ultra-large annotation files, rapid access | [22] |

| GenomeTools | Genome analysis toolkit | Format conversion, manipulation, visualization | [13] |

| gffutils | Python database interface | Programmatic access, SQL queries of annotations | [22] |

| BCBio-GFF | Python parser and validator | Validation, processing in custom pipelines | [22] |

| BedTools | Genomic interval operations | Intersection, comparison with other genomic files | [22] |

| SeqAn GffIO | C++ library for file I/O | High-performance parsing in C++ applications | [20] |

Within the framework of a comprehensive functional annotation pipeline, the selection between GFF and GTF formats represents more than a mere technical implementation detail—it constitutes a fundamental decision that shapes the scope, compatibility, and biological fidelity of the resulting annotation. The gene-centric specificity of GTF offers advantages for transcriptomics-focused workflows where consistent gene model representation is paramount, while the comprehensive flexibility of GFF3 better serves whole-genome annotation projects that aim to capture the full complexity of genomic architecture [13] [15]. As genomic datasets continue to expand in both scale and resolution, with emerging technologies revealing ever-more intricate regulatory networks and non-coding elements, the representational capacity of GFF3 positions it as the increasingly preferred community standard [22] [18].

The ongoing development of high-performance processing tools like GFFx, which demonstrates order-of-magnitude improvements in annotation indexing and query performance, underscores the growing importance of efficient GFF/GTF handling in contemporary genomics research [22]. For researchers engaged in drug development and precision medicine, where accurate genomic annotation forms the foundation for variant interpretation and target identification, adherence to format specifications and implementation of rigorous validation protocols is not merely good practice—it is an essential component of reproducible, clinically translatable genomics. As annotation methodologies continue to evolve, integrating deep learning approaches with multi-omic evidence, the role of standardized, computable annotation formats will only increase in importance for bridging the gap between genome sequence and biological function.

A Practical Toolkit: Evidence-Based and Ab Initio Annotation Strategies

Following gene calling, the selection of an appropriate functional annotation pipeline is a critical step that directly determines the quality and biological relevance of your genomic research outcomes. This process involves attaching biological information—such as Gene Ontology (GO) terms describing molecular functions, biological processes, and cellular components—to predicted protein-coding genes. Traditional methods have primarily relied on sequence similarity approaches using tools like BLAST to transfer annotations from characterized proteins in databases, a process with inherent limitations when annotating fast-evolving, divergent, or orphan genes without clear homologs [23]. The emergence of artificial intelligence, particularly protein Language Models, has introduced a paradigm shift, capturing functional and structural signals beyond traditional homology to illuminate the "dark proteome"—the substantial portion of protein-coding genes that remain uncharacterized in both model and non-model organisms [24].

This framework provides a structured decision process to navigate the expanding toolkit of annotation methodologies, balancing factors including research goals, data availability, and computational resources to optimize functional discovery.

Method Comparison and Selection Framework

The choice between traditional homology-based and novel embedding-based annotation strategies represents a fundamental decision point. The table below summarizes the core characteristics of these approaches to guide initial selection.

Table 1: Core Methodologies for Functional Annotation

| Method Type | Underlying Principle | Key Tools | Primary Input | Typical Output |

|---|---|---|---|---|

| Homology-Based | Transfer of function via sequence similarity to annotated proteins | BLAST, DIAMOND [23] | Protein/ Nucleotide FASTA | Annotations based on best database match |

| Embedding-Based (pLMs) | Function inference via embedding similarity in AI-learned space | FANTASIA [24], GOPredSim [24] | Protein FASTA | Gene Ontology (GO) terms |

A more detailed analysis of performance characteristics reveals critical trade-offs that influence method selection based on specific research objectives.

Table 2: Performance and Application Comparison of Annotation Methods

| Characteristic | Homology-Based Methods | Embedding-Based Methods (pLMs) |

|---|---|---|

| Annotation Coverage | Lower (fails on ~30-50% of genes, especially in non-models) [24] | Higher (can annotate a large portion of the dark proteome) [24] |

| Basis for Annotation | Sequence similarity (primary structure) | Functional/structural signals in embeddings [24] |

| Ideal for Non-Model Taxa | Limited by database representation | Strong zero-shot generalization [24] |

| Output Informativeness | Can be noisy with many non-specific terms | More precise and informative GO terms [24] |

| Computational Demand | Moderate (alignment-based) | High (requires GPU for embedding generation) |

The following decision workflow synthesizes this information into a logical selection pathway.

Detailed Experimental Protocols

Protocol 1: Large-Scale Annotation with FANTASIA

Purpose: To perform zero-shot functional annotation of a eukaryotic proteome using protein language models (pLMs), ideal for non-model organisms with high proportions of uncharacterized genes.

Principle: This protocol uses FANTASIA (Functional ANnoTAtion based on embedding space SImilArity) to generate numerical embeddings (dense vector representations) for query protein sequences using a pre-trained pLM. It then infers Gene Ontology terms by finding the closest embeddings in a reference database (e.g., GOA) and transferring their annotations based on embedding similarity, capturing functional signals beyond sequence homology [24].

Workflow:

Steps:

Input Preprocessing

- Provide a FASTA file containing the protein sequences from gene calling.

- Optional: Filter sequences by length or cluster to remove near-identical sequences (>90% identity) to reduce computational redundancy.

Compute Protein Embeddings

- Install FANTASIA v2 from GitHub (

https://github.com/CBBIO/FANTASIA). - Choose a pre-trained pLM. ProtT5 is recommended based on benchmarking [24].

- Execute the embedding generation. This step is computationally intensive and benefits significantly from GPU acceleration.

- Example Command:

- Install FANTASIA v2 from GitHub (

Embedding Similarity Search

- FANTASIA automatically accesses its on-the-fly generated database of reference embeddings (from GOA).

- The pipeline computes the cosine similarity between your query protein embeddings and all reference embeddings.

GO Term Transference

- For each query protein, the pipeline identifies the k-nearest reference embeddings (default k=50).

- GO terms associated with these nearest neighbors are transferred to the query protein.

- Distance-based filtering is applied to reduce noise from low-similarity hits.

Output and Interpretation

- The primary output is a tabular file listing each protein and its predicted GO terms.

- Analyze the results by performing GO enrichment analysis on newly annotated genes to reveal potential biological insights specific to your organism.

Protocol 2: Integrated Validation with AnnotaPipeline

Purpose: To provide automated functional annotation validated by experimental multi-omics data (RNA-Seq and MS/MS), enhancing confidence in predictions.

Principle: This protocol uses the AnnotaPipeline to perform classic homology-based annotation via BLAST against SwissProt and user-defined databases, then integrates transcriptomic and proteomic evidence to validate the in silico predictions [23]. This proteogenomic approach cross-validates predicted CDS, reducing the annotation of false-positive genes and providing expression-level support.

Workflow:

Steps:

Input and Configuration

- Inputs:

- Required: Genomic FASTA file OR protein FASTA file OR structural annotation GFF3 file.

- Optional: RNA-seq data (FASTQ) and/or MS/MS data (mzXML format).

- Configuration:

- Install AnnotaPipeline (

https://github.com/bioinformatics-ufsc/AnnotaPipeline). - Edit the

AnnotaPipeline.yamlfile to specify paths to software (AUGUSTUS, BLAST) and databases (SwissProt, custom databases). - Define classification keywords for "hypothetical proteins" (e.g., "uncharacterized," "hypothetical").

- Install AnnotaPipeline (

- Inputs:

Gene Prediction and Similarity Analysis

- If starting from a genomic FASTA, gene prediction is performed using AUGUSTUS (requires a pre-trained model for your organism).

- BLASTp is run against the SwissProt database and any user-specified databases.

- Proteins are classified as "annotated," "hypothetical," or "no-hit" based on search results and keyword filtering.

Experimental Data Integration

- RNA-seq Validation: Map RNA-seq reads to the genome to provide transcriptional evidence for predicted CDS.

- Proteomic Validation: Search MS/MS spectra against a custom database built from the predicted proteome to confirm protein expression.

- Predicted genes with supporting transcriptional and/or proteomic evidence are flagged as validated.

Output Analysis

- The pipeline produces an annotated GFF3 file and summary statistics.

- Focus downstream analyses on annotated proteins with multi-omics support for high-confidence results.

Successful implementation of functional annotation pipelines requires access to specific data resources and software tools. The following table details key components of the annotation toolkit.

Table 3: Essential Resources for Functional Annotation Pipelines

| Resource Name | Type | Primary Function | Key Features |

|---|---|---|---|

| SwissProt/UniProtKB [23] | Protein Database | Provides high-quality, manually curated protein sequences for homology-based annotation. | Low redundancy; high annotation reliability; standard for benchmark comparisons. |

| Gene Ontology (GO) [24] | Ontology/Vocabulary | Standardized framework for describing gene functions across species. | Three aspects: Molecular Function, Biological Process, Cellular Component; enables enrichment analysis. |

| Gene Ontology Annotation (GOA) [24] | Annotation Database | Source of pre-annotated proteins used as a reference database for tools like FANTASIA. | Comprehensive; regularly updated; links GO terms to proteins. |

| AUGUSTUS [23] | Gene Prediction Tool | Predicts the structure of protein-coding genes in genomic sequences. | Species-specific training; accurate ab initio prediction; often used in annotation pipelines. |

| BLAST/DIAMOND [23] | Sequence Similarity Tool | Performs rapid sequence alignment for homology detection and annotation transfer. | BLAST is standard; DIAMOND is faster for large datasets; core of traditional annotation. |

| ProtT5/ESM2 [24] | Protein Language Model | Converts protein sequences into numerical embeddings that encode functional features. | Enables annotation without sequence similarity; captures structural/functional signals. |

| FANTASIA [24] | Annotation Pipeline | Uses pLM embeddings for large-scale, zero-shot functional annotation of proteomes. | High coverage in non-model organisms; reduced annotation noise; GitHub available. |

| AnnotaPipeline [23] | Annotation Pipeline | Integrates multi-omics data (RNA-seq, MS/MS) to validate in silico functional predictions. | Proteogenomic approach; provides experimental evidence; reduces false positives. |

The advent of high-throughput sequencing has revolutionized transcriptomics, enabling comprehensive analysis of gene expression and RNA transcript diversity. A major challenge for researchers lies in selecting and effectively utilizing the appropriate sequencing technology—short-read or long-read—to answer specific biological questions within functional annotation pipelines. Short-read sequencing (e.g., Illumina) provides high-throughput, cost-effective data ideal for quantifying gene expression levels [25] [26]. In contrast, long-read sequencing (e.g., PacBio, Oxford Nanopore) captures full-length transcripts, enabling the precise identification of alternative splicing, novel isoforms, fusion transcripts, and RNA modifications [27]. This application note provides clear guidelines, detailed protocols, and resource tables to help researchers design robust transcriptomic studies that leverage the complementary strengths of both technologies for enhanced functional annotation after gene calling.

Technology Comparison and Selection Guidelines

The choice between short and long-read sequencing technologies involves trade-offs between throughput, read length, accuracy, and the specific biological features of interest. The following table summarizes the core characteristics of each approach to inform experimental design.

Table 1: Comparative analysis of short-read and long-read RNA sequencing technologies

| Feature | Short-Read Sequencing (Illumina) | Long-Read Sequencing (PacBio) | Long-Read Sequencing (Oxford Nanopore) |

|---|---|---|---|

| Typical Read Length | 50-300 bp [26] | 5,000-30,000 bp [26] | 5,000 to >1,000,000 bp [26] |

| Primary Accuracy | High (Q30+) [26] | High for HiFi (Q30-Q40+) [26] | Variable; improves with consensus [26] |

| Key Strengths | High sequencing depth, low cost per base, established analysis pipelines [25] [28] | Full-length isoform resolution, high single-read accuracy [25] [27] | Direct RNA sequencing, detection of base modifications, extreme read lengths [27] |

| Main Limitations | Limited resolution of complex isoforms and repetitive regions [27] [26] | Lower throughput, higher input requirements, higher cost per sample [25] | Historically higher error rates, though improving [26] |

| Ideal Applications | Gene-level differential expression, large cohort studies, single-cell RNA-seq [25] | Discovery of novel isoforms, fusion transcripts, and alternative splicing [25] [27] | Direct RNA modification detection (e.g., m6A), real-time sequencing, complex structural variation [27] |

Recent advancements are blurring the lines between these platforms. Pacific Biosciences (PacBio) has introduced Kinnex (formerly MAS-ISO-seq) to increase throughput by concatenating multiple transcripts into a single long read [25]. Conversely, Illumina now offers Complete Long-Reads kits to generate long-read data on their established short-read instruments [26]. For the most comprehensive functional annotation, an integrated approach using both technologies is often most powerful, using short reads for robust gene-level quantification and long reads to resolve underlying transcript isoform complexity [29].

Experimental Protocols for RNA-Seq Library Preparation

The following section outlines standard protocols for preparing RNA-seq libraries, highlighting critical steps where methodology influences downstream functional annotation.

Short-Read RNA-Seq Protocol (Illumina)

This protocol is based on standard procedures using the Illumina platform, which remains the workhorse for gene expression quantification [28] [30].

- STEP 1: RNA Extraction and Quality Control. Extract total RNA using a column-based or phenol-chloroform method. Assess RNA quality and integrity using an instrument such as an Agilent TapeStation or Bioanalyzer. An RNA Integrity Number (RIN) > 7.0 is generally recommended for high-quality libraries [28].

- STEP 2: RNA Enrichment. Select for mRNA using poly(A) tail capture beads or deplete ribosomal RNA (rRNA) using probe-based kits. Poly(A) selection is standard for eukaryotic mRNA sequencing [30].

- STEP 3: cDNA Synthesis and Library Construction. Reverse-transcribe RNA into double-stranded cDNA. Fragment the cDNA mechanically or enzymatically to a target size of 200-300 bp. Perform end repair, A-tailing, and ligation of Illumina-specific indexing adapters [25] [30].

- STEP 4: Library Amplification and Quality Control. Amplify the adapter-ligated DNA using a limited-cycle PCR to enrich for properly constructed fragments. Validate the final library size distribution using a Fragment Analyzer or TapeStation and quantify using a fluorescence-based method like Qubit [25].

- STEP 5: Sequencing. Pool multiplexed libraries and sequence on an Illumina platform (e.g., NovaSeq) with paired-end 150 bp reads being common for transcriptomics [28].

Long-Read RNA-Seq Protocol (PacBio Iso-Seq)

This protocol describes the Pacific Biosciences Iso-Seq method, which preserves full-length transcript information, crucial for annotating splice variants [25] [27].

- STEP 1: Full-Length cDNA Synthesis. Use the 10x Genomics Chromium platform or similar to partition cells and barcode RNA. Within each partition (GEM), reverse-transcribe RNA using an oligo-dT primer containing a cell barcode and a Unique Molecular Identifier (UMI) to generate full-length cDNA [25].

- STEP 2: cDNA Amplification and Size Selection. PCR-amplify the cDNA. Perform a clean-up and size selection using SPRI (Solid-Phase Reversible Immobilization) beads to remove short fragments and primers. This step helps retain transcripts shorter than 500 bp that might otherwise be lost [25].

- STEP 3: Removal of Artefacts (Critical for PacBio). To remove truncated cDNA contaminated by template-switching oligos (TSO), perform a PCR with a modified primer (e.g., MAS capture primer) to incorporate a biotin tag only into desired full-length cDNA products. Capture these using streptavidin-coated beads [25].