Comparative Analysis of Ribosome Binding Site Detection Methods: From Foundational Principles to Clinical Applications

This comprehensive review provides researchers, scientists, and drug development professionals with a systematic analysis of contemporary Ribosome Binding Site (RBS) detection methodologies.

Comparative Analysis of Ribosome Binding Site Detection Methods: From Foundational Principles to Clinical Applications

Abstract

This comprehensive review provides researchers, scientists, and drug development professionals with a systematic analysis of contemporary Ribosome Binding Site (RBS) detection methodologies. We explore foundational principles of translational regulation, examine cutting-edge experimental and computational techniques including Ribo-seq, nanopore sensing, and deep learning approaches, and address critical troubleshooting considerations. The analysis highlights performance validation across platforms and discusses emerging applications in synthetic biology, biomarker development, and therapeutic intervention. By synthesizing recent advances from high-throughput sequencing to machine learning prediction tools, this review serves as an essential resource for selecting appropriate RBS detection strategies based on specific research objectives and clinical requirements.

Understanding RBS Biology and Detection Principles

The Central Role of RBS in Translational Regulation and Gene Expression

The Ribosome Binding Site (RBS) is a pivotal cis-acting element in translational regulation, serving as the primary location where the ribosome initiates protein synthesis. In bacterial systems, riboswitches—structured noncoding RNA domains—exert precise control over gene expression by modulating the accessibility of the RBS in response to cellular metabolite concentrations [1] [2]. These regulatory elements function through a modular architecture consisting of a ligand-binding aptamer domain and a downstream expression platform that instructs the expression machinery [2]. The occupancy status of the aptamer domain determines the structural conformation of the expression platform, which either exposes or occludes the RBS, thereby activating or repressing translation [2]. Over 55 distinct classes of natural riboswitches have been experimentally validated, and they are ubiquitous in bacteria [1] [2]. Understanding the mechanisms by which riboswitches control the RBS is fundamental to both basic molecular biology and applied synthetic biology, enabling the development of novel genetic tools and therapeutic strategies.

Comparative Analysis of RBS Detection and Riboswitch Study Methodologies

Studying RBS regulation, particularly through riboswitches, requires a multifaceted approach. The following section provides a comparative analysis of key methodological frameworks, summarizing their core principles, experimental protocols, and outputs to guide researchers in selecting the appropriate tool for their investigations.

Table 1: Comparison of Methodologies for Studying RBS-Mediated Regulation

| Method Category | Core Principle | Key Experimental Steps | Primary Data Output | Key Advantages |

|---|---|---|---|---|

| Computational Prediction & Mining [3] [4] | Identifies riboswitch elements by analyzing sequence conservation and secondary structure features. | 1. Input genomic sequence.2. Scan for conserved motif patterns.3. Predict secondary structure and folding energy.4. Classify potential riboswitches. | List of genomic loci with high riboswitch potential; predicted secondary structures. | High-throughput capability; can screen entire genomes in silico; identifies novel candidates. |

| Structural Ensemble Mapping (DeConStruct) [5] | Deconvolutes multiple RNA conformations from chemical probing data to identify functional regulatory switches. | 1. In vivo DMS probing of cells.2. Mutational Profiling (MaP) via reverse transcription.3. High-throughput sequencing.4. DRACO algorithm ensemble deconvolution. | RNA secondary structure ensembles; stoichiometries of alternative conformations; identification of structurally heterogeneous regions. | Captures dynamic structural changes in living cells; transcriptome-wide scale. |

| In Vitro & In Vivo Functional Validation [2] | Directly tests the regulatory function of a riboswitch and its effect on the RBS using reporter constructs. | 1. Clone putative riboswitch into reporter gene's 5'UTR.2. Transfer into host organism (e.g., E. coli).3. Expose to varying ligand concentrations.4. Measure reporter output (e.g., fluorescence). | Quantitative gene expression data (e.g., fluorescence units); dose-response curves; dynamic range measurements. | Directly confirms regulatory function and mechanism; provides quantitative performance data. |

Experimental Protocols for Key Methods

Protocol 1: DRACO-Mediated Structural Ensemble Mapping [5] This protocol maps RNA structural ensembles in living cells, ideal for observing native RBS accessibility.

- Cell Culture and Probing: Grow E. coli cells (e.g., DH5α or TOP10 strains) to mid-exponential phase in appropriate medium at 37°C.

- In Vivo DMS Treatment: Treat living cells with dimethyl sulfate (DMS) at a final concentration optimized to modify unpaired adenines and cytosines.

- RNA Extraction: Harvest cells and extract total RNA using a hot phenol protocol or commercial kit, including DNase I treatment to remove genomic DNA.

- Library Preparation and Sequencing: Perform DMS-MaPseq. rRNA-depleted samples are used for reverse transcription with a MarI enzyme, which introduces mutations at DMS modification sites during cDNA synthesis. Sequencing libraries are prepared and run on an Illumina platform to obtain a minimum of 1 billion paired-end reads.

- Bioinformatic Analysis: Process raw sequencing data to generate mutation counts. Use the DRACO algorithm to deconvolute the data, identifying regions populating multiple conformations. A minimum effective read depth of 2,000x per analyzed region is recommended for robust ensemble deconvolution.

Protocol 2: Functional Validation of a Synthetic Riboswitch [6] This protocol tests the function of an engineered riboswitch controlling an RBS in vivo.

- Construct Design: Synthesize a DNA construct where the candidate riboswitch sequence is cloned upstream of a reporter gene (e.g., GFP) in a plasmid. The RBS of the reporter gene should be embedded within the riboswitch's putative expression platform.

- Transformation: Transform the constructed plasmid into a suitable host organism (e.g., Corynebacterium glutamicum for metabolic engineering or human cell lines via transfection for eukaryotic applications).

- Ligand Induction: Grow transformed cells and split the culture into aliquots. Expose these aliquots to a range of concentrations of the target ligand (e.g., tetracycline, theophylline, or a custom metabolite).

- Output Measurement: After a defined incubation period, measure the reporter signal. For fluorescent reporters, use flow cytometry or a plate reader. For other outputs, assess via enzymatic assays or western blot.

- Data Analysis: Calculate the fold-change in gene expression between induced and uninduced states to determine the dynamic range of the riboswitch. Generate dose-response curves to quantify its sensitivity and EC50.

Research Reagent Solutions Toolkit

Table 2: Essential Reagents for RBS and Riboswitch Research

| Reagent / Solution | Function / Application | Example Context |

|---|---|---|

| DMS (Dimethyl Sulfate) | Chemical probe that modifies unpaired A and C nucleotides in RNA, used for structural probing. | RNA structure ensemble mapping in vivo [5]. |

| MarI Reverse Transcriptase | Enzyme for Mutational Profiling (MaP); reads DMS modifications as mutations during cDNA synthesis. | Key for DMS-MaPseq protocols to decode RNA structure [5]. |

| Riboswitch Finder Software | Dedicated motif search program to identify riboswitch RNAs in sequence data based on sequence elements and secondary structure. | Computational identification of potential riboswitches in genomic sequences [3]. |

| Orthogonal FMN Aptamer | A re-engineered natural aptamer that responds to a synthetic ligand (e.g., DHEF, MHEF) instead of its native FMN ligand. | Tool for conditional gene regulation in bacteria and human cells without interference from endogenous FMN [7]. |

| Tetracycline-Responsive Aptazyme A synthetic ribozyme controlled by a tetracycline-binding aptamer; ligand binding modulates self-cleavage activity and mRNA stability. | Used in synthetic riboswitches to control gene expression in various organisms, including C. elegans and human B cells [6]. |

RBS Regulatory Mechanisms: A Visual Guide

Riboswitches regulate the RBS through distinct mechanistic paradigms. The following diagrams illustrate the "Direct Occlusion" mechanism, a common strategy where ligand binding directly controls RBS accessibility.

Direct Occlusion Mechanism

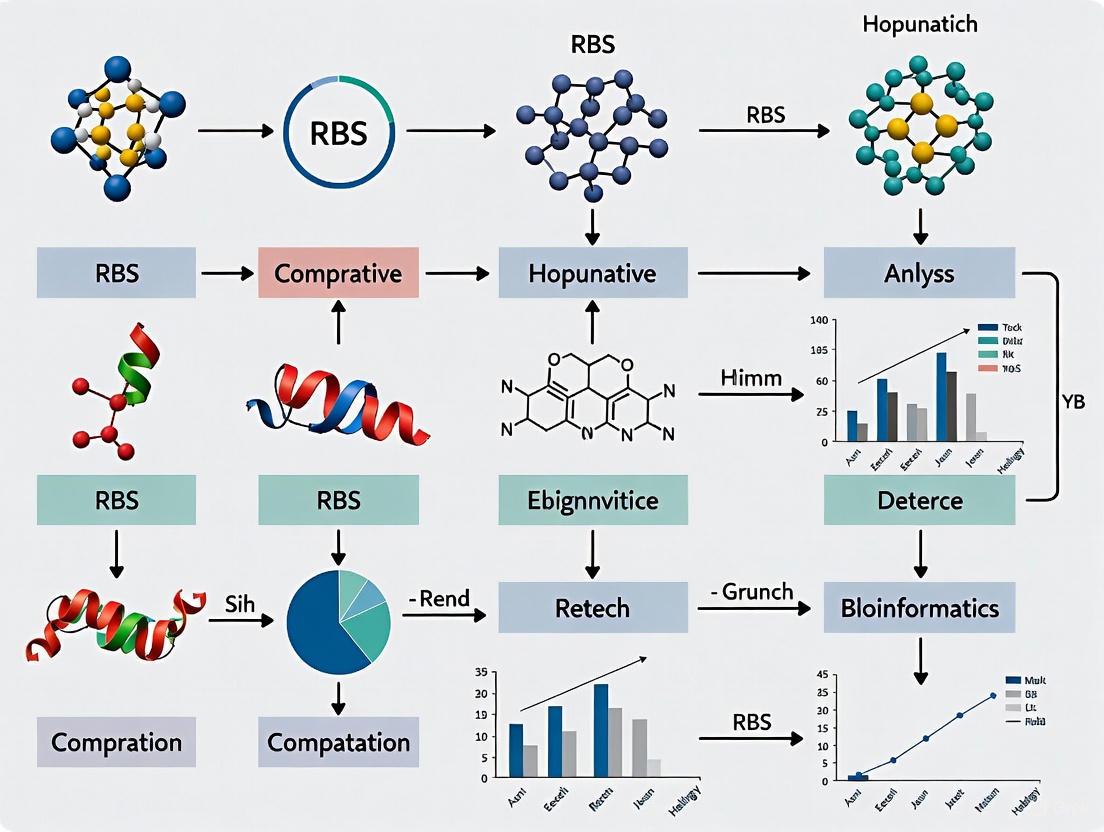

Experimental Workflow for RBS Regulator Discovery

The process of discovering and validating a novel RBS-regulating riboswitch integrates computational, structural, and functional biology techniques, as shown in the workflow below.

The RBS serves as a central processing unit for translational control, with riboswitches representing one of nature's most elegant solutions for its dynamic regulation. The comparative analysis presented herein underscores that a synergistic approach—combining computational prediction, structural ensemble mapping in living cells, and rigorous functional validation—is the most powerful strategy for dissecting RBS regulatory mechanisms [3] [2] [5]. The future of RBS research is poised to be transformed by the increasing sophistication of in vivo structural methods like DRACO and the application of machine learning, which can predict regulatory potential in vast genomic datasets [5] [4]. Furthermore, the rational engineering of natural riboswitches into orthogonal tools that respond to synthetic ligands opens new frontiers in biotechnology and medicine, allowing for precise, protein-independent control of therapeutic gene expression in complex organisms, including humans [6] [7]. As these tools mature, our ability to diagnose and treat diseases by targeting the RNA layer of gene regulation will become an increasingly tangible reality.

Ribo-seq as a High-Throughput Technology for Global Translatome Snapshots

Understanding gene expression requires moving beyond transcript abundance to directly measuring protein synthesis. Translatome profiling technologies fill this crucial gap by identifying mRNAs that are actively engaged with ribosomes. Among these methods, Ribosome Profiling (Ribo-seq) has emerged as a powerful technique that provides nucleotide-resolution snapshots of translation dynamics across the entire transcriptome. Developed in 2009, Ribo-seq builds upon earlier polysome profiling approaches but offers significantly enhanced precision in mapping ribosome positions [8] [9].

The fundamental principle underlying Ribo-seq is that translating ribosomes protect approximately 28-30 nucleotides of mRNA from nuclease digestion. By sequencing these ribosome-protected fragments (RPFs), researchers can determine the exact positions of ribosomes on transcripts, enabling codon-resolution analysis of translation dynamics [10] [9]. This technical advancement has revolutionized our understanding of translational regulation, revealing previously unannotated translated regions and nuanced regulatory mechanisms that were undetectable with previous methodologies.

Comparative Analysis of Translatome Profiling Methods

Methodological Comparison

Ribo-seq and RNC-seq represent the two primary high-throughput approaches for translatome analysis, each with distinct methodological foundations and data outputs. RNC-seq (Ribosome-Nascent Chain Complex sequencing) combines polysome profiling with RNA sequencing, separating actively translated mRNAs bound by multiple ribosomes through sucrose gradient centrifugation before sequencing [8]. This method provides information about the ribosome load on transcripts but lacks single-codon resolution. In contrast, Ribo-seq employs nuclease digestion to isolate and sequence the short mRNA fragments protected by individual ribosomes, enabling precise mapping of ribosome positions at nucleotide-level resolution [8] [11].

According to database analyses, Ribo-seq has been more widely adopted in scientific literature, with PubMed returning 1,454 publications for "Ribo-seq" compared to only 210 for "RNC-seq" as of February 2024 [8]. Similarly, TranslatomeDB contained 4,054 Ribo-seq datasets versus 216 RNC-seq datasets in 2024, reflecting the broader application of Ribo-seq across diverse research contexts [8].

Table 1: Technical Comparison of Ribo-seq and RNC-seq

| Feature | Ribo-seq | RNC-seq |

|---|---|---|

| Resolution | Nucleotide-level (28-30 nt) | Transcript-level (variable length) |

| Primary Output | Ribosome footprint positions | Ribosome-associated mRNA sequences |

| Mapping Precision | Codon-level positioning | Regional association |

| Protocol Complexity | High (specialized library prep) | Moderate (similar to RNA-seq) |

| Information on Ribosome Density | Indirect inference | Direct measurement from ribosome count |

| Identification of Novel ORFs | Excellent (precise start/stop mapping) | Limited (imprecise boundaries) |

Performance Metrics and Detection Capabilities

Both Ribo-seq and RNC-seq demonstrate robust capabilities in detecting translated transcripts, with each method identifying approximately 80% of protein-coding genes across various human cell lines (HBE, A549, and MCF-7) when using an RPKM cutoff of >0 [8]. This high detection rate significantly surpasses the approximately 30% of protein-coding genes typically detected by panoramic mass spectrometry proteomics, highlighting the superior sensitivity of translatome methods for comprehensive gene expression assessment [8].

However, the distribution patterns of detected transcripts differ between the methods, particularly in higher expression ranges. Ribo-seq typically identifies the largest number of protein-coding gene translated transcripts in the 1-10 RPKM interval for HBE and A549 cell lines, while both methods show comparable numbers across all expression intervals in MCF-7 cells [8]. This variation suggests context-dependent performance characteristics that researchers should consider when selecting the appropriate methodology for specific experimental systems.

Technical Advancements in Ribo-seq Methodology

Enhanced Protocols for Improved Data Quality

Recent methodological innovations have substantially addressed key limitations in conventional Ribo-seq protocols. The development of Ribo-FilterOut represents a significant advancement by incorporating an ultrafiltration step that physically separates ribosome footprints from ribosomal subunits after EDTA-mediated dissociation [10]. This approach dramatically reduces rRNA contamination, which traditionally consumed up to 92% of sequencing reads in standard protocols. When combined with conventional rRNA subtraction methods, Ribo-FilterOut increases usable reads for footprint analysis from 5.4% to 49% of the total library, significantly enhancing cost-efficiency and data yield [10].

Complementing this advancement, Ribo-Calibration utilizes external spike-ins of stoichiometrically defined mRNA-ribosome complexes prepared via in vitro translation systems [10]. These spike-ins enable absolute quantification of ribosome numbers on transcripts and facilitate cross-experiment normalization, addressing a longstanding challenge in traditional Ribo-seq analysis. The combination of these approaches allows researchers to estimate critical kinetic parameters, including translation initiation rates and the total number of translation events before mRNA decay, providing unprecedented insights into translation dynamics [10].

Specialized Profiling Techniques

Beyond general improvements, specialized Ribo-seq variants have emerged to investigate specific translational mechanisms. Translation Initiation Site (TIS) profiling utilizes inhibitors like retapamulin or oncocin 112 to enrich initiating ribosomes, enabling precise mapping of start codons, including non-AUG initiation events [12]. Conversely, Translation Termination Site (TTS) profiling employs apidaecin to trap terminating ribosomes, revealing stop codon usage and recoding events such as programmed frameshifts [12]. These specialized approaches have proven particularly valuable for comprehensive annotation of bacterial coding landscapes, as demonstrated in Campylobacter jejuni, where they facilitated a two-fold expansion of the known small proteome [12].

Table 2: Specialized Ribo-seq Applications and Their Utilities

| Application | Key Reagent | Primary Utility | Representative Finding |

|---|---|---|---|

| TIS Profiling | Retapamulin, Oncocin | Start codon mapping, initiation efficiency | Identification of non-AUG start codons and upstream ORFs |

| TTS Profiling | Apidaecin | Stop codon mapping, termination efficiency | Discovery of programmed frameshifting events |

| Disome-seq | Cycloheximide | Ribosome collision/stacking sites | Mapping translational pausing and stall sites |

| Selective Ribo-seq | Phase-specific inhibitors | Context-specific translation | Stress-responsive translation initiation |

Bioinformatics Tools for Ribo-seq Data Analysis

The complexity of Ribo-seq data demands specialized bioinformatics tools for accurate interpretation. Several integrated platforms have been developed to address the unique challenges of ribosome footprint analysis. RiboParser/RiboShiny represents one such comprehensive framework that offers improved P-site detection accuracy through optimized start/stop codon-based and ribosome structure-based models [13]. This platform maintains robust performance even for non-model organisms and species with high proportions of leaderless transcripts (exceeding 70% in Haloferax volcanii), where conventional tools frequently struggle [13].

Other specialized tools focus on specific analytical aspects: riboWaltz and Plastid excel at P-site offset detection; RIBOVIEW provides comprehensive quality control metrics; ORF-rater, RiboCode, and RiboTaper specialize in translation initiation site and open reading frame identification [13]. For detecting differential translation events, Anota2Seq, Xtail, and RiboDiff offer statistical frameworks that account for both ribosome occupancy and mRNA abundance [13]. The availability of these specialized tools has significantly lowered the barrier to entry for researchers seeking to implement Ribo-seq in their experimental workflows.

Table 3: Key Bioinformatics Tools for Ribo-seq Analysis

| Tool | Primary Function | Key Strength | Reference |

|---|---|---|---|

| RiboParser/RiboShiny | Comprehensive analysis & visualization | Optimized for non-model organisms | [13] |

| riboWaltz | P-site offset detection | Accurate metagene analysis | [13] |

| RiboTaper | ORF identification | Periodicity-based detection | [13] |

| Anota2Seq | Differential translation | Statistical robustness | [13] |

| RIBOVIEW | Quality control | Data quality assessment | [13] |

Research Applications and Case Studies

Expanding Annotated Proteomes

Ribo-seq has dramatically expanded our understanding of genomic coding potential through systematic discovery of previously unannotated open reading frames. The GENCODE consortium has utilized Ribo-seq data from multiple studies to identify 7,264 non-canonical translated ORFs in the human genome, significantly expanding the known translational landscape [14]. Similarly, in yeast, comprehensive profiling of ribo-seq detected small sequences has revealed 20,023 small open reading frames, with 1,134 unannotated microproteins displaying conservation patterns and signals of purifying selection comparable to canonical proteins [15].

In bacterial systems, integrated Ribo-seq approaches have proven equally transformative. A study in Campylobacter jejuni employing conventional Ribo-seq, TIS profiling, and TTS profiling expanded the known small proteome by two-fold, identifying novel virulence-associated factors including CioY, a 34-amino acid component of the CioAB oxidase [12]. These findings across diverse organisms highlight Ribo-seq's unparalleled sensitivity in detecting translated elements that evade prediction by conventional computational methods.

Elucidating Regulatory Mechanisms

Beyond expanding catalogs of translated genes, Ribo-seq provides crucial insights into translational regulation under various physiological and pathological conditions. In the model green alga Chlamydomonas reinhardtii, optimized Ribo-seq revealed that translation efficiency of core cell cycle genes significantly enhances during the early synthesis/mitosis stage, demonstrating cell cycle-coupled translational regulation [16]. The study also identified upstream ORFs (uORFs) with differential regulation across the diurnal cycle, suggesting their involvement in circadian control of gene expression [16].

In biotechnological applications, Ribo-seq has guided strain engineering for improved protein production. In Komagataella phaffii, ribosome profiling identified translational bottlenecks during heterologous expression of human serum albumin [17]. This data-driven approach revealed that ER trafficking becomes overloaded with abundant, non-essential host proteins, leading to the strategic knockout of three high ribosome-utilizing genes that collectively increased HSA secretion by 35% [17].

The Scientist's Toolkit: Essential Research Reagents

Successful implementation of Ribo-seq requires specific reagents and methodologies tailored to preserve ribosome-mRNA interactions while minimizing artifacts. The following table summarizes key solutions employed in modern ribosome profiling studies:

Table 4: Essential Research Reagents for Ribo-seq Studies

| Reagent Category | Specific Examples | Function | Considerations |

|---|---|---|---|

| Translation Inhibitors | Cycloheximide (eukaryotes), Chloramphenicol (prokaryotes) | Arrest ribosomes in native positions | Concentration and timing critical for artifact minimization |

| RNase Enzymes | RNase I, Micrococcal Nuclease | Digest unprotected mRNA regions | Concentration optimization essential for proper footprint length |

| rRNA Depletion Reagents | Ribo-Zero, riboPOOL, Ribo-FilterOut | Remove contaminating ribosomal RNA | Combination approaches yield best results (up to 83% usable reads) |

| Specialized Inhibitors | Retapamulin (TIS), Apidaecin (TTS) | Enrich specific ribosome populations | Enable mapping of initiation/termination sites |

| Spike-in Controls | Defined mRNA-ribosome complexes | Normalization and absolute quantification | Ribo-Calibration approach for stoichiometric measurements |

| Library Prep Kits | RiboLace, commercial alternatives | Streamlined footprint isolation | Gel-free methods improve reproducibility and yield |

Ribo-seq has established itself as an indispensable technology for comprehensive translatome analysis, offering unprecedented resolution for mapping translated regions and quantifying translational dynamics. While the method demands specialized experimental and computational expertise, continuous methodological refinements have substantially improved its accessibility and data quality. The complementary strengths of Ribo-seq and RNC-seq provide researchers with flexible options for translatome assessment, with Ribo-seq excelling in nucleotide-resolution mapping and novel ORF discovery, while RNC-seq offers a more straightforward analytical pipeline similar to conventional RNA-seq.

Looking forward, emerging innovations such as single-cell translatomics and nano-scale Ribo-seq promise to further expand the applications of this powerful technology, potentially enabling translational profiling of rare cell populations and spatially resolved tissue microenvironments [9]. As these advancements mature, Ribo-seq is poised to remain at the forefront of translational regulation research, continuing to reveal new layers of complexity in gene expression regulation across diverse biological contexts.

The accurate detection and analysis of Ribosome Binding Sites (RBS) are fundamental to molecular biology, enabling researchers to understand and engineer gene expression control. In prokaryotes, translation initiation is primarily governed by the Shine-Dalgarno (SD) sequence, a purine-rich region upstream of the start codon that base-pairs with the 3' end of the 16S ribosomal RNA (rRNA) [18] [19]. This key molecular interaction facilitates the recruitment of the ribosome to the mRNA transcript. However, RBS functionality is also profoundly influenced by RNA secondary structures in the 5' untranslated region (UTR), which can either mask the RBS or, in certain cases, promote alternative translation initiation mechanisms [20]. The field has developed multiple methodological approaches to interrogate these interactions, each with distinct advantages and limitations in sensitivity, specificity, and applicability to different research contexts.

This guide provides a comparative analysis of the primary experimental and computational methods used in RBS detection research. We evaluate the performance of 16S rRNA hybridization techniques, sequencing-based approaches, and computational prediction algorithms, providing researchers with objective data to select the most appropriate methodology for their specific applications. The comparative framework focuses on key performance metrics including detection sensitivity, phylogenetic resolution, capacity for novel discovery, and technical requirements, with particular emphasis on applications in microbial genomics and drug development research.

Comparative Analysis of RBS Detection Methods

Performance Metrics Across Method Categories

Table 1: Comprehensive Comparison of RBS Detection Method Performance Characteristics

| Method | Sensitivity & Specificity | Phylogenetic Resolution | Novel Discovery Potential | Technical Requirements | Primary Applications |

|---|---|---|---|---|---|

| 16S rRNA Hybridization Probes | High specificity for targeted taxa; sensitivity to sequence mismatches [21] | Limited to pre-defined taxa; cannot resolve below species level without multiple probes [21] | Low; requires prior sequence knowledge for probe design [21] | Medium; requires hybridization optimization and control experiments [21] | Specific pathogen detection; microbial diagnostics; fluorescence in situ hybridization (FISH) [21] |

| 16S rRNA Amplicon Sequencing | High sensitivity but prone to amplification biases; affected by primer selection [22] [23] | Species to strain level depending on region sequenced; hampered by microheterogeneity [22] | Medium; can detect novel taxa but limited by primer specificity [22] | Low to Medium; standardized PCR and sequencing protocols [22] [23] | Microbial community profiling; phylogenetic studies; clinical microbiology identification [22] |

| Shotgun Metagenomics | High sensitivity for abundant taxa; reduced for low-biomass samples [23] | Highest resolution (strain level); enables genome reconstruction [23] | High; can identify completely novel organisms and genes [23] | High; requires extensive sequencing depth and computational resources [23] | Comprehensive microbiome analysis; functional potential assessment; novel gene discovery [23] |

| 16S rRNA Hybridization Capture | High sensitivity for fragmented DNA; reduced background contamination [23] | Similar to amplicon sequencing; limited by reference database [23] | Medium; can detect novel taxa but dependent on reference databases [23] | Medium to High; specialized bait design and capture protocols [23] | Ancient DNA studies; low-biomass samples; targeted enrichment [23] |

| Computational RBS Prediction | Varies by algorithm; can detect non-canonical RBS sites [18] | Not applicable | High for predicting novel RBS in sequenced genomes [18] | Low; requires genomic sequences and appropriate software [18] | Genome annotation; genetic engineering; synthetic biology [18] |

Experimental Workflow Comparison

Table 2: Technical Requirements and Experimental Considerations

| Method | Sample Input Requirements | Hands-on Time | Total Processing Time | Cost Category | Data Output |

|---|---|---|---|---|---|

| 16S rRNA Hybridization Probes | Can work with small amounts; 100 cfu/100 mL demonstrated in water/milk [21] | Medium (hybridization steps) | 1-2 days including pre-culture [21] | Low to Medium | Presence/absence data for specific targets [21] |

| 16S rRNA Amplicon Sequencing | Varies; 1-10 ng DNA typical | Low (standardized kits) | 1-2 days (library prep to sequencing) | Low to Medium | Sequence reads of targeted 16S region [23] |

| Shotgun Metagenomics | Higher DNA input needed; >10 ng recommended | Low to Medium (library preparation) | 2-5 days (including deeper sequencing) | High | Entire genomic content of sample [23] |

| 16S rRNA Hybridization Capture | Compatible with degraded DNA; works with ancient samples [23] | Medium (additional capture step) | 3-4 days (including capture protocol) | Medium | Enriched 16S rRNA gene fragments [23] |

| Computational RBS Prediction | Genomic sequence data | Minimal (computational time) | Hours to days depending on dataset size | Low | Predicted RBS locations and strengths [18] |

Detailed Experimental Protocols

16S rRNA-Targeted Hybridization Probe Development and Validation

The development of specific oligonucleotide probes for 16S rRNA hybridization involves multiple stages of design, testing, and validation [21]:

Step 1: Target Sequence Identification and Alignment

- Select hypervariable regions of the 16S rRNA gene (e.g., V3, V6) that provide sufficient phylogenetic discrimination for your target organisms [21]

- Obtain 16S rRNA sequences from reference databases (e.g., RDP, SILVA) for both target and non-target species that may be present in the sample [21] [23]

- Perform multiple sequence alignment to identify regions unique to the target organism(s)

- Design oligonucleotide probes (typically 15-30 nucleotides) complementary to the unique regions

- Verify probe specificity in silico against comprehensive 16S rRNA databases

Step 2: Probe Labeling and Hybridization Optimization

- Incorporate appropriate labels (radioactive P³², fluorescent, or biotin tags) during oligonucleotide synthesis [21] [24]

- Establish hybridization conditions (temperature, buffer composition, washing stringency) using control organisms with known sequences [21]

- Optimize probe concentration and hybridization time to maximize signal-to-noise ratio

- Validate with positive and negative control samples to confirm specificity

Step 3: Sample Processing and Hybridization Assay

- For environmental or clinical samples, concentrate cells if necessary (filtration or centrifugation)

- Lyse cells to release rRNA while maintaining RNA integrity

- Immobilize target nucleic acids on solid support (nylon or nitrocellulose membranes) or perform in situ hybridization

- Perform pre-hybridization to block non-specific binding sites

- Apply labeled probes under optimized hybridization conditions

- Conduct stringent washes to remove non-specifically bound probes

- Detect hybridized probes using appropriate methods (autoradiography, fluorescence microscopy, or colorimetric detection)

Step 4: Sensitivity and Specificity Determination

- Establish limit of detection using serial dilutions of target organisms

- Test against closely related non-target organisms to confirm specificity

- Validate with real-world samples spiked with known quantities of target organisms

- For quantitative applications, develop standard curves relating signal intensity to cell numbers [21]

This method has demonstrated sensitivity for detecting as few as 100 cfu/100 mL in tap water or milk samples when combined with an 8-hour pre-culture step [21]. A key limitation is that Shigella species may cross-hybridize with Escherichia coli-specific probes due to high 16S rRNA sequence similarity [21].

Hybridization Capture for Ancient or Fragmented DNA

Hybridization capture has emerged as particularly valuable for analyzing ancient dental calculus or other samples with degraded DNA [23]:

Step 1: RNA Bait Design and Synthesis

- Select full-length 16S rRNA gene sequences from target species or broader phylogenetic groups

- For ancient oral microbiome studies, include species from the Human Oral Microbiome Database (HOMD) [23]

- Design biotinylated RNA baits targeting the entire 16S rRNA gene using in vitro transcription with biotin-labeled nucleotides [23]

- Purify baits and quantify concentration accurately

Step 2: Library Preparation and Capture

- Prepare DNA libraries from samples using protocols optimized for ancient DNA (including dual-indexing)

- Fragment DNA to appropriate size (100-500 bp) if not already degraded

- Hybridize biotinylated baits with DNA libraries at appropriate temperature (optimized for 55-65°C range) [23]

- Capture bait-bound fragments using streptavidin-coated magnetic beads

- Wash thoroughly to remove non-specifically bound DNA

- Elute captured DNA and amplify for sequencing

This approach has demonstrated a 334-fold enrichment of 16S rRNA gene fragments compared to unenriched libraries in ancient dental calculus samples, with lower susceptibility to background contamination than 16S rRNA amplification approaches [23].

Computational RBS Prediction Using Neural Networks

Computational methods provide a complementary approach for RBS identification in genomic sequences [18]:

Step 1: Training Set Preparation

- Curate a set of known RBS sequences with confirmed translation initiation sites

- Include negative examples (non-RBS sequences) for robust model training

- For neural network approaches, format sequences into fixed-length numerical inputs

Step 2: Model Architecture Selection

- Implement feedforward neural network with one input layer, one or more hidden layers, and output layer [18]

- Determine optimal number of nodes in hidden layers through iterative testing

- Select appropriate activation functions (sigmoid, tanh, or ReLU)

Step 3: Model Training and Validation

- Split data into training, validation, and test sets

- Train network using backpropagation to minimize prediction error

- Monitor performance on validation set to prevent overfitting

- Evaluate final model on independent test set

Step 4: RBS Prediction on Novel Sequences

- Apply trained model to scan unannotated DNA sequences

- Generate probability scores for potential RBS sites

- Apply appropriate threshold for positive predictions

- Integrate with other gene finding algorithms for comprehensive annotation

These computational approaches must account for the high degeneracy of RBS sequences and can be complemented by Gibbs sampling methods for improved accuracy [18].

Research Reagent Solutions

Table 3: Essential Research Reagents for RBS Detection Methods

| Reagent/Category | Specific Examples | Function & Application | Key Considerations |

|---|---|---|---|

| rRNA Depletion Kits | riboPOOLs, RiboMinus, MICROBExpress, RiboZero (discontinued) [24] | Enrich mRNA by removing abundant rRNA; improves sequencing efficiency | riboPOOLs show similar efficiency to former RiboZero; RiboMinus and MICROBExpress show lower efficiency [24] |

| Biotinylated Probes | Custom-designed oligonucleotides targeting 16S rRNA [23] [24] | Selective capture of complementary DNA/RNA sequences; used in hybridization and capture methods | Species-specific design possible; enables customized depletion or enrichment; comparable efficiency to commercial kits [24] |

| Streptavidin-Coated Magnetic Beads | Various commercial sources | Binding biotinylated probes for physical separation in capture methods | Strong non-covalent binding allows efficient depletion or enrichment [24] |

| Universal Primers | Primers targeting conserved 16S rRNA regions [22] | Amplification of variable regions for sequencing identification | Conserved regions enable broad amplification; variable regions provide phylogenetic discrimination [22] |

| Neural Network Software | Custom implementations in Python, TensorFlow, PyTorch | Computational prediction of RBS locations in genomic sequences | Requires curated training set of known RBS; can identify degenerate sequences [18] |

Molecular Interaction Diagrams

Prokaryotic Translation Initiation Mechanism

16S rRNA Hybridization Capture Workflow

RBS Detection Method Selection Algorithm

The selection of an appropriate RBS detection methodology requires careful consideration of research objectives, sample characteristics, and technical constraints. For targeted detection of specific pathogens or taxonomic groups, 16S rRNA hybridization probes offer high specificity and relatively simple implementation [21]. When working with complex microbial communities, 16S amplicon sequencing provides a balanced approach for comparative community profiling, though it is susceptible to amplification biases [22] [23]. For maximum phylogenetic resolution and functional insights, shotgun metagenomics represents the gold standard, despite higher computational and sequencing requirements [23]. In specialized applications involving degraded DNA, such as ancient microbiome studies, hybridization capture techniques provide superior recovery of target sequences with reduced background contamination [23]. Computational methods serve as complementary approaches for genome annotation and genetic engineering applications, capable of identifying both canonical and non-canonical RBS sequences [18].

Emerging methodologies, including machine learning approaches for multi-geometry data analysis [25] [26] and advanced hybridization techniques [23] [24], continue to enhance the precision and efficiency of RBS detection and analysis. The integration of multiple complementary approaches often provides the most comprehensive understanding of microbial taxonomy and gene regulation mechanisms in both basic research and drug development applications.

Evolution from Traditional Methods to Next-Generation Sequencing Approaches

The field of RNA modification detection, a crucial component of epitranscriptomics, has undergone a significant technological evolution. This transition has moved research from traditional, low-throughput biochemical techniques to sophisticated next-generation sequencing (NGS) approaches that provide comprehensive, transcriptome-wide insights. More than 170 chemical RNA modifications have been characterized since the first discovery over 60 years ago, creating a new layer of gene expression regulation termed the "epitranscriptome" [27]. These modifications, including prominent examples such as N6-methyladenosine (m6A), 5-methylcytosine (m5C), pseudouridine (Ψ), and N1-methyladenosine (m1A), play distinct regulatory roles in RNA metabolism and function, influencing stability, splicing, translation, and RNA secondary structure [27]. The development of detection technologies has been instrumental in advancing the functional studies of these modifications, moving from simple quantification to single-nucleotide resolution mapping across entire transcriptomes.

Traditional Methodologies: Foundation of RNA Modification Detection

Traditional methods for detecting RNA modifications are primarily characterized by their reliance on biochemical properties and their lower throughput. These techniques are categorized into quantification methods, which measure modification abundance without sequence context, and locus-specific detection methods, which provide positional information for known RNA sequences.

RNA Modification Quantification Methods

- Two-Dimensional Thin-Layer Chromatography (2D-TLC): This sensitive method involves partial digestion of isolated RNA into oligonucleotides, labeling with ³²P using T4 polynucleotide kinase, and subsequent digestion to 5'-³²P-NMPs with nuclease P1. These nucleotides are separated by 2D-TLC based on their distinct mobilities in the solvent, and modifications are identified by comparing their retardation factor values to standards. Quantification is achieved by measuring the radioactivity of corresponding spots. While sensitive enough to work with small amounts of RNA (50-200 ng) and inexpensive, it requires radioactive reagents and can be biased by differential RNase digestion and labeling efficiency [27].

- Dot Blot: This semiquantitative assay uses specific antibodies for target modifications. Isolated RNAs are immobilized on a membrane and probed with a modification-specific primary antibody, followed by a secondary antibody for signal detection. Although straightforward, inexpensive, and widely applicable, its accuracy is highly dependent on antibody specificity, and it lacks both absolute quantification and locus information [27].

- Liquid Chromatography-Mass Spectrometry (LC-MS): This method involves complete digestion and dephosphorylation of RNA to single nucleosides, which are then separated by liquid chromatography and analyzed by mass spectrometry. Nucleosides are identified based on retention time, mass-to-charge ratio, and product ions. LC-MS is considered a benchmark for quantification due to its high sensitivity (low femtomolar range) and ability to work with RNA amounts as low as 50 ng. Its main limitations are the requirement for expensive instrumentation and the need to avoid contamination from highly modified abundant RNAs like rRNA and tRNA when studying mRNA [27].

Locus-Specific Detection Methods

- Primer Extension: This reverse transcription-based method uses a labeled primer hybridized to a specific RNA sequence. Reverse transcriptase extension is blocked immediately upstream of certain modified nucleotides, producing truncated cDNA products. These products are separated on denaturing polyacrylamide gels, and the truncation position indicates the modification site. This method is sensitive and specific but is generally limited to detecting modifications that block or significantly hinder reverse transcriptase progression [27].

Table 1: Comparison of Traditional RNA Modification Detection Methods

| Method | Principle | Throughput | Locus Information | Key Advantages | Key Limitations |

|---|---|---|---|---|---|

| 2D-TLC | Separation based on nucleotide mobility | Low | No | High sensitivity; inexpensive | Requires radioactivity; potential digestion bias |

| Dot Blot | Antibody-based detection | Low | No | Simple workflow; inexpensive | Semiquantitative; antibody-dependent |

| LC-MS | Mass-to-charge ratio of nucleosides | Low | No | Highly sensitive and quantitative; gold standard | Expensive equipment; risk of contamination |

| Primer Extension | Reverse transcription blockage | Medium | Yes, for known sequences | High specificity and sensitivity | Limited to blocking modifications |

Diagram 1: Workflows of Traditional RNA Modification Detection Methods. These methods form the foundational approaches for RNA modification analysis, focusing on quantification or specific locus interrogation.

Next-Generation Sequencing Approaches: High-Throughput Revolution

NGS-based technologies have transformed the field by enabling the transcriptome-wide mapping of RNA modifications, offering unparalleled scale and resolution. These methods typically involve converting modification signals into sequencer-detectable changes in cDNA, often through antibody-based enrichment or chemical treatment.

Core NGS-Based Detection Technologies

The core of NGS-based epitranscriptomics lies in methods that convert the presence of a modification into a sequencer-detectable signal. MeDIP-Seq/m6A-Seq and miCLIP are common antibody-based enrichment strategies for modifications like m6A. Alternatively, chemical treatment methods, such as Pseudo-Seq for Ψ, exploit the unique chemistry of modifications to induce mutations or truncations in cDNA, which are then detected by high-throughput sequencing [27]. These approaches generate genome-wide maps of modifications but often require specific protocols for each modification type.

Direct RNA Sequencing via Nanopore Technology

A groundbreaking development in the field is nanopore direct RNA sequencing. This third-generation sequencing technology allows RNA molecules to be sequenced directly without the need for reverse transcription or amplification. As an RNA molecule passes through a nanopore, it causes characteristic disruptions in an ionic current. Since RNA modifications alter the physical and chemical properties of the RNA molecule, they produce distinct current signatures that can be decoded to identify the modification and its precise location [27] [28]. This approach is particularly powerful because it can, in principle, detect multiple different modifications simultaneously on single RNA molecules, providing insights into the co-occurrence and dynamics of the epitranscriptome [27].

Comparative Analysis: Performance and Data

The evolution from traditional to NGS methods represents a dramatic improvement in detection capabilities, as evidenced by performance comparisons in pathogen detection—a field with analogous technological progression.

Quantitative Performance Comparison

A prospective study on Lower Respiratory Tract Infections (LRTIs) starkly illustrates the performance gap. The study compared a broad-spectrum targeted NGS (bstNGS) panel covering 1872 microorganisms against traditional culture methods and metagenomic NGS (mNGS). bstNGS demonstrated a 96.33% detection rate of microorganisms found by mNGS and a 91.15% detection rate for those identified by culture, even detecting microorganisms with lower loads [29]. Another study at a community hospital in Eastern China directly compared NGS of bronchoalveolar lavage fluid with traditional methods (culture, nucleic acid amplification, antibody tests) in 71 LRTI patients. The pathogen detection rate of NGS was 84.5%, vastly superior to the 26.8% achieved by traditional methods. Furthermore, the turnaround time for NGS was significantly shorter [30].

Table 2: Experimental Comparison of Traditional vs. NGS Methods in Pathogen Detection

| Method | Pathogen Detection Rate | Turnaround Time | Consistency with Other Methods | Key Identified Pathogens (Examples) |

|---|---|---|---|---|

| Traditional Culture/Methods | 26.8% [30] | Significantly longer [30] | Gold standard for comparison | Aspergillus, Pseudomonas aeruginosa, Candida albicans [30] |

| Metagenomic NGS (mNGS) | 82.0% [29] | Shorter | Used as a benchmark for bstNGS [29] | Broad spectrum, unbiased identification [31] |

| Targeted NGS (bstNGS) | 87.3% [29] | Shorter | 68.4% consistency with traditional methods [30] | Mycobacterium, Streptococcus pneumoniae, Viruses (HPV, EBV) [30] |

| NGS (General) | 84.5% [30] | Significantly shorter [30] | Detected additional pathogens missed by culture | Mycobacterium, Klebsiella pneumoniae, Pneumocystis jiroveci [30] |

Advantages and Limitations in Context

The data clearly shows NGS's superior sensitivity and speed. NGS is non-targeted, allowing for the identification of unexpected or novel pathogens without prior hypothesis [31] [30]. However, traditional methods are not obsolete; culture remains essential for obtaining isolates needed for antibiotic susceptibility testing. The integration of both approaches, therefore, provides the most robust diagnostic and research framework [30]. A key limitation of broader NGS approaches like mNGS can be high costs and interference from host nucleic acids, which newer targeted NGS (tNGS) panels aim to mitigate through enrichment, improving accuracy and cost-effectiveness for specific applications [29] [31].

Essential Research Toolkit

The following table details key reagents and materials central to conducting experiments in RNA modification detection and analysis.

Table 3: Key Research Reagent Solutions for RNA Modification Studies

| Reagent/Material | Function in Research | Application Context |

|---|---|---|

| Specific Antibodies | Immunoprecipitation or detection of specific RNA modifications (e.g., m6A, m5C). | Antibody-based enrichment methods like MeDIP-Seq and dot blot [27]. |

| Chemical Probing Agents | React with RNA bases to mark modifications, altering reverse transcription efficiency. | Chemical-based mapping methods (e.g., for Ψ); also used in RNA structure probing [28]. |

| Nuclease P1 & Alkaline Phosphatase | Digest RNA to single nucleosides for downstream analytical separation. | Essential for sample preparation in LC-MS and HPLC quantification [27]. |

| Capture Probes (for tNGS) | Designed oligonucleotides that enrich for target sequences from a complex nucleic acid mixture. | Targeted NGS (tNGS) to improve detection of specific pathogens or genes [29]. |

| Reverse Transcriptases | Synthesize cDNA from RNA templates; different enzymes have varying sensitivities to RNA modifications. | Critical for most NGS library prep and locus-specific methods like primer extension [27] [28]. |

| Oxford Nanopore Flow Cells | Contain the nanopores for direct electrical detection of RNA or DNA molecules. | The core consumable for direct RNA sequencing on platforms like MinION [28]. |

Diagram 2: Core Workflows of Modern Sequencing Approaches. Next-generation and third-generation sequencing leverage high-throughput data generation and sophisticated bioinformatic analysis for epitranscriptome-wide discovery.

The evolution from traditional biochemical methods to NGS represents a paradigm shift from targeted, low-throughput analysis to comprehensive, systems-level investigation of RNA modifications. While traditional methods like LC-MS remain the gold standard for absolute quantification and primer extension for validating specific sites, NGS technologies, particularly nanopore sequencing, have unlocked the potential to map the dynamic epitranscriptome at an unprecedented scale and resolution. The future of the field lies in the continued refinement of these sequencing technologies, the development of robust bioinformatic tools for data analysis, and the intelligent integration of complementary methods to achieve a truly holistic understanding of RNA biology. This technological progression will be vital for unraveling the complex functional roles of RNA modifications in health and disease, ultimately informing novel therapeutic strategies.

Integration with Multi-Omics Data for Comprehensive Gene Expression Analysis

Multi-omics integration represents a transformative approach in biological research, enabling a holistic perspective on complex disease mechanisms by combining data from various molecular layers, including the genome, epigenome, transcriptome, proteome, and metabolome [32]. This methodology plays a crucial role in promoting the study of human diseases by overcoming the limitations of single-omics approaches, which can only provide correlative associations rather than causal relationships [32]. While single-omics research can reflect changes in disease processes, it cannot fully explain the intricate mechanisms underlying complex conditions like Alzheimer's disease or cancer [32].

The fundamental challenge in multi-omics integration stems from the inherent differences in data structure, scale, and noise characteristics across various omics layers [33]. Each omic modality possesses unique data scales, noise ratios, and preprocessing requirements, creating substantial technical hurdles for researchers [33]. Furthermore, the biological correlations between different omic layers within the same sample are not always straightforward—for instance, actively transcribed genes typically show greater open chromatin accessibility, while abundant proteins may not necessarily correlate with high gene expression levels [33].

Multi-omics data integration strategies can be broadly classified into two main categories: vertical (matched) integration and diagonal (unmatched) integration [33]. Vertical integration merges data from different omics within the same set of samples, using the cell itself as an anchor to bring these omics together. In contrast, diagonal integration involves combining different omics from different cells or different studies, requiring the creation of a co-embedded space to find commonality between cells [33]. A third emerging category, mosaic integration, handles experimental designs where each experiment has various combinations of omics that create sufficient overlap through shared modalities [33].

Comparative Analysis of Multi-Oomics Integration Methods

Methodological Approaches and Tool Classifications

The computational landscape for multi-omics integration has evolved substantially, with tools now available for various data integration scenarios. These methods can be meaningfully categorized based on their underlying computational approaches and their capacity to handle matched versus unmatched data [33].

Table 1: Multi-Omics Integration Tools by Data Type and Methodology

| Tool Name | Year | Methodology | Integration Capacity | Data Type |

|---|---|---|---|---|

| Seurat v4 | 2020 | Weighted nearest-neighbour | mRNA, spatial coordinates, protein, accessible chromatin | Matched |

| MOFA+ | 2020 | Factor analysis | mRNA, DNA methylation, chromatin accessibility | Matched |

| totalVI | 2020 | Deep generative | mRNA, protein | Matched |

| SCENIC+ | 2022 | Unsupervised identification model | mRNA, chromatin accessibility | Matched |

| GLUE | 2022 | Variational autoencoders | Chromatin accessibility, DNA methylation, mRNA | Unmatched |

| LIGER | 2019 | Integrative non-negative matrix factorization | mRNA, DNA methylation | Unmatched |

| Cobolt | 2021 | Multimodal variational autoencoder | mRNA, chromatin accessibility | Mosaic |

| StabMap | 2022 | Mosaic data integration | mRNA, chromatin accessibility | Mosaic |

Technical Approaches to Integration

The computational strategies for multi-omics integration encompass diverse mathematical and machine learning frameworks, each with distinct strengths and limitations for specific research applications.

Classical Statistical and Machine Learning Approaches

Classical approaches include correlation/covariance-based methods such as Canonical Correlation Analysis (CCA) and its extensions, which explore relationships between two sets of variables with the same set of samples [34]. Sparse and regularized Generalised CCA (sGCCA/rGCCA) represent widely used generalizations of CCA to multi-omics data [34]. Matrix factorization methods, including Joint and Individual Variation Explained (JIVE) and Non-Negative Matrix Factorization (NMF), are powerful techniques for joint dimensionality reduction that condense datasets into fewer factors to reveal important patterns [34]. Probabilistic-based methods like iCluster offer advantages in handling missing data by incorporating uncertainty estimates and allowing for flexible regularization [34].

Deep Learning Approaches

Deep generative models, particularly variational autoencoders (VAEs), have gained prominence since 2020 for tasks such as imputation, denoising, and creating joint embeddings of multi-omics data [34]. These approaches excel at learning complex nonlinear patterns and offer flexible architecture designs that can support missing data and denoising operations [34]. The strength of deep learning approaches lies in their ability to handle high-dimensional omics integration and perform data augmentation, though they typically demand substantial computational resources and larger training datasets [34].

Table 2: Technical Approaches to Multi-Omics Integration

| Model Approach | Strengths | Limitations | Typical Applications |

|---|---|---|---|

| Correlation/Covariance-based | Captures relationships across omics, interpretable, flexible extensions | Limited to linear associations, typically requires matched samples | Disease subtyping, detection of co-regulated modules |

| Matrix Factorization | Efficient dimensionality reduction, identifies shared and omic-specific factors, scalable | Assumes linearity, does not explicitly model uncertainty or noise | Disease subtyping, identification of shared molecular patterns |

| Probabilistic-based | Efficient dimensionality reduction, captures uncertainty in latent factors | Computationally intensive, may require careful tuning and strong model assumptions | Disease subtyping, latent factors discovery, biomarker discovery |

| Network-based | Represents samples or omics relationships as networks, robust to missing data | Sensitive to similarity metrics choice, may require extensive tuning | Disease subtyping, patient similarity analysis |

| Deep Generative Learning | Learns complex nonlinear patterns, flexible architecture designs, can support missing data | High computational demands, limited interpretability, requires large data to train | High-dimensional omics integration, data augmentation and imputation |

Experimental Design Considerations

Robust multi-omics study design requires careful consideration of several computational and biological factors that fundamentally influence integration outcomes. Based on comprehensive benchmarking across multiple TCGA datasets, researchers should adhere to several critical criteria for optimal results [35]:

- Sample Size: Include 26 or more samples per class to ensure robust statistical power

- Feature Selection: Select less than 10% of omics features to reduce dimensionality while preserving biological signal

- Class Balance: Maintain a sample balance under a 3:1 ratio between classes

- Noise Management: Keep the noise level below 30% to maintain data integrity

Feature selection emerges as particularly important, with demonstrated improvements in clustering performance of up to 34% when appropriately implemented [35]. Proper preprocessing strategies, including min-max normalization, handling missing values, encoding target labels, and dataset splitting, are essential for ensuring clean, consistent inputs that improve training stability and reduce noise [36].

Experimental Protocols and Benchmarking

Reference Materials and Quality Control

The Quartet Project provides essential multi-omics reference materials for objective assessment of data quality and integration reliability [37]. These reference suites include matched DNA, RNA, protein, and metabolites derived from immortalized cell lines from a family quartet of parents and monozygotic twin daughters, providing built-in ground truth defined by their biological relationships [37]. This approach enables researchers to implement ratio-based profiling that scales absolute feature values of study samples relative to a concurrently measured common reference sample, producing reproducible and comparable data suitable for integration across batches, laboratories, and platforms [37].

Case Study: Multi-Omics in Sepsis Research

A comprehensive multi-omics analysis investigating the role of short-chain fatty acids (SCFAs) in sepsis demonstrates a robust integration protocol [38]. The study employed a integrated strategy combining murine models, untargeted metabolomics, human transcriptomics (datasets GSE185263, GSE54514), single-cell RNA sequencing (GSE167363), and Mendelian randomization [38].

Experimental Protocol:

- Animal Modeling: Cecal ligation and puncture (CLP) was performed in C57BL/6 mice (n=60) divided into three groups: sham operation, sepsis, and SCFA treatment groups [38]

- Multi-omics Data Collection:

- LC-MS untargeted metabolomics with quality control using multivariate statistical analyses (PCA, OPLS-DA)

- Transcriptomic analysis from human datasets with batch effect correction using ComBat method

- Differential expression analysis using limma package with significance thresholds of |logFold Change|>1 and p.adj value <0.05 [38]

- Machine Learning Integration:

- Support Vector Machine Recursive Feature Elimination (SVM-RFE) and LASSO regression to prioritize SCFA-associated hub genes

- Single-cell profiling to localize targets to specific cell types

- Immune infiltration analysis using single-sample Gene Set Enrichment Analysis (ssGSEA) [38]

This integrated approach identified five SCFA-associated hub genes (CASP5, GPR84, MMP9, MPO, PRTN3) and revealed glycerophospholipid metabolism as the most significantly altered pathway under SCFA intervention [38].

Performance Benchmarking in Cancer Genomics

Rigorous benchmarking of multi-omics integration methods across The Cancer Genome Atlas (TCGA) datasets provides critical insights into methodological performance [35]. Evaluation of 10 clustering methods across various TCGA cancer types demonstrates that feature selection improves clustering performance by 34%, highlighting its crucial importance in analysis pipelines [35].

Experimental Parameters for Optimal Performance:

- Sample Size: Minimum of 26 samples per class for robust discrimination

- Feature Selection: Retention of less than 10% of omics features to reduce dimensionality

- Class Balance: Maintenance of sample balance under 3:1 ratio between classes

- Noise Threshold: Noise levels kept below 30% to preserve biological signal integrity [35]

The benchmarking analysis incorporated multi-omics layers including gene expression (GE), miRNA (MI), mutation data, copy number variation (CNV), and methylation (ME) across ten cancer types from 3,988 patients in TCGA [35].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Reagents for Multi-Omics Studies

| Reagent/Material | Function | Application Examples |

|---|---|---|

| Quartet Reference Materials | Provides multi-omics ground truth for quality control | Assessing wet-lab proficiency in data generation [37] |

| Cell Line Models | Reproducible biological systems for mechanistic studies | B-lymphoblastoid cell lines for reference materials [37] |

| LC-MS/MS Systems | Simultaneous quantification of proteins and metabolites | Proteomic and metabolomic profiling [37] |

| Single-Cell RNA-seq Kits | High-resolution transcriptomic profiling at cellular level | Identifying cell-type specific responses in sepsis [38] |

| Methylation Arrays | Genome-wide epigenetic profiling | DNA methylation analysis in cancer subtyping [35] |

| Quality Control Metrics | Objective assessment of data quality and integration reliability | Mendelian concordance rates, signal-to-noise ratios [37] |

Multi-omics integration represents a paradigm shift in biological research, enabling comprehensive characterization of complex disease mechanisms through the combined analysis of multiple molecular layers. The comparative analysis presented herein demonstrates that method selection must be guided by specific experimental designs, particularly the availability of matched versus unmatched samples across omics modalities [33]. While classical statistical methods offer interpretability and efficiency for well-defined linear relationships, deep learning approaches provide superior performance for capturing complex nonlinear patterns in high-dimensional data, albeit with greater computational demands and reduced interpretability [34].

Future developments in multi-omics integration will likely focus on several key areas: enhanced scalability for increasingly large datasets, improved handling of missing data across modalities, more effective integration of spatial omics technologies, and the development of more interpretable deep learning models [34]. Furthermore, the adoption of standardized reference materials and ratio-based profiling approaches will be crucial for ensuring reproducibility and comparability across studies and laboratories [37]. As these technologies mature, multi-omics integration will continue to transform our understanding of biological systems and accelerate the development of precision medicine approaches for complex diseases.

Experimental and Computational RBS Detection Platforms

Ribosome Profiling Sequencing (Ribo-seq) represents a transformative high-throughput technology based on deep sequencing that targets ribosome protected mRNA fragments to produce a 'global snapshot' of the translatome [39]. Since its development, this technique has opened new avenues for measuring translation across the transcriptome in various biological contexts, revealing translational efficiency, identifying new open reading frames (ORFs), and monitoring ribosome traversal speed at codon resolution in a genome-wide manner [40]. The fundamental principle underpinning Ribo-seq is that translating ribosomes protect short mRNA fragments (~28-30 nucleotides in eukaryotes) from nuclease digestion, and these ribosome-protected fragments (RPFs) can be isolated, sequenced, and mapped to the transcriptome to determine the precise positions of actively translating ribosomes [41] [9].

The importance of Ribo-seq in modern molecular biology is underscored by its rapidly expanding adoption. A comprehensive bibliometric analysis identified 2,744 published articles that utilized the term 'Ribo-seq' between 2009 and January 2024, with 684 articles containing both Ribo-seq and RNA-seq terms, reflecting the growing integration of this technology into multi-omics studies [39]. Unlike transcriptomics or proteomics alone, Ribo-seq captures which mRNAs are actively translated in real time, offering unmatched visibility into translational dynamics under normal and disease conditions, making it particularly valuable for biotech and pharmaceutical companies focused on RNA-based drug development as well as academic labs studying gene regulation, cancer biology, and neurodegeneration [9].

Core Principles and Methodological Workflow

Fundamental Biochemical Basis

At its core, Ribo-seq exploits the physical protection of mRNA fragments by actively translating ribosomes. When ribosomes engage with mRNA to synthesize proteins, they shield approximately 28-30 nucleotides of mRNA from nuclease activity. This protection creates a precise footprint of the ribosome's position, which serves as a snapshot of translational activity at the moment of cell harvesting [41]. The length distribution of these protected fragments typically shows a single symmetrical peak with a median of 28-29 nucleotides in S. cerevisiae or 30-31 nucleotides in mammalian cells, reflecting the larger size of mammalian 60S ribosomal subunits [41].

The precision of ribosome positioning achieved with Ribo-seq is remarkably high, particularly when using E. coli RNase I for footprint generation, as this enzyme exhibits little sequence specificity compared to other nucleases like RNase A, RNase T1, or micrococcal nuclease used in earlier methods [41]. This precision enables determination of ribosome positions along the ORF with single nucleotide resolution and reveals a clear trinucleotide periodicity in the footprint data, which allows assignment of the translation reading frame and distinguishes footprints arising from translating ribosomes from RNA fragments protected for other reasons [41].

Standard Experimental Workflow

The canonical Ribo-seq protocol consists of several critical steps that must be carefully optimized for different organisms and experimental systems [42]. The process begins with preparation of biological samples, typically involving rapid translation arrest to preserve the native distribution of ribosomes on mRNAs. This is achieved either through flash-freezing or treatment with translation inhibitors such as cycloheximide (CHX), though the choice and timing of inhibitors require careful consideration as they can introduce artifacts [41] [42].

Following cell lysis using optimized buffers to preserve ribosome-mRNA complexes, the lysate undergoes nuclease footprinting, where mRNA not protected by ribosomes is digested. The ribosome-protected mRNA fragments are then recovered, often through sucrose gradient ultracentrifugation to isolate monosomes [42] [9]. Subsequent steps involve linker ligation to the protected fragments, rRNA depletion to remove highly abundant ribosomal RNA sequences, and library preparation for high-throughput sequencing [42]. The entire process requires meticulous execution at each step to minimize biases and ensure high-quality data.

Figure 1: Standard Ribo-seq Experimental Workflow

Comparative Analysis of Ribo-seq Methodologies

Advanced Methodological Variations

Recent innovations in Ribo-seq technologies have significantly enhanced their sensitivity, specificity, and resolution, leading to the development of specialized protocol variations designed to overcome specific technical limitations [40] [43]. One major advancement addresses the challenge of applying Ribo-seq to limited input materials. Conventional protocols typically require ~10⁶ or more cells, creating barriers for studies with rare cell populations or precious clinical samples [40]. Several ligation-free methods have been implemented to address this limitation, including Ribo-lite, which can be applied to low-inputs such as 1,000 HEK293 cells and even ultralow-inputs like a single oocyte [40]. Similarly, LiRibo-seq employs a unique method of footprint recovery using biotin-conjugated puromycin, which covalently links to nascent peptide chains, allowing isolation of footprint-ribosome complexes via streptavidin beads [40].

Another significant innovation is the expansion of Ribo-seq to single-cell resolution. Two independent methods—scRibo-seq and Ribo-ITP—have been developed to measure translatomes at single-cell level [40]. scRibo-seq involves collecting individual cells in multi-well plates, with each well undergoing cell lysis, MNase digestion, and linker ligation in a single-pot reaction [40]. Ribo-ITP utilizes a microfluidic isotachophoresis system for high-yield RNA purification and footprint enrichment, substantially reducing sample processing time and materials required [40]. These single-cell techniques enable researchers to characterize translational heterogeneity within cell populations, providing insights previously masked by bulk measurements.

Method-Specific Performance Characteristics

Table 1: Comparative Analysis of Advanced Ribo-seq Methodologies

| Method | Key Innovation | Input Requirements | Primary Applications | Technical Limitations |

|---|---|---|---|---|

| Conventional Ribo-seq [42] | Nuclease protection & ultracentrifugation | ~10⁶ cells | Genome-wide ribosome positioning, ORF discovery | High input requirement, rRNA contamination |

| Ribo-lite [40] | Ligation-free, one-pot reaction | 50 cells to single oocyte | Low-input translatomics, maternal-to-zygotic transition | Restricted RNA complexity in low-input samples |

| LiRibo-seq [40] | Biotin-puromycin ribosome capture | ~5,000 cells | Rare cell populations, embryonic development | Potential bias in puromycin incorporation |

| scRibo-seq [40] | Single-cell processing in multi-well plates | Single cells | Translational heterogeneity, cell-to-cell variation | Lower read depth, MNase sequence bias |

| Ribo-ITP [40] | Microfluidic footprint enrichment | Single cells | Allele-specific translation, early embryogenesis | Specialized equipment requirement |

| Thor-Ribo-seq [40] | T7 RNA polymerase amplification | ~10³ to 10⁶ cells | Wide dynamic range applications, dissected tissues | Potential amplification biases |

| Ribo-RET/TIS [12] | Translation initiation site mapping | Varies by protocol | Start codon identification, uORF discovery | Requires specific inhibitors (retapamulin) |

Critical Experimental Considerations and Optimization

Technical Challenges and Limitations

Despite its powerful capabilities, Ribo-seq presents several technical challenges that researchers must address during experimental design and data interpretation. One significant limitation concerns the reproducibility of local ribosome density measurements. While Ribo-seq replicates typically show high correlation at the gene level (r between 0.85 and 1.00), the reproducibility at nucleotide-level resolution is considerably lower, with median correlations between replicates often below 0.4 [42]. This indicates that ribosome profiles at single-nucleotide scale are not as reproducible as previously thought, necessitating careful statistical treatment when analyzing local features such as ribosome pausing.

Another critical challenge involves potential artifacts introduced during sample preparation, particularly through the use of translation inhibitors. Cycloheximide (CHX) treatment, commonly employed to arrest translation before cell lysis, can distort the natural distribution of ribosomes [41]. As noted in earlier studies, incubation with CHX for 3-5 minutes before cooling can lead to overrepresentation of initiation sites because CHX doesn't inhibit scanning or initiation, allowing additional 80S initiation complexes to form on mRNAs with vacant initiation sites during the inhibition period [41]. Similar concerns apply to harringtonine treatment used to identify initiation sites [41]. These artifacts can obscure the true relative utilization frequency of different initiation sites under steady-state conditions.

The presence of sporadic high-density peaks and long alignment gaps in Ribo-seq data creates additional challenges for data normalization and interpretation [44]. These fluctuations may arise from genuine biological phenomena like ribosome pausing or from technical artifacts, making it difficult to distinguish signal from noise without appropriate controls and normalization strategies.

Quality Assessment and Normalization Approaches

Robust quality assessment is essential for ensuring reliable Ribo-seq data. Key quality metrics include fragment length distribution, triplet periodicity, and ribosomal RNA contamination levels [45] [9]. The expected triplet periodicity—a pattern where ribosome footprint density oscillates with a three-nucleotide period corresponding to codon positions—serves as an important indicator of data quality, as it reflects the codon-by-codon movement of ribosomes during translation [41] [9].

To address the challenges of data heterogeneity and normalization, several computational approaches have been developed. The Ribo-seq Unit Step Transformation (RUST) method provides a robust normalization technique that converts ribosome footprint densities into a binary step unit function, where individual codons receive a score of 1 or 0 depending on whether their footprint density exceeds the ORF average [44]. This approach reduces the impact of heterogeneous noise and sporadic high-density peaks, allowing more accurate identification of mRNA sequence features that affect ribosome footprint densities globally [44]. Simulation studies have demonstrated that RUST outperforms other normalization methods, including conventional normalization (CN) and logarithmic mean normalization (LMN), particularly in the presence of noise or under reduced coverage conditions [44].

For specialized applications like isoform-level analysis, tools such as RPiso have been developed to quantify ribosome profiling data at the transcript isoform level rather than the gene level [45]. This is particularly important in higher eukaryotes where alternative splicing generates multiple mRNA isoforms from a single gene that may be subject to different translational regulation [45].

Essential Research Reagents and Tools

Key Research Reagent Solutions

Table 2: Essential Research Reagents and Tools for Ribo-seq Studies

| Reagent/Tool | Function | Examples/Alternatives | Application Notes |

|---|---|---|---|

| Translation Inhibitors | Arrest ribosomes in native positions | Cycloheximide (CHX), Harringtonine, Retapamulin | CHX can distort initiation site representation; inhibitor choice affects results [41] [12] |

| Nucleases | Digest unprotected mRNA regions | RNase I, Micrococcal nuclease (MNase) | RNase I has minimal sequence bias; MNase has A/U preference [40] [41] |

| Ribosome Capture Methods | Isolate ribosome-protected fragments | Ultracentrifugation, RiboLace (puromycin-based) | Gel-free methods like RiboLace reduce sample loss [40] [9] |

| rRNA Depletion Kits | Remove abundant ribosomal RNAs | Commercial rRNA depletion kits | Critical for enriching meaningful signal; major source of sample loss [40] [42] |

| Spike-in Controls | Enable quantitative comparisons | External RNA controls, Cross-species lysates | Essential for measuring global translation changes [40] |

| Library Prep Kits | Prepare sequencing libraries | Commercial kits, LaceSeq protocol | Ligation-free methods reduce sample loss [40] [9] |

| Bioinformatics Tools | Data processing and analysis | RUST, RPiso, Ribomap, RiboProfiling | Choice affects normalization and interpretation [44] [45] |

Applications and Future Perspectives

Ribo-seq has enabled numerous groundbreaking discoveries in translation biology, revealing unexpected complexity in genomic coding potential. Among the most significant findings has been the identification of numerous translated short upstream ORFs (uORFs) with near-cognate initiation codons in mouse ES cells, which outnumber AUG-initiated uORFs by approximately 4:1 [41]. Additionally, Ribo-seq has demonstrated that for many protein-coding ORFs, the annotated start codon is not the only in-frame initiation site, and in some cases not even the main start site, revealing many more cases of mRNAs coding for N-terminally extended or truncated protein isoforms than previously appreciated [41].