Correcting for Sequencing Depth Bias in PCA: A Practical Guide for Genomic Researchers

Principal Component Analysis (PCA) is a cornerstone of genomic data exploration, but its results can be severely biased by uneven sequencing depth across samples.

Correcting for Sequencing Depth Bias in PCA: A Practical Guide for Genomic Researchers

Abstract

Principal Component Analysis (PCA) is a cornerstone of genomic data exploration, but its results can be severely biased by uneven sequencing depth across samples. This article provides a comprehensive guide for researchers and drug development professionals on understanding, correcting, and validating PCA in the context of sequencing depth bias. We cover foundational concepts of how sequencing depth affects covariance structures, methodological approaches from simple scaling to sophisticated normalization techniques, troubleshooting common pitfalls in high-dimensional data, and validation strategies to ensure biological interpretations are robust. By integrating principles from transcriptomics and population genetics, this guide equips scientists to perform more reliable dimensionality reduction and extract meaningful biological insights from their sequencing data.

Understanding Sequencing Depth Bias: Why Your PCA Results Might Be Misleading

Defining Sequencing Depth and Its Impact on Read Count Distributions

Core Definitions: Depth, Coverage, and Read Counts

What is the precise definition of sequencing depth?

Sequencing depth, also called read depth, refers to the number of times a specific nucleotide in the genome or transcriptome is read during the sequencing process. It is an average expressed as a multiple, such as 30x depth, meaning each base was sequenced 30 times on average [1].

How is sequencing coverage different from depth?

While often used interchangeably, these terms have distinct meanings [1]:

| Term | Definition | Measures | Purpose |

|---|---|---|---|

| Sequencing Depth | The number of times a specific base is sequenced. | Redundancy at a base position. | Increases confidence in base calling and variant detection. |

| Sequencing Coverage | The proportion of the genome sequenced at least once. | Comprehensiveness of the sequenced region. | Ensures the target region is adequately represented. |

What are "read counts" and how do they relate to depth? In RNA-Seq, "read counts" are the number of sequencing reads mapped to a gene or transcript. The total sum of all read counts in a sample is directly determined by the sequencing depth. Higher depth yields more total reads, which generally increases the statistical power to detect expressed genes, especially those with low expression [2].

Impact of Sequencing Depth on Data Analysis

How does sequencing depth impact differential expression analysis in RNA-Seq? Sequencing depth positively correlates with the reliability and informational content of the data [2]. Deeper sequencing:

- Increases statistical power to detect differential expression (DE), particularly for genes with low expression levels [2].

- Allows for a more global view of gene expression and can provide information for analyses like alternative splicing [2].

What is the "read count bias" and how is it influenced by sequencing depth and experimental design? A known bias in DE analysis is that highly expressed genes (or longer genes) are more likely to be called differentially expressed [3]. Research shows that this bias is primarily determined by a gene's dispersion (variance) in the negative binomial model used for RNA-Seq count data [3].

- Technical Replicates vs. Biological Replicates: The read count bias is pronounced in data with small gene dispersions, such as technical replicates or data from genetically identical models (e.g., cell lines). In contrast, data from biological replicates from unrelated individuals have much larger dispersions and largely do not suffer from this bias [3].

- Impact on GSEA: This read count bias can cause a considerable number of false positives in sample-permuting Gene Set Enrichment Analysis (GSEA) [3].

What is the recommended sequencing depth for a standard bulk RNA-Seq experiment? The required depth depends on the organism's complexity and the study's goals. For a human differential gene expression (DGE) analysis [2]:

- Bare Minimum: 5 million mapped reads per sample.

- Common Range: 20 - 50 million mapped reads per sample. This provides a good balance for detecting both highly and lowly expressed genes.

Sequencing Depth in Practice: Trade-offs and Troubleshooting

Should I prioritize higher sequencing depth or more biological replicates? For differential expression studies, increasing the number of biological replicates often provides a greater boost in statistical power than increasing sequencing depth per sample. One methodology experiment showed that increasing replicates from 2 to 6 at a fixed depth of 10 million reads per sample increased power more than increasing depth from 10 million to 30 million reads with only 2 replicates [2].

How do I determine the optimal sequencing depth for a single-cell RNA-Seq (scRNA-seq) experiment? In scRNA-seq, a key trade-off exists between the number of cells sequenced and the sequencing depth per cell for a fixed total budget. A mathematical framework suggests that for estimating important gene properties, the optimal allocation is often to sequence at a depth of around one read per cell per gene, which typically means sequencing more cells at a shallower depth [4].

What are common sources of bias in NGS data that are confounded with sequencing depth? Multiple technical artifacts can introduce biases that affect read count distributions independent of true biological signal [5] [6]:

- GC Content Bias: The dependence between fragment count (read coverage) and the GC content of the DNA fragment. Both GC-rich and AT-rich fragments can be underrepresented. This bias is thought to be primarily introduced during PCR amplification [6].

- PCR Amplification Biases: DNA sequence content and length affect amplification efficiency during PCR, leading to over- or under-representation of certain sequences. This bias increases with the number of PCR cycles [5].

- Read Mapping Biases: Repetitive elements, paralogous genes, and differences between the sequenced genome and the reference genome can create regions with low or no coverage (unmappable regions), which no amount of sequencing depth can resolve [5].

Methodologies and Protocols

Detailed Methodology: Investigating Read Count Bias and Gene Dispersion

- Objective: To systematically analyze how gene dispersion in negative binomial models affects read count bias in DE analysis across different replicate types [3].

- Datasets: Analysis of multiple public RNA-seq datasets (e.g., Marioni, MAQC-2, TCGA KIRC, TCGA BRCA) comprising both technical replicates and biological replicates from unrelated individuals [3].

- Data Processing: Read counts were normalized using methods like the DESeq median method. A Signal-to-Noise Ratio (SNR) was calculated for each gene to represent differential expression scores [3].

- Statistical Analysis: Gene-wise dispersion coefficients were estimated using the

edgeRpackage. The relationship between mean read count, dispersion, and SNR (or LRT statistics) was examined to identify bias patterns [3].

Experimental Protocol: Mitigating Technical Variation in RNA-Seq

- Sample Preparation: Randomize samples during library preparation and dilute to the same RNA concentration to minimize batch effects [7].

- Library Multiplexing: Use indexing to multiplex all samples across all sequencing lanes/flow cells. If this is not possible, use a blocking design that includes some samples from each experimental group on each lane [7].

- Control for PCR: Limit the number of PCR amplification cycles to reduce associated biases [5].

- Normalization: Apply appropriate between-sample normalization methods (e.g., TMM) to account for differences in library size and RNA composition [8].

Essential Visualizations

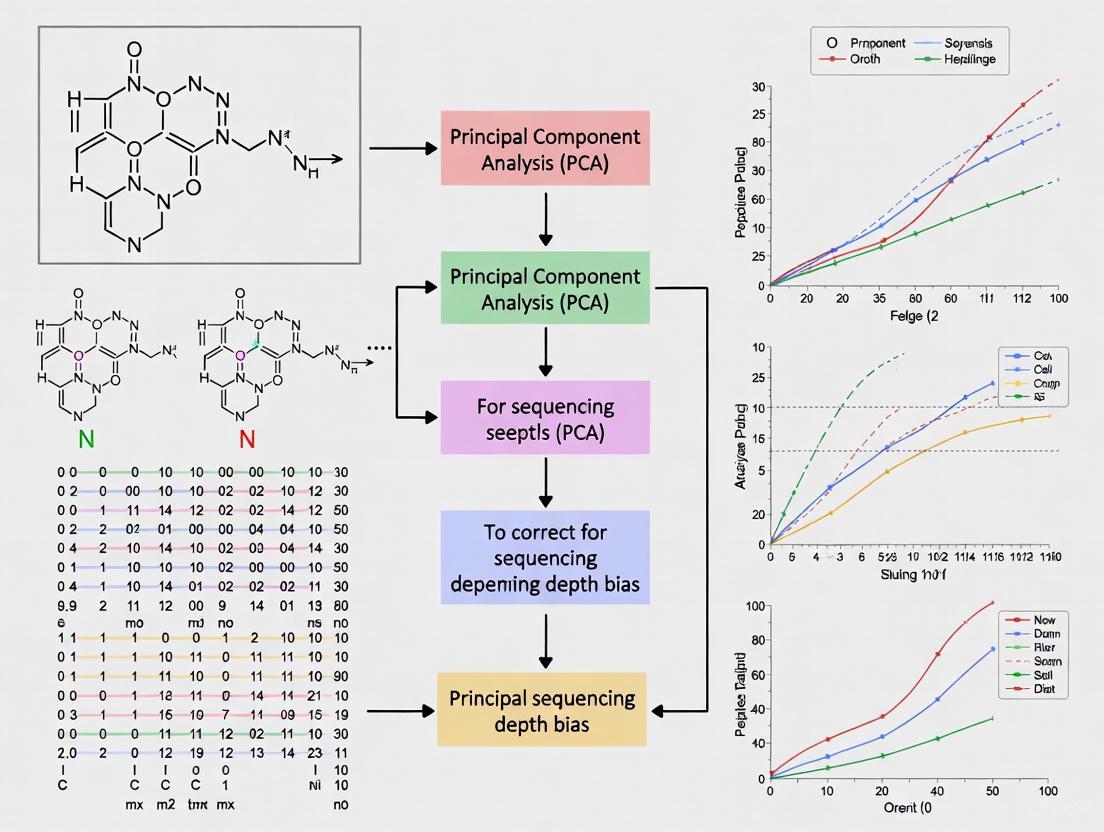

Workflow for Addressing Depth-Related Bias

This diagram illustrates a logical workflow for diagnosing and correcting for sequencing depth-related biases in your data analysis pipeline, particularly before conducting PCA.

The Interplay of Factors Affecting Read Counts

This diagram summarizes the key factors, both technical and biological, that interact to determine the final read count distribution in an NGS experiment.

The Scientist's Toolkit: Research Reagent Solutions

The following table lists key materials and their functions relevant to the experiments and corrections discussed in this guide.

| Research Reagent / Tool | Function in Context |

|---|---|

| DESeq / edgeR | Software packages that use negative binomial models to estimate gene-wise dispersion and test for differential expression, helping to account for count distribution biases [3]. |

| TMM Normalization | A between-sample normalization method that calculates scaling factors to adjust for library composition differences, mitigating the effect of highly expressed genes on the rest of the analysis [8]. |

| UMIs (Unique Molecular Identifiers) | Short random barcodes used in scRNA-seq and other NGS protocols to label individual mRNA molecules before PCR amplification, allowing for accurate counting and removal of PCR duplicates [4]. |

| BEADS / Loess Model | A correction method and model used to estimate and remove the unimodal GC-content bias from fragment count data in DNA-seq and other NGS assays [6]. |

| Input DNA/RNA | A critical control in experiments like ChIP-seq; an input sample that is sonicated and processed but not immunoprecipitated, used to control for technical biases like sonication efficiency and open chromatin [5]. |

How Uneven Sequencing Depth Distorts Sample Covariance Structures

FAQs on Sequencing Depth and Data Structure

How does uneven sequencing depth distort PCA and covariance structures?

Uneven sequencing depth introduces technical variation that distorts the true biological covariance structure between samples. During Principal Component Analysis (PCA), which relies on the covariance matrix of gene expression data, this technical variation can be mistaken for biological signal. The principal components, which should represent major transcriptional programs, become skewed towards representing the technical artifact of sequencing depth rather than true biological differences [9] [10]. One study evaluating 12 normalization methods found that biological interpretation of PCA models can depend heavily on the normalization method applied to counter this effect [9].

What are the visible signs of sequencing depth distortion in my analysis?

You can identify sequencing depth distortion through several diagnostic approaches:

- PCA Score Plots: Samples may cluster primarily by sequencing depth rather than biological groups in the first principal component [11].

- Quantitative Metrics: Increased variance and distorted gene-gene correlation patterns emerge, which can be detected using correlation analysis or Covariance Simultaneous Component Analysis [9].

- t-SNE/UMAP Visualization: Cells or samples from different batches or sequencing depths cluster separately rather than by biological similarity [11].

- Statistical Distribution Shifts: Unexpected large shifts in t-statistics when comparing random populations within the same cohort [12].

How does normalization method choice affect covariance structure recovery?

Different normalization methods correct for sequencing depth effects in distinct ways, significantly impacting downstream covariance structures and PCA results. The table below summarizes key methods and their effects:

Table: Normalization Methods and Their Impact on Covariance Structures

| Method | Sequencing Depth Correction | Library Composition Correction | Suitable for Covariance/PCA | Key Considerations |

|---|---|---|---|---|

| CPM | Yes | No | No | Simple scaling; heavily affected by highly expressed genes [13] |

| TPM | Yes | Partial | Limited for PCA | Adjusts for gene length; reduces composition bias [13] |

| Median-of-Ratios (DESeq2) | Yes | Yes | Yes | Affected by global expression shifts [13] |

| TMM (edgeR) | Yes | Yes | Yes | Can be affected by over-trimming genes [13] |

| Rarefaction | Yes (via subsampling) | N/A | For specific metrics | Controversial; discards data but can control for uneven effort [14] |

Research shows that although PCA score plots might appear similar across normalizations, the biological interpretation of the models can differ substantially depending on the method used [9].

Can I use shallow sequencing and still recover true covariance structures?

Yes, due to the inherent low-dimensionality of gene expression data, where genes are co-regulated within transcriptional modules, effective covariance structures can often be recovered even with shallow sequencing [10]. The required depth depends on the dominance of the transcriptional programs you wish to detect—programs that explain greater variance in the dataset require less sequencing depth for accurate identification [10]. One mathematical framework revealed that transcriptional programs can be reproducibly identified at just 1% of conventional read depths for dominant programs [10].

Troubleshooting Guides

Problem: Batch Effects Confounded with Sequencing Depth in PCA

Symptoms: In PCA plots, samples separate by sequencing batch or run date rather than biological conditions, with clear correlation between sequencing depth and position along primary principal components.

Solution Protocol:

- Visual Diagnostic: Generate PCA scores plots colored by both biological groups and technical batches (sequencing date, lane, depth quartile).

- Quantitative Assessment: Apply quantitative batch effect metrics such as kBET (k-nearest neighbor batch effect test) or Graph-ILSI (graph-based integrated local similarity inference) [11].

- Batch Effect Correction: Apply appropriate batch correction algorithms:

- Validation: Verify that biological signals are preserved while technical artifacts are removed by checking known biological markers.

Experimental Workflow for Batch Effect Correction

Problem: Uneven Sequencing Depth Across Samples in 16S rRNA or scRNA-seq Experiments

Symptoms: Large variation (e.g., 100-fold) in sequence counts across samples, leading to distorted alpha and beta diversity metrics, and inability to distinguish technical from biological variation.

Solution Protocol:

- Pre-sequence Normalization: Work with your sequencing lab to normalize the concentration of PCR products before pooling using fluorometric quantification (e.g., Qubit) rather than just absorbance [15].

- Quality Filtering: Remove outlier samples with extremely low counts that cannot support diversity analysis.

- Rarefaction Approach: For diversity metrics, use rarefaction to normalize sequencing effort across samples:

- Select a threshold based on your sample with the lowest usable sequence count

- Randomly subsample without replacement to this threshold

- Repeat multiple times (100-1000x) and calculate mean diversity metrics [14]

- Validation: Compare rarefaction results with alternative normalization methods to ensure robust conclusions.

Table: Research Reagent Solutions for Sequencing Preparation

| Reagent/Tool | Function | Considerations for Covariance Stability |

|---|---|---|

| Qubit Fluorometer | Accurate DNA/RNA quantification | Prevents uneven library concentrations that distort covariance [15] |

| Size Selection Beads | Cleanup of adapter dimers and small fragments | Reduces technical variation in fragment distribution [16] |

| Unique Molecular Identifiers | Corrects for PCR amplification bias | Essential for accurate counting in single-cell RNA-seq [4] |

| Nuclease-Free Water | Dilution and resuspension | Prevents enzymatic degradation that introduces noise [16] |

| High-Fidelity Polymerase | Library amplification | Maintains representation of low-abundance transcripts [16] |

Experimental Protocols for Covariance Structure Validation

Protocol: Evaluating Normalization Method Efficacy for PCA

This protocol helps determine the optimal normalization approach for preserving biological covariance structures in your specific dataset.

Materials:

- Raw count matrix from RNA-seq experiment

- R or Python environment with appropriate packages (DESeq2, edgeR, vegan, scikit-learn)

- Sample metadata including biological groups and technical factors

Methodology:

Apply Multiple Normalizations:

- Process raw counts using CPM, TPM, median-of-ratios (DESeq2), TMM (edgeR), and if appropriate, rarefaction

- Include variance-stabilizing transformations where applicable [12]

Compute Covariance Matrices:

- Generate gene expression covariance matrices for each normalized dataset

- Perform PCA on each covariance matrix

Assess Sample Separation:

- Calculate silhouette widths for biological group separation in PCA space [9]

- Quantify the percentage of variance explained by technical factors vs. biological factors

Evaluate Gene-Gene Correlations:

- Compute correlation distributions for each normalized dataset

- Compare to expected null distributions using summary statistics [9]

Pathway Enrichment Validation:

- Perform gene enrichment analysis on top principal components

- Assess biological coherence of pathway results across normalization methods [9]

Relationship Between Normalization and PCA Interpretation

Protocol: Mathematical Framework for Sequencing Depth Optimization

Based on the research showing low-dimensionality enables shallow sequencing [10], this protocol uses perturbation theory to determine optimal sequencing depth.

Theoretical Foundation:

The principal component error introduced by reduced sequencing depth can be modeled as:

[ \|pci - \hat{pc}i\| \approx \sqrt{\sum{j \neq i} \left( \frac{pci^T(\hat{C} - C)pcj}{\lambdai - \lambda_j} \right)^2 } ]

Where:

- (pc_i) = true principal component i

- (\hat{pc}_i) = estimated principal component at shallow sequencing

- (C, \hat{C}) = true and estimated covariance matrices

- (\lambda_i) = eigenvalue (variance explained) by component i [10]

Application Steps:

- Subsample Deep Data: Take a deeply sequenced dataset and create artificially shallow versions by random subsampling

- Calculate Covariance Perturbation: Compute how the covariance matrix changes with reduced depth

- Model Component Stability: Use the error equation to determine which principal components remain stable

- Determine Optimal Depth: Identify the sequencing depth where dominant biological signals remain detectable with acceptable error

This framework reveals that the key factor is the eigengap ((\lambdai - \lambdaj)) - larger gaps between eigenvalues make components more stable to sequencing noise [10].

The Fundamental Link Between Normalization and PCA Performance

Core Concepts: Normalization and PCA

What is Principal Component Analysis (PCA) and why is it used with sequencing data? Principal Component Analysis (PCA) is a linear dimensionality reduction technique that transforms potentially correlated variables into a smaller set of uncorrelated variables called principal components. These components are eigenvectors of the data's covariance matrix and are ordered so that the first component captures the largest possible variance in the data, with each succeeding component capturing the next highest variance while being orthogonal to the preceding components [17] [18]. For single-cell RNA sequencing (scRNA-seq) and other high-dimensional biological data, PCA is indispensable for reducing complexity, noise reduction, and visualizing cell populations in lower-dimensional space [19].

Why is normalization essential before performing PCA? Normalization is crucial before PCA because PCA is a variance-maximizing exercise. If variables (genes) have vastly different variances—often due to technical artifacts like sequencing depth rather than biological signals—PCA will load heavily on the variables with the largest variances, potentially obscuring biologically relevant patterns [20] [21].

- Without normalization, the first principal component may be dominated by technical variation, such as a single highly-expressed gene or overall differences in library size, making the analysis uninterpretable or misleading [21].

- With proper normalization, the data is transformed onto a comparable scale, allowing PCA to capture underlying biological variance rather than technical noise [9] [19].

Troubleshooting Guide: Normalization for PCA

Problem 1: A single gene or a few highly-expressed genes dominate the first principal component.

- Symptoms: The PCA overview plot shows a very linear first PC. The loadings plot indicates that the first PC is strongly determined by a small number of genes. The variance explained plot shows the first PC captures the vast majority of the variance [21].

- Causes: This is often caused by a single, very highly expressed gene (e.g.,

Rn45sin the Tabula Muris dataset) or a small set of such genes whose expression levels swamp the signal from all other genes [21]. - Solutions:

- Log-transformation and Scaling: Apply a log(1+x) transformation to make the data more closely approximate a Gaussian distribution, then center and scale each gene to have a mean of 0 and a standard deviation of 1. This de-emphasizes highly differentially expressed genes and places equal weight on all genes for downstream analysis [19] [21].

- Exclude Offending Genes: Manually remove the highly-expressed gene(s) and re-run PCA. While effective, this is less systematic than scaling [21].

- Improved Normalization: When performing counts-per-million (CPM) normalization, use functions that allow exclusion of highly expressed genes from the size factor calculation to prevent them from skewing the normalization [21].

Problem 2: Library size variation confounds the PCA results.

- Symptoms: Cells cluster primarily by their total number of reads (library size) rather than by biological cell type or condition.

- Causes: Technical variations in RNA capture, PCR amplification efficiency, and sequencing depth on multiplexed platforms cause substantial differences in the total reads derived from each cell. PCA will interpret these large-scale differences as the primary source of variance [21].

- Solutions:

- Linear Scaling (CPM): Normalize the data using Counts Per Million (CPM) or a similar method. This involves dividing the counts for each cell by the total counts for that cell and multiplying by a scaling factor (e.g., 1,000,000). This simple linear approach corrects for differences in library size [19] [21].

- Other Linear Methods: Consider downsampling or using more sophisticated nonlinear normalization methods if the cell population is highly heterogeneous with different RNA contents [21].

Problem 3: The biological interpretation of PCA models changes drastically with different normalization methods.

- Symptoms: When the same dataset is normalized using different methods, the resulting PCA score plots may look similar, but the gene loadings and biological pathways identified through enrichment analysis differ significantly [9].

- Causes: Different normalization methods alter the correlation patterns and data characteristics in distinct ways, which directly impacts the PCA model's structure and the biological stories they tell [9].

- Solutions:

- Method Evaluation: Do not rely on a single normalization method. Perform a comprehensive evaluation of several normalization methods (e.g., the 12 methods cited in the study) on your dataset [9].

- Downstream Validation: Evaluate the PCA models not just by their score plots, but also by the quality of sample clustering and the biological plausibility of the gene rankings and pathway enrichments they produce [9].

Problem 4: PCA fails to separate known cell populations.

- Symptoms: Biologically distinct cell types do not form separate clusters in the 2D or 3D PCA plot.

- Causes:

- Insufficient Quality Control (QC): The presence of low-quality cells or genes introduces too much noise, obscuring the biological signal [19].

- Not Using Highly Variable Genes (HVGs): Performing PCA on all genes, including non-informative ones, dilutes the signal from genes that actually drive population differences [19].

- Batch Effects: Technical variations between different sequencing runs or facilities can be the strongest source of variation, masking biological differences [19] [22].

- Solutions:

- Stringent QC: Filter out cells with low gene counts, high mitochondrial content, and low UMI counts. Remove genes expressed in only a few cells [19].

- Feature Selection: Identify and use only Highly Variable Genes (HVGs) for the PCA input. This focuses the analysis on the most biologically relevant features [19].

- Batch Correction: Apply batch correction methods (e.g., Harmony, BBKNN) to remove technical batch effects before performing PCA [19].

Experimental Protocols & Data

Quantitative Impact of Normalization on PCA

The following table summarizes key findings from studies that quantitatively assessed the effect of normalization on PCA outcomes.

Table 1: Experimental Evidence on Normalization's Impact on PCA

| Study Context | Key Finding | Quantitative Result |

|---|---|---|

| scRNA-seq Data Analysis [21] | A single gene (Rn45s) can dominate variance. |

Before correction: PC1 dominated by one gene. After log-transformation and scaling: Multiple genes contribute to the first ~5-10 PCs. |

| 12 Normalization Methods on Transcriptomics Data [9] | Biological interpretation of PCA models is method-dependent. | PCA score plots were often similar across methods, but biological interpretation via gene enrichment pathway analysis depended heavily on the normalization method used. |

| Sequencing Depth in scRNA-seq [4] | Optimal sequencing budget allocation for accurate estimation. | For estimating gene properties, the optimal allocation is ~1 read per cell per gene. A 10x shallower sequencing of 10x more cells reduced estimation error by twofolds in one example. |

| Methodological Differences in Sequencing Centers [22] | Technical protocols induce systematic bias detectable by PCA. | PCA showed sequencing depth clustered by facility (69% variance in first two PCs). 96.9% of samples were correctly assigned to their sequencing center based on depth patterns. |

Standardized Protocol: Data Preprocessing for PCA on scRNA-seq Data

This protocol is compiled from established best practices in the field [19] [21].

Quality Control (QC):

- Cell Filtering: Remove cells with low library size (total UMI counts), low numbers of expressed genes, and high proportions of mitochondrial reads (indicative of stressed or dying cells).

- Gene Filtering: Remove genes that are not expressed in a sufficient number of cells (e.g., genes detected in < 10 cells).

Normalization:

- Aim: Correct for differences in sequencing depth between cells.

- Method: Perform linear scaling to Counts Per Million (CPM). Alternatively, use a more robust method like the one implemented in

sc.pp.normalize_total(adata, target_sum=1e6, exclude_highly_expressed=True)in Scanpy, which mitigates the influence of very highly expressed genes.

Feature Selection:

- Aim: Focus the analysis on biologically relevant signals.

- Method: Identify Highly Variable Genes (HVGs) using a method like

sc.pp.highly_variable_genesin Scanpy. Use only these genes as input for PCA.

Scaling and Centering:

- Aim: Ensure each gene contributes equally to the variance.

- Method:

- Apply a log-transform:

sc.pp.log1p(adata) - Center and scale to unit variance:

sc.pp.scale(adata)

- Apply a log-transform:

Perform PCA:

- Run PCA on the preprocessed data matrix. Visually inspect the results using overview plots, scree plots, and scatter plots colored by relevant metadata.

The logical workflow and the consequences of skipping normalization are summarized in the diagram below.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for scRNA-seq PCA Analysis

| Tool / Reagent | Function / Purpose | Example Use Case |

|---|---|---|

| Scanpy (Python) [19] [21] | A comprehensive toolkit for single-cell data analysis. It includes functions for QC, normalization, HVG selection, PCA, and visualization. | The primary software used in the tutorial from CZI for normalizing data and performing PCA on the Tabula Muris dataset [21]. |

| Seurat (R) [19] | A comprehensive R package for the analysis of single-cell genomics data. Provides a complete workflow from raw data to clustering and differential expression. | A popular alternative to Scanpy, widely used in the bioinformatics community for scRNA-seq analysis, including PCA. |

| Highly Variable Genes (HVGs) [19] | A curated list of genes that exhibit the most variation across cells, likely to drive the separation of cell populations. | Used as input for PCA to reduce noise and improve the separation of cell populations by focusing on biologically relevant features. |

| Counts Per Million (CPM) [19] [21] | A simple linear normalization method that corrects for library size by scaling each cell's total counts to a common value (one million). | The initial normalization step applied to the brain dataset to correct for differences in sequencing depth before log transformation and scaling [21]. |

| Harmony / BBKNN [19] | Algorithms designed for integrating single-cell data from different batches or experiments by removing technical batch effects. | Applied to the data before PCA when cells cluster by batch (e.g., sequencing run, facility) instead of by cell type, to ensure PCA captures biological variance. |

Frequently Asked Questions (FAQs)

If my data is already from a normalized quantification method (like Cufflinks or RSEM), do I need to normalize again before PCA? Some quantification methods incorporate library size during estimation and may not require further normalization for sequencing depth [21]. However, it is still critical to check your data for other sources of unwanted variation. Centering and scaling to unit variance is often still recommended to ensure no single gene dominates the PCA due to its expression level alone.

How do I know if my normalization was successful? Use PCA overview plots to diagnose success [21]. A successful normalization will show:

- Score Plot: Cells forming more Gaussian-looking groups, not strictly separated by technical metadata like

mouse.idorplate.barcode. - Loadings Plot: Multiple genes contributing to each principal component, not just one or two.

- Variance Explained: The variance is more evenly distributed across the first several principal components (e.g., the first 5-10), rather than being dominated exclusively by PC1.

What is the difference between 'normalization' and 'standardization' in this context? In the context of PCA preprocessing, the terms are sometimes used interchangeably, but they can have specific meanings [20]:

- Normalization often refers to correcting for library size (e.g., CPM).

- Standardization typically refers to the subsequent step of scaling each gene to have a mean of 0 and a standard deviation of 1 (unit variance). This step is crucial for preventing high-expression genes from biasing the PCA [19] [20].

Principal Component Analysis (PCA) is a foundational dimensionality reduction technique used to explore and visualize high-dimensional data, such as that from genomic sequencing studies [23] [17]. It works by transforming the data into a new set of variables, the principal components (PCs), which are ordered so that the first few retain most of the variation present in the original dataset [23]. However, the presence of technical variation, such as batch effects or sequencing depth bias, can confound these components, creating artifacts that obscure true biological signals and lead to spurious conclusions [24] [25]. This guide provides troubleshooting protocols to identify, diagnose, and correct for these artifacts, ensuring the biological integrity of your PCA findings.

Frequently Asked Questions (FAQs)

FAQ 1: What are the common indicators of technical artifacts in my PCA plot? Technical artifacts often manifest as clusters or patterns in the PCA plot that correlate with technical, non-biological variables. Key indicators include:

- Batch Effects: Samples cluster strongly according to processing date, sequencing lane, or laboratory technician [25] [26].

- Outliers: One or a few samples appear isolated from the main cluster of data points. These can be identified using standard deviation thresholds (e.g., samples beyond 2 or 3 standard deviations) [25].

- Dominant, Biologically Irrelevant PCs: The first one or two principal components are driven by technical covariates rather than the biological conditions of interest [24] [27].

FAQ 2: How can I determine if an outlier sample should be removed? Not all outliers are bad. A systematic evaluation is crucial:

- Correlate with Metadata: Check the outlier's metadata for technical issues (e.g., low read count, high contamination).

- Biological Plausibility: Assess if the outlier could represent a valid, rare biological state.

- Re-run Analysis: Re-compute PCA without the suspected outlier. If its removal causes a major shift in the structure of the remaining data, it was exerting undue influence and its removal may be justified [25].

FAQ 3: My data has a strong batch effect. What correction methods are available? After identifying a batch effect, several correction methods can be applied:

- Median Normalization: A straightforward method that adjusts each batch to a common median scale. This is effective when the batch effect is a systematic, global shift [25].

- Advanced Algorithms: Tools like ComBat or those implemented in the

limmapackage can model and remove more complex batch effects. - Enhanced PCA: Methods like PCA-Plus incorporate batch information directly into the visualization by calculating group centroids and dispersion rays, helping to objectively quantify the batch effect's magnitude [26]. Always validate that the correction method removes technical variation without distorting the underlying biological signal.

FAQ 4: Are there PCA alternatives that are more robust to technical variation? Yes, several related techniques can be more effective in specific scenarios:

- Contrastive PCA (cPCA): This method uses a background dataset (e.g., control samples or a dataset known to contain the technical variation) to identify low-dimensional structures that are enriched in your target dataset. This helps to visualize patterns specific to your condition of interest that might be masked by dominant technical noise in standard PCA [27].

- PCA-Plus: This enhancement to conventional PCA provides tools for objectively quantifying group differences (e.g., batch effects) using a novel Dispersion Separability Criterion (DSC) and includes a permutation test for statistical significance [26].

Troubleshooting Guides

Problem 1: Suspected Batch Effect Artifact

Symptoms: Clusters in the PCA plot correspond to processing batches rather than biological groups.

Diagnosis and Solution Protocol:

- Color by Batch: Re-plot the PCA, coloring the data points by their batch identifier (e.g., sequencing run date).

- Compute Centroids: Calculate the centroid (mean position) for each batch group in the principal component space [26].

- Calculate DSC: Apply the Dispersion Separability Criterion (DSC) to quantify the batch effect. DSC is the ratio of between-group dispersion to within-group dispersion. A higher DSC indicates greater separation between batches [26].

- Apply Correction: Choose and apply a batch correction method like median normalization [25].

- Re-assess: Perform PCA on the corrected data and confirm that batch-associated clustering is reduced. The DSC value should decrease post-correction.

The following workflow outlines this diagnostic and correction process:

Problem 2: Dominant Variation from Sequencing Depth

Symptoms: The first principal component (PC1) explains a very high percentage of variance and separates samples based purely on their total read count (sequencing depth), masking biologically relevant variation.

Diagnosis and Solution Protocol:

- Correlate PC with Depth: Calculate the correlation between PC1 values and the total read count (sequencing depth) for each sample. A strong correlation indicates a depth-related artifact.

- Apply Transformation: Use variance-stabilizing transformations on the count data before performing PCA. For RNA-Seq data, a log-transformation or VST (Variance Stabilizing Transformation) is often effective.

- Utilize Contrastive PCA: If a suitable background dataset is available (e.g., samples with similar technical variation but without the biological signal of interest), apply cPCA. cPCA finds components with high variance in your target data but low variance in the background, effectively filtering out the shared technical noise like that from sequencing depth [27].

- Validate: Confirm that the correlation between the new top PCs and sequencing depth is minimized and that biological groups of interest become more distinguishable.

The logical relationship between the problem and solution pathways is shown below:

Table 1: Quantitative Metrics for Artifact Diagnosis

This table summarizes key metrics to diagnose and quantify technical artifacts in PCA.

| Metric | Calculation Method | Interpretation | Typical Threshold for Concern |

|---|---|---|---|

| Dispersion Separability Criterion (DSC) [26] | ( DSC = Db / Dw ) Where ( Db ) is trace of between-group scatter matrix and ( Dw ) is trace of within-group scatter matrix. | Quantifies separation between pre-defined groups (e.g., batches). A higher value indicates stronger group structure. | Context-dependent; a significant p-value from the accompanying permutation test indicates a non-random group structure [26]. |

| PC-Sequencing Depth Correlation | Pearson correlation coefficient between sample sequencing depths and their values along a principal component. | Measures the influence of sequencing depth on a specific PC. A strong correlation (near +1 or -1) suggests a technical artifact. | Absolute value > 0.7 suggests a strong, potentially confounding correlation. |

| Standard Deviation Outlier Threshold [25] | Flag samples that fall outside an ellipse set at a specified number of standard deviations from the data centroid in PC space. | Identifies individual samples that are extreme outliers, which may unduly influence the PCA model. | Samples beyond 2.0 (approx. 95% of data) or 3.0 (approx. 99.7% of data) standard deviations. |

Table 2: Key Research Reagent Solutions

Essential computational tools and packages for implementing the diagnostics and corrections discussed.

| Tool / Reagent | Function / Purpose | Key Utility |

|---|---|---|

| PCA-Plus R Package [26] | Enhanced PCA with group centroids, dispersion rays, and DSC metric. | Objectively quantifies and visualizes batch effects and group differences. |

| Contrastive PCA (cPCA) [27] | Identifies patterns enriched in a target dataset relative to a background dataset. | Suppresses shared technical variation (e.g., sequencing depth) to reveal condition-specific signals. |

| SmartPCA (EIGENSOFT) [24] | A widely cited implementation of PCA for population genetics. | Standard tool for initial data exploration; serves as a baseline for identifying artifacts. |

| scales & prismatic packages [28] | Automates the selection of high-contrast colors for data visualization. | Ensures accessibility and clarity in PCA plots, adhering to WCAG contrast guidelines [29] [30]. |

Protocol 1: Implementing Contrastive PCA (cPCA) to Correct for Sequencing Depth Bias

Objective: To use cPCA to visualize biological patterns in gene expression data that are obscured by technical variation from sequencing depth.

Methodology:

- Data Preparation:

- Target Dataset ({xi}): Your primary gene expression count matrix (e.g., from RNA-Seq), with genes as rows and samples as columns.

- Background Dataset ({yi}): A carefully chosen dataset that shares the technical variation (e.g., similar distribution of sequencing depths, batch structure) but lacks the specific biological signal of interest. This could be a set of control samples from the same sequencing runs or a public dataset processed with similar technology.

- Preprocessing: Normalize both datasets for sequencing depth (e.g., using TPM or CPM). Log-transform the normalized counts to stabilize variance.

Covariance Matrix Computation: Calculate the sample covariance matrices for both the target dataset (ΣX) and the background dataset (ΣY).

Contrastive Parameter Search: The core of cPCA involves finding a direction (contrastive component) that maximizes variance in the target data while minimizing variance in the background data. This is done by solving a generalized eigenvalue problem for the matrix ( \SigmaX - \alpha \SigmaY ), where α is a contrastive parameter. A range of α values (e.g., from 0 to 1) must be tested to find the one that reveals the most informative structure [27].

Visualization and Interpretation: Project the target data onto the top contrastive principal components (cPCs). Visually inspect the resulting scatterplot for clusters or trajectories that correspond to biological groups. These patterns are enriched in your target data relative to the technical background.

Validation: Compare the cPCA plot to a standard PCA plot of the same target data. Successful correction is indicated by the emergence of biologically meaningful clusters in cPCA that were absent or obscured in the standard PCA.

Normalization Techniques for Robust PCA: From Basic Scaling to Advanced Methods

Counts Per Million (CPM) is a fundamental normalization technique in RNA sequencing (RNA-seq) data analysis. Its primary purpose is to account for differences in sequencing depth—the total number of reads obtained from a sample—which, if uncorrected, would make gene expression levels incomparable between samples [31]. While CPM is valued for its simplicity and intuitiveness [8], researchers must be aware of its specific limitations, particularly in the context of sophisticated analyses like Principal Component Analysis (PCA), where it can introduce significant biases [9].

This guide addresses common questions and troubleshooting points regarding the use and misuse of CPM normalization.

Frequently Asked Questions & Troubleshooting

FAQ 1: What is the correct way to calculate CPM, and when should I use it?

CPM is calculated for each gene in a sample using the following formula [31] [8]:

CPM = (Number of reads mapped to the gene / Total number of mapped reads in the sample) * 1,000,000CPM is suitable for within-sample comparisons when you need to assess the relative abundance of different genes in a single sample. It corrects for the fact that samples sequenced to different depths will have different raw counts, allowing you to see which genes are most highly expressed in that specific sample [8].

FAQ 2: Why are my sample clusters in a PCA plot driven by sequencing depth and not by biology?

This is a classic symptom of using CPM (or other similar methods like RPKM/FPKM) for between-sample comparisons without further correction. CPM only scales counts by the total library size. It does not account for library composition bias [13]. This bias occurs when a few genes are extremely highly expressed in one condition, consuming a large fraction of the sequencing reads. This skews the total count and, consequently, the CPM values for all other genes in that sample. PCA is highly sensitive to these systematic technical differences, which can overshadow true biological variation [9]. For PCA and other between-sample analyses, use methods designed to handle composition bias, such as TMM (in

edgeR) or median-of-ratios (inDESeq2) [13] [31].FAQ 3: I've normalized with CPM, but my differential expression analysis results are unreliable. Why?

CPM (and also TPM, RPKM, FPKM) is not suitable for direct use in statistical testing for differential expression (DE) [13] [31]. These normalized counts are not the native input for statistical tools like

DESeq2oredgeR. These tools perform their own internal normalization based on robust assumptions about the data (e.g., that most genes are not differentially expressed) to estimate dispersion and model variance accurately [13]. Using CPM-normalized data as input for these tools can violate their statistical assumptions and lead to an increased false discovery rate. You should provide these tools with the raw count matrix and allow them to apply their own normalization methods [8].FAQ 4: Can I use CPM to compare expression levels between two different genes?

No. CPM does not correct for gene length [31]. Longer genes will naturally have more reads mapped to them than shorter genes expressed at the same biological abundance [31] [8]. Therefore, a higher CPM value for Gene A compared to Gene B could simply mean that Gene A is longer, not that it is more highly expressed. For within-sample comparisons between genes, use units that account for both sequencing depth and gene length, such as TPM (Transcripts Per Million) or FPKM [31] [8].

Comparative Analysis of Normalization Methods

The table below summarizes key normalization methods and their characteristics to help you select the appropriate one for your analysis goals.

| Method | Sequencing Depth Correction | Gene Length Correction | Library Composition Correction | Primary Use Case |

|---|---|---|---|---|

| CPM | Yes [31] | No [13] | No [13] | Initial data screening; within-sample relative comparison [8]. |

| TPM | Yes [31] | Yes [31] | Partial [13] | Within-sample gene comparison; cross-sample visualization [13] [8]. |

| FPKM/RPKM | Yes [31] | Yes [31] | No [13] | (Largely superseded by TPM) Within-sample gene comparison [8]. |

TMM (edgeR) |

Yes [31] | No [13] | Yes [13] [31] | Differential expression analysis; between-sample comparisons [8]. |

Median-of-Ratios (DESeq2) |

Yes [13] | No [13] | Yes [13] | Differential expression analysis; between-sample comparisons [13]. |

| Quantile | Varies | No | Yes (assumes global distribution is technical) | Making sample distributions comparable; cross-dataset integration [31] [8]. |

Experimental Workflow: Placing CPM in the RNA-seq Analysis Pipeline

Understanding where CPM fits into a complete RNA-seq analysis workflow is crucial for avoiding common pitfalls. The diagram below outlines a standard workflow, highlighting the appropriate and inappropriate stages for using CPM.

The Scientist's Toolkit: Essential Research Reagents & Solutions

The following table lists key computational tools and resources essential for implementing proper RNA-seq normalization and analysis.

| Tool/Resource | Function | Relevance to CPM & Normalization |

|---|---|---|

| FastQC / MultiQC | Quality control of raw sequencing data [13] | Essential first step to identify issues (e.g., adapter contamination, low quality) before any normalization is applied. |

| DESeq2 (R/Bioconductor) | Differential expression analysis [13] | Uses its own median-of-ratios normalization. Input should be raw counts, not CPM. |

| edgeR (R/Bioconductor) | Differential expression analysis [13] | Uses its own TMM normalization. Input should be raw counts, not CPM. |

| Kallisto / Salmon | Pseudoalignment for transcript quantification [13] | Provides fast transcript-level abundance estimates, which can be aggregated to gene-level counts or imported with uncertainty for differential analysis. |

| SAMtools / Picard | Processing aligned reads (BAM files) [13] | Used in post-alignment QC to remove poorly mapped or duplicate reads, ensuring the integrity of the raw counts used for normalization. |

In the context of sequencing depth bias correction for Principal Component Analysis (PCA) research, the choice of normalization method is a critical preprocessing step that can profoundly influence downstream analytical outcomes. Methods that accurately account for technical variations, such as differences in sequencing depth and RNA composition, are essential for ensuring that the principal components reflect genuine biological signal rather than technical artifacts. Two of the most robust methods for this purpose are the Trimmed Mean of M-values (TMM) from the edgeR package and the Median-of-Ratios (Relative Log Expression) method from the DESeq2 package. This guide provides an in-depth technical overview, troubleshooting advice, and frequently asked questions to assist researchers in implementing these methods effectively.

Methodologies at a Glance

The table below summarizes the core features of the TMM and Median-of-Ratios normalization methods.

Table 1: Comparison of Core Normalization Methods

| Feature | DESeq2's Median-of-Ratios (RLE) | edgeR's TMM |

|---|---|---|

| Primary Accounted For | Sequencing depth & RNA composition [32] | Sequencing depth & RNA composition [33] [8] |

| Core Hypothesis | The majority of genes are not differentially expressed [32] | The majority of genes are not differentially expressed [33] [8] |

| Reference Sample | A pseudo-reference sample created from the geometric mean of all samples [32] | A single sample is chosen as a reference, often the one with an upper quartile closest to the mean [33] |

| Normalization Factor | The median of ratios of counts to the pseudo-reference [32] | A weighted, trimmed mean of log expression ratios (M-values) [33] |

| Recommended Use | Gene count comparisons between samples and DE analysis [32] | Gene count comparisons between and within samples, and DE analysis [32] [8] |

Workflow and Signaling Pathways

The following diagrams illustrate the logical workflows for implementing the DESeq2 and edgeR normalization methods.

DESeq2 Median-of-Ratios Workflow

edgeR TMM Normalization Workflow

The Scientist's Toolkit: Essential Research Reagents and Computational Solutions

Table 2: Key Computational Tools and Their Functions

| Item | Function/Purpose |

|---|---|

| R Statistical Environment | The foundational software platform for executing all statistical computations and analyses. |

| Bioconductor Project | A repository for bioinformatics R packages, providing DESeq2, edgeR, and other essential tools. |

| DESeq2 R Package | Implements the Median-of-Ratios normalization and a suite of differential expression analysis methods [32]. |

| edgeR R Package | Implements the TMM normalization and statistical methodologies for differential expression analysis [33]. |

| Raw Count Matrix | The input data, where rows represent genes and columns represent samples, containing the number of mapped reads per gene. |

| High-Performance Computing (HPC) Cluster | Recommended for handling the intensive computational load of large RNA-seq datasets. |

Troubleshooting Common Issues

Problem 1: DESeq2 Error Regarding Repeated Input or Different Number of Rows

Issue: When running DESeq2, you encounter an error stating that the input file has a "repeated input" or that there is a "different number of rows" in your count data [34].

Solutions:

- Check for Replicates: DESeq2 and most differential expression tools require biological replicates. Ensure your experimental design includes at least two samples per condition [34].

- Verify Gene Count Consistency: All count files must have the same number of rows (genes). A different number of lines indicates an upstream problem with the reference annotation or the feature counting step (e.g., using different genome annotations or versions for different samples) [34].

- Inspect Upstream Analysis: Re-run the alignment and read counting steps (e.g., StringTie, featureCounts) using the exact same reference annotation file for all samples to ensure consistency [34].

Problem 2: Conceptual Confusion with edgeR's "Normalized Counts"

Issue: There is confusion about how to obtain "TMM-normalized counts" from edgeR, as the package does not store a separate matrix of normalized counts internally [35].

Solutions:

- Use

cpm()Function: The standard way to export normalized expression values from edgeR is to use thecpm()(counts per million) function on theDGEListobject after runningcalcNormFactors(). This yields counts normalized by the effective library sizes [35]. - Understand Effective Library Size: TMM normalization produces a scaling factor used to compute an "effective library size." The

cpm()function uses this effective library size internally, resulting in values that are normalized for both sequencing depth and RNA composition [35].

Frequently Asked Questions (FAQs)

Q1: Can I use DESeq2 to perform TMM normalization?

No, TMM normalization is a specific method implemented in the edgeR package. While DESeq2 can output normalized counts using its own Median-of-Ratios method, it does not implement TMM [36].

Q2: For between-sample comparisons in a PCA, which normalization method should I use? Both TMM and Median-of-Ratios are designed for between-sample comparisons and are suitable for use prior to PCA [32] [8]. They effectively correct for sequencing depth and RNA composition biases, which is crucial for ensuring that the computed principal components reflect biological, rather than technical, variance.

Q3: Why are my normalized counts from DESeq2 and edgeR not whole numbers? Normalized counts generated by these methods are the result of dividing raw counts by a real-numbered size or normalization factor. They are therefore not integers and should not be treated as such [32]. These values are used for downstream analysis and visualization, not as raw counts.

Q4: How do I choose between TMM and DESeq2's Median-of-Ratios method?

Both methods are highly robust and perform similarly in many scenarios [37] [38]. The choice can depend on the specific data set and the broader analytical pipeline you intend to use. If you plan to use the full DESeq2 suite for differential expression analysis, it is logical to use its built-in normalization. The same applies to edgeR. For a simple two-condition experiment, the choice of normalization method may have minimal impact, but for more complex designs, a careful comparison might be beneficial [37].

FAQs on Normalization Methods

1. What is the fundamental difference between TPM and RPKM/FPKM?

The core difference lies in the order of operations for normalization, which profoundly impacts the results [39].

- RPKM/FPKM first normalizes for sequencing depth and then for gene length.

- TPM first normalizes for gene length and then for sequencing depth.

This means that for a given sample, the sum of all TPM values is constant (equal to 1 million), allowing you to directly interpret each value as the fraction of total transcripts that a specific gene represents. In contrast, the sum of all RPKM or FPKM values in a sample can vary, making cross-sample comparisons of individual gene expression less straightforward [39] [40] [41].

2. Why is TPM often preferred for cross-sample comparison, especially in the context of PCA?

PCA is sensitive to the variance structure of the entire dataset. Normalization methods that do not produce a consistent total across samples, like RPKM/FPKM, can introduce spurious variance that distorts the principal components [9]. Since TPM ensures that the total quantified expression is the same for every sample, it preserves the relative relationships between genes and provides a more stable foundation for multivariate analyses like PCA, leading to more reliable and interpretable results [39] [41].

3. Can I directly compare TPM values from different sequencing protocols (e.g., poly(A)+ selection vs. rRNA depletion)?

No, you should not directly compare them. The relative abundance measured by TPM depends on the composition of the RNA population in your sample [42]. Different sample preparation protocols sequence fundamentally different RNA repertoires. For example, in a poly(A)+ selected library, protein-coding genes may dominate, while in an rRNA-depleted library, non-coding RNAs can be the most abundant species [42]. Comparing TPM values directly in such a case would be misleading, as the same physical RNA molecule could have a very different relative value in the two different backgrounds.

Troubleshooting Guide

Problem: Poor sample clustering in PCA that does not reflect biological expectations.

- Potential Cause: The use of RPKM/FPKM normalization for cross-sample comparison.

- Solution: Re-normalize your gene count data using the TPM method. Because TPM maintains a constant total across samples, it provides a more consistent proportional representation of gene expression, which often improves clustering based on biological rather than technical differences [39] [9]. For downstream differential expression analysis, consider using tools like DESeq2 or edgeR that employ more advanced normalization methods (e.g., median-of-ratios or TMM) designed specifically for cross-condition comparisons [43] [13].

Problem: Inconsistent results when comparing expression of the same gene across different projects.

- Potential Cause: Differences in experimental protocol or data processing pipelines, combined with the use of relative expression measures (TPM, RPKM) [42].

- Solution: Be cautious when integrating public datasets. Always note the library preparation protocol (e.g., poly(A)+ selection vs. rRNA depletion) and the transcriptome annotation used for quantification. If possible, re-process raw reads through a unified bioinformatics pipeline. For meta-analyses, consider using normalized counts from a robust pipeline (e.g., DESeq2) instead of relative abundance measures [43].

Comparison of RNA-Seq Normalization Methods

The table below summarizes the key characteristics, formulas, and use cases for common normalization measures [13] [40] [41].

| Method | Full Name | Key Formula | Corrects for Sequencing Depth? | Corrects for Gene Length? | Sum is Constant Across Samples? | Primary Recommended Use |

|---|---|---|---|---|---|---|

| TPM | Transcripts Per Million | ( \text{TPM}i = \frac{\frac{\text{Reads}i}{\text{Length}i}}{\sumj \frac{\text{Reads}j}{\text{Length}j}} \times 10^6 ) | Yes | Yes | Yes | Cross-sample comparison, PCA |

| RPKM/FPKM | Reads/Fragments Per Kilobase per Million | ( \text{RPKM}i = \frac{\text{Reads}i}{ \frac{\text{Length}_i}{10^3} \times \frac{\text{Total Reads}}{10^6} } ) | Yes | Yes | No | Within-sample gene comparison (legacy) |

| RPM/CPM | Reads/Counts Per Million | ( \text{RPM}i = \frac{\text{Reads}i}{\text{Total Reads}} \times 10^6 ) | Yes | No | No | Small RNA-seq, chip-seq |

Note: FPKM is the paired-end equivalent of RPKM. The formulas use "Reads" for simplicity [40] [41].

Experimental Workflow: From Raw Reads to Normalized Expression

The following diagram illustrates a standard RNA-seq data processing workflow, highlighting the quantification and normalization steps critical for obtaining accurate expression values for downstream analyses like PCA [13] [44].

The Scientist's Toolkit: Essential Reagents & Materials

The table below lists key materials and tools used in a typical RNA-seq experiment for gene expression analysis [42] [13] [44].

| Item | Function / Description |

|---|---|

| Oligo(dT) Beads | For purification and enrichment of polyadenylated mRNA from total RNA. |

| rRNA Depletion Kits | For removal of abundant ribosomal RNA (rRNA) to sequence both polyA+ and polyA- transcripts. |

| Fragmentation Enzymes/Buffers | To randomly fragment RNA or cDNA into uniform sizes suitable for sequencing. |

| Reference Transcriptome | A curated set of known transcript sequences (e.g., from GENCODE) used for read alignment and quantification. |

| Alignment Software (e.g., STAR, HISAT2) | Maps sequenced reads to a reference genome or transcriptome. |

| Pseudoalignment Tools (e.g., Kallisto, Salmon) | Fast, alignment-free software for transcript abundance estimation. |

| Quantification Tools (e.g., featureCounts, HTSeq) | Generates raw read counts per gene from aligned read files (BAM). |

Frequently Asked Questions (FAQs)

Core Concepts

1. Why is normalization absolutely necessary before performing PCA on RNA-seq data?

PCA is a variance-maximizing technique. In RNA-seq data, the raw read counts for a gene are influenced not only by its true biological expression level but also by technical factors, primarily the sequencing depth (the total number of reads obtained for a sample). A sample with a higher sequencing depth will naturally have higher raw counts across all genes. If PCA is run on unnormalized data, it will prioritize this technical variance from sequencing depth over more subtle biological variances, potentially leading to misleading results where samples cluster based on their library size rather than biological condition [13] [20]. Normalization adjusts the counts to remove these technical biases, ensuring that PCA captures meaningful biological variation [9].

2. What is the difference between 'within-sample' and 'between-sample' normalization?

- Within-sample normalization adjusts gene counts to account for two factors: sequencing depth and gene length. This allows for a more accurate comparison of expression levels between different genes within the same sample. Methods like FPKM, RPKM, and TPM fall into this category [8].

- Between-sample normalization adjusts counts primarily to account for differences in sequencing depth and library composition across multiple samples. This is essential for comparing the expression of the same gene between different samples or conditions. Methods like TMM (Trimmed Mean of M-values) and DESeq2's "median-of-ratios" are designed for this purpose and are required prior to analyses like PCA and differential expression [13] [8].

Implementation & Methodology

3. Which normalization method should I choose before PCA?

The choice of method depends on your data and analytical goals. The table below summarizes common methods and their suitability.

Table 1: Common RNA-seq Normalization Methods and Their Characteristics

| Method | Sequencing Depth Correction | Gene Length Correction | Library Composition Correction | Suitable for Between-Sample PCA? | Key Notes |

|---|---|---|---|---|---|

| CPM | Yes | No | No | No | Simple scaling; highly influenced by a few highly expressed genes [13] [8]. |

| FPKM/RPKM | Yes | Yes | No | No | Designed for within-sample comparisons; not ideal for between-sample comparison due to composition bias [13] [8]. |

| TPM | Yes | Yes | Partial | No | An improvement over FPKM/RPKM where the sum of all TPMs is constant per sample; good for visualization but not for downstream statistical testing [8]. |

| TMM | Yes | No | Yes | Yes | Implemented in edgeR. Robust to composition bias by assuming most genes are not differentially expressed [13] [8] [45]. |

| Median-of-Ratios | Yes | No | Yes | Yes | Implemented in DESeq2. Uses a geometric mean-based pseudo-reference to calculate size factors [13]. |

| Quantile | Yes | No | Yes | Yes | Forces the distribution of gene expression to be the same across all samples. Can be effective but may distort true biological differences in some cases [8] [45]. |

For a standard PCA aimed at exploring sample relationships based on gene expression, between-sample methods like TMM (from the edgeR package) or the median-of-ratios method (from the DESeq2 package) are generally recommended and are considered the standard in the field [13] [9].

4. What is a detailed protocol for normalizing RNA-seq data prior to PCA?

The following workflow outlines the key steps from raw data to a normalized matrix ready for PCA.

Step-by-Step Protocol:

- Quality Control (QC): Use

FastQCto generate quality reports for your raw sequencing files (FASTQ format). UseMultiQCto aggregate reports from multiple samples into a single view. Examine metrics like per-base sequence quality, adapter contamination, and GC content [13]. - Read Trimming & Adapter Removal: Based on the QC report, use tools like

Trimmomaticorfastpto remove low-quality base calls, adapter sequences, and other technical sequences. This step is crucial for accurate alignment [13]. - Alignment/Pseudo-alignment: Map the cleaned reads to a reference genome using aligners like

STARorHISAT2. Alternatively, for faster processing and quantification, use pseudo-aligners likeKallistoorSalmonwhich estimate transcript abundances without generating base-by-base alignments [13]. - Read Quantification: Generate a raw count matrix that summarizes the number of reads mapped to each gene in each sample. Tools like

featureCountsorHTSeq-countare commonly used for this step after alignment. Pseudo-aligners likeSalmondirectly output count estimates [13]. - Normalization (Key Step for PCA): Import the raw count matrix into an analysis environment (e.g., R/Bioconductor). Apply a between-sample normalization method.

- Example using DESeq2's median-of-ratios in R:

- Example using TMM from edgeR in R:

- Perform PCA: Use the normalized count matrix (not the raw counts) as input for PCA. Most PCA functions require the data to be transposed, so that rows are samples and columns are genes.

Troubleshooting

5. My PCA results are still dominated by technical batches after normalization. What should I do?

Between-sample normalization methods correct for sequencing depth but may not fully account for other batch effects (e.g., samples processed on different days, by different personnel, or at different sequencing centers). If your PCA shows strong clustering by batch rather than condition, you need to apply batch effect correction methods after normalization [8] [46] [45].

- Common Methods: Tools like

ComBat(from thesvapackage) orremoveBatchEffect(from thelimmapackage) can be used to model and subtract known batch effects from your normalized data before proceeding with PCA [8] [45]. - Important Note: Batch correction should only be applied when the batch effect is not confounded with the biological variable of interest. For example, you cannot correct for a batch if all control samples were sequenced in one batch and all treated samples in another [46].

6. I have single-cell RNA-seq (scRNA-seq) data with vastly different read depths per cell. How should I normalize?

scRNA-seq data presents unique challenges like high dropout rates and extreme variability in sequencing depth between cells. Standard bulk RNA-seq methods may not be optimal.

- Specialized Methods: For UMI-based scRNA-seq data, methods like analytic Pearson residuals (as implemented in

scTransformandScanpy) have been shown to outperform traditional normalization for tasks like highly variable gene selection and dimensionality reduction [47]. - Other Options: Tools like

SCnormare also designed to address the dependence of transcript count distributions on sequencing depth in single-cell data [48]. The core principle remains: normalization is non-negotiable before PCA.

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 2: Key Bioinformatics Tools for RNA-seq Normalization and PCA

| Tool / Resource Name | Function / Category | Brief Explanation of Role |

|---|---|---|

| FastQC / MultiQC | Quality Control | Provides initial assessment of raw sequencing data quality, identifying issues like adapter contamination or low-quality bases [13]. |

| Trimmomatic / fastp | Read Trimming | Cleans raw reads by removing adapter sequences and low-quality base calls, which is critical for accurate downstream alignment [13]. |

| STAR / HISAT2 | Read Alignment | Aligns (maps) sequencing reads to a reference genome to determine their genomic origin [13]. |

| Kallisto / Salmon | Pseudo-alignment & Quantification | Fast, alignment-free tools for quantifying transcript abundances. Useful for large datasets and often incorporated into modern pipelines [13]. |

| featureCounts / HTSeq | Read Quantification | Generates the raw count matrix by counting the number of reads mapped to each gene [13]. |

| DESeq2 | Normalization & Differential Expression | Provides the "median-of-ratios" method for between-sample normalization, a standard for downstream statistical analysis [13]. |

| edgeR | Normalization & Differential Expression | Provides the "TMM" (Trimmed Mean of M-values) method, another standard for between-sample normalization [13] [8]. |

| ComBat (sva package) | Batch Effect Correction | Empirical Bayes method used to adjust for known batch effects in normalized data before PCA [8] [45]. |

| scTransform (Seurat) | Single-Cell Normalization | Uses Pearson residuals to normalize scRNA-seq UMI data, accounting for sequencing depth and biological variance [47]. |

Frequently Asked Questions (FAQs)

1. What is the fundamental trade-off between sequencing depth and sample size? The core trade-off lies in the allocation of a fixed sequencing budget. Deeper sequencing (more reads per cell) reduces technical noise for more precise gene expression measurements in each cell, while sequencing more cells provides a broader view of biological variability within the cell population. The goal is to balance these to minimize the overall estimation error for your biological question [4].

2. How does sequencing depth specifically bias Principal Component Analysis (PCA)? PCA is a multivariate tool that can be heavily influenced by how data is normalized. Different normalization methods, required to make samples comparable, affect the correlation structure of the data. Consequently, the outcome of PCA and the biological interpretation of the principal components can depend significantly on the normalization method chosen, which is itself influenced by sequencing depth and library size [9].

3. What is a simple starting point for determining sequencing depth in a bulk RNA-Seq experiment? For bulk RNA-Seq, a foundational observation is that approximately 91% ± 4% of all annotated genes are sequenced at a rate of 0.1 reads per million mapped reads. This means that to ensure a gene is covered by at least 10 reads, a sequencing depth of 10 million reads is a good baseline [49].

4. What is a key guideline for sequencing depth in a single-cell RNA-Seq (scRNA-seq) experiment? For many scRNA-seq experiments, particularly those using 3'-end counting (like UMI protocols), the optimal strategy for estimating fundamental gene properties is often to sequence at a depth of around one read per cell per gene. This typically means maximizing the number of cells sequenced under a fixed budget [4].

5. What are common library preparation issues that affect data quality? Common issues fall into several categories, each with distinct failure signals [16]:

- Sample Input/Quality: Degraded DNA/RNA or contaminants leading to low yield and complexity.

- Fragmentation/Ligation: Over- or under-shearing, or inefficient ligation causing adapter-dimer peaks.

- Amplification/PCR: Too many cycles leading to high duplicate rates and amplification artifacts.

- Purification/Cleanup: Incorrect bead ratios or over-drying leading to sample loss or adapter carryover.

Troubleshooting Guides

Guide 1: Troubleshooting Poor PCA Results Suspected from Depth Bias

Problem: Your PCA results are unstable, difficult to interpret biologically, or seem driven by technical artifacts rather than biological groups.

| Step | Action | Diagnostic Cues & Solutions |

|---|---|---|

| 1 | Audit Your Normalization | Check if the normalization method is appropriate for your data. Try several widely used methods and compare if the core biological story in the PCA remains consistent [9]. |

| 2 | Check Gene Detection Rates | Investigate the mean counts per gene. An abundance of genes with very low average counts (e.g., <1) across cells may indicate insufficient sequencing depth, making the data dominated by technical noise [4]. |

| 3 | Re-assess Experimental Design | If the project is in the planning stage, use a power calculation tool to determine the optimal allocation of your sequencing budget between the number of biological replicates and sequencing depth per sample [49]. |

Guide 2: Diagnosing and Fixing Low Library Yield

Problem: The final concentration of your sequencing library is unexpectedly low.

| Symptom | Possible Root Cause | Corrective Action |

|---|---|---|

| Low yield across all samples; degraded sample smear on electropherogram. | Poor input quality or contaminants inhibiting enzymes [16]. | Re-purify input sample; use fluorometric quantification (Qubit) over absorbance (NanoDrop); ensure purity ratios (260/280 ~1.8) [16] [50]. |

| Sharp peak at ~70-90 bp on Bioanalyzer. | High adapter-dimer formation from inefficient ligation or purification [16]. | Titrate adapter-to-insert ratio; optimize bead clean-up parameters to remove short fragments; ensure fresh ligase buffer [16]. |

| Yield drop after amplification. | PCR overcycling or inhibitors [16]. | Reduce the number of PCR cycles; use high-fidelity polymerases; ensure no carryover of purification beads or salts [16]. |

Experimental Protocols & Data Analysis

Power Analysis for RNA-Seq Experimental Design

This methodology helps determine the number of biological replicates needed to detect a specific fold-change with a given statistical power.

Key Formula:

The required number of samples per group (n) can be estimated as [49]:

n = (Z_α/2 + Z_β)^2 * [1/(μ * Δ^2) + σ^2] / (log(Δ))^2

Parameter Definitions:

- Zα/2 & Zβ: Critical values from the normal distribution for significance level (α, e.g., 1.96 for α=0.05) and statistical power (β, e.g., 1.28 for 90% power).

- μ: The average read count or coverage for the gene of interest.

- σ: The coefficient of biological variation for the gene.

- Δ: The target fold-change to be detected (e.g., 1.5 for a 50% change).

Practical Application:

This formula is implemented in user-friendly tools. For grant preparations, a simple Excel sheet is available. For complex queries, an R package (RNASeqPower) is available via Bioconductor [49].

Standardized Workflow for Depth and Size Optimization

The following diagram outlines a logical workflow for designing your sequencing experiment.

Recommended Sequencing Depth for scRNA-seq

| Biological Task | Recommended Guideline | Key Consideration |

|---|---|---|

| Gene Property Estimation (e.g., CV, correlation) | ~1 UMI/cell/gene on average for genes of interest [4]. | Allocate budget to maximize cell number. |

| Cell Type Identification | Can be performed at relatively shallow sequencing depths [4]. | Sufficient to capture major transcriptional states. |

| Accurate Gene Expression | Deeper sequencing is recommended [4]. | Required to reduce technical noise for precise quantification. |

Bulk RNA-Seq Gene Coverage

| Sequencing Depth (Million Reads) | Approx. % of Genes Covered (≥10 reads) | Notes |

|---|---|---|

| 10 | ~90% | Based on 0.1 reads/million rate for most genes [49]. |

| 100 | Varies | Early studies showed mixed results, highlighting influence of variability [49]. |

The Scientist's Toolkit: Essential Research Reagents & Materials

| Item | Function & Importance in Experimental Design |

|---|---|

| Fluorometric Quantification Kit (e.g., Qubit) | Accurately measures double-stranded DNA concentration. Critical for avoiding overestimation common with photometric methods (NanoDrop), which is a leading cause of sequencing failure [16] [50]. |

| High-Fidelity Polymerase | Reduces errors during PCR amplification in library preparation, minimizing biases in the final sequence data [16]. |

| SPRIselect Beads | Used for post-fragmentation clean-up and size selection. The precise bead-to-sample ratio is critical for removing adapter dimers and selecting the desired insert size [16]. |

Power Calculation Software (e.g., R RNASeqPower package) |

Enables statistical justification of experimental design by calculating necessary replicates and depth to achieve target power, a requirement for grant applications [49]. |

| UMI Adapters (for scRNA-seq) | Unique Molecular Identifiers tag individual mRNA molecules before amplification, allowing bioinformatic correction for PCR duplication bias and more accurate digital counting [4]. |

Troubleshooting PCA in High-Dimensional Data: Optimization Strategies and Pitfalls

A fundamental question in virtually every single-cell RNA sequencing (scRNA-seq) experiment is how to best allocate a limited sequencing budget: should you sequence a few cells deeply or many cells at a shallower depth? [4] [51] [52] This dilemma is central to experimental design, as the choice directly impacts the reliability of downstream analyses, including Principal Component Analysis (PCA). Inaccurate sequencing depth can introduce technical biases that obscure true biological variation, a critical concern when using PCA to explore cellular heterogeneity. [9] This guide addresses this core issue through a technical FAQ format, providing actionable protocols and insights to correct for sequencing depth bias in your research.