Covariance Matrix Calculation for Gene Expression Data: A Guide for Genomic Researchers and Drug Developers

This article provides a comprehensive guide to covariance matrix calculation and analysis for high-dimensional gene expression data.

Covariance Matrix Calculation for Gene Expression Data: A Guide for Genomic Researchers and Drug Developers

Abstract

This article provides a comprehensive guide to covariance matrix calculation and analysis for high-dimensional gene expression data. Aimed at researchers and drug development professionals, it covers foundational concepts, practical methodologies for tackling the 'large p, small n' problem, optimization strategies to overcome computational challenges, and frameworks for the biological validation and comparison of results. By integrating statistical rigor with biological interpretation, this resource aims to enhance the reliability and insight gained from covariance-based analyses in genomics and biomedical research.

The What and Why: Core Concepts and Biological Significance of Covariance in Genomics

Defining Covariance and Correlation in the Context of Gene Co-expression

In the field of genomics, understanding the complex interplay between genes is fundamental to elucidating biological processes and disease mechanisms. Covariance and correlation serve as fundamental statistical measures for quantifying these gene-gene relationships through co-expression analysis [1]. Gene co-expression networks, constructed using these measures, provide a powerful framework for predicting gene function, identifying disease modules, and uncovering novel regulatory relationships [2] [3]. The calculation of a covariance matrix forms the foundational step in these analyses, enabling researchers to capture the joint variability of gene expression profiles across multiple biological conditions [1]. With advances in sequencing technologies and the emergence of sophisticated analytical tools, the application of these concepts has evolved beyond linear relationships to capture the complex, higher-order interactions that characterize real biological systems [4]. This article explores the definitions, methodologies, and practical applications of covariance and correlation in gene co-expression studies, providing researchers with detailed protocols for implementing these analyses in their own work.

Theoretical Foundations

Definitions and Mathematical Formulations

In probability theory and statistics, covariance is formally defined as a measure of the joint variability of two random variables [1]. For two jointly distributed real-valued random variables X and Y, the covariance is defined as:

cov(X,Y) = E[(X - E[X])(Y - E[Y])] = E[XY] - E[X]E[Y]

where E[X] and E[Y] represent the expected values of X and Y, respectively [1]. The sign of the covariance indicates the tendency in the linear relationship between the variables: positive covariance suggests that greater values of one variable correspond with greater values of the other, while negative covariance indicates that greater values of one variable correspond with lesser values of the other [1].

A significant limitation of covariance is that its magnitude is affected by the units of measurement, making it difficult to assess the strength of relationship between different pairs of random variables [1]. To overcome this, researchers use the correlation coefficient, which normalizes the covariance by dividing by the geometric mean of the total variances (the product of the standard deviations) for the two random variables [1]. This normalization produces a dimensionless value between -1 and 1, facilitating comparison across different variable pairs and studies.

In the context of gene co-expression analysis, these random variables represent expression levels of genes measured across multiple samples, conditions, or time points. The resulting covariance matrix for a set of genes is a symmetric matrix where each entry (i,j) represents the covariance between the expression profiles of gene i and gene j [5]. This matrix serves as the foundation for constructing gene co-expression networks and modules.

Correlation Metrics in Genomic Research

Several correlation metrics are commonly employed in gene co-expression studies, each with distinct advantages and limitations:

Table 1: Comparison of Correlation Metrics for Gene Co-expression Analysis

| Metric | Relationship Type | Advantages | Limitations |

|---|---|---|---|

| Pearson Correlation | Linear | Simple interpretation; Computationally efficient [4] | Sensitive to outliers; Only captures linear relationships [4] |

| Spearman's Rank Correlation | Monotonic | Robust to outliers; No distributional assumptions [4] | Lower accuracy for continuous data; Loss of information due to ranking [4] |

| Distance Correlation | Linear and Non-linear | Measures all dependence types; Distribution-free; Zero only if independent [4] | High computational complexity; Requires more memory [4] |

| Maximal Information Coefficient (MIC) | All types | Captures complex relationships [4] | May create false correlations; Limited mathematical foundations [4] |

The choice of correlation metric significantly impacts the biological insights gained from co-expression analysis. While Pearson correlation remains the default measure in many tools, its assumption of linearity often fails to capture the complex relationships observed in real biological systems [4]. Distance correlation has emerged as a powerful alternative because it does not assume normality, can measure nonlinear relationships, is more robust to outliers, and is zero only if the random vectors are independent [4].

Computational Methods and Protocols

Covariance Matrix Calculation for Gene Expression Data

The calculation of a robust covariance matrix constitutes a critical first step in gene co-expression network analysis. Below is a detailed protocol for generating a covariance matrix from RNA-Seq data, adapted from the methodology used in Correlation AnalyzeR [2]:

Table 2: Key Research Reagents and Computational Tools

| Item | Function | Example Tools/Databases |

|---|---|---|

| RNA-Seq Data | Raw gene expression measurements | ARCHS4, GEO, TCGA [2] |

| Normalization Method | Removes technical artifacts and library size differences | DESeq2's VST function [2] |

| Correlation Algorithm | Calculates gene-gene associations | WGCNA, correlationAnalyzeR [2] |

| Metadata Resources | Sample categorization | Cellosaurus ontology, GEO metadata [2] |

Protocol: Calculating Tissue and Disease-Specific Covariance Matrices

Data Acquisition and Categorization

- Download RNA-Seq read counts and metadata from repositories such as ARCHS4, which contains standardized counts across hundreds of thousands of human sequencing samples [2].

- Categorize samples based on tissue descriptions from GEO using manually curated regex dictionaries in conjunction with established ontologies like Cellosaurus [2].

- Classify sample disease status (e.g., cancer vs. normal) using curated dictionaries of disease-related terms [2].

Data Filtering

- Identify and remove single-cell RNA-Seq (scRNA-Seq) samples, as this data type has demonstrated unsuitability for co-expression network inference by Pearson correlation [2].

- Discard samples with fewer than 5 million raw read counts to reduce noise from low-quality samples [2].

- Remove tissue-disease groups with fewer than 30 distinct samples to limit the effects of bias from individual samples [2].

Normalization and Transformation

Covariance and Correlation Calculation

- Calculate gene-gene Pearson correlations separately for each tissue-disease group using the correlation function of the WGCNA package [2].

- For large datasets, transform each correlation matrix row into comma-separated values for efficient storage and querying in database systems such as Azure MySQL [2].

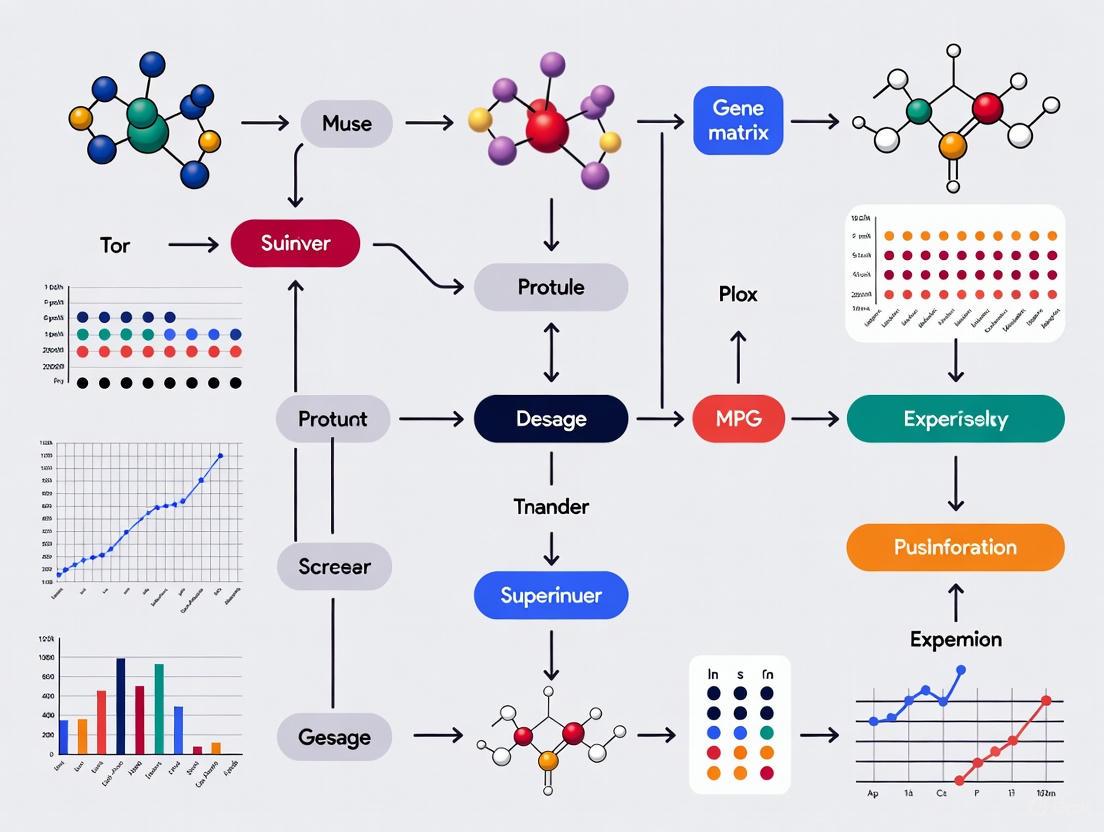

Figure 1: Workflow for calculating covariance matrices from RNA-Seq data.

Advanced Network Inference Using Covariance Dynamics

For more sophisticated applications, particularly with single-cell RNA-seq data measured after interventions at multiple time points, the WENDY (netWork infErence by covariaNce DYnamics) method provides an advanced approach to gene regulatory network inference [6]. This method leverages covariance dynamics between time points to infer regulatory relationships:

Protocol: GRN Inference with Covariance Dynamics (WENDY)

Experimental Design

- Implement an intervention that affects gene expression (e.g., drugs, gene knockdown).

- Measure expression levels of genes for different single cells at multiple time points, focusing on time points where expression has not yet reached a stationary state [6].

Covariance Matrix Computation

Dynamics Modeling

Network Inference

A key advantage of WENDY is its requirement of only two time points worth of data, making it particularly valuable in scenarios where intervention and/or measurement ultimately result in cell death [6]. Additionally, WENDY exhibits high data utilization efficiency - for single cell expression data comprising n genes across T time points, WENDY extracts (0.5n² + 0.5n)T numbers for further analyses [6].

Applications and Case Studies

Functional Prediction and Disease Module Discovery

Gene co-expression correlations have proven particularly valuable for predicting gene functionality within specific biological contexts, such as different tissue and disease conditions [2]. The Correlation AnalyzeR tool provides a user-friendly interface for exploring these relationships through several analytical modes:

- Single Gene Mode: For any gene of interest in any tissue-disease group, this method retrieves genome-wide correlations and uses them to predict gene function through specialized visualizations and statistical analyses [2].

- Gene vs. Gene Mode: This approach analyzes the relationship between two genes across multiple biological contexts, revealing condition-specific interactions [2].

- Gene Set Topology: This mode identifies biologically relevant subgroups within gene sets to facilitate discovery of novel relationships [2].

In practice, these approaches have been successfully applied to explore complex biological phenomena. For example, researchers have used Correlation AnalyzeR to investigate the role of BRCA1-NRF2 interplay in the context of bone cancer, demonstrating how co-expression analysis can effectively generate and support novel biological hypotheses [2].

The integration of co-expression networks with protein-protein interaction data has further enhanced the utility of these approaches for disease module discovery. The SWIM (SWItch Miner) methodology exemplifies this integration, combining co-expression network analysis with the human interactome to predict novel disease genes and modules [3]. This approach identifies "switch genes" within co-expression networks that regulate disease state transitions, then maps them to the human protein-protein interaction network to reveal disease-specific modules [3].

Figure 2: Workflow for disease module discovery via co-expression analysis.

Spatial Gene Co-expression Network Analysis

Recent technological advances have enabled the recovery of spatially-varying cell-specific gene co-expression networks from single-cell spatial expression data [7]. This represents a significant advancement over traditional approaches that average co-expression patterns across cell populations, ignoring spatial context. The following two-step algorithm facilitates this spatial analysis:

Protocol: Spatially-Varying Cell-Specific Networks

Data Preprocessing

Cell-Type Covariance Estimation

Cell-Specific Network Construction

- For each cell, find its appropriate spatial neighborhood [7].

- Combine cell label proportions in the neighborhood with cell-type covariance matrices to assign an empirical prior to the covariance matrix of that cell [7].

- Apply Bayes' rule to obtain posterior mean estimates, transform to correlation matrix, and apply thresholding to generate cell-specific co-expression network [7].

This approach enables not only the estimation of co-expression networks for individual cells based on their spatial context but also prediction of network structures for tissue positions where cells are not detected [7].

Emerging Methods and Future Directions

The field of gene co-expression analysis continues to evolve with several emerging methodologies enhancing traditional approaches:

Higher-Order Network Analysis: Traditional weighted gene co-expression network analysis (WGCNA) primarily characterizes pairwise relationships, failing to capture complex higher-order interactions among genes [8]. The newly proposed Weighted Gene Co-expression Hypernetwork Analysis (WGCHNA) addresses this limitation by modeling genes as nodes and samples as hyperedges using hypergraph theory [8]. This approach more effectively reveals complex co-regulatory patterns between multiple genes, such as when TP53, BRCA1, and PIK3CA jointly participate in the same cancer signaling pathway [8].

Covariation versus Coexpression: Most massive analyses of transcriptomic results obtained with single-channel techniques have used coexpression (correlation between absolute expression levels) [9]. However, evidence suggests that variation data (covariation) are far more reproducible than expression level results [9]. A method that systematically compares any two biological conditions and classifies genes as increased (I), decreased (D), or not changed (N) for each comparison can generate positive and negative covariation matrices with potentially improved biological relevance [9].

Distance Correlation Integration: The integration of distance correlation into WGCNA (DC-WGCNA) has shown promise in enhancing enrichment analysis results and improving module stability [4]. This approach is particularly valuable given that most gene expression data are not normally distributed - in analyses of multiple datasets, only 23-38% of genes followed normal distributions even after log transformation [4]. Since distance correlation does not require distributional assumptions, it maintains statistical power for non-normally distributed data [4].

As genomic technologies continue to advance, incorporating spatial context, capturing higher-order interactions, and utilizing more robust correlation metrics will likely become standard practice in gene co-expression analysis, further strengthening the biological insights gained from covariance and correlation-based approaches.

In the field of genomics, where researchers simultaneously measure the expression levels of tens of thousands of genes, the covariance matrix has emerged as a fundamental mathematical tool for extracting meaningful biological insights from high-dimensional data. While individual gene expression levels provide limited information, the coordinated expression patterns between genes, quantified through covariance and correlation, reveal the functional organization of the genome. These patterns form the basis for constructing genetic interaction networks, identifying functional gene modules, and detecting disease-specific pathway disruptions [10].

The importance of covariance analysis has grown alongside technological advancements. From early microarray studies that allowed monitoring of gene expression for thousands of genes in parallel [10] to modern single-cell RNA sequencing (scRNA-seq) technologies that profile individual cells [11], the challenge remains transforming raw expression data into biological knowledge. Covariance structure analysis enables researchers to move beyond single-gene differential expression to understand system-level rewiring in diseases like cancer, neurological disorders, and complex traits.

This Application Note provides a comprehensive framework for applying covariance-based analyses to gene expression data, with detailed protocols for network construction, confounder adjustment, and differential analysis. We focus particularly on addressing the statistical challenges inherent to high-dimensional genomic data, where the number of variables (genes) far exceeds the number of observations (samples or cells) [11] [12].

Key Applications of Covariance in Genomics

Covariance analysis enables several critical applications in genomic research, each providing unique biological insights. The table below summarizes the primary applications and their research utilities.

Table 1: Key Applications of Covariance Analysis in Genomic Research

| Application Area | Specific Uses | Research Utility |

|---|---|---|

| Gene Co-expression Networks | Identification of gene modules; Functional annotation of unknown genes; Cancer classification [10] [13] | Reveals functionally related gene groups; Predicts gene functions; Identifies disease subtypes |

| Differential Network Analysis | Detection of condition-specific changes in gene interactions; Pathway-level differential co-expression [14] | Identifies disrupted biological processes in disease; Reveals regulatory rewiring |

| Confounder Adjustment | Batch effect correction [15]; Removal of hidden technical artifacts [16] | Improves data quality and integration; Reduces false discoveries in correlation analysis |

| Single-Cell Analysis | Cell-type classification [11]; Identification of spatially variable genes [17] | Enables analysis of heterogeneous cell populations; Maps gene expression to tissue architecture |

Covariance Fundamentals for Gene Expression Data

In gene expression studies, covariance matrices capture the coordinated fluctuations in mRNA transcript abundances across genes. For a gene expression matrix ( X \in \mathbb{R}^{n \times p} ) with ( n ) samples (or cells) and ( p ) genes, the sample covariance matrix ( S ) is calculated as:

[ S{kq} = \frac{1}{n-1} \sum{i=1}^{n} (X{ik} - \bar{X}k)(X{iq} - \bar{X}q) ]

where ( \bar{X}_k ) represents the mean expression of gene ( k ) across all samples [11].

In high-dimensional genomic settings where ( p \gg n ), the classical sample covariance matrix becomes poorly conditioned and inaccurate. Several specialized approaches have been developed to address this challenge:

- Regularization Methods: Incorporating shrinkage estimators to produce well-conditioned covariance estimates [12]

- Sparse PCA: Identifying sparse principal components that better approximate the true underlying signal structure [11]

- Robust Covariance Estimation: Using methods like Minimum Regularized Covariance Determinant (MRCD) to minimize outlier effects [12]

Experimental Protocols

Protocol 1: Constructing Weighted Gene Co-expression Networks

Purpose: Identify modules of co-expressed genes from transcriptomic data to infer functional relationships and identify key regulatory genes.

Materials:

- Gene expression matrix (microarray, bulk RNA-seq, or scRNA-seq data)

- Normalization and transformation tools

- Computational resources (R/Python and relevant packages)

Table 2: Research Reagent Solutions for Network Construction

| Reagent/Resource | Function | Example Tools/Implementations |

|---|---|---|

| Normalized Expression Matrix | Input data for correlation calculations | DESeq2, EdgeR, SCTransform |

| Correlation Metrics | Quantify gene-gene associations | Pearson, Spearman, Mutual Information |

| Network Construction Algorithms | Build co-expression networks | WGCNA, WGCHNA, ARACNE, CLR |

| Module Detection Methods | Identify gene clusters | Hierarchical clustering, Dynamic tree cutting |

Procedure:

- Data Preprocessing: Normalize raw expression data to account for technical variations (library size, batch effects). For scRNA-seq data, consider pseudo-bulk aggregation approaches like metacells to reduce sparsity [13].

- Gene Selection: Filter genes to focus analysis on biologically relevant features. Common approaches include selecting genes with:

- Highest variability across samples

- Highest mean expression

- Largest differential expression across conditions [13]

- Similarity Matrix Calculation: Compute pairwise correlations between all selected genes using an appropriate measure (Pearson, Spearman, or mutual information).

- Network Construction: Transform the correlation matrix into an adjacency matrix using:

- Weighted networks: Soft thresholding to preserve connection strengths

- Unweighted networks: Hard thresholding to create binary connections [8]

- Module Detection: Identify modules of highly interconnected genes using:

- Topological Overlap Matrix (TOM): Calculate interconnectedness considering shared neighbors

- Hierarchical clustering: Group genes based on TOM dissimilarity

- Dynamic tree cutting: Define module boundaries from dendrograms [8]

- Module Characterization: Annotate modules functionally using:

- Enrichment analysis (GO, KEGG pathways)

- Correlation with sample traits

- Identification of hub genes (highly connected genes) [8]

Troubleshooting:

- For low module robustness, try different correlation thresholds or clustering parameters

- For computationally intensive analyses with large gene sets, implement feature selection or dimension reduction first

- For sparse single-cell data, use specialized methods like CS-CORE or HdWGCNA [13]

Protocol 2: Confounder Adjustment with CorrAdjust

Purpose: Identify and remove the effects of hidden confounders to improve the specificity of gene-gene correlation analyses.

Materials:

- Normalized gene expression matrix

- Reference gene sets (e.g., Gene Ontology, TarBase for miRNA-mRNA pairs)

- Computational implementation of CorrAdjust

Procedure:

- Input Preparation: Format expression data with samples as rows and genes as columns. Optionally, include multiple data types (e.g., mRNA and miRNA) with appropriate labels [16].

- Reference Collection Definition: Compile ground-truth gene sets representing known biological relationships:

- For mRNA-mRNA pairs: Gene Ontology terms sharing genes

- For miRNA-mRNA pairs: Experimentally validated targets from TarBase [16]

- Principal Component Analysis: Perform PCA on the expression matrix to derive potential confounding factors.

- Iterative PC Selection: Apply CorrAdjust's greedy algorithm to select PCs for adjustment:

- Rank gene pairs by correlation strength

- Evaluate enrichment of reference pairs among top-ranked pairs

- Select PCs that maximize this enrichment [16]

- Data Residualization: Regress out selected PCs from the expression data.

- Adjusted Correlation Analysis: Compute correlations from the residualized expression data.

Validation:

- Compare enrichment of reference pairs before and after adjustment

- Evaluate biological coherence of resulting correlation networks

- Assess specificity using negative control gene pairs [16]

Protocol 3: Differential Co-expression with DRaCOoN

Purpose: Identify gene-gene associations that change significantly between two biological conditions (e.g., healthy vs. diseased).

Materials:

- Gene expression data for two conditions (minimum ~10 samples per condition) [14]

- Predefined TF-target pairs for pathway-level analysis (optional)

- DRaCOoN implementation (Python package or web tool)

Procedure:

- Data Preparation: Ensure adequate sample size and homogeneity within each condition. Check for batch effects and apply correction if needed [14] [15].

- Association Calculation: For each gene pair, compute co-expression values separately for each condition using:

- Pearson's correlation

- Spearman's correlation

- Entropy-based measures [14]

- Differential Metric Computation: Quantify association changes between conditions using:

- Absolute Difference: ( \Delta r = |ra - rb| )

- Degree of Association Shift: ( s = r{ab} - 2 \times (ra + rb) ) [14] where ( ra ) and ( rb ) are co-expression values under conditions A and B, and ( r{ab} ) is computed across all samples.

- Significance Assessment: Apply permutation testing to evaluate statistical significance:

- Randomly shuffle condition labels

- Recompute differential metrics

- Compare observed values to null distribution [14]

- Multiple Testing Correction: Adjust p-values using Benjamini-Hochberg FDR control.

- Network Reconstruction: Build differential co-expression networks using significant gene pairs.

Advanced Application: For pathway-level differential regulation, provide predefined transcription factor-target gene pairs and focus analysis on these regulatory relationships [14].

Workflow Visualization

Covariance Analysis Workflow for Gene Expression Data

Advanced Applications and Methods

Hypergraph-Based Analysis for Higher-Order Interactions

Traditional co-expression networks based on pairwise correlations cannot capture complex multi-gene interactions. Weighted Gene Co-expression Hypernetwork Analysis (WGCHNA) addresses this limitation by modeling higher-order relationships using hypergraph theory:

- Nodes: Represent genes

- Hyperedges: Represent samples connecting multiple genes simultaneously

- Weights: Capture the strength of co-expression among multiple genes [8]

WGCHNA demonstrates superior performance in identifying biologically relevant modules, particularly for complex processes like neuronal energy metabolism in Alzheimer's disease [8].

Spatial Transcriptomics and Covariance

In spatially resolved transcriptomics (SRT), covariance analysis helps identify spatially variable genes (SVGs) whose expression patterns show non-random spatial distributions. Three categories of SVGs have been defined:

- Overall SVGs: Screen informative genes for downstream analyses

- Cell-type-specific SVGs: Reveal spatial variation within cell types

- Spatial-domain-marker SVGs: Annotate and interpret spatial domains [17]

Robust Covariance Testing for High-Dimensional Data

Comparing covariance matrices between groups (e.g., healthy vs. disease) presents challenges in high-dimensional settings. A novel robust test based on Minimum Regularized Covariance Determinant (MRCD) estimators addresses this by:

- Providing accurate testing when ( p > n )

- Maintaining robustness against outliers

- Operating without distributional assumptions through permutation [12]

This approach enables reliable detection of covariance differences in complex genomic datasets contaminated with outliers.

Covariance matrix analysis provides an essential foundation for extracting system-level insights from high-dimensional gene expression data. From constructing gene co-expression networks to detecting condition-specific pathway disruptions, covariance-based methods enable researchers to move beyond single-gene analysis to understand the complex regulatory architecture underlying biological processes and disease states.

The protocols presented here offer practical frameworks for implementing these analyses while addressing common challenges such as confounding artifacts, high dimensionality, and outlier sensitivity. As genomic technologies continue evolving toward higher resolution—including single-cell and spatial transcriptomics—covariance analysis will remain indispensable for translating expression measurements into biological understanding.

In the field of genomics, researchers are frequently confronted with a significant statistical challenge known as the "Large p, Small n" problem. This scenario occurs when the number of features or variables (p), such as genes, single nucleotide polymorphisms (SNPs), or other molecular markers, vastly exceeds the number of observations or samples (n). The emergence of high-throughput technologies, including microarray and next-generation sequencing, has made this a common feature of modern genomic studies, where it is not unusual to measure tens of thousands of genes across only dozens or hundreds of samples [18].

This imbalance between variables and samples creates substantial obstacles for statistical analysis. Traditional statistical methods, which were developed for scenarios where n exceeds p, often become inapplicable or unreliable. In the context of gene expression data research, this problem is particularly acute when attempting to estimate covariance matrices—fundamental structures that describe the interrelationships between genes. Accurate estimation of these matrices is crucial for understanding co-expression networks, identifying functional pathways, and detecting subtle patterns in genetic regulation [15].

The primary challenge in large p, small n situations is that the sample covariance matrix becomes singular or ill-conditioned, meaning it cannot be inverted reliably, which is often required for multivariate statistical procedures. Furthermore, with limited samples, the estimates of parameters become highly variable, leading to overfitting where models perform well on the training data but fail to generalize to new data. This directly impacts the reliability of biological conclusions drawn from genomic studies and necessitates specialized statistical approaches to overcome these limitations [19] [18].

Covariance Matrix Estimation in High-Dimensional Gene Expression Data

The Centrality of Covariance in Genomic Analysis

In gene expression studies, the covariance matrix provides critical insights into the complex web of biological relationships. It captures how the expression levels of genes co-vary across different conditions, treatments, or time points. These co-expression patterns often reflect underlying functional relationships, such as genes participating in the same biological pathway or being co-regulated by the same transcriptional mechanisms. Under the large p, small n regime, however, standard covariance estimation methods fail because the sample size is insufficient to reliably estimate the vast number of parameters involved [15].

The dimensionality challenge is stark: with p genes, the covariance matrix contains O(p²) unique parameters to estimate. For a typical gene expression microarray with 20,000 genes, this translates to approximately 200 million parameters. With sample sizes rarely exceeding a few hundred, this represents an underdetermined system where the number of unknown parameters far exceeds the number of data points available for estimation [15].

Impact of Batch Effects on Covariance Structure

Batch effects represent a particularly pernicious problem in covariance estimation for genomic studies. These technical artifacts arise when samples are processed in different batches, at different times, or by different personnel. Batch effects not only alter the mean expression levels of individual genes but can also distort the inter-gene relationships, leading to biased covariance estimates [15].

When analyzing data affected by batch effects, the assumed model is that each observed array vector Yij from batch i can be expressed as an affine transformation of some unobservable "true" expression vector Y: Yij = AiY + bi, where Ai is a transformation matrix and bi is a shift vector. This means that the covariance structure within each batch (Σi) relates to the true underlying covariance (Σ) through Σi = AiΣAiᵀ. The multivariate nature of batch effects necessitates covariance adjustment methods that can correct both means and covariance structures across batches to create properly harmonized datasets [15].

Table 1: Comparison of Covariance Estimation Methods for Large p, Small n Problems

| Method | Key Approach | Advantages | Limitations |

|---|---|---|---|

| Sample Covariance Matrix | Standard empirical estimation | Simple computation | Singular when p > n; High variance |

| Factor Model-Based Estimation [15] | Uses factor model with hard-thresholding | Captures underlying structure; Scalable to high dimensions | Requires selection of tuning parameters |

| Shrinkage Methods | Shrinks off-diagonal elements towards zero | Stable inversion; Reduced variance | May overshrink true signals |

| Sparse Covariance Estimation | Assumes many zero elements in covariance matrix | Computational efficiency; Biological interpretability | May miss subtle dependencies |

Statistical Frameworks and Protocols for Large p, Small n Problems

Resampling Approaches for Genome-Wide Significance

For determining genome-wide statistical thresholds in large p, small n situations, resampling methods offer a robust solution. Diao et al. (2013) developed a resampling approach specifically designed to control the genome-wide Type I error rate in quantitative trait loci (QTL) mapping studies [19]. The methodology employs the following protocol:

- Step 1: Generate null distribution of test statistics by repeatedly resampling the data while preserving the correlation structure among genes.

- Step 2: Calculate test statistics for each resampled dataset to establish an empirical null distribution.

- Step 3: Determine genome-wide significance thresholds based on percentiles of the empirical null distribution.

- Step 4: Apply these thresholds to the original data to identify statistically significant associations.

This approach maintains proper false discovery control even when the number of tests far exceeds the sample size, addressing a fundamental challenge in genome-wide association studies and QTL mapping [19].

Bayesian Variable Selection for QTL Mapping with Epistases

In the context of QTL mapping with epistatic effects, the large p, small n problem becomes particularly acute due to the explosive number of possible interactions. With p markers, the number of possible pairwise interactions is O(p²), creating an extremely high-dimensional search space [18].

The Bayesian variable selection protocol for this problem involves a two-step procedure:

Initial Screening Step:

- Use restrictive priors to identify a candidate set of markers and interactions

- Employ spike-and-slab priors to separate potentially important effects from negligible ones

- Incorporate sparsity constraints reflecting the expectation that few true effects exist

Refinement Step:

- Perform detailed analysis on the reduced set of candidates

- Use Gibbs sampling to stochastically search through low-dimensional subspaces

- Estimate posterior inclusion probabilities for each potential QTL and interaction

This approach handles the sparse parameter space characteristic of genomic studies, where only a small fraction of markers typically have detectable effects on traits of interest [18].

Table 2: Experimental Reagents and Computational Tools for Genomic Studies

| Research Reagent/Resource | Function/Application | Key Features |

|---|---|---|

| Affymetrix Microarray Platforms | Genome-wide expression profiling | Standardized protocols; High reproducibility [20] |

| R/qtl Software Package | QTL mapping in experimental crosses | Comprehensive mapping tools; Cross-platform compatibility [19] |

| Gene Expression Omnibus (GEO) | Public repository of expression data | Data sharing; Meta-analysis capability [20] |

| Spike-and-Slab Priors | Bayesian variable selection | Automatic sparsity control; Model uncertainty quantification [18] |

| Distance Weighted Discrimination (DWD) | Batch effect correction | Supervised adjustment; Preserves biological signals [15] |

Covariance Adjustment Methods for Batch Effect Correction

Multivariate Batch Adjustment Protocol

The presence of batch effects significantly compromises the validity of combined genomic datasets. While simple mean-centering methods can remove location shifts between batches, they fail to address changes in covariance structure. A comprehensive batch adjustment protocol should include [15]:

Batch Effect Diagnosis:

- Perform principal component analysis (PCA) on the combined dataset

- Visualize batch separation in the first few principal components

- Check if batch differences dominate biological signals

Covariance Adjustment Implementation:

- Estimate batch-specific covariance matrices Σi for each batch i

- Estimate target covariance structure Σ* (either from a reference batch or pooled)

- Compute adjustment transformation: Âi = Σ*^{1/2}Σi^{-1/2}

- Apply adjustment to each batch: Yij(adjusted) = Âi(Yij - μi) + μ*

Quality Assessment:

- Verify reduction in batch separation via PCA

- Confirm preservation of biological signals using control samples

- Check stability of adjusted covariance estimates

This multivariate approach corrects both mean shifts and covariance distortions, providing more scientifically valid combined datasets for downstream analysis [15].

Covariation Matrix Construction for Gene Expression Data

An alternative approach to handling batch effects involves constructing covariation matrices based on expression change patterns rather than absolute expression levels. The protocol involves [20]:

Systematic Condition Comparisons:

- For n biological conditions, perform all N = n(n-1)/2 pairwise comparisons

- For each gene in each comparison, classify as increased (I), decreased (D), or not changed (N)

- Represent each gene by an ordered string of N symbols (e.g., IDDNNIDID...)

Covariation Matrix Calculation:

- For each gene pair, calculate positive and negative correlation scores by comparing their symbol strings

- Use statistical significance testing to identify meaningful covariation patterns

- Construct separate positive and negative covariation matrices

Biological Interpretation:

- Apply clustering methods to identify functional modules

- Characterize modules using Gene Ontology information

- Validate with known biological pathways

This method leverages the improved reproducibility of variation measures compared to absolute expression levels, potentially providing more robust gene relationship networks [20].

Workflow Visualization and Experimental Design

The following diagrams illustrate key protocols and analytical workflows for handling large p, small n problems in genomic research.

Diagram 1: Batch Effect Correction Workflow

Diagram 2: Covariance Estimation Under Sparsity

Diagram 3: Gene Covariation Network Construction

The large p, small n problem presents fundamental challenges in genomic research that require specialized statistical approaches. Through resampling methods, Bayesian variable selection techniques, and sophisticated covariance adjustment procedures, researchers can extract meaningful biological signals from high-dimensional genomic data while maintaining statistical validity. The continued development of these methodologies remains crucial for advancing our understanding of complex biological systems and improving the reliability of genomic findings across diverse applications in basic research and drug development.

In the analysis of gene expression data, the covariance matrix has emerged as a powerful tool for moving beyond simple differential expression to understand the complex, coordinated nature of biological systems. While traditional analyses have focused predominantly on changes in mean expression levels between conditions, covariance-based methods capture the dynamic interrelationships between genes, providing insights into co-regulation patterns and pathway activity that would otherwise remain hidden [21]. This shift in perspective—from analyzing genes in isolation to studying them as interconnected systems—aligns with the fundamental biological understanding that genes operate in coordinated networks rather than independently.

The biological rationale for this approach stems from the "guilt-by-association" principle, which posits that genes involved in the same biological pathway or regulated by the same transcription factors tend to exhibit correlated expression patterns across multiple conditions [22]. When pathway activity changes—due to cellular state transitions, disease processes, or experimental perturbations—the coordination between member genes also changes, leading to detectable shifts in the covariance structure. These structural changes often manifest before significant mean expression differences emerge, making covariance analysis particularly sensitive for detecting early biological responses.

From a technical perspective, analyzing high-dimensional gene expression data (where the number of genes p often exceeds the number of samples n) presents significant challenges. Classical multivariate tests require n > p and become unstable or inapplicable in high-dimensional settings [23] [12]. This has spurred the development of specialized statistical methods that can handle these challenging data structures while extracting biologically meaningful signals.

Biological Foundations: From Covariance to Co-regulation

The Spiked Eigenvalue Model for Pathway Activity

A fundamental model for understanding how pathway activity influences gene expression covariance proposes that the observed expression of pathway genes derives from a shared latent variable representing pathway activity level combined with gene-specific noise. Mathematically, this can be expressed as:

[ y{ik} = \muk + hk ai + \varepsilon_{ik} ]

where (y{ik}) represents the expression of gene k in sample i, (\muk) is a gene-specific intercept, (ai) is the pathway activity level in sample i with variance (\sigmaa^2), (hk) are gene-specific scaling coefficients, and (\varepsilon{ik}) represents unstructured noise with variance (\sigma_\varepsilon^2) [21].

Under this model, the covariance matrix of the pathway genes takes a specific form:

[ \text{cov}(yi) = \sigmaa^2 hh^T + \sigma_\varepsilon^2 I ]

This structure leads to what is known as a spiked eigenvalue pattern, where the first eigenvalue ((\sigmaa^2 + \sigma\varepsilon^2)) captures variability due to changes in pathway activity, while the remaining eigenvalues ((\sigma_\varepsilon^2)) represent unstructured noise [21]. The leading eigenvector corresponds to the direction of maximum coordinated variability and provides a natural measure of pathway activity in each sample.

Biological Interpretation of Covariance Patterns

Different patterns of change in the covariance structure between conditions reflect distinct biological scenarios:

- Increased leading eigenvalue with stable residual variance suggests heightened pathway activity in one condition

- Increased residual variance may indicate pathway dysregulation or breakdown of normal co-regulation

- Changes in multiple eigenvalues often reflect the involvement of additional biological processes affecting subsets of pathway genes

- Altered eigenvectors suggest fundamental rewiring of regulatory relationships within the pathway

These patterns provide a rich interpretive framework that goes beyond what can be learned from mean expression alone. For example, in cancer research, changes in covariance structure have been linked to oncogenic pathway activation, loss of normal regulatory control, and cellular plasticity [21] [22].

Table 1: Biological Interpretation of Covariance Patterns

| Covariance Pattern | Biological Interpretation | Typical Context |

|---|---|---|

| Increased leading eigenvalue | Enhanced pathway activity | Pathway activation in disease or stimulus response |

| Increased residual variance | Loss of co-regulation | Pathway dysregulation in disease |

| Multiple changed eigenvalues | Multiple processes affecting pathway | Complex pathophysiology |

| Changed eigenvectors | Rewiring of regulatory relationships | Cellular reprogramming, adaptation |

Experimental Protocols and Analytical Workflows

Protocol 1: Co-expression Pathway Analysis Using Spiked Eigenvalue Methods

This protocol tests for changes in pathway activity between two biological conditions by examining the leading eigenvalue and trace of covariance matrices.

Sample Processing and Data Generation

Sample Collection and RNA Extraction

- Collect biological samples from conditions to be compared (e.g., diseased vs. normal, treated vs. control)

- Extract total RNA using standardized methods (e.g., column-based purification)

- Assess RNA quality using Bioanalyzer or similar (RIN > 8 recommended)

Library Preparation and Sequencing

- Prepare sequencing libraries using poly-A selection for mRNA enrichment

- Use unique molecular identifiers (UMIs) to correct for PCR duplicates

- Sequence on an appropriate platform (Illumina recommended) to sufficient depth (≥20 million reads per sample)

Read Processing and Quantification

- Perform quality control using FastQC

- Align reads to reference genome using STAR aligner

- Generate count matrices using HTSeq-count or featureCounts [24]

Data Preprocessing and Normalization

Count Matrix Preprocessing

- Filter low-count genes (recommended: retain genes with ≥10 counts in ≥20% of samples)

- Normalize for library size differences using DESeq2's median of ratios method or similar [24]

Pathway Gene Selection

- Select genes from pathway databases (KEGG, Reactome, Hallmark gene sets)

- Subset normalized expression data to pathway genes only

Data Scaling for Covariance Analysis

- Calculate the median eigenvalue of the pooled sample covariance matrix from both classes

- Divide all observations by the square root of this median eigenvalue

- This ensures unspiked eigenvalues are approximately 1, satisfying methodological assumptions [21]

Covariance Matrix Estimation and Testing

Covariance Matrix Calculation

- For each condition, calculate the sample covariance matrix of the preprocessed pathway gene expressions

- Ensure sample sizes are adequate (recommended: n ≥ 10 per condition for initial pilot studies)

Eigenvalue Decomposition

- Perform eigenvalue decomposition of each covariance matrix

- Extract the leading eigenvalue (α̂{j,1}) and trace (T̂j) for each condition j

Hypothesis Testing

- Test the null hypothesis H0: (α{1,1}, T1) = (α{2,1}, T_2) using a χ² test statistic

- The test statistic combines information from both the leading eigenvalue difference and trace difference between conditions [21]

- Calculate p-values using asymptotic results or permutation testing

Biological Interpretation

- If H_0 is rejected, examine the direction of changes:

- α{1,1} > α{2,1} suggests stronger pathway activity in condition 1

- T1 - α{1,1} > T2 - α{2,1} suggests greater dysregulated noise in condition 1

- If H_0 is rejected, examine the direction of changes:

Figure 1: Workflow for co-expression pathway analysis using spiked eigenvalue methods

Protocol 2: Differential Network Analysis with Pathway Integration

This protocol identifies changes in gene-gene interactions within pathways between two conditions, incorporating prior pathway knowledge to improve detection power.

Data Preparation and Network Estimation

Data Processing

- Follow steps 3.1.1 and 3.1.2 for sample processing and normalization

- Include potential covariates (e.g., batch, sex, age) in normalization if available

Association Measure Selection

- Choose an appropriate association measure based on biological question:

- Marginal correlations for overall co-expression patterns

- Partial correlations for direct connections (conditional independence)

- Robust correlations for data with outliers

- Information-theoretic measures for non-linear associations [22]

- Choose an appropriate association measure based on biological question:

Network Estimation

- For each condition, calculate association matrices (G₁ and G₂) using the chosen measure

- For partial correlations, use graphical lasso with appropriate regularization to ensure sparsity

- Compute the differential network: G_diff = G₁ - G₂ [25]

Differential Connectivity Analysis

Pathway Integration

- Obtain pathway information from curated databases (KEGG, GO, BioCarta)

- Account for potential pathway misspecification (missing or extraneous genes)

Sparse Singular Value Decomposition (SSVD)

- Apply SSVD to G_diff to identify gene clusters contributing most to network differences

- The first sparse singular vector identifies the primary gene cluster driving differences

- Subsequent vectors identify additional contributing clusters [25]

Statistical Significance Assessment

- Use permutation testing (recommended: 10,000 permutations) to assess significance

- Permute condition labels and recalculate differential connectivity measures

- Compute empirical p-values based on the permutation distribution [22]

Assisted Differential Network Analysis

Regulator Data Incorporation

- Collect regulator data (e.g., copy number variation, methylation, miRNA) if available

- These serve as auxiliary information to improve detection power

Integrated Analysis

- Use assisted analysis methods that leverage regulator information without requiring complete knowledge of all relevant regulators

- This approach improves identification of key genes contributing to network differences [25]

Biological Validation

- Examine identified differentially connected genes for known biological functions

- Validate findings using external datasets or experimental follow-up

Table 2: Comparison of Covariance-Based Analytical Methods

| Method | Primary Application | Key Strengths | Sample Size Requirements | Implementation |

|---|---|---|---|---|

| Spiked Eigenvalue Test [21] | Pathway activity changes | Direct biological interpretation of eigenvalues | n ≥ 10 per group | Custom R implementation |

| Differential Network Analysis [22] | Network rewiring between conditions | Flexible association measures; pathway integration | n ≥ 15 per group | R package dnapath |

| Assisted Differential Network [25] | Network changes with regulator data | Improved power using multi-omics data | n ≥ 20 per group | Custom R algorithms |

| Regularized Covariance Test (RCMAT) [23] | Gene set mean differences | Handles n < p settings; uses regularization | n ≥ 5 per group | Custom R implementation |

Visualization and Interpretation Framework

Visualizing Differential Network Results

Effective visualization is crucial for interpreting differential network analysis results. The following approaches facilitate biological insight:

Differential Network Layouts

- Visualize both condition-specific networks with consistent node placement

- Highlight edges that differ significantly between conditions

- Use edge color and thickness to represent association strength and direction

Module-Based Representations

- Group genes into functional modules based on network topology

- Display modules that show significant reorganization between conditions

- Annotate modules with enriched biological functions

Covariance Matrix Heatmaps

- Plot covariance matrices for each condition side by side

- Use clustering to group genes with similar covariance patterns

- Highlight regions of significant difference [20]

Figure 2: Interpretation framework for differential network analysis

Table 3: Research Reagent Solutions for Covariance-Based Analyses

| Resource Category | Specific Tools/Databases | Primary Function | Application Notes |

|---|---|---|---|

| Pathway Databases | KEGG, Reactome, GO, MSigDB Hallmarks | Provide biologically defined gene sets for analysis | Hallmark gene sets often show strong co-regulation [26] |

| Analysis Software/Packages | R packages: limma, roastgsa, dnapath | Implement various covariance-based tests | roastgsa compares rotation-based scores for GSA [26] |

| Expression Forecasting | GGRN/PEREGGRN framework | Benchmarks expression prediction methods | Useful for validating inferred regulatory relationships [27] |

| Normalization Tools | DESeq2, EdgeR, HTSeq-count | Process raw sequencing data into normalized counts | Essential preprocessing before covariance analysis [24] |

| Covariance Estimation | Graphical lasso, MRCD estimators, Regularized methods | Handle high-dimensional n < p settings | MRCD estimators provide robustness to outliers [12] |

Advanced Applications and Integration with Other Data Types

Integration with Multi-omics Data

The covariance-based framework can be extended to incorporate multiple data types for enhanced biological insight:

Regulator-Assisted Analysis

- Include copy number variation, methylation, or transcription factor binding data

- These regulators provide explanatory context for observed covariance changes

- Methods exist that don't require complete knowledge of all relevant regulators [25]

Multi-omics Factor Analysis

- Use factor models to integrate transcriptomic with other omics data

- Identify latent factors that drive coordinated changes across data types

- The glfBLUP approach demonstrates this for genomic prediction [28]

Expression Forecasting and Validation

Covariance patterns can inform expression forecasting models:

Benchmarking Platforms

- Use platforms like PEREGGRN to evaluate expression prediction methods

- Test ability to predict effects of novel genetic perturbations

- Assess whether covariance patterns improve prediction accuracy [27]

Validation Strategies

- Compare covariance-based findings with experimental perturbation data

- Use external datasets to confirm identified network rewiring events

- Employ cross-validation within studies to assess robustness

Covariance-based analyses provide a powerful framework for extracting biologically meaningful signals from gene expression data that complement traditional mean-based approaches. By focusing on the coordinated behavior of genes within pathways, these methods can detect changes in pathway activity, identify network rewiring events, and reveal dysregulation patterns that might otherwise remain hidden. The protocols outlined here—from spiked eigenvalue methods to differential network analysis—offer practical approaches for implementing these analyses while properly handling the statistical challenges of high-dimensional data. As biological research increasingly recognizes the importance of systems-level understanding, covariance-based methods will continue to provide essential tools for linking statistical patterns to biological mechanism.

Genetic networks represent the complex functional interactions between genes and their products, forming the circuitry that dictates cellular behavior. A critical characteristic of these networks is their dynamism; they can be rewired in response to different genetic backgrounds or environmental conditions [29]. This rewiring manifests as changes in epistatic interactions—where the phenotypic effect of one gene mutation depends on the presence of other mutations [29]. In the context of disease, such rewiring can fundamentally alter pathological mechanisms and clinical presentations.

The study of genetic network rewiring is significantly advanced by analyzing covariance matrices derived from gene expression data. The covariance structure among a set of genes reveals the extent and pattern of their co-regulation. Changes in this structure between healthy and diseased states, or between different disease subtypes, provide a powerful mathematical framework for quantifying network rewiring and its impact on disease classification [21]. This case study explores how computational analyses of covariance-based network rewiring are refining our understanding of disease mechanisms and enabling more precise molecular classifications.

Covariance Matrix Calculation: A Theoretical Framework for Quantifying Rewiring

Biological Model of Co-Expression

The calculation of a covariance matrix for a set of genes begins with a biological model that explains their co-expression patterns. For a predefined pathway or gene set comprising p genes, the expression data from n observations is represented as an n × p matrix. A common model assumes that a shared biological process, such as pathway activity, is the primary driver of coordinated gene expression [21]. This can be expressed as:

y_ik = μ_k + h_k * a_i + ε_ik

Here, y_ik is the expression level of gene k in sample i, μ_k is a gene-specific intercept, a_i is the latent level of pathway activity in sample i (with variance σ_a^2), h_k is the scaling coefficient for gene k, and ε_ik represents unstructured noise (with variance σ_ε^2) [21]. Under this model, the population covariance matrix of the gene expressions takes the form:

Σ = σ_a^2 * h*h^T + σ_ε^2 * I

This structure implies that the covariance matrix has a "spiked" eigenvalue, where the first eigenvalue is σ_a^2 + σ_ε^2 and the remaining eigenvalues are σ_ε^2 [21]. The leading eigenvalue thus captures variability due to changes in pathway activity, while the sum of the remaining eigenvalues represents noisy, non-co-regulated variability.

Testing for Covariance Changes Indicating Rewiring

To detect genetic network rewiring between two conditions (e.g., healthy vs. diseased), one tests for changes in the biologically meaningful components of the covariance structure. Instead of testing for the equality of the entire covariance matrices Σ_1 and Σ_2, a more powerful and interpretable approach is to test the null hypothesis:

H_0: (α_1,1, T_1) = (α_2,1, T_2)

where α_j,1 is the leading eigenvalue (representing co-regulated variability) and T_j is the trace of the covariance matrix (representing total variability) for class j [21]. Rejecting this hypothesis indicates a significant change in the network's structure—specifically, a rewiring that alters the strength of co-regulated pathway activity (α_j,1) or the level of disordered noise (T_j - α_j,1). This covariance-based test provides a parsimonious summary of network changes that directly ties to biological interpretation.

Application Note: Network Rewiring in Disease Subtyping

Case Study: Diffuse Large B-Cell Lymphoma (DLBCL)

The application of covariance matrix analysis to identify disease-relevant genetic networks is exemplified by a meta-analysis of 11 gene expression studies of Diffuse Large B-Cell Lymphoma (DLBCL) [30]. The study aimed to estimate a common covariance matrix of gene expressions across multiple independent datasets to identify robust gene correlation networks with clinical significance.

- Methodological Approach: The researchers developed a hierarchical random covariance model and an accompanying EM algorithm to estimate a common underlying covariance matrix obscured by study-specific technical noise [30]. This approach improved upon simple pooling of data by accounting for between-study heterogeneity.

- Findings and Outcome: The meta-analytically derived common covariance matrix enabled the identification of novel, biologically meaningful gene correlation networks. Furthermore, the eigengenes (principal components) of these networks demonstrated prognostic value, potentially distinguishing patient subgroups with different clinical outcomes [30]. This demonstrates how stable features of genetic networks, distilled from noisy multi-study data through advanced covariance estimation, can refine disease classification.

Covariation vs. Coexpression in Network Construction

The quality of the genetic network used for rewiring analysis is paramount. An important methodological consideration is the choice between coexpression and covariation measures when calculating gene correlations from transcriptomic data [20].

- Coexpression is defined as the correlation between absolute gene expression levels across multiple biological conditions.

- Covariation is defined as the correlation between relative changes in gene expression (e.g., fold-change) across a series of comparisons between pairs of conditions [20].

Evidence suggests that variation data (e.g., fold-changes) are often more reproducible across laboratories than absolute expression levels [20]. Therefore, constructing genetic networks based on covariation can, in some cases, yield more robust and biologically relevant networks than the more traditionally used coexpression networks, ultimately leading to more reliable detection of rewiring events.

Experimental Protocols

Protocol 1: Differential Gene Expression and Covariation Analysis from RNA-seq Data

This protocol outlines the steps for identifying differentially expressed genes and preparing data for covariation matrix analysis, using the RumBall pipeline within a Docker container for reproducibility [31].

Software Setup and Data Acquisition

- Step 1: Install Docker. Follow the official Docker installation instructions for your operating system to create a containerized environment [31].

- Step 2: Obtain RumBall. Download the RumBall Docker image from DockerHub with the command:

docker pull rnakato/rumball[31]. - Step 3: Acquire Data. Download public RNA-seq data (e.g., FASTQ files) from repositories like the Gene Expression Omnibus (GEO) using tools like SRA Toolkit.

Read Mapping and Expression Quantification

- Step 4: Create a Project Directory.

mkdir RumBall_tutorialand navigate into it. - Step 5: Map Reads and Generate Counts. Use a mapping tool like STAR (included in RumBall) to align reads to a reference genome. The aligned BAM files are then processed to generate a gene count matrix, which tabulates the number of reads mapped to each gene in each sample [31].

Differential Expression and Analysis

- Step 6: Identify Differentially Expressed Genes (DEGs). Perform differential expression analysis on the count matrix using tools like DESeq2 or edgeR (included in RumBall) to identify genes with statistically significant expression changes between conditions [31].

- Step 7: Generate Input for Covariation Analysis. For each gene, across all pairs of biological conditions (e.g., healthy vs. disease, different time points), classify its expression change as significantly increased (I), decreased (D), or not changed (N). This generates a string of symbols (e.g., "IDDNNIDID...") for each gene, representing its variation profile [20].

Covariation Matrix Construction

- Step 8: Calculate Covariation Scores. Construct a covariation matrix (CVM) by calculating statistically significant positive or negative correlation scores for every pair of genes by comparing their ordered strings of I/D/N symbols [20]. This matrix forms the basis for network construction and rewiring analysis.

Protocol 2: Integrated Analysis of Gene Expression and Genetic Variants Using exvar

This protocol utilizes the exvar R package for an integrated analysis that combines gene expression with genetic variation, which can provide mechanistic insights into the causes of network rewiring [32].

Data Preprocessing

- Step 1: Install exvar. Install the package from GitHub using:

devtools::install_github("omicscodeathon/exvar/Package"). Alternatively, pull the Docker container:docker pull imraandixon/exvar[32]. - Step 2: Process FASTQ Files. Use the

processfastq()function to perform quality control (with rfastp), trim reads, and align them to a reference genome (with gmapR), producing indexed BAM files [32].

Gene Expression and Variant Calling

- Step 3: Perform Gene Expression Analysis. Use the

expression()function to generate gene counts from BAM files (with GenomicAlignments) and perform differential expression analysis (with DESeq2) [32]. - Step 4: Call Genetic Variants. Use the

callsnp(),callindel(), andcallcnv()functions to identify single nucleotide polymorphisms, insertions/deletions, and copy number variations from the BAM files, respectively, using VariantTools and other packages [32].

Data Integration and Visualization

- Step 5: Visualize Integrated Data. Use the shiny applications within exvar for visualization:

vizexp(): Creates MA plots, PCA plots, volcano plots, and performs GO enrichment analysis for differential expression results.vizsnp(): Compares SNP profiles between patient and control groups, visualizing SNP types and transitions/transversions.vizcnv(): Illustrates recurrent CNV regions and their overlaps with functional genomic elements [32].

Table 1: Key Software Tools for Genetic Network Analysis

| Tool Name | Type | Primary Function | Application in Protocol |

|---|---|---|---|

| RumBall [31] | Dockerized Pipeline | Reproducible RNA-seq analysis | Differential gene expression analysis from FASTQ files. |

| DESeq2 [31] [32] | R Package | Differential expression analysis | Identifies statistically significant DEGs between conditions. |

| exvar [32] | R Package | Integrated expression & variant analysis | Analyzes and visualizes gene expression, SNPs, Indels, and CNVs. |

| STAR [31] | Aligner | RNA-seq read mapping | Aligns sequencing reads to a reference genome. |

| ClusterProfiler [31] [32] | R Package | Gene ontology enrichment | Interprets biological functions of DEGs or variant-affected genes. |

Visualization of Workflows and Genetic Networks

Workflow for Covariance-Based Disease Classification

This diagram outlines the end-to-end process for using genetic network rewiring in disease classification.

Model of Pathway Co-Regulation and Rewiring

This diagram illustrates the biological model of pathway co-regulation and how its disruption (rewiring) manifests in the covariance structure.

Table 2: Key Research Reagent Solutions for Genetic Network Studies

| Reagent / Resource | Function | Example & Notes |

|---|---|---|

| Reference Genome | A standard genomic sequence for read alignment and variant calling. | Ensembl or GENCODE human genome assembly (e.g., GRCh38). Essential for all mapping steps. |

| Annotation Databases | Provide functional genomic context (gene models, regulatory elements). | Bioconductor TxDb objects (e.g., TxDb.Hsapiens.UCSC.hg38.knownGene) or Ensembl via AnnotationHub [32]. |

| Gene Ontology (GO) Resources | Provide standardized vocabulary for gene function annotation. | Used in enrichment analysis (e.g., with ClusterProfiler) to interpret results from DEG lists or rewired modules [31] [32]. |

| Variant Database | Annotates identified genetic variants with known population frequency and clinical significance. | dbSNP, used to inject SNP IDs into VCF files via SNPlocs packages [32]. |

| Species-Specific R/Bioconductor Packages | Provide organized genomic annotations for non-human models. | Packages like org.Mm.eg.db for mouse or org.Sc.sgd.db for yeast are crucial for cross-species analysis [32]. |

The rewiring of genetic networks is a fundamental mechanism underlying disease heterogeneity. By applying sophisticated statistical analyses to covariance matrices derived from gene expression data, researchers can quantify this rewiring, moving beyond static gene lists to dynamic network interactions. The integration of these approaches with genetic variant data and standardized, reproducible bioinformatics protocols enables a systems-level understanding of disease. This paradigm shift from a gene-centric to a network-centric view is crucial for advancing precision medicine, leading to more accurate disease classification, the discovery of robust prognostic biomarkers, and the identification of novel therapeutic targets anchored in network pathology.

From Theory to Practice: Robust Methods for High-Dimensional Covariance Estimation

In the analysis of high-dimensional genomic data, researchers frequently encounter the challenge of singularity and ill-conditioned covariance matrices, particularly when the number of genes (p) far exceeds the number of samples (n). This p >> n scenario renders traditional statistical methods ineffective for estimating stable covariance structures essential for understanding gene-gene interactions and regulatory networks [33]. Sparse estimation techniques address this fundamental limitation by leveraging the inherent biological structure of genomic systems, where most gene pairs do not interact directly, resulting in covariance matrices with many zero or near-zero elements [34].

These methodologies have become indispensable in computational biology for constructing gene co-expression networks, identifying differentially expressed gene clusters, and understanding the molecular underpinnings of disease through sophisticated statistical regularization. By systematically constraining model complexity, sparse estimation techniques enable researchers to extract meaningful biological signals from high-dimensional transcriptomic data where conventional approaches would fail due to mathematical singularity [33] [34].

Sparse Estimation Paradigms for Covariance Estimation

Bayesian Sparse Covariance Estimation

Bayesian frameworks provide a powerful approach for sparse covariance estimation by incorporating shrinkage priors that systematically pull small off-diagonal elements toward zero. The bspcov R package implements state-of-the-art Bayesian methods specifically designed for high-dimensional covariance estimation [33].

Key functions within this package include:

bmspcov: Implements block Gibbs samplers using beta-mixture shrinkage priors to estimate sparse covariance matricessbmspcov: Employs screened beta-mixture shrinkage priors for enhanced computational efficiencybandPPPandthresPPP: Perform direct posterior sampling from post-processed posteriors for banded and thresholded sparse covariance structures [33]

These Bayesian approaches are particularly valuable for gene expression analysis as they provide natural uncertainty quantification through posterior distributions, allowing researchers to assess the reliability of estimated gene co-expression relationships.

Weighted Gene Co-expression Network Analysis (WGCNA)

WGCNA represents a widely adopted framework for constructing scale-free co-expression networks from transcriptomic data. This method identifies modules of highly correlated genes that often correspond to functionally related gene sets or pathways [34]. The protocol involves:

- Correlation matrix construction using pairwise gene expression correlations

- Topological overlap transformation to minimize effects of spurious correlations

- Hierarchical clustering with dynamic tree cutting to identify co-expression modules

- Module preservation analysis to assess conservation across different conditions [34]

For tumor vs. normal tissue comparisons, WGCNA with module preservation analysis enables identification of cancer-specific network disruptions versus conserved biological processes, providing crucial insights into disease mechanisms [34].

Integrated Analysis Pipelines

The exvar R package offers an integrated solution for gene expression and genetic variation analysis, combining multiple sparse estimation approaches within a unified framework [32]. This package supports eight model organisms including Homo sapiens, Mus musculus, and Arabidopsis thaliana, and provides:

- Differential expression analysis using DESeq2

- Variant calling (SNPs, Indels, CNVs) from RNA-seq data

- Interactive visualization tools for exploratory analysis [32]

Such integrated pipelines are particularly valuable for clinical and biological researchers with basic programming skills, as they streamline the complex workflow from raw sequencing data to biologically interpretable results.

Table 1: Software Solutions for Sparse Covariance Estimation in Genomics

| Software/Tool | Methodology | Key Features | Genomic Applications |

|---|---|---|---|

bspcov R Package [33] |

Bayesian shrinkage priors | Beta-mixture priors, block Gibbs sampling, posterior inference | Gene co-expression network estimation, high-dimensional covariance regularization |

| WGCNA Protocol [34] | Weighted correlation network analysis | Scale-free network construction, module detection, preservation analysis | Identification of coordinated gene expression modules in tumor vs. normal tissues |

exvar R Package [32] |

Integrated analysis pipeline | Variant calling, differential expression, Shiny apps for visualization | Multi-species gene expression and genetic variation analysis from RNA-seq data |

Experimental Protocols

Protocol for WGCNA with Module Preservation Analysis

This protocol enables researchers to construct and compare gene co-expression networks across conditions (e.g., tumor vs. normal tissues) to identify conserved and condition-specific functional modules [34].

Materials and Reagents

Table 2: Essential Research Reagents and Computational Tools

| Item | Function/Application | Implementation Notes |

|---|---|---|

| R Statistical Environment | Primary computational platform | Version 4.0 or higher recommended |

| WGCNA R Package [34] | Co-expression network construction | Enables weighted network analysis and module detection |

| RNA-seq Dataset (FASTQ files) | Raw gene expression data | Paired tumor and normal samples recommended |

| DESeq2 R Package [32] | Differential expression analysis | Used for count data normalization and preprocessing |

| ClusterProfiler R Package [32] | Functional enrichment analysis | GO and KEGG pathway analysis of identified modules |

Step-by-Step Methodology

Data Preprocessing and Quality Control

Network Construction

- Calculate pairwise correlation matrices between all genes

- Transform correlations using signed network approach with beta parameter estimation

- Construct adjacency matrix and convert to topological overlap matrix (TOM)

- Perform hierarchical clustering using TOM-based dissimilarity

- Identify co-expression modules using dynamic tree cutting with minimum module size of 30 genes [34]

Module Preservation Analysis

- Calculate preservation statistics (Zsummary) between tumor and normal networks

- Identify modules with Zsummary < 2 as non-preserved (condition-specific)

- Classify modules with Zsummary > 10 as highly preserved across conditions

- Extract module eigengenes for downstream association with clinical traits [34]

Functional Validation

Anticipated Results and Interpretation

This protocol typically requires 2-3 hours of hands-on time and 8-12 hours of computational time, depending on dataset size and permutation numbers used for preservation analysis [34]. Researchers can expect to identify 5-20 co-expression modules per condition, with approximately 30-70% showing significant preservation across conditions. Non-preserved modules in tumor tissues often reveal dysregulated biological processes central to disease pathogenesis.

Figure 1: WGCNA workflow for gene co-expression network analysis.

Protocol for Bayesian Sparse Covariance Estimation

This protocol details the application of Bayesian sparse covariance methods for estimating gene association networks from high-dimensional transcriptomic data.

Materials and Reagents

Table 3: Bayesian Estimation Research Tools

| Item | Function/Application | Implementation Notes |

|---|---|---|

bspcov R Package [33] |

Bayesian sparse covariance estimation | Implements beta-mixture and screened beta-mixture priors |

| Normalized Expression Matrix | Input data for covariance estimation | Rows as samples, columns as genes |

coda R Package |

Convergence diagnostics | Assesses MCMC chain convergence |

Step-by-Step Methodology

Data Preparation

- Format normalized expression data as

n × pmatrix, wherenis sample size andpis number of genes - Standardize each gene to have mean zero and unit variance

- For ultra-high-dimensional data (

p > 1000), consider preliminary screening

- Format normalized expression data as

Model Specification

- Select appropriate prior structure based on sparsity assumptions:

- Use

bmspcov()for general sparse covariance estimation - Use

sbmspcov()for screened beta-mixture priors on large datasets - Use

bandPPP()for banded covariance structures - Use

thresPPP()for thresholded sparse estimation [33]

- Use

- Set hyperparameters according to prior biological knowledge

- Select appropriate prior structure based on sparsity assumptions:

Posterior Inference

- Run Markov Chain Monte Carlo (MCMC) sampling with sufficient iterations (typically 10,000+)

- Discard initial samples as burn-in (first 20%)

- Assess convergence using trace plots and Gelman-Rubin statistics

Result Extraction and Interpretation

- Extract posterior mean of covariance matrix as point estimate

- Calculate posterior credible intervals for specific gene-gene associations

- Identify significant associations based on inclusion probabilities

Anticipated Results and Interpretation

Bayesian sparse covariance estimation produces posterior distributions for all elements of the gene covariance matrix, allowing probabilistic statements about gene-gene associations. Researchers can expect shrinkage of small correlations toward zero while preserving strong biological associations, resulting in sparse, interpretable networks. The method particularly excels in low-sample scenarios where traditional correlation estimates are unstable.

Comparative Analysis of Sparse Estimation Techniques

Performance Considerations

Each sparse estimation technique offers distinct advantages for specific genomic applications:

Bayesian Methods (bspcov) provide:

- Natural uncertainty quantification through posterior distributions

- Flexible incorporation of prior biological knowledge

- Robustness to small sample sizes through regularization