Decoding Prokaryotic Genomes: How Gene Prediction Algorithms Power Biomedical Discovery

This article provides a comprehensive overview of prokaryotic gene prediction algorithms, from foundational ab initio methods to advanced machine learning approaches.

Decoding Prokaryotic Genomes: How Gene Prediction Algorithms Power Biomedical Discovery

Abstract

This article provides a comprehensive overview of prokaryotic gene prediction algorithms, from foundational ab initio methods to advanced machine learning approaches. Tailored for researchers and drug development professionals, it explores the core mechanisms of tools like Prodigal and GeneMark, their integration into pipelines like NCBI's PGAP, and critical evaluation frameworks. The content addresses persistent challenges including small protein prediction and lineage-specific optimization, highlighting direct implications for functional genomics, microbiome research, and therapeutic target identification.

The Core Mechanics: Understanding Ab Initio Prokaryotic Gene Prediction

Prokaryotic genomes are characterized by their high gene density, with protein-coding sequences (CDS) typically constituting approximately 86-90% of the DNA [1] [2]. This "wall-to-wall" architecture stands in stark contrast to eukaryotic genomes, where coding DNA often represents only 1-2% of the total sequence [2]. Despite this high coding density, the remaining 10-14% of non-coding DNA in prokaryotes plays crucial biological roles through its content of regulatory elements, origins of replication, and non-coding RNA genes [2] [3]. The accurate distinction between coding and non-coding regions presents a fundamental challenge in genomics, with significant implications for our understanding of bacterial biology, virulence, and metabolic capabilities. As the volume of sequenced prokaryotic genomes continues to grow exponentially, the development and refinement of computational tools for gene prediction have become increasingly critical for accurate genome annotation and subsequent biological discovery [1] [4].

Table 1: Genomic Composition Across Life Domains

| Organism Type | Total Genome Size | Percentage Coding DNA | Percentage Non-Coding DNA | Key Non-Coding Components |

|---|---|---|---|---|

| Prokaryotes | 0.5 - 10 Mbp | 86-90% | 10-14% | Regulatory elements, origins of replication, non-coding RNA [2] [3] |

| Eukaryotes | 10 - 150,000 Mbp | 1-2% (human) | 98-99% (human) | Introns, regulatory sequences, repetitive DNA, telomeres, centromeres [2] |

| Human | ~3,000 Mbp | 1-2% | 98-99% | Introns (37%), repetitive elements, regulatory sequences [2] |

Fundamental Biological Distinctions

Composition and Function

The primary distinction between coding and non-coding DNA lies in their functional roles and molecular outputs. Coding DNA consists of nucleotide sequences that are transcribed into messenger RNA (mRNA) and subsequently translated into amino acid sequences to form proteins [5]. These proteins execute the vast majority of catalytic, structural, and regulatory functions within the cell. In prokaryotes, coding sequences are typically contiguous, lacking the intron-exon structure common in eukaryotes, which significantly simplifies their identification in theory, though several practical challenges remain [1].

Non-coding DNA encompasses all genomic regions that do not encode protein sequences but may still be functional [2]. This category includes several important subclasses: promoters and other regulatory sequences that control gene expression; origins of DNA replication; genes for functional non-coding RNAs (such as tRNA, rRNA, and regulatory RNAs); and sequences without clearly defined functions, sometimes termed "junk" DNA [2] [5]. In prokaryotes, non-coding regions are significantly shorter than in eukaryotes but contain a high density of regulatory information essential for coordinating cellular processes.

Structural and Organizational Differences

Beyond their functional distinctions, coding and non-coding regions exhibit differential structural properties at the nucleotide level. Research has revealed that purines and pyrimidines show distinct distribution patterns between these genomic compartments. In non-coding DNA, these bases demonstrate significant aggregation, whereas in coding regions, their distribution is more uniform or even over-dispersed in nearly half of prokaryotic genomes [6]. This structural difference likely reflects the contrasting evolutionary constraints acting on these regions: coding sequences are constrained by the dual requirements of maintaining open reading frames and encoding functional proteins, while non-coding regions are shaped by the selective pressure to maintain regulatory signals while minimizing genome size [3] [6].

Table 2: Structural Properties of Coding vs. Non-Coding DNA in Prokaryotes

| Structural Property | Coding DNA | Non-Coding DNA | Biological Significance |

|---|---|---|---|

| Base Distribution | Uniform or over-dispersed in ~44% of genomes | Aggregated in 86% of genomes | Reflects different evolutionary constraints and functions [6] |

| Sequence Conservation | High amino acid sequence conservation | Higher nucleotide-level conservation in regulatory motifs | Different evolutionary rates due to different functional constraints |

| GC Content Bias | Exhibits codon position-specific GC bias | Lacks consistent positional bias | Coding bias relates to translation efficiency and accuracy [7] |

| Typical Length | ~300-1000 nucleotides per gene | Short (often <50 bp) between convergent genes; longer between divergent genes | Determined by functional requirements and selective pressure for compaction [3] |

The Computational Challenge: Gene Prediction Algorithms

Core Principles and Historical Approaches

Prokaryotic gene prediction algorithms leverage specific statistical and sequence properties to distinguish coding from non-coding regions. The fundamental assumption underlying these tools is that coding sequences exhibit statistical signatures distinct from non-coding DNA, reflecting their biological function and evolutionary constraints [7]. Early algorithms primarily relied on codon usage bias—the non-random use of synonymous codons—and GC content variation across the three codon positions [7]. Coding sequences typically show preference for certain codons that may correspond to abundant tRNAs or optimize translation efficiency, and often display GC content that differs significantly between codon positions, particularly in the third ("wobble") position where mutations are frequently silent [7].

Additional key signals include the presence of ribosomal binding sites (RBS), such as the Shine-Dalgarno sequence, located upstream of start codons; identifiable start and stop codons that define open reading frames (ORFs); and sequence composition biases that reflect the constraints of encoding functional proteins [1] [7]. Early generation tools like GLIMMER and GeneMark implemented these principles using Markov models of varying orders to capture the statistical properties of coding sequences and distinguish them from non-coding background [7].

The Prodigal Algorithm: A Case Study in Modern Gene Prediction

Prodigal (PROkaryotic DYnamic programming Gene-finding ALgorithm) represents a significant advancement in gene prediction methodology, explicitly designed to address three key challenges: improved gene structure prediction, more accurate translation initiation site recognition, and reduction of false positives [7]. The algorithm employs a multi-stage process that begins with unsupervised training on the input genome to identify organism-specific signatures.

During its initial training phase, Prodigal analyzes the GC frame plot bias across the genome, examining the preference for guanine and cytosine bases in each of the three codon positions within potential open reading frames [7]. This analysis reveals the characteristic codon position bias of the organism, which is then used to construct preliminary coding scores for each putative gene. The algorithm subsequently applies dynamic programming to identify an optimal "tiling path" of genes across the genome, considering constraints on gene overlaps (maximum 60 bp for same-strand overlaps) and ensuring comprehensive coverage while minimizing false positives [7].

A distinctive feature of Prodigal is its sophisticated approach to translation initiation site (TIS) prediction. The algorithm evaluates multiple potential start sites for each gene using a weighted combination of evidence, including RBS motif strength, sequence conservation upstream of start codons, and the coding potential of the resulting extended ORF [7]. This comprehensive approach enables Prodigal to achieve higher accuracy in start site identification compared to earlier methods, reducing the need for post-processing correction with specialized TIS prediction tools.

Current Limitations and Biases in Gene Prediction

Systematic Biases in Tool Performance

Despite considerable advances, current gene prediction tools exhibit systematic biases that impact our understanding of prokaryotic genomes. The ORForise evaluation framework, which assesses tools across 12 primary and 60 secondary metrics, has demonstrated that no single tool performs optimally across all genomes or metrics [1]. This performance variability stems from several factors, including differences in algorithmic approaches, training data composition, and inherent biases toward specific gene characteristics.

A significant limitation shared by many tools is poor performance with atypical genes, including those with non-standard codon usage, genes that overlap other coding sequences, and particularly short genes encoding small proteins [1]. The latter represents a substantial challenge, as many tools implement minimum length thresholds (often 90-110 nucleotides) that automatically exclude genuine small coding sequences [1] [7]. This bias has profound implications for genome annotation, as it results in the systematic under-representation of entire functional categories, such as short/small ORFs (sORFs) that play important regulatory roles [1].

Furthermore, most algorithms exhibit biases toward historic genomic annotations from model organisms, creating a self-reinforcing cycle where tools are optimized to find genes similar to those already known [1]. This "knowledge bias" hinders the discovery of novel genomic information, particularly when analyzing genomes from poorly characterized taxonomic groups or metagenomic assemblies from environmental samples [1]. The integration of machine learning approaches, while powerful, can exacerbate this problem if training datasets are not representative of the full diversity of prokaryotic gene sequences.

The Impact of Genome Composition

Tool performance varies substantially with genomic characteristics, particularly GC content [7]. High-GC genomes present specific challenges due to their lower frequency of stop codons and consequent abundance of spurious open reading frames. This increases both false positive rates and errors in translation initiation site identification, as longer ORFs contain more potential start codons [7]. Performance differences across the GC spectrum highlight the importance of tool selection based on the specific characteristics of the target genome.

Comparative analyses have revealed that tool performance is genome-dependent, with different tools exhibiting superior accuracy on different organisms [1]. This context-dependent performance underscores the limitations of a "one-size-fits-all" approach to gene prediction and emphasizes the need for systematic evaluation frameworks that can guide tool selection for specific applications.

Table 3: Performance Challenges with Specific Gene Classes

| Gene Class | Prediction Challenge | Biological Significance | Potential Solutions |

|---|---|---|---|

| Short Genes (<300 nt) | Often missed due to length filters; high false negative rate | Encode important regulatory proteins; underrepresented in databases [1] | Specialized tools (e.g., smORFer); integration of transcriptomic data [1] |

| High-GC Genes | More spurious ORFs; reduced TIS accuracy | Common in Actinobacteria and other soil microbes [7] | Organism-specific training; adjusted statistical thresholds [7] |

| Non-Canonical Starts | Non-ATG start codons poorly recognized | Limited knowledge of translation initiation mechanisms [7] | Expanded start codon models; RBS motif integration |

| Horizontally Acquired Genes | Atypical codon usage reduces sensitivity | Important for adaptation and virulence [1] | Integration of homology searches; codon adaptation index analysis |

Evaluation Frameworks and Emerging Solutions

Systematic Tool Assessment with ORForise

The ORForise evaluation framework represents a significant advancement in the objective assessment of gene prediction tools [1]. This comprehensive system employs 12 primary and 60 secondary metrics to facilitate detailed comparison of tool performance across diverse genomic contexts. By providing a standardized, replicable approach to tool evaluation, ORForise enables researchers to make data-informed decisions about tool selection for specific applications [1].

Key findings from ORForise-based evaluations include the lack of a universally superior tool, with performance depending strongly on the specific genome being analyzed and the metrics considered most important for the research question [1]. Even top-performing tools produce substantially different gene collections, and simple aggregation of multiple tool outputs does not resolve these discrepancies effectively [1]. These observations highlight the complex nature of gene prediction and the limitations of current computational approaches.

Integration of Artificial Intelligence and Multi-Omics Data

The integration of artificial intelligence, particularly deep learning models, represents a promising direction for improving gene prediction accuracy [8] [4]. Frameworks such as gReLU provide comprehensive environments for developing and applying deep learning models to genomic sequences, enabling advanced analyses including variant effect prediction, regulatory element identification, and even synthetic sequence design [8]. These approaches can capture complex, non-linear sequence patterns that may elude traditional statistical methods.

The incorporation of additional data types significantly enhances gene prediction accuracy. Transcriptomic data (RNA-seq) provides direct evidence of transcription, helping to validate putative genes and identify non-coding RNAs [1]. Homology evidence from sequence databases can support gene calls, particularly for evolutionarily conserved genes, though this approach risks reinforcing existing biases in genomic knowledge [1]. Epigenomic signatures and ribosome profiling data provide additional layers of functional evidence that can distinguish coding from non-coding regions with high confidence [4].

Table 4: Key Computational Tools and Resources

| Tool/Resource | Primary Function | Application Context | Key Features |

|---|---|---|---|

| Prodigal | Prokaryotic gene prediction | Initial genome annotation | Dynamic programming; unsupervised training; high accuracy with TIS identification [7] |

| ORForise | Tool evaluation framework | Comparative assessment of gene predictors | 12 primary and 60 secondary metrics; reproducible analyses [1] |

| gReLU | Deep learning framework | Regulatory element prediction; variant effect analysis | Unified environment for sequence modeling; model zoo with pre-trained models [8] |

| smORFer | Short ORF prediction | Identification of small protein-coding genes | Integration of RNA-seq and conservation scores [1] |

| DeepVariant | Variant calling | Mutation detection in sequenced genomes | Deep learning-based approach; superior accuracy to traditional methods [4] |

The distinction between coding and non-coding DNA in prokaryotes remains a challenging computational problem with significant implications for genomic interpretation and biological discovery. While current gene prediction algorithms leverage sophisticated statistical models and evolving machine learning approaches, systematic biases and limitations persist, particularly for atypical gene classes and genetically diverse organisms. The development of comprehensive evaluation frameworks like ORForise provides researchers with critical insights for selecting appropriate tools based on specific genomic contexts and research objectives. Future advances will likely emerge from the integration of multi-omics data, the application of more sophisticated AI models, and continued refinement of algorithms to reduce existing biases. As prokaryotic genomics continues to expand into non-model organisms and complex metagenomic samples, accurate distinction between coding and non-coding sequences will remain fundamental to unlocking the biological insights encoded in microbial genomes.

In the realm of genomics, accurate gene prediction is a fundamental challenge, particularly in prokaryotic organisms where genomic architecture differs significantly from that of eukaryotes. The efficiency of computational algorithms designed to identify genes hinges on the recognition of key genomic signals. Among these, ribosomal binding sites (RBS), start/stop codons, and GC-content play pivotal roles in delineating the beginning, end, and structural context of protein-coding sequences. These elements are not merely passive landmarks; they are active participants in the mechanistic process of translation, influencing both the efficiency and fidelity of gene expression. This guide provides an in-depth technical examination of these core signals, framing their functionality and properties within the context of prokaryotic gene prediction algorithms. Understanding these components is essential for researchers and bioinformaticians aiming to refine annotation accuracy, explore genomic diversity, and advance applications in synthetic biology and drug development.

Ribosomal Binding Sites (RBS)

Definition and Core Function

The Ribosomal Binding Site (RBS) is a specific nucleotide sequence upstream of the start codon on an mRNA transcript that is responsible for the recruitment of a ribosome to initiate translation [9]. In prokaryotes, this site is paramount for the correct and efficient initiation of protein synthesis. The primary function of the RBS is to ensure the ribosome is positioned correctly on the mRNA, with the start codon aligned in the ribosome's P-site, thereby setting the correct reading frame for translation [10]. While RBSs are predominantly discussed in bacterial systems, eukaryotic ribosomes typically employ a different mechanism, recruiting directly to the 5' cap of the mRNA, though internal ribosome entry sites (IRES) represent an alternative, cap-independent initiation pathway [9].

Key Sequence Elements: The Shine-Dalgarno Sequence

The most critical component of the prokaryotic RBS is the Shine-Dalgarno (SD) sequence [10] [9]. This consensus sequence, 5'-AGGAGG-3', is located upstream of the start codon and base-pairs with a complementary sequence (CCUCCU), known as the anti-Shine-Dalgarno (ASD) sequence, located at the 3' end of the 16S rRNA component of the 30S ribosomal subunit [9]. This specific Watson-Crick base pairing is a key determinant for the identification of the correct translation initiation site by the ribosome.

Table 1: Key Prokaryotic RBS Components and Their Functions

| Component | Sequence/Location | Function in Translation Initiation |

|---|---|---|

| Shine-Dalgarno (SD) Sequence | 5'-AGGAGG-3' (consensus) | Base-pairs with 16S rRNA to position the ribosome on the mRNA. |

| Anti-Shine-Dalgarno (ASD) | 3'...CCUCCU...5' (of 16S rRNA) | The ribosomal binding partner for the SD sequence. |

| Spacer Region | ~5-10 nucleotides | Separates the SD sequence from the start codon; length and composition affect initiation efficiency. |

| Start Codon | AUG (most common), GUG, UUG | Specifies the first amino acid of the protein (fMet in prokaryotes). |

Factors Influencing RBS Efficiency and Algorithmic Detection

The efficiency of translation initiation is highly regulated and influenced by several RBS properties, which also pose challenges and provide features for gene prediction algorithms.

- Complementarity to ASD: The degree of complementarity between the mRNA's SD sequence and the ribosomal ASD significantly impacts the initiation efficiency. Richer complementarity generally leads to higher efficiency, although extremely tight binding can paradoxically reduce the translation rate by impeding ribosome progression downstream [9].

- Spacer Region: The distance and the nucleotide composition between the SD sequence and the start codon are critical. An optimal spacing (typically 5-10 nucleotides) maximizes the rate of translation initiation once a ribosome has been bound [9].

- Secondary Structure: mRNA can form secondary structures through base-pairing, which may hide the RBS and make it inaccessible to the ribosome. This is a key regulatory mechanism, as seen in heat shock proteins whose RBS secondary structures melt at elevated temperatures, allowing translation to initiate [9].

- Sequence Degeneracy: Not all prokaryotic genes possess a strong, canonical SD sequence. Some, like E. coli's rpsA, completely lack an identifiable SD sequence, relying on alternative, less-characterized signals for ribosome binding [9]. This degeneracy makes computational identification of RBSs non-trivial and necessitates sophisticated pattern recognition or machine learning models, such as neural networks or Gibbs sampling methods, for accurate N-terminal prediction in unannotated sequences [9].

Start and Stop Codons

The Genetic Code's Punctuation Marks

Start and stop codons are triple-nucleotide sequences within messenger RNA (mRNA) that signal the initiation and termination of translation, respectively. They function as the fundamental punctuation marks of the genetic code, defining the boundaries of the protein-coding region [11].

Start Codons

The Canonical Start Codon and Initiator tRNA

The AUG codon is the universal start codon across all domains of life. It is decoded by a specialized initiator transfer RNA (tRNA) that is distinct from the tRNA used to incorporate methionine during elongation [12]. This distinction is crucial for the fidelity of initiation. In prokaryotes, the initiator tRNA carries a formylmethionine (fMet), whereas in eukaryotes and archaea, it carries an unmodified methionine (Met) [10] [12].

Alternative Start Codons

Despite the centrality of AUG, alternative start codons are utilized, particularly in prokaryotes, mitochondria, and archaea. These codons are still translated as formylmethionine (in prokaryotes) or methionine due to the use of the initiator tRNA [12].

Table 2: Start Codon Usage in Prokaryotes and Other Systems

| System | Primary Start Codon | Alternative Start Codons | Notes |

|---|---|---|---|

| General Prokaryotes (e.g., E. coli) | AUG (83%) | GUG (14%), UUG (3%) [12] | Non-AUG start codons are functional in genes like lacI (GUG) and lacA (UUG) [12]. |

| Eukaryotes | AUG | Very rare non-AUG codons [12] | AUG initiation is highly regulated and precise. |

| Human Mitochondria | AUG | AUA, AUU [12] | Utilize an alternative genetic code. |

| Archaea | AUG | UUG, GUG [12] | Simpler initiation machinery compared to eukaryotes. |

Stop Codons

Standard Termination Signals

There are three stop codons in the standard genetic code: UAA, UAG, and UGA [13] [14]. These codons are also known as nonsense or termination codons. Unlike sense codons, they are not recognized by a tRNA. Instead, they are bound by proteins called release factors, which cause the ribosome to disassemble and release the completed polypeptide chain [14].

The stop codons have historical names derived from the mutants in which they were first characterized: UAG is "amber," UAA is "ochre," and UGA is "opal" or "umber" [14].

Genomic Distribution and Context

The distribution of stop codons within a genome is non-random and can be influenced by the overall GC-content [14]. For example, in the E. coli K-12 genome (GC content 50.8%), the UAA (TAA) stop codon, which is AT-rich, is the most prevalent (63%), followed by UGA (TGA) (29%), and the UAG (TAG) is the least used (8%) [14]. The frequency of TAA decreases in high-GC genomes, while TGA frequency increases [14].

Recoding and Exceptions

In certain contexts, the standard function of a stop codon can be "overridden" in a process called translational readthrough, where a near-cognate tRNA incorporates an amino acid instead of terminating translation [14]. Furthermore, specific mechanisms have evolved to reassign stop codons. For instance, UGA can be recoded to incorporate the amino acid selenocysteine, and UAG can be recoded to incorporate pyrrolysine [14]. These exceptions are important considerations for advanced gene prediction and annotation pipelines.

GC-Content

Definition and Structural Implications

GC-content is the percentage of nitrogenous bases in a DNA or RNA molecule that are guanine (G) or cytosine (C) [15]. It is a fundamental genomic property with significant structural and functional implications. Guanine and cytosine form a base pair held together by three hydrogen bonds, in contrast to the two hydrogen bonds of adenine-thymine (A-T) base pairs. This makes GC base pairs thermodynamically more stable than AT pairs [15].

It was once presumed that this hydrogen bonding was the primary reason for the higher thermostability of high-GC DNA; however, research has shown that the base-stacking interactions between adjacent bases are a more important factor contributing to thermal stability [15].

GC-Content in Genomes and Genes

Genomic Variation and Isochores

GC-content is not uniform across a genome. In more complex organisms, the genome is organized into mosaic regions with different GC-ratios, known as isochores [15]. These variations can be observed as different staining intensities on chromosomes. GC-rich isochores are typically associated with a higher density of protein-coding genes [15].

GC-Content and Coding Sequences

Protein-coding regions often exhibit a higher GC-content compared to the genomic background [15]. This is a critical feature exploited by gene prediction algorithms. There is a direct correlation between the length of a coding sequence and its GC-content, partly because the stop codons are AT-rich (UAA, UAG, UGA); shorter genes have a higher probability of being AT-rich [15]. Furthermore, within a gene, the GC-content at the third, or "wobble," position of a codon is highly variable and is a major contributor to codon usage bias [16].

Table 3: GC-Content Variations Across Genomes and Regions

| Genomic Region/Organism | GC-Content Characteristics | Significance |

|---|---|---|

| Human Genome | 35% - 60% across 100-kb fragments (mean ~41%) [15] | Shows strong isochore structure. |

| Yeast (S. cerevisiae) | 38% [15] | A standard model organism with a relatively low GC-content. |

| Actinomycetota | High GC-content (e.g., Streptomyces coelicolor at 72%) [15] | Historically classified as "high GC-content bacteria." |

| Plasmodium falciparum | ~20% [15] | An example of an extremely AT-rich genome. |

| Typical Coding Sequence | Higher than genomic background [15] | A key signal for computational gene identification. |

Experimental and Computational Analysis

Determining GC-Content: An HPLC Protocol

A standard and accurate method for determining the molar percentage (mol%) G+C content of DNA is Reverse-Phase High-Performance Liquid Chromatography (HPLC) [16]. This protocol is essential for the taxonomic description of novel prokaryotes.

Detailed Methodology:

- DNA Isolation and Purification: Genomic DNA is extracted from the organism and purified to remove contaminants like proteins and RNA.

- Enzymatic Digestion: The purified DNA is completely digested into its constituent deoxynucleosides using a cocktail of enzymes, typically including nuclease P1 and bacterial alkaline phosphatase.

- Chromatographic Separation: The resulting deoxynucleoside mixture is injected into an HPLC system equipped with a reverse-phase C18 column. The nucleosides are separated based on their hydrophobicity as they elute with a solvent gradient.

- Detection and Quantification: The separated nucleosides are detected by their UV absorbance. The area under the peak for each deoxynucleoside (dA, dT, dG, dC) is measured.

- Calculation: The mol% G+C content is calculated using the formula:

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Reagents and Tools for Genomic Signal Analysis

| Research Reagent / Tool | Function / Application |

|---|---|

| Nuclease P1 & Alkaline Phosphatase | Enzymatic cocktail for complete DNA digestion to deoxynucleosides for HPLC-based GC-content analysis [16]. |

| C18 Reverse-Phase HPLC Column | The core matrix for separating individual nucleosides during chromatographic GC-content determination [16]. |

| Shine-Dalgarno (SD) Sequence (5'-AGGAGG-3') | The key prokaryotic RBS sequence used in synthetic biology to design and control translation initiation rates [17]. |

| Initiator tRNA (tRNAfMet) | Specialized tRNA that recognizes the start codon (AUG/GUG/UUG) and initiates protein synthesis with fMet [10] [12]. |

| Release Factors (RF1/RF2) | Proteins that recognize stop codons and catalyze the release of the finished polypeptide from the ribosome [14]. |

| Neural Network & Gibbs Sampling Software | Computational methods used in gene prediction algorithms to identify degenerate RBS sequences and translation start sites [9]. |

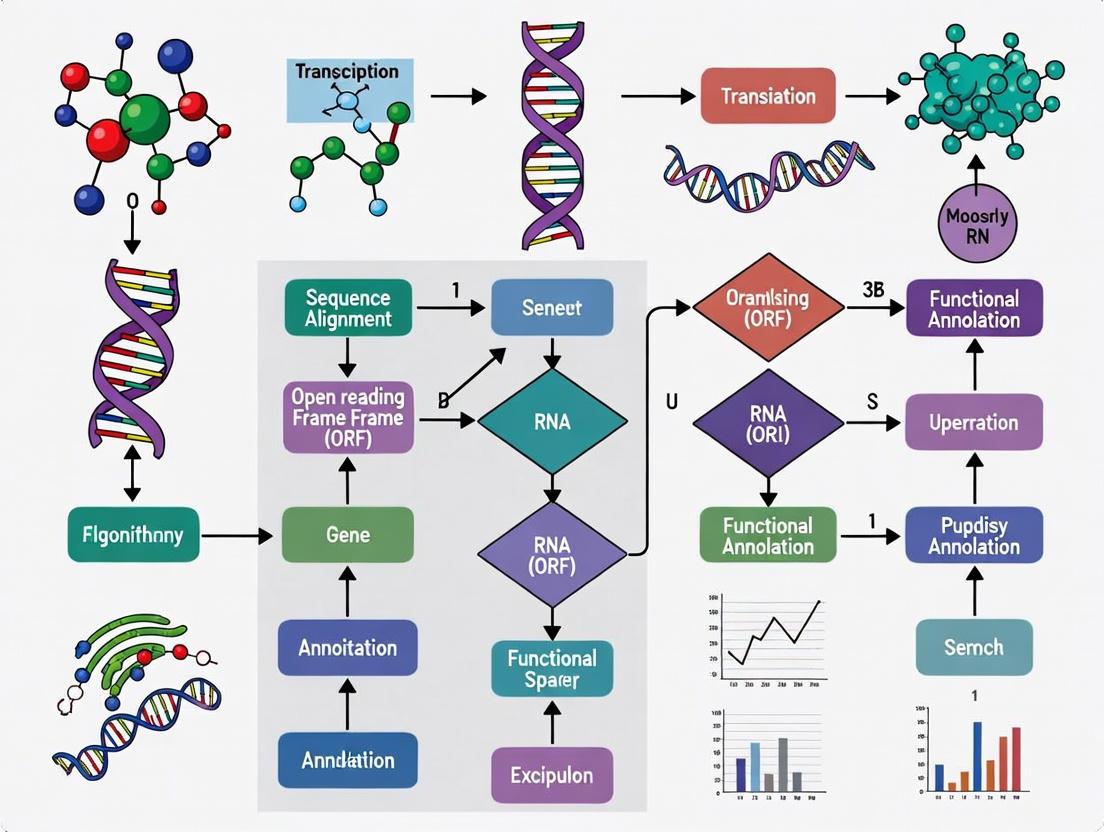

Visualizing the Logic of Prokaryotic Gene Prediction

The following diagram illustrates the logical workflow a prokaryotic gene prediction algorithm follows, leveraging the genomic signals discussed in this guide to identify potential protein-coding regions (Open Reading Frames - ORFs).

Diagram 1: Prokaryotic gene prediction logic based on key genomic signals.

Ribosomal binding sites, start/stop codons, and GC-content are not isolated elements but form an integrated system of genomic signals that guide the machinery of gene expression. For prokaryotic gene prediction algorithms, these signals provide the essential features for distinguishing protein-coding sequences from non-coding background DNA. The Shine-Dalgarno sequence ensures precise initiation, the start and stop codons define the unambiguous boundaries of the coding sequence, and the GC-content and associated codon usage bias provide a statistical measure of coding potential. As genomic sequencing continues to expand into uncharted taxonomic space, and as synthetic biology demands more precise genetic design, a deeper understanding of these core signals—including their variations, exceptions, and interactions—will remain paramount for researchers, scientists, and drug development professionals aiming to decipher and engineer the genetic code.

Prodigal (Prokaryotic Dynamic Programming Gene-finding Algorithm) employs a sophisticated dynamic programming approach to identify optimal gene tiling paths across microbial genomes. This algorithm addresses fundamental challenges in prokaryotic gene prediction, including translation initiation site recognition and false positive reduction. By integrating GC-frame bias analysis with a dynamic programming scoring system, Prodigal achieves high-precision gene calling without requiring extensive manual curation or training data. This technical examination details the core methodology, computational framework, and performance characteristics of Prodigal's tiling path approach, providing researchers with comprehensive insights into its application for genomic annotation.

Prokaryotic gene prediction represents a fundamentally different challenge than eukaryotic gene finding due to the absence of introns and higher gene density in microbial genomes [18]. While early methods like Glimmer and GeneMarkHMM demonstrated reasonable performance, significant limitations persisted in translation initiation site (TIS) prediction and false positive identification, particularly in high GC-content genomes where spurious open reading frames abound [7]. These limitations motivated the development of Prodigal, which implemented a novel dynamic programming framework to select optimal combinations of genes across the entire genome sequence.

The algorithm's "tiling path" approach refers to its methodology of evaluating multiple potential gene arrangements and selecting the highest-scoring combination through dynamic programming, effectively "tiling" the genome with the most probable set of coding sequences. This method significantly improved both gene structure prediction and translation initiation site recognition while reducing false positives compared to previous methodologies [7].

Core Algorithmic Methodology

Dynamic Programming Framework

Prodigal implements a dynamic programming algorithm that operates on a matrix of nodes representing start and stop codons throughout the genome [7]. The algorithm connects these nodes through two types of connections: "gene" connections (start to stop codons) and "intergenic" connections (stop to start codons). Each potential gene receives a preliminary coding score based on GC-frame bias analysis, while intergenic regions receive small bonuses or penalties based on distance between genes.

The dynamic programming process evaluates all possible paths through this network of connections to identify the highest-scoring combination of genes. This approach allows Prodigal to make global decisions about gene selection rather than evaluating each potential gene in isolation, effectively addressing the challenge of choosing between overlapping open reading frames in the same genomic region [7].

GC Frame Plot Analysis

Before executing the dynamic programming algorithm, Prodigal analyzes the GC content bias across codon positions to build a training profile for the specific organism [7]. The algorithm examines all open reading frames longer than 90 base pairs, analyzing the preference for G and C nucleotides in each of the three codon positions:

- Codon Position Analysis: For each ORF, the algorithm identifies which codon position (1st, 2nd, or 3rd) contains the highest GC content within a 120-base pair sliding window centered on each position

- Bias Calculation: The preferences are aggregated across all ORFs and normalized to generate frame bias scores for each of the three codon positions

- Preliminary Scoring: Each potential gene receives an initial score based on how well its GC pattern matches the organism's characteristic coding bias

This GC frame plot analysis enables Prodigal to adapt to the specific codon usage patterns of the input genome without requiring pre-existing training data or manual curation [7].

Scoring System and Tiling Path Selection

The dynamic programming scoring system integrates multiple signals to evaluate potential genes [7]. The score (S) for a gene starting at position n1 and ending at n2 is calculated as:

S = Σ [B(i) × l(i)]

Where B(i) is the bias score for codon position i, and l(i) is the number of bases in the gene where the 120-bp maximal window at that position corresponds to codon position i.

The algorithm populates a dynamic programming matrix by evaluating all valid start-stop pairs, considering three types of connections:

- Gene connections: Start codon to corresponding stop codon

- Intergenic connections: Stop codon to start codon of next gene

- Overlap connections: Special rules for handling genes with limited overlap

Table 1: Dynamic Programming Connection Types in Prodigal

| Connection Type | From | To | Score Basis | Constraints |

|---|---|---|---|---|

| Gene Connection | Start codon | Stop codon | GC frame plot coding score | Minimum 90 bp length |

| Intergenic Connection | Stop codon | Start codon | Distance-based bonus/penalty | Follows stop codon |

| Same-Strand Overlap | 3' end | 3' end | Pre-calculated best overlap | Max 60 bp overlap |

| Opposite-Strand Overlap | 3' end (forward) | 5' end (reverse) | Implied gene score | Max 200 bp 3' overlap |

Implementation Details

Handling Gene Overlaps

A significant innovation in Prodigal's dynamic programming approach is its systematic handling of overlapping genes [7]. Since standard dynamic programming assumes non-overlapping solutions, Prodigal implements special rules to accommodate biologically plausible gene overlaps:

- Same-Strand Overlaps: The algorithm pre-calculates the highest-scoring overlapping gene in each frame for every stop codon, allowing connections between the 3' ends of two genes on the same strand with a maximum overlap of 60 bp

- Opposite-Strand Overlaps: The algorithm permits 200 bp overlap between the 3' ends of genes on opposite strands, but prohibits 5' end overlaps

- Frame-Specific Evaluation: For each potential overlap situation, the algorithm evaluates candidates in all three reading frames to identify optimal configurations

This overlap handling mechanism enables Prodigal to accurately represent the complex gene arrangements found in microbial genomes while maintaining the computational efficiency of the dynamic programming paradigm [7].

Training Set Construction

Prodigal operates in a fully unsupervised manner by automatically constructing a training set from the input sequence [7]. The process includes:

- Initial ORF Collection: All open reading frames longer than 90 bp are identified

- GC Bias Profiling: Organism-specific codon position biases are calculated

- Preliminary Scoring: Each start-stop pair is scored based on GC frame plot compatibility

- Dynamic Programming Selection: The initial tiling path is selected using dynamic programming to identify the most promising training genes

- Profile Refinement: The selected genes are used to build hexamer coding statistics, RBS motifs, and other species-specific signals

This automated training process allows Prodigal to achieve high accuracy without manual intervention or pre-trained models, making it particularly valuable for newly sequenced organisms with no existing annotation [7].

Performance and Evaluation

Quantitative Assessment

Prodigal was rigorously evaluated against existing gene prediction methods including Glimmer and GeneMarkHMM [7]. The evaluation focused on three key metrics: gene structure prediction accuracy, translation initiation site recognition, and false positive reduction.

Table 2: Performance Comparison of Prodigal Against Other Gene Prediction Tools

| Metric | Prodigal | Glimmer | GeneMarkHMM | Evaluation Method |

|---|---|---|---|---|

| Gene Prediction Accuracy | High overall, especially in high-GC genomes | Reduced in high-GC genomes | Moderate across GC ranges | Comparison to curated genomes |

| Start Site Precision | Significantly improved | Lower accuracy | Moderate accuracy | Experimental validation |

| False Positive Rate | Substantially reduced | Higher short gene predictions | Moderate | Proteomics validation |

| Unsupervised Operation | Fully automated | Requires training | Requires training | Pre-processing requirements |

Experimental Validation

The development team employed extensive experimental validation using curated genomes from the JGI ORNL pipeline [7]. The validation methodology included:

- Reference Data Sets: Initial testing used 10 curated genomes plus Escherichia coli K12, Bacillus subtilis, and Pseudomonas aeruginosa

- Expanded Validation: Final testing expanded to over 100 genomes from GenBank

- Rule Optimization: Algorithmic rules were refined based on performance across the entire validation set rather than optimizing for specific genomes

- Cross-Validation: Rules that improved performance only on specific genome types were rejected in favor of generally applicable approaches

This rigorous validation strategy ensured that Prodigal would perform robustly across diverse microbial organisms rather than being optimized for specific phylogenetic groups [7].

Research Reagent Solutions

Table 3: Essential Research Materials for Gene Prediction Validation

| Reagent/Resource | Function in Gene Prediction Research | Example Applications |

|---|---|---|

| Curated Genome Sequences | Gold standard for algorithm training and validation | JGI ORNL pipeline genomes, Ecogene Verified Protein Starts |

| High-Quality Genome Annotations | Benchmark for prediction accuracy comparison | GenBank annotations, manually curated references |

| Proteomics Datasets | Experimental validation of predicted coding sequences | Mass spectrometry data to verify expressed proteins |

| Ribosomal Binding Site Motifs | Training signal for translation initiation site prediction | RBS sequence patterns for start codon identification |

| GC Frame Plot Analysis Tools | Visualization of coding potential across the genome | Artemis compatibility, custom visualization scripts |

| Dynamic Programming Frameworks | Core algorithmic implementation for tiling path selection | Custom C code in Prodigal, general DP libraries |

Visualization of Core Algorithm

Dynamic Programming Matrix Structure

Prodigal Dynamic Programming Network: This diagram illustrates the connection types in Prodigal's dynamic programming matrix, showing how start and stop codons are connected through gene, intergenic, and overlap connections to form the complete tiling path.

GC Frame Plot Analysis Workflow

GC Frame Plot Analysis: This workflow diagram shows Prodigal's process for analyzing GC content bias across codon positions to build organism-specific training profiles for gene prediction.

Prodigal's dynamic programming approach to gene tiling path selection represents a significant advancement in prokaryotic gene prediction methodology. By integrating GC-frame bias analysis with a comprehensive scoring system that evaluates gene combinations across the entire genome, the algorithm achieves improved accuracy in both gene identification and translation initiation site recognition while substantially reducing false positives. The fully automated nature of the algorithm, combined with its robust performance across diverse microbial taxa, has established Prodigal as a valuable tool in genomic annotation pipelines. As sequencing technologies continue to generate vast amounts of microbial genomic data, efficient and accurate computational methods like Prodigal remain essential for extracting biological insights from sequence information.

Prokaryotic gene prediction represents a fundamental challenge in computational genomics, essential for understanding microbial diversity and function. Unlike supervised methods requiring pre-labeled data, unsupervised algorithms autonomously derive organism-specific parameters directly from genomic sequences, enabling their application across the vast diversity of uncharacterized microorganisms. This technical guide elucidates the core principles and methodologies underpinning unsupervised learning in prokaryotic gene finders, focusing on statistical models that self-train on intrinsic genomic features. We examine how these systems detect coding sequences through iterative refinement of sequence models, translation initiation signals, and open reading frame characteristics without external annotations. Within the broader thesis of prokaryotic gene prediction mechanisms, this review details the mathematical foundations and computational frameworks that allow algorithms to adapt to species-specific genetic architectures, providing researchers with a comprehensive understanding of this critical bioinformatics capability.

The exponential growth of sequenced prokaryotic genomes has far outpaced experimental characterization, creating a critical need for computational methods that can accurately identify protein-coding genes without relying on existing annotations [19]. Unsupervised algorithms address this challenge by learning organism-specific parameters directly from the genomic sequence itself, requiring no pre-trained models or labeled examples. This capability is particularly vital for studying microbial "dark matter"—the enormous diversity of uncharacterized bacteria and archaea that constitute approximately 99% of microbial species and remain functionally unknown [19].

Unsupervised gene finders operate on the fundamental principle that protein-coding regions exhibit statistical signatures distinct from non-coding DNA. These signatures include codon usage bias, nucleotide composition patterns, and sequence periodicity that reflect the molecular machinery of translation and evolutionary constraints [20]. By detecting these signals through iterative statistical learning, algorithms can derive a species-specific model of gene structure that accommodates the substantial variation in genomic features across different taxa. This adaptability is crucial given the remarkable diversity of prokaryotes, which span extremes of GC content, genome size, and genetic organization [21].

The development of unsupervised methods represents a significant evolution from early gene finders that relied on conserved rules or supervised training on model organisms. By learning directly from each genome, these algorithms avoid biases toward well-studied species and can more accurately annotate novel microorganisms with divergent sequence features [1]. This technical guide examines the core mechanisms through which unsupervised algorithms learn organism-specific parameters, with detailed analysis of their mathematical foundations, implementation workflows, and performance characteristics.

Core Mathematical Principles

Statistical Foundations of Unsupervised Parameter Learning

Unsupervised gene prediction algorithms are grounded in statistical learning theory, employing probabilistic models to distinguish coding from non-coding sequences without labeled training data. The fundamental assumption is that protein-coding regions exhibit measurable statistical biases in nucleotide composition and sequence organization that differ systematically from non-functional DNA [20].

The Entropy Density Profile (EDP) model provides a sophisticated approach to capturing these statistical regularities. For a DNA sequence, the EDP computes the information-theoretic properties of its potential amino acid composition. The model defines a vector S = {s_i} for i = 1,...,20 amino acids, where each component is calculated as:

si = - (1/H) × pi × log p_i

Here, pi represents the probability of amino acid i, and H is the Shannon entropy of the amino acid distribution: H = -Σj pj log pj [20]. This transformation emphasizes the information content of the sequence rather than simply its composition. In the EDP phase space, coding open reading frames (ORFs) form distinct clusters separate from non-coding ORFs, enabling discrimination based on their position in this multidimensional space [20].

For GC-rich genomes, Principal Component Analysis reveals that ORFs form six clusters in the EDP phase space—one for coding ORFs and five for non-coding ORFs—reflecting the impact of genomic GC content bias on sequence statistics [20]. This clustering behavior provides the mathematical basis for distinguishing functional genes through unsupervised clustering algorithms.

Modeling Translation Initiation Sites

Accurate identification of translation initiation sites (TIS) is critical for precise gene annotation. Unsupervised approaches model TIS by integrating multiple sequence features around potential start codons. The MED 2.0 algorithm implements a comprehensive TIS model that incorporates:

- Sequence motifs surrounding start codons (ATG, GTG, TTG)

- Ribosomal binding site (Shine-Dalgarno sequence) characteristics

- Sequence conservation patterns upstream of potential starts

- Codon usage biases in the immediate downstream region [20]

These features are combined into a multivariate statistical model that scores potential TIS locations based on their congruence with expected patterns derived from the genome itself. The algorithm learns the genome-specific parameters for these features through iterative analysis, without requiring prior knowledge of validated start sites [20]. This approach is particularly valuable for archaeal genomes, which exhibit divergent translation initiation mechanisms compared to bacteria [20].

Algorithmic Implementation

The MED 2.0 Framework

The Multivariate Entropy Distance (MED 2.0) algorithm exemplifies the unsupervised learning approach to prokaryotic gene prediction. Its implementation involves a structured workflow that iteratively refines genome-specific parameters through statistical analysis of sequence features.

Figure 1: MED 2.0 unsupervised learning workflow. The algorithm iteratively refines genome-specific parameters through statistical analysis until convergence.

The MED 2.0 workflow begins with comprehensive identification of all possible open reading frames (ORFs) in the input genome. For each ORF, the algorithm calculates its Entropy Density Profile vector, which captures the information-theoretic properties of its potential amino acid composition [20]. These vectors are then analyzed through clustering techniques in the 20-dimensional EDP phase space, where coding and non-coding ORFs form distinct clusters due to different evolutionary constraints [20].

Through iterative expectation-maximization, MED 2.0 progressively refines the discrimination boundary between these clusters, simultaneously deriving genome-specific parameters for codon usage bias, nucleotide composition, and other sequence features. This iterative process continues until cluster assignments stabilize, indicating convergence. The final step integrates the EDP-based coding potential assessment with a translation initiation site (TIS) model to produce comprehensive gene predictions [20].

A key advantage of this approach is its ability to reveal divergent biological characteristics across taxa. For example, MED 2.0 can identify variations in translation initiation mechanisms and start codon usage patterns (ATG, GTG, TTG) in archaeal genomes without any prior training on these organisms [20]. This adaptability makes unsupervised methods particularly valuable for studying non-model microorganisms with unusual genetic architectures.

Comparative Performance of Gene Prediction Tools

Different gene prediction algorithms employ varying strategies for learning organism-specific parameters, with significant implications for their performance across diverse taxa.

Table 1: Comparison of prokaryotic gene prediction tools and their parameter learning methods

| Tool | Learning Approach | Primary Features | Organism-Specific Training Required | Key Applications |

|---|---|---|---|---|

| MED 2.0 | Unsupervised (EDP model) | Entropy density profiles, TIS features | No - learns during execution | GC-rich genomes, Archaea [20] |

| Balrog | Supervised (Universal model) | Temporal convolutional network | No - uses pre-trained universal model | Diverse bacteria and archaea [22] |

| Glimmer | Unsupervised | Interpolated Markov models | Yes - before gene prediction | Finished genomes [22] |

| Prodigal | Unsupervised | Dynamic programming, heterogeneous starts | Yes - before gene prediction | Bacterial and archaeal genomes [22] |

| GeneMark | Unsupervised | Inhomogeneous Markov models | Yes - before gene prediction | Standard microbial genomes [20] |

The comparative performance of these tools highlights trade-offs between different learning strategies. In evaluations, Balrog—which uses a universally pre-trained model rather than organism-specific learning—achieved sensitivity comparable to Prodigal (2,248 vs. 2,250 known genes found) while reducing "hypothetical protein" predictions by 11% (664 vs. 747) [22]. This suggests that universal models may reduce false positives while maintaining high sensitivity.

However, unsupervised methods like MED 2.0 show particular strength on non-standard genomes. MED 2.0 demonstrates "competitive high performance in gene prediction for both 5' and 3' end matches, compared to current best prokaryotic gene finders," with advantages "particularly evident for GC-rich genomes and archaeal genomes" [20]. This performance advantage stems from their ability to adapt to the specific statistical properties of each genome without bias from previously seen organisms.

Experimental Validation Protocols

Benchmarking Framework and Metrics

Rigorous evaluation of unsupervised gene prediction algorithms requires standardized benchmarks and quantitative metrics. The ORForise framework provides a comprehensive evaluation system based on 12 primary and 60 secondary metrics that facilitate assessment of coding sequence (CDS) prediction performance [1]. This systematic approach enables researchers to identify which tool performs better for specific use cases, as "the performance of any tool is dependent on the genome being analysed, and no individual tool ranked as the most accurate across all genomes or metrics analysed" [1].

Key evaluation metrics include:

- Sensitivity: Proportion of known genes correctly identified

- Specificity: Proportion of predicted genes that match known annotations

- Accuracy at start codons: Precision in identifying correct translation initiation sites

- Accuracy at stop codons: Precision in identifying correct translation termination sites

- Hypothetical gene rate: Number of predictions labeled as "hypothetical protein"

Experimental protocols typically involve hold-out testing, where algorithms are evaluated on genomes excluded from any training process. For example, in validating Balrog, researchers used "a test set of 30 bacteria and 5 archaea that were not included in the Balrog training set" [22]. This approach provides unbiased performance estimation and reveals how tools generalize to novel organisms.

Genomic Signature Analysis for Environmental Adaptation

Unsupervised learning extends beyond basic gene prediction to uncover correlations between genomic signatures and environmental adaptations. Research on prokaryotic extremophiles has demonstrated that "adaptations to extreme temperatures and pH imprint a discernible environmental component in the genomic signature of microbial extremophiles" [21].

The experimental protocol for this analysis involves:

- Sequence Fragment Selection: Extracting 500 kbp DNA fragments to represent each genome

- k-mer Frequency Calculation: Computing k-mer frequency vectors for values 1≤k≤6

- Unsupervised Clustering: Applying clustering algorithms to group sequences by genomic signature similarity

- Environmental Correlation: Assessing whether clusters correspond to environmental conditions rather than taxonomy

This methodology has revealed that "hyperthermophile organisms [have] large similarities in their genomic signatures, in spite of belonging to different domains in the Tree of Life" [21]. Such findings demonstrate how unsupervised analysis of sequence composition can reveal fundamental biological relationships beyond taxonomic boundaries.

The Scientist's Toolkit

Implementation and evaluation of unsupervised gene prediction algorithms requires specific computational resources and data sources.

Table 2: Essential research reagents and resources for unsupervised gene prediction research

| Resource | Type | Function | Application Context |

|---|---|---|---|

| ORForise | Evaluation framework | Assess CDS prediction tool performance | Benchmarking gene finders [1] |

| GTDB | Database | Taxonomic classification of genomes | Training and testing set construction [22] |

| BacDive | Database | Phenotypic data for prokaryotes | Correlation of genomic and phenotypic traits [23] |

| Pfam | Database | Protein family annotations | Functional characterization of predictions [23] |

| Genomic-benchmarks | Dataset collection | Standardized sequences for classification | Method development and comparison [24] |

These resources enable comprehensive development and testing of unsupervised learning algorithms. The Genomic-benchmarks collection, for example, provides "a collection of datasets for genomic sequence classification with an interface for the most commonly used deep learning libraries" [24], addressing the critical need for standardized evaluation datasets in computational genomics.

Implementation Considerations for Novel Genomes

When applying unsupervised gene prediction to newly sequenced organisms, several practical considerations influence algorithm performance:

- Genome Quality: Highly fragmented assemblies disrupt the statistical patterns used for unsupervised learning

- GC Content: Extreme GC bias requires specialized handling, as implemented in MED 2.0 for GC-rich genomes [20]

- Taxonomic Group: Algorithm performance varies across bacterial and archaeal domains [22]

- Gene Density: Prokaryotic genomes typically have 80-90% coding density, but this varies significantly [1]

Tools like MED 2.0 specifically address these challenges through their adaptive learning approach, which automatically adjusts to genome-specific characteristics without requiring manual parameter tuning [20]. This capability makes unsupervised methods particularly valuable for annotating novel microorganisms that diverge significantly from model organisms.

Unsupervised learning algorithms represent a powerful approach for prokaryotic gene prediction, capable of deriving organism-specific parameters directly from genomic sequences without prior training or manual intervention. Through statistical models that detect coding potential, translation initiation signals, and sequence composition biases, these methods adapt to the remarkable diversity of microbial genomes, from GC-rich bacteria to archaea with divergent genetic codes. The MED framework demonstrates how entropy-based modeling and iterative refinement can achieve performance competitive with state-of-the-art tools while providing insights into genome biology.

As sequencing technologies continue to reveal the vast expanse of microbial diversity, unsupervised methods will play an increasingly vital role in initial genome characterization. Their ability to learn species-specific parameters without external references makes them uniquely suited for exploring the functional dark matter of prokaryotic life—the hypothetical proteins that constitute approximately 30% of genes even in well-studied model organisms like Escherichia coli [19]. Future developments in unsupervised learning will likely incorporate additional sequence features and more sophisticated statistical models to further improve annotation accuracy across the tree of life.

The Role of Hidden Markov Models in GeneMark's Prediction Strategy

Accurate identification of genes is a fundamental challenge in computational genomics. For prokaryotic genomes, which are typically gene-dense and lack the intron-exon structure of eukaryotes, the primary challenges involve locating coding regions and precisely determining translation start sites [25] [26]. The Hidden Markov Model (HMM) has emerged as a powerful statistical framework for addressing these challenges by modeling DNA sequences as stochastic processes with observable nucleotides and hidden functional states [27] [28]. GeneMark.hmm, developed in 1998, represents a significant evolution from the original GeneMark algorithm by embedding GeneMark's probabilistic models into a sophisticated HMM framework specifically designed to improve the accuracy of gene boundary prediction [25]. This integration has established GeneMark.hmm and its self-training successor, GeneMarkS, as standard tools for gene identification in newly sequenced prokaryotic genomes and metagenomes [26].

Theoretical Foundations of Hidden Markov Models

Core Concepts and Definitions

A Hidden Markov Model is a statistical framework that models doubly-embedded stochastic processes: an observable sequence (nucleotides) and an underlying sequence of hidden states (functional regions) that are not directly observable but govern the probability distribution of the observations [27] [28]. Formally, an HMM is characterized by the parameter set λ = (A, B, π), where:

- State Space (Q): The set of all possible hidden states, Q = {q₁, q₂, ..., q_N}, where N is the number of states [28].

- Observation Space (V): The set of all possible observable symbols (in genomics, V = {A, C, G, T}) [28].

- Transition Probability Matrix (A): The probabilities of transitioning between hidden states, aij = P(x{t+1} = qj | xt = q_i) [28].

- Emission Probability Matrix (B): The probabilities of emitting observable symbols given a hidden state, bj(k) = P(ot = vk | xt = q_j) [28].

- Initial State Distribution (π): The probability distribution over states at the beginning of the sequence [28].

The Three Fundamental HMM Problems and Their Solutions

Three canonical problems must be addressed to utilize HMMs in practical applications [28]:

Table 1: The Three Fundamental Problems of Hidden Markov Models

| Problem Name | Description | Solution Algorithm | Relevance to Gene Prediction |

|---|---|---|---|

| Evaluation Problem | Given model λ and observation sequence O, compute P(O|λ) | Forward Algorithm or Backward Algorithm | Determine likelihood of DNA sequence given gene model |

| Decoding Problem | Given λ and O, find the most likely hidden state sequence | Viterbi Algorithm | Predict locations of coding/non-coding regions in DNA |

| Learning Problem | Given O, adjust λ to maximize P(O|λ) | Baum-Welch Algorithm or Supervised Learning | Train model parameters on known genomic sequences |

The Viterbi algorithm, particularly crucial for gene finding, employs dynamic programming to efficiently find the most probable path through hidden states [28]. For a DNA sequence of length T, it computes two variables: δt(i) representing the maximum probability of reaching state i at time t, and ψt(i) that tracks the optimal path. The algorithm proceeds through initialization, recursion, termination, and backtracking to reconstruct the optimal state sequence [28].

Evolution of GeneMark: From Markov Models to HMMs

The Original GeneMark Algorithm

The original GeneMark algorithm, developed in 1993, was among the first gene finding methods recognized as an efficient and accurate tool for genome projects [26]. It was used for the annotation of the first completely sequenced bacteria, Haemophilus influenzae, and the first completely sequenced archaea, Methanococcus jannaschii [26]. GeneMark employed species-specific inhomogeneous Markov chain models of protein-coding DNA sequence alongside homogeneous Markov chain models of non-coding DNA [26]. The core algorithm computed a posteriori probability of a sequence fragment carrying genetic code in one of six possible frames (including three frames in the complementary DNA strand) or being "non-coding" [26].

The GeneMark.hmm Advancement

GeneMark.hmm was specifically designed to improve gene prediction quality, particularly in finding exact gene boundaries [25] [26]. The key innovation was integrating GeneMark models into a naturally designed hidden Markov model framework with gene boundaries modeled as transitions between hidden states [25] [26]. This HMM architecture allowed for more precise modeling of the sequence segment dependencies and state transitions that characterize genuine gene structures. Additionally, the algorithm incorporated a ribosome binding site (RBS) model to refine predictions of translation initiation codons, addressing one of the most challenging aspects of prokaryotic gene prediction [25].

Table 2: Performance Comparison of GeneMark and GeneMark.hmm

| Algorithm | Development Year | Core Methodology | Key Innovation | Gene Start Prediction Accuracy |

|---|---|---|---|---|

| GeneMark | 1993 | Inhomogeneous Markov Models | Species-specific codon usage models | Limited accuracy |

| GeneMark.hmm | 1998 | Hidden Markov Models | Integration of Markov models into HMM framework with RBS patterns | Significantly improved |

| GeneMarkS | 2001 | Self-training HMM | Unsupervised parameter estimation from target genome | 83.2% in B. subtilis, 94.4% in E. coli [29] |

Evaluation demonstrated that GeneMark.hmm was significantly more accurate than the original GeneMark in exact gene prediction, even when using relatively simple Markov models of order zero, one, and two [25]. Interestingly, this high accuracy was maintained despite the simplicity of the underlying Markov models, highlighting the power of the HMM framework itself [25].

GeneMark.hmm Architecture and Methodology

HMM State Design for Prokaryotic Genes

The GeneMark.hmm algorithm implements an HMM architecture specifically designed for prokaryotic gene organization. The hidden states correspond to distinct functional regions in DNA sequences:

GeneMark.hmm State Transition Diagram

The model incorporates states for:

- Non-coding regions: Intergenic sequences with homogeneous statistical properties

- Ribosome Binding Sites (RBS): Translation initiation signals upstream of start codons

- Coding regions with codon position awareness: Three distinct states for first, second, and third codon positions, capturing the period-3 property of coding sequences [25] [26]

- Start and stop codons: Critical for defining gene boundaries

This state structure enables the model to capture the fundamental statistical differences between coding and non-coding regions, as well as the distinct nucleotide frequencies at different codon positions—a phenomenon known as "codon bias" [27].

Integration of Ribosome Binding Site Models

A key innovation in GeneMark.hmm was the incorporation of specially derived ribosome binding site patterns to refine predictions of translation initiation codons [25]. The RBS model identifies conserved sequence motifs upstream of start codons that facilitate the initiation of translation in prokaryotes. By integrating this specific signal pattern into the HMM framework, the algorithm could more accurately distinguish true translation start sites from false ones, addressing one of the most persistent challenges in prokaryotic gene prediction.

Implementation of the Viterbi Algorithm for Gene Prediction

GeneMark.hmm employs the Viterbi algorithm to find the most probable path through the hidden states [28]. For a given DNA sequence O = o₁o₂...o_L, the algorithm computes:

Initialization: δ₁(i) = πi · bi(o₁) for 1 ≤ i ≤ N

Recursion: δt(j) = max₁≤i≤N [δ{t-1}(i) · a{ij}] · bj(ot) ψt(j) = argmax₁≤i≤N [δ{t-1}(i) · a{ij}]

Termination: P* = max₁≤i≤N [δL(i)] yL* = argmax₁≤i≤N [δ_L(i)]

Backtracking: yt* = ψ{t+1}(y_{t+1}*) for t = L-1, L-2, ..., 1

This dynamic programming approach efficiently computes the optimal state sequence (gene structure) without explicitly evaluating all possible paths, making it computationally feasible for entire microbial genomes [28].

GeneMarkS: Self-Training Advancement

The Self-Training Methodology

GeneMarkS represents a further evolution of the HMM approach by incorporating a self-training method for prediction of gene starts in microbial genomes [29]. This algorithm combines GeneMark.hmm and GeneMark with a self-training procedure that determines parameters for both models through iterative refinement [26] [29]. The self-training process enables the method to be applied to newly sequenced prokaryotic genomes with no prior knowledge of any protein or rRNA genes, significantly enhancing its applicability to the growing number of sequenced genomes [29].

The self-training procedure operates as follows:

- Initialization: Generate initial heuristic models based on genomic GC content

- Iterative refinement: Alternately predict genes and refine model parameters

- Convergence: Terminate when parameter estimates stabilize between iterations

- Final prediction: Execute GeneMark.hmm with optimized parameters

This methodology leverages the observation that parameters of Markov models used in GeneMark can be approximated by functions of sequence G+C content, enabling parameter derivation from relatively short DNA fragments [26].

Performance and Accuracy

GeneMarkS demonstrated remarkable accuracy in empirical evaluations, precisely predicting 83.2% of translation starts in GenBank-annotated Bacillus subtilis genes and 94.4% of translation starts in an experimentally validated set of Escherichia coli genes [29]. The self-training approach also proved effective for detecting prokaryotic genes in terms of identifying open reading frames containing real genes, with accuracy matching the best gene detection methods available at the time [29].

Comparative Analysis with Other HMM-Based Approaches

Prokaryotic versus Eukaryotic Gene Prediction

While this whitepaper focuses on prokaryotic applications, it is noteworthy that HMM-based approaches have been extensively applied to eukaryotic gene finding with appropriate architectural modifications. Eukaryotic GeneMark.hmm incorporates additional hidden states for initial, internal, and terminal exons, introns, intergenic regions, single-exon genes on both DNA strands, and states for initiation sites, termination sites, donor sites, and acceptor splice sites [26]. This more complex architecture reflects the additional regulatory elements and splicing mechanisms in eukaryotic genes.

HMMs in Contemporary Gene Finding

Traditional HMMs like those in GeneMark.hmm continue to be used alongside newer deep learning approaches. For example, Helixer, a recently developed AI-based tool for ab initio gene prediction, combines deep learning with a hidden Markov model for post-processing [30]. Interestingly, evaluations show that Helixer's performance is very similar to existing HMM tools for fungi, with only a slight margin of improvement (0.007 overall), though it shows more significant advantages in plant and vertebrate genomes [30]. This demonstrates the continued relevance and competitiveness of well-designed HMM approaches in genomic annotation.

Table 3: Essential Research Reagents and Computational Resources

| Resource Type | Specific Tool/Resource | Function in Gene Prediction | Application Context |

|---|---|---|---|

| Algorithm Suite | GeneMark.hmm (prokaryotic) | Core gene prediction algorithm | Primary gene finding in microbial genomes |

| Training Method | GeneMarkS self-training procedure | Unsupervised parameter estimation | New genome annotation without prior knowledge |

| Sequence Data | FASTA format genomic sequences | Input data for analysis | Standardized sequence representation |

| Model Parameters | Species-specific parameter sets | Pre-computed algorithm parameters | Rapid annotation without training phase |

| Evaluation Framework | False positive/negative analysis | Prediction accuracy assessment | Method validation and comparison |

The integration of Hidden Markov Models into GeneMark's prediction strategy represents a significant milestone in computational genomics. By embedding established Markov models of coding potential into an HMM framework with explicit state transitions for gene boundaries, GeneMark.hmm substantially improved the accuracy of exact gene prediction in prokaryotic genomes [25] [26]. The subsequent development of GeneMarkS with its self-training capability further enhanced the method's applicability to newly sequenced organisms without requiring pre-existing annotation [29].

The enduring utility of HMMs in gene prediction stems from their principled probabilistic foundation, computational efficiency, and natural alignment with the sequential organization of genomic features. While newer approaches based on deep learning are emerging, HMM-based methods continue to offer robust performance, particularly for prokaryotic genomes [30]. The GeneMark.hmm implementation demonstrates how domain knowledge—such as ribosome binding site patterns and codon position statistics—can be effectively incorporated into statistical frameworks to solve complex biological problems.

As genomic sequencing continues to expand into uncharted taxonomic space and metagenomic exploration, the self-training HMM approach pioneered by GeneMarkS provides an essential tool for extracting meaningful genetic information from sequence data. The methodology exemplifies how sophisticated computational strategies can transform raw sequence data into biological knowledge, advancing our understanding of genomic architecture and supporting drug development through improved gene annotation.

From Sequence to Function: Integrated Pipelines and Real-World Applications

The NCBI Prokaryotic Genome Annotation Pipeline (PGAP) is an automated system designed to provide comprehensive structural and functional annotation for bacterial and archaeal genomes, including both chromosomes and plasmids [31]. As a cornerstone of the RefSeq database, PGAP delivers consistent, high-quality annotation that supports comparative genomics and facilitates research in microbial genetics, pathogenesis, and drug discovery. The pipeline has evolved significantly since its initial development in 2001, incorporating increasingly sophisticated methods that combine homology-based evidence with ab initio gene prediction algorithms to accurately identify genomic features [31] [32]. For researchers investigating prokaryotic gene prediction algorithms, PGAP represents a robust, standardized approach that leverages both extrinsic evidence from protein families and intrinsic statistical patterns within genomic sequences.

PGAP operates on a non-redundant protein data model where each unique protein sequence receives a single WP_ accession number that represents all identical occurrences across annotated genomes [33]. This model enables efficient propagation of updated functional annotations across thousands of genomes simultaneously, ensuring that new characterizations of protein function can be systematically applied to all identical sequences. The pipeline is capable of processing both complete genomes and draft Whole Genome Shotgun (WGS) assemblies consisting of multiple contigs, making it applicable to a wide range of sequencing projects [31].

Core Methodology and Architectural Framework

PGAP employs a sophisticated multi-level approach to genome annotation that integrates multiple evidence sources before executing ab initio prediction. This fundamental architectural difference distinguishes it from other pipelines that typically run ab initio prediction first and then face the challenge of reconciling conflicting evidence [32]. The PGAP workflow determines structural annotation by comparing open reading frames (ORFs) to libraries of protein hidden Markov models (HMMs), representative RefSeq proteins, and proteins from well-characterized reference genomes [34].

Table: Major Components of the PGAP Structural Annotation Workflow

| Component | Function | Tools Used |

|---|---|---|

| ORF Prediction | Identifies potential coding regions in all six frames | ORFfinder |

| Protein Evidence Mapping | Maps homologous proteins to genome | BLAST, ProSplign |

| HMM-based Prediction | Identifies genes using protein family models | HMMER (TIGRFAM, Pfam, NCBIfams) |

| ab initio Prediction | Predicts genes in regions lacking homology evidence | GeneMarkS-2+ |

| Non-coding RNA Identification | Finds structural RNAs, tRNAs, small ncRNAs | tRNAscan-SE, Infernal cmsearch |

The following diagram illustrates the comprehensive workflow of the PGAP system:

Pan-Genome Approach and Protein Family Models

A fundamental innovation in PGAP is its pan-genome approach to protein annotation. For well-populated taxonomic clades, PGAP utilizes pre-computed sets of core proteins that are conserved across at least 80% of genomes within that clade [32]. This approach leverages the exponential growth of sequenced prokaryotic genomes to provide evolutionary context for annotation. The core protein sets are generated through clustering analyses that reduce redundancy while maintaining representative sequences for homologous protein groups.

PGAP employs a hierarchical system of Protein Family Models for functional annotation, comprising Hidden Markov Models (HMMs), BlastRules, and Conserved Domain Database (CDD) architectures [35]. This evidence hierarchy follows a strict order of precedence when assigning names and functions to predicted proteins:

Table: Protein Family Model Hierarchy and Precedence in PGAP

| Evidence Type | Precedence Score | Description | Typical Use Case |

|---|---|---|---|

| BlastRuleIS | 96 | Strict rules (99% identity) for transposases | Insertion sequence elements |

| BlastRuleException | 95 | Specific function groups (94% identity) | Specialized proteins like toxins |

| Exception HMM | 77 | HMMs for specific chemical functions | Named isozymes with specific roles |

| Equivalog HMM | 70 | Proteins with conserved specific function | Enzymes with conserved EC numbers |

| Domain Architecture | 60 | Conserved domain arrangements | Multi-domain proteins |

| Subfamily HMM | 55 | Proteins with general but not specific function | NAD-dependent oxidoreductases |

| Superfamily HMM | 33 | Broad homology detection | Diverse protein families |

| Domain HMM | 30 | Localized regions of homology | General functional categorization |

Detailed Methodologies and Experimental Protocols

Structural Annotation of Protein-Coding Genes