DESeq2 vs edgeR: How Normalization Choice Impacts PCA in Your RNA-Seq Analysis

Principal Component Analysis (PCA) is a cornerstone of RNA-seq data exploration, yet its results are profoundly shaped by the normalization method chosen.

DESeq2 vs edgeR: How Normalization Choice Impacts PCA in Your RNA-Seq Analysis

Abstract

Principal Component Analysis (PCA) is a cornerstone of RNA-seq data exploration, yet its results are profoundly shaped by the normalization method chosen. This article provides a comprehensive guide for researchers and bioinformaticians comparing DESeq2's RLE and edgeR's TMM normalization. We cover the foundational principles of these methods, detail their direct application for PCA, troubleshoot common pitfalls like skewed data and batch effects, and present a comparative validation framework. By understanding how these popular tools transform count data, scientists can make informed choices that lead to more reliable and biologically accurate interpretations of their transcriptomic studies.

The Core of the Matter: Understanding RLE and TMM Normalization

Why Normalization is Non-Negotiable for RNA-Seq PCA

In the realm of RNA-Seq data analysis, Principal Component Analysis (PCA) is an indispensable tool for exploratory data analysis, providing a visual assessment of sample relationships, batch effects, and overall data structure. However, the raw count data generated by sequencing technologies are not directly suitable for PCA. The necessity of normalization stems from the fundamental nature of sequencing data, where raw counts are influenced by technical artifacts that can obscure biological signals. Without proper normalization, PCA results can be misleading, potentially leading to incorrect biological interpretations. This guide examines why normalization is a critical prerequisite for RNA-Seq PCA, with a specific focus on the comparative approaches of DESeq2 and edgeR, two widely used methods in the field.

The Fundamental Challenges of Raw RNA-Seq Data

RNA-Seq data inherently contains technical variations that must be addressed before any meaningful analysis, including PCA, can be performed. Three primary challenges necessitate normalization:

Sequencing Depth Variation: Samples are sequenced to different depths, resulting in varying total numbers of reads across samples. A gene with 1,000 counts in a sample with 10 million total reads represents a different relative expression level than the same count in a sample with 50 million reads [1]. Without correction, samples with higher sequencing depth will appear more different in PCA space due to technical rather than biological factors.

Library Composition Effects: The presence of a few highly expressed genes can consume a significant fraction of the sequencing library, reducing the counts available for other genes. This creates a competitive dynamic where the increase in one transcript can artificially depress the observed counts of others [2] [3].

Gene Length Bias: Longer transcripts generate more sequencing fragments by virtue of their size, making them appear more highly expressed than shorter transcripts at the same biological abundance level [1]. While some between-sample analyses like PCA are less affected by this, it remains a critical consideration for cross-gene comparisons.

Table 1: Primary Technical Biases in RNA-Seq Data Requiring Normalization

| Bias Type | Description | Impact on Raw Data |

|---|---|---|

| Sequencing Depth | Total number of reads varies between samples. | Counts are not comparable between samples. |

| Library Composition | A few highly expressed genes dominate the read pool. | Reduces counts for other genes non-uniformly. |

| Gene Length | Longer genes produce more sequencing fragments. | Longer genes appear more highly expressed. |

Normalization Methods: DESeq2 vs. edgeR

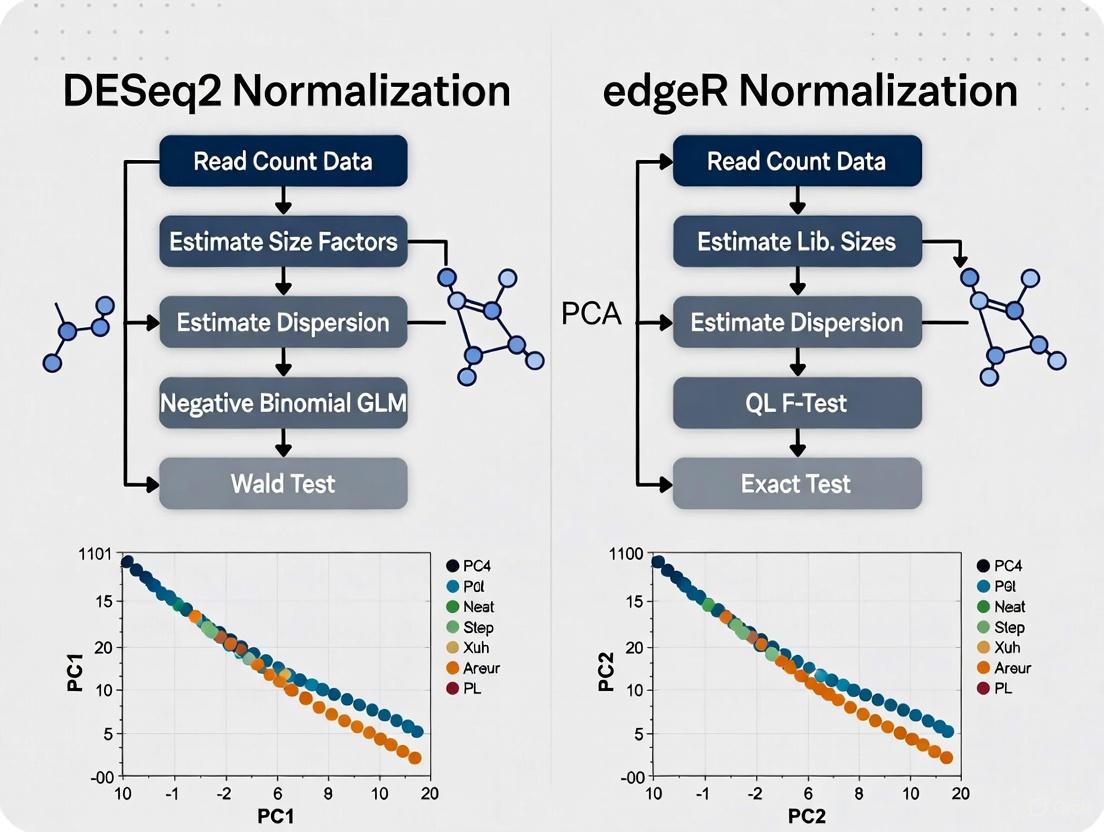

DESeq2 and edgeR employ distinct normalization strategies to counteract these technical biases. While both are designed for differential expression analysis, their normalized data can be used for PCA, with implications for the resulting visualizations.

DESeq2 utilizes the median-of-ratios method. This approach calculates a size factor for each sample by first finding the geometric mean of counts for each gene across all samples. It then computes the ratio of each gene's count to this geometric mean for each sample, and the median of these ratios (excluding genes with extreme values) becomes the sample's size factor. Raw counts are divided by this factor to generate normalized counts [2]. This method is robust to the presence of differentially expressed genes, as it uses the median ratio.

edgeR employs the Trimmed Mean of M-values (TMM) method. TMM selects a reference sample (often the one with the upper quartile closest to the mean across all samples) and compares each other sample to this reference. For each pair, it calculates M-values (log fold changes) and A-values (average expression levels). It then trims the extremes of these distributions (by default, 30% of the M-values and 5% of the A-values) and uses the weighted mean of the remaining M-values to calculate a normalization factor [2].

Table 2: Comparison of DESeq2 and edgeR Normalization Methods

| Feature | DESeq2 | edgeR |

|---|---|---|

| Core Method | Median-of-ratios | Trimmed Mean of M-values (TMM) |

| Approach | Models raw counts and incorporates normalization into the dispersion estimation. | Calculates scaling factors between a test sample and a reference. |

| Robustness | Robust to high numbers of differentially expressed genes (uses median). | Robust to imbalance in differential expression and highly expressed genes (uses trimming). |

| Considerations | Can be affected by large-scale expression shifts. | Can be influenced by the chosen reference sample and the level of trimming. |

The Direct Impact of Normalization on PCA

The choice of normalization method directly influences the outcome of PCA, which is sensitive to the variance structure of the data. A study evaluating 12 different normalization methods found that while PCA score plots might appear similar across methods, the biological interpretation of the models can depend heavily on the normalization method applied [4]. This is because normalization alters the correlation patterns between genes and samples, which in turn affects the principal components.

Specifically, when different normalization techniques were applied to the same dataset, the genes identified as most influential in the principal components (through their loadings) varied. Consequently, the biological pathways highlighted by enrichment analysis of these top genes also changed, demonstrating that the normalization choice can lead to different biological conclusions [5]. DESeq2 and edgeR normalization, by accounting for library composition, aim to ensure that the major sources of variance captured by PCA reflect true biological differences rather than technical artifacts.

Experimental Protocols for Normalization Assessment

To objectively compare the performance of DESeq2 and edgeR normalization for PCA, the following experimental workflow can be implemented. This protocol is adapted from established RNA-Seq analysis practices [2] [6] and normalization comparison studies [4] [5].

Data Preprocessing and Quantification

- Begin with raw sequencing reads (FASTQ files).

- Perform quality control using tools like FastQC or MultiQC to assess base quality, GC content, and adapter contamination [2].

- Trim reads to remove low-quality bases and adapters using Trimmomatic or Cutadapt [2].

- Align reads to a reference genome using STAR or HISAT2, or perform alignment-free quantification with Salmon or Kallisto to obtain a count matrix [2] [6].

Normalization and PCA Execution

- Import the count matrix into an R/Bioconductor environment.

- Apply DESeq2 normalization to generate median-of-ratios scaled counts.

- Apply edgeR normalization to generate TMM-scaled counts.

- Perform log-transformation (e.g., log2(normalized counts + 1)) on the normalized counts from both methods to stabilize variance for PCA.

- Run PCA on both transformed datasets.

Evaluation of Results

- Visual Inspection: Plot the PCA results (typically PC1 vs. PC2) for both methods. Assess the separation of known biological groups and the clustering of technical replicates.

- Variance Attribution: Check if known batch effects or technical covariates (e.g., sequencing depth) are strongly associated with the principal components.

- Biological Consistency: Perform pathway enrichment analysis on the genes contributing most (highest loadings) to the first few PCs. Compare the biological relevance and consistency of the enriched pathways against prior knowledge.

The Scientist's Toolkit: Essential Research Reagents & Solutions

The following table details key computational tools and resources necessary for performing the comparative analysis outlined in this guide.

Table 3: Essential Research Reagents and Computational Tools

| Item Name | Function / Role in Analysis |

|---|---|

| FastQC / MultiQC | Quality control tools for assessing raw sequencing data quality and generating aggregate reports [2]. |

| Trimmomatic / Cutadapt | Read trimming tools for removing adapter sequences and low-quality bases from raw reads [2]. |

| STAR / HISAT2 | Spliced transcript alignment to a reference genome for generating count matrices [2]. |

| Salmon / Kallisto | Alignment-free quantification tools for rapid transcript-level abundance estimation [2] [6]. |

| DESeq2 | R/Bioconductor package for differential expression analysis implementing the median-of-ratios normalization [2] [6]. |

| edgeR | R/Bioconductor package for differential expression analysis implementing the TMM normalization [2]. |

| Airway2 Dataset | A well-annotated public RNA-Seq dataset, useful for testing and benchmarking normalization workflows [6]. |

Normalization is a non-negotiable preprocessing step for RNA-Seq PCA. It ensures that the variance structure analyzed by PCA reflects biological reality rather than technical artifacts. Both DESeq2's median-of-ratios and edgeR's TMM methods offer robust, composition-aware normalization strategies. The choice between them can subtly influence the resulting PCA plot and the subsequent biological narrative. Therefore, researchers should understand the principles behind these methods and apply them consistently, as their impact extends beyond differential expression into the foundational exploratory analysis of transcriptomic data.

In RNA-sequencing (RNA-Seq) analysis, normalization stands as a critical preprocessing step that ensures accurate and comparable gene expression measurements between samples. The process addresses several technical biases inherent in sequencing data, including sequencing depth (variation in the total number of reads per sample), RNA composition (changes driven by a few highly differentially expressed genes), and gene length (longer genes accumulating more reads independent of expression level) [7]. Without proper normalization, these technical artifacts can obscure true biological signals and lead to erroneous conclusions in downstream analyses, particularly in Principal Component Analysis (PCA) where the goal is to visualize and interpret the major sources of variation in the data.

DESeq2's Relative Log Expression (RLE) and edgeR's Trimmed Mean of M-values (TMM) represent two widely adopted normalization strategies in transcriptomics research. While both methods aim to account for sequencing depth and RNA composition, they diverge fundamentally in their mathematical foundations and underlying assumptions. DESeq2 employs a geometric approach centered on pseudo-reference samples calculated from per-gene geometric means across all samples, making it particularly robust to outliers and highly differentially expressed genes [7]. In contrast, edgeR's TMM method operates through a trimmed mean strategy that selects a reference sample and calculates weighted mean log-ratios against this reference, providing stability through the exclusion of extreme values [8] [9].

The choice between these normalization methods carries significant implications for PCA, a multivariate exploratory technique essential for visualizing sample relationships and identifying batch effects or outliers in RNA-seq datasets. Research has demonstrated that although PCA score plots often appear superficially similar regardless of normalization method, the biological interpretation of these models can differ substantially depending on the normalization approach applied [4]. This comparison guide examines the geometric foundations of DESeq2's RLE normalization, contrasts it with edgeR's TMM approach, and evaluates their performance characteristics specifically within the context of PCA-based research applications.

Mathematical Foundations: A Comparison of Core Algorithms

The Geometric Framework of DESeq2's RLE Normalization

DESeq2's Relative Log Expression (RLE) normalization employs a geometric mean-based strategy that operates under the fundamental assumption that most genes are not differentially expressed across samples [7]. This method progresses through four defined computational stages, each building upon the previous to generate robust, composition-adjusted normalized counts.

Table 1: Step-by-Step Mathematical Procedure of DESeq2's RLE Normalization

| Step | Procedure Name | Mathematical Operation | Output |

|---|---|---|---|

| 1 | Pseudo-Reference Creation | Calculate geometric mean across all samples for each gene | Row-wise geometric means |

| 2 | Ratio Calculation | Divide each gene count by its pseudo-reference value | Gene-wise ratios for all samples |

| 3 | Size Factor Determination | Compute median of ratios for each sample | Sample-specific size factors |

| 4 | Count Normalization | Divide raw counts by corresponding size factors | Normalized expression values |

In Step 1, the algorithm creates a pseudo-reference sample by calculating the geometric mean (the nth root of the product of n numbers) for each gene across all samples. For a gene g across samples 1 to S, with counts X_{g,1} to X_{g,S}, the geometric mean is calculated as GM_g = (X_{g,1} × X_{g,2} × ... × X_{g,S})^{1/S} [7]. This pseudo-reference represents a "typical" expression profile under the assumption that most genes do not change systematically between conditions.

Step 2 involves calculating the ratio of each gene's count to this pseudo-reference value for every sample, generating a matrix of ratios where each element r_{g,s} = X_{g,s} / GM_g. The underlying logic posits that for non-differentially expressed genes, these ratios should cluster around 1, while differentially expressed genes will show systematic deviations.

In Step 3, the size factor for each sample is determined as the median of all gene ratios for that sample: SF_s = median(r_{1,s}, r_{2,s}, ..., r_{G,s}). The median offers robustness against outliers, ensuring that a small number of highly differentially expressed genes do not disproportionately influence the normalization factor [7].

Finally, in Step 4, normalized counts are obtained by dividing each raw count by its corresponding sample-specific size factor: N_{g,s} = X_{g,s} / SF_s. This process effectively aligns the distributions across samples while preserving the relative expression relationships within each sample.

Diagram 1: DESeq2 RLE normalization workflow. The process transforms raw counts through geometric operations to produce normalized counts suitable for downstream analysis.

edgeR's TMM Normalization: A Reference-Based Approach

In contrast to DESeq2's geometric framework, edgeR's Trimmed Mean of M-values (TMM) normalization employs a reference-sample strategy that also assumes most genes are not differentially expressed [8] [9]. The TMM method selects one sample as a reference (typically the one with upper quartile closest to the mean across all samples) and normalizes all other samples against this reference.

The algorithm begins by calculating M-values (log fold changes) and A-values (average expression intensities) for each gene between the test and reference samples. For genes g in test sample T and reference sample R, with counts X_{g,T} and X_{g,R} and library sizes N_T and N_R respectively:

- M_g = log₂((X_{g,T}/N_T) / (X_{g,R}/N_R))

- A_g = ½·log₂((X_{g,T}/N_T) · (X_{g,R}/N_R))

The method then trims the data by excluding genes with extreme M-values (differential expression) and extreme A-values (low expression), typically removing 5% of the most extreme observations from both ends [8]. The remaining genes are used to compute a weighted mean of M-values, with weights reflecting the approximate precision of the log fold changes. This weighted trimmed mean becomes the normalization factor for that test sample relative to the reference.

Table 2: Comparative Foundations of RLE and TMM Normalization Methods

| Aspect | DESeq2 RLE (Geometric) | edgeR TMM (Reference-Based) |

|---|---|---|

| Core Assumption | Most genes not DE | Most genes not DE |

| Central Tendency | Geometric mean | Trimmed mean |

| Reference Point | Pseudo-reference (all samples) | Single reference sample |

| Robustness Mechanism | Median of ratios | Trimming of extremes + weighting |

| Handling of Zeros | Problematic for geometric mean | Handled through trimming |

| Library Size Relationship | Positive correlation with factors [8] | No direct correlation with factors [8] |

A key distinction between the methods lies in their treatment of library size. Experimental comparisons have demonstrated that DESeq2's size factors typically show a positive correlation with library size, while edgeR's TMM factors do not directly correlate with library size [8]. This fundamental difference arises from RLE's geometric foundation versus TMM's focus on fold-change stability independent of absolute sequencing depth.

Experimental Comparisons: Performance in Real Datasets

Normalization Factor Discrepancies in Tomato Fruit Set Data

Empirical evidence from biological datasets reveals important practical differences between DESeq2's RLE and edgeR's TMM normalization approaches. A comprehensive comparison using RNA-Seq data from tomato fruit set development—comprising 34,675 genes across 9 samples (3 developmental stages with 3 biological replicates each)—demonstrated that while both methods effectively normalize data, they produce meaningfully different normalization factors [8] [9].

Table 3: Normalization Factors for Tomato Fruit Set Data Across Methods

| Sample | Developmental Stage | TMM (edgeR) | RLE (DESeq2) | MRN |

|---|---|---|---|---|

| Bud 1 | Flower Bud | 0.980 | 1.017 | 0.871 |

| Bud 2 | Flower Bud | 0.922 | 0.809 | 0.754 |

| Bud 3 | Flower Bud | 0.720 | 0.727 | 0.914 |

| Ant 1 | Anthesis | 1.058 | 0.866 | 0.793 |

| Ant 2 | Anthesis | 0.981 | 1.236 | 1.201 |

| Ant 3 | Anthesis | 0.884 | 0.736 | 0.805 |

| Pos 1 | Post-Anthesis | 1.130 | 1.282 | 1.340 |

| Pos 2 | Post-Anthesis | 1.194 | 1.272 | 1.253 |

| Pos 3 | Post-Anthesis | 1.241 | 1.373 | 1.293 |

The data reveals several important patterns: TMM normalization factors show the least variability across samples (range: 0.720-1.241), while RLE factors demonstrate greater dispersion (range: 0.727-1.373) [8]. Additionally, RLE and MRN (Median Ratio Normalization) factors tend to be more similar to each other than to TMM factors, reflecting their related mathematical foundations. Statistical analysis of the relationship between normalization factors and library sizes further revealed that TMM factors showed no significant correlation with library size, while both RLE and MRN factors demonstrated statistically significant positive correlations [8].

Impact on Principal Component Analysis and Interpretation

The choice of normalization method significantly influences the outcome of Principal Component Analysis (PCA), a fundamental exploratory technique in transcriptomics research. A comprehensive evaluation of 12 normalization methods applied to both simulated and experimental RNA-sequencing data demonstrated that normalization meaningfully impacts PCA results and their biological interpretation [4].

Research examining correlation patterns in normalized data found that although PCA score plots often appear visually similar regardless of normalization method, the underlying model complexity, sample clustering quality, and gene ranking within the PCA model vary substantially across normalization approaches [4]. These differences directly translate to altered biological interpretations when researchers perform pathway enrichment analysis on genes identified as important drivers of principal components.

Specifically for DESeq2's RLE and edgeR's TMM, studies have found that while both methods generally produce coherent sample clustering in PCA space, the relative positions of samples and the specific genes contributing most to each principal component can differ. These differences emerge from each method's approach to handling compositional bias—situations where a small number of genes show extreme differential expression between conditions, disproportionately influencing overall normalization [7] [9].

For PCA applications, DESeq2's geometric approach offers particular advantages when working with datasets containing extreme outliers or when the assumption of balanced up- and down-regulation is violated. The median-based robustness of RLE normalization prevents these outliers from dominating the normalization factors, thereby preserving more subtle patterns in the data that might be obscured by other methods [7].

Practical Implementation: Protocols for Research Applications

Computational Protocols for DESeq2 RLE Normalization

Implementing DESeq2's RLE normalization requires specific computational protocols to ensure reproducible and accurate results. The following step-by-step protocol outlines the essential procedures for generating and applying RLE normalization to RNA-seq count data:

Step 1: Data Preparation and Input

- Format count data as a matrix with genes as rows and samples as columns

- Ensure metadata (sample information) matches column names and order in count matrix

- Filter out genes with zero counts across all samples to reduce computational burden

- Load the DESeq2 package in R:

library(DESeq2)

Step 2: DESeqDataSet Object Creation

- Create a DESeqDataSet object using:

dds <- DESeqDataSetFromMatrix(countData = counts, colData = metadata, design = ~ condition) - Specify the experimental design formula based on the study structure

- Verify that sample names consistently match between count data and metadata

Step 3: Size Factor Estimation and Normalization

- Estimate size factors using the built-in function:

dds <- estimateSizeFactors(dds) - Access the calculated size factors:

sizeFactors(dds) - Generate normalized counts:

normalized_counts <- counts(dds, normalized = TRUE) - Verify normalization by comparing library sizes before and after normalization

Step 4: Quality Assessment

- Check size factors to ensure they range approximately between 0.5 and 2

- Extreme values may indicate potential issues with specific samples

- Visualize normalized data using mean-difference plots or PCA to assess normalization effectiveness

Diagram 2: Comparative research workflow for normalization method evaluation in PCA applications.

edgeR TMM Normalization Protocol

For comparative studies implementing edgeR's TMM normalization, the following protocol ensures proper application and fair comparison with DESeq2's RLE:

Step 1: Data Preparation and DGEList Creation

- Format count data similarly to DESeq2 preparation

- Create a DGEList object:

dge <- DGEList(counts = counts, group = group) - Include sample information in the DGEList object for downstream analysis

Step 2: TMM Normalization Implementation

- Calculate normalization factors using:

dge <- calcNormFactors(dge, method = "TMM") - Access normalization factors:

dge$samples$norm.factors - Generate normalized counts using:

cpm(dge, normalized.lib.sizes = TRUE)

Step 3: Comparative Analysis Setup

- Apply identical filtering criteria to both DESeq2 and edgeR analyses

- Use the same gene set for PCA implementation after normalization

- Ensure consistent visualization parameters for comparative assessment

Table 4: Essential Research Reagent Solutions for Normalization Comparisons

| Resource Category | Specific Tools | Application Context | Key Functions |

|---|---|---|---|

| Computational Environments | R Statistical Environment | All analyses | Primary platform for statistical computing |

| Bioconductor Packages | DESeq2, edgeR, limma | Normalization implementation | Specialized algorithms for RNA-seq analysis |

| Data Structures | DESeqDataSet, DGEList | Method-specific data handling | Container objects storing counts and normalization factors |

| Visualization Tools | ggplot2, pheatmap | Result interpretation | Data visualization and exploratory analysis |

| Benchmark Datasets | Tomato fruit set, TCGA CESC | Method validation | Experimental data for normalization comparison |

| Quality Metrics | PCA variance, silhouette widths | Performance assessment | Quantitative evaluation of normalization effectiveness |

DESeq2's Relative Log Expression normalization method offers a geometrically grounded approach to RNA-seq count normalization that demonstrates particular strengths in PCA-focused research applications. The method's foundation in geometric means and median-based robustness provides stability against outlier genes and compositional biases that can distort multivariate analyses. While both DESeq2's RLE and edgeR's TMM produce biologically valid results, empirical evidence indicates that RLE normalization factors typically show greater dynamic range and correlation with library size compared to TMM factors [8].

For researchers employing PCA as an exploratory tool, DESeq2's geometric approach offers advantages in preserving subtle biological patterns that might be obscured by more aggressive trimming strategies. The method's inherent resistance to extreme values aligns well with the assumptions of principal component analysis, which can be sensitive to technical artifacts in high-dimensional genomic data. Furthermore, studies have consistently shown that while normalization choice affects PCA interpretation, both DESeq2 and edgeR represent mature, robust approaches suitable for most research applications [4] [10].

When selecting a normalization method for PCA-based studies, researchers should consider their specific data characteristics—including the presence of extreme outliers, balance between up- and down-regulated genes, and overall sample-to-sample variability. DESeq2's RLE normalization provides a geometrically principled option that maintains biological signal integrity while effectively controlling for technical variation, making it particularly valuable for exploratory transcriptomic research where preserving subtle patterns is paramount.

Thesis Context: Normalization in DESeq2 vs edgeR for PCA Research

In the analysis of RNA-seq data, normalization is a critical preprocessing step that ensures the comparability of expression levels across different samples. Within the broader thesis comparing DESeq2 and edgeR, the choice of normalization method is paramount, particularly for analyses like Principal Component Analysis (PCA), where the structure and interpretation of data can be heavily influenced by technical variation. This guide objectively compares the Trimmed Mean of M-values (TMM) method from edgeR with the Relative Log Expression (RLE) method from DESeq2, providing experimental data and protocols to inform researchers and drug development professionals.

The core challenge in RNA-seq analysis is that the total number of reads, or library size, from different samples is not directly comparable. Simple normalization by total count fails when there are significant differences in the RNA composition between samples [11]. If a small number of genes are extremely highly expressed in one condition, they consume a substantial portion of the sequencing "real estate," artificially deflating the read counts for all other genes in that sample. Failure to correct for this composition bias can lead to a high false positive rate in Differential Expression (DE) analysis and can distort the sample relationships observed in PCA.

The two most prominent scaling normalization methods designed to handle this bias are the Trimmed Mean of M-values (TMM) from the edgeR package and the Relative Log Expression (RLE) from the DESeq2 package. Both are founded on the assumption that the majority of genes are not differentially expressed (DE) across the samples, and they use robust statistical techniques to estimate scaling factors that align samples appropriately [8] [9].

Technical Foundations and Comparative Workflow

Core Algorithm of TMM

The TMM normalization procedure is a robust, gene-wise approach that calculates scaling factors between a test sample and a reference sample. The algorithm follows a specific workflow to mitigate the influence of DE genes and low-count genes on the final scaling factor.

The following diagram illustrates the logical workflow and key decision points within the TMM algorithm:

The mathematical definitions for the key values in this workflow are:

M-value (Log Fold Change): ( Mg = \log2(\frac{Y{g,k}/Nk}{Y{g,r}/Nr}) ) [8] [11]

- Represents the log-fold-change for gene ( g ) between the test sample ( k ) and the reference sample ( r ).

- ( Y{g,k} ) and ( Y{g,r} ) are the observed counts for gene ( g ).

- ( Nk ) and ( Nr ) are the total read counts (library sizes) for the samples.

A-value (Absolute Expression): ( Ag = \frac{1}{2} \log2(\frac{Y{g,k}}{Nk} \times \frac{Y{g,r}}{Nr}) ) [8] [11]

- Represents the average expression level of gene ( g ) across the two samples.

The algorithm trims the M-values by 30% and the A-values by 5% by default to remove extreme DE genes and genes with very low expression, which have high variance [11]. The final normalization factor is derived from the weighted mean of the remaining M-values, with weights based on the inverse of the approximate asymptotic variance [12].

Core Algorithm of RLE (DESeq2)

The RLE method used by DESeq2 operates on a different principle. It calculates a scaling factor for a given sample as the median of the ratio of each gene's count to its geometric mean across all samples.

- For each gene ( g ), its geometric mean ( G_g ) is calculated across all samples.

- For each sample ( k ), the ratio ( R{g,k} = Y{g,k} / G_g ) is computed for all genes.

- The scaling factor for sample ( k ) is the median of all ratios ( R_{g,k} ) (excluding zeros) [8].

This method is robust because the median is unaffected by extreme values, ensuring that the scaling factor is not skewed by a subset of highly DE genes.

Direct Comparison of TMM and RLE

The table below summarizes the key technical differences between the TMM and RLE normalization methods.

Table 1: Technical Comparison of TMM and RLE Normalization Methods

| Aspect | TMM (edgeR) | RLE (DESeq2) |

|---|---|---|

| Core Principle | Trimmed mean of gene-wise log-fold-changes (M-values) relative to a reference. | Median of ratios of counts to a per-gene geometric mean. |

| Underlying Assumption | The majority of genes are not DE and should have similar log-fold-changes around zero. | The majority of genes are not DE and should have a ratio to the geometric mean of one. |

| Default Trimming | Dual-trimming of M-values (30%) and A-values (5%). | Implicit trimming via the median. |

| Reference Point | A single sample is typically used as a reference (often the one with the upper quartile closest to the mean). | The geometric mean across all samples serves as a "virtual reference." |

| Handling of Library Size | Normalization factors are largely independent of library size [8]. | Normalization factors show a positive correlation with library size [8]. |

| Typical Use Case | Efficient and robust for a wide range of sample types, including those with very small sample sizes [13]. | Performs well with moderate to large sample sizes and offers strong FDR control [13]. |

Experimental Performance and Benchmarking

A Hypothetical Scenario Revealing Normalization Necessity

A classic hypothetical experiment demonstrates why simple total count normalization fails and how TMM corrects for it [14] [11]. Consider two control samples and two case samples, each with a total of 500 reads (equal sequencing depth). The first 25 transcripts are present at 10 counts each in controls and 20 counts each in cases. The controls, however, contain an additional 25 transcripts (also at 10 counts each) that are completely absent (0 counts) in the cases.

- Without Normalization: A DE analysis incorrectly identifies all 50 transcripts as differentially expressed because it does not account for the fact that the case samples have a smaller "effective" transcriptome. The first 25 genes appear upregulated in cases simply because the sequencing depth is concentrated on a smaller number of genes [14].

- With TMM Normalization: TMM identifies the compositional bias and calculates a scaling factor. The effective library size of the case samples is halved, correctly normalizing the data. Subsequent DE analysis correctly identifies zero differentially expressed genes, as the apparent fold-changes were a technical artifact [14].

Performance on Real and Simulated Data

Benchmarking studies on real and simulated data have consistently shown that TMM and RLE are top-performing methods, often yielding similar results.

Table 2: Normalization Factors from a Tomato Fruit Set RNA-Seq Dataset (9 samples) [8]

| Sample | TMM Factor | RLE (DESeq2) Factor | MRN Factor |

|---|---|---|---|

| Bud 1 | 0.980 | 1.017 | 0.871 |

| Bud 2 | 0.922 | 0.809 | 0.754 |

| Bud 3 | 0.720 | 0.727 | 0.914 |

| Ant 1 | 1.058 | 0.866 | 0.793 |

| Ant 2 | 0.981 | 1.236 | 1.201 |

| Ant 3 | 0.884 | 0.736 | 0.805 |

| Pos 1 | 1.130 | 1.282 | 1.340 |

| Pos 2 | 1.194 | 1.272 | 1.253 |

| Pos 3 | 1.241 | 1.373 | 1.293 |

A study on a large Drosophila melanogaster dataset (726 individuals) found that both TMM and DESeq's RLE method properly aligned data distributions across samples. However, it noted that TMM was more sensitive to the filtering strategy used for low-expressed genes [15]. Another study concluded that for simple two-condition experiments without replicates, the choice of method (TMM, RLE, or MRN) has minimal impact on results. However, for more complex experimental designs, careful selection is advised [9].

Practical Implementation and Protocols

The Scientist's Toolkit: Essential Research Reagents and Software

Table 3: Essential Tools for RNA-seq Normalization and DE Analysis

| Tool / Reagent | Function / Description |

|---|---|

| R Programming Language | The standard computational environment for statistical analysis of RNA-seq data. |

| Bioconductor Project | An open-source repository for bioinformatics R packages, providing edgeR and DESeq2. |

| edgeR Package | Provides the calcNormFactors function for TMM normalization and a full suite for DE analysis. |

| DESeq2 Package | Provides the estimateSizeFactorsForMatrix function for RLE normalization and its own DE pipeline. |

| High-Throughput Sequencer | Platform (e.g., Illumina) for generating raw RNA-seq read data. |

| Alignment Software (e.g., STAR) | Maps raw sequencing reads to a reference genome to generate count data. |

| Count Quantification Tool (e.g., featureCounts) | Summarizes mapped reads into a count matrix for each gene and sample. |

Detailed Protocol: Implementing TMM Normalization in edgeR

The following code block details the steps to perform TMM normalization and generate normalized expression values in R using edgeR.

Protocol Notes:

- The

calcNormFactorsfunction alone does not modify the count data; it only calculates the scaling factors [16]. - The

cpmfunction withlog=TRUEapplies a prior count of 0.25 to avoid taking the log of zero. - These normalized log-CPM values are suitable for downstream analyses like PCA.

Detailed Protocol: Implementing RLE Normalization in DESeq2

For comparison, here is the protocol for RLE normalization within the DESeq2 framework.

Implications for PCA and Differential Expression

The choice of normalization method directly impacts the results of PCA and DE analysis, which are central to many research and drug development pipelines.

- Impact on PCA: PCA is sensitive to technical variance. A proper normalization method like TMM or RLE removes composition biases that could otherwise cause samples to cluster by technical artifacts rather than biological truth. This leads to more reliable and interpretable PCA plots, ensuring that the primary sources of variation in the data are biological [17].

- Impact on DE Analysis: Normalization is a critical step for controlling false positives and false negatives in DE analysis. Using total count normalization in the presence of composition bias can lead to a high false discovery rate. Both TMM and RLE have been shown to control type I error effectively and provide good power for detecting true DE genes [11] [15].

- Tool Selection: While TMM (edgeR) and RLE (DESeq2) often produce concordant results, some benchmarks suggest that

edgeRmay perform slightly better with very small sample sizes (as low as 2 replicates) and for genes with low expression counts, whileDESeq2is robust for moderate to large sample sizes and offers strong FDR control [13].

Within the context of comparing DESeq2 and edgeR for PCA research, the TMM normalization method stands as a robust, ratio-based technique that effectively corrects for library composition biases. The experimental data and protocols outlined in this guide provide a foundation for researchers to make an informed choice. For simple designs, both TMM and RLE are excellent. For complex designs or when integrating datasets from different sources (batches), TMM's properties may offer specific advantages, but careful evaluation, potentially including batch correction methods like ComBat-seq, is recommended [17]. The ultimate choice may also be influenced by the broader statistical framework (edgeR or DESeq2) the researcher intends to use for the entire analysis pipeline.

In RNA-sequencing (RNA-seq) analysis, normalization is an essential preprocessing step that ensures accurate comparisons of gene expression levels between samples. Technical variations, such as differences in sequencing depth (library size) and the compositional structure of the transcript population, can obscure true biological signals and lead to erroneous conclusions in downstream analyses [18] [19]. The choice of normalization method can have a profound impact on the results of differential expression analysis, sometimes even more so than the choice of the statistical test itself [19] [20]. Within the context of differential expression tools, DESeq2 and edgeR have emerged as two of the most widely used packages, each employing its own core normalization technique: the Relative Log Expression (RLE) method for DESeq2 and the Trimmed Mean of M-values (TMM) for edgeR [8] [9]. This guide provides a theoretical and data-driven comparison of how the RLE and TMM normalization methods respond to variations in library size and RNA composition, which is critical for informing their use in PCA and other exploratory analyses.

Theoretical Foundations of RLE and TMM

Core Assumptions and Mathematical Principles

Both RLE and TMM are "scaling normalization" methods designed to make expression counts comparable across samples by calculating sample-specific scaling factors (size factors). They operate under a crucial shared assumption: that the majority of genes in an experiment are not differentially expressed [18] [21]. However, they diverge in their specific strategies and computational implementations.

RLE (Relative Log Expression):

- Principle: The RLE method calculates a size factor for each sample as the median of the ratios of its counts to a "pseudo-reference" sample. This pseudo-reference is constructed for each gene by taking the geometric mean across all samples [18] [22].

- Mathematical Definition: For a given sample ( j ), the RLE size factor ( SFj^{RLE} ) is computed as: ( SFj^{RLE} = median{g} \frac{K{g,j}}{(\prod{m=1}^M K{g,m})^{1/M} ) where ( K_{g,j} ) is the count of gene ( g ) in sample ( j ), and ( M ) is the total number of samples [8] [22].

- Key Trait: The factors are intrinsically correlated with library size, as they are computed directly from the count data without an explicit library size correction beforehand [8].

TMM (Trimmed Mean of M-values):

- Principle: TMM designates one sample as a reference and then calculates scaling factors for all other test samples relative to this reference. It uses a weighted trimmed mean of the log-fold changes (M-values) between the test and reference samples, focusing on genes that are not differentially expressed and have average expression levels (A-values) that are not extreme [18] [14].

- Mathematical Definition: The M-value for a gene ( g ) between test sample ( j ) and reference sample ( ref ) is ( Mg = \log2(K{g,j}/Nj) - \log2(K{g,ref}/N{ref}) ), where ( N ) represents library sizes. The TMM factor for sample ( j ) is the weighted mean of these ( Mg ) values after trimming a fixed percentage (default 30%) from both the upper and lower ends of the M-value and A-value distributions [18] [14].

- Key Trait: TMM factors are designed to be independent of library size, aiming to correct for composition biases rather than mere sequencing depth differences [8].

Visualizing the Normalization Workflows

The following diagrams illustrate the logical procedures and key decision points for the RLE and TMM normalization methods.

RLE (Relative Log Expression) Normalization Workflow

TMM (Trimmed Mean of M-values) Normalization Workflow

Comparative Response to Technical Factors

Handling of Library Size Differences

Library size (total read count per sample) is a primary source of technical variation. While both methods aim to correct for it, their underlying approaches lead to different behaviors.

RLE (DESeq2): The RLE size factors are positively correlated with library size. Because the geometric mean is influenced by the total count distribution, samples with larger library sizes will generally yield larger size factors [8]. The normalization process then divides the counts by this factor, effectively shrinking the counts of larger libraries more aggressively to make them comparable to others.

TMM (edgeR): TMM normalization factors are calculated to be orthogonal to library size [8]. The method intentionally removes the effect of library size during the calculation of M-values (which use counts per million), focusing the adjustment purely on compositional biases. Consequently, the TMM factor for a sample does not directly scale with its library size.

Table 1: Comparative Response to Library Size

| Method | Underlying Principle | Correlation with Library Size | Primary Correction Target |

|---|---|---|---|

| RLE | Median ratio to a geometric mean reference | Positive correlation [8] | Global scaling differences |

| TMM | Weighted trimmed mean of log-ratios (M-values) | No significant correlation [8] | RNA composition bias |

Handling of RNA Composition Biases

RNA composition bias occurs when a few highly expressed genes consume a large fraction of the sequencing resources in one condition, making the counts of all other genes appear artificially lower in that sample relative to a condition without such highly expressed genes [19] [23]. This is a critical challenge for normalization.

TMM (edgeR): TMM is explicitly designed to handle composition bias. By trimming genes with extreme M-values (log-fold changes) and A-values (average expression), it strategically excludes genes that are likely to be differentially expressed. This trimming allows it to base the scaling factor on a stable set of genes assumed to be non-DE, thereby correcting for the "draw-down" effect caused by upregulated genes [19] [14].

RLE (DESeq2): The RLE method is generally robust to moderate composition biases but can be influenced by severe, global shifts in expression. Because it uses the median of all ratios, a widespread imbalance where a large proportion of genes are differentially expressed in one direction can pull the median away from its true value, leading to inaccurate size factors [19] [22].

Table 2: Comparative Response to RNA Composition Bias

| Method | Strategy for Composition Bias | Robustness to Global DE | Key Strength |

|---|---|---|---|

| RLE | Relies on the robustness of the median; assumes most genes are non-DE. | Can be compromised if >50% of genes are DE [22] | Simplicity and computational efficiency |

| TMM | Active trimming of potential DE genes based on M-A values. | High, due to explicit filtering of outliers [19] [14] | Superior correction in presence of strong, unbalanced expression |

Experimental Data and Performance Comparison

Key Findings from Comparative Studies

Empirical comparisons using real and simulated datasets have shed light on the performance characteristics of RLE and TMM.

Similarity in Balanced Designs: In studies with a simple two-condition design and balanced, symmetric differential expression, RLE and TMM often produce highly similar results and normalization factors [8] [9]. A comparative analysis highlighted that for a straightforward two-condition experiment without replicates, users could employ any of these methods with negligible impact on the results [9].

Performance in Complex/Imbalanced Designs: Under more complex experimental designs or when composition bias is pronounced, differences emerge.

- A study by Maza et al. (2016) found that while RLE and TMM factors were correlated, the RLE factors showed a clearer positive relationship with library size, whereas TMM factors did not [8].

- Research comparing normalization methods for downstream Principal Component Analysis (PCA) revealed that while the overall sample clustering in PCA score plots might look similar regardless of the method, the biological interpretation of the principal components, including gene ranking and pathway enrichment results, can be heavily influenced by the choice of normalization [4].

Impact on Downstream Analysis:

- Differential Expression: Both methods, when coupled with their respective statistical frameworks (DESeq2's Wald test and edgeR's exact or quasi-likelihood tests), are considered top performers. Some studies suggest that the RLE method in early DESeq was conservative, while DESeq2's RLE with a Wald test improved sensitivity, and edgeR's TMM with a quasi-likelihood F-test is often recommended for better error control with small sample sizes [20].

- PCA and Exploratory Analysis: Normalization choice directly affects the covariance structure of the data, which is the foundation of PCA. Consequently, different normalizations can lead to different allocations of variance to the first few principal components and alter the list of genes identified as drivers of these components [4].

Table 3: Summary of Experimental Performance Evidence

| Context | RLE (DESeq2) Performance | TMM (edgeR) Performance | Supporting Evidence |

|---|---|---|---|

| Simple Two-Group Design | Similar results to TMM | Similar results to RLE | In papyro comparison shows negligible differences [9] |

| Data with Severe Composition Bias | Can be influenced; may yield biased factors | High robustness due to active trimming | Theoretical and simulation studies [19] [14] |

| Downstream PCA Interpretation | Affects gene loadings and pathway enrichment results | Affects gene loadings and pathway enrichment results | Comprehensive evaluation of 12 methods [4] |

| Differential Expression Analysis | RLE with Wald test (DESeq2) offers good sensitivity | TMM with QL F-test (edgeR) recommended for small n |

Benchmarking on MAQC data [20] |

A Hypothetical Experiment Demonstrating Composition Bias

A classic hypothetical scenario from Robinson and Oshlack (2010) effectively illustrates the problem of composition bias and the need for methods like TMM [14].

- Experimental Setup: Suppose you have two control and two case samples. Each sample has a total of 500 reads (equal library size). The control samples express 50 transcripts, each with a count of 10. The case samples express only 25 of these transcripts (the other 25 have zero expression), but each of the expressed transcripts has a count of 20.

- The Bias: Although the total library sizes are equal, the expression of the 25 shared transcripts is identical. However, a naive analysis without proper normalization would incorrectly show all 25 genes as being differentially expressed (up-regulated in the case) because their raw counts are double. This is an artifact caused by the "loss" of 25 transcripts in the case samples, which changes the composition of the RNA pool.

- Normalization Correction:

- TMM would identify this imbalance. It would trim the 25 missing genes (extreme M-values) and base the scaling factor on the stable, shared genes, correctly determining that no normalization is needed for library size but a factor is needed to account for the composition shift. This would correctly identify that the 25 shared genes are not DE.

- RLE would also perform well in this specific scenario because the majority (the 25 shared genes) are not differentially expressed, making the median ratio a reliable estimator.

Table 4: Key Reagents and Computational Tools for RNA-Seq Normalization Analysis

| Item Name | Function/Brief Explanation | Example/Note |

|---|---|---|

| DESeq2 | An R/Bioconductor package for differential analysis of RNA-seq count data. Uses the RLE normalization by default. | Critical for implementing the RLE method and subsequent Wald test for DE [18] [20] |

| edgeR | An R/Bioconductor package for differential analysis of RNA-seq count data. Uses the TMM normalization by default. | Essential for implementing TMM and associated exact tests or quasi-likelihood F-tests [18] [20] |

| scran | An R/Bioconductor package for single-cell RNA-seq analysis. Uses a deconvolution method for normalization. | Useful for extending normalization concepts to single-cell data where composition biases are more acute [22] |

| Spike-In RNAs | Exogenous RNA sequences added in known quantities to each sample during library preparation. | Used to track technical variation and normalize data based on external controls, an alternative to TMM/RLE [22] |

| MAQC Datasets | Publicly available benchmark RNA-seq datasets with validated expression profiles. | Used for method validation and comparison (e.g., to assess FDR control) [20] |

| TCGA Data | Large-scale public repository of cancer transcriptome data (e.g., CESC - cervical cancer). | Provides real-world, complex data for testing normalization methods on heterogeneous samples [18] |

The theoretical and experimental comparisons reveal that while RLE (DESeq2) and TMM (edgeR) share a common goal and often yield concordant results, their distinct approaches lead to different responses to library size and, most importantly, RNA composition biases.

- RLE is a robust and efficient method, particularly when the assumption of a non-DE gene majority holds true. Its integration within the DESeq2 framework makes it a powerful and user-friendly choice for many standard RNA-seq analyses.

- TMM' explicit design to correct for composition bias through trimming makes it particularly robust in complex experiments where subpopulations of genes show strong, unbalanced differential expression.

For researchers focusing on PCA and exploratory analysis, it is critical to understand that the choice between RLE and TMM is not neutral. Since PCA is sensitive to the covariance structure of the data, and normalization directly alters this structure, the resulting principal components and their biological interpretation can vary [4]. Therefore, it is not a matter of identifying a single "best" method, but rather of selecting the most appropriate one based on the data characteristics.

Recommendation: Researchers should consider the experimental design. If the study involves conditions where massive, global shifts in gene expression are expected (e.g., comparing different tissue types or disease states with known profound transcriptomic changes), TMM might offer a more robust normalization. For more subtle comparisons, RLE is an excellent default. Whenever possible, a sensitivity analysis—running PCA with both normalization methods—is highly recommended to ensure that key findings are not an artifact of the normalization choice.

The Critical Role of Data Transformation (VST, rlog, log-CPM) for PCA Stability

In the analysis of high-throughput RNA sequencing (RNA-seq) data, Principal Component Analysis (PCA) serves as a fundamental tool for exploratory data analysis, quality control, and visualization of sample relationships. However, the raw count data generated by RNA-seq technologies present unique challenges for PCA and similar multivariate methods. These techniques work best with homoskedastic data – where the variance of an observable quantity does not depend on its mean. RNA-seq data intrinsically violate this assumption because variance grows with the mean expression level of genes. Without proper transformation, PCA results become dominated by a few highly expressed genes, potentially masking biologically relevant patterns and leading to misinterpretations of sample relationships [24].

The choice between DESeq2 and edgeR, two widely used packages for differential expression analysis, extends beyond their normalization approaches to encompass their specialized transformation methods that prepare data for dimensionality reduction techniques like PCA. DESeq2 offers the regularized log transformation (rlog) and variance stabilizing transformation (VST), while edgeR commonly employs the log-counts-per-million (log-CPM) transformation. Understanding the performance characteristics, stability, and appropriate applications of these methods is crucial for researchers seeking to extract meaningful biological insights from their PCA plots and ensure the reliability of their exploratory analyses [25] [24].

Understanding Key Transformation Methods

Mathematical Foundations and Implementation

Table 1: Core Transformation Methods for RNA-Seq PCA

| Method | Package | Underlying Principle | Key Parameters | Output Features |

|---|---|---|---|---|

| Variance Stabilizing Transformation (VST) | DESeq2 | Uses parametric dispersion-mean relationship to stabilize variance across expression ranges | Fit to dispersion-mean trend | Approximate homoskedasticity, faster computation |

| Regularized Log Transformation (rlog) | DESeq2 | Empirical Bayesian approach with ridge penalty for shrinking low counts | Shrinkage towards genes' averages | Homoskedasticity, optimal for small datasets |

| log-CPM (log-Counts Per Million) | edgeR (limma) | Simple normalization by library size followed by log transformation | Prior count to avoid log(0) | Computational efficiency, simplicity |

| TPM (Transcripts Per Million) | Various | Within-sample normalization accounting for gene length and sequencing depth | Gene length normalization | Suitable for within-sample comparisons |

The regularized log transformation (rlog) in DESeq2 addresses the mean-variance relationship in RNA-seq data through an empirical Bayesian approach. For genes with high counts, the rlog transformation behaves similarly to an ordinary log2 transformation. However, for genes with lower counts, where relative differences between samples are exaggerated by Poisson noise inherent to small count values, rlog shrinks the values toward the genes' averages across all samples. This shrinkage is implemented using a ridge penalty, effectively minimizing the influence of technical noise on the transformed data while preserving biological signal. The resulting rlog-transformed data exhibit approximately homoskedastic variance, making them suitable for PCA and other distance-based clustering methods [24].

The variance stabilizing transformation (VST) in DESeq2 employs a different strategy, using a parametric dispersion-mean relationship to stabilize the variance across the dynamic range of expression levels. Similar to rlog, VST produces transformed data where the variance is no longer dependent on the mean. A significant advantage of VST over rlog is computational efficiency, particularly valuable when working with large datasets containing hundreds of samples. The DESeq2 documentation recommends VST for such large datasets, noting that it offers similar properties to rlog but with substantially faster computation times [24].

The log-CPM transformation, commonly associated with the edgeR/limma workflow, represents a more straightforward approach. Raw counts are first normalized using the trimmed mean of M-values (TMM) method to account for differences in library size and composition, then converted to counts per million (CPM). A log2 transformation is subsequently applied, typically with a small prior count added to avoid taking the logarithm of zero. While computationally efficient, log-CPM does not explicitly address the mean-variance relationship to the same extent as rlog or VST, which can impact PCA stability, particularly for genes with low expression levels [2] [21].

Relationship Between Normalization and Transformation

It is crucial to distinguish between normalization and transformation in RNA-seq analysis, as these sequential steps serve distinct purposes. Normalization methods, such as the median-of-ratios approach in DESeq2 (RLE - Relative Log Expression) or the trimmed mean of M-values (TMM) in edgeR, primarily correct for technical variations between samples, including differences in sequencing depth and library composition [2] [26]. These methods generate normalized counts that are adjusted for these technical factors.

Transformation methods, including VST, rlog, and log-CPM, then build upon these normalized counts to address statistical properties essential for downstream analyses like PCA. The transformation step modifies the scale and distribution of the data to meet the assumptions of statistical methods used in exploratory analysis, particularly the requirement for homoskedasticity. This distinction explains why researchers must apply both appropriate normalization and subsequent transformation when preparing RNA-seq data for PCA [21].

Experimental Protocols for Method Evaluation

Benchmarking Framework and Evaluation Metrics

Table 2: Experimental Design for Transformation Method Comparison

| Experimental Factor | Configuration | Rationale |

|---|---|---|

| Dataset Types | Large homogeneous datasets (GTEx), Small heterogeneous datasets (SRA) | Assess robustness across data structures |

| Sample Sizes | Ranging from 10 to 100+ samples | Evaluate scalability and performance with varying N |

| Sequencing Depth | Shallow (5-10M reads), Standard (20-30M reads), Deep (50M+ reads) | Test sensitivity to technical variation |

| Evaluation Metrics | Area Under Precision-Recall Curve (auPRC), Silhouette Width, Cluster Separation | Quantify biological signal preservation |

| Gold Standards | Tissue-naive GO terms, Tissue-aware functional relationships | Measure accuracy against biological truth |

To objectively compare the performance of VST, rlog, and log-CPM transformations for PCA stability, researchers have employed rigorous benchmarking frameworks. One comprehensive study analyzed 36 distinct workflows combining different normalization and transformation methods, applying them to both large homogeneous datasets (9,657 GTEx samples) and small heterogeneous datasets (6,301 SRA samples representing 287 individual studies). This design enabled assessment of method performance across diverse experimental scenarios and sample sizes [25].

The evaluation typically utilizes biologically relevant gold standards, such as Gene Ontology (GO) biological process terms with experimentally verified co-annotations. The accuracy of coexpression networks derived from PCA-transformed data is quantified using the area under the precision-recall curve (auPRC), which emphasizes the accuracy of top-ranked coexpression gene pairs and is particularly suitable for genomic data where only a small fraction of gene pairs interact biologically. Additional metrics include silhouette widths for cluster compactness and separation, and the K-nearest neighbor batch-effect test for assessing technical artifact removal [25] [27].

A representative experimental protocol proceeds as follows: (1) Raw RNA-seq counts are processed through either DESeq2's median-of-ratios (RLE) normalization or edgeR's TMM normalization; (2) The normalized counts are transformed using VST, rlog, or log-CPM methods with default parameters; (3) PCA is performed on each transformed dataset; (4) The resulting principal components are evaluated against gold standard gene functional relationships using auPRC; (5) Sample clustering patterns in reduced-dimensional space are assessed using silhouette widths and compared to known biological groups; (6) Computational performance is measured by recording execution time and memory usage [25] [24].

Table 3: Key Research Reagent Solutions for RNA-Seq Transformation Analysis

| Tool/Resource | Function | Implementation |

|---|---|---|

| DESeq2 | Differential expression analysis, VST and rlog transformations | R/Bioconductor package |

| edgeR/limma | Differential expression analysis, TMM normalization, log-CPM transformation | R/Bioconductor package |

| sctransform | Normalization and variance stabilization for single-cell RNA-seq | R package (Seurat integration) |

| recount2 | Unified processed RNA-seq data from GTEx and SRA | Database for benchmarking |

| SCnorm | Quantile regression for count data | R package for specific normalization |

Successful evaluation of transformation methods requires specific computational tools and data resources. The DESeq2 package (version 1.40.0 or later) provides implementation of the median-of-ratios normalization, rlog, and VST transformations, with dedicated functions like plotPCA() for visualization. The edgeR package (version 4.0.0 or later) offers TMM normalization and log-CPM transformation, often used in conjunction with the limma package for downstream analyses. For large-scale benchmarking, the recount2 database provides uniformly processed RNA-seq data from both the GTEx project and SRA repository, enabling consistent evaluation across diverse datasets [25] [6] [24].

The sctransform package, while developed for single-cell RNA-seq data, exemplifies the extension of variance stabilization principles to sparse count data and can inform method development for bulk RNA-seq. The SCnorm tool implements quantile regression for normalizing count data based on the observation that different groups of genes may require distinct normalization factors. These tools collectively enable comprehensive assessment of transformation methods and facilitate reproducible research in transcriptomics [28] [27].

Performance Comparison and Experimental Data

Quantitative Benchmarking Results

Table 4: Performance Comparison of Transformation Methods for PCA Applications

| Performance Dimension | VST (DESeq2) | rlog (DESeq2) | log-CPM (edgeR) |

|---|---|---|---|

| Variance Stabilization | Excellent | Excellent | Moderate |

| Computational Speed | Fast (scales well) | Slow (small datasets only) | Very Fast |

| Large Dataset Handling | Recommended | Not recommended | Recommended |

| Biological Signal Preservation (auPRC) | 0.45-0.65 | 0.44-0.63 | 0.40-0.58 |

| Handling of Low-Count Genes | Effective shrinkage | Effective shrinkage | Moderate shrinkage |

Empirical benchmarking reveals distinct performance characteristics among the three transformation methods. In terms of biological accuracy measured by auPRC against gene functional relationship gold standards, VST and rlog transformations consistently outperform log-CPM, particularly for heterogeneous datasets. One comprehensive study reported auPRC values ranging from 0.45-0.65 for VST, 0.44-0.63 for rlog, and 0.40-0.58 for log-CPM across diverse datasets, indicating superior capture of biologically meaningful relationships by the DESeq2 transformations [25].

Regarding computational efficiency, log-CPM and VST demonstrate significant advantages over rlog for larger datasets. In benchmarks using datasets with >100 samples, VST completed transformation 5-10 times faster than rlog while producing qualitatively similar PCA results. The rlog function showed practical limitations for datasets exceeding 50 samples, with computation time increasing substantially. Consequently, the DESeq2 documentation explicitly recommends VST over rlog for datasets with hundreds of samples due to comparable performance characteristics with markedly improved computational efficiency [24].

All three methods effectively reduce the influence of sequencing depth on downstream analyses when applied following appropriate between-sample normalization. However, VST and rlog demonstrate superior handling of the mean-variance relationship, particularly for lowly expressed genes where technical noise would otherwise dominate the signal in PCA. This translates to more stable PCA results that better reflect biological rather than technical variation between samples [25] [24].

Impact on PCA Stability and Interpretation

The stability of PCA results is crucially dependent on the transformation method applied prior to analysis. Between-sample normalization has been identified as having the most significant impact on PCA stability, with counts adjusted by size factors (as in DESeq2's median-of-ratios or edgeR's TMM) producing networks that most accurately recapitulate known functional relationships [25].

In practical applications, the choice of transformation method can substantially influence the interpretation of PCA plots. For instance, a study examining the impact of normalization methods on PCA found that although PCA score plots often appear superficially similar regardless of the normalization used, the biological interpretation of the models can depend heavily on the method applied. This underscores the importance of selecting an appropriate transformation method that stabilizes variance across the expression range, particularly when analyzing datasets with diverse expression profiles or substantial technical variability [4].

The application of network transformation techniques such as Weighted Topological Overlap (WTO) and Context Likelihood of Relatedness (CLR) following initial transformation and PCA can further enhance the biological relevance of the results. These methods help emphasize connections more likely to represent true biological relationships while downweighting potential spurious correlations, ultimately leading to more robust and interpretable PCA outcomes [25].

Figure 1: RNA-seq Data Transformation Workflow for PCA Analysis

Guidelines for Method Selection

Based on comprehensive benchmarking studies and practical considerations, specific guidelines emerge for selecting appropriate transformation methods for PCA applications. For most standard RNA-seq analyses, particularly those involving moderate to large sample sizes (n > 30), VST implemented in DESeq2 represents the optimal choice, balancing computational efficiency with effective variance stabilization and biological signal preservation. The method's robust performance across diverse dataset types and compatibility with downstream DESeq2 functions make it a versatile tool for exploratory analysis [25] [24].

For smaller datasets (n < 30) where computational efficiency is less concerning, rlog transformation may provide slightly improved variance stabilization, particularly for studies with pronounced biological effects and limited sample sizes. However, the practical differences between VST and rlog in these scenarios are often minimal, and VST remains a defensible choice for consistency across analyses [24].

The log-CPM transformation in the edgeR/limma workflow offers a computationally efficient alternative suitable for large-scale screening analyses or situations where DESeq2 is not already integrated into the analytical pipeline. While generally showing slightly lower performance in biological signal preservation metrics, log-CPM remains a valid approach, particularly when supplemented with additional quality control measures and careful interpretation of results [2] [21].

Concluding Remarks on PCA Stability

The critical role of data transformation methods in ensuring PCA stability for RNA-seq data cannot be overstated. Proper application of VST, rlog, or log-CPM transformations addresses the fundamental mean-variance relationship inherent in count data, enabling more biologically meaningful dimensional reduction and pattern discovery. Through rigorous benchmarking, between-sample normalization followed by variance-stabilizing transformation has emerged as a crucial preprocessing combination for reliable PCA results.

Within the broader context of comparing DESeq2 and edgeR normalization approaches, the transformation methods represent a natural extension of their respective philosophical frameworks. DESeq2's more comprehensive approach to variance stabilization through VST and rlog provides measurable benefits for PCA applications, particularly in studies where exploratory analysis and biological interpretation of sample relationships are primary objectives. As RNA-seq technologies continue to evolve and dataset sizes increase, the principles of variance stabilization and appropriate data transformation will remain essential components of robust transcriptomic analysis workflows.

A Step-by-Step Guide to PCA with DESeq2 and edgeR

In RNA-seq analysis, normalization is an essential preprocessing step that accounts for technical variations, enabling meaningful biological comparisons between samples. Both DESeq2 and edgeR, two widely used Bioconductor packages for differential expression analysis, require careful data preparation to function optimally [19] [29]. While they share similarities in processing raw count data, their underlying normalization methodologies differ significantly, which can impact downstream analyses such as Principal Component Analysis (PCA) [10] [30].

The fundamental challenge in RNA-seq normalization stems from technical variations including sequencing depth, RNA composition, and library preparation protocols [19] [31]. These technical factors can obscure biological signals if not properly accounted for. DESeq2 employs a median-of-ratios approach, whereas edgeR utilizes the trimmed mean of M-values (TMM) method [32]. Both methods operate under the core assumption that most genes are not differentially expressed, though they implement this assumption through different statistical frameworks [19] [30].

This guide provides a comprehensive comparison of data preparation workflows for DESeq2 and edgeR, with particular emphasis on constructing count matrices and target files—the foundational inputs for both tools. We present experimental data comparing their normalization performance and provide detailed protocols for implementing these methods in practice.

Normalization Methods: Core Algorithmic Differences

DESeq2's Median-of-Ratios Method

DESeq2 employs a size factor estimation approach based on the median-of-ratios method [32] [30]. This technique calculates normalization factors by comparing each gene's count to a pseudo-reference sample derived from geometric means across all samples.

The key steps in DESeq2's normalization include:

- Geometric mean calculation: For each gene, compute the geometric mean across all samples

- Ratio computation: For each sample and each gene, compute the ratio of the gene's count to the geometric mean

- Size factor determination: The median of these ratios for each sample serves as the size factor

This method is particularly robust to differential expression in a subset of genes, as the median is resistant to outliers [30]. DESeq2 incorporates these size factors into its generalized linear model (GLM) framework, using them as offsets in the negative binomial regression [30].

edgeR's Trimmed Mean of M-values (TMM)

edgeR implements the TMM normalization procedure, which operates by selecting a reference sample and then calculating scaling factors for all other samples relative to this reference [32]. The method focuses on genes that are not differentially expressed and exhibit moderate expression levels.

The TMM algorithm involves:

- Reference selection: Typically the sample whose upper quartile is closest to the mean upper quartile across all samples

- M-value calculation: For each non-reference sample, compute log-fold changes (M-values) relative to the reference

- A-value calculation: Compute the average intensity (A-values) for each gene

- Trimming: Remove genes with extreme M-values and extreme A-values (typically top and bottom 30%)

- Scaling factor computation: Calculate the weighted mean of M-values for remaining genes

TMM normalization effectively accounts for RNA composition biases, which occur when a small subset of genes is highly expressed in one condition, potentially distorting the apparent expression of other genes [19].

Table 1: Core Normalization Algorithms in DESeq2 and edgeR

| Aspect | DESeq2 | edgeR |

|---|---|---|

| Method Name | Median-of-ratios | Trimmed Mean of M-values (TMM) |

| Key Reference | Geometric mean across samples | Single reference sample |

| Statistical Basis | Median ratios | Weighted mean of log-ratios |

| Trim Parameters | None (uses median) | Typically 30% trim each tail |

| Handling of DE Genes | Robust due to median | Robust due to trimming and weighting |

| Implementation in Package | estimateSizeFactors() |

calcNormFactors() |

Data Preparation Fundamentals

Count Matrix Construction

The foundational input for both DESeq2 and edgeR is a count matrix containing raw read counts for features (genes or transcripts) across all samples [29] [33]. This matrix should be constructed with genes as rows and samples as columns, containing integer values representing the number of reads mapped to each feature.

Proper count matrix construction requires:

- Raw count data: Avoid normalized counts (e.g., RPKM, FPKM) as input

- Feature consistency: Consistent gene identifiers across all samples

- Complete data: No missing values in the matrix

Count data can be generated using various alignment and quantification tools such as HTSeq, featureCounts, or Salmon, with the output typically consisting of separate count files for each sample [29] [33].

Target File Specification

The target file (also called sample metadata or colData) provides essential experimental design information that enables proper statistical modeling [29]. This tab-separated file should include:

Table 2: Essential Target File Components

| Column Name | Description | Example Content | Requirement |

|---|---|---|---|

| sampleName | Unique identifier for each sample | WT1, WT2, KO1, KO2 | Mandatory |

| fileName | Name of the count file for the sample | WT1counts.txt, WT2counts.txt | Mandatory |

| condition | Primary experimental factor | Control, Treated | Mandatory |

| batch | Batch effect factor (if applicable) | Batch1, Batch2 | Optional |

The target file enables both packages to associate count data with experimental factors, which is crucial for proper differential expression testing and accounting for batch effects [29] [10].

Figure 1: Workflow for constructing count matrices and target files from raw sequencing data

Experimental Comparison of Normalization Performance

Methodology for Normalization Assessment

To evaluate the performance of DESeq2 and edgeR normalization methods in the context of PCA, we analyzed data from a comparative study examining multiple normalization approaches [31]. The experimental design included:

- Dataset: 726 individual Drosophila melanogaster from the Drosophila Genetic Reference Panel

- Factors: Genotype, environment, sex, and their interactions

- Replicates: 8 biological replicates per genotype, environment, and sex combination

- Normalization Methods: Total Count (TC), Upper Quartile (UQ), Median (Med), TMM (edgeR), DESeq, Quantile (Q), RPKM, and RUVg

- Evaluation Metrics: Data alignment across samples, dynamic range preservation, and impact on differential expression results

The performance assessment focused on each method's ability to properly align data distributions across samples while preserving biological signals, particularly important for dimensionality reduction techniques like PCA [31] [10].

Comparative Performance Results

Table 3: Normalization Method Performance Comparison

| Normalization Method | Data Alignment | Dynamic Range | DE Result Quality | Sensitivity to Filtering |

|---|---|---|---|---|

| DESeq | Excellent | Excellent | High | Low |

| TMM (edgeR) | Excellent | Excellent | High | High |

| Median | Good | Good | Medium | High |

| Upper Quartile | Good | Good | Medium | Medium |

| Total Count | Poor | Poor | Low | Low |