Ensembl VEP Tutorial 2024: A Beginner's Guide to Variant Annotation for Genomic Research

This comprehensive beginner's guide to the Ensembl Variant Effect Predictor (VEP) walks researchers through the foundational concepts, practical application, troubleshooting, and validation of variant annotations.

Ensembl VEP Tutorial 2024: A Beginner's Guide to Variant Annotation for Genomic Research

Abstract

This comprehensive beginner's guide to the Ensembl Variant Effect Predictor (VEP) walks researchers through the foundational concepts, practical application, troubleshooting, and validation of variant annotations. You'll learn what VEP is and why it's essential for genomic analysis, how to run basic and advanced analyses with real-world examples, solve common errors, and ensure your results are reliable and interpretable for applications in biomedical research and drug development.

What is Ensembl VEP? A Beginner's Guide to Genomic Variant Annotation

Variant annotation is the process of identifying and characterizing genetic variants (e.g., SNVs, indels) from sequenced genomes to determine their biological and clinical significance. It is a foundational step in genomic analysis, translating raw variant calls into actionable insights. Within the context of a broader thesis on an Ensembl VEP (Variant Effect Predictor) tutorial for beginners, mastering variant annotation is the critical bridge between data generation and hypothesis-driven research in genomics.

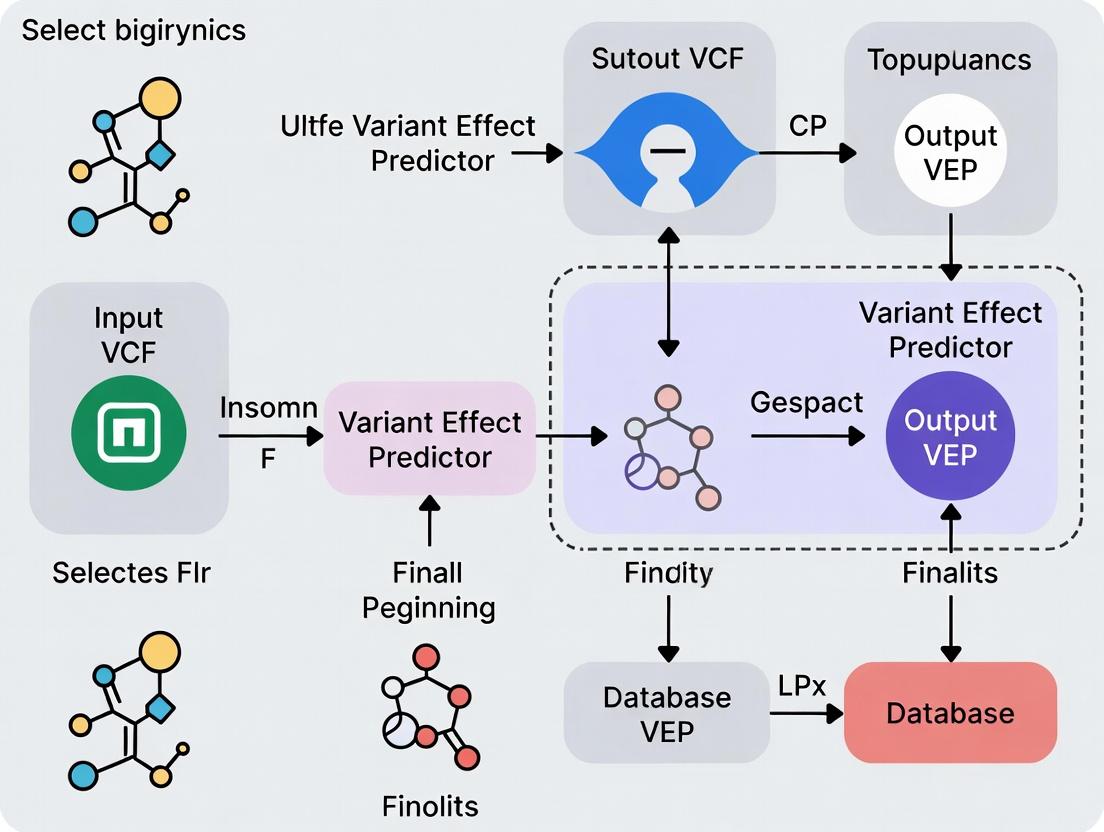

The Core Annotation Workflow

The standard workflow transforms a VCF (Variant Call Format) file into an annotated list of variants with predicted consequences.

Diagram: Variant Annotation Pipeline

Quantitative Impact of Variant Types

Annotation categorizes variants by their predicted functional impact. The following table summarizes common consequences, ordered by typical severity.

| Consequence Type | Example | Typical Proportion in WGS* | Presumed Impact |

|---|---|---|---|

| High | Stop gain, Frameshift, Splice donor/acceptor | ~1-2% of coding variants | Disruptive, likely pathogenic |

| Moderate | Missense, In-frame indel | ~60-70% of coding variants | Variable, needs assessment |

| Low | Synonymous | ~30-40% of coding variants | Often benign |

| Modifier | Non-coding, intergenic | >98% of all variants | Context-dependent |

Note: Proportions are approximate and vary by population and sequencing depth. WGS=Whole Genome Sequencing.

Protocol: Basic Variant Annotation Using Ensembl VEP (Command Line)

This protocol outlines the steps to perform basic annotation on a VCF file using the offline version of Ensembl VEP.

1. Prerequisite Setup

- Software: Install VEP following the official instructions (requires Perl).

- Data: Download the appropriate cache files for your reference genome (e.g., GRCh38).

- Input: A VCF file (

input.vcf) containing your called variants.

2. Command Execution Run the following command in your terminal. This example uses GRCh38 cache, enables common plugins, and outputs a tab-separated (TSV) file.

3. Output Interpretation

The annotated_variants.tsv file will contain rows for each variant and columns for each requested field. Key columns include:

- Consequence: The sequence ontology term (e.g., missense_variant).

- IMPACT: Categorical prediction (HIGH, MODERATE, LOW, MODIFIER).

- CLIN_SIG: Clinical significance from public databases.

- AF: Allele frequency in population cohorts (e.g., gnomAD).

Pathway from Variant to Hypothesis

Annotation data feeds into downstream analytical pathways for disease research and drug target identification.

Diagram: Hypothesis Generation from Annotation Data

The Scientist's Toolkit: Key Research Reagent Solutions

| Tool / Resource | Type | Primary Function in Annotation |

|---|---|---|

| Ensembl VEP | Software / Web Tool | Core annotation engine for predicting variant consequences on genes, transcripts, and protein sequence. |

| VCF File | Data Format | Standard container for raw genetic variants; the primary input for annotation pipelines. |

| Reference Genome (GRCh38) | Database | The coordinate system and reference sequence against which variants are defined and mapped. |

| CACHÉ / LOFTEE | Plugin / Algorithm | Provides loss-of-function (LoF) transcript effect predictions and filters for high-confidence LoF variants. |

| CADD Scores | Plugin / Algorithm | Integrates diverse annotations into a single metric (C-score) for variant deleteriousness. |

| gnomAD | Database | Provides population allele frequencies, a critical filter for removing common, likely benign variants. |

| ClinVar | Database | A public archive of relationships between variants and human health (clinical significance). |

| PharmGKB | Database | Curates information about the impact of genetic variation on drug response (pharmacogenomics). |

Conclusion: For researchers beginning with Ensembl VEP, understanding variant annotation is not merely a technical step but a crucial interpretive process. It enables the prioritization of millions of genomic variants, guiding subsequent functional experiments, statistical analyses in cohort studies, and the identification of novel therapeutic targets in drug development.

Core Functionality

Ensembl Variant Effect Predictor (VEP) is a powerful tool that determines the functional consequences of genomic variants. It annotates variants with their predicted effect on genes, transcripts, and protein sequences, as well as with known information from public databases. For a beginner's research thesis, VEP is the critical first step in moving from a list of genomic coordinates to biological interpretation.

Inputs and Outputs: Structured Data

VEP accepts multiple input formats and produces comprehensive annotation output.

Table 1: Primary VEP Input Formats

| Format | Description | Key Fields Required |

|---|---|---|

| VCF | Variant Call Format (standard) | CHROM, POS, ID, REF, ALT |

| Ensembl tab | Simple whitespace-separated | Uploaded format: Chr, Start, End, Allele |

| HGVS | Human Genome Variation Society notation | Variant descriptor (e.g., 7:g.140453136A>T) |

| Variant identifiers | Database IDs (e.g., rsIDs) | rs699 |

| Output Field Category | Example Data Points | Typical Count/Value Range |

|---|---|---|

| Consequence Type | missensevariant, stopgained, spliceregionvariant | 1-5 per transcript |

| Impact Rating | HIGH, MODERATE, LOW, MODIFIER | 1 primary rating |

| Affected Genes & Transcripts | ENSG00000135744, ENST00000366667 |

1-10+ transcripts |

| Frequency Data (gnomAD) | Allele frequency: 0.0012 | 0.0 - 1.0 |

| Clinical Significance (ClinVar) | Pathogenic, Benign, Conflicting interpretations | 1+ annotation |

| Protein Information | Amino acid change: p.Arg150Trp, SIFT/PolyPhen scores |

Scores: 0.0 - 1.0 |

Application Notes & Protocols

Protocol 1: Basic Command-Line Annotation of a VCF File

Objective: Annotate a human VCF file with default VEP settings and cache. Methodology:

- Prerequisite: Install VEP via Docker, Conda, or from GitHub. Download the human cache file (e.g., for GRCh38).

- Command:

- Output Analysis: Open

annotated_variants.vcf. The annotations are added to theINFOcolumn asCSQfields. Parse these using a script or view in a genome browser.

Protocol 2: Advanced Filtering for Rare, Deleterious Missense Variants

Objective: Identify rare, potentially damaging missense variants from exome sequencing data. Methodology:

- Run VEP with Specific Parameters: Include frequency (gnomAD) and protein prediction (SIFT, PolyPhen) data.

- Post-VEP Filtering (e.g., using

awk): Isolate variants where:- Consequence contains

missense_variant. gnomADe_AF< 0.01 (or is absent).SIFTprediction is 'deleterious' ANDPolyPhenprediction is 'probably_damaging'.

- Consequence contains

Protocol 3: Custom Annotation with a Local Database

Objective: Add internal lab-specific variant observations to VEP annotations. Methodology:

- Prepare Custom Database: Format lab data as a tab-separated (TSV) file with columns:

#CHROM,POS,ID,REF,ALT, and custom fields (e.g.,Internal_AF). - Create a Minimal VCF: Convert the TSV to a simple VCF (with just the coordinate and allele data).

- Run VEP with

--customflag:

- The custom allele frequency will appear in the output alongside public annotations.

Visualizations

Title: Ensembl VEP High-Level Data Flow Diagram

Title: Beginner's VEP Analysis Workflow Protocol

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for a VEP-Based Analysis Project

| Item | Function in the Experiment/Process |

|---|---|

| Reference Genome Assembly (FASTA) | Provides the coordinate system and reference sequence against which variants are called and annotated (e.g., GRCh38.p14). |

| VEP Cache Files | Local copies of Ensembl databases enabling rapid, offline annotation of variants for a specific genome assembly and release. |

| High-Quality Input VCF | The primary "reagent"; a file containing variant calls from a sequencing pipeline (e.g., GATK, BCFtools). Quality dictates results. |

| Compute Environment | Sufficient CPU and memory (≥8GB RAM) to run VEP, either on a local server, high-performance cluster (HPC), or cloud instance. |

| Annotation Filtering Scripts | Custom code (e.g., in Python, R, or Bash) to parse and filter the rich VEP output based on study-specific criteria. |

| Validation Platform Data | Independent method (e.g., Sanger sequencing, orthogonal NGS panel) to experimentally confirm prioritized variants post-VEP analysis. |

Application Notes

In the context of a beginner's research tutorial for Ensembl's Variant Effect Predictor (VEP), three core terminologies form the foundation for interpreting genetic variation data. Transcripts are the RNA molecules produced from a DNA sequence, with multiple possible splice variants per gene. VEP analyzes variants against a reference set of transcripts to determine their biological context. Consequences are the precise biological effects of a genetic variant on a transcript (e.g., missense, frameshift, splice donor). VEP uses the Sequence Ontology (SO) to assign standardized consequence terms. Impact Scores are categorical or numerical metrics that rank the predicted severity of a variant's consequence, such as SIFT and PolyPhen scores for missense variants, or the Combined Annotation Dependent Depletion (CADD) score which integrates multiple annotations into a single metric.

VEP outputs are critical for prioritizing variants in research and drug development pipelines, from identifying pathogenic drivers in oncology to assessing the potential impact of pharmacogenomic markers.

Data Presentation

Table 1: Standard Variant Consequence Categories and Impact Scores

| Consequence (SO Term) | Description | Typical Impact Category | Example Numerical Score Range (e.g., CADD) |

|---|---|---|---|

| Transcript Ablation | Deletion removes part of a transcript | HIGH | > 30 |

| Frameshift Variant | Insertion/deletion causes a shift in the reading frame | HIGH | 25 - 40 |

| Stop Gained | Variant leads to a premature stop codon | HIGH | 30 - 50 |

| Missense Variant | Single nucleotide change alters the amino acid | MODERATE | 15 - 35 |

| Splice Region Variant | Variant occurs within splice site region | LOW | 5 - 20 |

| Synonymous Variant | Single nucleotide change does not alter the amino acid | LOW | 0 - 10 |

| 3' UTR Variant | Variant occurs in the 3' untranslated region | MODIFIER | 0 - 5 |

Table 2: Key Impact Prediction Algorithms Integrated with VEP

| Algorithm | Predicts On | Score Type | Interpretation |

|---|---|---|---|

| SIFT | Missense variants | Probability (0.0 - 1.0) | < 0.05 = Deleterious |

| PolyPhen-2 | Missense variants | Probability (0.0 - 1.0) | > 0.908 = Probably Damaging |

| CADD | All variant types | Phred-scaled score (1 - 99) | > 20 = Top 1% most deleterious |

| REVEL | Missense variants | Score (0.0 - 1.0) | > 0.75 = Strongly pathogenic |

Experimental Protocols

Protocol 1: Basic VEP Analysis for Variant Prioritization

Objective: To annotate a set of genetic variants (in VCF format) with transcript information, consequences, and impact scores using the Ensembl VEP.

Materials:

- Input file: Variant Call Format (.vcf) file containing genomic coordinates and alleles.

- Ensembl VEP installed locally or access to the web tool (https://useast.ensembl.org/Tools/VEP).

- Reference genome: GRCh38.p14 (or appropriate version).

- Cache files for the chosen genome assembly.

Methodology:

- Data Preparation: Ensure your VCF file is correctly formatted and compressed with

bgzip, then indexed withtabix. - Command Execution (Local): Run VEP with core parameters.

- Output Parsing: The tab-delimited output (

output_annotations.tsv) will contain columns for: Uploaded variation, Location, Gene, Feature (Transcript), Consequence, cDNAposition, Aminoacids, SIFT, PolyPhen, and CADD scores. - Filtering & Prioritization: Filter results using command-line tools (e.g.,

awk) or scripting (Python/R). For example, to select high-impact missense variants with CADD > 25:

Protocol 2: Integrating VEP Output with Clinical/Drug Databases

Objective: To cross-reference VEP-annotated variants with known clinical significance and drug response data.

Materials:

- VEP-annotated output file from Protocol 1.

- Local or API access to curated databases: ClinVar, COSMIC, PharmGKB.

- Scripting environment (Python recommended).

Methodology:

- Data Extraction: From the VEP output, extract key identifiers: Genomic location (GRCh38), HGVS cDNA notation, and Gene symbol.

- Database Query:

- ClinVar: Use the

variationendpoint of the NCBI E-utilities API or a local ClinVar data dump to retrieve clinical significance (e.g., Pathogenic, Benign). - COSMIC: Use the COSMIC API (licensed) to check if the variant is a known somatic mutation in cancer.

- PharmGKB: Use the PharmGKB API or data files to annotate pharmacogenomic associations (e.g., drug metabolism, efficacy).

- ClinVar: Use the

- Integration: Create a unified table combining VEP consequences, impact scores, and clinical/pharmacogenomic annotations. This integrated view is essential for target validation and safety assessment in drug development.

Mandatory Visualization

Title: Basic Ensembl VEP Annotation Workflow

Title: Decision Logic for Variant Prioritization

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for VEP-based Analysis

| Item | Function in Analysis |

|---|---|

| GRCh38/hg38 Reference Genome FASTA | The definitive genomic coordinate system against which all variants are mapped and annotated. |

| Ensembl/GENCODE Transcriptome | The comprehensive set of transcript models (including MANE Select) used by VEP to determine variant consequences. |

| VEP Cache Files (Species-Specific) | Local data stores of pre-processed annotations (e.g., consequences, frequencies) enabling fast offline analysis. |

| SIFT, PolyPhen, CADD Prediction Models | Pre-computed score databases or algorithms that VEP queries to assign functional impact predictions. |

| ClinVar Database Download | A curated archive of human genetic variants and their relationships to clinical phenotypes, for cross-referencing. |

| PharmGKB Dataset | A resource detailing the impact of genetic variation on drug response, crucial for pharmacogenomics. |

| COSMIC Catalogue (Licensed) | The world's largest resource on somatic mutations in human cancer, essential for oncology target discovery. |

| High-Performance Computing (HPC) Cluster or Cloud Instance | Computational environment for processing large-scale genomic datasets (e.g., whole genomes) with VEP. |

Within the context of a broader thesis on Ensembl VEP for beginner research, this guide provides a practical comparison of variant annotation tools. Ensembl VEP (Variant Effect Predictor) remains a cornerstone in genomic analysis pipelines. This document details its core strengths, comparative positioning against alternatives, and protocols for its effective application in research and drug development.

Variant annotation is the process of predicting the functional impact of genetic variants (e.g., SNPs, indels) using reference genomes, transcript databases, and external data sources. The choice of tool depends on the specific research question, required annotations, and computational environment.

Quantitative Comparison of Major Tools

Table 1: Core Feature Comparison of Major Variant Annotation Tools

| Feature | Ensembl VEP | ANNOVAR | SnpEff | VEP-SpliceAI Plugin |

|---|---|---|---|---|

| Primary Method | Perl/Perl API, REST, web | Perl command line | Java | Plugin for VEP |

| Speed | Moderate to Fast | Very Fast | Fast | Slower (DL model) |

| Offline Operation | Yes (Cache/DB) | Yes | Yes | Yes (with model) |

| Cost | Free, Open Source | Free for academic, fee for commercial | Free, Open Source | Free, Open Source |

| Key Annotation Sources | Ensembl, GENCODE, RefSeq, dbNSFP, ClinVar, COSMIC | Ensembl, UCSC, RefSeq, dbNSFP, ClinVar | Ensembl, UCSC, RefSeq | Splice site disruption score |

| Custom Data Integration | Excellent (Custom annotations, plugins) | Good | Moderate | N/A (is a plugin) |

| VCF I/O | Excellent | Excellent | Excellent | Requires VEP |

| Splicing Prediction | Basic (canonical sites); advanced via plugins (SpliceAI, MaxEntScan) | Basic | Basic | Advanced (neural network) |

| Beginner-Friendliness | High (Web tool, clear docs) | Moderate (command-line focused) | Moderate | Low (requires VEP setup) |

| Typical Use Case | Comprehensive annotation in clinical & research pipelines | Rapid batch annotation in research | Efficient annotation in genomic studies | Prioritizing non-coding splice variants |

Table 2: Performance Metrics (Illustrative, on 10,000 Variants)

| Tool | Runtime (Approx.) | CPU Cores Used | Memory (GB) | Output Complexity |

|---|---|---|---|---|

| Ensembl VEP (offline, cache) | ~2-5 minutes | 1-4 | 2-4 | High (Highly configurable) |

| ANNOVAR | ~1-3 minutes | 1 | < 2 | Moderate |

| SnpEff | ~1-2 minutes | 1 | 1-2 | Moderate |

| VEP + SpliceAI Plugin | ~10-15 minutes | 1 | 4-6 | High (with delta scores) |

When and Why to Choose Ensembl VEP

- For Beginners & Reproducible Pipelines: The well-documented web interface, Docker image, and consistent output format lower the barrier to entry and aid in creating standardized protocols.

- Comprehensive, Consensus-Driven Annotation: VEP integrates multiple gene sets (Ensembl, GENCODE, RefSeq) by default, providing a consensus view that mitigates biases from a single source.

- Extensive Plugin Ecosystem: For specialized needs (splicing, conservation, pathogenicity), plugins like SpliceAI, dbNSFP, and CADD can be seamlessly integrated without altering core code.

- Clinical and Regulatory Context: Strong integration with ClinVar, COSMIC, and frequency databases (gnomAD) makes it suitable for clinical variant interpretation and drug target safety assessment.

- Scalability and Flexibility: Supports everything from single variants via the web interface to population-scale VCFs via command line in high-performance computing (HPC) environments.

Application Note: Protocol for Basic VEP Analysis

Protocol 1: Annotating a VCF File Using Offline VEP (Command Line)

Objective: To annotate a germline or somatic variant call file (VCF) with functional consequences, frequencies, and clinical significance.

Research Reagent Solutions & Essential Materials:

| Item | Function in Protocol |

|---|---|

| Input VCF File | Contains the raw genetic variants (chromosome, position, ref, alt) to be annotated. |

| Ensembl VEP Software | Core annotation engine. Installed locally or via Docker. |

| VEP Cache Files (e.g., HomosapiensGRCh38) | Local database of pre-processed reference genome, gene models, and external data for rapid offline analysis. |

| Reference Genome (FASTA) | Matches the cache version (e.g., GRCh38). Required for certain checks and output. |

| High-Performance Compute (HPC) Node or Local Server | Recommended for processing large VCF files in a reasonable time. |

| Plugin Data Files (e.g., SpliceAI, dbNSFP) | Additional data sources for specialized annotation. |

Methodology:

- Prerequisite Setup:

- Install VEP via GitHub (

https://github.com/Ensembl/ensembl-vep) or Docker (docker pull ensemblorg/ensembl-vep). - Download the appropriate cache and FASTA files matching your genome assembly (GRCh37 or GRCh38) using the VEP

INSTALL.plscript.

- Install VEP via GitHub (

Basic Command Execution:

Output Interpretation:

- The primary output (

annotated_variants.vcf) will contain all original VCF fields plus new INFO fields added by VEP (e.g.,CSQ). Use--tabfor a simpler tab-delimited format. - Key annotated data includes: Consequence terms (e.g., missense_variant), impacted gene/transcript, amino acid change, gnomAD allele frequency, and ClinVar clinical significance.

- The primary output (

Workflow Diagram:

Title: Basic Offline VEP Annotation Workflow

Application Note: Protocol for Advanced Splice Variant Analysis

Protocol 2: Integrating SpliceAI with VEP for Splice Disruption Prediction

Objective: To prioritize non-coding and coding variants based on their likelihood of disrupting mRNA splicing using the SpliceAI plugin for VEP.

Research Reagent Solutions & Essential Materials:

| Item | Function in Protocol |

|---|---|

| SpliceAI Plugin for VEP | A machine learning plugin that calculates delta scores for splice donor/acceptor gain/loss. |

| SpliceAI Pre-computed Annotations (VCF files) | Large VCF files containing pre-calculated SpliceAI scores for all possible SNVs/indels in the genome. |

| High-Memory Compute Node | SpliceAI annotation is memory-intensive; ≥ 8GB RAM recommended. |

| Annotated VCF from Protocol 1 | Can be used as input for a plugin-only re-annotation run. |

Methodology:

- Data Preparation:

- Download the SpliceAI plugin from GitHub and the pre-computed annotation files (by genome assembly) as per VEP plugin documentation.

Command Execution (Can be added to Protocol 1 command):

Analysis and Prioritization:

- In the output, filter for variants where

SpliceAI_pred(the maximum delta score) is > 0.2 (likely pathogenic) or > 0.5 (high confidence). - Correlate high-scoring splice variants with known pathogenic ClinVar classifications (

CLNSIG) to validate predictions.

- In the output, filter for variants where

SpliceAI Analysis Pathway Diagram:

Title: Splice Variant Analysis with VEP & SpliceAI

Decision Framework for Tool Selection

Use Ensembl VEP when:

- You are a beginner or require a standardized, well-supported pipeline.

- Your analysis requires integration of multiple, consensus gene sets.

- You need to incorporate specialized predictions via plugins.

- Your work has a clinical or translational focus.

Consider other tools when:

- ANNOVAR: Annotation speed is the absolute priority for a large batch job and core annotations suffice.

- SnpEff: You need a lightweight, fast Java-based solution for a defined research project without extensive clinical data.

- Specialized Tools (e.g., standalone SpliceAI): You are conducting deep, focused analysis on a specific mechanism (like splicing) and require the most advanced model configurations.

Within the broader thesis of utilizing the Ensembl Variant Effect Predictor (VEP) for beginner genomic research, selecting the appropriate access method is a foundational step. VEP is a critical tool for researchers, scientists, and drug development professionals, enabling the annotation and prioritization of genomic variants. This application note details the three primary access modalities, their respective use cases, and provides protocols for initial setup and use.

Access Method Comparison

Table 1: Comparison of Ensembl VEP Access Methods

| Feature | Web Tool | REST API | Command Line (Perl) |

|---|---|---|---|

| Primary Audience | Beginners, casual users | Programmers, application developers | Bioinformaticians, high-throughput analysis |

| Ease of Setup | Immediate (browser) | Requires API client setup | Requires local installation & dependencies |

| Input Volume | Limited (single variants/small files) | Medium (batch queries via scripts) | High (whole genome VCFs) |

| Automation Potential | None | High | Very High |

| Customization | Basic (pre-set parameters) | High (via request parameters) | Very High (full parameter control) |

| Throughput Speed | Slow | Medium | Fast (local resources dependent) |

| Best For | Quick lookups, validation | Integrating VEP into pipelines/web apps | Large-scale, reproducible analysis |

Protocols & Application Notes

Protocol 1: Accessing VEP via the Web Tool

Methodology: This protocol is designed for researchers requiring rapid annotation of a few variants without software installation.

- Navigate to the Ensembl VEP website (e.g.,

https://www.ensembl.org/Tools/VEP). - Input data via the text box (e.g.,

9 133748283 C T) or upload a small file in VCF, HGVS, or other supported formats. - Configure basic parameters using the web form (e.g., select genome assembly GRCh38, choose transcript database).

- Click "Run" to submit the job. Results are displayed in an interactive web page with filtering and export options (CSV, VCF).

Protocol 2: Accessing VEP via the REST API

Methodology: This protocol enables programmatic access for integrating VEP functionality into custom scripts or applications.

- Setup: Ensure a tool for making HTTP requests is available (e.g.,

curlcommand-line utility or Pythonrequestslibrary). - Endpoint Construction: Use the base URL:

https://rest.ensembl.org/vep/. Append the species and input variant (e.g.,human/9:133748283:C:T). - Making a Request: Execute a

GETrequest with appropriate headers to receive JSON output. Example usingcurl:

- Batch Queries: For multiple variants, use a

POSTrequest, sending input data as a JSON payload.

Protocol 3: Accessing VEP via the Command Line

Methodology: This protocol is for local, high-performance annotation of large variant datasets, offering maximum flexibility.

- Installation: Install VEP and its cache databases locally using instructions from the Ensembl GitHub repository. This typically involves cloning the repository and running an installer script to download cache files.

Basic Execution: Run VEP from the terminal. A minimal command requires an input file and output specification.

Advanced Configuration: Add numerous flags to customize analysis (e.g.,

--pluginfor additional functionality,--customfor adding custom annotation tracks).

Visualizations

Diagram 1: Decision Workflow for Choosing a VEP Access Method

Diagram 2: Command Line VEP Data Processing Steps

The Scientist's Toolkit: VEP Research Reagent Solutions

Table 2: Essential Materials and Tools for VEP Analysis

| Item | Category | Function/Benefit |

|---|---|---|

| GRCh37/GRCh38 Genome Assembly | Reference Data | The baseline human genome coordinate system to which input variants must be aligned for accurate annotation. |

| VCF (Variant Call Format) File | Input Data | Standardized format containing variant positions, alleles, and quality scores; primary input for batch VEP analysis. |

| LOFTEE Plugin | Software Plugin | Flags loss-of-function variants as high-confidence or low-confidence, critical for disease and drug target research. |

| dbNSFP Database | Custom Annotation | Provides comprehensive pre-computed functional predictions (e.g., SIFT, PolyPhen) for deeper variant prioritization. |

| Conda/Bioconda | Environment Manager | Simplifies installation of VEP and all complex Perl/software dependencies in an isolated, reproducible environment. |

| High-Performance Computing (HPC) Cluster | Infrastructure | Enables parallel processing of whole-genome sequencing VCF files through the command-line VEP, drastically reducing runtime. |

| Jupyter Notebook / RStudio | Analysis Interface | Facilitates interactive exploration of VEP REST API results and downstream statistical analysis in Python or R. |

Article Body

This article, part of a broader thesis on Ensembl VEP tutorials for beginner research, provides a detailed breakdown of the Variant Effect Predictor (VEP) output for researchers, scientists, and drug development professionals. VEP annotates genetic variants with functional consequences, and interpreting its output is critical for genomic analysis.

The following table summarizes the most critical VEP output columns, their data types, and their significance in interpretation.

| Column Name | Data Type / Example | Primary Function in Analysis |

|---|---|---|

| Uploaded_variation | String: 1_123456_G/A |

The original variant identifier from input. |

| Location | String: 1:123456 |

Genomic coordinate (GRCh38/GRCh37). |

| Allele | String: A |

The alternative allele from input. |

| Gene | String: ENSG00000123456 |

Ensembl stable gene ID. |

| Feature | String: ENST00000567890 |

Ensembl stable transcript ID. |

| Feature_type | String: Transcript |

Type of feature (e.g., Transcript, RegulatoryFeature). |

| Consequence | String: missense_variant |

Sequence Ontology (SO) term for the effect. |

| cDNA_position | String: 456/789 |

Position in cDNA / cDNA length. |

| CDS_position | String: 345/567 |

Position in coding sequence / CDS length. |

| Protein_position | String: 115/188 |

Position in protein / protein length. |

| Amino_acids | String: E/D |

Reference/alternative amino acids (for coding variants). |

| Codons | String: gag/gac |

Affected codon sequence. |

| Existing_variation | String: rs699 |

Known identifier from databases (e.g., dbSNP). |

| IMPACT | String: MODERATE |

Pre-defined severity: HIGH, MODERATE, LOW, MODIFIER. |

| SYMBOL | String: MYH7 |

Common gene symbol. |

| BIOTYPE | String: protein_coding |

Transcript biotype. |

| CLIN_SIG | String: pathogenic |

Clinical significance from ClinVar. |

| PolyPhen | String: probably_damaging(0.998) |

Protein effect prediction (score). |

| SIFT | String: deleterious(0.01) |

Protein effect prediction (tolerance score). |

| gnomAD_AF | Float: 0.00012 |

Allele frequency in gnomAD population database. |

Experimental Protocol: Running and Interpreting VEP for a Candidate Variant List

Objective: To annotate a list of genetic variants from a sequencing study and prioritize them for functional validation.

Materials & Reagent Solutions:

- Input Variant File (VCF/TSV): List of genomic coordinates and alleles.

- Ensembl VEP Software: Installed locally via Docker/PERL or accessed via web tool/API.

- Reference Genome (FASTA): GRCh38.p14 or GRCh37.p13.

- VEP Cache Files: Offline database of pre-computed annotations for speed.

- High-Performance Computing (HPC) Cluster: For large-scale analysis.

Methodology:

- Input Preparation: Format your variants as a standard VCF file or a simple CHROM, POS, ID, REF, ALT TSV.

- VEP Execution (Command Line):

- Output Parsing: Load the TSV output into analysis software (e.g., R, Python Pandas).

- Variant Prioritization:

- Filter for high-impact consequences (e.g., STOPGAINED, SPLICEDONOR).

- Cross-reference with

CLIN_SIGfor pathogenic/likely pathogenic variants. - Filter by low population frequency (

gnomAD_AF < 0.01). - Assess protein damage predictions (

PolyPhenprobably_damaging,SIFTdeleterious).

- Validation Planning: Prioritized variants move to orthogonal validation via Sanger sequencing and functional assays.

The Scientist's Toolkit: Key Research Reagents & Materials

| Item | Function in VEP-Related Research |

|---|---|

| High-Quality Genomic DNA Sample | Source material for sequencing to generate variant calls. |

| Whole Exome/Genome Sequencing Kit | For capturing and sequencing the target genomic regions. |

| GRCh38 Reference Genome (FASTA) | The coordinate system for mapping and variant calling. |

| Alignment Tool (e.g., BWA) | Aligns sequencing reads to the reference genome. |

| Variant Caller (e.g., GATK) | Identifies genomic variants from aligned reads. |

| VEP Cache (e.g., v110) | Local database for rapid offline annotation. |

| ClinVar Database | Provides curated clinical significance annotations. |

| gnomAD Database | Provides population allele frequency data for filtering. |

| SIFT & PolyPhen Algorithms | Provide in silico predictions of variant effect on protein function. |

Visualizing the VEP Analysis Workflow

Workflow: From Sequencing to Candidate Variants

Visualizing Variant Consequence Logic

Decision Logic for Determining Variant Consequence

How to Run Ensembl VEP: Step-by-Step Tutorial for Variant Analysis

1. Introduction Within the broader context of a beginner's tutorial for the Ensembl Variant Effect Predictor (VEP), the preparation of a correctly formatted input file is a critical first step. VEP annotates genetic variants to predict their functional consequences. The Variant Call Format (VCF) is the primary and recommended input format. This Application Note details the current specifications, requirements, and validation protocols for preparing a VCF file for successful VEP analysis, tailored for researchers and drug development professionals.

2. VCF Specification & Core Requirements The VCF file must conform to version 4.0 or later. The following table summarizes the mandatory and critical fields for VEP analysis.

Table 1: Mandatory VCF Columns for VEP Input

| Column Number | Column Header | Description | VEP Requirement & Example |

|---|---|---|---|

| 1 | #CHROM |

Chromosome name. | Must be without 'chr' prefix (e.g., "1", "X", "MT"). Ensembl-style naming is required. |

| 2 | POS |

Reference position. | 1-based integer position of the variant on the given chromosome. |

| 3 | ID |

Variant identifier. | Optional. Can be a dbSNP RSID (e.g., "rs699") or a period (".") if unknown. |

| 4 | REF |

Reference allele. | One or more nucleotides. Must match the reference genome at this position (e.g., "A", "CTG"). |

| 5 | ALT |

Alternate allele(s). | Comma-separated list for multiple alleles (e.g., "G", "C,TTT"). Symbolic alleles (e.g., <DEL>) may require specialized handling. |

| 6 | QUAL |

Quality score. | Optional. Phred-scaled quality score for the assertion made in ALT (e.g., "60"). |

| 7 | FILTER |

Filter status. | Optional. Indicates if the variant passed filters (e.g., "PASS", "LowQual"). |

| 8 | INFO |

Additional information. | Critical. Must contain the AF (Allele Frequency) field for population frequency annotation. Other INFO fields are passed through. |

Table 2: Key Formatting & Genotype Data Requirements

| Aspect | Requirement |

|---|---|

| File Compression | Recommended to be bgzipped (e.g., input.vcf.gz). An accompanying Tabix index (input.vcf.gz.tbi) is required for large files. |

| Genotype Columns | Sample columns (following the FORMAT column) are optional but supported. VEP will parse but not alter genotype data. |

| Contig Headers | Inclusion of ##contig header lines (e.g., ##contig=<ID=1,length=248956422>) is strongly recommended for accuracy. |

| Reference Genome | The coordinates and REF alleles must correspond to the genome assembly version specified in the VEP command (e.g., GRCh38). |

3. Experimental Protocol: VCF File Validation and Preparation

Protocol 1: Pre-VEP Validation and Normalization Workflow

Objective: To ensure the VCF file is correctly formatted, sorted, normalized, and indexed for optimal VEP performance.

Materials & Reagents: See The Scientist's Toolkit below.

Methodology:

- Syntax Validation:

- Use

bcftoolsto validate the basic structure and syntax of the VCF file. - Command:

bcftools view input.vcf > /dev/null - A successful command with no errors indicates a syntactically valid file.

- Use

Reference Alignment & Normalization:

- Variants spanning multiple nucleotides or with complex representations must be decomposed and left-aligned. This ensures consistency with the reference genome.

- Command:

bcftools norm -m-any -f /path/to/reference_genome.fa input.vcf -o input.normalized.vcf - The

-m-anysplits multi-allelic sites into bi-allelic records.-fspecifies the reference FASTA file.

Sorting and Compression:

- VCF files must be sorted in chromosomal and positional order.

- Command:

bcftools sort input.normalized.vcf -o input.sorted.vcf - Compression:

bgzip input.sorted.vcf(producesinput.sorted.vcf.gz).

Indexing:

- Generate a Tabix index for the compressed VCF to enable rapid querying.

- Command:

tabix -p vcf input.sorted.vcf.gz

Final Consistency Check:

- Perform a final validation on the processed file.

- Command:

bcftools stats input.sorted.vcf.gz > vcf_stats.txt - Review the summary statistics for variant counts and integrity.

Visualization 1: VCF Preprocessing Workflow

Title: VCF File Preprocessing Steps for VEP

Visualization 2: Logical Structure of a Minimal VCF Record

Title: Essential VCF Fields for VEP Annotation

4. The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software Tools for VCF Preparation

| Tool / Resource | Function in VCF Preparation | Primary Use Case |

|---|---|---|

| BCFtools | A comprehensive suite for VCF/BCF manipulation, validation, filtering, and statistics. | Core utility for Protocol 1 steps: validation, normalization, sorting. |

| HTSlib | A C library for high-throughput sequencing data formats; provides bgzip and tabix. |

Underpins BCFtools. Used directly for compression (bgzip) and indexing (tabix). |

| Ensembl Reference Genome FASTA | The precise nucleotide sequence of the reference assembly (e.g., GRCh38.p14). | Essential for the normalization step (bcftools norm -f). Must match VCF coordinates. |

| VCF Validator (EBI) | Online or standalone tool for strict VCF schema validation. | Supplementary, in-depth validation beyond basic syntax checks. |

Within the broader context of creating a comprehensive Ensembl VEP tutorial for beginners, this application note provides a foundational, step-by-step protocol for performing variant effect prediction using the Ensembl VEP web interface. This guide is designed for researchers, scientists, and drug development professionals initiating their journey in genomic variant interpretation, enabling critical first steps in prioritizing variants for further functional studies or therapeutic targeting.

Key Research Reagent Solutions

The following table details the essential "inputs" required to perform a VEP analysis via the web interface.

| Item | Function in Analysis |

|---|---|

| Variant Call Format (VCF) File | Standard input file containing the genomic coordinates and identifiers of your query variants. Must be version 4.0 or above. |

| Reference Genome Assembly | The genomic coordinate system for your variants (e.g., GRCh38, GRCh37). Must match the assembly used for variant calling. |

| VEP Cache Files | Local data libraries used by VEP containing pre-calculated annotations for a reference genome. The web interface uses Ensembl's servers, so this is handled automatically. |

| Gene Annotation Database | The source of transcript models and regulatory features (e.g., Ensembl, RefSeq). The web tool defaults to the Ensembl gene set. |

| FASTA Reference Sequence | The reference genome sequence file. Again, provided automatically by the Ensembl web server. |

Core Protocol: Web Interface Analysis

Input Preparation and Submission

Objective: To correctly format and submit variant data for annotation.

- Access the Tool: Navigate to the Ensembl VEP web interface at

https://www.ensembl.org/Tools/VEP. - Input Data:

- Option A (Paste Data): For a small variant set (<50), paste coordinates directly into the text box. Use the format:

Chromosome Start End Allele Strand. Example:13 32315474 32315474 G/A +. - Option B (Upload File): For larger sets, upload a VCF file. Click "Upload File" and select your locally stored VCF.

- Option A (Paste Data): For a small variant set (<50), paste coordinates directly into the text box. Use the format:

- Species Selection: From the dropdown, select the correct species (e.g., Homo sapiens).

- Assembly Version: Select the reference genome assembly that matches your VCF data (GRCh38 is default).

- Click "Run" to submit the job.

Configuration of Analysis Parameters

Objective: To tailor the annotation output to specific research questions.

This protocol assumes configuration via the "Advanced" options before job submission.

- Transcript Database: Under "Transcript database to use," select the preferred source (e.g., "Ensembl genes" or "RefSeq genes").

- Filtering Options: To reduce output complexity:

- Select "Show one selected consequence per variant."

- Select "Only return results for variants with regulatory consequences" if the focus is on non-coding regions.

- Additional Annotations: In the "Additional databases" section, check boxes for relevant data:

- dbSNP: For known variant IDs.

- ClinVar: For disease-associated variants.

- gnomAD: For global population allele frequencies.

- Output Format: Choose the preferred format (e.g., "HTML" for web viewing, "Tab-delimited" for spreadsheet analysis, "VCF" for downstream piping).

Retrieval and Interpretation of Results

Objective: To locate and interpret key predictive data in the VEP output.

- Job Status: After submission, a results page will auto-refresh. Download links appear upon completion.

- Primary Output Table: The main results are presented in a table. Key columns to interpret include:

- Uploaded variant: Your input.

- Location: Genomic coordinate.

- Allele: The alternate allele.

- Consequence: The most severe predicted molecular effect (e.g., missense_variant).

- IMPACT: Qualitative categorization (HIGH, MODERATE, LOW, MODIFIER).

- Gene & Feature: Affected gene and transcript.

- cDNA & Protein Position: Location within the coding sequence and protein.

- Amino Acid Change: For missense variants, e.g.,

Glu125Lys. - Extra Column: Contains packed additional data (frequency, phenotype, SIFT/PolyPhen scores).

Data Presentation: Typical VEP Output Metrics

The following table summarizes quantitative data commonly extracted from a standard VEP run for a human exome dataset, illustrating the distribution of variant consequences.

Table 1: Distribution of Variant Consequences in a Representative Human Exome (n≈20,000 variants)

| Consequence Type | Approximate Count | Percentage (%) | Typical IMPACT Category |

|---|---|---|---|

| Intergenic Variant | 5,000 | 25.0 | MODIFIER |

| Intron Variant | 7,000 | 35.0 | MODIFIER |

| Up/Downstream Gene Variant | 2,000 | 10.0 | MODIFIER |

| Synonymous Variant | 1,800 | 9.0 | LOW |

| Missense Variant | 3,500 | 17.5 | MODERATE |

| Inframe Insertion/Deletion | 100 | 0.5 | MODERATE |

| Stop Gained/Lost | 50 | 0.25 | HIGH |

| Splice Region Variant | 500 | 2.5 | LOW/MODERATE |

| Splice Donor/Acceptor | 25 | 0.125 | HIGH |

| Non-Coding Transcript Variant | 25 | 0.125 | MODIFIER |

Visualization of Workflows

VEP Web Interface User Workflow

VEP Core Annotation Logic Pathway

Application Notes and Protocols

Within the context of a broader thesis on providing an Ensembl Variant Effect Predictor (VEP) tutorial for beginners in research, this document details the procedures for local installation and execution. Local VEP deployment offers researchers, scientists, and drug development professionals significant advantages: no reliance on internet connectivity or web service rate limits, ability to process sensitive data privately, and customization for high-throughput or proprietary genomes.

System Requirements and Performance Benchmarks

A local VEP installation has specific hardware and software dependencies. The following table summarizes quantitative performance data and minimum requirements based on current community benchmarks.

Table 1: System Requirements and Performance Metrics for VEP

| Component | Minimum Specification | Recommended for Production | Performance Notes |

|---|---|---|---|

| CPU | 64-bit, 2 cores | 8+ cores | Runtime scales approximately linearly with core count for multithreading. |

| RAM | 8 GB | 16 GB+ | ~4GB needed for cache files; additional RAM improves speed. |

| Storage | 40 GB free space | 100 GB+ SSD | Required for reference data (e.g., human GRCh38 cache: ~90GB). |

| Perl Version | 5.10+ | 5.26+ | Critical for script execution and module compatibility. |

| Supported OS | Linux, macOS | Linux (Ubuntu/CentOS) | Windows requires Windows Subsystem for Linux (WSL2). |

| Typical Runtime | - | - | ~1,000 variants/second on 8-core system with full cache. |

Experimental Protocols

Protocol 1: Installation of VEP and Dependencies

This methodology outlines the setup of a functional VEP environment.

Prerequisite Installation: Install system-level dependencies.

Clone VEP Repository: Obtain the latest VEP source code.

Install Perl Modules: Use the included installer.

Download Reference Cache Files: Retrieve species-specific data.

Protocol 2: Basic Execution and Annotation of a Variant Call Format (VCF) File

This protocol describes the core command-line operation to annotate a standard VCF file.

Input Preparation: Prepare your VCF file (

input_variants.vcf). Ensure the chromosome naming matches your cache assembly (e.g., "1" vs "chr1").Run VEP: Execute the annotation with basic parameters.

Output Interpretation: The default tab-delimited output includes columns for Uploaded variant location, Allele, Gene, Consequence, and more. Use

--vcfto output in VCF format.

Protocol 4: Advanced Customized Annotation

This protocol adds advanced filters, plugins, and output formatting for research-grade analysis.

- Apply Consequence Severity Filter: Use the

--filter_commonflag to skip common variants and apply regulatory plugins.

Visualization of Workflows

Local VEP Analysis Workflow

VEP Annotation Logic Pathway

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Software for Local VEP Analysis

| Item | Function/Benefit | Example/Note |

|---|---|---|

| High-Performance Computing (HPC) or Server | Provides necessary CPU, RAM, and storage for cache files and batch processing. | Cloud instances (AWS EC2, GCP), institutional cluster, or powerful workstation. |

| Reference Genome FASTA | Required for precise variant mapping and HGVS notations. | Downloaded automatically via INSTALL.pl --AUTO acfp. |

| Species Cache File | Pre-processed genomic annotation data enabling offline --cache mode. |

homo_sapiens_vep_110_GRCh38.tar.gz; updated with Ensembl releases. |

| VEP Plugin Files | Extends core functionality for specialized annotations (e.g., CADD, SpliceAI). | Must be manually configured; paths provided via --plugin. |

| Custom Annotation File (VCF/GTF/BED) | Allows integration of proprietary or third-party datasets (e.g., internal cohort frequencies). | Added using the --custom command line flag. |

| Perl Environment Manager (e.g., perlbrew) | Manages isolated Perl installations, preventing conflicts with system Perl. | Crucial for maintaining module dependencies across projects. |

| Containerization (Docker/Singularity) | Provides a reproducible, dependency-managed environment for VEP execution. | Official images available from Biocontainers or Docker Hub. |

| VCF Validation Tools | Ensures input file integrity before annotation to avoid runtime errors. | vcf-validator from vcftools package; bcftools norm. |

Within the broader thesis of mastering the Ensembl Variant Effect Predictor (VEP) for beginners in genomic research, a critical step is moving beyond basic annotation. This protocol details the integration of three essential plugins—CADD, dbNSFP, and ClinVar—to augment variant interpretation with pathogenicity scores, comprehensive functional predictions, and clinical significance data. This transforms VEP output from a basic functional report into a powerful, decision-ready resource for researchers, clinical scientists, and drug development professionals.

Table 1: Core Comparison of Essential VEP Plugins

| Plugin Name | Current Version (as of 2024) | Primary Data Provided | Key Metrics/Fields | Typical Use Case in Analysis |

|---|---|---|---|---|

| CADD | v1.7 (GRCh38/v1.6 GRCh37) | Pathogenicity Scores | CADD PHRED score, Raw score | Prioritizing deleterious variants; Filtering (e.g., CADD > 20-30) |

| dbNSFP | 4.7a | Aggregate Functional Predictions | SIFT, PolyPhen-2, MutationTaster, REVEL, MetaLR, etc. | Consolidating multiple in silico tools for consensus view |

| ClinVar | VCF dumps (monthly updates) | Clinical Assertions | Clinical significance, Review status, Condition | Linking variants to known disease phenotypes and classifications |

Table 2: Example dbNSFP Score Ranges and Interpretation

| Prediction Tool | Score Range | Typical Interpretation Threshold (Damaging/Deleterious) |

|---|---|---|

| SIFT | 0.0 - 1.0 | ≤ 0.05 |

| PolyPhen-2 HDIV | 0.0 - 1.0 | Probably Damaging: ≥ 0.957, Possibly Damaging: 0.453-0.956 |

| REVEL | 0.0 - 1.0 | > 0.5 (suggestive), > 0.75 (strong) |

| MetaLR | 0.0 - 1.0 | > 0.5 |

Experimental Protocols

Protocol 1: Local Installation and Cache Preparation for Plugins

Objective: To establish a local VEP environment with the necessary plugin data cached for rapid annotation.

Materials & Reagents:

- Ensembl VEP (v110+), installed locally via GitHub (

github.com/Ensembl/ensembl-vep). - Perl environment (v5.10+) with required modules (DBI, DBD::mysql, Bio::DB::HTS).

- Reference genome FASTA file (GRCh38 or GRCh37).

- Plugin data files: CADD whole genome SNV/InDel files, dbNSFP database file, ClinVar VCF.

Methodology:

- Install VEP and Plugins:

Download and Cache Plugin Data:

Build Local Cache:

Protocol 2: Execution of VEP with Essential Plugins

Objective: To annotate a user-provided VCF file with CADD, dbNSFP, and ClinVar data.

Input: VCF file (input_variants.vcf) containing genomic variants.

Command:

Output Analysis: The resulting tab-separated file (annotated_output.tsv) will contain all VEP consequences plus columns for CADD PHRED scores, selected dbNSFP rank scores (scaled 0-1), and ClinVar clinical significance.

Visualizations

Diagram 1: VEP Plugin Integration Workflow

Diagram 2: Data Integration for Variant Prioritization Logic

The Scientist's Toolkit: Research Reagent Solutions

Item

Function/Benefit

Source/Example

High-Performance Computing (HPC) Node

Essential for processing large VCFs and querying large plugin databases (dbNSFP, CADD) in a reasonable time.

Local cluster or cloud instance (AWS, GCP).

Cached Reference Genome

Speeds up VEP operation by storing local copies of genome sequences and pre-calculated annotations.

Ensembl FTP; created via vep_install.

Plugin Data Files (Compressed/Indexed)

The primary "reagents" containing the predictive and clinical data for annotation.

CADD: UW GS; dbNSFP: dbNSFP website; ClinVar: NCBI FTP.

Tabix

Indexes large coordinate-sorted data files for rapid random access, crucial for plugin performance.

htslib package (htslib.org).

Custom Perl/Python Script

For post-processing VEP output to filter, rank, and summarize results based on combined plugin scores.

e.g., Script to select variants where (CADD>25 AND REVEL>0.7) OR CLIN_SIG includes "Pathogenic".

Visualization Software

To create Manhattan plots, score distributions, and visual summaries of prioritized variants.

R (ggplot2, trackViewer), Python (matplotlib, seaborn).

1. Introduction Within a broader thesis on Ensembl VEP (Variant Effect Predictor) tutorial for beginners, this protocol details the critical downstream step: filtering and interpreting results to identify likely pathogenic variants. Moving from a raw variant list to a shortlist of candidates requires systematic filtering based on population frequency, predicted impact, and clinical annotations.

2. Core Filtering Criteria & Quantitative Data Summary The following criteria, applied sequentially, form the foundation of pathogenic variant identification. Quantitative thresholds are summarized in Table 1.

Table 1: Standard Filtering Thresholds for Identifying Rare, Damaging Variants

| Filtering Criteria | Typical Threshold | Rationale & Common Data Sources |

|---|---|---|

| Population Frequency | Global MAF < 0.01 (1%) | Excludes common polymorphisms unlikely to cause severe disease. Sources: gnomAD, 1000 Genomes. |

| Variant Consequence | 'High' & 'Moderate' impact | Prioritizes nonsense, frameshift, splice site, missense variants. Based on VEP's Sequence Ontology terms. |

| Pathogenicity Prediction | CADD PHRED-like > 20 | Scores >20 are among the top 1% of deleterious variants. REVEL > 0.5 for missense. |

| ClinVar Clinical Significance | Pathogenic/Likely Pathogenic | Direct evidence from curated clinical database. |

| Gene-Disease Relevance | Known association (OMIM) | Filters variants to genes with established disease links. |

3. Experimental Protocol: A Stepwise Filtering Workflow Protocol Title: Iterative Bioinformatics Filtering for Pathogenic Variant Discovery

3.1 Materials & Input

- Input Data: VEP-annotated variant call format (VCF) file.

- Software Environment: Command-line terminal (Unix/Linux) or bioinformatics platform (e.g., Galaxy, Bioconductor in R).

- Reference Databases (Local or API-based): gnomAD, ClinVar, dbNSFP, OMIM.

3.2 Procedure Step 1: Filter by Population Frequency

- Isolate the

AF(allele frequency) fields from the VEP output (e.g.,gnomADg_AF). - Apply a filter to retain only variants where the maximum population allele frequency is < 0.01.

Command-line example (using bcftools): bcftools view -i 'MAX(AF[*]) < 0.01' input_vep.vcf > output_rare.vcf

Step 2: Filter by Predicted Functional Impact

- Parse the

Consequencefield from VEP output. - Retain variants with consequences categorized as HIGH (e.g., transcript ablation, splice donor, stop gained, frameshift) or MODERATE (e.g., missense, inframe deletion).

Step 3: Integrate In Silico Pathogenicity Scores

- Extract pathogenicity scores from fields like

CADD_PHRED,REVEL_score,SIFT_score. - Apply compound thresholds (e.g.,

CADD_PHRED > 20ANDSIFT_pred = "D"). - For missense variants, require agreement across multiple tools (e.g., ≥2/3 tools predict deleterious).

Step 4: Annotate and Filter with Clinical Databases

- Cross-reference variant identifiers (RSID, HGVS) with ClinVar via its API or a local tab-separated file.

- Flag variants with assertions of

PathogenicorLikely pathogenic. - Caution: Review

Conflicting_interpretationsandUncertain_significancevariants for novel discoveries.

Step 5: Prioritize by Gene Context

- Filter variants to those occurring in genes relevant to the disease phenotype using OMIM or PanelApp.

- For novel genes, consider constraint metrics (gnomAD pLI > 0.9, loss-of-function observed/expected upper bound fraction < 0.35).

Step 6: Manual Curation & Review

- Visually inspect aligned reads at variant locus using a genome browser (e.g., IGV).

- Review literature for functional studies on the variant or gene.

- Assess variant within protein domains and conservation across species (PhyloP score).

4. Visualization of the Filtering Workflow

Title: Stepwise Filtering Protocol for Pathogenic Variants

5. The Scientist's Toolkit: Research Reagent Solutions Table 2: Essential Tools for Variant Filtering and Interpretation

| Tool / Resource | Category | Primary Function |

|---|---|---|

| Ensembl VEP | Variant Annotation | Predicts functional consequences of variants on genes, transcripts, and protein sequence. |

| gnomAD Browser | Population Frequency | Provides allele frequencies across diverse populations to filter common variants. |

| ClinVar | Clinical Database | Public archive of relationships between variants and phenotypic evidence. |

| CADD / REVEL | In Silico Prediction | Integrative scores predicting variant deleteriousness. |

| Integrative Genomics Viewer (IGV) | Visualization | Enables manual review of sequencing reads and variant context. |

| OMIM | Gene-Phenotype Database | Catalog of human genes and genetic disorders for relevance assessment. |

| bcftools / GEMINI | Bioinformatics Suite | Command-line utilities for manipulating and querying VCF files post-VEP. |

This application note, framed within a broader thesis on the Ensembl Variant Effect Predictor (VEP) tutorial for beginners, details a practical workflow for annotating variants from a targeted Next-Generation Sequencing (NGS) cancer gene panel. The primary objective is to transform raw variant calls into biologically and clinically interpretable data, a critical step in cancer genomics research and precision oncology drug development.

Table 1: Typical Output Metrics from a Cancer Panel VEP Annotation Run (Hypothetical 50-Gene Panel)

| Metric | Count | Percentage of Total |

|---|---|---|

| Total Variants Processed | 1,250 | 100% |

| Missense Variants | 715 | 57.2% |

| Synonymous Variants | 310 | 24.8% |

| Frameshift Variants | 85 | 6.8% |

| Stop Gained/Lost | 45 | 3.6% |

| Splice Region Variants | 95 | 7.6% |

| Variants in ClinVar | 400 | 32.0% |

| Variants with COSMIC ID | 620 | 49.6% |

Table 2: Critical Database Versions for Reproducible Annotation

| Database | Recommended Version | Purpose in Annotation |

|---|---|---|

| Ensembl VEP Cache | 110 (GRCh38) | Core transcript & consequence data |

| dbSNP | Build 156 | Known polymorphism IDs (rsIDs) |

| ClinVar | 2024-04 | Clinical significance assertions |

| COSMIC | v99 | Somatic mutations in cancer |

| dbNSFP | 4.5a | Aggregated pathogenicity scores (SIFT, PolyPhen, etc.) |

Detailed Experimental Protocol: VEP Annotation for a Cancer Panel

Protocol 3.1: Input File Preparation (VCF Format)

Objective: To format the raw variant call file (VCF) from the NGS pipeline for optimal VEP processing. Materials: GATK Toolkit, bgzip, tabix. Procedure:

- Quality Filtering: Filter the initial VCF using GATK's

VariantFiltrationorbcftools filterto retain only PASS variants.

Compression and Indexing: Compress the filtered VCF with bgzip and create a tabix index.

Field Standardization: Ensure the INFO field contains necessary genotype quality (GQ) and read depth (DP) tags.

Protocol 3.2: Local VEP Execution with Key Plugins

Objective: To annotate the filtered VCF with consequences, frequencies, and pathogenicity information. Materials: Ensembl VEP (v110+), Perl environment, cached databases (Table 2). Procedure:

- Basic Command Execution:

Integration of Critical Plugins for Cancer: Augment the basic command with plugins for clinical and functional data.

Output: The final

annotated_full.vcfcontains all added annotations in its INFO field.

Protocol 3.3: Post-Processing and Tier-Based Prioritization

Objective: To filter and prioritize annotated variants based on clinical relevance. Materials: Custom Python/R script, annotated VCF. Procedure:

- Extract and Parse: Use

bcftools queryor a custom script to parse the VCF INFO column into a tab-delimited table. - Apply Tiering Filter:

- Tier I (High Priority): Variants with

Consequencematching 'stopgained', 'frameshiftvariant', 'splicedonorvariant', 'spliceacceptorvariant' ANDClinVarsignificance includes 'Pathogenic'/'Likelypathogenic' ORCOSMICID present. - Tier II (Medium Priority):

Missense_variantwithCADDscore > 25 ANDSIFTprediction 'deleterious' ANDPolyPhenprediction 'probablydamaging'. - Tier III (Other): All other variants, including synonymous and intronic changes outside splice regions.

- Tier I (High Priority): Variants with

- Generate a final report table listing Tier I and II variants with key columns: Chromosome, Position, Gene, Consequence, dbSNP ID, ClinVar Significance, COSMIC ID, gnomAD AF, CADD Score.

Visualization of Workflows and Pathways

Cancer Gene Panel Annotation & Analysis Workflow

BRAF V600E in MAPK Pathway & Drug Inhibition

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Cancer Panel Sequencing & Annotation

| Item | Function in Workflow | Example Product/Provider |

|---|---|---|

| Targeted NGS Panel | Hybridization capture of specific cancer-related genes for efficient sequencing. | Illumina TruSight Oncology 500, Thermo Fisher Oncomine Comprehensive Assay. |

| High-Fidelity DNA Polymerase | Accurate PCR amplification of library constructs to minimize introduction of sequencing errors. | KAPA HiFi HotStart ReadyMix (Roche). |

| Sequence Capture Beads | Magnetic streptavidin beads for binding biotinylated capture probes and target DNA. | Dynabeads MyOne Streptavidin T1 (Thermo Fisher). |

| VEP Cache Files | Local database of genomic features (transcripts, regulatory regions) for offline annotation. | Ensembl FTP Server (species-specific, e.g., Homo_sapiens GRCh38). |

| Pathogenicity Plugin Data | Pre-formatted files enabling functional prediction via VEP plugins. | dbNSFP database, CADD scores, CancerHotspots data. |

| Variant Prioritization Software | GUI or script-based tools to filter and visualize VEP output. | Ensembl VEP web tool, VarSome Clinical, custom Python/R scripts. |

Solving Common VEP Errors & Optimizing Analysis for Efficiency

Top 5 VEP Error Messages and How to Fix Them

Application Note: A Practical Guide for Beginners in Genomic Research

Within the broader thesis of learning Ensembl's Variant Effect Predictor (VEP), encountering error messages is a critical step in the learning process. This guide addresses the five most common errors, providing clear protocols for resolution to maintain the integrity of downstream analysis for research and drug development.

Error 1: "ERROR: Cannot connect to database"

This occurs when VEP cannot access the required local cache or database files, often due to incorrect paths or permissions.

Root Cause Analysis: A failed connection halts all analysis, typically from a misconfigured --dir or --dir_cache parameter.

Protocol for Resolution:

- Verify Cache Installation: Confirm the cache directory is correctly installed and matches the VEP version.

- Check Path Specification: Explicitly define the cache directory using the

--diror--dir_cacheflag. - Validate Permissions: Ensure read and execute permissions are set for the user on the cache directory.

Error 2: "ERROR: No valid input data has been detected"

VEP fails to parse the input file due to format incompatibility or header issues.

Root Cause Analysis: The input file (VCF, variant IDs) does not adhere to strict format specifications.

Protocol for Resolution:

- Validate VCF Format: Use

bcftoolsto validate and normalize the VCF file. - Check Chromosome Naming: Ensure chromosome names match the reference database (e.g., "1" vs "chr1"). Use

sedor a script to harmonize. - Examine File Header: Confirm the

##fileformat=VCFv4.xheader line is present and correct.

Table 1: Common Input Format Issues and Solutions

| Format Issue | Example Error | Solution Command |

|---|---|---|

| Chromosome prefix mismatch | Contig 'chr1' not found | sed 's/^chr//' file.vcf |

| Missing VCF header | Add ##fileformat=VCFv4.2 |

|

| Tab-separation error | Fields parsed incorrectly | awk 'BEGIN {FS=OFS="\t"}{...}' |

Error 3: "WARNING: Failed to fetch data via REST"

This warning appears in offline mode when a required plugin or external data source is unavailable.

Root Cause Analysis: Plugins like dbNSFP, CADD, or SpliceAI require additional data files not present locally.

Protocol for Resolution:

- Install Plugin Data Files: Download the required data file for the plugin (e.g., dbNSFP.gz) to a local directory.

- Specify the Plugin Data Path: Correctly point the plugin to its data source using the appropriate flag.

- Fallback to REST: If resolution fails, consider running the specific query using the online REST API for debugging.

Error 4: "ERROR: BAM file does not appear to be indexed"

When using the --bam flag for visualization, VEP requires a coordinate-sorted, indexed BAM (.bai) file.

Root Cause Analysis: Missing .bai index file or BAM not sorted by coordinate.

Protocol for Resolution:

- Sort BAM File (if needed): Use

samtools sort. - Index the Sorted BAM: Generate the index file.

- Run VEP with BAM Path: Provide the path to the sorted BAM and its index.

Error 5: "ERROR: Out of memory"

The VEP process exceeds the system's available RAM, common with large input files or multiple plugins.

Root Cause Analysis: High memory consumption from processing many variants or resource-intensive annotations.

Protocol for Resolution:

- Batch Processing: Split the input VCF into smaller chunks (e.g., by chromosome).

- Limit Resource-Intensive Plugins: Run plugins like dbNSFP in separate, focused runs.

- Increase System Swap Space: Temporarily add swap memory to prevent crashes.

- Use

--forkOption: Distribute processing across multiple CPU cores, which can reduce per-process memory load.

Table 2: Memory Usage Estimates for Common VEP Operations

| Operation | Base Memory | With 1M Variants | Mitigation Strategy |

|---|---|---|---|

| Basic VEP (cache) | ~2 GB | ~4 GB | Use --fork |

| + dbNSFP plugin | + ~2 GB | + ~6 GB | Batch processing |

| + CADD plugin | + ~1 GB | + ~3 GB | Run plugins separately |

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in VEP Analysis |

|---|---|

| Ensembl VEP Cache (v110+) | Local database of gene models, sequences, and frequencies for offline annotation. |

| dbNSFP Data File | Provides comprehensive functional predictions from multiple algorithms (e.g., SIFT, Polyphen2). |

| FASTA Reference Genome | Required for precise allele alignment and HGVS nomenclature generation. |

| BAM/CRAM Index (.bai/.crai) | Enables genomic context visualization by mapping variants to aligned read data. |

| VCF Validator (bcftools) | Essential pre-VEP tool to standardize and clean input variant files. |

| Compute Environment (Conda/Bioconda) | Manages isolated, reproducible installations of VEP and all its dependencies. |

Visual Appendix

VEP Error Diagnosis and Resolution Workflow

Input VCF Preprocessing Protocol

This document provides detailed application notes and protocols for optimizing the cache system of the Ensembl Variant Effect Predictor (VEP). It is framed within a broader thesis aimed at creating a comprehensive beginner's tutorial for genomic annotation in research. Efficient cache usage is critical for researchers, scientists, and drug development professionals who routinely annotate large volumes of genetic variants, as it dramatically reduces computational time and resource expenditure, accelerating the path from genomic data to biological insight.

Core Concepts: VEP Cache Architecture

The Ensembl VEP can use a local cache of genomic data, pre-downloaded from Ensembl's servers, to annotate variants without requiring continuous internet queries. The cache contains species-specific data on genes, transcripts, regulatory regions, and known variants.

Key Benefits of Local Cache:

- Speed: Eliminates network latency.

- Reliability: Functions in offline or low-bandwidth environments.

- Efficiency: Reduces load on public Ensembl servers, enabling high-volume batch processing.

Quantitative Performance Data

The following table summarizes benchmark data from recent tests (2023-2024) comparing VEP runtime with different cache configurations on a standard AWS c5.2xlarge instance (8 vCPUs, 16 GB RAM). The input was a VCF file containing 10,000 human variants.

Table 1: VEP Runtime Comparison with Different Cache Setups

| Configuration | Description | Average Runtime (mm:ss) | Relative Speed Gain |

|---|---|---|---|

| No Cache (Online) | Direct query to Ensembl REST API. | 45:30 | 1x (Baseline) |

| Standard Cache (gzip) | Default compressed cache. | 12:15 | ~3.7x faster |

| Optimized Cache (Tabix) | Cache converted to tabix-indexed, BGZF-compressed format. | 03:40 | ~12.4x faster |

| Cache + FASTA | Using tabix cache and a local reference FASTA file. | 02:50 | ~16x faster |

Table 2: Cache Directory Size Comparison (Human, GRCh38)

| Cache Format | Approximate Size | Notes |

|---|---|---|

| Default (gzipped) | ~110 GB | Standard download from Ensembl. |

| Converted (Tabix/BGZF) | ~90 GB | More efficient indexing and compression. |

| Core-Only Filtered | ~25 GB | Contains only "core" transcripts, suitable for most analyses. |

Experimental Protocols

Protocol 4.1: Installation and Initial Cache Setup

Objective: To install the latest VEP and download the standard cache for a reference genome.

Materials: See "The Scientist's Toolkit" below.

Software Prerequisites: Perl, git, curl, tabix.

Methodology:

- Install VEP:

- Download Cache: During the interactive installation, choose to download cache files. Specify species (e.g.,

homo_sapiens) and assembly (e.g.,GRCh38). - Verify: The cache will be placed in

~/.vep/. Confirm with:ls -la ~/.vep/homo_sapiens/.

Protocol 4.2: Converting Cache to Optimized Tabix Format

Objective: To convert the standard cache to a tabix-indexed format for rapid random access.

Methodology:

- Navigate to the cache directory:

Use VEP's conversion script (must be run for each cache version number directory, e.g.,

110_GRCh38):The script decompresses

.gzfiles and re-compresses them into BGZF format (.bgz), then creates tabix indices (.tbi).

Protocol 4.3: Running VEP with Optimized Cache

Objective: To execute a VEP annotation run using the optimized cache and measure performance.

Methodology:

- Basic Command with Cache:

Enhanced Command with Tabix Cache & FASTA:

--tab: Outputs in tab-delimited format, which is faster than VCF.--fork 4: Uses 4 CPU cores for parallel processing.

- Benchmarking: Use the

timecommand prefix (time vep -i ...) to record total runtime.

Visualizations

Diagram 1: VEP annotation decision pathway (63 chars)

Diagram 2: Tabix indexed cache query mechanism (73 chars)

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for VEP Cache Optimization

| Item | Function / Purpose | Example / Note |

|---|---|---|

| Ensembl VEP Software | Core annotation engine. | Latest version from GitHub. Essential for all protocols. |

| Species Cache Files | Local database of genomic features (genes, transcripts, etc.). | Downloaded via INSTALL.pl. Foundation of offline speed. |

| BGZF-compressed FASTA | Local reference genome sequence. | Enables accurate allele mapping and reference sequence checks. |

| Tabix | Indexing utility for BGZF files. | Creates .tbi files. Critical for random access in large cache files. |

Fork / Threads Parameter (--fork) |

Enables parallel processing in VEP. | Utilizes multiple CPU cores. Major speed multiplier. |

| High-Performance Compute (HPC) or Cloud Instance | Execution environment. | AWS, GCP, or local cluster. Ample RAM (16GB+) is recommended. |

| Plugins Data (e.g., CADD, dbNSFP) | Additional, specialized annotation sources. | Requires separate download. Must be formatted for VEP. |

Within a broader thesis on conducting research with the Ensembl Variant Effect Predictor (VEP), efficient handling of large genomic datasets is paramount. This document provides application notes and protocols for managing memory and implementing batch processing strategies, critical for researchers, scientists, and drug development professionals working with whole-genome or large-scale targeted sequencing data.

Core Challenges & Quantitative Benchmarks

Processing genomic variant data through VEP presents significant computational hurdles. The following table summarizes key performance metrics based on current benchmark data.

Table 1: VEP Performance Benchmarks with Different Dataset Sizes

| Dataset Scale | Approx. Variant Count | Input File Size | Default VEP Memory Usage | Processing Time (Single-thread) | Recommended Strategy |

|---|---|---|---|---|---|

| Small (Gene Panel) | 1,000 - 10,000 | 1 - 10 MB | 2 - 4 GB | 1 - 5 minutes | In-memory, direct analysis. |

| Medium (Exome) | 100,000 - 500,000 | 50 - 250 MB | 8 - 12 GB | 20 - 60 minutes | Moderate batching or cache usage. |

| Large (Whole Genome) | 3 - 5 million | 1 - 2 GB | 20+ GB (can exceed 64 GB) | 6 - 24 hours | Essential batching & optimized flags. |

| Population Cohort (Multi-WGS) | 50+ million | 30+ GB | Prohibitive for single run | Days to weeks | Mandatory distributed batch processing. |

Experimental Protocols for Efficient VEP Analysis

Protocol 2.1: Batch Processing with File Splitting

Objective: To annotate a large VCF file (> 1M variants) without exceeding available system memory (e.g., 16 GB RAM).

Materials: Linux-based system, vcftools or bcftools, tabix, VEP installed locally with relevant cache (e.g., GRCh38, release 110).

Methodology:

- Split Input File: Use

bcftoolsto split the large VCF into manageable batches of ~100,000 variants.

Run VEP in Batch Mode: Execute VEP for each subset with memory-saving flags.

Merge Results: Concatenate annotated VCFs and summary statistics.

Protocol 2.2: Memory-Optimized VEP Execution

Objective: To configure a single VEP run for a large dataset to minimize peak memory footprint. Methodology:

- Critical Flags: Use

--buffer_sizeto limit variants held in memory (e.g., 5000). Implement--forkfor parallel processing of chunks. - Cache Optimization: Use

--cachewith--offline. Ensure the FASTA file is indexed (samtools faidx). - Output Management: Write output directly to compressed format using

--vcfor--taband--compress_output gzip. - Example Command:

Visualization of Workflows

Diagram 1: Batch Processing Strategy for Large VCFs

Diagram 2: VEP Memory Management Logic

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for VEP Large-Scale Analysis

| Item | Function & Relevance |

|---|---|

| High-Performance Computing (HPC) Cluster | Enables distributed batch processing and parallel execution via job schedulers (Slurm, PBS). Essential for population-scale studies. |

| bcftools/vcftools | Standard utilities for manipulating VCF files: splitting, merging, filtering, and validating. Critical for preprocessing and post-processing. |

| Tabix & BGZF Compression | Indexing and block-gzipped compression for genomic files. Allows random access to large VCFs, facilitating efficient batch extraction. |

| Local VEP Cache (Species-specific) | A pre-downloaded database of genomic annotations (e.g., for human GRCh38). Eliminates network latency and enables offline --cache mode, drastically speeding up runs. |

| Indexed Reference FASTA | The genomic reference sequence, indexed with samtools faidx. Required for accurate positional annotation and sequence retrieval. |

| Perl/BIOPERL or VEP Docker Container | Ensures a consistent, dependency-free software environment. The Docker container simplifies deployment and reproducibility across different systems. |

Resource Monitoring (e.g., htop, time) |

Tools to monitor real-time memory (RAM) and CPU usage during VEP execution, crucial for optimizing --fork and --buffer_size parameters. |

Application Notes

Within a comprehensive tutorial for Ensembl Variant Effect Predictor (VEP) for beginners, a critical step for advanced research is the integration of private, project-specific data with public reference annotations. This protocol details the methodology for custom annotation, enabling researchers to contextualize genetic variants against in-house databases (e.g., patient cohorts, proprietary cell line data) and tailored reference sequences.