Ensuring qPCR Specificity in Transcript Validation: A Comprehensive Guide from Primer Design to Clinical Application

This article provides a comprehensive framework for ensuring the specificity of quantitative PCR (qPCR) in transcript validation, a critical factor for rigor and reproducibility in biomedical research and drug development.

Ensuring qPCR Specificity in Transcript Validation: A Comprehensive Guide from Primer Design to Clinical Application

Abstract

This article provides a comprehensive framework for ensuring the specificity of quantitative PCR (qPCR) in transcript validation, a critical factor for rigor and reproducibility in biomedical research and drug development. Covering foundational principles, methodological best practices, advanced troubleshooting, and rigorous validation protocols, it addresses key challenges such as amplification efficiency, primer design, and data analysis. Aimed at researchers and drug development professionals, the guide synthesizes current guidelines, including MIQE and FAIR principles, and explores emerging technologies like digital PCR to empower the development of robust, clinically translatable qPCR assays.

The Pillars of qPCR Specificity: Core Principles for Accurate Transcript Detection

In transcript validation research, specificity is a foundational pillar for ensuring data integrity and reproducible results. While often narrowly defined as the absence of non-specific amplification, a comprehensive assessment of specificity in quantitative PCR (qPCR) encompasses multiple dimensions, including target sequence recognition, amplification fidelity, and detection reliability across diverse experimental conditions. Moving beyond simplistic definitions requires researchers to adopt rigorous experimental designs and validation protocols that address the complete specificity profile of their qPCR assays. This guide examines the multifaceted nature of specificity in qPCR, compares methodological approaches for its verification, and provides detailed protocols for comprehensive specificity validation in transcript analysis.

Defining the Dimensions of Specificity

Specificity in qPCR transcends mere primer binding accuracy. Contemporary frameworks define it through several interconnected components:

- Sequence Specificity: The precise binding of primers and probes to intended target sequences without cross-reacting to homologous regions, pseudogenes, or unrelated transcripts.

- Amplification Specificity: The faithful amplification of only the intended amplicon without generating primer-dimers or other spurious products.

- Detection Specificity: The accurate fluorescence signal reporting that exclusively corresponds to the target amplicon accumulation.

- Analytical Specificity: The reliable differentiation of target transcripts in complex biological samples containing potential interferents.

- Assay Specificity: Consistent performance across different sample types, experimental conditions, and instrumentation platforms.

This expanded definition necessitates a multi-parameter approach to validation, as no single metric sufficiently captures the complete specificity profile of a qPCR assay.

Comparative Analysis of Specificity Assessment Methods

Method Performance Comparison

Table 1: Comparison of Methods for Assessing qPCR Specificity

| Method | Specificity Dimension Assessed | Key Performance Metrics | Throughput | Limitations |

|---|---|---|---|---|

| Melting Curve Analysis | Amplification specificity | Tm peak uniformity, presence of extra peaks | High | Limited to dye-based chemistry; cannot distinguish same-size products |

| Electrophoresis | Amplicon size verification | Fragment size confirmation | Low | Low sensitivity; poor quantification |

| Sequencing | Sequence specificity | 100% identity to target | Low | Costly; not routine for validation |

| No-Template Controls | Reagent contamination | Absence of amplification | High | Only detects contamination |

| Dilution Series Linearilty | Amplification specificity | R² > 0.98, consistent efficiency | Medium | Does not detect minor contaminants |

| Multiplex Verification | Probe specificity | Differential fluorescence detection | Medium | Requires specialized design |

Experimental Data on Specificity Performance

Table 2: Specificity Performance of Different Detection Chemistries

| Chemistry | Reported False Positive Rate | Typical Efficiency Range | Best Suited Applications | Specificity Validation Requirements |

|---|---|---|---|---|

| SYBR Green | 5-15% (without optimization) | 90-110% | High-throughput screening; multiple targets | Mandatory melt curve analysis; sequencing verification |

| Hydrolysis Probes (TaqMan) | 1-5% | 90-105% | Multiplexing; low-abundance targets | Probe specificity verification; cross-reactivity testing |

| Molecular Beacons | 1-3% | 85-100% | SNP detection; low-abundance targets | Stem-loop stability assessment; temperature optimization |

| Scorpion Probes | 1-3% | 90-105% | Rapid cycling; closed-tube formats | Intramolecular interaction testing |

Experimental Protocols for Comprehensive Specificity Assessment

Protocol 1: Multi-Stage Specificity Verification

This protocol provides a comprehensive framework for establishing qPCR assay specificity, integrating both in silico and empirical validation steps.

Materials Required

- Template RNA/DNA: Target samples, negative controls

- Primers and Probes: Validated sequences with minimal secondary structure

- qPCR Master Mix: Enzyme, buffer, dNTPs, optimized for chosen chemistry

- No-Template Controls (NTCs): Molecular grade water or buffer

- No-Reverse Transcription Controls (NRTs): For RNA templates

- Positive Controls: Known target sequences

- qPCR Instrument: Calibrated for multi-channel detection if multiplexing

Procedure

Step 1: In Silico Specificity Assessment

- Perform BLAST analysis of primer and probe sequences against relevant genome databases

- Check for secondary structure formation using mFold or similar tools

- Verify Tm consistency (±1°C) across primer pairs

- Ensure amplicon length is between 70-200 bp for optimal efficiency

Step 2: Empirical Amplification Verification

- Prepare dilution series of template (5-6 log orders)

- Run qPCR with intercalating dye chemistry (SYBR Green)

- Analyze amplification curves for singular sigmoidal shape

- Perform melt curve analysis with high-resolution temperature ramping (0.1°C increments)

- Confirm single peak with narrow temperature range (<1°C variation across replicates)

Step 3: Sequence Identity Confirmation

- Purify qPCR products from reactions with Cq values <30

- Clone products into appropriate sequencing vector

- Sequence multiple clones (minimum 3) from independent reactions

- Verify 100% sequence identity to expected target

Step 4: Cross-Reactivity Testing

- Test assay against related templates (paralogs, family members)

- Include biological samples known to lack target transcript

- Verify absence of amplification or significantly different Cq values (ΔCq >10)

Step 5: Dynamic Range Assessment

- Run standard curve with 5-6 points of 10-fold serial dilutions

- Calculate PCR efficiency using formula: Efficiency = [10(-1/slope) - 1] × 100%

- Acceptable range: 90-110% efficiency with R² > 0.98 [1]

- Confirm consistent efficiency across dilution range

Protocol 2: High-Throughput Specificity Screening Using "Dots in Boxes" Method

For laboratories validating multiple qPCR targets simultaneously, the "dots in boxes" method provides a visual framework for rapid specificity assessment [2].

Materials Required

- Multi-target panels: 5+ targets spanning various GC contents and lengths

- qPCR plates: 96 or 384-well formats

- Liquid handling system: For reproducible dispensing

- Data analysis software: With capability for efficiency calculations

Procedure

Step 1: Target Panel Design

- Select minimum of 5 targets representing typical amplicon characteristics

- Include range of GC content (40-60%)

- Vary amplicon lengths (70-200 bp)

- Incorporate both high and low abundance targets

Step 2: Standard Curve Generation

- Prepare 5-log serial dilutions for each target

- Include NTCs for each target in triplicate

- Run qPCR with appropriate controls

- Calculate efficiency and ΔCq values [ΔCq = Cq(NTC) - Cq(lowest input)]

Step 3: Quality Scoring Apply 5-point quality score based on these criteria:

- Linearity: R² ≥ 0.98

- Reproducibility: Replicate Cq variation ≤ 1 cycle

- RFU Consistency: Maximum plateau fluorescence within 20% of mean

- Curve Steepness: Rise from baseline to plateau within 10 Cq values

- Curve Shape: Sigmoidal shape with clear plateau

Step 4: Data Visualization

- Plot PCR efficiency (y-axis) against ΔCq (x-axis)

- Establish acceptable region: Efficiency 90-110%, ΔCq ≥ 3

- Represent quality scores by dot size and opacity (solid for scores 4-5)

- Visually identify assays falling outside acceptable specificity parameters

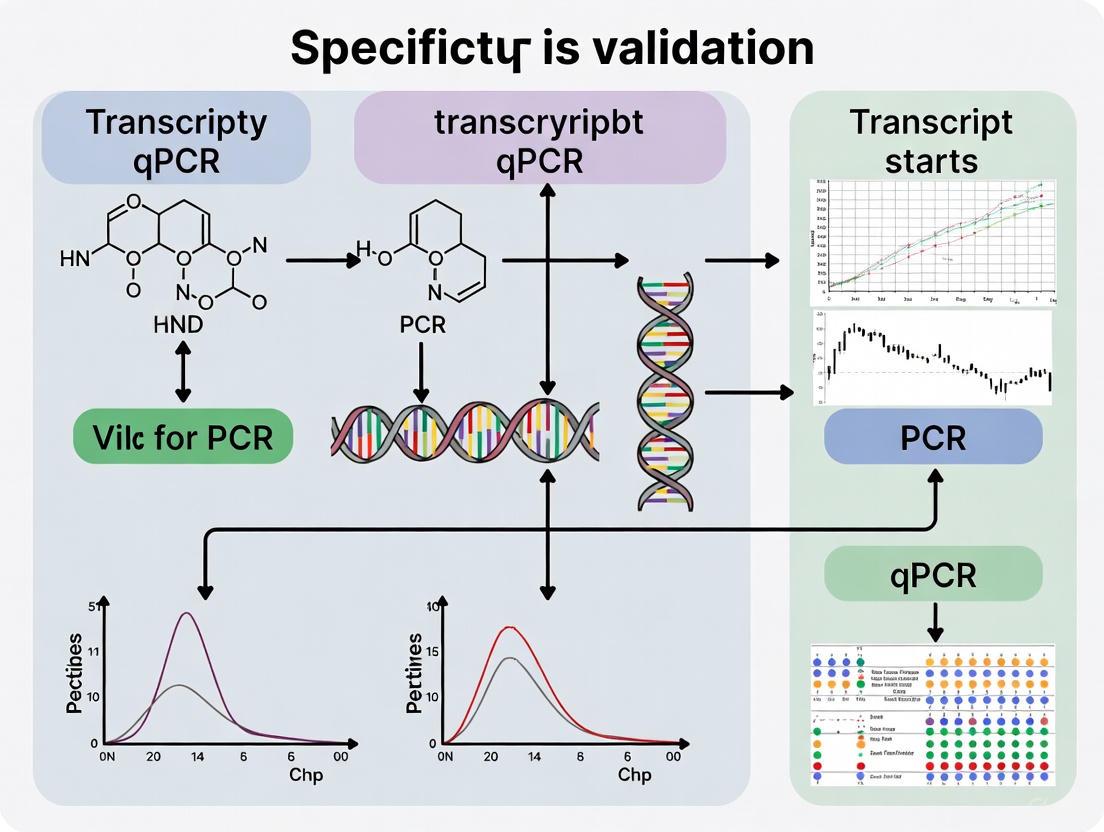

Visualizing Specificity Assessment Workflows

Specificity Validation Workflow: This diagram illustrates the comprehensive, iterative process for establishing qPCR assay specificity, from initial design to final validation.

Table 3: Research Reagent Solutions for Specificity Validation

| Reagent/Resource | Function in Specificity Assessment | Implementation Example | Validation Parameters |

|---|---|---|---|

| Sequence-Specific Probes | Enhance target discrimination | TaqMan probes for SNP detection | ΔCq > 5 between variants |

| No-Template Controls (NTCs) | Detect reagent contamination | Include in every run | Cq > 5 cycles past lowest sample |

| No-Reverse Transcription Controls | Identify genomic DNA amplification | For RNA targets | No amplification or Cq > 40 |

| Positive Control Plasmids | Verify assay performance | Cloned target sequence | Consistent Cq values (±0.5) |

| Standard Curve Materials | Assess amplification efficiency | Serial dilutions of known quantity | Efficiency 90-110%, R² > 0.98 |

| Multi-Target Validation Panels | Comprehensive specificity profiling | 5+ targets with varying properties | All pass "dots in boxes" criteria |

Data Analysis and Interpretation Framework

Statistical Approaches for Specificity Validation

Robust statistical analysis is essential for objective specificity assessment. The rtpcr package for R provides a comprehensive framework for efficiency-weighted analysis that enhances specificity verification [3]. Key considerations include:

- Efficiency-Weighted ΔCT: Calculate using log₂(Etarget) × CTtarget - log₂(Eref) × CTref to account for efficiency differences

- ANCOVA Modeling: Provides greater statistical power compared to traditional 2-ΔΔCT methods, particularly for detecting subtle specificity issues [4]

- Confidence Interval Estimation: Calculate standard errors for fold-change values using Taylor series approximations

- Reference Gene Validation: Use algorithms like geNorm, NormFinder, or BestKeeper to confirm reference gene stability under experimental conditions [5]

Critical Thresholds for Specificity Acceptance

Establishing predefined acceptance criteria is essential for objective specificity validation:

- Amplification Efficiency: 90-110% with linear dynamic range over at least 3 log orders [2]

- Melt Curve Profiles: Single peak with <1°C variation between replicates

- Non-Template Controls: Cq values at least 5 cycles higher than the most dilute sample or no amplification

- Cross-Reactivity Testing: ΔCq > 10 between target and non-target templates

- Standard Curve Linearilty: R² > 0.98 across the dynamic range

- Inter-Assay Variability: CV < 5% for efficiency values [1]

Case Study: Specificity Challenges in Viral Detection

The comparative evaluation of SARS-CoV-2 detection methods illustrates the practical implications of specificity assessment. A 2025 study comparing GeneXpert (CBNAAT) with RT-qPCR demonstrated 100% sensitivity but only 32.89% specificity, with a positive predictive value of 53.64% [6]. This highlights how even clinically approved assays can show significant specificity limitations, potentially leading to false positive results. The findings emphasize that sensitivity and specificity represent independent performance dimensions that must both be optimized for reliable transcript detection.

Comprehensive specificity assessment in qPCR-based transcript validation requires a multifaceted approach that extends far beyond verifying non-specific amplification. By implementing the protocols and frameworks outlined in this guide, researchers can establish rigorous specificity standards that enhance experimental reproducibility and data reliability. The integration of in silico design tools, empirical verification methods, statistical analysis frameworks, and continuous monitoring processes creates a robust system for specificity assurance. As qPCR technologies evolve toward higher throughput and greater sensitivity, maintaining this comprehensive view of specificity will be essential for generating biologically meaningful transcript validation data that advances scientific discovery and therapeutic development.

In transcript validation research, the accuracy of quantitative PCR (qPCR) results is paramount. At the heart of a reliable qPCR assay lies the meticulous design of primers and probes, which directly determines the method's specificity, sensitivity, and efficiency. Failures in oligonucleotide design can lead to skewed abundance data, false positives, and compromised conclusions, particularly when quantifying subtle changes in gene expression [7] [8]. This guide examines the critical design parameters—melting temperature (Tm), GC content, and specificity—by comparing theoretical ideals against experimental data, providing researchers and drug development professionals with evidence-based strategies for developing robust qPCR assays.

The exponential nature of PCR means that even minor imperfections in primer or probe design are amplified over multiple cycles, potentially leading to significant inaccuracies in quantification [9]. As highlighted in recent research, "non-homogeneous amplification due to sequence-specific amplification efficiencies often results in skewed abundance data, compromising accuracy and sensitivity" [7]. By understanding and applying the principles outlined in this guide, researchers can minimize variability and enhance the reliability of their transcript validation data.

Core Principles of Primer and Probe Design

Established Design Parameters and Guidelines

Successful qPCR assays are built upon well-characterized oligonucleotide design parameters that work in concert to ensure specific and efficient amplification. The following guidelines represent the consensus from leading molecular biology resources and manufacturers.

Table 1: Recommended Design Parameters for qPCR Primers and Probes

| Parameter | Primer Recommendations | Probe Recommendations | Rationale |

|---|---|---|---|

| Length | 18-30 bases [10] [11] | 20-30 bases (single-quenched); can be longer with double-quenched probes [10] | Balances specificity with efficient hybridization and binding kinetics |

| Melting Temperature (Tm) | 60-64°C (optimal 62°C); primer pair Tm within ≤2°C [10] | 5-10°C higher than primers [10] | Ensures simultaneous primer binding while maintaining probe hybridization |

| GC Content | 35-65% (ideal 50%) [10] [12] | 35-60% [10] [13] | Provides sequence complexity while minimizing secondary structures |

| GC Clamp | 3' end should end in G or C, but avoid >3 consecutive G/C residues [13] [11] | Should not contain G at 5' end [10] [13] | Stabilizes primer binding while preventing primer-dimer formation |

| Complementarity | No runs of 4+ identical bases; avoid dinucleotide repeats [11]; ΔG of structures > -9 kcal/mol [10] | Similar screening for self-dimers and hairpins [10] | Precludes nonspecific amplification and primer-dimer artifacts |

The annealing temperature (Ta) should be set no more than 5°C below the primer Tm [10]. The amplicon length should ideally be between 70-150 base pairs for optimal amplification efficiency, though longer amplicons up to 500 bp can be successfully amplified with modified cycling conditions [10]. When designing primers for gene expression studies, it's recommended to treat RNA samples with DNase I and design assays to span exon-exon junctions to reduce genomic DNA amplification [10].

The Critical Relationship Between Design Parameters and Specificity

The parameters outlined in Table 1 interact to determine assay specificity through several mechanisms. Primer length and Tm directly affect binding specificity, with shorter primers (<17 bases) risking nonspecific binding, and longer primers (>30 bases) exhibiting slower hybridization rates [13]. GC content influences both Tm and secondary structure formation, with extremes leading to unstable binding (<30% GC) or complex secondary structures (>70% GC) that hinder amplification [12].

The 3' end of primers is particularly critical, as this is where polymerase extension initiates. A GC clamp (ending in G or C) strengthens binding through additional hydrogen bonds, but excessive GC richness at the 3' end promotes mispriming [13] [11]. Self-complementarity within and between primers enables dimer formation that competes with target amplification, while internal secondary structures prevent proper binding to the template [13].

Diagram 1: Relationship between primer design parameters and specificity outcomes. Proper parameter selection enables molecular mechanisms that lead to reliable experimental results in qPCR.

Experimental Evidence: From Theory to Practice

Case Study: Specificity Failures in Diagnostic Assays

A 2025 investigation evaluating the specificity of LEISH-1/LEISH-2 primer pair with TaqMan MGB probe for visceral leishmaniasis diagnosis revealed critical design flaws [8]. The study reported unexpected amplification in all negative control samples (30 seronegative dogs and 16 negative wild animals), indicating a fundamental specificity failure. Subsequent in silico analysis identified structural incompatibilities and low selectivity in the probe design as the primary culprits.

Experimental Protocol:

- Sample Preparation: 85 serum samples from domestic dogs and wild animals with previously defined serological status (30 positive, 30 negative dogs; 9 positive, 16 negative wild animals) [8]

- qPCR Conditions: Using LEISH-1/LEISH-2 primer pair with TaqMan MGB probe following established protocols [8]

- In silico Analysis: Primer-BLAST specificity checking, Multiple Alignment using Fast Fourier Transform (MAFFT), RNAfold secondary structure prediction, SnapGene analysis [8]

- Results Interpretation: Comparison of amplification curves between seropositive and seronegative samples; computational validation of oligonucleotide properties [8]

This case highlights the critical importance of thorough in silico validation before experimental implementation. The researchers addressed these limitations by designing a new oligonucleotide set (GIO), which computational analyses showed had superior structural stability, absence of unfavorable secondary structures, and improved specificity [8].

Multi-Template PCR Efficiency and Sequence-Specific Bias

Groundbreaking research published in Nature Communications (2025) employed deep learning models to investigate sequence-specific amplification efficiency in multi-template PCR [7]. The study analyzed 12,000 random sequences with common terminal primer binding sites across 90 PCR cycles, revealing that approximately 2% of sequences exhibited severely compromised amplification efficiencies as low as 80% relative to the population mean.

Table 2: Amplification Efficiency Analysis in Multi-Template PCR

| Parameter | GC-All Pool | GC-Fixed Pool (50% GC) | Impact on Amplification |

|---|---|---|---|

| Poorly amplifying sequences | ~2% of pool | ~2% of pool | Independent of GC content |

| Amplification efficiency | As low as 80% of mean | As low as 80% of mean | Halving in relative abundance every 3 cycles |

| Sequence recovery | Effectively drowned out by cycle 60 | Effectively drowned out by cycle 60 | Complete loss of detection after 60 cycles |

| Reproducibility | Consistent across replicates | Consistent across replicates | Reproducible and sequence-specific |

Experimental Protocol:

- Oligonucleotide Pool Design: 12,000 random sequences with truncated TruSeq adapters; parallel experiment with GC-constrained sequences (50% GC) [7]

- Amplification Conditions: Six consecutive PCR reactions of 15 cycles each (total 90 cycles); serial sampling for sequencing at each 15-cycle interval [7]

- Efficiency Calculation: Fit of sequencing coverage data to exponential PCR amplification model with two parameters (initial coverage bias and sequence-specific amplification efficiency, εi) [7]

- Validation: Orthogonal verification via single-template qPCR dilution curves for selected sequences [7]

The finding that poor amplification persisted even in GC-controlled pools indicates that factors beyond traditional design parameters significantly impact amplification efficiency. The researchers developed a convolutional neural network model that achieved high predictive performance (AUROC: 0.88) in identifying poorly amplifying sequences based on sequence information alone [7].

Comparative Analysis of Design Methodologies

Traditional vs. Computational Design Approaches

The evolution of primer design methodologies has expanded from manual design following basic rules to sophisticated computational approaches that can predict performance before synthesis.

Table 3: Comparison of Primer Design and Validation Methodologies

| Methodology | Key Features | Advantages | Limitations |

|---|---|---|---|

| Traditional Rule-Based Design | Manual design based on length, Tm, GC content guidelines [10] [13] [11] | Simple to implement; requires minimal computational resources | May miss sequence-specific inefficiencies; limited predictive power |

| Software-Assisted Design (Primer3, Geneious) | Automated algorithms considering multiple parameters simultaneously [14] | Rapid generation of multiple design options; comprehensive parameter optimization | Limited ability to predict cross-hybridization in complex samples |

| In silico Validation (BLAST, OligoAnalyzer) | Computational specificity checking against database; secondary structure prediction [10] [8] | Identifies potential off-target binding; predicts stable secondary structures | Does not account for reaction condition variability |

| Deep Learning Prediction (1D-CNN) | Neural networks trained on large experimental datasets to predict efficiency [7] | High accuracy in identifying poorly amplifying sequences; accounts for complex interactions | Requires substantial training data; "black box" interpretation challenges |

The integration of these approaches provides the most robust design strategy. For example, using software-assisted design to generate candidate primers, followed by in silico validation and efficiency prediction with advanced models where available, creates a comprehensive design workflow.

Impact of Reaction Conditions on Design Parameters

Theoretical design parameters must be adjusted for specific reaction conditions, as buffer composition significantly impacts oligonucleotide behavior. Salt concentrations particularly influence melting temperature, with Mg²⁺ having approximately 10-100 times the stabilizing effect of monovalent ions per mole [12].

Key Considerations:

- Mg²⁺ Concentration: Standard PCR typically uses 1.5-2.5 mM Mg²⁺; increasing from 1.5 mM to 3.0 mM raises Tm by 1.5-2.5°C [12]

- DMSO Effects: Adding 5% DMSO lowers Tm by approximately 2.5-3.5°C, which can improve specificity for GC-rich templates [12]

- Salt Correction Formulas: The Owczarzy (2008) correction formula accounts for competitive binding between Mg²⁺ and dNTPs, providing more accurate Tm predictions under PCR conditions [12]

When calculating Tm using online tools, it's essential to input the specific reaction conditions rather than relying on default parameters [10]. This includes monovalent and divalent cation concentrations, oligonucleotide concentration, and any additives like DMSO that significantly impact hybridization thermodynamics.

Table 4: Research Reagent Solutions for qPCR Assay Development and Validation

| Reagent/Resource | Function | Application Notes |

|---|---|---|

| Double-Quenched Probes (e.g., with ZEN/TAO internal quenchers) | Reduce background fluorescence; enable longer probe designs [10] | Provide consistently lower background than single-quenched probes |

| High-Fidelity DNA Polymerase | Accurate amplification with minimal introduction of errors | Critical for sequencing applications; reduces mutation accumulation |

| Synthetic RNA/DNA Standards | Generate standard curves for absolute quantification [1] | Essential for efficiency determination; aliquot to avoid freeze-thaw degradation |

| RNase-Free DNase I | Remove residual genomic DNA from RNA preparations [10] | Prevents false positives in transcript detection; essential when not spanning exon junctions |

| TaqMan Fast Virus 1-Step Master Mix | Combine reverse transcription and qPCR in single reaction [1] | Reduces handling time and variability; optimized for difficult templates |

Recommended Bioinformatics Tools

- PrimerQuest Tool (IDT): Design highly customized qPCR assays and PCR primers with sophisticated algorithms [10]

- OligoAnalyzer Tool (IDT): Analyze Tm, hairpins, dimers, and mismatches with BLAST analysis capability [10]

- Geneious Prime: Comprehensive platform for primer design, validation, and management with visualization features [14]

- Primer-BLAST: Verify primer specificity against database sequences to minimize off-target amplification [8]

- RNAfold: Predict secondary structures that might interfere with primer or probe binding [8]

The critical role of primer and probe design in qPCR specificity is evident across both theoretical principles and experimental evidence. Optimal design requires careful attention to multiple interdependent parameters rather than focusing on single factors in isolation. Based on the current evidence, the following best practices emerge for researchers conducting transcript validation studies:

Employ a Comprehensive Design Approach: Combine length (18-30 bp), Tm (60-64°C with ≤2°C difference between primers), and GC content (40-60%) requirements during initial design [10] [13] [11]

Validate Specificity Computationally: Utilize BLAST analysis and secondary structure prediction tools to identify potential off-target binding and self-complementarity issues before experimental testing [10] [8]

Account for Reaction Conditions: Calculate Tm using specific buffer conditions rather than default parameters, particularly noting Mg²⁺ concentration and any additives like DMSO [10] [12]

Verify Experimentally: Include standard curves in each qPCR run to monitor efficiency, as inter-assay variability can impact quantification accuracy even with well-designed assays [1]

Consider Advanced Prediction Tools: For critical applications, utilize deep learning-based efficiency prediction where available to identify sequence-specific amplification issues [7]

The integration of these practices creates a robust framework for developing qPCR assays that deliver specific and reproducible results, ultimately strengthening the reliability of transcript validation data in both basic research and drug development applications.

Quantitative Polymerase Chain Reaction (qPCR) is a cornerstone technique in molecular biology, particularly for transcript validation research. Its accuracy hinges on the concept of amplification efficiency (E), which defines the fraction of target molecules copied in each PCR cycle [15]. The theoretical ideal is 100% efficiency (E=2), meaning the number of target molecules doubles perfectly every cycle [16]. In practice, however, reactions rarely achieve this perfection. This guide objectively compares the theoretical ideal of qPCR efficiency against practical reality, providing a framework for researchers to critically evaluate their data and the methodologies used to generate it.

The Theoretical Ideal: 100% Efficiency

In an ideal qPCR assay, the amplification process is perfectly exponential and predictable.

Mathematical Foundation and Assumptions

The kinetics of ideal PCR amplification are described by the equation: NC = N0 × EC where NC is the number of amplicons after cycle C, N0 is the initial number of target molecules, and E is the efficiency [9]. With 100% efficiency (E=2), this becomes a perfect doubling reaction. This ideal forms the basis of the commonly used 2–ΔΔCT method for relative quantification, which simplifies calculations by assuming maximum efficiency for both target and reference genes [16].

Implications for Data Analysis

This theoretical perfection allows for straightforward quantification. The Cycle threshold (Cq) value has a predictable, inverse logarithmic relationship with the initial target quantity. When all assays in a run operate at 100% efficiency, their amplification curves, plotted on a logarithmic fluorescence axis, appear as parallel lines during the exponential phase, simplifying inter-assay comparisons and normalization [16].

Practical Reality: Causes and Consequences of Variable Efficiency

In reality, qPCR efficiency is variable and frequently deviates from 100%, potentially compromising data accuracy.

Common Causes of Efficiency Variation

- Primer Design and Reaction Components: Non-optimal primer design, inappropriate melting temperatures (Tm), and sub-optimal reagent concentrations are primary reasons for poor amplification efficiency [17]. Secondary structures like dimers and hairpins can affect primer-template annealing.

- PCR Inhibitors: The presence of inhibitors is a major reason for observed efficiencies exceeding 100%. Carry-over contaminants from nucleic acid isolation (e.g., ethanol, phenol, SDS) or inherent sample components (e.g., heparin, hemoglobin) can inhibit the polymerase enzyme [17]. Inhibition is often more pronounced in concentrated samples, leading to a flattening of the standard curve slope and a calculated efficiency of over 100% [17].

- Sample and Template Quality: The purity of DNA/RNA samples is critical. Spectrophotometric measurement (e.g., A260/A280 ratios) is recommended prior to qPCR. Furthermore, the stochastic effect in very diluted samples can introduce high variability [17].

- Pipetting Errors and Reaction Setup: Inaccurate serial dilutions and pipetting errors are practical sources of variation, particularly when generating standard curves [16].

Impact on Quantification Accuracy

The exponential nature of PCR means small efficiency differences cause large quantitative errors. For a Cq of 20, the calculated quantity from an assay with 80% efficiency can be 8.2-fold lower than one with 100% efficiency [16]. This demonstrates why assuming 100% efficiency without validation is a major source of bias in qPCR results [9]. Relying on the 2–ΔΔCT method when efficiencies are variable or unknown overlooks a critical factor influencing results [4].

Comparative Analysis of Efficiency Estimation Methods

Several mathematical approaches exist to estimate efficiency, each with distinct advantages and limitations. The choice of method significantly impacts the final quantitative result.

The table below summarizes the core methodologies for efficiency estimation and data analysis.

Table 1: Comparison of qPCR Efficiency Estimation and Data Analysis Methods

| Method | Core Principle | Reported Output | Key Advantages | Key Limitations / Sources of Variability |

|---|---|---|---|---|

| Standard Curve | Linear regression of Cq vs. log template concentration [1] [15]. | A single efficiency (E) value for the entire assay. | Well-established and widely understood. | Inter-assay variability; requires significant time, resources, and a suitable standard [1]. |

| Exponential Model | Fits a straight line to data points within the exponential phase of individual amplification curves [15]. | Efficiency for each individual reaction. | Does not require a standard curve; provides reaction-specific efficiency. | Subject to bias from subjective selection of the "exponential phase" [18]. |

| Sigmoidal Model | Fits a non-linear curve (e.g., 4 or 5-parameter sigmoid) to the entire amplification curve [18] [15]. | Calculates the initial fluorescence, F0 [18]. | Uses all data points; less subjective than phase selection. | Complex computation; multiple models exist (e.g., Richards, Gompertz). |

| f0% Method | A modified sigmoidal approach that reports initial fluorescence as a percentage of the maximum [18]. | f0%, an estimate of the initial target amount. | Reported to reduce variation and error compared to Cq and other methods [18]. | Less established; requires specialized software or scripts. |

| ANCOVA | A flexible multivariable linear modeling approach applied to raw fluorescence data [4]. | Differential expression P-values and estimates. | Greater statistical power and robustness; P-values not affected by efficiency variability [4]. | Requires raw fluorescence data and statistical programming proficiency (e.g., R). |

Experimental Data on Method Performance and Variability

Independent studies benchmark these methods, revealing significant performance differences. A 2024 study found that the f0% method reduced the coefficient of variation (CV%) by 1.76-fold and variance by 3.13-fold compared to the traditional Cq method in relative quantification [18]. Another study on a prokaryotic model highlighted that the choice of mathematical model (exponential vs. sigmoidal) directly impacted efficiency estimates, which subsequently altered normalized gene expression values [15].

Inter-assay variability is a major practical concern. Research on virus quantification found that while all assays met minimum efficiency targets (>90%), there was notable variability between experiments. For instance, the SARS-CoV-2 N2 gene showed a CV of 4.38-4.99%, underscoring the recommendation to include a standard curve in every run for reliable absolute quantification [1].

Table 2: Experimental Variability in qPCR Efficiency (Selected Data)

| Viral Target / Gene | Reported Efficiency | Observed Variability | Study Context |

|---|---|---|---|

| SARS-CoV-2 (N2 gene) | 90.97% | Coefficient of Variation (CV): 4.38-4.99% | 30 independent standard curve experiments [1]. |

| NoVGII | >90% | Higher inter-assay variability compared to other viruses. | Virus surveillance in wastewater [1]. |

| Pseudomonas aeruginosa genes | 50-79% (Exponential Model) | Efficiency decreased as DNA concentration increased. | Assessment of 16 genes, noting inhibitor impact [15]. |

| Pseudomonas aeruginosa genes | 52-75% (Sigmoidal Model) | Different impact on normalized expression vs. exponential model. | Comparison of mathematical approaches [15]. |

The Critical Role of Reference Genes

In relative quantification, the stability of reference genes ("housekeeping genes") is as critical as the efficiency of the target assay. Using inappropriate reference genes is a widespread flaw that affects result precision and reliability [19] [20].

Experimental Protocol for Validation

A rigorous protocol for reference gene selection involves:

- Candidate Selection: Choose multiple candidates from literature or genomic databases. Common genes include those for actin (IbACT, β-actin), ribosomal proteins (IbRPL, rpL32), and transcription factors (IbEF1α, arf1) [19] [20].

- RNA Extraction & cDNA Synthesis: Extract high-quality RNA from all relevant tissues/conditions, check purity (A260/A280), and synthesize cDNA with a standardized kit [20].

- qPCR Amplification: Run all candidates across all experimental conditions. Primers must be validated for specificity and their own amplification efficiency [19].

- Stability Analysis: Use algorithms like geNorm, NormFinder, BestKeeper, and the comparative ΔCq method. Tools like RefFinder integrate results from these algorithms for a comprehensive ranking [19] [20].

- Experimental Validation: Confirm the stability of the selected genes by normalating a known target gene (e.g., major royal jelly protein 2, mrjp2) and assessing if the resulting expression patterns align with biological expectations [20].

Case Study in Experimental Design

A 2025 study on sweet potato highlights this process. It evaluated ten candidate reference genes across four tissues. The study found IbACT, IbARF, and IbCYC to be the most stable, while commonly used genes like IbGAP and IbRPL were among the least stable [19]. This demonstrates that stability must be empirically determined and cannot be assumed.

Best Practices and Future Directions

Recommendations for Rigorous qPCR

To align practical results closer to theoretical ideals, researchers should adopt the following practices:

- Validate, Don't Assume: Determine the exact efficiency of every assay and reference gene under your specific experimental conditions. Do not assume 100% efficiency [15] [16].

- Embrace Transparency and FAIR Principles: Share raw fluorescence data and detailed analysis scripts starting from raw input. This practice is crucial for reproducibility and allows re-analysis with different methods like ANCOVA [4].

- Report Fully and Accurately: Adhere to MIQE (Minimum Information for Publication of Quantitative Real-Time PCR Experiments) guidelines. Report slopes, R2, y-intercepts, and efficiency values for standard curves, and acknowledge the variability of these metrics [1].

- Use Appropriate Analysis Methods: Consider moving beyond the simplistic 2–ΔΔCT method. Methods like ANCOVA or sigmoidal curve fitting (e.g., f0%) can offer greater robustness and power, especially when efficiency is variable [4] [18].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Reagent Solutions for qPCR Efficiency Analysis

| Item / Reagent | Critical Function | Considerations for Efficiency |

|---|---|---|

| SYBR Green I / DNA Binding Dyes | Binds double-stranded DNA, providing the fluorescence signal for monitoring amplification. | Inexpensive but can bind to non-specific products (e.g., primer dimers), artificially inflating signal and skewing efficiency calculations [18]. |

| TaqMan Probes / Fluorogenic Probes | Sequence-specific probes that increase specificity by requiring hybridization for signal generation. | Generally more specific and reliable for efficiency estimation, but more expensive than intercalating dyes [1]. |

| One-Step / Two-Step RT-PCR Kits | Master mixes that include reverse transcriptase and PCR enzymes. One-step integrates both; two-step separates them. | The reverse transcription step is a major source of variability. Kit formulation and inhibitor tolerance can significantly impact final Cq and calculated efficiency [1] [15]. |

| Synthetic RNA/DNA Standards | Pre-quantified nucleic acids for generating standard curves for absolute quantification. | Essential for determining absolute efficiency. Must be handled carefully (aliquoting, limited freeze-thaw) to prevent degradation and ensure accurate concentration [1]. |

| High-Purity Nucleic Acid Isolation Kits | For extracting DNA/RNA from complex biological samples. | Critical for removing PCR inhibitors that directly suppress amplification efficiency. The purity (A260/A280) should be verified [17]. |

Workflow for Assessing qPCR Efficiency

The following diagram illustrates a logical workflow for assessing and troubleshooting qPCR amplification efficiency, integrating the concepts and methods discussed in this guide.

The gap between the theoretical ideal of 100% qPCR efficiency and the variable reality of laboratory practice is a significant source of bias in molecular data. Acknowledging this discrepancy is the first step toward producing more rigorous and reproducible science. By critically evaluating efficiency using robust methods, rigorously validating reference genes, and adopting transparent data-sharing practices, researchers can ensure their findings on transcript validation are accurate, reliable, and meaningful.

The Impact of Reaction Components and Conditions on Assay Specificity

In transcript validation research, the specificity of quantitative polymerase chain reaction (qPCR) is paramount, as it directly influences the accuracy and reliability of gene expression data. Achieving high specificity ensures that the amplification signal originates solely from the intended target transcript, minimizing false positives and erroneous quantification. This guide provides an objective comparison of how critical reaction components and conditions—from DNA polymerase selection to primer design and experimental optimization—impact qPCR assay specificity. We present supporting experimental data and detailed methodologies to guide researchers and drug development professionals in making informed decisions to optimize their qPCR workflows for superior transcript validation.

Core Reaction Components and Their Impact on Specificity

The specificity of a qPCR assay is governed by a complex interplay of its core components. The table below summarizes the impact of these key elements and provides comparative data on their performance.

Table 1: Impact of Core qPCR Components on Assay Specificity

| Component | Key Feature for Specificity | Comparison of Options | Supporting Data / Effect |

|---|---|---|---|

| DNA Polymerase | Hot-Start Mechanism | Hot-Start vs. Standard: Antibody-mediated hot-start polymerases show no detectable activity at room temperature, while standard or "warm-start" enzymes can exhibit pre-cycling activity [21]. | Figure 2: Hot-start polymerases show improved yields of the desired amplicon and a lack of nonspecific amplification compared to non-hot-start versions [21]. |

| Primers | Design & Melting Temperature (Tm) | Optimal vs. Suboptimal: Primers with Tm of 60-65°C, 40-60% GC content, and amplicons of 70-200 bp outperform those with low Tm, high dimer potential, or long products [22]. | Primer sequences based on SNPs among homologous genes achieve high specificity, enabling discrimination between highly similar sequences [23]. |

| Detection Chemistry | Probe-Based vs. Dye-Based | Hydrolysis Probes vs. DNA Intercalating Dyes: Probe-based chemistries offer higher specificity through a second hybridization event, while dye-based methods are more versatile but less specific [22]. | Table 1 (Detection Methods): Hydrolysis probes are "highly specific" but require custom design; DNA intercalating dyes are "versatile and cost-effective" but have "low specificity" [22]. |

| Magnesium Ions (Mg²⁺) | Optimal Concentration | Titrated vs. Fixed Concentration: The optimal Mg²⁺ concentration is template- and primer-specific. Titration is recommended for new assays [24]. | Implicit in protocol optimization; incorrect Mg²⁺ concentration can promote mispriming and reduce specificity. |

| Template Quality | Purity and Integrity | High-Quality vs. Degraded/Contaminated DNA: High molecular weight DNA without contaminants is critical. Phenol extraction is not recommended as it can introduce oxidative damage [25]. | The QPCR assay for DNA damage measurement requires high-quality DNA to avoid nicking and shearing, which artificially reduces amplification [25]. |

Experimental Protocols for Optimizing Specificity

Protocol for Stepwise qPCR Optimization

An optimized protocol for the stepwise optimization of real-time RT-PCR analysis emphasizes that computational primer design alone is insufficient and can lead to a false sense of confidence, making optimization essential for efficiency, specificity, and sensitivity [23]. The protocol involves sequential optimization of the following parameters:

- Primer Sequence Selection: Begin by identifying all homologous gene sequences for the target from the genome. Perform a multiple sequence alignment and design sequence-specific primers based on single-nucleotide polymorphisms (SNPs) present among these homologs. This ensures primers will bind uniquely to the intended transcript [23].

- Annealing Temperature Optimization: Perform a temperature gradient qPCR run (e.g., from 55°C to 65°C) using the designed primers. Select the temperature that yields the lowest Cq value, the highest fluorescence (ΔRn), and a single peak in the melting curve analysis, indicating specific amplification [23] [24].

- Primer Concentration Titration: Test different primer concentrations (e.g., 50 nM, 200 nM, 600 nM) around the manufacturer's recommended starting point (often 200-500 nM) while keeping the annealing temperature constant. The optimal concentration provides the lowest Cq and highest ΔRn without increasing background signal [23].

- cDNA Concentration Range Testing: Generate a standard curve using a serial dilution (e.g., 5-6 points) of a cDNA pool to determine the dynamic range and efficiency of the assay. The goal is to achieve a linear standard curve with an R² ≥ 0.9999 and a PCR efficiency (E) of 100 ± 5% [23].

This protocol was successfully applied to identify stable reference genes in Tripidium ravennae, demonstrating its effectiveness in achieving highly specific and reliable qPCR results [23].

Protocol for SYBR Green Assay Validation

A protocol for detecting antimicrobial resistance genes (ARGs) using SYBR Green chemistry paired with melting curve analysis highlights steps to confirm specificity [24]:

- Reaction Setup: The 10 μL reaction mixture contains 1X PowerUp SYBR Green Master Mix, 600 nM of each forward and reverse primer, and 2.5 μL of DNA template [24].

- Thermal Cycling Profile: The protocol uses an initial incubation at 50°C for 2 min, followed by 2 min at 95°C, and 45 cycles of denaturation at 95°C for 10 s, and a primer-specific annealing/extension at 56–60°C for 30 s [24].

- Melting Curve Analysis: After amplification, a melting curve is generated by slowly heating the product from 60°C to 95°C while continuously monitoring fluorescence. A single, sharp peak in the derivative melt curve confirms the amplification of a single, specific product. The amplicons from environmental samples were further analyzed by Sanger sequencing to definitively confirm the specificity of the assays [24].

Quantitative Comparison of Specificity Parameters

The optimization of qPCR conditions ultimately yields quantitative metrics that directly reflect the assay's specificity and performance. The following table compares these key parameters across different experimental contexts.

Table 2: Quantitative Metrics for qPCR Performance and Specificity

| Parameter | Optimal Value / Outcome | Comparative Data / Variability |

|---|---|---|

| Amplification Efficiency (E) | 90–105% (Ideal: 100%) [23] [1] | In a 30-experiment study, efficiencies were >90%, but variability was observed between viral targets. NoVGII showed the highest inter-assay variability in efficiency [1]. |

| Standard Curve R² | R² ≥ 0.9999 [23] | A study on viral targets found that while efficiencies were adequate, the slope of the standard curve showed variability, with the SARS-CoV-2 N2 gene target exhibiting the largest variability (CV 4.38–4.99%) [1]. |

| DNA Polymerase Fidelity (Error Rate) | Lower error rate indicates higher fidelity [26] [21]. | Error rates measured by direct sequencing: Taq polymerase: ~3.0-5.6 x 10⁻⁵; Pfu, Pwo, Phusion: >10x lower than Taq (in the 10⁻⁶ range) [26]. |

| Inter-assay Variability (CV for Efficiency) | As low as possible | In a study of 30 replicates, NoVGII showed higher inter-assay variability, while SARS-CoV-2 N2 had the lowest efficiency (90.97%) among targets tested [1]. |

Visualization of Workflows and Specificity Mechanisms

qPCR Assay Optimization Workflow

Mechanisms of Hot-Start DNA Polymerase Specificity

The Researcher's Toolkit: Essential Reagents for Specific qPCR

The following table details key reagent solutions and their critical functions in establishing and maintaining a highly specific qPCR assay.

Table 3: Essential Research Reagent Solutions for qPCR Specificity

| Reagent / Kit | Specific Function in Assay | Role in Enhancing Specificity |

|---|---|---|

| Hot-Start DNA Polymerase | Enzyme for DNA synthesis during PCR amplification. | Prevents polymerase activity during reaction setup, dramatically reducing non-specific amplification and primer-dimer formation [21]. |

| High-Purity Primer Pairs | Short, single-stranded DNA sequences that define the target amplicon. | Well-designed primers with appropriate Tm and length ensure binding is specific to the intended transcript, minimizing off-target amplification [23] [22]. |

| Probe-Based Master Mix (e.g., TaqMan) | Contains polymerase, dNTPs, and buffer optimized for probe-based detection. | The requirement for both primers and a probe to bind correctly for signal generation provides a second layer of specificity beyond intercalating dyes [22]. |

| SYBR Green Master Mix | Contains polymerase, dNTPs, buffer, and DNA-intercalating dye. | When paired with rigorous melting curve analysis, it confirms amplification of a single, specific product, making it a cost-effective option [24]. |

| DNA/RNA Extraction Kits (e.g., Qiagen) | Isolate high-quality nucleic acid templates from samples. | Provides pure, intact template free of contaminants (e.g., salts, phenol, proteins) that can inhibit the polymerase and promote non-specific binding [25] [24]. |

| Quantitative Synthetic RNA/DNA Standards | Known concentrations of in vitro transcribed RNA or synthetic DNA. | Essential for generating standard curves to validate assay efficiency and dynamic range, key indicators of a robust and specific assay [1]. |

In the field of transcript validation research, the reliability and reproducibility of experimental data are paramount. Two foundational guidelines have emerged as critical frameworks to uphold these standards: the MIQE (Minimum Information for Publication of Quantitative Real-Time PCR Experiments) guidelines and the FAIR (Findable, Accessible, Interoperable, Reusable) data principles. MIQE provides a standardized framework specifically for the execution and reporting of qPCR experiments, ensuring the credibility and repeatability of this sensitive technique [27]. Concurrently, the FAIR principles offer a broader set of guidelines for scientific data management, designed to enhance the reusability of digital assets by both humans and computational systems [28]. While MIQE focuses on the technical specifics of a key transcript validation method, FAIR addresses the entire data lifecycle. Together, they provide a comprehensive foundation for conducting rigorous, transparent, and impactful research in molecular biology and drug development.

The MIQE Guidelines: A Standard for qPCR Rigor

Purpose and Evolution

The MIQE guidelines were established in 2009 to address widespread concerns about the quality and transparency of qPCR experiments. These guidelines create a standardized framework for designing, executing, and reporting qPCR assays, which is essential for advancing scientific knowledge and maintaining research integrity [27]. The recent release of MIQE 2.0 in 2025 reflects advances in qPCR technology and the expansion of qPCR into new applications, offering updated recommendations tailored to contemporary complexities [29].

Core Requirements and Applications

MIQE guidelines comprehensively cover all aspects of qPCR experiments, including experimental design, sample quality, assay validation, and data analysis [27]. A key focus is on transparent reporting to ensure that all experiments can be independently verified. For transcript validation research, this specificity is crucial as it standardizes the methodology for measuring gene expression levels, a common application in drug discovery and biomarker identification.

The guidelines emphasize that quantification cycle (Cq) values should be converted into efficiency-corrected target quantities and reported with prediction intervals [29]. Furthermore, they outline best practices for normalization and quality control, which are vital for accurate interpretation of qPCR results in transcript studies.

Table: Key Aspects of MIQE 2.0 Guidelines for qPCR Analysis

| Aspect | Requirement | Significance in Transcript Validation |

|---|---|---|

| Data Reporting | Report Cq values as efficiency-corrected target quantities with prediction intervals | Enables accurate comparison of transcript levels between samples and conditions |

| Assay Design | Provide probe or amplicon context sequence along with Assay ID | Ensures complete transparency and reproducibility of the qPCR assay [27] |

| Normalization | Apply appropriate normalization techniques using reference genes | Corrects for technical variations, allowing valid biological interpretations |

| Detection Limits | Specify detection limits and dynamic ranges for each target | Defines the quantitative scope and limitations of the transcript detection assay |

| Raw Data | Enable export of raw data for independent analysis | Facilitates re-evaluation by peer reviewers and other researchers [29] |

The FAIR Principles: A Framework for Data Stewardship

Foundation and Rationale

The FAIR Guiding Principles for scientific data management and stewardship were formally published in 2016 to address the challenges posed by the increasing volume, complexity, and creation speed of research data [28] [30]. These principles were designed to enhance the capacity of computational systems to automatically find, access, interoperate, and reuse data with minimal human intervention, while still supporting reuse by individuals [31].

The Four Principles Explained

The FAIR acronym represents four core principles that collectively optimize the reuse of digital research objects:

Findable: The first step in data reuse is discovery. Data and metadata should be easy to find for both humans and computers. This is achieved by assigning globally unique and persistent identifiers (such as DOIs or UUIDs) and ensuring datasets are described with rich, machine-actionable metadata that include these identifiers [30] [32]. Metadata should be registered or indexed in searchable resources [31].

Accessible: Once found, users need to know how data can be accessed. Data should be retrievable by their identifiers using standardized communication protocols, which should be open, free, and universally implementable. When data cannot be made openly available due to privacy or security concerns, clear authentication and authorization procedures should be in place [30] [31].

Interoperable: Data must be able to be integrated with other datasets and work with applications or workflows for analysis, storage, and processing. This requires using formal, accessible, shared languages for knowledge representation and vocabularies that follow FAIR principles [28] [30]. The data should include qualified references to other metadata to establish context and relationships.

Reusable: The ultimate goal of FAIR is to optimize the reuse of data. This requires that metadata and data are well-described with a plurality of accurate and relevant attributes [28]. Reusability is enhanced when data is released with a clear usage license, associated with detailed provenance, and meets domain-relevant community standards [30] [31].

Table: Detailed Breakdown of FAIR Principles Implementation

| Principle | Primary Requirement | Key Implementation Strategies |

|---|---|---|

| Findable | Metadata and data are easy to find for humans and computers | Assign persistent identifiers (DOIs); Use rich, machine-readable metadata; Index in searchable resources [30] [31] |

| Accessible | Data is retrievable through standardized protocols | Implement open, free access protocols; Provide authentication/authorization where needed; Keep metadata accessible even if data isn't [30] |

| Interoperable | Data can be integrated with other data and applications | Use standardized vocabularies and ontologies; Employ formal knowledge representation languages; Include qualified references [28] [31] |

| Reusable | Data can be replicated or combined in different settings | Provide clear data usage licenses; Document detailed provenance; Follow domain-relevant community standards [30] [32] |

Comparative Analysis: Complementary Roles in Research

Distinct Focus Areas with Overlapping Goals

While both MIQE and FAIR aim to enhance research quality, they operate at different levels of the research ecosystem. MIQE is methodology-specific, providing detailed technical requirements for a particular experimental technique (qPCR), whereas FAIR is domain-agnostic, offering high-level principles applicable to any digital research object [29] [28]. This distinction is particularly evident in transcript validation research, where MIQE ensures the technical validity of qPCR data generation, while FAIR ensures the proper management and sharing of the resulting data.

Despite their different scopes, both frameworks share the common goals of enhancing reproducibility, promoting transparency, and facilitating data reuse. MIQE achieves this through standardized reporting of technical parameters, while FAIR accomplishes it through systematic data management practices.

Implementation in Transcript Validation Workflow

In a typical transcript validation research workflow, MIQE and FAIR principles complement each other at different stages:

Practical Implementation in Transcript Research

Research Reagent Solutions for qPCR Experiments

Implementing MIQE guidelines requires careful selection of reagents and tools that facilitate compliance. The following table outlines essential materials for rigorous qPCR-based transcript validation studies:

Table: Research Reagent Solutions for MIQE-Compliant qPCR

| Reagent/Tool | Function | MIQE Compliance Consideration |

|---|---|---|

| TaqMan Assays | Sequence-specific detection of target transcripts | Provide Assay ID and amplicon context sequence for complete reporting [27] |

| RNA Quality Assessment Tools (e.g., Bioanalyzer) | Evaluate RNA integrity prior to reverse transcription | Essential for documenting sample quality metrics required by MIQE |

| Reverse Transcription Kits | Convert RNA to cDNA for qPCR analysis | Must detail enzyme properties and reaction conditions for reproducibility |

| qPCR Instruments with Raw Data Export | Amplification and detection of target sequences | Enable export of raw fluorescence data for independent validation [29] |

| Reference Gene Assays | Normalization of technical variations | Critical for accurate quantification as emphasized in MIQE guidelines |

Experimental Protocol for MIQE-Compliant qPCR

For researchers conducting transcript validation studies, the following protocol ensures adherence to MIQE guidelines while incorporating FAIR data principles:

Experimental Design Phase

- Incorporate appropriate biological replicates (minimum 3 per condition) to account for variability [33]

- Include negative controls (no-template controls) and positive controls

- Plan for reference genes for normalization that are stable under experimental conditions

Sample Preparation and Quality Assessment

- Document RNA extraction methodology comprehensively

- Assess RNA quality using appropriate metrics (e.g., RNA Integrity Number)

- Record RNA quantification method and values

Assay Validation

- For TaqMan assays, record the Assay ID and obtain the amplicon context sequence from the manufacturer's Assay Information File [27]

- Determine amplification efficiency for each assay using dilution series

- Establish linear dynamic range and limit of detection for each transcript target

qPCR Execution

- Document complete reaction conditions: component concentrations, thermocycling parameters, and instrument details

- Include appropriate controls in each run

- Record raw Cq values and amplification curves

Data Analysis and FAIR Implementation

- Apply efficiency-corrected quantification methods to convert Cq to target quantities [29]

- Use appropriate normalization strategy based on reference genes

- Export and preserve raw data for future reuse

- Annotate datasets with rich metadata including experimental conditions, analysis parameters, and quality metrics

Data Reporting and Sharing

- Assign persistent identifiers to datasets

- Apply clear usage licenses

- Deposit data in recognized repositories with structured metadata

- Ensure metadata remains accessible even if data access is restricted

Impact on Scientific Research and Drug Development

Enhancing Reproducibility and Rigor

The implementation of both MIQE and FAIR principles significantly strengthens the reliability of transcript validation research, which forms the foundation for many drug development programs. MIQE guidelines directly address the reproducibility crisis in qPCR experiments by ensuring complete methodological transparency [27]. Simultaneously, FAIR principles enhance research traceability by embedding metadata, provenance, and context to help teams track how data was collected, processed, and interpreted [30].

Facilitating Collaboration and Innovation

In the context of drug development, where research often involves multiple institutions and disciplines, both frameworks enable better collaboration. MIQE standardizes the language and reporting of qPCR data, allowing clear communication between academic researchers, pharmaceutical companies, and regulatory bodies. FAIR principles break down data silos by making data interoperable across different systems and teams [30]. This interoperability is particularly valuable in multi-modal research environments that integrate diverse datasets like genomic sequences, imaging data, and clinical trial information.

Supporting Regulatory Compliance and AI Readiness

For drug development professionals, both MIQE and FAIR principles support regulatory requirements by ensuring data integrity and traceability. Furthermore, FAIR data provides the foundation needed to harmonize diverse data types into machine-readable formats with rich metadata, which is essential for scaling AI and ML projects in life sciences [30]. As computational approaches become increasingly central to transcriptomics and drug discovery, having MIQE-compliant experimental data that also adheres to FAIR principles ensures this data remains valuable for future analytical methods.

MIQE guidelines and FAIR principles represent complementary but distinct frameworks that collectively address both experimental rigor and data stewardship in transcript validation research. MIQE provides the methodology-specific standards necessary to ensure qPCR experiments generate reliable, reproducible data on transcript expression [29] [27]. Meanwhile, FAIR principles offer a comprehensive data management framework that ensures research outputs remain findable, accessible, interoperable, and reusable by both humans and computational systems [28] [30].

For researchers, scientists, and drug development professionals, understanding and implementing both frameworks is increasingly essential. Together, they provide a robust foundation for producing high-quality, transparent, and reusable research that can accelerate scientific discovery and innovation in transcriptomics and beyond. As the volume and complexity of biological data continue to grow, the integration of methodological standards like MIQE with data management principles like FAIR will become ever more critical to advancing our understanding of gene expression and developing new therapeutic approaches.

Robust qPCR Workflows: Methodologies for High-Specificity Assay Design and Data Analysis

Step-by-Step Guide to High-Fidelity Primer and Probe Design

In transcript validation research, the accuracy of quantitative PCR (qPCR) data is paramount. The foundation of any reliable qPCR assay lies in the meticulous design of primers and probes. Specificity and sensitivity, the two pillars of a robust qPCR experiment, are dictated primarily by the oligonucleotide designs selected [34]. High-fidelity design goes beyond simple sequence selection; it encompasses a holistic approach that considers sequence uniqueness, secondary structures, and thermodynamic properties to ensure that the fluorescent signal detected originates exclusively from the intended transcript target [35]. This guide provides a detailed, step-by-step framework for designing high-fidelity primers and probes, objectively compares leading probe technologies, and presents supporting experimental data to empower researchers in drug development and biomedical research to generate publication-quality, reproducible data.

Fundamental Principles of Primer and Probe Design

Core Design Parameters for Primers

The following parameters are critical for designing effective PCR primers [10] [36]:

- Length: Optimal primer length is typically 18–30 bases. This range is sufficient to ensure specificity while facilitating efficient binding [10] [36].

- Melting Temperature (Tm): The optimal Tm for primers is 60–64°C, with an ideal of 62°C. The Tm values for the forward and reverse primer should not differ by more than 2°C to ensure both primers bind simultaneously and efficiently [10].

- GC Content: Aim for a GC content of 40–60%, with 50% being ideal. This provides sufficient sequence complexity while maintaining specificity. Avoid regions with very high or very low GC content [10] [36].

- 3' End Stability: Avoid runs of three or more G or C bases at the 3' end, as this can promote non-specific priming. The last five nucleotides at the 3' end should contain no more than two G or C residues [35] [36].

- Specificity and Secondary Structures: Screen primers for self-dimers, hairpins, and cross-dimers. The ΔG value for any secondary structures should be weaker (more positive) than -9.0 kcal/mol [10]. Always verify primer specificity using tools like NCBI BLAST to ensure they are unique to the target sequence [10] [35].

Core Design Parameters for Probes

For hydrolysis probes (e.g., TaqMan), adhere to these guidelines [10] [35] [37]:

- Location: The probe must be located between the forward and reverse primer binding sites. Ideally, it should be in close proximity to a primer but should not overlap with the primer-binding site. For gene expression assays, placing the probe over an exon-exon junction ensures detection of correctly spliced cDNA and avoids amplification of genomic DNA [35].

- Melting Temperature (Tm): The probe should have a Tm that is 5–10°C higher than the primers. This ensures the probe is firmly bound before the primers extend [10] [37].

- Length and GC Content: Probes are typically 20–30 bases long for single-quenched designs. Similar to primers, GC content should be 30–80% [35] [37].

- Sequence Composition: Avoid a guanine (G) base at the 5' end, as this can quench the reporter fluorophore. Also, avoid runs of four or more identical nucleotides, particularly consecutive Gs [10] [37].

- Quencher Selection: Double-quenched probes, which incorporate an internal quencher (such as ZEN or TAO), provide lower background and higher signal compared to single-quenched probes and are recommended for optimal performance [10].

Amplicon and Target Sequence Considerations

- Amplicon Length: Shorter amplicons (50–150 base pairs) are recommended for standard qPCR as they promote efficient amplification [35]. Amplicons up to 500 bases can be used, but this requires longer extension times [10].

- Genomic DNA Avoidance: Treat RNA samples with DNase I. Design assays to span an exon-exon junction, preferably with the probe (rather than a primer) covering the junction, to prevent amplification of contaminating genomic DNA [10] [35].

- Sequence Uniqueness: Select a target region with an unambiguous sequence, free of known single nucleotide polymorphisms (SNPs) or repetitive elements [35]. Use BLAST searches to confirm the uniqueness of the selected primer and probe binding sites [35].

The following workflow diagram summarizes the key stages of the high-fidelity design process.

Comparative Analysis of qPCR Probe Technologies

Established Technologies: TaqMan Probes

TaqMan probes are the most widely used hydrolysis probes in qPCR. They are dual-labeled oligonucleotides with a 5' reporter fluorophore and a 3' quencher [37]. During amplification, the 5' to 3' exonuclease activity of Taq DNA polymerase cleaves the probe, separating the fluorophore from the quencher and generating a fluorescent signal [37]. TaqMan probes are highly reliable and are cited in over 296,000 publications, making them a trusted choice [38].

Variants like TaqMan MGB (Minor Groove Binder) probes incorporate a minor groove binder molecule, which increases the Tm and allows for the use of shorter probes. This enhances specificity, particularly in distinguishing closely related sequences or single nucleotide polymorphisms (SNPs) [38]. MGB probes are suitable for multiplexing up to five targets [38].

An Innovative Approach: High-Fidelity DNA Polymerase-Mediated Probes

A novel qPCR method utilizes high-fidelity (HF) DNA polymerase and a single-stranded HFman probe [39]. This system leverages the 3'→5' exonuclease (proofreading) activity of HF DNA polymerase. Unlike traditional TaqMan probes, the HFman probe can function as a fluorescent primer. The HF polymerase removes the 3'-base (which is labeled with a fluorophore), liberating the fluorescent signal and initiating extension, even in the presence of some mismatches [39].

This innovative one-primer-one-probe system offers distinct advantages, particularly for detecting highly variable viral targets, due to its greater flexibility in probe design and tolerance for sequence variations [39].

Technology Comparison and Experimental Performance Data

The table below summarizes the head-to-head comparison of TaqMan probes and the novel HFman probe system based on experimental data [39].

Table 1: Comparative experimental performance of TaqMan vs. HFman probe systems

| Feature | Conventional TaqMan Probe | Novel HFman Probe |

|---|---|---|

| System Requirements | Two primers, one probe [39] | One primer, one HFman probe [39] |

| Enzyme Used | Standard Taq DNA polymerase [37] | High-fidelity DNA polymerase [39] |

| Probe Cleavage Mechanism | 5'→3' hydrolysis [37] | 3'→5' exonuclease proofreading [39] |

| Fluorophore Labeling | 5' end [37] | 3' end is more efficient [39] |

| Tolerance to Mismatches | Low; mismatches reduce efficiency [39] | High; more flexible to template variability [39] |

| Sensitivity in HIV-1 Viral Load Quantification | Conventional sensitivity [39] | Higher sensitivity [39] |

| Multiplexing Capability | Up to 6-plex with advanced quenchers [38] | Proven feasible in 4-plex format [39] |

The experimental data from a 2017 Scientific Reports study demonstrates that the HFman probe system offers practical advantages in challenging scenarios. The study showed that the HFman probe system exhibited higher sensitivity and better adaptability to sequence-variable templates than conventional TaqMan probes in the quantification of HIV-1 viral load [39]. Furthermore, a comparison with the commercial COBAS TaqMan HIV-1 Test showed a good correlation coefficient (R² = 0.79), validating its clinical applicability [39].

The diagram below illustrates the fundamental difference in the mechanism of action between these two probe systems.

A Step-by-Step Experimental Design and Validation Protocol

In-Silico Design and Screening Workflow

- Define Target Sequence: Input a high-quality, unambiguous sequence into a design tool. For gene expression, use a RefSeq mRNA accession number. Identify a region that spans an exon-exon junction to prevent genomic DNA amplification [40] [35].

- Set Amplicon Parameters: Define the amplicon length, typically 70–150 bp for optimal efficiency [10] [35]. Specify the target region if you need the amplicon in a specific location.

- Input Design Constraints: Enter the key parameters discussed in Section 2:

- Check for Specificity: Use the tool's built-in BLAST functionality to screen your designed oligonucleotides against the appropriate genomic database (e.g., RefSeq mRNA) to ensure they are unique to your target gene [40] [35].

- Analyze Secondary Structures: Use tools like the IDT OligoAnalyzer to screen for self-dimers, cross-dimers, and hairpins. Ensure the ΔG values for any structures are more positive than -9.0 kcal/mol [10].

Experimental Optimization Using Design of Experiments (DOE)

For complex assay optimization, a statistical Design of Experiments (DOE) approach is superior to the traditional one-factor-at-a-time method. A study on mediator probe optimization demonstrated that DOE could achieve maximum information with only 180 individual reactions, compared to an estimated 320 reactions required for a one-factor-at-a-time approach [41].

A typical DOE screening for a qPCR assay involves:

- Selecting Input Factors: These are critical parameters such as primer concentration, probe concentration, and annealing temperature. For probe sequence optimization, factors like dimer stability (ΔG) between the probe and its target can be evaluated [41].

- Defining the Target Value: This is a composite metric that represents overall assay performance. It can be calculated from key performance characteristics such as PCR efficiency, R² from the standard curve, signal-to-noise ratio, and the Cq value at a fixed concentration [41].

- Running the Experiment and Analysis: The DOE software guides the experimental setup and subsequent data analysis, identifying which input factors have the most significant effect on the target value and determining their optimal levels [41].

Validation and Quality Control

Before using a new assay for transcript validation, perform the following quality control checks:

- Standard Curve Analysis: Run a dilution series (at least 5 points) of a template with known concentration. The assay should demonstrate a linear dynamic range covering the expected target concentrations. The PCR efficiency should be between 90–110%, and the correlation coefficient (R²) should be >0.99 [41] [34].

- Specificity Check: Analyze the melt curve for SYBR Green assays or use endpoint electrophoresis to confirm a single product of the expected size. For probe-based assays, the absence of signal in a no-template control (NTC) and a no-reverse-transcription control (no-RT) confirms specificity and lack of genomic DNA contamination [35].

- Sensitivity and Limit of Detection (LOD): Determine the lowest concentration of target that can be reliably detected. This often requires multiple replicates (e.g., 9 replicates per concentration) at the lower end of the standard curve [41].

Table 2: Key research reagent solutions for high-fidelity qPCR assay development

| Tool / Reagent | Primary Function | Key Features and Considerations |

|---|---|---|

| High-Fidelity DNA Polymerase | Catalyzes DNA synthesis with proofreading activity. | Essential for HFman probe system; provides tolerance to probe/template mismatches [39]. |

| Double-Quenched Probes | Fluorescent detection of target sequence. | Incorporate internal quenchers (e.g., ZEN, TAO) for lower background and higher signal-to-noise [10]. |

| TaqMan MGB Probes | Fluorescent detection for highly specific applications. | Shorter probes with higher Tm; ideal for discriminating SNP's or GC-rich targets [38]. |

| NCBI Primer-BLAST | In-silico primer design and specificity checking. | Free tool that combines Primer3 design with BLAST search to ensure primer uniqueness [40]. |

| IDT SciTools Suite | A collection of online oligonucleotide analysis tools. | Includes OligoAnalyzer (for Tm, dimers, hairpins) and PrimerQuest (for custom assay design) [10]. |

| Custom Assay Design Services | Bioinformatics-driven design of probe/primer sets. | Services like Thermo Fisher's Custom Plus option perform in-silico QC and specificity checks [35]. |

The journey to producing publication-quality qPCR data begins with rigorous primer and probe design. This guide has detailed the critical parameters for high-fidelity design, highlighting the importance of Tm, GC content, specificity screening, and amplicon selection. The objective comparison between the conventional TaqMan system and the novel HFman probe system reveals a trade-off between established reliability and innovative flexibility, particularly for variable targets. By adhering to these step-by-step protocols—leveraging sophisticated in-silico tools, employing efficient optimization strategies like DOE, and utilizing the essential reagents outlined—researchers and drug development professionals can ensure their transcript validation data is both specific and reproducible, thereby upholding the highest standards of scientific rigor.