Ensuring Reliability in Epigenetic Research: A Comprehensive Guide to Histone Modification Data Reproducibility

This article provides a systematic framework for researchers and drug development professionals to assess and enhance the reproducibility of histone modification data.

Ensuring Reliability in Epigenetic Research: A Comprehensive Guide to Histone Modification Data Reproducibility

Abstract

This article provides a systematic framework for researchers and drug development professionals to assess and enhance the reproducibility of histone modification data. It covers the foundational importance of reproducibility, details best practices in mass spectrometry and bioinformatics, addresses common troubleshooting scenarios, and outlines robust validation and comparative analysis frameworks. By integrating current methodologies, practical optimization strategies, and emerging standards, this guide aims to empower scientists to generate reliable, clinically translatable epigenetic data, thereby accelerating biomarker discovery and therapeutic development.

Why Reproducibility Matters: The Critical Role of Reliable Histone PTM Data in Epigenetic Discovery

Defining Reproducibility in the Context of Histone Post-Translational Modifications (PTMs)

In the evolving field of epigenetics, histone post-translational modifications (PTMs) represent a complex layer of regulatory information that controls gene expression and chromatin dynamics. The reproducibility of histone PTM research is paramount, as it ensures that findings related to these crucial epigenetic marks are reliable, verifiable, and translatable to therapeutic development. In scientific terms, reproducibility means that using the same data and analytical tools should yield the same results as originally reported, providing a foundation for scientific credibility [1]. For histone modification studies, this principle extends across multiple dimensions—from consistent sample preparation and accurate PTM detection to transparent data analysis and computational verification.

The unique challenges in histone PTM research stem from the chemical complexity of modifications themselves. Beyond the well-characterized lysine acetylation and methylation, recent research has uncovered numerous additional PTMs that significantly contribute to chromatin structure and function, including acylations (propionyl, butyryl, crotonyl), glutamine monoaminylation (serotonylation and dopaminylation), and glycation products [2]. This expanding landscape of modifications, coupled with their dynamic and combinatorial nature, creates substantial hurdles for reproducible investigation. Mass spectrometry has emerged as the most effective analytical method for studying histone PTMs, yet computational limitations and methodological variability often restrict analyses and impede reproducibility [2] [3]. This guide systematically compares the leading methodologies for histone PTM analysis, evaluating their performance against critical reproducibility metrics to establish best practices for researchers, scientists, and drug development professionals working in this field.

Comparative Analysis of Histone PTM Research Methods

The pursuit of reproducible histone PTM research employs diverse methodological approaches, each with distinct strengths and limitations. The table below provides a systematic comparison of major technologies and platforms based on key reproducibility metrics.

Table 1: Comparative Analysis of Methodologies for Reproducible Histone PTM Research

| Methodology/Platform | Core Approach | Key Reproducibility Strengths | Quantitative Performance Data | Primary Limitations |

|---|---|---|---|---|

| HiP-Frag (with FragPipe) [2] | Bioinformatics workflow using unrestrictive mass spectrometry search | Integrates closed, open, and detailed mass offset searches; Identifies novel PTMs with stringent filtering | • Identified 60 novel PTMs on core histones• Identified 13 novel PTMs on linker histones | Computational complexity; Requires specialized expertise |

| PTMViz [4] | Interactive platform for differential abundance analysis & visualization | Modular R/Shiny-based environment; Performs moderated t-tests using limma; Interactive data exploration | • Successfully identified 3/580 significant histone PTM changes in murine drug exposure study• Detected H3K9me, H3K27me3, H4K16ac regulation | Downstream analysis tool only; Dependent on upstream data quality |

| Reverse Phase Protein Array (RPPA) [5] | Antibody-based high-throughput profiling | Validated with synthetic histone PTM peptides; Partially automated workflows; High-throughput capability | • Profiles 20 histone PTMs simultaneously• Analyzes 40 histone-modifying proteins• Reproducible across hundreds of samples | Limited to known, antibody-available PTMs; Potential antibody cross-reactivity |

| ReproSchema [6] | Schema-driven ecosystem for standardized data collection | Meets 14/14 FAIR principles; Built-in version control; Supports 6/8 key survey functionalities | • Library with >90 standardized assessments• Enables conversion to REDCap, FHIR formats | Focused on questionnaire/data collection aspects rather than wet-lab protocols |

| CUT&Tag [7] | Antibody-directed chromatin profiling | High-resolution profiling from minimal input (∼10 cells); Low background noise; Single-cell variant available | • Successfully detected H3K4me2, H3K27me3 in low-input samples• Superior signal-to-noise ratio vs. ChIP-seq | Requires specific equipment; Optimization needed for different histone marks |

This comparative analysis reveals that method selection significantly influences reproducibility outcomes. Mass spectrometry-based approaches like HiP-Frag offer unparalleled capability for discovering novel PTMs but demand substantial computational resources [2]. Antibody-based methods like RPPA provide high-throughput capacity for known modifications but face limitations in specificity and discovery potential [5]. Platforms like PTMViz and ReproSchema address specific reproducibility challenges in data analysis and collection standardization respectively [6] [4], while CUT&Tag enables reproducible profiling from precious, limited samples [7].

Experimental Protocols for Reproducible Histone PTM Analysis

Mass Spectrometry-Based Workflow with HiP-Frag

The HiP-Frag workflow represents a cutting-edge approach for comprehensive histone PTM characterization through mass spectrometry. The protocol begins with histone enrichment from biological samples using acid extraction, which provides high efficiency in recovering core histones, though high-salt extraction maintains a neutral pH compatible with acid-sensitive modifications [3] [5]. Following extraction, specialized digestion protocols are critical, as standard trypsin digestion produces peptides too short for proper MS analysis. The recommended approach uses either in-solution ArgC enzyme digestion or an "ArgC-like" method where lysine residues are chemically derivatized prior to tryptic digestion [2].

For derivatization, researchers can employ either deuterated acetic anhydride (D3 protocol) or propionic anhydride (PRO protocol), with the latter often followed by a second derivatization of N termini to enhance chromatographic retention [2]. The mass spectrometry analysis utilizes bottom-up approaches, with data processing through the HiP-Frag bioinformatics workflow that integrates closed, open, and detailed mass offset searches to comprehensively characterize histone modifications without prior restriction to known PTMs [2]. This method has demonstrated its robust capability by identifying 60 previously unreported marks on core histones and 13 on linker histones, establishing a new standard for reproducible, comprehensive histone PTM discovery [2].

Antibody-Based Profiling with Reverse Phase Protein Array (RPPA)

The RPPA platform offers an antibody-based alternative for histone PTM analysis optimized for throughput and reproducibility. The protocol utilizes a rapid microscale method for histone isolation compatible with processing hundreds of samples [5]. Following extraction, histones are arrayed onto nitrocellulose-coated slides using a specialized arrayer, and antibody-based detection is performed with validated antibodies targeting specific histone PTMs. The assay specificity was rigorously validated using synthetic peptides corresponding to known histone PTMs and by detecting expected histone PTM changes in response to inhibitors of histone modifier proteins in cell cultures [5].

The partially automated workflows enable consistent processing and minimize technical variability, while the platform's reproducibility has been demonstrated across applications including induced pluripotent stem cell differentiation and mammary tumor progression models [5]. This methodology provides a valuable approach for studies requiring high-throughput analysis of known histone modifications, particularly in translational applications seeking to discover and validate epigenetic states as therapeutic targets and biomarkers.

Visualization of Reproducibility Concepts and Workflows

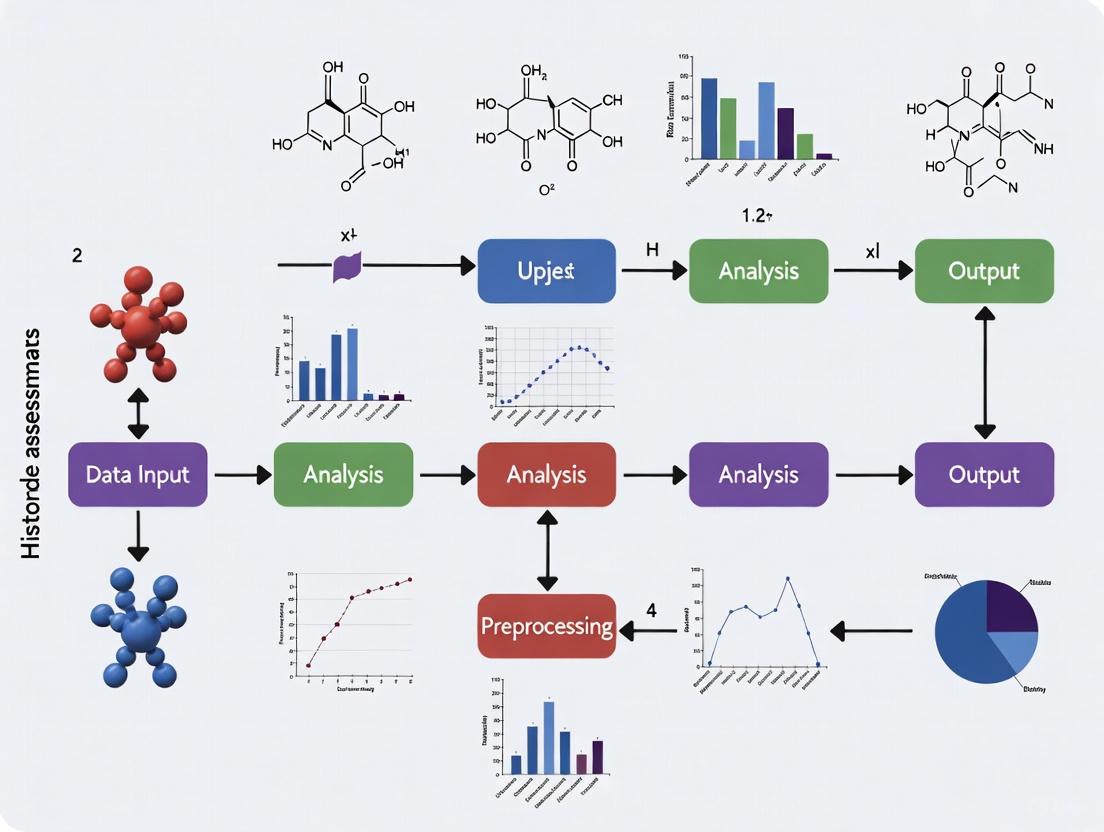

Multi-Dimensional Framework for Reproducibility

The complexity of histone PTM research requires a multi-dimensional approach to reproducibility, encompassing everything from data collection to computational verification. The following diagram illustrates this comprehensive framework and the interrelationships between its components:

This framework highlights how reproducible histone PTM research requires integration across standardized data collection practices [6], analytical transparency [4], and systematic verification practices [8]. Platforms like ReproSchema address the data collection dimension by implementing schema-driven standardization and version control [6], while tools like PTMViz enhance analytical transparency through open workflows and modular analysis environments [4]. Verification practices, including independent confirmation of results and FAIR data compliance, complete this comprehensive approach to reproducibility [8].

HiP-Frag Workflow for Unrestrictive PTM Discovery

The HiP-Frag workflow represents a significant advancement in reproducible histone PTM analysis by overcoming limitations of traditional restricted searches. The following diagram illustrates this integrated approach:

This integrated workflow demonstrates how combining multiple search strategies—closed searches for known PTMs, open searches for unknown modifications, and detailed mass offset analysis—enables comprehensive characterization of the histone modification landscape [2]. The approach systematically addresses the limitation of traditional methods that restrict analysis to common modifications due to computational constraints, thereby enhancing both the discovery potential and reproducibility of histone PTM research.

Essential Research Reagent Solutions for Reproducible Histone PTM Studies

Reproducible histone PTM research relies on carefully selected reagents and platforms that ensure consistency across experiments and laboratories. The following table catalogues essential solutions with demonstrated performance in epigenetic studies.

Table 2: Essential Research Reagent Solutions for Reproducible Histone PTM Studies

| Reagent Category | Specific Solution/Platform | Key Function in Reproducibility | Validation Evidence |

|---|---|---|---|

| Bioinformatics Workflows | HiP-Frag (FragPipe) | Enables unrestrictive PTM searches; Integrates multiple search strategies | Identified 73 novel PTMs (60 core + 13 linker histones) [2] |

| Data Analysis Platforms | PTMViz (R/Shiny) | Interactive differential abundance analysis; Moderated t-tests via limma | Detected significant H3K9me, H3K27me3, H4K16ac changes [4] |

| Standardized Assessment Libraries | ReproSchema Library | Provides >90 standardized, reusable assessments in JSON-LD format | Meets 14/14 FAIR criteria; Supports 6/8 key survey functions [6] |

| High-Throughput Profiling | Reverse Phase Protein Array (RPPA) | Simultaneously profiles 20 histone PTMs + 40 modifying proteins | Validated with synthetic peptides; Drug response detection [5] |

| Low-Input Profiling | CUT&Tag | Chromatin profiling from ∼10 cells; Low background noise | Detected H3K4me2, H3K27me3 in minimal samples [7] |

| Search Engines & Algorithms | Sequence Search Engines (Mascot, Sequest, Andromeda) | Aligns spectra against theoretical database sequences | Standard for bottom-up histone PTM characterization [3] |

These reagent solutions form a foundation for reproducible histone PTM research, each addressing specific challenges in the workflow. Bioinformatics tools like HiP-Frag overcome computational limitations that traditionally restricted analyses [2], while standardized libraries like ReproSchema ensure consistency in data collection methodologies [6]. The selection of appropriate reagents should align with specific research objectives—whether focused on discovery of novel modifications, high-throughput screening of known marks, or analysis of limited clinical samples.

The establishment of reproducible practices in histone PTM research requires thoughtful integration of methodological rigor, computational transparency, and standardized workflows. As this comparison demonstrates, platforms like HiP-Frag excel in comprehensive PTM discovery through unrestrictive search strategies [2], while RPPA provides robust, high-throughput capability for profiling known modifications [5]. Tools such as PTMViz and ReproSchema address critical dimensions of analytical and data collection standardization respectively [6] [4], and CUT&Tag enables reproducible analysis from minimal sample inputs [7].

The evolving landscape of histone PTM research—with its expanding repertoire of modifications and growing relevance to disease mechanisms and therapeutic development—demands continued attention to reproducibility frameworks. Implementation of standardized protocols, adoption of tools that enhance analytical transparency, and commitment to verification practices will collectively strengthen the reliability and translational potential of histone modification studies. By selecting appropriate methodologies based on specific research objectives and consistently applying reproducibility best practices, researchers can advance our understanding of the epigenetic code with greater confidence and scientific rigor.

Reproducible research on histone modifications is fundamental to advancing our understanding of epigenetic regulation in health and disease. However, investigators face a triad of formidable challenges: technical noise introduced during experimental procedures, inherent biological variability between samples, and subtle analysis pitfalls that can compromise data interpretation. For researchers and drug development professionals, navigating these issues is critical for generating reliable, translatable epigenetic data. This guide objectively compares the performance of prevalent methodologies—primarily mass spectrometry and chromatin immunoprecipitation sequencing (ChIP-seq)—in mitigating these challenges, supported by experimental data and detailed protocols.

Technical Noise in Histone Modification Analysis

Technical noise arises from inconsistencies in sample preparation, instrumentation, and data processing, directly impacting the precision and reproducibility of quantitative measurements.

Mass Spectrometry (MS) Technical Noise

Mass spectrometry offers a comprehensive, antibody-free approach for quantifying histone post-translational modifications (PTMs), but its precision is highly dependent on sample input and preparation chemistry.

Sample Input and Quantification Precision: A systematic assessment of bottom-up MS using ion trap instrumentation across four human cell lines (HeLa, 293T, hESCs, and myoblasts) revealed that quantification precision varies with both starting cell number and the abundance of the specific PTM [9]. The table below summarizes the coefficient of variation (CV) for selected histone marks at different cell inputs.

Chemical Derivatization Pitfalls: The required propionylation step prior to trypsin digestion is a major source of technical variance. An evaluation of eight different propionylation protocols found significant issues with incomplete propionylation (up to 85% under-propagated) and specific over-propionylation on serine and threonine residues (up to 63%) depending on the reagent and reaction conditions [10]. Protocol A2, which used a double round of propionylation with propionic anhydride, performed best, achieving an average conversion rate of 93-100% for monitored peptides and significantly reducing technical variation [10].

Table 1: Precision of Histone PTM Quantification by Mass Spectrometry at Varying Cell Inputs [9]

| Histone PTM | Average Abundance | Coefficient of Variation (CV) at 5 Million Cells | CV at 50,000 Cells |

|---|---|---|---|

| H3K9me2 | ~40% | Low | ~4% |

| H4 Acetylation | High | Low | Efficiently quantified |

| H3K4me2 | <3% | Low | ~34% |

ChIP-seq Technical Noise

ChIP-seq technical noise stems from antibody specificity, library preparation, and sequencing depth. The ENCODE consortium has established rigorous standards to control these variables [11].

Antibody Specificity and Library Complexity: A primary source of noise is non-specific antibody binding. The Fraction of Reads in Peaks (FRiP) score is a key quality metric, where a low score indicates high background noise [11]. Library complexity, measured by the Non-Redundant Fraction (NRF > 0.9) and PCR Bottlenecking Coefficients (PBC1 > 0.9, PBC2 > 10), is crucial to avoid biases from over-amplification of a limited number of fragments [11].

Sequencing Depth Requirements: Sufficient sequencing depth is non-negotiable for robust peak calling. ENCODE standards mandate different depths for "narrow" and "broad" histone marks [11]:

- Narrow marks (e.g., H3K4me3, H3K27ac): 20 million usable fragments per replicate.

- Broad marks (e.g., H3K27me3, H3K36me3): 45 million usable fragments per replicate.

Biological Variability: A Pervasive Challenge

Biological variability refers to the genuine inter-individual and inter-tissue differences in histone modification patterns, which can be conflated with technical noise if not properly accounted for in experimental design.

Genetic and Tissue-Specific Variation

Evidence from recombinant inbred rat strains demonstrates that histone methylation levels are under significant genetic control. In heart and liver tissues, hundreds of quantitative trait loci (QTLs) were mapped that regulate H3K4me3 and H3K27me3 levels in cis (local) and trans (distant) manners [12]. Notably, 7% of H3K4me3 peaks and 16% of H3K4me1 peaks showed significant differential methylation between the two progenitor strains [12]. Furthermore, these marks exhibit tissue specificity; while 55% of H3K4me3 peaks were shared between heart and liver, the remainder were tissue-specific and associated with relevant biological functions [12].

Inter-Individual and Sample Processing Variability

A study on Arabidopsis thaliana ecotypes quantified the contributions of inter-plant variability versus technical sample processing [13]. It found consistently higher inter-individual variability in histone mark levels among Wassilewskija (Ws) plants compared to Columbia-0 (Col-0) plants. This highlights that the required number of biological replicates for sufficient statistical power is organism and ecotype-dependent [13]. Regarding sample processing, tissue homogenization using a cryomill introduced more heterogeneity in histone modification data than the traditional mortar and pestle method, identifying another source of technical variability that can obscure biological signals [13].

Analysis Pitfalls and Reproducibility Metrics

The computational analysis of histone modification data presents its own set of pitfalls, particularly in defining reproducible peaks and assessing data quality.

Pitfalls in Standard Correlation Analyses

For Hi-C and related chromatin conformation data, simple correlation coefficients (Pearson/Spearman) are poor measures of reproducibility. They are susceptible to outliers and dominated by short-range interactions, failing to capture meaningful differences in high-order chromatin structure [14].

Specialized Reproducibility Metrics

To address these shortcomings, specialized tools have been developed. When benchmarked on real and simulated Hi-C data, these methods outperformed simple correlation in accurately ranking data quality and reproducibility [14].

- HiCRep: Measures reproducibility by stratifying-smoothed contact matrices by genomic distance.

- GenomeDISCO: Uses random walks on the contact network for data smoothing before similarity computation.

- QuASAR-Rep: Based on the interaction correlation matrix, weighted by interaction enrichment.

The Scientist's Toolkit: Research Reagent Solutions

The following table details essential reagents and their functions for generating reproducible histone modification data, based on best practices and cited studies.

Table 2: Key Research Reagent Solutions for Histone Modification Analysis

| Reagent / Material | Function and Importance | Considerations for Reproducibility |

|---|---|---|

| Propionic Anhydride | Chemical derivatization for MS; blocks lysine residues to generate Arg-C like peptides for trypsin digestion [10]. | Protocol A2 (double propionylation) showed highest specificity and efficiency, minimizing under- and over-propionylation [10]. |

| Histone Modification-Specific Antibodies | Enrichment of histone-marked chromatin fragments in ChIP-seq [11]. | Must be rigorously validated per ENCODE standards. Specificity is critical to avoid off-target peaks and high background [11]. |

| Micrococcal Nuclease (MNase) | Fragmentation of chromatin for native ChIP (N-ChIP) of histones, preferred over sonication for precise nucleosome mapping [15]. | Known sequence bias; requires optimization for consistent digestion across samples [15]. |

| Input DNA Control | Control for ChIP-seq representing the whole-genome background [11]. | Mandatory for ENCODE-compliant experiments. Must be generated from the same cell type with matching replicate structure [11]. |

Experimental Protocols for Reproducible Data

This protocol (Method A2) was identified as optimal for minimizing technical variation.

- Reaction Setup: Suspend histone samples in 100 µL of 50 mM HEPES buffer (pH 8.0).

- First Propionylation: Add propionic anhydride to a final concentration of 7.5% (v/v). Incubate for 30 minutes at 37°C with constant agitation.

- Quenching and Drying: Quench the reaction by adding 10% ammonium hydroxide to pH ~10. Dry the sample completely using a vacuum concentrator.

- Trypsin Digestion: Reconstitute and digest the histones with trypsin.

- Second Propionylation: Repeat steps 1-3 on the digested peptides.

- MS Analysis: Desalt the peptides and analyze by LC-MS/MS.

This standardized pipeline ensures consistency and reproducibility across labs.

- Mapping (for all ChIP-seq):

- Input: FASTQ files (min. read length 50 bp, longer encouraged).

- Process: Concatenate multiple FASTQs from the same library. Map reads to a reference genome (GRCh38/mm10).

- Output: Filtered BAM files.

- Histone Peak Calling (for replicated experiments):

- Input: BAM files from ChIP experiment and matched input control.

- Process:

- Generate fold-change-over-control and signal p-value tracks (bigWig).

- Call relaxed peaks on individual replicates and pooled reads.

- Identify a final set of "replicated peaks" observed in both true biological replicates or in two pseudoreplicates derived from the pooled data.

- Output: BED/BigBed files of replicated peaks, quality control metrics (library complexity, read depth, FRiP score, reproducibility).

Visualizing Challenges and Workflows

The following diagram illustrates the major sources of variability and key control points in a standard histone modification analysis workflow.

Major Noise Sources in Histone Analysis

Producing reproducible histone modification data requires a vigilant, multi-faceted approach. Key takeaways for researchers include: the non-negotiable need for sufficient biological replicates, the superiority of standardized protocols like ENCODE's ChIP-seq pipeline and optimized propionylation for MS, and the critical importance of using sequencing depths and quality metrics that are appropriate for the specific histone mark being studied. By systematically addressing technical noise, accounting for biological variability, and avoiding analytical pitfalls, scientists can generate the robust, reliable epigenetic data necessary for meaningful biological insights and successful drug development.

The Impact of Irreproducible Data on Biomarker Validation and Drug Discovery Pipelines

The reproducibility crisis represents a fundamental challenge in biomedical science, silently undermining progress and wasting billions of dollars annually in failed research and development. In the specific contexts of biomarker validation and drug discovery, this crisis manifests as an inability to replicate promising findings across independent studies, datasets, and experimental conditions. Large-scale assessments have revealed alarming statistics: only 11-25% of landmark preclinical findings can be independently reproduced, and a mere 0.1% of potentially clinically relevant cancer biomarkers described in literature progress to routine clinical use [16] [17]. The problem is particularly acute in biomarker development, where despite advances in 'omics technologies, only about 0-2 new protein biomarkers achieve FDA approval per year across all diseases [18]. This reproducibility gap costs billions in failed R&D and delays life-saving treatments, creating a critical bottleneck where promising candidates face the harsh reality of clinical application. The crisis stems not from a single cause but from a complex interplay of technical, methodological, and systemic factors including biological heterogeneity, analytical variability, inappropriate statistical analyses, and publication biases that favor novel positive results over negative or confirmatory data.

Quantitative Impact: Assessing the Damage

The impact of irreproducible data can be measured in both economic terms and scientific progress delays. The tables below summarize key quantitative findings from reproducibility assessments and their specific impacts on drug development pipelines.

Table 1: Reproducibility Failure Rates Across Biomedical Research

| Field of Study | Reproducibility Rate | Study/Source | Key Findings |

|---|---|---|---|

| General Preclinical Research | 11-25% | Bayer/Amgen Reviews [16] | Only 11-25% of "landmark" preclinical findings could be independently reproduced |

| Cancer Research Studies | 46% | Center for Open Science (2021) [19] | Less than half of 53 different cancer research studies could be replicated |

| Biomarker Translation | 0.1% | Literature to Clinical Use [17] | Only ~0.1% of potentially clinically relevant cancer biomarkers progress to clinical use |

| FDA Biomarker Approval | 0-2/year | Protein Biomarkers [18] | Fewer than 2 new protein biomarkers achieve FDA approval annually across all diseases |

Table 2: Economic and Temporal Costs of Irreproducibility

| Cost Category | Specific Impact | Magnitude | Consequence |

|---|---|---|---|

| Biomarker Validation | Single candidate verification | Up to $2 million [18] | ELISA development for one candidate can cost up to $2 million with high failure rate |

| Drug Development | Attrition due to false leads | Billions annually [16] | Wasted resources on fragile leads and failed trials |

| Research Efficiency | Multiplex vs. ELISA cost | $42.33/sample saved [17] | MSD multiplex assay ($19.20/sample) vs. ELISA ($61.53/sample) for 4 biomarkers |

| Timeline Impact | Project delays | Years [16] | Failed targets set back trials by years; entire pipelines compromised |

Root Causes: Technical Drivers of Irreproducibility

Analytical and Biological Variability

The journey from discovery to validated biomarker is fraught with technical challenges that undermine reproducibility. Analytical variability emerges when different teams use slightly different methods or processing parameters, producing conflicting results that invalidate comparisons [18]. This is compounded by biological heterogeneity arising from batch effects, comorbidities, and demographic variations across sample populations [16]. The "small n, large p" problem—where studies measure thousands of potential features (genes, proteins) but only have a small number of patients—makes it statistically difficult to distinguish meaningful signals from noise [18]. Further complications include heterogeneity in data generation platforms (e.g., microarrays vs. RNA-seq, LC-MS vs. NMR) and lack of standardized preprocessing pipelines for normalization, imputation, and filtering [16].

Statistical and Computational Deficiencies

Improper statistical approaches significantly contribute to irreproducible findings. The overreliance on p-values without correction for multiple hypothesis testing increases false discovery rates [16]. Model overfitting represents another critical failure point, particularly when working with high-dimensional data and small sample sizes, where algorithms may identify patterns that exist only in the specific dataset rather than general biological phenomena [16]. The widespread problem of inadequate metadata documentation and non-standardized protocols further impedes replication attempts, as essential methodological details remain obscured [18].

Systemic and Incentive Problems

Beyond technical issues, structural problems within the scientific ecosystem perpetuate the reproducibility crisis. Publication bias favors novel, positive results over negative or confirmatory data, creating an incomplete evidence base [16] [19]. The competitive academic reward system prioritizes publication in high-impact journals over rigorous replication, with Thomas Powers of the University of Delaware's Center for Science, Ethics, and Public Policy noting that "funding agencies got tired of funding science that's already been done" [19]. Brian Nosek, Executive Director of the Center for Open Science, summarizes the challenge: "The reward system for science is not necessarily aligned with scientific values" [19]. This misalignment creates pressure for selective reporting and, in extreme cases, fabrication; a 2024 meta-analysis of 75,000 studies across multiple fields suggested as many as one in seven may have contained at least partially faked results [19].

Case Study: Reproducibility Challenges in Histone Modification Research

Research on histone modifications exemplifies both the specific technical challenges and potential solutions for reproducibility in epigenetic studies. Histone post-translational modifications (PTMs)—such as H3K27ac, H3K4me3, and H3K9ac—regulate chromatin architecture and gene expression in a context-dependent manner, making them promising biomarkers and therapeutic targets [7]. However, their dynamic nature and technical requirements for analysis present distinct reproducibility challenges.

Experimental Protocols for Histone Modification Analysis

Chromatin Immunoprecipitation Sequencing (ChIP-Seq) Protocol: The classical ChIP-seq method involves cross-linking proteins to DNA, chromatin fragmentation, immunoprecipitation with modification-specific antibodies, and next-generation sequencing to map genomic distributions of histone marks [7]. While powerful, standard ChIP-seq requires high sample input, complex workflows, and often suffers from elevated background noise, limiting its application to precious or trace forensic samples [7]. The protocol typically includes the following critical steps: (1) Cross-linking with formaldehyde to fix protein-DNA interactions; (2) Chromatin shearing by sonication or enzymatic digestion to fragment DNA; (3) Immunoprecipitation with validated, modification-specific antibodies; (4) Library preparation and next-generation sequencing; (5) Bioinformatic analysis including peak calling and annotation.

CUT&Tag (Cleavage Under Targets and Tagmentation) Protocol: Developed to address ChIP-seq limitations, CUT&Tag uses antibody-directed Tn5 transposase to simultaneously fragment and tag chromatin at modification sites [7]. This method enables high-resolution chromatin profiling from as few as 10 cells and has demonstrated superior signal-to-noise ratios compared to earlier approaches [7]. The streamlined protocol includes: (1) Permeabilization of cells or nuclei; (2) Antibody binding with specific primary antibodies against target histone modifications; (3) pA-Tn5 adapter binding where protein A-coated transposase binds to primary antibodies; (4) Tagmentation where activated Tn5 simultaneously cleaves DNA and adds sequencing adapters; (5) DNA purification and library amplification; (6) Sequencing and data analysis. The single-cell variant (scCUT&Tag) offers additional benefits in resolution and reproducibility [7].

Diagram 1: Histone modification research challenges and solutions.

EpiMapper: A Tool for Enhancing Reproducibility in Epigenomic Analysis

The EpiMapper Python package addresses key reproducibility challenges in analyzing high-throughput sequencing data from CUT&Tag, ATAC-seq, or ChIP-seq experiments [20]. This tool provides a standardized analysis pipeline that includes every necessary step from quality control to annotation and differential peak analysis. EpiMapper offers improved functionality for reproducibility assessment compared to previous protocols and provides novel features such as genome annotation and differential peak analysis [20]. By simplifying data analysis for scientists without expert-level computational skills, EpiMapper helps reduce analytical variability—one of the root causes of irreproducibility. The package has been successfully validated in three case studies (two on CUT&Tag and one on ATAC-seq data), where it reproduced previous results, demonstrating its utility for robust epigenetic research [20].

Solutions and Best Practices for Enhancing Reproducibility

Technological and Methodological Advances

Table 3: Solutions for Improving Reproducibility in Biomarker Research

| Solution Category | Specific Approach | Key Benefit | Implementation Example |

|---|---|---|---|

| Advanced Assay Technologies | Meso Scale Discovery (MSD) | Up to 100x greater sensitivity than ELISA; multiplexing capability [17] | U-PLEX platform for custom biomarker panels |

| LC-MS/MS | Analysis of hundreds to thousands of proteins in a single run [17] | Surpassing 10,000 identified proteins in single run [17] | |

| Data Standardization | FAIR Principles | Findable, Accessible, Interoperable, Reusable data [18] | Digital Biomarker Discovery Pipeline (DBDP) [18] |

| Standardized Formats | Enables data comparability across studies [18] | Brain Imaging Data Structure (BIDS) for EEG data [18] | |

| Computational Approaches | Explainable AI (XAI) | Builds trust and clinical acceptance of AI-driven biomarkers [18] | Integrating interpretability from start of development |

| Open-Source Pipelines | Promotes transparency and verification of methods [18] | DBDP on GitHub with Apache 2.0 License [18] |

Systemic Reforms and Incentive Structures

Systemic reforms are essential for addressing the root causes of irreproducibility. The preregistration of research—where researchers approach journals before data collection to commit to publication regardless of outcome—represents a promising approach for reducing publication bias [19]. Creating clear career paths for scientists conducting replication studies would help legitimize and reward this essential work [19]. Funding agencies can play a pivotal role by mandating allocation of resources for replication studies; the Paragon Health Institute has recommended that the NIH devote at least 0.1% of its annual budget (approximately $48 million) to such efforts [19]. Stuart Buck, author of the Paragon report, argues that "we should expect more like 80-90% of science to be replicable" [19], suggesting a tangible target for improvement.

Diagram 2: Comprehensive solutions for irreproducible data.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 4: Key Research Reagents and Platforms for Reproducible Histone Modification Studies

| Reagent/Platform | Function | Application in Reproducibility |

|---|---|---|

| CUT&Tag Assay Kits | Antibody-directed tagmentation for epigenomic profiling | Enables high-resolution mapping with low input requirements and reduced background [7] [20] |

| Modification-Specific Validated Antibodies | Immunoprecipitation or binding of specific histone PTMs | Critical for specificity; batch-to-batch validation reduces variability [7] |

| MSD U-PLEX Assays | Multiplex electrochemiluminescence detection | Simultaneous measurement of multiple biomarkers with greater sensitivity than ELISA [17] |

| LC-MS/MS Systems | High-sensitivity mass spectrometry | Unbiased protein/biomarker quantification without antibody requirements [17] |

| EpiMapper Python Package | Analysis of CUT&Tag, ATAC-seq, ChIP-seq data | Standardized bioinformatic workflows with reproducibility assessment [20] |

| Digital Biomarker Discovery Pipeline (DBDP) | Open-source biomarker development toolkit | Modular frameworks reduce analytical variability via community standards [18] |

The impact of irreproducible data on biomarker validation and drug discovery pipelines is both profound and multifaceted, affecting everything from early research decisions to late-stage clinical trials. Solving this crisis requires coordinated technological improvements, methodological rigor, and systemic reforms to scientific incentives. Promisingly, emerging technologies like CUT&Tag for epigenomic profiling, MSD and LC-MS/MS for biomarker validation, and standardized computational pipelines like EpiMapper are addressing technical sources of variability [7] [17] [20]. Simultaneously, the adoption of FAIR data principles, preregistration of studies, and dedicated funding for replication efforts represent structural changes that could reshape the research landscape [18] [19]. As Brian Nosek aptly notes, "Science is trustworthy because it doesn't trust itself" [19]—embracing this self-critical ethos through concrete actions offers the path forward. For researchers, drug developers, and the patients who ultimately depend on scientific progress, making reproducibility the standard rather than the exception would transform the efficiency and reliability of biomedical innovation.

Post-translational modifications (PTMs) of histones constitute a fundamental chromatin indexing mechanism that regulates gene expression without altering the underlying DNA sequence. Among the myriad of histone modifications, H3K4me3, H3K27me3, and H3K9ac represent three of the most extensively studied marks, each associated with distinct chromatin states and transcriptional outcomes. H3K4me3 is a well-established marker of active promoters, H3K27me3 denotes facultative heterochromatin and transcriptional repression, and H3K9ac is associated with active transcription. These modifications serve as critical case studies in epigenetics research due to their well-characterized functions and the availability of established detection reagents. However, the reproducibility of data concerning these marks faces significant challenges, primarily stemming from methodological variations and reagent specificity issues. The reliability of histone PTM research has profound implications for drug development, particularly in the context of epigenetic therapies targeting chromatin-modifying enzymes. This guide objectively compares the performance of leading experimental methods for studying these core histone PTMs, providing researchers with the experimental data and protocols necessary to enhance reproducibility in their investigations.

Biological Functions and Genomic Distributions

Functional Roles of Core Histone Modifications

The three histone modifications under examination play distinct and crucial roles in gene regulation and chromatin organization. H3K4me3 is highly enriched at active promoters near transcription start sites (TSS) and is considered a transcription activation epigenetic biomarker [21]. This mark facilitates an open chromatin configuration that permits transcription factor binding and RNA polymerase II recruitment. H3K27me3, in contrast, is a heterochromatin-associated histone mark specific for facultative heterochromatin and indicates repressed transcriptional activity in neighboring genomic regions [21]. This repressive mark is dynamically regulated throughout development and cellular differentiation. H3K9ac denotes active gene transcription and is generally associated with accessible chromatin structures in promoter and enhancer regions [21]. Unlike the stable methylation marks, acetylation is highly dynamic and correlates with immediate transcriptional activation potential.

Genomic Distribution Patterns

The genomic distributions of H3K4me3, H3K27me3, and H3K9ac exhibit characteristic patterns that reflect their functional differences. H3K4me3 typically displays sharp, distinct peaks concentrated around TSS regions of actively transcribed genes [22]. H3K27me3 modifications generally show broad distribution across large genomic domains, often encompassing entire gene clusters involved in developmental regulation [22]. H3K9ac marks tend to localize to both promoters and enhancers of active genes, with patterns that can overlap with H3K4me3 at promoter regions while also extending into regulatory elements further from TSS.

Table 1: Characteristic Genomic Profiles of Core Histone PTMs

| Histone PTM | Chromatin State | Transcriptional Association | Typical Peak Profile | Key Genomic Locations |

|---|---|---|---|---|

| H3K4me3 | Euchromatin | Activation | Sharp, narrow | Active promoters near TSS |

| H3K27me3 | Facultative heterochromatin | Repression | Broad, wide | Developmentally regulated genes |

| H3K9ac | Euchromatin | Activation | Sharp to intermediate | Active promoters and enhancers |

Methodological Comparisons for Histone PTM Analysis

Established Workflows: ChIP-seq and CUT&Tag

The gold standard for genome-wide mapping of histone modifications has traditionally been chromatin immunoprecipitation followed by sequencing (ChIP-seq). This method relies on antibodies specific to histone modifications to immunoprecipitate cross-linked chromatin fragments, which are then sequenced to determine their genomic locations. More recently, CUT&Tag (Cleavage Under Targets and Tagmentation) has emerged as a promising alternative that uses a protein A-Tn5 transposase fusion protein targeted to specific histone marks by antibodies to simultaneously cleave and tag chromatin for sequencing [22]. This method offers several advantages for low-input samples, including applications with single embryos or rare cell populations.

A comparative study analyzing H3K4me3 and H3K27me3 in bovine blastocysts revealed that CUT&Tag produces overall similar genomic distributions to ChIP-seq, though with notable technical differences. For H3K4me3, both methods showed high correlation in signal distribution, with CUT&Tag detecting approximately 20,000 significant peaks throughout the genome, 20% of which were located in promoter regions [22]. However, the study identified a false negative rate (FNR) of 21-32% for H3K4me3 with CUT&Tag compared to ChIP-seq, with missing peaks predominantly having lower signals in ChIP-seq [22]. For the broad domains of H3K27me3, CUT&Tag exhibited lower resolution compared to ChIP-seq, with inter- and intra-assay correlations being lower than those observed for H3K4me3 [22].

Performance Metrics Across Methods

Both ChIP-seq and CUT&Tag face challenges related to the specificity of binding reagents. A significant concern with CUT&Tag is the potential bias of Tn5 transposase toward cutting open chromatin regions, which can affect the accurate detection of repressive marks like H3K27me3. The false positive rate (FPR) caused by this bias was calculated to be 10-15% for H3K4me3 and 12-25% for H3K27me3 [22]. This technical bias must be considered when interpreting data from Tn5-based methods, particularly for marks associated with closed chromatin.

Table 2: Performance Comparison of Histone PTM Mapping Methods

| Performance Metric | ChIP-seq | CUT&Tag | Notes |

|---|---|---|---|

| Input Requirements | High (thousands to millions of cells) | Low (100-1000 cells) | CUT&Tag enables single-embryo analysis [22] |

| H3K4me3 Resolution | High, with distinct valley-like shapes near TSS | High, but lacks valley-like shapes near TSS | Overall high correlation between methods [22] |

| H3K27me3 Resolution | High for broad domains | Lower, peaks tend to fragment | Broader domains challenging for CUT&Tag [22] |

| False Positive Rate | Varies with antibody quality | 10-25% (due to Tn5 open chromatin bias) | FPR higher for H3K27me3 than H3K4me3 [22] |

| False Negative Rate | Varies with antibody quality | 21-32% for H3K4me3 | Missing peaks have lower ChIP-seq signals [22] |

| Technical Variability | Moderate to high | Lower between replicates | CUT&Tag shows high concordance between replicates [22] |

| Protocol Complexity | High (crosslinking, sonication, IP) | Moderate (permeabilization, antibody, tagmentation) | CUT&Tag has simpler workflow with in situ tagmentation [22] |

Diagram 1: Comparative Workflows for Histone PTM Mapping. This diagram illustrates the key procedural differences between ChIP-seq and CUT&Tag methods for histone modification analysis, highlighting their divergent approaches to chromatin processing and library preparation.

Reagent Specificity and Reproducibility Challenges

Antibody-Related Variability

A critical challenge in histone PTM research concerns the specificity and consistency of antibodies used for detection. Histone PTM-specific antibodies have been the standard reagent despite documented caveats including lot-to-lot variability of specificity and binding affinity [23]. This variability represents a significant reproducibility concern, particularly for modifications with similar sequence contexts such as H3K9me3 and H3K27me3, which both occur in ARKS amino acid motifs [23]. The problem is compounded by the fact that histone tails are hypermodified, with adjacent amino acid side chains often bearing different modifications that can prevent antibody binding despite the presence of the target modification, yielding false negative results [23].

The ENCODE Project Consortium has established quality criteria for histone PTM antibodies to address these concerns, including requirements for specific detection in Western blots and fulfillment of secondary criteria such as specific binding to modified peptides in dot blot assays, mass spectrometric detection of the modification in precipitated chromatin, or loss of signal upon knockdown of the corresponding histone modifying enzyme [23]. Despite these guidelines, significant variability persists, necessitating careful validation of antibodies for each application.

Alternative Binding Domains

To address antibody limitations, researchers have developed histone modification interacting domains (HMIDs) as alternative reagents. These domains, such as the MPHOSPH8 Chromo domain and ATRX ADD domain for H3K9me3, can be produced recombinantly in E. coli at low cost and constant quality, eliminating lot-to-lot variability [23]. Specificity analyses demonstrate that these HMIDs show comparable specificity to good antibodies currently used in chromatin research, fulfilling ENCODE criteria for specific binding to peptide epitopes [23].

Protein design of reading domains allows for generation of novel specificities, addition of affinity tags, and preparation of PTM binding pocket variants as matching negative controls, which is not possible with antibodies [23]. This engineering capability provides researchers with more precise tools for distinguishing between highly similar modification states and offers opportunities for developing improved detection reagents with minimal cross-reactivity.

Table 3: Research Reagent Solutions for Histone PTM Studies

| Reagent Type | Examples | Advantages | Limitations | Applications |

|---|---|---|---|---|

| Traditional Antibodies | Polyclonal and monoclonal antibodies from various vendors | Wide commercial availability, established protocols | Lot-to-lot variability, cross-reactivity issues [23] | ChIP-seq, Western blot, IHC |

| ENCODE-Validated Antibodies | Abcam ab8898 (H3K9me3) | Rigorously validated, consistent performance | Higher cost, limited target range | Standardized ChIP-seq protocols |

| Histone Modification Interacting Domains (HMIDs) | MPHOSPH8 Chromo domain, ATRX ADD domain | Constant quality, recombinantly produced, engineerable [23] | Limited commercial availability, requires protein production expertise | Alternative to antibodies in ChIP-like experiments, peptide arrays |

| Reverse Phase Protein Array (RPPA) | Platform for 20 histone PTMs and 40 modifier proteins | High-throughput, reproducible, scalable [5] | Requires specialized equipment, antibody validation needed | Comprehensive epigenomic profiling, biomarker discovery |

| CRISPR-based Enrichment | enChIP with dCas9 [24] | Locus-specific, high specificity | Requires guide RNA design, lower throughput | Isolation of specific genomic regions, identification of associated proteins |

Diagram 2: Reagent Selection Strategy for Histone PTM Studies. This decision diagram outlines a systematic approach for selecting appropriate reagents based on experimental goals, highlighting alternatives to traditional antibodies that may enhance reproducibility.

Clinical Relevance and Translational Applications

Prognostic and Diagnostic Value

The reproducible detection of H3K4me3, H3K27me3, and H3K9ac has significant clinical implications, particularly in oncology and developmental disorders. In pediatric acute myeloid leukemia (AML), H3K27me3 expression at diagnosis has demonstrated prognostic value, with high expression significantly associated with superior overall and event-free survival over three years [25]. Among KMT2A-rearranged cases, all patients with high H3K27me3 achieved long-term first remission, whereas those with low expression had higher relapse rates [25]. This correlation suggests that H3K27me3 may serve as both a prognostic biomarker and potential therapeutic target in hematological malignancies.

In sepsis, altered levels of H3K9ac, H3K4me3, and H3K27me3 in promoters of differentially expressed genes related to innate immune response correlate with clinical outcomes [26]. Non-surviving sepsis patients exhibit more pronounced epigenetic dysregulation compared with survivors, including increased H3K27me3 in the IL-10 and HLA-DR promoters, suggesting a more dysfunctional immune response [26]. These clinical correlations highlight the importance of reliable PTM detection for patient stratification and treatment decisions.

High-Throughput Platforms for Translational Research

The Reverse Phase Protein Array (RPPA) platform has been adapted for global profiling of histone modifications, enabling simultaneous analysis of 20 histone PTMs and expression of 40 histone-modifying proteins in a high-throughput manner [5]. This platform addresses the need for reproducible, scalable epigenetic profiling in translational research, particularly for biomarker discovery and therapeutic development. The RPPA method has been validated through detection of histone PTM changes in response to inhibitors of histone modifier proteins in cell cultures and demonstrated useful application in models of induced pluripotent stem cell generation and mammary tumor progression [5].

The reproducibility of histone PTM data for H3K4me3, H3K27me3, and H3K9ac depends critically on methodological choices and reagent quality. Based on comparative studies, CUT&Tag offers advantages for low-input samples but shows higher false negative rates for H3K4me3 and reduced resolution for broad H3K27me3 domains compared to ChIP-seq. Reagent specificity remains a fundamental challenge, with antibody variability constituting a major reproducibility concern that can be mitigated through use of ENCODE-validated reagents or alternative binding domains. For clinical and translational applications, standardized platforms like RPPA provide more reproducible high-throughput profiling capabilities. Enhancing reproducibility requires careful method selection based on experimental goals, rigorous validation of reagents, and implementation of standardized protocols across laboratories. By addressing these factors, researchers can generate more reliable data on these core histone modifications, advancing both basic chromatin biology and the development of epigenetic therapies.

From Bench to Bioinformatics: Robust Methods for Generating and Analyzing Reproducible Histone Data

Mass spectrometry (MS) has emerged as the preeminent analytical technique for characterizing histone post-translational modifications (PTMs), which are crucial regulators of gene expression, DNA repair, and chromosome condensation in epigenetic mechanisms [27] [28]. The reliability and reproducibility of histone PTM data directly impact research validity and translational potential in disease mechanisms and drug development. Histone proteins undergo complex, combinatorial modifications that create a "histone code" influencing chromatin structure and cellular phenotype [27] [2]. Aberrations in PTM abundance are linked to various diseases, particularly cancer, making accurate quantification essential for both basic research and clinical applications [27] [29].

Within this context, three primary MS strategies have been developed: bottom-up, middle-down, and top-down proteomics. Each approach offers distinct advantages and limitations for histone analysis, particularly regarding their ability to preserve and quantify PTM combinations along protein sequences. This guide objectively compares these methodologies, focusing on their performance characteristics, experimental requirements, and appropriateness for specific research goals within epigenetic studies, with special emphasis on generating reproducible, reliable data for histone modification research.

Core Principles and Workflow Comparisons

The fundamental distinction between MS approaches lies in their initial sample handling and the size of the protein fragments analyzed. Bottom-up proteomics involves digesting proteins into short peptides (<20 amino acids) prior to LC-MS/MS analysis [27] [30]. Middle-down proteomics utilizes larger polypeptides (typically >50 amino acids) corresponding to intact histone tails [27]. Top-down proteomics analyzes intact proteins without enzymatic digestion [31] [30].

The following workflow diagram illustrates the fundamental steps and key differences between these three approaches:

Performance Comparison and Experimental Data

Technical Characteristics and Applications

Table 1: Comparison of Key Technical Characteristics for Histone Analysis

| Parameter | Bottom-Up | Middle-Down | Top-Down |

|---|---|---|---|

| Analysis Level | Short peptides (<20 aa) [27] | Intact histone tails (>50 aa) [27] | Whole intact proteins [30] |

| PTM Co-occurrence | Limited to short sequences [27] | Preserved on histone tails [27] | Fully preserved across entire protein [30] |

| Throughput | High [30] | Moderate [27] | Lower [30] |

| Sensitivity | High [30] | Moderate [27] | Lower for complex mixtures [30] |

| Ionization Efficiency Bias | Significant (requires correction) [27] | Reduced (same peptide sequence) [27] | Minimal for intact proteoforms |

| Stoichiometry Accuracy | Good after correction [27] | Good without correction [27] | Excellent [30] |

| Technical Complexity | Established protocols [30] | Specialized separation needed [27] | Advanced instrumentation required [30] |

| Ideal Application | High-throughput PTM screening [29] | Combinatorial PTM analysis on tails [27] | Complete proteoform characterization [30] |

Quantitative Performance in Histone PTM Analysis

Direct comparative studies have evaluated the accuracy of bottom-up and middle-down approaches for histone PTM quantification. In a benchmark study using synthetic peptide libraries for external correction, both methods demonstrated comparable performance in defining PTM relative abundance and stoichiometry [27] [32].

Table 2: Quantitative Performance Metrics from Comparative Studies

| Performance Metric | Bottom-Up (Uncorrected) | Bottom-Up (Corrected) | Middle-Down |

|---|---|---|---|

| Average CV across replicates | 18.5% [27] | N/A | 42.1% [27] |

| Overall difference from reference | 218.9% [27] | N/A (used as reference) | 172.1% [27] |

| PTM binary ratios within 1 absolute difference unit | 83.1% [27] | N/A (used as reference) | 78.7% [27] |

| Stoichiometry calculation CV | 50.0% [27] | N/A | 94.4% [27] |

| PTMs quantified per experiment | ~44 modified peptides [27] | N/A | ~287 combinatorial PTMs [27] |

The data reveals that middle-down provided better accuracy for specific PTMs like K9me1 and K27me2, while bottom-up showed higher precision with lower coefficients of variation [27]. After external correction using synthetic standards, bottom-up data served as a reliable reference, demonstrating that middle-down is at least equally reliable for quantifying histone PTMs [27] [32].

Detailed Experimental Protocols

Bottom-Up Proteomics for Histones

Sample Preparation:

- Histone Derivatization: Propionic anhydride derivatization of lysines is performed before trypsin digestion to create an "ArgC-like" digestion pattern, generating appropriately sized peptides for analysis [27] [2]. Alternative protocols use deuterated acetic anhydride (D3 protocol) for this purpose [29].

- Enzymatic Digestion: Trypsin digestion cleaves at underivatized arginine residues, producing peptides suitable for LC-MS/MS analysis [27] [2]. For comprehensive H4 analysis, ArgC protease can be used for in-solution digestion [29].

- Peptide Cleanup: Solid-phase extraction or ultrafiltration removes salts, detergents, and other impurities prior to LC-MS analysis [30].

LC-MS Analysis:

- Chromatography: Reversed-phase liquid chromatography separates peptides based on hydrophobicity [27]. Peak widths are typically ~40 seconds, providing approximately 20 data points across the elution profile with standard duty cycles [27].

- Mass Spectrometry: Tandem MS with collision-induced dissociation (CID) or higher-energy collisional dissociation (HCD) fragments selected peptides for sequence identification [30].

- Quantification: Label-free quantification or isotopic labeling (TMT, iTRAQ) enables comparison of PTM abundance across samples [30].

Critical Considerations:

- Ionization Efficiency Bias: Different peptides and modified forms have varying ionization efficiencies, requiring external correction using synthetic peptide libraries with known relative abundances for accurate quantification [27].

- PTM Coverage: Bottom-up provides higher sensitivity for certain modifications like H3K4 methylation states but cannot analyze arginine methylation due to trypsin cleavage requirements [27].

Middle-Down Proteomics for Histones

Sample Preparation:

- Limited Proteolysis: GluC enzyme cleavage generates polypeptides corresponding to entire histone N-terminal tails (>50 amino acids) [27].

- Chemical Derivatization: Propionic anhydride derivatization may be used to improve chromatographic behavior and fragmentation efficiency.

LC-MS Analysis:

- Specialized Chromatography: Weak cation exchange-hydrophilic interaction liquid chromatography (WCX-HILIC) exploits the high hydrophilicity and basicity of histone tails for separation [27]. This method generates wide, heterogeneous peak widths ranging from 2-7 minutes [27].

- Mass Spectrometry: Electron transfer dissociation (ETD) is preferred for fragmentation as it preserves labile PTMs and provides more complete sequence coverage of the large polypeptides [27]. The high complexity of isobaric peptides requires quantification at the MS/MS level [27].

- Data Analysis: Platforms like isoScale extract total ion intensity of identified MS/MS spectra to retrieve peptide abundance using a fragment ion relative ratio approach [27]. Thousands of MS/MS spectra are typically used for quantification across replicates [27].

Critical Considerations:

- Complexity Management: Each precursor mass corresponds to several combinatorial PTM codes that cannot be separated chromatographically, requiring sophisticated data analysis tools [27].

- Throughput: Analysis time is longer than bottom-up, with lower analytical throughput [27].

Top-Down Proteomics for Histones

Sample Preparation:

- Protein Extraction: Histones are isolated from biological samples using techniques like homogenization or centrifugation with appropriate buffers to maintain protein stability and prevent degradation [30].

- Concentration and Purification: Protein solutions are concentrated using precipitation (ammonium sulfate) or ultrafiltration to remove small molecules and contaminants [30]. MWCO spin cartridges are particularly effective for removing MS-incompatible salts [33].

- Buffer Compatibility: Critical attention must be paid to buffer components, as detergents and less volatile salts cause significant signal suppression. Substitution with volatile alternatives like ammonium acetate is essential [33].

LC-MS Analysis:

- Intact Protein Separation: Reversed-phase or size-exclusion chromatography separates intact proteins, though resolution decreases with molecular weight [30].

- Mass Spectrometry: High-resolution mass spectrometers (FT-ICR, Orbitrap) measure intact protein masses with high accuracy [30]. Electron capture dissociation (ECD), ETD, or ultraviolet photodissociation (UVPD) fragment intact proteins while preserving labile PTMs [31] [30].

- Data Analysis: Specialized software interprets complex mass spectra to identify protein sequences, modifications, and proteoforms directly without database searching [30].

Critical Considerations:

- Technical Requirements: Demands high-resolution mass spectrometers and sophisticated data processing techniques, creating accessibility challenges for some laboratories [30].

- Throughput: Generally has lower analytical throughput compared to bottom-up methods, making it more suitable for in-depth studies of limited numbers of proteins [30].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Reagents for Histone PTM Analysis by Mass Spectrometry

| Reagent/Resource | Function | Application Notes |

|---|---|---|

| Propionic Anhydride | Chemical derivatization of lysine residues | Creates "ArgC-like" digestion pattern in bottom-up; improves chromatographic behavior in middle-down [27] [2] |

| Trypsin | Proteolytic enzyme for protein digestion | Standard enzyme for bottom-up proteomics; requires lysine derivatization for histone analysis [27] [30] |

| GluC | Proteolytic enzyme for limited digestion | Generates intact histone tails (>50 aa) for middle-down approach [27] |

| Synthetic Peptide Libraries | External standards for quantification correction | Essential for correcting ionization efficiency biases in bottom-up quantification [27] |

| Heavy-isotope Labeled Histones | Internal standards for quantification | Spike-in standards improve quantitation accuracy across samples [29] |

| WCX-HILIC Chromatography | Specialized separation resin | Exploits hydrophilicity and basicity of histone tails for middle-down separation [27] |

| ETD/ECD Reagents | Fragmentation techniques | Preserve labile PTMs during fragmentation; preferred for middle-down and top-down [27] [31] |

Integrated Workflows and Emerging Approaches

Recent methodological advances have demonstrated the power of integrating multiple MS approaches. For example, the PolySeq.AI workflow combines bottom-up, middle-down, and intact mass analysis for de novo sequencing of polyclonal antibodies, achieving >99% sequencing accuracy [34]. Similarly, in histone research, multi-omics approaches integrating MS-based epigenomic profiling with transcriptomics and proteomics have revealed novel epigenetic pathways in triple-negative breast cancer [29].

Novel bioinformatic workflows like HiP-Frag represent significant advances for comprehensive histone modification analysis. This approach integrates closed, open, and detailed mass offset searches to enable identification of previously unexplored histone PTMs, discovering 60 novel marks on core histones and 13 on linker histones [2].

The following decision framework illustrates how to select the appropriate MS approach based on specific research goals:

The selection of appropriate mass spectrometry approaches is fundamental to generating reproducible, reliable histone modification data. Bottom-up proteomics offers high throughput and sensitivity for comprehensive PTM screening, while middle-down excels at analyzing combinatorial modifications on histone tails. Top-down proteomics provides the most complete characterization of intact proteoforms but requires advanced instrumentation.

For research focused on reproducibility assessment in histone modification studies, the integration of multiple approaches provides the most robust validation. The consistent epigenetic signatures identified in breast cancer subtypes using MS-based profiling [29], coupled with the comparable accuracy demonstrated between bottom-up and middle-down methodologies [27] [32], highlight the maturity of MS platforms for reliable epigenetic research. As mass spectrometry technologies continue to advance, along with developing bioinformatic tools like HiP-Frag [2] and integrated workflows [34], researchers are better equipped than ever to generate reproducible, biologically meaningful histone PTM data that accelerates both basic epigenetic discovery and clinical translation.

Chromatin Immunoprecipitation followed by sequencing (ChIP-seq) has established itself as a foundational methodology for generating genome-wide maps of histone modifications and transcription factor binding. However, the reproducibility of histone modification data research faces significant challenges, primarily centered on antibody specificity and cross-reactivity. These technical variables substantially impact data reliability and comparative analysis across experimental conditions and laboratories. The core of the ChIP-seq technique involves immunoprecipitation of crosslinked protein-DNA complexes using antibodies specific to the target epitope, followed by high-throughput sequencing of the purified DNA [35]. While this approach has enabled remarkable insights into the epigenomic landscape, the performance characteristics of antibodies—including their affinity, specificity, and tolerance to experimental conditions—remain critical determinants of data quality. As the field moves toward more quantitative comparisons and large-scale consortia like ENCODE, rigorous validation of antibody-based techniques becomes paramount for ensuring reproducible and biologically meaningful results in histone modification research.

ChIP-seq Methodology: Workflows and Critical Validation Steps

Core Experimental Protocol

The standard ChIP-seq protocol encompasses multiple critical steps, each requiring optimization to ensure high-quality results. Initially, proteins are crosslinked to DNA in living cells using formaldehyde, preserving in vivo protein-DNA interactions [35]. Chromatin is then isolated and fragmented, typically via sonication using instruments like the Covaris LE220 ultrasonicator or Bioruptor, to generate fragments ranging from 200-600 base pairs [35] [36]. The immunoprecipitation step follows, where specific antibodies capture the protein-DNA complexes of interest. Magnetic beads pre-coated with protein A/G are commonly used for this capture. After extensive washing to remove non-specifically bound material, crosslinks are reversed, and the immunoprecipitated DNA is purified [35]. This DNA then undergoes library preparation for next-generation sequencing, which may involve specialized amplification approaches to minimize background when working with limited material [36].

Antibody Validation Frameworks

Comprehensive antibody validation represents the most crucial component for ensuring ChIP-seq reproducibility. Leading antibody providers have established rigorous validation pipelines that extend beyond simple ChIP-qPCR confirmation. According to Cell Signaling Technology, ChIP-seq validated antibodies undergo a multi-tiered validation process that includes: (1) demonstration of acceptable signal-to-noise ratios for target enrichment across the genome compared to input controls; (2) achievement of a minimum threshold of defined enrichment peaks; (3) motif analysis for transcription factor targets to confirm biological relevance; (4) comparison using multiple antibodies against distinct epitopes of the same target protein; and (5) benchmarking against published reference data from consortia like ENCODE [37]. This comprehensive approach addresses both technical performance (sensitivity and specificity) and biological relevance of the obtained data.

Table 1: Key Validation Metrics for ChIP-seq Antibodies

| Validation Metric | Description | Acceptance Criteria |

|---|---|---|

| Signal-to-Noise Ratio | Comparison of target enrichment to input control across genome | Minimum threshold compared to input chromatin [37] |

| Peak Number | Count of defined enrichment regions | Acceptable minimum number based on biological expectation [37] |

| Motif Enrichment | For transcription factors, analysis of enriched DNA sequences | Significant enrichment for known binding motifs [37] |

| Epitope Comparison | Consistency across antibodies targeting different epitopes | High correlation in enrichment profiles [37] |

| Reference Benchmarking | Comparison to established datasets (e.g., ENCODE) | Recapitulation of known genomic distribution patterns [37] [38] |

Comparative Analysis of ChIP-seq and Emerging Alternatives

Performance Benchmarking: ChIP-seq vs. CUT&Tag

Recent systematic comparisons between ChIP-seq and Cleavage Under Targets & Tagmentation (CUT&Tag) provide valuable insights into their relative performance characteristics. A comprehensive benchmarking study evaluating H3K27ac and H3K27me3 profiling in K562 cells revealed that CUT&Tag recovers approximately 54% of known ENCODE ChIP-seq peaks for both histone modifications [38]. This study implemented a rigorous computational workflow to evaluate multiple experimental parameters, including antibody sources, dilutions, and library preparation methods. The recovered peaks predominantly represented the strongest ENCODE peaks and showed similar functional and biological enrichments, suggesting that CUT&Tag effectively captures the most biologically relevant signals while requiring substantially fewer cells (approximately 200-fold reduction) and lower sequencing depth (10-fold reduction) compared to ChIP-seq [38].

Technical Considerations Across Methods

The choice between ChIP-seq and its alternatives involves trade-offs that must be considered within specific experimental contexts. Traditional ChIP-seq requires substantial starting material (typically 1-10 million cells) and exhibits limitations in signal-to-noise ratio due to non-specific immunoprecipitation and background from crosslinking [38]. In contrast, CUT&Tag operates under native conditions without crosslinking, utilizes an enzyme-tethering approach for targeted tagmentation, and maintains DNA fragments within permeabilized nuclei throughout the process, minimizing sample loss [38]. However, concerns about the comprehensive capture of regulatory elements remain, as evidenced by the partial overlap with ENCODE references. For specialized applications requiring absolute quantification, Internal Standard Calibrated ChIP (ICeChIP) incorporates spike-in nucleosomes with defined modifications to measure histone modification densities on a biologically meaningful scale, enabling unbiased cross-experimental comparisons [39].

Table 2: Method Comparison for Histone Modification Profiling

| Parameter | Traditional ChIP-seq | CUT&Tag | cChIP-seq | ICeChIP |

|---|---|---|---|---|

| Cell Input | 1-10 million [38] [40] | ~5,000 [38] | 10,000-100 [36] | Similar to ChIP-seq [39] |

| Crosslinking | Required (formaldehyde) [35] | Not required [38] | Required [36] | Required [39] |

| Sequencing Depth | High (10-50 million reads) [38] | Low (2-5 million reads) [38] | Similar to ChIP-seq [36] | Similar to ChIP-seq [39] |

| ENCODE Peak Recovery | Reference standard | ~54% [38] | Equivalent with proper optimization [36] | Enables absolute quantification [39] |

| Key Advantage | Established benchmark | Low cell input, high signal-to-noise | Robust low-cell implementation | Absolute quantification |

| Limitation | High cell input, crosslinking artifacts | Incomplete peak recovery | Carrier optimization | Complex experimental setup |

Addressing Technical Challenges in Antibody-Based Chromatin Profiling

Strategies for Limited Cell Numbers

Working with rare cell populations or clinical samples often necessitates approaches requiring minimal cell input. Several methods have been developed to address this challenge. Carrier ChIP-seq (cChIP-seq) employs DNA-free recombinant histone carriers to maintain working reaction scales without introducing exogenous DNA that would compromise sequencing libraries [36]. This approach has been successfully applied to profile H3K4me3, H3K4me1, and H3K27me3 starting from as few as 10,000 cells, generating data equivalent to reference epigenomic maps generated from three orders of magnitude more cells [36]. Similarly, the PerCell methodology integrates cellular spike-in ratios of orthologous species' chromatin with a bioinformatic pipeline to enable quantitative comparisons across experimental conditions and cellular contexts [41]. These approaches maintain the fundamental antibody-based enrichment principle while adapting it to limited input material.

Quantitative Comparison Methodologies

Traditional ChIP-seq provides relative enrichment measurements that complicate direct comparisons between experiments or conditions. Recent innovations address this limitation through internal standardization strategies. The PerCell approach combines well-defined cellular spike-in ratios with a flexible bioinformatic pipeline to facilitate highly quantitative comparisons of 2D chromatin sequencing across experimental conditions [41]. Similarly, ICeChIP spikes native chromatin samples with nucleosomes reconstituted from recombinant and semisynthetic histones on barcoded DNA prior to immunoprecipitation, enabling measurement of local histone modification densities on a biologically meaningful scale [39]. These methods provide critical tools for normalizing technical variability and enabling more rigorous assessment of histone modification dynamics across cell states, developmental timepoints, and disease conditions.

Table 3: Research Reagent Solutions for ChIP-seq Experiments

| Reagent Category | Specific Examples | Function & Application Notes |

|---|---|---|

| Validated Antibodies | Anti-H3K4me3 (CST #9751S) [35], Anti-H3K27ac (Abcam-ab4729) [38], Anti-H3K27me3 (CST #9733S) [35] | Target-specific immunoprecipitation; selection of ChIP-seq validated antibodies critical for success [37] |

| Chromatin Shearing Instruments | Covaris LE220 [36], Bioruptor (Diagenode) [35] | Chromatin fragmentation to appropriate size distribution; parameters require optimization for cell type and crosslinking conditions |

| Library Preparation Kits | Illumina Sequencing Kits [35] | Preparation of sequencing libraries; may require modifications for low-input applications [36] |

| Spike-in Controls | Recombinant nucleosomes (ICeChIP) [39], Orthologous chromatin (PerCell) [41] | Normalization for technical variability and quantitative comparisons across conditions |

| Validation Resources | ENCODE reference datasets [36] [38], Positive control primers [35] | Benchmarking experimental results against community standards |

The evolving landscape of antibody-based chromatin profiling techniques presents researchers with multiple options tailored to specific experimental needs and sample limitations. Traditional ChIP-seq remains the benchmarked standard with established validation frameworks, while emerging methods like CUT&Tag offer advantages in sensitivity and required input. Critical to all approaches is the rigorous validation of antibody specificity and the implementation of appropriate controls to ensure reproducible results. As the field advances, the integration of spike-in standards and quantitative normalization methods will further enhance our ability to compare histone modification data across experiments and laboratories. By carefully considering the performance characteristics, limitations, and appropriate applications of each method, researchers can generate more reliable and interpretable epigenomic data that advances our understanding of gene regulatory mechanisms in health and disease.