Ensuring Reproducible PCA: A Comprehensive Framework for Validating Principal Components in Biomedical Research

Principal Component Analysis (PCA) is a foundational tool in biomedical research for dimensionality reduction and pattern discovery.

Ensuring Reproducible PCA: A Comprehensive Framework for Validating Principal Components in Biomedical Research

Abstract

Principal Component Analysis (PCA) is a foundational tool in biomedical research for dimensionality reduction and pattern discovery. However, its reproducibility across datasets is a critical and often overlooked challenge, with implications for the validity of scientific conclusions in areas like drug development and population genetics. This article provides a structured framework for researchers and scientists to assess and ensure the reproducibility of PCA components. We begin by exploring the core concepts of PCA and the fundamental threats to its reproducibility. We then detail robust methodological workflows for application, systematic troubleshooting strategies to address common pitfalls, and finally, rigorous validation and comparative techniques. By integrating insights from recent studies on PCA reliability with practical guidance, this resource aims to empower professionals to implement reproducible PCA practices, thereby enhancing the credibility of their data-driven findings.

The Reproducibility Challenge: Core Concepts and Critical Threats to Reliable PCA

Principal Component Analysis (PCA) stands as a cornerstone technique for dimensionality reduction in data analysis and machine learning. This guide provides an objective primer on PCA, detailing its core mechanisms with a specific focus on interpreting explained variance. Framed within the critical context of assessing the reproducibility of PCA components across datasets, this review synthesizes standard protocols and compares PCA's performance against emerging alternatives. Supporting experimental data and structured comparisons are presented to equip researchers and drug development professionals with the practical knowledge to apply PCA robustly in high-dimensional biological research.

Principal Component Analysis (PCA) is a powerful statistical technique used for dimensionality reduction, which simplifies complex datasets by transforming correlated variables into a smaller set of uncorrelated principal components [1] [2]. These components are linear combinations of the original variables and are designed to capture the maximum possible variance within the data, with the first component accounting for the most variance, the second for the remainder, and so on [3]. The concept of "explained variance" is central to PCA, as it quantifies the proportion of the dataset's total variability that is captured by each successive component [4] [5]. This allows researchers to reduce the number of dimensions while retaining the most significant information, thereby improving computational efficiency and facilitating data visualization [1].

However, applying PCA to modern biological research, such as single-cell RNA-sequencing (scRNA-seq) studies of neurodegenerative diseases, reveals a significant challenge: the reproducibility of PCA components across different datasets can be poor [6]. For instance, a 2025 meta-analysis found that differentially expressed genes (DEGs) identified from individual Alzheimer's disease (AD) and Schizophrenia (SCZ) datasets had poor predictive power for the case-control status of other datasets, highlighting a concerning level of variability in results derived from single studies [6]. This reproducibility crisis underscores the necessity for standardized meta-analysis methods and a deeper understanding of how to stabilize PCA outcomes, making the mastery of its core concepts not just beneficial, but essential for generating reliable scientific insights.

Core Concepts: How PCA Works and Variance Explained

The Mathematical Foundation of PCA

PCA operates through a series of defined steps rooted in linear algebra. The process begins with data standardization, where each feature is centered to have a mean of zero and scaled to have a standard deviation of one [1] [2]. This crucial step ensures that variables with larger scales do not disproportionately dominate the analysis. The next step involves computing the covariance matrix, which reveals the relationships and correlations between different features [1] [3]. The core of PCA lies in the eigen decomposition of this covariance matrix, which yields eigenvectors and eigenvalues [1]. The eigenvectors define the directions of the new feature space—these are the principal components themselves. The corresponding eigenvalues quantify the amount of variance carried by each of these directions [1] [4]. The final step involves projecting the original data onto the selected principal components, effectively creating a new, lower-dimensional dataset [2].

Demystifying "Variance Explained"

The "variance explained" by a principal component is a direct function of its eigenvalue. Specifically, the fraction of total variance explained by a single component is calculated as the ratio of its eigenvalue to the sum of all eigenvalues [4] [5] [7]. If ( \lambda_i ) is the eigenvalue for the ( i^{th} ) principal component, then its explained variance ratio is:

[ \text{Explained Variance Ratio} = \frac{\lambdai}{\lambda1 + \lambda2 + \dots + \lambdan} ]

The sum of all eigenvalues equals the total variance in the original (standardized) data [7]. Therefore, by ranking the eigenvectors in descending order of their eigenvalues, we obtain the principal components in order of significance [2]. The cumulative explained variance is simply the sum of the explained variances for the first ( k ) components, providing a metric to decide how many components to retain. A common practice is to choose the number of components that capture a sufficiently high percentage (e.g., 95%) of the total variance [1] [3].

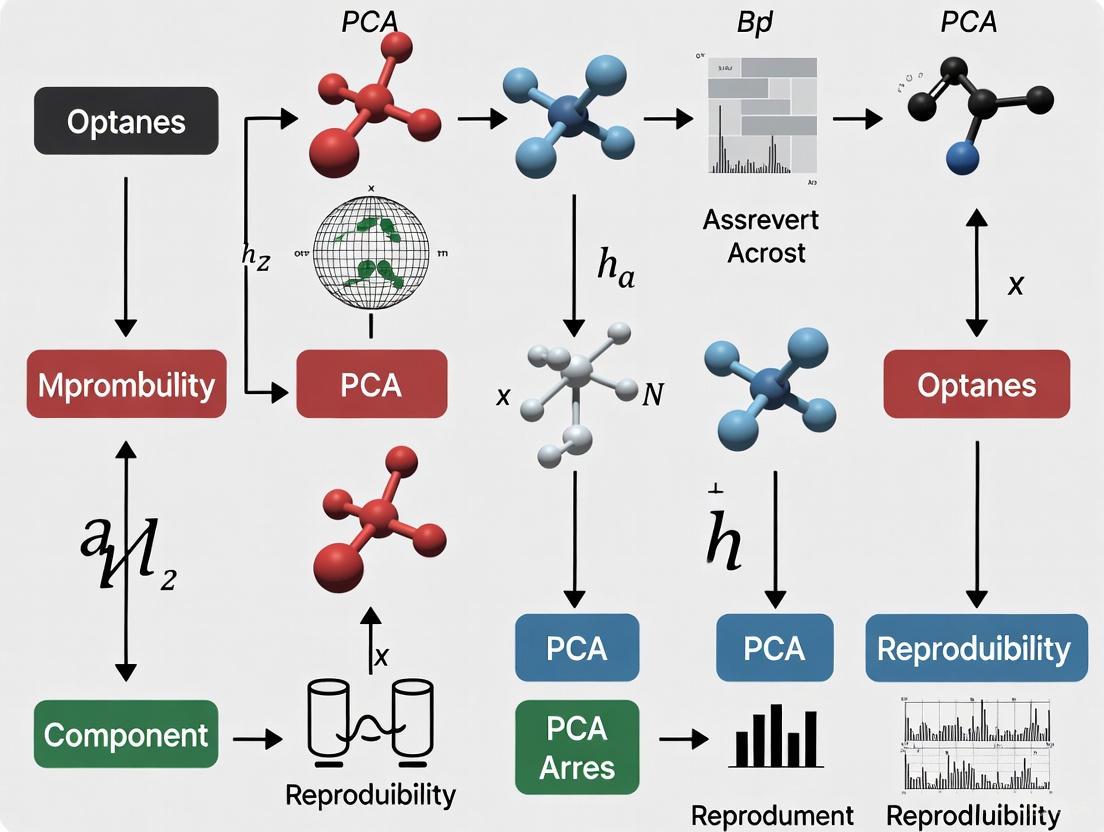

The following diagram illustrates the logical workflow of a PCA analysis and the pivotal role of explained variance in guiding decision-making.

Experimental Protocols for PCA

Standard PCA Workflow with Python Code

Implementing PCA effectively requires a structured protocol. The following methodology, utilizing Python and scikit-learn, outlines the key steps for performing PCA and evaluating the explained variance, which is critical for assessing component reproducibility.

Protocol 1: Standard PCA and Explained Variance Analysis

- Import Libraries: Import necessary Python libraries, including

pandasfor data handling,StandardScalerfor standardization, andPCAfromsklearn.decomposition[1] [5]. - Standardize the Data: Standardization is a non-negotiable prerequisite for PCA. Use

StandardScalerto transform the raw data so that each feature has a mean of 0 and a standard deviation of 1. This prevents variables with larger units from biasing the analysis [1] [3]. - Instantiate and Fit PCA: Create a PCA object. Initially, you may not specify the number of components to retain all possible components. Fit the PCA model to the standardized data [5] [3].

- Calculate Explained Variance: After fitting the model, access the

explained_variance_ratio_attribute. This returns an array of the variance explained by each principal component, listed in descending order [5]. - Visualize the Explained Variance: Create a scree plot to visualize the explained variance. Plot the individual explained variances as a bar chart and the cumulative explained variance as a step plot. This visual aid is indispensable for deciding how many components to retain [5].

- Transform the Data: Once the number of components, ( k ), is chosen (e.g., enough to capture 95% of the variance), project the original standardized data onto these ( k ) components using the

transformmethod, resulting in the new, lower-dimensional dataset [1].

A Practical Example: Iris Dataset

To illustrate, applying PCA to the classic Iris dataset (with 4 features) reveals how explained variance guides dimensionality reduction. The analysis might show that the first principal component explains 73% of the variance, the second explains 23%, and the last two explain the remaining 4% [3]. This means the 4D data can be effectively reduced to 2D while retaining over 95% of the original information, demonstrating a powerful trade-off between simplicity and information loss [3].

Comparative Performance of PCA and Modern Alternatives

While PCA is a foundational technique, several alternatives have been developed to address its limitations, particularly in comparative analyses. The table below summarizes key methods.

Table 1: Comparison of Dimensionality Reduction Techniques for Comparative Analysis

| Method | Core Objective | Key Functionality | Advantages | Disadvantages/Limitations |

|---|---|---|---|---|

| Principal Component Analysis (PCA) [1] [3] | Find dimensions of maximum variance in a single dataset. | Unsupervised, orthogonal linear transformation. | Simple, fast, and well-understood. Excellent for exploratory data analysis. | Cannot directly compare covariance structures between two conditions. |

| Linear Discriminant Analysis (LDA) [8] | Find dimensions that best separate predefined classes. | Supervised dimensionality reduction. | Optimizes for class separability, often leading to better predictive performance for classification. | Requires class labels. Does not compare covariance structures, only means. |

| Contrastive PCA (cPCA) [8] | Find dimensions enriched in a target dataset relative to a background dataset. | Eigendecomposition of (C_target - α*C_background). |

Identifies patterns specific to a target condition, useful for highlighting differences. | Requires a hyperparameter (α) with no objective criteria for selection, leading to multiple potential solutions. |

| Generalized Contrastive PCA (gcPCA) [8] | Symmetrically find patterns differing between two datasets. | Solves a generalized eigenvalue problem with normalization. | Hyperparameter-free, provides unique solutions, less biased towards high-variance dimensions. | A newer method with less established adoption compared to PCA. |

The challenge of reproducibility is directly addressed by methods like gcPCA. As noted in a 2025 study, cPCA's need for a hyperparameter (α) means it can produce multiple, equally plausible solutions with no way to determine which is correct without prior knowledge [8]. This directly impacts the reproducibility of components across studies. In contrast, gcPCA introduces a normalization factor that penalizes high-variance dimensions prone to noisy estimation, thereby eliminating the need for a hyperparameter and yielding more stable, reproducible results [8].

Table 2: Sample Explained Variance Output from a PCA Analysis

| Principal Component Index | Individual Explained Variance Ratio | Cumulative Explained Variance Ratio |

|---|---|---|

| 1 | 0.847 | 0.847 |

| 2 | 0.103 | 0.950 |

| 3 | 0.030 | 0.980 |

| 4 | 0.020 | 1.000 |

The Scientist's Toolkit: Essential Research Reagents

Successful application of PCA and related methods in computational research relies on a suite of software "reagents." The following table details key resources for implementing the analyses discussed in this guide.

Table 3: Key Research Reagent Solutions for PCA and Comparative Analysis

| Tool / Resource Name | Type/Function | Key Use-Case | Implementation Example |

|---|---|---|---|

| scikit-learn [1] [5] | Python machine learning library. | Provides the PCA class for easy implementation of standard PCA, including calculation of explained_variance_ratio_. |

from sklearn.decomposition import PCA |

| MDAnalysis [9] | Python toolkit for molecular dynamics (MD) trajectories. | Enables PCA on protein structural ensembles from MD simulations to analyze conformational changes and dynamics. | Analyzing protein flexibility and ligand binding effects in drug discovery. |

| gcPCA Toolbox [8] | Open-source toolbox (Python & MATLAB). | Implements generalized contrastive PCA for symmetrically comparing two high-dimensional datasets without hyperparameters. | Identifying transcriptional patterns specific to a disease state versus a control in scRNA-seq data. |

| NumPy & SciPy [5] | Fundamental Python packages for scientific computing. | Perform linear algebra operations (e.g., eigh for eigen decomposition) for custom PCA implementation without scikit-learn. |

from numpy.linalg import eigh for custom covariance matrix decomposition. |

PCA remains an indispensable tool for simplifying complex data, with the interpretation of explained variance being paramount for making informed decisions about dimensionality reduction. However, as research increasingly focuses on comparing conditions and ensuring reproducibility, understanding the limitations of standard PCA is critical. Emerging techniques like gcPCA offer promising avenues for overcoming these limitations by providing robust, hyperparameter-free methods for comparative analysis. For researchers in drug development and biomedicine, mastering both the foundational principles of PCA and the capabilities of these next-generation tools is key to extracting reliable and reproducible insights from high-dimensional data.

Principal Component Analysis (PCA) is a cornerstone of multivariate statistics, widely used for reducing the complexity of datasets while preserving data covariance. Its ability to create intuitive, colorful scatterplots has made it a favored tool across scientific disciplines, from population genetics to drug development. However, a growing body of evidence reveals a concerning reality: PCA results are highly sensitive to analytical choices and data characteristics, potentially undermining the reproducibility of scientific findings. This guide examines the sources of PCA's variability and provides a framework for assessing its reliability in research.

The Core of the Crisis: Why PCA Results Diverge

PCA, developed by Karl Pearson in 1901, is designed to transform high-dimensional data into a set of linear, uncorrelated components that capture maximum variance. Despite its mathematical elegance, PCA possesses inherent characteristics that make it susceptible to producing irreproducible results:

- Data Sensitivity: PCA outcomes are heavily influenced by data composition, including sample selection, outliers, and data preprocessing methods.

- Interpretive Subjectivity: Researchers must make subjective decisions about which components to retain and how to interpret scatterplot patterns.

- Parameter Flexibility: The lack of standardized guidelines for key analytical choices creates room for variability.

One study highlighted that PCA can be easily manipulated to generate desired outcomes, raising concerns about its reliability in scientific investigations. The authors demonstrated that "PCA results can be artifacts of the data and can be easily manipulated to generate desired outcomes," indicating fundamental reproducibility challenges [10].

Experimental Evidence: Documenting PCA Instability

Population Genetics Case Study

A comprehensive assessment in population genetics analyzed twelve test cases using both color-based models and human population data [10]. The findings were striking:

- Contradictory Conclusions: PCA supported multiple opposing arguments in the same debate when analytical parameters were modified.

- Dimensionality Reduction Artifacts: In a controlled color model where the "true" relationships were known (colors existing in 3D RGB space), PCA failed to accurately represent distances between primary colors in 2D projections.

- Marker Sensitivity: Varying the selection of genetic markers produced fundamentally different population clusters and relationships.

The study concluded that "PCA results may not be reliable, robust, or replicable as the field assumes," noting that between 32,000-216,000 genetic studies may need reevaluation due to these methodological concerns [10].

Transcriptomics and Cell Culture Research

Research on cell passage numbers revealed how biological variables affect PCA reproducibility. In a study of tumor cell lines (ACHN and Renca) from passage 3 to 39, researchers observed significant "transcriptomic drift" across passages [11]. The PCA results showed:

- Nonlinear Expression Patterns: Gene expression changes followed a "middle波动,两端稳定" (middle fluctuation, both ends stable) pattern, with mid-passage cells (P10-P17) showing the most transcriptional activity.

- Temporal Dynamics: The gradual dispersion of samples in PCA space across passages complicated direct comparisons between experiments using cells at different passage numbers.

- Biological Interpretation Challenges: Varying numbers of differentially expressed genes across passages (e.g., 1,276 upregulated in P10 vs. P3 versus only 201 in P39 vs. P3 in ACHN cells) affected PCA clustering patterns [11].

Physical Anthropology and Morphometrics

In physical anthropology, researchers applying geometric morphometrics to papionin crania found that "PCA outcomes are artefacts of the input data and are neither reliable, robust, nor reproducible as field members may assume" [12]. Key issues included:

- Landmark Dependency: The use of different landmark types (Type 1, 2, or 3) and semi-landmarks significantly altered PCA outcomes.

- Subjective Interpretation: Researchers selectively presented PC combinations that supported their hypotheses while ignoring contradictory patterns in other components.

- Taxonomic Confusion: In the case of Homo Nesher Ramla, different PC combinations (PC1-PC2 vs. PC2-PC3) produced conflicting phylogenetic placements [12].

Table 1: Documented PCA Reproducibility Challenges Across Disciplines

| Research Domain | Primary Reproducibility Challenge | Impact on Results |

|---|---|---|

| Population Genetics [10] | Sensitivity to population and marker selection | Altered population clustering and ancestry inferences |

| Cell Biology [11] | Biological variability (passage effects) | Transcriptomic drift complicates cross-study comparisons |

| Physical Anthropology [12] | Landmark choice and semi-landmark alignment | Conflicting taxonomic and evolutionary conclusions |

| Metabolomics [13] | High dimensionality with small sample sizes | Overfitting and spurious pattern detection |

Methodology: How PCA Variability is Assessed

Standard PCA Protocol

The conventional PCA workflow involves several critical steps where variability can be introduced [14] [15]:

- Data Standardization: Continuous variables are normalized to mean = 0 and standard deviation = 1 to prevent bias toward specific features.

- Covariance Matrix Computation: Measures how variables deviate from the mean together.

- Eigenvalue Decomposition: Identifies principal components as eigenvectors of the covariance matrix.

- Component Selection: Researchers choose how many components to retain based on eigenvalues or variance explained.

- Data Projection: Original data is projected onto the new component space.

Experimental Designs for Testing Reproducibility

Color-Based Benchmarking

The color model approach uses RGB color space as a ground truth for testing PCA performance [10]. Since all colors consist of three dimensions (red, green, blue), they can be plotted in 3D space representing true relationships. PCA reduces this to 2D, allowing researchers to measure how well the projected distances match true color relationships.

Multi-Scenario Clustering Comparison

Research on health security performance in high-income countries employed a multistage analytical framework comparing three methodological scenarios [16]:

- Scenario 1: Using countries' average scores across nine PCA-derived components

- Scenario 2: Clustering based on 13 high-loading indicators from the first principal component

- Scenario 3: Using aggregated scores across six original GHSI categories

This design enabled direct comparison of how different data representations affected clustering outcomes.

Robustness Testing with Contaminated Data

Studies in image processing introduced outliers (rotated images of cats) into face recognition datasets to test PCA's robustness [14]. Comparing standard PCA with robust variants (Robust Semiparametric PCA) revealed how outlier sensitivity affects feature extraction and image reconstruction accuracy.

PCA Workflow with Bias Sources

Comparative Analysis: PCA Performance Across Domains

Table 2: Quantitative Comparison of PCA Reproducibility Factors

| Factor | Impact on Reproducibility | Supporting Evidence | Recommended Mitigation |

|---|---|---|---|

| Sample Size & Composition | High - Explains majority of variance fluctuations | 9 principal components explained 74.50% of variance in health security study, with first component alone contributing 37.62% [16] | Consistent sampling protocols; sample size justification |

| Data Preprocessing | Medium-High - Normalization affects covariance | Data standardization to mean=0, SD=1 prevents feature dominance [15] | Transparent reporting of normalization methods |

| Outlier Presence | High - Significantly shifts components | Robust Semiparametric PCA outperformed standard PCA when outliers were present [14] | Outlier detection and robust PCA variants |

| Component Selection Criteria | Medium - Subjective thresholds affect results | No consensus on PC number; practices range from 2 to 280 components [10] | Objective criteria (e.g., Tracy-Widom, scree plots) |

| Biological Variability | High - Introduces uncontrolled variance | Cell passage number drove transcriptomic drift with 1,276 upregulated genes in P10 vs. P3 [11] | Standardization of biological materials |

The Researcher's Toolkit: Materials & Methods for Reliable PCA

Table 3: Essential Research Reagents and Computational Tools

| Item/Resource | Function in PCA Analysis | Application Context |

|---|---|---|

| SmartPCA (EIGENSOFT) [10] | Implements population genetics-specific PCA with advanced features | Population structure analysis in genetic studies |

| MORPHIX Python Package [12] | Processes landmark data with classifier and outlier detection methods | Geometric morphometrics in physical anthropology |

| Robust Semiparametric PCA [14] | Reduces outlier influence through weighted estimation | Analysis of contaminated datasets or those with extreme values |

| Global Health Security Index Data [16] | Provides standardized metrics for cross-country comparisons | Public health preparedness and capacity assessment |

| Olivetti Faces Dataset [14] | Benchmark for testing image processing and recognition algorithms | Method validation in computer vision research |

| Cell Passage Standardization [11] | Controls for transcriptomic drift in biological experiments | Reproducible cell culture studies |

Strategic Recommendations for Enhanced Reproducibility

Experimental Design Considerations

- Standardize Biological Materials: Control for passage effects in cell cultures by reporting and standardizing passage numbers [11].

- Implement Benchmark Tests: Use color models or other ground-truth datasets to validate PCA performance [10].

- Apply Multiple Scenarios: Conduct sensitivity analyses using different data representations and component selections [16].

Analytical Best Practices

- Address Outlier Sensitivity: Implement robust PCA variants when analyzing data with potential contaminants [14].

- Transparent Reporting: Document all analytical choices, including standardization methods, component selection criteria, and data exclusion rationales.

- Validation with Alternative Methods: Supplement PCA with supervised machine learning classifiers for improved accuracy in classification tasks [12].

PCA Reproducibility Framework

The evidence from multiple disciplines reveals that PCA, while valuable for exploratory data analysis, carries significant reproducibility risks that researchers must acknowledge and address. The method's sensitivity to data composition, analytical choices, and biological variability means that "identical" analyses can yield different results due to subtle variations in execution.

For researchers in drug development and related fields, the path forward involves:

- Acknowledging PCA's limitations as primarily an exploratory tool

- Implementing robust validation frameworks and sensitivity analyses

- Maintaining rigorous standards for documentation and methodological transparency

- Supplementing PCA with complementary analytical approaches

By adopting these practices, researchers can continue to leverage PCA's strengths for dimensionality reduction and pattern recognition while mitigating the reproducibility concerns that currently challenge its scientific utility.

Principal Component Analysis (PCA) is a foundational technique for dimensionality reduction, widely used across fields from healthcare to genomics. However, the reproducibility and stability of its components are critical for reliable scientific findings. This guide objectively assesses key threats to PCA component stability—sample size, data quality, and algorithmic choices—by comparing experimental data and methodologies from published research. Understanding these factors is essential for researchers, scientists, and drug development professionals who depend on reproducible multivariate data analysis.

Sample Size and Its Impact on Component Stability

Inadequate sample size is a fundamental threat to the development of reliable AI-based prediction models, including those using PCA. Insufficient samples can lead to overfitting, reduce model generalizability, and ultimately produce unstable components that fail to validate on independent datasets [17].

Experimental Evidence: Sample Size Effects

The following table summarizes findings from research investigating how sample size influences analytical stability:

| Study Focus | Key Finding on Sample Size | Impact on Stability/Performance |

|---|---|---|

| AI-Based Healthcare Models [17] | Most studies lack rationale for sample size; datasets often inadequate for training/evaluation. | Negatively affects model training, evaluation, and performance, with harmful consequences for patient care. |

| Healthcare Prevalence Studies [18] | Convenience samples of 135 hospitals were subsampled to a target of 55 to meet representativeness requirements. | Non-representative sampling introduced distributional bias; structured subsampling methods were required to reduce bias and produce reliable prevalence estimates. |

Experimental Protocol: Assessing Sample Size Adequacy

- Problem Identification: Determine that a convenience sample of 135 units (e.g., hospitals) suffers from over-representation of specific groups (e.g., large hospitals or specific regions) [18].

- Reference Definition: Obtain a national database containing the true distribution of all units according to key characteristics (e.g., hospital size and geographical location) [18].

- Bias Evaluation: Compare the distribution of the convenience sample against the reference population to quantify distributional bias [18].

- Subsampling Application: Apply a structured procedure (e.g., Probability or Distance procedure) to select a subsample of a specific target size (e.g., 55 units) that more closely mirrors the reference population's distribution [18].

- Outcome Comparison: Compare outcome estimates (e.g., disease prevalence) from the original convenience sample and the subsample to assess the impact of improved representativeness [18].

Data Quality and Preprocessing Imperatives

The quality of input data directly determines the validity of PCA's output. Violations of PCA's underlying statistical assumptions are a major source of instability, particularly in biological and medical data [19].

Experimental Evidence: Data Quality and Methodological Alignment

| Data Type / Context | PCA Performance Issue | Superior Alternative & Performance |

|---|---|---|

| COVID-19 CT Scans (Nonlinear Data) [19] | 83.76% accuracy; PCA violates linearity assumptions, may discard biologically relevant low-variance features. | Feature Agglomeration (FA): 92.79% accuracy; preserves spatial relationships. |

| Geometric Morphometrics [20] | Inconsistent clustering and taxonomic inferences; highly susceptible to partial sampling and missing data. | Machine Learning Classifiers (e.g., via MORPHIX): Showed superior robustness and classification accuracy. |

| Hyperspectral Image Analysis [21] | Effective for simplifying high-dimensional spectral data by preserving maximal variance. | PCA is appropriately applied for its intended purpose of variance-based distillation. |

Experimental Protocol: Evaluating Dimensionality Reduction Methods

- Dataset Selection: Use a benchmark dataset with known characteristics (e.g., MNIST with 70,000 samples and 784 features) [19].

- Method Application: Apply multiple dimensionality reduction techniques (e.g., PCA, High Variance Gene Selection, Feature Agglomeration) to the same raw dataset without applying scaling or normalization to isolate intrinsic method performance [19].

- Feature Reduction: Select an identical number of top features (e.g., 30) from each method [19].

- Model Training & Validation: Use a consistent classifier (e.g., Random Forest) with cross-validation to assess the accuracy of the reduced feature sets [19].

- Performance Analysis: Compare accuracy and consistency (standard deviation) across methods to determine the best approach for the data type [19].

Algorithmic Choices: PCA vs. Factor Analysis and Beyond

The choice of dimensionality reduction algorithm is not one-size-fits-all. Selecting between PCA and Factor Analysis (FA), or opting for newer methods, has profound implications for the interpretability and stability of the resulting components.

Experimental Evidence: PCA vs. Factor Analysis

| Comparison Criteria | Principal Component Analysis (PCA) | Factor Analysis (FA) |

|---|---|---|

| Core Purpose | To maximize explained variance in the observed variables [22]. | To identify underlying latent (hidden) constructs that explain covariances [23]. |

| Model Outcome | Creates new, uncorrelated variables (components) as linear combinations of original variables [24]. | Models observed variables as linear combinations of latent factors and unique error terms [23]. |

| Statistical Basis | Eigen-decomposition of the covariance/correlation matrix [24]. | Fits a model to the covariance/correlation structure [23]. |

| Performance in Simulation | Behaves similarly to FA in many cases [23]. | Generally produces factors with stronger correlations to true underlying genetic components in simulated data [23]. |

| Performance in Cancer Diagnosis | Effectively distinguished healthy and cancerous colon tissues in mass spectrometry data [25]. | Also effectively distinguished tissues, with factors showing strong alignment with principal components from PCA [25]. |

Emerging Algorithmic Alternatives

- Stratified PCA (SPCA): Addresses limitations of Probabilistic PCA (PPCA) by allowing for repeated eigenvalues, providing a better fit for datasets with limited samples and improving model interpretability by transitioning from principal components to principal subspaces [26].

- Robust PCA (RPCA): Designed to handle very large datasets (e.g., in image analysis) by decomposing a matrix into a low-rank component and a sparse component, making it more resilient to outliers and corruptions [24].

The Scientist's Toolkit: Essential Reagents for Stability

| Reagent / Solution | Function in Ensuring Component Stability |

|---|---|

| Sample Size Calculation Tools | Provides pre-study rationale for minimum sample size required for model training and evaluation, mitigating overfitting [17]. |

| Data Quality Score (QS) | A weighted metric that grades individual data units (e.g., hospitals) on completeness and reliability, enabling quality-based selection for analysis [18]. |

| Structured Subsampling Procedures | Algorithms (e.g., Probability, Distance, Uniformity) that select a representative or balanced subsample from a larger convenience sample to reduce distributional bias [18]. |

| MORPHIX Python Package | Provides tools for morphometrics analysis using machine learning classifiers as a robust alternative to PCA-based geometric morphometrics [20]. |

| Feature Agglomeration | A nonlinear dimensionality reduction technique based on hierarchical clustering that can outperform PCA on image data by preserving local spatial relationships [19]. |

The stability and reproducibility of PCA components are not guaranteed. They are critically dependent on rigorous study design and analytical choices. Evidence shows that inadequate sample size undermines model reliability, poor data quality and violation of methodological assumptions lead to inaccurate feature reduction, and the choice of algorithm must be matched to the data structure and research question. Researchers can mitigate these threats by employing power analysis for sample size, rigorously preprocessing and assessing data quality, and considering robust alternatives like FA, Feature Agglomeration, or SPCA when PCA's assumptions are violated. A deliberate and informed approach to these factors is essential for producing valid, reproducible research in scientific and drug development contexts.

Principal Component Analysis (PCA) is a foundational tool for dimensionality reduction across numerous scientific fields, from population genetics to materials science. Its ability to transform high-dimensional data into lower-dimensional visualizations has made it a staple in exploratory data analysis. However, its unsupervised and mathematically deterministic nature, combined with numerous subjective choices in its application, raises critical concerns about the reproducibility and robustness of its findings. Framed within a broader thesis on assessing the reproducibility of PCA components, this case study synthesizes evidence demonstrating that PCA outcomes can be significantly influenced by pre-processing decisions, sample composition, and parameter selection, at times rendering them statistical artifacts rather than genuine biological or physical discoveries. This analysis aims to equip researchers, scientists, and drug development professionals with a critical understanding of both the pitfalls and the rigorous practices necessary for the reliable application of PCA.

Theoretical Foundations and Standard Protocols

The Core Mechanism of PCA

Principal Component Analysis is a multivariate technique designed to reduce the dimensionality of a dataset while preserving the covariance structure of the data [10]. It operates by identifying new orthogonal axes, termed principal components (PCs), which are linear combinations of the original features. The first PC captures the direction of maximum variance in the data, with each subsequent component capturing the next highest variance under the constraint of orthogonality to preceding components [27] [28]. The process can be implemented via eigen-decomposition of the covariance matrix or through Singular Value Decomposition (SVD) of the mean-centered data matrix [27].

Standard Experimental Protocol in Genetics

In population genetics, a typical PCA workflow involves several standardized steps to control for population structure, often using packages like EIGENSOFT and PLINK [29] [10].

- Data Preparation: Genotype data is encoded numerically (e.g., for bi-allelic variants). A crucial pre-processing step involves Linkage Disequilibrium (LD) pruning to remove genetic variants in high correlation with their neighbors, as unusual LD patterns can cause PCs to capture local genomic features rather than true population structure [29]. Common practice uses a pairwise-correlation threshold of ( r^2 > 0.2 ) for pruning.

- Covariance Matrix Computation: The genetic relationship matrix or covariance matrix is calculated from the pre-processed genotype data.

- Dimensionality Reduction: Eigen-decomposition is performed on the covariance matrix to obtain eigenvalues and eigenvectors (the PCs).

- Visualization and Interpretation: Samples are projected onto the first few PCs, typically PC1 and PC2, and visualized on a scatter plot. Clusters and distances between data points in this reduced space are interpreted as representing genetic similarity, shared ancestry, or population history [10].

Table: Key Research Reagent Solutions for PCA in Genetics

| Item | Function | Example Tools / Datasets |

|---|---|---|

| Genotype Data | Raw data for analysis; can be manipulated to alter outcomes. | Women’s Health Initiative SHARE, Jackson Heart Study [29] |

| LD Pruning Tool | Removes correlated variants to prevent artifact-prone PCs. | PLINK [29] |

| PCA Software | Performs core decomposition calculations. | EIGENSOFT (SmartPCA), PLINK [10] |

| Reference Datasets | Provides population labels for interpretation; choice can bias results. | gnomAD, UK Biobank [10] |

The following workflow diagram summarizes a typical PCA protocol in population genetics.

Typical PCA Protocol in Population Genetics

Evidence of Artifacts and Manipulation

The Color Model: A Simplistic Demonstration

To unambiguously test PCA's reliability, Elhaik (2022) employed an intuitive color-based model where the "truth" is known [10]. In this model, distinct populations are represented by the primary colors Red, Green, and Blue, each defined by a pure 3D vector (e.g., Red = [1,0,0]). PCA successfully reduced this data from 3D to a 2D plot with the three colors positioned equidistantly, correctly representing their true relationships. However, when the sample composition was manipulated—specifically, by reducing the number of "Blue" individuals—the PCA plot underwent a dramatic and misleading shift. The "Black" ([0,0,0]) cluster, which was originally equidistant from all primary colors, moved significantly closer to the under-sampled "Blue" cluster. This demonstrates that sample size imbalances alone can drastically alter the perceived relationships between groups in a PCA plot, generating a potentially false conclusion about the closeness of "Black" and "Blue" [10].

Case Study: Genetic Origins of Indian Populations

The vulnerability of PCA to manipulation is not merely theoretical. A landmark 2009 study used PCA to conclude that Indians constitute a distinct genetic cluster separate from Europeans, East Asians, and Africans [10] [30]. Elhaik (2022) revisited this finding using the same real-world genomic data. By simply altering the proportions of the non-Indian reference populations in the input dataset, the PCA output was manipulated to support three entirely different historical conclusions: that Indians descend from Europeans, from East Asians, or from Africans [30]. This demonstrates that PCA results can be "easily manipulated to generate desired outcomes," fundamentally challenging the reliability of any single analysis that lacks rigorous sensitivity checks [10].

Contamination from Technical Artifacts

Beyond sample composition, PCA results can be distorted by technical artifacts within the data. In genomics, a significant concern is that principal components may capture patterns from regions with atypical linkage disequilibrium (LD) instead of genuine population structure [29]. Adjusting for these artifact-laden PCs in Genome-Wide Association Studies (GWAS) can induce severe collider bias, leading to both biased effect size estimates and spurious associations [29]. This problem is particularly acute in admixed populations, where standard pre-processing steps like excluding known high-LD regions (e.g., the HLA region on chromosome 6) may not fully resolve the issue [29]. The choice of LD pruning threshold is also critical and non-uniform across studies, further threatening reproducibility.

Table: Summary of PCA Manipulation Evidence

| Experimental Context | Manipulation Method | Impact on PCA Results | Reference |

|---|---|---|---|

| Color Model (Synthetic) | Varying sample size of color groups | Altered perceived distances between clusters; Black moved closer to under-sampled Blue. | [10] |

| Indian Population Genetics | Varying proportions of reference populations | Supported opposing origins (European, East Asian, African) from the same core data. | [10] [30] |

| Admixed Population GWAS | Inclusion of PCs capturing local LD | Induced collider bias, leading to spurious associations and biased effect estimates. | [29] |

| High-LD Genomic Regions | Inclusion of variants from known high-LD regions | PCs reflected local genomic features instead of true population structure. | [29] |

Contrasting Case: A Principled Application of PCA

It is crucial to note that PCA remains a powerful tool when applied with rigor and diagnostic checks. A positive example comes from materials science, where researchers developed a deep learning potential for the LLZO solid-state electrolyte [31]. In this study, PCA was not used as a primary analytical tool but as a diagnostic to ensure the convergence and completeness of the training set for a machine learning model. The researchers calculated the "coverage" of local structural features in both training and test sets using PCA. They established that the iterative training process was complete only when the coverage rate of the test set by the training set reached 99.51%, a quantitative and objective criterion [31]. This contrasts sharply with genetic studies where the number of PCs retained is often arbitrary (e.g., the first 2, 5, or even 280) [10]. The LLZO case demonstrates a reproducible application of PCA, where it serves a specific, validated function within a larger workflow, and its output is measured against a pre-defined, quantitative metric.

Strategies for Mitigation and Alternative Approaches

Recommendations for Robust PCA

In light of the evidence, researchers can adopt several strategies to fortify their use of PCA.

- Rigorous Pre-processing: In genetics, this means careful LD pruning and consideration of excluding known problematic genomic regions, though optimal thresholds may be dataset-specific [29].

- Comprehensive Sensitivity Analysis: It is essential to test how PCA results change with variations in sample composition, the number of markers used, and different pre-processing parameters [10].

- Objective PC Selection: Avoid relying solely on the first two PCs or arbitrary cutoffs. Use statistical guides like the Tracy-Widom test, while acknowledging their sensitivity, and report the proportion of variance explained by the PCs used for interpretation [29] [10].

- Emphasis on Quantification: Move beyond qualitative interpretations of scatter plots. Use PCA results as part of a quantitative diagnostic framework, as in the coverage metric used in materials science [31].

Emerging Alternative Methods

Several methods have been developed to address specific limitations of PCA. The following diagram illustrates the relationships between PCA and its alternatives.

PCA and Its Alternatives

- Generalized Contrastive PCA (gcPCA): This method is designed to compare the covariance structures of two datasets. It improves upon Contrastive PCA (cPCA) by eliminating the need for a problematic hyperparameter (( \alpha )) that previously required tuning and yielded multiple potential solutions. gcPCA provides a more robust, hyperparameter-free way to find patterns enriched in one dataset relative to another [8].

- Model-Based Approaches: In population genetics, tools like ADMIXTURE offer a model-based framework for inferring ancestry, which can provide a complementary or alternative perspective to the more descriptive PCA [29].

- Deep Learning: In fields like materials science and defect detection, deep learning models (e.g., ResNet) can sometimes achieve superior performance, particularly in capturing complex, non-linear patterns, though they may come with their own challenges like requirements for large data and computational resources [32].

The evidence presented in this case study unequivocally shows that PCA results are not inherently objective and can be heavily influenced by subjective analytical choices, leading to artifacts and manipulable outcomes. This poses a significant threat to the reproducibility of research in genetics and beyond, calling into question a vast body of literature. However, PCA is not an irredeemable tool. The path forward requires a paradigm shift from its naive application to a principled one. Researchers must prioritize rigorous sensitivity analyses, transparent reporting of all parameters and procedures, and the use of quantitative diagnostics to validate results. Furthermore, the scientific community should actively explore and adopt next-generation methods like gcPCA, which are specifically designed to address the known weaknesses of standard PCA. By acknowledging these pitfalls and adhering to stricter standards, researchers can continue to leverage PCA's strengths while mitigating its considerable risks.

Foundational Assumptions of PCA and Where They Fail in Biomedical Data

Principal Component Analysis (PCA) stands as a cornerstone dimensionality reduction technique in biomedical research, applied across domains from genomics to medical imaging. This mathematical procedure transforms high-dimensional datasets into a reduced set of uncorrelated principal components that capture maximum variance. However, PCA's foundational assumptions—linearity, correlation between features, and homoscedasticity—frequently contradict the complex biological realities of biomedical data. This guide examines PCA's core assumptions, identifies where they fail in experimental biomedical contexts, and objectively compares PCA's performance against emerging alternatives, providing researchers with evidence-based framework for selecting appropriate analytical methods.

PCA serves as an essential exploratory tool for analyzing high-dimensional biomedical data, including data from omics technologies, medical imaging, and clinical biomarkers. The technique operates through orthogonal transformation of potentially correlated variables into principal components (PCs), ordered so that the first PC explains the largest possible variance [33] [34]. This dimensionality reduction enables data visualization, noise reduction, and pattern recognition in datasets where the number of variables often vastly exceeds sample sizes [35] [36].

In practical biomedical applications, PCA simplifies complex datasets by identifying multidimensional directions that maximize variation, effectively condensing biological variability into interpretable components. For instance, in congenital adrenal hyperplasia research, PCA has successfully created endocrine profiles from multiple hormone measurements to objectively classify treatment efficacy [37]. Similarly, in mass spectrometry analysis of colon tissues, PCA has demonstrated utility in distinguishing cancerous from healthy samples based on spectral patterns [38].

The technique's mathematical foundation relies on several statistical assumptions that frequently mismatch the intrinsic properties of biological systems. As biomedical data grows in complexity and dimensionality, understanding where PCA's theoretical foundations align with empirical biological reality becomes crucial for research validity and reproducibility.

Foundational Assumptions of PCA

Mathematical and Statistical Foundations

PCA operates according to several non-negotiable mathematical prerequisites that dictate its proper application and interpretation. The algorithm fundamentally assumes linear relationships between all variables in the dataset, implementing a rigid linear transformation that may fail to capture nonlinear biological interactions [33] [19]. This linearity assumption permits the computation of principal components as straight-line axes of maximum variance through high-dimensional data space.

The technique requires meaningful correlations between variables, without which dimensionality reduction becomes ineffective [33]. This dependency manifests mathematically through the covariance matrix computation, which quantifies how variables change together [33] [36]. PCA further presupposes homoscedasticity (uniform variance across observations) and continuous, appropriately standardized data distributions [19]. The algorithm is also sensitive to outlier influence, where extreme values can disproportionately sway component orientation [33] [39].

Practical Implementation Requirements

In applied settings, PCA demands careful data preprocessing to align experimental measurements with algorithmic expectations. Feature standardization proves essential—variables must be centered to zero mean and scaled to unit variance to prevent features with larger numerical ranges from artificially dominating the first components [33] [36]. Without this normalization, PCA results become biased toward high-magnitude features regardless of their biological significance.

Implementation further requires adequate sample sizes relative to feature dimensions, with rules of thumb suggesting 5-10 cases per variable or absolute minimums of 150 observations [39]. Absence of missing values represents another practical requirement, as most statistical implementations cannot handle incomplete data matrices [33]. Additionally, researchers must determine the optimal number of components to retain, balancing information preservation against dimensionality reduction—a decision often guided by variance-based thresholds or scree plots [34].

The following diagram illustrates the standard PCA workflow and its embedded assumptions:

Where PCA Assumptions Fail in Biomedical Data

Nonlinear Biological Relationships

Biological systems fundamentally operate through nonlinear interactions—from gene regulatory networks and protein folding to metabolic pathways and cellular signaling cascades. These complex relationships directly violate PCA's core linearity assumption [19]. When applied to COVID-19 CT image classification, PCA's linear transformations failed to capture critical spatial relationships, achieving only 83.76% accuracy compared to 92.79% for Feature Agglomeration, a method accommodating nonlinear patterns [19].

In genomics, nonlinear genotype-phenotype relationships and epistatic interactions create multidimensional biological realities that PCA's linear projections inevitably distort. Single-cell RNA sequencing data exhibits particularly pronounced nonlinear structures, with gene expression patterns following complex biological gradients and differentiation trajectories that linear methods cannot adequately capture [40]. The inherent sparsity and technical noise in scRNA-seq data further exacerbate these limitations, resulting in components that may reflect analytical artifacts rather than biological truth.

Data Structure and Composition Challenges

Biomedical data frequently violates PCA's requirement for homoscedasticity and correlation structures. Mass spectrometry data, for instance, exhibits heterogeneous variance patterns across mass-to-charge ratios, contradicting the uniform variance assumption [38]. Medical imaging data, including CT scans, contains local spatial dependencies that PCA treats as independent linear dimensions, discarding critical contextual information [19].

The high dimensionality and sparsity of omics data creates additional challenges. In genomic studies, the number of genetic variants (features) vastly exceeds the number of samples, producing unreliable covariance estimates [10]. This "curse of dimensionality" means PCA results become highly sensitive to technical artifacts and sampling variations rather than reflecting stable biological patterns. In population genetics, PCA applications have demonstrated alarming non-reproducibility, with results changing dramatically based on marker selection, sample composition, and implementation parameters [10].

Table 1: Documented PCA Performance Issues Across Biomedical Domains

| Domain | Data Type | Assumption Violated | Documented Consequence |

|---|---|---|---|

| Medical Imaging | COVID-19 CT Scans | Linearity | 83.76% accuracy vs. 92.79% for nonlinear alternative [19] |

| Population Genetics | Genotype Data | Correlation Structure | Highly biased results; manipulation to generate desired outcomes [10] |

| Single-Cell Genomics | scRNA-seq Data | Linearity, Homoscedasticity | Performance degradation with increasing data size/sparsity [40] |

| Cancer Diagnostics | Mass Spectrometry Data | Homoscedasticity | Difficulty distinguishing tissue types without additional preprocessing [38] |

| Clinical Biomarkers | Hormone Measurement Data | Outlier Sensitivity | Required extensive data cleaning and standardization [37] |

Interpretation and Reproducibility Concerns

The interpretability crisis in PCA applications represents another critical failure point. Principal components constitute mathematical constructs that combine original variables in non-intuitive ways, often lacking clear biological correspondence [33] [10]. In population genetics, PCA results have proven highly manipulable—the same data can produce conflicting patterns depending on analytical choices, enabling "desired outcomes" through selective parameterization [10].

Reproducibility concerns further undermine PCA's validity in biomedical contexts. Different preprocessing decisions, component selection criteria, and software implementations generate inconsistent results from identical underlying data [10]. This irreproducibility poses particular risks in clinical applications, where PCA-derived biomarkers might inform diagnostic or therapeutic decisions without stable biological foundation. The combination of mathematical mismatch and implementation variability suggests that many published PCA applications in biomedicine require reevaluation.

Experimental Comparisons and Performance Benchmarking

Methodology for Comparative Evaluation

Rigorous benchmarking studies have employed standardized experimental designs to quantitatively evaluate PCA's performance against alternative dimensionality reduction techniques. These protocols typically apply multiple methods to identical datasets, measuring performance across computational efficiency, information preservation, and downstream analytical utility [40].

In scRNA-seq analysis, comprehensive evaluations have assessed PCA alongside Random Projection methods, including Sparse Random Projection (SRP) and Gaussian Random Projection (GRP) [40]. The standard evaluation protocol involves: (1) applying dimensionality reduction to normalized count matrices; (2) measuring computational time and resource requirements; (3) quantifying preservation of data structure using metrics like Within-Cluster Sum of Squares (WCSS); and (4) evaluating downstream clustering performance using labeled datasets with known cell populations [40].

For medical imaging data, comparative studies typically employ classification accuracy as the primary endpoint, applying reduced features to supervised learning tasks. Studies typically use benchmark datasets like MNIST (for methodological validation) alongside specialized medical image collections, implementing strict cross-validation protocols to ensure generalizable results [19].

Quantitative Performance Results

Table 2: Benchmarking Results of PCA Versus Alternative Dimensionality Reduction Methods

| Method | Data Type | Accuracy (%) | Computational Efficiency | Information Preservation | Reference |

|---|---|---|---|---|---|

| Standard PCA (SVD) | scRNA-seq | 84.41 | Low | Moderate | [40] |

| Randomized PCA | scRNA-seq | 84.10 | Medium | Moderate | [40] |

| Sparse Random Projection | scRNA-seq | 85.25 | High | High | [40] |

| Gaussian Random Projection | scRNA-seq | 85.90 | Medium-High | High | [40] |

| PCA (unscaled) | Medical Imaging (MNIST) | 83.76 | Medium | Low | [19] |

| Feature Agglomeration | Medical Imaging (MNIST) | 92.79 | Medium | High | [19] |

| High Variance Gene Selection | scRNA-seq | 84.41 | High | Medium | [19] |

Experimental evidence demonstrates that PCA is consistently outperformed by methods better aligned with data characteristics. In scRNA-seq analysis, Random Projection methods not only achieved superior computational efficiency but also exceeded PCA in preserving data variability and enhancing downstream clustering quality [40]. Specifically, SRP and GRP demonstrated 1-2% improvements in clustering accuracy while reducing computational time by 30-50% compared to standard PCA implementations.

In medical imaging applications, PCA's performance limitations proved even more pronounced. When applied to CT scan classification, PCA's linearity assumption resulted in significant information loss, achieving only 83.76% classification accuracy compared to 92.79% for Feature Agglomeration—a method that preserves local spatial relationships [19]. This 9% performance gap highlights the practical consequences of violating methodological assumptions in clinical contexts.

Alternative Methodologies

Random Projection Methods

Random Projection (RP) techniques have emerged as computationally efficient alternatives to PCA, particularly for ultra-high-dimensional biomedical data. Based on the Johnson-Lindenstrauss lemma, RP reduces dimensionality by projecting data onto a randomly generated lower-dimensional subspace while approximately preserving pairwise distances [40]. Unlike PCA, RP makes no assumptions about data distribution, linear relationships, or correlation structures, making it particularly suitable for sparse, noisy biological data.

Two main RP variants have demonstrated promising results: Sparse Random Projection (SRP) uses sparse random matrices for enhanced computational efficiency and reduced memory requirements, while Gaussian Random Projection (GRP) employs dense random matrices with entries drawn from Gaussian distributions, offering theoretical guarantees on distance preservation [40]. In benchmarking studies on scRNA-seq data, both SRP and GRP outperformed PCA in clustering accuracy while providing substantial speed improvements, particularly for datasets exceeding 10,000 cells [40].

Nonlinear and Specialized Alternatives

For biomedical data with inherent nonlinear structures, several specialized alternatives have demonstrated superior performance:

Feature Agglomeration applies hierarchical clustering to group similar features, effectively preserving local spatial relationships that PCA disregards. In medical imaging applications, this approach achieved 92.79% classification accuracy compared to PCA's 83.76%, highlighting the value of method-data alignment [19].

t-Distributed Stochastic Neighbor Embedding (t-SNE) and Uniform Manifold Approximation and Projection (UMAP) excel at visualizing high-dimensional data by preserving local neighborhood structures, though they are primarily visualization tools rather than general dimensionality reduction techniques [35] [40].

Factor Analysis (FA) represents another alternative that, while similar to PCA, incorporates a formal error model and can better distinguish shared versus unique variance components. In mass spectrometry studies of colon tissues, FA provided complementary insights to PCA, with different loading patterns offering enhanced biological interpretability [38].

The following diagram illustrates the decision process for selecting appropriate dimensionality reduction methods based on data characteristics:

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Dimensionality Reduction in Biomedical Research

| Tool/Resource | Function | Implementation Notes |

|---|---|---|

| R Statistical Environment | Primary implementation platform for PCA and alternatives | Use prcomp() function for PCA with center=TRUE, scale.=TRUE parameters [34] [37] |

| Python Scikit-learn | Machine learning library with comprehensive dimensionality reduction modules | Provides PCA, Randomized PCA, and multiple nonlinear alternatives in unified API |

| EIGENSOFT/SmartPCA | Specialized package for population genetic analysis | Implements PCA with specific optimizations for genetic data [10] |

| Seurat Single-cell Toolkit | Integrated scRNA-seq analysis with built-in dimensionality reduction | Offers PCA, UMAP, and t-SNE specifically optimized for single-cell data |

| Custom Benchmarking Scripts | Method comparison and performance validation | Essential for verifying method appropriateness for specific data types [40] [19] |

PCA remains a valuable exploratory tool for biomedical data analysis when its foundational assumptions align with data characteristics. However, evidence demonstrates that linearity requirements, correlation dependencies, and homoscedasticity assumptions frequently contradict the complex realities of biological systems. These mismatches produce unreliable, non-reproducible results that can undermine research validity—particularly concerning in clinical and translational applications.

Researchers should adopt a critical, evidence-based approach to dimensionality reduction, rigorously validating PCA outcomes against biological expectations and considering alternative methods when data characteristics suggest assumption violations. Future methodological development should focus on nonlinear techniques that better capture biological complexity while maintaining computational efficiency and interpretability. As biomedical data grows in scale and complexity, aligning analytical methods with data structures becomes increasingly essential for research reproducibility and biological discovery.

Building a Robust PCA Workflow: From Data Preprocessing to Reproducible Execution

Designing a Principled PCA Workflow for Quality Assessment and Outlier Detection

In the context of assessing the reproducibility of Principal Component Analysis (PCA) components across datasets, establishing a principled workflow is not just beneficial—it is essential for credible scientific discovery. Principal Component Analysis serves as an indispensable tool for quality assessment and exploratory data analysis in omics research and other scientific fields. It provides critical insights into data structure, revealing batch effects, sample outliers, and underlying biological patterns [35]. Without systematic application of PCA-based quality assessment, technical artifacts can masquerade as biological signals, leading to spurious discoveries and irreproducible results. Conversely, true biological outliers may be inappropriately removed if not properly distinguished from technical outliers [35]. This guide objectively compares PCA against alternative multivariate methods within the framework of reproducible research, providing experimental protocols and data-driven comparisons to inform method selection for researchers, scientists, and drug development professionals.

PCA Fundamentals for Quality Assessment

Core Principles and Workflow

PCA is a multivariate statistical procedure that transforms high-dimensional data into a lower-dimensional space through orthogonal transformations. It generates new uncorrelated variables called principal components (PCs), which are ordered such that the first component explains the major source of variance in the data, the second component the second largest source, and so forth [41]. These components are weighted combinations of the original variables that reflect the interrelation between all original features, allowing for disease pattern detection and overcoming univariate analysis limitations [41].

The effectiveness of PCA for outlier detection stems from its ability to reshape data so that unusual points become more easily identifiable. The PCA transformation often creates a situation where outliers are easier to detect through two primary mechanisms: points that follow the general pattern but are extreme become visible in early components, while points that do not follow the general patterns of the data tend to be extreme values in the later components [42].

Essential Research Reagent Solutions

Table 1: Essential Computational Tools for PCA Workflows

| Tool/Solution | Function | Application Context |

|---|---|---|

| StandardScaler (scikit-learn) | Normalizes data to have mean of 0 and unit variance | Preprocessing step to ensure all features contribute equally to PCA [43] |

| PCA Class (scikit-learn) | Performs principal component analysis | Core PCA computation and transformation [42] |

| syndRomics (R package) | Component visualization, interpretation, and stability | Reproducible analysis of disease spaces via principal components [41] |

| Metware Cloud Platform | Web-based PCA visualization | Generating PCA plots without local installation [44] |

| PyOD (Python) | Comprehensive outlier detection | PCA-based outlier detection implementation [42] |

Comparative Analysis of Multivariate Methods

Method Comparison: PCA vs. Supervised Alternatives

Table 2: Objective Comparison of PCA, PLS-DA, and OPLS-DA for Omics Data Analysis

| Feature | PCA | PLS-DA | OPLS-DA |

|---|---|---|---|

| Type | Unsupervised | Supervised | Supervised |

| Core Function | Exploratory data analysis, quality control | Classification, feature selection | Classification with noise separation |

| Advantages | Data visualization, evaluation of biological replicates, outlier detection | Identifies differential metabolites, builds classification models | Improves accuracy by filtering non-experimental variation |

| Disadvantages | Unable to identify differential metabolites based on groups | May be affected by noise | Higher computational complexity, risk of overfitting |

| Risk of Overfitting | Low | Medium | Medium–High |

| Best Suited For | Exploration, quality assessment | Classification tasks | Classification with improved interpretability |

| Reproducibility Considerations | High (deterministic algorithm) | Medium (depends on group labeling) | Medium (requires careful validation) |

Quantitative Performance Metrics

Table 3: Experimental Performance Comparison Across Method Types

| Performance Metric | PCA | PLS-DA | OPLS-DA |

|---|---|---|---|

| Variance Explained | Components ordered by variance explained | Focus on group separation variance | Separates predictive from orthogonal variance |

| Handling of Technical Variance | Excellent for detection | Moderate (can incorporate in model) | Excellent (separates orthogonal variance) |

| Outlier Detection Capability | High | Medium | Low (focused on group separation) |

| Computational Efficiency | High | Medium | Low |

| Interpretability | High (direct feature contribution) | Medium | High (clear separation of variance types) |

Experimental Protocols for PCA Workflows

Protocol 1: PCA-Based Quality Control and Outlier Detection

Objective: To identify sample outliers and assess data quality through PCA in an unsupervised manner.

Materials and Equipment: StandardScaler from scikit-learn, PCA implementation (scikit-learn or syndRomics), visualization tools (Matplotlib, Seaborn, or syndRomics package).

Procedure:

- Data Preprocessing: Center and scale the preprocessed feature data using StandardScaler to ensure all features contribute equally, regardless of their original scale [35].

- PCA Computation: Decompose the scaled data matrix into principal components using linear algebra to determine eigenvectors and eigenvalues [43].

- Variance Threshold Determination: Calculate cumulative explained variance and set a threshold (commonly 95%) for component selection [43].

- Outlier Identification: Implement standard deviation threshold method using multivariate standard deviation ellipses in PCA space with common thresholds at 2.0 and 3.0 standard deviations [35].

- Visualization: Generate PCA score plots of the first two principal components (PC1 vs. PC2) to visualize sample clustering and potential outliers [44].

Validation: Assess biological replicate consistency through tightness of clustering in PCA space. Calculate reconstruction error for each sample—higher errors indicate potential outliers [42].

Protocol 2: Comparative Analysis of Multivariate Methods

Objective: To objectively compare PCA, PLS-DA, and OPLS-DA performance on the same dataset.

Materials and Equipment: Standardized dataset (e.g., Wine Quality Dataset from UCI), scikit-learn environment, Metware Cloud Platform for OPLS-DA, cross-validation tools.

Procedure:

- Data Preparation: Split data into training and test sets (typically 70/30 or 80/20) while maintaining class distributions.

- PCA Implementation: Apply PCA without using group labels to explore natural data structure and identify outliers.

- PLS-DA Implementation: Implement PLS-DA using group labels to force separation and identify features contributing to group differences.

- OPLS-DA Implementation: Apply OPLS-DA with orthogonal signal correction to separate predictive variation from noise.

- Model Validation: Perform internal cross-validation (e.g., 10-fold) for PLS-DA and OPLS-DA to assess overfitting risk [44].

- Performance Metrics: Calculate variance explained, classification accuracy (for supervised methods), and feature importance consistency.

Validation: Use permutation testing to assess statistical significance of supervised models. Apply the syndRomics package to evaluate component stability across resampled datasets [41].

Visualization of PCA Workflows for Reproducible Research

PCA Workflow for Reproducibility Assessment

Advanced Considerations for Reproducible PCA

Component Stability and Reproducibility

A critical aspect of reproducible PCA analysis is assessing component stability across datasets and resampled versions of the same data. The syndRomics R package provides specialized functionality for this purpose, implementing resampling strategies that provide data-driven approaches to analytical decision-making aimed to reduce researcher subjectivity and increase reproducibility [41]. The package offers functions to extract metrics for component and variable significance using nonparametric permutation methods, informing component selection and interpretation [41].

For studies aiming to reproduce PCA components across multiple datasets, it is recommended to:

- Perform bootstrap resampling to assess component stability

- Use the component stability metrics provided by syndRomics

- Compare variable loadings across related datasets

- Assess the consistency of variance explained by comparable components

Handling Method-Specific Limitations

Each multivariate method carries specific limitations that impact reproducibility:

PCA Limitations: PCA is sensitive to outliers as it is based on minimizing squared distances of points to components, so remote points can have very large squared distances that disproportionately influence results [42]. To address this, robust PCA variants can be employed where extreme values in each dimension are removed before performing the analysis [42].

PLS-DA/OPLS-DA Limitations: Supervised methods carry higher risks of overfitting, particularly with small sample sizes. Internal cross-validation is crucial to prevent overfitting in OPLS-DA models [44]. Permutation testing should be used to assess the statistical significance of separation observed in supervised methods.

The choice between PCA, PLS-DA, and OPLS-DA fundamentally depends on the research question and the need for unsupervised exploration versus supervised classification. PCA remains the foundation for quality assessment and outlier detection in omics data analysis, providing a robust, interpretable, and scalable framework for identifying batch effects and outliers before downstream analysis [35]. For researchers focused on assessing reproducibility of components across datasets, PCA's deterministic nature and well-established stability assessment methods make it particularly valuable.

A typical reproducible workflow begins with PCA for quality control and data exploration, followed by supervised methods like PLS-DA or OPLS-DA when specific group separations are of interest and sufficient validation measures are implemented. Throughout this process, tools like the syndRomics package provide critical functionality for component visualization, interpretation, and stability assessment—essential elements for ensuring that PCA components maintain their meaning and utility across diverse datasets and research contexts [41].

Within the broader thesis of assessing the reproducibility of Principal Component Analysis (PCA) components across datasets, robust data preprocessing emerges as a non-negotiable foundation. The credibility of any downstream multivariate analysis hinges on the steps taken to prepare the data. Research highlights that technical artifacts, if not properly addressed through preprocessing, can masquerade as biological signals, leading to spurious and irreproducible discoveries [35]. This guide objectively compares the performance of different preprocessing techniques—specifically centering, scaling, and methods for handling missing data—in the context of generating stable and reliable PCA outcomes, providing supporting experimental data from relevant fields.

Core Preprocessing Operations for Reproducible PCA

The Critical Role of Centering and Scaling

Centering and scaling are foundational preprocessing steps that directly impact the covariance structure that PCA seeks to capture.

Centering involves adjusting the data so that each feature has a mean of zero. This is achieved by subtracting the mean of each feature from its individual values ( X_{\text{centered}} = X - \mu ) [45]. Centering ensures that the first principal component describes the direction of maximum variance rather than the direction of the mean, which is crucial for correct interpretation [46].

Scaling adjusts the range of features to ensure they contribute equally to the analysis. This is vital when variables are measured on different scales (e.g., age vs. income) [45]. Without scaling, a feature with a larger native range would disproportionately dominate the principal components, potentially obscuring meaningful patterns [45] [47].

Comparative Performance of Scaling Methods

The choice of scaling technique can lead to different PCA outcomes. The table below summarizes the performance of common methods based on their application in reproducible research.

| Scaling Method | Mathematical Formula | Best-Suited Data Types | Impact on PCA Reproducibility | ||

|---|---|---|---|---|---|

| Standard Scaler (Z-score) | ( X_{\text{scaled}} = \frac{X - \mu}{\sigma} ) [45] | Data assumed to be normally distributed; the default for many scenarios [45] [47]. | Ensures all features have unit variance. Prevents high-variance features from dominating, leading to more stable components [35]. | ||

| Min-Max Scaler | ( X{\text{scaled}} = \frac{X - X{\text{min}}}{X{\text{max}} - X{\text{min}}} ) [45] | Data where bounds are known (e.g., images); neural networks with sigmoid activation functions [45]. | Sensitive to outliers. A single extreme value can compress the majority of data, reducing reproducibility in the presence of outliers. | ||

| Robust Scaler | Uses median and interquartile range (IQR) [47] | Datasets containing significant outliers [47]. | Mitigates the influence of outliers, enhancing the robustness and reliability of derived components in real-world, noisy data. | ||

| Max-Abs Scaler | ( X_{\text{scaled}} = \frac{X}{ | X_{\text{max}} | } ) [45] | Data that is centered around zero and contains both positive and negative values [45]. | Preserves the sparsity of data. Its effect on reproducibility is similar to Min-Max but less common for general PCA applications. |

Experimental data from omics studies confirms that PCA computation begins by centering and scaling the preprocessed feature data to ensure all features contribute equally, regardless of their original scale [35]. This practice is essential for credible scientific discovery when using PCA for quality assessment.

Methodologies for Handling Missing Data

Missing data is a common problem that can introduce bias and reduce the statistical power of an analysis if not handled appropriately [48] [49]. The optimal strategy often depends on the mechanism behind the missingness and the specific dataset.

Experimental Protocols for Common Imputation Methods

Mean/Median/Mode Imputation:

- Methodology: Missing values in a column are replaced with the mean (for continuous data), median (for ordinal data or data with outliers), or mode (for categorical data) of that column's observed values [48] [49].

- Implementation: This is typically implemented using the

fillna()function in pandas [48]. - Use Case: A quick and simple method suitable for data Missing Completely at Random (MCAR) with a low percentage of missing values.

k-Nearest Neighbors (KNN) Imputation:

- Methodology: For a data point with a missing value, the algorithm finds the 'k' most similar data points (neighbors) based on other available features. The missing value is then imputed using the mean or mode of the value from these neighbors [49].

- Use Case: A more advanced method that preserves relationships between variables, suitable for data Missing at Random (MAR).

Forward Fill/Backward Fill:

- Methodology: In time-series or ordered data, missing values are filled using the last observed value (forward fill) or the next observed value (backward fill) [48].

- Implementation: Also implemented with

fillna(method='ffill' or 'bfill')in pandas [48]. - Use Case: Ideal for ordered data where consecutive values are likely to be similar.