Essential Quality Control Metrics for Prokaryotic Gene Annotations: A Guide for Accurate Genomic Analysis

This article provides a comprehensive framework for implementing robust quality control (QC) in prokaryotic gene annotation, a critical step for reliable downstream analysis in microbial genomics and drug development.

Essential Quality Control Metrics for Prokaryotic Gene Annotations: A Guide for Accurate Genomic Analysis

Abstract

This article provides a comprehensive framework for implementing robust quality control (QC) in prokaryotic gene annotation, a critical step for reliable downstream analysis in microbial genomics and drug development. We cover foundational QC concepts, practical application of tools like BUSCO and OMArk, strategies for troubleshooting common annotation errors, and methods for the comparative validation of genomic data. By synthesizing current methodologies and emerging best practices, this guide empowers researchers to critically assess and improve their genomic annotations, thereby enhancing the reliability of findings in biomedical and clinical research.

Why Annotation Quality Matters: Foundational Concepts and Impact on Research

Defining Prokaryotic Genome Annotation and the QC Imperative

Frequently Asked Questions (FAQs)

1. What is prokaryotic genome annotation? Prokaryotic genome annotation is a multi-level process that identifies the location and function of genomic elements within bacterial and archaeal genomes. This includes predicting protein-coding genes, structural RNAs, tRNAs, small non-coding RNAs, pseudogenes, and mobile genetic elements like insertion sequences and CRISPR regions [1]. The NCBI Prokaryotic Genome Annotation Pipeline (PGAP) combines ab initio gene prediction algorithms with homology-based methods to provide both structural and functional annotation [1] [2].

2. What are the most common errors in genome submission and annotation? Common errors often relate to incorrect feature formatting, biological source description, or sequence problems [3]. Key issues include:

- Internal stop codons in CDS: Caused by an incorrect genetic code, incorrect CDS location, or a genuine pseudogene. Fix by using the correct genetic code (e.g.,

gcode=11for prokaryotes), adjusting the CDS location, or adding the/pseudoqualifier to the gene [3]. - Incorrect protein names: For example, a "hypothetical protein" should not have an associated EC number. The EC number should be removed or a valid product name should be provided based on the EC number [3].

- Missing or misformatted source data: This includes errors in culture collection codes, collection dates, and geographic location names, which must follow specific, controlled formats [3].

3. My protein accession (NP/YP) has disappeared. Where did it go? NCBI has implemented a non-redundant protein model to reduce data redundancy. Most NP_ and YP_ accessions have been replaced by non-redundant WP_ accessions. An exception is made for a subset of RefSeq reference genomes, which continue to use NP_ or YP_ accessions that cross-reference the WP_ accessions. You can find the replacement by searching for the original protein accession in NCBI's Protein database, where a message typically links to the new WP_ accession [4].

4. The locustags on my RefSeq genome have changed. How can I map the old ones to the new ones?

Locustags often change when a genome is re-annotated by NCBI's PGAP. The original locus_tag is typically preserved as an /old_locus_tag qualifier on the gene feature in the current RefSeq record. You can view this in the GenBank flatfile of the genome record [4].

5. What should I do if I believe the name assigned to a non-redundant RefSeq protein is incorrect?

You can contact the NCBI help desk at info@ncbi.nlm.nih.gov. Provide the protein accession in question, the suggested name, and the evidence supporting the change [4].

Troubleshooting Common Annotation Problems

| Problem | Error Message / Symptom | Solution |

|---|---|---|

| Internal Stop Codon | SEQ_FEAT.InternalStop or SEQ_INST.StopInProtein [3] |

Verify genetic code; adjust CDS location/reading frame; add /pseudo qualifier if gene is non-functional [3]. |

| Hypothetical Protein with EC Number | SEQ_FEAT.BadProteinName: Unknown or hypothetical protein should not have EC number [3] |

Remove EC number if protein is truly hypothetical, or assign a valid product name based on the EC number [3]. |

| Missing or Changed Protein Accession | Former NP/YP accession is no longer found [4] | Search for the old accession in the Protein database; the record will link to the replacement WP_ accession [4]. |

| Changed Locus_Tag | Original locus_tag from submission is not present in RefSeq version [4] | Check the RefSeq record's GenBank flatfile; the original locus_tag is retained as an /old_locus_tag [4]. |

| Poor Annotation Quality | Over-prediction of genes, gene fragmentation, or missing genes [5] | Use quality assessment tools like OMArk to evaluate completeness and contamination. Manually curate annotations using tools like Apollo [6]. |

Quality Control Metrics and Assessment

Robust quality control is indispensable for reliable genomic analysis. Quality can be assessed at multiple levels.

1. PGAP Output Metrics The NCBI Prokaryotic Genome Annotation Pipeline provides a summary of annotation statistics for each genome, which serves as a primary quality check [7].

Table: Key Quality Metrics from PGAP Output [7]

| Metric | Description | What it Indicates |

|---|---|---|

| Genes (coding) | Number of genes that produce a protein. | The coding potential of the genome. |

| Pseudo Genes (total) | Number of genes with frameshifts, internal stops, or that are incomplete. | Genome degradation or assembly/annotation errors. |

| rRNAs (5S, 16S, 23S) | Counts of complete ribosomal RNA genes. | A hallmark of assembly completeness; low numbers suggest a draft assembly. |

| tRNAs | Number of transfer RNA genes. | Essential for functionality; should be close to expected range for the organism. |

| CRISPR Arrays | Number of clustered repeats. | Identified defense systems. |

2. Advanced Quality Assessment with OMArk While tools like BUSCO measure completeness, they are blind to other errors like contamination and gene over-prediction. OMArk is a newer tool that addresses this by comparing a query proteome to precomputed gene families across the tree of life [5]. It assesses:

- Completeness: The proportion of expected conserved ancestral genes present.

- Consistency: The taxonomic and structural consistency of the entire gene repertoire. It flags sequences that are contaminants, fragments, or otherwise inconsistent with the expected lineage [5].

Table: OMArk Quality Assessment Output Categories [5]

| Category | Description | Implication |

|---|---|---|

| Consistent | Proteins fit the expected gene families for the lineage. | High-quality, reliable annotation. |

| Contaminant | Proteins are more similar to genes from another species. | Indicates contamination in the genome assembly or sample. |

| Inconsistent | Proteins placed in gene families outside the expected lineage. | Could be novel genes or annotation errors. |

| Unknown | Proteins with no known gene family assignment. | Novel sequences or spurious gene predictions. |

| Fragments | Proteins less than half the median length of their gene family. | Potential gene model inaccuracies or fragmented assemblies. |

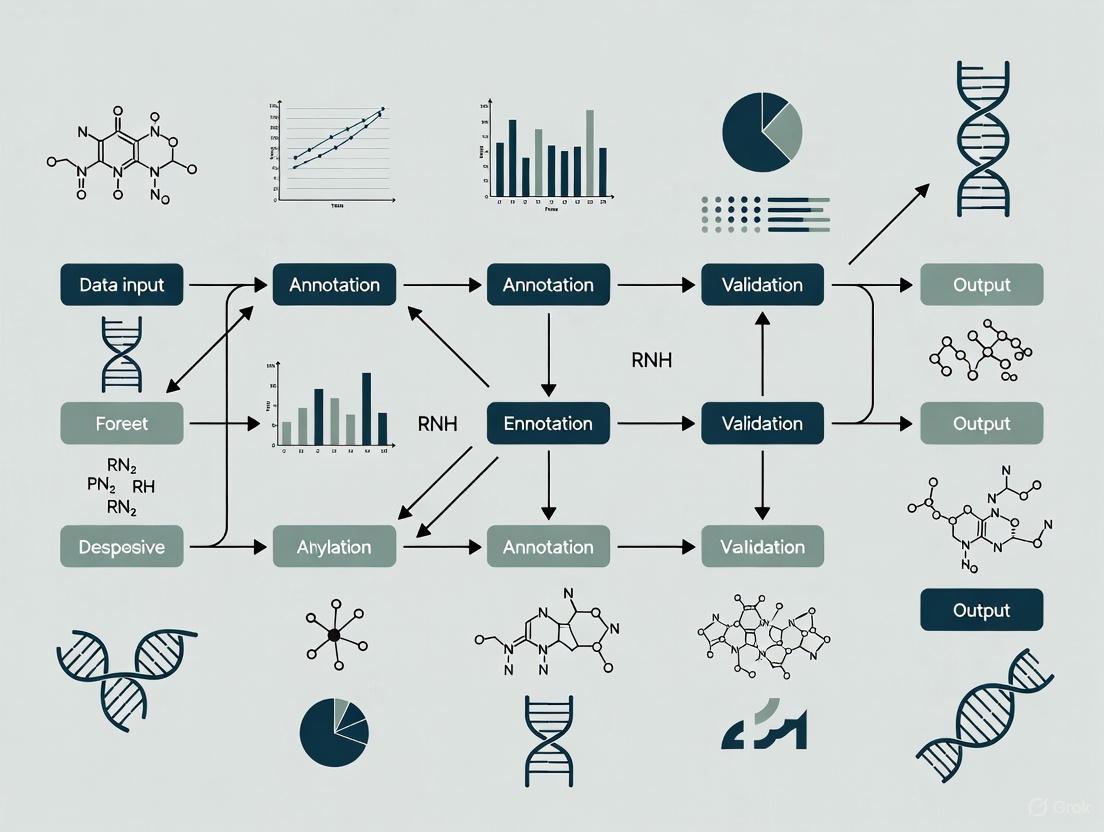

The following diagram illustrates the logical workflow of the OMArk quality assessment process:

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Resources for Prokaryotic Genome Annotation & QC

| Tool / Resource | Function | Use Case |

|---|---|---|

| NCBI PGAP [1] [8] | Automated annotation pipeline for bacterial/archaeal genomes. | Primary structural and functional annotation of genomes for GenBank submission. |

| OMArk [5] | Quality assessment of gene repertoire annotations. | Evaluating proteome completeness, contamination, and gene model errors post-annotation. |

| table2asn [9] | Command-line tool to generate GenBank submission files from a feature table. | Preparing and validating annotation data for submission to GenBank. |

| GeneMarkS-2+ [2] [7] | Ab initio gene prediction algorithm integrating homology evidence. | Core component of PGAP for predicting coding regions, especially those without homology evidence. |

| tRNAscan-SE [7] | Specialized tool for identifying tRNA genes. | Accurate prediction of tRNA genes within the genome. |

| Infernal (cmsearch) [7] | Scans DNA sequences for non-coding RNAs using covariance models (Rfam). | Annotation of structural rRNAs and other non-coding RNAs. |

| Apollo [6] | Web-based, collaborative genome annotation editor. | Manual curation and refinement of automated genome annotations. |

The NCBI Prokaryotic Genome Annotation Pipeline employs a sophisticated, multi-step protocol. The following diagram outlines the major components and data flow for structural annotation in PGAP:

- Input: The process begins with a genome assembly (complete or draft) and its predefined taxonomic identity.

- Open Reading Frame (ORF) Prediction: ORFfinder is used to identify all potential ORFs across all six frames of the genome.

- Homology-Based Evidence Collection:

- Translated ORFs are searched against libraries of protein family hidden Markov models (HMMs), including TIGRFAMs, Pfam, and NCBIfams.

- Simultaneously, they are aligned using BLAST against curated protein sets, including lineage-specific reference genomes and protein cluster representatives.

- Evidence Integration: The HMM hits and protein alignments are mapped from the ORFs back to the genomic sequence.

- Ab Initio Prediction: GeneMarkS-2+ is run on the genome. Crucially, it uses the mapped homology evidence as "hints" to inform and improve its statistical gene predictions, particularly for defining start codons and genes in regions without strong external evidence.

- Non-Coding RNA Annotation:

- tRNAs: Identified using tRNAscan-SE with specific parameter sets for Bacteria and Archaea.

- Other RNAs: Structural rRNAs (5S, 16S, 23S) and small non-coding RNAs are found by searching Rfam models with Infernal's

cmsearch. - CRISPRs: Identified using PILER-CR and the CRISPR Recognition Tool (CRT).

- Final Feature Determination: The final set of coding sequences (CDSs) is created by combining the supporting homology evidence and the ab initio predictions, resolving conflicts and selecting the best-supported model for each gene.

- Functional Annotation: Predicted proteins are assigned names, gene symbols, EC numbers, and Gene Ontology terms based on hits to the highest-precedence Protein Family Model (HMMs or BlastRules).

Frequently Asked Questions (FAQs)

Completeness

Q1: How can I assess if my prokaryotic genome annotation contains a complete set of essential genes? Completeness is assessed by checking for the presence of a core set of universal, single-copy orthologs. For prokaryotes, tools like BUSCO (Benchmarking Universal Single-Copy Orthologs) are commonly used. A high-quality, complete genome should have a high percentage of these conserved genes found as single copies. The NCBI Prokaryotic Genome Annotation Pipeline also specifies minimum standards, including the presence of at least one copy each of the 5S, 16S, and 23S structural RNAs, and at least one tRNA for each amino acid [10]. The completeness score is calculated as the proportion of expected conserved ancestral genes present in the query proteome [5].

Q2: My genome assembly is highly fragmented. How does this impact annotation completeness? A fragmented assembly directly leads to a fragmented and incomplete annotation. When assembly contigs are broken, genes may be missing or predicted as partial, especially at the contig ends. This results in a lower count of complete BUSCOs and an inflation in the number of fragmented and missing genes. Furthermore, short contigs cannot resolve repeated genomic regions, which often leads to mis-assemblies and the incorrect collapse of distinct genes into one [11]. For draft genomes, partial coding regions are allowed only at the very end of a contig [10].

Contiguity

Q3: What are the standard metrics for reporting genome assembly contiguity, and what are their limitations? The standard metrics for contiguity are the N50 and L50 values. The N50 is the length of the shortest contig or scaffold such that 50% of the entire assembly is contained in contigs or scaffolds of at least this length. The L50 is the number of contigs or scaffolds that account for 50% of the total assembly size. A major limitation of N50 is that it provides a single data point and can be skewed by a few long sequences. A more comprehensive view is provided by the NG(X) plot, which shows the proportion of the genome (Y-axis) assembled in sequences longer than a given length X (X-axis) for all thresholds from 1-100% [12]. These metrics gauge contiguity but do not directly report on completeness or correctness.

Q4: My contiguity metrics (N50) look good, but my annotation is poor. What could be wrong? High contiguity does not guarantee high annotation quality. The underlying assembly may have other issues that disrupt gene structures, such as:

- Mis-assemblies: Incorrectly joined contigs can create chimeric genes or break real genes.

- Systematic sequencing biases: Regions with extremely high or low GC-content can be missing or poorly sequenced with certain technologies, creating gaps in gene models even within long contigs [11].

- Annotation pipeline errors: The software used for annotation may have mispredicted gene starts, stops, or introns (in eukaryotes). It is crucial to use assessment tools that go beyond contiguity, such as BUSCO for completeness and OMArk for consistency, to identify these issues [5] [12].

Contamination

Q5: What tools can I use to detect contamination in my annotated genome? Several tools are available to detect contamination:

- OMArk: This tool compares your query proteome to known gene families across the tree of life. It identifies proteins that are taxonomically inconsistent with the rest of the proteome and reports them as likely contaminants [5].

- GenomeQC: This toolkit includes a vector contamination check, which blasts the assembly against the UniVec database to identify common cloning vectors or contaminants from the sequencing process [12].

- BlobTools: A tool that uses taxonomy assignment and coverage information to identify and remove contaminant sequences from assemblies [12].

Q6: My analysis shows evidence of contamination. What are the immediate next steps?

- Identify the contaminant source: Use the taxonomic report from tools like OMArk [5] or BlobTools to determine the likely source organism (e.g., bacteria, fungus, or another sample).

- Filter the assembly: Remove the identified contaminant contigs or scaffolds from your assembly file.

- Re-annotate: Run your annotation pipeline on the cleaned assembly. Do not simply remove genes from the old annotation, as the contamination likely affects the underlying sequence.

- Re-assess quality: Re-run your quality assessments (completeness, consistency) on the new, clean annotation to ensure the issue has been resolved.

Consistency

Q7: What does "taxonomic consistency" mean in genome annotation, and why is it important? Taxonomic consistency measures whether the proteins in your annotated proteome belong to gene families expected for your species' lineage. A high level of consistency indicates that the annotation is reliable and biologically plausible. A significant number of inconsistent proteins can indicate several problems, including:

- Contamination from other species.

- Horizontal gene transfer events.

- Annotation errors, where non-coding sequences have been mis-annotated as protein-coding genes [5].

Q8: How can I check my annotation for consistency and other gene-level errors?

The OMArk software specializes in consistency assessment. It classifies proteins based on their taxonomic origin (consistent, inconsistent, contaminant) and their structural quality compared to their gene family (e.g., fragments, partial mappings) [5]. Additionally, NCBI provides the Discrepancy Report, a tool that performs internal consistency checks (e.g., ensuring no gene is completely contained within another on the same strand) and is available as part of the tbl2asn tool used during GenBank submission [10].

General Quality

Q9: What are the minimum annotation standards for a complete prokaryotic genome? According to NCBI standards, a complete prokaryotic genome annotation should meet the following minimum requirements [10]:

- Structural RNAs: At least one copy each of 5S, 16S, and 23S rRNA with appropriate length.

- tRNAs: At least one copy for each amino acid.

- Protein-coding density: The ratio of protein-coding gene count to genome length should be close to 1.

- Non-overlapping genes: No gene should be completely contained within another on the same or opposite strand.

- No partial features: All features should be complete, with exceptions only for draft genomes at contig ends.

Q10: Where should I submit my annotated genome and what quality checks will it undergo? Annotated genomes are typically submitted to the International Nucleotide Sequence Database Collaboration (INSDC) databases, which include GenBank (NCBI), the European Nucleotide Archive (ENA), and the DNA Data Bank of Japan (DDBJ). NCBI's RefSeq project provides derived, non-redundant reference sequences [13]. During submission to GenBank, your annotation will be run through NCBI's annotation assessment tools, including the Discrepancy Report and a frameshift check, to identify common problems before the record is made public [10].

Troubleshooting Guides

Problem 1: Low Completeness Score

Issue: Your genome annotation is missing a large number of conserved, single-copy genes according to a BUSCO or OMArk analysis.

Step-by-Step Diagnosis and Solution:

Verify the Assembly:

- Action: Check the assembly contiguity (N50) and completeness (assembly BUSCO).

- Rationale: A fragmented or incomplete genome assembly is the most common cause of missing genes. If the DNA sequence is absent, the gene cannot be annotated.

- Tool: Use GenomeQC or QUAST to get assembly metrics [12].

Investigate Sequencing Bias:

- Action: If the assembly appears contiguous, check for regions of low coverage, which may indicate GC-bias.

- Rationale: Technologies like Illumina struggle with extremely high or low GC-content regions, leading to gaps [11].

- Solution: Consider sequencing with a technology less prone to such bias (e.g., PacBio) or using a PCR-free library preparation.

Review Annotation Evidence:

- Action: Examine the missing genes in a genome browser. Check if there is underlying genomic sequence but no gene model.

- Rationale: The annotation pipeline may have failed to predict genes in otherwise intact genomic regions.

- Solution: Improve the annotation by providing transcriptomic (RNA-seq) evidence to guide the gene prediction. For prokaryotes, ensure the pipeline is using the appropriate genetic code.

Problem 2: Suspected Contamination

Issue: OMArk or a similar tool reports a significant proportion of proteins as taxonomically inconsistent, suggesting contamination.

Step-by-Step Diagnosis and Solution:

Confirm and Localize:

Filter the Assembly:

- Action: Create a new, clean version of your genome assembly file (.fasta) by removing all contaminant contigs.

- Rationale: The root of the problem is the sequence data itself; the annotation is just a symptom.

Re-annotate the Genome:

- Action: Run your entire annotation pipeline (structural and functional) on the cleaned assembly.

- Rationale: Generating a new annotation from the clean data is the only way to ensure a correct result.

Validate the Clean Annotation:

- Action: Re-run OMArk and BUSCO on the new annotation.

- Expected Outcome: The proportion of inconsistent/contaminant proteins should drop to near zero, and the completeness score should remain high for your target organism.

Problem 3: Inconsistent Gene Annotations Across Releases

Issue: Gene identifiers (like locus_tags) or protein accessions change or disappear between annotation releases or database updates, disrupting your analysis.

Background and Solution:

- Background: NCBI has undertaken a large-scale re-annotation of prokaryotic genomes to improve consistency and reduce redundancy. This has resulted in the replacement of many strain-specific NP/YP protein accessions with non-redundant WP_ accessions and changes to locus_tags [4].

- Solution:

- Find Replacements: For a discontinued locustag, check the current RefSeq genome record. The original locustag is often preserved as an

/old_locus_tagqualifier alongside the new one [4]. - Map Proteins: For a removed protein accession, navigate to its record in the Protein database. A message will often link to the replacement non-redundant WP_ accession. You can also use the "Identical Proteins" report to find all genomes that code for that protein [4].

- Use Stable Identifiers: Where possible, use the non-redundant WP_ accessions for comparative analyses, as these are more stable across strains and species.

- Find Replacements: For a discontinued locustag, check the current RefSeq genome record. The original locustag is often preserved as an

Quantitative Data and Assessment Tools

Table 1: Key Quality Metrics and Target Values

| Quality Dimension | Metric | Tool(s) | Target (Prokaryotic Genome) |

|---|---|---|---|

| Completeness | BUSCO Score [5] [12] | BUSCO, OMArk | >95% (Complete + Single) |

| rRNA & tRNA Presence [10] | Manual inspection, PGAP | 5S, 16S, 23S, & 20 tRNAs | |

| Contiguity | N50/NG50 [12] | GenomeQC, QUAST | As high as possible, context-dependent |

| L50/LG50 [12] | GenomeQC, QUAST | As low as possible | |

| Contamination | Inconsistent Proteins [5] | OMArk | < 1-2% |

| Vector Contamination [12] | GenomeQC (UniVec BLAST) | 0% | |

| Consistency | Taxonomic Consistency [5] | OMArk | >95% |

| Structural Consistency [5] | OMArk | Low % of fragments/partial genes | |

| Internal Annotation Consistency [10] | NCBI Discrepancy Report | 0 errors |

Table 2: Comparison of Genome Quality Assessment Tools

| Tool | Primary Function | Key Features | Pros | Cons |

|---|---|---|---|---|

| OMArk [5] | Proteome quality assessment | Assesses completeness & taxonomic/structure consistency using gene families. | Identifies contamination and dubious genes; provides holistic quality view. | Newer tool; requires proteome as input. |

| BUSCO [5] [12] | Gene space completeness | Reports % of conserved universal single-copy orthologs found. | Standardized, easy-to-interpret metric. | Blind to contamination and gene over-prediction. |

| GenomeQC [12] | Integrated assembly & annotation assessment | Combines N50, BUSCO, contamination check, and LTR Assembly Index (LAI). | Comprehensive and user-friendly web interface. | LAI is more relevant for eukaryotic repeats. |

| NCBI PGAP [10] | Prokaryotic Genome Annotation Pipeline | Provides structural and functional annotation following standards. | Integrated into the NCBI submission process; uses official standards. | Primarily an annotation tool, not an assessment tool. |

Experimental Protocols

Protocol 1: Comprehensive Genome Quality Assessment Workflow

This protocol describes a holistic approach to assessing the quality of an annotated prokaryotic genome, integrating multiple tools.

Purpose: To evaluate a prokaryotic genome assembly and its annotation across the four key dimensions of completeness, contiguity, contamination, and consistency.

Materials:

- Input Files:

genome_assembly.fasta: The assembled genome sequence.annotation.gff: The structural annotation file in GFF format.proteome.fasta: The predicted protein sequences.

- Software:

Procedure:

- Assembly Contiguity and Contamination Check:

- Run the GenomeQC Docker pipeline on your

genome_assembly.fasta. - Output: The pipeline will generate NG(X) plots, N50/L50 metrics, and a report on vector contamination.

- Interpretation: A steady NG(X) curve and high N50 indicate good contiguity. Any vector contamination must be investigated and removed.

- Run the GenomeQC Docker pipeline on your

Gene Space Completeness Assessment:

- Run BUSCO in genome mode on

genome_assembly.fastausing the appropriate prokaryotic dataset (e.g.,bacteria_odb10). - Output: A summary table with percentages of complete (single and duplicated), fragmented, and missing BUSCOs.

- Interpretation: A high-quality genome should have >95% complete BUSCOs, most of which are single-copy.

- Run BUSCO in genome mode on

Proteome Consistency and Contamination Assessment:

- Submit your

proteome.fastafile to the OMArk web server or run it locally. - Output: OMArk produces a report detailing completeness relative to the lineage, taxonomic consistency, and structural consistency.

- Interpretation: A high-quality annotation will show high completeness, high taxonomic consistency (>95%), and a low proportion of fragmented and inconsistent proteins.

- Submit your

Troubleshooting:

- Low BUSCO completeness: Refer to Troubleshooting Guide: Problem 1.

- High contamination in OMArk: Refer to Troubleshooting Guide: Problem 2.

Protocol 2: Exon-by-Exon Ortholog Annotation for Quality Validation

This protocol, adapted from a eukaryotic annotation exercise [14], provides a manual method to validate computationally predicted gene models by mapping exons from a known ortholog. This is especially useful for verifying problematic gene calls.

Purpose: To manually verify and correct the structure of a specific gene model using a trusted ortholog from a related species.

Materials:

- A trusted protein sequence (the ortholog) from a well-annotated reference organism.

- The genomic sequence of your organism (the target).

- NCBI BLAST suite.

Procedure:

- Identify Ortholog: Use your gene of interest from the target organism to BLAST against the reference organism's proteome to identify the correct ortholog [14].

- Retrieve Exon Sequences: Obtain the individual coding sequence (CDS) for each exon of the reference ortholog from a database like Gene Record Finder or Ensembl.

- Map Exons Individually:

- For each reference exon, perform a

blastxsearch against the entire target genomic sequence. - Use BLAST parameters: turn off "low complexity regions" filter and set "Compositional adjustments" to "No adjustment" [14].

- For each reference exon, perform a

- Determine Exon Coordinates:

- From the BLAST results, record the start and end DNA base coordinates in the target genome for the alignment to each reference exon. Note the reading frame used in the alignment [14].

- Construct Gene Model:

- Compile the coordinates of all exons to build a validated gene model for your target organism.

- Compare this model to the one predicted by your annotation pipeline. Discrepancies (e.g., missing exons, incorrect boundaries) indicate errors in the computational prediction.

Workflow and Relationship Diagrams

Genome Quality Assessment Workflow

Quality Dimension Relationships

The Scientist's Toolkit

Table 3: Essential Research Reagents and Software for Quality Control

| Item Name | Type | Function/Benefit | Key Feature |

|---|---|---|---|

| BUSCO [5] [12] | Software | Assesses gene repertoire completeness by searching for universal single-copy orthologs. | Provides a simple, quantitative score (C%, D%, F%, M%). |

| OMArk [5] | Software | Assesses proteome quality for completeness, contamination, and consistency against gene families. | Identifies mis-annotated and contaminant sequences that BUSCO misses. |

| GenomeQC [12] | Software / Web App | Integrates multiple metrics (N50, BUSCO, contamination) for a unified assembly & annotation report. | User-friendly interface and comprehensive Docker pipeline. |

| NCBI PGAP [10] | Software Pipeline | Annotates prokaryotic genomes according to established standards for GenBank submission. | Ensures compliance with NCBI structural and functional annotation rules. |

| NCBI Discrepancy Report [10] | Software Tool | Checks annotation for internal consistency (e.g., overlapping genes, partial features). | Critical for catching errors before database submission. |

| UniVec Database [12] | Database | A database of common vector and adapter sequences. | Used by tools like GenomeQC to identify and flag vector contamination. |

| OMA Database [5] | Database | A repository of gene families and hierarchical orthologous groups (HOGs). | Serves as the reference database for OMArk's taxonomic placement. |

Frequently Asked Questions (FAQs)

FAQ 1: What are the concrete risks of using a poorly annotated genome in my comparative genomics study? Poor annotation directly compromises the validity of your study's findings. Key risks include:

- Inaccurate Evolutionary Models: Misannotated gene repertoires lead to incorrect inferences about gene family evolution, including false conclusions about gene duplication or loss events [5]. This distorts the understanding of evolutionary relationships [15].

- Flawed Functional Predictions: Assuming function from annotation, if the original gene models are wrong (e.g., fragmented or fused genes), will misguide your hypothesis about gene function and regulation in the species of interest [15].

- Propagation of Errors: Using a poorly annotated genome as a reference for annotating other genomes can cause errors to spread through the scientific literature. OMArk analysis, for instance, identified error propagation in avian gene annotations stemming from a fragmented zebra finch proteome used as a reference [5].

FAQ 2: How can poor annotation derail a drug target discovery project? The success of target-based drug discovery hinges on the accurate identification and characterization of the target [16]. Poor annotation introduces significant risks:

- Target Misidentification: Pursuing a "gene" that is, in fact, an annotation error (e.g., a mispredicted non-coding sequence or a fragment) wastes resources and can lead to late-stage project failure [5].

- Overlooked Therapeutic Targets: Poorly annotated genes are often excluded from consideration. Research shows that a significant portion of human genes lack phenotype associations in major databases, meaning potential drug targets are being missed [17]. Methods like phylogenetic profiling are specifically designed to help associate these poorly annotated genes with diseases [17].

- Faulty Insights from Omics Data: Integrative analyses that use transcriptomic or proteomic data rely on a correct catalog of genes. Inaccurate annotation undermines network-based and machine learning methods used for drug-target interaction prediction [16].

FAQ 3: What are the key metrics and tools I can use to assess the quality of a genome annotation before using it? You should assess both completeness and consistency. The table below summarizes the purpose of two key tools and the metrics they provide.

Table: Key Tools for Gene Repertoire Quality Assessment

| Tool | Primary Purpose | Key Quality Metrics | What a Good Result Looks Like |

|---|---|---|---|

| BUSCO [5] | Assesses completeness of a gene repertoire based on universal single-copy orthologs. | Percentage of expected conserved genes found (as single-copy, duplicated, or fragmented). | High percentage (>95%) of complete, single-copy BUSCOs. |

| OMArk [5] | Assesses completeness and consistency of the entire gene repertoire relative to an evolutionary lineage. | Completeness; proportion of consistent proteins; proportion of contaminants, fragments, and inconsistent genes. | High completeness and a high proportion (>95%) of consistent proteins. |

Troubleshooting Guides

Problem: Inconsistent or Unexpected Results in Comparative Genomics Analysis

1. Issue: Anomalous Gene Family Counts

- Potential Cause: Widespread gene prediction errors, leading to either massive over-prediction (many spurious genes) or under-prediction (many missing genes) [5].

- Diagnostic Steps:

- Solution: Re-annotate the genome using a standardized, evidence-based pipeline and manually review gene models in problematic regions.

2. Issue: Suspected Contamination in Genome Assembly

- Potential Cause: The genomic sequence data contains DNA from another organism, which has subsequently been annotated as genuine genes of the target species.

- Diagnostic Steps:

- Use OMArk, which specifically identifies contamination by detecting an overrepresentation of proteins that map to gene families from a taxonomic lineage different from the main species [5].

- For prokaryotes, ensure annotation follows NCBI standards, which include checks for appropriate numbers of rRNA and tRNA genes [10].

- Solution: Identify and remove contaminated contigs from the assembly before re-annotation.

Problem: Failure to Identify a Novel Drug Target

1. Issue: The "Poorly Annotated Gene" Blind Spot

- Potential Cause: Many genes with unknown function or poor annotation are excluded from candidate lists because existing gene-prioritization tools depend on existing data, a phenomenon known as the "rich get richer" [17].

- Diagnostic Steps:

- Check if your candidate gene list is biased towards well-studied genes. Cross-reference with databases like OMIM to see if genes lack established phenotype associations [17].

- Use a tool like EvORanker, which uses phylogenetic profiling to link genes to clinical phenotypes without relying solely on prior annotation [17].

- Solution: Incorporate unbiased methods like clade-wise phylogenetic profiling into your target identification workflow to systematically evaluate poorly annotated genes [17].

2. Issue: Inability to Reproduce Drug-Target Interactions

- Potential Cause: The annotated gene sequence is incorrect, leading to the production of an invalid protein target for in vitro assays.

- Diagnostic Steps:

- Use a tool like NESSie to detect potential annotation errors in your training data or reference datasets [18].

- Validate the gene model experimentally (e.g., via RT-PCR and Sanger sequencing) to confirm the annotated transcript structure.

- Solution: Always validate the sequence of a potential drug target at the DNA and transcript level before initiating high-throughput screening.

Experimental Protocols for Quality Control

Protocol 1: Assessing Proteome Quality with OMArk

This protocol uses OMArk to evaluate the completeness and consistency of a eukaryotic proteome [5].

- Input Preparation: Obtain your proteome in a FASTA format file.

- Software Execution:

- Web Server (Easiest): Upload the FASTA file to the OMArk web server (https://omark.omabrowser.org).

- Command Line (Advanced): Install the OMArk Python package and the required OMAmer database from the OMA browser.

- Results Interpretation: Analyze the output:

- Completeness Report: The proportion of conserved ancestral genes present indicates completeness.

- Consistency Assessment: A high percentage of "consistent" proteins indicates reliable annotation. A high percentage of "fragments," "inconsistent" or "contaminant" proteins indicates potential issues.

- Troubleshooting: If contamination is detected, OMArk will report the likely contaminant taxon, allowing you to filter those sequences.

The following diagram illustrates the logical workflow of the OMArk analysis process:

Protocol 2: Linking Poorly Annotated Genes to Phenotypes with EvORanker

This protocol outlines how to use EvORanker to associate poorly annotated genes with rare disease phenotypes, a common scenario in target identification [17].

- Data Input: Provide clinical phenotype data and the list of mutated genes from a patient's sequencing data.

- Algorithm Execution: EvORanker integrates this data with multi-scale phylogenetic profiles and other omics data from the STRING database.

- Prioritization: The algorithm prioritizes candidate disease genes by identifying functionally related genes through patterns of evolutionary conservation across 1,028 eukaryotic genomes.

- Validation: The top candidate genes (e.g., DLGAP2, LPCAT3 in the original study) require functional validation in the lab.

The workflow for this method is summarized in the following diagram:

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Resources for Managing Annotation Quality

| Resource / Tool | Function / Purpose | Relevant Use Case |

|---|---|---|

| OMArk [5] | Provides a comprehensive quality assessment of a eukaryotic proteome, evaluating completeness, contamination, and gene model errors. | First-line check for any proteome before use in comparative genomics or target identification. |

| EvORanker [17] | An algorithm that uses phylogenetic profiling to link mutated genes, especially poorly annotated ones, to clinical phenotypes. | Prioritizing candidate disease genes from sequencing data where standard methods fail. |

| BUSCO [5] | Benchmarks universal single-copy orthologs to assess the completeness of a genome assembly and annotation. | A quick and standard check for gene repertoire completeness. |

| NCBI Prokaryotic Annotation Standards [10] | Defines the minimum standards and quality checks for annotating prokaryotic genomes. | Ensuring your prokaryotic genome annotation meets community-accepted quality levels. |

| NESSie [18] | A software package that uses various methods to automatically detect potential label errors in annotated corpora. | Checking for inconsistencies in manually curated training data used for gene prediction models. |

| DARTS [16] | A drug-affinity responsive target stability method that identifies drug targets by monitoring ligand-induced protein stability. Experimentally validating a predicted drug target. | Confirming the interaction between a small molecule drug and its putative protein target. |

| HUGO Gene Nomenclature Committee (HGNC) [19] | The central authority for approving unique and standardized human gene names and symbols. | Ensuring clear and consistent communication about human genes in research and publications. |

Pipeline Comparison and Selection Guide

The selection of an appropriate annotation pipeline is a critical quality control decision. The table below provides a systematic comparison of three major pipelines to guide researchers.

Table 1: Comparative Overview of Prokaryotic Genome Annotation Pipelines

| Feature | NCBI PGAP | PROKKA | RAST |

|---|---|---|---|

| Primary Use Case | High-quality, standardized annotation for GenBank submission [1] [20] | Rapid annotation for initial analysis and draft genomes [21] | User-friendly web-based system with metabolic subsystem analysis [22] |

| Annotation Strategy | Hybrid: Combines homology-based (HMMs, BLAST) and ab initio (GeneMarkS-2+) methods [20] [2] | Hybrid: Relies on curated databases for homology and tools like Prodigal for ab initio prediction [22] [21] | Homology-based leveraging the SEED database and subsystem technology [22] |

| Typical Output | Comprehensive GenBank-ready files with functional assignments, EC numbers, and Gene Ontology terms [20] [8] | Standards-compliant files (GFF, GBK, FAA) for visualization and downstream analysis [21] | Functional roles, subsystem coverage, and metabolic network reconstruction [22] |

| Gene Naming | Follows International Protein Nomenclature Guidelines [20] | Uses user-defined locus tag prefix [21] | Internal naming convention |

| Ideal User | Submitters to public repositories, users requiring NCBI compliance [1] | Bioinformaticians needing quick, local annotation for multiple genomes [21] | Users preferring a web interface with minimal setup for metabolic insights [22] |

Frequently Asked Questions (FAQs) and Troubleshooting

Q1: My PGAP annotation results in many "pseudo" genes. Is this a problem with my genome assembly?

Not necessarily. The PGAP pipeline annotates genes as pseudogenes when it detects frameshifts, internal stop codons, or when it cannot find a start or stop codon for an evidence-based protein match [20]. This can indicate a true biological event or a sequencing/assembly error. As a quality control metric, a very high proportion of pseudogenes (e.g., >10%) may suggest a problematic assembly requiring improvement. A lower percentage is expected in natural isolates due to authentic gene decay [20].

Q2: When using PROKKA for a novel bacterium, the number of predicted genes seems low. How can I improve sensitivity?

PROKKA's speed comes from using curated databases, which may lack representatives for highly divergent or novel lineages [22]. To enhance sensitivity:

- Use the

--proteinsoption to provide a custom database of proteins from closely related organisms. - Enable the

--rfamoption to search for non-coding RNAs using the Rfam database, which is not enabled by default due to speed considerations [21]. - Adjust the

--evaluethreshold to be less strict (e.g.,1e-05) to capture weaker, but potentially valid, homology hits [21].

Q3: For quality control, how does the functional annotation from RAST and PGAP differ, and which should I trust?

Both pipelines use homology-based functional inference but rely on different underlying protein family models and hierarchies. PGAP uses a hierarchical collection of evidence composed of HMMs (TIGRFAMs, Pfam), BlastRules, and Conserved Domain Database (CDD) architectures [20] [8]. RAST leverages the SEED database and subsystem technology [22]. Discrepancies are common for poorly characterized gene families. For critical genes, manual curation using tools like BLAST against the non-redundant (nr) database and domain analysis with InterProScan is recommended as a gold standard.

Q4: What are the key computational requirements for running PGAP locally?

Running the standalone version of NCBI PGAP requires a Linux environment or a compatible container technology (like Docker or Singularity) and the Common Workflow Language (CWL) reference implementation, cwltool. You will also need to download approximately 30GB of supplemental data for the reference databases and models [8].

Experimental Protocol: Executing the NCBI PGAP

This protocol outlines the methodology for annotating a prokaryotic genome using the NCBI PGAP, which employs a evidence-integrated approach for high-quality structural and functional annotation [20] [2].

Workflow Overview:

Procedure:

Input Preparation:

Pipeline Execution (Structural Annotation):

- ORF Prediction: The pipeline identifies all potential Open Reading Frames (ORFs) in all six translational frames using ORFfinder [20].

- Evidence Gathering: Translated ORFs are searched against:

- Ab Initio Prediction: The ab initio gene-finding program GeneMarkS-2+ is run. Unlike other pipelines, PGAP performs evidence-based searching before ab initio prediction, allowing GeneMarkS-2+ to use the alignment evidence as "hints" to modify and improve its statistical predictions [2]. This is particularly important for resolving gene starts and identifying genes in regions lacking homology evidence.

- Non-Coding RNA Annotation:

- Final Determination: The final set of protein-coding genes is made based on the combining aligning evidence and ab initio predictions in evidence-free regions [20].

Pipeline Execution (Functional Annotation):

- Predicted protein sequences are searched against a hierarchical collection of Protein Family Models (HMMs, BlastRules, and domain architectures) [20].

- Proteins are assigned a name, gene symbol, EC number, and Gene Ontology terms based on the highest-precedence evidence hit [20] [8]. Names follow the International Protein Nomenclature Guidelines [20].

Output and Quality Control:

- The pipeline produces files ready for GenBank submission [20].

- A summary report is generated. Key metrics for quality control include:

- Total genes and the split between coding (CDS) and RNA genes.

- rRNA completeness: A complete prokaryotic genome should typically have at least one copy each of 5S, 16S, and 23S rRNA.

- Pseudo Genes (total): The number of genes annotated as pseudogenes, which can indicate assembly issues or biological reality [20].

- CRISPR Arrays: Identified by searching with PILER-CR and the CRISPR Recognition Tool (CRT) [20].

Table 2: Key Software Tools and Databases in Prokaryotic Genome Annotation

| Item Name | Function in Annotation | Relevance to Quality Control |

|---|---|---|

| GeneMarkS-2+ | Ab initio gene prediction algorithm that integrates extrinsic homology evidence [20] [2]. | Improves accuracy of gene boundaries and start codon selection, especially in novel genomic regions. |

| tRNAscan-SE | Specialized tool for identifying tRNA genes with high accuracy and low false-positive rates [20]. | A complete set of tRNAs is a marker for genome quality and functional completeness. |

| Infernal & Rfam | Tools for annotating non-coding RNA genes (e.g., rRNAs) based on covariance models [20]. | Essential for identifying structural RNAs; their presence and completeness are key QC metrics. |

| TIGRFAMs & Pfam HMMs | Curated databases of protein family hidden Markov models [20] [8]. | Provides high-specificity functional assignments, crucial for reliable metabolic reconstruction. |

| CheckM | Tool for estimating genome completeness and contamination based on conserved single-copy markers [8]. | A vital independent check for assembly and annotation quality, particularly for draft genomes. |

A Practical Toolkit: Core Metrics and Tools for Prokaryotic Annotation QC

Assessing Gene Repertoire Completeness with BUSCO and OMArk

For researchers in prokaryotic genomics, accurately assessing the completeness of a gene repertoire is a critical step in quality control. Two prominent tools for this task are BUSCO (Benchmarking Universal Single-Copy Orthologs) and OMArk. While BUSCO has been a long-standing standard for assessing genome completeness based on conserved single-copy genes, OMArk offers a more comprehensive approach by evaluating both completeness and consistency while identifying potential contamination.

This technical support guide provides practical troubleshooting advice and detailed protocols to help you effectively implement these tools in your gene annotation quality control pipeline, enabling more reliable downstream analyses in drug development and comparative genomics research.

Frequently Asked Questions

Q1: What are the fundamental differences between BUSCO and OMArk?

A1: While both tools assess gene repertoire completeness, they differ significantly in scope and methodology:

- BUSCO focuses exclusively on completeness assessment by searching for universal single-copy orthologs in specific lineages. It reports these genes as present, duplicated, or missing [5].

- OMArk provides a multi-faceted quality assessment that includes not only completeness but also taxonomic consistency and structural consistency. It can identify contamination and questionable genes that don't fit the expected lineage patterns [5].

- OMArk uses alignment-free k-mer comparisons against precomputed gene families across the tree of life, while BUSCO typically uses sequence alignment-based methods [5].

Q2: My OMArk results show a high percentage of duplicated genes. Is this problematic?

A2: Not necessarily. OMArk differentiates between expected and unexpected duplications:

- Expected duplications result from known evolutionary events like whole-genome duplications that occurred after the ancestral lineage's speciation. For example, in the tetraploid plant Hibiscus syriacus, nearly 70% of genes were correctly reported as duplicated due to two WGD events [5].

- Unexpected duplications may indicate potential annotation errors or more recent gene duplication events.

- Interpretation should always consider the ploidy level of your query species compared to the selected ancestral lineage in OMArk [5].

Q3: How does gene annotation quality affect orthology inference in downstream analyses?

A3: Gene annotation quality significantly impacts orthology inference, which is crucial for comparative genomics:

- Studies show that different annotation methods yield markedly distinct orthology inferences, affecting the proportion of orthologous genes per genome and the completeness of Hierarchical Orthologous Groups (HOGs) [23].

- Using heterogeneous genome annotations across species in a study can spuriously inflate the number of lineage-specific genes and misrepresent gene loss patterns [23].

- For consistent results, use the same annotation pipeline for all genomes in your comparative analysis when possible [23].

Q4: What should I do if OMArk detects contamination in my prokaryotic genome?

A4: When OMArk reports contamination:

- First, verify the findings by checking the taxonomic assignments of the flagged sequences.

- Consider revisiting your DNA extraction and sequencing protocols to identify potential contamination sources.

- For public datasets, consult resources like proGenomes4, which provides rigorously quality-controlled prokaryotic genomes, to compare your results against trusted references [24].

- If working with metagenome-assembled genomes (MAGs), adhere to the SeqCode quality criteria for naming and standardizing uncultivated prokaryotes [25].

Troubleshooting Guides

High Incompleteness Scores in BUSCO or OMArk

Symptoms:

- BUSCO reports >20% missing BUSCOs

- OMArk shows low completeness percentage

Possible Causes and Solutions:

Poor Genome Assembly Quality

- Solution: Improve assembly metrics (N50, contiguity) before annotation

- Verification: Check assembly statistics using QUAST or similar tools

Incorrect Lineage Selection

- Solution: Use the most specific lineage possible for your organism

- Verification: Consult available taxonomic information for your species

Annotation Method Issues

- Solution: Try multiple annotation pipelines (NCBI, Ensembl, Augustus) and compare results [23]

- Verification: Use consistency metrics between different approaches

Discrepancies Between BUSCO and OMArk Results

Symptoms:

- BUSCO reports high completeness while OMArk shows inconsistencies

- Significant differences in missing/duplicated gene percentages

Interpretation and Resolution:

Understand Methodological Differences

- BUSCO focuses only on single-copy orthologs, while OMArk considers broader gene family contexts [5]

- OMArk's ancestral lineage selection might be more appropriate for your organism

Check for Contamination

- OMArk may detect contamination that BUSCO misses, affecting completeness calculations [5]

- Investigate inconsistent taxonomic placements in OMArk output

Evaluate Gene Model Quality

- OMArk's structural consistency assessment might identify fragmented or partial genes that BUSCO counts as complete [5]

- Review gene models flagged as fragments or partial mappings

Performance Comparison and Best Practices

Table 1: Comparative Analysis of BUSCO and OMArk Features

| Feature | BUSCO | OMArk |

|---|---|---|

| Completeness Assessment | Yes, based on universal single-copy orthologs | Yes, based on conserved ancestral genes |

| Contamination Detection | Limited | Yes, through taxonomic inconsistency analysis |

| Gene Structural Quality | No | Yes, identifies fragments and partial genes |

| Handling of Gene Duplications | Reports as "duplicated" | Differentiates expected vs. unexpected duplications |

| Reference Database | BUSCO lineage datasets | OMA database of gene families |

| Speed | Fast | Moderate (typically ~35 minutes for 20,000 sequences) |

| Input Format | Genome or proteome FASTA | Proteome FASTA |

Table 2: Quantitative Performance Comparison Based on Validation Studies

| Metric | BUSCO | OMArk |

|---|---|---|

| Average Completeness Overestimation (Model datasets) | +2.1% | +2.3% |

| Average Completeness Overestimation (Diverse datasets) | +6.1% | +9.9% |

| Contamination Detection Capability | Limited to specific tools | Identified contamination in 73 of 1,805 eukaryotic proteomes |

| Effect of High Duplication Rates | Moderate overestimation | Higher overestimation due to inclusive conserved gene set |

Experimental Protocols

Standard Workflow for Gene Repertoire Assessment

Sample Protocol: Integrated BUSCO and OMArk Analysis

Input Data Preparation

- Obtain genome assembly in FASTA format

- Annotate protein-coding genes using a consistent pipeline (e.g., NCBI Eukaryotic Genome Annotation, Ensembl, or Augustus) [23]

- Extract proteome (all predicted protein sequences) in FASTA format

BUSCO Analysis

- Select appropriate lineage (-l parameter) for your organism

- Run in genome mode for assemblies or protein mode for annotated proteomes

- Interpret results: focus on complete, single-copy percentages

OMArk Analysis

- Ensure proteome FASTA headers follow standard formatting

- For command-line use, download appropriate OMAmer database

- Review HTML output for interactive visualization of results

Results Integration

- Compare completeness estimates between tools

- Investigate discrepancies using OMArk's consistency metrics

- Cross-reference contamination flags with taxonomic information

Workflow Diagram: Gene Repertoire Quality Assessment

Validation Protocol for Annotation Pipelines

Purpose: To evaluate how different annotation methods affect downstream completeness assessments.

Procedure:

- Select a high-quality reference genome with chromosome-level assembly [23]

- Annotate the same genome using multiple pipelines:

- NCBI Eukaryotic Genome Annotation Pipeline

- Ensembl Gene Annotation System

- Ab initio prediction with Augustus [26]

- Other relevant pipelines for your organism

- Run both BUSCO and OMArk on each resulting proteome

- Compare results using statistical tests (e.g., Wilcoxon signed-rank test) [23]

- Assess orthology inference differences using tools like OMA or OrthoFinder [23]

Expected Outcomes:

- Understanding of annotation pipeline bias in your completeness assessments

- Guidance for selecting the most appropriate annotation method for your organism

- Awareness of potential artifacts in downstream comparative genomics analyses

Research Reagent Solutions

Table 3: Essential Tools and Databases for Gene Repertoire Assessment

| Resource | Type | Purpose | Access |

|---|---|---|---|

| BUSCO Lineages | Database | Curated sets of universal single-copy orthologs for completeness assessment | https://busco.ezlab.org/ |

| OMA Database | Database | Gene families and hierarchical orthologous groups for OMArk comparisons | https://omabrowser.org/ |

| proGenomes4 | Database | Quality-controlled prokaryotic genomes for reference and comparison | https://progenomes.embl.de/ |

| PGAP2 | Software | Prokaryotic pan-genome analysis with ortholog identification | https://github.com/bucongfan/PGAP2 |

| GALBA | Software | Genome annotation pipeline combining miniprot and AUGUSTUS | https://galba.github.io/ |

| SeqCode | Framework | Standards for naming and quality assessment of uncultivated prokaryotes | https://seqco.de/ |

Advanced Applications

Case Study: Addressing Annotation Error Propagation

Problem: The zebra finch proteome, which suffered from fragmentation issues, was used as a reference for other avian gene annotations, propagating errors through multiple species [5].

OMArk Solution:

- OMArk identified this systematic error by detecting inconsistent gene structures across avian species

- The tool recognized unexpectedly high proportions of fragmented and partial genes

- Resolution required reannotation of the original zebra finch genome and subsequent reannotation of affected species

Recommendation: Periodically reassess reference genomes in your study system using tools like OMArk to identify and correct systematic annotation errors.

Special Considerations for Prokaryotic Genomics

While BUSCO and OMArk were developed with eukaryotic genomes in mind, they can be applied to prokaryotic research with these considerations:

- Lineage Selection: Choose the most appropriate bacterial or archaeal lineage in BUSCO, or ensure your species is represented in OMArk's reference database

- Horizontal Gene Transfer: Be aware that HGT can complicate completeness assessments in prokaryotes

- Metagenome-Assembled Genomes: For MAGs, use additional quality metrics like CheckM alongside BUSCO/OMArk [25]

- Population Genomics: For large-scale prokaryotic studies, consider complementing with specialized tools like PGAP2 for pan-genome analysis [27]

By implementing these troubleshooting guides, protocols, and best practices, researchers can significantly improve the reliability of gene repertoire completeness assessments, leading to more robust downstream analyses in drug development and comparative genomics.

Frequently Asked Questions (FAQs)

1. What do N50 and L50 tell me about my genome assembly that basic contig counts cannot? The N50 statistic provides a weighted median contig length, indicating the contiguity of your assembly by giving more importance to longer sequences. In contrast, the L50 value tells you the number of contigs that constitute the core half of your assembly. Relying solely on the total number of contigs can be misleading, as this count includes many potentially small, fragmented sequences. Together, N50 and L50 offer a more realistic view of assembly quality by highlighting how the sequence length is distributed [28]. A higher N50 and a lower L50 generally indicate a more complete and contiguous assembly.

2. My assembly's N50 is lower than the reference genome's. Does this mean my assembly is of poor quality? Not necessarily. While a lower N50 can indicate a more fragmented assembly, it is not the sole indicator of quality. It is essential to integrate other metrics provided by QUAST for a comprehensive assessment. You should evaluate the genome fraction (the percentage of the reference genome covered by your assembly), the number of misassemblies (structural errors), and gene-based completeness metrics like BUSCO [29] [12]. A fragmented assembly with high genome fraction and complete gene sets can still be highly useful for many downstream analyses, such as gene annotation.

3. How does QUAST calculate N50 and related metrics, and what is the difference between N50 and NG50? QUAST calculates the N50 by first ordering all contigs from longest to shortest. It then calculates the cumulative sum of their lengths. The N50 is the length of the shortest contig in the list at the point where this cumulative sum reaches 50% of the total assembly length [30] [28]. The NG50 is a more rigorous metric used when the genome size is known or estimated. It is the contig length at which 50% of the genome size, not the assembly size, is covered [28]. Therefore, NG50 allows for more meaningful comparisons between different assemblies of the same organism.

4. What is a "misassembly" in QUAST, and how can a high number affect my prokaryotic gene annotation? QUAST defines a misassembly as a significant structural error in a contig, identified when aligned to a reference genome. This includes situations where flanking sequences align to different chromosomes, to the same chromosome but over 1 kilobase apart, or in reverse orientation [30]. For prokaryotic gene annotation, misassemblies can be particularly detrimental. They can disrupt operon structures, split coding sequences, create false gene fusions, or lead to incorrect functional predictions because the genomic context is broken.

5. I have a prokaryotic genome assembly. Which QUAST metrics are most critical for my gene annotation research? For a focus on prokaryotic gene annotation, prioritize the following QUAST metrics:

- Genome Fraction: This indicates what percentage of the reference genome your assembly covers. A high value is crucial for ensuring you have not missed genomic regions containing genes [30].

- # of Genes (Complete/Partial): This reports how many genes from a reference annotation are fully or partially covered by your assembly, directly indicating annotation potential [30].

- # of Mismatches and Indels per 100 kb: A low count here is vital for obtaining accurate gene models, as sequencing errors can create false start/stop codons or frameshifts [30].

- N50 & L50: While indirect, these contiguity metrics help ensure genes are not fragmented across multiple contigs [28].

Metric Definitions and Methodologies

Core Contiguity Metrics Table

The following table summarizes the key metrics for assessing the contiguity of a genome assembly.

| Metric | Definition | Interpretation | Calculation Method |

|---|---|---|---|

| N50 | The length of the shortest contig such that contigs of this length or longer contain at least 50% of the total assembly length [28]. | A higher N50 suggests a more contiguous assembly. Sensitive to the presence of very short contigs. | 1. Sort all contigs from longest to shortest.2. Calculate cumulative sum of lengths.3. N50 is the length of the contig at which the cumulative sum reaches or exceeds 50% of the total assembly length. |

| L50 | The smallest number of contigs whose combined length represents at least 50% of the total assembly length [28]. | A lower L50 indicates a more contiguous assembly. Complements the N50 value. | 1. Sort all contigs from longest to shortest.2. Calculate cumulative sum.3. L50 is the count of contigs at the point the cumulative sum reaches or exceeds 50%. |

| NG50 | The length of the shortest contig such that contigs of this length or longer contain at least 50% of the estimated genome size [28]. | Allows for fair comparison between assemblies, especially when assembly sizes differ. More stringent than N50. | Same as N50, but the cumulative sum is calculated against a known or estimated genome size instead of the assembly length. |

| Total # of Contigs | The total number of contigs in the assembly. | A simple count of sequence fragments. Can be skewed by a large number of very short contigs. | Direct count from the FASTA file. |

| Largest Contig | The length (in base pairs) of the single largest contig in the assembly. | Provides an upper bound on contig length. | Identify the longest sequence in the FASTA file. |

Reference-Based Quality Metrics Table

When a reference genome is available, QUAST provides powerful metrics for evaluating assembly accuracy, as shown in the following table.

| Metric | Definition | Impact on Prokaryotic Annotation |

|---|---|---|

| # of Misassemblies | The number of positions where left and right flanking sequences align to distant or opposite locations on the reference [30]. | High impact. Can disrupt operons and split coding sequences, leading to erroneous gene calls. |

| Genome Fraction (%) | The percentage of aligned bases in the reference genome covered by the assembly [30]. | Critical. A low value indicates missing genomic material and potentially missing genes. |

| Duplication Ratio | The total number of aligned bases in the assembly divided by the number of aligned bases in the reference [30]. | A ratio >1.1 may indicate haplotypic duplication or over-collapsed repeats, confusing gene copy number. |

| # Mismatches per 100 kb | The rate of base substitution errors in the aligned regions [30]. | Can introduce errors in coding sequences, creating false stop codons or altering amino acid sequences. |

| # Indels per 100 kb | The rate of small insertions or deletions in the aligned regions [30]. | Frameshift indels within coding sequences will completely disrupt downstream gene prediction. |

| NGA50 | An N50-like metric based on aligned blocks after breaking contigs at misassembly sites [31]. | Provides a contiguity measure that accounts for structural errors, giving a more realistic quality assessment. |

Workflow and Data Visualization

Genome Assembly Quality Control Workflow

The diagram below illustrates a standard workflow for using QUAST as part of a genome assembly and annotation pipeline, highlighting key decision points.

Relationship Between Key QUAST Metrics

This diagram shows the logical relationships between primary QUAST metrics and how they contribute to the overall assessment of an assembly.

The Scientist's Toolkit: Essential Research Reagents and Software

| Tool / Reagent | Category | Function in Evaluation |

|---|---|---|

| QUAST | Software | The core quality assessment tool that computes all contiguity and reference-based metrics [30]. |

| Reference Genome | Data | A high-quality genome sequence from a closely related strain or species, used as a benchmark for calculating misassemblies, genome fraction, and NG50 [30]. |

| BUSCO Dataset | Data | A set of universal single-copy orthologs used to assess the completeness of the gene space in the assembly, independent of a reference genome [29] [12]. |

| BLAST+ | Software | Used by QUAST and other tools for sequence alignment, such as in contamination checks against the UniVec database [12]. |

| GeneMark-ES/ET | Software | An ab initio gene prediction algorithm often integrated with QUAST to estimate the number of genes in a novel assembly [30] [31]. |

Detecting Contamination and Taxonomic Inconsistencies with OMArk

OMArk Technical Support Center

Frequently Asked Questions (FAQs)

Q1: What is the primary function of OMArk in quality control for prokaryotic gene annotations?

OMArk is a software package designed for the quality assessment of protein-coding gene repertoires. Its primary functions are to measure proteome completeness, characterize the consistency of all protein-coding genes with their homologs, and identify contamination from other species. Unlike other tools that only measure completeness, OMArk also assesses taxonomic consistency and identifies likely contamination events and dubious proteins, providing a more comprehensive quality overview [32].

Q2: My OMArk results show a high proportion of "Inconsistent" proteins. What does this indicate?

A high proportion of proteins classified as "Inconsistent" suggests that many sequences in your proteome are placed into gene families outside of your species' expected ancestral lineage. While some of these may be novel gene families not previously identified in the target clade, an unusually high proportion often indicates systematic error in the annotation. These sequences could be contamination from other species or misannotated non-coding sequences [32].

Q3: What does OMArk require as input, and what are the output formats?

OMArk requires a proteome in FASTA format where each gene is represented by at least one protein sequence. The pipeline begins by running OMAmer software on this FASTA file to obtain a search result file, which becomes the main input for OMArk. If your proteome contains multiple isoforms per gene, you must also provide a .splice file via the --isoform_file option [33].

OMArk produces two main output files: a machine-readable file with a .sum extension and a human-readable summary ending with _detailed_summary.txt. These files report the reference lineage used, the number of conserved Hierarchical Orthologous Groups (HOGs) used for completeness assessment, and the results of the completeness assessment [33].

Q4: How does OMArk differentiate from BUSCO in completeness assessment?

While both OMArk and BUSCO assess completeness based on conserved genes, OMArk considers conserved multicopy genes and does not require conserved genes to be in a single copy in extant species. This results in a more inclusive set of conserved gene families. Furthermore, OMArk provides additional consistency assessments that BUSCO does not, specifically evaluating taxonomic origin and structural consistency of all proteins [32].

Q5: What steps should I take if OMArk detects potential contamination in my prokaryotic proteome?

First, verify the OMArk results by checking the specific contaminant proteins identified and their taxonomic assignments. Cross-reference these findings with other contamination detection tools if available. Review your laboratory procedures for potential sources of cross-species contamination during sample preparation. Consider re-sequencing or re-assembling the genome with stricter contamination filtering parameters. The NCBI Prokaryotic Genome Annotation Pipeline also provides annotation assessment tools that can help identify other annotation issues [10] [32].

Troubleshooting Guides

Issue 1: Problems with OMAmer Database Selection

- Symptoms: Inaccurate species identification; inability to properly assess contamination; warnings about limited taxonomic range.

- Solution: Ensure you are using an OMAmer database that covers a wide range of species, such as the

LUCA.h5database constructed from the whole OMA database. Using a database for a restricted taxonomic range (e.g., Metazoa only for a bacterial genome) limits OMArk's ability to detect contamination or identify sequences from outside the expected range [33]. - Prevention: Always download the recommended comprehensive database from the "Current release" page of the OMA Browser or use the provided

OMAmerDB.gzfile [33].

Issue 2: Interpreting High Duplication Levels in Prokaryotic Genomes

- Symptoms: Completeness appears overestimated; unusually high percentage of genes reported as duplicated.

- Solution: For prokaryotic genomes, high duplication levels are unusual. This result may indicate the presence of multiple contigs from very similar genomes (e.g., different strains) being annotated as a single proteome, which OMArk may interpret as duplication. Check your assembly for possible redundancies. Note that OMArk tends to overestimate completeness in species with high numbers of duplicated genes because reporting a gene as missing requires all copies to be absent [32].

Issue 3: Species Misidentification or Multiple Taxon Placement

- Symptoms: OMArk reports multiple overrepresented clades, with one as the main taxon and others as contaminants.

- Solution: OMArk identifies the most recently emerged clade with overrepresented placements as the inferred taxon. If multiple paths are overrepresented, it reports the most populated as the main taxon and others as contaminants. For prokaryotic genomes with significant horizontal gene transfer, this may require careful biological interpretation. You can override the automatic selection by providing a known taxonomic identifier [32].

OMArk Quality Metrics and Interpretation

Table 1: Key OMArk Output Metrics and Their Interpretation for Prokaryotic Genomics

| Metric | Description | Interpretation in Prokaryotic Genomes |

|---|---|---|

| Completeness | Proportion of expected conserved ancestral genes present [32]. | High value indicates a more complete gene repertoire. Compare to BUSCO results for verification [32]. |

| Consistent Proteins | Proteins fitting the lineage's known gene families [32]. | High proportion (>90%) indicates reliable annotation. Lower values suggest contamination or annotation errors [32]. |

| Contaminant Proteins | Inconsistent placements closer to a contaminant species [32]. | Any significant percentage requires investigation. The NCBI standard emphasizes the importance of contamination-free genomes [10] [32]. |

| Inconsistent Proteins | Proteins placed outside lineage repertoire, not classified as contaminants [32]. | May be novel genes or annotation errors. High proportions suggest potential systematic error [32]. |

| Unknown Proteins | Proteins with no gene family assignment [32]. | Could be sequences without close homologs or misannotated non-coding sequences [32]. |

| Fragments | Proteins with lengths less than half their gene family's median length [32]. | Suggests potential gene model inaccuracies or fragmented assemblies. NCBI standards require "no partial feature" for complete genomes [10] [32]. |

Table 2: Comparison of Common Quality Assessment Tools

| Tool | Completeness | Contamination Detection | Taxonomic Consistency | Structural Consistency |

|---|---|---|---|---|

| OMArk | Yes (conserved single-copy and multicopy genes) [32]. | Yes (identifies contaminant sequences) [32]. | Yes (assesses all proteins) [32]. | Yes (identifies fragments, partial mappings) [32]. |

| BUSCO | Yes (conserved single-copy orthologs) [32]. | Limited [32]. | No | No |

| EukCC/CheckM | Yes | Yes (for some tools) [32]. | No | No |

Experimental Protocols

Protocol 1: Standard OMArk Analysis Workflow for Prokaryotic Proteomes

- Input Preparation: Prepare your proteome in FASTA format. Ensure each gene is represented by a single protein sequence. For multi-contig drafts, partial coding regions are allowed at contig ends per NCBI standards [10].

- OMAmer Database Selection: Download a comprehensive OMAmer database (e.g.,

LUCA.h5) from the OMA Browser [33]. - OMAmer Execution: Run OMAmer on your proteome FASTA file against the selected database to generate a search result file [33] [32].

- OMArk Analysis: Execute OMArk using the OMAmer output as the primary input.

- Result Interpretation: Analyze the

.sumfile and the_detailed_summary.txtfile. Pay close attention to the completeness score, the proportion of consistent proteins, and any reported contamination.

Protocol 2: Validation of Contamination Findings

- Independent Verification: If OMArk flags contamination, use additional tools like EukCC (for eukaryotes) or CheckM (for bacteria) to corroborate findings [32].

- Taxonomic Analysis: Extract the sequences OMArk identified as contaminants. Perform a BLAST search against the NCBI non-redundant database to confirm their taxonomic origin.

- Assembly-Level Inspection: Map sequencing reads back to the assembled contigs containing the putative contaminants. Look for uneven read coverage or specific sequence composition (e.g., GC content) that differs from the main genome, which can support the contamination hypothesis.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials and Resources for OMArk Analysis

| Item | Function/Description | Source/Download |

|---|---|---|

| OMAmer Database | A precomputed database of gene families used by OMArk for fast protein placement. The LUCA.h5 database (based on the entire OMA database) is recommended [33]. |

OMA Browser ("Current Release" page) [33]. |

| Proteome FASTA File | The input proteome to be analyzed. Must be in FASTA format, with ideally one protein sequence representing each gene [33]. | User-provided (e.g., from NCBI, Ensembl, or internal annotation pipeline). |

| OMArk Software | The core software package for proteome quality assessment, installed as a command-line tool [33]. | Bioconda (conda install -c bioconda omark), PyPI (pip install omark), or GitHub [33]. |

| NCBI Annotation Tools | Tools like tbl2asn and the Discrepancy Report, which help find problems with genome annotations, providing complementary checks to OMArk [10]. |

NCBI (as part of the submission toolkit or stand-alone) [10]. |

| Splice File (Optional) | A text file defining protein isoforms for genes, required if the input proteome contains multiple proteins per gene [33]. | User-generated, following OMArk's format specifications [33]. |

Analyzing Sequence Quality and k-mer Based Validation with Merqury

Core Concepts and Definitions

What are k-mers and why are they fundamental to genome assembly validation?

K-mers are subsequences of length k (e.g., 21 bases long) derived from longer DNA sequences. In the context of quality control, they provide a reference-free method to assess the accuracy and completeness of a genome assembly by comparing the k-mers present in the original sequencing reads to those found in the final assembly. This approach is powerful because it does not rely on a pre-existing reference genome and can identify issues like missing sequences, artificial duplications, and base-level errors by analyzing k-mer coverage spectra [34] [35].

How does Merqury differ from other quality assessment tools like BUSCO? While BUSCO assesses completeness by looking for a set of universal single-copy orthologs, Merqury evaluates quality by comparing k-mers from high-accuracy reads (like Illumina) to the assembled genome. Merqury provides metrics for base-level accuracy (QV), completeness, and for phased diploid assemblies, it can also assess haplotype-specific accuracy and phasing. A key advantage is that it is not limited to conserved gene regions and can evaluate the entire assembly, including difficult-to-assemble non-genic regions [34] [5].

Frequently Asked Questions (FAQs)

1. What sequencing data is required to run Merqury effectively? Merqury requires two primary inputs:

- A de novo genome assembly in FASTA format.