Evaluating Model Quality Assessment in CASP: From Monomers to Complexes in the AlphaFold Era

This article provides a comprehensive analysis of Model Quality Assessment (MQA) for CASP targets, a critical community-wide experiment in protein structure prediction.

Evaluating Model Quality Assessment in CASP: From Monomers to Complexes in the AlphaFold Era

Abstract

This article provides a comprehensive analysis of Model Quality Assessment (MQA) for CASP targets, a critical community-wide experiment in protein structure prediction. We explore the foundational principles of CASP evaluation, detailing the shift in assessment focus from monomeric structures to multimeric complexes. The article examines cutting-edge MQA methodologies, including the integration of AlphaFold3-derived per-atom confidence measures and novel evaluation frameworks like QMODE. We address key challenges in model refinement and accuracy estimation, and present validation frameworks for benchmarking MQA performance. Synthesizing insights from recent CASP experiments, this review serves as a resource for researchers and drug development professionals leveraging computational structural biology.

CASP and Model Quality Assessment: Foundations for a Structural Biology Revolution

The Critical Assessment of Structure Prediction (CASP) is a community-wide, worldwide experiment that has been conducted every two years since 1994 to objectively test protein structure prediction methods through blind testing [1]. As a cornerstone of structural bioinformatics, CASP provides an independent mechanism for assessing the state of the art in modeling protein three-dimensional structure from amino acid sequence [2]. The core principle of CASP is fully blinded testing: participants receive amino acid sequences of proteins whose structures are unknown (but soon to be solved experimentally) and submit computed models before the experimental structures are made public [3]. This process ensures objective evaluation without bias, establishing CASP as the "world championship" in protein structure prediction, with over 100 research groups regularly participating [1].

The fundamental goal of CASP is to advance methods of identifying protein structure from sequence by establishing current capabilities, identifying progress, and highlighting where future efforts should focus [4]. As experimental structures are available for less than 1/500th to 1/1000th of proteins with known sequences, modeling plays a crucial role in providing structural information for biological research and drug development [5] [3]. CASP has witnessed dramatic progress over its 15+ experiments, particularly with recent breakthroughs in deep learning methods that have revolutionized the field [6] [7].

Experimental Design and Methodology

Target Selection and Blind Testing Protocol

CASP employs a double-blind design where neither predictors nor organizers know target structures during the prediction period [1]. Targets are protein sequences from structures soon-to-be solved by X-ray crystallography, NMR spectroscopy, or cryo-electron microscopy, or structures recently solved but kept on hold by the Protein Data Bank [1]. The experiment begins with CASP organizers soliciting and releasing target sequences to registered participants. Modeling groups then have specified time windows (typically 72 hours for automated servers and 3 weeks for human-expert teams) to submit their predicted 3D structures [3].

The experimental workflow follows a rigorous cyclical process that maintains the blind testing principle throughout the prediction and assessment phases:

Prediction Categories and Evaluation Metrics

CASP assessment has evolved over time to reflect methodological advances. In recent experiments, the main categories include:

- Single Protein and Domain Modeling: Assessment of overall 3D structure accuracy for monomeric proteins and individual domains [2]

- Assembly: Evaluation of domain-domain, subunit-subunit, and protein-protein interactions in complexes [4] [2]

- Accuracy Estimation: Assessment of methods for estimating the reliability of predicted models [2]

- Refinement: Testing the ability to improve initial models toward higher accuracy [5]

- Contact Prediction: Evaluation of residue-residue contact prediction accuracy [7]

The primary metric for evaluating backbone accuracy is the Global Distance Test Total Score (GDTTS), which measures the average percentage of residues that can be superimposed under multiple distance thresholds (typically 1, 2, 4, and 8 Å) [8] [1]. As a rule of thumb, models with GDTTS >50 generally have correct topology, while those with GDT_TS >75 have many correct atomic-level details [7]. Additional metrics include Local Distance Difference Test (LDDT) for local accuracy, Interface Contact Score (ICS) for complexes, and Z-scores for statistical significance [4].

Target difficulty is classified based on sequence and structure similarity to known structures: TBM-Easy (straightforward template modeling), TBM-Hard (difficult homology modeling), FM/TBM (remote homologies), and FM (free modeling with no detectable homology) [6].

Historical Progress and Key breakthroughs

Quantitative Assessment of Methodological Evolution

CASP has documented remarkable progress in prediction accuracy over its 15+ experiments. The table below summarizes the key advancements in model quality across major CASP editions:

Table 1: Evolution of Prediction Accuracy in CASP Experiments

| CASP Edition | Year | Key Methodological Advances | Easy Targets (GDT_TS) | Difficult Targets (GDT_TS) | Notable Performers |

|---|---|---|---|---|---|

| CASP4 | 2000 | First reasonable ab initio models [9] | ~70-80 | ~20-30 (small proteins only) | Early comparative modeling |

| CASP7 | 2006 | Improved loop modeling [9] | ~80-85 | ~30-40 (for ≤120 residues) | Graph-based approaches |

| CASP11 | 2014 | Coevolution-based contact prediction [5] | ~85-90 | ~40-45 | Statistical methods |

| CASP12 | 2016 | Advanced statistical methods for contacts [4] | ~90 | ~47 (contact precision) | Evolutionary coupling |

| CASP13 | 2018 | Deep learning for distance prediction [7] | ~90-92 | ~60+ | AlphaFold, DeepMind A7D |

| CASP14 | 2020 | End-to-end deep learning [6] | ~95 | ~85+ | AlphaFold2 |

| CASP15 | 2022 | Extension to complexes, RNA [4] [2] | High accuracy maintained | ~85+ for monomers | Multimeric modeling |

The progression of model quality for the most challenging targets (free modeling category) demonstrates the most dramatic improvement, particularly between CASP12 and CASP14 where GDT_TS scores increased from approximately 47 to over 85 [4] [6].

Paradigm-Shifting Breakthroughs

Several CASP rounds have marked fundamental shifts in protein structure prediction capability:

CASP11 (2014) witnessed the first substantial improvement in contact prediction accuracy due to coevolutionary analysis methods that properly accounted for transitive correlations, nearly doubling precision from 27% to 47% [5]. This enabled the first accurate models of larger proteins (256 residues) without templates [5].

CASP13 (2018) saw dramatic progress driven by deep learning techniques applied to contact and distance prediction [7]. Deep neural networks treated contact maps as images, achieving 70% precision and enabling correct fold prediction for most free modeling targets with adequate sequence information [7]. The AlphaFold system from DeepMind demonstrated particularly impressive performance [1].

CASP14 (2020) marked a revolutionary advance with AlphaFold2 delivering models competitive with experimental accuracy for approximately two-thirds of targets [6]. This end-to-end deep learning approach produced models with median GDT_TS scores above 85 even for the most difficult targets, essentially solving the single-protein folding problem for many cases [6].

Assessment Protocols by Category

Template-Based Modeling (TBM) Assessment

Template-Based Modeling evaluates predictions when detectable homologous structures exist. Assessment focuses on:

- Overall backbone accuracy using GDT_TS and RMSD metrics

- Alignment accuracy measuring correct residue mapping to templates

- Non-template region modeling for regions not covered by structural templates

- Side-chain modeling accuracy for atomic-level details

Until CASP13, TBM showed consistent but gradual improvement, with models based on identified templates remaining the most accurate [4] [3]. CASP14 revealed that the advantage from homologous templates became marginal, with AlphaFold2 achieving high accuracy even without detectable templates [6].

Free Modeling (FM) Assessment

Free Modeling (historically called "ab initio" or "new fold") assesses predictions without detectable templates. Assessment emphasizes:

- Global topology capture using GDT_TS at looser thresholds

- Contact-based accuracy for evaluating physical plausibility

- Structural novelty relative to known folds

FM witnessed the most dramatic progress in CASP13 and CASP14, with accuracy jumping from GDT_TS~50 to ~85 for difficult targets [6] [7]. This transformation was enabled by deep learning methods that could predict structures without explicit evolutionary templates.

Complex Assembly Assessment

Assembly assessment evaluates predictions of multimolecular complexes, including:

- Interface Contact Score (ICS/F1) for residue contacts at interfaces

- LDDT of interface for local accuracy at binding sites

- DockQ for overall quaternary structure quality

CASP15 showed enormous progress in modeling multimolecular complexes, with accuracy almost doubling in terms of ICS and increasing by one-third in LDDT compared to CASP14 [4]. Deep learning methodology that revolutionized monomer prediction was successfully extended to multimeric modeling [4].

Model Refinement Assessment

Refinement tests the ability to improve initial models, with evaluation focusing on:

- GDT_TS improvement over starting models

- Local geometry enhancement

- Physical plausibility of atomic interactions

Earlier CASPs showed refinement as particularly challenging, but CASP11 and subsequent experiments demonstrated consistent (though modest) improvements using molecular dynamics and related approaches [5].

Key Experimental Results and Data

Quantitative Performance Across CASP Editions

The table below summarizes key quantitative results from recent CASP experiments, demonstrating the rapid progress in prediction accuracy:

Table 2: Comparative Performance Metrics Across Recent CASP Experiments

| Assessment Category | CASP11 (2014) | CASP12 (2016) | CASP13 (2018) | CASP14 (2020) | CASP15 (2022) |

|---|---|---|---|---|---|

| FM Targets GDT_TS | ~40-45 [5] | ~47 [4] | ~60+ [7] | ~85+ [6] | High accuracy maintained [4] |

| TBM Targets GDT_TS | ~85-90 [5] | ~90 [4] | ~90-92 [7] | ~92-95 [6] | High accuracy maintained [4] |

| Contact Precision | 27% → 47% [5] | 47% [4] | 70% [7] | No significant improvement [4] | Category retired [2] |

| Refinement Success | Consistent slight improvements [5] | Moderate improvements [4] | Limited progress [7] | Mixed results [6] | Category retired [2] |

| Assembly Accuracy | Early development [5] | Preliminary assessment | Steady progress | Moderate accuracy | Dramatic improvement [4] |

Impact of Experimental Methodologies

The Scientist's Toolkit for CASP experimentation includes both computational and experimental resources:

Table 3: Essential Research Reagents and Tools in CASP Experiments

| Resource Type | Specific Examples | Function in CASP Experiment |

|---|---|---|

| Experimental Structure Methods | X-ray crystallography, NMR, Cryo-EM [6] | Provide experimental reference structures for blind assessment |

| Sequence Databases | UniProt, Metagenomic databases [7] | Supply evolutionary information for coevolution-based methods |

| Structure Templates | Protein Data Bank (PDB) [1] | Source of template structures for comparative modeling |

| Assessment Software | LGA, TM-score, GDT_TS [1] | Enable objective quantitative comparison of models to experiments |

| Specialized Data | Sparse NMR, chemical crosslinks [5] [3] | Provide experimental constraints for hybrid modeling approaches |

Implications for Structural Biology and Drug Discovery

The advances demonstrated in CASP have profound implications for biological research and therapeutic development:

Accelerating Structure Determination: CASP14 results showed that computational models can now sometimes successfully address biological questions that motivate experimental structure determination [5]. In several cases, models have helped solve crystal structures by molecular replacement, and AlphaFold2 models assisted in solving four structures in CASP14 [4].

Enabling New Research Avenues: The accuracy revolution has expanded into new areas including protein-ligand complexes relevant to drug design, RNA structures, and protein conformational ensembles - all featuring as pilot assessments in CASP15 [2].

Transforming Structural Biology Practice: With models now often competitive with medium-resolution experimental structures, computational predictions are becoming integral partners to experimental approaches, helping to resolve challenging cases and interpret low-resolution data [6].

The CASP experiment continues to evolve, with CASP15 introducing new categories like RNA structure prediction and protein-ligand complex modeling while retiring older categories where the problem has been effectively solved [2]. This ongoing adaptation ensures CASP remains relevant for measuring progress in the most current challenges in protein structure modeling.

The Critical Assessment of protein Structure Prediction (CASP) experiments, established in 1994, serve as the cornerstone for objectively evaluating the state of the art in protein structure modeling [4]. These community-wide, blind tests provide a rigorous framework for assessing the accuracy of computational methods in predicting protein structures from amino acid sequences. A critical component of this evaluation is the development and application of quantitative assessment metrics that can reliably measure the similarity between predicted models and experimentally determined reference structures. The evolution of these metrics reflects the changing frontiers of the field, from an initial focus on tertiary structure prediction to the more complex challenge of modeling multimolecular assemblies.

The Global Distance TestTotal Score (GDTTS) emerged as a foundational metric for evaluating tertiary structure predictions and has been a major assessment criterion since CASP3 in 1998 [10] [11]. As the field progressed and began tackling the prediction of protein complexes, it became clear that GDTTS alone was insufficient for evaluating the accuracy of interfacial regions in oligomeric proteins. This recognition led to the development of the Interface Contact Score (ICS), introduced when assembly prediction became an independent assessment category in CASP12 (2016) [12]. The transition from GDTTS to ICS represents a significant evolution in assessment methodology, reflecting the protein structure prediction community's growing capability to address biologically relevant quaternary structures.

Understanding GDT_TS: The Foundation of Tertiary Structure Assessment

Calculation and Interpretation

The Global Distance Test (GDTTS) was developed as a more robust alternative to Root-Mean-Square Deviation (RMSD), which is sensitive to outlier regions that can disproportionately skew results [10]. The metric is calculated by identifying the largest set of equivalent Cα atoms in the model structure that can be superimposed under a defined distance cutoff of the reference structure after iterative structural alignment [13]. The conventional GDTTS score is the average of the percentages of residues that can be superimposed under four distance thresholds: 1Å, 2Å, 4Å, and 8Å [10]. This calculation is formally expressed as:

GDT_TS = (P1Å + P2Å + P4Å + P8Å) / 4

where PXÅ represents the percentage of residues superposable within X Ångströms.

The score ranges from 0 to 100, where 100 represents a perfect prediction. As a general guideline, random predictions typically score around 20, correctly identifying the gross topology achieves approximately 50, accurate topology reaches about 70, and models that correctly capture detailed structural features climb above 90 [13].

Variations and Applications

Several specialized variants of GDT have been developed to address specific assessment needs. The GDTHA (High Accuracy) version employs more stringent distance cutoffs (0.5Å, 1Å, 2Å, and 4Å) to more heavily penalize larger deviations, making it particularly useful for evaluating high-accuracy models [10] [11]. To assess side-chain positioning, the GDCsc (Global Distance Calculation for side chains) uses characteristic atoms near the end of each residue instead of Cα atoms [10]. The GDC_all variant extends this further by incorporating full-model information for comprehensive evaluation [10].

Table 1: GDT Metric Variations and Their Applications in CASP

| Metric | Distance Cutoffs | Assessment Focus | First Used in CASP |

|---|---|---|---|

| GDT_TS | 1Å, 2Å, 4Å, 8Å | Overall tertiary structure accuracy | CASP3 (1998) |

| GDT_HA | 0.5Å, 1Å, 2Å, 4Å | High-accuracy modeling | CASP7 |

| GDC_sc | Predefined characteristic atoms | Side-chain positioning | 2008 |

| GDC_all | All atoms | Complete atomic model | CASP8 |

The Emergence of Assembly Prediction and the Need for Interface-Specific Metrics

Biological Significance of Protein Assemblies

Proteins frequently form multimeric complexes to perform their biological functions, with approximately half of the structures in the Protein Data Bank (PDB) annotated as oligomeric [14]. In fact, as of March 2019, the average structure in the PDB is a dimer, and cellular estimates suggest an even higher average oligomeric state [14]. This biological reality underscored the limitation of assessing only monomeric structures and prompted the CASP experiment to formally incorporate assembly prediction as an independent category in CASP12 [12].

The introduction of this category created an immediate need for new assessment metrics that could specifically evaluate the accuracy of protein-protein interfaces. While GDT_TS effectively measures global fold similarity, it is less sensitive to specific interfacial geometry and contact patterns that determine the functional integrity of complexes. This limitation became particularly evident during the collaborative CASP11/CAPRI30 experiment in 2014, which highlighted the challenges of evaluating quaternary structure predictions [14].

The CASP12 Assessment Framework

In CASP12, predictors were provided with protein sequences and stoichiometry information, then asked to submit complete three-dimensional structures of the macromolecular assemblies [12]. The assessment team established a target difficulty classification system:

- Easy: Targets with templates for both subunits and overall assembly findable by sequence homology

- Medium: Targets with partial templates for subunits or interfaces

- Hard: Targets without findable templates for either subunits or assembly [12]

This classification revealed that while interface patches could be reliably predicted even for some hard targets, specific residue contacts at interfaces remained challenging without templates [12].

Interface Contact Score (ICS): A Specialist Metric for Assembly Evaluation

Theoretical Foundation and Calculation

The Interface Contact Score (ICS) was specifically designed to quantify the accuracy of predicted interfacial residues in protein complexes. The metric operates at the level of residue-residue contacts, providing a precise measurement of how well a model recapitulates the specific atomic interactions at subunit interfaces [12].

The calculation involves several defined steps. First, a contact is defined as a residue from one chain having at least one heavy atom within 5Å of a heavy atom from a residue in another chain [12]. The interface contact set (C) comprises all pairs of residues from two chains satisfying this condition. The ICS is derived from precision and recall calculations:

Precision, P(M,T) = |Cₘ ∩ Cₜ| / |Cₘ|

Recall, R(M,T) = |Cₘ ∩ Cₜ| / |Cₜ|

ICS(M,T) = F₁(P,R) = 2 · P(M,T) · R(M,T) / [P(M,T) + R(M,T)]

where Cₘ represents the contact set in the model and Cₜ represents the contact set in the target experimental structure [12]. The ICS score ranges from 0 to 1, with 1 indicating perfect prediction of all native contacts.

Related Interface Metrics

The CASP assembly assessment introduced a complementary metric called Interface Patch Similarity (IPS), which provides a less stringent evaluation by measuring the similarity of interface patches without requiring specific residue-residue pairing accuracy [14] [12]. The IPS is calculated as a Jaccard coefficient of the interface residues:

IPS(M,T) = |Iₘ ∩ Iₜ| / |Iₘ ∪ Iₜ|

where Iₘ and Iₜ represent the interface residues in the model and target, respectively [12]. This metric is less sensitive to rotations and translations of partner subunits on the interface plane, providing a complementary perspective to ICS.

Table 2: Key Metrics for Protein Assembly Assessment in CASP

| Metric | Calculation Basis | Strengths | Limitations |

|---|---|---|---|

| ICS | F₁-score of residue-residue contacts | Precise evaluation of specific atomic interactions | Sensitive to interfacial rotations |

| IPS | Jaccard index of interface residues | Robust to subunit translations | Doesn't evaluate specific contact pairs |

| GDT_TS | Cα superposition under multiple cutoffs | Comprehensive global structure assessment | Less sensitive to interface accuracy |

Comparative Analysis: GDT_TS vs. ICS in CASP Evaluation

Methodological Differences and Complementary Applications

GDTTS and ICS employ fundamentally different approaches to structure comparison. While GDTTS uses a superposition-based method that identifies the maximal set of Cα atoms that can be aligned within specified distance cutoffs, ICS utilizes a contact-based approach that directly evaluates residue-residue interactions without requiring structural alignment [10] [12]. This fundamental difference dictates their respective applications: GDT_TS excels at assessing overall fold similarity, while ICS specifically targets interface accuracy in complexes.

The metrics also differ in their sensitivity to structural variations. GDT_TS effectively captures global topological similarities but may overlook specific interfacial details critical for complex function. Conversely, ICS is specifically designed to detect inaccuracies in interfacial geometry but provides no information about the overall fold. In practice, CASP assessments often employ both metrics to obtain a comprehensive evaluation of assembly predictions [14].

Performance Patterns in CASP Assessments

Analysis across multiple CASP experiments reveals distinct performance patterns for these metrics. In CASP12, predictors demonstrated greater success in accurately identifying interface patches (measured by IPS) than specific residue contacts (measured by ICS), particularly for targets without templates [12]. This pattern continued in CASP13, where researchers observed "consistent, albeit modest, improvement of the predictions quality" in assembly prediction [14].

The evolution of performance is particularly evident when examining recent CASP experiments. CASP15 demonstrated remarkable progress in assembly modeling, with the accuracy of models almost doubling in terms of ICS and increasing by one-third in terms of the overall fold similarity score (LDDTo) [4]. This improvement reflects the successful extension of deep learning methodologies from monomeric to multimeric modeling.

Experimental Protocols and Assessment Methodologies

CASP Assessment Workflow

The evaluation of protein structure predictions in CASP follows a standardized workflow to ensure consistent and objective comparison across methods. The process begins with target selection from experimentally determined structures that are soon to be publicly released [4]. For assembly assessment, particular attention is paid to biological unit assignment, especially for crystal structures where crystal contacts must be distinguished from biologically relevant interfaces using tools like EPPIC and PISA [14] [12].

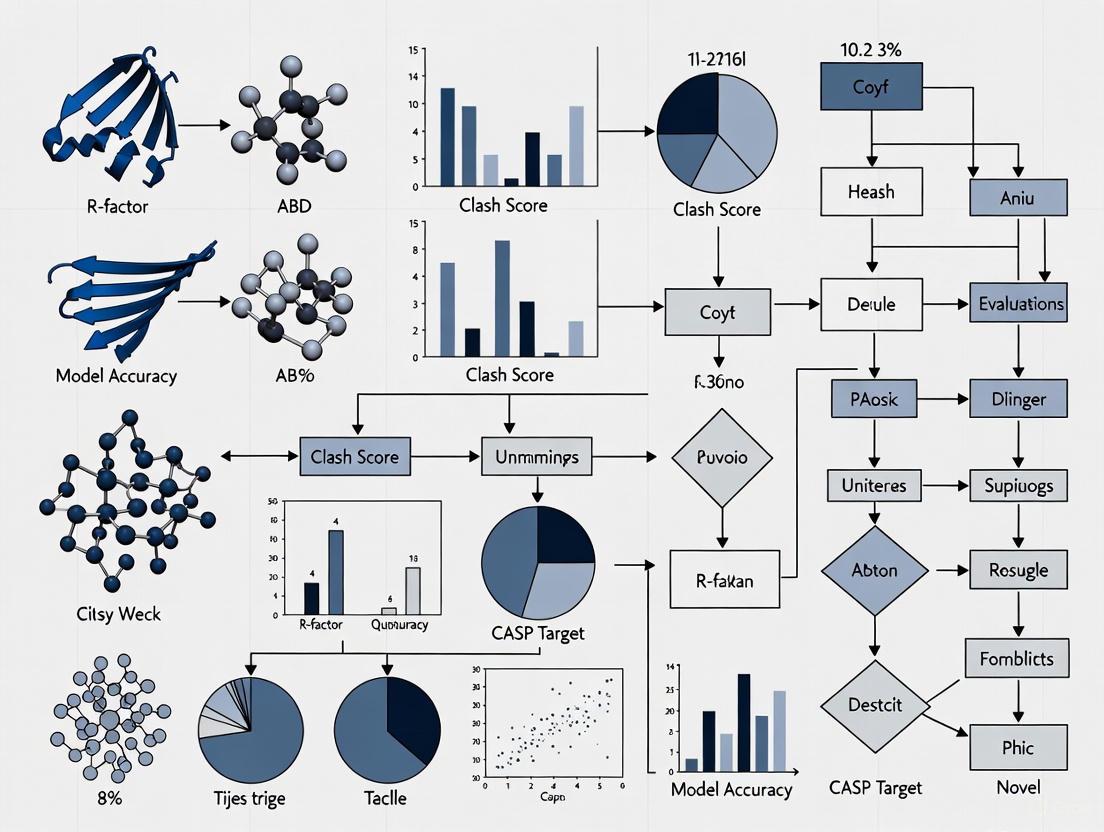

Figure 1: CASP Assessment Workflow for Protein Structure Prediction

Structure Comparison Algorithms

The calculation of GDT_TS typically employs the Local-Global Alignment (LGA) program, which implements the GDT algorithm to identify optimal superpositions under selected distance cutoffs [10] [13]. The algorithm iteratively superposes subsets of Cα atoms to find the largest set that can be aligned within specified thresholds. For ICS calculation, the process involves identifying interfacial residues based on atomic distances and computing the F₁-score of the contact sets [12].

Table 3: Research Reagent Solutions for Structure Prediction Assessment

| Tool/Resource | Function | Application Context |

|---|---|---|

| LGA (Local-Global Alignment) | Structure superposition and GDT calculation | Tertiary structure assessment [10] [13] |

| AS2TS Server | Web-based GDT_TS calculation | Accessible structure comparison [13] |

| EPPIC | Protein-protein interface classification | Biological assembly assignment [14] [12] |

| PISA | Protein Interfaces, Surfaces and Assemblies | Interface analysis and biological unit assignment [14] [12] |

| PredictionCenter.org | CASP results and evaluation data | Access to assessment results and models [4] |

Recent Advances and Future Directions

The field of protein structure assessment continues to evolve with emerging methodologies and expanding applications. The development of uncertainty estimations for GDT_TS scores addresses the inherent flexibility of protein structures, utilizing structural ensembles from NMR or time-averaged X-ray refinement to quantify score variations [11]. The local Distance Difference Test (lDDT) has emerged as a complementary metric that compares interatomic distances within a defined radius, providing an orthogonal assessment to GDT-based scores [14].

The most transformative recent development has been the integration of deep learning approaches, exemplified by AlphaFold2's performance in CASP14, which demonstrated GDT_TS scores competitive with experimental accuracy for approximately two-thirds of targets [4]. These advances are now being extended to assembly prediction, with CASP15 showing "enormous progress in modeling multimolecular protein complexes" [4].

Future developments will likely focus on more integrated assessment frameworks that simultaneously evaluate tertiary and quaternary structure accuracy, potentially incorporating functional annotations and multi-scale modeling approaches. As the field progresses, the historical evolution from GDT_TS to ICS illustrates how assessment metrics continue to adapt to address the increasingly complex challenges of protein structure prediction.

The field of computational protein structure prediction has undergone a revolutionary transformation, largely benchmarked by the Critical Assessment of protein Structure Prediction (CASP) experiments [1]. For years, the primary focus remained on predicting the tertiary structure of single-chain proteins, or monomers. However, proteins frequently perform their biological functions by forming multimeric complexes [15]. This shift from monomeric to multimeric structure assessment represents a critical expansion in scope, driven by both biological necessity and technological advancement. The introduction of deep learning methods, particularly AlphaFold2, marked a turning point, achieving accuracy competitive with experimental methods for many monomers [4] [1]. This success redirected community efforts toward the more formidable challenge of predicting the quaternary structures of protein complexes, a transition clearly reflected in the evolving focus of recent CASP experiments [4] [16]. This guide objectively compares the assessment methodologies, performance metrics, and experimental protocols for monomeric versus multimeric protein structures within the context of CASP, providing a framework for researchers and drug development professionals to evaluate model quality.

Comparative Assessment Metrics in CASP

The evaluation of prediction accuracy differs significantly between monomers and multimers, reflecting their distinct structural complexities.

Monomer Assessment Metrics

For monomeric proteins, the Global Distance Test (GDTTS) is a primary metric. It measures the average percentage of Cα atoms in a model that fall within a defined distance cutoff (typically 1, 2, 4, and 8 Å) from their correct positions in the experimental reference structure after optimal superposition [4] [1]. A GDTTS above 90 is considered competitive with experimental accuracy, a benchmark achieved by AlphaFold2 for approximately two-thirds of targets in CASP14 [4]. Another key metric is the predicted Local Distance Difference Test (pLDDT), an internal confidence score provided by AlphaFold2 that estimates the reliability of each residue's predicted local structure [17].

Multimer Assessment Metrics

Assessing multimer predictions requires metrics that specifically evaluate the interface region between chains. The Interface Contact Score (ICS), also known as F1-score, is a central metric in CASP. It evaluates the model's ability to correctly predict residue-residue contacts across the binding interface [4] [16]. Additionally, LDDTo is used to assess the overall fold similarity of the complex, providing a complementary measure of global accuracy [4].

Table 1: Key Performance Metrics for Monomeric and Multimeric Structure Assessment in CASP

| Category | Primary Metric | Description | Interpretation |

|---|---|---|---|

| Monomer | GDT_TS (Global Distance Test - Total Score) | Percentage of Cα atoms within a distance cutoff after superposition [1]. | >90: Competitive with experiment [4]. |

| pLDDT (predicted Local Distance Difference Test) | Per-residue confidence score on a scale from 0-100 [17]. | Higher score indicates higher local confidence. | |

| Multimer | ICS (Interface Contact Score / F1-score) | Accuracy in predicting inter-chain residue-residue contacts [4] [16]. | >0.8: Considered a satisfactory model [17]. |

| LDDTo | Overall fold similarity score for the complex [4]. | Higher score indicates better overall model quality. |

Performance Benchmarking and Historical Progress

The CASP experiments provide a clear historical record of the progress in both domains. The performance leap in monomer prediction with AlphaFold2 in CASP14 was unprecedented [4] [1]. Subsequently, the field's focus has demonstrably shifted to multimers.

In CASP15 (2022), multimeric modeling showed "enormous progress," with the accuracy of models almost doubling in terms of ICS and increasing by one-third in LDDTo compared to CASP14 [4]. Where satisfactory models (ICS >0.8) were achieved for only 7% of complexes in CASP14, the best methods in CASP15 provided them for 47% of cases [17]. This rapid improvement underscores the intensive effort dedicated to multimer challenges.

Table 2: Comparative Performance of State-of-the-Art Prediction Methods on CASP15 Targets

| Method | Type | Reported Performance on CASP15 Multimers | Key Innovation |

|---|---|---|---|

| AlphaFold2 | Monomer-focused | N/A (Defined monomer prediction state-of-the-art in CASP14) [1]. | End-to-end deep learning using MSA-derived co-evolutionary signals [17]. |

| AlphaFold-Multimer | Multimer-focused | Baseline for CASP15 comparisons [16]. | Extension of AlphaFold2 for multimers [16]. |

| DeepSCFold | Multimer-focused | 11.6% and 10.3% higher TM-score than AlphaFold-Multimer & AlphaFold3 [16]. | Uses sequence-derived structural complementarity for paired MSA construction [16]. |

| DeepMSA2 | Multimer-focused | Created models with "considerably higher quality" than AlphaFold2-Multimer server [17]. | Hierarchical MSA construction using huge metagenomic databases [17]. |

| Yang-Multimer, MULTICOM | Multimer-focused | Superior performance to baseline AlphaFold-Multimer in CASP15 [16] [17]. | Strategies based on AlphaFold-Multimer with enhanced sampling and MSA processing [16]. |

Experimental Protocols for Multimer Prediction

The core challenge in multimer prediction lies in capturing inter-chain interactions. The following workflow details the protocol used by advanced methods like DeepSCFold and DeepMSA2.

Workflow for Advanced Multimer Structure Prediction

Step 1: Input and Monomeric MSA Generation. The protocol begins with the amino acid sequences of all constituent chains of the protein complex. Individual, monomeric Multiple Sequence Alignments (MSAs) are generated for each chain using tools like HHblits or MMseqs2 against large genomic and metagenomic sequence databases (e.g., UniRef, BFD, MGnify) [16] [17]. The depth and diversity of these MSAs are critical for success.

Step 2: Deep Learning-Based Analysis for Pairing. This step is where advanced methods diverge. Rather than pairing sequences arbitrarily, deep learning models analyze the monomeric MSAs to identify biologically plausible pairs.

- Structural Similarity (pSS-score): A deep learning model predicts the protein-protein structural similarity between the input sequence and its homologs in the monomeric MSA, providing a complementary metric to sequence similarity for ranking and selecting MSA sequences [16].

- Interaction Probability (pIA-score): Another model estimates the interaction probability for potential pairs of sequence homologs derived from different subunit MSAs, based solely on sequence-level features [16].

Step 3: Construction of Paired MSAs. Using the pSS-scores and pIA-scores, monomeric homologs from different chains are systematically concatenated to build deep paired MSAs. Multi-source biological information (e.g., species annotation, known complexes from the PDB) is also integrated to enhance biological relevance [16] [17]. This process generates multiple candidate paired MSAs.

Step 4: Structure Prediction and Model Selection. The series of paired MSAs are used as input to a structure prediction engine, typically AlphaFold-Multimer, to generate multiple candidate models [16] [17]. A specialized model quality assessment method for complexes (e.g., DeepUMQA-X) is then used to select the top model. This model may be used as an input template for a final refinement iteration to produce the output quaternary structure [16].

Successful protein complex prediction and validation rely on a suite of computational tools and data resources.

Table 3: Key Research Reagent Solutions for Protein Complex Structure Prediction

| Item Name | Type | Function in Multimer Assessment |

|---|---|---|

| AlphaFold-Multimer [16] | Software | Core deep learning model for predicting multimeric protein structures from sequence and MSA. |

| DeepMSA2 Pipeline [17] | Software/Database | Constructs deep multiple sequence alignments from genomic/metagenomic databases, crucial for input quality. |

| DeepSCFold [16] | Software | Predicts structural complementarity and interaction probability to build superior paired MSAs. |

| ColabFold Database [16] | Database | A comprehensive sequence database used for MSA construction. |

| UniRef90/UniRef30 [16] | Database | Clustered sets of protein sequences used to find diverse homologs for MSA construction. |

| Metagenomic Databases (e.g., BFD, MGnify) [16] [17] | Database | Large-scale metagenome sequence collections that significantly increase MSA depth and diversity. |

| Model Quality Assessment (QA) Tools | Software | Methods like DeepUMQA-X assess the per-residue and interface quality of predicted complex models [16]. |

The transition from monomeric to multimeric structure assessment in CASP marks a pivotal and necessary evolution in computational structural biology. While the prediction of single-chain proteins has reached a high level of maturity, the accurate modeling of protein complexes remains a formidable challenge. The core differences lie in the critical need to capture inter-chain interactions, which demands specialized metrics like the Interface Contact Score and sophisticated methods for constructing paired multiple sequence alignments. As benchmarked by CASP15, modern approaches like DeepSCFold and DeepMSA2, which leverage huge metagenomic data and deep learning to infer structural complementarity, are pushing the boundaries of accuracy. For researchers in biomedicine and drug development, understanding these distinctions and the associated experimental protocols is essential for critically evaluating models of protein complexes, which are often the most therapeutically relevant targets.

Model Quality Assessment (MQA) is a critical component in the field of computational structural biology, providing essential estimates of the accuracy of predicted protein models. Within the Critical Assessment of Protein Structure Prediction (CASP) experiments, MQA has evolved to address the unique challenges posed by multimeric protein complexes, with rigorous evaluations conducted through the Estimation of Model Accuracy (EMA) category [18] [19]. For researchers, scientists, and drug development professionals, understanding and selecting high-quality structural models is paramount for downstream applications such as function annotation and drug design. The core concepts of MQA can be distilled into three key areas: global accuracy, which evaluates the overall structural correctness of a model; local confidence, which provides residue-level or atom-level accuracy estimates; and model utility, which determines a model's fitness for specific experimental uses. This guide objectively compares the performance of leading MQA methods from recent CASP experiments, provides detailed experimental protocols, and outlines essential resources for practitioners in the field.

Core MQA Concepts and Evaluation Frameworks in CASP

The CASP experiments provide a standardized, blind framework for evaluating protein structure prediction and quality assessment methods. The introduction of a dedicated EMA category for quaternary structure models in CASP15 marked a significant shift, reflecting the increased emphasis on multimeric assemblies [19]. The evaluation is structured into distinct modes to comprehensively assess different aspects of quality.

Global Accuracy (QMODE1): This evaluation mode focuses on the overall structural correctness of the entire model, particularly for multimers. It requires predictors to submit a single

SCOREreflecting the estimated global accuracy, which is evaluated against metrics like oligo-lDDT and TM-score [18] [19]. Accurate global accuracy estimates are crucial for selecting the best overall model from a set of predictions.Local Confidence and Interface Accuracy (QMODE2): This mode emphasizes the accuracy of interface residues in complexes. Predictors must provide not only global (

SCORE) and interface (QSCORE) scores but also a set of individual residue-level confidence scores estimating the likelihood of each residue contributing to the interface [19]. This granular information is vital for understanding binding sites and functional regions.Model Selection (QMODE3): Introduced in CASP16, this mode tests the ability to select high-quality models from large pools of pre-generated models, such as those derived from AlphaFold2 via MassiveFold [18]. This addresses a practical real-world scenario where researchers must identify the most reliable model from a multitude of options.

Performance Comparison of Leading MQA Methods

The following tables summarize the performance of top-performing MQA methods from CASP15 and CASP16, based on official evaluation data. These metrics allow for an objective comparison of their capabilities in estimating global and local model quality.

Table 1: Performance of Top MQA Methods in CASP15 Global Fold Accuracy Assessment (QMODE1)

| Method Name | Global Pearson Correlation (GDT_TS-like) | Global Spearman Correlation (GDT_TS-like) | Global Pearson Correlation (TM-score) | Global Spearman Correlation (TM-score) |

|---|---|---|---|---|

| MULTICOM_qa | 0.629 | 0.559 | 0.712 | 0.580 |

| ModFOLDdock | 0.613 | 0.487 | 0.636 | 0.517 |

| ModFOLDdockR | 0.565 | 0.510 | 0.635 | 0.504 |

| Venclovas | 0.530 | 0.435 | 0.490 | 0.437 |

| VoroIF | 0.492 | 0.345 | 0.483 | 0.351 |

| GraphCPLMQA-single* | N/A | N/A | N/A | N/A |

GraphCPLMQA-single was reported to achieve top performance in residue-level interface assessment but was not listed in the provided QMODE1 global ranking table [20] [21].

Table 2: Key Method Characteristics and CASP16 Insights

| Method Name | Method Type | Key Features/Components | Reported CASP16 Performance / Findings |

|---|---|---|---|

| Methods with AlphaFold3-derived features | Hybrid | Utilize per-atom pLDDT confidence measures from AlphaFold3 | Best performance in local accuracy estimation and utility for experimental structure solution [18] |

| ModFOLDdock variants | Consensus | Combines single-model, clustering, and deep learning approaches (e.g., ModFOLDIA, DockQJury, CDA-score) [19] | Strong performance across multiple EMA categories in CASP15 [19] |

| GraphCPLMQA | Single-model & Deep Learning | Graph neural network combined with protein language model (ESM) embeddings [21] | Ranked first in CAMEO blind test (2022); excelled in CASP15 residue-level interface evaluation [21] |

| DeepSCFold (Modeling pipeline) | Modeling Pipeline | Uses sequence-derived structural complementarity for paired MSA construction; includes MQA via DeepUMQA-X [16] | Improved TM-score by 11.6% and 10.3% over AlphaFold-Multimer and AlphaFold3 on CASP15 targets [16] |

Experimental Protocols for MQA Evaluation

The methodologies for developing and benchmarking MQA methods are rigorous, involving specific training datasets, feature extraction techniques, and network architectures. Below are detailed protocols for two representative, high-performing approaches.

Protocol 1: Development of GraphCPLMQA

GraphCPLMQA is a deep learning-based method for residue-level model quality assessment that leverages graph coupled networks and protein language models [21].

Training Dataset Curation:

- A dataset of 15,054 proteins was selected from the PDB (as of November 2021) based on specific criteria: a resolution of 2.5 Å or better, sequence length between 50 and 400 residues, and sequence similarity below 35% within the dataset.

- For each protein, decoy models were generated using three approaches to ensure structural diversity: 1) structural dihedral angle adjustment followed by fast relaxation, 2) template modeling using RosettaCM and I-TASSER-MTD, and 3) deep learning-guided conformational changes using the RocketX method with varying geometric constraints.

- After filtering for structural similarity, the final training set comprised 1,378,676 protein models [21].

Feature Extraction:

- Sequence and Structure Embeddings: The ESM-2 (for single-sequence) or ESM-MSA-1b (for MSA-based) protein language models were used to generate residue-level sequence embeddings. The ESM-IF1 model was used to generate structural embeddings from input backbone atomic coordinates.

- Novel Structural Features: The "triangular location" feature was designed to characterize the orientation and distance of local structures within the overall topology. The "residue-level contact order" feature was also incorporated to describe the topological relationship between residues [21].

Network Architecture:

- Graph Encoding Network: This module learns the latent connections between sequence and structure representations. It processes the extracted features to capture high-dimensional relationships within the protein model.

- Transform-based Convolutional Decoding Network: This module infers the mapping relationship between the structural representations and the final quality scores, producing the per-residue accuracy estimates [21].

GraphCPLMQA Workflow: This diagram illustrates the process from input model to per-residue quality scores, featuring graph encoding and convolutional decoding networks.

Protocol 2: The ModFOLDdock Consensus Approach

ModFOLDdock is a consensus-based method specifically designed for quaternary structure models, which integrates multiple scoring functions [19].

Component Scoring Methods:

- The server combines up to seven individual scoring algorithms: ModFOLDIA (a clustering interface accuracy score), DockQJury (clustering based on DockQ score), QSscoreJury and QSscoreOfficialJury (clustering using QS-scores), lDDTOfficialJury (clustering using lDDT scores), voronota-js-voromqa (a Voronoi tessellation-based score), and the CDA-score (a contact distance agreement score adapted for multimers) [19].

- The integration of both single-model and clustering methods ensures robustness across different scenarios, such as when there are few model variations or only a limited number of models are available.

Method Variants and Optimization:

- For CASP15, three variants of ModFOLDdock were developed, each optimized for a different facet of quality estimation:

- ModFOLDdock: Optimized for positive linear correlations with observed quality scores.

- ModFOLDdockR: Optimized for ranking models, ensuring the top-ranked model has the highest accuracy, even if the relationship between predicted and observed scores is not perfectly linear.

- ModFOLDdockS: A quasi-single-model approach that scores each model individually against a set of reference models generated by the MultiFOLD server [19].

- For CASP15, three variants of ModFOLDdock were developed, each optimized for a different facet of quality estimation:

Target Score Calculation for Training:

- The method was optimized against benchmark target scores derived from official CASP assessments. The "Interface" target score was an unweighted mean of ICS (F1) and IPS (Jaccard Coeff.), while the "Fold" target score was an unweighted mean of Oligo-lDDT and TM-score [19].

The following table details key computational tools and resources essential for researchers working in the field of protein model quality assessment.

Table 3: Key Research Reagent Solutions for Protein Model Quality Assessment

| Resource Name | Type | Primary Function in MQA | Access Information |

|---|---|---|---|

| ModFOLDdock Server | Web Server | Quality assessment for quaternary structure models (multimers) | Available at: https://www.reading.ac.uk/bioinf/ModFOLDdock/ [19] |

| MultiFOLD Docker Package | Software Package | Integrated package for multimer structure modeling and quality assessment | Available at: https://hub.docker.com/r/mcguffin/multifold [19] |

| AlphaFold3 | Modeling & Confidence Estimation | Predicts protein structures and provides per-atom pLDDT local confidence measures | Online server: https://golgi.sandbox.google.com/ [18] [16] |

| ESM (Evolutionary Scale Modeling) | Protein Language Model | Generates sequence and structure embeddings used as features in deep learning MQA methods | Publicly available models (ESM-2, ESM-MSA-1b, ESM-IF1) [21] |

| CASP & CAMEO Assessment Data | Benchmark Datasets | Provides standardized datasets and ground truth for training and blind testing of MQA methods | CASP: https://predictioncenter.org CAMEO: https://www.cameo3d.org [20] [21] |

MQA Application Decision Guide: A flowchart to help researchers select and apply MQA methods based on their available models and assessment needs.

The field of Model Quality Assessment has advanced significantly to meet the challenges posed by high-accuracy protein structure prediction, particularly for multimeric complexes. The core concepts of global accuracy, local confidence, and model utility provide a framework for both developers and experimentalists to evaluate and select models. Performance data from CASP15 and CASP16 reveal a competitive landscape where consensus methods like ModFOLDdock and deep learning approaches leveraging protein language models like GraphCPLMQA each have distinct strengths. A key emerging trend is the utility of AlphaFold3-derived local confidence measures. For researchers, the choice of MQA method depends on the specific application: consensus methods may be preferred for ranking multiple models, while single-model deep learning approaches are invaluable for evaluating individual structures without requiring large ensembles. As computational structural biology continues to evolve, the integration of these sophisticated MQA tools will be indispensable for validating models and ensuring their reliable application in biomedical research and drug development.

Cutting-Edge MQA Methodologies: Integrating AI and Multi-Metric Evaluation Frameworks

The introduction of AlphaFold3 (AF3) represents a paradigm shift in biomolecular structure prediction, extending accuracy from single proteins to complexes involving proteins, nucleic acids, and small molecules. This guide objectively compares AF3's performance against its predecessors and specialized alternatives, with a focused analysis of its per-residue confidence metric, pLDDT (predicted Local Distance Difference Test). Framed within model quality assessment for CASP targets, we detail how researchers can leverage pLDDT for local accuracy estimation, supported by experimental data on its correlations with molecular flexibility and limitations in capturing complex interface thermodynamics. The analysis provides a scientific toolkit for drug development professionals to critically apply AF3 predictions in structural biology and rational drug design.

The Critical Assessment of protein Structure Prediction (CASP) experiments have served as the gold standard for evaluating protein structure prediction methodologies since 1994. The field witnessed revolutionary progress with AlphaFold2 (AF2), which achieved unprecedented accuracy in single-protein structure prediction at CASP14, often generating models competitive with experimental structures in terms of backbone accuracy [22]. This breakthrough, however, was primarily confined to monomeric proteins, with limitations in modeling complexes and providing reliable local confidence metrics for residues in interaction interfaces.

AlphaFold3 (AF3) marks the next evolutionary leap, introducing a substantially updated diffusion-based architecture capable of predicting the joint structure of complexes including proteins, nucleic acids, small molecules, ions, and modified residues [23]. Within the context of CASP-based model quality assessment, AF3's key advancement lies not only in its expanded biomolecular scope but also in its refined confidence scoring system. The model demonstrates substantially improved accuracy over previous specialized tools: far greater accuracy for protein–ligand interactions compared to state-of-the-art docking tools, much higher accuracy for protein–nucleic acid interactions compared to nucleic-acid-specific predictors, and substantially higher antibody–antigen prediction accuracy [23] [24]. This guide provides a comparative analysis of AF3's performance, with a particular emphasis on the practical application of its per-atom pLDDT scores for estimating local accuracy in predicted structures.

Understanding pLDDT as a Local Confidence Metric

Definition and Interpretation

The predicted Local Distance Difference Test (pLDDT) is a per-residue measure of local confidence scaled from 0 to 100, with higher scores indicating higher confidence and typically a more accurate prediction [25]. It is based on the local distance difference test Cα (lDDT-Cα), a superposition-free score that assesses the local distance differences of atom pairs in a model compared to a theoretical experimental reference [25] [26].

Confidence bands are conventionally interpreted as follows:

- pLDDT > 90: Very high confidence; both backbone and side chains are typically predicted with high accuracy.

- 70 < pLDDT < 90: Confident; the backbone is usually correct with potential side chain misplacement.

- 50 < pLDDT < 70: Low confidence; the prediction should be interpreted with caution.

- pLDDT < 50: Very low confidence; the region may be intrinsically disordered or lack sufficient evolutionary information for accurate folding [25].

pLDDT in AF2 vs. AF3

While both AF2 and AF3 employ pLDDT, its calculation and reliability in AF3 are enhanced by the model's updated architecture. AF3 replaces AF2's structure module with a diffusion module that directly predicts raw atom coordinates, leading to improved local stereochemical accuracy [23]. Furthermore, AF3 introduces a diffusion "rollout" procedure during training to compute performance metrics for its confidence head, which predicts pLDDT along with pairwise accuracy estimates (PAE) [23]. This refined training allows AF3's pLDDT to better reflect local accuracy across diverse biomolecular contexts, including interface residues.

Table: Evolution of AlphaFold Capabilities and Confidence Metrics

| Feature | AlphaFold2 | AlphaFold3 |

|---|---|---|

| Primary Prediction Scope | Proteins | Proteins, nucleic acids, ligands, ions, modified residues |

| Architecture Core | Evoformer & Structure Module | Pairformer & Diffusion Module |

| Confidence Metrics | pLDDT, PAE | pLDDT, PAE, PDE (Predicted Distance Error) |

| pLDDT Training | Regressed from structure module output | Trained via diffusion "rollout" procedure |

| Disordered Region Handling | Prone to hallucination in unstructured regions | Improved via cross-distillation training to mimic extended loops |

Comparative Performance Analysis on Biomolecular Complexes

Protein-Protein Interactions

AF3 represents a significant advance for predicting protein-protein complexes. CASP15 had already shown enormous progress in modeling multimolecular complexes, with the accuracy of models almost doubling in terms of the Interface Contact Score (ICS) compared to CASP14 [4]. AF3 builds upon this progress. However, a critical assessment reveals that while global accuracy metrics like DockQ and RMSD are high, major inconsistencies from experimental structures can exist in the compactness of the complex, directional polar interactions (e.g., over 2 hydrogen bonds may be incorrectly predicted), and interfacial apolar-apolar packing [27]. These discrepancies caution against using AF3 predictions uncritically for understanding key stabilizing interactions. Furthermore, when AF3-predicted complexes are subjected to molecular dynamics (MD) simulation for relaxation, the quality of the structural ensemble often deteriorates, suggesting potential instability in the predicted intermolecular packing [27].

Protein-Ligand and Protein-Nucleic Acid Interactions

AF3's performance in predicting interactions involving non-protein molecules is where it most dramatically surpasses specialized tools.

Table: Accuracy Across Complex Types (Based on [23])

| Interaction Type | Benchmark | AlphaFold3 Performance | Comparison with Specialized Tools |

|---|---|---|---|

| Protein-Ligand | PoseBusters Benchmark (428 structures) | High accuracy at docking | Greatly outperforms classical docking tools (Vina) and blind docking (RoseTTAFold All-Atom) without using structural inputs. |

| Protein-Nucleic Acid | Nucleic-acid-specific benchmarks | Much higher accuracy | Substantially improved over nucleic-acid-specific predictors. |

| Antibody-Antigen | Protein interaction benchmarks | Substantially higher accuracy | Improved compared to AlphaFold-Multimer v.2.3. |

The ability to predict protein-ligand interactions with high accuracy is particularly transformative for drug discovery, allowing for rapid identification of potential drug targets and binding sites with greater precision than traditional methods like docking simulations [24] [28].

pLDDT as an Indicator of Local Accuracy and Flexibility

Correlation with Experimental and Simulated Flexibility

A critical question for researchers is whether pLDDT can predict local flexibility and dynamics, not just static accuracy. Large-scale studies comparing AF2/3 pLDDT with flexibility metrics from Molecular Dynamics (MD) simulations and NMR ensembles provide insights.

Table: pLDDT Correlation with Flexibility Metrics (Based on [26])

| Flexibility Metric | Source | Correlation with AF2/AF3 pLDDT |

|---|---|---|

| RMSF (Root Mean Square Fluctuation) | MD Simulations (ATLAS dataset) | Reasonable correlation observed. |

| Local Deformability (Neq) | MD Simulations (ATLAS dataset) | Significant correlation. |

| Structural Variance | NMR Ensembles | Lower correlation than with MD-derived metrics. |

| B-factors | Crystallography | Poor correlation for globular proteins; pLDDT is more relevant for MD/NMR contexts. |

These studies conclude that while AF pLDDT reasonably correlates with protein flexibility, particularly from MD simulations, it fails to capture flexibility variations induced by interacting partners [26]. A region that is flexible in isolation but becomes ordered upon binding may be predicted with high pLDDT, as AF3 tends to lean toward predicting conditionally folded states [25]. AF3 shows only slight improvements over AF2 in capturing protein dynamics, and MD simulations remain superior for comprehensive flexibility assessment [26].

Workflow for Local Accuracy Assessment in CASP-Style Evaluation

The following diagram illustrates the logical process a researcher should follow to leverage AF3's pLDDT for local accuracy estimation, incorporating insights from comparative analyses to avoid common pitfalls.

The Scientist's Toolkit: Essential Research Reagents and Workflows

Successfully leveraging AF3 for local accuracy estimation requires integrating its predictions with other computational and experimental tools.

Table: Research Reagent Solutions for AF3 Quality Assessment

| Tool/Reagent | Type | Function in AF3 Validation | Key Application |

|---|---|---|---|

| AlphaFold3 Server | Software | Generate 3D structure models and per-residue pLDDT confidence scores. | Primary structure and confidence prediction. |

| Molecular Dynamics (MD) Software (e.g., GROMACS) | Software | Simulate protein dynamics and calculate RMSF for flexibility comparison. | Validate and refine AF3 predictions; assess flexibility. |

| NMR Ensemble Data | Experimental Data | Provide experimental evidence of structural flexibility and heterogeneity. | Benchmark AF3 pLDDT against experimentally observed disorder. |

| PoseBusters Benchmark | Validation Suite | Standardized set for validating protein-ligand pose predictions. | Objectively assess AF3 docking accuracy vs. traditional tools. |

| Alanine Scanning with Generalized Born and Interaction Entropy (ASGB/IE) | Computational Assay | Calculate mutation-induced affinity variations from simulation trajectories. | Evaluate if AF3-predicted interfaces retain functional thermodynamic properties. |

Experimental Protocol: Validating Local Accuracy with pLDDT

For researchers aiming to validate AF3's local accuracy estimates for specific CASP targets or novel complexes, the following methodology is recommended:

- Prediction Generation: Input protein sequences, nucleic acid sequences, and/or ligand SMILES strings into the AF3 model. Download the predicted structure and the accompanying per-residue pLDDT and PAE data.

- Confidence Mapping: Map pLDDT scores onto the 3D structure using molecular visualization software (e.g., PyMOL, ChimeraX) to visually identify low-confidence regions.

- Comparative Analysis:

- Integration with Experimental Data:

- If an experimental structure is available, calculate the local lDDT-Cα to directly validate pLDDT accuracy.

- Compare pLDDT profiles with experimental B-factors from crystallography or order parameters from NMR. Note that correlation may be poor for globular regions but more meaningful for flexible loops and termini [26].

- Molecular Dynamics Validation:

- Run short, all-atom MD simulations from the AF3-predicted structure.

- Calculate per-residue RMSF from the simulation trajectory.

- Correlate RMSF with pLDDT. A strong negative correlation (high pLDDT, low RMSF) indicates AF3 successfully identified rigid regions, while discrepancies may indicate over-stabilization or instability in the prediction [26] [27].

AlphaFold3 represents a formidable tool for predicting the structures of diverse biomolecular complexes. Its pLDDT score provides a crucial, locally interpretable measure of confidence that shows reasonable correlation with protein flexibility and local accuracy. However, this guide underscores that researchers must apply pLDDT with a nuanced understanding of its limitations—particularly its tendency to reflect a single, conditionally folded state and its potential inaccuracies in describing the precise chemical geometry of interaction interfaces. For drug development professionals, AF3 predictions offer an unparalleled starting point for structure-based design, but critical tasks like hot-spot identification and binding affinity calculation still benefit from, and sometimes require, integration with experimental data and physics-based simulation methods. As the field progresses, the integration of AF3's powerful predictions with multi-state modeling and advanced molecular simulations will further close the gap between prediction and biological reality.

The Model Quality Assessment (MQA) category in the Critical Assessment of Structure Prediction (CASP) experiment provides an independent mechanism for evaluating the accuracy of computational methods for predicting protein structures. In CASP16, the Evaluation of Model Accuracy (EMA) experiment was specifically designed to assess the ability of predictors to estimate the accuracy of predicted protein models, with a particular emphasis on multimeric assemblies [18]. The CASP16 EMA framework introduced a structured evaluation approach through three distinct modes (QMODE1, QMODE2, and QMODE3) that address complementary aspects of model quality assessment, creating a comprehensive benchmark for the field [18] [29].

The expansion of the QMODE framework in CASP16 reflects the evolving challenges in protein structure prediction, especially in the post-AlphaFold era where accuracy estimation has become as crucial as structure generation itself. With the widespread adoption of AlphaFold-derived systems, the critical bottleneck in structural bioinformatics has shifted toward identifying the most accurate models from potentially thousands of candidates [30]. This review examines the experimental protocols, performance outcomes, and methodological innovations revealed through the CASP16 QMODE framework, providing researchers with actionable insights for advancing MQA methodologies in structural biology and drug discovery applications.

The QMODE Framework: Experimental Design and Evaluation Metrics

QMODE1: Global Structure Accuracy Assessment

QMODE1 focused on evaluating predictors' ability to estimate the global accuracy of complete protein models [18]. In this mode, participants were required to provide accuracy estimates that reflected the overall quality of structural models relative to experimental reference structures. The evaluation employed OpenStructure-based metrics to provide a standardized assessment framework that could be consistently applied across diverse protein targets [18]. The primary objective was to determine which methods could most reliably distinguish between high-quality and low-quality structural models at the global level, which is particularly valuable for experimentalists seeking to identify usable models for downstream applications such as molecular replacement in crystallography or structural analysis.

QMODE2: Local Interface Residue Accuracy

QMODE2 shifted focus from global assessment to local accuracy estimation, specifically targeting interface residues in multimeric assemblies [18]. This mode recognized that for protein complexes and multimers, the accuracy of interfacial regions is often more critical than global fold accuracy, as these regions directly mediate molecular interactions and biological function. Predictors were challenged to estimate per-residue or per-atom accuracy specifically at subunit interfaces, with evaluation metrics designed to quantify how well these local estimates correlated with actual deviations from experimental reference structures. The introduction of QMODE2 reflected the growing importance of protein-protein and protein-ligand interactions in therapeutic development and systems biology.

QMODE3: Model Selection Challenge

QMODE3 represented a novel evaluation mode in CASP16, focusing specifically on model selection performance from large-scale pools of pre-generated models [18]. This challenge was designed to address a practical bottleneck in modern structural bioinformatics: identifying the best models from thousands of candidates generated by methods like AlphaFold2. Specifically, predictors were provided with massive model pools generated by MassiveFold and were required to select the five highest-quality models [18] [29]. To address the statistical challenges of score interdependence and varying prediction quality distributions across targets, assessors developed a novel penalty-based ranking scheme for evaluating QMODE3 performance [18]. This mode tested the practical utility of MQA methods in real-world scenarios where researchers must select optimal models from extensive collections.

Table 1: QMODE Evaluation Modes in CASP16

| Evaluation Mode | Primary Focus | Evaluation Metrics | Key Challenges |

|---|---|---|---|

| QMODE1 | Global structure accuracy | OpenStructure-based metrics | Overall model quality estimation |

| QMODE2 | Interface residue accuracy | Local contact measures | Focusing on biologically critical interfaces |

| QMODE3 | Model selection performance | Penalty-based ranking scheme | Handling large model pools and score interdependence |

Performance Analysis of Leading Methods

Methodological Trends and Top Performers

The evaluation of CASP16 predictors revealed several important trends in methodologically advanced MQA approaches. Methods that incorporated AlphaFold3-derived features, particularly per-atom pLDDT confidence measures, demonstrated superior performance in estimating local accuracy [18]. These methods also showed enhanced utility for experimental structure solution, suggesting that per-atom confidence metrics provide valuable information beyond traditional residue-level estimates. The advantage of AlphaFold3-integrated approaches was particularly evident in QMODE2, where local interface accuracy depends on precise atomic-level interactions.

For the model selection challenge in QMODE3, performance varied significantly across different target categories, including monomeric, homomeric, and heteromeric complexes [18]. This variability underscored the ongoing challenge of evaluating complex assemblies, where interface accuracy and subunit arrangement introduce additional complexity beyond single-chain folding. The top-performing groups developed specialized strategies for handling these diverse scenarios, though no single approach dominated across all target types, indicating persistent specialization in method performance.

Quantitative Performance Assessment

The CASP16 assessment employed a sophisticated ranking system based on combined z-scores that aggregated performance across multiple metrics and target types [31]. While the precise numerical results for individual groups are published through the official CASP16 assessment portal, the overall analysis revealed that methods with robust performance across all three QMODE categories shared several architectural features, including ensemble approaches that combined multiple confidence metrics and specialized modules for interface assessment [18] [31].

Table 2: Key Performance Metrics in CASP16 QMODE Evaluation

| Performance Dimension | Assessment Approach | Key Findings |

|---|---|---|

| Global Accuracy Estimation | Correlation with experimental structures | AlphaFold3-enhanced methods led in local accuracy |

| Interface Residue Assessment | Interface-specific metrics | Per-atom pLDDT provided significant advantage |

| Model Selection Capability | Penalty-based ranking | Performance varied by complex type |

| Methodological Advancement | Comparative z-scores | Integration of multiple confidence metrics proved beneficial |

Research Reagents and Computational Tools

The advanced MQA methods evaluated in CASP16 relied on a sophisticated ecosystem of computational tools and resources. The following table summarizes key research reagents that enable state-of-the-art model quality assessment.

Table 3: Essential Research Reagents for Model Quality Assessment

| Tool/Resource | Type | Primary Function | Application in CASP16 |

|---|---|---|---|

| OpenStructure | Software framework | Structural analysis and metrics | Primary evaluation framework for QMODE1/2 [18] |

| AlphaFold3 | Structure prediction | Atomic-level structure prediction | Source of per-atom pLDDT confidence metrics [18] |

| MassiveFold | Model generation | Large-scale model sampling | Source of model pools for QMODE3 challenge [18] |

| Per-atom pLDDT | Confidence metric | Local accuracy estimation | Key feature for top-performing methods [18] |

Experimental Workflows and Methodologies

QMODE3 Model Selection Pipeline

The QMODE3 experiment introduced a complex workflow for evaluating model selection capabilities. The following diagram illustrates the key stages in this evaluation process:

Integrated MQA Assessment Framework

The comprehensive QMODE evaluation in CASP16 required careful integration of multiple assessment components. The following diagram outlines the overall experimental framework:

Implications for Structural Biology and Drug Discovery

The methodological advances demonstrated through CASP16's QMODE framework have significant implications for structural biology research and pharmaceutical development. The enhanced capability to assess local interface accuracy (QMODE2) directly benefits drug discovery efforts where protein-ligand and protein-protein interactions represent key therapeutic targets [32]. Similarly, the model selection capabilities evaluated in QMODE3 address a critical bottleneck in structural bioinformatics pipelines, enabling researchers to more efficiently identify high-quality models from large-scale predictions [18] [29].

For computational biochemists and drug development professionals, the performance trends observed in CASP16 suggest several strategic considerations. First, the advantage of per-atom confidence metrics supports incorporating atomic-level assessment into structural validation workflows. Second, the specialization of methods across different complex types indicates that optimal MQA may require target-specific approaches rather than one-size-fits-all solutions. Finally, the persistent challenges in evaluating complex assemblies highlight the need for continued method development, particularly for multimeric proteins and antibody-antigen complexes [30] [33].

The QMODE framework established in CASP16 provides a foundation for advancing model quality assessment methodologies that will be essential for realizing the full potential of AI-based structure prediction in basic research and therapeutic development. As these methods mature, they promise to enhance the reliability of computational structural biology and accelerate the application of predicted structures in mechanistic studies and drug design.

The breakthrough of AlphaFold2 marked a revolutionary turn in computational structural biology, transitioning the field's primary challenge from generating accurate protein models to identifying the most accurate ones from vast collections of predictions. This paradigm shift is particularly evident in the prediction of protein complexes (multimers), where most state-of-the-art methods, including DMFold, MassiveFold, and AlphaFold3, achieve high-precision modeling through extensive sampling approaches [34]. The core idea is simple yet powerful: by generating a massive diversity of structural models, the probability of including a high-accuracy structure within the pool increases significantly. However, this success has bred a new, critical challenge: the accurate scoring, ranking, and selection of models from these enormous decoy sets. This challenge became the central focus of the CASP16 Estimation of Model Accuracy (EMA) experiment, which introduced the QMODE3 evaluation specifically designed to test model selection performance from large-scale pools, many of which were derived from AlphaFold2-powered tools like MassiveFold [18] [34].

Understanding the Contenders: MassiveFold and the Model Quality Assessment Landscape

MassiveFold: An Engine for Massive Structural Sampling

MassiveFold addresses a fundamental bottleneck in the post-AlphaFold era. While massive sampling unlocks elevated modeling capabilities, particularly for protein assemblies, it traditionally struggles with prohibitive GPU cost and data storage requirements. MassiveFold is an optimized and parallelized framework that radically reduces the computing time for large-scale sampling—from several months down to hours. Its architecture cleanly separates the workflow into three stages: (1) alignments computation on a CPU, (2) parallelized structure inference across multiple GPUs, and (3) a post-processing CPU step that gathers, ranks, and analyzes all results [35].

The power of MassiveFold lies in its deliberate injection of structural diversity. It integrates numerous parameters to explore the conformational space, including using all neural network models released by AlphaFold (both monomeric and multimeric versions), activating dropout during inference to sample uncertainty, controlling the use of templates, and extensively modulating the number of recycling steps and the early-stop tolerance threshold [35]. This systematic approach to sampling was proven in CASP15, where a predecessor method demonstrated that massive sampling with AlphaFold could substantially improve multimer prediction quality, with the mean DockQ score increasing from 0.43 to 0.56 compared to a baseline using identical input data [36].

Model Quality Assessment (MQA) Methods: The Selectors

Faced with the vast model pools generated by MassiveFold, researchers rely on MQA (or EMA) methods to identify the best structures. These methods can be categorized based on their operational principles:

- Single-model methods: Evaluate individual models in isolation based on their intrinsic structural and physical properties. Examples include the DeepUMQA series and ProQ series. They are computationally efficient but historically less accurate for complex assemblies [34].

- Consensus (multi-model) methods: Operate on the principle that structurally similar models from a diverse pool are likely to be closer to the native structure. They evaluate model quality based on structural similarity within the input model pool. Representative methods include Pcons and MULTICOM_qa [34] [37].

- Quasi-single-model methods: A hybrid approach that uses internal structural modeling techniques to evaluate models without relying heavily on the overall pool quality [34].

Table 1: Categorization of Model Quality Assessment Methods

| Method Type | Core Principle | Representative Methods | Key Advantage |

|---|---|---|---|

| Single-Model | Direct assessment of an individual model's features | DeepUMQA series, ProQ series, Voro series | Does not rely on model pool quality |

| Consensus | Leverages structural similarity within a model pool | Pcons, MULTICOM_qa, ModFOLDclust2 | High performance when pool is diverse and high-quality |

| Quasi-Single-Model | Uses internal modeling for evaluation | ModFOLD series, QMEANDisCo | Reduces reliance on pool quality |

The CASP16 QMODE3 Challenge: An Experimental Framework for Model Selection

The CASP16 experiment formally established the benchmark for evaluating model selection capabilities in the era of massive sampling. Its EMA component was structured around three distinct evaluation modes [18]:

- QMODE1: Assessed the accuracy of global structure quality estimates.

- QMODE2: Focused on the accuracy of interface residue quality estimates, critical for complexes.

- QMODE3: Specifically tested the performance of methods in selecting high-quality models from large-scale pools, notably those generated by MassiveFold and enriched with AlphaFold2-derived models [18] [34].

A key innovation in QMODE3 was the development of a novel penalty-based ranking scheme to handle the complex issues of score interdependence and the varying distributions of prediction quality across different targets. This rigorous framework was designed to objectively determine which MQA methods were most effective at navigating the sea of models produced by tools like MassiveFold and identifying the true structural gems [18].

Performance Comparison: Key Methods Under the QMODE3 Lens

The Rise of DeepUMQA-X

In the competitive blind test of CASP16, one server demonstrated top-tier performance across nearly all tracks, including QMODE3: DeepUMQA-X. This server's success is attributed to its hybrid architecture, which strategically combines the strengths of single-model and consensus approaches [34].