Evaluating RNA-Seq Alignment Tools: A 2025 Comprehensive Guide for Biomedical Researchers

This article provides a comprehensive guide for researchers and drug development professionals on evaluating and selecting RNA-seq alignment tools.

Evaluating RNA-Seq Alignment Tools: A 2025 Comprehensive Guide for Biomedical Researchers

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on evaluating and selecting RNA-seq alignment tools. It covers foundational principles of RNA-seq alignment, methodological comparisons of major tools including HISAT2, STAR, and kallisto, strategies for troubleshooting and optimizing analysis pipelines, and rigorous validation approaches. By synthesizing current benchmarking studies and best practices, this guide aims to equip scientists with the knowledge to make informed decisions that enhance the accuracy and reliability of their transcriptomic studies, ultimately supporting advancements in biomedical research and therapeutic development.

RNA-Seq Alignment Fundamentals: Understanding Core Concepts and Tool Landscape

The Critical Role of Alignment in RNA-Seq Analysis Pipelines

RNA sequencing (RNA-seq) has become the primary method for transcriptome analysis, enabling detailed exploration of gene expression, novel transcripts, and splicing events. The alignment step, where sequenced reads are mapped to a reference genome or transcriptome, serves as the computational foundation of the entire RNA-seq workflow. The choice of alignment tool directly influences the accuracy of all downstream analyses, including differential expression and isoform discovery. Current research demonstrates that alignment is not a one-size-fits-all process, with tool performance varying significantly across different species, experimental designs, and computational environments. This guide provides a systematic comparison of mainstream RNA-seq aligners, evaluates their performance using published experimental data, and offers evidence-based recommendations for researchers and drug development professionals.

Performance Benchmarking of Major Alignment Tools

Comparative Analysis of STAR and HISAT2

Table 1: Performance comparison between STAR and HISAT2 across key metrics

| Performance Metric | STAR | HISAT2 | Experimental Context |

|---|---|---|---|

| Alignment Rate | >90-95% unique mapping [1] | Variable (as low as 50% on complex genomes) [1] | Human genomes & complex draft genomes [1] |

| Splice Junction Detection | Excellent, uses uncompressed suffix arrays [2] [3] | Good, uses hierarchical FM-index [4] [3] | SEQC project; human reference samples [2] |

| Runtime Speed | Very Fast (~400M reads/hour) [1] | ~3x faster than STAR [3] | 48 samples of Erysiphe necator [3] |

| Memory Usage | High (can be ~30GB for human genome) [4] [1] | Low memory footprint [4] | Standard human genome alignment [4] |

| Handling of Complex Genomes | Superior on draft genomes with many scaffolds [1] | Standard performance on reference-quality genomes [3] | Genome with 33,000 scaffolds [1] |

| Key Strength | Accuracy and high mapping rates [1] | Computational efficiency [4] | Multi-site benchmarking studies [5] [6] |

Experimental data from a multi-center benchmarking study involving 45 laboratories confirms that the choice of alignment tool significantly impacts gene expression measurements, especially when detecting subtle differential expression between similar biological samples [6]. The alignment step introduces variations that propagate through the entire analysis pipeline, making tool selection a critical consideration for robust results.

Experimental Protocols for Alignment Assessment

Standardized Workflow for Benchmarking Aligners

1. Input Data Preparation:

- Begin with high-quality RNA-seq datasets from public repositories (e.g., SEQC/MAQC reference samples) or in-house data.

- Use samples with built-in "ground truth" such as synthetic spike-in RNAs (e.g., ERCC controls) or samples mixed in known ratios [2] [6].

- Process raw FASTQ files through quality control (FastQC) and adapter trimming (Trimmomatic, fastp) to ensure input data quality [5] [7].

2. Reference Genome Indexing:

- Download the appropriate reference genome (e.g., GRCh38 for human) and annotation file (GTF/GFF).

- Build aligner-specific indices using default parameters as per developer recommendations.

- For HISAT2, this involves running

hisat2-buildfor the genome, potentially including a known-snps parameter for better handling of polymorphisms [1]. - For STAR, execute the

--genomeGeneratemode, specifying the sjdbOverhang parameter based on read length [4].

3. Alignment Execution:

- Map reads to the reference genome using identical computational resources for fair comparison.

- Use consistent parameters across all tested aligners where possible (e.g., setting similar mismatch thresholds).

- For RNA-seq, ensure all aligners are run in splice-aware mode [3].

4. Performance Quantification:

- Calculate alignment rates from output SAM/BAM files using samtools flagstat.

- Assess splice junction detection against annotated junctions using specialized tools like regtools or custom scripts.

- Evaluate gene body coverage using programs such as Qualimap or RSeQC to identify 3' or 5' biases [3].

- Measure computational resource consumption (CPU time, memory usage) using system monitoring tools.

5. Downstream Analysis Impact:

- Generate read counts using featureCounts or HTSeq for alignment-based methods [4] [7].

- Perform differential expression analysis with DESeq2 or edgeR to determine how alignment affects biological conclusions [8] [7].

- Compare results against ground truth (spike-ins, qPCR validation) to assess accuracy [2] [6].

Key Metrics for Alignment Evaluation

Research indicates that comprehensive alignment assessment should incorporate multiple complementary metrics rather than relying on a single parameter [3]. The most informative metrics include:

- Mapping Rate: Percentage of reads successfully aligned to the reference, with distinctions between uniquely mapped reads and multi-mapped reads [3].

- Splice Junction Accuracy: Precision in identifying canonical and non-canonical splice sites, validated against orthogonal methods [2].

- Runtime and Memory Efficiency: Computational resource requirements measured in CPU hours and RAM consumption [4] [3].

- Gene Coverage Uniformity: Evenness of read distribution across gene bodies, with 3' or 5' bias indicating protocol-specific artifacts [3].

- Differential Expression Concordance: Consistency in differentially expressed gene lists generated from the same data using different aligners [6] [7].

Large-scale consortium studies like SEQC and Quartet have demonstrated that alignment-induced variability becomes particularly problematic when attempting to detect subtle expression differences, as often encountered in clinical samples or drug treatment studies [2] [6].

Impact on Downstream Analysis and Biological Interpretation

The alignment step exerts a profound influence on subsequent analytical stages and biological conclusions. A benchmarking study evaluating 192 analysis pipelines found that the choice of aligner significantly affected both raw gene expression quantification and differential expression results [7]. Different aligners can produce varying counts for genes with paralogs or repetitive elements due to differences in how they handle multi-mapping reads [3].

For clinical applications and drug development, where detecting subtle expression changes is critical, alignment-induced variability can impact biomarker identification. The Quartet project, which focused on detecting subtle differential expression relevant to clinical diagnostics, found that alignment choice was among the bioinformatics factors contributing to inter-laboratory variation [6]. This highlights the importance of aligner selection for applications requiring high sensitivity and precision.

Table 2: Key research reagents and computational resources for RNA-seq alignment evaluation

| Resource Type | Specific Examples | Function in Alignment Assessment |

|---|---|---|

| Reference Samples | MAQC (A: UHRR, B: Brain) [2]; Quartet Project samples [6] | Provide well-characterized transcriptomes with known expression patterns for benchmarking |

| Spike-in Controls | ERCC RNA Spike-In Mixes [2] [6] | Add known RNA sequences at defined concentrations for accuracy measurement |

| Alignment Software | STAR [4] [1]; HISAT2 [4] [3]; Bowtie2 [7] | Perform the core mapping function with different algorithms and performance characteristics |

| Validation Technologies | qRT-PCR [7]; TaqMan assays [6]; Nanostring nCounter | Provide orthogonal verification of expression measurements from RNA-seq |

| Computational Resources | High-performance computing clusters; Cloud computing platforms | Enable processing of large datasets and comparison of computational requirements |

| Quality Control Tools | FastQC [8] [7]; MultiQC [4]; RSeQC | Assess input data quality and alignment outputs across multiple metrics |

Best Practice Recommendations for Alignment Selection

Species-Specific Considerations

Research indicates that alignment tools perform differently across species, necessitating careful selection based on organism-specific characteristics [5]. For well-annotated model organisms like human and mouse, STAR generally provides excellent performance, particularly for splice junction detection [1] [2]. For non-model organisms or those with complex genomes, performance should be validated using orthogonal methods. Plant pathogenic fungi data, for instance, showed distinct alignment characteristics compared to animal data [5].

Experimental Design Alignment

The optimal aligner choice depends on specific research objectives:

- Differential Gene Expression: Both STAR and HISAT2 perform well when followed by count-based tools like featureCounts and differential expression analysis with DESeq2 or edgeR [4] [8].

- Isoform Discovery and Splice Junction Analysis: STAR demonstrates superior performance for comprehensive splice junction detection, making it preferable for alternative splicing studies [2] [3].

- Single-Cell RNA-seq: For 10x Genomics data, Cell Ranger (which uses STAR internally) remains the standard processing pipeline [9].

- Resource-Constrained Environments: HISAT2 offers a favorable balance between accuracy and computational efficiency for laboratories with limited computing resources [4] [3].

Alignment represents a critical determinant of success in RNA-seq analysis, with tool selection influencing every subsequent analytical step. Experimental evidence from large-scale benchmarking studies indicates that while STAR generally provides superior alignment rates and junction detection, HISAT2 offers significant advantages in computational efficiency. The optimal choice depends on specific research questions, biological systems, and computational resources. For clinical and drug development applications where detecting subtle expression changes is paramount, rigorous alignment validation using spike-in controls and reference samples is strongly recommended. As RNA-seq continues to evolve, alignment tool selection remains a foundational decision that researchers must approach with careful consideration of both technical performance and biological requirements.

Aligning millions of short RNA sequencing (RNA-seq) reads to a reference genome is a foundational step in transcriptomic analysis, but it presents distinct computational challenges that surpass those of DNA read alignment [10]. The process is complicated by biological phenomena such as RNA splicing, which creates reads that span exon-exon junctions, and the frequent presence of sequence polymorphisms and sequencing errors [10] [3]. Furthermore, a significant portion of reads, known as multi-mapping reads, can align equally well to multiple genomic locations due to gene duplications, repetitive sequences, or shared exons among paralogous genes, creating ambiguity in their assignment [11] [12].

This guide provides an objective comparison of modern RNA-seq alignment tools, evaluating their performance in overcoming these hurdles. We summarize quantitative data from independent benchmarking studies and detail experimental methodologies to offer researchers a evidence-based framework for selecting the most appropriate aligner for their specific needs.

Performance Benchmarking of RNA-Seq Aligners

Independent benchmarking studies consistently reveal that aligners exhibit major performance differences across key metrics such as alignment yield, base-wise accuracy, and sensitivity in detecting splice junctions [11].

Comparative Performance on Core Alignment Metrics

The following table synthesizes findings from several studies that evaluated aligners on real and simulated RNA-seq datasets, highlighting their performance regarding key challenges [10] [11] [13].

Table 1: Comparative Performance of RNA-Seq Alignment Tools on Key Challenges

| Aligner | Algorithm Type | Spliced Alignment Accuracy | Handling of Sequence Polymorphisms/Errors | Management of Multi-mapping Reads | Basewise & Junction Accuracy |

|---|---|---|---|---|---|

| STAR | Spliced (Seed-based) | High sensitivity for junction discovery [11] | High basewise accuracy, tolerates mismatches well [11] | Reports a quantitative measure of multireads [3] | High basewise accuracy and precise junction detection [10] [11] |

| HISAT2 | Spliced (FM-index) | Supersedes TopHat; handles splicing well [3] | Good performance, but can misalign reads to retrogene loci [13] | Information not available from search results | Robust performance at both base and junction levels [10] |

| GSNAP | Spliced (Seed-and-extend) | Accurate junction discovery [11] | Robust to polymorphisms and sequencing error [10] | Information not available from search results | High basewise accuracy and sensitive deletion detection [11] |

| TopHat2 | Spliced (Exon-first) | Good junction discovery, but lower mapping yield [11] | Low tolerance for mismatches; lower yield with errors [11] | Higher fraction of pairs with only one read aligned [11] | High rate of perfect spliced alignments, but lower yield [11] |

| MapSplice | Spliced (Two-step) | Accurate junction discovery [11] | Robust to polymorphisms and sequencing error [10] | Information not available from search results | High basewise accuracy, good balance for long indels [11] |

| BWA | Unspliced (BWT) | Does not perform spliced alignment [3] | Handles polymorphisms well; high base-wise accuracy [10] [3] | Reports a quantitative measure of multireads [3] | High base-wise accuracy, but fails at splice junctions [10] |

Quantitative Alignment Metrics from Real RNA-Seq Data

A large-scale assessment (RGASP) evaluated multiple alignment protocols on human K562 cell line data, revealing significant variations in performance [11]. The following table provides a quantitative snapshot of these results.

Table 2: Quantitative Alignment Metrics on Human K562 RNA-Seq Data (from RGASP Consortium)

| Aligner | Alignment Yield (% of read pairs) | Mismatch Tolerance | Indel Frequency (per 1000 reads) | Truncation of Read Ends |

|---|---|---|---|---|

| GSNAP/GSTRUCT | ~91-95% [11] | High | ~20-40 (high rate of long deletions) [11] | Yes [11] |

| STAR | ~91-95% [11] | High | ~10-20 (internally placed) [11] | Yes [11] |

| MapSplice | ~90% [11] | Low | ~10-20 (internally placed) [11] | Yes [11] |

| TopHat | ~84% [11] | Low | ~10 (long insertions), variable distribution [11] | No [11] |

| PALMapper | ~68-91% [11] | Moderate | Up to ~115 (mostly deletions) [11] | No [11] |

Experimental Protocols for Benchmarking Aligners

To ensure fair and meaningful comparisons, benchmarking studies employ rigorous experimental designs, often using simulated data where the "ground truth" is known, and validating findings with real biological data.

The BEERS RNA-Seq Simulation Framework

The Benchmarker for Evaluating the Effectiveness of RNA-Seq Software (BEERS) was developed to simulate realistic RNA-seq data and measure alignment accuracy [10].

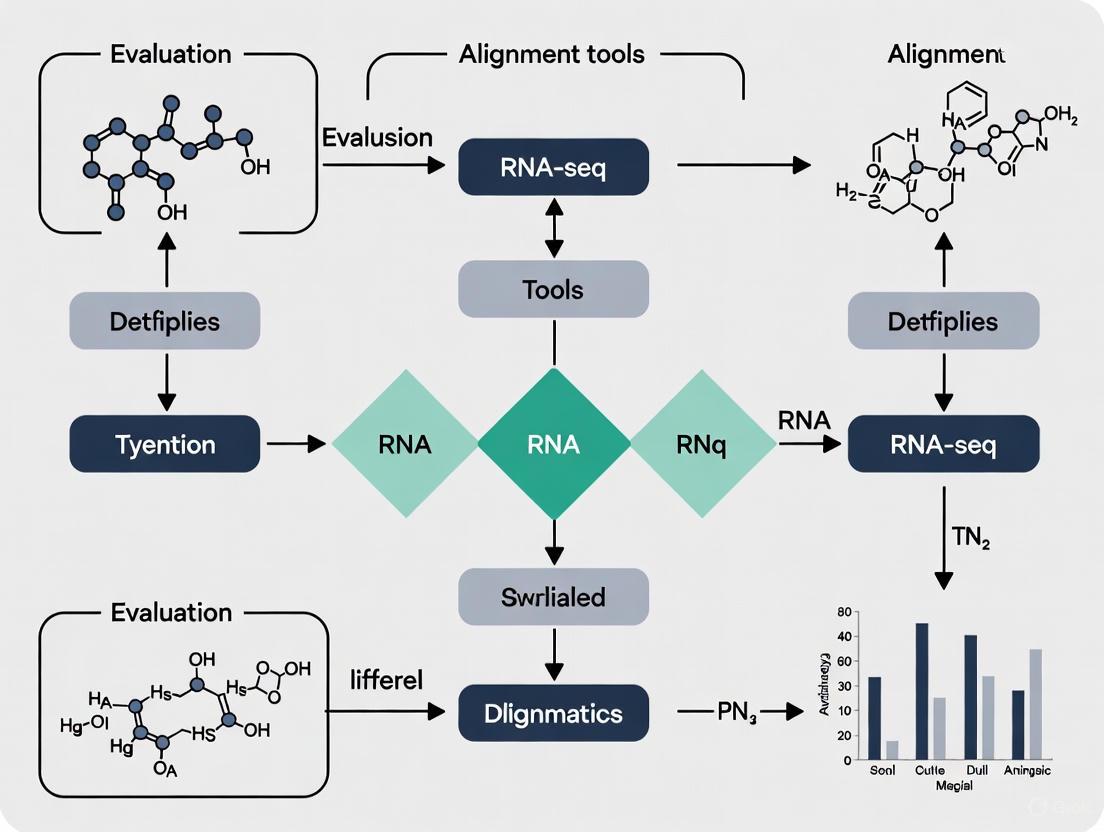

- Workflow Overview: The diagram below outlines the BEERS simulation and evaluation pipeline.

- Simulation Inputs: BEERS uses a filtered set of gene models merged from multiple annotation databases (e.g., AceView, Ensembl, RefSeq) to generate simulated paired-end reads [10].

- Configurable Impediments: The simulator incorporates realistic challenges at controlled rates, including:

- Alternative splicing and novel transcript forms.

- Substitutions, insertions, and deletions (indels).

- Sequencing errors, including decreasing quality scores toward read ends, mimicking Illumina data [10].

- Accuracy Assessment: The known origin of each simulated read allows for direct computation of performance metrics by comparing inferred alignments to the true alignments. Accuracy is evaluated at both the level of individual bases and splice junction calls [10].

Multi-Center Real-World RNA-Seq Assessment

The Quartet project conducted a extensive multi-center study to evaluate RNA-seq performance in real-world diagnostic scenarios, focusing on the detection of subtle differential expression [6].

- Reference Materials: The study used RNA reference materials from a Chinese quartet family (with small biological differences) and the MAQC reference samples (with large biological differences), spiked with External RNA Control Consortium (ERCC) synthetic RNAs [6].

- Study Design: A total of 45 independent laboratories sequenced 24 RNA samples (including technical replicates) using their in-house experimental protocols and bioinformatics pipelines, generating over 120 billion reads [6].

- Performance Framework: The study assessed:

- Data Quality: Using signal-to-noise ratio (SNR) from principal component analysis (PCA).

- Accuracy of Expression: Based on TaqMan datasets, ERCC spike-in ratios, and known sample mixing ratios.

- Reproducibility: Measuring inter-laboratory variation in gene expression and differential expression analysis [6].

Successful RNA-seq alignment and benchmarking rely on several key resources, from reference materials to software pipelines.

Table 3: Key Research Reagent Solutions for RNA-Seq Alignment Benchmarking

| Resource Name | Type | Primary Function in Evaluation |

|---|---|---|

| BEERS (Benchmarker for Evaluating the Effectiveness of RNA-Seq Software) [10] | Software/Simulation | Generates realistic simulated RNA-seq reads with a known "ground truth" alignment for controlled accuracy testing. |

| Quartet & MAQC Reference RNA Samples [6] | Biological Reference Material | Provides well-characterized, stable RNA samples with built-in truths for assessing performance and reproducibility across labs. |

| ERCC Spike-in Controls [6] | Synthetic RNA Mix | A set of 92 synthetic RNAs with known concentrations spiked into samples to evaluate quantification accuracy. |

| RUM (RNA-Seq Unified Mapper) [10] | Alignment Pipeline | A benchmarked pipeline that combines Bowtie and BLAT alignments against both genome and transcriptome for high accuracy. |

| RGASP (RNA-seq Genome Annotation Assessment Project) Datasets [11] | Consortium & Data | Provided a framework for a competitive, community-wide evaluation of RNA-seq alignment protocols on common real and simulated datasets. |

The performance of RNA-seq aligners is not uniform, with significant differences observed in their ability to handle the core challenges of spliced alignment, sequence variations, and multi-mapped reads [11]. Tools like STAR, GSNAP, and MapSplice generally demonstrate high accuracy in base alignment and junction discovery while being robust to polymorphisms [10] [11]. In contrast, aligners like BWA, while excellent for DNA sequencing, are not designed for spliced alignment and perform poorly at exon junctions [10] [3].

The choice of an aligner must be guided by the specific research context. Studies relying on formalin-fixed, paraffin-embedded (FFPE) samples, which often have more sequencing errors and lower data quality, may benefit from the precision of STAR, which has been shown to generate more precise alignments and fewer misalignments in such challenging datasets compared to HISAT2 [13]. Furthermore, as RNA-seq moves toward clinical applications for detecting subtle differential expression between disease subtypes, ensuring reliability through rigorous benchmarking using appropriate reference materials becomes paramount [6]. Ultimately, there is no single aligner that meets all needs for every user, but a wealth of quality tools exists, and an evidence-based selection is key to generating biologically accurate results [3].

RNA sequencing (RNA-seq) has become a foundational technology in molecular biology and biomedical research, providing precise measurements of gene expression, isoform usage, and novel transcripts. The accuracy of any RNA-seq study hinges on the critical step of read alignment, where sequenced fragments are mapped to a reference genome or transcriptome. Alignment tools transform raw sequencing data into analyzable information by determining the genomic origin of each read, directly impacting all downstream analyses and biological conclusions. The evolution of alignment methodologies has produced three principal categories of tools: splice-aware aligners for identifying exon-intron boundaries, pseudoalignment tools for rapid quantification, and genome-free approaches for de novo transcriptome analysis. Each category employs distinct algorithmic strategies to balance competing demands of accuracy, computational efficiency, and specialized application needs.

Understanding the strengths, limitations, and appropriate use cases for each alignment approach is essential for researchers designing RNA-seq experiments, particularly as studies grow in scale and complexity. This guide provides a comprehensive comparison of these major alignment tool categories, synthesizing current benchmarking evidence to inform tool selection based on experimental goals, sample characteristics, and computational resources. By objectively evaluating performance across standardized metrics and providing detailed experimental protocols, we aim to equip researchers with the knowledge needed to optimize their RNA-seq analysis pipelines for robust, reproducible results.

Tool Category 1: Splice-Aware Aligners

Definition, Key Algorithms, and Applications

Splice-aware aligners are specialized tools designed to handle the mapping of RNA-seq reads across splice junctions, where reads span exon-exon boundaries created during pre-mRNA splicing. This capability requires algorithms that can accommodate large gaps in alignment corresponding to intronic regions, while simultaneously identifying canonical GT-AG splice signals and their variants. These tools typically employ complex indexing strategies of reference genomes and sophisticated seed-and-extend algorithms to efficiently identify potential splicing events. The fundamental challenge they address is the accurate reconstruction of transcript isoforms from short reads that cover only small portions of entire transcripts, making them indispensable for alternative splicing analysis, novel isoform detection, and fusion gene identification.

Splice-aware aligners have evolved significantly since their inception, with modern tools offering enhanced sensitivity for detecting rare splicing events and improved accuracy in complex genomic regions. STAR (Spliced Transcripts Alignment to a Reference) utilizes a unique strategy of sequencing consecutive seed matches to achieve ultra-fast mapping, while HISAT2 employs a hierarchical indexing scheme of the global genome and local exonic regions for memory-efficient operation. These tools predominantly output alignment files in SAM/BAM format that detail the genomic coordinates of each read, enabling both quantification and visualization of splicing patterns. Their applications span diverse research contexts including differential splicing analysis between conditions, characterization of splicing quantitative trait loci (sQTLs), and clinical diagnostics where splicing defects underlie disease pathogenesis.

Performance Evaluation and Comparative Data

Rigorous benchmarking studies have established performance characteristics across leading splice-aware aligners, revealing context-dependent advantages. In a comprehensive evaluation of small RNA analysis, STAR and Bowtie2 demonstrated superior effectiveness compared to BBMap, with STAR coupled with Salmon quantification emerging as a particularly reliable approach for reducing false positives [14]. When considering resource utilization, clear trade-offs emerge between mapping speed and memory requirements. STAR achieves high throughput by building large genome indices that accelerate mapping, making it ideal for large mammalian genomes when compute nodes have sufficient RAM, while HISAT2 uses a hierarchical FM-index strategy that lowers memory requirements while remaining competitive in accuracy [4].

The performance characteristics of splice-aware aligners become particularly important in specialized applications such as RNA variant identification, where different algorithms can produce substantially divergent results. A study investigating variant calling from RNA-seq data found surprisingly low concordance among splice-aware aligners, with the number of common potential RNA editing sites identified by all alignment algorithms being less than 2% of the total, primarily due to differences in how tools handle mapped reads on splice junctions [4]. This highlights how algorithmic differences can significantly impact downstream biological interpretations, necessitating careful tool selection based on analytical goals.

Table 1: Performance Comparison of Major Splice-Aware Alignment Tools

| Tool | Primary Algorithm | Strengths | Limitations | Ideal Use Cases |

|---|---|---|---|---|

| STAR | Sequential seed extension | Ultra-fast mapping, high sensitivity for canonical junctions | High memory usage (~32GB for human genome) | Large-scale studies with sufficient computational resources |

| HISAT2 | Hierarchical FM-index | Lower memory footprint, competitive accuracy | Slightly slower than STAR | Constrained computing environments, many simultaneous small genomes |

| Bowtie2 | Burrows-Wheeler Transform | Memory efficient, excellent for unspliced alignment | Less optimized for splice discovery than specialized tools | Small RNA analysis, mRNA sequencing without complex splicing |

Tool Category 2: Pseudoalignment

Definition, Key Algorithms, and Applications

Pseudoalignment represents a paradigm shift in RNA-seq analysis, focusing on rapid quantification rather than precise genomic coordinate assignment. These tools utilize lightweight algorithms that determine whether reads are compatible with transcripts through k-mer matching or streamlined mapping, bypassing computationally intensive alignment procedures. The fundamental innovation of pseudoalignment is the recognition that for many statistical quantification purposes, knowing the exact alignment coordinates is unnecessary; instead, determining which transcripts a read could potentially originate from is sufficient. This conceptual shift enables order-of-magnitude improvements in speed and resource utilization while maintaining quantification accuracy for most applications.

Salmon and Kallisto represent leading implementations of the pseudoalignment approach, though they employ distinct algorithmic strategies. Kallisto utilizes a de Bruijn graph constructed from transcript sequences and performs pseudoalignment by examining k-mer compatibility between reads and transcripts, effectively creating a "transcriptome-like" graph for rapid querying. Salmon incorporates similar concepts but adds additional bias correction modules for GC content and fragment-level biases that can improve accuracy in certain library types. Both tools operate directly on raw sequencing reads without prior alignment, generating transcript-level abundance estimates in TPM (Transcripts Per Million) format that are immediately usable for downstream differential expression analysis. Their primary applications include large-scale differential expression studies, meta-analyses combining multiple datasets, and situations with computational constraints where rapid iteration is valuable.

Performance Evaluation and Comparative Data

Comprehensive benchmarking has established that pseudoalignment tools provide dramatic speed improvements with minimal accuracy loss for quantification tasks. In evaluations of linearity—a critical metric for deconvolution analyses—Salmon and Kallisto demonstrated superior performance, with their TPM values showing the best fit to linear models compared to count-based methods [15]. This linearity makes them particularly suitable for applications like cell type deconvolution from mixed tissue samples, where the observed signal is assumed to be a weighted sum of constituent expression profiles. The alignment-free approach of these tools also eliminates the need for large intermediate BAM files, significantly reducing storage requirements and data transfer bottlenecks in distributed computing environments.

While pseudoalignment tools excel at quantification tasks, they have limitations for analyses requiring precise genomic coordinates. Since they bypass traditional alignment, they do not generate position-level information needed for variant calling, visualization in genome browsers, or novel isoform discovery. However, recent developments have extended pseudoalignment concepts to new domains, as demonstrated by alevin-fry-atac, which applies a modified pseudoalignment scheme with "virtual colors" to single-cell ATAC-seq data, achieving 2.8 times faster processing while using only 33% of the memory required by Chromap [16]. This expansion into new data types highlights the continuing evolution and growing influence of pseudoalignment approaches in computational biology.

Table 2: Performance Comparison of Major Pseudoalignment Tools

| Tool | Primary Algorithm | Speed Advantage | Accuracy Performance | Special Features |

|---|---|---|---|---|

| Salmon | Selective alignment with bias correction | 20-30x faster than traditional alignment | Excellent linearity for deconvolution [15] | GC bias and sequence-specific bias correction |

| Kallisto | k-mer based de Bruijn graph | 25-35x faster than traditional alignment | High concordance with ground truth mixtures [15] | Extremely simple workflow, minimal parameters |

| Alevin-fry | Virtual color partitioning | 2.8x faster than Chromap for ATAC-seq [16] | High concordance with alignment-based methods | Specialized for single-cell data, unified RNA-seq and ATAC-seq |

Tool Category 3: Genome-Free Approaches

Definition, Key Algorithms, and Applications

Genome-free, or de novo, transcriptome approaches reconstruct transcripts without reference genome guidance, using overlap information between reads to assemble complete transcript sequences. These methods employ graph-based algorithms that represent read relationships, iteratively extending and resolving paths to generate candidate isoforms. The fundamental advantage of genome-free approaches is their independence from existing annotations, enabling discovery of novel transcripts in genetically uncharacterized organisms or in contexts where the reference genome is incomplete, poorly assembled, or significantly divergent from the sample being studied. This makes them particularly valuable for non-model organisms, cancer genomics with extensive rearrangements, and metatranscriptomics of microbial communities.

Genome-free assembly typically utilizes de Bruijn graph or overlap-layout-consensus (OLC) algorithms similar to those used in genome assembly, but adapted for the complexities of transcriptomes where multiple isoforms share exonic regions. Tools like Trinity, SOAPdenovo-Trans, and Oases implement specialized strategies to handle varying expression levels, alternative splicing, and sequencing errors that complicate transcriptome assembly. The output of these pipelines is a set of contigs representing putative transcripts that can then be quantified and annotated. Primary applications include exploratory studies in non-model organisms, discovery of novel genes and isoforms in cancer transcriptomes, identification of fusion transcripts, and analysis of samples with significant genetic differences from available references.

Performance Evaluation and Comparative Data

The performance of genome-free approaches has been systematically evaluated in large-scale benchmarking efforts like the Long-read RNA-Seq Genome Annotation Assessment Project (LRGASP). This consortium generated over 427 million long-read sequences and revealed that libraries with longer, more accurate sequences produce more accurate transcripts than those with increased read depth, whereas greater read depth improved quantification accuracy [17]. For well-annotated genomes, tools based on reference sequences demonstrated the best performance, but genome-free approaches provided valuable capabilities for novel transcript detection. The consortium recommended incorporating additional orthogonal data and replicate samples when aiming to detect rare and novel transcripts using reference-free approaches.

The rise of long-read sequencing technologies has significantly enhanced the capabilities of genome-free transcriptome analysis by providing full-length transcript information that simplifies assembly. The SG-NEx project systematically benchmarked Nanopore long-read RNA sequencing methods, demonstrating that long-read approaches more robustly identify major isoforms and facilitate analysis of complex transcriptional events [18]. However, challenges remain in accurately quantifying transcript abundance from long-read data, with tools still lagging behind short-read methods due to throughput and error rate limitations. Nevertheless, the project validated many lowly expressed, single-sample transcripts, suggesting further exploration of long-read data for reference transcriptome creation.

Table 3: Considerations for Genome-Free Versus Reference-Based Approaches

| Factor | Reference-Based Assembly | Genome-Free Assembly |

|---|---|---|

| Prerequisite | High-quality reference genome | Sufficient read depth and overlap |

| Novelty Discovery | Limited by reference annotation | Unconstrained discovery potential |

| Computational Demand | Generally lower | Significantly higher |

| Accuracy in Well-Studied Systems | Higher when reference is complete | Lower due to assembly artifacts |

| Applicability to Non-Model Organisms | Limited | High |

| Recommended Use Cases | Differential expression, splicing analysis in model organisms | Non-model organisms, cancer genomics, novel isoform discovery |

Integrated Analysis and Decision Framework

Experimental Design Considerations

Selecting the optimal alignment approach requires careful consideration of experimental goals, sample characteristics, and computational resources. For standard differential expression analysis in well-annotated model organisms, pseudoalignment tools like Salmon or Kallisto typically provide the best balance of speed and accuracy, particularly for large sample sizes. When analyzing splicing patterns, identifying novel junctions, or working with clinical samples where precise variant detection is crucial, splice-aware aligners like STAR or HISAT2 remain essential. Genome-free approaches should be reserved for situations where reference genomes are unavailable, incomplete, or significantly divergent, or when the explicit goal is comprehensive novel transcript discovery.

The choice between alignment strategies also has practical implications for computational resource allocation and pipeline design. A multi-alignment framework (MAF) approach that systematically compares results from different alignment programs on the same dataset enables comprehensive analysis of subtle to significant differences [14]. Such frameworks are particularly valuable for method development, quality control, and studies where optimal tool selection is uncertain. As sequencing technologies evolve, the boundaries between these categories are blurring, with hybrid approaches emerging that combine the strengths of multiple methods, such as using pseudoalignment for quantification with selective traditional alignment for visualization and validation.

Visual Workflow for Tool Selection

The following diagram illustrates a systematic workflow for selecting appropriate alignment tools based on research objectives and sample characteristics:

Experimental Protocols and Reagent Solutions

Detailed Methodologies for Benchmarking Experiments

Comprehensive evaluation of alignment tools requires standardized benchmarking protocols that assess performance across multiple dimensions. The LRGASP consortium established a rigorous framework for evaluating long-read RNA-seq methods across three key challenges: reconstructing full-length transcripts for well-annotated genomes, quantifying transcript abundance, and de novo transcript reconstruction for genomes lacking high-quality references [17]. Their approach utilized aliquots of the same RNA samples processed with varied library protocols and sequencing platforms, enabling direct comparison across methods while controlling for biological variability. This design incorporated spike-in RNAs with known concentrations to assess quantification accuracy, and orthogonal validation data such as m6ACE-seq for RNA modification detection.

For splice-aware aligner evaluation, studies typically employ both synthetic datasets with known ground truth and real biological samples with orthogonal validation. A benchmark of long-read splice-aware aligners developed specialized tools for evaluating alignment results by comparing simulated reads to their genomic origin or aligning real reads to annotated transcripts [19]. Critical metrics include alignment accuracy, splice junction detection sensitivity and precision, resource consumption (memory and time), and the effect of error correction on alignment quality. For pseudoalignment tools, linearity assessments using mixed samples at known proportions provide crucial information about quantification accuracy, with studies fitting multiple linear regression models to evaluate how well estimated abundances reflect expected mixtures [15].

Essential Research Reagent Solutions

Table 4: Key Experimental Resources for Alignment Tool Benchmarking

| Resource Type | Specific Examples | Application in Alignment Evaluation |

|---|---|---|

| Reference Materials | SEQC samples, Sequins (V1, V2), ERCC spike-ins, SIRVs (E0, E2) [18] [15] | Provide known mixture ratios for assessing quantification linearity and accuracy |

| Standardized Data | SG-NEx data (7 human cell lines, 5 protocols) [18], LRGASP data (human, mouse, manatee) [17] | Enable cross-platform and cross-algorithm comparisons on consistent datasets |

| Quality Control Tools | FastQC, MultiQC [4] | Assess read quality and identify technical issues affecting alignment |

| Analysis Pipelines | nf-core RNA-seq pipelines [20], Multi-alignment Framework (MAF) [14] | Provide reproducible workflows for consistent tool evaluation |

| Validation Methods | m6ACE-seq [18], Orthogonal short-read data [19] | Generate complementary data for verifying alignment results |

The landscape of RNA-seq alignment tools encompasses three distinct categories—splice-aware aligners, pseudoalignment, and genome-free approaches—each with characteristic strengths and optimal applications. Splice-aware aligners like STAR and HISAT2 provide comprehensive mapping solutions essential for splicing analysis and variant detection, with performance trade-offs between speed and memory utilization. Pseudoalignment tools including Salmon and Kallisto deliver dramatic speed improvements for quantification tasks with minimal accuracy loss, making them ideal for differential expression studies. Genome-free approaches enable transcriptome characterization without reference genomes, proving invaluable for non-model organisms and comprehensive novel isoform discovery.

Tool selection must be guided by experimental objectives, sample characteristics, and computational resources, with emerging frameworks supporting multi-alignment strategies for comprehensive analysis. As sequencing technologies evolve toward long-read platforms and multi-modal assays, alignment methodologies continue to advance in tandem. Future developments will likely further blur categorical boundaries through hybrid approaches that leverage the respective advantages of each paradigm, ultimately providing researchers with increasingly powerful and precise tools for transcriptome analysis.

In the field of RNA-seq research, the selection of alignment tools is a foundational decision that directly impacts the sensitivity, accuracy, and specificity of all downstream analyses. These metrics are not merely academic; they determine a pipeline's ability to correctly identify true biological signals (sensitivity), reject false ones (specificity), and deliver correct results overall (accuracy). Performance varies significantly across different tools and is influenced by experimental design and computational resources. This guide provides an objective comparison of leading RNA-seq alignment tools based on recent benchmarking data, detailing the experimental methodologies that yield these critical insights.

Core Performance Metrics Explained

In the context of RNA-seq alignment, the terms sensitivity, accuracy, and specificity have specific, technical meanings. The diagram below illustrates the relationship between these key metrics and the outcomes of an alignment process.

- Sensitivity (or Recall): Measures the tool's ability to correctly identify true alignment positions. It is the proportion of truly alignable reads that are successfully mapped. A tool with high sensitivity minimizes false negatives (FN), ensuring that genuine biological signals are not missed [21]. This is crucial for applications like biomarker discovery or detecting rare transcripts.

- Specificity: Measures the tool's ability to avoid incorrect alignments. It is the proportion of non-alignable reads that are correctly left unmapped or the proportion of reported alignments that are correct. High specificity minimizes false positives (FP), which is vital for avoiding spurious results that could lead to false conclusions [21].

- Accuracy: A broader measure of overall correctness. It represents the proportion of all reads that are either correctly aligned or correctly not aligned. While useful, accuracy should be interpreted alongside sensitivity and specificity, as it can be skewed if the data has a high proportion of easy-to-map reads [21].

Comparative Performance of RNA-Seq Alignment Tools

Choosing an aligner involves balancing performance metrics with practical computational constraints. The following table summarizes a comparative benchmark of common RNA-seq alignment tools, providing a snapshot of their performance and resource profiles.

Table 1: Comparison of RNA-Seq Alignment Tool Performance

| Tool | Sensitivity | Specificity (On-Target Hits) | Runtime (Minutes) | Memory Usage (GB) |

|---|---|---|---|---|

| STAR | High (Ultra-fast alignment) [4] | High [4] | ~31* [21] | High (~28 GB) [4] [21] |

| HISAT2 | High (Excellent splice-aware mapping) [4] | High [4] | ~47* [21] | Low (Balanced memory footprint) [4] |

| BBMap | Moderate | High (~99%) [21] | ~35* [21] | ~24 (Minimum requirement) [21] |

| TopHat2 | Moderate | High (~99%) [21] | ~125* [21] | Moderate (~3.3 GB) [21] |

*Runtime for aligning 100,000 read pairs, including index loading time [21].

Experimental Protocols for Benchmarking

The performance data presented in this guide are derived from rigorous, real-world benchmarking studies. Understanding their methodology is key to assessing the results.

Benchmarking Design and Reference Materials

Large-scale consortium efforts, such as a study involving 45 independent laboratories, have established robust frameworks for evaluation. These studies often use well-characterized reference RNA samples, such as those from the Quartet Project and the longstanding MAQC Consortium [6]. These materials provide a "ground truth" because their transcriptomes are known, allowing for precise measurement of alignment and quantification accuracy. For instance, the Quartet samples are derived from a family quartet of immortalized cell lines and are designed to have subtle, clinically relevant differential expression, making them a challenging and realistic test [6].

Performance Assessment Metrics

In a typical benchmarking pipeline, the performance of tools is assessed using multiple metrics [6] [5]:

- Data Quality and Signal-to-Noise Ratio (SNR): SNR is calculated using Principal Component Analysis (PCA) to measure a tool's ability to distinguish biological signals from technical noise across sample groups.

- Accuracy of Expression Measurement: The correlation (e.g., Pearson coefficient) between the expression levels quantified from the aligned data and validation datasets (e.g., TaqMan assays) is a key metric for accuracy.

- Sensitivity and Specificity of Differential Expression: The accuracy of identifying Differentially Expressed Genes (DEGs) is assessed against a reference DEG list derived from the ground truth samples. Sensitivity is the proportion of true DEGs correctly identified, while specificity is the proportion of non-DEGs correctly rejected.

- Computational Resource Tracking: Runtime and memory consumption are monitored under standardized conditions to assess efficiency and practical usability [21].

The workflow below illustrates the standard process for generating benchmarking data, from raw sequencing reads to performance evaluation.

Building a reliable RNA-seq analysis pipeline requires both biological reference materials and specialized software tools.

Table 2: Key Resources for RNA-Seq Benchmarking and Analysis

| Resource Name | Type | Function in Evaluation |

|---|---|---|

| Quartet Reference Materials | Biological Sample | Provides a ground truth with subtle differential expression for accurately benchmarking tool performance in detecting clinically relevant changes [6]. |

| MAQC Reference Samples | Biological Sample | Offers samples with large biological differences (e.g., from cancer cell lines), traditionally used for establishing baseline RNA-seq accuracy and reproducibility [6]. |

| ERCC Spike-In Controls | Synthetic RNA | A set of 92 synthetic RNA transcripts spiked into samples at known concentrations to evaluate the accuracy of transcript quantification across experiments [6]. |

| FastQC | Software Tool | Performs initial quality control on raw sequencing reads, identifying potential sequencing artifacts and biases before alignment [4] [5]. |

| fastp / Trim Galore | Software Tool | Used for filtering and trimming raw reads to remove adapter sequences and low-quality bases, producing clean data for downstream alignment [5]. |

| Salmon / Kallisto | Software Tool | Lightweight, alignment-free quantification tools that use quasi-mapping to rapidly estimate transcript abundance, often used for comparison with alignment-based methods [4]. |

The performance of RNA-seq alignment tools is not uniform, and the optimal choice depends heavily on the specific research goals and available infrastructure. Tools like STAR offer high speed and sensitivity for large genomes but require significant memory, making them suitable for well-resourced environments. HISAT2 provides a more balanced memory profile while maintaining high accuracy, ideal for standard servers. Ultimately, there is no universal "best" tool. Researchers must weigh the trade-offs between sensitivity, specificity, computational cost, and the nature of their biological questions—whether detecting subtle differential expression or analyzing large, complex genomes—to select the most appropriate aligner for their investigation.

Practical Implementation: Comparing Leading RNA-Seq Alignment Tools and Workflows

This guide provides an objective comparison of five prominent RNA-seq analysis tools, framing their performance within the broader thesis of selecting optimal alignment and quantification software for robust and efficient transcriptomic research.

The initial step of aligning millions of short sequencing reads to a reference genome or transcriptome is foundational to RNA-seq analysis. The accuracy of this alignment heavily influences all downstream results, including differential gene expression, isoform quantification, and the discovery of novel splice variants [22]. However, the plethora of available tools, each employing distinct algorithms, presents a significant challenge for researchers. This guide profiles five widely used tools—HISAT2, STAR, Kallisto, Salmon, and CLC Genomics—by synthesizing data from independent benchmarking studies. The objective is to move beyond anecdotal evidence and provide a data-driven framework for tool selection, empowering researchers to align their choice with specific experimental goals and resource constraints.

A key conceptual division exists among these tools. HISAT2 and STAR are splice-aware aligners that map reads to a reference genome, determining their precise genomic coordinates and handling reads that span intron-exon junctions [23] [4]. In contrast, Kallisto and Salmon are quantification-focused tools that use pseudoalignment or quasi-mapping to determine transcript abundance directly, bypassing the computationally intensive step of producing base-by-base alignments [23]. CLC Genomics Workbench represents a commercial, integrated solution with a graphical user interface, which often relies on provided annotations for optimal performance [24] [22]. The following workflow diagram illustrates the two primary analytical paradigms and where each tool operates.

Experimental Benchmarking: Methodologies for Objective Comparison

To objectively evaluate tool performance, researchers employ rigorous benchmarking methodologies, primarily using simulated and real experimental data.

Simulation-Based Benchmarking with Polyester

A 2024 study on Arabidopsis thaliana data used the simulation tool Polyester to generate RNA-seq reads with known genomic origins, enabling precise accuracy measurements [25]. The workflow involved:

- Genome Collection: Using the well-annotated A. thaliana genome.

- Read Simulation: Employing Polyester to generate synthetic RNA-seq reads, introducing annotated single nucleotide polymorphisms (SNPs) from The Arabidopsis Information Resource (TAIR) to mimic genetic variation.

- Alignment and Accuracy Calculation: Running each aligner on the simulated reads and computing base-level and junction-level accuracy by comparing the aligner's output to the known truth [25].

Real Data Benchmarking with Polymorphic Accessions

A 2020 study took an experimental approach using real RNA-seq data from two natural accessions of Arabidopsis thaliana, Columbia-0 (Col-0) and N14 [24]. The methodology was:

- Data Generation: Isolating RNA and generating 150 bp single-end Illumina reads from the two accessions, which possess natural genetic variability.

- Mapping and Quantification: Mapping reads from the polymorphic N14 accession to the Col-0 reference genome using seven different tools (including HISAT2, STAR, Kallisto, Salmon, and CLC-based mapping).

- Downstream Analysis Comparison: Using the raw counts from each mapper to perform Differential Gene Expression (DGE) analysis with DESeq2, and then comparing the overlap in identified differentially expressed genes between the tools [24].

Quantitative Performance Comparison

Synthesizing data from multiple benchmarks reveals clear performance trade-offs. The table below summarizes key metrics for the profiled tools.

Table 1: Comprehensive performance profile of RNA-seq analysis tools

| Tool | Primary Function | Key Algorithm | Alignment Rate/Accuracy | Speed & Memory | Strengths | Weaknesses |

|---|---|---|---|---|---|---|

| HISAT2 | Genome Aligner | Hierarchical Graph FM Index [25] | High base-level accuracy; performs well with polymorphisms [24] [25] | Fast runtime; low memory footprint [3] [4] | Balanced performance; efficient for small servers [4] | Lower junction accuracy vs. SubRead [25] |

| STAR | Genome Aligner | Seed-based search with suffix arrays [25] | High read mapping rate (>98%); superior base-level accuracy [24] [25] | Very fast alignment; high memory usage [23] [4] | Ultra-fast; accurate splice junction detection [22] [4] | High memory demand; less accurate for quantification vs. lightweight tools [23] |

| Kallisto | Transcript Quantifier | Pseudoalignment via k-mers and De Bruijn graphs [24] [23] | High correlation with other tools for count distribution [24] | Fastest; minimal memory use [23] | Extremely fast and lightweight; ideal for transcript quantification [23] [26] | Cannot discover novel transcripts/splice forms [23] |

| Salmon | Transcript Quantifier | Quasi-mapping / Selective alignment [24] [23] | Near-identical results to Kallisto; handles biases [24] [4] | Very fast; low memory use [23] | Accurate with bias correction; suitable for complex libraries [4] | Cannot discover novel transcripts/splice forms [23] |

| CLC Genomics | Commercial Aligner | Method by Mortazavi et al. [24] | High mapping rate; top junction recall with annotation [24] [22] | Moderate runtime and memory requirements | User-friendly GUI; high junction accuracy with annotation [22] | Commercial cost; relies heavily on annotation, limiting novel discovery [22] |

Performance in Differential Gene Expression Analysis

The choice of tool can significantly impact biological interpretation. In the benchmark using polymorphic Arabidopsis accessions, the overlap of differentially expressed genes (DEGs) identified by different mappers was high but not perfect. Kallisto and Salmon showed the highest agreement (over 97% overlap), while comparisons involving STAR and HISAT2 generally showed slightly lower overlaps (around 92-94%) with other mappers [24]. Furthermore, when the commercial CLC software was used with its own DGE module instead of the standard DESeq2, strongly diverging results were obtained, highlighting that the statistical analysis module is also a critical variable [24].

Building a reproducible RNA-seq analysis pipeline requires both software tools and curated data resources. The following table details essential "research reagents" for your computational experiments.

Table 2: Key resources and materials for RNA-seq analysis workflows

| Item Name | Function / Purpose | Usage in Context |

|---|---|---|

| Reference Genome | A curated DNA sequence assembly for an organism. | Serves as the map for aligning sequencing reads. Essential for all alignment-based tools (HISAT2, STAR, CLC). [25] |

| Annotation File (GTF/GFF) | A file defining the coordinates of genomic features (genes, exons, transcripts). | Crucial for guiding splice-aware alignment and for quantifying reads at the gene level. Required by CLC for optimal performance. [22] [4] |

| Transcriptome Index | A pre-built computational index of all known transcripts. | Used by quantification tools Kallisto and Salmon for ultra-fast mapping. Must be built from a FASTA file of all transcript sequences. [23] |

| Polyester | An R/Bioconductor package for simulating RNA-seq datasets. | Allows for controlled benchmarking of aligners and quantifiers by generating data with a known ground truth. [25] |

| DESeq2 / edgeR | R packages for statistical analysis of differential expression from count data. | The standard for downstream DGE analysis after quantification. Their robust statistical models are key for reliable biological conclusions. [24] [27] |

Synthesizing the experimental data, the optimal tool choice is dictated by the specific research question and available resources.

For Maximum Quantification Speed and Efficiency: Choose Kallisto or Salmon. Their pseudoalignment approach is ideal for fast, accurate transcript quantification in studies with well-annotated transcriptomes, offering massive speed and memory advantages [23] [26]. They are the best choice for standard differential expression analyses on a laptop or server without high memory capacity.

* For Discovery-Oriented Splice-Aware Alignment:* Choose STAR or HISAT2. If your goal is to discover novel splice junctions, fusion genes, or perform variant calling, these genome aligners are essential. Opt for STAR when alignment speed is critical and sufficient computational memory (≥32 GB) is available. Choose HISAT2 for a balanced compromise between accuracy, speed, and a much lower memory footprint, making it suitable for standard workstations [25] [4].

For Annotation-Dependent Analysis with a GUI: Choose CLC Genomics. Its integrated graphical interface and high accuracy with annotated junctions make it a strong candidate for labs with budget for commercial software and less bioinformatics expertise, provided the analysis relies on existing annotation [24] [22].

Ultimately, the broader thesis supported by this data is that there is no single "best" tool for all RNA-seq research. Researchers must weigh the trade-offs between alignment-based and quantification-focused paradigms, considering their specific needs for discovery, quantification accuracy, computational resources, and ease of use.

The accurate alignment of RNA sequencing reads to a reference genome is a critical foundational step in bioinformatics pipelines, with the choice of alignment tool directly impacting downstream analyses, including variant calling and differential expression. For researchers and drug development professionals, selecting the optimal aligner is not merely a technical decision but a strategic one that influences the reliability of biological conclusions, especially in precision medicine contexts like cancer research. This guide provides a performance benchmarking comparison of leading RNA-seq alignment tools—STAR, HISAT2, and minimap2—focusing on their mapping accuracy and capability to handle genetic variants. The evaluation is framed within the broader thesis that effective alignment tools must not only achieve high speed and efficiency but also maintain precision in complex genomic contexts, such as splice junction mapping and variant-dense regions, to support robust RNA-seq research.

Performance Comparison of Major Alignment Tools

The table below summarizes the key performance characteristics, strengths, and limitations of STAR, HISAT2, and minimap2 based on current benchmarking data.

| Tool | Primary Algorithm | Best For | Speed | Memory Usage | Variant Handling | Key Strength | Notable Limitation |

|---|---|---|---|---|---|---|---|

| STAR [4] [28] | Spliced Alignment / Seed-based | Standard RNA-seq (splice-aware), Novel junction discovery | Ultra-fast [28] | High (~30 GB human) [28] | Uses annotations; superior for novel junctions [28] | High accuracy, comprehensive output [28] | High memory footprint [4] |

| HISAT2 [4] [29] [30] | Hierarchical Graph FM-index (HGFM) | RNA-seq in constrained environments, Population variants | Fast [4] | Low [4] | Incorporates known SNPs/indels via graph genome [30] | Low memory, high sensitivity [4] [30] | May be less sensitive for novel junctions vs. STAR [4] |

| Minimap2 [31] [32] | Minimizer-based with k-mer rescuing | Long reads (Iso-seq, Nanopore), Spliced long reads | Very fast [32] | Moderate | Improved alignment in repetitive regions, long INDELs [31] | Versatility for long reads & genomics [32] | Primarily optimized for long-read technologies [32] |

Experimental Protocols for Benchmarking

To ensure fair and reproducible comparisons between alignment tools, a standardized experimental and computational workflow is essential. The following protocols detail the key steps for benchmarking mapping accuracy and variant detection performance.

Benchmarking Workflow for Aligner Evaluation

The diagram below illustrates the core workflow for a rigorous aligner benchmarking study, from data preparation to final performance assessment.

Protocol 1: Mapping Accuracy Assessment

This protocol evaluates the fundamental ability of each aligner to correctly place reads on the genome, which is the foundation for all downstream analysis.

- Input Data Preparation: Obtain high-quality RNA-seq datasets with paired-end reads, such as those from the ENCODE project (e.g., stranded "dUTP" protocol on total RNA from GM12878 cell line) [28]. Ensure datasets include a validated set of known splice junctions for accuracy verification.

- Alignment Execution:

- STAR: Run using a two-pass alignment method to enhance the detection of novel splice junctions. First, perform an initial mapping to discover new junctions, then re-index the genome including the new junctions, and run a second mapping pass [28]. Critical parameters include

--runThreadNfor parallel processing and--sjdbGTFfilefor annotated splice junctions. - HISAT2: Execute using the hierarchical graph FM-index. The tool should be run with

-xto specify the pre-built index and-kto report multiple distinct alignments, which is crucial for assessing mapping ambiguity in variant-rich regions [29] [30]. - Minimap2: For long-read RNA-seq data (e.g., PacBio Iso-seq or Oxford Nanopore cDNA), use the

-ax splicepreset. For short reads, the-ax srpreset is available. The-ufparameter can be used to force alignment to the forward transcript strand when the technology warrants it [32].

- STAR: Run using a two-pass alignment method to enhance the detection of novel splice junctions. First, perform an initial mapping to discover new junctions, then re-index the genome including the new junctions, and run a second mapping pass [28]. Critical parameters include

- Accuracy Metrics Calculation: Calculate standard metrics from the alignment summaries generated by each tool. Key metrics include overall alignment rate, unique mapping rate, and the percentage of reads mapped to splice junctions. For a more granular view, use tools like RSeQC to assess the distribution of reads across genomic features (exons, introns, intergenic regions) [4].

Protocol 2: Evaluation of Variant Calling Performance

This protocol tests the alignment tools in a pipeline where the ultimate goal is the accurate identification of genetic variants, such as single nucleotide variants (SNVs) and insertions/deletions (indels).

- Ground Truth Establishment: Use a cohort with paired tumor and normal DNA exome sequencing data. The variants called from the exome data (e.g., using GATK Mutect2 for somatic variants and HaplotypeCaller for germline) serve as the high-confidence "ground truth" for evaluating RNA-derived variants [33].

- RNA-Seq Variant Calling Pipeline: Align the RNA-Seq data from the same samples using each tool (STAR, HISAT2, minimap2). Subsequently, call variants from the resulting BAM files using a specialized RNA variant caller. A robust method like VarRNA can be employed, which uses two XGBoost machine learning models to classify variants as germline, somatic, or artifact, thereby mitigating the high false-positive rate often associated with RNA-seq variant calling [33].

- Performance Evaluation: Compare the variant calls from the RNA-seq pipeline against the DNA-based ground truth.

- Calculate sensitivity: the percentage of DNA-based variants that are also detected in the RNA-seq data.

- Calculate precision: the percentage of RNA-seq variant calls that are confirmed by the DNA data.

- Notably, also document "unique RNA variants"—those detected in RNA-seq but absent in the exome data. These may represent allele-specific expression or RNA editing events, which are biologically significant findings enabled by RNA-seq [33] [34]. Studies have shown that tools like VarRNA can identify about 50% of exome sequencing variants while also detecting unique variants not found in DNA data [33].

Successful execution of alignment benchmarking and variant analysis requires a suite of reliable software, databases, and computational resources. The following table catalogs the key components of a functional bioinformatics toolkit for this domain.

| Category | Item | Specific Example / Version | Function / Application |

|---|---|---|---|

| Alignment Software | STAR | v2.7.10a+ [33] [28] | Spliced alignment of RNA-seq reads to a reference genome. |

| HISAT2 | v2.2.1+ [29] [30] | Alignment using a graph-based index representing a population of genomes. | |

| Minimap2 | v2.22+ [31] [32] | Versatile alignment for long reads (e.g., Iso-seq, Nanopore) and short reads. | |

| Variant Callers & Classifiers | GATK | v4.1.9+ [33] | Industry standard for variant calling in DNA sequencing data (Mutect2, HaplotypeCaller). |

| VarRNA | N/A [33] | Specialized classifier for calling and classifying germline/somatic variants from tumor RNA-seq data. | |

| Reference Data | Genome Assembly | GRCh38/hg38 [33] [28] | Standard human reference genome for alignment. |

| Gene Annotations | GENCODE / Ensembl GTF [28] | Provides known gene models and splice sites to guide alignment. | |

| Known Variants | dbSNP (build 151+) [33] [30] | Database of known polymorphisms for base recalibration and variant filtering. | |

| Workflow Management | Pipeline Framework | Snakemake [33] | Tool for creating reproducible and scalable data analysis workflows. |

| Containerization | Docker / Singularity | Ensures environment consistency and reproducibility across compute platforms. |

Discussion and Strategic Recommendations for Aligner Selection

The choice of an optimal alignment tool is contingent upon the specific research objectives, data types, and computational resources. The following diagram synthesizes the benchmarking data into a strategic decision pathway for tool selection.

- For Standard Short-Read RNA-seq with Ample Resources: STAR remains the gold standard for classic RNA-seq analyses due to its high accuracy in splice junction mapping and its ability to discover novel junctions via its two-pass method [28]. Its main drawback is a high memory footprint (~30 GB for the human genome), which can be prohibitive for some computing environments [4] [28].

- For Resource-Constrained Environments or Known Variant Integration: HISAT2 provides the best balance of performance and efficiency, offering low memory usage without a significant sacrifice in accuracy for standard analyses [4]. Its unique advantage is the graph-based alignment, which incorporates known population variants (from databases like dbSNP) directly into the index, leading to more accurate mapping in polymorphic regions and reducing reference bias [30].

- For Long-Read Transcriptomic Technologies: Minimap2 is the undisputed leader for aligning reads from PacBio Iso-seq or Oxford Nanopore technologies [32]. Recent algorithmic improvements, such as rescuing high-occurrence k-mers and a new scoring function that less severely penalizes long indels, have significantly enhanced its accuracy in complex and repetitive regions, which are common in long-read data [31].

- For Somatic Variant Discovery in Cancer Research: In precision oncology applications, where detecting expressed mutations is critical, the alignment tool is just one part of the pipeline. A specialized variant classification method like VarRNA is recommended post-alignment. It is crucial to use paired DNA-seq data as ground truth for validation, as RNA-seq alone can detect unique, clinically relevant expressed variants that DNA-seq misses, while also missing some DNA variants due to low expression [33] [34]. This integrated approach ensures that variant calls are not only technically accurate but also biologically and clinically relevant.

Selecting an optimal alignment tool is a critical step in RNA-seq data analysis, with direct implications for research efficiency, computational costs, and the validity of biological conclusions. Alignment is often the most computationally intensive step in the workflow, requiring significant memory and processing time [21]. The rapidly growing volume of plant RNA-seq data further underscores the need for tools whose performance and default settings are appropriate beyond mammalian genomes, for which they are often pre-tuned [25]. This guide provides an objective comparison of leading RNA-seq aligners, summarizing quantitative performance data and the experimental methodologies used to generate them, empowering researchers to make informed choices that align with their computational constraints and research objectives.

Performance Comparison of RNA-Seq Alignment Tools

| Tool | Primary Algorithm/Strategy | Key Strengths | Typical Use Case |

|---|---|---|---|

| STAR | Seed-search with maximal mappable prefix (MMP), followed by clustering/stitching [25]. | Ultra-fast alignment, sensitive splice junction detection without prior annotation [4] [25]. | Large datasets (e.g., mammalian genomes) where high speed is prioritized and sufficient memory is available [4]. |

| HISAT2 | Hierarchical Graph FM indexing (HGFM) for efficient mapping of reads to a reference genome and common variants [25]. | Low memory footprint, excellent splice-aware mapping, efficient for smaller genomes [4] [25]. | Environments with limited RAM (e.g., desktop computers), or when processing many small genomes [4]. |

| Subread | Aligner for both DNA- and RNA-Seq, emphasizes identification of structural variations and short indels [25]. | General-purpose aligner, high accuracy in junction base-level assessment [25]. | Analyses requiring precise mapping at splice junctions or general-purpose NGS alignment [25]. |

| BBMap | Splice-aware aligner designed to handle significantly mutated genomes [25]. | Robust alignment to mutated genomes, accounts for long indels and large deletions [25]. | Datasets with high variation or significant structural differences from the reference genome [25]. |

| Salmon | Quasi-mapping and two-phase inference (online/offline EM) for transcript-level quantification [4] [35]. | Dramatic speedups, reduced storage needs, includes bias correction models [4] [35]. | Rapid transcript-level quantification for differential expression analysis [4]. |

| Kallisto | Pseudo-alignment via de Bruijn graphs to check read-transcript compatibility [35]. | Extreme speed and simplicity, accurate transcript abundance estimates [4] [35]. | Situations requiring the fastest possible transcript-level estimates with minimal setup [4]. |

Comparative Performance Metrics

Performance data varies based on experimental setup, reference genome, and dataset size. The following summaries are based on benchmark studies.

- Runtime and Memory: In a benchmark study, STAR demonstrated fast runtimes but with high peak memory usage, making it ideal for high-throughput facilities with robust compute nodes. In contrast, HISAT2 offered a balanced compromise with a significantly smaller memory footprint, preferable for constrained environments [4]. A separate analysis noted that for small RNA (microRNA) data, STAR and Bowtie2 were more effective than BBMap [14].

- Alignment Accuracy: In a base-level assessment using simulated Arabidopsis thaliana data, STAR outperformed other aligners with an overall accuracy exceeding 90% under different test conditions. However, at the more challenging junction base-level, which assesses accuracy in deciphering splice sites, SubRead emerged as the most promising aligner, with over 80% accuracy [25].

- Sensitivity and Specificity: A comparison of mapping tools that measured performance in finding all optimal alignment hits (allowing for multiple mapping loci) reported on the sensitivity (true positive rate) and false positive rates of different tools. The specific results varied by aligner, highlighting that the choice of tool can significantly impact the alignments used for downstream variant identification [21].

Experimental Protocols for Benchmarking Aligners

The quantitative data presented in the previous section are derived from rigorous experimental benchmarks. Understanding their methodologies is crucial for interpreting the results.

Workflow for Comprehensive Aligner Assessment

A typical benchmarking workflow involves multiple stages to evaluate performance and accuracy systematically [25] [35]. The following diagram illustrates the general process for generating and evaluating aligner performance using simulated data, which provides a known ground truth for accuracy measurements.

Key Benchmarking Methodologies

- Use of Simulated Data: Benchmarks often use simulated RNA-seq reads from a reference genome (e.g., Arabidopsis thaliana or human) to establish a "ground truth." Tools like Polyester can simulate reads with biological replicates and specified differential expression [25] [35]. This allows for precise calculation of accuracy metrics by comparing aligner results to known genomic origins.

- Introduction of Genetic Variants: To test alignment robustness, benchmarks may introduce known genetic variations, such as single nucleotide polymorphisms (SNPs) from curated databases like The Arabidopsis Information Resource (TAIR), during the read simulation process [25].

- Performance Metrics: Alignment accuracy is evaluated at two key levels:

- Base-level Accuracy: Measures the percentage of correctly aligned individual nucleotides against the known simulation truth [25].

- Junction-level Accuracy: Assesses the aligner's ability to correctly identify and map reads across exon-exon splice junctions, a critical task for transcriptome analysis [25].

- Resource Consumption Tracking: Computational requirements are measured by tracking the central processing unit (CPU) time, wall clock time, and peak memory consumption during the alignment process for each tool [21] [36].

Successful execution of an RNA-seq experiment and its analysis relies on a suite of computational tools and reference materials. The table below details key components used in the benchmark studies cited in this guide.

| Category | Item | Function and Description |

|---|---|---|

| Reference Annotations | Gencode (Human) [35], TAIR (Arabidopsis) [25] | High-quality, curated annotations of genes and transcripts for a reference genome. Provides the coordinate systems for read alignment and quantification. Critical for accuracy, as the choice of gene model dramatically impacts results [35]. |

| Read Simulation | Polyester [25] [35], RSEM [35] | Software tools that generate synthetic RNA-seq reads in silico. This creates a dataset with a known "ground truth," which is essential for objectively benchmarking the accuracy of alignment and quantification tools. |

| Quality Control | FastQC [4] [37], MultiQC [4] [37] | Tools that generate quality control reports for raw and processed sequencing data. They help identify issues with read quality, adapter contamination, or other technical artifacts early in the analysis pipeline. |

| Quantification Tools | featureCounts [4], Salmon [4] [35], Kallisto [4] [35] | Software that converts aligned or pseudo-aligned reads into numerical counts of expression for each gene or transcript. Alignment-free tools like Salmon and Kallisto offer significant speed advantages [4]. |

| Workflow Management | Snakemake [37], Bash Scripts [14] | Frameworks that automate multi-step computational workflows. They ensure reproducibility, manage complex dependencies between analysis steps, and efficiently handle computational resources. |

| Containerization | Singularity [37], Docker | Technologies that package software and its environment into a portable container. This guarantees that analyses are reproducible across different computing systems by eliminating dependency conflicts. |

The choice of an RNA-seq alignment tool involves a strategic trade-off between computational resource consumption and analytical accuracy. Researchers working with large mammalian genomes and possessing substantial memory resources may find STAR's speed to be optimal. For projects with limited RAM or those focused on smaller plant genomes, HISAT2 provides an efficient and accurate alternative. When the primary goal is rapid gene expression quantification rather than full genomic alignment, alignment-free tools like Salmon and Kallisto offer an exceptional balance of speed and precision. Ultimately, the selection should be guided by the specific biological question, the experimental organism, and the available computational infrastructure.

A critical factor in selecting an RNA-seq alignment tool is its seamless integration with downstream differential expression (DE) analysis. This guide objectively compares the performance of prominent alignment and quantification tools, focusing on their compatibility with established DE pipelines like DESeq2, and provides supporting experimental data.

In RNA-seq analysis, the alignment or quantification step is not an end in itself but a gateway to identifying biologically significant changes in gene expression. The accuracy of tools like DESeq2, edgeR, and limma-voom depends heavily on the quality of the input data they receive—typically, count matrices of reads mapped to genes or transcripts. The choice of alignment method directly influences this count data, affecting the sensitivity and specificity of DE detection. Studies have shown that while many modern pipelines perform well for common gene targets, their performance can vary significantly for lowly-expressed genes, small RNAs, or in complex experimental designs. This evaluation synthesizes findings from multiple experimental benchmarks to guide researchers in selecting an alignment strategy that ensures reliable and robust downstream DE analysis.