Evaluating the STAR RNA-seq Pipeline: A Comprehensive Guide for Accurate Differential Expression Analysis

This article provides a comprehensive evaluation of the STAR (Spliced Transcripts Alignment to a Reference) pipeline for differential expression analysis from RNA-seq data.

Evaluating the STAR RNA-seq Pipeline: A Comprehensive Guide for Accurate Differential Expression Analysis

Abstract

This article provides a comprehensive evaluation of the STAR (Spliced Transcripts Alignment to a Reference) pipeline for differential expression analysis from RNA-seq data. Aimed at researchers, scientists, and drug development professionals, it covers foundational concepts, detailed methodological protocols, and critical optimization strategies to enhance accuracy and reliability. We explore STAR's unique alignment algorithm, compare its performance against pseudoalignment tools like Kallisto, and address common troubleshooting scenarios. By synthesizing current best practices and validation techniques, this guide empowers users to construct robust, species-specific analysis workflows that yield precise biological insights, ultimately accelerating discovery in biomedical and clinical research.

Understanding STAR: Core Algorithms and Its Role in the Modern RNA-seq Ecosystem

RNA sequencing (RNA-seq) has become a cornerstone technology in genomics, enabling researchers to analyze the entirety of RNA transcripts within a biological sample. [1] However, the accurate interpretation of this complex data hinges on a critical computational step: sequence alignment. The process of mapping millions of short RNA reads back to a reference genome is fraught with unique challenges, primarily due to the phenomenon of RNA splicing, where introns are removed and exons are joined together in the mature mRNA. [2] This article delves into the core challenges of RNA-seq alignment—splicing, speed, and sensitivity—framed within the context of evaluating differential expression analysis pipelines. For researchers and drug development professionals, the choice of alignment tool can profoundly impact downstream analyses, from identifying novel biomarkers to understanding disease mechanisms. [3] We provide a objective comparison of modern aligners, supported by recent experimental data and detailed methodologies, to guide the selection of optimal tools for specific research scenarios.

The Core Computational Hurdles in RNA-seq Alignment

The fundamental challenge in RNA-seq alignment stems from the biological reality that RNA sequences do not exist as continuous segments in the genome. During splicing, introns can be thousands of bases long, requiring the aligner to correctly identify exon-exon junctions where the sequenced read spans two exons that are far apart in the genomic DNA. [2]

- The Splicing Problem: Accurate spliced alignment requires sophisticated modeling of splice sites. While most introns begin with 'GT' and end with 'AG' (the canonical splice signals), these dinucleotides are abundant throughout the genome; only a small fraction (approximately 0.1%) are genuine splice sites. [2] Disambiguating true splice sites from random occurrences demands algorithms that can incorporate additional sequence context and probabilistic models. Aligners that use simple models may struggle with accuracy, particularly for noisy data or evolutionarily distant sequences. [2]

- The Speed and Sensitivity Trade-off: Sensitivity in alignment refers to the ability to correctly map reads to their true origin, including those that span novel splice junctions not present in existing annotation databases. High sensitivity often requires computationally intensive algorithms, creating a direct trade-off with processing speed. [4] [3] In large-scale studies or clinical diagnostics, where processing hundreds of samples is routine, the computational efficiency of an aligner is a practical concern alongside its accuracy. [3]

Benchmarking Alignment Performance: A Multi-Tool Comparison

Independent benchmarking studies provide crucial empirical data for comparing aligners. A recent large-scale, multi-center study involving 45 laboratories and 140 distinct bioinformatics pipelines offers a real-world perspective on performance. [3] Furthermore, focused evaluations on specific tools yield detailed insights into their strengths and weaknesses.

The table below summarizes key findings from a controlled small RNA sequencing case study, which evaluated the effectiveness of three popular alignment programs—STAR, Bowtie2, and BBMap—when combined with different quantification tools [4].

Table 1: Performance Comparison of Alignment and Quantification Tools in a Small RNA-seq Study

| Alignment Program | Quantification Tool | Key Findings and Recommendations |

|---|---|---|

| STAR | Salmon | Appeared to be the most reliable approach for analysis [4]. |

| STAR | Samtools | A reliable approach, though with some limitations [4]. |

| Bowtie2 | Various | More effective than BBMap for microRNA analysis [4]. |

| BBMap | Various | Less effective than STAR and Bowtie2 for microRNA analysis [4]. |

The broader multi-center study underscored that the choice of genome alignment tool is a primary source of variation in final gene expression measurements, highlighting its profound influence on the reproducibility and reliability of RNA-seq results. [3]

Emerging Solutions and Advanced Protocols

Deep Learning for Enhanced Splicing Accuracy

A significant innovation in this field is the application of deep learning to model splice sites with greater precision. Minisplice is a recently developed tool that uses a one-dimensional convolutional neural network (1D-CNN) to learn conserved splice signals from genome annotations [2]. Unlike traditional models like position weight matrices (PWM), this approach can capture complex dependencies between nucleotide positions and regulatory motifs.

- Implementation: The minisplice workflow involves training a compact model (7,026 parameters) on known splice sites, which is then used to precompute empirical splicing probabilities for every GT and AG dinucleotide in a target genome. These scores are fed into established aligners like minimap2 (for long-read mRNA) and miniprot (for protein-to-genome alignment) to guide the alignment process [2].

- Performance: Evaluation on human long-read RNA-seq data showed that this method greatly improves junction accuracy, especially for noisy reads and when aligning sequences from distantly related species [2].

Containerized and Standardized Pipelines

To mitigate inter-laboratory variability and simplify deployment, containerized solutions are gaining traction. Platforms like RumBall provide a self-contained Docker system that encapsulates an entire RNA-seq analysis workflow, from read mapping and normalization to statistical modeling and gene ontology enrichment [5]. Such protocols are designed to ensure consistency and reproducibility, making sophisticated differential expression analysis accessible in a few standardized steps [5].

Essential Research Reagent Solutions for RNA-seq Alignment

A successful RNA-seq alignment experiment relies on a suite of computational "reagents." The table below details key resources and their functions in a standard workflow [4].

Table 2: Key Research Reagent Solutions for RNA-seq Alignment Workflows

| Item Category | Specific Examples | Function in the Experiment |

|---|---|---|

| Alignment Programs | STAR, Bowtie2, BBMap, Minimap2 [4] [2] | Core algorithms that map sequencing reads to a reference genome or transcriptome. |

| Quantification Tools | Salmon, Samtools [4] | Tools that count the number of reads associated with each genomic feature (e.g., gene, transcript) to determine expression levels. |

| Reference Files | Genome Indices (e.g., for STAR, Bowtie2) [4] | Pre-processed reference genomes that enable rapid and efficient alignment of sequencing reads. |

| Sequence Data Formats | FASTQ, BAM/SAM [4] | Standardized file formats for storing raw sequencing reads (FASTQ) and aligned reads (BAM/SAM). |

| Workflow Frameworks | Multi-alignment Framework (MAF), RumBall [4] [5] | Integrated systems that streamline processing steps, saving time when repeating procedures with various datasets. |

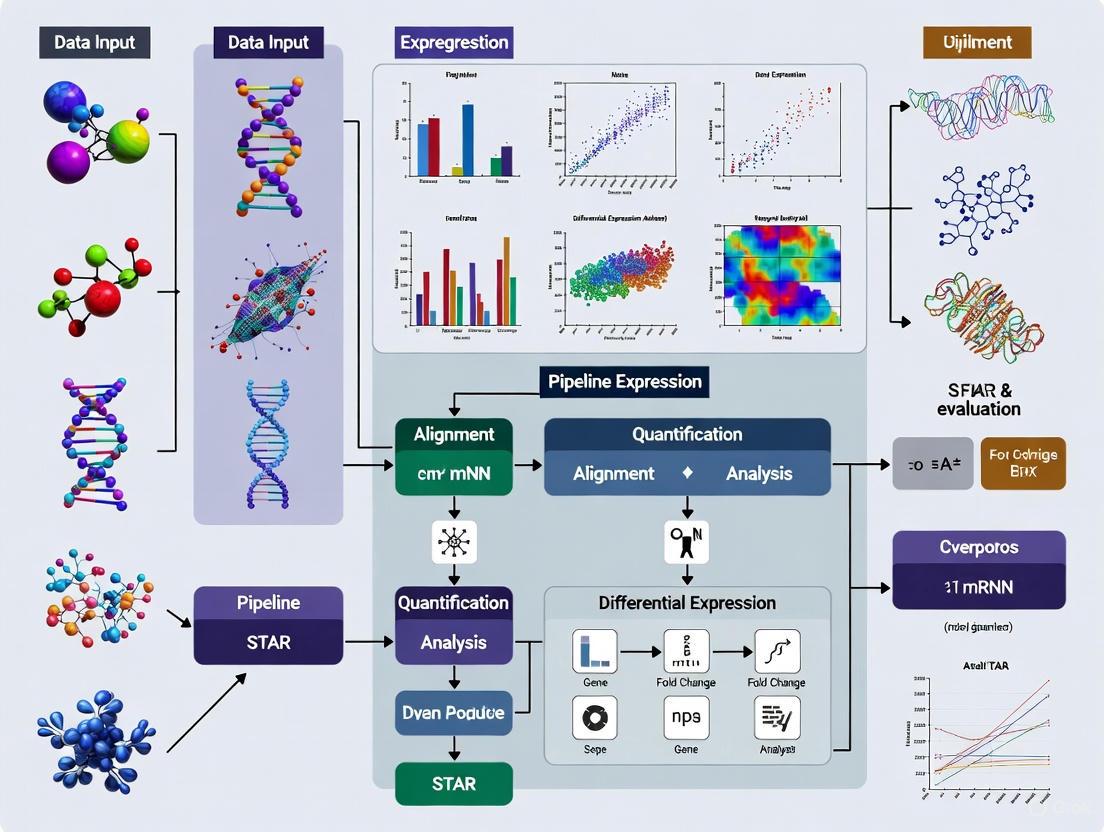

Visualizing the Alignment Workflow and Innovation

The following diagram illustrates a standard RNA-seq alignment workflow, integrating both traditional and deep learning-enhanced steps for a comprehensive view of the process.

Diagram 1: RNA-seq analysis workflow with deep learning splice site integration. The dashed line shows how the Minisplice innovation guides the alignment step.

The landscape of RNA-seq alignment is characterized by a continuous effort to balance the competing demands of splicing accuracy, computational speed, and analytical sensitivity. Evidence from recent large-scale benchmarks indicates that alignment tool selection significantly impacts results, with tools like STAR and Bowtie2 demonstrating particular effectiveness, especially when paired with modern quantification methods like Salmon [4] [3]. The field is advancing with innovations such as deep learning models for splice site prediction, which promise enhanced accuracy for challenging datasets [2]. Furthermore, the adoption of containerized and standardized pipelines is a positive step toward improving the reproducibility and accessibility of robust differential expression analysis [5]. For researchers, the key is to align the choice of alignment tool with the specific biological question, the nature of the sequencing data (short-read vs. long-read), and the available computational resources. A informed, evidence-based selection is paramount for generating reliable and biologically meaningful results in genomics research and drug development.

The Spliced Transcripts Alignment to a Reference (STAR) software represents a cornerstone tool in modern transcriptomics, enabling researchers to accurately align RNA sequencing (RNA-Seq) reads to a reference genome. Developed to address the challenges posed by the non-contiguous structure of transcripts and constantly increasing sequencing throughput, STAR utilizes a novel RNA-seq alignment algorithm that dramatically outperforms previous aligners by more than a factor of 50 in mapping speed while simultaneously improving alignment sensitivity and precision [6]. This exceptional performance has made STAR a fundamental component in countless transcriptomic studies, particularly in the field of drug development where reliable identification of differentially expressed genes can illuminate mechanisms of action and potential therapeutic targets.

The algorithm's design specifically addresses key challenges in RNA-Seq analysis, including the identification of canonical and non-canonical splice junctions, detection of chimeric (fusion) transcripts, and mapping of full-length RNA sequences [6]. Within the broader context of differential expression analysis pipelines, the choice of alignment tool represents a critical decision point that can significantly impact downstream biological interpretations. As comparative studies have revealed, while different mapping tools generally show high correlation in raw count distributions and differentially expressed gene overlap, the specific choice of aligner can introduce subtle but important variations in results, particularly for lowly expressed genes or in studies involving genotypes with substantial sequence polymorphisms [7]. This technical evaluation situates STAR within the ecosystem of RNA-Seq analysis tools, providing researchers with the comprehensive data needed to make informed decisions about their analytical workflows.

The STAR Algorithm: A Technical Breakdown

The STAR algorithm achieves its exceptional performance through a carefully engineered two-step process that combines computational efficiency with mapping accuracy. Unlike more traditional aligners that often search for entire read sequences before performing iterative mapping rounds, STAR employs an innovative strategy centered on maximal mappable prefixes and seed-based alignment [8].

Seed Searching with Sequential Maximum Mappable Prefixes (MMPs)

For every RNA-Seq read that STAR aligns, the algorithm initiates a search for the longest sequence that exactly matches one or more locations on the reference genome. These longest matching sequences are designated as Maximal Mappable Prefixes (MMPs). The initial MMP mapped to the genome is termed seed1 [8]. Following the identification of the first seed, STAR recursively searches only the unmapped portions of the read to identify the next longest sequence that exactly matches the reference genome, producing seed2 and subsequent seeds as needed. This sequential searching strategy, which focuses exclusively on unmapped read segments, underlies the notable efficiency of the STAR algorithm [8].

STAR implements this search using an uncompressed suffix array (SA), a data structure that enables rapid matching against even the largest reference genomes, such as the human genome [8] [6]. When exact matching sequences cannot be identified for particular read segments due to mismatches or indels, STAR employs an extension process for the previously identified MMPs. In cases where extension fails to produce a satisfactory alignment, the algorithm will soft-clip poor quality, adapter sequence, or other contaminating sequences [8].

Clustering, Stitching, and Scoring

Once the seed searching phase is complete, STAR transitions to integrating the separate seeds into a complete read alignment. This process begins with clustering, where seeds are grouped based on their proximity to a set of 'anchor' seeds—seeds that exhibit unique genomic mapping locations rather than multi-mapping across several positions [8].

Following clustering, the algorithm proceeds to stitching, where the clustered seeds are connected to form a continuous alignment. This stitching process is guided by a comprehensive scoring system that evaluates alignment quality based on multiple parameters including mismatches, indels, and gaps [8]. The result is a complete, spliced alignment of the RNA-Seq read to the reference genome, capable of accurately representing complex transcriptional events including intron excision and alternative splicing.

The following diagram illustrates the complete STAR alignment workflow:

Performance Benchmarking: STAR Versus Alternative Approaches

Experimental Design for Method Comparison

To objectively evaluate STAR's performance relative to other RNA-Seq analysis tools, we examine a comprehensive benchmark study that compared seven different mapping and quantification tools using experimentally generated RNA-Seq data from Arabidopsis thaliana accessions Col-0 and N14 [7]. This experimental design specifically addressed the performance of computational tools when analyzing data from genotypes with sequence polymorphisms, a common scenario in both basic and translational research.

The study utilized 36 samples with sequencing data ranging from approximately 21 to 33 million reads per sample [7]. The compared tools included:

- Alignment-based tools: BWA, STAR, HISAT2

- Quantification-focused tools: kallisto, salmon

- Statistical abundance estimation: RSEM

- Commercial solution: CLC Genomics Workbench

The experimental protocol involved mapping pre-processed reads to the reference genome or transcriptome, followed by gene quantification and differential expression analysis between control and cold-acclimated conditions. For alignment-based tools like STAR, reads were mapped to the reference genome, while quantification tools like kallisto and salmon directly estimated transcript abundances from the transcriptome. Differential expression analysis was subsequently performed using DESeq2 to ensure consistent statistical evaluation across methods [7].

Quantitative Performance Metrics

The following tables summarize the key performance metrics for STAR in comparison to other representative tools:

Table 1: Mapping Efficiency Across Tools for Arabidopsis thaliana Accessions

| Tool | Mapping Rate (Col-0) | Mapping Rate (N14) | Indexing Strategy | Alignment Approach |

|---|---|---|---|---|

| STAR | 99.5% | 98.1% | Suffix Array | Seed-and-extend with clustering |

| HISAT2 | 98.7%* | 97.3%* | Graph FM Index | Hierarchical indexing |

| kallisto | 97.2%* | 95.8%* | De Bruijn Graph | Pseudoalignment |

| salmon | 97.5%* | 96.1%* | Suffix Array (FMD) | Quasi-mapping |

| BWA | 95.9% | 92.4% | BWT/FM Index | Backward search |

Note: Values marked with * are estimated based on relative performance data provided in the benchmark study [7].

STAR demonstrated superior mapping efficiency for both accessions, achieving 99.5% for Col-0 and 98.1% for N14, outperforming all other tools in this critical metric [7]. This high mapping sensitivity makes STAR particularly valuable for studies where comprehensive capture of transcriptional events is paramount.

Table 2: Computational Resource Requirements and Differential Expression Concordance

| Tool | Relative Speed | Memory Usage | DGE Overlap with STAR | Primary Output |

|---|---|---|---|---|

| STAR | Baseline | High (∼30GB) | 100% | Genome-mapped BAM |

| HISAT2 | ∼2x faster [9] | Moderate | 93-94% [7] | Genome-mapped BAM |

| kallisto | ∼2.6x faster [9] | Low | 93-94% [7] | Transcript counts |

| salmon | ∼2.5x faster [9] | Low | 93-94% [7] | Transcript counts |

| BWA | Slower | Moderate | 92.1-93.4% [7] | Genome-mapped BAM |

The benchmarking data reveals a fundamental trade-off in RNA-Seq analysis tools: alignment-based methods like STAR typically require more computational resources but provide direct genomic mapping information, while quantification-focused tools like kallisto and salmon offer significant speed advantages but are limited to transcript abundance estimation [7] [9]. STAR's memory-intensive nature (typically requiring ∼30GB for the human genome) reflects its use of uncompressed suffix arrays, which enable its rapid search capabilities [8] [10].

The following diagram illustrates the experimental workflow and key comparison metrics from the benchmarking study:

Differential Expression Concordance

The benchmark study revealed high correlation coefficients for raw count distributions between different tools, ranging from 0.977 to 0.997 for Col-0 samples [7]. However, when examining the concordance of differentially expressed genes (DGEs) identified between control and cold-acclimated conditions, STAR showed approximately 93-94% overlap with the results from kallisto, salmon, and HISAT2 [7]. The lowest overlap (92.1-93.4%) was observed between STAR and BWA [7].

Notably, the choice of differential expression analysis software introduced greater variability than the choice of mapper. When the commercial CLC software employed its own DGE module instead of DESeq2, strongly diverging results were obtained despite using the same underlying mapping data [7]. This highlights the critical importance of consistent statistical processing when comparing alignment tools.

Practical Implementation and Research Applications

The following table details key components required for implementing STAR in a research pipeline:

Table 3: Research Reagent Solutions for STAR RNA-Seq Analysis

| Component | Function | Example/Note |

|---|---|---|

| Reference Genome | Sequence for read alignment | Species-specific (e.g., GRCh38 for human) |

| Annotation File | Gene model definitions | GTF or GFF3 format |

| High-Performance Computing | Running STAR alignment | 12+ cores, 32+ GB RAM recommended |

| Quality Control Tools | Assess raw read quality | FastQC [11] |

| Preprocessing Tools | Adapter trimming, quality filtering | Trimmomatic [11] |

| Quantification Tools | Generate count tables | featureCounts, HTSeq [9] |

| Differential Expression | Statistical analysis | DESeq2, edgeR [7] [11] |

Application Considerations for Research and Drug Development

STAR's alignment strategy offers distinct advantages for specific research scenarios. Its ability to perform spliced alignment and identify novel splice junctions makes it particularly valuable for studies focusing on transcript isoform regulation, fusion gene detection, and comprehensive annotation of transcriptional diversity [6] [10]. In drug development contexts, where understanding the complete mechanistic impact of compounds is essential, STAR's capability to reveal non-canonical splices and chimeric transcripts can provide insights that might be missed by quantification-focused approaches [10].

However, for large-scale studies prioritizing gene-level expression quantification across many samples, pseudoalignment tools like kallisto and salmon offer compelling advantages in computational efficiency, with demonstrated 2.6-fold faster processing and substantially reduced memory requirements [9]. These tools perform particularly well when working with well-annotated transcriptomes and when the research questions do not require discovery of novel transcriptional events [10] [9].

Recent advancements in long-read RNA sequencing technologies present new opportunities and challenges for alignment tools. While the SG-NEx project has demonstrated that long-read RNA sequencing more robustly identifies major isoforms, the analysis of such data requires specialized approaches beyond the scope of traditional short-read aligners like STAR [12].

The STAR algorithm's innovative two-step strategy of sequential maximum mappable seed search followed by clustering and stitching represents a significant advancement in RNA-Seq analysis methodology. Its high mapping sensitivity (99.5% in benchmark studies), precision in splice junction detection, and ability to identify novel transcriptional events make it an indispensable tool for research requiring comprehensive transcriptome characterization [7] [6].

The empirical data reveals that STAR occupies a specific niche in the tool ecosystem—exceling in discovery-focused research where complete transcriptional landscape mapping is prioritized, particularly in studies of alternative splicing, fusion genes, and non-canonical splicing events [6] [10]. In drug development pipelines, where both throughput and comprehensive mechanistic insights are valued, researchers might strategically employ different tools at various stages: quantification-focused tools for large-scale screening studies and STAR for in-depth mechanistic investigation of prioritized compounds or conditions.

The performance characteristics and trade-offs detailed in this analysis provide researchers and drug development professionals with evidence-based guidance for selecting the most appropriate RNA-Seq analysis strategy for their specific research context and computational resources.

In the analysis of bulk RNA-seq data, a foundational step is the accurate alignment of sequenced reads to a reference genome. This process is complicated in eukaryotes by the presence of spliced transcripts, where mature RNA molecules are composed of non-contiguous exons. Accurately detecting the boundaries between these exons, known as splice junctions, is paramount for correct transcript reconstruction and subsequent gene expression quantification [13] [14]. The Spliced Transcripts Alignment to a Reference (STAR) software was developed specifically to address the challenges of RNA-seq data mapping, offering a unique algorithm that has positioned it as a critical tool in the bioinformatics toolkit, especially for its capabilities in spliced and novel junction detection [13] [15].

This guide objectively evaluates STAR's performance against other widely used aligners, focusing on its core strengths. We frame this evaluation within broader research on differential expression analysis pipelines, where the initial alignment step can significantly influence all downstream results. For researchers and drug development professionals, the choice of aligner is not merely a technicality but a decisive factor in ensuring the reliability of biological interpretations, particularly when investigating complex splicing variants or novel transcripts with potential clinical significance [16].

The STAR Algorithm: A Deeper Dive into Core Mechanics

STAR's alignment strategy is distinct from many earlier RNA-seq aligners that were extensions of DNA short-read mappers. Instead, STAR employs a two-step process designed explicitly for handling non-contiguous sequences.

The Two-Phase STAR Workflow

The following diagram illustrates the core sequential steps of the STAR alignment algorithm:

Phase 1: Sequential Maximum Mappable Prefix (MMP) Search

The first phase of STAR's algorithm involves a sequential search for Maximal Mappable Prefixes (MMPs). Starting from the first base of a read, STAR identifies the longest substring that matches one or more locations in the reference genome exactly. When a splice junction or sequencing error is encountered, the MMP ends, and the search restarts from the next unmapped base. This sequential application of the MMP search to unmapped portions of the read is a key factor in STAR's speed and a natural way to pinpoint splice junction locations without prior knowledge [13] [17].

This MMP search is implemented using uncompressed suffix arrays (SAs), which allow for a binary string search with logarithmic scaling relative to the genome size. This makes the search extremely fast, even for large genomes. A significant advantage is that the SA search can find all distinct genomic matches for each MMP with minimal computational overhead, facilitating accurate handling of reads that map to multiple genomic loci [13].

Phase 2: Clustering, Stitching, and Scoring

In the second phase, STAR constructs complete read alignments by stitching the seeds (MMPs) identified in the first phase. Seeds are clustered together based on their proximity to selected "anchor" seeds within a user-defined genomic window, which determines the maximum intron size. A dynamic programming algorithm then stitches each pair of seeds, allowing for mismatches and small indels [13].

Notably, for paired-end reads, seeds from both mates are clustered and stitched concurrently. This treats the paired-end read as a single entity, increasing alignment sensitivity, as only one correct anchor from one mate is sufficient to accurately align the entire fragment [13]. Furthermore, this phase is capable of identifying chimeric alignments, where parts of a read map to distal genomic loci, enabling the detection of fusion transcripts like the BCR-ABL fusion in leukemia [13] [16].

Benchmarking Performance: STAR vs. Other Aligners

Experimental Protocols in Benchmarking Studies

To objectively assess STAR's performance, it is essential to understand the methodologies used in comparative studies. A 2024 benchmarking study used simulated RNA-seq data derived from the model plant Arabidopsis thaliana to evaluate five popular aligners. The simulation introduced annotated single nucleotide polymorphisms (SNPs) from The Arabidopsis Information Resource (TAIR) to create a controlled "ground truth." The aligners were assessed on both base-level accuracy (correct alignment of individual bases) and junction base-level accuracy (correct alignment of bases at exon-intron boundaries) under both default and varied parameter settings [17].

Another massive real-world study, part of the Quartet project, generated over 120 billion reads from 1,080 libraries across 45 independent laboratories. This design used reference materials with known, subtle differential expressions to evaluate the real-world performance of 26 experimental processes and 140 bioinformatics pipelines, providing a comprehensive view of how different alignment tools perform in diverse, non-standardized environments [3].

The following workflow diagram generalizes the steps involved in such an alignment benchmarking study:

Quantitative Performance Comparison

The benchmarking data reveals clear strengths for each tool. The table below summarizes key quantitative findings from the 2024 plant study, which are highly relevant for researchers making an evidence-based choice of aligners.

Table 1: Performance Summary of RNA-Seq Aligners from a 2024 Benchmarking Study [17]

| Aligner | Reported Overall Base-Level Accuracy | Reported Junction Base-Level Accuracy | Notable Strengths |

|---|---|---|---|

| STAR | >90% (Superior to others tested) | ~80% (Varies with parameters) | High base-level sensitivity, fast execution |

| SubRead | High (exact % not specified) | >80% (Most promising) | Excellent junction detection precision |

| HISAT2 | High (exact % not specified) | High (exact % not specified) | Efficient memory use, fast for smaller genomes |

The data shows that STAR achieved superior overall performance at the read base-level, with accuracy exceeding 90% under different test conditions. This makes it a robust and reliable choice for general-purpose alignment where overall mapping correctness is the priority. However, at the more specialized junction base-level assessment, SubRead emerged as the most promising aligner, achieving over 80% accuracy under most conditions [17]. This indicates that for studies where the primary goal is the discovery and precise characterization of alternative splicing events, SubRead may have an edge.

Performance in Large-Scale Real-World Studies

The multi-center Quartet study highlighted that bioinformatics pipelines, including the choice of alignment tool, are a primary source of variation in gene expression data. This underscores the profound influence of data processing on final results. The study recommended using the Quartet reference materials, which feature subtle differential expression, for quality control, as they are more sensitive in detecting performance issues than samples with large biological differences [3]. STAR's reliability and speed have made it a popular choice in such large-scale consortium projects, such as the ENCODE Transcriptome project, for which it was originally developed to align over 80 billion reads [13].

STAR in the Differential Expression Analysis Pipeline

In a complete differential expression (DE) analysis pipeline, STAR typically occupies the first and most computationally intensive step. A recommended best practice is a hybrid approach: using STAR to perform spliced alignment to the genome, which generates rich data for quality control (QC) and visualization, and then using the alignment output in alignment-based quantification tools like Salmon to estimate transcript abundances [14]. This workflow leverages the strengths of both tools—STAR's accurate spliced alignment and Salmon's sophisticated handling of assignment uncertainty.

Table 2: Key Research Reagent Solutions for a STAR-based RNA-seq Pipeline

| Reagent / Resource | Function / Description | Source / Example |

|---|---|---|

| Reference Genome | A FASTA file of the organism's genomic sequence. Serves as the mapping reference. | ENSEMBL, UCSC Genome Browser |

| Annotation File (GTF/GFF) | Contains coordinates of known genes, transcripts, and exons. Improves junction detection. | ENSEMBL, GENCODE |

| ERCC Spike-In Controls | Synthetic RNA transcripts added to samples to assess technical accuracy and performance. | External RNA Controls Consortium |

| STAR Aligner | The splice-aware aligner software that performs the core read mapping step. | https://github.com/alexdobin/STAR |

| Salmon | A tool for transcript quantification that can use STAR's alignments to model uncertainty. | https://github.com/COMBINE-lab/salmon |

| nf-core/rnaseq | A portable, automated pipeline that integrates STAR and Salmon for end-to-end analysis. | https://nf-co.re/rnaseq |

STAR occupies a critical and enduring position in the bioinformatics toolkit. Its unique MMP-based algorithm provides an exceptional combination of speed and accuracy for base-level alignment, making it ideally suited for large-scale projects like ENCODE [13]. Its ability to perform unbiased de novo detection of canonical and non-canonical splice junctions, as well as chimeric transcripts, provides researchers with a powerful tool for transcriptome discovery [13] [16].

However, benchmarking studies show that the field is diverse, and no single tool is superior in all metrics. While STAR excels in overall base-level accuracy, specialized tools like SubRead can demonstrate higher precision at splice junctions [17]. Therefore, the choice of aligner should be guided by the specific research question. For large-scale DE studies where overall gene-level counts are the primary focus, STAR's speed and robustness are major advantages. For investigations centered on alternative splicing, a pipeline that leverages STAR's general alignment supplemented by a tool with superior junction precision might be optimal.

In conclusion, STAR's design for spliced alignment and its proven performance in real-world and benchmarking studies solidify its role as a cornerstone of modern RNA-seq analysis. Its integration into standardized, high-quality workflows like nf-core/rnaseq ensures that it will continue to be a key asset for researchers and clinicians seeking to extract meaningful biological insights from transcriptome data.

RNA sequencing (RNA-seq) has revolutionized transcriptomics by enabling comprehensive quantification of gene expression across diverse biological conditions, providing unprecedented detail about the RNA landscape [18]. This technology generates vast amounts of raw data that must be processed through a complex computational pipeline to yield biologically meaningful insights. The transformation begins with raw sequencing files (FASTQ), proceeds through alignment (BAM files), and culminates in quantitative gene expression data (count tables) that fuel downstream differential expression analysis.

The critical challenge researchers face lies in selecting appropriate tools from the array of available software, as different analytical tools demonstrate significant variations in performance when applied to data from different species [18]. This guide objectively compares the performance of key software tools throughout this pipeline, with particular emphasis on the STAR aligner within the broader context of differential expression analysis pipeline evaluation research. We present experimental data from benchmark studies to inform researchers, scientists, and drug development professionals in constructing optimal analysis workflows tailored to their specific research needs.

Performance Benchmarks: Alignment and Quantification Tools

Alignment Tool Performance

Alignment tools map sequence reads to a reference genome or transcriptome, a crucial step that significantly impacts all downstream analyses. Benchmarking studies using simulated data from Arabidopsis thaliana have revealed important performance differences among popular aligners [17].

Table 1: Base-Level and Junction-Level Alignment Accuracy Comparison

| Aligner | Base-Level Accuracy | Junction-Level Accuracy | Key Algorithm Features |

|---|---|---|---|

| STAR | >90% [17] | Not specified | Seed-search with maximal mappable prefixes (MMP), suffix arrays [17] |

| HISAT2 | Not specified | Not specified | Hierarchical Graph FM indexing (HGFM), local genomic indices [17] |

| SubRead | Not specified | >80% [17] | General-purpose aligner emphasizing structural variation and indel identification [17] |

At the read base-level assessment, the overall performance of STAR was superior to other aligners, with accuracy exceeding 90% under different test conditions [17]. However, at the junction base-level assessment—critical for detecting alternative splicing events—SubRead emerged as the most promising aligner, achieving over 80% accuracy under most test conditions [17]. These findings highlight the tool-specific strengths that researchers must consider when selecting alignment software.

Quantification Method Comparison

Quantification determines read abundance per genomic feature, with different methods offering distinct advantages. Popular tools include Kallisto and Salmon, which use pseudoalignment for rapid quantification, while traditional aligner-based methods like STAR generate read counts directly through alignment [10].

Table 2: Feature Comparison of STAR and Kallisto

| Feature | STAR | Kallisto |

|---|---|---|

| Alignment Approach | Traditional alignment-based [10] | Pseudoalignment [10] |

| Primary Output | Table of read counts for each gene [10] | Transcripts per million (TPM) and estimated counts [10] |

| Strengths | Identification of novel splice junctions, fusion genes [10] | Speed, memory efficiency [10] |

| Sample Size Suitability | Smaller sample sizes where computational resources are not a concern [10] | Large-scale studies with many samples [10] |

| Transcriptome Requirements | More suitable for incomplete transcriptomes or those with novel splice junctions [10] | Well-annotated, complete transcriptomes [10] |

Experimental design and data quality significantly impact the choice between these methods. Kallisto performs well with short read lengths and is less sensitive to sequencing depth, while STAR may be more suitable for longer read lengths and libraries with high complexity [10].

Experimental Protocols for Benchmarking Studies

Alignment Benchmarking Methodology

Comprehensive benchmarking of alignment tools requires carefully designed experiments using well-characterized datasets. One rigorous approach utilizes simulated data from model organisms with introduced genetic variations to measure alignment accuracy precisely [17].

Genome Collection and Indexing: The process begins with obtaining the reference genome and building the specific index required by each aligner. For plant studies, the completely sequenced and well-characterized genome of Arabidopsis thaliana provides ample resources for benchmarking in a plant context [17].

Read Simulation: Using specialized tools like Polyester to generate RNA-Seq reads offers advantages through its ability to simulate sequencing reads with biological replicates and specified differential expression signaling [17]. This simulation approach allows introduction of annotated single nucleotide polymorphisms (SNPs) from databases such as The Arabidopsis Information Resource (TAIR) to test alignment robustness to genetic variations [17].

Accuracy Assessment: Performance evaluation should include both base-level and junction-level accuracy measurements. Base-level assessment scores overall alignment precision, while junction-level evaluation specifically tests the algorithm's capability to correctly identify splice junctions, which is particularly important for eukaryotic transcriptomes [17].

Differential Expression Analysis Comparison

Differential expression analysis represents the ultimate goal of most RNA-seq studies, and several established methods exist with different statistical approaches.

limma Protocol: The limma method employs a linear model for statistics and requires normalized RNA-seq count data. It utilizes the successful quantile normalization approach from microarray analysis, which attempts to match gene count distributions across samples in your dataset [19] [20].

DESeq2 Protocol: DESeq2 uses a negative binomial distribution and does not require pre-normalized count data. It employs a "geometric" normalisation strategy based on the hypothesis that most genes are not differentially expressed, calculating a scaling factor for each lane as the median of the ratio for each gene of its read count over its geometric mean across all lanes [19] [20].

edgeR Protocol: Similar to DESeq2, edgeR uses a negative binomial distribution but implements a Trimmed Mean of M-values (TMM) normalization method. This approach computes the TMM factor as the weighted mean of log ratios between test and reference samples, after exclusion of the most expressed genes and the genes with the largest log ratios [19] [20].

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful RNA-seq analysis requires both computational tools and appropriate experimental reagents. The following table details key components essential for generating reliable data throughout the RNA-seq workflow.

Table 3: Essential Research Reagents and Computational Tools for RNA-Seq Analysis

| Item | Function | Implementation Example |

|---|---|---|

| Quality Control Tools | Assess read quality, identify sequencing artifacts | FastQC for quality control reporting [18] |

| Trimming/Filtering Tools | Remove adapter sequences, low-quality bases | fastp (rapid operation) or Trim_Galore (integrated QC) [18] |

| Alignment Algorithms | Map reads to reference genome | STAR, HISAT2, or SubRead depending on research goals [17] |

| Quantification Methods | Estimate transcript/gene abundance | FeatureCounts for aligner-based approaches [10] |

| Reference Annotations | Define genomic features for quantification | Organism-specific GTF/GFF files (e.g., TAIR for Arabidopsis) [17] |

| Normalization Methods | Account for technical variation | TMM in edgeR, geometric in DESeq2, quantile in limma [19] |

The journey from FASTQ files to aligned BAMs and count tables involves multiple processing steps, each with several tool options exhibiting distinct performance characteristics. Benchmarking studies reveal that STAR achieves superior base-level alignment accuracy (>90%), while SubRead excels at junction-level precision (>80%) [17]. For quantification and differential expression, the choice between alignment-based and pseudoalignment methods depends on experimental design, with STAR being preferred for discovery of novel splice junctions and Kallisto offering advantages in speed for large-scale studies [10].

The optimal RNA-seq pipeline requires careful tool selection at each stage based on the specific research context, considering factors such as species-specific requirements, experimental design, and data quality [18]. No single tool dominates across all scenarios, but understanding the documented performance characteristics and methodological approaches of each option enables researchers to construct robust, efficient analysis workflows that yield biologically accurate insights from their RNA-seq data.

Within the rigorous framework of STAR (Sequencing Technology and RNA-seq) pipeline evaluation research, a critical yet often overlooked aspect is the computational profile of differential expression (DE) analysis tools. For researchers and drug development professionals, selecting an algorithm involves a strategic trade-off between statistical accuracy, computational speed, and resource demands. This guide provides an objective comparison of leading DE tools—DESeq2, limma (voom), and edgeR—synthesizing data from benchmark studies to inform pipeline design and resource allocation for large-scale transcriptomic projects.

Comparative Analysis of Computational Performance

Characteristics and Performance Benchmarks

Extensive benchmarking reveals distinct computational profiles for each tool, shaped by their underlying statistical approaches. The following table summarizes their core characteristics and performance.

Table 1: Computational Characteristics of Differential Expression Tools

| Aspect | limma (voom) | DESeq2 | edgeR |

|---|---|---|---|

| Core Statistical Approach | Linear modeling with empirical Bayes moderation [21] | Negative binomial modeling with empirical Bayes shrinkage [21] | Negative binomial modeling with flexible dispersion estimation [21] |

| Computational Efficiency | Very efficient, scales well with large datasets [21] | Can be computationally intensive for large datasets [21] | Highly efficient, fast processing [21] |

| Ideal Sample Size | ≥3 replicates per condition [21] | ≥3 replicates [21] | ≥2 replicates, efficient with small samples [21] |

| Key Strength | Handles complex designs elegantly [21] | Strong FDR control, automatic outlier detection [21] | Flexible modeling, good with low-count genes [21] |

| Key Limitation | May not handle extreme overdispersion well [21] | Conservative fold change estimates [21] | Requires careful parameter tuning [21] |

A large-scale real-world benchmarking study across 45 laboratories, which analyzed over 120 billion reads, confirmed that the choice of differential analysis tool is a major source of variation in RNA-seq results [3]. Furthermore, a robustness analysis found that patterns of relative performance between tools are reliable when sample sizes are sufficiently large [22].

Quantitative Benchmarking Data

The theoretical characteristics translate into measurable differences in performance. The table below summarizes key benchmarking results from controlled studies.

Table 2: Benchmarking Performance Metrics

| Performance Metric | limma (voom) | DESeq2 | edgeR |

|---|---|---|---|

| Relative Robustness (Rank) | 3rd (after NOISeq & edgeR) [22] | 5th (Least Robust) [22] | 2nd (after NOISeq) [22] |

| Power in Small Samples | Excels with small sample sizes [21] | Requires more replicates for power [21] | Excellent power with very small samples [21] |

| Performance with Low-Count Genes | Standard performance [21] | Standard performance [21] | Particularly shines with low-count genes [21] |

Experimental Protocols for Benchmarking

To ensure the reproducibility and validity of the comparative data cited in this guide, the following section outlines the core experimental methodologies used in the key benchmarking studies.

Large-Scale Multi-Center Benchmarking (Quartet Project)

The Quartet project established a rigorous framework for assessing RNA-seq performance in detecting subtle differential expression, which is critical for clinical applications [3].

- Reference Materials: The study used four well-characterized Quartet RNA samples (from a family quartet) with small biological differences, MAQC samples (A and B) with large differences, and defined spike-in controls (ERCCs). This provided multiple "ground truths" [3].

- Data Generation: A total of 45 independent laboratories sequenced 1080 RNA-seq libraries, generating over 120 billion reads. Each lab used its own in-house experimental protocol and bioinformatics pipeline, capturing real-world variation [3].

- Performance Assessment: A multi-faceted metric framework was used, including:

- Signal-to-Noise Ratio (SNR): Based on Principal Component Analysis (PCA) to measure the ability to distinguish biological signals from technical noise [3].

- Accuracy of Expression: Measured by correlation with TaqMan datasets and known spike-in/mixing ratios [3].

- Accuracy of DEGs: Assessed against established reference datasets [3].

- Pipeline Comparison: To isolate the effect of the analysis tool, 140 distinct bioinformatics pipelines were applied to high-quality data, systematically varying gene annotations, aligners, quantification tools, normalization methods, and differential expression tools (including DESeq2, limma, and edgeR) [3].

Robustness Analysis via Fixed Count Matrices

A separate study provided a controlled assessment of model robustness, which is essential for diagnostic applications [22].

- Data Sets: The analysis used two breast cancer RNA-seq datasets. To test robustness, the study was conducted with both full and reduced sample sizes [22].

- Experimental Manipulation: The key feature of this benchmark was the use of "fixed count matrices." This approach involved introducing controlled sequencing alterations to the same underlying data, allowing for a direct measurement of how consistently each tool performs when faced with technical noise [22].

- Performance Metrics: The study employed unbiased metrics to evaluate robustness, including:

- Relative False Discovery Rate (FDR): To estimate test sensitivity.

- Concordance: Measuring agreement between model outputs.

- Slope Analysis: Generating a 'population' of slopes of relative FDRs across different library sizes to systematically compare robustness [22].

Visualizing the Tool Selection Logic

The following diagram illustrates the decision-making workflow for selecting an appropriate differential expression tool based on key experimental parameters, synthesizing the recommendations from benchmark studies.

The following table details key reagents, reference materials, and software solutions essential for conducting robust differential expression analysis, as utilized in the cited benchmark studies.

Table 3: Key Research Reagents and Resources for RNA-seq Benchmarking

| Item Name | Function / Purpose | Relevance to DE Analysis |

|---|---|---|

| Quartet Reference Materials | Immortalized B-lymphoblastoid cell lines from a Chinese family quartet [3] | Provides samples with subtle, known biological differences for benchmarking accuracy in detecting clinically relevant DE. |

| MAQC Reference Materials | RNA from cancer cell lines (MAQC A) and human brain (MAQC B) [3] | Provides samples with large biological differences for initial pipeline validation and performance assessment. |

| ERCC Spike-In Controls | 92 synthetic RNAs with known concentrations [3] | Acts as an absolute external standard for evaluating the accuracy of gene expression quantification. |

| DESeq2 R Package | A comprehensive package for DE analysis from RNA-seq count data [21] [23] | Implements a negative binomial model with shrinkage estimation; widely used for its robust statistical framework. |

| edgeR R Package | A flexible package for DE analysis of digital gene expression data [21] | Offers multiple testing strategies (exact tests, quasi-likelihood) and efficient dispersion estimation. |

| limma (with voom) R Package | A general-purpose package for analyzing gene expression data [21] | Uses linear modeling with precision weights, ideal for complex designs and computationally efficient. |

| R/Bioconductor Environment | An open-source software platform for bioinformatics [21] [23] [24] | The standard computational environment for running and integrating the aforementioned DE tools. |

The choice of a differential expression tool is a consequential decision that balances statistical robustness, computational efficiency, and suitability for the experimental design at hand. Benchmark studies consistently show that while limma (voom) offers superior speed and handles complex designs elegantly, edgeR provides great flexibility and efficiency, particularly for small samples and low-count genes. DESeq2 is characterized by strong false discovery rate control, though it can be more computationally intensive and conservative. There is no single "best" tool for all scenarios; the optimal choice is contextual, depending on sample size, experimental complexity, and the biological questions being asked. By leveraging reference materials and standardized benchmarking protocols, researchers can make informed decisions to ensure the accuracy and reliability of their RNA-seq pipelines, a critical step in translating transcriptomic findings into scientific and clinical advancements.

Implementing a STAR Workflow: A Step-by-Step Protocol from Raw Reads to Expression Matrix

In the context of STAR-based differential expression analysis, the pre-alignment quality control (QC) and preprocessing of FASTQ files are critical first steps that significantly impact the reliability of all downstream results. This stage involves trimming adapter sequences, removing low-quality bases, and filtering out poor-quality reads, which collectively improve mapping rates and the accuracy of gene expression quantification. Within modern RNA-seq pipelines, fastp and Trim Galore! have emerged as two of the most widely adopted tools for this task [25] [26] [27]. This guide provides an objective comparison of their performance, features, and integration within a holistic differential expression workflow, supporting researchers in making an evidence-based selection for their projects.

fastp

fastp is an ultra-fast, all-in-one FASTQ preprocessor designed for comprehensive quality control and data filtering. Its development prioritized high speed, a comprehensive feature set, and ease of use [28] [29]. A key advantage is its ability to perform simultaneous quality control analysis both before and after processing, generating a single consolidated HTML report [28]. Notably, fastp can automatically detect and trim adapter sequences without user input, simplifying the preprocessing step [28] [29]. It is also cloud-optimized, requiring limited memory, which reduces computational costs [28].

Trim Galore!

Trim Galore! is a popular wrapper tool that automates adapter trimming and quality control by leveraging Cutadapt for trimming and FastQC for quality reporting [30] [31]. It is particularly valued for its simplicity and robust performance in removing adapter contamination. A notable feature is its automatic detection of common adapter sequences, such as the Illumina standard adapters, which streamlines the preprocessing of data from standard library preparations [31].

Table 1: Core Feature Comparison of fastp and Trim Galore!

| Feature | fastp | Trim Galore! |

|---|---|---|

| Core Technology | Standalone C++ application | Wrapper around Cutadapt & FastQC |

| Adapter Trimming | Yes, with auto-detection | Yes, with auto-detection |

| Quality Control | Integrated (before & after) | Via FastQC (separate runs) |

| Report Format | Integrated HTML & JSON | Separate FastQC HTML reports |

| UMI Processing | Supported [29] | Not directly supported |

| PolyX Trimming | Yes (e.g., polyG) [29] | Limited |

| Batch Processing | Supported with scripts [28] | Requires external scripting |

Performance and Experimental Data Comparison

Processing Speed and Efficiency

fastp demonstrates a significant advantage in processing speed due to its highly optimized algorithms and integrated design. The tool achieves this by reading data only once to complete trimming, filtering, and quality analysis simultaneously [28]. Further optimizations, such as a novel one-gap-matching algorithm for adapter detection, reduce computational complexity from O(n²) to O(n), making it substantially faster than many alternatives [28]. In contrast, Trim Galore!'s multi-tool architecture, while effective, inherently involves more steps and can be slower, especially since it typically requires separate FastQC runs before and after trimming for comprehensive QC [30].

Impact on Data Quality and Downstream Analysis

Independent evaluations using RNA-seq data from diverse species, including plants, animals, and fungi, have compared the effectiveness of these tools. In one comprehensive study, both tools were assessed based on their effect on key quality metrics like Q20/Q30 base ratios and subsequent alignment rates.

- fastp significantly enhanced the quality of processed data, leading to robust improvements in Q20 and Q30 scores [30].

- Trim Galore! also improved base quality but was sometimes observed to cause an unbalanced base distribution in the tail regions of reads despite repeated optimization attempts [30].

Table 2: Experimental Performance Metrics from RNA-seq Data Analysis

| Metric | fastp | Trim Galore! | Notes |

|---|---|---|---|

| Q20/Q30 Improvement | Significant enhancement [30] | Quality enhanced, but caused unbalanced tail base distribution [30] | Higher Q20/Q30 indicates fewer sequencing errors |

| Adapter Removal | Effective with auto-detection [28] [29] | Effective with auto-detection [31] | Both are reliable for standard adapters |

| Computational Speed | Very High [28] | Moderate [30] | fastp's integrated architecture is more efficient |

| Alignment Rate Impact | Positive effect on subsequent alignment [30] | Positive effect on subsequent alignment [30] | Cleaner data generally increases STAR mapping rates |

Integration into a STAR Differential Expression Pipeline

The pre-alignment QC step is the first and foundational stage in a complete RNA-seq analysis workflow. The following diagram illustrates a streamlined pipeline, from raw FASTQ files to differential expression analysis with STAR and DESeq2, highlighting where fastp or Trim Galore! are utilized.

Workflow Description

- Pre-alignment QC & Trimming: The raw FASTQ files are processed by either fastp or Trim Galore! to remove adapters, trim low-quality bases, and filter out poor-quality reads. The output is a set of cleaned FASTQ files [25] [32] [27].

- Spliced Alignment with STAR: The trimmed reads are aligned to a reference genome using STAR, a splice-aware aligner. This step often includes the

--quantMode TranscriptomeSAMoption to generate a BAM file aligned to the transcriptome, which is useful for quantification tools like Salmon [25] [32]. - Alignment QC and Quantification: The genome-aligned BAM file is quality-checked with tools like Qualimap [32]. Gene-level counts are then generated using quantifiers like featureCounts or HTSeq [32] [27].

- Differential Expression Analysis: The count matrix is analyzed with DESeq2 (or similar tools) to identify genes that are statistically significantly differentially expressed between conditions [32] [26].

- Consolidated Reporting: Finally, MultiQC aggregates results from all stages—including FastQC/fastp reports, STAR alignment statistics, and Qualimap results—into a single, interactive HTML report, providing a holistic view of the entire experiment's quality [25] [32].

Essential Research Reagent Solutions

A successful RNA-seq experiment relies on a combination of software tools and reference files. The table below details the essential components for the pre-alignment and alignment phases of a differential expression pipeline.

Table 3: Key Research Reagents and Resources for RNA-seq Analysis

| Resource | Function/Description | Example/Standard |

|---|---|---|

| Reference Genome | Spliced-aware alignment of reads for accurate mapping. | GRCh38 (human), GRCm39 (mouse) [32] |

| Gene Annotation (GTF/GFF) | Provides genomic coordinates of genes, transcripts, and exons for read quantification. | Gencode annotations [32] |

| QC & Trimming Tool | Performs adapter trimming, quality filtering, and generates QC reports. | fastp or Trim Galore! [25] [32] |

| Splice-Aware Aligner | Aligns RNA-seq reads to the genome, accounting for introns. | STAR [25] [32] |

| Quantification Tool | Assigns reads to genomic features to create a count matrix for DE analysis. | featureCounts, HTSeq [32] [27] |

| Differential Expression Tool | Identifies statistically significant changes in gene expression between conditions. | DESeq2 [26] |

Detailed Experimental Protocols

Quality Control and Trimming with fastp

The following command provides a standard protocol for processing paired-end RNA-seq data with fastp, generating both cleaned FASTQ files and a comprehensive QC report.

Protocol Explanation:

-iand-I: Specify the input read 1 and read 2 FASTQ files.-oand-O: Specify the output filenames for the trimmed reads.--detect_adapter_for_pe: Enables automatic detection of adapter sequences for paired-end reads, which is a major convenience feature [32].-l 25: Sets the minimum length for a read to be kept after trimming; reads shorter than 25 bases are discarded [32].-jand-h: Generate both JSON and HTML format reports, with the HTML report providing an easy-to-visualize summary of the QC results [29] [32].

Quality Control and Trimming with Trim Galore!

For Trim Galore!, the protocol typically involves a more segmented approach, as quality control reports are generated in separate steps.

Protocol Explanation:

--paired: Indicates the input is paired-end data.--nextera: Specifies the type of adapter to be trimmed (can be changed to--illuminaor omitted for auto-detection) [31].--length 25: Discards reads shorter than 25 bases after trimming.- The multi-step process highlights that Trim Galore! relies on external calls to FastQC for comprehensive quality profiling, both before and after trimming, which is often aggregated by MultiQC for a unified view [25] [31].

The choice between fastp and Trim Galore! for pre-alignment QC depends on the specific priorities of the research project.

- For most users seeking speed and integration, fastp is the superior choice. Its exceptional processing speed, integrated QC that compares data before and after filtering in a single report, and comprehensive feature set (including UMI processing) make it highly efficient and user-friendly [28] [30] [29]. Evidence suggests it provides excellent results that enhance downstream alignment in STAR-based pipelines [30].

- For users who prefer a established, modular approach, Trim Galore! remains a robust and reliable option. Its reliance on the proven combination of Cutadapt and FastQC is its greatest strength, offering transparency and consistency [30] [31]. It is an excellent tool for standard RNA-seq experiments, particularly when the workflow already heavily utilizes FastQC and MultiQC.

In the context of a STAR differential expression pipeline, where data quality directly influences the validity of biological conclusions, both tools are capable of effectively preparing data. However, the performance benefits, streamlined workflow, and growing adoption in community-standard pipelines like nf-core/RNA-seq make fastp a compelling and highly recommended option for modern RNA-seq analysis [25] [30].

Genome index generation represents a foundational step in RNA-seq data analysis, creating a structured reference that enables rapid and accurate alignment of sequencing reads. This process significantly influences all downstream analyses, including gene expression quantification, differential expression analysis, and variant discovery. The integration of annotation files (GTF/GFF3) during index generation provides crucial information about known gene structures, substantially improving the identification of splice junctions—a critical capability for RNA-seq analysis. In the context of differential expression pipelines, the precision of genome indexing directly impacts the reliability of resultant gene counts and the biological conclusions drawn from them. This guide objectively examines the critical parameters for genome index generation across leading aligners, with particular focus on their performance implications in sophisticated transcriptomic studies.

Comparative Analysis of Genome Indexing Approaches

Algorithmic Foundations and Indexing Strategies

Different aligners employ distinct algorithmic approaches to genome indexing, each with unique strengths and computational considerations:

STAR (Spliced Transcripts Alignment to a Reference) utilizes an uncompressed suffix array-based index, which allows for rapid exact matching of sequences against the reference genome [33]. This approach provides high sensitivity in detecting splice junctions but requires substantial memory resources—approximately 30 GB for the human genome [15]. STAR's genome generation step creates indices that incorporate sequence information and, when provided, annotation data to pre-populate known splice junctions, enabling comprehensive splice-aware alignment.

HISAT2 (Hierarchical Graph FM index) employs a more memory-efficient indexing strategy based on the Burrows-Wheeler Transform (BWT) and FM-index [34] [33]. HISAT2 extends this approach with a Hierarchical Graph FM index (HGFM) that incorporates population variants and transcript information, allowing it to account for genetic variation during alignment [35]. This sophisticated graph-based approach typically requires less memory than STAR—approximately 6.2 GB for the human genome including common SNPs [35].

Table 1: Core Algorithmic Differences Between Indexing Approaches

| Parameter | STAR | HISAT2 |

|---|---|---|

| Indexing Data Structure | Uncompressed suffix array | Hierarchical Graph FM index (BWT-based) |

| Memory Footprint (Human Genome) | ~30 GB [15] | ~6.2 GB (with SNPs) [35] |

| Splice Junction Handling | Annotation-guided + novel discovery | Graph-based incorporation of variants |

| Index File Extensions | Generated in genome directory | .ht2 (small) / .ht2l (large) |

| Variant Incorporation | Not native | Built-in capability via genome_snp indices [35] |

Critical Parameters for Genome Index Generation

The accuracy and efficiency of read alignment heavily depends on proper parameter specification during genome index generation. The following parameters have demonstrated significant impact on alignment performance across multiple studies:

Annotation File Integration: Both STAR and HISAT2 support the integration of gene annotation files (GTF/GFF3) during index generation. This integration provides crucial information about known transcript structures, which dramatically improves splice junction detection [15] [36]. For HISAT2, annotation integration creates specialized "genometran" or "genomesnp_tran" indices that explicitly incorporate transcriptomic information [35].

Splice Junction Overhang Specification: STAR requires careful specification of the --sjdbOverhang parameter, which defines the length of genomic sequence around annotated splice junctions to include in the index. Optimal performance is achieved when this parameter is set to read length minus 1 (e.g., 149 for 150bp reads) [36]. This parameter influences the aligner's ability to accurately map reads spanning splice sites.

Memory and Computational Resources: STAR's indexing process is memory-intensive, requiring approximately 10× the genome size in RAM (e.g., 30 GB for human) [15]. HISAT2 offers more moderate memory requirements, making it more accessible for environments with limited computational resources [35].

Table 2: Performance Comparison of Alignment Tools Based on Experimental Data

| Performance Metric | STAR | HISAT2 | TopHat2 | BWA |

|---|---|---|---|---|

| Alignment Speed | Fast [36] | ~3x faster than next fastest aligner [33] | Slower than HISAT2 [33] | Moderate [33] |

| Splice Junction Sensitivity | High (canonical & non-canonical) [36] | High | Lower than HISAT2 [33] | Not primarily designed for RNA-seq |

| Memory Efficiency | Lower (30GB for human) [15] | Higher [33] | Moderate | Moderate |

| Long Read Support | Yes (PacBio, Ion Torrent) [36] | Limited to shorter reads | Limited | Limited |

| Fusion Gene Detection | Native capability [37] [15] | Not primary function | Limited | No |

Experimental Data and Performance Benchmarks

Systematic Assessment of RNA-seq Procedures

A comprehensive 2020 study systematically compared 192 alternative methodological pipelines for RNA-seq analysis, providing valuable insights into aligner performance characteristics [38]. The research evaluated combinations of trimming algorithms, aligners, counting methods, and normalization approaches using data from two multiple myeloma cell lines. While the study emphasized that optimal pipeline selection depends on specific research objectives, it confirmed that both STAR and HISAT2 represent robust choices for read alignment when properly configured [38].

Another comparative study examining aligner performance across 48 samples of grapevine powdery mildew fungus found that all tested aligners except TopHat2 performed well based on alignment rate and gene coverage metrics [33]. The research specifically noted that "HISAT2 was ~3-fold faster than the next fastest aligner in runtime," while acknowledging that BWA demonstrated strong performance except for longer transcripts (>500 bp) where HISAT2 and STAR excelled [33].

Impact on Differential Expression Analysis

The choice of aligner and indexing parameters directly influences downstream differential expression results. A study investigating spinal cord gliomas utilized STAR-Fusion for detecting gene fusions, demonstrating how specialized alignment approaches can identify biologically relevant alterations in disease states [39]. The research identified novel fusion transcripts like GATSL2-GTF2I in lower-grade tumors, highlighting the importance of sensitive alignment in discovering potential biomarkers [39].

Detailed Methodologies for Genome Index Generation

STAR Genome Index Generation Protocol

The following protocol outlines the critical steps for generating genome indices using STAR aligner:

Necessary Resources:

- Hardware: Computer with Unix/Linux/Mac OS X, sufficient RAM (≥30 GB for human genome), adequate disk space (>100 GB)

- Software: STAR software (latest release recommended)

- Input Files: Reference genome (FASTA format), gene annotation file (GTF/GFF3 format)

Step-by-Step Procedure:

- Download and install STAR from the official GitHub repository [15]

- Prepare reference genome and annotation files in the appropriate formats

- Execute genome generation command with optimized parameters:

Critical Parameters:

--runThreadN: Number of parallel threads to utilize (optimizes speed)--genomeDir: Output directory for generated indices--genomeFastaFiles: Path to reference genome FASTA file--sjdbGTFfile: Path to gene annotation file (GTF format)--sjdbOverhang: Specifies splice junction overhang length (read length - 1)

For annotation files in GFF3 format, additional parameter --sjdbGTFtagExonParentTranscript Parent must be included to properly define parent-child relationships [36].

HISAT2 Genome Index Generation Protocol

Step-by-Step Procedure:

- Download and install HISAT2 from the official repository [34]

- Extract splice sites and exons from annotation file (optional but recommended):

- Build genome indices with transcriptome integration:

Critical Parameters:

-p: Number of parallel threads for indexing--ss: Splice sites file (generated from annotation)--exon: Exons file (generated from annotation)- Final arguments: Input genome FASTA and output index base name

HISAT2 offers multiple index types: basic genome index, genomesnp (including common SNPs), genometran (including transcripts), and genomesnptran (comprehensive inclusion of variants and transcripts) [35].

Genome Indexing Workflow and Performance Relationships

Diagram 1: Genome Index Generation Workflow and Performance Relationships. This diagram illustrates the critical decision points in genome index generation and how parameter selection influences subsequent alignment performance and downstream analytical capabilities.

Table 3: Essential Research Reagents and Computational Resources for Genome Indexing

| Category | Specific Resource | Function/Purpose | Implementation Example |

|---|---|---|---|

| Reference Sequences | GRCh38 (human) / other model organisms | Provides standardized genomic coordinate system | ENSEMBL, UCSC, or NCBI RefSeq genomes |

| Annotation Files | GTF/GFF3 format annotations | Defines known gene models and splice junctions | ENSEMBL, GENCODE, or organism-specific databases |

| Alignment Software | STAR (v2.7.10b or newer) | Splice-aware read alignment with high sensitivity | GitHub repository |

| Alignment Software | HISAT2 (v2.2.1 or newer) | Memory-efficient alignment with variant awareness | Official website |

| Computational Resources | High-performance computing cluster | Enables parallel processing for large genomes | 32+ GB RAM, multiple cores, sufficient storage |

| Quality Control Tools | FASTQC, MultiQC | Assesses read quality and alignment metrics | Pre- and post-alignment quality assessment |

The selection of genome indexing approach and parameters should be guided by specific research objectives, computational resources, and analytical requirements. STAR's comprehensive junction detection and fusion identification capabilities make it ideal for discovery-focused research where computational resources are sufficient. HISAT2 offers an excellent balance of performance and efficiency for large-scale studies or environments with limited computational resources. Critically, both aligners benefit substantially from proper annotation file integration during index generation, emphasizing the importance of this often-overlooked step in RNA-seq analysis pipelines. As sequencing technologies evolve toward longer reads and more complex analytical questions, appropriate genome index generation remains a cornerstone of robust transcriptomic analysis in both basic research and drug development contexts.

The accurate discovery and quantification of splice junctions are fundamental to understanding transcriptomic diversity in health and disease. Standard RNA-seq alignment algorithms inherently prioritize known, annotated splice junctions, creating a discovery bias against novel splicing events. The two-pass alignment strategy elegantly addresses this limitation by separating the processes of splice junction discovery and read quantification [40]. This method involves an initial alignment pass performed with high stringency to discover novel splice junctions, which are then incorporated into a custom genomic index to guide a more sensitive second alignment pass [40] [41]. Originally developed for short-read sequencing, its principles are now successfully applied to long-read technologies, making it a versatile approach for comprehensive transcriptome characterization [41].

For researchers investigating novel transcript variants in cancer, genetic diseases, or poorly annotated genomes, two-pass alignment provides a statistically significant enhancement in sensitivity. Profiling across diverse datasets has demonstrated that this method improves quantification for at least 94% of simulated novel splice junctions, delivering up to a 1.7-fold increase in median read depth over these junctions compared to traditional single-pass methods [40]. This technical advance is crucial for studies where detecting rare or condition-specific splicing events can reveal new diagnostic or therapeutic targets.

Performance Comparison: Two-Pass vs. Single-Pass and Other Methods

Quantitative Improvements in Junction Discovery and Quantification

Experimental data from multiple studies consistently demonstrates the superior performance of two-pass alignment. The following table summarizes key quantitative findings from benchmarking experiments:

Table 1: Performance Metrics of Two-Pass Alignment Across Experimental Conditions

| Sample Type | Read Length | Splice Junctions Improved | Median Read Depth Ratio | Primary Benefit |

|---|---|---|---|---|

| Lung Adenocarcinoma Tissue [40] | 48 nt | 99% | 1.68× | Enhanced novel junction quantification |

| Universal Human Reference RNA [40] | 75 nt | 94-97% | 1.25-1.26× | Improved sensitivity in complex transcriptomes |

| Lung Cancer Cell Lines [40] | 101 nt | 97% | 1.19-1.21× | Consistent gain across biological replicates |

| Arabidopsis Samples [40] | 75 nt | 95-97% | 1.12× | Effective in non-human systems |

The performance advantage extends beyond simple junction detection to the accuracy of downstream bioinformatic analyses. For differential splicing detection, a two-pass-based workflow that incorporates exon-exon junction reads (DEJU) demonstrated increased statistical power while effectively controlling the false discovery rate (FDR) compared to methods using only exon-level counts [42]. This workflow significantly improved the detection of challenging splicing events like intron retention, which are often missed by standard approaches [42].

Comparison with Post-Alignment Correction Methods

Beyond comparison with single-pass alignment, two-pass methods have been evaluated against post-alignment correction strategies. The following table illustrates a direct comparison using simulated data:

Table 2: Two-Pass Guided Alignment vs. Post-Alignment Correction for a Challenging Locus (FLM Exon 6)

| Method | Principle | Correctly Aligned Simulated Reads | Advantages/Limitations |

|---|---|---|---|

| Minimap2 (no guidance) [41] | Standard local alignment | 19.3% | Baseline; fails on short exons with errors |

| FLAIR Correction [41] | Post-alignment junction correction | 40.3% | Moderate improvement; limited by distance to true junction |

| Two-Pass Guided [41] | Junctions guide second alignment | 92.1% | Superior accuracy for complex splicing patterns |

This comparison reveals that providing splice junctions during alignment (two-pass) confers greater benefits than attempting to correct junctions after alignment is complete. The guided alignment approach is particularly advantageous for loci with complex splicing patterns where the alignment bonus for correctly mapping a short exon with sequencing errors is insufficient to overcome the penalty for opening two flanking introns in a single pass [41].

Experimental Protocols and Methodologies

Standardized Two-Pass Workflow with STAR

The two-pass method has been most extensively implemented and validated using the STAR aligner. The following workflow details the established protocol:

First Pass - Junction Discovery:

- Alignment: Perform an initial alignment of all RNA-seq samples using STAR with standard parameters and a comprehensive annotation file (e.g., GENCODE-Basic for human). Critical non-default parameters often include

--outFilterType BySJoutfor consistency in reporting,--alignSJoverhangMin 8to require reads span novel junctions by at least 8 nucleotides, and--alignSJDBoverhangMin 3for known junctions [40]. - Output: This pass generates a

SJ.out.tabfile for each sample, containing all detected splice junctions, both annotated and novel.

Second Pass - Sensitive Re-alignment:

- Junction Collation: Collect the