From Contigs to Genes: A Comprehensive Guide to Metagenomic Gene Prediction for Biomedical Research

This article provides a comprehensive guide for researchers and drug development professionals on the end-to-end process of predicting genes from metagenomic contigs.

From Contigs to Genes: A Comprehensive Guide to Metagenomic Gene Prediction for Biomedical Research

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on the end-to-end process of predicting genes from metagenomic contigs. It covers foundational concepts of metagenomic assembly, explores state-of-the-art gene prediction methodologies including machine learning approaches, addresses common troubleshooting and optimization challenges, and outlines rigorous validation techniques. By integrating established workflows with emerging deep learning tools and comparative benchmarking strategies, this resource aims to enhance the accuracy and biological relevance of gene predictions, ultimately supporting more effective discovery of novel microbial functions and therapeutic targets.

Understanding Metagenomic Contigs: Assembly, Characteristics, and Challenges for Gene Discovery

Defining Metagenomic Contigs and Their Role in Bypassing Microbial Cultivation

Metagenomic contigs are contiguous fragments of DNA sequence assembled from shorter, overlapping sequencing reads derived directly from an environmental sample [1]. They represent a foundational unit in modern microbial ecology, enabling researchers to reconstruct genetic material from complex communities without the need for prior laboratory cultivation of individual species.

The role of contigs in bypassing microbial cultivation is transformative. Traditional microbiology depends on isolating and growing microbes in pure culture, yet an estimated >81% of microbial genera and 25% of phyla remain uncultured [2]. This "uncultured majority" represents a vast reservoir of unexplored biological diversity and functional potential. Metagenomic approaches overcome this limitation by extracting, sequencing, and assembling DNA directly from environmental samples, with contigs serving as the building blocks for recovering genomes from these previously inaccessible microorganisms [2] [3]. This cultivation-independent methodology has dramatically accelerated the discovery of novel genes, metabolic pathways, and taxonomic lineages, fundamentally advancing our understanding of microbial ecosystems in environments ranging from the human gut to extreme habitats.

From Sample to Contig: A Technical Workflow

The journey from an environmental sample to assembled contigs involves a series of critical technical steps, each requiring careful optimization to ensure high-quality output for downstream gene prediction research.

Sample Processing and DNA Extraction

The initial sample processing phase is crucial for obtaining DNA that accurately represents the entire microbial community. Physical separation methods may be employed for host-associated samples to minimize host DNA contamination, which could otherwise overwhelm the microbial signal in subsequent sequencing [4]. For samples with low biomass, Multiple Displacement Amplification (MDA) using phi29 polymerase may be necessary to generate sufficient DNA for library preparation, though this introduces potential biases including chimera formation and uneven sequence coverage that must be considered in interpretation [4].

The choice between direct lysis (cells lysed within the sample matrix) and indirect lysis (cells separated first then lysed) significantly impacts DNA yield, sequence fragment length, and microbial diversity representation [4]. For example, direct lysis often provides higher yields but may co-extract enzymatic inhibitors like humic acids from soil samples, while indirect lysis typically yields purer DNA but may underrepresent certain microbial groups.

Sequencing Technologies and Their Impact on Assembly

Selection of appropriate sequencing technology profoundly influences contig quality and assembly outcomes. The table below compares key technologies used in metagenomic studies:

Table 1: Sequencing Technologies for Metagenomic Studies

| Technology | Read Length | Accuracy | Throughput | Best Use Cases |

|---|---|---|---|---|

| Illumina | 50-300 bp | 99.9% (High) | 300 Gb/run | High-coverage community profiling, variant detection |

| Sanger | 400-900 bp | 99.9% (High) | 50-100 Kb/run | Reference-quality assemblies, validation |

| PacBio | 10-15 kbp | 87% (Low) | 5-10 Gb/run | Complete genome resolution, repeat regions |

| Oxford Nanopore | 5-10 kbp | 70-90% (Low) | 500 Mb/run | Real-time sequencing, long-range continuity |

| 454/Roche | 700 bp | 98% (Medium) | 400 Mb/run | Largely obsolete but historically important |

For contemporary metagenomic studies, Illumina platforms provide the combination of high accuracy and throughput needed for most applications, while long-read technologies from PacBio and Oxford Nanopore are increasingly valuable for resolving complex genomic regions and generating more complete assemblies [5].

Assembly Algorithms and Strategies

Metagenomic assembly faces distinctive challenges including uneven species abundance, closely related strains, and conserved regions shared across taxa [6]. Three primary algorithmic approaches address these challenges:

Overlap-Layout-Consensus (OLC): This classical approach computes all pairwise read overlaps, builds an overlap graph, and identifies paths representing genomic sequences. While effective for high-error rate long reads, computational demands scale poorly with dataset size [5].

De Bruijn Graph: This method breaks reads into shorter k-mers (typically k=21-127), constructs graphs where edges represent k-mers and nodes represent overlaps of k-1 nucleotides, and identifies Eulerian paths through the graph. Highly efficient for large datasets but sensitive to sequencing errors [5] [7].

Greedy Extension: Iteratively extends contigs by joining reads with the best overlaps. Simple to implement but prone to local optima and errors in repetitive regions [5].

For metagenomic data, De Bruijn graph-based assemblers currently predominate due to their computational efficiency with large, complex datasets. Popular tools include metaSPAdes (producing higher fidelity contigs at greater computational cost) and MEGAHIT (optimized for memory efficiency and co-assembly of multiple samples) [6] [8].

Table 2: Comparative Analysis of Metagenomic Assembly Tools

| Assembler | Algorithm | Strengths | Limitations | Computational Demand |

|---|---|---|---|---|

| metaSPAdes | De Bruijn Graph | High accuracy, handles uneven coverage | High memory requirements | Very High |

| MEGAHIT | De Bruijn Graph | Memory efficient, fast co-assembly | May produce shorter contigs | Medium |

| IDBA-UD | De Bruijn Graph | Optimized for uneven sequencing depth | Complex parameter tuning | High |

A critical strategic consideration is the choice between individual assembly (each sample assembled separately) and co-assembly (multiple samples assembled together). Co-assembly can improve coverage for low-abundance organisms but risks combining genetically distinct populations and increases computational complexity [6]. Co-assembly is most appropriate for related samples from the same sampling event or longitudinal sampling of the same site.

From Contigs to Biological Insights: Gene Prediction and Functional Analysis

Once contigs are generated, the next critical step is extracting biological meaning through gene prediction and functional annotation, with particular considerations for metagenomic data.

Gene Prediction Challenges in Metagenomics

Gene prediction from metagenomic contigs presents unique difficulties compared to isolated genomes. Most fragments are relatively short, potentially containing incomplete genes, and source genomes are often unknown or novel, complicating statistical model construction [9]. Additionally, different microbial lineages utilize varied genetic codes and gene structures that are frequently ignored in standard annotation pipelines, leading to spurious predictions and missed genes [10].

Three computational approaches address these challenges:

- Homology-based methods (e.g., CRITICA) use BLAST comparisons against protein databases but cannot identify novel genes without known homologs [9].

- Model-based methods (e.g., MetaGeneMark) employ Markov chain models but require numerous parameters and may not generalize across diverse taxa [9].

- Machine learning-based methods (e.g., MetaGUN, Orphelia) integrate multiple sequence features (codon usage, ORF length, GC-content) with classifiers to predict coding potential [9].

Advanced Workflow: Lineage-Specific Gene Prediction

Recent advances introduce lineage-specific gene prediction that uses taxonomic assignment of contigs to inform tool selection and parameters [10]. This approach applies different tools optimized for specific domains (bacteria, archaea, eukaryotes, viruses) and customizes parameters based on genetic code and gene structure variations.

Table 3: Lineage-Specific Tool Selection for Optimal Gene Prediction

| Taxonomic Group | Recommended Tools | Key Considerations |

|---|---|---|

| Bacteria | Pyrodigal, MetaGeneMark, FragGeneScan | Standard genetic code, compact genomes |

| Archaea | Pyrodigal, MetaGeneMark, Prodigal | Potential alternative genetic codes |

| Eukaryotes | AUGUSTUS, SNAP, GeneMark-ES | Multi-exon genes, intron processing |

| Viruses | VirSorter, PhiSpy, Prodigal | High genomic density, atypical genes |

This lineage-aware approach significantly expands the protein landscape captured from metagenomes. When applied to human gut metagenomes, this strategy identified 14.7% more genes compared to using a single tool (Pyrodigal) exclusively, including previously hidden functional groups and 3,772,658 small protein clusters [10].

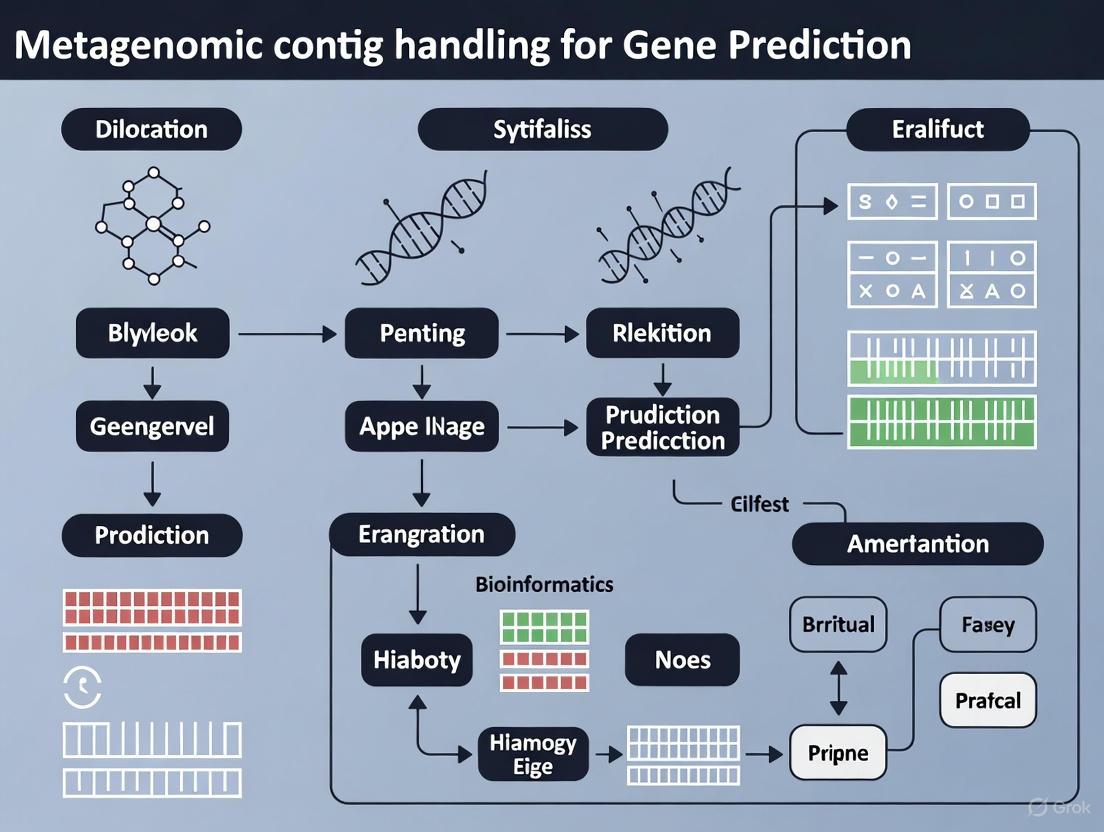

The following workflow diagram illustrates the complete process from sampling to functional analysis:

Workflow for Metagenomic Contig Analysis: This diagram outlines the key stages in processing metagenomic data from environmental samples to biological interpretation, highlighting the central role of contig generation and analysis.

Applications and Protocols

Application Note: Biofuel Enzyme Discovery

Metagenomic contigs have enabled breakthrough applications in industrial biotechnology, particularly in biofuel production. Researchers have discovered numerous lignocellulose-degrading enzymes from uncultured microbes in diverse environments including buffalo rumen, anaerobic digesters, and compost soils [3]. For example, novel thermotolerant cellulases identified from buffalo rumen metagenomes function at elevated temperatures ideal for industrial processes, while halotolerant enzymes from anaerobic beer waste metabolomes remain active in high-salt conditions [3].

These discoveries bypass the traditional limitation of cultivating source organisms, directly addressing the challenge that approximately 99.9% of environmental microbes cannot be cultured using standard methods [3]. The resulting enzymes provide more efficient and cost-effective tools for breaking down plant biomass into fermentable sugars for bioethanol and biodiesel production.

Experimental Protocol: Contig Generation and Analysis

This protocol provides a standardized workflow for generating and analyzing metagenomic contigs from raw sequencing data.

Protocol: Metagenomic Contig Assembly and Gene Prediction

I. Quality Control and Preprocessing

- Quality Assessment: Run FastQC on raw FASTQ files to evaluate per-base sequence quality, GC content, and adapter contamination [8].

- Quality Trimming: Use Trimmomatic or KneadData to remove adapters and trim low-quality bases (Phred score <20). Retain only paired reads after trimming [8].

- Host DNA Removal: Align reads to host reference genome (e.g., GRCh38 for human-associated samples) using Bowtie2 or BWA. Remove aligned reads to enrich for microbial sequences [8].

II. Assembly and Binning

- De Novo Assembly: Run MEGAHIT or metaSPAdes with optimized k-mer ranges. For MEGAHIT, use parameters:

--k-list 27,47,67,87,107,127for progressive k-mer sizes [6] [8]. - Assembly Quality Assessment: Evaluate contig quality using QUAST, reporting N50, largest contig, and total assembly size. Map reads back to contigs with Bowtie2 to estimate assembly completeness [6].

- Binning: Cluster contigs into Metagenome-Assembled Genomes (MAGs) using MetaBAT2 based on coverage and composition. Refine bins with MetaWRAP to achieve >90% completeness and <5% contamination [8].

III. Gene Prediction and Annotation

- Taxonomic Classification: Classify contigs using Kraken2 against standard databases. Apply lineage-specific gene prediction based on taxonomic assignments [10].

- Open Reading Frame Prediction: For bacterial contigs, use Pyrodigal with metagenome mode. For eukaryotic contigs, use AUGUSTUS with appropriate species parameters [10].

- Functional Annotation: Annotate predicted genes using eggNOG-mapper, KofamKOALA for KEGG pathways, and CAZy for carbohydrate-active enzymes [8].

IV. Downstream Analysis

- Gene Abundance Quantification: Map reads to non-redundant gene catalog using Bowtie2. Calculate normalized abundance (TPM) with CoverM [8].

- Statistical Analysis: Identify differentially abundant genes between sample groups using DESeq2 with adjusted p-value < 0.05 [8].

The Scientist's Toolkit: Essential Research Reagents and Computational Tools

Table 4: Essential Resources for Metagenomic Contig Research

| Category | Resource | Specification/Version | Application |

|---|---|---|---|

| Sequencing Kits | Illumina NovaSeq 6000 S4 Reagent Kit | 300-400 Gb output | High-throughput metagenome sequencing |

| DNA Extraction | DNeasy PowerSoil Pro Kit | 100 preps | Inhibitor-free DNA from soil/fecal samples |

| Assembly Software | metaSPAdes | v3.15.5 | High-quality contig assembly |

| Binning Tool | MetaBAT 2 | v2.15 | MAG reconstruction from contigs |

| Gene Predictor | Pyrodigal | v2.0 | Prokaryotic gene finding in metagenomes |

| Taxonomic Classifier | Kraken 2 | v2.1.2 | Rapid taxonomic assignment of contigs |

| Functional Database | eggNOG | v6.0 | Orthology assignments and functional annotation |

| Quality Control | FastQC | v0.12.1 | Quality assessment of sequencing reads |

Metagenomic contigs represent the crucial bridge between raw sequencing data and biologically meaningful insights in cultivation-independent microbial studies. Their generation through sophisticated assembly algorithms and subsequent analysis via lineage-aware annotation pipelines has fundamentally expanded access to the genetic and functional diversity of the microbial world. As protocols standardize and computational methods advance, contig-based approaches will continue to drive discoveries in fields ranging from human health to industrial biotechnology, unlocking the functional potential of Earth's vast uncultured microbial majority.

The workflows and methodologies detailed in this application note provide researchers with robust frameworks for constructing and analyzing metagenomic contigs, emphasizing the importance of appropriate tool selection at each step to maximize biological insight from complex microbial communities.

The accurate reconstruction of genomes from metagenomic sequencing data is a foundational step in microbiome research, enabling the discovery of novel organisms, metabolic pathways, and functional genes. For gene prediction research, the quality of assembled contigs directly impacts the accuracy and completeness of identified gene models. Metagenomic assembly presents unique computational challenges compared to single-genome assembly, primarily due to the presence of multiple organisms at varying abundances, strain-level heterogeneity, shared conserved regions across species, and repetitive genomic elements [11] [6]. Within this context, metaSPAdes and MEGAHIT have emerged as two predominant assemblers, each employing distinct algorithmic approaches to address these challenges. This application note provides a comprehensive comparison of these tools, detailed experimental protocols for their implementation, and their critical connection to downstream gene prediction in metagenomic studies.

metaSPAdes: Advanced Graph-Based Assembly

metaSPAdes is part of the SPAdes toolkit and was specifically designed for large and complex metagenomic datasets. It extends the capabilities of the single-cell SPAdes assembler, which was already adept at handling non-uniform coverage, by incorporating new algorithmic ideas to address metagenomic-specific challenges such as high microdiversity and strain heterogeneity [11]. The assembler operates by first constructing a de Bruijn graph from all reads, then transforming it into an assembly graph using various graph simplification procedures, and finally reconstructing paths in the assembly graph that correspond to long genomic fragments within a metagenome [11]. A key feature of metaSPAdes is its focus on reconstructing a consensus backbone of strain mixtures, which allows it to ignore some strain-specific features corresponding to rare strains, thereby improving assembly contiguity in diverse communities.

MEGAHIT: Efficient Succinct Graph Assembly

MEGAHIT employs a succinct de Bruijn graph (SdBG) approach to achieve highly memory-efficient assembly, making it particularly suitable for large-scale metagenomic projects such as soil microbiomes that require substantial computational resources [12] [6]. It utilizes iterative k-mer sizing with progressively larger values, beginning with smaller k-mers to assemble low-coverage regions and advancing to larger k-mers to resolve repetitive areas [12]. The tool includes several preset parameter configurations optimized for different data types, including "meta" for generic metagenomes, "meta-sensitive" for more comprehensive but slower assembly, and "meta-large" for particularly large and complex metagenomes like soil [12].

Comparative Performance Characteristics

Table 1: Key Characteristics of metaSPAdes and MEGAHIT

| Feature | metaSPAdes | MEGAHIT |

|---|---|---|

| Core Algorithm | de Bruijn graph with graph transformation and path reconstruction [11] | Succinct de Bruijn Graph (SdBG) [12] [6] |

| Memory Usage | Higher memory requirements | Highly memory-efficient [6] |

| Speed | Moderate | Ultra-fast [12] |

| Strengths | Handles strain heterogeneity well; better for complex strain variants [11] [13] | Efficient for large datasets; suitable for low-abundance species [13] [6] |

| Optimal Use Cases | Communities with high microdiversity; strain-resolved analysis [13] | Large-scale metagenomic projects; resource-constrained environments [12] [6] |

Table 2: Performance in Genome Recovery Applications

| Application | metaSPAdes | MEGAHIT | Evidence |

|---|---|---|---|

| Low-Abundance Species Recovery | Highly effective when combined with MetaBAT2 [13] | Moderate performance | Evaluation on human metagenomes [13] |

| Strain-Resolved Genomes | Moderate performance | Excels when combined with MetaBAT2 [13] | Evaluation on real and simulated human metagenomes [13] |

| Assembly of Variable Regions | Good recovery of variable genome regions | Struggles with high-sequence diversity regions [14] | Comparison using paired long-read and short-read data [14] |

Experimental Protocols

Protocol 1: metaSPAdes Assembly Workflow

Principle: metaSPAdes utilizes a multi-stage graph construction and simplification process to resolve complex metagenomic communities, making it particularly effective for samples with significant strain diversity [11].

Procedure:

Input Preparation: Obtain quality-filtered paired-end reads in FASTQ format. Ensure reads have been processed through quality control including adapter removal and trimming.

Basic Assembly Command:

Critical Parameters for Metagenomes:

-k: Specify k-mer sizes manually (e.g.,21,33,55,77,99,121for diverse communities)--meta: Explicit meta-mode for metagenomic assembly-t: Number of threads (adjust based on available computational resources)-m: Memory limit in GB to prevent system overload

Output: The assembly results will be generated in the specified output directory, with

contigs.fastacontaining the final assembled contigs.

Protocol 2: MEGAHIT Assembly Workflow

Principle: MEGAHIT employs iterative k-mer refinement using a memory-efficient succinct de Bruijn graph representation, enabling assembly of large metagenomic datasets on single servers [12] [6].

Procedure:

Input Preparation: Use the same quality-controlled paired-end reads as for metaSPAdes.

Basic Assembly Command:

Critical Parameters and Presets:

--presets: Use preset parameter configurations:meta-sensitive: For more comprehensive assembly (slower)meta-large: For large, complex metagenomes like soil

--min-contig-len: Set minimum contig length output (default: 200)-t: Number of CPU threads-m: Memory usage as fraction of total system memory (default: 0.9)

Output: Assembled contigs are located at

megahit_output/final.contigs.fa.

Protocol 3: Assembly Strategy Selection Framework

Individual vs. Co-assembly Decision Protocol:

Evaluate Sample Characteristics:

- Choose co-assembly when processing samples from the same sampling event, longitudinal sampling of the same site, or related samples with similar community structures [6].

- Choose individual assembly followed by de-replication when working with distinct sampling events, different environments, or communities with substantial strain variation [6].

Co-assembly Advantages and Disadvantages:

Integration with Gene Prediction Research

The connection between assembly quality and gene prediction accuracy cannot be overstated. High-quality assemblies with longer contigs and fewer errors provide more complete gene models with accurate structural annotations. Research has demonstrated that short-read assemblies often struggle with variable regions of genomes, such as integrated viruses or defense system islands, potentially leading to underestimation of true functional diversity [14]. These limitations directly impact downstream gene prediction, as fragmented assemblies may yield partial gene sequences or miss genetically variable elements entirely.

The emergence of hybrid assembly approaches, combining short-read technologies with long-read sequencing from platforms such as Pacific Biosciences (PacBio) or Oxford Nanopore Technologies (ONT), has shown promise in addressing these limitations. Studies comparing long-read and short-read metagenome assemblies indicate that long-read sequencing improves assembly contiguity and recovery of variable regions, thereby providing more complete templates for gene prediction algorithms [14].

For gene prediction on metagenomic assemblies, tools such as Helixer represent advances in ab initio prediction, using deep learning to identify gene structures directly from genomic DNA without requiring additional experimental data such as RNA sequencing [15]. Unlike traditional hidden Markov model-based tools like GeneMark-ES or AUGUSTUS, Helixer operates across fungal, plant, vertebrate, and invertebrate genomes without species-specific training, making it particularly valuable for metagenomic contigs from diverse and uncharacterized organisms [15].

Visualization of Assembly Workflows

metaSPAdes Assembly Workflow

MEGAHIT Assembly Workflow

Table 3: Essential Resources for Metagenomic Assembly and Analysis

| Resource Category | Specific Tools/Components | Function in Workflow |

|---|---|---|

| Primary Assemblers | metaSPAdes, MEGAHIT | Core assembly algorithms for constructing contigs from sequencing reads [11] [12] |

| Quality Assessment | CheckM2, BUSCO, GUNC, QUAST | Evaluate assembly quality, completeness, contamination, and contiguity [16] |

| Gene Prediction | Helixer, GeneMark-ES, AUGUSTUS | Identify and annotate gene structures on assembled contigs [15] |

| Binning Tools | MetaBAT2, SemiBin2 | Group contigs into metagenome-assembled genomes (MAGs) based on sequence composition and coverage [14] [13] |

| Taxonomic Annotation | GTDB-Tk2 | Assign taxonomic classifications to assembled contigs and MAGs [16] |

| Data Sources | NCBI GenBank, IMG/VR | Reference databases for comparative analysis and completeness assessment [17] [15] |

Selection between metaSPAdes and MEGAHIT represents a critical decision point in metagenomic analysis workflows with direct implications for downstream gene prediction research. metaSPAdes excels in environments with significant strain heterogeneity and when assembly quality is prioritized over computational efficiency. In contrast, MEGAHIT offers a robust solution for large-scale metagenomic projects where computational resources are constrained or when processing extremely complex samples such as soil microbiomes. The emerging paradigm of hybrid assembly approaches, leveraging both short and long-read technologies, presents a promising avenue for further improving contiguity and completeness of metagenomic assemblies. As gene prediction algorithms continue to advance, particularly with deep learning approaches like Helixer, the synergy between high-quality assembly and accurate gene annotation will remain fundamental to extracting biological insights from complex microbial communities.

Within the framework of a broader thesis on handling metagenomic contigs for gene prediction research, this application note addresses three critical contig properties—GC-content, the presence of repeat elements, and heterozygosity. These properties significantly influence the quality of metagenome-assembled genomes (MAGs) and the accuracy of subsequent gene prediction and functional annotation [18] [19] [20]. Inaccurate assemblies, driven by these factors, can lead to fragmented genes, false positives in gene calls, and a misrepresentation of the functional potential and antimicrobial resistance (AMR) profiles within a microbial community [19] [21]. This document provides a structured overview of these challenges, summarizes quantitative data, and offers detailed protocols to identify and mitigate their effects, thereby enabling more reliable metagenomic analysis for researchers, scientists, and drug development professionals.

The following tables consolidate key quantitative findings on how GC-content, repeat elements, and heterozygosity impact metagenomic assembly and gene prediction.

Table 1: Impact of GC-Content on Sequencing Coverage and Assembly

| GC Content Range | Observed Coverage Effect | Sequencing Platform | Library Preparation Notes |

|---|---|---|---|

| ~30% GC | >10-fold less coverage vs. 50% GC | Illumina MiSeq/NextSeq | Major bias outside 45-65% GC range [18] |

| 45-65% GC | Optimal (baseline) coverage | Illumina MiSeq/NextSeq | Least biased range for these platforms [18] |

| GC-rich | Falsely low coverage | Illumina MiSeq/NextSeq | Inefficient PCR amplification [18] |

| GC-rich & GC-poor | Under-coverage relative to optimal | Illumina HiSeq, PacBio | Distinct bias profile from MiSeq/NextSeq [18] |

| All ranges | No significant GC bias | Oxford Nanopore | PCR-free workflow [18] |

Table 2: Impact of Repeat Elements and Heterozygosity on Assembly

| Property | Impact on Assembly | Consequence for Gene Prediction |

|---|---|---|

| Antibiotic Resistance Genes (ARGs) | Assemblies tend to break around ARGs [19] | Fragmented ARG contigs lose genomic context and taxonomic origin [19] [21] |

| Mobile Genetic Elements (MGEs) | Highly fragmented, collapsed contigs [21] | Inability to link ARGs to plasmids or phages, misjudging mobilization risk [22] [21] |

| High Heterozygosity (>3%) | Highly fragmented assembly, high duplication rate [20] | Duplicated single-copy genes can be misinterpreted as different genes [20] |

| High Heterozygosity (e.g., 5.29%) | Conventional assembly procedures fail; scaffolds not reaching chromosome level [20] | Hinders accurate biological and genetic research [20] |

Experimental Protocols for Assessing Critical Properties

Protocol: Evaluating and Mitigating GC Bias

1. Objective: To quantify GC-dependent coverage biases in metagenomic sequencing data and account for them in downstream analyses.

2. Materials:

- High-quality metagenomic DNA

- Library preparation kit (note PCR cycles)

- Sequencing platform (e.g., Illumina, Oxford Nanopore)

- Computing resources with bioinformatics software (e.g., FastQC, metaSPAdes, custom scripts)

3. Methodology:

- Library Preparation: If using Illumina, consider PCR-free protocols where possible. If PCR is necessary, optimize polymerase mixtures and use additives like betaine or trimethylammonium chloride to improve coverage of GC-rich and GC-poor regions, respectively [18].

- Sequencing: Select an appropriate sequencing platform. Note that GC bias profiles vary significantly between platforms; Oxford Nanopore has been shown to be largely unaffected by GC bias [18].

- Bioinformatic Analysis:

- Quality Control: Process raw reads with tools like Trimmomatic or PRINSEQ to remove adapters and low-quality bases [23].

- Calculate GC-Coverage Relationship: Map quality-filtered reads to contigs or a reference genome. For each contig (or genomic window), calculate its mean coverage and its GC content.

- Visualization: Create a scatter plot of coverage versus GC content. A neutral profile shows a random scatter, while a biased profile shows a clear curve (e.g., peak at 50% GC) [18].

- Account for Bias: For quantitative metagenomics, use the GC-coverage relationship to correct abundance estimates before drawing ecological conclusions [18].

Protocol: Managing Repetitive Elements and ARGs

1. Objective: To assess the fragmentation of contigs around repetitive elements like ARGs and MGEs and improve their contextual assembly.

2. Materials:

- Metagenomic short-read (Illumina) and/or long-read (Oxford Nanopore, PacBio) data

- High-performance computing infrastructure

- MetaMobilePicker pipeline

- Assembly tools (metaSPAdes, MEGAHIT, Trinity)

- Annotation databases (CARD, mobileOG)

3. Methodology:

- Assembly: Perform de novo assembly using multiple tools. For short reads, metaSPAdes is generally recommended, but for recovering context around repetitive genes, the transcriptome assembler Trinity has shown promise in producing longer, fewer contigs [19].

- Element Identification: Annotate assembled contigs for ARGs using the Comprehensive Antibiotic Resistance Database (CARD) and for MGEs (plasmids, phages, IS elements) using tools integrated in pipelines like MetaMobilePicker [21].

- Fragmentation Assessment:

- Calculate the number of contigs containing ARGs.

- Determine the average length of ARG-containing contigs.

- Check if the ARG is complete and if flanking sequences (e.g., plasmid backbones, chromosomal genes) are present.

- Complementary Quantification: Since assembly often fragments ARGs, directly map quality-filtered reads to an ARG database (e.g., using BWA or Bowtie2) to obtain accurate abundance and diversity measures that are not biased by assembly fragmentation [19].

Protocol: Handling High Heterozygosity in Genomes

1. Objective: To assemble a high-quality genome from a metagenome or isolate with high heterozygosity, minimizing fragmentation and duplication.

2. Materials:

- PacBio long-read data or Hi-C data

- Assemblers: MECAT2, 3d-dna, ALLHiC

- Duplication purging tools: Purgehaplotigs, Purgedups

3. Methodology (Based on the AealbF3 Workflow):

- Read Generation and Draft Assembly: Generate high-coverage long reads (e.g., ~418x PacBio reads). Process and assemble these reads into a draft genome using an assembler like MECAT2 [20].

- Identify Duplication: Use BUSCO to assess single-copy gene duplication. A high duplication rate (>70%) indicates a highly heterozygous assembly [20].

- Extract Flanking Sequences: Identify flanking sequences (>15,000 bp) around duplicated BUSCO genes with the highest BLAST alignment scores [20].

- Chromosome-Level Scaffolding: Map Hi-C data to the extracted flanking sequences. Use the 3d-dna software to generate a Hi-C contact map and cluster sequences into potential chromosomes. Finally, use ALLHiC to orient and order the sequences, producing a chromosome-level assembly [20].

- Evaluation: Assess the new assembly (e.g., AealbF3) for completeness (BUSCO), duplication rate, and contiguity (N50). The goal is a high completeness (>98%) with a low duplication rate (<2%) [20].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagents and Computational Tools

| Item Name | Function / Application | Specific Example / Note |

|---|---|---|

| Betaine (PCR Additive) | Improves amplification of GC-rich regions during library prep, mitigating coverage bias [18]. | Used in PCR-dependent Illumina library protocols. |

| Oxford Nanopore Tech. | Sequencing platform demonstrating minimal GC bias, advantageous for unbiased coverage [18]. | PCR-free library preparation is a key feature. |

| Trimmomatic | Software for quality filtering of raw sequencing reads; removes adapters and low-quality bases [23]. | Critical pre-processing step before assembly. |

| metaSPAdes | Metagenomic assembler for short reads; generally performs well but may fragment repeats [19] [21]. | Resource-intensive; requires high-performance computing. |

| Trinity | Transcriptome assembler that can be applied to metagenomes to recover longer contigs around conserved genes [19]. | Useful for contextualizing ARGs. |

| Comprehensive Antibiotic Resistance Database (CARD) | Reference database for annotating antibiotic resistance genes in contigs or reads [19]. | Essential for resistome analysis. |

| BUSCO (Benchmarking Universal Single-Copy Orthologs) | Tool to assess genome/completeness and duplication, identifying potentially heterozygous assemblies [20]. | High duplication rate flags heterozygosity. |

| ALLHiC | Software package for scaffolding and ordering contigs into chromosomes using Hi-C data [20]. | Part of the workflow for handling heterozygosity. |

| BWA-MEM / VG | Read alignment tools. VG maps to graph genomes, reducing reference bias and improving accuracy in repetitive regions [24]. | VG is part of a pangenome approach. |

Workflow and Relationship Diagrams

The following diagram illustrates the logical workflow for analyzing a metagenomic sample, highlighting the points at which the three critical contig properties impact the process and the corresponding mitigation strategies.

The assembly of metagenomic samples is paramount for elucidating the mobility potential and taxonomic origin of antibiotic resistance genes (ARGs), information that is critical for assessing public health risks and designing interventions. However, the presence of nearly identical ARGs and other conserved regions across multiple genomic contexts and bacterial species presents a substantial challenge for assembly algorithms [19]. These highly similar sequences create complex, branched assembly graphs that are difficult to resolve, invariably leading to premature contig breaks precisely in the regions of greatest interest [19]. This fragmentation obscures the genomic context of ARGs, including their association with mobile genetic elements (MGEs) and their bacterial host organisms, thereby complicating risk assessment and hindering efforts to track the dissemination of antimicrobial resistance (AMR) [19].

This Application Note delineates the core technical challenges of ARG fragmentation, provides quantitative data from comparative assessments of assembly strategies, and offers detailed protocols for leveraging advanced sequencing and bioinformatic techniques to overcome these hurdles, all within the framework of processing metagenomic contigs for gene prediction research.

Quantitative Assessment of Assembly Performance

Systematic evaluations of assembly tools reveal significant differences in their ability to reconstruct ARGs and their flanking regions. The following tables summarize key performance metrics from controlled benchmarking studies, providing a basis for selecting an appropriate assembly strategy.

Table 1: Performance of Assembly Tools in Recovering ARG Contexts from a Spiked Metagenomic Sample (Short-Read Data) [19]

| Assembly Tool | Assembly Type | Contiguity (Total contig length ≥500 bp) | Ability to Recover Unique Genomic Contexts | Fragmentation Around ARGs |

|---|---|---|---|---|

| metaSPAdes | Metagenomic | Intermediate | Moderate | High |

| MEGAHIT | Metagenomic | Low (very short contigs) | Poor | Very High |

| Trinity | Transcriptomic | High (longer, fewer contigs) | Good | Moderate |

| Velvet | Genomic | Intermediate | Moderate | High |

| SPAdes | Genomic | Intermediate | Moderate | High |

| Ray | Metagenomic | Intermediate | Moderate | High |

Table 2: Benefits of Co-assembly for Improving Assembly Quality in Low-Biomass Airborne Metagenomes [25]

| Assembly Metric | Individual Assembly | Co-assembly | Improvement |

|---|---|---|---|

| Genome Fraction (%) | 4.83 ± 2.71% | 4.94 ± 2.64% | Slight Increase |

| Duplication Ratio | 1.23 ± 0.20 | 1.09 ± 0.06 | Significant Reduction |

| Mismatches per 100 kbp | 4491.1 ± 344.46 | 4379.82 ± 339.23 | Slight Reduction |

| Number of Misassemblies | 410.67 ± 257.66 | 277.67 ± 107.15 | Significant Reduction |

| Total Contig Length (≥500 bp) | 334.31 Mbp | 555.79 Mbp | Substantial Increase |

Detailed Experimental Protocols

Protocol: Long-Read Metagenomic Sequencing for ARG Host Linking

This protocol utilizes Oxford Nanopore Technologies (ONT) long-read sequencing and DNA modification profiling to link plasmids carrying ARGs to their bacterial hosts [26].

I. Sample Preparation and DNA Extraction

- Input: Complex samples (e.g., chicken feces, wastewater, soil).

- Step 1: Preserve samples immediately upon collection using a stabilizer such as DNA/RNA Shield.

- Step 2: Extract high-molecular-weight genomic DNA. Use kits designed for long-read sequencing to maximize DNA integrity and size.

- Quality Control: Verify DNA quantity and quality using a fluorometer and fragment analyzer.

II. Library Preparation and Sequencing

- Step 3: Prepare a sequencing library from native DNA (without PCR amplification) to preserve epigenetic modifications, using ONT's kit (e.g., Ligation Sequencing Kit).

- Step 4: Load the library onto a PromethION flow cell (R10 or newer) and sequence for up to 72 hours using the associated instrument and V14 chemistry.

III. Bioinformatic Analysis for Host Linking

- Step 5: Basecalling and Assembly. Perform high-accuracy basecalling and assemble reads into contigs using a long-read assembler (e.g., Flye).

- Step 6: ARG Identification. Annotate ARGs on contigs using a tool like AMRFinderPlus or by aligning to the Comprehensive Antibiotic Resistance Database (CARD).

- Step 7: Methylation Motif Detection. Use the basecaller to detect DNA modification signals (4mC, 5mC, 6mA) from the raw sequencing data. Subsequently, run a tool like

NanoMotiforMicrobeModto identify active methylation motifs in the assembled contigs. - Step 8: Plasmid-Host Linking. Cluster contigs derived from the same bacterial host by identifying shared, strain-specific methylation motifs. A plasmid contig carrying an ARG will cluster with the chromosomal contigs of its host, enabling confident assignment [26].

Diagram 1: Methylation-based host linking workflow.

Protocol: Co-assembly of Metagenomic Samples for Enhanced ARG Recovery

This protocol is designed for complex, low-biomass samples (e.g., air, water) where individual assembly fails. Co-assembly pools multiple samples to increase effective sequencing depth and improve the recovery of ARG contexts [25].

I. Sample Grouping and Data Pre-processing

- Step 1: Define Subgroups. Group individual metagenomic samples based on shared characteristics, such as sampling time, location, or preliminary taxonomic profile (e.g., via 16S rRNA gene sequencing).

- Step 2: Quality Control and Read Trimming. Process raw sequencing reads for each sample independently using tools like FastQC and Trimmomatic to remove adapters and low-quality bases.

II. Co-assembly Execution

- Step 3: Pool Reads. Concatenate all quality-filtered reads from the samples within a predefined subgroup into a single, large read set.

- Step 4: Perform Co-assembly. Assemble the pooled reads using a metagenome assembler such as metaSPAdes.

metaSPAdes.py --meta -1 pooled_1.fastq -2 pooled_2.fastq -o coassembly_output - Step 5: Assess Assembly Quality. Use tools like Quast or CheckM to evaluate assembly metrics (N50, number of contigs) and compare them against individual assemblies.

III. ARG Annotation and Context Analysis

- Step 6: Identify ARGs. Annotate ARGs on the co-assembled contigs using a database like SARG or CARD.

- Step 7: Quantify Context Improvement. Compare the length of ARG-containing contigs and the presence of flanking MGEs (e.g., using tools like MobileElementFinder) between the co-assembly and individual assemblies.

Protocol: Species-Resolved ARG Profiling with Argo

Argo is a read-based profiler that uses long-read overlapping to accurately assign ARGs to their host species without assembly, thus bypassing fragmentation issues [27].

I. Data Preparation and ARG Screening

- Input: Long reads (ONT or PacBio) from a metagenomic sample.

- Step 1: Identify ARG-Carrying Reads. Align all reads against the SARG+ database (a manually curated expansion of CARD, NDARO, and SARG) using DIAMOND in frameshift-aware mode. This step filters out reads without ARGs.

diamond blastx --db SARGplus --query reads.fastq --out argo_matches.txt --outfmt 6 ...

II. Taxonomic Classification via Read Clustering

- Step 2: Map Reads to Taxonomy Database. Map the ARG-containing reads to a comprehensive taxonomy database (e.g., GTDB) using

minimap2in base-level alignment mode to generate candidate species labels for each read. - Step 3: Build and Segment Overlap Graph. Use

minimap2to compute all-vs-all pairwise overlaps between the ARG-containing reads. Construct an overlap graph and segment it into read clusters using the Markov Cluster (MCL) algorithm. Each cluster ideally represents a single ARG from a specific species. - Step 4: Assign Taxonomy per Cluster. Assign a consensus taxonomic label to all reads within a cluster, rather than to individual reads. This collective assignment significantly enhances accuracy by leveraging the consensus from multiple overlapping sequences [27].

Diagram 2: Argo workflow for species-resolved ARG profiling.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Overcoming ARG Fragmentation

| Resource Name | Type | Primary Function | Key Application in Protocol |

|---|---|---|---|

| Oxford Nanopore R10 Flow Cell & V14 Chemistry | Sequencing Hardware | Generates highly accurate long reads with native DNA modification detection. | Enables long-read assembly and methylation-based host linking in Protocol 3.1. |

| SARG+ Database | Bioinformatics Database | A comprehensive, non-redundant ARG protein sequence database. | Used by Argo (Protocol 3.3) for sensitive and specific identification of ARGs from long reads. |

| GTDB (Genome Taxonomy Database) | Bioinformatics Database | A high-quality, standardized microbial taxonomy database. | Provides the reference for accurate taxonomic classification of ARG-containing read clusters in Argo. |

| NanoMotif | Bioinformatics Tool | Detects active DNA methylation motifs from native ONT sequencing data. | Critical for identifying strain-specific signatures to link plasmids to hosts in Protocol 3.1. |

| metaSPAdes | Bioinformatics Tool | A metagenomic assembler designed for complex microbial communities. | The core assembler used for both individual and co-assembly approaches in Protocol 3.2. |

| Trinity | Bioinformatics Tool | A transcriptome assembler repurposed for metagenomics. | Useful for recovering longer contigs around conserved regions in complex samples (Table 1). |

| CRISPR-Cas9 Enrichment | Molecular Biology Method | Enriches low-abundance ARG targets prior to sequencing. | Dramatically lowers detection limits, allowing identification of ARGs missed by standard metagenomics [28]. |

The analysis of microbial communities through metagenomics has revolutionized our understanding of the microbial world, bypassing the need for isolation and lab cultivation of individual species [9]. This approach involves directly sampling and sequencing genetic material from environmental samples, enabling researchers to uncover microbial diversity and functional potential with unprecedented resolution [29]. The fundamental challenge in metagenomics lies in transforming raw sequencing data into accurate, analyzable contigs that faithfully represent the genomic content of complex microbial communities. This process is particularly crucial for downstream applications such as gene prediction, which serves as the gateway to understanding the functional capabilities of microbial ecosystems [9] [30].

The complexity of metagenomic data arises from several factors: the coexistence of thousands of different species in a single sample, highly uneven species abundance, and the fragmented nature of sequencing outputs [9]. For researchers and drug development professionals, establishing a robust, reproducible workflow from raw reads to analyzable contigs is essential for extracting biologically meaningful information that can inform therapeutic discovery and functional characterization. This protocol outlines a comprehensive workflow to address these challenges, incorporating both established practices and recent advancements in the field.

Metagenomic Workflow: From Sample to Contigs

The journey from environmental sample to analyzable contigs involves multiple critical steps, each contributing to the quality and reliability of the final output. The overall workflow, integrating both short-read and long-read approaches, is visualized in Figure 1.

Figure 1. Comprehensive workflow for metagenomic analysis from sample collection to gene prediction. The diagram illustrates the parallel paths for short-read and long-read sequencing technologies, converging at assembly evaluation before proceeding to downstream applications like binning and gene prediction. MAG: Metagenome-Assembled Genome.

Sample Collection and DNA Extraction

The initial steps of sample collection and DNA extraction are critical as they establish the foundation for all subsequent analyses.

Sample Collection: The specific sampling strategy must be tailored to the research question and environment. For instance, rhizosphere studies require collecting soil tightly associated with plant roots, while human gut microbiome investigations necessitate fecal samples [30]. Temporal considerations are also important; in a study of infant viromes, samples were collected at multiple time points following vaccination to capture temporal dynamics [30]. Environmental parameters such as pH, temperature, and nutrient availability should be documented as they help interpret resulting genomic data.

DNA Extraction: This step must efficiently lyse diverse microbial cell types while minimizing bias. A recommended approach uses enzymatic lysis with lysozyme, lysostaphin, and mutanolysin to target different cell wall types, followed by chemical or mechanical disruption [30]. The quality and quantity of extracted DNA should be rigorously assessed using spectrophotometric (e.g., Nanodrop) and fluorometric (e.g., Qubit) methods. For highly complex samples like soil, specialized extraction kits designed for environmental samples often yield better results.

Sequencing Technologies and Library Preparation

Selecting appropriate sequencing technology is crucial and involves trade-offs between read length, accuracy, throughput, and cost.

Table 1: Comparison of Sequencing Technologies for Metagenomics

| Technology | Read Length | Key Advantages | Key Limitations | Ideal Applications |

|---|---|---|---|---|

| Illumina | Short-read (75-300 bp) | High accuracy (<0.1% error rate), high throughput, low cost per base | Short reads complicate assembly in repetitive regions | High-coverage sequencing of low-complexity communities, taxonomic profiling |

| PacBio HiFi | Long-read (10-25 kb) | High accuracy (>99.9%), long reads resolve repeats | Higher DNA input requirements, lower throughput | Complex community assembly, complete genome reconstruction |

| Nanopore | Long-read (up to 2 Mb+) | Real-time sequencing, very long reads, portable options | Higher error rates (5-15%) requiring correction | Complex environmental samples, rapid in-field sequencing |

Library preparation follows standardized protocols specific to each sequencing platform. For Illumina, this typically involves DNA fragmentation, adapter ligation, size selection, and PCR amplification [30]. For long-read technologies, the process often focuses on size selection to maximize read length without fragmentation. Quality control of the final library using bioanalyzer systems or qPCR is essential to ensure sequencing success [30].

Read Processing and Quality Control

Raw sequencing data requires processing to remove low-quality sequences and artifacts before assembly.

Short-Read Processing: Tools like

fastpperform adapter trimming, quality filtering, and read pruning [31]. The default parameters typically include removing bases with quality scores below Q20 and discreads shorter than 50 bp after trimming.Long-Read Processing: Nanopore reads typically require filtering based on quality scores and length. Tools like

NanoFiltremove reads with average quality below Q10 and lengths below 1 kb. For PacBio data, circular consensus sequencing (CCS) mode generates high-fidelity (HiFi) reads by sequencing the same molecule multiple times.

Metagenomic Assembly

Assembly reconstructs contiguous sequences (contigs) from the overlapping reads, which is particularly challenging for metagenomic data due to multiple closely related strains and species.

Table 2: Metagenomic Assemblers and Their Applications

| Assembler | Algorithm Type | Read Type | Key Features | Considerations |

|---|---|---|---|---|

| metaSPAdes [9] | De Bruijn graph | Short-read | Multicut, mismatch correction | Effective for diverse communities but computationally intensive |

| metaFlye [32] [31] | Overlap-layout-consensus | Long-read | --meta flag for metagenomes, repeat resolution |

Good for complex samples, used in the mmlong2 workflow [33] |

| HiCanu [32] | Overlap-layout-consensus | Long-read | Adapted from Canu for HiFi data, high accuracy | Resource-intensive, requires high-memory computing |

| hifiasm-meta [32] | Graph-based | Long-read | Optimized for PacBio HiFi data, haplotype resolution | Fast assembly with good contiguity |

| metaMDBG [32] | Graph-based | Long-read | Minimizer-based, memory efficient | Suitable for large datasets, multiple versions available |

Recent advancements in long-read sequencing have dramatically improved metagenomic assembly. The mmlong2 workflow exemplifies this progress, incorporating multiple optimizations for recovering prokaryotic genomes from complex samples, including differential coverage binning, ensemble binning (using multiple binners), and iterative binning [33]. This approach recovered over 15,000 previously undescribed microbial species from terrestrial samples, expanding the phylogenetic diversity of the prokaryotic tree of life by 8% [33].

Assembly Quality Assessment and Contig Binning

Evaluating assembly quality is crucial before proceeding to downstream analyses. Key metrics include contiguity (N50, L50), completeness, and contamination. CheckM2 is widely used for assessing completeness and contamination of metagenome-assembled genomes (MAGs) [34]. For a more thorough evaluation, anvi-script-find-misassemblies can identify potential assembly errors by examining long-read mapping patterns [32].

Contig binning groups assembled contigs into MAGs representing individual populations. This can be achieved through composition-based methods (using k-mer frequencies) and/or abundance-based methods (using coverage patterns across multiple samples). The mmlong2 workflow demonstrates the power of combining multiple binning strategies to maximize MAG recovery from complex samples [33].

The Scientist's Toolkit: Essential Research Reagents and Computational Tools

Successful metagenomic analysis requires both wet-lab reagents and computational tools. The following table summarizes key components of the metagenomics toolkit.

Table 3: Essential Research Reagent Solutions and Computational Tools for Metagenomic Analysis

| Category | Item/Software | Specific Application/Function | Key Features/Benefits |

|---|---|---|---|

| Wet-Lab Reagents | Lysozyme, Lysostaphin, Mutanolysin | Enzymatic cell lysis in DNA extraction | Targets diverse cell wall types; increases DNA yield from complex samples |

| PACBIO SMRTbell Express Template Prep Kit | Library preparation for PacBio sequencing | Optimized for long-read sequencing; maintains DNA integrity | |

| Illumina DNA Prep Kits | Library preparation for Illumina sequencing | High-efficiency adapter ligation; compatible with low-input samples | |

| Computational Tools | fastp [31] |

Quality control and adapter trimming | Fast processing; integrated quality reporting |

metaFlye [32] |

Long-read metagenomic assembly | Resolves repetitive regions; --meta flag for complex communities |

|

CheckM2 [34] |

Assembly quality assessment | Estimates completeness and contamination; rapid analysis | |

CompareM2 [34] |

Comparative genome analysis | Easy installation; comprehensive reporting for bacterial/archaeal genomes | |

AMRomics [31] |

Large-scale microbial genome analysis | Scalable to thousands of genomes; supports progressive analysis | |

Meteor2 [35] |

Taxonomic, functional, and strain-level profiling | Uses environment-specific gene catalogs; improved sensitivity for low-abundance species | |

Prokka [31] |

Genome annotation | Rapid annotation; standardized output format | |

Bakta [34] |

Genome annotation | Comprehensive functional annotation; database included |

Downstream Analysis: Connecting Contigs to Gene Prediction

The ultimate goal of generating analyzable contigs is to enable biological insights through downstream analyses such as gene prediction and functional annotation.

Gene Prediction Approaches

Gene prediction in metagenomic data presents unique challenges compared to isolate genomes, including fragmented genes and unknown source organisms [9]. Three primary approaches have been developed:

Homology-based methods (e.g., CRITICA, Orpheus) use BLAST against known protein databases but cannot predict novel genes without homologs [9].

Model-based methods (e.g., MetaGeneMark, FragGeneScan) employ hidden Markov models or higher-order Markov chains but require thousands of parameters [9].

Machine learning-based methods (e.g., MetaGUN, Orphelia) use features like codon usage, open reading frame (ORF) length, and sequence patterns to train classifiers for gene identification [9].

The Meta-MFDL predictor represents a recent advancement, fusing multiple features (monocodon usage, monoamino acid usage, ORF length coverage, and Z-curve features) with deep stacking networks to improve prediction accuracy [9]. For ORFs shorter than 60 bp, which are typically too short to provide useful information, prediction is generally not recommended [9].

Functional Annotation and Interpretation

After gene prediction, functional annotation assigns biological meaning to predicted genes. This typically involves comparing protein sequences against databases such as KEGG for metabolic pathways, dbCAN for carbohydrate-active enzymes, and AMRFinderPlus for antimicrobial resistance genes [34] [35] [31]. Tools like CompareM2 and AMRomics integrate multiple annotation sources to provide comprehensive functional profiles [34] [31].

Advanced approaches are emerging that leverage machine learning to extract deeper insights from genomic context. The genomic language model (gLM) trains transformer neural networks on millions of metagenomic scaffolds to learn functional and regulatory relationships between genes, demonstrating that contextualized protein embeddings can capture biologically meaningful information such as enzymatic function and taxonomic affiliation [36].

The workflow from raw reads to analyzable contigs represents the foundational pipeline in metagenomic studies, determining the quality and reliability of all subsequent biological interpretations. As sequencing technologies continue to evolve, with long-read approaches enabling more complete genome reconstruction from complex samples [33], and computational methods advance through deep learning approaches [9] [36], this workflow continues to improve in both accuracy and accessibility.

For researchers focused on gene prediction from microbial communities, establishing a robust and reproducible workflow following the protocols outlined in this application note is essential. The integration of wet-lab protocols with computational tools, coupled with rigorous quality control at each step, ensures that resulting contigs faithfully represent the microbial community under investigation. This foundation enables accurate gene prediction and functional annotation, ultimately supporting drug discovery and broader microbial ecology research.

Gene Prediction Methodologies: From Ab Initio Tools to Deep Learning Applications

Within the framework of metagenomic contig analysis, ab initio gene prediction represents a critical first step in deciphering the functional potential of uncultured microorganisms. This methodology identifies protein-coding genes directly from nucleotide sequences by leveraging intrinsic signals—such as codon usage, ribosome binding sites, and GC frame bias—without relying on experimental data or homology comparisons [37] [38]. In metagenomics, where a substantial proportion of sequences originate from unknown and uncharacterized organisms, this reference-independent approach is indispensable for discovering novel genes and functions [39].

The computational challenge is substantial. Metagenomic assemblies present fragmented sequences from diverse organisms with varying genomic signatures, complicating the accurate delineation of gene boundaries [40] [41]. This application note details standardized protocols for employing two preeminent ab initio prediction tools, Prodigal and MetaGeneMark, within metagenomic research workflows. We provide experimental validations and performance benchmarks to guide researchers in their effective application.

Prodigal (PROkaryotic DYnamic programming Gene-finding ALgorithm)

Prodigal employs a dynamic programming algorithm to identify the optimal tiling path of genes across a genomic sequence [37]. Its strength lies in its unsupervised operation; it automatically derives a training profile from the input sequence, capturing characteristics like start codon usage, ribosomal binding site motifs, and GC content bias. A key feature is its use of the GC frame plot, which analyzes the bias for guanine and cytosine in the three codon positions across a 120-base pair window to distinguish coding from non-coding regions [37].

MetaGeneMark

The MetaGeneMark algorithm utilizes inhomogeneous Markov models of orders 3, 4, and 5 to model protein-coding regions [42]. The MetaGeneMark software is often applied with models pre-computed for sequences with specific GC content. For more advanced analysis, the GeneMark-HM pipeline leverages a database of pan-genomes from human microbiome species. It assigns a nearly optimal model to each metagenomic contig by first performing a similarity search of initially predicted genes against the pan-genome database. If a close relative is found, the corresponding species-specific model is used for a more accurate prediction; otherwise, the contig is analyzed by the self-training GeneMarkS-2 or the standard MetaGeneMark with GC-specific models [42].

The Scientist's Toolkit: Essential Research Reagents

The following table catalogues the key computational tools and databases essential for implementing the ab initio gene prediction protocols described in this note.

Table 1: Key Research Reagent Solutions for Ab Initio Gene Prediction

| Name | Type | Primary Function |

|---|---|---|

| Prodigal [37] | Gene Prediction Algorithm | Accurately predicts prokaryotic genes and translation initiation sites in isolated genomes and metagenomic contigs. |

| MetaGeneMark [42] | Gene Prediction Algorithm | Predicts genes in metagenomic fragments using Markov models and GC content-specific models. |

| GeneMark-HM [42] | Metagenomic Annotation Pipeline | Improves prediction accuracy in human microbiome samples using a pan-genome database for model selection. |

| geneRFinder [38] | Machine Learning Gene Finder | Identifies coding sequences and intergenic regions using a Random Forest model, designed for complex metagenomes. |

| FragGeneScan [40] | Gene Prediction Algorithm | A tool designed for predicting genes in fragmented metagenomic data, accounting for sequencing errors. |

| CheckM [43] | Genome Quality Assessment Tool | Evaluates the completeness and contamination of Metagenome-Assembled Genomes (MAGs) post-binning. |

| KEGG [44] | Functional Database | Used for the functional annotation of predicted genes, particularly for mapping onto metabolic pathways. |

| eggNOG [44] | Functional Database | Provides orthologous group information for functional annotation and evolutionary analysis of predicted genes. |

| GTDB-tk [40] | Taxonomic Classification Tool | Assigns taxonomic labels to MAGs based on the Genome Taxonomy Database. |

Experimental Protocols & Performance Benchmarks

Protocol: Gene Prediction with Prodigal on Metagenomic Contigs

This protocol is designed for predicting open reading frames (ORFs) from a set of assembled metagenomic contigs.

- Input Preparation: Ensure your input is a FASTA file containing assembled metagenomic contigs. Contigs are typically generated from short-read (e.g., Illumina) or long-read (e.g., PacBio) assemblers like metaSPAdes or Flye [40] [41].

- Tool Execution: Run Prodigal in "metagenomic" mode, which uses a pre-trained model suitable for mixed communities.

-i: Input contig FASTA file.-o: Output gene models in GFF format.-a: Output predicted protein sequences in FASTA format.-d: Output predicted nucleotide coding sequences (CDS) in FASTA format.-p meta: Selects the metagenomic mode.

- Output Interpretation: The GFF file contains the precise coordinates, strand, and frame for each predicted gene. The protein and CDS FASTA files are used for downstream functional annotation (e.g., with KEGG or eggNOG via DIAMOND/BLAST+) [44].

Protocol: Gene Prediction with MetaGeneMark

- Input Preparation: Prepare your metagenomic contigs in a FASTA file.

- Tool Execution: Run MetaGeneMark. The following command uses a model file for general metagenomic data.

-M: Specifies the model file (e.g., for metagenomes).-f G: Output in GFF format.-oand-d: Define output files for coordinates and CDS sequences.

- Output Interpretation: Similar to Prodigal, the outputs are coordinate and sequence files for downstream analysis.

Performance Benchmarking

Independent evaluations provide critical insights into tool performance. A 2021 study introduced geneRFinder and benchmarked it against other tools on datasets of varying complexity [38]. The results demonstrated that tool performance is context-dependent.

Table 2: Gene Prediction Tool Performance on Isolated Genomes and Metagenomic Data [38]

| Tool | Reported Sensitivity on Genomes | Reported Specificity on Genomes | Reported Sensitivity on High-Complexity Metagenome | Reported Specificity on High-Complexity Metagenome |

|---|---|---|---|---|

| geneRFinder | 95.8% | 95.7% | 89.5% | 90.1% |

| Prodigal | 94.3% | 93.9% | 58.1% | 84.3% |

| FragGeneScan | 89.9% | 89.7% | 34.9% | 11.2% |

A separate 2021 analysis (ORForise) of 15 CDS predictors concluded that no single tool ranked as the most accurate across all tested genomes or metrics, emphasizing that performance is highly dependent on the genome being analyzed [39]. This underscores the value of a multi-tool approach for comprehensive annotation.

Impact of Sequencing Technology on Prediction

The choice of sequencing technology influences assembly continuity, which in turn affects gene prediction. A 2023 study comparing short-read (Illumina) and long-read (PacBio) metagenomics found that while short-read data recovered a greater number of MAGs due to higher sequencing depth, long-read data produced MAGs of higher quality [40]. Specifically, 88% of long-read MAGs included a 16S rRNA gene, compared to only 23% of short-read MAGs [40]. This superior contiguity of long-read assemblies provides more complete genomic context, facilitating more accurate and complete gene prediction.

Integrated Analysis Workflow

The gene prediction process is one component of a larger genome-resolved metagenomics workflow [41]. The diagram below illustrates the logical sequence of steps from raw sequencing data to functional and taxonomic insights, highlighting the role of ab initio prediction.

Figure 1: Genome-resolved metagenomic analysis workflow. Ab initio gene prediction is a pivotal step that connects assembled genomes to functional interpretation. MAGs are generated by binning contigs based on sequence composition and coverage [41] [43].

Technical Notes & Best Practices

- Tool Selection: For standard prokaryotic genomes and simple metagenomes, Prodigal is highly efficient and accurate [37]. For complex human microbiome samples, the GeneMark-HM pipeline, which integrates MetaGeneMark with a pan-genome database, may offer improved accuracy [42]. In cases of high metagenomic complexity, machine learning-based tools like geneRFinder can potentially outperform traditional methods [38].

- Start Codon Accuracy: Prodigal was explicitly designed to improve the identification of translation initiation sites (TIS), a known weakness in earlier algorithms. Its dynamic programming approach, trained on manually curated genomes, allows it to outperform tools that require a separate start-codon refinement step [37].

- Addressing Tool Bias: Be aware that gene prediction tools, especially those trained on model organisms, can exhibit biases that cause them to miss genes with atypical features (e.g., non-standard codon usage, short length, or overlapping genes) [39]. This can hinder the discovery of novel genomic elements.

- Quality Control: Always assess the quality of your input MAGs using tools like CheckM before performing gene prediction [43]. High contamination levels can lead to erroneous gene calls.

Within the broader scope of metagenomic contig analysis, homology-based gene prediction serves as a fundamental methodology for annotating putative genes and inferring their biological functions. Unlike ab initio methods that rely solely on statistical models of coding sequences, homology-based approaches leverage vast repositories of known proteins to identify evolutionarily related sequences through sequence similarity searching [45] [46]. The Basic Local Alignment Search Tool (BLAST) algorithm, particularly the BLASTp program for protein-protein comparisons, represents the gold standard for this purpose [47] [48]. This protocol details the application of BLAST-based methods for identifying known genes within metagenomic contigs, a critical step in functional metagenomics that enables researchers to decipher the metabolic potential and ecological roles of uncultured microbial communities [49] [10].

The strategic importance of homology-based annotation is particularly evident in metagenomic studies, where a significant proportion of sequenced data originates from previously uncharacterized organisms [49]. For instance, recent analyses of human gut viromes revealed that approximately 72% (788 out of 1,090) of high-quality viral genomes showed no significant similarity to sequences in the NCBI viral RefSeq database, underscoring both the vast unexplored genetic diversity and the critical need for sensitive annotation methods [49]. Furthermore, in metaproteomic studies, the accuracy of taxonomic annotation directly impacts the quality of downstream functional analyses, with optimized pipelines like ConDiGA (Contigs Directed Gene Annotation) demonstrating that refined BLAST-based searches against carefully selected reference species significantly improve protein identification rates compared to uncurated database searches [50].

Principles of Homology-Based Gene Prediction

Fundamental Concepts

Homology-based gene prediction operates on the principle that evolutionarily related proteins share detectable sequence similarity despite accruing mutations over time. The core assumption is that sequence similarity implies functional relatedness and common evolutionary ancestry. In metagenomic analysis, this approach is particularly valuable because it can provide functional insights for genes from poorly characterized taxa that lack appropriate training data for ab initio prediction tools [10].

The BLAST algorithm implements a heuristic approach to identify High-scoring Segment Pairs (HSPs) between a query sequence and subjects in a database. For protein BLAST (BLASTp), the comparison assesses the similarity between amino acid sequences using substitution matrices (e.g., BLOSUM62) which assign scores to amino acid substitutions based on their observed frequencies in known protein families [48] [51]. The statistical significance of each match is expressed as an E-value, which represents the number of alignments with equivalent scores expected to occur by chance in a database of a given size. Lower E-values indicate more significant matches, with typical thresholds for homology set at 1e-5 or lower [52].

Integration with Metagenomic Analysis Workflows

In comprehensive metagenomic studies, homology-based methods typically follow contig assembly and often complement ab initio gene prediction. As illustrated in Figure 1, the process begins with quality-filtered metagenomic contigs that undergo initial gene calling, after which the predicted protein sequences are searched against reference databases. Recent advances emphasize the importance of lineage-specific approaches, where taxonomic assignment of contigs informs subsequent database selection and search parameters [10]. This strategy has been shown to increase the landscape of captured microbial proteins by 78.9% compared to non-specific approaches [10].

Figure 1. Workflow for homology-based gene annotation in metagenomic analysis. The process begins with assembled contigs, proceeds through gene prediction and translation to protein sequences, followed by BLASTp analysis against reference databases, and culminates in functional annotation based on significant homologs.

Materials and Equipment

Research Reagent Solutions

Table 1: Essential computational tools and databases for homology-based gene prediction

| Tool/Database | Type | Primary Function | Application Notes |

|---|---|---|---|

| BLAST+ Suite [47] [48] | Software Package | Command-line tools for sequence similarity searching | Includes blastp for protein-protein comparisons; essential for high-throughput analysis |

| NCBI nr Database | Protein Database | Comprehensive non-redundant protein sequence collection | General-purpose database; may require filtering for specific taxonomic groups |

| UniProtKB/TrEMBL | Protein Database | Automatically annotated and non-reviewed protein sequences | Larger but less curated than Swiss-Prot; useful for discovering novel homologs [53] |

| Meta4 | Web Application | Sharing and annotating metagenomic gene predictions | Provides dynamic interface with web services for up-to-date BLAST annotations [53] |

| Kaiju | Taxonomy Analysis Tool | Taxonomic classification of metagenomic sequences | Protein-level classification; useful for contig-directed annotation strategies [50] |

| Prodigal | Gene Predictor | Prokaryotic gene prediction in metagenomes | Often used prior to BLAST analysis to generate protein queries from contigs [45] |

Computational Requirements

The computational resources required for homology-based analysis vary significantly based on dataset size and database selection. For typical metagenomic studies containing thousands of contigs, a multi-core server with substantial RAM (≥64 GB) is recommended. BLASTp searches against comprehensive databases like NCBI nr can be computationally intensive, with search time proportional to both query and database sizes. For large-scale projects, consider distributed computing approaches or optimized BLAST implementations such as DIAMOND for accelerated processing.

Experimental Protocol

Preliminary Gene Prediction

Before performing homology searches, protein-coding regions must be identified on metagenomic contigs:

- Contig Preparation: Ensure contigs are in FASTA format. Quality filter based on length and coverage thresholds appropriate to your study.

- Gene Calling: Apply prokaryotic gene prediction tools to identify open reading frames (ORFs). For metagenomic data, recommended tools include:

- Protein Translation: Extract predicted protein sequences in FASTA format. Maintain careful tracking of nucleotide-to-protein correspondence for downstream validation.

BLASTp Database Selection and Preparation

Database selection critically impacts annotation accuracy and computational efficiency:

Database Options:

- Full comprehensive databases (e.g., NCBI nr, UniProtKB) offer broad coverage but increased search time and potential for ambiguous matches

- Taxonomically restricted databases focused on expected clades improve specificity and speed [50]

- Custom databases compiled from specific reference genomes provide highest precision for targeted studies

Database Download and Configuration:

BLASTp Execution and Parameter Optimization

Execute BLASTp searches with parameters optimized for metagenomic data:

Basic Command Structure:

Critical Parameter Settings:

- E-value threshold: Set between 1e-3 and 1e-5 based on desired stringency [52]

- Output format: Use tabular format (outfmt 6) with specific columns for downstream processing

- Coverage filters: Implement query coverage thresholds (qcovs) to avoid partial matches

Advanced Filtering: For homology inference, apply post-processing filters:

- Minimum percent identity (typically 30-90% depending on evolutionary distance)

- Minimum query coverage (≥70% recommended to avoid domain-only matches)

- Bitscore thresholds relative to database size

Table 2: Key BLASTp output fields and their interpretation for homology assessment

| Output Field | Description | Interpretation Guidelines |

|---|---|---|

| pident | Percentage identity | >90% suggests recent common ancestry; 30-50% may indicate distant homology |

| evalue | Expect value | Lower values indicate greater significance; <0.00001 recommended for homologs |

| qcovs | Query coverage | Percentage of query sequence covered by all HSPs; high values (>80%) indicate full-length matches |

| qcovhsp | HSP coverage | Coverage by single High-scoring Segment Pair; reveals potential multi-domain structure |

| bitscore | Normalized score | Alignment quality independent of database size; useful for comparing matches across databases |

Results Interpretation and Homology Assessment

Proper interpretation of BLAST results requires integrating multiple metrics:

- Multi-HSP Analysis: Examine the arrangement of High-scoring Segment Pairs. Multiple disjoint HSPs may indicate multi-domain proteins or gene fusion/fission events [52].

- Taxonomic Consistency: Assess whether top hits originate from phylogenetically coherent groups, which increases confidence in homology calls.

- Consensus Annotation: Transfer functional annotations from the best significant hits, giving preference to experimentally characterized proteins in well-studied model organisms.

Applications and Case Studies

Novel Viral Genome Characterization

In a recent study profiling human gut viromes, homology-based methods were instrumental in characterizing 1,090 high-quality viral genomes. After initial gene prediction, BLASTp analysis revealed seven core genes (antB, dnaB, DNMT1, DUT, xlyAB, xtmB, and xtmA) associated with metabolism and fundamental viral processes. Additionally, homology searches identified genes for virulence, host-takeover, drug resistance, tRNA, tmRNA, and CRISPR elements, providing functional insights into previously uncharacterized viral diversity [49].

Enhanced Metaproteomic Analysis