From High-Dimensional Data to Biological Insights: A Practical Guide to PCA for Gene Expression Analysis

This guide provides a comprehensive framework for projecting gene expression data onto principal components, a fundamental technique for exploring high-dimensional transcriptomic data.

From High-Dimensional Data to Biological Insights: A Practical Guide to PCA for Gene Expression Analysis

Abstract

This guide provides a comprehensive framework for projecting gene expression data onto principal components, a fundamental technique for exploring high-dimensional transcriptomic data. Tailored for researchers and bioinformaticians, it covers the foundational theory of Principal Component Analysis (PCA) and the curse of dimensionality, then details a complete methodological workflow for performing PCA in R, including data preprocessing, normalization, and visualization. The article further addresses critical troubleshooting areas such as batch effect correction and data quality control (the 'garbage in, garbage out' principle), and concludes with validation strategies and a comparative look at when to use PCA versus other dimensionality reduction methods like t-SNE. By integrating foundational knowledge with practical application and troubleshooting, this resource empowers scientists to confidently use PCA to uncover patterns, identify outliers, and generate robust biological hypotheses from complex gene expression datasets.

Understanding the 'Why': PCA as a Solution to the Curse of Dimensionality in Transcriptomics

In gene expression analysis, dimensions correspond to the individual features or variables measured for each biological sample. In a typical RNA-sequencing or microarray experiment, each gene or transcript represents one dimension. This results in an extremely high-dimensional data space where the number of measured features (p)—often 20,000 to 40,000 genes—far exceeds the number of biological samples (n), creating the characteristic "large d, small n" problem [1] [2]. This high-dimensional landscape presents both challenges and opportunities for biological discovery, requiring specialized analytical approaches to extract meaningful patterns.

The concept of dimensionality extends beyond simply counting genes. It encompasses the complex relationships and interactions between genes, pathways, and regulatory networks. Each dimension (gene) contains information about its expression level across different samples, conditions, or time points. When analyzed collectively, these high-dimensional measurements provide a comprehensive snapshot of cellular state, but simultaneously introduce significant computational and statistical challenges that must be addressed through dimensionality reduction techniques like Principal Component Analysis (PCA) [1].

Quantitative Characterization of Gene Expression Data

The high-dimensional nature of gene expression data can be quantitatively described through several key characteristics. The following table summarizes the core dimensional properties of typical genomic datasets:

Table 1: Dimensional Characteristics of Gene Expression Data

| Characteristic | Typical Range | Description | Implication |

|---|---|---|---|

| Number of Features (Genes) | 20,000 - 40,000 | Individual genes or transcripts measured per sample | Creates ultra-high-dimensional space that exceeds sample count |

| Sample Size | Dozens to hundreds | Biological replicates or conditions | Leads to "curse of dimensionality" with more features than samples |

| Data Sparsity | High | Many genes show little to no variation across samples | Majority of dimensions may contain noise rather than biological signal |

| Intrinsic Dimensionality | 3 - 10+ | Number of components needed to explain major variation | True biological signal may be concentrated in fewer dimensions [3] |

| Noise Level | Variable | Technical and biological variability | Can obscure true biological signals in high-dimensional space |

The intrinsic dimensionality represents a particularly important concept, referring to the minimum number of dimensions needed to capture the essential biological variation in the data. While gene expression datasets contain tens of thousands of measured dimensions, research suggests that the true biological signal may be effectively captured in a much lower-dimensional space. Studies applying PCA to large compendia of gene expression data have found that the first few principal components often capture identifiable biological patterns, such as separation by tissue type, cellular lineage, or experimental conditions [3].

Table 2: Variance Explained by Principal Components in Genomic Studies

| Principal Component | Typical Variance Explained | Common Biological Interpretation | Reproducibility |

|---|---|---|---|

| PC1 | 10-25% | Major cell types/tissues (e.g., hematopoietic vs. neural) | High across studies |

| PC2 | 8-15% | Secondary biological processes (e.g., malignancy, proliferation) | Moderate to high |

| PC3 | 5-12% | Tertiary processes (e.g., specific tissue subtypes) | Variable |

| PC4+ | Decreasing with each component | Tissue-specific signals or technical artifacts | Study-dependent [3] |

The Computational and Statistical Challenge

The Curse of Dimensionality

The "curse of dimensionality" profoundly impacts the analysis of gene expression data. As the number of dimensions increases, the data becomes increasingly sparse, with samples distributed thinly throughout the high-dimensional space. This sparsity makes it difficult to identify true biological patterns, as the distance between samples becomes less meaningful and the risk of identifying spurious correlations grows exponentially [2]. In practical terms, this means that traditional statistical methods often fail or require modification to handle genomic data appropriately.

The dimensionality problem is further compounded by data sparsity and noise. Many genes exhibit minimal variation across samples or show expression patterns dominated by technical artifacts rather than biological signals. Genomics data can be highly noisy due to biological variability, errors during data collection, or limitations in sequencing technology. This noise creates a scenario analogous to "trying to hear someone whisper in a crowded room," making it challenging to distinguish true biological signals from background noise [2].

Impact on Analysis and Interpretation

High dimensionality directly impacts analytical outcomes through several mechanisms. Overfitting occurs when models learn patterns from noise rather than true biological signals, performing well on training data but failing to generalize to new datasets. The risk of false discoveries increases as the number of hypotheses tested (across thousands of genes) grows without appropriate statistical correction. Additionally, visualization and interpretation become challenging, as the human capacity for pattern recognition is limited to three dimensions, necessitating dimensionality reduction for effective data exploration [1].

Principal Component Analysis as a Solution

Theoretical Foundation of PCA

Principal Component Analysis addresses high-dimensional challenges by constructing new composite variables (principal components) that are linear combinations of the original genes. These components are calculated to capture the maximum possible variance in the data, with the first component (PC1) representing the direction of greatest variance, the second component (PC2) capturing the next highest variance while being orthogonal to the first, and so on [1] [4]. Mathematically, PCA works by performing eigenvalue decomposition of the covariance matrix of the standardized gene expression data, identifying the dominant directions of variation in the high-dimensional space.

The process can be understood geometrically as rotating the coordinate system to align with the natural axes of variation in the data. Imagine a cloud of points in 15-dimensional space (representing 15 genes): PCA finds the optimal rotation such that the new axes (principal components) are ordered by the amount of variance they explain, with the first axis (PC1) aligned along the direction of maximum spread of the data points [4]. This rotation preserves all original information while reorganizing it to emphasize the most prominent patterns.

Workflow for PCA Application

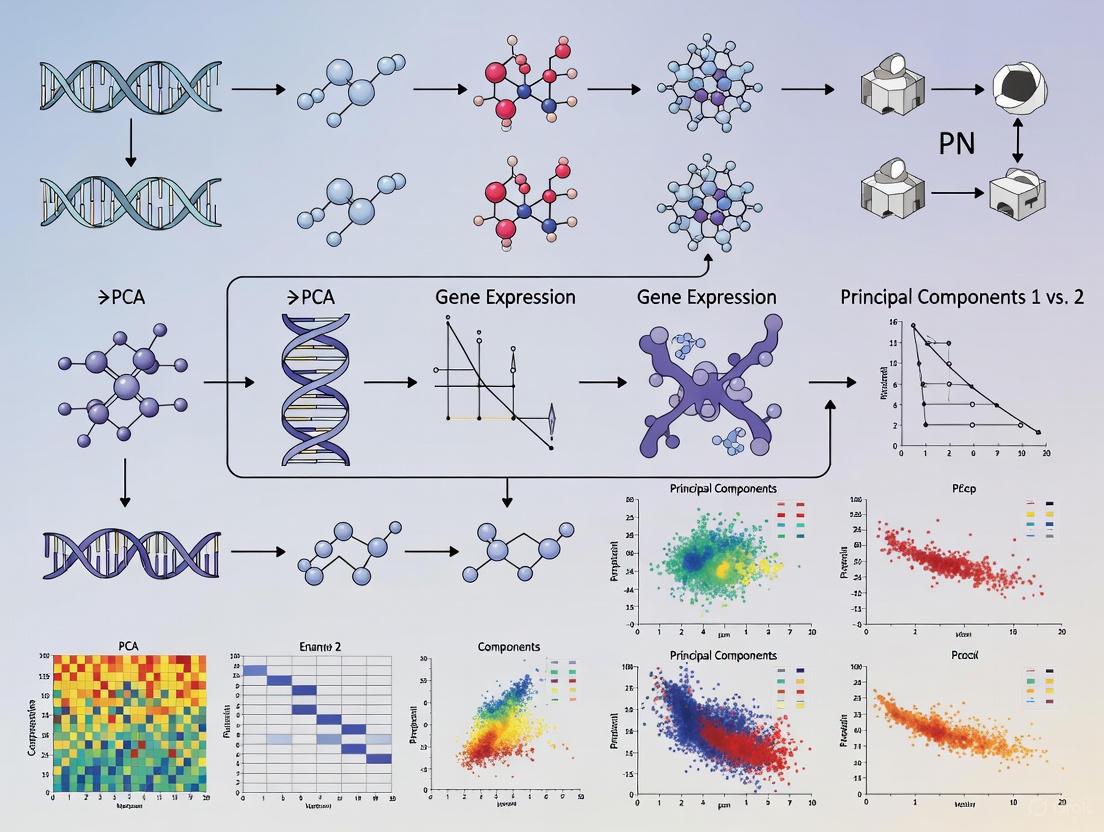

The application of PCA to gene expression data follows a systematic workflow that transforms raw expression measurements into interpretable lower-dimensional representations. The following diagram illustrates the key steps in this process:

PCA Analysis Workflow

Interpretation of PCA Results

Interpreting PCA results requires understanding both the mathematical output and its biological significance. The principal components themselves represent patterns of co-expressed genes, often referred to as "metagenes" or "super genes" [1]. Samples can be projected onto these components, creating low-dimensional visualizations where distances reflect similarity in expression profiles. Samples with similar expression patterns cluster together in the PCA plot, while biologically distinct samples separate along the principal components.

The weights (or loadings) of individual genes on each principal component provide insight into which genes drive the observed separation. Genes with large absolute weights on a specific component have strong influence on that dimension's pattern. Biologically, these gene sets often correspond to coordinated biological processes, such as cell proliferation, metabolic pathways, or tissue-specific functions. By examining these loadings, researchers can move from abstract mathematical dimensions to concrete biological mechanisms [1] [4].

Experimental Protocols for Dimensionality Analysis

RNA-seq Data Processing Protocol

Before applying PCA, gene expression data must be properly generated and processed. The following protocol outlines the key steps for RNA-sequencing data analysis using the RumBall pipeline, which provides a reproducible framework for processing raw sequencing data into analyzable expression values [5]:

Software Setup and Installation

- Install Docker following platform-specific instructions

- Download RumBall Docker image:

docker pull rnakato/rumball - Verify installation:

docker run --rm -it rnakato/rumball star.sh

Data Acquisition and Quality Control

- Obtain FASTQ files from sequencing facilities or public repositories (e.g., GEO, SRA)

- Create project directory:

mkdir project_name && cd project_name - Perform quality control using built-in tools (FastQC equivalent)

- Generate quality report in JSON and CSV formats

Read Mapping and Quantification

- Align reads to reference genome using STAR aligner

- Trim reads longer than 200 bases due to reference genome limitations

- Generate aligned BAM files as output

- For specific version use:

docker run --rm -it rnakato/rumball:<version> ls

Expression Matrix Generation

- Extract gene-level counts from BAM files using GenomicAlignments

- Create count matrix with genes as rows and samples as columns

- Output results as CSV file for downstream analysis

This protocol generates the essential expression matrix that serves as input for PCA, with samples as columns and genes as rows, representing the high-dimensional starting point for dimensionality reduction.

PCA Application Protocol

Once expression data is prepared, the following protocol details the specific steps for performing and interpreting PCA:

Data Preprocessing

- Filter genes with low expression across samples

- Apply log2 transformation to normalize variance:

log2(count + 1) - Center data by subtracting mean expression for each gene

- Optionally scale to unit variance for each gene

PCA Computation

- Compute covariance matrix of preprocessed expression matrix

- Perform eigenvalue decomposition using singular value decomposition (SVD)

- Extract principal components, eigenvalues, and gene loadings

- In R: Use

prcomp()function; in Python: usesklearn.decomposition.PCA

Result Interpretation

- Examine scree plot to determine number of meaningful components

- Project samples onto first 2-3 components for visualization

- Identify clusters and outliers in the PCA plot

- Correlate principal components with sample metadata (e.g., treatment, tissue type)

- Analyze gene loadings to interpret biological meaning of components

Validation and Follow-up

- Assess robustness through cross-validation or bootstrapping

- Conduct differential expression analysis within identified clusters

- Perform pathway enrichment on genes with high loadings on key components

Research Reagent Solutions and Computational Tools

Successful analysis of high-dimensional gene expression data requires both experimental reagents and computational tools. The following table outlines essential resources for generating and analyzing dimensional genomic data:

Table 3: Research Reagent Solutions for Dimensional Genomics

| Resource Type | Specific Tool/Reagent | Function/Purpose | Application Context |

|---|---|---|---|

| Sequencing Platform | RNA-sequencing | Genome-wide transcript quantification | Generating high-dimensional expression data |

| Analysis Pipeline | RumBall [5] | Reproducible RNA-seq analysis from FASTQ to counts | Data preprocessing for PCA input |

| Analysis Package | exvar R package [6] | Integrated gene expression and variant analysis | Differential expression and variant calling |

| Dimensionality Reduction | prcomp (R), PCA (Python sklearn) | Principal component analysis | Core dimensionality reduction method |

| Visualization Package | ggplot2, ClusterProfiler [6] | Data visualization and enrichment analysis | Plotting PCA results and interpreting components |

| Reference Databases | GENCODE, Ensembl | Gene annotation and functional information | Biological interpretation of PCA components |

| Containerization | Docker [5] | Reproducible computational environments | Ensuring consistent analysis across systems |

These resources collectively support the entire workflow from experimental data generation through dimensional analysis and biological interpretation. The exvar package, in particular, provides integrated functionality for multiple analysis types, supporting eight species including Homo sapiens, Mus musculus, and Arabidopsis thaliana [6].

Advanced Considerations and Future Directions

While PCA remains a foundational approach for handling high-dimensional gene expression data, several advanced considerations merit attention. The intrinsic dimensionality of biological data appears to be higher than initially thought, with studies suggesting that more than three to four principal components may contain biologically relevant information, particularly for distinguishing between closely related cell types or conditions [3]. The information captured by PCA is also highly dependent on sample composition, with dataset-specific factors influencing which biological patterns emerge in the principal components.

Emerging methods address limitations of standard PCA for genomic applications. Sparse PCA incorporates regularization to produce principal components with fewer non-zero loadings, improving interpretability by focusing on smaller gene sets. Supervised PCA incorporates outcome variables to guide dimensionality reduction, potentially enhancing relevance for predictive modeling. Functional PCA extends the approach to time-course gene expression data, capturing dynamic patterns across multiple time points [1]. These advanced techniques, combined with ongoing developments in machine learning and single-cell genomics, continue to expand our ability to navigate the high-dimensional landscape of gene expression data, transforming overwhelming complexity into biological insight.

Principal Component Analysis (PCA) is a foundational statistical technique for dimensionality reduction, addressing the "curse of dimensionality" common in bioinformatics where datasets often contain thousands of variables (e.g., gene expressions) but relatively few samples [1] [7]. This high-dimensionality creates significant challenges for computational analysis, visualization, and statistical modeling [7]. PCA operates by transforming the original variables into a new set of uncorrelated variables called Principal Components (PCs), which are linear combinations of the original variables designed to capture maximum variance in the data [1] [8].

In gene expression analysis, PCA has become indispensable for exploring high-throughput genomic data, where it helps researchers identify patterns, reduce noise, and visualize complex datasets [1]. The resulting principal components are often referred to as "metagenes," "super genes," or "latent genes" as they represent consolidated patterns of gene activity across multiple biological variables [1].

Theoretical Foundation: The Mathematics of Maximum Variance

Core Mathematical Principle

The fundamental objective of PCA is to identify a sequence of orthogonal linear transformations that maximize the retained variance in the data [8]. Mathematically, given a data matrix ( X ) with ( n ) observations (samples) and ( p ) variables (genes), where each variable is centered to have zero mean, PCA seeks a set of weight vectors ( \mathbf{w}{(k)} = (w1, \dots, w_p) ) that transform the original variables into new components [8]:

[ t{k(i)} = \mathbf{x}{(i)} \cdot \mathbf{w}_{(k)} \quad \text{for} \quad i=1,\dots,n \quad k=1,\dots,l ]

The first weight vector ( \mathbf{w}_{(1)} ) must satisfy:

[ \mathbf{w}{(1)} = \arg \max{\|\mathbf{w}\|=1} \left{ \sumi (x{(i)} \cdot w)^2 \right} = \arg \max_{\|\mathbf{w}\|=1} \left{ \mathbf{w}^T \mathbf{X}^T \mathbf{X} \mathbf{w} \right} ]

This optimization problem can be solved through eigendecomposition of the covariance matrix ( \mathbf{X}^T\mathbf{X} ) or singular value decomposition (SVD) of the data matrix ( \mathbf{X} ) itself [8]. The eigenvectors of the covariance matrix correspond to the principal components, while the eigenvalues indicate the amount of variance captured by each component [8].

Key Properties of Principal Components

Principal components possess several mathematically important properties [1]:

- Orthogonality: All PCs are mutually perpendicular (( \mathbf{w}{(i)} \cdot \mathbf{w}{(j)} = 0 ) for ( i \neq j )), ensuring they capture non-redundant information.

- Variance Ordering: The first PC captures the largest possible variance, the second PC captures the next largest variance while being orthogonal to the first, and so on.

- Variance Preservation: The total variance in the original data equals the sum of variances of all PCs.

- Dimensionality Reduction: Often, a small number of PCs can explain the majority of total variance, enabling significant dimensionality reduction.

Practical Implementation for Gene Expression Data

Preprocessing and Data Preparation

Proper data preprocessing is critical for successful PCA application to gene expression data:

- Data Normalization: Normalize gene expression measurements to account for technical variations between samples or platforms [1].

- Centering: Subtract the mean from each variable so all features are centered around zero [1] [8].

- Scaling: Consider scaling variables to unit variance if genes have different measurement scales [1].

- Quality Control: Remove samples with poor quality and filter out genes with minimal expression variation.

Computational Protocol

The following protocol details PCA implementation for gene expression data:

Table 1: Software Tools for PCA Implementation

| Software | Function/Command | Key Features | Application Context |

|---|---|---|---|

| R Statistical Software | prcomp() or princomp() |

Comprehensive statistical analysis, visualization with ggplot2 | Academic research, bioinformatics [1] |

| Python (scikit-learn) | sklearn.decomposition.PCA() |

Integration with machine learning pipelines | Large-scale data analysis, predictive modeling |

| SAS | PROC PRINCOMP | Enterprise-level statistical analysis | Pharmaceutical industry, clinical research [1] |

| MATLAB | princomp() function |

Numerical computation, engineering applications | Academic research, engineering [1] |

| SPSS | Factor analysis (Data Reduction) | User-friendly interface | Social sciences, preliminary data exploration [1] |

Component Selection Criteria

Determining the optimal number of principal components is crucial for balancing dimensionality reduction with information retention:

- Scree Plot Analysis: Plot eigenvalues in descending order and identify the "elbow point" where the curve flattens [8].

- Cumulative Variance Threshold: Retain enough components to explain a predetermined percentage of total variance (typically 70-90%) [9].

- Kaiser Criterion: Retain components with eigenvalues greater than 1 (when using correlation matrix).

- Cross-Validation: Use computational methods to assess how well components predict out-of-sample data.

Research Reagent Solutions for PCA in Genomics

Table 2: Essential Research Reagents and Computational Tools

| Reagent/Tool | Function | Example Applications |

|---|---|---|

| Gene Expression Microarrays | Genome-wide expression profiling | Pre-PCA data generation; measures ~40,000 probes simultaneously [1] |

| RNA-seq Platforms | High-throughput transcriptome sequencing | Alternative to microarrays for gene expression quantification [7] |

| Normalization Algorithms | Technical variation correction | RPKM, TPM, or DESeq2 methods for count data [1] |

| Quality Control Metrics | Data quality assessment | RNA integrity number (RIN), sequencing depth evaluation [1] |

| Computational Environments | Data processing and analysis | R, Python, or specialized bioinformatics pipelines [1] |

Applications in Drug Discovery and Biomedical Research

PCA serves as a powerful "hypothesis-generating" tool that enables researchers to explore complex biological datasets without strong a priori assumptions [10]. Its applications in pharmaceutical sciences include:

Compound Optimization and Profiling

In drug discovery, PCA helps identify key molecular characteristics that influence pharmacological properties. A recent study on quercetin analogues used PCA to identify molecular descriptors contributing to blood-brain barrier permeability, revealing that intrinsic solubility and lipophilicity (logP) were primary factors determining permeation capability [11]. The analysis successfully clustered four trihydroxyflavone compounds with the highest predicted BBB permeability, guiding future synthetic efforts for neuroprotective agents [11].

High-Throughput Screening Analysis

PCA enables efficient analysis of large-scale drug screening datasets by reducing thousands of compound descriptors to a manageable number of components. Researchers applied PCA to quantum mechanical descriptors of oxindole derivatives, achieving approximately 85% accuracy in predicting anticancer and antimicrobial activities based on structural properties [12]. This approach facilitates rapid prioritization of lead compounds for further investigation.

Biomarker Identification and Disease Stratification

PCA can identify patterns in clinical and biomarker data to predict disease progression and treatment response. In feline sporotrichosis research, PCA of hematological and biochemical analytes revealed that total plasma protein concentration serves as an independent predictor for the dissemination of cutaneous lesions [13]. This finding provides veterinarians with a readily measurable biomarker for disease prognosis.

Advanced PCA Applications in Genomics

Beyond standard applications, several specialized PCA approaches have been developed for genomic studies:

Pathway-Based PCA: Instead of applying PCA to all genes simultaneously, this approach conducts separate PCA on genes within predefined biological pathways, with PCs representing pathway effects [1]. This method accommodates biological hierarchy and facilitates interpretation.

Network Module PCA: PCA is applied to groups of genes within the same network modules, where PCs represent effects of tightly connected gene clusters [1]. This approach leverages known biological networks to enhance pattern discovery.

Supervised PCA: Incorporates response variable information to guide component selection, often demonstrating superior predictive performance compared to standard PCA [1].

Sparse PCA: Modifies the standard algorithm to produce components with many zero loadings, improving interpretability by focusing on smaller gene subsets [1].

Functional PCA: Specifically designed for analyzing time-course gene expression data, capturing patterns in trajectories rather than static measurements [1].

Protocol: PCA-Based Analysis of Pathway Interactions in Genomics

Experimental Workflow for Pathway Interaction Analysis

Recent methodological advances enable PCA to accommodate interactions between biological pathways:

Pathway Definition: Identify two or more biologically relevant gene pathways containing ( p1 ) and ( p2 ) genes respectively [1].

Individual Pathway PCA: Conduct PCA separately on genes within each pathway:

- Denote ( U1, U2, \dots, U{m1} ) as PCs for pathway 1

- Denote ( V1, V2, \dots, V{m2} ) as PCs for pathway 2

Interaction Modeling: Two alternative approaches:

- Approach A1: Include both sets of PCs (( U ) and ( V )) and their cross-products (( Ui \times Vj )) as covariates in downstream regression analysis [1].

- Approach A2: For each pathway, conduct PCA on the expanded set containing original gene expressions and their second-order interactions, then use resulting PCs as covariates [1].

Validation: Assess model performance using cross-validation and biological validation experiments.

This approach enables researchers to investigate pathway-level interactions while maintaining computational feasibility, overcoming limitations of analyzing all possible gene-gene interactions in high-dimensional data [1].

Covariance vs. Correlation Matrix Selection

The choice between using covariance matrices or correlation matrices for PCA depends on data characteristics and research goals:

Covariance Matrix (PCA-COV): Preserves the original scaling of variables, giving higher weight to variables with larger variances [9]. Particularly beneficial when analyzing dichotomous variables, as it prioritizes variables with more balanced representation.

Correlation Matrix (PCA-COR): Standardizes all variables to unit variance, giving equal weight to all variables regardless of their original scales [9]. Appropriate when variables are measured on different scales.

In large-scale educational assessments, PCA-COV has demonstrated advantages in reducing estimation bias and mean squared error, particularly with dichotomous contextual variables [9]. This approach weights variables with respect to their variance, potentially improving estimation stability in high-dimensional settings.

Principal Component Analysis remains a cornerstone technique for dimensionality reduction in gene expression studies and drug discovery research. By transforming original variables into a new set of orthogonal components that capture maximum variance, PCA enables researchers to overcome the challenges of high-dimensional data while preserving essential biological information. The continued development of specialized PCA variants—including supervised, sparse, and functional PCA—ensures its ongoing relevance in extracting meaningful patterns from increasingly complex genomic datasets. As biomedical research continues to generate high-dimensional data, PCA's ability to reduce dimensionality, visualize complex relationships, and generate biological hypotheses will maintain its position as an essential tool in the bioinformatics toolkit.

Principal Component Analysis (PCA) is a foundational technique for exploring high-dimensional biological data, such as gene expression datasets. By transforming complex data into a simplified structure of orthogonal principal components (PCs), PCA allows researchers to identify dominant patterns, reduce dimensionality, and visualize sample relationships. This Application Note provides a structured guide to interpreting the three core outputs of PCA—scores, loadings, and variance explained—within the context of gene expression analysis. We detail protocols for projecting data onto principal components and validating the biological relevance of the resulting models, supported by practical examples from transcriptomic research.

In the field of genomics, researchers frequently encounter datasets where the number of measured variables (genes) vastly exceeds the number of observations (samples). Principal Component Analysis (PCA) serves as a powerful multivariate statistical technique to address this challenge by systematically reducing data dimensionality while preserving essential patterns [14] [8]. PCA achieves this by transforming the original variables into a new set of uncorrelated variables, the principal components (PCs), which are ordered such that the first few retain most of the variation present in the original dataset [8] [15].

When applied to gene expression data, PCA summarizes the biological state of profiled samples using derived gene signatures [14]. The first principal component (PC1) represents the direction of maximum variance in the data, often corresponding to the most dominant biological signal, such as a proliferation signature in tumor datasets [14]. Subsequent components capture progressively smaller, orthogonal sources of variation. The interpretation of a PCA model relies on understanding three fundamental outputs: the variance explained by each component, the scores which position samples in the new PC space, and the loadings which connect the original variables to the components [16] [17]. Proper interpretation of these elements is critical for drawing biologically meaningful conclusions from transcriptomic studies.

Core Concepts: The Three Pillars of PCA Output

Variance Explained

The variance explained by a principal component quantifies its importance in representing the original data. It is derived from the eigenvalues of the data's covariance matrix [18] [19].

- Mathematical Basis: For a centered data matrix

X, the covariance matrix is computed asC = (X^T X)/(n-1). The eigenvaluesλ₁, λ₂, ..., λ_pof this matrix represent the variances of successive principal components [18] [19]. The total variance in the data is the sum of all eigenvalues, which equals the trace of the covariance matrix [19]. - Calculation of Proportion: The proportion of total variance explained by the

i-thPC is calculated asλ_i / Σ(λ). The cumulative variance explained by the firstkPCs is the sum of their individual proportions [16] [19]. - Visualization with Scree Plots: A Scree Plot displays eigenvalues in descending order against component number. It helps identify a "kink" or elbow, indicating where subsequent components contribute less significantly and can often be discarded without major information loss [18] [16].

Table 1: Example of Variance Explained by Principal Components

| Principal Component | Eigenvalue (Variance) | Proportion of Variance Explained | Cumulative Proportion |

|---|---|---|---|

| PC1 | 351.0 | 38.8% | 38.8% |

| PC2 | 147.0 | 16.3% | 55.2% |

| PC3 | 76.7 | 8.5% | 63.7% |

| ... | ... | ... | ... |

| PC36 | < 1.0 | < 0.1% | 100.0% |

Data adapted from an RNA-seq PCA example [16].

Scores

Scores are the coordinates of the samples in the new, low-dimensional space defined by the principal components [17]. They are obtained by projecting the original data onto the principal components [8].

- Geometric Interpretation: Geometrically, scores result from rotating the original coordinate axes to align with the directions of maximum variance [17]. The score for a sample on PC1 is its coordinate along this new primary axis.

- Usage in Analysis: Score plots (e.g., PC1 vs. PC2) allow for visual assessment of sample clustering and patterns. Similar samples will group together in this space, potentially revealing biological subgroups, batch effects, or outliers [16] [20].

Figure 1: The conceptual workflow of how PCA scores are generated and used. Samples are transformed from a high-dimensional gene space to a low-dimensional PCA space for easier visualization and interpretation.

Loadings

Loadings (or eigenvectors) are the weights assigned to each original variable (gene) in a principal component [14] [17]. They define the direction of the PC in the original high-dimensional space.

- Mathematical Definition: Loadings are the eigenvectors of the covariance matrix. Each loading vector is a unit vector, and all loading vectors are mutually orthogonal [18] [8].

- Geometric Interpretation: A loading value is the cosine of the angle between the original variable's axis and the principal component axis [17]. A loading close to +1 or -1 indicates the variable strongly influences that PC's direction. The sign indicates whether the variable's contribution is positive or negative [17].

- Interpretation: Genes with high absolute loadings on a PC are the major drivers of the variance that PC captures. Analyzing these top-loading genes can provide biological meaning to a component (e.g., revealing that PC1 represents a gene signature related to cellular proliferation) [14].

Table 2: Interpreting PCA Loadings for a Hypothetical 2-Gene System

| Original Variable | Loading on PC1 | Interpretation |

|---|---|---|

| Gene A | 0.500 | Gene A contributes positively to PC1. Its influence is moderate. |

| Gene B | 0.866 | Gene B contributes positively to PC1. It is a stronger driver of PC1 than Gene A. |

The loadings show that PC1 is more aligned with the direction of Gene B, which has a higher loading value [17].

Protocols for Projecting Gene Expression Data onto Principal Components

This protocol details the steps for performing PCA on gene expression data, from preprocessing to projection and validation.

Data Preprocessing and Normalization

The choice of normalization method profoundly impacts the correlation structure of the data and, consequently, the PCA model and its biological interpretation [21].

- Step 1: Data Matrix Construction: Create a genes-by-samples matrix of expression values (e.g., FPKM, TPM, or normalized counts from RNA-seq).

- Step 2: Normalization: Apply a robust normalization method to remove technical artifacts. A comprehensive evaluation of 12 methods showed that the choice of normalization affects gene ranking and pathway interpretation in PCA [21]. Methods like TMM (Trimmed Mean of M-values) or DESeq2's median-of-ratios are common starting points.

- Step 3: Transformation and Scaling: For gene expression data, a log₂ transformation is often applied to stabilize variance. Centering (subtracting the column mean) is essential for PCA. Scaling (dividing by the column standard deviation) to unit variance is recommended if genes are on different scales, to prevent high-expression genes from dominating the model [16] [15].

PCA Computation and Projection

The core computational process can be efficiently performed in R using the prcomp() function.

- Step 1: Compute PCA: Use

pca_result <- prcomp(t(expression_matrix), center = TRUE, scale. = TRUE). Transposing the matrix ensures samples are rows and genes are columns, as required byprcomp[16]. - Step 2: Extract Outputs:

- Scores:

pca_scores <- pca_result$x - Loadings:

pca_loadings <- pca_result$rotation - Standard Deviations:

pca_sdev <- pca_result$sdev - Variances:

eigenvalues <- (pca_result$sdev)^2[16]

- Scores:

- Step 3: Project Data: The projection is performed automatically during the

prcomp()call. The scores are the result of the matrix multiplication:Scores = Scaled_Data × Loadings[8].

Figure 2: A streamlined workflow for performing Principal Component Analysis on gene expression data, from raw input to interpretable output.

Validation of the PCA Model

Before biological interpretation, validating that the PCA model is robust and describes intended biology is critical, especially when applying a pre-defined gene signature to a new dataset [14].

- Coherence: Assess if genes within the signature are correlated beyond chance in the target dataset. This can be done by comparing the variance explained by the signature's first PC to that of thousands of randomly generated gene sets [14].

- Uniqueness: Ensure the signature's primary signal is not merely a reflection of a dominant, general direction in the data (e.g., a proliferation bias in tumor datasets). The signature's first PC should be distinguishable from the first PC of the entire transcriptome [14].

- Robustness: The biological signal should be strong and distinct. This can be tested by evaluating the variance captured in the first PC relative to the second; a sharp drop (as seen in a scree plot) indicates a strong, dominant signal [14] [18].

- Transferability: The PCA-based gene signature score must describe the same biology in the target dataset as in the training dataset. This involves checking the correlation of loadings or the biological function of top-loading genes [14].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for PCA in Gene Expression Analysis

| Tool / Resource | Function | Application Note |

|---|---|---|

| R Statistical Environment | Open-source platform for statistical computing and graphics. | The primary environment for running PCA and downstream analyses. |

| prcomp() / PCA() functions | Core R functions for performing PCA. | prcomp() is preferred for numerical accuracy using SVD [16]. |

| MDAnalysis Python Toolkit | Object-oriented toolkit for analyzing molecular dynamics trajectories. | Can be adapted for analysis of protein dynamics from structural data [20]. |

| VolSurf+ | Mathematical platform for computing molecular descriptors from 3D structures. | Used to derive descriptors for permeability and solubility in drug discovery [11]. |

Application Example: PCA in Neuroprotective Drug Discovery

A study on quercetin analogues aimed to identify compounds with improved blood-brain barrier (BBB) permeability used PCA to identify key molecular descriptors [11].

- Objective: Understand which molecular descriptors (e.g., logP, solubility parameters) primarily govern BBB permeation in a set of 34 quercetin analogues.

- Method: PCA was applied to a matrix of multiple computed molecular descriptors for all analogues. The resulting scores and loadings were analyzed.

- Result: The PCA model successfully reduced the descriptor space. The first few PCs captured the majority of the variance. Analysis of loadings revealed that descriptors related to intrinsic solubility and lipophilicity (logP) were the main drivers of the first principal components, meaning they were most responsible for the clustering pattern seen in the scores plot [11].

- Conclusion: This PCA output provided a clear, data-driven direction for future synthetic efforts: focus on modifying analogues to optimize lipophilicity and solubility to enhance BBB penetration [11].

Interpreting PCA outputs is a critical skill for extracting meaningful biological insights from high-dimensional gene expression data. A rigorous approach involves not just visualizing sample scores for clusters, but also quantitatively assessing the variance explained to determine the significance of components and interrogating the loadings to assign biological meaning to the patterns observed. By following the standardized protocols for projection and validation outlined in this guide, researchers can ensure their PCA models are robust, reproducible, and truly reflective of underlying biology, thereby reliably informing subsequent drug discovery and development decisions.

In the analysis of high-dimensional genomic data, exploratory techniques are not merely a preliminary step but a critical foundation for ensuring robust and biologically meaningful conclusions. Gene expression studies, characterized by their "large d, small n" paradigm (a large number of genes profiled over a relatively small number of samples), present unique challenges in visualizing data structure and identifying anomalous observations [1]. This document, framed within a broader thesis on projecting gene expression data onto principal components, outlines detailed application notes and protocols for key exploratory analyses. We focus on the pivotal roles of Principal Component Analysis (PCA) for visualizing sample similarity and multivariate methods for detecting outliers, providing scientists and drug development professionals with the practical toolkit necessary to navigate the complexity of transcriptomic data.

Principal Component Analysis: A Dimensionality Reduction Powerhouse

Core Concepts and Rationale

Principal Component Analysis (PCA) is a classic dimension reduction approach that constructs new, uncorrelated variables called principal components (PCs), which are linear combinations of the original gene expressions [1]. The core objective is to project high-dimensional data into a lower-dimensional space while preserving the maximal amount of variance. This is achieved by identifying axes of greatest variance (principal components), where the first PC captures the most variation, the second PC (orthogonal to the first) captures the next most, and so on [22].

In the context of bioinformatics, the first few PCs are assumed to capture dominant biological signals and major factors of heterogeneity, whereas later PCs are often associated with random technical or biological noise [22]. This makes PCA invaluable for data compaction, noise reduction, and the generation of intuitive visualizations that reveal underlying sample relationships.

Quantitative and Qualitative Applications in Genomics

PCA serves multiple critical functions in genomic data analysis:

- Exploratory Analysis and Data Visualization: It enables the projection of thousands of dimensional gene expressions onto a 2- or 3-dimensional space (e.g., PC1 vs. PC2), allowing researchers to visually assess sample clustering, identify batch effects, and observe broad patterns [1] [23].

- Clustering Analysis: The first few PCs, which capture most of the variation, can be used as input for clustering genes or samples, offering a denoised representation of the data [1].

- Regression Analysis: In studies aiming to predict clinical outcomes, the top PCs can be used as covariates in regression models, effectively circumventing the high-dimensionality problem and avoiding collinearity [1].

Visualizing Sample Similarity through PCA and Clustering

Assessing how samples relate to each other—whether replicates are consistent or treatment groups are distinct—is a fundamental question in genomics [24]. PCA is an intuitive way to identify sample clusters in a reduced-dimensionality plot [23].

Protocol: Sample Similarity Analysis via PCA

Objective: To reduce the dimensionality of a gene expression matrix and visualize sample relationships based on principal components. Input: A normalized gene expression matrix (e.g., log-counts) with rows representing genes and columns representing samples.

- Feature Selection: Select a subset of genes for PCA to reduce noise and computational load. Typically, this involves using the top 2000 genes with the highest biological variance (e.g., highly variable genes, or HVGs) [22].

- Data Scaling: Standardize the expression values for each gene to a mean of zero and a standard deviation of one (z-scores). This ensures that genes with high expression levels do not dominate the variance calculation simply due to their scale [24].

- PCA Computation: Perform PCA on the scaled and subsetted expression matrix using a singular value decomposition (SVD) algorithm. By default, the first 50 PCs are often computed and stored [22].

- Determination of PCs: Choose the number of top principal components (d) to retain for downstream analysis. While this can be data-driven, a common practice is to use an arbitrary but reasonable number, often between 10 and 50, as the later PCs explain minimal variance [22].

- Visualization: Create a scatter plot of the samples using the first two (or three) PCs. Samples with similar gene expression profiles will cluster together in this projected space [22].

Complementary Technique: Hierarchical Clustering

An alternative or complementary method for assessing sample similarity is hierarchical clustering, which creates a tree structure (dendrogram) showing the relationship between individual data points and clusters [24].

Protocol: Hierarchical Clustering of Samples

- Distance Calculation: Compute a distance matrix between all pairs of samples. Common metrics include Euclidean distance or correlation distance (

1 - correlation coefficient) [24]. - Clustering Algorithm: Perform hierarchical clustering on the distance matrix using a linkage method. "Ward's minimum variance method" is often recommended as it aims to find compact, spherical clusters [24].

- Visualization: Visualize the results as a dendrogram and often in tandem with a heatmap, which color-codes the original expression values to reveal patterns driving the cluster formation [24].

Table 1: Common Distance Metrics for Sample Clustering

| Metric Name | Formula | Use Case and Properties |

|---|---|---|

| Euclidean Distance | d_AB = √[Σ(e_Ai - e_Bi)²] |

The default "ordinary" distance; can be heavily influenced by outliers [24]. |

| Manhattan Distance | d_AB = Σ|e_Ai - e_Bi| |

Less sensitive to outliers compared to Euclidean distance [24]. |

| Correlation Distance | d_AB = 1 - ρ (ρ = Pearson correlation) |

Measures similarity in expression pattern, independent of scale [24]. |

Detecting Multivariate Outliers

In high-dimensional data, an outlier may not be extreme in any single dimension but can be an unusual combination of multiple variables [25]. These multivariate outliers can heavily influence model fitting and lead to incorrect biological inferences.

Protocol: Multivariate Outlier Detection using Mahalanobis Distance

Objective: To identify samples that are multivariate outliers based on their principal component scores. Input: The matrix of principal component scores from the PCA analysis (typically the top d PCs).

- Calculate Mahalanobis Distance: For each sample, compute the Mahalanobis distance of its PC score vector from the centroid of all sample scores. The Mahalanobis distance accounts for the covariance structure of the data, unlike Euclidean distance [25] [26].

- Assess Statistical Significance: Compare the calculated Mahalanobis distances to a Chi-square (χ²) distribution. The degrees of freedom (df) for this distribution are equal to the number of PCs used in the calculation [26].

- Identify Outliers: Compute the right-tail p-value for each distance (

1 - CDF.CHISQ(mahal_dist, df)). Samples with a p-value below a significance threshold (e.g., .001) are flagged as potential multivariate outliers [26].

Alternative Outlier Detection Methods

While Mahalanobis distance is a common parametric approach, other powerful methods exist.

Table 2: Methods for Multivariate Outlier Detection

| Method | Brief Description | Key Considerations |

|---|---|---|

| Mahalanobis Distance | Measures the distance of a point from the data centroid, accounting for covariance [25] [26]. | Sensitive to the presence of outliers itself, as they can distort the mean and covariance estimates. |

| Robust Mahalanobis | Uses robust estimates of the centroid and covariance matrix (e.g., minimum covariance determinant) [25]. | More reliable for outlier detection as it is less influenced by the outliers themselves. |

| Principal Component Analysis (PCA) | Visually inspect the first few PCs for samples that lie far from the main cloud of data [27]. | A simple, intuitive approach, but may miss outliers that do not manifest in the first two PCs. |

| Isolation Forest | A model-based method that isolates anomalies by randomly selecting features and split values [27]. | Effective for high-dimensional data and does not rely on assumptions of data distribution. |

The Scientist's Toolkit: Essential Research Reagents and Computational Solutions

Table 3: Key Research Reagent Solutions for Exploratory Analysis

| Item / Software Package | Function in Analysis |

|---|---|

| R Statistical Environment | A comprehensive platform for statistical computing and graphics. The prcomp function is a standard tool for performing PCA [1]. |

| scran / scater (R/Bioconductor) | Specialized packages for single-cell RNA-seq data analysis. They provide optimized functions like fixedPCA() for efficient and scalable PCA computation [22]. |

| Python (scikit-learn) | A general-purpose programming language with extensive data science libraries. sklearn.decomposition.PCA is a widely used implementation of PCA [28]. |

| Mahalanobis Distance (in R/SPSS) | A statistical distance metric used for multivariate outlier detection. Can be computed in R via mahalanobis() or in SPSS via the Linear Regression menu with the "Save Mahalanobis distances" option [26]. |

| Hierarchical Clustering (hclust in R) | A standard function for performing hierarchical cluster analysis on a distance matrix, essential for generating dendrograms of sample relationships [24]. |

| Heatmap Visualization (pheatmap in R) | A function for drawing annotated heatmaps, often combined with clustering to visualize gene expression patterns across samples [24]. |

A Step-by-Step Pipeline: Executing PCA on Your Gene Expression Matrix

In gene expression analysis, techniques like RNA-sequencing (RNA-seq) and microarrays generate high-dimensional data where the number of measured genes (variables, P) vastly exceeds the number of biological samples (observations, N). This structure, often called a "P ≫ N" scenario, presents significant challenges for analysis and visualization due to the curse of dimensionality [7]. Principal Component Analysis (PCA) is a powerful unsupervised machine learning method essential for exploring such data, revealing patterns, identifying outliers, and visualizing sample relationships [21] [29] [30]. The accuracy and biological interpretability of PCA, however, are profoundly influenced by how the raw count matrix is prepared and normalized prior to analysis. This protocol details the critical steps for structuring a gene expression count matrix—with samples as rows and genes as columns—to ensure robust and meaningful PCA outcomes within a gene expression research thesis.

Background & Key Concepts

The Curse of Dimensionality in Transcriptomic Data

Transcriptomic datasets commonly profile the expression of over 20,000 genes (P) across fewer than 100 samples (N). In this high-dimensional space, data points become sparse, making analysis and visualization computationally challenging and mathematically unstable. Dimensionality reduction techniques like PCA are essential to mitigate these effects [7].

The Role of PCA in Gene Expression Analysis

PCA transforms the original high-dimensional data into a new set of uncorrelated variables, the principal components (PCs), which are linear combinations of the original genes. The first PC captures the direction of maximum variance in the data, with each subsequent component capturing the next highest variance under the constraint of orthogonality [31] [30]. This transformation is pivotal for:

- Exploratory Data Analysis (EDA): Assessing data quality, identifying batch effects, and detecting sample outliers [21] [29].

- Visualization: Projecting samples into a 2D or 3D space defined by the top PCs to visualize inherent clustering or trends [30] [32].

- Downstream Analysis: Providing a de-noised, lower-dimensional representation for clustering or as input to non-linear dimensionality reduction techniques like t-SNE and UMAP [29] [32].

Experimental Protocol: Data Preparation Workflow

The following workflow outlines the standardized procedure for preparing a gene expression count matrix for PCA. The accompanying diagram visualizes this multi-stage process.

Protocol 3.1: Data Curation and Initial Quality Control

Objective: To filter the raw count matrix for low-quality samples and uninformative genes.

- Remove Low-Quality Samples: Calculate the total library size (sum of counts) per sample. Exclude samples with an extremely low library size, which may indicate failed libraries. The specific threshold is experiment-dependent.

- Filter Lowly Expressed Genes: Remove genes with zero or near-zero counts across the majority of samples. A common filter is to keep only genes with a count per million (CPM) above a threshold (e.g., 1 CPM) in at least

nsamples, wherenis the size of the smallest experimental group. - Address Missing Data: Identify genes with missing values. Common strategies include imputation using methods like

scikit-learn'sSimpleImputeror removal of genes with excessive missingness.

Protocol 3.2: Data Normalization

Objective: To remove technical variation (e.g., sequencing depth, sample-specific biases) while preserving biological signal.

Normalization is a critical step, and the choice of method can significantly impact the PCA results and their biological interpretation [21]. The table below summarizes common and advanced methods.

Table 1: Overview of Gene Expression Data Normalization Methods

| Method Category | Specific Method | Key Principle | Suitability for PCA |

|---|---|---|---|

| Library Size Scaling | Total Count | Scales counts by total library size per sample. | Basic method; can be confounded by highly expressed genes. |

| Reference Gene-Based | Housekeeping Genes [33] | Uses stably expressed internal reference genes for scaling. | Dependent on validated, stable reference genes for the tissue/condition. |

| Global Mean (GM) [33] | Uses the global mean expression of all profiled genes as a baseline. | Effective when profiling >55 genes; outperforms multiple RGs in some studies [33]. | |

| Non-Differentially Expressed Genes (NDEG) [34] | Uses genes identified as statistically non-differential (high p-value from ANOVA) for scaling. | Improves cross-platform (microarray/RNA-seq) model performance [34]. | |

| Statistical Modeling | SCTransform | Uses a regularized negative binomial model. | May remove spatial domain signals in spatial transcriptomics [35]. |

| Spatially-Aware | SpaNorm [35] | A GLM-based method that concurrently models and segregates library size effects from biology using spatial information. | Superior for spatial transcriptomics; retains spatial domain information and improves SVG detection [35]. |

Procedure:

- Select a Method: Choose a normalization method appropriate for your data type (e.g., bulk RNA-seq, spatial transcriptomics) and experimental design.

- Apply Normalization: Use dedicated software packages (e.g.,

scikit-learn,Rpackages, or specialized tools like SpaNorm) to apply the chosen normalization, transforming the raw count matrix.

Protocol 3.3: Feature Selection for Dimensionality Reduction

Objective: To reduce noise and computational load by selecting a subset of informative genes for PCA.

- Variance-Based Selection: A common approach is to select the top

Ngenes (e.g., 1000-5000) with the highest variance across samples. This targets genes that are most likely to contribute to the separation of samples in the PCA space. - Differentially Expressed Genes (DEGs): Alternatively, use statistical tests (e.g., ANOVA [34]) to identify genes significantly associated with the experimental conditions. Using DEGs for PCA can sharpen the separation of pre-defined groups.

Protocol 3.4: Data Scaling

Objective: To standardize the data so that each gene contributes equally to the PCA, preventing highly expressed genes from dominating the principal components.

- Standardization (Z-score): For each gene, subtract the mean expression and divide by the standard deviation. This results in all genes having a mean of 0 and a standard deviation of 1.

- Implementation: Use

StandardScalerfromscikit-learn.

- Implementation: Use

- Apply Scaling: Fit the scaler on the normalized and filtered matrix and transform the data. The output is the final, pre-processed matrix ready for PCA.

The Scientist's Toolkit

Table 2: Essential Research Reagents and Computational Tools

| Item / Software | Function / Purpose | Example / Note |

|---|---|---|

| Reference Genes (RGs) | Endogenous controls for normalizing qPCR or RNA-seq data. | Must be validated for stability. RPS5, RPL8, HMBS are stable in canine GI tissue [33]. |

| Non-Differentially Expressed Genes (NDEGs) | A set of stable genes identified from the dataset itself for normalization. | Selected based on high p-value (e.g., p>0.85) from ANOVA [34]. |

| scikit-learn (Python) | A comprehensive machine learning library. | Provides PCA, StandardScaler, and various utilities for the entire workflow. |

| Single-Cell / Spatial Packages | Specialized tools for modern transcriptomics data. | scran [35] for scRNA-seq; SpaNorm [35] for spatial transcriptomics. |

| Stable Algorithms (e.g., IRLBA) | Memory-efficient and fast PCA implementations for large datasets. | Crucial for large-scale single-cell RNA-sequencing data [29]. |

Anticipated Results and Interpretation

After applying PCA to the prepared matrix, the following outputs are standard:

- Explained Variance Ratio: The proportion of the total variance captured by each principal component. This is used to determine the number of components to retain, often by looking for an "elbow" in a scree plot or by retaining enough components to explain a set threshold (e.g., 95%) of the variance [31] [30].

- PCA Loadings: The coefficients (

pca.components_inscikit-learn) representing the contribution of each original gene to each principal component. High-loading genes drive the separation seen along that PC and can be biologically interpreted. - PCA Scores: The coordinates of the samples in the new PC space (

pca.transform()). These are plotted to visualize sample clustering.

A well-prepared matrix should yield a PCA plot where biological replicates cluster together, and separation between experimental groups aligns with the hypothesis. Conversely, strong batch effects or clustering driven by technical artifacts indicates potential issues with the normalization or data curation steps.

In the analysis of high-dimensional gene expression data, Principal Component Analysis (PCA) serves as a fundamental technique for exploratory data analysis and visualization. The reliability of its output, however, is profoundly dependent on appropriate data preprocessing. Normalization, scaling, and centering are not merely optional steps but critical transformations that determine whether PCA reveals true biological signals or amplifies technical artifacts and noise. This application note examines the impact of these preprocessing steps within the context of projecting gene expression data onto principal components, providing structured protocols and analyses for research scientists and drug development professionals.

Research demonstrates that although PCA score plots may appear similar across different normalization methods, the biological interpretation of these models can vary dramatically based on the preprocessing approach used [21]. Without proper centering, PCA captures variance around zero instead of the mean expression, causing highly expressed housekeeping genes to dominate the first principal component regardless of their biological relevance to the experimental conditions [36]. Similarly, without scaling, genes with large numeric ranges and high expression levels can disproportionately influence the analysis, potentially obscuring more meaningful but subtle expression patterns [36].

Background and Key Concepts

The Curse of Dimensionality in Gene Expression Data

Gene expression data from technologies like RNA-sequencing typically manifests in a classic high-dimensional structure where the number of variables (genes, P) vastly exceeds the number of observations (samples, N). It is common to analyze more than 20,000 genes across fewer than 100 samples, creating significant computational and statistical challenges for visualization, analysis, and mathematical operations [7]. This "curse of dimensionality" necessitates effective dimensionality reduction strategies like PCA to make biological interpretation feasible.

The Mathematics of PCA and Preprocessing

PCA operates by identifying orthogonal directions of maximal variance in the dataset, with the first principal component capturing the greatest variance, the second the next greatest, and so on [37]. The algorithm is sensitive to the scale and distribution of variables, making preprocessing decisions fundamental to its output:

- Centering involves subtracting the mean expression of each gene across all samples. This ensures that PCA measures variance around the mean expression rather than around zero, preventing highly expressed genes from dominating components simply due to their abundance rather than biologically relevant variation [36].

- Scaling standardizes the variance of each gene, typically by dividing by the standard deviation after centering (Z-scoring). This gives equal weight to all genes in the analysis, preventing those with naturally large expression ranges from overwhelming the variance structure [36].

Table 1: Critical Preprocessing Steps for PCA

| Processing Step | Mathematical Operation | Impact on PCA | When to Use |

|---|---|---|---|

| Centering | Subtract gene-wise mean | Shifts focus from mean expression to variation around mean | Almost always for gene expression |

| Scaling (Z-score) | (Value - Mean)/Standard Deviation | Equalizes influence of all genes regardless of expression level | When comparing expression patterns across genes |

| Library Size Normalization | Adjust for total sequencing depth (e.g., TMM, CPM) | Enables cross-sample comparison by removing technical variation | Essential for RNA-seq data before centering and scaling |

Normalization Methods for RNA-Seq Data

RNA-sequencing data requires specialized normalization to address technical artifacts including sequencing depth and library composition before conducting PCA. The raw count matrix generated from RNA-seq processing cannot be directly compared between samples because the number of reads mapped to a gene depends not only on its expression level but also on the total number of sequencing reads obtained for that sample [38].

Common Normalization Techniques

- Counts Per Million (CPM): A simple normalization where raw read counts for each gene are divided by the total number of reads in the library, then multiplied by one million. This method corrects for sequencing depth but remains sensitive to highly expressed genes that consume a large fraction of reads [38].

- Trimmed Mean of M-values (TMM): A more sophisticated method that uses a weighted trimmed mean of the log expression ratios to account for differences in library composition [39]. TMM normalization specifically addresses situations where a few highly expressed genes in one condition can skew the representation of other genes [39] [40].

- Median-of-Ratios: Used in DESeq2, this method calculates a reference expression level for each gene and then a size factor for each sample to adjust for sequencing depth while considering library composition [38].

Impact on Downstream PCA

Different normalization methods directly impact the PCA results and their biological interpretation. A comprehensive evaluation of 12 normalization methods for transcriptomics data found that although PCA score plots often appear similar regardless of normalization, the biological interpretation of the models depends heavily on the method applied [21]. Specifically, the choice of normalization affects:

- Model complexity in PCA

- Quality of sample clustering in low-dimensional space

- Gene ranking in the model fit

- Pathway enrichment results during biological interpretation [21]

Table 2: Comparison of RNA-Seq Normalization Methods for PCA

| Method | Sequencing Depth Correction | Library Composition Correction | Impact on PCA | Best Use Cases |

|---|---|---|---|---|

| CPM | Yes | No | May be dominated by highly expressed genes; can distort sample relationships | Initial exploration, but not recommended for final analysis |

| TMM | Yes | Yes | Reduces composition bias; improves biological signal recovery | Differential expression analysis; multi-tissue comparisons [40] |

| Median-of-Ratios | Yes | Yes | Robust to expression shifts; improves cluster separation | Differential expression analysis with DESeq2 |

| TPM | Yes | Partial | Adjusts for gene length; reduces composition bias | Within-sample comparisons and visualizations |

Experimental Protocols

Comprehensive Workflow for RNA-Seq Normalization and PCA

The following workflow outlines a robust methodology for preprocessing RNA-seq data prior to PCA, incorporating quality control, normalization, and batch effect correction.

Workflow: RNA-Seq Preprocessing for PCA

Protocol 1: TMM Normalization with Centering and Scaling

This protocol details the specific steps for implementing TMM normalization followed by centering and scaling prior to PCA, adapted from the GTEx_Pro pipeline [40].

Materials and Reagents

- Raw count matrix from RNA-seq alignment/quantification

- R statistical software (version 4.0 or higher)

- edgeR package for TMM normalization

- Metadata file containing sample information

Methodology

Data Input and Filtering

- Import raw count data into R using

read.table()orfread() - Filter out genes with low expression: Remove genes with counts per million (CPM) < 1 in at least X samples, where X equals the smallest group size

- This step reduces noise and computational complexity

- Import raw count data into R using

TMM Normalization

- Create a DGEList object using

edgeR::DGEList() - Calculate normalization factors:

calcNormFactors(object, method = "TMM") - The TMM method trims extreme log-fold-changes and absolute expression levels (default: trim 30% of M values and 5% of A values) to robustly estimate scaling factors [39]

- Create a DGEList object using

CPM Transformation

- Apply

edgeR::cpm()function with log = FALSE to convert normalized counts to counts per million scale - This standardizes for sequencing depth across samples

- Apply

Batch Effect Correction (Optional but Recommended)

- Identify batch effects using surrogate variable analysis (SVA) with the

svapackage - Include known batch variables and surrogate variables in the normalization model

- The GTEx_Pro pipeline demonstrated that SVA following TMM+CPM normalization substantially improves tissue-specific clustering in PCA [40]

- Identify batch effects using surrogate variable analysis (SVA) with the

Centering and Scaling

- Center the data: Subtract the mean expression for each gene across all samples

- Scale the data: Divide by the standard deviation for each gene

- This can be accomplished in R using the

scale()function

Principal Component Analysis

- Perform PCA using the

prcomp()function in R - Visualize results using score plots and loading plots

- Evaluate the proportion of variance explained by each component

- Perform PCA using the

Protocol 2: Evaluation of Normalization Impact

This protocol provides a systematic approach to evaluate how different normalization methods affect PCA outcomes.

Experimental Design

- Apply multiple normalization methods (CPM, TMM, Median-of-Ratios) to the same raw count matrix

- Perform PCA on each normalized dataset

- Compare results using both quantitative metrics and qualitative assessments

Evaluation Metrics

Cluster Quality Metrics

- Calculate the Davies-Bouldin Index (DBI) to assess separation and compactness of sample clusters in PCA space

- Lower DBI values indicate better clustering [40]

Variance Explained

- Compare the proportion of variance captured by the first several principal components across normalization methods

- Note that normalization often reduces the total variance explained by early PCs by diminishing the influence of technical artifacts

Biological Concordance

- Assess whether known biological groups (e.g., tissue types, treatment conditions) separate appropriately in PCA space

- Validate with established housekeeping genes and tissue-specific markers [40]

Case Study: Multi-Tissue Gene Expression Analysis

The GTEx_Pro pipeline provides a compelling case study on the importance of robust normalization for PCA of gene expression data across multiple tissues [40].

Methods and Implementation

Researchers analyzed GTEx v8 RNA-sequencing data comprising 54 tissues with 17,235 total samples. The pipeline implemented:

- TMM + CPM normalization to address library size differences and compositional biases

- Surrogate Variable Analysis (SVA) to correct for batch effects related to donor demographics and tissue processing

- PCA to visualize sample relationships before and after processing

Results and Interpretation

- Raw Data PCA: 3D PCA of raw count data showed substantial overlap between different tissues, with clustering driven primarily by technical variation rather than biological signal [40]

- After TMM + CPM Normalization: PCA revealed pronounced tissue-specific clustering, with biologically related tissues (e.g., heart chambers, skin regions) grouping together

- After SVA Correction: Further improvement in tissue separation with increased Euclidean distances between distinct tissue clusters

- Quantitative Improvement: The Davies-Bouldin index decreased after SVA correction, indicating better clustering quality, while overall variance in the first two principal components increased by 1.5% [40]

The Scientist's Toolkit

Table 3: Essential Tools for RNA-Seq Normalization and PCA

| Tool/Resource | Function | Application Context |

|---|---|---|

| edgeR | TMM normalization implementation | RNA-seq data normalization prior to differential expression and PCA |

| DESeq2 | Median-of-ratios normalization | Alternative normalization approach with robust composition adjustment |

| SVA Package | Batch effect correction | Identifying and adjusting for surrogate variables representing batch effects |

| FastQC | Quality control assessment | Initial evaluation of raw sequencing data quality |

| GTEx_Pro Pipeline | Integrated normalization workflow | Comprehensive processing for multi-tissue gene expression data [40] |

Preprocessing decisions, particularly normalization, centering, and scaling, fundamentally shape the results of Principal Component Analysis for gene expression data. The systematic evaluation of normalization methods demonstrates that while visual similarities may exist in PCA projections across methods, the biological interpretation varies significantly [21]. Researchers should select normalization approaches based on their specific data characteristics and biological questions, implement careful centering and scaling to focus on biologically relevant variation, and always validate their preprocessing choices through quantitative metrics and biological plausibility checks. The provided protocols offer a robust foundation for generating reliable, interpretable PCA results from high-dimensional gene expression data.

Principal Component Analysis (PCA) is a foundational dimensionality reduction technique in computational biology, indispensable for analyzing high-dimensional gene expression data. By transforming vast datasets into a lower-dimensional space defined by principal components, PCA facilitates the identification of patterns, outliers, and key sources of biological variation. This protocol provides a detailed, step-by-step guide for implementing PCA in R using the prcomp() function, with a specific focus on applications in genomic research. We cover the complete workflow—from data preprocessing and model fitting to the interpretation and projection of new data—enabling researchers and drug development professionals to efficiently extract meaningful insights from complex transcriptomic profiles.

The analysis of gene expression data, such as from RNA-seq experiments, presents a classic challenge of high dimensionality. A typical dataset contains expression levels for thousands of genes (variables, P) across far fewer samples or cells (observations, N), a scenario often described as the "curse of dimensionality" [7]. This P≫N situation complicates visualization, increases computational cost, and risks model overfitting.

PCA addresses this by performing a linear transformation of the original correlated variables (gene expression levels) into a new set of uncorrelated variables called principal components (PCs). These PCs are ordered such that the first few capture the greatest proportion of variance present in the original dataset [37]. In genomics, this allows researchers to:

- Visualize major sample groupings or gradients (e.g., by cell type, treatment, or disease state) in 2D or 3D plots.

- Identify technical artifacts or batch effects that dominate data variance.

- Reduce noise by focusing downstream analyses (like clustering) on the most informative components.

- Prepare data for further machine learning tasks by creating a lower-dimensional, denoised representation.

The prcomp() function in R is a preferred method for PCA due to its numerical stability, ease of use, and direct integration into the bioinformatics ecosystem [41] [42].

Materials: The Researcher's Toolkit

Software and Computing Environment

| Resource | Specification | Function |

|---|---|---|

| R Programming Language | Version 4.0.0 or higher | Provides the core computational environment for statistical analysis and execution of the prcomp() function [41]. |

| RStudio IDE | (Recommended) | Offers an integrated development environment for R, facilitating script writing, visualization, and data management. |

| Core R Packages | stats (includes prcomp) |

Performs the principal component analysis [41] [42]. |

| Visualization Packages | ggplot2, factoextra |

Creates high-quality static plots, including scree plots and PCA scatter plots [43]. |

| Hardware | Minimum 8GB RAM | Sufficient memory is critical for handling large gene expression matrices, which can exceed several gigabytes in size. |

Data Requirements

A gene expression data matrix is the primary input. The data should be structured as an N x P matrix, where rows (N) represent individual observations (e.g., cells, patient samples), and columns (P) represent variables (e.g., measured gene expression levels) [7]. The matrix must contain numeric values only (e.g., normalized read counts, FPKM, TPM). Categorical metadata (e.g., sample species, treatment group) is stored separately and used for coloring points in visualizations.

Methodology

The following diagram illustrates the complete PCA workflow for gene expression analysis, from raw data to the projection of new samples.

Protocol: A Step-by-Step Guide

This section provides a detailed, hands-on protocol for performing PCA on a typical gene expression dataset.

Step 1: Data Preprocessing and Preparation

Proper preprocessing is critical for a biologically meaningful PCA.

Load Data: Begin by reading your gene expression matrix into R. The matrix should have genes as columns and samples as rows.

Subset and Clean: Ensure the data contains only numeric values. Remove any non-numeric columns (e.g., gene symbols should be row names, not a separate column).

Center and Scale: Center the data (subtract the mean of each gene) to ensure the first PC captures the direction of maximum variance, not the mean. Scaling (dividing by the standard deviation of each gene) is equally important, as it gives all genes equal weight. This prevents highly expressed genes from dominating the PCs purely due to their magnitude [43].

Step 2: Performing PCA withprcomp()

Execute the PCA using the prcomp() function from the stats package.

Note: The center and scale. parameters are set to FALSE because we have already performed these operations in the previous step [41].

Step 3: Interpreting the PCA Output

The pca_result object contains several key elements [41]:

sdev: The standard deviations of the principal components. Squaring these gives the eigenvalues (variances).rotation: The matrix of variable loadings (eigenvectors). Each column corresponds to a PC and indicates the contribution (weight) of each original gene to that PC.x: The coordinates of the original data projected into the principal component space (the PCA scores).

Use summary() to obtain a quick overview of the importance of each component.

The following table quantifies the importance of the first five principal components from a hypothetical gene expression analysis, showing the cumulative variance reaching over 80%.

Table 1: Importance of Principal Components in a Hypothetical Gene Expression Dataset