From Patterns to Pathways: A Practical Guide to Integrating Heatmap Findings with Functional Enrichment Analysis

This article provides a comprehensive guide for researchers and bioinformaticians on integrating heatmap visualization with functional enrichment analysis to extract robust biological meaning from complex omics data.

From Patterns to Pathways: A Practical Guide to Integrating Heatmap Findings with Functional Enrichment Analysis

Abstract

This article provides a comprehensive guide for researchers and bioinformaticians on integrating heatmap visualization with functional enrichment analysis to extract robust biological meaning from complex omics data. It covers foundational concepts of heatmap clustering and enrichment principles, details step-by-step methodologies using current tools like Functional Heatmap, clusterProfiler, and Cytoscape, and addresses common troubleshooting scenarios. By exploring advanced validation techniques and comparative frameworks for multi-omics data, this resource empowers scientists in drug development and biomedical research to move beyond simple visualization towards mechanistic insight and hypothesis generation, ultimately accelerating discovery in functional genomics and translational medicine.

Decoding the Language of Data: Core Principles of Heatmaps and Functional Enrichment

Heatmaps are powerful graphical representations that depict values for a main variable of interest across two axis variables as a grid of colored squares [1]. The axis variables are divided into ranges, and each cell's color indicates the value of the main variable in the corresponding cell range, creating an intuitive visual summary of complex data patterns [1]. In scientific research, particularly in drug development and functional genomics, heatmaps enable researchers to visualize relationships between experimental conditions, gene expression patterns, protein interactions, and other multidimensional data crucial for integrating heatmap findings with functional enrichment results.

The fundamental components of a heatmap include:

- Axis Variables: Represent the two dimensions being analyzed (e.g., genes vs. experimental conditions)

- Color Encoding: Uses a color gradient to represent the magnitude of the main variable

- Grid Structure: Organized matrix where each cell corresponds to a specific data point

- Legend: Essential for interpreting how colors map to numeric values [1]

For scientific applications, heatmaps serve as more than simple visualization tools—they provide a framework for identifying patterns, clusters, and outliers in high-dimensional data, forming a critical bridge between raw experimental results and biological interpretation through functional enrichment analysis.

Technical Specifications and Data Requirements

Data Structure Formats

Heatmap data can be structured in two primary formats, each with distinct advantages for research applications:

Matrix Format: Data is organized in a two-dimensional grid where rows typically represent features (e.g., genes, proteins) and columns represent samples or experimental conditions. This format is ideal for direct visualization and is computationally efficient for large datasets [1].

Three-Column Format: Each cell in the heatmap is associated with one row in a data table, where the first two columns specify the 'coordinates' of the heatmap cell, and the third column indicates the cell's value [1]. This long-form structure is particularly useful for sparse data or when working with statistical software for advanced analysis.

Color Interpretation Standards

Proper color selection is fundamental to accurate heatmap interpretation. The table below outlines standard color palettes and their appropriate applications in scientific research:

Table: Color Palette Specifications for Scientific Heatmaps

| Palette Type | Color Sequence | Research Application | Data Characteristics |

|---|---|---|---|

| Sequential | Single color increasing in intensity (e.g., light to dark blue) | Gene expression levels, Protein abundance | Unidirectional data (low to high) |

| Diverging | Two contrasting colors with neutral midpoint (e.g., blue-white-red) | Fold-change, Correlation coefficients, Z-scores | Data with meaningful center point |

| Qualitative | Distinct, unrelated colors | Categorical data, Sample groups | Non-ordered categories |

The accessibility and interpretability of heatmaps depend heavily on color contrast. Web Content Accessibility Guidelines (WCAG) recommend a minimum contrast ratio of 3:1 for graphical components [2] [3]. This is particularly important when presenting research findings to ensure that all viewers, including those with color vision deficiencies, can accurately interpret the data.

Clustering Methodologies and Algorithms

Clustering Fundamentals

Clustered heatmaps represent an advanced variation where both rows and columns are reordered based on similarity patterns, creating associations between both the data points and their features [1]. This technique enables researchers to identify which experimental samples are similar to each other and which measured variables demonstrate correlated patterns, with profound implications for identifying functional relationships in omics data.

The core clustering process involves:

- Distance Calculation: Computing pairwise distances between all rows and all columns using metrics such as Euclidean, Manhattan, or correlation distance

- Linkage Method: Determining how distances between clusters are calculated (complete, average, or single linkage)

- Tree Formation: Creating dendrograms that visually represent the clustering hierarchy

- Reordering: Rearranging rows and columns based on the clustering results to group similar elements together

Experimental Protocol: Hierarchical Clustering for Gene Expression Analysis

Purpose: To identify groups of co-expressed genes and similar experimental conditions in transcriptomics data.

Materials and Reagents:

- Normalized gene expression matrix (genes × samples)

- Computational environment (R, Python, or specialized tools)

- Clustering algorithms (hierarchical clustering with multiple linkage options)

- Distance metric options (Euclidean, correlation-based)

Methodology:

- Data Preprocessing: Apply appropriate normalization to remove technical artifacts and ensure comparability across samples. Log-transform count data for RNA-seq experiments to stabilize variance.

Distance Matrix Computation:

- For gene-based clustering: Calculate pairwise distances between all genes across all samples using selected distance metric.

- For sample-based clustering: Calculate pairwise distances between all samples across all genes.

Clustering Execution:

- Apply hierarchical clustering using the calculated distance matrices.

- Implement multiple linkage methods (ward.D, complete, average) to compare results.

- Generate dendrograms to visualize clustering relationships.

Optimal Cluster Determination:

- Apply gap statistic or elbow method to identify appropriate number of clusters.

- Cut dendrogram at determined height to assign cluster membership.

Visualization Integration:

- Create heatmap with dendrograms displaying both gene and sample clustering.

- Annotate with sample metadata and gene set information.

- Implement color scaling that accurately represents expression dynamics.

Clustering Workflow: Standard hierarchical clustering pipeline for genomic data.

Color Interpretation and Quantitative Analysis

Color Scale Selection Criteria

The interpretation of heatmaps depends critically on understanding the color scale and mapping between colors and values [4]. Scientific heatmaps typically employ two primary gradient types:

Sequential Gradients use a single color that increases in intensity from light to dark, ideal for displaying data that progresses from low to high values, such as gene expression levels, protein concentrations, or phosphorylation states [4]. These gradients assume a directional relationship where one extreme is biologically more significant than the other.

Diverging Gradients utilize two contrasting colors with a neutral midpoint (often white or yellow), perfect for highlighting deviation from a reference value, such as fold-changes, z-scores, or correlation coefficients [4]. This approach effectively visualizes both positive and negative deviations, which is crucial for identifying up-regulated and down-regulated biological processes.

Experimental Protocol: Color Optimization for Scientific Visualization

Purpose: To establish a color scheme that accurately represents quantitative relationships while maintaining accessibility for all viewers, including those with color vision deficiencies.

Materials:

- Experimental dataset with known value ranges

- Color palette tools (ColorBrewer, Viridis)

- Contrast checking software (WebAIM Contrast Checker)

- Multiple display devices for validation

Methodology:

- Data Range Assessment:

- Determine minimum, maximum, and critical threshold values in the dataset

- Identify whether data distribution is symmetric or skewed

- Establish biologically meaningful breakpoints for color transitions

Palette Selection:

- For sequential data: Choose perceptually uniform colormaps that maintain consistent visual weight across the value range

- For diverging data: Select two hues with sufficient lightness difference to distinguish positive and negative values

- Avoid rainbow colormaps due to non-linear perceptual characteristics [4]

Accessibility Validation:

- Verify that all color transitions meet minimum 3:1 contrast ratio for graphical elements [2]

- Test visualization using color blindness simulators

- Ensure legibility under various lighting conditions and display types

Quantitative Accuracy Assessment:

- Conduct user studies to evaluate interpretation accuracy

- Compare numerical estimates from color decoding across multiple observers

- Optimize legend design to facilitate precise value estimation

Table: Color Interpretation Guidelines for Scientific Communications

| Color Scheme | Value Representation | Biological Application | Accessibility Considerations |

|---|---|---|---|

| Viridis | Sequential luminance progression | RNA-seq expression values | Colorblind-safe, perceptually uniform |

| Red-Blue Diverging | Negative-zero-positive continuum | Fold change visualization | Problematic for colorblind users |

| Magma/Plasma | High-contrast sequential | Feature importance scores | Good luminance progression |

| Custom Qualitative | Distinct categorical groups | Sample type annotation | Minimum 3:1 contrast between adjacent colors |

Integration with Functional Enrichment Analysis

Analytical Framework

The integration of heatmap findings with functional enrichment results represents a critical workflow in systems biology and drug discovery. This approach connects observed patterns in high-dimensional data (e.g., gene expression clusters) with biological meaning through established knowledge bases such as GO, KEGG, and Reactome.

The analytical pipeline involves:

- Pattern Identification: Using clustered heatmaps to identify groups of co-regulated genes, proteins, or metabolites

- Cluster Extraction: Isolating feature sets from distinct heatmap clusters

- Functional Enrichment: Statistically testing extracted feature sets for over-representation in biological pathways, processes, or functions

- Interpretive Synthesis: Relating pattern characteristics to biological mechanisms and therapeutic hypotheses

Experimental Protocol: Integrated Heatmap and Enrichment Analysis

Purpose: To establish a reproducible workflow connecting heatmap-derived clusters with functional enrichment results for biological interpretation.

Materials:

- Omics data matrix (e.g., transcriptomics, proteomics)

- Functional annotation databases (GO, KEGG, Reactome)

- Statistical analysis environment (R/Bioconductor, Python)

- Visualization tools (ComplexHeatmap, clustermap)

Methodology:

- Comprehensive Heatmap Analysis:

- Perform quality control and appropriate normalization of raw data

- Execute bidirectional clustering (samples and features) using multiple distance metrics

- Identify robust clusters through consensus clustering approaches

- Document cluster characteristics and distinguishing patterns

Cluster-Based Functional Enrichment:

- Extract feature lists from each significant cluster

- Perform over-representation analysis using hypergeometric test or Fisher's exact test

- Apply multiple testing correction (Benjamini-Hochberg FDR control)

- Calculate enrichment scores and significance values

Results Integration and Visualization:

- Create integrated visualization displaying both heatmap clusters and enrichment results

- Implement side annotations to indicate functional assignments

- Develop interactive capabilities for exploring cluster-function relationships

Heatmap-Enrichment Integration: Workflow for connecting clustering results with biological context.

Research Reagent Solutions and Computational Tools

Table: Essential Research Reagents and Computational Tools for Heatmap Analysis

| Category | Specific Tool/Reagent | Research Application | Key Features |

|---|---|---|---|

| Bioinformatics Platforms | R/Bioconductor | Comprehensive statistical analysis | ComplexHeatmap, pheatmap, heatmap.2 packages for advanced customization |

| Bioinformatics Platforms | Python SciPy/Matplotlib | Computational biology workflows | clustermap function in seaborn, extensive statistical libraries |

| Commercial Analytics | VWO Insights | Web analytics and user behavior | Clickmaps, scrollmaps, rage click identification [5] |

| Commercial Analytics | Hotjar | User experience research | Anonymous visitor tracking, session recording [6] |

| Commercial Analytics | FullSession | Behavioral analytics | Session recordings, funnel analysis, customer feedback [6] |

| Functional Annotation | Gene Ontology (GO) | Biological process enrichment | Standardized vocabulary, hierarchical structure |

| Functional Annotation | KEGG PATHWAY | Pathway mapping and analysis | Curated pathway diagrams, disease associations |

| Functional Annotation | MSigDB | Gene set enrichment analysis | Curated collections, computational signatures |

Comparative Analysis of Heatmap Applications

Methodological Comparison

Different research questions demand specific heatmap configurations and analytical approaches. The table below compares primary heatmap types used in scientific research:

Table: Heatmap Typology and Research Applications

| Heatmap Type | Data Structure | Research Context | Interpretation Focus |

|---|---|---|---|

| Clustered Heatmap | Feature × sample matrix | Genomic profiling, Drug response studies | Identification of co-regulated feature groups and sample subtypes |

| Correlation Heatmap | Pairwise correlation matrix | Network analysis, Functional relationships | Detection of positively/negatively associated variable pairs [4] |

| Time Series Heatmap | Temporal × condition matrix | Longitudinal studies, Treatment kinetics | Pattern progression over time and across conditions [5] |

| Cohort Analysis Heatmap | Cohort × time point matrix | Patient stratification, Clinical outcomes | Retention patterns, subgroup behavior over time [5] |

Validation Frameworks

Robust interpretation of heatmap results requires systematic validation through multiple approaches:

Statistical Validation:

- Apply resampling methods (bootstrapping, jackknifing) to assess cluster stability

- Implement consensus clustering to identify robust patterns across algorithms

- Calculate silhouette widths to quantify cluster cohesion and separation

Biological Validation:

- Test enrichment significance of cluster-derived feature sets in independent datasets

- Verify functional predictions through experimental follow-up

- Correlate cluster assignments with clinical outcomes or phenotypic measurements

Technical Validation:

- Assess sensitivity to normalization methods and data transformation

- Evaluate reproducibility across technical replicates

- Determine robustness to parameter choices in clustering algorithms

Advanced Applications in Drug Development

Heatmap methodologies have evolved into indispensable tools throughout the drug development pipeline, from target identification to clinical biomarker stratification. In preclinical development, clustered heatmaps enable researchers to identify mechanism-of-action signatures by clustering compounds based on transcriptomic or proteomic responses, facilitating drug repositioning and combination therapy design.

In clinical development, heatmaps integrated with functional enrichment analysis support patient stratification efforts by identifying molecular subtypes with distinct therapeutic responses. This approach is particularly valuable in precision oncology, where heatmap visualizations help translate complex molecular profiles into clinically actionable classifications.

The continuing evolution of heatmap methodologies—including interactive visualization, integration with machine learning approaches, and real-time analytical capabilities—promises to further enhance their utility in accelerating therapeutic discovery and development.

Functional enrichment analysis serves as a critical bridge in genomics, connecting statistically significant gene lists with biologically meaningful context. This process transforms inert lists of differentially expressed genes into functional insights about underlying biological processes, molecular functions, and cellular components. Researchers across diverse fields—from basic molecular biology to applied drug development—routinely employ these methods to extract meaning from high-throughput experimental data. The fundamental challenge lies not merely in identifying enriched terms but in accurately interpreting the resulting functional profiles, which often contain dozens or hundreds of overlapping biological categories.

The field has evolved substantially from early methods that focused primarily on statistical over-representation. While Gene Set Enrichment Analysis (GSEA) and over-representation analysis (ORA) remain cornerstone approaches, recent computational advances have introduced more sophisticated frameworks that address critical limitations in interpretation, sensitivity, and visualization. These newer methods particularly excel at handling the coordinated but subtle expression changes that characterize complex biological phenomena and at integrating quantitative enrichment metrics with visual analytics to support biological discovery. This guide provides an objective comparison of current methodologies, focusing on their performance characteristics, interpretive capabilities, and applicability to different research scenarios in functional genomics.

Performance Comparison of Functional Enrichment Tools

Selecting an appropriate functional enrichment tool requires careful consideration of multiple performance metrics. The table below summarizes key characteristics of recently developed methods based on published experimental evaluations.

Table 1: Performance Comparison of Functional Enrichment Tools

| Tool | Primary Methodology | Key Advantages | Computational Efficiency | Interpretive Output |

|---|---|---|---|---|

| GOREA | Combined binary cut & hierarchical clustering | Incorporates GO hierarchy; ranks clusters by quantitative metrics (NES/overlap) | ~2.88s clustering + ~9.98s representative terms | Heatmap with broad GO terms & cluster representatives |

| FRoGS | Deep learning functional representation | Captures weak pathway signals; superior sensitivity for sparse gene sets | Moderate (neural network processing) | Functional similarity scores; 2D projection visualizations |

| DMEA | Adapted GSEA for drug mechanisms | Groups drugs by MOA; increases on-target signal | Fast (GSEA-based algorithm) | Volcano plots; mountain plots for MOA enrichment |

| simplifyEnrichment | Binary cut clustering | Standard approach for GO term simplification | ~1.01s clustering + ~118s word clouds | Word clouds for cluster representation |

Recent benchmarking studies demonstrate that GOREA provides a substantial improvement over existing approaches by integrating quantitative metrics directly into the interpretation workflow [7]. Its combined clustering approach demonstrates significantly lower difference scores than binary cut methods (Wilcoxon signed-rank test, P = 3.47e−07), indicating improved clustering precision [8]. In practical applications, GOREA successfully identified distinct immune-related clusters such as "defense response to other organism," "response to cytokine," and "antigen processing and presentation of peptide antigen," while previous methods grouped these into a single, broad cluster [8].

For weak signal detection, FRoGS significantly outperforms traditional identity-based methods, particularly when pathway signals are sparse [9]. In simulation studies with weak signals (λ = 5 pathway genes), FRoGS maintained superior performance while Fisher's exact test—representing popular gene identity-based similarity measurements—demonstrated markedly reduced sensitivity [9]. This capability makes FRoGS particularly valuable for analyzing gene signatures derived from emerging single-cell technologies or rare cell populations.

Experimental Protocols and Methodologies

GOREA Clustering and Interpretation Workflow

The GOREA methodology employs a structured approach to overcome the fragmentation and generality that often limits biological interpretation of enrichment results.

Table 2: Key Research Reagent Solutions for Functional Enrichment

| Research Reagent | Function in Analysis | Application Context |

|---|---|---|

| ComplexHeatmap R Package | Visualizes clustered enrichment results | GOREA output visualization |

| GOxploreR R Package | Provides GO term hierarchy levels | Representative term identification in GOREA |

| Wallenius Noncentral Hypergeometric | Accounts for selection bias in target genes | Regulatory element analysis in GeneCodis4 |

| siamese Neural Network | Computes similarity between signature vectors | FRoGS compound-target prediction |

Experimental Protocol for GOREA Evaluation:

- Input Preparation: Significant GO Biological Process terms with either overlap proportion or normalized enrichment score (NES)

- Clustering Phase:

- Apply combined binary cut and hierarchical clustering

- Calculate difference scores to evaluate cluster separation

- Representative Term Identification:

- Identify highest-level common ancestor terms encompassing subsets of input GOBP terms

- Repeat for remaining terms not covered by initially identified representatives

- Visualization & Ranking:

- Generate heatmap with representative terms using ComplexHeatmap

- Sort clusters by average gene overlap proportion or NES

- Add panel of broad GOBP terms labeled by percentage of included child terms

This protocol was applied to immune-related data and cancer hallmark gene sets, demonstrating GOREA's ability to capture specific biological processes with enhanced interpretability compared to existing tools [7] [8]. The computational efficiency of the approach enables researchers to perform iterative optimization more effectively, accelerating the biological interpretation workflow.

FRoGS Functional Representation Methodology

The FRoGS approach addresses a fundamental limitation in traditional gene signature comparisons: the treatment of genes as independent identifiers without considering their functional relationships.

Experimental Protocol for FRoGS Evaluation:

- Gene Embedding Training:

- Map individual human genes to high-dimensional coordinates encoding functions

- Train deep learning model to assign coordinates based on GO annotations and ARCHS4 expression correlations

- Signature Vector Generation:

- Aggregate vectors for individual gene members into single signature vector

- Similarity Assessment:

- Implement Siamese neural network to compute similarity between perturbation signatures

- Performance Validation:

- Simulate experimentally derived signature pairs with varying pathway signal strength (λ values)

- Compare with state-of-the-art methods (OPA2Vec, Gene2vec, clusDCA, Fisher's exact test)

- Evaluate using one-sided Wilcoxon signed-rank test across 460 Reactome pathways

This methodology demonstrated that FRoGS remained superior across the entire range of λ values, particularly under conditions of weak pathway signals where traditional gene identity-based algorithms struggle [9]. The approach effectively functions as a "word2vec for bioinformatics," capturing functional similarities between genes that share biological roles despite different identities.

Integration of Heatmap Visualization with Enrichment Analysis

The integration of advanced visualization techniques represents a significant advancement in functional enrichment interpretation. GOREA specifically addresses this need through its implementation of hierarchical clustering results combined with quantitative enrichment metrics. The tool generates a comprehensive visual output that includes both the clustered heatmap of enrichment terms and a panel of broad GOBP terms that provide biological context at multiple levels of specificity [8].

This visualization approach enables researchers to simultaneously observe:

- Global patterns across all enriched terms through the heatmap structure

- Cluster-specific biology through representative terms for each cluster

- Quantitative prioritization through sorting based on NES or gene overlap proportions

- Hierarchical context through the broad GO term panel

The method stands in contrast to earlier approaches like simplifyEnrichment, which produced fragmented keyword representations that often failed to capture specific biological context [7] [8]. By incorporating the underlying GO hierarchy directly into the visualization, GOREA maintains the biological relationships between terms while reducing redundancy in the output.

Applications in Drug Discovery and Development

Functional enrichment methodologies have found particularly valuable applications in drug discovery, where understanding mechanism of action and detecting subtle biological effects is critical. The Drug Mechanism Enrichment Analysis (DMEA) approach adapts the GSEA algorithm to group drugs with shared mechanisms of action, then evaluates their collective enrichment in drug sensitivity profiles or perturbagen signatures [10].

Experimental Protocol for DMEA Application:

- Drug List Preparation: Rank-ordered drugs from sensitivity scores, perturbagen signatures, or molecular classification

- MOA Set Definition: Group drugs by annotated mechanism of action (minimum 6 drugs per MOA)

- Enrichment Analysis:

- Calculate enrichment score as maximum deviation of weighted Kolmogorov-Smirnov-like statistic

- Estimate p-value using empirical permutation test (1000 permutations)

- Compute normalized enrichment score (NES) and false discovery rate (FDR)

- Visualization: Generate volcano plots of all MOA NES and mountain plots for significant MOAs

This approach has demonstrated improved prioritization of drug repurposing candidates by increasing on-target signal and reducing off-target effects compared to individual drug analysis [10]. In validation studies, DMEA successfully identified expected mechanisms of action as well as other relevant MOAs across multiple data types, including drug sensitivity scores from high-throughput cancer cell line screening and molecular classification scores of drug resistance.

The evolving landscape of functional enrichment analysis offers researchers multiple pathways for extracting biological meaning from gene lists. The choice of method depends critically on the specific research context and analytical needs. For traditional gene set enrichment analysis with enhanced interpretation, GOREA provides specific advantages in clustering precision, computational efficiency, and biological interpretability through its integrated visualization approach. For applications involving weak or sparse pathway signals, FRoGS offers superior sensitivity by capturing functional relationships beyond simple gene identity matching. In drug discovery contexts, DMEA enhances prioritization of therapeutic candidates by aggregating signals across drugs with shared mechanisms of action.

Each method addresses specific limitations in earlier approaches: GOREA tackles the fragmentation and generality of clustered enrichment terms; FRoGS overcomes the sparseness problem in experimental gene signatures; and DMEA resolves the interpretation challenges of long candidate drug lists. Together, these tools represent significant advancements in the critical task of transforming gene lists into biological meaning, ultimately accelerating discovery across basic research and translational applications.

Table of Contents

- Introduction: The Analytical Symbiosis

- Comparative Analysis of Integration Methodologies

- Experimental Protocol for Directional Multi-Omics Integration

- Visualizing the Workflow: From Data to Biological Insight

- The Scientist's Toolkit: Essential Research Reagents and Solutions

In modern bioinformatics, particularly in multi-omics studies, heatmaps and functional enrichment analysis are not merely sequential tools but deeply interconnected components of a single analytical engine. Heatmaps provide a powerful, visual summary of complex data matrices, such as gene expression across multiple samples, allowing researchers to instantly identify patterns, clusters, and outliers [11]. These visual patterns, however, gain their true biological meaning when interpreted through the lens of functional enrichment analysis, which maps the identified gene sets to known biological pathways, processes, and functions [12]. This relationship is synergistic: heatmap patterns guide enrichment analysis by highlighting candidate genes of interest, while the results of enrichment analysis provide a functional context that explains and validates the patterns observed in the heatmaps. This guide objectively compares computational frameworks that formalize this synergy, with a focus on methodologies for integrating heterogeneous datasets and adhering to visualization standards that ensure accessibility and clarity for all readers, including those with color vision deficiencies [11].

Comparative Analysis of Integration Methodologies

Various computational methods have been developed to integrate the pattern-detection capabilities of heatmaps with the functional interpretation of enrichment analysis. The table below compares two distinct approaches: one focused on directional gene prioritization and another on optimizing visual contrast for pattern recognition.

Table 1: Comparison of Multi-Omics and Visualization-Focused Integration Methods

| Feature | DPM (Directional P-value Merging) | Accessibility-First Heatmap Optimization |

|---|---|---|

| Primary Objective | Gene prioritization and pathway enrichment from multiple omics datasets using directional constraints [12] | Improving heatmap interpretability and accessibility for users with color vision deficiencies [11] |

| Core Methodology | Statistical fusion of P-values and directional changes (e.g., fold-change signs) based on a user-defined constraints vector [12] | Application of WCAG 2.1 (Level AA) contrast standards (minimum 3:1 for graphics) and use of dual encodings (textures, text, shapes) [11] |

| Key Inputs | Gene/protein P-values and directional signs from multiple omics datasets (e.g., transcriptomics, proteomics) [12] | A data matrix and a color palette that meets contrast requirements, often leveraging dark themes for a wider range of compliant shades [11] |

| Handling of Data Conflict | Penalizes genes with significant but directionally inconsistent changes across datasets [12] | Uses outlines and borders that meet contrast ratios while employing lighter fills to maintain visual focus on key metrics [11] |

| Advantages | Yields detailed mechanistic insights by testing specific directional hypotheses; reduces false-positive findings [12] | Creates visualizations that are usable by a wider audience; improves glanceability by reducing visual noise and focusing attention [11] |

| Limitations | Requires well-defined directional hypotheses and carefully processed upstream statistical data [12] | May require manual color curation and can involve a trade-off between strict contrast compliance and optimal color differentiation [11] |

Experimental Protocol for Directional Multi-Omics Integration

The following protocol details the methodology for employing the DPM framework, as cited in recent research [12], to integrate heatmap-derived patterns from multiple omics datasets into a functionally enriched pathway map.

1. Upstream Data Processing: - Input Data Preparation: Begin with pre-processed omics datasets (e.g., from RNA-Seq, proteomics, DNA methylation arrays). For each dataset, perform the appropriate statistical analysis (e.g., differential expression) to generate two key matrices for every gene or protein: - A P-value matrix indicating the statistical significance of the change. - A directional matrix indicating the sign of the change (e.g., +1 for up-regulation, -1 for down-regulation, based on log fold-change or correlation coefficients) [12]. - Pathway Database Curation: Collect current pathway and gene set information from databases such as Gene Ontology (GO) or Reactome [12].

2. Define Directional Constraints:

- Formulate a Constraints Vector (CV) based on the biological hypothesis or experimental design. This vector defines the expected directional relationship between datasets. For example:

- Integrating mRNA and protein expression under the "central dogma" might use a CV of [+1, +1], seeking genes upregulated at both levels.

- Integrating promoter methylation and mRNA expression might use a CV of [-1, +1], seeking genes with lower methylation (repression) and higher expression [12].

3. Execute Directional P-value Merging (DPM):

- For each gene, compute the directionally weighted score (X_DPM) using the provided formula, which incorporates the P-values, observed directions, and the constraints vector [12].

- Calculate a merged P-value (P'_DPM) for each gene that reflects its joint significance and directional consistency across all input datasets. This step results in a prioritized gene list.

4. Perform Integrated Pathway Enrichment: - Use the merged gene list from DPM as input for a pathway enrichment analysis tool. The cited research uses the ActivePathways method, which employs a ranked hypergeometric test to identify significantly enriched pathways and also identifies which input omics datasets contributed evidence to each enriched pathway [12].

5. Visualize and Interpret Results: - Visualize the final list of enriched pathways as an enrichment map, a network diagram where nodes represent pathways and edges represent shared genes [12]. - This map reveals functional themes and highlights the directional evidence from the original omics datasets, completing the cycle from heatmap pattern to biological insight.

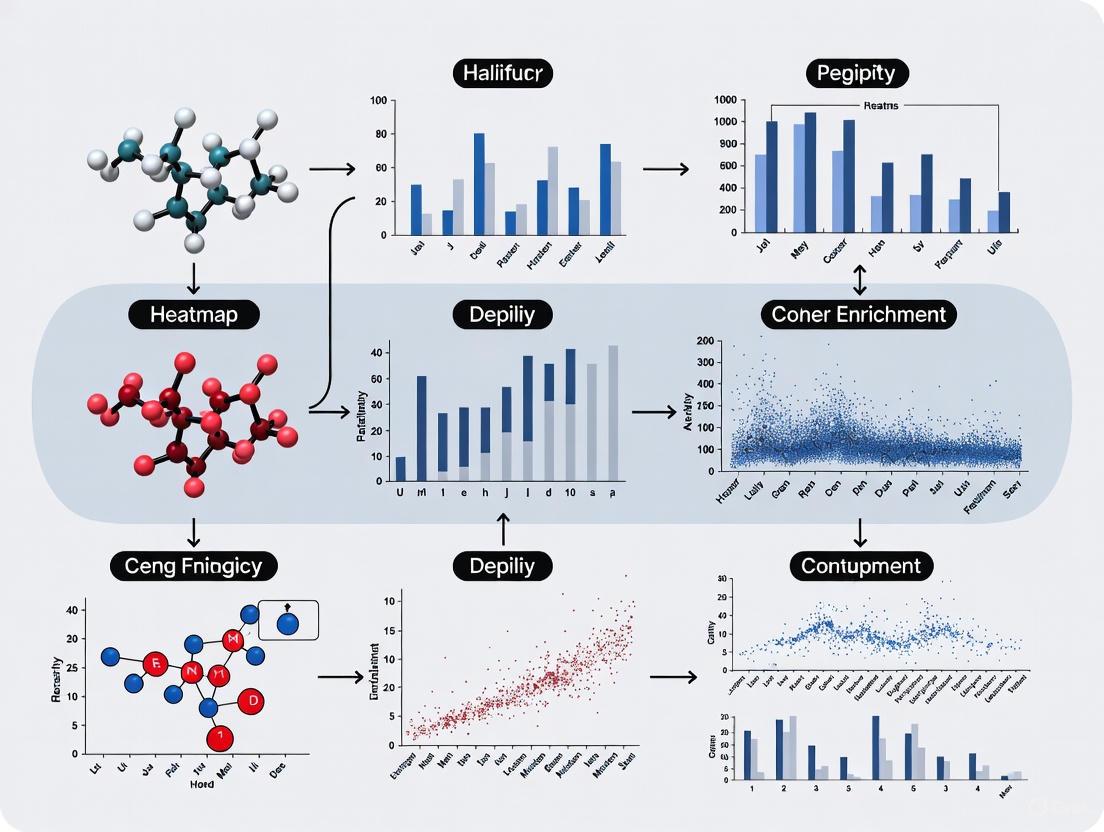

Visualizing the Workflow: From Data to Biological Insight

The following diagram illustrates the logical workflow of the directional integration process, from raw data to biological interpretation.

Diagram 1: Directional multi-omics integration workflow.

The Scientist's Toolkit: Essential Research Reagents and Solutions

Successful integration of heatmap patterns and enrichment analysis relies on a foundation of robust computational tools and resources. The table below lists key solutions mentioned in the supporting research.

Table 2: Key Research Reagent Solutions for Integrated Analysis

| Research Reagent / Tool | Function in Analysis | Source / Implementation |

|---|---|---|

| ActivePathways R Package | Serves as the primary tool for performing both direction-aware data fusion (DPM) and subsequent pathway enrichment analysis [12]. | Available in the CRAN repository for R [12]. |

| Directional P-value Merging (DPM) | The core algorithm for statistically integrating P-values and directional signs from multiple omics datasets to prioritize genes [12]. | Implemented within the ActivePathways package [12]. |

| Web Content Accessibility Guidelines (WCAG) | Provides the standard for color contrast (3:1 for graphics) and the requirement for dual encodings to ensure visualizations are accessible [11]. | Public standard published by the W3C [13]. |

| Viz Palette Tool | An evaluation tool used to generate color reports and visualize the just-noticeable difference (JND) between colors in a palette, helping to diagnose differentiation issues [14]. | Open-source tool created by Susie Lu and Elijah Meeks [14]. |

Urban Institute R Theme (urbnthemes) |

An example of a domain-specific visualization package that applies a standardized style, including color palettes, to charts created in R for consistent and accessible publication-ready graphics [15]. | Available via GitHub for the R programming language [15]. |

Functional enrichment analysis is a cornerstone of modern bioinformatics, essential for extracting biological meaning from high-throughput gene expression data. The core of this analysis relies on comparing a query dataset (a list or rank of genes) against annotated databases, which provide the biological context for interpretation. Among the most established methods for this purpose are Gene Set Enrichment Analysis (GSEA) and Over-Representation Analysis (ORA) [8]. These methods help researchers determine whether defined sets of genes (pathways) show statistically significant differences in expression between experimental conditions.

The value of any enrichment analysis is directly dependent on the quality and comprehensiveness of the underlying pathway database used. However, these databases are not created equal; they differ in content, structure, curation methods, and biological scope. This guide provides an objective comparison of four essential pathway resources—Gene Ontology (GO), KEGG, Reactome, and WikiPathways—focusing on their application in functional enrichment studies, particularly those integrated with heatmap visualization of results. Understanding their distinct characteristics enables researchers to select the most appropriate database for their specific biological context and analytical goals.

Pathway databases organize biological knowledge into computable units, but they originate from different philosophies and serve complementary roles. Below is a detailed comparison of their core attributes.

Table 1: Core Characteristics of Major Pathway Databases

| Database | Primary Focus | Curation Model | Hierarchical Structure | Key Strengths |

|---|---|---|---|---|

| Gene Ontology (GO) | Gene functions (BP, MF, CC) [8] | Consortium & Automated | Yes (Directed Acyclic Graph) [16] | Extensive functional annotations, well-defined hierarchy |

| KEGG | Metabolic & Signaling Pathways [16] | Expert Curation | No (Flat List) | Standardized pathway diagrams, strong in metabolism |

| Reactome | Detailed Biological Reactions [16] | Expert Curation | Yes (Reaction Hierarchy) | High detail, expert-curated reactions, extensive annotations |

| WikiPathways | Diverse Biological Pathways [17] | Community Curation | No (Flat List) | Rapidly updated, broad coverage of novel pathways |

- Gene Ontology (GO): Unlike pathway-centric databases, GO provides a structured, controlled vocabulary (ontology) for gene function across three domains: Biological Process (BP), Molecular Function (MF), and Cellular Component (CC). Its hierarchical nature allows for reasoning at different levels of biological specificity, from broad to fine-grained terms [8] [16].

- KEGG (Kyoto Encyclopedia of Genes and Genomes): One of the oldest and most widely cited resources, KEGG provides static flow diagrams of metabolic and signaling pathways. Its style is iconic and frequently reproduced in biomedical literature [16].

- Reactome: This open-source database offers highly detailed, expert-curated representations of biological reactions. It functions as a reaction knowledgebase, providing a deep, hierarchical view of biological processes from large-scale processes down to individual molecular events [16].

- WikiPathways: Adopting a wiki model, this database allows the research community to contribute and update pathway content. This fosters rapid incorporation of newly discovered pathways and is particularly strong in areas of active research like cancer and immunology [17] [16].

Quantitative Benchmarking and Performance Comparison

The choice of database significantly impacts the results of statistical enrichment analysis and subsequent biological interpretation. Studies have demonstrated that equivalent pathways from different databases can yield disparate enrichment results due to variations in gene set composition and curation focus [18].

Statistical Enrichment Performance

Benchmarking analyses using datasets from The Cancer Genome Atlas (TCGA) have quantified the performance differences across databases when applying common enrichment methods like the hypergeometric test, GSEA, and Signaling Pathway Impact Analysis (SPIA) [18].

Table 2: Database Performance in Enrichment Analysis and Predictive Modeling

| Database | Pathway Count (Human) | Avg. Genes per Pathway | Impact on Machine Learning Performance | Typical Use Case |

|---|---|---|---|---|

| GO Biological Process | > 14,000 terms [8] | Varies by term specificity | High, but can be noisy | Comprehensive functional profiling |

| KEGG | ~300 pathways [18] | Relatively high | Dataset-dependent; can be high | Core metabolism and signaling |

| Reactome | ~2,170 pathways [16] | Varies (detailed reactions) | Dataset-dependent | Detailed mechanistic studies |

| WikiPathways | ~600 pathways [18] | Varies | Dataset-dependent | Novel pathways and active research areas |

Key findings from these benchmarks include:

- No single database is comprehensively sufficient for all analyses, and their performance varies depending on the dataset and biological context under investigation [18].

- KEGG, while highly cited, is often severely overrepresented in published -omics studies, potentially leading to a bias in the literature [18].

- Integrative approaches that combine multiple databases, such as in the MPath resource, can sometimes improve prediction performance and yield more consistent, biologically plausible results in enrichment analyses by capturing a more complete picture of the pathway landscape [18].

Experimental Protocols for Enrichment Analysis

Integrating pathway analysis with heatmap visualization requires a structured workflow. The following protocol outlines key steps using common tools and databases.

Protocol 1: Standard Functional Enrichment Workflow

Objective: To identify significantly enriched pathways from a gene list and prepare results for visualization.

Materials and Reagents:

- Input Data: A ranked list of genes (e.g., by fold change and p-value from a differential expression analysis).

- Pathway Database: A GMT file from a chosen database (KEGG, Reactome, WikiPathways, or GO).

- Software Tools: GSEA software or R packages (e.g., clusterProfiler).

Methodology:

- Data Preparation: Prepare your input gene list. For ORA, this is a list of significant differentially expressed genes. For GSEA, it is a ranked list of all genes.

- Database Selection: Choose one or more pathway databases appropriate for your biological question. Consider using an integrative resource like Pathway Commons for a broader search [19].

- Enrichment Analysis: Run the statistical test (e.g., hypergeometric test for ORA or GSEA). Use stringent significance cutoffs (e.g., FDR q-value < 0.05) to minimize false positives.

- Result Export: Export the enrichment results, including key metrics like the Normalized Enrichment Score (NES), p-value, and the list of genes contributing to the enrichment score for each significant pathway [8].

Protocol 2: Reducing Redundancy and Integrating with Heatmaps

Objective: To cluster redundant enriched pathways and create an interpretable heatmap visualization.

Materials and Reagents:

- Input Data: The list of significantly enriched pathways from Protocol 1.

- Software Tools: GOREA R script [8] or the Enrichment Map Cytoscape App [19].

- Visualization Packages: R ComplexHeatmap package (for GOREA) or Cytoscape (for Enrichment Map).

Methodology:

- Pathway Clustering: Input the enriched pathways into a tool like GOREA, which uses a combined binary cut and hierarchical clustering approach to group pathways based on the similarity of their gene sets [8].

- Define Representative Terms: For each cluster, algorithms like those in GOREA identify a human-readable, representative term by incorporating information on ancestor terms from the GO hierarchy, moving beyond simple keyword extraction [8].

- Heatmap Generation: Visualize the results as a heatmap where rows represent pathways, columns can represent different conditions or analyses, and color intensity represents the quantitative enrichment metric (e.g., NES or gene overlap proportion). GOREA natively supports this via the ComplexHeatmap package [8].

- Interpretation: Prioritize clusters of pathways based on their average NES or gene overlap proportion. The integrated panel of broad GO terms and specific representative terms provided by tools like GOREA aids in moving from general to specific biological insights [8].

Visualization Tools for Interpreting Enriched Pathways

A significant challenge in enrichment analysis is managing the large number of resulting pathways. Visualization tools are critical for overcoming this redundancy and interpreting results.

- Enrichment Map (Cytoscape App): This method simplifies enrichment results by creating a similarity network where nodes are gene sets and edges connect sets that share genes. The thickness of the edge is proportional to the degree of overlap. This allows researchers to quickly identify major functional themes as clusters within the network, rather than reviewing a long, flat list [19].

- GOREA Heatmap Visualization: GOREA provides an alternative visualization by summarizing Gene Ontology Biological Process (GOBP) terms into a heatmap. It clusters terms, identifies representative labels, and sorts clusters based on quantitative metrics like NES. A key feature is the addition of a panel showing broad GOBP terms, providing both a general overview and specific details in a single image [8]. This direct integration of clustered enrichment results with a heatmap is particularly suited for functional enrichment research.

Table 3: Key Software Tools and Platforms for Pathway Analysis

| Tool/Resource | Type | Primary Function | Access |

|---|---|---|---|

| GOREA | R Script | Clusters enriched GOBP terms and generates an interpretable heatmap [8]. | https://github.com/KuChoiLab/GOREA |

| Enrichment Map | Cytoscape App | Visualizes enriched gene sets as a similarity network to reduce redundancy [19]. | Cytoscape App Store |

| WikiPathways App | Cytoscape App | Imports, visualizes, and maps data directly from WikiPathways [20]. | Cytoscape App Store |

| Pathway Commons | Meta-Database | Searches for pathways across multiple databases using genes or pathway names [16]. | https://www.pathwaycommons.org |

| MSigDB | Gene Set Database | Extensive collection of gene sets for GSEA, including pathways from multiple resources [18]. | http://software.broadinstitute.org/gsea/msigdb |

| Reactome.db | R Package | Provides access to Reactome pathway annotations within R/Bioconductor [16]. | Bioconductor |

The landscape of pathway databases is diverse, and the choice of resource is not neutral. Based on the comparative data and experimental protocols presented, we recommend the following best practices:

- Do Not Rely on a Single Database: Given that no single database is comprehensive and results vary, using multiple databases provides a more robust and complete biological interpretation [18].

- Use Integrative Resources or Combine Results: Leverage meta-databases like Pathway Commons, MSigDB, or create integrated resources like MPath to mitigate the biases inherent in any single database [18].

- Employ Advanced Clustering and Visualization: Move beyond simple ranked lists of pathways. Use tools like GOREA [8] and Enrichment Map [19] to cluster redundant terms and create insightful visualizations that integrate quantitative enrichment scores with pathway relationships. This is essential for effectively connecting heatmap findings with functional enrichment results.

- Select Databases Based on Context: Choose databases that fit your biological question. KEGG is strong for core metabolism, Reactome for detailed mechanistic studies, WikiPathways for novel and community-driven content, and GO for broad functional characterization.

Ultimately, a deliberate, multi-database strategy combined with sophisticated visualization is key to unlocking the full potential of functional enrichment analysis in genomic research.

From Data to Discovery: A Step-by-Step Workflow for Integrated Analysis

The integration of heatmap findings with functional enrichment results represents a powerful paradigm in modern bioinformatics, enabling researchers to transition from observing patterns in complex omics data to understanding their biological significance. This process is pivotal in fields like precision oncology, where accurately stratifying diseases based on multi-omics data can suggest biological mechanisms and potential targeted therapies [21] [22]. The reliability of these insights, however, is fundamentally dependent on the quality and appropriateness of data preprocessing steps applied before clustering and enrichment analysis.

Functional enrichment analysis serves as an essential bridge, allowing scientists to extract biological meaning from gene expression data by identifying overrepresented biological processes, pathways, or molecular functions within their datasets [7] [23]. These analyses come in several forms, including Over-representation Analysis (ORA), which tests for statistically significant associations between a gene list and predefined gene sets; Functional Class Scoring (FCS), which considers the entire dataset using rank-based methods like Gene Set Enrichment Analysis (GSEA); and Pathway Topology (PT) methods, which incorporate structural information about pathways [23] [24]. Each approach has distinct strengths and makes different assumptions about the input data, necessitating specific preprocessing considerations to generate valid biological interpretations.

Core Preprocessing Workflows: From Raw Data to Analysis-Ready Sets

The journey from raw omics data to results ready for clustering and enrichment analysis follows a structured pathway with critical decision points at each stage. The entire workflow, from raw data processing through to biological interpretation, can be visualized as an integrated system with multiple interconnected components.

Figure 1: Integrated workflow for omics data preprocessing, clustering, and enrichment analysis.

Quality Control and Normalization

Quality control establishes the foundation for all subsequent analyses by identifying technical artifacts and low-quality measurements. For single-cell RNA sequencing (scRNA-seq) data, this typically involves filtering cells based on metrics like the number of detected genes, total counts, and mitochondrial percentage [25]. In bulk RNA-seq, quality assessment might focus on sample-level metrics such as sequencing depth, GC content, and adapter contamination. Following quality control, normalization addresses technical variability between samples, enabling meaningful biological comparisons. Different omics modalities require distinct normalization approaches—for instance, transcriptomic data often benefits from methods that account for library size differences, while proteomic data may require variance-stabilizing transformations.

Feature Selection and Dimensionality Reduction

Feature selection identifies the most biologically informative variables for downstream analysis, reducing noise and computational burden. In differential expression analysis, this typically involves selecting genes based on statistical thresholds (e.g., p-values, false discovery rates) and effect sizes (e.g., fold changes) [25]. For clustering applications, highly variable features that drive population heterogeneity are often selected. Dimensionality reduction then projects the high-dimensional omics data into a lower-dimensional space while preserving the relative relationships between samples or cells [26]. This step is crucial for effective visualization and clustering, as it helps to mitigate the "curse of dimensionality" that can obscure meaningful biological patterns in the original high-dimensional space.

Comparative Analysis of Preprocessing Tools and Their Performance

Dimensionality Reduction Tools for Single-Cell Omics

Dimensionality reduction represents a critical preprocessing step for single-cell omics data, with significant implications for downstream clustering and interpretation. Different algorithms demonstrate substantial variation in their computational efficiency and resource requirements, factors that become increasingly important with growing dataset sizes.

Table 1: Performance comparison of dimensionality reduction tools for single-cell omics data

| Tool | Algorithm Type | Scalability | Memory Usage (200k cells) | Runtime (200k cells) | Primary Applications |

|---|---|---|---|---|---|

| SnapATAC2 | Matrix-free spectral embedding | Linear | 21 GB | 13.4 minutes | scATAC-seq, scRNA-seq, scHi-C |

| ArchR | Latent Semantic Indexing (LSI) | Linear | Moderate | Fast | scATAC-seq |

| Signac | Latent Semantic Indexing (LSI) | Linear | Moderate | Fast | scATAC-seq |

| EpiScanpy | Principal Component Analysis (PCA) | Linear | Moderate | Fast | scATAC-seq |

| cisTopic | Latent Dirichlet Allocation (LDA) | Poor | High | Slow (hours-days) | scATAC-seq |

| PeakVI | Deep neural network | Linear (slow) | GPU-dependent | ~4 hours | scATAC-seq |

| Original SnapATAC | Spectral embedding | Quadratic | >500 GB (fails >80k cells) | Slow | scATAC-seq |

SnapATAC2 introduces a particularly efficient approach through its matrix-free spectral embedding algorithm, which utilizes the Lanczos algorithm to derive eigenvectors without constructing a full similarity matrix [26]. This innovation enables linear scaling of both time and memory usage with the number of cells, making it feasible to process datasets containing millions of cells without heuristic approximations. The tool demonstrates exceptional versatility across diverse single-cell omics modalities, including scATAC-seq, scRNA-seq, single-cell DNA methylation, and scHi-C data [26].

Multi-Omics Integration and Functional Enrichment Tools

The landscape of tools for multi-omics integration and functional enrichment analysis has expanded considerably, with solutions targeting different aspects of the analytical workflow from data integration to biological interpretation.

Table 2: Comparison of multi-omics integration and enrichment tools

| Tool | Primary Function | Integration Method | Enrichment Support | Key Features |

|---|---|---|---|---|

| GOREA | Enrichment result interpretation | Binary cut + hierarchical clustering | GSEA, ORA | Integrates quantitative metrics (NES), reduces fragmentation |

| clusterProfiler 4.0 | Functional enrichment | Universal interface | ORA, GSEA | Supports thousands of species, compares multiple gene lists |

| Flexynesis | Multi-omics integration | Deep learning | N/A | Modular, deployable, supports classification and survival |

| Φ-Space | Cell type annotation | Phenotype space mapping | N/A | Continuous phenotyping, handles bulk and single-cell references |

| * simplifyEnrichment* | Enrichment result clustering | Binary clustering | GSEA, ORA | Predecessor to GOREA with more general clustering |

GOREA addresses a specific challenge in functional enrichment analysis—the interpretation of large numbers of enriched Gene Ontology Biological Process (GOBP) terms. It improves upon earlier tools like simplifyEnrichment by integrating binary cut and hierarchical clustering approaches while incorporating GOBP term hierarchy to define representative terms [7]. By leveraging quantitative metrics such as normalized enrichment scores (NES) or gene overlap proportions, GOREA generates more specific and interpretable clusters while significantly reducing computational time compared to its predecessors [7].

Flexynesis represents a comprehensive deep learning framework for bulk multi-omics integration that addresses common limitations in existing tools, including lack of transparency, modularity, and deployability [22]. It streamlines data processing, feature selection, and hyperparameter tuning while supporting diverse analytical tasks including regression, classification, and survival modeling. The platform enables both single-task and multi-task modeling, where multiple multi-layer perceptrons are attached to the encoder networks, allowing the embedding space to be shaped by multiple clinically relevant variables simultaneously [22].

Experimental Protocols for Preprocessing Benchmarking

Benchmarking Dimensionality Reduction Methods

To evaluate the performance of dimensionality reduction tools, researchers can implement a standardized benchmarking protocol using synthetic or real-world datasets with known cellular compositions. The following protocol outlines key steps for systematic comparison:

Dataset Preparation: Generate or obtain scATAC-seq datasets with varying cell numbers (e.g., 10,000, 50,000, 100,000, 200,000 cells) to assess scalability. The datasets should represent biologically diverse cell populations with established marker genes.

Processing Pipeline: Apply each dimensionality reduction method to the same datasets using recommended parameters. For SnapATAC2, this involves using the matrix-free spectral embedding algorithm [26]. For neural network methods like PeakVI, scBasset, and SCALE, utilize GPU acceleration with a fixed number of epochs (e.g., 50) for fair comparison.

Performance Metrics: Track computational resources including runtime and memory usage across different dataset sizes. Assess biological utility by measuring how well the low-dimensional embeddings separate known cell types using metrics such as silhouette score and adjusted Rand index.

Visualization Quality: Generate two-dimensional visualizations using UMAP or t-SNE applied to the embeddings produced by each method. Qualitatively assess whether the visualization preserves known biological relationships and clearly separates distinct cell populations.

This protocol was implemented in a recent comprehensive benchmarking study that compared SnapATAC2 against other widely used dimensionality reduction algorithms including LSI (used by ArchR and Signac), LDA (used by cisTopic), PCA (used by EpiScanpy), and classic spectral embedding [26]. The benchmarks were conducted on a Linux server utilizing four cores of a 2.6 GHz Intel Xeon Platinum 8358 CPU, with neural network methods additionally evaluated using an A100 GPU [26].

Protocol for Integrated Clustering and Enrichment Analysis

The integration of clustering results with functional enrichment analysis enables the biological interpretation of identified groups. The following protocol outlines a standardized approach for this integrated analysis:

Data Preprocessing and Clustering: Begin with quality-controlled and normalized omics data. Perform clustering using the preprocessed data, selecting an appropriate algorithm based on data characteristics and research questions. For multi-omics data, consider integration methods like concatenated clustering, clustering of clusters, or interactive clustering [21].

Differential Analysis: Identify features (genes, peaks, etc.) that are significantly different between clusters. For gene expression data, this typically involves differential expression analysis using methods like Wilcoxon rank-sum test, with subsequent filtering based on effect size and statistical significance [25].

Functional Enrichment: Input the differential features into functional enrichment tools. For ORA methods, use statistically significant differential features as input. For GSEA, use the ranked list of all features based on their association with biological differences [23] [24].

Result Interpretation: Use tools like GOREA to interpret and cluster the enrichment results. GOREA incorporates Gene Ontology term hierarchy and quantitative metrics to define representative terms and rank clusters based on biological importance [7].

Visualization Integration: Create heatmaps that simultaneously display both the expression patterns of key genes across clusters and the associated enriched biological processes. This integrated visualization helps establish direct connections between molecular patterns and their functional implications.

Essential Research Reagent Solutions for Omics Preprocessing

Successful preprocessing of omics data for clustering and enrichment analysis relies on both computational tools and curated biological knowledge bases. The table below outlines essential resources across different categories.

Table 3: Essential research reagents and resources for omics data preprocessing and analysis

| Resource Category | Specific Examples | Primary Function | Application Context |

|---|---|---|---|

| Annotation Databases | Gene Ontology (GO), KEGG, Reactome, MSigDB | Provide structured biological knowledge | Functional enrichment analysis for interpretation |

| Reference Datasets | TCGA, CCLE, DICE, Stemformatics atlases | Offer annotated reference data | Cell type annotation, reference mapping |

| Programming Frameworks | R/Bioconductor, Python/scverse, Rust | Provide computational infrastructure | Implementing preprocessing pipelines |

| Enrichment Tools | clusterProfiler, Webgestalt, Enrichr, Gprofiler | Perform ORA, GSEA, other enrichment types | Functional interpretation of gene lists |

| Multi-omics Integration Tools | iCluster, moCluster, jNMF, SNF | Integrate multiple data types | Multi-omics clustering |

Gene Ontology (GO) represents a cornerstone resource for functional enrichment analysis, providing a structured, hierarchical vocabulary that systematically describes gene functions across three domains: biological process, molecular function, and cellular component [25] [24]. The GO graph structure, where each term is a node and edges represent relationships between terms, enables more sophisticated enrichment analyses that account for term relationships [24]. Similarly, KEGG (Kyoto Encyclopedia of Genes and Genomes) provides pathway information that integrates genomic knowledge with chemical and systems-level information, offering valuable context for interpreting omics data within established biological pathways [24].

Reference datasets like The Cancer Genome Atlas (TCGA) and the Cancer Cell Line Encyclopedia (CCLE) provide essential benchmarking resources and annotated references for method development and validation [22]. These resources enable approaches like Φ-Space, which performs continuous phenotyping of single-cell multi-omics data by characterizing query cell identity in a low-dimensional phenotype space defined by reference phenotypes [27]. The ability to leverage these comprehensively annotated references significantly enhances the accuracy and biological relevance of clustering and enrichment analyses.

Integration of Heatmap Findings with Enrichment Results

The true power of omics data analysis emerges when combining visualization techniques like heatmaps with functional enrichment results. This integration creates a bidirectional analytical flow where clustering patterns suggest biological hypotheses through enrichment analysis, while enrichment results inform the interpretation of visualized patterns. GOREA facilitates this integration by visualizing enrichment results as a heatmap accompanied by a panel of broad GOBP terms and representative terms for each cluster, providing both general and specific biological insights [7].

This integrated approach enables researchers to move beyond simple observation of expression patterns to understanding their functional consequences. For example, a heatmap revealing distinct clustering of patient samples could be linked with enrichment analysis showing differential activation of immune response pathways, potentially stratifying patients into clinically relevant subgroups [7] [21]. Similarly, in single-cell analyses, clustering identified through dimensionality reduction can be interpreted by enrichment analysis of marker genes specific to each cluster, revealing the biological identity and functional properties of distinct cell populations [26] [27].

The relationship between data preprocessing, clustering, visualization, and biological interpretation forms a continuous cycle that drives discovery in omics research, as illustrated below.

Figure 2: Integrated analytical workflow connecting preprocessing, clustering, visualization, and biological interpretation.

Effective preprocessing of omics data establishes the essential foundation for meaningful clustering and biological interpretation through functional enrichment analysis. As the field advances, several emerging trends are shaping the future of omics data preprocessing. Scalable algorithms that maintain linear time and space complexity with growing dataset sizes, like the matrix-free spectral embedding in SnapATAC2, are becoming increasingly crucial for handling the massive datasets generated by modern single-cell technologies [26]. The development of universal enrichment tools such as clusterProfiler 4.0, which supports functional analysis for thousands of species with up-to-date gene annotation, addresses the critical need for tools that can keep pace with the expanding genomic resources [28].

The integration of multiple omics modalities represents another frontier, with tools like Flexynesis providing flexible deep learning frameworks for bulk multi-omics integration [22], while methods like Φ-Space enable continuous phenotyping of single-cell multi-omics data by projecting query cells into a reference-defined phenotype space [27]. These approaches facilitate a more comprehensive understanding of biological systems by leveraging complementary information from different molecular layers. As these technologies evolve, the emphasis remains on developing methods that are not only computationally efficient but also biologically interpretable, enabling researchers to extract meaningful insights from complex omics data and ultimately advance human health and disease understanding.

In the field of biomedical research, clustering analysis serves as a fundamental computational technique for identifying inherent patterns in high-dimensional omics data. The process of grouping data points based on their inherent similarities enables researchers to uncover hidden structures within complex biological datasets, facilitating pattern recognition and anomaly detection. When integrated with visualization techniques like heatmaps and downstream functional enrichment analysis, clustering becomes a powerful approach for extracting meaningful biological insights from large-scale experimental data. This integration is particularly valuable in drug development, where understanding the functional implications of clustered gene or protein expression patterns can accelerate therapeutic discovery.

The application of these methods has proven instrumental in critical research areas, including the study of host responses to pathogens and the identification of potential drug candidates. For instance, integrative analysis of clustering and functional enrichment has been applied to study drugs against SARS-CoV-2, helping researchers understand drug effects on gene expression in different cell lines and identify potential therapeutic options through drug-target network analysis [29]. This demonstrates how clustering serves as a foundational step in complex bioinformatics workflows for drug discovery and mechanism understanding.

Comparative Analysis of Clustering Algorithms

Core Methodologies and Mechanisms

K-means Clustering operates through an iterative process that partitions data points into a pre-specified number (K) of spherical clusters based on distance metrics, typically Euclidean distance. The algorithm begins with random centroid initialization and alternates between two main steps: (1) Assignment Step, where each data point is assigned to the nearest centroid, and (2) Update Step, where new centroids are calculated as the mean of all data points assigned to each cluster [30]. This process continues until centroid positions stabilize, ensuring convergence to a local optimum. A key characteristic of K-means is its requirement for advanced specification of the cluster number (K), which often necessitates auxiliary techniques like the Elbow method for determination.

Hierarchical Clustering creates a tree-like structure of nested clusters either through a bottom-up (agglomerative) or top-down (divisive) approach. Agglomerative methods begin by treating each data point as an individual cluster and successively merge the closest pairs until only one cluster remains [31]. The results are typically visualized through a dendrogram, which illustrates the sequence of merges and allows researchers to determine appropriate cluster cutpoints by interpreting the hierarchical relationships [30]. Unlike K-means, this method doesn't require pre-specifying the number of clusters and can reveal relationships at multiple levels of granularity.

DBSCAN (Density-Based Spatial Clustering of Applications with Noise) operates on fundamentally different principles, identifying clusters as high-density regions separated by low-density areas. The algorithm categorizes points as core points (with at least MinPts neighbors within radius ε), border points (within ε of a core point but without sufficient neighbors), and noise points (neither core nor border points) [30]. This density-based approach allows DBSCAN to discover clusters of arbitrary shapes and automatically identify outliers without requiring pre-specified cluster numbers.

Comparative Performance Evaluation

Table 1: Comparative Analysis of Clustering Algorithms

| Parameter | K-means | Hierarchical Clustering | DBSCAN |

|---|---|---|---|

| Cluster Shape Assumption | Hyper-spherical shapes [31] | Can handle various shapes but works best when hierarchical structure exists [31] | Arbitrary shapes based on data density [30] |

| Prior Knowledge Requirement | Requires advance knowledge of K (number of clusters) [31] | No need to specify cluster number; can determine by interpreting dendrogram [31] | Requires parameters ε (epsilon) and MinPts [30] |

| Computational Complexity | Less computationally intensive; suitable for very large datasets [31] | Requires computation of n×n distance matrix; expensive for large datasets [31] | Efficient for large datasets with appropriate indexing structures |

| Noise Handling | Sensitive to outliers; all points assigned to clusters | Sensitive to outliers; all points assigned to clusters | Explicitly identifies noise points as outliers [30] |

| Result Stability | Results may differ between runs due to random centroid initialization [31] | Reproducible results due to deterministic algorithm [31] | Deterministic results with fixed parameters |

| Key Advantage | Computational efficiency for large datasets [30] | Reveals hierarchical relationships and multiple granularity levels [30] | Identifies arbitrary-shaped clusters and outliers automatically [30] |

Experimental Data and Performance Metrics

In practical applications, these algorithms demonstrate distinct performance characteristics. When applied to the Iris dataset as a benchmark, K-means with k=3 produces visibly distinct clusters when visualized on principal components, effectively separating the three species when the underlying data conforms to spherical distributions [30]. The algorithm's linear time complexity makes it particularly suitable for large-scale omics studies where computational efficiency is crucial.

Hierarchical clustering applied to the same dataset generates a dendrogram that suggests an appropriate cutpoint at three clusters, aligning with biological reality [30]. However, the method requires computing and storing an n×n distance matrix, which becomes computationally expensive for large genomic datasets containing thousands of genes or samples [31]. This limitation necessitates careful consideration when designing analyses of transcriptomic or proteomic data.

DBSCAN demonstrates particular strength in identifying clusters with non-spherical geometries and automatically detecting outliers, which is valuable for quality control in experimental data. However, its performance is sensitive to the ε and MinPts parameters, requiring careful tuning to appropriately model cluster density [30]. This algorithm excels in detecting novel subpopulations in single-cell sequencing data where the number and shape of clusters aren't known in advance.

Integration with Heatmap Visualization

Principles of Effective Heatmap Design

Heatmaps provide a powerful visualization method for representing clustered data, where color intensity corresponds to values in a data matrix. Effective heatmap design follows specific color principles to ensure accurate interpretation. The fundamental rules include: (1) representing degrees in heatmaps by shading, using a single color blended with white, black, or grey, and (2) using distinct colors to represent qualitative differences [32]. This approach aligns with how human visual perception naturally interprets color gradients, making the visualizations more intuitive.

Color selection critically impacts interpretation accuracy. While rainbow color schemes are common, they can sometimes lead to misinterpretation when colors don't follow a natural ordering [32]. For example, a temperature-based scheme (blue to red) naturally communicates low to high values, as our brains are conditioned to associate blue with cooler/lower values and red with warmer/higher values [33]. In specialized applications like gene expression heatmaps, diverging color schemes (e.g., blue-white-red) effectively represent up-regulation, baseline, and down-regulation, providing immediate visual cues about direction and magnitude of change.

Interpreting Heatmap Patterns

Reading heatmaps requires understanding how color correlates with underlying values. In most scientific heatmaps, warmer colors (reds, oranges) represent higher values, while cooler colors (blues, greens) represent lower values [33]. The specific interpretation depends on context: in gene expression analysis, red might indicate up-regulation; in protein-protein interaction networks, it might represent stronger binding affinity.

Different heatmap types serve distinct analytical purposes. Scroll heatmaps show percentage of users who scroll to each depth level on web pages, with warmer colors indicating higher visibility areas [33]. Similarly, in scientific contexts, attention heatmaps can highlight regions of interest in microscopic images or areas of significant change in differential expression analyses. The key to interpretation lies in understanding the color legend and how it maps to the underlying data scale.

Research Workflow: From Clustering to Functional Interpretation

Integrated Analytical Pipeline

The integration of clustering results with functional enrichment analysis represents a powerful workflow in biomedical research. This pipeline typically begins with data preprocessing and normalization, followed by application of appropriate clustering algorithms to identify patterns in the data. The resulting clusters are then subjected to functional enrichment analysis to determine whether specific biological functions, pathways, or diseases are statistically over-represented.

Advanced tools like Flame (v2.0) exemplify this integrated approach, offering combinatorial analysis through merging and visualizing results from multiple functional enrichment applications [34]. This web tool utilizes aGOtool, g:Profiler, WebGestalt, and Enrichr pipelines, presenting their outputs through interactive visualizations including parameterizable networks, heatmaps, barcharts, and scatter plots [34]. Such platforms enable researchers to move seamlessly from cluster identification to biological interpretation.

Research workflow from data to biological interpretation

Application in Drug Discovery Research