From Sequence to Function: Validating Gene Predictions with Modern Proteomics

This article provides a comprehensive guide for researchers and drug development professionals on integrating proteomic data to validate and refine computational gene predictions.

From Sequence to Function: Validating Gene Predictions with Modern Proteomics

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on integrating proteomic data to validate and refine computational gene predictions. It covers the foundational principles of why protein-level evidence is crucial, detailing methodological workflows from LC-MS/MS to data analysis. The content addresses common challenges in experimental design and data interpretation, offering optimization strategies from recent large-scale studies. Furthermore, it explores advanced applications of validated targets in biomarker discovery and therapeutic development, highlighting how proteomic validation strengthens the link between genetic discoveries and clinical applications.

The Critical Link: Why Protein-Level Validation is Non-Negotiable for Gene Models

In silico gene prediction tools have become indispensable in modern genomic research and therapeutic development, offering a scalable method to interpret the vast landscape of human genetic variation. These computational models leverage artificial intelligence and machine learning to predict the functional impact of genetic variants, potentially accelerating precision medicine and drug target discovery [1] [2]. However, as these tools proliferate, a critical gap persists between computational predictions and biological reality—a disconnect that can significantly impact diagnostic accuracy and therapeutic decisions.

The fundamental challenge lies in the inherent complexity of genotype-phenotype relationships. While sequence-based AI models show great potential for high-resolution variant effect prediction, their practical value depends heavily on rigorous validation against experimental evidence [1] [3]. This review systematically assesses the limitations of current in silico prediction methods through the lens of proteomic and functional validation, providing researchers with a critical framework for evaluating these essential bioinformatic tools.

Performance Benchmarking: Quantitative Comparisons of Prediction Tools

Variant Effect Predictors in Human Traits

Independent benchmarking using population-scale biobanks has provided unbiased evaluations of computational variant effect predictors by assessing their ability to correlate with actual human traits. These studies circumvent the circularity concerns that plague many evaluations, as they utilize data not included in model training [2].

Table 1: Performance of Variant Effect Predictors in Human Cohort Studies

| Predictor | Performance in UK Biobank | Performance in All of Us | Key Strengths |

|---|---|---|---|

| AlphaMissense | Best or tied in 132/140 gene-trait combinations [2] | Consistent top performer [2] | Superior rare variant interpretation |

| VARITY | Not statistically different from AlphaMissense for some traits [2] | Strong correlation with human phenotypes [2] | Robust performance across diverse traits |

| ESM-1v | Tied with AlphaMissense for some binary traits [2] | Independent validation pending | Strong for specific variant classes |

| MPC | Competitive for medication use prediction [2] | Independent validation pending | Effective for pharmacogenomic applications |

In a comprehensive assessment of 24 predictors across 140 gene-trait associations in the UK Biobank, AlphaMissense significantly outperformed most other predictors, demonstrating the highest correlation with human traits based on rare missense variants [2]. This performance was subsequently confirmed in the independent All of Us cohort, establishing a robust benchmark for predictor selection in clinical and research settings.

Splicing Variant Prediction Tools

The accurate prediction of splicing variants presents particular challenges, as these may occur deep within introns or exons away from canonical splice sites. Benchmarking against the largest set of functionally assessed variants of uncertain significance (VUSs) revealed substantial variability in tool performance [4].

Table 2: Performance Comparison of Splicing Prediction Algorithms

| Tool | AUC | Sensitivity | Specificity | Optimal Application |

|---|---|---|---|---|

| SpliceAI | Highest single AUC (0.20 threshold) [4] | 89% | 86% | Deep intronic & canonical variants |

| Consensus Approach | Similar to SpliceAI (4/8 tools threshold) [4] | 91% | 85% | Comprehensive variant assessment |

| Weighted Combination | Potentially superior to single tools [4] | 93% | 87% | Critical clinical applications |

| CADD | Lower than SpliceAI [4] | 67% | 82% | Region-specific performance varies |

SpliceAI emerged as the best single algorithm, correctly prioritizing variants that impact splicing with high accuracy. However, a consensus approach combining multiple tools achieved similar performance, while a novel weighted approach incorporating relative scores from multiple algorithms showed potential for even greater accuracy, though this requires further validation [4].

Long-Range DNA Interaction Modeling

The prediction of long-range genomic interactions represents a particularly challenging frontier, as functional elements may influence gene regulation across megabase-scale distances. The DNALONGBENCH suite systematically evaluates this capability across five critical tasks [5].

Table 3: Performance on Long-Range Genomic Tasks (Scale: 0-1)

| Task | Expert Models | DNA Foundation Models | CNN | Most Effective Model |

|---|---|---|---|---|

| Enhancer-Target Gene Interaction | 0.841 [5] | 0.789-0.801 [5] | 0.762 [5] | ABC Model |

| eQTL Prediction | 0.721 [5] | 0.632-0.658 [5] | 0.601 [5] | Enformer |

| 3D Genome Organization | 0.841 [5] | 0.512-0.523 [5] | 0.488 [5] | Akita |

| Regulatory Sequence Activity | 0.712 [5] | 0.521-0.538 [5] | 0.498 [5] | Enformer |

| Transcription Initiation Signals | 0.733 [5] | 0.108-0.132 [5] | 0.042 [5] | Puffin-D |

Across all tasks, highly parameterized and specialized expert models consistently outperformed both DNA foundation models and simpler convolutional neural networks. The performance gap was especially pronounced for regression tasks such as contact map prediction and transcription initiation signal prediction, suggesting that current foundation models struggle with capturing sparse real-valued signals across long DNA contexts [5].

Experimental Validation Protocols: Bridging the In Silico-In Vivo Gap

Proteomic Validation of Genetic Findings

Proteomics provides a crucial intermediate validation layer between genetic predictions and phenotypic outcomes, offering direct evidence of functional molecular consequences. Recent advances demonstrate how machine learning applied to proteomic data can improve disease risk prediction while simultaneously validating potential drug targets [6] [7].

The Explainable Boosting Machine (EBM) framework has shown particular promise, achieving an AUROC of 0.785 for 10-year cardiovascular disease risk prediction by integrating proteomic data with clinical features [7]. This represents a significant improvement over traditional equation-based risk scores like PREVENT (AUROC: 0.767 with proteomics alone) and provides both global and local explanations for predictions, enabling researchers to identify which proteins contribute most to individual risk assessments [7].

Functional Splicing Assays

For splicing variants, experimental validation typically involves functional analyses to directly observe impacts on mRNA processing. The largest study of its kind functionally assessed 249 variants of uncertain significance (VUSs) from diagnostic testing, finding that 80 (32%) significantly impacted splicing, potentially enabling reclassification as "likely pathogenic" [4].

The experimental workflow typically includes:

- RNA extraction from patient-derived cells or appropriate tissue models

- Reverse transcription PCR to convert mRNA to cDNA

- Fragment analysis to detect abnormal splicing patterns

- Sanger sequencing to identify specific exon skipping, intron retention, or cryptic splice site usage

- Quantification of aberrant transcript proportions compared to normal controls

This functional evidence provides the highest level of validation for splicing predictions, though cell- and tissue-specific factors may influence results and require consideration in experimental design [4].

Longitudinal Proteomic Validation

Longitudinal study designs provide particularly powerful validation by capturing dynamic protein expression changes over time, offering more statistical power than cross-sectional approaches to detect true biological differences [8]. The Robust Longitudinal Differential Expression (RolDE) method was specifically developed to address the unique characteristics of proteomics data, including prevalent missing values and technical noise [8].

In comprehensive benchmarking using over 3000 semi-simulated spike-in datasets, RolDE achieved superior performance (IQR mean pAUC: 0.977) compared to other methods, demonstrating particular strength in handling missing values and diverse expression patterns [8]. This approach enables researchers to more confidently distinguish true longitudinal differential expression from technical artifacts when validating in silico predictions.

Critical Gaps and Limitations in Current Methodologies

Context Specificity and Generalizability

A fundamental limitation of many in silico prediction tools is their limited ability to account for biological context, including cell type, tissue specificity, and developmental stage. This is particularly problematic for regulatory variants, where effects may be highly context-dependent [1]. As noted in plant breeding applications—where these tools show promise but face similar limitations—"the accuracy and generalizability of sequence models heavily depend on the training data, highlighting the need for validation experiments" [1].

This challenge extends to human genomics, where models trained on bulk tissue data may fail to capture cell-type-specific regulatory effects, potentially leading to false positives or negatives in specific physiological or pathological contexts.

The Long-Range Challenge

As demonstrated in the DNALONGBENCH evaluation, capturing dependencies across very long genomic distances remains a major computational hurdle [5]. While specialized expert models like Enformer and Akita show reasonable performance for specific tasks, general-purpose DNA foundation models struggle with long-range interactions, particularly for predicting 3D genome organization and transcription initiation [5].

This limitation has direct implications for interpreting non-coding variation, as enhancers may regulate gene expression across megabase-scale distances, and current tools may miss these functional connections.

Data Quality and Technical Artifacts

Proteomic validation introduces its own technical challenges, as data quality significantly impacts validation reliability. Benchmarking studies of data-independent acquisition (DIA) mass spectrometry workflows—increasingly used for proteomic validation—reveal substantial variability in identification and quantification performance across different analysis tools [9].

For instance, in single-cell proteomic simulations, Spectronaut's directDIA workflow quantified 3,066 ± 68 proteins per run, compared to 2,753 ± 47 for PEAKS and fewer for DIA-NN under similar conditions [9]. These technical differences in validation methodologies can directly impact the apparent performance of in silico gene predictions.

A Framework for Robust Validation

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 4: Key Experimental Resources for Validation Studies

| Resource Type | Specific Examples | Applications & Functions |

|---|---|---|

| Spectral Libraries | Sample-specific DDALib, PublicLib, AlphaPeptDeep predicted libraries [9] | Peptide identification in proteomic validation |

| Proteomic Platforms | Olink Explore Platform, TIMS-DIA (diaPASEF) [9] [7] | High-throughput protein quantification |

| Analysis Software | DIA-NN, Spectronaut, PEAKS Studio [9] | DIA mass spectrometry data processing |

| Functional Assay Systems | Patient-derived xenografts, Organoids, Tumoroids [10] | Experimental validation in biologically relevant models |

| Longitudinal Analysis Tools | RolDE, Limma, MaSigPro [8] | Detecting differential expression over time |

| Splicing Assay Systems | Mini-gene constructs, RT-PCR protocols [4] | Functional assessment of splicing variants |

Integrated Validation Workflow

To overcome the limitations of individual prediction tools, we propose a tiered validation framework:

Computational Cross-Validation: Employ multiple complementary algorithms with different underlying architectures and training data. Consensus approaches consistently outperform individual tools [4].

Proteomic Corroboration: Utilize quantitative proteomics to validate predicted molecular consequences, acknowledging both the power and limitations of current mass spectrometry methods [9] [7].

Functional Characterization: Implement targeted experiments (splicing assays, CRISPR-based functional studies) for high-priority predictions, particularly those with potential clinical implications [4].

Longitudinal Confirmation: Where possible, incorporate longitudinal designs to capture dynamic effects and enhance statistical power for detecting true biological signals [8].

In silico gene prediction tools have revolutionized genomic research but remain imperfect proxies for biological reality. Through rigorous benchmarking against proteomic and functional validation data, we can identify their strengths and limitations, enabling more informed tool selection and interpretation.

The most promising developments lie in integrated approaches that combine multiple computational strategies with experimental validation—such as the weighted combination method for splicing prediction that outperforms individual tools [4], or the explainable machine learning frameworks that simultaneously predict disease risk and identify biologically plausible biomarkers [7].

As these tools continue to evolve, maintaining a critical perspective on their limitations—particularly regarding context specificity, long-range interactions, and technical validation constraints—will be essential for translating computational predictions into meaningful biological insights and clinical applications. The gap between in silico predictions and biological reality is narrowing, but bridging it completely will require continued development of both computational and experimental methodologies alongside rigorous, multi-modal validation frameworks.

The Central Dogma of molecular biology outlines a straightforward flow of genetic information: from DNA to RNA to protein. In laboratory practice, this principle often leads to the use of mRNA abundance as a convenient proxy for protein levels. However, a growing body of evidence reveals that this relationship is far from linear, with mRNA levels frequently diverging from the functional effector molecules they encode [11] [12].

This discrepancy presents a significant challenge for validating gene predictions against proteomic data. While transcriptomic methods like RNA-Seq have become routine and reproducible, proteomic analyses remain more technically challenging [11]. Consequently, many studies are forced to extrapolate conclusions from mRNA to protein, an approach that often proves unjustified [11]. Understanding the mechanisms underlying this discordance is crucial for researchers, scientists, and drug development professionals who rely on accurate gene expression data for discovery and validation workflows.

Key Biological Mechanisms Driving Divergence

The relationship between mRNA and protein abundance is governed by a complex series of regulatory steps, each offering potential points of divergence.

Post-Transcriptional Regulation

After mRNA is synthesized, multiple mechanisms influence whether and how it becomes translated into protein:

- Translation Efficiency: The rate of ribosome movement along mRNA and the availability of free ribosomes significantly impact protein synthesis [11].

- tRNA Availability and Codon Usage: The abundance of specific tRNAs and codon optimization affects translation efficiency and protein yield [11].

- RNA Secondary Structure: Complex structures in the transcript itself can hinder or facilitate ribosomal binding and progression [11].

Post-Translational Regulation

Once synthesized, proteins undergo further processing that dissociates their abundance from initial mRNA levels:

- Protein Degradation: Proteins have widely varying half-lives regulated by degradation mechanisms like the ubiquitin-proteasome system [13].

- Post-Translational Modifications (PTMs): Phosphorylation, acetylation, ubiquitination, and glycosylation significantly alter protein function and stability without changing mRNA abundance [13].

- Protein Complex Formation: The assembly of proteins into complexes can influence their degradation kinetics and functional availability [14].

Evolutionary and Compensatory Mechanisms

Recent phylogenetic analyses across mammalian species reveal that protein abundances evolve under strong stabilizing selection, while mRNA abundances show greater divergence [15]. This suggests an evolutionary buffering system where:

- Mutations affecting mRNA abundances often have minimal impact on protein abundances [15]

- mRNA abundances adapt faster than protein abundances due to greater mutational opportunity [15]

- Compensatory evolution maintains protein abundance stability despite transcriptional changes [15]

Quantitative Comparison of mRNA and Protein Levels

Correlation Coefficients Across Studies

Table 1: Reported mRNA-Protein Correlation Coefficients Across Organisms and Conditions

| Study System | Correlation Coefficient (R) | Sample Size | Measurement Technique |

|---|---|---|---|

| Mouse Liver Tissues [11] | 0.27 (Pearson) | 100 mice | RNA-Seq + LC-MS |

| Yeast [11] | 0.58 (R²) | Log-transformed data | Multi-platform |

| S. cerevisiae [11] | 0.73 (R²) | Averaged technologies | Combined datasets |

| Rice and Maize [11] | <0.4 (Pearson) | Plant tissues | RNA-Seq + MS |

| Mammalian Cells [16] | ~0.40 (Pearson) | Multiple datasets | RNA-Seq + MS |

| Mouse Inner Ear Tissues [16] | 0.58 (Average) | Cochlea/vestibule | RNA-Seq + MS |

Protein-to-mRNA Ratios Across Tissues and Conditions

Table 2: Protein Conservation vs. mRNA Divergence Across Biological Contexts

| Dataset | Observation | Statistical Significance | Biological Interpretation |

|---|---|---|---|

| EAR (Mouse inner ear) [16] | Protein correlation between cochlea/vestibule: 0.97 vs mRNA: 0.94 | Higher protein conservation | Buffering maintains protein homeostasis across similar tissues |

| PRIMATE (Lymphoblastoid cells) [16] | 3/3 pairs showed higher protein correlation | Consistent pattern | Evolutionary conservation of protein levels across species |

| MMT (Mouse tissues) [16] | 9/10 tissue pairs showed higher protein correlation | p = 2.9×10⁻³ (Wilcoxon test) | Compensatory mechanisms operate across diverse tissues |

| NCI60 (Cancer cell lines) [16] | 24/36 cancer types showed higher protein correlation | p = 8.0×10⁻³ (Wilcoxon test) | Buffering persists but is less consistent in cancer |

Experimental Protocols for Parallel mRNA-Protein Analysis

Simultaneous Single-Cell mRNA-Protein Quantification

Recent methodological advances enable simultaneous measurement of mRNA and protein in the same cells, eliminating technical variability:

Proximity Sequencing (Prox-seq) Protocol [17]:

- Principle: Combines proximity ligation assay with single-cell sequencing to measure proteins, protein complexes, and mRNAs simultaneously

- Workflow:

- Target proteins with specific antibodies conjugated to DNA oligonucleotides

- When antibodies are in proximity (<40 nm), perform proximity ligation

- Sequence resulting DNA products to identify interacting proteins and complexes

- Simultaneously sequence transcriptome from the same single cells

- Applications: Identifying cell types, detecting protein complexes, discovering novel interactions in immune signaling

- Validation: Successfully identified naïve CD8+ T cells displaying CD8-CD9 complex and TLR signaling complexes

Dual Fluorescent Reporter System in Yeast [14]:

- Principle: CRISPR-based system for simultaneous quantification of mRNA and protein via dual fluorescent reporters

- Workflow:

- Engineer fluorescent transcriptional and translational reporters for genes of interest

- Image live cells to quantify both reporters simultaneously

- Map trans-acting loci affecting expression

- Key Finding: <20% of trans-acting loci had concordant effects on mRNA and protein

- Advantage: Eliminates environmental confounders and technical biases between separate measurements

Mass Spectrometry-Based Proteomics with RNA-Seq

For population-level studies, paired omics measurements provide complementary insights:

Matched Transcriptome-Proteome Analysis in Mammalian Systems [15] [16]:

- Tissue Collection: Standardized sampling procedures across multiple species (e.g., mammalian skin fibroblasts)

- RNA Sequencing: Standard RNA-seq protocols with quality controls

- Proteome Analysis: Liquid chromatography coupled with data-independent acquisition tandem mass spectrometry (DIA-MS)

- Key Consideration: Use standardized experimental protocols across all samples to minimize technical variation

- Phylogenetic Framework: Apply evolutionary models to distinguish mutational and selective influences on expression divergence

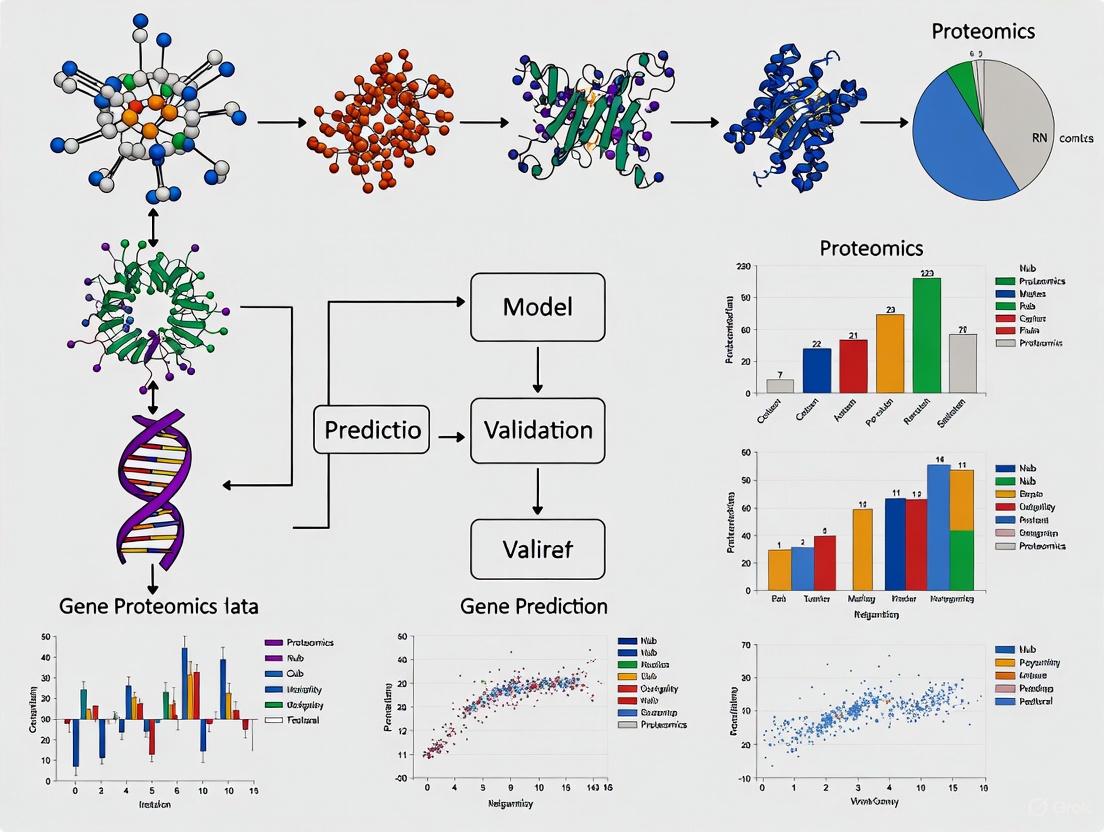

Figure 1: Experimental workflows for simultaneous mRNA-protein quantification. Three complementary approaches enable researchers to capture expression relationships at different biological scales and resolutions.

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Key Research Reagents for mRNA-Protein Correlation Studies

| Reagent/Solution | Function | Application Examples |

|---|---|---|

| Antibody-DNA Oligo Conjugates [17] | Target proteins for proximity ligation assays | Prox-seq protein detection and complex identification |

| Dual Fluorescent Reporters [14] | Simultaneous monitoring of transcription and translation | Live-cell imaging of mRNA and protein dynamics |

| Data-Independent Acquisition (DIA) Reagents [15] | Comprehensive peptide quantification in mass spectrometry | Proteome analysis across multiple species |

| CRISPR-Cas9 Editing Tools [14] | Precise genetic manipulation | Engineering reporter systems and functional validation |

| Liquid Chromatography Columns [15] [11] | Peptide separation prior to mass spectrometry | Proteomic sample preparation |

| RNA-Seq Library Prep Kits [11] | Transcriptome library construction | mRNA abundance quantification |

| Protein Degradation Inhibitors | Preserve protein abundance profiles | Sample collection for accurate proteomics |

| Cross-linking Reagents [17] | Stabilize protein complexes | Studying protein interactions and complexes |

Implications for Drug Development and Therapeutic Discovery

The discordance between mRNA and protein levels has profound implications for pharmaceutical research and development:

Target Identification and Validation

Genetic studies increasingly integrate proteomic data to improve therapeutic target identification. A recent cross-population genome-wide association study of atrial fibrillation demonstrated that integrating genomic data with proteomic profiling significantly enhanced disease risk prediction and identified potential drug targets [18]. The study identified 28 circulating proteins with potential causal associations with AF, with protein risk scores outperforming traditional polygenic risk scores [18].

Pharmacogenomics and Biomarker Development

The move toward proteomics-driven precision medicine recognizes that proteins, as the primary effector molecules, provide more direct insight into disease mechanisms and treatment responses [13]. Several key considerations emerge:

- Post-translational modifications create functional protein diversity not predictable from mRNA [13]

- Protein-protein interactions and complex formation influence therapeutic efficacy [17] [13]

- FDA-approved biomarkers increasingly rely on protein rather than RNA measurements [13]

Figure 2: Relationship between mRNA-protein divergence mechanisms and therapeutic applications. Understanding biological causes enables methodological innovations that directly impact drug development success.

The divergence between mRNA and protein levels represents a fundamental consideration rather than a technical limitation in molecular biology. Quantitative comparisons reveal generally modest correlations (typically R=0.3-0.6) that vary by biological context, with protein levels often showing greater conservation across tissues and species than their corresponding mRNAs [16].

These findings carry significant implications for validating gene predictions against proteomic data. Researchers should prioritize:

- Direct protein measurement whenever possible for functional validation

- Simultaneous mRNA-protein quantification methods to eliminate technical variability

- Evolutionary perspectives that recognize stabilizing selection on protein abundances

- Multi-omics integration that accounts for post-transcriptional and post-translational regulation

As proteomic technologies continue advancing in accessibility and scalability [13], the research community moves closer to realizing proteomics-driven precision medicine that fully acknowledges the complex relationship between genetic information and its functional effectors.

The sequencing of a genome produces a vast list of predicted gene models, but this structural annotation is merely a starting point. The critical next step is functional annotation—linking these genomic elements to biological function [19] [20]. While computational predictions provide initial functional clues, they require experimental validation to confirm biological relevance. Proteomics, the large-scale study of proteins, has emerged as a powerful tool for bridging this gap, providing direct experimental evidence for the existence of predicted gene products and enabling more accurate functional characterization [21]. This guide examines the central role of proteomics in functional annotation workflows, objectively comparing its performance against alternative approaches and detailing the experimental methodologies that make it indispensable for genome annotation projects.

Proteomics as a Validation Tool for Gene Predictions

From In Silico Prediction to Experimental Confirmation

Structural annotation of newly sequenced genomes begins with electronic prediction of open reading frames (ORFs), which are typically released into public databases without experimental validation [19] [20]. These predicted proteins account for the majority of data for newly sequenced species but face a significant annotation challenge: highly curated databases like UniProtKB often exclude predicted gene products until experimental evidence confirms their in vivo expression [19] [20].

Proteomics addresses this limitation by providing direct experimental support for gene model predictions. In a landmark chicken genome study, researchers analyzed eight tissues and provided experimental confirmation for 7,809 computationally predicted proteins, corresponding to 51% of the chicken predicted proteins in NCBI at the time [19] [20]. This demonstrated the utility of high-throughput expression proteomics for rapid experimental structural annotation of a newly sequenced eukaryote genome [19] [20]. Importantly, this approach identified 30 proteins that were only electronically predicted or hypothetical translations in human, highlighting its power for cross-species validation [19] [20].

Orthology-Based Functional Annotation Transfer

Once protein expression is experimentally confirmed, proteomics data enables functional annotation through orthology mapping. By identifying human or mouse orthologs of experimentally supported proteins, Gene Ontology (GO) functional annotations can be transferred from the characterized orthologs to the newly confirmed proteins [19] [20]. In the chicken genome study, researchers identified orthologs for 77% (6,008) of the confirmed chicken proteins, then used this orthology to produce 8,213 GO annotations—representing an 8% increase in available chicken GO annotations and a doubling of non-IEA (Inferred from Electronic Annotation) annotations [19] [20].

Table 1: Performance Metrics for Functional Annotation Methods

| Annotation Method | Evidence Basis | Coverage | Accuracy | Limitations |

|---|---|---|---|---|

| Proteomics + Orthology Transfer | Experimental protein detection + evolutionary conservation | Moderate (e.g., 77% ortholog identification in chicken study) | High (direct protein confirmation + conserved function) | Limited to expressed proteins; requires related annotated species |

| Transcriptomics Co-expression | mRNA expression patterns | High (most transcribed genes) | Moderate (subject to transcriptional noise) | Poor correlation with protein abundance; accidental covariation [22] |

| Electronic Annotation (IEA) | Sequence similarity, functional motifs | Very High (can be automated genome-wide) | Variable (depends on motif specificity) | High false positive rate; no experimental support [19] |

| Genomic Context | Chromosomal colocalization, operon structure | Variable | Lower for eukaryotes | More reliable for prokaryotes; indirect functional inference |

Performance Comparison: Proteomics Versus Transcriptomics

While mRNA profiling has been the dominant approach for studying gene expression, proteome profiling provides distinct advantages for functional annotation. A systematic comparison of mRNA and protein coexpression networks for three cancer types revealed marked differences in wiring between these networks [22].

Protein coexpression was driven primarily by functional similarity between coexpressed genes, whereas mRNA coexpression was driven by both cofunction and chromosomal colocalization of the genes [22]. This fundamental difference has significant implications for function prediction: functionally coherent mRNA modules were more likely to have their edges preserved in corresponding protein networks than functionally incoherent mRNA modules [22].

The study concluded that proteomics strengthens the link between gene expression and function for at least 75% of Gene Ontology biological processes and 90% of KEGG pathways, demonstrating that proteome profiling outperforms transcriptome profiling for coexpression based gene function prediction [22].

Table 2: Direct Performance Comparison of Proteomics vs. Transcriptomics for Function Prediction

| Performance Metric | Proteomics Approach | Transcriptomics Approach | Performance Advantage |

|---|---|---|---|

| Driver of Coexpression | Functional similarity between genes [22] | Cofunction + chromosomal colocalization [22] | Proteomics provides more specific functional signals |

| Function Prediction Accuracy | Higher link to known functions | Lower specificity | Proteomics strengthens function links for 75% GO processes, 90% KEGG pathways [22] |

| Biological Relevance | Direct measurement of functional molecules | Proxy measurement (mRNA) | Proteomics directly detects functional entities |

| Functional Coherence | Higher in coexpressed modules | Lower coherence in coexpressed modules | Functionally coherent mRNA modules preserved in protein networks [22] |

Experimental Protocols and Workflows

Mass Spectrometry-Based Protein Identification

The core experimental methodology for proteomic validation involves liquid chromatography mass spectrometry (LC-MS)-based analysis [21]. The standard workflow encompasses:

Sample Preparation: Protein extraction from tissues or cells, potentially using Differential Detergent Fractionation (DDF) to enhance protein identification [19] [20]

Protein Digestion: Cleavage into peptides using trypsin or similar proteases

LC-MS/MS Analysis: Separation via liquid chromatography followed by mass spectrometry analysis

Database Searching: Matching acquired spectra against theoretical spectra from predicted protein databases

In the chicken genome study, this approach identified 48,583 peptides with a false discovery rate (FDR) of 0.9%, providing high-confidence support for protein existence [19] [20]. Although 58% of protein identifications were based on single-peptide matches, the low FDR and independent identification in multiple tissues provided strong evidence for in vivo expression [19] [20].

Differential Expression Analysis Workflows

For quantitative proteomics applications, differential expression analysis workflows typically encompass five key steps [23]:

- Raw Data Quantification: Using tools like MaxQuant or FragPipe

- Expression Matrix Construction

- Matrix Normalization

- Missing Value Imputation (MVI)

- Differential Expression Analysis with statistical methods

Optimizing these workflows is crucial for accurate results. A comprehensive study evaluating 34,576 combinatoric experiments revealed that optimal workflows are settings-specific, with normalization and DEA statistical methods exerting greater influence for label-free DDA and TMT data, while matrix type is additionally important for DIA data [23].

High-performing workflows for label-free data are enriched for directLFQ intensity, no normalization (referring to distribution correction methods not embedded with particular settings), and specific imputation methods (SeqKNN, Impseq, or MinProb), while eschewing simple statistical tools like ANOVA, SAM, and t-test [23].

Handling Missing Values in Proteomics Data

Missing values present a significant challenge in proteomics, as they can limit statistical power for comparisons between experimental groups. Traditional approaches include:

- Removal of high-missingness proteins (typically 50-80% missingness thresholds)

- Statistical imputation using methods like k-nearest neighbors (kNN) or random forest

Recent innovations include retention time (RT) boundary imputation rather than quantitation imputation. For each missing value, RT boundaries are imputed, then quantitation is obtained by integrating the chromatographic signal within the imputed boundaries [24]. This approach, implemented in tools like Nettle, yields more accurate quantitations than traditional proteomics imputation methods and increases the number of peptides with quantitations, leading to enhanced statistical power [24].

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Key Research Reagent Solutions for Proteomics-Based Functional Annotation

| Reagent/Resource | Function | Application Notes |

|---|---|---|

| Differential Detergent Fractionation (DDF) Kits | Sequential extraction of cellular compartments | Enhances protein identification; critical for membrane-associated proteins [19] [20] |

| Trypsin/Lys-C Protease | Protein digestion into peptides | Essential sample preparation step for LC-MS/MS analysis |

| iTRAQ/TMT Labeling Reagents | Multiplexed protein quantification | Enables simultaneous analysis of multiple samples; improves throughput [22] [23] |

| Universal Proteomics Standard (UPS) Sets | Spike-in controls for quantification | Provides internal standards for differential expression studies [23] |

| Spectral Libraries (.blib files) | Reference databases for peptide identification | Critical for DIA-NN and Skyline analysis; can be enhanced with imputation [24] |

| Orthology Prediction Tools | Mapping genes between species | Enables functional annotation transfer (e.g., Homologene, Inparanoid, Treefam) [19] [20] |

| Functional Annotation Pipelines | Automated annotation workflows | Tools like FA-nf integrate multiple approaches for comprehensive annotation [25] |

Integrated Annotation Workflows

Effective functional annotation typically requires integrating multiple complementary approaches. Pipeline tools like FA-nf, implemented in Nextflow, provide containerized workflows that integrate different annotation approaches including NCBI BLAST+, DIAMOND, InterProScan, and KEGG [25]. These pipelines begin with protein sequence FASTA files and optionally structural annotation in GFF format, producing comprehensive annotation reports including GO assignments [25].

Similarly, the AgBase functional annotation workflow employs three annotation tools in concert: GOanna (for BLAST-based GO annotation transfer), InterProScan (for protein family and domain identification), and KOBAS (for KEGG Orthology terms and pathway annotation) [26].

Proteomics provides an essential bridge between genomic sequence data and biological understanding by experimentally validating predicted gene models and enabling accurate functional annotation. The experimental evidence generated through mass spectrometry-based proteomics addresses critical limitations of purely computational predictions, while orthology-based annotation transfer leverages evolutionary conservation to assign biological meaning.

When compared to transcriptomic approaches, proteomics demonstrates superior performance for function prediction, with protein coexpression networks more specifically reflecting functional relationships than mRNA coexpression networks. As proteomics technologies continue to advance in sensitivity, throughput, and quantification accuracy, their role in functional annotation workflows will become increasingly central to extracting biological insight from genomic sequences.

For researchers engaged in genome annotation projects, integrating proteomic validation provides the critical path "from candidate to confirmation"—transforming in silico predictions into biologically validated functional elements.

The high failure rate in clinical drug development, estimated at 90%, is often attributed to inadequate target validation. Within this challenging landscape, human genetic evidence has emerged as a powerful tool for establishing the causal role of genes in human disease, with drug mechanisms supported by such evidence demonstrating a 2.6 times greater probability of success from clinical development to approval [27]. This review systematically compares how different genetic and proteomic validation methodologies perform in prioritizing drug targets, with a specific focus on validating gene predictions against experimental proteomics data.

Table 1: Performance Comparison of Genetic and Proteomic Validation Approaches

Table 1 summarizes the key performance metrics of different genetic and proteomic validation methods as presented in recent literature.

| Methodology | Primary Function | Key Performance Metrics | Advantages | Limitations |

|---|---|---|---|---|

| Gene-Disease Level DOE Prediction [28] | Predicts direction of therapeutic effect for gene-disease pairs | Macro-averaged AUROC: 0.59 (improves with genetic evidence) | Incorporates genetic associations across allele frequency spectrum; models dose-response. | Performance is currently modest and highly dependent on available genetic data. |

| Gene-Level DOE-Specific Druggability [28] | Predicts suitability for activation/inhibition across all diseases | Macro-averaged AUROC: 0.95 for activator/inhibitor druggability | Leverages gene/protein embeddings; outperforms existing druggability predictors. | Disease-agnostic; does not guarantee therapeutic utility for a specific indication. |

| Sparse Plasma Protein Signatures [29] | Predicts 10-year disease risk for drug target indication | Median ΔC-index: +0.07 over clinical models; Detection Rate at 10% FPR: 45.5% | Clinically useful prediction for 67 diseases; points directly to druggable protein targets. | Predictive power varies by disease pathology; enrichment for hematological/immunological diseases. |

| Proteogenomic Causal Inference (pQTL MR/Colocalization) [30] | Establishes causal links between protein abundance and disease | Identified 43 colocalizing associations with posterior probability >80% | Provides high-confidence causal inference; instruments novel proteins like LTK for T2D. | Requires large sample sizes for robust pQTL discovery; can be confounded by pleiotropy. |

Experimental Protocols for Genetic and Proteomic Validation

Protocol for Direction of Effect (DOE) Prediction and Validation

Objective: To predict whether a therapeutic should activate or inhibit a target protein for a given disease.

Methodology Summary: A multi-level machine learning framework integrates diverse data inputs [28]:

- Input Features:

- Genetic Associations: Effect directions from variants across the allelic series (common, rare, ultrarare) to model dose-response relationships [28].

- Gene and Protein Embeddings: Continuous representations from GenePT (NCBI gene summaries) and ProtT5 (amino acid sequences) [28].

- Tabular Features: Gene-level characteristics such as LOEUF (constraint), dosage sensitivity, and mode of inheritance [28].

- Model Training: Three distinct models are trained:

- DOE-specific druggability for 19,450 protein-coding genes.

- Isolated DOE among 2,553 known druggable genes.

- Gene-disease-specific DOE for 47,822 gene-disease pairs.

- Validation: Model performance is assessed via AUROC and calibration plots, with successful predictions shown to be associated with clinical trial success [28].

Protocol for Proteogenomic Causal Inference via pQTLs

Objective: To establish a causal relationship between genetically predicted plasma protein levels and disease risk, thereby validating the protein as a therapeutic target.

Methodology Summary: This workflow uses Mendelian randomization (MR) and colocalization, as exemplified in a Scottish cohort study [30]:

- Step 1: Protein Quantitative Trait Locus (pQTL) Discovery

- Proteomic Profiling: Measure thousands of plasma proteins (e.g., 6,432 via SomaLogic v4.1 aptamer-based technology) in a large cohort [30].

- Genome-Wide Association Analysis: Perform GWAS for each protein to identify genetic variants (pQTLs) associated with its abundance levels. Use significance thresholds of P < 5×10⁻⁸ for cis-pQTLs (within 1 Mb of the gene) and a more stringent P < 6.6×10⁻¹² for trans-pQTLs [30].

- Step 2: Causal Inference via Mendelian Randomization

- Instrument Variable Selection: Use the identified, independent pQTLs as genetic instruments for the protein of interest.

- MR Analysis: Perform a two-sample MR analysis to estimate the causal effect of the protein on the disease outcome of interest, using summary statistics from large disease GWAS.

- Step 3: Colocalization Analysis

- Statistical Colocalization: Apply Bayesian colocalization methods (e.g., COLOC) to calculate the posterior probability (PP > 80% is a common threshold) that the pQTL and the disease GWAS signal in a locus share a single causal variant [30]. This step is critical to rule out confounding by distinct, but physically close, causal variants.

- Output: High-confidence, colocalized associations suggest that modifying the protein will directly alter disease risk, providing strong genetic support for target prioritization [30].

Visualizing Experimental Workflows

Diagram 1: Proteogenomic Causal Inference Workflow

Diagram 2: From Genetic Association to Therapeutic Direction

The Scientist's Toolkit: Essential Research Reagents & Platforms

Table 2 lists key reagents, technologies, and databases essential for implementing the genetic and proteomic validation protocols described above.

| Tool / Reagent | Type | Primary Function in Validation | Key Features / Examples |

|---|---|---|---|

| SomaScan Platform [31] [30] | Proteomics Technology (Aptamer-based) | High-throughput quantification of thousands of plasma proteins for pQTL discovery. | SomaScan v4.1 measures ~7,000 proteins; used in large consortia (GNPC) and cohort studies [31]. |

| Olink Explore Platform [29] | Proteomics Technology (Antibody-based) | High-sensitivity proteomic profiling for disease prediction models. | Olink Explore 1536+Expansion targets 2,923 proteins; used in UK Biobank Pharma Proteomics Project [29]. |

| GWAS Catalog [32] | Database | Foundational resource for identifying coincident genetic associations between traits and diseases. | Contains ~29,500 genome-wide significant associations; enables hypothesis generation for target identification [32]. |

| COLOC / Co-localization Software [32] | Statistical Software Package | Tests whether two traits (e.g., pQTL and disease GWAS) share a single causal variant in a genomic locus. | Critical for confirming a shared genetic mechanism and strengthening causal inference in MR studies [32]. |

| Gene & Protein Embeddings (e.g., GenePT, ProtT5) [28] | AI-Derived Feature Set | Provides deep, contextual representations of gene/protein function for machine learning models. | Improves performance of gene-level models predicting druggability and direction of effect [28]. |

| Large-Scale Biobanks (e.g., UK Biobank) [32] [29] | Cohort Resource | Provides integrated genetic, proteomic, and phenotypic data on a massive scale for discovery. | Enables systematic pQTL mapping and agnostic discovery of protein-disease links with high statistical power [29]. |

The integration of genetic evidence and proteomic validation represents a paradigm shift in target validation for drug discovery. Quantitative comparisons demonstrate that proteogenomic frameworks like pQTL-based causal inference provide among the highest levels of validation confidence by linking genetic variants to specific, measurable protein effects on disease. Furthermore, sparse protein signatures derived from large-scale proteomics offer a direct path to clinically actionable biomarkers and targets, particularly for conditions like multiple myeloma and motor neuron disease. As these data-driven approaches mature, their systematic application, supported by the essential research tools detailed herein, promises to de-risk therapeutic development and usher in a new era of precision medicine.

The Validation Pipeline: LC-MS/MS Workflows and Bioinformatics Analysis

Liquid Chromatography-Tandem Mass Spectrometry (LC-MS/MS) has emerged as a cornerstone technology for untargeted proteomics, enabling the comprehensive identification and quantification of proteins within complex biological samples. Within the specific context of validating gene predictions against experimental data, LC-MS/MS provides prima facie evidence for the existence of predicted genes by confirming their translation into proteins [33]. This orthogonal validation is critical, as transcriptomic data alone can confirm gene expression but not translation, and computational predictions frequently generate multiple candidate gene models for a single genomic locus [33]. The integration of experimental proteomic data directly into genomic annotation pipelines significantly enhances the quality and reliability of genome annotation, much as expressed sequence tag (EST) data has done historically [33]. This guide objectively compares the performance of LC-MS/MS with other proteomic technologies, providing the experimental data and protocols essential for researchers engaged in gene prediction validation and systems biology.

Core Principles of LC-MS/MS in Untargeted Proteomics

Untargeted LC-MS/MS proteomics aims to identify and quantify as many proteins as possible from a sample without prior selection. The typical workflow involves digesting proteins into peptides, separating them via liquid chromatography, and then analyzing them with a tandem mass spectrometer. The instrument operates in data-dependent acquisition (DDA) mode, automatically selecting the most abundant precursor ions for fragmentation to generate MS/MS spectra [34]. These spectra are subsequently matched against theoretical spectra derived from a protein sequence database to achieve identification [35].

Quantification can be achieved through label-free methods or by using isobaric chemical labels (e.g., Tandem Mass Tags, TMT). The power of this approach for genome annotation was demonstrated in a study on Aspergillus niger, where 405 identified peptide sequences were mapped to 214 different genomic loci. This data provided direct experimental support for specific gene models, and in 6% of these loci, the proteomic evidence suggested that a model other than the annotators' chosen "best" model was the correct one [33].

Technology Performance Comparison

The selection of a proteomic technology involves critical trade-offs between coverage, specificity, and throughput. The following table provides a structured comparison of LC-MS/MS with the proximity extension assay (PEA), a leading affinity-based technology, based on recent large-scale evaluations [35].

Table 1: Comparative Performance of LC-MS/MS and Affinity-Based Proteomics

| Performance Metric | LC-MS/MS | Olink PEA | Technical and Biological Implications |

|---|---|---|---|

| Detection Principle | Direct detection of peptide mass/charge [35] | Indirect detection via antibody binding [35] | MS provides direct sequence evidence; PEA relies on binder specificity. |

| Typical Proteome Coverage | ~2,500-2,600 proteins [35] | ~2,900 proteins [35] | Coverage is complementary; combined use covers >60% of reference plasma proteome [35]. |

| Protein Abundance Range | Mid to high-abundance proteins [35] | Superior for low-abundance proteins (e.g., cytokines) [35] | MS may miss key signaling molecules; PEA may miss high-abundance structural proteins. |

| Precision (Median CV) | 6.8% [35] | 6.3% [35] | Both platforms demonstrate high and comparable technical precision. |

| Key Strengths | • Direct peptide evidence for gene validation [33]• Discovery of novel proteins [35]• No affinity reagents required | • High sensitivity for low-abundance targets• Excellent throughput• Simplified data analysis | MS is superior for confirming gene models and ORFs; PEA for high-throughput biomarker screening. |

| Key Limitations | • Complex sample preparation• Lower throughput• Limited sensitivity for very low-abundance proteins | • Limited to pre-defined protein targets• Potential for antibody cross-reactivity• No direct sequence information | MS is not ideal for rapid, targeted screening; PEA is less suited for exploratory research in poorly characterized organisms. |

Beyond this direct comparison, the specific configuration of the LC-MS/MS workflow itself greatly impacts performance. A landmark study evaluating 34,576 combinatoric workflows found that optimal workflows are highly specific to the quantification setting (e.g., label-free DDA, DIA, or TMT) [23]. Key steps like data normalization and the choice of differential expression analysis statistical method were identified as having an outsized influence on final results for most data types [23].

Experimental Protocols for Gene Model Validation

Sample Preparation and Protein Extraction

The foundation of a successful LC-MS/MS experiment is robust and reproducible sample preparation. For microbial or fungal cells, such as A. niger, a typical protocol is as follows [33]:

- Cell Lysis: Grind harvested mycelia (e.g., 100 mg) using a pestle and mortar under liquid nitrogen. Subsequently, lyse the cells via mechanical disruption with glass beads.

- Protein Precipitation: Precipitate proteins from the lysate using trichloroacetic acid (TCA) to remove contaminants and concentrate the protein.

- Protein Quantification: Determine the protein concentration of the resulting extract using a standardized assay like micro-BCA.

For complex samples like blood plasma or serum, where a few high-abundance proteins dominate, an additional high-abundance protein depletion step is critical. This expands the dynamic range, allowing for the detection of lower-abundance proteins [36]. This can be achieved using affinity columns designed to remove specific abundant proteins (e.g., albumin, IgG) [36].

Gel Electrophoresis and In-Gel Digestion

- Separation: Separate protein extracts using one-dimensional SDS-PAGE (e.g., 10%, 12%, and 15% gels). Stain the gels with Coomassie R250 to visualize protein bands.

- Band Excision: Excise gel bands from top to bottom of the lane.

- Tryptic Digestion: Perform in-gel digestion with trypsin, a protocol that involves steps to reduce, alkylate, and enzymatically cleave proteins into peptides [33].

- Peptide Extraction: Extract the resulting peptides from the gel pieces using acetonitrile and dry them prior to LC-MS/MS analysis [33].

LC-MS/MS Analysis and Data Processing

- Chromatography: Reconstitute dried peptides and load them onto a nanoflow HPLC system equipped with a trapping column for desalting. Separate the peptides on a reversed-phase analytical column (e.g., C18, 75 µm i.d., 15 cm length) using a long, shallow acetonitrile gradient (e.g., 5–90% solvent B over 1 hour) [33].

- Mass Spectrometry: The HPLC system is coupled online to a tandem mass spectrometer (e.g., a Q-TOF instrument). Data acquisition is performed in data-dependent acquisition (DDA) mode: the instrument first performs an MS1 scan to measure peptide precursor ions, then automatically selects the most intense ions for fragmentation (MS2) to generate sequence spectra [33].

- Peptide Identification: Generate peak lists from the raw data and search them against a protein sequence database using search engines like Mascot [33]. The database should include both forward and reversed sequences to facilitate false discovery rate (FDR) calculation. Techniques like Average Peptide Scoring (APS) can be used to iteratively calculate peptide filters and improve confident protein identification [33].

- Mapping to Genome: Map the confidently identified peptide sequences back to the genomic loci and the available predicted gene models. This provides direct experimental evidence for the existence and structure of the predicted genes [33].

The following diagram illustrates this multi-step workflow, from the initial biological sample to validated gene models.

Optimized Data Analysis and Workflow Integration

The identification of differentially expressed proteins is a multi-step process, and the choice of methods at each step significantly impacts the results. An extensive benchmarking study identified that high-performing workflows for label-free data are often characterized by the use of directLFQ intensity, no normalization (or specific normalization methods), and specific imputation algorithms like SeqKNN, Impseq, or MinProb [23].

To maximize proteome coverage and resolve inconsistencies, ensemble inference—integrating results from multiple top-performing individual workflows—has been shown to be beneficial. This approach can lead to gains in performance metrics like partial area under the curve (pAUC) by up to 4.61% [23]. This is particularly powerful when integrating results from different quantification approaches (e.g., topN, directLFQ, MaxLFQ), as they provide complementary information [23].

Table 2: Key Steps and High-Performing Method Choices in Differential Expression Analysis

| Workflow Step | Description | High-Performing Method Examples |

|---|---|---|

| Quantification Setting | Defines the experimental platform and data type (e.g., DDA, DIA, TMT). | Workflow performance is highly setting-specific [23]. |

| Expression Matrix Construction | Defines how peptide-level data is summarized into a protein-level matrix. | directLFQ intensity, MaxLFQ, topN intensities [23]. |

| Normalization | Corrects for technical variation between samples. | "No normalization" (for specific settings), specific distribution correction methods [23]. |

| Missing Value Imputation (MVI) | Replaces missing data points, a common issue in proteomics. | SeqKNN, Impseq, MinProb (probabilistic minimum) [23]. |

| Differential Expression Analysis | Statistical method to identify significant protein abundance changes. | Methods like limma; simple tests (t-test, ANOVA) are often lower-performing [23]. |

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key reagents and materials required for implementing the LC-MS/MS protocols described in this guide.

Table 3: Essential Reagents and Materials for LC-MS/MS Proteomics Workflows

| Item Name | Function / Application | Specific Example |

|---|---|---|

| Trypsin/Lys-C Mix (MS-grade) | Enzymatic digestion of proteins into peptides for MS analysis. | Promega (Madison, WI, USA) [36]. |

| Depletion Column | Removal of high-abundance proteins from serum/plasma to enhance detection of low-abundance proteins. | Agilent Human 14 multiple affinity removal column [36]. |

| Mass Spectrometry-Grade Solvents | Sample preparation and mobile phases for LC-MS/MS to minimize background contamination. | Acetonitrile (ACN), Water (H₂O), Formic Acid (FA) from Fisher Scientific [36]. |

| Buffers and Additives for Digestion | Create optimal conditions for enzymatic digestion and protein handling. | Ammonium Bicarbonate (ABC), Dithiothreitol (DTT), Iodoacetamide (IAA) from Sigma-Aldrich [36]. |

| Internal Standard Peptides | Monitoring instrument stability and performance during the LC-MS/MS run. | Stable isotope-labeled peptides (e.g., caffeine-13C3, L-Leucine-D7) added to the extraction solvent [34]. |

| LC Column | Chromatographic separation of peptides prior to mass spectrometry. | Reversed-phase C18 column (e.g., Waters ACQUITY Premier HSS T3) [34]. |

LC-MS/MS-based proteomics stands as an indispensable, orthogonal method for validating computational gene predictions, providing direct experimental evidence of translation that is not available from transcriptomic data alone. While affinity-based platforms like Olink offer superior throughput and sensitivity for specific low-abundance proteins, LC-MS/MS provides unmatched specificity, the ability to discover novel proteins, and does not rely on pre-defined affinity reagents [33] [35]. The performance of an LC-MS/MS workflow is not monolithic but depends on a synergistic combination of steps from sample preparation to data analysis. By adopting optimized and, where appropriate, ensemble workflows, researchers can robustly leverage this powerful technology to refine genome annotations, confirm gene structures, and build a more accurate understanding of biological systems.

In the context of validating gene predictions against proteomics data, the accuracy of protein-level evidence is paramount. Gene modulation tools like CRISPR and siRNA alter genomic or transcriptomic sequences, but their functional consequences must be confirmed by observing changes in the actual protein output [37]. Among the various proteomic workflows available, GeLC-MS/MS—which combines protein separation via SDS-PAGE with liquid chromatography-tandem mass spectrometry—provides a robust, reproducible, and accessible platform for this critical validation step [38] [39]. This guide objectively compares the performance of GeLC-MS/MS with alternative proteomic methods and provides detailed experimental protocols to implement this technique effectively in gene prediction validation research.

The GeLC-MS/MS workflow integrates classical biochemical separation with modern mass spectrometry, creating a powerful tool for protein identification and characterization. This method is particularly valuable for researchers studying the proteomic effects of gene manipulations, as it provides visible assessment of protein samples and deep proteome coverage without absolute dependence on specific antibodies [39] [37].

Figure 1: GeLC-MS/MS workflow for proteomic analysis. The process begins with protein extraction and proceeds through fractionation, digestion, and final LC-MS/MS analysis, enabling comprehensive protein identification and quantification.

Detailed Experimental Protocols

Protein Sample Preparation

Efficient protein extraction and preparation are critical for obtaining an accurate representation of the proteome under study. Proteins can be prepared from various sources including tissues, bodily fluids, or cell cultures, with preparation methods often involving mechanical lysis, solubilization in buffer, and subcellular fractionation [38].

Reduction and Alkylation: Add 5 mM TCEP to the sample and incubate at room temperature for 20 minutes to reduce disulfide bonds. Then add 10 mM iodoacetamide (IAA) to alkylate free cysteines, incubating in the dark at room temperature for 20 minutes. Quench the reaction with 10 mM DTT, incubating for another 20 minutes in the dark [39].

Protein Precipitation: For samples >500 μg/mL, use methanol-chloroform precipitation: Dilute sample to ~100 μL, add 400 μL methanol and vortex, add 100 μL chloroform and vortex, then add 300 μL water and vortex. Centrifuge at 14,000 × g for 1 minute, remove aqueous and organic layers, retaining the middle protein disk. Add 400 μL methanol, vortex, and centrifuge for 2 minutes [39].

SDS-PAGE Separation and In-Gel Digestion

Gel Electrophoresis: Use precast Bis-Tris 4-12% gradient gels. Add LDS sample buffer (4×) to protein samples with reducing agent and heat at 70°C for 10 minutes. Centrifuge at 2,400 × g for 30 seconds to remove insoluble material before loading [38].

Whole Gel Processing: After electrophoresis and Coomassie staining, destain the entire gel. Perform washing, reduction, and alkylation steps on the intact gel before slicing into 5-20 equal segments based on pre-stained molecular weight markers. This "whole gel" approach significantly reduces processing time compared to conventional methods where each slice is processed individually [40].

In-Gel Digestion: Destain gel pieces with 25 mM ammonium bicarbonate/50% acetonitrile. Add trypsin (10 ng/μL in 25 mM ammonium bicarbonate) and incubate overnight at 37°C. Extract peptides with 1% formic acid, then desalt using StageTips or similar methods before LC-MS/MS analysis [38] [39].

LC-MS/MS Analysis

Chromatography Setup: Use trap column (ZORBAX 300SB-C18, 5 × 0.3 mm, 5 μm) and self-packed analytical column (100 μm i.d. × 150 mm fused silica with C18 resin). Employ gradient elution with Solvent A (0.1% formic acid in water) and Solvent B (0.1% formic acid in acetonitrile) [38].

Mass Spectrometry Parameters: Use high-resolution mass spectrometers (e.g., LTQ Orbitrap) with data-dependent acquisition. For quantitative analyses, consider stable isotope dimethyl labeling to improve accuracy by enabling precise comparison between samples within a single LC-MS run [41].

Performance Comparison with Alternative Methods

Depth of Proteome Coverage

GeLC-MS/MS provides significant advantages for in-depth proteome coverage compared to simpler fractionation approaches, particularly for complex samples.

Table 1: Comparison of protein and peptide identification across fractionation methods

| Method | Proteome Depth | Unique Advantages | Limitations |

|---|---|---|---|

| GeLC-MS/MS (2-D/repetitive) | Moderate protein identifications [42] | Visual QC, removes interferents, compatible with detergents [38] [40] | Limited high MW protein recovery [38] |

| 3-D Fractionation (Protein-level) | Substantially more unique peptides and proteins, including low-abundance species [42] | Highest proteome depth, overcomes MS undersampling [42] | More complex, potential sample loss [42] |

| Solution Digestion (MudPIT) | High peptide identifications [40] | Amenable to automation, higher throughput [40] | Less effective for abundant protein depletion [38] |

Quantitative Performance and Reproducibility

GeLC-MS/MS shows excellent performance for quantitative proteomics, particularly when combined with stable isotope labeling strategies.

Table 2: Quantitative performance characteristics of GeLC-MS/MS

| Parameter | Performance | Experimental Context |

|---|---|---|

| Identification Reproducibility | >88% overlap between technical replicates [40] | Triplicate analysis of HCT116 cell lysate and FFPE tissue |

| Quantification Precision | CV <20% on protein quantitation [40] | Label-free spectral counting |

| Quantification Accuracy | High accuracy with stable isotope dimethyl labeling [41] | Comparative analysis between samples |

| Correlation with Conventional Method | R² = 0.94 for spectral counts [40] | Comparison of whole gel vs. in-gel digestion procedures |

Applications in Gene Prediction Validation

Connecting Genomic and Proteomic Data

For researchers validating gene predictions, GeLC-MS/MS provides a direct link between genetic manipulations and their protein-level consequences. When gene modulation tools like siRNA or CRISPR are employed, mRNA and protein levels may not always correlate, making protein-level verification essential [37]. In one application, researchers used LC-MS/MS proteomics to confirm protein expression changes in cells treated with in-house designed siRNA targeting the epidermal growth factor receptor (EGFR), identifying 73 significantly differentially expressed proteins [37].

Figure 2: Role of GeLC-MS/MS in validating gene predictions. The method provides critical protein-level validation between transcript analysis and biological interpretation, confirming the functional effects of gene modulation.

Biomarker Discovery and Verification

GeLC-MS/MS plays a crucial role in biomarker verification pipelines. The method enables the detection of protein forms that may result from gene mutations or alternative splicing events, providing critical information for selecting appropriate surrogate peptides for targeted assays [43]. In one workflow, GeLC/MS characterization allowed visualization of different forms of a protein in cerebral spinal fluid, informing appropriate peptide selection for subsequent assay development [43].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key reagents and materials for GeLC-MS/MS experiments

| Reagent/Material | Function | Examples/Specifications |

|---|---|---|

| Precast Gels | Protein fractionation by molecular weight | NuPAGE Bis-Tris 4-12% gradient gels [39] |

| Reducing Agents | Break protein disulfide bonds | TCEP, DTT [38] [39] |

| Alkylating Agents | Prevent reformation of disulfide bonds | Iodoacetamide [38] [39] |

| Protease | Digest proteins into peptides | Sequencing-grade trypsin [38] [39] |

| LC Columns | Peptide separation | C18 trap and analytical columns [38] |

| Mass Spectrometer | Peptide identification and quantification | High-resolution instruments (e.g., LTQ Orbitrap) [38] [43] |

GeLC-MS/MS represents a robust, versatile platform for proteomic analysis that balances practical considerations with analytical performance. For researchers validating gene predictions, this method provides the critical protein-level evidence needed to confirm the functional consequences of genetic manipulations. While alternative methods may offer advantages in specific scenarios such as ultimate proteome depth or throughput, GeLC-MS/MS remains an excellent choice for comprehensive protein identification and quantification, particularly when analyzing complex samples or when visual assessment of protein quality is desirable. The continuous development of streamlined protocols and quantitative enhancements ensures that GeLC-MS/MS will remain a cornerstone technique in functional proteomics and gene validation research.

In the field of proteomics, the validation of gene predictions relies heavily on robust data processing workflows for protein identification, quantification, and differential expression analysis. As proteomics technologies advance, researchers require clear guidance on selecting appropriate bioinformatic tools that deliver accurate and reproducible results. This guide provides an objective comparison of leading software platforms, evaluates their performance based on published benchmark studies, and outlines standardized experimental protocols to ensure data integrity. The focus on practical implementation aims to equip researchers with the knowledge needed to effectively connect genomic predictions with protein-level evidence, thereby strengthening multi-omics integration in biomedical research and drug development.

Comparative Performance of Proteomics Software

Objective evaluation of proteomics software requires examination of key performance metrics including proteome coverage, quantitative accuracy, precision, and completeness of data. Independent benchmarking studies provide crucial insights beyond vendor claims, enabling researchers to select optimal tools for their specific applications, particularly for validating gene predictions against experimental proteomics data.

Performance Benchmarking of DIA Analysis Tools

Data-Independent Acquisition (DIA) mass spectrometry has emerged as a powerful technique for comprehensive protein quantification, especially in single-cell proteomics. A recent benchmarking study evaluated three prominent software tools—DIA-NN, Spectronaut, and PEAKS Studio—using simulated single-cell samples consisting of mixed proteomes from human, yeast, and E. coli cells at 200 pg total input levels [44].

Table 1: Performance Comparison of DIA Analysis Software in Single-Cell Proteomics

| Software | Quantification Strategy | Proteins Quantified (Mean ± SD) | Peptides Quantified (Mean ± SD) | Quantitative Precision (Median CV) | Quantitative Accuracy |

|---|---|---|---|---|---|

| Spectronaut | directDIA (library-free) | 3066 ± 68 proteins | 12,082 ± 610 peptides | 22.2–24.0% | High accuracy |

| DIA-NN | Library-free with deep learning | 2607 proteins (at 50% completeness) | 11,348 ± 730 peptides | 16.5–18.4% | Highest accuracy |

| PEAKS Studio | Sample-specific library | 2753 ± 47 proteins | Not specifically reported | 27.5–30.0% | Comparable accuracy |

The study revealed significant differences in software performance. Spectronaut's directDIA workflow demonstrated the highest detection capabilities, quantifying the greatest number of proteins and peptides [44]. However, DIA-NN achieved superior quantitative precision with lower median coefficients of variation (CV) and outperformed other tools in quantitative accuracy, as measured by closeness of experimental fold-change values to theoretical expectations in ground-truth samples [44]. PEAKS Studio showed intermediate performance in proteome coverage but somewhat lower precision in quantification [44].

Comparison of MS1-Based Label-Free Quantification Tools

For label-free quantification approaches, a systematic evaluation compared MaxQuant and Proteome Discoverer using spiked-in human proteins (UPS1) in a yeast background across a wide dynamic range [45]. This study assessed six different MS1-based quantification methods:

Table 2: Performance of MS1-Based Quantification Methods in MaxQuant and Proteome Discoverer

| Software | Quantification Method | Dynamic Range | Reproducibility | Sensitivity for Differential Analysis | Specificity/Accuracy |

|---|---|---|---|---|---|

| Proteome Discoverer | Normalized Intensity (PD-nI) | Wide | High | Highest sensitivity for narrow abundance ratios | High accuracy |

| Proteome Discoverer | Normalized Area (PD-nA) | Wide | High | High sensitivity | High accuracy |

| MaxQuant | LFQ (normalized intensity) | Moderate | Moderate | Slightly lower sensitivity | Highest specificity |

| MaxQuant | Raw intensity (MQ-I) | Moderate | Moderate | Lower sensitivity | High specificity |

The investigation found that Proteome Discoverer, particularly with normalized quantification methods (PD-nI and PD-nA), outperformed MaxQuant in quantification yield, dynamic range, and reproducibility [45]. PD's normalized methods were most accurate in estimating abundance ratios between groups and most sensitive when comparing samples with narrow abundance ratios. Conversely, MaxQuant methods generally achieved slightly higher specificity, accuracy, and precision values [45]. The study also demonstrated that applying optimized log ratio-based thresholds could maximize specificity, accuracy, and precision in differential analysis.

Key Selection Criteria for Proteomics Software

Beyond performance metrics, several practical factors influence software selection for proteomics workflows [46]:

- Compatibility: Support for vendor-specific raw data formats (Thermo .raw, SCIEX .wiff, Bruker .d) or open formats (mzML, mzXML)

- Quantification Strategy: Specialization in label-free (LFQ), isobaric tagging (TMT/iTRAQ), metabolic labeling (SILAC), or DIA methods

- Usability: Graphical user interface (GUI) versus command-line operation, with GUIs being more accessible for beginners

- Reproducibility and Transparency: Open-source tools (Skyline, MaxQuant, OpenMS, FragPipe, DIA-NN) provide code visibility, while commercial software (Proteome Discoverer, Spectronaut) offers professional support

- Cost and Licensing: Free academic tools versus commercial licenses with associated costs

- Integration Capabilities: Compatibility with downstream statistical analysis tools and visualization platforms

Experimental Protocols for Benchmarking Studies

Standardized experimental protocols are essential for generating reproducible and comparable data in proteomics. The methodologies described below are derived from published benchmark studies and can be adapted for evaluating proteomics software performance in specific research contexts.

Benchmarking Protocol for DIA-Based Single-Cell Proteomics

The benchmarking framework for DIA-based single-cell proteomics involved several critical steps [44]:

Sample Preparation:

- Simulated single-cell samples were created using tryptic digests of human HeLa cells, yeast, and Escherichia coli proteins mixed in defined proportions

- A reference sample (S3) contained 50% human, 25% yeast, and 25% E. coli proteins

- Test samples (S1, S2, S4, S5) maintained equivalent human protein abundance while varying yeast and E. coli proportions with expected ratios from 0.4 to 1.6 relative to reference

- Total protein input was maintained at 200 pg to mimic single-cell protein levels

Mass Spectrometry Analysis:

- Samples were analyzed using diaPASEF on a timsTOF Pro 2 mass spectrometer

- Six technical replicates (repeated injections) were performed for each sample to assess reproducibility

- Trapped ion mobility spectrometry (TIMS) was utilized to enhance sensitivity by excluding singly charged contaminating ions

Data Analysis Workflow:

- Multiple analysis strategies were evaluated including library-free and library-based approaches

- Sample-specific spectral libraries (DDALib) were generated from DDA injections of individual organisms (2 ng) on the same LC-MS/MS system

- Public spectral libraries (PublicLib) were compiled from community resources using timsTOF data of HeLa, yeast, and E. coli digests (200 ng) with high-pH reversed-phase fractionation

- Predicted spectral libraries were generated using AlphaPeptDeep for whole-proteome scale prediction

Performance Evaluation Metrics:

- Identification metrics: Number of proteins and peptides quantified, data completeness across replicates

- Precision: Coefficient of variation (CV) of protein quantities among technical replicates

- Accuracy: Deviation of measured fold-change values from expected ratios in ground-truth mixtures

- Statistical significance: T-test p-values and Cohen's d effect sizes for comparing fold-change distributions

DIA Benchmarking Workflow: This diagram illustrates the key steps in benchmarking DIA analysis software, from sample preparation to performance evaluation.

Protocol for Comparing MS1-Based Label-Free Quantification

The comparative evaluation of MaxQuant and Proteome Discoverer followed a rigorous methodology [45]:

Sample Design and Data Sets:

- Primary data set: UPS1 standard (48 human proteins) spiked at nine different amounts (100 to 0.1 fmol) into a constant background of yeast cell lysate (2 μg)

- Secondary data set: Yeast background with (YH) and without (Y) 25 fmol spiked-in human proteins for specificity assessment

- Triplicate or quadruplicate runs for each condition to enable statistical analysis

Mass Spectrometry Parameters:

- LC-MS/MS analysis using nanoRS UHPLC system coupled to LTQ-Orbitrap Velos mass spectrometer

- Data-dependent acquisition mode with survey scans at 60,000 resolution

- Top 20 most intense ions selected for CID fragmentation

- 75-minute gradient for peptide separation

Protein Identification and Quantification:

- Database searching against combined Saccharomyces cerevisiae and UPS1 human protein databases

- False discovery rate (FDR) set to 1% for both peptide and protein identifications

- Six quantification methods compared:

- MaxQuant: Raw intensity (MQ-I) and normalized LFQ intensity (MQ-L)

- Proteome Discoverer: Intensity (PD-I), normalized intensity (PD-nI), area (PD-A), and normalized area (PD-nA)

- Chromatographic peak alignment with 10-minute time windows

Statistical Analysis:

- Correlation analysis between measured and expected protein abundance values

- Calculation of sensitivity, specificity, accuracy, and precision for differential analysis