GATK Somatic Calling: Complete Guide to Input BAM Requirements and Best Practices

This comprehensive guide details the critical input BAM file requirements for successful somatic variant discovery with GATK's Mutect2.

GATK Somatic Calling: Complete Guide to Input BAM Requirements and Best Practices

Abstract

This comprehensive guide details the critical input BAM file requirements for successful somatic variant discovery with GATK's Mutect2. Covering foundational principles, methodological workflows for tumor-normal, tumor-only, and mitochondrial analyses, common troubleshooting scenarios, and validation strategies using benchmark datasets, it provides researchers and clinicians with the essential knowledge to optimize their sequencing data for accurate detection of cancer mutations and somatic mosaicism.

Understanding the Role of BAM Files in GATK Somatic Variant Discovery

GATK Mutect2 is a sophisticated computational tool designed for the discovery of somatic short variants, including single nucleotide variants (SNVs) and insertions or deletions (indels), from high-throughput sequencing data [1] [2]. Developed by the Broad Institute, it combines the DREAM challenge-winning somatic genotyping engine of the original MuTect with the assembly-based machinery of HaplotypeCaller, providing a powerful solution for detecting somatic mutations in cancer and other contexts [2].

Mutect2 operates using a Bayesian somatic genotyping model that differs from the original MuTect approach, performing local assembly of haplotypes to identify variants with high sensitivity and specificity [1]. This tool is featured prominently in the Somatic Short Mutation Calling Best Practice Workflow and has evolved significantly since its inception, with versions from v4.1.0.0 onwards enabling joint analysis of multiple samples and accommodating extreme high depths up to 20,000X [1].

Beyond its primary application in cancer genomics, Mutect2 has proven adaptable to other research contexts, including mitochondrial variant calling and detection of somatic mosaicism [1]. Its powerful processing engine and high-performance computing features make it capable of handling projects of any scale, from targeted panels to whole genomes.

Key Operational Modes

Tumor with Matched Normal

The tumor-normal mode represents the most robust configuration for somatic variant calling, where a tumor sample is analyzed alongside a matched normal sample from the same individual. In this mode, Mutect2 employs specific logic to avoid emitting variants that are clearly present in the germline based on the evidence from the matched normal, conserving computational resources by pre-filtering obvious germline events at an early stage [1]. When a variant's germline status is borderline, Mutect2 will emit the variant to the callset for subsequent filtering by FilterMutectCalls and manual review [1].

The basic command structure for this mode is:

Starting with v4.1, Mutect2 supports joint calling of multiple tumor and normal samples from the same individual, requiring only that additional samples be specified with -I and -normal parameters [1]. This multi-sample approach genotypes all tumor samples jointly, allowing them to share statistical power, which is particularly useful when analyzing several samples with low coverage or low variant allele fractions [3].

Tumor-Only Mode

When a matched normal sample is unavailable, Mutect2 can operate in tumor-only mode using a single sample's alignment data. This approach is inherently more challenging because the evidence from normal reads is missing, and the powerful normal artifact filter is not available [3]. The tool must rely more heavily on population germline resources and panels of normals to distinguish somatic variants from germline polymorphisms.

The basic command for tumor-only calling is:

For creating a panel of normals (PoN), researchers first call variants on each normal sample individually in tumor-only mode, then use CreateSomaticPanelOfNormals to generate the composite PoN [1] [2]. After FilterMutectCalls filtering, additional filtering by functional significance with Funcotator is recommended for tumor-only callsets [1].

Mitochondrial Mode

Mutect2 offers specialized processing for mitochondrial DNA through its mitochondrial mode, activated with the --mitochondria flag. This mode automatically optimizes parameters for calling variants on mitochondria, setting --initial-tumor-lod to 0, --tumor-lod-to-emit to 0, --af-of-alleles-not-in-resource to 4e-3, and the advanced parameter --pruning-lod-threshold to -4 [1]. This configuration enhances sensitivity for detecting variants in high-depth mitochondrial sequencing data.

The command structure for mitochondrial calling is:

This mode accepts only a single sample, though it can be provided in multiple files [1].

Force-Calling Mode

For scenarios where specific variants require investigation regardless of whether they would normally be detected, Mutect2 provides a force-calling mode that genotypes all alleles specified in a VCF file in addition to any other variants discovered during normal operation [1]. This is particularly useful for clinical applications where known variants of interest must be comprehensively assessed.

The command includes the -alleles parameter:

For samples suspected to exhibit orientation bias artifacts (such as FFPE tumor samples), researchers can add an --f1r2-tar-gz argument to collect F1R2 metrics for subsequent analysis with LearnReadOrientationModel [1].

Performance Characteristics and Benchmarking

Performance Across Sequencing Depths and Mutation Frequencies

Extensive benchmarking studies have systematically evaluated Mutect2's performance across different sequencing depths and variant allele frequencies (VAF). The relationship between these parameters and detection performance follows predictable patterns that should inform experimental design decisions.

Table 1: Performance Metrics of Mutect2 Across Different Sequencing Depths

| Sequencing Depth | Recall Rate Range | Precision Rate Range | F-score Range | Recommended Use Cases |

|---|---|---|---|---|

| 100X | 23-96% | >95% (in most samples) | 0.374-0.96 | Limited applications due to variable sensitivity |

| 200X | 48-97% | >95% | 0.63-0.96 | Standard for mutation frequency ≥20% |

| 300X | 50-97% | >95% | 0.65-0.96 | Moderate improvement over 200X |

| 500X | 60-97% | 93-95% | 0.70-0.96 | Beneficial for low frequency mutations (5-10%) |

| 800X | 70-97% | 93-95% | 0.75-0.96 | Optimal performance across all frequencies |

Higher sequencing depths generally improve recall rates, with increases of 0.6-44% observed when depth increases from 100X to 200-800X [4]. However, this comes with a marginal decrease in precision (less than 0.7%) when sequencing depth exceeds 200X [4]. For most applications targeting higher mutation frequencies (≥20%), sequencing depths of 200X provide sufficient sensitivity to call 95% of mutations [4].

Table 2: Mutect2 Performance Across Different Mutation Frequencies

| Mutation Frequency | Recall Rate | Precision | F-score | Recommended Depth |

|---|---|---|---|---|

| 1% (Very Low) | 2.7-34.5% | 68.9-100% | 0.05-0.51 | 500-800X |

| 5-10% (Low) | 48-96% | 95.5-95.9% | 0.65-0.95 | ≥500X |

| 20-40% (High) | 92-97% | >95% | 0.94-0.96 | ≥200X |

Mutation frequency substantially influences the performance benefits gained from increasing sequencing depth [4]. For low mutation frequencies (1%), detection performance remains poor even at high depths, with recall rates between 2.7-34.5% across all depths and tools [4]. At moderate frequencies (5-10%), recall improves substantially to 48-96%, while high mutation frequencies (20-40%) enable excellent recall rates of 92-97% [4].

Comparative Performance with Strelka2

Benchmarking studies have compared Mutect2 against other somatic callers, particularly Strelka2, revealing context-dependent performance differences:

Table 3: Performance Comparison Between Mutect2 and Strelka2

| Performance Metric | Mutation Frequency ≥20% | Mutation Frequency 5-10% | Mutation Frequency 1% |

|---|---|---|---|

| Mutect2 Precision | >95% | 95.5-95.9% | Variable |

| Strelka2 Precision | >95% | 96.2-96.5% | Variable |

| Mutect2 Recall | >90% | 50-96% | 2.7-34.5% |

| Strelka2 Recall | >90% | 48-93% | 2.7-34.5% |

| Relative Performance | Comparable (difference <1%) | Mutect2 has slightly better F-score | Mutect2 superior at 500X+ |

| Computational Speed | Reference | 17-22 times faster | 17-22 times faster |

For higher mutation frequencies (≥20%), both tools perform excellently with minimal differences (less than 1%) in precision, recall, and F-score [4]. At moderate mutation frequencies (5-10%), Strelka2 demonstrates slightly higher precision (96.2-96.5% vs. 95.5-95.9%) while Mutect2 achieves higher recall (50-96% vs. 48-93%), resulting in better overall F-scores for Mutect2 [4]. At the lowest mutation frequency (1%), Mutect2 surpasses Strelka2 when sequencing depth increases to 500X or higher [4]. Notably, Strelka2 is significantly faster, processing data 17-22 times quicker on average [4].

Input BAM Requirements and Preprocessing

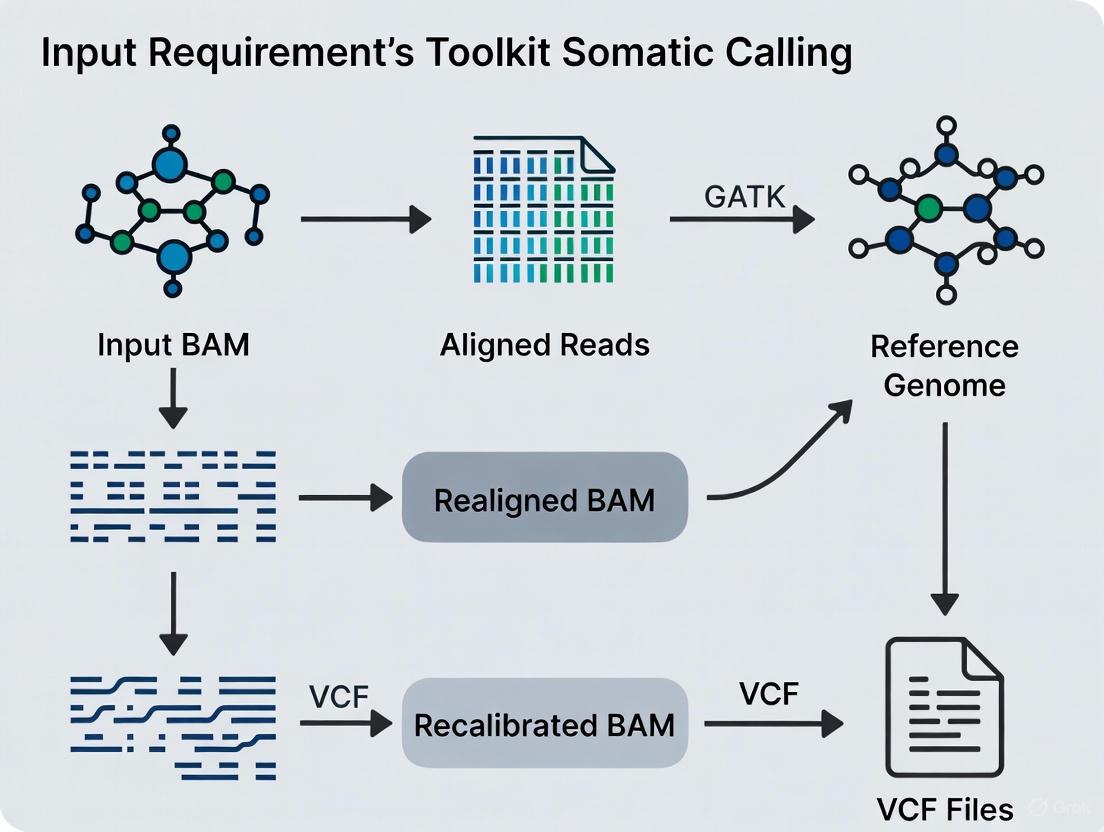

BAM File Preparation Workflow

Proper preparation of input BAM files is crucial for optimal Mutect2 performance. The preprocessing workflow ensures that sequencing artifacts are minimized and data quality is maximized before variant calling.

Critical BAM Quality Metrics

For reliable somatic variant calling, input BAM files must meet specific quality standards. Several key metrics determine whether sequencing data is appropriate for Mutect2 analysis.

Sequence Alignment: The initial alignment of raw sequencing reads to a reference genome is a critical foundation for accurate variant calling [5]. Recommended aligners include BWA-Mem, which efficiently handles the characteristics of NGS data [5]. The resulting alignments are stored in BAM format, which has become the standard for storing and sharing NGS data due to compressed file size, indexed-access capabilities, and standardized format [5].

Duplicate Marking: PCR duplicates, representing 5-15% of sequencing reads in a typical exome, should be identified and marked using tools such as Picard or Sambamba [5]. These redundant reads originate from the same DNA sequence molecule and can be identified based on alignment position and read pairing information. Marking (rather than removing) duplicates preserves information for downstream QC metrics while preventing artificial inflation of coverage estimates.

Base Quality Score Recalibration (BQSR): This optional but recommended step adjusts the base quality scores of sequencing reads using an empirical error model [5]. While evaluations of variant calling accuracy before and after BQSR suggest improvements are marginal, it may help correct systematic errors in base quality scores [5].

Quality Control: Comprehensive QC of analysis-ready BAMs should be performed prior to variant calling to evaluate key sequencing metrics, verify sufficient sequencing coverage was achieved, and check samples for evidence of contamination [5]. For family studies and paired samples (e.g., tumor-normal), expected sample relationships should be confirmed with tools for relationship inference such as the KING algorithm [5].

Resource and Panel Requirements

Mutect2 does not strictly require a germline resource or panel of normals (PoN) to run, but both are strongly recommended for optimal performance [1]. The tool uses these resources to prefilter sites, saving computational resources and improving specificity.

Germline Resources: Population VCFs such as gnomAD provide allele frequencies that serve as prior probabilities for germline variation [1] [3]. When a variant is absent from the germline resource, Mutect2 uses an imputed allele frequency based on the --af-of-alleles-not-in-resource parameter [1]. The default values for this parameter are dynamically adjusted: tumor-only calling sets it to 5e-8, tumor-normal calling to 1e-6, and mitochondrial mode to 4e-3 [1].

Panel of Normals (PoN): The PoN captures technical artifacts and common germline variants present in a population of normal samples [3]. Mutect2 marks variants found in the PoN with the "PON" info field, which FilterMutectCalls then uses for filtering [3]. Most PON sites are silently pre-filtered without ending up in the output, though some may be emitted if they appear in active regions containing non-PON sites [3].

Advanced Filtering and Artifact Detection

Comprehensive Filtering Workflow

Raw variant calls from Mutect2 require sophisticated filtering to separate true somatic variants from technical artifacts and residual germline polymorphisms. The complete filtering workflow involves multiple specialized tools and considerations.

Orientation Bias Artifact Filtering

Sequence context-dependent artifacts represent a significant challenge in somatic variant calling, particularly for certain sample types. Mutect2 provides specialized tools to address these artifacts.

Common Artifact Types:

- OxoG Artifacts: Stem from oxidation of guanine to 8-oxoguanine, resulting in G to T transversions [6]. These are particularly common in certain sample preparation protocols.

- FFPE Artifacts: Result from formaldehyde deamination of cytosines in formalin-fixed paraffin-embedded samples, causing C to T transitions [6]. These are prevalent in archival clinical specimens.

The FilterByOrientationBias tool provides specialized filtering for these artifacts and should be run after FilterMutectCalls [6]. This tool requires pre-adapter detailed metrics calculated by Picard's CollectSequencingArtifactMetrics and specification of the base substitutions to consider for orientation bias [6].

The workflow for orientation bias filtering involves:

- Running CollectSequencingArtifactMetrics on the sample BAM

- Processing Mutect2 calls through FilterMutectCalls

- Applying FilterByOrientationBias with the appropriate artifact modes

Example command for OxoG artifact filtering:

For FFPE samples, researchers would specify --artifact-modes 'C/T' to address formalin-induced deamination artifacts [6].

Key Filtering Parameters

FilterMutectCalls employs a sophisticated statistical framework to distinguish true somatic variants from various false positive scenarios:

Normal Artifact LOD: While the normal log odds (NLOD) is calculated assuming a diploid genotype, the normal-artifact-lod uses the same variable ploidy assumption approach as tumor-lod [2]. If the normal artifact log odds becomes large, FilterMutectCalls applies the "artifact-in-normal" filter [2]. For matched normal samples with tumor contamination, consider increasing the normal-artifact-lod threshold [2].

Tumor LOD: The tumor log odds is calculated independently of any matched normal and determines whether to filter a tumor variant [2]. Variants with tumor LODs exceeding the threshold pass filtering [2].

Sensitivity Adjustment: The -f-score-beta parameter in FilterMutectCalls controls the balance between sensitivity and precision [3]. Increasing this parameter from its default value of 1 enhances sensitivity at the expense of precision, which may be appropriate for screening applications where false negatives are less desirable than false positives [3].

Research Reagent Solutions

Table 4: Essential Research Reagents and Resources for Mutect2 Analysis

| Resource Type | Specific Examples | Purpose and Function | Criticality |

|---|---|---|---|

| Germline Resource | af-only-gnomad.hg38.vcf | Provides population allele frequencies as prior probabilities for germline variation | Highly Recommended |

| Panel of Normals | 1000g_pon.hg38.vcf, Mutect2-WGS-panel-b37.vcf | Identifies technical artifacts and common germline variants present in normal populations | Highly Recommended |

| Reference Genome | GRCh38, HG19 | Standardized reference sequence for read alignment and variant calling | Required |

| Benchmarking Resources | GIAB Gold Standard Datasets (HG001-7) | Enables performance validation and optimization through comparison against truth sets | Recommended for QC |

| Alignment Tools | BWA-MEM | Efficient mapping of sequencing reads to reference genome | Required |

| Duplicate Marking | Picard MarkDuplicates | Identifies PCR duplicates to prevent coverage inflation | Required |

| Variant Filtering | FilterMutectCalls, FilterByOrientationBias | Removes false positives and technical artifacts from raw variant calls | Required |

These research reagents form the foundation of reproducible somatic variant detection. The germline resource, typically derived from gnomAD, provides population allele frequencies that serve as prior probabilities in Mutect2's statistical model [1] [3]. When a variant is absent from this resource, Mutect2 uses a small imputed allele frequency based on the --af-of-alleles-not-in-resource parameter [1]. The Panel of Normals captures systematic artifacts specific to sequencing protocols and analysis methods [3]. Publicly available PoNs include the 1000 Genomes Project panel for hg38 and Broad Institute-generated panels for both whole-genome and exome sequencing [3].

Benchmarking resources such as the Genome in a Bottle (GIAB) consortium's gold standard datasets enable objective performance assessment and pipeline optimization [5]. These resources provide high-confidence variant calls based on the consensus of multiple technologies and variant calling methods, establishing "ground truth" for evaluation [5]. For somatic calling, synthetic datasets or carefully characterized cell line mixtures can provide similar benchmarking capabilities.

Critical Importance of Properly Processed Input BAM Files

In genomic research, particularly in somatic variant discovery for cancer and drug development, the quality of the input data fundamentally determines the validity of all subsequent findings. Properly processed Binary Alignment Map (BAM) files represent the essential foundation upon which accurate variant identification rests. The Genome Analysis Toolkit (GATK), the industry standard for genomic analysis, imposes stringent requirements on input BAM files to ensure analytical validity [7]. Somatic variant calling with tools like Mutect2 requires BAM files that have undergone extensive pre-processing to correct for technical artifacts and biases inherent in sequencing technologies [8] [9]. When BAM files fail to meet these specifications, researchers risk introducing false positives, overlooking genuine somatic mutations, and ultimately generating unreliable results that can misdirect critical research pathways and therapeutic development programs.

The complex journey from raw sequencing reads to analysis-ready BAM files involves multiple computationally intensive steps, each designed to address specific technical challenges [9]. This whitepaper provides researchers and drug development professionals with a comprehensive technical framework for understanding, creating, and validating BAM files that meet the exacting standards required for robust somatic variant discovery using GATK's best practices workflow.

BAM File Specifications and GATK Requirements

Structural and Compositional Mandates

GATK maintains precise specifications for input BAM files to ensure compatibility and analytical accuracy. These requirements span file structure, content organization, and metadata completeness. According to GATK documentation, all SAM, BAM, or CRAM files must be accompanied by an index, which can be generated by Picard's BuildBamIndex tool [7]. The header must contain read groups with sample names, and every read in a BAM must belong to a read group listed in the header [7]. This read group information is critical for tracking the provenance of sequencing data and for downstream analysis steps.

A particularly critical requirement is that GATK tools require that reads be sorted in coordinate order—not by queryname and not "unsorted" [7]. This coordinate sorting enables efficient processing of genomic regions and is essential for proper function of GATK's variant discovery tools. The sort order must be explicitly declared in the header using the SO:coordinate flag [7]. Contig ordering must also be consistent across all files in an analysis, including FASTA and VCF files, with everything matching the sequence dictionary of the reference genome FASTA [7].

Table: Essential BAM File Requirements for GATK Somatic Variant Calling

| Requirement Category | Specific Specification | Purpose/Rationale |

|---|---|---|

| File Format | BAM (preferred) or CRAM | CRAM observed to have performance issues; BAM recommended for analysis [7] |

| Sort Order | Coordinate-sorted (SO:coordinate in header) |

Enables efficient regional processing; required by GATK tools [7] |

| Indexing | Must have accompanying index (.bai file) | Permits random access to genomic regions; required by GATK [7] |

| Read Groups | Required with sample names (SM tags) | Essential for sample tracking and downstream analysis [7] |

| Validation | Must pass ValidateSamFile (Picard) | Ensures file integrity and compliance with specifications [7] |

| Contig Order | Consistent with reference dictionary | Prevents analytical errors from coordinate mismatches [7] |

Technical Composition of BAM Files

Understanding the internal structure of BAM files is essential for troubleshooting and quality assurance. BAM files are the binary, compressed version of SAM (Sequence Alignment Map) files, which are tab-delimited text files containing alignment information [10]. The basic structure consists of a header section and alignment section. The header section begins with '@' characters and includes critical metadata such as @SQ lines for reference sequences and @RG lines for read groups [10].

The alignment section contains one line per read, with 11 mandatory fields that provide comprehensive mapping information [10]. Key fields include the SAM flag (which encodes multiple properties of the alignment in a single numerical value), mapping quality (MAPQ), and CIGAR string (which precisely describes the alignment including matches, mismatches, insertions, and deletions) [10]. The CIGAR string is particularly important for variant discovery as it enables the identification of insertion and deletion events.

The BAM Pre-processing Pipeline for Somatic Variant Discovery

Systematic Workflow from Raw Data to Analysis-Ready BAM

The transformation of raw sequencing data into analysis-ready BAM files requires a multi-stage pre-processing pipeline designed to correct systematic errors and prepare data for variant discovery. The GATK best practices workflow for data pre-processing involves three principal phases: mapping to a reference genome, duplicate marking, and base quality score recalibration [9]. Each stage addresses distinct technical challenges and introduces specific corrections to enhance data quality.

Mapping to Reference represents the initial processing step, performed per-read group, where individual read pairs are aligned to a reference genome [9]. This provides the fundamental coordinate system for all subsequent genomic analyses. Because the mapping algorithm processes each read pair in isolation, this step can be massively parallelized to increase throughput. The resulting file is in SAM/BAM format sorted by coordinate [9].

Marking Duplicates constitutes the second processing step, performed per-sample, where read pairs likely originating from duplicates of the same original DNA fragments are identified [9]. These represent non-independent observations that can bias variant calling, so the program tags all but a single read pair within each set of duplicates, causing the marked pairs to be ignored during variant discovery. GATK recommends using MarkDuplicatesSpark for this step, which utilizes Apache Spark to parallelize what has historically been a performance bottleneck [9].

Base Quality Score Recalibration (BQSR) forms the third and most computationally sophisticated pre-processing step, performed per-sample [9]. This procedure applies machine learning to detect and correct patterns of systematic errors in the base quality scores, which are confidence scores emitted by the sequencer for each base call. Since variant calling algorithms rely heavily on these quality scores, correcting systematic biases is essential for accurate variant discovery [9]. Biases can originate from various sources, including biochemical processes during library preparation, manufacturing defects in sequencing chips, or instrumentation defects in the sequencer.

Specialized Processing for Specific Applications

While the core pre-processing workflow remains consistent across applications, certain data types require specialized processing steps. For RNA sequencing data, an additional SplitNCigarReads step is necessary to split reads that contain Ns in their CIGAR string, which typically represent reads spanning splicing events [11]. This specialized processing ensures that variant calling algorithms can properly interpret splicing-aware alignments.

For tumor-normal paired analyses, the pre-processing pipeline must be applied consistently to both sample types, with particular attention to potential artifacts in formalin-fixed paraffin-embedded (FFPE) samples, which often exhibit specific sequencing artifacts that require specialized handling in the Mutect2 workflow [8] [12].

Quality Control and Validation of Processed BAM Files

Comprehensive Quality Assessment Metrics

Rigorous quality control of processed BAM files is essential before proceeding to variant discovery. Multiple quality assessment tools provide complementary perspectives on BAM file quality, with GATK's CollectMultipleMetrics and external tools like Qualimap offering comprehensive evaluation frameworks [11] [13]. These tools generate a suite of metrics that collectively characterize the technical quality of the sequencing data and its suitability for somatic variant calling.

Alignment metrics provide fundamental information about mapping success, including the total number of reads, mapping rate, and read distribution across genomic regions [13]. For targeted sequencing approaches, the percentage of reads on-target represents a critical quality indicator. Coverage metrics characterize the depth and uniformity of sequencing across the genome or target regions, with mean coverage, coverage uniformity, and the percentage of bases achieving minimum coverage thresholds (typically 20-30X for somatic variants) representing key quality benchmarks [13].

Library complexity metrics help identify potential issues with amplification bias, with duplication rates serving as a primary indicator [13]. Exceptionally high duplication rates may suggest insufficient starting material or amplification artifacts that could compromise variant detection. Insert size metrics provide information about library preparation quality, with deviations from expected insert size distributions potentially indicating fragmentation issues or other library preparation artifacts [13].

Table: Essential Quality Control Metrics for Processed BAM Files

| Metric Category | Specific Metrics | Acceptance Criteria |

|---|---|---|

| Alignment Quality | Mapping rate (>90%), Properly paired rate, Mean mapping quality (>Q30) | Indicates successful alignment to reference |

| Coverage Statistics | Mean coverage (≥30X for somatic), Uniformity, % bases at ≥20X (>85%) | Ensures sufficient depth for variant detection |

| Library Complexity | Duplication rate (varies by protocol), Estimated library size | Identifies potential amplification biases |

| Base Quality | Pre- and post-recalibration quality scores, Error rates | Confirms effective error correction |

| Sequence Content | GC distribution, Nucleotide composition | Detects sequence-specific biases |

| Insert Size | Mean insert size, Standard deviation | Verifies library preparation quality |

Specialized QC for Somatic Variant Discovery

Beyond standard quality metrics, BAM files intended for somatic variant discovery require additional, specialized quality assessments. Cross-sample contamination represents a particular concern in somatic analyses, as even low levels of contamination can generate false positive variant calls [8]. GATK provides specialized tools including GetPileupSummaries and CalculateContamination specifically designed to estimate contamination fractions, with CalculateContamination engineered to perform well even in samples with significant copy number variation [8].

For analyses involving FFPE-derived samples or those sequenced on platforms prone to specific artifacts, read orientation bias assessment becomes critical [12]. The Mutect2 workflow includes specialized tools (LearnReadOrientationModel) to detect and correct for substitution errors that occur predominantly on a single strand before sequencing [12]. This specialized quality control step is essential for minimizing false positive calls in challenging sample types.

Integration with GATK Mutect2 Somatic Variant Calling

Direct Pipeline Connectivity

Properly processed BAM files serve as the direct input to the GATK Mutect2 somatic variant calling workflow, which identifies somatic short variants (SNVs and Indels) via local assembly of haplotypes [8] [1]. The Mutect2 caller uses a Bayesian somatic genotyping model that differs from the original MuTect algorithm and employs assembly-based machinery similar to HaplotypeCaller [1]. When Mutect2 encounters regions showing evidence of somatic variation, it completely reassembles reads in that region to generate candidate variant haplotypes, then aligns each read to these haplotypes to obtain likelihood matrices [8].

The critical dependency between BAM quality and Mutect2 performance manifests in multiple aspects of the algorithm. The base quality scores directly influence the Bayesian genotyping model, with inaccurately calibrated quality scores potentially skewing variant likelihood calculations [9]. The presence of duplicate reads can artificially inflate evidence for variant alleles if not properly flagged [9]. Improperly sorted BAM files or those with incorrect read groups can disrupt the regional processing approach used by Mutect2, leading to analysis failures or incomplete variant calling [7].

Advanced Filtering Dependencies

The downstream filtering steps in the Mutect2 workflow exhibit direct dependencies on properly processed BAM files. FilterMutectCalls, which applies sophisticated filters to distinguish true somatic variants from artifacts, relies on multiple BAM-derived metrics [8] [12]. The contamination estimation derived from GetPileupSummaries and CalculateContamination directly influences filtering stringency [8]. The read orientation model learned from the BAM file data enables detection of strand-specific artifacts common in FFPE samples [12].

Recent advances in Mutect2 have introduced a somatic clustering model that leverages allele fraction patterns across variants to distinguish true somatic events from artifacts [12]. This model uses a Dirichlet process binomial mixture model to identify subclonal allele fraction clusters, which depends on accurate read alignment and quality calibration in the input BAM files to properly characterize allele fractions [12].

Successful somatic variant discovery requires not only proper BAM files but also a suite of reference resources and analytical tools that collectively ensure analytical validity. These reagents provide the necessary context for distinguishing true somatic variants from technical artifacts and population polymorphisms.

Table: Essential Research Reagents for Somatic Variant Discovery

| Resource Type | Purpose/Function | Examples/Sources |

|---|---|---|

| Reference Genome | Coordinate system for alignment and variant calling | GRCh37/hg19, GRCh38/hg38 (with consistent decorators) |

| Germline Resource | Provides population allele frequencies to distinguish rare germline variants from somatics | gnomAD, 1000 Genomes, population-specific databases |

| Panel of Normals (PON) | Identifies recurrent technical artifacts across normal samples | Created from 40+ normal samples using CreateSomaticPanelOfNormals [12] |

| Known Sites Resources | Enables base quality recalibration and contamination assessment | dbSNP, Mills indel gold standard, known indels sets [9] |

| Functional Annotation Databases | Provides biological context for identified variants | COSMIC, dbSNP, GENCODE, ClinVar [8] |

The germline resource, typically derived from large population databases like gnomAD, provides allele frequencies that enable Mutect2 to distinguish potential germline polymorphisms from true somatic events [1]. The Panel of Normals (PON) is particularly critical for tumor-only analyses, as it captures technical artifacts that recur across normal samples, allowing these to be filtered from the final variant call set [12]. Creation of a high-quality PON requires Mutect2 to be run on each normal sample in tumor-only mode, followed by GenomicsDBImport and CreateSomaticPanelOfNormals to aggregate artifacts [12].

Properly processed BAM files represent the non-negotiable foundation for robust somatic variant discovery in cancer research and therapeutic development. The intricate dependencies between BAM file quality, pre-processing methodologies, and downstream variant calling algorithms necessitate rigorous attention to each stage of the data preparation pipeline. From initial read alignment through duplicate marking, base quality recalibration, and comprehensive quality assessment, each step introduces specific corrections that collectively enable accurate distinction of true somatic mutations from technical artifacts.

The GATK Mutect2 workflow incorporates sophisticated statistical models and filtering approaches that presume properly processed, analysis-ready BAM files as input. Deviations from best practices in BAM processing propagate through the analytical pipeline, potentially compromising the sensitivity and specificity of variant detection. For researchers in drug development and clinical applications, where variant calls may inform therapeutic decisions and clinical trial design, adherence to these stringent BAM processing standards is not merely methodological refinement—it is an essential requirement for generating clinically actionable insights.

As somatic variant detection extends into increasingly challenging contexts, including low allele fractions, complex structural variants, and heterogeneous sample types, the importance of optimized BAM processing will only intensify. Future methodological advances will likely introduce additional preprocessing refinements, but the fundamental principle will endure: reliable somatic variant discovery depends irrevocably on properly processed input BAM files.

Within the framework of somatic variant discovery for cancer research and therapeutic development, data pre-processing constitutes the foundational phase that transforms raw sequencing data into analysis-ready aligned files. This process is particularly critical for drug development pipelines where accurate detection of somatic mutations directly informs target identification, patient stratification, and treatment response monitoring. The Genome Analysis Toolkit (GATK) Best Practices workflow establishes a standardized methodology for pre-processing sequence data prior to variant detection, ensuring optimal input quality for somatic callers like Mutect2 [9] [8]. For research teams in pharmaceutical and biotechnology settings, rigorous implementation of these steps reduces false positive rates in variant calling, thereby increasing confidence in identified biomarkers and therapeutic targets.

The pre-processing phase specifically addresses technical artifacts and systematic biases introduced during sequencing library preparation and instrumentation [9]. Through three coordinated procedures—read alignment, duplicate marking, and base quality score recalibration—the workflow produces BAM files that accurately represent the true biological sequence of the tumor and normal samples. This technical foundation is indispensable for subsequent somatic variant calling, where distinguishing true somatic mutations from sequencing artifacts requires carefully calibrated quality metrics [14]. The computational reproducibility afforded by standardized pre-processing directly supports regulatory requirements in clinical trial sequencing and diagnostic development.

Core Pre-processing Steps

Sequence Alignment to Reference

The initial pre-processing step involves aligning raw sequencing reads from FASTQ or unmapped BAM (uBAM) files to a reference genome, establishing genomic coordinates for all subsequent analyses [9]. This process utilizes optimized aligners such as BWA-MEM, which handles the mapping of individual read pairs to the reference genome in a highly parallelizable manner [15]. For somatic variant discovery in cancer research, consistent alignment across tumor-normal pairs is essential to ensure that observed differences reflect true biological variation rather than technical inconsistencies in mapping.

The alignment step is performed per read group, which corresponds to the intersection of library preparation and sequencing lane [16]. Each read group must be assigned appropriate identifiers (RGID) and sample names (SM) that distinguish biological samples from technical replicates. Following alignment, the MergeBamAlignments tool integrates mapping information with original read data to produce coordinate-sorted BAM files [9]. For multi-library or multiplexed sequencing designs, the Broad Institute's production pipeline processes each read group individually before merging, ensuring optimal handling of lane-specific effects while maintaining sample integrity [16].

Table 1: Key Alignment Tools and Their Applications in Somatic Research

| Tool | Primary Function | Role in Somatic Analysis |

|---|---|---|

| BWA-MEM | Read alignment to reference | Maps tumor and normal reads to common coordinate system |

| MergeBamAlignments | Integrates mapping with original read data | Preserves original read information while adding mapping coordinates |

| Samtools | BAM file manipulation and indexing | Provides utilities for file processing and quality checks [14] |

Duplicate Marking

Duplicate marking identifies non-independent observations arising from the same original DNA fragments, most commonly through PCR amplification during library preparation [9]. These artifacts artificially inflate coverage metrics and can mislead variant allele frequency calculations—a critical parameter in somatic variant calling where subclonal mutations may exist at low frequencies. The GATK workflow employs MarkDuplicatesSpark (or MarkDuplicates followed by SortSam) to identify and tag duplicate read pairs based on their alignment coordinates and pairing information [9].

For cancer genome analysis, duplicate marking is particularly important in tumor samples that often originate from limited biological material requiring significant amplification. The process distinguishes between PCR duplicates (occurring during library preparation) and optical duplicates (from sequencing cluster imaging), with the former being more prevalent in amplified samples [9]. Modern implementations perform duplicate marking after merging all read groups for a sample, enabling detection of duplicates that occur across different sequencing lanes [16]. This approach recognizes that the same DNA fragment might be sequenced in multiple lanes in multiplexed designs, and only cross-lane duplicate marking can identify these artifacts.

Diagram 1: Duplicate marking process flow. The algorithm compares alignment coordinates to identify duplicate reads before marking all but one representative read.

Base Quality Score Recalibration

Base quality score recalibration (BQSR) corrects systematic biases in the quality scores assigned by sequencing instruments [9]. These quality scores represent confidence values for each base call and profoundly influence variant calling algorithms, which use them as weights when evaluating evidence for or against potential variants [9]. BQSR employs machine learning to detect patterns of systematic errors based on covariates such as sequencing cycle, base composition, and machine characteristics, then adjusts quality scores to better reflect empirical error rates.

The BQSR process occurs in two distinct phases: BaseRecalibrator builds a recalibration model by analyzing all base calls in the dataset and identifying systematic errors, while ApplyBQSR applies the model to adjust quality scores in the BAM file [9]. For somatic variant discovery, this step is crucial because tumors often exhibit mutation patterns that can be confused with sequencing artifacts without proper quality adjustment. The procedure is performed per read group, recognizing that different lanes and libraries may exhibit distinct error profiles [16]. This granular approach ensures that lane-specific artifacts from sequencing chips or instrumentation defects are properly corrected.

Table 2: Base Quality Score Recalibration Components

| Component | Function | Technical Implementation |

|---|---|---|

| BaseRecalibrator | Builds error model from covariate data | Collects statistics by sequencing cycle, read group, and sequence context [9] |

| Recalibration Model | Mathematical representation of systematic errors | Applies machine learning to detect patterns across covariates [9] |

| ApplyBQSR | Adjusts base quality scores in BAM | Modifies QUAL fields based on model predictions [15] |

| AnalyzeCovariates (Optional) | Visualizes recalibration effects | Generates plots comparing pre- and post-recalibration quality metrics [9] |

Diagram 2: Base quality score recalibration workflow. The process involves analyzing systematic errors, building a correction model, and applying quality score adjustments.

Experimental Design Considerations

Multiplexed Sequencing and Multi-Library Designs

Complex experimental designs involving multiplexed sequencing or multiple libraries per sample require specific adaptations to the standard pre-processing workflow. For drug development studies analyzing large cohorts of tumor samples, multiplexing enables cost-effective sequencing but necessitates careful data handling [16]. The recommended approach processes each read group (representing a unique library-lane combination) individually through alignment and sorting before merging read groups belonging to the same sample during duplicate marking [16].

This strategy efficiently handles technical variability while ensuring biological consistency within samples. When multiple libraries are prepared from the same biological sample, they must maintain distinct read group identifiers to preserve their technical origin throughout analysis [16]. Base quality recalibration subsequently processes the merged BAM files while still distinguishing read groups internally, applying lane-specific corrections where necessary [16]. This balanced approach acknowledges that some artifacts are lane-specific while biological phenomena should be consistent across technical replicates.

Resource Planning for Large-Scale Studies

For pharmaceutical companies conducting large-scale cancer genomics studies, computational resource planning is essential for timely processing of hundreds or thousands of tumor-normal pairs. The GATK Best Practices reference implementations provide scalable solutions optimized for cloud execution [9]. The MarkDuplicatesSpark tool specifically addresses performance bottlenecks in duplicate marking by leveraging Apache Spark for parallel processing, significantly accelerating this computationally intensive step [9].

Base quality recalibration can be scattered across genomic regions (typically by chromosome) during the initial statistics collection phase, then gathered into a genome-wide model [9]. This parallelization strategy enables efficient processing of whole-genome sequencing data from large clinical trials. For organizations with established high-performance computing infrastructure, the GATK workflows can be distributed across clusters to meet the computational demands of drug discovery timelines.

Integration with Somatic Variant Discovery

Input Requirements for Mutect2

The Mutect2 somatic variant caller, which forms the core of the GATK somatic discovery workflow, requires properly pre-processed BAM files as input [8]. The pre-processing steps directly impact Mutect2's performance by ensuring that alignment artifacts do not masquerade as somatic variants and that base quality scores accurately reflect error probabilities [8] [1]. Specifically, duplicate marking prevents over-represented fragments from inflating variant evidence, while base quality recalibration enables the Bayesian caller to properly weigh evidence for and against potential mutations [9].

For tumor-normal analyses, both samples must undergo identical pre-processing procedures to ensure comparability [8]. The GATK somatic short variant discovery workflow explicitly states that "input BAMs should be pre-processed as described in the GATK Best Practices for data pre-processing" before variant calling with Mutect2 [8]. This consistency is particularly important for detecting subclonal variants with low allele frequencies, where subtle technical differences between tumor and normal processing could obscure true biological signals.

Quality Control Metrics

Rigorous quality control following pre-processing is essential before proceeding to variant discovery. The GATK Best Practices recommend verifying key sequencing metrics, assessing coverage uniformity, checking for sample contamination, and confirming expected sample relationships in family studies or tumor-normal pairs [14]. Tools such as Picard and BEDTools provide standardized metrics for evaluating pre-processing outcomes [14].

For cancer studies, additional checks should include comparing the overall quality profiles of tumor and normal samples, verifying expected contamination levels in tumor samples, and ensuring adequate coverage in genomic regions of therapeutic interest [14]. These QC measures provide confidence that pre-processed BAM files meet the quality standards required for reliable somatic variant detection, particularly in clinical contexts where results may inform treatment decisions.

Research Reagent Solutions

Table 3: Essential Computational Tools for Pre-processing in Somatic Variant Studies

| Tool/Resource | Function | Application Context |

|---|---|---|

| BWA-MEM | Read alignment to reference | Standard aligner for Illumina sequencing data [14] [15] |

| GATK MarkDuplicatesSpark | Identify and tag duplicate reads | Handles duplicate marking efficiently across multiple cores [9] |

| GATK BaseRecalibrator | Build base quality recalibration model | Detects patterns of systematic errors in sequencing data [9] [15] |

| GATK ApplyBQSR | Apply base quality adjustments | Implements model to correct systematic quality score biases [15] |

| Samtools | BAM file manipulation and indexing | Provides utilities for file processing, sorting, and indexing [14] |

| Picard Tools | Sequence data processing metrics | Generates quality control metrics for aligned reads [14] |

The pre-processing workflow encompassing alignment, duplicate marking, and base quality recalibration establishes the essential foundation for accurate somatic variant discovery in cancer research. By systematically addressing technical artifacts and biases inherent to sequencing technologies, these steps transform raw data into analysis-ready BAM files suitable for variant calling with Mutect2. For pharmaceutical and biotechnology researchers, rigorous implementation of these standardized procedures ensures the reliability of variant calls used to identify therapeutic targets, develop biomarkers, and stratify patient populations. The integration of these pre-processing steps within the broader context of somatic variant discovery represents a critical methodology supporting the advancing field of precision oncology.

In somatic variant analysis with the Genome Analysis Toolkit (GATK), the fidelity of the final variant callset is inextricably linked to the quality and correctness of the input alignment (BAM) files. Adherence to stringent input requirements is not merely a preliminary step but a critical determinant of success, directly impacting the sensitivity and specificity of mutation detection. This technical guide details the core input specifications—encompassing sample type, read group formatting, and alignment quality—within the framework of the GATK Best Practices workflow for somatic short variant discovery. It provides researchers and drug development professionals with the definitive protocols and quality control measures necessary to generate reliable, reproducible results in cancer genomics and therapeutic development.

Sample Type and Experimental Design

The foundational step in somatic variant analysis is the appropriate selection and pairing of samples. The GATK somatic short variant discovery workflow is designed to identify somatically acquired mutations by comparing sequence data from a tumor sample against a matched normal sample from the same individual [8]. The matched normal sample is crucial for identifying and filtering out germline variants present in the patient's constitutive DNA, thereby isolating the true somatic changes that have occurred in the tumor tissue.

The workflow offers flexibility to accommodate different research scenarios [1]:

- Tumor-Normal Matched Pairs: This is the standard and most recommended approach. It involves processing both the tumor BAM and the matched normal BAM simultaneously. In this mode, Mutect2, the primary variant calling engine in GATK, uses the normal sample to aggressively filter out germline events at an early stage, conserving computational resources and improving the purity of the initial somatic callset [1].

- Tumor-Only Analysis: It is possible to run Mutect2 on a single tumor sample without a matched normal. However, this approach requires the use of a Panel of Normals (PoN) and a germline resource (e.g., gnomAD) to help identify and remove common technical artifacts and population germline polymorphisms, respectively [1]. The resulting callset typically requires more stringent post-calling filtration and annotation.

The choice of sequencing strategy—whole genome (WGS), whole exome (WES), or targeted gene panels—also influences input requirements and expected outcomes. Each strategy offers a different balance of breadth, depth, and cost, affecting the types of variants that can be reliably detected [14].

Table 1: Comparison of Sequencing Strategies for Somatic Variant Calling

| Strategy | Target Space | Typical Read Depth | Strengths for Somatic Calling | Key Input & Resource Considerations |

|---|---|---|---|---|

| Whole Genome (WGS) | ~3200 Mbp | 30-60x | Comprehensive SNV/Indel detection; enables CNV/SV calling [14] | Large BAM file size; requires a germline resource for optimal filtering. |

| Whole Exome (WES) | ~50 Mbp | 100-150x | Cost-effective SNV/Indel discovery in coding regions [14] | BAM files must be processed with the target interval list; a PoN is highly recommended. |

| Targeted Panel | ~0.5 Mbp | 500-1000x | Excellent for detecting low-VAF variants due to high depth [14] | High-depth BAMs; accurate target region BED file is critical for QC. |

Read Group Specifications and Metadata Integrity

Read groups are a fundamental, non-negotiable component of GATK analysis. They are sets of reads derived from a single sequencing run on a single lane of a flow cell, and their metadata tags allow the GATK engine to account for technical batch effects and sample identity during processing [17]. The GATK requires specific read group fields to be present and correctly specified in the header of input BAM files.

Table 2: Mandatory and Critical Read Group Fields for GATK Analysis

| Field Tag | Description | Importance in Somatic Workflow | Example Value |

|---|---|---|---|

ID |

Unique read group identifier. | Differentiates instrument runs for technical artifacts; used in Base Quality Score Recalibration (BQSR) [17]. | H0164.2 |

SM |

Sample name. | Critically important. All read groups with the same SM are treated as the same sample. This name populates the sample column in the final VCF and must be consistent and accurate [17]. |

NA12878 |

PL |

Sequencing platform/technology. | Informs the error model used by the GATK. Must be a standardized value (e.g., ILLUMINA, PACBIO) [17]. |

ILLUMINA |

LB |

DNA preparation library identifier. | Used by MarkDuplicates to identify reads from the same library that were sequenced on multiple lanes, allowing for accurate duplicate marking [17]. |

Solexa-272222 |

PU |

Platform Unit. | A more granular identifier than ID, often containing {FLOWCELL_BARCODE}.{LANE}.{SAMPLE_BARCODE}. Takes precedence over ID for BQSR if present [17]. |

H0164ALXX140820.2 |

To view the read group information in a BAM file, use the command: samtools view -H sample.bam | grep '^@RG' [17]. If the read group fields are missing or incorrect, Picard's AddOrReplaceReadGroups tool must be used to add or correct them prior to any GATK analysis.

Alignment Quality and Pre-processing

Analysis-ready BAM files are the product of a rigorous pre-processing pipeline. The quality of the alignment and the application of several data cleanup steps are paramount for reducing technical artifacts that can manifest as false positive variant calls.

The standard data pre-processing protocol, as outlined in the GATK Best Practices, includes [18] [14]:

- Alignment to Reference: Mapping sequencing reads from FASTQ files to a reference genome using an aligner such as BWA-MEM [14].

- Duplicate Marking: Identifying and flagging PCR duplicates—reads that originate from the same DNA fragment—using tools like Picard

MarkDuplicatesorSambamba. These reads are excluded from variant calling to prevent over-representation of individual molecules [14]. - Base Quality Score Recalibration (BQSR): A GATK-specific step that empirically adjusts the base quality scores in the sequencing data using an error model based on known variant sites. This corrects for systematic technical errors in the base quality scores, leading to more accurate variant calls [14].

Routine quality control of the final BAM files is essential before proceeding to variant calling. This involves checking key sequencing metrics to ensure sufficient coverage and data integrity [14]. Tools like Alfred can compute comprehensive multi-sample QC metrics, including insert size distribution, GC bias, sequencing error rates, and, for capture assays, on-target rate and coverage uniformity [19].

The Somatic Variant Discovery Workflow: From BAM to VCF

The GATK Best Practices workflow for somatic short variants is a multi-step process that takes the prepared BAM files and applies a series of specialized tools to generate a high-confidence somatic callset [8]. The following diagram illustrates the logical flow and the critical role of input BAM requirements at each stage.

The workflow consists of two main stages: generating candidate variants and then applying rigorous filtering [8]. The key steps are:

- Call Candidate Variants with Mutect2: Mutect2 performs local de novo assembly of haplotypes in active regions to call SNVs and indels simultaneously. It applies a Bayesian somatic likelihoods model to calculate the log odds of a variant being somatic versus a sequencing error [8] [1].

- Calculate Contamination: The

GetPileupSummariesandCalculateContaminationtools estimate the fraction of reads in the tumor sample resulting from cross-sample contamination. This is crucial for samples without a matched normal and those with significant copy number variation [8]. - Learn Orientation Bias Artifacts: Using optional output from Mutect2,

LearnReadOrientationModelcalculates prior probabilities for single-stranded substitution errors. This step is particularly critical for FFPE tumor samples, which are prone to such artifacts [8]. - Filter Variants with FilterMutectCalls: This tool applies hard filters and probabilistic models to account for correlated errors, strand bias, polymerase slippage, germline variants, and contamination. It refines the initial Mutect2 calls and automatically sets a filtering threshold to optimize the balance between sensitivity and precision [8].

- Annotate Variants with Funcotator: Finally,

Funcotatoradds functional annotations to each variant, such as the affected gene, amino acid change, and links to external databases (e.g., GENCODE, dbSNP, COSMIC). It outputs a VCF or MAF file ready for downstream biological interpretation [8].

The Scientist's Toolkit: Essential Research Reagents and Tools

A successful somatic variant calling project relies on a suite of bioinformatics tools and curated genomic resources. The following table catalogs the essential components of the analysis pipeline.

Table 3: Essential Tools and Resources for Somatic Variant Analysis

| Tool / Resource | Category | Function in Workflow |

|---|---|---|

| BWA-MEM [14] | Alignment | Aligns sequencing reads from FASTQ format to a reference genome. |

| Picard Tools [14] | Pre-processing | A collection of utilities, with MarkDuplicates and AddOrReplaceReadGroups being critical for BAM preparation. |

| GATK [8] [1] | Variant Discovery | The core toolkit. Mutect2 calls somatic variants; BQSR recalibrates base qualities; Funcotator adds annotations. |

| Samtools [14] | Utility | A versatile suite for manipulating and viewing SAM/BAM/CRAM files, and for basic variant calling (BCFtools). |

| Alfred [19] | Quality Control | Computes comprehensive, multi-sample QC metrics from BAM files, including GC bias, insert size, and coverage. |

| Panel of Normals (PoN) [8] | Resource | A VCF of artifacts common in normal samples, used by Mutect2 to filter technical false positives in tumor samples. |

| Germline Resource (e.g., gnomAD) [1] | Resource | A VCF of population allele frequencies, used to impute the probability that an allele is a common germline variant. |

| Funcotator Data Sources [8] | Resource | Configurable datasources (GENCODE, dbSNP, COSMIC, etc.) used to annotate variants with biological information. |

The path to a high-quality somatic variant callset is paved with meticulous attention to input data quality. The requirements for sample type specification, precise read group tagging, and stringent alignment quality control are not arbitrary hurdles but scientifically grounded prerequisites. By rigorously adhering to these protocols—ensuring accurate sample metadata in read groups, verifying alignment metrics, and leveraging the complete GATK somatic workflow with all recommended resources—researchers can achieve the sensitivity and specificity required for robust cancer genomic studies. This foundational rigor ensures that subsequent biological insights and clinical interpretations are based on reliable and accurate mutation data.

Somatic short mutation calling is a critical bioinformatics process designed to identify acquired genetic alterations, specifically single nucleotide variants (SNVs) and small insertions or deletions (indels), within tumor samples. This workflow is essential for characterizing the cancer genome, understanding tumor evolution, and informing personalized cancer therapy strategies. The complete process transforms raw sequencing data from tumor and matched normal samples into a refined set of high-confidence somatic variants, providing insights into clonal and sub-clonal mutations that may be present at very low allele fractions [20]. The reliability of this workflow is heavily dependent on the quality and proper preparation of input data, particularly the Binary Alignment/Map (BAM) files, which must undergo rigorous pre-processing to ensure accurate variant discovery [8] [9].

Input BAM Requirements and Pre-processing

Fundamental BAM File Specifications

The somatic short variant discovery workflow requires analysis-ready BAM files for each input tumor and normal sample. These files must be generated through a comprehensive pre-processing pipeline to correct for technical biases and ensure data suitability for variant calling [8] [9].

Table 1: Essential Pre-processing Steps for BAM File Preparation

| Processing Step | Tools Involved | Purpose | Key Output |

|---|---|---|---|

| Read Alignment | BWA-MEM, Minimap2, MergeBamAlignments | Map sequence reads to reference genome | Coordinate-sorted SAM/BAM file [9] [14] |

| Duplicate Marking | MarkDuplicatesSpark, MarkDuplicates + SortSam | Identify and tag non-independent reads from PCR amplification | BAM file with duplicates marked for exclusion [9] |

| Base Quality Score Recalibration (BQSR) | BaseRecalibrator, ApplyBQSR | Correct systematic errors in base quality scores | Recalibrated BAM file with adjusted quality scores [9] |

Critical Pre-processing Considerations

The pre-processing phase is obligatory and must precede all variant discovery efforts. Input BAMs should conform to specific requirements, including proper read group tags, which are essential for downstream analysis stages. The marking of duplicates is particularly crucial as it mitigates biases introduced by data generation steps such as PCR amplification, which can represent 5-15% of sequencing reads in a typical exome [9] [14]. While local realignment around indels was previously recommended to reduce false-positive variant calls caused by alignment artifacts, recent evaluations suggest that the improvements from this step may be marginal, potentially making it optional due to high computational costs [14].

Core Somatic Variant Discovery Workflow

Primary Variant Calling with Mutect2

The cornerstone of somatic short variant discovery is Mutect2, a Bayesian-based caller that identifies SNVs and indels simultaneously via local de novo assembly of haplotypes in active regions [8] [1]. When Mutect2 encounters regions showing evidence of somatic variation, it discards existing mapping information and completely reassembles reads in that region to generate candidate variant haplotypes. The tool then applies a Bayesian somatic likelihoods model to obtain log odds for alleles to be somatic variants versus sequencing errors [8].

Mutect2 operates in several distinct modes tailored to different experimental designs:

- Tumor-Normal Mode: Analyzes a tumor sample against a matched normal to identify somatic variants while filtering germline events [1]

- Tumor-Only Mode: Runs on a single sample, useful for creating panels of normals or when no matched normal is available [1]

- Mitochondrial Mode: Optimized parameters for calling variants on mitochondrial DNA with high sensitivity [1]

- Force-Calling Mode: Genotypes a specific set of alleles provided in a VCF file in addition to de novo discovery [1]

Contamination Assessment

Following initial variant calling, the workflow estimates cross-sample contamination using a two-step process:

- GetPileupSummaries: Collects summary information from the tumor BAM file [8]

- CalculateContamination: Emits an estimate of the fraction of reads due to cross-sample contamination for each tumor sample [8]

This step is particularly valuable as it functions effectively without a matched normal, even in samples with significant copy number variation, and makes no assumptions about the number of contaminating samples [8].

Artifact Detection and Filtering

A critical component for ensuring specificity involves detecting and filtering technical artifacts:

- LearnReadOrientationModel: Identifies orientation bias artifacts, which is especially important for FFPE tumor samples, by determining prior probabilities of single-stranded substitution errors [8]

- FilterMutectCalls: Applies sophisticated filtering to account for correlated errors through several hard filters and probabilistic models for strand bias, orientation bias, polymerase slippage artifacts, germline variants, and contamination [8]

FilterMutectCalls automatically sets a filtering threshold to optimize the F-score, representing the harmonic mean of sensitivity and precision, and uses a Bayesian model for the overall SNV and indel mutation rate and allele fraction spectrum of the tumor to refine the initial log odds generated by Mutect2 [8].

Functional Annotation

The final analytical step involves annotating discovered variants with biological context using tools such as Funcotator [8]. This functional annotation tool:

- Labels each variant with one of twenty-three distinct variant classifications

- Provides gene information (affected gene, predicted variant amino acid sequence)

- Associates variants with information from datasources including GENCODE, dbSNP, gnomAD, and COSMIC

- Produces either a VCF file (with annotations in the INFO field) or a Mutation Annotation Format (MAF) file [8]

Diagram 1: Complete GATK Somatic Short Variant Discovery Workflow illustrating the sequential steps from input BAM processing through final variant annotation, with color-coded functional groups.

Experimental Design Considerations

Sequencing Strategies and Performance

The choice of sequencing strategy significantly impacts variant calling performance, with different approaches offering distinct advantages for somatic mutation detection.

Table 2: Sequencing Strategy Comparison for Somatic Variant Detection

| Strategy | Target Space | Typical Depth | SNV/Indel Detection | Low VAF Sensitivity |

|---|---|---|---|---|

| Gene Panel | ~0.5 Mbp | 500-1000× | ++ | ++ |

| Whole Exome | ~50 Mbp | 100-150× | ++ | + |

| Whole Genome | ~3200 Mbp | 30-60× | ++ | + |

Performance indicators: + (good), ++ (outstanding) [14]

Computational Requirements

Implementation of the somatic variant calling workflow requires substantial computational resources, particularly for whole-genome sequencing data. The EPI2ME workflow recommends minimum requirements of 16 CPUs and 48GB RAM, with optimal performance achieved using 64 CPUs and 256GB RAM [21]. Approximate run time varies significantly depending on sequencing modality and coverage, with a complete analysis of a 60X/30X Tumor/Normal pair taking approximately 6.5 hours using recommended resources [21].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Analytical Tools and Resources for Somatic Variant Calling

| Tool/Resource | Category | Function | Application Context |

|---|---|---|---|

| Mutect2 | Variant Caller | Core somatic SNV/indel detection via local assembly | Primary variant discovery [8] [1] |

| Panel of Normals (PoN) | Reference Resource | Identifies recurrent technical artifacts | False positive reduction [8] [1] |

| Germline Resource | Reference Resource | Distinguishes somatic from germline variants | Population allele frequency context [1] |

| Funcotator | Annotation Tool | Adds biological context to variants | Functional interpretation [8] |

| SComatic | Specialized Caller | Detects somatic mutations in single-cell data | scRNA-seq/scATAC-seq analysis [22] |

Validation and Benchmarking Approaches

Rigorous validation is essential for verifying somatic variant calls. Recommended approaches include:

- Down-sampling: Uses subsets of reads from primary sequencing data of validated somatic mutations to measure sensitivity at different coverage depths [20]

- Virtual Tumors: Creates synthetic datasets by mixing reads from different samples to generate truth sets with known mutations for comprehensive sensitivity and specificity assessment [20]

- Orthogonal Validation: Employs independent sequencing technologies or experimental methods to confirm putative somatic variants

The Genome in a Bottle (GIAB) and Platinum Genome datasets provide benchmark resources with established "ground truth" variant calls for systematic pipeline validation [14]. These resources define "high-confidence" regions of the human genome where variant calls can be reliably benchmarked, though researchers should note that these resources may have inherent biases as they were constructed using many of the same technologies being evaluated [14].

Advanced Applications and Emerging Methodologies

The field of somatic variant detection continues to evolve with several advanced applications:

- Single-Cell Mutation Detection: Tools like SComatic enable detection of somatic mutations in single-cell RNA-seq and ATAC-seq data without requiring matched DNA sequencing, facilitating the study of clonal heterogeneity at single-cell resolution [22]

- Liquid Tumor Analysis: Specialized parameters for detecting somatic variants in circulating tumor DNA, which typically exhibits very low variant allele fractions [21]

- Multi-sample Analysis: Joint calling of multiple tumor and normal samples from the same individual to improve detection power [1]

These advanced applications demonstrate the expanding utility of somatic variant calling workflows across diverse research contexts, from basic cancer genomics to clinical translational studies.

Practical Guide: Configuring Mutect2 BAM Inputs for Different Analysis Modes

This technical guide details the precise requirements for specifying input BAM files and correctly labeling normal samples within the GATK Mutect2 somatic variant calling workflow. Proper configuration of these inputs is fundamental to generating accurate somatic mutation calls in cancer genomics research. The methodologies presented herein form the foundation for reliable detection of single nucleotide variants (SNVs) and insertion-deletion variants (indels) in tumor research samples, enabling robust downstream analysis for therapeutic development. This guide synthesizes best practices from established somatic variant discovery pipelines, providing researchers with explicit technical specifications for data preparation and analysis configuration.

In somatic variant analysis using GATK's Mutect2, properly formatted and organized input files are prerequisite for accurate mutation detection. The fundamental input requirements encompass aligned sequence data in BAM format, reference genome sequences, and appropriate sample identification metadata. The alignment files must derive from previously processed BAM files that have undergone standard preprocessing procedures including duplicate marking, base quality score recalibration, and indel realignment according to GATK Best Practices [8]. Each BAM file must contain read groups with sample-specific information that enables Mutect2 to correctly identify and process tumor and normal components separately.

The basic command structure for initiating Mutect2 analysis requires several core components: reference genome sequence (-R), input BAM files (-I), and designated sample names for tumor and normal components. Additional resources such as germline variant databases and panels of normals significantly enhance variant calling accuracy but represent optional components in the workflow [2]. The input BAM specifications must maintain consistency throughout the analysis pipeline, as subsequent tools in the somatic variant discovery workflow, including FilterMutectCalls, depend on the initial configuration and output formats generated by Mutect2.

BAM File Specification Methodology

Input Specification Syntax

The Mutect2 tool accepts BAM, SAM, or CRAM files containing aligned sequencing reads through the --input or -I argument [2]. Multiple input files can be specified by repeating the -I argument followed by the file path. This capability enables processing of multiple BAM files simultaneously, which is particularly useful when handling separately sequenced tumor regions or technical replicates.

Table 1: Required Input Files for Mutect2 Analysis

| File Type | Description | Format Specification |

|---|---|---|

| Reference Genome | Reference sequence file | FASTA format with associated index and dictionary files |

| Tumor BAM | Aligned sequencing reads from tumor sample | BAM/SAM/CRAM format with index |

| Normal BAM | Aligned sequencing reads from matched normal | BAM/SAM/CRAM format with index |

| Germline Resource | Population allele frequencies | VCF format compressed and indexed |

| Panel of Normals | Technical artifacts database | VCF format compressed and indexed |

Technical Specifications for Alignment Files

Input BAM files must comply with specific technical standards to ensure compatibility with the Mutect2 processing engine. The files should be coordinate-sorted, with accompanying index files (.bai) present in the same directory [23]. The sequence dictionary within the BAM files must be compatible with the reference genome sequence dictionary to prevent validation errors. For whole-genome sequencing data, the average coverage depth should be documented, with recommended minimums of 30x for normal samples and 60x for tumor samples to ensure sufficient sensitivity for variant detection [24]. For tumor samples with significant heterogeneity, higher coverage depths (≥100x) may be necessary to detect subclonal populations.

The Mutect2 tool incorporates advanced processing capabilities for handling varying coverage depths, though the documentation notes that further parameter optimization may be required for extreme high-depth scenarios exceeding 1000X coverage [2]. The alignment files should ideally be generated using PCR-free library preparation methods to minimize technical artifacts, though the pipeline includes filtering mechanisms for common sequencing- and amplification-derived errors.

Normal Sample Labeling Protocols

Sample Identification in BAM Headers

Each input BAM file must contain appropriate read groups with sample identifiers that correspond to the labels specified in the Mutect2 command. The sample identifiers within the BAM files' read groups (@RG SM tags) should match the sample names provided to Mutect2 via the -tumor and -normal arguments. Consistency in sample labeling ensures proper sample tracking throughout the variant calling process and prevents sample mix-ups that could compromise analysis integrity.

The standard approach for designating sample types within the Mutect2 command involves explicitly linking each input BAM file to its biological context using the -I argument followed by either -tumor or -normal with the appropriate sample name [2]. This explicit designation enables Mutect2 to apply appropriate statistical models for somatic versus germline variation.

Normal Sample Labeling Syntax

The correct specification of normal samples follows a precise sequence of arguments that explicitly links the input BAM file to its designation as a normal control. The standard syntax for matched tumor-normal analysis is demonstrated below:

In this implementation, the -I argument introduces each BAM file, immediately followed by either -tumor or -normal to designate the biological role of the subsequent sample [2]. The sample names provided to these arguments must correspond to the sample identifiers embedded in the BAM file's read groups. This explicit linkage ensures Mutect2 correctly associates each BAM file with its sample type, enabling appropriate application of the somatic likelihoods model that distinguishes somatic mutations from germline variants.

Table 2: Mutect2 Arguments for Sample Specification

| Argument | Function | Required | Example |

|---|---|---|---|

-I |

Input BAM file | Yes | -I tumor.bam |

-tumor |

Tumor sample name | Conditional | -tumor TUMOR_001 |

-normal |

Normal sample name | Conditional | -normal NORMAL_001 |

-R |

Reference genome | Yes | -R reference.fa |

-O |

Output VCF | Yes | -O results.vcf.gz |

Tumor-Only Analysis Mode

For studies lacking matched normal samples, Mutect2 supports a tumor-only operating mode with modified labeling requirements. In this configuration, only the tumor BAM is specified with its corresponding sample name, without any normal sample designation [2]. The command structure simplifies to:

When operating in tumor-only mode, researchers should note that the resulting variant calls will contain both somatic and germline variants, requiring additional filtering strategies to distinguish true somatic mutations. The GATK Best Practices recommend creating a panel of normals (PoN) to identify technical artifacts common to the sequencing platform and methodology [12]. This PoN can be generated by running Mutect2 in tumor-only mode on multiple normal samples and then combining the results using CreateSomaticPanelOfNormals.

Integrated Analysis Workflow

Complete Mutect2 Somatic Variant Calling Pipeline

The full somatic variant discovery workflow extends beyond initial variant calling with Mutect2 to include contamination assessment, artifact identification, and comprehensive variant filtering. The complete pipeline incorporates multiple analytical stages that build upon the properly specified BAM inputs and sample labels [8].

The following diagram illustrates the relationship between input BAM files and the key processing stages in the complete somatic variant discovery workflow:

Somatic Variant Calling Workflow

Contamination Estimation Procedures