Genome vs. Transcriptome Alignment: A Comprehensive Guide for Biomedical Researchers

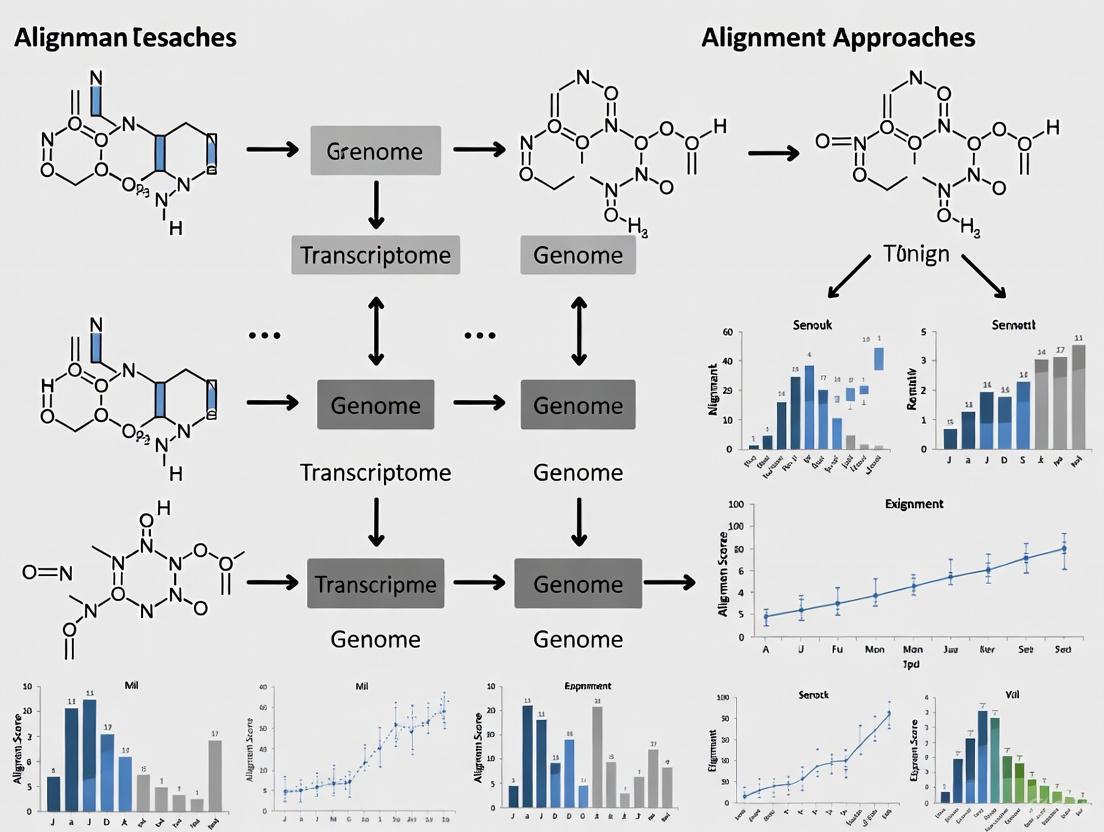

This article provides a detailed comparison of genome and transcriptome alignment approaches, essential for accurate RNA-seq data analysis.

Genome vs. Transcriptome Alignment: A Comprehensive Guide for Biomedical Researchers

Abstract

This article provides a detailed comparison of genome and transcriptome alignment approaches, essential for accurate RNA-seq data analysis. We explore the foundational principles, including the distinct goals of aligning reads to a reference genome versus a transcriptome. The piece covers established and cutting-edge methodologies, addresses common challenges like ambiguous mapping and complex gene families, and presents validation strategies from recent consortium benchmarks. Aimed at researchers and drug development professionals, this guide offers practical insights for selecting and optimizing alignment tools to maximize data fidelity in diverse applications, from basic research to clinical biomarker discovery.

Core Concepts: Understanding the Fundamental Goals of Genome and Transcriptome Alignment

In the field of genomics and transcriptomics, the choice of alignment strategy—mapping sequencing reads to a complete genome or to a spliced transcriptome—represents a fundamental decision that directly impacts the accuracy, efficiency, and biological relevance of downstream analyses. The genome contains all DNA present in a cell, while the transcriptome comprises the complete set of RNA molecules, including messenger RNA molecules derived from genes [1]. This distinction creates different coordinate systems and analytical challenges for read alignment.

Recent methodological advances and benchmarking studies have clarified the strengths and limitations of each approach across diverse applications. This guide provides an objective comparison of genome versus transcriptome alignment methodologies, synthesizing current experimental data to help researchers and drug development professionals select optimal strategies for their specific research contexts.

Key Comparison: Genome vs. Transcriptome Alignment

Table 1: Comparative overview of genome and transcriptome alignment approaches

| Feature | Genome Alignment | Transcriptome Alignment |

|---|---|---|

| Reference Basis | Complete DNA sequence of an organism [1] | Collection of all expressed transcript sequences [1] |

| Primary Tools | HISAT2, STAR [2] | Kallisto, Salmon [2] [3] |

| Splice Handling | Must be splice-aware; detects novel junctions [2] | Built into reference; limited to annotated isoforms |

| Computational Demand | Higher resource requirements [2] | Faster processing; lower memory footprint [2] |

| Multi-mapped Reads | Challenging for gene families & complex regions [4] | Discarded or proportionally assigned [2] |

| Quantification Accuracy | Strong for gene-level; depends on annotation [2] | Excellent for transcript-level with sufficient depth [5] |

| Novel Transcript Detection | Possible with appropriate assemblers [2] | Limited to predefined transcriptome |

Performance Benchmarking Across Applications

Transcript Identification and Quantification

The Long-read RNA-Seq Genome Annotation Assessment Project (LRGASP) consortium conducted a comprehensive evaluation of long-read RNA sequencing methods, revealing that libraries with longer, more accurate sequences produce more accurate transcripts than those with increased read depth, whereas greater read depth improved quantification accuracy [5]. In well-annotated genomes, tools based on reference sequences demonstrated the best performance, with alignment-based approaches generally outperforming de novo methods for transcript reconstruction.

Single-Cell RNA-Seq Applications

A 2025 evaluation of single-cell RNA-seq technologies from 10× Genomics, PARSE Biosciences, and HIVE demonstrated varying capabilities for capturing challenging transcriptomes like neutrophils, which contain low RNA levels and high RNases [6]. The study found that fixed RNA methods (10× Genomics Flex and Parse Biosciences Evercode) showed strong concordance with flow cytometry and established reliable workflows for clinical biomarker studies, with Flex offering a simplified sample collection protocol suitable for clinical site implementation [6].

Viral Genome Analysis

For viral genomics, Vclust represents a recent advancement in genome alignment, using Lempel-Ziv parsing-based algorithms to achieve superior accuracy and efficiency compared to existing tools [7]. This approach can cluster millions of viral genomes into virus operational taxonomic units (vOTUs) in hours on mid-range workstations, demonstrating approximately 40,000× faster processing than VIRIDIC while maintaining high agreement with International Committee on Taxonomy of Viruses standards [7].

Differential Expression Analysis

A comprehensive comparison of six popular RNA-seq analysis procedures revealed that computational requirements and performance characteristics vary significantly across pipelines [2]. Cufflinks-Cuffdiff demanded the highest computing resources while Kallisto-Sleuth required the least. HISAT2-StringTie-Ballgown demonstrated higher sensitivity to genes with low expression levels, whereas Kallisto-Sleuth performed best for medium-to-high abundance genes [2].

Table 2: Quantitative performance comparison of alignment and analysis methods

| Method/Tool | Application Context | Key Performance Metrics | Comparative Findings |

|---|---|---|---|

| Vclust [7] | Viral genome clustering | Mean absolute error: 0.3%; Speed: >40,000× faster than VIRIDIC | 95% agreement with ICTV taxonomy after correcting inconsistencies |

| Kallisto [3] | Transcript quantification | Runtime: Fastest among tested methods; Memory: Low footprint | Produced similar quantifications to genome alignment; suitable for most applications |

| HISAT2-StringTie-Ballgown [2] | Differential expression | Sensitivity: High for low-expression genes | More sensitive to low-expression genes than Kallisto-Sleuth |

| 10× Genomics Flex [6] | Single-cell RNA-seq (neutrophils) | Data quality: Low mitochondrial genes (0-8%); Cell capture: Effective for neutrophils | Simplified protocol suitable for clinical trials; strong concordance with flow cytometry |

| Enzymatic Methyl-seq (EM-seq) [8] | DNA methylation profiling | Concordance: High with WGBS; DNA preservation: Superior to bisulfite methods | Robust alternative to WGBS with more uniform coverage and lower DNA input requirements |

Experimental Protocols and Workflows

Standard RNA-Seq Analysis Pipeline

The following diagram illustrates the core steps in RNA-seq analysis, highlighting phases where methodological choices between genome and transcriptome alignment significantly impact results:

Diagram 1: Core RNA-seq analysis workflow with key decision points

Specialized Alignment Pipeline for Complex Genomic Regions

Recent research has highlighted limitations in standard "one-size-fits-all" alignment approaches for complex genomic regions such as major histocompatibility complex (MHC) and killer immunoglobulin-like receptors [4]. The nimble pipeline addresses these challenges through a supplemental approach:

Diagram 2: Supplemental alignment pipeline for complex genomic regions

Detailed Methodologies from Key Studies

LRGASP Consortium Protocol [5]: The consortium generated over 427 million long-read sequences from complementary DNA and direct RNA datasets across human, mouse, and manatee species. Developers utilized these data to address challenges in transcript isoform detection, quantification, and de novo transcript detection. Libraries were prepared using different protocols and sequenced on multiple platforms including PacBio and Oxford Nanopore Technologies. Bioinformatics tools were then evaluated for their performance in transcript reconstruction, quantification accuracy, and novel transcript detection.

Single-Cell RNA-seq Method Comparison [6]: Blood was drawn from healthy donors and divided into aliquots for testing using Flex, Evercode, and Chromium Single-Cell 3' Gene Expression v.3.1. For each donor, flow cytometry characterized cells into major types for comparison with scRNA-seq clustering. Analysis was limited to 18,532 genes captured in the Flex probe set to enable direct cross-technology comparison. A minimum threshold of 50 genes and 50 unique molecular identifiers was applied across all samples to ensure neutrophil inclusion.

DNA Methylation Profiling Comparison [8]: Researchers evaluated four DNA methylation detection approaches—whole-genome bisulfite sequencing, Illumina methylation microarray, enzymatic methyl-sequencing, and Oxford Nanopore Technologies sequencing—across three human genome samples derived from tissue, cell line, and whole blood. They systematically compared methods in terms of resolution, genomic coverage, methylation calling accuracy, cost, time, and practical implementation, with EM-seq showing the highest concordance with WGBS.

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Key research reagents and computational tools for alignment studies

| Tool/Reagent | Function | Application Context |

|---|---|---|

| Chromium Single-Cell 3' Gene Expression Flex [6] | Fixed RNA profiling for single-cell analysis | Clinical trial biomarker studies; sensitive cell types like neutrophils |

| Evercode WT Mini v.2 [6] | Combinatorial barcoding for single-cell RNA-seq | Studies requiring high gene detection sensitivity; sample multiplexing |

| HIVE scRNA-seq v.1 [6] | Nanowell-based single-cell capture | RBC-depleted samples; neutrophil isolation |

| Nimble Pipeline [4] | Targeted quantification of complex gene families | Immune genotyping; MHC allele-specific regulation; viral RNA detection |

| Vclust [7] | Viral genome clustering and ANI calculation | Large-scale viromics; taxonomic classification of viral sequences |

| Kallisto [3] | Transcriptome pseudoalignment for quantification | Fast transcript quantification; bulk and single-cell RNA-seq analysis |

| EM-seq Kit [8] | Enzymatic methylation conversion | DNA methylation profiling with minimal DNA degradation |

The choice between genome and transcriptome alignment approaches depends heavily on research goals, sample types, and computational resources. Genome alignment excels at novel transcript discovery and splice variant detection, while transcriptome alignment provides superior speed and efficiency for quantification of annotated genes. Recent methodological developments—including fixed RNA profiling for sensitive cell types, long-read sequencing for complete isoform resolution, and specialized tools for complex genomic regions—continue to expand our analytical capabilities across diverse research contexts. For most applications, a hybrid approach leveraging the complementary strengths of both strategies provides the most comprehensive solution for modern genomic and transcriptomic studies.

The field of biological sequence alignment is built upon classical algorithms that solved the fundamental problem of comparing two sequences to find their optimal alignment. The Needleman-Wunsch algorithm, introduced in 1970, was the first to solve the problem of global sequence alignment using dynamic programming, ensuring the optimal alignment of two sequences from end to end [9] [10]. This was followed by the Smith-Waterman algorithm in 1981, which introduced a similar dynamic programming approach but for local alignment, enabling the identification of regions of high similarity within longer sequences [9].

These algorithms established the core dynamic programming framework that remains influential today. They work by building a matrix of alignment scores where each cell represents the optimal alignment score up to that position in the sequences. The recurrence relation for Needleman-Wunsch can be expressed as:

[F{i,j} = max \begin{cases} F{i-1,j} + G & \text{skip a position of }x\ F{i,j-1} + G & \text{skip a position of }y\ F{i-1,j-1} + S_{x[i],y[j]} & \text{match/mismatch}\ \end{cases}]

Where (F{i,j}) is the score at position ((i,j)), (G) is the gap penalty, and (S{x[i],y[j]}) is the substitution score [9]. This foundational approach, while computationally intensive for modern datasets, established the precision standard against which all subsequent alignment methods would be measured.

Table 1: Core Characteristics of Foundational Alignment Algorithms

| Algorithm | Type | Year | Key Innovation | Computational Complexity |

|---|---|---|---|---|

| Needleman-Wunsch | Global | 1970 | First dynamic programming for biological sequences | O(nm) |

| Smith-Waterman | Local | 1981 | Local alignment with traceback | O(nm) |

| Levenshtein Distance | Edit Distance | 1965 | Minimum edit operations | O(nm) |

The Evolutionary Bridge: From Classical to Modern Methods

As genomic datasets expanded exponentially, the computational demands of classical O(nm) algorithms became prohibitive, spurring innovation in both algorithmic efficiency and implementation. Key developments included the introduction of heuristic methods that sacrificed theoretical optimality for practical speed, and implementation optimizations that leveraged modern hardware capabilities [10].

Myers (1986) and Ukkonen (1985) made crucial algorithmic improvements with the diagonal transition method, achieving O(n+s²) complexity in expectation, where s is the edit distance between sequences [10]. This was particularly efficient for similar sequences where s is small. Equally important were implementation advances such as bitpacking (Myers, 1999), which packed 64 adjacent states of the dynamic programming matrix into two 64-bit computer words, providing up to 64× speedup [10]. With advances in computer hardware, this extended to SIMD instructions that could process up to 512-bit operations, providing another 8× speedup [10].

The introduction of banded alignment strategies represented another significant optimization, restricting computation to a diagonal band of the full matrix under the assumption that optimal alignments would not deviate too far from the main diagonal [10]. For RNA-seq and other specialized applications, tools like STAR implemented sophisticated strategies such as spliced alignment, which could efficiently handle introns by detecting splice junctions without the computational cost of full dynamic programming [11].

Diagram 1: Evolution from classical to modern alignment methods

Performance Benchmarking: Quantitative Comparisons

Modern alignment tools exhibit significant variation in performance characteristics, with specialized algorithms optimized for specific data types and applications. Benchmarking studies reveal that the choice of alignment methodology substantially impacts downstream analytical outcomes, particularly in transcriptomic studies where alignment accuracy directly influences transcript abundance estimation [11].

In assessments of long-read sequencing aligners, tools displayed markedly different performance profiles. Minimap2 and Winnowmap2 were computationally lightweight enough for use at scale, while NGMLR required substantially more resources but produced consistent alignments [12]. Notably, different alignment tools widely disagreed on which reads to leave unaligned, affecting genome coverage and structural variant discovery [12]. For short-read RNA-seq data, studies demonstrate that lightweight mapping approaches can lead to considerably different abundance estimates compared to traditional alignment methods, affecting downstream differential expression analysis [11].

Table 2: Performance Comparison of Modern Alignment Tools

| Tool | Best Application | Speed (10M reads) | Memory Efficiency | Key Strength |

|---|---|---|---|---|

| HISAT2 | RNA-seq | ~700 sec [13] | High | Balanced speed/accuracy |

| STAR | Spliced RNA-seq | ~850 sec [13] | Moderate | Spliced alignment |

| BWA | WGS | ~980 sec [13] | Moderate | Proven accuracy |

| Bowtie2 | Chip-seq/Short reads | ~1000 sec [13] | High | Flexibility |

| Minimap2 | Long-read alignment | Fast [12] | High | Scalability |

| Winnowmap2 | Long-read alignment | Fast [12] | High | Repetitive regions |

| NGMLR | Long-read alignment | Slow [12] | Low | SV detection |

The LRGASP consortium benchmark (2024) revealed that for transcript identification, libraries with longer, more accurate sequences produced more accurate transcripts than those with increased read depth, whereas greater read depth improved quantification accuracy [5]. In well-annotated genomes, tools based on reference sequences demonstrated the best performance, though moderate agreement among bioinformatics tools highlighted variations in analytical goals [5].

Experimental Protocols and Methodologies

Benchmarking Alignment Tools for Long-Read Sequencing

Comprehensive evaluation of alignment algorithms requires standardized methodologies across diverse data types. For long-read sequencing platforms, recent benchmarks employed publicly-available data from the Joint Initiative for Metrology in Biology's Genome in a Bottle Initiative, specifically samples NA12878 sequenced with nanopore technology and NA24385 sequenced with Pacific Biosciences CCS technology [12].

Tool Selection Criteria: Studies evaluated platform-agnostic alignment tools including GraphMap2, LRA, Minimap2, NGMLR, and Winnowmap2, focusing on their suitability for whole-genome experiments and ability to produce standard SAM/BAM output [12]. Tools were assessed based on recommendations from platform developers and searches of specialized databases like Long-Read Tools.

Evaluation Metrics: Key performance measures included computational performance (peak memory utilization, CPU time, file storage requirements), genome depth and basepair coverage, and the number of reads left unaligned [12]. To assess practical utility for variant discovery, researchers ran the structural variant caller Sniffles on alignment outputs to compare breakpoint identification.

Experimental Findings: The benchmark revealed that no single alignment tool independently resolved all large structural variants present in established databases, suggesting that a combined approach using multiple aligners provides the most comprehensive view of genomic variability [12]. Specifically, researchers recommended using both Minimap2 and Winnowmap2 as lightweight complementary approaches, with NGMLR or LRA as additional options depending on computational resources and specific research questions.

Transcriptome Alignment Assessment

For transcriptome studies, the influence of mapping methodology on quantification accuracy has been systematically evaluated using both simulated and experimental data [11]. These studies typically compare three categories of mapping strategies:

- Unspliced alignment of RNA-seq reads directly to the transcriptome (e.g., Bowtie2)

- Spliced alignment of RNA-seq reads to the annotated genome with projection to transcriptome (e.g., STAR)

- Lightweight mapping of RNA-seq reads directly to the transcriptome (e.g., quasi-mapping)

Methodologies maintain consistency by using the same quantification engine (e.g., Salmon) while varying only the alignment methodology, thus isolating the effect of alignment on downstream results [11]. Studies have introduced selective alignment as an improved mapping algorithm that maintains speed while eliminating many mapping errors of lightweight approaches through alignment scoring to differentiate between mapping loci [11].

Diagram 2: RNA-seq alignment workflow for transcript quantification

Table 3: Key Research Reagents and Computational Resources

| Resource | Type | Function | Example Applications |

|---|---|---|---|

| Spike-in RNA Controls | Experimental Reagent | Normalization and quality control | ERCC, Sequin, SIRVs [14] |

| Reference Genomes | Computational Resource | Alignment template | GRCh38, CHM13, pan-genomes [12] [15] |

| Truth Sets | Validation Resource | Benchmarking accuracy | GIAB variants [12] |

| Gold Standard Alignments | Validation Resource | MSA method validation | Structure-based alignments [16] |

| Decoy Sequences | Computational Resource | Reduce false mappings | Genome-derived decoys [11] |

Implications for Transcriptome vs. Genome Alignment Approaches

The evolution of alignment algorithms has significant implications for the choice between transcriptome and genome alignment approaches in modern genomics research. Each strategy presents distinct advantages that must be considered within specific research contexts.

Genome-guided approaches generally produce longer contigs and are less computationally demanding than de novo assembly, particularly when a high-quality reference genome is available [17]. However, using a closely related reference genome to guide transcriptome assembly can generate biased contig sequences [17]. For long-read RNA-seq data, recent evaluations indicate that in well-annotated genomes, tools based on reference sequences demonstrate the best performance for transcript identification [5].

Transcriptome alignment approaches face different challenges. Lightweight mapping methods, while fast, may suffer from spurious mappings leading to decreased quantification accuracy compared to alignment-based approaches [11]. This has driven the development of hybrid methods like selective alignment that maintain speed while incorporating alignment scoring to avoid false mappings [11].

The emergence of pan-transcriptome resources represents a promising direction, addressing limitations of single-reference approaches. For example, PanBaRT20, a comprehensive pan-transcriptome for barley, demonstrated an average mapping efficiency of 87.3% for RNA-seq read alignment during transcript quantification, representing an 11.1% improvement over previous single-reference datasets [15]. Such approaches better capture species-wide transcriptional diversity but require more sophisticated computational infrastructure.

The evolution of sequence alignment continues with emerging approaches that build upon the legacy of classical algorithms while addressing contemporary challenges. The A*PA algorithm attempts to break the O(s²) complexity boundary by implementing the A* search algorithm with a gap-chaining seed heuristic, achieving near-linear scaling in practice when errors are uniformly distributed [10]. A*PA2 further combines this with band-doubling and bit-packing, resulting in speedups up to 1000× faster per visited state compared to previous exact methods [10].

Future progress will likely focus on multi-platform approaches, as evidenced by recommendations to leverage multiple alignment tools to generate a complete picture of genomic variability [12]. The development of consensus meta-methods like M-Coffee for multiple sequence alignment provides a framework for combining the output of various methods, offering improved accuracy and local reliability estimation [16]. Template-based alignment methods that incorporate structural and homology data represent another promising direction, moving beyond purely sequence-based approaches to achieve greater biological accuracy [16].

As sequencing technologies continue to evolve toward longer reads and more complex analytical questions, the fundamental principles established by Needleman-Wunsch and Smith-Waterman remain remarkably relevant. Their dynamic programming framework continues to inform new algorithms that balance the competing demands of accuracy, speed, and scalability in the era of pangenomics and single-cell multi-omics.

In genomics research, the choice between short-read and long-read sequencing technologies represents a fundamental dichotomy, forcing researchers to balance the high accuracy of short reads against the superior genomic context provided by long reads. Next-generation sequencing (NGS) technologies have revolutionized biological research and clinical diagnostics, yet each platform carries distinct advantages and limitations rooted in their underlying biochemical principles and technical workflows. Short-read technologies, predominantly led by Illumina's sequencing-by-synthesis approach, typically generate reads of 50-300 base pairs with exceptional accuracy exceeding 99.9% [18] [19]. In contrast, long-read technologies from Pacific Biosciences (PacBio) and Oxford Nanopore Technologies (ONT) routinely produce reads spanning thousands to hundreds of thousands of bases, with some ultra-long reads exceeding megabase lengths, albeit with different error profiles and cost considerations [20] [21]. This guide provides an objective comparison of these platforms, focusing on their performance in genome and transcriptome analyses, supported by experimental data and detailed methodologies to inform researchers and drug development professionals.

Short-Read Sequencing Technologies

Illumina's Sequencing-by-Synthesis forms the foundation of most short-read sequencing platforms. This technology involves fragmenting DNA into short pieces, attaching them to a flow cell surface, and amplifying them to create clusters. Through iterative cycles of fluorescently-labeled nucleotide incorporation and imaging, the sequence is determined with high precision [18] [22]. The method boasts exceptionally high throughput, with modern instruments like the NovaSeq X Series capable of generating terabases of data per run, enabling large-scale studies and population-level sequencing projects [18].

Other notable short-read platforms include Element Biosciences' AVITI System, which employs sequencing by binding (SBB) to create a more natural DNA synthesis process, and Ion Torrent, which detects nucleotide incorporation through pH changes rather than optical signals [18]. While MGI's DNBSEQ platforms based on DNA nanoball technology offer competitive costs, they can be more labor-intensive despite lower operational expenses [18]. These platforms collectively dominate the sequencing market due to their established workflows, extensive analytical tools, and proven reliability for numerous applications including variant calling, gene expression profiling, and targeted sequencing.

Long-Read Sequencing Technologies

Pacific Biosciences Single Molecule Real-Time (SMRT) Sequencing utilizes a unique approach where DNA polymerase is immobilized at the bottom of microscopic wells called zero-mode waveguides. As the polymerase incorporates fluorescently-labeled nucleotides, the detection system records these events in real-time, generating long reads typically ranging from 10-20 kilobases [23] [18]. The platform's circular consensus sequencing (CCS) mode enables multiple passes of the same template, producing highly accurate HiFi reads with accuracy exceeding 99.9% [18]. PacBio's recent Revio system has dramatically increased throughput while reducing costs, making long-read sequencing more accessible for large-scale projects.

Oxford Nanopore Technologies employs a fundamentally different approach based on the modulation of ionic current as DNA or RNA molecules pass through protein nanopores embedded in a membrane [18] [20]. The technology directly sequences native nucleic acids without requiring amplification, preserving base modifications and enabling ultra-long reads that can exceed 4 megabases in exceptional cases [21]. Unlike other technologies, Nanopore devices range from portable MinION units to high-throughput PromethION platforms, offering flexibility for diverse applications from field sequencing to comprehensive genome assembly projects.

Table 1: Core Sequencing Technology Comparison

| Feature | Short-Read (Illumina) | Long-Read (PacBio) | Long-Read (Nanopore) |

|---|---|---|---|

| Typical Read Length | 50-300 bp | 10-20 kb (HiFi); up to 50 kb | 1 kb - >4 Mb |

| Raw Accuracy | >99.9% | ~99.9% (HiFi mode) | ~98-99% (dependent on basecaller) |

| Throughput | Very high (terabases) | Medium-high | Configurable (low to high) |

| Key Advantage | High accuracy, low cost per base | Long accurate reads, epigenetic detection | Ultra-long reads, real-time analysis |

| Primary Limitation | Limited resolution in repetitive regions | Higher DNA input requirements | Higher error rate for single passes |

Performance Comparison: Experimental Data and Benchmarking

Genome Assembly and Structural Variant Detection

Long-read technologies demonstrate superior performance in resolving complex genomic regions and detecting structural variations. Experimental data from maize genome assembly reveals that both read length and sequencing depth critically impact assembly completeness. At 20× coverage with 11 kb reads, only 68.0% of benchmarking universal single-copy orthologs (BUSCO) were completely assembled, while 30× coverage with 21 kb reads achieved 95.5% completeness, with minimal improvements at higher depths [24]. This highlights a critical threshold for resource allocation in genome projects.

In human genomics, a comparative study of colorectal cancer samples demonstrated Nanopore's enhanced ability to resolve large and complex rearrangements with consistently high precision across different structural variant types [22]. The research showed that long reads detect approximately five times more structural variants in the human genome than short-read approaches, significant given that 34% of disease variants are associated with structural variations [21]. This capability is crucial for molecular diagnostics where short-read technologies often miss clinically relevant variants in repetitive or complex genomic regions.

The completion of the first truly gapless human genome assembly exemplifies the unique value of ultra-long reads. The Telomere-to-Telomere (T2T) consortium utilized Oxford Nanopore ultra-long reads exceeding 100 kb to resolve approximately 8% of the human genome that had remained inaccessible to short-read technologies for decades, primarily in centromeres and segmental duplications [21]. This achievement underscores how read length directly determines the biological questions that can be addressed through sequencing.

Transcriptome Analysis and Isoform Resolution

In transcriptomics, the read-length dichotomy profoundly impacts isoform discovery and quantification. Short-read RNA-seq struggles to resolve complete transcript isoforms because the reads are shorter than most mRNAs, requiring complex assembly algorithms that often incorrectly reconstruct splicing patterns [23]. In contrast, long-read technologies can capture full-length transcripts in single reads, dramatically simplifying isoform identification and quantification.

A methodological comparison in single-cell RNA sequencing revealed that both approaches recover a large proportion of cells and transcripts with high correlation, but platform-specific biases affect the results [25]. Short-read sequencing provided higher sequencing depth, while long-read sequencing enabled identification of truncated cDNA artifacts and retained transcripts shorter than 500 bp that were missed by short-read protocols [25]. The ability to sequence full-length cDNA molecules makes long-read approaches particularly valuable for characterizing alternative splicing, fusion transcripts, and complex transcriptional events in cancer and developmental biology.

Table 2: Performance Comparison in Key Applications

| Application | Short-Read Performance | Long-Read Performance | Experimental Evidence |

|---|---|---|---|

| SNP/Small Variant Calling | Excellent (>99.9% accuracy) | Good (improving with HiFi) | Kolmogorov et al., 2023 [21] |

| Structural Variant Detection | Limited, especially in repeats | Excellent (5× more SVs detected) | Kolmogorov et al., 2023 [21] |

| De Novo Assembly | Fragmented, especially in repeats | Highly contiguous assemblies | Chen et al., 2023 (maize) [21] |

| Transcript Isoform Discovery | Limited, requires inference | Direct observation of full-length isoforms | PMC article on scRNA-seq [25] |

| Methylation/Epigenetic Detection | Requires special protocols | Direct detection (Nanopore) or inherent (PacBio) | CRC study showing preserved signals [22] |

Experimental Design: Methodologies and Protocols

Representative Genome Analysis Protocol

A comprehensive comparison of short- and long-read sequencing technologies requires careful experimental design. The colorectal cancer study methodology provides an exemplary approach for cross-platform benchmarking [22]:

Sample Preparation and Sequencing:

- Extract high-molecular-weight DNA from matched normal-tumor pairs (e.g., colorectal cancer samples)

- For short-read sequencing: Prepare Illumina whole-exome libraries using standard protocols (fragmentation, end repair, A-tailing, adapter ligation, and PCR amplification)

- For long-read sequencing: Prepare Nanopore libraries using the Ligation Sequencing Kit without fragmentation to preserve long molecules

- Sequence Illumina libraries to high depth (>100× coverage) on NovaSeq 6000 systems

- Sequence Nanopore libraries on PromethION flow cells to achieve >20× coverage

Data Processing and Analysis:

- Process Illumina data through standard BWA-MEM alignment and GATK variant calling pipeline

- Basecall Nanopore data using Guppy or Dorado, then align with minimap2

- Call structural variants using long-read specific tools (e.g., Sniffles, cuteSV)

- Filter Nanopore data to exonic regions using BED files for direct comparison with exome data

- Validate key mutations (e.g., KRAS, BRAF) using orthogonal methods like digital PCR

This methodology enables direct comparison of variant calling performance, coverage distribution, and detection of different variant types across platforms.

Transcriptome Analysis Workflow

For transcriptome studies, the single-cell comparison protocol offers a robust framework for evaluating both technologies [25]:

Library Preparation and Sequencing:

- Prepare single-cell suspensions from tissue samples (e.g., patient-derived organoids)

- Generate full-length cDNA using 10x Genomics Chromium Single Cell 3' Reagent Kits

- Split the same cDNA sample for both short-read and long-read sequencing

- For Illumina: Fragment cDNA, add adapters, and sequence on NovaSeq 6000 with 28-91 bp paired-end reads

- For PacBio: Prepare MAS-ISO-seq libraries to concatenate transcripts, sequence on Sequel IIe system

Data Processing and Comparison:

- Process Illumina data using Cell Ranger standard pipeline for gene counting

- Process PacBio data using Iso-Seq pipeline for transcriptome analysis

- Match molecules between platforms using cell barcodes and unique molecular identifiers (UMIs)

- Compare gene detection rates, UMI recovery, and isoform identification

This approach enables direct molecule-to-molecule comparison, revealing platform-specific biases and capabilities in transcript recovery and quantification.

Diagram 1: Comparative Transcriptome Analysis Workflow. The same cDNA sample is processed through both short-read and long-read paths enabling direct comparison.

Advanced Applications: Integrated Approaches and Emerging Methods

Hybrid Sequencing Strategies

Recognizing the complementary strengths of both technologies, researchers have developed hybrid approaches that integrate short- and long-read data. Joint processing of Illumina and Nanopore data using deep learning models like hybrid DeepVariant demonstrates improved variant detection accuracy compared to single-technology methods [26]. This approach leverages short reads' base-level accuracy while incorporating long reads' superior coverage of complex regions, potentially reducing overall sequencing costs while improving results.

Shallow hybrid sequencing—combining moderate coverage from both technologies—can match or surpass the variant detection accuracy of deep sequencing using a single technology [26]. This strategy is particularly promising for clinical applications where comprehensive variant detection is essential but cost constraints exist. The hybrid approach enables detection of both small variants and large structural variations from the same experiment, providing a more complete mutational profile for cancer genomics and rare disease diagnosis.

Multi-Omics Integration

Long-read technologies uniquely enable simultaneous collection of genomic and epigenomic information from the same molecule. PacBio's SMRT sequencing detects base modifications through kinetic signatures, while Oxford Nanopore directly identifies DNA and RNA modifications through current deviations [20]. This capability permits integrated analysis of genetic variation and epigenetic states, revealing mechanisms of gene regulation in development and disease.

In cancer research, the combined assessment of mutation profiles, structural variations, and methylation patterns from long-read data provides unprecedented insights into tumor evolution and heterogeneity [22]. The preservation of methylation signals in PCR-free Nanopore protocols enables researchers to connect genetic alterations with epigenetic changes, offering a more comprehensive view of oncogenic processes.

Diagram 2: Hybrid Variant Calling Workflow. Integrated processing of short and long reads improves detection of both small and large variants.

Essential Research Reagent Solutions

Table 3: Key Research Reagents and Their Applications

| Reagent/Kits | Primary Function | Application Context |

|---|---|---|

| 10x Genomics Chromium Single Cell 3' Reagent Kits | Partitioning cells into GEMs for barcoding | Single-cell RNA sequencing for both short- and long-read platforms [25] |

| PacBio MAS-ISO-seq for 10x Genomics | Concatenating transcripts into longer arrays | Increasing throughput for single-cell long-read transcriptomics [25] |

| Oxford Nanopore Ligation Sequencing Kit | Preparing genomic DNA libraries | Standard long-read genome sequencing across various input types [21] |

| Oxford Nanopore Ultra-Long DNA Sequencing Kit | Specialized protocol for ultra-long reads | Resolving complex repeats, centromeres, structural variants [21] |

| SPRI Beads | Size selection and clean-up | Library preparation across all platforms [25] |

| MyOne SILANE Dynabeads | cDNA capture after GEM generation | Single-cell protocols for transcriptome analysis [25] |

The read-length dichotomy presents researchers not with a binary choice, but with a strategic decision based on specific research questions, sample types, and resource constraints. Short-read technologies remain the workhorse for applications requiring high accuracy and throughput at lower costs, such as variant calling in well-characterized genomic regions, population studies, and expression quantification. Long-read technologies excel in resolving structural variations, assembling complex genomes, characterizing transcript isoforms, and detecting epigenetic modifications. Rather than competing solutions, these technologies increasingly serve as complementary approaches that, when combined, provide a more comprehensive view of genomic architecture and function. As both technologies continue evolving—with short-read platforms increasing throughput and long-read platforms enhancing accuracy and reducing costs—the future of genomic research lies in strategic integration of multiple sequencing modalities to address biological questions with unprecedented resolution and context.

How Alignment Choice Affects Variant Detection, Isoform Discovery, and Gene Expression Quantification

In the analysis of next-generation sequencing data, the choice of alignment strategy is a foundational decision that profoundly influences downstream biological interpretations. This comparison guide objectively assesses the impact of genome versus transcriptome alignment approaches on three critical areas: variant detection, isoform discovery, and gene expression quantification. The selection between aligning sequencing reads to a genome or directly to a transcriptome is not merely a procedural detail; it involves distinct computational paradigms that can yield meaningfully different results [27]. This guide synthesizes recent experimental evidence to help researchers and drug development professionals navigate these methodological choices, providing clear performance comparisons and detailed protocols to inform analytical workflows.

Alignment Fundamentals: Genome vs. Transcriptome Approaches

Sequence alignment serves as the critical first step in converting raw sequencing reads into biologically interpretable information. The two predominant strategies—genome and transcriptome alignment—leverage different reference sequences and algorithmic techniques, each with distinct implications for accuracy, computational efficiency, and analytical focus.

Genome Alignment involves mapping reads to a reference genome, requiring specialized spliced aligners (e.g., STAR) that can recognize exon-exon junctions by handling large gaps in the alignment to account for introns [27]. This approach allows for the discovery of novel transcripts and isoforms not present in existing annotations, while also enabling the detection of variants in non-coding regions.

Transcriptome Alignment maps reads directly to a reference transcriptome using unspliced aligners (e.g., Bowtie2) [27]. This method is computationally efficient but constrained by existing transcript annotations, potentially missing novel isoforms or generating spurious mappings when reads originate from unannotated genomic loci.

A emerging hybrid approach, selective alignment, enhances traditional methods by performing sensitive lightweight mapping followed by alignment scoring. It can be augmented with decoy sequences from the genome to reduce false mappings while maintaining speed [27].

Key Technical Concepts and Terminology

Table 1: Essential Alignment Terminology

| Term | Definition |

|---|---|

| Spliced Alignment | Alignment capable of identifying exon-exon junctions by creating gaps in read placement to account for introns [27]. |

| Lightweight Mapping | Fast mapping that avoids full sequence alignment, using instead exact match signatures but potentially missing suboptimal mappings [27]. |

| Spurious Mappings | Incorrect read alignments to loci with sequence similarity but not the true origin, a risk with lightweight methods [27]. |

| Quantification | The process of counting reads associated with specific genomic or transcriptomic features to determine expression levels [28]. |

| Meta-alignment | A post-processing approach that integrates multiple independent alignment results to produce more accurate consensus alignments [29]. |

Impact on Gene Expression Quantification

Gene expression quantification represents one of the most common applications of RNA-seq data, and alignment methodology significantly influences its accuracy. Studies systematically comparing alternative pipelines have demonstrated that alignment choice can introduce substantial variability in expression estimates.

Experimental Evidence from Comparative Studies

A comprehensive study evaluating 192 analysis pipelines—constructed from combinations of trimming algorithms, aligners, counting methods, and normalization approaches—found that alignment selection significantly affected both raw gene expression quantification and differential expression results [30]. The research utilized two human multiple myeloma cell lines (KMS12-BM and JJN-3) under drug treatments, with validation performed via qRT-PCR on 32 genes.

Further investigation revealed that lightweight mapping approaches (e.g., quasi-mapping), while demonstrating high concordance with traditional alignment in simulated data, produced meaningfully different abundance estimates in experimental data [27]. These differences stem from their tendency to return distinct, sometimes disjoint, mapping loci for certain reads compared to alignment-based methods.

Performance Comparison of Alignment Strategies

Table 2: Gene Expression Quantification Accuracy Across Methods

| Alignment Method | Representative Tool | Key Characteristics | Performance Findings |

|---|---|---|---|

| Genome Alignment | STAR | Spliced alignment; projects alignments to transcriptome [27] | High agreement with qRT-PCR validation; effective for annotated transcript quantification [30] |

| Transcriptome Alignment | Bowtie2 | Unspliced alignment to transcriptome only [27] | Good performance but constrained by annotation completeness [27] |

| Lightweight Mapping | Salmon (quasi-mode) | Fast k-mer based mapping without alignment scoring [27] | Faster but prone to spurious mappings; reduced accuracy in experimental data [27] |

| Selective Alignment | Salmon (selective) | Lightweight mapping + alignment scoring with decoys [27] | Improved concordance with traditional alignment; addresses spurious mapping [27] |

Detailed Methodology: Selective Alignment Protocol

The selective alignment method was benchmarked against other approaches using simulated and experimental RNA-seq datasets [27]. Below is the detailed experimental protocol:

Index Preparation: Generate a transcriptome index using Salmon with optional decoy sequences. Decoys can be either:

Read Mapping and Quantification:

- Process RNA-seq reads using Salmon in selective alignment mode with the command:

salmon quant -i transcriptome_index -l A -1 reads_1.fastq -2 reads_2.fastq -p 8 --validateMappings -o quantification_output - The

--validateMappingsparameter enables the alignment scoring framework that distinguishes selective alignment from pure lightweight mapping [27].

- Process RNA-seq reads using Salmon in selective alignment mode with the command:

Comparison Framework:

Figure 1: Experimental workflow for comparing alignment methods in expression quantification.

Impact on Isoform Discovery and Characterization

Alignment methodology critically influences the detection and analysis of transcript isoforms, with genome-alignment approaches generally providing superior capability for discovering novel isoforms, while transcriptome-alignment methods offer efficiency for quantifying annotated isoforms.

Genome Alignment for Comprehensive Isoform Detection

Spliced alignment to the genome enables identification of novel splice junctions and unannotated transcripts, as the reference genome contains the complete transcriptional potential of an organism, unlike curated transcriptome references which are often incomplete [27]. This approach is particularly valuable in disease research where novel isoforms may play important pathological roles.

Studies of rare diseases demonstrate how transcriptome-wide outlier patterns from genome-aligned RNA-seq data can diagnose conditions like minor spliceopathies. By applying splicing outlier detection methods (FRASER and FRASER2) to blood samples from 385 individuals, researchers identified five individuals with excess intron retention in minor intron-containing genes, all harboring rare variants in minor spliceosome components [31].

Technical Considerations for Isoform Analysis

Alignment Parameter Sensitivity: The detection of splice junctions heavily depends on aligner parameters. For instance, using "strict" parameters that disallow insertions, deletions, and soft-clipping (as recommended in RSEM) can improve splice junction precision but potentially reduce sensitivity for novel junctions [27].

Reference Preparation: Genome alignment for isoform discovery benefits from comprehensive annotation files, though the algorithm can identify junctions extending beyond annotated boundaries. Tools like STAR generate splice junction databases that catalog both known and novel splicing events [27].

Impact on Variant Detection

Variant detection from RNA-seq data presents unique challenges that are differentially addressed by genome and transcriptome alignment approaches. The choice of alignment strategy affects variant calling accuracy, particularly in non-coding regions and alternatively spliced exons.

Alignment-Related Artifacts in Variant Calling

A critical consideration in variant detection is the problem of spurious alignments to non-native references. When analyzing multiple strains or closely related species, mapping reads to a common reference can introduce false positives in variant calls and differential expression analysis [32]. This occurs because sequences absent in the reference genome but present in the sample may be incorrectly aligned to similar regions in the reference, generating apparent variants that represent technical artifacts rather than biological reality.

Research demonstrates that identifying regions most affected by non-native alignments is essential for minimizing false variant calls. The recommended approach involves identifying orthology between heterologous strains, aligning reads to both reference genomes, and using orthology mapping information to compile accurate alignment counts [32].

Strategic Recommendations for Variant Detection

For variant detection applications, genome alignment with appropriate parameters generally provides more comprehensive coverage of variant types, including:

- Splice-region variants that affect RNA splicing

- Non-coding variants in regulatory regions

- Structural variants with breakpoints in intronic regions

However, transcriptome alignment may offer advantages for detecting expressed sequence variants with reduced computational requirements, particularly when focusing on coding regions with well-established transcript annotations.

Integrated Analysis: Multi-Alignment Framework

Given the significant impact of alignment choice across different analytical applications, researchers have developed frameworks that systematically compare multiple alignment approaches. The Multi-Alignment Framework (MAF) provides a user-friendly platform for running several alignment programs on the same dataset, enabling comprehensive analysis of subtle to significant differences in results [28].

Implementation and Workflow

MAF is specifically designed for Linux environments and uses Bash scripts to integrate alignment and post-processing programs into a unified workflow. The framework includes three main scripts: 30_se_mrna.sh for single-end mRNA analysis, 30_pe_mrna.sh for paired-end mRNA analysis, and 30_se_mir.sh for small RNA analysis [28].

The standard workflow encompasses:

- Quality control of raw sequencing data

- Adapter trimming and read preprocessing

- Parallel alignment using multiple aligners (STAR, Bowtie2, BBMap)

- Post-processing including UMI deduplication

- Quantitative comparison of alignment results

Performance Findings from MAF

In microRNA analysis applications, MAF demonstrated that STAR and Bowtie2 alignment programs were more effective than BBMap. Combining STAR with Salmon quantifier emerged as the most reliable approach, with Samtools quantification also performing well with some limitations [28].

Figure 2: Multi-Alignment Framework workflow for comprehensive method comparison.

Table 3: Key Experimental Resources for Alignment Methodology Research

| Resource Category | Specific Tools/Solutions | Primary Function | Application Context |

|---|---|---|---|

| Spliced Aligners | STAR [27], BBMap [28] | Map RNA-seq reads to genome, handling exon junctions | Genome alignment for novel isoform discovery |

| Unspliced Aligners | Bowtie2 [27] [30] | Efficient alignment to transcriptome | Expression quantification of annotated transcripts |

| Lightweight Mappers | Salmon (quasi-mode) [27] | Rapid mapping without full alignment | Fast expression quantification |

| Alignment Frameworks | Multi-Alignment Framework (MAF) [28] | Compare multiple aligners on same dataset | Method evaluation and optimization |

| Quality Assessment | FASTQC [30], FRASER [31] | Evaluate read quality and splicing patterns | Data QC and outlier detection |

| Reference Sequences | GENCODE, RefSeq | Provide genome and transcriptome references | Foundation for all alignment approaches |

| Validation Methods | qRT-PCR [30] | Experimental validation of expression | Benchmarking alignment accuracy |

The choice between genome and transcriptome alignment approaches involves significant trade-offs that affect research outcomes across variant detection, isoform discovery, and gene expression quantification. Genome alignment (e.g., with STAR) generally provides more comprehensive detection of novel isoforms and variants, particularly in non-coding regions, while transcriptome alignment (e.g., with Bowtie2) offers computational efficiency for quantifying annotated features. Emerging hybrid approaches like selective alignment in Salmon demonstrate promising ability to balance speed and accuracy while minimizing spurious mappings.

For researchers and drug development professionals, the optimal alignment strategy depends on specific research goals, annotation completeness of the studied organism, and available computational resources. When possible, employing multi-alignment frameworks that compare several methods provides the most robust approach for ensuring reliable biological conclusions. As sequencing technologies continue to evolve, alignment methodologies will likewise advance to address new challenges in transcriptomic analysis.

Toolkits and Techniques: A Practical Guide to Alignment Pipelines and Their Applications

The accurate alignment of RNA sequencing (RNA-seq) reads is a foundational step in transcriptomic studies, enabling the quantification of gene expression and the discovery of novel splicing events [33]. Splice-aware aligners must solve the complex problem of mapping short sequencing reads back to a reference genome, even when the reads are separated by large intronic regions that were spliced out in the mature mRNA [34]. This challenge is particularly pronounced in eukaryotic genomes where genes contain numerous introns—averaging 9.4 introns per protein-coding gene in humans [35]. The fundamental objective of RNA-Seq aligners is to perform sensitive and accurate alignments while sufficiently allowing for sequencing errors, maintaining minimal computational workload, and ultimately aggregating mapped reads into meaningful biological data for downstream analysis [33] [36].

The choice between genome and transcriptome alignment approaches represents a significant methodological decision in RNA-seq analysis pipelines. Genome alignment involves mapping reads to the reference genome, requiring aligners to identify splice junctions de novo or with the aid of annotation databases. This approach enables discovery of novel splicing events but computationally demands more sophisticated splice-aware algorithms. In contrast, transcriptome alignment maps reads directly to a reference set of transcribed sequences, simplifying the process but potentially missing unannotated transcripts or splicing variants [37]. Most alignment software tools are typically pre-tuned with human or prokaryotic data, and therefore may not be suitable for applications to other organisms, such as plants, highlighting the importance of selecting appropriate tools for specific research contexts [33] [36].

Performance Comparison of Major Aligners

Base-Level and Junction-Level Accuracy

Comprehensive benchmarking studies reveal significant differences in how aligners perform across various accuracy metrics. In a systematic evaluation using Arabidopsis thaliana data, researchers assessed five popular RNA-Seq alignment tools at both base-level and junction-level resolutions [33] [36]. The results demonstrated that while some aligners excel at overall read mapping, others show superior performance for specific aspects of alignment.

Table 1: Base-Level Alignment Accuracy Across Platforms

| Aligner | Overall Accuracy | Strengths | Limitations |

|---|---|---|---|

| STAR | >90% | Superior base-level accuracy, robust splice junction detection | Higher computational resource requirements |

| HISAT2 | ~85-90% | Efficient memory usage, handles known SNPs well | Prone to misalignment in repetitive regions |

| SubRead | >80% (junction bases) | Excellent junction base-level accuracy | Less accurate for base-level alignment |

| DeepSAP | 97.1% (F1 score) | Best-in-class splice junction detection | Complex workflow with multiple components |

At the read base-level assessment, the overall performance of STAR was superior to other aligners, with overall accuracy reaching over 90% under different test conditions [33]. This aligns with findings from studies on human clinical samples, where STAR generated more precise alignments compared to HISAT2, especially for challenging samples like early neoplasia [37]. STAR's accuracy stems from its sophisticated two-step algorithm that first locates maximal mappable prefixes (seeds) and then performs clustering, stitching, and scoring of these seeds to reconstruct accurate alignments across splice junctions [33] [36].

For junction base-level assessment, which specifically evaluates accuracy in identifying splice junction boundaries, SubRead emerged as the most promising aligner with overall accuracy over 80% under most test conditions [33]. This specialized performance highlights how different algorithmic approaches favor different aspects of alignment accuracy. However, the recently developed DeepSAP method demonstrates a remarkable mean F1 score of 0.971 for splice junction detection, far outperforming established tools like STAR and HISAT2 [38].

Computational Resource Requirements

Beyond accuracy, computational efficiency represents a critical practical consideration when selecting an alignment tool, particularly for large-scale studies or resource-limited environments.

Table 2: Computational Resource Requirements

| Aligner | Memory Usage | Speed | Indexing Requirements |

|---|---|---|---|

| STAR | High | Fast alignment but requires significant memory | Generates large genome indices |

| HISAT2 | Moderate | ~3-fold faster than other aligners | Uses hierarchical FM indexing for efficiency |

| SubRead | Low to Moderate | Competitive speed | Efficient memory mapping algorithms |

HISAT2 demonstrates approximately 3-fold faster runtime compared to the next fastest aligner, making it particularly attractive for projects with computational constraints [34]. This efficiency stems from its use of hierarchical indexing, which employs multiple small FM indices for rapid local alignment combined with a whole-genome FM index for anchoring alignments [33] [34]. In contrast, while STAR offers excellent accuracy, it requires substantial memory resources, particularly during the indexing phase [39]. This trade-off between accuracy and resource consumption represents a key consideration for researchers selecting alignment tools.

Experimental Protocols and Benchmarking Methodologies

Benchmarking Workflows for Alignment Validation

Robust evaluation of aligner performance requires carefully designed experimental protocols and benchmarking workflows. The following diagram illustrates a standardized pipeline for assessing alignment accuracy:

Standardized Alignment Benchmarking Workflow

This workflow begins with generating simulated RNA-seq reads from a reference genome and annotation database, creating datasets with known ground truth for validation [33]. Tools like Polyester can simulate sequencing reads with biological replicates and specified differential expression signaling [33] [36]. The simulated reads are then aligned using each aligner under evaluation, producing alignment files that undergo both base-level and junction-level assessment against known splice sites. Finally, performance metrics are computed for comparative analysis of alignment accuracy [33].

Specialized Protocols for Specific Applications

Different research contexts require tailored benchmarking approaches. For clinical samples, particularly formalin-fixed paraffin-embedded (FFPE) tissues, specialized protocols have been developed to address challenges like RNA degradation and decreased poly(A) binding affinity [37]. Studies comparing aligner performance on FFPE samples have revealed significant differences, with STAR demonstrating superior alignment precision for degraded samples [37].

For plant genomics, where intron structures differ significantly from mammalian systems—with Arabidopsis introns being significantly shorter than human introns—benchmarking must account for these biological differences [33] [36]. Most alignment tools are pre-tuned for human genomes, potentially limiting their effectiveness for plant transcriptomic analysis without appropriate parameter adjustments [36].

Emerging Methods and Advanced Approaches

Deep Learning-Enhanced Alignment

Recent advances in splice-aware alignment integrate deep learning models to improve accuracy, particularly for challenging junction detection. DeepSAP represents a groundbreaking approach that combines traditional transcriptome-guided alignment with transformer-based splice junction scoring [38]. This method utilizes the TGGA GSNAP aligner initially, then incorporates a fine-tuned DNABERT transformer model to enhance splice junction detection, recalibrating mapping quality scores for multi-mapped reads and applying soft clipping for splice junctions with low transformer scores [38].

Similarly, minisplice employs a one-dimensional convolutional neural network (1D-CNN) to learn splice signals, capturing conserved splice patterns across species [35]. This approach models splice sites with 7,026 parameters for vertebrate and insect genomes, revealing biologically relevant patterns like GC-rich introns specific to mammals and birds [35]. Evaluation on human long-read RNA-seq data shows that such deep learning approaches significantly improve junction accuracy, especially for noisy long RNA-seq reads and proteins of distant homology [35].

Error Detection and Correction Methods

Despite improvements in alignment algorithms, systematic errors persist, particularly in regions with repetitive sequences. EASTR (Emending Alignments of Spliced Transcript Reads) addresses this by detecting and removing falsely spliced alignments through analysis of sequence similarity between intron-flanking regions [40]. This tool identifies that widely used splice-aware aligners can introduce erroneous spliced alignments between repeated sequences, leading to the inclusion of falsely spliced transcripts in RNA-seq experiments [40].

EASTR employs a multi-step strategy to identify spurious splice junctions, focusing on sequence similarity between flanking regions and their occurrence frequency in the reference genome [40]. Applications across diverse species including human, maize, and Arabidopsis thaliana demonstrate that EASTR substantially improves alignment accuracy by detecting and correcting alignment artifacts that can even make their way into reference annotation databases [40].

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Key Research Reagents and Computational Tools

| Item | Function | Example Applications |

|---|---|---|

| Polyester | RNA-seq read simulation | Generating benchmark datasets with known ground truth [33] |

| EASTR | Alignment error correction | Detecting falsely spliced alignments in repetitive regions [40] |

| SpliceAI | Splice site prediction | Scoring splice junctions using deep learning models [40] |

| FeatureCounts | Read quantification | Counting reads overlapping genomic features [37] |

| StringTie2 | Transcript assembly | Reconstructing transcripts from aligned reads [40] |

| GTF/GFF Files | Genomic annotation | Providing known splice sites for alignment guidance [37] |

This toolkit comprises essential computational resources for conducting comprehensive alignment studies. Polyester simulates RNA-seq data with biological replicates and differential expression signaling, enabling controlled benchmarking studies [33]. EASTR and SpliceAI provide complementary approaches to validation—EASTR by detecting alignment errors in repetitive regions, and SpliceAI by predicting splice site likelihood using machine learning models [40]. FeatureCounts and StringTie2 facilitate downstream analysis after alignment, enabling read quantification and transcript assembly respectively [37] [40].

The comparative analysis of splice-aware genomic aligners reveals a complex landscape where tool selection must be guided by specific research objectives and constraints. For applications demanding maximum base-level alignment accuracy, particularly in human transcriptomic studies, STAR remains a strong candidate despite its computational intensity [33] [37]. For projects with limited computational resources or those focusing on plant genomes where default parameters may be suboptimal, HISAT2 offers an attractive balance of efficiency and accuracy [33] [34]. For the most challenging junction detection tasks, particularly in clinical or evolutionary contexts where splice site prediction is critical, emerging deep learning methods like DeepSAP and minisplice demonstrate superior performance [38] [35].

The integration of genome and transcriptome alignment approaches, complemented by error detection tools like EASTR, represents a promising direction for comprehensive transcriptomic analysis. As sequencing technologies continue to evolve toward longer reads and single-cell applications, alignment methods must similarly advance, likely through increased incorporation of machine learning and species-specific modeling to address the unique challenges of splice-aware alignment across diverse biological contexts.

In the analysis of RNA sequencing (RNA-seq) data, the traditional approach has relied on computationally intensive base-by-base alignment of sequencing reads to a reference genome. This process, while informative, is slow and resource-heavy, creating a bottleneck for processing large datasets. Pseudoalignment represents a paradigm shift by bypassing exact alignment in favor of rapidly determining which transcripts in a reference collection could have generated the sequenced reads [41]. The core idea is that for quantifying gene expression, the crucial information is not the precise genomic coordinates of a read, but the set of compatible transcripts it could originate from [42]. Two tools at the forefront of this innovation are Kallisto and Salmon. They leverage this principle to achieve orders-of-magnitude speed improvements over traditional alignment-based methods like Tophat/Cufflinks while maintaining high accuracy, making them indispensable for modern transcriptomics studies [42] [41] [43].

Core Algorithmic Principles: How Kallisto and Salmon Work

The Kallisto Algorithm

Kallisto, introduced by Bray et al. in 2016, operates using a novel pseudoalignment process. Its workflow is built around a transcriptome de Bruijn graph (T-DBG) constructed from all k-mers in the transcriptome. When a read is processed, Kallisto breaks it down into k-mers and queries them against the T-DBG index. The set of transcripts that contain all the k-mers from a read are deemed compatible, forming the pseudoalignment. This allows Kallisto to skip the traditional, slow alignment step entirely. The tool then uses an expectation-maximization (EM) algorithm on these pseudoalignments to estimate transcript abundances [42]. A key feature is its use of equivalence classes—grouping reads that map to the same set of transcripts—which simplifies the model and enhances computational efficiency [42]. The entire process is exceptionally fast, enabling Kallisto to quantify 20 million reads in under five minutes on a standard laptop [42].

The Salmon Algorithm

Salmon, developed by Patro et al., employs a similar overall strategy but introduces distinct features. Its approach is often termed "quasi-mapping." While also highly efficient, Salmon's mapping procedure typically tracks the position and orientation of mapped fragments by default, using this information to inform a more complex probabilistic model [43]. A fundamental architectural difference is Salmon's dual-phase inference algorithm, which consists of an online phase and an offline phase. The online phase uses a variant of stochastic, collapsed variational Bayesian inference to produce initial abundance estimates and learn parameters of sample-specific bias models. The offline phase then refines these estimates using the rich equivalence classes constructed during the online phase [43]. This two-step process allows Salmon to incorporate more contextual information about the data.

Performance Comparison: Accuracy, Speed, and Robustness

Benchmarking on Experimental and Simulated Data

Independent benchmarking studies provide critical insights for tool selection. The table below summarizes key quantitative comparisons between Kallisto and Salmon, alongside traditional methods, from several independent studies.

Table 1: Comparative Performance of RNA-seq Quantification Tools

| Metric | Kallisto | Salmon | Traditional Alignment-Based (e.g., Tophat-HTSeq) | Notes & Source |

|---|---|---|---|---|

| Speed (22M PE reads) | ~3.5 minutes [42] | ~8 minutes [42] | Hours to days [42] | Single core, 8GB RAM. |

| Correlation with Cufflinks (r) | 0.941 [42] | 0.939 [42] | 1.0 (self) | Measures consistency with an established method. |

| Expression Correlation with qPCR (R²) | 0.839 [44] | 0.845 [44] | 0.827 (Tophat-HTSeq) [44] | Higher correlation indicates better accuracy. |

| Fold Change Correlation with qPCR (R²) | 0.930 [44] | 0.929 [44] | 0.934 (Tophat-HTSeq) [44] | Measures DE analysis accuracy. |

| Fraction of Non-concordant DE genes with qPCR | ~16.5% (estimated) [44] | ~19.4% [44] | ~15.1% (Tophat-HTSeq) [44] | Lower is better. |

| Impact on DE Analysis | High sensitivity and specificity [44] [45] | Can reduce false positives in DE [43] | Varies by tool | Salmon's GC bias correction improves DE reliability [43]. |

Key Differentiating Factors in Performance

- Accuracy on Simulated vs. Real Data: On idealized simulated data without technical biases, Kallisto, Salmon, RSEM, and Cufflinks show the highest accuracy [45]. However, on more realistic data incorporating variants, sequencing errors, and non-uniform coverage, their performance advantage over simpler methods is less dramatic, though they remain top performers [45].

- Bias Modeling: A significant differentiator for Salmon is its incorporation of sample-specific bias models. It can correct for fragment GC content bias, positional biases, and sequence-specific biases [43]. This has been shown to substantially improve the accuracy of abundance estimates and the reliability of subsequent differential expression analysis, reducing false positives and instances of inferred isoform switching [43].

- Performance on Low-Abundance Transcripts: A study comparing analysis workflows noted that Kallisto-Sleuth may be most useful for evaluating genes with medium to high abundance, while methods like HISAT2-StringTie-Ballgown can be more sensitive to genes with low expression levels [2].

Experimental Protocols for Benchmarking

To ensure the validity of tool comparisons, rigorous and standardized benchmarking protocols are essential. The following outlines a typical methodology derived from cited independent studies.

Data Source and Preparation

Benchmarks often use two types of data:

- Real RNA-seq Datasets: Well-characterized reference RNA samples are used, such as the MAQCA (Universal Human Reference RNA) and MAQCB (Human Brain Reference RNA) samples [44]. These provide a realistic biological context.

- Simulated Data: Tools like the BEERS simulator are used to generate RNA-seq reads in silico from a known transcriptome [45]. This approach provides an exact ground truth for evaluating quantification accuracy. Simulations can be "idealized" or incorporate real-world complexities like polymorphisms, intron signal, and non-uniform coverage [45].

Quantification and Alignment Workflow

The experimental workflow for a typical benchmarking study involves processing the same dataset through multiple pipelines in parallel.

Validation and Performance Metrics

The outputs from each pipeline are compared against the ground truth using several metrics:

- Expression Correlation: The Pearson or Spearman correlation (R²) between the estimated expression values (e.g., TPM) and the validation data (qPCR or simulated truth) is calculated [44]. This measures accuracy in absolute quantification.

- Fold Change Correlation: The correlation of log-fold changes between conditions (e.g., MAQCA vs. MAQCB) is a critical metric for assessing performance in differential expression analysis [44].

- Concordance in Differential Expression (DE): Genes are classified based on whether they are called as differentially expressed by both the tool and the validation method (concordant) or only by one (non-concordant) [44].

- Computational Resource Usage: Memory (RAM) consumption and total runtime are measured and compared [2] [45].

Table 2: Key Research Reagents and Computational Resources for RNA-seq Quantification

| Item / Resource | Function / Purpose | Example / Note |

|---|---|---|

| Reference Transcriptome | Set of known transcript sequences used for pseudoalignment. | Ensembl cDNA files (e.g., Homo_sapiens.GRCh38.cdna.all.fa). |

| Reference Genome | Used for traditional alignment-based pipelines and annotation. | Ensembl genome assembly (e.g., GRCh38 for human). |

| Alignment-Based Pipelines | Serves as a benchmark for evaluating new tools. | HISAT2 (alignment) + HTSeq/StringTie (quantification). |

| Validation Data (qPCR) | Gold-standard experimental method for validating expression levels. | Used on a subset of genes to assess quantification accuracy [44]. |

| Validation Data (Spike-in Controls) | RNA molecules of known concentration added to the sample. | Provides an external standard for absolute quantification. |

| Simulation Software | Generates RNA-seq data with known transcript abundances. | BEERS [45], Polyester [43], RSEM-sim [43]. |

| High-Performance Computing | Necessary for processing large-scale RNA-seq datasets. | Multi-core servers for parallel execution of Salmon/Kallisto. |

Advanced Applications and Future Directions

The principles of pseudoalignment are now being adapted to overcome challenges in emerging sequencing technologies. A prominent example is lr-kallisto, an adaptation of the kallisto algorithm for long-read sequencing data from platforms like Oxford Nanopore Technologies (ONT) [46]. Long-read technologies can sequence full-length transcripts but have higher error rates (~0.5%) compared to short-read technologies. Lr-kallisto demonstrates that pseudoalignment is feasible and accurate even with these higher error rates, retaining the efficiency of kallisto while being robust to the error profiles of long-read data [46]. Furthermore, both Kallisto and Salmon have been successfully applied to single-cell RNA-seq (scRNA-seq) data, where their computational efficiency is critical for handling the massive datasets generated from thousands of individual cells [46] [41].

Kallisto and Salmon have fundamentally changed the landscape of RNA-seq analysis by making rapid and accurate transcript quantification accessible. The choice between them depends on the specific needs of the study.

- Choose Kallisto when your priority is maximum speed and simplicity for standard differential expression analysis on well-annotated model organisms. It is a robust, near-optimal tool for fast profiling [42] [41].

- Choose Salmon when your analysis requires sophisticated bias correction (e.g., for GC content), or when working with data types where such biases are a known concern. Its rich model can improve the reliability of differential expression calls, particularly in complex scenarios [41] [43].

The diagram below summarizes the decision workflow for selecting an analysis tool based on common research goals.

For the vast majority of users, both tools represent a superior choice over traditional alignment-based methods for the specific task of transcript quantification, offering a compelling blend of speed and accuracy that is well-suited for the scale of modern genomics.

The bioinformatic processing of RNA sequencing (RNA-seq) data typically involves aligning short sequence reads to a single reference genome and quantifying gene expression using a uniform set of rules across all genes [4] [47]. While this standardized approach works well for most genomic regions, it proves systematically inadequate for complex gene families with high polymorphism, segmental duplications, or incomplete reference genome representation [4] [48]. The Major Histocompatibility Complex (MHC) and Killer-cell Immunoglobulin-like Receptors (KIR) regions exemplify this challenge, as balancing selection has generated polygenic gene families not accurately represented in standard "one-size-fits-all" reference genomes [4] [47].

These limitations manifest as several technical problems: genes missing from reference annotations result in absent expression data; highly similar genes create alignment ambiguity where reads map to multiple locations; and genetic polymorphism across populations means a single reference genome cannot capture species diversity [4]. For immunology research, these shortcomings are particularly problematic because accurately quantifying expression of MHC and KIR genes is critical for understanding antigen recognition and immune responses [48]. This article examines specialized computational pipelines designed to address these challenges, focusing on their performance compared to standard approaches.

Tool Comparison: Nimble as a Supplemental Pipeline