How PCA Reduces Gene Expression Data Dimensionality: A Guide for Biomedical Researchers

This article provides a comprehensive exploration of Principal Component Analysis (PCA) and its pivotal role in simplifying high-dimensional gene expression data.

How PCA Reduces Gene Expression Data Dimensionality: A Guide for Biomedical Researchers

Abstract

This article provides a comprehensive exploration of Principal Component Analysis (PCA) and its pivotal role in simplifying high-dimensional gene expression data. Tailored for researchers, scientists, and drug development professionals, it covers foundational concepts from its statistical origins to its function as a 'data summary' technique. The piece details methodological applications across transcriptomics and spatial transcriptomics, addresses critical troubleshooting aspects like overfitting and noise sensitivity, and validates PCA's utility through performance comparisons with alternative methods. By synthesizing theory and practical application, this guide empowers professionals to leverage PCA for efficient, interpretable analysis in complex biomedical datasets.

The Core of PCA: From Statistical Theory to Genomic Data Summary

Defining the High-Dimensionality Problem in Gene Expression Data

Gene expression data, particularly from high-throughput technologies like RNA sequencing (RNA-seq) and single-cell RNA sequencing (scRNA-seq), is inherently high-dimensional. A typical experiment generates expression measurements for tens of thousands of genes (features) across a much smaller number of biological samples (observations). This "high-dimension, low-sample size" (HDLSS) scenario presents significant challenges for statistical analysis, visualization, and biological interpretation [1] [2]. The core problem lies in the curse of dimensionality, where the vast feature space leads to data sparsity, increased computational complexity, and elevated risks of overfitting and identifying spurious correlations. Within this context, dimensionality reduction techniques—particularly Principal Component Analysis (PCA)—have become indispensable tools for making high-dimensional gene expression data computationally manageable and biologically interpretable. This technical guide examines the high-dimensionality problem and elucidates how PCA and related methods provide solutions within gene expression research, equipping scientists with practical frameworks for analyzing complex transcriptomic datasets.

The Nature of High-Dimensionality in Gene Expression Data

Fundamental Characteristics and Statistical Challenges

In bioinformatics, high-dimensionality refers to datasets where the number of features (p) vastly exceeds the number of observations (n). A standard RNA-seq experiment might profile 20,000-30,000 genes across only a dozen or so samples, creating a 20,000×12 data matrix [1]. This structure violates the fundamental assumptions of many classical statistical tests and machine learning algorithms designed for contexts where n >> p. The resulting data sparsity means that as dimensionality increases, data points become increasingly distant from each other, making it difficult to detect true clusters or patterns. Furthermore, the high feature-to-sample ratio dramatically increases the multiple testing burden in differential expression analysis, requiring stringent correction methods that can obscure genuinely significant findings [3].

Consequences for Analysis and Interpretation

The high-dimensional nature of gene expression data introduces several specific analytical challenges that impact biological interpretation. Table 1 summarizes the core problems and their implications for transcriptomic research.

Table 1: Key Challenges in High-Dimensional Gene Expression Data Analysis

| Challenge | Description | Impact on Analysis |

|---|---|---|

| Data Sparsity | As dimensions increase, data points become increasingly distant in feature space, occupying only a tiny fraction of the possible space. | Reduces effectiveness of clustering algorithms; makes it difficult to detect true patterns and relationships. |

| Computational Burden | Processing and storing large matrices (e.g., 30,000 genes × 1,000 samples) demands significant memory and processing power. | Slows down analysis; requires high-performance computing resources for large-scale studies. |

| Risk of Overfitting | Models with excessive parameters relative to observations may fit noise rather than true biological signal. | Produces models that fail to generalize to new datasets; leads to false discoveries. |

| Multiple Testing Problem | Evaluating thousands of genes simultaneously dramatically increases the chance of false positives. | Requires stringent statistical corrections (e.g., FDR) that may obscure true biological signals. |

| Visualization Difficulty | Humans cannot directly perceive relationships in spaces with thousands of dimensions. | Hinders exploratory data analysis and intuitive understanding of dataset structure. |

Principal Component Analysis (PCA): A Mathematical Foundation for Dimensionality Reduction

Core Conceptual Framework

Principal Component Analysis (PCA) is a linear dimensionality reduction technique that identifies the most informative directions of variance in high-dimensional data [2]. Mathematically, PCA performs an orthogonal transformation of the potentially correlated original variables (gene expression levels) into a new set of linearly uncorrelated variables called principal components (PCs). These components are ordered such that the first PC (PC1) captures the largest possible variance in the data, the second PC (PC2) captures the second largest variance while being orthogonal to the first, and so on. The resulting low-dimensional representation preserves the most significant patterns in the data while filtering out noise.

Mathematical Formulation

Given a centered gene expression matrix X with n samples and p genes, PCA is typically solved via eigen decomposition of the covariance matrix C = (1/(n-1))XᵀX. The principal components are the eigenvectors of C, and the amount of variance explained by each component is proportional to its corresponding eigenvalue. Alternatively, PCA can be computed via Singular Value Decomposition (SVD) of the centered data matrix: X = UΣVᵀ, where the columns of V are the principal components (loadings), and the product UΣ represents the principal component scores [2]. The loadings indicate the contribution of each original gene to each PC, while the scores represent the projection of each sample onto the new components, enabling low-dimensional visualization of sample relationships.

PCA in Practice: An Experimental Protocol for Gene Expression Analysis

Standardized Workflow for RNA-seq Data

Implementing PCA effectively requires a structured approach to data preprocessing, computation, and interpretation. The following protocol outlines key steps for applying PCA to RNA-seq count data, integrating best practices from established bioinformatics pipelines [1] [4].

Table 2: Key Research Reagent Solutions for PCA-Based Gene Expression Analysis

| Tool/Resource | Function | Implementation |

|---|---|---|

| DESeq2 | Normalizes raw count data to account for library size and RNA composition biases. | R/Bioconductor package |

| pcaExplorer | Interactive exploration of PCA results; generates publication-ready graphs and reports. | R/Bioconductor package |

| SingleCellExperiment | S4 class for storing and manipulating single-cell expression data. | R/Bioconductor package |

| Scanpy | Preprocessing, PCA, and visualization for large-scale single-cell data. | Python package |

| Factoextra | Visualizes PCA results, including scree plots and sample biplots. | R package |

| ideal | Interactive differential expression analysis integrating PCA for quality assessment. | R/Bioconductor package |

Step 1: Data Input and Normalization Begin with a raw count matrix (genes as rows, samples as columns) and associated sample metadata. Normalize the counts to correct for technical variations like library size and RNA composition. While PCA can be applied to raw counts, variance-stabilizing transformations (e.g., via DESeq2) or log-transformation of normalized counts (e.g., Counts Per Million) are often recommended to prevent highly expressed genes from dominating the variance structure [1] [5].

Step 2: Feature Selection and Data Scaling Select the most variable genes (e.g., top 500-5000) before performing PCA to reduce noise and computational load. Center the data (ensure each gene has a mean of zero) as PCA is sensitive to the mean. Scaling (setting variance to unity for each gene) is debated; it prevents high-expression genes from dominating but may inflate the influence of low-expression noise genes. The decision should align with the biological question [2].

Step 3: PCA Computation and Dimension Selection

Perform PCA using optimized functions (prcomp in R, scanpy.tl.pca in Python). The output includes sample scores (coordinates in PC space) and gene loadings (contributions to each PC). Determine the number of meaningful components to retain using a scree plot (variance vs. component number) or the elbow method, selecting components that capture significant biological variance [1] [3].

Step 4: Visualization and Interpretation Visualize samples in 2D or 3D plots using the first few PCs (e.g., PC1 vs. PC2) to assess sample clustering, batch effects, or outliers. Color points by experimental conditions from the metadata. Examine the gene loadings to identify which genes drive the separation observed along each component, linking patterns back to biology [1] [4].

Step 5: Functional and Reproducibility Analysis

Interpret the biological meaning of components by performing gene set enrichment analysis on genes with high absolute loadings for each significant PC. Ensure reproducibility by generating automated reports with tools like pcaExplorer, which captures the analysis state and parameters [1] [6].

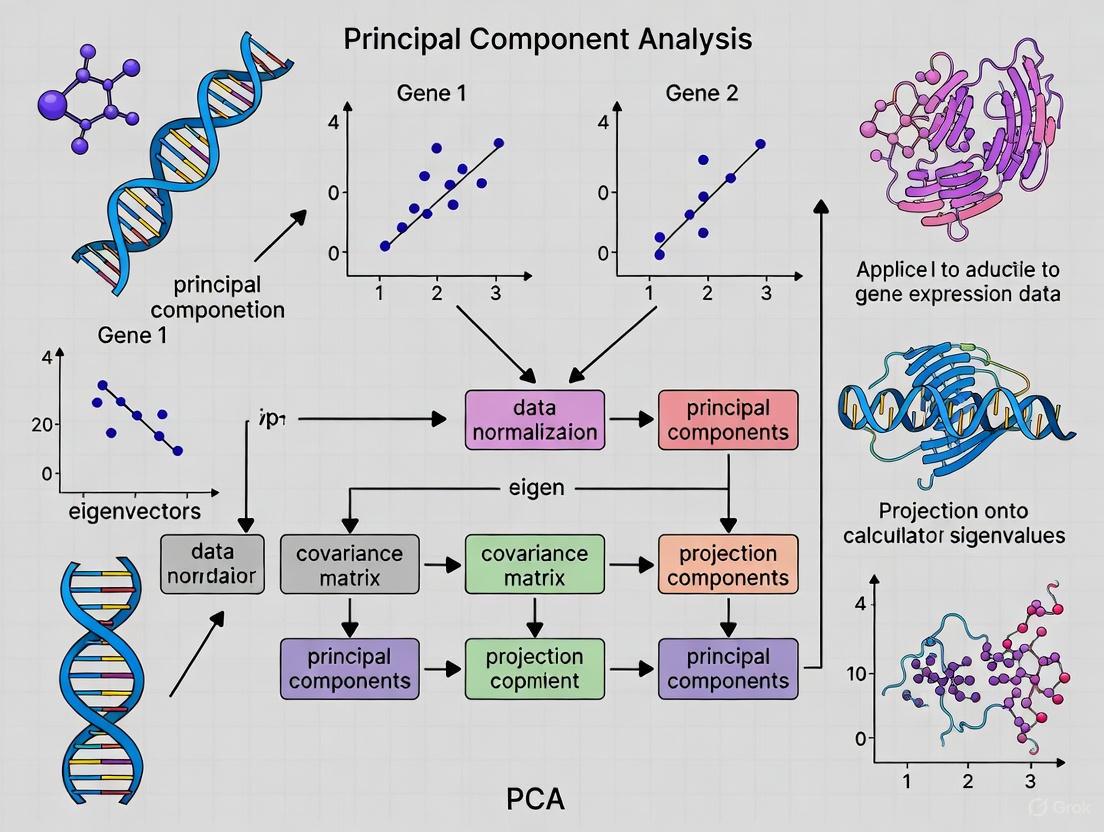

The following diagram illustrates the logical relationships and workflow between these key steps.

Beyond Linearity: Advanced and Complementary Dimensionality Reduction Techniques

Expanding the Methodological Arsenal

While PCA is powerful for capturing global linear structures, gene expression data often contains complex nonlinear relationships. Several advanced techniques address this limitation, offering complementary approaches to dimensionality reduction [2] [7].

t-Distributed Stochastic Neighbor Embedding (t-SNE) focuses on preserving local neighborhood structures, often creating visually compelling cluster separations. However, t-SNE is computationally intensive and its global structure preservation can be sensitive to parameter tuning [3]. Uniform Manifold Approximation and Projection (UMAP) often provides a superior balance, preserving more of the global data structure than t-SNE while maintaining strong local clustering and offering faster computation [4] [2]. For the most complex patterns, Autoencoders (AEs) and Variational Autoencoders (VAEs) use deep learning to learn nonlinear latent representations, providing exceptional flexibility for capturing intricate hierarchical structures in large-scale datasets like scRNA-seq [8] [7].

Table 3: Comparison of Key Dimensionality Reduction Methods for Gene Expression Data

| Method | Type | Key Strength | Key Limitation | Typical Use Case |

|---|---|---|---|---|

| PCA [1] [2] | Linear | Computationally efficient; preserves global variance; interpretable. | Cannot capture nonlinear relationships. | Initial exploratory analysis; quality control; detecting batch effects. |

| t-SNE [3] | Nonlinear | Excellent at visualizing local clusters and complex groupings. | Computationally heavy; loses global structure. | Visualizing cell types or subtypes in scRNA-seq. |

| UMAP [4] [2] | Nonlinear | Better preservation of global structure than t-SNE; faster. | Can be sensitive to parameters; less interpretable than PCA. | Large-scale single-cell data visualization; trajectory inference. |

| Autoencoders [8] [7] | Nonlinear (DL) | Highly flexible; can model complex, hierarchical patterns. | "Black-box" nature; requires large datasets and expertise. | Analyzing very large, complex datasets (e.g., million-cell datasets). |

Method Selection and Hybrid Approaches

Choosing the appropriate method depends on the data scale and biological question. PCA remains the optimal starting point for most analyses due to its speed and interpretability. For detailed visualization of cellular heterogeneity in single-cell studies, UMAP or t-SNE are preferred. A powerful strategy involves combining methods: using PCA for initial noise reduction before applying UMAP or t-SNE, or employing PCA on a nonlinear latent space discovered by an autoencoder [2] [3]. Recent innovations like the Boosting Autoencoder (BAE) further enhance interpretability by identifying small, biologically relevant gene sets that explain latent dimensions, directly linking patterns back to specific genes and pathways [7].

The relationships between these methods, from linear to nonlinear and from traditional to deep learning-based, are visualized below.

The high-dimensionality of gene expression data presents fundamental challenges that are effectively addressed through dimensionality reduction. PCA stands as a cornerstone technique, providing a computationally efficient, interpretable framework for exploratory analysis, quality control, and initial data compression. Its linear nature offers a transparent foundation for understanding major sources of variation. However, the complexity of biological systems often necessitates complementary nonlinear methods like UMAP and deep learning approaches such as autoencoders for uncovering intricate patterns. The ongoing innovation in this space, including hybrid models and interpretable deep learning, continues to enhance our capacity to extract meaningful biological insights from increasingly complex genomic datasets. By strategically implementing these techniques within a structured analytical workflow, researchers can transform the high-dimensionality problem from an analytical obstacle into a source of discovery.

Principal Component Analysis (PCA) stands as a cornerstone multivariate technique in modern data science, with profound implications for dimensionality reduction in gene expression research. This whitepaper traces the methodological foundations of PCA to Karl Pearson's seminal 1901 work, elucidates its core statistical mechanics, and provides detailed experimental protocols for its application in transcriptomic data analysis. Within the context of gene expression research, PCA enables researchers to transform high-dimensional genomic data into lower-dimensional spaces, preserving essential biological signals while mitigating computational challenges. We demonstrate how proper application of PCA facilitates exploratory data analysis, outlier detection, and biological interpretation in pharmaceutical development and basic research.

Historical Foundations: The Pearsonian Revolution

Karl Pearson's Geometrical Innovation

The genesis of Principal Component Analysis dates to Karl Pearson's seminal 1901 paper "On Lines and Planes of Closest Fit to Systems of Points in Space," which introduced a fundamental shift in statistical reasoning [9]. Pearson addressed a critical limitation of traditional least-squares regression, which treated independent variables (x) as error-free and dependent variables (y) as subject to deviation. He recognized that in biological and physical systems, both variables often contain inherent uncertainty, necessitating a symmetrical approach to error minimization [9].

Pearson's novel conceptualization defined the core objective of PCA: to identify the "best-fitting" linear subspaces that represent multidimensional data. His approach minimized perpendicular distances from data points to the model subspace (Figure 1), in contrast to conventional regression that minimized vertical distances assuming certainty only in the y-variable. This geometrical innovation established the foundation for extracting principal components as the directions of maximum variance in correlated data [9].

From Pearson to Modern Multivariate Analysis

Pearson's initial framework has evolved through various methodological traditions under different names—including Factor Analysis, Singular Value Decomposition, Karhunen-Loève transformation, and Essential Dynamics—while retaining the core principles he established [9]. This cross-disciplinary dissemination underscores PCA's versatility as a "hypothesis-generating tool" that creates a statistical mechanics framework for biological systems modeling without requiring strong a priori theoretical assumptions [9]. In the era of high-throughput genomics, Pearson's approach provides precisely the methodological foundation needed to explore complex biological datasets, particularly in gene expression analysis where numerous correlated variables (gene expression levels) must be reduced to their essential features.

Statistical and Geometrical Basis of PCA

The Mathematics of Dimensionality Reduction

PCA operates on a fundamental principle: transforming correlated variables into a set of uncorrelated principal components that are linear combinations of the original variables. These components are derived in sequence, with each subsequent component capturing the maximum remaining variance while being orthogonal to all previous components [10].

Mathematically, principal components are constructed according to the formula: PC = aX₁ + bX₂ + cX₃ + ... + kXₙ where X₁–Xₙ represent the original variables (e.g., gene expression values) and the coefficients a, b, c,..., k are determined through eigen decomposition to maximize variance capture [9]. The resulting components provide the "best summary" of information in the original data in a least-squares sense while eliminating multicollinearity [9].

The PCA Workflow: A Step-by-Step Statistical Procedure

The implementation of PCA follows a systematic workflow that operationalizes Pearson's geometrical insight:

Standardization and Centering - Variables are transformed to comparable scales by subtracting means and dividing by standard deviations, ensuring that variables with larger ranges do not dominate the analysis [10].

Covariance Matrix Computation - The covariance matrix captures inter-variable relationships, with diagonal elements representing variances and off-diagonal elements representing covariances between variable pairs [10].

Eigen Decomposition - Eigenvectors and eigenvalues of the covariance matrix are computed, where eigenvectors define the directions of principal components (new axes), and eigenvalues quantify the variance captured by each component [10].

Component Selection - Components are ranked by decreasing eigenvalues, and a subset is selected based on variance contribution, typically using scree plots or variance retention thresholds (e.g., 95% cumulative variance) [11].

Data Projection - The original data is projected onto the selected component axes, creating a transformed dataset with reduced dimensionality [10].

The following diagram illustrates this workflow in the context of gene expression analysis:

Figure 1: PCA Workflow for Gene Expression Data

Variance Interpretation and Component Selection

The information content of principal components is quantified through eigenvalues, with larger eigenvalues indicating components that capture more variance. The proportion of total variance explained by each component is calculated as the ratio of its eigenvalue to the sum of all eigenvalues [10]. In gene expression studies, the cumulative variance approach typically retains components that collectively explain 70-95% of total variance, though biological interpretability often supersedes strict percentage thresholds [11].

PCA in Gene Expression Data Analysis

The Dimensionality Challenge in Transcriptomics

Modern transcriptomic datasets present substantial analytical challenges with tens of thousands of genes measured across multiple samples, creating high-dimensional spaces where traditional analysis methods struggle [11]. The "curse of dimensionality" manifests through data sparsity, increased risk of overfitting, and computational inefficiency [11]. PCA addresses these issues by identifying the dominant patterns of variation, effectively reducing thousands of gene expression measurements to a manageable number of composite variables while preserving essential biological information [12].

Normalization Critical Considerations

The application of PCA to RNA-sequencing data requires careful normalization prior to analysis. Different normalization methods significantly impact PCA results and biological interpretation [13]. A comprehensive evaluation of twelve normalization methods for RNA-seq data demonstrated that while PCA score plots may appear similar across normalization techniques, the biological interpretation of components can vary substantially [13]. Without proper normalization, technical artifacts such as sequencing depth can dominate the first principal component, obscuring biological signals [12].

Table 1: Variance Explained by Principal Components in Gene Expression Studies

| Principal Component | Percentage of Variance Explained | Typical Biological Interpretation |

|---|---|---|

| PC1 | 15-30% | Sequencing depth, major tissue type, or dominant biological factor |

| PC2 | 8-15% | Secondary biological factor, batch effects |

| PC3 | 5-10% | Tertiary biological factor, additional batch effects |

| PC4+ | <5% each | Subtle biological signals, noise |

| Cumulative (PC1-PC10) | 40-70% | Total variance captured for downstream analysis |

Experimental Protocol: PCA for Transcriptomic Data

The following protocol provides a standardized approach for applying PCA to RNA-sequencing data, incorporating critical considerations for normalization and interpretation:

Data Preprocessing

- Data Acquisition: Obtain raw count data from RNA-sequencing experiments. Publicly available sources include TCGA, GTEx, and ICGC [14].

- Filtering: Remove genes with zero counts across all samples and apply expression thresholding (e.g., counts per million > 1 in at least 20% of samples) [14].

- Normalization: Apply appropriate normalization method to address sequencing depth and compositional effects. Options include:

- Quantile normalization [14]

- TMM (Trimmed Mean of M-values)

- DESeq2's median of ratios

- Transformation: Convert normalized counts to log2 scale to stabilize variance [14].

- Standardization: Center each gene to zero mean and unit variance [10].

PCA Implementation

- Covariance Matrix Computation: Calculate the gene-by-gene covariance matrix on the preprocessed data [10].

- Eigen Decomposition: Perform singular value decomposition on the covariance matrix to obtain eigenvectors (principal components) and eigenvalues (variances) [11].

- Component Selection:

- Generate scree plot of eigenvalues

- Calculate cumulative variance explained

- Select components that collectively explain >70% variance or until eigenvalue <1 (Kaiser criterion)

- Result Interpretation:

- Examine component loadings to identify genes contributing most to each PC

- Project samples into PC space for visualization

- Correlate PC scores with sample metadata (e.g., tissue type, treatment)

Validation and Downstream Analysis

- Stability Assessment: Perform cross-validation to evaluate component stability [15].

- Biological Interpretation: Conduct gene set enrichment analysis on high-loading genes for each component [13].

- Visualization: Generate biplots to simultaneously visualize samples and variables in reduced space [15].

Information Content in Higher Components

While early transcriptomic studies suggested limited utility beyond the first 2-3 principal components, recent evidence indicates important biological signals reside in higher components. Research on large heterogeneous gene expression datasets (7,100 samples) revealed that while the first three components explained approximately 36% of variance, significant tissue-specific information remained in higher components [16]. When analyzing specific tissue subsets (e.g., brain regions), components beyond the first three captured biologically relevant differentiation [16].

The following diagram illustrates how PCA reveals hierarchical biological structure in gene expression data:

Figure 2: Hierarchical Biological Structure in PCA of Gene Expression Data

Applications in Drug Discovery and Development

Quantitative Structure-Activity Relationships (QSAR)

PCA has become an indispensable tool in QSAR studies, where it analyzes matrices of molecular descriptors to identify dominant structural properties influencing biological activity [17]. In a case study on CCR5 antagonists, PCA enabled researchers to navigate complex descriptor spaces, identify outliers, and select appropriate modeling approaches for further analysis [17]. By reducing hundreds of molecular descriptors to a few principal components, PCA facilitates the understanding of structure-activity relationships and guides rational drug design.

Network Pharmacology and Systems Biology

The emergence of network pharmacology requires methods that can capture system-level responses to therapeutic interventions. PCA provides a framework for analyzing complex correlation structures in biological networks, identifying latent factors that underlie observed phenotypic responses [9]. This approach aligns with Pearson's original vision of capturing system behavior through correlation structure analysis, enabling researchers to move beyond reductionist models to more comprehensive representations of biological complexity [9].

Table 2: PCA Applications in Drug Discovery Pipeline

| Application Area | PCA Function | Impact on Drug Development |

|---|---|---|

| Target Identification | Analysis of gene expression patterns across tissues and conditions | Identifies disease-relevant pathways and potential therapeutic targets |

| Lead Optimization | Reduction of molecular descriptor space in QSAR | Prioritizes compound series with desirable properties, reduces synthetic effort |

| Toxicology Assessment | Pattern recognition in metabolomic and transcriptomic data | Identifies safety liabilities early in development process |

| Biomarker Discovery | Identification of dominant variation patterns in patient populations | Stratifies patient populations, identifies predictive biomarkers |

| Clinical Trial Analysis | Multidimensional assessment of efficacy endpoints | Provides comprehensive view of drug effects, supports regulatory decisions |

Methodological Considerations and Best Practices

Data Preprocessing Critical Impact

The choice of data preprocessing methods significantly influences PCA outcomes in transcriptomic studies. Research comparing RNA-Seq data preprocessing pipelines demonstrated that normalization, batch effect correction, and scaling decisions affect downstream classification performance [14]. Interestingly, while batch effect correction improved performance when applied to certain datasets (TCGA to GTEx), it worsened performance with other independent test sets (ICGC/GEO) [14]. This underscores the context-dependent nature of preprocessing decisions and the need for careful pipeline validation.

Alternative Dimensionality Reduction Techniques

While PCA remains the most widely used linear dimensionality reduction technique, several alternatives offer complementary approaches:

Table 3: Comparison of Dimensionality Reduction Methods for Biological Data

| Method | Input Data | Distance Measure | Best Application Context |

|---|---|---|---|

| PCA | Original feature matrix | Covariance/Euclidean | Linear data, feature extraction, exploratory analysis |

| PCoA | Distance matrix | Any distance metric (Bray-Curtis, Jaccard) | Ecological distances, beta-diversity analysis |

| NMDS | Distance matrix | Rank-order preservation | Complex datasets, non-linear relationships |

| t-SNE | Similarity matrix | Probability distributions | Visualization of high-dimensional data |

| UMAP | Nearest neighbor graph | Topological preservation | Visualization, clustering preservation |

PCA is particularly effective for linear data structures and serves as an excellent first step in exploratory analysis, while PCoA and NMDS offer advantages when analyzing ecological distances or non-linear relationships [18]. The selection of method should align with data characteristics and research objectives.

Table 4: Essential Computational Tools for PCA in Gene Expression Analysis

| Tool/Resource | Function | Application Context |

|---|---|---|

| R Statistical Environment | Programming platform for statistical computing | Primary analysis environment with specialized packages |

| Python (scikit-learn) | Machine learning library with PCA implementation | Integrative analysis pipelines, machine learning workflows |

| TCGAbiolinks | Bioconductor package for TCGA data access | Standardized retrieval of cancer transcriptomic data |

| DESeq2/edgeR | Differential expression analysis | Normalization and preprocessing of RNA-seq count data |

| ggplot2/plotly | Visualization libraries | Generation of publication-quality PCA score plots |

| FactoMineR | Multivariate analysis package | Comprehensive PCA implementation with enhanced diagnostics |

| Enrichr/clusterProfiler | Gene set enrichment analysis | Biological interpretation of principal components |

Karl Pearson's century-old geometrical insight continues to fuel modern scientific discovery, particularly in the high-dimensional landscape of gene expression research. PCA provides an indispensable framework for reducing dimensionality while preserving essential biological information, enabling researchers to navigate complex transcriptomic datasets and extract meaningful patterns. As genomic technologies generate increasingly large and complex datasets, Pearson's legacy endures through the ongoing evolution and application of principal component analysis in biological research and drug development. The method's unique combination of mathematical elegance and practical utility ensures its continued relevance in an era of data-intensive biology.

Principal Component Analysis (PCA) stands as a foundational dimensionality reduction technique in computational biology, transforming high-dimensional gene expression data into a lower-dimensional space of synthetic variables known as principal components (PCs). Within gene expression analysis, these PCs function conceptually as "metagenes"—linear combinations of original genes that capture coordinated expression patterns across biological samples. This whitepaper provides an in-depth technical examination of PCA's mathematical framework, its implementation as a feature extraction method in transcriptomics, and its critical role in elucidating biological structure through metagenes. We further present standardized protocols for applying PCA to single-cell RNA sequencing (scRNA-Seq) data, benchmark performance against alternative methods, and discuss both interpretative advantages and limitations relevant to drug discovery and biomedical research.

Modern genomic technologies, particularly single-cell RNA sequencing (scRNA-Seq), generate data of extreme dimensionality, where each of thousands or millions of cells is characterized by expression measurements for 20,000-30,000 genes [19]. This high-dimensional space presents significant challenges for computational analysis, visualization, and biological interpretation—a phenomenon known as the "curse of dimensionality" [20].

In this context, dimensionality reduction techniques like PCA become essential preprocessing steps that address multiple analytical challenges:

- Computational efficiency: Reducing dataset size enables faster downstream analysis [21]

- Noise reduction: Filtering out technical variance and highlighting biologically meaningful signals [19]

- Visualization: Enabling exploration of data structure in 2D or 3D plots [10] [22]

- Overfitting prevention: Mitigating the risk of models memorizing noise rather than learning biological patterns [21]

The concept of "metagenes" emerges naturally from PCA's mathematical foundation, representing latent variables that capture coordinated biological programs across cell populations or experimental conditions [19].

Mathematical Foundation of PCA

Core Principles

PCA is an orthogonal linear transformation that converts correlated variables into a set of uncorrelated principal components, ordered by the amount of variance they explain from the original data [22]. The transformation is designed such that:

- The first principal component (PC1) captures the maximum possible variance in the data

- Each subsequent component captures the maximum remaining variance while being orthogonal to previous components [10] [22]

Mathematically, given a data matrix ( X ) with dimensions ( n × p ) (where ( n ) is the number of samples and ( p ) is the number of genes), PCA seeks the set of eigenvectors ( w ) that satisfy: [ w{(1)} = \arg \max{\|w\|=1} \left{ \sumi (x{(i)} · w)^2 \right} ] where ( w_{(1)} ) is the first principal component [22].

The Five Steps of PCA Implementation

The practical implementation of PCA follows a standardized five-step process [10]:

Standardization: Centering and scaling each variable to mean=0 and variance=1, ensuring equal contribution from all genes regardless of their original expression ranges [10]

Covariance Matrix Computation: Constructing a ( p × p ) symmetric matrix that captures how all pairs of genes vary together, with entries indicating:

- Positive covariance: Genes increase or decrease together

- Negative covariance: One gene increases as the other decreases

- Zero covariance: No linear relationship between genes [10]

Eigen Decomposition: Calculating eigenvectors and eigenvalues of the covariance matrix, where:

- Eigenvectors represent the principal components (directions of maximum variance)

- Eigenvalues indicate the amount of variance carried by each component [10]

Feature Selection: Sorting eigenvectors by decreasing eigenvalues and selecting the top ( k ) components that capture sufficient cumulative variance [10]

Data Projection: Transforming the original data into the new subspace via matrix multiplication ( T = XW ), where ( W ) contains the selected eigenvectors [10]

Metagenes as Biological Constructs

In the context of gene expression data, each principal component constitutes a metagene—a weighted linear combination of original genes where loadings (eigenvector entries) indicate each gene's contribution to the component [19]. These metagenes represent latent expression programs that may correspond to:

- Coregulated gene modules under common transcriptional control

- Biological pathways activated in specific cell states

- Technical artifacts or batch effects requiring statistical correction

Table 1: Interpretation of PCA Outputs in Gene Expression Studies

| PCA Output | Mathematical Meaning | Biological Interpretation |

|---|---|---|

| Eigenvectors | Direction of maximum variance | "Metagenes" - coordinated expression programs |

| Eigenvalues | Variance along each component | Importance of each expression program |

| Projections (Scores) | Sample coordinates in new space | Sample expression profile based on metagenes |

| Loadings | Gene contributions to components | Individual gene weights in each metagene |

PCA in Single-Cell RNA Sequencing Workflows

Standardized Protocol for scRNA-Seq Data

The following experimental protocol details PCA implementation specifically for single-cell transcriptomics:

Input Requirements:

- Normalized count matrix (cells × genes) with batch effects mitigated

- Minimum quality control: Removal of low-quality cells, dead cells, and empty droplets

- Recommended: Log-transformation and normalization for sequencing depth

Procedure:

- Feature Selection: Identify highly variable genes (HVGs) across the cell population

- Data Scaling: Center and scale HVG expression values to mean=0, variance=1

- PCA Execution: Perform eigendecomposition on scaled expression matrix

- Component Selection: Determine significant PCs using elbow method or statistical tests

- Downstream Application: Use PC embeddings for clustering, visualization, or trajectory inference

Component Selection Criteria:

- Elbow method: Visual identification of variance explained "elbow" in scree plot [19]

- Statistical approaches: Tracy-Widom test for significant deviation from random noise [23]

- Arbitrary thresholds: Retain components explaining >90% cumulative variance [19]

The following workflow diagram illustrates the standard PCA pipeline in scRNA-Seq analysis:

Research Reagents and Computational Tools

Table 2: Essential Research Reagents and Software for PCA in Genomic Studies

| Resource | Type | Function/Purpose |

|---|---|---|

| Cell Ranger [19] | Software Pipeline | Preprocessing of 10x Genomics scRNA-Seq data |

| EIGENSOFT/SmartPCA [23] | Specialized Software | Population genetics-focused PCA implementation |

| Seurat / Scanpy [19] | Analysis Toolkit | Comprehensive scRNA-Seq analysis including PCA |

| Scikit-learn [10] | Python Library | General-purpose PCA implementation |

| UMI Filtering [19] | Molecular Technology | Unique Molecular Identifiers for accurate gene counting |

Benchmarking PCA Against Alternative Dimensionality Reduction Methods

Performance Comparison in Biological Contexts

Recent benchmarking studies have evaluated PCA alongside numerous linear and nonlinear dimensionality reduction techniques specifically for transcriptomic data [24]. The table below summarizes key findings:

Table 3: Benchmarking of Dimensionality Reduction Methods for Transcriptomic Data

| Method | Type | Key Strengths | Limitations in Genomic Context |

|---|---|---|---|

| PCA [24] | Linear | Computational efficiency, interpretability, preservation of global structure | Limited capture of nonlinear relationships |

| t-SNE [24] | Nonlinear | Excellent local structure preservation, effective clustering | Computational intensity, loss of global structure |

| UMAP [24] | Nonlinear | Balance of local/global structure, runtime efficiency | Parameter sensitivity, less interpretable |

| PaCMAP [24] | Nonlinear | Strong performance without parameter tuning | Less established in biological domains |

| PHATE [24] | Nonlinear | Superior capture of branching trajectories | Specialized primarily for trajectory inference |

In drug discovery contexts, PCA has demonstrated particular utility for analyzing drug-induced transcriptomic changes in resources like the Connectivity Map (CMap), where it effectively groups compounds with similar mechanisms of action while separating those with distinct targets [24].

Theoretical Foundations: The Genealogical Interpretation

A fundamental theoretical framework connects PCA to population genetics concepts, demonstrating that for single nucleotide polymorphism (SNP) data, sample projections onto principal components directly reflect average coalescence times between pairs of haploid genomes [25]. This genealogical interpretation provides a population-genetic foundation for understanding PCA outputs:

[ \textbf{E}[X{ij}] = \textbf{E}[\delta{is} - \mus] = \textbf{Cov}(\delta{is}, \delta{js}) - \textbf{Cov}(\delta{is}, \mus) - \textbf{Cov}(\delta{js}, \mus) + \textbf{Var}(\mus) ]

where ( \delta{is} ) represents allele frequency differences and ( \mus ) represents population means [25].

This framework enables interpretation of PCA results in terms of underlying demographic processes, including:

- Population migration and admixture events

- Geographical isolation and genetic drift

- Historical bottlenecks and founder effects

The following diagram illustrates the mathematical relationships underlying the genealogical interpretation of PCA:

Critical Considerations and Limitations

Methodological Artifacts and Biases

Despite its widespread application, PCA presents significant limitations that researchers must consider:

Sensitivity to Data Composition: PCA outcomes are strongly influenced by sample composition, where inclusion or exclusion of specific populations can dramatically alter component orientations [23]. In one comprehensive evaluation, PCA demonstrated vulnerability to generating "artifacts of the data" rather than true biological structure [23].

Variance Interpretation Challenges: In population genetics, the proportion of variance explained by principal components may not reliably reflect biological importance, as major axes of variation can represent technical artifacts or evolutionarily neutral structure [23].

Linearity Assumption: PCA's fundamental limitation as a linear method constrains its ability to capture complex nonlinear relationships common in biological systems, potentially necessitating complementary nonlinear approaches [24].

Validation and Interpretation Guidelines

To ensure robust biological interpretation of PCA results:

Technical Validation:

- Assess batch effects across experimental conditions

- Perform sensitivity analysis with sample subsets

- Compare multiple normalization strategies

Biological Corroboration:

- Integrate with complementary methods (e.g., clustering, trajectory inference)

- Correlate metagene expression with known biological markers

- Validate findings through experimental perturbation

Statistical Rigor:

- Apply appropriate significance testing for component selection

- Avoid overinterpretation of low-variance components

- Report variance explained alongside visualization

Future Directions and Advanced Applications

Integrative Approaches

Emerging methodologies combine PCA with other analytical frameworks to enhance feature selection and interpretation:

PCA-MCDM Fusion: Integration of PCA with Multi-Criteria Decision-Making (MCDM) methods provides a robust framework for unsupervised feature selection in bioinformatics, evaluating genes against multiple criteria simultaneously [26].

Deep Learning Extensions: Variational autoencoders and other neural architectures extend PCA concepts to capture nonlinear relationships while maintaining interpretability [19].

Specialized Applications in Drug Development

PCA-derived metagenes enable several advanced applications in pharmaceutical research:

Mechanism of Action Prediction: Metagenes representing conserved transcriptional programs facilitate classification of novel compounds by mechanism of action through pattern matching against reference databases [24].

Dose-Response Modeling: Subtle transcriptomic changes across drug concentrations can be captured through specialized application of PCA and related methods [24].

Biomarker Discovery: Metagenes representing coordinated pathway activity serve as robust biomarkers for patient stratification and treatment response prediction.

Principal Component Analysis serves as an essential transformation in the genomic data analysis pipeline, converting high-dimensional gene expression measurements into interpretable metagenes that capture coordinated biological programs. While limitations regarding sensitivity to data composition and linearity assumptions necessitate complementary approaches, PCA's computational efficiency, mathematical elegance, and interpretability ensure its continued relevance. For drug development professionals and biomedical researchers, understanding both the theoretical foundations and practical implementation of PCA remains crucial for extracting meaningful biological insights from complex transcriptomic datasets. Future methodological developments will likely focus on hybrid approaches that maintain PCA's interpretability while capturing the nonlinear relationships inherent in biological systems.

In the analysis of gene expression data, researchers are frequently confronted with the "large d, small n" problem, where the number of genes (d) vastly exceeds the number of samples (n) [27]. Principal Component Analysis (PCA) addresses this challenge by performing a linear dimensionality reduction that transforms the high-dimensional gene expressions into a lower-dimensional set of orthogonal principal components (PCs), often referred to as "metagenes" or "super genes" in bioinformatics literature [27]. This transformation allows researchers to project samples with tens of thousands of gene expressions onto just two or three dimensions for visualization, identify sample clusters, and detect batch effects [28]. The technique is computationally simple and has become fundamental to bioinformatics data analysis, enabling researchers to overcome the curse of dimensionality while preserving essential patterns in the data.

Mathematical Foundations of PCA

Core Mathematical Framework

PCA is fundamentally an orthogonal linear transformation that maps data to a new coordinate system [22]. Consider a gene expression dataset represented as an ( n \times p ) matrix ( \mathbf{X} ), where ( n ) is the number of samples and ( p ) is the number of genes. Each element ( x_{ij} ) represents the expression level of gene ( j ) in sample ( i ). PCA seeks a set of new variables (principal components) that are linear combinations of the original genes:

[ PCk = w{1k}x1 + w{2k}x2 + \cdots + w{pk}x_p ]

where ( PCk ) is the ( k )-th principal component, and ( \mathbf{w}{(k)} = (w{1k}, w{2k}, \dots, w{pk}) ) is the weight vector for the ( k )-th component [22]. The first weight vector ( \mathbf{w}{(1)} ) must satisfy:

[ \mathbf{w}{(1)} = \arg\max{\|\mathbf{w}\|=1} \left{ \sumi (t1){(i)}^2 \right} = \arg\max{\|\mathbf{w}\|=1} \left{ \sumi (\mathbf{x}{(i)} \cdot \mathbf{w})^2 \right} ]

where ( t_1 ) represents the scores of the first principal component [22]. This optimization problem can be solved via eigendecomposition of the covariance matrix ( \mathbf{X}^T\mathbf{X} ) or singular value decomposition (SVD) of the data matrix ( \mathbf{X} ) itself [27] [22].

Geometric Interpretation and Variance Maximization

Geometrically, PCA can be understood as fitting a p-dimensional ellipsoid to the data, where each axis of the ellipsoid represents a principal component [22]. The principal components are the directions along which the data exhibits maximum variance. The process begins with mean-centering each variable in the dataset, followed by computation of the covariance matrix. The eigenvectors of this covariance matrix form the principal components, while the corresponding eigenvalues represent the amount of variance explained by each component [22]. The proportion of total variance explained by the ( k )-th principal component is calculated as:

[ \text{Proportion}k = \frac{\lambdak}{\sum{j=1}^p \lambdaj} ]

where ( \lambda_k ) is the eigenvalue corresponding to the ( k )-th principal component [29]. In practical terms, PCA rotates the original set of ( n ) axes (corresponding to the ( n ) measured variables) to find a new axis (the first principal component) that explains as much of the total variance as possible [30]. Subsequent components are then found sequentially, each explaining the maximum possible residual variance while being orthogonal to all previous components.

Figure 1: Geometric Interpretation of PCA as Axis Rotation

Core Mathematical Concepts: Loadings, Scores, and Variance

Loadings: The Building Blocks of Components

Loadings (also called eigenvectors or weights) define the orientation of the principal components relative to the original variables [30] [29]. Mathematically, the loadings are the coefficients ( w{1k}, w{2k}, \dots, w_{pk} ) in the linear combination that defines each principal component. These coefficients indicate the relative contribution of each original variable to that specific principal component.

For a dataset with two original variables, the loadings for the first principal component are defined by the cosine of its angle of rotation relative to each of the original axes [30]. If PC1 has a rotation angle of θ relative to variable 1, then its loading for variable 1 is cos(θ), and for variable 2 is cos(90° - θ) (or sin(θ)) [30]. Since individual loadings are defined by a cosine function, they are limited to the range -1 to +1 [30]. The sign of the loading indicates how a variable contributes to the principal component: a positive loading suggests the variable's presence contributes to the component, while a negative loading indicates its absence contributes [30].

Scores: The Projected Coordinates

Scores represent the coordinates of the original data points in the new principal component space [30]. After determining the principal component axes (loadings), the score for a given sample on the k-th principal component is calculated as the linear combination of its original variable values weighted by the loadings:

[ tk(i) = x{(i)} \cdot w{(k)} = w{1k}x{1(i)} + w{2k}x{2(i)} + \cdots + w{pk}x_{p(i)} ]

where ( tk(i) ) is the score of sample i on the k-th principal component, ( x{j(i)} ) is the value of variable j for sample i, and ( w_{jk} ) is the loading of variable j on component k [22]. In gene expression analysis, these scores allow researchers to visualize high-dimensional data in a reduced space (typically 2D or 3D), enabling the identification of sample clusters, outliers, and patterns [27] [30].

Variance Explained: Measuring Information Content

The variance explained by each principal component quantifies its importance in capturing the variability within the dataset [29]. The total variance in the data is the sum of the variances of all original variables (when variables are standardized). The eigenvalue ( \lambda_k ) corresponding to the k-th principal component represents the variance captured by that component [29]. The proportion of total variance explained by the k-th component is:

[ \text{Proportion}k = \frac{\lambdak}{\sum{j=1}^p \lambdaj} ]

The cumulative variance explained by the first m components is the sum of their individual proportions [29]. In practice, researchers often retain only the first few principal components that explain a sufficient amount of variance (e.g., 70-90%), effectively reducing dimensionality while preserving most meaningful information [27] [29].

Table 1: Interpretation of PCA Outputs in Gene Expression Studies

| Component | Loadings Interpretation | Scores Interpretation | Variance Explained |

|---|---|---|---|

| PC1 | Genes with highest absolute loadings contribute most; reveals primary expression pattern | Positions samples along dominant expression pattern; reveals major sample groupings | Typically explains largest variance percentage; may represent major biological factor |

| PC2 | Genes orthogonal to PC1 pattern; reveals secondary expression signature | Positions samples along secondary axis; may represent subtler biological effects | Usually less than PC1; combined with PC1 often explains substantial total variance |

| Subsequent PCs | Progressively capture residual patterns; may represent noise or subtle biological signals | Finer sample discrimination; may correlate with minor biological factors or technical artifacts | Diminishing returns; later components often discarded as noise |

PCA Workflow for Gene Expression Data

Standard Analytical Procedure

The application of PCA to gene expression data follows a systematic workflow designed to transform raw expression measurements into meaningful biological insights. A comprehensive protocol includes the following steps:

Data Preprocessing: Gene expression data should be properly normalized before PCA. Typically, expression values are centered to mean zero, and sometimes scaled to unit variance to make genes more comparable [27]. This step is crucial as PCA is sensitive to variable scales.

Covariance Matrix Computation: Calculate the sample variance-covariance matrix based on the normalized expression values across all samples [27]. For p genes, this produces a p × p covariance matrix that captures the relationships between all gene pairs.

Eigendecomposition: Perform eigendecomposition of the covariance matrix to extract eigenvalues and eigenvectors [27] [22]. This can be achieved using standard singular value decomposition (SVD) techniques available in most statistical software packages [27].

Component Selection: Sort the eigenvectors by decreasing magnitude of their corresponding eigenvalues [29]. The eigenvector with the largest eigenvalue becomes the first principal component, and so on. Determine how many components to retain based on eigenvalues (>1 according to Kaiser criterion), scree plot analysis, or cumulative variance explained (typically 70-90%) [29].

Score Calculation: Compute principal component scores for each sample by projecting the original data onto the selected components [30] [29]. These scores serve as new coordinates for samples in the reduced-dimensional space.

Interpretation and Visualization: Create biplots showing both sample scores and variable loadings, scree plots displaying variance explained by each component, and score plots colored by experimental conditions to identify patterns and clusters [27] [22].

Figure 2: PCA Workflow for Gene Expression Data Analysis

Research Reagents and Computational Tools

Table 2: Essential Computational Tools for PCA in Bioinformatics

| Tool/Software | Specific Function/Package | Application Context |

|---|---|---|

| R Statistical Environment | prcomp(), princomp(), FactoMineR | Comprehensive PCA implementation with extensive visualization capabilities |

| Python Scientific Stack | scikit-learn (decomposition.PCA), SciPy, NumPy | Custom analysis pipelines and integration with machine learning workflows |

| SAS | Procedures PRINCOMP and FACTOR | Enterprise-level statistical analysis with robust validation |

| SPSS | Factor function (Data Reduction menu) | User-friendly interface for basic PCA applications |

| MATLAB | princomp(), pca() functions | High-performance numerical computation for large-scale datasets |

| Specialized Bioinformatics Tools | NIA Array Analysis Tool | Domain-specific implementations for genomic data |

Advanced PCA Applications in Drug Discovery

Network and Pathway-Based PCA

Traditional PCA applied to entire gene expression datasets has evolved into more sophisticated approaches that incorporate biological domain knowledge. Recent methodologies conduct PCA on genes within predefined biological pathways or network modules rather than across all genes simultaneously [27]. In this framework, PCA is performed separately on genes within each pathway, and the resulting principal components are used as covariates in downstream analyses [27]. These components effectively represent the coordinated activity of entire pathways, providing a more biologically interpretable reduction of dimensionality.

This pathway-based PCA approach offers significant advantages for drug discovery applications. First, it aligns dimensionality reduction with biologically meaningful units (pathways) known to be important in disease mechanisms. Second, it naturally accommodates interaction effects between pathways. For instance, when studying two pathways containing different gene sets, researchers can conduct PCA on each pathway separately and include interaction terms between the resulting components in predictive models [27]. This enables the detection of non-additive effects between biological pathways that might be missed by conventional analyses.

Supervised and Sparse PCA Variations

Standard PCA is an unsupervised technique that doesn't incorporate sample labels or outcomes. Supervised PCA extends the method by incorporating response variable information to guide the dimension reduction, often resulting in components with better predictive performance for specific biological outcomes [27]. This approach is particularly valuable in drug discovery when trying to relate gene expression patterns to drug response phenotypes.

Sparse PCA addresses another limitation of traditional PCA: the fact that each principal component typically includes nonzero loadings for all genes, making biological interpretation challenging [27]. Sparse PCA introduces regularization constraints that force many loadings to exactly zero, resulting in components defined by smaller, more interpretable gene sets. This enhances the ability to identify specific genes driving the observed patterns, which is crucial for identifying potential drug targets.

Table 3: Comparison of PCA Variants in Pharmaceutical Research

| Method | Key Features | Advantages | Limitations |

|---|---|---|---|

| Standard PCA | Unsupervised; Maximizes variance; All genes contribute to all components | Computational simplicity; Widely implemented; Preserves global data structure | Difficult biological interpretation; No incorporation of outcome variables |

| Supervised PCA | Incorporates response variables; Outcome-guided dimension reduction | Improved predictive performance; Enhanced relevance to specific phenotypes | Risk of overfitting; More complex implementation |

| Sparse PCA | Regularization forces zero loadings; Sparse component structure | More interpretable results; Identifies key driver genes | Additional tuning parameters; May miss subtle coordinated effects |

| Functional PCA | Designed for time-course data; Models temporal patterns | Captures dynamic biological processes; Suitable for longitudinal studies | Increased mathematical complexity; Requires careful experimental design |

Biomarker Identification and Drug Response Prediction

PCA-based approaches have demonstrated significant utility in identifying biomarkers and predicting drug response in pharmaceutical applications. In transcriptomic studies of drug response, PCA and other dimensionality reduction methods have been benchmarked for their ability to capture drug-induced gene expression changes [31]. These methods help in separating distinct drug responses and grouping drugs with similar molecular mechanisms of action, facilitating drug repositioning and mechanism elucidation [31].

Beyond transcriptomic data, PCA finds applications in structural biology and molecular dynamics simulations relevant to drug discovery. When analyzing molecular dynamics trajectories of protein-ligand complexes, PCA can reduce the high-dimensional atomic coordinate data to reveal essential collective motions [32]. This helps in understanding how different ligands influence protein conformational sampling, which has direct implications for rational drug design and optimizing compound selectivity.

Principal Component Analysis provides a powerful mathematical framework for addressing the high-dimensionality challenges inherent in gene expression research and drug discovery. Through the decomposition of data into orthogonal components defined by loadings, scores, and explained variance, PCA enables researchers to distill complex genomic information into interpretable patterns. The continuous evolution of PCA methodologies—including supervised, sparse, and pathway-aware variants—ensures its ongoing relevance in extracting biologically meaningful insights from increasingly complex datasets. As pharmaceutical research continues to embrace systems-level approaches, PCA's ability to reveal latent structures in high-dimensional data will remain indispensable for connecting molecular profiles to therapeutic outcomes.

Within high-dimensional biological research, particularly gene expression analysis, the challenge of modeling relationships amidst numerous variables is paramount. This article delineates a fundamental paradigm shift between Principal Component Analysis (PCA), a dimensionality reduction technique, and Classical Regression. While classical regression models an outcome variable based on predictor variables, explicitly modeling and minimizing prediction error, PCA adopts a fundamentally different approach. It is an unsupervised method designed to reduce data dimensionality by creating new, uncorrelated variables that successively capture maximum variance from the entire dataset without reference to a specific outcome [33]. Framed within the context of single-cell RNA sequencing (scRNA-seq) data analysis, this whitepaper explores how PCA's variance-centric model, in contrast to the error-minimization model of regression, provides a powerful foundation for interpreting complex genomic data, enhancing computational efficiency, and facilitating downstream discovery in drug and therapeutic development.

The advent of technologies like single-cell RNA sequencing (scRNA-seq) has revolutionized genomics by enabling the measurement of gene expression at the resolution of individual cells. However, this power comes with a significant challenge: the "curse of dimensionality." scRNA-seq data is inherently high-dimensional and sparse, where each of the thousands of genes represents a dimension, and each cell is a data point in this vast space [34] [35]. This high-dimensionality, compounded by technical artifacts like "dropout events" (an abundance of zero counts for truly expressed genes), poses major obstacles for visualization, analysis, and interpretation [35].

In this context, dimensionality reduction is not merely a preprocessing step but a critical necessity to transform data into a lower-dimensional space that retains essential biological information [35]. It mitigates computational load, reduces noise, and enables the identification of latent structures, such as novel cell types or states, which are crucial for understanding disease mechanisms and identifying drug targets [34] [36]. Principal Component Analysis (PCA) stands as one of the most widely used techniques for this purpose, but its core philosophy represents a significant departure from the classical statistical modeling approach of regression.

Theoretical Foundations: Error Modeling vs. Variance Capture

Classical Regression: Predicting Outcomes and Minimizing Error

Classical linear regression is a supervised learning technique. Its primary goal is to model the relationship between a set of independent variables (predictors) and a dependent variable (outcome) to predict future outcomes. The model takes the form:

Y = Xβ + ε

Here, Y is the dependent variable, X is the matrix of independent variables, β represents the unknown regression coefficients, and ε represents the error term [37]. The model is fundamentally concerned with this error term; parameter estimation, typically via ordinary least squares (OLS), aims to find the coefficients β that minimize the sum of squared errors between the observed and predicted values of Y [37]. The entire framework is built around accurately predicting a specific target and quantifying the error in that prediction.

Principal Component Analysis: Capturing Data Structure through Variance

PCA, in contrast, is an unsupervised technique. It requires no outcome variable and is instead concerned with the internal structure of the entire dataset X. Its objective is not prediction but exploratory data analysis and dimensionality reduction [33]. PCA seeks a set of new, uncorrelated variables—the principal components (PCs)—which are linear combinations of the original variables. These components are ordered such that the first PC (PC1) captures the direction of maximum variance in the data, the second PC (PC2) captures the next greatest variance while being orthogonal to the first, and so on [11] [33].

Mathematically, this is achieved by solving an eigenvalue-eigenvector problem on the covariance matrix S of the data:

Sa = λa

The eigenvectors ak are the PC loadings, defining the directions of the new feature space, and the eigenvalues λk represent the amount of variance captured by each component [33]. The projected data points in this new space are the PC scores. Thus, the fundamental "model" of PCA is one of variance decomposition, not error minimization.

The Paradigm Shift Summarized

The table below summarizes the core differences between these two approaches, highlighting the fundamental shift in their objectives and methodologies.

Table 1: Core Philosophical Differences Between Classical Regression and PCA

| Feature | Classical Regression | Principal Component Analysis (PCA) |

|---|---|---|

| Primary Goal | Predict a specific outcome variable [37] | Reduce dimensionality; explore data structure [33] |

| Model Core | Minimizes prediction error (ε) [37] | Maximizes captured variance (λ) [33] |

| Variable Role | Distinction between dependent (Y) and independent (X) variables | No distinction; analyzes all variables collectively |

| Learning Type | Supervised | Unsupervised |

| Output | A predictive equation for Y | A set of principal components (loadings and scores) |

| Key Metric | R-squared, p-values | Explained variance ratio [11] |

This shift in objective from explaining an outcome to explaining the structure of the predictor set itself is what makes PCA uniquely suited for the initial exploration of high-dimensional genomic data, where a single, pre-specified outcome of interest may not exist.

PCA in Practice: Dimensionality Reduction for Gene Expression Data

The Standard PCA Workflow in scRNA-seq Analysis

In scRNA-seq analysis, where the data matrix X consists of cells (observations) and genes (variables), PCA is applied to reduce the thousands of gene dimensions into a smaller set of latent variables [35]. The standard workflow, as implemented in pipelines such as those in Seurat or Scanpy [36], involves several key steps. The following diagram illustrates this sequential process.

Figure 1: The Standard PCA Workflow for scRNA-seq Data.

- Data Preprocessing: The raw gene count matrix is normalized and scaled to account for differences in sequencing depth and other technical variations between cells [35].

- Centering: The data matrix is column-centered, meaning the mean expression of each gene is subtracted. This is crucial as PCA is sensitive to the mean of the variables [33].

- Covariance Matrix: The covariance matrix S of the centered data is computed. This matrix encodes how the expression levels of all genes vary with one another.

- Eigendecomposition: The covariance matrix is decomposed into its eigenvalues and eigenvectors. The eigenvectors are the principal components (the new axes), and the eigenvalues indicate the variance each PC explains.

- Component Selection: The top k eigenvectors (PCs) are selected based on their eigenvalues, often by looking for an "elbow" in a scree plot (a plot of eigenvalues in descending order) or by retaining enough components to capture a pre-defined percentage (e.g., 95%) of the total variance [35].

- Projection: The original data is projected onto the selected PCs to obtain the PC scores matrix T, which is the low-dimensional representation of the data used for downstream tasks like clustering and visualization [34].

Key Advantages for Genomic Data

- Noise Reduction: By discarding lower-order principal components, which often capture technical noise or irrelevant biological variation, PCA effectively denoises the data [11].

- Computational Efficiency: Reducing dimensionality drastically lowers the computational cost of subsequent analyses, such as clustering, which is essential for large-scale scRNA-seq datasets containing tens of thousands of cells [34] [38].

- Preservation of Variance: The method ensures that the most biologically meaningful signals, which often contribute the most to variance in the data, are retained in the lower-dimensional space.

Experimental Benchmarking and Protocol

Benchmarking PCA in Genomic Studies

The performance of PCA is often benchmarked against other dimensionality reduction techniques in terms of computational efficiency and the quality of downstream analysis, such as clustering. A recent large-scale benchmarking study on scRNA-seq data provides quantitative insights into PCA's performance [34].

Table 2: Performance Benchmark of Dimensionality Reduction Methods on scRNA-seq Data [34]

| Method | Type | Key Strength | Computational Speed | Clustering Quality (Example Metrics) |

|---|---|---|---|---|

| PCA (Full SVD) | Linear | Maximizes variance capture | Slower on very large datasets | High, serves as a common baseline |

| Randomized SVD | Linear | Approximation of full PCA | Faster than full SVD | Comparable to full PCA |

| Gaussian Random Projection (GRP) | Random | Distance preservation | Very Fast | Rivals/Potentially exceeds PCA in some cases |

| Sparse Random Projection (SRP) | Random | Memory efficiency | Very Fast | Competes well with PCA |

| t-SNE | Non-linear | Preserves local structure | Slow | High for visualization, separates clusters |

| UMAP | Non-linear | Preserves local/global structure | Moderate-Fast | High for visualization and clustering |

This benchmarking demonstrates that while PCA remains a robust and interpretable standard, random projection methods have emerged as strong competitors, sometimes surpassing PCA in speed and rivaling it in clustering effectiveness [34]. This is particularly relevant for ultra-large-scale datasets in drug discovery.

Detailed Experimental Protocol: Applying PCA to an scRNA-seq Dataset

The following is a generalized protocol for applying PCA to a typical scRNA-seq dataset, as described in the literature [34] [36] [35].

Objective: To reduce the dimensionality of a scRNA-seq gene expression matrix for downstream cell clustering and population identification.

Materials and Reagents (Computational): Table 3: Essential Research Reagent Solutions for scRNA-seq PCA Analysis

| Reagent / Tool | Function / Explanation | Example |

|---|---|---|

| scRNA-seq Data Matrix | The input data; rows = cells, columns = genes. | UMI count matrix from 10x Genomics [35]. |

| High-Performance Computing (HPC) Cluster | Provides the computational power needed for large matrix operations. | Local server or cloud computing environment. |

| Normalization Software | Corrects for technical variation (e.g., sequencing depth). | scran package in R or scanpy.pp.normalize_total in Python. |

| PCA Implementation | The algorithm that performs the principal component analysis. | prcomp() in R or sklearn.decomposition.PCA in Python [36]. |

| Visualization Package | For generating scree plots and 2D PCA plots. | ggplot2 in R or matplotlib in Python [11]. |

Methodology:

Data Input and Quality Control (QC):

- Load the gene-cell count matrix. A common starting point is a matrix with dimensions [3,000 cells x 20,000 genes].

- Perform QC to filter out low-quality cells (e.g., based on high mitochondrial gene percentage) and poorly detected genes.

Normalization and Transformation:

- Normalize the counts to correct for library size differences between cells (e.g., counts per million (CPM) or log-normalization).

- Identify Highly Variable Genes (HVGs): To reduce noise and focus on biologically informative genes, select a subset of genes (e.g., 1,000-5,000) that exhibit the highest cell-to-cell variation. This step is critical for enhancing the signal in PCA.

Scaling and Centering:

- Center the data: subtract the mean expression for each gene so that each gene has a mean of zero.

- Scale the data (optional but often recommended): divide each gene's expression by its standard deviation. This puts all genes on the same scale, preventing highly expressed genes from dominating the PCs simply due to their magnitude.

Perform PCA:

- Apply the PCA algorithm to the preprocessed (normalized, HVG-selected, and scaled) data matrix.

- The output includes:

- Loadings (

A_k): The eigenvectors, showing the contribution (weight) of each original gene to each PC. - Scores (

T): The projected coordinates of each cell in the new PC space. - Explained Variance Ratio (

λ_k / Σλ): The proportion of total variance explained by each PC.

- Loadings (

Component Selection:

- Plot the explained variance ratio for each PC (Scree Plot).

- Identify the "elbow" point where the marginal gain in explained variance drops significantly. Alternatively, retain enough PCs to capture ~80-90% of the total cumulative variance. For a typical scRNA-seq dataset, this might be the top 20-50 PCs.

Downstream Analysis:

- Use the PC scores matrix

T(containing only the top k PCs) as input for graph-based clustering algorithms (e.g., Louvain, Leiden) to identify cell populations. - Visualize the first two PCs in a scatter plot to observe broad separation of cell types or states.

- Use the PC scores matrix

Discussion: Implications for Research and Drug Development

The variance-capture model of PCA has profound implications for biomedical research. For scientists and drug development professionals, PCA is not an endpoint but a critical enabling technology. By transforming high-dimensional gene expression data into a lower-dimensional latent space, PCA facilitates the discovery of novel cell subtypes associated with disease, the identification of biomarker genes (by examining PC loadings), and the understanding of developmental trajectories [34] [35].

The relationship between PCA and regression is not purely antagonistic; they can be synergistic. Principal Component Regression (PCR) is a technique that uses the principal components as new regressors for a outcome variable, elegantly combining the two philosophies [37]. This approach helps overcome multicollinearity in high-dimensional datasets, as the PCs are, by definition, uncorrelated.

Looking forward, the field continues to evolve. While PCA remains a cornerstone, benchmarks show that random projection methods offer compelling advantages in speed for very large datasets [34]. Furthermore, non-linear methods like UMAP are superior for final visualization, as they can capture complex manifold structures that linear PCA cannot [36] [39]. However, PCA's mathematical transparency, computational efficiency, and strength as a variance-preserving first step ensure it will remain an indispensable tool in the computational biologist's and drug developer's toolkit for the foreseeable future.

This whitepaper has articulated the fundamental conceptual shift from the error-minimizing framework of classical regression to the variance-maximizing model of Principal Component Analysis. Within the domain of gene expression research, PCA's unsupervised, variance-centric approach is uniquely suited to tackle the curse of dimensionality inherent in modern genomic technologies like scRNA-seq. It provides a robust, mathematically sound foundation for data compression, noise reduction, and exploratory analysis. By enabling the visualization and clustering of cells in a reduced space that captures the most significant biological signals, PCA directly empowers researchers to uncover novel biology, characterize disease states, and ultimately accelerate the pipeline of drug discovery and development.

Implementing PCA in Practice: From scRNA-seq to Spatial Transcriptomics

Principal Component Analysis (PCA) stands as a cornerstone dimensionality reduction technique in computational biology, enabling researchers to distill high-dimensional gene expression data into its core components of variation. This whitepaper details the standardized PCA workflow—encompassing critical data normalization, eigenvector-based transformation, and biological interpretation of components—within the broader thesis of how PCA effectively reduces data dimensionality to uncover latent biological patterns. By providing a rigorous methodological framework and benchmarking against emerging techniques, this guide equips researchers and drug development professionals with the foundational knowledge to implement PCA in genomic studies, thereby facilitating the identification of novel biomarkers and therapeutic targets.

The advent of high-throughput technologies has rendered genomic data, particularly from single-cell RNA sequencing (scRNA-seq), both high-dimensional and sparse. A typical dataset may measure the expression levels of over 20,000 genes (variables, P) across only hundreds of samples or cells (observations, N), creating a scenario where P ≫ N [20]. This "curse of dimensionality" presents significant challenges for analysis, including computational intractability, difficulty in visualization, and an increased risk that machine learning models will capture noise rather than biological signal, leading to overfitting [40] [41].

Dimensionality reduction techniques mitigate these issues by transforming the data into a lower-dimensional space while preserving essential biological information. PCA achieves this by identifying new, uncorrelated variables—the principal components—that are linear combinations of the original genes and that sequentially capture the maximum possible variance in the data [41] [42]. This process simplifies the data structure, making it amenable to visualization, clustering, and further downstream analysis, which is crucial for tasks like identifying distinct cell populations or characterizing gene expression dynamics in disease research [34].

Mathematical Foundations of PCA

At its core, PCA is a statistical procedure that leverages linear algebra to redefine a dataset in terms of its directions of maximum variance.

Key Concepts and Linear Algebra