How to Select Genes for a Differential Expression Heatmap: A Strategic Guide for Biomedical Researchers

This article provides a comprehensive guide for researchers and drug development professionals on strategically selecting genes for differential expression heatmaps, a critical step in transcriptomic data analysis.

How to Select Genes for a Differential Expression Heatmap: A Strategic Guide for Biomedical Researchers

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on strategically selecting genes for differential expression heatmaps, a critical step in transcriptomic data analysis. It covers foundational concepts of heatmaps and dendrograms, methodological approaches using established tools like DESeq2 and edgeR, troubleshooting for common pitfalls like false positives and normalization, and validation techniques to ensure robust, reproducible findings. By integrating current best practices and addressing key challenges such as accounting for donor effects and choosing appropriate statistical methods, this guide aims to enhance the biological interpretability and clinical relevance of gene expression visualizations.

Understanding Heatmaps and the 'Why' Behind Gene Selection

Differential expression heatmaps are powerful visualization tools that transform complex transcriptomic data into intuitive, color-coded matrices, revealing profound biological insights into gene regulation across experimental conditions. These visualizations serve as critical bridges between raw sequencing data and biological interpretation, enabling researchers to identify patterns of co-regulated genes, discern functional pathways, and validate experimental hypotheses. This protocol details comprehensive methodologies for constructing biologically meaningful differential expression heatmaps, focusing specifically on strategic gene selection—a pivotal step that determines the analytical depth and biological validity of the resulting visualization. We provide step-by-step protocols for data processing, normalization, statistical analysis, and visualization, incorporating both established and cutting-edge computational tools. Designed for researchers and drug development professionals, these guidelines emphasize reproducible analysis workflows while highlighting common pitfalls in visual representation and biological interpretation.

Differential expression (DE) analysis identifies genes whose expression levels change significantly between biological conditions, such as treatment versus control or healthy versus diseased states. While statistical tests output numerical results, the differential expression heatmap serves as an indispensable tool for visualizing these patterns across multiple samples and genes simultaneously. A well-constructed heatmap does more than display data; it reveals underlying biological narratives, including co-regulated gene clusters, sample-to-sample relationships, and potential biomarkers.

The process begins with raw count data derived from RNA sequencing (RNA-seq) or microarray platforms. Strategic gene selection for heatmap visualization is paramount, as including all measured genes often obscures meaningful patterns with excessive noise. Instead, researchers typically focus on statistically significant differentially expressed genes (DEGs) or genes exhibiting high variability across conditions, as these are most likely to drive biological phenomena of interest. The resulting visualization, when properly executed, provides an intuitive summary of complex dataset, facilitating hypothesis generation and experimental validation.

Data Preparation and Processing

Input Data Formats and Quality Control

The foundation of a reliable differential expression heatmap is high-quality input data. Analysis typically begins with a count matrix, where rows represent genes and columns represent samples, with integer values indicating read counts aligned to each gene [1] [2]. For Nanostring GeoMx DSP data, raw data comes in DCC files, a PKC file, and an XLSX annotation file, which are consolidated into a "GeoMxSet Object" for analysis [3].

Essential Quality Control (QC) Steps:

- Library Size Assessment: Check total read counts per sample to identify potential outliers.

- Distribution Examination: Use boxplots and density plots to visualize expression value distributions across samples.

- Sample-Level Clustering: Perform hierarchical clustering and Principal Component Analysis (PCA) to assess overall similarity between samples and identify batch effects or outliers [2].

PCA is particularly valuable for identifying major sources of variation in the dataset. In ideal experiments, replicates for each sample group cluster together, while sample groups separate clearly, with the experimental condition representing a primary source of variation [2].

Normalization Methods

Normalization accounts for technical variations (e.g., sequencing depth, RNA composition) to enable accurate biological comparisons. The table below summarizes common normalization methods and their appropriate applications:

Table 1: Common Normalization Methods for Gene Expression Data

| Normalization Method | Description | Accounted Factors | Recommended Use |

|---|---|---|---|

| CPM (Counts Per Million) | Counts scaled by total number of reads | Sequencing depth | Gene count comparisons between replicates of the same sample group; NOT for DE analysis |

| TPM (Transcripts per Kilobase Million) | Counts per length of transcript (kb) per million reads mapped | Sequencing depth and gene length | Gene count comparisons within a sample or between samples of the same group; NOT for DE analysis |

| RPKM/FPKM | Similar to TPM | Sequencing depth and gene length | Not recommended for between-sample comparisons due to incomparable total normalized counts between samples [2] |

| DESeq2's Median of Ratios | Counts divided by sample-specific size factors | Sequencing depth and RNA composition | Recommended for DE analysis and comparisons between samples [2] |

| EdgeR's TMM (Trimmed Mean of M-values) | Weighted trimmed mean of log expression ratios between samples | Sequencing depth, RNA composition | Recommended for DE analysis and comparisons between samples [2] |

For differential expression analysis specifically, DESeq2's median of ratios and EdgeR's TMM methods are most appropriate as they account for both sequencing depth and RNA composition, which is crucial when samples have different expression profiles [2].

Differential Expression Analysis

Differential expression analysis identifies genes with statistically significant expression changes between conditions. Multiple statistical frameworks are available:

- limma-voom: Uses linear models with precision weights for RNA-seq data [3] [4]

- DESeq2: Employs negative binomial generalized linear models with shrinkage estimators for fold changes [3] [1] [4]

- edgeR: Utilizes negative binomial models with empirical Bayes estimation [3]

These tools generate statistical outputs including p-values and log2 fold changes, which form the basis for selecting genes to include in heatmap visualizations.

Table 2: Key Differential Expression Analysis Tools

| Tool | Statistical Approach | Primary Application |

|---|---|---|

| limma | Linear models with empirical Bayes moderation | Microarray data; RNA-seq data (with voom transformation) [4] |

| DESeq2 | Negative binomial generalized linear models | RNA-seq data [4] |

| edgeR | Negative binomial models with empirical Bayes estimation | RNA-seq data [3] |

Gene Selection Strategies for Heatmaps

Strategic gene selection is crucial for creating informative heatmaps that reveal meaningful biological patterns rather than visual noise.

Statistical Significance-Based Selection

The most common approach selects genes based on statistical significance thresholds:

- Adjusted p-value (q-value): Typically < 0.05 or < 0.01 to control false discovery rate

- Log2 fold change: Often > 1 or < -1 (2-fold change) to focus on biologically relevant effects

Many analysis tools, including GEO2R, provide ranked lists of differentially expressed genes based on these statistical measures, facilitating the selection process [4].

Alternative Selection Methods

- Top N Most Variable Genes: Selects genes with highest variance across samples, useful for exploratory analysis when specific hypotheses are lacking [3]

- Pathway or Functional Group-Based Selection: Focuses on genes belonging to specific biological pathways or functional categories relevant to the research question

- Custom Gene Sets: Researcher-defined lists based on prior knowledge or specific hypotheses

For specialized applications like single-cell RNA-seq, methods like scRDEN convert unstable gene expression values into stable gene-gene interactions and differential expression orders, providing alternative selection criteria [5].

Heatmap Generation and Visualization Protocols

Data Transformation and Scaling

Before visualization, normalized count data often undergoes transformation:

- Z-score Scaling: Calculated for each gene across samples (mean-centered and divided by standard deviation) to emphasize expression patterns relative to the average [3]

- Log Transformation: Applied to normalized counts to moderate variance and improve visualization of expression differences

Clustering Analysis

Clustering organizes genes and samples with similar expression patterns:

- K-means Clustering: Partitions genes into k clusters based on expression similarity; the optimal k is often determined using the "elbow method" [3]

- Hierarchical Clustering: Builds a tree structure of similarities using distance metrics (e.g., Euclidean, Manhattan) and linkage methods (e.g., complete, average)

The DgeaHeatmap package implements Z-score scaling with k-means clustering to generate customizable heatmaps [3].

Color Scheme Selection

Appropriate color selection is critical for accurate data interpretation:

- Sequential Color Scales: Single-hue progressions from light to dark, ideal for non-negative values like raw TPM [6]

- Diverging Color Scales: Progress from one hue through a neutral color to a second hue, suitable for standardized values showing up-regulation and down-regulation [6]

Color Selection Guidelines:

- Avoid rainbow scales due to inconsistent perception and misleading magnitude representation [6]

- Use color-blind-friendly combinations (e.g., blue & orange, blue & red, blue & brown) [6]

- Ensure sufficient color contrast (minimum 3:1 ratio) for accessibility [7] [8]

- Maintain simplicity with 3-4 consecutive hues from a basic color wheel [6]

Experimental Protocols

Protocol 1: Basic Differential Expression Heatmap Using DgeaHeatmap

Application: Creating publication-quality heatmaps from normalized count data

Materials and Reagents:

- Normalized count matrix (CSV format)

- R statistical environment (version 4.0 or higher)

- DgeaHeatmap R package [3]

- RStudio IDE (recommended)

Procedure:

- Install and load required packages:

Import and prepare count data:

Filter and preprocess data:

Determine optimal cluster number:

Generate heatmap:

Troubleshooting Tip: If heatmap patterns appear weak, adjust the number of top variable genes or apply more stringent filtering based on statistical significance.

Protocol 2: End-to-End Analysis of Nanostring GeoMx DSP Data

Application: Processing raw Nanostring GeoMx data through to heatmap visualization

Materials and Reagents:

- Nanostring GeoMx DCC files

- PKC (probe kit configuration) file

- XLSX sample annotation file

- R environment with GeoMxTools and DgeaHeatmap packages [3]

Procedure:

- Load raw data files:

Create and preprocess GeoMxSet object:

Extract count and annotation data:

Perform differential expression analysis:

Generate annotated heatmap:

Note: For experiments with limited sample material, consider targeted capture approaches to enrich for transcripts of interest, which can improve detection of differentially expressed genes [9].

Protocol 3: Interactive Analysis with GEO2R and GENAVi

Application: Web-based differential expression analysis and heatmap generation

Materials and Reagents:

- GEO dataset accession number (e.g., GSEXXXXX)

- GENAVi web application or local installation [10]

Procedure for GEO2R [4]:

- Navigate to NCBI GEO and enter Series accession number

- Define sample groups (e.g., "treatment" and "control")

- Assign samples to appropriate groups

- Click "Analyze" with default parameters

- Download full results table for significant DEGs

- Use selected DEGs for heatmap generation in other tools

Procedure for GENAVi [10]:

- Access GENAVi: Visit https://junkdnalab.shinyapps.io/GENAVi/ or run locally

- Upload data: Provide count matrix and metadata table

- Select normalization: Choose appropriate method (e.g., DESeq2's median of ratios)

- Perform DE analysis: Configure statistical parameters and run analysis

- Generate visualizations: Create heatmaps using significant DEGs

Advantage: Web-based tools like GENAVi provide accessible interfaces for researchers without programming expertise, while offering flexibility through multiple normalization and analysis options [10].

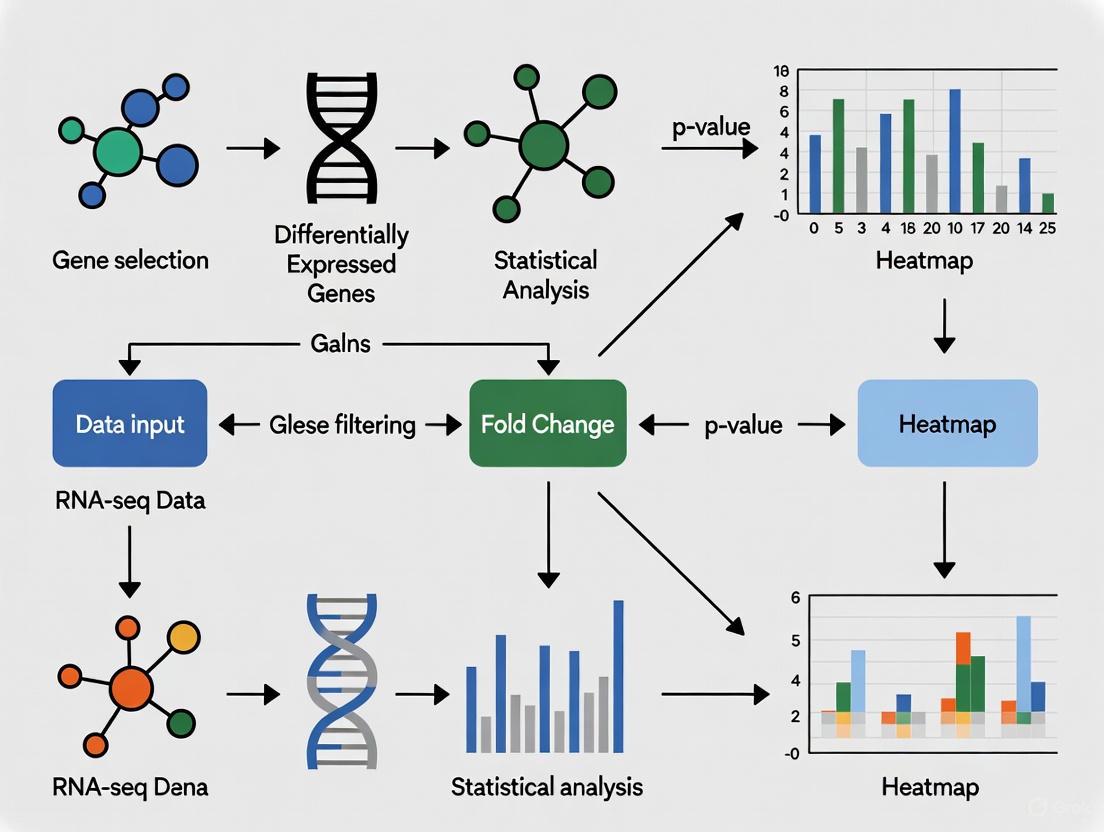

Visualization and Workflow Diagrams

Differential Expression Heatmap Workflow

Diagram 1: Differential expression heatmap workflow from raw data to biological interpretation.

Gene Selection Decision Framework

Diagram 2: Gene selection strategy decision framework based on analysis goals.

Research Reagent Solutions

Table 3: Essential Reagents and Computational Tools for Differential Expression Heatmap Analysis

| Category | Item | Specification/Function | Application Notes |

|---|---|---|---|

| RNA-seq Platforms | Illumina Sequencing | High-throughput mRNA sequencing | Generate raw read data; polyA-selection for eukaryotic mRNA [9] |

| Spatial Transcriptomics | Nanostring GeoMx DSP | Spatial profiling of RNA in tissue sections | Combine with DgeaHeatmap for spatial gene expression analysis [3] |

| rRNA Depletion Kits | NEBNext rRNA Depletion Kit | Remove ribosomal RNA | Essential for prokaryotic samples or host-pathogen studies [9] |

| Targeted Enrichment | Custom Capture Panels | Hybridization-based transcript enrichment | Critical for low-abundance organisms in multi-species studies [9] |

| Differential Expression Tools | DESeq2 R Package | Negative binomial models for DE analysis | Recommended for RNA-seq count data [1] [4] [2] |

| Differential Expression Tools | limma R Package | Linear models for microarray/RNA-seq | Suitable for microarray data and RNA-seq (with voom) [4] |

| Visualization Packages | DgeaHeatmap R Package | Specialized heatmap generation | Optimized for Nanostring GeoMx data [3] |

| Web-Based Tools | GEO2R | Online differential expression analysis | Access through NCBI GEO; uses DESeq2 for RNA-seq [4] |

| Web-Based Tools | GENAVi | Shiny app for normalization and DE analysis | User-friendly interface; multiple normalization methods [10] |

Differential expression heatmaps serve as powerful tools for transforming complex transcriptomic data into biologically interpretable visual patterns. The critical step of gene selection—whether based on statistical significance, variability, or functional relevance—directly determines the analytical insights that can be derived from these visualizations. By following the detailed protocols outlined in this document, researchers can systematically progress from raw data to meaningful biological interpretations, avoiding common pitfalls in visualization and analysis. The integration of established statistical methods with appropriate visualization techniques ensures that differential expression heatmaps reveal genuine biological patterns rather than technical artifacts, ultimately supporting robust conclusions in transcriptomics research and drug development.

In differential gene expression research, the heatmap serves as a critical visual tool for analyzing complex transcriptomic data. It transforms a numerical matrix of gene expression values into a color-shaded matrix display, allowing researchers to quickly identify patterns of up-regulation and down-regulation across experimental conditions [11]. The core components—color scale, dendrograms, and clusters—work in concert to reveal the underlying biological narrative. When properly configured, these elements can identify novel cell sub-populations, elucidate differentiation pathways, and pinpoint potential marker genes, thereby forming an indispensable part of the modern genomic scientist's toolkit [5].

The Color Scale: A Visual Legend for Gene Expression

Function and Implementation

The color scale translates continuous gene expression values (e.g., log-fold changes) or normalized counts into a spectrum of colors, creating an intuitive visual representation of the data. This translation allows for the immediate identification of genes with high (often upregulated) and low (often downregulated) expression levels across different samples or conditions [11].

The selection of an appropriate color scale is paramount. A typical and effective scheme uses a divergent color palette, where one color (e.g., red) represents high expression values, another color (e.g., blue) represents low expression values, and a neutral color (e.g., white or black) represents median or baseline expression [5] [12]. This approach helps in quickly distinguishing genes that are over-expressed or under-expressed in specific sample groups.

Table 1: Common Color Schemes for Differential Expression Heatmaps

| Color Scheme | Best Use Case | Data Representation | Example |

|---|---|---|---|

| Sequential (Single-Hue) | Representing expression levels or magnitude (e.g., from low to high). | Intensity increases from light to dark shades of one color. | d3.interpolateBuGn [13] |

| Divergent (Dual-Hue) | Highlighting deviation from a median or zero point (e.g., log-fold change). | Two distinct hues represent positive and negative deviations from a neutral center. | Red (High) -- White (Mid) -- Blue (Low) [12] |

| Spectral (Multi-Hue) | Displaying a wide range of values or categories. | Uses multiple colors from the visual spectrum. | d3.schemeRdYlBu [12] |

Technical Protocol: Creating a Robust Color Scale

Procedure:

- Data Normalization: Normalize the expression matrix (e.g., Z-score normalization per row/gene) to ensure colors represent relative expression across samples.

- Define the Scale Domain: Calculate the minimum and maximum values in the normalized dataset. For log-fold change data, center the domain around zero.

- Select a Color Palette: Choose a perceptually uniform and colorblind-friendly palette. Tools like

d3-scale-chromaticprovide predefined, robust schemes [12] [13]. - Create the Scale Function: Use a function like

d3.scaleSequential()ord3.scaleDiverging()to map the numerical domain to the color range. - Include a Legend: Always render a legend showing the color gradient and its corresponding numerical values to enable accurate interpretation [13].

Figure 1: Workflow for creating and applying a color scale to a gene expression matrix.

Dendrograms: Revealing the Hierarchical Structure

Function and Interpretation

A dendrogram, or tree diagram, is the visual output of a hierarchical clustering algorithm. It reveals the relatedness of rows (genes) and/or columns (samples) in the dataset [11]. In differential expression analysis, dendrograms are crucial for identifying sample subgroups with similar expression profiles and genes that exhibit co-expression patterns, potentially indicating co-regulation or shared functional pathways.

The length of the branches in a dendrogram represents the distance or dissimilarity between clusters. Shorter branches indicate that two clusters (of samples or genes) are more similar to each other, while longer branches indicate greater dissimilarity [11]. The structure helps in objectively determining the natural groupings within the data, which is foundational for identifying distinct cell types, disease subtypes, or functional gene modules.

Technical Protocol: Constructing Dendrograms

Procedure:

- Calculate Distance Matrix: For both rows (genes) and columns (samples), compute a pairwise distance matrix. Common distance metrics include:

- Euclidean: Straight-line distance between points.

- Manhattan: Sum of absolute differences.

- Correlation: 1 - Pearson correlation coefficient between profiles.

- Perform Hierarchical Clustering: Apply a clustering algorithm to the distance matrix. Key choices for the linkage function (which defines how the distance between clusters is calculated) are:

- Complete Linkage: Distance between clusters is the max distance between any two points in the different clusters. Tends to create compact clusters.

- Average Linkage: Distance is the average distance between all pairs of points in the two clusters. A balanced approach.

- Ward's Method: Minimizes the variance within clusters. Often produces very evenly sized clusters.

- Visualize the Dendrogram: The resulting hierarchical tree structure is drawn alongside the heatmap, typically above the columns and/or to the left of the rows.

Table 2: Common Hierarchical Clustering Linkage Methods

| Linkage Method | Description | Impact on Cluster Shape | Use Case in Genomics |

|---|---|---|---|

| Complete | Uses the maximum distance between objects in two clusters. | Tends to find compact, spherical clusters. | Identifying very distinct sample groups or gene sets. |

| Average | Uses the average distance between all pairs of objects in two clusters. | A compromise method; finds clusters of various shapes. | General-purpose clustering for samples and genes. |

| Ward's | Minimizes the total within-cluster variance. | Tends to find clusters of relatively equal size and shape. | Clustering when expecting similarly sized groups (e.g., cell types). |

Figure 2: The procedural workflow for generating a dendrogram from an expression matrix.

Clusters: Defining Biological Groups and Patterns

Function and Biological Significance

Clusters are the final output of the cluster analysis process, representing groups of objects (genes or samples) that are more similar to each other than to objects in other groups [14]. In the context of a gene expression heatmap, the combination of dendrograms and color scaling reveals these clusters as contiguous blocks of color.

The biological significance is profound:

- Sample Clusters (Column Clusters): Often represent distinct biological states, such as different cell types (e.g., monocyte, dendritic cell progenitors, pre-dendritic cells) [5], disease subtypes, or responses to treatment.

- Gene Clusters (Row Clusters): Represent sets of co-expressed genes. These genes frequently participate in common biological pathways or are regulated by the same transcriptional mechanisms, providing direct candidates for further functional validation [5] [11]. For instance, a cluster of genes showing high expression in one sample group may serve as a marker gene set for that specific cell population or condition.

Technical Protocol: Extracting and Interpreting Clusters

Procedure:

- Cut the Dendrogram: The hierarchical tree from clustering is "cut" at a specific height to define discrete clusters. The choice of where to cut determines the number and granularity of the clusters. This can be informed by:

- Biological Knowledge: Cutting to obtain a known number of cell types.

- Statistical Heuristics: Using methods like the "elbow method" on the within-cluster sum of squares.

- Dynamic Tree Cutting: Algorithms that allow for flexible cluster shapes and sizes.

- Validate Clusters: Assess the stability and quality of the clusters. Techniques include:

- Silhouette Analysis: Measures how similar an object is to its own cluster compared to other clusters.

- Functional Enrichment Analysis: Use tools like DAVID or Enrichr to test if the genes in a cluster are significantly enriched for specific Gene Ontology (GO) terms or KEGG pathways. This is a critical step for biological interpretation [5].

- Profile and Annotate: Characterize each cluster by its average expression profile and identify potential key driver genes (e.g., transcription factors) or marker genes within the cluster.

Figure 3: The process of defining and biologically interpreting clusters from a dendrogram.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagent Solutions for Differential Expression Heatmap Analysis

| Item / Reagent | Function / Application |

|---|---|

| Single-Cell RNA-Seq Kit (e.g., 10x Genomics) | Generation of the primary gene expression matrix from complex tissues by measuring transcriptomes in individual cells [5]. |

Clustering Algorithm Software (e.g., hclust in R, scRDEN) |

Performs the hierarchical clustering of genes and samples to identify patterns and groups within the expression data [14] [5]. |

Color Palette Library (e.g., d3-scale-chromatic, viridis) |

Provides perceptually uniform and accessible color schemes for accurately encoding expression values in the heatmap [12] [13]. |

| Functional Enrichment Tool (e.g., DAVID, Enrichr) | Statistically tests clusters of genes for over-representation of biological pathways, providing functional interpretation [5]. |

Visualization Library (e.g., ggplot2, ComplexHeatmap, D3.js) |

Creates the final, publication-quality heatmap visualization, integrating the color scale, dendrograms, and cluster annotations [15] [13]. |

Differential gene expression analysis is a cornerstone of modern molecular biology, enabling researchers to decipher the functional changes underlying biological processes. The selection of genes for a heatmap is a critical step that directly determines the clarity and impact of the research findings. This protocol provides a structured framework for defining analytical goals and selecting appropriate gene subsets for differential expression heatmaps based on three common research objectives: biomarker discovery, treatment effect demonstration, and novel biological insight generation. By aligning gene selection criteria with specific research questions, investigators can enhance the biological relevance and interpretability of their transcriptional data visualizations. The following sections outline definitive experimental strategies, data processing protocols, and visualization standards to ensure rigorous and reproducible heatmap design.

Application Notes & Experimental Protocols

Goal 1: Finding Reproducible Biomarkers

Experimental Protocol for Biomarker Discovery

Dataset Collection & Pre-processing: Collect multiple independent datasets from public repositories (e.g., GEO, ArrayExpress) or generate new datasets with consistent experimental conditions. For the pre-processing of microarray data, perform robust multi-array average (RMA) normalization and log2 transformation to minimize technical variability [16]. For RNA-seq data, implement count normalization using methods such as the "median of ratios" to account for differences in sequencing depth and RNA composition [17].

Differential Expression Analysis: Conduct meta-analysis across all datasets to identify consistently dysregulated genes. Apply cross-validation techniques (e.g., leave-one-out cross-validation) to assess the stability of identified gene signatures and minimize overfitting [16]. For single-cell RNA-seq data, perform pseudobulk analysis by aggregating counts per individual and per cell type before differential testing to account for within-individual correlations [18].

Biomarker Validation: Validate candidate biomarkers using orthogonal methods such as quantitative real-time PCR (qPCR). Use the 2−ΔΔCt method to calculate relative fold changes in gene expression, with GAPDH or ACTB typically serving as reference genes [16]. Establish significance thresholds (e.g., p-value < 0.05 and fold change > 2) for declaring biomarker candidacy.

Table 1: Exemplar Biomarker Genes from Hypertension Research

| Gene Symbol | Protein Name | Expression Direction | Reported Fold Change | Biological Function |

|---|---|---|---|---|

| ADM | Adrenomedullin | Upregulated | >2.0 | Vasodilation, cardiovascular homeostasis |

| EDN1 | Endothelin-1 | Upregulated | >2.0 | Vasoconstriction, vascular smooth muscle cell proliferation |

| ANGPTL4 | Angiopoietin-related protein 4 | Upregulated | >2.0 | Lipid metabolism, angiogenesis |

| CEBPD | CCAAT/enhancer-binding protein delta | Downregulated | Variable | Transcription factor, inflammatory response |

Goal 2: Showing Treatment Effects

Experimental Protocol for Treatment Effect Analysis

Experimental Design: Implement a controlled intervention study with appropriate sample sizes calculated for sufficient statistical power. Include randomized treatment and control groups, preferably with replicate measurements (recommended n ≥ 3 biological replicates per group) to account for biological variability.

Quality Control & Normalization: Perform rigorous quality control on raw expression data. For RNA-seq data, utilize visualization tools such as parallel coordinate plots or scatterplot matrices from the R package

bigPintto identify potential normalization issues, batch effects, or outlier samples that could confound treatment effect interpretations [19] [20].Focused Gene Selection: Prioritize genes from pathways known to be mechanistically related to the treatment. For example, when studying antihypertensive compounds, focus on genes within the renin-angiotensin-aldosterone system (RAAS) pathway such as ACE, AGT, and AGTR1 [16]. This targeted approach increases the signal-to-noise ratio for detecting bona fide treatment effects.

Statistical Analysis & Interpretation: Apply appropriate statistical models (e.g., negative binomial models for RNA-seq count data) to identify genes with significant expression changes between treatment and control groups. Adjust for multiple testing using false discovery rate (FDR) control methods (e.g., Benjamini-Hochberg procedure). Report both statistical significance (adjusted p-value) and biological magnitude (fold change).

Goal 3: Unveiling New Biology

Experimental Protocol for Novel Biological Insight

Unbiased Clustering & Subpopulation Identification: Process single-cell RNA-seq data using pipelines such as DIscBIO, which integrates clustering algorithms (k-means or model-based clustering) to identify novel cellular subpopulations without pre-existing markers [17]. Determine the optimal number of clusters (k) by identifying the minimal cluster number at the saturation level of the gap statistics [17].

Differential Expression & Functional Enrichment: Perform differential expression analysis between newly identified clusters to find marker genes. Subsequently, conduct gene enrichment analysis using tools like EnrichR to infer biological knowledge by comparing the gene set to annotated gene sets from over 160 libraries [17].

Network Analysis & Visualization: Construct protein-protein interaction networks using resources such as STRING to identify hub genes and functional modules within the differentially expressed gene set [17]. Visualize results using dimensionality reduction techniques (t-SNE, UMAP) to reveal underlying biological structures.

Validation Through Meta-Analysis: Apply non-parametric meta-analysis methods such as SumRank across multiple independent datasets to prioritize genes exhibiting reproducible differential expression patterns, thereby distinguishing robust biological signals from study-specific noise [18].

Table 2: Analytical Approaches for Different Research Goals

| Research Goal | Primary Gene Selection Strategy | Optimal Analysis Method | Key Validation Approach |

|---|---|---|---|

| Biomarker Discovery | Cross-study reproducible DEGs | Meta-analysis of multiple datasets | Orthogonal validation (qPCR) & cross-validation |

| Treatment Effects | Known pathway-focused genes | Controlled intervention analysis | Statistical testing with FDR correction |

| New Biology | Unbiased clustering-based markers | Single-cell clustering & DEG analysis | Functional enrichment & independent cohort validation |

Visualization Guidelines for Differential Expression Heatmaps

Color Scale Selection

Sequential vs. Diverging Scales: Use sequential color scales (e.g., ColorBrewer Blues or Viridis) when representing raw expression values (all non-negative). Use diverging color scales when displaying standardized values (e.g., z-scores) to effectively distinguish between up-regulated and down-regulated genes, with a neutral color representing the baseline or average value [6].

Color-Blind-Friendly Palettes: Implement accessible color combinations such as blue & orange or blue & red to accommodate color vision deficiencies. Avoid problematic combinations including red-green, green-brown, and blue-purple [6].

Avoid Rainbow Scales: Refrain from using "rainbow" color scales as they create misperceptions of data magnitude through non-uniform luminance and artificially abrupt transitions between hues [6].

Interactive Visualization Implementation

Utilize specialized R packages such as Rvisdiff to create interactive heatmaps that enable exploratory data analysis. These tools allow researchers to connect differential expression results with source expression data, facilitating the identification of patterns and potential artifacts through features like zooming, hovering, and dynamic linking between visualizations [21].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents and Computational Tools for Differential Expression Analysis

| Reagent/Tool | Function/Application | Example/Source |

|---|---|---|

| Affymetrix Microarrays | Genome-wide expression profiling using cDNA datasets for biomarker discovery | Human Genome U133 Plus 2.0 Array [16] |

| ERCC Spike-In Controls | External RNA controls for accounting for cell-to-cell technical variation in scRNA-seq | Thermo Fisher Scientific ERCC RNA Spike-In Mix [17] |

| DESeq2 | Differential expression analysis of RNA-seq count data using negative binomial distribution | Bioconductor Package [18] [21] |

| DIscBIO | Integrated pipeline for single-cell RNA-seq analysis including clustering and biomarker discovery | R Package on CRAN [17] |

| bigPint | Interactive visualization of differential expression results with quality control plots | R Package on GitHub [19] [20] |

| Rvisdiff | Interactive visualization and exploration of differential expression results | Bioconductor Package [21] |

| STRING Database | Online resource for protein-protein interaction network analysis | string-db.org [17] |

| EnrichR | Gene set enrichment analysis against annotated libraries from over 160 sources | Ma'ayan Lab [17] |

In differential expression (DE) analysis, the heatmap serves as a fundamental tool for visualizing complex gene expression patterns across multiple samples. However, the biological validity and visual clarity of a heatmap are entirely dependent on the initial step of gene selection. Choosing genes based on arbitrary statistical thresholds or convenience often leads to uninterpretable or, worse, biologically misleading conclusions. This article details a rigorous, hypothesis-driven framework for selecting genes for DE heatmaps, ensuring that the resulting visualizations accurately reflect the underlying biology and provide genuine insight for research and drug development.

Core Principles of Gene Selection

The process of gene selection bridges raw statistical output from DE analysis and a meaningful biological story. The following principles are critical for making informed choices.

Defining the Selection Objective

Before selecting any genes, explicitly define the goal of the heatmap. Common objectives include:

- Cell Type or Cluster Annotation: Identifying a minimal set of genes that robustly define and distinguish biological cell types present in the data [22].

- Pathway or Process Visualization: Selecting genes that belong to a specific biological pathway (e.g., glycolysis, apoptosis) or have a known functional relationship to interrogate their coordinated response.

- Phenotype Association: Highlighting genes that are associated with a specific phenotype, such as treatment response, disease severity, or survival.

A clearly defined objective dictates the subsequent selection strategy, preventing a "garbage-in, garbage-out" scenario.

Moving Beyond Arbitrary Thresholding

Traditional gene selection often involves applying universal thresholds for significance (e.g., adjusted p-value < 0.05) and effect size (e.g., absolute log2Fold Change > 1). While useful for initial filtering, this approach has significant limitations:

- Bias Toward Highly Variable Genes: It tends to favor genes with inherently high expression variance, potentially overlooking constrained genes where even small changes are biologically critical [23].

- Ignoring Biological Context: A uniform fold-change threshold may be too stringent for key regulatory genes and too lenient for noisy, highly expressed genes.

Advanced recalibration methods, such as weighting a gene's observed fold change against its natural dosage variation in the human population, can help prioritize functionally relevant genes over merely variable ones [23].

Gene Selection Methodologies and Protocols

This section provides actionable protocols for the primary gene selection strategies.

Protocol 1: Selection for Cell Type or Cluster Annotation

This protocol is designed to identify a concise marker gene set that defines the cellular identities within a dataset, suitable for annotation and validation.

Experimental Workflow: Cell Type Marker Selection

1. Differential Expression Testing:

- Method: Apply a statistical test comparing each cluster to all other clusters (one-vs-rest). Benchmarking studies indicate that the Wilcoxon rank-sum test and Student's t-test are highly effective for this task [22].

- Tools: Execute using standard frameworks like Seurat or Scanpy.

2. Statistical Filtering:

- Apply an adjusted p-value threshold (e.g., Benjamini-Hochberg < 0.05) to account for multiple testing.

- Implement a minimum log2 fold-change filter (e.g., |log2FC| > 0.5) to focus on genes with meaningful effect sizes.

3. Gene Ranking and Selection:

- Rank genes within each cluster based on a combination of statistical significance (p-value) and effect size (log2FC).

- Select the top N genes (typically 5-20) per cluster. The exact number depends on the complexity of the dataset and the need for a minimal yet definitive gene set.

4. Specificity Evaluation (Optional but Recommended):

- Calculate metrics like the area under the ROC curve (AUC) to quantify how well each gene distinguishes the target cluster from all others.

- Tools like

Cepocan identify genes that are consistently expressed in a specific cell type [22].

Protocol 2: Hypothesis-Driven Selection for Functional Analysis

This protocol is used when investigating a predefined biological question, such as the behavior of a specific pathway.

1. Define the Biological Hypothesis:

- Formulate a clear question (e.g., "How is the TGF-beta signaling pathway altered in treated versus control samples?").

2. Gene Set Compilation:

- Source: Obtain relevant gene lists from curated databases such as MSigDB, KEGG, or Reactome.

- Custom Lists: Use genes identified from prior experiments or literature reviews.

3. Filter and Prioritize:

- Intersect the predefined gene list with the results of the DE analysis.

- Filter for genes that show statistically significant changes under the experimental conditions.

- Prioritize genes based on the magnitude of change and their known central role in the pathway.

Protocol 3: Machine-Learning Assisted Selection

For discovery-based research, machine learning (ML) can help identify minimal gene sets with high predictive power for a condition of interest.

1. Feature Selection:

- Tool: Utilize a library like

gSELECT, which is designed for evaluating the classification performance of gene sets [24]. - Input: Provide the expression matrix and corresponding group labels (e.g., disease vs. healthy).

2. Model Training and Evaluation:

gSELECTcan use mutual information ranking, random sampling, or custom gene lists as a starting point.- It employs multilayer perceptrons with cross-validation to assess how well a gene set separates the conditions [24].

3. Identify Minimal Gene Combinations:

- Use the tool's "greedy" or "exhaustive" strategies to find the smallest number of genes that yield high predictive accuracy, providing a powerful, compact set for visualization [24].

Quantitative Benchmarking of Selection Methods

The choice of statistical method for marker gene selection significantly impacts the quality of the resulting gene list. The table below summarizes the performance of commonly used methods based on a comprehensive benchmark of 59 methods across 14 real and 170 simulated scRNA-seq datasets [22].

Table 1: Benchmarking of Common Marker Gene Selection Methods

| Method | Overall Efficacy | Key Characteristics | Best Use-Case |

|---|---|---|---|

| Wilcoxon Rank-Sum Test | High | Robust to outliers, effective for one-vs-rest comparisons. | General-purpose cluster annotation. |

| Student's t-test | High | Powerful for normally distributed data. | Cell type annotation with large, well-separated clusters. |

| Logistic Regression | High | Models log-odds of cluster membership, can adjust for covariates. | When technical confounders (e.g., batch) are a concern. |

| Cepo | High | Identifies consistent marker genes for cell type identity. | Defining stable cell type signatures. |

| NSForest | Medium | Uses random forest to identify minimal marker sets. | Selecting a minimal gene panel for experimental validation. |

Visualization and Interpretation Guidelines

A poorly constructed heatmap can obscure the patterns that careful gene selection sought to reveal. Adhere to the following best practices for visualization.

Color Palette Selection for Accessibility

Color is the primary channel for encoding expression data in a heatmap. Its misuse is a major source of interpretability failure.

- Avoid Non-Accessible Palettes: The common red-green colormap is indistinguishable for individuals with red-green color vision deficiency (CVD), which affects up to 8% of men [25] [26].

- Adopt Accessible Schemes: Use a blue-white-red diverging palette. For individuals with CVD, blue and red remain distinguishable [27]. Alternatively, a single-hue sequential palette (e.g., light to dark blue) is universally safe [26].

- Leverage Light vs. Dark: If using red and green is mandatory, ensure significant contrast in lightness (value) between them—for example, a dark red and a light green [25].

Table 2: Colorblind-Friendly Heatmap Color Palettes

| Palette Type | Example Colors (Low-Mid-High) | CVD-Friendly? | Use Case |

|---|---|---|---|

| Diverging | #2166AC #F7F7F7 #B2182B |

Yes (Blue/Red) | Showing up/down-regulation from a central point. |

| Sequential | #F1F3F4 #FBBC05 #EA4335 |

Yes (Light to Dark) | Displaying expression levels from low to high. |

| Sequential (Single Hue) | #DEEBFF #4292F4 #08306B |

Yes | Emphasizing a magnitude of change. |

Data Scaling and Clustering

- Row Scaling (Z-score): Normalize gene expression (rows) to a Z-score (mean=0, standard deviation=1). This ensures the color scale represents relative up- or down-regulation of each gene, making patterns across different expression levels comparable.

- Clustering: Apply hierarchical clustering to both rows (genes) and columns (samples) to group entities with similar expression profiles. This reveals co-expressed gene modules and sample subtypes that might otherwise be hidden [28]. Always check that the resulting dendrograms biologically make sense.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Gene Selection and Heatmap Generation

| Tool / Resource | Function | Application Context |

|---|---|---|

| Seurat / Scanpy | Comprehensive scRNA-seq analysis frameworks. | Performing initial DE testing (Wilcoxon test) and basic marker gene selection [22]. |

| gSELECT | Machine-learning based gene set evaluation. | Identifying minimal, high-performance gene combinations for classification [24]. |

| ColorBrewer / Paul Tol's Schemes | Curated colorblind-friendly palettes. | Selecting accessible color schemes for heatmaps and all data visualizations [26]. |

| DESeq2 / edgeR | DGE analysis tools for bulk RNA-seq. | Performing rigorous DE analysis on bulk RNA-seq data to generate input gene lists [29]. |

| FeatureCounts / HTSeq | Read quantification. | Generating the raw count matrices that are the input for DE analysis [29]. |

A Practical Pipeline: From DGE Analysis to Heatmap Visualization

Selecting genes for a differential expression heatmap is a foundational step in transcriptomic research, with implications for understanding disease mechanisms and identifying therapeutic targets. The choice of differential gene expression (DGE) tool directly influences which genes are selected as significant, thereby shaping subsequent biological interpretations. While DESeq2 and edgeR have long been the established methods for bulk RNA-seq analysis, recent evidence suggests these tools may produce exaggerated false positives in large-sample studies, raising concerns about their reliability for population-level datasets [30]. Meanwhile, non-parametric methods like the Wilcoxon rank-sum test are gaining attention for their robust false discovery rate (FDR) control in these same scenarios [30].

This Application Note examines the performance characteristics of DESeq2, edgeR, and the Wilcoxon test within the context of heatmap research, providing structured comparisons and detailed protocols to guide researchers toward robust, interpretable results. We frame this discussion within a broader thesis on gene selection, emphasizing methodological choices that ensure biological signatures visualized in heatmaps reflect true signals rather than statistical artifacts.

Performance Comparison and Key Considerations

Table 1: Comparative Performance of DGE Tools on Large Population RNA-seq Datasets

| Method | Typical FDR Control (5% Target) | Power | Robustness to Outliers | Key Assumptions |

|---|---|---|---|---|

| DESeq2 | Often exceeded (actual FDR sometimes >20%) [30] | High but potentially artificial due to FDR inflation | Low; severely affected by outliers violating negative binomial model [30] [31] | Negative binomial distribution |

| edgeR | Often exceeded (actual FDR sometimes >20%) [30] | High but potentially artificial due to FDR inflation | Low; severely affected by outliers violating negative binomial model [30] [31] | Negative binomial distribution |

| Wilcoxon Rank-Sum Test | Consistently maintained across sample sizes [30] | Comparable or better when actual FDRs are equal [30] | High; uses rank-based approach less sensitive to extreme values [30] | None; non-parametric |

Critical Findings from Recent Studies

Recent benchmarking on population-level RNA-seq datasets (n=100-1376) revealed that DESeq2 and edgeR can exhibit severely inflated false discovery rates. In one analysis of an immunotherapy dataset (51 pre-nivolumab vs. 58 on-nivolumab patients), these methods demonstrated surprisingly low overlap, with only 8% concordance in identified differentially expressed genes [30]. Permutation analysis confirmed concerning false positive rates, with DESeq2 and edgeR mistakenly identifying 30 and 233 genes respectively from 50% of permuted datasets where no true differences existed [30].

The Wilcoxon rank-sum test consistently controlled FDR at target levels across sample sizes in the same evaluations [30]. While it requires larger sample sizes (n>8 per condition) to achieve sufficient power—an expected limitation for non-parametric methods—it outperformed parametric methods when sample sizes exceeded this threshold [30].

A key reason for DESeq2 and edgeR's performance issues in large datasets appears to be violation of the negative binomial model assumption, particularly due to outliers [30] [31]. As sample sizes increase, so does the probability of encountering outlier values that violate distributional assumptions, leading to spurious significance calls.

Impact on Heatmap Interpretation and Gene Selection

When selecting genes for differential expression heatmaps, improper FDR control directly impacts biological interpretation. Researchers investigating immune response differences in the immunotherapy dataset found that spurious DEGs identified by DESeq2 and edgeR in permuted data showed enrichment in immune-related GO terms [30]. This demonstrates how methodological artifacts can masquerade as biologically plausible findings, potentially misdirecting research efforts and experimental validation.

Heatmaps visualize patterns across selected genes and samples, with clustering grouping genes with similar expression profiles and samples with similar expression patterns [28] [32]. When a method with poor FDR control selects genes for heatmap inclusion, the resulting visualization may highlight technical artifacts rather than biological truth, compromising downstream analyses.

Figure 1: Decision workflow for selecting a DGE tool for heatmap research

Experimental Protocols

Protocol 1: Wilcoxon Rank-Sum Test for Large-Sample Studies

Purpose: To identify differentially expressed genes while maintaining robust FDR control in studies with large sample sizes (n>50 per group).

Materials:

- RNA-seq count data: Raw read counts for genes across all samples

- Sample metadata: Condition labels for each sample

- R statistical environment (v4.0 or higher)

- R packages: stat (for Wilcoxon test), qvalue (for FDR correction)

Procedure:

- Data Preprocessing:

- Filter out lowly expressed genes using

filterByExprfrom edgeR or similar approach - No normalization is applied to the counts before running the Wilcoxon test [30]

- Filter out lowly expressed genes using

Differential Expression Testing:

- For each gene, perform Wilcoxon rank-sum test comparing expression values between conditions

- Extract p-values for all tested genes

- Apply Benjamini-Hochberg procedure to control false discovery rate

Gene Selection for Heatmap:

- Select genes with FDR < 0.05 (or appropriate threshold for your study)

- Consider incorporating fold-change thresholds if biological relevance requires

Validation (Recommended):

- Compare results with alternative methods

- Perform permutation testing to verify FDR control in your specific dataset

Technical Notes: The Wilcoxon test's non-parametric nature makes it robust to outliers and distributional assumptions but requires adequate sample size (n>8 per condition) for sufficient power [30].

Protocol 2: Winsorization-Enhanced DESeq2/edgeR Analysis

Purpose: To utilize DESeq2 or edgeR while mitigating false positives through outlier handling.

Materials:

- RNA-seq count data: Raw read counts for genes across all samples

- Sample metadata: Condition labels for each sample

- R statistical environment (v4.0 or higher)

- R packages: DESeq2 or edgeR, dplyr

Procedure:

- Winsorization Preprocessing:

- Calculate size factors using

estimateSizeFactors(DESeq2) orcalcNormFactors(edgeR) - Divide raw counts by size factors to obtain normalized counts

- For each gene, identify values exceeding the 93rd-95th percentile

- Replace extreme values with the chosen percentile value (93rd recommended for edgeR, 95th for DESeq2) [31]

- Multiply winsorized normalized counts by size factors and round to nearest whole numbers

- Calculate size factors using

Differential Expression Analysis:

- Input winsorized counts to DESeq2 or edgeR using standard pipelines

- Follow established workflows for model fitting and hypothesis testing

FDR Validation:

- Apply permutation testing by randomly shuffling condition labels

- Confirm that the number of significant findings in permuted data approximates expected FDR

Gene Selection for Heatmap:

- Apply standard significance thresholds (FDR < 0.05)

- Include winsorization parameters in method documentation

Technical Notes: Winsorization substantially reduces false positives for DESeq2 and edgeR, with edgeR demonstrating better FDR control post-winsorization [31]. The optimal percentile may vary by dataset and can be tuned using permutation studies.

Protocol 3: Permutation Testing for FDR Validation

Purpose: To empirically validate false discovery rate control for any DGE method.

Materials:

- Processed RNA-seq data (normalized or raw counts depending on method)

- Condition labels

- Computing resources capable of handling multiple iterations

Procedure:

- Data Preparation:

- Prepare dataset exactly as intended for primary analysis

- Preserve all normalization and filtering steps

Label Permutation:

- Randomly shuffle condition labels among samples

- Maintain original sample sizes for each condition

- Repeat 100-1000 times to generate permuted datasets

Analysis of Permuted Data:

- Apply your DGE method to each permuted dataset

- Record the number of significant genes identified for each permutation

FDR Estimation:

- Calculate the average number of significant genes found in permuted datasets

- Divide this by the number of significant genes in the original analysis

- This ratio provides an empirical estimate of the FDR

Technical Notes: For methods incorporating normalization, ensure permutation occurs after normalization or normalize after permutation to avoid creating artificial differences [33]. This protocol is particularly valuable when applying methods to new data types or when extending established methods to larger sample sizes.

Research Reagent Solutions

Table 2: Essential Tools for Differential Expression Analysis

| Tool/Resource | Function | Application Notes |

|---|---|---|

| DESeq2 | Parametric DGE analysis based on negative binomial distribution | Use with winsorization pre-processing for large samples; provides interpretable log2 fold changes [31] |

| edgeR | Parametric DGE analysis using negative binomial models | Shows better FDR control than DESeq2 after winsorization; 93rd percentile recommended [31] |

| Wilcoxon Rank-Sum Test | Non-parametric DGE analysis | Preferred for large-sample studies (>50 per group); robust to outliers and distribution violations [30] |

| dearseq | Non-parametric DGE analysis using variance component score test | Handles complex designs; competitive performance for large human population samples [33] |

| DElite | Integrated framework combining multiple DGE tools | Provides unified output from DESeq2, edgeR, limma, dearseq, and Wilcoxon; enables method comparison [34] |

| Winsorization | Outlier replacement technique | Critical pre-processing step for parametric methods; replaces extreme values with percentile cutoffs [31] |

| TMM Normalization | Between-sample normalization method | Accounts for composition biases; used by edgeR, limma, and dearseq [35] [34] |

| Median of Ratios | Between-sample normalization method | Default in DESeq2; based on geometric means [34] |

Integrating DGE Results with Heatmap Visualization

From Gene Selection to Heatmap Creation

The genes selected through differential expression analysis become the input for heatmap visualization, which displays expression patterns across samples and conditions [28] [32]. In a standard differential expression heatmap, each row represents a gene, each column represents a sample, and color intensity represents normalized expression values [28] [32].

Clustered heatmaps group genes with similar expression profiles together, revealing co-regulated genes and biological patterns [28] [32]. This clustering can identify biological signatures associated with particular conditions, making methodological choices in the preceding DGE analysis critical for generating meaningful visualizations.

Methodological Recommendations for Heatmap Research

Figure 2: Complete workflow from raw data to biological interpretation

Based on current evidence, we recommend the following approach for selecting genes for differential expression heatmaps:

For population-level studies with large sample sizes (n>50 per group):

- Use the Wilcoxon rank-sum test as the primary method for its robust FDR control

- Validate findings with winsorization-enhanced edgeR or DESeq2

For studies with moderate sample sizes (n=10-50 per group):

- Apply winsorization-enhanced edgeR (93rd percentile) or DESeq2 (95th percentile)

- Compare results with Wilcoxon test when sample size permits

For all studies:

- Implement permutation testing to empirically verify FDR control

- Document methodological choices transparently

- Consider using integrated frameworks like DElite to compare multiple methods [34]

The selection of DGE methodology fundamentally shapes which biological signals emerge in downstream visualizations and interpretations. By matching methodological choices to study characteristics—particularly sample size and data quality—researchers can ensure that their differential expression heatmaps highlight biologically meaningful patterns rather than statistical artifacts.

The selection of genes for differential expression heatmaps is a critical step that bridges statistical rigor with biological interpretation in transcriptomic research. This protocol details a structured methodology for integrating false discovery rate (FDR), p-values, and log2 fold-change thresholds to identify biologically meaningful genes while controlling for false positives. We provide implementable workflows using established R packages and validation techniques to ensure the selected gene sets robustly represent underlying biological phenomena for visualization and further analysis.

In high-throughput transcriptomic studies, reliably detecting differentially expressed genes (DEGs) requires careful balance between statistical significance and biological relevance [19]. While statistical tests effectively identify genes with non-zero expression changes, they often lack inherent mechanisms to ensure these changes are large enough to be biologically meaningful [36]. This challenge is particularly acute when selecting genes for visualization in heatmaps, where an inappropriate threshold can either obscure true biological signals with excessive noise or exclude potentially important findings.

The disconnect between mathematical and biological concepts of differential expression necessitates formal approaches that incorporate both statistical confidence and magnitude of change [36]. Early microarray studies utilized simple fold-change cutoffs, but these ignored variability and reproducibility concerns. Modern approaches combine statistical tests with fold-change thresholds to identify more biologically relevant gene sets [36]. This protocol synthesizes current best practices for establishing these dual-threshold criteria, with particular emphasis on their application to heatmap visualization for drug development and basic research.

Threshold Selection Strategies

Statistical Significance Foundations

Statistical testing in differential expression analysis typically evaluates whether the true differential expression differs from zero. The most widely adopted approaches include:

- P-values: Measure the probability of observing the data if the null hypothesis (no differential expression) is true

- False Discovery Rate (FDR): Controls the expected proportion of false positives among statistically significant results, with Benjamini-Hochberg being the most common implementation

However, these statistical measures alone permit genes with arbitrarily small fold-changes to be declared statistically significant, potentially identifying mathematically valid but biologically irrelevant changes [36].

Incorporating Magnitude Thresholds

The TREAT (t-tests relative to a threshold) methodology provides a formal statistical framework for testing whether differential expression exceeds a predefined log-fold-change threshold [36]. This approach tests the thresholded null hypothesis H0: |βg| ≤ τ against the alternative H1: |βg| > τ, where τ is a pre-specified threshold for the log-fold-change below which differential expression is not considered biologically meaningful.

Table 1: Common Threshold Combinations for DEG Selection

| Application Context | P-value/FDR Threshold | Log2FC Threshold | Rationale |

|---|---|---|---|

| Exploratory Discovery | FDR < 0.05 | 1.0-1.5 (2-2.8-fold) | Balanced sensitivity for novel findings |

| Targeted Validation | FDR < 0.01 | 1.5-2.0 (2.8-4-fold) | Higher stringency for confirmation |

| Clinical Biomarker Identification | FDR < 0.001 | ≥2.0 (≥4-fold) | Maximum specificity for translational applications |

| Pathway-Focused Analysis | FDR < 0.1 | 0.5-1.0 (1.4-2-fold) | Context-dependent for coordinated small changes |

Practical Implementation Considerations

The biological significance of a given fold-change threshold depends on both the gene and experimental context [36]. However, it is reasonable to assume a minimum fold-change threshold below which differential expression is unlikely to be biologically interesting for any gene. Combination approaches that apply both statistical significance and fold-change cutoffs typically identify more biologically meaningful gene sets than p-values alone [36].

For studies with very small sample sizes (e.g., n=3 per group), statistical power is inherently limited, and non-parametric tests like Mann-Whitney U may be preferable if data are not normally distributed [37].

Experimental Protocol: Integrated DEG Identification

Data Preprocessing and Quality Control

Materials:

- Raw RNA-seq count data or normalized expression data

- Sample metadata with experimental group assignments

- R statistical environment with appropriate packages

Procedure:

- Quality Assessment: Perform standard RNA-seq QC checks including examination of read distributions, outlier detection, and batch effect assessment

- Normalization: Apply appropriate normalization method (e.g., TMM for edgeR, median-of-ratios for DESeq2, or quantile normalization for microarray data)

- Data Filtering: Remove genes with low expression across samples (e.g., genes with counts <10 in >90% of samples)

Differential Expression Analysis

Method 1: Standard DESeq2/edgeR Workflow

- Fit appropriate statistical model accounting for experimental design

- Calculate p-values and FDR-adjusted p-values (Benjamini-Hochberg)

- Apply independent filtering to remove low-count genes

- Extract results including log2 fold-changes and statistical metrics

Method 2: TREAT Implementation

- Specify biologically relevant log-fold-change threshold (τ) based on experimental context

- Perform thresholded hypothesis testing using TREAT methodology [36]

- Obtain p-values specifically testing for differential expression beyond the threshold

Gene Selection for Heatmap Visualization

- Primary Filtering: Apply simultaneous thresholds for statistical significance (FDR < 0.05) and magnitude (|log2FC| > 1)

- Secondary Prioritization: For large gene lists, apply more stringent criteria (e.g., FDR < 0.01 and |log2FC| > 1.5)

- Cluster-Based Selection: When using tools like DgeaHeatmap, select top variable genes within expression clusters [3]

Diagram 1: Gene selection workflow for heatmap visualization

Visualization and Validation Workflow

Heatmap Generation with DgeaHeatmap

The DgeaHeatmap R package provides streamlined functions for creating publication-ready heatmaps from transcriptomic data [3]. Key features include:

- Support for both normalized and raw count data from platforms including Nanostring GeoMx DSP

- Z-score scaling and k-means clustering for pattern identification

- Customizable annotation and server-independent analysis

Implementation Protocol:

- Data Input: Load normalized expression matrix for selected DEGs

- Data Transformation: Apply Z-score scaling across genes or samples as appropriate

- Clustering Determination: Generate elbow plot to determine optimal cluster number (k)

- Heatmap Generation: Create annotated heatmap using

adv_heatmapfunction - Pattern Identification: Identify gene clusters with coherent expression patterns

Visual Validation Techniques

Parallel Coordinate Plots: Visualize expression patterns of selected genes across all samples to verify consistent patterns within experimental groups and identify potential outliers [19].

Scatterplot Matrices: Assess variability between replicates versus treatments to confirm expected data structure where replicate pairs show tighter correlation than treatment pairs [19].

Diagram 2: Multi-perspective visualization validation

The Scientist's Toolkit

Table 2: Essential Research Reagents and Computational Tools

| Item | Function/Application | Implementation Notes |

|---|---|---|

| DgeaHeatmap R Package | Streamlined heatmap generation from transcriptomic data | Supports Z-score scaling, k-means clustering; compatible with Nanostring GeoMx DSP data [3] |

| TREAT Algorithm | Formal testing of differential expression relative to fold-change threshold | Available in limma package; specifies biologically relevant τ threshold [36] |

| bigPint Package | Interactive visualization of RNA-seq data | Identifies normalization issues, DEG designation problems through parallel coordinate plots [19] |

| DESeq2/edgeR | Statistical testing for differential expression | Standard tools for RNA-seq analysis; require raw count data for normalization factors [3] |

| exvar R Package | Integrated gene expression and genetic variation analysis | User-friendly interface with visualization apps; supports multiple species [38] |

Troubleshooting and Optimization

Common Challenges and Solutions

- Too Few Genes Passing Thresholds: Consider relaxing FDR threshold to 0.1 or reducing log2FC requirement; investigate potential normalization issues

- Too Many Genes for Clear Visualization: Apply more stringent thresholds (FDR < 0.01, higher log2FC) or select top variable genes within clusters

- Poor Cluster Separation in Heatmap: Re-evaluate threshold choices; consider whether biological effect sizes warrant current thresholds

- Inconsistent Replicate Patterns: Examine raw data quality and potential confounding factors; use parallel coordinate plots to identify outlier samples [19]

Threshold Optimization Strategy

- Begin with standard thresholds (FDR < 0.05, |log2FC| > 1)

- Assess resulting gene set size and composition

- Adjust thresholds based on biological context and visualization requirements

- Validate selected genes through complementary visualizations (volcano plots, MA plots)

- Document rationale for final threshold selections for reproducibility

Effective gene selection for differential expression heatmaps requires thoughtful integration of statistical rigor and biological relevance. By implementing the dual-threshold approach outlined in this protocol—combining controlled false discovery rates with biologically informed fold-change cutoffs—researchers can generate heatmaps that faithfully represent meaningful patterns in transcriptomic data. The provided workflows, leveraging established tools like DgeaHeatmap and TREAT, offer a reproducible path from raw data to biologically interpretable visualizations suitable for publication and hypothesis generation in drug development and basic research.

In differential gene expression (DGE) analysis, researchers face a critical strategic decision: whether to prioritize genes based solely on statistical significance (the "top DEGs" approach) or to focus on a pre-defined set of biological interest (the "genes of interest" approach). This choice fundamentally influences subsequent analyses, including the creation of differential expression heatmaps for research publication. With growing concerns about the reproducibility of DEGs identified in individual studies, particularly in complex diseases like Alzheimer's and schizophrenia [18], establishing a robust gene shortlisting strategy has never been more important. This protocol provides a structured framework for making this strategic decision and executing the chosen approach effectively.

Core Concepts and Definitions

Differentially Expressed Genes (DEGs) are genes identified through statistical testing as exhibiting significant expression differences between experimental conditions, such as case versus control groups. The "Top DEGs" approach selects genes based on statistical metrics like p-values or false discovery rates (FDR), typically choosing a predetermined number of the most significantly altered genes. In contrast, the "Genes of Interest" approach focuses on a biologically relevant gene set selected prior to analysis based on existing literature, known pathways, or specific hypotheses.

Decision Framework: Choosing Your Shortlisting Strategy

The choice between these approaches depends on multiple factors, including research objectives, data characteristics, and validation resources. The following table outlines key decision criteria:

Table 1: Strategic Decision Framework for Gene Shortlisting Approaches

| Decision Factor | Prioritize Top DEGs | Focus on Genes of Interest |

|---|---|---|

| Primary Research Goal | Discovery-based research; hypothesis generation | Targeted validation; pathway-focused analysis |

| Data Characteristics | Large sample sizes; high statistical power | Smaller datasets; limited statistical power |

| Available Prior Knowledge | Limited field knowledge; exploratory studies | Substantial existing literature; established pathways |

| Downstream Analysis | Unbiased clustering; novel biomarker identification | Directed validation; mechanistic studies |

| Validation Resources | Ample resources for extensive follow-up | Limited resources requiring focused experimentation |

Quantitative Comparison of Method Performance

Recent studies have quantified the performance of different gene prioritization strategies, particularly highlighting the power of integrated approaches:

Table 2: Performance Metrics of Gene Prioritization Methods Across Studies

| Method Category | Representative Methods | Reported Performance | Application Context |

|---|---|---|---|

| Single-omics Analysis | t-test; SAM; CATT [39] | High false-positive rates; limited reproducibility [39] [18] | Initial screening; resource-limited studies |

| Meta-analysis | SumRank [18] | Substantially improved predictive power (AUC: 0.68-0.85) vs. individual studies [18] | Neurodegenerative diseases; COVID-19 studies |

| Multi-omics Integration | Improved TOPSIS; CNN models [39] [40] | Precision: 72.9%; Recall: 73.5%; F1-Measure: 73.4% in swine model [40] | Complex traits; non-model organisms |

| Rank-based Integration | Rank Product [39] | Identifies consistently ranked genes across datasets | Cross-study validation |

Experimental Protocols

Protocol 1: Top DEGs Identification Using RumBall RNA-seq Platform

This protocol enables identification of statistically significant DEGs starting from FASTQ files [41].

Materials and Reagents

- Computational Resources: Computer with at least 8GB RAM and 100GB storage

- Software Dependencies: Docker, RumBall platform, command line interface

- Reference Data: Species-appropriate reference genome and annotation files

Step-by-Step Procedure

- Environment Setup: Install Docker and download the RumBall container image following developer documentation.

- Data Acquisition: Place FASTQ files in designated input directory. Use built-in scripts to obtain external reference datasets and annotations if needed.

- Read Mapping and Quantification: Execute the primary RumBall workflow which internally utilizes established tools for:

- Read alignment to reference genome

- Transcript assembly and quantification

- Generation of count matrices

- Differential Expression Analysis: Run statistical modeling within RumBall to identify DEGs with parameters including:

- Minimum fold-change threshold (typically 1.5-2x)

- FDR cutoff (typically 0.05-0.1)

- Result Interpretation: Generate visualization plots (MA plots, volcano plots) and DEG tables for downstream analysis.

- Functional Enrichment: Perform gene ontology (GO) and pathway enrichment analysis on significant DEGs.

Technical Notes

- The entire process from FASTQ files to DEG identification can be completed in a few steps within the self-contained Docker system [41].

- RumBall internally utilizes popular RNA-seq analysis tools, providing a comprehensive yet accessible analysis pipeline.

Protocol 2: Gene Prioritization Through Multi-omics Integration

This meta-analysis strategy integrates evidence from multiple genomic resources to improve prioritization of biologically relevant DEGs [39].

Materials

- Multi-omics Data: Gene expression profiles, SNP genotype data, eQTL data

- Computational Tools: R or Python environment with statistical packages

- Reference Databases: Pathway databases (KEGG, Reactome), functional annotation resources

Step-by-Step Procedure

Data Collection and Preprocessing:

- Obtain gene expression data (e.g., microarray or RNA-seq)

- Acquire SNP genotype data from GWAS studies

- Gather eQTL data linking genetic variations to expression changes

Individual Resource Scoring:

- For gene expression data: Calculate significance using t-test or SAM

- For SNP data: Apply Cochran-Armitage trend test (CATT)

- For eQTL data: Map SNPs to biologically relevant genes

Score Normalization and Integration:

- Normalize scores from different resources using Euclidean normalization

- Apply improved TOPSIS method to calculate integrated gene scores:

- Construct gene score matrix (genes × resources)

- Identify positive and negative ideal solutions

- Calculate relative distances for each gene

- Alternative integration methods: Rank Product or Fisher's method

Candidate Gene Selection:

- Select top-ranking genes based on integrated scores

- Apply additional filtering based on biological relevance

Technical Notes

- The improved TOPSIS method is particularly effective as it is not affected by missing values in the score matrix [39].

- This approach has demonstrated superior performance in identifying disease-related genes for complex conditions like prostate and lung cancers [39].

Visual Decision Workflow

The following diagram illustrates the strategic decision process for gene shortlisting:

Table 3: Key Research Reagent Solutions for Gene Prioritization Studies

| Resource Category | Specific Examples | Primary Function | Application Context |

|---|---|---|---|

| Analysis Platforms | RumBall [41]; ISwine [40] | Integrated DEG identification and prioritization | RNA-seq analysis; multi-omics integration |

| Statistical Tools | DESeq2; t-test; CATT [39] [18] | Differential expression analysis; statistical testing | Single-omics analysis; GWAS studies |

| Multi-omics Databases | ISwine knowledgebase [40]; GTEx | Housing genomic, transcriptomic, and QTX data | Cross-validation; meta-analysis |

| Integration Algorithms | Improved TOPSIS [39]; SumRank [18] | Combining evidence from multiple resources | Enhanced reproducibility; reduced false positives |

| Visualization Tools | ggplot2; pheatmap; ComplexHeatmap | Generating publication-quality heatmaps | Data interpretation; result communication |

Advanced Integration Strategy Diagram

For studies requiring maximum reproducibility, consider this integrated approach combining both strategies:

Implementation Guidelines

When Reproducibility is Paramount

For studies where reproducibility is a primary concern, particularly in complex neurodegenerative diseases like Alzheimer's and Parkinson's disease, the SumRank meta-analysis method has demonstrated substantial improvements in identifying DEGs with predictive power [18]. This non-parametric approach prioritizes genes showing consistent relative differential expression ranks across multiple datasets rather than relying on significance thresholds from individual studies.

For Non-Model Organisms

In non-model organisms where annotated gene sets may be limited, deep learning approaches integrating multi-omics data have shown promising results. Convolutional neural network models achieving >70% precision and recall in swine demonstrate the potential of these methods despite limited prior biological knowledge [40].