Integrating Multi-Omics Datasets: A Comprehensive Guide from Foundational Principles to Advanced Clinical Applications

This article provides a comprehensive roadmap for researchers and drug development professionals navigating the complex landscape of multi-omics data integration.

Integrating Multi-Omics Datasets: A Comprehensive Guide from Foundational Principles to Advanced Clinical Applications

Abstract

This article provides a comprehensive roadmap for researchers and drug development professionals navigating the complex landscape of multi-omics data integration. It covers foundational principles, from defining omics layers and their interactions to exploring the latest spatial multi-omics technologies. The guide delves into contemporary methodological approaches, including statistical frameworks like MOFA+ and deep learning models such as Graph Neural Networks, with concrete applications in cancer subtyping and neurodegenerative disease research. It addresses critical troubleshooting challenges like data heterogeneity and missing values, and offers solutions for optimization. Finally, it presents rigorous validation and comparative analysis frameworks to evaluate method performance and ensure biological relevance, empowering scientists to leverage integrated multi-omics for transformative discoveries in precision medicine.

Demystifying Multi-Omics: Core Concepts, Technologies, and Exploratory Data Analysis

The completion of the human genome sequence marked a paradigm shift in biological sciences, enabling the development of technologies that generate massive molecular datasets from a single biological sample [1] [2]. These fields, collectively known as "omics," provide unprecedented resolution for characterizing biological systems at multiple molecular levels. Omics technologies share the suffix "-omics" and study collective sets of biological molecules, or "-omes," such as the genome, proteome, and metabolome [2]. The primary omics fields include genomics, transcriptomics, proteomics, metabolomics, and epigenomics, each focusing on different molecular layers that define cellular function and physiological states [1].

In translational medicine, integrating multiple omics datasets has proven powerful for detecting disease-associated molecular patterns, identifying patient subtypes, improving diagnosis and prognosis, predicting drug response, and understanding regulatory processes [3]. This integrated approach, known as multi-omics, recognizes that biological systems cannot be fully understood by studying any single molecular layer in isolation. The following sections define each omics field, detail their experimental protocols, and demonstrate their integration within a comprehensive research framework.

Defining the Omics Fields: Core Concepts and Technologies

The table below summarizes the key characteristics, molecular targets, and dominant technologies for the five major omics fields.

Table 1: Core Omics Fields: Definitions, Molecular Targets, and Primary Technologies

| Omics Field | Core Definition | Molecule Class Studied | Primary Analytical Technologies |

|---|---|---|---|

| Genomics | The systematic study of an organism's complete DNA sequence [1] [2]. | DNA (genes, non-coding regions, structural variants) [1]. | DNA sequencing (e.g., Next-Generation Sequencing), SNP chips [1]. |

| Epigenomics | The study of reversible, chemical modifications to DNA or DNA-associated proteins that regulate gene expression without changing the DNA sequence [1]. | DNA methylation, histone modifications, chromatin structure [1] [2]. | Bisulfite sequencing, ChIP-seq [1]. |

| Transcriptomics | The study of the complete set of RNA transcripts in a cell or tissue [1]. | mRNA, rRNA, tRNA, miRNA, and other non-coding RNAs [1]. | Microarrays, RNA sequencing (RNA-Seq) [1]. |

| Proteomics | The study of the complete set of proteins expressed by a cell, tissue, or organism, including their structures and functions [1] [2]. | Proteins, including post-translational modifications (e.g., phosphorylation, glycosylation) [1]. | Mass spectrometry, protein microarrays [1]. |

| Metabolomics | The study of the complete set of small-molecule metabolites within a biological sample [1] [2]. | Metabolic intermediates, hormones, signaling molecules, lipids (<1 kDa) [1] [2]. | Mass spectrometry, Nuclear Magnetic Resonance (NMR) spectroscopy [1] [2]. |

Experimental Protocols for Omics Data Generation

Transcriptomics Profiling Workflow

Gene expression profiling identifies and quantifies the mixture of mRNA transcripts in a biological sample, reflecting active genes under specific conditions [2].

Protocol: RNA Sequencing (RNA-Seq)

- Sample Collection & RNA Extraction: Homogenize tissue or lyse cells. Extract total RNA using a guanidinium thiocyanate-phenol-chloroform-based method (e.g., TRIzol). Assess RNA integrity and purity using an Agilent Bioanalyzer (RIN > 8.0 recommended).

- Library Preparation: Deplete ribosomal RNA or enrich for mRNA using poly-A selection. Fragment RNA chemically or enzymatically. Synthesize complementary DNA (cDNA) using reverse transcriptase. Ligate sequencing adapters to cDNA fragments. Amplify the library via PCR.

- Sequencing: Load the library onto a next-generation sequencer (e.g., Illumina NovaSeq). Perform paired-end sequencing (e.g., 2x150 bp) to a minimum depth of 30 million reads per sample.

- Bioinformatic Analysis:

- Quality Control: Use FastQC to assess read quality.

- Alignment: Map reads to a reference genome (e.g., GRCh38) using a splice-aware aligner like STAR.

- Quantification: Generate a count matrix of reads per gene using featureCounts.

- Differential Expression: Identify statistically significant changes in gene expression between experimental groups using R/Bioconductor packages such as DESeq2 or edgeR.

Proteomics and PTM Analysis Workflow

Proteomics provides insights into the functional molecules of the cell, capturing protein expression, interactions, and post-translational modifications (PTMs) that cannot be predicted from mRNA abundance alone [1] [2].

Protocol: Mass Spectrometry-Based Proteomics

- Sample Preparation: Lyse cells or tissue in a denaturing buffer (e.g., 8M Urea). Reduce disulfide bonds with dithiothreitol (DTT) and alkylate with iodoacetamide. Digest proteins into peptides using a sequence-specific protease (typically trypsin).

- Liquid Chromatography (LC): Desalt and separate peptides using reverse-phase C18 LC columns coupled to a high-performance LC system (e.g., NanoLC). Elute peptides with an acetonitrile gradient.

- Mass Spectrometry (MS) Analysis: Analyze eluted peptides using a high-resolution tandem MS instrument (e.g., Thermo Orbitrap Exploris). Operate in Data-Dependent Acquisition (DDA) mode to select the most abundant precursor ions for fragmentation (MS2).

- Data Processing and Protein Identification: Convert raw MS files to a universal format (e.g., .mgf). Search spectra against a protein sequence database (e.g., Swiss-Prot) using search engines (e.g., MaxQuant, Proteome Discoverer) to identify peptides and infer proteins. For PTM analysis, include variable modifications (e.g., oxidation, phosphorylation) in the search parameters. Use label-free (MaxLFQ) or isobaric tagging (TMT) methods for quantification.

Metabolomics Profiling Workflow

Metabolomics studies the dynamic complement of small molecules, providing a snapshot of the physiological state influenced by genetics, environment, and diet [1] [2].

Protocol: Untargeted Metabolomics via LC-MS

- Sample Collection and Quenching: Rapidly collect and freeze biofluids (e.g., plasma) or snap-freeze cell cultures in liquid nitrogen to halt metabolic activity.

- Metabolite Extraction: Thaw samples on ice. For plasma, precipitate proteins by adding cold methanol (1:3 sample:methanol ratio), vortex, and centrifuge. Transfer the metabolite-containing supernatant to a new vial and dry under a nitrogen stream.

- LC-MS Analysis: Reconstitute the dried extract in a solvent compatible with the LC phase (e.g., water or acetonitrile). Separate metabolites using reverse-phase or hydrophilic interaction liquid chromatography (HILIC). Analyze with a high-resolution mass spectrometer in both positive and negative electrospray ionization modes to maximize metabolite coverage.

- Data Processing: Use software (e.g., XCMS, MS-DIAL) for peak picking, alignment, and annotation. Normalize peak intensities to internal standards and sample protein content. Annotate metabolites by matching accurate mass and fragmentation spectra against databases (e.g., HMDB, METLIN).

Integration of Multi-Omics Datasets for Translational Research

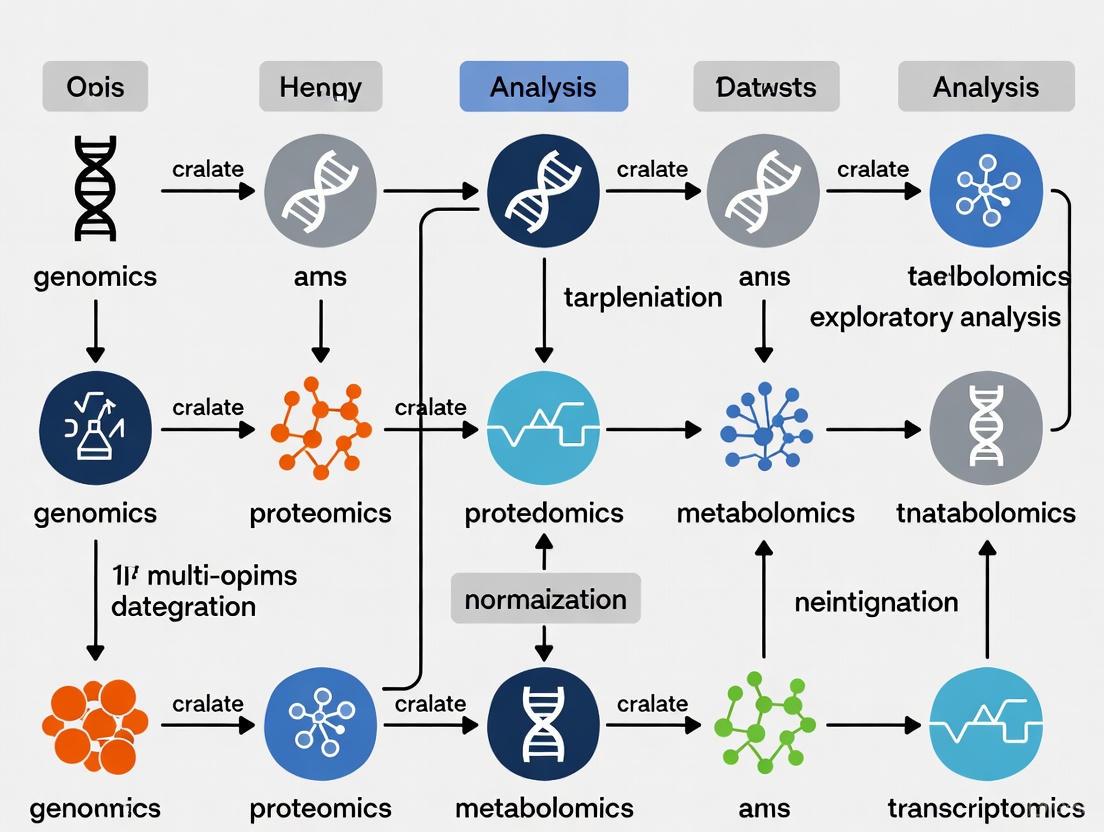

Multi-omics integration leverages complementary information from different molecular layers to build a more comprehensive model of biological systems and disease pathology. A representative framework for this process is illustrated below.

Diagram 1: A generalized multi-omics integration analysis workflow.

Case Study: AI-Driven Multi-Omics Integration for Schizophrenia Biomarker Discovery

A recent study demonstrated a robust framework for integrating plasma proteomics, post-translational modifications (PTMs), and metabolomics data to identify peripheral biomarkers for schizophrenia (SCZ) risk stratification [4]. The protocol below details the key steps.

Protocol: Automated Multi-Omics Integration with Machine Learning

Data Collection and Harmonization:

- Data Source: Utilize a publicly available dataset (e.g., PMC9054664) comprising quantitative profiles from 105 individuals (54 SCZ patients, 51 non-psychiatric controls) [4].

- Data Matrices: Construct standardized expression profile matrices for 742 proteins, 2289 PTMs, and 1535 metabolites.

- Preprocessing: Impute missing values using the

missForestR package. Perform rigorous normalization. Retain only features shared across all three omics datasets to create a harmonized dataset [4].

Model Training and Benchmarking:

- Model Selection: Employ a diverse set of 17 machine learning and deep learning models. Use automated machine learning pipelines (e.g., AutoGluon) for traditional models (Random Forest, XGBoost, LightGBM) [4].

- Deep Learning Architectures: Develop specialized models (CNNBiLSTM, Transformer, SimpleNN) to capture nonlinear dependencies and hierarchical feature representations [4].

- Performance Evaluation: Assess classification performance using Receiver Operating Characteristic (ROC) and Precision-Recall (PR) curves. Calculate the Area Under the Curve (AUC) with 95% confidence intervals [4].

Interpretable Feature Prioritization and Functional Analysis:

- Feature Importance: Apply interpretability frameworks like Shapley Additive Explanations (SHAP) to identify top discriminative molecular features (e.g., specific protein PTMs) [4].

- Functional Enrichment: Perform pathway enrichment analysis (e.g., using KEGG, GO databases) on prioritized features to identify implicated biological processes (e.g., complement activation, platelet signaling) [4].

- Network Analysis: Construct protein-protein interaction networks to reveal central molecular hubs (e.g., coagulation factors F2, F10) [4].

Table 2: Performance of Selected Machine Learning Models in a Multi-Omics Study of Schizophrenia [4]

| Omics Data Type | Best Performing Model | Classification Performance (AUC) | Key Discriminative Features Identified |

|---|---|---|---|

| Multi-Omics (Integrated) | LightGBMXT | 0.9727 (95% CI: 0.8889–1.000) | Carbamylation of IGKCK20 and IGHG1K8; Oxidation of F10_M8 |

| Proteomics Alone | CNNBiLSTM | 0.9636 (95% CI: 0.8636–1.0000) | Proteins involved in immune and coagulation pathways |

| PTMs Alone | CNNBiLSTM | 0.8818 (95% CI: 0.6731–1.000) | Site-specific modifications on immunoglobulins and coagulation factors |

| Metabolomics Alone | Not specified in results | Lower than multi-omics and proteomics | Metabolites linked to gut microbiota-associated metabolism |

Successful multi-omics research relies on a suite of high-quality reagents, analytical platforms, and bioinformatic resources.

Table 3: Essential Research Reagent Solutions for Multi-Omics Studies

| Category / Item | Function / Application | Specific Examples / Notes |

|---|---|---|

| Nucleic Acid Analysis | ||

| RNA Extraction Kit | Isolation of high-integrity total RNA for transcriptomics. | Kits based on guanidinium thiocyanate-phenol (e.g., TRIzol). Assess quality with Bioanalyzer. |

| Poly-A Selection Beads | Enrichment of messenger RNA (mRNA) from total RNA for RNA-Seq. | Magnetic beads coated with oligo(dT) nucleotides. |

| Protein & Metabolite Analysis | ||

| Trypsin, Sequencing Grade | Proteolytic digestion of proteins into peptides for mass spectrometry. | Highly purified to minimize autolysis. |

| Urea, Mass Spec Grade | Protein denaturation in sample lysis buffers. | High-purity grade to avoid carbamylation artifacts. |

| Internal Standards (IS) | Quantification and quality control in metabolomics. | Stable isotope-labeled compounds for LC-MS. |

| Bioinformatic Resources | ||

| SRMAtlas | Public resource for targeted proteomics assay development. | Provides pre-validated mass spectra for peptides [1]. |

| Human Protein Atlas | Tissue-specific expression data for human proteins. | Antibody-based findings for over 12,000 proteins [1]. |

| Metabolomics Databases | Annotation of small molecules from MS data. | Human Metabolome Database (HMDB), METLIN. |

The omics landscape provides a multi-layered view of biology, from genetic blueprint (genomics) to functional endpoints (proteomics, metabolomics). As demonstrated, the integration of these layers through advanced computational frameworks is a cornerstone of modern translational research, enabling the discovery of robust biomarkers and providing deeper insights into complex disease mechanisms like schizophrenia. The standardized protocols and resources outlined herein offer a practical guide for researchers embarking on multi-omics studies aimed at exploratory analysis and therapeutic development.

The integration of multi-omics datasets represents a transformative approach in modern biological research and drug development, enabling a systems-level understanding of health and disease. Exploratory analysis of these combined datasets can uncover complex biological mechanisms that remain invisible when examining a single molecular layer. Next-Generation Sequencing (NGS), Mass Spectrometry (MS), and Nuclear Magnetic Resonance (NMR) spectroscopy form the technological foundation for generating comprehensive genomics, proteomics, and metabolomics data [5] [6]. The convergence of these technologies provides unprecedented insights into the multi-layered regulation of biological systems, from genetic blueprint to functional metabolic activity.

The paradigm has shifted from isolated analysis to integrated multi-omics, where the synergistic interpretation of data from multiple analytical platforms provides a more holistic view of biological systems [7] [5]. This integration faces significant challenges, including the management of massive dataset volumes, the development of specialized computational tools for cross-omics analysis, and the need for standardized protocols to ensure reproducibility [5] [6]. However, the potential rewards are substantial, with applications spanning from the discovery of novel biomarkers and therapeutic targets to the advancement of personalized medicine strategies based on a complete molecular profile of individual patients [5].

Next-Generation Sequencing (NGS) Platforms

Next-Generation Sequencing (NGS) encompasses a suite of high-throughput technologies that have revolutionized genomics by enabling the rapid and cost-effective sequencing of millions to billions of DNA fragments in a single experiment [8] [9]. These technologies represent a fundamental shift from first-generation Sanger sequencing, utilizing massively parallel sequencing strategies to achieve extraordinary throughput and scale [9] [10]. The core principle shared by most NGS platforms involves the amplification of DNA fragments followed by sequential biochemical reactions that detect nucleotide incorporations, generating vast numbers of short or long DNA sequences (reads) that are computationally reconstructed into a complete genomic sequence [8] [10].

The applications of NGS extend far beyond whole genome sequencing to include targeted region sequencing, transcriptomics (RNA-Seq) to quantify gene expression, epigenomic analysis of DNA methylation and DNA-protein interactions, cancer genomics for identifying somatic mutations, microbiome studies, and pathogen discovery [8]. The versatility of NGS has made it an indispensable tool across diverse research areas, from basic biological investigation to clinical diagnostics and therapeutic development [9] [10]. The continuous evolution of NGS technologies has driven dramatic reductions in cost while simultaneously increasing data output and quality, making large-scale genomic studies increasingly accessible [10].

Comparative Analysis of NGS Platforms

Table 1: Comparison of Major NGS Platforms and Their Characteristics

| Platform | Technology Type | Amplification Method | Read Length | Key Applications | Primary Limitations |

|---|---|---|---|---|---|

| Illumina | Sequencing by Synthesis | Bridge Amplification | 36-300 bp (short) | Whole genome sequencing, transcriptomics, epigenomics, targeted sequencing | Signal crowding and overlapping can increase error rate to ~1% [9] |

| Ion Torrent (Thermo Fisher) | Semiconductor sequencing | Emulsion PCR | 200-400 bp (short) | Cancer research, inherited diseases, infectious diseases | Homopolymer sequences can lead to signal strength loss [9] [10] |

| PacBio SMRT | Single-molecule real-time sequencing | Without PCR | 10,000-25,000 bp (long) | Structural variant detection, haplotype phasing, genome assembly | Higher cost per sample compared to short-read platforms [9] |

| Oxford Nanopore | Nanopore sensing | Without PCR | 10,000-30,000 bp (long) | Real-time sequencing, field applications, metagenomics | Error rate can be as high as 15%, requiring computational correction [9] |

| PacBio Onso System | Sequencing by binding | Optional PCR | 100-200 bp (short) | Targeted sequencing, medical genomics | Higher cost compared to other short-read platforms [9] |

Detailed NGS Experimental Protocol

A standard NGS workflow consists of three fundamental steps: library preparation, sequencing, and data analysis [8]. The protocol below outlines a representative workflow for whole genome sequencing using Illumina technology, which dominates the current NGS market [10].

Library Preparation Protocol:

- DNA Fragmentation: Use mechanical shearing (e.g., ultrasonication) or enzymatic digestion to fragment genomic DNA into desired sizes (typically 200-500 bp for short-read sequencing).

- End Repair and A-Tailing: Convert the fragmented DNA into blunt-ended fragments using a combination of polymerase and exonuclease activities, then add a single adenosine nucleotide to the 3' ends to facilitate adapter ligation.

- Adapter Ligation: Ligate platform-specific sequencing adapters to both ends of the DNA fragments. These adapters contain sequences complementary to the flow cell oligos and barcodes for sample multiplexing.

- Size Selection and Purification: Use magnetic bead-based cleanups or gel electrophoresis to select library fragments of the desired size range and remove adapter dimers.

- Library Quantification and Quality Control: Precisely quantify the final library using fluorometric methods (e.g., Qubit) and validate library quality using capillary electrophoresis (e.g., Bioanalyzer or TapeStation).

Sequencing Protocol (Illumina Platform):

- Cluster Generation: Denature the adapter-ligated library into single strands and load onto a flow cell. Through bridge amplification, each fragment is amplified into a clonal cluster containing ~1000 copies.

- Sequencing by Synthesis: Employ reversible dye-terminator chemistry. Fluorescently labeled nucleotides are incorporated one at a time, with imaging after each incorporation to determine the base identity.

- Base Calling: Convert the fluorescence images into nucleotide sequences (base calls) using the instrument's onboard software. Generate sequencing reads in FASTQ format, which contain both the nucleotide sequences and associated quality scores.

Data Analysis Protocol:

- Quality Control: Assess raw read quality using tools like FastQC to identify issues with base quality, adapter contamination, or GC bias.

- Read Alignment: Map sequencing reads to a reference genome using aligners such as BWA (for short reads) or Minimap2 (for long reads).

- Variant Calling: Identify genetic variants (SNPs, indels) relative to the reference using callers like GATK or DeepVariant.

- Annotation and Interpretation: Annotate variants with functional information (gene context, predicted impact, population frequency) using tools like ANNOVAR or SnpEff, then prioritize potentially clinically significant variants.

Mass Spectrometry in Proteomics

Mass spectrometry has emerged as the cornerstone technology for proteomic analysis, enabling the high-throughput identification and quantification of proteins in complex biological samples [11]. Modern MS-based proteomics provides unprecedented insights into protein expression, post-translational modifications, protein-protein interactions, and structural characteristics [11]. The fundamental principle involves ionizing protein or peptide molecules and measuring their mass-to-charge ratio (m/z), generating spectra that can be interpreted to determine molecular identity and abundance [11]. Two primary acquisition strategies dominate contemporary proteomics: Data-Dependent Acquisition (DDA) and Data-Independent Acquisition (DIA), each with distinct advantages for different experimental applications [12].

The integration of MS-based proteomics with other omics technologies, particularly genomics and transcriptomics, is essential for comprehensive multi-omics studies [5] [6]. While genomic data reveals potential molecular capabilities, proteomic analysis reveals the functional executants of cellular processes, providing a more direct understanding of phenotypic manifestations [5]. This integration helps bridge the gap between genotype and phenotype, particularly in complex diseases like cancer where transcript levels often poorly correlate with protein abundance due to post-transcriptional regulation [5] [6]. Advances in MS instrumentation, sample preparation methodologies, and computational analysis have dramatically expanded the scope and precision of proteomic investigations, making MS an indispensable tool for systems biology and drug development [11] [12].

Detailed Mass Spectrometry Experimental Protocol

Sample Preparation Protocol (Based on Palumbos et al., 2025):

- Protein Extraction: Solubilize cell or tissue pellets in 50 μL of extraction buffer containing 5% sodium dodecyl sulfate (SDS), 50mM TEAB (pH 8.5), and protease inhibitor cocktail [11].

- Sample Shearing and Clarification: Sonicate samples for 10 minutes at 20°C in a focused-ultrasonicator (e.g., Covaris R230) to shear DNA and ensure complete solubilization. Centrifuge samples at 3000g for 10 minutes to clarify lysate [11].

- Protein Quantification: Determine protein concentration by intrinsic tryptophan fluorescence excited at 280nm and read at 350nm, using a standard curve on a microplate reader [11].

- In-Solution Digestion: Digest 10 μg of each sample using S-Trap Micro columns. Reduce proteins in 5mM TCEP, alkylate in 20mM iodoacetamide, then acidify with phosphoric acid to a final concentration of 1.2% [11].

- Digestion and Peptide Elution: Add a 1:10 ratio (enzyme:protein) of Trypsin and LysC suspended in 50mM TEAB and digest for 18 hours at 37°C in a humidity chamber. Elute peptides with 50 mM TEAB, followed by 0.1% trifluoroacetic acid (TFA) in water, and finally 50/50 acetonitrile:water in 0.1% TFA [11].

- Peptide Cleanup: Combine eluates, dry via vacuum centrifugation, and desalt using an Oasis HLB μElution plate. Condition wells with acetonitrile, equilibrate with 0.1% TFA, apply samples, wash with 0.1% TFA, and elute with 50:50 acetonitrile:water [11].

- Sample Reconstitution: Dry eluates by vacuum centrifugation and reconstitute in 0.1% TFA containing iRT peptides. Determine peptide concentration at OD280 and adjust to 400 ng/μL for injection [11].

Mass Spectrometry Data Acquisition Protocol (DIA Method):

- Chromatographic Separation: Load 5 μL of sample onto a trap column (e.g., Acclaim PepMap 100 75 μm × 2 cm) at 5 μL/min, then separate by reverse-phase HPLC on a nanocapillary column (e.g., 75 μm id × 50 cm 2 μm PepMap RSLC C18) [11].

- Mobile Phase Gradient: Elute peptides with a 105-minute gradient from 3% mobile phase B (0.1% formic acid/acetonitrile) to 45% B at a flow rate of 300 nL/min [11].

- Mass Spectrometry Settings: Use the following parameters on an Exploris 480 or similar instrument:

- One full MS scan at 120,000 resolution, with a scan range of 350-1200 m/z

- Normalized automatic gain control (AGC) target of 300%

- Variable DIA isolation windows

- MS2 scans at 30,000 resolution

- Normalized AGC target of 1000%

- Default charge state of 3

- Normalized collision energy set at 27 [11]

Data Analysis Protocol:

- Database Searching: Process DIA raw files using Spectronaut 18.7 in direct DIA mode with a species-appropriate database (e.g., UniProt) supplemented with common protein contaminants and iRT peptides [11].

- Search Parameters: Set enzyme specificity to trypsin with allowance for two potential missed cleavages. Specify fixed modification as carbamidomethyl of cysteine, with protein N-terminal acetylation and oxidation of methionine as variable modifications [11].

- Quality Control: Apply a false discovery rate limit of 1% for precursors, peptides, and proteins identification [11].

- Differential Analysis: Conduct statistical analysis in R using MS2 intensity values. Log2 transform and normalize data by subtracting the median value for each sample. Employ a Limma t-test to identify proteins with differential abundance, visualized through volcano plots [11] [12].

Nuclear Magnetic Resonance (NMR) Spectroscopy

Nuclear Magnetic Resonance (NMR) spectroscopy serves as a powerful analytical technique for structural elucidation of organic compounds and biomolecules, with growing applications in metabolomics and integrative multi-omics studies [13] [14]. The fundamental principle of NMR involves exposing atomic nuclei with non-zero spin (such as ^1H, ^13C, ^15N) to a strong external magnetic field, which causes alignment of nuclear spins, followed by application of radiofrequency pulses that perturb this alignment [13]. As nuclei return to equilibrium, they emit radiofrequency signals that provide detailed information about molecular structure, dynamics, and interactions [14]. The chemical shift (measured in ppm), coupling constants, signal intensity, and relaxation parameters collectively offer a comprehensive view of molecular properties and behavior.

Recent technological advances have significantly enhanced the capabilities of NMR in omics research, particularly through the development of high-field NMR spectrometers and cryogenically cooled probe technology [13]. The spectral resolution of NMR increases proportionally with magnetic field strength (B~0~), while the signal-to-noise ratio improves with the field strength raised to the power of three-halves [13]. Cryoprobes dramatically reduce system noise by cooling the coils and preamplifiers with cold helium or nitrogen, substantially improving detection sensitivity [13]. These advancements have made NMR particularly valuable for metabolomic studies, where it enables the simultaneous identification and quantification of numerous metabolites in complex biological samples, providing complementary data to genomic and proteomic analyses for comprehensive multi-omics integration [14].

Key NMR Applications in Multi-Omics Research

NMR spectroscopy contributes several unique capabilities to multi-omics research pipelines. In metabolomics, NMR provides robust, reproducible analysis of biofluids (blood, urine, cerebrospinal fluid) and tissue extracts, enabling the identification of metabolic biomarkers associated with disease states [14]. The technique is particularly valuable for detecting and quantifying small-molecular-weight metabolites (under 1500 Da) and linking these metabolic profiles to clinical data [14]. Unlike mass spectrometry-based metabolomics, NMR requires minimal sample preparation, is non-destructive, and provides exceptional reproducibility, making it ideal for large-scale clinical studies [14].

Structural biology applications include protein-ligand interaction studies using techniques such as Saturation Transfer Difference (STD) and transferred NOEs, which can map binding interfaces and characterize conformational changes upon binding [14]. NMR also plays a crucial role in flux analysis through stable isotope tracing experiments, enabling the tracking of metabolic pathways and quantification of metabolic fluxes in living cells [14]. The quantitative nature of NMR, combined with its ability to simultaneously detect diverse compound classes without separation, makes it particularly powerful for exploring metabolic alterations in disease states and response to therapeutics [14].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Essential Research Reagents and Materials for Multi-Omics Technologies

| Reagent/Material | Application | Function | Example Products/Suppliers |

|---|---|---|---|

| Trypsin/LysC Mix | Mass Spectrometry | Enzymatic digestion of proteins into peptides for LC-MS/MS analysis | Promega Trypsin, Wako LysC [11] |

| S-Trap Micro Columns | Mass Spectrometry | Efficient digestion and cleanup of protein samples, especially for membrane proteins | Protifi S-Trap Micro [11] |

| iRT Peptides | Mass Spectrometry | Retention time calibration standards for LC-MS systems | Biognosys iRT Kit [11] |

| TCEP | Mass Spectrometry | Reduction of disulfide bonds in proteins | Thermo Scientific TCEP [11] |

| Iodoacetamide | Mass Spectrometry | Alkylation of cysteine residues to prevent reformation of disulfide bonds | Sigma-Aldrich Iodoacetamide [11] |

| NGS Library Prep Kits | Next-Generation Sequencing | Preparation of DNA or RNA libraries for sequencing on various platforms | Illumina DNA Prep, Thermo Fisher Ion Torrent Oncomine [8] |

| NGS Adapters with Barcodes | Next-Generation Sequencing | Sample multiplexing and platform-specific sequence requirements | Illumina TruSeq Adapters, IDT for Illumina [8] |

| Deuterated Solvents | NMR Spectroscopy | Solvent for NMR samples; deuterium provides signal lock | Cambridge Isotope Laboratories deuterated solvents [14] |

| TMS or DSS Reference | NMR Spectroscopy | Chemical shift reference compound for NMR spectra | Sigma-Aldrich TMS, DSS [14] |

Multi-Omics Integration: Approaches and Analytical Frameworks

Data Integration Strategies

The integration of multi-omics datasets presents both unprecedented opportunities and significant computational challenges [6]. Data-driven integration approaches can be broadly categorized into three main strategies: statistical-based methods, multivariate approaches, and machine learning/artificial intelligence techniques [6]. Statistical methods, particularly correlation analysis (Pearson's or Spearman's), represent the most straightforward approach, quantifying relationships between different molecular entities across omics layers [6]. These methods can identify coordinated changes in gene expression, protein abundance, and metabolite levels, revealing potential regulatory relationships and functional connections [6].

Multivariate methods, including Principal Component Analysis (PCA) and Partial Least Squares (PLS) regression, enable the simultaneous analysis of multiple variables across omics datasets, identifying latent structures that explain the greatest covariance between molecular features and phenotypic outcomes [6]. More advanced network-based integration approaches, such as Weighted Gene Correlation Network Analysis (WGCNA), identify modules of highly correlated molecular features across different data types, which can then be related to clinical traits or experimental conditions [6]. Machine learning and AI techniques represent the most sophisticated approach, capable of detecting complex, non-linear patterns in high-dimensional multi-omics data that may elude traditional statistical methods [7] [5]. These computational frameworks enable the construction of predictive models for disease classification, treatment response, and patient stratification based on integrated molecular profiles [5] [6].

Integrated Multi-Omics Analysis Protocol

Data Preprocessing and Quality Control:

- Data Normalization: Apply appropriate normalization methods for each omics data type (e.g., TPM for RNA-Seq, median normalization for proteomics, probabilistic quotient normalization for metabolomics).

- Batch Effect Correction: Use ComBat or similar algorithms to remove technical variability introduced by different processing batches.

- Missing Value Imputation: Apply data-type specific imputation methods (e.g., KNN imputation for proteomics data, minimum value replacement for metabolomics).

Correlation-Based Integration (Statistical Approach):

- Differential Expression Analysis: Identify significantly altered features in each omics dataset separately using appropriate statistical tests (e.g., DESeq2 for RNA-Seq, Limma for proteomics).

- Pairwise Correlation Analysis: Compute correlation coefficients (Pearson or Spearman) between significantly altered features across different omics platforms.

- Network Construction: Build correlation networks using tools like xMWAS, retaining edges that meet specific thresholds for correlation coefficient (e.g., R^2^ > 0.7) and statistical significance (p-value < 0.05) [6].

- Module Detection: Apply community detection algorithms (e.g., multilevel community detection) to identify highly interconnected groups of molecules across omics layers [6].

Multivariate Integration Approach:

- Data Concatenation: Combine preprocessed omics datasets into a single multi-block matrix, ensuring proper scaling of different data types.

- Dimension Reduction: Apply multi-block PCA or PLS methods to identify latent variables that explain covariance between omics blocks.

- Model Interpretation: Examine loadings and variable importance in projection (VIP) scores to identify features that contribute most to the integrated model.

Machine Learning Integration Approach:

- Feature Selection: Apply recursive feature elimination or regularization methods (LASSO) to identify the most informative molecular features across all omics layers.

- Model Training: Train ensemble methods (random forests, gradient boosting) or neural networks on the integrated multi-omics dataset.

- Model Validation: Use rigorous cross-validation and external validation sets to assess model performance and prevent overfitting.

- Biological Interpretation: Apply model interpretation techniques (SHAP values, permutation importance) to identify key molecular drivers of the model predictions.

The integration of NGS, mass spectrometry, and NMR technologies provides an unprecedented comprehensive view of biological systems across multiple molecular layers. As these technologies continue to evolve, becoming more sensitive, affordable, and accessible, their application in both basic research and clinical settings will expand considerably [7] [5]. The future of multi-omics research lies not merely in the parallel application of these technologies, but in their genuine integration through advanced computational methods that can extract meaningful biological insights from these complex, high-dimensional datasets [5] [6].

Current trends indicate a movement toward single-cell multi-omics, which will enable the resolution of cellular heterogeneity in complex tissues; spatial omics, preserving the architectural context of molecular measurements; and real-time analytical capabilities, particularly through advances in long-read sequencing and miniaturized NMR technologies [7] [5]. The successful implementation of multi-omics approaches will require continued development of standardized protocols, robust computational infrastructure for data management and analysis, and interdisciplinary collaboration between biologists, chemists, computational scientists, and clinicians [5] [6]. By embracing these integrated approaches, the scientific community can accelerate the translation of molecular discoveries into clinical applications, ultimately advancing personalized medicine and improving patient outcomes across a wide spectrum of diseases.

The pursuit of a holistic understanding of health and disease necessitates moving beyond isolated biological observations to an integrated view of the entire biological system. Multi-omics data integration represents a paradigm shift in biomedical research, combining diverse datasets—genomics, transcriptomics, proteomics, metabolomics, and clinical records—to create a complete picture of a patient’s health and disease [15]. This approach enables researchers to decipher the complex flow of information from genetic blueprint to functional manifestation, revealing how genes, proteins, and metabolites interact to drive disease processes [15].

The field has seen explosive growth, with PubMed citations of the terms "Multiomics" and "Multi-omics" increasing from 307 in 2018 to 3,933 in 2023 [16]. This surge reflects the recognition that single-omics approaches provide only a limited, partial view of hidden biology, while multi-omics integration can illuminate the interplay of different biomolecules, understand relationships across multiple layers, and bridge the critical gap between genotype and phenotype [16]. By measuring multiple analyte types within a pathway, biological dysregulation can be better pinpointed to single reactions, enabling the elucidation of actionable targets for therapeutic intervention [5].

Key Integration Strategies & Methodological Framework

The integration of disparate omics layers presents significant computational and analytical challenges due to data heterogeneity, scale, and complexity [15]. Researchers typically employ three primary strategies, classified based on the timing of integration relative to the analysis [15] [16].

Table 1: Multi-Omics Data Integration Strategies

| Integration Strategy | Timing | Key Advantages | Primary Challenges |

|---|---|---|---|

| Early Integration (Low-Level) | Before analysis | Captures all cross-omics interactions; preserves raw information | Extremely high dimensionality; computationally intensive; adds noise [15] [16] |

| Intermediate Integration (Mid-Level) | During analysis | Reduces complexity; incorporates biological context; improved signal-to-noise ratio [15] [16] | Requires domain knowledge; may lose some raw information; can lack interpretability [16] |

| Late Integration (High-Level) | After individual analysis | Handles missing data well; computationally efficient; works with unique distribution of each omics type [15] [16] | May miss subtle cross-omics interactions; potential loss of biological information through individual modeling [15] [16] |

A Practical Integration Protocol

A robust protocol is essential for generating reliable and interpretable results from multi-omics studies. The following step-by-step guide outlines the critical phases of integration.

Pre-Integration Phase

- Research Question Definition: Clearly articulate the specific biological or clinical question. Example: "What are the changes in protein expression and metabolite profiles that correlate with treatment response?" [16].

- Omics Technology Selection: Identify the most relevant omics technologies (e.g., genomics and transcriptomics for cancer biology; proteomics and metabolomics for therapeutic response) based on the research question and available resources [16].

- Data Quality Assurance: Ensure data reliability through careful experimental design, consistent sample collection, and established quality control (QC) protocols to minimize batch effects. Implement technology-specific QC metrics [16]:

- Genomics/Transcriptomics: Assess read quality scores, sequencing depth, and alignment quality.

- Proteomics: Evaluate peak intensity distribution, mass accuracy, and protein identification false discovery rate.

- Metabolomics: Analyze signal-to-noise ratio and metabolite identification quality.

Data Preprocessing & Analysis

- Data Preprocessing:

- Overlapping Samples: Include only samples present across all omics datasets to ensure proper integration [16].

- Missing Value Imputation: Handle missing data using statistical or machine learning methods (e.g., Least-Squares Adaptive method), rather than removal, especially with limited samples. Exclude variables with a high percentage (>25-30%) of missing values [16].

- Standardization: Perform data transformation (e.g., logarithmic, centering, scaling) to ensure consistent feature scaling and prevent domination by features with larger inherent effects [16].

- Dimensionality Reduction: Apply techniques like PCA or autoencoders to compress high-dimensional omics data into a lower-dimensional "latent space," making integration computationally feasible while preserving key biological patterns [15] [16].

- Integrated Analysis: Apply chosen integration strategy (early, intermediate, late) using appropriate statistical models and machine learning algorithms to extract insights from the combined data landscape.

The Scientist's Toolkit: Essential Research Reagents & Materials

Successful multi-omics studies rely on a suite of specialized reagents and technologies designed for specific molecular layers.

Table 2: Essential Research Reagents & Platforms for Multi-Omics Studies

| Reagent/Platform | Function | Application Note |

|---|---|---|

| SOMAscan Aptamer-Based Assay | Multiplexed proteomic analysis using slow off-rate modified aptamers to measure protein abundances [17]. | Used in large-scale pQTL studies for high-throughput plasma protein quantification; enabled analysis of 4,907 circulating proteins [17]. |

| Mass Spectrometry Systems | Identify and quantify proteins and metabolites based on mass-to-charge ratio [15] [16]. | Workhorse for proteomics and metabolomics; assess quality via peak intensity, mass accuracy, and signal-to-noise ratio [16]. |

| Next-Generation Sequencing (NGS) | High-throughput DNA and RNA sequencing to assess genomic variation and transcript expression [15] [5]. | Foundation for genomics, transcriptomics, and epigenomics; requires QC of read quality, depth, and alignment metrics [16]. |

| Single-Cell RNA Sequencing (scRNA-seq) | Profile gene expression at individual cell resolution to uncover cellular heterogeneity [17] [5]. | Critical for mapping core hub genes to specific cell types (e.g., endothelial cells, monocytes); requires specialized cell isolation protocols [17]. |

| Liquid Biopsy Platforms | Non-invasive isolation and analysis of circulating biomarkers (e.g., ctDNA, exosomes) from blood [5] [18]. | Emerging tool for real-time monitoring; advancements increasing sensitivity/specificity for early disease detection [18]. |

Application Note: Ulcerative Colitis Biomarker Discovery

Experimental Protocol & Workflow

A 2025 study demonstrates the practical application of multi-omics integration to identify diagnostic and therapeutic biomarkers for ulcerative colitis (UC), a complex inflammatory bowel disease [17]. The workflow integrated data from genomics, transcriptomics, and proteomics.

Step-by-Step Protocol:

Data Acquisition & Causal Inference:

- Data Sources: Acquired microarray data (GSE87466, GSE92415) from GEO database as a training set, with GSE75214 as a validation set. Obtained pQTL data from a study of 35,559 individuals (4,907 plasma proteins) and UC GWAS data from the IEU Open GWAS project (1,579 cases, 335,620 controls) [17].

- Mendelian Randomization (MR): Performed proteome-wide MR analysis using the "TwoSampleMR" R package to identify plasma proteins with a causal association with UC. Used cis-pQTLs as instrumental variables under genome-wide significance (P < 5 × 10⁻⁸), independence (LD r² < 0.001), and F-statistic > 10 criteria [17].

Differential Expression & Data Intersection:

Machine Learning for Biomarker Selection:

- Employed three machine learning algorithms—Random Forest (RF), Support Vector Machine-Recursive Feature Elimination (SVM-RFE), and XGBoost—on the training set to screen core hub genes from the overlapping genes [17].

- Identified four core hub genes (EIF5A2, IDO1, CDH5, and MYL5) as robust diagnostic biomarkers [17].

Validation & Mechanistic Exploration:

- Constructed a diagnostic nomogram model with the four genes and validated its predictive performance in the external validation dataset [17].

- Utilized single-cell RNA sequencing data (GSE214695) to explore expression profiles of core hub genes across different cell types, revealing specific cellular localization (e.g., CDH5 in endothelial cells, IDO1 in monocytes) [17].

- Performed immune infiltration analysis (CIBERSORT), functional enrichment (GSEA), and constructed mRNA-miRNA-lncRNA regulatory networks [17].

- Validating expression changes in a dextran sulfate sodium (DSS)-induced UC mouse model using RT-qPCR confirmed consistency with bioinformatics predictions [17].

Key Findings & Quantitative Results

The integrated analysis successfully bridged genomic predisposition to functional pathophysiology, identifying four core hub genes with causal roles in UC.

Table 3: Key Findings from the Ulcerative Colitis Multi-Omics Study

| Analysis Stage | Key Result | Biological/Clinical Implication |

|---|---|---|

| Mendelian Randomization | 168 plasma proteins identified with causal association to UC [17]. | Prioritized potential therapeutic targets from a massive proteomic dataset using genetic evidence, minimizing confounding. |

| Differential Expression & Intersection | 1,011 DEGs found; intersection with MR results yielded 12 overlapping genes [17]. | Narrowed candidate list to genes with both causal (genetic) and correlative (expression) evidence of involvement in UC. |

| Machine Learning Feature Selection | 4 core hub genes identified: EIF5A2, IDO1, CDH5, MYL5 [17]. | Provided a minimal, robust gene signature for diagnostic model development. |

| Single-Cell Sequencing | Revealed cell-specific expression: CDH5 (endothelial), EIF5A2 (stem/T-cells), IDO1 (monocytes), MYL5 (epithelial/endothelial) [17]. | Uncovered cellular heterogeneity and suggested specific cell types involved in UC pathogenesis for targeted therapy. |

| Diagnostic Model | Nomogram demonstrated strong predictive performance, validated externally [17]. | Offered a potential clinical tool for stratifying UC patients based on their molecular profile. |

Discussion & Future Perspectives

The integration of multi-omics data is fundamentally transforming biomedical research from a siloed, single-layer perspective to a holistic, systems-level understanding. As the Ulcerative Colitis case study demonstrates, this approach powerfully bridges genetic predisposition and functional pathophysiology, enabling the discovery of causal biomarkers and therapeutic targets [17]. The convergence of advanced technologies and sophisticated computational methods is paving the way for a new era in precision medicine.

Looking ahead, several key trends are poised to shape the future of multi-omics. The rise of single-cell multi-omics will allow researchers to move beyond tissue-level averages and understand cellular heterogeneity, providing unprecedented resolution in mapping disease mechanisms [5]. Furthermore, liquid biopsies are expected to expand beyond oncology, offering a non-invasive method for dynamic monitoring of disease progression and treatment response across a wider range of conditions by analyzing biomarkers like cell-free DNA, RNA, and proteins [5] [18]. Finally, the growing integration of Artificial Intelligence and Machine Learning will be crucial, with AI-driven algorithms revolutionizing predictive analytics, automating data interpretation, and facilitating the development of truly personalized treatment plans based on complex, integrated molecular profiles [15] [18]. These advancements, coupled with ongoing efforts in standardization and the establishment of robust regulatory frameworks, will ensure that multi-omics integration continues to drive innovations in diagnostics, therapeutics, and ultimately, improved patient outcomes [18].

Spatially resolved multi-omics represents a paradigm shift in biological research, enabling the simultaneous measurement of multiple molecular layers within the native tissue architecture [19]. This approach addresses a critical limitation of traditional single-cell omics, which, while powerful, loses the spatial context essential for understanding cellular function, communication, and tissue organization [20]. The ability to perform multi-modal analysis on the same tissue section is particularly transformative, as it eliminates spatial misalignment and facilitates direct, cell-to-cell comparisons across molecular classes such as the transcriptome and proteome [21] [22]. This protocol outlines the integrated workflow for generating and analyzing spatially resolved transcriptomic and proteomic data from a single tissue section, a methodology recently demonstrated in human lung cancer and liver disease studies [21] [20]. By preserving the spatial context of multiple molecular readouts, researchers can now uncover novel insights into disease heterogeneity, immune-microenvironment interactions, and the complex regulatory networks governing biological systems.

Key Principles and Biological Significance

Spatially resolved multi-omics on a single section provides a holistic view of cellular machinery by capturing complementary data layers in their precise histological context. This is crucial because biological functions emerge from complex, spatially organized interactions. For instance, in the human liver, metabolic functions are zonated across the lobule axis due to gradients of oxygen, nutrients, and hormones [20]. Similarly, in cardiovascular disease, the myocardium is zoned into distinct spatial domains of injury after myocardial infarction [19].

A key finding reinforced by single-section multi-omics is the frequent discordance between transcript and protein abundances within individual cells. Studies have consistently observed systematically low correlations between mRNA and corresponding protein levels, a phenomenon now resolvable at cellular resolution [21] [22]. This highlights the complex post-transcriptional regulation and emphasizes the necessity of measuring both molecular layers for complete functional understanding.

The tumor microenvironment exemplifies where spatial multi-omics provides unique insights. Cellular function is profoundly influenced by positional context—proximity to blood vessels, immune cell infiltrates, and stromal components. Single-section multi-omics enables the dissection of these cell-cell interactions and regional-specific expression patterns without the ambiguity introduced by analyzing separate sections [21].

Table 1: Advantages of Single-Section Multi-Omics Approach

| Feature | Traditional Multi-Section Approach | Single-Section Approach |

|---|---|---|

| Spatial Context | Misalignment between sections | Perfect spatial registration |

| Morphological Consistency | Variable between sections | Preserved across modalities |

| Cell-to-Cell Comparison | Indirect and statistical | Direct at single-cell level |

| Data Quality | Potential section-to-section variation | Consistent tissue morphology |

| Regional Analysis | Approximate alignment required | Precise region-of-interest mapping |

Experimental Workflow and Protocol

The following section details a proven wet-lab and computational framework for performing and integrating spatial transcriptomics (ST) and spatial proteomics (SP) from the same tissue section, as demonstrated on human lung carcinoma samples [21] [22].

Sample Preparation and Tissue Processing

Materials:

- Formalin-fixed paraffin-embedded (FFPE) tissue sections (5 µm)

- Xenium In Situ Gene Expression reagents (10x Genomics)

- COMET hyperplex immunohistochemistry platform (Lunaphore)

- Primary antibodies for targets of interest (40 markers recommended)

- Fluorophore-conjugated secondary antibodies

- DAPI counterstain (Thermo Fisher Scientific)

Protocol:

- Mount FFPE sections on Xenium slides within the designated reaction region (12 mm × 24 mm).

- Perform deparaffinization and decrosslinking following manufacturer's instructions.

- For spatial transcriptomics: Hybridize DNA probes to target RNA sequences, followed by ligation and amplification of gene-specific barcodes.

- Load slides into the Xenium Analyzer for cyclic probe hybridization, imaging, and removal to generate optical signatures for each barcode.

- For spatial proteomics: Following Xenium analysis, perform heat-induced epitope retrieval (HIER) using the PT module (Epredia).

- Mount slides with microfluidic chips (9 mm × 9 mm acquisition region) on the COMET platform.

- Perform sequential immunofluorescence staining using off-the-shelf primary antibodies, fluorophore-conjugated secondary antibodies, and DAPI counterstain.

- The COMET platform conducts cyclical staining, imaging, and elution, generating a final stacked fluorescence image with multiple channels including DAPI.

- Perform background subtraction using Horizon software (v2.2.0.1, Lunaphore Technologies) before exporting images for analysis.

- Conduct manual hematoxylin and eosin (H&E) staining on the post-Xenium post-COMET sections and image using a slide scanner (e.g., Zeiss Axioscan 7).

Table 2: Key Research Reagent Solutions

| Reagent/Category | Specific Examples | Function |

|---|---|---|

| Spatial Transcriptomics | Xenium In Situ Gene Expression (10x Genomics) | High-resolution spatial RNA detection |

| Spatial Proteomics | COMET hyperplex IHC (Lunaphore) | Multiplexed protein detection from same section |

| Gene Panels | 289-gene human lung cancer panel | Targeted transcriptome profiling |

| Antibody Panels | 40-plex antibody panels | Multiplexed protein quantification |

| Nuclear Staining | DAPI counterstain | Cell segmentation and nuclear identification |

| Tissue Staining | Hematoxylin and Eosin (H&E) | Histological context and pathology annotation |

Computational Data Integration and Analysis

Image Registration and Alignment:

- Use computational registration software (e.g., Weave) for automated non-rigid alignment of DAPI images from Xenium and COMET acquisitions to the H&E reference image [22].

- Apply spline-based algorithms to achieve precise spatial matching across modalities, accounting for potential tissue deformation.

Cell Segmentation:

- For Xenium data: Perform cell segmentation based on DAPI nuclear expansion using the 10x Genomics pipeline.

- For COMET data: Utilize deep learning-based methods (e.g., CellSAM) integrating both nuclear (DAPI) and membrane (e.g., PanCK) markers for improved segmentation accuracy.

- Match cells between the two segmentation methods to compare morphological and molecular features.

Multi-Omics Data Integration:

- Apply cell segmentation masks to calculate mean intensity of each protein marker and transcript count per gene per cell.

- Generate an integrated dataset containing both gene expression and protein abundance measurements within the same cellular compartments.

- Create interactive web-based visualizations (e.g., using Weave) incorporating full-resolution H&E microscopy images with pathology annotations, COMET protein images, Xenium gene transcripts, and cell segmentation results.

(Spatial Multi-Omics Experimental Workflow)

Data Analysis and Interpretation

Multi-Omics Correlation Analysis

With integrated transcriptomic and proteomic data from the same cells, researchers can perform cross-modal correlation analysis to examine relationships between RNA transcripts and their corresponding protein products [22].

Protocol:

- Filter cells with total transcript count <20 to ensure data quality.

- Normalize gene expression data using total count normalization followed by log transformation.

- Calculate Spearman correlation coefficients between transcript counts and mean immunofluorescence intensity for paired RNA-protein markers.

- Visualize correlation patterns separately for tumor and non-tumor regions to identify compartment-specific regulatory differences.

Dimension Reduction and Cell Clustering

Protocol:

- Combine normalized transcript counts and protein intensities into a unified feature matrix.

- Perform dimensionality reduction using UMAP (Uniform Manifold Approximation and Projection).

- Construct neighbor graphs using 15 nearest neighbors and cosine similarity metrics.

- Apply Louvain clustering to identify cell populations based on combined transcriptomic and proteomic signatures.

- Annotate cell types using known marker genes and proteins, validating with pathological annotations from H&E staining.

Region-Specific Analysis and Cell-Cell Interactions

Leverage the precise spatial registration to investigate region-specific expression patterns and potential cell-cell communication [20] [19].

Protocol:

- Transfer pathological annotations (e.g., tumor regions, fibrotic areas) from H&E images to the multi-omics dataset.

- Perform differential expression analysis between anatomical or pathological regions.

- Identify spatially variable features using spatial autocorrelation statistics (e.g., Moran's I).

- Map ligand-receptor interactions between neighboring cells using specialized tools (e.g., CellChat, NicheNet).

Applications in Translational Research

The integrated spatial multi-omics approach has demonstrated significant utility across various research applications:

Drug Discovery and Development: Network-based integration of multi-omics data has shown particular promise in drug discovery, enabling drug target identification, drug response prediction, and drug repurposing [23]. By capturing the complex interactions between drugs and their multiple targets within the tissue context, these approaches can better predict clinical efficacy and identify novel therapeutic opportunities.

Disease Mechanism Elucidation: In metabolic dysfunction-associated steatotic liver disease (MASLD), spatial multi-omics revealed microphthalmia-associated transcription factor (MITF) as a key regulator of the lipid-handling capacity of lipid-associated macrophages [20]. The study also uncovered a hepatoprotective role of these macrophages mediated through hepatocyte growth factor secretion, demonstrating how spatial context reveals novel biological insights.

Biomarker Discovery: The technology enables identification of spatially-informed biomarkers that may be missed in bulk analyses. For example, in cardiovascular research, spatial multi-omics has identified distinct mechano-sensing genes in the border zone of myocardial infarcts that regulate remodeling processes [19].

Technical Considerations and Troubleshooting

Experimental Design:

- Include control sections processed without Xenium but with COMET and H&E staining to assess potential effects of the transcriptomics workflow on protein detection.

- Carefully titrate antibody concentrations for hyperplex immunohistochemistry to minimize background while maintaining signal intensity.

- Ensure proper tissue fixation to preserve both RNA integrity and protein epitopes.

Computational Challenges:

- Address data heterogeneity through appropriate normalization strategies that account for technical variations between platforms.

- Develop standardized evaluation frameworks for method performance comparison [23].

- Implement scalable computational approaches to handle large-scale multi-omics datasets efficiently.

Data Visualization: Utilize specialized visualization tools (e.g., Spatial-Live, Weave) that enable interactive exploration of integrated multi-omics datasets in both 2D and 3D perspectives [22] [24]. These tools facilitate the interpretation of complex spatial relationships across molecular modalities.

Spatially resolved multi-omics on single tissue sections represents a groundbreaking advancement in biomedical research, offering unprecedented insights into cellular organization and function within native tissue contexts. The integrated workflow presented here—combining spatial transcriptomics, spatial proteomics, and histology from the same section—provides a robust framework for investigating complex biological systems. As computational methods for data integration continue to evolve and spatial technologies become more accessible, this approach holds tremendous potential for revolutionizing our understanding of disease mechanisms, identifying novel biomarkers, and accelerating therapeutic development across diverse pathological conditions.

The integration of multi-omics data has revolutionized cancer research, enabling a holistic view of the molecular mechanisms driving oncogenesis. Large-scale public data repositories provide comprehensive molecular profiles across genomics, transcriptomics, proteomics, and epigenomics, allowing researchers to move beyond single-layer analyses. These resources have become indispensable for biomarker discovery, disease subtyping, and understanding therapeutic vulnerabilities [25]. The Cancer Genome Atlas (TCGA), Clinical Proteomic Tumor Analysis Consortium (CPTAC), International Cancer Genome Consortium (ICGC), and Omics Discovery Index (OmicsDI) represent cornerstone initiatives in this landscape, together housing molecular data for tens of thousands of patients across diverse cancer types [25] [26].

These repositories have catalyzed groundbreaking discoveries by providing the research community with standardized, high-quality data. For instance, multi-omics studies have revealed that somatic mutations in only three genes (TP53, PIK3CA, and GATA3) were responsible for signaling pathways deregulated in 30% of breast cancers, while chromosome 20q amplicon was associated with significant global changes at both mRNA and protein levels in colorectal cancers [27] [25]. Such insights demonstrate the power of integrative approaches over single-omics analyses, highlighting complex rearrangements at genetic, transcriptional, and proteomic levels that drive oncogenesis through clonal selection and treatment resistance [28].

Repository Characteristics and Data Types

Comparative Analysis of Major Repositories

Table 1: Core Characteristics of Public Multi-Omics Repositories

| Repository | Primary Focus | Key Data Types | Sample Scale | Access Type |

|---|---|---|---|---|

| TCGA | Pan-cancer molecular profiling | DNA-Seq, RNA-Seq, miRNA-Seq, SNV, CNV, DNA methylation, RPPA [25] | >20,000 tumors across 33 cancer types [28] | Open & Controlled [26] |

| CPTAC | Cancer proteomics | Global proteome, phosphoproteome, glycoproteome via mass spectrometry [27] | Proteomic data for TCGA cohorts [25] | Open Access |

| ICGC | International cancer genomics | WGS, WES, transcriptomics, epigenomics [25] | 76 projects across 21 cancer sites from 20,383 donors [25] | Open & Restricted [26] |

| OmicsDI | Cross-repository discovery | Consolidated datasets from 11 repositories [25] | Unified framework for multi-omics data [25] | Open Access |

| CCLE | Cancer cell lines | Gene expression, copy number, sequencing, drug sensitivity [25] [28] | ~1,000 cancer cell lines [26] | Open Access |

| TARGET | Pediatric cancers | Gene expression, miRNA, copy number, sequencing [25] | 24 molecular types of childhood cancer [25] | Controlled Access |

Data Harmonization and Availability

The repositories employ different data generation and processing strategies. TCGA provides both "legacy" data (original genome builds) and "harmonized" data (reprocessed using GRCh38 alignment and standardized workflows) [26]. This harmonization process ensures consistency across datasets, with the Genomic Data Commons (GDC) generating derived data including normal and tumor variant calls, gene expression profiles, and splice junction quantification [26]. CPTAC complements TCGA by analyzing cancer biospecimens using mass spectrometry to characterize and quantify their proteomes, with data available in multiple formats including raw mass spectrometry spectra and processed peptide spectrum matches [26].

ICGC coordinates a global network of research groups with data available through distributed repositories, including whole genome sequencing and RNA sequencing data from the PanCancer Analysis of Whole Genomes (PCAWG) study analyzed using common alignment and variant calling workflows [26]. OmicsDI serves as a meta-resource, providing a uniform framework to discover datasets across multiple repositories, significantly enhancing the findability of relevant multi-omics data [25].

Experimental Protocols for Data Utilization

Protocol 1: Multi-Omics Cancer Subtyping Analysis

Objective: Identify molecular subtypes across cancer types using integrated genomic, transcriptomic, and proteomic data.

Materials and Reagents:

- Multi-omics data from TCGA and CPTAC

- Computational environment (R/Python)

- Data integration tools (MOFA+, iCluster)

Procedure:

- Data Acquisition: Download matched genomic, transcriptomic, and proteomic data for breast invasive carcinoma (BRCA) or colon adenocarcinoma (COAD) from TCGA and CPTAC data portals [27] [26].

- Data Preprocessing:

- Normalize RNA-seq data using FPKM or TPM normalization

- Process proteomics data using maxLFQ or similar intensity-based quantification

- Filter features based on variance (select top 10% most variable features) [29]

- Data Integration: Apply integrative clustering using iCluster or MOFA+ to combine multi-omics layers [29].

- Subtype Validation: Evaluate clusters using survival analysis and clinical feature correlation.

Expected Results: Identification of 4-5 robust subtypes with distinct molecular profiles and clinical outcomes, as demonstrated in the CPTAC-TCGA breast cancer study which revealed subtypes (Luminal A, Luminal B, Basal-like, HER2-enriched) with differential therapeutic vulnerabilities [27].

Protocol 2: Proteogenomic Integration for Driver Gene Prioritization

Objective: Integrate genomic and proteomic data to identify and prioritize cancer driver genes.

Materials and Reagents:

- Somatic mutation data from TCGA

- Global proteome data from CPTAC

- Proteogenomic integration tools (LinkedOmics)

Procedure:

- Data Collection: Obtain somatic mutation data and proteomic profiles for colorectal cancer (COAD/READ) samples [27].

- Mutation Impact Analysis: Identify genes with recurrent non-synonymous mutations.

- Proteomic Correlation: Assess protein abundance changes associated with mutational status.

- Multi-omics Integration: Use tools like LinkedOmics to identify phosphorylation events or pathway alterations downstream of mutated genes [30].

- Functional Validation: Correlate findings with drug sensitivity data from cell line screens (e.g., CTRP or GDSC) [31].

Expected Results: Prioritization of driver genes with functional impact at protein level, such as identification of potential 20q candidates in colorectal cancer including HNF4A, TOMM34, and SRC through proteogenomic integration [25].

Protocol 3: Cross-Cohort Validation Analysis

Objective: Validate findings across multiple cancer types and cohorts.

Materials and Reagents:

- Multi-omics data from ICGC and TCGA

- Cloud computing platform (Cancer Genomics Cloud)

- Cross-validation frameworks

Procedure:

- Discovery Cohort: Perform initial analysis in TCGA cohort (e.g., LUAD - lung adenocarcinoma).

- Validation Cohort: Replicate analysis in corresponding ICGC cohort.

- Meta-Analysis: Use OmicsDI to identify additional independent datasets for validation.

- Pan-Cancer Assessment: Extract pan-cancer signatures across 32 cancer types using LinkedOmics [30].

Expected Results: Identification of robust molecular signatures conserved across cohorts and cancer types, with potential clinical applications as biomarkers or therapeutic targets.

Visualization and Analysis Workflows

Multi-Omics Data Integration Workflow

Diagram 1: Multi-omics data integration workflow depicting the flow from raw data to biological insights.

LinkedOmics Analysis Modules

Diagram 2: LinkedOmics analysis modules for exploring cancer multi-omics data.

The Scientist's Toolkit

Essential Research Reagents and Computational Tools

Table 2: Key Analytical Tools for Multi-Omics Data Integration

| Tool/Resource | Function | Application Context | Repository Compatibility |

|---|---|---|---|

| LinkedOmics [30] | Multi-omics data analysis within and across cancer types | Association analysis, comparison, pathway enrichment | TCGA, CPTAC (32 cancer types) |

| MOFA+ [28] | Unsupervised integration of multi-omics data | Dimension reduction, factor analysis for patient stratification | General purpose (all repositories) |

| oncoPredict [31] | Drug response prediction from genomic features | Biomarker discovery, therapy response prediction | TCGA, CCLE, GDSC |

| CellMinerCDB [31] | Cross-database genomics and pharmacogenomics | Cell line analysis, drug-gene interplay | NCI-60, GDSC, CCLE, CTRP |

| CARE [31] | Biomarker identification from drug target interactions | Multivariate modeling with interaction terms | Drug screening data |

| iCluster [29] | Integrative clustering of multi-omics data | Cancer subtype identification | TCGA, ICGC |

| CPTAC Common Data Analysis Pipeline [27] | Proteomics data processing | Peptide spectrum matching, protein quantification | CPTAC data |

Best Practices and Guidelines

Multi-Omics Study Design Considerations

Based on comprehensive benchmarking studies, several key factors influence the success of multi-omics integration projects. For robust analysis, studies should aim for a minimum of 26 samples per class, select less than 10% of omics features through careful feature selection, maintain sample balance under a 3:1 ratio between groups, and ensure noise levels remain below 30% [29]. Feature selection has been shown to improve clustering performance by up to 34% in benchmark tests [29].

The choice of integration strategy should align with research objectives. Early integration (feature concatenation) works well for closely related data types, while middle integration (using machine learning models) effectively captures complex relationships across diverse data types [28]. Late integration (separate analysis with merged results) provides flexibility for highly heterogeneous data sources. Studies comparing 10 clustering methods across TCGA datasets demonstrate that no single method universally outperforms others, highlighting the importance of method selection based on specific data characteristics and research questions [29].

Data Access and Computational Considerations

Accessing controlled data requires authorization through the NIH dbGaP system, while open access data is immediately available upon registration [26]. Cloud-based platforms such as the Cancer Genomics Cloud (CGC) provide powerful environments for querying, filtering, and analyzing large multi-omics datasets alongside private research data [26]. These platforms typically update their data within 30 days of GDC releases, ensuring access to the most current versions [26].

For computational efficiency, benchmarking studies recommend considering runtime and memory requirements when selecting integration methods. Methods like MOFA+ and iCluster show favorable performance in cancer type classification and drug response prediction tasks, with varying efficiency across different sample sizes and feature dimensions [28]. The integration of proteomics data alongside genomic and transcriptomic data has proven particularly valuable for prioritizing driver genes and understanding functional impacts of molecular alterations [25].

The integration of multi-omics data from public repositories represents a transformative approach to cancer research, enabling discoveries that transcend the limitations of single-omics analyses. TCGA, CPTAC, ICGC, and OmicsDI provide complementary resources that collectively offer unprecedented insights into the molecular architecture of cancer. As machine learning methodologies continue to evolve and datasets expand, these repositories will play an increasingly vital role in advancing personalized cancer medicine, drug discovery, and clinical trial design. The protocols and guidelines presented here provide a foundation for researchers to leverage these powerful resources effectively, with the ultimate goal of translating molecular insights into improved patient outcomes.

Methodologies in Action: Statistical, Deep Learning, and Workflow Strategies for Multi-Omics Integration

Integrating multi-omics data is crucial for a comprehensive understanding of complex biological systems and diseases. The heterogeneity and high-dimensionality of data types such as transcriptomics, epigenomics, and proteomics pose significant computational challenges. This framework compares two dominant methodological paradigms for this integration: the statistical approach, represented by Multi-Omics Factor Analysis (MOFA+), and deep learning-based approaches, represented by Graph Convolutional Networks (GCNs) and Autoencoders (AEs). The choice between these approaches significantly impacts the biological insights gained, the interpretability of results, and the resources required for analysis [32] [33] [34].

Core Principles and Data Flow

Statistical Approach (MOFA+) MOFA+ is an unsupervised Bayesian framework that uses factor analysis to infer a set of latent factors that capture the principal sources of variation across multiple omics datasets. It decomposes each omics data matrix into a shared factor matrix and view-specific weight matrices, effectively performing a multi-omics generalization of principal component analysis (PCA) [35].

Deep Learning Approaches

- Autoencoders (AEs): These are neural networks designed for dimensionality reduction. They learn to encode high-dimensional input data into a compressed latent representation and then decode this representation to reconstruct the original input. In multi-omics integration, AEs can learn a joint latent space from concatenated or separate omics inputs [34].

- Graph Convolutional Networks (GCNs): Methods like MoGCN model multi-omics data as graphs, where nodes can represent samples or biological features. These models leverage graph structures, such as patient similarity networks or biological knowledge graphs, and use message-passing mechanisms to learn powerful, non-linear representations that integrate information from a node's neighbors [36] [37].

The table below summarizes the fundamental characteristics of these approaches.

Table 1: Core Methodological Characteristics of Multi-Omics Integration Approaches

| Characteristic | Statistical (MOFA+) | Deep Learning (GCNs & AEs) |

|---|---|---|

| Core Principle | Unsupervised Bayesian factor analysis | Non-linear function approximation via neural networks |

| Integration Strategy | Intermediate (latent factors) | Early, Intermediate, or Late (model-dependent) |

| Model Assumptions | Linear relationships between variables | Minimal assumptions; can capture complex non-linearities |

| Primary Output | Latent factors and feature loadings | Latent embeddings and predicted labels or clusters |

| Interpretability | High; factors and loadings are directly interpretable | Lower; often considered a "black box," requires post-hoc explanation |

Performance and Application Comparison

A direct comparative analysis on a breast cancer dataset comprising 960 samples with transcriptomics, epigenomics, and microbiomics data provides quantitative performance insights [32]. After selecting the top 100 features from each omics layer using MOFA+ and MoGCN (a deep learning method using Graph Convolutional Networks and autoencoders), the features were evaluated using linear and nonlinear classifiers.

Table 2: Empirical Performance Comparison on Breast Cancer Subtyping [32]

| Evaluation Metric | Statistical (MOFA+) | Deep Learning (MoGCN) |

|---|---|---|

| F1-Score (Non-linear Model) | 0.75 | Lower than MOFA+ |

| Number of Enriched Pathways Identified | 121 | 100 |

| Key Pathways Identified | Fc gamma R-mediated phagocytosis, SNARE pathway | Not Specified |

| Clustering Quality (Calinski-Harabasz Index) | Higher | Lower |

| Clustering Quality (Davies-Bouldin Index) | Lower | Higher |

Experimental Protocols

Protocol 1: Unsupervised Integration with MOFA+

This protocol details the steps for using MOFA+ to identify latent sources of variation in a multi-omics cohort [32] [35].

1. Input Data Preparation

- Data Collection: Obtain matched multi-omics data (e.g., from TCGA cBioPortal). The example study used 960 breast cancer samples with transcriptomics, epigenomics, and microbiome data [32].